A Comprehensive Guide to Cell Type Annotation with scGPT: From Foundation Models to Clinical Applications

This article provides researchers, scientists, and drug development professionals with a complete framework for implementing scGPT for single-cell RNA sequencing annotation.

A Comprehensive Guide to Cell Type Annotation with scGPT: From Foundation Models to Clinical Applications

Abstract

This article provides researchers, scientists, and drug development professionals with a complete framework for implementing scGPT for single-cell RNA sequencing annotation. Covering foundational concepts, practical methodologies, troubleshooting strategies, and validation techniques, we explore how this transformer-based foundation model achieves exceptional accuracy—up to 99.5% F1-score in retinal cell annotation—while addressing real-world challenges like handling unannotated datasets and optimizing for rare cell populations. The guide synthesizes the latest protocols, compares scGPT with alternative tools, and demonstrates its potential for accelerating therapeutic discovery through interpretable, biologically-relevant insights.

Understanding scGPT: The Foundation Model Revolutionizing Single-Cell Biology

What is scGPT? Exploring the Transformer Architecture for Single-Cell Data

scGPT is a foundation model based on a generative pretrained transformer architecture specifically designed for single-cell multi-omics data analysis. Trained on a massive repository of over 33 million cells, this model represents a significant advancement in applying artificial intelligence to cellular biology research. By drawing parallels between language and cellular biology—where texts comprise words and cells are defined by genes—scGPT effectively distills critical biological insights concerning genes and cells. Through transfer learning, the model can be optimized for diverse downstream applications including cell type annotation, multi-batch integration, multi-omic integration, perturbation response prediction, and gene network inference, establishing itself as a versatile tool in the single-cell research landscape [1].

The emergence of scGPT marks a transformative development in the analysis of single-cell transcriptomic data. Inspired by the remarkable success of transformer architectures in natural language processing, scGPT adapts this powerful framework to decipher the complex "language" of gene expression within individual cells. At its core, the model employs a self-attention mechanism that allows it to capture intricate, context-dependent relationships between genes across diverse cell types and biological conditions. This architectural approach enables the model to learn rich, contextualized representations of cellular states from large-scale unlabeled data, mirroring how language models learn semantic relationships from vast text corpora [2] [3].

scGPT's pretraining process utilizes a masked language model objective, where portions of the gene expression profile are hidden and the model learns to predict them based on the remaining context. This self-supervised approach allows the model to develop a fundamental understanding of gene-gene interactions and regulatory relationships without requiring labeled data. The transformer architecture is particularly well-suited for this task because of its ability to handle the high-dimensional, sparse nature of single-cell RNA sequencing data while modeling complex, non-linear dependencies between genes. The model uses a gene encoder to encode gene identities, applies binning to expression values to obtain expression embeddings, and incorporates condition embeddings for specific genes, integrating these inputs through multiple transformer layers to build comprehensive cellular representations [3].

Model Architecture and Technical Specifications

Core Architectural Components

scGPT incorporates several specialized components to handle the unique characteristics of single-cell data:

- Gene Encoder: Transforms gene identifiers into dense vector representations, capturing functional and structural similarities between genes.

- Expression Embedding: Processes normalized expression values through binning techniques to create continuous embeddings that represent expression levels.

- Condition Embedding: Incorporates additional experimental conditions or perturbations into the model's representation.

- Transformer Layers: Multiple layers of self-attention mechanisms that model complex dependencies between genes and capture hierarchical patterns in gene regulation.

- Pre-training Objectives: Includes masked language modeling for gene expression prediction and other self-supervised tasks that encourage the model to learn biologically meaningful representations [3].

The model modifies the standard transformer architecture to better accommodate the non-sequential nature of genomic data, where the concept of word order present in natural language does not directly apply. Instead, the model treats genes as tokens without inherent sequence but leverages the attention mechanism to learn their contextual relationships based on co-expression patterns and regulatory networks.

Table 1: scGPT Technical Specifications and Performance Metrics

| Parameter Category | Specifications | Performance Metrics | Values |

|---|---|---|---|

| Training Data Scale | 33 million cells [1] | Cell Type Annotation | 99.5% F1-score on retinal data [4] |

| Architecture | Transformer-based | Batch Integration | Outperforms Harmony/scVI on complex biological batch effects [5] |

| Embedding Dimensions | 512 [6] | Perturbation Prediction | Pearson Delta: 0.641 (Adamson), 0.554 (Norman) [7] |

| Key Applications | Cell annotation, multi-omic integration, perturbation prediction | Drug Response Prediction | Superior PCC in leave-one-drug-out tests [2] |

Implementation and Scaling

scGPT demonstrates impressive scaling properties, with performance generally improving with increased model size and training data diversity. However, evaluations have shown that beyond a certain point, larger and more diverse datasets may not always confer additional benefits for specific tasks. The model is implemented in PyTorch and requires specific versions (torch==2.1.2) for optimal performance. Practical implementation involves careful preprocessing of single-cell data, including normalization, highly variable gene selection, and proper batch handling to ensure robust performance across diverse datasets [5] [6].

scGPT for Cell Type Annotation: Protocols and Applications

End-to-End Fine-tuning Protocol

The fine-tuning protocol for scGPT enables researchers to adapt the foundation model for high-precision cell type annotation tasks. This process involves several systematic steps:

Data Preprocessing: Raw single-cell RNA sequencing data undergoes quality control, normalization, and feature selection. The protocol specifically uses the

scanpylibrary for these tasks, selecting the top 3,000 highly variable genes using the 'seurat_v3' flavor to reduce dimensionality while preserving biological signal [6].Model Configuration: The pretrained scGPT model is loaded with appropriate parameters, including gene vocabulary mapping and model architecture specifications. The protocol utilizes the scGPT-human checkpoint as the starting point for fine-tuning.

Fine-tuning Process: The model is trained on annotated single-cell data using transfer learning approaches. This involves freezing certain layers while updating others, or applying full fine-tuning with a low learning rate to adapt the pretrained weights to the specific cell annotation task.

Evaluation and Validation: The fine-tuned model is assessed using multiple metrics including accuracy, F1-score, and visualization techniques like UMAP to validate clustering quality. The protocol generates comprehensive outputs including embedding files, classification results, and visualizations [4] [8].

This protocol has demonstrated remarkable success in practical applications, achieving a 99.5% F1-score for retinal cell type annotation when fine-tuned on a custom retina dataset. The approach effectively handles complex tissues and rare cell populations, providing high-resolution classification that surpasses traditional methods [4].

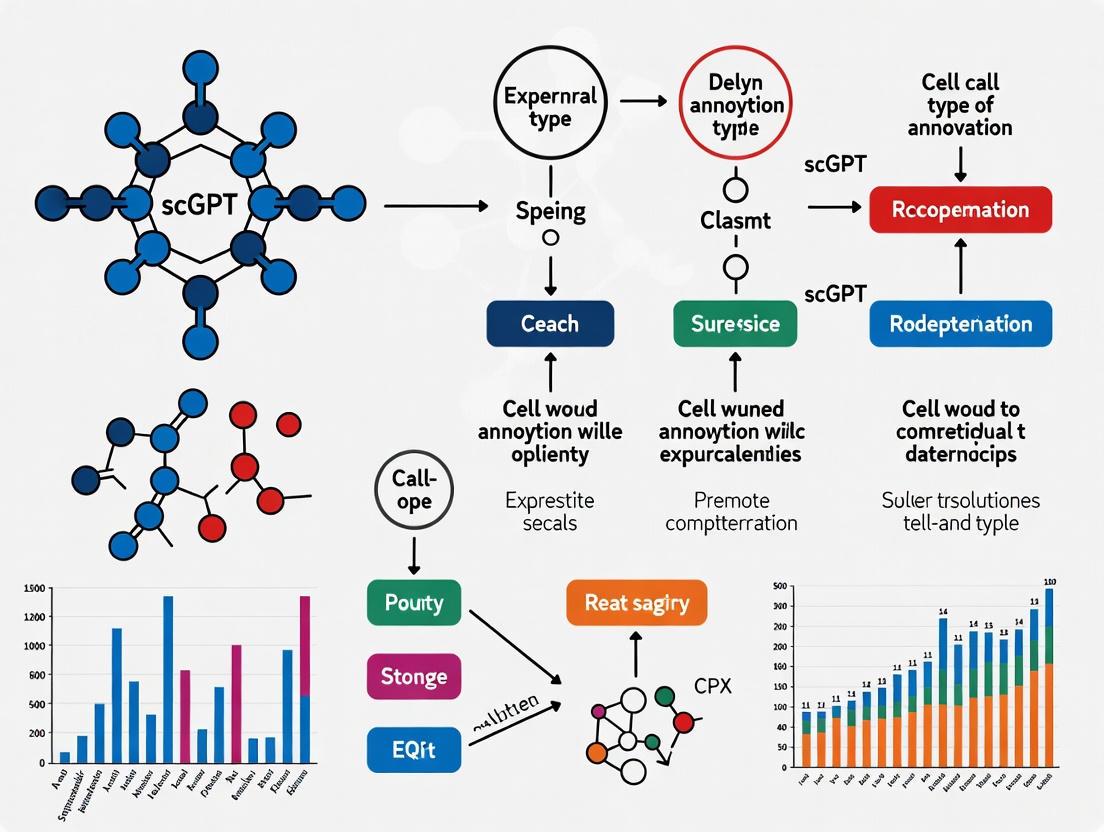

Diagram 1: scGPT Fine-tuning Workflow for Cell Type Annotation

Advanced Annotation Capabilities

scGPT excels in handling challenging annotation scenarios that often trouble traditional methods:

Rare Cell Population Identification: The model's attention mechanism and pretrained knowledge enable it to recognize subtle expression patterns characteristic of rare cell types, even with limited examples in the fine-tuning data.

Cross-Dataset Generalization: When properly fine-tuned, scGPT demonstrates robust performance across datasets generated using different technologies or originating from diverse laboratories, effectively handling batch effects and technical variations.

Resolution Adaptation: The framework supports annotation at multiple hierarchical levels, from major cell classes to fine-grained subtypes, allowing researchers to adjust annotation resolution based on biological questions and data quality.

The protocol's accessibility is enhanced through provided command-line scripts and Jupyter Notebooks, making high-precision cell type annotation available to researchers with intermediate bioinformatics skills rather than requiring deep expertise in machine learning [4] [8].

Performance Benchmarking and Comparative Analysis

Cell Type Annotation and Batch Integration

scGPT's performance has been rigorously evaluated across multiple benchmarks, demonstrating both strengths and limitations. In controlled fine-tuning scenarios, particularly for cell type annotation, the model achieves state-of-the-art results. However, zero-shot evaluations—where the model is used without task-specific training—reveal important limitations that must be considered for practical applications [5].

Table 2: Comparative Performance of scGPT Against Established Methods

| Method | Cell Type Clustering (AvgBIO) | Batch Integration (iLISI) | Perturbation Prediction (Pearson Δ) | Computational Demand |

|---|---|---|---|---|

| scGPT | Variable (dataset-dependent) [5] | Superior on complex biological batches [5] | 0.327-0.641 across datasets [7] | High (requires fine-tuning) [3] |

| Geneformer | Underperforms HVG selection [5] | Consistently ranks last [5] | Not benchmarked | Moderate |

| scVI | Consistent performance [5] | Effective on technical variation [5] | Not primary focus | Low-Moderate |

| Harmony | Good performance [5] | Struggles with Tabula Sapiens [5] | Not applicable | Low |

| HVG Selection | Outperforms foundation models [5] | Best scores across datasets [5] | Simple baseline | Minimal |

In zero-shot cell type clustering assessments, scGPT shows variable performance across datasets. It performs comparably to established methods like scVI on Tabula Sapiens, Pancreas, and PBMC datasets but underperforms relative to simpler approaches like highly variable gene (HVG) selection on others. This suggests that while pretraining provides a foundation, task-specific adaptation remains crucial for optimal performance [5].

For batch integration tasks, scGPT demonstrates particular strength in handling complex biological batch effects—such as those arising from different donors—where it outperforms both Harmony and scVI on Tabula Sapiens and Immune datasets. However, it shows limitations in correcting for batch effects between different experimental techniques, indicating that technical artifacts remain challenging [5].

Perturbation Response Prediction

In predicting cellular responses to genetic perturbations, scGPT has demonstrated mixed performance. When evaluated on standard Perturb-seq benchmarks, the model achieves Pearson correlation coefficients in differential expression space ranging from 0.327 to 0.641 across different datasets. Surprisingly, even simple baseline models—such as taking the mean of training examples—can outperform scGPT in some scenarios. Similarly, random forest regressors using Gene Ontology features substantially outperform scGPT by margins of 0.098 to 0.151 in Pearson Delta metrics across benchmarks [7].

This performance gap highlights an important consideration for researchers: incorporating biologically meaningful features through simpler models may sometimes yield better results than complex foundation models, particularly when training data is limited or when specific prior knowledge is available. However, it's worth noting that using scGPT's embeddings as features in random forest models improves performance compared to the fine-tuned scGPT model itself, suggesting that the model captures valuable biological information that may not be fully utilized by its native prediction heads [7].

Advanced Applications in Drug Discovery and Therapeutic Development

Drug Response Prediction

Beyond basic cell type annotation, scGPT shows significant promise in drug discovery applications, particularly in predicting cancer drug response (CDR). When integrated with graph neural networks in frameworks like DeepCDR, scGPT-derived cell embeddings enhance prediction accuracy for half-maximal inhibitory concentration (IC50) values—a critical metric for assessing drug potency and efficacy [2].

In comparative studies, scGPT-based approaches consistently outperform both the original DeepCDR framework and scFoundation-integrated variants across multiple evaluation settings, including cell line-based, cancer type-specific, and drug-specific predictions. The model demonstrates particular strength in leave-one-drug-out validation scenarios, where it must predict responses for completely unseen compounds, indicating better generalization capabilities than alternative approaches [2].

Additionally, scGPT-based models exhibit greater training stability compared to other foundation model integrations, an important practical consideration for reproducible research and deployment in resource-constrained environments. This stability, combined with competitive performance, positions scGPT as a valuable tool for prioritizing candidate therapeutics and accelerating personalized treatment strategies [2].

Interpretable Analysis and Target Discovery

Recent methodological advances have leveraged scGPT as a teacher model to train more interpretable architectures for therapeutic target discovery. The scKAN framework employs knowledge distillation from scGPT to a Kolmogorov-Arnold network, combining the foundation model's comprehensive biological knowledge with enhanced interpretability for identifying cell-type-specific marker genes and potential drug targets [3].

This approach demonstrates scGPT's utility not only as a direct predictive tool but also as a source of biological knowledge that can be transferred to more specialized architectures. In a case study on pancreatic ductal adenocarcinoma, gene signatures identified through this scGPT-guided approach led to a potential drug repurposing candidate, with molecular dynamics simulations supporting binding stability—showcasing a direct path from single-cell analysis to therapeutic hypothesis [3].

Diagram 2: scGPT for Drug Response Prediction Framework

Practical Implementation and Research Reagents

Essential Research Reagents and Computational Tools

Successful implementation of scGPT for cell type annotation requires specific computational resources and data components:

Table 3: Essential Research Reagents and Tools for scGPT Implementation

| Resource Category | Specific Tools/Datasets | Function and Purpose |

|---|---|---|

| Pretrained Models | scGPT-human checkpoint [6] | Provides foundational knowledge from 33M cells for transfer learning |

| Data Processing | Scanpy [6], NumPy, Pandas | Handles single-cell data preprocessing, normalization, and HVG selection |

| Visualization | UMAP [6], sc.pl.umap | Generates low-dimensional embeddings and cluster visualization |

| Benchmark Datasets | Retinal cell datasets [9], Pancreas, Tabula Sapiens [5] | Provides standardized benchmarks for model evaluation and comparison |

| Evaluation Metrics | F1-score, Pearson correlation, BIO score [5] | Quantifies model performance across different task types |

Implementation Considerations

Practical deployment of scGPT requires attention to several technical considerations:

Data Compatibility: Ensure single-cell data is properly formatted as AnnData objects with correct gene annotation columns (typically "feature_name" for CELLxGENE datasets) [6].

Preprocessing Consistency: Apply consistent normalization (CPM followed by log1p transformation) and highly variable gene selection methods (Seurat v3 flavor for 3,000 genes) to maintain compatibility with the model's expected input distribution [6].

Computational Resources: The model requires significant memory and GPU resources, particularly for fine-tuning on large datasets. A tested configuration includes 32GB RAM and T4 GPU for standard workflows [6].

Fine-tuning Strategy: For optimal cell type annotation performance, employ progressive fine-tuning—starting with low learning rates and potentially freezing earlier layers—to adapt the foundation model to specific tissues or experimental conditions without catastrophic forgetting of pretrained knowledge [4].

The availability of comprehensive protocols, Jupyter Notebook implementations, and pretrained model checkpoints significantly lowers the barrier to entry for researchers with intermediate bioinformatics skills, making advanced transformer-based analysis accessible to broader scientific communities [4] [8].

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biology by allowing researchers to probe cellular heterogeneity at an unprecedented resolution. However, the high dimensionality, sparsity, and technical noise inherent in scRNA-seq data present significant analytical challenges [10]. Inspired by breakthroughs in natural language processing (NLP), computational biologists have developed single-cell Foundation Models (scFMs)—large-scale deep learning models pre-trained on massive datasets to learn universal patterns of cellular biology [11]. These models treat individual cells as "sentences" and genes or their expression values as "words," creating a foundational understanding that can be adapted to various downstream tasks such as cell type annotation, perturbation prediction, and batch integration [11] [12].

This Application Note focuses on the transformative power of pre-training, specifically using the scGPT model as a case study. Pre-training on a corpus of over 33 million non-cancerous human cells allows scGPT to internalize the fundamental "language" of gene regulation and cellular identity [5] [11] [13]. We detail the protocols for leveraging this pre-trained biological foundation for the critical task of cell type annotation, providing researchers and drug development professionals with a robust, scalable framework to decipher complex cellular landscapes.

The Architecture of scGPT and the Pre-training Paradigm

Model Architecture and Tokenization

scGPT is built upon a transformer architecture, which uses self-attention mechanisms to weigh the importance of different genes when modeling a cell's state. A critical step in adapting transformer models to non-sequential biological data is tokenization—the process of converting raw gene expression data into discrete units the model can process [11].

The typical tokenization strategy for scGPT involves:

- Gene Tokens: Each gene is represented as a unique token, analogous to a word in a sentence.

- Value Embedding: The expression value of each gene is processed through binning or a projection layer to create a value embedding.

- Rank-Based Sequencing: Since genes lack a natural order, they are often ranked by their expression levels within each cell to create a deterministic sequence for the model input [11]. scGPT utilizes a GPT-like decoder architecture with a masked self-attention mechanism, training the model to iteratively predict masked genes based on the context of known genes in a cell [11] [12]. This process forces the model to learn the complex, co-regulatory relationships between genes.

The Pre-training Corpus

The scale and diversity of the pre-training dataset are the bedrock of the model's performance. scGPT was pre-trained on a massive collection of over 33 million high-quality human cells from public resources like CELLxGENE, encompassing a wide range of tissues, cell types, and states [5] [11] [13]. This exposure allows the model to learn a robust and generalizable representation of cellular biology that is not overfitted to any specific tissue or condition.

Table 1: Key Components of the scGPT Pre-training Framework

| Component | Description | Role in Building a Biological Foundation |

|---|---|---|

| Model Architecture | Transformer-based decoder (GPT-style) | Captures complex, non-linear gene-gene interactions via self-attention mechanisms. |

| Pre-training Data | >33 million non-cancerous human cells [13] | Provides a comprehensive universe of cellular states for the model to learn from. |

| Tokenization | Gene identity + expression value embedding | Converts continuous, unordered gene expression into a structured model input. |

| Pre-training Task | Masked Gene Modeling (MGM) | Forces the model to learn internal representations of gene regulatory networks. |

Application Note: Cell Type Annotation with Pre-trained scGPT

Cell type annotation is a fundamental yet laborious step in scRNA-seq analysis. Traditional manual annotation requires expert knowledge to compare cluster-specific marker genes against canonical references, a process that is slow and difficult to scale [14] [15]. Pre-trained scGPT automates and enhances this process by leveraging its internalized knowledge of marker genes across hundreds of cell types.

Advantages Over Traditional Methods

Benchmarking studies demonstrate that scGPT and other foundation models offer significant advantages:

- Accuracy: When evaluated across hundreds of tissue and cell types, GPT-4 (the backbone of tools like GPTCelltype) generates annotations with strong concordance to manual expert annotations [14]. In complex scenarios, it can even provide more granular annotations than manual methods [14].

- Robustness: scGPT shows considerable resilience in handling complex data. It can distinguish between pure and mixed cell types with ~93% accuracy and identify unknown cell types with ~99% accuracy, even when input gene sets are noisy or incomplete [14].

- Efficiency: The pre-trained model can be directly applied or efficiently fine-tuned for annotation, drastically reducing the need for extensive, dataset-specific training and pipeline construction [14] [11].

Table 2: Comparison of Cell Annotation Methods

| Method | Principle | Strengths | Limitations |

|---|---|---|---|

| Manual Annotation | Expert matching of marker genes to clusters. | Considered the gold standard; allows for novel cell discovery. | Labor-intensive, requires deep expertise, not scalable [15]. |

| Automatic Methods (e.g., SingleR, ScType) | Algorithmic comparison to reference datasets. | Fast, reproducible. | Performance depends on quality and comprehensiveness of reference [14]. |

| Foundation Models (scGPT) | Leverages knowledge from pre-training on millions of cells. | High accuracy, robust to noise, requires no custom reference for zero-shot tasks [14] [12]. | Requires computational resources; "black box" nature can hinder interpretation [14]. |

Experimental Protocol: Zero-Shot Cell Type Annotation

This protocol outlines the use of a pre-trained scGPT model for annotating cell types in a new scRNA-seq dataset without any further fine-tuning (zero-shot).

I. Input Data Preparation

- Data Pre-processing: Perform standard scRNA-seq analysis on your query dataset using a pipeline like Seurat or Scanpy. This includes:

- Quality Control: Filter out low-quality cells and genes.

- Normalization: Normalize the count data to account for library size.

- Differential Expression: Identify marker genes for each cell cluster. The top 10 differential genes identified by a two-sided Wilcoxon rank-sum test have been shown to be optimal for GPT-4-based annotation [14].

- Input Formatting: For each cell cluster, compile a list of the top N marker genes (e.g., 10 genes), ranked by significance or fold-change.

II. Model Inference

- Model Loading: Load the pre-trained scGPT model into your computational environment. The model can be accessed from official repositories (e.g., https://github.com/bowang-lab/scGPT).

- Prompt Construction: The marker gene list for a cluster is formatted as a "sentence" and fed into the model. A basic prompt strategy is sufficient [14]. Example:

- "Annotate the cell type based on the following marker genes: [Gene A], [Gene B], [Gene C], ..."

- Query Execution: Pass the constructed prompt for each cluster through the scGPT model to generate a cell type prediction.

III. Validation and Interpretation

- Expert Validation: As with any automated method, it is crucial to validate the model's annotations. Compare the predictions with known canonical markers and the scientific literature [14].

- Handling Uncertainty: The model may indicate low confidence or provide multiple hypotheses for ambiguous clusters. These should be flagged for further biological investigation.

Diagram 1: scGPT Annotation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of scFMs like scGPT requires both computational and biological resources. The following table details key solutions for researchers embarking on this path.

Table 3: Essential Research Reagent Solutions for scGPT-Based Annotation

| Item / Resource | Function / Description | Example / Source |

|---|---|---|

| Pre-trained scGPT Model | The core AI model containing pre-trained weights from 33+ million cells. | Official scGPT GitHub repository. |

| Single-Cell Analysis Platform | Integrated environment for pre-processing, QC, and clustering of scRNA-seq data. | Seurat (R), Scanpy (Python). |

| Reference Cell Atlas | High-quality, manually curated datasets for benchmarking and validation. | HuBMAP, Human Cell Atlas, CELLxGENE [11]. |

| Marker Gene Database | Curated knowledge base of cell-type-specific markers for expert validation. | CellMarker, Annotation of Cell Types (ACT) server [15]. |

| High-Performance Computing (HPC) | Computational infrastructure with GPUs to run large transformer models. | Local cluster or cloud computing services (AWS, GCP, Azure). |

Performance Benchmarks and Comparative Analysis

Independent benchmarking studies provide a critical lens for evaluating the real-world performance of scFMs. While pre-training offers immense potential, performance varies across tasks and models.

In batch integration, which aims to remove technical artifacts while preserving biological variance, scGPT's zero-shot performance is mixed. It can outperform methods like Harmony on complex datasets with both technical and biological batch effects (e.g., Tabula Sapiens) but may be outperformed by simpler methods like Highly Variable Genes (HVG) or scVI on datasets with purely technical variation [5].

For cell type clustering, zero-shot embeddings from scGPT and other foundation models do not consistently outperform established baselines. Simpler methods like HVG selection or scVI often achieve superior performance as measured by metrics like average BIO score [5]. This highlights that the relationship between the pre-training objective (e.g., masked gene modeling) and specific downstream tasks like clustering is not always straightforward.

However, in more complex gene-level tasks, such as predicting cellular responses to perturbations, foundation models show both promise and limitations. A benchmark of post-perturbation RNA-seq prediction found that fine-tuned scGPT was surprisingly outperformed by a simple baseline model that predicts the mean of the training data [7]. Furthermore, a Random Forest model using prior biological knowledge (Gene Ontology vectors) significantly outperformed foundation models [7]. This suggests that while scFMs learn powerful representations, integrating explicit biological knowledge can be crucial for optimal performance on specific prediction tasks.

Diagram 2: Performance Comparison

Pre-training on tens of millions of cells equips models like scGPT with a powerful, generalized understanding of cellular biology, making them invaluable tools for accelerating discovery. The application of pre-trained scGPT for cell type annotation demonstrates a paradigm shift from labor-intensive, manual curation toward scalable, AI-driven biological insight.

The future of scFMs lies in addressing current limitations, such as improving zero-shot task performance, enhancing model interpretability to avoid "AI hallucination," and developing more sophisticated methods for integrating multi-omic and spatial data [14] [11] [12]. As these models evolve, they will become even more integral to unraveling cellular complexity, driving forward both basic research and therapeutic development. By adhering to the protocols and considerations outlined in this note, researchers can confidently harness the power of pre-training to illuminate the inner workings of cellular systems.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of cellular heterogeneity. While cell type annotation remains a fundamental application, modern single-cell foundation models like scGPT are engineered to extract far deeper biological insights. This application note details two of scGPT's most powerful advanced capabilities: gene regulatory network (GRN) inference and batch integration. We provide a structured overview of their performance, followed by detailed experimental protocols to guide researchers in implementing these analyses, thereby moving beyond descriptive cataloging to functional and integrative biology.

The utility of scGPT in advanced downstream tasks is demonstrated through benchmarking against specialized tools and other foundation models. The following tables summarize key performance metrics.

Table 1: Benchmarking scGPT against other single-cell Foundation Models (scFMs) on general tasks. An overall ranking score was calculated across multiple tasks and datasets, where a lower score indicates better average performance [12].

| Model Name | Pretraining Dataset Scale | Model Parameters | Overall Benchmark Ranking (Lower is Better) |

|---|---|---|---|

| scGPT | 33 million cells [16] [17] | 50 million [16] [12] | 2 |

| scFoundation | 50 million cells [18] [12] | 100 million [18] [12] | 1 |

| Geneformer | 30 million cells [18] [12] | 40 million [12] | 3 |

| UCE | 36 million cells [18] [12] | 650 million [18] [12] | 4 |

| LangCell | 27.5 million cells [12] | 40 million [12] | 5 |

Table 2: Performance of GRN inference methods on the CausalBench benchmark. The F1 score is from biology-driven evaluation, and the Mean Wasserstein-FOR Trade-off is a statistical metric (lower rank is better) [19].

| Method Category | Method Name | Biological Evaluation F1 Score | Statistical Evaluation Rank (Mean Wasserstein-FOR) |

|---|---|---|---|

| Challenge (Interventional) | Mean Difference [19] | 0.136 | 1 |

| Challenge (Interventional) | Guanlab [19] | 0.138 | 2 |

| Observational | GRNBoost | 0.129 | 3 |

| Observational | GRNBoost + TF | 0.084 | 6 |

| Interventional | GIES | 0.092 | 9 |

| Interventional | DCDI-G | 0.091 | 10 |

Protocol 1: Gene Regulatory Network Inference with scGPT

Background and Principle

Gene regulatory network inference aims to reconstruct causal interactions between transcription factors and their target genes. While specialized tools like DAZZLE [20] [21] and locaTE [22] exist, scGPT provides a foundation model-based approach. scGPT is pre-trained on over 33 million human cells using a generative pre-training objective with a specialized attention mask, learning intrinsic relationships between genes [16] [17]. This protocol leverages the model's pre-trained knowledge to infer context-specific GRNs.

Experimental Workflow

The following diagram outlines the major steps for GRN inference using scGPT:

Step-by-Step Procedure

Data Preprocessing

- Input: Raw count matrix from scRNA-seq (cells x genes).

- Quality Control: Filter out low-quality cells and genes based on standard QC metrics (mitochondrial counts, number of genes detected).

- Normalization: Normalize the count data. A common approach is to use log1p (log(1+x)) transformation [20] [21].

- Formatting: Prepare the data as an

Anndataobject, ensuring gene names are stored inadata.var["feature_name"][16].

Model Loading and Fine-tuning

- Load Pre-trained Model: Initialize the scGPT model using the provided code repository and interface [16].

- Fine-tuning (Optional): For optimal performance on a specific cellular context, fine-tune scGPT on your target dataset using the provided scripts. This adapts the model's general knowledge to your specific data.

Generate Gene Embeddings

Calculate Gene-Gene Interactions

- Method A: Embedding Correlation

- Extract the gene embedding matrix.

- Compute the pairwise cosine similarity or Pearson correlation between all gene embeddings.

- The resulting symmetric matrix represents association scores between genes, which can be thresholded to infer the GRN [17].

- Method B: Attention Analysis

- Analyze the attention weights from the transformer layers. High attention scores between a pair of genes suggest a potential regulatory relationship [12].

- Method A: Embedding Correlation

Validation

- Validate the inferred network using prior knowledge from databases like KEGG or Reactome.

- For perturbation datasets, use benchmarks like CausalBench [19] to assess the accuracy of predicted causal interactions.

Protocol 2: Batch Integration with scGPT

Background and Principle

Integrating multiple scRNA-seq datasets is critical for large-scale analysis but is challenged by technical batch effects and biological differences (e.g., across species, protocols, or tissues). Methods like sysVI, a conditional VAE (cVAE) with VampPrior and cycle-consistency, have been developed to handle these "substantial batch effects" [23]. scGPT addresses this by learning a batch-invariant latent representation of cells during its pre-training, effectively aligning data from different sources into a shared space for downstream analysis [17].

Experimental Workflow

The workflow for batch integration using scGPT is illustrated below:

Step-by-Step Procedure

Data Preparation

- Input: Multiple

Anndataobjects, each representing a separate batch or dataset to be integrated. - Metadata: Ensure that batch or sample identity is recorded as a categorical field in the

Anndata.obsattribute. - Common Genes: Identify a set of variable genes common across all batches for integration.

- Input: Multiple

Model Setup and Tokenization

- Load Model: Load the pre-trained scGPT model as described in Protocol 1.

- Tokenization: Use the scGPT tokenizer to convert gene expression vectors into model inputs. The input incorporates both gene expression values and their corresponding gene tokens [16].

- Condition Tokens: A key feature of scGPT is the use of condition tokens. Provide the batch labels as condition tokens (e.g.,

"batch_1","batch_2") to the model. This instructs the model to explicitly account for and correct these technical variations [17].

Generate Integrated Embeddings

- Pass the tokenized data with condition tokens through the scGPT model.

- The model's transformer architecture, trained with an attention mask, generates a unified latent representation for each cell. In this space, cells cluster by type rather than by batch origin [17].

Downstream Analysis and Evaluation

- Clustering and Visualization: Use the integrated cell embeddings for UMAP/t-SNE visualization and clustering. Assess whether cell types cluster together regardless of their batch of origin.

- Evaluation Metrics: Quantify integration performance using standard metrics:

- iLISI: Measures the mixing of batches in local neighborhoods. A higher score indicates better batch mixing [23].

- Cell-type Level Biological Preservation: Use metrics like normalized mutual information (NMI) to ensure that biological variation (cell types) is preserved after integration [23].

- scGraph-OntoRWR: A novel metric that evaluates whether the relationships between cell types in the integrated space are consistent with established biological ontologies [12].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key software and data resources for implementing scGPT-based GRN inference and batch integration.

| Category | Item / Resource | Function / Description | Source / Citation |

|---|---|---|---|

| Foundation Model | scGPT | Core foundation model for single-cell biology; used for both GRN inference and batch integration. | [16] [17] |

| Computational Framework | PyTDC / TDC_ML | Machine learning platform for loading, fine-tuning, and running inference with scGPT. | [16] |

| Data Structure | Anndata | Standard Python object for handling single-cell data, compatible with scGPT. | [16] |

| Benchmarking Suite | CausalBench | Benchmark for rigorously evaluating GRN inference methods on real-world perturbation data. | [19] |

| Benchmarking Suite | scGraph-OntoRWR Metric | A biology-informed metric for evaluating if cell embedding relationships match known ontology. | [12] |

| Integration Metric | iLISI | Metric to evaluate batch mixing in the integrated latent space. | [23] |

| Prior Knowledge | KEGG, Reactome | Public databases used for validating inferred gene networks against known pathways. | Common Knowledge |

Single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of cellular heterogeneity, yet interpreting this data requires accurate identification of cell types and states. scGPT has emerged as a foundational model in this domain, trained on over 33 million single-cell transcriptomes to capture universal patterns in gene expression data [3]. This model adapts transformer-based architecture, originally developed for natural language processing, to decipher the complex "language" of gene regulation within cells. Unlike traditional methods that rely on predefined marker genes, scGPT aims to learn the underlying principles of gene-gene interactions and regulatory networks directly from data through self-supervised pretraining. This capability positions scGPT as a powerful tool for automated cell type annotation, enabling researchers to classify cell populations with high precision and gain biological insights into the regulatory mechanisms governing cell identity and function [24] [3].

Architectural Framework: How scGPT Models Biological Systems

Input Representation and Tokenization

scGPT processes single-cell data through a sophisticated input encoding system that transforms raw gene expression values into a structured format the transformer can understand:

- Gene Tokenization: Each gene is represented by embedding its gene ID, allowing the model to learn a unique representation for each gene [3].

- Expression Value Processing: Expression values undergo binning to obtain expression embeddings, which encode the abundance level of each transcript [3].

- Contextual Embeddings: The model incorporates condition embeddings for specific genes and integrates these embedding inputs through multiple transformer layers [3].

This multi-faceted input representation enables scGPT to capture both the identity of genes and their expression levels, creating a rich foundation for learning biological relationships.

Transformer Architecture and Attention Mechanisms

At the core of scGPT lies the transformer architecture, which utilizes self-attention mechanisms to model dependencies between all genes in the input sequence:

- Self-Attention Mechanism: The attention mechanism computes gene representations by weighing information from all other genes in the input sequence, learning a "global context" of gene interactions [3].

- Bidirectional Modeling: Unlike autoregressive models, scGPT employs bidirectional attention, allowing it to capture interactions between all genes simultaneously rather than in a sequential manner [25].

- Contextual Representations: Through multiple layers of transformer blocks, scGPT builds increasingly sophisticated representations of genes that incorporate contextual information from interacting genes [3].

The attention weights learned during this process theoretically represent the strength of regulatory influences between genes, forming the basis for inferring gene regulatory networks.

Analytical Capabilities: From Embeddings to Biological Insights

Cell Type Annotation Performance

scGPT's primary application in automated cell type annotation demonstrates substantial capabilities, though with important limitations as revealed by rigorous evaluation:

Table 1: Zero-Shot Cell Type Annotation Performance Comparison

| Method | AvgBIO Score | ASW Metric | Batch Integration | Notable Strengths |

|---|---|---|---|---|

| scGPT | Variable performance | Comparable to scVI on some datasets | Effective on complex biological batches | Strong on datasets seen during pretraining |

| Geneformer | Generally underperforms | Consistently outperformed by baselines | Poor batch effect correction | Context-aware learning |

| scVI | Consistently strong | Reference standard | Excellent technical batch correction | Probabilistic modeling |

| Harmony | Competitive | Strong performance | Mixed results on biological batches | Fast integration |

| HVG Selection | Often outperforms foundation models | Simple yet effective | Surprisingly effective in full dimensions | Computational efficiency |

In zero-shot settings where models are applied without task-specific fine-tuning, scGPT demonstrates variable performance. It performs comparably to established methods like scVI on certain datasets (Tabula Sapiens, Pancreas, and PBMC), but can be outperformed by simpler approaches like selecting Highly Variable Genes (HVG) in other cases [26]. This suggests that while scGPT captures broad biological patterns, its practical application may require validation against established baselines.

Gene Network Inference Capabilities

Beyond cell type annotation, scGPT shows promise in inferring gene regulatory networks, though emerging models suggest potential areas for improvement:

Table 2: Gene Network Inference Capabilities of Foundation Models

| Model | Training Data Scale | Architectural Innovations | Network Inference Strengths | Interpretability |

|---|---|---|---|---|

| scGPT | 33 million cells | Standard transformer with gene embedding | Captures broad gene-gene interactions | Limited by global attention context |

| scPRINT | 50 million cells | Protein embeddings + genomic location | Superior GN inference performance | Disentangled cell embeddings |

| Geneformer | ~30 million cells | Context-aware attention | Focused on regulatory relationships | Attention-based importance |

scPRINT, a more recent model, incorporates protein sequence embeddings from ESM2 and genomic location encoding, potentially providing richer biological priors for gene network inference [25]. This suggests possible evolutionary paths for enhancing scGPT's biological interpretability.

Experimental Protocols for scGPT Implementation

Protocol 1: Zero-Shot Cell Type Annotation

Purpose: To classify cell types using scGPT without task-specific fine-tuning, particularly valuable in exploratory settings where cell composition is unknown.

Materials:

- Processed scRNA-seq data (cell × gene matrix)

- Pretrained scGPT model (human or relevant species)

- Computational environment with GPU acceleration

- Python libraries: scGPT, scanpy, numpy

Procedure:

- Data Preprocessing: Normalize gene expression values using log(1+CP10K) transformation and select highly variable genes.

- Embedding Generation: Input processed data to scGPT to generate cell embeddings without fine-tuning.

- Dimensionality Reduction: Apply UMAP or t-SNE to embeddings for visualization.

- Cluster Identification: Use Leiden clustering on embeddings to identify distinct cell populations.

- Annotation: Transfer labels from reference datasets using embedding similarity or manually annotate based on marker gene expression.

Validation: Compare clustering metrics (AvgBIO, ASW) against established baselines like scVI and Harmony to ensure biological relevance [26].

Protocol 2: Interpretable Gene-Gene Interaction Analysis

Purpose: To extract biologically meaningful gene-gene interactions from scGPT's attention mechanisms for regulatory network inference.

Materials:

- Fine-tuned scGPT model for specific tissue/cell type

- Gene annotation database (e.g., MSigDB, GO)

- Network visualization tools (Cytoscape, NetworkX)

- High-performance computing resources for attention weight extraction

Procedure:

- Targeted Fine-tuning: Fine-tune scGPT on cell-type-specific data to specialize attention patterns.

- Attention Extraction: Extract attention weights from all transformer layers for representative cells.

- Network Construction: Aggregate attention weights across cells and layers to build gene-gene interaction scores.

- Threshold Application: Apply statistical thresholds to identify significant interactions.

- Biological Validation: Compare identified interactions with known pathways (KEGG, Reactome) and transcription factor targets (ChIP-seq databases).

Interpretation: Focus on consistent attention patterns across multiple layers and cells, as these likely represent robust biological relationships rather than technical artifacts.

Visualization of scGPT Workflow and Biological Insights

scGPT Architecture and Information Flow

Gene-Gene Interaction Network from Attention Weights

Table 3: Key Research Resources for scGPT Implementation

| Resource Category | Specific Tools/Databases | Function in Analysis | Access Considerations |

|---|---|---|---|

| Reference Data | CELLxGENE database, Tabula Sapiens, Human Cell Atlas | Pretraining data sources; reference for annotation | Publicly available; requires significant storage |

| Benchmarking Tools | BIO score, ASW metrics, batch integration scores | Evaluate model performance against baselines | Custom implementation needed |

| Computational Resources | GPU clusters (A100, H100), high-memory servers | Handle large-scale inference and training | Cloud computing or institutional HPC |

| Biological Validation | ChIP-seq databases, protein-protein interaction networks, pathway databases (KEGG, GO) | Validate biological relevance of identified interactions | Publicly available with curation needed |

| Software Libraries | scGPT codebase, PyTorch, Scanpy, Scikit-learn | Implementation of models and analysis pipelines | Open-source with specific dependency requirements |

Limitations and Future Directions

While scGPT represents a significant advance in single-cell analysis, important limitations must be acknowledged. The model's zero-shot performance can be inconsistent, sometimes being outperformed by simpler methods like highly variable gene selection [26]. The global attention mechanism, while powerful for capturing context, can make it challenging to isolate cell-type-specific gene interactions from the learned representations [3]. Additionally, substantial computational resources are required for both training and fine-tuning, creating barriers to accessibility.

Emerging approaches like scKAN attempt to address these limitations by combining knowledge distillation from scGPT with Kolmogorov-Arnold Networks, providing more direct interpretability of gene-cell relationships [3]. Similarly, scPRINT introduces protein sequence embeddings and genomic location encoding to enhance biological priors for gene network inference [25]. Future iterations of scGPT may incorporate these architectural innovations to improve both performance and biological interpretability while maintaining its strengths in capturing global gene expression patterns.

scGPT represents a paradigm shift in single-cell transcriptomic analysis, offering a unified framework for cell type annotation and gene regulatory network inference. By leveraging transformer architecture and pretraining on millions of cells, it captures complex gene-gene interactions that underlie cellular identity and function. While current implementations show limitations in zero-shot settings and interpretability, the model provides a powerful foundation for biological discovery. As methodological improvements address these challenges and computational resources become more accessible, scGPT and similar foundation models are poised to become indispensable tools for researchers exploring cellular heterogeneity, with particular promise for accelerating therapeutic target discovery in disease contexts.

In single-cell RNA sequencing (scRNA-seq) analysis, foundation models like scGPT represent a transformative approach, leveraging large-scale data to learn fundamental biological principles. The "scaling law" hypothesis suggests that model performance scales predictably with increased data volume and model size. For cell type annotation—a critical task in single-cell biology—this implies that models pre-trained on massive, diverse datasets should develop more robust and generalizable representations of cellular states. This Application Note examines the relationship between data volume and model performance within the specific context of cell type annotation using scGPT, providing validated protocols and quantitative benchmarks for researchers.

Quantitative Evidence: Data Volume vs. Performance

Evaluation of scGPT variants pre-trained on datasets of different sizes reveals a complex relationship between data volume and model performance for cell type annotation tasks.

Table 1: Performance of scGPT Variants Pre-trained on Different Data Volumes

| Pre-training Dataset | Cell Count | Primary Tissue Types | Performance on PBMC (12k) | Performance on Tabula Sapiens | Performance on Immune Dataset |

|---|---|---|---|---|---|

| Random Initialization | None | None | Baseline | Baseline | Baseline |

| scGPT Kidney | 814,000 | Kidney | Moderate improvement | Limited improvement | Limited improvement |

| scGPT Blood | 10.3 million | Blood and bone marrow | Significant improvement | Moderate improvement | Moderate improvement |

| scGPT Human | 33 million | Multi-tissue, non-cancerous human cells | Significant improvement | Moderate improvement | Moderate improvement |

Data from zero-shot evaluation studies indicates that while pretraining consistently provides improvement over randomly initialized models, the relationship between data volume and performance is not strictly linear [5]. The scGPT Human model (33 million cells) shows slightly inferior performance compared to scGPT Blood (10.3 million cells) on some non-blood tissue datasets, suggesting that dataset diversity and quality may be as important as sheer volume for optimal model performance [5].

Table 2: Comparative Performance of scFMs and Baseline Methods in Cell Type Annotation

| Method | Architecture | Pre-training Data Scale | Annotation Accuracy Range | Strengths | Limitations |

|---|---|---|---|---|---|

| scGPT | Transformer-based | 33 million cells | Variable (dataset-dependent) | Multi-task capability; handles multiple omics | Inconsistent zero-shot performance |

| STAMapper | Heterogeneous GNN | Not applicable | 75/81 datasets (best accuracy) | Excellent for spatial transcriptomics; works with limited genes | Specialized for spatial data |

| AnnDictionary + LLMs | Various LLMs | Text-based knowledge | 80-90% for major cell types | No pre-training required; leverages existing knowledge | Performance varies by LLM; Claude 3.5 Sonnet best |

| HVG + Traditional ML | Traditional ML | None | Often outperforms foundation models | Simplicity; computational efficiency | Limited transfer learning capability |

Recent benchmarking studies reveal that no single foundation model consistently outperforms all others across diverse cell type annotation tasks [12]. While scGPT demonstrates robust performance in many scenarios, simpler methods sometimes exceed its performance, particularly in zero-shot settings where foundation models may face reliability challenges [5].

Experimental Protocols for Evaluating scGPT Performance

Protocol: Zero-Shot Cell Type Annotation with Pre-trained scGPT

Purpose: To evaluate scGPT's cell type annotation capability without task-specific fine-tuning, simulating real-world exploratory analysis where labeled data is unavailable.

Materials:

- Pre-trained scGPT model (available from official repositories)

- Query scRNA-seq dataset (count matrix)

- High-performance computing environment with GPU acceleration

- Python 3.8+ with scGPT, scanpy, and numpy packages

Procedure:

- Data Preprocessing:

- Normalize the query dataset using scGPT's built-in normalization function

- Filter genes to match scGPT's pre-trained gene set (1,200 highly variable genes)

- Log-transform expression values using

sc.pp.log1p() - Format data into scGPT's custom data structure

Embedding Generation:

- Load pre-trained scGPT model weights

- Pass preprocessed query data through the model to extract cell embeddings

- Reduce dimensionality using UMAP or t-SNE for visualization

Cluster Identification:

- Perform Leiden clustering on the cell embeddings

- Identify marker genes for each cluster using differential expression analysis

Cell Type Prediction:

- Utilize scGPT's cell type prediction head (if available) or

- Employ k-nearest neighbors classification against reference atlases

Validation:

- Compare predictions with manual annotations (when available)

- Calculate accuracy metrics (Cohen's kappa, F1 score)

Technical Notes: Zero-shot performance is highly dependent on the similarity between query data and pre-training corpus. Performance degrades significantly when cell types are underrepresented in pre-training data [5].

Protocol: Fine-tuning scGPT for Specific Cell Type Annotation Tasks

Purpose: To adapt scGPT for specialized annotation tasks where some labeled data is available, potentially overcoming zero-shot limitations.

Materials:

- Pre-trained scGPT model

- Labeled target dataset (minimum 100 cells per cell type recommended)

- Computing environment with 16GB+ GPU memory

Procedure:

- Data Preparation:

- Split labeled data into training (80%), validation (10%), and test (10%) sets

- Apply same preprocessing as in Protocol 3.1

- Ensure class balance through stratified sampling

Model Configuration:

- Load pre-trained scGPT weights

- Replace classification head with randomly initialized layer matching target cell type count

- Set learning rate to 1e-5 for pre-trained layers, 1e-4 for classification head

Training:

- Freeze transformer layers for first 5 epochs

- Train for maximum 100 epochs with early stopping (patience=10)

- Use cross-entropy loss with class weighting for imbalanced datasets

- Monitor validation accuracy for model selection

Evaluation:

- Calculate accuracy, precision, recall, F1-score on test set

- Compare with baseline methods (HVG + Harmony, scVI, random forest)

- Perform cross-validation to assess robustness

Technical Notes: Fine-tuning typically improves performance over zero-shot by 10-30% on target tasks but risks overfitting to specific datasets. Regularization techniques like dropout and weight decay are essential [12].

Workflow Visualization

Diagram 1: scGPT Cell Type Annotation Workflow

Table 3: Key Research Reagent Solutions for scGPT Implementation

| Resource | Type | Function in scGPT Research | Implementation Example |

|---|---|---|---|

| Pre-trained scGPT Weights | Model parameters | Foundation for transfer learning | HuggingFace model repository: scGPT-33M |

| CZ CELLxGENE | Data repository | Source of standardized scRNA-seq data for pre-training and benchmarking | Download >100 million curated cells for custom pre-training [11] |

| AnnDictionary | Software package | LLM-integrated annotation comparison and evaluation | Benchmark scGPT against commercial LLMs (Claude 3.5 Sonnet, GPT-4) [27] |

| Tabula Sapiens v2 | Reference atlas | Gold-standard dataset for evaluation | Test generalization across 15+ tissues with manual annotations [27] |

| Harmony | Integration algorithm | Baseline method for performance comparison | Assess scGPT's advantage over traditional batch correction [5] |

| STAMapper | Specialized annotation tool | Benchmark for spatial transcriptomics tasks | Compare performance on 81 spatial datasets [28] |

Performance Optimization Guidelines

Data Volume Recommendations

Based on empirical evaluations, the following data volume guidelines are recommended for scGPT implementations:

- Minimum for meaningful transfer: 500,000 cells spanning multiple tissue types

- Optimal pre-training scale: 10-30 million quality-filtered cells

- Diminishing returns threshold: Beyond 50 million cells without increased diversity

When to Choose scGPT vs. Alternatives

- Select scGPT when: Working with data similar to pre-training corpus, multiple annotation tasks needed, computational resources available

- Choose simpler methods (HVG + traditional ML) when: Limited computational resources, target dataset diverges significantly from pre-training corpus, maximum interpretability required [7] [5]

- Consider specialized tools (STAMapper) when: Working with spatial transcriptomics data, annotating rare cell types with limited markers [28]

The scaling laws for scGPT in cell type annotation demonstrate that while increased pre-training data volume generally improves performance, the relationship is nuanced. Data quality, diversity, and task-specific alignment are critical factors that can outweigh sheer volume. Researchers should carefully evaluate whether scGPT's computational requirements are justified for their specific annotation tasks, as simpler methods sometimes achieve comparable results with greater efficiency. Future developments may overcome current limitations in zero-shot reliability while maintaining the model's demonstrated strengths in multi-task learning and biological insight extraction.

Practical Implementation: From Zero-Shot Prediction to Fine-Tuned Precision

Cell type annotation is a critical step in single-cell RNA sequencing (scRNA-seq) analysis, enabling researchers to decipher cellular heterogeneity and function. The advent of single-cell foundation models (scFMs), such as scGPT, has transformed this process by leveraging large-scale pretraining on millions of cells to generate powerful cellular representations [11]. A key decision researchers face is whether to use these models in a zero-shot manner or to invest resources in fine-tuning them for a specific task. This framework provides a structured comparison, detailed protocols, and a decision guide to help researchers, scientists, and drug development professionals select and implement the optimal scGPT workflow for their cell type annotation projects.

Strategic Comparison: Zero-Shot vs. Fine-Tuned scGPT

The choice between zero-shot and fine-tuned scGPT is the foundational decision that shapes the entire annotation workflow. The table below summarizes the core characteristics, advantages, and ideal use cases for each approach.

Table 1: Strategic comparison between zero-shot and fine-tuned scGPT workflows for cell type annotation.

| Aspect | Zero-Shot Approach | Fine-Tuned Approach |

|---|---|---|

| Core Definition | Using the pretrained model directly without any further training on your data [29]. | Adapting the pretrained model on a labeled subset of your own data for a limited number of epochs [29]. |

| Technical Process | Feeding your gene expression matrix into scGPT to obtain cell embeddings or provisional labels [29]. | Starting from the pretrained backbone and training for ~5-10 epochs on a labeled reference dataset [29] [8]. |

| Primary Pros | Instant results; no requirement for GPU hardware; easily reusable across different projects [29]. | Substantial accuracy gains (+10-25 percentage points); better resolution of rare or novel cell subtypes [29] [8]. |

| Primary Cons | Can miss rare, novel, or context-specific cell states; generally shows lower macro-F1 scores on data that differs from the pretraining distribution [29] [5]. | Requires GPU access and computational resources (~20 min on 1 A100 GPU); risk of overfitting on small cohorts; adds MLOps complexity [29]. |

| Ideal Use Cases | Rapid exploration of new datasets, initial data quality assessment, projects with no labeled reference data available [29]. | Production of publication or clinical-grade annotations, analysis of complex diseases, and identification of rare cell populations [29] [8]. |

Quantitative Performance and Benchmarking

Independent evaluations and real-world applications provide critical data on the expected performance of each approach. It is crucial to understand that zero-shot performance, while convenient, may be inconsistent.

Zero-Shot Performance Evaluation

A rigorous 2025 zero-shot evaluation of scGPT and other foundation models revealed that their performance can be variable and may be outperformed by simpler, established methods [5] [26]. Key findings include:

- Cell Type Clustering: In tests across multiple datasets, zero-shot scGPT and Geneformer often performed worse than embeddings based on Highly Variable Genes (HVG) or generated by methods like scVI and Harmony, as measured by average BIO score [5] [26].

- Batch Integration: scGPT showed mixed results in correcting for batch effects. It was outperformed by scVI and Harmony on datasets with purely technical variation but showed relative strength on more complex datasets containing biological batch effects (e.g., different donors) [5] [26]. Geneformer consistently underperformed in this task [26].

Fine-Tuning Performance Gains

In contrast, task-specific fine-tuning has been demonstrated to yield significant improvements in annotation accuracy:

- Accuracy Jump: Fine-tuning scGPT on a target dataset can lead to a 10-25 percentage point increase in accuracy for complex datasets involving multiple sclerosis and tumor-infiltrating myeloid cells [29].

- Protocol Validation: An end-to-end fine-tuning protocol for retinal cell type annotation achieved a remarkable 99.5% F1-score, showcasing the potential for expert-level accuracy on specific tissues [8].

Experimental Protocols

Protocol A: Zero-Shot Cell Type Annotation

This protocol is designed for the rapid, preliminary annotation of a scRNA-seq dataset using the pre-trained scGPT model without any training [29].

- Data Preprocessing: Begin with a standardly preprocessed scRNA-seq count matrix. Follow best practices for quality control (mitochondrial content, gene counts) and normalization.

- Generate Cell Embeddings: Input the normalized gene expression matrix into the pre-trained scGPT model. Execute the model in inference mode to extract a low-dimensional embedding vector for every cell.

- Downstream Clustering and Visualization: Use the scGPT-generated embeddings as input to a standard clustering algorithm (e.g., Leiden or Louvain). Visualize the resulting clusters in a two-dimensional space using UMAP or t-SNE. The clusters represent transcriptional neighborhoods.

- Provisional Labeling: For each cluster, calculate the top 10 marker genes. Input these concise marker lists into an auxiliary tool. This can be a GPT-4 API call for human-readable rationales or a fast reference-based tool like CellTypist to assign provisional cell type labels [29].

Protocol B: Fine-Tuned Cell Type Annotation

This protocol details the process of adapting scGPT to a specific dataset to achieve high-accuracy, reliable cell type annotations, as validated in real-world applications [29] [8].

- Reference Data Curation: Assemble a high-quality, labeled reference dataset. This can be a subset of your data or an external public dataset relevant to your tissue/organ of interest. The labels must be reliable, and the dataset should be representative of the biological variation you expect to encounter, even if it only contains a few thousand cells [29].

- Data Preprocessing and Setup: Preprocess the reference data and your target query data consistently. The scGPT fine-tuning protocol involves steps for cleaning, normalizing, binning, and compressing the data into a format ready for model training and inference [8].

- Model Fine-Tuning:

- Base Model: Load the scGPT model pre-trained on 33 million human cells [29] [8].

- Training Loop: Fine-tune the model on your prepared reference dataset. As per established workflows, training for 5-10 epochs on a single A100 GPU (or equivalent) is typically sufficient. The goal is to specialize the model's knowledge without overfitting [29].

- Output: The output of this stage is a custom fine-tuned scGPT model checkpoint.

- Inference and Evaluation:

- Run the fine-tuned model on your full, unlabeled dataset (or held-out test set) to generate predictions.

- The pipeline will output a CSV file with the predicted cell types and a UMAP visualization for clustering assessment [8].

- If ground truth labels are available for a test set, an optional confusion matrix will be generated to quantitatively evaluate performance [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of scGPT workflows relies on several key computational "reagents" and resources.

Table 2: Essential research reagents and computational tools for scGPT cell type annotation.

| Resource / Tool | Function / Description | Relevance in Workflow |

|---|---|---|

| Pre-trained scGPT Model | The foundation model pre-trained on tens of millions of single cells, providing a universal baseline understanding of gene expression patterns [11] [8]. | Starting point for both zero-shot and fine-tuning workflows. |

| Labeled Reference Dataset | A curated single-cell dataset with validated cell type annotations. Serves as the ground truth for fine-tuning and model validation. | Essential for the fine-tuning workflow; not required for zero-shot. |

| GPU Cluster (e.g., A100) | High-performance computing hardware necessary for efficient model fine-tuning, reducing training time from days to minutes [29]. | Critical for the fine-tuning workflow; optional for zero-shot. |

| CELLxGENE Platform | A data portal and census providing unified access to millions of curated single-cell datasets, useful for finding reference data [30] [11]. | Resource for discovering and downloading high-quality reference datasets for fine-tuning. |

| Harmony / scVI | Established batch integration and dimensionality reduction tools that can serve as strong baselines for evaluating scGPT's zero-shot embedding quality [5]. | Used for performance comparison and as a complementary analysis tool. |

| Gene Set (Top 10 Markers) | A concise list of the most differentially expressed genes for a cell cluster. Focuses subsequent labeling on signature genes, reducing noise [29]. | Critical input for GPT-4 or CellTypist in the zero-shot workflow to generate accurate provisional labels. |

The decision between zero-shot and fine-tuned scGPT is not a matter of one being universally superior, but of aligning the model's capabilities with the project's goals and constraints. Zero-shot scGPT offers a powerful, accessible tool for initial data exploration and hypothesis generation. However, researchers must be aware of its potential limitations in consistency and accuracy. For finalized analyses, publication-grade results, or studies focusing on subtle cellular differences, investing in task-specific fine-tuning is unequivocally the recommended path, delivering substantial gains in accuracy and reliability. By applying this decision framework and adhering to the detailed protocols, researchers can strategically leverage scGPT to unlock robust biological insights from their single-cell data.

Single-cell RNA sequencing (scRNA-seq) has revolutionized the study of cellular heterogeneity but faces significant challenges in accurately annotating cell types, especially within complex tissues and large-scale datasets. This protocol provides a comprehensive, accessible guide to fine-tuning scGPT (single-cell Generative Pretrained Transformer), a foundation model that leverages transformer-based architecture for high-precision cell type annotation. Demonstrated on a custom retina dataset, this end-to-end workflow achieves a remarkable 99.5% F1-score in classifying retinal cell types, automating key steps from data preprocessing to model evaluation. Designed for researchers with intermediate bioinformatics skills, this protocol offers an off-the-shelf solution that is both scalable and adaptable to various research contexts in neuroscience, immunology, and drug development [31] [8].

The scalability of scRNA-seq technologies has outpaced the development of analytical tools capable of handling the resulting large, complex datasets. Accurate cell type annotation is a critical step in single-cell analysis, as errors propagate through downstream analyses and can lead to incorrect biological interpretations. Foundation models like scGPT, pre-trained on millions of cells, provide a powerful starting point. These models learn generalizable representations of gene expression patterns, which can be specifically adapted or "fine-tuned" on a target dataset—such as retinal cells—to achieve exceptional annotation accuracy, even for rare cell populations [8].

The retina represents an ideal model system for demonstrating this protocol. It is a complex neural tissue composed of multiple distinct cell classes—including photoreceptors (rods and cones), bipolar cells (BCs), amacrine cells (ACs), retinal ganglion cells (RGCs), and others—each with numerous subtypes. This diversity tests the model's resolution and ability to handle fine-grained classification tasks. The fine-tuned scGPT model detailed in this protocol has been optimized to distinguish these retinal cell types with high precision, providing a template that can be adapted to other tissues and organs [9].

Key Research Reagent Solutions

The following table catalogues the essential computational materials and datasets required to implement this fine-tuning protocol.

Table 1: Essential Research Reagents and Datasets for scGPT Fine-Tuning

| Item Name | Type | Description | Function in Protocol |

|---|---|---|---|

| Pretrained scGPT Model [8] | Software Model | A foundational transformer model pre-trained on massive single-cell omics data. | Provides the base model whose parameters are updated during fine-tuning; encapsulates prior knowledge of gene expression relationships. |

| Custom Retina Dataset [9] | Training & Evaluation Data | A large-scale scRNA-seq dataset of retinal cells, split into training and multiple evaluation sets. | Serves as the target domain data for fine-tuning the model and for benchmarking its performance. |

TRAIN_snRNA2_9M.h5ad [9] |

Training Dataset | The primary training data; contains 1,327,511 cells and 36,601 genes (90% of original data). | Used to adjust the weights of the pretrained scGPT model to specialize in retinal cell annotation. |

EVAL_snRNA_no_enriched.h5ad [9] |

Evaluation Dataset | An evaluation set with no cell type enrichment; majority of cells are ROD photoreceptors. | Tests the model's general performance across a naturally distributed cell population. |

EVAL_snRNA_ac_enriched.h5ad [9] |

Evaluation Dataset | An evaluation dataset specifically enriched for Amacrine Cells (ACs). | Tests the model's accuracy on a specific, potentially rare, cell class. |

finetuned_AiO.zip [9] |

Fine-tuned Model | A compressed file containing the fine-tuned model, vocabulary, and configuration. | Provides an optional starting point, containing a pre-fine-tuned model and its associated files for inference. |

| Jupyter Notebook [31] | Software Tool | A user-friendly notebook interface provided with the protocol. | Guides users through the fine-tuning and evaluation process with minimal Python/Linux knowledge. |

Experimental Performance and Validation

The fine-tuned scGPT model was rigorously evaluated on multiple independent datasets derived from the human retina, including samples with enriched specific cell types and from public sources. The model's performance was quantified using the F1-score, a harmonic mean of precision and recall, providing a balanced measure of classification accuracy.

Table 2: Model Performance on Retinal Cell Type Annotation

| Evaluation Dataset | Key Characteristic | Number of Cells | Reported F1-Score |

|---|---|---|---|

| Overall Performance | Aggregated across all cell types and test sets | - | 99.5% [31] [8] |

| AC-Enriched Set [9] | High abundance of Amacrine Cells | 7,070 | High Performance |

| BC-Enriched Set [9] | High abundance of Bipolar Cells | 27,293 | High Performance |

| RGC-Enriched Set [9] | High abundance of Retinal Ganglion Cells | 7,681 | High Performance |

| Public Benchmark Set [9] | Independent dataset from Hahn et al. | 4,803 | High Performance |

This evaluation demonstrates that the fine-tuning protocol produces a model capable of generalizing to new, unseen data and accurately identifying both common and rare cell populations. The consistent high performance across diverse evaluation sets underscores the robustness of the scGPT framework when applied with this protocol [9] [8].

End-to-End Fine-Tuning Workflow

The following diagram illustrates the complete workflow for fine-tuning scGPT and using it for cell type annotation, from data preparation to final output.

Data Preprocessing Module

Before fine-tuning or inference, raw scRNA-seq data must be converted into a standardized format that the scGPT model can process. This critical first step ensures data quality and consistency.

- Input Data: The protocol accepts raw gene expression count matrices from retinal scRNA-seq experiments. The provided example dataset

TRAIN_snRNA2_9M.h5adcontains over 1.3 million cells and 36,601 genes [9]. - Processing Steps: The preprocessing pipeline involves several automated steps:

- Data Cleaning: Filtering out low-quality cells and genes with minimal expression.

- Normalization: Scaling counts to account for sequencing depth variation between cells.

- Binning and Compression: The expression values are discretized (binned) and the data is compressed into an H5AD file, which is efficient for storage and subsequent loading by the scGPT pipeline [8].

- Output: The result is a preprocessed data file (

.h5ad) ready for model training or evaluation.

Model Fine-Tuning Module

Fine-tuning adapts the general-purpose, pre-trained scGPT model to the specific task of retinal cell type annotation. This process is more efficient than training a model from scratch and requires less data.

- Model Input: The module takes two primary inputs: the preprocessed retinal dataset and the pre-trained scGPT model [8].

- Fine-Tuning Process: The transformer-based architecture of scGPT is optimized on the retinal training data. This updates the model's parameters to learn the unique gene expression signatures that define each retinal cell type. The protocol automates the setup of the training loop, including loss function and optimizer selection.

- Outputs: The key output of this module is the fine-tuned model (e.g.,

best_model.pt), which is saved alongside its configuration file (dev_train_args.yml), vocabulary (vocab.json), and cell type mappings (id2type.json) [9]. This complete package is essential for running inference.

Inference and Evaluation Module

This module uses the fine-tuned model to predict cell types on new, unseen retinal scRNA-seq data and evaluates its performance.

- Workflow: The inference pipeline requires a fine-tuned model and a preprocessed evaluation dataset. The model takes the gene expression profile of each cell as input and outputs a predicted cell type label [8].

- Key Outputs:

- UMAP for Cell-Type Clustering: A two-dimensional visualization (UMAP plot) that clusters cells based on their expression profiles, colored by their predicted labels, allowing for qualitative assessment of annotation quality [8].

- Prediction Results (CSV File): A table containing the cell barcodes and their corresponding predicted cell types, which can be used for downstream analysis [8].

- Confusion Matrix (Optional): If the ground truth cell types for the evaluation set are known, the protocol automatically generates a confusion matrix to quantitatively compare the predictions against the true labels, enabling the calculation of metrics like the F1-score [8].

Step-by-Step Experimental Protocol

Data Preparation and Configuration

- Acquire Datasets: Download the training and evaluation datasets from Zenodo (e.g.,