A Researcher's Guide to Automated Cell Type Annotation in 2025: From Foundations to LLMs

This guide provides researchers and drug development professionals with a comprehensive tutorial on automated cell type annotation tools for single-cell RNA sequencing (scRNA-seq) data.

A Researcher's Guide to Automated Cell Type Annotation in 2025: From Foundations to LLMs

Abstract

This guide provides researchers and drug development professionals with a comprehensive tutorial on automated cell type annotation tools for single-cell RNA sequencing (scRNA-seq) data. It covers foundational concepts, explores the latest methodologies including Large Language Models (LLMs) and semi-supervised learning, and offers practical workflows for application. The article also details strategies for troubleshooting common issues, optimizing performance, and provides a comparative analysis of leading tools and validation frameworks to ensure biological relevance and reproducibility in biomedical research.

Understanding Automated Cell Type Annotation: Core Concepts and Why It Matters

The Critical Challenge of Cell Type Identification in Single-Cell Biology

Cell type identification, or cell type annotation, is the foundational process of classifying individual cells into distinct biological categories based on their gene expression profiles [1]. This process transforms clusters of gene expression data into meaningful biological insights, enabling researchers to understand cellular heterogeneity, compare cell populations across conditions, and perform accurate differential expression analysis within specific cell types [1]. In the era of single-cell biology, the very concept of cell identity continues to evolve and remains actively debated, with definitions now encompassing not only established cell types but also novel cell types, cell states, disease stages, and developmental trajectories [2].

The critical challenge lies in accurately assigning these identities from complex, high-dimensional transcriptomic data characterized by significant technical noise, high sparsity, and low signal-to-noise ratios [3] [4]. This challenge is compounded by the subjective nature of cell type definitions and the potential discovery of previously uncharacterized cell populations [1]. Robust cell type identification depends on multiple factors: data quality, availability of suitable reference datasets, validity of chosen marker genes, and integration of biological expertise [2]. This article examines the methodologies, computational tools, and experimental considerations essential for addressing these challenges in modern single-cell research.

Computational Methodologies for Automated Cell Type Annotation

Automated cell annotation methods have emerged to address the limitations of manual annotation, particularly as single-cell experiments routinely generate data for thousands to millions of cells [1]. These approaches can be broadly categorized into three main computational paradigms, each with distinct advantages and limitations.

Table 1: Comparison of Automated Cell Type Annotation Methods

| Approach | Description | Advantages | Limitations |

|---|---|---|---|

| Correlation-Based | Compares gene expression patterns between query data and reference datasets using similarity measures [1]. | Comprehensive annotation; flexibility with multiple references; simple and fast computation; applicable at cell and cluster levels [1]. | Performance decreases with excessive features; potential reference selection bias [1]. |

| Cluster Annotation with Marker Genes | Matches expression patterns of specific marker genes to reference cell types using curated databases [1]. | Leverages comprehensive collections of known cell type markers; enables result replication [1]. | Relies on human-curated markers; uncertain annotation with unclean query data [1]. |

| Supervised Classification | Employs machine learning models trained on reference data to predict cell types [1]. | Robust to data noise and batch effects; higher accuracy with appropriate training; handles high-dimensional data well [1]. | Computationally intensive training; requires model retraining for new data/classifications [1]. |

The Emergence of Single-Cell Foundation Models

Recent advances have introduced single-cell foundation models (scFMs) trained on massive datasets containing millions of cells [3]. These models, including Geneformer, scGPT, UCE, scFoundation, LangCell, and scCello, leverage transformer architectures adapted for biological data to learn universal representations of gene and cell relationships [3]. Benchmark studies reveal that these scFMs are robust and versatile tools for diverse applications, demonstrating particular strength in capturing biologically meaningful insights into the relational structure of genes and cells [3].

However, comprehensive benchmarking studies indicate that no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, biological interpretability requirements, and computational resources [3]. Notably, simpler machine learning models often remain more adept at efficiently adapting to specific datasets, particularly under resource constraints [3].

Table 2: Benchmark Comparison of Single-Cell Foundation Models

| Model Name | Model Parameters | Pretraining Dataset Size | Input Genes | Architecture | Key Features |

|---|---|---|---|---|---|

| Geneformer | 40 M | 30 M cells | 2048 ranked genes | Encoder | Gene ID prediction with ranking [3] |

| scGPT | 50 M | 33 M cells | 1200 HVGs | Encoder with attention mask | Multi-omic support; generative pretraining [3] |

| UCE | 650 M | 36 M cells | 1024 non-unique genes | Encoder | Protein sequence integration [3] |

| scFoundation | 100 M | 50 M cells | ~19,264 genes | Asymmetric encoder-decoder | Read-depth-aware pretraining [3] |

| LangCell | 40 M | 27.5 M cell-text pairs | 2048 ranked genes | Encoder | Incorporates cell type labels [3] |

Integrated Experimental and Computational Workflow

A robust cell type annotation pipeline integrates both experimental and computational best practices through sequential stages that ensure biologically meaningful results.

Experimental Protocol: Sample Preparation and Sequencing

Sample Preparation for Single-Cell RNA Sequencing

Single-Cell Suspension Preparation: Extract viable single cells from tissue using appropriate dissociation methods. For challenging tissues (frozen, fragile, or difficult to dissociate), consider single-nuclei RNA-seq (snRNA-seq) as an alternative [4]. The ideal sample delivered for 10x Genomics protocols should have 100,000+ total cells at a concentration of 1,000-1,600 cells/μL, with >90% viability and minimal debris [5].

Cell Lysis and RNA Capture: Lyse individual cells to release RNA molecules. Use poly(T)-primers to selectively capture polyadenylated mRNA while minimizing ribosomal RNA contamination [4].

Molecular Barcoding and Amplification: Convert RNA to complementary DNA (cDNA) and amplify using polymerase chain reaction (PCR) or in vitro transcription (IVT) methods [4]. Incorporate Unique Molecular Identifiers (UMIs) during reverse transcription to label individual mRNA molecules, enabling accurate quantification by correcting for PCR amplification biases [4]. In 10x Genomics protocols, all cDNA molecules from a single cell receive the same Cell Barcode, while each transcript receives a unique UMI [5].

Library Preparation and Sequencing: Prepare sequencing libraries using platform-specific protocols. For 3' end counting methods (e.g., 10x Genomics 3' Gene Expression), sequence typically covers the 3' ends of transcripts including the poly(A) tail, cell barcode, and UMI [4] [5].

Computational Protocol: Data Analysis and Annotation

Computational Analysis Pipeline for Cell Type Identification

Preprocessing and Quality Control:

- Perform rigorous quality control to filter low-quality cells and genes.

- Apply doublet detection to exclude multiplets from analysis.

- Implement batch correction to mitigate technical variations from different sample preparations or sequencing runs [2].

Feature Selection and Clustering:

Cell Type Annotation:

- Reference-Based Mapping: Align query gene expression profiles with established reference datasets (e.g., CELLxGENE, Azimuth) using tools like SingleR or scArches [2] [6]. The 10x Genomics Cell Annotation pipeline uses an approximate Nearest Neighbor lookup against a reference database to assign cell types [6].

- Marker Gene Validation: Verify annotations by examining expression patterns of canonical marker genes through dot plots or violin plots [2] [1].

- Manual Refinement: Integrate biological expertise to fine-tune labels, interpret ambiguous clusters, and identify novel populations [2].

Biological Validation and Interpretation:

- Perform differential expression analysis within annotated cell types.

- Contextualize findings with relevant literature and functional annotations.

- Design orthogonal validation experiments (e.g., protein staining, functional assays) to confirm novel cell identities [2].

Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Single-Cell RNA Sequencing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| 10x Genomics 3' Gene Expression Kit | PolyA-based capture of mRNA at 3' end for library preparation | Standard "workhorse" kit; enables feature barcoding for surface protein or sample multiplexing [5] |

| 10x Genomics 5' Gene Expression Kit | Capture at 5' end via template-switching reverse transcription | Preferred for immune profiling with V(D)J sequencing add-ons [5] |

| Unique Molecular Identifiers (UMIs) | Labels individual mRNA molecules for accurate quantification | Corrects for PCR amplification biases; essential for quantitative analysis [4] [5] |

| Cell Barcodes | Unique sequences identifying cell of origin for each transcript | Enables assignment of transcripts to individual cells [5] |

| Viability Dye | Distinguishes live from dead cells during quality control | Critical for assessing sample quality pre-sequencing [5] |

| Dissociation Enzymes | Tissue-specific cocktails for generating single-cell suspensions | Worthington Tissue Disassociation Database provides protocol guidance [5] |

| RNase Inhibitors | Prevents RNA degradation during sample processing | Essential for maintaining RNA integrity [5] |

| PBS with 0.04% BSA | Sample delivery buffer for 10x Genomics protocols | Free of reverse transcription inhibitors like EDTA [5] |

Advanced Applications and Future Directions

Spatial Transcriptomics Integration

Spatial transcriptomics technologies have emerged as powerful complements to scRNA-seq, preserving the spatial context of gene expression within tissues [7]. However, most sequencing-based spatial transcriptomics methods (e.g., 10x Visium) cannot achieve true single-cell resolution, instead capturing gene expression from spots containing multiple cells [7]. Computational deconvolution methods like SWOT (Spatially Weighted Optimal Transport) have been developed to address this limitation by integrating scRNA-seq data with spatial transcriptomics data to infer both cell-type composition and single-cell spatial maps [7]. These approaches employ optimal transport frameworks to learn probabilistic relationships between cells and spots, enabling researchers to map single cells to their spatial locations within tissues [7].

Experimental Design Considerations

Proper experimental design remains critical for biologically meaningful cell type identification. Biological replicates are essential for statistical testing of differential expression or cell population changes between conditions [5]. Treating individual cells as replicates rather than accounting for sample-to-sample variation leads to sacrificial pseudoreplication, dramatically increasing false positive rates [5]. The pseudobulk approach—summing or averaging read counts within samples for each cell type before applying bulk RNA-seq differential expression methods—provides an effective correction for this problem [5].

Cell type identification remains a critical challenge and active area of innovation in single-cell biology. The integration of robust experimental protocols with advanced computational methods—from traditional correlation-based approaches to cutting-edge foundation models—enables researchers to transform high-dimensional transcriptomic data into biologically meaningful insights. As the field evolves, emerging technologies like spatial transcriptomics and long-read sequencing promise higher resolution cell type characterization, while improved benchmarking guides the selection of appropriate analytical tools for specific biological contexts. Through careful experimental design, methodological rigor, and interdisciplinary collaboration between computational and domain experts, researchers can overcome the challenges of cell type identification to advance our understanding of cellular heterogeneity in health and disease.

Cell type annotation is a critical step in the analysis of data from technologies like single-cell RNA sequencing (scRNA-seq) and spatial transcriptomics, transforming raw molecular measurements into biologically meaningful insights. Traditionally, this process has relied on manual annotation by domain experts who inspect established marker genes from literature or databases. However, this method is inherently subjective, prone to inter-observer variability, and incredibly time-consuming, often requiring 20 to 40 hours to manually annotate a typical single-cell dataset with 30 clusters [8]. In histopathology images, this problem is compounded, with inter-pathologist agreement for identifying certain cell types, like macrophages, being as low as 50% [9].

Automated cell type annotation methods have emerged to overcome these limitations, leveraging computational tools to provide scalable, objective, and reproducible cell identification. These methods are becoming an indispensable component of the single-cell data analysis pipeline, enabling researchers to handle the increasing scale and complexity of modern biological datasets [8]. This Application Note details the methodologies and protocols for implementing these automated solutions, providing a practical guide for researchers, scientists, and drug development professionals.

Key Automated Annotation Strategies and Quantitative Performance

Automated methods can be broadly categorized into several strategic approaches, each with its own strengths. The following table summarizes the core methodologies, while subsequent sections provide detailed protocols.

Table 1: Core Strategies for Automated Cell Type Annotation

| Strategy | Underlying Principle | Representative Tools | Key Advantages |

|---|---|---|---|

| Marker-Based | Uses curated lists of cell-type-specific marker genes to label cells or clusters. | SCINA, ScType, scSorter [10] [8] | Does not require a reference dataset; intuitive and interpretable. |

| Reference-Based | Transfers labels from a well-annotated reference dataset to a query dataset based on gene expression similarity. | SingleR, Seurat, Azimuth, scmap [10] [11] [8] | Leverages existing, high-quality annotations; highly accurate when reference is well-matched. |

| Supervised Classification | Trains a machine learning classifier on a labeled reference dataset, then applies it to query data. | CellTypist, scPred, MapCell [11] [8] | Creates a reusable model; can be very fast for annotating new datasets. |

| Large Language Model (LLM)-Based | Leverages pre-trained LLMs to interpret marker gene lists and contextual information from research articles for annotation. | LICT, scExtract, GPTCelltype [12] [13] | Does not require predefined references; can incorporate rich biological context. |

| Image-Based Deep Learning | Uses convolutional neural networks to classify cell types directly from histopathology images. | Custom models (e.g., combining self-supervised learning and domain adaptation) [9] | Applicable to standard H&E images; links morphology to molecular definition. |

Quantitative benchmarking is essential for selecting the appropriate tool. The table below compiles performance data from recent, rigorous evaluations across different data modalities.

Table 2: Performance Benchmarking of Selected Automated Annotation Tools

| Tool / Method | Data Modality | Reported Performance | Benchmarking Context |

|---|---|---|---|

| Histopathology Image Model [9] | H&E-Stained Images | 86-89% overall accuracy in classifying 4 cell types (tumor cells, lymphocytes, neutrophils, macrophages) | Trained on 1,127,252 cells with mIF-derived labels; validated on external WSIs. |

| LICT (LLM-Based) [12] | scRNA-seq (PBMCs) | Reduced mismatch rate to 9.7% (from 21.5% with a baseline tool) | Multi-model integration strategy on highly heterogeneous data. |

| LICT (LLM-Based) [12] | scRNA-seq (Gastric Cancer) | Reduced mismatch rate to 8.3% (from 11.1% with a baseline tool) | Multi-model integration strategy on highly heterogeneous data. |

| SingleR (Reference-Based) [11] | 10x Xenium Spatial Data | Results "closely matching" manual annotation; identified as the best-performing tool. | Benchmarking of five reference-based methods on imaging-based spatial transcriptomics. |

| scExtract (LLM-Based) [13] | scRNA-seq (Various Tissues) | Higher accuracy than established methods (SingleR, scType, CellTypist) | Evaluation on 21 manually annotated datasets from cellxgene. |

Detailed Experimental Protocols

Protocol 1: Automated Cell Annotation for Histopathology Images

This protocol uses multiplexed immunofluorescence (mIF) to generate high-quality ground truth labels for training a robust deep learning model to classify cells in standard H&E images [9].

Research Reagent Solutions:

- Tissue Samples: Formalin-Fixed Paraffin-Embedded (FFPE) tissue sections, such as Tissue Microarray (TMA) cores.

- Staining Reagents: Hematoxylin and Eosin (H&E) staining kit; multiplexed immunofluorescence (mIF) staining panel with antibodies against cell lineage markers (e.g., pan-CK for tumor cells, CD3/CD20 for lymphocytes, CD66b for neutrophils, CD68 for macrophages).

- Imaging Equipment: High-throughput slide scanner capable of capturing both brightfield (H&E) and fluorescence (mIF) images.

Procedure:

- Serial Staining: Perform mIF staining for key cell lineage protein markers on an FFPE tissue section, followed by H&E staining on the same section [9].

- High-Throughput Imaging: Acquire whole-slide images for both mIF and H&E stains using a slide scanner.

- Cell Segmentation and mIF-Based Annotation:

- Identify and segment individual cell nuclei from the mIF images.

- Quantify protein marker intensity for each segmented cell.

- Assign cell type labels using an unsupervised clustering algorithm (e.g., Leiden clustering) applied to the protein intensity data and nucleus size, defining major cell types (tumor, lymphocytes, etc.) based on cluster-specific marker expression profiles [9].

- Image Co-Registration:

- Co-register the H&E and mIF images at the single-cell level. This involves an initial rigid transformation followed by a non-rigid registration to achieve precise alignment, ensuring the centroid distance between corresponding cells is less than the average nuclear diameter (e.g., < 3.1 microns) [9].

- Visually inspect and verify the co-registration accuracy with a pathologist.

- Transfer the cell type labels from the mIF-based annotation to the corresponding cells in the H&E image, creating a large, high-quality training dataset (e.g., >800,000 cells) [9].

- Model Training:

- Extract single-cell image patches from the H&E images based on the segmentation masks.

- Train a deep learning model (e.g., a convolutional neural network combining self-supervised learning and domain adaptation) using the H&E patches as input and the transferred mIF labels as ground truth. Domain adaptation techniques are critical for generalizing across data from different institutions [9].

- Validation and Application:

- Evaluate the final model's classification accuracy (e.g., 86-89%) on held-out test sets and external validation cohorts.

- Apply the trained model to classify cells in new H&E whole-slide images for spatial biomarker discovery.

Protocol 2: LLM-Assisted Annotation for Single-Cell RNA-Seq Data

This protocol leverages Large Language Models (LLMs) to automate the annotation of scRNA-seq datasets, incorporating information directly from research articles to guide the process [12] [13].

Research Reagent Solutions:

- Computational Environment: R or Python environment with access to LLM APIs (e.g., GPT-4, Claude 3) and single-cell analysis packages (e.g., Scanpy, Seurat).

- Data Inputs: Raw or processed count matrix from a scRNA-seq experiment; text content from the associated research article (Methods and Results sections).

- Reference Databases (Optional): Cell marker databases (e.g., CellMarker, PanglaoDB) or curated reference atlases (e.g., Human Cell Atlas) for validation.

Procedure:

- Preprocessing and Clustering:

- Perform standard scRNA-seq quality control (filtering cells by gene counts, mitochondrial percentage, etc.) and normalization.

- Conduct dimensionality reduction (PCA) and unsupervised clustering (e.g., Leiden, Louvain) to identify cell populations [13].

- LLM-Based Information Extraction:

- Input the "Methods" section of the research article into an LLM agent (e.g., scExtract) to extract key preprocessing parameters (e.g., "% mitochondrial gene cutoff") and apply them [13].

- Input the "Results" section to infer the expected number of cell populations or the annotation granularity intended by the original authors to guide clustering resolution [13].

- Initial Annotation with Multi-Model Integration:

- For each cell cluster, identify the top differentially expressed genes (marker genes).

- Input the list of marker genes for a cluster into multiple top-performing LLMs (e.g., GPT-4, Claude 3, Gemini) using a standardized prompt to generate independent cell type predictions [12].

- Implement a multi-model integration strategy by selecting the best-performing or most consistent prediction across the LLMs, rather than simple majority voting, to yield the initial annotation [12].

- Iterative Validation and Refinement ("Talk-to-Machine"):

- For each initial LLM-predicted cell type, query the same LLM to generate a list of representative marker genes.

- Evaluate the expression of these validation marker genes in the original scRNA-seq dataset for the cluster in question.

- Validation Criteria: If >4 marker genes are expressed in >80% of cells in the cluster, accept the annotation. If validation fails, generate a structured feedback prompt for the LLM containing the validation results and additional differentially expressed genes, prompting it to revise its annotation in an iterative manner [12].

- Objective Credibility Evaluation:

- Use the same marker gene retrieval and expression evaluation from Step 4 to assign a final "credibility" score to each annotation. This provides an objective metric for researchers to prioritize highly reliable annotations for downstream biological analysis [12].

Successful implementation of automated annotation pipelines relies on both wet-lab reagents and computational resources.

Table 3: Essential Research Reagent Solutions for Automated Cell Annotation

| Item Name | Specifications / Examples | Primary Function in Workflow |

|---|---|---|

| FFPE Tissue Sections | Tissue Microarray (TMA) cores or whole slides. | Provides the foundational biological material for histopathology-based annotation. |

| Multiplexed IF (mIF) Staining Panel | Antibodies against cell lineage markers (e.g., pan-CK, CD3, CD20, CD66b, CD68). | Defines cell types with high specificity based on protein expression for generating ground truth data. |

| H&E Staining Kit | Standard hematoxylin and eosin staining reagents. | Creates the standard histopathology image format for which the final classification model is developed. |

| High-Throughput Slide Scanner | Scanners capable of brightfield and multichannel fluorescence imaging (e.g., Akoya Vectra, Zeiss Axio Scan). | Digitizes tissue slides at high resolution for subsequent computational analysis. |

| Curated Marker Gene Database | CellMarker 2.0, PanglaoDB, ScInfeRDB. | Provides lists of cell-type-specific genes for marker-based and LLM-based annotation methods. |

| Annotated Reference Atlas | Tabula Sapiens, Human Cell Atlas, Mouse Cell Atlas. | Serves as a gold-standard labeled dataset for reference-based and supervised classification methods. |

| LLM API Access | GPT-4, Claude 3, Gemini, or specialized models like ERNIE. | Powers the information extraction and cell type prediction in LLM-assisted annotation protocols. |

| Single-Cell Analysis Software | Scanpy (Python), Seurat (R), ScInfeR, CellTypist. | Provides the computational environment for data preprocessing, clustering, and running annotation algorithms. |

Cell identity is a fundamental concept in biology, referring to the distinctive molecular, phenotypic, and functional characteristics that define a cell's role within a multicellular organism. This identity emerges from a complex interplay of cell-intrinsic and extrinsic factors, creating a molecular profile encompassing genomics, epigenomics, transcriptomics, proteomics, and metabolomics [14]. In single-cell biology, identity is primarily delineated through two interconnected lenses: cell type and cell state.

- Cell Type: Represents a stable, reproducible identity, often defined by a core regulatory complex of transcription factors and their functional behavior in vivo or in vitro. Cell types are characterized by their ability to maintain this identity through cell divisions, such as a hematopoietic stem cell (HSC) reconstituting all blood lineages [14] [15].

- Cell State: Refers to dynamic, responsive changes in a cell's phenotype and function without a fundamental change of type. For example, a typically quiescent HSC entering the cell cycle is a state change essential for its function [14].

Resolving cellular identities is crucial for understanding normal development, tissue homeostasis, and disease. This is particularly challenging in complex organs like the human kidney, where research suggests the existence of at least 41 distinct renal cell populations and 32 non-renal populations, with more likely to be discovered [15].

Methodologies for Mapping Cell Identity

Single-cell transcriptomic sequencing (scRNA-seq) has revolutionized our ability to map cell identity by profiling gene expression in thousands of individual cells simultaneously [16] [17]. The standard workflow involves cell isolation, library preparation, sequencing, and computational analysis to cluster cells and infer identities based on gene expression patterns [14].

Experimental and Computational Workflow

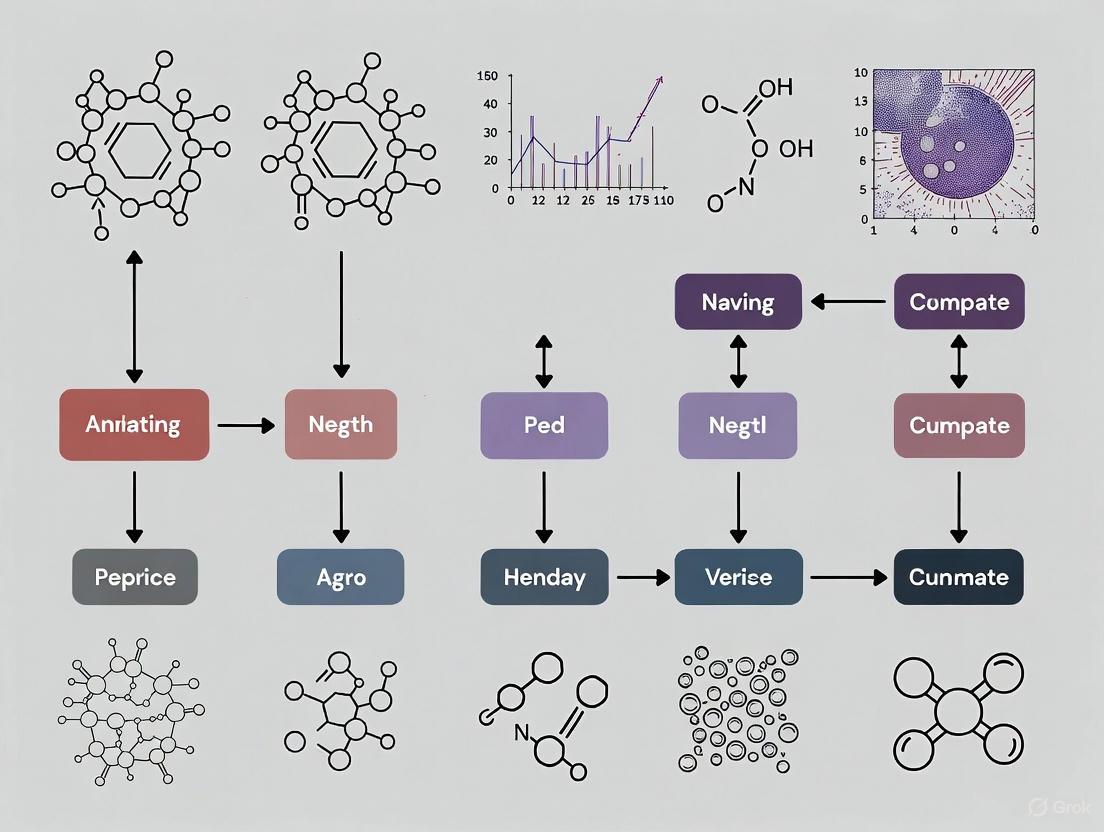

The following diagram outlines the core steps for defining cell identity using scRNA-seq, from single-cell isolation to final annotation.

Automated Cell Type Annotation Tools

A wide array of computational methods has been developed to assign cell identity from scRNA-seq data [17]. These can be broadly classified into several categories, each with specific strengths and applications. The table below summarizes the primary approaches.

Table 1: Categories of Automated Cell Type Annotation Tools

| Category | Description | Example Tools | Key Applications |

|---|---|---|---|

| Reference-Based | Compares query dataset to a pre-annotated reference dataset. | scmap, SingleR, Azimuth [16] [18] | Rapid annotation of well-characterized tissues; label transfer. |

| Marker-Based | Uses lists of marker genes associated with specific cell types. | SCINA [16] | Annotation when a high-quality reference is unavailable; hypothesis testing. |

| Large Language Model (LLM)-Based | Leverages LLMs to interpret marker genes and provide cell type labels. | AnnDictionary (Claude 3.5 Sonnet) [19] | De novo annotation from cluster markers; functional annotation of gene sets. |

| Integration-Based | Uses data integration as a form of annotation. | Harmony [16] | Annotation while correcting for technical batch effects. |

Detailed Experimental Protocols

Protocol 1: Automated Annotation with Azimuth

Azimuth is a reference-based tool that maps a query dataset against a pre-annotated reference. The following protocol is adapted for use in R [18].

Research Reagent Solutions

- Software: R (v4.2.1+), Seurat, Azimuth, Remotes, SeuratData, LoupeR packages.

- Input Data: A feature-barcode matrix (e.g., HDF5 file from Cell Ranger).

- Reference Dataset: A pre-annotated scRNA-seq reference (e.g., Human Lung Cell Reference from SeuratData).

Methodology

- Installation and Setup: Install necessary R packages and set the working directory.

- Load Reference and Query Data: Install the reference dataset and load the query data.

- Run Azimuth: Execute the annotation. This step can take 45-60 minutes for a large dataset.

- Extract and Refine Annotations: Azimuth provides multiple annotation levels. Extract and refine labels, for example, by replacing broad "Rare" labels with finer-grained ones.

- Export for Visualization: Use LoupeR to create a .cloupe file for visualization in Loupe Browser.

Protocol 2: A Multi-Tool Workflow for Consensus Annotation

This protocol from BaderLab recommends a three-step workflow combining automatic and manual methods for robust annotation [16].

Research Reagent Solutions

- Software: R with R Notebook; packages: SingleR, scmap, SCINA, Seurat, cerebroApp.

- Input Data: A pre-processed single-cell transcriptomic map (e.g., a Seurat object).

Methodology

- Reference-Based Automatic Annotation:

- Use tools like

scmap(cell and cluster) andSingleRto assign initial labels by comparing your data to reference datasets. - Use integration tools like

Harmonyas an alternative annotation strategy.

- Use tools like

- Marker-Based Automatic Annotation:

- Input lists of known marker genes for specific cell types.

- Use the program

SCINAto assign cell types based on the expression of these marker sets.

- Refining to Consensus Annotations:

- Compare the labels generated by the multiple methods above.

- For each cell, keep the label that most commonly occurs across the different tools to create a robust consensus.

- Manual Annotation and Verification:

- Extract marker genes for each cluster in the query dataset using

Seurat. - Use literature knowledge and tools like

cerebroAppto compare differentially expressed genes and pathways to known biology. - Manually verify and refine the consensus annotations based on this evidence.

- Extract marker genes for each cluster in the query dataset using

Protocol 3: LLM-Assisted Annotation with AnnDictionary

AnnDictionary is a Python package that leverages LLMs for de novo annotation directly from cluster marker genes [19].

Research Reagent Solutions

- Software: Python with AnnDictionary, LangChain, and Scanpy.

- LLM Access: An API key for a supported LLM provider (e.g., OpenAI, Anthropic, Google).

- Input Data: An AnnData object with computed differentially expressed genes for clusters.

Methodology

- Installation and Configuration:

- Data Pre-processing: Independently pre-process each tissue sample (normalize, log-transform, find high-variance genes, scale, PCA, neighborhood graph, Leiden clustering, and compute differentially expressed genes).

- Cell Type Annotation: Use AnnDictionary's functions to annotate each cluster. The package can be tissue-aware and uses chain-of-thought reasoning to compare marker gene lists.

- Label Management and Quality Control: Use AnnDictionary's label management functions to resolve syntactic differences, merge redundancies, and assess label agreement across methods or studies.

Benchmarking and Validation

Validating the performance of automated annotation tools is critical. A 2025 benchmarking study using AnnDictionary evaluated 15 major LLMs on their ability to perform de novo annotation of the Tabula Sapiens v2 atlas [19].

Table 2: Benchmarking LLM Performance in Cell Type Annotation (Adapted from [19])

| Model | Agreement with Manual Annotation | Key Strengths | Considerations |

|---|---|---|---|

| Claude 3.5 Sonnet | Highest | Accurate annotation of most major cell types (>80-90%); recovers functional annotations in >80% of test sets. | Current leader in LLM-based annotation performance. |

| Other LLMs (e.g., from OpenAI, Google, Meta) | Variable | Performance varies significantly with model size. | Inter-LLM agreement also correlates with model size; requires benchmarking for specific use cases. |

Key metrics for benchmarking include direct string comparison, Cohen's kappa (κ), and LLM-derived ratings of label quality (e.g., "perfect," "partial," or "not-matching") [19].

Application Note: Resolving Kidney Cell Identity

The human kidney exemplifies the challenge of defining cell identity. Single-cell RNA sequencing studies are moving toward a consensus of an accumulated 41 renal and 32 non-renal cell populations in the adult kidney [15]. This complexity arises during development from multiple progenitor pools (metanephric mesenchyme and ureteric bud) and intricate differentiation pathways [15].

Challenges and Solutions in Kidney Research:

- Challenge: Distinguishing between closely related cell types and transitional states.

- Protocol Application: A multi-tool workflow is essential. Reference-based tools can map cells to known nephron segments, while marker-based and LLM-based methods can help identify novel or rare populations like ionocytes or tuft cells that might be misclassified in broad "Rare" categories [18] [15].

- Outcome: A more precise and comprehensive annotation of kidney cell types, which is vital for understanding kidney development, disease, and for guiding the creation of more complete and mature kidney organoids from induced pluripotent stem cells [15].

Automated cell type annotation is a critical step in the analysis of single-cell RNA sequencing (scRNA-seq) data, enabling the interpretation of cellular heterogeneity and function in development, health, and disease [17] [20]. The field has moved beyond purely manual annotation, which is subjective and time-consuming, toward computational methods that offer scalability, reproducibility, and objectivity [12] [20]. These computational approaches can be broadly categorized into three main paradigms: reference-based, marker-based, and supervised classification. Reference-based methods transfer labels from an established, annotated dataset to a new query dataset. Marker-based approaches leverage prior biological knowledge, often from literature, to assign cell identities based on the expression of known marker genes. Supervised classification methods use machine learning models trained on reference data to predict cell labels. This article provides a detailed overview of these technological approaches, framed within the context of a broader thesis on automated cell type annotation, and is tailored for researchers, scientists, and drug development professionals. We summarize quantitative data in structured tables, provide detailed experimental protocols, and visualize workflows to serve as a practical guide for implementing these methods.

Reference-Based Annotation

Reference-based annotation methods utilize pre-annotated reference datasets to label cells in a query dataset. The core assumption is that cell types present in the query data are also represented in the reference. This approach is powerful for standardizing annotations across studies and leveraging well-curated cellular atlases.

Core Concepts and Tools

The process typically involves integrating the query and reference datasets after correcting for technical batch effects. Popular tools like Seurat use an "anchor"-based integration method to find mutual nearest neighbors between datasets, facilitating label transfer [20]. Harmony is another widely used algorithm that operates in a principal component (PC) space to iteratively correct batch effects while preserving biological variation [20]. A benchmark study recommended Harmony as one of the top three batch effect removal methods for this task [20]. The recently developed LICT (LLM-based Identifier for Cell Types) tool introduces a novel reference-free approach by leveraging large language models (LLMs) to interpret marker gene lists, demonstrating high consistency with expert annotations [12].

Performance and Considerations

A key challenge for reference-based methods is their inability to identify novel cell types not present in the reference data. Performance can also diminish when annotating cell populations with low heterogeneity, as models may struggle to distinguish closely related subtypes [12]. For instance, when annotating low-heterogeneity datasets of human embryos and stromal cells, even top-performing LLMs like Gemini 1.5 Pro and Claude 3 showed consistency rates with manual annotations of only 39.4% and 33.3%, respectively [12]. However, performance on highly heterogeneous datasets, such as peripheral blood mononuclear cells (PBMCs) and gastric cancer samples, is generally strong [12].

Table 1: Performance of Selected Reference-Based and Supervised Annotation Tools

| Tool Name | Methodology | Key Strength(s) | Reported Performance / Consistency | Key Limitation(s) |

|---|---|---|---|---|

| Seurat | Anchor-based integration | Effective dataset integration & label transfer [20] | N/A | Limited novel cell type discovery [20] |

| Harmony | PCA-based batch correction | Top-tier batch effect removal [20] | N/A | Requires a high-quality reference [20] |

| LICT | Multi-model LLM integration | Reduces annotation uncertainty; high alignment with experts [12] | Mismatch rate of 9.7% for PBMCs [12] | Performance drops on low-heterogeneity data [12] |

| SingleR | Correlation-based | Fast and intuitive | N/A | Sensitive to reference quality and batch effects |

| scANVI | Deep generative model | Handers complex data & partial labels | N/A | High computational demand |

Experimental Protocol: Reference-Based Annotation with Harmony and LLMs

Objective: To annotate cell types in a query scRNA-seq dataset using a pre-annotated reference dataset. Inputs: A query dataset (gene expression matrix) and a reference dataset (gene expression matrix with cell type labels).

- Data Preprocessing: Normalize both query and reference datasets and identify a common set of highly variable genes (HVGs) for downstream analysis [20].

- Batch Effect Correction: Perform principal component analysis (PCA) on the combined data. Use the top 50 PCs as input for the Harmony algorithm to correct for batch effects between the query and reference datasets, resulting in a harmonized 50-dimensional embedding [20].

- Label Transfer (Traditional): Use an anchor-based method (e.g., in Seurat) on the harmonized embedding to find correspondences between query cells and reference cell types. Transfer labels from the reference to the query cells with a confidence score.

- Annotation with LICT (Alternative): a. Marker Gene Extraction: Identify top marker genes for each cell cluster in the query data. b. Multi-Model Query: Input the marker gene lists into a panel of top-performing LLMs (e.g., GPT-4, Claude 3, Gemini) using standardized prompts [12]. c. Result Integration & Validation: Employ a "talk-to-machine" strategy. The LLM is asked to provide marker genes for its predicted cell type. If more than four of these genes are expressed in at least 80% of the cluster cells, the annotation is validated. If validation fails, provide the LLM with additional differentially expressed genes (DEGs) for a re-query, iterating until a consensus is reached [12].

- Quality Control: Assess the confidence of the transferred/annotated labels. Cells with low confidence scores may require further investigation or manual annotation.

Diagram 1: Workflow for reference-based annotation showing traditional and LICT pathways.

Marker-Based Annotation

Marker-based annotation relies on the use of known gene markers, often curated from scientific literature, to assign cell identities based on their expression patterns. This approach directly incorporates established biological knowledge.

Core Concepts and Marker Types

The fundamental principle is that specific cell types express a characteristic set of genes. The classification of biomarkers is multifaceted, encompassing genetic, epigenetic, transcriptomic, proteomic, and metabolomic markers [21]. Functional Markers (FMs) are particularly powerful, as they are derived from polymorphisms that have a demonstrated causal relationship with phenotypic trait variation, making them highly precise for selection [22]. This contrasts with Random DNA Markers (RDMs), which are associated with traits via linkage but lack a confirmed functional role, leading to a potential weakening of association over generations due to recombination [22]. With advancements in technology, many RDMs can be functionally validated and reclassified as FMs [22].

Table 2: Classification of Biomarker Types for Cell Annotation [21]

| Biomarker Type | Molecular Characteristics & Origin | Example Detection Technologies | Clinical/Biological Application Value |

|---|---|---|---|

| Genetic Biomarkers | DNA sequence variants, gene expression changes | Whole Genome Sequencing, PCR, SNP arrays | Genetic disease risk assessment, drug target screening [21] |

| Epigenetic Biomarkers | DNA methylation, histone modifications | Methylation arrays, ChIP-seq, ATAC-seq | Early cancer diagnosis, environmental exposure assessment [21] |

| Transcriptomic Biomarkers | mRNA expression profiles, non-coding RNAs | RNA-seq, microarrays, real-time qPCR | Molecular disease subtyping, treatment response prediction [21] |

| Proteomic Biomarkers | Protein expression, post-translational modifications | Mass spectrometry, ELISA, protein arrays | Disease diagnosis, prognosis evaluation, therapeutic monitoring [21] |

| Metabolomic Biomarkers | Metabolite concentration profiles | LC-MS/MS, GC-MS, NMR | Metabolic disease screening, drug toxicity evaluation [21] |

| Digital Biomarkers | Behavioral, physiological data | Wearable devices, mobile apps, IoT sensors | Chronic disease management, early warning systems [21] |

Experimental Protocol: Marker-Based annotation Using Functional Markers

Objective: To annotate cell types by leveraging known marker genes, with a focus on validating functional markers. Inputs: A query scRNA-seq dataset (gene expression matrix) and a curated list of marker genes for expected cell types.

- Marker Gene Curation: Compile a list of candidate marker genes from public databases (e.g., CellMarker) and relevant literature. Prioritize functional markers (FMs) where available, as they provide a direct, causal link to cell identity or function [22].

- Differential Expression Analysis: For each cell cluster in the query data, perform differential expression analysis to identify genes that are significantly upregulated compared to all other clusters. Common methods include the Wilcoxon rank-sum test or model-based approaches.

- Marker Validation & Overlap: Compare the list of differentially expressed genes from the query data with the curated list of known markers. A cluster is confidently annotated if a sufficient number of its top differentially expressed genes match the known markers for a specific cell type.

- Expression Pattern Check: Visualize the expression of key marker genes using violin plots or feature plots to confirm that expression is specific to the putative cluster and not ubiquitously low or highly expressed in multiple clusters.

- Handling Novelty: Clusters that do not show strong expression for any known markers may represent novel or unknown cell states and should be flagged for further investigation.

Supervised Classification

Supervised classification involves training a machine learning model on a labeled reference dataset to predict the cell types of individual cells in a query dataset. This approach directly addresses the issue of cluster impurity present in some unsupervised methods by classifying cells independently [20].

Core Concepts and Algorithms

A wide array of machine learning algorithms has been adapted for cell type classification. These include:

- Tree-based models: XGBoost (used by CaSTLe) and Random Forests (used by SingleCellNet) are powerful for structured data and can capture non-linear relationships [20].

- Support Vector Machines (SVM): Used by scPred and Moana, effective for high-dimensional data classification [20].

- Neural Networks/Deep Learning: Models like ACTINN and scDeepSort use deep learning for annotation and can handle complex patterns in large datasets [20].

- K-Nearest Neighbors (KNN): A simple yet effective algorithm used in scmap-cell and Moana, which classifies cells based on the majority vote of their nearest neighbors in the reference space [20].

The Semi-Supervised Paradigm: HiCat

A significant innovation in this space is the development of semi-supervised methods like HiCat (Hybrid Cell Annotation using Transformative embeddings), which integrate both supervised and unsupervised approaches to overcome key limitations [20]. HiCat leverages a labeled reference set but also uses the unlabeled query data to improve annotation and, crucially, to identify and differentiate between multiple novel cell types—a capability lacking in purely supervised methods [20]. Its structured pipeline involves batch effect removal with Harmony, non-linear dimensionality reduction with UMAP, unsupervised clustering, and the training of a classifier (CatBoost) on a multi-resolution feature space that combines principal components, UMAP embeddings, and cluster identities [20]. A final decision step resolves inconsistencies between supervised predictions and unsupervised clusters to produce final annotations [20].

Performance and Considerations

Purely supervised methods are constrained by the cell types present in their training data and cannot identify novel cell types. While some can assign an "unassigned" label, they generally cannot differentiate between multiple distinct unknown types [20]. In benchmark evaluations, HiCat demonstrated superior performance in both known cell type classification and novel cell type identification compared to existing methods, excelling particularly at distinguishing multiple novel cell populations [20].

Experimental Protocol: Supervised Classification with a Semi-Supervised Pipeline

Objective: To annotate cell types in a query dataset using a supervised model, while also identifying novel cell types not in the reference. Inputs: A reference dataset (gene expression matrix with labels) and a query dataset (gene expression matrix without labels).

- Data Integration and Preprocessing:

a. Common Gene Space: Identify common genes between the reference and query datasets.

b. Normalization and HVG Selection: Normalize the combined data and select Highly Variable Genes (HVGs) using a method like Seurat's

FindVariableFeatures[20]. c. Batch Correction: Perform PCA on the HVGs and apply Harmony to the top 50 PCs to remove batch effects, creating a harmonized embedding for both datasets [20]. - Feature Engineering: a. Dimensionality Reduction: Apply UMAP to the harmonized 50D embedding to capture key non-linear patterns in 2D [20]. b. Unsupervised Clustering: Perform clustering (e.g., Louvain, Leiden) on the harmonized embedding to propose novel cell type candidates. c. Multi-Resolution Feature Space: Create a consolidated feature set for each cell by combining its batch-corrected PCs, UMAP coordinates, and unsupervised cluster identity [20].

- Model Training and Prediction: a. Classifier Training: Train a supervised classifier (e.g., CatBoost in HiCat, XGBoost, or Random Forest) on the multi-resolution features from the reference data [20]. b. Prediction: Use the trained model to predict cell type probabilities for each cell in the query dataset.

- Resolution of Annotations: a. Fusion with Clustering: For cells where the supervised prediction has low confidence or conflicts with the unsupervised cluster assignment, prioritize the cluster-derived label. This step is key for identifying novel cell types [20]. b. Final Assignment: Assign a final cell type label, which can be either a known type from the reference or a novel label derived from the unsupervised clusters.

Diagram 2: Semi-supervised classification workflow (e.g., HiCat) for known and novel cell type discovery.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Automated Cell Type Annotation

| Item / Resource | Function / Description | Example Tools / Sources |

|---|---|---|

| Annotated Reference Datasets | Pre-annotated single-cell datasets used as a ground truth for training supervised models or for reference-based transfer. | Human Cell Atlas, Mouse Cell Atlas, Allen Brain Atlas [20] |

| Marker Gene Databases | Curated collections of known cell-type-specific marker genes used for marker-based annotation and validation. | CellMarker, PanglaoDB [12] |

| Batch Effect Correction Algorithms | Computational tools to remove technical variation between datasets, enabling valid comparative analysis. | Harmony, Seurat's CCA [20] |

| Pre-trained Language Models (LLMs) | Models capable of interpreting biological context from gene lists to provide automated, reference-free annotations. | GPT-4, Claude 3, Gemini (integrated via LICT) [12] |

| Benchmark Datasets | Standardized datasets with high-quality annotations used to evaluate and compare the performance of different annotation tools. | PBMC datasets (e.g., GSE164378), CybAttT/NIST Juliet weakness codes [12] [23] |

| Clustering Algorithms | Unsupervised learning methods to group cells based on gene expression similarity, forming the basis for cluster-based annotation. | Leiden, Louvain, K-means [20] |

The Emerging Role of AI, Natural Language Processing, and Large Language Models (LLMs)

Application Notes

The integration of Artificial Intelligence (AI), particularly large language models (LLMs), is revolutionizing the automated annotation of cell types in single-cell RNA sequencing (scRNA-seq) data. This paradigm shift addresses a significant bottleneck in single-cell analysis, traditionally reliant on manual expert annotation, which is time-consuming and subjective [12] [2]. LLMs, trained on vast corpora of scientific literature, can interpret lists of marker genes to propose cell type identities with remarkable accuracy, offering a scalable and consistent alternative [24] [12] [25].

Key Advancements and Performance

Recent research has demonstrated the superior performance of specialized LLM-based tools. These tools leverage strategies such as multi-model integration, iterative "talk-to-machine" refinement, and verification against curated biological databases to enhance accuracy and mitigate the risk of model "hallucination" [12] [26].

Table 1: Performance Benchmarking of Automated Cell Type Annotation Tools

| Tool Name | Core Methodology | Reported Accuracy | Key Advantage |

|---|---|---|---|

| LICT [12] | Multi-model LLM integration & credibility evaluation | ~90.3% match rate (PBMCs); ~91.7% match rate (Gastric Cancer) | Objective reliability assessment; excels in high-heterogeneity data |

| CellTypeAgent [26] | LLM candidate generation + CellxGene database verification | Outperformed GPTCelltype & CellxGene-alone across 9 datasets & 303 cell types | Effectively mitigates LLM hallucinations |

| AnnDictionary [19] | LLM-agnostic parallel backend for anndata | >80-90% accuracy for most major cell types | Unified interface for multiple LLMs; supports atlas-scale data |

| ScType [27] | Specificity scoring of marker genes from database | 98.6% accuracy (72/73 cell types) across 6 datasets | Ultra-fast, reference-free operation |

| CellAnnotator [24] | LLM interpretation of marker genes | N/A (New tool) | Integration within the scverse ecosystem |

Quantitative evaluations reveal that LLM-based annotation achieves high consistency with expert annotations. For instance, one large-scale benchmarking study found that LLM annotation of most major cell types exceeds 80-90% accuracy [19]. Another study on the LICT tool showed it reduced the mismatch rate in highly heterogeneous datasets like Peripheral Blood Mononuclear Cells (PBMCs) from 21.5% to 9.7% compared to earlier LLM methods [12]. Performance can vary with cellular heterogeneity; while LLMs excel with diverse cell populations, annotating low-heterogeneity datasets (e.g., stromal cells, embryonic cells) remains more challenging, though iterative refinement strategies can significantly improve accuracy [12].

The underlying LLM also critically impacts performance. Evaluations identify top-performing models such as Claude 3.5 Sonnet, which achieved the highest agreement with manual annotations in one study, and GPT-4 and Claude 3 [12] [19]. The open-source model Deepseek-R1, when integrated within a verification framework like CellTypeAgent, also delivers competitive results, offering a solution for data privacy concerns [26].

Protocols

This section provides detailed methodologies for implementing two advanced LLM-driven annotation strategies: one utilizing a multi-model framework with objective credibility evaluation, and another employing a hybrid agent that combines LLM inference with database verification.

Protocol 1: Cell Type Annotation Using LICT's Multi-Model and Credibility Evaluation Strategy

LICT (Large Language Model-based Identifier for Cell Types) leverages a multi-model approach to generate robust annotations and an objective framework to assess their reliability [12].

Experimental Workflow:

The following diagram outlines the core multi-model integration and credibility evaluation workflow.

Step-by-Step Procedure:

- Input Preparation. Begin with a pre-processed scRNA-seq dataset that has been normalized and clustered using standard methods (e.g., Leiden clustering). For each cluster, compute the differentially expressed genes (DEGs) compared to all other clusters.

- Parallel LLM Annotation.

- Prompt Design: Create a standardized prompt for the LLMs. Example: "

Identify the most likely cell type for a cell cluster from [Tissue] tissue of [Species] based on the following top marker genes: [List of top 10 genes]. Provide only the cell type name." - Model Query: Submit this prompt in parallel to a panel of top-performing LLMs. The validated panel includes GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE 4.0 [12].

- Prompt Design: Create a standardized prompt for the LLMs. Example: "

- Multi-Model Integration. Collect the annotations from all five LLMs. Instead of using a simple majority vote, the LICT strategy selectively combines the results, leveraging the complementary strengths of each model to generate a consolidated, high-confidence annotation list [12].

- Objective Credibility Evaluation. This critical step assesses the reliability of the proposed annotations.

- Marker Gene Retrieval: For each LLM-proposed cell type, query the same LLM to generate a list of known representative marker genes for that cell type.

- Expression Pattern Evaluation: Analyze the input scRNA-seq data to check the expression of these retrieved marker genes within the corresponding cell cluster.

- Credibility Threshold: An annotation is deemed reliable if more than four of the LLM-retrieved marker genes are expressed in at least 80% of the cells within the cluster. Otherwise, the annotation is classified as unreliable [12].

- Output and Interpretation. The final output is a list of cell type annotations for each cluster, each with a reliability flag. Researchers can proceed with high confidence for annotations marked as reliable and prioritize manual re-examination or further experimental validation for those flagged as unreliable.

Protocol 2: Cell Type Annotation Using the CellTypeAgent Hybrid Framework

CellTypeAgent combines the powerful inference capabilities of LLMs with the empirical validation provided by a gene expression database to deliver trustworthy annotations [26].

Experimental Workflow:

The diagram below illustrates the two-stage process of candidate prediction and verification.

Step-by-Step Procedure:

- Stage 1: LLM-based Candidate Prediction.

- Input: A set of marker genes from a cell cluster, along with the species and tissue of origin.

- Prompt Formulation: Use a precise prompt to guide the LLM. The recommended format is: "

Identify most likely top 3 celltypes of [tissue type] using the following markers: [marker genes]. The higher the probability, the further left it is ranked, separated by commas." [26]. - Model Execution: Run this prompt through a powerful LLM. The model o1-preview has been shown to achieve the highest accuracy, but GPT-4o and the open-source Deepseek-R1 are also effective choices [26]. The output is an ordered list of the top three most probable cell type candidates.

- Stage 2: Gene Expression-based Candidate Evaluation.

- Database Query: Take the list of candidate cell types from Stage 1 and query the CZ CELLxGENE Discover database [26]. The query is filtered by the relevant species and tissue.

- Data Extraction: For each candidate cell type, retrieve the scaled gene expression data for the input marker genes. The database provides the average expression value and the proportion of cells in which the gene is expressed.

- Candidate Scoring: Calculate the average expression value of all input marker genes for each candidate cell type within the database.

- Final Selection: Select the candidate cell type with the highest average gene expression in the CellxGene database as the final, verified annotation. This step grounds the LLM's prediction in empirical data, effectively mitigating hallucinations.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Type | Function in Automated Annotation | Example/Reference |

|---|---|---|---|

| LLM API/Service | Computational Tool | Core engine for interpreting marker genes and proposing cell types. | OpenAI GPT-4, Anthropic Claude 3.5, Google Gemini, Deepseek-R1 [12] [26] |

| Cell Marker Database | Data Resource | Provides ground-truth gene signatures for validation and verification. | CZ CELLxGene Discover [26], ScType Database [27], PanglaoDB [26] |

| Annotation Software | Software Package | Implements the annotation pipeline, integrating LLMs and analysis steps. | CellTypeAgent [26], LICT [12], AnnDictionary [19], CellAnnotator [24] |

| Single-Cell Analysis Suite | Software Ecosystem | Performs essential upstream data processing (clustering, DEG analysis). | Seurat [18], Scanpy (via AnnDictionary [19]) |

| Reference Atlas | Data Resource | Serves as a basis for reference-based mapping methods. | Azimuth References [18], Human Cell Atlas |

| High-Variance Gene Set | Data Feature | Identifies informative genes from the data for clustering and DEG analysis. | Standard output of Scanpy/Seurat preprocessing [19] |

Practical Workflows: A Step-by-Step Guide to Annotation Tools and Techniques

In the context of a broader thesis on automated cell type annotation tools for single-cell RNA sequencing (scRNA-seq) data, mastering the preliminary bioinformatic steps is paramount. The reliability of any downstream annotation, whether achieved through modern large language models (LLMs) like LICT or traditional reference-based methods, is entirely contingent upon the quality of the data processing pipeline [12] [2] [13]. Errors introduced at these early stages can propagate, leading to misannotation and flawed biological conclusions. This guide details the essential, sequential procedures for quality control (QC), batch effect correction, and clustering, providing a robust foundation for automated cell type annotation.

Quality Control (QC): Ensuring a High-Quality Single-Cell Dataset

Quality control is the first and most critical step in scRNA-seq analysis. Its purpose is to distinguish high-quality cells from background noise, debris, dying cells, and multiplets (droplets containing more than one cell) [28] [29]. High-quality data is the foundation of reliable cell annotation [2].

Key QC Metrics and Their Biological/Technical Interpretations

The table below summarizes the core metrics used to filter cells and recommends standard thresholds for a human PBMC dataset, which can be adapted for other sample types.

Table 1: Key Quality Control Metrics for scRNA-seq Data

| Metric | Description | Indication of Low Quality | Indication of High Quality / Multiplet | Recommended Filtering Threshold (Example: PBMCs) |

|---|---|---|---|---|

| UMI Counts per Cell | Total number of transcripts (or unique molecular identifiers) detected per cell. | Low counts suggest empty droplets or ambient RNA. | Very high counts may indicate multiplets. | Filter extreme outliers in the distribution [28]. |

| Genes Detected per Cell | Number of unique genes expressed per cell. | Low numbers suggest poor cell capture or broken cells. | High numbers may indicate multiplets. | Filter extreme outliers in the distribution [28]. |

| Mitochondrial Read Percentage | Proportion of reads mapping to the mitochondrial genome. | High percentage indicates cell stress or apoptosis. | Varies by cell type; can be biologically meaningful (e.g., cardiomyocytes). | <10% for PBMCs [28]. |

| Ribosomal Read Percentage | Proportion of reads mapping to ribosomal genes. | Deviations from the typical range can indicate altered metabolic states. | - | Often used as an informative metric; filtering thresholds are context-dependent. |

| Cell Counts | Number of cells recovered after initial calling. | Significantly lower than targeted cell numbers may indicate experimental issues. | Higher than expected counts with low genes/UMIs can suggest overloading. | Compare to targeted cell recovery [28]. |

Practical QC Workflow

The QC workflow involves calculating these metrics and applying filters. The following diagram illustrates the logical sequence of steps from raw data to a quality-controlled cell-by-gene matrix.

Figure 1: Sequential Workflow for scRNA-seq Quality Control.

Experimental Protocol 1: Performing Quality Control

- Process Raw Data: Align sequencing reads (FASTQ files) and generate a feature-barcode matrix using tools like the Cell Ranger

multipipeline from 10x Genomics. This step performs initial cell calling and provides aweb_summary.htmlfile for a first-pass QC check [28]. - Calculate Metrics: Using the raw or filtered matrix, compute key QC metrics for every cell barcode:

- Total UMI counts.

- Number of genes detected.

- Percentage of reads mapping to mitochondrial genes (e.g., based on a predefined list like

MT-ND1,MT-ND2, etc.). - Percentage of reads mapping to ribosomal genes.

- Visualize Distributions: Load the data and QC metrics into an interactive analysis environment (e.g., Loupe Browser, Scanpy, Seurat). Create violin plots or scatter plots to visualize the distributions of UMI counts, genes per cell, and mitochondrial percentage across all barcodes [28].

- Set Filters and Apply: Based on the visualizations and recommended thresholds (Table 1), define filtering parameters. For example, in Loupe Browser, use sliders to filter out barcodes with UMI/gene counts outside a reasonable range and those with high mitochondrial read percentages. Document all thresholds used for reproducibility [28].

Batch Effect Correction: Harmonizing Multiple Datasets

Batch effects are systematic technical variations introduced when datasets are generated at different times, by different personnel, or using different sequencing lanes or protocols [29] [30]. If unaddressed, these non-biological differences can dominate the analysis, obscuring true biological signals and leading to incorrect clustering and annotation.

Multiple computational strategies exist to mitigate batch effects. The choice of method depends on the data structure and analysis goals.

Table 2: Common Batch Effect Correction Methods

| Method | Underlying Principle | Typical Use Case |

|---|---|---|

| Harmony [29] | Iterative clustering and maximum diversity correction to align datasets in a low-dimensional space. | Integrating multiple datasets for joint analysis. |

| MMD Correct / Seurat Integration [29] | Identifies mutual nearest neighbors (MNNs) across batches and corrects the expression values. | Integrating datasets with strong batch effects. |

| ComBat-ref [30] | An advanced empirical Bayes method that uses a low-dispersion batch as a reference to adjust other batches, preserving count data structure. | Correcting batch effects for downstream differential expression analysis. |

| Scanorama-prior [13] | A modified version of Scanorama that incorporates prior cell type annotation information to guide the integration process, preserving biological diversity. | Integrating datasets that have already been automatically or manually annotated. |

Protocol for Data Integration

The following workflow is recommended when combining multiple samples or datasets.

Experimental Protocol 2: Correcting Batch Effects

- Individual Preprocessing: Perform QC, normalization, and preliminary clustering on each dataset individually [28]. This ensures that low-quality cells are removed before integration.

- Select a Correction Method: Choose an appropriate method from Table 2. For general-purpose integration of unannotated datasets, Harmony or Seurat's CCA integration are standard choices. If leveraging pre-annotated data, Scanorama-prior is a powerful option [13].

- Execute Integration: Run the chosen integration algorithm. This typically generates a "corrected" dimensionality reduction (e.g., a corrected PCA) where cells from different batches are co-embedded based on biological type rather than technical origin.

- Validate Results: Assess the success of integration by visualizing the data using UMAP. Successful correction is indicated by the intermingling of cells of the same predicted type from different batches, while distinct biological populations remain separate.

Clustering: Defining Cellular Populations

Clustering is the process of grouping cells based on the similarity of their gene expression profiles, forming the putative cell populations that will be annotated [29]. The goal is to partition the data in a way that reflects the underlying biology.

The Clustering Pipeline

The standard clustering workflow builds upon the integrated data from the previous step.

Figure 2: Standard Bioinformatic Pipeline for Clustering scRNA-seq Data.

Experimental Protocol 3: Clustering Cells

- Normalization: Normalize the gene expression counts to account for differences in sequencing depth per cell (e.g., using log-normalization).

- Feature Selection: Identify a subset of "highly variable genes" (HVGs) that drive population heterogeneity. This focuses the analysis on biologically relevant signals.

- Dimensionality Reduction: Perform principal component analysis (PCA) on the HVGs to reduce noise and computational complexity.

- Graph-Based Clustering: Using the top principal components, construct a graph where cells are nodes and edges represent transcriptional similarity. Then, apply a community detection algorithm like the Leiden algorithm to identify groups of cells, or clusters [29].

- Resolution Parameter: The

resolutionparameter controls the granularity of clustering. A lower resolution yields broader cell types, while a higher resolution identifies finer subtypes. This parameter must be tuned based on the biological context [29]. For discovering rare cell types, a higher resolution ("over-clustering") is recommended. - Visualization: Project the final clusters into two dimensions using UMAP to visualize the results.

The Scientist's Toolkit: Essential Research Reagents & Software

This table catalogs key computational tools and resources that form the essential toolkit for executing the foundational steps of scRNA-seq analysis.

Table 3: Key Software Tools for Foundational scRNA-seq Analysis

| Tool / Resource | Category | Function & Application |

|---|---|---|

| Cell Ranger [28] | Primary Analysis | A set of pipelines (e.g., cellranger multi) that process raw Chromium FASTQ data into aligned reads, count matrices, and preliminary clustering. |

| Loupe Browser [28] | Visualization & QC | Desktop software for interactive visualization of 10x Genomics data, enabling manual QC filtering and initial cluster exploration. |

| Scanpy / Seurat [13] [29] | Comprehensive Analysis | The standard programming frameworks (in Python and R, respectively) for all downstream steps, including normalization, HVG selection, PCA, clustering, and UMAP visualization. |

| SoupX / CellBender [28] | Ambient RNA Removal | Computational tools that estimate and subtract the profile of ambient RNA (from lysed cells) from the count matrix of genuine cells. |

| Harmony [29] | Batch Correction | An efficient integration algorithm for removing batch effects from multiple datasets in a low-dimensional space. |

| Scanorama-prior [13] | Batch Correction | An integration method that leverages prior cell type annotation information to improve batch correction while preserving biological diversity. |

| Azimuth [2] [29] | Reference Atlas | A web-based tool that uses a pre-built reference atlas to automatically project and annotate query scRNA-seq data. |

| LICT / mLLMCelltype [12] [31] | Automated Annotation | LLM-based tools that annotate cell clusters using marker genes without relying on reference datasets, leveraging models like GPT-4 and Claude 3. |

The path to reliable, automated cell type annotation is built upon the triad of rigorous quality control, effective batch effect correction, and biologically-informed clustering. Neglecting any of these steps compromises the entire analytical enterprise. By adhering to the detailed protocols and best practices outlined in this guide—from meticulously filtering cells based on QC metrics to strategically integrating datasets and tuning clustering parameters—researchers can ensure their data is primed for accurate annotation. A robust preliminary analysis pipeline ultimately unlocks the full potential of advanced annotation tools, paving the way for trustworthy biological discovery.

Cell type annotation is a fundamental step in the analysis of single-cell RNA sequencing (scRNA-seq) data, transforming clusters of cells into biologically meaningful identities based on gene expression profiles. While manual annotation using known marker genes is widely practiced, it is labor-intensive and subjective, requiring significant expert knowledge [32]. Reference-based annotation methods automate this process by leveraging previously annotated datasets to infer cell types in a new query dataset. This approach provides a more standardized, scalable, and unbiased alternative to manual methods [33] [34].

Two of the most prominent tools for reference-based annotation are SingleR and Azimuth. Both are designed to accurately identify cell types but employ different underlying methodologies and workflows. SingleR is a popular R package that performs cell-wise annotation by comparing gene expression profiles between query cells and a reference dataset using correlation metrics [35] [33]. In contrast, Azimuth, part of the Seurat ecosystem, uses an integrated web application and R package to map query datasets onto a pre-built reference, utilizing a reference-based mapping pipeline that includes normalization, visualization, cell annotation, and differential expression analysis [36] [18].

The performance of these tools has been rigorously evaluated in independent studies. For example, a 2022 study comparing five annotation algorithms found that cell-based methods, including Azimuth and SingleR, confidently annotated a higher percentage of cells compared to cluster-based algorithms [32]. A 2025 benchmarking study on 10x Xenium spatial transcriptomics data further highlighted SingleR's performance, noting it was "fast, accurate and easy to use, with results closely matching those of manual annotation" [33]. The choice between tools often depends on the specific biological context, dataset characteristics, and desired level of annotation granularity.

Selecting the appropriate annotation tool is crucial for generating biologically accurate results. The table below summarizes the core characteristics of SingleR and Azimuth to guide researchers in their selection.

Table 1: Key Characteristics of SingleR and Azimuth

| Feature | SingleR | Azimuth |

|---|---|---|

| Primary Method | Correlation-based (Spearman) cell-to-cell comparison [33] | Reference-based mapping and integration [36] |

| Annotation Level | Individual cells [32] | Individual cells, with projection onto reference UMAP [36] [18] |

| Reference Flexibility | Custom references or built-in from packages like celldex [35] |

Pre-built, tissue-specific references; supports custom reference creation in Seurat [36] |

| Output | Cell type labels with prediction scores; "pruned" labels for low-confidence cells [35] | Cell type labels at multiple resolutions, prediction scores, mapping scores, and UMAP projection [36] [18] |

| Ease of Use | R package with straightforward functions [35] [33] | Web application and R package; web app provides a user-friendly interface [36] |

| Ideal Use Case | Rapid, flexible cell typing with custom or standard references [33] | Standardized analysis using a curated reference, with deep integration into the Seurat ecosystem [18] |

Beyond the technical specifications, the practical performance of these tools is a key consideration. A comparative study on PBMC data from COVID-19 patients revealed that cell-based methods like Azimuth and SingleR could confidently annotate a much higher percentage of cells (up to 99.9% for Azimuth) compared to cluster-based algorithms [32]. Furthermore, a 2024 study in Nature Methods assessed the emerging use of GPT-4 for cell type annotation and, while noting its competency, contextualized its performance against established methods like SingleR [37].

Experimental Protocols and Detailed Methodologies

A Protocol for Cell Type Annotation with SingleR

SingleR operates on the principle of correlating the gene expression profile of each single cell in a query dataset with reference data from pure cell types [38]. The following step-by-step protocol is adapted for a typical scRNA-seq analysis in R.

Step 1: Environment Setup and Data Preparation Begin by installing and loading the required R packages. The query data should be a normalized single-cell matrix.

It is critical to ensure that the reference dataset is appropriate for the biological context of the query data. For instance, a blood-based query (like PBMCs) should use a reference that contains immune cell types [35].

Step 2: Running SingleR

Execute the core SingleR function. The ref argument is the reference dataset object, labels is the cell type labels from the reference, and query is the normalized matrix of the query dataset.

This function compares each query cell to every cell in the reference, assigning the cell type label of the best-matching reference cell.

Step 3: Interpreting Results and Integrating with Seurat

The annotations object contains the final labels and diagnostic scores.

SingleR also provides "pruned" labels for cells whose assignments are considered unreliable based on the difference in correlation scores between the first-best and second-best cell types [35]. These should be inspected and potentially treated as "unknown" in downstream analysis.

A Protocol for Cell Type Annotation with Azimuth

Azimuth uses a more complex workflow that maps the query dataset onto a pre-analyzed reference, effectively transferring annotations and visualizing the query in the context of the reference's UMAP [36]. This protocol covers both the web app and local R usage.

Step 1: Input Data Preparation for the Azimuth Web App The Azimuth web app requires data in a specific format. The input should be an unprocessed counts matrix.

- Supported File Types: Seurat object (RDS), 10x Genomics H5, H5AD, H5Seurat, or a matrix/data.frame as RDS.

- Key Requirement: If uploading a Seurat object, it must contain an assay named 'RNA' with raw data in the 'counts' slot. Azimuth uses only the unnormalized counts for its mapping pipeline [36].

- Dataset Size: Uploads must be smaller than 1GB and contain between 100 and 100,000 cells. For larger datasets, local execution in R is recommended.

Step 2: Executing Azimuth via the Web App

- Navigate to the Azimuth website and select a reference that matches your tissue type (e.g., "Human - PBMC").

- Upload your prepped file or use the demo dataset.

- (Optional) In the "Preprocessing" tab, filter cells based on QC metrics like gene or UMI counts.

- Click the "Map cells to reference" button to launch the analysis. A dataset of 10,000 cells typically processes in under a minute [36].

Step 3: Interpreting Azimuth Results The app provides several tabs for exploring results: