A Strategic Framework for Risk Assessment in Pharmaceutical Manufacturing Process Changes

This article provides a comprehensive guide to risk assessment for manufacturing process changes, specifically tailored for researchers, scientists, and drug development professionals in the pharmaceutical and biotech industries.

A Strategic Framework for Risk Assessment in Pharmaceutical Manufacturing Process Changes

Abstract

This article provides a comprehensive guide to risk assessment for manufacturing process changes, specifically tailored for researchers, scientists, and drug development professionals in the pharmaceutical and biotech industries. It covers the foundational principles of risk management, explores practical methodologies like FMEA and QbD, offers strategies for troubleshooting common pitfalls, and details validation approaches using matrix and bracketing. The content synthesizes current best practices and regulatory expectations to help professionals ensure product quality, maintain regulatory compliance, and facilitate efficient change management throughout the product lifecycle.

Understanding the Imperative: Why Risk Assessment is Non-Negotiable in Process Changes

In the manufacturing industry, risk is defined as the potential for events or actions to disrupt operational integrity, compromise product quality, or lead to non-compliance with regulatory standards, ultimately resulting in financial loss, reputational damage, or harm to human health and the environment [1]. For researchers and drug development professionals, understanding this risk landscape is paramount when evaluating manufacturing process changes, as even minor modifications can introduce unforeseen variables that affect product safety and efficacy.

A structured approach to risk management serves as both a shield against these threats and a foundation for long-term operational excellence [1]. In the highly regulated pharmaceutical and biotech sectors, this involves a multi-layered compliance framework consisting of:

- Regulatory Compliance: Adherence to mandatory requirements set by authorities such as the FDA (e.g., cGMP in 21 CFR Parts 210–211), OSHA, and the EPA [1].

- Industry Standards Compliance: Implementation of voluntary but critical frameworks like ISO 9001 (quality management) and GMP (Good Manufacturing Practice) [1].

- Internal Policy Compliance: Execution of organization-specific rules and procedures that often exceed legal minimums [1].

A Categorical Framework of Manufacturing Risks

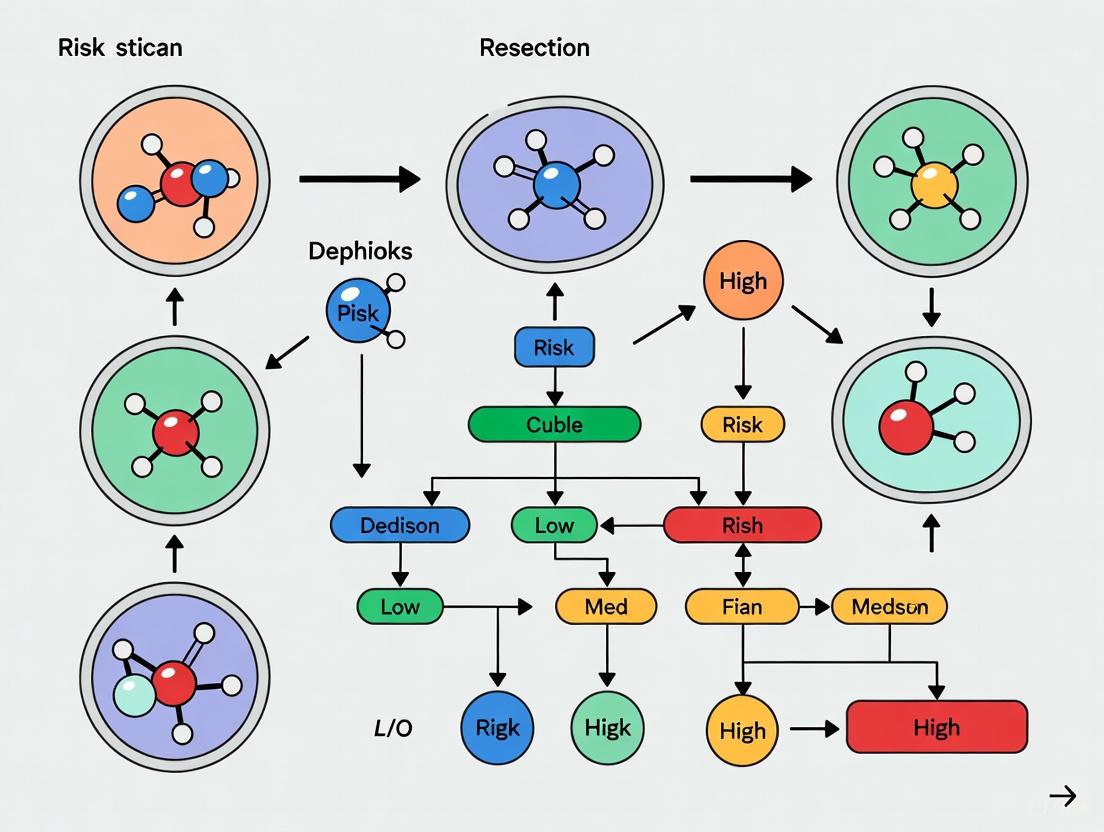

Manufacturing risks can be systematically categorized to facilitate targeted assessment and mitigation strategies. The following diagram illustrates the core risk categories and their interrelationships within the manufacturing context.

Operational Risks

Operational risks encompass threats to the daily functioning of manufacturing processes. These include:

- Equipment and Process Failures: Unplanned downtime due to machinery breakdowns or process deviations that halt production [2].

- Supply Chain Disruptions: Interruptions in the flow of raw materials and components, often caused by geopolitical events, supplier insolvency, or logistics failures [2] [3].

- Workforce Challenges: Skills gaps, labor shortages, or insufficient training that compromise production capabilities and safety protocols [2] [3].

Quality Risks

Quality risks refer to potential failures in meeting predefined product specifications and safety standards. In drug development, these are particularly critical and include:

- Product Defects: Deviations in identity, strength, quality, or purity that render products unfit for their intended use [4].

- Process Control Failures: Inadequate monitoring or control of critical process parameters leading to batch failures [1].

- Raw Material Variability: Inconsistencies in starting materials that affect final product quality and performance [4].

Compliance Risks

Compliance risks arise from failures to adhere to the layered framework of regulatory and internal standards. Key manifestations include:

- Regulatory Misalignment: Inability to adapt to evolving regulations across different markets (e.g., FDA, EMA, REACH) [1] [4].

- Documentation Gaps: Incomplete or inaccurate batch records, calibration logs, or quality control documentation that fail to demonstrate control during audits [1] [5].

- Inspection Failures: Inadequate preparedness for regulatory inspections, leading to observations, warning letters, or consent decrees [1] [4].

Quantitative Methodologies for Risk Analysis

Quantitative risk analysis provides a data-driven approach to measuring and prioritizing risks, transforming uncertainties into actionable numerical data [6]. For manufacturing process changes, these methodologies enable researchers to objectively evaluate potential impacts.

Core Quantitative Analysis Methods

| Method | Description | Application in Manufacturing | Key Outputs |

|---|---|---|---|

| Expected Monetary Value (EMV) Analysis [7] | Calculates the average outcome when future events include uncertainty. | Evaluating the financial impact of potential equipment failure or batch loss. | Prioritized risks based on financial impact. |

| Monte Carlo Simulation [6] [7] | Uses computational algorithms to simulate thousands of possible outcomes based on probability distributions for input variables. | Modeling production timeline uncertainties or yield variations for process changes. | Probability distributions of potential outcomes. |

| Decision Tree Analysis [6] [7] | Maps out all possible decision paths and outcomes in a tree-like structure. | Evaluating sequential decisions in process scale-up or technology transfer. | Visual representation of choices and consequences. |

| Sensitivity Analysis [6] [7] | Measures how uncertainty in model outputs can be apportioned to different input sources. | Identifying which process parameters most significantly impact product quality. | Tornado diagrams highlighting critical variables. |

| Three-Point Estimation [7] | Uses optimistic, pessimistic, and most likely estimates to determine expected outcomes. | Estimating validation timelines or resource requirements for process changes. | Risk-adjusted project timelines and budgets. |

Experimental Protocol for Quantitative Risk Assessment

For researchers implementing manufacturing process changes, the following structured protocol ensures comprehensive quantitative risk analysis:

Step 1: Determine Areas of Uncertainty

- Review project objectives, scope, and constraints to identify assumptions and information gaps [7].

- Consider external factors like regulatory changes or market shifts that could impact the process [8].

- Document all potential risk variables including both internal process parameters and external environmental factors.

Step 2: Identify Risks and Their Costs

- For simple risks with consistent remediation costs, record the anticipated expense directly [7].

- For complex, variable risks, decompose them into multiple components for accurate cost estimation [7].

- Categorize costs as direct (e.g., lost materials, rework) or indirect (e.g., delayed timelines, regulatory impacts).

Step 3: Assess Probability of Occurrence

- Calculate probabilities using historical data, experimental results, and expert judgment [6].

- For novel process changes with limited historical data, employ Delphi techniques or structured expert interviews [2].

- Express probabilities as discrete values (e.g., 0.7) or probability distributions for Monte Carlo analysis.

Step 4: Calculate Expected Cost and Impact

- Compute the Expected Monetary Value (EMV) for each risk by multiplying probability by impact:

EMV = Probability × Impact[7]. - For analyses involving multiple interconnected risks, use Monte Carlo simulations to model combined effects [6].

- Aggregate individual risk costs to determine the total estimated risk burden for the process change.

Step 5: Develop Mitigation Strategies

- Prioritize risks based on their quantified EMV values [6].

- For high-priority risks, design targeted mitigation strategies such as process controls, redundancy systems, or contingency plans [2].

- Perform cost-benefit analysis to ensure mitigation costs are proportionate to risk reduction achieved.

The following workflow diagram visualizes this quantitative risk assessment process for manufacturing process changes.

The Researcher's Toolkit: Essential Solutions for Risk Assessment

Implementing robust risk assessment protocols requires specific tools and methodologies tailored to manufacturing environments. The following table details essential solutions for researchers evaluating process changes.

| Tool/Category | Function/Purpose | Application Context |

|---|---|---|

| Risk Management Software [2] [7] | Centralizes risk data, automates calculations, and generates real-time reports. | Tracking risks across multiple process change initiatives. |

| Statistical Analysis Packages [6] | Perform advanced quantitative methods including regression analysis and Monte Carlo simulation. | Modeling complex relationships between process parameters and quality attributes. |

| IoT Sensors & Monitoring [2] [3] | Capture real-time data on equipment performance, environmental conditions, and process parameters. | Continuous monitoring of critical process parameters during technology transfer. |

| AI & Predictive Analytics [2] [4] | Identify patterns in historical data to forecast potential failures or deviations. | Predicting equipment maintenance needs or quality trend deviations. |

| Data Validation Tools [5] | Ensure accuracy, completeness, and regulatory compliance of manufacturing data. | Maintaining data integrity for regulatory submissions following process changes. |

| Process Modeling Software [6] | Creates digital twins of manufacturing processes to simulate changes and impacts. | Evaluating effects of process parameter modifications before implementation. |

| Regulatory Intelligence Platforms [1] [4] | Track evolving global compliance requirements and standards. | Ensuring process changes maintain alignment with current Good Manufacturing Practices. |

Integration of Qualitative and Quantitative Approaches

While quantitative analysis provides essential numerical rigor, effective risk assessment for manufacturing process changes requires integration with qualitative methods. A combined approach leverages both expert judgment and data-driven insights for comprehensive risk management [2].

The integrated methodology follows a sequential process:

- Initial Risk Identification: Use qualitative methods (e.g., brainstorming, Delphi technique, FMEA) to identify potential risks based on expert knowledge and historical experience [2].

- Quantitative Validation: Apply statistical methods and modeling to measure the probability and impact of identified risks [2].

- Risk Prioritization: Combine qualitative and quantitative findings to create a weighted list of critical risks requiring intervention [2].

- Mitigation Planning: Design control strategies informed by both expert insight and statistical evidence of effectiveness [2].

This hybrid approach is particularly valuable for drug development professionals addressing novel manufacturing technologies where historical data may be limited but expert knowledge exists.

Emerging Trends and Future Directions

Manufacturing risk assessment is evolving rapidly, with several trends particularly relevant to pharmaceutical research and development:

Agentic AI and Autonomous Risk Management: Advanced AI systems capable of autonomously sensing and mitigating supply chain risks, monitoring equipment performance, and recommending alternative suppliers [3]. These systems can quantify potential financial and operational impacts, representing a shift from reactive to predictive risk management [3].

Regulatory Evolution: Continuous updates to regulatory frameworks, such as the ongoing revisions to the TSCA Risk Evaluation Framework Rule, which emphasize science-driven approaches and consideration of real-world exposure controls [9] [10]. Researchers must institute processes for continuous regulatory monitoring to maintain compliance during process changes.

Smart Manufacturing Investments: Growing adoption of smart manufacturing technologies, with 80% of executives planning to allocate significant portions of their improvement budgets to smart manufacturing initiatives [3]. These technologies provide enhanced data collection capabilities that support more sophisticated quantitative risk analysis.

For drug development professionals, these trends highlight the increasing importance of digital literacy and cross-functional collaboration between scientific, operational, and data science domains when implementing manufacturing process changes.

The development and manufacturing of pharmaceuticals operate within a stringent regulatory ecosystem designed to ensure product quality, safety, and efficacy. This framework integrates foundational Current Good Manufacturing Practice (cGMP) regulations with internationally harmonized ICH guidelines, creating a comprehensive system for quality management throughout the product lifecycle. The Code of Federal Regulations (21 CFR Parts 210 and 211) establishes the minimum requirements for methods, facilities, and controls used in manufacturing, processing, and packing of drug products, rendering any non-compliant products adulterated under the Federal Food, Drug, and Cosmetic Act [11] [12]. These cGMP requirements provide the regulatory "floor" upon which more sophisticated, proactive quality systems are built.

The International Council for Harmonisation (ICH) guidelines, particularly Q8 (Pharmaceutical Development), Q9 (Quality Risk Management), and Q10 (Pharmaceutical Quality System), represent an evolution beyond basic compliance toward a more scientific and risk-based approach to quality [13] [14]. ICH Q7 specifically addresses GMP for Active Pharmaceutical Ingredients (APIs), establishing a robust quality framework that emphasizes an independent Quality Unit, rigorous documentation, and graduated GMP stringency from early processing to final purification [13]. Together, these guidelines form a cohesive structure that encourages manufacturers to move from empirical, end-product testing toward proactive, science-based manufacturing supported by thorough risk management [13]. The U.S. Food and Drug Administration (FDA) has formally incorporated these principles into its review process through internal policies that direct staff on applying ICH Q8, Q9, and Q10 during the assessment of pharmaceutical applications [15].

The Interplay Between cGMP and ICH Q9

cGMP Foundation and ICH Q9 Enhancement

The relationship between cGMP and ICH Q9 is synergistic rather than separate. While cGMP regulations establish the mandatory requirements for pharmaceutical manufacturing, ICH Q9 provides a systematic framework for implementing quality risk management that enables more effective and efficient compliance with these regulations [14]. The FDA has explicitly shifted from a purely reactive, punitive compliance model to a proactive, risk-based oversight framework championed by ICH Q9 principles [16]. This evolution recognizes that simply auditing adherence to procedures is insufficient; instead, oversight must prioritize systems that pose the greatest risk to product quality and patient safety [16].

ICH Q9 maps out a systematic approach to quality risk management (QRM) throughout the pharmaceutical product lifecycle, with the primary objective of enhancing drug and patient safety by ensuring proactive risk assessment, control, and communication [14]. The guideline operates on two fundamental principles: first, that evaluation of quality risk should be based on scientific knowledge and ultimately link to patient protection; and second, that the level of effort, formality, and documentation should be commensurate with the level of risk [14]. This risk-based approach enables manufacturers to focus resources on areas of highest impact to product quality and patient safety, creating a more robust quality system than one that merely meets minimum regulatory requirements.

The FDA's Risk-Based Inspection Approach

The FDA's adoption of ICH Q9 principles has fundamentally transformed its inspectional methodology. The agency now employs a sophisticated, data-driven approach to determine inspection frequency, depth, and focus [16]. Key factors in the agency's risk models include:

- Compliance history (number and severity of previous observations, Warning Letters)

- Product risk profile (with sterile injectables and complex dosage forms receiving heightened scrutiny)

- Time since last inspection

- Process complexity (novel technologies or high variability processes warrant more attention) [16]

This risk-based approach means that facilities manufacturing high-risk products or with problematic compliance histories can expect more frequent and thorough inspections, while well-controlled operations with robust quality risk management systems may experience less regulatory burden [16]. The FDA evaluates a company's QRM culture not by reviewing a single document, but by observing how risk principles are integrated into daily decision-making across the organization [16].

ICH Q9 (R1): Quality Risk Management Principles

The Four Components of QRM

ICH Q9 establishes a structured, cyclical process for quality risk management consisting of four core components that must be applied with rigor and consistency [16]:

Table: The Four Core Components of Quality Risk Management

| QRM Component | Description | Regulatory Focus |

|---|---|---|

| Risk Assessment | Systematic process of risk identification, analysis (evaluating likelihood and severity), and evaluation against acceptable risk levels | Inspectors examine scientific basis and comprehensiveness of risk identification using tools like Process Mapping or FMEA [16] |

| Risk Control | Decision-making to reduce risk to an acceptable level, including risk reduction actions and formal risk acceptance of residual risk | Regulators assess whether implemented controls are sufficient, justified by initial risk, and effective in practice [16] |

| Risk Communication | Sharing of risk and risk management information among internal and external stakeholders, including regulators | Ensures rationale for critical decisions is traceable, documented, and scientifically sound [16] |

| Risk Review | Monitoring output of the QRM process, revisiting risks when knowledge changes or new information emerges | System must demonstrate risk assessments are living documents reviewed per triggers like deviations, CAPAs, or changes [16] |

Key Revisions in ICH Q9 (R1)

The 2023/2024 revision to ICH Q9 (Q9(R1)) clarified several areas previously prone to misinterpretation, directly tightening regulatory expectations [16]. These clarifications include:

Degree of Formality: The revision explicitly requires that the level of effort, formality, and documentation must be proportionate to the level of risk. Organizations must define and document triggers for Formal QRM (requiring cross-functional teams and established tools like FMEA) versus Informal QRM (using simpler techniques for low-complexity issues) [16]. Factors determining formality include uncertainty, importance to product quality, and complexity [16].

Managing Subjectivity: Q9(R1) emphasizes the need to minimize inherent subjectivity in risk scoring. The FDA will challenge QRM outcomes where scoring scales are not clearly defined or are inconsistently applied across departments [16]. Effective implementation requires establishing clear, defined rating criteria and utilizing cross-functional teams to pool expertise and mitigate individual bias [16].

Product Availability and Supply Chain: The revision explicitly connects quality risk to potential drug shortages, requiring that risk assessments consider the impact of failures on the availability of critical medicines [16]. This means risk assessments on single-source materials or unique manufacturing steps must include the consequence of failure leading to market disruption [16].

Diagram: ICH Q9 Quality Risk Management Process. The cyclical nature demonstrates the ongoing review and communication requirements throughout the product lifecycle.

Practical Implementation of ICH Q9

Risk Assessment Methodologies and Tools

ICH Q9's Annex I outlines several formal tools that can be applied depending on the context and risk level [14]. The selection of appropriate methodology should align with the principles of formality outlined in Q9(R1), with more complex, high-impact risks warranting more rigorous approaches:

Table: Risk Assessment Tools and Applications

| Tool/Methodology | Description | Best Application Context |

|---|---|---|

| FMEA (Failure Mode Effects Analysis) | Breaks down large complex processes into manageable steps to identify potential failures | Formal QRM for processes with moderate to high complexity and known failure modes [14] |

| FMECA (Failure Mode, Effects and Criticality Analysis) | Extends FMEA by linking severity, probability, and detectability to criticality | High-risk processes where prioritization of risks based on multiple factors is needed [14] |

| FTA (Fault Tree Analysis) | Uses tree of failure modes combinations with logical operators to identify root causes | Complex systems with multiple potential failure pathways; useful for investigating deviations [14] |

| HACCP (Hazard Analysis and Critical Control Points) | Systematic, proactive, preventive method focusing on criticality - originally from food industry | Processes where specific critical control points can be monitored and controlled [14] |

| HAZOP (Hazard Operability Analysis) | Structured brainstorming technique using guide words to identify deviations | Early process development where potential hazards may not be fully understood [14] |

| Risk Ranking and Filtering | Compares and prioritizes risks using factors for each risk | Portfolio-level risk management or initial screening of multiple risks [14] |

Knowledge Management as the Foundation

Effective quality risk management depends on objective data and institutional knowledge rather than subjective opinion. Knowledge Management (KM) serves as the foundation that transforms risk assessment from speculation to evidence-based decision making [16]. Key knowledge sources and their QRM applications include:

- Annual Product Review (APR) Trends: Provide historical data to assign Probability scores in Risk Priority Number (RPN) calculations based on actual failure rates [16]

- Post-Approval Change History: Identifies processes that have undergone multiple changes, requiring risk re-assessment [16]

- Deviation and CAPA Effectiveness Data: Used during Risk Review to verify mitigation actions successfully reduced risk as predicted [16]

- Development Studies (QbD): Provides scientific basis for determining Severity and defining Critical Quality Attributes (CQAs) and Critical Process Parameters (CPPs) [16]

Regulators expect companies to use internal data as evidence of effective risk control and proactive management. During inspections, FDA investigators will examine how knowledge management informs risk-based decisions across the quality system [16].

Post-Approval Change Protocols and Lifecycle Management

Establishing Effective Change Management

The management of post-approval changes represents a critical application of quality risk management principles. A robust change management system must balance regulatory compliance with the need for continuous improvement. The FDA recognizes that flexible regulatory approaches can be justified when manufacturers demonstrate enhanced understanding of their products and processes [15]. Examples of such flexible approaches include:

- Manufacturing process improvements without regulatory notification when operating within an approved design space [15]

- Reduced post-approval submissions through submission of change protocols ("Comparability Protocols") [15]

- In-process tests in lieu of end product testing, including real-time release testing (PAT, RTRT) approaches [15]

- Mathematical models as surrogates for traditional end product testing [15]

The FDA's 2025 draft guidance on complying with 21 CFR § 211.110 further clarifies that process monitoring and control decisions resulting in minor equipment and process adjustments typically don't need additional quality unit approval if three conditions are met: (1) adjustments are within preestablished, scientifically justified limits; (2) these limits have been approved by the quality unit in the master production record; and (3) production data is reviewed by the quality unit before batch approval or rejection [12]. This flexibility underscores the value of establishing well-justified parameters during development.

Risk-Based Approach to Post-Approval Changes

Implementing an effective, risk-based change management process requires systematic assessment of each proposed change's potential impact. The following workflow illustrates a robust methodology for managing post-approval changes:

Diagram: Risk-Based Post-Approval Change Workflow. The pathway diverges based on risk classification, with corresponding regulatory requirements.

Comparability Protocols and Established Conditions

The concept of "Established Conditions" introduced in ICH Q12 (Pharmaceutical Product Lifecycle Management) provides a foundation for more predictable management of post-approval CMC changes [15]. Established Conditions are the legally binding information considered necessary to assure product quality. When combined with Comparability Protocols, which are prospective plans for managing future changes, manufacturers can create a more efficient pathway for implementing post-approval changes [15].

A well-constructed Comparability Protocol typically includes:

- Description of the proposed change(s) and manufacturing process

- Risk assessment identifying potential impact on product quality

- Studies and acceptance criteria to demonstrate comparability

- Testing protocol and analytical procedures

- Reporting mechanisms and commitments

This proactive approach to change management, when accepted by regulatory authorities, can significantly reduce the regulatory burden for post-approval changes while maintaining appropriate oversight of product quality.

Table: Key Research and Quality Management Resources

| Tool/Resource | Function/Purpose | Application Context |

|---|---|---|

| Quality Risk Management Plan | Defines triggers, methodology, and documentation requirements for Formal vs. Informal QRM | Required by Q9(R1) to ensure appropriate level of formality based on risk [16] |

| Risk Assessment Templates | Standardized formats for conducting and documenting risk assessments using FMEA, HACCP, etc. | Ensures consistency and compliance with Q9(R1) subjectivity management requirements [16] [14] |

| Knowledge Management System | Centralized repository for historical data, change history, deviation trends, and validation data | Provides objective evidence for risk scoring and demonstrates effective risk control [16] |

| Statistical Process Control Tools | Control charts, process capability analysis, and trend detection algorithms | Enables data-driven risk analysis and supports real-time release testing approaches [15] [14] |

| Change Control Software | Automated workflow for change assessment, implementation, and tracking | Ensures consistent application of risk-based approach to post-approval changes [15] |

| Design Space Documentation | Multidimensional combination of material attributes and process parameters demonstrating proven acceptable ranges | Foundation for flexible regulatory approaches and movement within design space [13] [15] |

The modern pharmaceutical regulatory landscape requires seamless integration of foundational cGMP requirements with sophisticated quality risk management principles and proactive change management strategies. The FDA's explicit shift toward risk-based oversight, formalized through ICH Q9 implementation, represents a fundamental transformation in how manufacturers and regulators approach product quality [16]. This approach recognizes that robust, science-based risk management ultimately provides greater assurance of product quality than rigid adherence to procedural requirements alone.

Successful navigation of this landscape demands both technical understanding of regulatory requirements and practical implementation of risk-based principles throughout the product lifecycle. By establishing a comprehensive quality risk management system, leveraging knowledge management, and implementing risk-based change protocols, manufacturers can not only maintain regulatory compliance but also achieve greater operational efficiency, reduce time-to-market for improvements, and most importantly, enhance patient safety through more predictable and controlled manufacturing processes.

Within pharmaceutical manufacturing, process variability presents significant risks to product quality, regulatory compliance, and patient safety. This technical guide provides a structured framework for researchers and drug development professionals to identify, assess, and mitigate key sources of manufacturing variability. By integrating systematic risk assessment methodologies, quantitative analysis tools, and detailed experimental protocols, this work supports the development of robust, scalable manufacturing processes essential for maintaining product critical quality attributes (CQAs).

Process variability in drug manufacturing refers to the inherent fluctuations in process parameters, material attributes, and environmental conditions that can lead to deviations in product quality. Effectively managing this variability is paramount for ensuring consistent product performance and compliance with Current Good Manufacturing Practices (cGMP). A proactive approach to identifying risk triggers—the specific factors or events that initiate variability—enables the development of control strategies that maintain process performance within a state of validation. This guide frames risk assessment not merely as a compliance exercise but as a fundamental scientific endeavor to understand process causality and build quality into pharmaceutical products from development through commercial manufacturing [17].

Foundational Risk Assessment Methodology

A disciplined, multi-step methodology is essential for systematically uncovering and evaluating the risk triggers within a manufacturing process.

Systematic Risk Assessment Steps

The core process for conducting a risk assessment is outlined in the table below [18]:

| Step | Description | Primary Outputs |

|---|---|---|

| 1. Hazard Identification | Collect information on worker routines, environment, tools, and equipment to identify potential hazards. | List of identified biological, chemical, machinery, and physical hazards [18]. |

| 2. Risk Evaluation | Determine risk level by considering severity of potential injuries and probability of occurrence. | Qualitative or quantitative risk ratings; risk scores [18]. |

| 3. Risk Control Measures | Identify strategies to eliminate or reduce risks to acceptable levels. | Hierarchy of controls: Elimination, Substitution, Engineering, Administrative, PPE [18]. |

| 4. Recording & Communication | Document findings and communicate them to all relevant stakeholders. | Formal risk assessment report; updated SOPs. |

| 5. Monitoring & Review | Periodically review risk control strategies to ensure ongoing effectiveness. | Updated risk assessments; records of monitoring activities. |

The 5x5 Risk Matrix as a Quantitative Tool

A 5x5 risk matrix is a pivotal tool for quantifying and prioritizing risks, providing a more nuanced analysis than simpler 3x3 or 4x4 matrices [19]. The matrix is defined by two axes: Probability (Likelihood) and Impact (Severity), each with five descriptive levels. The resulting risk score is calculated as: Risk Score = Severity × Probability [18] [19].

The following table details the standard levels for probability and impact used in a 5x5 risk matrix for manufacturing contexts [19]:

| Probability (Likelihood) | Description | Impact (Severity) | Description |

|---|---|---|---|

| Rare | Unlikely to happen; minor consequences. | Insignificant | No serious injuries or illnesses. |

| Unlikely | Possible to happen; moderate consequences. | Minor | Mild injuries or illnesses. |

| Moderate | Likely to happen; serious consequences. | Significant | Injuries requiring medical attention. |

| Likely | Almost sure to happen; major consequences. | Major | Irreversible injuries; constant medical attention. |

| Almost Certain | Sure to happen; major consequences. | Severe | Fatality. |

The final risk level is determined by the product of the assigned numerical values (typically 1-5 for each axis), which can be color-coded for quick visual prioritization [19]:

- 1-4 (Green - Low Risk): Acceptable; maintain control measures.

- 5-9 (Yellow - Medium Risk): Adequate; consider for further analysis.

- 10-16 (Orange - High Risk): Tolerable; requires timely review and improvement.

- 17-25 (Red - Extreme Risk): Unacceptable; cease activities and take immediate action.

Manufacturing variability can be categorized into several core domains. Understanding these categories allows for targeted risk assessment and control strategy development.

Material and Supply Chain Variability

Raw material attributes are a primary source of variability in pharmaceutical processes.

- Critical Material Attributes (CMAs): Changes in the physical or chemical properties of active pharmaceutical ingredients (APIs) and excipients, such as particle size distribution, polymorphic form, moisture content, or impurity profile, can significantly impact processability and product performance.

- Supplier-Induced Variability: Inconsistent quality from different suppliers, or even between batches from the same supplier, can introduce unforeseen risks. Recent tariffs and potential bans on critical minerals from specific countries highlight the geopolitical dimension of this risk [20].

- Raw Material Testing Gaps: Inadequate characterization of raw materials or over-reliance on Certificate of Analysis (CoA) without sufficient confirmatory testing can allow problematic materials to enter the manufacturing process.

Experimental Protocol for Material Variability Assessment

Objective: To quantify the impact of a specific Critical Material Attribute (e.g., API Particle Size Distribution) on a key process performance indicator (e.g., Blend Homogeneity).

- Design of Experiments (DoE): Utilize a factorial design to systematically vary the API particle size (e.g., D10, D50, D90) across a clinically and process-relevant range.

- Process Execution: For each material variant, execute the standard powder blending process in a scaled-down model (e.g., quart-size blender) that is representative of commercial scale.

- Sampling & Analysis: Employ a statistically valid sampling thief to collect samples from predefined locations within the blender. Analyze samples for API content using a validated HPLC-UV method.

- Data Analysis: Calculate the Relative Standard Deviation (RSD) of API content across samples to determine blend homogeneity. Use multivariate analysis (e.g., ANOVA, regression modeling) to establish a quantitative relationship between the input material attribute (particle size) and the output process performance indicator (RSD).

Process Equipment and Operational Hazards

The equipment itself and how it is operated are significant contributors to variability.

- Equipment Design and Scale-Up: Differences in equipment geometry, shear forces, and heat transfer properties between R&D, pilot, and commercial scales can lead to divergent process outcomes. Inadequate cleaning validation can also lead to cross-contamination.

- Human Factors and Training: Manual operations are susceptible to inconsistencies. Examples include variations in charging speed, sampling technique, or parameter settings on equipment HMIs. Inadequate training amplifies this risk [18].

- Machine-Related Hazards: Moving parts, sharp edges, and the potential for mechanical failure pose direct risks to both product quality and operator safety [18]. A documented Job Hazard Analysis (JHA) is critical for identifying these risks.

Environmental and Control System Fluctuations

The manufacturing environment must be actively controlled to prevent drift in product quality.

- Critical Process Parameters (CPPs): Uncontrolled or poorly controlled parameters such as temperature, pressure, flow rate, and mixing speed directly impact Critical Quality Attributes (CQAs). The table below summarizes common CPPs and their potential impact.

| Unit Operation | Critical Process Parameters (CPPs) | Potential Impact on CQAs |

|---|---|---|

| Granulation | Binder addition rate, impeller speed, granulation time | Granule density, particle size distribution, flowability |

| Compression | Compression force, feeder speed, turret speed | Tablet hardness, thickness, weight uniformity, dissolution |

| Coating | Spray rate, pan speed, inlet air temperature and volume | Coating uniformity, dissolution profile, stability |

- Facility and Utility Systems: Variations in compressed air quality, water-for-injection (WFI) conductivity, or HVAC performance (temperature, humidity, particulate counts) can compromise product quality, particularly in aseptic processing.

External and Regulatory Drivers

The external landscape presents evolving risks that must be factored into long-term process validation strategies.

- Regulatory Changes: Shifts in health regulations (e.g., potential bans on certain food colorings or additives [20]), environmental reporting requirements (e.g., SEC greenhouse gas rules, EU CSRD [20]), and tax policy (e.g., R&D expensing rules [20]) can necessitate process changes or re-validation.

- Supply Chain Policy Shifts: Government policies, such as tariffs on imported materials [20] and initiatives to onshore production of critical items like semiconductors and minerals [20], can alter material costs and availability, forcing rapid qualification of alternative sources.

- Energy and Immigration Policy: Fluctuations in energy costs due to policy changes [20] and stricter immigration enforcement impacting the labor force [20] can introduce instability to manufacturing operations.

The Hierarchy of Risk Controls for Mitigation

Once risks are identified and prioritized, a structured approach to mitigation is required. The hierarchy of controls provides a framework for selecting the most effective measures, prioritized from most to least effective [18].

Application in Pharmaceutical Development:

- Elimination/Substitution: Reformulating a product to remove a problematic excipient that is highly hygroscopic and causes variability in tablet hardness.

- Engineering Controls: Implementing Process Analytical Technology (PAT) with real-time feedback control to automatically adjust a CPP (e.g., spray rate in a fluid bed dryer) to maintain a CQA (e.g., granule moisture content).

- Administrative Controls: Updating Standard Operating Procedures (SOPs) and providing enhanced training for a high-risk manual operation.

- PPE: Requiring operators to wear appropriate gowning to protect the product from human-borne particulates in a cleanroom.

The Scientist's Toolkit: Essential Research Reagents and Materials

A systematic risk assessment relies on specific tools and materials to generate high-quality, defensible data. The following table details key items essential for conducting the experimental studies cited in this guide.

| Tool / Material | Function / Rationale | Example Application |

|---|---|---|

| Design of Experiments (DoE) Software | Enables efficient, statistically sound experimental design to model complex interactions between multiple variables with minimal experimental runs. | Identifying interaction effects between API particle size, blender speed, and blending time on blend uniformity. |

| Process Analytical Technology (PAT) Probes | Allows for real-time, in-line monitoring of Critical Quality Attributes (CQAs) and Process Parameters (CPPs) without manual sampling. | NIR spectroscopy probe to monitor blend homogeneity in real-time inside a bin blender. |

| Scale-Down Model (e.g., Mini-Reactors, Lab-Scale Blenders) | Provides a representative, cost-effective system for studying process variability and establishing a design space prior to commercial-scale validation. | Using a 1-liter bioreactor to study the impact of pH and dissolved oxygen variability on cell culture titer. |

| Stable Reference Standard | A well-characterized material with consistent properties, used as a benchmark to distinguish between assay variability and true process variability. | Used as a control in every HPLC run when testing blend uniformity samples to ensure analytical method consistency. |

| Statistical Analysis Software | Provides advanced capabilities for performing multivariate analysis, regression modeling, and statistical process control (SPC) on complex datasets. | Performing ANOVA to determine the statistical significance of factors studied in a DoE on tablet compression. |

A science-based approach to identifying common risk triggers is fundamental to achieving manufacturing excellence in the pharmaceutical industry. By adopting the structured methodologies, experimental protocols, and visualization tools outlined in this guide, researchers and drug development professionals can transform risk assessment from a regulatory formality into a powerful engine for process understanding. This systematic identification of variability sources enables the design of robust control strategies, ultimately ensuring the consistent production of safe and effective medicines for patients. The iterative cycle of assessment, control, and monitoring creates a foundation for continuous process improvement and lifecycle management.

In the highly regulated pharmaceutical manufacturing industry, establishing a risk-aware culture is not merely a strategic advantage but a fundamental component of quality assurance and patient safety. The complex nature of drug development and manufacturing processes demands a proactive approach to risk management that transcends departmental boundaries and becomes embedded in the organizational fabric. This whitepaper examines how leadership commitment and cross-functional collaboration create a robust risk-aware culture, specifically within the context of manufacturing process changes. By integrating diverse expertise and fostering shared responsibility, organizations can more effectively identify, assess, and mitigate risks throughout the product lifecycle, ensuring compliance, maintaining product quality, and safeguarding public health [21] [22].

The Critical Role of Leadership in Shaping Risk Culture

Leadership commitment serves as the cornerstone for building a sustainable risk-aware culture. Through their actions, communication, and resource allocation, leaders set the organizational tone and priorities regarding risk management.

Leadership Behaviors That Foster Risk Awareness

- Leading by Example: Leaders must actively demonstrate their commitment to risk management by openly discussing risks in strategic meetings, sharing lessons learned from past failures, and prioritizing risk considerations in resource allocation decisions. When leaders acknowledge uncertainties and demonstrate thoughtful risk-taking, they create psychological safety for team members to voice concerns without fear of reprisal [23].

- Reframing Risk as Strategic Opportunity: Effective leaders differentiate between reckless risk-taking and informed strategic risks. They position risk management not as a defensive activity but as an enabler of innovation and competitive advantage. This involves approving manufacturing process changes with known, well-understood trade-offs, provided they are accompanied by transparent mitigation plans and monitoring protocols [23].

- Establishing Clear Accountability: Leaders must clearly define and communicate risk management roles and responsibilities throughout the organization. A well-defined RACI (Responsible, Accountable, Consulted, Informed) matrix ensures that everyone understands their specific risk management obligations, creating a culture of accountability rather than blame [24].

Leadership Systems and Processes

- Resource Allocation and Support: Leaders must provide adequate resources, including tools, training, and personnel, to support effective risk management. This includes investing in modern risk assessment technologies, data analytics capabilities, and continuous training programs [25].

- Recognition and Reward Structures: Implementing formal recognition programs for employees who proactively identify and report risks reinforces desired behaviors. Such rewards can include monetary bonuses, public acknowledgment, or career advancement opportunities, signaling that risk awareness is valued within the organization [21] [23].

- Strategic Alignment: Leadership must ensure that risk management objectives are fully aligned with overarching organizational goals. This alignment helps integrate risk considerations into strategic planning and demonstrates the connection between risk awareness and business success [25].

Table 1: Leadership Practices for Establishing Risk-Aware Culture

| Leadership Practice | Key Implementation Strategies | Expected Organizational Impact |

|---|---|---|

| Visible Commitment | Active participation in risk reviews, transparent communication about risks, allocation of dedicated resources | Increased psychological safety, higher risk reporting rates, earlier risk identification |

| Strategic Risk-Taking | Evaluating risk-reward trade-offs, supporting calculated innovation, encouraging "what-if" thinking | Enhanced innovation, competitive advantage, more agile response to market changes |

| Accountability Framework | Implementing RACI matrices, defining clear risk ownership, establishing performance metrics | Clear ownership of risks, reduced siloed thinking, improved risk mitigation outcomes |

| Resource Provision | Investment in risk assessment tools, training programs, dedicated risk management personnel | Improved risk assessment capabilities, more consistent application of risk methodologies |

Cross-Functional Collaboration in Risk Management

Cross-functional collaboration breaks down organizational silos that often obscure comprehensive risk visibility. By integrating diverse perspectives and expertise, pharmaceutical manufacturers can develop more holistic approaches to risk identification and mitigation, particularly during manufacturing process changes.

Structural Foundations for Effective Collaboration

- Cross-Functional Team Composition: Establishing formal cross-functional teams with representatives from key departments—including R&D, quality assurance, regulatory affairs, manufacturing, and supply chain—ensures that risks are considered from multiple perspectives. This diversity of expertise enables more comprehensive risk identification and more effective mitigation strategies [22] [24].

- Unified Objectives and Governance: Cross-functional risk management requires clearly defined shared objectives that all participating departments understand and pursue. A clear governance structure with defined roles, effective communication channels, and executive sponsorship is essential for success [26] [24].

- Integrated Technology Platforms: Implementing common technology solutions that enable connected data sharing and workflow capabilities is crucial for breaking down information silos. Integrated platforms allow finance, operations, compliance, and manufacturing teams to access the same trusted information and collaborate effectively despite their different domain expertise [26].

Practical Implementation of Cross-Functional Risk Management

- Structured Collaboration Sessions: Facilitate regular cross-functional workshops and brainstorming sessions specifically focused on risk identification for proposed manufacturing process changes. These structured sessions should use techniques like Failure Mode and Effects Analysis (FMEA) and root cause analysis to systematically identify potential risks [22] [24].

- Shared Risk Assessment Frameworks: Adopt consistent scoring systems and assessment methodologies across all departments. Commonly used frameworks include ISO 31000 and COSO, which provide standardized approaches to evaluating risk likelihood, impact, and regulatory exposure [24].

- Integrated Monitoring and Reporting: Implement cross-departmental monitoring through shared Governance, Risk, and Compliance (GRC) software platforms that provide real-time risk tracking. Composite risk reports that combine data from all departments give leadership a comprehensive view of the organization's risk landscape [24].

Diagram 1: Cross-functional risk management workflow for process changes. This diagram illustrates the continuous, integrated process of managing risks associated with manufacturing process changes, highlighting the essential feedback loop and multi-departmental collaboration.

Quantitative Risk Assessment in Manufacturing Process Changes

Quantitative risk analysis provides a structured, data-driven approach to assess risks associated with manufacturing process changes, enabling more objective decision-making and resource prioritization.

Methodologies for Quantitative Risk Assessment

- Total Efficient Risk Priority Number (TERPN): This method integrates traditional FMEA with economic factors, enabling organizations to classify risks and identify corrective actions that provide the highest risk reduction at the lowest cost. TERPN is particularly valuable for prioritizing risk mitigation efforts in resource-constrained environments [27].

- Monte Carlo Simulation: This technique uses computational algorithms to simulate thousands of possible scenarios based on probability distributions for risk variables. It helps quantify the potential impact of uncertainties in manufacturing process parameters on critical quality attributes [6].

- Sensitivity Analysis: By varying input factors within manufacturing processes, sensitivity analysis helps determine which parameters have the greatest influence on outcomes, allowing organizations to focus their control strategies on the most critical variables [6].

- Value at Risk (VaR) Analysis: This methodology determines the maximum potential loss that could occur from a manufacturing process change at a given confidence level, helping to quantify financial exposure and inform decision-making [6].

Implementation Framework for Quantitative Assessment

The process for implementing quantitative risk assessment for manufacturing process changes involves several key stages, each requiring specific actions and deliverables to ensure comprehensive risk evaluation.

Diagram 2: Quantitative risk assessment methodology. This workflow outlines the systematic approach to quantifying risks associated with manufacturing process changes, highlighting key analytical techniques employed at each stage.

Table 2: Quantitative Risk Assessment Techniques for Manufacturing Process Changes

| Technique | Methodology | Application Context | Key Output Metrics |

|---|---|---|---|

| TERPN | Integration of FMEA with cost-benefit analysis | Prioritizing risk mitigation actions for maximum efficiency | Risk Priority Number, Cost-Benefit Ratio, Implementation Priority Score |

| Monte Carlo Simulation | Computational simulation using random variable sampling | Modeling complex process interactions and predicting outcomes | Probability Distributions, Confidence Intervals, Likelihood of Success/Failure |

| Sensitivity Analysis | Systematic variation of input parameters to observe outcome changes | Identifying critical process parameters and their impact on quality | Tornado Diagrams, Sensitivity Indices, Critical Parameter Ranking |

| Value at Risk (VaR) | Statistical technique to quantify potential loss magnitude | Financial risk assessment of process changes | Maximum Potential Loss, Confidence Level, Time Horizon |

Practical Implementation Framework

Building the Foundation: Education and Communication

- Comprehensive Risk Training Programs: Implement regular training sessions and workshops that cover risk management principles, specific manufacturing risks, and the organization's risk framework. These programs should use real-life scenarios and simulations to build practical risk assessment skills [21].

- Cross-Functional Risk Communication Protocols: Establish clear communication channels for discussing and reporting risks, including regular cross-departmental meetings, dedicated risk reporting portals, and standardized reporting templates. This ensures that risk information flows freely across organizational boundaries [21] [24].

- Open Door Policy and Psychological Safety: Foster an environment where employees feel comfortable reporting potential risks without fear of negative repercussions. Leadership behavior that encourages questions and acknowledges reported concerns reinforces psychological safety [21] [23].

Integration into Operational Processes

- Risk-Informed Decision-Making: Embed risk assessment directly into decision-making processes for manufacturing changes. Require formal risk evaluations before approving process modifications, and ensure risk considerations are integrated into project planning and resource allocation [21].

- Regular Risk Reviews: Schedule periodic risk review meetings at appropriate frequencies (weekly for active projects, monthly for operational risks, quarterly for strategic risks) to discuss ongoing risks, review mitigation progress, and identify new emerging risks [21].

- Performance Metrics and Monitoring: Establish key risk indicators (KRIs) and other metrics to monitor the effectiveness of risk management efforts. Track leading indicators like risk identification rates and mitigation completion percentages rather than relying solely on lagging indicators like incident rates [23].

Table 3: Essential Research Reagents for Risk Assessment in Pharmaceutical Manufacturing

| Tool/Resource | Function | Application in Risk Assessment |

|---|---|---|

| FMEA/FMECA Software | Systematic identification of potential failure modes and their effects | Analyzing manufacturing process changes for potential failure points and their impact on product quality |

| Statistical Analysis Packages | Advanced analytics for pattern recognition and predictive modeling | Identifying trends in manufacturing data, predicting potential deviations, and quantifying risk probabilities |

| Process Modeling Software | Digital simulation of manufacturing processes and workflows | Testing the impact of process changes virtually before implementation, identifying hidden risks |

| Quality Management Systems (QMS) | Integrated platforms for documenting and tracking quality events | Managing risk mitigation actions, tracking deviations, and maintaining audit trails for regulatory compliance |

| Data Visualization Tools | Creation of dashboards and visual representations of risk data | Communicating risk information effectively across functions, enabling faster risk recognition |

| Regulatory Intelligence Platforms | Monitoring and analysis of evolving regulatory requirements | Assessing compliance risks associated with manufacturing process changes across different jurisdictions |

Establishing a risk-aware culture through leadership commitment and cross-functional collaboration represents a critical success factor for pharmaceutical manufacturers implementing process changes. This integrated approach enables organizations to leverage diverse expertise, identify risks earlier, and develop more effective mitigation strategies. By embedding risk awareness into daily operations, providing comprehensive training, and implementing robust quantitative assessment methodologies, manufacturers can navigate the complexities of process changes while maintaining product quality, regulatory compliance, and patient safety. The frameworks and methodologies presented in this whitepaper provide a roadmap for researchers, scientists, and drug development professionals seeking to enhance risk management practices within their organizations, ultimately contributing to more resilient manufacturing operations and safer pharmaceutical products.

The Risk Assessment Toolkit: Proven Methodologies and Their Practical Application

In the highly regulated pharmaceutical industry, risk assessment provides a systematic framework for proactively identifying and controlling potential failures in manufacturing processes. As regulatory bodies like the U.S. Food and Drug Administration increasingly advocate for science- and risk-based approaches, selecting appropriate methodological tools has become critical for ensuring product quality, patient safety, and regulatory compliance [28]. This technical guide provides an in-depth examination of four fundamental risk assessment methodologies—FMEA, FTA, HACCP, and HAZOP—within the context of pharmaceutical manufacturing process changes.

These structured approaches enable researchers, scientists, and drug development professionals to anticipate potential failures, quantify risks, and implement effective controls before process modifications are implemented. The selection of a specific tool depends on multiple factors including the nature of the process change, regulatory requirements, resource constraints, and the type of hazards under consideration. A comparative analysis of these methodologies reveals distinct applications, strengths, and limitations that must be understood to deploy them effectively within a Quality by Design (QbD) framework for pharmaceutical development and manufacturing [29].

Core Methodologies: Principles and Components

Failure Mode and Effects Analysis (FMEA)

FMEA represents a systematic, proactive approach to identifying potential failure modes within a process, product, or system and assessing their relative impact. In pharmaceutical manufacturing, FMEA methodology focuses on process or equipment failure risk reduction before affecting final product quality [30]. The methodology employs several key components: Failure Mode (the manner in which a process could fail), Cause (the underlying reason for the failure), Effect (the consequence of the failure on product quality), and three quantitative metrics—Severity (seriousness of the effect), Occurrence (probability of the failure occurring), and Detection (likelihood of detecting the failure before impact) [30].

The critical output of FMEA is the Risk Priority Number (RPN), calculated as the product of Severity, Occurrence, and Detection scores (RPN = S × O × D). This numerical value enables prioritization of risks, with higher RPN values indicating risks that require immediate corrective actions [30]. FMEA finds particular application in pharmaceutical production, engineering, and validation activities conducted by Quality Assurance teams, where it serves as a preventive tool rather than a reactive one [30]. Recent studies in the medical device sector, however, highlight certain limitations of FMEA, noting that it focuses primarily on device functionality and risk of failure while potentially not accounting for all safety risks during normal device usage per ISO 14971:2019 requirements [31].

Fault Tree Analysis (FTA)

Fault Tree Analysis employs a deductive, top-down approach to risk assessment that begins with a potential undesired event (the "top event") and works backward to identify all potential causes and their logical relationships. The methodology utilizes graphical representation with logical gates (primarily AND and OR gates) to model how basic causes combine to produce the top event [30]. Key components of FTA include the Top Event (the specific undesired system state being analyzed), Basic Causes (fundamental failures or faults that initiate the failure sequence), and Logic Gates (symbols that represent the relationships between events and causes) [30].

In pharmaceutical applications, FTA excels at evaluating how multiple failure causes can converge to produce one major failure event, making it particularly valuable for analyzing complex systems such as sterile HVAC systems, compressed air systems, and critical equipment maintenance protocols [30]. The methodology provides a clear visual representation of failure pathways, enabling development teams to identify single points of failure and potential common cause failures that might otherwise remain undetected in more linear analysis methods. The quantitative aspect of FTA allows for probability calculations when failure rate data are available for basic events, supporting more data-driven decision making for risk control strategies.

Hazard Analysis and Critical Control Points (HACCP)

HACCP represents a structured, preventive system for managing food safety that has been adaptively applied to pharmaceutical manufacturing, particularly in sterile production environments. The methodology focuses on physical, chemical, and biological hazards through identification and control of critical points in the manufacturing process [30]. HACCP is built upon seven established principles: conducting a hazard analysis, determining critical control points (CCPs), establishing critical limits, implementing monitoring procedures, defining corrective actions, establishing verification procedures, and maintaining documentation [32].

The system's key components include Hazard Analysis (identification of potential hazards and control measures), Critical Control Points (steps where control can be applied to prevent or eliminate a hazard), Critical Limits (minimum/maximum values for biological, chemical, or physical parameters at CCPs), Monitoring Procedures (planned observations to assess CCP control), and Corrective Actions (procedures followed when deviations occur) [30] [32]. In pharmaceutical contexts, HACCP finds particular application in prevention and control of microbiological, chemical, and physical contamination within sterile manufacturing, water systems, and microbiology laboratories [30]. By 2025, HACCP continues to evolve with increased emphasis on digital compliance tools, global harmonization efforts, and integration with broader Food Safety Management Systems (FSMS) such as ISO 22000 [33] [34].

Hazard and Operability Study (HAZOP)

HAZOP represents a systematic, structured approach to identifying potential deviations from normal operating conditions and their consequences in complex processes. Originally developed for the chemical industry, HAZOP has been effectively adapted for pharmaceutical applications, particularly in active pharmaceutical ingredient (API) manufacturing and bulk drug processing [30]. The methodology employs a guide-word approach to systematically examine process parameters and identify deviations. Key components of HAZOP include Process Nodes (discrete segments of the process under examination), Parameters (relevant process variables such as flow, temperature, pressure), Guide Words (standard terms like "no," "more," "less" applied to parameters to generate deviations), Deviations (potential abnormal situations identified by combining guide words with parameters), Consequences (potential outcomes of deviations), and Safeguards (existing protective systems) [30].

HAZOP studies are typically conducted by multidisciplinary teams including process engineers, chemists, quality specialists, and operators who systematically examine each process node using the guide-word methodology. This comprehensive approach makes HAZOP particularly valuable for assessing process safety and operability during chemical or formulation processes in pharmaceutical manufacturing [30]. The methodology excels at identifying unforeseen interaction effects in complex systems and is often applied during technology transfer activities and process scale-up where understanding operational boundaries is critical to patient safety and product quality.

Comparative Analysis of Methodologies

Structured Comparison of Methodological Features

Table 1: Comparative Analysis of Risk Assessment Methodologies

| Feature | FMEA | FTA | HACCP | HAZOP |

|---|---|---|---|---|

| Primary Approach | Bottom-up (Inductive) | Top-down (Deductive) | Systematic prevention | Structured deviation analysis |

| Core Components | Failure modes, Severity, Occurrence, Detection, RPN | Top event, Logic gates, Basic causes | CCPs, Critical limits, Monitoring, Corrective actions | Guide words, Parameters, Deviations, Consequences |

| Primary Output | Risk Priority Number (RPN) | Probability of top event, Cut sets | Controlled process with validated CCPs | List of deviations with causes and consequences |

| Application Scope | Process/equipment failure risk | Multiple failure causes leading to major failure | Microbiological, chemical, physical contamination | Process safety and operability |

| Industry Sectors | Production, Engineering, Validation, QA [30] | Sterile HVAC, Compressed air, Critical equipment [30] | Sterile manufacturing, Water systems, Microbiology lab [30] | API manufacturing, Bulk drug processing, Process engineering [30] |

| Resource Intensity | Medium | Medium to High (for complex systems) | High (requires ongoing monitoring) | High (requires multidisciplinary team) |

| Regulatory Alignment | ISO 14971 (with limitations [31]) | Engineering safety standards | Codex Alimentarius, FDA FSMA [34] [32] | Process safety management standards |

Quantitative Assessment Metrics

Table 2: Risk Assessment Outputs and Applications

| Methodology | Risk Quantification Approach | Typical Application in Process Changes | Key Strengths | Key Limitations |

|---|---|---|---|---|

| FMEA | RPN (Severity × Occurrence × Detection) | Equipment changes, Process parameter modifications | Prioritizes risks numerically, Comprehensive coverage | Does not account for all safety risks during normal usage [31] |

| FTA | Probability calculation of top event | System failures, Multiple interaction failures | Handles complex interactions, Graphical visualization | Requires substantial data, Can become complex |

| HACCP | Binary determination (in/out of control) | Introduction of new process steps, Contamination control | Focused on critical points, Ongoing monitoring | Limited to specific hazard types, Requires prerequisite programs |

| HAZOP | Qualitative assessment of deviations | Process scale-up, Technology transfer | Systematic identification of deviations, Comprehensive | Time-consuming, Requires expert facilitation |

Methodological Selection Framework

Tool Selection Algorithm

The following decision pathway provides a systematic approach for researchers and drug development professionals to select the most appropriate risk assessment methodology based on specific process change characteristics and assessment objectives:

Implementation Protocols

FMEA Implementation Protocol

The successful implementation of FMEA follows a structured protocol requiring cross-functional expertise:

Preparatory Phase: Define FMEA scope and boundaries. Assemble a multidisciplinary team including process engineering, quality assurance, manufacturing, and research development. Gather all relevant process documentation including flow diagrams, control strategies, and historical quality data.

Functional Analysis: Deconstruct the process into sequential steps. For each step, identify all intended functions and requirements. This creates the foundation for identifying potential failure modes.

Failure Analysis: For each process step, systematically identify potential failure modes (ways the step could fail), potential causes of each failure mode, and potential effects on product quality or patient safety.

Risk Assessment: For each failure mode, assign Severity (S), Occurrence (O), and Detection (D) ratings on standardized scales (typically 1-10). Calculate Risk Priority Numbers (RPN = S × O × D) and prioritize failure modes for corrective actions.

Optimization Phase: Develop and implement corrective actions targeted at high RPN failure modes. Focus on reducing Occurrence through process improvements and enhancing Detection through improved controls or monitoring.

Documentation and Follow-up: Document the entire FMEA analysis. Recalculate RPN values after implementing improvements to verify risk reduction effectiveness. Integrate FMEA findings into the overall control strategy.

HACCP Implementation Protocol

Implementation of HACCP for pharmaceutical manufacturing requires meticulous attention to prerequisite programs and systematic analysis:

Prerequisite Programs: Establish and verify foundational programs including Good Manufacturing Practices (GMPs), Standard Operating Procedures (SOPs), supplier qualification, training, and facility maintenance. These create the basic environmental and operating conditions necessary for safe production [32].

HACCP Team Formation: Assemble a multidisciplinary team with specific knowledge and expertise appropriate to the product and process. The team should include members from microbiology, quality assurance, process engineering, and manufacturing.

Process Description: Develop comprehensive descriptions of the product and its distribution, including intended use and target patient population. Create and verify a detailed process flow diagram covering all process steps from raw materials to finished product.

Hazard Analysis: At each process step, identify potential biological, chemical, or physical hazards. Assess the severity and likelihood of each hazard and identify preventive control measures.

CCP Identification: Using a decision tree methodology, determine which process steps are Critical Control Points (CCPs) - steps where control is essential to prevent or eliminate a hazard or reduce it to an acceptable level.

Establish Control Parameters: For each CCP, establish critical limits, monitoring procedures, corrective actions, verification procedures, and comprehensive documentation. Implement ongoing monitoring to ensure each CCP remains under control.

Advanced Applications in Pharmaceutical Development

Integration with Regulatory Frameworks

The selection and implementation of risk assessment methodologies must align with evolving regulatory expectations for pharmaceutical manufacturing. The U.S. Food and Drug Administration's Chemistry, Manufacturing, and Controls (CMC) Development and Readiness Pilot Program emphasizes science- and risk-based approaches to facilitate expedited CMC development for products with accelerated clinical timelines [28]. This regulatory initiative encourages increased sponsor-agency communication and explores risk-based approaches to streamline CMC development, directly impacting methodology selection for process changes.

Similarly, the FDA's guidance on "Expressed Programs for Serious Conditions" advocates for risk-based regulatory strategies that can be effectively supported through rigorous application of FMEA, FTA, HACCP, and HAZOP methodologies [28]. As regulatory bodies worldwide move toward harmonized standards, understanding how each methodology supports compliance with international regulations becomes increasingly important for global development programs.

Emerging Trends and Future Directions

Risk assessment methodologies continue to evolve in response to technological advancements and emerging challenges in pharmaceutical manufacturing:

Digital Integration: The movement toward digital HACCP platforms featuring real-time monitoring, automated record-keeping, and cloud-based data analytics represents a significant advancement in methodology implementation [34]. These technologies enable more dynamic risk assessment and faster response to deviations.

AI and Predictive Analytics: Artificial intelligence and machine learning are being integrated into risk assessment methodologies to enable predictive hazard analysis. AI-enhanced FMEA can potentially identify failure mode relationships that might escape traditional analysis [34].

Supply Chain Applications: Traditionally facility-focused methodologies like HACCP are expanding to encompass end-to-end supply chain risk assessment, crucial for addressing vulnerabilities in global pharmaceutical supply chains [34].

Advanced Visualization: Emerging technologies including digital twins and augmented reality are being explored for risk assessment, creating opportunities for more immersive and interactive methodology application [34].

Table 3: Research Reagent Solutions for Risk Assessment Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| FMEA Software Platforms | Automated RPN calculation, tracking, and reporting | Digital management of FMEA analyses for complex processes |

| HACCP Digital Monitoring Systems | Real-time CCP monitoring with automated alerts | Sterile manufacturing environments requiring continuous compliance |

| FTA Modeling Software | Graphical construction of fault trees with probability calculations | Complex system failure analysis for engineering and equipment |

| HAZOP Facilitator Tools | Structured guideword application and deviation documentation | Complex process hazard analysis in API manufacturing |

| Quality Risk Management Templates | Standardized formats for risk documentation | Regulatory submissions and internal quality systems |

| Process Mapping Software | Visual representation of manufacturing processes | Preliminary analysis for all risk assessment methodologies |

| Statistical Analysis Packages | Quantitative analysis of occurrence and detection probabilities | Data-driven risk assessment for FMEA and FTA |

| Regulatory Database Access | Current regulatory requirements and guidance | Ensuring methodology application meets compliance standards |

The selection of an appropriate risk assessment methodology represents a critical decision point in pharmaceutical process development and improvement initiatives. FMEA, FTA, HACCP, and HAZOP each offer distinct approaches, strengths, and limitations that must be carefully matched to specific assessment needs. FMEA provides comprehensive failure analysis with quantitative prioritization, FTA excels at analyzing complex system failures, HACCP delivers focused contamination control, and HAZOP offers exhaustive deviation analysis for complex processes.

Understanding the structured protocols for implementing each methodology, along with their regulatory alignment and resource requirements, enables researchers and drug development professionals to make informed selections based on specific process change characteristics. As the pharmaceutical industry continues to embrace risk-based approaches and quality by design principles, the strategic application of these methodologies will remain fundamental to ensuring product quality, patient safety, and regulatory compliance throughout the product lifecycle.

Failure Mode and Effects Analysis (FMEA) is a systematic, proactive methodology for identifying potential failures in processes, products, or services [35]. For researchers and professionals managing risk in manufacturing process changes, FMEA provides a structured framework to anticipate and mitigate potential failures before they occur, thereby enhancing reliability, safety, and quality [36]. Originally developed in the 1940s and 1950s within the military and aerospace industries, this risk analysis tool has since become a cornerstone of risk management in highly regulated sectors, including pharmaceutical development and manufacturing [36] [37].

The core value of FMEA in a research context lies in its ability to turn hindsight into foresight. It builds a culture of anticipation and prevention rather than reaction, allowing teams to understand potential failures and their impacts systematically [36]. For drug development professionals, this proactive approach is strategic, enabling the identification of vulnerabilities in process changes before they lead to costly deviations, non-conforming products, or compromised patient safety [37].

Core FMEA Types for Process Analysis

Two primary types of FMEA are most relevant to process changes:

Process FMEA (PFMEA): Discovers risks associated with process changes, including failures that impact product quality, process reliability, and safety. It analyzes potential failures derived from the 6Ms: Man, Methods, Materials, Machinery, Measurement, and Mother Earth (environmental factors) [38]. PFMEA is highly relevant for manufacturing and assembly processes, such as a tablet packaging line or a sterile filling operation [35] [39].

Design FMEA (DFMEA): Analyzes risks associated with a new, updated, or modified product design. It explores the possibility of product malfunctions, reduced product life, and safety concerns. While the focus here is on process, changes in product design (e.g., drug formulation) can necessitate process changes, making an understanding of DFMEA valuable [38].

This guide will focus primarily on the application of PFMEA for managing risks associated with manufacturing process changes.

The FMEA Methodology: A Detailed Procedural Framework