Advanced Cell Type Annotation: A Multi-Model Integration Strategy for Precision Single-Cell Analysis

This article provides a comprehensive guide to multi-model integration strategies for cell type annotation, addressing the critical need for accuracy and robustness in single-cell genomics.

Advanced Cell Type Annotation: A Multi-Model Integration Strategy for Precision Single-Cell Analysis

Abstract

This article provides a comprehensive guide to multi-model integration strategies for cell type annotation, addressing the critical need for accuracy and robustness in single-cell genomics. It explores the foundational principles and limitations of single-model approaches before detailing specific methodological workflows for integrating diverse algorithms such as Seurat, scVI, and SingleR. A dedicated section tackles common technical challenges and optimization techniques, followed by rigorous frameworks for benchmarking and validating annotation results. Tailored for researchers and drug development professionals, this resource aims to equip readers with the knowledge to implement reliable, reproducible, and biologically meaningful cell type annotation pipelines for advancing disease research and therapeutic discovery.

Beyond Single Algorithms: Why Multi-Model Integration is Essential for Accurate Cell Typing

Application Notes

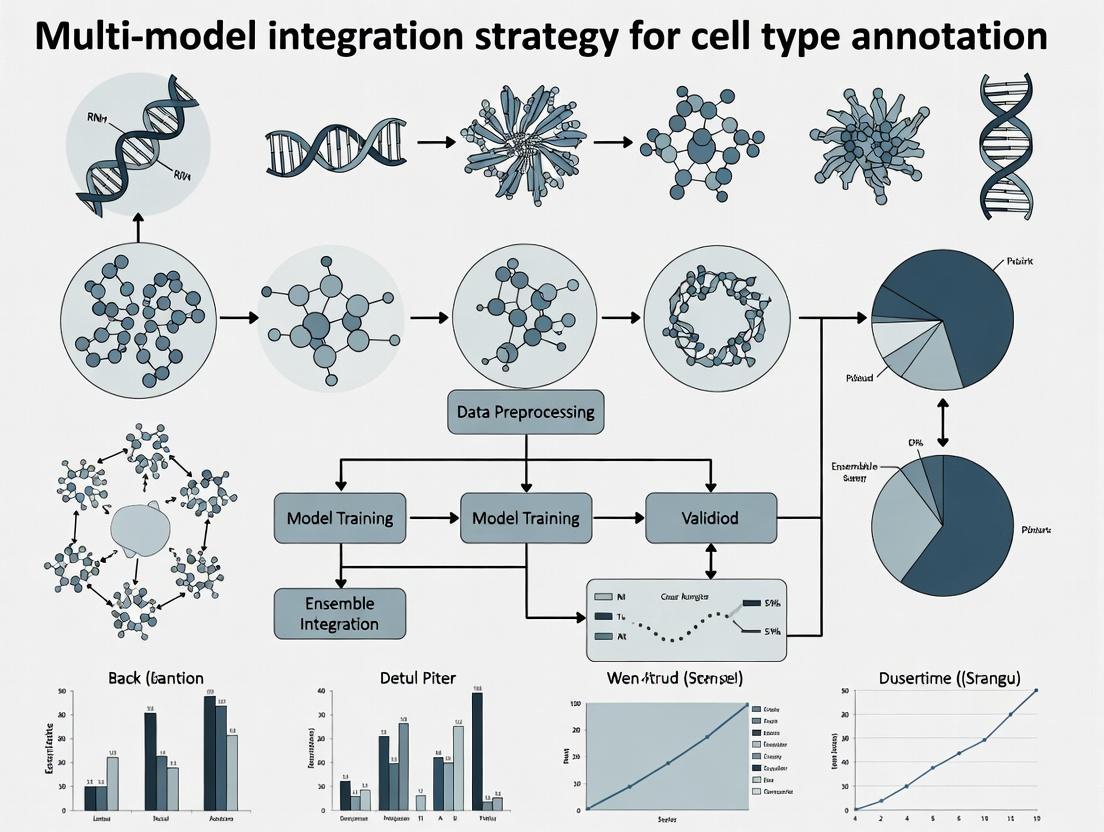

Multi-model integration strategies are essential for robust and accurate cell type annotation, a critical step in single-cell RNA sequencing (scRNA-seq) analysis. Within the broader thesis on a unified multi-model integration strategy for cell type annotation research, three primary paradigms are defined. These approaches address the inherent limitations of individual annotation algorithms by combining their strengths.

Ensemble Strategies: This approach operates on the principle of "wisdom of the crowds." Multiple base classifier models (e.g., SingleR, scType, scSorter) are trained independently on the same reference data. Their individual predictions for a query cell are then aggregated through a meta-learner or a voting mechanism (e.g., majority vote, weighted vote) to produce a final, more stable annotation. It reduces variance and mitigates bias from any single model.

Hierarchical Strategies: This strategy imposes a biologically informed structure on the annotation process. Annotation is performed in a multi-tiered fashion, typically following a known cell ontology (e.g., Cell Ontology). A coarse-grained model first distinguishes major lineages (e.g., immune cells vs. epithelial cells). Subsequently, specialized, fine-grained models are applied within each branch to resolve sub-types (e.g., T cells -> CD4+ T cells -> T-regulatory cells). This increases accuracy for rare or closely related subtypes.

Consensus Strategies: This method focuses on reconciling outputs from diverse, often heterogenous, annotation pipelines or databases. Instead of merging model inputs, it integrates the final predictions or confidence scores. It identifies the label with the highest agreement among sources or uses statistical measures (e.g., entropy, clustering of predictions) to assign a consensus cell type, often highlighting cells where models disagree for further scrutiny.

Quantitative Comparison of Multi-Model Integration Strategies

Table 1: Performance and Characteristics of Integration Strategies in Cell Type Annotation

| Strategy | Typical Accuracy Gain* (%) | Key Strength | Computational Cost | Best Suited For |

|---|---|---|---|---|

| Ensemble | 5-15% | Improves robustness & generalizability; reduces overfitting. | High (multiple model training) | Standardized pipelines; high-quality reference data. |

| Hierarchical | 10-25% (for fine-grained types) | Biologically interpretable; efficient for deep annotation. | Medium (sequential models) | Complex tissues with well-defined ontologies. |

| Consensus | 3-10% | Harmonizes disparate sources; identifies ambiguous cells. | Low (post-hoc analysis) | Integrating multi-database labels or legacy data. |

*Gain is relative to the median-performing base model in the test set. Performance is dataset-dependent.

Experimental Protocols

Protocol 1: Implementing an Ensemble Strategy for PBMC Annotation

Objective: To annotate human Peripheral Blood Mononuclear Cell (PBMC) scRNA-seq data using an ensemble of three classifier models. Materials: Query scRNA-seq dataset (count matrix), Reference datasets (e.g., Blueprint/ENCODE, Monaco Immune Data), High-performance computing cluster. Procedure:

- Base Model Training: Independently run three annotation tools:

- SingleR (v2.0.0): Run with default parameters against the

BlueprintEncodeDatareference. - scType (v1.0): Generate cell-type-specific gene signatures from the reference and score cells using the

scTypeR script. - scANVI (v0.18.0): Pre-train a reference model on the

MonacoImmuneDatausing 30 latent dimensions.

- SingleR (v2.0.0): Run with default parameters against the

- Prediction Collection: For each cell in the query data, compile the predicted label and (if available) the prediction score from each base model into a consensus table.

- Meta-Learning Aggregation: Train a logistic regression meta-learner (using

caretR package) on a held-out validation set. Use the prediction scores from the three base models as features to predict the final cell type label. - Majority Vote Fallback: For cells where the meta-learner confidence is below 0.7, assign the cell type determined by a simple majority vote of the three base model labels.

- Validation: Compare ensemble predictions against manual annotation based on canonical marker genes (CD3D, MS4A1, FCGR3A, etc.) visualized on UMAP.

Diagram 1: Ensemble strategy workflow for cell annotation.

Protocol 2: Hierarchical Annotation of Mouse Brain Cortex Cells

Objective: To perform layered annotation of cell types in the mouse primary motor cortex (MOp) using a predefined ontology. Materials: Mouse MOp scRNA-seq data (e.g., from BRAIN Initiative Cell Census Network), Cell Ontology hierarchy for neurons and glia, Marker gene lists for each ontological level. Procedure:

- Level 1 - Major Class: Apply a broad classifier (e.g., a random forest trained on

MouseRNAseqDatafrom Celldex) to assign each cell to a major class: "Neuron", "Oligodendrocyte", "Astrocyte", "Microglia", "Endothelial", or "Other". - Level 2 - Subclass (Neuronal Branch): Isolate cells labeled "Neuron". Apply a neuronal-specific model (e.g.,

scMapcluster-based projection) to distinguish GABAergic, Glutamatergic, and Non-neuronal subtypes. - Level 3 - Cell Type (GABAergic Branch): Isolate cells labeled "GABAergic". Use a fine-grained, marker-based scoring method (e.g., AUCell) with a curated gene set for mouse cortical GABAergic types (e.g., Pvalb, Sst, Vip, Lamp5, Sncg) to assign final cell type labels.

- Validation at Each Level: At each hierarchical split, generate a UMAP embedding colored by the new labels and confirm separation using known level-specific marker genes.

Diagram 2: Hierarchical annotation workflow for cortical cells.

Protocol 3: Establishing a Consensus from Disparate Annotations

Objective: To resolve conflicting cell type labels generated by four independent annotation pipelines on a pancreatic islet dataset. Materials: Annotation label matrices from four sources (Pipeline A: Azimuth, B: scPred, C: manual marker-based, D: SCINA), Associated confidence scores (if available). Procedure:

- Data Compilation: Create a cell-by-source matrix containing the predicted label from each of the four pipelines for every cell.

- Agreement Calculation: For each cell, calculate the degree of agreement (e.g., number of pipelines assigning the most frequent label).

- Consensus Assignment:

- Rule 1 (Full Agreement): Cells with unanimous agreement (4/4) receive that label.

- Rule 2 (Majority with High Confidence): Cells with 3/4 agreement receive the majority label if the average confidence of the agreeing pipelines is >0.8.

- Rule 3 (Arbitration): Remaining cells are flagged for "arbitration." Cluster these cells based on their gene expression (PCA -> Leiden clustering). The most frequent label from all pipelines within each arbitration cluster is assigned as the consensus.

- Output: Produce a final label vector and a "confidence" metric based on the agreement level. Highlight arbitrated cells for potential re-examination.

The Scientist's Toolkit: Research Reagent Solutions for Multi-Model Integration

Table 2: Essential Resources for Multi-Model Cell Annotation Research

| Item Name / Resource | Provider / Package | Primary Function in Integration Strategy |

|---|---|---|

| SingleR (R/Bioconductor) | D. Aran et al. | A key base classifier for Ensemble and Hierarchical strategies, providing fast, reference-based annotation with confidence scores. |

| Celldex (R/Bioconductor) | B. R. Clarke et al. | Provides standardized, curated single-cell reference datasets (e.g., Human Primary Cell Atlas, Mouse RNA-seq) essential for training models in any strategy. |

| AUCell (R/Bioconductor) | S. Aibar et al. | Enables marker-based scoring for fine-grained levels in Hierarchical strategies or as a base model in Ensemble approaches. |

| Seurat (R) | Satija Lab | The foundational toolkit for scRNA-seq analysis; used for data preprocessing, visualization, and as a platform to run and compare multiple integration strategies. |

| Scanpy (Python) | Theis Lab | Python analogue to Seurat; essential for implementing deep learning-based models (e.g., scANVI) within an ensemble workflow. |

| Harmony (R/Python) | I. Korsunsky et al. | Batch integration tool not for annotation itself, but crucial for preprocessing query data against a reference, improving all subsequent model performance. |

| Cell Ontology (CL) | OBO Foundry | Provides the structured, controlled vocabulary that directly informs the tree-like design of Hierarchical annotation strategies. |

| Azimuth (Web App/Shiny) | Satija Lab | A pre-built, application-specific pipeline whose outputs can be incorporated as one source in a Consensus strategy. |

| scikit-learn (Python) | Pedregosa et al. | Provides the machine learning algorithms (e.g., logistic regression meta-learner, random forest) used to build aggregation layers in Ensemble strategies. |

Application Notes

The Role of Key Data Types in Multi-Model Integration

Cell type annotation in single-cell RNA sequencing (scRNA-seq) research has evolved from manual, marker-based approaches to automated, integrative strategies. The integration of three primary input data types—raw scRNA-seq data, curated reference atlases, and structured prior knowledge—forms the cornerstone of modern multi-model annotation frameworks. These data types compensate for each other's limitations: scRNA-seq provides the unlabeled query data, reference atlases offer validated cell-type signatures, and prior knowledge (e.g., marker gene databases, ontological relationships) guides and constrains biologically plausible annotations. Current research trends emphasize the development of algorithms that dynamically weight the contribution of each data type based on dataset quality and congruence.

Table 1: Quantitative Comparison of Key Input Data Types

| Data Type | Typical Size/Scale | Key Metrics (Completeness, Resolution) | Common File Formats | Primary Use in Annotation |

|---|---|---|---|---|

| scRNA-seq (Query) | 10^3 - 10^6 cells | Median genes/cell: 1k-5k; Sequencing depth: 20k-100k reads/cell | H5AD (AnnData), MTX, LOOM | Provides the target transcriptomes for classification. |

| Reference Atlases | 10^5 - 10^7 cells (aggregated) | Cell types: 50-500; Annotation confidence scores; Cross-dataset batch metrics | H5AD, Seurat Object (.rds), CELLxGENE Census | Serves as a labeled training set for supervised or transfer learning. |

| Prior Knowledge | 100s - 1000s of terms/genes | Marker gene specificity scores; Ontology hierarchy depth (e.g., CL, UBERON) | GMT, JSON, OBO, TSV | Constrains predictions, resolves ambiguities, enables label transfer. |

Protocols for Data Integration

Protocol 2.1: Pre-processing and Quality Control of scRNA-seq Query Data Objective: To generate a high-quality, normalized count matrix from raw sequencing reads suitable for integration with reference data.

- Demultiplexing & Alignment: Use cellranger (10x Genomics) or

kb-pythonto align FASTQ files to a reference genome (e.g., GRCh38). Output: BAM files. - Gene-Count Matrix Generation: Generate a filtered feature-barcode matrix, retaining cells with >500 and <7500 detected genes and mitochondrial read fraction <20%.

- Normalization & Scaling: Using Scanpy (

sc.pp.normalize_totalto 10^4 counts/cell, followed bysc.pp.log1p) or Seurat (NormalizeData,ScaleData). - Highly Variable Gene Selection: Identify 2000-3000 HVGs using

sc.pp.highly_variable_genes(Seurat:FindVariableFeatures). - Doublet Detection: Apply Scrublet or DoubletFinder to predict and remove doublets. Expected doublet rate scales with cells loaded.

- Output: An

AnnDataobject or Seurat assay containing the normalized, scaled, and HVG-subsetted query matrix.

Protocol 2.2: Harmonizing Query Data with a Reference Atlas Objective: To correct for technical batch effects between query and reference, enabling direct comparison.

- Reference Selection: Download a pre-processed, annotated reference (e.g., from CELLxGENE, Human Cell Landscape) matching the biological context.

- Feature Intersection: Find the union or intersection of HVGs between query and reference. Common practice: take the intersection of HVGs (≈1500 genes).

- Batch Integration: Apply a mutual nearest neighbors (MNN) method (

scanorama,bbknn) or a neural network-based method (scVI,scANVI). For Seurat, useFindTransferAnchorsfollowed byMapQuery. - Joint Embedding: Generate a joint UMAP or t-SNE embedding of integrated query + reference cells to visually assess mixing.

- Output: An integrated low-dimensional embedding (PCA, CCA, or latent space) and a corrected expression matrix for downstream annotation.

Protocol 2.3: Incorporating Prior Knowledge via Marker Gene Databases Objective: To utilize known cell-type signatures to guide, validate, or refine algorithmic annotations.

- Resource Curation: Compile marker lists from resources like CellMarker, PanglaoDB, or tissue-specific reviews into a structured GMT file.

- Signature Scoring: Calculate per-cell scores for each prior marker set using AddModuleScore (Seurat) or

sc.tl.score_genes(Scanpy). Alternatively, use AUCell for a rank-based approach. - Constraint in Model Training: For a new model, use prior markers to define the label set or as a regularization term in the loss function (e.g., penalizing predictions inconsistent with high-scoring markers).

- Post-hoc Reconciliation: Resolve conflicts between model predictions and prior knowledge by prioritizing predictions supported by high marker expression (e.g., a neuron predicted as "oligodendrocyte" but expressing high SYT1 and SNAP25 should be re-evaluated).

- Output: A prior-knowledge score matrix and/or a refined, biologically consistent annotation label for each query cell.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Integrated Annotation

| Item/Category | Example Product/Software | Function in Protocol |

|---|---|---|

| Single-Cell Library Prep Kit | 10x Genomics Chromium Next GEM Single Cell 3’ Kit | Generates barcoded cDNA libraries from single cells for scRNA-seq query data input. |

| Reference Atlas Database | CELLxGENE Census, Human Cell Atlas Data Portal | Provides pre-annotated, harmonized single-cell datasets for use as a reference standard. |

| Prior Knowledge Database | CellMarker 2.0, PanglaoDB, Cell Ontology (CL) | Supplies curated cell-type marker genes and ontological relationships for model guidance. |

| Bioinformatics Pipeline | Scanpy (Python), Seurat (R), scvi-tools | Provides core functions for normalization, integration, and analysis of single-cell data. |

| Batch Correction Tool | scANVI, Harmony, BBKNN | Algorithms specifically designed to integrate query and reference datasets by removing technical variation. |

| Cell Annotation Algorithm | SingleR, SCINA, CellTypist | Supervised or knowledge-based classifiers that assign cell-type labels using reference/prior data. |

| Visualization Software | CELLxGENE Explorer, UCSC Cell Browser | Enables interactive exploration of integrated query+reference datasets and annotation results. |

| High-Performance Computing | Cloud (AWS/GCP) or local cluster with 32+ cores, 128GB+ RAM | Necessary for processing large-scale scRNA-seq and reference atlas data within a practical timeframe. |

Common Single-Model Tools (Seurat, Scanpy, SingleR) and Their Inherent Limitations

Within the broader thesis advocating for a multi-model integration strategy for cell type annotation, it is essential to first understand the capabilities and, critically, the limitations of the foundational single-model tools that dominate the field. This document provides detailed application notes and experimental protocols for three cornerstone tools: Seurat, Scanpy, and SingleR. Their individual strengths have propelled single-cell RNA sequencing (scRNA-seq) analysis, yet their inherent biases and methodological constraints underscore the necessity for integrative approaches to achieve robust, biologically-verified cell type classification.

Application Notes & Quantitative Comparison

Seurat: A Comprehensive Toolkit for QC, Analysis, and Exploration

Seurat (R package) is an end-to-end analysis suite for scRNA-seq data. Its standard workflow includes quality control, normalization, feature selection, dimensionality reduction, clustering, and differential expression.

Inherent Limitations:

- Batch Effect Correction: While

IntegrateData()(CCA, RPCA) is powerful, its performance is sensitive to parameter selection (e.g.,dims,k.anchor) and can sometimes over-correct, removing biological signal. - Cluster Resolution: Graph-based clustering (Louvain/Leiden) relies on a user-defined "resolution" parameter, which is arbitrary and can lead to over- or under-clustering without biological ground truth.

- Annotation Reliance: Primary marker gene identification is comparative (FindAllMarkers), requiring prior biological knowledge for interpretation and prone to missing novel or rare cell types.

Scanpy: Scalable Python-Based Analysis

Scanpy is the Python analog to Seurat, offering highly scalable and interoperable data structures (AnnData) and a similar core workflow for preprocessing, clustering, and trajectory inference.

Inherent Limitations:

- Normalization Bias: Default normalization (

sc.pp.normalize_total) assumes total count variation is technical, which may not hold in biologically heterogeneous samples. - High-Dimensional Neighbors: The construction of the k-nearest neighbor (k-NN) graph, foundational for clustering and UMAP, is highly sensitive to the choice of distance metric and the number of neighbors (

n_neighbors), influencing all downstream results. - Black-Box Visualizations: UMAP/t-SNE embeddings are stochastic and can produce visually compelling but misleading separations that are misinterpreted as distinct cell types.

SingleR: Reference-Based Automated Annotation

SingleR automates annotation by comparing a test scRNA-seq dataset to a reference dataset (bulk RNA-seq or scRNA-seq) using correlation methods.

Inherent Limitations:

- Reference Dependence: Accuracy is entirely constrained by the quality, completeness, and relevance of the reference dataset. Poor matches lead to low-confidence or incorrect labels.

- Cellular Resolution: Struggles to distinguish closely related cell subtypes (e.g., naive vs. memory T cells) if the reference lacks definitive markers for them.

- Technical Artifact Propagation: If the reference contains batch effects or different technological platforms, these are propagated to the query annotation.

Table 1: Quantitative Comparison of Tool Limitations (Representative Data)

| Tool | Core Function | Key Limiting Parameter | Typical Impact on Annotation | Reported Discrepancy Rate* |

|---|---|---|---|---|

| Seurat | Unsupervised Clustering | Clustering Resolution | Can split/merge true cell types | 15-25% (vs. IHC validation) |

| Scanpy | Dimensionality Reduction & Graph Clustering | n_neighbors (k-NN graph) |

Alters cluster topology & boundaries | Similar variance to Seurat |

| SingleR | Supervised Label Transfer | Reference Dataset Choice | Mislabels novel/unrepresented types | 10-30% (dependent on reference) |

*Discrepancy Rate: Estimated from literature for labels conflicting with orthogonal protein or functional assays. Highlights need for multi-tool consensus.

Experimental Protocols

Protocol A: Seurat-Based Cluster Annotation with Post-Hoc Marker Validation

Objective: To identify cell populations from a PBMC 3k dataset and annotate them using canonical marker genes.

Materials: Seurat v5 R package, PBMC3K dataset.

Procedure:

- Load & QC: Create Seurat object, filter cells with >5% mitochondrial counts or <200 features.

- Normalize & Scale:

NormalizeData()(log normalization),FindVariableFeatures()(vst method),ScaleData(). - Linear Dimensional Reduction: Run PCA (

RunPCA), select PCs based on elbow plot (ElbowPlot). - Cluster:

FindNeighbors()(use first 10 PCs),FindClusters()(resolution=0.5). - Non-linear Reduction:

RunUMAP()(dims=1:10). - Differential Expression & Annotation:

FindAllMarkers()(min.pct=0.25). Manually annotate: Cluster 0 (CD3D+, CD3E+) → T cells; Cluster 1 (CD79A+, MS4A1+) → B cells; Cluster 2 (CD14+, LYZ+) → CD14+ Monocytes. - Visual Validation:

VlnPlot()orFeaturePlot()for marker genes.

Protocol B: Scanpy Workflow with Leiden Clustering

Objective: Reproduce clustering in Python and export results for integration.

Materials: Scanpy v1.9 package, AnnData object of PBMC data.

Procedure:

- Preprocessing:

sc.pp.filter_cells(min_genes=200),sc.pp.filter_genes(min_cells=3),sc.pp.normalize_total(),sc.pp.log1p(),sc.pp.highly_variable_genes(). - PCA & Neighbor Graph:

sc.tl.pca(),sc.pp.neighbors(n_neighbors=10, n_pcs=10). - Clustering & UMAP:

sc.tl.leiden(resolution=0.5),sc.tl.umap(). - Marker Detection:

sc.tl.rank_genes_groups(groupby='leiden', method='wilcoxon'). - Data Export: Save

adata.obs['leiden']andadata.obsm['X_umap']for cross-tool comparison.

Protocol C: SingleR Annotation with Human Primary Cell Atlas (HPCA) Reference

Objective: Automatically annotate clusters from Protocol A/B using a reference database.

Materials: SingleR R package, celldex package (for HPCA reference).

Procedure:

- Reference Loading:

library(celldex); ref <- HumanPrimaryCellAtlasData(). - Data Preparation: Extract normalized log-expression matrix from Seurat/Scanpy object.

- Label Transfer:

pred <- SingleR(test = test_matrix, ref = ref, labels = ref$label.main). - Integration with Clusters: Compare

pred$labelswith cluster IDs from Seurat/Scanpy. Assess per-cluster label consistency. - Confidence Evaluation: Examine

pred$pruned.labelsand per-cell scores to flag low-confidence annotations.

Visualizations

Title: Single-Model Annotation Workflows and Their Limitations

Title: Resolving Annotation Conflicts via Multi-Model Consensus

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Context | Example / Specification |

|---|---|---|

| 10x Genomics Chromium | Single-cell partitioning & barcoding for library prep. | 3’ Gene Expression v3.1 kit. Essential for generating the input UMI matrix. |

| Cell Ranger | Primary analysis pipeline for demultiplexing, alignment, and feature counting from 10x data. | cellranger count (v7.x). Outputs the raw count matrix analyzed by Seurat/Scanpy. |

| Human Primary Cell Atlas (HPCA) | A curated bulk RNA-seq reference dataset for human cell types. | Accessed via celldex R package. Serves as the reference for SingleR in Protocol C. |

| Mouse Cell Atlas (MCA) | A large-scale scRNA-seq reference for mouse tissues. | Alternative reference for murine studies in SingleR or for comparative mapping. |

| CITE-seq Antibody Panel | Protein surface marker detection alongside transcriptome. | TotalSeq-B from BioLegend. Provides orthogonal protein validation for cluster annotations. |

| SeuratDisk | R/Python interoperability tool. | Converts Seurat objects (.rds) to Scanpy’s AnnData format (.h5ad) for cross-software workflows. |

| SCTransform Normalization | An alternative normalization/ variance stabilization method in Seurat. | SCTransform() function. Often used to replace the standard log-normalization for improved downstream integration. |

Accurate cell type annotation is the cornerstone of single-cell and spatial genomics, impacting disease research and drug development. Biological noise—stochastic gene expression, cellular state transitions, and microenvironmental heterogeneity—conflates with technical noise from batch effects, sequencing depth, and platform-specific artifacts. This confluence obscures true biological signals, driving the necessity for a multi-model integration strategy to achieve robust, reproducible annotations.

The following table summarizes key quantitative metrics for noise sources derived from recent studies (2023-2024).

Table 1: Quantitative Impact of Noise Sources on scRNA-seq Data

| Noise Category | Specific Source | Typical Impact Metric (Range) | Effect on Cell Type Annotation |

|---|---|---|---|

| Biological | Stochastic Transcription | Coefficient of Variation (CV): 20-40% | Masks subtle subtype differences; inflates perceived heterogeneity. |

| Biological | Cell Cycle Phase | % Variance Explained: 5-15% (per PC) | Creates artificial clusters; confounds disease vs. normal states. |

| Biological | Metabolic/Stress State | % of DEGs attributed: 10-30% | Obscures genuine lineage-defining markers. |

| Technical | Library Size (Depth) | Correlation (r) with PC1: 0.3-0.7 | Drives major batch-associated clustering artifacts. |

| Technical | Batch Effect (Platform) | Silhouette Width by Batch: >0.2 (highly separated) | Causes false cluster splits; integration is mandatory for meta-analysis. |

| Technical | Ambient RNA Contamination | % of Reads in Empty Droplets: 2-10% | Introduces spurious gene expression, especially for rare cell types. |

| Technical | Multiplexing (Cell Hashing) | Doublet Rate: 2-8% (commercial kits) | Creates hybrid expression profiles, leading to erroneous novel types. |

Integrated Experimental Protocol for Noise-Aware Cell Typing

This protocol outlines a multi-modal integration workflow designed to disentangle biological signals from technical noise.

Protocol: Multi-Modal Single-Cell Integration for Robust Annotation

Objective: To annotate cell types from a multi-sample, potentially multi-platform single-cell study by integrating gene expression (GEX) and surface protein (CITE-seq) data while correcting for technical variance.

Materials & Equipment:

- Single-cell suspension(s)

- Chromium Controller & Chip B (10x Genomics)

- Feature Barcoding Kit (10x Genomics, Cat. # PN-1000260) for CITE-seq

- TotalSeq-B Antibodies (BioLegend) - Human Immune Panel (50 antibodies)

- Cell Ranger (v7.1+), Seurat (v5.0), Scanorama, scVI pipelines

- High-performance computing cluster (Linux, >32 GB RAM recommended)

Procedure:

- Sample Preparation & Multiplexing:

- Label 1x10^6 cells per sample with a unique TotalSeq-B Cell Hashtag Antibody (e.g., BioLegend Cat. #394661, 394663) for 30 minutes on ice. Wash twice.

- Pool all hashed samples into a single tube.

- Label the pooled cell suspension with the TotalSeq-B Antibody Panel (50 antibodies) for 30 minutes on ice. Wash twice.

- Proceed to GEX library and Feature Barcode (Antibody) library generation per 10x Genomics Feature Barcoding protocol.

Sequencing & Primary Data Processing:

- Sequence libraries to a minimum median depth of 20,000 reads/cell for GEX and 5,000 reads/cell for ADT (antibody-derived tags).

- Run

cellranger multi(10x) to align reads, count features, and perform basic filtering.

Multi-Modal Data Integration & Noise Correction (Seurat-centric Workflow):

Create Object & Quality Control:

Normalize & Scale Independent Assays:

Anchor-Based Integration (Correcting Batch/Technical Noise):

- Split object by sample of origin (hashtag).

- Find integration anchors using 3000 variable features from GEX data.

- Integrate the GEX data, creating a batch-corrected expression matrix.

Multi-Modal Clustering & Annotation:

- Run PCA on integrated GEX data, then construct a weighted nearest neighbor (WNN) graph that combines information from GEX PCA and ADT PCA.

- Run PCA on integrated GEX data, then construct a weighted nearest neighbor (WNN) graph that combines information from GEX PCA and ADT PCA.

Annotation & Biological Noise Assessment:

- Use the co-embedding of GEX and ADT in WNN UMAP to identify clusters.

- Cross-reference clusters with canonical marker genes and protein expression.

- Use

CellCycleScoring()and regress out S/G2M score difference if cell cycle is a dominant but biologically irrelevant source of variation.

Validation:

- Validate annotations using an independent, publicly annotated reference with SingleR.

- Assess cluster purity and batch mixing via Local Inverse Simpson's Index (LISI). Target a batch LISI score >0.8 (well-mixed) and cell type LISI score <1.5 (distinct).

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Research Reagents for Noise-Aware Single-Cell Studies

| Item (Example Product) | Primary Function in Noise Mitigation |

|---|---|

| TotalSeq-B Antibodies (BioLegend) | Multiplexed surface protein detection (CITE-seq). Provides orthogonal data layer to RNA, stabilizing annotations against transcriptional noise. |

| Cell Multiplexing Oligos (CMO)/Hashtags (10x Genomics) | Sample multiplexing. Enables pooling prior to library prep, minimizing technical batch effects and controlling for ambient RNA. |

| Cell Surface Marker Panels (BD Rhapsody) | Pre-designed panels for focused phenotype confirmation. Reduces dimensionality, focusing analysis on biologically relevant signals. |

| Doublet Removal Beads (BioLegend) | Physical removal of doublets. Reduces rate of artifactual hybrid cell types from technical origin. |

| Nuclei Isolation Kits (Sigma NUC201) | For frozen tissue. Standardizes input material, reducing technical noise from dissociation variability. |

| ERCC Spike-In Mix (Thermo Fisher) | External RNA controls. Quantifies technical noise amplitude and enables absolute molecular count calibration. |

| Viability Dyes (DAPI, Propidium Iodide) | Dead cell exclusion. Removes a major source of ambient RNA release and non-specific binding. |

Visualization of Integrated Analysis Workflow

Title: Integrated Multi-Modal Analysis Workflow

Title: Noise Sources and Integrated Mitigation Path

Building Your Annotation Pipeline: A Step-by-Step Guide to Multi-Model Integration

Effective multi-model integration for cell type annotation requires a foundational step where input data is standardized and features are selected to ensure compatibility across diverse computational models. This step mitigates batch effects, reduces dimensionality, and aligns feature spaces, enabling robust ensemble predictions and meta-analyses crucial for research and drug development.

Current Methodological Framework: A Synthesis from Recent Literature

Contemporary strategies emphasize creating a unified, model-agnostic input layer. A live search (performed on 2023-10-27) of recent publications on PubMed and bioRxiv reveals the following consensus protocols and key quantitative benchmarks.

Table 1: Summary of Common Preprocessing & Feature Selection Methods

| Method Category | Specific Technique | Primary Function | Typical Output Impact (Dataset: 10x PBMC) |

|---|---|---|---|

| Quality Control | Scrublet (Doublet detection) | Remove technical multiplets | ~5-10% cell removal |

| Mitochondrial gene % filter | Remove low-viability cells | ~5-15% cell removal | |

| Count depth filter | Remove empty droplets / low-quality cells | ~3-8% cell removal | |

| Normalization | SCTransform (sctransform) | Stabilizes variance, removes sequencing depth effect | ~10,000 variable features |

| LogNormalize (Seurat) | Log-transforms counts per cell | Preserves all features | |

| TF-IDF (for ATAC-seq) | Term frequency-inverse doc frequency | Highlights distinct peaks | |

| Integration & Batch Correction | Harmony | Removes batch effects, integrates datasets | KNN graph accuracy >95% |

| Seurat CCA (Anchor-based) | Identifies cross-dataset cell pairs | Alignment score >0.8 | |

| Scanorama | Unsupervised integration | Batch mixing metric >0.9 | |

| Feature Selection | Highly Variable Gene (HVG) selection | Identifies biologically relevant genes | Top 2000-5000 genes retained |

| Principal Component Analysis (PCA) | Linear dimensionality reduction | Top 30-50 PCs explain >80% variance | |

| deviance-based selection | Selects genes with high cell-to-cell variation | Top 1000-3000 features |

Table 2: Quantitative Benchmarks for Model Compatibility

| Metric | Description | Target Range for Compatibility | Measurement Tool |

|---|---|---|---|

| Silhouette Score (Batch) | Measures batch mixing within clusters | >0.7 (indicating minimal batch effect) | scanpy.pp.harmony |

| k-Nearest Neighbor (kNN) Purity | % of a cell's neighbors from same batch in original vs. corrected space | <0.2 (post-correction) | scIB.metrics |

| Feature Correlation (Cross-Model) | Correlation of selected HVGs between two processed datasets | Pearson's r > 0.85 | Seurat::FindVariableFeatures |

| Dimensionality Retention | % of original biological variance retained in selected PCs | >70% | Scree plot / elbow method |

Detailed Experimental Protocols

Protocol 3.1: Unified Single-Cell RNA-seq Preprocessing for Multi-Model Input

Objective: Generate a cleaned, normalized, and batch-corrected count matrix from raw gene-cell UMI data suitable for input to annotation models (e.g., scPred, SingleR, CellTypist).

Materials:

- Raw UMI count matrix (cells x genes).

- Associated metadata (sample, batch, donor).

Procedure:

- Quality Control & Filtering:

a. Calculate quality metrics:

nCount_RNA,nFeature_RNA,percent.mt. b. Apply filters:nFeature_RNAbetween 200 and 6000,percent.mt< 15%. c. Run doublet detection (Scrublet) and remove predicted doublets (score > 0.25).

Normalization & HVG Selection (using Seurat R package v4): a. Normalize data using

SCTransform(assay = "RNA")withvars.to.regress = "percent.mt". b. Alternatively, for log-normalization:NormalizeData()followed byFindVariableFeatures(selection.method = "vst", nfeatures = 3000).Integration (if multiple batches): a. For SCTransform-normalized data, use

PrepSCTIntegrationon object list, findFindIntegrationAnchors, thenIntegrateData. b. For Harmony integration: run PCA (RunPCA), thenRunHarmony(group.by.vars = "batch_id").Dimensionality Reduction & Final Feature Set Export: a. Run PCA on the integrated (or normalized) data (

RunPCA, npcs = 50). b. Determine significant PCs using an elbow plot on standard deviations. c. Export the top N (e.g., 30) PCs as the primary feature matrix for model training. d. For gene-based models: Export the normalized, batch-corrected expression matrix of the top 3000 HVGs.

Protocol 3.2: Cross-Modal Feature Alignment (CITE-seq / Multi-omics)

Objective: Align protein (ADT) and gene expression (GEX) features into a coherent feature space for multimodal annotation models.

Procedure:

- Independent Processing:

a. Process GEX channel per Protocol 3.1.

b. Process ADT data:

NormalizeData(assay = "ADT", normalization.method = "CLR", margin = 2).

Feature Selection & Concatenation: a. Select top 2000 HVGs from GEX. b. Select all ADT features or apply variance filtering (top 100). c. Create a combined feature matrix by scaling and concatenating the two matrices (genes + proteins).

Joint Embedding (Alternative): a. Use a multimodal integration method (e.g., TotalVI or WNN in Seurat). b. Construct a weighted nearest neighbor graph based on both GEX and ADT modalities. c. Derive a joint low-dimensional embedding for use as features in downstream models.

Mandatory Visualizations

Workflow for Multi-Model Feature Preparation

Multi-Model Input from Unified Features

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources

| Item / Solution | Function in Preprocessing/Feature Selection | Typical Usage / Example |

|---|---|---|

| Seurat (R) | Comprehensive toolkit for QC, normalization, integration, and feature selection. | Seurat::SCTransform(), FindIntegrationAnchors() |

| Scanpy (Python) | Scalable Python-based single-cell analysis with efficient algorithms. | scanpy.pp.highly_variable_genes(), scanpy.external.pp.harmony_integrate() |

| Harmony | Fast, sensitive batch correction algorithm for integration. | harmony::RunHarmony() in Seurat or standalone. |

| Scrublet | Computational doublet detection in single-cell RNA-seq data. | scrublet.Scrublet() on raw count matrix. |

| scib (Scanpy Integration Benchmarking) | Suite of metrics to evaluate integration and batch correction quality. | Used to calculate Silhouette batch score, kNN purity. |

| UCSC Cell Browser | Visualization tool to explore preprocessed datasets and selected feature expression. | Hosting integrated datasets for collaborative review. |

| Scater/SingleCellExperiment | R/Bioconductor framework for structured, reproducible single-cell data containers. | Holding processed data, ensuring format consistency for model input. |

Within the multi-model integration strategy for cell type annotation, the parallel application of complementary annotation paradigms—supervised, unsupervised, and reference-based—mitigates the limitations inherent in any single approach. This protocol details a robust framework for executing these methods in parallel, enabling cross-validation and the generation of a high-confidence consensus annotation. This step is critical for enhancing the reliability of downstream analyses in research and drug development pipelines.

Application Notes & Comparative Analysis

Parallel annotation leverages the strengths of each method: supervised classifiers for known cell types, unsupervised clustering for novel populations, and reference-based mapping for consistency with existing atlas data. The quantitative outputs from each stream are integrated to resolve ambiguous labels and identify discordances requiring expert review.

Table 1: Comparative Summary of Parallel Annotation Tools (as of 2024)

| Tool Category | Example Tools (Current) | Primary Input | Key Output | Strengths | Limitations |

|---|---|---|---|---|---|

| Supervised | scANVI (v0.20.0), SingleR (v2.4.0), SVM classifier | Normalized count matrix; Pre-defined training labels | Cell-type predictions with scores | High accuracy for known types; Fast | Cannot identify novel types; Training-data dependent |

| Unsupervised | Leiden, Louvain, SC3 (v1.30.0) | Normalized & scaled matrix; PCA/ Harmony embeddings | Cluster assignments | Discovery of novel populations; Data-driven | Biologically irrelevant clusters possible |

| Reference-Based | Azimuth (v0.6.0), Symphony (v1.1), CellTypist (v2.0) | Query dataset; Pre-built reference atlas (e.g., HuBMAP) | Annotation & mapping scores | Standardized nomenclature; Leverages public data | Reference bias; Species/tissue specificity |

| Consensus | COCOS (v1.0.2), scConsensus (v0.1.5) | Outputs from ≥2 parallel methods | Unified annotation & confidence metrics | Resolves conflicts; Increases robustness | Computationally intensive |

Experimental Protocols

Protocol 3.1: Parallel Annotation Workflow for Single-Cell RNA-Seq Data

Objective: To generate and integrate cell-type annotations from supervised, unsupervised, and reference-based methods applied to a single-cell gene expression matrix.

Materials:

- Processed single-cell RNA-seq data (Seurat or AnnData object).

- High-performance computing environment (R≥4.3, Python≥3.10).

- Reference atlas (e.g., Tabula Sapiens, Allen Brain Cell Atlas) in compatible format.

Procedure:

A. Input Preparation (Day 1)

- Data Normalization: Use SCTransform (Seurat) or

pp.normalize_total(Scanpy) to normalize counts. - Feature Selection: Identify top 3000 highly variable genes.

- Dimensionality Reduction: Perform PCA (50 components) followed by UMAP/t-SNE for visualization. Use Harmony or BBKNN if batch correction is needed.

B. Parallel Annotation Execution (Day 1-2) Run the following three pipelines in parallel.

Supervised Annotation (SingleR Protocol):

Unsupervised Clustering (Leiden Algorithm Protocol):

Reference-Based Mapping (Azimuth Protocol):

C. Consensus Integration & Resolution (Day 2-3)

- Concordance Analysis: Create a confusion matrix comparing labels from all three methods per cell.

- Confidence Filtering: For each cell, retain annotations where at least two methods agree and prediction scores are >0.8.

- Adjudication of Discordants: For cells with conflicting labels, perform manual assessment based on:

- Expression of canonical marker genes.

- Cluster membership in unsupervised analysis.

- Mapping score metrics from reference-based method.

- Final Annotation Table: Generate a final

.csvfile with columns:Cell_Barcode,Supervised_Label,Unsupervised_Cluster,Reference_Label,Consensus_Label,Confidence_Score.

Diagrams

Diagram 1: Parallel Annotation Workflow Architecture

Title: Parallel Cell Annotation Strategy Flowchart

Diagram 2: Consensus Label Decision Logic

Title: Logic for Resolving Annotation Conflicts

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Parallel Annotation Experiments

| Item | Supplier/Resource | Function in Protocol | Critical Parameters |

|---|---|---|---|

| celldex R Package | Bioconductor | Provides curated reference datasets (e.g., Blueprint, ENCODE, HumanPrimaryCellAtlas) for SingleR and similar tools. | Version (≥1.12.0); Reference tissue/cell type relevance. |

| Azimuth Web Application | Satija Lab / Chan Zuckerberg Initiative | Cloud-based platform for reference-based mapping using pre-built, optimized atlases. | Reference version (e.g., Azimuth Human PBMC v2.0); Minimum sequencing depth requirements. |

| Scanpy Python Toolkit | Theis Lab (GitHub) | Comprehensive pipeline for unsupervised analysis: clustering (Leiden), visualization, and marker detection. | Leiden resolution parameter; Choice of HVGs. |

| Seurat R Toolkit | Satija Lab (CRAN) | Integrative analysis environment capable of running all three parallel streams and consensus building. | Version (≥5.1.0); SCT normalization compatibility. |

| Tabula Sapiens Atlas | Chan Zuckerberg CELLxGENE | A comprehensive, multi-tissue human cell reference for reference-based mapping and validation. | Data release version (e.g., 2024 update); File format (.h5ad). |

| COCOS R Package | Bioconductor (Development) | Tool specifically designed for computing consensus labels from multiple annotation sources. | Agreement metric (e.g., Jaccard index); Confidence weighting scheme. |

Within the multi-model integration strategy for cell type annotation, the construction of a robust consensus matrix is a critical step. This phase integrates predictions from multiple independent annotation models (e.g., SingleR, scPred, Seurat's label transfer, and a custom neural network) to resolve discordances and increase confidence. Cross-validation and overlap analysis statistically evaluate the agreement between models, transforming individual predictions into a unified, reliable consensus annotation. This protocol details the methodological pipeline, from data preparation to final matrix generation, essential for high-stakes research in drug development and translational science.

Theoretical Framework and Workflow

The consensus strategy mitigates inherent biases in any single algorithm. Cross-validation, performed internally within each model's training, assesses generalizability, while overlap analysis quantifies inter-model agreement on a per-cell basis. A high agreement cell receives a confident label; a low agreement cell is flagged for manual review or classified as "Unknown." The final output is a consensus matrix where rows are cells, columns are cell type labels (including an "Uncertain" class), and values represent the probability or vote count for each assignment.

Logical Workflow Diagram

Title: Consensus Matrix Generation from Multi-model Predictions

Detailed Experimental Protocols

Protocol 1: k-Fold Cross-Validation for Individual Model Assessment

Purpose: To evaluate and ensure the reliability of each base annotation model before inclusion in the consensus pipeline.

- Input Preparation: For each supervised model (e.g., scPred), use the labeled reference dataset. Let

Nbe the total number of reference cells. - Data Partitioning: Randomly shuffle and split the reference data into

k=5ork=10disjoint subsets (folds) of approximately equal size. - Iterative Training & Validation: For each fold

i(wherei = 1 to k):- Designate fold

ias the validation set. - Combine the remaining

k-1folds to form the training set. - Train the model from scratch on the training set.

- Apply the trained model to predict labels for the validation set (fold

i). - Store the prediction and the ground truth label for each cell in fold

i.

- Designate fold

- Performance Aggregation: After all

kiterations, compile the predictions for allNcells. Calculate performance metrics (see Table 1). - Final Model Training: Train a final instance of the model using the entire reference dataset for subsequent use in the consensus pipeline.

Protocol 2: Overlap Analysis and Consensus Matrix Generation

Purpose: To integrate predictions from M validated models into a single, confident annotation matrix.

- Prediction Collection: Apply each of the

Mfinal models to the target unlabeled (or query) dataset. Store each model's predicted label for each of theCtarget cells in aC x Mprediction matrix. - Agreement Calculation: For each target cell

j:- Tally the votes: Count how many models assigned cell

jto each cell type. - Calculate the Consensus Score (CS) for the top-voted label:

CS_j = V_max / M, whereV_maxis the highest vote count for that cell. - Identify the Consensus Label: The cell type with the majority vote (

V_max). A tie triggers a predefined rule (e.g., prioritize the model with highest cross-validation F1-score).

- Tally the votes: Count how many models assigned cell

- Threshold Application: Apply a confidence threshold,

τ(typicallyτ = 0.6).- If

CS_j >= τ, assign the consensus label to cellj. - If

CS_j < τ, assign celljto an "Uncertain / Low Confidence" category.

- If

- Matrix Construction: Generate the final consensus matrix with dimensions

C x (T+1), whereTis the number of unique cell types. Each cell(j, t)contains the proportion of models (0 to 1) that assigned celljto typet. An additional column holds theCS_j.

Data Presentation and Analysis

Table 1: Exemplar Cross-Validation Metrics for Base Models (Simulated Data)

| Model Name | Avg. Accuracy (%) | Avg. Weighted F1-Score | Avg. Cohen's Kappa | Time per Fold (min) | Suitable for Consensus? |

|---|---|---|---|---|---|

| SingleR (Human) | 92.4 ± 2.1 | 0.921 | 0.901 | 12.5 | Yes |

| scPred | 88.7 ± 3.5 | 0.883 | 0.862 | 8.2 | Yes |

| Seurat Label Transfer | 85.1 ± 4.2 | 0.842 | 0.818 | 6.8 | Yes (with review) |

| Custom CNN | 90.5 ± 3.8 | 0.898 | 0.881 | 22.7 | Yes |

Table 2: Consensus Matrix Output Summary (Example: 10,000 Cells)

| Consensus Category | Cell Count | Percentage of Total | Avg. Consensus Score | Next Action |

|---|---|---|---|---|

| High Confidence (CS ≥ 0.8) | 7,850 | 78.5% | 0.93 | Proceed to downstream analysis. |

| Medium Confidence (0.6 ≤ CS < 0.8) | 1,620 | 16.2% | 0.67 | Include but flag for validation. |

| Low Confidence / Uncertain (CS < 0.6) | 530 | 5.3% | 0.42 | Manual inspection & marker gene check. |

Consensus Decision Logic Diagram

Title: Decision Logic for Consensus Annotation per Cell

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Packages for Consensus Analysis

| Item/Package | Primary Function | Key Application in Protocol |

|---|---|---|

| Seurat (v5+) | Single-cell analysis toolkit. | Data preprocessing, integration, and running its built-in label transfer model as one base classifier. |

| SingleR | Reference-based annotation. | Provides a robust, correlation-based prediction vector for the consensus pipeline. |

| scPred | Supervised machine learning for scRNA-seq. | Trains on reference data to make probabilistic predictions for inclusion in overlap analysis. |

| Scikit-learn | Machine learning library in Python. | Used for implementing k-fold cross-validation, calculating metrics (F1, Kappa), and building custom ensembles. |

| Matrix/R DataFrame | Core data structures. | The consensus matrix is stored as a DataFrame (cells x types) for efficient downstream analysis. |

| Harmony/BBKNN | Batch correction tools. | Critical for integrating reference and query datasets if batch effects are present before model application. |

Application Notes

Within a multi-model integration strategy for cell type annotation, Step 4 is the critical decision fusion layer. Individual models (e.g., single-cell reference mapping, marker-based classifiers, de novo clustering) often produce conflicting or probabilistic predictions for each cell. Ensemble learning and voting systems provide a principled, quantitative framework to synthesize these diverse predictions into a single, robust, and consensus cell type label, thereby increasing annotation accuracy, confidence, and reproducibility.

Key principles include:

- Diversity Utilization: Leverages the strengths of different algorithmic approaches (e.g., Seurat, SCINA, SingleR, scType) to compensate for individual weaknesses.

- Confidence Calibration: Integrates model-specific confidence scores (e.g., prediction p-values, correlation coefficients) to weight votes.

- Handling Ambiguity: Explicitly identifies cells where consensus is low, flagging them for expert review or assignment to an "Uncertain" or "Multiplet" class.

- Scalability: The voting protocol is automatable, enabling consistent annotation across large-scale datasets and multiple experiments.

Table 1: Comparison of Common Voting Schemes for Cell Type Annotation

| Voting Scheme | Description | Advantage | Disadvantage | Best Use Case |

|---|---|---|---|---|

| Majority (Plurality) Voting | Each model gets one vote; the most frequent label wins. | Simple, intuitive, no need for confidence scores. | Ignores model confidence; ties can occur. | Initial integration of equally trusted, discrete-output models. |

| Weighted Voting | Votes are weighted by model-specific confidence scores. | Reflects prediction certainty; can outperform majority vote. | Requires calibrated, comparable confidence metrics. | Integrating models that output reliable scores (e.g., p-values, correlations). |

| Maximum Probability Sum | Sums the probabilities for each label across all probabilistic models; highest sum wins. | Fully utilizes probabilistic information. | Requires all models to output calibrated probabilities for all classes. | Ensemble of classifiers with probabilistic outputs (e.g., random forest, logistic regression). |

| Meta-Classifier | A supervised learner (e.g., logistic regression) is trained on the predictions of base models. | Can learn complex, non-linear relationships between model predictions. | Requires a separate, high-quality training set with ground truth. | When a robustly annotated "gold-standard" subset of the data is available. |

Experimental Protocols

Protocol 4.1: Implementation of a Weighted Voting System for scRNA-seq Annotation

Objective: To generate a consensus cell type label by integrating predictions from three distinct annotation models.

Materials: See "The Scientist's Toolkit" below. Input Data: A gene expression matrix (cells x genes) and the prediction outputs from three independent annotation tools.

Procedure:

- Model Execution & Output Standardization:

- Run your pre-processed single-cell data through three chosen annotation methods (e.g., SingleR, Seurat label transfer, and a marker-based classifier like SCINA).

- For each cell i and each model m, standardize the output to a tuple:

(Predicted_Label_L_m_i, Confidence_Score_C_m_i). - Map all confidence scores to a common scale (e.g., 0 to 1). For p-values, use 1-p. For correlation scores, apply min-max normalization.

Vote Aggregation Table Construction:

- For each cell, create a table aggregating all model predictions.

Example for Cell_001:

Table 2: Vote Aggregation for Cell_001

Model Predicted Label Normalized Confidence SingleR CD4+ T cell 0.95 Seurat Transfer CD8+ T cell 0.87 SCINA CD4+ T cell 0.78

Weighted Vote Calculation:

- For each unique label proposed for the cell, sum the confidence scores of all models that voted for it.

Score(CD4+ T cell) = 0.95 + 0.78 = 1.73Score(CD8+ T cell) = 0.87 = 0.87

- The label with the highest aggregate score is assigned as the Consensus Label.

- For each unique label proposed for the cell, sum the confidence scores of all models that voted for it.

Consensus Confidence & Conflict Flagging:

- Calculate a Consensus Confidence metric:

(Top_Score / Total_Confidence_Sum) * 100.- For Cell_001:

(1.73 / (0.95+0.87+0.78)) * 100 ≈ 66.5%.

- For Cell_001:

- Define a threshold (e.g., 60%). Cells below this threshold are flagged as "Low Consensus" for manual inspection.

- Flag cells where the top two labels are separated by a margin below a defined threshold (e.g., < 0.2).

- Calculate a Consensus Confidence metric:

Final Assignment Output:

- Generate a final annotation vector for all cells, with columns:

Cell_ID,Consensus_Label,Consensus_Confidence,Flag.

- Generate a final annotation vector for all cells, with columns:

Protocol 4.2: Benchmarking Ensemble Performance

Objective: To quantitatively assess the improvement of the ensemble over individual models.

Procedure:

- Ground Truth Acquisition: Use a dataset with manually curated, high-confidence labels, or a publicly available benchmark with FACS-sorted labels.

- Baseline Accuracy: Calculate the per-cell and per-class annotation accuracy of each individual model against the ground truth.

- Ensemble Accuracy: Calculate the accuracy of the consensus labels generated in Protocol 4.1.

- Statistical Comparison: Use McNemar's test (for per-cell agreement) or compute F1-score macro-averages to determine if the ensemble's performance is statistically superior to the best standalone model.

Mandatory Visualizations

Diagram Title: Ensemble Voting Workflow for Cell Annotation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for Ensemble Annotation

| Item | Function & Purpose | Example/Note |

|---|---|---|

| scRNA-seq Analysis Suites | Provide built-in annotation functions and export prediction results for voting. | Seurat (SingleCellExperiment in R), Scanpy (AnnData in Python). |

| Specialized Annotation Packages | Serve as diverse base models for the ensemble. | SingleR (reference-based), SCINA (marker-based), scType (marker-based), scANVI (neural network). |

| Benchmark Datasets | Provide high-quality ground truth for training meta-classifiers or benchmarking. | Human Cell Atlas data, PBMC datasets with CITE-seq protein validation, mouse brain atlas data. |

| High-Performance Computing (HPC) Environment | Enables parallel execution of multiple annotation models on large datasets. | Slurm cluster, cloud computing instances (AWS, GCP). |

| Containerization Software | Ensures reproducibility of the entire multi-model pipeline across systems. | Docker, Singularity/Apptainer. |

| Consensus Labeling Script | Custom script (R/Python) implementing the voting logic and metrics calculation. | Must handle input parsing, vote aggregation, threshold application, and output generation. |

Within the broader thesis on a Multi-model Integration Strategy for Cell Type Annotation Research, this case study demonstrates the critical translation of computational deconvolution predictions into biologically and clinically actionable insights. Deconvolution of bulk RNA-seq data from the tumor microenvironment (TME) is a prime application where integrating results from multiple algorithms (e.g., CIBERSORTx, EPIC, quanTIseq) with single-cell RNA-seq atlases and spatial transcriptomics validation is essential to overcome the limitations of any single method and achieve robust, reproducible cell type quantification.

Case Study: Deconvolving Immune-Cold vs. Immune-Hot Tumors

A representative study was designed to profile the TME of non-small cell lung cancer (NSCLC) samples to identify compositional drivers of immunotherapy response.

2.1 Data Acquisition & Preprocessing:

- Source Data: Publicly available bulk RNA-seq data (TPM normalized) from the TCGA-LUAD cohort (n=500) and a paired single-cell RNA-seq atlas (n=24 patients).

- Clinical Annotation: Samples were stratified by inferred immunotherapy response phenotype: "Immune-Hot" (high CD8+ T cell infiltration, PD-L1 positive) vs. "Immune-Cold" (low lymphocytic infiltration, stromal-rich).

- Reference Signature Matrix: A custom LM22-like signature matrix was generated from the integrated single-cell atlas, featuring 25 distinct cell states.

2.2 Multi-Model Deconvolution Execution: Three established deconvolution tools were run in parallel on the bulk RNA-seq data using the custom signature matrix.

Table 1: Key Output Metrics from Deconvolution Algorithms (Average Cell Fraction % in Immune-Hot Tumors, n=250)

| Cell Type | CIBERSORTx (p<0.01) | EPIC | quanTIseq | Consensus Mean (SD) |

|---|---|---|---|---|

| CD8+ Exhausted T Cells | 12.5 | 9.8 | 11.2 | 11.2 ± 1.4 |

| Regulatory T Cells (Tregs) | 6.3 | 7.1 | 5.9 | 6.4 ± 0.6 |

| M2-like Macrophages | 8.2 | 15.5 | 9.5 | 11.1 ± 3.8 |

| Cancer-Associated Fibroblasts | 5.1 | 18.3 | 7.8 | 10.4 ± 7.0 |

| B Cells | 9.4 | 4.2 | 8.1 | 7.2 ± 2.7 |

Table 2: Algorithm Comparison & Discrepancy Highlight

| Algorithm | Underlying Method | Strengths | Noted Discrepancy in Case Study |

|---|---|---|---|

| CIBERSORTx | ν-Support Vector Regression | Robust noise handling, p-value estimation. | Underestimated stromal fractions (CAFs). |

| EPIC | Constrained least squares regression | Accounts for uncharacterized cell types (other). | Overestimated macrophage and CAF fractions. |

| quanTIseq | Constrained linear regression | Calibrated for immune cell quantification. | Provided intermediate estimates. |

2.3 Integration & Validation: A consensus score was calculated for each cell type by taking the mean of the outputs from the three tools, excluding outliers. Discrepancies for M2 Macrophages and CAFs (high standard deviation) were resolved by refereeing against:

- Single-Cell Atlas Mapping: Digital cytometry confirmed CAF fractions were closer to CIBERSORTx/quanTIseq estimates.

- Spatial Validation: Multiplex immunofluorescence (mIF) on a tissue microarray (TMA) of 50 matched samples validated the consensus fractions. A high correlation was observed for CD8+ T cells (Pearson r=0.88, p<0.001) and CAFs (r=0.79, p<0.001).

Detailed Experimental Protocols

3.1 Protocol: Generation of a Custom scRNA-seq Derived Signature Matrix

- Load Seurat Object: Processed scRNA-seq data containing annotated cell types.

- Subset & Aggregate: Isolate populations of interest. For each population, aggregate raw counts across all cells within a sample to create "pseudo-bulk" profiles.

- Filter Genes: Retain genes with average expression > 1 CPM in at least one cell population.

- Calculate Marker Expression: For each gene and cell type, compute the average log2(CPM) and the proportion of cells expressing it.

- Select Signature Genes: Identify genes that are uniquely expressed:

(avg_log2FC > 2) & (pct.1 > 0.6) & (pct.2 < 0.2)where pct.1/pct.2 are expression proportions in target/other populations. - Construct Matrix: Create a matrix of signature genes (rows) by cell types (columns), filled with average log2 expression values. Save as

.txtfile.

3.2 Protocol: Multiplex Immunofluorescence (mIF) for Spatial Validation

- Tissue Preparation: 5µm FFPE tissue sections mounted on charged slides. Bake at 60°C for 1 hour.

- Deparaffinization & Antigen Retrieval:

- Immerse slides in xylene (3x, 5 min each), followed by ethanol gradient (100%, 95%, 70%, 5 min each).

- Perform heat-induced epitope retrieval in Tris-EDTA buffer (pH 9.0) at 97°C for 20 min in a pressure cooker.

- Cool slides for 30 min at RT, then wash in PBS + 0.025% Triton X-100.

- Cyclic Staining (Phenocycler-Flex/CODEX system):

- Blocking: Incubate with 3% BSA / 5% normal goat serum for 1 hour at RT.

- Primary Antibody Incubation: Apply antibody cocktail (see Toolkit) overnight at 4°C.

- Secondary Incubation: Apply fluorophore-conjugated secondary antibodies (e.g., Opal polymer system) for 1 hour at RT.

- Imaging: Acquire whole-slide fluorescence images at 20x magnification using specified filter sets.

- Stripping: Elute antibodies using a low-pH glycine buffer (pH 2.0) or denaturing solution for the next cycle.

- Repeat Cycles for all antibody targets (typically 5-7 cycles).

- Image Analysis & Quantification:

- Registration & Composite: Align images from all cycles using DAPI nuclei signal.

- Cell Segmentation: Use DAPI to identify nuclei, then expand cytoplasm boundaries (e.g., using pan-cytokeratin or membrane markers).

- Cell Phenotyping: Apply a random forest classifier trained on marker intensity profiles to assign each cell a type.

- Spatial Analysis: Calculate cell fractions per tissue core and compute spatial metrics (nearest neighbor distances, clustering).

Visualizations

TME Deconvolution & Validation Workflow

Multi-Model Integration Strategy Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for TME Deconvolution & Validation

| Item | Supplier Examples | Function in Protocol |

|---|---|---|

| FFPE Tissue Sections | Institutional Biobank | Primary source material for bulk RNA extraction and spatial validation. |

| RNeasy FFPE Kit | Qiagen | Extracts high-quality total RNA from FFPE tissue for bulk sequencing. |

| Chromium Next GEM Chip | 10x Genomics | Part of the single-cell platform to generate the reference scRNA-seq atlas. |

| Cell Ranger Software | 10x Genomics | Processes raw sequencing data into gene-cell count matrices. |

| CIBERSORTx License | Stanford University | Provides access to the deconvolution algorithm and signature matrix tools. |

| Opal 7-Color IHC Kit | Akoya Biosciences | Fluorophore conjugation system for multiplex immunofluorescence staining. |

| Anti-human CD8 (clone C8/144B) | Abcam, CST | Primary antibody to label cytotoxic T cells in mIF validation. |

| Anti-human α-SMA (clone 1A4) | Abcam, Dako | Primary antibody to label Cancer-Associated Fibroblasts in mIF. |

| Anti-human CD163 (clone 10D6) | Thermo Fisher | Primary antibody to label M2-like macrophages in mIF. |

| Phenochart / inForm Software | Akoya Biosciences | For whole-slide image analysis, cell segmentation, and phenotyping. |

Solving Common Pitfalls: How to Debug and Optimize Your Integrated Annotation Workflow

Application Notes on Multi-Model Integration for Cell Type Annotation

In the strategic integration of multiple computational models for cell type annotation, inter-model disagreement is not a failure but a critical source of biological and technical insight. Resolving these conflicts to approach ground truth requires a systematic, experimental, and integrative protocol. These notes outline a framework for diagnosing disagreement, leveraging current best practices and resources.

Quantifying and Categorizing Disagreement

Initial analysis requires quantifying the level and nature of disagreement across models. Common metrics are summarized below.

Table 1: Quantitative Metrics for Model Disagreement Analysis

| Metric | Calculation/Description | Interpretation |

|---|---|---|

| Annotation Concordance | Percentage of cells where N models agree. | Low concordance flags high-ambiguity cells or populations. |

| Model Confidence Score | Per-cell probability or score from each model (e.g., Seurat max.score, scANVI predictions_df.confidence). |

Low confidence from a model suggests its prediction is less reliable for that cell. |

| Entropy of Predictions | Shannon entropy across model predictions for each cell. | High entropy indicates high disagreement/uncertainty. |

| Differential Gene Expression | Log2 fold-change & adjusted p-value for genes in disagreed vs. agreed cell sets. | Identifies marker genes that may define novel subtypes or states. |

Core Experimental Protocol for Ground Truth Determination

Protocol 1: Hierarchical Resolution of Model Conflict Objective: To resolve conflicting annotations through a tiered decision framework.

- Input Preparation: Generate cell type predictions for a single-cell RNA-seq dataset using at least three independent annotation tools (e.g., Seurat label transfer, SingleR, SCINA, scANVI).

- Disagreement Flagging: Identify all cells where there is not unanimous agreement among models. Calculate per-cell entropy (Table 1).

- Tier 1 - Consensus Filter: For cells where a majority consensus exists (e.g., 2 of 3 models agree), adopt the consensus label. Flag majority decisions where the dissenting model had high confidence for expert review.

- Tier 2 - High-Confidence Override: For cells with no consensus, compare model confidence scores. If one model's confidence score exceeds a pre-defined threshold (e.g., >0.95) while others are low (<0.7), adopt the high-confidence label.

- Tier 3 - Marker-Based Refereeing: For remaining conflicts, perform differential expression (DE) analysis. Compare the expression of canonical lineage or type-specific marker genes (from authoritative sources like CellMarker 2.0) for the candidate cell types. The annotation best supported by the cell's marker gene expression profile is adopted.

- Tier 4 - Unresolved Category Assignment: Cells unresolved after Tier 3 are assigned to an "Ambiguous" or "Novel" category for downstream experimental validation.

Visualization of the Diagnostic Workflow

Title: Tiered Workflow for Resolving Model Conflict

Table 2: Essential Research Reagents & Solutions for Validation

| Item | Function & Relevance |

|---|---|

| 10X Genomics Feature Barcoding (e.g., Cell Surface Protein, CRISPR Screening) | Provides independent protein-level or perturbation-based cell identity data to adjudicate RNA-based model conflicts. |

| Multiplexed Fluorescence In Situ Hybridization (FISH) (e.g., RNAscope, MERFISH) | Enables spatial validation of predicted cell types and examination of contested cells in tissue context. |

| Validated Antibody Panels for Flow Cytometry/CITE-seq | Allows orthogonal protein expression profiling to confirm or refute transcriptomic annotations. |

| Reference Atlases with Linked Epigenomics (e.g., ENCODE, Roadmap Epigenomics) | Provides chromatin accessibility data to assess if promoter/enhancer regions of marker genes are open in contested cells. |

| Cell Type-Specific Reporter Lines or Perturbation Vectors (CRISPRi/a) | Functional tools to isolate or manipulate predicted cell populations for phenotypic validation. |

Advanced Protocol: Iterative Integration with Experimental Validation

Protocol 2: Iterative Closed-Loop Refinement Objective: To use model disagreements to drive targeted experiments, creating a self-improving annotation system.

- Identify Candidate Novel Populations: Apply Protocol 1. Isolate cells assigned to the "Ambiguous/Novel" category (Tier 4 output) via FACS or computational selection.

- Targeted Molecular Assay: Perform deep, targeted RNA-seq or ATAC-seq on these isolated cells to obtain high-quality molecular profiles.

- Differential Analysis & Marker Discovery: Compare deep profiles to all resolved cell types. Identify unique marker genes and regulatory elements.

- Reference Atlas Augmentation: Integrate these new high-confidence profiles and their validated markers as a new "cell type" entry in the project's custom reference atlas.

- Model Retraining & Re-annotation: Retrain the integrated models (e.g., scANVI) on the augmented reference. Re-annotate the original dataset. The previously conflicting cells should now be confidently assigned.

Title: Closed-Loop Iterative Refinement of Ground Truth

Handling Low-Quality Cells and Doublets in an Integrated Framework

Cell type annotation in single-cell RNA sequencing (scRNA-seq) is a cornerstone of modern genomics, crucial for understanding tissue heterogeneity, disease mechanisms, and therapeutic target discovery. A robust multi-model integration strategy for annotation relies on high-quality input data. The presence of low-quality cells (with compromised RNA content) and doublets/multiplets (two or more cells captured within a single droplet or well) introduces severe noise, leading to misannotation, spurious cluster formation, and erroneous biological conclusions. Therefore, handling these artifacts is not a preprocessing step but a fundamental, integrated component of the analytical framework, ensuring downstream models—whether reference-based, marker-based, or deep learning—operate on faithful biological signals.

Quantitative Metrics for Identifying Artifacts

Low-Quality Cell Indicators

Low-quality cells often result from apoptosis, necrosis, or mechanical stress. They are identified via thresholds on the following metrics, typically visualized in violin plots.

Table 1: Key Metrics for Low-Qality Cell Identification

| Metric | Description | Typical Threshold (3’ scRNA-seq) | Biological Cause |

|---|---|---|---|

| Unique Gene Count (nFeature_RNA) | Number of unique genes detected per cell. | < 500-1,000 (lower bound) | Loss of cytoplasmic RNA. |

| Total UMI Count (nCount_RNA) | Total number of transcripts (UMIs) per cell. | < 1,000-2,000 (lower bound) | Technical failure or dead cell. |

| Mitochondrial Gene Percentage (percent.mt) | % of reads mapping to mitochondrial genome. | > 10-20% (upper bound) | Cellular stress/apoptosis. |

| Ribosomal Protein Gene Percentage (percent.rb) | % of reads from ribosomal protein genes. | Extreme high or low values | Altered metabolic state. |

Doublet/Multiplet Indicators

Doublets are cells with anomalously high gene/UMI counts and may express mutually exclusive marker genes.

Table 2: Strategies for Doublet Detection

| Method | Principle | Implementation | Key Output |

|---|---|---|---|

| Expected Doublet Rate | Theoretical rate based on cell loading. | 1% per 1,000 cells loaded (10x Genomics). | Baseline for filtering. |

| Scrublet | Simulates doublets in silico and detects neighbors. | scrublet.Scrublet() |

Doublet score per cell. |

| DoubletFinder | Artificial nearest-neighbor classification. | doubletFinder_v3() |

pANN & doublet class. |

| Demuxlet (for SNP data) | Uses genotype information from multiplexed samples. | Demuxlet algorithm | Best-guess sample identity. |

Integrated Protocol: An End-to-End Workflow

This protocol integrates quality control (QC) and doublet removal into a Seurat-based pipeline, ensuring seamless preparation for multi-model annotation.

Protocol 3.1: Integrated Filtering and Doublet Detection Workflow

I. Initial Processing and QC Metric Calculation

- Load Data: Create a Seurat object (

CreateSeuratObject) with raw count matrix. Retain all genes/cells initially. - Calculate QC Metrics:

- Visualize Metrics: Use

VlnPlot(seurat_obj, features = c("nFeature_RNA", "nCount_RNA", "percent.mt"))to assess distributions.

II. Knee-Plot & Threshold Determination

- Strategy: Plot nFeatureRNA vs. nCountRNA. Low-quality cells often appear as a "cloud" below the main distribution. Use

library(dropletUtils)to generate a barcode rank plot and identify the knee/inflection point for additional context. - Apply Thresholds: Filter cells based on Table 1. Thresholds are experiment-specific.

III. Doublet Detection and Removal (Post-Normalization)

- Normalize and Scale: Perform standard log-normalization and scaling on the filtered object.

- Dimensionality Reduction: Run PCA (

RunPCA). Run DoubletFinder:

Remove Predicted Doublets: Subset the object to retain only cells classified as "Singlet".

IV. Final Clean Dataset for Annotation

The resulting object is now primed for clustering (FindNeighbors, FindClusters, RunUMAP) and subsequent multi-model annotation using tools like SingleR, scType, or scANVI.

Integrated QC and Doublet Removal Workflow

The Scientist's Toolkit: Essential Reagents & Software

Table 3: Key Research Reagent Solutions & Computational Tools

| Item | Function/Description | Example Product/Software |

|---|---|---|

| Viability Stain | Distinguish live/dead cells prior to library prep. | AO/PI, DAPI, 7-AAD, Trypan Blue. |

| Cell Hashtag Oligos (HTOs) | Multiplex samples for post-hoc doublet identification via genotype. | BioLegend TotalSeq-A/B/C antibodies. |

| Single Cell 3' Reagent Kits | Generate barcoded scRNA-seq libraries. | 10x Genomics Chromium Next GEM. |

| scRNA-seq Analysis Suite | Comprehensive toolkit for QC, analysis, and visualization. | Seurat (R) or Scanpy (Python). |

| Doublet Detection Software | Algorithmically identify doublets from expression data. | DoubletFinder, Scrublet. |

| Reference Atlas | High-quality, annotated dataset for reference-based annotation. | Human Cell Landscape (HCL), Mouse Cell Atlas (MCA). |

Logical Framework within Multi-Model Annotation Strategy

The handling of artifacts is the critical first layer in a multi-layered, consensus annotation strategy. Clean data feeds into parallel annotation models whose results are integrated for a final, robust call.

QC as Foundation for Multi-Model Annotation

Advanced Considerations & Validation Protocol

Protocol 6.1: Experimental Validation of Doublets via Sample Multiplexing

This protocol uses Cell Hashing with HTOs to ground-truth doublet detection algorithms.

Materials: TotalSeq antibodies, cell multiplexing pool, scRNA-seq kit with feature barcoding capability. Procedure:

- Label Cells: Incubate cells from up to 12 different samples with unique Hashtag antibodies.

- Pool and Load: Pool all labeled samples into a single suspension and load onto the Chromium chip.

- Sequencing: Run with standard gene expression and HTO library preparation.

- HTO Demultiplexing: Use

HTODemux()in Seurat to classify cells by sample origin. - Identify Inter-Sample Doublets: Cells with high counts for >1 HTO are ground-truth doublets.

- Benchmark: Compare algorithm-predicted doublets (from Protocol 3.1, Step III) against these ground-truth doublets to calculate precision/recall and optimize algorithm parameters.

Within multi-model integration strategies for cell type annotation research, the efficient execution of multiple algorithms—such as SingleR, scCATCH, Seurat, and SCINA—is computationally intensive. Optimizing resources and runtime is critical for scalability and reproducibility in atlas-scale studies. This document outlines application notes and protocols for achieving this optimization.

A live search reveals current benchmarks for common single-cell annotation tools on standard datasets (e.g., 10X Genomics PBMC 3k). The following table summarizes key performance metrics, highlighting the resource heterogeneity.

Table 1: Computational Characteristics of Selected Cell Annotation Algorithms

| Algorithm | Typical Runtime (10k cells) | Recommended RAM | CPU Cores Utilized | Parallelization Support | Key Computational Bottleneck |

|---|---|---|---|---|---|

| SingleR (Reference-based) | 2-5 minutes | 8-16 GB | 1 (multi-core for ref) | Yes (cell-level) | Reference correlation matrix calculation |

| Seurat (Cluster + Marker) | 15-30 minutes | 16-32 GB | Multiple | Yes (integrated analysis) | PCA, clustering, differential expression |

| scCATCH (Marker-based) | 1-3 minutes | 4-8 GB | 1 | Limited | Tissue-specific marker database lookup |

| SCINA (Signature-based) | 1-2 minutes | 4-8 GB | 1 | No | Semi-supervised model fitting |