Advanced Strategies for Novel and Rare Cell Type Annotation: From LLMs to Spatial Mapping

Accurately annotating novel and rare cell populations remains a significant challenge in single-cell RNA sequencing analysis.

Advanced Strategies for Novel and Rare Cell Type Annotation: From LLMs to Spatial Mapping

Abstract

Accurately annotating novel and rare cell populations remains a significant challenge in single-cell RNA sequencing analysis. This article provides a comprehensive guide for researchers and drug development professionals, exploring the evolution from traditional marker-based methods to cutting-edge computational approaches. We cover foundational concepts of cell identity, evaluate emerging methods like large language models (LLMs) and graph neural networks, address troubleshooting for low-heterogeneity datasets, and establish validation frameworks for annotation reliability. By synthesizing the latest advancements in AI-powered annotation tools and spatial transcriptomics integration, this resource aims to equip scientists with practical strategies to overcome annotation bottlenecks and accelerate discoveries in cellular biology and therapeutic development.

Defining Cell Identity: From Traditional Concepts to Modern Single-Cell Paradigms

The definition of a "cell type" has undergone a profound evolution, transitioning from historical classifications based solely on morphology and location to a modern, multimodal understanding that integrates molecular, functional, and spatial characteristics. This paradigm shift is largely driven by the advent of single-cell technologies, particularly single-cell RNA sequencing (scRNA-seq), which have revealed an unprecedented degree of cellular heterogeneity within tissues once considered uniform [1]. For researchers investigating novel or rare cell populations, this new framework is critical. Accurate cell type annotation serves as the foundational step for understanding cellular function in health and disease, deciphering disease mechanisms, and identifying novel therapeutic targets [1] [2].

The central challenge in modern cell type annotation lies in synthesizing information from various modalities—morphology, marker genes, and transcriptomic states—into a coherent and biologically meaningful definition. While traditional biologists defined cell types based on morphology (e.g., eosinophil granulocytes) and physiology, the onset of antibody labeling introduced surface markers as a key identifier [3]. Today, in the era of single-cell biology, cell identity is understood as a dynamic interplay of these factors, where transcriptomic profiles can reveal not only established types but also novel cell types, transitional states, and disease-associated alterations [3]. This guide provides an in-depth technical overview of the evolving definition of cell type, framing it within the practical context of annotating novel and rare cell populations for research and drug discovery.

The Historical Perspective: From Morphology to Molecular Markers

The journey to define cell types began with visible characteristics. Morphology and location were the primary criteria; neurons were classified based on the structure of their dendrites and axons, while glial cells were categorized by their physical appearance and anatomical position in the nervous system [1] [3]. This perspective was complemented by physiological roles, such as designating a cell as a "stem cell" based on its function rather than its molecular makeup.

The field transformed with the ability to detect specific proteins. Antibody-based labeling of cell surface and intracellular markers enabled a higher-resolution classification. This period established the critical concept of "canonical marker genes"—proteins whose expression reliably defines a specific cell lineage or type, such as PECAM1 for endothelial cells [3]. Although powerful, this approach was limited by the availability and specificity of antibodies and offered a relatively static view of cellular identity. It lacked the capacity to capture the full molecular complexity underlying cellular function or to easily discover entirely new cell categories.

The Single-Cell Revolution: Transcriptomic States and Cellular Heterogeneity

The development of scRNA-seq marked a watershed moment, moving cell typing from a pre-defined, protein-centric view to an unsupervised, genome-wide profiling of cellular identities. This technology allows for the high-resolution molecular profiling of individual cells, revealing cellular heterogeneity, lineage dynamics, and disease-associated states that are invisible to bulk measurement techniques [1].

Large-scale brain-mapping initiatives like the NIH’s BRAIN Initiative have identified hundreds of novel cell types, yet their functional roles in health and disease often remain uncharacterized [1]. scRNA-seq facilitates the discovery of novel cell types based on distinct transcriptomic signatures and the delineation of cell states—transient, often reversible conditions such as activation, stress, or metabolic phases—within a single cell type [3]. Furthermore, it enables the reconstruction of developmental trajectories, allowing researchers to map the progression from a progenitor to a mature cell type using computational methods like trajectory and pseudotime analysis [3].

Table 1: Key Single-Cell Technologies for Defining Transcriptomic States

| Technology | Key Principle | Application in Cell Typing | Considerations |

|---|---|---|---|

| scRNA-seq [1] | Captures the transcriptome of individual cells using droplet-based microfluidics and molecular barcoding. | Identifying novel cell types, characterizing cellular heterogeneity, and analyzing differential gene expression. | Requires fresh tissue; can be costly for very large numbers of cells. |

| snRNA-seq [1] | Sequences RNA from the nuclei of individual cells. | Analyzing frozen or archived post-mortem tissues, particularly effective for complex cell types like neurons. | May lack certain cytoplasmic transcripts; but reliably replicates scRNA-seq findings. |

| Spatial Transcriptomics [1] | Determines gene expression profiles while retaining the spatial coordinates of cells within a tissue. | Mapping the spatial organization of cell types identified by scRNA-seq and understanding tissue microarchitecture. | Resolves the loss of spatial context inherent in dissociated scRNA-seq. |

| Multi-omics Integration [1] | Combines scRNA-seq with other data types, such as proteomics, chromatin accessibility (ATAC-seq), or electrophysiology. | Provides a more comprehensive view of cellular identity by linking transcriptome to function, morphology, and epigenome. | Technically complex and requires advanced computational integration methods. |

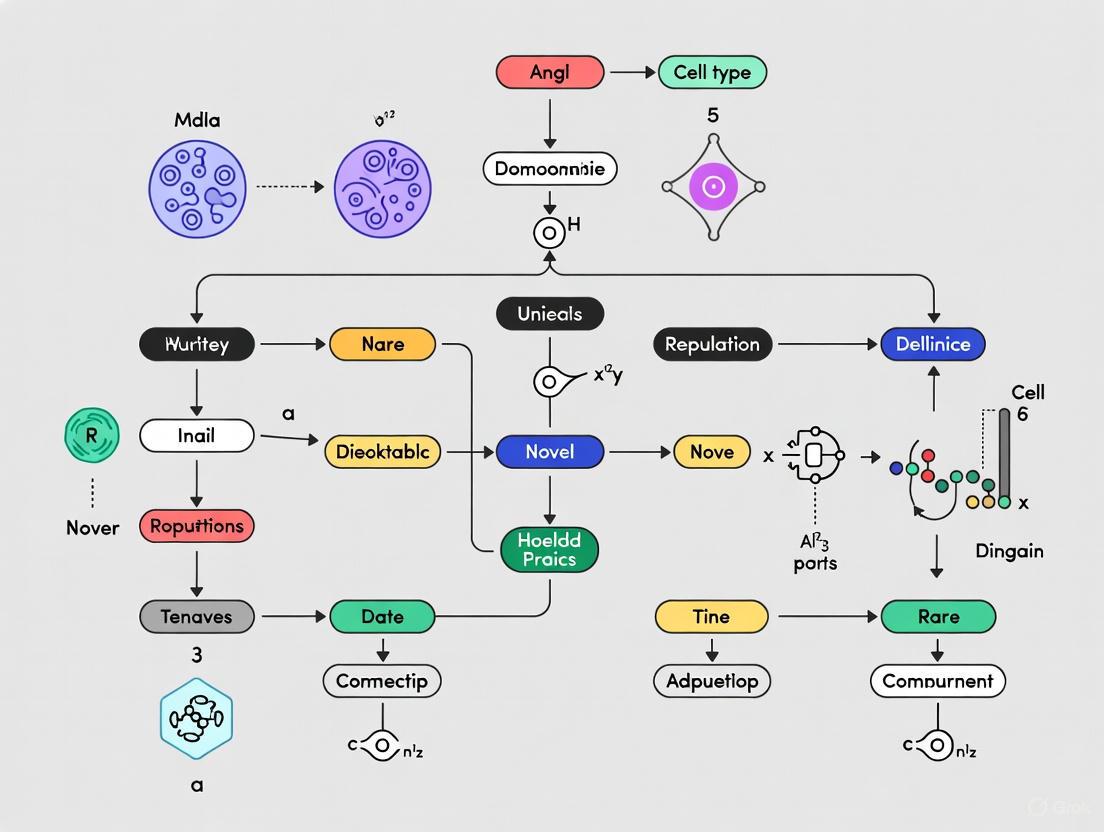

The following workflow diagram illustrates how these single-cell technologies are integrated in a typical study to define cell types, from sample preparation to final annotation.

A Multimodal Framework: Integrating Data for Confident Annotation

The most robust and modern definitions of cell type emerge from the integration of multiple data modalities. Relying on a single data type, such as transcriptomics alone, can lead to misclassification or an incomplete understanding of a cell's identity. A multimodal framework leverages the complementary strengths of each data type to create a definitive classification.

Initiatives like the Allen Institute's Brain Cell Atlas exemplify this approach. They characterize cell types using a combination of single-cell transcriptomics, DNA methylation patterns, cellular morphology and projections, patch-seq (linking transcriptomics with electrophysiology), and inter-areal circuit mapping [4]. This integration connects molecular signatures with cellular function and spatial context, refining our understanding of brain circuits and functional organization [1] [4].

A powerful application of this integrated approach is predicting one cellular characteristic from another. For instance, MorphDiff is a transcriptome-guided latent diffusion model that simulates high-fidelity cell morphological responses to drug or genetic perturbations [5]. By using the perturbed L1000 gene expression profile as a condition, it can generate the corresponding cell morphology images, demonstrating a tangible link between transcriptomic and morphological states. This is particularly valuable for phenotypic drug discovery, as it allows for in-silico prediction of morphological changes under thousands of unseen perturbations, accelerating Mechanism of Action (MOA) identification [5].

Table 2: Core Components of a Multimodal Cell Type Definition

| Modality | Description | Contribution to Cell Type Definition | Example Tools/Assays |

|---|---|---|---|

| Transcriptomics | Genome-wide measurement of gene expression. | Provides the primary molecular signature for unsupervised clustering and identification of novel types. | scRNA-seq, snRNA-seq, Spatial Transcriptomics (MERFISH) [1] [4]. |

| Morphology | Quantitative analysis of a cell's physical structure and shape. | Offers a direct, visual correlate of cellular identity and state, often linked to function. | Cell Painting [5], fluorescence microscopy, computational image analysis (CellProfiler, DeepProfiler) [5]. |

| Proteomics & Surface Markers | Detection and quantification of proteins, especially cell surface antigens. | Enables validation, sorting, and functional characterization of populations identified by transcriptomics. | Flow cytometry, mass cytometry (CyTOF), immunohistochemistry. |

| Epigenomics | Profiling of chromatin accessibility and DNA methylation states. | Reveals the regulatory landscape that controls gene expression and defines lineage potential. | scATAC-seq, DNA methylation sequencing. |

| Electrophysiology | Measurement of the electrical properties of cells. | Critically defines functional identity for excitable cells like neurons and cardiomyocytes. | Patch-seq [1] [4]. |

| Spatial Context | Locating a cell within the architecture of a tissue. | Identifies cellular niches and interactions, crucial for understanding tissue function. | Spatial transcriptomics, MERSCOPE [4], in situ hybridization. |

Methodologies and Best Practices for Cell Type Annotation

The Annotation Workflow

Assigning cell type identities to clusters derived from scRNA-seq data is a central challenge. A robust, multi-step process is recommended to ensure accuracy and biological relevance [3].

- In-depth Preprocessing: This foundational step involves rigorous quality control to filter out low-quality cells or doublets, batch effect correction to mitigate technical variation, and preliminary clustering to group cells with similar transcriptomic profiles [3].

- Reference-based Annotation: Cell clusters are mapped to known cell types using established reference datasets and atlases. Tools like SingleR or Azimuth are used, which can provide annotations at different levels of resolution [3]. It is considered a best practice to use multiple reference datasets to generate a robust consensus annotation.

- Manual Refinement: Automated methods require refinement through expert curation. This involves verifying the expression patterns of canonical marker genes, performing differential gene expression analyses to detect unique signatures, and consulting relevant literature. At this stage, the researcher's biological expertise is crucial for interpreting ambiguous clusters or edge cases [3].

Ensuring Reliability with Advanced Computational Tools

The reliability of annotation is paramount, especially for novel or rare populations. New computational tools are being developed to address the limitations of manual and purely reference-based methods.

- scSCOPE: This R-based platform addresses the lack of consistency in traditional differential expression analysis for marker gene identification. It utilizes stabilized LASSO feature selection, bootstrapped co-expression networks, and pathway enrichments to identify reproducible and functionally relevant marker genes across multiple datasets [6].

- LICT (LLM-based Identifier for Cell Types): This tool leverages large language models (LLMs) to provide an objective, reference-free framework for annotation. LICT employs a multi-model integration strategy, a "talk-to-machine" iterative feedback loop, and an objective credibility evaluation based on marker gene expression within the input dataset. This approach has been shown to reduce mismatch rates and provide greater annotation confidence, particularly in challenging low-heterogeneity datasets where expert annotation can be variable [2].

The diagram below illustrates the innovative "talk-to-machine" strategy used by LICT for reliable, iterative cell type annotation.

Success in characterizing novel cell types depends on leveraging a suite of open-access data resources, analytical tools, and experimental reagents.

Table 3: Essential Research Reagent Solutions for Cell Type Research

| Item | Function/Description | Example Use Case |

|---|---|---|

| Reference Atlases | Large, publicly available datasets that serve as a ground truth for annotation. | Aligning novel scRNA-seq data to established types in the Allen Brain Cell Atlas [4] or Human Cell Atlas. |

| Annotation Software | Computational tools for classifying cell clusters. | Using Seurat, SingleR, or Azimuth for reference-based annotation, and LICT [2] or scSCOPE [6] for enhanced reliability. |

| Cell Lines & Model Organisms | Genetically tractable systems for functional validation. | Using C. elegans [7] or humanized mouse models [8] to study the role of a gene in a specific cell type. |

| Validated Antibodies | Reagents for detecting protein markers identified via transcriptomics. | Confirming surface protein expression on a putative novel immune cell type via flow cytometry. |

| Perturbation Tools | Methods for altering gene function (CRISPR, RNAi) to test hypotheses. | Determining if the loss of a gene disrupts the development or function of a specific neuronal cell type. |

| Functional Assay Kits | Assays for measuring specific cellular behaviors (proliferation, metabolism). | Characterizing the functional state of a rare cell population isolated from a tumor. |

The definition of a cell type has evolved from a simple, morphology-based label to a complex, multidimensional identity integrating transcriptomic, proteomic, spatial, and functional data. This refined understanding is fundamental for researching novel and rare cell populations, as it provides a robust framework for their annotation and functional characterization. The future of cell typology will be shaped by the continued development of high-throughput multimodal technologies, more sophisticated and integrated computational models like MorphDiff [5], and the creation of comprehensive, standardized reference atlases across tissues, developmental stages, and disease states.

For the research community, this means that best practices must now involve a combinatorial approach. No single method is sufficient. Instead, confidence in cell type identification is achieved by converging evidence from transcriptomic clustering, marker gene validation, spatial localization, and functional assessment. As these tools and datasets become more accessible, they will undoubtedly accelerate the discovery of novel cell types involved in disease and unlock new therapeutic opportunities in drug development.

The identification and characterization of novel cell populations represents a fundamental challenge and opportunity in single-cell biology. As single-cell RNA-sequencing (scRNA-seq) technologies have matured, they have revealed an unprecedented view of cellular heterogeneity within tissues and organs. The definition of a cell type itself remains complex, as cells can be categorized based on diverse phenotypic properties including molecular profiles, morphological features, physiological characteristics, and functional roles [9]. In practice, cell types are typically grouped based on shared properties that distinguish them from other cells, though establishing consistent boundaries between types remains challenging due to the continuous nature of some cellular states [9].

Within this framework, novel cell populations emerge through several paradigms: as established types previously masked by bulk analysis methods, as rare states occurring at low frequencies within larger populations, and as disease-specific subtypes that arise or become altered in pathological conditions. The resolution of single-cell technologies allows researchers to identify and characterize these novel populations in ways that were previously impossible with traditional bulk RNA-sequencing methods [10]. This technical guide explores these categories, their methodological requirements, and their implications for biomedical research and therapeutic development, framed within the critical context of cell type annotation for novel and rare cell population research.

Established Novel Cell Types: Uncovering Hidden Diversity

Established novel cell types represent populations with distinct molecular and functional characteristics that were previously unrecognized in tissue taxonomies. These populations are typically identified through unsupervised clustering of scRNA-seq data, where cells group based on transcriptional similarity, revealing previously hidden cellular diversity [10].

Methodological Approaches for Discovery

The primary method for discovering established novel cell types involves unsupervised clustering of scRNA-seq data, followed by differential expression analysis to identify marker genes that define each cluster [10] [9]. Additional validation through in situ hybridization or immunofluorescence confirms the spatial localization and distinct identity of these populations [11].

Table 1: Experimental Workflow for Identifying Established Novel Cell Types

| Step | Method | Purpose | Key Considerations |

|---|---|---|---|

| 1. Data Generation | Single-cell RNA-sequencing | Comprehensive transcriptome profiling | Cell viability, sequencing depth, number of cells |

| 2. Clustering | Unsupervised algorithms (e.g., Leiden, Louvain) | Identify groups of transcriptionally similar cells | Resolution parameters, batch effects |

| 3. Marker Identification | Differential expression analysis | Find genes specific to each cluster | Statistical thresholds, effect size measures |

| 4. Annotation | Comparison to reference datasets | Preliminary cell type assignment | Context appropriateness, species differences |

| 5. Validation | In situ hybridization, Immunofluorescence | Spatial confirmation of novel types | Probe/antibody specificity, tissue preservation |

A compelling example comes from the mouse crista ampullaris, where scRNA-seq analysis revealed previously undefined support cell subtypes and transitional states during development. Researchers identified two distinct support cell clusters (Id1-high and Srxn1-high) with different developmental trajectories and proportional changes during maturation [11]. This discovery was enabled by the comprehensive profiling of individual cells across multiple developmental timepoints (E16, E18, P3, and P7), followed by trajectory analysis that positioned these populations along a differentiation continuum.

Bioinformatic Validation Strategies

Confirming novel cell types requires multiple lines of computational evidence:

- RNA velocity analysis to determine developmental trajectories

- Cross-species comparison to assess evolutionary conservation

- Gene set enrichment analysis to identify activated pathways and regulatory programs

- Integration with epigenomic data to confirm regulatory landscapes

These approaches collectively transform transcriptomic clusters into biologically meaningful cell types with distinct functional attributes and developmental relationships [11] [9].

Rare Cell Populations: Technical Challenges and Solutions

Rare cell populations are typically defined as representing less than 0.01% of the total cellular population [12]. Examples include circulating tumor cells, antigen-specific lymphocytes, hematopoietic stem cells, and circulating fetal cells in maternal blood [12]. These populations often possess critical functional importance despite their low frequency, making their detection and characterization essential for understanding tissue homeostasis, immune responses, and disease mechanisms.

Technical Limitations in Rare Cell Detection

The analysis of rare cell populations faces several significant challenges:

- Statistical limitations from insufficient event counts for robust analysis

- Background interference from more abundant cell types

- Marker complexity requiring multiple parameters for accurate identification

- Sample preparation artifacts that may preferentially loss rare populations

These challenges necessitate specialized methodological approaches to ensure rare populations are preserved, enriched, and accurately measured [12].

Advanced Technologies for Rare Cell Analysis

Table 2: Technical Solutions for Rare Cell Population Analysis

| Technology | Principle | Application | Benefits |

|---|---|---|---|

| Acoustic Focusing Flow Cytometry | Ultrasonic waves focus cells for laser interrogation | High-throughput analysis of rare cells | Increased acquisition speed, reduced clogging |

| Magnetic Enrichment | Antibody-conjugated beads bind surface markers | Pre-enrichment of target populations | 100-1000x concentration of rare cells |

| High-Parameter Panels | 10+ markers analyzed simultaneously | Precidentification of rare subsets | Improved specificity through multidimensional gating |

| Viability Preservation Reagents | Enhanced tissue dissociation protocols | Maintain cell integrity during preparation | Higher recovery of sensitive rare populations |

Advanced flow cytometry platforms employing acoustic focusing technology enable higher analysis speeds, allowing the processing of millions of cells to capture sufficient numbers of rare events for statistical significance [12]. This is particularly valuable when working with dilute samples such as cerebrospinal fluid or blood, where target cells may be both rare and limited in total sample volume.

Complementing these analytical advances, sample preparation methods have been optimized to preserve rare cell populations. Reagents such as Thermo Fisher Scientific's High-Yield Lyze and BD Bioscience's Horizon Dri Tumor & Tissue Dissociation Reagent specifically aim to maximize cell yields while minimizing the loss of rare populations during processing [12].

Disease-Specific Subtypes: Linking Cellular States to Pathology

Disease-specific subtypes represent cellular subpopulations that emerge or become altered in pathological conditions, offering insights into disease mechanisms and potential therapeutic targets. These subtypes may reflect cellular responses to disease, drivers of pathology, or resistance mechanisms to treatment.

Computational Frameworks for Subtype Identification

The identification of disease-specific subtypes requires specialized computational approaches that preserve heterogeneity while distinguishing disease-relevant features. The sc-linker framework integrates scRNA-seq data with epigenomic maps and genome-wide association study (GWAS) summary statistics to infer cell types and cellular processes through which genetic variants influence disease [13]. This method employs three types of gene programs: (1) cell-type-specific signatures, (2) disease-dependent signatures within cell types, and (3) cellular processes that vary within and/or across cell types [13].

Another innovative approach, PHet (Preserving Heterogeneity), uses iterative subsampling and differential analysis of interquartile range to identify features that maintain sample heterogeneity while distinguishing known disease states [14]. This method specifically addresses the limitation of conventional feature selection approaches that often prioritize discriminative features at the expense of heterogeneity, thereby masking biologically relevant subtypes [14].

Diagram 1: The sc-linker workflow for identifying disease-critical cell types

Applications in Disease Research

The power of disease-specific subtype analysis is illustrated in multiple disease contexts:

- In Alzheimer's disease, single-cell analysis has revealed transcriptionally distinct subpopulations of major brain cell types linked to pathology involving myelination, inflammation, and neuron survival [14].

- In ulcerative colitis, a disease-dependent M cell program has been identified, suggesting a previously unappreciated role for this epithelial cell subset in disease pathogenesis [13].

- In multiple sclerosis, a disease-specific complement cascade process has been discovered, highlighting novel mechanisms of immune-mediated damage [13].

- In pulmonary fibrosis, new pathological subtypes of epithelial cells and fibroblasts have been recognized as highly enriched in diseased tissue [14].

These discoveries demonstrate how disease-specific subtypes can reveal cellular processes central to pathogenesis, potentially informing targeted therapeutic development.

Annotation Tools for Novel and Rare Population Identification

Accurate cell type annotation is critical for the reliable identification of novel and rare cell populations. Recent advances have introduced both reference-based and reference-free approaches to improve annotation accuracy.

Reference-Based Annotation Tools

Reference-based methods leverage existing annotated datasets to classify cells in new experiments. Northstar enables automatic classification of both known and novel cell types from tumor samples by using atlas data as landmarks while simultaneously identifying new cell states such as malignancies [15]. This approach employs a similarity graph that connects either two cells with similar expression from the new dataset or a new cell with an atlas cell type, with clustering that prevents atlas nodes from merging or splitting [15].

The advantage of this approach is its ability to place new data within the context of existing biological knowledge while still allowing for the discovery of previously unannotated populations. In glioblastoma analysis, Northstar correctly identified neoplastic cells while maintaining accurate classification of known healthy brain cell types, demonstrating its utility in complex disease environments with mixed cellular populations [15].

Large Language Model-Based Annotation

The emergence of large language models (LLMs) has introduced novel approaches to cell type annotation. LICT (Large Language Model-based Identifier for Cell Types) leverages multiple LLMs through a "talk-to-machine" approach that iteratively enriches model input with contextual information [2]. This system employs three complementary strategies:

- Multi-model integration that selects the best-performing results from multiple LLMs

- Iterative feedback that incorporates marker gene validation results

- Objective credibility evaluation that assesses annotation reliability based on marker gene expression [2]

This approach addresses limitations in both manual annotation (subjectivity, expertise dependency) and automated reference-based methods (reference bias, limited generalizability) [2]. Validation across diverse datasets shows particularly strong performance in highly heterogeneous samples, though challenges remain in low-heterogeneity environments [2].

The Scientist's Toolkit: Essential Reagents and Computational Tools

Table 3: Research Reagent Solutions for Novel Cell Population Analysis

| Reagent/Tool | Function | Application Context |

|---|---|---|

| High-Yield Lyze (Thermo Fisher) | Red blood cell removal with rare cell preservation | Blood and bone marrow samples |

| Horizon Dri TTDR (BD Bioscience) | Tissue dissociation with minimal epitope damage | Solid tumor and tissue samples |

| Muse Count & Viability (Luminex) | Assessment of cell viability and concentration | Quality control during sample preparation |

| Viobility Fixable Dyes (Miltenyi) | Viability staining for fixed cells | Flow cytometry panel design |

| FluoroFinder Spectra Viewer | Fluorophore comparison across suppliers | Multiplex panel design optimization |

| PHet Algorithm | Heterogeneity-preserving feature selection | Disease subtype discovery |

| Northstar | Atlas-guided cell type classification | Tumor microenvironment analysis |

| sc-linker Framework | Integration of scRNA-seq with genetics | Cell type-disease relationship mapping |

Experimental Design Considerations for Novel Population Discovery

Research focused on novel cell population identification requires careful experimental design to ensure biological validity and technical reliability.

Sample Size and Replication

The detection of rare populations requires adequate cell numbers for statistical significance. For populations representing 0.01% frequency, analyzing 1 million cells would yield approximately 100 target cells, which may still be insufficient for robust characterization. Including biological replicates (multiple donors, independent experiments) is essential to distinguish consistent populations from technical artifacts or individual variation [12].

Multi-Omic Integration

Combining transcriptomic data with additional modalities strengthens novel cell type validation:

- Epigenomic profiling (ATAC-seq) reveals regulatory landscapes supporting transcriptional identities

- Spatial transcriptomics confirms tissue context and organizational relationships

- Protein measurement (CITE-seq) validates transcriptional signatures at the functional level

Integrative analysis across these modalities provides compelling evidence for genuinely distinct cell types rather than transient transcriptional states [9].

Functional Validation

Ultimately, putative novel cell populations require functional validation through:

- In vitro culture and functional assays

- Lineage tracing and fate mapping in model organisms

- Genetic perturbation to determine essential functions

- Therapeutic manipulation in disease models

These approaches transform descriptive categorizations into biologically meaningful cell types with defined functional roles in tissue homeostasis, development, and disease [11] [9].

The categorization of novel cell populations into established types, rare states, and disease-specific subtypes provides a conceptual framework for navigating the complex landscape of cellular heterogeneity revealed by single-cell technologies. Each category presents distinct technical challenges and requires specialized methodological approaches for reliable identification and characterization. As annotation methods evolve—particularly through the integration of large language models and more sophisticated reference atlases—the resolution at which we can define these populations continues to increase. This progress deepens our understanding of basic biology while simultaneously revealing novel therapeutic targets and diagnostic opportunities for human disease. The continued refinement of these approaches promises to further unravel the complexity of cellular ecosystems in health and disease.

The comprehensive characterization of cellular landscapes using single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of complex tissues. A pivotal challenge in this domain is the accurate annotation of rare cell types—low-abundance populations critically important for disease pathogenesis and biological processes such as angiogenesis and immune response mediation. These rare cells, which can constitute fewer than 1 in 100,000 cells in samples like peripheral blood mononuclear cells (PBMCs), exhibit minimal transcriptional differences from major populations and are frequently absent from reference atlases. This technical whitepaper examines the core challenges of low heterogeneity and limited reference data, evaluates current computational and experimental methodologies, and provides a detailed framework of experimental protocols and reagent solutions to advance the study of novel and rare cell populations for researchers and drug development professionals.

Rare cell types, despite their low abundance, play disproportionately significant roles in health and disease. Their functions range from mediating key immune responses to driving cancer metastasis, as seen with circulating tumor cells (CTCs). The accurate identification of these cells is not merely a technical exercise but a fundamental requirement for understanding cellular mechanisms and developing targeted therapies [16]. However, two interconnected technical challenges severely hamper this endeavor: low heterogeneity and limited reference data.

Low heterogeneity refers to the minimal transcriptional differences that distinguish rare cells from more abundant neighboring populations. This subtlety often causes them to be overlooked during standard clustering analyses. Limited reference data exacerbates this problem, as rare cell types are frequently missing from single-cell reference profiles used to deconvolve bulk data or annotate new datasets. This absence occurs for multiple reasons, including cell loss during tissue dissociation procedures—where fragile, adherent, or large cells exhibit low capture efficiency—and the simple fact that many rare states are not represented in existing atlases [17] [18]. The resulting incomplete annotations distort biological interpretation and impede the discovery of critical, yet elusive, cellular players.

Core Technical Challenges

The Problem of Low Heterogeneity and Subtle Transcriptomic Signals

The primary challenge in identifying rare cells lies in their faint transcriptional signature against a high-background of major cell types. Traditional clustering methods, which rely on global gene expression patterns to partition cells, often fail to resolve these rare populations. They may be grouped within larger clusters, their unique signals averaged out and lost. This is particularly problematic for cells in transitional states or those with highly similar expression profiles to dominant lineages [16]. Furthermore, standard dimensionality reduction techniques like PCA may prioritize major sources of variation, effectively obscuring the differential signals that are crucial for spotting rare entities.

The Impact of Missing Reference Data on Deconvolution

The deconvolution of bulk RNA-seq data with single-cell references is a powerful method for inferring cell-type proportions in complex tissues. However, this approach fundamentally assumes the reference contains every cell type present in the bulk sample. When this assumption fails, and cell types are missing from the reference, deconvolution accuracy plummets. Performance degradation is influenced by both the number of missing cell types and their transcriptional similarity to cell types that remain in the reference. The missing proportions are often incorrectly redistributed among phylogenetically or functionally related cell types present in the reference, leading to biologically misleading conclusions [17].

Notably, this missing information is not entirely lost. Evidence of missing cell types can be detected in the residuals—the differences between the original bulk data and the bulk data recreated using the deconvolution results and the incomplete reference. Studies have shown that applying techniques like non-negative matrix factorization (NMF) to these residuals can recover expression profiles highly correlated with the missing cell types, pointing toward potential computational solutions [17].

Benchmarking Computational Method Performance

To address the challenge of low heterogeneity, specialized computational methods have been developed. A recent benchmark study evaluated 10 state-of-the-art algorithms on 25 real-world scRNA-seq datasets, using the F1 score for rare cell types as a primary metric to balance precision and sensitivity [16].

Table 1: Performance Benchmark of Rare Cell Identification Methods

| Method | Overall F1 Score | Key Algorithmic Approach |

|---|---|---|

| scCAD | 0.4172 | Cluster decomposition-based anomaly detection |

| SCA | 0.3359 | Surprisal component analysis (dimensionality reduction) |

| CellSIUS | 0.2812 | Identifies rare sub-clusters via bimodal marker expression |

| FiRE | 0.2461 | Sketching-based rareness scoring in highly variable gene space |

| GapClust | 0.2339 | K-nearest neighbor distance variation in PCA space |

The superior performance of scCAD (Cluster decomposition-based Anomaly Detection) highlights the effectiveness of its iterative strategy. Unlike methods that rely on one-time clustering, scCAD employs an ensemble feature selection to preserve differential signals and then iteratively decomposes major clusters based on the strongest differential signals within each cluster. After decomposition and merging, it calculates an independence score for each cluster to quantify its rarity, successfully separating rare cell types that are initially entangled with major populations [16].

Advanced Experimental and Computational Protocols

An Iterative Clustering Workflow for Rare Cell Detection: scCAD

The following diagram illustrates the scCAD algorithm's workflow for rare cell identification.

Protocol Details:

- Initial Clustering (I-clusters): Perform standard clustering (e.g., Leiden algorithm) on the global gene expression profile to define initial major cell populations.

- Ensemble Feature Selection: Combine the most important genes identified using initial cluster labels and a random forest model. This step maximizes the preservation of differentially expressed (DE) genes critical for distinguishing rare types, moving beyond reliance solely on highly variable genes.

- Iterative Cluster Decomposition (D-clusters): For each major cluster, iteratively perform sub-clustering based on the most differential signals (genes) within that cluster. This process recursively breaks down heterogeneous clusters until no further substructure can be reliably identified.

- Cluster Merging (M-clusters): To improve computational efficiency, merge D-clusters that have the closest Euclidean distance between their centers, forming a set of merged clusters (M-clusters).

- Differential Expression and Anomaly Scoring: For each M-cluster, perform DE analysis to generate a candidate gene list. Then, using this list, run an isolation forest model to calculate an anomaly score for every cell.

- Rare Cluster Identification: Calculate an "independence score" for each M-cluster by measuring the overlap between its cells and the cells flagged as highly anomalous. Clusters with high independence scores, indicating a unique profile not shared by others, are reported as potential rare cell types [16].

Recovering Missing Cell Types from Deconvolution Residuals

When dealing with an incomplete reference, the following protocol can help detect and characterize cell types missing from the deconvolution reference.

Protocol Details:

- Pseudobulk Generation & Deconvolution: Generate simulated bulk data (pseudobulks) from a ground-truth single-cell dataset. Use a deconvolution method (e.g., NNLS, CIBERSORTx, BayesPrism) with a deliberately incomplete cell reference (missing one or more known cell types) to estimate proportions.

- Residual Calculation: Compute the residual matrix by subtracting the recreated bulk (the product of the estimated proportions and the reference profile) from the original pseudobulk data:

Residuals = Original Pseudobulk - (Estimated Proportions × Reference Profile). - Residual Factorization and Analysis: Apply a dimensionality reduction technique like Non-negative Matrix Factorization (NMF) to the residual matrix. This uncovers latent structures or patterns within the residuals.

- Missing Type Correlation: Plot the resulting NMF factors against the true proportions of the cell types that were missing from the reference. Studies have consistently found these factors to be highly correlated with the missing cell-type proportions, confirming that their signal persists in the residuals and is theoretically recoverable [17].

Transcript-Specific Enrichment for Rare Cell Profiling

For targeted experimental profiling, PERFF-seq (Programmable Enrichment via RNA FlowFISH by sequencing) enables the isolation of rare populations based on specific RNA transcripts.

Protocol Details:

- Design and Hybridization: Design fluorescence in situ hybridization (FISH) probes against the RNA transcripts that define the rare cell state of interest.

- Programmable Sorting: Use these RNA-based probes as a cytometry method to sort subpopulations based on the abundance of the target transcripts, without relying on cell surface markers or antibodies.

- Downstream Sequencing: Perform high-throughput scRNA-seq on the enriched cell population. This method has been successfully applied to immune populations and fresh-frozen/FFPE brain tissue to uncover phenotypic heterogeneity in rare cells that would be impossible to profile otherwise [19].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful rare cell annotation requires a combination of wet-lab reagents and computational tools.

Table 2: Key Research Reagent Solutions for Rare Cell Analysis

| Item / Solution | Function / Application | Example Use Case |

|---|---|---|

| PERFF-seq Probes | Transcript-specific enrichment via RNA FlowFISH; enables sorting of nuclei or cells based on intracellular RNA. | Profiling rare cell states in FFPE tissue where surface protein markers are unavailable [19]. |

| Gentle Dissociation Kits | Optimized enzymatic blends (e.g., with ROCK inhibitors) to maximize viability of fragile cells during tissue processing. | Preventing the loss of sensitive cell types (e.g., adipocytes) from single-cell suspensions [17] [18]. |

| Droplet-based scRNA-seq Kits | High-throughput single-cell partitioning and barcoding (e.g., 10x Genomics). | Large-scale cellular atlas construction to capture low-frequency cell types [18]. |

| scCAD Algorithm | Iterative cluster decomposition and anomaly detection for rare cell identification in silico. | Identifying rare circulating tumor cells (CTCs) in complex PBMC datasets [16]. |

| AnnDictionary Package | LLM-provider-agnostic Python package for automated de novo cell type annotation using marker genes. | Standardizing and scaling annotation across large, multi-tissue atlases [20]. |

Emerging Frontiers and Future Directions

The field is rapidly evolving with several promising trends. The integration of large language models (LLMs) for de novo cell type annotation shows increasing accuracy, with models like Claude 3.5 Sonnet achieving over 80-90% accuracy for most major cell types. Tools like AnnDictionary consolidate this functionality, allowing for tissue-aware annotation and gene set functional analysis, though performance varies with model size [21] [20].

Furthermore, multi-modal integration of transcriptomic data with epigenetic data (e.g., scATAC-seq) and spatial context (spatial transcriptomics) provides a more comprehensive view, helping to validate the identity and function of rare cells within their native tissue architecture [18] [16]. These advances, combined with the robust experimental and computational protocols outlined herein, provide a powerful framework for overcoming the critical challenges of low heterogeneity and limited reference data, ultimately illuminating the once-invisible world of rare cell biology.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to investigate cellular heterogeneity in complex biological systems, providing unprecedented resolution to study gene expression profiles at the individual cell level [22]. The process of assigning cell type identities—known as cell type annotation—represents one of the most critical and challenging steps in the scRNA-seq analysis pipeline. For researchers investigating novel or rare cell populations, robust annotation is particularly vital as it transforms clusters of gene expression data into meaningful biological insights that can drive drug discovery and therapeutic development [3].

The fundamental challenge in cell type annotation stems from the nature of cellular identity itself. Biologists traditionally defined cell types by morphology and physiology, later incorporating cell surface markers with the advent of antibody labeling. Now, in the era of single-cell biology, cell types are increasingly defined by their gene expression profiles, though this concept remains actively debated and continuously evolving [3]. The process is further complicated when studying rare cell populations, which may represent transitional states, novel cell types, or disease-specific subpopulations with significant clinical implications.

This technical guide provides a comprehensive framework for the single-cell annotation workflow, with particular emphasis on strategies optimized for identifying and characterizing novel or rare cell populations. We will explore integrated approaches that combine computational rigor with biological validation to ensure annotations are both technically sound and biologically meaningful.

Foundations of Single-Cell Annotation

Defining Cell Identity in the Single-Cell Era

Before embarking on annotation, it is essential to understand what constitutes a "cell type" in transcriptomic space. Cell identity in scRNA-seq data typically falls into one of several categories:

- Established cell types: Well-characterized populations with distinct marker genes (e.g., PECAM1 for endothelial cells) [3]

- Novel cell types: Biologically distinct clusters without clear matches in existing references

- Cell states and disease stages: Cellular phenotypes reflecting response to perturbation, activation, stress, or pathology

- Developmental stages: Positions along a differentiation continuum from progenitor to mature cell types [3]

For rare cell populations, these distinctions can become blurred, as these populations may represent transient states, intermediate differentiation stages, or previously uncharacterized cell types with specialized functions.

Experimental Design Considerations for Rare Cell Populations

The ability to successfully identify and annotate rare cell populations begins with appropriate experimental design. Choice of sequencing platform significantly impacts detection sensitivity, with each method offering distinct trade-offs between cell throughput, transcriptional coverage, and cost [23].

Table 1: scRNA-seq Platform Selection for Rare Cell Populations

| Platform Type | Throughput | Sensitivity | Best Use Cases for Rare Cells |

|---|---|---|---|

| Droplet-based (10x Genomics, Drop-Seq) | High (thousands to millions of cells) | Moderate (detects highly expressed genes) | Initial discovery phase; identifying rare populations within complex tissues |

| Microwell-based (Fluidigm C1) | Low to medium (hundreds to thousands of cells) | High (full-length transcript coverage) | Targeted analysis of pre-enriched populations; in-depth characterization |

| Plate-based with FACS | Flexible (depends on sorting strategy) | High (full-length transcripts) | Analysis of pre-defined rare populations using known surface markers |

| Split-pool combinatorial indexing | Very high (millions of cells) | Lower than other methods | Extremely rare populations across large sample sizes |

For rare cell populations specifically, a two-phase approach often proves effective: an initial high-throughput droplet-based screen to identify rare populations of interest, followed by targeted higher-sensitivity sequencing of sorted cells for deeper characterization [23].

The Integrated Annotation Workflow

A robust annotation strategy employs multiple complementary approaches to overcome the limitations of any single method. The integrated workflow presented below maximizes confidence in annotation results, particularly crucial when working with novel or rare cell populations.

Data Preprocessing: Building the Foundation

High-quality annotation requires meticulous data preprocessing. This begins with rigorous quality control to filter out low-quality cells, doublets, and background noise that could obscure rare populations [3]. Standard preprocessing includes:

- Quality control: Filtering based on unique gene counts, total UMIs, and mitochondrial percentage

- Normalization: Accounting for sequencing depth variation between cells (e.g., using SCnorm or regularized negative binomial regression) [24]

- Feature selection: Identifying highly variable genes that drive biological heterogeneity

- Dimensionality reduction: Applying PCA to capture major sources of transcriptomic variation

- Clustering: Grouping cells with similar expression profiles using algorithms such as Louvain or Leiden

For rare cell populations, specific considerations include adjusting clustering parameters to increase resolution and applying specialized doublet detection methods, as doublets can be misinterpreted as rare populations [24].

Manual Marker-Based Annotation

The classical approach to annotation relies on known marker genes from literature or previous studies. This method involves identifying highly expressed genes in each cluster and matching them to established cellular markers.

Table 2: Example Marker Genes for Hematopoietic Cells [25]

| Cell Type | Key Marker Genes | Negative Markers | Notes |

|---|---|---|---|

| CD14+ Mono | FCN1, CD14 | - | Classic monocyte markers |

| CD16+ Mono | TCF7L2, FCGR3A, LYN | - | Non-classical monocytes |

| cDC1 | CLEC9A, CADM1 | - | Conventional DC type 1 |

| NK cells | GNLY, NKG7, CD247 | - | Natural killer cells |

| Plasma cells | MZB1, HSP90B1, PRDM1 | - | Antibody-secreting cells |

| Proerythroblast | CDK6, SYNGR1 | HBM, GYPA | Early erythroid precursors |

When working with rare populations, manual annotation requires particular caution. Sparsity of single-cell data means that a rare cell might not express a key marker even if it is part of that cell type. Examining expression patterns across entire clusters rather than individual cells provides more robust annotation [25].

Reference-Based Automated Annotation

Automated methods compare query datasets to existing annotated references, leveraging large-scale annotation efforts such as the Human Cell Atlas. These approaches have gained popularity due to their scalability and reproducibility [26].

Popular reference-based tools include:

- Azimuth: Web-based application using Seurat algorithms, supporting various human and mouse tissues [26]

- SingleR: Correlation-based method comparing cells to reference datasets

- CellTypist: Python-based tool with pre-trained models on multiple tissues

For rare cell populations, reference-based methods may struggle if the rare population is absent from or poorly represented in reference datasets. Using multiple complementary references and setting appropriate confidence thresholds improves detection of novel populations [26].

Specialized Approaches for Rare Cell Identification

Rare cell populations require specialized computational approaches beyond standard annotation workflows:

- Multi-resolution clustering: Performing clustering at different resolutions to identify consistent rare subpopulations

- Outlier detection: Identifying cells that consistently fall outside main populations across multiple analyses

- Density-based clustering: Using algorithms like DBSCAN that can identify low-density clusters

- Trajectory analysis: Inferring differentiation pathways to identify rare intermediate states [3]

These specialized approaches help overcome the limitations of standard clustering algorithms, which often prioritize dominant populations at the expense of rare ones.

A successful annotation project leverages multiple complementary resources. The table below summarizes key databases and tools particularly valuable for rare population analysis.

Table 3: Essential Resources for Cell Type Annotation [25] [26]

| Resource Name | Type | Scope | Key Features for Rare Cells |

|---|---|---|---|

| CellMarker 2.0 | Marker Database | Human, mouse | Manually curated from >100k publications; includes non-coding RNAs |

| Azimuth | Reference Atlas | Human, mouse tissues | Web-based; multiple tissue references; confidence scores |

| Tabula Muris | Reference Data | Mouse organs | 20 different mouse organs; foundational dataset |

| Tabula Sapiens | Reference Data | Human atlas | 28 human organs from 24 subjects; web-based application |

| MSigDB C8/M8 | Curated Gene Sets | Human/mouse tissue | Curated cell type signature genes; usable via GSEA |

| CellTypist | Automated Tool | Multiple tissues | Pre-trained models; Python integration |

| ScArches | Reference Mapping | Multiple species | Transfer learning approach for atlas-level integration |

Each resource has particular strengths for rare cell investigation. CellMarker 2.0's extensive curation helps identify markers for poorly characterized populations, while Azimuth's confidence scores help flag cells with ambiguous assignments that might represent novel populations [26].

Biological Interpretation and Validation

From Annotation to Biological Insight

Successful annotation enables deeper biological interpretation, particularly for rare populations with potential clinical significance. Key analysis pathways include:

- Differential expression: Comparing rare populations to dominant populations to identify uniquely enriched pathways

- Cell-cell communication: Inferring interaction networks between rare populations and their microenvironment

- Regulatory network analysis: Identifying transcription factors driving rare population identity

- Spatial contextualization: Mapping rare populations within tissue architecture using spatial transcriptomics

For drug development professionals, understanding the functional role of rare populations is particularly valuable, as these populations may represent treatment-resistant cells, disease-initiating stem cells, or key immune modulators [27].

Validation Strategies

Annotation conclusions require rigorous validation, especially when claiming novel or rare populations:

- Orthogonal validation: Confirming protein expression of identified markers via cytometry or immunohistochemistry

- Functional assays: Testing purified populations for proposed cellular functions

- Spatial validation: Confirming tissue localization through spatial transcriptomics or multiplexed imaging

- Perturbation studies: Manipulating candidate regulators to test their necessity for population identity

As emphasized by single-cell experts, "the best practice is to follow up scRNA-seq experiments with validation experiments of another nature to further characterize the cells in your sample" [3].

Future Directions and Emerging Technologies

The field of cell type annotation is rapidly evolving, with several emerging technologies promising to enhance rare population characterization:

- Single-cell long-read sequencing: Enables isoform-level transcriptomic profiling, providing higher resolution than conventional gene expression-based methods [21]

- Multi-omics integration: Combining transcriptomic, epigenetic, and proteomic measurements at single-cell resolution

- Spatial transcriptomics: Mapping rare populations within tissue context to understand niche interactions

- AI and large language models: Enhancing annotation accuracy and scalability through natural language processing of scientific literature [21]

These technologies are particularly promising for rare cell research, as they provide additional layers of evidence to support the identity and function of poorly characterized populations.

The single-cell annotation workflow represents a critical bridge from raw sequencing data to biological insight. For researchers focused on novel or rare cell populations, success depends on implementing an integrated strategy that combines multiple annotation approaches, leverages specialized resources, and incorporates rigorous validation. As single-cell technologies continue to advance, annotation methods will undoubtedly become more refined, enabling increasingly precise characterization of rare populations with potential significance for basic biology and therapeutic development.

Cutting-Edge Annotation Tools: Leveraging AI, LLMs, and Spatial Mapping

The identification and characterization of cell types through single-cell RNA sequencing (scRNA-seq) represents a fundamental challenge in modern biology, particularly when investigating novel or rare cell populations. Traditional cell type annotation is a laborious, time-consuming process requiring human experts to compare highly expressed genes in each cell cluster with canonical cell type marker genes [28]. While automated methods have been developed, manual annotation using marker genes remains widely used despite its limitations in scalability and reproducibility [28]. The emergence of large language models (LLMs) has revolutionized this field by providing accurate, scalable alternatives that can considerably reduce the effort and expertise required for cell type annotation [28] [21].

These models leverage the vast biological knowledge encoded during pre-training on diverse textual corpora to interpret marker gene signatures and assign cell type labels with remarkable accuracy. For researchers investigating rare cell populations—such as stem cells, rare immune subsets, or disease-specific aberrant cells—LLM-powered annotation offers particular promise by providing consistent, reproducible classifications even when expert knowledge may be limited or unavailable. This technical guide examines three key implementations—GPTCelltype, LICT (integrated within AnnDictionary), and CellAnnotator (from scExtract)—that represent the cutting edge in automated cell type annotation, with a specific focus on their application to novel and rare cell population research.

Comparative Analysis of LLM-Based Annotation Tools

Performance Metrics Across Implementation Platforms

Table 1: Quantitative Performance Comparison of LLM-Based Cell Annotation Tools

| Tool | Underlying LLM | Reported Agreement with Manual Annotation | Key Strengths | Limitations |

|---|---|---|---|---|

| GPTCelltype | GPT-4 | Over 75% full or partial match in most tissues and studies [28] | High accuracy with literature-based marker genes; Robustness in complex scenarios [28] | Struggles with B lymphoma; Lower performance in small populations [28] |

| LICT (via AnnDictionary) | Claude 3.5 Sonnet | Over 80-90% accuracy for major cell types [20] | Multi-LLM support; Automatic cluster resolution; Chain-of-thought reasoning [20] | Performance varies with model size; Inter-LLM agreement inconsistencies [20] |

| CellAnnotator (via scExtract) | Multiple LLMs | Higher accuracy than established methods across tissues [29] | Article background integration; Prior-informed multi-dataset integration [29] | Sensitivity to annotation errors in integration phase [29] |

Technical Specifications and Operational Characteristics

Table 2: Technical Implementation Details of LLM Annotation Tools

| Characteristic | GPTCelltype | LICT (AnnDictionary) | CellAnnotator (scExtract) |

|---|---|---|---|

| Implementation Platform | R software package [28] | Python (built on AnnData and LangChain) [20] | Python (built on scanpy) [29] |

| LLM Flexibility | Specific to GPT series [28] | Supports 15+ LLMs with 1-line configuration switch [20] | Optimized for three model providers with cost-effective large-scale queries [29] |

| Input Requirements | Top 10 differential genes (Wilcoxon test recommended) [28] | Differential genes from unsupervised clustering [20] | Raw expression matrices + article content [29] |

| Cost Considerations | ~$0.1 for all queries in original study; $20 monthly web portal fee [28] | Varies by selected LLM provider [20] | Priced ≤$5.00 per 1M tokens [29] |

| Reproducibility | 85% identical annotations for same marker genes [28] | Not explicitly reported | Stepwise integration reduces output variations [29] |

Experimental Protocols and Methodologies

Benchmarking Frameworks for Annotation Accuracy

The evaluation of LLM-based annotation tools follows rigorous benchmarking protocols to ensure reliable performance assessment, particularly for rare cell type identification. The standard evaluation methodology involves:

Dataset Collection and Curation: For comprehensive benchmarking, researchers collect multiple annotated datasets spanning various tissues, species, and conditions. The GPTCelltype study, for instance, evaluated performance across ten datasets covering five species and hundreds of tissue and cell types, including both normal and cancer samples [28]. Similarly, AnnDictionary benchmarks utilized the Tabula Sapiens v2 single-cell transcriptomic atlas, processing each tissue independently [20].

Pre-processing Pipeline: Consistent pre-processing is critical for fair comparisons. The standard workflow includes:

- Normalization and log-transformation of raw counts

- Selection of high-variance genes

- Dimensionality reduction via PCA

- Neighborhood graph calculation

- Clustering using algorithms like Leiden

- Differential gene expression analysis for each cluster [20]

Accuracy Assessment Metrics: Multiple complementary metrics are employed:

- Direct string comparison between automated and manual annotations

- Cohen's kappa (κ) for inter-annotator agreement assessment

- LLM-derived ratings where models evaluate match quality (perfect, partial, or not-matching) [20]

- Binary classification (yes/no) for match determination [20]

Rare Cell Population Considerations: For evaluating performance on rare populations, studies often simulate challenging scenarios by:

- Creating mixed cell type conditions

- Introducing unknown cell types not in training data

- Artificially reducing cluster sizes to test small population robustness [28]

GPTCelltype Implementation Protocol

The GPTCelltype implementation follows a systematic protocol optimized for accurate cell type annotation:

Input Optimization:

- Utilize top 10 differential genes ranked by P-values

- Employ two-sided Wilcoxon test for differential gene identification

- Apply basic prompt strategy without complex reasoning steps [28]

Query Execution:

- Interface with GPT-4 via specialized R package

- Structure queries to include marker gene lists with appropriate context

- Process hundreds of cell types across multiple tissues in parallel [28]

Validation and Quality Control:

- Compare GPT-4 annotations with manual expert annotations

- Identify discordant cases for expert review

- Assess potential AI hallucination through marker gene verification [28]

AnnDictionary with LICT Methodology

The AnnDictionary framework implements LICT through a sophisticated, multi-step protocol:

Parallel Processing Backend:

- Utilize AdataDict class for handling multiple anndata objects

- Implement fapply method for multithreaded operations

- Incorporate error handling and retry mechanisms for robust large-scale processing [20]

Flexible LLM Integration:

- Configure LLM backend with single line of code via configurellmbackend()

- Support for multiple commercial providers (OpenAI, Anthropic, Google, Meta)

- Compatibility with Amazon Bedrock models (Mistral, Titan, Cohere) [20]

Advanced Annotation Techniques:

- Tissue-aware annotation at user's discretion

- Chain-of-thought reasoning for comparing multiple marker gene lists

- Contextual subtype identification with parent cell type information

- Expected cell type guidance to refine annotations [20]

scExtract with CellAnnotator Workflow

The scExtract framework implements CellAnnotator through a comprehensive automated pipeline:

Article-Based Processing:

- Extract methodological parameters directly from research articles

- Emulate human researcher workflow using scanpy framework

- Implement filtering criteria described in methods sections (e.g., mitochondrial gene thresholds) [29]

Intelligent Clustering:

- Extract explicit cluster group numbers from article text when available

- Infer appropriate clustering granularity from article content and biological context

- Leverage authors' prior knowledge for biologically meaningful clustering [29]

Prior-Informed Annotation:

- Incorporate article background knowledge during annotation phase

- Generate characteristic marker gene sets based on tissue and cell type information

- Optimize initial annotations by querying expression levels of inferred marker genes [29]

Multi-Dataset Integration:

- Apply cellhint-prior for cell type harmonization across datasets

- Utilize scanorama-prior for embedding-level integration with annotation similarity weighting

- Implement conservative prior incorporation to mitigate error propagation [29]

Workflow Visualization

LLM-Based Cell Type Annotation Workflow

Integration Architectures for Multi-Dataset Analysis

Prior-Informed Integration Framework

Prior-Informed Multi-Dataset Integration

Table 3: Key Research Reagent Solutions for LLM-Enhanced Cell Annotation

| Resource Category | Specific Tool/Platform | Function in Annotation Pipeline | Application to Rare Cell Research |

|---|---|---|---|

| Reference Databases | cellxgene [29] | Largest literature-curated single-cell database with 1458+ datasets (as of 2024) | Provides baseline annotations for comparison with rare populations |

| Differential Analysis Tools | Seurat (Wilcoxon test) [28] | Identifies significantly expressed genes for cell clusters | Enables detection of subtle expression patterns in rare cells |

| Multi-LLM Platforms | AnnDictionary [20] | Unified interface for 15+ LLMs with one-line configuration switching | Allows benchmarking multiple models on challenging rare cell annotations |

| Integration Frameworks | scanorama-prior & cellhint-prior [29] | Annotation-aware batch correction preserving biological diversity | Prevents over-integration of dataset-specific rare populations |

| Benchmarking Resources | Tabula Sapiens v2 [20] | Comprehensive single-cell atlas for validation studies | Provides ground truth for major cell types while highlighting unknowns |

| Automated Extraction | scExtract [29] | LLM-based processing of research articles for methodological parameters | Extracts rare cell descriptions from literature for informed annotation |

Advancements in Rare and Novel Cell Population Research

The application of LLM-based annotation tools has yielded significant advancements in the identification and characterization of rare and novel cell populations. These tools address specific challenges in rare cell research through several mechanisms:

Enhanced Sensitivity to Subtle Expression Patterns: GPT-4 has demonstrated particular effectiveness in distinguishing between closely related cell types, such as providing higher granularity for stromal cells by differentiating fibroblasts and osteoblasts based on type I collagen gene expression compared to manual annotations that used the broader "stromal cells" classification [28]. This sensitivity to subtle expression differences is critical for identifying novel cell states within heterogeneous populations.

Robustness in Challenging Scenarios: Systematic evaluations reveal that LLM-based annotation maintains reliability under conditions relevant to rare cell studies. GPT-4 achieves 93% accuracy in distinguishing between pure and mixed cell types and 99% accuracy in differentiating known from unknown cell types [28]. This capability is essential for recognizing potentially novel populations that don't match established classifications.

Multi-Dataset Consistency: Tools like scExtract enable the construction of comprehensive atlases by integrating multiple datasets while preserving rare population identities. In one demonstration, scExtract successfully integrated 14 skin scRNA-seq datasets to create a unified atlas of 440,000 cells, enabling identification of characteristic cluster expansion in proliferating keratinocytes in psoriasis [29]. This approach prevents the masking of rare populations that can occur in standard integration methods.

Context-Aware Annotation: The incorporation of article-specific information in scExtract allows the system to leverage authors' specialized knowledge about unusual or rare cell populations described in methods sections, leading to more accurate annotations that align with biological context [29].

Future Directions and Implementation Considerations

As LLM-based annotation approaches mature, several considerations emerge for researchers implementing these tools, particularly for rare cell population studies:

Training Data Limitations: Models trained on data predating September 2021 may lack knowledge of newly discovered cell types, necessitating caution when interpreting results for novel populations [28]. Fine-tuning with updated reference marker gene lists represents a promising approach to address this limitation.

Validation Imperatives: The undisclosed nature of LLM training corpora makes verification of annotation bases challenging, requiring human expert validation to ensure quality and reliability, especially for rare cell types [28]. Implementation of systematic validation workflows is essential.

Scalability and Cost Management: While LLM annotation substantially reduces manual effort, large-scale applications require cost management strategies. With GPTCelltype costing approximately $0.1 for all queries in the original study and scExtract utilizing models priced ≤$5.00 per 1M tokens, thoughtful budgeting is necessary for atlas-scale projects [28] [29].

Error Propagation in Integration: Prior-informed integration methods like scanorama-prior show sensitivity to annotation errors, necessitating conservative approaches to prior incorporation and implementation of error-correction mechanisms such as cellhint-prior's uncertainty-based weighting [29].

The rapid evolution of LLM capabilities suggests continued improvement in cell type annotation accuracy, particularly for challenging rare populations. As these tools become more sophisticated in leveraging contextual information and handling complex multi-dataset integrations, they promise to significantly accelerate the discovery and characterization of novel cell types across diverse biological systems and disease contexts.

Cell type annotation represents a critical bottleneck in single-cell RNA sequencing (scRNA-seq) analysis, particularly for novel or rare cell populations that lack established reference data. Traditional methods—whether manual expert annotation or automated reference-based tools—suffer from significant limitations, including subjectivity, reference dependency, and inconsistent accuracy when confronting unknown cell types. The emergence of large language models (LLMs) offers a promising alternative by leveraging their vast training on biological literature to interpret marker genes and propose cell type labels. However, individual LLMs exhibit substantial performance variability, with their effectiveness diminishing notably when annotating less heterogeneous datasets, such as rare cell populations. To address these challenges, multi-model integration strategies have emerged as a powerful methodology that combines the complementary strengths of multiple LLMs through consensus-based approaches, significantly enhancing annotation accuracy, reducing individual model bias, and providing crucial uncertainty quantification for downstream analysis.

Multi-LLM Integration Fundamentals

Multi-LLM integration for cell type annotation operates on the principle that different language models possess complementary strengths and knowledge bases derived from their distinct training data and architectural approaches. By combining predictions from multiple models, researchers can overcome the limitations of any single model and achieve more reliable, accurate annotations. This approach is particularly valuable for rare cell population research, where traditional annotation methods often fail due to limited reference data and subtle marker gene expression patterns.

The foundational methodology involves submitting the same set of marker genes or differential expression patterns to multiple LLMs simultaneously, then implementing a consensus mechanism to determine the final annotation. This strategy differs fundamentally from simply selecting the "best-performing" individual model, as it actively leverages the diverse reasoning pathways of different AI systems. Experimental validation demonstrates that this multi-model approach significantly reduces annotation mismatch rates—from 21.5% to 9.7% for highly heterogeneous datasets like PBMCs, and dramatically improves match rates for low-heterogeneity datasets like embryonic cells, where performance improvements of 16-fold over single-model approaches have been documented [2].

Performance Benchmarking: Quantitative Comparison of LLM Annotation Capabilities

Rigorous benchmarking studies have evaluated the performance of various LLMs on cell type annotation tasks across diverse biological contexts. The table below summarizes the performance of top-performing individual LLMs based on agreement with manual annotations across multiple dataset types:

Table 1: Performance of Individual LLMs on Cell Type Annotation Tasks

| LLM Model | PBMC Dataset Agreement | Gastric Cancer Dataset Agreement | Human Embryo Dataset Agreement | Stromal Cells Dataset Agreement |

|---|---|---|---|---|

| Claude 3 | Highest overall performance | Strong performance | Moderate performance | 33.3% consistency |

| Gemini 1.5 Pro | Strong performance | Strong performance | 39.4% consistency | Moderate performance |

| GPT-4 | Strong performance | Strong performance | Lower performance | Lower performance |

| LLaMA 3 | Moderate performance | Moderate performance | Lower performance | Lower performance |

| ERNIE 4.0 | Moderate performance | Moderate performance | Lower performance | Lower performance |

When these individual models are integrated through multi-model consensus approaches, the resulting systems demonstrate markedly improved performance:

Table 2: Multi-Model Integration Performance Improvements

| Dataset Type | Single Model Mismatch Rate | Multi-Model Mismatch Rate | Improvement |

|---|---|---|---|

| PBMCs (High heterogeneity) | 21.5% (GPT-4) | 9.7% | 55% reduction |

| Gastric Cancer (High heterogeneity) | 11.1% (GPT-4) | 8.3% | 25% reduction |

| Human Embryo (Low heterogeneity) | Very low match rate | 48.5% match rate | 16-fold increase |

| Stromal Cells (Low heterogeneity) | Very low match rate | 43.8% match rate | Significant increase |

Recent implementations of multi-LLM frameworks have achieved remarkable accuracy levels. The mLLMCelltype framework, which integrates predictions from 10+ LLM providers including OpenAI GPT-5/4.1, Anthropic Claude series, Google Gemini-2.0, and specialized models, reports 95% annotation accuracy through optimized consensus algorithms while reducing API costs by 70-80% compared to single-model approaches [30]. Similarly, benchmark studies using AnnDictionary for de novo cell type annotation found that multi-model strategies consistently outperformed individual models, with Claude 3.5 Sonnet showing particularly high agreement with manual annotations [20].

Methodological Framework: Implementation Strategies for Multi-Model Integration

Core Integration Strategies

Advanced multi-LLM implementations employ three sophisticated strategies to enhance annotation reliability:

Strategy I: Multi-Model Integration - This approach selects the best-performing results from multiple LLMs rather than relying on simple majority voting. The process involves parallel querying of multiple models with the same marker gene set, followed by intelligent selection of the most consistent annotations. This strategy has proven particularly effective for low-heterogeneity datasets where individual models struggle, increasing match rates from single-digit percentages to 48.5% for embryonic data and 43.8% for fibroblast data [2].

Strategy II: "Talk-to-Machine" Iterative Refinement - This human-computer interaction process creates a feedback loop between the researcher and the LLM ensemble. The methodology involves: (1) marker gene retrieval from the LLM based on initial annotations; (2) expression pattern evaluation within the dataset; (3) validation against predefined thresholds (e.g., >4 marker genes expressed in ≥80% of cells); and (4) structured feedback with additional differentially expressed genes for re-querying failed annotations. This iterative approach has increased full match rates to 69.4% for gastric cancer data while reducing mismatches to 2.8% [2].