Analytical Methods for Product Comparability Testing: A 2025 Guide for Drug Development Scientists

This article provides a comprehensive guide for researchers and drug development professionals on the evolving landscape of analytical methods for product comparability testing.

Analytical Methods for Product Comparability Testing: A 2025 Guide for Drug Development Scientists

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the evolving landscape of analytical methods for product comparability testing. Covering foundational principles established in ICH Q5E to the latest 2025 FDA draft guidance on biosimilars, it explores methodological applications for complex products like cell and gene therapies, troubleshooting strategies for expedited programs, and modern validation approaches under ICH Q14. The content synthesizes current regulatory expectations, advanced statistical techniques like equivalence testing, and risk-based frameworks to help scientists design robust comparability studies that ensure product quality while accelerating patient access to critical therapies.

Understanding Comparability: Regulatory Foundations and Scientific Principles

In the biopharmaceutical industry, comparability is the systematic process of gathering and evaluating scientific data to demonstrate that a pre-change and post-change product have a highly similar quality profile, with no adverse impact on safety or efficacy [1]. This foundational concept is critical throughout a product's lifecycle, from early development to post-marketing authorization, and across two primary scenarios: assessing the impact of manufacturing process changes for an originator product, and demonstrating biosimilarity between a proposed biosimilar and its reference biologic product [2] [1].

The fundamental principle underlying comparability is that a comprehensive analytical comparison can often substitute for additional non-clinical or clinical studies, saving significant resources and time while accelerating patient access to vital medicines [1]. With expensive biologic medications accounting for only 5% of U.S. prescriptions but 51% of total drug spending as of 2024, efficient comparability pathways are essential for healthcare sustainability [3].

Regulatory Framework and Evolution

The regulatory landscape for comparability has evolved significantly, with major agencies including the U.S. Food and Drug Administration (FDA), European Medicines Agency (EMA), and others establishing pathways for biosimilar approval and process change evaluation [2]. The Biologics Price Competition and Innovation Act (BPCIA) of 2010 established the formal biosimilar pathway in the United States [3].

A significant recent development is the regulatory shift toward waiving comparative efficacy studies (CES) in biosimilar development. As of 2025, the FDA, EMA, and UK's MHRA have all issued guidance acknowledging that modern analytical technologies, when coupled with pharmacokinetic studies, are often more sensitive than clinical trials for detecting product differences [4]. This represents a major advancement from the 2015 regulatory stance, reflecting two decades of accumulated experience showing that CES "consistently failed to yield clinically differentiating insights" [4].

Table 1: Global Regulatory Landscape for Biosimilar Approvals (as of 2021) [2]

| Region | Regulatory Agency | Year Pathway Established | Biosimilar Approvals (to date) |

|---|---|---|---|

| European Union | European Medicines Agency (EMA) | 2005 | 69 |

| United States | Food and Drug Administration (FDA) | 2015 | 34 |

| Japan | Pharmaceuticals and Medical Devices Agency | 2009 | 28 |

| India | Central Drugs Standard Control Organization | 2016 (revised) | 103 |

| Canada | Health Canada | 2016 | 26 |

Critical Quality Attributes (CQAs) for Biologics

For recombinant monoclonal antibodies and other biologics, Critical Quality Attributes (CQAs) are molecular properties that must be maintained within appropriate limits to ensure product safety and efficacy [2]. These attributes exhibit inherent variability due to the complex nature of biological manufacturing systems and the presence of numerous post-translational modifications (PTMs) [1].

A thorough understanding of CQAs and their structure-function relationships is essential for designing meaningful comparability studies. The risk assessment for comparability should focus on attributes most likely to be affected by process changes and those with potential impact on safety and efficacy [1].

Table 2: Key Quality Attributes for Recombinant Monoclonal Antibodies and Their Potential Impact [1]

| Attribute Category | Specific Modifications | Potential Impact on Safety/Efficacy |

|---|---|---|

| N-terminal modifications | Pyroglutamate formation, leader sequence retention, truncation | Generally low risk; generate charge variants but minimal impact on efficacy |

| C-terminal modifications | Lysine removal, amidation, truncation | Low risk; charge variants with minimal clinical impact |

| Fc-glycosylation | Sialic acid, α-1,3 Gal, terminal Gal, absence of core fucosylation, high mannose | High risk; can affect immunogenicity, ADCC, CDC, and half-life |

| Charge variants | Deamidation, isomerization, succinimide formation | Medium-high risk; modifications in CDR can decrease potency |

| Oxidation | Methionine, Tryptophan oxidation | Medium risk; can decrease potency and affect half-life |

| Aggregation | Soluble and insoluble aggregates | High risk; can cause immunogenicity and loss of efficacy |

Statistical Approaches for Comparability Assessment

A risk-based, three-tiered statistical approach is recommended for demonstrating comparability of biosimilars and process-changed products [5]. This framework ensures that the level of statistical rigor is commensurate with the attribute's criticality and potential impact on product quality.

Tier 1: Equivalence Testing for Critical Attributes

Tier 1 represents the most rigorous statistical assessment and is applied to critical quality attributes (CQAs) with potential impact on clinical performance [5]. The two primary statistical methods for Tier 1 are:

Equivalence Testing (TOST): Using a two one-sided t-test (TOST) to demonstrate that the difference between reference and test articles falls within a pre-defined equivalence margin [5]. The acceptance criteria are risk-based, with higher risk attributes allowing only small practical differences.

K Sigma Comparison: A simpler approach calculating the z-score as the mean difference between test and reference articles divided by the reference standard deviation. Acceptance criteria are typically set at ≤1.5 K sigma [5].

For both approaches, minimum sample sizes of three or more lots each of reference and test products are recommended, with multiple measurements per lot (3-6) to understand analytical method variability [5].

Tier 2: Range Testing for Less Critical Attributes

Tier 2 assessment applies to in-process controls or less critical quality attributes using range tests [5]. The methodology involves:

- Fitting reference lot data to an appropriate distribution (normal, gamma, Weibull)

- Setting limits at either 99% (2.576 K sigma) or 99.73% (3 K sigma)

- Demonstrating that a predefined percentage (85-95%, based on risk) of test article measurements fall within the reference limits [5]

Tier 3: Graphical Comparison for Monitored Attributes

Tier 3 represents the least rigorous approach, used for attributes that are simply monitored during production or where quantitative analysis is impractical [5]. This typically involves side-by-side graphical comparisons or overlays of molecular structures, growth curves, or sensor profiles without formal acceptance criteria [5].

Experimental Design and Protocols

Analytical Comparability Study Design

A well-designed comparability study should generate data demonstrating that the analytical procedure performance characteristics (APPCs) of two methods are comparable [6]. The European Pharmacopoeia chapter 5.27 recommends equivalence testing that generates comparable data for relevant APPCs, with acceptance criteria defined prior to study execution [6].

For quantitative tests, the study should evaluate accuracy and precision across the measurement range, with potential additional assessment of specificity/selectivity depending on the intended use [6]. The confidence intervals of mean results between two procedures should differ by no more than a predefined amount with an appropriate confidence level [6].

Orthogonal Analytical Methods

Implementation of orthogonal analytical tools - methods differing in their physicochemical or biological principles - is invaluable for unambiguous demonstration of comparability [2]. This approach is particularly important for dynamic attributes that cannot be completely characterized by a single technique.

For size variants, orthogonal techniques cover the breadth of the size range (soluble aggregates < sub-visible < visible < insoluble aggregates) and independently quantify aggregates within the same size range [2]. Similarly, orthogonal methods are essential for characterizing higher-order structure (HOS), glycosylation, and charge variants [2].

Sample and Study Design Considerations

Appropriate study design is critical for meaningful comparability assessment:

- Sample Sizes: Minimum of three lots each for reference and test products, with 3-6 measurements per lot to understand analytical variability [5]

- Power Analysis: Study design should include sample size and power analysis to ensure adequate power to detect meaningful differences [5]

- Sample Uniformity: Evaluation of sample uniformity is desirable though not always required [5]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Comparability Studies

| Reagent/Material | Function in Comparability Assessment |

|---|---|

| Reference Standard | Well-characterized material serving as benchmark for comparison studies; typically the originator product for biosimilars or pre-change material for process comparisons [1] |

| Orthogonal Analytical Columns | Chromatography resins with different separation mechanisms (e.g., ion exchange, hydrophobic interaction, size exclusion) for comprehensive characterization of charge variants, aggregates, and hydrophobicity profiles [2] |

| Cell-Based Assay Reagents | Reporter cells, cytokines, and detection antibodies for functional potency assays that reflect mechanism of action [4] |

| Mass Spectrometry Standards | Isotopically-labeled internal standards for precise quantification of post-translational modifications, glycan profiling, and sequence variant analysis [2] [1] |

| Forced Degradation Reagents | Chemicals for intentional stress studies (e.g., hydrogen peroxide for oxidation, buffers at various pH for deamidation) to understand degradation pathways and compare stability profiles [1] |

Case Study: Protocol for Monoclonal Antibody Comparability

Study Objective

To demonstrate comparability between a recombinant monoclonal antibody produced pre- and post-manufacturing process change through comprehensive analytical characterization focusing on critical quality attributes.

Materials and Methods

- Reference Material: Three consecutive lots of pre-change drug substance

- Test Material: Three consecutive lots of post-change drug substance

- Controls: Appropriate system suitability and assay controls

Experimental Protocol

Step 1: Primary Structure Analysis

- Perform intact mass analysis by LC-MS using reversed-phase UPLC coupled to Q-TOF mass spectrometer

- Execute peptide mapping with tryptic digestion followed by LC-MS/MS analysis

- Calculate sequence coverage and identify post-translational modifications

Step 2: Higher-Order Structure Assessment

- Conduct far-UV and near-UV circular dichroism spectroscopy

- Perform differential scanning calorimetry to determine thermal transition profiles

- Analyze by Fourier-transform infrared spectroscopy

Step 3: Charge Variant Analysis

- Run capillary isoelectric focusing with whole column imaging detection

- Perform cation exchange chromatography using linear pH gradient elution

- Calculate relative percentages of main species, acidic, and basic variants

Step 4: Size Variant Profiling

- Analyze by size exclusion chromatography with multi-angle light scattering detection

- Perform capillary electrophoresis-SDS under reducing and non-reducing conditions

- Use analytical ultracentrifugation for quantification of high molecular weight species

Step 5: Glycan Analysis

- Release N-glycans using PNGase F digestion

- Label released glycans with 2-AB fluorescent tag

- Analyze by HILIC-UPLC with fluorescence detection

- Perform exoglycosidase digestion for structural confirmation

Step 6: Biological Function Assessment

- Conduct cell-based potency assays reflecting mechanism of action

- Perform binding assays using surface plasmon resonance

- Evaluate Fc receptor binding using ELISA-based methods

Acceptance Criteria

Based on the risk-based tiered approach [5]:

- Tier 1 (Critical Potency Attributes): Equivalence testing with 90% confidence intervals within ±1.5 SD of reference

- Tier 2 (Structural Attributes): 90% of test results within 3σ range of reference distribution

- Tier 3 (Other Attributes): Graphical similarity with no qualitative differences

The demonstration of comparability through rigorous analytical assessment represents a cornerstone of modern biopharmaceutical development. The evolving regulatory landscape, particularly the move toward waiving comparative efficacy studies based on comprehensive analytical similarity, underscores the critical importance of well-designed comparability protocols [4]. By implementing a risk-based approach that leverages orthogonal analytical methods and appropriate statistical analyses, developers can efficiently navigate both manufacturing changes and biosimilar development while ensuring continuous supply of safe and effective biologic therapies to patients.

The regulatory landscape for demonstrating product comparability, particularly for biosimilars, is undergoing a significant transformation. The U.S. Food and Drug Administration (FDA) has issued new draft guidance in October 2025 proposing major updates to simplify biosimilarity studies and reduce unnecessary clinical testing [7]. This evolution reflects FDA's growing confidence that modern analytical technologies can now structurally characterize highly purified therapeutic proteins and model in vivo functional effects with a high degree of specificity and sensitivity [7]. This guidance, titled "Scientific Considerations in Demonstrating Biosimilarity to a Reference Product: Updated Recommendations for Assessing the Need for Comparative Efficacy Studies," signals that FDA may no longer routinely require comparative efficacy studies (CES) when other evidence provides sufficient assurance of biosimilarity [7]. This shift places comparative analytical assessment (CAA) at the forefront of demonstrating product comparability, making sophisticated analytical methods more critical than ever for pharmaceutical developers.

The 2025 Guidance: Key Changes and Regulatory Implications

Streamlined Approach for Therapeutic Protein Products

FDA's updated regulatory position represents a fundamental shift from its 2015 approach. Whereas the previous guidance expected a CES unless the sponsor could scientifically justify why one was unnecessary, the 2025 draft guidance establishes a new streamlined approach where CES "may not be necessary" for certain therapeutic protein products (TPPs) [7]. This reversal stems from the agency's "significant experience in evaluating data from comparative analytical and clinical studies" over the past decade [7].

Under the new framework, a CES may be waived when three key conditions are met:

- The biosimilar and reference product "are manufactured from clonal cell lines, are highly purified, and can be well-characterized analytically"

- The relationship between quality attributes and clinical efficacy is generally well understood for the reference product and "can be evaluated by assays included in the CAA"

- An appropriately designed human pharmacokinetic (PK) similarity study and immunogenicity assessment can address residual uncertainty [7]

Exceptions Where CES May Still Be Required

The guidance does identify specific circumstances where a CES may still be necessary, including:

- Biologics with limited structural understanding

- Products where analytical assays cannot fully evaluate functional effects

- Locally acting products such as intravitreally administered products where comparative PK data are "not feasible or clinically relevant" [7]

This refined approach aligns with similar advancements in Europe, where the EMA has also proposed relying more on advanced analytical and pharmacokinetic data, potentially harmonizing global requirements for demonstrating biosimilarity [7].

Analytical Method Comparability: Foundation of the New Paradigm

Defining Comparability and Equivalency

With analytical data taking center stage in the revised regulatory framework, proper understanding and execution of analytical method comparability becomes paramount. Within the pharmaceutical industry, two key concepts govern method comparisons: analytical method comparability refers to broader studies evaluating similarities and differences in method performance characteristics (accuracy, precision, specificity, detection limit, and quantitation limit), while analytical method equivalency specifically evaluates whether a new method can generate equivalent results to an existing method [8].

The European Pharmacopoeia chapter 5.27, "Comparability of alternative analytical procedures," formalizes this approach, stating that "the final responsibility for the demonstration of comparability lies with the user and the successful outcome of the process needs to be demonstrated and documented to the satisfaction of the competent authority" [6].

Risk-Based Approach to Method Changes

A risk-based approach is recommended for managing analytical method changes, particularly for HPLC assay and impurities methods in registration and post-approval stages [8]. The extent of comparability testing should correspond to the risk level of the method change:

Table: Risk-Based Assessment for Analytical Method Changes

| Risk Level | Type of Method Change | Comparability Testing Approach |

|---|---|---|

| Low Risk | Changes within USP <621> Chromatography ranges; changes within established robustness ranges | Method validation only; no equivalency study needed |

| Medium Risk | Change in LC stationary phase chemistry; implementation of UHPLC for HPLC methods | Side-by-side result comparison with predefined acceptance criteria |

| High Risk | Change in separation mechanism (e.g., normal-phase to reversed-phase); change in detection technique | Formal statistical demonstration of equivalency with comprehensive data package |

Industry surveys indicate that 68% of pharmaceutical companies differentiate between comparability and equivalency concepts, while 79% lack specific SOPs for analytical method comparability, highlighting the need for more standardized approaches [8].

Experimental Protocols for Analytical Comparability

Equivalence Testing Framework

The comparability study aims to evaluate whether the results and performance of an alternative analytical procedure are comparable to those of the pharmacopoeial or reference procedure [6]. This typically involves equivalence testing that generates comparable data for the analytical procedure performance characteristics (APPCs) of both procedures.

For quantitative tests, the accuracy and precision across the measurement range should be evaluated, along with other APPCs such as specificity/selectivity, depending on the intended use [6]. A study protocol containing the tests and acceptance criteria for comparing relevant APPCs must be established before study initiation.

The United States Pharmacopeia (USP) chapter <1033> clearly states the preference for equivalence testing over significance testing: "This is a standard statistical approach used to demonstrate conformance to expectation and is called an equivalence test. It should not be confused with the practice of performing a significance test, such as a t-test, which seeks to establish a difference from some target value" [9].

Implementing the Two One-Sided T-Test (TOST)

The Two One-Sided T-Test (TOST) approach is the standard statistical method for demonstrating comparability through equivalence testing [9]. The following protocol outlines the step-by-step procedure:

Protocol: Equivalence Testing for Analytical Method Comparability

Define Acceptance Criteria: Prior to study initiation, establish risk-based equivalence margins. For a pH method with specifications of 7-8 and medium risk, equivalence margins might be set at ±0.15 (15% of tolerance) [9].

Determine Sample Size: Calculate minimum sample size to achieve sufficient statistical power (typically 80-90%). For a single mean comparison with alpha=0.1, the minimum sample size is 13, with 15 recommended to provide adequate power [9]. The formula for sample size is: n = (t₁−α + t₁−β)²(s/δ)² for one-sided tests.

Prepare Study Materials: Include a minimum of three lots of material representing expected variability. For chromatography methods, ensure samples cover the specification range.

Execute Testing: Perform side-by-side analysis using both methods. For HPLC/UHPLC methods, analyze identical sample preparations using both systems.

Statistical Analysis: Conduct TOST analysis using the following procedure:

- Subtract reference method measurements from alternative method results

- Perform two one-sided t-tests against the lower and upper equivalence margins

- Calculate p-values for both tests

- Equivalence is demonstrated if both p-values are <0.05

Document Results: Report confidence intervals and calculate potential out-of-specification (OOS) rates associated with measured differences.

Table: Risk-Based Acceptance Criteria for Equivalence Testing

| Risk Level | Typical Acceptance Criteria (% of tolerance) | Application Examples |

|---|---|---|

| High Risk | 5-10% | Sterility testing, potency methods, impurity methods for narrow therapeutic index drugs |

| Medium Risk | 11-25% | Assay methods, dissolution testing, pH measurement |

| Low Risk | 26-50% | Identity tests, physical tests |

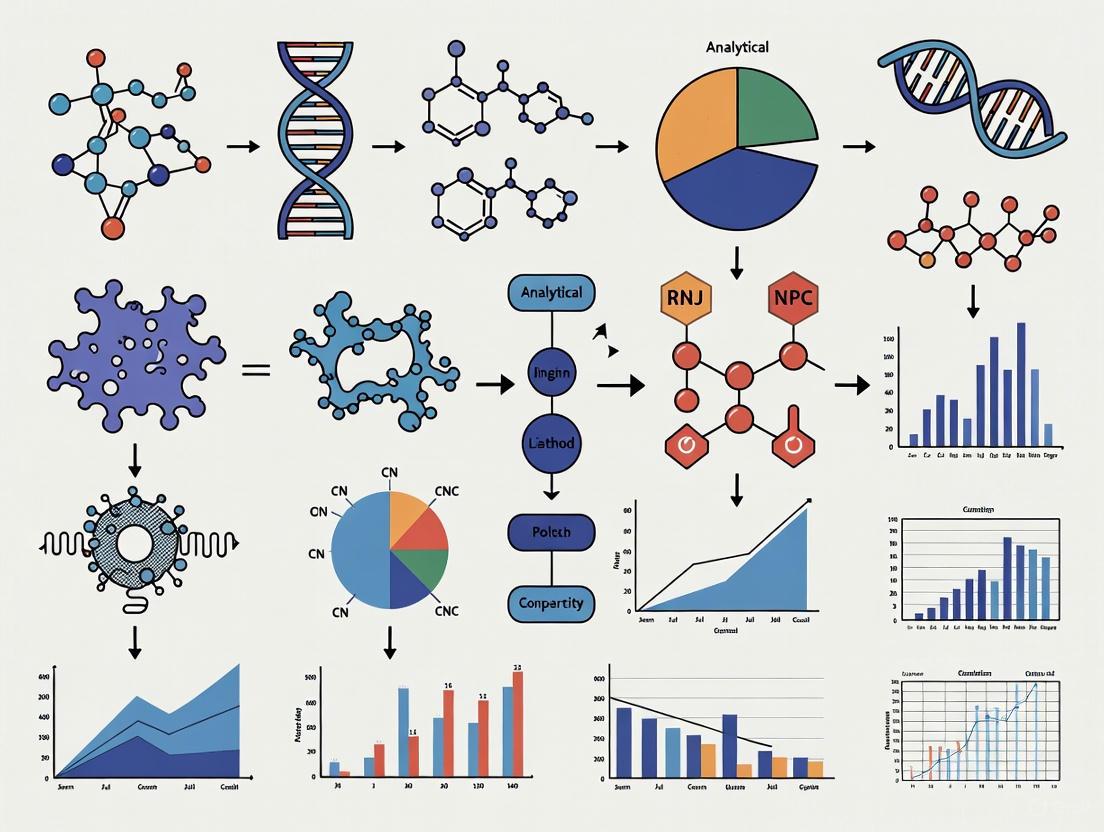

The experimental workflow for establishing analytical method comparability can be visualized as follows:

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing successful comparability studies requires specific reagents and materials designed to ensure analytical precision and reproducibility. The following table details essential research reagent solutions for analytical method comparability studies:

Table: Essential Research Reagent Solutions for Analytical Comparability

| Reagent/Material | Function in Comparability Studies | Key Specifications |

|---|---|---|

| Reference Standards | Serves as primary comparator for qualitative and quantitative assessments; essential for system suitability and method validation | Certified purity with comprehensive characterization data; traceable to recognized pharmacopoeial standards |

| System Suitability Test Mixtures | Verifies chromatographic system resolution, precision, and sensitivity before comparability testing | Contains all critical analytes at specified concentrations; stable under defined storage conditions |

| Column Evaluation Kits | Assesses chromatographic performance across different stationary phases during method transfer | Includes multiple column chemistries with tested reference compounds; provides performance comparisons |

| Stability-Indicating Solution | Demonstrates method specificity and ability to detect degradants in forced degradation studies | Contains drug substance with characterized degradants at known concentrations |

| Quality Control Materials | Monitors analytical performance throughout the comparability study | Represents product composition with established target values and acceptance ranges |

Regulatory Convergence: FDA's Broader 2025 Guidance Agenda

The paradigm shift in biosimilar development reflects a broader regulatory evolution evident across FDA's 2025 guidance agenda. The Center for Biologics Evaluation and Research (CBER) has announced plans for multiple new and revised guidances across therapeutic areas, including:

- "Potency Assurance for Cellular and Gene Therapy Products" (new in 2025) [10]

- "Post Approval Methods to Capture Safety and Efficacy Data for Cell and Gene Therapy Products" (new in 2025) [10]

- "Recommendations for Validation and Implementation of Alternative Microbial Methods for Testing of Biologics" (new in 2025) [10]

Similarly, the FDA's Human Foods Program has published its 2025 guidance agenda, including new topics such as "Action Level for Opiate Alkaloids on Poppy Seeds" and "Food Colors Derived from Natural Sources" [11]. This demonstrates a consistent trend toward streamlined regulatory approaches that emphasize efficient product development while maintaining rigorous safety and efficacy standards.

The statistical framework for equivalence testing can be visualized through the following decision process:

The FDA's 2025 draft guidance represents a watershed moment in regulatory thinking, formally recognizing that advanced analytical methods can provide more sensitive detection of product differences than clinical efficacy studies for certain well-characterized biologics. This evolution in regulatory science places robust analytical development and statistically sound comparability protocols at the center of biosimilar development.

To successfully implement this new paradigm, pharmaceutical developers should:

- Invest in state-of-the-art analytical technologies with appropriate validation

- Develop expertise in equivalence testing methodologies and statistical analysis

- Implement risk-based approaches to method changes and comparability assessments

- Engage in early dialogue with FDA to confirm alignment on evidence requirements

- Strengthen quality by design principles throughout product development

As regulatory agencies worldwide continue to refine their approaches based on scientific advances, the emphasis on analytical comparability is likely to expand to additional product categories, making these methodologies increasingly essential for efficient pharmaceutical development while ensuring product quality, safety, and efficacy.

In the development and lifecycle management of biotechnological and biological products, manufacturing process changes are inevitable. Product comparability testing is the critical scientific exercise that ensures these changes do not adversely impact the quality, safety, and efficacy of a drug product [12] [13]. This process relies on a robust analytical framework, guided by key regulatory documents. This article details the application of three cornerstone guidances—ICH Q5E, FDA Comparability Protocols, and ICH Q14—in establishing a scientific and risk-based approach to comparability for researchers and drug development professionals.

The following guidances provide a complementary framework for managing post-approval changes and analytical procedures.

Table 1: Key Guidance Documents for Comparability and Analytical Development

| Guidance Document | Scope & Primary Focus | Key Principles for Comparability |

|---|---|---|

| ICH Q5E (June 2005) [13] | Provides principles for assessing comparability of biotechnological/biological products before and after a manufacturing process change for drug substance or drug product [12]. | • Main emphasis is on quality aspects [13].• Does not prescribe specific analytical, nonclinical, or clinical strategies [13].• Assists in collecting technical information to prove no adverse impact from the change [12]. |

| FDA Comparability Protocols (Oct 2022) [14] | A CP is a comprehensive, prospectively written plan for assessing the effect of a proposed postapproval CMC change for an NDA, ANDA, or BLA [14]. | • A submission that outlines the planned change and studies to evaluate it [14].• Describes the change and the analytical, nonclinical, and clinical studies to demonstrate no adverse effect on identity, strength, quality, purity, or potency [14]. |

| ICH Q14 (March 2024) [15] | Defines scientific and risk-based approaches for analytical procedure development and lifecycle management for drug substances and products [16]. | • Aims to facilitate more efficient, science-based, and risk-based postapproval change management of analytical procedures [15].• Enables better analytical procedure control strategy, which is fundamental to generating reliable comparability data [16]. |

Experimental Protocols for Comparability Studies

A comprehensive comparability study is multi-faceted, relying on analytical data as its foundation. The following protocols, aligned with regulatory expectations, provide a structured approach.

Protocol for a Pre-Change Analytical Comparability Study

This protocol is designed to generate head-to-head comparison data between pre-change and post-change drug substance, as guided by ICH Q5E [12].

Objective: To demonstrate that a manufacturing process change does not adversely affect the critical quality attributes (CQAs) of the drug substance.

Materials and Reagents:

- Reference Standard: Pre-change drug substance, fully characterized and representative of material used in nonclinical and clinical studies that supported product approval.

- Test Article: Post-change drug substance manufactured using the modified process.

- Analytical Reagents: As specified by the validated methods in the control strategy (e.g., HPLC-grade solvents, reference standards, cell-based assay reagents).

Methodology:

- Study Design: A side-by-side analysis of at least three independent lots each of pre-change and post-change drug substance.

- Test Methods: Employ a suite of orthogonal analytical techniques to assess CQAs. The Analytical Procedure Control Strategy should be developed per ICH Q14 principles [16].

- Identity/Primary Structure: Peptide mapping, mass spectrometry, amino acid analysis.

- Purity/Impurities: Size exclusion HPLC (for aggregates), ion-exchange HPLC (for charge variants), reversed-phase HPLC (for product-related impurities), capillary electrophoresis.

- Potency: A validated cell-based bioassay or binding assay that reflects the mechanism of action.

- Other Quality Attributes: Glycosylation profile, secondary/tertiary structure (by circular dichroism or FTIR), subvisible particle count.

- Data Analysis: Data should be evaluated for statistical significance and, more importantly, for clinical relevance. Establish pre-defined acceptance criteria based on knowledge of the reference material and process capability. ICH Q5E emphasizes that the lot-to-lot variability of the pre-change material should be considered when setting these criteria [12].

Protocol for an Analytical Procedure Transfer (as a Post-Approval Change)

This protocol exemplifies a change that can be managed under a Comparability Protocol as per FDA guidance [14] and developed under ICH Q14 [15].

Objective: To qualify an alternate testing site to perform a validated analytical procedure, ensuring the procedure remains in a state of control and generates reliable data for comparability assessments.

Materials and Reagents:

- Validation Protocol: Document detailing the experimental plan and acceptance criteria.

- Test Samples: Drug substance/product samples with known and well-characterized attributes.

- System Suitability Standards: As defined in the original analytical procedure.

Methodology:

- Documentation Transfer: The transferring site provides the analytical procedure, validation report, and known performance characteristics to the receiving site.

- Training: Analysts at the receiving site are trained on the procedure by subject matter experts from the transferring site.

- Experimental Phase: The receiving site performs the procedure per the following design, which is prospectively defined in the Comparability Protocol [14]:

- Pre-Study: Both sites perform system suitability tests to ensure instrument performance.

- Analysis: The receiving site analyzes a minimum of three lots of the product in triplicate on three separate days (intermediate precision study design).

- Comparison: Results for key parameters (e.g., assay potency, related substances) are statistically compared against data generated by the transferring site and/or pre-defined acceptance criteria (e.g., % difference, statistical equivalence).

Diagram 1: Analytical Procedure Transfer Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

A successful comparability study depends on high-quality, well-characterized reagents and materials.

Table 2: Essential Research Reagent Solutions for Comparability Testing

| Research Reagent / Material | Function in Comparability Studies |

|---|---|

| Well-Characterized Reference Standard | Serves as the primary benchmark for assessing the quality of post-change material. Its well-defined profile is the basis for setting acceptance criteria [12]. |

| Cell-Based Bioassay Systems | Measures the biological activity (potency) of the product, which is a critical quality attribute that must be maintained after a process change [13]. |

| Orthogonal Chromatography Columns | Different separation mechanisms (e.g., SEC, IEX, RP-HPLC) are required to comprehensively assess purity, impurity profiles, and identity. |

| Highly Purified Analytical Reagents | Essential for achieving the sensitivity, specificity, and reproducibility required by analytical procedures to detect subtle differences. |

| Stable & Traceable Reference Materials | Used for system suitability testing and calibration to ensure the analytical procedure is in a state of control throughout the comparability study [16]. |

Integrated Workflow for Managing a Manufacturing Change

Navigating a manufacturing change requires a strategic integration of regulatory planning and scientific experimentation, leveraging all three guidance documents.

Diagram 2: Integrated Change Management Workflow

The successful demonstration of product comparability following a manufacturing change is a cornerstone of the product lifecycle. It requires a deep scientific understanding of the product and its process, which is guided by a robust regulatory framework. ICH Q5E establishes the foundational principles for the comparability exercise itself [12] [13]. The FDA Comparability Protocol provides a strategic mechanism for proactively planning and gaining regulatory agreement on these changes [14]. Finally, ICH Q14 equips scientists with modern, science-based approaches for developing and maintaining the analytical procedures that generate the high-quality data essential for any comparability decision [15] [16]. By integrating these three guidances, sponsors can adopt a systematic, efficient, and defensible approach to managing manufacturing changes, ultimately ensuring the consistent quality of biotechnological and biological products for patients.

The totality of evidence approach represents a foundational paradigm in the development and evaluation of biological products, including biosimilars and products undergoing manufacturing changes. This systematic framework involves the integrated assessment of analytical, non-clinical, and clinical data to demonstrate that no clinically meaningful differences exist between products in terms of safety, purity, and potency [17]. Regulatory agencies worldwide employ this comprehensive approach when making determinations about product comparability, biosimilarity, and the extrapolation of indications without the need for redundant clinical studies [18] [19].

The philosophy underlying this approach recognizes that while a single study might provide valuable information, regulatory decisions are based on the collective evidence derived from all available data sources [19]. This review examines the components, methodologies, and strategic implementation of the totality of evidence approach within product comparability testing, providing researchers and drug development professionals with practical guidance for its application.

Theoretical Framework and Regulatory Foundation

Historical Evolution and Regulatory Principles

The totality of evidence approach has evolved alongside advancements in analytical technologies and regulatory science. Historically, biological products were defined primarily by their manufacturing processes due to limited characterization capabilities [18]. With improvements in production methods and analytical techniques, regulators have developed more sophisticated frameworks for assessing product comparability after manufacturing changes [18] [1].

The International Council for Harmonisation (ICH) Q5E guideline provides the primary global framework for comparability assessments, requiring evaluation of relevant quality attributes to exclude adverse impacts on product safety and efficacy [20]. The U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) have adopted similar approaches, emphasizing scientific understanding of the relationship between quality attributes and their impact on safety and efficacy [1].

The Totality of Evidence Concept

The totality of evidence approach operates on the principle that comprehensive data from multiple sources, when considered together, provide sufficient assurance of product comparability or biosimilarity. This represents a holistic alternative to relying solely on any single type of evidence, whether analytical, non-clinical, or clinical [17]. The approach follows a stepwise assessment beginning with extensive analytical characterization, progressing through functional and non-clinical studies, and concluding with targeted clinical evaluations [17].

Table 1: Core Components of the Totality of Evidence Approach

| Evidence Category | Key Elements | Regulatory Purpose |

|---|---|---|

| Analytical | Structural characterization, physicochemical properties, functional activities | Demonstrate high similarity at molecular and functional levels |

| Non-Clinical | In vitro and in vivo studies, toxicological assessments | Bridge to clinical studies and assess potential safety concerns |

| Clinical | Pharmacokinetics, pharmacodynamics, immunogenicity, efficacy, safety | Confirm similarity in human subjects and identify any clinical impacts |

Analytical Comparability Assessment

Fundamental Principles and Methodologies

Analytical comparability forms the foundation of the totality of evidence approach, requiring comprehensive structural and functional characterization to demonstrate that products are highly similar despite manufacturing changes or different development pathways [1]. The assessment should be both targeted (measuring differences in potentially affected quality attributes) and broad (allowing detection of unexpected consequences) [20].

The risk-based approach to analytical comparability begins with identifying critical quality attributes (CQAs) that may affect safety and efficacy [20]. Understanding the structure-function relationship provides the scientific rationale for establishing comparability and helps predict the impact of process changes on product quality [1].

Key Analytical Techniques and Quality Attributes

Recombinant monoclonal antibodies (mAbs) are complex glycoproteins with significant heterogeneity due to various post-translational modifications (PTMs) and degradation events occurring throughout manufacturing [1]. Successful comparability studies require both general knowledge of mAbs and specific understanding of the molecule obtained through analytical characterization.

Table 2: Key Analytical Techniques for Assessing Monoclonal Antibody Quality Attributes

| Quality Attribute Category | Specific Attributes | Analytical Techniques | Potential Impact |

|---|---|---|---|

| Structural Characteristics | Amino acid sequence, Primary structure, Higher-order structure | Mass spectrometry, Circular dichroism, NMR | Ensures proper folding and structural integrity |

| Charge Variants | N-terminal pyroglutamate, C-terminal lysine, Deamidation | Ion-exchange chromatography, Capillary isoelectric focusing | May affect stability and biological activity |

| Post-translational Modifications | Glycosylation patterns, Oxidation, Glycation | LC-MS, HILIC, CE-LIF | Impacts effector functions, pharmacokinetics, and immunogenicity |

| Impurities and Aggregates | Product-related variants, Process-related impurities | Size-exclusion chromatography, CE-SDS | Potential immunogenicity concerns |

| Functional Properties | Binding affinity, Fc effector functions, Potency | ELISA, SPR, Cell-based bioassays | Direct impact on mechanism of action and efficacy |

Experimental Protocol: Comprehensive Structural and Functional Characterization

Objective: To demonstrate analytical similarity between pre-change and post-change biological products through extensive physicochemical and functional analyses.

Materials and Equipment:

- Reference standard and test articles

- Ultra-high-performance liquid chromatography (UHPLC) systems

- Mass spectrometers (LC-MS, HRMS)

- Circular dichroism spectrometer

- Surface plasmon resonance (SPR) instrumentation

- Cell culture facilities for bioassays

Procedure:

Primary Structure Analysis:

- Perform intact mass analysis by LC-MS under non-denaturing and denaturing conditions

- Conduct peptide mapping with tryptic digestion followed by LC-MS/MS to confirm amino acid sequence and identify post-translational modifications

- Quantify N-terminal pyroglutamate and C-terminal lysine variants using charge-based separation methods

Higher-Order Structure Assessment:

- Analyze secondary structure using far-UV circular dichroism spectroscopy

- Evaluate tertiary structure using near-UV circular dichroism and intrinsic fluorescence spectroscopy

- Assess thermal stability by differential scanning calorimetry

Product-Related Impurity Profiling:

- Quantify aggregates and fragments by size-exclusion chromatography coupled with multi-angle light scattering (SEC-MALS)

- Analyze charge variants using capillary isoelectric focusing (cIEF) or cation-exchange chromatography (CEX)

- Characterize glycosylation patterns by releasing N-glycans followed by HILIC-UPLC or CE-LIF analysis

Functional Characterization:

- Determine binding affinity to target antigens using surface plasmon resonance

- Measure antibody-dependent cell-mediated cytotoxicity (ADCC) and complement-dependent cytotoxicity (CDC) using cell-based reporter assays

- Evaluate Fab-mediated neutralization potency using relevant cell-based bioassays

Data Analysis and Interpretation:

- Compare test results to pre-defined acceptance criteria based on reference product characterization

- Employ statistical methods to determine if observed differences are within qualified ranges

- Integrate all analytical data to form a comprehensive assessment of similarity

Non-Clinical Assessment

Role in the Totality of Evidence

Non-clinical studies provide a critical bridge between analytical characterization and clinical evaluation within the totality of evidence approach [17]. These assessments focus on functional properties related to the mechanism of action and may include in vitro binding assays, cell-based potency assays, and animal studies where appropriate [17] [1].

The level of non-clinical testing depends on the nature of the product, the extent of characterization, and the demonstrated analytical similarity. When comprehensive analytical and in vitro functional data provide sufficient reassurance of similarity, in vivo non-clinical studies may be reduced or omitted [1].

Experimental Protocol: Mechanism of Action Assessment

Objective: To demonstrate functional similarity through comprehensive in vitro studies evaluating binding properties and biological activities relevant to the mechanism of action.

Materials:

- Reference and test product samples

- Target antigens and receptors

- Relevant cell lines expressing target receptors

- Fcy receptors (FcyRI, FcyRIIa, FcyRIIb, FcyRIIIa)

- Complement component C1q

- ELISA plates and reagents

- Flow cytometer

- Surface plasmon resonance instrument

Procedure:

Binding Affinity and Kinetics:

- Immobilize target antigen on SPR sensor chip

- Inject serial dilutions of reference and test products over chip surface

- Determine association rate (ka), dissociation rate (kd), and equilibrium dissociation constant (KD) using appropriate fitting models

Cell-Based Binding:

- Culture cells expressing target antigen

- Incubate cells with reference and test products across concentration range

- Detect bound antibody using fluorescently-labeled secondary antibody

- Analyze by flow cytometry to determine EC50 values

Fc Effector Function Assessment:

- Evaluate FcyR binding using ELISA or SPR-based methods

- Measure antibody-dependent cellular phagocytosis (ADCP) using macrophage-like cell lines and fluorescently-labeled target cells

- Assess complement-dependent cytotoxicity (CDC) by incubating target cells with antibodies and complement source, then quantifying cell viability

Data Interpretation:

- Compare dose-response curves and potency values between reference and test products

- Calculate relative potency with 95% confidence intervals

- Demonstrate that potency ratios fall within pre-defined equivalence margins (typically 0.8-1.25)

Clinical Assessment

Clinical Components of the Totality of Evidence

Clinical evaluations within the totality of evidence approach are targeted and focused, designed to resolve any residual uncertainty remaining after analytical and functional assessments [17]. The extent of clinical testing depends on the level of similarity established through prior characterizations and the product's complexity [21].

Clinical comparability assessments typically include pharmacokinetic studies to demonstrate similar exposure, pharmacodynamic studies where relevant biomarkers exist, immunogenicity assessment, and confirmatory efficacy and safety studies in sensitive patient populations [17].

Experimental Protocol: Clinical Pharmacokinetic and Immunogenicity Study

Objective: To demonstrate similar pharmacokinetic profiles and comparable immunogenicity between reference and test products in healthy volunteers or patients.

Study Design:

- Randomized, parallel-group or crossover design

- Single-dose or multiple-dose administration depending on product characteristics

- Sensitive population capable of detecting potential differences

Participants:

- Healthy volunteers or patients with the condition of interest

- Adequate sample size to provide sufficient power for equivalence testing

- Key inclusion criteria: age, weight, specific disease characteristics if applicable

- Key exclusion criteria: prior exposure to similar products, underlying conditions affecting PK

Procedures:

Pharmacokinetic Sampling:

- Obtain serial blood samples pre-dose and at specified timepoints post-dose

- For single-dose study: 10-15 timepoints spanning 5 elimination half-lives

- For multiple-dose study: intensive sampling after first dose and sparse sampling during steady state

Immunogenicity Assessment:

- Collect samples for anti-drug antibody (ADA) detection at baseline and specified intervals

- Use validated immunoassay for ADA detection

- For ADA-positive samples, perform neutralizing antibody (NAb) assays

Analytical Methods:

- Use validated bioanalytical method (e.g., ELISA, ECL) for drug concentration quantification

- Employ validated immunogenicity assays following current regulatory guidance

Endpoint Assessment:

Table 3: Key Clinical Pharmacokinetic Parameters for Comparability Assessment

| PK Parameter | Definition | Assessment Method | Acceptance Criteria |

|---|---|---|---|

| AUC0-t | Area under the concentration-time curve from time zero to last measurable timepoint | Non-compartmental analysis | 90% CI within 80-125% |

| AUC0-∞ | Area under the concentration-time curve from time zero extrapolated to infinity | Non-compartmental analysis | 90% CI within 80-125% |

| Cmax | Maximum observed concentration | Non-compartmental analysis | 90% CI within 80-125% |

| tmax | Time to reach maximum concentration | Non-compartmental analysis | Non-significant difference |

| t1/2 | Terminal elimination half-life | Non-compartmental analysis | No clinically meaningful difference |

| Immunogenicity Incidence | Proportion of subjects developing anti-drug antibodies | Immunoassay | No clinically meaningful difference in incidence, timing, or neutralizing capacity |

Integrated Data Assessment and Decision Framework

The Totality of Evidence Integration Process

The final assessment of comparability or biosimilarity requires integrated evaluation of all generated data, considering the collective evidence rather than individual study results in isolation [17] [20]. This holistic approach acknowledges that minor differences in certain quality attributes may be acceptable if balanced by other data demonstrating similar safety and efficacy profiles [1].

The weight assigned to each piece of evidence varies depending on the quality of the studies, the clinical and regulatory context, and the potential impact on patient outcomes [19]. Regulatory agencies evaluate whether the totality of the evidence provides sufficient assurance that there are no clinically meaningful differences between the products [17] [18].

Risk-Based Decision Framework

A systematic risk-based approach provides a structured methodology for comparability assessments throughout the product lifecycle [21] [20]. This framework evaluates the potential impact of manufacturing changes on product quality, safety, and efficacy, guiding the extent of comparability testing required.

Diagram: Risk-Based Comparability Assessment Framework. This decision framework outlines a systematic approach for evaluating manufacturing changes throughout the product lifecycle.

Emerging Approaches and Future Directions

The application of the totality of evidence approach continues to evolve with scientific advancements and regulatory experience. Emerging approaches include:

Model-Informed Drug Development (MIDD): Utilizing quantitative methods such as population pharmacokinetic modeling and exposure-response analysis to support comparability determinations with reduced clinical data requirements [21] [22].

Real-World Evidence (RWE): Incorporating data from clinical practice to complement evidence from randomized controlled trials, particularly for post-market effectiveness evaluation [19].

Advanced Analytics: Implementing artificial intelligence and machine learning approaches to enhance process understanding and predict the impact of manufacturing changes on product quality [21] [22].

Essential Research Reagent Solutions

Successful implementation of the totality of evidence approach requires access to high-quality, well-characterized research reagents and analytical tools. The following table outlines essential materials for comparability assessment:

Table 4: Essential Research Reagent Solutions for Comparability Assessment

| Reagent Category | Specific Examples | Function in Comparability Assessment |

|---|---|---|

| Reference Standards | WHO International Standards, USP Reference Standards, In-house primary reference | Provide benchmarks for analytical and biological comparisons |

| Characterized Cell Lines | Reporter gene cell lines, ADCC/ADCP effector cells, Target-expressing cells | Enable functional assessment of mechanism of action and effector functions |

| Recombinant Antigens | Soluble targets, Receptor extracellular domains, Fcγ receptors | Facilitate binding affinity and kinetics measurements |

| Affinity Capture Reagents | Anti-idiotypic antibodies, Protein A/G/L resins, Antigen-conjugated matrices | Support purification and characterization of product variants |

| Detection Systems | Labeled secondary antibodies, Enzyme substrates, Electrochemiluminescence reagents | Enable quantification and comparison of functional activities |

| Chromatography Resins | Size-exclusion, Ion-exchange, Hydrophobic interaction, Protein A affinity | Separate and characterize product variants and impurities |

| Mass Spec Standards | Proteolytic enzymes, Isotopic labels, Calibration standards | Enable structural characterization and post-translational modification analysis |

The totality of evidence approach provides a systematic framework for integrating analytical, non-clinical, and clinical data to demonstrate product comparability or biosimilarity. This comprehensive methodology requires robust scientific justification at each step, with decisions guided by thorough product and process knowledge [17] [20].

Successful implementation depends on strategic study design, appropriate analytical methods, and integrated data interpretation that collectively provide sufficient assurance of product similarity without clinically meaningful differences [1] [20]. As regulatory science advances, emerging approaches including model-informed drug development and real-world evidence are increasingly complementing traditional methodologies within the totality of evidence paradigm [19] [22].

For researchers and drug development professionals, understanding and properly applying this approach is essential for efficient product development and lifecycle management, ultimately benefiting patients through accelerated access to high-quality biological therapies.

The adoption of risk-based frameworks represents a paradigm shift in pharmaceutical development, moving quality control from reactive testing to proactive, science-based assurance. These systematic approaches enable researchers to identify, evaluate, and prioritize factors that potentially impact critical quality attributes (CQAs) of drug products, particularly safety, purity, and potency. Underpinned by regulatory guidelines such as ICH Q9 and the forthcoming ICH Q14, risk-based methodologies provide a structured foundation for making informed decisions throughout the product lifecycle [23]. This strategic focus allows organizations to concentrate resources on high-risk areas, thereby enhancing development efficiency, strengthening regulatory compliance, and ultimately ensuring patient safety.

Within analytical method development for product comparability testing, risk-based frameworks deliver a systematic mechanism for evaluating how process changes or analytical procedure variations might influence the assessment of safety, purity, and potency. The Quality by Design (QbD) principle, which leverages risk-based design to align methods with CQAs, is fundamental to this approach [23]. By implementing Design of Experiments (DoE) and establishing Method Operational Design Ranges (MODRs), developers can create robust, well-understood methods capable of detecting meaningful changes in product quality attributes [23]. This evidence-based strategy is particularly crucial for complex modalities like biologics, cell, and gene therapies, where traditional testing approaches may be insufficient to fully characterize product comparability.

Core Principles and Regulatory Foundation

Risk assessment in pharmaceutical development follows a systematic lifecycle encompassing risk identification, analysis, evaluation, control, and review [24] [25]. The International Council for Harmonisation (ICH) guidelines provide the foundational structure for these activities, with ICH Q9 formalizing principles for quality risk management and the emerging ICH Q14 offering detailed guidance on analytical procedure development [23]. These frameworks emphasize science-based decision-making and require meticulous documentation and transparency throughout the risk management process.

A pivotal concept in modern risk management is the distinction between qualitative and quantitative approaches, each offering distinct advantages for different assessment scenarios. Qualitative risk analysis relies on expert judgment and descriptive scales to prioritize risks based on their potential impact and likelihood of occurrence, typically using simple rating scales or probability/impact matrices [24] [25]. This approach is particularly valuable for emerging risks, complex scenarios with interconnected variables, or situations lacking historical data [25]. Conversely, quantitative risk analysis employs numerical data and statistical models to provide objective, measurable risk assessments, generating outputs such as probabilities, financial impacts, and confidence intervals [26] [24]. This method is ideal for data-rich environments where precise, financially-grounded decisions are required.

Table 1: Comparison of Qualitative and Quantitative Risk Assessment Approaches

| Feature | Qualitative Risk Assessment | Quantitative Risk Assessment |

|---|---|---|

| Approach | Descriptive, expert judgment, scenario-based [24] [25] | Numerical, data-driven, statistical models [26] [24] |

| Execution | Faster to implement, adaptable to changing conditions [25] | Resource-intensive, requires specialized expertise [24] [25] |

| Output | Risk rankings, visual matrices, descriptive reports [25] | Probabilities, financial metrics, confidence intervals [26] [27] |

| Strengths | Quick, flexible, easy to communicate across teams [25] | High precision, supports data-driven decision-making [26] [24] |

| Limitations | Subjective, depends on expert judgment, less precise [25] [27] | Data-dependent, may miss emerging risks without historical data [24] [25] |

| Best Use Cases | Emerging risks, complex scenarios, limited historical data [25] | Financial analysis, planning, data-rich environments [24] [25] |

Regulatory agencies including the FDA and EMA increasingly mandate risk-based approaches, with the ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate) ensuring data integrity throughout the risk assessment process [23]. The harmonization of global regulatory expectations through initiatives like ICH Q2(R2) and Q14 further enables consistent implementation of risk-based frameworks across multinational development programs [23].

Implementation Workflow and Methodology

Implementing a comprehensive risk-based framework follows a logical sequence from planning through continuous monitoring. The following workflow diagram illustrates the key stages in this systematic process:

Risk Identification and Assessment Protocol

Objective: Systematically identify and document potential risks to product safety, purity, and potency during analytical method development and comparability studies.

Materials and Equipment:

- Risk Assessment Team: Cross-functional experts (Analytical Development, Quality Assurance, Regulatory Affairs, Manufacturing)

- Documentation Tools: Electronic data capture systems compliant with ALCOA+ principles [23]

- Historical Data: Previous risk assessments, method validation reports, stability data, comparability study results

Procedure:

- Define the Scope and Context: Clearly establish the boundaries of the assessment, including the specific analytical method, product type, and stage of development [24].

- Form a Cross-Functional Team: Assemble experts from relevant disciplines to ensure comprehensive risk identification [23].

- Identify Potential Risks: Utilize structured approaches such as:

- Brainstorming sessions guided by experience and historical data

- Review of similar methods and products for known risk factors

- Analysis of process flow diagrams to identify vulnerability points

- Document Risks in a Register: Create a comprehensive risk register detailing each identified risk, its potential causes, and preliminary categorization.

Risk Analysis and Evaluation Protocol

Objective: Analyze identified risks to determine their potential impact on safety, purity, and potency, and prioritize them for further action.

Materials and Equipment:

- Risk Matrix: Defined scales for probability and impact

- Assessment Tools: Software for qualitative scoring or statistical analysis

- Historical Data: Method performance data, quality control records

Procedure:

- Qualitative Analysis:

- For each risk, assign probability (likelihood of occurrence) and impact (severity of effect) ratings using predefined scales (e.g., 1-5 or High/Medium/Low) [24].

- Calculate risk priority by multiplying probability and impact scores.

- Plot risks on a risk matrix to visualize prioritization.

- Quantitative Analysis (for high-priority risks):

- Collect historical data on failure rates, method performance, or quality attributes.

- Apply quantitative methods such as:

- Monte Carlo Simulation: Uses repeated random sampling to model probability distributions of potential outcomes [26] [24].

- Expected Monetary Value (EMV) Analysis: Calculates the average outcome when future scenarios include uncertainty [24].

- Annualized Loss Expectancy (ALE): Determines expected monetary loss per year (ALE = Single Loss Expectancy × Annualized Rate of Occurrence) [24] [27].

- Risk Evaluation:

- Compare analyzed risks against predefined risk acceptance criteria.

- Categorize risks as acceptable, requires control, or unacceptable.

- Document justification for all risk categorization decisions.

Table 2: Quantitative Risk Assessment Formulas and Applications

| Method | Formula/Approach | Application in Pharmaceutical Development |

|---|---|---|

| Annualized Loss Expectancy (ALE) | ALE = SLE × AROSLE = Asset Value × Exposure Factor [24] [27] | Quantifying financial impact of method failures, instrument downtime, or batch rejection |

| Monte Carlo Simulation | Repeated random sampling to model probability of different outcomes under uncertainty [26] [24] | Predicting method robustness under variable conditions; modeling impact of process parameters on CQAs |

| Expected Monetary Value (EMV) | EMV = Probability × Impact (in monetary terms) [24] | Comparing risk mitigation options for analytical method controls; cost-benefit analysis of additional testing |

| Three-Point Estimate | Estimate = (Optimistic + 4×Most Likely + Pessimistic) ÷ 6 [24] | Estimating method validation timelines; predicting stability testing outcomes |

Analytical Quality by Design (AQbD) Implementation Protocol

Objective: Implement AQbD principles to build quality into analytical methods rather than testing it post-development, ensuring methods remain robust for comparability assessment.

Materials and Equipment:

- Design of Experiments (DoE) software

- Analytical instruments with computerized data acquisition

- Statistical analysis software

Procedure:

- Define Analytical Target Profile (ATP): Clearly articulate the required performance characteristics of the analytical method.

- Identify Critical Method Parameters (CMPs): Through risk assessment, identify method parameters that may impact the ATP.

- Develop Method Operational Design Range (MODR):

- Utilize DoE to systematically evaluate the relationship between CMPs and method performance.

- Establish a MODR within which method parameters can be adjusted without requiring revalidation [23].

- Control Strategy:

- Implement a control strategy based on understanding gained through risk assessment and DoE.

- Establish procedure performance controls, system suitability tests, and continuous monitoring plans.

The following diagram illustrates the AQbD lifecycle management approach:

Application to Product Comparability Testing

In product comparability testing, risk-based frameworks provide a structured approach to evaluate the impact of manufacturing process changes on safety, purity, and potency. The framework ensures that analytical methods are sufficiently sensitive and specific to detect clinically relevant differences in product quality attributes.

Comparability Risk Assessment Protocol

Objective: Assess the impact of manufacturing changes on product CQAs through appropriate analytical methods.

Materials and Equipment:

- Pre-change and post-change product samples

- Validated analytical methods

- Statistical analysis software

Procedure:

- Identify Manufacturing Changes: Document all process changes, including scale, equipment, site, or raw material modifications.

- Link Changes to Potential Impact on CQAs: Using risk assessment, evaluate how each change might affect product CQAs.

- Select Appropriate Analytical Methods: Based on the risk assessment, select methods capable of detecting potential impacts on CQAs.

- Design Comparability Study:

- Include sufficient sample numbers to provide statistical power.

- Utilize orthogonal methods for high-risk attributes.

- Implement additional testing for attributes identified as high-risk.

- Execute Study and Interpret Results:

- Apply statistical tests appropriate for the data type and distribution.

- Use equivalence testing where applicable.

- Document any observed differences and assess their clinical relevance.

Case Study: Risk-Based Method Migration for HPLC Analysis

Background: A contract research organization needed to migrate validated HPLC methods from aging instrumentation to new platforms without requiring full revalidation [28].

Risk Assessment Approach:

- Specification Comparison: Conducted detailed comparison of technical specifications between legacy and new HPLC systems.

- Risk Identification: Identified potential variables including injection volume accuracy, delay volume, detector linearity, and dwell volume [28].

- Control Strategy: Established system suitability criteria and allowable adjustment ranges for method parameters.

Experimental Verification:

- Ran identical methods on both systems using the same column, mobile phase, and samples.

- Compared critical performance parameters: retention time (≤3% difference) and peak area precision (≤1% RSD difference) [28].

- Demonstrated equivalence without method revalidation, saving significant time and resources.

Outcome: Successful migration of multiple methods with maintained data quality and regulatory compliance [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Risk-Based Analytical Development

| Reagent/Category | Function in Risk-Based Analysis | Application Examples |

|---|---|---|

| High-Resolution Mass Spectrometry (HRMS) | Provides unparalleled sensitivity and specificity for identifying and quantifying trace-level impurities and degradants [23] | Monitoring process-related impurities; characterizing degradation products affecting potency and safety |

| Multi-Attribute Methods (MAM) | Consolidates measurement of multiple quality attributes into a single assay, reducing analytical variability [23] | Simultaneous monitoring of product quality attributes (e.g., oxidation, deamidation) in biologics comparability |

| Process Analytical Technology (PAT) | Enables real-time monitoring of critical process parameters, facilitating quality by design [23] | In-line monitoring during manufacturing to ensure consistent product quality and enable real-time release |

| Reference Standards & Controls | Provide benchmarks for method qualification and validation, ensuring data comparability across studies [28] | System suitability testing; assay qualification; demonstrating method robustness for comparability assessment |

| Advanced Chromatography Columns | Specialized stationary phases designed for specific separation challenges improve method resolution and reliability [28] | Separating complex mixtures of product-related variants; resolving closely eluting impurities |

Risk-based frameworks provide an essential foundation for assessing the impact of analytical method variability on the evaluation of product safety, purity, and potency. By implementing systematic risk assessment protocols, including both qualitative and quantitative approaches, researchers can make science-based decisions that enhance method robustness and reliability. The integration of Analytical Quality by Design (AQbD) principles and lifecycle management approaches ensures that methods remain fit-for-purpose throughout their use in product comparability testing [23]. As regulatory expectations evolve, evidenced by emerging guidelines such as ICH Q14, the adoption of comprehensive risk-based frameworks becomes increasingly critical for successful drug development and regulatory approval.

Practical Applications: Analytical Techniques and Study Designs for Different Product Types

In the development of biopharmaceuticals, orthogonal methods are defined as different analytical techniques that are used to measure the same Critical Quality Attribute (CQA) but are based on distinct measurement principles [29]. The primary goal of employing orthogonal methods is to obtain a more accurate and reliable description of a single, critical property by controlling for the systematic error or bias inherent in any individual analytical technique [29]. This approach is fundamental to building a comprehensive "totality of evidence" for demonstrating product quality, consistency, and comparability, especially following manufacturing changes [30] [31].

The complexity of biotherapeutics, including monoclonal antibodies, gene therapies like Adeno-associated viruses (AAVs), and other biologic products, means that a single analytical method often cannot provide a complete picture of all relevant attributes [29]. Orthogonal strategies are particularly crucial for product characterization and analytical controls, as they play a significant role in ensuring product quality and continuity of clinical trial material supply [30]. Regulatory guidance emphasizes the use of orthogonal, state-of-the-art analytical methods for the comprehensive physicochemical and functional characterization required to support biosimilarity claims or to demonstrate comparability after process changes [31].

Key Orthogonal Techniques for Critical Quality Attributes

Particle Analysis and Quantification

The characterization of particles, including protein aggregates and other subvisible particles, is a key CQA for many biopharmaceutical products, as their presence can impact product safety and efficacy. The following table summarizes common orthogonal techniques used for particle analysis:

Table 1: Orthogonal Methods for Particle Analysis and Quantification

| CQA | Method 1 | Method 1 Principle | Method 2 | Method 2 Principle | Application Context |

|---|---|---|---|---|---|

| Subvisible Particle Size & Concentration | Flow Imaging Microscopy (FIM) | Digital imaging and morphological analysis of individual particles [29] | Light Obscuration (LO) | Measurement of light blocked by particles passing through a sensor [29] | Provides accurate size/count of protein aggregates while ensuring compliance with pharmacopeia (e.g., USP <788>) [29] |

| AAV Capsid Content (Full/Empty Ratio) | Quantitative Electron Microscopy (QuTEM) | Direct visualization and quantification of capsids based on internal density in native state [32] | Analytical Ultracentrifugation (AUC) | Separation based on sedimentation velocity in a centrifugal field [32] | Critical for gene therapy efficacy; QuTEM offers superior granularity and structural integrity preservation [32] |

| AAV Capsid Content | Mass Photometry (MP) | Measures mass of individual particles by light scattering [32] | SEC-HPLC | Separation by hydrodynamic size using chromatography [32] | Assesses encapsidation efficiency for AAV vectors produced with different transgenes (e.g., scAAV, ssAAV) [32] |

Structural and Functional Characterization

For biologics and biosimilars, demonstrating similarity in structure, function, and pharmacokinetics through exhaustive orthogonal studies is now the core requirement for approval, often replacing the need for large Phase III clinical trials [31]. The following workflow illustrates a strategic approach to implementing orthogonal methods for comparability assessment:

The specific methods chosen are highly dependent on the product and the CQA being measured. For instance, a biosimilar development program must assess primary to quaternary structure, post-translational modifications, and product-related variants using orthogonal techniques [31]. This rigorous analytical comparison forms the foundation for demonstrating biosimilarity, supported by pharmacokinetic (PK) and pharmacodynamic (PD) studies [31].

Detailed Experimental Protocols

Protocol: Orthogonal Analysis of AAV Capsid Content

Objective: To accurately quantify the ratio of full, partial, and empty capsids in an AAV vector preparation using two orthogonal methods: Quantitative Transmission Electron Microscopy (QuTEM) and Analytical Ultracentrifugation (AUC) [32].

Materials:

- Purified AAV sample (e.g., ssAAV or scAAV)

- Negative stain (e.g., uranyl acetate)

- TEM grids

- AUC cells

- Phosphate Buffered Saline (PBS)

Method 1: Quantitative Transmission Electron Microscopy (QuTEM)

- Sample Preparation: Apply a diluted AAV sample to a TEM grid and negatively stain according to standard protocols.

- Imaging: Capture a minimum of 100 representative digital images at a specified magnification (e.g., 50,000x) using the TEM.

- Image Analysis: Analyze images using specialized software to classify capsids based on internal electron density.

- Classification & Quantification: Classify each capsid as "full" (high internal density), "empty" (low internal density), or "partial" (intermediate density). Calculate the percentage of each population from the total counted capsids.

Method 2: Analytical Ultracentrifugation (AUC)

- Sample Loading: Load the AAV sample and a reference buffer into double-sector AUC cells.

- Centrifugation: Place cells in an ultracentrifuge and run at a high speed (e.g., 150,000 rpm) at a controlled temperature (e.g., 20°C).

- Data Acquisition: Use UV/Vis absorbance or interference optics to monitor the sedimentation of the sample over time.

- Data Analysis: Analyze the sedimentation velocity data using a model that distinguishes species based on their sedimentation coefficients. Integrate the peaks corresponding to full, partial, and empty capsids to determine their relative proportions.

Data Correlation and Interpretation: Compare the percentage of full capsids obtained from QuTEM and AUC. A high concordance between the results (e.g., within 5-10%) validates the accuracy of the measurement and provides confidence in the product quality [32].

Protocol: Orthogonal Subvisible Particle Analysis

Objective: To determine the concentration and size distribution of subvisible particles (2-100 μm) in a biotherapeutic product using Flow Imaging Microscopy (FIM) and Light Obscuration (LO) [29].

Materials:

- Biotherapeutic drug product

- Appropriate diluent (if needed)

- Syringes

- Flow cell

Method 1: Flow Imaging Microscopy (FIM)

- System Setup: Prime the flow path and ensure background particle counts are within acceptable limits.

- Sample Analysis: Draw the sample through a flow cell and automatically capture digital images of each particle.

- Image Analysis: Software analyzes the images to determine particle size (based on equivalent circular diameter) and concentration. Morphological parameters (e.g., aspect ratio, transparency) can also be extracted.

- Reporting: Report the particle concentration per mL in size bins (e.g., 2-5 μm, 5-10 μm, 10-25 μm, ≥25 μm).

Method 2: Light Obscuration (LO)

- System Setup: Flush the system and perform a blank run to ensure cleanliness.

- Volume Calibration: Calibrate the sensor for the specific volume of sample analyzed.

- Sample Analysis: Pump the sample through a sensor where each particle blocks a proportional amount of light. The instrument counts and sizes particles based on the signal drop.

- Reporting: Report the particle concentration per mL in the same size bins as the FIM analysis.

Data Correlation and Interpretation: FIM data often provides a more accurate count and morphological insight for proteinaceous particles, while LO data is typically required for regulatory compliance with compendial standards [29]. The results should be compared to understand the nature of the particles present.

The Scientist's Toolkit: Research Reagent Solutions

The successful implementation of orthogonal methods relies on high-quality reagents and standards. The following table details essential materials for the featured experiments.

Table 2: Essential Research Reagents and Materials for Orthogonal Characterization