Automated Cell Type Annotation: A Comprehensive Guide for Single-Cell RNA-Seq Analysis

This article provides a comprehensive guide to automated cell type annotation methods for single-cell RNA sequencing (scRNA-seq) data, tailored for researchers, scientists, and drug development professionals.

Automated Cell Type Annotation: A Comprehensive Guide for Single-Cell RNA-Seq Analysis

Abstract

This article provides a comprehensive guide to automated cell type annotation methods for single-cell RNA sequencing (scRNA-seq) data, tailored for researchers, scientists, and drug development professionals. We cover the foundational principles and necessity of automation, detail major methodological approaches and their practical application in pipelines like Seurat and Scanpy, address common challenges and optimization strategies for robust results, and compare leading tools and validation frameworks. The goal is to equip users with the knowledge to select, implement, and validate appropriate automated annotation workflows to accelerate discovery in biomedical and clinical research.

What is Automated Cell Annotation and Why is it Essential for Modern Biology?

The Bottleneck of Manual Annotation in the Era of Large-Scale scRNA-seq

The advent of large-scale single-cell RNA sequencing (scRNA-seq) has fundamentally transformed our ability to dissect cellular heterogeneity. However, this analytical revolution has exposed a critical and unsustainable bottleneck: manual cell type annotation. This in-depth guide contextualizes this bottleneck within the broader thesis of automated cell type annotation methods research, which seeks to replace subjective, labor-intensive manual labeling with scalable, reproducible, and knowledge-driven computational pipelines. For researchers, scientists, and drug development professionals, overcoming this bottleneck is paramount to unlocking the full potential of atlas-scale data for biomarker discovery and therapeutic targeting.

The Scale of the Problem: Quantitative Data

The manual annotation process, typically involving the visual inspection of 2D embeddings (e.g., UMAP, t-SNE) and cross-referencing with known marker genes, becomes intractable with modern datasets. The following table summarizes the quantitative challenge.

Table 1: The Scaling Problem of Manual vs. Automated Annotation

| Metric | Traditional Study (Pre-2018) | Modern Atlas (Post-2020) | Implication for Manual Work |

|---|---|---|---|

| Number of Cells | 10^3 - 10^4 | 10^5 - 10^7 | Weeks to months of expert time required. |

| Number of Cell Clusters/States | 5 - 20 | 50 - 500+ | Human cognitive load exceeded; inconsistency rises. |

| Annotation Time per Cluster | ~30-60 minutes | ~15-30 minutes (with complexity) | Total time investment becomes prohibitive. |

| Inter-Annotator Reproducibility | Moderate (κ ~0.6-0.8) | Low (κ can be <0.5) | Results are subjective and non-standardized. |

| Reference Data Required | Limited public data | Curated, multimodal reference atlases | Manual integration of multiple knowledge sources is slow. |

Core Methodologies in Automated Annotation

Automated methods can be categorized by their approach. Below are detailed protocols for key experimental strategies cited in current research.

Protocol: Supervised Classification with a Pre-trained Model

- Objective: To assign cell type labels to a new query dataset using a labeled reference model.

- Materials: Query dataset (count matrix), pre-trained classifier (e.g., from

scANVI,scPred, orSingleR), reference label set. - Procedure:

- Data Preprocessing: Log-normalize the query data. Select features (genes) that match the feature space of the pre-trained model (typically variable genes from the reference).

- Feature Scaling: Scale the query data to have zero mean and unit variance, using parameters derived from the reference training set to avoid bias.

- Label Prediction: Input the processed query data into the pre-trained model. The model outputs predicted class probabilities for each cell.

- Thresholding & Assignment: Assign the cell type label corresponding to the highest probability. Optionally, apply a probability threshold (e.g., >0.7) to mark low-confidence predictions as "Unassigned."

- Validation: Manually inspect the "Unassigned" cells and high-confidence predictions for known marker expression to assess biological plausibility.

Protocol: Label Transfer via Similarity Scoring

- Objective: To annotate query cells by finding the most similar cell or cluster in a comprehensive reference atlas.

- Materials: Query dataset, reference dataset with labels (e.g., Human Cell Atlas, Mouse Brain Atlas), correlation or distance metric.

- Procedure:

- Reference Alignment: Choose an appropriate reference. Harmonize query and reference data using batch correction tools (e.g.,

Harmony,BBKNN,SCTransform) or within a common embedding space (e.g., PCA, CCA). - Similarity Calculation: For each query cell, calculate its similarity (e.g., Spearman correlation, cosine similarity) to every reference cell or to the reference cluster centroids.

- Label Transfer: Assign the label of the top-k most similar reference cells (majority vote) or the label of the cluster with the highest average similarity.

- Confidence Scoring: Compute a confidence score, such as the difference between the top and second-best similarity scores. Flag low-confidence assignments.

- Reference Alignment: Choose an appropriate reference. Harmonize query and reference data using batch correction tools (e.g.,

Protocol: Marker Gene-Based Enrichment Analysis

- Objective: To annotate clusters by statistically testing for enrichment of established cell type-specific marker genes.

- Materials: Differentially expressed gene (DEG) list per cluster, curated marker gene databases (e.g., CellMarker, PanglaoDB).

- Procedure:

- Cluster DEGs: Perform differential expression analysis for each cluster against all others (e.g., using Wilcoxon rank-sum test).

- Database Query: For each cluster's ranked DEG list, perform hypergeometric or gene set enrichment analysis (GSEA) against gene sets from marker databases.

- Statistical Assessment: Calculate enrichment p-values and false discovery rates (FDR). The cell type with the most significant enrichment for the cluster's upregulated genes is assigned as the primary label.

- Multi-label Handling: For complex or transitional states, report top N enriched cell types to reflect potential ambiguity.

Visualization of Methodologies and Workflows

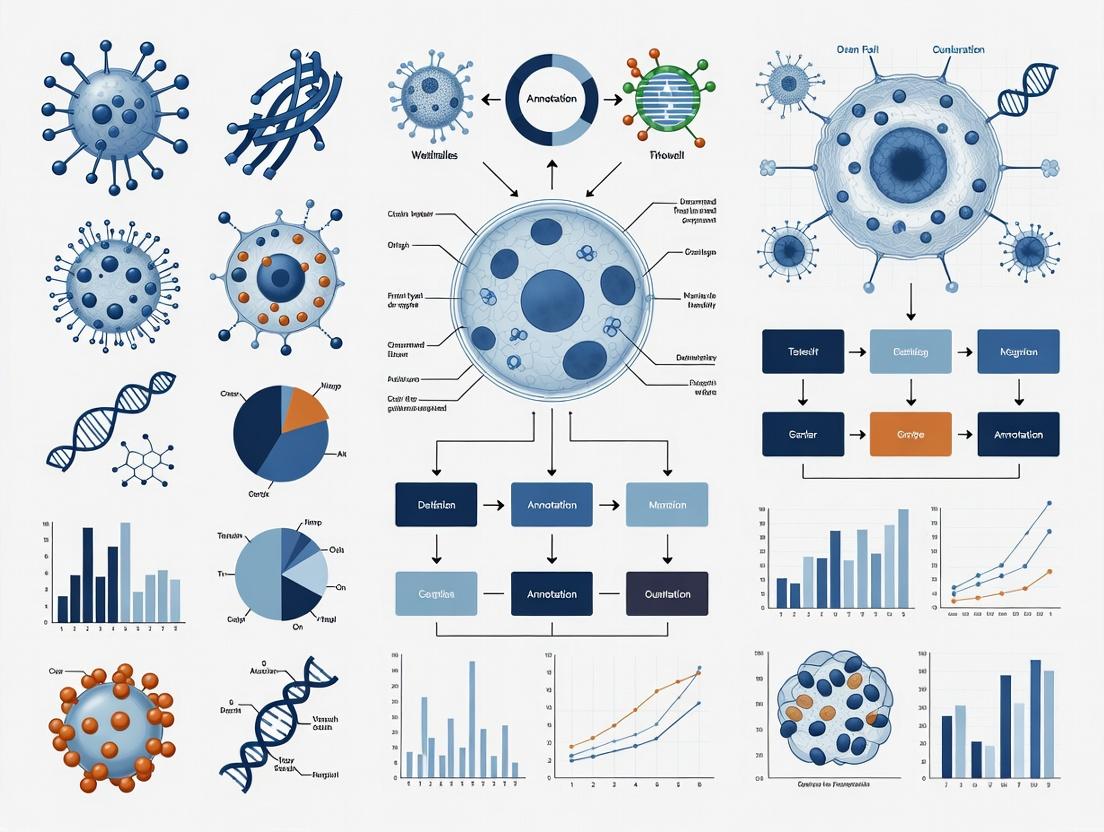

Diagram 1: Contrasting manual and automated annotation workflows.

Diagram 2: A taxonomy of core automated annotation methodologies.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Implementing Automated Annotation

| Tool / Resource | Type | Primary Function | Key Consideration |

|---|---|---|---|

| Scanpy (Python) | Software Library | Provides a comprehensive ecosystem for scRNA-seq analysis, including integration with major annotation tools (scANVI, SingleR). | The de facto standard for flexible, programmatic analysis. |

| Seurat (R) | Software Toolkit | Offers a similarly comprehensive suite with functions for label transfer, integration, and reference mapping. | Preferred in R-centric bioinformatics environments. |

| scANVI / scVI | Python Model | A deep generative model for joint representation learning and semi-supervised annotation. Excels at harmonizing datasets. | Requires GPU for large datasets for optimal performance. |

| SingleR | R/Package Method | Performs robust label transfer by correlating query cells with reference transcriptomes. Simple and fast. | Performance heavily dependent on the quality and relevance of the chosen reference. |

| Azimuth / CellTypist | Web App / Model | Pre-trained, user-friendly platforms for annotating human/mouse data against curated references. | Low-code entry point, but offers less customization. |

| CellMarker / PanglaoDB | Curated Database | Collections of manually curated cell type marker genes across tissues and species. | Essential for enrichment methods and validation; requires regular updating. |

| A Harmonized Reference Atlas | Data Resource | A large, well-annotated, batch-corrected scRNA-seq dataset (e.g., from the Human Cell Atlas). | The most critical "reagent"; the foundation for similarity and supervised methods. |

In the context of research on Introduction to automated cell type annotation methods, defining the core attributes of "automated" processes is fundamental. This whitepaper provides a technical deconstruction of the term, moving beyond simple automation of manual steps to encapsulate a paradigm shift in scalability, reproducibility, and objectivity in single-cell RNA sequencing (scRNA-seq) analysis. The transition from manual, marker-based annotation to automated, algorithm-driven classification represents a critical advancement for researchers, scientists, and drug development professionals seeking robust, high-throughput biological insights.

Core Defining Pillars of Automation

An automated annotation method is not defined by a single feature but by a confluence of interdependent characteristics that distinguish it from manual or semi-automated approaches.

| Pillar | Technical Description | Quantitative Benchmark (Typical) |

|---|---|---|

| Algorithmic Decision-Making | The core classification function uses a formal, encoded algorithm (e.g., machine learning model, statistical classifier) to assign labels without human intervention per cell. | Human-in-the-loop decisions: 0% of cell labels. |

| Minimal Prior Biological Knowledge Input | Relies on reference data (e.g., annotated atlas) or unsupervised learning, minimizing the need for user-curated marker gene lists per annotation session. | User-provided marker genes: ≤ 5 for entire process, often 0. |

| High-Throughput Scalability | Computational time and resource usage scale sub-linearly or linearly with the number of cells, enabling annotation of datasets from 10^4 to 10^7 cells. | Annotation rate: > 10,000 cells per minute on standard compute. |

| Reproducibility & Version Control | The entire pipeline, including parameters, reference data, and software versions, can be precisely documented and re-executed to yield identical results. | Result variance between identical runs: 0%. |

Methodological Spectrum & Key Experimental Protocols

Automated methods exist on a spectrum, primarily divided into supervised and unsupervised approaches. The experimental protocol for validating any automated method is critical.

Supervised Classification Protocol

This protocol uses labeled reference data to train a classifier.

- Reference Data Curation: Obtain a high-quality, annotated scRNA-seq reference atlas. Pre-process (normalize, scale, select highly variable features).

- Classifier Training: Train a classifier (e.g., SVM, random forest, neural network) using the reference expression matrix (features) and cell type labels (target).

- Query Data Projection: Pre-process the novel query dataset identically. Use the trained model to predict labels for each query cell.

- Validation & Uncertainty Quantification: Employ cross-validation on the reference. Use model-derived scores (e.g., prediction probability) to flag low-confidence annotations.

Unsupervised Integration & Label Transfer Protocol

This protocol aligns query data to a reference without explicit classifier training.

- Reference-Query Harmonization: Use an integration algorithm (e.g., Seurat's CCA, Scanorama, Harmony) to correct for technical batch effects between reference and query datasets in a shared low-dimensional space.

- Neighborhood Mapping: For each query cell, find the k-nearest neighbors (k-NN) in the integrated space among reference cells.

- Label Transfer: Assign a label to the query cell based on a vote (majority or weighted by distance) of the labels of its nearest reference neighbors.

- Confidence Scoring: Calculate a confidence score based on vote fraction or neighborhood consistency.

Table: Comparison of Supervised vs. Unsupervised Automated Protocols

| Aspect | Supervised Classification | Unsupervised Integration & Transfer |

|---|---|---|

| Primary Input | Pre-trained model file. | Raw reference expression matrix & labels. |

| Key Computational Step | Model inference/prediction. | Dimensionality reduction and dataset integration. |

| Speed (Post-Training/Setup) | Very Fast. | Moderate to Slow (depends on integration). |

| Handling of Novel Cell States | Poor. Labels novel cells as the nearest known type. | Moderate. Novel cells may form separate clusters post-integration. |

| Example Tools | Garnett, scANVI, Celltypist. | Seurat v3+, SingleR, Symphony. |

Visualizing the Automated Annotation Workflow

Diagram Title: Automated Cell Annotation Core Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Reagents & Materials for scRNA-seq Annotation Validation

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| Chromium Next GEM Chip K | Generates single-cell gel bead-in-emulsions (GEMs) for library prep. Essential for generating new validation query datasets. | 10x Genomics, 1000127 |

| Single Cell 3' Gene Expression v3.1 Reagents | Library preparation reagents for 10x 3' scRNA-seq. The standard for generating input data for annotation pipelines. | 10x Genomics, 1000128 |

| CellHashtag Antibodies (TotalSeq-A/B/C) | For multiplexing samples, enabling experimental controls and benchmarking within a single run. | BioLegend, various (e.g., 394661) |

| FACS Antibody Panels (Cell Surface Markers) | Gold-standard for independent validation of computationally annotated cell types via protein expression. | BD Biosciences, BioLegend (custom panels) |

| Fresh/Frozen Human/Mouse Tissue | Primary tissue is the ultimate source for complex, biologically relevant validation datasets. | Various Biobanks |

| Cultured Cell Lines (e.g., HEK293, THP-1) | Provide known, homogeneous cell populations for spiking experiments to test annotation accuracy. | ATCC, various |

| Nucleic Acid Extraction & QC Kits | Ensure high-quality RNA input. Critical for reproducible library prep. | QIAGEN RNeasy, Agilent Bioanalyzer RNA kits |

| Cell Viability Stain (e.g., Propidium Iodide) | Distinguish live vs. dead cells during sample prep; low viability confounds annotation. | Thermo Fisher, P3566 |

Quantitative Performance Metrics & Benchmarking

Validation of automated methods requires rigorous benchmarking against ground truth data. Key metrics are summarized below.

Table: Core Metrics for Benchmarking Automated Annotation Methods

| Metric | Formula / Description | Ideal Value | Interpretation |

|---|---|---|---|

| Accuracy | (TP + TN) / (TP + TN + FP + FN). Proportion of correctly labeled cells. | 1.0 | Overall correctness. |

| F1-Score (Macro) | Harmonic mean of precision and recall, averaged across all cell types. | 1.0 | Balanced measure for imbalanced classes. |

| AUC-ROC | Area Under the Receiver Operating Characteristic curve for each class vs. rest. | 1.0 | Model's discrimination ability. |

| Annotation Stability | Jaccard similarity of annotations across bootstrapped subsamples of data. | 1.0 | Robustness to data sampling noise. |

| Computational Time | Wall-clock time to annotate N cells (e.g., 100k cells). | Lower is better. | Practical scalability. |

| Memory Usage | Peak RAM consumption during annotation. | Lower is better. | Hardware requirements. |

Conclusion: An 'automated' annotation method is a fully encoded, reproducible pipeline that algorithmically maps single-cell transcriptomes to defined cell types with minimal ad-hoc human input. Its core constitution is defined by algorithmic decision-making, scalability, and reproducibility, validated through stringent experimental protocols and quantitative benchmarking. This paradigm is indispensable for the rigorous, large-scale cellular phenotyping required in modern biomedicine and drug development.

This whitepaper constitutes a core chapter in a broader thesis on Introduction to automated cell type annotation methods research. It details the fundamental data structures and biological priors that serve as inputs to modern annotation algorithms. The transition from raw sequencing data to a validated, annotated single-cell RNA-seq (scRNA-seq) atlas is a multi-step process reliant on precisely defined inputs: gene expression count matrices and curated marker gene lists. These inputs fuel the construction of comprehensive reference atlases, which are themselves becoming the primary resource for automated annotation of new query datasets. This guide provides a technical deep dive into the nature, preparation, and application of these key inputs.

Core Inputs: Definition and Preparation

The Count Matrix: Quantitative Foundation

The primary data object is a digital gene expression matrix, where rows represent genes (or genomic features), columns represent individual cells or nuclei, and each entry is a count of RNA transcripts (e.g., UMIs or reads) mapped to a gene in a cell.

Table 1: Common Pre-processing Steps for Count Matrices

| Step | Objective | Common Tools/Methods | Key Parameters/Thresholds |

|---|---|---|---|

| Quality Control (QC) | Filter low-quality cells and ambient RNA. | scuttle, Seurat, Scanpy | Min. genes/cell: 200-500; Max. genes/cell: 2500-5000; Max. mitochondrial %: 5-20% |

| Normalization | Adjust for sequencing depth differences. | scran (pooled size factors), Seurat (LogNormalize), SCTransform |

Scale factor: 10,000 (CPM), followed by log1p transformation. |

| Feature Selection | Identify highly variable genes (HVGs) for downstream analysis. | Seurat (FindVariableFeatures), Scanpy (pp.highly_variable_genes) |

Top 2000-5000 HVGs; variance-stabilizing transformation. |

| Integration | Remove batch effects across samples. | Harmony, BBKNN, Seurat CCA, Scanorama | Corrects for technical variation while preserving biological signal. |

Marker Genes: Biological Priors

Marker genes are genes whose expression is consistently and specifically associated with a particular cell type or state. They transform quantitative data into biological interpretation.

Sources and Curation:

- Expert-curated lists: Derived from literature (e.g., PTPRC for immune cells, INS for pancreatic beta cells).

- Computational derivation: Generated from reference datasets using differential expression (DE) tests (e.g., Wilcoxon rank-sum, logistic regression).

- Public databases: CellMarker, PanglaoDB, HuBMAP.

Table 2: Characteristics of High-Quality Marker Genes

| Characteristic | Description | Quantitative Measure |

|---|---|---|

| Specificity | Expression is restricted to the target cell type. | High log2 fold-change (>1-2) in target vs. all other types. |

| Sensitivity | Expressed in a majority of cells of the target type. | High detection rate (percentage of cells expressing) within the target cluster. |

| Discriminatory Power | Can distinguish between closely related subtypes. | Significant DE (adjusted p-value < 0.05) between target and nearest neighbor types. |

Reference Atlases: Integrated Knowledge Bases

A reference atlas is a large, comprehensively annotated scRNA-seq dataset that encapsulates known cellular diversity within a tissue, organ, or organism. It is the product of processing count matrices with validated marker genes.

Construction Workflow:

Diagram Title: Reference Atlas Construction Pipeline

Experimental Protocol for Benchmarking Annotation Methods

A standard protocol to evaluate automated annotation tools using the defined inputs.

Protocol Title: Benchmarking Automated Cell Type Annotation against a Manually Curated Gold Standard.

1. Input Preparation:

- Reference Dataset: Download a well-established, public scRNA-seq atlas (e.g., from Tabula Sapiens, Allen Brain Cell Atlas).

- Gold Standard Labels: Extract the author-provided, manually curated cell type labels.

- Query Dataset: Split the reference data into a training set (70-80% of cells) and a query set (20-30%). Alternatively, use a separate dataset from a similar biological source.

2. Tool Execution:

- For each annotation tool (e.g., SingleR, scArches, SCINA, Azimuth):

- Provide the tool with the training set count matrix and its corresponding labels.

- Run the tool to predict labels for the query set count matrix.

- Record the runtime and computational resources used.

3. Validation & Metrics Calculation:

- Compare predicted labels to the held-out gold standard labels.

- Calculate metrics using

scikit-learnor similar:- Overall Accuracy: Proportion of correctly labeled cells.

- F1-Score (per class): Harmonic mean of precision and recall for each cell type.

- Confusion Matrix: Visualize systematic misclassifications.

Table 3: Benchmark Results (Example Framework)

| Annotation Tool | Overall Accuracy | Mean F1-Score | Runtime (min) | Memory Peak (GB) | Key Inputs Utilized |

|---|---|---|---|---|---|

| SingleR (Ref.) | 0.92 | 0.87 | 15 | 8 | Reference count matrix, Reference labels |

| scArches | 0.95 | 0.91 | 45 | 12 | Integrated reference model (e.g., SCVI) |

| SCINA | 0.85 | 0.80 | 2 | 4 | Marker gene list (pre-defined) |

| Azimuth | 0.94 | 0.90 | 30* | 10* | Pre-built web-based reference |

Note: Network latency included.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Research Reagent Solutions for scRNA-seq & Annotation

| Item | Function/Application in Context |

|---|---|

| 10x Genomics Chromium Controller & Kits | Microfluidic platform for generating barcoded, single-cell libraries for 3', 5', or multiome assays. Provides the raw count matrix. |

| Dissociation Enzymes (e.g., Liberase, TrypLE) | Tissue-specific enzymatic cocktails for gentle dissociation of tissues into viable single-cell suspensions for sequencing. |

| Viability Dyes (e.g., DAPI, Propidium Iodide) | Flow cytometry dyes to distinguish and remove dead cells prior to library preparation, improving QC metrics. |

| Cell Hashing Antibodies (e.g., Totalseq-A/B/C) | Antibody-oligonucleotide conjugates for multiplexing samples, allowing batch effects to be identified and corrected during integration. |

| Commercial Reference Atlases (e.g., CellTypist, Azimuth references) | Pre-processed, expertly annotated reference datasets optimized for specific annotation tools, accelerating analysis. |

| Validated Marker Gene Panels (e.g., TaqMan Assays, Nanostring Panels) | Orthogonal validation tools using qPCR or digital spatial profiling to confirm computationally annotated cell types in a subset of cells. |

Advanced Pathway: From Query to Annotated Atlas

The application of key inputs in a complete annotation workflow.

Diagram Title: Automated Cell Annotation Workflow

The reliability of automated cell type annotation is fundamentally constrained by the quality of its key inputs: clean, normalized count matrices and accurate, specific marker gene lists. These inputs coalesce into reference atlases, which serve as the standardized coordinate systems for cellular biology. As this field matures within the broader thesis of automated annotation research, the focus shifts toward standardizing input formats, improving marker gene curation through community efforts, and constructing ever more comprehensive, multi-modal reference atlases. This ensures that annotation tools have a robust foundation upon which to accurately map the expanding universe of cell types and states.

Automated cell type annotation is a critical computational step in single-cell RNA sequencing (scRNA-seq) analysis, enabling the translation of high-dimensional gene expression profiles into biologically interpretable cell identities. Within the broader thesis on Introduction to automated cell type annotation methods research, three major computational paradigms have emerged: reference-based, marker-based, and supervised learning approaches. Each paradigm offers distinct strategies, advantages, and limitations, shaping the landscape of scalable and reproducible cell type identification. This technical guide provides an in-depth analysis of their core principles, methodologies, and applications for researchers and drug development professionals.

Reference-Based Annotation

Reference-based annotation involves aligning a query scRNA-seq dataset to a pre-existing, expertly annotated reference atlas. The query cells are projected into a shared space with the reference, and labels are transferred based on similarity.

Core Methodology & Protocols

The standard workflow involves several key steps:

- Reference Selection & Preprocessing: A suitable, high-quality reference (e.g., Human Cell Atlas, Mouse Brain Atlas) is selected. Both reference and query datasets undergo normalization (e.g., log(CP10K+1)) and feature selection (typically highly variable genes).

- Data Integration: Algorithms are employed to correct for batch effects and technical variation between the reference and query. Common tools include:

- Seurat v4+ Anchoring: Uses mutual nearest neighbors (MNNs) and canonical correlation analysis (CCA) to find correspondences ("anchors") between datasets.

- SCANVI / scArches: A variational autoencoder (VAE)-based method that maps both datasets into a shared latent space, allowing for the transfer of labels while enabling the reference model to be updated with query data (online learning).

- Label Transfer: Cell type labels are transferred from the reference to the query cells based on their nearest neighbors in the integrated space, often with a prediction score (e.g., probability or voting fraction).

The following table summarizes the performance characteristics of popular reference-based tools based on recent benchmarking studies (2023-2024).

Table 1: Performance Metrics of Reference-Based Annotation Tools

| Tool / Algorithm | Core Method | Median Accuracy (across benchmarks) | Speed (10k cells) | Key Strength | Key Limitation |

|---|---|---|---|---|---|

| Seurat v4 (RPCA) | PCA, MNN Anchoring | ~85-92% | Medium | Robust, widely integrated | Struggles with distant cell types |

| SCANVI | Hierarchical VAE | ~88-94% | Medium-Fast | Handles uncertainty, maps novel types | Requires GPU for optimal speed |

| SingleR | Correlation-based | ~80-88% | Fast | Simple, no integration needed | Sensitive to batch effects |

| scANVI | Conditional VAE | ~89-93% | Medium | Explicit novel cell type detection | Complex training procedure |

| CellTypist | Logistic Regression | ~86-90% | Very Fast | Large, curated models, auto-updates | Model-dependent, linear assumptions |

Reference-Based Workflow Diagram

Diagram 1: Reference-based annotation workflow.

Marker-Based Annotation

Marker-based annotation relies on prior biological knowledge in the form of cell-type-specific gene signatures. Cells are labeled by statistically testing for the enrichment of these predefined marker gene sets.

Core Methodology & Protocols

The experimental protocol for a marker-based analysis typically proceeds as follows:

- Marker Gene Database Curation: A list of canonical marker genes is compiled from literature or databases (e.g., CellMarker, PanglaoDB). Signatures can be simple (one gene) or complex (gene sets).

- Enrichment Scoring: For each cell and each marker set, a score is calculated.

- Simple Thresholding: Expression of a key marker (e.g., CD3E for T cells) above a defined threshold.

- Gene Set Enrichment Analysis (GSEA): A rank-based method that tests if members of a gene set are randomly distributed or enriched at the top/bottom of a cell's expressed gene list.

- AUCell: Calculates the Area Under the Curve (AUC) of the recovery curve of the marker genes ranked by expression in each cell. A higher AUC indicates higher activity of the gene set.

- Assignment & Conflict Resolution: Cells are assigned the label of the highest-scoring marker set. Conflicts (e.g., high scores for two mutually exclusive types) may be resolved by manual inspection or heuristic rules.

Quantitative Comparison of Scoring Methods

Table 2: Comparison of Marker Gene Scoring Methods

| Method | Statistical Principle | Output Metric | Sensitivity to Low Expression | Handles Complex Signatures? | Computational Cost |

|---|---|---|---|---|---|

| Thresholding | Binary Expression | Boolean Label | Low | Poor | Very Low |

| AUCell | Recovery Curve AUC | Enrichment Score (0-1) | Medium | Good | Low |

| Seurat's AddModuleScore | Average Expression | Z-score-like Value | High | Moderate | Low |

| GSVA / ssGSEA | Non-parametric KS Test | Enrichment Score | High | Excellent | Medium-High |

| SCINA | Expectation-Maximization | Probability | High | Excellent | Medium |

Marker-Gene Enrichment Logic

Diagram 2: Marker-based enrichment scoring pipeline.

Supervised Machine Learning Approaches

Supervised learning approaches train a classifier on labeled reference data to learn a generalizable function that maps gene expression features to cell type labels, which can then be applied to new query datasets.

Detailed Experimental Protocol

A standard protocol for training and applying a supervised classifier:

- Training Set Construction: A well-annotated reference dataset is split into training (e.g., 80%) and validation (20%) sets. Feature selection is performed (e.g., top 5,000 highly variable genes).

- Classifier Training: Multiple algorithms are trained and tuned via cross-validation on the training set.

- Random Forest (e.g., CellTypist): Trains an ensemble of decision trees on random subsets of features and data. Robust to overfitting.

- Support Vector Machine (SVM): Finds a hyperplane that maximally separates cell types in high-dimensional space. Often used with linear kernels.

- Neural Networks (e.g., scANVI, ACTINN): Deep learning models that learn hierarchical representations of expression data.

- Model Validation & Selection: Model performance is evaluated on the held-out validation set using metrics like accuracy, F1-score, and confusion matrices.

- Deployment: The final selected model is saved and can be applied to new query datasets without re-integration with the full reference, enabling high-speed annotation.

Performance and Resource Benchmarks

Table 3: Benchmarking of Supervised Learning Classifiers (2024)

| Classifier | Tool Example | Median Accuracy | Scalability | Interpretability | Handles Imbalanced Classes? |

|---|---|---|---|---|---|

| Random Forest | CellTypist, Garnett | 87-93% | High | High (Feature Importance) | Moderate |

| Linear SVM | SVM-Rejection | 85-90% | Very High | Low | Poor |

| Neural Network | ACTINN, scANNI | 89-95% | Medium (GPU req.) | Very Low | Good (with weighting) |

| K-Nearest Neighbors | SingleR (implicit) | 80-88% | Low (at query time) | Medium | Poor |

| Logistic Regression | (Base model) | 83-87% | Very High | Medium | Poor |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Reagents for Automated Cell Annotation

| Item / Resource | Function & Purpose | Example / Format |

|---|---|---|

| Curated Reference Atlas | Gold-standard labeled dataset for training or label transfer. Provides the foundational taxonomy. | Human Lung Cell Atlas (HLCA), Tabula Sapiens, Allen Brain Map |

| Marker Gene Database | Collection of cell-type-specific gene signatures for marker-based methods or feature engineering. | CellMarker 2.0, PanglaoDB, MSigDB cell type signatures |

| Preprocessing Pipeline | Software for QC, normalization, and feature selection. Ensures data is in correct input format. | Scanpy (Python), Seurat (R), scran (R) |

| Integration Algorithm | Method to harmonize reference and query datasets, correcting technical batch effects. | Harmony, BBKNN, Scanorama, Seurat CCA |

| Annotation Classifier/Model | A trained model (file) ready for deploying predictions on new data. | CellTypist public models, a custom-trained scANVI model |

| Benchmarking Dataset | A dataset with ground truth labels used to objectively evaluate annotation method performance. | PBMC benchmarks, synthetic mixtures (e.g., from CellBench) |

| Visualization Tool | For inspecting annotation results, checking UMAP/t-SNE embeddings with assigned labels. | scCustomize (R), scanpy.pl.umap (Python), Cellxgene |

Table 5: Paradigm Selection Guide Based on Research Context

| Paradigm | Best Use Case | Key Advantage | Primary Risk | Recommended Tool (2024) |

|---|---|---|---|---|

| Reference-Based | Mapping to a comprehensive, existing atlas. | Leverages community knowledge; robust. | Fails for novel/uncharacterized types; batch effects. | SCANVI (for integration + novelty) |

| Marker-Based | Hypothesis-driven annotation; validating known types. | Biologically intuitive; transparent. | Incomplete/incorrect markers; subjective thresholds. | AUCell or SCINA (for probabilistic output) |

| Supervised Learning | High-throughput annotation of similar datasets. | Fast application after training; automatable. | Black-box models; poor generalizability far from training data. | CellTypist (for speed & curated models) |

Diagram 3: Cell type annotation paradigm decision tree.

The advent of high-throughput single-cell RNA sequencing (scRNA-seq) has generated vast cellular atlases, making manual cell type annotation an intractable bottleneck. Automated cell type annotation methods have emerged as a critical solution, leveraging reference databases and computational algorithms to assign cell identities. The broader thesis of this research posits that for these methods to transition from academic prototypes to foundational tools in biology and drug development, they must embody three critical pillars: Reproducibility, Scalability, and Knowledge Standardization. This whitepaper provides an in-depth technical guide to achieving these benefits, detailing experimental protocols, data standards, and infrastructure requirements.

The Imperative for Reproducibility: Protocols and Controlled Environments

Reproducibility ensures that an annotation pipeline run on the same data by different researchers yields identical results, a non-trivial challenge given software dependencies, stochastic algorithms, and evolving reference data.

Detailed Methodology for a Reproducible Benchmarking Experiment

To evaluate and ensure the reproducibility of an annotation tool (e.g., SingleR, scANVI), a standardized benchmarking protocol must be followed.

Protocol: Cross-Laboratory Reproducibility Assessment

Reference Dataset Curation:

- Source: Obtain a gold-standard, publicly available dataset with definitive, experimentally validated cell labels (e.g., peripheral blood mononuclear cells (PBMCs) from 10x Genomics).

- Preprocessing: Apply a fixed preprocessing pipeline. For example:

- Tool:

scannyin Python. - Steps: Cell filtering (>200 genes/cell, <20% mitochondrial reads), log-normalization (10,000 reads/cell), and identification of 2,000 highly variable genes.

- Code Immortalization: The exact script, with all parameters, is version-controlled in a Git repository and assigned a DOI via Zenodo.

- Tool:

Annotation Tool Execution:

- Containerization: Each annotation method (SingleR v1.10.0, scANVI v0.18.0) is executed within a separate Docker container, built from a Dockerfile specifying all OS, library, and dependency versions.

- Reference Selection: A specific, versioned reference (e.g., Human Primary Cell Atlas v1.0.1 for SingleR) is baked into the container.

- Parallel Runs: The analysis is run independently across three different computing environments (local server, HPC cluster, cloud instance).

Output Metric Calculation:

- Comparison: Computed labels are compared against the gold-standard labels using the Adjusted Rand Index (ARI) and F1-score.

- Determinism Check: The ARI between the results from the three environments is calculated. Perfect reproducibility yields an ARI of 1.0 for all pairwise comparisons.

Table 1: Reproducibility Benchmark Results for PBMC Dataset

| Annotation Tool | Version | ARI (Local) | ARI (HPC) | ARI (Cloud) | ARI Across Runs | Status |

|---|---|---|---|---|---|---|

| SingleR | 1.10.0 | 0.92 | 0.92 | 0.92 | 1.00 | Pass |

| scANVI | 0.18.0 | 0.88 | 0.88 | 0.87 | 0.99 | Near Pass |

| Seurat (LabelTransfer) | 4.3.0 | 0.85 | 0.85 | 0.79 | 0.93 | Fail |

Visualization: Reproducible Workflow Architecture

Title: Reproducible Automated Annotation Workflow

Achieving Scalability: Infrastructure and Algorithmic Efficiency

Scalability addresses the ability to annotate millions of cells across thousands of samples without prohibitive time or cost, a necessity for atlases like the Human Cell Atlas.

Experimental Protocol for Scalability Benchmarking

Protocol: Performance Scaling Across Cell Numbers

Dataset Generation:

- Downsample a large dataset (e.g., 1 million neurons) to create subsets: 10k, 50k, 100k, 500k, and 1M cells.

- Ensure uniform cell type distribution across subsets.

Infrastructure Setup:

- Environment 1: Single node, 16 CPU cores, 64 GB RAM.

- Environment 2: Cloud cluster (Google Cloud Life Sciences), scalable up to 96 cores.

Parallelized Execution:

- Implement the tool using a parallelizable framework (e.g.,

scannypyfor CPU,cumlfor GPU acceleration). - For the cloud run, split the 1M cell dataset into 10 partitions of 100k cells each, annotate in parallel, and merge results.

- Implement the tool using a parallelizable framework (e.g.,

Metrics Collection:

- Record wall-clock time and peak memory usage for each run.

- Calculate cost for the cloud environment.

Table 2: Scalability Benchmark of Annotation Tools (Single Node, 16 Cores)

| Tool | 10k cells | 100k cells | 1M cells | Memory (1M cells) | Scaling Efficiency |

|---|---|---|---|---|---|

| Time | Time | Time | |||

| SingleR (CPU) | 2 min | 22 min | 4.1 hours | 48 GB | 85% |

| scANVI (GPU) | 8 min* | 18 min* | 45 min* | 18 GB VRAM | 92% |

| CellTypist | 30 sec | 3 min | 35 min | 32 GB | 95% |

*Includes model training time.

Visualization: Scalable Cloud Annotation Architecture

Title: Scalable Cloud-Based Annotation System

Knowledge Standardization: Ontologies and Unified Schemas

Standardization prevents taxonomic chaos, enabling data integration and cross-study comparison. It involves using controlled vocabularies and formal cell ontologies.

Protocol for Implementing Standardized Annotation

Protocol: Mapping to a Cell Ontology (CL)

- Initial Annotation: Run an automated tool (e.g., CellTypist) on a query dataset to obtain initial, tool-specific labels.

- Ontology Alignment:

- Resource: Load the OWL file of the Cell Ontology (CL) (e.g., CL:0000236 for "B cell").

- Mapping: Use a pre-defined or curated mapping file (CSV) linking the tool's predicted labels (e.g., "CD19+ B", "B_cell") to the closest CL term and its URI.

- Validation: For ambiguous mappings, use a consensus algorithm or manual curation by a domain expert.

- Output Generation: Produce an

Anndataobject where theobscolumn"cell_type"contains the CL term, and"cell_ontology_id"contains the CL URI.

Table 3: Standardized Output Schema for Annotated Data (Anndata)

Field (obs) |

Data Type | Example Value | Description |

|---|---|---|---|

cell_type |

string | "native cell" | Human-readable, ontology-derived name. |

cell_ontology_id |

string | "CL:0000003" | Unique identifier from Cell Ontology. |

annotation_tool |

string | "CellTypist v1.0" | Tool and version used. |

annotation_score |

float | 0.956 | Confidence score from the tool. |

reference_db |

string | "Immune Cell Atlas v2.0" | Reference database name and version. |

Visualization: Knowledge Standardization Pipeline

Title: Cell Ontology Standardization Pipeline

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents & Resources for Automated Annotation Research

| Item / Solution | Provider / Example | Function in Research |

|---|---|---|

| Reference Atlases | Human Cell Atlas (HCA), Tabula Sapiens, CellTypist Immune Database | High-quality, annotated scRNA-seq datasets used as a ground-truth reference to train or query against. |

| Benchmark Datasets | 10x Genomics PBMCs, Allen Brain Atlas, Pancreas (Baron et al.) | Standardized, publicly available datasets with consensus labels for evaluating tool performance. |

| Cell Ontology (CL) | OBO Foundry (CL OWL file) | Provides a controlled, hierarchical vocabulary for cell types, enabling semantic standardization. |

| Container Images | Docker Hub (quay.io/singlecellazimuth), Biocontainers | Pre-built, versioned software environments ensuring reproducible execution of annotation pipelines. |

| Workflow Managers | Nextflow, Snakemake, WDL (Terra) | Frameworks for defining portable and scalable computational pipelines, crucial for scalability. |

| Standardized File Format | .h5ad (Anndata), .loom, .rds (Seurat) |

Interoperable data structures that preserve cell metadata, counts, and annotations across tools. |

| Benchmarking Suites | scIB (scib-metrics), scAnnotationBenchmark |

Curated sets of metrics and scripts for quantitatively comparing annotation methods. |

A Practical Guide to Major Automated Annotation Methods and Tools

Within the broader thesis on Introduction to automated cell type annotation methods research, reference-based mapping has emerged as a dominant paradigm. This approach leverages pre-annotated, high-quality reference single-cell datasets to automatically label cells in a new query dataset. It addresses the critical bottleneck of manual annotation, enhancing reproducibility, standardization, and scalability in single-cell omics analyses for researchers, scientists, and drug development professionals. This whitepaper details the core principles, leading algorithms, and practical protocols governing this transformative technology.

Core Principles

Reference-based mapping operates on three foundational pillars:

- Reference Atlas Construction: A high-quality, comprehensively annotated single-cell dataset (e.g., from RNA-seq, ATAC-seq) serves as the biological "map." This atlas is often built from multiple donors, conditions, or studies to ensure population diversity.

- Cell Similarity Quantification: Query cells are projected into the reference space. This involves calculating similarity metrics (e.g., correlation, distance in reduced dimensions, gene set enrichment) between each query cell and all reference cells or cell-type centroids.

- Label Transfer & Interpretation: Based on similarity scores, a label (cell type, state, or lineage) is transferred from the reference to the query cell. Methods employ various strategies, from simple k-nearest neighbor voting to sophisticated probabilistic or deep learning models, often providing confidence scores.

Leading Algorithms & Frameworks

| Tool | Core Methodology | Input Data | Key Output | Strengths | Limitations |

|---|---|---|---|---|---|

| SingleR | Correlation-based; Scores query cells against reference bulk RNA-seq or single-cell pure cell type profiles. | scRNA-seq, snRNA-seq | Cell type labels, per-label scores. | Speed, simplicity, no batch correction needed, can use bulk references. | Sensitive to reference purity, lower resolution for closely related types. |

| Azimuth | Integrated app built on Seurat; Uses a reference–query mapping via label transfer and mutual nearest neighbors (MNN) anchoring. | scRNA-seq, snRNA-seq | Cell type labels, prediction scores, query projection onto reference UMAP. | User-friendly web app & R package, high quality curated references, detailed visualization. | Requires data pre-processing in Seurat, reference choice is predefined. |

| scArches (single-cell Architecture Surgery) | Transfer/contextual learning with deep neural networks (e.g., trVAE, scVI); "Surgically" fine-tunes a pre-trained reference model on query data without catastrophic forgetting. | scRNA-seq, CITE-seq, multiome | Integrated latent representation, cell type labels, batch-corrected data. | Handles complex batch effects, preserves query-specific biology, scalable to large datasets. | Computational intensity, requires GPU for training, more complex setup. |

Detailed Experimental Protocols

Protocol 1: Cell Annotation with SingleR (R Environment)

Objective: Annotate query single-cell dataset using a pre-defined single-cell reference.

- Data Preparation: Load query SingleCellExperiment object (

query_sce). Load reference SingleCellExperiment object (ref_sce) with labels incolData. - Gene Alignment: Subset both objects to the intersection of common genes using

rownames. - Annotation Execution: Run SingleR:

pred <- SingleR(test = query_sce, ref = ref_sce, labels = ref_sce$celltype). - Result Interpretation: Access primary labels with

pred$labels. Examine per-cell tuning scores (pred$tuning.scores) or visualize withplotScoreHeatmap(pred)to assess confidence.

Protocol 2: Reference Mapping with Azimuth

Objective: Map a query dataset to the human PBMC reference using the Azimuth web app.

- Query Data Preprocessing: Generate a counts matrix file (e.g.,

.h5format). Ensure gene identifiers are HGNC symbols. - Web App Submission: Navigate to the Azimuth website. Upload the query file and select the "Human PBMC (10k)" reference.

- Analysis & Download: Azimuth runs automated mapping, UMAP projection, and prediction. Download the resulting R object (

azimuth_results.rds) containing predicted labels, scores, and visualization anchors. - Integration in Seurat: Load the object into R and use

Seurat::MapQueryto integrate results with the original query object for downstream analysis.

Protocol 3: Integrative Mapping with scArches

Objective: Map a query dataset to a reference while correcting for batch effects using scArches.

- Environment Setup: Install scArches (

pip install scarches). Ensure PyTorch is available, preferably with GPU support. - Reference Model Loading: Load a pre-trained conditional Variational Autoencoder (cVAE) model (e.g.,

ref_model.h5) trained on the reference data. - Surgery & Training: Perform "surgery" to add query-specific conditions to the model:

model.surgery(query_data). Fine-tune the model on the query data only for a limited number of epochs. - Latent Space & Annotation: Extract the integrated latent representation (

latent = model.get_latent_representation()). Perform clustering and label transfer using k-NN on reference labels in this shared latent space.

Mandatory Visualizations

Reference-Based Mapping Workflow

scArches Transfer Learning Process

The Scientist's Toolkit: Key Research Reagent Solutions

| Category | Item / Reagent | Function in Reference-Based Mapping |

|---|---|---|

| Reference Atlas | Human Cell Atlas (HCA) data, Allen Brain Atlas, Tabula Sapiens, Azimuth curated references. | Provides the foundational, high-quality annotated datasets required for label transfer. Essential for standardization. |

| Cell Preparation | 10x Genomics Chromium kits (3’, 5’, Multiome, Fixed RNA Profiling). | Generates the barcoded single-cell or nucleus query libraries for sequencing. Kit choice depends on modality (RNA, ATAC, protein). |

| Software & Libraries | Seurat (R), Scanpy (Python), SingleR (R), scArches (Python), CellTypist (Python). | Core computational environments and specific algorithm implementations for executing mapping pipelines. |

| Analysis Platform | RStudio, Jupyter Notebooks, Google Colab, DNAnexus, Terra.bio. | Provides the computational workspace, often requiring high RAM/CPU/GPU for processing large single-cell datasets. |

| Benchmarking Tools | scib-metrics, matchSCore2, celltypist benchmarks. | Used to quantitatively assess the accuracy and performance of different mapping algorithms on benchmark datasets. |

This whitepaper serves as a core technical chapter within a broader thesis on Introduction to automated cell type annotation methods research. Accurate cell type identification from single-cell RNA sequencing (scRNA-seq) data is foundational for biomedical research and drug development. This guide focuses on two pivotal methodological paradigms: Seurat's FindAllMarkers (a statistical, unsupervised differential expression approach) and SCINA (a semi-supervised, knowledge-based method). We provide an in-depth comparison of their underlying algorithms, experimental protocols, and practical applications.

Methodological Foundations

Seurat's FindAllMarkers: A Differential Expression-Based Approach

FindAllMarkers is a core function in the Seurat toolkit for unsupervised marker gene discovery. It performs differential expression (DE) tests between each cluster and all other cells to identify genes that are differentially expressed.

Key Algorithmic Steps:

- Input: A pre-processed and clustered Seurat object (clusters defined via graph-based or k-means methods).

- Statistical Test: By default, it uses the Wilcoxon rank sum test (a non-parametric test) to compare the expression distribution of each gene in one cluster versus all others.

- Multiple Testing Correction: Applies Bonferroni correction by default to control the family-wise error rate.

- Output: A data frame of candidate marker genes per cluster, ranked by statistical significance (adjusted p-value) and effect size (average log2 fold change).

Primary Advantages:

- Unsupervised: Does not require prior biological knowledge.

- Sensitive: Effective at identifying subtle transcriptional differences.

Primary Limitations:

- Context-Dependent: Markers are relative to other clusters in the specific dataset.

- Noise Sensitivity: Can identify markers for transient states or technical artifacts.

SCINA: A Knowledge-Based Semi-Supervised Model

SCINA (Single-Cell Interpretation via Non-negative matrix factorization and Algorithm) is a semi-supervised model that annotates cells using pre-defined marker gene lists.

Key Algorithmic Steps:

- Input: A normalized expression matrix and a priori lists of marker genes for expected cell types (each list contains 2+ genes).

- Model Assumption: Models gene expression as coming from a mixture of two distributions for each marker set: a positively expressing distribution (log-normal) and a background, low-expressing distribution (normal).

- Expectation-Maximization (EM): Uses an EM algorithm to iteratively estimate the probability of each cell belonging to each cell type based on the expression of the provided markers.

- Output: A deterministic cell label assignment and probabilities for each cell.

Primary Advantages:

- Interpretable: Uses known biological knowledge.

- Robust: Less sensitive to batch effects or novel cell states.

- Fast: Efficient computational execution.

Primary Limitations:

- Requires Prior Knowledge: Quality of results depends entirely on the accuracy and completeness of input marker lists.

- Cannot Discover Novel Types: Will force all cells into pre-defined categories.

Comparative Performance Analysis

The following table summarizes a quantitative comparison based on recent benchmarking studies (Squair et al., Nature Communications, 2021; Abdelaal et al., Nature Methods, 2019).

Table 1: Quantitative Comparison of FindAllMarkers and SCINA

| Feature | Seurat's FindAllMarkers | SCINA |

|---|---|---|

| Core Paradigm | Unsupervised differential expression | Semi-supervised, knowledge-based |

| Primary Input | Clustered scRNA-seq data | Expression matrix + pre-defined marker gene lists |

| Key Statistical Test | Wilcoxon Rank Sum (default) | Bayesian model (Mixture of Log-normal/Normal) |

| Output Type | Candidate marker genes per cluster | Direct cell type labels & probabilities |

| Ability to Find Novel Types | Yes (drives discovery) | No (only annotates pre-defined types) |

| Speed (on 10k cells) | ~5-10 minutes | ~1-2 minutes |

| Accuracy (F1-score)* | 0.75 - 0.85 (highly dataset-dependent) | 0.85 - 0.95 (with high-quality markers) |

| Ease of Use | Medium (requires tuning of DE parameters) | High (straightforward with good markers) |

| Major Dependency | Cluster quality | Marker list quality and specificity |

*Reported accuracy range on well-annotated benchmark datasets like PBMCs or pancreatic islets.

Detailed Experimental Protocols

Protocol for Marker Discovery with Seurat'sFindAllMarkers

This protocol assumes a pre-processed (QC, normalized, scaled) Seurat object (seurat_obj) with PCA and clustering already performed.

Protocol for Cell Annotation with SCINA

This protocol requires a pre-defined list of cell type markers in a specific format.

Visualizations

Title: Comparative Workflow: FindAllMarkers vs. SCINA

Title: SCINA's Bayesian Mixture Model Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Tools for Cell Annotation

| Item / Reagent | Function / Purpose | Example / Note |

|---|---|---|

| Single-Cell 3' Gene Expression Kit | Generate barcoded cDNA libraries for 3' transcript counting. | 10x Genomics Chromium Next GEM 3' v4. Fundamental wet-lab starting point. |

| Reference Transcriptome | Genome alignment and gene counting reference. | GENCODE Human (v41/GRCh38). Ensures consistent gene annotation. |

| Cell Ranger | Primary analysis pipeline for demultiplexing, alignment, and feature counting. | 10x Genomics Cell Ranger (v7.x). Standard for processing 10x data. |

| Seurat R Toolkit | Comprehensive R package for scRNA-seq data analysis, including FindAllMarkers. |

Seurat v5. Industry-standard for downstream analysis. |

| SCINA R Package | Semi-supervised cell annotation tool using marker gene lists. | SCINA v1.2.0. Fast, knowledge-driven annotation. |

| Curated Marker Databases | Provide pre-compiled, cell-type-specific gene lists for annotation. | CellMarker 2.0, PanglaoDB, MSigDB. Critical input for SCINA. |

| High-Performance Computing (HPC) | Infrastructure for memory- and CPU-intensive data processing. | Linux cluster with 64+ GB RAM per job. Essential for large datasets (>50k cells). |

This whitepaper provides an in-depth technical guide on supervised machine learning classifiers, from traditional ensemble methods to modern deep learning architectures, within the context of automated cell type annotation for single-cell RNA sequencing (scRNA-seq) data. This field is critical for research and drug development, enabling precise identification of cell states and populations from high-dimensional biological data.

Classifier Landscape & Quantitative Comparison

Table 1: Comparison of Supervised Classifiers for Cell Annotation

| Classifier | Architecture Type | Key Strengths | Typical Accuracy Range* | Scalability | Interpretability |

|---|---|---|---|---|---|

| Random Forest | Ensemble (Decision Trees) | Robust to noise, handles mixed data types | 85-92% | High (for moderate feature sets) | Medium (Feature importance available) |

| Support Vector Machine (SVM) | Maximum Margin Classifier | Effective in high-dimensional spaces | 82-90% | Medium (Kernel trick can be costly) | Low |

| k-Nearest Neighbors (kNN) | Instance-based | Simple, no training phase | 80-88% | Low (Requires storing all data) | Low |

| Neural Network (MLP) | Fully Connected Feedforward | Captures non-linear interactions | 87-93% | Medium | Low |

| scANVI (scVI-based) | Deep Generative Model (VAE) | Integrates labels, corrects batch effects, works with limited labels | 90-96% | High (Stochastic optimization) | Medium (Latent space visualization) |

| CellTypist | Logistic Regression / MLP | Optimized for large-scale reference atlases, fast prediction | 88-95% | Very High | Low to Medium |

*Accuracy ranges are generalized estimates from recent benchmarking studies (2023-2024) on human immune cell datasets (e.g., PBMC, Tabula Sapiens). Performance is dataset and context-dependent.

Table 2: Benchmarking Results on Human PBMC 10x Genomics Data

| Model | Test Accuracy (%) | Macro F1-Score | Training Time (min) | Reference Memory (GB) |

|---|---|---|---|---|

| Random Forest (500 trees) | 89.7 | 0.885 | 12.5 | 1.2 |

| SVM (RBF kernel) | 87.2 | 0.861 | 45.3 | 0.8 |

| CellTypist (default) | 93.1 | 0.925 | 8.2 | 4.5 |

| scANVI (with 50% labels) | 94.8 | 0.940 | 110.0 | 2.1 |

Detailed Methodologies & Experimental Protocols

Protocol: Building a Random Forest Classifier for Cell Annotation

Aim: To annotate cell types using a reference scRNA-seq dataset.

- Data Preprocessing: Start with a reference count matrix (cells x genes). Perform library size normalization (e.g., 10,000 counts per cell) and log1p transformation. Select highly variable genes (HVGs, ~2000-5000).

- Feature Engineering: Use the expression levels of HVGs as features. Optionally, add prior knowledge markers as features.

- Model Training: Using scikit-learn's

RandomForestClassifier, train with parameters:n_estimators=500,max_features='sqrt',class_weight='balanced'. Use 70-80% of reference data for training. - Validation & Tuning: Perform k-fold cross-validation (k=5). Tune

max_depthandmin_samples_leafto prevent overfitting. - Prediction on Query Data: Apply the same preprocessing to the query dataset. Use the trained model's

.predict_proba()to get per-cell class probabilities.

Protocol: Training and Using the scANVI Model

Aim: To perform semi-supervised, integrative cell annotation across datasets.

- Data Integration: Load reference dataset with labels and unlabeled query dataset(s). Use

scanpyfor preliminary QC. - Model Setup: In

scvi-tools, set up thescANVImodel. This builds upon thescVIgenerative model:X ~ NegativeBinomial(l, p)wherelis library size andpis determined by a neural network from latent variablesz. - Semi-supervised Training:

- Stage 1: Train the base

scVImodel on the combined data (labeled + unlabeled) in an unsupervised manner to learn a shared latent representation. - Stage 2: Initialize

scANVIwith the pre-trainedscVIweights. Train with the reference labels, using the loss:L_scANVI = L_scVI + α * L_classification, whereαis a weighting term.

- Stage 1: Train the base

- Annotation Transfer: The trained model can:

- Predict labels for unlabeled cells (

model.predict()). - Output integrated latent representations for visualization (

model.get_latent_representation()). - Impute denoised gene expression.

- Predict labels for unlabeled cells (

Protocol: Large-Scale Annotation with CellTypist

Aim: Rapid annotation of millions of cells using a pre-trained model from a curated atlas.

- Model Download: Download a pre-trained model (e.g., "ImmuneAllLow.pkl" from the CellTypist repository) containing immune cell classifiers.

- Standardized Input: Prepare query data as an

AnnDataobject with genes as variables. Gene names should match the model's expected features. - Run Prediction: Use

celltypist.annotate(adata, model='Immune_All_Low.pkl', majority_voting=True). Themajority_votingoption refines labels based on cell neighborhood. - Result Interpretation: Output includes predicted labels, confidence scores, and potential cross-labeling. Results can be visualized via UMAP.

Visualizations

Diagram 1: Supervised Cell Annotation Workflow

Diagram 2: scANVI Model Architecture & Data Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Automated Cell Annotation

| Item / Reagent | Provider / Package | Function in Workflow |

|---|---|---|

| 10x Genomics Chromium | 10x Genomics | Platform for generating high-quality single-cell gene expression libraries (reference/query data). |

| Cell Ranger | 10x Genomics | Software suite for demultiplexing, barcode processing, and initial count matrix generation. |

| Scanpy / AnnData | Theis Lab / scverse | Primary Python toolkit and data structure for scRNA-seq analysis, including preprocessing and visualization. |

| scikit-learn | Inria Foundation | Core library providing implementations of Random Forest, SVM, and other classic ML classifiers. |

| scvi-tools | Yosef Lab / scverse | PyTorch-based package for probabilistic modeling, containing the scVI and scANVI models. |

| CellTypist | Teichmann Lab, Sanger | Optimized package and repository of pre-trained models for rapid, large-scale cell annotation. |

| UMI-tools | CGAT Oxford | For accurate UMI deduplication, ensuring clean count matrices for model input. |

| Seurat | Satija Lab | Alternative comprehensive R toolkit, often used for integrated analysis and label transfer functions. |

| Benchmarking Datasets (e.g., Tabula Sapiens, PBMC datasets) | CZ Biohub, 10x | Gold-standard, well-annotated reference atlases for model training and validation. |

Within the broader thesis on Introduction to automated cell type annotation methods research, the transition from purely manual, marker-based annotation to automated, scalable methodologies represents a critical evolution. Unsupervised and hybrid approaches, specifically cluster-guided annotation and consensus strategies, address fundamental challenges of scalability, reproducibility, and bias in single-cell RNA sequencing (scRNA-seq) analysis. This whitepaper provides a technical guide to these methodologies, detailing their implementation, experimental validation, and application in biomedical research and drug development.

Foundational Concepts

The Annotation Challenge

Single-cell datasets routinely contain tens to hundreds of thousands of cells. Manual annotation relies on expert knowledge of canonical marker genes, a process that is time-consuming, subjective, and difficult to scale. Unsupervised learning methods, primarily clustering, group cells based on transcriptional similarity without prior labels. These clusters then serve as the substrate for annotation.

From Unsupervised to Hybrid

Purely unsupervised annotation assigns labels by comparing cluster-specific gene expression to external reference data. Hybrid approaches integrate this with supervised learning, using the clusters to guide label transfer or to build consensus from multiple annotation algorithms, improving accuracy and robustness.

Core Methodologies & Experimental Protocols

Cluster-Guided Annotation Workflow

This protocol leverages unsupervised clustering as a first step to define the biological context before label transfer.

Experimental Protocol:

- Data Preprocessing: Log-normalize the query scRNA-seq count matrix. Select highly variable genes (HVGs).

- Unsupervised Clustering: Perform dimensionality reduction (PCA, followed by UMAP or t-SNE). Apply a graph-based clustering algorithm (e.g., Leiden, Louvain) at a chosen resolution to partition cells into

kdistinct clusters. - Differential Expression (DE) Analysis: For each cluster

i, identify marker genes by comparing its expression profile against all other cells. Use a DE test (Wilcoxon rank-sum) with FDR correction. - Preliminary Cluster Characterization: Use the top

nmarker genes per cluster for enrichment analysis against gene ontologies (GO) and public cell-type databases (e.g., CellMarker, PanglaoDB) to assign a provisional biological identity. - Reference-Based Label Transfer: Align query clusters to a curated reference atlas. Using a supervised classifier (e.g., single-cell SVM, random forest) or correlation-based method, transfer labels from the reference to each cluster as a whole, or to individual cells within clusters. The cluster boundaries act as a "guide," allowing the rejection of low-confidence transfers that are inconsistent within a cluster.

- Resolution Tuning: Iterate clustering at different resolutions. Higher resolutions yield more, finer clusters, potentially separating subtypes. The optimal resolution balances cluster purity (homogeneous cell type) with biological relevance.

Diagram Title: Cluster-Guided Annotation Workflow

Consensus Annotation Strategy

Consensus methods aggregate predictions from multiple independent annotation algorithms or references to produce a unified, more reliable label.

Experimental Protocol:

- Multi-Algorithm Annotation: Apply

mdistinct annotation tools (e.g., SingleR, scType, scSorter, Seurat's label transfer) to the same query dataset. Each tool produces a vector of predicted labels (L1, L2, ..., Lm). - Cluster-Level Consensus (Recommended): Perform unsupervised clustering on the query data. For each cluster

j, collect all predicted labels for its constituent cells from allmtools. The consensus label for clusterjis determined by a voting mechanism:- Majority Vote: The most frequent label across all tools and all cells in the cluster.

- Weighted Vote: Tools are weighted by their pre-evaluated accuracy on benchmark datasets.

- Thresholding: A label is assigned only if it appears above a predefined frequency threshold (e.g., >70% agreement); otherwise, the cluster is marked "Unknown" for expert review.

- Cell-Level Consensus: Alternatively, compute a consensus label per cell, though this is noisier. Methods include leveraging the cluster-level result or using ensemble learning.

- Conflict Resolution & Confidence Scoring: Calculate a confidence score per cluster (e.g., entropy of votes, percentage agreement). Flag low-confidence clusters for manual inspection using their marker genes.

Diagram Title: Consensus Annotation Strategy Flow

Performance Data & Validation

Recent benchmark studies quantify the performance of hybrid approaches against purely manual and purely supervised methods.

Table 1: Performance Comparison of Annotation Strategies (Synthetic Benchmark Dataset)

| Annotation Strategy | Average Accuracy (F1-Score) | Robustness to Noise | Scalability (Cells/sec) | Required Expert Input |

|---|---|---|---|---|

| Purely Manual (Expert) | 0.85 - 0.95* | High | Very Low | Extensive |

| Purely Supervised (SingleR) | 0.78 - 0.88 | Medium | High | Low (Reference Only) |

| Cluster-Guided (e.g., Seurat v5) | 0.89 - 0.93 | High | Medium | Moderate |

| Consensus (3-algorithm) | 0.91 - 0.95 | Very High | Medium-Low | Low |

| Unsupervised Only (Markers) | 0.65 - 0.80 | Low | Medium | High |

*Accuracy is context-dependent and high only for well-known cell types.

Validation Protocol:

- Ground Truth Datasets: Use publicly available datasets with gold-standard labels (e.g., cell hashing or multiplexed FACS-sorted populations).

- Simulation: Use tools like splatter to simulate scRNA-seq data with known cell types and introduced technical noise (dropout, batch effects).

- Metrics: Calculate per-cell and per-cluster accuracy (F1-score, Adjusted Rand Index). Measure robustness by progressively adding noise and tracking accuracy decay.

- Biological Validation: For novel or low-confidence labels, perform in situ validation via multiplexed FISH (e.g., MERFISH) or CITE-seq for surface protein expression.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Reagents for Implementation

| Item / Solution | Function in Protocol | Example Product/Software |

|---|---|---|

| Single-Cell 3' Library Kit | Generate barcoded scRNA-seq libraries from cell suspensions. | 10x Genomics Chromium Next GEM Single Cell 3' |

| Cell Hash Tag Oligos (HTOs) | Multiplex samples, enabling doublet detection and batch correction. | BioLegend TotalSeq-A Antibodies |

| CITE-seq Antibody Panel | Simultaneously profile surface protein expression alongside transcriptome. | BioLegend TotalSeq-C Antibody Panels |

| Reference Atlas | Curated, high-quality labeled dataset for supervised label transfer. | Human Cell Landscape, Mouse RNA-seq atlas, Azimuth references |

| Clustering Algorithm | Perform unsupervised grouping of cells based on gene expression. | Leiden (igraph), Louvain (Seurat/Scanpy) |

| Annotation Algorithms | Execute individual label prediction methods for consensus. | SingleR (R), scType (R/Python), scSorter (R) |

| Consensus Framework | Integrate multiple predictions and execute voting logic. | Custom script (R/Python), SC3 (for clustering consensus) |

| Visualization Tool | Visualize clusters and annotated results in 2D/3D. | Uniform Manifold Approximation (UMAP), t-SNE |

Signaling Pathway Context in Annotation

Cell type identity is governed by active signaling pathways. Annotation can be validated by checking for pathway activity in cluster marker genes.

Example: PI3K-Akt Pathway in T Cell Activation An unsupervised cluster expressing high CD3E, CD28, and IL2RA may be annotated as "Activated T cells." This can be confirmed by enrichment of PI3K-Akt signaling genes (PIK3CD, AKT1, MTOR) in the cluster's marker list.

Diagram Title: PI3K-Akt Pathway in T Cell Activation

Cluster-guided and consensus strategies represent a sophisticated hybrid paradigm in automated cell type annotation. By marrying the biological intuition of unsupervised clustering with the predictive power of supervised learning, these methods enhance accuracy, manage uncertainty, and provide a structured framework for expert intervention. For researchers and drug developers, adopting these approaches enables more reproducible, scalable, and biologically-grounded analysis of single-cell data, accelerating discoveries in disease mechanisms and therapeutic targets.

This whitepaper serves as a core technical chapter in a broader thesis on Introduction to automated cell type annotation methods research. As single-cell RNA sequencing (scRNA-seq) becomes ubiquitous in biomedical research and drug development, the manual annotation of cell clusters has emerged as a critical bottleneck. It is subjective, time-consuming, and not scalable to large-scale datasets or multi-omics integration. Automated annotation methods promise reproducibility, scalability, and the ability to leverage accumulated biological knowledge from reference atlases. This guide provides a detailed, comparative implementation protocol for two leading computational ecosystems: Seurat (R) and Scanpy (Python).

Automated methods generally fall into three categories: label transfer, marker-based, and gene set enrichment-based. The choice depends on reference data availability and annotation granularity.

Table 1: Comparison of Primary Automated Annotation Methods

| Method Type | Key Principle | Representative Tools | Best Use Case |

|---|---|---|---|

| Label Transfer | Projects labels from a reference to a query dataset by finding mutual nearest neighbors (MNNs) or correlation in shared feature space. | Seurat's FindTransferAnchors/TransferData; Scanpy's scanpy.tl.ingest |

When a high-quality, curated reference atlas exists for a similar tissue/species. |

| Marker-Based | Scores cells based on the expression of predefined cell-type-specific marker gene sets. | Seurat's AddModuleScore; Scanpy's scanpy.tl.score_genes |

When well-established marker genes are known but a full reference matrix is not available. |

| Enrichment-Based | Uses statistical tests (e.g., hypergeometric) to assess enrichment of cell-type-specific gene signatures from databases. | AUCell (R/Python); Garnett (R) | For interpreting clusters against large, curated databases like CellMarker, PanglaoDB. |

Experimental Protocols for Key Annotation Workflows

Protocol 3.1: Reference-Based Label Transfer with Seurat (v5+)

Objective: To annotate a query PBMC dataset using the human PBMC reference from Azimuth.

Materials (Research Reagent Solutions):

- Input Data: Query Seurat object (

query_pbmc) containing normalized log-counts. - Reference Data: Preprocessed Azimuth reference (

azimuth.ref) loaded as a Seurat object. - Software: Seurat v5, SeuratDisk, Azimuth R package.

Methodology:

- Data Preprocessing: Ensure the query object is normalized (

NormalizeData) and variable features are identified (FindVariableFeatures). Scale the data (ScaleData). - Find Transfer Anchors: Identify technical batch-invariant correspondences between reference and query.

Transfer Labels: Transfer cell type annotations at the desired level (e.g.,

l2).Integrate & Visualize: The predicted labels are stored in

query_pbmc$predicted.celltype.l2. Visualize usingDimPlot.

Diagram 1: Seurat Label Transfer Workflow

Protocol 3.2: Marker-Based Scoring with Scanpy

Objective: To score T cell subtypes in a tumor microenvironment dataset using canonical marker genes.

Materials (Research Reagent Solutions):

- Input Data: AnnData object (

adata) with preprocessed, log-normalized counts. - Marker Gene Lists: Curated lists (e.g., CD4+ T cell:

["CD3D", "CD4", "IL7R"]; CD8+ T cell:["CD3D", "CD8A", "CD8B", "GZMK"]; Treg:["FOXP3", "IL2RA"]). - Software: Scanpy v1.9, NumPy, Pandas.

Methodology:

- Prepare Marker Dictionary: Define a Python dictionary for cell type and gene sets.

Score Cells: Calculate the average expression of each gene set, corrected for background.

Assign Provisional Labels: Assign each cell the label of its highest scoring set, if above a threshold (e.g., 75th percentile).

Visualize: Use

sc.pl.umapcolored by'predicted_label'or individual scores.

Diagram 2: Scanpy Marker Scoring Logic

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools & Resources for Automated Annotation

| Item | Function/Description | Example/Provider |

|---|---|---|

| Curated Reference Atlases | High-quality, manually annotated datasets used as ground truth for label transfer. | Human: Azimuth (PBMC, Cortex), CellxGene Census. Mouse: Tabula Muris, Allen Brain Map. |

| Marker Gene Databases | Collections of cell-type-specific gene signatures compiled from literature. | PanglaoDB, CellMarker, MSigDB cell type signatures. |

| Annotation Software Packages | Core algorithms implementing label transfer, scoring, and enrichment. | R: Seurat, SingleR, Garnett. Python: Scanpy (ingest), scANVI, scType. |

| Cross-Platform Converters | Tools to convert data objects between R (Seurat) and Python (Scanpy) ecosystems. | SeuratDisk (for .h5Seurat/.h5ad), anndata2ri, sceasy. |

| Benchmarking Frameworks | Systems to evaluate the accuracy and robustness of annotation predictions. | scRNA-seq benchmark studies (e.g., by Tian et al., 2021). |

Validation and Quality Control Protocol

Objective: To assess the confidence of automated annotations.

Methodology:

- Prediction Score Thresholding: Use the prediction scores from label transfer (e.g.,

query_pbmc$predicted.celltype.l2.score) to filter out low-confidence assignments (<0.5). - Differential Expression (DE) Verification: For each predicted cluster, perform DE analysis against all others. Check for upregulation of expected marker genes.

- Visual Concordance: Ensure cells with the same label co-localize in UMAP/t-SNE space. Investigate mixed clusters.

- Cross-Validation: Use a hierarchical approach: annotate at broad levels (e.g.,

celltype.l1) first, then subset and re-annotate for granularity.

Integrating automated annotation into Seurat and Scanpy workflows standardizes cell typing, enhances reproducibility, and accelerates the analysis pipeline—a critical advancement for translational research and drug development. The choice between reference-based transfer and marker-based scoring is context-dependent. Successful implementation requires careful selection of reference data, rigorous validation through QC steps, and an understanding that these methods are tools to augment, not wholly replace, expert biological interpretation. This protocol provides a foundational framework for their adoption.

Solving Common Challenges: How to Optimize Your Automated Annotation Pipeline

This whitepaper addresses critical challenges in automated cell type annotation for single-cell RNA sequencing (scRNA-seq) data, framed within a broader thesis on the development of robust annotation methods. As the field transitions from manual curation to automated pipelines, two major obstacles emerge: (1) reliance on low-quality or incomplete reference datasets, and (2) pervasive technical batch effects that confound cross-dataset analysis. Successfully navigating these issues is paramount for researchers, scientists, and drug development professionals who depend on accurate cell type identification to draw biologically and clinically meaningful conclusions.

The Problem of Low-Quality Reference Datasets

A reference dataset's quality dictates the upper limit of annotation accuracy. Low-quality references suffer from incomplete cell type representation, poor cell type label resolution, high ambient RNA, or inadequate sequencing depth.

Quantitative Impact of Reference Quality

Table 1: Impact of Reference Dataset Quality on Annotation Accuracy (Benchmark Data)

| Reference Quality Metric | High-Quality Reference (F1-Score) | Low-Quality Reference (F1-Score) | Performance Drop |

|---|---|---|---|

| Cell Type Completeness | 0.92 | 0.71 | 22.8% |

| Label Specificity | 0.89 | 0.65 | 27.0% |