Batch Effect Correction for Cross-Dataset Annotation: A Comprehensive Guide for Biomedical Research

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying batch effect correction to enable reliable cross-dataset annotation.

Batch Effect Correction for Cross-Dataset Annotation: A Comprehensive Guide for Biomedical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying batch effect correction to enable reliable cross-dataset annotation. Covering foundational concepts to advanced validation strategies, it details why technical variations confound integrated analyses and how modern algorithms—from reference-based scaling to deep learning models—can mitigate these issues. Readers will gain practical insights for selecting, troubleshooting, and benchmarking correction methods across diverse data types, including transcriptomics, proteomics, and microbiome data, to ensure biological signals are preserved and translational research is accelerated.

Understanding Batch Effects: The Hidden Challenge in Data Integration

In molecular biology, a batch effect occurs when non-biological factors in an experiment introduce systematic changes in the data [1]. These technical variations are unrelated to the scientific variables under investigation but can correlate with outcomes of interest, leading to inaccurate conclusions and misleading biological interpretations [2] [1].

Batch effects represent a pervasive challenge in high-throughput technologies, affecting data from microarrays, mass spectrometers, second-generation sequencing, and other omics platforms [2]. The fundamental issue arises because measurements are affected by laboratory conditions, reagent lots, personnel differences, and other technical variables that create subgroups of measurements with qualitatively different behavior across experimental conditions [2].

Core Definitions and Characteristics

Multiple definitions exist for batch effects, reflecting their complex nature. One comprehensive definition describes batch effects as "the systematic technical differences when samples are processed and measured in different batches and which are unrelated to any biological variation recorded during the experiment" [1]. The critical characteristic is that these effects are non-biological in origin but can powerfully impact study outcomes.

Batch effects introduce significant heterogeneity into high-dimensional data, complicating accurate analysis [3]. In gene expression studies, the greatest source of differential expression is nearly always across batches rather than across biological groups, which can lead to confusing or incorrect biological conclusions due to the influence of technical artefacts [2].

Understanding the origins of batch effects is essential for both prevention and correction. These technical variations can arise from numerous sources throughout the experimental workflow.

Table 1: Common Sources of Batch Effects in High-Throughput Experiments

| Source Category | Specific Examples | Impact Level |

|---|---|---|

| Temporal Factors | Processing date, Time of day, Seasonal variations | High [2] [1] |

| Personnel Factors | Different technicians, Individual handling techniques | Moderate to High [2] [1] |

| Reagent Factors | Different lots, Different vendors, Preparation differences | High [2] [1] |

| Instrumentation | Different machines, Calibration differences, Maintenance cycles | High [1] |

| Environmental Conditions | Laboratory temperature, Humidity, Atmospheric ozone levels | Variable [2] [1] |

| Protocol Variations | Minor technique differences, Protocol deviations | Moderate [4] |

The processing group and date are often used as surrogates for accounting for batch effects, but in a typical experiment, these are probably only proxies for other sources of variation, such as ozone levels, laboratory temperatures, and reagent quality [2]. Many possible sources of batch effects are not recorded, leaving data analysts with just processing group and date as surrogates [2].

Detection and Visualization Methods

Identifying batch effects requires a combination of visual and statistical approaches. Proper detection is crucial for determining appropriate correction strategies.

Principal Component Analysis (PCA)

PCA is one of the most common methods for detecting batch effects. This technique identifies the most common patterns that exist across features by projecting data onto orthogonal vectors that preserve variance [2] [3]. When batch effects are present, the principal components often correlate strongly with batch variables rather than biological variables of interest.

In numerous studies of public data, principal components have been found to be highly correlated with batch surrogates such as processing date. For example, in one analysis of nine published datasets, the first principal component showed correlations with date surrogates ranging from 0.570 to 0.922 [2].

Quantitative Metrics for Batch Effect Assessment

Several statistical metrics have been developed to quantify batch effects:

- Signal-to-Noise Ratio (SNR): Measures the ability to separate distinct biological groups when multiple batches of data are integrated [4]

- Relative Correlation (RC) Coefficient: Assesses consistency between a dataset and reference datasets in terms of fold changes [4]

- k-nearest neighbor Batch Effect Test (kBET): Measures how batch effects are mixed at the local level of every cell's neighborhood [5]

- Average Silhouette Width (ASW): Quantifies the degree of batch mixing versus biological grouping [5]

Visualization Techniques

Table 2: Visualization Methods for Batch Effect Detection

| Method | Application | Strengths | Limitations |

|---|---|---|---|

| PCA Plots | General high-throughput data | Captures major sources of variation, Widely implemented | May miss subtle batch effects, Limited to global patterns [3] |

| t-SNE Plots | Single-cell data, Complex datasets | Captures nonlinear relationships, Good for visualization | Computational intensity, Stochastic nature [4] |

| UMAP Plots | Large-scale datasets, Single-cell data | Preserves global and local structure, Scalability | Parameter sensitivity [5] |

| Sample Boxplots | Distribution assessment | Simple implementation, Shows global distribution differences | May miss feature-specific effects, Less sensitive [3] |

| Hierarchical Clustering | Sample relationships | Visualizes sample groupings, Intuitive interpretation | Distance metric dependence [2] |

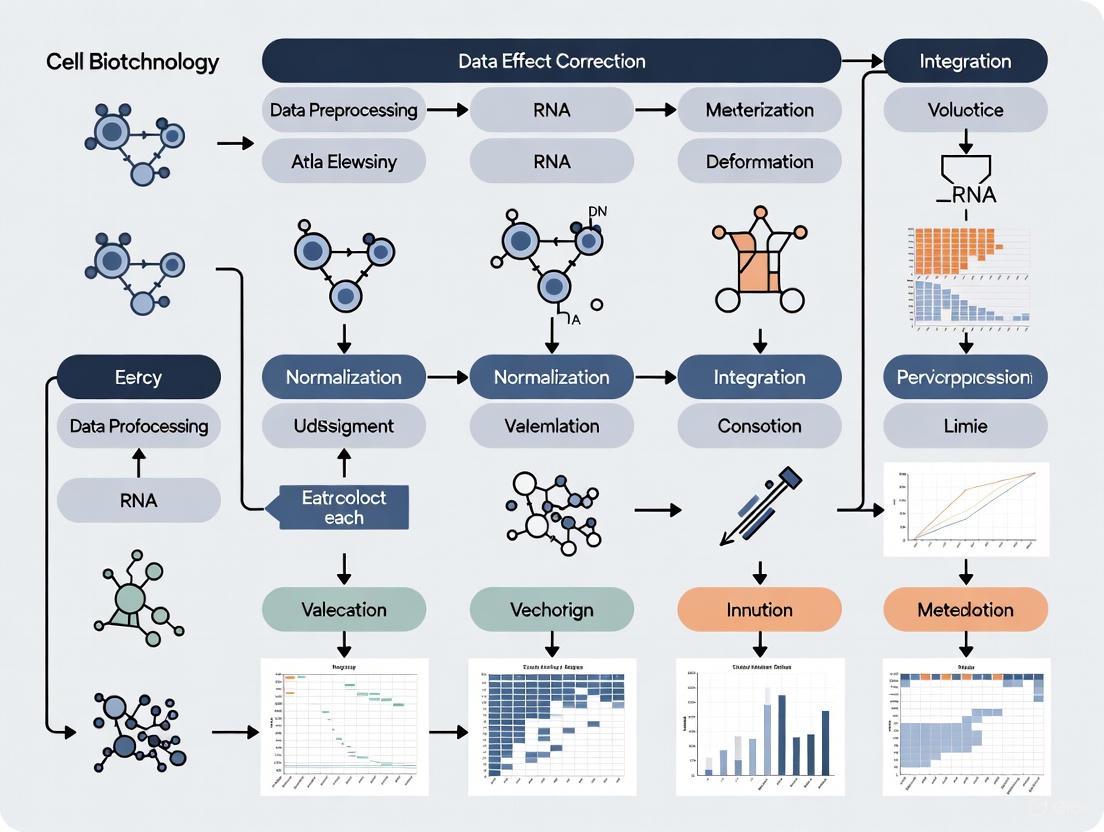

Figure 1: Workflow for batch effect detection and assessment in high-throughput data.

Batch Effect Correction Algorithms (BECAs)

Multiple computational approaches have been developed to correct for batch effects, each with different underlying assumptions and applications.

Algorithm Categories and Methodologies

Empirical Bayes Methods (ComBat) ComBat uses an empirical Bayes framework to adjust for batch effects, making it particularly effective with small batch sizes [1] [3]. The method models batch effects as additive and multiplicative and pools information across features to improve estimation [1].

Ratio-Based Methods (Ratio-G) Ratio-based approaches scale absolute feature values of study samples relative to those of concurrently profiled reference materials [4]. This method has proven particularly effective when batch effects are completely confounded with biological factors of interest [4].

Dimension Reduction Methods (Harmony) Harmony uses an iterative process of clustering, integration, and correction to remove batch effects while preserving biological variation [4] [5]. It works by projecting data into a reduced dimension space and correcting embeddings.

Surrogate Variable Analysis (SVA) SVA estimates hidden factors, including batch effects and other unwanted variations, without requiring prior knowledge of batch identities [3] [4]. It is particularly useful when the sources of technical variation are unknown or unrecorded.

Remove Unwanted Variation (RUV) RUV methods use control genes or samples to estimate and remove unwanted variation [3]. Different variants include RUVg (using control genes), RUVs (using replicate samples), and RUVr (using residuals) [4].

Comparative Performance of BECAs

Table 3: Performance Comparison of Batch Effect Correction Algorithms

| Algorithm | Underlying Method | Best Application Scenario | Strengths | Limitations |

|---|---|---|---|---|

| ComBat | Empirical Bayes | Known batch effects, Balanced designs | Handles small batches, Established method | Assumes balanced design, May over-correct [3] [4] |

| Ratio-Based | Reference scaling | Confounded designs, Multi-omics studies | Works in confounded scenarios, Simple implementation | Requires reference materials [4] |

| Harmony | Dimension reduction | Single-cell data, Large datasets | Preserves biological variance, Good performance | Computational complexity [4] [5] |

| SVA | Surrogate variable estimation | Unknown batch factors, Complex designs | No prior batch info needed, Flexible | May capture biological signal [3] [4] |

| RUV Series | Control features | Designed experiments, With controls | Uses negative controls, Multiple variants | Requires appropriate controls [3] [4] |

| limma | Linear models | Simple batch effects, Microarray data | Fast, Established methodology | Limited to simple cases [3] |

Recent comprehensive assessments, such as those performed in the Quartet Project, have demonstrated that ratio-based methods often outperform other approaches, particularly in confounded scenarios where biological factors and batch factors are completely mixed [4]. In these evaluations, ratio-based scaling showed superior performance in terms of the accuracy of identifying differentially expressed features, the robustness of predictive models, and the ability to accurately cluster cross-batch samples into their correct donors [4].

Experimental Protocols for Batch Effect Management

Reference Material-Based Ratio Protocol

Purpose: To effectively correct batch effects in confounded experimental designs using reference materials [4].

Materials and Reagents:

- Reference materials (e.g., Quartet multiomics reference materials)

- Study samples

- Platform-specific profiling reagents

- Normalization controls

Procedure:

- Experimental Design: Include appropriate reference materials in each batch of experiments

- Sample Processing: Process reference materials alongside study samples using identical protocols

- Data Generation: Generate raw data for both reference and study samples

- Ratio Calculation: For each feature, calculate ratio values using the formula:

Ratio_sample = Value_sample / Value_reference - Data Transformation: Use ratio-scaled values for downstream analysis

- Quality Assessment: Evaluate correction effectiveness using PCA and clustering

Validation:

- Assess biological group separation using SNR metrics

- Evaluate reproducibility using RC coefficients

- Verify classification accuracy after integration [4]

Computational Correction Protocol for Known Batch Effects

Purpose: To remove batch effects when batch information is known and documented.

Materials:

- Normalized data matrix

- Batch information metadata

- Statistical software (R, Python)

Procedure:

- Data Preparation: Import normalized data and batch information

- Algorithm Selection: Choose appropriate BECA based on experimental design

- Parameter Optimization: Adjust algorithm-specific parameters

- Batch Correction: Apply selected BECA to data matrix

- Visual Assessment: Generate PCA plots pre- and post-correction

- Statistical Validation: Calculate batch effect metrics (kBET, ASW)

- Biological Preservation: Verify retention of biological signal

Technical Notes:

- For ComBat: Specify empirical Bayes parameter for small batch sizes

- For Harmony: Adjust clustering parameters for optimal integration

- Always compare pre- and post-correction results [3] [4]

Figure 2: Reference material-based ratio correction workflow for batch effects.

Research Reagent Solutions

Table 4: Essential Reagents and Resources for Batch Effect Management

| Resource | Function | Application Context |

|---|---|---|

| Reference Materials | Provides standardization baseline | Cross-batch normalization, Quality control [4] |

| Control Genes/Samples | Estimates unwanted variation | RUV methods, Quality assessment [3] |

| Standardized Reagents | Minimizes technical variation | Experimental consistency, Reproducibility [2] |

| QC Metrics Tools | Assesses data quality | Pre-correction evaluation, Post-correction validation [3] [4] |

| Batch Tracking Systems | Documents batch information | Metadata collection, Covariate adjustment [2] |

Computational Tools and Software

R/Bioconductor Packages:

- sva: Implements surrogate variable analysis and ComBat [1]

- limma: Contains removeBatchEffect() function for linear model-based correction [3]

- RUVSeq: Provides multiple RUV methods for batch correction [3] [4]

- Harmony: Enables integration of datasets using dimension reduction [4]

Python Packages:

- scanpy: Includes batch correction tools for single-cell data [5]

- scvi-tools: Implements deep learning approaches for batch integration [5]

Evaluation Frameworks:

- SelectBCM: Helps select appropriate batch correction methods [3]

- kBET: Provides quantitative assessment of batch effect removal [5]

Batch effects remain a critical challenge in high-throughput data analysis, particularly as studies increase in scale and complexity. The comprehensive assessment of correction methods demonstrates that ratio-based approaches using reference materials provide particularly robust solutions, especially in confounded scenarios where biological and technical variables are completely mixed [4].

Future directions in batch effect management include the development of artificial intelligence and deep learning approaches that can automatically detect and correct for technical variations [5]. As multiomics studies become more prevalent, methods that can simultaneously handle batch effects across different data types will be increasingly valuable [4] [5]. Furthermore, the creation of standardized reference materials and benchmarking frameworks will enhance our ability to compare and validate correction methods across diverse experimental contexts [4].

Effective batch effect management requires careful consideration of both experimental design and computational correction strategies. By implementing robust protocols and selecting appropriate correction algorithms based on specific experimental scenarios, researchers can significantly enhance the reliability and reproducibility of their high-throughput data analyses.

The Critical Impact on Cross-Dataset Annotation and Drug Discovery

In modern drug discovery, the integration of large-scale biological data from multiple sources—such as genomics, transcriptomics, proteomics, and metabolomics—has become fundamental for understanding complex disease mechanisms and identifying novel therapeutic targets [6] [7]. However, this data integration introduces significant technical challenges, primarily due to batch effects—non-biological variances caused by differences in experimental protocols, measurement technologies, or laboratory conditions [8]. These technical artifacts obscure biological signals, compromise data quality, and ultimately hinder the reproducibility of scientific findings [9] [10]. The field of cross-dataset annotation specifically addresses these challenges by developing computational methods to harmonize heterogeneous datasets, enabling biologically meaningful comparisons and meta-analyses [8]. This application note examines the critical impact of batch effect correction on cross-dataset annotation, providing detailed protocols and resources to enhance data integration workflows in pharmaceutical research and development.

Quantitative Comparison of Batch Effect Correction Methods

Table 1: Performance Comparison of BERT versus HarmonizR on Simulated Data

| Performance Metric | BERT | HarmonizR (Full Dissection) | HarmonizR (Blocking - 4 Batches) |

|---|---|---|---|

| Numeric Value Retention | Retains all values (0% loss) | Up to 27% data loss | Up to 88% data loss |

| Runtime Improvement | Up to 11× faster (baseline: HarmonizR) | Baseline | Slower than BERT |

| Average Silhouette Width (ASW) Improvement | Up to 2× improvement for imbalanced conditions | Lower than BERT | Lower than BERT |

| Handling of Incomplete Data | Directly processes incomplete omic profiles | Requires matrix dissection, introducing data loss | Uses blocking approach, introducing high data loss |

The quantitative comparison reveals that the Batch-Effect Reduction Trees (BERT) algorithm significantly outperforms the previously available HarmonizR framework across multiple performance metrics [8]. BERT's key advantage lies in its ability to retain up to five orders of magnitude more numeric values by avoiding the data removal strategies employed by HarmonizR. This superior data retention is crucial in drug discovery applications where sample sizes are often limited and each data point carries significant value [10]. Furthermore, BERT's computational efficiency, with up to 11× runtime improvement, enables researchers to process large-scale multi-omics datasets more effectively, accelerating the drug discovery pipeline [8]. The method's consideration of covariates and reference measurements also provides up to 2× improvement in Average-Silhouette-Width for severely imbalanced or sparsely distributed conditions, enhancing its utility for real-world datasets with complex experimental designs [8].

Protocols for Batch Effect Correction in Multi-Omic Studies

Protocol 1: Batch-Effect Reduction Trees (BERT) Workflow

The BERT framework provides a robust methodology for integrating incomplete omic profiles while addressing technical variances. The following protocol outlines its key implementation steps [8]:

- Input Data Preparation: Format input data as a

data.frameorSummarizedExperimentobject. Ensure that all categorical covariates (e.g., biological conditions like sex, disease status) are properly annotated for each sample. - Data Pre-processing: Remove singular numerical values from individual batches (affecting typically ≪1% of available numerical values) to meet the requirement that each batch exhibits at least two numerical values per feature for the underlying ComBat or limma algorithms.

- Tree Construction and Parallelization: Decompose the data integration task into a binary tree structure. Configure parallel processing parameters (number of processes

P, reduction factorR, and sequential batch thresholdS) to optimize computational efficiency based on dataset size. - Pairwise Batch-Effect Correction: For each pair of batches in the tree:

- Apply ComBat or limma to features with sufficient numerical data (≥2 values per batch).

- Propagate features with values from only one batch to the next tree level without modification.

- Reference-Based Correction (Optional): For datasets with known covariate levels for only a subset of samples, identify these as references. Use a custom limma implementation to estimate batch effects among references, then apply these estimates to correct both reference and non-reference samples.

- Quality Control and Output: Compute quality control metrics, including Average Silhouette Width (ASW) for biological conditions and batch of origin, to assess integration performance. Return the integrated dataset in the same format and order as the original input.

Protocol 2: Data Consistency Assessment with AssayInspector

Prior to data integration, a systematic consistency assessment is crucial. The AssayInspector tool provides a standardized protocol for evaluating dataset compatibility [10]:

- Data Collection and Curation: Gather molecular property datasets from multiple public sources (e.g., TDC, ChEMBL, DrugBank). Standardize compound identifiers and endpoint annotations to ensure comparability.

- Statistical Characterization: Generate a comprehensive summary report including:

- Descriptive statistics (mean, standard deviation, quartiles) for regression endpoints

- Class counts and ratios for classification tasks

- Statistical comparisons using Kolmogorov-Smirnov test (regression) or Chi-square test (classification)

- Within- and between-source molecular similarity calculations using Tanimoto Coefficient or Euclidean distance

- Visualization and Discrepancy Detection: Create visualization plots to identify inconsistencies:

- Property distribution plots to highlight significantly different distributions

- Chemical space analysis using UMAP dimensionality reduction

- Dataset intersection diagrams to examine molecular overlap

- Feature similarity plots to detect deviant data sources

- Insight Report Generation: Analyze outputs to identify:

- Dissimilar datasets based on descriptor profiles

- Conflicting datasets with differing annotations for shared molecules

- Divergent datasets with low molecular overlap

- Redundant datasets with high proportion of shared molecules

- Informed Data Integration: Use the assessment report to make data-driven decisions about which datasets to aggregate, exclude, or process separately before model training.

Workflow Visualization

Diagram 1: Integrated workflow for batch effect correction and data consistency assessment in cross-dataset annotation.

Diagram 2: BERT's binary tree structure for hierarchical batch-effect correction.

Research Reagent Solutions

Table 2: Essential Computational Tools and Data Resources for Cross-Dataset Annotation

| Resource Name | Type | Primary Function | Application in Drug Discovery |

|---|---|---|---|

| BERT (Batch-Effect Reduction Trees) [8] | Algorithm | High-performance data integration of incomplete omic profiles | Integrating heterogeneous transcriptomic, proteomic, and metabolomic datasets |

| AssayInspector [10] | Software Package | Data consistency assessment and visualization | Identifying distributional misalignments in ADME datasets prior to modeling |

| Therapeutic Data Commons (TDC) [10] | Database | Curated benchmarks for therapeutic ML | Accessing standardized ADME and physicochemical property datasets |

| ChEMBL [7] | Database | Bioactive drug-like small molecules | Retrieving drug-target interaction data and bioactivity measurements |

| DrugBank [7] | Database | Comprehensive drug and target information | Validating drug-target networks and polypharmacology profiles |

| ADMETlab 3.0 [10] | Web Platform | ADMET property prediction | Benchmarking experimental PK parameters against computational predictions |

The integration of these computational resources creates a powerful ecosystem for addressing batch effects in pharmaceutical research. BERT provides the core algorithmic framework for handling technical variance in multi-omics data, which is particularly valuable when studying complex diseases requiring systems-level approaches [8] [7]. AssayInspector complements this by enabling proactive quality assessment before data integration, helping researchers identify and address dataset discrepancies that could compromise model performance [10]. The combination of these tools with curated biological databases creates a robust infrastructure for reliable cross-dataset annotation, ultimately enhancing the predictive accuracy of ML models in critical areas such as multi-target drug discovery and preclinical safety assessment [7] [10].

In the context of cross-dataset annotation research, batch effects are systematic sources of technical variation introduced during the lifecycle of a sample, from collection to data generation [11]. These non-biological variations arise from differences in sequencing protocols, laboratory conditions, and sample processing methods, posing a significant challenge for data integration and reproducibility [3] [11]. When uncorrected, batch effects can obscure true biological signals, lead to false associations, and ultimately result in misleading scientific conclusions and irreproducible findings [11] [4]. The profound negative impact of batch effects has been documented in severe cases, including incorrect patient classification in clinical trials and retraction of high-profile scientific articles [11]. This application note details the common sources of these technical variations and provides structured guidance for their identification and mitigation within experimental workflows.

The table below categorizes and describes major sources of batch effects, highlighting the stage at which they are introduced and their prevalence across omics types.

Table 1: Common Sources of Batch Effects in Omics Studies

| Source Category | Experimental Stage | Affected Omics Types | Description of Effect |

|---|---|---|---|

| Flawed Study Design | Study Design | Common | Non-randomized sample collection or selection based on specific characteristics (e.g., age, gender) confounds technical and biological factors [11]. |

| Sample Storage Conditions | Sample Preparation & Storage | Common | Variations in storage temperature, duration, and number of freeze-thaw cycles alter the integrity of mRNA, proteins, and metabolites [11]. |

| Protocol Procedure Variations | Sample Preparation | Common | Differences in standard protocols (e.g., centrifugal force, time/temperature before centrifugation) cause significant changes in analyte quality [11]. |

| Reagent Lot Variability | Wet-Lab Processing | Common | Different lots of key reagents (e.g., fetal bovine serum) introduce systematic shifts in measurements, potentially causing irreproducible results [11]. |

| Personnel and Equipment | Wet-Lab Processing | Common | Changes in handling personnel or the use of different machines/instruments introduce technical bias [3] [12]. |

| Sequencing Platform and Multiplexing | Sequencing | Genomics, Transcriptomics | Using different sequencing platforms or non-uniform multiplexing strategies across flow cells introduces technical variation [12] [13]. |

Experimental Protocols for Batch Effect Assessment and Mitigation

Protocol: A Beginner-Friendly RNA-Seq Data Processing Workflow

This protocol provides a step-by-step guide for analyzing next-generation sequencing (NGS) data, from raw data to differentially expressed genes, which is a foundational process for identifying batch effects [14].

- Step 1: Quality Control

- Tool: FastQC [14].

- Method: Run the tool on raw

.fastqfiles to assess sequence quality, per base sequence content, GC content, overrepresented sequences, and adapter contamination.

- Step 2: Trimming of Reads

- Tool: Trimmomatic [14].

- Method: Remove low-quality bases, adapter sequences, and other Illumina-specific artifacts from the raw reads based on the quality report from Step 1.

- Step 3: Read Alignment

- Tool: HISAT2 (a fast spliced aligner with low memory requirements) [14].

- Method: Map the trimmed reads to a reference genome to determine their genomic origin.

- Step 4: Gene Quantification

- Method: Count the number of reads aligned to each gene feature in the annotation file, generating a count matrix for downstream analysis.

- Step 5: Differential Expression and Visualization

- Environment: R (via RStudio) [14].

- Method: Using the count matrix, perform differential expression analysis to identify genes with significant expression changes between conditions. Visualize results using statistical and graphical tools such as heatmaps and volcano plots.

This workflow yields output files including count files, ordered lists of differentially expressed genes (DEGs), and visualization plots, which are primary inputs for batch effect diagnostics [14].

Protocol: Reference Material-Based Ratio Method for Confounded Batch Effects

The reference-material-based ratio method is particularly effective when biological groups are completely confounded with batch (e.g., all samples from Group A are processed in Batch 1, and all from Group B in Batch 2) [4].

- Step 1: Selection and Incorporation of Reference Materials

- Material: Integrate one or more well-characterized multiomics reference materials (e.g., Quartet Project reference materials from matched cell lines) into every batch of the study [4].

- Method: Process the reference materials concurrently with the study samples using the exact same protocols and conditions.

- Step 2: Data Generation and Feature Extraction

- Method: Generate absolute feature values (e.g., gene expression counts, protein abundances) for both the study samples and the reference material(s) in each batch.

- Step 3: Ratio-Based Scaling

- Calculation: For each feature (e.g., gene) in every study sample, transform the absolute value into a ratio by scaling it relative to the corresponding feature value in the concurrently profiled reference material. This can be expressed as:

Ratio = Feature_value_study_sample / Feature_value_reference_material[4].

- Calculation: For each feature (e.g., gene) in every study sample, transform the absolute value into a ratio by scaling it relative to the corresponding feature value in the concurrently profiled reference material. This can be expressed as:

- Step 4: Data Integration and Analysis

- Method: Use the resulting ratio-scaled data for all downstream integrative analyses. This transformation effectively removes batch-specific technical variations, allowing for a more accurate comparison of biological differences across batches [4].

Table 2: Key Research Reagent Solutions for Batch Effect Mitigation

| Reagent/Material | Function in Batch Control | Application Example |

|---|---|---|

| Quartet Project Reference Materials | Provides a stable, multiomics benchmark for ratio-based scaling across batches and labs [4]. | Correcting batch effects in large-scale transcriptomics, proteomics, and metabolomics studies [4]. |

| Common Reference Sample(s) | Acts as an internal standard for data normalization, enabling correction when commercial reference materials are not available [4]. | Scaling feature values of study samples relative to a common control sample processed in every batch. |

| NMD Inhibitors (e.g., Cycloheximide - CHX) | Inhibits nonsense-mediated decay (NMD), preventing the degradation of aberrant transcripts and allowing for the detection of disease-causing splicing variants [15]. | RNA-seq analysis on peripheral blood mononuclear cells (PBMCs) to uncover splicing defects in rare genetic disorders [15]. |

| Standardized Reagent Lots | Minimizes technical variability arising from differences in reagent composition and performance between lots [11] [12]. | Using the same lot of fetal bovine serum (FBS) or reverse transcriptase enzyme across a multi-batch experiment. |

Logical Workflow for Batch Effect Management

The following diagram illustrates a logical workflow for diagnosing and correcting batch effects, integrating both preventative wet-lab strategies and computational corrections.

Diagram 1: A workflow for managing batch effects from experimental design to data validation.

Effective management of batch effects originating from sequencing protocols, laboratory conditions, and sample processing is not merely a data preprocessing step but a fundamental requirement for robust cross-dataset annotation research. A successful strategy combines rigorous experimental design with appropriate computational correction. Proactive prevention through standardized protocols and reference materials significantly reduces the technical burden downstream. When correction is necessary, the choice of algorithm must be guided by the study design, with the reference-material-based ratio method offering a powerful solution for the challenging confounded scenarios often encountered in real-world research. By systematically implementing these protocols and validations, researchers can ensure the reliability, reproducibility, and biological validity of their integrated omics data.

In high-dimensional biomedical research, the integrity of study conclusions is profoundly influenced by the initial study design, specifically the distribution of samples across batches. A balanced design is one where samples from all biological groups or conditions of interest are evenly distributed across all processing batches [4]. In this ideal scenario, technical variations (batch effects) are not systematically associated with any biological factor, allowing for their separation during analysis. In contrast, a confounded design occurs when biological groups are processed in completely separate batches; for instance, all samples from 'Group A' are processed in 'Batch 1', while all samples from 'Group B' are processed in 'Batch 2' [4]. This confounding makes it nearly impossible to distinguish true biological differences from technical artifacts, as the sources of variation are perfectly mixed.

The distinction between these designs is critical for batch effect correction. In a balanced design, technical bias is independent of biological signals, enabling many batch-effect correction algorithms (BECAs) to function effectively [4]. Conversely, in a confounded scenario, most standard BECAs risk removing the biological signal of interest along with the technical noise, leading to false negatives and misleading conclusions [4]. Therefore, understanding and diagnosing the nature of your study design is the essential first step in selecting an appropriate data integration strategy.

Key Concepts and Definitions

The Nature of Batch Effects

Batch effects are systematic sources of heterogeneity introduced into data by technical factors unrelated to the biological subject of study [3]. These can include:

- Different machines or instruments

- Variations in reagent lots

- Changes in environmental conditions

- Different handling personnel [3]

These effects are pervasive in any domain reliant on instrumentation and high-dimensional data, including transcriptomics, proteomics, metabolomics, and other omics fields [3] [4]. Their impact is not trivial; they can introduce skewed variations that lead to false associations, misunderstandings about disease progression, and in severe cases, inaccurate drug target identification or wrong diagnoses [3]. In one notable example, gene expression signatures in an ovarian cancer study were falsely identified due to uncorrected batch effects, ultimately contributing to the study's retraction [3].

Table 1: Characteristics of Batch Effect Types

| Batch Effect Type | Description | Impact on Data |

|---|---|---|

| Additive | A constant value is added to measurements in a batch [3]. | Shifts the mean of all features in a batch. |

| Multiplicative | Measurements in a batch are scaled by a constant factor [3]. | Scales the variance of features in a batch. |

| Mixed | A combination of both additive and multiplicative effects [3]. | Alters both the mean and variance of the data. |

Balanced vs. Confounded Designs: A Formal Distinction

The core difference between balanced and confounded designs lies in the separability of biological and technical variance.

Balanced Design: An experimental setup where all treatment groups have an equal number of observations, and crucially, all biological groups are represented equally across all batches [16] [4]. This balance ensures that comparisons between groups are fair and unbiased [16]. The primary advantage is that biological factors and technical (batch) factors are independent, allowing variance to be cleanly decomposed into its individual contributions without confounding [17] [18].

Confounded Design: An experimental scenario where one or more biological factors of interest are completely or highly correlated with batch factors [4]. This is a common problem in longitudinal or multi-center studies where practical constraints force all samples from one clinical site or time point into a single batch. In this case, the effects of biology and batch are mixed, and standard correction methods struggle to disentangle them without potentially removing the biological signal [4].

Diagram 1: Core differences between balanced and confounded designs.

Implications for Batch Effect Correction Strategy

The structure of a study's design dictates the feasibility and success of different batch effect correction strategies. The following table summarizes the core performance implications.

Table 2: Correction Algorithm Performance by Design Type

| Correction Algorithm | Performance in Balanced Design | Performance in Confounded Design |

|---|---|---|

| Per Batch Mean-Centering (BMC) | Effective [4] | Fails (removes biological signal) [4] |

| ComBat | Effective [4] | Fails (removes biological signal) [4] |

| Harmony | Effective [4] | Fails (removes biological signal) [4] |

| SVA/RUVseq | Effective [4] | Fails (removes biological signal) [4] |

| Ratio-Based (e.g., Ratio-G) | Effective [4] | Remains Effective [4] |

As evidenced, the ratio-based method stands out as the only robust approach in a completely confounded scenario. This is because it uses a stable reference point—concurrently profiled reference material(s)—to scale the data, thereby correcting for technical variation without relying on the distribution of biological groups across batches [4].

The Critical Role of Reference Materials

The ratio-based method's success hinges on the use of reference materials. These are well-characterized control samples derived from a stable source (e.g., immortalized cell lines) that are profiled alongside study samples in every batch [4]. The expression profile of each study sample is then transformed to a ratio-based value using the data from the reference sample as a denominator. This scaling normalizes the data, effectively canceling out batch-specific technical noise [4].

Diagram 2: Ratio-based correction workflow using reference materials.

Experimental Protocols for Design Evaluation and Correction

Protocol 1: Diagnosing Design Balance and Confounding

Objective: To quantitatively assess whether a dataset exhibits a balanced or confounded structure. Reagents/Materials: Multi-batch dataset with known batch and biological group labels.

- Data Preparation: Compile a metadata table with columns for

Sample_ID,Biological_Group, andBatch. - Create Contingency Table: Generate a cross-tabulation of the counts of samples per biological group in each batch.

- Visual Inspection: Create a stacked bar plot where each bar represents a batch, and segments within the bar represent the count of samples from each biological group. A balanced design will show bars of similar height with a similar distribution of segments. A confounded design will show different biological groups dominating different batches.

- Quantitative Metric - Signal-to-Noise Ratio (SNR): Calculate the SNR. A low SNR after attempting standard correction can indicate a confounded structure where biological signal is being removed as noise [4].

Protocol 2: Reference-Material-Based Ratio Correction

Objective: To correct for batch effects in both balanced and confounded designs using a ratio-based method. Reagents/Materials:

- Study samples distributed across multiple batches.

- Certified reference material (e.g., Quartet Project reference materials for multiomics) profiled in every batch [4].

- Concurrent Profiling: In each batch, profile all study samples alongside one or more replicates of the chosen reference material (RM).

- Data Matrix Generation: For each omics platform, generate a data matrix (e.g., gene expression counts) for both study samples and the RM from all batches.

- Ratio Calculation: For each feature (e.g., gene) in every study sample, calculate a ratio value:

Ratio_Sample = Raw_Value_Sample / Raw_Value_RMwhereRaw_Value_RMis typically the mean or median value of the RM replicates within the same batch. - Data Integration: The resulting ratio-scale matrices from all batches can be combined into a single, batch-corrected dataset for downstream analysis.

Protocol 3: Downstream Sensitivity Analysis for BECA Selection

Objective: To empirically evaluate the performance of different BECAs on a specific dataset, ensuring robustness of findings [3].

- Data Splitting: If batches are comparable, split the data into its individual batches.

- Establish Reference Sets: Perform differential expression analysis (DEA) on each batch individually. Combine all unique differentially expressed (DE) features into a union set. Also, identify features that are DE in all batches as a high-confidence intersect set.

- Apply Multiple BECAs: Apply a variety of BECAs (e.g., ComBat, SVA, Ratio-G) to the original, integrated dataset.

- DEA on Corrected Data: Perform DEA on each batch-corrected dataset to get a new set of DE features for each BECA.

- Calculate Performance Metrics: For each BECA, calculate the recall (percentage of the reference union set correctly identified) and false positive rate. A reliable BECA should have high recall and a low false positive rate. Furthermore, check that the high-confidence intersect set is largely preserved after correction.

Table 3: The Scientist's Toolkit: Essential Reagents and Algorithms

| Tool Category | Specific Item | Function & Application Note |

|---|---|---|

| Reference Materials | Quartet Project Reference Materials (D5, D6, F7, M8) [4] | Matched DNA, RNA, protein, and metabolite materials from a single family. Note: Use as an internal scaling control for ratio-based correction. |

| Batch Effect Correction Algorithms (BECAs) | Ratio-Based Scaling (Ratio-G) [4] | Primary choice for confounded designs. Scales study sample data relative to reference material data. |

| ComBat [3] [4] | Effective for balanced designs. Uses an empirical Bayes framework to adjust for batch. | |

| Harmony [4] | Effective for balanced designs. Uses PCA-based integration. | |

| Evaluation & Metrics | SelectBCM [3] | A method to rank BECAs based on multiple evaluation metrics. Note: Inspect raw metrics, not just ranks. |

| Signal-to-Noise Ratio (SNR) [4] | Metric to quantify the ability to separate biological groups after integration. | |

| HVG Union & Intersect Metric [3] | Uses highly variable genes to assess the impact of BECAs on biological heterogeneity. |

The choice between a balanced and confounded study design has profound implications for the success of downstream data integration and the validity of scientific conclusions. While balanced designs offer flexibility in choosing correction algorithms and are the gold standard, the practical realities of large-scale multiomics studies often lead to confounded scenarios. In these cases, the ratio-based correction method, underpinned by the use of stable reference materials, has been demonstrated to be a robust and effective strategy, outperforming other popular algorithms. By proactively designing studies with balance in mind, diligently diagnosing the structure of existing datasets, and implementing a reference-material-based correction protocol, researchers can significantly enhance the reliability and reproducibility of their findings in cross-dataset annotation research.

Assessing Batch Effect Strength Before Correction

Batch effects are systematic technical variations introduced during high-throughput data generation that are unrelated to the biological conditions of interest. These non-biological variations can arise from multiple sources, including different instrumentation, reagent lots, handling personnel, laboratory conditions, and sequencing protocols [3] [19]. In cross-dataset annotation research, where the goal is to transfer cell type labels from well-annotated reference datasets to new target datasets, accurately assessing batch effect strength before applying any correction is a critical first step that directly impacts annotation accuracy [20].

Failure to properly evaluate batch effect magnitude can lead to either under-correction, where technical variations obscure true biological signals, or over-correction, where genuine biological information is inadvertently removed [21] [19]. Both scenarios can compromise downstream analyses, potentially leading to incorrect cell type assignments in single-cell RNA sequencing (scRNA-seq) studies and ultimately misleading biological interpretations [20]. This protocol provides comprehensive guidance for systematically evaluating batch effect strength using both quantitative metrics and visualization approaches, specifically tailored for researchers working in cross-dataset annotation pipelines.

Quantitative Metrics for Batch Effect Assessment

A diverse array of quantitative metrics has been developed to objectively measure batch effect strength across different data types and experimental designs. These metrics operate at various levels—global, cell type-specific, and cell-specific—each providing complementary insights into the nature and extent of batch-related technical variation.

Table 1: Quantitative Metrics for Assessing Batch Effect Strength

| Metric Name | Level | Basis | Interpretation | Best Use Cases |

|---|---|---|---|---|

| Principal Component Regression (PCR) | Global | PCA | Correlation of batch variable with PCs weighted by variance | Initial screening for major batch effects |

| Cell-specific Mixing Score (cms) | Cell-specific | knn, PCA | P-value for differences in batch-specific distance distributions | Detecting local batch bias; single-cell data |

| Local Inverse Simpson's Index (LISI) | Cell-specific | knn | Effective number of batches in neighborhood | Evaluating local batch mixing |

| k-nearest neighbour Batch Effect (kBET) | Cell type-specific | knn | P-value for deviation from expected batch proportions | Assessing batch balance within cell types |

| Average Silhouette Width (ASW) | Cell type-specific | PCA | Relationship of within and between batch-cluster distances | Measuring cell type separation by batch |

| Graph Connectivity | Cell type-specific | knn-graph | Fraction of directly connected cells within cell type graphs | Evaluating preservation of cell type relationships |

Global Metrics

Global metrics provide an overall assessment of batch effect strength across the entire dataset. Principal Component Regression (PCR) quantifies the proportion of variance in principal components (PCs) attributable to batch effects by calculating the correlation between batch variables and PCs weighted by their variance [22]. This metric is particularly useful for initial screening to identify datasets where batch effects represent a major source of variation.

Cell Type-Specific Metrics

Cell type-specific metrics evaluate how batch effects manifest within specific cell populations. The k-nearest neighbour Batch Effect test (kBET) tests whether batch proportions in local neighborhoods match expected distributions, with significant p-values indicating problematic batch effects [22]. Average Silhouette Width (ASW) measures the degree to which samples cluster by batch rather than by biological group, with values closer to 1 indicating strong batch separation [22]. Graph Connectivity assesses whether cells of the same type remain connected in nearest-neighbor graphs despite originating from different batches [22].

Cell-Specific Metrics

Cell-specific metrics provide fine-grained assessment of batch mixing at the individual cell level. The Cell-specific Mixing Score (cms) tests whether distance distributions to a cell's k-nearest neighbors differ significantly across batches using the Anderson-Darling test, effectively detecting local batch bias [22]. Local Inverse Simpson's Index (LISI) calculates the effective number of batches represented in each cell's neighborhood, with higher values indicating better mixing [22].

Experimental Protocol for Batch Effect Assessment

Pre-assessment Data Processing

Batch Effect Assessment Workflow

Before calculating batch effect metrics, proper data preprocessing is essential. Begin with the raw feature matrix (e.g., gene expression counts) and apply appropriate normalization methods such as library size normalization (CPM, TMM) for bulk RNA-seq or more specialized methods for single-cell data [23]. Incorporate batch annotation metadata, which should include comprehensive information about technical variables such as sequencing date, platform, laboratory, and operator. For high-dimensional data, perform feature selection to retain biologically informative features—typically highly variable genes (HVGs) in transcriptomic studies [3]. Finally, apply dimensionality reduction techniques (PCA, UMAP, t-SNE) to generate low-dimensional embeddings that preserve meaningful biological variation while reducing computational complexity for subsequent metric calculations [3] [22].

Step-by-Step Metric Implementation Protocol

Data Input Preparation

- Format data as a features × observations matrix (e.g., genes × cells)

- Ensure batch labels are encoded as categorical variables

- For supervised metrics, compile cell type annotations

Global Assessment with PCR

- Perform PCA on normalized data

- Fit regression models between principal components and batch labels

- Calculate variance explained by batch effects:

batch_variance = sum(PC_variance * R²) / total_variance - Values >10% indicate substantial batch effects requiring correction

Local Mixing Evaluation with cms

- Compute k-nearest neighbors (k=50-100 typically) in PCA space

- For each cell, calculate batch-specific distance distributions to its neighbors

- Apply Anderson-Darling test to compare distance distributions across batches

- Compute p-values for each cell, with low p-values indicating poor local mixing

Batch Balance Assessment with kBET

- Randomly sample cells (typically 10-20% of dataset)

- For each sampled cell, test if batch proportions in its neighborhood match expected distribution using Pearson's chi-squared test

- Report rejection rate across all samples, with high rejection rates (>0.5) indicating significant batch effects

Integration of Multiple Metrics

- Compute at least one metric from each category (global, cell type-specific, cell-specific)

- Create a comprehensive assessment report highlighting consistent findings across metrics

- Use metric outcomes to guide selection of appropriate correction strategies

Visualization Approaches for Batch Effects

Visualization provides critical complementary assessment to quantitative metrics by enabling researchers to intuitively understand batch effect patterns.

Standard Visualization Techniques

Principal Component Analysis (PCA) plots colored by batch membership represent the most straightforward visualization approach, where clear separation of batches along principal components indicates substantial batch effects [3]. However, PCA may miss subtle batch effects that don't align with the main axes of variation. t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) provide alternative visualizations that can often reveal more complex batch effect structures, though these methods prioritize local structure and may introduce artifacts [22].

Advanced Visualization Strategies

Sample boxplots comparing feature distributions across batches can reveal systematic shifts in data distributions, though they are most suitable for identifying large-scale batch effects [3]. For large datasets, density plots showing the distribution of cells from different batches in low-dimensional space can highlight regions with poor batch mixing. Additionally, before-and-after correction visualizations using the same dimensionality reduction coordinates provide intuitive assessment of correction effectiveness.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CellMixS | R/Bioconductor package | Calculate cell-specific batch mixing scores (cms) | Single-cell RNA-seq data |

| Harmony | Integration algorithm | Batch effect correction using iterative clustering | Multiple data types; good performance in benchmarks |

| Seurat | R toolkit | Single-cell analysis including integration methods | Single-cell genomics |

| scVI | Python package | Variational autoencoder for single-cell data | Large-scale single-cell datasets |

| ComBat | R/sva package | Empirical Bayes framework for batch adjustment | Bulk and single-cell transcriptomics |

| Reference Materials | Physical standards | Control for technical variation across batches | Multi-omics studies |

Special Considerations for Cross-Dataset Annotation

In cross-dataset annotation research, where the goal is to transfer cell type labels from reference to target datasets, special considerations apply when assessing batch effects. The presence of cell types in one dataset that are absent in another can complicate batch effect assessment, as some metrics may interpret novel cell types as batch effects [21]. Additionally, when batch effects show strong cell type specificity—affecting some cell populations more than others—standard global metrics may underestimate the problem for affected cell types [22].

For cross-dataset annotation applications, it is particularly important to evaluate whether batch effects are substantially larger between datasets than within datasets. This can be assessed by comparing distances between samples of the same cell type across different batch effect scenarios [21]. Furthermore, when biological and technical factors are completely confounded (e.g., all samples from one condition processed in a single batch), reference-material-based approaches such as ratio-based correction methods may be necessary for accurate assessment [4].

Systematic assessment of batch effect strength prior to correction ensures that researchers select appropriate correction strategies, avoid both under- and over-correction, and ultimately achieve more reliable cross-dataset annotations in single-cell and other omics studies.

Batch Correction Algorithms: From Theory to Practical Implementation

Batch effects are systematic non-biological variations that can be introduced into datasets during sample processing, sequencing, or analysis across different batches, platforms, or laboratories. These technical artifacts can compromise data reliability, obscure true biological signals, and significantly hinder cross-dataset comparisons and integrative analyses. In the context of cross-dataset annotation research, where the goal is to leverage existing annotated data to label new datasets, effectively mitigating batch effects is paramount for achieving accurate and reproducible results. Computational batch effect correction methods have become essential tools for ensuring that observed differences in data truly reflect biological phenomena rather than technical variations. This overview categorizes the major algorithm families, provides detailed experimental protocols, and offers a practical toolkit for researchers engaged in batch-sensitive omics studies.

Algorithm Family Classification and Characteristics

Batch effect correction algorithms can be broadly categorized into three major families based on their underlying mathematical frameworks and correction strategies. Each approach possesses distinct strengths, limitations, and optimal use cases, which researchers must consider when designing cross-dataset annotation workflows.

Table 1: Major Algorithm Families for Batch Effect Correction

| Algorithm Family | Core Methodology | Key Variations | Primary Applications | Notable Examples |

|---|---|---|---|---|

| Linear Models | Statistical adjustment using parametric and non-parametric frameworks | Empirical Bayes, Negative Binomial models, Covariate adjustment | Bulk RNA-seq, Differential expression analysis | ComBat, ComBat-seq, ComBat-ref, removeBatchEffect, RUVSeq |

| Deep Learning | Non-linear feature learning via neural networks | Adversarial learning, Metric learning, Autoencoders, Cycle-consistency | scRNA-seq integration, Multi-omics, Complex batch effects | scDML, scVI, scANVI, SCALEX, sysVI, SpaCross, Cell BLAST |

| Reference-Based Methods | Scaling relative to concurrently profiled reference standards | Ratio-based transformation, Reference batch alignment | Multi-batch studies, Confounded designs, Quality control | Ratio-based scaling, Ratio-G, ComBat-ref (with reference) |

Linear Model-Based Methods

Linear model-based approaches constitute some of the earliest and most widely adopted methods for batch effect correction. These methods operate by statistically modeling the observed data to partition variation into biological signals of interest and technical batch artifacts.

2.1.1 Core Principles and Variations Linear methods assume that batch effects represent systematic, additive or multiplicative shifts in measurements that can be estimated and removed. The ComBat family of algorithms employs an empirical Bayes framework to correct for both location and scale parameters of distribution, effectively shrinking batch effect parameters toward the overall mean for improved stability, particularly with small sample sizes [24]. For RNA-seq count data, ComBat-seq utilizes a negative binomial generalized linear model to preserve the integer nature of count data during adjustment, making it more suitable for downstream differential expression analysis [24]. Recent refinements like ComBat-ref introduce strategic reference batch selection, choosing the batch with the smallest dispersion as an anchor and adjusting other batches toward this reference, which demonstrates superior performance in maintaining statistical power for differential expression detection [24].

Alternative linear approaches include including batch as a covariate in differential expression tools like edgeR and DESeq2, or using factor-based methods like Surrogate Variable Analysis (SVA) and Remove Unwanted Variation (RUV) to model unmeasured technical factors [24] [25]. The rescaleBatches function in the batchelor package implements a linear regression-based approach on log-expression values, scaling batch-specific means downward to the lowest mean across batches to mitigate variance differences [25].

2.1.2 Experimental Protocol for Linear Model Applications

Protocol 1: Applying ComBat-ref for RNA-seq Data

- Input Preparation: Format your RNA-seq data as a raw count matrix with genes as rows and samples as columns. Prepare metadata indicating batch membership and biological conditions.

- Dispersion Estimation: For each batch, estimate gene-wise dispersions using established methods (e.g., via edgeR or DESeq2).

- Reference Batch Selection: Calculate the average dispersion for each batch and select the batch with the minimum average dispersion as the reference.

- Parameter Estimation: Using a negative binomial generalized linear model (GLM), estimate the global gene expression (αg), batch effect (γig), and biological condition effect (βcjg) parameters:

log(μ_ijg) = α_g + γ_ig + β_cjg + log(N_j)where μijg is the expected count for gene g in sample j from batch i, and N_j is the library size. - Data Adjustment: For non-reference batches, adjust the expected counts toward the reference batch:

log(μ̃_ijg) = log(μ_ijg) + γ_1g - γ_igwhere γ_1g is the batch effect parameter for the reference batch. - Count Adjustment: Generate adjusted counts by matching the cumulative distribution function (CDF) of the original negative binomial distribution to the CDF of the adjusted distribution, preserving the count nature of the data.

- Output: The final output is a batch-corrected integer count matrix ready for downstream differential expression analysis.

Deep Learning-Based Methods

Deep learning approaches have emerged as powerful alternatives for handling complex, non-linear batch effects that challenge traditional linear methods, particularly in single-cell genomics and spatially resolved transcriptomics.

2.2.1 Core Architectures and Learning Strategies Deep learning frameworks leverage neural networks to learn low-dimensional, batch-invariant representations of high-dimensional omics data. Variational autoencoders (VAEs), such as those implemented in scVI and scANVI, project data into a latent space while conditioning on batch information to remove technical variation [26] [21]. Adversarial learning methods, including domain adaptation networks and GAN-based frameworks, employ a discriminator network that competes with the feature extractor to generate embeddings indistinguishable across batches [20] [27]. Deep metric learning approaches, exemplified by scDML, utilize triplet loss functions to minimize distances between cells of the same type across batches while maximizing distances between different cell types in the latent space [28]. More recent innovations incorporate cycle-consistency constraints (as in sysVI) and masked self-supervised learning (as in SpaCross) to enhance representation robustness and preserve biological signals during integration [29] [21].

2.2.2 Experimental Protocol for Deep Learning Applications

Protocol 2: Implementing scDML for Single-Cell Data Integration

- Data Preprocessing: Normalize the raw count matrix using standard scRNA-seq workflows (e.g., SCANPY). Apply log1p transformation, identify highly variable genes, and scale the data.

- Initial Clustering: Perform graph-based clustering at high resolution on the principal component analysis (PCA) embedding of the concatenated datasets to obtain initial, fine-grained clusters that potentially capture rare cell types.

- Similarity Matrix Construction: Compute a symmetric similarity matrix between clusters using k-nearest neighbor (KNN) and mutual nearest neighbor (MNN) information within and between batches.

- Cluster Merging: Apply a hierarchical clustering-based merging criterion to consolidate over-clustered groups. The number of final clusters can be determined by known cell type numbers or optimization metrics.

- Triplet Selection: For deep metric learning, form triplets (anchor, positive, negative) where the anchor and positive are cells of the same cluster from different batches, and the negative is a cell from a different cluster.

- Model Training: Train a deep neural network using triplet loss to minimize the distance between anchor-positive pairs while maximizing the distance between anchor-negative pairs in the learned embedding space.

- Embedding Extraction: The final output is a low-dimensional, batch-corrected embedding that can be used for visualization, clustering, and downstream analysis.

Figure 1: scDML Workflow for Single-Cell Data Integration. The diagram outlines the key steps in implementing the scDML algorithm for batch effect correction in single-cell RNA sequencing data.

Reference-Based Methods

Reference-based correction methods offer a conceptually distinct approach by leveraging commonly profiled reference materials to standardize measurements across batches.

2.3.1 Core Principles and Variations The fundamental principle of reference-based methods involves transforming absolute feature values into relative measurements scaled to concurrently profiled reference standards. The ratio-based method (Ratio-G) converts expression values to ratios relative to a common reference sample analyzed within the same batch [4]. In study designs where a specific batch demonstrates superior data quality (e.g., lowest dispersion), algorithms like ComBat-ref can be adapted to use this batch as a reference for aligning all other batches [24]. For large-scale multi-omics studies, dedicated reference material sets (e.g., the Quartet Project reference materials) can be profiled across all batches to establish standardized scaling factors [4].

2.3.2 Experimental Protocol for Reference-Based Applications

Protocol 3: Implementing Ratio-Based Correction with Reference Materials

- Reference Material Selection: Choose appropriate, well-characterized reference materials (e.g., commercial reference standards or internal control samples) that will be profiled in every experimental batch.

- Concurrent Profiling: In each batch, process both the study samples and the selected reference material(s) using identical experimental protocols.

- Reference Value Calculation: For each feature (gene, protein, metabolite) in each batch, compute the average expression value across technical replicates of the reference material.

- Ratio Transformation: Transform the absolute expression values of study samples to ratios relative to the reference value within the same batch:

Ratio_ijg = Value_ijg / Reference_igwhere Valueijg is the absolute value of feature g in sample j from batch i, and Referenceig is the reference value for feature g in batch i. - Data Integration: The resulting ratio-scaled values can be directly integrated across batches for consolidated analysis, as they are normalized to the batch-specific reference standard.

Performance Benchmarking and Quantitative Comparisons

Rigorous benchmarking studies provide critical insights into the relative performance of different algorithm families under various experimental scenarios. Understanding these performance characteristics is essential for selecting appropriate methods for specific research contexts.

Table 2: Performance Comparison of Batch Effect Correction Methods

| Method | Algorithm Family | Batch Correction Strength (iLISI) | Biological Conservation (ASW_celltype) | Rare Cell Type Preservation | Computational Efficiency |

|---|---|---|---|---|---|

| ComBat-ref | Linear Model | High | High [24] | Moderate | High |

| Harmony | Linear Model | High | Moderate [26] | Low | High |

| scVI | Deep Learning | Moderate | High [26] | Moderate | Moderate |

| scDML | Deep Learning | High | High [28] | High | Moderate |

| scANVI | Deep Learning | High | High [26] | High | Low |

| sysVI (VAMP+CYC) | Deep Learning | High | High [21] | High | Moderate |

| Ratio-Based | Reference-Based | High | High [4] | High | High |

Key benchmarking findings reveal that linear methods like ComBat-ref demonstrate exceptional performance in bulk RNA-seq analyses, maintaining high sensitivity and specificity in differential expression detection even with significant batch effect challenges [24]. For single-cell data integration, deep learning approaches generally outperform other families, with scDML showing particular strength in preserving rare cell types that are often lost by other methods [28]. In confounded experimental designs where biological groups are completely confounded with batch groups, reference-based ratio methods demonstrate superior reliability compared to other approaches, effectively distinguishing technical artifacts from biological signals [4]. Recent innovations in deep learning, such as the combination of VampPrior with cycle-consistency constraints in sysVI, address limitations of earlier approaches that often sacrificed biological information when increasing batch correction strength [21].

Successful implementation of batch effect correction strategies requires both computational tools and well-characterized experimental resources. The following table summarizes key reagents and their applications in batch effect correction workflows.

Table 3: Essential Research Reagents and Computational Tools

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Quartet Reference Materials | Reference Material | Provides multi-omics standards for cross-batch normalization | Bulk transcriptomics, proteomics, metabolomics studies [4] |

| Animal Cell Atlas (ACA) | Reference Database | Curated scRNA-seq database with structured cell type annotations | Reference-based cell type annotation [27] |

| Cell BLAST | Computational Tool | Adversarial domain adaptation for query-to-reference mapping | Cross-dataset cell type annotation [27] |

| scvi-tools | Software Package | Implements variational autoencoders for single-cell data | Deep learning-based data integration [26] |

| batchelor | Software Package | Provides multiple batch correction methods for single-cell data | Linear model and rescaling approaches [25] |

The three major algorithm families for batch effect correction—linear models, deep learning, and reference-based methods—each offer distinct advantages for specific research scenarios in cross-dataset annotation. Linear models provide statistically robust, interpretable correction for bulk omics data. Deep learning methods excel at handling complex, non-linear batch effects in high-dimensional single-cell and spatial transcriptomics. Reference-based approaches offer unparalleled reliability in confounded experimental designs. Future methodological development will likely focus on hybrid approaches that combine strengths from multiple families, improved preservation of subtle biological variations, and specialized algorithms for emerging technologies such as multi-omics integration and spatially resolved transcriptomics. As the scale and complexity of biological datasets continue to grow, the strategic selection and implementation of appropriate batch effect correction methods will remain fundamental to ensuring the validity and reproducibility of cross-dataset comparative analyses.

The integration of multiple datasets is a cornerstone of modern biological research, enabling cross-condition comparisons, population-level analyses, and the construction of large-scale reference atlases. However, this integration is often compromised by batch effects—systematic technical variations that arise when samples are processed in different batches, using different protocols, or across different biological systems. These effects can confound biological signals, leading to inaccurate conclusions and reduced reliability of downstream analyses. In single-cell RNA sequencing (scRNA-seq), this problem is particularly acute when integrating datasets with substantial batch effects, such as those originating from different species (e.g., mouse vs. human), different model systems (e.g., organoids vs. primary tissue), or different sequencing technologies (e.g., single-cell vs. single-nuclei RNA-seq) [30].

Conditional Variational Autoencoders (cVAEs) have emerged as a powerful framework for addressing these challenges. A cVAE is a generative model that extends the standard Variational Autoencoder (VAE) by conditioning both the encoder and decoder on additional information, such as batch labels or other covariates. This architecture enables the model to learn a latent representation of the data that effectively disentangles biological signals from technical artifacts. During training, the cVAE learns to reconstruct its input while regularizing the latent space to approximate a prior distribution, typically a standard Gaussian. The Kullback-Leibler (KL) divergence term in the loss function measures how much the learned latent distributions deviate from this prior, serving as a form of regularization [31].

Despite their promise, standard cVAE-based integration methods exhibit significant limitations when confronted with substantial batch effects. Increasing KL regularization strength often removes both technical and biological variation without discrimination, while adversarial learning approaches—which aim to make batch origins indistinguishable in the latent space—can inadvertently mix embeddings of unrelated cell types, especially when cell type proportions are unbalanced across batches [30]. These shortcomings highlight the need for more sophisticated integration strategies that can robustly correct for batch effects while preserving delicate biological signals.

The sysVI Framework: Advanced cVAE for Substantial Batch Effects

Core Innovations: VampPrior and Cycle-Consistency

The sysVI model represents a significant advancement in cVAE-based integration by incorporating two key innovations: the VampPrior and latent cycle-consistency constraints. These components work in concert to overcome the limitations of traditional cVAE approaches when handling substantial batch effects [30] [32].

The VampPrior (Variational Mixture of Posteriors Prior) replaces the standard Gaussian prior typically used in VAEs with a more flexible, multi-modal distribution. This prior is defined as a mixture of variational posteriors, with components corresponding to pseudo-inputs that are learned during training. In the context of scRNA-seq integration, this flexible prior helps preserve biological heterogeneity that might otherwise be collapsed by a restrictive Gaussian prior, particularly important for maintaining subtle cell state differences across systems [30].

Latent cycle-consistency constraints introduce an additional loss term that encourages consistent mapping of biologically similar cells across different systems (batches). Specifically, when a cell from one system is encoded to the latent space and then decoded to another system, the resulting representation should map back to the original cell's identity when cycled through the latent space again. This cycle-consistency loss actively pushes together cells from different systems that share biological similarity, without requiring adversarial training that can remove biological signals [30].

Table: Core Components of the sysVI Framework

| Component | Standard cVAE | sysVI Implementation | Functional Benefit |

|---|---|---|---|

| Prior Distribution | Standard Gaussian | VampPrior (Mixture of Posteriors) | Preserves multi-modal biological heterogeneity |

| Integration Mechanism | KL regularization | Cycle-consistency constraints | Actively aligns similar cells across systems |

| Batch Alignment | Adversarial learning (in some implementations) | Explicit cycle-consistency loss | Prevents mixing of unrelated cell types |

| Biological Preservation | Limited by prior flexibility | Enhanced by flexible prior and targeted alignment | Maintains subtle cell state differences |

sysVI Performance and Comparative Evaluation

sysVI has been rigorously evaluated across multiple challenging integration scenarios, including cross-species (mouse-human pancreatic islets), cross-technology (single-cell vs. single-nuclei RNA-seq from adipose tissue), and cross-system (retinal organoids vs. primary tissue) datasets. In these evaluations, sysVI demonstrated superior performance compared to existing methods in both batch correction and biological preservation [30].

Quantitative assessment using metrics such as graph integration local inverse Simpson's index (iLISI) for batch mixing and normalized mutual information (NMI) for cell type conservation revealed that sysVI successfully integrates datasets with substantial batch effects while maintaining higher biological fidelity than approaches relying solely on KL regularization tuning or adversarial learning. Notably, sysVI avoided the problematic behaviors observed in other methods: it did not collapse meaningful dimensions (as occurred with high KL regularization) and did not mix unrelated cell types with unbalanced proportions across batches (as occurred with adversarial approaches) [30].

Table: Performance Comparison of Integration Methods on Challenging Datasets

| Method | Batch Correction (iLISI) | Biological Preservation (NMI) | Notable Limitations |

|---|---|---|---|

| Standard cVAE | Moderate | Moderate | Removes biological signal with increased KL weight |

| cVAE + Adversarial | High | Low to Moderate | Mixes unrelated cell types with unbalanced proportions |

| GLUE | High | Low to Moderate | Mixes delta, acinar, and immune cells in pancreas data |

| sysVI (VAMP + CYC) | High | High | Maintains cell type integrity while achieving integration |

Experimental Protocols for sysVI Implementation

Data Preprocessing and Setup

Proper data preprocessing is critical for successful integration with sysVI. The following protocol outlines the essential steps for preparing scRNA-seq data:

Normalization and Transformation: Perform normalization to a fixed number of counts per cell followed by log-transformation. The model assumes Gaussian noise distribution of features [33].

Feature Selection: Identify highly variable genes (HVGs) separately within each system (e.g., species) using within-system batches as the batch_key. Start with genes present in all systems, then take the intersection of HVGs across systems to obtain approximately 2000 shared HVGs [33].

Covariate Specification: Define the primary batch_key covariate representing the "system" (e.g., species, technology). Additional categorical covariates (e.g., samples within systems) can also be specified for correction. For multiple system types (e.g., both species and technology), create combined system labels (e.g., "mouse-nuclei", "human-cell") [33].

Data Setup with scvi-tools:

Model Training and Configuration

The training process requires careful configuration of model architecture and loss weights:

Model Initialization:

Loss Weight Configuration: The key hyperparameters for controlling the integration behavior are the KL loss weight and the cycle-consistency loss weight. Empirical testing suggests:

- Cycle-consistency weight (zdistancecycle_weight): Typically between 2-10, though values up to 50 may be beneficial for particularly challenging integrations