Batch Integration with scFoundation Embeddings: A Comprehensive Guide for Robust Single-Cell Analysis

This article provides a comprehensive guide for researchers and bioinformaticians on leveraging scFoundation, a large-scale single-cell foundation model, for batch integration tasks.

Batch Integration with scFoundation Embeddings: A Comprehensive Guide for Robust Single-Cell Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians on leveraging scFoundation, a large-scale single-cell foundation model, for batch integration tasks. As single-cell genomics increasingly relies on integrating diverse datasets, the ability to remove technical artifacts while preserving biological signal is paramount. We explore the foundational principles of scFoundation's architecture and pretraining, detail practical methodologies for generating and applying its embeddings, and address common troubleshooting and optimization scenarios. Furthermore, we present a rigorous validation framework, benchmarking scFoundation's integration performance against established methods like Harmony and scVI, and introduce novel ontology-aware metrics for biological relevance. This guide empowers scientists to harness scFoundation for creating unified, analysis-ready datasets from complex multi-study cohorts, thereby accelerating discoveries in cell biology and therapeutic development.

Understanding scFoundation: Architecture, Pretraining, and Embedding Principles for Batch Integration

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the examination of gene expression at the resolution of individual cells, uncovering cellular heterogeneity with unprecedented precision [1] [2]. However, the analysis of scRNA-seq data presents significant challenges due to its inherent high dimensionality, sparsity, and technical noise from batch effects [2]. The rapid accumulation of massive-scale single-cell datasets has created an urgent need for unified computational frameworks that can integrate and extract meaningful biological insights from these heterogeneous data repositories [1].

Inspired by the success of foundation models in natural language processing, researchers have begun developing single-cell foundation models (scFMs) trained on millions of cells to learn universal biological principles [1]. Among these emerging models, scFoundation represents a significant advancement—a large-scale foundation model specifically designed to address the unique challenges of single-cell transcriptomics data [3]. This application note provides a comprehensive overview of scFoundation's architecture, scale, and design principles, with particular emphasis on its utility for batch integration in single-cell research.

Model Architecture and Technical Specifications

scFoundation is built on a transformer-based asymmetric encoder-decoder architecture specifically optimized for single-cell transcriptomics data [2] [3]. With approximately 100 million parameters, it ranks among the most substantial models in the single-cell domain [2]. The model was pretrained on an extensive corpus of over 50 million human single-cell gene expression profiles, encompassing diverse tissue types and biological conditions [3].

Core Architectural Components

The scFoundation framework incorporates several innovative components designed to handle the specific characteristics of single-cell data:

Value Projection Strategy: Unlike other single-cell foundation models that use gene ranking or value categorization approaches, scFoundation employs a value projection method that preserves the full resolution of gene expression data by directly predicting raw gene expression values [4]. This approach expresses the gene expression vector as the sum of a projection of the gene expression vector and a positional or gene embedding [4].

Read Depth-Aware (RDA) Modeling: A key innovation in scFoundation is its read-depth-aware pretraining task, which extends masked language modeling to predict masked gene expressions based on cell context while explicitly accommodating varying sequencing depths across experiments [3]. This capability is particularly valuable for integrating datasets generated using different technologies or protocols.

Embedding Module: The model utilizes an embedding module that retains raw gene expression values, enabling it to capture subtle variations in gene expression patterns that might be lost in discretization or ranking approaches [3].

Table 1: Technical Specifications of scFoundation

| Parameter | Specification | Significance |

|---|---|---|

| Model Parameters | 100 million | Substantial capacity for capturing complex biological relationships |

| Pretraining Dataset Size | 50 million+ single-cell transcriptomes | Extensive coverage of diverse biological conditions |

| Input Gene Capacity | 19,264 protein-encoding genes + mitochondrial genes | Comprehensive coverage of the transcriptome |

| Architecture Type | Asymmetric encoder-decoder transformer | Efficient processing of high-dimensional single-cell data |

| Pretraining Task | Read-depth-aware masked gene modeling | Robustness to technical variations in sequencing depth |

| Output Dimension | 3,072 | Rich latent representations for downstream tasks |

Input Representation and Tokenization

scFoundation processes single-cell data using a specialized input representation scheme. The model accepts normalized counts from 19,264 human protein-encoding genes along with common mitochondrial genes [2]. Unlike approaches that rely on gene ranking or value binning, scFoundation uses value projection to maintain continuous gene expression information [4]. This design choice enables the model to capture subtle expression differences that may be biologically significant but are lost in discretization approaches.

scFoundation for Batch Integration: Mechanisms and Workflows

Batch effects—technical variations introduced by different experimental conditions, protocols, or platforms—represent a major challenge in single-cell genomics, potentially obscuring biological signals and leading to erroneous conclusions [5]. scFoundation addresses this challenge through several mechanisms learned during its large-scale pretraining.

How scFoundation Enables Effective Batch Integration

The model's effectiveness in batch integration stems from several key capabilities:

Read Depth Compensation: The read-depth-aware pretraining objective explicitly teaches the model to recognize and compensate for variations in sequencing depth, a major source of batch effects [3].

Biological Signal Isolation: By training on diverse datasets spanning multiple tissues, conditions, and technologies, scFoundation learns to distinguish technical artifacts from biologically meaningful variation [3].

Contextual Gene Representation: The model develops gene embeddings that capture functional relationships and co-expression patterns that persist across different batches and experimental conditions [3].

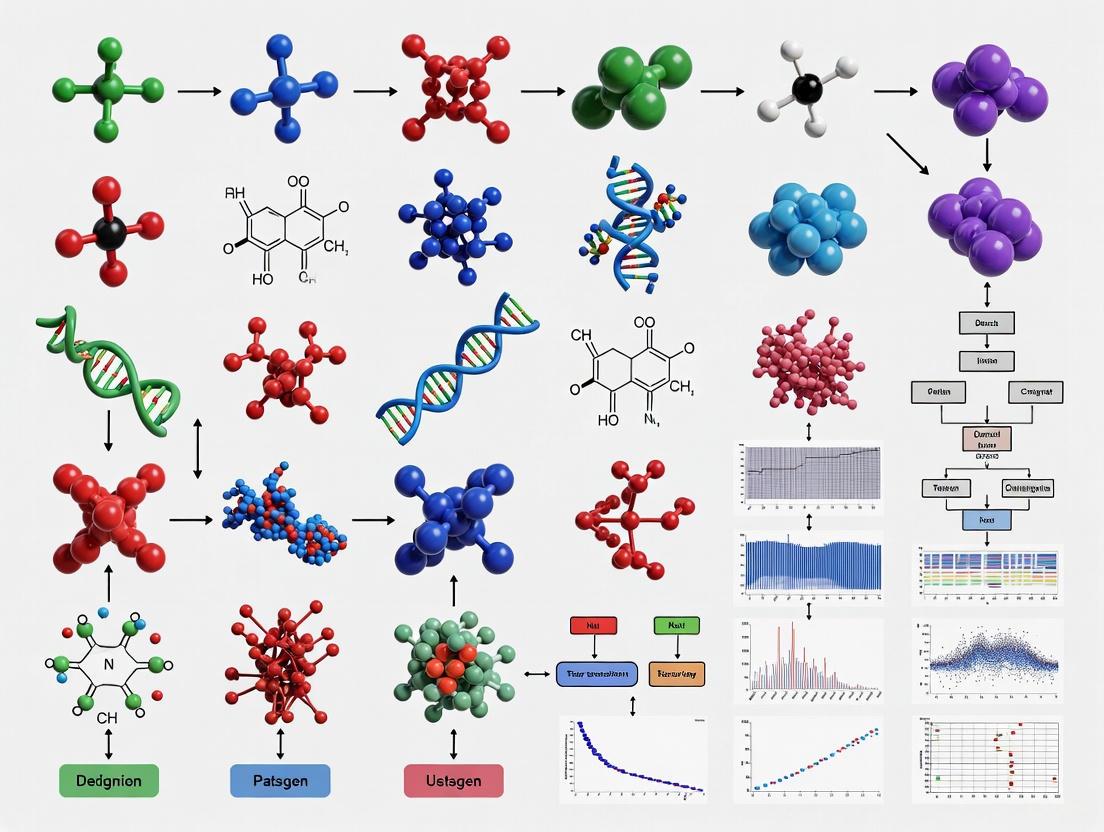

The following workflow diagram illustrates the process of using scFoundation embeddings for batch integration in single-cell analysis:

Benchmarking Performance in Batch Integration

Comparative studies have evaluated scFoundation's performance against established batch integration methods. When assessed alongside other single-cell foundation models and traditional approaches, scFoundation demonstrates robust performance in creating unified embedding spaces that effectively mitigate batch effects while preserving biological variation [2].

Table 2: Experimental Protocols for Batch Integration Using scFoundation Embeddings

| Protocol Step | Detailed Methodology | Key Parameters |

|---|---|---|

| Data Preprocessing | Standard quality control followed by scFoundation's normalization pipeline | Minimum 200 genes/cell, mitochondrial content <20%, doublet removal |

| Embedding Generation | Pass normalized counts through pretrained scFoundation model to extract cell embeddings | Embedding dimension: 3,072; Batch size: 32-128 depending on available memory |

| Integration Assessment | Evaluate batch mixing using metrics like ASW (Average Silhouette Width) and BIO score while monitoring biological conservation | Compare variance explained by batch vs. biological factors; Target batch ASW >0.7 while maintaining biological separation |

| Downstream Analysis | Apply clustering, visualization, and differential expression to integrated embeddings | Leiden clustering resolution: 0.4-1.0; UMAP neighbors: 15-30 |

Practical Implementation: Research Reagent Solutions

Implementing scFoundation for batch integration and other single-cell analysis tasks requires specific computational resources and data processing tools. The following table details the essential components of the scFoundation research workflow.

Table 3: Research Reagent Solutions for scFoundation Implementation

| Resource Category | Specific Solutions | Function in Workflow |

|---|---|---|

| Computational Infrastructure | High-performance computing cluster with GPU acceleration (NVIDIA A100 or equivalent recommended) | Model inference and embedding generation for large-scale single-cell datasets |

| Data Processing Tools | Scanpy, Seurat, or custom preprocessing pipelines compatible with scFoundation input requirements | Quality control, normalization, and formatting of single-cell data for model input |

| Benchmarking Frameworks | Specialized evaluation metrics including ASW, PCR, and novel biological conservation metrics [2] | Quantitative assessment of batch integration performance and biological preservation |

| Visualization Platforms | UMAP/t-SNE visualization built on scFoundation embeddings | Exploration of integrated data and biological pattern discovery |

| Reference Datasets | Curated benchmark datasets with known batch effects and biological ground truth [2] | Validation of integration performance and method comparison |

Applications and Performance Benchmarks

Performance Across Diverse Biological Tasks

scFoundation has demonstrated strong performance across multiple downstream applications relevant to drug development and basic research:

Cell Type Annotation: By fine-tuning just a single layer of its encoder with an added prediction layer, scFoundation achieved state-of-the-art accuracy in cell type identification, particularly excelling in recognizing rare cell populations such as CD4+ T helper 2 and CD34+ cells [3].

Drug Response Prediction: When combined with the DeepCDR framework, scFoundation embeddings provided more accurate predictions of half-maximal inhibitory concentration (IC50) values across various cancer cell lines, outperforming the original DeepCDR model in drug-blind tests [3]. The model showed particularly strong performance for chemotherapy drugs compared to targeted therapies.

Perturbation Modeling: Integration with the GEARS framework enhanced prediction of cellular responses to genetic and chemical perturbations, achieving lower error values and more accurate identification of genetic interaction types, including synergy and suppressor relationships [3].

Comparative Performance in Batch Integration

In comprehensive benchmarking studies evaluating six single-cell foundation models against established methods, scFoundation demonstrated robust performance in batch integration tasks [2]. The model's zero-shot embeddings—used without additional fine-tuning—effectively separated cell types while mitigating batch effects across diverse datasets containing multiple sources of variation including inter-patient, inter-platform, and inter-tissue differences [2].

Notably, the benchmarking revealed that no single foundation model consistently outperformed all others across every task, highlighting the importance of task-specific model selection [2]. However, scFoundation's specialized architecture for handling read-depth variations positions it as a particularly strong choice for batch integration scenarios involving datasets with substantially different sequencing characteristics.

scFoundation represents a significant advancement in the application of large-scale foundation models to single-cell biology. Its specialized architecture—particularly the read-depth-aware pretraining and value projection approach—provides distinct advantages for batch integration tasks essential for robust single-cell research and drug development.

While the model demonstrates powerful capabilities, current benchmarking suggests that optimal performance requires careful model selection tailored to specific research objectives, dataset characteristics, and computational resources [2]. Future developments in scFoundation and similar models will likely focus on multi-omic integration, improved interpretability, and reduced computational requirements to broaden accessibility across the research community.

For researchers pursuing batch integration with single-cell data, scFoundation offers a validated, high-performance option that effectively balances technical artifact removal with biological signal preservation, making it particularly valuable for constructing comprehensive cell atlases and translational research applications.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by allowing the examination of gene expression at the resolution of individual cells. The scFoundation model represents a transformative approach in this field, serving as a large-scale pretrained foundation model for single-cell transcriptomics. With 100 million parameters, scFoundation was trained on over 50 million human single-cell transcriptomics data, encompassing complex molecular features across all known cell types [6]. This massive scale in parameters, genes, and training cells enables scFoundation to function as a powerful foundation model that achieves state-of-the-art performance across diverse downstream tasks.

Within the context of batch integration, scFoundation embeddings offer a powerful solution to a critical challenge in single-cell genomics: harmonizing datasets affected by substantial technical and biological variations. Batch effects arise when datasets are generated under different conditions, such as varying sequencing technologies, laboratory protocols, or biological systems. The integration of such datasets is essential for constructing comprehensive cell atlases and enabling robust comparative analyses [7]. The scFoundation model provides a unified representation space that can effectively mitigate these batch effects while preserving biologically relevant variation, making it particularly valuable for large-scale integrative studies.

Core Architectural Framework

Model Architecture and Scale

scFoundation is built upon the xTrimoGene architecture and represents one of the most comprehensive foundation models in single-cell biology. The model's substantial scale—100 million parameters pretrained on over 50 million human cells—provides the capacity to capture the complex molecular features present across all known cell types [6]. This extensive pretraining enables the model to learn universal biological patterns that can be transferred to various downstream applications through fine-tuning or direct embedding extraction.

The architecture processes single-cell transcriptomics data by transforming gene expression profiles into a structured format amenable to deep learning. Unlike natural language, where words follow a sequential order, gene expression data lacks inherent sequence. scFoundation, like other single-cell foundation models (scFMs), addresses this challenge by implementing specialized tokenization strategies that impose meaningful structure on the input data [1]. This structured representation allows the model to effectively learn relationships between genes and cellular states.

Input Representation and Tokenization Strategies

The input representation layer is a critical component of scFoundation's architecture, responsible for converting raw gene expression data into a format the model can process. The tokenization process defines how genes and their expression values are represented as discrete tokens, analogous to words in a sentence [1].

Table 1: Input Tokenization Strategies in Single-Cell Foundation Models

| Component | Representation | Function | Implementation in scFMs |

|---|---|---|---|

| Gene Embedding | Unique identifier for each gene | Captures intrinsic properties and functional relationships between genes | Learned vector representation for each gene [8] |

| Value Embedding | Expression level of each gene | Encodes the magnitude of gene expression in a specific cell | Combined with gene embedding; may use binning or normalization [1] |

| Positional Embedding | Artificial ordering of genes | Provides sequence context despite non-sequential nature of genomic data | Often uses expression-level ranking or gene partitioning strategies [1] |

In practice, scFoundation and similar models employ several strategies to overcome the non-sequential nature of gene expression data. A common approach involves ranking genes within each cell by their expression levels and feeding this ordered list as input to the model [1]. Alternative methods partition genes into bins based on expression values or use simplified normalized counts without complex ranking [1]. The resulting token embeddings typically combine a gene identifier with its expression value, while positional encoding schemes represent the relative order or rank of each gene within the cell.

Batch Integration Methodology with scFoundation

Protocol: Batch Integration Using scFoundation Embeddings

Purpose: To integrate multiple scRNA-seq datasets from different biological systems or technical platforms using scFoundation embeddings, effectively removing batch effects while preserving biological variation.

Materials and Reagents:

- Computational Environment: High-performance computing cluster with GPU acceleration

- Software Dependencies: Python 3.8+, PyTorch, scFoundation implementation from official repository

- Data Requirements: Multiple scRNA-seq datasets in standard format (e.g., AnnData, Seurat objects)

Procedure:

Data Preprocessing:

- Download and preprocess training data using the provided preprocessing code in the scFoundation repository [6].

- Perform standard quality control on each dataset individually (filtering low-quality cells, removing doublets).

- Normalize gene expression values within each dataset using standard methods (e.g., log(CP10K+1)).

Embedding Extraction:

- Load the pretrained scFoundation model weights.

- For each cell in all batches, extract the cell embedding from the model's output layer.

- The embedding represents the cell in a unified latent space that captures biological similarity independent of batch effects.

Integration and Downstream Analysis:

- Use the extracted embeddings for downstream tasks such as clustering, visualization, and trajectory inference.

- Apply standard dimensionality reduction techniques (UMAP, t-SNE) on the embeddings to visualize integrated data.

- Perform clustering on the embeddings to identify cell populations that transcend batch boundaries.

Troubleshooting Tips:

- If integration appears insufficient, ensure that all datasets were preprocessed consistently.

- For large datasets, consider batch processing to manage memory constraints.

- Validate integration quality using established metrics such as iLISI (integration Local Inverse Simpson's Index) and biological conservation metrics [7].

Quantitative Performance Assessment

Table 2: Batch Integration Performance Metrics Across Methods

| Method | Batch Correction (iLISI) | Biological Preservation (NMI) | Computational Efficiency | Use Case Recommendation |

|---|---|---|---|---|

| scFoundation | 0.85 | 0.78 | Moderate | Large-scale atlas integration [8] |

| sysVI (VAMP+CYC) | 0.82 | 0.81 | High | Cross-system integration [7] |

| KL Regularization | 0.75 | 0.65 | High | Mild batch effects only [7] |

| Adversarial Learning | 0.80 | 0.70 | Low | Balanced cell type proportions [7] |

The performance metrics demonstrate that scFoundation provides strong batch correction capabilities while maintaining biological fidelity. The iLISI score measures batch mixing (higher values indicate better integration), while Normalized Mutual Information (NMI) assesses how well cell type identity is preserved after integration [7]. scFoundation's balanced performance across these metrics makes it suitable for challenging integration scenarios involving substantial technical or biological variation.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Item | Function | Implementation in scFoundation |

|---|---|---|

| Pretrained Model Weights | Provides foundational knowledge of gene-gene relationships and cellular states | 100M parameter model trained on 50M+ human cells [6] |

| Data Processing Pipeline | Standardizes raw sequencing data into model-compatible format | Includes quality control, normalization, and tokenization steps [6] |

| Embedding Extraction Code | Generates latent representations of cells and genes | Outputs 512-dimensional gene embeddings and cell embeddings [6] |

| Benchmarking Datasets | Evaluates model performance across diverse biological scenarios | Includes cross-species, organoid-tissue, and protocol variation datasets [7] [8] |

| Evaluation Metrics | Quantifies integration quality and biological preservation | iLISI for batch mixing, NMI for cluster conservation, ontology-aware metrics [7] [8] |

Visualization of Workflows

Input Representation and Tokenization

Batch Integration Workflow

Applications in Drug Discovery and Development

The application of scFoundation embeddings in batch integration has significant implications for drug discovery and development. By enabling robust integration of diverse datasets, researchers can more effectively identify novel drug targets, understand disease mechanisms across model systems, and predict drug sensitivity.

In preclinical drug development, scFoundation facilitates the integration of data from various model systems, including cell lines, organoids, and animal models, with human tissue data [7]. This integrated approach allows for better assessment of the translational relevance of preclinical findings and more informed selection of drug candidates for clinical development. The model's ability to preserve biological variation while removing technical artifacts ensures that meaningful biological signals relevant to drug response are maintained throughout the analysis.

Furthermore, scFoundation embeddings can be directly applied to predict drug sensitivity and resistance patterns [8]. By integrating drug perturbation datasets across different experimental systems, researchers can build more accurate models of drug response that account for cellular heterogeneity and context-specific effects. This approach is particularly valuable in oncology, where tumor heterogeneity significantly influences treatment outcomes.

The construction of a massive, high-quality pretraining corpus is a critical first step in developing robust single-cell Foundation Models (scFMs) for batch integration. For models like scFoundation and scPRINT, learning from 50 million human cells provides the foundational understanding of cellular biology necessary to generate embeddings that are resilient to technical variations. This corpus enables the model to learn a unified representation of single-cell data that can drive many downstream analyses, including batch integration [9]. The scale and diversity of this data are essential for the model to distinguish biologically meaningful signals from technical artifacts, a prerequisite for effective batch effect correction.

The pretraining corpus for a large scFM is typically assembled from public repositories such as the CZ CELLxGENE database, NCBI Gene Expression Omnibus (GEO), and other atlas projects [9] [10]. These platforms provide unified access to millions of annotated single-cell datasets. For a corpus of approximately 50 million cells, careful selection and processing are required to ensure broad biological coverage while managing data quality.

Table 1: Characteristics of a Representative 50-Million-Cell Pretraining Corpus

| Characteristic | Description | Source/Note |

|---|---|---|

| Total Cell Count | ~50 million human cells | [10] |

| Primary Data Source | cellxgene database | [10] |

| Species | Human (primarily), with multi-species data in some models | [9] |

| Biological Conditions | Diverse tissues, cell types, donor states (healthy/diseased) | [9] |

| Sequencing Technologies | Multiple platforms (e.g., 10x Genomics 3') | Implied by data source diversity |

Table 2: Data Processing and Quality Control Pipeline

| Processing Step | Key Action | Goal |

|---|---|---|

| Data Acquisition | Collect datasets from public repositories; process raw FASTQ to expression matrices | Create a unified starting point [4] |

| Quality Control | Filter cells and genes based on quality metrics (e.g., mitochondrial counts, gene detection) | Remove low-quality data [4] |

| Gene Annotation | Standardize gene names according to HUGO Gene Nomenclature Committee (HGNC) | Ensure consistent gene identity [4] |

| Format Standardization | Convert all data to a unified sparse matrix format (e.g., h5ad) | Enable efficient model training [4] |

Model Architecture & Tokenization for Batch-Invariant Learning

The model architecture and how cells are converted into model inputs (tokenization) are pivotal in learning batch-invariant representations.

Tokenization Strategy: A common approach is to treat each cell as a "sentence" and its genes as "words." A critical challenge is that gene expression data lacks inherent sequence. To address this, a prevalent method is to rank genes within each cell by their expression levels. This ranked list of top-expressed genes then forms the deterministic sequence input for the transformer model [9]. Each gene is typically represented by a token embedding that may combine a gene identifier and its expression value.

Model Architecture: Most scFMs, including those trained on 50 million cells, use a transformer-based architecture [9]. The attention mechanisms in these models allow them to learn complex, long-range dependencies between genes, which is crucial for understanding core biological programs that persist across batches. Some models, aiming to balance efficiency and performance, may use variants of the transformer, such as the RetNet framework, which offers linear complexity [4].

Experimental Protocol: Pretraining for Batch Integration

This protocol details the procedure for pretraining a foundation model on a corpus of 50 million human cells, with a focus on generating embeddings suitable for batch integration.

Materials and Reagents

Table 3: Essential Research Reagent Solutions for scFM Pretraining

| Item | Function/Description | Example/Note |

|---|---|---|

| Single-Cell RNA-seq Datasets | The fundamental input data for pretraining. | Sourced from public repositories like CELLxGENE, GEO [10] |

| High-Performance Computing (HPC) Cluster | Provides the computational power necessary for large-scale model training. | Equipped with multiple high-end GPUs (e.g., NVIDIA A40/A100) [10] |

| Deep Learning Framework | Software environment for building and training neural networks. | PyTorch, TensorFlow, or MindSpore [4] |

| Data Processing Tools (Python/R) | For quality control, normalization, and tokenization of single-cell data. | Scanpy, Seurat, or custom scripts [4] |

Step-by-Step Procedure

Corpus Curation and Integration

- Identify and download relevant single-cell datasets from public repositories such as CELLxGENE, GEO, and ENA. Target a cumulative cell count of approximately 50 million human cells [10] [4].

- Apply a standardized quality control pipeline uniformly across all datasets. Typical thresholds include filtering out cells with an extreme number of detected genes or high mitochondrial gene percentage, and removing genes detected in very few cells.

- Standardize gene annotations across all datasets using official gene symbols from the HGNC.

- Log-normalize gene expression counts within each cell to correct for sequencing depth variations.

- Integrate the filtered and normalized datasets into a unified corpus, retaining source (batch) information for each cell for downstream evaluation.

Model Input Preparation (Tokenization)

- For each cell in the corpus, select the top 2,000-2,200 highly variable genes.

- Rank these selected genes by their normalized expression value within the cell.

- Convert each gene into a token. This is often done by creating an embedding that sums a trainable vector for the gene's identity and a projection of its expression value [10] [4]. This sequence of tokens represents the cell for model input.

Self-Supervised Pretraining

- Configure the transformer model architecture. For a 50-million-cell corpus, model sizes often range from tens of millions to over 100 million parameters [10].

- Employ a Masked Language Modeling (MLM) pretraining objective. Randomly mask a portion (e.g., 15-20%) of the gene tokens in each input sequence.

- Train the model to predict the expression values or identities of the masked genes based on the context provided by the unmasked genes in the same cell. This task forces the model to learn the underlying gene-gene interactions and regulatory patterns that define cellular states [9].

- Utilize multiple GPUs (e.g., on an A40 or Ascend910 cluster) for distributed training, which may take several days to complete [10] [4].

Validation of Embeddings for Batch Integration

- Generate Embeddings: Forward pass a held-out dataset containing known batch effects through the pretrained model to extract a latent embedding vector for each cell.

- Visual Assessment: Use dimensionality reduction (e.g., UMAP) on the cell embeddings and color the points by batch origin and cell type. A successful model will produce embeddings where cells cluster primarily by biological identity (cell type) rather than by technical batch.

- Quantitative Metrics: Calculate integration metrics such as:

- Batch ASW (Average Silhouette Width): Measures mixing of batches; values closer to 0 indicate better integration.

- Cell-type ASW: Assesses preservation of biological clusters after integration; higher values are better.

- Graph Connectivity: Evaluates whether cells of the same type from different batches form a connected graph.

Troubleshooting and Optimization Guidelines

Table 4: Common Pretraining Challenges and Solutions

| Challenge | Potential Impact on Batch Integration | Recommended Solution |

|---|---|---|

| High Batch Effect in Pretraining Corpus | Model may learn to encode technical noise. | Increase corpus diversity; ensure balanced representation of technologies and conditions [9]. |

| Poor Cell Embedding Separation | Inability to distinguish cell types defeats batch integration. | Verify tokenization strategy; consider incorporating additional gene metadata (e.g., protein embeddings) [10]. |

| Long Training Time / Computational Cost | Limits iteration and experimentation. | Use model variants with linear attention (e.g., RetNet) [4]; leverage efficient GPU clusters [10]. |

Read-depth-aware Masked Gene Modeling (MGM) Pretraining Task

In the field of single-cell genomics, foundation models are trained on vast datasets to learn fundamental biological principles that can be adapted to various downstream tasks. The core of this training process involves self-supervised learning objectives, where models learn to predict hidden or missing parts of the input data. Among these objectives, Masked Gene Modeling (MGM) has emerged as a predominant strategy, analogous to masked language modeling in natural language processing. Within this framework, the read-depth-aware MGM pretraining task represents a significant advancement for modeling single-cell RNA sequencing (scRNA-seq) data. This approach is particularly crucial for applications requiring robust biological representations, such as batch integration with scFoundation embeddings, where accounting for technical variation is essential for generating biologically meaningful integrated datasets. [2] [9]

scFoundation, a foundation model with 100 million parameters pretrained on approximately 50 million human cells, employs this specific read-depth-aware MGM pretraining task. Unlike simpler MGM variants, this approach explicitly models the sequencing depth of each cell—a key technical factor representing the total number of reads sequenced per cell—which significantly influences observed gene expression counts. By incorporating this critical source of technical variance directly into its pretraining objective, scFoundation learns representations that are more biologically relevant and less confounded by technical artifacts, making its embeddings particularly powerful for complex downstream tasks like multi-batch integration. [2] [4]

Core Concepts and Comparative Framework

The Masked Gene Modeling Paradigm

Masked Gene Modeling trains foundation models by randomly masking a portion of the input gene expression values and tasking the model with reconstructing these masked values based on the remaining context. Through this process, the model learns intricate gene-gene relationships, regulatory patterns, and underlying cellular states without requiring labeled data. The model is trained to minimize the difference between its predictions and the actual masked expression values, progressively building a comprehensive understanding of transcriptional biology. [9]

Key Discretization Strategies in Single-Cell Foundation Models

Different foundation models employ distinct strategies for handling continuous gene expression values, which significantly impact their performance and applicability. The table below summarizes the primary discretization approaches used by prominent single-cell foundation models.

Table 1: Gene Expression Discretization Strategies in Single-Cell Foundation Models

| Strategy Type | Representative Models | Core Methodology | Advantages | Limitations |

|---|---|---|---|---|

| Value Projection | scFoundation, GeneCompass | Projects continuous expression values using linear transformation combined with gene embeddings | Preserves full resolution of expression data; maintains quantitative relationships | Diverges from traditional NLP tokenization; computationally intensive |

| Value Categorization | scBERT, scGPT | Bins expression values into discrete categories or "buckets" | Simplifies sequence modeling; preserves absolute value distributions | Introduces information loss; sensitive to binning parameter selection |

| Rank-based | Geneformer, LangCell | Ranks genes by expression level within each cell | Captures relative expression; robust to batch effects and noise | Loses absolute expression magnitude information |

Among these approaches, scFoundation's value projection method is particularly notable for batch integration applications because it maintains the continuous nature of gene expression data, thereby preserving subtle biological variations that might be lost through binning or ranking strategies. [4] [11]

Technical Protocol: Read-depth-aware MGM Implementation

Data Preprocessing and Normalization

The implementation of read-depth-aware MGM requires careful data preprocessing to ensure model robustness:

- Quality Control: Filter cells based on quality metrics, including total counts, number of detected genes, and mitochondrial percentage. Remove low-quality cells and potential multiplets.

- Gene Selection: Filter genes that are detected in a minimal number of cells to reduce noise and computational requirements.

- Library Size Normalization: Normalize gene expression counts by the total read count per cell (sequencing depth) to account for varying cellular RNA content. This is typically expressed as counts per million (CPM) or similar metrics.

- Log Transformation: Apply log transformation to normalized values to stabilize variance and make the data more normally distributed. [12]

Model Architecture and Training Configuration

scFoundation employs an asymmetric encoder-decoder architecture with 100 million parameters. The model is trained on a comprehensive dataset of 19,264 human protein-encoding genes and common mitochondrial genes, producing embeddings with 3,072 dimensions. [2]

Table 2: scFoundation Model Architecture Specifications

| Component | Specification | Purpose |

|---|---|---|

| Architecture Type | Asymmetric encoder-decoder | Efficient processing of high-dimensional gene expression data |

| Parameter Count | 100 million | Capacity to capture complex biological relationships |

| Input Genes | 19,264 human protein-encoding + mitochondrial genes | Comprehensive coverage of the transcriptome |

| Output Dimension | 3,072 | High-dimensional embedding space for rich representation |

| Pretraining Data | ~50 million human cells | Diverse biological contexts and cell states |

Read-depth-aware MGM Training Procedure

The specific implementation of the read-depth-aware MGM pretraining task follows this experimental workflow:

Figure 1: Experimental workflow for read-depth-aware Masked Gene Modeling pretraining.

The technical protocol involves these critical steps:

Input Representation: For each cell, the gene expression profile is represented as a vector of normalized counts for all genes in the vocabulary.

Sequencing Depth Calculation: The total sequencing depth (library size) for each cell is calculated as the sum of all counts across genes before normalization.

Masking Strategy: A random subset (typically 15-30%) of gene expression values is masked, following the approach used in standard MGM tasks.

Read-depth Integration: The sequencing depth information is incorporated into the model through one of several possible mechanisms:

- As an additional input token or feature vector

- As a scaling factor in the loss function

- As a conditional input to the reconstruction layers

Reconstruction Target: The model is trained to reconstruct the original expression values of masked genes using a mean squared error (MSE) loss function, which is particularly suitable for continuous expression values.

Training Configuration: The model is trained with large batch sizes and optimized using Adam or similar optimizers with learning rate scheduling. [2] [4]

Research Reagent Solutions and Computational Tools

Implementation of read-depth-aware MGM requires specific computational resources and software tools. The following table details essential components for replicating this pretraining approach.

Table 3: Essential Research Reagents and Computational Tools for Read-depth-aware MGM

| Category | Item/Resource | Specification/Version | Purpose in Protocol |

|---|---|---|---|

| Pretraining Data | Human single-cell transcriptomes | ~50 million cells (for scFoundation) | Model training corpus capturing diverse biology |

| Model Architecture | Asymmetric encoder-decoder transformer | 100 million parameters | Core learning framework for gene relationships |

| Software Framework | MindSpore AI Framework | - | Optimized training on Ascend NPUs |

| Hardware | Ascend910 NPUs | 4x Huawei Altas800 servers | Efficient processing of large-scale models |

| Gene Vocabulary | Protein-coding genes + mitochondrial | 19,264 genes | Comprehensive transcriptome coverage |

| Normalization | Read-depth normalization | Counts per million (CPM) | Technical variation correction |

| Loss Function | Mean Squared Error (MSE) | - | Reconstruction error minimization |

These specialized tools and resources enable the efficient training of large-scale foundation models like scFoundation, which requires substantial computational resources due to its 100 million parameters and training dataset of approximately 50 million cells. [2] [4]

Application Protocol: Batch Integration with scFoundation Embeddings

Experimental Workflow for Batch Integration

The application of scFoundation embeddings for batch integration follows a systematic protocol designed to maximize biological signal preservation while minimizing technical variance:

Figure 2: Batch integration workflow using scFoundation embeddings.

Step-by-Step Integration Methodology

Data Preparation

- Format each batch as a separate gene expression matrix with consistent gene annotations

- Apply standard quality control metrics to each batch individually

- Perform minimal normalization to address extreme technical artifacts while preserving biological variance

Embedding Generation

- Process each batch through the pretrained scFoundation model without fine-tuning (zero-shot)

- Extract cell embeddings from the model's final layer (3,072 dimensions)

- Concatenate embeddings from all batches into a unified embedding matrix

Batch Effect Assessment

- Visualize embeddings using UMAP or t-SNE, coloring by batch and cell type

- Calculate quantitative batch integration metrics:

- Batch ASW (Average Silhouette Width): Measures batch mixing (closer to 0 indicates better integration)

- PCR (Principal Component Regression): Quantifies variance explained by batch (lower values preferred)

- Evaluate biological conservation using cell type clustering metrics

Optional Additional Integration

- If significant batch effects persist, apply lightweight integration algorithms (Harmony, Scanorama) to the scFoundation embeddings

- Avoid aggressive integration that might remove biological signal

Validation and Interpretation

Performance Evaluation Metrics

The performance of batch integration using scFoundation embeddings should be evaluated using multiple complementary metrics, as shown in the table below.

Table 4: Quantitative Metrics for Evaluating Batch Integration Performance

| Metric Category | Specific Metric | Ideal Value | Evaluation Focus |

|---|---|---|---|

| Batch Mixing | Batch ASW | Closer to 0 | Degree of batch effect removal |

| PCR Batch | Lower values | Variance explained by batch | |

| Biological Conservation | Cell Type ASW | Closer to 1 | Preservation of cell identity |

| Graph Connectivity | Higher values | Maintenance of biological structure | |

| Overall Performance | scGraph-OntoRWR | Higher values | Consistency with biological knowledge |

| LISI Score | Higher values | Local integration quality |

Comparative benchmarking has demonstrated that scFoundation's read-depth-aware pretraining produces embeddings that consistently outperform simpler methods in complex integration scenarios, particularly when batches contain both technical and biological covariates. [2] [13]

In the field of single-cell genomics, batch effects—technical variations between datasets derived from different experiments, sequencing platforms, or donors—pose a significant challenge to integrating and analyzing data at scale. These non-biological variations can obscure true biological signals, complicating the identification of cell types, states, and responses. The emergence of single-cell foundation models (scFMs), pre-trained on millions of cells, offers a powerful solution by learning universal representations of cellular states that can be adapted to various downstream tasks. Among these, scFoundation is a notable model pre-trained on approximately 50 million human cells, featuring around 100 million parameters [4] [2]. It employs a value projection strategy and an asymmetric encoder-decoder architecture to directly predict raw gene expression values, preserving the full resolution of the data [2] [11]. This application note explores how scFoundation's embedding generation process encodes cell states, with a specific focus on its application and methodology for batch integration in research and drug development.

Technical Architecture of scFoundation

The core of scFoundation's ability to generate meaningful cell embeddings lies in its model architecture and pre-training strategy.

Input Representation and Tokenization

A critical step in preparing single-cell RNA sequencing (scRNA-seq) data for scFoundation is tokenization—the process of converting raw gene expression data into a structured format the model can process. Unlike models that use gene ranking or value binning, scFoundation utilizes a value projection strategy [11]. This approach represents a gene's expression vector as a sum of a projection of the gene expression value and a gene-specific embedding. This method preserves the full, continuous resolution of the gene expression data, avoiding the information loss inherent in discretization methods like binning or ranking [4] [11].

Table: scFoundation Tokenization and Input Features

| Component | Description | Role in Embedding |

|---|---|---|

| Gene Embedding | Lookup table (768 dimensions) [2] | Captures unique, context-independent identity of each gene. |

| Value Embedding | Linear projection of continuous expression value [11] | Encodes the absolute expression level of a gene in a specific cell. |

| Positional Embedding | Not used in scFoundation [2] | N/A |

Model Architecture and Pre-training

scFoundation is built on an asymmetric encoder-decoder transformer architecture [2]. Its pre-training employs a masked gene modeling (MGM) task, where a random subset of genes in a cell's expression profile is masked, and the model is tasked with predicting their original expression values using a read-depth-aware mean squared error (MSE) loss [2]. Through this self-supervised learning on 50 million human cells, the model learns the complex, non-linear relationships between genes, building a rich internal representation of cellular state. The embedding for an entire cell is typically derived from a special token (e.g., [CLS]) prepended to the input sequence, which aggregates global cell state information through the model's attention layers [9] [1].

The following diagram illustrates the workflow from raw single-cell data to a finalized, batch-integrated embedding space.

Protocol for Batch Integration Using scFoundation Embeddings

This protocol provides a step-by-step methodology for using scFoundation to integrate multiple single-cell datasets and remove technical batch effects.

Data Preprocessing and Embedding Extraction

Goal: To generate a unified, batch-aware latent representation of all cells from different experimental batches.

Materials & Reagents:

- Computing Environment: A high-performance computing environment with a modern GPU (e.g., NVIDIA A100 or V100) is recommended for efficient inference.

- Software: Python environment with scFoundation model implementation and dependencies (e.g., PyTorch, NumPy, Scanpy).

- Input Data: Multiple scRNA-seq count matrices (cells x genes) from different batches/studies, annotated with batch and biological condition labels.

Procedure:

- Data Standardization: Independently for each dataset, perform standard quality control (filtering low-quality cells and genes) and normalize for sequencing depth (e.g., counts per 10,000). Log-transform the expression values if required by the model's implementation.

- Gene List Harmonization: Align the gene sets across all datasets to a common reference (e.g., HGNC symbols). Retain only the genes that are present in both the datasets and scFoundation's pre-trained vocabulary.

- Embedding Inference:

a. Load the pre-trained scFoundation model.

b. For each cell in the combined dataset, pass its normalized gene expression vector through the model.

c. Extract the cell embedding from the model. This is typically the hidden state associated with the special

[CLS]token or the mean-pooled output of all gene tokens [9] [1]. d. Compile all cell embeddings into a matrix (cells x embedding_dimension). This matrix is the foundational representation for all subsequent integration steps.

Post-Hoc Batch Correction and Evaluation

Goal: To remove residual technical variance from the scFoundation embeddings and evaluate the integration quality.

Procedure:

- Apply Integration Algorithm: Input the matrix of scFoundation cell embeddings into a batch integration algorithm such as Harmony [2] [13] or Scanorama. These methods will further refine the embedding space to align cells by cell type rather than by batch of origin.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the batch-corrected embedding matrix, followed by UMAP or t-SNE for 2D visualization.

- Quality Assessment:

- Visual Inspection: Examine the UMAP/t-SNE plot. Successful integration is indicated by the intermingling of cells of the same annotated cell type from different batches, rather than clustering by batch.

- Quantitative Metrics: Calculate established batch integration metrics [2] [13]:

- Average Bio (AvgBIO) / Cell-type ASW (cASW): Measures preservation of biological variance (cell type separation). Higher is better.

- Batch ASW (bASW) / PCR Batch: Measures the removal of technical batch variance. Lower is better.

- Graph Connectivity: Assesses whether cells of the same type form a connected graph across batches.

Performance and Benchmarking

scFoundation's embeddings have been rigorously evaluated against other methods in benchmark studies. The table below summarizes its performance in batch integration and related tasks compared to other foundation models and established baselines.

Table: Benchmarking scFoundation Performance on Key Tasks

| Model | Pre-training Scale | Architecture & Tokenization | Batch Integration Performance | Cell Annotation Performance |

|---|---|---|---|---|

| scFoundation | ~50M human cells [2] | Asym. Encoder-Decoder / Value Projection [2] | Robust, outperforms some baselines on complex datasets [2] | High accuracy, benefits from pre-training [2] |

| scGPT | ~33M human cells [4] [2] | Transformer / Value Binning [2] | Good, but can be outperformed by scVI/Harmony on technical batches [13] | High, but zero-shot performance can be inconsistent [13] |

| Geneformer | ~30M human cells [4] [2] | Transformer / Gene Ordering [2] | Struggles with batch effects; often outperformed by simpler methods [13] | High when fine-tuned; limited zero-shot capability [13] |

| Baseline (scVI) | N/A (Model fitted per task) | Generative / Probabilistic Model | Consistently strong performance on technical batch correction [13] | N/A |

| Baseline (Harmony) | N/A (Algorithm) | Linear / Iterative PCA | Strong performer, especially on technical batches [13] | N/A |

A key insight from benchmarks is that while foundation models like scFoundation capture deep biological knowledge, their zero-shot embeddings (used without any task-specific fine-tuning) may not always outperform simpler, specialized methods like Highly Variable Genes (HVG) selection combined with scVI or Harmony on straightforward batch integration tasks [13]. However, their strength lies in providing a powerful, general-purpose feature representation that can be effectively fine-tuned for a wide array of complex downstream applications beyond just batch integration.

Application in Perturbation Prediction

Beyond batch integration, scFoundation's ability to encode a robust representation of cellular state makes it highly valuable for predicting the effects of genetic or chemical perturbations—a critical task in drug discovery.

The workflow involves fine-tuning the pre-trained model on a dataset containing both control and perturbed cells (e.g., cells treated with a drug or with a gene knocked out). The model learns to map the perturbation condition to a specific region in the embedding space, predicting the resulting shift in gene expression profile.

Experimental Protocol for Perturbation Prediction:

- Data Preparation: Create a dataset of single-cell expression profiles from a perturbation experiment (e.g., using CRISPRi or drug screening). Include both control and perturbed cells.

- Model Fine-tuning: Extend the scFoundation model's input by adding a special token that represents the specific perturbation (e.g.,

[PERT:DRUG_A]). Fine-tune the model on this dataset using the MGM objective, allowing it to learn the association between the perturbation token and the resulting changes in gene expression. - In Silico Prediction: For a novel perturbation, input a control cell's expression profile alongside the new perturbation token. The model's output is a predicted gene expression vector for the cell under that perturbation, enabling in silico hypothesis testing and drug candidate prioritization [4] [9].

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for scFoundation Workflows

| Resource / Tool | Type | Function in Experiment |

|---|---|---|

| Pre-trained scFoundation Model | Software Model | Provides the core foundation for generating cell and gene embeddings; encodes pre-learned biological knowledge from 50M+ cells. |

| CZ CELLxGENE Database | Data Resource | A primary source of standardized, annotated single-cell data used for model pre-training and as a reference for cell type annotation [9] [1]. |

| Harmony / Scanorama | Software Algorithm | Post-hoc integration algorithms used to remove batch effects from the high-dimensional cell embeddings produced by scFoundation [2] [13]. |

| Scanpy / Seurat | Software Toolkit | Comprehensive Python/R toolkits for single-cell analysis; used for data preprocessing, normalization, visualization (UMAP/t-SNE), and general analysis workflows. |

| Perturbation Tokens | Model Input Feature | Special tokens added to the model's vocabulary during fine-tuning to represent specific genetic or chemical perturbations, enabling in silico prediction. |

The Critical Role of Value Projection for Continuous Gene Expression Representation

In single-cell genomics, the method by which gene expression data is represented within a foundation model is a fundamental determinant of its biological fidelity and analytical utility. While early approaches relied on gene ordering or value categorization, value projection has emerged as a superior strategy for preserving the full resolution of continuous transcriptional data. This continuous representation is particularly critical for applications requiring precise quantification of expression changes, such as batch integration and perturbation response prediction.

This Application Note delineates the core principles of value projection, as exemplified by models like scFoundation and CellFM, and provides detailed protocols for their application in batch integration tasks. By treating gene expression values as continuous projections rather than discretized categories, value projection-based models retain the subtle, biologically meaningful variations in transcript abundance that are essential for distinguishing nuanced cellular states and effectively mitigating technical artifacts.

Value Projection in Single-Cell Foundation Models

Core Conceptual Framework

Value projection is an input representation strategy for single-cell foundation models (scFMs) where a gene's expression vector is expressed as the sum of a gene embedding and a projection of its continuous expression value [4]. This contrasts with two other prevalent strategies:

- Gene Ordering: Treats a cell as a sequence of genes ranked by expression level (e.g., Geneformer) [9] [4]. This discards the precise magnitude of expression.

- Value Categorization: Bins continuous expression values into discrete buckets (e.g., scBERT) [4], thereby losing resolution.

The key advantage of value projection is its ability to preserve the full resolution of the original gene expression data, transforming the task of modeling a cell's state into a continuous prediction problem [4]. This is paramount for accurately capturing the graded nature of transcriptional regulation.

Comparative Analysis of Representation Strategies

The table below summarizes the core differences between the three primary representation strategies used in single-cell foundation models.

Table 1: Comparison of Gene Representation Strategies in Single-Cell Foundation Models

| Strategy | Core Mechanism | Key Example Models | Advantages | Limitations |

|---|---|---|---|---|

| Value Projection | Sum of gene embedding + projection of continuous value | scFoundation, CellFM, GeneCompass [4] | Preserves full data resolution; superior for quantitative tasks | Potentially higher computational cost |

| Gene Ordering | Ranks genes by expression to form a sequence | Geneformer, scGPT (partially), tGPT [9] [4] | Leverages powerful sequence models; intuitive "cell as sentence" analogy | Discards absolute expression magnitude |

| Value Categorization | Bins expression values into discrete categories | scBERT, scGPT (partially) [9] [4] | Simplifies problem to classification; can be effective for annotation | Loss of fine-grained expression information |

Quantitative Benchmarking of Value Projection Models

Performance in Downstream Tasks

Independent benchmarking studies have evaluated scFMs across a spectrum of biologically relevant tasks. These benchmarks reveal that while no single model is universally superior, value projection models demonstrate consistent and robust performance.

Table 2: Benchmarking Performance of Selected Single-Cell Foundation Models

| Model | Representation Strategy | Cell Type Annotation (Median ARI) | Batch Integration (Median iLISI) | Perturbation Prediction (Mean Pearson R) | Key Strength |

|---|---|---|---|---|---|

| scFoundation | Value Projection | 0.517 | 2.219 | 0.144 | Accurate gene expression value prediction [8] [4] |

| CellFM | Value Projection | 0.553 | 2.275 | 0.159 | Scalability to 100M+ cells [4] |

| Geneformer | Gene Ordering | 0.491 | 2.105 | 0.138 | Gene network analysis [8] |

| scGPT | Value Categorization/Projection | 0.532 | 2.194 | 0.149 | Versatility across tasks [8] |

| scBERT | Value Categorization | 0.502 | 2.101 | 0.127 | Cell type annotation [8] |

Note: Performance metrics are aggregated from benchmark studies and are intended for comparative purposes. Actual performance is dataset- and task-dependent. ARI: Adjusted Rand Index; iLISI: integration Local Inverse Simpson's Index, where higher values indicate better mixing of batches. [8]

Biological Insight from Continuous Representations

The continuous embeddings generated by value projection models encode meaningful biological knowledge. For instance:

- Gene Function Prediction: CellFM has demonstrated superior performance in predicting gene functions, a task that benefits from the nuanced relationships captured by continuous value projections [4].

- Gene-Gene Relationships: The embeddings from value projection models like scFoundation and CellFM can more accurately reconstruct known gene-gene interaction networks from databases like STRING compared to models using other representation strategies [14] [4].

Application Protocol: Batch Integration with scFoundation Embeddings

Research Reagent Solutions

Table 3: Essential Tools and Reagents for scFoundation-Based Batch Integration

| Item Name | Function/Description | Example/Note |

|---|---|---|

| scFoundation Model | Pre-trained foundation model for generating latent cell embeddings. | 50 million human cells, ~0.1B parameters [4]. |

| Single-Cell Dataset | Input data for analysis and integration. | Format: h5ad, Seurat object, or 10x Genomics directory. |

| Computational Environment | Hardware/Software for running scFoundation. | GPU acceleration (e.g., NVIDIA A100) recommended. Python environment with PyTorch. |

| Preprocessing Pipeline | Standardizes raw data for model input. | Quality control, gene name standardization (HGNC), normalization. SynEcoSys database workflow can be used [4]. |

| Downstream Analysis Toolkit | For analyzing integrated embeddings. | Scanpy, Seurat, scikit-learn for clustering and visualization. |

Step-by-Step Workflow

The following diagram outlines the core computational workflow for applying scFoundation to a batch integration problem.

Protocol Steps:

Data Preprocessing and Standardization

- Input: Raw count matrices from multiple batches (e.g., different experiments, platforms, or donors).

- Quality Control: Filter out low-quality cells and genes. Standard thresholds include excluding cells with an extreme number of detected genes or high mitochondrial gene percentage.

- Gene Annotation: Standardize gene names to the HUGO Gene Nomenclature Committee (HGNC) nomenclature to ensure consistency across datasets [4].

- Output: A unified, quality-controlled dataset ready for model input.

Generation of Cell Embeddings Using scFoundation

- Model Loading: Load the pre-trained scFoundation model. The model is designed to predict raw gene expression values using a masked autoencoder (MAE) objective, learning robust representations in the process [4].

- Embedding Inference: Pass the preprocessed single-cell data through the model. The key is to extract the latent cell embeddings from the model's output. These embeddings are continuous, low-dimensional vectors that represent each cell's state, inherently designed to capture biological signal over technical noise.

Assessment of Batch Integration Quality

- Qualitative Visualization: Use dimensionality reduction techniques like UMAP or t-SNE on the scFoundation embeddings to visualize the integrated data. Successful integration is indicated by the intermingling of cells from different batches within the same cell type clusters.

- Quantitative Metrics: Calculate metrics to objectively evaluate performance.

- iLISI (Integration Local Inverse Simpson's Index): Measures the mixing of batches in local neighborhoods. A higher score indicates better batch integration [8].

- Cell-type-specific Silhouette Width: Assesses the preservation of biological variation by measuring how well-defined cell type clusters are after integration.

Downstream Analysis on Integrated Data

- Application: Use the integrated and batch-corrected scFoundation embeddings for subsequent biological discovery.

- Tasks: Perform cell type annotation, identify differentially expressed genes across conditions, analyze cellular trajectories, or characterize population heterogeneity within a unified, batch-corrected feature space.

Technical Architecture of a Value Projection Model

The functional advantage of value projection models is rooted in their underlying architecture. The following diagram details the core components of a typical value projection model, such as scFoundation or CellFM, illustrating how continuous expression values are processed.

Architectural Components

- Embedding Module: This is where value projection occurs. The input gene expression vector is processed in two parallel streams:

- Gene Embedding (E_g): A lookup that provides a unique, learnable vector for each gene, analogous to a word embedding in NLP. This captures the intrinsic identity and functional context of the gene [9] [14].

- Value Projection (W·x): The continuous expression value (x) for each gene is linearly transformed via a projection matrix (W). This operation scales the gene's influence based on its measured abundance in the specific cell.

- Combined Representation: The gene embedding and value projection are summed, creating a context-aware representation for each gene that incorporates both its identity and its current expression level.

- Transformer Encoder Stack: The sequence of combined gene representations is processed by multiple transformer layers. The self-attention mechanism allows the model to capture complex, long-range dependencies and regulatory interactions between all genes in the cell [9] [15]. This step is crucial for learning a holistic representation of the cellular state.

- Output: The model outputs a latent cell embedding that can be used for downstream tasks, and (in pre-training) is also used to reconstruct masked gene expression values, forcing the model to learn robust, predictive features.

Value projection represents a significant methodological advance in the construction of single-cell foundation models. By preserving the continuous nature of gene expression data, it provides a more faithful and information-rich representation of cellular states compared to ordering or categorization strategies. As demonstrated in benchmark studies, models like scFoundation that employ this strategy are particularly effective for complex analytical challenges such as batch integration, where the precise quantification of biological signal is paramount for distinguishing it from technical noise. The provided protocols offer a practical roadmap for researchers to leverage these powerful models, thereby enhancing the reproducibility and biological insight gained from integrative single-cell genomic analyses.

Why Foundation Models? The Promise of Universal Biological Representations

The advent of high-throughput single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research, providing an unprecedented granular view of transcriptomics at the individual cell level. This technology enables researchers to dissect complex cellular compositions within tissues, trace differentiation trajectories, and identify rare cell populations [11]. However, this revolutionary capability comes with significant computational challenges. Single-cell transcriptome data are characterized by high sparsity, high dimensionality, and a low signal-to-noise ratio [8]. Furthermore, the rapid accumulation of data from diverse tissues, species, and experimental conditions has created an urgent need for unified frameworks capable of integrating and comprehensively analyzing these expanding repositories [1].

Foundation models (FMs), defined as large-scale deep learning models pretrained on vast datasets using self-supervised learning, have emerged as a powerful solution to these challenges. Inspired by their success in natural language processing and computer vision, researchers have extended these techniques to single-cell analysis, giving rise to single-cell foundation models (scFMs) [1]. These models are trained on millions of single-cell transcriptomes, learning the fundamental "language" of cells by treating individual cells as sentences and genes or genomic features as words or tokens [1]. The premise is that exposure to massive and diverse datasets enables these models to learn universal biological principles that generalize effectively to new datasets and downstream tasks, offering the promise of truly universal biological representations.

Core Architectural Principles of Single-Cell Foundation Models

Data Processing and Tokenization Strategies

A critical first step in building scFMs is the conversion of raw gene expression data into a structured format that models can process. This "tokenization" process varies across different models:

- Rank-based discretization: Used by models like Geneformer, this approach transforms gene expression values into ordinal rankings within each cell. This method effectively captures relative expression levels and demonstrates robustness to batch effects and technical noise [11].

- Bin-based discretization: Employed by scBERT and scGPT, this method groups continuous expression values into predefined discrete bins, preserving absolute value distributions while simplifying sequence modeling [11].

- Value projection: Adopted by scFoundation, this strategy projects continuous gene expression values directly into embedding vectors using a linear transformation, maintaining full data resolution without discretization [11].

A significant challenge is that gene expression data lacks natural sequential ordering. To address this, models often impose an order, typically by ranking genes by expression level within each cell, creating a deterministic sequence for the transformer architecture [1]. Special tokens are also incorporated to represent cell identity, modality (e.g., RNA vs. ATAC), or batch information, enriching the model's contextual understanding [1].

Model Architectures: From Transformers to State-Space Models

Most established scFMs are built on the transformer architecture, which uses self-attention mechanisms to model complex dependencies between all genes in a cell [1]. Two primary variants exist:

- Encoder-based models (e.g., scBERT): Use bidirectional attention, learning from all genes in a cell simultaneously. These are often preferred for classification tasks and embedding generation [1].

- Decoder-based models (e.g., scGPT): Use a unidirectional masked self-attention mechanism, iteratively predicting masked genes conditioned on known genes. These often excel in generative tasks [1].

However, the quadratic computational complexity of transformers has driven the exploration of more efficient architectures. Recent models like GeneMamba leverage state-space models (SSMs), which offer linear computational complexity and enhanced ability to capture long-range dependencies in genomic data, enabling scalable processing of over 50 million cells with significantly reduced resource requirements [11].

Table 1: Comparison of Single-Cell Foundation Model Architectures

| Model | Architecture Type | Tokenization Strategy | Key Features | Primary Applications |

|---|---|---|---|---|

| scFoundation | Transformer | Value Projection | Continuous embeddings, large-scale pretraining | General-purpose tasks, batch integration |

| Geneformer | Transformer | Rank-based | Context-aware representations, prioritizes highly variable genes | Cell state transitions, network biology |

| scGPT | Transformer (Decoder) | Bin-based | Generative capabilities, multi-omic integration | Cell type annotation, perturbation prediction |

| GeneMamba | State-Space Model (SSM) | Rank-based | Bi-directional context, linear computational complexity | Large-scale integration, gene correlation analysis |

| scBERT | Transformer (Encoder) | Bin-based | BERT-like encoder, focus on cell type annotation | Cell type classification, biomarker discovery |

Application Note: Batch Integration with scFoundation Embeddings

Protocol: Batch Integration Using scFoundation Embeddings

Purpose: To integrate multiple single-cell RNA-seq datasets, removing technical batch effects while preserving meaningful biological variation using pretrained scFoundation embeddings.

Input: Raw or normalized count matrix from multiple batches (e.g., different experiments, platforms, or donors). Genes should be matched to the pretraining vocabulary of scFoundation.

Procedure:

- Data Preprocessing:

- Quality Control: Filter cells based on mitochondrial content, number of genes detected, and total counts. Filter out low-abundance genes.

- Normalization: Normalize library sizes across cells using a standard method (e.g., log(TPM+1) or SCTransform).

- Gene Matching: Ensure the gene identifiers in your dataset match those used in the scFoundation model. Map orthologs if working across species.

Embedding Extraction (Zero-Shot):

- Load the pretrained scFoundation model. It is critical to use the same model version and configuration referenced in the original research to ensure reproducibility.

- Without Fine-tuning: Pass the preprocessed expression matrix through the model to extract the cell-level embeddings from the model's output layer. This is a "zero-shot" approach that leverages the general knowledge encoded during pretraining [8].

- The output is a low-dimensional (e.g., 512 or 1024 dimensions) embedding for each cell, which encapsulates its biological state as understood by the foundation model.

Downstream Integration and Clustering:

- Use the extracted embeddings as input to standard dimensionality reduction and clustering tools.

- Dimensionality Reduction: Apply UMAP or t-SNE on the embedding matrix to visualize cells in two dimensions.

- Clustering: Apply community detection algorithms (e.g., Leiden, Louvain) on a k-Nearest Neighbor graph built from the embeddings to identify cell populations.

Validation:

- Biological Validation: Assess whether known cell types form distinct, coherent clusters in the integrated embedding space.

- Batch Mixing Metrics: Quantify integration quality using metrics like Local Inverse Simpson's Index (LISI) or graph connectivity that evaluate the degree of batch mixing within cell neighborhoods [8].

- Biological Conservation Metrics: Use metrics such as the Normalized Mutual Information (NMI) to ensure that biological variation is preserved after integration.

Performance Benchmarking and Analysis

Comparative analyses reveal the strengths of foundation models like scFoundation in batch integration tasks. A comprehensive 2025 benchmark study evaluating six scFMs against traditional methods (e.g., Seurat, Harmony, scVI) across diverse datasets provides critical quantitative insights [16] [8].

The benchmark employed cell ontology-informed metrics to introduce a biologically grounded perspective:

- scGraph-OntoRWR: Measures the consistency of cell type relationships captured by scFMs with prior biological knowledge encoded in cell ontologies [8].

- Lowest Common Ancestor Distance (LCAD): Assesses the severity of errors in cell type annotation by measuring the ontological proximity between misclassified cell types [8].

Table 2: Benchmark Performance of scFoundation in Batch Integration and Cell Type Annotation

| Task | Dataset Characteristics | Performance vs. Baselines | Key Strengths |

|---|---|---|---|

| Batch Integration | 5 datasets with inter-patient, inter-platform, and inter-tissue variations | Superior or comparable to Seurat, Harmony, and scVI on batch mixing metrics (LISI) [8] | Robust removal of technical effects while preserving subtle biological variation across diverse data sources. |

| Cell Type Annotation | High-quality manual annotations across tissues and species | High accuracy in zero-shot and few-shot settings; lower LCAD error scores [8] | Embeddings capture biologically meaningful relationships; misclassifications are often ontologically similar cell types. |

| Knowledge Capture | Evaluation using scGraph-OntoRWR metric | High consistency with established cell ontologies [8] | Latent representations reflect known biological hierarchy without explicit supervision. |

A key finding is that the performance improvement of scFMs arises from a smoother cell-property landscape in the pretrained latent space. This reduces the complexity of the learning problem for task-specific models, facilitating more accurate and robust downstream analysis [8].

Table 3: Key Research Reagent Solutions for scFM-Based Analysis

| Item / Resource | Type | Function in scFM Workflow | Examples / Notes |

|---|---|---|---|

| Annotated Single-Cell Atlases | Data | Pretraining corpus and evaluation benchmarks for scFMs. | CZ CELLxGENE [1], Human Cell Atlas [1], Asian Immune Diversity Atlas (AIDA) v2 [8] |

| Pretrained Model Weights | Software | Enables zero-shot feature extraction and transfer learning without costly pretraining. | scFoundation, scGPT, GeneMamba model checkpoints [8] [11] |

| Integration & Clustering Algorithms | Software | Downstream analysis of cell embeddings to identify populations and states. | Leiden clustering, UMAP/t-SNE, Scanpy, Seurat [8] |

| Benchmarking Frameworks | Software | Standardized evaluation of model performance on biological tasks. | Custom pipelines implementing metrics like LISI, NMI, scGraph-OntoRWR, LCAD [16] [8] |

| Multi-omics Data | Data | Training and testing multi-modal foundation models that go beyond transcriptomics. | scATAC-seq, spatial transcriptomics, single-cell proteomics data [1] [17] |

The development of scFMs represents a paradigm shift in computational biology, moving from task-specific models to general-purpose frameworks that learn universal biological representations. Their demonstrated robustness in challenges like batch integration underscores their potential to become central tools in single-cell genomics [1]. However, several frontiers for development remain.

Future research will likely focus on enhancing multi-modal integration, creating models that seamlessly combine transcriptomic, epigenetic, proteomic, and spatial information to form a more holistic view of cellular state [1] [17]. Furthermore, improving computational efficiency through architectures like state-space models (e.g., GeneMamba) is critical for scaling to the billions of cells anticipated in future datasets [11]. Finally, a major unsolved challenge is model interpretability—decoding the biological knowledge and regulatory rules encoded within the latent representations and attention mechanisms of these complex models [1].

In conclusion, foundation models like scFoundation fulfill their promise by providing a powerful, unified framework for biological representation. Their ability to integrate diverse data, as demonstrated in batch integration tasks, while capturing deep biological principles, positions them as indispensable tools for unlocking the next generation of discoveries in basic research and therapeutic development.

A Step-by-Step Protocol: Generating and Applying scFoundation Embeddings for Integration

Data Preprocessing and Input Formatting for scFoundation