Benchmarking Cell Type Annotation Accuracy: A 2025 Guide to Methods, Tools, and Best Practices

Accurate cell type annotation is a critical, yet challenging, step in single-cell RNA sequencing analysis.

Benchmarking Cell Type Annotation Accuracy: A 2025 Guide to Methods, Tools, and Best Practices

Abstract

Accurate cell type annotation is a critical, yet challenging, step in single-cell RNA sequencing analysis. This article provides a comprehensive benchmark and practical guide for researchers and drug development professionals, exploring the evolving landscape of annotation methodologies. We cover foundational concepts, from manual expert annotation to the rise of large language models (LLMs) like Claude 3.5 Sonnet and GPT-4. The guide delves into the application and performance of diverse computational tools, including reference-based methods like SingleR and Azimuth, and novel LLM-based platforms such as AnnDictionary and LICT. We further address key troubleshooting strategies for low-heterogeneity datasets and data sparsity, and present a rigorous comparative analysis of accuracy, robustness, and computational efficiency across platforms. This synthesis offers actionable insights for selecting optimal annotation strategies to enhance reproducibility and discovery in biomedical research.

The Foundation of Cell Identity: From Manual Curation to AI-Powered Annotation

Cell type annotation serves as the fundamental cornerstone of single-cell RNA sequencing (scRNA-seq) analysis, enabling significant biological discoveries and deepening our understanding of tissue biology [1]. This process transforms high-dimensional gene expression data into biologically meaningful cell identities, forming the essential foundation for exploring cellular diversity, functional differences, and gaining critical insights into biological processes and disease mechanisms [1]. With the rapid accumulation of single-cell transcriptomic data providing unprecedented computational resources, researchers can now accurately infer cell types, sparking the development of numerous innovative annotation methods [2]. The precision of this annotation step is non-negotiable because inaccuracies propagate through all downstream analyses—from cellular heterogeneity assessment and differential expression testing to cell-cell communication inference and trajectory analysis—potentially compromising biological interpretations and therapeutic discoveries.

The field has witnessed an evolution from traditional wet-lab approaches, such as immunohistochemistry and fluorescence-activated cell sorting—which offer reliability but suffer from lengthy development cycles and high costs—to computational methods that effectively identify and differentiate between various cell types and states by analyzing mRNA levels in individual cells [2]. These computational approaches leverage gene expression profiles derived from transcriptomic data, utilizing strategies including marker gene identification, correlation-based matching, supervised learning, and more recently, large language models and deep learning techniques [2]. As single-cell technologies continue to advance, generating data with increasing dimensionality and sparsity, the challenge of accurate cell type annotation intensifies, necessitating robust benchmarking frameworks and sophisticated methodological comparisons to guide researchers in selecting appropriate tools for their specific biological contexts.

Methodological Landscape: A Comparative Analysis of Annotation Approaches

Computational methods for cell type annotation have diversified significantly to address varying research needs and data availability. These approaches can generally be classified into four main categories based on their underlying principles and application requirements, each with distinct strengths and limitations for specific research scenarios [2].

Table 1: Comparison of Major Cell Type Annotation Method Categories

| Method Category | Principle | Representative Tools | Advantages | Limitations |

|---|---|---|---|---|

| Specific Gene Expression-Based | Uses known marker genes to manually label cells via characteristic expression patterns | CellMarker, PanglaoDB | Simple, interpretable, requires no reference data | Limited to known markers, prone to bias, labor-intensive |

| Reference-Based Correlation | Categorizes unknown cells based on similarity to pre-constructed reference libraries | SingleR, Azimuth, scmap | High accuracy with good references, standardized | Reference-dependent, batch effects problematic |

| Data-Driven Reference | Trains classification models on pre-labeled cell type datasets | scPred, scSemiGAN | Can learn complex patterns, handles large datasets well | Requires extensive labeled data, training complexity |

| Large-Scale Pretraining | Uses unsupervised learning on large data to capture deep gene-cell relationships | scGPT, scBERT, Geneformer | Handles novel cell types, minimal downstream training | Computational intensity, resource demands |

Traditional Methods and Their Evolution

Reference-based correlation methods represent some of the most widely adopted approaches for cell type annotation. These methods function by comparing the gene expression profiles of unannotated cells against comprehensively labeled reference datasets, assigning cell type identities based on similarity metrics. For example, SingleR employs correlation analysis between query cells and reference data, while Azimuth builds on this approach with integrated preprocessing and visualization capabilities [3]. The performance of these methods heavily depends on reference quality and compatibility, with studies demonstrating that SingleR produces results closely matching manual annotation in spatial transcriptomics data, making it particularly valuable for imaging-based platforms like Xenium with limited gene panels [3].

Simultaneously, specific gene expression-based methods continue to evolve, leveraging curated marker gene databases such as CellMarker 2.0 and PanglaoDB, which catalog cell-specific genes across numerous tissue types and species [2]. These resources provide vital support for innovation in single-cell research, though they face limitations including incomplete coverage of certain marker genes, outdated data, and inconsistencies across samples, which restrict their performance when handling novel cell types or rare cell populations [2]. The dynamic updating of these databases through integration of deep learning-derived gene importance scores with biological validation represents a promising direction for enhancing their utility in single-cell annotation.

The Rise of Deep Learning and Large Language Models

Deep learning approaches have revolutionized cell type annotation by extracting informative features from noisy, sparse, and high-dimensional scRNA-seq datasets [1]. Transformer-based models like scTrans employ sparse attention mechanisms to utilize all non-zero genes, effectively reducing input data dimensionality while minimizing information loss—addressing a critical limitation of highly variable gene selection strategies that potentially overlook crucial information contained in low-variability genes [1]. These models demonstrate strong robustness and generalization capabilities, accurately annotating cells in novel datasets and generating high-quality representations essential for precise clustering and trajectory analysis [1].

Large language models (LLMs) have emerged as powerful tools for automating single-cell analysis based on marker genes [4]. Tools like AnnDictionary consolidate multiple LLM providers into a unified framework, enabling de novo cell type annotation where gene lists are derived directly from unsupervised clustering rather than curated gene lists—a potentially more challenging task due to unknown signal and noise that may affect the annotation process [4]. Benchmarking studies reveal significant variability in LLM performance, with Claude 3.5 Sonnet demonstrating the highest agreement with manual annotation, recovering close matches of functional gene set annotations in over 80% of test sets [4]. However, performance diminishes when annotating less heterogeneous datasets, highlighting the importance of multi-model integration strategies to enhance annotation reliability [5].

Benchmarking Experimental Data: Quantitative Performance Comparisons

Rigorous benchmarking of annotation methods provides crucial insights for researchers selecting appropriate tools. Recent evaluations across diverse biological contexts reveal significant performance variations among methods, with optimal tool selection dependent on data characteristics and research objectives.

Method Performance Across Datasets

Comprehensive benchmarking studies evaluate annotation methods using metrics such as accuracy, consistency with manual annotations, computational efficiency, and robustness to technical artifacts. These assessments typically employ diverse scRNA-seq datasets representing various biological contexts—from normal physiology and developmental stages to disease states and low-heterogeneity cellular environments—to thoroughly challenge method capabilities.

Table 2: Performance Comparison of Cell Type Annotation Methods Across Experimental Datasets

| Method | PBMC Accuracy | Gastric Cancer Accuracy | Embryo Data Consistency | Stromal Cells Consistency | Computational Efficiency |

|---|---|---|---|---|---|

| LLM-Based (LICT) | 90.3% | 91.7% | 48.5% | 43.8% | Medium |

| scTrans | 94.2%* | 93.1%* | N/A | N/A | High |

| SingleR | 92.5%* | N/A | N/A | N/A | High |

| Azimuth | 91.8%* | N/A | N/A | N/A | Medium |

| GPT-4 Only | 78.5% | 88.9% | 39.4% | 33.3% | Medium |

| Manual Annotation | Reference | Reference | Reference | Reference | Low |

Note: Values marked with * are estimated from method descriptions where exact values were not provided in the source material. N/A indicates insufficient data for comparison.

The multi-model integration strategy implemented in LICT (Large Language Model-based Identifier for Cell Types) demonstrates significant improvements over single-model approaches, particularly for challenging low-heterogeneity datasets. This strategy reduces mismatch rates from 21.5% to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data compared to GPTCelltype [5]. For low-heterogeneity datasets like embryonic cells and fibroblasts, the improvement is even more pronounced, with match rates increasing to 48.5% for embryo and 43.8% for fibroblast data [5]. The "talk-to-machine" strategy further enhances performance through iterative human-computer interaction, increasing full match rates to 34.4% for PBMC and 69.4% for gastric cancer in highly heterogeneous datasets [5].

Spatial Transcriptomics Applications

The application of reference-based annotation methods to imaging-based spatial transcriptomics data presents unique challenges due to limited gene panels. A recent benchmarking study evaluating five reference-based methods (SingleR, Azimuth, RCTD, scPred, and scmapCell) on Xenium data of human breast cancer revealed that SingleR performed best, being fast, accurate, and easy to use, with results closely matching manual annotation [3]. This performance advantage stems from SingleR's correlation-based approach, which proves more robust to the technical noise and sparsity characteristic of spatial data compared to more complex models requiring extensive parameter tuning.

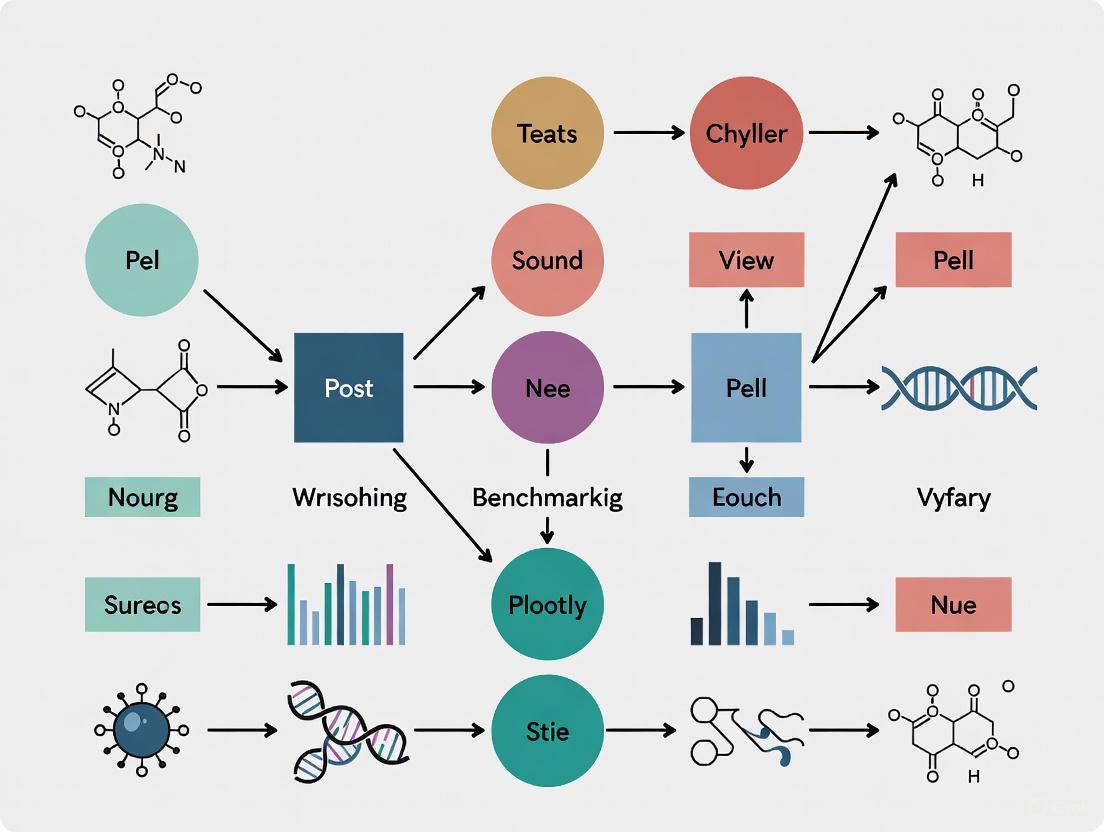

Figure 1: Benchmarking Workflow for Cell Type Annotation Methods

Experimental Protocols: Methodologies for Rigorous Benchmarking

Benchmarking Framework Design

Comprehensive evaluation of annotation methods requires standardized workflows and metrics. The single-cell integration benchmarking (scIB) framework provides quantitative evaluations focusing on two key areas: batch correction and biological conservation based on batch and cell-type labels [6]. However, this framework has limitations in fully capturing unsupervised intra-cell-type variation, prompting the development of enhanced metrics that better assess biological signal preservation [6]. These refined metrics incorporate intra-cell-type biological conservation, validated with multi-layered annotations from the Human Lung Cell Atlas (HLCA) and the Human Fetal Lung Cell Atlas [6].

For LLM-based annotation benchmarking, standardized protocols employ metrics including direct string comparison, Cohen's kappa (κ), and LLM-derived ratings where models assess whether automatically generated labels match manual labels, providing binary yes/no answers or quality ratings (perfect, partial, or not-matching) [4]. These evaluations typically utilize diverse biological contexts—normal physiology (PBMCs), developmental stages (human embryos), disease states (gastric cancer), and low-heterogeneity cellular environments (stromal cells)—to thoroughly challenge method capabilities across research scenarios [5].

Data Preprocessing Protocols

The preprocessing pipeline in single-cell data analysis forms the foundation for ensuring annotation accuracy. Standard protocols include quality control (QC) through evaluation of metrics such as the number of detected genes, total molecule count, and the proportion of mitochondrial gene expression, effectively eliminating low-quality cells and technical artifacts [2]. Data filtering further refines datasets by removing noise samples, including doublets or high-noise cells, with methods like scDblFinder specifically designed for doublet prediction [3].

For spatial transcriptomics data, specialized processing approaches address platform-specific characteristics. Analysis of Xenium data typically skips feature selection steps due to limited gene panels (several hundred genes), utilizing all genes for data scaling rather than selecting highly variable genes [3]. Normalization approaches also require adjustment for spatial data characteristics, with methods like SCTransform in Seurat providing effective normalization for reference preparation in Azimuth workflows [3].

Successful cell type annotation requires leveraging specialized computational resources and biological databases. These tools form the essential toolkit for researchers implementing annotation workflows across diverse experimental contexts.

Table 3: Essential Research Reagents and Resources for Cell Type Annotation

| Resource Category | Specific Resource | Function | Application Context |

|---|---|---|---|

| Marker Gene Databases | CellMarker 2.0 | Provides curated cell-specific marker genes | Manual annotation, validation |

| Reference Atlases | Human Cell Atlas (HCA) | Comprehensive reference of human cells | Reference-based annotation |

| Processing Tools | Seurat | Standardized pipeline for scRNA-seq analysis | Data preprocessing, normalization |

| Annotation Algorithms | SingleR | Fast correlation-based cell type assignment | General-purpose annotation |

| Deep Learning Frameworks | scTrans | Transformer-based annotation with sparse attention | Large-scale, high-accuracy annotation |

| Spatial Transcriptomics Tools | RCTD | Cell type decomposition for spatial data | Spatial transcriptomics annotation |

| LLM Integration Platforms | AnnDictionary | Unified interface for multiple LLM providers | De novo annotation, label management |

Public databases provide vital support for innovation and exploration in single-cell research. The Human Cell Atlas (HCA) offers multi-organ datasets across 33 organs, while the Mouse Cell Atlas (MCA) covers 98 major cell types in mouse models [2]. Specialized resources like the Allen Brain Atlas focus on neuronal cell types, containing 69 distinct neuronal classifications across human and mouse species [2]. These reference atlases enable robust annotation through correlation-based methods and facilitate cross-species comparisons essential for translational research.

For marker-based approaches, databases like PanglaoDB and CellMarker 2.0 catalog cell-specific genes, with CellMarker 2.0 containing markers for 467 human and 389 mouse cell types [2]. CancerSEA specializes in cancer functional states, providing markers across 14 distinct cancer phenotypes [2]. These resources continue to evolve through integration with deep learning-derived gene importance scores, expanding their coverage of novel cell types and rare cell populations.

Computational Frameworks and Platforms

The AnnDictionary package represents a significant advancement in LLM integration for cell type annotation, providing a unified backend for parallel processing of multiple anndata objects through a simplified interface [4]. Built on top of AnnData and LangChain, it supports all common LLM providers while requiring just one line of code to configure or switch the LLM backend [4]. This flexibility enables researchers to leverage the complementary strengths of multiple models, with benchmarking revealing that Claude 3.5 Sonnet achieves the highest agreement with manual annotation, while other models like GPT-4 and Gemini offer distinct advantages for specific cell types or tissues [4].

Deep learning frameworks like scTrans address critical challenges in single-cell analysis by mapping genes to high-dimensional vector spaces and leveraging sparse attention based on Transformer architecture to aggregate genes of non-zero value for representation learning [1]. This approach mitigates problems of information loss and batch effects associated with highly variable gene selection strategies while reducing computational and hardware burdens [1]. The method employs a two-stage process involving pre-training through unsupervised contrastive learning to exploit unlabeled data, followed by fine-tuning with labeled data for supervised learning, resulting in a robust tool for cell type annotation and feature extraction [1].

Integration and Interpretation: Navigating Annotation Challenges

Addressing Technical Variability

Technical variability introduced by different sequencing platforms profoundly impacts annotation outcomes. Platforms such as 10x Genomics and Smart-seq exhibit distinct data characteristics due to differences in their sequencing principles [2]. The 10x Genomics platform employs droplet-based encapsulation for high-throughput sequencing, enabling rapid profiling of large cell populations but often resulting in higher data sparsity, potentially hindering detection of key marker genes for rare cell types [2]. In contrast, Smart-seq utilizes a full-transcriptome amplification strategy, detecting more genes with higher sensitivity, which aids in identifying rare transcripts but may reveal finer-grained cell subpopulations that exceed the classification capacity of pre-trained models [2].

These technical differences exacerbate key challenges in scRNA-seq analysis, including sparsity, heterogeneity, and batch effects. In cross-platform applications, these factors frequently result in inconsistent annotation performance, contributing to reduced model stability in diverse data environments [2]. Effective preprocessing strategies, such as batch correction or cross-platform normalization, are essential for mitigating these systemic biases and improving model generalization ability across experimental contexts.

Credibility Assessment and Validation

Discrepancies between automated and manual annotations do not necessarily indicate reduced reliability of computational methods. Manual annotations often exhibit inter-rater variability and systematic biases, particularly in datasets with ambiguous cell clusters [5]. Objective credibility evaluation strategies address this challenge by assessing annotation reliability through marker gene validation—retrieving representative marker genes for each predicted cell type and evaluating their expression patterns within corresponding cell clusters [5]. An annotation is deemed reliable if more than four marker genes are expressed in at least 80% of cells within the cluster, providing a reference-free, unbiased validation approach [5].

In comparative evaluations, LLM-generated annotations frequently outperform manual annotations in credibility assessments, particularly for low-heterogeneity datasets. In embryonic cell data, 50% of mismatched LLM-generated annotations were deemed credible compared to only 21.3% for expert annotations, while for stromal cell datasets, 29.6% of LLM-generated annotations met credibility thresholds compared to none of the manual annotations [5]. These findings highlight the limitations of relying solely on expert judgment and demonstrate the value of objective evaluation frameworks for identifying reliably annotated cell types for downstream analysis.

Figure 2: Challenges and Solutions in Cell Type Annotation

Cell type annotation remains a complex but non-negotiable component of single-cell biology, with methodological advancements progressively enhancing accuracy, efficiency, and reproducibility. The integration of multi-model strategies, interactive validation approaches, and objective credibility assessment frameworks represents a paradigm shift from reliance on single-method annotations toward consensus-based, empirically validated cell type identification. As the field continues to evolve, the convergence of deep learning architectures with biologically informed benchmarking standards promises to address persistent challenges including technical variability, rare cell type identification, and spatial context integration.

For researchers and drug development professionals, method selection must align with specific research contexts—with correlation-based methods like SingleR offering speed and accuracy for standard applications, transformer-based approaches like scTrans providing robustness for large-scale studies, and LLM-integrated platforms like AnnDictionary enabling de novo annotation for exploratory research. Through continued benchmarking efforts and method development, the field moves closer to comprehensive cellular cartography that faithfully represents biological complexity while powering discoveries in basic research and therapeutic development.

Cell type annotation, the process of identifying and labeling individual cells based on their molecular profiles, represents a fundamental step in single-cell RNA sequencing (scRNA-seq) analysis. This field has undergone a dramatic transformation, evolving from reliance on specialized expert knowledge to the emergence of sophisticated computational automation. This evolution has been driven by the exponential growth in data volume and complexity, which has rendered purely manual approaches increasingly impractical for large-scale studies. Traditionally, researchers manually annotated cell types using well-known and established biomarkers obtained from literature or databases, visualizing marker expression at the cluster level to assign cell identities. While invaluable, this process was inherently subjective, prone to inter-annotator variation, and tremendously time-consuming, taking an estimated 20 to 40 hours to manually annotate a typical dataset with 30 clusters [7].

The limitations of manual annotation catalyzed the development of automated computational methods, creating a new paradigm that emphasizes scalability, reproducibility, and objectivity. Automated cell type annotation has now become an indispensable component of the single-cell data analysis pipeline, enabling researchers to decipher the cellular composition of complex tissues with unprecedented speed and consistency [7]. This guide provides a comprehensive comparison of these evolving methodologies, benchmarking their performance within the broader context of accuracy, efficiency, and applicability to modern genomic research. We synthesize evidence from recent benchmarking studies to objectively evaluate the current landscape of annotation tools, from reference-based methods to the cutting-edge application of large language models (LLMs).

From Manual Curation to Computational Automation

The journey of cell type annotation reflects a broader trend in biology towards data-driven, computational discovery. The initial paradigm, rooted in deep biological expertise, has been progressively augmented and, in many cases, supplanted by algorithmic approaches.

The Era of Expert Knowledge and Marker Genes

The foundation of traditional annotation rests on manual curation and marker gene expression. Researchers used known marker genes—such as CD3 for T cells and CD19 for B cells—to identify cell types by investigating their expression patterns across cell clusters [2] [7]. This method leveraged rich, context-specific knowledge from scientific literature and specialized biological databases like CellMarker and PanglaoDB [2]. Its primary strength was the deep contextual understanding that human experts bring to the task, allowing for the interpretation of nuanced or ambiguous expression patterns. However, this approach was severely limited by its subjectivity, low throughput, and poor scalability, making it unsuitable for the vast datasets generated by modern sequencing technologies [7].

The Rise of Computational Automation

To overcome these limitations, the field developed three major classes of computational annotation tools, each with distinct operational principles:

- Marker Gene Database-Based Methods (e.g., scCATCH, SCSA): These tools use curated lists of marker genes from cell atlases and databases. They employ scoring systems based on marker expression to perform annotation, typically at the cluster level [7].

- Correlation-Based Methods (e.g., SingleR, scmap-cell): These methods measure the similarity between a query dataset and a pre-annotated reference dataset (either bulk RNA-seq or labeled scRNA-seq data) using correlation metrics like Spearman or cosine distance. The reference labels with the highest similarity are assigned to the query cells [3] [7].

- Supervised Classification Methods (e.g., CellTypist, MapCell): Using machine learning algorithms, these tools train classifiers on labeled reference scRNA-seq datasets. The trained models are then applied to predict cell types in new query datasets. MapCell, for instance, uses a Siamese neural network for this purpose [7].

The core advantage of these automated methods is their ability to perform annotation in a relatively short time, providing consistent results and increasing reproducibility [7]. However, their performance is contingent on the quality of the underlying marker genes or reference datasets.

The Emergence of Large Language Models

The most recent evolutionary leap involves the application of large language models (LLMs). While not designed specifically for biology, LLMs like GPT-4 and Claude 3 can autonomously perform cell type annotation without domain-specific reference datasets by processing marker gene lists through standardized prompts [4] [8]. Tools like AnnDictionary and LICT (LLM-based Identifier for Cell Types) leverage this capability, offering a flexible, reference-free approach to annotation [4] [8]. AnnDictionary, for example, is an LLM-provider-agnostic Python package that consolidates automated cell type annotation and biological process inference into a single tool, requiring just one line of code to configure or switch the LLM backend [4]. These models represent a move towards a more generalized form of biological reasoning, though their performance can vary significantly based on the model and the task complexity.

The progression of these paradigms is visually summarized in the following workflow:

Benchmarking Annotation Performance: A Quantitative Comparison

Recent studies have conducted rigorous benchmarking to evaluate the performance of various annotation methodologies, providing crucial data for researchers to select the most appropriate tool.

Performance of Reference-Based Methods on Spatial Transcriptomics Data

A 2025 benchmark study evaluated five reference-based annotation methods on 10x Xenium spatial transcriptomics data from human HER2+ breast cancer, using a paired single-nucleus RNA sequencing (snRNA-seq) profile as the reference. The study compared their performance against manual annotation based on marker genes. The results, summarized in the table below, found that SingleR was the best-performing tool, being fast, accurate, and easy to use, with results most closely matching manual annotation [3].

Table 1: Benchmarking Reference-Based Cell Type Annotation Methods on 10x Xenium Data

| Annotation Method | Underlying Principle | Key Performance Finding | Ease of Use |

|---|---|---|---|

| SingleR | Correlation-based | Best performing, fast, and accurate | Easy |

| Azimuth | Reference-based | Evaluated for accuracy and running time | Integrated in Seurat |

| RCTD | Reference-based | Requires extensive parameter adjustment | Complex |

| scPred | Supervised classification | Performance compared to manual annotation | Requires model training |

| scmap-cell | Correlation-based | Predicts based on similarity to reference | Cell-level annotation |

Performance of Large Language Models in De Novo Annotation

A landmark benchmarking study using the AnnDictionary package provided the first comprehensive evaluation of LLMs for de novo cell-type annotation—a challenging task where gene lists are derived directly from unsupervised clustering rather than being curated. The study, which analyzed the Tabula Sapiens v2 atlas, revealed that performance varies greatly with model size. It found that for most major cell types, LLM annotation can be more than 80-90% accurate [4]. Specifically, Claude 3.5 Sonnet demonstrated the highest agreement with manual annotation and recovered close matches of functional gene set annotations in over 80% of test sets [4].

Another study developed LICT, which employs a multi-model integration strategy to leverage the complementary strengths of multiple LLMs (including GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE). This approach significantly enhanced performance, particularly for low-heterogeneity datasets like human embryos and stromal cells, where it increased the match rate with manual annotations to 48.5% and 43.8%, respectively—a substantial improvement over using a single model [8]. The study also implemented a "talk-to-machine" strategy, an iterative feedback process that further boosted the full match rate with manual annotations to 69.4% in a gastric cancer dataset [8].

Table 2: Benchmarking LLM-Based Cell Type Annotation Methods

| LLM Tool / Model | Key Strategy | Reported Performance | Applicable Context |

|---|---|---|---|

| Claude 3.5 Sonnet | N/A (Standalone Model) | >80-90% accuracy for major types; Highest agreement with manual annotation [4] | De novo annotation |

| LICT | Multi-model integration | Increased match rate to 48.5% (embryo) & 43.8% (fibroblast) vs. single model [8] | Low-heterogeneity datasets |

| LICT | "Talk-to-machine" iterative feedback | 69.4% full match rate in gastric cancer data [8] | Refining ambiguous annotations |

| GPT-4, LLaMA-3, etc. | Individual model use | Performance varies significantly with model size and heterogeneity of data [4] [8] | General use, high-heterogeneity data |

The following table synthesizes the core characteristics of the three major annotation paradigms, highlighting their key features and trade-offs.

Table 3: Comparative Analysis of Cell Type Annotation Paradigms

| Feature | Manual Annotation | Traditional Automated Methods | LLM-Based Annotation |

|---|---|---|---|

| Primary Basis | Expert knowledge & marker genes [7] | Reference datasets & marker databases [7] | Pre-trained biological knowledge [4] |

| Scalability | Low (20-40 hours for 30 clusters) [7] | High | Very High |

| Reproducibility | Low (Subjective) [7] | High | High |

| Accuracy (Context-Dependent) | High for known cell types with clear markers | Moderate to High, depends on reference quality [3] [7] | 80-90% for major types, varies by model [4] |

| Key Limitation | Time-consuming, subjective, not scalable [7] | Constrained by reference data quality/scope [8] [7] | Performance varies; can struggle with low-heterogeneity data [8] |

| Ideal Use Case | Small datasets, novel cell types, final validation | Large-scale studies with high-quality references | Rapid, reference-free annotation, data integration |

Experimental Protocols in Benchmarking Studies

To ensure the reproducibility of the benchmarking data presented, this section outlines the core experimental protocols employed in the cited studies. Adhering to standardized workflows is critical for generating comparable and reliable annotation results.

General scRNA-seq Data Preprocessing Workflow

A typical preprocessing pipeline for scRNA-seq data before annotation involves several key steps to ensure data quality, as derived from common practices in the field [4] [3] [2]:

- Quality Control (QC): Cells are filtered based on metrics like the number of detected genes, total molecule count (UMIs), and the proportion of mitochondrial gene expression to remove low-quality cells and technical artifacts [2].

- Normalization: Data is normalized to account for differences in sequencing depth between cells, for example, using the

NormalizeDatafunction in Seurat [3]. - Feature Selection: Highly variable genes are selected (e.g., top 1000-2000 genes) to focus on biologically relevant signals [3].

- Scaling: The expression value of each gene is scaled and centered.

- Dimensionality Reduction and Clustering: Principal Component Analysis (PCA) is performed, followed by the construction of a neighborhood graph and clustering using algorithms like Leiden. Differentially expressed genes (DEGs) for each cluster are then computed for downstream annotation [4].

This standard workflow is visualized in the following diagram:

Protocol for Benchmarking LLMs with AnnDictionary

The 2025 benchmarking study using AnnDictionary followed this specific protocol [4]:

- Data: The Tabula Sapiens v2 single-cell transcriptomic atlas was used.

- Pre-processing: Each tissue was processed independently. Data was normalized, log-transformed, high-variance genes were set, and then scaled. PCA was performed, the neighborhood graph was calculated, and cells were clustered with the Leiden algorithm. Differentially expressed genes for each cluster were computed.

- Annotation: LLMs were used to annotate each cluster with a cell type label based on its top differentially expressed genes. The same LLM was then used to review its labels to merge redundancies and fix spurious verbosity.

- Evaluation: Agreement with manual annotation was assessed using direct string comparison, Cohen’s kappa (κ), and two different LLM-derived rating systems (binary match/no-match and perfect/partial/not-matching quality rating).

Protocol for LICT's Multi-Model and "Talk-to-Machine" Strategies

The LICT tool introduced and benchmarked several advanced strategies [8]:

- Multi-Model Integration Strategy: Instead of relying on a single LLM, the best-performing results from five top-performing LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0) were selected to leverage their complementary strengths.

- "Talk-to-Machine" Strategy: This is an iterative human-computer interaction process:

- The LLM provides a list of representative marker genes for its predicted cell type.

- The expression of these genes is evaluated in the corresponding cluster.

- If more than four marker genes are expressed in ≥80% of cells, the annotation is validated. Otherwise, it fails.

- For failed validations, a feedback prompt with the validation results and additional DEGs is sent back to the LLM to revise or confirm its annotation.

- Objective Credibility Evaluation: This strategy assesses annotation reliability based on the expression of LLM-retrieved marker genes within the input dataset itself, providing a reference-free measure of confidence.

Successful cell type annotation, whether manual or computational, relies on a foundation of key biological databases, software tools, and reference datasets. The table below catalogs essential "research reagent solutions" for annotation workflows.

Table 4: Essential Research Reagents & Resources for Cell Type Annotation

| Resource Name | Type | Primary Function in Annotation | Relevant Context |

|---|---|---|---|

| CellMarker 2.0 [2] | Marker Gene Database | Provides curated list of cell marker genes for manual and marker-based automated annotation. | Manual, Marker-Based Automation |

| PanglaoDB [2] | Marker Gene Database | Serves as a curated database of marker genes for cell type identification. | Manual, Marker-Based Automation |

| Human Cell Atlas (HCA) [2] | scRNA-seq Reference Atlas | Provides a multi-organ, annotated single-cell dataset for use as a reference in correlation-based and supervised methods. | Reference-Based Automation |

| Tabula Sapiens [4] | scRNA-seq Reference Atlas | A comprehensive, multi-tissue human cell atlas used for benchmarking and as a reference. | Benchmarking, Reference |

| SingleR [3] [7] | Software Tool (R) | Performs correlation-based cell type annotation using reference datasets. | Reference-Based Automation |

| CellTypist [7] | Software Tool (Python) | A supervised classification tool that uses logistic regression for automated annotation. | Supervised Automation |

| AnnDictionary [4] | Software Tool (Python) | An LLM-provider-agnostic package for automated cell type and gene set annotation. | LLM-Based Annotation |

| LICT [8] | Software Tool | Leverages multiple LLMs and a "talk-to-machine" strategy for reference-free annotation. | LLM-Based Annotation |

The evolution of cell type annotation from a purely expert-driven activity to a highly automated computational task underscores a broader transformation in biological research. The benchmarking data clearly demonstrates that computational methods, including both traditional reference-based tools and emerging LLM-based approaches, now offer a powerful combination of speed, scalability, and accuracy that is essential for navigating the scale of modern single-cell datasets. While manual annotation retains its value for validating complex cases and novel discoveries, it is no longer feasible as the primary method for large-scale studies.

The future of cell type annotation lies in hybrid, intelligent systems. The "talk-to-machine" strategy of LICT exemplifies this direction, creating an interactive loop between human expertise and computational power [8]. Furthermore, the integration of deep learning for dynamic updates of marker gene databases will help address the current limitations of static references [2]. As these tools continue to mature, they will move from simply classifying known cell types to the more ambitious task of discovering and defining novel cell states in an open-world context, ultimately deepening our understanding of cellular heterogeneity in health and disease. For researchers, the key to success will be a critical and informed approach to tool selection, guided by robust benchmarking studies and a clear understanding of the strengths and limitations of each annotation paradigm.

Cell type annotation is a critical step in the analysis of single-cell RNA sequencing (scRNA-seq) and spatial transcriptomics data, enabling researchers to decipher cellular heterogeneity and function within complex tissues [2]. The accuracy of this process directly impacts downstream biological interpretations, making the benchmarking of annotation methods a cornerstone of reproducible single-cell research. Computational approaches for annotation have evolved significantly, now primarily falling into three broad categories: reference-based correlation methods, supervised learning (data-driven) methods, and Large Language Model (LLM)-based methods. Each category employs distinct mechanisms and exhibits unique strengths and limitations, necessitating a systematic comparison to guide researchers in selecting appropriate tools for their specific experimental contexts. This guide objectively compares the performance of these methodologies based on recent benchmarking studies, providing a framework for evaluating cell type annotation accuracy within a broader thesis on computational biology benchmarking.

Method Categories and Core Mechanisms

Reference-Based Correlation Methods

Reference-based methods classify unknown cells by comparing their gene expression profiles to a pre-constructed reference dataset of known cell types. The core principle involves calculating similarity scores (e.g., correlation coefficients) between a query cell and all reference cells or cell types.

- Representative Tools: SingleR, Azimuth, RCTD, scmap, scPred [3] [9].

- Typical Workflow: A high-quality, pre-annotated scRNA-seq dataset serves as the reference. The gene expression profile of each query cell is compared to the reference, and the cell type label of the best-matching reference cell or cell-type average is assigned to the query cell [2].

- Key Characteristics: These methods are highly dependent on the quality and comprehensiveness of the reference data. They perform well when the query data is biologically similar to the reference but struggle with novel cell types not present in the reference.

Supervised Learning (Data-Driven) Methods

Supervised methods involve training a classification model on a labeled reference dataset to learn the gene expression patterns characteristic of each cell type. The trained model is then used to predict cell labels for query datasets.

- Representative Tools: Support Vector Machines (SVM), scPred, CellTypist [10] [11].

- Typical Workflow: A classifier is trained on a labeled reference dataset, where the features are gene expression values and the labels are cell types. This model captures the decision boundaries between different cell types in high-dimensional space and applies them to classify cells in new, unlabeled query data [2] [10].

- Key Characteristics: A benchmark study of 22 classifiers found that general-purpose classifiers like SVM achieved top performance [10]. These models can be sensitive to batch effects between the reference and query data and require retraining when new reference data becomes available.

Large Language Model (LLM)-Based Methods

A recent innovation involves leveraging the biological knowledge encoded within large language models. These methods do not rely on a reference expression matrix; instead, they treat cell type annotation as a natural language processing task, using marker gene lists as input "prompts" to infer cell identities.

- Representative Tools: LICT, AnnDictionary, scExtract, GCTHarmony [8] [4] [11].

- Typical Workflow: The top differentially expressed genes from a cell cluster are fed into an LLM via a structured prompt, asking the model to infer the most likely cell type based on its internal knowledge of marker genes [8] [4]. Advanced strategies like multi-model integration and iterative "talk-to-machine" feedback loops are used to improve accuracy [8].

- Key Characteristics: LLM-based methods are reference-free, reducing bias from incomplete reference datasets. They show particular promise for annotating novel or rare cell types and for harmonizing inconsistent annotations across studies [12] [11].

The following diagram illustrates the core workflow for each of these three methodological categories.

Performance Benchmarking and Quantitative Comparison

Performance on Standard Single-Cell RNA-seq Data

Benchmarking studies across diverse tissues and species reveal how each method category performs under different conditions. The following table summarizes key quantitative findings from recent large-scale evaluations.

Table 1: Performance Comparison of Cell Type Annotation Method Categories

| Method Category | Representative Tool | Reported Accuracy / Agreement | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Reference-Based | SingleR | High agreement with manual annotation on Xenium data [3] | Fast, easy to use, leverages well-curated references | Performance depends on reference quality; fails on novel cell types |

| Supervised Learning | Support Vector Machine (SVM) | Overall best performance in 22-method benchmark [10] | High accuracy on known cell types; robust classification | Requires retraining for new data; sensitive to batch effects |

| LLM-Based | LICT (Multi-model) | Mismatch rate reduced to 9.7% (vs. 21.5% for GPTCelltype) in PBMC data [8] | Reference-free; identifies novel cell types; high interpretability | Performance drops in low-heterogeneity data [8] |

| LLM-Based | Claude 3.5 Sonnet (via AnnDictionary) | >80-90% accuracy for major cell types; highest agreement in benchmark [4] | Excellent at de novo annotation; integrates with Scanpy | Cost per query (though minimal); potential for "hallucination" |

Performance on Spatial Transcriptomics Data

The performance of these methods extends to imaging-based spatial transcriptomics platforms like the 10x Xenium, which profile a smaller panel of genes. A dedicated benchmark study compared five reference-based methods on human breast cancer Xenium data, using a paired single-nucleus RNA-seq dataset as a reference.

Table 2: Benchmarking Reference-Based Methods on 10x Xenium Data [3]

| Method | Agreement with Manual Annotation | Key Findings |

|---|---|---|

| SingleR | High | Best performing tool: fast, accurate, and easy to use, with results closely matching manual annotation. |

| Azimuth | Moderate | Requires specific reference preparation but integrates well with Seurat pipeline. |

| RCTD | Moderate | Designed for spatial data but requires extensive parameter adjustment for Xenium. |

| scPred | Moderate | Accuracy depends on model training; can capture dataset-specific features. |

| scmapCell | Lower | Quick but less accurate compared to other methods in this benchmark. |

Advanced LLM Strategies and Their Impact

To address inherent limitations, advanced LLM strategies have been developed, showing measurable improvements in annotation reliability.

Table 3: Impact of Advanced Strategies in LLM-based Annotation [8]

| Strategy | Description | Performance Improvement |

|---|---|---|

| Multi-Model Integration | Combines annotations from multiple LLMs (e.g., GPT-4, Claude 3, Gemini) to leverage complementary strengths. | Reduced mismatch rate in PBMC data from 21.5% to 9.7%. Increased match rate in low-heterogeneity embryo data to 48.5%. |

| "Talk-to-Machine" | An iterative feedback loop where the LLM's initial annotation is validated against marker gene expression and re-queried with additional evidence. | Increased full match rate in gastric cancer data to 69.4% (from baseline). Improved full match rate in embryo data by 16-fold compared to using GPT-4 alone. |

| Objective Credibility Evaluation | Assesses annotation reliability by checking if >4 marker genes from the LLM are expressed in >80% of cluster cells. | Provided a framework to objectively assess reliability, proving more credible than manual annotations in some low-heterogeneity datasets. |

Detailed Experimental Protocols from Key Studies

Benchmarking Protocol for Reference-Based Methods on Xenium Data

The following workflow was used to benchmark reference-based annotation methods on 10x Xenium data, providing a reproducible template for spatial transcriptomics method evaluation [3]:

- Data Collection: Acquire Xenium data and a paired single-nucleus RNA sequencing (snRNA-seq) dataset from the same sample to serve as the reference.

- Reference Preparation: Process the snRNA-seq data using a standard Seurat pipeline, including quality control (removing unannotated cells and doublets), normalization, scaling, and dimensionality reduction (PCA, UMAP). Cell types are confirmed using known marker genes and, for cancer datasets, copy number variation (CNV) analysis tools like inferCNV.

- Query Processing: Process the Xenium data similarly, filtering out unlabeled cells and normalizing counts. Due to the small gene panel, the feature selection step is often skipped, and all genes are used for scaling.

- Cell Type Prediction: Apply each reference-based method (SingleR, Azimuth, RCTD, scPred, scmapCell) using the prepared snRNA-seq reference to predict cell types in the Xenium data.

- Performance Evaluation: Compare the composition of predicted cell types from each method against the gold standard of manual annotation based on marker genes. Accuracy is assessed by the degree of concordance in cell type proportions and labels.

Benchmarking Protocol for LLM-based De Novo Annotation

The protocol for evaluating LLMs on de novo cell type annotation, which uses gene lists from unsupervised clustering, highlights the unique aspects of testing reference-free methods [4]:

- Data Pre-processing: Independently process each tissue dataset from a source like Tabula Sapiens v2. This includes normalization, log-transformation, identification of high-variance genes, scaling, PCA, neighborhood graph calculation, and clustering using the Leiden algorithm.

- Differentially Expressed Gene (DEG) Calculation: Compute the top differentially expressed genes for each cluster, which will serve as the input for the LLMs.

- LLM Annotation: Use a standardized framework (e.g., AnnDictionary) to prompt various LLMs with the list of top DEGs for each cluster and request a cell type label.

- Label Consolidation: Have the same LLM review its initial labels to merge redundancies and correct verbose or incorrect annotations, creating a finalized label set.

- Agreement Assessment: Evaluate performance using multiple metrics:

- Direct String Match: Treating exact string matches as correct.

- Cohen's Kappa (κ): Measuring inter-annotator agreement between the LLM and manual annotations.

- LLM-as-a-Judge: Using an LLM to rate the quality of the match (e.g., perfect, partial, or not-matching) between automatic and manual labels.

Successful cell type annotation relies on a foundation of high-quality data and software tools. The table below lists key resources mentioned across the benchmarking studies.

Table 4: Essential Resources for Cell Type Annotation Research

| Resource Name | Type | Primary Function in Annotation | Relevant Context |

|---|---|---|---|

| 10x Genomics Xenium | Spatial Transcriptomics Platform | Generates imaging-based spatial transcriptomics data at single-cell resolution. | Common platform for benchmarking spatial annotation methods [3]. |

| Tabula Sapiens | scRNA-seq Reference Atlas | A comprehensive, multi-tissue human cell atlas used as a benchmark dataset. | Used for large-scale benchmarking of LLM performance [4]. |

| CellMarker / PanglaoDB | Marker Gene Database | Curated collections of cell-type-specific marker genes. | Used for manual annotation and validating LLM predictions [2]. |

| Seurat | R Toolkit | Comprehensive toolkit for single-cell data analysis, including reference-based mapping. | Used in the preprocessing and analysis pipeline for benchmarking [3]. |

| Scanpy | Python Toolkit | A scalable toolkit for analyzing single-cell gene expression data, similar to Seurat. | Forms the computational backbone for many analysis workflows, including scExtract [11]. |

| Cell Ontology (CL) | Standardized Vocabulary | A structured, controlled ontology for cell types. | Used by tools like GCTHarmony to standardize and harmonize cell type labels across studies [12]. |

| cellxgene | Data Platform | A crowdsourced platform hosting numerous curated single-cell datasets. | Sourced for manually annotated datasets to evaluate automated annotation accuracy [11]. |

Integrated Workflow for Annotation and Harmonization

Frameworks like scExtract demonstrate how LLMs can be integrated into a fully automated pipeline that goes beyond annotation to include data integration. The following diagram outlines this sophisticated multi-stage process.

The benchmarking data clearly demonstrates that the optimal choice of cell type annotation method is context-dependent. Reference-based methods like SingleR are fast and reliable when a high-quality, biologically relevant reference dataset is available, making them excellent for routine analyses. Supervised learning methods can achieve high accuracy but are constrained by the need for labeled training data and are susceptible to batch effects. The emergent category of LLM-based methods offers a powerful, reference-free alternative that excels at de novo annotation and shows remarkable promise for standardizing annotations across studies, though it requires strategies to mitigate inaccuracies in low-heterogeneity contexts and manage operational costs.

For researchers embarking on large-scale integrative studies, a hybrid approach may be most effective: using LLM-based tools for initial discovery and annotation, followed by reference-based or supervised methods for validation and refinement within a well-defined cellular hierarchy. As the field progresses, the integration of these methodologies into unified, automated pipelines will continue to enhance the accuracy, reproducibility, and depth of cellular insights derived from single-cell and spatial genomics.

Impact of Sequencing Platforms and Data Quality on Annotation Foundational Reliability

The foundational reliability of cell type annotation is a critical prerequisite for valid biological interpretation in single-cell genomics. This reliability is intrinsically governed by two fundamental factors: the technical characteristics of the sequencing platform used to generate the data and the inherent quality of the resulting data upon which computational annotation methods operate. As single-cell RNA sequencing (scRNA-seq) and spatial transcriptomics technologies evolve, researchers are presented with a diverse array of platform choices, each with distinct performance characteristics that systematically influence downstream annotation outcomes [2]. The burgeoning development of computational annotation methods—ranging from reference-based correlation approaches to large language model (LLM)-based strategies—further compounds the need for a rigorous comparative framework [2]. This guide provides an objective comparison of sequencing technologies and their cascading effects on data quality, culminating in empirically grounded recommendations for optimizing annotation reliability within a comprehensive benchmarking paradigm.

Sequencing Platform Landscape: Technical Characteristics and Performance Trade-offs

Sequencing technologies fall into three primary categories: second-generation sequencing (SGS), third-generation sequencing (TGS), and emerging spatial transcriptomics platforms. Each category exhibits distinct error profiles, throughput capabilities, and cost structures that directly impact their suitability for cell type annotation workflows.

Table 1: Comparison of Major Sequencing Platforms for Single-Cell Analysis

| Platform | Technology Generation | Read Length | Key Strengths | Key Limitations | Primary Error Type | Reported Error Rate |

|---|---|---|---|---|---|---|

| Illumina [13] [14] | SGS | Short (36-300 bp) | High accuracy, low cost per cell, high throughput | Short reads struggle with repetitive regions, GC bias | Substitution | ~0.1% [14] |

| MGI DNBSEQ-T7 [14] | SGS | Short | Cost-effective, accurate | Similar limitations to Illumina platforms | Substitution | Similar to Illumina |

| PacBio SMRT [13] | TGS | Long (avg. 10,000-25,000 bp) | Resolves complex genomic regions, isoform detection | Higher cost per cell, lower throughput | Insertion-Deletion (Indel) | 5-20% [14] |

| Oxford Nanopore [13] | TGS | Long (avg. 10,000-30,000 bp) | Ultra-long reads, real-time analysis | Highest raw error rate | Insertion-Deletion (Indel) | Up to 15% (1D read) [13] |

| 10x Xenium [3] | Imaging-based Spatial | Targeted (300-500 genes) | Single-cell spatial resolution, preserves tissue architecture | Limited to predefined gene panel | Imaging-based | Technology-dependent |

The choice between SGS and TGS involves fundamental trade-offs. SGS platforms like Illumina NovaSeq 6000 and MGI DNBSEQ-T7 provide highly accurate reads (up to 99.5% accuracy) but produce short fragments that cannot resolve complex genomic regions, potentially leading to misassembly and ambiguous cell type assignments [14]. Conversely, TGS platforms from PacBio and Oxford Nanopore generate reads long enough to span repetitive elements and identify novel isoforms—critical for distinguishing closely related cell types—but at the cost of higher error rates (5-20%) that can introduce noise into gene expression counts [13] [14]. Spatial transcriptomics platforms like 10x Xenium add dimensional context but are constrained by targeted gene panels that may omit cell-type-specific markers [3] [15].

The Data Quality Pathway: From Sequencing Output to Annotation Input

Sequencing outputs undergo extensive preprocessing before annotation, with data quality at each stage directly determining annotation fidelity. The following diagram illustrates the core pathway from raw sequencing data to annotated cells, highlighting key data quality checkpoints that influence reliability.

Critical data quality metrics established during preprocessing directly mediate how sequencing platform characteristics ultimately impact annotation. Sequencing depth must be sufficient to capture true biological heterogeneity rather than technical noise; inadequate depth disproportionately affects rare cell type detection [2]. Batch effects introduced by platform-specific protocols or processing dates can create artificial clusters that are misinterpreted as distinct cell types [2]. Gene detection rates vary substantially between platforms—10x Genomics typically exhibits higher sparsity than Smart-seq2—affecting the reliability of marker gene detection [2]. Finally, data integration across platforms remains challenging, as technical variance can obscure biologically meaningful differences essential for precise annotation [2].

Benchmarking Annotation Method Performance Across Data Contexts

The performance of cell type annotation methods varies significantly based on the data context, particularly the heterogeneity of cell populations and the technological origin of the data. The following experimental data, synthesized from recent large-scale benchmarks, reveals critical patterns in method reliability.

Table 2: Annotation Method Performance Across Experimental Contexts

| Annotation Method | Category | High Heterogeneity Performance | Low Heterogeneity Performance | Key Strengths | Notable Limitations |

|---|---|---|---|---|---|

| STAMapper [15] | Neural Network | Highest accuracy (Benchmark leader) | Maintains superior performance even with <200 genes | Robust to poor sequencing quality, identifies rare types | Computational complexity for very large datasets |

| scANVI [15] | Deep Learning | Second-best overall accuracy | Good performance with >200 genes | Handles complex integration tasks | Performance drops with <200 genes |

| SingleR [3] | Reference-based | Closely matches manual annotation | Not specifically reported | Fast, accurate, easy to use | Reference quality dependency |

| RCTD [15] | Reference-based | Good performance with >200 genes | Weaker performance with <200 genes | Accounts for platform effects | Struggles with very sparse data |

| LICT (LLM Integration) [8] | Large Language Model | Mismatch reduced to 9.7% (PBMC) | Match rate ~48.5% (embryo data) | Reduces uncertainty via multi-model consensus | Depends on quality of marker gene prompts |

| Claude 3.5 Sonnet [4] | Large Language Model | >80-90% accuracy for major types | Not specifically reported | Highest agreement with manual annotation | Performance varies with model size |

The experimental protocols for these benchmarks typically involve several standardized steps. For method benchmarking, researchers use well-annotated reference datasets like Tabula Sapiens [4] or peripheral blood mononuclear cells (PBMCs) [8] as ground truth. The annotation process involves normalizing data, selecting highly variable genes, performing dimensionality reduction (PCA), clustering (e.g., with Leiden algorithm), and then applying annotation methods to assign cell type labels based on differentially expressed genes [4]. Performance is quantified using metrics like accuracy, Cohen's kappa, F1-score, and agreement with manual annotations [4] [8] [15].

A particularly insightful finding comes from the benchmarking of LLM-based annotation methods like AnnDictionary and LICT, which employ sophisticated strategies to enhance reliability. The following diagram illustrates the multi-model integration approach used by LICT, which demonstrates how combining multiple LLMs can produce more reliable annotations than any single model.

The "talk-to-machine" strategy represents another innovative approach to improving annotation reliability. This iterative human-computer interaction process involves the model retrieving marker genes for its predicted cell type, validating their expression in the dataset, and receiving feedback to refine inaccurate annotations. When applied to challenging low-heterogeneity datasets, this strategy improved the full match rate with manual annotations by 16-fold for embryo data compared to using GPT-4 alone [8].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Successful cell type annotation requires both wet-lab reagents and computational tools. The following table catalogues essential solutions for ensuring annotation reliability throughout the experimental workflow.

Table 3: Essential Research Reagent Solutions for Cell Type Annotation

| Resource/Solution | Type | Primary Function | Key Features | Reference |

|---|---|---|---|---|

| 10x Genomics Platform | Wet-lab Technology | Single-cell library preparation | High-throughput cell partitioning, widely adopted | [3] [2] |

| PanglaoDB | Database | Marker gene reference | Curated marker genes for 155 cell types | [2] |

| CellMarker 2.0 | Database | Marker gene reference | Expanded database covering human and mouse | [2] |

| Tabula Sapiens | Reference Data | Annotation ground truth | Multi-tissue, well-annotated scRNA-seq atlas | [4] |

| Azimuth Reference | Computational Tool | Reference-based annotation | Pre-trained models for cell type prediction | [3] [16] |

| AnnDictionary | Computational Tool | LLM-based annotation | Multi-LLM support, de novo annotation | [4] |

| STAMapper | Computational Tool | Spatial annotation | Graph neural network for label transfer | [15] |

| SingleR | Computational Tool | Reference-based annotation | Fast correlation-based method | [3] |

| ScaleBio Human Blood | Reference Data | Annotation benchmark | High-quality annotations for immune cells | [16] |

| Bluster R Package | Computational Tool | Clustering assessment | Evaluates clustering quality metrics | [16] |

Based on comprehensive benchmarking evidence, annotation reliability fundamentally depends on aligning sequencing platform capabilities with biological question requirements. For heterogeneous cell populations like immune cells, most modern annotation methods perform adequately when applied to data from either SGS or TGS platforms. However, for low-heterogeneity samples or fine subtype discrimination, TGS platforms that capture isoform diversity provide significant advantages despite their higher error rates. The emerging consensus indicates that multi-algorithm approaches—particularly those incorporating LLMs with traditional reference-based methods—deliver superior reliability compared to any single method. Furthermore, spatial transcriptomics annotation benefits disproportionately from specialized tools like STAMapper that explicitly model spatial relationships. Ultimately, foundational reliability is achievable through strategic platform selection coupled with method benchmarking on data representative of the specific biological context under investigation.

A Practical Toolkit: Applying Reference-Based and LLM-Driven Annotation Methods

Spatial transcriptomics has revolutionized biological research by enabling the profiling of gene expression within the context of tissue architecture. Imaging-based spatial technologies, such as the 10x Xenium platform, can achieve single-cell resolution but typically profile only several hundred genes, making accurate cell type annotation both crucial and challenging [17]. While many reference-based cell type annotation tools have been developed for single-cell RNA sequencing (scRNA-seq) and sequencing-based spatial transcriptomics data, their performance on imaging-based spatial transcriptomics data remained insufficiently studied until recently [17] [9].

This benchmarking guide objectively compares the performance of four prominent reference-based cell type annotation tools—SingleR, Azimuth, scPred, and RCTD—when applied to imaging-based spatial transcriptomics data. We focus specifically on their application to 10x Xenium data from human breast cancer samples, providing researchers with experimental data and practical insights to inform their analytical choices.

Experimental Design and Methodology

Data Collection and Processing

The benchmarking study utilized public Xenium and single-cell data of human HER2+ breast cancer from 10x Genomics [17]. The dataset included:

- Xenium data: Two replicate samples (sample 1 and sample 2)

- Reference data: Paired 10x Flex single-nucleus RNA sequencing (snRNA-seq) data from sample 1

- Quality control: Cells without 10x-provided cell type annotation were removed, and potential doublets were predicted and eliminated using scDblFinder to ensure reference data quality [17]

For the snRNA-seq reference data analysis, researchers followed the standard Seurat (v4.3.0) pipeline, which included normalization, highly variable gene selection, scaling, principal component analysis (PCA), and uniform manifold approximation and projection (UMAP) [17]. Tumor cells were specifically annotated based on copy number variation (CNV) analysis using inferCNV, comparing the expression of genes across chromosomal positions in the snRNA-seq data against a normal reference scRNA-seq dataset from human breast tissue [17].

Cell Type Annotation Methods

The benchmarking study compared five reference-based methods against manual annotation based on marker genes. This guide focuses on four of these tools, which represent diverse algorithmic approaches to cell type annotation:

- SingleR: A correlation-based method that predicts cell types by comparing query gene expression profiles to reference datasets using Spearman or Pearson correlation [17] [18]

- Azimuth: A comprehensive tool for reference-based mapping of single-cell data, utilizing SCTransform normalization and UMAP projection for annotation [17] [18]

- scPred: A machine learning-based method that trains classification models on reference data for cell type prediction [17]

- RCTD (Robust Cell Type Decomposition): A regression framework designed for spatial transcriptomics data that models cell-type profiles in reference and accounts for platform effects [17] [18] [19]

Each method was applied to the Xenium data using the prepared snRNA-seq reference data with default parameters unless otherwise specified. For RCTD, specific parameters were adjusted to retain all cells in the Xenium data (UMImin, countsMIN, genecutoff, fccutoff, fccutoffreg set to 0; UMIminsigma set to 1; CELLMININSTANCE set to 10) [17].

Performance Evaluation Framework

The performance of each reference-based annotation method was evaluated by comparing its results with manual annotation based on marker genes, which served as the benchmark. The evaluation considered:

- Accuracy: How closely the automated annotations matched manual annotations

- Composition: The distribution of predicted cell types compared to manual annotation

- Running time: Computational efficiency of each method

- Ease of use: Implementation complexity and required parameter tuning

Table 1: Key Experimental Components in the Benchmarking Workflow

| Component | Description | Function in Study |

|---|---|---|

| 10x Xenium Human Breast Cancer Data | Imaging-based spatial transcriptomics data with ~500 genes | Serves as query dataset for method evaluation [17] |

| 10x Flex snRNA-seq Data | Single-nucleus RNA sequencing data from same sample | Provides reference labels for cell type prediction [17] |

| Seurat v4.3.0 | R toolkit for single-cell genomics | Primary environment for data processing and analysis [17] |

| scDblFinder | R package for doublet detection | Identifies and removes potential doublets from reference data [17] |

| inferCNV | R package for copy number variation analysis | Distinguishes tumor cells from normal cells in reference [17] |

Figure 1: Experimental workflow for benchmarking cell type annotation methods, illustrating the sequential process from data collection through to final evaluation.

Performance Comparison Results

Accuracy and Qualitative Assessment

The benchmarking study revealed significant differences in performance among the four methods when applied to Xenium spatial transcriptomics data. SingleR emerged as the most accurate method, with results most closely matching manual annotation based on marker genes [17]. The performance hierarchy was consistent across different evaluation metrics, with SingleR demonstrating superior accuracy in predicting cell type compositions that aligned with biological expectations derived from manual annotation.

Notably, the performance differences were attributed to the distinct algorithmic approaches of each method and how effectively they handled the specific challenges of imaging-based spatial data, particularly the limited gene panels typically comprising only several hundred genes [17]. SingleR's correlation-based approach proved particularly robust to these constraints, while other methods showed varying degrees of sensitivity to the platform-specific characteristics.

Table 2: Performance Comparison of Reference-Based Cell Type Annotation Methods

| Method | Overall Performance | Key Strengths | Key Limitations | Implementation |

|---|---|---|---|---|

| SingleR | Best performing - fast, accurate, easy to use [17] | High accuracy matching manual annotation; minimal parameter tuning [17] | Less effective with poorly curated references | R (SingleR package) |

| Azimuth | Moderate performance | Integrated with Seurat workflow; web application available [18] | Requires specific reference preparation [17] | R/Web (Azimuth) |

| scPred | Moderate performance | Machine learning approach; flexible framework [17] | Performance dependent on training data quality | R (scPred package) |

| RCTD | Variable performance | Specifically designed for spatial data; accounts for platform effects [17] [19] | Requires parameter adjustment for Xenium data [17] | R (spacexr package) |

Technical and Practical Considerations

Beyond raw accuracy, the benchmarking study evaluated several practical aspects of implementing these methods in research workflows:

Computational Efficiency SingleR was notably fast in addition to being accurate, making it suitable for large-scale analyses [17]. The running times for all methods were quantified, with significant variations observed based on the algorithmic complexity and implementation optimizations of each tool.

Ease of Implementation SingleR was characterized as "easy to use" with minimal parameter tuning required, lowering the barrier for researchers with limited computational expertise [17]. Azimuth benefits from integration with the widely-used Seurat ecosystem but requires specific reference preparation steps [17] [18]. RCTD demanded the most significant parameter adjustments to accommodate the characteristics of Xenium data, particularly to retain all cells during analysis [17].

Reference Data Requirements All methods performed best with high-quality reference data. The study emphasized the importance of proper reference preparation, including doublet removal and accurate cell type annotation, as a critical factor influencing method performance [17]. The use of paired snRNA-seq data from the same sample minimized technical variability between reference and query datasets, providing ideal conditions for evaluation.

Discussion and Research Implications

Interpretation of Performance Differences

The superior performance of SingleR in annotating Xenium data can be attributed to its correlation-based algorithm, which appears robust to the limited gene panels characteristic of imaging-based spatial technologies. By comparing the correlation of gene expression patterns between query cells and reference cell types, SingleR effectively leverages the most informative genes within the panel without requiring complete transcriptome coverage.

RCTD's variable performance highlights the challenge of adapting methods designed for sequencing-based spatial technologies to imaging-based platforms. While RCTD incorporates specific considerations for spatial data, its regression-based framework may be more sensitive to the gene panel size and composition [17] [19]. The requirement for extensive parameter adjustments to process Xenium data suggests that default settings optimized for other platforms may not transfer directly to imaging-based technologies.

Best Practices for Spatial Cell Type Annotation

Based on the benchmarking results, researchers working with Xenium data should consider the following best practices:

Reference Data Preparation

- Use paired reference data from the same sample when possible to minimize batch effects

- Implement rigorous quality control, including doublet detection and removal

- Employ complementary analyses (e.g., inferCNV for tumor/normal classification) to validate reference annotations [17]

Method Selection Considerations

- For most Xenium applications, SingleR provides the optimal balance of accuracy, speed, and ease of use

- When working with well-established tissue types with available Azimuth references, this method may offer streamlined integration with Seurat workflows

- For studies specifically focused on spatial patterns of rare cell types, testing multiple methods is recommended

Validation Strategies

- Always include manual annotation based on marker genes as a benchmark when evaluating new methods or applications

- Compare the spatial distributions of annotated cell types to histological features and known biological patterns

- Utilize method-specific diagnostic outputs (e.g., confidence scores) to identify potentially problematic annotations

Figure 2: Logical relationship between spatial data challenges, computational strategies, and desired outcomes in cell type annotation, illustrating how different methods address specific analytical problems.

Emerging Methods and Future Directions

While this guide focuses on established reference-based methods, emerging approaches show promise for spatial cell type annotation. STAMapper, a heterogeneous graph neural network method, has demonstrated superior performance in annotating single-cell spatial transcriptomics data from various technologies, particularly for datasets with fewer than 200 genes [15]. Additionally, BANKSY, a spatially-aware clustering algorithm, represents a complementary approach that unifies cell typing and tissue domain segmentation by incorporating neighborhood transcriptome information [20].

Future benchmarking studies would benefit from including these newer algorithms and evaluating performance across a wider range of tissue types, experimental conditions, and spatial technologies. The rapid evolution of both spatial transcriptomics platforms and computational methods necessitates ongoing assessment of annotation tools to provide researchers with current, evidence-based recommendations.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Spatial Transcriptomics Annotation

| Tool/Resource | Category | Specific Function | Implementation Notes |

|---|---|---|---|

| Seurat | Analysis Toolkit | Comprehensive environment for single-cell and spatial data analysis | Primary platform for SingleR, Azimuth, and scPred implementation [17] |

| SingleR Package | Annotation Method | Reference-based cell type annotation using correlation | Optimal for Xenium data; minimal parameter tuning required [17] |

| spacexr (RCTD) | Annotation Method | Cell type decomposition for spatial transcriptomics | Requires parameter adjustment for Xenium; designed for spatial data [17] [19] |

| scPred Package | Annotation Method | Machine learning-based cell type prediction | Flexible framework; performance dependent on training data [17] |

| Azimuth | Annotation Method | Web-based and R-based reference mapping | Integrated with Seurat; requires specific reference preparation [17] [18] |

| scDblFinder | Quality Control | Doublet detection in single-cell data | Essential for reference data curation [17] |

| inferCNV | Analysis Tool | Copy number variation analysis | Critical for distinguishing tumor cells in cancer studies [17] |