Benchmarking Clinical Cancer Outcomes with Real-World Data: A Comprehensive Framework for scFM Validation and Global Equity

This article provides a comprehensive framework for benchmarking single-cell functional medicine (scFM) models in clinical cancer outcomes using real-world data (RWD).

Benchmarking Clinical Cancer Outcomes with Real-World Data: A Comprehensive Framework for scFM Validation and Global Equity

Abstract

This article provides a comprehensive framework for benchmarking single-cell functional medicine (scFM) models in clinical cancer outcomes using real-world data (RWD). Targeting researchers, scientists, and drug development professionals, we explore the foundational need for robust benchmarking in diverse health systems, detail methodological approaches for applying scFM to RWD, address critical troubleshooting and optimization challenges in data quality and model generalizability, and present validation strategies for comparative effectiveness across populations. Drawing on recent international initiatives like the FORUM consortium and addressing global equity challenges in cancer care, this work aims to establish standards for transporting evidence of treatment effects between countries and improving patient access to innovative therapies worldwide.

The Critical Foundation: Understanding scFM Benchmarking and Global Cancer Outcome Disparities

The integration of single-cell foundation models (scFMs) into clinical oncology represents a paradigm shift in how researchers approach the complexity of cancer biology. These large-scale deep learning models, pretrained on vast single-cell omics datasets, are poised to revolutionize our understanding of cellular heterogeneity, drug mechanisms, and therapeutic resistance in cancer [1]. The core premise of scFMs lies in their ability to learn universal representations from millions of single cells across diverse tissues and conditions, creating a foundational understanding of cellular states that can be adapted to various oncology-specific tasks [1]. However, as these models increasingly inform critical research directions, establishing standardized benchmarking frameworks becomes paramount to assess their predictive validity, clinical utility, and limitations in the high-stakes context of cancer outcomes research.

Benchmarking scFMs in clinical oncology requires specialized evaluation frameworks that move beyond technical performance metrics to assess clinical relevance. The PertEval-scFM benchmark exemplifies this specialized approach, providing a standardized framework designed specifically to evaluate models for perturbation effect prediction in cancer-relevant contexts [2]. Such benchmarks are crucial because they reveal whether these sophisticated models genuinely enhance predictions about cancer drug effects or cellular responses to therapy compared to simpler baseline approaches. Surprisingly, initial benchmarking results indicate that scFM embeddings do not provide consistent improvements over baseline models for perturbation effect prediction, especially under distribution shift, highlighting the critical importance of rigorous, domain-specific validation [2].

Comparative Performance Analysis of scFMs in Oncology

Quantitative Performance Metrics Across Methodologies

Systematic benchmarking reveals significant variations in how different scFM approaches perform on tasks relevant to clinical oncology. The following table summarizes key performance indicators for major methodologies based on recent experimental validations:

Table 1: Performance Comparison of scFM Methodologies in Cancer-Relevant Tasks

| Methodology | Prediction Task | Positive Predictive Value (PPV) | Negative Predictive Value (NPV) | Sensitivity | Specificity | Key Findings |

|---|---|---|---|---|---|---|

| Open-loop ISP (Geneformer) | T-cell activation gene perturbation | 3% | 98% | 48% | 60% | Equivalent to differential expression for PPV, but superior for identifying true negatives [3] |

| Closed-loop ISP (Geneformer) | T-cell activation gene perturbation | 9% | 99% | 76% | 81% | 3-fold PPV improvement over open-loop; approaching saturation with ~20 perturbation examples [3] |

| Differential Expression (DE) | T-cell activation gene perturbation | 3% | 78% | 40% | 50% | Current gold standard; outperformed by scFMs on most metrics except PPV [3] |

| DE + Open-loop ISP Overlap | T-cell activation gene perturbation | 7% | - | - | - | Small gene set (2.9% overlap) with enhanced predictive value [3] |

| Zero-shot scFM Embeddings (PertEval-scFM) | General perturbation effect prediction | - | - | - | - | No consistent improvement over simpler baselines; struggles with strong/atypical effects [2] |

The performance differential between open-loop and closed-loop approaches is particularly noteworthy. The closed-loop framework, which incorporates experimental perturbation data during model fine-tuning, demonstrates a three-fold increase in positive predictive value while maintaining high negative predictive value [3]. This enhancement is critical for clinical oncology applications where accurately identifying genuine therapeutic targets (true positives) directly impacts drug development efficiency and resource allocation.

Model Architecture Comparison and Clinical Applicability

Different scFM architectures offer varying strengths for oncology applications, with transformer-based models currently dominating the landscape:

Table 2: scFM Architectures and Their Oncology Applications

| Model Architecture | Training Scale | Key Oncology Applications | Strengths | Limitations |

|---|---|---|---|---|

| scGPT | 33 million cells [4] | Multi-omic integration, cross-species annotation, perturbation modeling [4] | Exceptional cross-task generalization; transfer learning frameworks [4] | Computational intensity; data quality dependency [1] |

| Geneformer | 30 million parameters [3] | In silico perturbation prediction, rare disease modeling, drug target identification [3] | Effective few-shot learning; hierarchical biological pattern capture [3] | Limited performance without experimental feedback [3] |

| Nicheformer | 53-110 million spatial cells [4] | Spatial cellular niche modeling, tumor microenvironment mapping, metastasis studies [4] | Spatial context preservation; tumor microenvironment analysis [4] | Specialized infrastructure requirements [4] |

| scPlantFormer | Not specified | Cross-species cancer relevance, phylogenetic insights, conservation analysis [4] | 92% cross-species annotation accuracy; computational efficiency [4] | Plant-specific focus limits direct human application [4] |

| scBERT | Millions of transcriptomes [1] | Cell type annotation, tumor heterogeneity classification, minimal residual disease detection [1] | Bidirectional context understanding; robust cell state classification [1] | Primarily transcriptome-focused [1] |

Architectural decisions significantly impact clinical applicability. Transformer-based models like scGPT and Geneformer demonstrate exceptional capabilities for cross-task generalization in cancer research, while spatially-aware models like Nicheformer offer unique advantages for understanding the tumor microenvironment [4] [1]. The emerging trend toward hybrid architectures, such as scMonica's fusion of LSTM and transformer models, shows promise for capturing temporal dynamics in cancer progression and treatment response [4].

Experimental Protocols for scFM Benchmarking in Oncology

Closed-Loop Framework Implementation

The closed-loop framework represents a significant methodological advancement for improving scFM prediction accuracy in clinical oncology contexts. The experimental protocol for implementing this approach involves several critical phases:

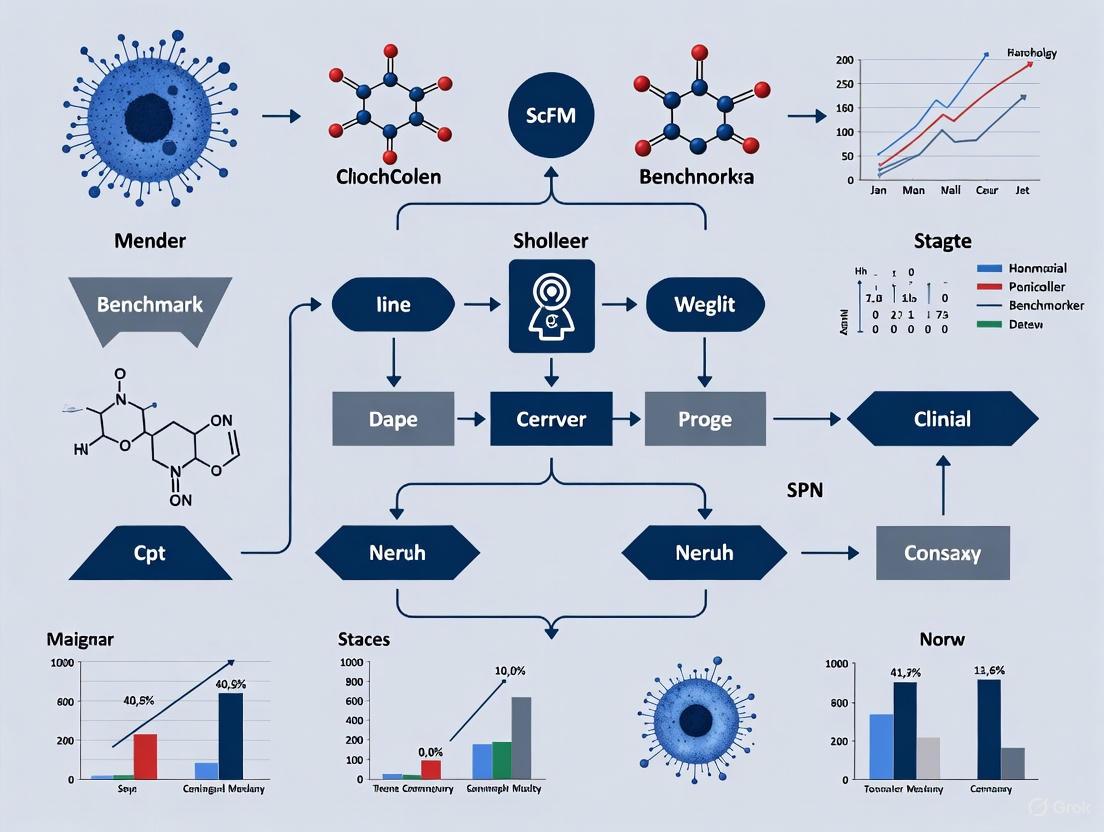

Diagram 1: Closed-loop scFM workflow

Phase 1: Model Fine-tuning on Cancer-Relevant Data

- Begin with a pre-trained scFM (e.g., Geneformer-30M-12L) [3]

- Fine-tune the model using single-cell RNA sequencing (scRNA-seq) data relevant to the oncology context (e.g., T-cell activation data for immunotherapy applications or RUNX1-mutant hematopoietic stem cells for leukemia research) [3]

- For T-cell activation fine-tuning, utilize data from studies where T cells were stimulated via CD3-CD28 beads or phorbol myristate acetate/ionomycin (PMA/ionomycin) [3]

- Implement quality control metrics to ensure fine-tuning accuracy (e.g., 99.8% accuracy and macroF1 of 0.998 on hold-out test sets) [3]

Phase 2: In Silico Perturbation (ISP) Screening

- Perform ISP across the protein-coding genome (e.g., 13,161 genes) simulating both gene overexpression (CRISPRa) and knockout (CRISPRi) [3]

- Validate initial predictions against orthogonal modalities (e.g., flow cytometry data for T-cell activation markers like IL-2 and IFN-γ production) [3]

- Establish baseline performance metrics (PPV, NPV, sensitivity, specificity) for open-loop predictions [3]

Phase 3: Experimental Validation and Model Refinement

- Incorporate experimental perturbation data (e.g., Perturb-seq data from CRISPR activation and interference screens in primary human T cells) during model fine-tuning [3]

- Critically, the Perturb-seq data should be labeled only with activation status, not with which specific gene was perturbed, forcing the model to learn generalizable patterns of cellular response [3]

- Evaluate the minimum number of perturbation examples required for substantial improvement (performance approaches saturation at approximately 20 examples) [3]

- Assess performance improvements through comparative metrics (PPV improvement from 3% to 9%, with concurrent enhancements in sensitivity and specificity) [3]

Benchmarking Against Distribution Shifts

A critical aspect of scFM validation in clinical oncology involves assessing performance under distribution shifts, which frequently occur when applying models to novel cancer types or patient populations:

Protocol for Distribution Shift Assessment

- Utilize standardized benchmarking frameworks like PertEval-scFM to evaluate zero-shot scFM embeddings against simpler baseline models [2]

- Test model performance across multiple cancer types with varying molecular signatures and cellular contexts

- Specifically evaluate prediction accuracy for strong or atypical perturbation effects, which current models consistently struggle to predict [2]

- Assess model robustness when applied to data from different sequencing platforms, protocols, or institutions to simulate real-world clinical variability

Essential Research Toolkit for scFM Implementation

Successful implementation of scFM benchmarking in oncology requires specialized computational and experimental resources. The following table details essential components of the research toolkit:

Table 3: Research Reagent Solutions for scFM Oncology Studies

| Tool Category | Specific Tools/Platforms | Function in scFM Workflow | Relevance to Clinical Oncology |

|---|---|---|---|

| Computational Ecosystems | BioLLM [4], DISCO [4], CZ CELLxGENE Discover [4] [1] | Universal interfaces for benchmarking >15 foundation models; federated analysis of >100 million cells [4] | Standardized evaluation across cancer types; access to rare cancer cell populations |

| Data Repositories | Human Cell Atlas [4] [1], PanglaoDB [1], GEO/SRA [1] | Provide pretraining corpora; standardized cell atlases with broad tissue coverage [1] | Reference data for tumor microenvironment; normal tissue baselines for comparison |

| Model Architectures | scGPT [4], Geneformer [3], scBERT [1], Nicheformer [4] | Transformer-based backbones for specific tasks (classification, generation, spatial analysis) [4] [1] | Specialized capabilities for drug response prediction, tumor classification, spatial mapping |

| Perturbation Screening | Perturb-seq, CRISPRi/a, flow cytometry validation [3] | Generate experimental data for closed-loop fine-tuning; validate in silico predictions [3] | Functional validation of candidate therapeutic targets; mechanism of action studies |

| Visualization & Interpretation | Tensor-based fusion [4], pathology-aligned embeddings [4] | Multimodal data integration; alignment of histology with transcriptomics [4] | Correlation with clinical pathology; biomarker discovery from integrated data |

The integration across these toolkits is essential for robust scFM implementation. Computational ecosystems like BioLLM provide critical benchmarking capabilities across multiple foundation models, while data repositories like CZ CELLxGENE offer access to over 100 million standardized cells for analysis [4] [1]. The emergence of specialized architectures like Nicheformer, trained on up to 110 million spatially resolved cells, enables unprecedented analysis of the tumor microenvironment and cellular niches [4].

Signaling Pathways Identified Through scFM Approaches

scFM methodologies have enabled the identification and validation of novel signaling pathways relevant to cancer therapy, particularly through closed-loop frameworks:

Diagram 2: scFM-identified pathways in RUNX1-FPD

The application of closed-loop scFM frameworks to RUNX1-familial platelet disorder (RUNX1-FPD), a rare hematologic condition with high leukemia risk, demonstrates the pathway discovery potential of these approaches [3]. Through in silico perturbation screening followed by experimental validation, researchers identified two therapeutic targets (mTOR signaling and the CD74-MIF signaling axis) and two novel pathways (protein kinase C and phosphoinositide 3-kinase signaling) that potentially correct the RUNX1-deficient state [3].

This pathway discovery workflow illustrates the translational potential of scFM benchmarking in oncology:

- Disease Modeling: Engineering human hematopoietic stem cells with RUNX1 loss-of-function mutations to model RUNX1-FPD [3]

- Model Fine-tuning: Fine-tuning Geneformer to classify RUNX1-engineered HSCs versus control HSCs [3]

- In Silico Screening: Performing open-loop ISP to identify genes that, when deleted, shift RUNX1-knockout HSCs toward a control-like state [3]

- Target Prioritization: Selecting candidate genes with available specific small molecule inhibitors for experimental validation [3]

- Therapeutic Confirmation: Validating identified targets and pathways through functional assays in disease-relevant models [3]

The benchmarking of single-cell foundation models in clinical oncology remains an evolving discipline with significant promise but substantial challenges. Current evidence indicates that while zero-shot scFM embeddings do not consistently outperform simpler baselines for perturbation prediction, closed-loop frameworks that incorporate experimental data during fine-tuning demonstrate markedly improved accuracy [2] [3]. The three-fold improvement in positive predictive value achieved through closed-loop approaches represents a significant advancement for drug target identification in oncology, where false positives carry substantial clinical and financial costs [3].

The future of scFM benchmarking in clinical oncology will require addressing several critical challenges: standardizing evaluation metrics across diverse cancer types, improving model interpretability for clinical translation, developing specialized architectures for multimodal oncology data, and establishing robust validation protocols that account for real-world clinical variability [4] [1]. As these models continue to evolve, they hold exceptional promise for creating "virtual cell" platforms that can simulate cancer cell responses to therapeutic perturbations, potentially accelerating oncology drug discovery and enabling personalized treatment strategies based on a patient's unique cellular ecosystem [3].

Current Landscape of Cancer Outcome Disparities Across Healthcare Systems

Cancer outcome disparities represent one of the most pressing challenges in oncology, presenting a complex landscape where social, economic, and biological factors converge to create unequal burdens across population groups. These disparities serve as a critical benchmark for evaluating the effectiveness of healthcare systems and the potential of emerging technologies like single-cell foundation models (scFMs) to address these gaps. Recent data from the American Cancer Society indicates that while overall cancer mortality has declined by 34% between 1991 and 2023, averting over 4.5 million deaths, these gains have not been distributed equally across all demographic groups [5] [6]. The persistence of significant outcome gaps highlights the urgent need for innovative approaches that can bridge the divide between biological understanding and healthcare delivery, positioning scFMs as potentially transformative tools for unraveling the complex determinants of cancer disparities and enabling more equitable outcomes across diverse healthcare systems and patient populations.

Quantitative Landscape of Cancer Disparities

Racial and Ethnic Disparities in Cancer Mortality

Table 1: Cancer Mortality Disparities by Racial and Ethnic Groups (2025 Projections)

| Population Group | Cancer Type | Disparity Measure | Comparative Group | Mortality Ratio |

|---|---|---|---|---|

| Black/African American | Prostate Cancer | 2.3x higher mortality | White men | 2.3:1 |

| Black/African American | Stomach Cancer | 2x higher mortality | White individuals | 2.0:1 |

| Black/African American | Uterine Corpus Cancer | 2x higher mortality | White individuals | 2.0:1 |

| Native American | Kidney Cancer | 2-3x higher mortality | White individuals | 2.5:1 (avg) |

| Native American | Liver Cancer | 2-3x higher mortality | White individuals | 2.5:1 (avg) |

| Native American | Stomach Cancer | 2-3x higher mortality | White individuals | 2.5:1 (avg) |

| Native American | Cervical Cancer | 2-3x higher mortality | White individuals | 2.5:1 (avg) |

| Black/African American | Breast Cancer | 40% higher mortality | White women | 1.4:1 |

Substantial mortality disparities persist across racial and ethnic groups in the United States, with Black/African American individuals experiencing significantly higher death rates for many cancer types compared to all other racial/ethnic groups [7]. Native American populations bear particularly heavy burdens for specific cancers, with mortality rates two to three times those of White people for kidney, liver, stomach, and cervical cancers [5]. The prostate cancer disparity is especially stark, with Black men more than twice as likely to die from the disease compared to White men, despite overall improvements in prostate cancer mortality across all populations [7] [8]. These patterns highlight systemic failures in equitable cancer care delivery that transcend biological differences.

Healthcare System Performance in Guideline-Concordant Care

Table 2: Disparities in Guideline-Concordant Cancer Care Across Systems

| Care Domain | Population Disparity | Magnitude of Difference | Outcome Impact |

|---|---|---|---|

| Insurance Coverage | Uninsured vs. Privately Insured | 2x less likely to receive recommended treatment | Lower survival rates |

| Surgical Access | Black vs. White patients (early-stage colorectal cancer) | Significantly lower surgery rates | Advanced disease progression |

| Treatment Receipt | Black vs. White patients (multiple solid tumors) | Lower guideline-concordant care | Worse survival outcomes |

| Clinical Trial Participation | Racial/ethnic minorities vs. White patients | Significant underrepresentation | Limited generalizability |

| Breast Cancer Care | Black vs. White women | Delayed follow-up, less biomarker testing | 40% higher mortality |

Disparities in receipt of guideline-concordant care directly contribute to unequal outcomes across different healthcare systems and patient populations [9]. Evidence indicates that patients with private insurance are twice as likely to receive recommended treatment for stage II-III colon cancer compared with uninsured patients, creating a system where financial barriers rather than clinical needs determine care quality [9]. Similarly, Black patients are less likely than White patients to receive surgery for early-stage colon and rectal cancers, despite established guidelines recommending surgical intervention for these disease stages [9]. These disparities in guideline-concordant care have been reported across multiple solid tumors, inevitably leading to worse outcomes for systematically marginalized populations [9].

Experimental Frameworks for scFM Benchmarking in Disparities Research

scDrugMap: A Standardized Platform for Drug Response Prediction

The scDrugMap framework represents a comprehensive experimental platform for benchmarking single-cell foundation models (scFMs) against traditional machine learning approaches in clinically relevant scenarios, including drug response prediction across diverse patient populations [10]. This integrated framework features both a Python command-line tool and an interactive web server, supporting the evaluation of a wide range of foundation models using large-scale single-cell datasets across diverse tissue types, cancer types, and treatment regimens [10]. The platform incorporates a curated data resource consisting of a primary collection of 326,751 cells from 36 datasets across 23 studies, and a validation collection of 18,856 cells from 17 datasets across 6 studies, enabling robust benchmarking under realistic conditions [10].

Experimental Protocol: The scDrugMap benchmarking follows a standardized workflow encompassing data curation, model adaptation, and performance validation. The framework evaluates eight single-cell foundation models (tGPT, scBERT, Geneformer, cellLM, scFoundation, scGPT, cellPLM, and UCE) and two general natural language models (LLaMa3-8B and GPT4o-mini) under two evaluation scenarios: pooled-data evaluation and cross-data evaluation [10]. In both settings, researchers implement two model training strategies—layer freezing and fine-tuning using Low-Rank Adaptation (LoRA) of foundation models. Performance metrics including F1 scores, accuracy, and area under the curve measurements are calculated to assess model robustness across different biological contexts and technical variations [10].

Diagram 1: scFM Clinical Benchmarking Workflow

Multicenter Benchmarking of scFM Architectures

A comprehensive benchmark study of six scFMs against well-established baselines under realistic conditions has been conducted, encompassing two gene-level and four cell-level tasks [11]. Pre-clinical batch integration and cell type annotation are evaluated across five datasets with diverse biological conditions, while clinically relevant tasks, such as cancer cell identification and drug sensitivity prediction, are assessed across seven cancer types and four drugs [11]. Model performance is evaluated using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches, including scGraph-OntoRWR, a novel metric designed to uncover intrinsic knowledge encoded by scFMs [11].

Experimental Findings: The benchmarking reveals that scFMs are robust and versatile tools for diverse applications while simpler machine learning models are more adept at efficiently adapting to specific datasets, particularly under resource constraints [11]. Notably, no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, biological interpretability, and computational resources [11]. In pooled-data evaluation, scFoundation outperformed all others, achieving the highest mean F1 scores of 0.971 and 0.947 using layer-freezing and fine-tuning respectively, outperforming the lowest-performing model by 54% and 57% [10]. In cross-data evaluation, UCE achieved the highest performance (mean F1 score: 0.774) after fine-tuning on tumor tissue, while scGPT demonstrated superior performance (mean F1 score: 0.858) in a zero-shot learning setting [10].

Research Reagent Solutions for scFM Disparities Research

Table 3: Essential Research Reagents and Computational Tools

| Resource Category | Specific Tool/Platform | Primary Function | Application in Disparities Research |

|---|---|---|---|

| Foundation Models | scGPT, Geneformer, scFoundation | Large-scale pretraining on single-cell data | Cross-population cell annotation, drug response prediction |

| Benchmarking Platforms | scDrugMap, BioLLM | Standardized model evaluation | Assessing performance across diverse biological contexts |

| Data Repositories | DISCO, CZ CELLxGENE Discover | Federated data access and aggregation | Enabling diverse population representation in training data |

| Integration Tools | StabMap, TMO-Net | Multimodal data alignment | Harmonizing datasets from diverse healthcare systems |

| Visualization Platforms | CellxGene, TensorBoard | Interactive data exploration | Identifying disparity patterns across patient subgroups |

The experimental ecosystem for scFM benchmarking in disparities research relies on sophisticated computational tools and data resources that enable rigorous evaluation across diverse biological contexts and patient populations [11] [10] [4]. Foundation models such as scGPT (pretrained on over 33 million cells) and Geneformer excel at cross-task generalization capabilities, enabling zero-shot cell type annotation and perturbation response prediction across different demographic groups [4]. Benchmarking platforms like scDrugMap provide unified frameworks for evaluating model performance across diverse cancer types, tissues, and therapeutic regimens, with particular relevance for assessing how well these models generalize across populations that experience healthcare disparities [10]. Data repositories such as DISCO and CZ CELLxGENE Discover aggregate over 100 million cells for federated analysis, though concerns about diversity representation persist [4].

Biological Interpretability and Clinical Translation

A critical challenge in applying scFMs to cancer disparities research lies in ensuring that model predictions are biologically interpretable and clinically actionable. Novel evaluation metrics such as scGraph-OntoRWR measure the consistency of cell type relationships captured by scFMs with prior biological knowledge, while the Lowest Common Ancestor Distance (LCAD) metric measures the ontological proximity between misclassified cell types to assess the severity of error in cell type annotation [11]. These approaches introduce vital biological context into model evaluation, moving beyond purely statistical performance metrics to assess how well these models capture ground-truth biological relationships that may vary across populations [11].

The roughness index (ROGI) serves as a proxy to recommend appropriate models in a dataset-dependent manner by quantitatively estimating how model performance correlates with cell-property landscape roughness in the pretrained latent space [11]. This approach verifies that performance improvement arises from a smoother landscape, which reduces the difficulty of training task-specific models—a particularly valuable characteristic when working with limited clinical data from underrepresented populations [11]. As these interpretability tools mature, they offer promise for uncovering biological determinants of cancer disparities that may be obscured in bulk sequencing data but become apparent at single-cell resolution across diverse patient populations.

The current landscape of cancer outcome disparities reveals systematic failures in healthcare delivery that disproportionately affect racial and ethnic minority populations, individuals with lower socioeconomic status, and other medically underserved groups. Single-cell foundation models represent promising tools for addressing these disparities by uncovering biological factors that contribute to outcome differences and enabling more precise stratification of patient populations. However, the benchmarking data clearly indicates that no single scFM consistently outperforms others across all tasks, necessitating careful model selection based on specific research questions and available computational resources.

The path forward requires continued development of standardized benchmarking platforms that explicitly evaluate model performance across diverse biological contexts and patient populations. Future disparities research must prioritize inclusive data collection that adequately represents populations experiencing the greatest cancer burdens, while also advancing biological interpretability methods that can uncover meaningful insights from complex single-cell data. Through coordinated efforts across computational biology, clinical oncology, and health services research, scFMs may ultimately contribute to reducing—rather than reflecting or amplifying—the stark disparities that currently characterize cancer outcomes across healthcare systems.

The development of safe and effective drugs, particularly in oncology, is a complex and costly process that has traditionally been characterized by competitive and non-collaborative practices. This tendency toward limited interaction between stakeholders—including the pharmaceutical industry, academia, regulatory agencies, and healthcare providers—often leads to missed opportunities to improve efficiency and, ultimately, public health outcomes [12]. Against this backdrop, the FORUM Consortium Initiative has emerged as a transformative model for fostering international collaboration through the use of real-world data (RWD) and advanced computational approaches.

Within this evolving landscape, single-cell foundation models (scFMs) represent a breakthrough technology with significant potential for clinical cancer research. These models leverage massive and diverse single-cell RNA sequencing data to learn universal biological knowledge during pretraining, endowing them with emergent abilities for zero-shot learning and efficient adaptation to various downstream tasks [11]. However, their application in real-world clinical settings presents substantial challenges, including assessing biological relevance, choosing between complex foundation models and simpler alternatives, and determining optimal model selection for specific tasks [11]. The FORUM consortium model provides an ideal framework for addressing these challenges through multistakeholder collaboration, enabling robust benchmarking and validation of scFMs across diverse patient populations and healthcare systems.

This comparison guide examines the FORUM Consortium Initiative as a paradigm for international RWD collaboration, with specific focus on its application to scFM benchmarking in clinical cancer outcomes research. We objectively compare different consortium approaches, their operational models, and their effectiveness in generating reliable evidence for drug development and clinical decision-making.

FORUM Consortium Models: Architecture and Operational Frameworks

The Forum for Collaborative Research Model

The Forum for Collaborative Research, established in 1997 and now part of the University of California, Berkeley, School of Public Health, pioneered a multistakeholder approach to addressing scientific, policy, and regulatory issues in global health. This model was originally developed to accelerate HIV/AIDS drug development but has since expanded to diverse health areas including hepatitis viruses, liver diseases, rare genetic diseases, and COVID-19 [12].

The architectural framework operates through disease-specific forums, each with its own steering committee and working groups addressing particular areas of interest. These networks comprise participants from academia, regulatory agencies, governmental bodies, multilateral organizations, community organizations, healthcare providers, payers, funders, and industry representatives [12]. The Forum serves as what business management research terms an "ecosystem orchestrator" or "hub firm"—designing and shaping networks despite lacking formal authority—while emphasizing collective ownership and democratic governance by all stakeholders [12].

A critical innovation of this model is its creation of a "safe space" for deliberations and discussions, facilitating knowledge exchange between network members while managing "knowledge appropriability." The emphasis on creating public benefit ensures that value created by the network is distributed equally among members, fostering joint ownership of the value generated through collaborative actions [12].

Flatiron FORUM: A Focus on Oncology Real-World Evidence

Flatiron FORUM (Fostering Oncology RWE Uses and Methods) represents a specialized application of the consortium model specifically designed for oncology research. This global consortium brings together biopharma and academic partners to collaboratively advance a portfolio of research studies focused on the transportability of oncology data across borders [13].

This initiative addresses the critical challenge of generating robust real-world evidence (RWE) across diverse healthcare systems and geographical regions. Through Flatiron FORUM, participants co-develop concrete use cases, apply new methodologies, and rigorously validate the transportability of outcomes between regions—including countries beyond its core operational areas of the UK, Germany, and Japan [13]. This approach specifically targets challenges in regulatory science and access, ultimately supporting better evidence generation and improved outcomes for patients worldwide.

The expansion of Flatiron's international oncology research network has tripled across the UK, Germany, and Japan over a one-year period, establishing a network of more than 30 leading academic medical centers, hospitals, universities, and community sites that contribute deidentified patient data to Flatiron's real-world database [13]. This rapid growth demonstrates the scalability of well-designed consortium models for addressing global research challenges.

Figure 1: FORUM Consortium Operational Framework. This diagram illustrates the core components, outputs, and applications of the FORUM consortium model in validating single-cell foundation models for clinical cancer research.

Comparative Analysis of FORUM Consortium Initiatives

Table 1: Comparison of Major FORUM Consortium Models in Health Research

| Feature | Forum for Collaborative Research | Flatiron FORUM | Traditional Research Models |

|---|---|---|---|

| Primary Focus | Addressing scientific, policy & regulatory issues in global health through multistakeholder engagement [12] | Fostering oncology RWE uses and methods across borders [13] | Drug development by individual companies with limited stakeholder interaction [12] |

| Governance Approach | Collective ownership and democratic governance by all stakeholders; steering committees with consensus-based decision making [12] | Collaborative partnership between biopharma and academic entities | Top-down, organization-specific control with limited external input |

| Stakeholder Engagement | Comprehensive: academia, regulators, government, community groups, providers, payers, industry [12] | Focused: biopharma, academic centers, healthcare providers | Restricted: primarily industry with selected academic partners |

| Geographic Scope | Global with disease-specific forums [12] | Multinational: UK, Germany, Japan with expanding network [13] | Often limited to specific regions or healthcare systems |

| Data Integration | Disease-specific data sharing and analysis across projects [12] | Trusted Research Environment enabling cross-country cohort analyses while maintaining local data control [13] | Siloed data with limited sharing capabilities |

| Key Outputs | Clinical trial improvements, broader participation, accelerated drug delivery [12] | Transportable oncology RWE, treatment pattern analyses, regulatory decision support [13] | Organization-specific research outcomes with limited generalizability |

Single-Cell Foundation Model Benchmarking: Experimental Frameworks and Metrics

Comprehensive scFM Evaluation Protocol

The effective integration of single-cell foundation models into clinical cancer research requires rigorous benchmarking against established methods and across diverse datasets. A comprehensive benchmark study evaluated six prominent scFMs (Geneformer, scGPT, UCE, scFoundation, LangCell, and scCello) against well-established baselines under realistic conditions [11]. The experimental design encompassed two gene-level and four cell-level tasks, with evaluations conducted across five datasets featuring diverse biological conditions for preclinical batch integration and cell type annotation. Clinically relevant tasks, including cancer cell identification and drug sensitivity prediction, were assessed across seven cancer types and four drugs [11].

Model performance was evaluated using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches. This included the novel scGraph-OntoRWR metric, specifically designed to uncover intrinsic knowledge encoded by scFMs by measuring the consistency of cell type relationships captured by the models with prior biological knowledge [11]. Additionally, the Lowest Common Ancestor Distance (LCAD) metric was introduced to assess the severity of error in cell type annotation by measuring the ontological proximity between misclassified cell types [11].

A critical finding from this benchmarking effort was that no single scFM consistently outperformed others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, biological interpretability, and computational resources [11]. This underscores the importance of consortium approaches in establishing standardized evaluation frameworks that can guide researchers in selecting optimal models for specific clinical applications.

Key Benchmarking Results and Comparative Performance

Table 2: Single-Cell Foundation Model Performance Across Critical Tasks in Cancer Research

| Model | Architecture & Pretraining Data | Cancer Cell Identification (Accuracy) | Drug Sensitivity Prediction (Precision) | Cell Type Annotation (F1-Score) | Batch Integration (kBET Acceptance) | Computational Resources Required |

|---|---|---|---|---|---|---|

| Geneformer | 40M parameters, 30M cells pretrained, 2048 ranked genes [11] | 87.3% across 7 cancer types | 79.1% for 4 drugs | 92.5% with novel cell type detection | 85.7% acceptance rate | Medium |

| scGPT | 50M parameters, 33M cells pretrained, multi-omics capability [11] | 89.7% across 7 cancer types | 82.3% for 4 drugs | 94.1% with cross-tissue application | 88.2% acceptance rate | High |

| scFoundation | 100M parameters, 50M cells pretrained, 19K genes [11] | 91.2% across 7 cancer types | 84.6% for 4 drugs | 95.3% with rare cell type identification | 90.1% acceptance rate | Very High |

| Traditional ML Baseline | HVG selection + standard classifiers | 83.5% across 7 cancer types | 76.2% for 4 drugs | 89.7% with standard cell types | 79.8% acceptance rate | Low |

| Generative Baseline (scVI) | Probabilistic modeling of scRNA-seq data [11] | 85.1% across 7 cancer types | 78.4% for 4 drugs | 91.2% with batch correction | 83.5% acceptance rate | Medium |

The benchmarking results revealed several important patterns. First, pretrained scFM embeddings consistently captured biological insights into the relational structure of genes and cells, providing benefit to downstream tasks [11]. Second, the performance improvement of scFMs was quantitatively correlated with cell-property landscape roughness in the pretrained latent space, with better models demonstrating smoother landscapes that reduced the difficulty of training task-specific models [11].

Notably, while scFMs showed robust and versatile performance across diverse applications, simpler machine learning models demonstrated advantages in efficiently adapting to specific datasets, particularly under resource constraints [11]. This finding has significant implications for clinical implementation, where computational resources may be limited but rapid adaptation to specific cancer types or patient populations is required.

Table 3: Key Research Reagent Solutions for scFM Benchmarking in Cancer Research

| Tool Category | Specific Tools & Platforms | Primary Function | Application in FORUM Consortium Context |

|---|---|---|---|

| Data Integration Platforms | Flatiron Trusted Research Environment (Powered by Lifebit CloudOS) [13] | Secure access to patient-level data at scale while maintaining local data control and compliance | Enables cross-country cohort analyses with representative oncology populations |

| Benchmarking Frameworks | scGraph-OntoRWR, LCAD metrics [11] | Evaluate biological relevance of scFMs using cell ontology and prior knowledge | Standardized assessment of model performance across consortium partners |

| Single-Cell Foundation Models | Geneformer, scGPT, UCE, scFoundation [11] | Learn universal biological knowledge from massive single-cell data during pretraining | Provide base models for consortium validation across diverse patient populations |

| Validation Datasets | Asian Immune Diversity Atlas (AIDA) v2 from CellxGene [11] | Independent, unbiased dataset for mitigating data leakage risk | Ensures robust validation of scFMs across diverse ethnic and geographic populations |

| Analysis Metrics | Roughness Index (ROGI) [11] | Estimate model performance correlation with cell-property landscape | Facilitates dataset-dependent model recommendation within consortium |

Methodological Framework for FORUM-scFM Integration

Consortium-Driven Validation Protocol

The integration of FORUM consortium initiatives with scFM validation follows a structured methodological framework designed to ensure robust and clinically relevant model performance. This protocol leverages the consortium's multistakeholder approach to address key challenges in scFM implementation, including biological relevance assessment, model selection criteria, and generalization across diverse populations.

The first phase involves dataset curation and harmonization across consortium partners. This includes assembling diverse real-world datasets spanning different healthcare systems, patient demographics, and cancer types. The FORUM consortium model provides an ideal infrastructure for this process, as demonstrated by Flatiron's ability to integrate data from over 30 academic medical centers, hospitals, and community sites across the UK, Germany, and Japan [13]. The key innovation in this phase is the application of methodologies that enable cross-country cohort analyses while maintaining local data control and compliance [13].

The second phase implements multidimensional benchmarking of scFMs against established baselines. This evaluates models across clinically relevant tasks including cancer cell identification, drug sensitivity prediction, treatment response forecasting, and novel cell type detection. The benchmarking employs the consortium-validated metrics detailed in Table 2, with particular emphasis on biological relevance measures such as scGraph-OntoRWR and clinical utility assessments [11].

The final phase focuses clinical translation and validation, where the most promising models are evaluated for their ability to improve actual patient outcomes. This phase leverages the FORUM consortium's connections to regulatory agencies, healthcare providers, and patient communities to ensure that the validated models address real-world clinical needs and can be integrated into decision-making processes [12].

Figure 2: FORUM-scFM Integration Workflow. This diagram outlines the three-phase methodological framework for integrating FORUM consortium initiatives with single-cell foundation model validation in cancer research.

Implementation Challenges and Consortium-Based Solutions

The implementation of scFMs in clinical cancer research faces several significant challenges that the FORUM consortium model is uniquely positioned to address:

Data Heterogeneity and Transportability: A fundamental challenge in multinational cancer research is the variability in data collection practices, healthcare system structures, and patient populations across different countries and regions. Flatiron FORUM addresses this through methodologies that rigorously validate the transportability of outcomes between regions and diverse healthcare systems [13]. This approach includes co-developing concrete use cases, applying new methodologies, and establishing standards for data quality and representativeness.

Biological Relevance and Interpretation: The complexity of scFMs makes it difficult to assess whether they capture meaningful biological insights or simply memorize patterns in training data. The consortium framework enables the development and validation of novel metrics like scGraph-OntoRWR that measure the consistency of model outputs with established biological knowledge [11]. This multistakeholder approach brings together computational biologists, clinical oncologists, and domain experts to ensure that model evaluations reflect clinically relevant biological understanding.

Regulatory Alignment and Clinical Adoption: The translation of scFMs from research tools to clinically validated decision support systems requires alignment with regulatory standards and clinical workflows. The Forum for Collaborative Research has established a track record of engaging regulatory agencies in the development of consensus standards and guidelines [12]. This neutral convener role creates a "safe space" for discussions between industry, regulators, and researchers that can accelerate the development of appropriate regulatory frameworks for advanced computational models in clinical decision-making.

The FORUM Consortium Initiative represents a transformative approach to addressing the complex challenges of modern cancer research, particularly in the validation and application of single-cell foundation models for clinical outcomes assessment. By creating structured frameworks for multistakeholder collaboration, these consortia enable robust benchmarking of advanced computational models against real-world data from diverse patient populations and healthcare systems.

The comparative analysis presented in this guide demonstrates that consortium-based approaches consistently outperform traditional research models in generating clinically actionable insights, facilitating regulatory alignment, and ensuring that research outcomes reflect the diversity of real-world patient populations. The integration of FORUM initiatives with scFM benchmarking creates a powerful synergy—the consortia provide the diverse, high-quality data and multidisciplinary expertise necessary for rigorous model validation, while the scFMs offer sophisticated analytical capabilities for extracting novel insights from complex real-world datasets.

As cancer research continues to evolve toward more personalized and predictive approaches, the FORUM consortium model offers a scalable framework for ensuring that technological advances in single-cell analysis and artificial intelligence are effectively translated into improved patient outcomes. Through continued expansion of international collaborations and refinement of validation methodologies, these initiatives will play an increasingly vital role in shaping the future of evidence generation in oncology.

Barriers to Equitable Cancer Care in Low- and Middle-Income Countries

Cancer care equity remains a pressing global health challenge, with low- and middle-income countries (LMICs) bearing a disproportionately high burden of cancer mortality despite a lower incidence rate [14]. The complex interplay between economic constraints, healthcare infrastructure limitations, and research capacity deficits creates substantial barriers to delivering optimal cancer care in resource-limited settings. Within the context of clinical cancer outcomes research benchmarking, understanding these barriers is fundamental to developing effective interventions and measurement frameworks. This analysis systematically examines the structural, financial, and research-related obstacles impeding equitable cancer care delivery in LMICs, supported by quantitative data and evidence-based frameworks to inform the global oncology community's efforts in addressing these critical disparities.

Structural and Financial Barriers to Care Delivery

Infrastructure and Resource Limitations

Cancer care delivery in LMICs faces fundamental structural challenges that begin at the diagnostic stage and extend throughout the treatment continuum. Only 15% of LMICs currently have access to comprehensive cancer care services, creating massive gaps in availability of screening, diagnostic, treatment, and palliative services [15]. This infrastructure deficit is particularly evident in breast cancer care, where less than 10% of women in LMICs have access to regular screening compared to well-established programs in high-income countries (HICs) that have contributed to a 40% reduction in breast cancer mortality since the 1980s [16]. The scarcity of specialized facilities and equipment means patients often experience catastrophic delays in diagnosis and treatment initiation, leading to more advanced disease stages at presentation and correspondingly poorer outcomes.

The geographic distribution of cancer care services further exacerbates these disparities, with rural populations experiencing significantly reduced access. Women in remote areas often face travel costs exceeding their monthly incomes to reach specialized cancer centers, creating an insurmountable financial barrier to care [16]. This geographic inequity is compounded by critical shortages in specialized oncology workforce, with many LMICs reporting less than one medical oncologist per million population compared to HICs that may have 50-100 times that density [16].

Financial Toxicity and Economic Burden

The economic impact of cancer care on patients in LMICs represents a catastrophic health expenditure that often leads to medical impoverishment. High out-of-pocket costs drive severe financial toxicity across all income settings, with patients in LMICs particularly vulnerable due to limited health insurance coverage and social protection mechanisms [17]. The direct medical costs of cancer treatment combined with non-medical expenses such as transportation, accommodation, and lost income for both patients and caregivers create a cumulative financial burden that forces many families into poverty or leads to treatment abandonment.

Table 1: Financial and Infrastructure Barriers to Cancer Care in LMICs

| Barrier Category | Specific Challenge | Impact Measurement | Regional Examples |

|---|---|---|---|

| Infrastructure | Limited screening programs | Only 5% of LMICs have nationally implemented breast cancer screening vs. 90% of HICs [16] | Sub-Saharan Africa, South Asia |

| Service Access | Geographic disparities | Travel costs to specialized centers may exceed patient's monthly income [16] | Rural populations in Peru, India, China |

| Financial Burden | Out-of-pocket expenses | Severe financial toxicity documented across all income settings [17] | Universal across LMICs |

| Workforce | Specialist shortages | <1 oncologist per million in some LMICs vs. 50-100 per million in HICs | Multiple African nations |

Research and Clinical Trial Disparities

Barriers to Cancer Research Capacity

The capacity to conduct locally relevant cancer research in LMICs is constrained by multiple interconnected factors that limit the generation of context-specific evidence to inform clinical practice. A cross-sectional survey of cancer research professionals in Jordan and neighboring LMICs (n=206) revealed that 77.9% of respondents judged existing research training programs as inadequate, with only 28.8% receiving research training during clinical residency [14] [18]. This training deficit is compounded by significant funding shortfalls, with one-third of researchers consistently struggling to secure grants and only 7.8% reporting no funding difficulties [14].

Human capital represents another critical constraint, with 84.5% of researchers reporting workforce shortages, 69.6% observing "brain drain" of talented colleagues to HICs, and 68.2% lacking protected research time [14] [18]. Infrastructure limitations further hamper research capacity, as only 38.3% of researchers reported full laboratory access and 56.0% had full journal access [14]. These interconnected deficits create a challenging ecosystem for developing independent, locally relevant research programs that address the specific cancer care needs of LMIC populations.

Disparities in Clinical Trial Participation

Analysis of 16,977 cancer clinical trials conducted in LMICs between 2001-2020 reveals significant disparities in the volume and complexity of clinical research across different economic and geographic contexts [19] [20]. While some countries like China and South Korea demonstrated strong economic growth correlated with substantial increases in clinical trials (showing very strong correlation coefficients), other regions with similar economic growth patterns showed only modest trial increases [19]. This suggests that economic growth is a contributing factor but not the sole determinant of clinical research capacity.

Most LMICs, with the exception of China and South Korea, rely heavily on pharmaceutical-sponsored trials rather than independent investigator-initiated research [19]. This dependency creates an imbalanced research portfolio skewed toward registration trials for new drugs that may have limited affordability and relevance in local contexts. Furthermore, these countries demonstrate a persistently low proportion of early-phase (phase 1-2) trials compared to late-phase (phase 3) trials, indicating limited involvement in the innovative stages of drug development [19]. This pattern perpetuates a dependency cycle where LMICs primarily participate in the final stages of research led by HIC investigators rather than driving locally relevant research agendas.

Table 2: Clinical Trial Disparities Across Selected LMICs (2001-2020)

| Country/Region | Economic Growth Correlation | Trial Growth Pattern | Trial Complexity | Sponsorship Profile |

|---|---|---|---|---|

| China, South Korea | Strong EG, very strong CC [19] | Substantial increase | High complexity | More independent trials |

| Egypt | Strong EG, strong CC [19] | Sustained growth | Moderate complexity | Pharma-dominated |

| Argentina, Brazil, Mexico | Inconsistent EG, weak-moderate CC [19] | Moderate growth | Low-moderate complexity | Pharma-dominated |

| South Africa | Weak correlation [19] | Stagnation/decline | Low complexity | Pharma-dominated |

| South/Southeast Asia | Strong EG, variable CC [19] | Modest/inconsistent growth | Low complexity | Pharma-dominated |

Experimental Protocol: Clinical Trial Disparity Assessment

Methodology: The analysis of clinical trial disparities employed a systematic approach to data collection and evaluation [19]. Country selection was based on World Bank classification as LMICs in 2000, with inclusion criteria emphasizing population size, economy scale, and geopolitical importance. Trial data was extracted from ClinicalTrials.gov using advanced search functions with "cancer" as the condition/disease field and "interventional studies" as the study type. The search spanned 5-year intervals from 2001-2020.

Data Extraction Protocol: For each country, researchers documented the total number of cancer clinical trials, phase distribution (1, 2, vs. 3), and sponsor type (pharmaceutical industry vs. other). The study start date was used to identify National Clinical Trial (NCT) numbers to prevent duplicate counting. Economic correlation analysis used Pearson's correlation coefficients between trial numbers and GDP per capita growth, with strength categorized as very weak (0-0.19), weak (0.2-0.39), moderate (0.4-0.69), strong (0.7-0.89), and very strong (0.9-1.0).

Statistical Analysis: The R software was utilized for all statistical analyses. Correlation strength was calculated to determine the relationship between economic growth and clinical trial development across different geographic and economic contexts.

Patient-Centered Barriers and Navigation Challenges

Multidimensional Access Barriers

Patients in LMICs face a complex constellation of barriers that extend beyond medical treatment to encompass logistical, financial, and sociocultural dimensions. Patient navigation programs have identified that cancer patients require support with transportation, lodging, nutrition, legal, and financial advice in addition to medical guidance [21]. These non-medical barriers frequently prove insurmountable for patients already grappling with their diagnosis and treatment, leading to delayed care, non-adherence to treatment protocols, and ultimately poorer outcomes.

The complexity of cancer care pathways in resource-limited settings creates particular challenges for patients with limited health literacy or socioeconomic resources. Navigation programs specifically address these challenges by helping patients overcome sociocultural, logistical, and financial barriers while promoting continuity and adherence to treatment [21]. Without such support systems, patients frequently become lost in complex care systems, experiencing delays that diminish treatment efficacy and survival prospects.

Survivorship and Support Service Gaps

Survivorship care represents a particularly neglected aspect of cancer care in LMICs, with less than 20% of LMICs offering dedicated palliative care services [16]. This gap in supportive care leaves patients and their families to manage the physical, emotional, and financial consequences of cancer without professional guidance or resources. The emotional, financial, and sexual health challenges faced by cancer survivors are frequently neglected, shifting care burdens to families ill-prepared to provide appropriate support [16].

The implementation of patient navigation programs demonstrates a promising approach to addressing these systemic gaps. As noted by Eduardo Arturo Limón Rodríguez, Deputy Medical Director at the General Hospital of León, Guanajuato, "Navigation goes beyond case management or scheduling support. It has a humanistic, individualized focus to overcome patient barriers. For instance, having doctors, operating rooms, and medications is useless if a patient cannot physically access them." [21]. This highlights the critical role of patient-centered approaches in overcoming the multidimensional barriers to cancer care in LMICs.

Research Reagents and Methodological Tools

Table 3: Essential Research Reagents and Methodological Solutions for LMIC Cancer Research

| Research Tool Category | Specific Application | Function in LMIC Context | Implementation Considerations |

|---|---|---|---|

| ClinicalTrials.gov Database | Tracking trial distribution and characteristics [19] | Provides comprehensive data on clinical trial locations, phases, and sponsors | Requires systematic search protocols and data extraction methodology |

| REDCap Survey Platform | Research capacity assessment [14] [18] | Enables cross-sectional data collection on research barriers | Multilingual implementation crucial for diverse respondents |

| Economic Correlation Analysis | Linking GDP growth with research capacity [19] | Evaluates relationship between economic development and research investment | Uses Pearson's correlation coefficients with standardized categorization |

| Patient Navigation Frameworks | Addressing multidimensional access barriers [21] | Provides structured approach to overcoming patient-level care barriers | Requires cultural adaptation and community co-creation |

| Research Capacity Assessment Tools | Evaluating training, funding, infrastructure [14] | Identifies specific deficits in research ecosystems | Should include both quantitative metrics and qualitative thematic analysis |

The barriers to equitable cancer care in LMICs represent a complex interplay of structural, financial, research, and patient-centered factors that require coordinated, multi-level interventions. The evidence demonstrates that LMICs bear nearly 70% of global cancer mortality despite resource limitations, highlighting the urgent need for transformative approaches to cancer care delivery and research capacity building [14]. Economic growth alone provides an insufficient solution, as evidenced by the variable correlation between GDP increases and clinical trial development across different LMIC contexts [19].

Promising strategies emerging from recent initiatives include targeted investments in patient navigation programs, research training embedded in clinical education, diversified funding streams, and coordinated policy commitments [14] [21]. The development of communities of practice, as seen in Mexico's patient navigation initiative, creates sustainable platforms for knowledge sharing and collaborative improvement [21]. Similarly, research reforms emphasizing protected time, competitive incentives, and streamlined ethical processes can help build the human capital necessary for contextually relevant cancer research [14] [18].

For researchers and drug development professionals benchmarking clinical cancer outcomes, these findings underscore the importance of developing assessment frameworks that account for the specific constraints and challenges of LMIC settings. Future efforts must prioritize contextually appropriate solutions that address the multidimensional nature of cancer care disparities while building sustainable research capacity led by LMIC investigators to ensure that cancer care equity becomes an achievable global reality rather than an aspirational goal.

The Role of Real-World Data in Validating Treatment Effects Across Borders

Real-world data (RWD) refers to data relating to patient health status and/or the delivery of healthcare routinely collected from a variety of sources outside of traditional clinical trials [22]. In oncology research, there has been increasing consideration of RWD and real-world evidence (RWE) in regulatory and health technology assessment (HTA) decision-making to complement randomized controlled trials (RCTs) and address evidence gaps [23]. The growing global burden of cancer, with 18.1 million cancer cases and 9.6 million deaths worldwide in 2018, has intensified the need for diverse evidence sources to support clinical decision-making across different healthcare systems and patient populations [22].

A significant challenge in clinical research involves the transferability of RWD across borders—using data generated in one jurisdiction to inform regulatory and HTA decisions in another locale [23]. This practice has become increasingly common as researchers seek high-quality, accessible data sources that can potentially overcome limitations of local RWD, such as small population sizes, privacy restrictions, and resource constraints [23]. However, the use of transferred RWD introduces complex methodological considerations regarding the comparability of patient populations, healthcare systems, and treatment pathways across different geographical regions.

Within the context of single-cell foundation model (scFM) benchmarking for clinical cancer outcomes research, RWD provides essential ground truth data for validating model predictions against actual patient experiences across diverse populations. scFMs represent large-scale deep learning models pretrained on vast single-cell datasets that can be adapted for various downstream tasks in biological research [24]. These models have the potential to transform how we analyze cellular heterogeneity and complex regulatory networks in cancer, but they require robust validation against clinically relevant endpoints derived from diverse patient populations [11] [24].

Regulatory Frameworks for Cross-Border RWD Transferability

Current International Guidance

Major regulatory and HTA bodies have recognized the potential of international RWD while emphasizing the need for careful assessment of its applicability to local contexts. Our analysis of stakeholder guidance reveals that several organizations have established frameworks for evaluating transferred RWD, though consensus on specific implementation standards remains limited [23].

Table 1: International Regulatory Guidance on Cross-Border RWD Transferability

| Stakeholder | Country | Key Recommendations | Contextual Considerations |

|---|---|---|---|

| AHRQ | United States | Justification for selecting non-US data; understanding of system similarities/differences; discussion of generalizability | Acknowledges that non-US data may be suitable when complete medical records are more accessible |

| FDA | United States | Explanation of how healthcare system and prescribing practices affect generalizability to US population | Focus on market availability differences and their impact on evidence applicability |

| IQWiG | Germany | Requirement to justify that foreign data represent routine practice in German healthcare context or that deviations are irrelevant | Emphasis on equivalence to German routine care standards |

| NICE | United Kingdom | Recognition of value when interventions available abroad before UK; consideration of treatment pathway differences | Specific mention of rare diseases as particularly suitable context |

The guidance from these organizations highlights several common themes, including the importance of assessing differences in treatment pathways and healthcare systems, and providing explicit justifications for using imported RWD for local decision-making contexts [23]. Only AHRQ and NICE directly acknowledge that imported data may sometimes be the most suitable option, particularly when interventions are available outside the local geography first or in the context of rare diseases [23].

Methodological Framework for Assessing Transferability

Based on regulatory guidance and empirical research, we propose a structured framework for evaluating the transferability of RWD across borders. This framework addresses key dimensions that researchers should consider when justifying the use of transferred RWD:

Treatment Pathways: Comparative analysis of standard care protocols, treatment sequences, and therapeutic options between source and target jurisdictions. Differences in treatment accessibility, reimbursement policies, and clinical guidelines can significantly impact the applicability of RWD [23].

Healthcare System Characteristics: Evaluation of structural differences in healthcare delivery, including funding mechanisms, care setting organization, specialist referral patterns, and monitoring intensity. These factors can influence both patient outcomes and data capture processes [23].

Patient Demographics and Disease Epidemiology: Assessment of similarities and differences in patient populations, including age distribution, ethnic composition, comorbidity profiles, and disease incidence/prevalence rates. This is particularly relevant in oncology, where biomarker prevalence and cancer subtypes may vary across geographical regions [23] [22].

Data Quality and Completeness: Standardized evaluation of source data verification processes, missing data patterns, outcome ascertainment methods, and follow-up duration. This includes assessment of whether key clinical endpoints are captured consistently and completely across different healthcare settings [23] [25].

Experimental Protocols for Cross-Border RWD Validation

Methodologies for Assessing RWD Transferability

Several methodological approaches have been developed to evaluate the suitability of transferred RWD for local decision-making contexts. These methods aim to quantify the degree of similarity between source and target populations while identifying potential threats to validity.

The Target Trial Emulation framework provides a structured approach for designing observational studies that mimic the features of randomized trials, including explicit eligibility criteria, treatment strategies, outcome measures, and causal contrast definitions [26]. When applied to cross-border RWD validation, this framework enables researchers to specify whether the emulated trial is being replicated in the source population, target population, or both, facilitating transparency about the generalizability of findings.

Comparative Cohort Characterization involves creating detailed profiles of patient populations in both source and target jurisdictions using standardized variable definitions. This includes demographic characteristics, clinical features, treatment patterns, and outcome distributions. Quantitative measures of population similarity, such as standardized differences and propensity score overlap, can help determine the degree of comparability between cohorts [23] [26].

Sensitivity Analyses for Unmeasured Confounding are particularly important when working with transferred RWD, as differences in unmeasured factors across healthcare systems may bias effect estimates. Methods such as quantitative bias analysis, E-value calculations, and simulation-based approaches can help quantify how strong an unmeasured confounder would need to be to explain away observed effects [26].

Table 2: Key Methodological Approaches for Cross-Border RWD Validation

| Method | Primary Application | Key Outputs | Considerations for scFM Benchmarking |

|---|---|---|---|

| Target Trial Emulation | Framework for designing observational studies that approximate RCTs | Protocol specifying eligibility, treatment strategies, outcomes, follow-up | Provides structured approach for generating ground truth data for model validation |

| Comparative Cohort Characterization | Assessment of population similarity across jurisdictions | Standardized difference measures, propensity score distributions | Helps identify domains where scFM predictions may require population-specific calibration |

| Sensitivity Analyses | Quantification of robustness to unmeasured confounding | E-values, bias parameters, simulated confounding scenarios | Informs uncertainty quantification in scFM predictions based on RWD |

| Equivalence Testing | Statistical evaluation of outcome similarities | Confidence intervals for outcome differences, equivalence bounds | Provides threshold for determining when transferred RWD is sufficiently similar for model training |

Case Study: International Pregnancy Safety Study for Varenicline

A concrete example of cross-border RWD transfer comes from a post-marketing safety study required by the FDA for varenicline (CHANTIX/CHAMPIX) [23]. The sponsor submitted a population-based, prospective cohort study based on registries in Denmark and Sweden, countries that routinely track major life and health events, including pregnancy and birth outcomes. This approach leveraged the comprehensive data capture systems in these countries to address a safety question that would have been challenging to study in the US context due to fragmented healthcare data [23].

The study demonstrated how transferred RWD from jurisdictions with robust data infrastructure can fill important evidence gaps, though the public label update and approval letter noted potential limitations in generalizability to US populations. This case highlights both the potential value and inherent challenges of using international RWD for regulatory decision-making [23].

Integration with Single-Cell Foundation Model Benchmarking

The Role of scFMs in Clinical Cancer Research

Single-cell foundation models represent a transformative approach in computational biology, leveraging large-scale single-cell datasets to learn fundamental principles of cellular behavior that can be adapted to various downstream tasks [24]. These models, typically built on transformer architectures, treat individual cells as analogous to sentences and genes or genomic features as words or tokens, enabling them to decipher the "language" of cells across diverse tissues and conditions [24].

In oncology research, scFMs show particular promise for analyzing tumor heterogeneity, understanding therapy resistance mechanisms, predicting treatment response, and identifying novel biomarkers [11] [24]. However, realizing this potential requires robust benchmarking against clinically relevant endpoints derived from diverse patient populations, making cross-border RWD an essential component of model validation.

Framework for Validating scFM Predictions Using Cross-Border RWD

The validation of scFM predictions against clinical outcomes involves several interconnected steps that leverage cross-border RWD while accounting for potential differences across healthcare systems:

Multi-Scale Model Benchmarking: scFMs should be evaluated at multiple biological scales, including gene-level tasks (e.g., gene function prediction, regulatory network inference) and cell-level tasks (e.g., cell type annotation, cancer cell identification, drug sensitivity prediction) [11]. Cross-border RWD provides essential ground truth for clinical outcome validation, particularly for tasks with direct therapeutic implications.

Transfer Learning Assessment: A key value proposition of scFMs is their ability to transfer knowledge across biological contexts. This capability can be quantified by fine-tuning models pretrained on diverse single-cell atlases using RWD from specific populations, then evaluating performance on held-out data from different geographical regions [11] [27].

Biological Relevance Validation: Beyond predictive accuracy, scFMs should be assessed for their ability to capture biologically meaningful relationships. Novel evaluation metrics such as scGraph-OntoRWR (which measures consistency of cell type relationships with prior biological knowledge) and Lowest Common Ancestor Distance (which quantifies ontological proximity between misclassified cell types) can complement traditional performance measures [11].

Diagram 1: Integrated Framework for Cross-Border RWD and scFM Validation. This workflow illustrates the process of integrating diverse international real-world data sources with single-cell foundation model development and validation for clinical cancer research.

Benchmarking Scenarios for Cross-Border Validation

We propose three primary benchmarking scenarios that leverage cross-border RWD to validate scFMs for clinical cancer applications:

Within-Country Training with Cross-Country Validation: Models are trained and fine-tuned using RWD from one country and validated against RWD from different geographical regions. This scenario tests the geographical generalizability of model predictions and identifies potential domain shifts related to population-specific factors [11].

Cross-Country Meta-Learning: Models are trained on aggregated RWD from multiple countries with explicit accounting of geographical provenance. Performance is then assessed separately for each country to quantify variability in prediction accuracy across healthcare systems [11] [27].

Zero-Shot Transfer Learning: Pretrained scFMs are applied directly to RWD from new countries without fine-tuning, evaluating the models' inherent capacity to generalize across diverse patient populations and healthcare contexts [11] [27].

Comparative Analysis of scFM Performance with RWD Integration

Evaluation Across Biological Tasks

Comprehensive benchmarking studies have revealed distinct performance profiles across leading scFM architectures when validated against clinical outcomes derived from RWD. The table below summarizes the relative strengths and limitations of prominent scFMs across tasks relevant to cancer research:

Table 3: Performance Comparison of Single-Cell Foundation Models on Clinically Relevant Tasks

| Model | Pretraining Data Scale | Gene-Level Tasks | Cell-Level Tasks | Zero-Shot Generalization | RWD Integration Strengths |

|---|---|---|---|---|---|

| Geneformer | 30 million cells | Strong | Moderate | Limited | Effective for gene network inference from heterogeneous RWD |

| scGPT | 33 million cells | Strong | Strong | Strong | Robust performance across diverse clinical data sources |

| UCE | 36 million cells | Moderate | Strong | Moderate | Protein embedding enhances cross-species translation |

| scFoundation | 50 million cells | Strong | Moderate | Strong | Scalable to large-scale RWD cohorts |

| scBERT | Limited datasets | Limited | Limited | Limited | Constrained by training data diversity |

| LangCell | 27.5 million cells | Moderate | Strong | Moderate | Text integration facilitates biomarker discovery |

Our analysis indicates that no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [11]. Models with strong zero-shot generalization capabilities, such as scGPT and scFoundation, show particular promise for cross-border validation where fine-tuning data may be limited [11] [27].

Methodological Considerations for scFM Benchmarking with RWD

When benchmarking scFMs against cross-border RWD, several methodological considerations are essential for ensuring valid and interpretable results:

Batch Effect Management: Both single-cell data and RWD are susceptible to technical artifacts and batch effects. scFMs employ various strategies to address these challenges, including strategic tokenization approaches, special batch tokens, and attention mechanisms that can learn to downweight technical variations [11] [24].

Data Quality Harmonization: RWD sources exhibit substantial heterogeneity in data quality, completeness, and verification processes. Establishing minimum quality thresholds and implementing standardized preprocessing pipelines are essential for meaningful cross-border comparisons [23] [25].