Benchmarking Perturbation Effect Prediction: Protocols, Pitfalls, and Future Directions for Computational Biology

This article provides a comprehensive guide to benchmarking protocols for computational models that predict cellular responses to genetic and chemical perturbations.

Benchmarking Perturbation Effect Prediction: Protocols, Pitfalls, and Future Directions for Computational Biology

Abstract

This article provides a comprehensive guide to benchmarking protocols for computational models that predict cellular responses to genetic and chemical perturbations. As deep learning foundation models promise to revolutionize drug discovery and functional genomics, rigorous and standardized evaluation is paramount. We explore the foundational concepts and critical need for benchmarking, detail the methodological pipeline from data embedding to aggregation, address common troubleshooting and optimization challenges, and present a comparative analysis of current model performance against simple baselines. Designed for researchers, scientists, and drug development professionals, this review synthesizes recent benchmarking studies to offer actionable insights for developing, evaluating, and selecting the most robust prediction tools.

Laying the Groundwork: Why Benchmarking is Critical in Perturbation Biology

Defining the Benchmarking Challenge in Perturbation Prediction

The ability to accurately predict cellular responses to genetic and chemical perturbations represents a cornerstone goal in computational biology, with profound implications for therapeutic discovery and fundamental biological understanding. Recent advances have spawned numerous deep-learning foundation models trained on millions of single cells, promising to learn generalizable representations that enable prediction of perturbation effects [1] [2]. However, comprehensive benchmarking reveals a significant gap between these promises and current capabilities, as sophisticated models consistently fail to outperform deliberately simple baselines [1] [3]. This challenge defines a critical juncture in the field, where standardized evaluation protocols, rigorous benchmarking frameworks, and community-wide initiatives are urgently needed to direct methodological progress toward biologically meaningful predictions.

Quantitative Benchmarking of Model Performance

Performance Gaps Between Foundation Models and Simple Baselines

Recent systematic evaluations demonstrate that state-of-the-art foundation models for perturbation prediction consistently underperform simple statistical and machine learning approaches across diverse datasets and evaluation metrics. These findings challenge the prevailing narrative of deep learning superiority in this domain.

Table 1: Comparative Performance of Perturbation Prediction Models (Pearson Delta Metric)

| Model Category | Model Name | Adamson Dataset | Norman Dataset | Replogle K562 | Replogle RPE1 |

|---|---|---|---|---|---|

| Foundation Models | scGPT | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation | 0.552 | 0.459 | 0.269 | 0.471 | |

| Simple Baselines | Train Mean | 0.711 | 0.557 | 0.373 | 0.628 |

| Additive Model | - | - | - | - | |

| ML with Prior Knowledge | Random Forest + GO | 0.739 | 0.586 | 0.480 | 0.648 |

| Random Forest + scGPT embeddings | 0.727 | 0.583 | 0.421 | 0.635 |

As illustrated in Table 1, even the simplest baseline—predicting the mean expression from training samples—consistently outperforms foundation models across multiple datasets [2]. Furthermore, standard machine learning approaches incorporating biologically meaningful features, such as Gene Ontology annotations, achieve superior performance compared to foundation models fine-tuned on perturbation data [2].

Benchmark Datasets and Key Characteristics

The evaluation of perturbation prediction models relies on standardized datasets that capture diverse perturbation modalities and cellular contexts.

Table 2: Key Benchmark Datasets for Perturbation Prediction

| Dataset | Perturbation Type | Cell Line/Type | Single Perturbations | Double Perturbations | Total Cells |

|---|---|---|---|---|---|

| Norman et al. | CRISPRa | K562 | 100 | 124 | 91,205 |

| Adamson et al. | CRISPRi | K562 | Individual genes | None | 68,603 |

| Replogle et al. | CRISPRi | K562, RPE1 | Genome-wide | None | ~162,750 each |

| Srivatsan et al. | Chemical | 3 cell lines | 188 | None | 178,213 |

| Frangieh et al. | Genetic | 3 cell types | 248 | None | 218,331 |

These datasets enable evaluation under two primary scenarios: perturbation generalization (predicting effects of unseen perturbations in familiar cellular contexts) and cellular context generalization (predicting effects of known perturbations in unseen cell types or conditions) [4] [5]. Current evidence suggests that while foundation models may excel at the former, simpler approaches often outperform at the more challenging cellular context generalization task [5].

Experimental Protocols for Benchmarking

Protocol 1: Double Perturbation Effect Prediction

Objective: To evaluate model performance in predicting transcriptome changes after combinatorial genetic perturbations.

Materials:

- Norman et al. dataset (100 single gene perturbations + 124 paired perturbations in K562 cells)

- 19,264 gene expression measurements per perturbation

- Control condition (no perturbation) expression data

Methodology:

- Data Partitioning: Fine-tune models on all 100 single perturbations and 62 randomly selected double perturbations. Reserve the remaining 62 double perturbations for testing. Repeat this process across five random partitions for robustness [1].

- Model Training: Implement foundation models (scGPT, scFoundation, GEARS, CPA, scBERT, Geneformer, UCE) according to authors' specifications with recommended hyperparameters.

- Baseline Comparison: Include two simple baselines:

- No-change model: Always predicts control condition expression.

- Additive model: Predicts sum of individual logarithmic fold changes for each gene in double perturbations [1].

- Evaluation Metrics: Calculate L2 distance between predicted and observed expression values for the 1,000 most highly expressed genes. Supplement with Pearson delta measure and L2 distances for most differentially expressed genes.

Expected Results: Foundation models typically exhibit prediction errors substantially higher than the additive baseline, with limited capacity to predict genetic interactions beyond buffering effects [1].

Protocol 2: Unseen Perturbation Prediction

Objective: To assess model generalization to entirely novel perturbations not seen during training.

Materials:

- Replogle et al. CRISPRi datasets (K562 and RPE1 cell lines)

- Adamson et al. CRISPRi dataset (K562 cells)

- Linear baseline model components

Methodology:

- Data Preparation: Process single-cell data to pseudobulk expression profiles by averaging gene expression across cells for each perturbation condition.

- Embedding Generation:

- For read-out genes: Create K-dimensional vectors using dimension-reducing embeddings of training data or external sources.

- For perturbations: Create L-dimensional vectors using similar approaches.

- Linear Model Implementation: Solve the equation: argmin𝑊‖Ytrain−(𝐺𝑊𝑃𝑇+𝑏)‖₂² where Ytrain is the training data matrix, G is the gene embedding matrix, P is the perturbation embedding matrix, W is the learned weight matrix, and b is the vector of row means of Ytrain [1].

- Comparison Framework: Evaluate foundation models against (1) mean prediction baseline (b) and (2) linear model with embeddings derived from training data.

- Cross-cell Line Validation: Test transfer learning performance by pretraining on K562 data and evaluating on RPE1 data, and vice versa.

Expected Results: Simple linear models typically match or exceed foundation model performance, with the strongest results emerging from linear models using perturbation embeddings pretrained on relevant perturbation data [1].

Protocol 3: Genetic Interaction Prediction

Objective: To quantify model capability in identifying synergistic, buffering, or opposite genetic interactions.

Materials:

- Norman et al. double perturbation dataset

- Established genetic interaction classification framework

Methodology:

- Interaction Identification: Using full dataset, identify genetic interactions where double perturbation phenotypes differ from additive expectation more than expected under a Normal distribution null model (5,035 interactions at 5% FDR in original study) [1].

- Prediction Generation: For each model, compute difference between predicted expression and additive expectation across 1,000 read-out genes and 62 held-out double perturbations.

- Threshold Sweep: Vary interaction detection threshold D to generate true-positive rate (TPR) and false discovery proportion curves.

- Interaction Classification: Categorize predicted interactions as:

- Buffering: Combined effect is less than expected

- Synergistic: Combined effect is greater than expected

- Opposite: Combined effect opposes individual effects

- Accuracy Assessment: Calculate precision of interaction type predictions across classifications.

Expected Results: Most models predominantly predict buffering interactions, with limited success in identifying synergistic relationships. Foundation models typically fail to outperform the no-change baseline in interaction prediction [1].

Visualization of Benchmarking Workflows

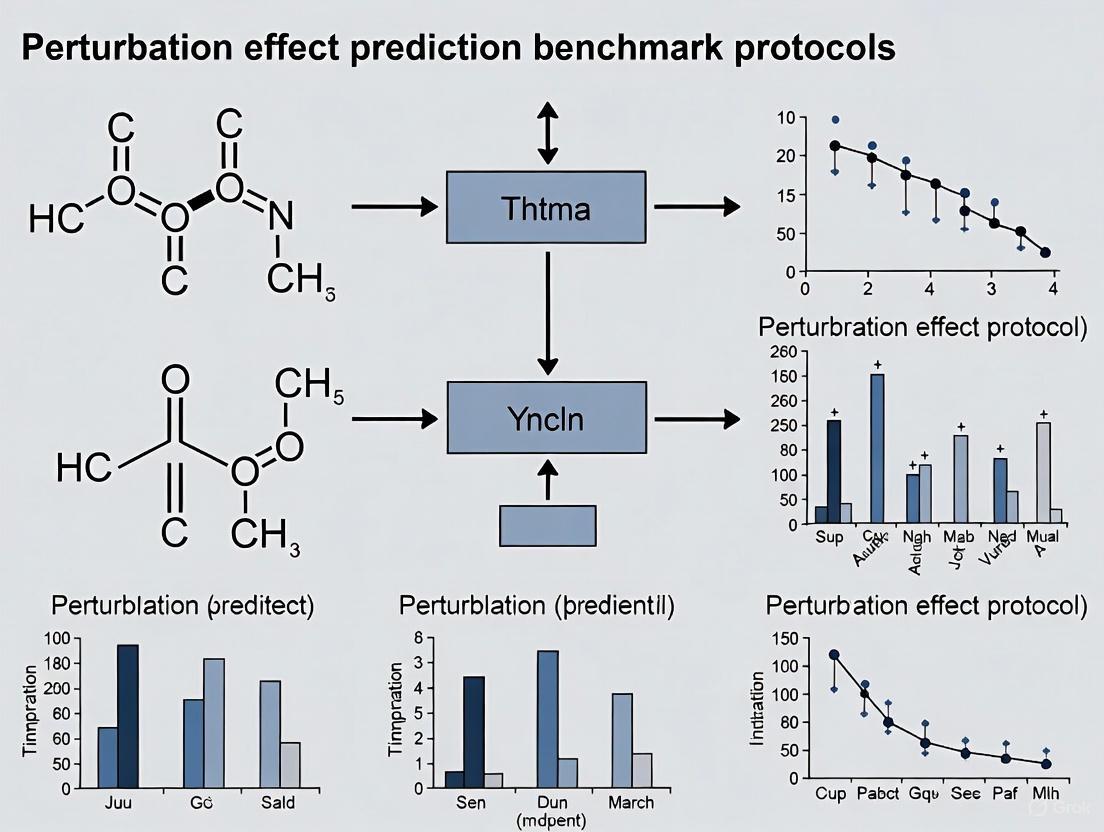

Figure 1: Comprehensive Benchmarking Workflow for Perturbation Prediction Models

Figure 2: Model Comparison Framework for Perturbation Prediction

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagents and Computational Platforms

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Perturb-seq Data | Experimental Dataset | Provides single-cell readouts of genetic perturbations | Model training and validation |

| scGPT | Foundation Model | Gene embedding and perturbation prediction | Benchmarking baseline |

| scFoundation | Foundation Model | Graph neural network for perturbation effects | Benchmarking baseline |

| GEARS | Specialized Model | Predicts combinatorial perturbation effects | Double perturbation benchmarks |

| Additive Model | Simple Baseline | Sum of individual perturbation effects | Performance comparison baseline |

| Train Mean | Simple Baseline | Average of training samples | Minimal performance benchmark |

| scPerturBench | Benchmarking Platform | Reproducible evaluation of 27 methods | Standardized model comparison |

| PerturBench | Benchmarking Framework | Modular model development and evaluation | Community benchmarking standard |

| Virtual Cell Challenge | Competition Platform | Accelerates model development through prizes | Community-driven progress |

| bioLord-emCell | Generalization Framework | Improves cross-context prediction via cell line embedding | Cellular context generalization |

Community Initiatives and Future Directions

The recognition of benchmarking challenges has spurred community-wide initiatives to establish standards and accelerate progress. The Arc Institute's Virtual Cell Challenge represents a landmark effort, providing standardized datasets, evaluation metrics, and a competitive framework with a $100,000 grand prize [6]. This initiative mirrors the successful CASP competition in protein structure prediction that ultimately enabled breakthroughs like AlphaFold.

Concurrently, comprehensive benchmarking platforms such as scPerturBench and PerturBench have emerged, enabling reproducible evaluation of up to 27 perturbation prediction methods across 29 datasets with multiple evaluation metrics [4] [5]. These platforms address critical limitations in current benchmarking practices, including the low perturbation-specific variance in commonly used datasets and the inadequate evaluation of model generalizability across cellular contexts [2].

Future progress will depend on developing more biologically realistic evaluation tasks, creating higher-quality datasets with greater perturbation diversity, and establishing rigorous standards for model comparison that prioritize real-world application scenarios. The field must also address the persistent gap between model performance on in-distribution versus out-of-distribution predictions, particularly for therapeutic applications where generalization to novel cellular contexts is essential [4] [5].

Perturbation modeling encompasses computational methods designed to predict the effects of experimental interventions, or "perturbations," on biological systems. In the context of drug discovery and functional genomics, these perturbations can be genetic (e.g., CRISPR-based gene knockouts) or chemical (e.g., drug treatments) [7] [8]. The primary goal is to use in silico models to predict system-level outcomes, such as changes in gene expression or cell morphology, thereby accelerating therapeutic discovery and reducing the need for exhaustive physical screening [8] [9].

A core challenge is the combinatorial explosion of possible interventions; for instance, the number of potential two-drug combinations is immense, making empirical testing infeasible [10]. Furthermore, the effect of a perturbation is highly context-dependent, varying by biological model system, experimental protocol, and measurement technology [9]. Modern computational approaches, including machine learning and deep generative models, are being developed to disentangle these factors and predict the outcomes of both single and combinatorial perturbations [11] [8].

Core Concepts and Definitions

Perturbation Units

In single-cell perturbation studies, a "Perturbation Unit" is the fundamental entity whose effect is being measured. This is often defined by the experimental technology and the nature of the intervention.

- Genetic Perturbation Unit: A single guide RNA (sgRNA) targeting a specific gene for knockout or activation, as used in technologies like Perturb-seq and CROP-seq [8]. In double-gene perturbation studies, the unit can be a combination of two sgRNAs [1].

- Chemical Perturbation Unit: A specific compound, often represented by its chemical structure (e.g., SMILES string) or a unique barcode linked to the drug molecule [12] [10]. In CP-seq, oligonucleotide barcodes are used to tag and identify different drugs [10].

Perturbation Maps

A "Perturbation Map" is a comprehensive representation of the system-wide changes induced by a perturbation. It serves as a key output for understanding and comparing perturbation effects.

- Transcriptomic Perturbation Map: A high-dimensional vector representing gene expression changes across many genes (e.g., the entire transcriptome or a selected subset like the L1000 genes) following a perturbation [12] [8].

- Morphological Perturbation Map: A representation of phenotypic changes, often derived from high-content imaging (e.g., Cell Painting). This can be a set of hand-crafted morphological features from CellProfiler or a latent representation from a deep learning model like MorphDiff [12].

- Perturbation Embedding: A low-dimensional, latent vector that encapsulates the essence of a perturbation's effect, learned by models like the Compositional Perturbation Autoencoder (CPA) or the Large Perturbation Model (LPM) to facilitate comparison and prediction [8] [9].

Key Prediction Tasks in Perturbation Biology

Computational models are applied to several critical tasks for predicting perturbation effects.

- Perturbation Response Prediction: This involves forecasting the omics signature (e.g., transcriptome) of a cell or population after a specific perturbation. Predictions are evaluated by correlating predicted features with true experimental values [7].

- Combinatorial Perturbation Prediction: A central task is predicting the effect of new perturbation combinations (e.g., drug pairs or double-gene knockouts) using data only from single perturbations. This "multiplies the utility of existing datasets" by enabling in-silico screening of vast combinatorial spaces [8].

- Target and Mechanism Identification: This task uses omics measurements to predict the targets and Mechanisms of Action (MOAs) of uncharacterized perturbations, such as novel compounds [7].

- Cross-Context Prediction: This advanced task involves generalizing predictions across different biological contexts, such as predicting a perturbation's effect in a new cell type or for a new drug dosage, which is crucial for translating findings from model systems to humans [9].

Quantitative Benchmarking of Prediction Models

The performance of perturbation prediction models is quantitatively evaluated on specific tasks, such as predicting gene expression changes after single or double genetic perturbations. Benchmarks often compare complex deep learning models against simple baselines.

Table 1: Benchmarking Model Performance on Double-Gene Perturbation Prediction (Norman et al. dataset)

| Model Category | Specific Model | Key Feature | Performance vs. Additive Baseline |

|---|---|---|---|

| Simple Baseline | Additive Model | Sums individual logarithmic fold changes (LFCs) | Reference [1] |

| Simple Baseline | No Change Model | Predicts control condition expression | Worse [1] |

| Deep Learning | GEARS | Uses knowledge graph of gene-gene relationships | Worse [1] |

| Deep Learning | scGPT | Single-cell foundation model | Worse [1] |

| Deep Learning | scFoundation | Single-cell foundation model | Worse [1] |

Table 2: Performance on Single-Gene Perturbation Prediction (Pearson Correlation)

| Model | Sciplex2 (Continuous) | Replogle (Continuous) | Norman (Continuous) |

|---|---|---|---|

| GPerturb-Gaussian | 0.988 | 0.981 | 0.979 [11] |

| CPA-mlp | 0.980 | - | - [11] |

| GEARS | 0.977 | 0.977 | 0.974 [11] |

Detailed Experimental Protocols

Protocol: Prioritizing Cell Type Response with Augur

Application Note: This protocol uses Augur to identify which cell types within a heterogeneous sample are most affected by a perturbation, based on single-cell RNA sequencing (scRNA-seq) data [7].

Materials:

- Software: pertpy (a perturbation analysis toolbox in Python).

- Input Data: An AnnData object containing scRNA-seq counts and metadata with cell type annotations and perturbation labels (e.g., 'control' vs 'stimulated').

Methodology:

- Data Import and Preparation: Load the scRNA-seq dataset (e.g., the Kang 2018 PBMC dataset). Ensure the metadata contains a column for cell type (

cell_type_col) and a column for the experimental condition (label_col).

Initialize Augur: Create an Augur object, selecting a machine learning estimator appropriate for the data type. For categorical conditions (control/stimulated), a random forest classifier is recommended.

Data Loading: Format the AnnData object for Augur.

Model Training and Prediction: Run the Augur prediction. Use the original Augur feature selection (

select_variance_features=True) for general use. Thesubsample_sizeparameter can be adjusted for resolution.Interpretation: The primary output is

v_results['summary_metrics'], which contains the Augur score for each cell type. Cell types with higher Augur scores are more responsive to the perturbation, meaning their transcriptomic state is more separable between control and perturbed conditions [7].

Protocol: Predicting Combinatorial Perturbations with a Linear Model

Application Note: This protocol details a simple yet powerful linear model approach for predicting the transcriptomic outcomes of unseen single or double genetic perturbations, which can serve as a strong baseline [1].

Materials:

- Input Data: A gene expression matrix (Ytrain) with rows as genes and columns as perturbation conditions (pseudobulk profiles).

- Embeddings: Matrices G (gene embeddings) and P (perturbation embeddings). These can be learned from the training data or obtained from pre-trained models.

Methodology:

- Problem Formulation: The goal is to predict the expression vector for a set of "read-out" genes under a new perturbation.

- Model Architecture: A linear model is defined as:

Y_pred = G * W * P^T + b, where:- G is a K-dimensional embedding for each read-out gene.

- P is an L-dimensional embedding for each perturbation.

- W is a K x L matrix of weights to be learned.

- b is a bias vector, typically the mean expression across training perturbations.

- Model Training: The weight matrix W is learned by solving the optimization problem:

- Prediction: For a new perturbation with embedding

p_new, the predicted expression isy_new = G * W_hat * p_new.T + b. - Validation: This linear model, especially when using perturbation embeddings P pre-trained on a large-scale atlas (e.g., from the Replogle dataset), has been shown to outperform or match the performance of several more complex deep learning models in predicting unseen perturbations [1].

Protocol: Predicting Cell Morphology with MorphDiff

Application Note: This protocol uses MorphDiff, a transcriptome-guided latent diffusion model, to simulate high-fidelity cell morphological responses to unseen genetic or drug perturbations [12].

Materials:

- Paired Datasets: Cell morphology images (e.g., from Cell Painting) and corresponding L1000 gene expression profiles for the same perturbations.

- Software: MorphDiff model implementation.

Methodology:

- Data Compression (MVAE): A Morphology Variational Autoencoder (MVAE) is trained to compress high-dimensional, five-channel cell painting images into low-dimensional latent representations. The MVAE consists of an encoder (

E) and a decoder (D).- Encoder:

z = E(I)whereIis the input image andzis its latent code. - Decoder:

I_recon = D(z)reconstructs the image from the latent code.

- Encoder:

- Latent Diffusion Model (LDM) Training: A diffusion model is trained to generate the morphological latent codes

zconditioned on the perturbed L1000 gene expression profilec.- Noising Process: Gaussian noise is added to a ground-truth latent

z_0overTsteps to produce a completely noisy latentz_T. - Denoising Process: A U-Net (

U_θ) is trained to predict the noise inz_tat each stept, conditioned onc. The training objective isL = E || ε - U_θ(z_t, t, c) ||^2.

- Noising Process: Gaussian noise is added to a ground-truth latent

- Prediction/Inference: The trained model can be used in two modes:

- G2I (Gene-to-Image): A random noise vector

z_Tis iteratively denoised by the LDM using a target gene expression profilecto generate a novel morphological latent codez_0, which is then decoded into an image. - I2I (Image-to-Image): An unperturbed cell image is encoded to

z_0, noise is added to createz_t, and the LDM denoises it conditioned on a perturbed gene expression profilec, effectively transforming the morphology from unperturbed to perturbed.

- G2I (Gene-to-Image): A random noise vector

- Validation: The generated morphologies are evaluated using image quality metrics and their utility in downstream tasks like Mechanism of Action (MOA) retrieval, where they have been shown to achieve accuracy comparable to ground-truth morphology [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents and Materials for Perturbation Experiments

| Reagent / Material | Function | Example Use Case |

|---|---|---|

| sgRNA Library | Targets genes for knockout/activation in pooled CRISPR screens. | Genetic perturbation in Perturb-seq [1]. |

| Oligo-Barcoded Drugs | Drugs conjugated with unique DNA barcodes for multiplexed tracking. | Combinatorial drug screening in CP-seq [10]. |

| Concanavalin A (ConA)-Oligo Conjugate | Linker to tag drug barcodes to cell membranes. | Cell labeling in CP-seq workflow [10]. |

| L1000 Assay | A low-cost, high-throughput gene expression profiling method. | Provides transcriptomic conditioning for MorphDiff [12]. |

| Cell Painting Assay | A high-content imaging assay using fluorescent dyes to label cell components. | Generates ground-truth morphology data for training models like MorphDiff [12]. |

| Microwell Array Chip | Microfluidic device for high-throughput droplet pairing and cell processing. | Enables combinatorial perturbation in CP-seq [10]. |

Within the field of genetic perturbation effect prediction, a critical yet often overlooked benchmark protocol involves comparison against deliberately simple baselines. The emergence of complex deep learning foundation models promises to learn generalizable representations of single-cell data for predicting transcriptome changes after genetic perturbations [1]. However, rigorous benchmarking consistently reveals that these sophisticated models frequently fail to outperform simple mean prediction or additive effect models [1]. This protocol document outlines standardized methodologies for benchmarking perturbation prediction models against these simple baselines, ensuring robust evaluation within therapeutic development pipelines.

Quantitative Performance Comparison

Benchmarking Results Across Model Architectures

Table 1: Performance comparison of deep learning models versus simple baselines on perturbation prediction tasks

| Model Category | Specific Model | Performance Metric | Result vs. Baseline | Dataset |

|---|---|---|---|---|

| Foundation Models | scGPT, scFoundation | Pearson Correlation (L2 distance) | Underperformed additive baseline | Norman et al. [1] |

| Specialized DL | GEARS, CPA | Prediction Error | Higher error than additive model | Norman et al. [1] |

| Simple Baselines | Additive Model | L2 Distance | Best Performance | Norman et al. [1] |

| Simple Baselines | Mean Prediction | Correlation | Competitive with DL models | Replogle et al. [1] |

| Gaussian Process | GPerturb-Gaussian | Pearson Correlation | 0.981 (Competitive with CPA) | Replogle [11] |

| Classical GAM | GAM vs GLM | AIC, R-squared | Better performance than GLM | Epidemiology Study [13] |

Systematic Review Evidence on Model Performance

Table 2: GAMs vs. neural networks across 430 datasets (systematic review findings)

| Data Characteristic | Generalized Additive Model Performance | Neural Network Performance |

|---|---|---|

| Overall (430 datasets) | No consistent superiority for either approach [14] | No consistent superiority for either approach [14] |

| Smaller sample sizes | Remains competitive [14] | Tends to underperform [14] |

| Larger datasets with more predictors | Less advantage [14] | Tends to outperform [14] |

| Interpretability | High - retains transparent, additive structure [14] | Low - "black box" algorithms [14] |

| Key Advantage | Interpretability with modest performance trade-off [14] | Predictive performance in large-data settings [14] |

Experimental Protocols

Core Benchmarking Protocol for Perturbation Effect Prediction

Objective: Systematically evaluate the performance of complex perturbation prediction models against simple baselines.

Materials:

- Single-cell RNA sequencing dataset with genetic perturbation data

- Computational resources for model training and inference

- Implementation of simple baseline models (additive, mean)

- Implementation of complex models (foundation models, specialized DL)

Procedure:

- Data Preparation:

- Utilize publicly available perturbation datasets (e.g., Norman et al., Replogle et al., Adamson et al.)

- Partition data into training and test sets, ensuring held-out double perturbations for evaluation

- For double perturbation prediction, hold out 62 double perturbations for testing [1]

Baseline Model Implementation:

Complex Model Setup:

- Fine-tune foundation models (scGPT, scFoundation) on single and double perturbations

- Configure specialized models (GEARS, CPA) according to recommended settings

- Ensure comparable training data access across all models

Evaluation Metrics:

- Calculate L2 distance between predicted and observed expression values

- Compute Pearson correlation between predictions and ground truth

- Assess genetic interaction prediction performance (true-positive rate, false discovery proportion)

- For systematic comparisons, use RMSE, R², and AUC where appropriate [14]

Statistical Analysis:

- Perform multiple runs with different random partitions (minimum 5 replicates)

- Compare performance distributions using appropriate statistical tests

- Report effect sizes and confidence intervals for performance differences

Figure 1: Workflow for perturbation prediction benchmarking protocol

Protocol for GAM Implementation and Benchmarking

Objective: Implement and evaluate Generalized Additive Models as interpretable alternatives to complex neural networks.

Theoretical Background: GAMs extend generalized linear models by replacing linear terms with smooth non-linear functions, maintaining interpretability through additive structure [14]. The model takes the form: μ = E(Y|x₁...xₚ) = Σsⱼ(xⱼ), where sⱼ are smooth functions for each explanatory variable [15].

Materials:

- R statistical software environment

mgcvpackage for GAM implementation- Dataset with continuous or binary response variables

Procedure:

- Model Specification:

- Use

gam()function from mgcv package - Specify smooth terms using

s()function:gam(response ~ s(predictor1) + s(predictor2), data=dataset) - Select appropriate basis functions (e.g.,

bs="cr"for cubic regression splines) [16]

- Use

Model Fitting:

- Use Restricted Maximum Likelihood (REML) for smoothness parameter estimation

- Specify appropriate link functions (e.g., logit for binary outcomes) [15]

Model Evaluation:

Interpretation:

- Visualize smooth component functions to understand non-linear relationships

- Evaluate statistical significance of smooth terms from model summary

- Compare feature importance with complex models

Figure 2: Generalized Additive Model structure and interpretability

Research Reagent Solutions

Table 3: Essential computational tools and datasets for perturbation benchmarking

| Resource Type | Specific Resource | Application in Research | Key Features/Benefits |

|---|---|---|---|

| Perturbation Datasets | Norman et al. dataset [1] | Double perturbation benchmarking | 100 single + 124 double gene perturbations in K562 cells |

| Replogle et al. data [1] | Unseen perturbation prediction | CRISPRi data from K562 and RPE1 cell lines | |

| Software Packages | mgcv R package [16] |

GAM implementation | Comprehensive GAM modeling with multiple smoother options |

| scGPT, scFoundation [1] | Foundation model benchmarking | Pretrained single-cell foundation models | |

| Benchmarking Tools | Custom linear baselines [1] | Critical performance comparison | Simple additive and mean prediction models |

| GPerturb model [11] | Gaussian process benchmarking | Sparse, interpretable perturbation effects with uncertainty | |

| Evaluation Metrics | L2 distance [1] | Prediction accuracy | Measures deviation from observed expression values |

| Genetic interaction detection [1] | Biological mechanism assessment | Identifies synergistic/antagonistic gene interactions |

Discussion and Implementation Guidelines

The consistent finding that simple baselines remain competitive with complex models has profound implications for perturbation effect prediction in therapeutic development. Researchers should implement these benchmarking protocols as mandatory steps in model evaluation pipelines.

Key Recommendations:

- Always include simple baselines (additive and mean models) in perturbation prediction studies

- Prioritize interpretable models like GAMs when working with smaller sample sizes

- Evaluate the trade-off between interpretability and performance for each specific application

- Allocate computational resources efficiently based on demonstrated performance benefits

The evidence suggests that GAMs and neural networks should be viewed as complementary rather than competing approaches [14]. For many tabular data applications in pharmaceutical research, the performance trade-off is modest, and interpretability may strongly favor GAMs [14]. These protocols provide a framework for making evidence-based decisions in model selection for perturbation prediction tasks.

Accurately predicting the effects of genetic perturbations is a central challenge in computational biology, with significant implications for drug discovery and therapeutic development. The evaluation of predictive models, however, has been hampered by a lack of standardized benchmarking protocols. This application note outlines a proposed universal framework for map building—the Evaluation Framework for Accurate And Robust perturbation prediction (EFAAR) pipeline. Developed within the context of perturbation effect prediction benchmark protocols research, the EFAAR pipeline provides structured methodologies and quantitative standards to impartially assess model performance, thereby directing and evaluating method development in a field where complex deep-learning models have not yet consistently outperformed simple linear baselines [1].

Quantitative Benchmarking of Model Performance

A core component of the EFAAR pipeline is the rigorous, quantitative comparison of prediction models against deliberately simple baselines. The following table summarizes key performance metrics from a landmark benchmark study that evaluated five foundation models and two other deep learning models [1].

Table 1: Performance Summary of Perturbation Prediction Models vs. Baselines

| Model / Baseline Name | Primary Function | Performance on Double Perturbations (L2 Distance) | Performance on Unseen Perturbations | Ability to Predict Genetic Interactions |

|---|---|---|---|---|

| Additive Baseline | Predicts sum of individual logarithmic fold changes (LFCs) | Best Performance (Lowest L2 distance) | Not Applicable (Requires single-gene data) | None (By definition) |

| No Change Baseline | Predicts same expression as control condition | Outperformed by Additive Baseline | Comparable or better than deep learning models [1] | Not better than random |

| GEARS | Deep-learning for perturbation prediction | Higher L2 distance than baselines | Did not consistently outperform linear model or mean baseline [1] | Mostly predicted buffering interactions; rare correct synergistic predictions |

| scGPT | Single-cell foundation model | Higher L2 distance than baselines | Outperformed by linear model with its own embeddings [1] | Predictions showed little variation across perturbations |

| scFoundation | Single-cell foundation model | Higher L2 distance than baselines | Not included in unseen perturbation benchmark [1] | Predictions varied less than ground truth |

| CPA | Deep-learning for perturbation prediction | Higher L2 distance than baselines | Not designed for unseen perturbations [1] | Not reported |

| Linear Model with Embeddings | Simple linear decoder with pretrained embeddings | Not Applicable | Performance matched or exceeded original deep-learning models [1] | Not Applicable |

EFAAR Experimental Protocols

Protocol 1: Benchmarking Double Perturbation Predictions

Objective: To evaluate model performance in predicting transcriptome-wide expression changes following double gene perturbations.

Materials:

- Norman et al. CRISPR activation dataset (K562 cells) [1].

- 100 single-gene perturbations, 124 double-gene perturbations.

- Expression data for 19,264 genes per perturbation.

Methodology:

- Data Partitioning: Randomly split the 124 double perturbations into a training set (62 pairs) and a held-out test set (the remaining 62 pairs). Include all 100 single perturbations in the training data. Repeat this process five times with different random seeds for robustness.

- Model Fine-tuning: Fine-tune the candidate models (e.g., scGPT, GEARS, scFoundation) on the combined single and double perturbation training set.

- Prediction & Evaluation: On the held-out test set, compute the L2 distance between the predicted and observed expression values. Focus analysis on the 1,000 most highly expressed genes. Supplementary analyses should include Pearson delta measure and L2 distances for the n most highly expressed or differentially expressed genes.

- Genetic Interaction Analysis: Identify true genetic interactions from the full dataset using a null model with a Normal distribution (e.g., at a 5% FDR). For model predictions, calculate the difference between the predicted expression and the additive expectation for each double perturbation. Vary the threshold D to call a predicted interaction and plot the true-positive rate (TPR) against the false discovery proportion (FDP).

Protocol 2: Benchmarking Unseen Perturbation Predictions

Objective: To assess model generalization by predicting effects of single-gene perturbations not seen during training.

Materials:

- Replogle et al. CRISPRi datasets (K562 and RPE1 cells) [1].

- Adamson et al. dataset (K562 cells) [1].

Methodology:

- Baseline Establishment: Implement two simple baselines:

- Mean Baseline: Predicts the average expression across all training perturbations for each gene [1].

- Linear Model: Solve for the matrix W in the equation: ( \text{argmin}{\mathbf{W}}|| \mathbf{Y}{\text{train}} - (\mathbf{GW}\mathbf{P}^T + \mathbf{b}) ||_2^2 ) where G is a gene embedding matrix, P is a perturbation embedding matrix, and b is the vector of row means of the training data Y [1].

- Cross-Cell Line Validation: For a stringent test, use the K562 cell line data as the training set to predict effects in the RPE1 cell line, and vice-versa.

- Embedding Transfer Test: Extract pretrained gene embedding matrix G from foundation models (e.g., scFoundation, scGPT) and perturbation embedding matrix P from models like GEARS. Use these in the linear model framework (Step 1) and compare performance against the models' native decoders and the simple baselines.

- Performance Analysis: Evaluate prediction accuracy, noting that pretraining on large-scale single-cell atlases may offer less benefit than pretraining on targeted perturbation data [1].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Tools for Perturbation Prediction Benchmarking

| Item / Resource | Function in the Protocol | Example Sources / Identifiers |

|---|---|---|

| CRISPR Activation (CRISPRa) Dataset | Provides ground truth data for model training and testing on gene upregulation. | Norman et al. 2019 [1] |

| CRISPR Interference (CRISPRi) Dataset | Provides ground truth data for benchmarking predictions on unseen gene perturbations. | Replogle et al. 2022; Adamson et al. 2016 [1] |

| Linear Regression Model | Serves as a critical, high-performance baseline; implementation is essential for fair model comparison. | Python: scikit-learn |

| Gene Ontology (GO) Annotations | Used by some models (e.g., GEARS) for extrapolation to unseen perturbations based on functional similarity. | Gene Ontology Resource [1] |

| Pretrained Model Embeddings | Gene and perturbation vector representations that can be used with a linear decoder for prediction. | Extracted from scGPT, scFoundation, or GEARS [1] |

EFAAR Pipeline Workflow Visualization

The following diagram illustrates the logical workflow and decision points of the proposed EFAAR pipeline for benchmarking perturbation prediction models.

The EFAAR pipeline establishes a universal framework for mapping the capabilities and limitations of perturbation prediction models. By mandating comparison against simple, non-linear baselines and providing standardized protocols for double and unseen perturbation benchmarks, it introduces much-needed rigor into the field. The consistent finding that complex foundation models do not yet outperform simple linear models [1] underscores the critical importance of such a framework. Adopting the EFAAR pipeline will enable researchers, scientists, and drug development professionals to direct resources more effectively, ultimately accelerating progress toward the foundational goal of generalizable prediction of genetic perturbation effects.

Accurately predicting cellular responses to genetic and chemical perturbations is a fundamental challenge in computational biology, with significant implications for understanding disease mechanisms and accelerating therapeutic discovery [17] [2]. The field has witnessed the development of numerous deep learning models, including transformer-based foundation models, designed to predict post-perturbation gene expression profiles [17] [1]. However, recent rigorous benchmarking studies have revealed that these complex models often fail to outperform deliberately simple baseline methods, highlighting a critical need for robust, standardized evaluation frameworks [17] [1]. This application note provides a comprehensive overview of key public datasets, benchmarking resources, and experimental protocols essential for researchers developing and evaluating perturbation effect prediction models. The standardized benchmarking approaches detailed herein enable meaningful comparisons across methods and help direct future development toward biologically relevant improvements rather than incremental metric optimization.

Key Public Datasets for Perturbation Modeling

Several large-scale perturbation datasets serve as community standards for benchmarking prediction models. These datasets typically employ CRISPR-based interventions coupled with single-cell RNA sequencing readouts.

Table 1: Key Public Perturbation-Seq Datasets for Benchmarking

| Dataset Name | Perturbation Type | Cell Line | Perturbation Scale | Key Features | Primary Application |

|---|---|---|---|---|---|

| Adamson et al. [17] [2] | CRISPRi (single) | K562 | 68,603 single cells | Single perturbations | Baseline response prediction |

| Norman et al. [17] [1] | CRISPRa (single/dual) | K562 | 91,205 single cells | Combinatorial perturbations | Genetic interaction prediction |

| Replogle et al. (K562) [17] [18] | CRISPRi (genome-wide) | K562 | 162,751 single cells | Genome-wide single perturbations | Unseen perturbation prediction |

| Replogle et al. (RPE1) [17] [18] | CRISPRi (genome-wide) | RPE1 | 162,733 single cells | Genome-wide single perturbations | Cross-cell line generalization |

| Connectivity Map (CMap) [19] | Chemical/Genetic | Multiple | ~1.5M gene expression profiles | Multi-modal perturbations | Drug discovery & mechanism of action |

Dataset Selection Considerations

When selecting datasets for benchmarking, researchers should consider the perturbation type (CRISPRi, CRISPRa, knockout, or chemical), cell line context, and the specific prediction task being evaluated. The Perturbation Exclusive (PEX) setup assesses a model's ability to predict effects of novel perturbations in familiar cell types, while the Cell Exclusive (CEX) setup evaluates prediction of known perturbations in novel cell types [17]. Current benchmarks predominantly focus on PEX evaluation using Perturb-seq datasets with diverse genetic perturbations in single cell lines [17]. For combinatorial perturbation prediction, the Norman dataset provides both single and double perturbations, enabling assessment of genetic interaction predictions [1]. The Replogle dataset offers genome-scale perturbation data across two distinct cell lines (K562 and RPE1), facilitating evaluation of cross-cell-line generalization [17] [18].

Standardized Benchmarking Suites

The community has developed several comprehensive benchmarking suites to address the challenges of reproducible evaluation in perturbation modeling.

Table 2: Benchmarking Frameworks and Resources

| Resource Name | Main Focus | Key Features | Supported Tasks | Access |

|---|---|---|---|---|

| CausalBench [18] | Network inference | Biologically-motivated metrics, distribution-based interventional measures | Causal network inference from perturbation data | Openly available suite |

| CZI Benchmarking Suite [20] | Virtual cell models | Community-driven, multiple metrics per task, no-code web interface | Perturbation expression prediction, cell type classification | Freely available platform |

| EFAAR Pipeline [21] [22] | Perturbative map building | Standardized framework for constructing maps from perturbation data | Biological relationship identification, perturbation signal assessment | Open-source codebase |

Benchmarking Metrics and Evaluation Strategies

Proper metric selection is critical for meaningful benchmark comparisons. For perturbation effect prediction, key metrics include:

- Differential Expression Correlation: Pearson correlation in differential expression space (perturbed minus control profile) provides a more meaningful assessment than raw expression correlation [17] [2].

- Top DE Gene Performance: Evaluation focused on the top 20 differentially expressed genes emphasizes capture of most significant transcriptional changes [17].

- Genetic Interaction Prediction: For combinatorial perturbations, assessment of ability to predict non-additive effects (synergistic, buffering, or opposite interactions) [1].

- Perturbation Signal Metrics: Consistency and magnitude of individual perturbation representations in embedding spaces [22].

- Biological Relationship Benchmarks: Evaluation of ability to recapitulate known biological relationships from annotated sources [22].

Recent benchmarks have established that even simple baseline models—such as predicting the mean of training examples or using an additive model of logarithmic fold changes—can outperform complex foundation models [17] [1]. This underscores the importance of including appropriate baselines in benchmarking protocols.

Experimental Protocols for Perturbation Prediction Benchmarking

Standard Workflow for Model Evaluation

Figure 1: Standard workflow for perturbation prediction benchmarking, covering key stages from data selection to biological validation.

Protocol 1: Benchmarking Post-Perturbation RNA-seq Prediction

This protocol outlines the evaluation procedure for models predicting transcriptome changes after genetic perturbations, adapted from established benchmarking studies [17] [2].

Materials:

- Perturb-seq dataset (e.g., Norman, Adamson, or Replogle)

- Control gene expression profiles

- Computing environment with appropriate deep learning frameworks

- Benchmarking suite (e.g., CZI benchmarking tools)

Procedure:

Data Preparation and Splitting

- Download and preprocess selected Perturb-seq dataset

- Implement Perturbation Exclusive (PEX) splitting: ensure test perturbations are completely unseen during training

- Generate pseudo-bulk expression profiles by averaging single-cell expression for each perturbation

- For combinatorial perturbations, include subgroups where 0, 1, or 2 perturbations of combinations were present in training

Baseline Model Implementation

- Implement Train Mean baseline: predict average pseudo-bulk expression profiles from training dataset

- Implement additive baseline: sum logarithmic fold changes for combinatorial perturbations

- Implement Random Forest Regressor with Gene Ontology features as biologically-informed baseline

Foundation Model Fine-tuning

- Initialize pre-trained foundation models (scGPT, scFoundation, or others)

- Follow authors' recommended fine-tuning procedures for perturbation data

- Use consistent training-validation splits across all models

Evaluation and Metric Calculation

- Generate predictions at single-cell level and aggregate to pseudo-bulk profiles

- Calculate Pearson correlation in differential expression space (Pearson Delta)

- Evaluate performance on top 20 differentially expressed genes

- For combinatorial perturbations, assess genetic interaction prediction accuracy

Statistical Analysis

- Perform multiple runs with different random seeds (minimum 5 repetitions)

- Compare model performances using appropriate statistical tests

- Evaluate whether foundation models significantly outperform simple baselines

Troubleshooting:

- Low variance in benchmark datasets may complicate performance assessment; consider dataset selection carefully [17]

- Ensure proper implementation of pseudo-bulking as this affects metric calculation

- Validate that PEX splitting correctly excludes test perturbations from training

Protocol 2: Network Inference from Perturbation Data

This protocol describes the evaluation of causal network inference methods using the CausalBench framework [18].

Materials:

- CausalBench benchmarking suite

- Large-scale perturbation datasets (e.g., Replogle K562 and RPE1)

- Network inference methods (observational and interventional)

Procedure:

Data Preparation

- Load and preprocess single-cell perturbation datasets

- Separate observational (control) and interventional (perturbed) data

- Format data according to CausalBench specifications

Method Implementation

- Implement observational baselines (PC, GES, NOTEARS variants)

- Implement interventional methods (GIES, DCDI variants)

- Include recent challenge methods (Mean Difference, Guanlab, Catran)

Evaluation

- Run biological evaluation using known biological relationships as approximate ground truth

- Perform statistical evaluation using Mean Wasserstein distance and False Omission Rate (FOR)

- Assess trade-off between precision and recall across methods

Analysis

- Determine whether methods effectively leverage interventional information

- Evaluate scalability to large-scale perturbation data

- Identify methodological limitations and opportunities for improvement

Troubleshooting:

- Ensure proper handling of both observational and interventional data

- Validate that evaluation metrics align with biological relevance

- Address scalability issues that may limit method performance on large datasets

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Perturbation Benchmarking

| Reagent / Resource | Type | Function | Example Sources/Implementations |

|---|---|---|---|

| Perturb-seq Datasets | Data | Provide single-cell resolution transcriptomic responses to genetic perturbations | Adamson, Norman, Replogle datasets |

| Connectivity Map (CMap) [19] | Data | Catalog of cellular signatures from chemical and genetic perturbations | LINCS Consortium, CLUE platform |

| EFAAR Pipeline [21] [22] | Computational | Standardized framework for building perturbative maps from genome-scale data | Recursion Pharmaceuticals codebase |

| CausalBench Suite [18] | Computational | Benchmarking network inference methods on real-world interventional data | Openly available GitHub repository |

| CZI Benchmarking Tools [20] | Computational | Community-driven benchmarking for virtual cell models | CZI Virtual Cell Platform |

| Gene Ontology Annotations | Knowledge Base | Biological prior knowledge for feature engineering in baseline models | Gene Ontology Consortium |

| scGPT/scFoundation | Model | Pre-trained foundation models for single-cell biology | Published implementations with pre-trained weights |

| CORUM Database | Reference | Manually annotated protein complexes for biological validation | CORUM database |

Analysis and Interpretation of Benchmark Results

Critical Considerations for Benchmark Interpretation

When analyzing benchmarking results, several critical factors must be considered to ensure biologically meaningful interpretations:

- Dataset Limitations: Current Perturb-seq benchmarks exhibit low perturbation-specific variance, potentially limiting their ability to discriminate model performance [17]. This may explain why simple baselines can outperform complex foundation models.

- Metric Sensitivity: Raw gene expression space correlations (>0.95) often fail to distinguish model performance, while differential expression space correlations provide more discriminative power [17].

- Biological Relevance: Benchmark performance should be contextualized with biological validation, such as recapitulation of known pathways or protein complexes [22].

- Generalization Assessment: Evaluate model performance across multiple cell lines and perturbation types to assess robustness beyond narrow benchmark settings.

Expected Results and Performance Patterns

Based on recent comprehensive benchmarks, researchers should expect the following patterns:

- Simple baseline models (Train Mean, additive) often compete with or outperform foundation models in current benchmark settings [17] [1].

- Random Forest models with biological prior knowledge (Gene Ontology features) typically outperform foundation models by significant margins [17] [2].

- Using foundation model embeddings as features in traditional machine learning models can improve performance compared to the end-to-end fine-tuned foundations [17].

- Most models struggle to predict genetic interactions accurately, particularly synergistic interactions [1].

- Pretraining on perturbation data generally provides more benefit than pretraining on single-cell atlas data alone [1].

Future Directions in Perturbation Benchmarking

The field of perturbation effect prediction is rapidly evolving, with several promising directions for benchmark development:

- Multi-modal Integration: Future benchmarks should incorporate diverse data modalities beyond transcriptomics, including imaging and proteomic readouts [21] [22].

- Dynamic Perturbation Modeling: Current benchmarks focus on static endpoints; temporal perturbation responses would provide more challenging evaluation scenarios.

- Cross-cell-type Generalization: Enhanced evaluation of model transferability across diverse cellular contexts and conditions.

- Experimental Design Integration: Benchmarks that evaluate how well models can guide optimal perturbation selection for experimental design.

As benchmarking methodologies mature, they will play an increasingly critical role in guiding the development of biologically relevant models that can truly advance our understanding of cellular mechanisms and accelerate therapeutic discovery.

Building and Executing a Robust Benchmarking Pipeline

The EFAAR framework provides a standardized, systematic pipeline for constructing and benchmarking perturbative "maps of biology," which unify data from genetic or chemical manipulations into relatable embedding spaces [23]. These maps are critical tools in functional genomics and drug discovery, enabling the prediction of perturbation effects by capturing known biological relationships and uncovering novel associations in an unbiased manner [21] [23]. The framework's name is an acronym for its five core computational steps: Embedding, Filtering, Aligning, Aggregating, and Relating [23]. This structured approach addresses the significant challenge of analyzing high-dimensional perturbation data from diverse technologies—such as CRISPR-Cas9 knockout, CRISPRi knockdown, and compound treatment—across various readouts, including cellular microscopy and RNA-sequencing [23]. By establishing a common vocabulary and a modular, open-source codebase, EFAAR facilitates the comparison and optimization of computational pipelines, which is essential for accumulating knowledge and demonstrating the practical relevance of predictive models in perturbation effect research [24] [23].

Table: Core Components of the EFAAR Framework

| Component | Primary Function | Key Inputs | Key Outputs |

|---|---|---|---|

| Embedding | Reduces high-dimensional assay data into tractable numeric representations. | Raw assay data (e.g., images, transcript counts). | Feature vectors or embeddings for each perturbation unit. |

| Filtering | Removes perturbation units that fail quality control metrics. | All generated embeddings. | A curated set of high-quality perturbation units. |

| Aligning | Corrects for technical batch effects and unintended experimental variation. | Curated embeddings from multiple batches. | Batch-corrected, aligned embeddings. |

| Aggregating | Combines replicate units to create a robust profile for each perturbation. | Aligned embeddings from replicate units. | A single, aggregated embedding per perturbation. |

| Relating | Quantifies the similarity between different perturbation profiles. | All aggregated perturbation embeddings. | A similarity matrix or map of biological relationships. |

Detailed Breakdown of EFAAR Components

Embedding

The Embedding step transforms high-dimensional, raw assay data into compact, information-rich numeric representations, making downstream analysis computationally tractable [23]. A "perturbation unit" is the fundamental experimental entity, which can be a single cell in pooled screens or a well containing hundreds of cells in arrayed settings [23]. The specific embedding methodology is highly dependent on the data modality. For morphological data from cellular imaging, embeddings can be extracted using feature engineering software like CellProfiler or, more powerfully, from intermediate layers of deep neural networks [23]. For transcriptomic data from RNA-sequencing, linear methods like Principal Component Analysis (PCA) or non-linear neural network-based approaches are commonly employed [23]. The quality of this initial embedding is paramount, as it sets the foundation for all subsequent analysis and the ultimate biological relevance of the map.

Filtering

Filtering is a critical quality control step to remove perturbation units that do not meet predefined quality criteria, thereby reducing noise and enhancing the reliability of the final map [23]. This step can be executed at multiple stages of the pipeline, both pre- and post-embedding. Filtering criteria are often based on metrics that reflect data quality or experimental success. For instance, in image-based screens, units with low cell counts or poor staining quality can be excluded. In single-cell transcriptomic data, cells with an unusually low number of detected genes or a high percentage of mitochondrial reads are typically filtered out. This process ensures that only high-quality, reliable data proceeds through the pipeline, which is crucial for building a map that accurately reflects true biological signal rather than technical artifacts.

Aligning

The Aligning step corrects for batch effects, which are systematic technical biases introduced when experiments are conducted across different plates, dates, or instrument configurations [23]. These biases can confound biological signals if not properly addressed. The EFAAR framework incorporates several alignment strategies. A baseline approach uses control perturbation units within each batch to center and scale features. More advanced linear methods, such as Typical Variation Normalization (TVN), can align both the first-order statistics and the covariance structures of the data [23]. For more complex batch effects, non-linear methods based on nearest-neighbor matching or deep learning models like variational autoencoders have proven highly effective for both transcriptomic and image data [23]. Instance Normalization, which normalizes features within individual samples, is another valuable technique for mitigating bias in image-based datasets [23].

Aggregating

In the Aggregating step, multiple replicate units representing the same targeted perturbation (e.g., the same gene knockout) are combined to create a single, robust embedding profile for that perturbation [23]. This step is essential for increasing the signal-to-noise ratio and providing a stable estimate of the perturbation's effect. The aggregation function must be chosen carefully. Common approaches include taking the mean or median across replicate embeddings. The choice between robust aggregation (like median) versus standard aggregation (like mean) can significantly impact the map's resilience to outliers. In single-cell data, where a single perturbation is applied to many cells, aggregation is necessary to move from a cell-level profile to a perturbation-level profile, which is the fundamental unit of the final map.

Relating

The final step, Relating, involves computing a quantitative measure of similarity between all pairs of aggregated perturbation embeddings, thereby constructing the actual "map" [23]. This similarity matrix functions as a quantitative backbone of biological relationships, where perturbations with similar functional impacts are positioned close to one another in the map space. Common metrics for relating perturbations include Pearson or Spearman correlation, cosine similarity, and Euclidean distance. The resulting map can then be visualized using dimensionality reduction techniques like UMAP or t-SNE, allowing researchers to explore clusters of biologically related perturbations, such as genes in the same protein complex or compounds with similar mechanisms of action [23].

Benchmarking and Evaluation of EFAAR Maps

Rigorous benchmarking is indispensable for assessing the quality and biological relevance of maps constructed using the EFAAR pipeline. Without standardized evaluation, comparing the performance of different maps or computational choices becomes meaningless [24] [23]. The EFAAR benchmarking framework introduces two primary classes of benchmarks to systematically quantify map utility.

Perturbation Signal Benchmarks assess the effect and consistency of individual perturbations within the map. They answer the fundamental question of whether a specific perturbation (e.g., a gene knockout) produces a detectable and reproducible signal compared to negative controls. Key metrics include the separation between positive and negative control perturbations and the reproducibility of signals across experimental replicates.

Biological Relationship Benchmarks evaluate the map's ability to recapitulate known, annotated biological relationships from public databases [23]. The underlying hypothesis is that a high-quality map should successfully group perturbations with known functional connections. These benchmarks leverage several annotation sources:

- Protein Complexes (CORUM): Measures the map's ability to cluster genes encoding proteins that belong to the same experimentally-validated complex [23].

- Protein-Protein Interactions (HuMAP): Tests the recovery of known physical interactions between proteins [23].

- Pathways (Reactome): Evaluates whether genes involved in the same biological pathway are positioned closely in the map [23].

- Signaling Networks (SIGNOR): Assesses the mapping of causal, directed signaling relationships [23].

Table: EFAAR Map Performance Across Diverse Datasets and Annotations

| Dataset (Perturbation Type; Readout) | CORUM | HuMAP | Reactome | SIGNOR |

|---|---|---|---|---|

| RxRx3 (CRISPR-Cas9; Morphological Images) | 0.556 | 0.200 | 0.154 | Information missing |

| GWPS (CRISPRi; Transcriptomic) | Information missing | Information missing | Information missing | Information missing |

| cpg0016 (CRISPR-Cas9; Morphological Images) | 0.333 | 0.133 | 0.108 | Information missing |

| OpenPhenom (Phenotypic Screening) | 0.333 | 0.133 | 0.108 | Information missing |

Note: Performance metrics represent the ability to recover known biological relationships from respective annotation databases. Higher values indicate better performance. Data adapted from benchmarking studies [25] [23].

Experimental Protocol for Map Construction and Validation

Protocol: Constructing a Perturbative Map from a Transcriptomic Dataset

This protocol outlines the steps for building a perturbative map from a single-cell transcriptomic dataset, such as one generated using CRISPRi/Perturb-seq.

I. Preprocessing and Embedding

- Data Input: Begin with a single-cell RNA-seq count matrix (cells x genes) where each cell is annotated with its respective genetic perturbation (e.g., sgRNA identity).

- Normalization: Normalize the count data for each cell using a standard method (e.g., library size normalization and log-transformation).

- Embedding:

- Perform dimensionality reduction on the normalized count matrix using Principal Component Analysis (PCA). Retain the top 50 to 100 principal components (PCs).

- Alternatively, use a neural network-based method (e.g., a variational autoencoder) to generate a non-linear embedding of each cell. The output is a matrix where each row (a cell) is represented by a low-dimensional vector.

II. Quality Control and Filtering

- Cell-level Filtering: Filter out cells that are potential outliers. Common criteria include:

- Number of detected genes per cell (remove cells in the bottom and top 2.5 percentile).

- Percentage of mitochondrial reads (set a threshold, e.g., <20%).

- For pooled screens, filter cells with low UMI counts or those not confidently assigned to a perturbation.

- Perturbation-level Filtering: Post-aggregation, exclude perturbations that have fewer than a predetermined number of high-quality cells (e.g., < 30 cells) to ensure robust aggregation.

III. Batch Alignment

- Identify Batches: Define batches based on experimental variables (e.g., sequencing lane, sample processing date).

- Apply Alignment Method:

- Using a linear method like Typical Variation Normalization (TVN), which aligns the covariance structures of different batches toward a common target using control cells [23].

- Alternatively, employ a non-linear method like Harmony or Scanorama, which integrate cells across batches based on the similarity of their embedding profiles [23].

IV. Replicate Aggregation

- Group Cells: For each unique genetic perturbation (e.g., target gene), group all cells that have passed the previous filtering and alignment steps.

- Compute Aggregate Profile: Calculate the median profile across the embedding vectors of all cells within the same perturbation group. The median is preferred over the mean for its robustness to outliers. This results in one consolidated embedding vector per perturbation.

V. Relating and Map Generation

- Compute Similarity: Calculate a perturbation-by-perturbation similarity matrix. Use a correlation metric (e.g., Spearman rank correlation) computed between all pairs of the aggregated perturbation embeddings.

- Visualize the Map: Generate a two-dimensional representation of the similarity matrix using a visualization algorithm like UMAP or t-SNE, inputting the pairwise similarity matrix.

VI. Benchmarking and Validation

- Run Benchmarks: Execute the perturbation signal and biological relationship benchmarks using the provided codebase [23].

- Validate Findings:

- Examine if perturbations targeting genes in the same protein complex (e.g., the Integrator complex) cluster together in the map.

- For novel predictions (e.g., an uncharacterized gene clustering with a specific complex), plan orthogonal experiments (e.g., co-immunoprecipitation) for functional validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, datasets, and computational tools essential for conducting research involving the EFAAR framework and perturbative map building.

Table: Research Reagent Solutions for Perturbative Mapping

| Item Name | Type | Function/Application | Example/Source |

|---|---|---|---|

| CRISPRi/a Library | Molecular Reagent | Enables targeted genetic knockdown (CRISPRi) or activation (CRISPRa) for large-scale perturbation. | Genome-wide libraries (e.g., Brunello, Calabrese). |

| Perturb-seq Dataset | Data Resource | Provides single-cell transcriptomic readouts for genetic perturbations, serving as primary input for map building. | Data from studies like Replogle et al. (2022) [23]. |

| RxRx3 Dataset | Data Resource | A large-scale morphological dataset of genetic perturbations in HUVEC cells, with deep neural network embeddings provided. | Recursion Pharmaceuticals [21] [23]. |

| CellProfiler | Software | Open-source tool for extracting quantitative morphological features from cellular images for the Embedding step. | cellprofiler.org [23] |

| EFAAR Codebase | Software | Public code repository containing the pipeline for map building and benchmarking, ensuring reproducibility. | github.com/recursionpharma/EFAAR_benchmarking [23] |

| CORUM Database | Data Resource | A curated database of manually annotated protein complexes for Biological Relationship Benchmarking. | corum.uni-muenchen.de [23] |

| HuMAP Database | Data Resource | A comprehensive map of physically interacting human proteins used for benchmark validation. | humap.uni.lu [25] [23] |

| Reactome | Data Resource | An open-source, open-access, manually curated pathway database used for functional benchmark validation. | reactome.org [23] |

Embedding Strategies for High-Dimensional Assay Data (PCA, VAEs, Neural Networks)

The shift towards high-dimensional phenotypic assays in genomics and drug discovery necessitates robust dimensionality reduction techniques to extract meaningful biological insights. This protocol details a standardized framework for benchmarking embedding strategies—including Principal Component Analysis (PCA), Non-negative Matrix Factorization (NMF), Autoencoders (AE), and Variational Autoencoders (VAE)—within perturbation effect prediction studies. We provide application notes and step-by-step methodologies for employing these techniques to transform high-dimensional assay data into tractable embeddings, evaluate their performance using novel biological metrics, and integrate them into downstream predictive models for therapeutic target discovery.

Core Embedding Strategies: Mathematical Frameworks and Applications

Dimensionality reduction is a cornerstone of modern computational biology, transforming high-dimensional gene-expression or cellular image data into compact, informative embeddings for downstream analysis [26]. The choice of embedding strategy influences all subsequent findings, from cluster identification to biological interpretation.

Table 1: Core Dimensionality Reduction Techniques for High-Dimensional Assay Data

| Method | Category | Core Objective Function | Key Strengths | Key Limitations | Ideal Use Cases |

|---|---|---|---|---|---|

| PCA | Linear |

subject to |

Computational efficiency, interpretability, maximizes variance [26] [27] | Limited to linear associations [26] [27] | Fast baseline analysis, initial data exploration |

| NMF | Linear | min ‖X - ZWᵀ‖²_F subject to Z ≥ 0, W ≥ 0 [26] |

Parts-based, additive representations; yields interpretable gene signatures [26] [27] | Cannot model nonlinear interactions [26] | Identifying co-expressed gene programs, interpretable domain discovery |

| Autoencoder | Nonlinear | min‖X - g_φ(f_θ(X))‖²_F [26] |

Flexible, can capture complex nonlinear manifolds in data [26] [22] | Risk of overfitting; representations can be less interpretable [26] | Learning complex phenotypic patterns from image or expression data |

| Variational Autoencoder | Nonlinear | Evidence Lower Bound (ELBO):E[log p_φ(x|z)] - KL(q_θ(z|x) | p(z)) [26] |

Probabilistic, regularized latent space; good for denoising and disentanglement [26] [27] | Higher computational demand; requires careful tuning [26] | Data imputation, augmentation, learning robust representations for integration |

Benchmarking Protocol for Embedding Evaluation

A critical phase in perturbation analysis is the systematic evaluation of embedding quality, moving beyond mere reconstruction error to biologically-grounded metrics.

Experimental Setup and Workflow

The following workflow, termed the EFAAR pipeline (Embedding, Filtering, Aligning, Aggregating, Relating), standardizes the construction of perturbative maps from raw assay data [22].

Protocol 2.1: EFAAR Pipeline Execution

Embedding:

- Input: Normalized cell-by-gene expression matrix

X ∈ ℝ^(n×d)or high-dimensional image features. - Procedure: Apply one or more dimensionality reduction techniques (See Table 1) to obtain low-dimensional embeddings

Z ∈ ℝ^(n×k), wherek ≪ d. Systematically vary the latent dimensionk(e.g., from 5 to 40) [26]. - Output: Low-dimensional embeddings for each perturbation unit (cell or well).

- Input: Normalized cell-by-gene expression matrix

Filtering:

- Procedure: Remove perturbation units that fail quality control. Criteria can include:

- Cells with low mRNA UMI counts or high mitochondrial gene percentage.

- Wells with extreme pixel intensity values.

- Cells or wells identified as outliers by multivariate analysis.

- Cells transduced with multiple guide RNAs in pooled screens [22].

- Procedure: Remove perturbation units that fail quality control. Criteria can include:

Aligning (Batch Effect Correction):

- Procedure: Apply batch effect correction methods to remove technical variation.

- For linear correction: Use negative control perturbation units per batch to center and scale features.

- For gene expression: Use methods like ComBat [22] or mutual nearest neighbors (MNN) [22].

- For deep learning approaches: Use variational autoencoders that explicitly model batch as a covariate [22] [27].

- Procedure: Apply batch effect correction methods to remove technical variation.

Aggregating:

- Procedure: Combine replicate units (technical or biological) for each perturbation.

- Common method: Compute the coordinate-wise mean or median of the aligned embeddings for all units representing the same perturbation (e.g., the same gene knockout).

- Robust method: For datasets prone to outliers, use the Tukey median [22].

- Output: A single, aggregated embedding vector for each unique perturbation.

- Procedure: Combine replicate units (technical or biological) for each perturbation.

Relating:

- Procedure: Compute similarity or distance measures between aggregated perturbation embeddings.

- Common metrics: Euclidean distance, cosine similarity, or Pearson correlation.

- Downstream Analysis: Use the resulting distance matrix for clustering, or as input to further dimensionality reduction (e.g., UMAP) for visualization [22].

- Procedure: Compute similarity or distance measures between aggregated perturbation embeddings.

Quantitative and Biological Benchmarking Metrics

Table 2: Benchmarking Metrics for Embedding Quality Assessment

| Metric Category | Specific Metric | Description | Interpretation |

|---|---|---|---|

| Reconstruction Fidelity | Mean Squared Error (MSE) | Average squared difference between original and reconstructed data [26]. | Lower values indicate better reconstruction. |

| Explained Variance | Proportion of variance in the original data captured by the embedding [26]. | Higher values are better. | |

| Clustering Quality | Silhouette Score | Measures how similar a cell is to its own cluster compared to other clusters [26]. | Higher scores (closer to 1) indicate better-defined clusters. |

| Davies-Bouldin Index (DBI) | Average similarity between each cluster and its most similar one [26]. | Lower values indicate better cluster separation. | |

| Biological Coherence | Cluster Marker Coherence (CMC) | Fraction of cells in a cluster expressing its designated marker genes [26]. | Higher values indicate clusters are biologically homogeneous. |

| Marker Exclusion Rate (MER) | Fraction of cells that would express another cluster's markers more strongly [26]. | Lower values indicate fewer misassigned cells. A high MER can guide post-hoc refinement. | |

| Perturbation Signal | Perturbation Consistency | Measures the reproducibility of the embedding for replicate perturbations [22]. | Higher consistency indicates a more robust method. |

| Biological Relationship | Protein Complex Recapitulation | Assesses if known protein complex members are positioned closely in the embedding space [22]. | Successful methods place known interactors near each other. |

Protocol 2.2: MER-Guided Cluster Refinement

A high MER score indicates potential cell misassignment. This protocol details a post-processing step to improve cluster biological fidelity [26].

- Initial Clustering: Perform clustering (e.g., Leiden, K-means) on the low-dimensional embeddings

Zto obtain initial cluster labels. - Marker Gene Identification: For each initial cluster, identify significantly upregulated marker genes.

- MER Calculation: For each cell, calculate the aggregate expression of every other cluster's marker genes. If a cell shows higher expression for another cluster's markers, flag it.

- Cell Reassignment: Reassign flagged cells to the cluster whose markers they express most strongly.

- Validation: Recalculate CMC and other clustering metrics post-reassignment. Benchmarking shows this can improve CMC scores by up to 12% on average [26].

Application in Predictive Modeling: The PDGrapher Framework