Benchmarking scGPT vs. scFoundation: A Comprehensive Performance Analysis for Single-Cell Biology

This article provides a systematic evaluation of two leading single-cell foundation models, scGPT and scFoundation, based on the latest benchmarking studies.

Benchmarking scGPT vs. scFoundation: A Comprehensive Performance Analysis for Single-Cell Biology

Abstract

This article provides a systematic evaluation of two leading single-cell foundation models, scGPT and scFoundation, based on the latest benchmarking studies. It explores their foundational concepts and architectures, examines their methodological applications in tasks like drug response prediction and perturbation modeling, identifies key performance limitations and optimization strategies, and delivers a rigorous comparative analysis across multiple biological contexts. Aimed at researchers, scientists, and drug development professionals, this review synthesizes critical insights to guide model selection and application, highlighting current challenges and future directions for integrating AI into biomedical research.

Understanding scGPT and scFoundation: Core Architectures and Pretraining Paradigms

Defining Single-Cell Foundation Models (scFMs) and Their Role in Biology

Table of Contents

- Introduction to scFMs

- Head-to-Head Performance Comparison

- Experimental Protocols for Benchmarking

- Visualizing the scFM Workflow

- The Scientist's Toolkit: Essential Research Reagents & Materials

Single-cell Foundation Models (scFMs) are large-scale deep learning models, typically based on transformer architectures, that are pretrained on vast datasets of single-cell RNA sequencing (scRNA-seq) data [1]. The core concept draws an analogy from natural language processing: treating a cell as a "sentence" and its constituent genes as "words" [1] [2]. By training on millions of cells across diverse tissues, conditions, and species, these models aim to learn fundamental principles of cellular biology and gene-gene interactions in a self-supervised manner [3] [1]. This pretraining allows scFMs to develop rich, internal representations of biological knowledge, which can then be adapted—or fine-tuned—for a wide array of downstream tasks without the need to train a new model from scratch for each specific application [1].

The emergence of scFMs addresses critical challenges in single-cell data analysis, including the characteristically high sparsity, high dimensionality, and technical noise of transcriptome data [3] [4]. They offer a promising unified framework for integrating and comprehensively analyzing the rapidly expanding repositories of single-cell data [1]. Two prominent examples of such models are scGPT and scFoundation, which have been the subject of extensive benchmarking studies to evaluate their respective strengths and limitations [3] [5] [6].

Head-to-Head Performance Comparison

Comprehensive benchmarking reveals that no single scFM consistently outperforms all others across every possible task [3] [6]. Model performance is highly dependent on the specific downstream application, dataset size, and the biological question being asked. The following tables summarize the comparative performance of scGPT and scFoundation across key biological tasks, based on recent, rigorous evaluations.

Table 1: Performance Comparison on Cell-Level Tasks

| Task | Description | scGPT Performance | scFoundation Performance | Key Findings |

|---|---|---|---|---|

| Cell Type Annotation | Classifying cell identity from gene expression. | Superior in zero-shot settings; achieves better cell type separation in embeddings [6]. | Competitive, but generally outperformed by scGPT in independent benchmarks [6]. | scGPT's architecture is particularly proficient at preserving biologically relevant information, enhancing cell type clustering [6]. |

| Batch Integration | Correcting for technical variations between datasets. | Superior at removing batch effects while preserving biological variation in zero-shot tasks [3] [6]. | Effective at distinguishing certain cell types, but generally less effective at batch correction than scGPT [6]. | A unified framework found scGPT outperformed other models, including scFoundation, on batch-effect-removal metrics [6]. |

| Cancer Cell Identification | Identifying malignant cells within a tumor microenvironment. | Robust and versatile performance across diverse applications and cancer types [3]. | Robust and versatile performance across diverse applications and cancer types [3]. | Both models demonstrated utility in this clinically relevant task, with no single model being a clear winner in all contexts [3]. |

Table 2: Performance Comparison on Gene-Level and Perturbation Tasks

| Task | Description | scGPT Performance | scFoundation Performance | Key Findings |

|---|---|---|---|---|

| Perturbation Prediction | Predicting gene expression changes after a genetic or chemical intervention. | Underperformed compared to simpler baseline models (e.g., Random Forest with GO features) [5]. | Underperformed compared to simpler baseline models, including the "Train Mean" baseline [5]. | A key study found that even the simplest baseline model (predicting the mean of training data) could outperform these foundation models on certain Perturb-seq benchmarks [5]. |

| Gene Function Prediction | Inferring gene function and relationships from embeddings. | Strong capabilities, benefiting from effective pretraining strategies [6]. | Strong capabilities in gene-level tasks [6]. | Both models automatically learn a gene embedding matrix that can be leveraged for predicting biological relationships [3] [6]. |

Experimental Protocols for Benchmarking

The performance data presented in the previous section are derived from standardized benchmarking frameworks designed to ensure a fair and rigorous comparison. The core methodology involves a "zero-shot" or "fine-tuning" evaluation of the model's learned representations on specific, held-out downstream tasks [3] [6].

Benchmarking Workflow

A typical benchmarking pipeline involves several critical stages:

- Feature Extraction: Zero-shot cell or gene embeddings are extracted from the pre-trained scFMs without any further task-specific training. This tests the intrinsic biological knowledge captured during pre-training [3] [4].

- Downstream Task Execution: These embeddings are then used as input to various downstream tasks. For cell-level tasks like annotation, a simple classifier (e.g., logistic regression) is often trained on the embeddings. For gene-level tasks, the similarity between gene embeddings is evaluated against known biological databases [3].

- Performance Quantification: Model performance is evaluated using a battery of metrics. These can include standard metrics like clustering accuracy, as well as novel, biology-informed metrics like scGraph-OntoRWR (which measures consistency of cell-type relationships with prior biological knowledge) and Lowest Common Ancestor Distance (LCAD) (which assesses the severity of cell type misannotation errors) [3] [4].

Key Evaluation Metrics

Benchmarking studies employ a diverse set of metrics to holistically assess model performance [3]:

- Cell Embedding Quality: Measured by the Average Silhouette Width (ASW), which indicates how well-separated different cell types are in the latent space [6].

- Biological Fidelity: Evaluated through gene regulatory network (GRN) analysis and the novel ontology-based metrics (scGraph-OntoRWR, LCAD) that compare model outputs to established biological knowledge [3] [6].

- Prediction Accuracy: For tasks like cell annotation and perturbation prediction, standard classification and regression metrics are used, such as Pearson correlation between predicted and actual pseudo-bulk expression profiles in differential expression space [5].

Visualizing the scFM Workflow

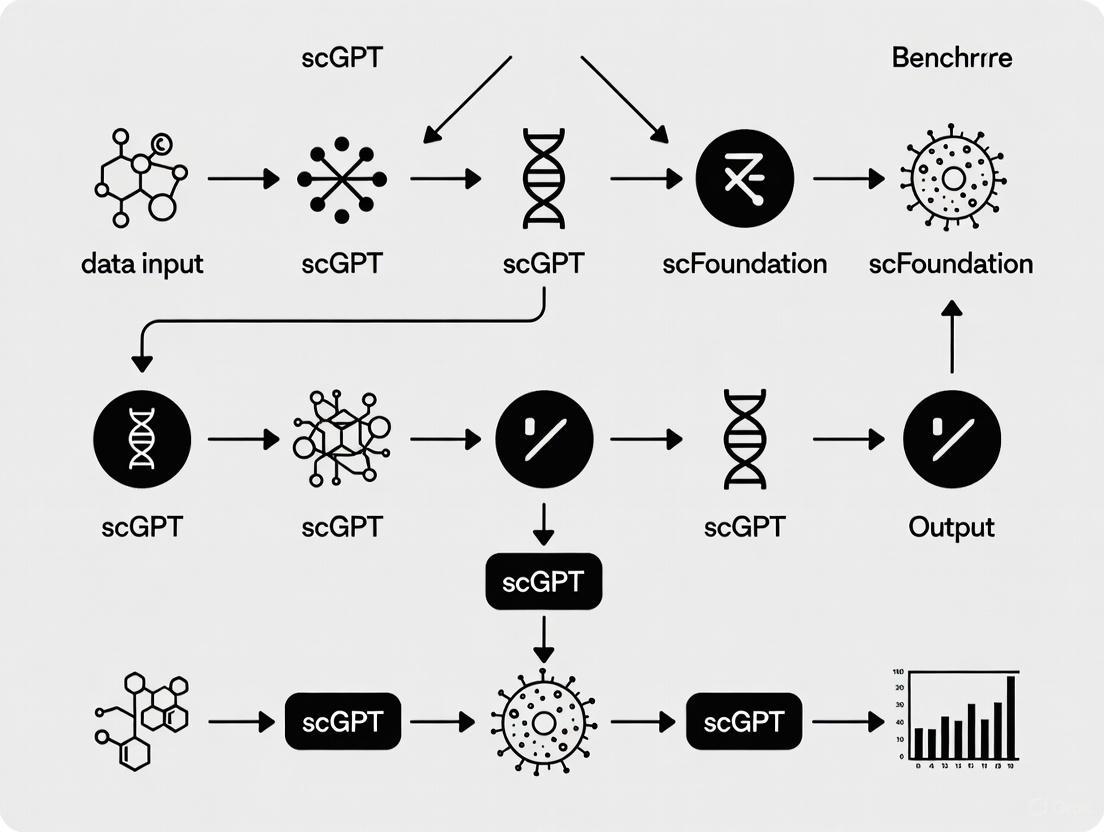

The diagram below illustrates the standard lifecycle of a single-cell Foundation Model, from pretraining on large-scale data to application on downstream biological tasks.

Lifecycle of a Single-Cell Foundation Model

The Scientist's Toolkit: Essential Research Reagents & Materials

Successfully applying and benchmarking scFMs requires a combination of computational tools, software frameworks, and curated biological data resources. The following table details key components of the modern computational biologist's toolkit for working with models like scGPT and scFoundation.

Table 3: Essential Resources for scFM Research

| Category | Item / Tool | Function & Description |

|---|---|---|

| Software & Frameworks | BioLLM | A unified framework that standardizes the deployment of various scFMs (like scGPT and scFoundation) through consistent APIs, enabling seamless model switching and comparative benchmarking [6]. |

| Data Resources | CZ CELLxGENE | A curated atlas and database that provides unified access to millions of annotated single-cell datasets, often used for model pretraining and as a source of high-quality, independent validation data [3] [1]. |

| Data Resources | Perturb-seq Datasets | High-throughput single-cell datasets combining CRISPR-based genetic perturbations with sequencing. They serve as the primary benchmark for evaluating a model's ability to predict cellular responses to genetic interventions [5]. |

| Baseline Models | Traditional ML Models (e.g., RF, kNN) | Simple machine learning models like Random Forest (RF) and k-Nearest Neighbors (kNN) are used as critical baselines. They help determine if the complexity of a foundation model provides a tangible performance benefit for a given task [3] [5]. |

| Evaluation Metrics | Cell Ontology-Informed Metrics (e.g., LCAD) | Novel metrics that incorporate prior biological knowledge from cell ontologies to assess whether model errors are biologically reasonable (e.g., misclassifying a T-cell as a B-cell is less severe than misclassifying it as a neuron) [3] [4]. |

| Gene Embedding Baselines | Functional Representation of Gene Signatures (FRoGS) | An alternative method for generating gene embeddings via random walks on a biological hypergraph. Used as a baseline to evaluate the quality of gene representations learned by scFMs [3]. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized biology by allowing researchers to probe cellular heterogeneity at an unprecedented resolution. The emergence of single-cell foundation models (scFMs), inspired by breakthroughs in large language models (LLMs), represents a paradigm shift in how this complex data is analyzed. These models, pre-trained on massive collections of single-cell data, aim to learn universal patterns of cellular biology that can be adapted to diverse downstream tasks. Among these, scGPT and scFoundation have emerged as prominent transformer-based models. This guide provides an objective comparison of their performance, underpinned by experimental data from recent benchmarking studies, to inform researchers and drug development professionals about their respective strengths and limitations.

Architectural and Methodological Deep Dive

Core Architectures of scGPT and scFoundation

The design philosophies of scGPT and scFoundation, while both rooted in transformer architecture, differ in ways that influence their capabilities and performance.

scGPT leverages a generative pre-trained transformer architecture, specifically designed to handle the non-sequential nature of gene expression data [1] [7]. Its input processing creates a composite embedding for each gene by combining its identity (a unique gene token) and its expression value (often binned into discrete values) [8]. A key innovation is its use of a specialized attention mask within its transformer blocks, which allows for generative pre-training on gene expression profiles without relying on a fixed gene order [2] [7]. scGPT was pre-trained on a massive corpus of over 33 million human cells from 51 organs and 441 studies, collated from the CELLxGENE collection [9] [10] [2].

scFoundation, in contrast, employs an asymmetric encoder-decoder architecture [4]. It is designed to process a much larger input gene set, encompassing nearly all ~19,000 human protein-encoding genes along with common mitochondrial genes [5] [4]. Its pre-training strategy incorporates a read-depth-aware masked gene modeling (MGM) objective, using a mean squared error (MSE) loss to reconstruct masked gene expressions [4].

Table: Architectural Comparison of scGPT and scFoundation

| Feature | scGPT | scFoundation |

|---|---|---|

| Core Architecture | GPT-like (Decoder-based) | Asymmetric Encoder-Decoder |

| Model Parameters | ~50 million [4] | ~100 million [4] |

| Pre-training Dataset Size | ~33 million cells [4] [10] | ~50 million cells [4] |

| Input Gene Handling | ~1,200 Highly Variable Genes (HVGs) [4] | ~19,264 protein-encoding genes [4] |

| Value Embedding | Expression value binning [8] | Value projection [4] |

| Positional Embedding | Not used [4] | Not used [4] |

Experimental Workflow for Benchmarking Foundation Models

The evaluation of scFMs like scGPT and scFoundation follows a structured pipeline to ensure fair and informative comparisons. The following diagram visualizes a typical benchmarking workflow as implemented in frameworks like BioLLM [4] [6].

Diagram: Benchmarking Workflow for Single-Cell Foundation Models

Performance Benchmarking Across Key Tasks

Cell-Level Tasks: Embedding and Annotation

A primary application of scFMs is to generate meaningful representations (embeddings) of cells that capture biological state, which is crucial for tasks like cell type annotation and batch integration.

In a comprehensive benchmark by BioLLM, which evaluated zero-shot cell embeddings using metrics like Average Silhouette Width (ASW) to measure cluster purity, scGPT consistently outperformed other models, including scFoundation [6]. scGPT's embeddings provided superior separation of cell types in visualizations and demonstrated greater effectiveness in integrating data across batches, though it, like other models, struggled to correct for strong batch effects across different sequencing technologies [6]. Another independent study confirmed that fine-tuned scGPT outperformed Geneformer in cell type annotation, though it noted that inconsistent results across studies highlight the importance of proper adaptation techniques like Parameter-Efficient Fine-Tuning (PEFT) [8].

Perturbation Response Prediction

Predicting cellular transcriptional responses to genetic perturbations is a rigorous test of a model's grasp of gene regulatory mechanics. A dedicated benchmarking study yielded surprising results [5].

The study evaluated models on their ability to predict post-perturbation gene expression profiles (in differential expression space) across four Perturb-seq datasets. The results demonstrated that even a simple baseline model (Train Mean), which predicts the average expression profile from the training data, could outperform the fine-tuned foundation models. More notably, a Random Forest (RF) regressor using prior biological knowledge like Gene Ontology (GO) vectors outperformed scGPT by a large margin [5].

Table: Performance in Perturbation Prediction (Pearson Correlation in Differential Expression Space)

| Model | Adamson Dataset | Norman Dataset | Replogle (K562) | Replogle (RPE1) |

|---|---|---|---|---|

| Train Mean (Baseline) | 0.711 | 0.557 | 0.373 | 0.628 |

| scGPT (Fine-tuned) | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation (Fine-tuned) | 0.552 | 0.459 | 0.269 | 0.471 |

| RF with GO Features | 0.739 | 0.586 | 0.480 | 0.648 |

| RF with scGPT Embeddings | 0.727 | 0.583 | 0.421 | 0.635 |

An important finding was that using the pre-trained embeddings from scGPT as features for a Random Forest model led to better performance than using the fine-tuned scGPT model itself, though it still fell short of the RF model with GO features [5]. This suggests that while scGPT's embeddings contain valuable biological information, the full fine-tuning pipeline may not be leveraging it optimally for this specific task.

Drug Response Prediction

The application of scFMs to predict patient-specific or cell-specific responses to drugs is highly relevant for therapeutic development. The scDrugMap benchmark provides insights here [11].

In pooled-data evaluation (training and testing on mixed datasets), scFoundation achieved the best performance, with a mean F1 score of 0.971, outperforming other models by a significant margin [11]. However, in the more challenging cross-data evaluation (testing on datasets not seen during training), which better assesses model generalizability, scGPT excelled in zero-shot learning (mean F1: 0.858), while another model, UCE, performed best after fine-tuning [11]. This indicates a trade-off: scFoundation may achieve higher peak performance on familiar data distributions, while scGPT shows stronger inherent generalization in some contexts.

The Scientist's Toolkit: Essential Research Reagents

Benchmarking studies rely on a suite of computational tools and data resources. The table below details key components used in the evaluations discussed.

Table: Key Reagents for Single-Cell Foundation Model Research

| Reagent / Resource | Type | Function in Research |

|---|---|---|

| Perturb-seq Datasets [5] | Experimental Data | Provides ground-truth data (genetic perturbation + scRNA-seq) for evaluating model predictions of causal cellular responses. |

| CELLxGENE Atlas [9] [2] | Data Repository | A primary source of millions of curated single-cell datasets used for pre-training and as a reference for model applications. |

| BioLLM Framework [6] | Software Tool | A unified framework that standardizes the integration, fine-tuning, and evaluation of different scFMs, ensuring fair comparisons. |

| Gene Ontology (GO) Vectors [5] | Prior Knowledge | Structured, biologically grounded feature sets used to build powerful baseline models for tasks like perturbation prediction. |

| Parameter-Efficient Fine-Tuning (PEFT) [8] | Computational Method | Adaptation techniques like LoRA that efficiently tailor large scFMs to new tasks, reducing computational cost and catastrophic forgetting. |

The benchmarking data reveals that the competition between scGPT and scFoundation is not a simple matter of one being universally superior. Instead, each model demonstrates distinct strengths, a finding consistent with a broader benchmark concluding that "no single scFM consistently outperforms others across all tasks" [4].

- scGPT shows robust and often superior performance in cell-level tasks like annotation and batch integration, generates high-quality zero-shot embeddings, and generalizes well in challenging cross-data drug response prediction [6] [11]. Its architecture appears well-suited for learning generalizable representations of cellular state.

- scFoundation can achieve top-tier performance on specific prediction tasks when data conditions are favorable, as seen in the pooled-data drug response benchmark [11]. Its capacity to process a full gene set may provide an advantage in certain contexts.

A critical insight from the perturbation prediction benchmarks is that foundation models do not always outperform simpler, biologically-informed baseline models [5]. This highlights the necessity of including such baselines in evaluations to properly assess the value added by these large-scale models.

For researchers, the choice between scGPT, scFoundation, or a simpler alternative should be guided by the specific downstream task, dataset size, available computational resources, and the need for biological interpretability. As the field matures, standardized frameworks like BioLLM and continued rigorous benchmarking will be essential for guiding the effective application of these powerful tools in biological discovery and drug development.

A Benchmarking Perspective on Single-Cell Foundation Models

The development of single-cell foundation models (scFMs) represents a significant push in computational biology, aiming to leverage large-scale datasets to build models that can generalize across diverse biological tasks. Among these, scFoundation is a prominent model that utilizes an asymmetric transformer architecture and was pre-trained on approximately 50 million human cells [12] [13] [4]. This guide objectively situates scFoundation's performance within the competitive landscape of single-cell foundation models, focusing on direct comparisons with alternatives like scGPT, Geneformer, and UCE, based on recent, rigorous benchmarking studies.

Model Architecture & Pretraining

The performance of any foundation model is fundamentally shaped by its architectural choices and the scale of its training data.

scFoundation: Employs an asymmetric encoder-decoder transformer architecture and is categorized as a value projection-based model [4]. This approach aims to preserve the full resolution of gene expression data by directly predicting raw expression values. Its pretraining was conducted on a corpus of around 50 million human cells, resulting in a model with ~100 million parameters [12] [4]. The pretraining task was a read-depth-aware masked gene modeling (MGM) objective, optimized using a Mean Squared Error (MSE) loss [4].

scGPT: Also a value projection model, scGPT uses a standard transformer encoder architecture and incorporates an attention mask mechanism [13] [4]. It segments gene expression values into bins, treating the prediction as a regression task. It was pretrained on over 33 million human cells (non-cancerous) and has ~50 million parameters [12] [4]. Its pretraining combines both generative objectives and iterative MGM.

Geneformer: This model adopts a different strategy, based on the ordering of genes by expression level [13] [4]. It is a rank-based model that learns by predicting gene positions within a cell's context. Geneformer was trained on 30 million cells from humans and mice and has a smaller architecture with 40 million parameters [13] [4]. Its pretraining uses MGM with a Cross-Entropy (CE) loss for gene identity prediction.

UCE (Universal Cell Embedding): Distinguished by its use of protein language model embeddings from ESM-2 as gene representations, UCE is a massive model with 650 million parameters [14] [4]. It was pretrained on 36 million cells and uses a modified MGM task with a binary cross-entropy loss to predict whether a gene is expressed or not [4].

The following diagram summarizes the core pretraining workflow common to these models, highlighting key steps like tokenization and the masked gene modeling objective.

Performance Benchmarking Across Key Tasks

Independent benchmarks have revealed that no single model consistently dominates across all tasks. The table below summarizes the comparative performance of scFoundation against its peers in several critical applications.

| Task | Top Performing Model(s) | scFoundation's Performance & Notes |

|---|---|---|

| Perturbation Response Prediction | Random Forest with GO features, Additive baseline model [5] [14] | Underperformed against a simple baseline that predicts the mean of training data [5] [14]. |

| Drug Response Prediction | scFoundation (pooled-data), UCE (cross-data) [11] | Achieved top performance (mean F1: 0.971) when data is pooled; less dominant in cross-data settings [11]. |

| Zero-Shot Cell Type Clustering | HVG selection, scVI, Harmony [15] [16] | Not among top performers; simpler methods like Highly Variable Genes (HVG) selection outperformed foundation models [15] [16]. |

| Zero-Shot Batch Integration | HVG selection, scVI, Harmony [15] [16] | Not among top performers. Geneformer consistently ranked last, while scGPT showed mixed results [15] [16]. |

| Gene Function Prediction | CellFM, scGPT [12] [17] | CellFM, a newer model, reported improvements. scGPT also showed strong capabilities [12] [17]. |

Deep Dive: Perturbation Prediction Benchmark

Predicting a cell's transcriptomic response to a genetic perturbation is a key test for a model's understanding of regulatory biology. Recent benchmarks have yielded critical insights.

Experimental Protocol [5] [14]:

- Datasets: Models are evaluated on Perturb-seq datasets (e.g., Adamson, Norman, Replogle), which measure gene expression in single cells after CRISPR-based gene knockdown or overexpression.

- Task: The model is fine-tuned on a set of seen perturbations and must predict the gene expression profile for unseen perturbations (Perturbation Exclusive, or PEX, setup).

- Baselines: Performance is compared against deliberately simple models, including:

- Train Mean: Predicts the average expression profile from the training data.

- Additive Model: For double perturbations, predicts the sum of the individual logarithmic fold changes.

- Linear Models: Utilize pre-defined biological features like Gene Ontology (GO) vectors.

- Evaluation: Predictions are compared to ground truth using metrics like Pearson correlation in the differential expression space (Pearson Delta) and L2 distance for the most highly expressed or differentially expressed genes.

- A Random Forest regressor using Gene Ontology (GO) features significantly outperformed both scFoundation and scGPT.

- The simplest baseline, the Train Mean model, surprisingly surpassed the performance of the fine-tuned foundation models.

- When the gene embeddings learned by scFoundation during pre-training were used as features in a Random Forest model, its performance improved, suggesting that the pre-training does capture some useful biological information, but the model's full architecture may not be leveraging it optimally for this task.

The logical flow of this benchmarking process is outlined below.

The Scientist's Toolkit

The following table details key resources and computational tools referenced in the benchmarking of single-cell foundation models.

| Research Reagent / Resource | Function in Evaluation |

|---|---|

| Perturb-seq Datasets (Adamson, Norman, Replogle) | Provides ground-truth experimental data for benchmarking genetic perturbation prediction models [5] [14]. |

| Gene Ontology (GO) Annotations | A source of biologically meaningful features used in simple baseline models (e.g., Random Forest) to compete against foundation models [5]. |

| BioLLM Framework | A unified software framework that provides standardized APIs for integrating and evaluating different scFMs, ensuring fair comparisons [17]. |

| Highly Variable Genes (HVG) | A simple, traditional feature selection method in single-cell analysis that serves as a strong baseline in zero-shot tasks like clustering and batch correction [15] [16]. |

| Harmony & scVI | Established, specialized algorithms for single-cell data integration (batch correction) and analysis. Used as baseline benchmarks for cell-level tasks [15] [4] [16]. |

In conclusion, benchmarking studies reveal a nuanced picture of the current capabilities of scFoundation and its peers. While these models represent a significant architectural achievement, they do not consistently outperform simpler, often biologically-informed, baseline methods on critical tasks like perturbation prediction and zero-shot analysis [5] [14] [15]. This indicates that the goal of a generalized, out-of-the-box foundation model that fully captures the complexity of cellular biology remains an active challenge.

The choice of model is highly task-dependent. For instance, while scFoundation excelled in one drug response prediction benchmark [11], it was less competitive in perturbation prediction [5] [14]. The field is maturing with the development of standardized evaluation frameworks like BioLLM [17], which will be crucial for guiding future development. The path forward likely involves not only scaling model and dataset size but also more effectively integrating prior biological knowledge to build models that offer robust, generalizable, and biologically plausible predictions.

In single-cell RNA sequencing (scRNA-seq) data, genes do not possess a natural sequential order, unlike words in a sentence. This fundamental difference presents a significant challenge for applying transformer-based architectures, which were originally designed for sequentially ordered text. Treating a cell's gene expression profile as a "sentence" requires researchers to impose an artificial sequence, a process known as tokenization. How different foundation models approach this tokenization problem directly impacts their ability to capture biological relationships and predict cellular behavior.

This guide objectively compares the performance of two prominent single-cell foundation models—scGPT and scFoundation—within the broader context of benchmarking research. By examining their tokenization strategies, architectural implementations, and experimental outcomes, we provide researchers and drug development professionals with critical insights for model selection in biological discovery and therapeutic applications.

Model Architectures and Tokenization Strategies

Table 1: Fundamental Characteristics of scGPT and scFoundation

| Characteristic | scGPT | scFoundation |

|---|---|---|

| Primary Architecture | GPT-like decoder | Asymmetric encoder-decoder |

| Model Parameters | ~50 million | ~100 million |

| Pretraining Dataset Size | ~33 million cells | ~50 million cells |

| Input Gene Capacity | 1,200 highly variable genes (HVGs) | 19,264 protein-encoding genes |

| Value Representation | Value binning | Value projection |

| Positional Embedding | Not used | Not used |

| Gene Symbol Embedding | Lookup Table (512 dimensions) | Lookup Table (768 dimensions) |

Tokenization Approaches in Practice

Tokenization strategies differ markedly between models, significantly influencing their biological representations:

scGPT employs a highly variable gene selection approach, focusing computational resources on 1,200 genes with the most variable expression across cells. It uses value binning to transform continuous expression values into discrete tokens and does not incorporate positional embeddings, treating the gene set as a bag-of-words rather than an ordered sequence [4].

scFoundation utilizes a comprehensive gene representation, incorporating nearly all protein-encoding genes. This provides a more complete biological picture but increases computational complexity. Like scGPT, it foregoes positional embeddings, instead using a value projection system to handle continuous expression data [5] [4].

Benchmarking Performance on Perturbation Prediction

Experimental Protocols for Perturbation Prediction

Benchmarking studies employed standardized experimental protocols to evaluate model performance on predicting transcriptomic changes after genetic perturbations:

Datasets: Models were evaluated on multiple Perturb-seq datasets, including Adamson (68,603 cells with CRISPRi), Norman (91,205 cells with CRISPRa), and Replogle (K562 and RPE1 cell lines, ~162,000 cells each) [5].

Training Setup: Foundation models were fine-tuned according to authors' specifications using a perturbation-exclusive (PEX) setup, where models were evaluated on their ability to predict effects of completely unseen perturbations [5].

Baseline Models: Simple baseline models including Train Mean (predicting average expression from training data), Elastic-Net Regression, k-Nearest Neighbors, and Random Forest regressors were implemented for comparison [5].

Evaluation Metrics: Performance was assessed using Pearson correlation in differential expression space (Pearson Delta) and accuracy in predicting top 20 differentially expressed genes, with pseudo-bulk profiles created by averaging single-cell predictions [5].

Quantitative Performance Comparison

Table 2: Performance Comparison on Perturbation Prediction (Pearson Delta)

| Model | Adamson | Norman | Replogle K562 | Replogle RPE1 |

|---|---|---|---|---|

| Train Mean (Baseline) | 0.711 | 0.557 | 0.373 | 0.628 |

| scGPT | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation | 0.552 | 0.459 | 0.269 | 0.471 |

| Random Forest + GO | 0.739 | 0.586 | 0.480 | 0.648 |

| Random Forest + scGPT Embeddings | 0.727 | 0.583 | 0.421 | 0.635 |

Performance Gap: Simple baseline models consistently outperformed both foundation models across all datasets. The Train Mean baseline achieved superior Pearson Delta correlation values (0.711, 0.557, 0.373, 0.628) compared to scGPT (0.641, 0.554, 0.327, 0.596) and scFoundation (0.552, 0.459, 0.269, 0.471) across the four benchmark datasets respectively [5].

Biological Prior Knowledge Integration: Random Forest models incorporating Gene Ontology (GO) features substantially outperformed all foundation models (0.739, 0.586, 0.480, 0.648), suggesting that explicit biological knowledge may be more valuable than representations learned through pretraining [5].

Embedding Utility: When scGPT's pretrained gene embeddings were used as features in Random Forest models, performance improved over the fine-tuned scGPT model itself (0.727 vs. 0.641 on Adamson dataset), indicating that the embeddings capture biologically relevant information that may be underutilized by scGPT's native architecture [5].

Zero-Shot Performance and Batch Integration

Experimental Protocols for Zero-Shot Evaluation

Evaluation Setting: Models were assessed without any task-specific fine-tuning to measure the generalizable biological knowledge acquired during pretraining [15].

Tasks: Cell type clustering and batch integration across multiple datasets including Tabula Sapiens, Pancreas, and PBMC datasets [15].

Baselines: Compared against established methods including Highly Variable Genes (HVG) selection, Harmony, and scVI [15].

Metrics: Average BIO score (AvgBio) for clustering quality and batch integration metrics (PCR) for technical variation removal [15].

Zero-Shot Performance Results

Table 3: Zero-Shot Performance Comparison Across Tasks

| Model | Cell Type Clustering (AvgBio) | Batch Integration (PCR) | Generalization to Unseen Data |

|---|---|---|---|

| scGPT | Variable, outperformed by HVG and scVI on most datasets | Moderate, outperforms Harmony and scVI on some complex datasets | Inconsistent, no clear advantage over baselines |

| scFoundation | Not fully evaluated in zero-shot setting | Not fully evaluated in zero-shot setting | Limited evaluation available |

| Geneformer | Consistently outperformed by all baselines | Poor, shows inadequate batch mixing | Fails to generalize effectively |

| HVG (Baseline) | Best performing across most datasets | Best batch integration scores | Consistent performance across datasets |

Cell Type Clustering: In zero-shot settings, both scGPT and Geneformer were generally outperformed by simple Highly Variable Genes selection and established methods like Harmony and scVI across multiple datasets. HVG achieved the best clustering performance, indicating that foundation models do not necessarily provide superior cell embeddings without fine-tuning [15].

Batch Integration: scGPT showed mixed results, outperforming Harmony and scVI on complex datasets with both technical and biological batch effects, but underperforming on datasets with purely technical variation. Geneformer consistently ranked last in batch integration capabilities, with its embeddings often showing higher batch effect retention than the original data [15].

Pretraining Impact: Evaluation of different scGPT variants (random, kidney-specific, blood-specific, human) demonstrated that pretraining provides clear improvements over random initialization, but larger and more diverse pretraining datasets do not consistently confer additional benefits, suggesting diminishing returns to scale [15].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Tools and Resources for scFM Research

| Resource | Type | Primary Function | Relevance to Tokenization |

|---|---|---|---|

| Perturb-seq Datasets | Experimental Data | Provides ground truth for perturbation effects | Enables evaluation of tokenization strategies on functional outcomes |

| Gene Ontology (GO) | Biological Database | Structured knowledge base of gene functions | Provides biological priors for comparison with learned representations |

| CZ CELLxGENE | Data Platform | Standardized access to >100M single cells | Pretraining resource for developing tokenization approaches |

| HVG Selection | Computational Method | Identifies genes with high variability | Basis for scGPT's token reduction strategy |

| Random Forest Regression | Machine Learning Model | Baseline for prediction tasks | Tests biological relevance of gene embeddings independent of transformer architecture |

| Pearson Delta Metric | Evaluation Metric | Correlates predicted vs. actual differential expression | Quantifies performance of different tokenization schemes |

The benchmarking data reveals several critical considerations for researchers and drug development professionals:

Simplicity Versus Complexity: Simple baseline models consistently match or outperform sophisticated foundation models in perturbation prediction tasks. The "Train Mean" baseline surprisingly exceeded both scGPT and scFoundation performance, suggesting that current foundation models may not be capturing meaningful perturbation-specific signals beyond basic averaging approaches [5] [14].

Tokenization Impact: The choice of tokenization strategy significantly influences model performance. scGPT's focused approach using 1,200 highly variable genes demonstrates that careful gene selection may be more important than comprehensive gene inclusion, as implemented in scFoundation with 19,264 genes [5] [4].

Embedding Utility Versus Architecture: The strong performance of Random Forest models using scGPT's embeddings suggests that the pretrained gene representations capture biologically meaningful information, but this potential may not be fully leveraged within the transformer architecture itself [5].

Zero-Shot Limitations: Both scGPT and Geneformer show inconsistent zero-shot performance, indicating that their pretraining objectives may not optimally align with downstream biological tasks without fine-tuning [15].

For researchers selecting models for drug development applications, these findings suggest that foundation models should be evaluated against simple baselines specific to each use case. While scGPT and scFoundation represent significant engineering achievements, their practical advantage over simpler, more interpretable methods remains uncertain for critical applications like perturbation prediction. Future development should focus on better alignment between tokenization strategies, pretraining objectives, and biologically meaningful outcomes.

In the development of single-cell foundation models (scFMs) like scGPT and scFoundation, the choice of pretraining data is a fundamental determinant of model performance. These models are trained on vast collections of single-cell data to learn the "language of cells," with the goal of generating accurate predictions for downstream tasks, such as forecasting cellular responses to genetic perturbations [1]. However, recent rigorous benchmarks reveal a surprising trend: these complex models often fail to outperform simple baseline methods on key predictive tasks [18] [14]. This guide objectively compares the major data sources and examines the experimental evidence benchmarking the performance of models built upon them.

Single-cell foundation models rely on large-scale, curated data repositories for pretraining. The table below summarizes the key characteristics of the primary data sources available.

| Atlas Name | # Cells | Lead Organization | # Species | Primary URL |

|---|---|---|---|---|

| CZ CELLxGENE Discover | 112.8 M | Chan Zuckerberg Initiative (CZI) | 7 | https://cellxgene.cziscience.com/ |

| Human Cell Atlas (HCA) | 65.4 M | HCA Consortium | 1 | https://data.humancellatlas.org/ |

| DISCO | 125.6 M | Singapore Immunology Network | 1 | https://www.immunesinglecell.org |

| Single Cell Portal | 57.6 M | Broad Institute | 18 | https://singlecell.broadinstitute.org/ |

| Single Cell Expression Atlas | 13.5 M | EMBL-EBI | 21 | https://www.ebi.ac.uk/gxa/sc/home |

| Allen Brain Cell Atlas | 4.0 M | Allen Institute | 1 | https://portal.brain-map.org/ |

Source: Adapted from PMC[citiation:7]. Note: Cell counts are approximate and as of the time of writing.

Platforms like CZ CELLxGENE provide unified access to millions of annotated single-cell datasets, serving as a cornerstone for the scFM ecosystem [1] [19]. The Human Cell Atlas (HCA) is another monumental project that aggregates data from thousands of studies, regularly updating its portal with new and updated projects [20]. Public repositories such as the Gene Expression Omnibus (GEO) and the Sequence Read Archive (SRA) host thousands of individual studies, which researchers then integrate into large training corpora [1].

Benchmarking Performance: scGPT vs. scFoundation

Despite their sophisticated architecture and pretraining on massive datasets, both scGPT and scFoundation have been shown to underperform compared to simpler models in predicting post-perturbation gene expression. The following table summarizes key quantitative results from independent benchmarks.

| Model / Baseline | Performance Summary (Pearson Delta, Differential Expression) | Key Benchmarking Finding |

|---|---|---|

| scGPT | Adamson: 0.641, Norman: 0.554, Replogle K562: 0.327, Replogle RPE1: 0.596 [18] | Underperformed versus simple mean baseline and random forest models. |

| scFoundation | Adamson: 0.552, Norman: 0.459, Replogle K562: 0.269, Replogle RPE1: 0.471 [18] | Underperformed versus simple mean baseline and random forest models. |

| Train Mean (Baseline) | Adamson: 0.711, Norman: 0.557, Replogle K562: 0.373, Replogle RPE1: 0.628 [18] | The simplest baseline, which predicts the average expression from training data, outperformed both foundation models. |

| Random Forest + GO Features | Adamson: 0.739, Norman: 0.586, Replogle K562: 0.480, Replogle RPE1: 0.648 [18] | Outperformed foundation models by a large margin by using biologically meaningful features. |

| Additive Model (Baseline) | Outperformed all deep learning models in predicting double perturbation effects [14]. | A simple baseline that sums the effects of single perturbations was not beaten by any complex model. |

| Linear Model with Pretrained Embeddings | Performed as well as or better than scGPT and GEARS with their built-in decoders [14]. | Using embeddings from scFMs in a simple linear model was more effective than using the models' own complex architectures. |

A study published in Nature Methods (2025) reached a similar stark conclusion, finding that no deep-learning model could consistently outperform the simple mean prediction or a linear model in predicting the effects of unseen single-gene perturbations [14]. Furthermore, in predicting double-gene perturbations, even the simplistic "additive" baseline model, which sums the effects of two single perturbations, proved superior to all foundation models [14].

Experimental Protocols in Benchmarking Studies

To ensure fair comparisons, independent benchmarks have employed rigorous and consistent methodologies.

- Datasets: Benchmarks typically use well-established Perturb-seq datasets, including:

- Adamson et al.: 68,603 single cells with single-guide CRISPRi perturbations in K562 cells [18] [14].

- Norman et al.: 91,205 single cells with single and dual CRISPRa (overexpression) perturbations in K562 cells [18] [14].

- Replogle et al.: Over 160,000 single cells each from genome-wide CRISPRi screens in K562 and RPE1 cell lines [18] [14].

- Evaluation Metrics:

- Pearson Delta: The Pearson correlation between the predicted and ground truth differential expression profiles (perturbed vs. control). This is considered more meaningful than correlation in raw expression space [18].

- L2 Distance: The Euclidean distance between predicted and observed expression values for the top highly expressed or differentially expressed genes [14].

- Benchmarking Setup: Models are evaluated in a Perturbation Exclusive (PEX) setting, where their ability to generalize to unseen perturbations is tested. Models are fine-tuned on a set of perturbations and then evaluated on a held-out set [18] [14].

Diagram of the scFM pretraining and benchmarking workflow.

The Scientist's Toolkit: Key Research Reagents & Solutions

The following table details essential resources and their functions in this field, from data portals to computational tools.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| CELLxGENE Discover | Data Portal | Provides unified access to over 100 million curated single-cells for discovery and analysis [1] [19]. |

| HCA Data Portal | Data Portal | Centralized platform to explore and download data from the Human Cell Atlas project [20]. |

| Perturb-seq | Experimental Technology | Combines CRISPR-based genetic perturbations with single-cell RNA sequencing to generate ground-truth data for benchmarking [18]. |

| Gene Ontology (GO) | Knowledge Base | Provides structured biological knowledge features (e.g., functional annotations) that can be used to build highly predictive baseline models [18]. |

| Random Forest Regressor | Computational Model | A classic machine learning algorithm that, when provided with GO features, has been shown to outperform complex foundation models [18]. |

| Linear Model with Embeddings | Computational Model | A simple model that uses pretrained gene embeddings from scFMs as input, often outperforming the original complex models [14]. |

In conclusion, while data sources like CELLxGENE and the Human Cell Atlas are invaluable for pretraining scFMs, current evidence indicates that the models themselves may not yet be leveraging this data effectively for perturbation prediction. Researchers should consider these benchmarking results and the power of simple, biologically-informed baselines when designing and evaluating their own studies.

Practical Applications: From Drug Response to Perturbation Prediction

The accurate prediction of drug response is a critical challenge in modern oncology, directly impacting the development of effective cancer therapies and the understanding of drug resistance mechanisms. Single-cell RNA sequencing (scRNA-seq) technology has emerged as a powerful tool for characterizing the cellular heterogeneity that underpins varying treatment outcomes [21]. Recently, large-scale foundation models pre-trained on massive biological datasets have shown potential for enhancing single-cell analysis. This guide provides an objective performance comparison of two prominent foundation models—scGPT and scFoundation—within the scDrugMap benchmarking framework, offering researchers evidence-based insights for model selection in drug response prediction tasks.

The scDrugMap framework represents the first comprehensive benchmark for evaluating large foundation models on drug response prediction using single-cell data. It incorporates a curated resource of over 326,000 cells from 36 datasets across 23 studies, spanning diverse cancer types, tissues, and treatment regimens [11] [22] [21]. The framework evaluates models under two distinct scenarios—pooled-data evaluation and cross-data evaluation—implementing both layer freezing and Low-Rank Adaptation (LoRA) fine-tuning strategies [21].

Table 1: Overall Performance Comparison of scGPT and scFoundation in scDrugMap Benchmark

| Model | Pooled-Data Evaluation (F1 Score) | Cross-Data Evaluation (F1 Score) | Key Strengths |

|---|---|---|---|

| scFoundation | 0.971 (layer freezing)0.947 (fine-tuning) | Not reported | Excels in pooled-data scenarios with extensive training data |

| scGPT | Not best performer | 0.858 (zero-shot) | Superior cross-dataset generalization with zero-shot learning |

| UCE | Not best performer | 0.774 (fine-tuning on tumor tissue) | Strong performance after fine-tuning on specific tissue types |

Table 2: Detailed Performance Metrics Across Evaluation Settings

| Evaluation Scenario | Training Strategy | scFoundation Performance | scGPT Performance | Top Performing Model |

|---|---|---|---|---|

| Pooled-Data | Layer Freezing | 0.971 (F1) | Lower than scFoundation | scFoundation |

| Pooled-Data | LoRA Fine-tuning | 0.947 (F1) | Lower than scFoundation | scFoundation |

| Cross-Data | Zero-Shot Learning | Lower than scGPT | 0.858 (F1) | scGPT |

| Cross-Data | Fine-tuning | Not best performer | Lower than UCE | UCE (0.774 F1) |

Comparative Analysis of Model Performance

Pooled-Data Evaluation

In the pooled-data evaluation scenario, where models are trained and tested on aggregated data from multiple studies, scFoundation demonstrated superior performance compared to all other models, including scGPT [11] [21]. scFoundation achieved the highest mean F1 scores of 0.971 with layer freezing and 0.947 with fine-tuning, outperforming the lowest-performing model by over 50% [21]. This indicates that scFoundation excels in contexts where substantial training data from multiple sources is available, effectively leveraging its pre-training on large-scale single-cell data.

Cross-Data Evaluation

In cross-data evaluation, where models are tested independently on datasets from individual studies to assess generalization capabilities, scGPT demonstrated superior performance in zero-shot learning with a mean F1 score of 0.858 [21]. This highlights scGPT's stronger generalization to unseen data distributions without additional training. After fine-tuning on tumor tissue, UCE achieved the highest performance (mean F1: 0.774) in this setting [21], suggesting that model performance is highly dependent on both the base architecture and the adaptation strategy.

Critical Perspectives on Foundation Model Performance

Independent benchmarking studies beyond scDrugMap have revealed important limitations in current foundation models for biological prediction tasks. Research published in Nature Methods found that neither scGPT nor scFoundation outperformed deliberately simple baselines for predicting genetic perturbation effects [14]. Simple models—including taking the mean of training examples or using basic machine learning models with biologically meaningful features—often outperformed these foundation models by a substantial margin [5] [14].

Similarly, zero-shot evaluations published in Genome Biology demonstrated that both scGPT and Geneformer underperform simpler methods like highly variable gene selection and established integration tools (Harmony, scVI) on tasks including cell type clustering and batch integration [16] [15]. These findings highlight that while foundation models show promise, their practical utility for drug response prediction requires careful validation against simpler alternatives.

scDrugMap Experimental Framework

Datasets and Curation

The scDrugMap framework incorporates a primary collection of 326,751 single tumor cells from 36 scRNA-seq datasets across 23 studies, with representation of 14 cancer types, 3 therapy categories (targeted therapy, chemotherapy, immunotherapy), and multiple tissue types (cell lines, bone marrow aspirates, tumor tissue, PBMCs) [21]. An independent validation collection includes 18,856 cells from 17 datasets across 6 studies [21]. This comprehensive coverage ensures robust benchmarking across diverse biological contexts.

Model Training and Adaptation Strategies

scDrugMap implements two primary adaptation strategies for foundation models:

- Layer Freezing: The pre-trained model weights are kept fixed while only task-specific heads are trained, preserving the knowledge acquired during pre-training.

- Low-Rank Adaptation (LoRA): A parameter-efficient fine-tuning method that introduces trainable low-rank matrices into the model architecture, enabling effective adaptation with minimal computational overhead [21].

Evaluation Metrics and Protocol

The benchmarking protocol employs the F1 score as the primary metric, providing a balanced measure of precision and recall for drug response prediction [21]. The evaluation follows rigorous data splitting strategies appropriate for each scenario, with cross-validation in pooled-data settings and leave-one-dataset-out validation in cross-data settings to ensure reliable performance estimation.

Research Reagent Solutions

Table 3: Essential Research Resources for scDrugMap-Style Benchmarking

| Resource Category | Specific Examples | Function in Research |

|---|---|---|

| Single-Cell Foundation Models | scFoundation, scGPT, UCE, scBERT, Geneformer, cellLM, cellPLM | Base pre-trained models for transfer learning and zero-shot evaluation |

| Large Language Models | LLaMa3-8B, GPT4o-mini | General-purpose models adaptable for biological sequence analysis |

| Training Adaptation Methods | Low-Rank Adaptation (LoRA), Layer Freezing | Parameter-efficient fine-tuning strategies for model specialization |

| Computational Frameworks | scDrugMap (Python CLI & Web Server), BioLLM | Standardized interfaces for model integration and evaluation |

| Benchmark Datasets | Primary Collection (326,751 cells), Validation Collection (18,856 cells) | Curated single-cell data with drug response annotations for training and testing |

| Evaluation Metrics | F1 Score, Pearson Correlation, Differential Expression Analysis | Quantitative performance assessment for model comparison |

The scDrugMap benchmarking framework provides comprehensive evidence that both scFoundation and scGPT offer distinct strengths for drug response prediction, with the optimal choice dependent on the specific research context and application requirements. scFoundation demonstrates superior performance in pooled-data scenarios where substantial training data is available, while scGPT excels in cross-data evaluation with stronger zero-shot generalization capabilities. However, independent studies consistently show that simpler models can sometimes outperform these foundation models, highlighting the importance of rigorous benchmarking against appropriate baselines. Researchers should select models based on their specific use case, data availability, and generalization requirements, while remaining critical of model claims and validating performance against simpler alternatives.

Predicting Cellular Responses to Genetic Perturbations with Perturb-Seq Data

The ability to accurately predict cellular responses to genetic perturbations is a cornerstone of functional genomics and therapeutic discovery. Technologies like Perturb-seq, which combines CRISPR-based interventions with single-cell RNA sequencing, have generated vast datasets detailing these responses. In response, the computational biology community has developed sophisticated "foundation" models, pre-trained on millions of single-cell transcriptomes, to tackle this prediction problem. Two prominent models, scGPT and scFoundation, have emerged as state-of-the-art candidates. However, rigorous and independent benchmarking is crucial to validate their performance claims. This guide synthesizes evidence from recent, comprehensive studies to objectively compare the predictive performance of these foundation models against each other and, importantly, against simpler baseline approaches. The overarching finding across multiple independent investigations is that despite their complexity and computational cost, these foundation models currently fail to consistently outperform deliberately simple baselines, highlighting significant challenges and opportunities for improvement in the field.

Performance Comparison: Foundation Models vs. Baseline Approaches

Recent benchmark studies have systematically evaluated scGPT and scFoundation against a range of simpler models on the task of predicting post-perturbation gene expression profiles. The consistent result is that foundation models are often outperformed by straightforward alternatives.

Table 1: Performance Comparison on Perturbation Prediction Tasks (Pearson Correlation in Differential Expression Space)

| Model / Dataset | Adamson et al. | Norman et al. | Replogle (K562) | Replogle (RPE1) |

|---|---|---|---|---|

| Train Mean (Baseline) | 0.711 | 0.557 | 0.373 | 0.628 |

| scGPT | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation | 0.552 | 0.459 | 0.269 | 0.471 |

| Random Forest (GO Features) | 0.739 | 0.586 | 0.480 | 0.648 |

Data Source: [5]

The data reveals that the simplest baseline, which predicts the average expression profile from the training data ("Train Mean"), reliably outperforms both scGPT and scFoundation across multiple datasets [5]. Even more notably, a standard Random Forest model using Gene Ontology (GO) biological pathway features as input "outperformed foundation models by a large margin" [5]. This suggests that incorporating structured biological prior knowledge can be more effective than the representations learned through the foundation models' pre-training on vast amounts of single-cell data.

In a separate study published in Nature Methods, a simple additive model—which sums the individual logarithmic fold changes of single perturbations to predict the effect of a double perturbation—proved superior to five foundation models and two other deep learning approaches [14]. Furthermore, when tasked with predicting genetic interactions (where the effect of a double perturbation is non-additive), none of the deep learning models performed better than a "no change" baseline that always predicts the control condition [14].

Detailed Experimental Protocols for Benchmarking

Understanding the methodology behind these benchmarks is critical for interpreting the results and for researchers aiming to conduct their own evaluations.

The benchmarks rely on publicly available Perturb-seq datasets, which use CRISPR to perturb genes and single-cell RNA sequencing to measure the transcriptional outcome. Key datasets include:

- Adamson et al.: 68,603 single K562 cells with single-gene CRISPRi perturbations [5] [14].

- Norman et al.: 91,205 single K562 cells with single and dual-gene CRISPRa (overexpression) perturbations [5] [14].

- Replogle et al. (K562 & RPE1): Two datasets each with over 160,000 single cells from genome-wide CRISPRi screens in different cell lines [5] [14].

For evaluation, single-cell predictions are typically aggregated by perturbation to create pseudo-bulk expression profiles, which are compared to the ground truth pseudo-bulk profiles [5].

Evaluation Metrics and Task Formulation

The core evaluation metric is often the Pearson correlation, calculated in two key spaces:

- Differential Expression Space (Pearson Delta): The correlation between the predicted and actual change in gene expression (perturbed vs. control). This is considered more informative than raw expression space, as it focuses on the perturbation-specific effect [5].

- Performance on Top DE Genes: The correlation is also calculated specifically for the top 20 differentially expressed (DE) genes to assess the model's ability to capture the most significant changes [5].

The primary task is Perturbation Exclusive (PEX) prediction, where the model's ability to generalize to the effects of completely unseen perturbations or combinations is tested [5] [23].

Model Training and Fine-tuning

For the foundation models, the standard protocol involves taking a model that has been pre-trained on a large corpus of single-cell data (often >10 million cells) and then fine-tuning it on the specific perturbation dataset of interest. The baseline models, such as Random Forest or k-Nearest Neighbors, are trained directly on the perturbation data using features derived from biological databases or the foundation models' own gene embeddings [5].

Visualizing the Benchmarking Workflow

The following diagram illustrates the standard workflow for training and evaluating perturbation response prediction models, as used in the cited benchmarks.

Diagram 1: Benchmarking Workflow for Perturbation Prediction Models. This workflow compares foundation models (fine-tuned on Perturb-seq data) against baseline models trained directly on the data with biological features. Performance is evaluated by comparing predictions to the experimental ground truth.

Successful perturbation modeling relies on a combination of computational tools and curated biological datasets. The table below details essential "research reagents" for this field.

Table 2: Essential Research Reagents for Perturbation Modeling

| Resource Name | Type | Primary Function in Perturbation Modeling |

|---|---|---|

| Perturb-seq Datasets (Adamson, Norman, Replogle) | Experimental Data | Provides the ground truth data of gene expression responses to genetic perturbations, used for model training and benchmarking [5] [14]. |

| Gene Ontology (GO) / KEGG/ REACTOME | Biological Database | Curated knowledge bases of biological pathways and functions. Used as informative features for baseline machine learning models [5]. |

| CollecTRI | Biological Database | A comprehensive gene regulatory network resource. Used to evaluate the biological meaningfulness of learned gene embeddings [5]. |

| PerturBench | Computational Framework | A modular codebase for standardized development and evaluation of perturbation prediction models, ensuring fair comparisons [23]. |

| BioLLM | Computational Framework | A unified interface that integrates diverse single-cell foundation models (scGPT, Geneformer, scFoundation), streamlining their application and evaluation [17]. |

The independent benchmarking of scGPT and scFoundation reveals a critical and consistent finding: as of early 2025, these complex foundation models do not surpass the performance of simple baseline methods for predicting cellular responses to genetic perturbations. Models that predict the average training response or use off-the-shelf biological features in a Random Forest regressor consistently set a high bar. This does not negate the potential of the foundation model approach but underscores that the field is still in its early stages. Future progress will likely depend on improved model architectures, more effective pre-training strategies, and the development of benchmarking standards that more accurately reflect the complex biological reality of perturbation responses. For researchers and drug developers, the current evidence strongly suggests that simpler, interpretable models should be included as robust baselines in any project aiming to predict genetic perturbation effects.

The advent of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, offering unprecedented opportunities to advance cancer drug response prediction (DRP). Models like scGPT and scFoundation, built on transformer architectures pretrained on millions of single cells, promise to capture universal biological principles that can be specialized for downstream tasks like DRP [1]. These models employ sophisticated tokenization strategies where genes become input tokens analogous to words in a sentence, with expression values providing additional context [4]. The fundamental premise is that exposure to diverse cellular states across tissues and conditions enables these models to learn generalized representations of cellular behavior that can enhance predictive accuracy for specific applications like DeepCDR.

However, integrating these powerful models into existing DRP pipelines requires careful benchmarking to identify their relative strengths, limitations, and optimal implementation strategies. Recent comprehensive evaluations reveal a complex performance landscape where scFMs demonstrate significant potential but also notable limitations compared to simpler approaches [5] [14] [4]. This comparison guide provides an objective assessment of scGPT versus scFoundation performance to inform effective integration with DeepCDR frameworks, supported by experimental data and implementation protocols.

Performance Benchmarking: Quantitative Comparison

Perturbation Response Prediction

Accurately predicting cellular responses to genetic and chemical perturbations is fundamental to DRP. Benchmarking studies directly compared scGPT and scFoundation against baseline models for predicting transcriptome changes after single and double genetic perturbations using Perturb-seq datasets (Adamson, Norman, and Replogle) [5] [14].

Table 1: Performance Comparison in Perturbation Prediction (Pearson Delta Metric)

| Model | Adamson Dataset | Norman Dataset | Replogle K562 | Replogle RPE1 |

|---|---|---|---|---|

| Train Mean (Baseline) | 0.711 | 0.557 | 0.373 | 0.628 |

| scGPT | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation | 0.552 | 0.459 | 0.269 | 0.471 |

| Random Forest with GO | 0.739 | 0.586 | 0.480 | 0.648 |

Surprisingly, even simple baseline models like Train Mean (predicting the average of training examples) outperformed both foundation models across all datasets [5]. Similarly, a linear baseline model that sums individual logarithmic fold changes for double perturbations substantially outperformed scGPT, scFoundation, and other deep learning models [14]. Random Forest models incorporating biological prior knowledge (Gene Ontology features) achieved the best performance, surpassing scGPT by a large margin [5].

Zero-Shot Capabilities and Generalizability

For practical implementation in DRP pipelines, zero-shot performance (without task-specific fine-tuning) is crucial for exploratory applications where labeled data is limited. Evaluation of zero-shot capabilities for cell type annotation and batch integration revealed important limitations:

Table 2: Zero-Shot Performance Across Biological Tasks

| Model | Cell Type Annotation (AvgBIO) | Batch Integration | Biological Relevance |

|---|---|---|---|

| scGPT | Inconsistent; outperformed by scVI and Harmony on most datasets | Moderate technical batch correction; struggles with biological variation | Captures some biological pathways |

| Geneformer | Consistently outperformed by simple HVG selection | Poor performance; embeddings often dominated by batch effects | Limited biological relevance in embeddings |

| scFoundation | Not extensively evaluated in zero-shot | Not extensively evaluated in zero-shot | Gene embeddings show biological utility |

In zero-shot cell type clustering, both scGPT and Geneformer were consistently outperformed by established methods like Harmony, scVI, and even simple highly variable genes (HVG) selection [15]. Notably, selecting HVGs achieved the best batch integration scores across all datasets, highlighting the performance gap for foundation models in zero-shot settings [15].

Experimental Protocols and Methodologies

Benchmarking Framework Design

To ensure reproducible evaluation of scFMs for DRP applications, researchers should implement standardized benchmarking protocols mirroring recent comprehensive studies:

Data Preparation and Partitioning:

- Utilize standardized Perturb-seq datasets (Adamson, Norman, Replogle) for genetic perturbation prediction [5] [14]

- Implement rigorous train-test splits focusing on perturbation-exclusive (PEX) settings where models predict effects of completely unseen perturbations [5]

- For drug response prediction, employ GDSC and CCLE datasets with multiple splitting strategies (mask-cells, mask-drugs, mask-pairs) to assess generalizability [24]

Evaluation Metrics:

- Primary: Pearson correlation in differential expression space (Pearson Delta) focusing on top differentially expressed genes [5]

- Secondary: L2 distance for most highly expressed genes, genetic interaction detection capability [14]

- Additional: Batch integration metrics (ASW, BIO scores) for zero-shot evaluation [15]

Baseline Models:

- Include simple baselines (Train Mean, No Change, Additive Model) [5] [14]

- Implement traditional machine learning models (Random Forest with biological features) [5]

- Compare against specialized DRP models (GraphTCDR, SubCDR) [25] [26]

Model Integration Strategies

Feature Extraction Approach: Rather than using scFMs as end-to-end predictors, extract gene and cell embeddings as features for traditional machine learning models. Random Forest models using scGPT embeddings achieved better performance than fine-tuned scGPT itself, though still underperforming compared to biological prior knowledge features [5].

Hybrid Prediction Framework: Implement ensemble approaches combining scFM embeddings with biological knowledge features. Studies show that incorporating Gene Ontology vectors and pathway information significantly boosts prediction accuracy compared to using foundation model outputs alone [5] [4].

Diagram 1: Enhanced DeepCDR Integration Framework. This workflow combines foundation model embeddings with traditional machine learning and biological prior knowledge for improved drug response prediction.

Technical Specifications and Implementation

Architectural Comparison

Understanding the fundamental architectural differences between scGPT and scFoundation is essential for effective integration:

Table 3: Model Architectures and Training Specifications

| Parameter | scGPT | scFoundation |

|---|---|---|

| Architecture | GPT-style decoder with unidirectional attention | BERT-style encoder with bidirectional attention |

| Parameters | ~50 million | ~100 million |

| Pretraining Data | 33 million non-cancerous human cells | 50 million single cells |

| Input Genes | 1,200 highly variable genes | 19,264 protein-encoding genes |

| Tokenization | Value binning combined with gene embeddings | Gene embeddings with value projection |

| Positional Encoding | Not used | Not used |

| Primary Pretraining Task | Iterative masked gene modeling with MSE loss | Read-depth-aware masked gene modeling |

scGPT employs a GPT-style decoder architecture pretrained on 33 million non-cancerous human cells, using value binning for expression levels and focusing on highly variable genes [4] [1]. In contrast, scFoundation utilizes a BERT-style encoder trained on 50 million cells with nearly complete gene coverage, implementing read-depth-aware masking during pretraining [4].

Research Reagent Solutions

Table 4: Essential Research Resources for scFM Integration

| Resource | Type | Function in DRP Research |

|---|---|---|

| GDSC Database | Drug screening dataset | Primary source of drug response data (IC50 values) for model training and validation |

| CCLE | Cell line database | Provides multi-omics profiles of cancer cell lines for feature generation |

| Perturb-seq Datasets | Genetic perturbation data | Enables model benchmarking for perturbation response prediction |

| PubChem | Chemical database | Source of drug molecular representations (fingerprints, SMILES strings) |

| Gene Ontology | Biological knowledge base | Provides prior knowledge features for enhancing prediction accuracy |

| BioLLM Framework | Software framework | Standardized APIs for integrating and evaluating multiple scFMs |

Integration Recommendations for DeepCDR

Practical Implementation Guidelines

Based on comprehensive benchmarking evidence, the following integration approaches are recommended:

Prioritize Feature Extraction over End-to-End Learning: Instead of using scFMs as complete DRP solutions, extract their gene and cell embeddings as input features for established DeepCDR architectures. Experimental results demonstrate that Random Forest models using scGPT embeddings outperformed fine-tuned scGPT while maintaining computational efficiency [5].

Implement Ensemble Strategies: Combine foundation model outputs with biological prior knowledge. Studies consistently show that models incorporating Gene Ontology features and pathway information achieve superior performance compared to standalone scFM predictions [5] [25].

Leverage scGPT for Blood-Derived Cancers: scGPT demonstrates stronger performance on blood and bone marrow datasets compared to other tissue types, suggesting prioritized integration for hematological malignancies [15].

Utilize scFoundation for Comprehensive Gene Coverage: When full transcriptome analysis is required, scFoundation's coverage of 19,264 protein-encoding genes provides advantages over scGPT's highly-variable-gene approach [4].

Limitations and Alternative Approaches

Despite their theoretical promise, current scFMs show consistent limitations that warrant consideration:

Simplicity-Performance Paradox: Across multiple benchmarks, simple baseline models consistently matched or outperformed sophisticated foundation models. The "additive model" for genetic interactions and "train mean" for perturbation response provided competitive baselines [5] [14].

Specialized DRP Model Superiority: Models specifically designed for drug response prediction, such as GraphTCDR (utilizing heterogeneous graph neural networks) and SubCDR (employing subcomponent-guided deep learning), demonstrated superior performance compared to general-purpose scFMs [25] [26].

Computational Efficiency Trade-offs: The substantial computational resources required for scFM fine-tuning may not be justified given their current performance limitations, especially when simpler models achieve comparable or better results [14].

Diagram 2: Model Selection Framework for DRP. This decision flow prioritizes simpler, biologically-informed approaches based on benchmarking evidence, with foundation models reserved for specialized cases.

Integration of single-cell foundation models with DeepCDR frameworks offers promising avenues for enhancing cancer drug response prediction, but requires careful, evidence-based implementation. Current benchmarking reveals that while scGPT and scFoundation provide valuable biological representations, they rarely outperform simpler approaches as end-to-end solutions and show significant limitations in zero-shot settings.

For immediate DeepCDR enhancement, a hybrid approach leveraging scGPT embeddings as input features to traditional machine learning models, augmented with biological prior knowledge, represents the most promising integration path. This strategy combines the representation learning capabilities of foundation models with the proven predictive power of established DRP methodologies. As the scFM field rapidly evolves, continued rigorous benchmarking against simple baselines remains essential to distinguish genuine algorithmic advances from incremental improvements that fail to translate to practical predictive performance.

Within the rapidly evolving field of single-cell biology, foundation models pretrained on millions of cells promise to serve as versatile tools for a wide array of downstream tasks. The "pre-train then fine-tune" paradigm aims to capture universal patterns of gene regulation and cell behavior, which can then be efficiently adapted to specific applications. This guide provides an objective, data-driven comparison of two prominent foundation models—scGPT and scFoundation—focusing on their performance across three critical tasks: cell type annotation, batch correction, and gene network inference. The analysis is framed within the broader context of benchmarking studies that seek to evaluate whether these complex models deliver tangible advantages over simpler, more established computational methods. The findings summarized here are based on recent peer-reviewed literature and preprints that have conducted rigorous, multi-faceted benchmarks.

Performance Comparison Tables

The following tables summarize the quantitative performance of scGPT and scFoundation against various baseline models across different tasks. The data is aggregated from multiple large-scale benchmarking studies.

Table 1: Performance on Perturbation Effect Prediction (Differential Expression Space)

| Model | Adamson Dataset (Pearson Delta) | Norman Dataset (Pearson Delta) | Replogle K562 (Pearson Delta) | Replogle RPE1 (Pearson Delta) |

|---|---|---|---|---|

| Train Mean (Simplest Baseline) | 0.711 | 0.557 | 0.373 | 0.628 |

| scGPT | 0.641 | 0.554 | 0.327 | 0.596 |

| scFoundation | 0.552 | 0.459 | 0.269 | 0.471 |

| Random Forest with GO Features | 0.739 | 0.586 | 0.480 | 0.648 |

Table 2: Zero-Shot Performance on Cell-Level Tasks [4] [15]

| Model | Cell Type Annotation (AvgBio Score) | Batch Integration (iLISI Score) | Computational Resources |

|---|---|---|---|

| scGPT | Variable; outperformed by scVI and Harmony on most datasets [15] | Good on complex datasets with biological batch effects [15] | 50 M parameters [4] |

| scFoundation | Information missing | Information missing | 100 M parameters [4] |

| Geneformer | Consistently outperformed by simpler baselines [15] | Poor; often worsened batch effects [15] | 40 M parameters [4] |

| Baseline: scVI / Harmony | Consistently high performance [15] | Consistently high performance [15] | Lower resource requirements |

Table 3: Performance on Gene Network Inference [27]

| Model Category | Representative Methods | Precision | Recall | Leverages Interventional Data |

|---|---|---|---|---|

| Observational Methods | PC, GES, NOTEARS | Low to Moderate | Low to Moderate | No |

| Interventional Methods | GIES, DCDI | Low to Moderate | Low to Moderate | Yes, but with limited benefit |

| Challenge-Winning Methods | Mean Difference, Guanlab | High | High | Yes |

| Foundation Models | scGPT, scFoundation | Not consistently top-ranked [27] | Not consistently top-ranked [27] | Information missing |

Experimental Protocols