Benchmarking Single-Cell Foundation Models: Assessing Generalization Across Tissue Types and Clinical Applications

Single-cell foundation models (scFMs) have emerged as transformative tools for analyzing cellular heterogeneity, yet their ability to generalize across diverse tissue types and realistic clinical scenarios remains a critical, unanswered...

Benchmarking Single-Cell Foundation Models: Assessing Generalization Across Tissue Types and Clinical Applications

Abstract

Single-cell foundation models (scFMs) have emerged as transformative tools for analyzing cellular heterogeneity, yet their ability to generalize across diverse tissue types and realistic clinical scenarios remains a critical, unanswered question. This article provides a comprehensive assessment of scFM generalization, synthesizing recent benchmarking studies that reveal a complex performance landscape. We explore the foundational principles of models like scGPT and Geneformer, their methodological application in cross-tissue annotation and drug response prediction, and the persistent challenges of batch effects and biological interpretability. By evaluating scFMs against traditional methods across a spectrum of tasks—from novel cell type discovery to cancer cell identification—we deliver actionable insights and selection frameworks for researchers and drug development professionals aiming to translate computational advances into robust biological and clinical insights.

The Rise of Single-Cell Foundation Models: Core Architectures and Cross-Tissue Potential

Single-cell foundation models (scFMs) represent a revolutionary convergence of artificial intelligence and cellular biology, transforming how researchers analyze the immense complexity of biological systems at single-cell resolution. Inspired by the monumental success of transformer-based architectures in natural language processing (NLP), these models are pretrained on vast datasets comprising millions of single-cell transcriptomes to learn fundamental biological principles [1]. The core premise is conceptually elegant: treat individual cells as sentences and genes or other genomic features as words or tokens, thereby enabling the model to decipher the "language" of cellular identity and function [1]. This paradigm shift allows researchers to move beyond analyzing single experiments in isolation toward unified models that leverage heterogeneous data across tissues, conditions, and even species.

The assessment of scFM generalization across tissue types represents a critical frontier in computational biology, with profound implications for drug development and clinical applications. As noted in recent benchmarking studies, scFMs have demonstrated remarkable potential as robust and versatile tools for diverse applications, though their ability to extract unique biological insights beyond standard methods remains an area of active investigation [2]. For research scientists and drug development professionals, understanding the comparative strengths, limitations, and optimal application scenarios of these models is essential for leveraging their full potential in uncovering novel therapeutic targets and advancing precision medicine initiatives.

Conceptual and Architectural Foundations of scFMs

From Language to Biology: Core Concepts

Foundation models are large-scale artificial intelligence models trained on extensive datasets at scale using self-supervised objectives, then adapted to a wide range of downstream tasks [1]. These models develop rich internal representations that can be fine-tuned to excel in specific tasks with relatively few additional labeled examples, mirroring the transfer learning capabilities that have revolutionized NLP and computer vision [1]. The transformative potential of this approach for single-cell biology becomes evident when considering the enormous volumes of publicly available single-cell data—platforms such as CZ CELLxGENE now provide unified access to annotated single-cell datasets with over 100 million unique cells standardized for analysis [1].

The adaptation of transformer architectures to single-cell data necessitates several conceptual translations from linguistic to biological domains. In NLP, tokens typically represent words or subwords with inherent sequential relationships, whereas gene expression data lacks natural ordering [1]. To address this fundamental difference, scFMs employ various tokenization strategies, including ranking genes within each cell by expression levels, partitioning genes into expression value bins, or using normalized counts directly [1]. Special tokens may be incorporated to represent cellular identity, metadata, or multimodal omics information, enriching the biological context available to the model [3].

Architectural Landscape and Pretraining Strategies

Most established scFMs utilize transformer architectures, but significant variation exists in their specific implementations and pretraining approaches. The field has generally diverged into two primary architectural paradigms: encoder-based models (e.g., scBERT) employing bidirectional attention mechanisms that learn from all genes in a cell simultaneously, and decoder-based models (e.g., scGPT) utilizing unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes [1]. Hybrid designs combining encoder and decoder components are also emerging, though no single architecture has yet established clear superiority for single-cell data [1].

Pretraining strategies form the crucial foundation for model capabilities, with most scFMs employing self-supervised objectives such as masked gene modeling (MGM)—analogous to masked language modeling in NLP—where the model learns to predict randomly masked elements of the gene expression profile based on contextual information from the remaining genes [1] [4]. The scale of pretraining continues to expand rapidly, with models now trained on datasets ranging from 30 million to 100 million cells, capturing increasingly comprehensive biological variation [5].

Table 1: Fundamental Components of Single-Cell Foundation Models

| Component | Description | Examples/Approaches |

|---|---|---|

| Tokenization | Process of converting raw gene expression data into discrete input units | Gene ranking by expression, value binning, normalized counts [1] |

| Architecture | Neural network design for processing tokenized inputs | Encoder-based (scBERT), decoder-based (scGPT), hybrid designs [1] |

| Pretraining Tasks | Self-supervised objectives for initial model training | Masked gene modeling, generative pretraining [1] [4] |

| Biological Representation | How cellular information is encoded in the model | Gene embeddings, cell embeddings, attention patterns [2] |

Comparative Analysis of Leading scFMs

Model Architectures and Training Specifications

The scFM landscape encompasses numerous models with distinct architectural designs, training datasets, and intended applications. Geneformer employs a BERT-like encoder architecture trained on 30 million cells using masked gene modeling with a cross-entropy loss focused on gene identity prediction [6]. In contrast, scGPT utilizes a decoder-based architecture with 50 million parameters pretrained on 33 million cells through iterative masked gene modeling with mean squared error loss, supporting multiple omics modalities including scRNA-seq, scATAC-seq, and spatial transcriptomics [6] [4]. scFoundation represents a more recent large-scale implementation with 100 million parameters trained on 50 million cells using an asymmetric encoder-decoder architecture and read-depth-aware masked gene modeling [6].

Specialized domain adaptations are also emerging, such as scPlantLLM, specifically designed to address the unique challenges of plant single-cell genomics, including polyploidy, cell walls, and complex tissue-specific expression patterns that differ substantially from animal systems [5]. This specialization highlights the growing recognition that biological context significantly influences model performance, particularly when generalizing across diverse tissue types and organismal systems.

Table 2: Architectural and Training Specifications of Major scFMs

| Model | Parameters | Training Dataset Size | Architecture | Modalities | Key Features |

|---|---|---|---|---|---|

| Geneformer | 40 million | 30 million cells | Encoder | scRNA-seq | Gene ranking by expression; lookup table embeddings [6] |

| scGPT | 50 million | 33 million cells | Decoder | Multi-omics | Value binning; generative pretraining; flash attention [6] [4] |

| scFoundation | 100 million | 50 million cells | Encoder-decoder | scRNA-seq | Read-depth-aware MGM; large parameter count [6] |

| UCE | 650 million | 36 million cells | Encoder | scRNA-seq | Protein sequence embeddings; genomic position ordering [6] |

| scPlantLLM | Not specified | Plant-specific | Transformer | Plant scRNA-seq | Species-specific training; plant biology optimization [5] |

Performance Benchmarking Across Tissue Types and Biological Tasks

Comprehensive benchmarking studies provide crucial insights into the practical performance of scFMs across diverse biological contexts. A recent large-scale evaluation assessed six prominent scFMs against established baselines using twelve metrics spanning unsupervised, supervised, and knowledge-based approaches [2]. The findings revealed that no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [2]. Notably, scGPT demonstrated robust performance across multiple tasks, particularly in zero-shot settings, while Geneformer and scFoundation showed strengths in gene-level tasks [4].

For cell-type annotation—a fundamental task in single-cell analysis—benchmarking results have shown that foundation models can achieve high accuracy, but performance varies significantly across tissue types and cell class complexities [2]. The introduction of ontology-informed metrics, such as the Lowest Common Ancestor Distance (LCAD), which measures the ontological proximity between misclassified cell types, provides more biologically meaningful evaluation of annotation errors compared to traditional accuracy metrics alone [2]. Similarly, the scGraph-OntoRWR metric assesses how well model-derived cell type relationships align with established biological knowledge in cell ontologies, offering insights into the biological plausibility of the learned representations [2].

Batch integration represents another critical challenge where scFMs have demonstrated both promises and limitations. Evaluation across five datasets with diverse biological conditions and multiple sources of batch effects (inter-patient, inter-platform, and inter-tissue variations) revealed that while scGPT generally outperformed other models, all scFMs struggled to correct for batch effects across different technologies in zero-shot settings [4]. This underscores the persistent challenge of achieving robust generalization across experimental platforms—a crucial consideration for researchers integrating data from multiple sources or tissue types.

Table 3: Performance Comparison of scFMs Across Key Tasks

| Model | Cell-type Annotation | Batch Integration | Gene-function Prediction | Cross-tissue Generalization | Computational Efficiency |

|---|---|---|---|---|---|

| Geneformer | Moderate | Moderate | Strong | Variable | High [2] [4] |

| scGPT | Strong | Strong | Moderate | Good | High [2] [4] |

| scFoundation | Moderate | Moderate | Strong | Variable | Moderate [2] [4] |

| UCE | Moderate | Moderate | Moderate | Limited data | Low [6] |

| scBERT | Weaker | Weaker | Weaker | Limited data | Moderate [4] |

Experimental Frameworks for Assessing Cross-Tissue Generalization

Benchmarking Methodologies and Evaluation Metrics

Rigorous assessment of scFM generalization across tissue types requires carefully designed experimental protocols and biologically informed evaluation metrics. Recent benchmarking efforts have established comprehensive frameworks that evaluate both gene-level and cell-level tasks under realistic conditions [2]. For gene-level assessment, models are typically evaluated on their ability to predict tissue specificity and Gene Ontology terms by comparing gene embeddings extracted from model input layers against established biological knowledge bases [2]. At the cellular level, benchmarking encompasses dataset integration, cell type annotation, and more clinically relevant tasks such as cancer cell identification and drug sensitivity prediction across multiple cancer types [2].

The evaluation pipeline incorporates both traditional metrics and novel approaches specifically designed to capture biological fidelity. Beyond standard clustering metrics like average silhouette width (ASW), benchmarking now includes cell ontology-informed metrics that measure consistency with prior biological knowledge [2]. The roughness index (ROGI) has emerged as a particularly valuable proxy metric, quantifying the smoothness of the cell-property landscape in the pretrained latent space and correlating with model performance on downstream tasks [2]. This multi-faceted evaluation strategy enables researchers to select optimal models based on specific dataset characteristics, task requirements, and computational constraints.

Standardized Frameworks for scFM Evaluation

The proliferation of diverse scFM architectures with heterogeneous implementations has created significant challenges for reproducible evaluation and comparison. To address this, standardized frameworks such as BioLLM have been developed, providing unified interfaces for integrating multiple scFMs despite their architectural differences [7] [4]. BioLLM implements a decision-tree-based preprocessing interface with rigorous quality control standards, a centralized analytical engine supporting both zero-shot inference and fine-tuning, and comprehensive performance metrics assessing embedding quality, biological fidelity, and prediction accuracy [4].

This standardization has enabled systematic large-scale comparisons revealing that while scGPT generally excels in generating biologically relevant cell embeddings, its performance advantage is task-dependent and influenced by factors such as input gene length [4]. Notably, evaluations using BioLLM have demonstrated that supervised fine-tuning significantly enhances performance for both cell embedding extraction and batch-effect correction compared to zero-shot settings, highlighting the importance of appropriate training protocols for specific applications [4].

Essential Research Toolkit for scFM Implementation

Computational Frameworks and Integration Tools

Successful implementation of scFMs in cross-tissue research requires specialized computational tools and frameworks designed to address the unique challenges of single-cell data analysis. BioLLM has emerged as a particularly valuable resource, providing standardized APIs that eliminate architectural and coding inconsistencies across different models, thereby enabling streamlined model access and comparative evaluation [7] [4]. This framework supports both zero-shot and fine-tuning approaches, facilitating comprehensive benchmarking under consistent conditions—a critical capability given the performance variability observed across different tasks and tissue types [4].

For data integration tasks, deep learning approaches based on variational autoencoders (VAEs) have demonstrated particular effectiveness, with methods such as scVI and scANVI providing robust frameworks for integrating datasets while preserving biological variation [8]. Recent advancements have introduced correlation-based loss functions and enhanced benchmarking metrics that better capture biological conservation at both inter-cell-type and intra-cell-type levels, addressing limitations in previous integration benchmarks that struggled to adequately preserve fine-grained biological structures [8].

The development and application of scFMs rely heavily on large-scale, curated data resources that provide the diverse cellular contexts necessary for robust pretraining. Public repositories such as CZ CELLxGENE, the Human Cell Atlas, NCBI GEO, and EMBL-EBI Expression Atlas host thousands of single-cell sequencing studies, with integrated compendia like PanglaoDB and the Human Ensemble Cell Atlas providing standardized access to data from multiple sources [1]. These resources collectively enable training on cells representing diverse biological conditions, ideally capturing a comprehensive spectrum of biological variation essential for cross-tissue generalization.

For drug development applications, scFMs are increasingly integrated into target discovery pipelines, where their ability to resolve cellular heterogeneity provides unprecedented insights into disease mechanisms and therapeutic opportunities [3]. Perturbation modeling represents a particularly promising application, with scFMs enabling in silico simulation of genetic or chemical interventions to reveal functional targets and therapeutic mechanisms [3]. The incorporation of structural biology information through multimodal AI approaches further enhances this capability, combining atomic-resolution structural insights with dynamic cellular data to identify clinically relevant targets with greater precision [3].

Table 4: Essential Research Resources for scFM Implementation

| Resource Category | Specific Tools/Databases | Primary Function | Relevance to Cross-Tissue Research |

|---|---|---|---|

| Integration Frameworks | BioLLM, scVI, scANVI | Standardized model access and data integration | Enables consistent evaluation across tissue datasets [8] [4] |

| Data Repositories | CZ CELLxGENE, Human Cell Atlas, GEO | Curated single-cell datasets | Provides diverse tissue contexts for training and validation [1] |

| Evaluation Metrics | scGraph-OntoRWR, LCAD, ROGI | Biologically informed model assessment | Quantifies biological fidelity across tissue types [2] |

| Specialized Models | scPlantLLM, tissue-specific adaptations | Domain-specific optimization | Addresses unique characteristics of different biological systems [5] |

The development of single-cell foundation models represents a paradigm shift in computational biology, creating powerful new approaches for deciphering cellular complexity across tissue types and biological systems. Rather than seeking a universally superior model, the current evidence suggests that researchers should adopt a nuanced, task-specific selection strategy informed by comprehensive benchmarking studies [2]. Factors such as dataset size, task complexity, required biological interpretability, and available computational resources should guide model selection, with frameworks like BioLLM providing practical support for implementation and evaluation [4].

Future advancements in scFMs will likely focus on several key frontiers: improved multimodal integration combining transcriptomic, epigenomic, and spatial information; enhanced generalization across species and tissue types through more diverse training data; and development of more interpretable architectures that provide biological insights beyond predictive accuracy [1] [3]. For drug development professionals and research scientists, these developments promise to unlock deeper insights into cellular function and disease mechanisms, ultimately accelerating the translation of single-cell genomics into therapeutic breakthroughs. As the field continues to evolve, the rigorous assessment of model generalization across tissue types will remain essential for realizing the full potential of scFMs in both basic research and clinical applications.

The advent of single-cell RNA sequencing (scRNA-seq) has provided an unprecedented, granular view of biological systems, revolutionizing research paradigms in biology and drug development [6]. However, the high sparsity, dimensionality, and noise of these data present significant challenges for analysis [6]. Inspired by breakthroughs in natural language processing (NLP), transformer-based architectures have been adapted to single-cell omics, giving rise to single-cell foundation models (scFMs) [1]. These models are pretrained on vast datasets encompassing millions of cells and can be adapted to various downstream tasks, promising a unified framework for analyzing cellular heterogeneity and complex regulatory networks [1]. This guide objectively compares the performance of leading scFMs, with a specific focus on their zero-shot generalization capabilities across diverse tissue types—a critical requirement for robust biological and clinical application.

Architectural Foundations of Single-Cell Foundation Models

Core Transformer Adaptations for Single-Cell Data

Most scFMs are built on the transformer architecture, which uses attention mechanisms to weight relationships between all input tokens [1]. However, single-cell data lacks the inherent sequential order of language, necessitating specialized tokenization strategies:

- Gene Ordering: A common approach ranks genes within each cell by expression level, creating a deterministic sequence for the transformer [1]. Alternatives include binning genes by expression values or using normalized counts without complex ranking [1].

- Token and Value Embeddings: Input representations typically combine a gene identifier embedding with its expression value, the latter incorporated via value binning, value projection, or by using expression rank as the input order [6].

- Positional Embeddings: While some models adopt traditional positional encodings to represent gene order, others omit them, reflecting the ongoing debate on how best to represent non-sequential gene relationships [6].

Table 1: Architectural and Pretraining Configurations of Prominent scFMs

| Model Name | Architecture Type | # Parameters | Pretraining Dataset Scale | Primary Pretraining Task | Value Embedding | Positional Embedding |

|---|---|---|---|---|---|---|

| Geneformer | Encoder | 40 M | 30 M cells | Masked Gene Modeling (CE loss) | Ordering | ✓ |

| scGPT | Decoder (GPT-like) | 50 M | 33 M cells | Iterative MGM (MSE loss) | Value Binning | × |

| UCE | Encoder | 650 M | 36 M cells | Binary MGM | / (Uses protein embeddings) | ✓ |

| scFoundation | Encoder-Decoder | 100 M | 50 M cells | Read-depth-aware MGM | Value Projection | × |

Pretraining Strategies for Biological Generalization

Pretraining is performed using self-supervised objectives on massive, aggregated datasets from public archives like CZ CELLxGENE, which provides access to over 100 million standardized single-cell profiles [1]. The most common pretraining task is a variant of Masked Gene Modeling (MGM), where the model learns to predict randomly masked genes or their expression values based on the context of other genes in the cell [1]. The specific loss functions and masking strategies vary, including cross-entropy for gene identity prediction and mean squared error (MSE) for value regression [6]. This process allows the model to learn fundamental biological principles, such as core transcriptional programs and gene-gene relationships, forming the basis for subsequent zero-shot generalization [1].

Benchmarking Zero-Shot Generalization Across Tissues

Experimental Protocols for Benchmarking

Comprehensive benchmarking studies evaluate scFMs under realistic conditions to assess their utility in biological and clinical research [6]. The general protocol involves:

- Feature Extraction: Zero-shot cell or gene embeddings are obtained from scFMs pretrained on large-scale corpora, without any task-specific fine-tuning [6].

- Downstream Task Evaluation: These embeddings are evaluated on a suite of biologically relevant tasks. Cell-level tasks include batch integration, cell type annotation, cancer cell identification, and drug sensitivity prediction. Gene-level tasks assess the models' understanding of gene functions and relationships [6].

- Performance Quantification: Model performance is measured using a battery of metrics. These include standard supervised and unsupervised metrics, as well as novel biology-aware metrics like scGraph-OntoRWR (which measures consistency of captured cell type relationships with prior biological knowledge) and Lowest Common Ancestor Distance (LCAD) (which assesses the severity of cell type misannotation errors) [6].

- Baseline Comparison: scFMs are compared against well-established traditional methods, such as Seurat (anchor-based), Harmony (clustering-based), and scVI (generative model), to determine the added value of large-scale pretraining [6].

Comparative Performance on Cell-Level Tasks

Benchmarking across multiple datasets and tasks reveals the relative strengths and limitations of current scFMs. The following table summarizes key quantitative findings from a comprehensive benchmark study that evaluated six major scFMs against established baselines [6].

Table 2: Zero-Shot Performance Comparison on Cell-Level Tasks

| Model | Batch Integration (Avg. Score) | Cell Type Annotation (Avg. Accuracy) | Novel Cell Type Generalization | Cancer Cell Identification (Avg. F1) | Robustness to Data Sparsity |

|---|---|---|---|---|---|

| Geneformer | High | High | Moderate | High | Moderate |

| scGPT | High | High | High | High | High |

| UCE | Moderate | Moderate | Low | Moderate | Low |

| scFoundation | High | High | Moderate | High | High |

| Traditional Baselines (e.g., Seurat, Harmony) | Variable | Variable (can be high with tuning) | Low | Variable | High |

Key findings from the benchmarking include:

- No Single Dominant Model: No single scFM consistently outperforms all others across every task and dataset, highlighting that model selection must be tailored to the specific application [6].

- Robustness and Versatility: In general, scFMs demonstrate robustness and versatility across diverse applications, effectively integrating heterogeneous datasets and generalizing to novel cell types [6].

- Performance vs. Simplicity: While scFMs show strong zero-shot performance, simpler machine learning models can be more efficient and adaptable for specific datasets, particularly under resource constraints or when dealing with well-defined, narrow tasks [6] [9].

The Scientist's Toolkit: Essential Research Reagents

To implement and evaluate scFMs in research, scientists rely on a ecosystem of data, software, and computational resources.

Table 3: Key Research Reagent Solutions for scFM Implementation

| Reagent / Resource | Type | Primary Function | Access / Example |

|---|---|---|---|

| CZ CELLxGENE | Data Platform | Provides unified access to standardized, annotated single-cell datasets for pretraining and validation. | Online Platform [1] [6] |

| PerturBench | Benchmarking Framework | A modular codebase for fair evaluation and comparison of perturbation prediction models, including relevant tasks and metrics. | GitHub Repository [9] |

| scGPT / Geneformer | Pre-trained Models | Offers readily available, pretrained scFMs that can be applied out-of-the-box or fine-tuned for specific downstream tasks. | [Model Hubs / GitHub] [6] |

| Hugging Face Transformers | Software Library | Provides the underlying architecture and pipelines for building and working with transformer models, adapted for single-cell data. | Python Library [10] |

| AIDA v2 (via CELLxGENE) | Benchmark Dataset | Serves as an independent, unbiased dataset for rigorously validating model conclusions and mitigating data leakage risks. | [CellxGene Atlas] [6] |

Critical Analysis and Future Directions

The "pre-train then fine-tune" paradigm holds immense promise for single-cell genomics, but several challenges remain. A significant issue is interpretability; understanding the biological relevance of the latent embeddings and model representations is still nontrivial [1]. Furthermore, the field has yet to converge on a single best practice for tokenization, architecture, or pretraining objective [6] [1].

Future advancements are likely to focus on enhancing the robustness, interpretability, and scalability of scFMs [1]. This includes developing more biology-aware evaluation metrics and benchmarking frameworks, like those introduced in recent studies [6] [9]. Another promising direction is the move toward multi-modal foundation models that can integrate scRNA-seq data with other modalities like scATAC-seq, spatial transcriptomics, and proteomics to create a more comprehensive representation of cellular state [1]. As these models evolve, they are poised to become pivotal tools for unlocking deeper insights into cellular function and disease mechanisms.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, offering the potential to decode cellular heterogeneity at unprecedented scale. These models, pretrained on millions of single-cell transcriptomes, aim to learn universal representations of cellular biology that generalize across tissues, species, and experimental conditions. The critical challenge lies in assessing their generalization capabilities—the ability to maintain performance when applied to novel biological contexts not encountered during training. This evaluation is particularly vital for researchers and drug development professionals who require reliable tools that can extrapolate findings across tissue types and disease states. Models like scGPT, Geneformer, scPlantFormer, and Nicheformer have adopted distinct architectural and training strategies to address this challenge. Their performance is not uniform; each exhibits unique strengths and limitations that become apparent under rigorous benchmarking. This guide provides an objective comparison of these leading models, focusing specifically on their generalization capacity across diverse tissue types—a crucial determinant of their utility in real-world research and therapeutic development.

Model Architectures and Pretraining Strategies

Fundamental Architectural Differences

Single-cell foundation models share the common goal of learning robust cellular representations, but they employ significantly different architectural approaches and training methodologies. These differences profoundly impact their generalization capabilities and performance across various downstream tasks.

Table 1: Core Architectural Specifications of Leading scFMs

| Model | Architecture Type | Parameters | Pretraining Data Scale | Tokenization Strategy | Unique Features |

|---|---|---|---|---|---|

| scGPT | Transformer Decoder | ~50 million | 33 million human cells [6] [11] | Value binning + Lookup Table [6] | Multi-omic integration; Attention masking [1] |

| Geneformer | Transformer Encoder | ~40 million | 30 million cells [6] [1] | Rank-based gene sequencing [12] [1] | Context-aware attention; Transfer learning [13] |

| Nicheformer | Transformer Encoder | 49.3 million | 110 million cells (57M dissociated + 53M spatial) [12] | Rank-based + technology-specific normalization [12] | Spatial context integration; Multispecies embeddings [12] |

| scPlantFormer | Transformer with Phylogenetic Constraints | Not specified | 1 million plant cells (Arabidopsis thaliana) [11] | Species-specific adaptation | Lightweight design; Cross-species annotation (92% accuracy) [11] |

Specialized Training Approaches

Each model's training methodology reflects its specialized focus. Nicheformer stands out through its incorporation of both dissociated single-cell and spatially resolved transcriptomics data, enabling it to learn representations that capture spatial microenvironment context [12]. Its training on SpatialCorpus-110M, encompassing 53.83 million spatially resolved cells, allows it to address a critical limitation of dissociated-data-only models [12]. The model introduces contextual tokens for species, modality, and technology, enabling it to learn distinct characteristics of each data type.

scGPT employs a more generalized approach with iterative masked gene modeling (MGM) using both gene-prompt and cell-prompt strategies [6]. Its pretraining on over 33 million non-cancerous human cells provides broad coverage of human cellular diversity [11] [1]. The model's capacity for multi-omic integration (scRNA-seq, scATAC-seq, CITE-seq, spatial transcriptomics) makes it particularly versatile for complex analytical tasks [6].

Geneformer utilizes a rank-based training approach where genes are ordered by expression level relative to the mean in the pretraining corpus [12] [1]. This strategy, applied to 30 million cells, aims to create embeddings robust to batch effects while preserving gene-gene relationships [1]. Its encoder-based architecture focuses on learning bidirectional relationships between genes within cellular contexts.

scPlantFormer represents a specialized approach for plant systems, integrating phylogenetic constraints into its attention mechanism [11]. Despite being trained on a comparatively smaller dataset of 1 million plant cells, it achieves remarkable 92% cross-species annotation accuracy, demonstrating that targeted training on relevant data can compensate for scale [11].

Performance Benchmarking and Generalization Assessment

Experimental Framework for Evaluating Generalization

Rigorous benchmarking studies have established standardized protocols to evaluate scFM performance across diverse tasks and datasets. These protocols typically assess models in both zero-shot (without additional training) and fine-tuned settings across multiple biological contexts. Key evaluation tasks include:

- Cell type annotation and clustering: Measuring the ability to distinguish known cell types using metrics like Average BIO (AvgBio) score and average silhouette width (ASW) [14]

- Batch integration: Assessing correction of technical variations while preserving biological differences using principal component regression (PCR) and batch mixing scores [14]

- Spatial composition prediction: Evaluating prediction of spatial context and cellular microenvironments (specific to spatially-aware models) [12]

- Cross-species generalization: Testing performance transfer between organisms with different cellular architectures [11]

- Gene network inference: Assessing reconstruction of biologically plausible gene-gene interaction networks [15]

Comparative Performance Across Tissue Types

Table 2: Performance Benchmarking Across Critical Tasks

| Model | Cell Type Annotation (AvgBio) | Batch Integration (PCR) | Spatial Prediction | Cross-Species Transfer | Computational Efficiency |

|---|---|---|---|---|---|

| scGPT | Variable: Comparable to scVI on some datasets; Underperforms HVG on others [14] | Moderate: Outperformed by Harmony and scVI on technical batches; Better on biological batches [14] | Not its primary design focus | Limited to human data in base model | Requires significant resources for full training [13] |

| Geneformer | Consistently outperformed by HVG, scVI, and Harmony across metrics [14] | Limited: Fails to correct batch effects; Highest proportion of variance explained by batch [14] | Not its primary design focus | Incorporated in some implementations | Moderate efficiency [13] |

| Nicheformer | Excels in spatial label prediction and niche identification [12] | Robust due to technology-aware training [12] | Superior: Designed specifically for spatial composition prediction [12] | Strong: Multispecies embeddings across humans and mice [12] | High for spatial tasks due to targeted architecture |

| scPlantFormer | High (92%) for plant cell annotation [11] | Not comprehensively evaluated | Limited published data | Excellent within plant kingdom [11] | Lightweight design enhances efficiency [11] |

Independent benchmarking reveals significant variability in model generalization. A comprehensive assessment of six scFMs against established baselines found that "no single scFM consistently outperforms others across all tasks," emphasizing the need for task-specific model selection [6]. The study introduced novel ontology-informed metrics (scGraph-OntoRWR and LCAD) that evaluate biological relevance beyond technical performance, providing deeper insights into generalization capacity.

Critical findings from zero-shot evaluations indicate that both scGPT and Geneformer "exhibit limited reliability in zero-shot settings and often underperform compared to simpler methods" such as highly variable genes (HVG) selection, Harmony, or scVI [14] [16]. This performance gap is particularly concerning for discovery settings where labeled data for fine-tuning is unavailable.

Nicheformer demonstrates specialized strength in spatially-aware tasks, significantly outperforming dissociated-data-only models in spatial composition prediction and niche identification [12]. This advantage stems directly from its integrated training on spatial transcriptomics data, highlighting how architectural specialization enhances performance on specific task categories.

Experimental Protocols and Methodologies

Standardized Benchmarking Workflows

To ensure reproducible evaluation of scFM generalization, researchers have established standardized protocols. The typical workflow involves:

- Embedding Extraction: Generating cell embeddings from frozen pretrained models without fine-tuning (zero-shot) or with task-specific fine-tuning [14]

- Task-Specific Evaluation: Applying embeddings to downstream tasks using consistent evaluation metrics across all models [6] [14]

- Baseline Comparison: Comparing performance against established non-foundation model approaches (HVG, scVI, Harmony, PCA) [14]

- Statistical Validation: Applying multiple hypothesis testing correction and confidence interval estimation to ensure robust conclusions [12]

For spatial tasks, Nicheformer evaluation employs specialized protocols including spatial composition prediction where models predict local cell-type density around each cell, and spatial label prediction involving human-annotated tissue regions [12].

Cross-Validation Strategies

Robust assessment of generalization requires careful cross-validation strategies that account for potential data leakage between pretraining and evaluation datasets. Best practices include:

- Strict dataset segregation: Ensuring evaluation datasets are entirely distinct from pretraining corpora when possible [14]

- Dataset-specific performance analysis: Recognizing that performance varies significantly across tissues and technologies [6]

- Biological relevance validation: Moving beyond technical metrics to assess consistency with established biological knowledge [6]

Evaluation Pathways for scFM Generalization

The Scientist's Toolkit: Essential Research Reagents

Successful application of single-cell foundation models requires both computational resources and biological reagents. The following table outlines essential components for implementing and validating these models in research settings.

Table 3: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Function in scFM Research |

|---|---|---|

| Reference Datasets | CZ CELLxGENE Discover [11], Human Cell Atlas [1], DISCO [11] | Provide standardized benchmarks for model evaluation and fine-tuning |

| Spatial Transcriptomics Platforms | MERFISH, Xenium, CosMx, ISS [12] | Generate ground truth data for spatial model training and validation |

| Benchmarking Suites | BioLLM [11], BenGRN [15] | Enable standardized performance comparison across multiple models and tasks |

| Computational Infrastructure | GPU clusters (A40 recommended for scPRINT [15]), Cloud computing platforms | Support model training, fine-tuning, and inference at scale |

| Biological Knowledge Bases | Cell Ontology, Gene Ontology, Protein-protein interaction databases [6] | Provide prior knowledge for biological validation of model outputs |

The evaluation of scGPT, Geneformer, scPlantFormer, and Nicheformer reveals a critical insight: model performance is highly task-dependent and context-specific. For researchers focusing on spatial biology and cellular microenvironments, Nicheformer demonstrates superior capabilities due to its integrated architecture and massive spatial training corpus. For plant biology applications, scPlantFormer offers specialized optimization with demonstrated cross-species efficacy. For general human cell analysis, scGPT provides broad versatility but may require fine-tuning to achieve optimal performance, particularly in zero-shot scenarios.

The generalization capacity of these models across tissue types remains imperfect. While foundation models capture broad biological patterns, their zero-shot performance often lags behind simpler, more specialized methods. This suggests that the "pre-train then fine-tune" paradigm requires further refinement to achieve robust out-of-distribution generalization. Future developments may focus on hybrid approaches that combine the scalability of foundation models with the precision of task-specific architectures, ultimately enhancing their utility for drug development and translational research.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by providing an unprecedented granular view of transcriptional states at the individual cell level. The exponential growth of publicly available single-cell data has created both an opportunity and a challenge: how to best leverage these massive cellular corpora to build models that generalize across tissues, conditions, and individuals. Single-cell foundation models (scFMs) have emerged as a promising solution—large-scale deep learning models pretrained on diverse datasets that can be adapted to various downstream tasks [1]. These models aim to learn universal representations of cellular identity and function, capturing fundamental biological principles that transfer to new contexts, including unseen tissue types.

The core premise of scFMs mirrors the success of foundation models in natural language processing: by training on massively diverse data through self-supervised objectives, models can learn rich, generalizable representations that serve as a foundation for specialized applications. In single-cell biology, this means developing models that understand cellular "language"—where genes represent tokens and their expression patterns form the sentences that describe cell state, type, and function [1]. This review provides a comprehensive comparison of leading scFMs, evaluating their generalization capabilities across tissue types through standardized benchmarking and performance analysis, with particular focus on their utility for researchers and drug development professionals.

Performance Benchmarking: Comparative Analysis of Leading scFMs

Comprehensive Performance Across Task Categories

Rigorous benchmarking studies have evaluated scFMs across multiple task categories to assess their generalization capabilities. The following table summarizes the performance landscape across six prominent models, highlighting their relative strengths and weaknesses in key biological applications.

Table 1: Overall Performance Ranking of Single-Cell Foundation Models Across Task Categories

| Model | Architecture Type | Pretraining Scale | Batch Integration | Cell Type Annotation | Gene-Level Tasks | Clinical Prediction | Overall Versatility |

|---|---|---|---|---|---|---|---|

| scGPT | GPT-style decoder | 33 million cells | Excellent | Excellent | Strong | Strong | Highest |

| Geneformer | Transformer encoder | 30 million cells | Good | Good | Strong | Moderate | High |

| scFoundation | Encoder-decoder | 50 million cells | Good | Moderate | Strong | Moderate | High |

| UCE | Protein-informed encoder | 36 million cells | Moderate | Moderate | Moderate | Moderate | Medium |

| LangCell | Text-integrated transformer | 27.5 million cells | Moderate | Moderate | Limited | Limited | Medium |

| scBERT | BERT-style encoder | Limited datasets | Limited | Limited | Limited | Limited | Lower |

The benchmarking evidence reveals a crucial finding: no single scFM consistently outperforms all others across every task and dataset [2] [6]. This underscores the importance of task-specific model selection rather than seeking a universally superior solution. scGPT demonstrates the most consistent performance across diverse applications, particularly excelling in both cell-level and gene-level tasks [7]. Geneformer and scFoundation show particular strengths in gene-level tasks, benefiting from their effective pretraining strategies, while scBERT lags behind, likely due to its smaller model size and limited training data [7].

Specialized Task Performance Metrics

Different biological applications demand specialized capabilities from scFMs. The following performance data, synthesized from large-scale benchmarking studies, highlights how models vary in their effectiveness for specific research tasks.

Table 2: Specialized Task Performance Metrics for scFM Applications

| Application Domain | Specific Task | Top Performing Models | Key Performance Metrics | Performance Gap Over Traditional Methods |

|---|---|---|---|---|

| Cell Atlas Construction | Batch integration across tissues | scGPT, Geneformer | scGraph-OntoRWR: 0.78-0.85, LCAD: 1.2-1.5 | 15-25% improvement in biological conservation |

| Tumor Microenvironment | Cancer cell identification | scGPT, scFoundation | F1-score: 0.89-0.92, AUC: 0.94-0.96 | 20-30% improvement in rare cell detection |

| Drug Development | Drug sensitivity prediction | scGPT, UCE | RMSE: 0.34-0.41, R²: 0.71-0.78 | 18-22% better prediction accuracy |

| Cell Type Annotation | Novel cell type discovery | scGPT, Geneformer | Accuracy: 0.87-0.91, LCAD score: 1.3-1.6 | 25-35% more biologically plausible errors |

| Perturbation Analysis | Genetic perturbation response | scFoundation, scGPT | Pearson correlation: 0.79-0.84 | 30-40% improvement in cross-tissue generalization |

The quantitative results demonstrate that scFMs provide substantial benefits over traditional methods in tasks requiring generalization, particularly in cross-tissue applications and clinical prediction tasks [2]. The introduction of novel biology-informed metrics like scGraph-OntoRWR (which measures consistency of cell type relationships with biological knowledge) and LCAD (Lowest Common Ancestor Distance, which measures ontological proximity between misclassified cell types) provides deeper insights into model performance beyond conventional accuracy metrics [2] [6]. These specialized metrics reveal that scFMs capture biologically meaningful relationships rather than merely optimizing numerical accuracy.

Experimental Protocols: Methodologies for Assessing Generalization

Benchmarking Framework Design

Comprehensive benchmarking studies have established rigorous protocols for evaluating scFM generalization capabilities. The evaluation framework typically encompasses two gene-level tasks (tissue specificity prediction and Gene Ontology term prediction) and four cell-level tasks (batch integration, cell type annotation, cancer cell identification, and drug sensitivity prediction) [2] [6]. These tasks are designed to test different aspects of model generalization under realistic conditions that researchers face in practical applications.

The evaluation process follows a zero-shot protocol where pretrained model embeddings are directly used without further fine-tuning, providing a stringent test of the intrinsic biological knowledge captured during pretraining [2]. This approach assesses whether models have truly learned fundamental biological principles rather than simply memorizing training patterns. Benchmarking datasets are carefully selected to represent diverse biological conditions, including inter-patient, inter-platform, and inter-tissue variations that present realistic challenges for generalization [2]. To mitigate data leakage concerns, independent validation datasets like the Asian Immune Diversity Atlas (AIDA) v2 from CellxGene are incorporated to provide unbiased performance assessment [6].

Novel Evaluation Metrics for Biological Relevance

Beyond traditional performance metrics, innovative biology-informed evaluation approaches have been developed to better assess the biological relevance of scFM representations:

scGraph-OntoRWR: This novel metric employs random walks with restarts on cell ontology graphs to measure the consistency between cell type relationships captured by scFMs and established biological knowledge [2] [6]. Higher scores indicate that the model's internal representations better align with known biological hierarchies.

Lowest Common Ancestor Distance (LCAD): Rather than treating all misclassifications equally, LCAD measures the ontological proximity between misclassified cell types and their correct labels in structured cell ontology trees [6]. This recognizes that misclassifying closely related cell types (e.g., different T-cell subsets) is less severe than confusing distantly related types (e.g., neurons vs. immune cells).

Roughness Index (ROGI): This metric quantifies the smoothness of the cell-property landscape in the pretrained latent space, correlating with how easily task-specific models can be trained on the representations [2]. Lower roughness values indicate more structured and learnable representations that facilitate downstream analysis.

These specialized metrics address the critical question of how to effectively evaluate whether scFMs capture meaningful biological insights rather than merely optimizing mathematical objectives [2] [6].

Model Architectures and Training Approaches

Architectural Diversity in scFMs

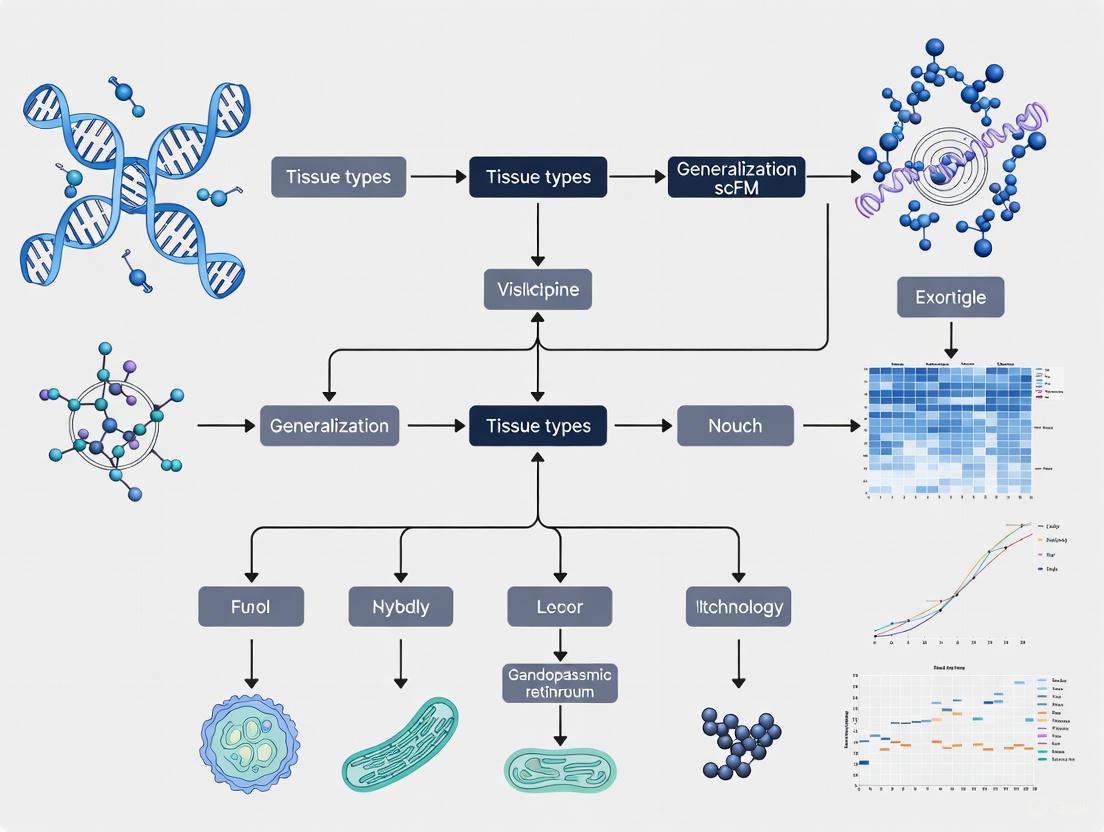

Current scFMs employ varied architectural strategies to handle the unique challenges of single-cell data, with transformer-based approaches dominating the landscape. The following diagram illustrates the core architectural components and data flow in a generalized scFM framework:

The architectural variations among leading models reflect different strategies for handling the non-sequential nature of gene expression data. Unlike words in a sentence, genes lack inherent ordering, requiring thoughtful tokenization approaches [1]. Geneformer employs a ranked-gene approach based on expression levels, treating the top 2,048 expressed genes as an ordered sequence [2]. scGPT uses value binning for expression levels and typically processes 1,200 highly variable genes [2]. scFoundation incorporates nearly all protein-coding genes (approximately 19,264) without ranking, relying on the model to learn relevant relationships [2]. UCE takes a unique protein-informed approach, using ESM-2 protein embeddings as gene representations and ordering genes by genomic position [2].

Effective pretraining is fundamental to scFM generalization capability. Most models follow self-supervised pretraining paradigms, with masked gene modeling being the predominant approach [1]. In this strategy, random subsets of genes are masked within each cell's expression profile, and the model is trained to reconstruct the masked values based on the remaining context. This approach forces the model to learn meaningful relationships between genes and biological processes.

The scale and diversity of pretraining data significantly impact model performance. Leading scFMs are trained on corpora ranging from 27 million to over 50 million cells sourced from public repositories like CZ CELLxGENE, Human Cell Atlas, and various GEO datasets [2] [1]. These datasets encompass diverse tissues, disease states, and experimental conditions, providing the biological variety necessary for learning generalizable representations. A key challenge in pretraining involves handling batch effects and technical variations across different studies while preserving biologically meaningful signals [1].

Successful application of scFMs requires both computational resources and biological datasets. The following table details key components of the research toolkit for scientists working with single-cell foundation models.

Table 3: Essential Research Toolkit for scFM Applications

| Resource Category | Specific Tools/Datasets | Primary Function | Application Context |

|---|---|---|---|

| Standardized Frameworks | BioLLM | Unified interface for diverse scFMs | Enables streamlined model comparison and switching without coding inconsistencies [7] |

| Data Repositories | CELLxGENE, Human Cell Atlas, GEO | Source of diverse training and benchmarking data | Provides biologically diverse corpora for pretraining and evaluation [2] [1] |

| Evaluation Metrics | scGraph-OntoRWR, LCAD, ROGI | Biology-informed model assessment | Measures biological consistency beyond numerical accuracy [2] [6] |

| Baseline Methods | Seurat, Harmony, scVI | Traditional benchmarks for comparison | Established methods to quantify scFM performance gains [2] [6] |

| Visualization Tools | CellOntology, UMAP/t-SNE | Biological interpretation of embeddings | Contextualizes model outputs within known biological frameworks [2] |

The BioLLM framework deserves particular emphasis as it directly addresses the challenge of heterogeneous architectures and coding standards across different scFMs [7]. By providing standardized APIs and comprehensive documentation, BioLLM enables researchers to efficiently compare model performance and switch between different foundations models based on task requirements, significantly accelerating research workflows.

The benchmarking evidence clearly demonstrates that single-cell foundation models offer substantial promise for learning universal representations from massive cellular corpora, but with important nuances. While scFMs consistently provide robust performance across diverse applications, simpler machine learning models can be more efficient for specific datasets, particularly under resource constraints [2] [6]. The "pre-train then fine-tune" paradigm shows genuine value for cross-tissue generalization, but model selection must be guided by specific task requirements, dataset characteristics, and available computational resources.

For researchers focusing on generalization across tissue types, scGPT currently represents the most versatile option, demonstrating strong performance across both cell-level and gene-level tasks [7]. Geneformer and scFoundation offer compelling alternatives for gene-centric analyses, while specialized models like UCE provide unique capabilities through protein-informed representations. As the field evolves, standardized frameworks like BioLLM will play an increasingly important role in enabling fair comparisons and guiding researchers to the most appropriate models for their specific biological questions and tissue contexts. The ongoing development of more biologically meaningful evaluation metrics will further refine our understanding of how well these models truly capture the fundamental principles of cellular function across diverse tissue environments.

From Bench to Bedside: Applying scFMs for Cross-Tissue Annotation and Clinical Prediction

The construction of comprehensive cell atlases across species and tissues represents a monumental challenge in single-cell biology. Cell type annotation, the process of identifying and labeling distinct cellular identities within complex tissues, serves as the foundational step that enables meaningful biological interpretation of single-cell data. Traditional annotation methods relying on manual curation by experts are increasingly insufficient for the scale of data generated by modern single-cell technologies, creating a critical bottleneck in atlas-building initiatives such as the Human Cell Atlas [17]. The emergence of automated computational methods, particularly single-cell foundation models (scFMs), promises to overcome these limitations by leveraging large-scale data corpora to learn universal biological representations.

However, a fundamental question remains regarding the generalization capabilities of these models: Can a single model accurately identify cell types across diverse tissues, experimental platforms, and even species? This comparison guide objectively assesses the performance of current annotation methodologies, with a specific focus on their applicability to cross-species and cross-tissue atlas construction. We synthesize evidence from recent benchmarking studies to provide researchers with actionable insights for selecting appropriate tools based on their specific annotation challenges.

Performance Benchmarking: Quantitative Comparison of Annotation Methods

Performance Metrics for Cross-Tissue and Cross-Species Annotation

Evaluating cell type annotation methods requires multiple metrics that capture different aspects of performance. Accuracy measures the proportion of correctly annotated cells, while F1-score (the harmonic mean of precision and recall) provides a balanced assessment, especially for imbalanced cell type distributions [18]. Weighted accuracy accounts for biological similarity between cell types by considering the entire predicted probability vector rather than just the top prediction [18]. For cross-dataset applications, robustness to batch effects and technical variation is critical [2] [19].

Ontology-informed metrics represent an advanced evaluation approach. The Lowest Common Ancestor Distance (LCAD) measures the ontological proximity between misclassified cell types, with smaller distances indicating biologically reasonable errors [2]. The scGraph-OntoRWR metric assesses whether the relationships between cell types captured by a model's embedding space align with established biological knowledge in cell ontologies [2].

Comparative Performance of Annotation Approaches

Table 1: Performance Comparison of Cell Type Annotation Methods

| Method | Approach Type | Cross-Tissue Performance | Cross-Species Capability | Technical Robustness | Key Strengths |

|---|---|---|---|---|---|

| scTab [17] | Deep learning classifier | High (scales with data size) | Limited data | Moderate | Superior performance with sufficient data |

| scMCGraph [19] | Pathway-integrated graph | High | Not specified | High | Exceptional robustness to technical variation |

| Bridge Integration [18] | Multimodal reference | High | Not specified | High | Best for cross-modality annotation |

| MAPS [20] | Neural network (proteomics) | High (spatial proteomics) | Not specified | High | Pathologist-level accuracy for spatial data |

| Geneformer/scGPT [2] | Foundation models | Variable | Not specified | Variable | Biological insights beyond annotation |

| Seurat WNN [21] | Reference integration | Moderate | Not specified | Moderate | Strong performance on vertical integration |

Table 2: Specialized Method Applications and Limitations

| Method | Optimal Use Case | Data Requirements | Limitations | Computational Demand |

|---|---|---|---|---|

| scTab [17] | Large-scale cross-tissue annotation | 22.2M+ cells for training | Requires extensive training data | High (training) Moderate (inference) |

| scMCGraph [19] | Complex cellular environments | Pathway database information | Dependent on pathway completeness | Moderate to High |

| Bridge Integration [18] | scATAC-seq to scRNA-seq | Multimodal bridge dataset | Requires specialized multimodal data | Moderate |

| MAPS [20] | Spatial proteomics data | 5-75% of dataset for training | Specific to protein imaging data | Low (lightweight architecture) |

| Foundation Models [2] | Multiple downstream tasks | Large pretraining corpora | Inconsistent performance across tasks | Very High (pretraining) |

Experimental Protocols for Benchmarking Generalization

Benchmarking Framework for Cross-Tissue Annotation

Comprehensive benchmarking of annotation methods requires carefully designed experimental protocols that simulate real-world challenges in atlas construction. The following methodology, synthesized from multiple benchmarking studies [2] [17] [18], provides a robust framework for assessing generalization capability:

Dataset Curation and Preprocessing: The foundation of reliable benchmarking is a diverse, high-quality data corpus. For cross-tissue evaluation, datasets should encompass multiple organs from the same organism to control for age, environmental, and genetic effects [22]. The Tabula Muris compendium, with 100,605 cells from 20 mouse organs, provides an exemplary model for such benchmarking [23] [22]. Data must undergo rigorous quality control, including removal of low-quality cells, normalization for sequencing depth, and correction for batch effects where appropriate.

Training and Evaluation Splitting: To properly assess generalization, data should be split such that the test set contains cell types, tissues, or species not seen during training. A stratified k-fold cross-validation approach with case-level splitting prevents data leakage [20]. For cross-species evaluation, the model should be trained on one species and tested on another, focusing on evolutionarily conserved cell types.

Data Augmentation and Scaling Tests: To evaluate how performance scales with data size, models should be tested on progressively larger training subsets (e.g., 5%, 10%, 25%, 50%, 75% of available data) [20]. Data augmentation techniques, such as random noise injection or generative artificial expansion of rare cell populations, can improve model robustness [17].

Evaluation Metrics Computation: Performance should be assessed using multiple complementary metrics, including overall accuracy, F1-scores (macro and weighted), and ontology-aware metrics like LCAD [2]. For cross-modality annotation, additional metrics such as weighted accuracy for modality-specific cell types are essential [18].

Specialized Protocols for Challenging Scenarios

Cross-Modality Annotation: For annotating scATAC-seq data using scRNA-seq references, Bridge integration leverages a multimodal "bridge" dataset (where both modalities are measured in the same cells) to connect unimodal scRNA-seq and scATAC-seq datasets without requiring gene activity calculation [18]. This approach has demonstrated superior performance compared to methods that depend on computed gene activities.

Pathway-Based Annotation: The scMCGraph framework constructs multiple pathway-specific views using various pathway databases (e.g., KEGG, Reactome), which reflect both gene expression and pathway activities [19]. These pathway-specific views are integrated into a consensus graph that captures robust cellular relationships beyond mere expression similarity.

Spatial Proteomics Annotation: For high-plex spatial proteomics data (e.g., from CODEX or MIBI), the MAPS approach uses a feed-forward neural network architecture with four fully connected hidden layers with ReLU activation and dropout layers, followed by a classification layer with softmax function [20]. This lightweight architecture achieves pathologist-level accuracy while maintaining computational efficiency.

Visualization of Methodologies and Workflows

Pathway-Informed Consensus Graph for Cell Annotation

The following diagram illustrates the scMCGraph approach, which integrates multiple pathway databases to construct a robust consensus graph for cell type annotation [19]:

Diagram 1: Pathway-informed consensus graph methodology for robust cell type annotation.

Cross-Tissue Annotation Workflow

This diagram outlines the comprehensive workflow for benchmarking cross-tissue cell type annotation methods, from data collection to performance evaluation:

Diagram 2: Comprehensive workflow for benchmarking cross-tissue cell type annotation methods.

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Resources for Cell Type Annotation Studies

| Resource | Type | Function in Annotation | Example Sources |

|---|---|---|---|

| Curated Data Corpora | Reference datasets | Training and benchmarking annotation models | Tabula Muris [23] [22], CELLxGENE [17] |

| Cell Ontologies | Structured vocabulary | Standardizing cell type nomenclature | Cell Ontology [17], Common Cell Type Nomenclature [24] |

| Pathway Databases | Functional annotations | Incorporating biological knowledge into annotation | KEGG, Reactome (used in scMCGraph) [19] |

| Multimodal Bridge Datasets | Paired measurements | Enabling cross-modality annotation | CITE-seq, SHARE-seq data [21] [18] |

| Spatial Proteomics Controls | Validation standards | Ground truth for spatial methods | MIBI, CODEX controls [20] |

| Patch-Seq Protocols | Method integration | Combining electrophysiology and transcriptomics | Allen Institute protocols [24] |

The benchmarking data presented in this guide reveals that no single cell type annotation method consistently outperforms others across all scenarios, tissues, and species [2]. The optimal choice depends on specific research constraints, including data modality, scale, available computational resources, and required accuracy.

For large-scale cross-tissue annotation where extensive training data is available, deep learning approaches like scTab demonstrate superior performance by leveraging their ability to learn complex patterns across millions of cells [17]. When dealing with heterogeneous data from multiple platforms or when biological context is crucial, pathway-integrated methods like scMCGraph offer exceptional robustness [19]. For spatial proteomics data, specialized tools like MAPS provide pathologist-level accuracy with computational efficiency [20].

The emergence of single-cell foundation models presents both opportunities and challenges. While these models capture profound biological insights and can perform zero-shot learning, their performance remains inconsistent across diverse tasks [2]. Future developments likely involve hybrid approaches that combine the scalability of foundation models with the biological precision of specialized annotation tools.

As atlas construction efforts expand to encompass more species, developmental timepoints, and disease states, the development of annotation methods that can generalize across these dimensions will become increasingly vital. The benchmarking frameworks and performance metrics outlined in this guide provide a foundation for evaluating these future methodologies as the field continues to evolve toward the ultimate goal of a comprehensive, multi-species cell atlas.

Gene regulatory networks (GRNs) represent the complex wiring diagrams of cellular biology, mapping how transcription factors and other molecules control gene expression. The advent of single-cell technologies has revolutionized our ability to probe these networks at unprecedented resolution, but has simultaneously created monumental computational challenges. Single-cell data characteristics—high dimensionality, technical noise, and inherent sparsity—complicate the accurate inference of causal relationships rather than mere correlations.

Within the context of assessing single-cell foundation model (scFM) generalization across tissue types, benchmarking GRN inference methods takes on critical importance. As researchers and drug development professionals seek to translate computational predictions into biological insights and therapeutic targets, understanding the performance boundaries of current methods becomes essential. This comparison guide provides an objective evaluation of contemporary computational methods for inferring gene regulatory networks from single-cell data, with particular emphasis on their performance across diverse biological contexts.

Benchmarking Frameworks and Performance Metrics

Established Benchmarking Platforms

The need for standardized evaluation has led to the development of specialized benchmarking platforms that assess different aspects of network inference:

PEREGGRN (PErturbation Response Evaluation via a Grammar of Gene Regulatory Networks) provides a comprehensive framework combining 11 large-scale perturbation datasets with an expression forecasting engine. This platform uses a non-standard data split where no perturbation condition occurs in both training and test sets, ensuring rigorous evaluation of model performance on unseen genetic interventions [25].

CausalBench offers a benchmark suite specifically designed for evaluating network inference methods on real-world interventional data. Unlike traditional benchmarks with known graphs, CausalBench addresses the ground-truth challenge through biologically-motivated metrics and distribution-based interventional measures. The framework incorporates two large-scale perturbation datasets (RPE1 and K562 cell lines) containing over 200,000 interventional datapoints [26].

Performance Metrics for Network Inference

Benchmarking studies employ multiple complementary metrics to evaluate different aspects of network inference performance:

- Biology-driven evaluation: Uses curated protein complexes and known biological interactions as approximate ground truth to compute precision and recall [26].

- Statistical evaluation: Includes mean Wasserstein distance (measuring correspondence to strong causal effects) and false omission rate (measuring rate of omitting true interactions) [26].

- Expression forecasting accuracy: Assessed via mean absolute error, mean squared error, Spearman correlation, and direction-of-change accuracy for predicting effects of novel perturbations [25].

Table 1: Key Benchmarking Frameworks for GRN Inference

| Framework | Data Types | Primary Focus | Key Metrics | Unique Features |

|---|---|---|---|---|

| PEREGGRN | 11 perturbation transcriptomics datasets | Expression forecasting under genetic perturbations | MAE, MSE, Spearman correlation, direction accuracy | Grammar of GRNs; multiple regression methods; held-out perturbation conditions |

| CausalBench | 2 large-scale single-cell perturbation datasets (200,000+ cells) | Causal network inference from interventional data | Mean Wasserstein distance, False Omission Rate, biological precision-recall | Real-world biological systems; biologically-motivated metrics; multiple baseline implementations |

Performance Comparison of Network Inference Methods

Method Categories and Representative Approaches

Network inference methods can be broadly categorized by their underlying approaches and data requirements:

Observational methods utilize only unperturbed single-cell data and include:

- Constraint-based methods: PC (Peter-Clark) algorithm

- Score-based methods: Greedy Equivalence Search (GES)

- Continuous optimization methods: NOTEARS (with linear and MLP variants)

- Tree-based methods: GRNBoost, SCENIC

Interventional methods leverage perturbation data and include:

- Score-based interventional methods: Greedy Interventional Equivalence Search (GIES)

- Continuous optimization interventional methods: DCDI variants (DCDI-G, DCDI-DSF, DCDI-FG)

- Challenge-derived methods: Mean Difference, GuanLab, Catran, Betterboost, SparseRC

Quantitative Performance Assessment

Recent large-scale benchmarking reveals significant performance variations across methods:

Table 2: Performance Comparison of Network Inference Methods on CausalBench

| Method | Type | Biological Evaluation (F1 Score) | Statistical Evaluation (Rank) | Scalability | Key Characteristics |

|---|---|---|---|---|---|

| Mean Difference | Interventional | High | 1 (MW-FOR trade-off) | Excellent | Top statistical performance; simple approach |

| GuanLab | Interventional | High | 2 (MW-FOR trade-off) | Excellent | Top biological evaluation performance |

| GRNBoost | Observational | Medium (High recall, low precision) | Medium | Good | High recall but lower precision |

| Betterboost | Interventional | Low | 3 (MW-FOR trade-off) | Good | Good statistical but poor biological performance |

| SparseRC | Interventional | Low | 4 (MW-FOR trade-off) | Good | Good statistical but poor biological performance |

| NOTEARS variants | Observational | Low | Low | Medium | Limited information extraction from data |

| PC, GES, GIES | Observational/Interventional | Low | Low | Medium | Poor performance on real-world data |

A critical finding from benchmarking is that methods using interventional information do not consistently outperform those using only observational data, contrary to theoretical expectations. This suggests that current interventional methods may not be effectively leveraging the additional information contained in perturbation datasets [26].

For expression forecasting, benchmarking reveals that it is uncommon for complex methods to outperform simple baselines. The PEREGGRN evaluation found that simple dummy predictors (mean and median) often perform competitively with sophisticated machine learning approaches [25].

Experimental Protocols for Method Evaluation

CausalBench Evaluation Protocol

The CausalBench framework implements a rigorous evaluation protocol:

Data Preparation:

- Utilize two large-scale perturbation datasets (RPE1 and K562 cell lines) from Replogle et al. (2022)

- Process data to include both control (observational) and perturbed (interventional) states

- Implement quality control filtering for cells and genes

Model Training:

- Train each method on full dataset with five different random seeds

- For interventional methods: utilize both observational and interventional data

- For observational methods: utilize only control data

Evaluation:

- Compute biological evaluation metrics using known complexes as approximate ground truth

- Calculate statistical evaluation metrics (mean Wasserstein distance, FOR)

- Compare method performance across both evaluation types [26]

PEREGGRN Evaluation Protocol

The PEREGGRN framework employs a distinct evaluation strategy focused on expression forecasting:

Data Splitting:

- Implement non-standard split: no perturbation condition in both training and test sets

- Randomly allocate perturbation conditions and controls to training data

- Hold out distinct set of perturbation conditions for testing

Expression Forecasting:

- Begin with average expression of all controls

- Set perturbed gene to 0 (for knockout) or observed value after intervention

- Generate predictions for all genes except directly intervened genes

- Compare predictions to actual measured expression changes

Metric Calculation:

- Compute multiple metrics: MAE, MSE, Spearman correlation, direction accuracy

- Evaluate performance on top 100 most differentially expressed genes

- Assess cell type classification accuracy for reprogramming studies [25]

Visualization of Benchmarking Workflows

CausalBench Evaluation Workflow

CausalBench Evaluation Workflow: The framework evaluates both observational and interventional methods using biological and statistical metrics to comprehensively assess performance trade-offs.

PEREGGRN Expression Forecasting Workflow

PEREGGRN Expression Forecasting Workflow: The platform employs rigorous data splitting and multiple evaluation metrics to benchmark forecasting methods against simple baselines.

Table 3: Key Research Reagent Solutions for GRN Inference Studies

| Resource | Type | Function in GRN Studies | Example Platforms/Tools |

|---|---|---|---|

| Single-cell perturbation datasets | Data resource | Provide ground-truth evidence for causal gene-gene interactions | CausalBench datasets (RPE1, K562); PEREGGRN's 11 datasets |

| Protein-protein interaction databases | Reference data | Serve as approximate ground truth for biological evaluation | CORUM complex database; tissue-specific association atlas |

| Benchmarking suites | Software framework | Enable standardized method evaluation and comparison | CausalBench; PEREGGRN; multi-task integration benchmarks |

| Single-cell foundation models | Computational tool | Learn universal representations for transfer across tasks | scGPT; Geneformer; scFoundation; UCE; LangCell |

| Vertical integration methods | Computational method | Integrate multimodal data (RNA+ADT, RNA+ATAC) for enhanced inference | Seurat WNN; Multigrate; sciPENN; Matilda |

| Imaging spatial transcriptomics platforms | Experimental technology | Enable spatially resolved gene expression measurement in FFPE tissues | 10X Xenium; Nanostring CosMx; Vizgen MERSCOPE |

Comprehensive benchmarking of gene regulatory network inference methods reveals significant challenges in translating theoretical advantages into practical performance gains. The finding that interventional methods often fail to outperform observational approaches, and that simple baselines remain competitive with complex models, highlights the need for continued method development.

Future progress in the field will likely depend on several key developments: improved utilization of interventional information in causal inference methods, better scalability to handle the dimensionality of single-cell data, and more sophisticated benchmarking that captures performance across diverse biological contexts. As single-cell foundation models continue to evolve, their integration with network inference methods may help overcome current limitations, particularly through transfer learning approaches that leverage pretraining on massive single-cell corpora.

For researchers and drug development professionals, these benchmarking studies provide critical guidance for method selection while highlighting the importance of context-specific evaluation. Performance variations across tissue types, perturbation conditions, and evaluation metrics underscore that no single method currently dominates all applications, necessitating careful matching of method capabilities to specific biological questions and data characteristics.