Benchmarking Traditional vs. AI Annotation for Drug Discovery: A 2025 Guide for Biomedical Researchers

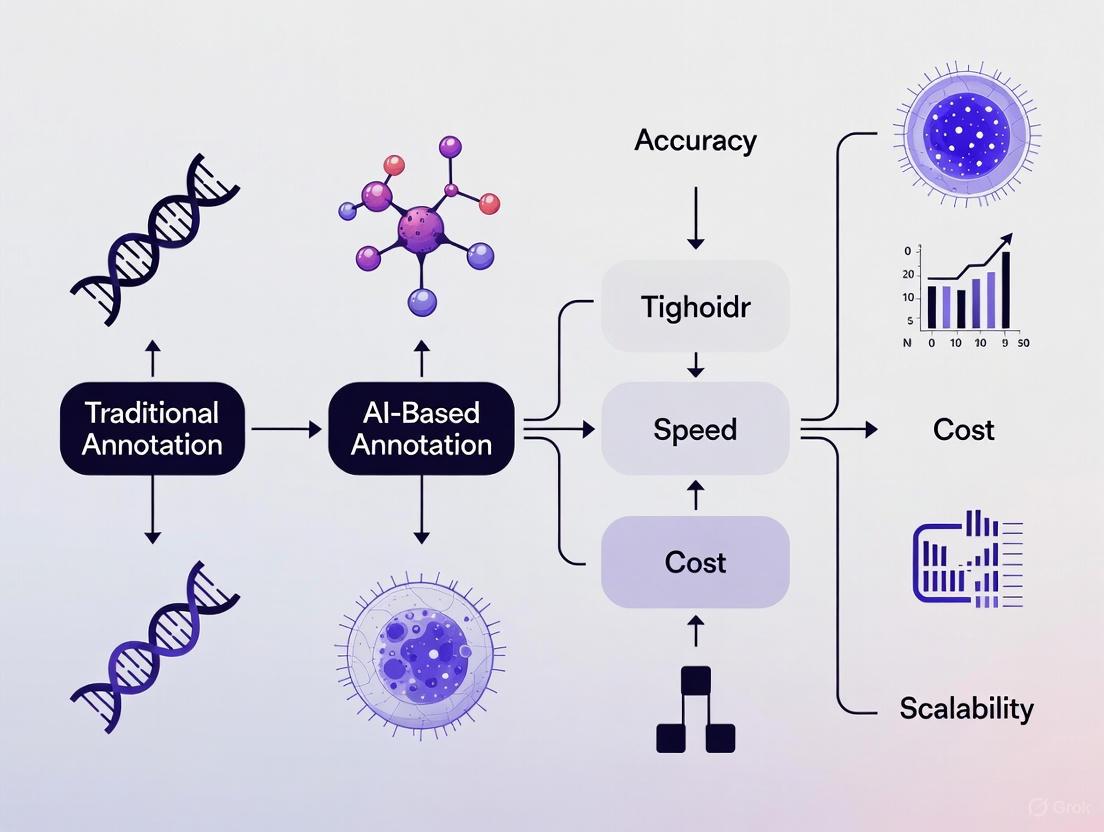

This article provides a comprehensive benchmark analysis for researchers and scientists in drug development, comparing traditional manual and modern AI-powered data annotation methods.

Benchmarking Traditional vs. AI Annotation for Drug Discovery: A 2025 Guide for Biomedical Researchers

Abstract

This article provides a comprehensive benchmark analysis for researchers and scientists in drug development, comparing traditional manual and modern AI-powered data annotation methods. It explores the foundational principles of both approaches, details their practical application in biomedical research pipelines, and offers strategies for troubleshooting and optimization. A final validation framework synthesizes key performance metrics—accuracy, speed, cost, and scalability—to guide the selection of the optimal annotation strategy for specific projects, from target identification to clinical candidate optimization.

The Foundational Principles of Data Annotation in Biomedical AI

Why Data Annotation is the Bedrock of AI in Drug Development

In the rapidly evolving field of AI-driven drug development, high-quality annotated data is the fundamental component that powers machine learning models. The transition from traditional, labor-intensive methods to AI-accelerated discovery is entirely dependent on the accuracy, volume, and context of the training data. This guide benchmarks traditional manual data annotation against modern AI-assisted methods, providing researchers and scientists with a structured comparison to inform their experimental design and platform selection.

The Indispensable Role of Data in AI-Driven Drug Discovery

Artificial Intelligence has demonstrably compressed drug discovery timelines, with platforms like Exscientia and Insilico Medicine advancing candidates from target identification to Phase I trials in as little as 18 months—a fraction of the traditional 5-year timeline [1]. This acceleration is contingent upon AI models that can accurately predict molecular behavior, identify viable drug targets, and optimize lead compounds. The performance of these models is a direct function of their training data [2].

Data annotation, or data labeling, is the process of meticulously tagging raw data—such as molecular structures, medical images, and clinical trial text—to create structured, machine-readable datasets. In drug development, this can involve classifying protein structures, annotating toxicity in cellular images, or labeling patient responses in electronic health records. Without this foundational layer of high-quality, context-rich data, even the most sophisticated algorithms fail to deliver reliable or translatable results, a principle often summarized as "garbage in, garbage out" [2]. The global market for data annotation tools, projected to grow from $1.9 billion in 2024 to $6.2 billion by 2030, underscores its critical and expanding role in the AI ecosystem [3].

Benchmarking Annotation Methods: A Quantitative Comparison

The choice between annotation methodologies involves a critical trade-off between accuracy, speed, and cost. The following section provides a structured, data-driven comparison to guide this decision.

Experimental Protocol for Benchmarking Annotation Methods

A robust comparison of annotation methods involves a standardized experimental setup to ensure validity and reproducibility.

- Objective: To quantitatively compare the performance metrics of Manual, AI-Assisted, and Hybrid data annotation workflows within a drug development context.

- Dataset: A curated set of 10,000 high-resolution histopathology images requiring segmentation of tumor regions. A pre-validated "gold standard" subset of 1,000 images is used for accuracy calibration.

- Annotation Teams: Three separate teams of equal size (n=5 annotators) are assigned to one of the three methodologies. All annotators undergo standardized training on the task and labeling ontology.

- Key Performance Indicators (KPIs): The primary metrics measured are Annotation Speed (images/hour), Annotation Accuracy (F1-score against the gold standard), and Cost per Annotation. Accuracy is measured upon completion of the entire dataset.

- Tools: The teams use platforms representative of each method: a manual interface, an AI-assisted platform with pre-labeling capabilities, and a hybrid system that integrates both.

Performance Metrics and Analysis

The data from the comparative experiment reveals clear trends and trade-offs, summarized in the table below.

Table: Benchmarking Performance of Data Annotation Methods

| Performance Metric | Manual Annotation | AI-Assisted Annotation | Hybrid (Human-in-the-Loop) |

|---|---|---|---|

| Throughput (images/hour) | 20-30 | 200-500 | 100-250 |

| Relative Speed | 1x (Baseline) | ~10x | ~5x [4] |

| Typical Accuracy (F1-Score) | High (95-98%) [2] | Variable (85-95%) [2] | Very High (96-99%) [4] |

| Best Use Case | Complex, novel, or nuanced data (e.g., subjective medical imagery) [2] | Structured, repetitive, and large-scale tasks [2] | Most real-world scenarios, balancing speed and precision [4] |

| Cost Efficiency | Low (High labor cost) | High (Low cost per label) | Medium (Optimized allocation) [4] |

| Handling of Edge Cases | Excellent (Human judgment) | Poor (Relies on training data) | Good (Human review of low-confidence predictions) |

The data shows that AI-assisted annotation provides the highest speed and scalability, making it suitable for processing the massive datasets common in genomics and high-throughput screening [2]. However, its accuracy is inherently tied to its training data and can falter with novel or ambiguous information.

Conversely, manual annotation delivers superior accuracy and nuance for complex tasks, such as interpreting subtle pathological features in medical images or labeling complex molecular interactions [2]. Its primary limitations are poor scalability and high cost.

In practice, the hybrid Human-in-the-Loop (HITL) approach has emerged as the industry standard for critical applications in drug development [5] [4]. This method leverages AI for initial, high-volume pre-labeling, while human experts focus on quality control, complex cases, and edge conditions. Real-world implementations report 5x faster throughput and 30-35% cost savings while maintaining or even improving accuracy levels compared to purely manual workflows [4].

Essential Reagents: The Data Annotation Toolkit for Researchers

Selecting the right tools and workflows is as crucial as any laboratory reagent. The following toolkit outlines the core components of a modern data annotation pipeline for drug development research.

Table: Research Reagent Solutions for Data Annotation

| Category | Solution / Tool | Primary Function in Annotation |

|---|---|---|

| Annotation Platforms | Labelbox, Encord, SuperAnnotate, V7 | Provides the core software environment for managing data, defining ontologies, and executing labeling tasks [6] [7]. |

| AI-Assisted Labeling | SAM2, T-Rex2, Platform-specific models | Automates repetitive labeling tasks (e.g., segmentation) using pre-trained models, dramatically increasing speed [6] [4]. |

| Human-in-the-Loop (HITL) | Custom workflows on major platforms | A framework that integrates human expert review into the AI-driven pipeline to validate results and handle complex edge cases [5] [2]. |

| Data Management & QC | Integrated analytics dashboards | Tools for monitoring annotation throughput, user activity, and label accuracy in real-time, enabling continuous quality control [4]. |

Implementing an Optimized Annotation Workflow

An effective annotation pipeline is a cyclical process of continuous improvement. The following diagram maps the logical flow of a optimized, hybrid annotation workflow.

Diagram: Hybrid Human-in-the-Loop Annotation Workflow

The workflow begins with raw, unlabeled data (e.g., molecular structures, medical images). This data first undergoes AI-assisted pre-labeling, where initial models apply labels at high speed. The pre-labeled data then moves to human expert review, where specialists correct errors and handle complex cases that the AI could not confidently resolve. This is followed by a rigorous quality control and validation stage to ensure the dataset meets required standards. The output is a high-quality curated dataset ready for model training. A critical final component is the feedback loop, where the improved AI model can be used to enhance the pre-labeling for future annotation cycles, creating a virtuous cycle of increasing efficiency and accuracy [2] [4].

The "Human-in-the-Loop" Imperative in Drug Development

Given the high-stakes nature of pharmaceutical research, where model errors can lead to costly clinical trial failures or safety issues, the "Human-in-the-Loop" (HITL) model is particularly critical [8]. AI systems can struggle with the nuanced context, rare edge cases, and complex biology inherent to drug development. Human experts provide the indispensable judgment for tasks like [5] [2]:

- Interpreting ambiguous pathological findings in medical images.

- Labeling complex, multi-step biological pathways from scientific literature.

- Validating the context of adverse event reports in pharmacovigilance.

Diagram: The Human-in-the-Loop Annotation Cycle

This cyclical process, as shown in the diagram, ensures that AI becomes a powerful assistant that augments—rather than replaces—human expertise, leading to progressively smarter and more reliable automated annotation.

The assertion that data annotation is the bedrock of AI in drug development is firmly supported by the data. The choice of annotation strategy has a direct and measurable impact on the success of AI initiatives. While AI-assisted methods provide unmatched speed for scalable data processing, and manual methods offer superior nuance for novel complexities, the evidence points to a hybrid Human-in-the-Loop approach as the most effective and robust strategy for the pharmaceutical industry.

By strategically investing in high-quality annotated data and modern annotation platforms, drug development teams can build more accurate and reliable AI models. This, in turn, accelerates the entire R&D pipeline, bringing life-saving treatments to patients faster and more efficiently. The future of AI in drug discovery will not be won by the best algorithm alone, but by the best algorithm built upon the highest-quality data foundation.

Within the context of benchmarking traditional versus AI-driven data annotation methods, this guide provides an objective comparison of their performance for scientific and drug development applications. While automated tools offer scalability, traditional manual annotation remains indispensable for tasks requiring deep contextual understanding, domain expertise, and the handling of complex edge cases. This analysis synthesizes current experimental data and methodologies, demonstrating that a hybrid approach—leveraging both human nuance and algorithmic speed—often yields the most robust and reliable training data for critical research applications.

For researchers, scientists, and drug development professionals, the quality of annotated data is a foundational element in building reliable AI models. Data annotation is the process of labeling raw data—be it images, text, audio, or video—to make it intelligible for supervised machine learning algorithms [9]. In scientific domains, the stakes for accuracy are exceptionally high; a mislabeled medical image or an misinterpretated chemical compound structure can compromise an entire model's validity.

The debate between traditional manual annotation and modern AI-assisted methods is not about absolute superiority but about optimal application. This guide objectively compares these methodologies within a benchmarking framework, providing the experimental data and protocols needed to inform data strategy in research environments. The core distinction lies in the deployment of human expertise: manual annotation leverages continuous human judgment, whereas AI-assisted annotation automates repetitive tasks, often with human oversight reserved for quality control [10] [11].

Core Differentiators: Manual vs. AI-Assisted Annotation

The choice between manual and AI-assisted annotation involves a fundamental trade-off between the nuanced accuracy of human intelligence and the scalable efficiency of automation. The table below summarizes their core performance characteristics based on aggregated industry data and studies.

Table 1: Performance Benchmarking of Manual vs. AI-Assisted Annotation

| Criterion | Manual Annotation | AI-Assisted Annotation |

|---|---|---|

| Accuracy | Very high; professionals interpret nuance, context, and domain-specific terminology [12]. | Moderate to high; excels with clear, repetitive patterns but struggles with subtle or specialized content [12] [13]. |

| Speed | Slow; human annotators label each data point individually, taking days or weeks for large volumes [12]. | Very fast; once configured, models can label thousands of data points in hours [12]. |

| Scalability | Limited; scaling requires hiring and training more annotators [12]. | Excellent; annotation pipelines can handle massive data volumes once trained [12]. |

| Adaptability | Highly flexible; annotators adjust in real-time to new taxonomies and unusual edge cases [12] [13]. | Limited; models operate within pre-defined rules and require retraining for significant workflow changes [12]. |

| Cost Structure | High; due to skilled labor, multi-level reviews, and specialist expertise [12] [10]. | Lower long-term cost; reduces human labor but incurs upfront model development and training expenses [12]. |

| Edge Case Handling | Exceptional; human reasoning is critical for rare, complex, or ambiguous data [13] [11]. | Poor; models fail when encountering data outside their training distribution, often with high confidence [13]. |

| Best For | Complex, subjective, or safety-critical data; domains requiring deep expertise (e.g., medical imaging, drug discovery) [14] [11]. | Large, repetitive datasets with well-defined objects and patterns; rapid prototyping [12] [10]. |

Experimental data underscores this performance trade-off. One study found that while AI-assisted pre-labeling accelerates workflows, an average of 42% of all automated data labels require human correction or intervention to meet quality standards [11]. Furthermore, well-executed manual labeling can improve model performance from an average baseline of 60-70% accuracy to the 95% accuracy range, which is often essential for research-grade outputs [11].

Experimental Protocols for Benchmarking Annotation Methods

To ensure valid and reproducible comparisons between annotation methods, a structured experimental protocol is essential. The following methodology outlines a robust benchmarking workflow suitable for scientific validation.

Workflow Design

The benchmarking process is a cycle that integrates both manual and automated components to continuously improve data quality and model performance.

Diagram Title: Benchmarking Workflow for Annotation Methods

Methodology Details

- Define Scope & Objectives: Determine the specific domain (e.g., histopathology image analysis, chemical structure recognition) and the key metrics for evaluation, such as inter-annotator agreement, label accuracy, time-per-task, and downstream model performance [15].

- Select & Prepare Dataset: Curate a representative dataset that includes common examples as well as known edge cases critical to the domain. The dataset should be split, with a portion reserved for creating a "gold standard" through expert manual annotation [13].

- Manual Annotation (Expert Gold Standard): Domain experts (e.g., radiologists, biologists) annotate the gold standard dataset. This process should involve multiple annotators to measure inter-annotator agreement (IAA), a key metric for establishing benchmark quality and consistency [9] [11].

- AI-Assisted Pre-labeling: Utilize one or more AI-assisted annotation tools (e.g., Encord, CVAT, Labelbox) to pre-label the same dataset. The models may be pre-trained or fine-tuned on a separate, annotated dataset [6] [14].

- Quantitative Comparison: Compare the AI-generated labels against the human gold standard using metrics like precision, recall, F1-score, and mean Average Precision (mAP). The 42% human correction rate for automated labels is a typical quantitative finding from this stage [11].

- Qualitative Analysis: Experts perform a root-cause analysis on discrepancies. This identifies why the AI failed, often revealing issues with contextual ambiguity, rare data, or domain complexity that quantitative metrics alone cannot explain [13].

- Model Retraining & Iteration: Human corrections and qualitative feedback are fed back into the AI model in a Human-in-the-Loop (HITL) active learning cycle. This improves the model for subsequent benchmarking rounds, creating a continuous improvement loop [13] [11].

The Researcher's Toolkit: Annotation Platforms & Solutions

Selecting the right tools is critical for executing a valid benchmark. The landscape includes a range of platforms, from open-source to enterprise-grade solutions, each with strengths for different research scenarios.

Table 2: Research Reagent Solutions: Annotation Platforms & Tools

| Tool / Platform | Primary Function | Key Features for Research |

|---|---|---|

| Encord | Platform for labeling and managing high-volume visual datasets [14]. | Native support for DICOM medical imaging; AI-assisted video annotation; collaborative workflow management for large teams [14]. |

| CVAT | Open-source image and video annotation tool [6]. | Completely free and self-hosted; supports basic AI-assisted labeling; strong community support via OpenCV [6] [14]. |

| T-Rex Label | Web-based AI-assisted annotation tool [6]. | Features cutting-edge T-Rex2 model for visual prompt annotation; excels in rare object detection; free model available [6]. |

| Labelbox | One-stop data and model management platform [6]. | Integrates data annotation with model training and analysis; supports active learning to prioritize impactful data [6]. |

| Supervisely | Unified operating system for computer vision [14]. | Intuitive interface with strong support for DICOM and other medical imaging modalities; customizable plugin architecture [14]. |

| Domain Experts | Human annotators with specialized knowledge [11]. | Provide ground truth for gold standard datasets; handle edge cases and complex annotations in fields like drug discovery and medical diagnostics [11]. |

The benchmarking data and experimental protocols presented confirm that traditional manual annotation is not obsolete but has evolved into a strategic, high-value function within the modern AI research pipeline. Its unparalleled strength in managing contextual understanding, domain expertise, and edge cases makes it irreplaceable for safety-critical and scientifically rigorous fields like drug development [16] [13] [11].

The future of data annotation in research does not lie in a binary choice between human and machine, but in sophisticated hybrid pipelines. In these workflows, automation handles scalable, repetitive labeling tasks, while human expertise is strategically deployed for quality assurance, complex case resolution, and guiding model retraining through active learning loops [12] [13] [11]. For researchers building models where accuracy is non-negotiable, a benchmarked and validated hybrid approach that leverages both human nuance and AI efficiency will yield the most reliable and impactful results.

Data annotation, the process of labeling data to make it understandable for machines, is a foundational step in developing artificial intelligence systems. For researchers, scientists, and drug development professionals, the shift from traditional manual annotation to AI-powered methods represents a critical evolution in how we build training datasets for machine learning models. This transformation is particularly relevant in biomedical contexts, where the volume of unstructured text from scientific publications has made manual knowledge extraction impractical [17]. The emerging paradigm of AI-powered annotation offers unprecedented potential for automation and scalability while introducing new considerations for accuracy and validation.

This guide provides an objective comparison between traditional and AI-powered annotation methods, focusing on experimental performance data and practical implementation frameworks. By examining quantitative benchmarks, methodological protocols, and specialized tools, we aim to equip research professionals with the evidence needed to make informed decisions about integrating AI annotation into their scientific workflows, particularly in drug discovery and biomedical research contexts where annotation quality directly impacts model reliability and research outcomes.

Performance Benchmarks: Quantitative Comparisons

Rigorous evaluation of annotation methods requires multiple performance dimensions. The following comparative analysis examines both agreement metrics and computational efficiency across different annotation approaches.

Annotation Agreement and Accuracy

Table 1: Human vs. AI Annotation Agreement Metrics

| Annotation Method | Pearson Correlation with Human Consensus | Agreement Metric (Fleiss' κ/Cohen's κ) | Domain Context | Source |

|---|---|---|---|---|

| GPT-4 (Analyze-Rate Prompt) | 0.61 (Likert scale) | N/A | Conversational Safety | [18] |

| Median Human Annotator | 0.51 | N/A | Conversational Safety | [18] |

| GPT-4 (Binary) | 0.59 | N/A | Conversational Safety | [18] |

| Llama 3.1 405B (Rating Only) | 0.60 (Likert scale) | N/A | Conversational Safety | [18] |

| ICU Consultants (11 Annotators) | N/A | 0.383 (Fleiss' κ) | Clinical ICU Scoring | [19] |

| EEG Experts | N/A | 0.38 (Average pairwise Cohen's κ) | ICU EEG Analysis | [19] |

| Pathologists (Breast Lesions) | N/A | 0.34 (Fleiss' κ) | Medical Diagnostics | [19] |

| Psychiatrists (Depression) | N/A | 0.28 (Fleiss' κ) | Mental Health | [19] |

The data reveals that advanced AI models can surpass median human performance in correlating with human consensus on annotation tasks. In the evaluation of conversational safety, GPT-4 with a chain-of-thought prompting strategy achieved a Pearson correlation of 0.61 with the average rating of 112 human annotators, exceeding the median human annotator's correlation of 0.51 [18]. This suggests that in certain domains, AI annotation can not only match but exceed the consistency of individual human annotators when measured against collective human judgment.

However, the consistently modest inter-annotator agreement among human experts across medical domains (with Fleiss' κ scores ranging from 0.28 to 0.38) highlights the inherent subjectivity and "noise" in human judgment [19]. This variability presents a fundamental challenge for establishing reliable ground truth in biomedical annotation tasks, particularly in domains requiring clinical expertise where even highly trained specialists demonstrate significant disagreements in their annotations.

Efficiency and Scalability Metrics

Table 2: Operational Efficiency Comparisons

| Metric | Traditional Manual Annotation | AI-Powered Annotation | Context |

|---|---|---|---|

| Speed | Baseline reference | Up to 10x faster with AI-assisted labeling | Computer Vision Projects [20] |

| Scalability | Limited by human resources | Massive dataset handling without degradation | Enterprise AI Platforms [20] [21] |

| Consistency | Subject to inter-annotator variability | High algorithmic consistency | Quality Assurance [22] |

| Domain Adaptation | Requires retraining annotators | Model fine-tuning | Cross-domain Applications [14] |

| Cost Structure | Linear scaling with volume | Higher initial investment, lower marginal cost | Total Cost of Ownership [20] |

AI-powered annotation demonstrates significant advantages in operational efficiency, particularly for large-scale projects. In computer vision applications, AI-assisted labeling can accelerate annotation speed by up to 10x compared to purely manual approaches [20]. This acceleration stems from capabilities like AI-assisted pre-labeling, automated object tracking in video sequences, and active learning systems that prioritize the most valuable samples for human review [14].

The scalability of AI-powered methods represents another substantial advantage. Where traditional manual annotation faces practical limits due to human resource constraints, AI systems can maintain consistent performance across massive datasets without degradation in quality or throughput [20]. This capability is particularly valuable in drug development contexts where the volume of biomedical literature and research data continues to grow exponentially, making comprehensive manual annotation increasingly impractical [17].

Experimental Protocols: Methodological Framework

To ensure reproducible and valid comparisons between annotation methodologies, researchers should adhere to structured experimental protocols.

Protocol 1: Benchmarking AI-Human Annotation Alignment

Objective: Quantify the alignment between AI-generated annotations and human consensus across demographic groups.

Dataset Preparation:

- Utilize the DICES-350 dataset containing 350 multi-turn conversations [18]

- Incorporate annotations from 112 human annotators spanning 10 race-gender groups

- Establish ground truth through majority voting or weighted consensus

AI Annotation Procedure:

- Implement zero-shot prompting with models (GPT-4, Llama 3.1 405B, Gemini 1.5 Pro)

- Apply both "rating-only" and "analyze-rate" (chain-of-thought) prompt strategies

- Configure output format to match human rating scales (Likert or binary)

Quality Validation:

- Calculate Pearson correlation between AI annotations and human consensus

- Compute inter-annotator agreement metrics (Fleiss' κ, Cohen's κ)

- Perform bootstrap resampling (e.g., 1000 iterations) for statistical significance

- Conduct qualitative analysis of systematic disagreement patterns

This protocol revealed that GPT-4 with chain-of-thought prompting achieved a correlation of r=0.61 with human consensus, exceeding the median human annotator's correlation of r=0.51 [18]. The methodology also enabled investigation of whether AI models align more closely with specific demographic groups, though the DICES-350 dataset was underpowered to detect significant differences [18].

Protocol 2: Evaluating Clinical Annotation Consistency

Objective: Assess the impact of annotation inconsistencies on AI model performance in clinical decision-making.

Experimental Design:

- Recruit 11 ICU consultants as expert annotators [19]

- Provide identical patient cases described by six clinical features

- Collect annotations on a five-point ICU Patient Scoring System (ICU-PSS)

Model Development:

- Train separate classifier models for each consultant's annotations

- Apply identical machine learning algorithms and feature engineering

- Maintain consistent validation procedures across all models

Validation Framework:

- Internal validation: Compare model performance on held-out test sets

- External validation: Evaluate classifiers on HiRID dataset (external ICU data)

- Measure agreement between model classifications (pairwise Cohen's κ)

- Assess clinical utility for specific decisions (mortality prediction vs. discharge)

This experimental approach demonstrated that models trained on different experts' annotations showed minimal agreement (average Cohen's κ = 0.255) when applied to external validation data [19]. The research also revealed that standard consensus approaches like majority voting often yield suboptimal models, suggesting that assessing "annotation learnability" may produce better outcomes.

Workflow Visualization

Experimental Workflow for Annotation Benchmarking

AI-Powered Annotation Tools and Platforms

The landscape of AI-powered annotation tools has evolved significantly, with platforms offering specialized capabilities for different research contexts.

Table 3: Specialized Annotation Platforms for Research Applications

| Platform | AI-Powered Features | Supported Data Types | Research Applications | Limitations |

|---|---|---|---|---|

| Encord | Micro-models, automated object tracking, active learning | Video, DICOM, SAR, Documents, Audio | Physical AI, medical imaging, robotics | Less suitable for non-visual data [14] |

| SuperAnnotate | AI-assisted labeling, pre-labeling, automation tools | Images, video, text, audio, 3D | Computer vision, RLHF, agent evaluation | Platform can feel heavy for simple projects [20] [21] |

| Labelbox | Model-assisted labeling, automated workflows, AI-assisted curation | Images, video, text, audio, PDFs, geospatial | Enterprise AI development, medical imagery | Cost forecasting needs careful management [20] [21] |

| CVAT | Semi-automated labeling, custom model integration | Images, video | Robotics, autonomous vehicles (open-source) | Requires engineering resources for extensibility [14] |

| Scale AI | Human-in-the-loop verification, quality control | Images, video, text, LiDAR | Large-scale complex AI projects | Pricing may be high for smaller organizations [20] |

These platforms demonstrate the increasing specialization of annotation tools for research contexts. Encord offers particular strength in physical AI applications with support for complex video workflows and multimodal data synchronization [14]. SuperAnnotate provides comprehensive functionality for computer vision projects but may present a steeper learning curve for simpler applications [20]. For resource-constrained research teams, open-source options like CVAT provide fundamental AI-assisted labeling capabilities while allowing full customization [14].

Domain-Specific Applications in Drug Development

AI-powered annotation offers particular promise in pharmaceutical and biomedical research contexts where manual annotation presents significant bottlenecks.

Biomedical Corpus Development

The creation of specialized annotated corpora enables the application of NLP techniques to traditional medicine research. The Traditional Formula-Disease Relationship (TFDR) corpus exemplifies this approach, containing 6,211 traditional formula mentions and 7,166 disease mentions from 740 PubMed abstracts, with 1,109 relationships between them [17]. This manually annotated resource facilitates the automatic extraction of TF-disease relationships from biomedical literature, demonstrating how structured annotation frameworks can accelerate knowledge discovery in specialized domains.

The TFDR corpus development workflow involved:

- Vocabulary development from Traditional Korean Medicine ontology

- Dictionary-based pre-annotation of traditional formula mentions

- Algorithmic pre-annotation of disease mentions using TaggerOne with MEDIC vocabulary

- Manual validation and relationship annotation by domain experts

This hybrid approach combining automated pre-annotation with expert validation represents an efficient methodology for creating high-quality annotated resources in specialized biomedical domains.

Drug Target Discovery

AI-powered annotation plays a crucial role in modern drug target discovery through several emerging methodologies:

Network-Based and Machine Learning Approaches

- Guilt by association: Identifies proteins interacting with known drug targets as potential targets [23]

- Network-based inference: Combines multiple bioinformatics networks for improved prediction accuracy [23]

- Random walks: Models network traversal to identify nodes relevant to known drug targets [23]

- Supervised learning: Trains models on known drug-target interactions to predict new relationships [23]

These approaches rely on comprehensive annotation of biological entities and their relationships, enabling computational methods to prioritize potential drug targets for experimental validation.

Research Reagent Solutions

Table 4: Essential Research Resources for Annotation Studies

| Resource Type | Specific Examples | Research Function | Access Considerations |

|---|---|---|---|

| Annotation Datasets | DICES-350, TFDR Corpus, HiRID ICU Dataset | Benchmarking, validation, methodological development | Licensing, data use agreements, privacy compliance |

| Computational Models | GPT-4, Llama 3.1 405B, Gemini 1.5 Pro, Custom ML Models | AI-powered annotation, baseline comparisons | API access, computational resources, licensing fees |

| Annotation Platforms | Encord, SuperAnnotate, Labelbox, CVAT | Workflow management, quality control, collaboration | Subscription costs, deployment options, integration requirements |

| Biomedical Vocabularies | MEDIC, OMIM, MeSH, Traditional Medicine Ontologies | Entity recognition, relationship extraction, normalization | License restrictions, coverage limitations, update frequency |

| Quality Metrics | Pearson Correlation, Fleiss' κ, Cohen's κ, Precision/Recall | Performance evaluation, method comparison, validation | Implementation complexity, interpretation guidelines |

These research reagents constitute the essential toolkit for conducting rigorous studies comparing annotation methodologies. The DICES-350 dataset has been particularly valuable for evaluating alignment with human perceptions of conversational safety [18], while clinical datasets like the HiRID ICU data enable validation of annotation approaches in healthcare contexts [19]. Biomedical vocabularies such as MEDIC and traditional medicine ontologies provide the standardized terminology necessary for consistent entity annotation across research teams [17].

Implementation Framework and Decision Protocol

Selecting the appropriate annotation methodology requires careful consideration of project requirements and constraints. The following decision protocol visualizes this process:

Annotation Methodology Decision Protocol

Implementation Guidelines

For research teams implementing AI-powered annotation, the following evidence-based recommendations can optimize outcomes:

Hybrid Workflow Design

- Use AI for initial bulk annotation with human expert review for quality assurance

- Implement active learning to identify uncertain predictions for human verification

- Establish clear annotation guidelines and quality metrics before project initiation

- Maintain audit trails for all annotations to enable continuous improvement

Quality Assurance Framework

- Implement multi-stage review processes with domain experts

- Calculate inter-annotator agreement metrics even for AI-generated labels

- Conduct regular error analysis to identify systematic annotation patterns

- Establish feedback loops between annotators and model developers

Validation Strategies

- Compare AI annotations against expert consensus on representative samples

- Assess potential demographic biases in alignment patterns

- Evaluate impact on downstream model performance, not just annotation metrics

- Conduct qualitative analysis of systematic disagreement patterns

The evidence demonstrates that AI-powered annotation has reached a maturity level where it can significantly accelerate research workflows while maintaining quality standards comparable to human annotators. In drug development and biomedical research contexts, these methods offer particular promise for scaling knowledge extraction from the rapidly expanding scientific literature.

The most effective approaches implement hybrid strategies that leverage the scalability of AI with the contextual understanding of human experts. This balanced methodology is especially valuable in domains like clinical research and drug discovery, where annotation errors can have significant consequences. As AI annotation capabilities continue advancing, with models like GPT-4 already exceeding median human performance in correlation with consensus ratings [18], the research community should focus on developing more sophisticated validation frameworks and domain-specific implementations.

For research professionals, successfully adopting AI-powered annotation requires careful consideration of project requirements, available resources, and quality standards. By implementing structured evaluation protocols and maintaining human oversight for critical applications, teams can harness the scalability of AI methods while ensuring the reliability required for scientific research and drug development.

The journey from a theoretical molecule to an approved medicine is fundamentally a process of data generation, annotation, and interpretation. In drug discovery, data annotation—the methodical labeling of raw data to give it context and meaning—is the critical bridge that transforms unstructured information into predictive insights. This process is undergoing a profound transformation, moving from traditional, manual, and hypothesis-driven methods to modern, automated, and AI-driven data-centric approaches. The core data types, spanning molecular structures, biological assay results, and clinical text, each present unique annotation challenges and opportunities. The choice of annotation strategy directly impacts the speed, cost, and ultimate success of discovering new therapeutics. This guide provides a comparative benchmark of traditional versus AI-powered annotation methodologies across these core data types, equipping researchers with the experimental protocols and performance data needed to inform their data strategy.

Comparative Analysis: Traditional vs. AI Annotation Methods

The performance of traditional and AI-driven annotation methods varies significantly across different data types and metrics. The following table synthesizes key comparative data to guide methodology selection.

Table 1: Performance Benchmark of Annotation Methods Across Data Types

| Metric | Traditional Manual Annotation | AI-Driven & Hybrid Annotation |

|---|---|---|

| Reported Throughput Speed | Baseline (Time-consuming) [24] | Up to 5x faster throughput; 60% faster iteration cycles [4] |

| Reported Cost Efficiency | Expensive (High labor costs) [24] | 30-35% cost savings; over 33% lower labeling costs [4] |

| Accuracy & Handling of Complexity | High accuracy for complex, nuanced data (e.g., medical imaging, legal docs) [24] [10] | Can achieve high accuracy; hybrid approaches reported 30% increase in annotation accuracy [4] |

| Scalability | Difficult to scale; requires extensive human resources [24] | Highly scalable for large datasets; enables handling of massive data volumes [24] [4] |

| Best-Suited Data Types | Small, complex datasets; novel data without pre-trained models; tasks requiring expert contextual judgment [24] [10] | Large-scale, repetitive tasks (e.g., image segmentation); structured data with existing models for pre-labeling [24] [4] |

| Typical Workflow | Linear, human-driven process with high oversight[c:7] | Hybrid human-in-the-loop (HITL); AI pre-labeling with human validation and QA [4] |

Annotation of Molecular Structures

Traditional Molecular Representation and Annotation

Molecular representation is the foundational annotation step that translates a chemical structure into a computer-readable format [25]. Traditional methods rely on rule-based feature extraction.

- Simplified Molecular-Input Line-Entry System (SMILES): A string-based notation system that uses ASCII characters to describe a molecule's structure through a depth-first traversal of its atoms and bonds [25]. For example, the serotonin molecule can be represented as

C1=CC2=C(C=C1CCO)NC=N2. - Molecular Fingerprints (e.g., ECFP): These encode a molecule's substructural information as a fixed-length binary bit string. Each bit indicates the presence or absence of a specific substructural pattern [25].

- Molecular Descriptors: These are numerical values that quantify a molecule's physical or chemical properties, such as molecular weight, logP (hydrophobicity), or topological indices [25].

Experimental Protocol for Traditional QSAR Modeling:

- Data Curation: Assemble a library of molecules with known biological activity (e.g., IC50 values).

- Structure Annotation (Featurization): Convert each molecular structure into its representative form (e.g., SMILES strings, ECFP fingerprints, or a vector of molecular descriptors).

- Model Training: Use the annotated features to train a machine learning model (e.g., Random Forest, Support Vector Machine) to predict biological activity from molecular structure.

- Validation: Evaluate model performance on a held-out test set using metrics like ROC-AUC or R².

Modern AI-Driven Molecular Representation

AI-driven methods have shifted from predefined rules to data-driven learning paradigms [25]. These models learn continuous, high-dimensional feature embeddings directly from large datasets.

- Graph Neural Networks (GNNs): Represent a molecule as a graph with atoms as nodes and bonds as edges. GNNs learn to aggregate information from a node's neighbors to create a powerful representation that captures both local and global structural information [25].

- Language Model-Based Approaches: Models like Transformers are adapted to process SMILES or SELFIES strings as a specialized chemical language. They learn contextual relationships between atoms and functional groups, similar to how language models understand words in a sentence [25].

- Multimodal Learning: This advanced approach integrates multiple data types—such as molecular structure, protein binding data, and cellular images—into a single model. This creates a holistic view of the drug-target interaction, overcoming the limitations of single-modality analysis [26].

Experimental Protocol for AI-Driven Scaffold Hopping:

- Data Preparation: Collect a large dataset of molecules, often represented as graphs or SMILES strings.

- Model Pre-training: Train a generative model (e.g., a Variational Autoencoder or a GNN) on the dataset to learn a continuous, meaningful latent space of chemical structures.

- Property-Guided Generation: Use optimization techniques (e.g., Bayesian optimization or reinforcement learning) to navigate the latent space and generate novel molecular structures that are structurally distinct from the starting point (scaffold hop) but are predicted by the model to retain the desired biological activity [25].

- Validation: Synthesize and experimentally test the top-ranked generated molecules to confirm the predicted activity.

Diagram Title: Molecular Annotation Workflows

Annotation of Clinical and Biological Text

Manual Curation and Rule-Based Systems

The annotation of clinical text—such as electronic health records (EHRs), scientific literature, and clinical trial protocols—has traditionally been a manual and labor-intensive process.

- Expert-Led Curation: Domain experts (e.g., physicians, biologists) read and label text documents, identifying and extracting entities such as gene names, disease phenotypes, drug mechanisms, and adverse event reports. This process is essential for creating high-quality "gold-standard" datasets [24] [10].

- Rule-Based & Dictionary-Based NLP: Early computational methods used handcrafted rules (e.g., regular expressions) and predefined dictionaries to identify relevant terms in text. These systems are highly interpretable but lack flexibility and struggle with linguistic variation and context [10].

Experimental Protocol for Manual Corpus Annotation:

- Ontology Definition: Define a strict schema (ontology) of the entities and relationships to be extracted (e.g., linking a drug to a target protein).

- Annotator Training: Train a team of expert annotators on the schema and annotation guidelines.

- Iterative Labeling & Adjudication: Have multiple annotators label the same documents to measure inter-annotator agreement. Resolve discrepancies through adjudication by a senior expert to create a consensus ground-truth dataset.

- Model Training (Optional): Use the ground-truth dataset to train a supervised machine learning model.

AI-Powered Annotation of Clinical Text

The advent of Large Language Models (LLMs) and multimodal AI has revolutionized the annotation of complex biological and clinical text [26].

- LLM-Powered Named Entity Recognition (NER) and Relationship Extraction: Models like GPT-4o and Gemini can be fine-tuned to automatically identify and link entities (e.g., "drug," "gene," "mutation") and their relationships (e.g., "inhibits," "is associated with") from vast corpora of scientific literature at high speed [26].

- Multimodal Knowledge Graphs: These AI systems integrate textual data from publications and EHRs with structured data from genomic databases, protein structures, and cellular imaging. By associating concepts across these modalities, they can identify hidden patterns and generate novel hypotheses for target discovery and patient stratification [26].

Experimental Protocol for AI-Assisted Clinical Trial Recruitment:

- Model Fine-Tuning: Fine-tune a clinical LLM on a labeled dataset of de-identified EHRs, where patient records are annotated with trial eligibility criteria (e.g., specific diagnoses, lab values, medication history).

- Deployment for Screening: Apply the model to a large, unlabeled EHR database to automatically identify and flag potentially eligible patients for a given clinical trial.

- Human-in-the-Loop Validation: A clinical research coordinator reviews the model's predictions, confirming or rejecting the matches. This step is critical for ensuring patient safety and protocol adherence [4] [27].

- Continuous Learning: The human-validated results are fed back into the model to improve its accuracy over time.

Diagram Title: Multimodal Data Integration for Discovery

The Scientist's Toolkit: Essential Reagents & Materials

The following table details key resources and tools used in modern, data-driven drug discovery experiments.

Table 2: Key Research Reagent Solutions for Data-Driven Discovery

| Reagent / Resource | Type | Primary Function in Experimentation |

|---|---|---|

| HUVEC Cells | Biological Model | Human umbilical vein endothelial cells; a standard cellular model used in high-content screening to study cellular perturbations in a controlled, standardized way [28]. |

| CRISPR-Cas9 | Molecular Tool | Used for precise gene knockout in cellular models to generate fit-for-purpose data on gene function and identify novel drug targets [28]. |

| RxRx3-core Dataset | Data Resource | A public, standardized dataset of cellular microscopy images used to benchmark AI models for tasks like drug-target interaction prediction [28]. |

| AlphaFold / Genie | AI Software Tool | Generative AI models that predict protein 3D structures from amino acid sequences, revolutionizing target assessment and structure-based drug design [27]. |

| ChEMBL / Protein Data Bank | Data Resource | Public databases containing curated chemical bioactivity data and 3D protein structures, used for training and validating predictive models [28]. |

| Clinical LLM (e.g., TrialGPT) | AI Software Tool | A large language model fine-tuned on clinical text to automate the annotation of EHRs and streamline patient recruitment for clinical trials [27]. |

The adoption of artificial intelligence (AI) in high-stakes fields like pharmaceutical research has fundamentally shifted requirements for training data quality. As AI models grow more sophisticated, the annotation quality—the accuracy, consistency, and expertise embodied in labeled data—has emerged as a critical determinant of real-world performance. This is particularly evident in drug discovery, where AI systems are increasingly deployed for tasks ranging from target identification to clinical trial optimization [29] [30]. The traditional paradigm of using crowdsourced annotation from non-specialists is proving inadequate for these complex domains, creating a pressing need for domain-expert-driven annotation methodologies [31].

This guide examines the critical relationship between annotation quality and model performance through a comparative analysis of traditional versus AI-enhanced annotation methods. By synthesizing current research metrics and experimental findings, we provide drug development professionals with a evidence-based framework for evaluating annotation approaches and their tangible impact on predictive outcomes in biomedical research.

Quantitative Comparison: Traditional vs. Domain-Expert Annotation

A systematic analysis of performance metrics reveals substantial differences between annotation methodologies. The following table synthesizes empirical data from recent studies evaluating annotation quality and its downstream effects on model performance.

Table 1: Performance Metrics Comparison: Traditional vs. Domain-Expert Annotation

| Performance Metric | Traditional Annotation | Domain-Expert Annotation | Measurement Context |

|---|---|---|---|

| Model Accuracy Improvement | Baseline | +28% improvement [31] | Specialized domains (e.g., biomedical) |

| Real-World Error Reduction | Baseline | 85% reduction [31] | High-stakes deployment environments |

| Model Iteration Speed | Baseline | 30-40% faster cycles [31] | Time from data labeling to production |

| Data Efficiency | Lower | 50-95% data pruning achievable [31] | Quality-focused curation processes |

| Multimodal Understanding | Limited by segregated workflows | Enhanced through integrated expertise [31] | Complex text-image relationships |

| Reasoning Capabilities | Superficial pattern recognition | Deep conceptual understanding [31] | STEM problem-solving tasks |

The comparative advantage of domain-expert annotation is particularly pronounced in specialized fields like drug discovery. Here, accurate annotation requires nuanced understanding of biomedical concepts, molecular interactions, and clinical contexts that typically fall outside the knowledge domain of general-purpose annotators [31]. The 28% improvement in model accuracy observed with expert annotation translates directly to more reliable predictions in critical applications such as toxicity forecasting and therapeutic efficacy assessment [29] [31].

Experimental Protocols: Methodologies for Annotation Quality Assessment

Systematic Framework for Annotation Quality Evaluation

Rigorous assessment of annotation methodologies requires controlled experimental designs that isolate the impact of annotation quality on model performance. The following protocol outlines a comprehensive approach for comparing annotation methodologies:

Table 2: Experimental Protocol for Annotation Quality Assessment

| Experimental Phase | Methodology | Key Performance Indicators (KPIs) |

|---|---|---|

| Dataset Preparation | Curate standardized dataset with gold-standard references; partition for different annotation methods | Dataset diversity, complexity, reference standard quality |

| Annotation Process | Apply traditional (crowdsourced) and domain-expert annotation to identical datasets; control for time and resources | Annotation throughput, inter-annotator agreement, consistency metrics |

| Model Training | Train identical model architectures on differentially annotated datasets; maintain consistent hyperparameters | Training convergence speed, loss function trajectory, computational requirements |

| Performance Validation | Evaluate models on held-out test sets with gold-standard labels; assess generalizability | Accuracy, precision, recall, F1 scores, specialized benchmark performance (e.g., STEM) |

| Real-World Fidelity | Deploy models in simulated or controlled real-world environments; assess practical utility | Error rates in application contexts, user satisfaction, task completion efficacy |

This protocol emphasizes the importance of controlling for confounding variables while directly measuring the impact of annotation quality on model performance across the development lifecycle. The methodology is adapted from systematic reviews of AI in drug discovery that have identified annotation quality as a critical success factor [29].

Domain-Specific Validation in Pharmaceutical Applications

In drug discovery contexts, additional validation steps are necessary to ensure biological and clinical relevance. The experimental workflow for pharmaceutical applications extends the general protocol with domain-specific verification:

Diagram 1: Pharmaceutical Annotation Workflow

This workflow highlights the iterative validation process required for pharmaceutical AI applications, where annotation quality must ultimately demonstrate correlation with clinically relevant outcomes [29] [30]. The process begins with raw compound and disease data, progresses through expert annotation and model training, and culminates in clinical correlation—with each stage dependent on the annotation quality of preceding stages.

Annotation Quality in AI-Driven Drug Discovery: Specialized Applications

The impact of annotation quality is particularly evident in specific drug discovery applications where specialized knowledge dramatically influences model utility:

Molecular Interaction and Target Validation

In target identification and validation, accurately annotated chemical structures, protein interactions, and binding affinities enable more precise prediction of drug-target interactions. Expert-curated annotations incorporating structural biology principles and kinetic parameters produce models with significantly higher predictive value for compound efficacy [29].

Clinical Trial Optimization and Digital Twins

AI systems using digital twin technology—virtual patient models that simulate disease progression—are particularly sensitive to annotation quality. Inaccurate annotations of patient data, disease milestones, or treatment responses propagate through the models, reducing their reliability for clinical trial optimization [8]. Domain-expert annotation of electronic health records, medical imaging, and biomarker data is essential for creating valid digital twins that can meaningfully predict patient outcomes.

Table 3: Research Reagent Solutions for AI-Driven Drug Discovery

| Research Reagent | Function in AI Workflow | Annotation Requirements |

|---|---|---|

| Multi-Omics Datasets | Provide integrated genomic, proteomic, metabolomic data for target identification | Cross-domain expert knowledge for accurate feature labeling |

| Chemical Compound Libraries | Serve as input for virtual screening and molecular generation | Structural annotation with biochemical properties and activity data |

| High-Content Screening Systems | Generate phenotypic data for mechanism-of-action analysis | Computer vision expertise for image annotation and pattern recognition |

| Biomedical Knowledge Graphs | Structured representation of biological knowledge for reasoning | Relationship extraction requiring biological domain expertise |

| Clinical Trial Datasets | Enable prediction of patient outcomes and trial optimization | Annotation of complex medical terminology and outcomes |

The Mechanism of Impact: How Annotation Quality Influences Model Performance

The relationship between annotation quality and model performance operates through multiple interconnected mechanisms that collectively determine predictive accuracy:

Diagram 2: Annotation Quality Impact Mechanism

High-quality annotation directly improves feature representation by ensuring that inputs to models capture semantically meaningful patterns rather than superficial correlations [31]. This foundational improvement enables more effective model generalization beyond training data distributions, particularly crucial for applications in diverse patient populations or across different disease subtypes. The compounding benefits ultimately manifest as enhanced predictive accuracy in real-world scenarios, where models must handle novel data with clinical or economic consequences [29] [8].

Future Directions: Evolving Annotation Paradigms for Advanced AI

As AI architectures grow more sophisticated—exemplified by Mixture of Experts models and native multimodal systems—annotation methodologies must correspondingly evolve [31]. The emerging paradigm emphasizes:

- Integrated Multimodal Annotation: Simultaneous labeling of interconnected data modalities (text, image, structural data) rather than segregated annotation workflows

- Reasoning-Focused Labeling: Annotation schemes that capture logical inference chains and problem-solving approaches rather than merely categorical labels

- Adaptive Annotation Systems: Human-in-the-loop frameworks that continuously refine annotation quality based on model performance feedback

- Domain-Specialized Annotation Platforms: Tools specifically designed for expert annotators in specialized fields like structural biology or clinical medicine

These advancements acknowledge that as model capabilities expand from pattern recognition to complex reasoning, the annotation processes that fuel them must similarly advance from simple labeling to rich, context-aware knowledge representation [31].

The evidence consistently demonstrates that annotation quality is not merely a technical implementation detail but a fundamental determinant of AI model performance in pharmaceutical applications. The 28% improvement in model accuracy, 85% reduction in real-world errors, and 30-40% faster iteration cycles achievable through domain-expert annotation represent strategic advantages in the highly competitive and resource-intensive drug development landscape [31].

For research organizations, investing in high-quality annotation infrastructure—whether through internal expertise development, specialized vendor partnerships, or hybrid approaches—delivers compounding returns throughout the drug development pipeline. From target identification to clinical trial optimization, superior annotation practices enable more reliable predictions, reduce costly late-stage failures, and ultimately accelerate the delivery of effective therapies to patients [29] [8] [30].

As AI continues its transformative impact on pharmaceutical research, organizations that systematically address the annotation quality challenge will establish a sustainable competitive advantage in both research efficiency and therapeutic outcomes.

Methodologies and Real-World Applications in Drug Development Pipelines

In the era of accelerating artificial intelligence automation, manual data annotation persists as a critical methodology for developing high-quality, reliable AI systems, particularly in specialized domains requiring expert knowledge. As of 2025, the manual annotation segment continues to hold the largest market share at 41.3%, demonstrating its fundamental role in handling complex, nuanced datasets where accuracy is paramount [32]. This guide examines manual annotation workflows within the broader context of benchmarking traditional versus AI annotation methods, providing researchers and drug development professionals with evidence-based best practices, experimental protocols, and comparative performance data.

Manual annotation involves human experts assigning metadata labels to raw, unstructured data, creating the foundational training sets that enable machine learning models to interpret information accurately [33] [10]. Unlike automated approaches, manual annotation excels where contextual understanding, subjective judgment, and domain-specific expertise are required—precisely the conditions prevalent in pharmaceutical research, medical imaging, and scientific discovery [34] [10]. The central thesis of contemporary annotation research posits that rather than being replaced by automation, manual annotation is evolving toward hybrid workflows where human expertise focuses on complex edge cases, quality validation, and tasks requiring specialized knowledge [35] [36].

Core Principles of Effective Manual Annotation

Establishing Annotation Quality Frameworks

The foundation of any successful manual annotation project lies in implementing rigorous quality frameworks. High-quality manual annotation directly correlates with improved model performance, with studies showing that a few thousand perfectly labeled samples often prove more valuable than millions of mediocre annotations [33]. The principle of "Garbage In, Garbage Out" remains an absolute law in machine learning, as models will learn to perfection all the errors, inconsistencies, and biases present in their training data [33].

Inter-Annotator Agreement (IAA) serves as the primary metric for quantifying annotation quality and consistency [33] [37]. This measure involves having multiple annotators independently label the same data samples, then calculating the degree to which their labels align. High IAA scores indicate clear annotation guidelines and reproducible processes, while low scores signal problematic ambiguity in labeling criteria. Research protocols should establish IAA benchmarks before project initiation, with ongoing monitoring throughout the annotation lifecycle.

Domain Expertise Integration

For drug development professionals and scientific researchers, domain expertise represents the most crucial element distinguishing manual from automated annotation approaches. In fields such as medical imaging, compound analysis, and clinical data interpretation, specialized knowledge enables annotators to recognize subtle patterns, contextual relationships, and domain-specific nuances that automated systems frequently miss [38] [33]. Studies consistently show that annotation quality improves significantly when performed by subject matter experts rather than general-purpose annotators, particularly in specialized domains like healthcare and life sciences [33] [32].

Experimental Design for Manual Annotation Benchmarking

Protocol 1: Quality Assurance Measurement

Objective: Quantify annotation quality and consistency across multiple expert annotators.

Methodology:

- Select a representative sample dataset of 200-500 items from the target domain

- Develop comprehensive annotation guidelines with definitions, examples, and edge cases

- Train 3-5 domain experts on annotation protocols and guidelines

- Each annotator independently labels the entire sample dataset

- Calculate Inter-Annotator Agreement using Cohen's Kappa or Fleiss' Kappa for categorical data, or Intraclass Correlation Coefficient for continuous measurements

- Conduct reconciliation sessions where annotators discuss discrepancies and refine guidelines

- Repeat annotation cycle until acceptable agreement thresholds are met (typically >0.8 for Kappa)

Metrics: Inter-Annotator Agreement scores, annotation throughput (items/hour), error distribution analysis, guideline revision cycles required.

Protocol 2: Manual vs. Automated Annotation Comparison

Objective: Compare performance characteristics of manual annotation against AI-assisted approaches.

Methodology:

- Select a stratified sample of data representing common cases (70%), edge cases (20%), and novel cases (10%)

- Implement three parallel annotation workflows:

- Pure manual annotation by domain experts

- AI-pre-annotation with human verification and correction

- Fully automated annotation with post-hoc quality assessment

- Measure time investment, accuracy, precision, recall, and cost across all approaches

- Use gold-standard reference annotations created by senior domain experts for accuracy benchmarking

- Conduct qualitative analysis of error types and distribution across methodologies

Metrics: Time per annotation, accuracy rates, precision/recall metrics, cost efficiency, error type classification.

Comparative Performance Analysis

Quantitative Benchmarking Data

Table 1: Performance Metrics Comparison Across Annotation Approaches

| Metric | Pure Manual Annotation | AI-Assisted Hybrid | Fully Automated |

|---|---|---|---|

| Accuracy on Complex Tasks | 94-98% [34] | 88-95% [36] | 70-85% [34] |

| Throughput (items/hour) | 1-20x (baseline) [36] | 3-5x faster than manual [36] | 10-100x faster than manual [35] |

| Setup & Training Time | 2-4 weeks [36] | 1-2 weeks [36] | 1-4 weeks [35] |

| Cost per Annotation | Highest [34] | 30-35% reduction vs. manual [36] | 60-80% reduction vs. manual [34] |

| Edge Case Performance | Superior [34] [10] | Good with human oversight [35] | Poor [34] |

| Adaptability to New Tasks | Immediate [34] | Requires retraining [35] | Requires complete retraining [34] |

| Domain Expertise Requirement | Essential [33] | Human verification for complex cases [35] | Limited [34] |

Table 2: Manual Annotation Performance Across Domains (2025 Benchmarking Data)

| Domain | Annotation Type | Accuracy | Time Investment | Expertise Level Required |

|---|---|---|---|---|

| Medical Imaging | Semantic Segmentation | 96-98% [34] | 15-30 min/image [33] | Radiologist/Specialist [33] |

| Drug Compound Analysis | Entity Recognition | 92-95% [37] | 5-10 min/document [37] | Pharmaceutical Expert [37] |

| Clinical Text Analysis | Intent & Sentiment Classification | 90-94% [39] | 2-5 min/text [39] | Clinical Linguist [39] |

| Molecular Structure | Relationship Annotation | 95-97% [32] | 10-20 min/structure [32] | Chemistry Expert [32] |

Qualitative Factors Analysis

Beyond quantitative metrics, manual annotation demonstrates distinct qualitative advantages in complex domains:

Contextual Interpretation: Domain experts bring nuanced understanding of context, ambiguity, and intentionality that automated systems struggle to replicate [33]. In drug development, this includes recognizing experimental caveats, methodological limitations, and theoretical implications that might escape pattern-based AI systems.

Adaptive Learning: Human annotators continuously refine their approach based on accumulating experience, whereas automated systems require explicit retraining cycles [34]. This enables manual workflows to adapt more gracefully to evolving research paradigms and emerging concepts.

Implicit Knowledge Application: Experts unconsciously apply years of accumulated domain knowledge, recognizing subtle patterns, relationships, and anomalies that may not be explicitly defined in annotation guidelines [33] [10]. This tacit knowledge represents a significant advantage over explicit rule-based systems.

Best Practices for Optimizing Manual Annotation Workflows

Workflow Design & Implementation

Table 3: Research Reagent Solutions for Manual Annotation

| Tool Category | Representative Solutions | Primary Function | Domain Specialization |

|---|---|---|---|

| Annotation Platforms | Labelbox, Scale AI, V7, CVAT [33] | Interface for manual labeling, collaboration, QA | General with domain customization [33] |

| Quality Assurance Tools | Argilla, Encord Analytics [10] [36] | IAA calculation, error detection, performance monitoring | Cross-domain with statistical focus [10] |

| Domain-Specific Tools | GdPicture, Medical Imaging Specialized Platforms [37] [32] | Specialized interfaces for domain data types | Healthcare, Life Sciences [37] [32] |

| Data Management Systems | Custom BPO Platforms, Active Learning Integration [35] [36] | Data versioning, workflow management, distribution | Scalable enterprise solutions [35] |

Implementing structured workflows is essential for maintaining quality while managing manual annotation costs. The following multi-stage framework has demonstrated success across research environments:

Stage 1: Guideline Development & Annotator Training

- Create comprehensive annotation manuals with explicit definitions, examples, and edge case handling [33]

- Conduct interactive training sessions with calibration exercises

- Establish quality benchmarks and IAA thresholds before full-scale annotation

Stage 2: Pilot Annotation & Process Refinement

- Begin with small batches (100-200 items) to validate guidelines and processes

- Measure initial IAA scores and conduct reconciliation sessions

- Refine guidelines based on pilot results before scaling

Stage 3: Full-Scale Annotation with Multi-Layer QA

- Implement parallel annotation with regular IAA monitoring

- Incorporate senior reviewer validation for low-confidence or contentious labels

- Maintain continuous feedback loops between annotators and project leads

Stage 4: Gold Standard Creation & Validation

- Select representative samples for expert consensus labeling

- Use gold standards for ongoing quality assurance and model training

- Document all guideline revisions and decision rationales

Quality Assurance Protocols

Effective quality management in manual annotation requires systematic approaches:

Multi-Layer Review Processes: Implement tiered review systems where junior annotators handle initial labeling, with senior experts validating complex cases and random samples [33]. This optimizes resource allocation while maintaining quality standards.

Continuous Calibration: Schedule regular recalibration sessions where annotators review challenging cases together, discussing discrepancies and refining shared understanding [37]. This prevents "concept drift" where annotation criteria gradually shift over time.

Performance Analytics: Deploy dashboard monitoring of annotator performance, flagging statistical outliers for retraining or guideline clarification [36]. Track metrics beyond simple accuracy, including timing patterns, error type distributions, and consistency measures.

For research organizations and drug development professionals, manual annotation remains indispensable for high-complexity, high-stakes domains where accuracy trumps efficiency considerations. The experimental data demonstrates that while automated methods offer compelling advantages for standardized, high-volume tasks, manual annotation maintained by domain experts delivers superior performance on complex, nuanced, or novel challenges [34] [10].

The most effective annotation strategy employs a hybrid approach that leverages the respective strengths of both methodologies. Manual annotation should be prioritized for gold standard creation, edge case handling, and domains requiring specialized expertise, while automated methods can augment efficiency for routine labeling tasks once quality benchmarks are established [35] [36]. As AI-assisted annotation continues to advance, the role of domain experts will evolve from performing repetitive labeling to focusing on quality validation, guideline development, and managing the complex cases that demand human judgment [38] [10].

For research institutions implementing manual annotation workflows, the critical success factors include: investing in comprehensive guideline development, establishing rigorous quality assurance protocols, maintaining continuous annotator training and calibration, and implementing performance analytics for ongoing optimization. When properly structured and managed, manual annotation workflows provide the foundation for robust, reliable AI systems capable of meeting the exacting standards required in scientific research and drug development.

In the world of artificial intelligence, data annotation has transformed from a manual, labor-intensive process into a sophisticated technological domain where human expertise collaborates with advanced automation. By 2025, the global data annotation market is experiencing significant growth, driven by increasing AI adoption across healthcare, autonomous vehicles, and pharmaceutical research [5]. This evolution is characterized by a fundamental shift from purely human-driven annotation toward hybrid human-AI systems that leverage the strengths of both approaches.

The traditional manual annotation process, where specialists would spend hours labeling data points, is being augmented by AI-assisted tools that can pre-label data, suggest annotations, and automate repetitive tasks. This transformation is particularly crucial for drug development professionals and researchers who require high-quality, domain-specific datasets for training specialized AI models. The emergence of Large Language Models (LLMs) and multimodal AI systems has further accelerated this trend, creating new possibilities for annotation scalability while introducing new challenges in quality control and validation [40] [5].

Within research contexts, particularly for benchmarking traditional versus AI annotation methods, understanding this landscape becomes essential. The core challenge has shifted from simply generating labeled data to creating reliable, ethically-sourced, and scientifically valid annotations that can support mission-critical AI applications in drug discovery, medical imaging, and biomedical research. This guide provides a comprehensive comparison of the current AI-assisted annotation ecosystem, experimental methodologies for evaluation, and practical frameworks researchers can employ to select appropriate tools for their specific scientific domains.

Comparative Analysis of Leading AI-Assisted Annotation Platforms

The market for AI-assisted annotation tools has matured significantly, with platforms now offering specialized capabilities for different research and industry needs. Based on comprehensive analysis of current platforms, we have identified several leading solutions that dominate the 2025 landscape, each with distinct strengths and limitations for research applications.

Table 1: Comprehensive Comparison of Leading AI-Assisted Annotation Platforms

| Platform | Best For | Supported Data Types | AI Automation Features | Key Strengths | Limitations |

|---|---|---|---|---|---|

| SuperAnnotate | Scalable, enterprise-grade multimodal projects [41] [42] | Image, video, audio, text, LiDAR, geospatial [41] [42] | AI-assisted labeling, custom model integration, automated workflows [41] | Comprehensive multimodal support, strong QA system, enterprise security [41] [42] | Steep learning curve, opaque pricing, resource-intensive [42] |

| Labelbox | Complex, high-volume multimodal datasets [41] [42] | Image, video, text, audio, documents, geospatial [7] [42] | Model-assisted labeling, foundation model integration, active learning [41] [42] | End-to-end platform, model feedback loops, strong governance [41] [42] | High cost of entry, steep learning curve, cloud-only [42] |

| Dataloop | End-to-end automation & large-scale workflows [41] [42] | Image, video, audio, text, LiDAR [41] [7] | AI pre-labeling, automated QC, Python SDK for customization [41] [42] | Powerful automation, enterprise flexibility, version control [41] [42] | High complexity, enterprise-leaning pricing, cloud-dependent [42] |

| V7 | Fast, accurate image & video labeling [41] [7] | Image, video, PDF, medical imaging [41] [7] | AI-powered auto-labeling, segmentation, real-time model updates [41] [7] | User-friendly interface, efficient automation, medical imaging specialty [41] [7] | Limited data modalities, niche application focus [7] |

| BasicAI | 3D sensor fusion & LiDAR data [7] | Image, video, audio, 3D sensor fusion, 4D-BEV, text [7] | Smart annotation tools, auto-labeling, object tracking [7] | Industry-leading 3D capabilities, sensor fusion support [7] | Lacks open API support, limited third-party integrations [7] |

| Label Studio | Open-source customization & research teams [42] | Image, video, audio, text, time series [42] | Model-assisted labeling, custom algorithm integration [42] | Maximum flexibility, open-source foundation, no vendor lock-in [42] | Requires technical expertise, self-hosted setup and maintenance [42] |

For research and drug development applications, the selection criteria extend beyond basic functionality to include domain-specific capabilities, security compliance, and integration with scientific workflows. Platforms like SuperAnnotate and Labelbox offer enterprise-grade security features essential for handling sensitive research data, including HIPAA compliance for healthcare applications [41] [42]. Specialized capabilities such as V7's medical imaging suite or BasicAI's 3D sensor fusion support can be particularly valuable for specific research domains like medical image analysis or spatial biology [7].

The trend toward multimodal annotation capabilities reflects the growing complexity of AI research applications, where models must process and interpret diverse data types simultaneously. Platforms that support image, video, text, and specialized data formats within integrated environments provide significant advantages for cross-disciplinary research teams [41] [7]. This capability is particularly relevant for drug development workflows that might integrate molecular imaging, clinical text data, and experimental results within unified AI models.

Benchmarking Methodologies: Traditional vs. AI-Assisted Annotation

Rigorous benchmarking of annotation approaches requires standardized methodologies and metrics that can objectively quantify performance across multiple dimensions. The transition from traditional human-only annotation to AI-assisted workflows necessitates comprehensive evaluation frameworks that account for both quantitative efficiency gains and qualitative quality considerations.

Key Performance Metrics and Measurement Protocols

Table 2: Core Metrics for Benchmarking Annotation Quality and Efficiency

| Metric Category | Specific Metrics | Measurement Protocol | Interpretation Guidelines |

|---|---|---|---|