Beyond Single Samples: A Framework for Assessing and Ensuring Generalizability Across Tissue Types in Biomedical Research

The ability of computational models and analytical frameworks to generalize across diverse tissue types is a critical benchmark for their clinical and research utility.

Beyond Single Samples: A Framework for Assessing and Ensuring Generalizability Across Tissue Types in Biomedical Research

Abstract

The ability of computational models and analytical frameworks to generalize across diverse tissue types is a critical benchmark for their clinical and research utility. This article provides a comprehensive resource for researchers and drug development professionals on the principles and practices of evaluating generalizability. We first explore the foundational concepts of tissue diversity and the key challenges, such as batch effects and biological variability, that hinder model transferability. The article then details state-of-the-art methodological approaches, from multi-omics integration to unsupervised annotation tools, that are designed for cross-tissue application. Furthermore, we discuss troubleshooting and optimization strategies to mitigate performance degradation, including data harmonization techniques and hyperparameter tuning. Finally, we present a rigorous framework for validation, emphasizing the importance of external test sets and benchmark comparisons. By synthesizing insights from recent advances in spatial omics, digital pathology, and AI, this work aims to equip scientists with the knowledge to build more robust, reliable, and generalizable tools for tissue analysis.

The What and Why: Core Concepts and Challenges in Cross-Tissue Generalizability

The pursuit of generalizability—the ability of a research finding or model to maintain its performance across diverse and unseen conditions—represents a fundamental challenge in computational biology and precision medicine. Within tissue-based research, this challenge manifests as the transition from demonstrating excellent performance on a single tissue type (single-tissue performance) to achieving reliable results across multiple tissue types and experimental conditions (pan-tissue reliability). This distinction is particularly crucial for the development of robust diagnostic tools, predictive models, and therapeutic strategies that can function effectively in real-world clinical settings, where biological variability is the norm rather than the exception.

The assessment of generalizability requires careful consideration of multiple performance dimensions, including predictive accuracy, biological relevance, computational efficiency, and translational potential. This comparison guide provides an objective evaluation of current methodologies for predicting spatial gene expression from histology images, with a specific focus on their generalizability across tissue types. By benchmarking these approaches against standardized metrics and datasets, we aim to provide researchers with critical insights for selecting and developing methods that offer not just optimal performance, but also reliable pan-tissue applicability.

Performance Comparison of Spatial Gene Expression Prediction Methods

Comprehensive Benchmarking Across Evaluation Categories

Eleven methods for predicting spatial gene expression from histology images have been comprehensively evaluated using 28 metrics across five key categories: SGE prediction performance, model generalizability, clinical translational impact, usability, and computational efficiency [1]. The evaluation utilized five Spatially Resolved Transcriptomics (SRT) datasets and included external validation using The Cancer Genome Atlas (TCGA) data to assess cross-study applicability [1].

Table 1: Overall Performance Ranking of Spatial Gene Expression Prediction Methods

| Method | SGE Prediction Performance | Model Generalizability | Clinical Translational Impact | Usability | Computational Efficiency |

|---|---|---|---|---|---|

| EGNv2 | Highest (PCC = 0.28) | Limited | Limitations in distinguishing survival risk groups | Moderate | Moderate |

| Hist2ST | High (AUC = 0.63) | Notable | Moderate | High | Moderate |

| DeepSpaCE | Moderate | Notable | Moderate | High | Moderate |

| HisToGene | Moderate | Notable | Moderate | High | Moderate |

| DeepPT | High for Visium data | Limited | Highest for survival prediction | Moderate | Moderate |

The benchmarking results revealed that no single method emerged as the definitive top performer across all evaluation categories [1]. While EGNv2 and DeepPT demonstrated the highest accuracy in predicting spatial gene expression for ST and Visium data respectively, they showed limitations in distinguishing survival risk groups and in model generalizability based on the predicted SGE [1]. Conversely, HisToGene, DeepSpaCE, and Hist2ST demonstrated notable performance in model generalizability and usability, highlighting the inherent trade-offs between prediction accuracy and broader applicability [1].

Quantitative Performance Metrics Across Tissue Types

The predictive performance of these methods was quantitatively assessed using multiple metrics, including Pearson Correlation Coefficient (PCC), Mutual Information (MI), Structural Similarity Index (SSIM), and Area Under the Curve (AUC) [1]. These metrics were applied to evaluate performance on both lower-resolution spatial transcriptomics (ST) data and higher-resolution 10x Visium data [1].

Table 2: Detailed Performance Metrics by Method and Tissue Context

| Method | PCC (HER2+ ST) | MI (HER2+ ST) | SSIM (HER2+ ST) | AUC (HER2+ ST) | Performance on HVGs | Performance on SVGs |

|---|---|---|---|---|---|---|

| EGNv2 | 0.28 | 0.06 | 0.22 | 0.65 | p < 0.05 | p < 0.05 |

| Hist2ST | Moderate | 0.06 | Moderate | 0.63 | Not significant | p < 0.05 |

| DeepPT | Moderate | Moderate | Moderate | Moderate | p < 0.05 | p < 0.05 |

| GeneCodeR | Moderate | Moderate | Moderate | Moderate | p < 0.05 | p < 0.05 |

| iStar | Moderate | Moderate | Moderate | Moderate | p < 0.05 | p < 0.05 |

Notably, most methods exhibited higher correlation or SSIM for both highly variable genes (HVGs) and spatially variable genes (SVGs) compared to using all genes, providing a more meaningful evaluation of biological relevance [1]. For HVGs and SVGs, most methods showed statistically significant improvements in performance (with p < 0.05 for most methods under both gene categories), indicating their capacity to capture biologically relevant patterns despite relatively low average correlation across all genes [1].

Experimental Protocols and Methodologies

Standardized Benchmarking Framework

The comprehensive benchmarking study employed a rigorously designed evaluation framework encompassing five key categories to ensure fair comparison across methods [1]:

Within-image SGE prediction performance: Evaluation was conducted on hold-out test images from cross-validation for both lower-resolution ST data and higher-resolution 10x Visium data [1]. Models were trained consistently to predict SGE from histology, with predicted SGE compared to ground truth SGE using multiple correlation and similarity metrics [1].

Cross-study model generalizability: This critical assessment involved applying models trained on ST data to predict gene expression in Visium tissues, as well as predicting gene expression for TCGA images to determine utility for existing H&E image repositories [1].

Clinical translational impact: The practical utility of predicted SGE was assessed through survival outcome prediction and identification of canonical pathological regions, evaluating the potential for real-world clinical application [1].

Usability: This category encompassed evaluation of code quality, documentation completeness, and manuscript clarity, addressing practical implementation concerns for researchers [1].

Computational efficiency: Assessment of resource requirements and processing speeds, crucial considerations for large-scale studies and clinical deployment [1].

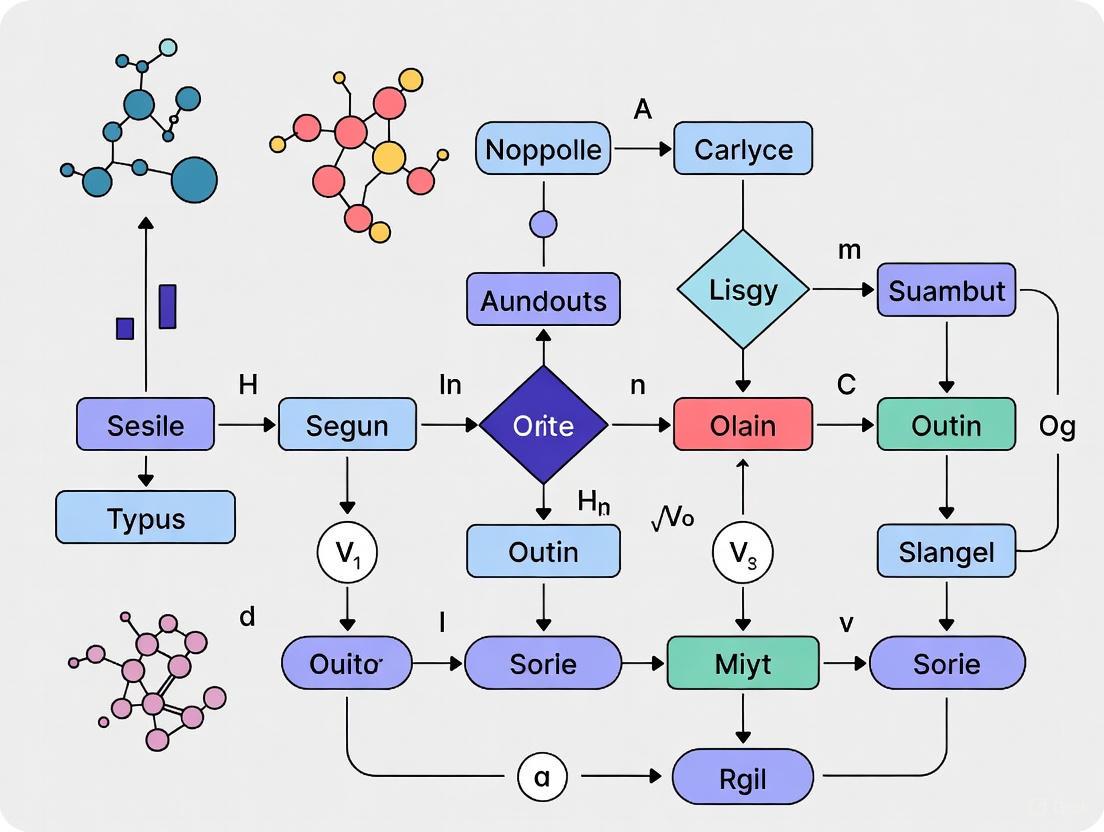

The experimental workflow for assessing generalizability across tissue types can be visualized as follows:

Pan-Cancer Drug Response Prediction Protocol

Complementing the spatial gene expression benchmarking, research on pan-cancer predictions of drug sensitivity provides important insights into tissue-specific considerations. These studies typically employ the following methodology [2]:

Data Acquisition: Utilizing public pharmacogenomic databases of patient-derived cancer cell lines (such as Klijn 2015 and Cancer Cell Line Encyclopedia) containing drug response data alongside molecular characterization including RNA expression, point mutations, and copy number variations [2].

Tissue-specific Stratification: Analysis is stratified by cancer type defined by organ site, with focus on well-represented cancer types (n≥15 in both datasets for MEK inhibitor studies) to ensure robust within-tissue evaluation [2].

Between-Tissue vs Within-Tissue Signal Parsing: Implementing analytical approaches that distinguish signals derived from differences between tissue types from those reflecting variation among individual tumors within the same tissue type [2].

Cross-Dataset Validation: Applying prediction models across independent datasets to evaluate consistency and generalizability of findings, assessing whether performance advantages in pan-cancer models are primarily attributable to larger sample sizes rather than truly shared regulatory mechanisms [2].

This methodology revealed that while tissue-level drug responses can be accurately predicted (between-tissue ρ = 0.88-0.98), only 5 of 10 cancer types showed successful within-tissue prediction performance (within-tissue ρ = 0.11-0.64) [2]. Between-tissue differences made substantial contributions to pan-cancer MEKi response predictions, with exclusion of between-tissue signals leading to decreased performance from Spearman's ρ range of 0.43-0.62 to 0.30-0.51 [2].

The Impact of Tissue Context on Model Performance

Between-Tissue vs. Within-Tissue Predictive Signals

The performance of predictive models varies substantially when considering between-tissue differences versus within-tissue variation. Research on pan-cancer drug sensitivity predictions has demonstrated that between-tissue differences contribute significantly to apparent model performance, potentially masking limited within-t tissue predictive capability [2].

Table 3: Between-Tissue vs. Within-Tissue Prediction Performance for MEK Inhibitors

| Cancer Type | Between-Tissue Prediction (ρ) | Within-Tissue Prediction (ρ) | Successful Within-Tissue Prediction |

|---|---|---|---|

| Pan-Cancer (Overall) | 0.88-0.98 | 0.11-0.64 | Mixed Performance |

| Tissue Type A | High | 0.64 | Yes |

| Tissue Type B | High | 0.11 | No |

| Tissue Type C | High | 0.45 | Yes |

| Tissue Type D | High | 0.23 | No |

| Tissue Type E | High | 0.58 | Yes |

This analysis reveals that approximately half of cancer types examined show poor within-tissue prediction despite strong overall pan-cancer performance, highlighting the critical importance of distinguishing between these two types of predictive signals when evaluating model generalizability [2].

Biological Factors Influencing Tissue-Specific Performance

The molecular distinctness of tissue types significantly impacts prediction generalizability. Studies comparing normal adjacent to tumor (NAT) tissue across multiple cancer types have demonstrated that NAT presents a unique intermediate state between healthy and tumor tissue across all tissue types examined [3]. Dimensionality reduction of transcriptomic data consistently shows clear distinction between healthy, NAT, and tumor tissues, with NAT samples consistently positioned between tumor and healthy samples across disparate tissue contexts [3].

This biological continuum has important implications for model generalizability, as methods trained exclusively on tumor tissue may fail to capture the nuanced molecular profiles of NAT tissues, and vice versa. The unique gene expression signature of NAT tissue—characterized by activation of pro-inflammatory immediate-early response genes concordant with endothelial cell stimulation—represents a pan-cancer phenomenon that must be accounted for in robust predictive models [3].

Essential Research Reagents and Computational Tools

Critical Datasets for Generalizability Assessment

The rigorous evaluation of method generalizability requires utilization of diverse, publicly available datasets that encompass multiple tissue types and technological platforms:

The Cancer Genome Atlas (TCGA): Provides H&E images and molecular data across multiple cancer types, essential for external validation and assessment of clinical translational potential [1] [3].

Genotype-Tissue Expression (GTEx) Project: Offers transcriptomic profiling of healthy tissues from multiple sites, enabling comparison with disease states and assessment of tissue-specific effects [3].

Spatially Resolved Transcriptomics (SRT) Datasets: Include both lower-resolution ST data and higher-resolution 10x Visium data spanning multiple tissue types, crucial for evaluating spatial prediction performance across resolutions [1].

Cancer Cell Line Encyclopedia (CCLE): Contains drug response and molecular characterization data for tumor cell lines across diverse cancer types, enabling pan-cancer drug response prediction studies [2].

Computational Frameworks and Visualization Tools

The development and evaluation of generalizable models requires specific computational frameworks and visualization approaches:

Convolutional Neural Networks (CNNs) and Transformers: Commonly selected architectures for extracting local and global 2D vision features from histology image patches for gene expression prediction [1].

Graph Neural Networks (GNNs): Implemented in some methods to capture neighborhood relationships between adjacent spots, enhancing spatial context understanding [1].

Exemplar Modules: Used in advanced methods to guide predictions by inferring from gene expression of the most similar exemplars [1].

Urban Institute Data Visualization Tools: Include Excel macros and R packages (urbnthemes) that facilitate creation of standardized, accessible visualizations with proper color contrast and typographic hierarchy [4].

Visualization Framework for Generalizability Assessment

The relationship between model complexity, performance, and generalizability across tissue types can be conceptualized through the following framework:

This comprehensive comparison demonstrates that assessing generalizability requires moving beyond single-tissue performance metrics to incorporate multiple dimensions of reliability across tissue types. The current state of spatial gene expression prediction reveals a landscape of method-specific strengths and limitations, with clear trade-offs between prediction accuracy, generalizability, and clinical utility.

The most accurate methods for specific tissue types (EGNv2 for ST data and DeepPT for Visium data) do not necessarily translate to the most generalizable approaches across tissues [1]. Similarly, pan-cancer drug response models show variable performance across tissue types, with between-tissue differences contributing substantially to apparent success [2]. These findings emphasize the critical importance of rigorous, multi-tissue validation frameworks that parse within-tissue and between-tissue signals when evaluating methodological generalizability.

For researchers and drug development professionals, this analysis underscores the necessity of selecting methods based not only on reported performance metrics but also on demonstrated reliability across diverse tissue contexts and experimental conditions. Future methodological development should prioritize architectures and training strategies that explicitly address tissue-specific biases while capturing biologically meaningful pan-tissue signals, ultimately bridging the gap between single-tissue performance and genuine pan-tissue reliability.

For researchers, scientists, and drug development professionals working across diverse tissue types, achieving generalizable results is paramount. The path to reliable, reproducible findings is fraught with three interconnected obstacles: batch effects, technical artifacts, and biological heterogeneity. Batch effects are technical variations introduced due to differences in experimental conditions, sequencing runs, reagents, or equipment that are unrelated to the biological questions under investigation [5]. Left unaddressed, they can obscure true biological signals, reduce statistical power, and even lead to incorrect conclusions [5]. Technical artifacts encompass a broader range of non-biological noises, including variations in sample preparation, storage conditions, and instrumentation [5]. Perhaps most critically, biological heterogeneity—the natural variation in molecular, cellular, and physiological characteristics within and between samples—represents both a fundamental property of living systems and a significant analytical challenge [6].

The central dilemma in multi-tissue research lies in successfully removing technical noise while preserving meaningful biological variation. Over-correction of batch effects can eliminate the very biological heterogeneity essential for identifying novel subtypes, understanding disease mechanisms, and developing personalized therapeutic strategies [7] [6]. This challenge is particularly acute in cancer genomics, where heterogeneity drives disease progression and treatment response [7]. Furthermore, the problem extends to clinical translation, where limitations in generalizability often restrict the adoption of quantitative imaging biomarkers and genomic classifiers across institutions and patient populations [8] [9]. This guide objectively compares current methodologies to navigate these challenges, providing experimental frameworks for assessing their effectiveness in preserving biological signals while removing technical artifacts.

Understanding Batch Effects and Technical Artifacts

Batch effects and technical artifacts arise throughout the experimental workflow, from initial study design to final data analysis. Understanding their origins is the first step toward effective mitigation. The fundamental cause can be partially attributed to the basic assumptions of data representation in omics, where the relationship between the actual abundance of an analyte and the instrument readout may fluctuate due to experimental factors [5].

Table 1: Common Sources of Batch Effects and Technical Artifacts

| Stage | Source | Description | Affected Omics/Fields |

|---|---|---|---|

| Study Design | Flawed or Confounded Design | Non-randomized sample collection or selection based on specific characteristics confounded with batches [5]. | Common across omics [5] |

| Minor Treatment Effect | Small effect sizes are harder to distinguish from batch effects [5]. | Common across omics [5] | |

| Sample Preparation | Protocol Procedures | Variations in centrifugal forces, time/temperature before centrifugation [5]. | Common across omics [5] |

| Sample Storage Conditions | Variations in storage temperature, duration, freeze-thaw cycles [5]. | Common across omics [5] | |

| Data Generation | Reagent Lots | Differences between enzyme batches for cell dissociation or RNA amplification kits [7] [10]. | scRNA-seq, Genomics [7] [10] |

| Sequencing Runs | Differences between sequencing platforms (e.g., Illumina vs. Ion Torrent) or different runs [5] [10]. | scRNA-seq, Bulk RNA-seq [5] [10] | |

| Data Analysis | Analysis Pipelines | Different normalization methods, parameters, or software versions [5] [8]. | Common across omics, Radiomics [5] [8] |

The negative impact of these technical variations is profound. In benign cases, they increase variability and decrease the power to detect real biological signals. In worse scenarios, they can actively mislead research. For example, a change in RNA-extraction solution in a clinical trial led to incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy [5]. Similarly, what appeared to be significant cross-species differences between human and mouse gene expression was later shown to be primarily driven by batch effects related to data generation timepoints [5]. These artifacts are a paramount factor contributing to the widely recognized reproducibility crisis in scientific research [5].

The Critical Role of Biological Heterogeneity

Biological heterogeneity is not noise to be eliminated but a fundamental property of living systems that provides critical information [6]. It operates at all scales—from molecular and cellular to tissue and organism levels—and can be categorized into three main types:

- Population Heterogeneity: Variation in phenotypes among individuals in a population at a single time point [6].

- Spatial Heterogeneity: Variation in variables at different spatial locations within a sample (e.g., within a tumor) [6].

- Temporal Heterogeneity: Variation in measured variables as a function of time [6].

Furthermore, heterogeneity can be classified as micro-heterogeneity (variance within an apparently uniform population) or macro-heterogeneity (the presence of distinct subpopulations) [6]. In oncology, this heterogeneity enables tumors to adapt, progress, and develop resistance to therapies. Therefore, analytical methods that preserve this heterogeneity are essential for realizing the goals of precision medicine, where personalized genomic signatures guide optimal treatment selection for individual patients [7] [6].

Diagram: The central challenge lies in balancing the removal of technical artifacts with the preservation of meaningful biological heterogeneity, which directly impacts the generalizability of research findings.

Comparative Analysis of Batch Effect Correction Methods

Algorithm Performance and Benchmarking

Multiple computational methods have been developed to address batch effects, each with distinct approaches, strengths, and limitations. A comprehensive benchmark study evaluating 14 batch correction methods for single-cell RNA sequencing data provides critical insights for researchers selecting appropriate tools [11].

Table 2: Comparison of Select Batch Effect Correction Methods

| Method | Underlying Approach | Strengths | Limitations | Performance in Benchmarking |

|---|---|---|---|---|

| Harmony | Iterative clustering in PCA space with diversity maximization [11]. | Fast, scalable, preserves biological variation [10] [11]. | Limited native visualization tools [10]. | Recommended; fast runtime with good efficacy [11]. |

| Seurat 3 | CCA to find correlated features, then MNNs as "anchors" [11] [10]. | High biological fidelity, comprehensive workflow [10]. | Computationally intensive, requires parameter tuning [10]. | Recommended; good efficacy but slower [11]. |

| LIGER | Integrative non-negative matrix factorization (NMF) [11]. | Distinguishes technical from biological variation [11]. | Requires reference dataset selection [11]. | Recommended; good for preserving biological variation [11]. |

| ComBat | Empirical Bayes framework with linear models [7]. | Established method, models known batches [7]. | Risk of over-correction, requires biological covariates [7]. | Not top-ranked; can remove biological heterogeneity [7] [11]. |

| BBKNN | Graph-based method creating batch-balanced KNN networks [10]. | Computationally efficient, easy to use in Scanpy [10]. | Less effective for complex non-linear batch effects [10]. | Not top-ranked; efficient but may lack correction power [11]. |

| pSVA | Models artifacts blind to biology using permuted covariates [7]. | Retains unknown biological heterogeneity, good for subtype identification [7]. | Less established than other methods [7]. | Specific to genomic data; improves cross-study validation [7]. |

The benchmark, which used datasets with identical and non-identical cell types across multiple technologies, evaluated methods based on computational runtime, ability to handle large datasets, and efficacy in batch-effect correction while preserving cell type purity [11]. Metrics included kBET (k-nearest neighbor Batch Effect Test), LISI (Local Inverse Simpson's Index), ASW (Average Silhouette Width), and ARI (Adjusted Rand Index) [11]. Based on the overall performance, Harmony, LIGER, and Seurat 3 emerged as the recommended methods, with Harmony offering a particularly favorable balance of speed and accuracy [11].

The Risk of Over-Correction

A significant concern with many batch correction algorithms is their potential to remove true biological heterogeneity. Methods like ComBat and standard Surrogate Variable Analysis (SVA) use linear models that require pre-specification of biological covariates to "protect" during correction [7]. When studying novel disease subtypes or dynamic processes where relevant biological groups are unknown a priori, these algorithms may incorrectly model true biological heterogeneity as technical artifacts and remove it [7]. This is particularly problematic in cancer genomics, where personalized genomic signatures are the central goal.

The permuted-SVA (pSVA) algorithm was developed specifically to address this over-correction problem [7]. By reversing the standard SVA approach—modeling known technical covariates and iteratively estimating biological heterogeneity from genes not associated with these artifacts—pSVA retains biological heterogeneity while removing technical artifacts [7]. In head and neck cancer gene expression data, pSVA facilitated accurate subtype identification and improved cross-study validation for predicting HPV status, even when batches were highly confounded with HPV status [7].

Experimental Protocols for Assessing Generalizability

Standardized Workflow for Method Evaluation

To objectively compare batch effect correction methods and assess their impact on generalizability, researchers should implement standardized experimental protocols. The following workflow outlines key steps for rigorous evaluation:

Dataset Selection and Preparation: Utilize publicly available datasets with known ground truth, such as:

- Human PBMCs (Peripheral Blood Mononuclear Cells): Available from multiple technologies (10X, Smart-seq2) with well-annotated cell types [11].

- Pancreatic Cell Data: Contains multiple batches from different technologies with significantly different cell type distributions [11].

- Head and Neck Cancer Data: Includes formalin-fixed and frozen samples with different RNA amplification kits, highly confounded with HPV status [7].

Preprocessing: Follow consistent normalization and scaling procedures. For scRNA-seq data, this includes quality control, filtering, and selection of highly variable genes (HVGs) using standardized pipelines [11].

Batch Correction Application: Apply multiple correction methods to the same preprocessed data, ensuring consistent parameter settings according to developer recommendations.

Dimensionality Reduction and Visualization: Generate UMAP and t-SNE plots from the corrected data to visually inspect batch mixing and cell type separation [11].

Quantitative Assessment: Calculate multiple benchmarking metrics to evaluate different aspects of performance:

- kBET (k-nearest neighbor Batch Effect Test): Measures local batch mixing using a chi-square test [11]. Lower rejection rates indicate better mixing.

- LISI (Local Inverse Simpson's Index): Quantifies both batch mixing (Batch LISI) and cell type separation (Cell Type LISI) [10] [11]. Higher Batch LISI indicates better integration, while higher Cell Type LISI indicates preserved biological separation.

- ASW (Average Silhouette Width): Assesses clustering compactness and separation [11]. Can be computed on batch labels (higher values indicate poor integration) or cell type labels (higher values indicate preserved biology).

- ARI (Adjusted Rand Index): Measures similarity between clustering results and known cell type labels [11]. Higher values indicate better preservation of biological structure.

Diagram: Standardized workflow for evaluating batch effect correction methods, incorporating both technical metrics and biological validation.

Assessing Impact on Downstream Biological Analyses

Beyond technical metrics, evaluating the impact of batch correction on downstream biological analyses is crucial for assessing generalizability:

- Differential Expression Analysis: Using simulated datasets with known differentially expressed genes (DEGs), compare the precision and recall of DEG detection before and after batch correction. The F-score (harmonic mean of precision and recall) provides a single metric for comparison [11].

- Novel Subtype Identification: Apply clustering algorithms to corrected data and compare identified clusters to known biological groups or clinical outcomes. Methods that facilitate accurate identification of previously unknown subtypes (e.g., pSVA in head and neck cancer [7]) demonstrate superior preservation of biological heterogeneity.

- Cross-Study Prediction: Train classifiers (e.g., for HPV status or clinical outcomes) on corrected data from one study and test performance on independent datasets from different institutions or technologies. Improved cross-study validation indicates successful removal of technical artifacts without sacrificing biological signal [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Mitigating Technical Variation

| Reagent/Material | Function | Considerations for Generalizability |

|---|---|---|

| Reference Standards | Calibrate instruments and normalize measurements across batches and labs [6] [8]. | Essential for distinguishing biological heterogeneity from system variability; use matrix-matched standards where possible [6]. |

| RNA Amplification Kits | Amplify limited RNA input for sequencing (e.g., from FFPE or frozen tissues) [7]. | Different kits (e.g., NuGEN Ovation) introduce systematic variations; balance kits across experimental groups [7]. |

| Cell Dissociation Enzymes | Dissociate tissues into single-cell suspensions for scRNA-seq [10]. | Enzyme batch variability can affect cell viability and subtype representation; record lot numbers and test new batches [10]. |

| Fetal Bovine Serum (FBS) | Cell culture supplement for maintaining cells prior to analysis [5]. | Batch variability can dramatically impact results, including failure to reproduce key findings; use single lot or pre-test multiple lots [5]. |

| Multimodal Feature Barcodes | Simultaneously profile surface proteins and gene expression (CITE-seq) [10]. | Normalize protein data separately using CLR (Centered Log Ratio) normalization; enables cross-modal validation [10]. |

| Spatial Barcoding Slides | Capture spatial gene expression patterns in tissue sections [12]. | Preserves spatial heterogeneity lost in dissociation-based methods; integrates with single-cell data for spatial deconvolution [12]. |

Achieving generalizability across tissue types requires carefully balanced strategies that address both technical artifacts and biological heterogeneity. Based on current evidence, researchers should prioritize methods like Harmony, Seurat 3, and LIGER for standard batch integration tasks, while considering specialized approaches like pSVA when preserving unknown biological heterogeneity is paramount [7] [11]. Experimental design remains the most powerful tool—randomizing sample processing, balancing technical factors across biological groups, and incorporating reference standards can significantly reduce batch effects before computational correction [5] [10]. Validation should extend beyond technical metrics to include biological endpoints such as differential expression recovery, novel subtype identification, and cross-study predictive performance [7] [11]. As the field advances, the integration of multimodal data and spatial context will provide additional anchors for distinguishing technical artifacts from biologically meaningful heterogeneity, ultimately enhancing the generalizability of findings across diverse tissues and populations.

The Impact of Disease Progression on Tissue Architecture and Model Performance

The pursuit of tissue-agnostic therapeutics represents a paradigm shift in precision oncology, moving away from treatments defined by tumor origin to those targeting specific molecular alterations. A fundamental assumption underpinning this approach is that key biological processes and their manifestation in the tissue microenvironment are consistent across different cancer types. This guide critically examines this assumption by exploring the interplay between disease progression, the resultant disruption of tissue architecture, and the performance of computational models designed to decode this spatial complexity. As this review will demonstrate, the generalizability of models across tissue types is not a given but a property that must be rigorously assessed, as alterations in tissue structure can significantly impact the accuracy and clinical applicability of both spatial and prognostic models.

Benchmarking Spatial and Prognostic Models

To objectively evaluate the current landscape, this section benchmarks the performance of several computational models that analyze tissue architecture or disease progression. The following table summarizes key performance metrics from recent studies, highlighting their applicability across different tissue types and disease contexts.

Table 1: Performance Benchmarking of Spatial and Prognostic Models

| Model Name | Primary Function | Key Performance Metrics | Tissue Types Applied | Generalizability Strengths |

|---|---|---|---|---|

| SpatialTopic [13] | Identifies recurrent spatial patterns (topics) in tissue images. | High precision & interpretability; processes 100,000 cells in <1 min [13]. | NSCLC, melanoma, healthy lung, mouse spleen [13]. | Highly scalable across multiple platforms (CODEX, mIF, IMC, CosMx); identifies consistent structures like TLS [13]. |

| SNOWFLAKE [14] | Integrates single-cell morphology & protein expression via graph neural networks. | Outperformed conventional ML in classifying pediatric COVID-19 infection status [14]. | Lymphoid tissues, breast cancer, Tertiary Lymphoid Structures [14]. | Generalizes across tissue types; identifies interpretable spatial motifs linked to infection and survival [14]. |

| Leaspy [15] [16] | Parametric disease progression modeling for cognitive decline. | AUC: 0.96; Correlation with observed conversion time: r=0.78 [15]. | Neuropsychological data (ADNI cohort) [15] [16]. | Effective for early detection and prognosis of Alzheimer's disease using neuropsychological markers [15]. |

| RPDPM [15] | Parametric disease progression modeling. | Superior robustness to missing data (accurate with up to 40% data loss) [15]. | Neuropsychological data (ADNI cohort) [15]. | Maintains predictive accuracy with incomplete data, enhancing real-world applicability [15]. |

The data reveals a critical insight for tissue-agnostic research: while spatial models like SpatialTopic and SNOWFLAKE demonstrate technical generalizability across imaging platforms and tissue types, the biological features they identify, such as Tertiary Lymphoid Structures (TLS), may not hold consistent prognostic value across all cancers [13] [17]. Similarly, the high performance of disease progression models like Leaspy is contingent on a specific, compact set of biomarkers (e.g., CDRSB, ADAS13, MMSE), underscoring that model generalizability depends on the consistent relevance of its input features [15].

Experimental Protocols for Model Evaluation

To ensure fair and reproducible comparisons, researchers must adhere to standardized experimental protocols. The methodologies below are derived from the benchmarked studies and can be adapted for evaluating model generalizability.

Protocol for Spatial Topic Modeling of Tissue Architecture

This protocol is based on the SpatialTopic model, designed to decode spatial tissue architecture from multiplexed imaging data [13].

- Input Data Preparation: The primary inputs are cell type annotations and their spatial coordinates within whole-slide tissue images. Cell types should be pre-determined using a phenotyping algorithm appropriate for the dataset's marker panel [13].

- Model Initialization:

- Anchor Cell Selection: Select regional centers via spatially stratified sampling.

- KNN Graph Construction: For each image, construct a K-nearest neighbor graph between anchor cells and all other cells.

- Initial Region Assignment: Assign cells to initial regions based on proximity to these anchor cells [13].

- Model Inference via Collapsed Gibbs Sampling: This iterative process has two main steps per cell:

- Sample Topic Assignment (Zgi): The topic for each cell is sampled conditional on its region assignment, cell type, and the current topic composition of its region.

- Sample Region Assignment (Dgi): The region for each cell is sampled conditional on its current topic assignment, spatial distance to the region center, and the topic composition of the region. Spatial information is weakly incorporated using a kernel function [13].

- Output Analysis: After convergence, the model outputs:

- Topic Content: The distribution of cell types for each identified spatial topic.

- Cell Assignment: Each cell is assigned to the topic with the highest posterior probability, allowing for the visualization of spatial patterns across the tissue [13].

Protocol for Benchmarking Predictive Performance in Tissue-Agnostic Indications

This protocol outlines the use of real-world evidence (RWE) to assess whether treatment effects are truly consistent across tissue types, as detailed in the analysis of tissue-agnostic therapies [17].

- Dataset Curation: Compile a large, pan-cancer database of molecularly profiled tumor samples with linked clinical outcome data. The analyzed dataset included 295,316 samples across 57 tumor types, profiled for alterations like TMB-High, MSI-High/MMRd, and BRAFV600E mutations [17].

- Outcome Measures: Define and extract key clinical endpoints. The primary outcomes were:

- Time on Treatment (TOT): The median duration a patient remains on a specific therapy (e.g., pembrolizumab).

- Overall Survival (OS): The median survival time from the start of treatment [17].

- Statistical Comparison: Calculate the median TOT and OS for the entire treated cohort (the global median). Then, compare the median TOT and OS for each specific tumor type against this global median to identify statistically significant (P < 0.05) deviations. This reveals whether certain cancers derive more or less benefit from the same tissue-agnostic treatment [17].

Visualizing the Research Workflow

The following diagram illustrates the logical workflow and key relationships in assessing how disease progression impacts tissue architecture and how this, in turn, influences model performance and therapeutic generalizability.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful spatial analysis and disease modeling rely on a suite of specialized reagents, platforms, and computational tools. The following table catalogs key solutions mentioned in the benchmarked research.

Table 2: Key Research Reagent Solutions for Spatial Analysis and Modeling

| Item Name / Category | Function / Description | Example Use-Case / Platform |

|---|---|---|

| Multiplexed Tissue Imaging | Enables in-situ profiling of RNA/protein expression at single-cell resolution within intact tissue architecture. | CODEX, Multiplexed Immunofluorescence (mIF), Imaging Mass Cytometry (IMC) [13]. |

| Spatial Transcriptomics | Provides whole-transcriptome or targeted RNA expression data with spatial context. | Nanostring CosMx Spatial Molecular Imager [13]. |

| Cell Phenotyping Algorithm | Software to classify individual cells into specific types (e.g., T-cells, macrophages) based on marker expression. | Required pre-processing input for SpatialTopic analysis [13]. |

| R Package: SpaTopic | Efficient R implementation of the SpatialTopic algorithm for scalable analysis of large images. | Used for spatial topic modeling on datasets with millions of cells [13]. |

| Graph Neural Networks (GNNs) | A class of deep learning models that operate on graph-structured data, ideal for modeling cell-cell interactions. | Core architecture of the SNOWFLAKE pipeline [14]. |

| Neuropsychological Test Battery | A compact set of clinical tests to assess cognitive function for disease progression modeling. | CDRSB, ADAS13, and MMSE were sufficient for reliable training of Leaspy and RPDPM models [15]. |

The integration of advanced spatial analytics and rigorous model benchmarking reveals a nuanced reality for tissue-agnostic research. While computational models demonstrate an increasing ability to identify conserved spatial patterns of disease progression, their predictive power and the efficacy of associated therapies are not universally generalizable. Instead, they are often context-dependent, influenced by the tissue of origin and the specific ways in which disease remodels the local microenvironment. Future research must therefore move beyond merely validating model accuracy and toward a deeper understanding of the biological and architectural contexts that limit or enable successful generalization across the diverse landscape of human tissues.

Tissue Microarrays (TMAs) represent a transformative technology in molecular pathology, enabling the simultaneous analysis of hundreds of tissue specimens on a single slide. This high-throughput approach is indispensable for validating findings across diverse tissue types. This case study examines how TMAs facilitate robust, large-scale tissue analysis, their methodological advantages, and their critical role in assessing the generalizability of research across different tissues and disease states.

A Tissue Microarray (TMA) is a platform constructed by extracting small cylindrical tissue cores from numerous donor paraffin blocks and embedding them in a single recipient paraffin block in a precise grid pattern [18] [19]. This design allows for the parallel analysis of up to hundreds of tissue samples under identical experimental conditions, dramatically accelerating research workflows [18].

- High-Throughput Efficiency: TMAs enable the analysis of hundreds of tissues on one slide, significantly reducing reagent consumption, processing time, and overall costs compared to traditional slide-by-slide analysis [19] [20].

- Experimental Uniformity: A key strength of TMA technology is its ability to subject all arrayed samples to the same staining, incubation, and analysis conditions on a single slide, which minimizes technical variability and enhances the reproducibility and reliability of results [18] [19].

- Broad Applicability: TMAs support various analytical techniques, including immunohistochemistry (IHC), fluorescence in situ hybridization (FISH), and RNA in situ hybridization (RNA-ISH), making them versatile tools for protein, DNA, and RNA investigation [18] [19].

TMA Workflow and Experimental Protocols

The process of creating and utilizing TMAs involves a series of standardized, high-precision steps.

TMA Construction and Analysis Workflow

The following diagram illustrates the end-to-end process of TMA-based research:

Detailed Experimental Protocol: DESI-MS Analysis of TMAs

A cutting-edge application involves using desorption electrospray ionization mass spectrometry (DESI-MS) for rapid, label-free molecular profiling [21]. The protocol below demonstrates a high-throughput approach:

- TMA Generation: A high-density TMA is created using an automated fluid handling workstation (e.g., Beckman Biomek i7) equipped with a 384-pin tool. Minute amounts of tissue (hundreds of nanograms) are transferred from a microtiter plate onto a specially coated DESI slide, creating sample spots of approximately 800 µm diameter [21].

- Array Density: This method can generate ultra-high-density arrays containing up to 6,144 sample spots per slide, with a center-to-center distance of about 1.1 mm [21].

- Mass Spectrometry Analysis: The spotted TMA slide is automatically transferred to a mass spectrometer (e.g., a Synapt G2-Si quadrupole time-of-flight instrument) equipped with a 2D DESI stage. The analysis is performed in a spot-to-spot manner [21].

- Data Acquisition: In full-scan mode, the effective analysis time can be as short as 500 milliseconds per sample. Tandem MS (MS/MS) analysis for targeted compound identification takes approximately 6 seconds per spot [21].

- Molecular Profiling: This label-free method allows for both untargeted analysis (e.g., tissue classification based on lipid profiles) and targeted analysis (e.g., identification of specific mutations like isocitrate dehydrogenase in glioma) [21].

Quantitative Data and Performance Comparison

Economic and Operational Advantages

The high-throughput nature of TMAs translates into significant economic and operational benefits, as shown in the following comparison with traditional methods.

Table 1: Cost and Efficiency Comparison: TMA vs. Traditional Tissue Analysis

| Feature | Traditional Tissue Analysis | Tissue Microarray (TMA) |

|---|---|---|

| Samples Processed per Slide | One tissue per slide [18] | Hundreds of tissues per slide [18] |

| Reagent Consumption | High [18] | Significantly reduced [18] |

| Time Efficiency | Labor-intensive and time-consuming [18] | High-throughput, faster results [18] |

| Experimental Variability | Higher due to sample-to-sample processing differences [18] | Lower, as all samples are processed under identical conditions [18] |

| Cost for 10,000 Analyses | Approximately $200,000 (estimated @ $20/slide) [19] | Approximately $600 (estimated @ $20/slide for 30 slides) [19] |

Addressing Tissue Heterogeneity: A Sampling Challenge

A critical consideration in TMA analysis is whether a small tissue core adequately represents a heterogeneous tumor. Research indicates that sampling strategy is crucial, particularly for highly variable cancers like epithelial ovarian cancer (EOC).

Table 2: Impact of Sampling Strategy on Biomarker Interpretation

| Sampling Method | Cases Showing Loss of MMR Expression | Key Finding |

|---|---|---|

| Cores from Tumor Center | 17 out of 59 cases (29%) [22] | Initial analysis suggested a high rate of MMR deficiency. |

| Cores from Tumor Periphery | 6 out of 17 original cases (35% of initial positives) [22] | Follow-up analysis from peripheral samples showed loss of expression in only 6 cases, highlighting significant sampling variability. |

This data underscores that optimal tissue fixation often occurs at the tumor periphery, and sampling from this region can yield more reliable IHC results for heterogeneous tumors [22]. For robust conclusions, it is considered best practice to sample multiple cores (e.g., two to three) from different regions of a donor block to account for tumor heterogeneity [19].

The Scientist's Toolkit: Essential Reagents and Solutions

Successful TMA experimentation relies on a suite of specialized instruments and reagents.

Table 3: Key Research Reagent Solutions for TMA Workflows

| Item | Function/Description | Application in TMA Workflow |

|---|---|---|

| TMA Arrayer | A precision instrument (e.g., Chemicon ATA-100, 3DHISTECH models) for extracting and placing tissue cores [22] [23]. | Core extraction from donor blocks and precise assembly of the recipient TMA block [18]. |

| DESI Mass Spectrometer | An ambient ionization MS system (e.g., Synapt G2-Si) for direct, label-free analysis [21]. | High-throughput molecular profiling of TMA spots via lipidomic or metabolic signatures [21]. |

| Primary Antibodies | Antibodies specific to target proteins (e.g., against MLH1, MSH2, HER2) for IHC [22]. | Detection and localization of protein expression across hundreds of tissue samples simultaneously [18]. |

| FISH/RNA-ISH Probes | Fluorescently or enzymatically labeled DNA/RNA probes [18] [19]. | Detection of gene amplifications, translocations, or mRNA expression levels on TMA sections [19]. |

| PTFE-Coated Slides | Specially coated glass slides for high-density spotting in DESI-MS applications [21]. | Serve as the substrate for creating spotted TMAs for ambient ionization MS analysis [21]. |

Analytical Workflow for Cross-Tissue Generalization

The power of TMAs in assessing generalizability lies in a structured workflow that moves from data generation to biological insight, as shown in the diagram of the analytical process for cross-tissue generalization.

This process integrates data from various TMA types, each serving a distinct purpose in establishing generalizability:

- Prevalence TMAs: Contain samples from numerous tumor types to determine the frequency of a biomarker across a wide spectrum of diseases [20].

- Progression TMAs: Include samples from different stages of a specific tumor type to uncover associations between molecular alterations and disease advancement [20].

- Prognosis TMAs: Comprise samples with extensive clinical follow-up data to evaluate the relationship between molecular features and patient outcomes [19] [20].

- Normal Tissue TMAs: Feature samples from vital organs to assess potential "on-target, off-organ" side effects of novel therapies, a crucial step in drug discovery [20].

Tissue Microarrays have fundamentally changed the scale and efficiency of histopathology-based research. By enabling the parallel processing of vast tissue cohorts, they provide a powerful and statistically robust platform for biomarker validation, drug target discovery, and clinical translation.

The case for TMAs is strengthened by their demonstrable cost-effectiveness and methodological rigor, which standardizes conditions and reduces assay variability [19]. While challenges such as tissue heterogeneity require thoughtful sampling strategies [22], the integration of advanced analytical techniques like DESI-MS [21] and sophisticated computational tools [24] continues to expand their utility.

In the context of assessing generalizability, TMAs are indispensable. They provide the necessary high-throughput framework to rigorously test whether molecular discoveries hold true across diverse tissue types, disease states, and patient populations. This capability is paramount for advancing precision medicine, ensuring that new diagnostics and therapeutics are developed based on findings that are not only statistically significant but also broadly applicable and clinically relevant.

Building Robust Tools: Methodologies for Pan-Tissue Analysis and Model Application

Leveraging Multi-Omics Integration (e.g., MESA) for a Holistic Tissue View

Understanding complex tissues requires more than just a catalog of their cellular components; it demands insight into how these cells are spatially organized and interact. The spatial organization of cells within tissues fundamentally influences biological processes, from development to disease progression [25]. Multi-omics integration has emerged as a powerful paradigm for achieving a comprehensive view by combining data from various molecular layers, such as transcriptomics, proteomics, and epigenomics. This guide objectively compares one such method, MESA (Multiomics and Ecological Spatial Analysis), against other statistical and deep learning-based integration approaches. We focus on their performance and, crucially, their generalizability—the ability to yield consistent, biologically relevant insights across diverse tissue types and disease states, a core requirement for robust biomedical research.

Multi-Omics Integration Methodologies

Multi-omics integration methods can be broadly categorized by their underlying computational strategies. The key differentiator for generalizability is whether a method relies solely on inherent data patterns or can leverage external biological knowledge.

The Ecological Approach: MESA

MESA introduces a unique, ecology-inspired framework for analyzing spatial omics data. It treats cell types in a tissue analogously to species in an ecosystem [25] [26]. Its workflow involves:

- In Silico Multi-Omics Fusion: MESA first enriches spatial proteomics data (e.g., from CODEX) with corresponding single-cell RNA sequencing (scRNA-seq) data from the same tissue type using a data integration algorithm like MaxFuse. This step creates a comprehensive multi-omics profile for each cell without requiring additional experiments [25].

- Cellular Neighborhood Characterization: Instead of using pre-defined cell types, MESA characterizes the local environment of each cell by aggregating multi-omics information (e.g., average protein and mRNA levels) from its spatially determined neighbors. These neighborhoods are then clustered to identify conserved tissue niches [25].

- Spatial Diversity Quantification: Drawing from ecology, MESA introduces systematic metrics to quantify cellular diversity [25]:

- Multiscale Diversity Index (MDI): Measures how cellular diversity changes across different spatial scales.

- Global and Local Diversity Indices (GDI/LDI): Identify spatial clusters of high and low cellular diversity ("hot spots" and "cold spots").

- Diversity Proximity Index (DPI): Evaluates the spatial relationships between these spots, suggesting the potential for dynamic cellular interactions.

Statistical and Deep Learning Approaches

Other prominent methods employ distinct strategies for integration and feature selection, which impact their generalizability.

- Statistical-Based (MOFA+): MOFA+ (Multi-Omics Factor Analysis) is an unsupervised factor analysis method. It reduces the dimensionality of multi-omics datasets into latent factors that capture shared sources of variation across the different omics modalities. Features are selected based on their absolute loadings from the latent factor explaining the highest shared variance [27].

- Deep Learning-Based (MoGCN): MoGCN (Multi-omics Graph Convolutional Network) uses graph convolutional networks for cancer subtype analysis. It often employs autoencoders for dimensionality reduction and noise removal. Feature importance is calculated by multiplying the absolute encoder weights by the standard deviation of each input feature [27].

Experimental Workflows at a Glance

The diagrams below illustrate the core workflows for benchmarking multi-omics methods and the specific analytical pipeline of MESA.

Comparative Performance Across Tissue Types

Generalizability is tested by applying methods to diverse datasets. The following tables summarize quantitative performance data from independent benchmarks and original studies.

Table 1: Benchmarking Performance Across Integration Tasks

Data from a large-scale Registered Report in Nature Methods benchmarking 40 integration methods across 64 real and 22 simulated datasets [28].

| Integration Category | Top-Performing Methods | Key Evaluation Tasks | Performance Summary |

|---|---|---|---|

| Vertical(Paired multi-omics from same cells) | Seurat WNN, Multigrate, Matilda | Dimension Reduction, Clustering | Generally strong performance in preserving biological variation of cell types across 13 RNA+ADT and 12 RNA+ATAC datasets. Performance is dataset and modality-dependent. |

| Feature Selection(From multi-omics data) | MOFA+, scMoMaT, Matilda | Feature Selection, Clustering, Classification | MOFA+ produced more reproducible features. scMoMaT and Matilda features led to better cell type clustering and classification. |

| Mosaic(Non-overlapping features) | StabMap | Alignment under feature mismatch | Robust integration of datasets measuring different features by leveraging shared cell neighborhoods [29]. |

Table 2: Method Performance in Disease Subtyping & Spatial Analysis

Data from studies focused on specific biological questions, demonstrating translational relevance.

| Method | Study Context | Performance & Generalizability Findings |

|---|---|---|

| MESA(Spatial Ecology) | Human tonsil, mouse spleen, human intestine, human liver (CosMx SMI) [25]. | Identified novel spatial structures and key cell populations linked to disease states (e.g., subniches within germinal centers) not discerned by prior techniques. Quantified spatial diversity shifts. |

| MOFA+(Statistical) | Breast cancer subtype classification (960 samples) [27]. | Achieved F1 score of 0.75 in nonlinear classification. Identified 121 relevant pathways. Outperformed a deep learning model (MoGCN) in feature selection for subtyping. |

| Biologically-Informed DL(Deep Learning) | Pan-cancer classification (30 cancer types, 7632 samples) [30]. | Classified tissue of origin with 96.67% accuracy on external datasets. Showed superior separation of cancer types in latent space compared to single-omics models. |

| MIIT(Spatial Toolset) | Prostate tissue (Spatial Transcriptomics & Mass Spectrometry Imaging) [31]. | Enabled integration of spatially resolved multi-omics from serial sections via a novel non-rigid registration algorithm (GreedyFHist), validated on 244 images. |

Experimental Protocols for Assessing Generalizability

To ensure findings are reproducible and comparable, below are detailed methodologies for key experiments cited in this guide.

Protocol for Benchmarking Multi-Omics Integration Methods

This protocol is adapted from the Registered Report in Nature Methods [28].

- 1. Data Curation: Assemble a diverse panel of single-cell multimodal omics datasets. This should include datasets with different modality combinations (e.g., RNA+ADT, RNA+ATAC) and from various tissues and conditions.

- 2. Method Categorization: Classify methods into integration categories: Vertical, Diagonal, Mosaic, and Cross integration based on their input data structure and modality combination.

- 3. Task-Based Evaluation: Evaluate each method on multiple common computational tasks:

- Dimension Reduction & Clustering: Use metrics like Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), and Average Silhouette Width (ASW) to assess how well the integrated data separates known cell types.

- Feature Selection: Evaluate selected features by their ability to cluster cells (using clustering metrics) and classify cell types (using F1 score).

- Batch Correction: Assess the ability to remove technical variation while preserving biological variation.

- 4. Cross-Validation: Apply methods to both real and simulated datasets to distinguish robust performance from overfitting. Calculate overall rank scores for each method across all datasets and tasks.

Protocol for Evaluating Spatial Method Generalizability (MESA)

This protocol is based on the application of MESA across multiple tissues as described in Nature Genetics [25].

- 1. Multi-Omics Data Integration:

- Input: Collect spatial proteomics data (e.g., CODEX) and matched scRNA-seq data from the same tissue type and disease condition.

- Integration: Use MaxFuse to impute and enrich the spatial data with gene expression information, creating a fused multi-omics spatial dataset.

- 2. Neighborhood Identification and Clustering:

- For each cell, calculate the average multi-omics profile (protein and mRNA levels) of its local neighborhood (e.g., 20 nearest neighbors).

- Apply k-means clustering to these neighborhood profiles to identify conserved cellular neighborhoods across the tissue.

- 3. Spatial Diversity Analysis:

- MDI Calculation: Tessellate the tissue into patches of varying sizes. Calculate diversity (e.g., Shannon index) within each patch and regress against spatial scale. The MDI is the slope of this regression.

- Hot/Cold Spot Identification: Use Local Diversity Index (LDI) to compute a diversity heatmap. Apply spatial autocorrelation analysis (e.g., Getis-Ord Gi*) to identify statistically significant hot spots (high diversity) and cold spots (low diversity).

- 4. Validation: Demonstrate generalizability by applying the entire pipeline to distinct tissue types (e.g., tonsil, spleen, intestine, liver) and showing the discovery of consistent, biologically plausible spatial patterns in each.

Core Ecological Concepts in MESA

MESA's power comes from translating well-established ecological concepts to cellular distributions.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful multi-omics integration relies on both computational tools and high-quality biological data. The following table details key resources for implementing these analyses.

| Category | Item / Tool | Function & Application |

|---|---|---|

| Computational Tools | MESA (Python package) | Applies ecological metrics to quantify spatial cellular diversity and identify niches from multi-omics data [25] [26]. |

| MOFA+ (R package) | Unsupervised statistical tool for multi-omics integration via factor analysis; effective for feature selection and subtyping [27]. | |

| Seurat WNN (R package) | Weighted Nearest Neighbors method for vertical integration of paired multi-omics data; strong performer in benchmarking [28]. | |

| StabMap | Enables mosaic integration of datasets with non-overlapping features by leveraging shared cell neighborhoods [29]. | |

| Spatial Profiling Technologies | CODEX | Multiplexed protein imaging technology that provides high-dimensional spatial data on tissue sections [25]. |

| CosMx SMI | In situ imaging platform for spatially resolved RNA and protein measurement at single-cell resolution [25]. | |

| Spatial Transcriptomics | Technologies capturing gene expression data while retaining spatial location information in a tissue [31]. | |

| Reference Data Resources | scRNA-seq Data | Single-cell RNA sequencing data from matched tissues used to computationally enrich spatial data in frameworks like MESA [25]. |

| TCGA, ICGC, CPTAC | Large-scale public archives providing multi-omics data from cancer and normal samples for method development and validation [32] [30] [27]. |

Unsupervised Annotation Tools (e.g., SCGP) for Universal Tissue Structure Discovery

Tissues are organized into anatomical and functional units at different scales, from cellular neighborhoods to entire tissue compartments. The advent of high-dimensional molecular profiling technologies has enabled the characterization of these structure-function relationships in unprecedented molecular detail. However, a significant challenge remains: consistently identifying key functional units across experiments, tissues, and disease contexts often demands extensive manual annotation, creating a critical bottleneck in spatial biology research. Uniform and consistent identification of structures across different batches, experiments, and diverse disease conditions remains challenging, often requiring manual intervention. The generalizability of annotations from a reference dataset to new or unseen data represents a major methodological hurdle [33] [24].

This comparison guide assesses unsupervised computational tools designed to address this generalizability challenge. We focus specifically on methods that enable tissue structure discovery without extensive manual supervision, evaluating their performance across diverse tissue types, experimental conditions, and technological platforms. The ability to generalize annotations across different contexts is particularly crucial for large-scale atlas projects and comparative studies of disease progression.

Performance Comparison of Unsupervised Annotation Tools

Quantitative Performance Metrics Across Tissue Types

Comprehensive benchmarking across multiple biological contexts reveals significant differences in tool performance. The following table summarizes quantitative performance metrics for leading unsupervised annotation tools evaluated across diverse tissue types and spatial omics technologies.

Table 1: Performance Comparison of Unsupervised Annotation Tools Across Tissue Types

| Tool | Algorithm Type | Key Metric | Kidney (DKD) | Tonsil/BE | Breast Cancer | Liver |

|---|---|---|---|---|---|---|

| SCGP [33] | Graph partitioning | ARI | 0.60 | - | - | - |

| SCGP [33] | Graph partitioning | Glomeruli F1 Score | ~0.80 | - | - | - |

| UTAG [33] | Linear weighting | Glomeruli F1 Score | ~0.80 | - | - | - |

| SpaGCN [33] | Graph neural network | Tubule F1 Score | High | - | - | - |

| scNiche [34] | Multi-view GAE | ARI | - | - | - | Best |

| STELLAR [35] | Geometric deep learning | Accuracy | - | 93% | - | - |

Evaluation metrics include Adjusted Rand Index (ARI) measuring similarity between algorithmic and expert annotations, and F1 scores for specific tissue structures. SCGP demonstrates particularly strong performance in kidney tissues, achieving a median ARI of 0.60, significantly outperforming competing methods (Wilcoxon signed-rank test) [33]. SCGP and UTAG show exceptional capability in identifying glomeruli structures (F1 ≈ 0.8), while SpaGCN excels at recognizing tubule structures in kidney tissue [33].

Cross-Technology and Generalization Performance

The ability to maintain performance across different spatial omics technologies and generalize from reference to query datasets is crucial for practical utility. The following table compares tool performance across technological platforms and generalization capabilities.

Table 2: Cross-Technology Performance and Generalization Capabilities

| Tool | CODEX Performance | Visium Performance | MERFISH Performance | Generalization Approach | Novel Type Discovery |

|---|---|---|---|---|---|

| SCGP [33] | Excellent | Excellent | Excellent | SCGP-Extension pipeline | Limited |

| SCGP-Extension [33] | Excellent | Excellent | Excellent | Reference-query alignment | Limited |

| STELLAR [35] | Excellent | - | Excellent | Geometric deep learning | Supported |

| scNiche [34] | - | Good | - | Multi-view integration | Limited |

SCGP shows outstanding performance across 8 distinct spatial omics datasets spanning different technologies including CODEX, Visium, IMC, and MERFISH, totaling more than 2.5 million cells [33]. SCGP-Extension effectively generalizes existing tissue structure labels to unseen samples, performing data integration and tissue structure discovery while addressing common data integration challenges [33] [24]. STELLAR demonstrates robust cross-tissue application, successfully transferring annotations from human tonsil to Barrett's esophagus tissue with 93% accuracy while discovering novel cell types specific to the target tissue [35].

Experimental Protocols and Methodologies

Core Algorithmic Approaches

SCGP (Spatial Cellular Graph Partitioning) Methodology [33]: SCGP performs community detection on specialized graph representations of tissue samples. Nodes in the graphs represent small spatial units characterized by spatial coordinates and gene/protein expression. The algorithm constructs two edge types: (1) Spatial edges based on Delaunay triangulation of node coordinates to capture adjacency relationships, and (2) Feature edges connecting nodes with similar expression profiles to interrelate similar tissue structures even when spatially separated. The Leiden graph community detection algorithm is then applied to identify tissue structures, with both edge types ensuring spatial continuity and consistent interpretation.

scNiche Multi-View Framework [34]: scNiche employs a multi-view feature fusion approach, integrating three default feature views: (1) molecular profiles of the cell itself, (2) molecular profiles of its neighborhoods, and (3) cellular compositions of its neighborhoods. The method uses a neural network architecture of multiple graph autoencoder (M-GAE) coupled with a graph fusion network (GFN) to integrate multi-view features into a joint representation. A multi-view mutual information maximization (MMIM) module guides the joint representation to be more clustering-friendly by boosting similarity between representations of neighboring samples.

STELLAR Geometric Deep Learning [35]: STELLAR utilizes graph convolutional neural networks to learn latent low-dimensional cell representations that jointly capture spatial and molecular similarities. The framework consists of two components: (1) separation of reference cell types by controlling intra-class variance using adaptive margin mechanism, and (2) discovery of novel classes by generating auxiliary labels for unannotated data based on nearest neighbors in the embedding space.

Benchmarking Experimental Designs

Performance evaluations typically employ multiple datasets with expert annotations as ground truth. The DKD Kidney dataset comprises 17 tissue sections from 12 individuals with diabetes and various stages of diabetic kidney disease, imaged using CODEX and annotated for four major kidney compartments [33]. Benchmarking involves quantitative metrics including Adjusted Rand Index (ARI) for overall concordance with manual annotations, and F1 scores for specific tissue structures to account for class imbalance [33]. Cross-technology validation assesses performance consistency across platforms (CODEX, Visium, MERFISH, IMC), while cross-tissue experiments evaluate generalization capability [33] [35].

SCGP Workflow: Spatial and feature edges are jointly analyzed.

Visualization of Method Workflows and Relationships

Algorithmic Architectures and Data Flows

scNiche Multi-View Architecture: Integrating multiple feature views.

Performance Relationship Mapping

Performance Strengths: Different tools excel in specific contexts.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Research Reagents and Computational Solutions for Spatial Omics

| Category | Specific Resource | Function/Application | Example Use |

|---|---|---|---|

| Spatial Technologies | CODEX [33] [35] | Multiplexed protein imaging | High-dimensional spatial proteomics |

| 10X Visium [33] [36] | Spatial transcriptomics | Gene expression with spatial context | |

| MERFISH [33] | Single-molecule RNA imaging | High-resolution spatial transcriptomics | |

| IMC [33] | Imaging mass cytometry | Spatial proteomics at single-cell resolution | |

| Computational Tools | Leiden Algorithm [33] | Graph community detection | Partitioning spatial cellular graphs |

| Graph Neural Networks [34] [35] | Deep learning on graphs | Learning spatial-cell representations | |

| Harmony [37] | Batch correction | Integrating datasets from different sources | |

| scVI [37] | Probabilistic modeling | Single-cell variational inference | |

| Reference Datasets | DKD Kidney [33] | Diabetic kidney disease benchmark | 17 tissue sections, 137,654 cells |

| HuBMAP Intestine [35] | Human intestine reference | 2.6 million cells, 54 protein markers | |

| Triple-negative breast cancer [34] | Cancer microenvironment | Patient-specific niche identification |

The table summarizes critical experimental platforms, computational algorithms, and reference datasets that form the foundation of robust spatial omics analysis. CODEX and Visium represent widely adopted spatial profiling technologies, while algorithmic tools like the Leiden algorithm and graph neural networks provide the computational foundation for structure discovery [33] [34] [35]. Carefully curated reference datasets such as the DKD Kidney collection enable method benchmarking and validation [33].

The comparative analysis reveals that tool selection must be guided by specific research objectives and experimental contexts. SCGP demonstrates exceptional performance in identifying conserved tissue structures across multiple samples and technologies, with its extension pipeline providing robust generalization to unseen data [33]. STELLAR offers unique advantages for cross-tissue annotation where novel cell type discovery is anticipated, successfully identifying previously uncharacterized cell populations [35]. scNiche provides a flexible framework for microenvironment analysis, particularly when leveraging multiple feature views enhances discovery potential [34].

For atlas-building initiatives and large-scale spatial studies, SCGP and SCGP-Extension provide reliable, consistent performance across diverse samples. In exploratory settings with potentially novel biology, STELLAR's ability to identify unseen cell types offers significant value. scNiche's multi-view approach enables comprehensive microenvironment characterization, particularly valuable in complex disease contexts like cancer. As spatial omics continues to evolve, generalizable unsupervised annotation will remain crucial for translating high-dimensional spatial data into meaningful biological insights.

Foundation models (FMs), pre-trained on vast amounts of unlabeled data using self-supervised learning (SSL), promise to revolutionize computational pathology by serving as versatile starting points for developing various diagnostic AI tools [38]. Their potential to encode rich, transferable feature representations of histopathology images could accelerate the creation of models for cancer diagnosis, prognostication, and biomarker prediction. However, the central challenge lies in their generalizability—the ability to perform robustly across diverse tissue types, cancer subtypes, staining protocols, and medical institutions [39] [40]. A model that excels on data from one source may fail dramatically on another due to "domain shift," a phenomenon where differences in data distribution between training and real-world deployment scenarios lead to significant performance degradation [39]. This guide objectively compares the performance, training methodologies, and limitations of current histopathology foundation models, providing a framework for assessing their true generalizability for research and drug development.

Comparative Performance of Pathology Foundation Models

Evaluating FMs on tasks like cancer subtyping, biomarker prediction, and slide retrieval reveals a complex landscape where scale and architecture alone do not guarantee robustness.

Benchmarking Slide-Level Classification and Retrieval

Table 1: Performance Comparison of Selected Foundation Models

| Model | Pretraining Data Scale | Key Strengths | Reported Limitations / Performance |

|---|---|---|---|

| TITAN [38] | 335,645 WSIs; multimodal (images + reports/synthetic captions) | Superior slide-level representation; outperforms other FMs in few-shot/zero-shot tasks & rare cancer retrieval. | Evaluated on diverse tasks; generalizability to very rare conditions remains to be fully proven. |

| Virchow2 [40] | Not Specified | Achieved a Robustness Index (RI) > 1.2, meaning embeddings cluster more by biology than by site. | An exception; most other models showed significant site bias. |

| UNI, Phikon-v2, Others [40] | Large-scale | Competitive performance on data from training distribution. | Low Robustness Index (RI ≈ 1 or <1); embeddings cluster by hospital/scanner, not cancer type. |

| PathDino [40] | <10 million parameters | Highest rotation invariance (m-kNN: 0.85), indicating better geometric stability. | Smaller model; may lack the broad feature diversity of larger models. |

| Task-Specific (TS) Models [40] | Task-specific datasets | Can match or outperform FMs when sufficient labeled data is available; up to 35x more energy-efficient. | Lack the "off-the-shelf" versatility of FMs; require extensive labeling for each new task. |

The TITAN model demonstrates the potential of large-scale, multimodal pretraining, showing strong performance across classification and retrieval tasks, even in low-data scenarios [38]. However, a systematic evaluation of robustness reveals a critical weakness in most FMs: they often learn to recognize the source of the image (e.g., the hospital or scanner) rather than the underlying biology. A study evaluating ten leading FMs found that only Virchow2 learned embeddings where biological class similarity definitively outweighed site-specific bias [40]. This lack of robustness translates to poor performance when these models are applied to data from new medical centers.

Limitations in Zero-Shot and Fine-Tuning Paradigms

The promise of FMs is their adaptability, but in practice, their downstream application is often limited to linear probing (training a shallow classifier on frozen features) rather than full fine-tuning. This is because fine-tuning these massive models on typical clinical dataset sizes often leads to overfitting and catastrophic forgetting [40]. This reliance on linear probing contradicts the core FM premise of easy adaptation and indicates that current models function more as static feature extractors than truly adaptable foundations.

Furthermore, in zero-shot retrieval tasks—where a model retrieves similar cases without task-specific training—even large FMs show modest performance. One evaluation on over 11,000 whole-slide images (WSIs) across 23 organs found macro-averaged F1 scores around 40-42% for top-5 retrieval, with performance varying drastically between organs (e.g., 68% for kidneys vs. 21% for lungs) [40]. This indicates that while FMs capture some general textures, their ability to generalize to meaningful diagnostic morphology across the board is limited.

Experimental Protocols for Training and Evaluation

Understanding the methodologies used to train and benchmark FMs is crucial for interpreting their reported performance and limitations.

Training Workflows for Generalizable Models

The training of a robust FM involves multiple stages designed to instill both visual and semantic understanding.

Diagram 1: Multimodal Foundation Model Pretraining Workflow