Beyond the Basics: Advanced Strategies to Resolve Ambiguity in Single-Cell Annotation of Similar Subtypes

This article provides a comprehensive guide for researchers and drug development professionals facing the critical challenge of accurately annotating highly similar cell subtypes in single-cell RNA sequencing (scRNA-seq) data.

Beyond the Basics: Advanced Strategies to Resolve Ambiguity in Single-Cell Annotation of Similar Subtypes

Abstract

This article provides a comprehensive guide for researchers and drug development professionals facing the critical challenge of accurately annotating highly similar cell subtypes in single-cell RNA sequencing (scRNA-seq) data. We begin by exploring the biological and technical sources of annotation ambiguity. We then detail advanced computational methodologies, including multi-modal integration, graph-based techniques, and ensemble learning. The guide addresses common pitfalls, offers optimization strategies for real-world datasets, and establishes rigorous validation and benchmarking frameworks. By synthesizing current best practices, this resource aims to enhance the reliability of cell-type identification, directly impacting downstream analyses in disease modeling, biomarker discovery, and therapeutic target identification.

The Annotation Ambiguity Problem: Why Distinguishing T Cells from T Cells is So Hard

Troubleshooting Guides & FAQs

FAQ 1: My single-cell RNA-seq clustering reveals a continuous gradient instead of distinct clusters. Is this biological reality or a batch effect?

- Answer: This is a core challenge. First, systematically rule out technical noise.

- Check Batch Integration: Use visualizations like UMAPs colored by batch, library size, or percent mitochondrial reads. A strong batch correlation suggests technical noise.

- Apply QC Metrics: See Table 1 for key metrics to calculate per batch.

- Test Integration Algorithms: Apply a method like Harmony, Seurat's CCA, or Scanorama. If the continuum collapses into clear batches post-integration, it was likely technical. If the gradient persists across batches, it is more likely biological.

FAQ 2: After integration, my marker genes for putative subtypes have low expression and high dropout. How can I be confident they are real?

- Answer: Low-expression markers are vulnerable to technical noise. Employ a multi-modal validation workflow.

- Within-assay validation: Use differential expression testing that accounts for dropout (e.g., MAST, Wilcoxon with proper filtering). Require markers to be co-expressed in the same cells (see pathway diagram).

- Cross-platform validation: Correlate your RNA-seq findings with protein abundance (CITE-seq, flow cytometry) or accessible chromatin (scATAC-seq) from the same or matched samples.

- Functional validation: Design perturbation experiments (CRISPRi, inhibitor assays) based on the putative subtype markers and measure subtype-specific functional outputs.

FAQ 3: I am using CITE-seq to resolve subtypes, but the ADT data is noisy. How do I troubleshoot poor antibody-derived tag (ADT) data?

- Answer: Noisy ADT data often stems from protocol-specific issues.

- High Background: This can be due to non-specific antibody binding or insufficient washing. Compare to isotype controls and increase wash steps.

- Low Signal: Could be caused by antibody degradation, low cell surface antigen expression, or poor conjugation. Titrate antibodies, use fresh aliquots, and include a high-expressing positive control sample.

- Doublet Artifacts: Cells expressing high levels of many unrelated ADTs may be doublets. Use a doublet detection tool (e.g.,

DoubletFinder) on the ADT channel and remove suspected doublets.

FAQ 4: My trajectory inference analysis yields different results with different algorithms. Which one should I trust?

- Answer: Disagreement between algorithms (e.g., Monocle3, Slingshot, PAGA) is common. Treat this as a hypothesis-generating, not confirmatory, step.

- Benchmark with Ground Truth: If available, use a published dataset with a known lineage to test which algorithm recapitulates it best for your data type.

- Seek Consensus: Look for the shared topological features (e.g., a key branch point) present across multiple method outputs.

- Validate with Pseudotime Markers: Genes that algorithmically change along the pseudotime should be validated by orthogonal methods like RNAscope to confirm spatial/ temporal expression patterns.

Table 1: Key QC Metrics for Batch Effect Diagnosis

| Metric | Calculation | Interpretation | Acceptable Threshold* |

|---|---|---|---|

| Median Genes per Cell | Count of genes with >0 counts per cell, median across cells in batch. | Low values indicate poor library complexity or dead cells. | Batch difference < 20% |

| Total Counts per Cell | Sum of all UMIs/reads per cell, median across batch. | Captures differences in sequencing depth or cell size. | Batch difference < 50% |

| % Mitochondrial Reads | (Counts in mitochondrial genes / Total counts) * 100, median. | High values indicate stressed or dying cells. | Batch difference < 2x |

| # of Doublets | Estimated by DoubletFinder or scDblFinder. |

High doublet rates can create artificial continua. | Batch difference < 2% |

*Thresholds are starting points; vary by tissue and protocol.

Experimental Protocol: Cross-Modal Subtype Validation

Title: Integrated scRNA-seq and CITE-seq Workflow for Subtype Annotation

Methodology:

- Sample Preparation: Generate single-cell suspensions from tissue or culture.

- Multimodal Library Generation: Use the 10x Genomics Chromium Single Cell Multiome ATAC + Gene Expression or perform separate scRNA-seq and CITE-seq/flow cytometry on split aliquots from the same sample.

- Sequencing: Follow platform-specific guidelines (Illumina NovaSeq).

- Primary Analysis (scRNA-seq):

- QC & Filtering: Using

Cell RangerandSeurat. Remove cells with <200 genes, >6000 genes, or >10% mitochondrial reads. - Integration: Normalize data (

SCTransform), identify anchors, and integrate batches usingIntegrateDatain Seurat. - Clustering: Run PCA, UMAP, and Leiden clustering on integrated data.

- QC & Filtering: Using

- Primary Analysis (CITE-seq):

- ADT Normalization: Normalize ADT counts using centered log-ratio (CLR) normalization in Seurat.

- Integration with RNA: Use WNN (Weighted Nearest Neighbor) analysis in Seurat to jointly cluster cells based on RNA and protein.

- Differential Expression & Marker Validation:

- Find conserved markers (

FindConservedMarkers) for RNA-based clusters across batches. - Find ADT markers that are differentially expressed (

FindAllMarkerson ADT assay). - Visually confirm co-localization of top RNA marker expression and corresponding protein expression on UMAP plots.

- Find conserved markers (

- Functional Assay:

- Sort putative subtypes via FACS based on top 2 ADT markers.

- Perform a bulk or single-cell functional assay (e.g., cytokine secretion, drug response, metabolic flux) on sorted populations.

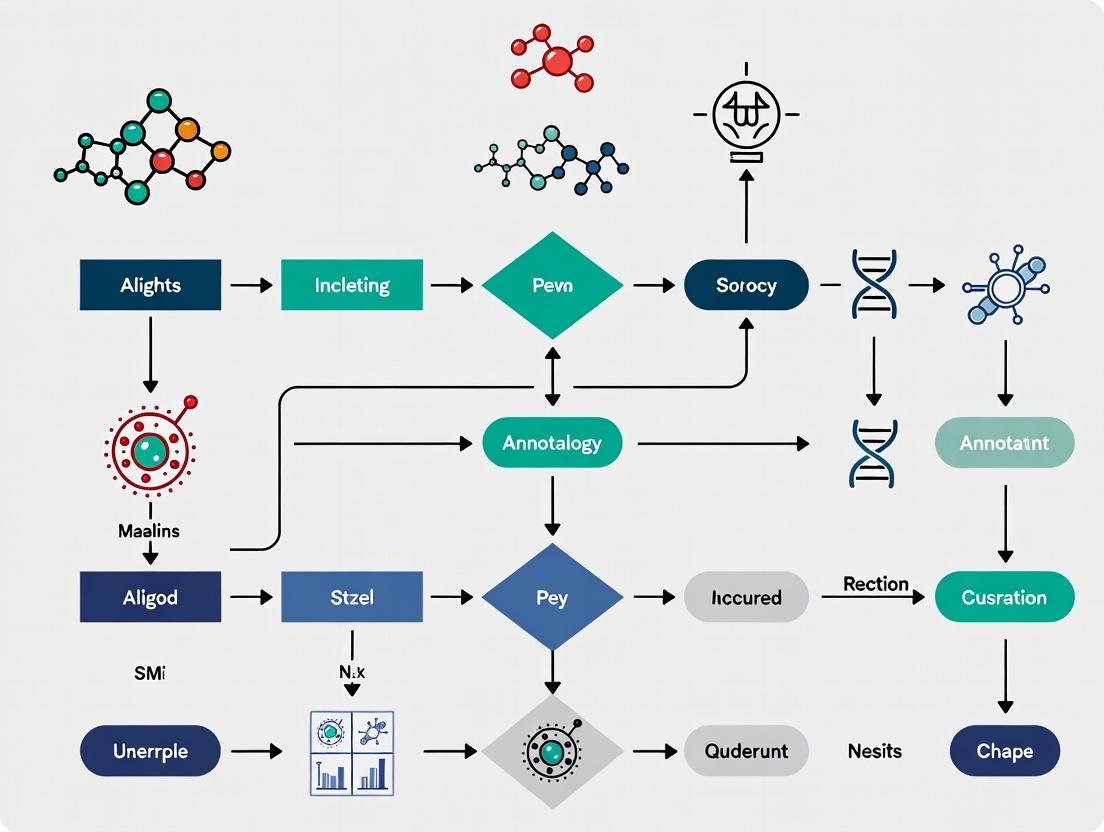

Diagram 1: Multi-modal Validation Workflow

Diagram 2: Technical Noise vs. Biological Continuum Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context |

|---|---|

| 10x Genomics Feature Barcode Kits | Enables simultaneous measurement of RNA and surface proteins (CITE-seq) or CRISPR perturbations (Perturb-seq) from the same cell, crucial for linking subtype identity to function. |

| Cell Hashing Antibodies (TotalSeq) | Allows multiplexing of samples, reducing batch effects and costs. Essential for designing experiments where controls and conditions are processed together. |

| Viability Dyes (e.g., Propidium Iodide, DAPI) | Critical for pre-sequencing FACS sorting to remove dead cells, which are a major source of technical noise and spurious gene expression. |

| DNase I / RNase Inhibitors | Maintain RNA integrity during single-cell suspension preparation, preserving true biological signals and minimizing stress-response artifacts. |

| UltraPure BSA | Used as a blocking agent in CITE-seq and cell hashing protocols to reduce non-specific antibody binding, improving signal-to-noise ratio in ADT data. |

| Chromium Next GEM Chips & Kits | Standardized microfluidic platform for partitioning single cells with barcoded beads, ensuring consistent cell throughput and library quality. |

| Validated Flow Cytometry Antibodies | Independent protein-level validation of transcriptional subtype markers identified from scRNA-seq, confirming protein expression and enabling FACS sorting for functional assays. |

Troubleshooting Guide

Q1: Our single-cell RNA sequencing analysis of T cells shows a continuous gradient of gene expression rather than discrete clusters. How do we determine if this is a true biological maturation gradient or an artifact of transcriptional overlap? A: This is a common challenge. First, verify technical artifacts:

- Check batch effects: Use integration tools (e.g., Seurat's CCA, Harmony) to ensure the gradient is not driven by sample processing batches.

- Examine gene dropout: Plot the relationship between gene detection rate and the pseudotime score. A correlation may indicate a technical gradient.

- Validate with known markers: Project established, stage-specific marker genes (e.g., for naive, effector, memory T cells) onto your gradient. A biologically meaningful progression should align these markers in a consistent order.

If technical issues are ruled out, proceed to confirm a maturation gradient:

- Pseudotime Analysis: Use tools like Monocle3, Slingshot, or PAGA to construct a trajectory. The root should be set using prior knowledge (e.g., the most naive-like cluster).

- Differential Expression Testing along Pseudotime: Identify genes whose expression changes smoothly along the trajectory. A true maturation gradient will show coordinated waves of gene programs.

- Cross-Platform Validation: Sort cells from key points along the inferred gradient using a few key surface proteins (by FACS) and perform bulk RNA-seq or qPCR to confirm the transcriptional continuum.

Q2: We have identified a novel cell population that co-expresses markers typically associated with two distinct lineages (e.g., myeloid and lymphoid). How can we resolve if this is a mixed identity state, a technical doublet, or a new activation state? A: Follow this systematic troubleshooting workflow:

Doublet Detection:

- Apply computational doublet detectors (e.g., Scrublet, DoubletFinder) to your data. A high doublet score for the ambiguous cluster is a strong indicator.

- Experimentally, if possible, re-run the assay with a lower cell loading concentration to reduce doublet rate.

Assess Activation/Transient State:

- Perform Gene Set Enrichment Analysis (GSEA) on the ambiguous population against databases of activation signatures (e.g., MSigDB Hallmarks, inflammatory responses).

- Check for high expression of immediate-early genes (e.g., FOS, JUN, EGR1), which can indicate a transient activation state blurring lineage boundaries.

- Design in vitro stimulation/resting experiments. If the mixed phenotype converges to a canonical state upon resting, it was likely an activation state.

Q3: When integrating multiple public datasets to define a reference atlas, how do we disentangle true biological activation states from study-specific batch effects? A: This requires careful iterative integration and annotation.

- Strategy: Use a "canonical marker-first" integration approach.

- Step 1: Perform a coarse integration using only a small, universally accepted set of core lineage-defining genes (e.g., CD3E for T cells, CD19 for B cells). This anchors major lineages.

- Step 2: Within each anchored lineage, perform a secondary integration of all genes, using strong integration anchors from Step 1.

- Step 3: Identify clusters that are dataset-specific. For these, test if they express coherent gene programs (suggesting a biological state) or random genes (suggesting residual batch effect).

Q4: Our flow cytometry data shows intermediate expression levels of a key marker, making gating subjective. How can we improve the resolution of these activation states? A: Move beyond one-dimensional gating.

- Solution: Employ a panel of 3-4 markers that collectively define the activation state, even if each is expressed intermediately. Use dimensionality reduction (e.g., t-SNE, UMAP) on the flow cytometry data itself to visualize cell states. Then, index sort cells from different regions of the low-dimensional space and perform low-input RNA-seq to transcriptionally validate the distinctness of the populations defined by combinatorial protein expression.

Frequently Asked Questions (FAQs)

Q: What is the most reliable way to assign a cell to a specific subtype when its transcriptome shows significant overlap with another? A: There is no single method. The most robust strategy is a consensus approach:

- Use a high-confidence marker gene panel (≥3 genes) verified by protein expression.

- Apply multiple independent classification algorithms (e.g., SingleR, SCINA, random forest) and only assign a label where there is agreement.

- For borderline cells, report them as "intermediate" or "transitional" rather than forcing a discrete label.

Q: How many cells do we need to profile to reliably detect rare transition states or cells along a maturation gradient?

A: The number is highly dependent on the rarity and length of the transition. As a rule of thumb, if you suspect a transition state representing 1% of your population, you should aim for at least 100 cells from that state for basic characterization. This often requires profiling 10,000+ total cells. Use power analysis tools (e.g., powsimR) for more precise estimation.

Q: Are there specific experimental protocols to 'freeze' cells in a transient activation state for better characterization? A: Yes. Pharmacological inhibitors can be used to arrest cells in specific states shortly after stimulation (e.g., protein translation inhibitors to capture immediate-early responses). However, this perturbs biology. A better practice is high-throughput time-course sampling (e.g., scRNA-seq at 0, 15min, 1h, 4h, 12h post-stimulation) to computationally reconstruct the trajectory.

Experimental Protocols

Protocol 1: CITE-seq for Resolving Transcriptional Overlap with Surface Protein Expression

Objective: To simultaneously measure RNA and surface protein expression in single cells, linking ambiguous transcriptional profiles to definitive protein markers. Materials: Fresh single-cell suspension, TotalSeq-B antibody cocktail, Chromium Next GEM Single Cell 5' Kit, sequencer. Steps:

- Antibody Staining: Stain 1-2 million cells with a pre-titrated panel of TotalSeq-B antibodies for 30 minutes on ice. Wash twice.

- Single-Cell Partitioning: Process stained cells according to the 10x Genomics Chromium Single Cell 5' Protocol.

- Library Preparation: Generate separate gene expression (GE) and antibody-derived tag (ADT) libraries as per kit instructions.

- Sequencing & Analysis: Sequence libraries. Process GE data (Cell Ranger). Process ADT data (Cell Ranger or CITE-seq-Count). Normalize ADT counts using centered log-ratio (CLR) transformation. Integrate with transcriptome data for joint clustering.

Protocol 2: Cellular Indexing of Transcriptomes and Epitopes by Sequencing (CITE-seq) Workflow

Protocol 3: Pseudotime Analysis with RNA Velocity

Objective: To infer a directed maturation trajectory and predict future cell states. Materials: scRNA-seq data prepared using a protocol that retains unspiced RNA information (e.g., 10x Genomics Chromium Single Cell 3' v3, or SMART-seq). Steps:

- Data Preprocessing: Align reads to a reference genome using a tool that distinguishes spliced and unspliced transcripts (e.g.,

cellranger countwith--include-intronsorSTARsolo). - RNA Velocity Estimation: Compute velocity vectors using

scveloorvelocyto.py. This models transcriptional dynamics from the ratio of unspliced to spliced mRNA. - Embedding and Visualization: Embed cells in a low-dimensional space (UMAP) using the transcriptome. Project the velocity vectors onto this embedding to visualize the direction and speed of state transitions.

- Pseudotime Inference: Use

scvelo.tl.latent_timeor combine with PAGA to construct a robust, velocity-informed pseudotime ordering from a user-defined root cell.

Protocol 4: RNA Velocity-Informed Pseudotime Analysis

Table 1: Common Causes and Solutions for Ambiguity in Single-Cell Data

| Source of Ambiguity | Key Indicators | Recommended Confirmatory Experiment |

|---|---|---|

| Transcriptional Overlap | Co-expression of marker genes from >1 lineage; Low confidence scores from classifiers. | CITE-seq or flow cytometry for protein markers; Index sorting + qPCR. |

| Maturation Gradient | Continuous gene expression changes in UMAP; Lack of clear cluster boundaries. | RNA velocity; Time-course experiments; Pseudotime with in situ validation (FISH). |

| Transient Activation State | High expression of immediate-early/response genes; State disappears upon rest. | Pharmacologic arrest (e.g., cycloheximide); High-temporal-resolution scRNA-seq. |

| Technical Doublet | High doublet classifier score; Simultaneous expression of mutually exclusive markers. | Re-run with lower cell load; Use doublet-aware clustering and removal. |

Table 2: Comparison of Trajectory Inference Tools

| Tool | Method | Best For | Key Input | Consideration |

|---|---|---|---|---|

| Monocle3 | Reverse graph embedding | Complex trees, branching points | Cell & feature matrix | Sensitive to root cell selection. |

| PAGA | Abstract graph mapping | Preserving global topology | Nearest-neighbor graph | Provides abstract trajectory map. |

| Slingshot | Minimum spanning trees | Linear/cyclic trajectories | Cluster labels & reduced dims | Requires pre-defined clusters. |

| scVelo | RNA velocity dynamics | Directed trajectories, kinetics | Spliced/unspliced counts | Requires specific library prep. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Tool | Function | Example Use Case |

|---|---|---|

| TotalSeq Antibodies (BioLegend) | Oligo-tagged antibodies for CITE-seq. | Resolving transcriptional overlap by adding 20-30 protein dimensions. |

| Cell Hashing Antibodies (BioLegend) | Sample multiplexing oligo-antibodies. | Pooling samples to minimize batch effects before sequencing. |

| Chromium Single Cell Immune Profiling (10x) | Targeted library prep for V(D)J + gene expression. | Defining clonality and activation states of T/B cells simultaneously. |

| SMART-Seq v4 Ultra Low Input Kit (Takara) | Full-length, high-sensitivity scRNA-seq. | Deep sequencing of rare or sorted intermediate cells for gradient analysis. |

| CellTrace Proliferation Kits (Invitrogen) | Fluorescent dye to track cell divisions. | Correlating maturation state with proliferative history. |

| scATAC-seq Kit (10x Genomics) | Single-cell assay for transposase-accessible chromatin. | Identifying regulatory landscapes driving activation/transition states. |

Technical Support Center: Troubleshooting Guides and FAQs

FAQ 1: How can mis-annotated cell clusters lead to misleading differential expression (DE) results?

Answer: Mis-annotation merges distinct cell types or splits a homogeneous population. This causes DE analysis to compare apples-to-oranges (e.g., neurons vs. glia) or find spurious differences within the same cell type. Key artifacts include:

- False Positives: Up-regulated genes that are merely markers of a contaminating, unannotated cell subtype.

- False Negatives: True differentially expressed genes are diluted when compared across a mixed population.

- Pathway Misinterpretation: Enriched pathways reflect the identity of the mis-annotated cells, not the biological condition.

Table 1: Common DE Artifacts from Poor Annotation

| Annotation Error | Downstream DE Consequence | Typical P-value/LogFC Pattern |

|---|---|---|

| Cluster Merging: Two subtypes as one. | False negatives; diluted signal. | High p-values, attenuated log2FC for true marker genes. |

| Cluster Splitting: One type as two. | False positives; batch/technical effect genes appear significant. | Low p-values for technical or state-specific (e.g., cell cycle) genes. |

| Contamination: Unannotated minor subtype. | False positives for subtype marker genes. | Low p-values, high log2FC for unknown markers misattributed to condition. |

Troubleshooting Protocol: Validating DE Results Post-Annotation

- Check Marker Overlap: For each DE gene list, verify known cell identity markers are not driving the signal. Use public databases (e.g., CellMarker, PanglaoDB).

- Re-cluster and Re-test: Re-perform DE analysis on a subset of well-annotated, high-confidence clusters. Compare results to the full dataset.

- Pseudobulk Correlation: Create pseudobulk samples per cluster+condition. Correlate samples. High correlation between clusters suggests mis-annotation and unreliable DE.

- Doublet Detection: Run a doublet detection algorithm (e.g., Scrublet, DoubletFinder). Exclude predicted doublets and re-run DE.

FAQ 2: Why does trajectory/pseudotime inference fail or produce illogical paths after annotation?

Answer: Trajectory tools (e.g., Monocle3, PAGA, Slingshot) rely on accurate topology. Mis-annotation introduces "short-circuit" connections between unrelated lineages or breaks continuous transitions.

Table 2: Trajectory Errors from Annotation Issues

| Problem | Root Cause | Manifestation in Trajectory Graph |

|---|---|---|

| Disconnected Graph | Over-splitting of a continuous cell state into multiple discrete annotations. | Multiple, isolated trajectories instead of a connected manifold. |

| Circular/Illogical Paths | Merging of distinct lineages (e.g., merging precursor cells for different end states). | Branches that converge incorrectly or cycles where none exist biologically. |

| Incorrect Branch Order | Contamination of a branch point cluster with cells from an unrelated lineage. | The inferred sequence of cell fate decisions does not match known biology. |

Troubleshooting Protocol: Diagnosing Faulty Trajectories

- Marker Gene Heatmap along Pseudotime: Order cells by pseudotime. Plot expression of known lineage-specific markers. They should show monotonic increase/decrease along a branch, not mixed patterns.

- Graph Robustness Test: Sub-sample cells from key questionable clusters and re-run trajectory inference multiple times. If branch topology is unstable, annotation of those clusters is likely flawed.

- Cluster Connectivity Analysis: Calculate k-nearest neighbor connections before annotation. If cells in two annotated clusters are highly inter-connected, they may be the same type or state and should be merged for trajectory analysis.

Title: How Annotation Quality Drives Downstream Analysis Outcomes

FAQ 3: What experimental and computational protocols improve annotation for similar subtypes?

Answer: A multi-modal, iterative approach is required.

Detailed Protocol: Iterative Annotation Refinement

- Initial Clustering & Marker Detection: Use high-resolution clustering (e.g., Leiden, high

resolution). Find top markers per cluster (Wilcoxon rank-sum test). - Cross-Reference with Multi-Omics Atlases: Query markers against specialized databases (e.g., Allen Brain Map, Human Cell Atlas) and CITE-seq/ASAP-seq datasets from similar tissues for surface protein correlation.

- Confirm with Orthogonal Data:

- Experimental Validation: Perform multiplexed FISH (e.g., MERFISH) on a panel of ~5-10 key marker genes from putative subtypes.

- Functional Assay: Sort populations based on putative marker genes (e.g., via FACS) and conduct a brief functional assay (e.g., cytokine secretion, phagocytosis) to confirm phenotypic difference.

- Re-cluster with Integrated Labels: Integrate validated labels and re-run downstream analyses.

The Scientist's Toolkit: Key Reagents & Resources

Table 3: Essential Resources for Accurate Subtype Annotation

| Item | Function | Example/Provider |

|---|---|---|

| High-Quality Reference Atlas | Provides pre-annotated datasets for mapping/transferring labels. | CellTypist, SingleR, Azimuth, Human/Auto Cell Atlases. |

| Multiplexed FISH Reagents | Spatially validates co-expression of putative marker genes in situ. | Akoya Biosciences (CODEX, Phenocycler), 10x Genomics (Xenium). |

| CITE-seq Antibody Panels | Adds surface protein expression, crucial for distinguishing transcriptomically similar subtypes. | BioLegend TotalSeq, BD AbSeq. |

| Cell Hashing Antibodies | Enables sample multiplexing, reducing batch effects that confound annotation. | BioLegend TotalSeq-H, BD Single-Cell Multiplexing Kit. |

| CRISPR Screening Libraries (Perturb-seq) | Links genes to causal cell state changes, defining functional subtypes. | Custom sgRNA libraries targeting subtype marker genes. |

| Doublet Detection Software | Identifies & removes artifactual cell multiplets that appear as novel subtypes. | Scrublet, DoubletFinder, scDblFinder. |

Title: Iterative Workflow for Robust Cell Subtype Annotation

Troubleshooting Guides & FAQs

Q1: Our single-cell RNA-seq data shows inconsistent annotation results when using different public reference atlases for the same tissue (e.g., brain cortex). What is the likely cause and how can we resolve it? A: This directly highlights the "Gold Standard Problem." Different atlases are built using specific protocols, donors, and bioinformatics pipelines, leading to batch effects and differing definitions of cell states. To resolve:

- Use Multiple References & Consensus: Annotate your data against 2-3 high-quality, recent atlases (e.g., HuBMAP, HCA). Cells with concordant labels across atlases are high-confidence.

- Apply Harmony or Seurat's CCA: Perform integration between your query dataset and the reference to correct for technical batch effects before label transfer.

- Validate with Novel Marker Expression: Check the expression of recently published, high-specificity marker genes for your target subtypes in your unannotated data.

Q2: A canonical marker gene for a cell type (e.g., SLC17A7 for excitatory neurons) is expressed in unexpected clusters in our dataset. How should we interpret this? A: Marker gene promiscuity is common. Proceed as follows:

- Check Expression Level & Co-expression: Ensure expression is biologically meaningful (not low/ambient). Use a dual-gene plot to see if it co-expresses with a contradictory marker (e.g., GAD1 for inhibitory neurons).

- Examine Metadata: Verify the marker's specificity in the original reference literature—it may define a broad class, not a subtype.

- Run a Specificity Test: Calculate metrics like the Gini index or AUC for the gene across all your clusters. A true marker should have high specificity for one cluster.

Q3: After using an automated annotation tool (Azimuth, SingleR), we get a large "unassigned" or "low-confidence" population. What are the next steps? A: This indicates your data contains cell states not well-represented in the reference.

- Sub-cluster the "Unassigned" Cells: Re-cluster only these cells at a higher resolution. Perform de novo differential expression to find new marker genes.

- Manual Annotation with Updated Markers: Compare new cluster markers against the latest literature (e.g., recent papers on rare subtypes) and databases like CellMarker 2.0.

- Consider a Custom Reference: If you have FACS-sorted or well-validated cells from a pilot experiment, build a small, custom reference for your specific biological system.

Q4: How do we validate annotation accuracy for two highly similar subtypes (e.g., CD8+ T-cell exhaustion states Tex1 vs. Tex2) where marker overlap is significant? A: Move beyond transcriptome-only annotation.

- Multimodal Validation: If you have CITE-seq data, validate at the protein level for key surface markers (e.g., check PD-1, TIM-3 protein).

- Pseudotime/RNA Velocity: Confirm the annotated populations align with a biologically plausible trajectory (e.g., progenitor → exhausted).

- Functional Assay Correlation: Sort populations based on key markers and perform a functional assay (e.g., cytokine release) to confirm phenotypic differences.

Key Methodologies for Improving Annotation Accuracy

Protocol 1: Building a Multireference Consensus Annotation Pipeline

- Preprocess Query Data: Normalize (SCTransform) and integrate (if multiple batches) your single-cell dataset.

- Parallel Label Transfer: Run SingleR (with

reflist of multiple atlases), Azimuth, and SCINA (using marker gene lists) independently. - Create a Consensus Matrix: For each cell, record the label from each tool. Calculate concordance.

- Assign Final Labels: Cells with >70% agreement receive that label. Flag low-confidence cells for manual review.

- Manual Curation: Examine marker expression and differentially expressed genes (DEGs) for flagged cells using a visualization tool like CellBrowser.

Protocol 2: Experimental Validation of Annotations via Multiplexed FISH

- Probe Design: Select 3-5 top marker genes from bioinformatics analysis for each contested subtype, plus 1-2 housekeeping genes.

- Tissue Sectioning: Use the same tissue sample as for single-cell sequencing (adjacent section) or a genetically matched model.

- Hybridization & Imaging: Perform multiplexed error-robust FISH (MERFISH) according to manufacturer protocol.

- Image Analysis & Co-localization: Identify cells and quantify transcript counts per gene. Cluster cells based on spatial transcriptomics profiles.

- Correlation: Compare cluster identities from spatial data with single-cell annotations to confirm spatial relationships and subtype localization.

Research Reagent Solutions Toolkit

| Item | Function & Application in Annotation |

|---|---|

| 10x Genomics Chromium Single Cell Immune Profiling | Provides paired V(D)J and gene expression data critical for disentangling immune subtypes (e.g., B-cell clones, T-cell states). |

| CELLection Dynabeads | For immune cell depletion or enrichment from tissue digests prior to sequencing, reducing complexity and improving resolution of rarer stromal/parenchymal cells. |

| Visium Spatial Gene Expression Slide | Enables validation of annotated cell type localization within tissue architecture, confirming biologically plausible distributions. |

| TotalSeq Antibodies (BioLegend) | For CITE-seq, allowing protein-level measurement of key marker genes (e.g., CD markers) to confirm transcriptome-based annotations. |

| NucleoBond Xtra Maxi Kit (Machery-Nagel) | For high-quality, high-molecular-weight DNA extraction when performing single-cell multiome (ATAC + GEX) assays to integrate chromatin accessibility. |

| Live-or-Dye Fixable Viability Stains | Critical for ensuring high viability of single-cell suspensions, directly improving clustering and reducing ambient RNA artifacts. |

Table 1: Comparison of Major Public Reference Atlases (Human)

| Atlas Name (Project) | Tissue Scope | Cell Count | Key Feature | Common Annotation Challenge |

|---|---|---|---|---|

| Human Cell Atlas (HCA) | Comprehensive, Multi-tissue | ~50M (aim) | Community-driven standard, diverse donors. | Inconsistent granularity across tissues. |

| HuBMAP | Healthy adult tissues | ~15M (to date) | High-resolution spatial mapping integrated. | Focus on healthy states may limit disease relevance. |

| Tabula Sapiens | 24 organs, same donors | ~500k | Multi-organ from the same donors, reducing variability. | Lower per-organ cell count limits rare subtype discovery. |

| Tabula Muris & Tabula Muris Senis | Mouse, across lifespan | ~200k | Aging model, FACS and droplet-based. | Mouse-to-human translation discrepancies. |

| Azimuth References | Specific tissues (e.g., PBMC) | Varies | Optimized for direct use in Azimuth web app. | Black-box algorithm; hard to debug low-confidence calls. |

Table 2: Quantitative Metrics for Marker Gene Evaluation

| Metric | Formula/Description | Interpretation | Ideal Value for Subtype Marker |

|---|---|---|---|

| Log2 Fold Change (log2FC) | mean(exp_group) - mean(exp_ref) |

Magnitude of expression difference. | >1.5 |

| Percent Expressed (Pct.Exp) | % of cells in group where gene > 0 |

How ubiquitous the gene is in the group. | High in group (>60%) |

| Percent Expressed Ratio (Pct.Ratio) | Pct.Exp_Group / Pct.Exp_Ref |

Specificity of expression. | >>1 (e.g., >3) |

| Area Under the ROC Curve (AUC) | Probability a random cell from group ranks higher than from ref. | Overall classification power. | >0.85 |

| Gini Index | Measures inequality of expression across all clusters. | Specificity (1 = expressed in one cluster only). | >0.6 |

Visualizations

Title: Multi-Reference Consensus Annotation Workflow

Title: Root Causes of the Gold Standard Problem

Title: Multi-Tier Validation Strategy for Subtype Annotation

From Manual Gating to AI: A Toolkit for High-Resolution Cell Typing

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During CITE-seq library preparation, I observe a significant drop in ADT counts compared to my previous experiment. What could be the cause?

A: A drop in Antibody-Derived Tag (ADT) counts is commonly linked to antibody degradation or conjugation issues. First, verify the storage conditions of your TotalSeq-B antibodies; they should be aliquoted and stored at -20°C or -80°C to prevent freeze-thaw cycles. Second, ensure the cell staining and wash steps are performed with a large excess of cold wash buffer containing a protein carrier (e.g., 0.5% BSA in PBS) to block non-specific binding. Third, check the viability of your single-cell suspension, as high debris or dead cells can sequester antibodies. Finally, confirm that the correct downstream PCR amplification cycle number is used for the ADT library—typically 12-18 cycles—as over-amplification can cause index switching and under-amplification yields low counts.

Q2: In an integrated CITE-seq + ATAC-seq experiment, my ATAC-seq data shows unusually high mitochondrial read content. How do I resolve this?

A: High mitochondrial reads in ATAC-seq (>20-30%) typically indicate excessive cell lysis or suboptimal transposition, where exposed mitochondrial DNA is preferentially tagmented. To troubleshoot:

- Cell Permeabilization: Precisely titrate the digitonin concentration in the transposition mix. Standard protocols use 0.01-0.05% digitonin. Over-permeabilization lyses cells completely.

- Cell Counting & Input: Use an accurate, viable cell count. Do not exceed 50,000 cells per reaction for most commercial kits. Overloading can inhibit the transposase reaction.

- Transposition Time/Temp: Strictly adhere to the recommended time (30 min) and temperature (37°C) for the transposition reaction. Prolonged incubation increases mitochondrial tagmentation.

- Nuclei Isolation: Consider a separate nuclei isolation step prior to transposition for challenging cell types. Wash nuclei gently but thoroughly to remove cytoplasmic mitochondrial contaminants.

Q3: When integrating spatial transcriptomics data with CITE-seq/ATAC-seq references, the cell type mapping is inconsistent or has low confidence scores. What steps can improve this?

A: Low mapping confidence often arises from technical and biological disparities.

- Batch Effect Correction: Apply robust integration tools (e.g., Harmony, Seurat's CCA/Integration, SCVI) to the scRNA-seq/CITE-seq reference and the spatial data before mapping. Use shared highly variable genes or total features.

- Feature Selection: For spatial mapping, ensure your reference includes landmark genes that are robustly detected by the spatial platform (e.g., Visium, MERFISH). Avoid relying solely on genes with low capture efficiency in spatial data.

- Multi-modal Anchor Finding: Use tools like Weighted Nearest Neighbor (WNN) in Seurat or MOFA+ that can leverage ADT (protein) and ATAC (chromatin) modalities from the reference to find more robust anchors against the spatial gene expression data.

- Region-Specific Annotation: Manually check marker genes for the ambiguous spots. The spatial context itself (e.g., being in a specific anatomical layer) should be used as a prior to refine annotations, not just as a validation.

Q4: The integration of ATAC-seq peaks with CITE-seq-derived clusters fails to reveal expected transcription factor motifs. What are the potential reasons?

A:

- Low Resolution Clustering: The CITE-seq clusters may be too broad, masking subtype-specific chromatin accessibility. Re-cluster using WNN analysis that combines RNA and ADT, or perform sub-clustering on populations of interest.

- Inadequate Peak Calling: Perform peak calling on subsets of cells (pseudo-bulk) corresponding to the refined cell subtypes, not on the entire dataset. This increases signal-to-noise for subtype-specific peaks.

- Motif Database: Ensure you are using a comprehensive and appropriate motif database (e.g., JASPAR, CIS-BP) that includes motifs for the species and cell type relevant to your study.

- Chromatin vs. Protein Correlation: Remember that ATAC-seq peaks indicate potential regulatory regions. The correlation between TF motif accessibility and actual TF protein levels (from ADT) may not be perfect due to post-translational regulation. Directly compare the motif activity score with the paired protein expression level from the same cell.

Experimental Protocols for Improving Annotation Accuracy

Protocol 1: Multi-modal Reference Atlas Construction using CITE-seq and ATAC-seq

Objective: To create a high-resolution, multi-omics reference for cell subtypes that integrates gene expression, surface protein, and chromatin accessibility.

Methodology:

- Cell Preparation: Generate a high-viability (>90%) single-cell suspension from target tissue(s). Count using a fluorescent viability dye.

- CITE-seq Staining: Incubate cells with a pre-titrated TotalSeq-B antibody cocktail for 30 min on ice. Wash 3x with cold cell staining buffer.

- Nuclear Tagmentation (Multiome): Process cells through the 10x Genomics Chromium Single Cell Multiome ATAC + Gene Expression assay according to the manufacturer's protocol. This co-encapsulates a single nucleus for simultaneous GEX and ATAC library generation.

- Library Preparation: Generate three libraries: Gene Expression (GEX), Antibody Capture (ADT), and ATAC-seq. Use unique sample indices for multiplexing.

- Sequencing: Sequence GEX and ADT libraries on a NovaSeq (PE 28x8x0x91). Sequence ATAC library on a HiSeq 4000 or NovaSeq (PE 50x50) for sufficient coverage in open chromatin regions.

- Primary Analysis: Use Cell Ranger ARC (10x) for demultiplexing, barcode processing, and initial peak calling.

- Integrated Analysis in Seurat/Signac:

- Create a Seurat object for GEX and ADT data. Normalize ADT counts using centered log ratio (CLR).

- Create a ChromatinAssay object in Signac for the ATAC data. Call peaks using MACS2.

- Perform weighted nearest neighbor (WNN) analysis on the combined RNA, ADT, and ATAC (gene activity score) matrices to construct a unified UMAP and define cell clusters.

- Identify multi-modal markers: differential RNA expression, differential surface protein abundance, and differential chromatin accessibility peaks.

Protocol 2: Spatial Validation and Context Integration using a Multi-modal Reference

Objective: To map and validate fine-grained cell subtypes onto a spatial transcriptomics slide and interpret spatial neighborhoods.

Methodology:

- Spatial Transcriptomics: Perform 10x Visium or similar spatial gene expression protocol on a consecutive tissue section (preferably fresh frozen).

- Image & Data Processing: Process slide through Space Ranger for alignment, tissue detection, and barcode/UMI counting.

- Anchor-Based Mapping:

- Downsample the multi-modal reference (from Protocol 1) and the spatial data to a shared set of ~5,000 highly variable genes.

- Find integration anchors using the

FindTransferAnchors()function in Seurat, setting the reference to the WNN-integrated CITE-seq/ATAC-seq object and the query to the spatial data. - Transfer cell type predictions (and optionally, imputed ADT or gene activity scores) to each spatial spot using

TransferData().

- Spatial Neighborhood Analysis:

- Perform niche analysis with

SpaCellorGiottoto identify recurrent spot neighborhoods based on the transferred cell type composition. - Correlate local cell type colocalization with key pathway activity (inferred from the reference's RNA data) or with histology from the H&E image.

- Perform niche analysis with

Table 1: Common Issues and Solutions in Multi-modal Data Generation

| Issue | Primary Assay | Likely Cause | Recommended Solution |

|---|---|---|---|

| Low ADT Recovery | CITE-seq | Antibody degradation, poor staining/wash | Aliquot antibodies, use cold BSA buffer, titrate antibody amount. |

| High Mitochondrial % | ATAC-seq (Multiome) | Cell over-lysis, high cell input | Titrate digitonin (<0.05%), use accurate viable cell count (<50k). |

| Low Gene Complexity | scRNA-seq/GEX | Cell damage, poor RT/amplification | Assess cell viability, check reagent freshness, avoid over-amplification. |

| Low Peak Signal | ATAC-seq | Incomplete transposition, low cell input | Verify TN5 activity, ensure correct cell concentration, check for inhibitor carryover. |

| Low Mapping Confidence | Spatial Integration | Batch effects, mismatched features | Apply Harmony/CCA, use robust spatial marker genes, leverage WNN anchors. |

Table 2: Recommended Sequencing Parameters for Multi-modal Studies

| Library Type | Platform | Recommended Depth | Read Configuration | Key Quality Metric |

|---|---|---|---|---|

| scRNA-seq (GEX) | Illumina NovaSeq | 20,000-50,000 reads/cell | 28bp Read1, 8bp i7, 0bp i5, 91bp Read2 | >70% reads confidently mapped to transcriptome. |

| CITE-seq (ADT) | Illumina NovaSeq | 5,000-20,000 reads/cell | 22bp Read1, 8bp i7, 0bp i5, 20bp* Read2 | Distinct antibody UMI distribution, low background. |

| scATAC-seq | Illumina NovaSeq | 25,000-100,000 fragments/cell | 50bp Paired-End | TSS enrichment score >5, FRiP score >0.2. |

| Visium (Spatial) | Illumina NovaSeq | 50,000-200,000 reads/spot | 28bp Read1, 10bp i7, 10bp i5, 90bp Read2 | >30% reads in spots under tissue, high UMIs/spot. |

*ADT Read2 length is determined by the specific TotalSeq-B antibody panel.

Visualizations

Workflow: Constructing a Multi-modal Reference Atlas

Spatial Mapping with a Multi-modal Reference

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example/Key Feature |

|---|---|---|

| TotalSeq-B Antibodies | Barcoded antibodies for quantifying surface protein expression alongside RNA in CITE-seq. | BioLegend, ~1,000+ human/mouse targets, contain PCR handle for library prep. |

| Chromium Single Cell Multiome ATAC + Gene Expression Kit | Enables co-assay of chromatin accessibility (ATAC) and gene expression (GEX) from the same nucleus. | 10x Genomics, includes nucleus isolation buffers, transposase, gel beads. |

| Chromium Next GEM Chip K | Microfluidic chip for partitioning cells/nuclei into Gel Bead-in-Emulsions (GEMs). | 10x Genomics, essential for all 10x single-cell library generation. |

| Digitonin | Mild, cholesterol-dependent detergent for permeabilizing cell membranes in ATAC-seq protocols. | Used at low concentration (0.01-0.05%) in transposition mix to allow Tn5 entry. |

| DMSO | Cryoprotectant for long-term storage of single-cell suspensions or nuclei prior to loading. | Use at 5-10% final concentration; helps maintain cell viability and prevent clumping. |

| BSA (0.5% in PBS) | Protein blocking agent for antibody staining and wash buffers. | Reduces non-specific binding of antibodies in CITE-seq and cell adhesion to tubes. |

| RNase Inhibitor | Protects RNA integrity during sample processing prior to cDNA synthesis. | Critical for high-quality GEX data, added to lysis and wash buffers. |

| Visium Spatial Tissue Optimization Slide & Kit | Determines optimal permeabilization time for tissue prior to spatial transcriptomics run. | 10x Genomics, essential for maximizing RNA capture efficiency from FFPE or frozen tissue. |

| SPRIselect Beads | Magnetic beads for size selection and clean-up of DNA libraries (ATAC, ADT). | Beckman Coulter, used for post-PCR purification and fragment size selection. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During graph-based clustering (e.g., Leiden, Louvain) of single-cell data, my results are overly granular, splitting known cell types into too many meaningless clusters. How can I optimize resolution?

- A: This is a common issue related to the resolution parameter and graph construction.

- Diagnosis: First, visualize the k-nearest neighbor (kNN) graph. An excessively high

kor a distance metric unsuited to your data (e.g., Euclidean on highly sparse data) can create over-connected graphs. - Protocol - Systematic Resolution Scanning:

- Run the clustering algorithm across a logarithmic series of resolution parameters (e.g., 0.1, 0.2, 0.5, 1.0, 2.0).

- For each result, calculate cluster stability metrics (e.g., average silhouette width, confidence in clustering via bootstrapping).

- Use a known marker gene (from prior knowledge) as a pseudo-ground truth. Calculate the Adjusted Rand Index (ARI) between clustering results and a binary classification based on expression of this marker.

- Select the resolution that maximizes both stability and biological plausibility (see Table 1).

- Adjust Graph Construction: Consider using a cosine distance metric for high-dimensional transcriptomic data. Reduce

kin the kNN step to create a sparser graph.

- Diagnosis: First, visualize the k-nearest neighbor (kNN) graph. An excessively high

Q2: When applying a supervised classifier (e.g., Random Forest, SVM) to annotate new cell subtypes, performance drops significantly on data from a different batch or donor. How to improve generalization?

- A: This indicates batch effect confounding the model. The solution involves integration before classification.

- Diagnosis: Perform PCA and color cells by batch. If batches separate in low-dimensional space, batch correction is mandatory.

- Protocol - Integration-First Classification Workflow:

- Step 1: Merge all datasets (training and new) and perform standard normalization.

- Step 2: Apply a batch integration method (e.g., Harmony, Seurat's CCA) without using the cell labels.

- Step 3: Use the integrated embeddings (not the original counts) as features for training your classifier on the labeled training data.

- Step 4: Apply the trained model to predict labels on the integrated embeddings of the new data. This ensures the classifier learns on a batch-corrected feature space.

Q3: In semi-supervised learning for annotation, how do I select which unlabeled cells to query for expert labeling to maximize model improvement with minimal effort?

- A: Implement an Active Learning loop based on uncertainty sampling.

- Protocol - Active Learning Cycle:

- Train an initial model on your small set of labeled cells.

- Apply the model to all unlabeled cells to obtain prediction probabilities.

- Calculate the uncertainty for each unlabeled cell. For a Random Forest, use

1 - max(prediction_probability). For a model with calibrated probabilities, use entropy. - Rank unlabeled cells by uncertainty (highest first).

- Present the top

N(e.g., 20-50) most uncertain cells to the domain expert for labeling. - Add the newly labeled cells to the training set, retrain the model, and repeat.

- Key Consideration: To avoid sampling bias, periodically also sample a small random subset of low-uncertainty cells for validation.

- Protocol - Active Learning Cycle:

Q4: What quantitative metrics should I use to benchmark the final annotation accuracy of my pipeline against a manually curated gold standard?

- A: Rely on a suite of metrics, not just one. Compare your algorithmic labels (

Pred) to expert labels (True) using the following, summarized in Table 1.

Table 1: Benchmarking Metrics for Annotation Accuracy

| Metric | Formula / Description | Interpretation & Use Case |

|---|---|---|

| Overall Accuracy | (Correct Cells) / (Total Cells) |

Simple global measure. Can be misleading if class imbalance. |

| Balanced Accuracy | (Sensitivity + Specificity) / 2 |

Better for imbalanced classes. Average of per-class recall. |

| Adjusted Rand Index (ARI) | Adjusted for chance similarity of two partitions. Range: [-1, 1]. | Measures cluster similarity. 1=perfect match. Robust to label permutations. |

| Weighted F1-Score | Harmonic mean of precision & recall, averaged weighted by class size. | Good overall measure of classifier performance per class. |

| Confusion Matrix | C(i,j) = cells of true class i predicted as class j. |

Essential for diagnosing which subtypes are consistently confused. |

Experimental Protocols

Protocol 1: Benchmarking Graph Clustering for Subtype Discovery

- Input: Normalized single-cell RNA-seq count matrix (e.g., 10k cells x 20k genes).

- Method:

- Feature Selection: Select 2,000-5,000 highly variable genes.

- Dimensionality Reduction: PCA (50 principal components).

- Graph Construction: Build a shared nearest neighbor (SNN) graph using the first 30 PCs (

k=20neighbors). - Clustering: Apply the Leiden algorithm at resolution

r. - Optimization: Repeat step 4 for

rin[0.2, 0.5, 1.0, 1.5, 2.0]. Calculate average silhouette width perr. - Validation: For each

r, compute the separation of known major type markers (e.g., CD3E for T cells) using per-cluster log fold-change. Selectryielding high silhouette width and clear marker separation.

Protocol 2: Semi-Supervised Annotation with Self-Training

- Input: Integrated embedding matrix (e.g., Harmony dimensions), small set of labeled cells (

L), large set of unlabeled cells (U). - Method:

- Initial Model: Train a Support Vector Machine (SVM) with radial basis function kernel on

L. - Pseudo-Labeling: Apply the SVM to

U. Select cells where prediction probability exceeds a high threshold (e.g., 0.95). - Expansion: Add these high-confidence pseudo-labeled cells to

L. - Reiteration: Retrain the SVM on the expanded

L. Repeat steps 2-3 for 2-3 iterations. - Final Classification: Apply the final model to all cells in

Uwith lower confidence thresholds for annotation.

- Initial Model: Train a Support Vector Machine (SVM) with radial basis function kernel on

Visualizations

Title: Self-Training Semi-Supervised Learning Workflow

Title: Graph-Based Clustering Optimization Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Cell Subtype Annotation Research

| Item | Function in Context |

|---|---|

| 10x Genomics Chromium | Platform for high-throughput single-cell RNA/DNA library preparation. Generates the primary barcoded sequencing data. |

| Cell Hashing Antibodies (e.g., BioLegend TotalSeq-A) | Allows multiplexing of samples, reducing batch effects and costs. Enables post-hoc sample demultiplexing. |

| Feature Barcoding Kits (CITE-seq/REAP-seq) | Enables simultaneous measurement of surface protein abundance alongside transcriptome, crucial for defining similar subtypes. |

| Seurat R Toolkit / Scanpy Python Toolkit | Comprehensive software suites for single-cell analysis, including graph construction, clustering, and visualization. |

| Harmony Integration Algorithm | Software package for batch effect correction without using labels, creating integrated embeddings for downstream analysis. |

| Cell Annotation Databases (CellMarker, PanglaoDB) | Curated resources of marker genes for cell types, used as prior knowledge for seeding supervised/semi-supervised models. |

| Google Colab / High-Performance Computing (HPC) Cluster | Computational environment required for running advanced algorithms on large-scale single-cell datasets. |

Troubleshooting Guides & FAQs

Q1: Our ensemble model (e.g., Random Forest or a custom voting classifier) is consistently overfitting to our training data on single-cell RNA-seq datasets, leading to poor generalization on validation batches. What are the primary checks and steps to mitigate this?

A1: Overfitting in ensembles for cell annotation often stems from correlated base classifiers or dataset-specific noise.

- Check Base Learner Diversity: Calculate pairwise correlations between the predicted probability outputs of your base classifiers (e.g., different k-NN, SVM, and decision tree configurations) on a held-out set. Low correlation indicates good diversity. Introduce more algorithmically diverse models (neural networks, gradient boosting) if correlations are high (>0.8).

- Review Aggregation Method: Switch from a simple majority vote to a weighted vote, where weights are inversely proportional to each classifier's error rate on a validation set, or use stacking with a simple meta-learner (like logistic regression) trained on cross-validated base classifier outputs.

- Apply Post-hoc Feature Selection: Before training, apply consensus feature selection. Use the following protocol:

- Run Recursive Feature Elimination (RFE) with a linear SVM, Boruta, and a variance threshold filter independently.

- Retain only the features selected by at least 2 out of 3 methods.

- Retrain your ensemble on this reduced gene expression matrix.

Q2: When using a stacking ensemble, the performance of the meta-classifier is worse than that of the best base classifier. What could be going wrong and how do we debug the stacking workflow?

A2: This usually indicates data leakage during the generation of the training data for the meta-learner or a poorly chosen meta-learner.

- Debug the Training Protocol: Ensure you used proper out-of-fold predictions for the base layer. The correct workflow is:

- Split your training data into k folds.

- For each base classifier, train on k-1 folds and predict probabilities on the held-out fold. Repeat for all k folds to generate a full set of out-of-fold predictions for the training set.

- Train the meta-classifier only on these out-of-fold predictions and their true labels.

- Finally, train all base classifiers on the entire training set.

- Simplify the Meta-Learner: Start with a simple linear model (e.g., Logistic Regression) as your meta-classifier to avoid adding another layer of complexity. Non-linear meta-learners can overfit quickly.

- Check Base Classifier Outputs: Ensure your base classifiers output well-calibrated probability estimates (not just decision labels) for the meta-learner to utilize.

Q3: How do we quantitatively decide between a hard voting and a soft voting ensemble approach for our cell subtype classification task?

A3: The decision should be based on the confidence calibration of your base classifiers. Follow this experimental comparison:

Protocol:

- Train your candidate base classifiers (e.g., Classifier A, B, C).

- On a dedicated validation set, record predictions for both strategies:

- Hard Vote: Final class = mode of each classifier's predicted class label.

- Soft Vote: Final class = argmax of the average predicted probabilities for each class.

- Calculate key metrics for both ensemble types.

Quantitative Comparison Table:

| Metric | Hard Voting Ensemble | Soft Voting Ensemble | Interpretation |

|---|---|---|---|

| Overall Accuracy | 94.2% | 95.7% | Soft voting marginally better. |

| Avg. Precision (Macro) | 0.89 | 0.92 | Soft voting better at ranking positive cells. |

| Cohen's Kappa | 0.91 | 0.93 | Soft voting leads to better agreement beyond chance. |

| Runtime (Prediction) | ~1.2s | ~1.5s | Hard voting is slightly faster. |

Conclusion: If base classifiers produce meaningful probabilities (are well-calibrated), soft voting is generally superior. Use hard voting if probabilities are unreliable or speed is critical.

Q4: We observe high disagreement among classifiers for a specific rare cell subtype (e.g., a novel T-cell state). How should we handle these "low-consensus" cells to improve annotation robustness?

A4: High disagreement is an opportunity for discovery or quality control. Implement a consensus threshold filter.

- Define a Consensus Score: For each cell, calculate the proportion of base classifiers that agree with the final ensemble call. For soft voting, use the standard deviation of the averaged probabilities; a high SD indicates low consensus.

- Set a Threshold: Based on your validation set, plot consensus score against classifier confidence. Identify a score below which manual review is required (see table for example).

- Action Protocol: Implement a triage system based on consensus level.

Consensus Triage Protocol Table:

| Consensus Score (Proportion) | Action | Outcome |

|---|---|---|

| ≥ 0.9 (High) | Accept automated call. | Robust annotation for downstream analysis. |

| 0.6 - 0.89 (Medium) | Flag for review via visualizations (UMAP with highlighted cell). | Check if cells lie in ambiguous region in gene expression space. |

| ≤ 0.59 (Low) | Reject automated call. Send for manual annotation or label as "Uncertain". | Prevents erroneous calls from skewing rare population analysis. |

Q5: What are the essential computational tools and packages for implementing ensemble methods in a Python-based single-cell analysis pipeline?

A5: The following toolkit is standard for building classifier ensembles in this domain.

Research Reagent Solutions (Computational Tools):

| Tool/Package | Primary Function | Use Case in Ensemble Cell Annotation |

|---|---|---|

| scikit-learn | Core ML & ensemble algorithms. | Providing base estimators (SVM, RF, k-NN) and ensemble wrappers (VotingClassifier, StackingClassifier). |

| Scanpy/Anndata | Single-cell data management. | Housing expression matrices, cell metadata, and storing ensemble prediction results as new annotations. |

| scGeneFit | Marker selection & feature extraction. | Identifying discriminative genes for training classifiers, reducing dimensionality. |

| CellTypist | Pre-trained & transfer learning models. | Can be used as a powerful base classifier within a custom ensemble. |

| Joblib | Parallel processing. | Parallelizing the training of multiple base classifiers to reduce runtime. |

| UNCURL | Preprocessing & denoising. | Generating alternative, denoised views of the data to train diverse base classifiers. |

Experimental Protocol: Benchmarking Ensemble Strategies for Cell Annotation

Objective: To compare the performance of a single classifier versus multiple ensemble methods on a benchmark single-cell dataset with known, challenging similar subtypes (e.g., CD8+ T-cell exhaustion states).

1. Data Preparation:

- Dataset: Use a public PBMC dataset with expert-annotated CD8+ T-cell subtypes (Naive, Central Memory, Effector, Exhausted).

- Preprocessing: Log-normalize, identify highly variable genes (2000), and scale the data. Split into 70% training, 15% validation, 15% test, ensuring balanced subtype representation.

2. Base Classifier Training:

- Train four distinct base classifiers on the training set using 5-fold cross-validation:

- C1: Support Vector Machine (RBF kernel).

- C2: Random Forest (100 trees).

- C3: k-Nearest Neighbors (k=5).

- C4: Multi-layer Perceptron (1 hidden layer).

3. Ensemble Construction:

- Hard Voting (HV): Majority vote from C1-C4.

- Soft Voting (SV): Argmax of average predicted probabilities from C1-C4.

- Stacking (ST): Use out-of-fold predictions from C1-C4 on the training set to train a Logistic Regression meta-classifier.

4. Evaluation:

- Evaluate all models on the held-out test set.

- Key Metrics: Accuracy, Weighted F1-Score, Matthews Correlation Coefficient (MCC).

5. Consensus Analysis:

- For each cell in the test set, calculate the consensus score (proportion of agreeing base classifiers).

- Correlate low-consensus cells with their position in UMAP space.

Title: Ensemble Method Benchmarking Workflow for Cell Annotation

Title: Decision Logic for Low-Consensus Cell Triage

Debugging Your Annotations: Common Pitfalls and Pro Tips for Refinement

Troubleshooting Guide & FAQs

Q1: After automated annotation, a significant subset of cells has low confidence scores (<0.5). What should I do first? A1: First, perform UMAP inspection. Generate a UMAP plot colored by confidence score and a second plot colored by the preliminary cluster labels. Overlaying these helps identify if low-confidence cells are isolated in specific regions (suggesting a novel or poorly represented subtype) or diffusely spread (suggesting technical noise or batch effect).

Q2: In UMAP space, my low-confidence cells form a distinct, dense cluster separate from high-confidence populations. What does this indicate? A2: This pattern strongly suggests the presence of a biologically distinct cell subtype not well-represented in your reference dataset. The annotation algorithm cannot confidently map these cells to existing labels. The next step is to perform differential expression analysis on this cluster versus the nearest high-confidence cluster to identify potential marker genes for a new subtype.

Q3: Low-confidence cells are scattered diffusely across all clusters in UMAP. What are the likely causes and solutions? A3: This typically points to data quality issues or batch effects.

- Cause 1: High doublet or multiplet rate.

- Solution: Re-run doublet detection (e.g., using

scrublet) and remove suspected doublets before re-annotation.

- Solution: Re-run doublet detection (e.g., using

- Cause 2: Significant batch effect confounding the analysis.

- Solution: Apply a robust integration/batch correction method (e.g.,

Harmony,Scanorama,BBKNN) on the raw count data before generating the embeddings used for UMAP and annotation.

- Solution: Apply a robust integration/batch correction method (e.g.,

- Cause 3: Low sequencing depth or high mitochondrial gene percentage in those specific cells.

- Solution: Filter cells based on stricter QC thresholds (nCountRNA, nFeatureRNA, percent.mt).

Q4: How do I decide the threshold for a "low" confidence score? Is it universal? A4: No, the threshold is not universal. It depends on your annotation tool and dataset complexity.

- Plot the distribution of confidence scores for your entire dataset.

- Identify natural breaks or bimodality in the distribution.

- Manually inspect the expression of canonical markers in cells from different score ranges (e.g., 0-0.3, 0.3-0.6, 0.6-1.0) to validate the biological relevance of the threshold.

Q5: What is the step-by-step protocol for the differential expression analysis recommended for investigating a novel low-confidence cluster? A5: Protocol: Marker Identification for Low-Confidence Clusters

- Subset Data: Isolate the cells from the distinct low-confidence cluster (Cluster A) and the cells from the nearest high-confidence cluster (Cluster B).

- Normalization: Perform library size normalization and log transformation on the raw count matrix for this subset (e.g.,

SCTransformorNormalizeDatain Seurat). - Differential Testing: Use a statistical test appropriate for single-cell data (e.g., Wilcoxon rank-sum test via

FindMarkersin Seurat) to compare Cluster A vs. Cluster B. - Filter Results: Apply thresholds (e.g.,

avg_log2FC > 0.5,p_val_adj < 0.01) to identify significant differentially expressed genes (DEGs). - Validation: Visually validate top upregulated DEGs in Cluster A using feature plots on the UMAP and violin plots. Cross-reference with external literature or databases (e.g., CellMarker, PanglaoDB) to assess novelty.

Table 1: Common Causes and Diagnostic Signals of Low-Confidence Annotations

| Pattern in UMAP | Likely Primary Cause | Key Diagnostic Check | Recommended Action |

|---|---|---|---|

| Distinct, isolated cluster | Novel cell type/subtype | DEGs vs. nearest cluster; Check literature for markers | Curate new label; Expand reference dataset |

| Diffuse scattering across plots | High doublet rate | Run doublet detection score | Remove predicted doublets and re-analyze |

| Mixing at cluster boundaries | Ambiguous transitional state | Check expression of cycling (MKI67) or stress markers | Apply a "transitioning" or "unknown" label; Use trajectory inference |

| Batch-specific distribution | Batch effect | Color UMAP by sample/batch of origin | Apply batch correction before annotation |

Table 2: Typical Confidence Score Ranges and Interpretation

| Score Range | Interpretation | Action for Thesis Context |

|---|---|---|

| 0.8 – 1.0 | High-confidence assignment. | Accept label for downstream analysis. Use these cells as a stable core for comparisons. |

| 0.5 – 0.8 | Moderate confidence. | Accept label tentatively. Flag for manual review if subpopulation analysis is critical. |

| 0.3 – 0.5 | Low confidence. | Mandatory manual inspection. Likely ambiguous or poorly represented subtype. Primary target for diagnostic workflow. |

| 0.0 – 0.3 | Very low/no confidence. | Highly ambiguous or aberrant cells. Check for technical artifacts (doublets, low quality). |

Visualizations

Diagram 1: Workflow for Diagnosing Low Confidence Annotations

Diagram 2: Signaling Pathway for Cell State Ambiguity (Example: IFN Response)

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function/Application in Diagnosis |

|---|---|

Single-Cell Annotation Software (e.g., scArches, SingleR, scPred) |

Provides the automated cell-type label and the associated per-cell confidence score which is the starting point for diagnosis. |

Integration/Batch Correction Tools (e.g., Harmony, BBKNN) |

Critical for resolving diffuse low-confidence patterns caused by batch effects. Corrects embeddings before annotation. |

Doublet Detection Algorithms (e.g., Scrublet, DoubletFinder) |

Identifies and removes technical multiplets, a common cause of unassignable, low-confidence cells. |

| Marker Gene Databases (e.g., CellMarker, PanglaoDB) | Used to validate potential novel markers from low-confidence clusters against known biology. |

Visualization Packages (e.g., scanpy.plot, Seurat::DimPlot) |

Enables generation of UMAP/ t-SNE plots colored by confidence score and cluster ID for pattern recognition. |

Differential Expression Tool (e.g., Seurat::FindMarkers, scanpy.tl.rank_genes_groups) |

Performs statistical comparison between low-confidence clusters and reference populations to identify signature genes. |

Batch Effect Correction Strategies for Consistent Cross-Dataset Annotation

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After integrating two single-cell RNA-seq datasets from different labs, my shared cell subtype clusters separately in UMAP. What is the first step to diagnose the issue? A1: This is a clear sign of strong batch effect. The first diagnostic step is to perform a pre-correction visualization. Calculate principal components (PCs) on the combined, normalized (e.g., log(CP10K+1)) but uncorrected data. Create a PC heatmap and plot variance explained by each PC colored by batch label. If the early PCs (PC1-PC5) show strong batch association in the heatmap and explain high variance, technical batch effect is confounding biological variation.

Q2: I used Harmony to integrate my datasets, but now I suspect it is over-correcting and removing real biological signal. How can I verify this? A2: Over-correction is a critical risk. To verify, conduct a differential expression analysis for known, biologically defined marker genes within a batch-corrected cluster, but using the pre-correction, batch-separated data. Follow this protocol:

- Identify a cluster of interest post-Harmony.

- Map cells in this cluster back to their original, uncorrected expression matrices and batch labels.

- For a strong marker gene (e.g., CD8A for cytotoxic T cells), plot its expression (log counts) across batches within this cluster using a violin plot.

- If the gene shows significant expression (Wilcoxon rank-sum test, p < 0.05) in only one batch pre-correction, it was likely an artifact. If it is consistently expressed across batches pre-correction but its signal has been diluted post-correction, over-correction is likely. Adjust Harmony's

thetaparameter (greater values for more diversity, less correction) and repeat.

Q3: Which batch correction method should I choose for integrating datasets generated with different platforms (e.g., 10x Genomics v2 vs. SMART-seq2)? A3: Platform-based differences are severe. Use a mutual nearest neighbors (MNN) or Seurat's CCA-based anchor method, as they are designed for strong, non-linear biases. Do not use ComBat in this scenario, as it assumes similar distribution across batches, which is violated across platforms. Critical pre-processing step: Perform aggressive, feature-based selection by retaining only genes detected (expression > 0) in a minimum percentage of cells (e.g., 5%) in all batches. This focuses correction on robustly measured biological signal.

Q4: My batch-corrected data shows good integration visually, but downstream differential expression (DE) results yield many insignificant or inconsistent genes. What might be wrong?

A4: The correction may have altered the variance structure. Always perform DE testing on the reconstructed "corrected" counts from the chosen method (e.g., corrected_counts from scvi-tools), not on the integrated low-dimensional embeddings. Ensure you are using statistical models (e.g., Wilcoxon, MAST, or NB models from scvi-tools) that account for the data's technical noise. Running DE on PCA embeddings will produce invalid statistics.

Experimental Protocols

Protocol 1: Benchmarking Correction Performance Using a Mixed-Species Experiment This protocol is the gold standard for quantifying batch correction accuracy.

- Experimental Design: Generate a "batch" dataset by mixing human (HEK293) and mouse (3T3) cells in equal proportions. Process half the mix for library prep on Day 1 (Batch A) and the other half on Day 2 (Batch B).

- Bioinformatics Processing: Align reads to a combined human (GRCh38) and mouse (GRCm39) genome. This allows unambiguous species assignment for each cell barcode.

- Benchmark Metric Calculation: Apply your chosen correction method (e.g., Seurat, Harmony, Scanorama). Post-integration, calculate two metrics:

- Batch ASW (Average Silhouette Width): Compute the silhouette width for each cell using batch labels. A value from -1 to 1. Aim for a value close to 0, indicating no batch structure.

- kBET (k-nearest neighbor batch effect test): For a random subset of cells, test if the batch label distribution in its local neighborhood matches the global distribution. Reports a rejection rate. Aim for < 0.1.

- Biological Conservation Metric: Perform clustering on the corrected data. Calculate the Adjusted Rand Index (ARI) between clusters and the known species labels. Aim for an ARI > 0.9, confirming that biological signal (species) is preserved while batch is removed.

Protocol 2: Validating Annotations with a Hold-Out Dataset This protocol tests the generalizability of your annotation model.

- Data Split: Designate one full dataset as your reference. Hold out one or more distinct datasets as your query.

- Reference Processing & Labeling: Process, batch-correct (if multiple references), and cluster the reference data. Manually annotate clusters using a vetted marker gene list to establish "ground truth" labels.

- Query Mapping: Use a label transfer algorithm (e.g., Seurat's

FindTransferAnchorsandTransferData, orscANVI). This projects query cells into the reference's classification space. - Validation: In the query data, check the prediction confidence scores and visually inspect the expression of reference marker genes in the predicted query cell groups. High confidence with concordant marker expression validates both the correction and annotation.

Table 1: Comparison of Common Batch Correction Algorithms

| Method | Core Principle | Best For | Key Parameter | Runtime (10k cells) | Preserves Global Biology |

|---|---|---|---|---|---|

| ComBat | Empirical Bayes, linear model | Weak technical batches (same platform) | model (covariates) |

~1 min | Moderate (can shrink biological variance) |

| Harmony | Iterative clustering & linear correction | Multiple datasets, cell type imbalance | theta (diversity penalty) |

~5 min | High (explicitly modeled) |

| Seurat v5 | Reciprocal PCA & MNN anchors | Large-scale, strong batch effects | k.anchor (number of anchors) |

~15 min | High (uses mutual nearest neighbors) |

| Scanorama | Panorama stitching via MNN | Very large datasets (>100k cells) | k (neighbors for matching) |

~10 min | High |

| scVI | Deep generative model (VAE) | Complex, non-linear effects; downstream DE | n_latent (latent space dim) |

~1 hour (GPU) | Very High (models count distribution) |

Table 2: Benchmark Metrics from a Mixed-Species Experiment (Representative Results)

| Correction Method Applied | Batch ASW (Ideal: 0) | kBET Rejection Rate (Ideal: <0.1) | ARI to Species (Ideal: 1) | Interpretation |

|---|---|---|---|---|

| No Correction | 0.82 | 0.95 | 0.99 | Strong batch effect, perfect biology. |

| ComBat | 0.15 | 0.25 | 0.85 | Batch reduced, some biology lost. |

| Harmony | 0.08 | 0.12 | 0.97 | Batch well-removed, biology preserved. |

| Seurat v5 Integration | 0.05 | 0.08 | 0.99 | Excellent integration and biology. |

| Over-Corrected Example | 0.01 | 0.05 | 0.65 | Batch removed, but biological signal destroyed. |

Visualizations

Title: Batch Effect Correction Experimental Workflow

Title: The Correction Dilemma: Balancing Risks

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Studies |

|---|---|

| Cell Hashing/Oligo-tagged Antibodies | Enables multiplexing of samples from different batches into a single sequencing library, physically eliminating batch effects from library prep. |

| Spike-in RNAs (e.g., from Another Species) | Added in equal amounts across batches to monitor and computationally remove global technical variation. |

| Commercial Reference RNA Samples | Provides a standardized biological control across experiments and platforms to benchmark technical performance. |

| Validated Primer/Panel for Key Markers | Enables orthogonal validation (e.g., by flow cytometry) of cell subtype identities predicted from corrected scRNA-seq data. |

| Pre-mixed Multi-species Cell Lines (e.g., Human/Mouse) | Serves as a controlled, ground-truth benchmark sample for quantifying correction accuracy (see Protocol 1). |

| scRNA-seq Platform Calibration Beads | Used to monitor instrument performance and reagent lot variability over time, identifying a source of batch effects. |

Parameter Tuning for Clustering Resolution and Classification Thresholds

Troubleshooting Guides & FAQs

Q1: My clustering analysis yields one giant cluster and many very small clusters. How can I achieve better separation of cell subtypes? A: This typically indicates a suboptimal clustering resolution parameter. The resolution parameter directly influences the number and granularity of clusters found. For single-cell RNA-seq data analyzed with Seurat or similar tools, a resolution that is too low (e.g., 0.2) under-clusters, while a very high value (e.g., 2.0) may over-cluster. Conduct a parameter sweep and use cluster stability metrics to find the optimal value.

Q2: After manual annotation, I find that my automated cell type classification has mixed two similar subtypes. Which threshold should I adjust? A: This is a common precision/recall trade-off. The classification score threshold is likely set too low, allowing cells with lower confidence scores to be assigned. Increase the classification threshold (e.g., from 0.5 to 0.7 or 0.8) to require higher confidence for label assignment. This improves precision at the potential cost of leaving more cells unassigned.

Q3: How do I quantitatively determine the "best" clustering resolution without known ground truth labels? A: Use internal validation metrics on a sweep of resolution parameters. Calculate metrics like the Silhouette Index, Davies-Bouldin Index, or clustering stability using bootstrapping for each resolution. The resolution yielding the optimal balance of these metrics (high Silhouette, low Davies-Bouldin, high stability) is typically selected.

Q4: My threshold tuning improves annotation for one subtype but severely hurts performance for another. How should I proceed? A: Avoid a single global threshold for all cell types. Implement cell type-specific classification thresholds. Calculate the distribution of classification scores for a manually curated, high-confidence training set for each subtype. Set thresholds based on the score distribution (e.g., 10th percentile) for each class independently.