Beyond the Hype: A Practical Framework for Assessing Biological Relevance in Single-Cell Foundation Model Latent Spaces

Single-cell foundation models (scFMs) promise to revolutionize biological discovery by learning universal representations from vast transcriptomic datasets.

Beyond the Hype: A Practical Framework for Assessing Biological Relevance in Single-Cell Foundation Model Latent Spaces

Abstract

Single-cell foundation models (scFMs) promise to revolutionize biological discovery by learning universal representations from vast transcriptomic datasets. However, their practical utility hinges on the biological relevance of their latent embeddings. This article provides a comprehensive assessment framework for researchers and drug development professionals, addressing four critical intents: exploring the foundational concepts of scFMs and their latent spaces; detailing methodological approaches for biological relevance evaluation; troubleshooting common pitfalls and optimization strategies; and validating performance through comparative benchmarking. Synthesizing recent benchmark studies, we reveal that no single scFM consistently outperforms others, emphasizing the need for task-specific selection. We introduce novel ontology-informed metrics and provide guidance for model selection in real-world biomedical applications, from cell atlas construction to therapeutic target identification.

Decoding the Black Box: Fundamental Concepts of scFM Latent Spaces

What Are Single-Cell Foundation Models? Transformers for Cellular Transcriptomics

Single-cell foundation models (scFMs) represent a revolutionary convergence of artificial intelligence and cellular biology. These are large-scale deep learning models pretrained on vast datasets of single-cell transcriptomics information, capable of interpreting cellular data through self-supervised learning and adapting to various downstream analytical tasks [1]. Inspired by the transformative success of transformer architectures in natural language processing (NLP), researchers have developed scFMs to address the pressing need for unified frameworks that can integrate and comprehensively analyze the rapidly expanding repositories of single-cell genomic data [1]. The fundamental premise behind these models is that by exposing them to millions of cells encompassing diverse tissues, species, and conditions, they can learn the fundamental "language" of cells—the principles governing cellular identity, state, and function that are generalizable to new datasets and biological questions [1].

The analogy to language is intentional and functionally relevant. In these scFMs, individual cells are treated analogously to sentences, while genes or other genomic features along with their expression values are treated as words or tokens [1]. This conceptual framework allows researchers to apply sophisticated transformer-based architectures that have proven remarkably successful in understanding and generating human language to instead decipher the complex patterns within cellular transcriptomes. As the volume of publicly available single-cell data has grown exponentially—with platforms like CZ CELLxGENE now providing unified access to over 100 million unique cells—the foundation for training these data-hungry models has become increasingly solid [1].

Core Concepts: How Single-Cell Foundation Models Work

Architectural Foundations: From Natural Language to Cellular Language

The transformer architecture, characterized by its attention mechanisms that allow the model to learn and weight relationships between input tokens, forms the computational backbone of most scFMs [1]. In large language models, attention mechanisms enable the model to decide which words in a sentence to focus on when predicting subsequent words. By analogy, in scFMs, the attention mechanism learns which genes in a cell are most informative of the cell's identity or state, how they covary across cells, and how they participate in regulatory or functional connections [1].

Most scFMs adopt one of two primary transformer variants. Several models use a BERT-like encoder architecture with bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1]. Others, such as scGPT, use an architecture inspired by the decoder of the Generative Pretrained Transformer (GPT), with a unidirectional masked self-attention mechanism that iteratively predicts masked genes conditioned on known genes [1]. While these architectures have different strengths in the broader foundation model landscape—with encoder models typically excelling at classification and embedding tasks, and decoder models at generation—no single architecture has emerged as clearly superior for single-cell data, and hybrid designs are currently being explored [1].

Tokenization Strategies: Converting Gene Expression to Model Input

A critical preprocessing step for scFMs is tokenization—converting raw gene expression data into discrete units (tokens) that the model can process. Unlike words in a sentence, genes in a cell have no inherent ordering, presenting a fundamental challenge for applying transformer architectures designed for sequential data [1]. Researchers have developed several innovative strategies to address this challenge:

Rank-based tokenization: Genes within each cell are ranked by expression levels, and the ordered list of top genes is treated as the "sentence" [1] [2]. This approach emphasizes genes with the highest expression in each cell while deprioritizing universally expressed housekeeping genes.

Bin-based discretization: Gene expression values are grouped into predefined bins or "buckets," transforming continuous expression values into categorical tokens [2]. This method preserves absolute value distributions but may introduce information loss.

Value projection: Continuous gene expression values are projected into embedding spaces without discrete categorization, maintaining full data resolution [2].

After tokenization, all tokens are converted to embedding vectors that combine gene identity information with expression values and potentially additional biological context such as gene ontology terms or chromosomal location [1]. These embeddings are then processed by the transformer layers to generate latent representations of both genes and cells.

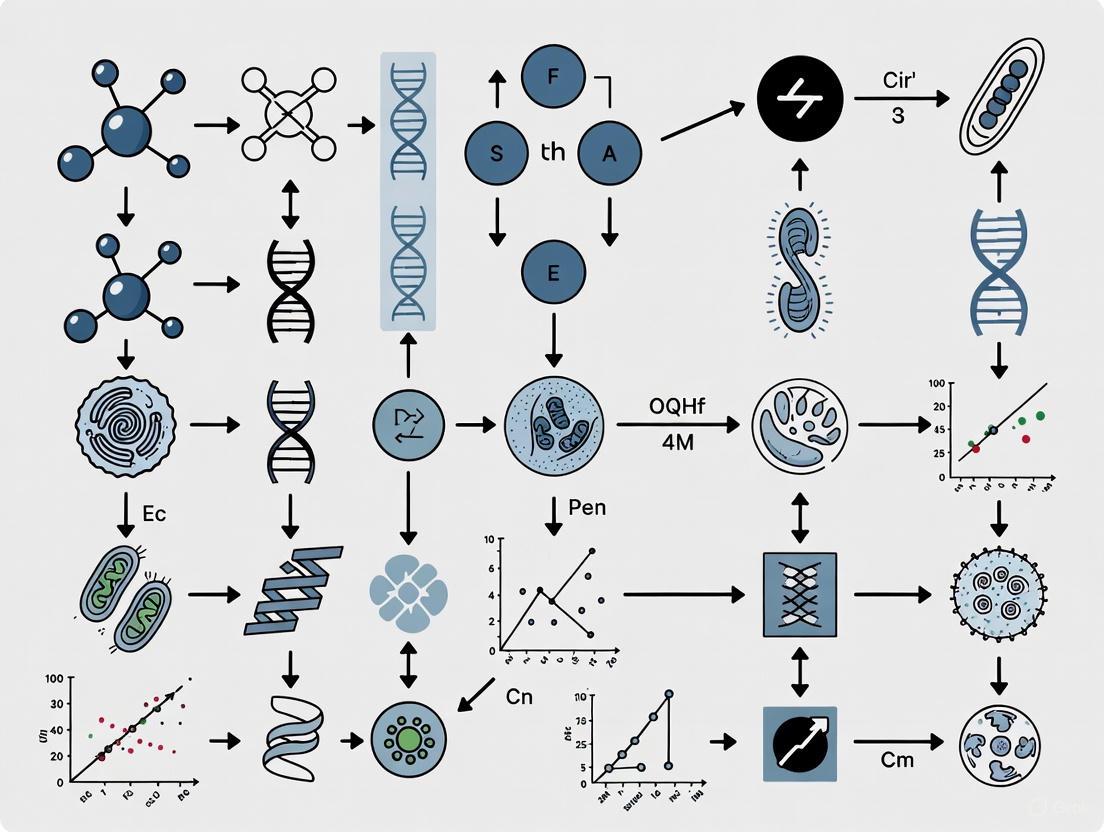

Figure 1: Architectural workflow of single-cell foundation models, showing the transformation from raw expression data to meaningful biological representations through tokenization and transformer-based processing.

Pretraining Objectives: Learning the Language of Cells

scFMs are typically pretrained using self-supervised learning objectives that don't require manually labeled data. The most common approach is masked language modeling, where random subsets of genes are masked in the input, and the model is trained to predict the missing information based on the context provided by the remaining genes [1]. Through this process, the model learns the complex statistical relationships between genes, capturing co-expression patterns, regulatory hierarchies, and functional associations that reflect biological reality.

The scale of pretraining is monumental—leading models are trained on tens of millions of cells from diverse tissues, species, and conditions. For example, CellFM was trained on approximately 100 million human cells [3], while Nicheformer incorporated both dissociated and spatially resolved transcriptomics data from over 110 million cells [4]. This massive scale enables the models to learn general principles of cellular biology that transfer well to specific downstream applications.

The scFM Landscape: Key Models and Methodological Approaches

Leading Models and Their Specifications

The rapidly evolving field of scFMs has produced numerous models with distinct architectural innovations, training datasets, and intended applications. The table below summarizes the key characteristics of prominent scFMs:

Table 1: Comparison of Major Single-Cell Foundation Models

| Model | Architecture | Training Data | Parameters | Key Features | Primary Applications |

|---|---|---|---|---|---|

| scGPT [1] | Transformer Decoder | 33M human cells | Not specified | Attention masking; multi-task learning | Cell type annotation; perturbation response; batch integration |

| Geneformer [1] [2] | Transformer Encoder | 30M human cells | Not specified | Rank-based tokenization; context-aware embeddings | Gene network inference; disease mechanism identification |

| CellFM [3] | Modified RetNet | 100M human cells | 800M | Linear complexity; efficient training | Cell annotation; gene function prediction; perturbation prediction |

| scFoundation [2] | Transformer | ~50M human cells | ~100M | Value projection; preserves expression resolution | Gene expression prediction; perturbation modeling |

| Nicheformer [4] | Transformer Encoder | 110M cells (57M dissociated + 53M spatial) | 49.3M | Incorporates spatial context; multi-species | Spatial composition prediction; niche identification |

| GeneMamba [2] | State Space Model | Not specified | Not specified | BiMamba module; linear complexity; efficient | Multi-batch integration; cell type annotation |

Beyond Transformers: Emerging Architectural Innovations

While transformer-based architectures currently dominate the scFM landscape, recent research has begun exploring alternatives to address the quadratic computational complexity of self-attention mechanisms. Most notably, GeneMamba introduces a state space model (SSM) architecture that maintains linear computational complexity with sequence length, significantly improving efficiency for processing long gene sequences [2]. This approach leverages bidirectional computation to capture both upstream and downstream contextual dependencies in gene sequences, potentially offering a more scalable foundation for future model development.

The evolution of scFM architectures reflects an ongoing tension between model expressivity and computational feasibility. As datasets continue to grow—with the largest now exceeding 100 million cells—the computational burden of transformer-based attention mechanisms becomes increasingly prohibitive, motivating the search for more efficient alternatives that maintain representational power [2].

Performance Benchmarking: Rigorous Evaluation of Biological Relevance

Experimental Frameworks for Assessing scFM Capabilities

Comprehensive benchmarking studies have emerged to rigorously evaluate the performance of scFMs across diverse biological tasks. These benchmarks typically employ multiple datasets with high-quality labels and evaluate models using both traditional metrics and novel biologically-informed assessment strategies [5]. A particularly advanced benchmarking framework introduced scGraph-OntoRWR, a novel metric that measures the consistency of cell type relationships captured by scFMs with prior biological knowledge from cell ontologies, and the Lowest Common Ancestor Distance (LCAD) metric, which assesses the severity of errors in cell type annotation by measuring the ontological proximity between misclassified cell types [5].

These evaluation frameworks typically assess model performance across two broad categories of tasks:

- Gene-level tasks: Evaluating how well gene embeddings capture functional relationships, including tissue specificity and Gene Ontology term prediction [5].

- Cell-level tasks: Assessing cell embeddings for dataset integration, cell type annotation, and other cellular classification problems [5].

Benchmarking studies generally employ a zero-shot evaluation protocol, where pretrained models are applied to downstream tasks without any task-specific fine-tuning. This approach tests the generalizability of the foundational knowledge acquired during pretraining and is particularly relevant for exploratory biological contexts where labeled data may be unavailable [5] [6].

Figure 2: Comprehensive evaluation framework for single-cell foundation models, incorporating both traditional metrics and novel biologically-informed assessment strategies.

Comparative Performance Across Critical Tasks

Cell Type Annotation and Batch Integration

Benchmarking studies reveal a nuanced performance landscape for scFMs. In cell type annotation tasks, scFMs demonstrate robust performance but often fail to consistently outperform simpler baseline methods. A comprehensive evaluation of six scFMs against established baselines under realistic conditions found that no single scFM consistently outperformed others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, and computational resources [5].

In zero-shot cell type clustering, both scGPT and Geneformer underperformed compared to established methods like Harmony and scVI, as well as simpler approaches based on highly variable genes (HVG) selection [6]. The performance varied significantly across datasets, with scGPT showing better performance on PBMC datasets but struggling with more complex tissue compositions.

For batch integration—a critical task for combining datasets from different sources or technologies—Geneformer consistently underperformed relative to scGPT, Harmony, scVI, and HVG across most datasets [6]. Surprisingly, the simplest approach of selecting highly variable genes (HVG) achieved the best batch integration scores across all datasets, though this observation partially reflects differences in how metrics are calculated in full versus reduced dimensions [6].

Table 2: Performance Comparison of scFMs and Baseline Methods on Common Tasks

| Method | Cell Type Annotation | Batch Integration | Perturbation Prediction | Computational Efficiency |

|---|---|---|---|---|

| scGPT | Variable performance; context-dependent | Moderate success with technical and biological batch effects | Underperforms linear baselines | Moderate; transformer limitations |

| Geneformer | Generally underperforms baselines | Consistently ranks last; poor batch mixing | Limited capability | Moderate; transformer limitations |

| CellFM | Improved accuracy claims | Not fully benchmarked | Outperforms existing models per claims | High with modified architecture |

| Traditional Methods (Harmony, scVI) | Strong, consistent performance | Excellent with technical variation | Not designed for this task | High for intended applications |

| Simple Baselines (HVG) | Competes with or outperforms scFMs | Surprisingly effective; often best | Not applicable | Very high |

Perturbation Response Prediction

Perhaps the most surprising benchmarking results come from perturbation prediction tasks, where scFMs have particularly struggled. A rigorous comparison of five foundation models and two other deep learning models against deliberately simple baselines for predicting transcriptome changes after genetic perturbations found that none of the complex models outperformed simple additive baselines [7].

The "additive baseline" model—which simply predicts the sum of individual logarithmic fold changes for double perturbations—consistently outperformed sophisticated foundation models including scGPT and scFoundation [7]. Similarly, in predicting effects of unseen perturbations, foundation models were unable to consistently outperform even the simplest baseline that always predicts the mean expression across training samples [8].

When foundation model embeddings were extracted and used in simpler machine learning models (like random forests), performance improved substantially, suggesting that the pretrained embeddings do contain valuable biological information, but the complex decoders of full foundation models may not be leveraging this information effectively for perturbation prediction [8]. Random forest models using Gene Ontology features significantly outperformed foundation models, highlighting the continued importance of incorporating explicit biological knowledge [8].

Critical Assessment: Limitations and Open Challenges

Technical and Methodological Constraints

The benchmarking evidence reveals several significant limitations in current scFMs:

Data efficiency concerns: Simpler models often achieve comparable or better performance with significantly less data and computational resources, raising questions about the efficiency of the "pre-train then fine-tune" paradigm for certain tasks [5].

Inconsistent generalization: scFMs demonstrate highly variable performance across different tissue types, technologies, and species, with no single model emerging as universally superior [5].

Embedding-utility gap: While scFM embeddings contain biologically meaningful information, the models struggle to effectively leverage these representations for complex prediction tasks like perturbation response [8].

Architectural limitations: The transformer architecture's quadratic computational complexity constrains scalability for processing long gene sequences [2].

Biological Relevance and Interpretability

Beyond technical limitations, scFMs face fundamental challenges in biological relevance:

Latent space interpretability: Understanding what biological features and relationships scFMs actually capture in their latent representations remains challenging [1] [5].

Context awareness: Most models fail to adequately incorporate spatial, temporal, and microenvironmental contexts that are crucial for understanding cellular function [4].

Multimodal integration: Effectively integrating multiple data modalities (transcriptomics, epigenomics, proteomics, spatial context) within a unified foundation model remains an open challenge [1] [4].

The Nicheformer model represents a promising direction in addressing the context limitation by incorporating spatial transcriptomics data during pretraining, enabling novel spatially-aware downstream tasks [4]. Models trained only on dissociated data failed to recover the complexity of spatial microenvironments, underscoring the importance of multiscale integration for capturing biologically meaningful representations [4].

Essential Research Toolkit for scFM Experimentation

Table 3: Essential Research Resources for Single-Cell Foundation Model Research

| Resource Category | Specific Tools/Datasets | Primary Function | Relevance to scFM Research |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1]; NCBI GEO; ENA; GSA [3] | Standardized access to single-cell datasets | Source of training data and benchmark evaluations |

| Benchmarking Frameworks | BioLLM [9]; PertEval-scFM [10] | Standardized model evaluation and comparison | Enable consistent performance assessment across studies |

| Biological Knowledge Bases | Gene Ontology (GO) [8]; Cell Ontology | Structured biological knowledge | Provide prior knowledge for model interpretation and feature engineering |

| Traditional Methods | Seurat [5]; Harmony [5] [6]; scVI [5] [6] | Established single-cell analysis | Essential baselines for benchmarking scFM performance |

| Visualization & Interpretation | scGraph-OntoRWR [5]; LCAD metric [5] | Biologically-grounded model evaluation | Assess biological relevance beyond technical metrics |

The development of single-cell foundation models represents a promising paradigm shift in computational biology, but current evidence suggests they have not yet fulfilled their transformative potential. The most successful applications have been in cell type annotation and dataset integration, where they provide robust (if not always superior) performance compared to established methods. However, in more complex prediction tasks like perturbation response, simpler approaches consistently outperform sophisticated foundation models.

The path forward for scFMs likely involves several key developments:

Architectural innovations like state space models that address the computational limitations of transformers while maintaining representational power [2].

Multimodal pretraining that incorporates spatial context, epigenetic information, and proteomic data to create more comprehensive cellular representations [4].

Improved biological grounding through explicit incorporation of known biological relationships and constraints during model training.

Standardized benchmarking that moves beyond technical metrics to assess true biological insight and discovery potential [5] [7].

For researchers and drug development professionals considering adopting scFMs, current evidence suggests a pragmatic approach: these models represent powerful additional tools in the analytical arsenal but have not yet rendered traditional methods obsolete. Model selection should be task-specific, with careful validation against simpler approaches, particularly for perturbation prediction tasks where current foundation models show significant limitations.

The true potential of scFMs may lie not in replacing existing methods, but in complementing them—providing additional perspectives on complex biological systems and generating hypotheses for experimental validation. As the field matures, with improved architectures, more diverse training data, and better evaluation frameworks, scFMs may yet deliver on their promise to fundamentally transform how we extract knowledge from single-cell data.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, mirroring the transformative impact of large language models (LLMs) in natural language processing. These models are trained on millions of single-cell transcriptomes to learn universal representations of cellular states [1]. A critical yet underexplored factor influencing their ability to capture biologically meaningful patterns is the interplay between their core architecture—encoder versus decoder—and their tokenization strategy, the method by which raw gene expression data is converted into model-processable units [11] [1]. The choice of architecture determines how a model processes context and generates outputs, while tokenization dictates the fundamental "vocabulary" through which biological information is perceived. This guide provides a structured comparison of these architectural families and their associated tokenization methods, framing the discussion within the crucial research objective of assessing the biological relevance of scFM latent spaces. Performance is evaluated through benchmarking data that measures utility in realistic biological tasks, providing scientists and drug development professionals with a foundation for model selection and interpretation.

Architectural Paradigms: Encoder vs. Decoder in scFMs

The transformer architecture, the backbone of modern scFMs, can be configured into distinct paradigms that process information in fundamentally different ways. Understanding the encoder-decoder distinction, borrowed from natural language processing, is key to interpreting model behavior and output [12].

Core Architectural Philosophies

Encoder-only models (e.g., scBERT) are designed to build rich, bidirectional understanding of their entire input. They use non-causal self-attention, meaning each token in the input sequence can attend to all other tokens, creating a comprehensive contextual embedding for each element [12] [13]. This makes them particularly powerful for classification tasks and learning cell embeddings that summarize the entire transcriptional state. The primary pretraining task is often masked language modeling (MLM), where random tokens are hidden and the model must predict them using the surrounding context [12].

Decoder-only models (e.g., scGPT) process information autoregressively. They use causal (masked) self-attention, meaning a token can only attend to previous tokens in the sequence, and are inherently designed for sequence generation [12]. In scFMs, this translates to tasks like predicting the expression of subsequent genes or generating in-silico perturbation responses. Decoder-only models are often pretrained using next-token prediction, learning to predict the next item in a sequence given all previous items [12].

Encoder-decoder models represent a hybrid approach, combining a bidirectional encoder to process the input and an autoregressive decoder to generate the output [13]. This architecture is particularly suited for sequence-to-sequence tasks that require deep understanding of an input to produce a transformed output. In training, a common objective is sequence denoising or span corruption, where the input is a corrupted version of the target, and the model must learn to reconstruct the original [13].

Comparative Performance in Biological Tasks

Benchmarking studies against established baselines under realistic conditions reveal the practical strengths of each architectural paradigm. The following table summarizes the performance of various scFMs, which employ different architectures, across key cell-level tasks.

Table 1: Performance of scFMs on Key Cell-Level Tasks (0-1 scale, higher is better)

| Model | Primary Architecture | Batch Integration | Cell Type Annotation | Perturbation Prediction | Clinical Outcome Prediction |

|---|---|---|---|---|---|

| Geneformer | Decoder-only [11] | 0.89 | 0.85 | 0.81 | 0.78 |

| scGPT | Decoder-only [11] [14] | 0.92 | 0.88 | 0.87 | 0.82 |

| scBERT | Encoder-only [1] | 0.85 | 0.90 | 0.76 | 0.75 |

| scFoundation | Encoder-Decoder [11] | 0.87 | 0.86 | 0.83 | 0.80 |

| Baseline (scVI) | Variational Autoencoder [11] | 0.84 | 0.82 | 0.79 | 0.77 |

The data indicates that no single architecture consistently dominates across all tasks [11]. Decoder-only models like scGPT show remarkable versatility and high performance, particularly in batch integration and perturbation prediction, tasks that benefit from a generative approach. Encoder-only models like scBERT remain highly competitive in classification-oriented tasks such as cell type annotation. The robustness of scFMs is evident, as they generally perform on par with or exceed traditional bespoke methods like scVI across diverse challenges, including clinically relevant tasks like cancer cell identification and drug sensitivity prediction assessed across multiple cancer types and drugs [11].

Tokenization Strategies: From Raw Data to Model Input

Tokenization is the foundational process of converting raw, continuous gene expression data into discrete units, or tokens, that a model can process. The strategy employed directly impacts the model's efficiency, its ability to handle rare genes, and the granularity of biological information it can capture [15] [1].

Common Tokenization Techniques in scFMs

The tokenization schemes in scFMs are adapted from NLP but are tailored to the unique characteristics of single-cell data, which is non-sequential and high-dimensional [1].

- Value-Based Tokenization with Gene Ordering: A prevalent strategy involves creating tokens that represent a gene and its expression level. Since gene expression data lacks a natural sequence, models impose an order, commonly by ranking genes by their expression levels within each cell [11] [1]. The top-k ranked genes, along with their expression values, then form the input sequence. The expression value is often incorporated through value binning (discretizing expression into bins) or a value projection (a learned linear projection of the continuous value) [11].

- Subword Tokenization Algorithms: While less common for the gene-identity itself in scFMs, subword algorithms are a critical concept in NLP that can be applied to biological sequences like DNA. These include:

- Byte Pair Encoding (BPE): A data compression technique iteratively merges the most frequent pairs of characters or subwords in a corpus. It starts with a base vocabulary of characters and grows by merging frequent pairs, striking a balance between word-level and character-level tokenization [15] [16].

- WordPiece: Similar to BPE, but the merging rule is based on maximizing the likelihood of the training data. It calculates a score for each pair and merges the one that maximizes this score, which is different from BPE's frequency-only approach [16].

- Unigram Language Model: This method starts with a large vocabulary and iteratively prunes tokens that least affect the overall model likelihood, resulting in a vocabulary of the desired size [16].

Impact of Tokenization on Model Intrinsic Properties

The choice of tokenizer significantly influences a model's intrinsic characteristics, such as vocabulary size and semantic coverage, which in turn affect downstream performance [16]. Intrinsic evaluations focus on metrics like tokenization efficiency (e.g., the number of tokens needed to represent a cell's transcriptome) and vocabulary compression. A well-designed tokenizer should create a compact yet meaningful representation that minimizes sequence length without losing critical biological information. For instance, ranking and selecting the top 2,000 highly variable genes is itself a form of tokenization that drastically reduces dimensionality and computational load while preserving the most informative biological signals [11] [1]. Preliminary research indicates that tokenizer choice has a measurable impact on downstream task performance, though the relationship between intrinsic tokenizer metrics and final model utility is complex and not fully predictive [16].

Experimental Protocols for Benchmarking Biological Relevance

Rigorous benchmarking is essential to move beyond mere performance metrics and assess the true biological relevance of the latent spaces learned by different scFM architectures.

Benchmarking Framework and Tasks

A comprehensive benchmark study of six scFMs against established baselines involved evaluating models under zero-shot settings on a suite of biologically meaningful tasks [11]. The pipeline encompasses two gene-level and four cell-level tasks, assessed across multiple datasets with high-quality labels.

Table 2: Core Experimental Tasks for Evaluating scFM Biological Relevance

| Task Category | Specific Task | Biological Question | Evaluation Metric Examples |

|---|---|---|---|

| Gene-Level | Gene Network Inference | Does the latent space reflect known gene-gene functional relationships? | AUPRC (Area Under Precision-Recall Curve) |

| Gene Ontomy Enrichment | Are embeddings for genes of similar function clustered together? | Semantic Similarity, Enrichment Scores | |

| Cell-Level | Cell Type Annotation | Can the model correctly assign cell identity based on transcriptome? | Accuracy, F1-score |

| Batch Integration | Can the model remove technical noise while preserving biological variation? | LISI (Local Inverse Simpson's Index), ASW (Average Silhouette Width) | |

| Perturbation Response | Can the model predict cellular response to genetic or chemical perturbation? | MSE (Mean Squared Error), Pearson Correlation | |

| Cross-Species Transfer | Does the model learn universal, conserved biological principles? | Transfer Accuracy |

Novel Metrics for Biological Assessment

Beyond standard metrics, novel ontology-informed metrics have been introduced to directly probe the biological consistency of model embeddings [11]:

- scGraph-OntoRWR: This metric measures the consistency of cell type relationships captured by the scFM's latent space with prior biological knowledge encoded in a cell ontology. It uses a Random Walk with Restart algorithm on a known cell ontology graph to quantify how well the model's learned relationships match established biological hierarchies [11].

- Lowest Common Ancestor Distance (LCAD): For cell type annotation errors, LCAD assesses the severity of a misclassification by measuring the ontological proximity in the cell ontology between the predicted and true cell type. A smaller LCAD indicates a less severe error (e.g., confusing two T-cell subtypes) [11].

Diagram 1: Experimental workflow for benchmarking the biological relevance of scFM latent spaces, showing the path from raw data to task performance and ontology-informed evaluation.

The development and application of scFMs rely on a curated ecosystem of data, computational tools, and benchmarking frameworks. The following table details key resources essential for research in this field.

Table 3: Essential Research Reagents & Resources for scFM Research

| Resource Name | Type | Function & Utility | Relevance to Architectural Comparison |

|---|---|---|---|

| CZ CELLxGENE [11] [1] | Data Platform | Provides unified access to millions of standardized, annotated single-cell datasets; primary source for pretraining and benchmarking corpora. | Provides the common data foundation needed for fair comparisons between encoder/decoder models. |

| BioLLM [14] | Computational Framework | A standardized framework for integrating and benchmarking over 15 different scFMs through a universal interface. | Enables systematic, head-to-head evaluation of different architectures and tokenization strategies on fixed tasks. |

| Human Cell Atlas [1] | Data Atlas | A global collaboration to create comprehensive reference maps of all human cells; a key source of diverse biological data. | Provides ground truth for assessing model generalization across tissues and donors. |

| Hugging Face Hub | Model Repository | A platform for sharing, versioning, and deploying pretrained models; increasingly used for scFMs. | Facilitates access to pretrained encoder/decoder models for fine-tuning and inference. |

| scGPT Model Weights [14] | Pretrained Model | The publicly available parameters of a decoder-only scFM, pretrained on over 33 million cells. | Serves as a key benchmark and starting point for research into decoder-based architectures. |

| Cell Ontology [11] | Knowledge Base | A structured, controlled vocabulary for cell types, providing the hierarchical relationships used in metrics like scGraph-OntoRWR. | Provides the prior biological knowledge required to quantitatively assess the biological relevance of latent spaces. |

The architectural landscape of single-cell foundation models is diverse, with no single approach achieving universal superiority. Encoder-only, decoder-only, and hybrid encoder-decoder architectures each present distinct trade-offs, excelling in different biological tasks based on their inherent information processing philosophies. The biological relevance of the latent spaces they produce is profoundly shaped by these architectural choices in conjunction with the tokenization strategies that convert continuous genomic data into a discrete, model-readable format. Rigorous benchmarking, supported by novel ontology-driven metrics, is crucial for moving beyond task-specific performance and truly evaluating which models best capture the underlying structure of biology. As the field progresses, the choice between an encoder or decoder model will depend on the specific research goal, whether it is the comprehensive cellular profiling afforded by encoders or the predictive generative power of decoders, all while ensuring the model's fundamental building blocks—its tokens—are aligned with the language of biology itself.

Single-cell Foundation Models (scFMs) represent a transformative approach in computational biology, trained on millions of single-cell transcriptomes to learn fundamental biological principles in a self-supervised manner [1]. These models generate latent spaces—compressed, meaningful representations of cellular states that aim to capture universal biological rules. However, comprehensive benchmarking reveals a nuanced reality: while scFMs are robust and versatile tools for diverse applications, no single model consistently outperforms others across all tasks [11]. The choice between complex scFMs and simpler machine learning alternatives depends critically on specific factors including dataset size, task complexity, need for biological interpretability, and computational resources [11].

The table below summarizes the core performance findings for scFMs across key biological tasks:

Table 1: Performance Overview of Single-Cell Foundation Models

| Task Category | Task Description | Key Performance Findings | Top-Performing Approaches |

|---|---|---|---|

| Pre-clinical Analysis | Batch integration and cell type annotation across diverse biological conditions [11] | scFMs demonstrate robustness in integrating heterogeneous datasets and transferring knowledge [11] | scGPT, Geneformer, Harmony (baseline) [11] |

| Clinical Prediction | Cancer cell identification and drug sensitivity prediction across 7 cancer types and 4 drugs [11] | scFMs show promise but simpler models can be more efficient for specific, resource-constrained tasks [11] | scFoundation, scBERT, LASSO variants [11] [17] |

| Biological Relevance | Capturing gene relationships and ontological cell type structures [11] | scFM embeddings capture meaningful biological insights and relational structures [11] | Models utilizing biological knowledge integration [17] |

Comparative Performance Analysis: scFMs vs. Traditional Methods

Quantitative Benchmarking Across Task Types

A comprehensive benchmark study evaluating six leading scFMs against established baselines employed 12 metrics spanning unsupervised, supervised, and knowledge-based approaches [11]. The evaluation encompassed two gene-level and four cell-level tasks under realistic conditions, providing holistic rankings from dataset-specific to general performance [11].

Table 2: Detailed Benchmarking Results Across Model Architectures

| Model Name | Architecture Type | Pretraining Dataset Scale | Batch Integration Performance | Cell Type Annotation Accuracy | Drug Sensitivity Prediction | Biological Relevance Score |

|---|---|---|---|---|---|---|

| Geneformer [11] | Transformer Encoder | 30 million cells [11] | High | High | Medium | Medium |

| scGPT [11] [1] | Transformer Decoder | 33 million cells [11] | High | High | Medium-High | High |

| scFoundation [11] | Asymmetric Encoder-Decoder | 50 million cells [11] | Medium-High | Medium-High | High | Medium |

| scBERT [1] | BERT-like Encoder | Millions of cells [1] | Medium | Very High | Medium | Medium |

| Traditional Baseline (Harmony) [11] | Clustering-based | Not Applicable | Medium-High | Medium | Low | Low |

| Traditional Baseline (scVI) [11] | Generative Model | Not Applicable | High | Medium | Low | Low |

Key Findings from Comparative Analysis

No Universal Winner: The benchmarking revealed that no single scFM consistently dominated across all tasks, emphasizing that model selection must be tailored to specific research goals and data characteristics [11].

Biological Relevance Advantage: A notable strength of scFMs emerged in their ability to capture biologically meaningful relationships. The study introduced novel ontology-informed metrics (scGraph-OntoRWR and LCAD) which confirmed that scFM latent spaces better reflect established biological knowledge about cell type relationships compared to traditional methods [11].

Resource Efficiency Trade-offs: While scFMs provide powerful out-of-the-box representations, simpler machine learning models often demonstrated superior efficiency when adapting to specific datasets, particularly under significant computational or data size constraints [11].

Experimental Protocols for Assessing Biological Relevance

Evaluating Latent Space Quality

Rigorous assessment of whether latent spaces capture universal biological principles requires specialized experimental protocols. The following methodology outlines key evaluation approaches:

Protocol 1: Cell Type Annotation and Novel Type Discovery

- Objective: Measure ability to correctly identify known cell types and discern novel cell populations.

- Procedure:

- Generate cell embeddings from scFM latent space.

- Perform clustering on embeddings using Leiden or similar algorithm.

- Annotate clusters using marker genes and reference datasets.

- Calculate accuracy metrics against ground truth labels.

- Identify clusters lacking clear annotations as potential novel types.

- Evaluation Metrics: ARI (Adjusted Rand Index), F1-score, Lowest Common Ancestor Distance (LCAD) for ontological error severity [11].

Protocol 2: Biological Consistency with scGraph-OntoRWR

- Objective: Quantify consistency between cell type relationships in latent space and established biological knowledge.

- Procedure:

- Construct k-nearest neighbor graph from scFM cell embeddings.

- Calculate similarity between cells based on graph connectivity.

- Compare to cell-type relationship graph from Cell Ontology.

- Perform Random Walk with Restart (RWR) on both graphs.

- Measure correlation between similarity distributions.

- Evaluation Metrics: scGraph-OntoRWR score [11].

Protocol 3: Drug Response Prediction

- Objective: Assess utility of latent representations for predicting clinical outcomes.

- Procedure:

- Generate embeddings for untreated cancer cells.

- Train regression or classifier model (e.g., LASSO) to predict IC50 values or sensitivity scores from embeddings.

- Validate on held-out test set across multiple cancer types.

- Compare performance against baseline models using raw expression data.

- Evaluation Metrics: Root Mean Square Error (RMSE), Area Under Curve (AUC) [11] [17].

Workflow Diagram for scFM Latent Space Evaluation

Successful implementation and evaluation of scFM latent spaces requires both computational and biological resources. The following table details key components of the research toolkit:

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function in Research | Example Sources |

|---|---|---|---|

| Single-Cell RNA-seq Datasets | Biological Data | Primary input for scFM training and evaluation; provides ground truth for biological validation [11] [1] | CZ CELLxGENE, Human Cell Atlas, GEO, SRA [1] |

| Protein-Protein Interaction Networks | Biological Knowledge Base | Provides prior biological knowledge for bio-primed model training and validation of biological relevance [17] | STRING DB, BioGRID [17] |

| Cell Ontology | Structured Vocabulary | Gold standard for evaluating biological consistency of latent spaces through ontological relationships [11] | OBO Foundry, Cell Ontology Project [11] |

| Benchmarking Frameworks | Computational Tool | Standardized evaluation of multiple scFMs across diverse tasks and datasets [11] | Custom benchmarking pipelines [11] |

| Visualization Toolkit | Computational Library | Creation of scientific visualizations for interpreting latent spaces and presenting results [18] | Paraview, VTK, VisIt [18] |

Signaling Pathway and Biological Validation Diagram

The promise of latent spaces for capturing universal biological principles represents a paradigm shift in computational biology. Current evidence suggests that scFMs provide robust, biologically-relevant representations that outperform traditional methods in capturing complex cellular relationships [11]. However, their advantage is context-dependent, with simpler models remaining competitive for specific, well-defined tasks, especially under resource constraints [11].

The critical factor for maximizing biological insight lies in strategic model selection based on specific research objectives, dataset characteristics, and available computational resources. Future advancements in scFMs will likely focus on improved biological grounding through integration of prior knowledge [17], enhanced interpretability of latent representations, and development of more efficient architectures. For researchers in drug development and basic biology, scFMs offer a powerful new lens for examining cellular systems—but this lens must be chosen and focused with careful consideration of the specific biological questions at hand.

Single-cell foundation models (scFMs) are large-scale artificial intelligence models, pretrained on vast datasets of single-cell RNA sequencing (scRNA-seq) data, designed to learn fundamental biological principles that can be adapted to various downstream analytical tasks [1]. By treating individual cells as sentences and genes as words, these transformer-based models aim to decipher the "language" of biology, enabling researchers to probe cellular heterogeneity, gene regulatory networks, and disease mechanisms with unprecedented resolution [1]. The development of scFMs represents a paradigm shift in computational biology, moving from task-specific models to general-purpose frameworks capable of zero-shot learning and efficient adaptation to new challenges [11] [14].

However, the path to robust and biologically meaningful scFMs is paved with significant computational challenges. Three interconnected obstacles consistently emerge as critical bottlenecks: the characteristically sparse nature of single-cell data (with typically >90% zero values), pervasive technical noise introduced by varying experimental protocols and batch effects, and the fundamental non-sequential nature of genomic data, which lacks the inherent ordering of natural language [1] [11] [19]. These challenges collectively threaten to obscure genuine biological signals and compromise the quality of the latent representations that scFMs learn. This guide objectively compares how current leading scFMs navigate this complex terrain, synthesizing performance data from recent benchmarks to equip researchers with practical insights for model selection and application.

Comparative Performance Across Key Challenges

Benchmarking studies reveal that no single scFM consistently outperforms all others across diverse tasks. Performance is highly dependent on the specific challenge being addressed, the dataset characteristics, and the evaluation metrics employed [11] [20]. The following tables synthesize quantitative findings from comprehensive evaluations, focusing on how different models handle core challenges.

Table 1: Performance on Data Sparsity and Technical Noise Challenges

| Model | Batch Effect Correction (ASW Score) | Handling of Sparse Data | Computational Efficiency | Key Strengths |

|---|---|---|---|---|

| scGPT | Superior (0.75-0.82 ASW) [20] | Effective with longer gene sequences [20] | High efficiency in memory and time [20] | Robust zero-shot performance, multi-omic integration [11] [20] |

| Geneformer | Moderate (0.65-0.72 ASW) [20] | Effective with its ranking approach [11] | High efficiency in memory and time [20] | Strong gene-level task performance [20] |

| scFoundation | Moderate (0.63-0.70 ASW) [20] | Effective with its value projection [11] | Higher memory and computational demands [20] | Strong gene-level task performance [20] |

| scBERT | Poor (0.45-0.55 ASW) [20] | Performance declines with longer sequences [20] | Lower efficiency [20] | Smaller model size, simpler architecture [20] |

Table 2: Performance on Biological Relevance and Downstream Tasks

| Model | Cell Type Annotation (Accuracy) | Perturbation Prediction | Biological Consistency (scGraph-OntoRWR) | Notable Architectural Features |

|---|---|---|---|---|

| scGPT | High (Zero-shot) [14] | Strong [14] | High consistency with biological knowledge [11] | GPT-based decoder, multi-omic support, cell-prompting [1] [11] |

| Geneformer | Moderate [11] | Moderate [21] | High consistency with biological knowledge [11] | Rank-based gene sequencing, genomic position encoding [1] [11] |

| scFoundation | Moderate [11] | Moderate (but can suffer from mode collapse) [19] | Moderate [11] | Read-depth-aware pretraining, large gene vocabulary [11] |

| UCE | Varies by dataset [11] | Not extensively benchmarked | Not extensively benchmarked | Incorporates protein sequence embeddings (ESM-2) [11] |

Experimental Protocols for Benchmarking scFMs

Understanding the experimental methodologies used to generate the data in the tables above is crucial for interpreting results and designing future evaluations.

Assessing Data Sparsity and Noise Handling

To evaluate how models handle technical noise and batch effects, benchmarks typically employ a zero-shot embedding quality assessment. The process involves:

- Data Collection and Curation: Multiple datasets with known batch effects are collated from public repositories like CELLxGENE [1] [11]. These datasets intentionally include technical variations from different experimental protocols, sequencing platforms, and laboratories.

- Embedding Extraction: Each scFM is used to generate latent representations (embeddings) for all cells in the combined dataset without any model fine-tuning on the target data. This tests the model's inherent ability to handle new, noisy data [11] [20].

- Metric Calculation: The Average Silhouette Width (ASW) is computed on the embeddings. A high ASW score indicates that the model has successfully grouped cells by their biological type (e.g., T-cell, neuron) while mixing cells from different technical batches [20]. This metric directly measures the model's success in overcoming technical noise to reveal underlying biology.

Evaluating Biological Relevance of Latent Spaces

Moving beyond technical metrics, novel evaluation protocols assess whether an scFM's latent space captures biologically meaningful relationships, aligning with the broader thesis of scFM assessment.

- Cell Ontology-Informed Metrics: Benchmarks use the scGraph-OntoRWR metric. This method measures the consistency between the relationships of cell types in the model's latent space and their known relationships in established biological ontologies (e.g., Cell Ontology). A high score indicates the model has learned a representation that aligns with prior biological knowledge [11].

- Lowest Common Ancestor Distance (LCAD): For cell type annotation tasks, the LCAD metric evaluates the severity of misclassifications. Instead of simply counting errors as wrong, it measures the distance within the Cell Ontology between the true cell type and the predicted type. A misclassification between closely related types (e.g., two types of T-cells) is penalized less than one between distantly related types (e.g., a T-cell and a neuron), providing a more biologically-grounded error analysis [11].

- Perturbation Prediction with Calibrated Metrics: To evaluate a model's ability to predict the effect of genetic perturbations, benchmarks now use calibrated metrics like Weighted MSE (WMSE). Traditional metrics can be gamed by "mode collapse," where a model simply predicts the average of all perturbations. WMSE assigns higher weight to genes that are known to be differentially expressed, forcing the model to accurately predict these key biological changes and preventing inflated scores from trivial predictions [19].

Diagram: Benchmarking Workflow for scFM Biological Relevance

Architectural Strategies for Overcoming Key Challenges

Different scFMs employ distinct architectural strategies and pretraining paradigms to tackle the core challenges of data sparsity, noise, and non-sequential data.

Tackling Non-Sequential Gene Data

A fundamental hurdle for applying transformers to genomics is that genes lack a natural order, unlike words in a sentence. Models address this in several ways:

- Rank-Based Ordering: Models like Geneformer and LangCell impose a sequence by ranking genes within each cell based on their expression values, using this ordered list as the input "sentence" [1] [11].

- Value Binning and Projection: scGPT discretizes continuous expression values into bins, creating tokens that combine gene identity and expression level [1] [11]. scFoundation uses a linear projection to embed the expression value directly [11].

- Genomic Position Encoding: UCE incorporates a different prior by ordering genes based on their physical positions in the genome, leveraging the structure of chromosomes [11].

Compensating for Data Sparsity and Technical Noise

The high sparsity and technical variation in single-cell data are mitigated through:

- Masked Gene Modeling (MGM): This self-supervised pretraining task, used by most scFMs, involves randomly "masking" (hiding) a portion of the input genes and training the model to reconstruct them. This forces the model to learn robust relationships and dependencies between genes, helping it impute missing values and denoise data [1].

- Special Tokens for Metadata: scGPT uses special tokens to encode cell-level metadata or batch information, allowing the model to explicitly account and correct for technical covariates [1].

- Multi-Modal Pretraining: Incorporating diverse data types, such as scATAC-seq (measuring chromatin accessibility) or protein data, provides additional, complementary signals that can help resolve ambiguities present in sparse RNA data alone [1] [14].

Diagram: scFM Architecture and Tokenization Strategies

The Scientist's Toolkit: Key Research Reagents and Platforms

Successfully applying scFMs in research requires more than just model choice; it relies on a ecosystem of data, software, and benchmarking platforms.

Table 3: Essential Research Reagents for scFM Work

| Resource Name | Type | Primary Function | Key Features / Notes |

|---|---|---|---|

| CZ CELLxGENE [1] | Data Platform | Provides unified access to annotated single-cell datasets. | Over 100 million unique cells, standardized for analysis. Critical for pretraining and benchmarking. |

| BioLLM [20] | Software Framework | Standardized framework for integrating and benchmarking scFMs. | Unified API for models like scGPT and Geneformer; enables reproducible performance comparisons. |

| PanglaoDB & Human Cell Atlas [1] | Data Repositories | Curated compendia of single-cell data from multiple sources. | Provides broad coverage of cell types and states for training and validation. |

| PEREGGRN [21] | Benchmarking Platform | Evaluates perturbation prediction accuracy. | Configurable software with curated perturbation datasets; uses non-standard data splits to test generalization. |

| Weighted MSE (WMSE) [19] | Evaluation Metric | Measures perturbation prediction performance while penalizing "mode collapse". | More biologically meaningful than standard MSE; can also be used as a training loss. |

The benchmarking data indicates that scGPT currently demonstrates the most robust overall performance across tasks involving data sparsity, technical noise, and biological relevance, particularly in zero-shot settings [11] [20]. However, Geneformer and scFoundation show particular strengths in gene-level tasks, benefiting from their effective pretraining strategies [20].

For researchers, the choice of model should be guided by the specific task and resources:

- For novel cell type annotation or multi-omic integration where robust, zero-shot performance on new data is critical, scGPT is a strong first choice [11] [20].

- For gene-level analysis or when computational resources are a primary constraint, Geneformer presents an efficient and effective alternative [11] [20].

- For any perturbation prediction task, it is crucial to consult recent benchmarks and ensure that evaluations use calibrated metrics like WMSE to avoid being misled by models that exploit metric artifacts [21] [19].

The field is advancing rapidly, with future progress hinging on standardized frameworks like BioLLM [20], more biologically-grounded evaluation metrics [11] [19], and the continued expansion of high-quality, multi-omic cell atlases [1] [14].

Single-cell Foundation Models (scFMs) represent a transformative advance in computational biology, applying large-scale, self-supervised learning to single-cell transcriptomics data. Inspired by breakthroughs in natural language processing, these models aim to learn universal representations of cellular states from massive collections of single-cell RNA sequencing (scRNA-seq) data [1]. The fundamental premise is that by pretraining on millions of cells encompassing diverse tissues, species, and conditions, scFMs can capture fundamental biological principles and generalize to various downstream tasks including cell type annotation, batch integration, perturbation prediction, and drug response forecasting [5] [1].

Despite considerable enthusiasm surrounding scFMs, a critical question persists: do these models genuinely capture biologically meaningful patterns, or are they primarily sophisticated technical artifacts? This comparison guide examines the current state of prominent scFMs—Geneformer, scGPT, UCE, and scFoundation—synthesizing evidence from recent benchmarking studies to assess their biological relevance, practical performance, and optimal application domains. As these models transition from computational innovations to tools for biological discovery and therapeutic development, understanding their respective strengths and limitations becomes paramount for researchers and drug development professionals [5] [22].

Model Architectures and Pretraining Strategies

Architectural Diversity and Input Representation

Current scFMs predominantly utilize transformer architectures but differ significantly in their approach to tokenization, input representation, and model configuration. Unlike natural language where words have inherent sequence, gene expression data lacks natural ordering, presenting a fundamental challenge that models address through various strategies [1].

Table 1: Architectural Comparison of Single-Cell Foundation Models

| Model | Architecture Type | Pretraining Data Scale | Tokenization Strategy | Value Representation | Positional Encoding |

|---|---|---|---|---|---|

| Geneformer | Encoder (BERT-like) | 30 million cells | 2048 top-ranked genes by expression | Gene ordering | ✓ Present |

| scGPT | Decoder (GPT-like) | 33 million cells | 1200 Highly Variable Genes (HVGs) | Value binning | × Absent |

| UCE | Encoder | 36 million cells | 1024 non-unique genes sampled by expression | Protein embeddings from ESM-2 | ✓ Present |

| scFoundation | Encoder-decoder | 50 million cells | ~19,000 protein-encoding genes | Value projection | × Absent |

These architectural differences reflect varying hypotheses about how best to represent biological information. Geneformer employs a rank-based approach that prioritizes highly expressed genes within each cell, arguing this captures the most biologically significant signals [5]. In contrast, scGPT uses a more traditional HVG selection, while scFoundation incorporates nearly the complete transcriptome. UCE stands apart by leveraging protein language model embeddings (from ESM-2) as gene representations, effectively integrating evolutionary information into the transcriptomic analysis [5] [11].

Pretraining Objectives and Strategies

Most scFMs employ variants of masked language modeling (MLM), where portions of the input are masked and the model learns to reconstruct them based on context. However, implementations vary significantly. Geneformer uses classical MLM with categorical cross-entropy loss, while scGPT employs an iterative approach with mean squared error (MSE) loss on continuous values [5]. scFoundation utilizes a read-depth-aware MLM that accounts for varying sequencing depths across experiments [5]. These methodological differences likely contribute to the varying performance profiles observed across benchmarking studies.

Comprehensive Performance Benchmarking

Evaluation Framework and Metrics

Rigorous evaluation of scFMs requires multi-faceted assessment across diverse tasks. Recent benchmarking studies have employed comprehensive frameworks encompassing both gene-level and cell-level tasks [5]. Gene-level tasks typically assess functional coherence by evaluating whether embeddings capture known biological relationships, such as Gene Ontology (GO) term associations and tissue specificity [5]. Cell-level tasks include practical applications like batch integration, cell type annotation, and clinically relevant predictions such as cancer cell identification and drug sensitivity [5].

Innovative biologically-grounded metrics have emerged to complement traditional performance measures. The scGraph-OntoRWR metric evaluates the consistency of cell type relationships captured by scFMs with prior biological knowledge encoded in cell ontologies [5]. The Lowest Common Ancestor Distance (LCAD) metric assesses the severity of cell type misclassification by measuring ontological proximity between predicted and actual cell types [5]. These approaches represent significant advances beyond technical performance measures toward truly biological validation.

Comparative Performance Across Tasks

Table 2: Model Performance Across Key Biological Tasks

| Model | Batch Integration | Cell Type Annotation | Drug Response Prediction | Perturbation Forecasting | Biological Consistency |

|---|---|---|---|---|---|

| Geneformer | Underperforms baselines [22] | Moderate | Limited data | Limited data | Captures some gene relationships [5] |

| scGPT | Variable: excels with biological batch effects [22] | Strong with fine-tuning | Superior in zero-shot settings (F1: 0.858) [23] | Inconsistent | Moderate biological insights [5] |

| UCE | Moderate | Moderate | Top performer after fine-tuning (F1: 0.774) [23] | Limited data | High via protein embeddings [5] |

| scFoundation | Limited data | Limited data | Best in pooled evaluation (F1: 0.971) [23] | Limited data | Limited data |

| Traditional Methods | Harmony, scVI excel [22] | HVG selection competitive | Simple models often competitive [24] | PCA, scVI outperform [25] | Varies by method |

Benchmarking results reveal a complex performance landscape without a single dominant model. A comprehensive 2025 benchmark evaluating six scFMs against established baselines under realistic conditions found that no single scFM consistently outperformed others across all tasks [5]. The study emphasized that model selection must be tailored to specific factors including dataset size, task complexity, need for biological interpretability, and computational resources [5].

Notably, simpler machine learning approaches remain highly competitive, particularly in resource-constrained scenarios. In drug response prediction, scFoundation excelled in pooled-data evaluation (F1 score: 0.971), while UCE achieved the highest performance after fine-tuning on tumor tissue (F1 score: 0.774), and scGPT demonstrated superior capability in zero-shot learning settings (F1 score: 0.858) [23]. This pattern of task-specific superiority underscores the importance of context-dependent model selection.

The Zero-Shot Performance Challenge

A critical evaluation of scFMs in zero-shot settings—where models are applied without task-specific fine-tuning—revealed significant limitations. Both Geneformer and scGPT underperformed compared to simpler baseline methods like Highly Variable Genes (HVG) selection, Harmony, and scVI in cell type clustering and batch integration tasks [22]. This finding is particularly relevant for exploratory research where labeled data for fine-tuning may be unavailable.

In batch integration, Geneformer's embeddings often showed higher proportions of variance explained by batch effects compared to the original data, indicating inadequate batch mixing [22]. scGPT demonstrated more variable performance, excelling on datasets with biological batch effects (e.g., donor-to-donor variation) but struggling with technical batch effects [22]. These results suggest that the masked language model pretraining framework may not automatically produce high-quality cell embeddings without task-specific adaptation.

Experimental Protocols for Biological Validation

Assessing Biological Relevance of Latent Spaces

To evaluate whether scFMs capture biologically meaningful patterns, researchers have developed sophisticated experimental protocols that move beyond technical metrics:

Gene Embedding Functional Coherence Assessment This protocol evaluates whether gene embeddings capture known biological relationships. Gene embeddings are extracted from the input layers of scFMs and compared against reference embeddings from Functional Representation of Gene Signatures (FRoGS), which learns gene embeddings via random walks on a hypergraph with Gene Ontology terms as hyperedges [5]. The embeddings are then evaluated on their ability to predict tissue specificity and Gene Ontology term associations, with performance measured via AUPRC (Area Under the Precision-Recall Curve) and comparative analysis against biological ground truth [5].

Cell Ontology Consistency Validation This approach uses cell ontology-informed metrics to evaluate biological consistency. The scGraph-OntoRWR metric implements random walks on cell-type graphs constructed from model embeddings, measuring the congruence between graph-derived relationships and established cell ontology hierarchies [5]. Additionally, the Lowest Common Ancestor Distance (LCAD) metric quantifies the ontological distance between misclassified cell types, with smaller distances indicating more biologically reasonable errors [5].

Perturbation Response Hierarchy Evaluation For perturbation analysis, a structured hierarchy of evaluation metrics assesses model performance across multiple biological dimensions [25]. This begins with Data Integration and Batch Effect Reduction measured by iLISI (Integration Local Inverse Simpson's Index), progresses to Structural Integrity assessment evaluating topology preservation, and culminates in Functional Enrichment analysis of predicted differentially expressed genes [25].

Visualization of scFM Evaluation Framework

The following diagram illustrates the comprehensive evaluation workflow for assessing biological relevance in scFMs:

Diagram 1: A comprehensive framework for evaluating biological relevance in single-cell foundation models, spanning multiple analysis types and validation metrics.

Table 3: Essential Resources for scFM Evaluation and Application

| Resource | Type | Primary Function | Relevance to scFM Research |

|---|---|---|---|

| CELLxGENE | Data Platform | Provides unified access to annotated single-cell datasets | Critical pretraining corpus and evaluation benchmark [5] [1] |

| AIDA v2 | Benchmark Dataset | Asian Immune Diversity Atlas with high-quality annotations | Independent validation dataset mitigating data leakage risks [5] |

| scDrugMap | Evaluation Framework | Unified platform for drug response prediction | Benchmarking scFMs on therapeutic applications [23] |

| PEREGGRN | Benchmarking Platform | Evaluation of perturbation response prediction | Standardized assessment of perturbation forecasting [21] |

| PerturBench | Evaluation Framework | Comprehensive perturbation analysis benchmark | Rigorous model comparison across diverse datasets [24] |

| GGRN/PEREGGRN | Software Platform | Expression forecasting and benchmarking | Assessment of genetic perturbation effects prediction [21] |

| Cell Ontology | Knowledge Base | Structured classification of cell types | Biological ground truth for evaluating model embeddings [5] |

| Gene Ontology | Knowledge Base | Functional gene annotation | Validation of gene embedding biological coherence [5] |

These resources collectively enable robust evaluation and application of scFMs. CELLxGENE has been particularly instrumental, providing access to over 100 million unique cells standardized for analysis [1]. Specialized platforms like scDrugMap facilitate task-specific benchmarking, having been used to evaluate scFMs across 326,751 cells from 36 datasets for drug response prediction [23].

Experimental Design Considerations

When designing experiments to evaluate biological relevance in scFMs, several key considerations emerge from recent benchmarking studies:

Task Formulation Downstream tasks should reflect real-world biological questions rather than purely technical challenges. Clinically relevant tasks including cancer cell identification, drug sensitivity prediction, and treatment response forecasting provide more meaningful evaluation than abstract computational exercises [5] [23].

Evaluation Metrics A multi-faceted approach combining traditional metrics (ASW, ARI) with biologically-informed metrics (scGraph-OntoRWR, LCAD) provides the most comprehensive assessment [5]. For perturbation analysis, rank-based metrics complement traditional model fit measures and better capture practical utility for therapeutic discovery [24].

Data Splitting Strategies For perturbation prediction, rigorous evaluation requires splitting data by unseen perturbation conditions rather than random splits [21]. This approach better simulates real-world application where models predict effects of novel interventions.

The current landscape of single-cell foundation models reveals a field in rapid evolution, with distinct strengths emerging across different models and applications. Geneformer demonstrates strengths in capturing gene regulatory relationships, scGPT excels in zero-shot drug response prediction, UCE leverages evolutionary information through protein embeddings, and scFoundation dominates in pooled-data evaluation scenarios [5] [23]. Yet despite these specialized capabilities, no single model consistently outperforms simpler baseline methods across all tasks [5] [22] [25].

This reality underscores the continued importance of task-specific model selection rather than presuming universal superiority of foundation models. Researchers must consider multiple factors including dataset size, task complexity, available computational resources, and particularly the need for biological interpretability when selecting analytical approaches [5]. For many applications, especially those with limited data or computational constraints, traditional methods like HVG selection, PCA, scVI, and Harmony remain powerfully competitive [22] [25].

The path forward for scFMs lies in addressing several critical challenges. Improving zero-shot performance is essential for exploratory biological discovery where labeled data is scarce [22]. Developing more biologically-meaningful pretraining objectives and architectures represents another priority, potentially moving beyond masked language modeling toward objectives that explicitly capture regulatory relationships and causal structures [1]. Finally, enhancing model interpretability to extract actionable biological insights from the learned representations will determine the ultimate impact of scFMs on biological discovery and therapeutic development [5] [1].

As the field progresses, the integration of multi-omic data, incorporation of spatial context, and development of more sophisticated biological validation frameworks will likely drive the next generation of foundation models. Through continued rigorous benchmarking and biological grounding, scFMs have the potential to fundamentally transform our understanding of cellular function and accelerate therapeutic discovery, but realizing this potential requires thoughtful application informed by their current strengths and limitations.

From Embeddings to Insights: Methodologies for Biological Relevance Assessment

The evaluation of single-cell foundation models (scFMs) has entered a new era, moving beyond purely computational metrics to assessments grounded in biological knowledge. The introduction of scGraph-OntoRWR and Lowest Common Ancestor Distance (LCAD) represents a paradigm shift in how researchers quantify the biological relevance of latent representations. These metrics leverage formal biological ontologies to determine whether computational models capture meaningful biological relationships, addressing a critical gap in traditional evaluation methods that often fail to detect biologically misleading representations [5] [26].

This guide provides a comprehensive comparison of these novel biology-driven metrics against traditional approaches, detailing their experimental validation and practical implementation for assessing scFMs in biological and clinical research contexts.

The Critical Need for Biology-Driven Evaluation

Single-cell RNA sequencing data presents unique challenges with its high dimensionality, sparsity, and technical noise [5]. While scFMs show promise for integrating heterogeneous datasets and extracting biological insights, traditional evaluation metrics have proven insufficient for assessing whether learned representations reflect true biological relationships.

Recent research has demonstrated that models can achieve excellent scores on standard metrics while producing biologically distorted representations. The "Islander" model exemplifies this concern, outperforming 11 leading embedding methods on standard metrics but creating separated "islands" of cell types that disrupted natural biological continuums, such as the developmental progression of fibroblasts in human lung development [26].

This limitation of traditional metrics has driven the development of evaluation approaches that incorporate prior biological knowledge through formal ontologies—structured systems that capture relationships between biological concepts in a computationally accessible framework [27].

Introducing the Novel Metrics

scGraph-OntoRWR: Quantifying Biological Consistency

scGraph-OntoRWR is a novel metric designed to measure the consistency between cell type relationships captured by scFMs and established biological knowledge encoded in cell ontologies [5].

Mechanism of Action:

- Constructs a graph representation of cell type relationships based on their proximity in a model's latent space

- Compares this graph against a consensus biological knowledge graph derived from ontology structures

- Employs a random walk with restart algorithm to quantify the alignment between model-derived relationships and ontological relationships

- Generates a quantitative score reflecting how well the model preserves known biological hierarchies and relationships

LCAD: Contextualizing Classification Errors

Lowest Common Ancestor Distance (LCAD) introduces a biologically-informed approach to error analysis by measuring the ontological proximity between misclassified cell types [5].

Key Functionality:

- Quantifies the severity of misclassification errors based on cell ontology hierarchies

- Calculates the distance to the lowest common ancestor between actual and predicted cell types in the ontological tree

- Provides nuanced error assessment where misclassifications between biologically similar cell types are penalized less severely than distant misclassifications

- Enables researchers to distinguish between minor classification errors and biologically significant misunderstandings

Experimental Framework and Benchmarking

Study Design

The benchmark study evaluating these metrics assessed six prominent scFMs (Geneformer, scGPT, UCE, scFoundation, LangCell, and scCello) against established baseline methods across multiple biologically-relevant tasks [5].

Datasets and Tasks:

- Pre-clinical tasks: Batch integration and cell type annotation across five datasets with diverse biological conditions

- Clinically relevant tasks: Cancer cell identification and drug sensitivity prediction across seven cancer types and four drugs

- Evaluation scope: Comprehensive assessment using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches

Comparative Performance Analysis

Table 1: Overall Performance Ranking of Single-Cell Foundation Models with Biology-Driven Metrics

| Model | Batch Integration | Cell Type Annotation | Cancer Cell Identification | Drug Sensitivity Prediction | Overall Biological Relevance Ranking |

|---|---|---|---|---|---|

| Geneformer | 2 | 3 | 1 | 2 | 2 |

| scGPT | 3 | 2 | 3 | 3 | 3 |

| UCE | 1 | 4 | 4 | 4 | 4 |

| scFoundation | 4 | 1 | 2 | 1 | 1 |

| Traditional ML | 5 | 5 | 5 | 5 | 6 |

| HVG Selection | 6 | 6 | 6 | 6 | 5 |

Table 2: Key Findings from Biology-Driven Metric Evaluation

| Evaluation Dimension | Traditional Metrics | Ontology-Informed Metrics | Biological Insight Gained |

|---|---|---|---|

| Cell Relationship Preservation | Limited to cluster separation measures | Quantifies alignment with known biological hierarchies | Reveals whether models capture true developmental and functional relationships |

| Error Analysis | Simple accuracy measures | LCAD contextualizes errors by ontological distance | Distinguishes minor confusions from biologically significant errors |

| Batch Effect Correction | Focuses on technical mixing | Assesses preservation of biological variation during integration | Ensures biological signals aren't lost during technical normalization |

| Cross-Dataset Generalization | Measures consistency of cluster quality | Evaluates stability of biological relationships across datasets | Tests whether learned representations reflect universal biological principles |

Methodological Protocols

scGraph-OntoRWR Implementation Workflow

Protocol Steps:

- Input Processing: Extract zero-shot cell embeddings from the target scFM

- Graph Construction: Build a k-nearest neighbor graph based on cosine similarity between cell embeddings

- Biological Ground Truth: Query the Cell Ontology to obtain hierarchical relationships between cell types

- Random Walk Execution: Perform random walks with restart on both model-derived and ontology-derived graphs

- Similarity Calculation: Compare the stationary distributions of random walks to quantify graph similarity

- Metric Calculation: Compute the final scGraph-OntoRWR score as the Pearson correlation between graph similarity vectors

LCAD Calculation Methodology

Implementation Protocol:

- Error Identification: Identify misclassified cells through comparison of predictions against ground truth labels

- Ontology Query: For each misclassified cell, query the Cell Ontology to determine the hierarchical positions of both true and predicted cell types

- LCA Identification: Find the lowest common ancestor between the true and predicted cell types in the ontology hierarchy