Beyond the Hype: A Practical Framework for Validating LLM-Based Cell Type Annotations with Marker Gene Expression

The integration of Large Language Models (LLMs) into single-cell RNA sequencing analysis promises to revolutionize cell type annotation by reducing manual labor and leveraging vast biological knowledge.

Beyond the Hype: A Practical Framework for Validating LLM-Based Cell Type Annotations with Marker Gene Expression

Abstract

The integration of Large Language Models (LLMs) into single-cell RNA sequencing analysis promises to revolutionize cell type annotation by reducing manual labor and leveraging vast biological knowledge. However, ensuring the reliability of these automated annotations is paramount for downstream research and drug discovery. This article provides a comprehensive guide for researchers and drug development professionals on validating LLM-generated cell type calls through rigorous marker gene expression analysis. We explore the foundational principles of LLM-based annotation, detail cutting-edge methodological frameworks that integrate external verification, address common troubleshooting and optimization scenarios, and present a comparative analysis of validation strategies. By establishing a robust workflow for confirmation, this resource aims to build trust in automated annotations, enhance reproducibility, and accelerate the translation of single-cell genomics into therapeutic insights.

The New Frontier: Understanding LLMs in Cellular Taxonomy and the Imperative for Validation

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to dissect cellular heterogeneity, yet accurate cell type annotation remains a significant bottleneck in data analysis pipelines. Traditional methods rely heavily on expert knowledge or reference datasets, introducing subjectivity and limitations in generalizability [1]. The emergence of Large Language Models (LLMs) presents a paradigm shift, offering the potential to automate this process without requiring extensive domain expertise. However, this promise comes with inherent perils, including the risk of model "hallucination" where LLMs generate confident but biologically incorrect annotations.

This guide objectively evaluates the performance of a pioneering LLM-based tool, LICT (Large Language Model-based Identifier for Cell Types), against established annotation methods. We frame this comparison within the critical thesis that validation with marker gene expression is non-negotiable for reliable biological interpretation, providing experimental data and protocols to empower researchers in implementing and validating these approaches in their own work.

Evaluating LLM Performance in scRNA-seq Annotation

The LICT Framework: Multi-Model Integration and Validation

The LICT tool was developed to address key limitations in existing LLM-based annotation approaches. It employs three core strategies to enhance performance and reliability [1]:

- Multi-Model Integration: Leverages five top-performing LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE 4.0) selected from an initial evaluation of 77 models, using their complementary strengths to improve accuracy.

- "Talk-to-Machine" Strategy: Implements an iterative human-computer interaction process where initial annotations are validated against marker gene expression, with structured feedback loops for ambiguous cases.

- Objective Credibility Evaluation: Provides a reference-free framework to assess annotation reliability based on marker gene expression patterns within the input dataset.

Table 1: Top-Performing LLMs Integrated in LICT for scRNA-seq Annotation

| LLM Model | Key Characteristics | Performance Highlights |

|---|---|---|

| GPT-4 | General-purpose multimodal LLM | Strong overall performance in heterogeneous cell populations |

| Claude 3 | Conversation-focused model | Highest overall performance in initial evaluation |

| Gemini | Multimodal capabilities | 39.4% consistency with manual annotations for embryo data |

| LLaMA-3 | Open-source foundation model | Balanced performance across datasets |

| ERNIE 4.0 | Chinese language model | Complementary capabilities for diverse data sources |

Performance Benchmarking Across Diverse Biological Contexts

LICT was systematically validated across four scRNA-seq datasets representing diverse biological contexts to assess its generalizability [1]:

- Normal Physiology: Peripheral Blood Mononuclear Cells (PBMCs) - widely used benchmark

- Developmental Stages: Human embryonic cells

- Disease States: Gastric cancer samples

- Low-Heterogeneity Environments: Stromal cells from mouse organs

Table 2: LICT Performance Comparison Across Biological Contexts

| Dataset | Annotation Match Rate | Mismatch Rate | Key Challenges |

|---|---|---|---|

| PBMCs (High heterogeneity) | 90.3% (after integration strategy) | 9.7% (reduced from 21.5%) | Minimal challenges with robust performance |

| Gastric Cancer (High heterogeneity) | 91.7% (after integration strategy) | 8.3% (reduced from 11.1%) | Strong performance in disease context |

| Human Embryo (Low heterogeneity) | 48.5% match rate | 51.5% inconsistency | Significant challenges with partial differentiation states |

| Stromal Cells (Low heterogeneity) | 43.8% match rate | 56.2% inconsistency | Limited transcriptional diversity problematic |

The benchmarking revealed a critical pattern: while LLMs excel with highly heterogeneous cell populations, their performance diminishes significantly with less heterogeneous datasets such as embryonic cells and stromal populations [1]. This highlights a fundamental limitation in applying current LLM technology to cell types with subtle transcriptional differences.

Experimental Protocols for LLM Annotation Validation

Multi-Model Integration Methodology

The multi-model integration strategy follows a structured protocol to leverage complementary LLM strengths [1]:

- Input Standardization: Prepare standardized prompts incorporating the top ten marker genes for each cell subset, following established benchmarking methodologies.

- Parallel Model Query: Simultaneously query all five selected LLMs with identical input prompts containing marker gene information.

- Result Selection: Instead of conventional majority voting, select the best-performing results from the five LLMs based on validation criteria.

- Cross-Validation: Assess annotations against known cell type signatures and expression patterns.

This protocol was validated using PBMC and gastric cancer datasets, with performance measured by consistency with manual expert annotations and reduction in mismatch rates.

"Talk-to-Machine" Iterative Validation Protocol

The "talk-to-machine" strategy implements a rigorous iterative validation workflow [1]:

- Initial Annotation: LLM provides preliminary cell type predictions based on input marker genes.

- Marker Gene Retrieval: Query the LLM for representative marker genes for each predicted cell type.

- Expression Validation: Assess expression of these marker genes within corresponding clusters in the input dataset.

- Validation Thresholding: Classify annotations as valid if >4 marker genes are expressed in ≥80% of cells within the cluster.

- Iterative Refinement: For failed validations, generate structured feedback prompts with expression results and additional differentially expressed genes (DEGs) to re-query the LLM.

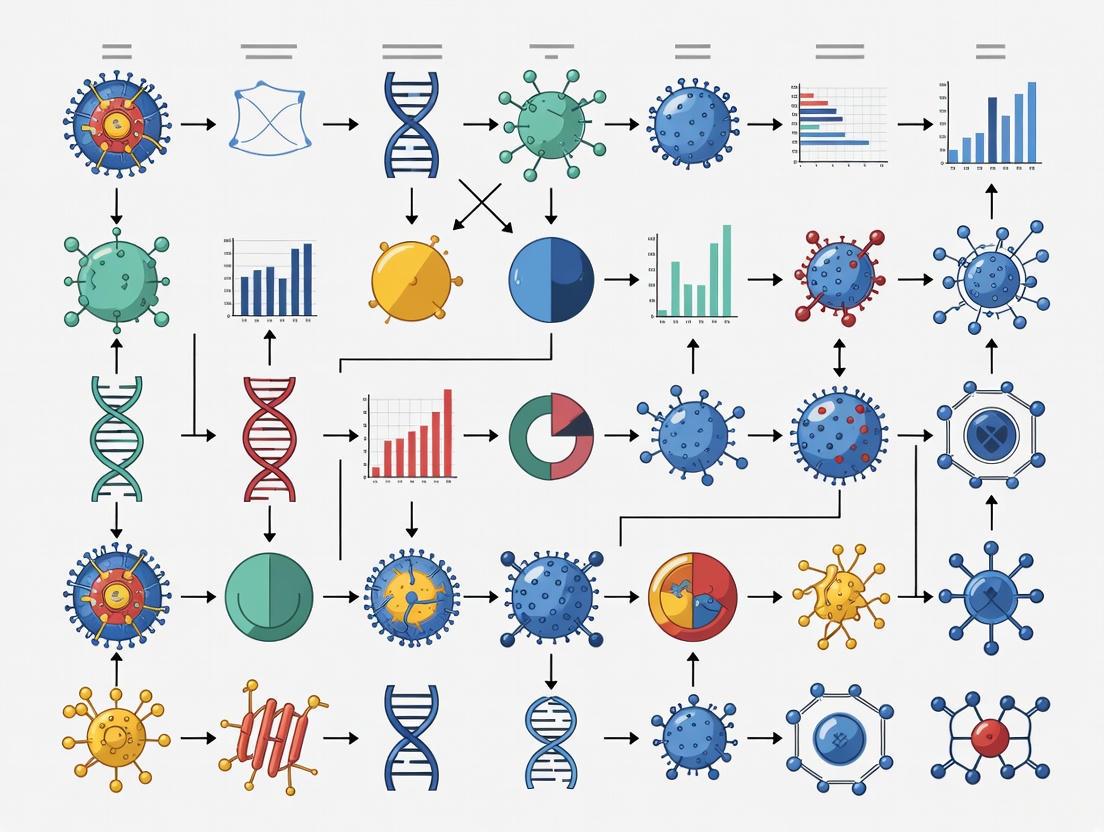

Diagram 1: Talk-to-Machine Validation Workflow (83x54)

Objective Credibility Assessment Protocol

The credibility evaluation strategy provides a critical framework for distinguishing methodological limitations from dataset intrinsic constraints [1]:

- Marker Gene Generation: For each predicted cell type, query the LLM to generate representative marker genes.

- Expression Analysis: Analyze expression patterns of these marker genes within corresponding cell clusters.

- Credibility Thresholding: Classify annotations as reliable if >4 marker genes are expressed in ≥80% of cells within the cluster.

- Comparative Assessment: Apply the same credibility standards to both LLM-generated and manual expert annotations.

- Discrepancy Resolution: Investigate cases where both LLM and manual annotations are classified as reliable but differ in their conclusions.

This protocol revealed that in low-heterogeneity datasets, LLM-generated annotations sometimes demonstrated higher credibility than manual annotations based on objective marker expression criteria [1].

Comparative Performance Analysis

Quantitative Benchmarking Against Established Methods

Comprehensive performance assessment reveals both strengths and limitations of the LICT framework compared to existing approaches:

Table 3: Strategy Performance Comparison Across Dataset Types

| Strategy | PBMC Match Rate | Gastric Cancer Match Rate | Embryo Match Rate | Stromal Cell Match Rate |

|---|---|---|---|---|

| Single LLM (GPT-4) | 78.5% | 88.9% | ~3% (estimated) | ~30% (estimated) |

| Multi-Model Integration | 90.3% | 91.7% | 48.5% | 43.8% |

| Talk-to-Machine Enhancement | 92.5% full match | 97.2% full match | 48.5% full match | 43.8% full match |

The data demonstrates that the multi-model integration strategy alone reduces mismatch rates by approximately 50% in high-heterogeneity datasets, while the talk-to-machine approach further enhances accuracy, particularly for challenging low-heterogeneity environments [1].

Credibility Assessment: LLM vs. Manual Annotations

The objective credibility evaluation provides critical insights into annotation reliability beyond simple match rates:

Table 4: Credibility Assessment of LLM vs. Manual Annotations

| Dataset | LLM Credibility Rate | Manual Annotation Credibility Rate | Notable Findings |

|---|---|---|---|

| Gastric Cancer | Comparable to manual | Comparable to LLM | Both methods show similar reliability |

| PBMC | Higher than manual | Lower than LLM | LLM outperforms in objective criteria |

| Human Embryo | 50% of mismatched annotations credible | 21.3% credible | LLM shows higher credibility despite mismatches |

| Stromal Cells | 29.6% credible | 0% credible | Manual annotations fail credibility threshold |

This analysis reveals that discrepancy between LLM-generated and manual annotations does not necessarily indicate reduced LLM reliability. In some cases, particularly with low-heterogeneity datasets, LLM annotations demonstrate superior objective credibility based on marker gene expression evidence [1].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 5: Key Research Reagents and Computational Tools for LLM-Based Annotation

| Resource Category | Specific Examples | Function/Application |

|---|---|---|

| Reference Datasets | PBMC datasets (GSE164378), Human embryo data, Gastric cancer scRNA-seq | Benchmarking and validation of annotation methods |

| LLM Platforms | GPT-4, Claude 3, Gemini, LLaMA-3, ERNIE 4.0 | Core annotation engines with complementary strengths |

| Validation Tools | Marker gene expression analysis, Differential expression testing | Objective credibility assessment of annotations |

| Experimental Platforms | 10x Genomics Chromium, BD Rhapsody | Single-cell RNA sequencing technology options [2] |

| Visualization Tools | BioRender, ConceptDraw Biology | Scientific figure creation and pathway visualization [3] [4] |

Technical Implementation Considerations

Feature Selection Impact on Analysis Quality

Beyond annotation methods, feature selection significantly impacts scRNA-seq data integration and interpretation. Recent benchmarks show that highly variable feature selection remains effective for producing high-quality integrations, with important considerations for [5]:

- Number of Features: Optimal performance typically requires balancing between 2,000 highly variable features and smaller targeted gene sets

- Batch-Aware Selection: Accounting for technical batch effects during feature selection improves integration quality

- Lineage-Specific Features: For focused biological questions, selecting features relevant to specific lineages enhances resolution

Sequencing Technology Selection Framework

Choosing appropriate scRNA-seq technologies forms the foundation for reliable analysis. A comprehensive evaluation of nine commercial technologies provides guidance based on [2]:

- Performance Metrics: The Chromium Fixed RNA Profiling kit (10x Genomics) demonstrated best overall performance

- Cost-Balance Considerations: The Rhapsody WTA kit (Becton Dickinson) offers balanced performance and cost efficiency

- Read Utilization: A critical metric differentiating kits based on efficiency of converting sequencing reads to usable counts

Diagram 2: Integrated scRNA-seq Analysis Pipeline (77x60)

The integration of LLMs into scRNA-seq analysis represents a significant advancement in automated cell type annotation, with the LICT framework demonstrating superior efficiency, consistency, and accuracy compared to single-model approaches. However, the persistent challenges with low-heterogeneity datasets highlight the critical importance of objective credibility assessment through marker gene expression validation.

The most successful implementation strategy combines multi-model integration with iterative validation protocols, enabling researchers to harness the automation potential of LLMs while mitigating the risks of biological hallucination. As the field evolves, the framework of validating computational predictions with experimental evidence remains paramount for biological discovery.

Researchers should approach LLM-based annotation as a powerful but imperfect tool—one that enhances but does not replace rigorous biological validation and expert critical evaluation. The protocols and comparative data presented here provide a foundation for implementing these approaches while maintaining scientific rigor in the age of AI-driven discovery.

Why Marker Gene Expression is the Gold Standard for Biological Ground-Truthing

In the rapidly evolving field of single-cell and spatial biology, the need for reliable biological ground-truthing has never been more critical. As artificial intelligence, particularly large language models (LLMs), becomes increasingly integrated into cellular annotation pipelines, the validation of these computational predictions requires a firm biological foundation. Marker gene expression has emerged as the undisputed gold standard for this validation, providing an objective, measurable benchmark rooted in fundamental biology. This article explores the central role of marker genes in verifying cell type identities and states, with a specific focus on their application in validating emerging LLM-based annotation tools.

The Biological Foundation of Marker Genes

Marker genes are uniquely expressed or highly enriched in specific cell types or states, serving as molecular fingerprints that allow for precise cellular identification. The utility of a marker gene is determined by the extent to which it satisfies key biological desiderata: it must be expressed at detectable levels yet not ubiquitously; its expression should vary sufficiently to permit detection of differential expression; and it should be concentrated within the state of interest [6].

The "Goldilocks principle" applies to ideal marker genes—they must be expressed at levels that are "not too high but not too low" for detection using standard spatial analysis techniques like antisense mRNA in situ hybridization and immunofluorescence [6]. These experimental techniques represent the conventional gold standard in organismal biology for identifying spatially distinct cell states, providing crucial spatial information lacking in transcriptomic approaches alone.

Marker Genes as Validation Benchmarks for LLM-Based Annotations

The Rise of LLM-Based Cell Type Annotation

Recent advancements have introduced LLM-based tools for cell type annotation, such as LICT (Large Language Model-based Identifier for Cell Types), which leverages multiple model integration and a "talk-to-machine" approach to annotate single-cell RNA sequencing data [1]. These tools represent a significant shift from traditional manual annotation, which suffers from subjectivity and experience dependency, and automated tools that often rely on potentially biased reference datasets.

The Critical Role of Marker Expression in Validation

Marker gene expression serves as the fundamental validation metric for assessing the reliability of LLM-generated annotations. In the LICT framework, an objective credibility evaluation strategy directly uses marker gene expression to assess annotation reliability [1]. The methodology follows these critical steps:

- Marker Gene Retrieval: For each predicted cell type, the LLM is queried to generate representative marker genes based on the initial annotation.

- Expression Pattern Evaluation: The expression of these marker genes is analyzed within corresponding cell clusters in the input dataset.

- Credibility Assessment: An annotation is deemed reliable if more than four marker genes are expressed in at least 80% of cells within the cluster [1].

This approach provides a reference-free, unbiased method for validating computational predictions against biological reality. Notably, studies have demonstrated that in low-heterogeneity datasets, LLM-generated annotations validated against marker expression sometimes outperformed manual expert annotations, with 50% of mismatched LLM annotations deemed credible compared to only 21.3% for expert annotations in embryo data, and 29.6% versus 0% in stromal cell data [1].

Experimental Protocols for Marker-Based Validation

Ensemble Methods for Robust Marker Identification

Identifying reliable marker genes is itself a challenging computational task. The EIGEN (Ensemble Identification of Gene Enrichment) approach demonstrates that applying an ensemble of differential expression methods (Welch's t-test, Wilcoxon ranked-sum test, binomial test, and MAST) robustly identifies genes that mark cells clustering together and show restricted expression validated by antisense mRNA in situ and immunofluorescence [6].

Table 1: Performance Comparison of Differential Expression Methods in Identifying Validated Marker Genes

| Method | AUROC Performance Across Clusters | AUPR Performance Across Clusters | Ranking of Validated Markers |

|---|---|---|---|

| EIGEN (Ensemble) | Best performer for 11/12 clusters | Best performer for 7/12 clusters | Highest rank in 9/13 validated cases |

| Wilcoxon Ranked-Sum Test | Intermediate performance | Intermediate performance | Variable performance across markers |

| MAST | Lower performance | Lower performance | Suboptimal ranking of validated markers |

| Binomial Test | Lower performance | Lower performance | Variable performance across markers |

| Welch's t-test | Intermediate performance | Intermediate performance | Variable performance across markers |

The superiority of the ensemble approach is reflected in its higher combined performance score across clusters and its ability to rank experimentally validated "anchor genes" among the top candidates in all cases [6].

Advanced Spatial Validation Frameworks

With the advent of spatial transcriptomics, marker validation has expanded beyond traditional techniques. Methods like MaskGraphene create interpretable joint embeddings for multi-slice spatial transcriptomics by establishing "hard-links" through cluster-wise local alignment and "soft-links" through triplet loss in latent embedding space [7]. The framework benchmarks integration performance against biological ground truth, including layer-wise alignment accuracy based on the critical hypothesis that aligned spots across adjacent consecutive slices are more likely to belong to the same spatial domain or cell type [7].

Meanwhile, GHIST represents another advancement, predicting spatial gene expression at single-cell resolution from histology images using deep learning. It validates predictions by comparing cell-type distributions and examining correlation between predicted and ground-truth expression for spatially variable genes, with top markers showing median correlations of 0.6-0.7 [8].

Comparative Performance Data: Marker-Validated Methods

Table 2: Performance Metrics of Advanced Spatial Analysis Methods Using Marker Validation

| Method | Primary Function | Key Validation Metric | Reported Performance |

|---|---|---|---|

| LICT | LLM-based cell type annotation | Marker expression credibility (>4 markers in >80% of cells) | 50% credibility for embryo data vs 21.3% for manual annotations |

| EIGEN | Marker gene identification | Experimental validation via in situ hybridization | Ranked validated markers in top 25 in all experimentally tested cases |

| MaskGraphene | Multi-slice spatial transcriptomics integration | Layer-wise alignment accuracy | Superior alignment and mapping accuracy across 9 DLPFC slice pairs |

| GHIST | Spatial gene prediction from histology | Correlation of predicted vs actual marker expression | Median correlation 0.6-0.7 for top spatially variable genes |

| Cepo | Trait-cell type mapping (GWAS + scRNA-seq) | Prioritization of gold-standard marker genes | Outperformed 7 other metrics in mapping power and false positive rate control [9] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for Marker-Based Validation

| Reagent/Platform | Function | Application in Validation |

|---|---|---|

| 10x Visium | Spot-based spatial transcriptomics | Provides spatial context for marker gene expression patterns [7] [8] |

| MERFISH | Imaging-based spatial transcriptomics | High-resolution spatial mapping of marker expression [7] |

| 10x Xenium | Subcellular spatial transcriptomics | Single-cell resolution spatial gene expression for validation [8] |

| H&E Stained Images | Routine histopathology | Morphological context for spatial predictions [8] |

| Antisense mRNA In Situ Hybridization | Spatial gene expression validation | Gold-standard technique for verifying restricted marker expression [6] |

| Immunofluorescence | Protein-level spatial validation | Confirms translation of marker gene expression [6] |

| scRNA-seq Reference Data | Single-cell RNA sequencing | Provides marker gene lists for cell type annotation [1] |

Methodological Workflows for Marker-Based Ground-Truthing

Workflow 1: LLM Annotation Validation Pipeline

Workflow 2: Ensemble Marker Identification and Validation

Marker gene expression remains the indispensable gold standard for biological ground-truthing in the age of computational biology and artificial intelligence. As LLM-based annotation tools and advanced spatial analysis methods continue to evolve, the rigorous validation against experimentally verified marker expression patterns provides the critical biological anchor that ensures computational predictions reflect biological reality. The integration of ensemble methods for marker identification, spatial validation frameworks, and objective credibility evaluation based on marker expression creates a robust ecosystem for advancing cellular research while maintaining scientific rigor. For researchers, drug development professionals, and computational biologists, this marker-centered validation paradigm offers a reliable pathway to leverage cutting-edge computational tools while ensuring biological fidelity.

In the fields of bioinformatics and drug development, the use of Large Language Models (LLMs) to annotate unstructured biomedical text and genomic data represents a paradigm shift with the potential to accelerate discovery. However, beneath this excitement lies a fundamental threat to scientific validity: the phenomenon of LLM hacking. This term describes how researcher choices in model selection, prompting, and parameter settings can systematically bias LLM outputs, leading to incorrect downstream scientific conclusions [10]. In statistical terms, these errors manifest as false positives (Type I), false negatives (Type II), incorrect effect signs (Type S), or exaggerated effect magnitudes (Type M) [10]. For researchers validating biomarker candidates or interpreting transcriptomic data, the implications are profound. An LLM-based analysis could incorrectly associate a gene with a disease pathway or misrepresent the effect size of a therapeutic target. This article defines the key metrics for assessing the credibility of LLM-generated annotations, providing a framework grounded in the rigorous principles of marker discovery and validation [11]. By establishing clear benchmarks and experimental protocols, we empower scientists to harness LLMs' scalability without compromising the integrity of their research.

Quantitative Landscape: Benchmarking LLM Performance on Annotation Tasks

Empirical assessments across diverse annotation tasks reveal significant variation in LLM reliability. A large-scale replication of 37 data annotation tasks from published studies, involving 13 million LLM labels, found that the risk of drawing incorrect conclusions from LLM-annotated data is substantial. The error rate fluctuates dramatically based on the model used and the specific task [10].

Table 1: LLM Hacking Risk and Error Rates Across Model Scales

| Model Scale | Overall LLM Hacking Risk | Dominant Error Type | Average Effect Size Deviation |

|---|---|---|---|

| State-of-the-Art (70B+ parameters) | 31% | Type II (False Negative) | 40% - 77% |

| Small Language Models (~1B parameters) | 50% | Type II (False Negative) | 40% - 77% |

The risk is not uniform across all tasks. For instance, the error rate for humor detection is relatively low at around 5%, but it soars to over 65% for more complex tasks like ideology and frame classification [10]. This is a critical consideration for researchers who might use LLMs to classify, for instance, scientific literature or patient records into specific biological categories.

Performance on standardized benchmarks provides a baseline for model selection. The table below summarizes the capabilities of leading 2025 models across key competencies relevant to scientific annotation, such as knowledge, reasoning, and coding [12].

Table 2: Performance Benchmarks of Leading LLMs (2025)

| Model | Knowledge (MMLU) | Reasoning (GPQA) | Coding (SWE-bench) | Best Application Context |

|---|---|---|---|---|

| OpenAI o3 | 84.2% | 87.7% | 69.1% | Complex reasoning, mathematical tasks |

| Claude 3.7 Sonnet | 90.5% | 78.2% | 70.3% | Software engineering, factual content |

| GPT-4.1 | 91.2% | 79.3% | 54.6% | General use, knowledge-intensive tasks |

| Gemini 2.5 Pro | 89.8% | 84.0% | 63.8% | Balanced performance and cost |

| Grok 3 | 86.4% | 80.2% | - | Mathematics, visual reasoning |

Alarmingly, even when models correctly identify statistically significant effects, the estimated effect sizes can deviate from true values by 40% to 77% on average [10]. This systematic bias in effect magnitude—a Type M error—is particularly dangerous in biomarker research, where it could lead to misallocated resources based on overstated findings.

Core Metrics for Annotation Credibility

Assessing the credibility of LLM-generated annotations requires a multi-faceted approach that goes beyond simple accuracy metrics. The framework below visualizes the core components of this validation process, connecting computational outputs with established biological research pathways.

Statistical Reliability and Error Typology

The most direct threat to credible research is LLM hacking, which quantifies how often a researcher's configuration choices lead to incorrect conclusions [10]. The associated error types are critical to monitor:

- Type I Errors (False Positives): The LLM annotation pipeline identifies a non-existent effect or association. In a biomarker context, this could mean incorrectly labeling a gene as a significant marker.

- Type II Errors (False Negatives): The pipeline fails to identify a true effect. This is the dominant error type for LLMs, occurring in 31-59% of cases depending on model size [10].

- Type S Errors (Sign Errors): The direction of a significant effect is reversed. For example, an LLM might annotate a gene as being significantly downregulated in a disease when it is actually upregulated.

- Type M Errors (Magnitude Errors): The effect size is correctly signed but is substantially exaggerated or underestimated, with average deviations of 40-77% from true values [10].

Agreement with Expert Benchmarks

For tasks involving nuanced judgment, the gold standard is comparison to human expertise. Studies show that expert agreement serves as a more informative benchmark for contextualizing LLM performance than standard classification metrics alone [13]. In one study comparing experts, crowdworkers, and LLMs on annotating empathic communication, LLMs consistently approached expert-level benchmarks and exceeded the reliability of crowdworkers across four evaluative frameworks [13]. The key metrics here are inter-annotator agreement scores, such as Cohen's Kappa or Intraclass Correlation Coefficient (ICC), calculated between the LLM and a panel of domain expert annotators.

Contextual Robustness

An annotation system is not credible if it is brittle. Contextual robustness measures the variance in outputs resulting from plausible, non-malicious changes to the input prompt, model parameters (like temperature), or the underlying LLM model itself [10]. A robust annotation protocol will yield consistent labels across these reasonable variations. The risk of LLM hacking is highest when p-values are near significance thresholds (e.g., 0.05), where error rates can approach 70% [10].

Experimental Protocols for Validation

Validating an LLM annotation system for scientific use requires a rigorous, multi-stage experimental design. The following protocol ensures a comprehensive assessment of credibility.

Protocol: A Multi-Stage Validation Framework

Stage 1: Establish a Ground Truth Benchmark Dataset

- Procedure: Curate or generate a dataset of text samples (e.g., scientific abstracts, clinical notes, gene descriptions) that have been annotated by a minimum of three independent domain experts. The annotation guideline should be meticulously detailed.

- Metrics: Calculate the inter-expert agreement using Cohen's Kappa or ICC. A Kappa value above 0.8 indicates excellent agreement and a reliable ground truth. This expert consensus becomes the benchmark for all subsequent LLM evaluations [13].

Stage 2: Systematically Test LLM Configurations

- Procedure: Execute the annotation task across a wide array of configurations. This should include multiple LLMs (from small to state-of-the-art), numerous prompt paraphrases that capture the same task instruction, and different decoding parameters (e.g., temperature settings from 0 to 1).

- Metrics: For each configuration, compute standard task performance metrics (Precision, Recall, F1-Score) against the expert benchmark. More importantly, run the planned downstream statistical analysis (e.g., t-test, regression) on the LLM-annotated data and record the resulting p-values and effect sizes. This allows for the direct quantification of Type I, II, S, and M errors [10].

Stage 3: Integrate with Biological Validation

- Procedure: When LLM annotations generate novel biological hypotheses (e.g., identifying a previously uncharacterized gene-disease association), these findings must be tested in a wet-lab setting, following established experimental pathways.

- Workflow: The diagram below outlines a standardized workflow for the experimental validation of marker genes, from hypothesis generation through functional analysis. This mirrors the process used in studies identifying oxidative stress genes in Hypertrophic Cardiomyopathy [14].

Stage 4: Implement Continuous Observability

- Procedure: In production, instrument the LLM annotation workflow with an observability platform. Log every prompt, completion, token usage, and latency. Attach automated evaluators to score outputs for factuality, relevance, and potential hallucination [15].

- Metrics: Monitor token usage and cost, latency, and automated evaluation scores in real-time. Route low-confidence outputs to a human-in-the-loop for review. This creates a feedback loop that continuously improves the system's reliability and allows for rapid diagnosis of performance regressions [15].

The Scientist's Toolkit: Research Reagent Solutions

Bridging computational annotations with biological discovery requires a specific set of computational and experimental tools. The following table details essential "research reagents" for this field.

Table 3: Essential Research Reagent Solutions for Validation

| Research Reagent | Function / Application | Example Use Case |

|---|---|---|

| LLM Observability Platform (e.g., Maxim AI) | Provides distributed tracing, token accounting, and eval pipelines to monitor LLM workflows in production. | Tracking prompt-completion correlation and detecting hallucination flags in a high-throughput annotation pipeline [15]. |

| Bioinformatics Suites (GSVA, GSEA, CIBERSORT) | Perform gene set variation, enrichment, and immune cell infiltration analysis on transcriptomic data. | Identifying if LLM-identified marker genes are enriched in specific KEGG pathways or correlate with tumor microenvironment cells [14]. |

| Feature Selection Algorithms (LASSO, SVM-RFE) | Machine learning algorithms used to identify the most informative genes from high-dimensional genomic data. | Refining a large set of differentially expressed genes down to a concise panel of diagnostic biomarkers [14]. |

| Adenoviral Vectors (e.g., for PRKAG2 gene) | Tools for gene overexpression or knockdown in cellular models to test gene function. | Validating the functional role of a candidate gene identified via LLM annotation in disease pathogenesis [14]. |

| ROS Detection Probe (Dihydroethidium - DHE) | A fluorescent dye used to detect superoxide production and measure oxidative stress in cells. | Quantifying oxidative stress levels in cardiomyocytes after perturbation of an LLM-identified gene [14]. |

| Primary Cells (e.g., Neonatal Rat Cardiomyocytes) | Biologically relevant in vitro models for studying disease mechanisms and therapeutic effects. | Establishing a cellular model to test hypotheses generated from LLM-annotated literature and genomic data [14]. |

The integration of LLMs into the biomedical research workflow offers unparalleled scale but introduces a new layer of methodological risk. Credibility is not guaranteed by the model's general capabilities but must be actively built and measured. The key is to shift from viewing LLMs as oracles to treating them as complex scientific instruments that require rigorous calibration and validation. This involves quantifying statistical error profiles, benchmarking against expert consensus, and, most critically, tethering computational findings to experimental results in the laboratory. By adopting the metrics and protocols outlined here, researchers can fortify their use of LLM-based annotations, ensuring that this powerful tool enhances, rather than undermines, the integrity of scientific discovery in drug development and beyond.

The application of Large Language Models (LLMs) to single-cell RNA sequencing (scRNA-seq) data represents a paradigm shift in cellular research. A critical challenge in this domain lies in the accurate annotation of cell types, a process traditionally dependent on expert knowledge or automated tools constrained by their reference data. This guide objectively compares the performance of various LLMs in annotating cell populations with high and low heterogeneity, framing the evaluation within the broader thesis of validating LLM-based annotations against the ground truth of marker gene expression. For researchers and drug development professionals, understanding these performance characteristics is essential for selecting appropriate tools and interpreting results with confidence.

Quantitative Performance Comparison

Table 1: Overall Annotation Performance of Top LLMs on Benchmark Datasets [1] [16]

| Model | Company | High-Heterogeneity Match Rate (e.g., PBMCs) | Low-Heterogeneity Match Rate (e.g., Embryo) | Performance Drop |

|---|---|---|---|---|

| Claude 3 Opus | Anthropic | ~84% (26/31) | ~33% (Stromal Cells) | ~51% |

| LLaMA 3 70B | Meta | ~81% (25/31) | Data Not Specified | - |

| ERNIE-4.0 | Baidu | ~81% (25/31) | Data Not Specified | - |

| GPT-4 | OpenAI | ~77% (24/31) | ~3% (Baseline for Embryo) | ~74% |

| Gemini 1.5 Pro | ~77% (24/31) | ~39% (Embryo) | ~38% |

Independent benchmarking of major LLMs using the AnnDictionary package on the Tabula Sapiens v2 atlas confirmed that Claude 3.5 Sonnet achieved the highest agreement with manual annotations [17] [18]. A key finding across studies is that the performance of all LLMs diminishes significantly when annotating less heterogeneous datasets [1] [16]. For example, while models like Claude 3 excelled with highly heterogeneous cell subpopulations found in PBMCs and gastric cancer samples, they showed substantial discrepancies in low-heterogeneity environments like human embryos and stromal cells [1].

Performance of Advanced Multi-Model Strategies

To address performance gaps, advanced strategies like the LICT (LLM-based Identifier for Cell Types) tool were developed, employing multi-model integration. The following table summarizes the performance improvements achieved by this approach.

Table 2: Performance of Multi-Model Integration Strategy (LICT) [1] [16]

| Dataset | Heterogeneity | Single Model Mismatch (e.g., GPT-4) | Multi-Model (LICT) Mismatch | Improvement |

|---|---|---|---|---|

| PBMCs | High | 21.5% | 9.7% | 11.8% |

| Gastric Cancer | High | 11.1% | 8.3% | 2.8% |

| Human Embryo | Low | >50% (Est. 97%) | 42.4% | >7.6% |

| Stromal Cells | Low | >50% (Est. 95%) | 56.2% | >5.0% |

The multi-model integration strategy, which selects the best-performing results from five top LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE 4.0), significantly enhanced annotation accuracy [1] [16]. This approach leverages the complementary strengths of different models, reducing uncertainty and increasing reliability, particularly for challenging low-heterogeneity cell types [1].

Experimental Protocols and Validation Workflows

Standardized Benchmarking Methodology

The foundational protocol for evaluating LLM performance on cell type annotation involves a standardized benchmarking process [1] [17] [16]:

Dataset Selection and Pre-processing: Benchmarking utilizes diverse scRNA-seq datasets representing various biological contexts, including:

- Normal Physiology: Peripheral Blood Mononuclear Cells (PBMCs), widely used for evaluating automated annotation tools due to well-defined cell types [1] [16].

- Disease States: Gastric cancer samples [1].

- Developmental Stages: Human embryo data [1].

- Low-Heterogeneity Environments: Stromal cells from mouse organs [1]. Standard pre-processing is performed, including normalization, log-transformation, scaling, PCA, neighborhood graph calculation, clustering via the Leiden algorithm, and identification of differentially expressed genes (DEGs) for each cluster [17] [18].

Prompting and Annotation: A standardized prompt incorporating the top marker genes for each cell cluster is used to query the LLMs. The models are then tasked with providing a cell type label based on this gene list [1] [16].

Performance Assessment: The primary metric for evaluation is the agreement between the LLM-generated annotation and the manual, expert-derived annotation. This can be measured via direct string comparison, Cohen’s kappa, or LLM-assisted rating of label match quality (e.g., perfect, partial, or not-matching) [17] [18].

The "Talk-to-Machine" Iterative Validation Strategy

For a more robust validation of annotations against marker expression, the "talk-to-machine" strategy provides an iterative workflow [1] [16]. This process creates a feedback loop that refines the LLM's output based on empirical gene expression data.

Objective Credibility Evaluation Framework

Discrepancies between LLM and manual annotations do not always indicate LLM failure, as manual annotations can also be subjective or biased [1] [16]. An objective credibility evaluation strategy was developed to assess the intrinsic reliability of any annotation (whether from an LLM or an expert) based on marker gene expression within the dataset itself [1].

Table 3: Credibility Assessment of Conflicting Annotations [1] [16]

| Dataset | Conflicting Annotation Source | Percentage Deemed Credible by Marker Evidence |

|---|---|---|

| Human Embryo | LLM-generated | 50.0% |

| Human Embryo | Expert (Manual) | 21.3% |

| Stromal Cells | LLM-generated | 29.6% |

| Stromal Cells | Expert (Manual) | 0.0% |

This framework involves:

- For a given cell type annotation, the LLM is queried to generate a list of representative marker genes.

- The expression of these marker genes is analyzed within the corresponding cell cluster from the input scRNA-seq dataset.

- The annotation is deemed objectively credible if more than four marker genes are expressed in at least 80% of cells within the cluster. Otherwise, it is classified as unreliable [1] [16]. This method provides a reference-free, unbiased metric for validating annotation results, shifting the focus from simple agreement with a human label to a more fundamental biological validation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Tools and Datasets for LLM-based Cell Annotation

| Tool / Resource | Type | Primary Function | Relevance to Heterogeneity |

|---|---|---|---|

| LICT (LICT) [1] [16] | Software Package | Integrates multiple LLMs & strategies for cell type identification. | Specifically designed to improve performance on low-heterogeneity data. |

| AnnDictionary [17] [18] | Python Package | Provides a unified interface for multiple LLMs to annotate anndata objects. | Enables large-scale benchmarking across diverse tissues and cell types. |

| PBMC Dataset [1] [16] | scRNA-seq Data | Gold-standard benchmark for high-heterogeneity cell populations. | Tests model performance on well-defined, diverse immune cells. |

| Human Embryo Dataset [1] | scRNA-seq Data | Represents a low-heterogeneity biological context. | Challenges models to distinguish subtly different cell states. |

| Tabula Sapiens v2 [17] [18] | scRNA-seq Atlas | A large, multi-tissue reference atlas. | Provides a comprehensive testbed for model generalizability. |

The benchmarking data and experimental protocols presented in this guide illuminate a critical aspect of employing LLMs for cell type annotation: their performance is intrinsically linked to the heterogeneity of the cell population under investigation. While top-tier models like Claude 3.5 Sonnet demonstrate high accuracy (often 80-90%) for major, well-defined cell types in high-heterogeneity environments, a significant performance drop occurs in low-heterogeneity scenarios. This challenge, however, is being effectively mitigated by sophisticated strategies such as multi-model integration (LICT) and iterative validation workflows ("talk-to-machine"). Furthermore, the move towards objective credibility evaluation based on marker gene expression, rather than sole reliance on agreement with manual labels, represents a more robust framework for validating LLM-based annotations. For the scientific community, this underscores the importance of selecting not just a powerful model, but a comprehensive validation strategy tailored to the biological complexity of their specific research question.

Building Trustworthy Pipelines: Strategies and Tools for Integrated Verification

The integration of multiple Large Language Models represents a paradigm shift in scientific artificial intelligence applications, moving beyond the limitations of single-model approaches. While individual LLMs demonstrate remarkable capabilities, standalone models inevitably exhibit specific strengths and weaknesses, creating reliability concerns for high-stakes domains like drug development and marker expression research where accurate annotations are paramount [19]. Multi-model integration strategically combines complementary AI systems to create a more robust, accurate, and trustworthy analytical framework capable of supporting complex scientific workflows.

This approach is particularly valuable for validating LLM-based annotations in scientific research, where different models can cross-verify findings and provide consensus-based outcomes. Research indicates that while individual LLMs show notable variability in performance across different tasks and domains, integrated systems leverage their complementary strengths to deliver more consistent and reliable results [19] [20]. For scientific researchers and drug development professionals, this multi-model framework offers a methodological advancement that enhances both the precision and reproducibility of AI-assisted annotations in critical research areas such as biomarker identification and expression analysis.

Comparative Performance Analysis of Leading LLMs

Quantitative Benchmarking in Scientific Domains

Rigorous evaluation of LLM performance across scientific domains reveals significant differences in capabilities. A recent expert-led study assessed five prominent models—Claude 3.5 Sonnet, Gemini, GPT-4o, Mistral Large 2, and Llama 3.1 70B—across multiple dimensions including depth, accuracy, relevance, and clarity of scientific responses [19]. Sixteen expert scientific reviewers with h-indices ranging from 10 to 58 conducted blinded evaluations using a standardized rubric, providing a robust assessment framework for research applications.

Table 1: Overall Performance Scores of LLMs on Scientific Question-Answering (Scale: 0-10)

| Model | Overall Score | Accuracy | Depth | Relevance | Clarity |

|---|---|---|---|---|---|

| Claude 3.5 Sonnet | 8.42 | 8.5 | 8.3 | 8.6 | 8.2 |

| Gemini | 7.98 | 8.1 | 7.8 | 8.2 | 7.8 |

| GPT-4o | 7.35 | 7.4 | 7.2 | 7.5 | 7.1 |

| Mistral Large 2 | 6.87 | 6.9 | 6.7 | 7.0 | 6.8 |

| Llama 3.1 70B | 6.52 | 6.5 | 6.4 | 6.7 | 6.4 |

The findings demonstrated that Claude 3.5 Sonnet emerged as the highest-performing model for scientific tasks, particularly excelling in accuracy and relevance [19]. This performance hierarchy provides researchers with critical guidance for model selection in multi-model frameworks, where higher-performing models might anchor complex analytical tasks while specialized models contribute specific capabilities.

Specialized Capabilities Across Modalities

Beyond general scientific reasoning, LLMs demonstrate specialized performance across different data modalities relevant to marker expression research. A comprehensive evaluation of facial emotion recognition capabilities—pertinent to behavioral marker analysis—revealed substantial differences in model performance on the validated NimStim dataset [20].

Table 2: Performance Comparison on Facial Emotion Recognition Task (NimStim Dataset)

| Model | Overall Accuracy | Cohen's Kappa (κ) | Strength on Emotions | Common Misclassifications |

|---|---|---|---|---|

| GPT-4o | 86% | 0.83 | Calm/Neutral, Surprise, Happy | Fear → Surprise (52.5%) |

| Gemini 2.0 Experimental | 84% | 0.81 | Surprise, Happy, Calm/Neutral | Fear → Surprise (36.25%) |

| Claude 3.5 Sonnet | 74% | 0.70 | Happy, Angry | Fear → Surprise (36.25%), Sadness → Disgust (20.24%) |

The evaluation demonstrated that GPT-4o and Gemini 2.0 Experimental achieved reliability comparable to human observers for most emotion categories, with GPT-4o significantly outperforming Claude 3.5 Sonnet on several emotions including Calm/Neutral, Sad, Disgust, and Surprise [20]. This modality-specific performance stratification underscores the importance of multi-model integration, as no single model dominates across all data types and analytical tasks.

Epistemic Reliability and Confidence Alignment

A critical consideration for scientific applications is the reliability of model-expressed confidence levels. Research on epistemic markers—verbal expressions of uncertainty like "I am fairly confident"—reveals important limitations in how LLMs communicate confidence in their outputs [21]. Studies evaluating marker confidence stability across question-answering datasets found that while markers generalize well within the same distribution, their confidence becomes inconsistent in out-of-distribution scenarios, raising significant concerns about relying on verbal confidence indicators alone [21].

Advanced models like GPT-4o and Qwen2.5-32B-Instruct demonstrated better understanding of epistemic markers with lower calibration errors (C-AvgECE of 11.84 and 10.40 respectively) compared to smaller models like Mistral-7B-Instruct-v0.3 (C-AvgECE of 24.81) [21]. This research highlights the importance of multi-model approaches with built-in confidence validation mechanisms, particularly for scientific applications where understanding uncertainty is crucial for reliable annotations.

Experimental Protocols and Methodologies

Retrieval-Augmented Generation for Scientific Accuracy

The implementation of Retrieval-Augmented Generation significantly enhances LLM performance in scientific contexts by grounding responses in domain-specific literature [19]. The experimental protocol implemented for scientific benchmarking provides a reproducible framework for researchers:

Context Collection: A targeted search of scientific databases (e.g., Scopus) using domain-specific terms retrieves relevant literature. In the benchmark study, searching "Extraction AND Agricultural AND Byproduct" returned 306 articles with abstracts [19].

Query Expansion: Each LLM performs query expansion to refine search and retrieval of scientific abstracts, enabling more targeted document selection from scientific databases.

Embedding and Selection: The expanded queries are used to select the most relevant article abstracts through embedding similarity matching.

Superprompt Construction: Integrated prompts combine specific scientific context, the research question, and clear instructions for answering.

Answer Generation: Each LLM generates responses to scientific questions using the superprompts in isolated sessions to prevent interference [19].

This methodology significantly improved the precision and relevance of LLM outputs across all tested models, providing a robust framework for scientific applications including marker expression research where domain literature integration is essential.

Multi-Model Ensemble Framework

The Multi-model Integration for Dynamic Forecasting framework provides a methodological template for integrating multiple AI models [22]. Though developed for wind forecasting, its architecture offers valuable insights for scientific research applications:

Specialized Model Selection: Identify models with complementary strengths—probabilistic forecasting capabilities (DeepAR) and attention mechanisms for multivariate data (Temporal Fusion Transformer) [22].

Two-Step Meta-Learning: Implement incremental refinement where models strategically leverage each other's strengths through a structured integration process.

Cross-Validation Mechanism: Establish protocols where model outputs can be validated against complementary systems, enhancing reliability.

Uncertainty Quantification: Incorporate probabilistic outputs to gauge confidence levels and identify areas requiring human expert validation.

This ensemble approach achieved superior performance with MSE values of 0.0035 for wind speed and 0.00052 for wind direction, significantly reducing errors compared to standalone models [22]. The framework demonstrates how strategically combined models can overcome individual limitations while enhancing overall system robustness.

Literature Screening and Annotation Protocol

For scientific annotation tasks, a structured screening methodology has demonstrated efficacy across multiple LLMs [23]. The protocol involves:

Target Set Creation: Compile validated studies from authoritative systematic reviews to establish benchmark annotations.

Similarity Stratification: Use semantic similarity models (e.g., all-mpnet-base-v2) to stratify literature into quartiles of descending relevance to the research topic.

Multi-Model Classification: Employ multiple LLMs with standardized prompts to classify articles or annotations as "Accepted" or "Rejected" based on inclusion criteria.

Performance Metrics: Calculate precision, recall, and F1 scores to evaluate model performance against expert judgments, with high recall being particularly important to avoid discarding relevant studies [23].

This methodology proved effective with advanced models like Claude 3 Haiku, GPT-3.5 Turbo, and GPT-4o consistently achieving high recall rates, though precision varied across similarity quartiles [23]. The approach provides a validated framework for annotation tasks in marker expression research where comprehensive literature coverage is essential.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Multi-Model LLM Validation

| Research Reagent | Function | Example Implementation |

|---|---|---|

| Validated Benchmark Datasets | Provide ground truth for model evaluation | NimStim facial expression dataset with expert-validated emotional expressions [20] |

| Domain-Specific Literature Corpora | Contextual grounding for scientific accuracy | Scopus/PubMed abstracts on specific research domains [19] |

| Semantic Similarity Models | Stratify research materials by relevance | all-mpnet-base-v2 for article similarity scoring [23] |

| Standardized Evaluation Rubrics | Ensure consistent expert assessment | Criteria for accuracy, depth, relevance, and clarity (0-10 scale) [19] |

| Epistemic Marker Lexicons | Evaluate uncertainty communication | Defined markers like "fairly confident" with confidence accuracy correlations [21] |

| Retrieval-Augmented Generation Framework | Enhance factual accuracy | Custom pipelines integrating scientific databases with LLM queries [19] |

| Multi-Model Orchestration Systems | Coordinate complementary AI capabilities | Platforms like Magai providing access to 50+ AI models [24] |

Integrated Workflow for Annotation Validation

The integration of multiple LLMs into a cohesive annotation validation system requires careful architectural planning. The workflow must leverage the complementary strengths of different models while maintaining scientific rigor and reproducibility.

Multi-model integration represents a methodological advancement in leveraging artificial intelligence for scientific research, particularly in validating LLM-based annotations for marker expression studies. The complementary strengths of different models—Claude's analytical depth, GPT-4o's multimodal capabilities, and Gemini's visual recognition prowess—create a more robust validation framework than any single model can provide [19] [20].

Successful implementation requires careful attention to experimental protocols, particularly retrieval-augmented generation for scientific accuracy [19], structured ensemble methodologies [22], and rigorous confidence calibration [21]. By adopting these structured approaches and leveraging the specialized tools outlined in this guide, researchers can develop more reliable, reproducible, and valid annotation systems for critical drug development and biomarker research applications.

The future of multi-model integration will likely involve increasingly sophisticated orchestration frameworks, improved uncertainty quantification, and domain-specific fine-tuning. As these technologies evolve, they promise to enhance the scientist's ability to extract meaningful patterns from complex biological data while maintaining the rigorous standards required for scientific discovery and therapeutic development.

In single-cell RNA sequencing (scRNA-seq) analysis, the annotation of cell types represents a critical bottleneck. Traditional methods, which rely either on manual expert knowledge or automated tools using reference datasets, are often constrained by subjectivity and limited generalizability [1]. The emergence of Large Language Models (LLMs) has introduced a promising pathway for automating this process by leveraging their encoded biological knowledge. However, a significant challenge remains: how can we objectively validate the reliability of LLM-generated annotations against ground-truth biological data?

This comparison guide explores the 'Talk-to-Machine' strategy, an iterative feedback loop methodology designed to bridge this validation gap. This approach moves beyond single-query interactions, implementing a cyclical verification process where initial LLM annotations are tested against marker gene expression patterns, with results fed back to the model for refinement. We will objectively compare the performance of this strategy against other annotation methods, using experimental data from recent studies to evaluate its precision, reliability, and applicability in biomarker research and drug development.

Methodology: Implementing Iterative Feedback Loops

The 'Talk-to-Machine' strategy transforms the standard LLM annotation process from a single query into a dynamic, evidence-based dialogue. The methodology, as implemented in tools like LICT (Large Language Model-based Identifier for Cell Types), follows a structured, iterative workflow [1]:

- Initial Annotation Query: The process begins by providing an LLM with a list of top marker genes identified from a cell cluster in an scRNA-seq dataset.

- Marker Gene Retrieval and Validation: For each cell type predicted by the LLM, the system queries the model to generate a list of representative marker genes. The expression of these genes is then quantitatively assessed within the corresponding cell cluster in the input dataset.

- Iterative Feedback and Revision: An annotation is considered valid if more than four marker genes are expressed in at least 80% of cells within the cluster. If this threshold is not met, the annotation fails validation. A structured feedback prompt is then generated, containing the expression validation results and additional differentially expressed genes (DEGs) from the dataset. This prompt is used to re-query the LLM, prompting it to revise or confirm its previous annotation [1].

This workflow can be visualized as a cyclical process of annotation, validation, and refinement:

Figure 1: The 'Talk-to-Machine' iterative feedback loop for validating LLM-generated cell type annotations against marker gene expression data.

Performance Comparison: 'Talk-to-Machine' vs. Alternative Annotation Methods

To objectively evaluate the 'Talk-to-Machine' strategy, we compare its performance against other common annotation approaches, including manual expert annotation, single-query LLM annotation, and multi-model integration without iterative feedback. The evaluation leverages experimental data from studies involving diverse biological contexts, including Peripheral Blood Mononuclear Cells (PBMCs), gastric cancer, human embryo, and stromal cell datasets [1].

Annotation Accuracy Across Diverse Biological Contexts

The following table summarizes the performance of different annotation strategies in matching expert manual annotations across four distinct dataset types, measured as the rate of full matches.

Table 1: Comparison of Annotation Match Rates Across Methods and Datasets

| Annotation Method | PBMC Dataset | Gastric Cancer Dataset | Human Embryo Dataset | Stromal Cell Dataset |

|---|---|---|---|---|

| Single-Query LLM (GPT-4) | Data Not Available | Data Not Available | ~3% (Baseline) | ~2.7% (Baseline) |

| Multi-Model Integration | 90.3% Match Rate | 91.7% Match Rate | 48.5% Match Rate (Combined Full & Partial) | 43.8% Match Rate (Combined Full & Partial) |

| 'Talk-to-Machine' Strategy | 34.4% (Full Match) | 69.4% (Full Match) | 48.5% (Full Match) | 43.8% (Full Match) |

| Mismatch Rate (Talk-to-Machine) | 7.5% | 2.8% | 42.4% | 56.2% |

The data reveal several key insights. The 'Talk-to-Machine' strategy significantly enhances annotation precision, particularly for complex and heterogeneous cell populations. In the gastric cancer dataset, it achieved a remarkable 69.4% full match rate with manual annotations, while reducing the mismatch rate to just 2.8% [1]. The strategy also demonstrated a dramatic 16-fold improvement in the full match rate for the challenging low-heterogeneity human embryo data compared to the single-query GPT-4 baseline [1].

Objective Reliability Assessment via Marker Expression

Beyond simple agreement with manual labels, a more rigorous validation involves an objective assessment of the biological credibility of the annotations based on marker gene expression. The following table compares the credibility of annotations generated by the 'Talk-to-Machine' strategy versus manual expert annotations, based on the objective criterion that a credible annotation must have more than four associated marker genes expressed in at least 80% of cells in the cluster [1].

Table 2: Credibility Assessment of LLM vs. Manual Annotations Based on Marker Expression

| Dataset | Credible 'Talk-to-Machine' Annotations | Credible Manual Annotations | Key Findings |

|---|---|---|---|

| PBMC | Higher than manual | Lower than LLM | LLM annotations showed higher objective credibility [1]. |

| Gastric Cancer | Comparable to manual | Comparable to LLM | Both methods demonstrated similar, high reliability [1]. |

| Human Embryo | 50.0% of mismatched annotations were credible | 21.3% of mismatched annotations were credible | LLM identified biologically plausible cell types missed by experts [1]. |

| Stromal Cells | 29.6% of annotations were credible | 0% were credible | LLM annotations were objectively more reliable where experts struggled [1]. |

This objective evaluation is critical. It demonstrates that discrepancies with manual annotations do not necessarily indicate LLM errors. In datasets like human embryos and stromal cells, the 'Talk-to-Machine' strategy produced annotations with significantly higher objective credibility scores than manual annotations, suggesting it can identify biologically plausible cell types that may be overlooked by experts constrained by pre-existing classifications [1].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Implementing a robust 'Talk-to-Machine' validation pipeline requires a suite of computational tools and biological resources. The table below details key research reagent solutions essential for this workflow.

Table 3: Essential Research Reagents and Platforms for LLM-Assisted Annotation

| Item Name | Type | Primary Function | Key Features |

|---|---|---|---|

| LICT (LLM-based Identifier for Cell Types) [1] | Software Package | Implements the core 'Talk-to-Machine' strategy. | Multi-model integration, iterative feedback loops, objective credibility evaluation [1]. |

| AnnDictionary [18] | Open-source Python Package | Provides a flexible backend for parallel LLM-based annotation of multiple datasets. | LLM-agnostic (single line to switch models), multithreading optimizations, integrates with Scanpy [18]. |

| Tabula Sapiens v2 [18] | Reference scRNA-seq Atlas | A benchmark dataset for training and validating annotation models. | Multi-tissue, multi-donor, manually annotated high-quality data [18]. |

| LangChain | Framework | Used within packages like AnnDictionary to manage LLM interactions. | Simplifies prompt orchestration, context management, and connection to various LLM providers [18]. |

| Claude 3.5 Sonnet [18] | Large Language Model | A top-performing LLM for cell type annotation tasks. | Achieved the highest agreement with manual annotation in independent benchmarks [18]. |

Experimental Protocols for Benchmarking

To ensure reproducible and comparable results when evaluating the 'Talk-to-Machine' strategy, adherence to standardized experimental protocols is essential. The following methodology is adapted from recent benchmarking studies [1] [18].

- Data Pre-processing: Process scRNA-seq data for each tissue or sample independently. Standard steps include normalization, log-transformation, selection of high-variance genes, scaling, Principal Component Analysis (PCA), neighborhood graph construction, and clustering using an algorithm such as Leiden. Differentially expressed genes (DEGs) for each cluster are then computed.

- LLM Annotation Setup: Configure the LLM backend (e.g., via AnnDictionary). For each cluster, the top DEGs (e.g., top 10 by log-fold change) are formatted into a standardized prompt provided to the LLM.

- Iterative Feedback Loop Execution:

- Initialization: Submit the DEG list to the LLM for an initial cell type prediction.

- Validation Check: Query the LLM for known marker genes of the predicted cell type. Check the expression of these genes in the original cluster.

- Decision Point: If the marker expression validation passes (e.g., >4 markers expressed in >80% of cells), finalize the annotation. If it fails, compile a feedback prompt containing the failed validation results and additional high-quality DEGs from the cluster.

- Refinement: Resubmit the feedback prompt to the LLM for a revised annotation. Repeat until validation passes or a maximum number of iterations is reached.

- Performance Benchmarking: Compare final annotations against a gold standard (e.g., manual expert annotations) using metrics like direct string match, Cohen's Kappa, and the objective credibility score based on marker expression.

The relationships and data flow between these core components of the benchmarking protocol are illustrated below.

Figure 2: Workflow and data flow for benchmarking the 'Talk-to-Machine' annotation strategy against gold standards.

The experimental data presented in this guide compellingly argues for the 'Talk-to-Machine' strategy as a superior methodology for validating LLM-based cellular annotations against the ground truth of marker gene expression. Its precision, particularly in complex and low-heterogeneity environments, and its ability to generate objectively credible annotations—sometimes surpassing expert labels—make it an invaluable tool for researchers and drug developers seeking to derive reliable biological insights from scRNA-seq data.

While challenges remain, especially in achieving perfect alignment with manual annotations in all contexts, the implementation of iterative feedback loops represents a significant leap forward. It moves LLMs from being static knowledge repositories to dynamic, reasoning partners in scientific discovery. As LLM technology and our understanding of cellular biomarkers continue to evolve, this collaborative, human-in-the-loop approach is poised to become an indispensable component of the precision medicine toolkit, enhancing the reproducibility and reliability of research in cell biology and therapeutic development.

The adoption of large language models (LLMs) for automated cell type annotation represents a significant advancement in single-cell RNA sequencing (scRNA-seq) analysis, offering the potential to reduce manual labor and standardize classification. However, these models face a fundamental challenge: the phenomenon of "hallucination," where they may generate confident but factually incorrect responses, including fabricated cell type annotations [25]. This reliability concern is particularly critical in biomedical research and drug development, where inaccurate cell identification can compromise downstream analyses and experimental validity.

Database-driven verification has emerged as a powerful strategy to mitigate these limitations by grounding LLM outputs in empirically validated biological data. This approach integrates the sophisticated pattern recognition and contextual understanding of LLMs with the rigorous, data-driven validation provided by established marker gene databases [16] [25]. Cross-referencing with curated databases like CellxGene and PanglaoDB provides an objective framework for assessing annotation reliability, effectively distinguishing genuine biological insights from methodological artifacts [16]. This guide objectively compares how these verification databases perform when integrated with LLM-based annotation tools, providing researchers with the experimental data needed to select appropriate validation strategies for their specific research contexts.

Key Database Profiles

- CellxGene Discover: A comprehensive repository from the Chan Zuckerberg Initiative containing single-cell gene expression data from 1634 datasets across 257 studies. It allows queries based on species, tissue type, cell type, and marker gene name, covering over 41 million cells and 106,944 genes [25].

- PanglaoDB: A publicly available database of marker genes for cell types in tissues from various species, particularly strong in data from murine and human tissues. It is one of the resources integrated into the Cell Marker Accordion platform [26].

The Database Heterogeneity Challenge

A significant challenge in database-driven verification stems from the substantial heterogeneity across available marker gene resources. Systematic analysis of seven available marker gene databases revealed low consistency between them, with an average Jaccard similarity index of just 0.08 and a maximum of 0.13 between matching cell types [26]. This means different databases frequently recommend different marker genes for the same cell type, which can lead to inconsistent annotations when used for verification.

For example, when annotating a human bone marrow scRNA-seq dataset, using CellMarker2.0 and PanglaoDB as separate verification sources resulted in divergent cell types assigned to the same cluster (e.g., "hematopoietic progenitor cell" versus "anterior pituitary gland cell") and inconsistent nomenclature (e.g., "Natural killer cell" versus "NK cells") [26]. This heterogeneity raises profound concerns for data mining and interpretation, highlighting the importance of selecting appropriate verification databases matched to specific research contexts.

Comparative Performance Analysis of Verification Strategies

Performance Metrics Across Tools and Datasets

Table 1: Performance Comparison of Database-Verified LLM Annotation Tools

| Tool | Verification Database | Reported Accuracy | Test Datasets | Key Advantage |

|---|---|---|---|---|

| CellTypeAgent | CellxGene | Consistently outperforms other methods across all 9 tested datasets [25] | 303 cell types from 36 tissues across 9 datasets [25] | Combines LLM inference with empirical expression data verification |

| LICT | Multiple sources via internal weighting | Superior to GPTCelltype in efficiency, consistency, accuracy, and reliability [16] | PBMCs, human embryos, gastric cancer, stromal cells [16] | Multi-model integration reduces uncertainty |

| Cell Marker Accordion | 23 integrated databases (including PanglaoDB) | Significantly improved accuracy versus other tools in benchmark [26] | 93,456-cell FACS-sorted dataset, human bone marrow CITE-seq [26] | Evidence consistency scoring across multiple sources |

Impact of Verification on Annotation Accuracy

The integration of database verification substantially enhances annotation performance. In direct comparisons, CellTypeAgent demonstrated consistent superiority over both LLM-only approaches (GPTCelltype) and database-only methods (CellxGene alone) across all evaluated datasets [25]. The verification component is particularly valuable for resolving ambiguous cases where multiple cell types exhibit similar marker gene expression patterns.

For example, when annotating pericyte cells in human adipose tissue, querying CellxGene alone yielded multiple cell types (mural cells, pericytes, and muscle cells) with similarly high average gene expression, leading to frequent misclassification. When enhanced with LLM pre-screening, CellTypeAgent correctly identified pericytes, whereas GPTCelltype misclassified them as fibroblasts [25]. This demonstrates how the combined approach of LLM inference followed by database verification achieves higher precision than either method used independently.

Experimental Protocols for Database Verification

CellTypeAgent with CellxGene Verification Protocol

Workflow Description: This methodology implements a two-stage verification process that combines LLM-based candidate generation with quantitative validation against single-cell gene expression data from CellxGene [25].

Methodology Details:

Stage 1: LLM-Based Candidate Prediction

- Input: A set of marker genes

G = {g₁, g₂, ..., gₙ}from a specific tissue (τ) and species (s). - LLM Prompting: Uses the standardized prompt: "Identify most likely top 3 cell types of [tissue type] using the following markers: [marker genes]. The higher the probability, the further left it is ranked, separated by commas."

- Output: An ordered set of candidate cell types

C = {c₁, c₂, c₃}where c₁ is the highest probability candidate [25].

- Input: A set of marker genes

Stage 2: Gene Expression-Based Candidate Evaluation

- Data Extraction: For each candidate cell type

cinC, query CellxGene to extract:e_g,c,s,τ: Scaled expression value of genegin cell typecfor speciessand tissueτ.ρ_g,c,s,τ: Expressed ratio of genegin cell typecfor speciessand tissueτ.

- Selection Score Calculation:

- When tissue type is known:

score(c) = r_c + rank(Σ_g e_g,c,s,τ) + rank(Σ_g ρ_g,c,s,τ) + (1/|T|) Σ_τ rank(e_g,c,s) - Where

r_cis the initial rank score from the LLM (e.g., 3 for top candidate, 2 for second, 1 for third) [25].

- When tissue type is known:

- Final Selection: The cell type candidate with the highest selection score is chosen:

c* = argmax score(c).

- Data Extraction: For each candidate cell type

Cell Marker Accordion with PanglaoDB Integration Protocol

Workflow Description: This approach integrates PanglaoDB and 22 other marker sources into a unified database with evidence-weighted scoring, implemented through an R package or web interface [26].

Methodology Details:

Database Integration and Standardization

- Source Integration: Combines marker genes from 23 databases, distinguishing positive from negative markers.

- Ontology Mapping: Standardizes cell type nomenclature to Cell Ontology terms and tissue names to Uber-anatomy ontology (Uberon) terms to resolve nomenclature inconsistencies [26].

- Evidence Weighting: Genes are weighted by:

- Specificity Score (SPs): Indicates whether a gene is a marker for different cell types.

- Evidence Consistency Score (ECs): Measures agreement across different annotation sources [26].

Annotation Process

- Input: Single-cell count matrix or Seurat object.

- Marker-Based Assignment: Automatically annotates cell populations using the built-in database, weighting markers by their EC and SPs scores.

- Interpretation Features: Provides top marker genes that most significantly determine the final annotation and evaluates similarity of competing cell types using Cell Ontology hierarchy [26].

LICT Multi-Model Verification Protocol

Workflow Description: The LICT framework employs a "talk-to-machine" strategy that iteratively refines annotations through human-computer interaction and multi-LLM integration [16].

Methodology Details:

Multi-Model Integration

- Model Selection: Identifies top-performing LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0) through systematic evaluation on PBMC benchmark datasets.

- Complementary Strengths: Selects best-performing results from multiple LLMs rather than using majority voting, particularly beneficial for low-heterogeneity datasets where single-model performance declines [16].

Iterative "Talk-to-Machine" Verification

- Step 1 - Marker Retrieval: The LLM provides representative marker genes for its predicted cell type.

- Step 2 - Expression Evaluation: The expression of these markers is assessed within corresponding clusters in the input dataset.

- Step 3 - Validation Check: Annotation is validated if >4 marker genes are expressed in ≥80% of cells in the cluster.

- Step 4 - Iterative Feedback: For failed validations, a structured feedback prompt with expression results and additional DEGs is used to re-query the LLM for revised annotation [16].

The Scientist's Toolkit: Essential Research Reagents & Databases

Table 2: Key Databases and Computational Tools for Cell Type Verification

| Resource | Type | Primary Function in Verification | Key Features |

|---|---|---|---|

| CellxGene Discover | Gene Expression Database | Provides quantitative expression data for candidate validation | 1634 datasets, 7 species, 50 tissues, 714 cell types [25] |

| PanglaoDB | Marker Gene Database | Source of curated marker genes for cell type identification | Murine and human tissue focus, integrated into multiple tools [26] |

| Cell Marker Accordion DB | Integrated Marker Database | Provides evidence-weighted markers from multiple sources | 23 integrated databases, Cell Ontology mapping, EC/SPs scores [26] |

| Cell Ontology | Structured Vocabulary | Standardizes cell type nomenclature across sources | Resolves naming inconsistencies between databases and tools [26] |