Cell Type Annotation 2025: From Foundational Concepts to AI-Driven Validation in Single-Cell Research

This article provides a comprehensive guide to cell type annotation for researchers and drug development professionals.

Cell Type Annotation 2025: From Foundational Concepts to AI-Driven Validation in Single-Cell Research

Abstract

This article provides a comprehensive guide to cell type annotation for researchers and drug development professionals. It covers foundational principles, explores the latest automated methods including large language models (LLMs) and hybrid approaches, addresses common troubleshooting scenarios, and establishes robust validation frameworks. By synthesizing current methodologies and benchmarking data, this resource aims to enhance annotation accuracy, reproducibility, and biological insight across single-cell RNA sequencing, ATAC-seq, and spatial omics applications.

Defining Cellular Identity: The Evolution and Core Principles of Cell Type Annotation

What is a Cell Type? Evolving Definitions from Morphology to Transcriptomics

The question "What is a cell type?" represents one of the most fundamental inquiries in biology, yet it has eluded a simple, universal definition. Cell types are broadly understood as the basic functional units of an organism, where cells within a type exhibit similar structure and function that are distinct from cells in other types [1]. This conceptual framework has served biology for over a century, dating back to the pioneering work of Ramón y Cajal and his contemporaries who first categorized cells based on their morphological characteristics [1]. However, the rapid advancement of single-cell technologies, particularly single-cell RNA sequencing (scRNA-seq), has fundamentally transformed our understanding of cellular identity and diversity.

The traditional view of cell types as discrete, easily categorizable entities has given way to a more nuanced understanding that acknowledges the continuous nature of biological variation [2]. This evolution in thinking reflects a broader shift in biological research from qualitative descriptions to quantitative, data-driven classifications. In the era of single-cell biology, the definition of cell type identity remains actively debated, requiring researchers to integrate evidence from multiple modalities and present compelling arguments for their labeling schemes [3]. This review traces the conceptual journey of cell type definition from its morphological origins to the current transcriptomic era, examining the organizing principles, methodological approaches, and challenges that define this dynamic field.

The Historical Perspective: Morphology and Physiology as Defining Features

The Anatomical Foundation of Cell Typing

The initial classification of cell types relied heavily on visual characteristics observable through microscopy. Morphological properties such as cell size, shape, nuclear characteristics, and organizational patterns provided the first systematic approach to categorizing cellular diversity [3]. In the nervous system, this approach allowed early neuroscientists to distinguish between major neuronal classes—such as pyramidal neurons with their distinctive apical dendrites versus spiny stellate cells—and to relate these morphological differences to potential functional specializations [1]. These anatomical definitions created a foundational taxonomy that still informs our understanding of cellular diversity today.

Physiological measurements eventually complemented morphological characterization, particularly in electrically excitable tissues. For neurons, properties such as action potential waveform, firing patterns, and synaptic connectivity became essential criteria for classification [1]. The Petilla Convention, a major community effort to define cortical interneuron types, exemplified the rigorous application of multidisciplinary criteria—including morphological, physiological, and molecular features—to establish a consistent nomenclature [1]. This historical approach, while powerful, faced significant limitations in scalability and objectivity, as comprehensive characterization required labor-intensive techniques that were difficult to standardize across laboratories.

Technical Limitations and Conceptual Constraints

Traditional methods for cell type classification, including immunohistochemistry, electrophysiology, and morphological reconstruction, provided rich qualitative data but suffered from inherent limitations:

- Low-throughput nature: Techniques like intracellular recording and dye-filling could only characterize small numbers of cells in each experiment [4]

- Subjective categorization: Qualitative descriptions often varied between researchers and laboratories [1]

- Context-dependent properties: Physiological measurements could change under different experimental conditions [1]

- Limited multiplexing: Traditional approaches could typically examine only a few features simultaneously

These constraints began to dissolve with the advent of molecular biology and genomic technologies, which offered more standardized, quantitative, and scalable approaches to cell type classification.

The Molecular Revolution: Transcriptomics as a Quantitative Basis for Cell Identity

The Rise of Single-Cell Transcriptomics

The development of single-cell RNA sequencing (scRNA-seq) technologies marked a paradigm shift in cell type classification. By simultaneously measuring the expression levels of thousands of genes in individual cells, scRNA-seq provides a high-dimensional, quantitative, and largely unbiased molecular signature for each cell [4]. This technological advancement has enabled researchers to move beyond subjective morphological descriptions to data-driven classifications based on comprehensive molecular profiles.

The scalability of scRNA-seq has been particularly transformative, allowing characterization of hundreds of thousands to millions of cells in a single experiment [1]. This unprecedented depth and breadth of cellular sampling has facilitated the creation of detailed cell type taxonomies, or "cell atlases," across diverse species, tissues, and brain regions [1]. Large-scale consortium efforts like the Human Cell Atlas and the BRAIN Initiative Cell Census Network aim to create comprehensive reference maps of all cell types in the human body and brain, respectively [1] [4]. These projects represent a fundamental change in scale and approach to cataloging cellular diversity.

Complementary Molecular Modalities

While transcriptomics has become the dominant approach for cell type classification, other molecular modalities provide complementary information:

- Single-cell epigenomics: Techniques such as single-nucleus ATAC-seq characterize chromatin accessibility and reveal cell type-specific gene regulatory landscapes [1]

- Spatially resolved transcriptomics: Methods based on in situ imaging or sequencing preserve spatial context, revealing organizational principles of tissues [1]

- Proteomics: Measurement of protein levels and modifications provides information closer to functional output [1]

The integration of these multimodal data streams offers a more comprehensive view of cellular identity than any single approach could provide alone.

Table 1: Comparison of Methodologies for Cell Type Classification

| Methodology | Key Measured Features | Throughput | Key Advantages | Major Limitations |

|---|---|---|---|---|

| Morphology | Cell shape, size, structure | Low | Direct visualization, historical context | Subjective, low-throughput |

| Electrophysiology | Action potential properties, firing patterns | Low | Functional relevance, high temporal resolution | Invasive, technically demanding |

| scRNA-seq | Genome-wide mRNA expression | High | Unbiased, quantitative, scalable | Captures only transcriptome, technical noise |

| snATAC-seq | Chromatin accessibility landscape | High | Reveals regulatory architecture | Indirect measure of gene expression |

| Spatial Transcriptomics | mRNA expression with spatial coordinates | Medium | Preserves tissue context | Lower resolution than scRNA-seq |

Methodological Framework: From Data Generation to Cell Type Annotation

The Single-Cell RNA Sequencing Workflow

The standard scRNA-seq workflow involves multiple critical steps, each contributing to the quality and interpretability of the resulting data:

- Cell isolation and library preparation: Single cells are isolated through fluorescence-activated cell sorting (FACS), microfluidics, or droplet-based methods [4]

- Reverse transcription and amplification: mRNA is reverse-transcribed to cDNA and amplified [4]

- Sequencing: High-throughput sequencing generates millions of reads per cell [4]

- Bioinformatic processing: Raw sequences are aligned to reference genomes and quantified as count matrices [4]

Different technological platforms, such as 10x Genomics and Smart-seq, offer distinct tradeoffs between throughput, sensitivity, and cost [4]. The 10x Genomics platform employs droplet-based encapsulation for high-throughput profiling of large cell populations, while Smart-seq uses full-transcriptome amplification for deeper coverage of individual cells [4]. These technical differences significantly impact downstream analyses and must be considered when designing experiments and interpreting results.

Computational Approaches for Cell Type Annotation

The accumulation of large-scale scRNA-seq data has driven the development of diverse computational methods for cell type annotation, which can be broadly categorized into four approaches:

- Specific gene expression-based methods: Utilize known marker genes to manually label cells based on characteristic expression patterns [4]

- Reference-based correlation methods: Categorize unknown cells by comparing their gene expression profiles to pre-annotated reference datasets [4]

- Data-driven reference methods: Train classification models on pre-labeled datasets to predict cell types in new data [4]

- Large-scale pretraining-based methods: Employ unsupervised learning on massive collections of scRNA-seq data to learn generalizable features [5]

Each approach has distinct strengths and limitations, and researchers often combine multiple methods to achieve robust annotations [6]. The emergence of deep learning models like scGPT, which adapts transformer architecture to predict gene expression patterns, represents the cutting edge of annotation technology [5]. When fine-tuned on specific tissues like the retina, scGPT has demonstrated remarkable accuracy, achieving F1-scores of 99.5% in cell type prediction [5].

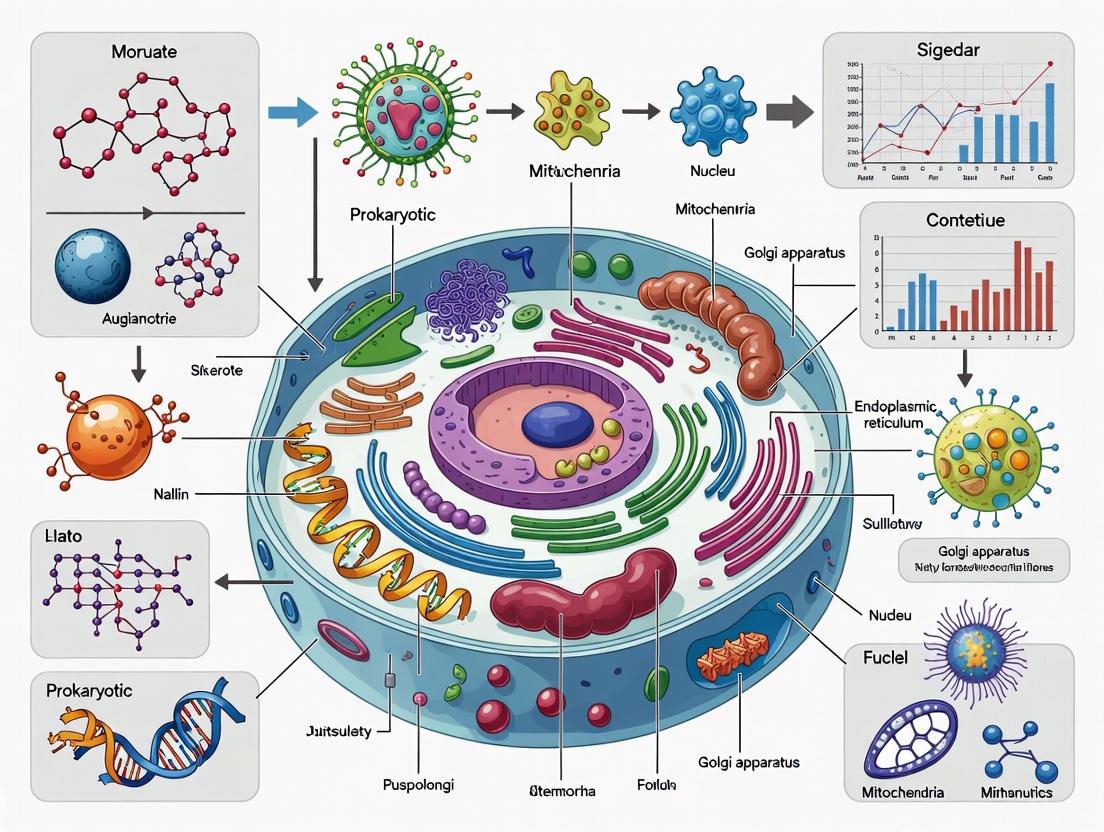

Diagram 1: scRNA-seq analysis workflow showing major steps from cell capture to annotation

Addressing Technical Challenges in scRNA-seq Analysis

Single-cell transcriptomic data present several unique analytical challenges that must be addressed to ensure accurate cell type annotation:

- Data sparsity: scRNA-seq data typically contain a high proportion of zero counts, due both to biological factors and technical dropout events [4]

- Batch effects: Technical variation between experiments can introduce confounding patterns that obscure biological signals [4]

- Imbalanced cell type distribution: Rare cell types may be underrepresented in the data, making them difficult to identify [7]

Novel computational methods like Coralysis have been developed specifically to address these challenges, particularly the problem of imbalanced data where cell types vary substantially in abundance between samples [7]. Coralysis uses a multi-level integration approach inspired by puzzle assembly, progressively refining cellular identities through multiple rounds of divisive clustering [7].

Table 2: Key Computational Tools for Cell Type Annotation

| Tool Name | Annotation Approach | Key Features | Applicability |

|---|---|---|---|

| SingleR | Reference-based | Fast correlation with reference data | General purpose |

| Azimuth | Reference-based | Web application, Seurat integration | Human and mouse tissues |

| scGPT | Deep learning | Transformer architecture, high accuracy | Tissue-specific fine-tuning |

| SCINA | Marker-based | Uses pre-defined marker gene sets | Knowledge-driven annotation |

| Coralysis | Multi-level integration | Handles imbalanced data, confidence estimates | Cross-sample integration |

| CellMarker | Marker database | Manually curated markers | Manual annotation support |

The creation of comprehensive reference databases has been instrumental in standardizing cell type annotation across the research community. These resources provide essential ground truth data that enable reproducible cell type identification:

- Human Cell Atlas (HCA): A collaborative project to create comprehensive reference maps of all human cells [4]

- Tabula Muris/Sapiens: Multi-organ atlases for mouse and human, containing transcriptome data from diverse tissues [1] [2]

- Allen Brain Cell Atlas: Specialized resource for neuronal cell types in human and mouse brain [4]

- CellMarker 2.0: Manually curated database of cell type markers from over 100,000 publications [4] [2]

- PanglaoDB: Marker gene database with information on 155 human cell types [4]

These databases vary in scope, species coverage, and data type, allowing researchers to select the most appropriate references for their specific experimental context.

Experimental Validation and Integration

While computational annotation methods have advanced dramatically, biological validation remains essential for confirming cell type identities. The most robust annotation workflows integrate computational predictions with experimental evidence:

- Immunohistochemistry: Protein-level validation of marker gene expression [3]

- Fluorescence-activated cell sorting (FACS): Physical isolation of cell populations based on surface markers [4]

- Multiplexed error-robust fluorescence in situ hybridization (MERFISH): Spatial validation of transcriptomic predictions [1]

- Functional assays: Tests of cellular properties predicted from transcriptomic profiles [3]

This integrative approach ensures that computational annotations reflect genuine biological differences rather than technical artifacts.

Current Challenges and Future Directions in Cell Type Definition

Conceptual and Technical Limitations

Despite significant progress, the field continues to grapple with fundamental challenges in cell type definition and annotation:

- Discrete classification vs. continuous variation: The imposition of discrete categories on continuously varying cellular states often fails to capture biological complexity [1] [2]

- Context-dependent identity: Cell properties can change dramatically across developmental stages, physiological conditions, and disease states [3]

- Modality alignment: Correlating cell types defined by different modalities (transcriptomics, morphology, physiology) remains difficult [1]

- The "long-tail" problem: Rare cell types are frequently undersampled and misclassified in standard analyses [4]

These challenges highlight the need for more sophisticated conceptual frameworks that can accommodate the dynamic, multi-dimensional nature of cellular identity.

Emerging Technologies and Approaches

Several promising directions are emerging that may address current limitations:

- Multi-omics integration: Simultaneous measurement of multiple molecular modalities from the same cells [4]

- Dynamic cell state tracking: Time-resolved analyses that capture transitions between states rather than static snapshots [3]

- Spatial transcriptomics advancement: Higher resolution spatial methods that preserve architectural context [1]

- Deep learning architectures: More sophisticated models that can recognize novel cell types in an "open-world" framework [4] [5]

- Cross-species alignment: Systematic comparison of cell types across evolutionarily distant organisms [1]

These technological developments, combined with more nuanced computational approaches, promise to yield increasingly refined and biologically meaningful cell type definitions.

Diagram 2: Evolution of cell type classification approaches from historical to future methods

The definition of a cell type has evolved dramatically from static, morphology-based classifications to dynamic, multidimensional characterizations based on molecular signatures. This conceptual shift reflects broader changes in biological research, embracing complexity, dynamics, and quantitative approaches. While transcriptomics has emerged as a powerful and scalable basis for cell type classification, the most robust definitions integrate information across multiple modalities, including morphology, physiology, epigenetics, and spatial context.

The future of cell type classification lies in developing frameworks that can accommodate continuous variation, dynamic state transitions, and context-dependent identities. As single-cell technologies continue to advance and computational methods become increasingly sophisticated, we move closer to a comprehensive understanding of cellular diversity that reflects the true complexity of biological systems. This evolving understanding of cell types will fundamentally shape basic research, drug development, and therapeutic strategies across human health and disease.

Cell type annotation is a crucial and indispensable step in the analysis of single-cell RNA sequencing (scRNA-seq) data. This process enables significant biological discoveries and deepens our understanding of tissue biology by allowing researchers to label groups of cells based on known or unknown cellular phenotypes [8] [9]. In the broader context of cell type annotation research, accurately determining cellular identity serves as the gateway to exploring cellular diversity, functional differences, and gaining critical insights into biological processes and disease mechanisms [8]. The fundamental challenge lies in the fact that gene expression levels exist on a continuum rather than as discrete values, and differences in gene expression do not always directly translate to differences in cellular function [2]. This creates a complex landscape where the accuracy of annotation directly determines the quality of biological insights that can be derived from single-cell studies.

The process of cell type identification faces significant technical hurdles due to the high-dimensional and highly sparse nature of single-cell RNA sequencing data [8]. Moreover, the field lacks universally standardized categorization systems, as the size of categories and borders drawn between them are partly subjective and can evolve with new technologies that provide higher resolution views of cells [9]. These challenges are compounded when researchers attempt to integrate multiple datasets or identify novel cell populations, making robust annotation methodologies essential for advancing our understanding of cellular biology in health and disease.

The Critical Impact of Annotation Accuracy on Biological Interpretation

Direct Consequences for Research Outcomes

Accurate cell type annotation serves as the foundation for virtually all downstream analyses in single-cell research. Errors in this foundational step can propagate through subsequent analyses, potentially leading to flawed biological interpretations and misleading conclusions. The reliability of annotation directly influences how researchers interpret cellular composition, identify rare cell populations, understand disease mechanisms, and develop potential therapeutic strategies [9]. When annotation is performed accurately, it enables researchers to make valid inferences about cellular functions, developmental trajectories, and responses to perturbations, thereby driving meaningful biological discovery.

The impact of annotation quality extends beyond basic research into translational applications. In drug development, for instance, incorrectly annotated cell types could lead to misidentification of therapeutic targets or misinterpretation of drug effects on specific cellular populations. Furthermore, as single-cell technologies increasingly enter clinical diagnostics, the reliability of cell type identification becomes paramount for accurate patient stratification and disease classification [10]. The scientific community recognizes these stakes, with recent research highlighting how annotation inaccuracies can result in wasted resources, failed experiments, and delayed scientific progress due to the propagation of errors through subsequent analyses [11].

Technical Challenges in Annotation

The path to accurate annotation is fraught with technical challenges that directly impact biological interpretation. Single-cell RNA-seq data is characterized by its high dimensionality, extreme sparsity, and significant technical noise [8]. Conventional annotation methods that rely on clustering cells and identifying marker genes through differential expression analysis become increasingly time-consuming and impractical as dataset sizes grow to encompass millions of cells [8]. The selection of highly variable genes (HVG) to reduce dimensionality, while computationally advantageous, inevitably results in information loss that can weaken a model's generalization performance and adaptability to novel datasets [8].

Batch effects present another substantial challenge, where technical variations between experiments can obscure true biological signals [12]. These effects can arise from differences in patients, sampling procedures, or sequencing processes, leading to unwanted variations in the data that do not reflect genuine biological variation [12]. When unaddressed, these technical artifacts can be misinterpreted as biological phenomena, fundamentally compromising the insights derived from the data. The problem is particularly acute in large-scale integrative studies that combine datasets from multiple sources, where inconsistent annotation can severely limit the utility of combined analyses [13].

Methodological Landscape: Annotation Approaches and Techniques

Traditional and Manual Annotation Methods

The classical approach to cell type annotation relies on marker gene identification based on prior biological knowledge. This method dates back to pre-scRNA-seq times when single-cell data was low dimensional, such as FACS data with gene panels consisting of no more than 30-40 genes [9]. In this paradigm, researchers typically cluster cells first and then annotate groups of cells rather than making per-cell calls, which provides robustness against the inherent sparsity of single-cell data where a single cell might not have a count for a specific marker even if it was expressed [9].

Manual annotation typically follows one of two pathways: working from a established table of marker genes for expected cell types and checking which clusters express these markers, or examining which genes are highly expressed in defined clusters and then determining if they associate with known cell types [9]. While manual annotation benefits from expert knowledge, it is inherently subjective and highly dependent on the annotator's experience, creating challenges for reproducibility and standardization across studies [10]. The labor-intensive nature of this process also makes it impractical for the enormous datasets generated by modern single-cell technologies.

Automated and Reference-Based Approaches

To address the limitations of manual annotation, numerous automated cell type identification methods have been developed. These can be broadly categorized into reference-based and reference-free approaches. Reference-based methods, such as Azimuth and CellTypist, transfer labels from well-annotated reference datasets to new query data using various similarity metrics [2] [13]. These approaches benefit from curated knowledge but face limitations when encountering novel cell types not present in the reference data [13].

Table 1: Comparison of Automated Cell Type Annotation Methods

| Method | Approach | Advantages | Limitations |

|---|---|---|---|

| SingleR [13] | Reference-based | Fast computation; utilizes reference transcriptomes | Limited to cell types in reference |

| CellTypist [13] | Reference-based | Large collection of tissue-specific models | May miss dataset-specific cell populations |

| scExtract [13] | LLM-assisted automation | Processes data from articles; prior-informed integration | Requires article text as input |

| scTrans [8] | Deep learning with sparse attention | Uses all non-zero genes; minimizes information loss | Computational complexity |

| LICT [10] | Multi-LLM integration | Reference-free; objective reliability assessment | Dependent on multiple API services |

Automated methods provide greater objectivity compared to manual annotation but often depend heavily on the quality and comprehensiveness of reference datasets [10]. This dependency can limit their accuracy and generalizability, particularly for rare cell types or disease-specific cellular states [10]. The performance of these methods also varies significantly across tissues and biological contexts, necess careful selection and validation for specific applications.

Emerging LLM-Based and Deep Learning Approaches

Recent advancements in artificial intelligence have introduced novel approaches to cell type annotation using large language models (LLMs) and specialized deep learning architectures. Methods like scTrans employ Transformer-based models with sparse attention mechanisms to utilize all non-zero genes in single-cell data, effectively reducing input dimensionality while minimizing information loss [8]. This approach demonstrates strong generalization capabilities and can efficiently handle datasets approaching a million cells even with limited computational resources [8].

LLM-based tools represent another frontier, with frameworks like mLLMCelltype integrating multiple large language models to improve annotation accuracy through consensus-based predictions [14]. These methods leverage the extensive knowledge embedded in pre-trained language models while addressing individual model limitations through multi-model integration [14]. The "talk-to-machine" strategy represents a particularly innovative approach, where LLMs iteratively enrich their input with contextual information through human-computer interaction, mitigating ambiguous or biased outputs [10].

Table 2: Performance Comparison of Annotation Methods Across Datasets

| Method | PBMC Accuracy | Gastric Cancer Accuracy | Embryo Data Accuracy | Stromal Cells Accuracy |

|---|---|---|---|---|

| Traditional Manual | High [10] | Moderate [10] | Variable [10] | Low [10] |

| GPT-4 Only | 78.5% [10] | 88.9% [10] | 24.2% [10] | 33.3% [10] |

| Multi-LLM Integration (LICT) | 90.3% [10] | 91.7% [10] | 48.5% [10] | 43.8% [10] |

| scExtract | Top performer [13] | Top performer [13] | Not reported | Not reported |

Experimental Framework and Protocol Design

Benchmarking Strategies and Validation Protocols

Rigorous benchmarking is essential for evaluating the performance of cell type annotation methods. The standard methodology involves comparing automated annotations against manually curated gold-standard labels, typically using metrics such as accuracy, balanced accuracy, and F1 score [13]. Peripheral blood mononuclear cells (PBMCs) serve as a common benchmark dataset due to their well-characterized cell types and widespread use in method evaluation [10]. However, comprehensive benchmarking should include diverse biological contexts including normal physiology, developmental stages, disease states, and low-heterogeneity cellular environments to assess method robustness [10].

The benchmarking protocol for LLM-based methods typically involves providing standardized prompts incorporating top marker genes for each cell subset and assessing agreement between manual and automated annotations [10]. For traditional computational methods, standard practice involves using manually annotated datasets from resources like cellxgene, comparing performance across multiple human tissues and organs to ensure generalizability [13]. These evaluations should specifically test method performance on challenging scenarios such as identifying novel cell types, handling batch effects, and maintaining accuracy across different sequencing technologies.

Integration of Multi-Model and Consensus Approaches

Advanced annotation frameworks increasingly employ multi-model strategies to enhance accuracy and reliability. The multi-LLM integration approach, for instance, selects the best-performing results from multiple language models rather than relying on conventional majority voting or a single top-performing model [10]. This strategy effectively leverages the complementary strengths of different models, significantly reducing mismatch rates particularly for low-heterogeneity datasets where individual models struggle [10].

The consensus approach extends beyond simply combining predictions to include iterative discussion mechanisms where LLMs evaluate evidence and refine annotations through multiple rounds of discussion [14]. This process incorporates validation steps where annotations are checked against marker gene expression patterns, with failed validations triggering structured feedback prompts that include expression validation results and additional differentially expressed genes from the dataset [10]. This iterative refinement continues until consensus is reached or a predetermined number of iterations is completed, ensuring robust and reliable annotations.

Figure 1: Multi-Model Consensus Annotation Workflow

Advanced Technical Solutions and Research Reagents

Research Reagent Solutions for Single-Cell Analysis

Table 3: Essential Research Reagents and Computational Tools for Cell Type Annotation

| Reagent/Tool | Type | Function | Application Context |

|---|---|---|---|

| 10X Genomics Platform [2] | Experimental Platform | Single-cell RNA sequencing | Generating single-cell gene expression data |

| Cellxgene [13] | Data Resource | Literature-curated single-cell database | Access to annotated reference datasets |

| Scanpy [13] | Computational Tool | Python-based single-cell analysis | Data preprocessing, clustering, and visualization |

| Tabula Muris [2] | Reference Database | Mouse single-cell transcriptome data | Reference-based annotation for mouse studies |

| CellMarker 2.0 [2] | Marker Database | Manually curated cell marker resource | Marker gene identification for manual annotation |

| Seurat [2] | Computational Tool | R package for single-cell analysis | Data integration, clustering, and annotation |

Technical Frameworks for Large-Scale Annotation

Advanced computational frameworks have been developed specifically to address the challenges of large-scale cell type annotation. The CELLULAR framework employs contrastive learning and a carefully designed loss function to create a generalizable embedding space from scRNA-Seq data [12]. This approach effectively reduces batch effects while preserving biological information, outperforming existing methods in learning representations that transfer well across datasets [12]. The model's architecture focuses on maximizing true biological differences while minimizing technical variations, creating embeddings that support both accurate cell type classification and novel cell type detection.

The scExtract framework represents another technical advancement by leveraging large language models to automate the entire single-cell data analysis pipeline from preprocessing to annotation and integration [13]. This approach uniquely extracts information from research articles to guide data processing, implementing an LLM agent that emulates human expert analysis by automatically processing datasets while incorporating article background information [13]. The framework includes modified versions of integration algorithms like scanorama-prior and cellhint-prior that incorporate prior annotation information for improved batch correction while preserving biological diversities, addressing a critical limitation of conventional integration methods that fail to leverage prior knowledge [13].

Figure 2: Single-Cell Analysis Workflow from Data to Insight

Validation and Quality Assurance Frameworks

Objective Credibility Evaluation

Ensuring the reliability of cell type annotations requires robust validation frameworks that can objectively assess annotation quality. The credibility evaluation strategy addresses this need by providing a reference-free method to distinguish discrepancies caused by annotation methodology from those due to intrinsic limitations in the dataset itself [10]. This approach involves retrieving representative marker genes for each predicted cell type, analyzing their expression patterns within corresponding cell clusters, and deeming an annotation reliable if more than four marker genes are expressed in at least 80% of cells within the cluster [10].

This objective assessment framework has revealed that LLM-generated annotations can sometimes outperform manual annotations in terms of reliability, particularly for low-heterogeneity datasets where manual annotations show higher rates of unreliable calls [10]. The framework also identifies cases where both LLM and manual annotations differ but are both classified as reliable, highlighting situations where single cell populations exhibit multifaceted traits that could reasonably be interpreted as different cell types [10]. This capability allows researchers to focus on biologically meaningful ambiguities rather than methodological limitations.

Uncertainty Quantification and Novel Cell Type Detection

Advanced annotation frameworks incorporate explicit uncertainty quantification to help researchers identify potentially problematic annotations. Methods like mLLMCelltype provide Consensus Proportion and Shannon Entropy metrics that enable quantitative assessment of annotation confidence [14]. These metrics are particularly valuable for identifying borderline cases where cell identities are ambiguous, allowing researchers to prioritize validation efforts on the most uncertain annotations.

The ability to detect novel cell types represents another critical aspect of annotation quality assurance. The CELLULAR framework addresses this challenge by designing its architecture to identify instances where it is not confident about any known cell type [12]. By setting appropriate likelihood thresholds, researchers can capture samples that may represent new cell types, significantly enhancing the method's utility in discovery-oriented research [12]. This capability is especially important for avoiding false negatives when working with diverse or poorly characterized tissues.

The critical importance of accurate cell type annotation for biological insight cannot be overstated. As single-cell technologies continue to evolve and dataset sizes grow exponentially, the development of robust, scalable, and accurate annotation methods remains a central challenge in the field. The emergence of multi-model consensus approaches, advanced deep learning architectures, and LLM-integrated frameworks represents significant progress in addressing this challenge. These methods demonstrate that combining complementary approaches—leveraging prior biological knowledge while maintaining flexibility for novel discoveries—provides the most promising path forward for the research community.

Future advancements in cell type annotation will likely focus on several key areas. Multi-modal deep learning approaches that integrate other data types alongside scRNA-seq, such as cell images or chromatin accessibility data, promise to provide more comprehensive cellular representations [12]. The development of standardized, community-accepted cell type representation schemes would significantly enhance reproducibility and comparability across studies. As the field moves toward clinical applications, ensuring annotation reliability will become increasingly critical for diagnostic accuracy and therapeutic development. By addressing these challenges through continued methodological innovation and rigorous validation, the single-cell research community can fully leverage the transformative potential of these technologies to advance our understanding of biology and disease.

In single-cell RNA sequencing (scRNA-seq) data analysis, cell type annotation is a foundational step for interpreting cellular heterogeneity and function. While automated computational methods are rapidly evolving, expert manual annotation is still widely regarded as the gold standard for assigning cell type identities to cell clusters. This whitepaper examines the critical role, established methodologies, and inherent limitations of manual annotation by domain experts. By exploring its integration with emerging automated approaches and the growing availability of curated biological knowledge bases, we frame manual annotation's enduring value within a modern, hybrid cell annotation workflow essential for rigorous biological discovery and therapeutic development.

The analysis of scRNA-seq data enables the dissection of complex tissues into their constituent cell types and states at unprecedented resolution. A crucial step in this process is cell type annotation, the assignment of biological identities to clusters of cells based on their gene expression profiles. Within this domain, expert manual annotation persists as the benchmark against which all automated methods are evaluated [15] [16].

This approach involves researchers with domain expertise manually inspecting cluster-specific upregulated genes and comparing them against prior knowledge of cell-type markers derived from the scientific literature [15]. The continued reliance on this method stems from its ability to leverage the nuanced, contextual understanding that human experts bring to the annotation process. Experts can interpret ambiguous expression patterns, identify novel cell types, and account for biological context in a way that purely algorithmic approaches have yet to fully replicate. Consequently, manual curation leaves researchers with "a vivid understanding of cell types and deeply portray[s] the characteristics of different cell types" [15]. This deep, intuitive understanding is particularly valuable for identifying rare cell populations or novel cell states that do not fit predefined classifications.

The Manual Annotation Methodology: A Step-by-Step Guide

The process of expert manual annotation typically follows a systematic, albeit labor-intensive, workflow. Adherence to a standardized protocol enhances the reproducibility and reliability of the results.

Standardized Workflow for Cluster Annotation

The following Graphviz diagram outlines the core steps in the expert manual annotation process:

Step 1: Cell Clustering. After standard preprocessing of the scRNA-seq data, unsupervised clustering algorithms (e.g., those in Seurat or Scanpy) are applied to group cells with similar gene expression profiles [16]. This step identifies putative cell populations without any prior labeling.

Step 2: Differential Expression Analysis. For each cell cluster, statistical tests are performed to identify differentially expressed genes (DEGs)—genes that are significantly upregulated in a specific cluster compared to all other clusters [15] [16]. This generates a ranked list of potential marker genes for each cluster.

Step 3: Literature Curation & Knowledge Base Query. The expert then compares the identified DEGs against known cell-type markers from existing scientific literature and curated databases. Resources such as CellMarker, singleCellBase, and ACT provide manually curated collections of cell type and marker gene associations, which are invaluable for this step [15] [17]. singleCellBase, for instance, contains over 9,158 entries linking 1,221 cell types with 8,740 gene markers across 31 species [17].

Step 4: Expert Label Assignment. The core of the manual process involves the expert synthesizing the evidence from the previous steps to assign a cell type label. This is not a simple lookup exercise; it requires contextual interpretation of marker co-expression, expression strength, and tissue or disease context to make a final determination [16].

Step 5: Validation & Iteration. The assigned labels are assessed for biological plausibility. This may involve checking the expression of canonical markers via visualizations like feature plots and validating that the composition of cell types makes sense within the sampled tissue. Clusters with ambiguous identities may be re-clustered or subjected to further analysis [16].

Essential Research Reagents and Knowledge Bases

The manual annotation process is heavily dependent on high-quality, curated biological knowledge. The table below details key resources that provide the essential prior knowledge required for expert annotation.

Table 1: Key Research Reagent Solutions for Manual Cell Type Annotation

| Resource Name | Type | Key Features and Function | Coverage |

|---|---|---|---|

| ACT (Annotation of Cell Types) [15] | Web Server & Marker Map | Provides a hierarchically organized marker map curated from ~7,000 publications; integrates a weighted gene set enrichment method (WISE). | Human, Mouse |

| singleCellBase [17] | Manually Curated Database | A high-quality resource of cell type and marker gene associations; features extensive species coverage and a user-friendly interface for browsing and searching. | 31 species (Animalia, Protista, Plantae) |

| CellMarker [15] [17] | Database | A widely used database of manually curated cell markers in human and mouse, often integrated into other analysis tools. | Human, Mouse |

| PanglaoDB [17] | Database | A web server for exploration of mouse and human single-cell RNA sequencing data, including curated marker genes. | Human, Mouse |

Benchmarking Manual Against Automated Annotation

Despite its status as the gold standard, it is critical to evaluate the performance of manual annotation objectively, particularly as automated methods advance. Benchmarking studies and the emergence of new AI-driven tools provide a framework for this comparison.

Quantitative Performance and Subpopulation Challenges

Studies evaluating cell annotation methods reveal that while manual annotation is robust for broad cell types, its effectiveness can vary. A benchmark of five annotation methods (including GSEA, GSVA, and CIBERSORT) on several scRNA-seq datasets found that all methods could perform well for major cell types, with an average area under the receiver operating characteristic curve (AUC) of 0.91 [18]. However, precision-recall performance showed wide variation (average AUC = 0.53), indicating that accurate annotation remains challenging across the board [18].

A significant limitation of both manual and automated methods is annotating subtle cell subpopulations. This is particularly evident in heterogeneous populations like T cells, where distinguishing between highly similar subtypes (e.g., T helper 1 vs. 2) based on scRNA-seq data alone remains problematic [16]. The granularity of annotation is a key factor; pushing for overly specific labels can reduce confidence and accuracy [19].

The Rise of LLM-Based Evaluation and Hybrid Approaches

New technologies are emerging to objectively assess annotation quality. Tools like LICT (Large Language Model-based Identifier for Cell Types) leverage multiple AI models to provide an objective credibility evaluation of cell type annotations [10]. LICT assesses reliability by checking if a set of model-generated marker genes for a predicted cell type are expressed in the cell cluster. This provides a reference-free method to identify potentially unreliable annotations, whether they originate from manual or automated sources [10].

In one evaluation, such objective checks revealed that for certain low-heterogeneity datasets, a significant proportion of manual expert annotations failed to meet credibility thresholds, whereas some LLM-generated annotations that disagreed with the expert were deemed reliable [10]. This highlights that manual annotations are not infallible and can benefit from objective, computational verification.

Critical Analysis: Limitations and the Path Forward

The reliance on expert manual annotation presents several concrete challenges for the scalability and reproducibility of single-cell research.

Table 2: Key Limitations of Expert Manual Annotation and Emerging Mitigations

| Limitation | Impact on Research | Emerging Solutions and Mitigations |

|---|---|---|

| Labor-Intensive Process [15] [16] | Low throughput; not feasible for the growing volume of scRNA-seq data. | Development of semi-automated tools (e.g., CellTypist, scGate) [16] and AI assistants (e.g., LICT) [10] to accelerate the expert review process. |

| Requires Domain Expertise [15] [16] | Creates a bottleneck; results are dependent on scarce specialist knowledge. | Creation of comprehensive, hierarchically organized knowledge bases (e.g., ACT [15]) that codify expert knowledge for broader use. |

| Subjectivity and Low Reproducibility [16] [18] | Introduces variability and limits the consistency of annotations across studies and labs. | Implementation of objective credibility checks [10] and the use of standardized, controlled vocabularies for cell type names [15] [17]. |

| Dependence on Prior Knowledge [15] [17] | Struggles to identify truly novel cell types not described in existing literature. | Hybrid approaches that combine automated clustering with expert review, allowing experts to focus on unannotated or ambiguous clusters [16]. |

The Evolving Gold Standard: A Hybrid Paradigm

The field is moving towards a two-step, hybrid annotation process that leverages the strengths of both automated and manual methods [16]. This is now considered a gold-standard approach in modern pipelines [16]. The workflow involves:

- Primary Automated Annotation: An initial, rapid annotation of the majority of cell clusters using supervised, reference-based, or marker-based automated tools.

- Expert-Based Manual Interrogation: A subsequent, focused review by a domain expert to validate the automated labels, resolve ambiguities, and annotate cell populations that the algorithm failed to classify or identified as novel [16].

This hybrid model, illustrated below, balances efficiency with the irreplaceable value of expert insight.

Expert manual annotation remains the cornerstone of reliable cell type identification in single-cell genomics, providing the contextual understanding and flexibility that purely computational methods currently lack. Its role as a gold standard is thus well-deserved but nuanced. However, its inherent limitations—subjectivity, labor-intensity, and dependency on prior knowledge—render it unsustainable as the sole method in an era of exponentially growing data.

The future of accurate and scalable cell type annotation lies not in choosing between manual expertise and automated efficiency, but in strategically integrating them. The emerging hybrid paradigm, which combines robust automated pre-annotation with targeted expert validation and discovery, represents the new best practice. For researchers and drug developers, leveraging curated knowledge bases, adopting objective validation tools like LICT, and implementing this hybrid workflow is essential for ensuring that cell type annotations—the fundamental units of analysis in single-cell biology—are both biologically insightful and technically robust.

Cell type annotation, the process of identifying and labeling distinct cell populations within a biological sample using data from techniques like single-cell RNA sequencing (scRNA-seq), has emerged as a foundational capability in modern life sciences [20]. This process transcends mere cataloging, serving as a critical gateway to understanding cellular diversity and function within complex tissues and organisms. The ability to accurately classify cells into specific types—such as neurons, immune cells, or epithelial cells—based on their gene expression profiles has revolutionized our approach to biological research and therapeutic development [20]. Within the broader thesis of cell type annotation research, this technical guide examines how advanced annotation methodologies are being leveraged for two paramount applications: the discovery of novel cell types and the systematic identification of druggable targets. This dual-purpose capability establishes cell type annotation not merely as an analytical endpoint but as a powerful discovery engine that bridges fundamental cellular biology with translational medicine, enabling researchers to decipher the cellular composition of diseases and accelerate the development of precision therapeutics.

Advanced Methodologies in Cell Type Annotation

The evolution from manual annotation based on known marker genes to automated, computational methods represents a significant paradigm shift in single-cell analysis. Supervised classification-based methods now dominate the landscape, training models on reference datasets to label cell types in unlabeled data [21]. Recent advances have introduced several sophisticated deep-learning architectures, each offering distinct mechanistic advantages for interpreting scRNA-seq data.

Kolmogorov-Arnold Networks (KANs) present a novel architecture for single-cell analysis. The scKAN framework utilizes learnable activation functions on the edges of its network, rather than fixed weights, to model gene-to-cell relationships directly [22]. This design provides superior interpretability for identifying cell-type-specific marker genes and gene sets, as the activation curves visualize specific gene interactions. scKAN employs a knowledge distillation strategy where a large pre-trained model (teacher) guides a KAN-based module (student), integrating prior knowledge with ground truth cell type information. This approach has demonstrated a 6.63% improvement in macro F1 score over state-of-the-art methods in cell-type annotation tasks [22].

Transformer-based Models with attention mechanisms have been adapted for single-cell data, though with modifications to address computational constraints. scTrans utilizes sparse attention mechanisms to focus on all non-zero genes in the input data, effectively reducing dimensionality while minimizing information loss that typically plagues highly variable gene (HVG) selection approaches [8]. This architecture efficiently processes large-scale datasets while maintaining robust generalization capabilities for novel datasets. The self-attention mechanism dynamically assesses gene relevance, capturing long-range dependencies within the transcriptomic profile [8] [23].

Graph Neural Networks (GNNs) offer a distinct approach by incorporating cellular topological information. WCSGNet constructs Weighted Cell-Specific Networks (WCSNs) for individual cells, capturing unique gene interaction patterns rather than assuming a universal network across all cells [21]. These cell-specific networks are built using highly variable genes and inherently capture both gene expression patterns and gene association network structure features. A graph neural network then extracts features from these personalized networks to perform accurate cell type classification, demonstrating particular strength with imbalanced datasets [21].

Large Language Models (LLMs) represent the most recent innovation, with models like CellTypeAgent and LICT leveraging natural language processing capabilities for annotation tasks [11] [24]. These frameworks often incorporate verification from biological databases to mitigate hallucinations and improve reliability. Their "talk-to-machine" approach provides an objective framework for assessing annotation reliability, even when single-cell populations exhibit multifaceted traits [11].

Table 1: Comparative Analysis of Advanced Cell Type Annotation Methods

| Method | Core Architecture | Key Innovation | Strengths | Limitations |

|---|---|---|---|---|

| scKAN [22] | Kolmogorov-Arnold Network | Learnable activation curves for gene-cell relationships | High interpretability for marker genes; Superior accuracy (6.63% F1 improvement) | Requires knowledge distillation from teacher model |

| scTrans [8] | Transformer with Sparse Attention | Focuses on all non-zero genes, minimizing information loss | Efficient processing of large datasets; Strong generalization | Computational complexity remains non-trivial |

| WCSGNet [21] | Graph Neural Network | Weighted Cell-Specific Networks for individual cells | Excellent with imbalanced data; Captures cell-specific gene interactions | Network construction adds computational overhead |

| CellTypeAgent [24] | Large Language Model | Database verification to reduce hallucinations | High accuracy; Handles multifaceted cell populations | Dependent on quality and scope of verification databases |

From Annotation to Drug Target Discovery

The transition from cell type identification to therapeutic target discovery represents a critical pathway in translational medicine. Accurate cell type annotation enables researchers to identify cell populations specifically implicated in disease processes, thereby revealing potential therapeutic targets within these cells [20]. This approach has evolved beyond simple differential expression analysis to incorporate sophisticated multi-omics integration and functional validation.

The foundational principle underlying this application is that diseases often affect specific cell types rather than entire tissues uniformly. By identifying which cell types are pathogenic—such as specific immune cell subsets in autoimmune disorders or rare cancer stem cell populations in tumors—researchers can focus target identification efforts on molecules that are critical to these cells' survival or function [22] [20]. This cell-type-specific targeting strategy enhances therapeutic efficacy while minimizing off-target effects, as modulating a target present primarily in pathogenic cells reduces disruption to healthy tissue function [25].

Advanced annotation methods like scKAN facilitate a more nuanced approach to target discovery by identifying not just highly expressed genes but those with high functional significance through their learned activation curves [22]. This capability enables the discovery of potential therapeutic targets that might be overlooked by conventional differential expression methods, particularly targets with moderate expression levels but high functional importance to the cell type's identity [22]. The resulting gene signatures provide biologically informed starting points for therapeutic intervention.

The integration of cell-type-specific gene importance scores with activation curve patterns creates a novel framework for identifying druggable targets [22]. In a case study on pancreatic ductal adenocarcinoma (PDAC), scKAN-identified gene signatures led to a potential drug repurposing candidate, with molecular dynamics simulations subsequently validating binding stability [22]. This end-to-end pipeline—from single-cell analysis to drug candidate validation—demonstrates the powerful synergy between advanced annotation methods and therapeutic discovery.

Table 2: Key Steps in Transitioning from Cell Annotation to Target Identification

| Step | Process | Key Techniques | Outcome |

|---|---|---|---|

| 1. Pathogenic Cell Identification | Identify cell types quantitatively expanded or altered in disease states | Clustering, differential abundance testing | Definition of disease-relevant cellular compartments |

| 2. Functional Gene Prioritization | Identify genes critical to pathogenic cell identity or function | Importance scoring (e.g., scKAN edges), pathway enrichment | Shortlist of potential therapeutic targets |

| 3. Druggability Assessment | Evaluate target tractability for therapeutic intervention | Structural analysis, database mining (DrugBank, TTD) [26] | Prioritized list of druggable targets |

| 4. Experimental Validation | Confirm target functional relevance | siRNA knockdown [25], binding assays | Validated therapeutic targets |

Experimental Protocols and Workflows

Integrated Workflow for Target Discovery via Cell Type Annotation

The following diagram illustrates the comprehensive experimental workflow that bridges single-cell RNA sequencing data with drug target identification and validation:

Protocol for Drug Target Identification Using scKAN

The following protocol outlines the specific methodology for leveraging scKAN in drug target discovery, as demonstrated in the PDAC case study [22]:

Phase I: Model Training and Knowledge Distillation

- Teacher Model Fine-tuning: Utilize a pre-trained single-cell foundation model (e.g., scGPT pre-trained on 33 million cells) and fine-tune it on the target dataset using standard transformer architecture with gene encoder, expression embeddings, and condition embeddings [22].

- Student Model Training: Implement the scKAN student model with multiple KAN layers. Train using knowledge distillation from the teacher model combined with ground truth cell type information.

- Loss Function Optimization: Employ a combined loss function integrating:

- Knowledge distillation loss

- Self-entropy loss to prevent over-concentration on dominant cell types

- Modified deep divergence-based clustering (DDC) loss using Cauchy-Schwarz divergence to optimize feature-cluster alignment [22].

Phase II: Cell-Type-Specific Gene Identification

- Importance Score Calculation: Extract edge scores from the trained KAN model, adapting them to quantify the learned contribution of each gene to specific cell type classification.

- Marker Gene Validation: Validate importance scores by demonstrating significant enrichment for known cell-type-specific markers and differentially expressed genes.

- Activation Curve Analysis: Cluster genes with similar learned activation function curves to reveal functionally related gene sets and co-expression patterns within specific cell types.

Phase III: Target Prioritization and Validation

- Functional Enrichment Analysis: Perform pathway enrichment on high-importance genes to identify biologically coherent processes.

- Druggability Assessment: Cross-reference prioritized genes with druggable genome databases (e.g., DrugBank, Therapeutic Target Database) using BLASTp with E-value cutoff of 10−4 [26].

- Compound Screening: Screen compound libraries (e.g., flavonoid libraries for MRSA targets [26]) against prioritized targets using molecular docking.

- Experimental Validation: Perform binding stability assessment through molecular dynamics simulations (analyzing RMSD, RMSF, ROG, SASA parameters) and calculate binding free energies [22] [26].

Protocol for Target Deconvolution and Validation

For scenarios where therapeutic effects are observed before targets are identified, target deconvolution approaches are employed:

Affinity-Based Target Deconvolution

- Affinity Probe Design: Design small molecule affinity probes incorporating the therapeutic compound with photoactivatable or chemical cross-linking groups.

- Cellular Treatment: Expose disease-relevant cell types to the affinity probes under physiological conditions.

- Target Capture and Isolation: UV-irradiate for photoactivation (if applicable), lyse cells, and isolate target complexes using affinity chromatography.

- Mass Spectrometry Analysis: Identify captured targets through liquid chromatography-tandem mass spectrometry (LC-MS/MS) and database searching [25].

Functional Genomics Approaches

- siRNA Screening: Implement high-throughput siRNA screens to systematically knock down genes in disease-relevant cell types and identify genes whose knockdown phenocopies therapeutic treatment.

- CRISPR-Based Validation: Utilize CRISPR-Cas9 to generate knockout cell lines for putative targets and validate their role in therapeutic response.

- Dose-Response Correlation: Establish correlation between target protein reduction (via siRNA) and phenotypic effect, comparing to compound dose-response relationships [25].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementation of the described methodologies requires specific reagents and computational resources. The following table details key components of the experimental toolkit for cell type annotation and subsequent drug target discovery:

Table 3: Essential Research Reagents and Solutions for Cell Annotation and Target Discovery

| Category | Item/Solution | Specification/Function | Application Examples |

|---|---|---|---|

| Reference Datasets | Tabula Muris, Human Cell Atlas | Annotated single-cell transcriptomes from multiple tissues | Training and benchmarking annotation algorithms [8] [21] |

| Analysis Platforms | Polly, Seurat, Scanpy | Integrated platforms for data retrieval, processing, and analysis | Automated cell type annotation; Multi-omics data integration [20] |

| Target Databases | DrugBank, Therapeutic Target Database (TTD) | Curated repositories of druggable targets and drug interactions | Druggability assessment; Target prioritization [26] |

| Validation Reagents | siRNA Libraries, CRISPR-Cas9 Systems | Tools for targeted gene knockdown/knockout | Functional validation of candidate targets [25] |

| Structural Tools | AutoDock Vina, Molecular Dynamics Software | Computational tools for binding prediction and dynamics | Binding stability assessment; Binding free energy calculations [22] [26] |

| Specialized Algorithms | scKAN, scTrans, WCSGNet | Specialized algorithms for annotation and marker discovery | Cell-type-specific gene identification; Network analysis [22] [8] [21] |

The integration of advanced cell type annotation methodologies with drug target discovery represents a paradigm shift in translational research. Techniques such as scKAN, scTrans, and WCSGNet are transforming single-cell analysis from a descriptive exercise to a hypothesis-generating engine that directly fuels the therapeutic development pipeline. By enabling the identification of cell-type-specific molecular features with high functional relevance, these approaches are accelerating the discovery of novel therapeutic targets while improving the specificity and efficacy of candidate interventions. As these methodologies continue to evolve—particularly through the integration of multi-omics data and more sophisticated AI architectures—their impact on personalized medicine and drug development is poised to grow substantially, ultimately enabling more precise and effective therapies for complex diseases.

In single-cell RNA sequencing (scRNA-seq) research, the transformation of raw sequencing data into biologically meaningful insights hinges on two fundamental pre-processing steps: quality control (QC) and clustering. These technical procedures form the indispensable foundation upon which all subsequent biological interpretation, including the critical task of cell type annotation, is built. Within the broader context of cell type annotation research, the accuracy and reliability of final cell type labels are directly constrained by the quality of these preliminary analytical stages. As single-cell technologies increasingly inform drug development and clinical applications, establishing robust, standardized protocols for these foundational steps becomes paramount for ensuring reproducible and biologically valid discoveries.

The intrinsic relationship between pre-processing and annotation is elegantly summarized by the observation that "accurate cell type prediction is a crucial step in the interpretation of single-cell RNA-seq data, as downstream biological insights strongly depend on these predictions" [27]. This dependency creates an analytical chain where early decisions in QC and clustering parameters propagate through the entire analytical pipeline, ultimately determining whether researchers can accurately identify established cell types, discover novel populations, or delineate disease-specific cellular states [3]. This technical guide examines the operational principles, methodological considerations, and practical implementation of these foundational procedures specifically within the research framework of cell type annotation.

Quality Control: The First Gatekeeper of Data Integrity

Core Quality Control Metrics and Thresholding Strategies

Quality control serves as the initial filter through which raw scRNA-seq data must pass before any biological interpretation can occur. This process aims to distinguish intact, viable cells from artifacts resulting from technical variance, while preserving legitimate biological heterogeneity. The standard QC workflow operates primarily on three complementary metrics that collectively identify compromised cells: the number of counts per barcode (count depth), the number of genes detected per barcode, and the fraction of counts originating from mitochondrial genes [28].

Cells exhibiting low count depth, few detected genes, and elevated mitochondrial fractions typically indicate broken membranes where cytoplasmic mRNA has leaked out, leaving only mitochondrial mRNA behind [28]. However, these metrics must be interpreted jointly rather than in isolation, as certain biological contexts—such as respiratory-active cells or quiescent populations—may naturally exhibit higher mitochondrial content or lower transcriptional activity [28].

Effective thresholding strategies balance permissiveness with stringency. Overly aggressive filtering risks eliminating rare cell populations or biologically distinct states, while excessively lenient thresholds permit technical artifacts to distort downstream clustering and annotation [28]. Two primary approaches dominate practice:

- Manual thresholding based on visual inspection of distribution plots (e.g., violin plots, scatter plots) [28].

- Automated thresholding using robust statistical measures like Median Absolute Deviations (MAD), where cells exceeding 5 MADs from the median are typically flagged as outliers [28].

Table 1: Essential Quality Control Metrics and Interpretation Guidelines

| QC Metric | Technical Interpretation | Biological Consideration | Common Thresholding Approach |

|---|---|---|---|

| Count Depth (total counts per barcode) | Low values may indicate empty droplets; high values may suggest multiplets | Large cells or highly transcriptionally active populations naturally have higher counts | MAD-based outlier detection or manual percentile-based thresholds [28] |

| Genes Detected (number of genes with positive counts) | Low values suggest poor cell capture or dying cells | Small cells or quiescent populations may naturally express fewer genes | Correlate with count depth; filter joint outliers [28] |

| Mitochondrial Fraction (% of counts from mitochondrial genes) | High values indicate broken cell membranes | Cardiomyocytes and other metabolic-active cells have naturally high mtRNA | Tissue-dependent; typically 5-20% range, but validate biologically [29] [28] |

| Ribosomal Fraction (% of counts from ribosomal genes) | May indicate cellular stress responses | Varies by cell type and metabolic state | Often used as diagnostic but less frequently for filtering [28] |

Specialized QC Challenges and Advanced Approaches

Beyond these core metrics, specialized QC challenges require additional analytical consideration. Ambient RNA contamination arises from free-floating RNA released by lysed cells during sample preparation, which can be absorbed by intact cells during the partitioning process [29]. This contamination is particularly problematic for detecting rare cell types whose marker genes might also be present at low levels in the ambient pool [29]. Computational tools such as SoupX and CellBender have been developed to estimate and subtract this background contamination [29].

Doublet detection represents another critical QC component, as multiplets—droplets containing two or more cells—can create artificial hybrid expression profiles that mislead both clustering and annotation [28]. As dataset complexity increases, the probability of doublets grows, necessitating specialized detection algorithms that identify cells expressing mutually exclusive marker genes or exhibiting unusually high gene counts [28].

The sequencing technology itself also informs QC strategy. For imaging-based spatial transcriptomics platforms like 10x Xenium, which typically profile only several hundred genes, QC must accommodate the distinct statistical properties of targeted gene panels compared to whole-transcriptome assays [30].

Clustering: From Cellular Neighborhoods to Discrete Populations

Algorithmic Foundations and Parameter Selection

Clustering transforms the continuous landscape of gene expression space into discrete cellular populations that serve as the primary units for annotation. Most modern scRNA-seq pipelines employ graph-based clustering approaches that operate in a low-dimensional space, typically derived from principal components analysis (PCA) [31]. The standard workflow involves constructing a k-nearest neighbor (KNN) graph in PCA space, followed by community detection algorithms such as Louvain or Leiden to identify densely connected groups of cells [31].

The Leiden algorithm has increasingly supplanted Louvain as the community detection method of choice because it guarantees well-connected communities and addresses connectivity limitations observed in the Louvain approach [31]. This technical improvement is particularly valuable for identifying subtle subtypes in immunology or oncology applications where connectivity directly impacts biological interpretation [31].

Clustering outcomes are profoundly influenced by several key parameters that must be carefully tuned based on dataset characteristics and biological questions:

- Number of Principal Components: Determines the "amount of data" used for clustering and must balance signal preservation against noise inclusion [27] [32].

- Resolution Parameter: Controls the granularity of clustering, with higher values producing more fine-grained partitions [27] [32].

- Number of Nearest Neighbors: Affects graph connectivity, with lower values creating sparser graphs that better preserve local relationships [32].

Table 2: Key Clustering Parameters and Their Impact on Downstream Annotation

| Parameter | Technical Function | Impact on Annotation | Empirical Optimization Guidance |

|---|---|---|---|

| Resolution | Controls partition granularity in community detection | Higher resolution improves rare cell type detection but may over-split populations; lower resolution better captures broad structure [27] | Test range (0.2-1.2 initially); use clustering metrics to evaluate [27] [32] |

| Number of PCs | Defines the dimensionality of the neighborhood graph | Too few PCs lose biological signal; too many introduce noise [27] | Assess variance explained; often 20-50 for diverse tissues [27] |

| Number of Nearest Neighbors | Determines local connectivity in graph construction | Sparse graphs (fewer neighbors) preserve fine-grained relationships; dense graphs emphasize global structure [32] | Balance local and global structure; typically 10-30 for most datasets [32] |

| Clustering Algorithm (Louvain vs. Leiden) | Defines how communities are identified in the graph | Leiden typically produces better-connected communities, improving biological coherence of clusters [31] | Prefer Leiden for most applications, especially when subtle subtypes matter [31] |

Recent research has demonstrated that parameter selection should be guided by both intrinsic goodness metrics and the specific annotation goals. Studies evaluating clustering quality against ground-truth annotations have revealed that "there is no direct correlation between clustering quality and a good cell type prediction performance" when using standard clustering metrics alone [27]. Instead, different parameter configurations offer complementary biological insights, suggesting that a single "optimal" clustering may not exist for complex annotation tasks [27].

Quantitative Framework for Clustering Optimization

The relationship between clustering parameters and annotation outcomes can be systematically evaluated using both intrinsic metrics (calculated without reference to external labels) and extrinsic metrics (calculated against ground-truth annotations) [32]. Research analyzing three organ datasets with curated ground-truth annotations has identified that intrinsic measures including within-cluster dispersion and the Banfield-Raftery index serve as reliable proxies for clustering accuracy when true labels are unavailable [32].

A robust linear mixed regression analysis of parameter impacts revealed that using UMAP for neighborhood graph generation combined with increased resolution parameters generally benefits accuracy, particularly when paired with fewer nearest neighbors to create sparser, more locally sensitive graphs [32]. This configuration appears to better preserve fine-grained cellular relationships that correspond to biologically distinct populations.

The computational framework for parameter optimization involves:

- Subsampling and preprocessing the dataset using standardized normalization approaches [32].

- Systematic parameter permutation across dimensions, resolution, and algorithm selections [27] [32].

- Intrinsic metric calculation using packages like

blusterin Bioconductor, which implements silhouette width, purity, and RMSD metrics [27]. - Extrinsic validation against ground-truth labels when available, using measures like Adjusted Rand Index (ARI) [27] [32].

- Accuracy prediction through trained regression models that map intrinsic metrics to expected accuracy [32].

This methodological framework enables researchers to select clustering parameters that maximize the biological fidelity of the resulting partitions, thereby creating a more reliable foundation for subsequent annotation.

Integrated Workflow: From Raw Data to Annotation-Ready Clusters

The interdependence of quality control, clustering, and annotation necessitates an integrated analytical workflow where decisions at each stage influence subsequent outcomes. The complete pathway from raw data to annotation-ready clusters involves both linear processing steps and iterative refinement cycles.

Diagram 1: Integrated scRNA-seq Pre-processing Workflow. This workflow illustrates the sequential steps from raw data to annotation-ready clusters, highlighting the parameter dependencies and iterative refinement nature of the process.

The critical connection between clustering outcomes and annotation fidelity is demonstrated by empirical studies showing that clustering configurations with more partitions (higher resolution) prove more effective at detecting rare cell types, as evidenced by stronger performance in macro-averaged metrics [27]. Conversely, clusterings with fewer partitions excel at capturing broad cell type structure, reflected in superior weighted-average, Cohen's Kappa, and Matthews Correlation Coefficient scores [27]. This fundamental tradeoff necessitates careful alignment between clustering strategies and annotation objectives.

Implementing robust QC and clustering workflows requires leveraging specialized computational tools and reference resources. The field has developed a rich ecosystem of software packages, each optimized for specific aspects of the pre-processing pipeline.

Table 3: Essential Computational Tools for scRNA-seq Pre-processing

| Tool/Package | Primary Function | Key Features | Integration Compatibility |

|---|---|---|---|

| Seurat [27] [30] [31] | Comprehensive scRNA-seq analysis | Implementation of graph-based clustering, visualization, and reference mapping | R-based; compatible with SingleR and Azimuth for annotation |

| Scanpy [28] | Python-based scRNA-seq analysis | Scalable processing for large datasets; Leiden clustering implementation | Python ecosystem; interfaces with CellTypist and scVI |

| SingleR [30] | Reference-based cell type annotation | Correlation-based prediction using reference datasets; fast computation | Works with Seurat and SingleCellExperiment objects |

| Azimuth [30] | Reference-based mapping | Weighted nearest neighbor integration with curated references | Built on Seurat framework; web application available |

| bluster [27] | Clustering metric calculation | Comprehensive intrinsic metric implementation for clustering evaluation | Bioconductor package; compatible with SingleCellExperiment |

| SoupX [29] | Ambient RNA correction | Estimates and removes background contamination from lysed cells | R package; can be integrated into Seurat/Scanpy workflows |

| SC3 [32] | Consensus clustering | Ensemble approach for clustering stability; optimized for smaller datasets | R package; can complement graph-based methods |

Beyond these computational tools, successful implementation requires access to appropriate reference datasets for both validation and method selection. The CellTypist organ atlas provides manually curated annotations across multiple tissues that can serve as ground truth for evaluating clustering performance [32]. Similarly, the Azimuth references offer multi-level annotations that support both broad and fine-grained cell type identification [27] [3].

Quality control and clustering represent more than mere technical preliminaries in the scRNA-seq analytical pipeline; they constitute the fundamental substrate upon which biologically meaningful annotation depends. The empirical evidence demonstrates that decisions made during these pre-processing stages directly constrain and shape all subsequent biological interpretation, from identifying established cell types to discovering novel populations.