Complete Guide to Immune Cell Annotation with CellTypist: From Basics to Advanced Applications in Single-Cell RNA-Seq

This comprehensive guide provides researchers, scientists, and drug development professionals with essential knowledge for utilizing CellTypist in immune cell annotation of scRNA-seq data.

Complete Guide to Immune Cell Annotation with CellTypist: From Basics to Advanced Applications in Single-Cell RNA-Seq

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with essential knowledge for utilizing CellTypist in immune cell annotation of scRNA-seq data. Covering foundational concepts through advanced applications, the article explores CellTypist's logistic regression-based automated classification system, detailed methodological workflows for both built-in and custom models, optimization strategies for large datasets, and validation techniques against established cell ontologies. With practical examples from recent immunological studies and troubleshooting guidance, this resource enables accurate, reproducible cell type identification to accelerate research in immunology, disease mechanisms, and therapeutic development.

Understanding CellTypist: Core Principles and Immune Cell Annotation Fundamentals

What is CellTypist? Automated cell type annotation for scRNA-seq data

CellTypist is an automated cell type annotation tool specifically designed for single-cell RNA sequencing (scRNA-seq) data. It employs logistic regression classifiers optimised by a stochastic gradient descent algorithm to provide rapid and precise prediction of cell identities [1] [2]. Originally developed to explore tissue adaptation of immune cells, CellTypist has evolved into an open-source tool with a community-driven knowledge base for cell types, serving as a standardized platform for automated cell annotation [3]. One of its unique advantages is the comprehensive training set encompassing a wide range of immune cell types across diverse human tissues, enabling accurate organ-agnostic classification of immune compartments [2]. The tool is designed to recapitulate cell type structure and biology of independent datasets, providing robust models that are both scalable and flexible for integration into existing analysis pipelines [4].

Performance and Validation

Quantitative Performance Metrics

CellTypist has demonstrated high performance in cell type classification across multiple metrics. When trained on deeply curated and harmonized cell types from 20 different tissues across 19 reference datasets, CellTypist achieved precision, recall, and global F1-scores of approximately 0.9 for cell type classification at both high- and low-hierarchy levels [2]. The performance is notably robust to technical variations, including differences in gene expression sparseness between training and query datasets, as well as batch effects commonly encountered in scRNA-seq data [2].

Table 1: Performance Metrics of CellTypist Classifiers

| Classifier Hierarchy | Number of Cell Types | Precision | Recall | F1-Score |

|---|---|---|---|---|

| High-hierarchy (low-resolution) | 32 | ~0.9 | ~0.9 | ~0.9 |

| Low-hierarchy (high-resolution) | 91 | ~0.9 | ~0.9 | ~0.9 |

In comparative assessments with other label-transfer methods, CellTypist has shown comparable or better performance with minimal computational cost [2]. A notable advantage is its ability to resolve transcriptionally similar populations; for instance, it clearly distinguishes between monocytes and macrophages, which often form a transcriptomic continuum in scRNA-seq datasets due to their functional plasticity [2].

Comparison with Alternative Approaches

When benchmarked against emerging annotation methods, automated tools like CellTypist offer distinct advantages. Recent evaluations of GPT-4 for cell type annotation demonstrated its capability to generate expert-comparable annotations, with over 75% full or partial matches to manual annotations in most tissues [5]. However, CellTypist provides a specialized framework specifically optimized for scRNA-seq data analysis, avoiding potential limitations associated with large language models such as training corpus opacity and artificial intelligence hallucination risks [5].

Table 2: Comparison of Automated Cell Annotation Methods

| Method | Approach | Advantages | Limitations |

|---|---|---|---|

| CellTypist | Logistic regression with SGD | High performance (~0.9 F1-score), fast prediction, immune-focused | Organ-specific models may be needed for non-immune tissues |

| GPT-4 | Large language model | Broad knowledge base, no reference data needed | Undisclosed training corpus, potential hallucinations |

| SingleR | Correlation-based | Simple implementation, reference-based | Requires high-quality reference datasets |

| ScType | Marker-based | Marker gene focused, web application | Limited to predefined marker genes |

Installation and Setup

Installation Methods

CellTypist can be installed through multiple package management systems. For users with Python 3.6+ installed, the simplest approach is via pip:

Alternatively, installation through bioconda is also supported:

The installation includes dependencies such as pandas, scikit-learn, scanpy, and numpy, which are essential for the annotation workflow [6] [7].

Model Download and Configuration

CellTypist operates using pre-trained models that serve as the basis for cell type predictions. Users can download available models through the Python API:

The models are stored in a local directory (default: .celltypist/ in the user's home directory), though this path can be customized by setting the environment variable CELLTYPIST_FOLDER [1]. Since each model averages about 1 megabyte in size, downloading all available models is recommended for comprehensive analysis [1].

Core Annotation Workflow

Basic Annotation Procedure

The standard CellTypist workflow begins with importing the necessary modules and loading the query data. The input data should be a raw count matrix (reads or UMIs) in formats such as .txt, .csv, .tsv, .tab, .mtx or .mtx.gz, with cells as rows and gene symbols as columns [1]:

For data in gene-by-cell format, the transpose_input = True parameter should be specified. For MTX format files, additional gene_file and cell_file arguments are required to identify the feature and observation names [1].

Prediction Modes

CellTypist offers two distinct prediction modes to accommodate different annotation scenarios:

Best Match Mode (

mode = 'best match'): The default mode where each query cell is predicted to have the cell type with the largest score/probability among all possible types. This approach is straightforward and ideal for differentiating between highly homogeneous cell types [1].Probability Match Mode (

mode = 'prob match'): In this mode, a probability cutoff (default: 0.5, adjustable viap_thres) determines whether a cell is assigned to none, one, or multiple cell types. Cells failing the probability cutoff for all cell types receive an 'Unassigned' label, while those passing the cutoff for multiple types receive concatenated labels (e.g., "T cell|B cell") [1]. This mode is particularly valuable for identifying ambiguous cell states or novel cell types not well-represented in the reference model.

Majority Voting Refinement

To enhance annotation accuracy, CellTypist incorporates a majority voting approach that refines predictions within local cell clusters. When enabled (majority_voting = True), this feature performs over-clustering of the query dataset and assigns the dominant cell type label within each cluster [4] [8]. This strategy helps mitigate potential batch effects and improves consistency, as cells belonging to the same type are assigned identical labels regardless of technical variations [8].

The majority voting process generates additional columns in the output, including the original predictions, over-clustering assignments, and consensus labels after voting [9].

Output Interpretation and Visualization

Result Extraction

The AnnotationResult object returned by the annotate function contains three primary components:

predicted_labels: The main prediction results, including cell type assignments for each cell.decision_matrix: The raw decision scores for each cell across all cell types.probability_matrix: Probabilities transformed from the decision matrix using the sigmoid function [1].

These results can be exported to various formats for further analysis:

Visualization Methods

CellTypist provides built-in visualization capabilities to facilitate result interpretation:

The visualization function automatically generates UMAP coordinates using a canonical Scanpy pipeline, overlaying the predicted cell types for intuitive assessment of annotation quality [1].

Advanced Applications

Multi-Label Classification

For complex biological scenarios where cells may exhibit hybrid identities or transitional states, CellTypist supports multi-label classification. This approach is particularly valuable when dealing with unexpected cell types (e.g., low-quality cells or novel types) or ambiguous cell states (e.g., doublets) that fall outside the traditional "find-a-best-match" paradigm [6]. The multi-label capability allows CellTypist to assign zero (unassigned), one, or multiple cell type labels to each query cell, providing a more nuanced interpretation of cellular identities [6].

Custom Model Training

While CellTypist provides numerous pre-trained models, users can also train custom models on their own reference datasets:

The training process incorporates feature selection to identify the most informative genes for cell type discrimination, optimizing model performance and reducing computational requirements [9]. Custom models can be particularly valuable for specialized cell types or experimental conditions not adequately covered by the pre-trained models.

Online Interface

For users preferring a web-based approach, CellTypist offers an online interface accessible through the CellTypist portal [4] [8]. The online version accepts .csv or .h5ad files, with specific requirements for each format: CSV files should contain raw count matrices, while H5AD files require log-normalized expression data (normalized to 10,000 counts per cell) [8]. Results are delivered via email and include the same core components as the Python package: predicted labels, decision matrix, and probability matrix [8].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for CellTypist Workflow

| Tool/Resource | Function | Specifications | Application Context |

|---|---|---|---|

| CellTypist Python Package | Core annotation engine | Python 3.6+, requires scikit-learn, scanpy, pandas | Primary analysis tool for local execution |

| Pre-trained Models | Reference classifiers for prediction | ~1MB each; immune-focused (e.g., ImmuneAllLow.pkl) | Standardized cell type annotation without custom training |

| Raw Count Matrix | Input query data | Cells × genes (CSV, TSV, MTX, H5AD formats) | Essential input format for accurate prediction |

| Scanpy Ecosystem | Complementary analysis toolkit | Single-cell analysis pipeline for Python | Preprocessing, normalization, and visualization |

| CELLxGENE References | Curated data corpus | 22.2 million human cells, 164 cell types | Training data for model development and benchmarking |

Practical Implementation Protocol

Step-by-Step Experimental Guide

Data Preparation

- Format your scRNA-seq data as a raw count matrix with cells as rows and gene symbols as columns

- Ensure proper quality control has been performed (filtering of low-quality cells and genes)

- For log-normalized data (as needed for online interface), use

scanpy.pp.normalize_total(target_sum=1e4)followed byscanpy.pp.log1p()[8]

Model Selection

- For immune cell annotation: Start with

Immune_All_Low.pklorImmune_All_High.pkl[8] - Explore available models using

models.models_description()to identify tissue-specific options - Consider custom training if pre-trained models don't cover your cell types of interest

- For immune cell annotation: Start with

Annotation Execution

- Run basic annotation:

predictions = celltypist.annotate(input_file, model='selected_model.pkl') - Enable majority voting for cluster-refined results:

majority_voting=True - Use probability mode for complex populations:

mode='prob match', p_thres=0.5

- Run basic annotation:

Result Validation

- Examine confidence scores in the output (

insert_conf=True) - Compare with marker gene expression patterns

- Perform differential expression analysis between predicted clusters

- Utilize multi-label classifications to identify ambiguous populations

- Examine confidence scores in the output (

Downstream Analysis

- Integrate predictions with other analytical modalities (e.g., trajectory inference, gene regulatory networks)

- Compare CellTypist results with alternative annotation methods (e.g., manual annotation, other tools)

- Export results for publication-quality visualizations and further computational analysis

This comprehensive protocol ensures researchers can effectively implement CellTypist for their immune cell annotation research, from initial setup through advanced analytical applications.

The logistic regression classifier optimized by stochastic gradient descent

Algorithm Fundamentals

Logistic regression optimized by stochastic gradient descent (SGD) represents a powerful machine learning approach that combines the probabilistic interpretation of logistic regression with the computational efficiency of iterative gradient-based optimization. This method is particularly valuable in scenarios with large-scale datasets where traditional optimization methods become computationally prohibitive. The core concept involves applying a stochastic approximation of gradient descent to minimize the logistic loss function, resulting in faster iterations though with a potentially lower convergence rate compared to batch methods [10].

The fundamental objective function in logistic regression follows the form of a sum: Q(w) = 1/n * ΣQ_i(w), where w represents the parameters to be estimated, and each Q_i typically corresponds to the loss for an individual data point [10]. SGD optimizes this function by iteratively updating parameters using the gradient computed from individual samples or small mini-batches rather than the entire dataset, making it particularly suitable for large-scale problems in machine learning and statistical estimation [10].

Relevance to CellTypist in Immune Cell Annotation

Within the CellTypist ecosystem, logistic regression with SGD serves as the computational engine enabling rapid and accurate annotation of immune cell types from single-cell RNA sequencing (scRNA-seq) data [11] [2]. This implementation allows researchers to automatically transfer cell type labels from comprehensively curated reference models to query datasets, dramatically accelerating the analysis pipeline while maintaining biological accuracy [4]. The choice of SGD optimization is particularly strategic given the substantial sizes of modern scRNA-seq datasets, which frequently encompass hundreds of thousands of cells across numerous samples and conditions [2].

Algorithm Specification and Mathematical Formulation

Core Algorithm Components

The logistic regression classifier with SGD optimization integrates several mathematical components to achieve efficient model training:

Sigmoid Function: Transforms linear combinations of input features into probability estimates ranging between 0 and 1, representing the probability that a given sample belongs to a particular class [12].

Log Loss Function: Also known as cross-entropy loss, this function measures the discrepancy between predicted probabilities and actual class labels. The loss for a single sample is given by -1 * log(likelihood function), with the total loss representing the sum across all training samples [12].

L2 Regularization: Incorporated to prevent overfitting by penalizing large parameter values, enhancing model generalization to unseen data [12] [13]. The regularization strength is controlled through the parameter α (SGD) or C (traditional logistic regression), where C represents the inverse of regularization strength [13].

Parameter Update Mechanism

The SGD algorithm updates model parameters according to the following iterative process:

- Initialization: Initialize parameter vector

wand learning rateη[10] - Iteration Loop: Repeat until convergence criteria met [10]

- Data Shuffling: Randomly shuffle samples in training set [10]

- Sequential Processing: For each sample

i = 1,2,...,n, update parameters:w := w - η∇Q_i(w)[10]

For linear regression with a squared error loss, the parameter update takes the specific form:

This illustrates how each parameter is adjusted proportionally to the negative gradient of the loss with respect to that parameter [10].

Mini-Batch Extension

CellTypist implements an enhanced variant of SGD utilizing mini-batch training, where small batches of cells (typically 1,000 cells per batch) are processed sequentially rather than individual samples [14] [13]. This approach represents a compromise between computing the true gradient (using all data) and the gradient at a single sample, enabling better computational efficiency through vectorization while maintaining the beneficial stochastic properties of the algorithm [10].

Table 1: Comparison of Optimization Approaches in CellTypist

| Aspect | Traditional Logistic Regression | SGD Logistic Classifier | Mini-batch SGD |

|---|---|---|---|

| Data Usage | Entire dataset per iteration | Single random point per iteration | 1,000 cells per batch |

| Regularization | L2 with parameter C |

L2 with parameter α |

L2 with parameter α |

| Computational Efficiency | Lower for large datasets | Higher for large datasets | Highest for very large datasets |

| Convergence Behavior | Stable but slow | Noisy but fast | Balanced stability/speed |

| CellTypist Application | Small to medium datasets | Large datasets (>50k cells) | Very large datasets (>100k cells) |

Implementation Protocols for Immune Cell Annotation

CellTypist Model Training Procedure

The following protocol outlines the comprehensive procedure for training logistic regression models with SGD optimization using CellTypist for immune cell annotation:

Input Data Preparation:

- Format input data in cell-by-gene matrix structure (transpose if gene-by-cell) [13]

- Ensure expression data is log1p normalized to 10,000 counts per cell [13]

- Provide cell type labels as a list-like object or column name in AnnData metadata [13]

- Include gene identifiers corresponding to matrix columns [13]

Parameter Configuration:

- Set

use_SGD = Trueto enable stochastic gradient descent learning [13] - For large datasets (>100k cells), enable

mini_batch = Truefor enhanced efficiency [13] - Configure

batch_size = 1000andbatch_number = 100as default mini-batch parameters [13] - Set

epochs = 10as the default training iteration count [13] - Specify L2 regularization strength using

alpha = 0.0001(default) [13] - For traditional logistic regression (non-SGD), use

C = 1.0as inverse regularization strength [13]

Model Training Execution:

Model Validation and Application:

- Evaluate model performance using precision, recall, and F1-score metrics [2]

- Apply trained model to query datasets for cell type prediction [4]

- Optionally employ majority voting to refine predictions based on transcriptional similarity [15]

Advanced Training Configurations

For specialized applications, CellTypist offers several advanced training options:

Feature Selection Optimization:

- Enable

feature_selection = Truefor two-pass data training [13] - Set

top_genes = 300to select top genes from each cell type based on absolute regression coefficients [13] - Final feature set represents the union across all cell types [13]

Class Imbalance Adjustment:

- Activate

balance_cell_type = Trueto address imbalanced cell type frequencies [13] - Systematically oversamples rare cell types with higher probability [13]

- Ensures close-to-even cell type distributions in mini-batches [13]

Computational Performance Tuning:

- Specify

n_jobs = -1to utilize all available CPUs [13] - For traditional logistic regression, consider

use_GPU = Trueto enable GPU acceleration [13] - Adjust

max_iterbased on dataset size: 200 (large), 500 (medium), 1000 (small datasets) [13]

Table 2: CellTypist Training Parameters for Different Data Scenarios

| Scenario | use_SGD | mini_batch | batch_size | epochs | balancecelltype | feature_selection |

|---|---|---|---|---|---|---|

| Small dataset (<50k cells) | Optional | False | N/A | N/A | Optional | Recommended |

| Standard dataset (50-100k cells) | True | False | N/A | 10 | Optional | Recommended |

| Large dataset (100-500k cells) | True | True | 1000 | 10 | Recommended | Optional |

| Very large dataset (>500k cells) | True | True | 1000 | 10-30 | Highly Recommended | Optional |

| Imbalanced cell types | True | True | 1000 | 10-30 | True | Optional |

| High-dimensional data | True | Optional | 1000 | 10 | Optional | True |

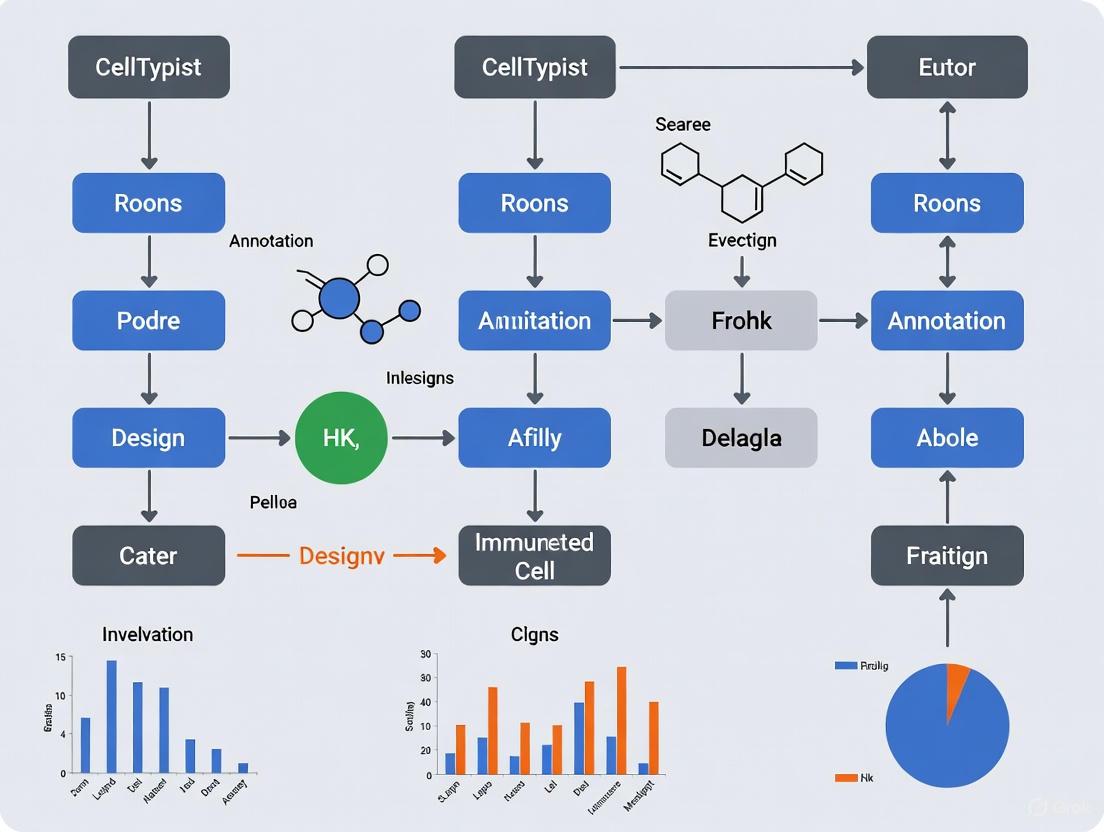

Workflow Visualization and Experimental Design

CellTypist SGD Training Workflow

The following diagram illustrates the complete workflow for training logistic regression models with SGD optimization in CellTypist:

Immune Cell Annotation Pipeline

The comprehensive CellTypist annotation pipeline incorporating SGD-optimized logistic regression:

Performance Metrics and Validation

Model Evaluation Framework

CellTypist's logistic regression with SGD has been rigorously validated using comprehensive metrics and benchmarking:

Performance Metrics:

- Precision: Measures the accuracy of positive predictions [2]

- Recall: Quantifies the ability to identify all relevant instances [2]

- F1-Score: Harmonic mean of precision and recall, providing balanced assessment [2]

- Global F1-Score: Overall performance metric reaching approximately 0.9 for cell type classification [2]

Cross-Validation:

- Model performance remains robust against variations in gene expression sparseness [2]

- Prediction accuracy maintained despite batch effects between datasets [2]

- Performance correlates with representation of cell types in training data [2]

Table 3: CellTypist Performance on Immune Cell Annotation

| Evaluation Aspect | High-Hierarchy (32 types) | Low-Hierarchy (91 types) | Validation Method |

|---|---|---|---|

| Precision | ~0.9 | ~0.9 | Cross-dataset validation |

| Recall | ~0.9 | ~0.9 | Cross-dataset validation |

| F1-Score | ~0.9 | ~0.9 | Cross-dataset validation |

| Cell Types Identified | 15 major populations | 43 specific subtypes | Multi-tissue dataset |

| Robustness to Sparseness | High | High | Systematic testing |

| Batch Effect Resistance | High | High | Multi-dataset analysis |

| Computational Efficiency | High | High | Comparison to alternatives |

Comparative Performance Analysis

In benchmark studies, CellTypist demonstrated comparable or superior performance relative to other label-transfer methods while requiring minimal computational resources [2]. The tool successfully recapitulated immune cell biology across independent datasets, accurately resolving transcriptionally similar cell populations including:

- T cell heterogeneity (αβ vs. γδ T cells, CD4+ vs. CD8+ subsets) [2]

- B cell compartment (naive vs. memory B cells) [2]

- Mononuclear phagocytes (monocytes, macrophages, dendritic cells) [2]

- Innate lymphoid cells (NK cells, non-conventional T cells) [2]

- Dendritic cell subsets (DC1, DC2, migratory DCs) [2]

The granularity of annotation enabled the identification of tissue-specific immune features, such as distinct macrophage subpopulations in lung tissue characterized by expression of GPNMB, TREM2, and TNIP3 [2].

Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Resources for CellTypist Implementation

| Resource Type | Specific Solution | Function/Purpose | Implementation Example |

|---|---|---|---|

| Data Input Format | Cell-by-gene matrix | Standardized input structure | AnnData objects, CSV/TSV/MTX files |

| Reference Data | Multi-tissue immune atlas | Training data for model development | 357,211 immune cells from 16 tissues |

| Preprocessing Tool | Scanpy pipeline | Data normalization and QC | Log1p normalization, HVG selection |

| SGD Implementation | Scikit-learn SGDClassifier | Core optimization algorithm | SGDClassifier(loss='log_loss') |

| Model Training | CellTypist.train function | End-to-end model training | celltypist.train(use_SGD=True) |

| Visualization | CellTypist.dotplot | Result visualization and interpretation | celltypist.dotplot(predictions) |

| Cluster Analysis | Leiden algorithm | Over-clustering for majority voting | scanpy.tl.leiden() integration |

| Performance Metrics | Precision/Recall/F1 | Model validation and benchmarking | Cross-dataset performance assessment |

| Gene Selection | Top_genes parameter | Feature selection for improved performance | Select 300 genes per cell type |

| Batch Correction | Built-in standardization | Handling technical variability | Data scaling during prediction |

Technical Notes and Troubleshooting

Optimal Parameter Selection

Based on extensive testing with immune cell datasets, the following guidelines ensure optimal performance:

Learning Rate Considerations:

- SGD requires careful learning rate tuning to balance convergence speed and stability [16]

- Excessive learning rates cause divergence, while insufficient rates slow convergence [10]

- CellTypist implements appropriate default values based on dataset characteristics [13]

Data Scaling Requirements:

- SGD performance is sensitive to feature scaling [16]

- Input data should be standardized for optimal results [16]

- CellTypist automatically performs appropriate scaling during training [13]

Regularization Strategy:

- L2 regularization prevents overfitting by penalizing large coefficients [12]

- Regularization strength (

αfor SGD,Cfor traditional) requires tuning based on dataset size and complexity [13] - Smaller

Cvalues (stronger regularization) may improve generalization at potential accuracy cost [13]

Troubleshooting Common Issues

Non-Convergence Solutions:

- Increase

max_iterparameter if cost function fails to converge [13] - Reduce learning rate for more stable convergence [10]

- Ensure proper data preprocessing and normalization [13]

Performance Optimization:

- For datasets >100,000 cells, enable

mini_batch=Truefor training efficiency [13] - Utilize

n_jobs=-1to parallelize computation across all available CPUs [13] - Consider feature selection (

feature_selection=True) for high-dimensional data [13]

Biological Validation:

- Always verify automated annotations with marker gene expression [2]

- Employ majority voting to refine predictions based on transcriptional similarity [15]

- Cross-reference identified populations with established immune cell signatures [2]

Within the field of single-cell RNA sequencing (scRNA-seq) analysis, accurate cell type annotation is a critical step for interpreting data and drawing meaningful biological conclusions. CellTypist has emerged as an automated tool that leverages logistic regression classifiers optimized by stochastic gradient descent to address this need [14] [4]. For researchers focusing on immune cells, the choice between the two primary built-in models, Immune_All_Low.pkl and Immune_All_High.pkl, forms a fundamental decision point that balances resolution against broad classification. These models are part of a curated collection available on the CellTypist website, where they can be downloaded for use within a Python environment [14] [1]. The "Low" and "High" suffixes refer directly to the hierarchy level of the cell types they contain; "Low" indicates low-hierarchy (high-resolution) cell types and subtypes, whereas "High" indicates high-hierarchy (low-resolution) cell types [14]. This protocol outlines a structured approach to exploring these models, enabling researchers to select the appropriate tool based on their experimental goals, whether for discovering novel immune subsets or for broader immune population mapping.

Model Characteristics and Quantitative Comparison

The Immune_All_Low and Immune_All_High models serve distinct purposes, and their differences are quantified in the table below. This comparison is essential for making an informed selection.

Table 1: Quantitative Comparison of CellTypist's Key Immune Models

| Feature | ImmuneAllLow | ImmuneAllHigh |

|---|---|---|

| Hierarchy Level | Low-hierarchy (High-resolution) | High-hierarchy (Low-resolution) |

| Number of Cell Types | 98 | 32 |

| Use Case | Detailed annotation of immune cell subtypes | Broad classification of major immune lineages |

| Example Annotation | Follicular B cells, Germinal center B cells, Memory B cells, Naive B cells [17] | B cells [17] |

| Recommended For | In-depth investigation of heterogeneous populations, novel subtype discovery | Initial data exploration, projects focused on major immune cell categories |

These models are built on a logistic regression framework, and for large training datasets, an SGD logistic regression approach using mini-batch training (e.g., 1,000 cells per batch) may be employed to enhance efficiency [14]. The models are serialized in a pickle format and can be easily inspected within Python to list all contained cell types and genes, providing transparency into the annotation process [1].

Experimental Protocols for Model Application

Workflow for Cell Type Annotation Using CellTypist

The following diagram illustrates the general workflow for applying CellTypist models to an scRNA-seq dataset, from data preparation to result interpretation.

Protocol 1: Model Inspection and Selection in Python

Before applying a model, it is good practice to inspect its contents. The following protocol details this process.

Step 1: Install and Import CellTypist Ensure CellTypist is installed in your Python environment. Then, import the necessary modules.

Step 2: Download the Models Download the models of interest to your local machine. The default storage directory is

~/.celltypist/.Step 3: Inspect Model Content Load a model and examine the cell types it contains to confirm it suits your research question.

Protocol 2: Cell Annotation and Result Refinement

This protocol covers the core annotation process and the optional but recommended majority voting refinement.

Step 1: Prepare Input Data Your input data should be a raw count matrix in a format like

.csvor.h5ad. For.h5adfiles, the data should be log-normalized (to 10,000 counts per cell) usingscanpy.pp.normalize_total(target_sum=1e4)andscanpy.pp.log1p()[8].Step 2: Run Cell Annotation Use the

celltypist.annotatefunction to predict cell identities. Themodeparameter allows you to choose between the "best match" (default) and a more conservative "probability match" strategy.Step 3: Apply Majority Voting Refinement Majority voting refines initial predictions by over-clustering the data and assigning the most frequent label within each local cluster to all its cells. This helps to reduce noise and improve consistency.

Step 4: Interpret and Export Results The prediction results can be examined, exported as tables, or converted into an

AnnDataobject for further analysis and visualization.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for scRNA-seq Annotation with CellTypist

| Item | Function / Description | Example / Note |

|---|---|---|

| CellTypist Python Package | Core software for automated cell type annotation. | Install via pip install celltypist [4]. |

Pre-trained Models (Immune_All_Low/High) |

Reference classifiers containing immune cell type signatures. | Downloaded via models.download_models() [1]. |

| Processed scRNA-seq Data | Query data in a compatible format for annotation. | A log-normalized count matrix in .h5ad or .csv format [8]. |

| Scanpy | Python library for single-cell data analysis. | Used for data pre-processing (normalization, PCA) and visualization (UMAP) [18]. |

| Jupyter Notebook / Python Script | Environment for executing the analysis workflow. | Provides reproducibility and a record of the analysis steps. |

Case Study: Application in a Multi-omic Immune Atlas

The utility of CellTypist's models is exemplified by their use in constructing the Human Immune Health Atlas, a high-resolution reference from over 100 healthy donors aged 11 to 65 years [18] [19]. In this large-scale study, researchers utilized multiple CellTypist models (Immune_All_High, Immune_All_Low, and Healthy_COVID19_PBMC) alongside Seurat's reference to guide expert annotation of 71 immune cell subsets [18]. This atlas, which includes 35 T cell, 11 B cell, 7 monocyte, and 6 NK cell subsets, was subsequently used to label cells in a longitudinal multi-omic study of immune aging [20] [19]. The project's analytical trace, from raw FASTQ files to the final annotated atlas, is documented within the Human Immune System Explorer (HISE) framework, showcasing a rigorous and reproducible application of these tools [18]. This real-world example demonstrates how CellTypist models can be integrated into a larger, high-throughput pipeline to generate biologically significant findings, such as identifying robust, non-linear transcriptional reprogramming in T cell subsets with age [20].

Troubleshooting and Best Practices

- Data Preprocessing is Critical: Ensure your query data is properly normalized. For the online interface,

.csvfiles require raw counts, while.h5adfiles require log-normalized data [8]. Mismatched normalization can lead to poor predictions. - Choosing Between Low and High Resolution: Begin your analysis with the

Immune_All_Lowmodel for the most detailed view. If the results appear overly fragmented or noisy for your research question, switch toImmune_All_Highto consolidate cells into broader, more robust populations. - Leverage Majority Voting: Always enable majority voting (

majority_voting = True) for your final analysis. This step is crucial for consolidating predictions within biologically meaningful clusters and mitigating the impact of outlier cells or technical artifacts [8]. - Biological Validation is Essential: Treat automated annotations as strong hypotheses. Use marker gene expression (e.g., via dot plots or feature plots in Scanpy) and prior biological knowledge to validate the assigned cell types, especially for rare or unexpected populations.

CellTypist's Role in the Single-Cell Analysis Workflow Pipeline

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological and medical research by enabling the exploration of transcriptomic profiles at individual cell resolution, revealing cellular heterogeneity and complex communication networks [21]. A critical step in scRNA-seq analysis is cell type annotation, which traditionally relied on manual expert knowledge, introducing subjectivity and variability [22]. CellTypist addresses these challenges as an automated cell type annotation tool specifically designed for scRNA-seq datasets, leveraging machine learning to provide rapid, precise classification of immune cell types and subtypes [4] [2].

This computational tool implements regularised linear models optimized via Stochastic Gradient Descent (SGD), balancing prediction accuracy with computational efficiency [4] [15]. Its training incorporates a comprehensive collection of immune cells from multiple tissues, creating a pan-tissue immune database that enables robust annotation across diverse biological contexts [2]. Unlike methods dependent on limited reference datasets, CellTypist's community-driven approach facilitates continuous knowledge expansion, allowing researchers to contribute new cell types and annotations [15].

Performance Characteristics and Quantitative Evaluation

Model Performance Metrics

CellTypist demonstrates high performance in cell type classification across multiple metrics. Validation studies reported precision, recall, and global F1-scores of approximately 0.9 for classification at both high- and low-hierarchy levels [2]. The tool's performance compares favorably against other label-transfer methods while maintaining minimal computational costs, making it suitable for large-scale datasets [2].

Table 1: Performance Metrics of CellTypist Models

| Metric | High-Hierarchy Model | Low-Hierarchy Model | Evaluation Context |

|---|---|---|---|

| Precision | ~0.9 | ~0.9 | Multi-tissue immune cell classification [2] |

| Recall | ~0.9 | ~0.9 | Multi-tissue immune cell classification [2] |

| F1-Score | ~0.9 | ~0.9 | Multi-tissue immune cell classification [2] |

| Training Cells | 360,000+ | 360,000+ | 16 tissues from 12 donors [2] |

| Cell Types Resolved | 32 | 91 | Initial model specifications [2] |

Comparison with Alternative Approaches

When benchmarked against emerging annotation methods, CellTypist maintains distinct advantages. A 2025 study evaluating LLM-based approaches found that while tools like LICT (Large Language Model-based Identifier for Cell Types) showed promise, CellTypist provided more consistent performance across diverse tissue contexts [22]. Specifically, LLM-based methods demonstrated diminished performance when annotating less heterogeneous datasets, with consistency rates dropping to 39.4% for embryo data and 33.3% for fibroblast data compared to manual annotations [22].

Table 2: Cross-Tool Performance Comparison in Immune Cell Annotation

| Tool | Methodology | Strengths | Limitations | Best Application Context |

|---|---|---|---|---|

| CellTypist | Logistic regression with SGD | High precision (~0.9), fast computation, immune-focused | Limited non-immune cell types | Multi-tissue immune cell annotation [4] [2] |

| LICT | Multi-model LLM integration | Reference-free, objective credibility assessment | Lower consistency in low-heterogeneity data (~39%) | Scenarios requiring reference-free approach [22] |

| Manual Annotation | Expert knowledge | Incorporates domain expertise | Subjective, time-consuming, variable between experts | Small datasets with available expertise [22] |

| GPTCelltype | Single LLM (ChatGPT) | No reference data needed | Limited biological context adaptation | Preliminary annotations before refinement [22] |

Experimental Protocols for CellTypist Implementation

Data Preparation and Input Requirements

Proper data preparation is essential for optimal CellTypist performance. The tool accepts multiple input formats, each with specific requirements:

- Format Specifications: Input data can be provided as

.csv,.h5ad,.txt,.tsv,.tab, or.mtxfiles [1]. For online analysis, only.csvand.h5adformats are accepted [8]. - Matrix Orientation: Expression matrices can be provided with cells as rows and genes as columns, or the reverse with appropriate parameter adjustment [1].

- Normalization Requirements: For

.csvfiles, raw count matrices are expected to reduce file size and upload burden. For.h5adfiles, log-normalized expression matrices (normalized to 10,000 counts per cell) are required, processed byscanpy.pp.normalize_total(target_sum=1e4)followed byscanpy.pp.log1p()[8]. - Gene Format: Gene symbols should be used as identifiers in the expression matrix [8].

Model Selection and Configuration

CellTypist provides multiple pre-trained models optimized for different annotation contexts:

- ImmuneAllLow.pkl: Recommended starting point for immune cell types, containing low-hierarchy (high-resolution) cell types and subtypes [14].

- ImmuneAllHigh.pkl: Alternative model with high-hierarchy (low-resolution) immune cell types [14].

- Custom Models: User-trained models for specialized applications [15].

Cell Annotation Workflow

The core annotation process involves transferring cell type labels from reference models to query data:

Result Interpretation and Validation

CellTypist generates multiple output matrices requiring different interpretation approaches:

- predicted_labels: Primary annotation results, including over-clustering information and majority-voting refined labels [8].

- decision_matrix: Continuous scores representing each cell's similarity to reference cell types [8].

- probability_matrix: Transformed probabilities (via sigmoid function) for each cell type assignment [8].

Integration with Single-Cell Analysis Workflows

Workflow Integration Diagram

The following diagram illustrates CellTypist's role within a comprehensive single-cell analysis pipeline:

Majority Voting Mechanism

CellTypist's majority voting refinement significantly improves annotation accuracy by leveraging transcriptional similarity among cells:

Table 3: Key Research Reagent Solutions for CellTypist Implementation

| Resource Category | Specific Solution/Format | Function in Workflow | Implementation Notes |

|---|---|---|---|

| Input Data Formats | .h5ad (AnnData) |

Preferred format for Python workflow | Contains log-normalized expression matrix [8] |

.csv (raw counts) |

Alternative for online interface | Required for web-based CellTypist analysis [4] | |

| Reference Models | Immune_All_Low.pkl |

High-resolution immune cell annotation | Recommended starting point [14] |

Immune_All_High.pkl |

Lower-resolution immune cell annotation | Alternative for broader classification [14] | |

| Software Dependencies | Python 3.6+ | Core programming environment | Required for local installation [4] |

| Scanpy | Single-cell analysis ecosystem | Enables seamless data exchange [1] | |

| NumPy/SciPy | Mathematical operations | Foundation for model calculations [15] | |

| Computational Resources | CPU configuration | Model application | Minimum 8GB RAM recommended for large datasets |

| Internet connection | Model download | Required for initial setup and updates [1] |

Advanced Applications and Integration Opportunities

Multi-Tissue Immune Cell Atlas Construction

CellTypist enables systematic resolution of immune cell heterogeneity across tissues, as demonstrated in a comprehensive analysis of 16 tissues from 12 donors [2]. This approach revealed tissue-specific features in mononuclear phagocytes, including distinct macrophage subpopulations in lung (alveolar macrophages expressing GPNMB and TREM2) and liver tissues [2]. The tool successfully classified 43 specific immune cell subtypes, including T cell subsets (CD4+ helpers, regulatory, cytotoxic), B cell compartments (naive, memory), and dendritic cell subsets (DC1, DC2, migDCs) [2].

Custom Model Development for Specialized Applications

For researchers investigating novel cell types or specialized tissues, CellTypist provides functionality for custom model development:

Integration with Spatial Transcriptomics

CellTypist annotations can be projected onto spatial transcriptomics data to resolve cellular organization patterns [21]. This integration enables mapping of immune cell distributions within tissue architecture, revealing spatial relationships between different immune subsets and their tissue microenvironments.

Troubleshooting and Technical Considerations

Common Implementation Challenges

- Gene Name Mismatches: Ensure consistent gene symbol nomenclature between query data and reference models [1].

- Batch Effects: While CellTypist demonstrates robustness to batch effects, pronounced technical variability may require integration methods prior to annotation [2].

- Novel Cell Types: The probability match mode (

mode = 'prob match') helps identify cells lacking clear reference counterparts [1]. - Computational Resources: Large datasets (>100,000 cells) may benefit from command-line implementation to optimize memory usage [4].

Quality Assessment Metrics

- Confidence Scores: Examine the

conf_scorefield in results to identify low-confidence predictions requiring manual verification [1]. - Marker Gene Expression: Validate annotations by checking expression of canonical marker genes for assigned cell types [2].

- Cross-Validation: Employ dataset splitting or cross-dataset validation to assess annotation stability [2].

CellTypist represents a robust, efficient solution for automated cell type annotation within single-cell RNA sequencing workflows, particularly for immune cell analysis. Its continuous model expansion and community-driven knowledge base position it as an increasingly valuable resource for the single-cell research community.

Cell Types, Subtypes, States, and Annotation Hierarchies

CellTypist is an automated cell type annotation tool for single-cell RNA sequencing (scRNA-seq) data that uses logistic regression classifiers optimized by a stochastic gradient descent algorithm [4] [1]. It represents a significant advancement in the field of cellular annotation by enabling rapid and consistent classification of immune cell types and subtypes without the subjectivity and time-intensive nature of manual annotation [23]. The platform operates through a global reference system that recapitulates cell type structure and biology across independent datasets, providing robust models that are both scalable and flexible for integration into existing analysis pipelines [4]. For researchers studying immune cells, CellTypist offers specially trained models with a current focus on immune sub-populations, allowing for accurate discrimination of closely related immune cell types [1].

The importance of automated annotation tools like CellTypist becomes evident when considering the limitations of manual annotation approaches, which can require 20 to 40 hours to manually annotate approximately 30 clusters in a typical single-cell dataset and are prone to subjective interpretation and inter-researcher variability [23]. Automated methods provide consistent results, enhance reproducibility, and significantly reduce analysis time while leveraging well-curated reference databases and computational algorithms [23]. CellTypist specifically addresses these challenges by implementing a supervised classification approach based on machine learning, where classifiers are trained using labeled reference scRNA-seq datasets and then applied to query datasets for cell type prediction [23].

Core Methodology of CellTypist

Algorithmic Foundation

CellTypist employs a regularized linear model with Stochastic Gradient Descent (SGD) to provide fast and accurate prediction of cell identities [4] [1]. The SGD optimization allows the model to efficiently handle large-scale scRNA-seq data while maintaining robust performance across diverse tissue types and experimental conditions. The model operates on raw count matrices (reads or UMIs) and requires gene expression data in either cell-by-gene or gene-by-cell format, supporting multiple file types including .txt, .csv, .tsv, .tab, .mtx or .mtx.gz [1]. A key aspect of the algorithmic implementation is the recommendation to include non-expressed genes in the input table as they provide important negative transcriptomic signatures that enhance the model's discriminatory power when compared against the reference model [1].

The prediction workflow in CellTypist offers two distinct modes for cell type assignment [1]:

- Best Match Mode (default): Each query cell is predicted into the cell type with the largest score/probability among all possible cell types. This approach works well for differentiating highly homogeneous cell types.

- Probability Match Mode: A more flexible approach where query cells are assigned to multiple cell types if they exceed a probability threshold (default: 0.5), or marked as "Unassigned" if they fail to pass the probability cutoff for any cell type. This mode accommodates cells with ambiguous identities or transitional states.

Model Architecture and Reference Databases

CellTypist employs a structured model architecture that includes both built-in and custom-trained models. The built-in models, such as Immune_All_Low.pkl and Immune_All_High.pkl, are specifically optimized for immune cell annotation and are regularly updated to incorporate the latest biological knowledge [1]. These models are distributed through a centralized repository, with each model file averaging approximately 1 megabyte in size, making them easily downloadable and manageable [1]. Users can access comprehensive information about available models through the models.models_description() function and download specific models or entire collections based on their research needs [1].

The model structure encapsulates detailed information about cell types and the features (genes) used for discrimination. Users can inspect any model by loading it as an instance of the Model class, which provides access to the complete set of cell types and genes/features contained within the model [1]. This transparency allows researchers to verify the biological relevance of the reference model before applying it to their data. By default, CellTypist stores these models in a folder called .celltypist/ within the user's home directory, though this location can be customized through environment variables [1].

Quantitative Performance Benchmarks

Comparison with Manual and Alternative Automated Methods

Recent benchmarking studies have demonstrated CellTypist's strong performance in automated cell type annotation. In comprehensive evaluations across diverse biological contexts, including normal physiology (PBMCs), developmental stages (human embryos), disease states (gastric cancer), and low-heterogeneity cellular environments (stromal cells), CellTypist and similar automated methods have shown consistent performance advantages over manual annotation approaches [22]. The tool's logistic regression framework combined with SGD optimization provides particularly robust performance for immune cell annotation, where it successfully discriminates between closely related cell subtypes [1].

Table 1: Performance Comparison of Cell Type Annotation Methods

| Method Category | Approach | Accuracy Range | Time Requirements | Consistency | Key Limitations |

|---|---|---|---|---|---|

| Manual Annotation | Expert-based marker inspection | Variable (subjective) | 20-40 hours for 30 clusters | Low inter-researcher consistency | Subjective, experience-dependent, time-consuming [23] |

| CellTypist | Logistic regression + SGD | High (immune cells) | Minutes to hours | High | Reference-dependent [1] |

| LLM-Based Methods (LICT) | Multi-model integration + talk-to-machine | 48.5-69.4% full match rate | Moderate | High | Performance varies by dataset heterogeneity [22] |

| sc-ImmuCC | Hierarchical + ssGSEA | 71-90% accuracy | Moderate | High | Specific to immune cells [24] |

Performance Across Dataset Types

CellTypist's performance demonstrates variability depending on the heterogeneity of the cell populations being analyzed. In highly heterogeneous datasets such as peripheral blood mononuclear cells (PBMCs) and gastric cancer samples, automated annotation tools typically achieve high accuracy with mismatch rates between 2.8% and 9.7% when compared to expert manual annotations [22]. However, in low-heterogeneity environments such as stromal cells in mouse organs or specific developmental stages in human embryos, the performance of all automated methods, including CellTypist, shows more variability, with match rates ranging from 43.8% to 48.5% for embryo and fibroblast data [22]. This pattern highlights the importance of dataset characteristics in determining the appropriate annotation approach and the potential need for method selection based on specific experimental contexts.

Table 2: Annotation Performance Across Biological Contexts

| Biological Context | Example Tissue/Cell Types | CellTypist Performance | Key Challenges | Recommended Approach |

|---|---|---|---|---|

| High Heterogeneity | PBMCs, Gastric Cancer | Mismatch rates: 7.5-9.7% [22] | Distinguishing closely related subtypes | Standard CellTypist models with best match mode |

| Low Heterogeneity | Embryonic cells, Stromal cells | Match rates: 43.8-48.5% [22] | Limited transcriptomic distinction | Ensemble methods + manual verification |

| Immune-specific | T cell subsets, B cell types | High accuracy for major subtypes [1] | Rare cell population detection | Specialized immune models + majority voting |

| Cross-tissue | Multiple organ systems | Recapitulates tissue-specific features [3] | Batch effects, technical variation | Batch correction + tissue-aware models |

Hierarchical Annotation Frameworks for Immune Cells

Conceptual Foundation of Annotation Hierarchies

Hierarchical annotation represents an advanced approach to cell type classification that mirrors the natural differentiation pathways of immune cells. This method organizes cell identities in a tree-like structure, with broad categories at higher levels (e.g., lymphoid vs. myeloid cells) and progressively finer subdivisions at lower levels (e.g., CD4+ T cell subsets) [24]. The hierarchical framework is particularly valuable for immune cells given their extensive diversity and lineage relationships, enabling more accurate and biologically meaningful annotations that capture both major cell types and specialized subtypes [24] [25]. Tools like sc-ImmuCC implement this approach through a three-layer hierarchy that can annotate nine major immune cell types and 29 cell subtypes, significantly improving annotation granularity compared to flat classification systems [24].

The power of hierarchical annotation lies in its ability to model the developmental continuum of immune cells while maintaining discrete classification categories that are practical for downstream analysis. This approach acknowledges that cells exist along differentiation trajectories rather than in strictly discrete categories, while still providing defined reference points for consistent annotation across datasets [26]. For T cells specifically, hierarchical frameworks have demonstrated the capacity to identify 46 reproducible gene expression programs (GEPs) reflecting core T cell functions including proliferation, cytotoxicity, exhaustion, and effector states, far exceeding the resolution of traditional clustering-based approaches [26].

Implementation in CellTypist

CellTypist implements hierarchical annotation through its majority voting feature, which refines initial cell-level predictions by considering the consensus of cells within clusters [4] [1]. This approach operates as a two-tiered hierarchy: first, individual cells receive preliminary annotations based on their transcriptomic profiles; second, these predictions are contextualized within cluster-level patterns to generate more robust assignments. The majority voting process significantly enhances annotation accuracy by leveraging the biological principle that cells of the same type tend to cluster together in transcriptional space, thereby reducing spurious assignments based on technical noise or individual cell variability [1].

The tool also supports custom model training, allowing researchers to build hierarchical classifiers tailored to specific biological questions or tissue types [1]. This flexibility enables the creation of specialized annotation frameworks that can capture tissue-specific immune cell states or disease-associated alterations in cell identity. For complex immune cell landscapes, such as tumor microenvironments, CellTypist's ability to integrate multiple reference models provides a pseudo-hierarchical approach that can resolve subtle differences between activated, exhausted, and resident memory T cell subsets [3].

Experimental Protocols for Immune Cell Annotation

Standard CellTypist Workflow

The standard CellTypist workflow for immune cell annotation involves sequential steps from data preparation through final annotation and visualization. The following protocol outlines the key experimental steps for comprehensive immune cell profiling:

Data Preprocessing Requirements:

- Input data must be a raw count matrix (reads or UMIs) in either cell-by-gene or gene-by-cell format

- Recommended file formats:

.csv,.h5ad,.txt,.tsv,.tab, or.mtx/.mtx.gz - For

.mtxformats, separate gene and cell files must be provided - Non-expressed genes should be included as they provide negative transcriptomic signatures [1]

Cell Type Prediction Protocol:

- Import CellTypist and available models:

- Download and inspect appropriate immune cell models:

- Perform cell type prediction with majority voting:

- Extract and examine results:

- Export results in multiple formats:

Validation and Quality Control:

- Compare annotations across different models (e.g., ImmuneAllLow vs. ImmuneAllHigh)

- Verify confidence scores for critical cell populations

- Inspect decision matrices for ambiguous assignments

- Visualize annotations in low-dimensional space (UMAP/t-SNE) to check for coherent labeling [1]

Advanced Hierarchical Annotation for T Cell Subsets

For researchers focusing specifically on T cell biology, advanced hierarchical approaches provide enhanced resolution of T cell states and functions. The following protocol integrates CellTypist with specialized T cell annotation frameworks:

T Cell-Specific Annotation Workflow:

- Primary T Cell Identification:

- Use CellTypist with immune models to isolate T cells from heterogeneous samples

- Apply probability match mode (p_thres = 0.5) to identify ambiguous cells

- Extract T cell populations for secondary analysis

Subset Resolution:

- Apply T cell-specific references or custom models

- Utilize hierarchical classification strategies

- Implement cross-dataset normalization when integrating multiple samples

Functional State Annotation:

- Integrate gene expression programs (GEPs) for activation, exhaustion, and differentiation states

- Employ tools like T-CellAnnoTator (TCAT) for predefined GEP quantification [26]

- Contextualize states within subset identities (e.g., exhausted CD8+ T cells vs. exhausted CD4+ T cells)

Validation and Biological Interpretation:

- Correlate transcriptomic annotations with surface protein expression (if available)

- Verify expected proportional relationships between subsets (e.g., CD4:CD8 ratios)

- Confirm presence of critical marker genes for assigned subsets

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Resources for Immune Cell Annotation

| Resource Category | Specific Tool/Resource | Function in Annotation Workflow | Key Features | Implementation in CellTypist |

|---|---|---|---|---|

| Reference Databases | ImmuneAllLow.pkl | Primary annotation of major immune cell types | Broad immune coverage, 1MB size [1] | Default model for immune annotation |

| Reference Databases | ImmuneAllHigh.pkl | High-resolution annotation of immune subtypes | Detailed subtype resolution [1] | Secondary model for validation |

| Custom Models | User-trained classifiers | Tissue-specific or disease-specific annotation | Tailored to specific research contexts [1] | celltypist.train() function |

| Analysis Environments | Python 3.6+ | Computational environment for CellTypist | Required for package installation [1] | Base requirement |

| Data Formats | .h5ad, .csv, .mtx | Input data compatibility | Flexible data input options [1] | Multiple format support |

| Visualization Tools | UMAP/t-SNE plotting | Visual validation of annotations | Spatial confirmation of labels [1] | predictions.to_plots() |

| Validation Resources | Decision matrices | Assessment of prediction confidence | Quantitative confidence scoring [1] | predictions.decision_matrix |

| Benchmarking Datasets | PBMC references | Method validation and performance testing | Standardized evaluation [22] | Quality control application |

Integration with Emerging Technologies

The field of automated cell type annotation is rapidly evolving with advancements in both sequencing technologies and computational methods. CellTypist exists within an ecosystem of complementary tools and approaches that collectively enhance our ability to resolve immune cell identities with increasing precision. Recent developments in large language model (LLM) applications for cell type annotation demonstrate promising alternative approaches that can achieve 48.5-69.4% full match rates with manual annotations across diverse datasets [22]. Tools like LICT (Large Language Model-based Identifier for Cell Types) leverage multi-model integration and "talk-to-machine" strategies to improve annotation reliability, particularly for challenging cell populations with ambiguous identities [22].

Single-cell long-read sequencing technologies represent another frontier with significant implications for cell type annotation, as they enable isoform-level transcriptomic profiling that provides higher resolution than conventional gene expression-based methods [27]. These technical advances offer opportunities to redefine cell types based on splicing patterns and isoform usage, potentially leading to more precise classifications of immune cell states and functions. Integration of these multi-modal data sources with tools like CellTypist will likely enhance annotation accuracy and biological relevance, particularly for discriminating between closely related immune cell states that exhibit subtle transcriptional differences.

For T cell immunology specifically, specialized annotation frameworks like TCAT (T-CellAnnoTator) and STCAT have demonstrated the ability to identify reproducible gene expression programs (GEPs) reflecting activation states, functional specializations, and subset identities [26] [25]. These tools can complement CellTypist by providing deeper insights into T cell biology beyond basic subset classification, enabling researchers to connect cell identities with functional capacities and clinical implications. The integration of these specialized approaches with CellTypist's robust classification framework represents a powerful strategy for comprehensive immune cell analysis in research and drug development contexts.

The Pan Immune Atlas represents a comprehensive, cross-tissue compendium of immune cells, systematically characterizing the diversity and distribution of immune populations across the human body. This atlas provides a foundational resource for understanding immune cell heterogeneity in health and disease, enabling the deconvolution of complex immune responses from various tissues [28]. Built upon large-scale single-cell RNA sequencing (scRNA-seq) initiatives, it captures detailed transcriptional profiles of both common and rare immune subsets, establishing a reference framework for automated cell type annotation.

CellTypist is an automated cell type annotation tool for scRNA-seq data that leverages logistic regression classifiers optimized by stochastic gradient descent (SGD) [11]. Its integration with immune cell atlases, including the Pan Immune Atlas, allows researchers to accurately classify immune cell types and subtypes in query datasets by leveraging pre-trained models built on comprehensive reference data [14] [4]. This synergy between expansive immune cell references and robust classification algorithms has positioned CellTypist as a valuable tool for standardized immune cell annotation in research and clinical applications.

Atlas Composition and Quantitative Features

The Pan Immune Atlas encompasses diverse immune cell populations across multiple biological contexts. The following table summarizes key quantitative features of major immune atlases integrated with CellTypist.

Table 1: Quantitative Features of Immune Cell Atlases in CellTypist

| Atlas/Model Name | Number of Cell Types/Subsets | Biological Context | Key Features | Source/Reference |

|---|---|---|---|---|

| Human Immune Health Atlas (Allen Institute) | 71 immune cell subsets [20] | Peripheral blood mononuclear cells (PBMCs) from healthy donors (age 25-90) [20] [29] | Cross-age atlas; >1.8 million cells from 108 healthy donors; longitudinal flu vaccination data [29] | Nature (2025) [20] |

| CellTypist Pan Immune Atlas v2 | 98 low-hierarchy cell types [17] | Multiple tissues; pan-immune system coverage [17] | Includes high- and low-hierarchy cell types; mapped to Cell Ontology IDs [17] | CellTypist Wiki [17] |

| Cross-Tissue Atlas | 76 non-epithelial cell subsets (majority immune) [28] | 35 healthy human tissues; 2.3 million cells [28] | Identified 12 cross-tissue coordinated cellular modules (CMs) [28] | Nature (2025) [28] |

The cellular composition of these atlases reveals significant immune heterogeneity across tissues. For example, the cross-tissue atlas demonstrated that peripheral blood and immune organs (bone marrow, lymph nodes, spleen) are predominantly composed of immune cells, while reproductive tissues exhibit a higher proportion of stromal cells [28]. Furthermore, rare subsets like age-associated B cells (ABCs), constituting less than 1% of total B cells, were identified not only in expected tissues like the liver and spleen but also in unexpected locations such as the ureter and skeletal muscle [28].

CellTypist Methodology for Immune Cell Annotation

Core Algorithm and Model Training

CellTypist operates on a logistic regression framework, with the option to implement SGD learning for large training datasets [14]. The model training process involves:

- Traditional Logistic Regression: Used in most cases for standard dataset sizes.

- SGD Logistic Regression: Applied for large datasets (>100,000 cells) using mini-batch training (1,000 cells per batch) over 10-30 epochs [14].

- Regularization: Incorporates L2 regularization to prevent overfitting and improve model generalizability.

- Gene Selection: Utilizes curated marker genes from the Pan Immune Atlas for feature selection in classification models [17].

Table 2: CellTypist Model Selection Guide for Immune Cell Annotation

| Model Name | Resolution | Cell Types Covered | Recommended Use Case |

|---|---|---|---|

| ImmuneAllLow | Low-hierarchy (High-resolution) | Detailed immune subtypes | Fine-grained annotation of immune cell subsets [14] |

| ImmuneAllHigh | High-hierarchy (Low-resolution) | Major immune lineages | Rapid annotation of major immune cell classes [14] |

| Pan Immune Atlas v2 | Multi-level | 98 immune cell types | Comprehensive cross-tissue immune annotation [17] |

Annotation Workflow and Validation

CellTypist Immune Annotation Workflow

The workflow begins with quality-controlled scRNA-seq data as input, followed by appropriate model selection based on the biological context of the query data [14]. For immune cell annotation, the "ImmuneAllLow" or "ImmuneAllHigh" models are typically recommended as starting points [14]. CellTypist then generates prediction probabilities for each cell, which can be further refined through majority voting to integrate predictions across similar cells and improve annotation robustness [4].

Experimental Protocols for Immune Cell Annotation

Basic Cell Annotation Protocol

Materials Required:

- Single-cell RNA sequencing data in matrix format (cells × genes)

- CellTypist installation (Python 3.6+ environment)

- Pre-trained immune cell model (downloaded automatically or manually)

Procedure:

Load data and import CellTypist in Python:

Download and select the appropriate immune model:

Run cell type prediction:

Examine and export results:

Advanced Protocol for Cross-Tissue Immune Annotation

For complex datasets involving multiple tissues or disease states, additional steps enhance annotation accuracy:

Model Customization:

- Combine multiple references to cover tissue-specific immune subsets

- Fine-tune models using transfer learning when reference data is limited

Hierarchical Annotation:

- First annotate major immune lineages using high-hierarchy models

- Then refine subsets using tissue-specific or state-specific models

Validation Integration:

- Incorporate marker gene expression validation post-annotation

- Compare with orthogonal methods (e.g., flow cytometry) when available

Table 3: Essential Research Reagents and Computational Tools for Immune Cell Annotation

| Resource Type | Specific Tool/Reagent | Function/Purpose | Availability |

|---|---|---|---|

| Reference Atlas | Human Immune Health Atlas [29] | Gold-standard reference for PBMC immune cells | Allen Institute Portal |

| Annotation Software | CellTypist [4] | Automated cell type classification | Python package: pip install celltypist |

| Cell Ontology | Cell Ontology IDs [17] | Standardized cell type nomenclature | Cell Ontology |

| Pre-trained Models | ImmuneAllLow, ImmuneAllHigh [14] | Ready-to-use classifiers for immune cells | Built-in CellTypist models |

| Validation Tool | LICT (LLM-based Identifier) [22] | Objective annotation reliability assessment | Communications Biology |

| Data Visualization | CellTypist UMAP Visualization [29] | Visual assessment of annotation quality | Allen Institute visualization tools |

Applications in Immunobiology and Drug Development

The integration of Pan Immune Atlas data with CellTypist annotation enables several advanced applications in research and therapeutic development:

Aging Immune Studies

Longitudinal immune profiling using CellTypist with the Human Immune Health Atlas has revealed non-linear transcriptional reprogramming in T cell subsets with age, particularly in naive CD4+ and CD8+ T cells, demonstrating robust changes prior to advanced aging [20]. These findings provide insights into age-related immune dysregulation that impacts vaccine responses and infection susceptibility.

Cancer Immunotherapy Research

CellTypist facilitates the analysis of tumor-infiltrating lymphocytes (TILs) using immune signatures derived from atlas data. Recent pan-cancer analyses have identified prognostic TIL signatures, such as the Zhang CD8 TCS signature, which demonstrates higher accuracy in prognostication across multiple cancer types [30]. This application enables better patient stratification for immunotherapy response.

Cross-Tissue Immune Coordination Analysis

The identification of coordinated cellular modules (CMs) across tissues reveals fundamental principles of immune organization. CellTypist can annotate these conserved cellular ecosystems, such as CM04 and CM05 enriched in primary and secondary immune organs, providing insights into systemic immune coordination and its dysregulation in disease [28].

Applications of CellTypist in Immune Research

Validation and Quality Control Framework

Ensuring annotation accuracy requires systematic validation approaches:

Objective Credibility Evaluation:

- Retrieve marker genes for predicted cell types

- Validate expression in corresponding clusters (>4 markers expressed in >80% of cells) [22]

- Calculate confidence scores based on marker concordance

Multi-Model Integration:

- Combine predictions from multiple LLMs or classification algorithms

- Leverage complementary strengths of different approaches

- Resolve discrepancies through iterative refinement [22]

Benchmarking Against Gold Standards:

- Compare with manual expert annotations

- Validate using orthogonal methods (e.g., protein markers)

- Assess reproducibility across technical replicates

This multi-layered validation framework addresses the limitations of both manual annotations (subjectivity, inter-rater variability) and automated methods (reference bias, technical artifacts), ensuring robust and biologically meaningful cell type assignments [22] [31].

Future Directions and Development

The continued expansion of Pan Immune Atlas resources and CellTypist capabilities includes several promising directions:

- Temporal Dynamics Integration: Incorporating longitudinal immune profiling data to model immune changes across lifespan and disease progression [20]

- Multi-omic Expansion: Integrating transcriptomic, proteomic, and epigenetic data for multi-modal cell type definition

- Spatial Context Integration: Combining single-cell resolution with spatial positioning information from emerging spatial transcriptomics technologies [28]

- Automated Ontology Alignment: Enhanced mapping to standardized cell ontologies to improve annotation consistency and interoperability [17]

These developments will further establish CellTypist as an essential tool for leveraging comprehensive immune cell atlases in basic research, translational studies, and therapeutic development.

Integration with Cell Ontology for standardized cell type identification

The advent of high-throughput single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to characterize cellular heterogeneity at unprecedented resolution. However, this technological advancement has introduced a significant challenge: the inconsistent annotation of cell types across different studies, tissues, and laboratories. The Cell Ontology (CL) serves as a structured, controlled vocabulary for cell types, providing standardized terminology and definitions that enable data integration and comparison across experiments. The integration of Cell Ontology with automated cell type annotation tools represents a critical advancement toward building a unified Human Cell Atlas where cellular annotations can be consistently interpreted across the scientific community [32].

CellTypist, an automated cell type annotation tool for scRNA-seq data, has emerged as a key platform that facilitates this integration. By incorporating Cell Ontology identifiers into its annotation framework, CellTypist enables researchers to bridge the gap between computational predictions and biologically meaningful, standardized cell type nomenclature. This integration is particularly valuable for immune cell annotation, given the extensive diversity and functional specialization of immune cell populations across tissues and physiological states [17]. The harmonization of cell type annotations through ontological frameworks addresses a fundamental challenge in single-cell biology - the reconciliation of annotation resolutions and technical biases across independently generated datasets [33].

Cell Ontology fundamentals and structure

The Cell Ontology is a community-based, structured vocabulary that represents a comprehensive collection of cell types across multiple species, with a particular emphasis on mammalian cell types. Built upon formal ontological principles, CL employs a directed acyclic graph structure where cell types are connected through "isa" and "partof" relationships, creating a hierarchical organization from broad to specific cell type categories. This hierarchical structure enables annotations at multiple levels of resolution, from general categories (e.g., "immune cell") to highly specialized subtypes (e.g., "CD4-positive, alpha-beta memory T cell") [32].

Each cell type in the CL is assigned a unique ontology identifier (e.g., CL:0000236 for B cells) and includes precise definitions, synonyms, and relationships to other cell types. This standardized approach facilitates computational reasoning and enables the integration of cell type information across different databases and analytical platforms. The CL is continuously updated through community curation efforts, incorporating new cell types as they are discovered and characterized through single-cell genomics and other experimental approaches [32].

For immune cells specifically, the CL encompasses the diverse lineages and functional states of the immune system, building upon decades of immunological research and classification systems such as the CD nomenclature established through the International Workshop on Human Leukocyte Differentiation Antigens [32]. This comprehensive coverage makes CL particularly suitable for standardizing annotations in immune cell-focused single-cell studies.

CellTypist's integration with Cell Ontology

Implementation of ontological standards

CellTypist incorporates Cell Ontology integration through several key mechanisms. The platform's model repository includes CL identifiers for the majority of cell types in its reference atlases, creating a direct mapping between computationally predicted labels and standardized ontological terms. This mapping enables consistent annotation across different datasets and analytical contexts [17]. For example, in the CellTypist Pan Immune Atlas v2, most low-hierarchy cell types are associated with specific CL identifiers, allowing predictions to be grounded in established biological definitions rather than dataset-specific nomenclature [17].