Dataset Size and scFM Performance: A Practical Guide for Biomedical Researchers

Single-cell foundation models (scFMs) represent a transformative advancement for analyzing cellular heterogeneity, yet their effective application is critically dependent on dataset size and quality.

Dataset Size and scFM Performance: A Practical Guide for Biomedical Researchers

Abstract

Single-cell foundation models (scFMs) represent a transformative advancement for analyzing cellular heterogeneity, yet their effective application is critically dependent on dataset size and quality. This article synthesizes the latest 2024-2025 research to provide a comprehensive framework for researchers and drug development professionals. We explore the foundational principles of scFMs, detailing how architectural choices and pretraining data volume impact model capability. The review systematically compares methodological approaches for diverse dataset scenarios, from large-scale atlas construction to resource-limited studies. We offer evidence-based troubleshooting strategies to overcome data sparsity, optimize feature selection, and mitigate batch effects. Finally, we present rigorous validation benchmarks and novel biological metrics to guide model selection, empowering scientists to make informed decisions that maximize analytical robustness and biological discovery across genomics, oncology, and clinical translation.

Understanding scFMs: Core Concepts and the Critical Role of Data Scale

Frequently Asked Questions (FAQs)

Q1: What is a single-cell foundation model (scFM), and how does it relate to transformer architectures?

A single-cell foundation model (scFM) is a large-scale deep learning model pretrained on vast datasets of single-cell omics data, capable of being adapted to a wide range of downstream biological tasks [1]. Inspired by advances in natural language processing (NLP), these models often use transformer architectures to process single-cell data [1]. In this analogy, an individual cell is treated like a sentence, and genes or genomic features (along with their expression values) are treated as words or tokens. The transformer's self-attention mechanism allows the model to learn complex relationships and dependencies between genes, helping to decipher the fundamental 'language' of cells [1].

Q2: My dataset is relatively small. Will a pretrained scFM still be beneficial for my analysis?

The utility of a pretrained scFM for small datasets is a key research question. Benchmark studies suggest that the decision to use a complex scFM versus a simpler model depends on factors like dataset size, task complexity, and available computational resources [2] [3]. For smaller datasets, leveraging the zero-shot embeddings from a model pretrained on millions of cells can sometimes improve performance by providing a biologically meaningful starting representation. However, evidence indicates that simpler machine learning models can be more adept at efficiently adapting to specific, small datasets, particularly under resource constraints [2] [3]. The following table summarizes considerations for model selection based on dataset size.

Table: Guidance on Model Selection Relative to Dataset Size

| Dataset Size | Recommended Approach | Rationale |

|---|---|---|

| Large (e.g., >100k cells) | Use and fine-tune a scFM. | Large datasets provide sufficient data for effective fine-tuning, allowing the model to adapt its broad knowledge to your specific task [1]. |

| Small (e.g., <10k cells) | Consider using zero-shot scFM embeddings or simpler baseline models (e.g., Seurat, scVI). | Simple models are less prone to overfitting on limited data. Zero-shot embeddings transfer knowledge without needing fine-tuning [2] [3]. |

| Medium | Evaluate scFMs against baselines; consider the roughness index (ROGI) for dataset-specific selection [2]. | Performance is variable; empirical testing on your data is crucial. The ROGI metric can help predict which model will perform best [2]. |

Q3: What are the most common technical challenges when applying scFMs, and how can I troubleshoot them?

Common challenges include managing the non-sequential nature of omics data, handling batch effects and data quality inconsistency, and the computational intensity of training and fine-tuning [1]. Furthermore, interpreting the biological relevance of the model's latent embeddings remains non-trivial [1].

Table: Troubleshooting Guide for Common scFM Challenges

| Problem | Potential Cause | Troubleshooting Steps |

|---|---|---|

| Poor performance on a downstream task (e.g., cell annotation) | Data quality issues; model not capturing relevant biology; task mismatch. | 1. Check quality control metrics for your input data [4].2. Verify that the model was pretrained on a relevant biological corpus (e.g., similar species, tissues).3. Compare against a simpler baseline model to see if the scFM paradigm is appropriate [2]. |

| Inability to reproduce a published scFM's results | Data preprocessing differences; version mismatches; hyperparameter variations. | 1. Replicate the exact data preprocessing, tokenization, and normalization steps described in the original paper [1].2. Use standardized frameworks like BioLLM to ensure consistent model loading and evaluation [5]. |

| High computational resource demands | Large model size; inefficient fine-tuning. | 1. Consider using smaller variants of scFMs if available.2. Employ parameter-efficient fine-tuning (PEFT) methods.3. Use models that offer a "zero-shot" option to avoid fine-tuning altogether [2] [3]. |

| Difficulty interpreting model outputs or embeddings | "Black box" nature of deep learning models. | 1. Use attention analysis to identify genes that were important for a specific prediction [1] [2].2. Validate embeddings using ontology-informed metrics like scGraph-OntoRWR or LCAD to see if the model's cell relationships match prior biological knowledge [2] [3]. |

Q4: How do I choose the right scFM for my specific biological task?

There is no single scFM that consistently outperforms all others across every task [2] [3]. Your choice should be guided by the nature of your task (gene-level vs. cell-level), the required output, and the model's pretraining data. The table below benchmarks several prominent scFMs across different task types based on a comprehensive 2025 study [2] [3].

Table: Benchmarking of scFMs Across Different Task Categories

| Model Name | Primary Architecture | Strengths | Ideal For |

|---|---|---|---|

| scGPT [2] [5] | Transformer (Decoder) | Robust performance across diverse tasks (zero-shot & fine-tuning); multi-omics capability [2] [5]. | General-purpose applications, especially when analyzing multiple data modalities. |

| Geneformer [2] | Transformer (Encoder) | Strong performance on gene-level tasks and predicting perturbation effects [2]. | Studying gene-network dynamics and causal relationships. |

| scFoundation [2] [5] | Asymmetric Encoder-Decoder | Strong gene-level task performance; trained on a vast number of genes [2] [5]. | Tasks requiring a broad representation of the protein-coding genome. |

| UCE [2] | Transformer (Encoder) | Incorporates protein sequence information via ESM-2 embeddings [2]. | Exploring the link between genetic sequence and gene expression. |

| scBERT [2] [5] | Transformer (Encoder) | Early pioneering model for cell type annotation [2]. | Educational purposes or as a baseline; may be outperformed by newer, larger models on complex tasks [5]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key "Research Reagent Solutions" for scFM Workflows

| Item / Resource | Function / Description | Example Use in scFM Research |

|---|---|---|

| Public Data Repositories | Sources of large-scale, diverse single-cell data for model pretraining and benchmarking. | Platforms like CZ CELLxGENE, Human Cell Atlas, and GEO provide the "vast datasets" necessary for pretraining robust scFMs [1]. |

| Unified Software Frameworks | Tools that standardize access and evaluation of different scFMs. | The BioLLM framework provides standardized APIs for seamless integration and benchmarking of diverse scFMs, eliminating coding inconsistencies [5]. |

| Cell Ontologies | Structured, controlled vocabularies for cell types. | Used to create novel evaluation metrics like scGraph-OntoRWR, which measures if an scFM's learned cell relationships are consistent with established biological knowledge [2] [3]. |

| Tokenizer & Input Formatter | The method that converts raw gene expression data into a sequence of model tokens. | A critical preprocessing step; common strategies include ranking genes by expression level or binning expression values. This defines how the model "reads" a cell [1]. |

| Benchmarking Datasets | High-quality, labeled datasets with known biological ground truth. | Used to rigorously evaluate scFM performance on tasks like cell annotation, batch integration, and drug sensitivity prediction under realistic conditions [2] [3]. |

Experimental Protocols & Workflows

Protocol 1: Benchmarking scFM Performance on Cell Type Annotation

This protocol assesses an scFM's ability to correctly assign cell identity, a fundamental downstream task.

- Feature Extraction: Input your target single-cell RNA-seq dataset (e.g., from a new study) into the scFM and extract the zero-shot cell embeddings without any further model fine-tuning [2] [3].

- Classifier Training: Using a set of labeled, high-quality reference cells (e.g., from an atlas), train a simple classifier (e.g., a logistic regression or k-NN model) on the extracted embeddings.

- Prediction and Evaluation: Use the trained classifier to predict cell types on a held-out test set from your target dataset. Evaluate performance using standard metrics like accuracy.

- Biological Relevance Assessment: Implement advanced metrics for a deeper biological evaluation:

- Lowest Common Ancestor Distance (LCAD): For any misclassified cells, calculate the ontological distance between the true and predicted cell type. A smaller LCAD indicates a less severe error (e.g., confusing two T cell subtypes vs. confusing a T cell with a neuron) [2] [3].

- scGraph-OntoRWR: Measure the consistency between the cell-type relationships in the scFM's latent space and the known relationships in a formal cell ontology [2] [3].

Protocol 2: Evaluating Data Integration and Batch Correction

This protocol evaluates how well an scFM can merge datasets from different sources while removing technical noise.

- Dataset Compilation: Combine two or more scRNA-seq datasets that profile similar biological systems but contain known batch effects (e.g., from different labs, patients, or sequencing platforms) [2].

- Embedding Generation: Process the combined, unintegrated data through the scFM to obtain a unified set of cell embeddings.

- Visualization and Quantitative Assessment:

- Visualize the embeddings using UMAP or t-SNE. A successful integration will show cells mixing based on cell type rather than clustering by dataset of origin.

- Quantify integration performance using metrics like Local Inverse Simpson's Index (LISI) to measure cell-type mixing and batch separation.

- Compare the scFM's performance against established batch correction methods like Seurat or Harmony [2].

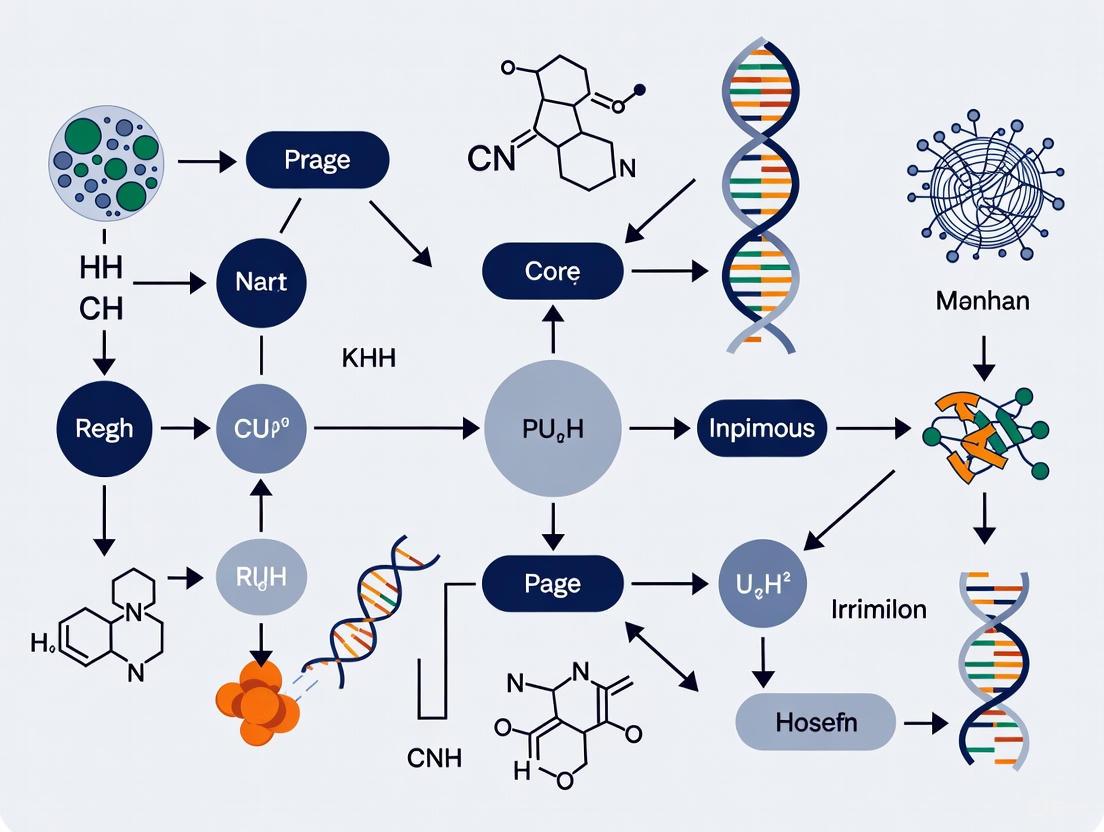

The following diagram illustrates the logical workflow for a typical scFM benchmarking process, incorporating the protocols above.

Diagram: Workflow for scFM Performance Benchmarking

The following table synthesizes key quantitative findings from a major 2025 benchmark study that evaluated six leading scFMs against traditional methods [2] [3]. This data is crucial for understanding the practical performance landscape under the constraints of dataset size and task type.

Table: Consolidated Benchmarking Results for scFM Performance

| Evaluation Dimension | Key Finding | Implication for Research |

|---|---|---|

| Overall Model Superiority | No single scFM consistently outperformed all others across every task [2] [3]. | Researchers should select models based on their specific task (gene-level vs. cell-level) and data characteristics, rather than relying on a single "best" model. |

| scGPT Performance | Demonstrated robust and competitive performance across all evaluated tasks, including both zero-shot learning and fine-tuning scenarios [2] [5]. | A strong candidate for a general-purpose, all-rounder model, especially for projects involving multiple types of analysis. |

| Gene-level Tasks | Geneformer and scFoundation showed particularly strong capabilities, benefiting from their effective pretraining strategies [2] [5]. | These models are preferred for tasks like predicting gene-gene interactions or the effects of genetic perturbations. |

| Performance vs. Simpler Models | Pretrained scFMs are robust and versatile, but simpler machine learning models (e.g., on HVGs) can be more efficient and effective for specific datasets, especially under resource constraints [2]. | For analyses of limited scope or with very small datasets, starting with a traditional method is a valid and computationally efficient strategy. |

| Basis for Model Selection | The Roughness Index (ROGI) can serve as a proxy to recommend an appropriate model in a dataset-dependent manner [2]. | Provides a data-driven method for model selection, helping to predict which scFM will create the most structured and analyzable latent space for a given dataset. |

Frequently Asked Questions

What is a single-cell foundation model (scFM)? A single-cell foundation model (scFM) is a large-scale artificial intelligence model, typically based on a transformer architecture, that is pretrained on vast datasets of single-cell omics data. Through self-supervised learning, it develops a fundamental understanding of cellular biology that can be adapted to various downstream tasks like cell type annotation, batch integration, and perturbation prediction [1].

Why is pretraining data volume so crucial for scFMs? Large and diverse pretraining datasets are essential for teaching the model the universal "language" of cells. Exposing the model to millions of cells from diverse tissues, species, and conditions allows it to learn generalizable patterns of gene expression and cellular function, which is the core of its emergent capabilities and robustness [2] [1].

My scFM underperforms on a specific task. Should I use a simpler model? Benchmarking studies reveal that while scFMs are robust and versatile, simpler machine learning models can sometimes be more efficient and effective, particularly for tasks focused on a specific dataset or under computational constraints [2] [6]. The choice depends on factors like dataset size, task complexity, and available resources [2].

Can scFMs accurately predict the effect of genetic perturbations? This is an active area of research, but current benchmarks suggest that the performance of scFMs for predicting transcriptome changes after genetic perturbation is still limited. Several studies have found that they often do not outperform deliberately simple linear baselines [6] [7]. This remains a significant challenge for the field.

Troubleshooting Guides

Problem: Poor Performance on Downstream Tasks

Potential Causes and Solutions:

Cause 1: Data Mismatch The biological context of your fine-tuning data (e.g., a specific cancer type) is not well-represented in the model's pretraining corpus.

- Solution: Probe the model's zero-shot performance on your data type. If performance is low, consider using a model pretrained on a more relevant data compendium or supplementing your training with data from similar contexts [2].

Cause 2: Insufficient Fine-Tuning The model has not been adequately adapted to your specific task.

- Solution: Ensure you are using an appropriate fine-tuning protocol. Leverage the model's latent embeddings and train a task-specific head on your data. The performance gain often arises because the pretrained latent space has a "smoother landscape," making it easier to train subsequent models [2].

Cause 3: Overwhelming Distribution Shift Your experimental data is too far from the pretraining data distribution.

Problem: Inconsistent or Uninterpretable Results

Potential Causes and Solutions:

- Cause: Fragmented Internal Knowledge

The model's knowledge about an entity (e.g., a cell type) may be inconsistent due to variations in how it was represented across different pretraining datasets.

- Solution: Evaluate the biological relevance of the model's outputs using ontology-informed metrics. Novel metrics like scGraph-OntoRWR can measure the consistency of cell type relationships captured by the scFM with established biological knowledge, helping you assess if the results are meaningful [2].

Problem: High Computational Resource Demands

Potential Causes and Solutions:

- Cause: Large Model Size scFMs are inherently large models, and fine-tuning them can be computationally intensive.

Benchmarking Data and scFM Performance

The table below summarizes key findings from recent benchmark studies evaluating scFMs against traditional methods. This data can guide your model selection.

Table 1: scFM Performance Across Common Tasks [2]

| Task Category | Example Tasks | Performance Summary | Key Insight |

|---|---|---|---|

| Cell-level Tasks | Batch integration, Cell type annotation | scFMs are robust and versatile tools for these applications. | No single scFM consistently outperforms all others across every task. |

| Gene-level Tasks | Drug sensitivity prediction | Performance varies; simpler models can be more adept at adapting to specific datasets. | Model selection must be tailored to dataset size and task complexity. |

| Perturbation Prediction | Predicting transcriptome changes after single/double genetic perturbations | Does not yet outperform simple linear baselines (e.g., an additive model of single-gene effects) [6] [7]. | Highlights the current limitations of scFMs for this complex task. |

Experimental Protocols

Protocol 1: Benchmarking scFM Embeddings for a New Dataset

This protocol helps you evaluate whether an scFM is suitable for your specific data.

- Feature Extraction: Extract zero-shot cell embeddings from the scFM for your dataset.

- Baseline Setup: Establish simple baselines (e.g., using Highly Variable Genes (HVGs), or embeddings from traditional methods like scVI or Harmony).

- Task Application: Use the extracted features to perform your downstream task (e.g., classify cell types using a simple classifier).

- Performance Evaluation: Compare the performance of the scFM-based model against your baselines using relevant metrics. For cell type annotation, consider using the Lowest Common Ancestor Distance (LCAD) metric to assess the biological severity of any misclassifications [2].

- Landscape Analysis (Advanced): Quantitatively estimate the "cell-property landscape roughness" in the pretrained latent space. A smoother landscape often correlates with better downstream task performance [2].

Protocol 2: Evaluating Perturbation Effect Prediction

This protocol is based on benchmarks that found current models lacking [6] [7].

- Data Preparation: Use a dataset with known genetic perturbations and transcriptomic readouts (e.g., Norman et al. or Replogle et al. datasets).

- Model Fine-tuning: Fine-tune the scFM (e.g., scGPT, scFoundation) on a subset of single and double perturbations.

- Baseline Comparison: Compare the model's predictions on held-out double perturbations against a simple "additive baseline," which sums the logarithmic fold changes of the two corresponding single perturbations.

- Assessment: Evaluate the L2 distance between predicted and observed expression values for the top 1,000 highly expressed genes. Current benchmarks show the additive baseline is often superior [6].

Key Signaling Pathways and Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Resources for scFM Research [2] [1]

| Item | Function |

|---|---|

| Public Data Platforms (e.g., CZ CELLxGENE) | Provide unified access to tens of millions of curated single-cell datasets, serving as the primary raw material for pretraining. |

| Pretrained Model Weights | The core "reagent," containing the learned biological knowledge from pretraining, which can be fine-tuned for specific tasks. |

| Tokenization Strategy | The method for converting raw gene expression data into a sequence of discrete tokens (e.g., by ranking genes by expression) that the transformer model can process. |

| Benchmarking Frameworks (e.g., PertEval-scFM) | Standardized tools to objectively evaluate model performance on specific tasks like perturbation prediction, crucial for validating claims. |

| Ontology-Informed Metrics (e.g., scGraph-OntoRWR) | Specialized metrics that gauge whether the model's learned relationships align with established biological knowledge from cell ontologies. |

Frequently Asked Questions

Q1: What is the core functional difference between an encoder and a decoder in a transformer model? Encoders are designed to create rich, context-aware representations (embeddings) of the input text. They use bi-directional attention, meaning they consider all words in a sentence (both preceding and succeeding) to understand context. These embeddings are typically used for tasks like classification. In contrast, decoders are designed for text generation. They use masked multi-head self-attention, which prevents the model from attending to future words in a sequence, ensuring predictions depend only on known previous outputs. This auto-regressive property is key for tasks like translation or question answering [8] [9].

Q2: My sequence-to-sequence model performs well on short sentences but poorly on long, complex sequences. What could be the issue? This is a common problem, often related to the bottleneck of the fixed-length context vector. In early RNN-based encoder-decoder models, the encoder had to compress all information from a potentially long input sequence into a single vector of fixed dimensionality. This can lead to information loss, especially for long sequences [10] [11] [12]. For transformer-based models, consider integrating an attention mechanism. This allows the decoder to dynamically focus on different parts of the input sequence at each decoding step, thereby mitigating the information bottleneck and significantly improving performance on long sequences [13].

Q3: When should I choose a hybrid encoder-decoder model like T5 or BART over a purely encoder-only or decoder-only architecture? The choice depends on your task's nature. Use encoder-only models (like BERT, RoBERTa) for tasks requiring deep understanding of the input, such as text classification, named entity recognition, or sentiment analysis [8] [9]. Use decoder-only models (like the GPT family) for classic text generation tasks, such as creative writing or open-ended question answering [8] [9]. Encoder-decoder hybrid models (like T5, BART) are particularly powerful for tasks that involve both a deep understanding of an input sequence and the generation of a new, related output sequence. These are ideal for text summarization, machine translation, and abstractive question answering, where there is a complex, non-sequential mapping between input and output [8] [14] [9].

Q4: During training, my autoregressive decoder model suffers from slow convergence and error propagation. Are there established techniques to address this? Yes, a standard technique is Teacher Forcing. During training, instead of feeding the decoder's own (potentially incorrect) previous prediction as the next input, the actual target token from the training dataset is provided. This helps accelerate training convergence and reduces error propagation by preventing the model from being exposed to its own mistakes during the early stages of training [12] [13]. It is common practice to use a scheduled sampling ratio to gradually transition from using teacher forcing to using the model's own predictions.

Troubleshooting Guides

Problem: Model Generates Irrelevant or Factually Incorrect Outputs This issue, often a form of "hallucination," can be critical in scientific applications.

- Possible Cause 1: Insufficient or Noisy Training Data.

- Solution: Curate a high-quality, domain-specific dataset. For research on scFM, ensure the training corpus is relevant and accurately represents the domain. Data cleaning and preprocessing are crucial.

- Possible Cause 2: Lack of Proper Context Alignment.

- Solution: For encoder-decoder models, verify the effectiveness of the encoder-decoder attention layer. This layer is responsible for helping the decoder align its output with relevant parts of the input sequence. Ensure the model is correctly learning to focus on the most pertinent input tokens [8] [13]. Fine-tuning on a task-specific dataset can strengthen these alignment patterns.

Problem: Training is Unstable or Diverging This can manifest as exploding gradients or wild fluctuations in the loss curve.

- Possible Cause 1: Improperly Scaled or Unnormalized Activations.

- Solution: Leverage the "Add & Normalize" (Layer Normalization) components integral to the transformer architecture. These residual connections and normalization layers stabilize the training process and enable the training of very deep networks. Check that these components are correctly implemented [8] [9].

- Possible Cause 2: Suboptimal Learning Rate or Vanishing Gradients.

Problem: Poor Performance on Downstream Tasks After Pre-training This is a key concern when adapting a pre-trained model to a specific task like analyzing scFM data.

- Possible Cause: Mismatch between Pre-training Objective and Downstream Task.

- Solution: Carefully select a pre-trained model whose pre-training objective aligns with your goal. For instance, BART is pre-trained as a denoising autoencoder, where text is corrupted and the model learns to reconstruct the original. This makes it particularly strong for comprehension and conditional generation tasks like summarization and translation [14] [9]. Fine-tune the selected model on your specific, smaller scFM dataset to adapt its knowledge.

Comparative Analysis of Model Architectures

The following table summarizes the key characteristics of different model paradigms to guide selection for scFM research.

Table 1: Comparison of Core Architectural Paradigms in Transformer Models

| Feature | Encoder-Only (e.g., BERT, RoBERTa) | Decoder-Only (e.g., GPT Series) | Encoder-Decoder Hybrid (e.g., T5, BART) |

|---|---|---|---|

| Core Function | Understanding & representing input text [8] [9] | Autoregressive text generation [8] [9] | Sequence-to-sequence mapping (understanding input & generating output) [8] [14] |

| Attention Mechanism | Bi-directional (full context) [8] | Masked (causal, only previous tokens) [8] [9] | Encoder: Bi-directionalDecoder: Masked + Cross-attention to encoder [8] |

| Primary Use Cases | Text classification, sentiment analysis, named entity recognition [9] | Text completion, open-ended generation, some Q&A [8] [9] | Machine translation, text summarization, abstractive Q&A [8] [14] |

| Pre-training Objective | Masked Language Modeling (MLM), Next Sentence Prediction [9] | Next Token Prediction [9] | Varied denoising objectives (e.g., text infilling, sentence shuffling) [14] |

Experimental Protocols for Model Evaluation

Protocol 1: Benchmarking Model Performance on Summarization Tasks This protocol is relevant for evaluating how models condense large scientific texts.

- Dataset Preparation: Use a standard summarization dataset (e.g., CNN/DailyMail) as a proxy for scientific text condensation. For scFM-specific evaluation, curate an internal dataset of scientific abstracts and corresponding full-text conclusions.

- Model Fine-tuning: Select pre-trained encoder-decoder models like BART or T5. Fine-tune them on the training split of your chosen dataset. Use a sequence length appropriate for your documents.

- Evaluation Metric: Use ROUGE scores (Recall-Oriented Understudy for Gating Evaluation) to automatically measure the overlap of n-grams and word sequences between the model-generated summary and the reference (human-written) summary [14]. A higher ROUGE score typically indicates better performance.

Protocol 2: Probing Context Understanding with Masked Language Modeling This tests a model's ability to understand biological context, which is crucial for scFM.

- Task Formulation: Present the model with a sentence from a scientific paper where a key technical term or gene name has been masked (e.g., "The expression of [MASK] is a marker for T-cell exhaustion.").

- Procedure: Use an encoder-only model like BERT or RoBERTa, which are pre-trained for this exact task. Pass the masked sentence to the model and analyze the top-k predicted tokens for the mask [9].

- Evaluation: The accuracy of the model in predicting the correct, contextually relevant token is a direct measure of its domain-specific understanding. This can be performed with both pre-trained and fine-tuned models to gauge the effect of domain adaptation.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Computational "Reagents" for Transformer-Based Research

| Item / Component | Function in Experimental Workflow |

|---|---|

| Pre-trained Model Weights (e.g., BERT-base, GPT-2, BART-large) | Foundational model parameters trained on large corpora; serves as the starting point for transfer learning and fine-tuning on specific scFM tasks [9]. |

| Tokenization Vocabulary (e.g., WordPiece, SentencePiece) | A dictionary that maps words or subwords to numerical IDs; critical for preprocessing raw text into a format the model can understand [8] [13]. |

| Attention Mask Matrix | A binary matrix that tells the model which tokens in the input sequence to pay attention to and which to ignore (e.g., padding tokens), ensuring valid computation [8] [9]. |

| Fine-Tuning Dataset (Domain-Specific) | A curated collection of labeled data specific to scFM; used to adapt the general knowledge of a pre-trained model to the nuances of the target scientific domain [9]. |

| Teacher Forcing Ratio | A hyperparameter that controls the probability of using the true previous token versus the model's own output during decoder training, crucial for stabilizing sequence generation [12]. |

Architectural Diagrams

The following diagrams illustrate the core workflows and logical relationships of the discussed architectures.

Encoder-Decoder Sequence Flow

Model Paradigm Comparison

Frequently Asked Questions (FAQs)

General Tokenization Concepts

What is tokenization in the context of single-cell foundation models (scFMs)?

Tokenization is the process of converting raw single-cell omics data into discrete units called tokens that can be processed by deep learning models. In single-cell biology, individual genes or genomic features along with their expression values are treated as the fundamental tokens, analogous to words in a sentence. These tokens serve as structured input for transformer-based architectures that power scFMs [1].

Why is tokenization challenging for single-cell RNA-seq data?

Single-cell gene expression data presents unique tokenization challenges because, unlike natural language, genes lack a natural sequential order. Additional complexities include high sparsity, high dimensionality, low signal-to-noise ratio, and technical variations between experiments [2] [1]. Researchers have developed various strategies to impose structure on this non-sequential biological data for model consumption.

Technical Implementation

What are the main components of tokenization input layers in scFMs?

Most scFMs incorporate three key components in their input layers [2]:

- Gene Embeddings: Unique vector representations for each gene identifier, analogous to word embeddings in NLP.

- Value Embeddings: Representations of gene expression levels, often processed through binning, normalization, or ranking.

- Positional Embeddings: Information about gene order since transformers require positional input, despite the inherent lack of natural sequence in genomic data.

How do different scFMs handle gene ordering in their tokenization schemes?

Different models employ distinct gene ordering strategies, as shown in the table below:

Table: Gene Ordering Strategies in Popular scFMs

| Model Name | Gene Ordering Strategy | Input Genes | Positional Embedding |

|---|---|---|---|

| Geneformer | Ranking by expression levels | 2,048 ranked genes | ✓ |

| scGPT | Not specified | 1,200 HVGs | × |

| UCE | Ordering by genomic positions | 1,024 non-unique genes sampled by expression | ✓ |

| scFoundation | Not specified | ~19,000 protein-encoding genes | × |

| LangCell | Ranking by expression levels | 2,048 ranked genes | Information not available [2] |

Performance & Optimization

Does tokenization strategy impact scFM performance on downstream tasks?

Yes, tokenization significantly affects model performance. Benchmark studies reveal that no single scFM consistently outperforms others across all tasks, indicating that tokenization and architectural choices create different strengths and limitations. Performance depends on factors including dataset size, task complexity, and biological context [2].

What tokenization approach works best for small datasets?

For smaller datasets or resource-constrained environments, simpler machine learning models with established preprocessing steps like Highly Variable Genes (HVGs) selection may be more efficient than large foundation models. When using scFMs, models with gene ranking strategies (like Geneformer) or HVG-based approaches (like scGPT) may offer better performance on smaller datasets due to their focused input representation [2].

Troubleshooting Guides

Problem: Poor Model Performance on Specific Cell Types

Symptoms:

- Low accuracy in cell type annotation for specific populations

- Misclassification errors clustering together biologically distinct cell types

- Inconsistent performance across tissues or conditions

Solution:

Evaluate Tokenization Comprehensiveness:

- Verify that marker genes for problematic cell types are included in your model's vocabulary

- For models with fixed gene sets, check if any critical genes are missing from the input representation

Analyze Tokenization Strategy Compatibility:

- For heterogeneous cell populations, consider models that incorporate gene ranking within cells (e.g., Geneformer) as they may better capture cell-specific expression patterns

- For focused analyses, models using HVG selection (e.g., scGPT) may provide more targeted representation

Implementation Protocol:

- Utilize the scGraph-OntoRWR metric or similar ontology-based evaluation methods to assess whether misclassifications are biologically reasonable [2]

- Calculate the Lowest Common Ancestor Distance (LCAD) to measure ontological proximity between misclassified cell types [2]

- Compare performance across multiple tokenization strategies using the roughness index (ROGI) as a proxy for dataset-specific model suitability [2]

Problem: Handling Multi-Omic and Spatial Data

Symptoms:

- Inability to incorporate multiple data modalities

- Poor integration of spatial context

- Limited cross-modality transfer learning

Solution:

Advanced Tokenization Workflow for Multi-Modal Data:

Table: Multi-Omic Tokenization Specifications

| Data Modality | Token Components | Value Representation | Special Tokens |

|---|---|---|---|

| scRNA-seq | Gene ID + Expression value | Normalized counts, bins, or ranks | [CELL] token for cell-level context |

| scATAC-seq | Peak ID + Accessibility score | Binarized or normalized counts | [ATAC] modality indicator |

| Spatial Data | Coordinate information | Relative or absolute positions | [SPATIAL] modality indicator |

| Protein Data | Antibody ID + Abundance | Normalized protein expression | [ADT] modality indicator |

Problem: Computational Efficiency and Scaling Issues

Symptoms:

- Long training times

- Memory constraints during tokenization

- Inability to process large-scale datasets

Solution:

Gene Selection Strategies:

- Implement Highly Variable Genes (HVG) selection to reduce token sequence length

- Use gene ranking approaches to focus on most informative genes per cell

- Consider sampling-based methods for extremely large datasets

Optimized Tokenization Protocol:

- Step 1: Pre-filter genes using HVG selection (1,000-5,000 genes based on dataset size)

- Step 2: For each cell, rank genes by expression value and select top N genes (typically 1,000-2,000)

- Step 3: Apply value embedding through binning or normalization to reduce vocabulary complexity

- Step 4: Implement efficient positional encoding based on expression rank

- Step 5: Utilize memory-efficient attention mechanisms for long token sequences

Research Reagent Solutions

Table: Essential Computational Tools for scFM Tokenization

| Tool/Resource | Function | Application Context |

|---|---|---|

| Transformer Architectures | Base model architecture for scFMs | Captures complex gene-gene interactions and dependencies |

| Gene Embedding Layers | Converts gene identifiers to vector representations | Provides semantic representation of gene identity |

| Positional Encoding Schemes | Adds information about gene order | Compensates for lack of natural sequence in biological data |

| Value Binning/Normalization | Processes continuous expression values | Reduces complexity of continuous expression data |

| Cellular Barcode Systems | Tags mRNA from individual cells | Enables single-cell resolution in tokenization |

| Unique Molecular Identifiers (UMIs) | Labels individual mRNA molecules | Distinguishes biological duplicates from amplification artifacts [15] |

| Cell Ontology Resources | Standardized cell type terminology | Provides biological ground truth for evaluation [2] |

Core Concepts and Troubleshooting FAQs

This section addresses fundamental questions on how the amount of training data influences the performance of single-cell Foundation Models (scFMs) and other machine learning models, providing clear, actionable guidance for researchers.

FAQ 1: What is the fundamental relationship between training data size and model performance?

The performance of machine learning models, including scFMs, typically improves as the size of the training dataset increases, following a power-law relationship [16] [17]. This means that initial performance gains are rapid as data is added, but the benefits diminish as the dataset grows very large, leading to a plateau in the learning curve [17]. For simpler machine learning models, performance may be less influenced by dataset size, especially if the model is well-specified with relevant features [18]. In contrast, complex deep learning models and foundation models generally require exponentially more data to learn robust representations and avoid overfitting [19].

FAQ 2: My scFM isn't performing well on a specific downstream task. Is more data the only solution?

Not necessarily. Before seeking more data, consider these troubleshooting steps:

- Check Data Quality: The axiom of "garbage in, garbage out" holds true. Noisy, biased, or low-quality data can be detrimental, and exponentially larger volumes may be required to overcome these issues [19]. First, profile your data for statistical distribution, redundancy, and completeness.

- Assess Model Specification: For certain tasks, especially with less complex data interactions, a well-specified traditional machine learning model (e.g., with carefully selected interaction terms) can outperform a foundation model trained on the same data [18] [2]. Evaluate if your problem truly requires the complexity of an scFM.

- Leverage Transfer Learning: A key advantage of scFMs is their ability to be fine-tuned on smaller, task-specific datasets. You can leverage the universal biological knowledge the model learned during its large-scale pretraining, adapting it to your specific task with a relatively modest amount of high-quality data [19] [1].

FAQ 3: How do I estimate the amount of data needed for my project?

While there is no one-size-fits-all answer, several heuristics and methods can provide a starting point:

- The 10 Times Rule: A common rule-of-thumb is to have at least 10 examples for each feature or predictor variable in your model [19] [20]. For instance, if your model uses 1,000 highly variable genes (features), this rule suggests a minimum of 10,000 cells. Note that this was developed for simpler models and may be insufficient for deep neural networks.

- Consider Model Complexity: Deep neural networks, with their high parameter counts, demand substantially more data. Another heuristic is to budget dataset size as a function of trainable parameters, such as having 10-20 samples per parameter [19].

- Performance vs. Budget Trade-off: Empirical evidence suggests that it is often possible to retain 95% of a model's final performance by training on only a fraction (e.g., 5% to 30%) of a very large dataset, offering significant speedups in training and hyperparameter tuning [17].

Table 1: Guidelines for Estimating Training Data Requirements

| Guideline/Method | Description | Best Suited For | Key Considerations |

|---|---|---|---|

| Power-Law Scaling [16] [17] | Performance improves as a power of the training set size. | General ML models and scFMs. | Initial gains are rapid; plateaus for large datasets. |

| 10 Times Rule [19] [20] | At least 10 examples per feature. | Simpler models (e.g., linear/logistic regression). | Often insufficient for modern deep learning models. |

| Factor of Model Parameters [19] | 10-20 samples per model parameter. | Deep Neural Networks. | Indirectly encodes model complexity into data needs. |

| Compute-Optimal Training (Chinchilla) [21] | Model size and training tokens should scale equally. | Large Language Models (LLMs). | For scFMs, the optimal ratio is an active research area. |

FAQ 4: For a fixed compute budget, should I prioritize a larger model or more data?

This is a critical trade-off. Early scaling laws suggested that model size was more important [21]. However, the Chinchilla paradigm shift demonstrated that for a fixed compute budget, model size and the amount of training data should be scaled equally to produce the highest quality model [21]. The "20:1 rule" (20 tokens per parameter) emerged as a baseline for LLMs, and recent models like Llama-3 have successfully pushed this ratio much higher, a trend known as "overtraining" [21]. This suggests that investing in more high-quality data for a given model size can be more effective than solely increasing parameters.

Experimental Protocols for Investigating Data Scaling

To empirically determine the data requirements for your specific scFM task, the following experimental protocol is recommended.

Protocol: Establishing a Learning Curve

Objective: To characterize the relationship between training set size and model performance for a specific scFM and downstream task.

Materials & Reagents: Table 2: Research Reagent Solutions for Scaling Experiments

| Item/Solution | Function in Experiment |

|---|---|

| Base scFM (e.g., scGPT, Geneformer) | The foundation model to be fine-tuned and evaluated. |

| Benchmark Dataset (e.g., from CZ CELLxGENE) | A large, diverse, and high-quality single-cell dataset for creating training subsets. |

| Downstream Task Dataset | A separate, curated dataset with high-quality labels for evaluation (e.g., cell type annotation, drug sensitivity prediction). |

| Computational Cluster | Provides the necessary hardware (GPUs/TPUs) for multiple training runs. |

Methodology:

- Data Subsampling: From your large benchmark dataset, create multiple random subsets of increasing size (e.g., 1%, 5%, 10%, 25%, 50%, 100%).

- Model Training & Fine-tuning: Fine-tune the base scFM on each of the sampled subsets. Keep all other hyperparameters (learning rate, architecture, etc.) constant across runs to isolate the effect of data size.

- Performance Evaluation: Evaluate each fine-tuned model on the same, held-out downstream task dataset. Record relevant performance metrics (e.g., Accuracy, F1 Score, AUC [18]).

- Analysis: Plot the performance metric against the training set size to generate the learning curve. Fit a power-law function to the data to model the scaling relationship [17].

The workflow for this experiment can be visualized as follows:

Advanced Protocol: Data Quality vs. Quantity

Objective: To determine if investing in data quality (e.g., cleaning, filtering) can be more effective than simply collecting more data.

Methodology:

- Create Two Tracks:

- Quantity Track: Fine-tune your scFM on progressively larger random samples from a raw, uncurated dataset.

- Quality Track: Fine-tune your scFM on datasets of fixed size that have undergone rigorous quality control (e.g., cell/gene filtering, batch effect correction, data augmentation [19]).

- Compare Learning Curves: Plot the learning curves for both tracks. If the Quality Track curve lies above the Quantity Track curve, it indicates that for a given compute budget, improving data quality yields better performance than increasing quantity.

The Scientist's Toolkit: Optimization and Advanced Strategies

When data is limited or expensive to acquire, consider these advanced strategies to maximize model performance.

Strategy 1: Data Augmentation and Synthesis Automatically expand your training set by applying label-preserving transformations. For single-cell data, this can include generating realistic synthetic cell profiles using techniques like Generative Adversarial Networks (GANs) [19]. This exposes the model to greater variability without new wet-lab experiments.

Strategy 2: Leveraging Pre-trained Models and Transfer Learning This is a cornerstone of the scFM approach. Instead of training a model from scratch, start with a model that has already been pre-trained on millions of cells from diverse tissues and conditions [2] [1]. This model has learned universal biological knowledge, which you can then transfer to your specific task by fine-tuning on your smaller, target dataset.

Strategy 3: Active Learning Instead of passively using all available data, an active learning algorithm iteratively queries for the most informative data points to be labeled next [20]. This targeted approach ensures the model learns from the most effective examples, maximizing performance gains with minimal data.

Strategy 4: Data Efficiency through Architectural Innovation The field is continuously evolving to improve data efficiency. The emerging "densing law" observes that the capability density of models—the performance per parameter—is growing exponentially over time [22]. This means newer, more efficient model architectures can achieve the same or better performance as older, larger models, but with significantly less data and parameters.

The conceptual relationship between core strategies is summarized below:

Frequently Asked Questions

Q1: What is the primary factor that determines whether I should use an scFM or a traditional model? The decision hinges on a combination of dataset size, task complexity, and available computational resources. For large, diverse datasets and complex tasks like cross-tissue analysis, scFMs generally provide more robust and biologically meaningful insights. For smaller, focused datasets (often below a few hundred cells) or when computational resources are limited, traditional machine learning models or simpler baselines can be more efficient and equally effective [2] [3].

Q2: Is there a specific sample size threshold that dictates when scFMs become advantageous? While a universal magic number does not exist, insights from related machine learning fields suggest that datasets with N ≤ 300 cells are highly prone to overfitting and may overestimate model performance. Studies indicate that N = 500 can help mitigate overfitting, but performance often does not converge until N = 750–1500 [23]. For scFMs specifically, their strength is unlocked with larger and more diverse datasets that allow the model's pre-trained knowledge to be effectively transferred [2].

Q3: Do scFMs consistently outperform all traditional methods? No. Comprehensive benchmarks reveal that no single scFM consistently outperforms all others across every task [2] [3]. While scFMs are robust and versatile, simpler machine learning models can be more adept at efficiently adapting to specific datasets, particularly under resource constraints. The choice of model must be tailored to the specific task [3].

Q4: How can I evaluate if an scFM has learned biologically relevant information? Beyond standard accuracy metrics, novel evaluation perspectives are crucial. You can use cell ontology-informed metrics like:

- scGraph-OntoRWR: Measures the consistency of cell type relationships captured by the scFM with established biological knowledge from cell ontologies.

- Lowest Common Ancestor Distance (LCAD): Assesses the severity of cell type annotation errors by measuring the ontological proximity between misclassified cell types [2] [3].

Troubleshooting Guides

Issue 1: Poor Model Performance on a Small Dataset

Problem: Your dataset is relatively small, and the scFM is underperforming compared to a simpler baseline model.

Diagnosis Steps:

- Quantify Dataset Size: Determine the exact number of cells (N) in your dataset.

- Benchmark Against Baselines: Always compare your scFM's performance against established traditional methods like Seurat, Harmony, or scVI on the same dataset [3].

- Check for Overfitting: Examine the gap between training and test set performance. A large gap indicates overfitting, a common issue with small data [23].

Solutions:

- If N < 500: Strongly consider using a simpler, traditional method. These models have fewer parameters and are less likely to overfit on small sample sizes [23].

- Leverage Pre-trained Embeddings: Even if fine-tuning fails, use the scFM in a zero-shot setting to generate cell embeddings. Then, use these embeddings as input for a simpler classifier, which can be more effective with limited data [3].

- Data Augmentation: If possible, explore legitimate data augmentation techniques to artificially expand your training set, though this must be done carefully to avoid introducing biases.

Issue 2: Choosing the Right scFM for a Specific Task

Problem: With multiple scFMs available (e.g., Geneformer, scGPT, scFoundation), it's unclear which one to select for your specific task.

Diagnosis Steps:

- Define Your Task Precisely: Identify if your task is gene-level (e.g., gene function prediction) or cell-level (e.g., cell type annotation, batch integration) [2] [3].

- Review Model Specializations: Consult benchmarking studies to see which models excel at your task of interest. For instance, some models may be better at clinical prediction tasks, while others are stronger at batch integration [2].

Solutions:

- Consult Holistic Rankings: Use benchmarking studies that provide task-specific model rankings. The table below summarizes general findings.

- Use the Roughness Index (ROGI): This is a dataset-dependent metric that can serve as a proxy to recommend an appropriate model. A smoother latent space landscape (lower roughness) often correlates with better downstream task performance [2] [3].

- Prioritize Interpretability: If understanding the model's decision is critical, explore models that offer attention-based interpretability analyses to uncover which genes the model deems important [2].

Issue 3: Validating the Biological Relevance of scFM Results

Problem: The model produces results with high statistical accuracy, but you are unsure if the findings are biologically meaningful.

Diagnosis Steps:

- Analyze Gene Embeddings: Check if functionally similar genes are clustered together in the gene embedding space learned by the scFM. Compare these embeddings to those from knowledge-driven methods like FRoGS, which uses Gene Ontology (GO) terms [3].

- Inspect Cell-type Relationships: Use the scGraph-OntoRWR metric to validate that the relationships between cell types in the model's latent space align with known cell ontology hierarchies [3].

Solutions:

- Implement Ontology-Based Metrics: Integrate the LCAD and scGraph-OntoRWR metrics into your evaluation pipeline. A lower LCAD for misclassifications and a higher scGraph-OntoRWR score indicate that the model's errors are biologically reasonable and its internal knowledge is consistent with established science [2] [3].

- Perform Attention Analysis: For transformer-based scFMs, analyze the attention weights to identify which genes were most influential for a specific prediction, potentially revealing novel gene regulatory relationships [2].

Table 1: General Performance Guide for scFMs vs. Traditional Methods

| Scenario | Recommended Approach | Rationale |

|---|---|---|

| Large, diverse dataset (N > 1000 cells) | Single-cell Foundation Model (scFM) | scFMs leverage pre-trained knowledge for robust integration and insight discovery on complex data [2] [3]. |

| Small, focused dataset (N < 500 cells) | Traditional ML / Simple Baseline (e.g., Seurat, Harmony, scVI) | Simpler models are less prone to overfitting and are more efficient with limited data [2] [23]. |

| Need for biological interpretability | scFM with ontology-based metrics (e.g., scGraph-OntoRWR, LCAD) | These models and metrics provide insights consistent with prior biological knowledge [3]. |

| Limited computational resources | Traditional ML / Simple Baseline | Training or fine-tuning large scFMs is computationally intensive [2]. |

| Task-specific optimization | Consult task-specific benchmarks | No single scFM is best for all tasks; selection must be tailored [2] [3]. |

Table 2: Key Evaluation Metrics for scFM Performance

| Metric Category | Metric Name | Description | What It Measures |

|---|---|---|---|

| Knowledge-Based | scGraph-OntoRWR | Measures consistency of model's cell-type relationships with a known cell ontology [2] [3]. | Biological relevance of the learned representations. |

| Knowledge-Based | Lowest Common Ancestor Distance (LCAD) | Measures ontological distance between misclassified and true cell types [2] [3]. | Severity of cell-type annotation errors. |

| Unsupervised | Cell-Property Landscape Roughness | Quantifies the smoothness of the latent space with respect to cell properties [2]. | Generalizability and ease of training downstream models. |

| Supervised | Standard Accuracy / AUC | Standard classification accuracy or Area Under the Curve. | Overall predictive performance on a specific task. |

Experimental Protocols

Protocol 1: Benchmarking an scFM Against Traditional Baselines

Objective: To determine the most suitable model for a specific dataset and task (e.g., cell type annotation).

Materials:

- Your target single-cell dataset (e.g., scRNA-seq data).

- Access to scFMs (e.g., Geneformer, scGPT).

- Access to traditional methods (e.g., Seurat, Harmony, scVI).

- Computational environment with sufficient resources (GPU recommended for scFMs).

Methodology:

- Data Preprocessing: Standardize the preprocessing of your dataset (normalization, filtering) to ensure a fair comparison.

- Feature Extraction:

- For scFMs: Extract zero-shot cell embeddings from the pre-trained model without any fine-tuning [3].

- For Traditional Methods: Generate cell embeddings using the respective algorithms (e.g., PCA in Seurat, latent space in scVI).

- Downstream Task Evaluation:

- On the extracted embeddings, train a simple classifier (e.g., logistic regression) for cell type annotation.

- Use a consistent train/test split for all models.

- Performance Assessment:

- Calculate standard metrics (e.g., Accuracy, F1-score, AUC).

- Calculate biological insight metrics like LCAD for annotation tasks [3].

- Analysis: Compare the performance and computational cost of all models to guide selection.

Protocol 2: Evaluating Biological Relevance with scGraph-OntoRWR

Objective: To validate that an scFM captures biologically meaningful relationships between cell types.

Materials:

- Cell embeddings from an scFM.

- A structured cell ontology (e.g., Cell Ontology).

- Implementation of the scGraph-OntoRWR algorithm [2].

Methodology:

- Graph Construction: Construct a graph from the scFM's embeddings where nodes are cells, and edges represent similarity (e.g., k-nearest neighbors).

- Ontology Graph: Represent the known cell-type relationships from the cell ontology as a separate graph.

- Random Walk with Restart (RWR): Perform RWR on both the embedding-derived graph and the ontology graph.

- Similarity Calculation: Measure the similarity between the steady-state probability distributions of the RWR on the two graphs. A higher similarity indicates the scFM's internal representation is more aligned with biological knowledge [2] [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for scFM Research

| Item | Function in Research | Example / Note |

|---|---|---|

| Benchmarking Framework | Provides a standardized pipeline to evaluate and compare different scFMs and baselines across various tasks and datasets [2] [3]. | Custom framework from benchmarking studies. |

| Cell Ontology | A structured, controlled vocabulary for cell types. Serves as the ground truth for calculating biology-driven metrics like scGraph-OntoRWR and LCAD [3]. | Cell Ontology from OBO Foundry. |

| Pre-trained scFM Models | Ready-to-use models that can be applied to new data for zero-shot embedding extraction or fine-tuned for specific tasks. | Geneformer, scGPT, scFoundation [2] [1]. |

| Traditional Baseline Algorithms | Essential for establishing a performance baseline to contextualize scFM results. | Seurat (anchor-based), Harmony (clustering-based), scVI (generative) [3]. |

Workflow and Relationship Diagrams

Diagram 1: Model Selection Strategy

Diagram 2: Biological Relevance Evaluation Workflow

Strategic Implementation: Matching scFMs to Your Dataset Constraints

Technical Support Center: Troubleshooting Single-Cell Foundation Models

This technical support center provides practical guidance for researchers conducting large-scale single-cell studies. The following troubleshooting guides and FAQs address common challenges in atlas construction and cross-study integration, framed within research on how dataset size constraints impact single-cell foundation model (scFM) performance.

Frequently Asked Questions (FAQs)

Q1: My integrated atlas shows strong batch effects instead of biological variation. What should I do? A1: This indicates inadequate batch effect correction. First, ensure you've selected an integration method appropriate for your data's complexity. For complex atlas-level tasks with multiple laboratories and protocols, methods like scANVI, Scanorama, or scVI are recommended. Using Highly Variable Genes (HVG) selection before integration generally improves performance. If batch effects persist, avoid scaling your data before integration, as this can push methods to over-prioritize batch removal at the expense of conserving biological variation [24].

Q2: How can I assess if my integration has preserved meaningful biological trajectories?

A2: Use trajectory conservation metrics to evaluate your results. A well-integrated dataset should maintain continuous biological processes, such as development or differentiation. Inspect trajectories like erythrocyte development in immune cell atlases. Poor methods may introduce unexpected branching or overclustering. Quantitative metrics from benchmarking pipelines like scIB can calculate a trajectory conservation score for objective assessment [24].

Q3: For cross-modality integration (e.g., scRNA-seq with scATAC-seq), which methods are most effective? A3: Performance depends on your feature space. Harmony and LIGER have proven effective for scATAC-seq data on window and peak feature spaces. Alternatively, consider gene-based integration methods like GIANT, which constructs gene graphs from different modalities (scRNA-seq, scATAC-seq, spatial transcriptomics) and embeds them into a unified space, sidestepping challenges of direct cell-based alignment across modalities [24] [25].

Q4: What is the practical impact of dataset size on scFM performance for annotation tasks? A4: Benchmarking reveals that no single scFM consistently outperforms all others across tasks. While scFMs are robust and versatile, simpler machine learning models can be more efficient and adaptable for specific datasets, particularly under computational or data constraints. The choice between a complex scFM and a simpler alternative should be guided by factors like dataset size, task complexity, and available resources [2].

Q5: How can I evaluate the biological relevance of the latent embeddings produced by an scFM? A5: Beyond standard clustering metrics, use ontology-informed metrics. The scGraph-OntoRWR metric evaluates whether the cell-type relationships captured by the model are consistent with established biological knowledge from cell ontologies. The Lowest Common Ancestor Distance (LCAD) metric assesses the severity of cell type misclassification by measuring the ontological proximity between predicted and true cell types [2].

Troubleshooting Guide for Common Experimental Issues

| Problem | Root Cause | Solution Steps |

|---|---|---|

| Poor Integration of Complex Batches | Nested batch effects from multiple labs/protocols; incorrect method choice [24]. | 1. Preprocess: Apply HVG selection.2. Select Method: Use a method proven for complex tasks (e.g., Scanorama, scVI).3. Evaluate: Check batch mixing with kBET and iLISI metrics; verify biology is conserved with trajectory metrics [24]. |

| Loss of Biological Variation | Over-correction during integration; method prioritizes batch removal over biology [24]. | 1. Avoid Scaling: Do not scale data pre-integration if the method advises against it.2. Tune Parameters: Reduce the batch correction strength parameter in your chosen method.3. Validate: Use bio-conservation metrics (e.g., ARI, NMI, cell-type ASW) to ensure cell types remain distinct [24]. |

| Failure in Cross-Modality Integration | Technical variation between modalities overwhelms biological signals; cell-based alignment fails [25]. | 1. Reassess Unit: Consider a gene-based integration method like GIANT.2. Feature Space: For scATAC-seq, try methods like Harmony on window/peak features.3. Check Input: Ensure features are correctly aligned between modalities (e.g., gene activity scores from ATAC). |

| Low Accuracy on New Dataset (scFM) | Task or data characteristics do not match the scFM's pretraining strengths [2]. | 1. Benchmark: Run simple baselines (e.g., Seurat, Harmony) for comparison.2. Assess Landscape: Calculate the Roughness Index (ROGI) of your data in the scFM's latent space; a smoother landscape suggests better fit.3. Fine-Tune: If possible, use task-specific data to fine-tune the pretrained scFM. |

Quantitative Data and Method Performance

Table 1: Benchmarking Scores of Selected Integration Methods on a Human Immune Cell Task (Example) [24]

| Method | Overall Accuracy Score | Batch Removal Score | Bio-Conservation Score | Key Strength |

|---|---|---|---|---|

| Scanorama (embedding) | High | High | High | Excellent batch mixing, good trajectory conservation |

| scANVI | High | Medium | High | Best when cell annotations are available |

| FastMNN (embedding) | High | Medium | High | Robust performance |

| Harmony | Medium | High | Medium | Effective for scATAC-seq; can merge rare populations |

Table 2: Key Evaluation Metrics for Data Integration [24]

| Metric Category | Metric Name | Description | What it Measures |

|---|---|---|---|

| Batch Effect Removal | kBET | k-nearest-neighbor batch effect test | Whether local neighborhoods mix batches well. |

| iLISI | Integration Local Inverse Simpson's Index | Diversity of batches in any local region. | |

| Biological Conservation | ARI/NMI | Adjusted Rand Index / Normalized Mutual Information | Similarity of clustering results before/after integration. |

| ASW (cell-type) | Average Silhouette Width | How well cell-type identities are separated. | |

| Trajectory Conservation | - | How well continuous biological processes are preserved. | |

| Label-Free Conservation | HVG Overlap | Overlap of Highly Variable Genes | Conservation of gene-wise variance structure. |

| Cell-Cycle Variance | - | Retention of cell-cycle variation signal. |

Experimental Protocols for Key Analyses

Protocol 1: Benchmarking an Integration Method for an Atlas Task

This protocol is adapted from large-scale benchmarking studies [24].

- Data Preparation: Collect your batches (e.g., from multiple donors, labs, protocols). Perform standard quality control on each batch separately. Optionally, select Highly Variable Genes (HVGs) common across batches.

- Method Execution: Run the integration method (e.g., Scanorama, scVI). For a comprehensive evaluation, run the method with different preprocessing combinations (e.g., with/without HVGs, with/without scaling). Save the output (corrected matrix or embedding).

- Metric Calculation: Use a benchmarking pipeline (e.g., the

scIBPython module) to compute a suite of metrics.- Batch Removal: Calculate kBET, iLISI, and graph connectivity.

- Bio-Conservation: Calculate ARI, NMI, cell-type ASW, and isolated label scores.

- Label-Free Conservation: Compute trajectory and cell-cycle conservation scores.

- Result Interpretation: Aggregate metrics into overall batch removal and bio-conservation scores. A 40/60 weighting is often used for a final score. Visually inspect UMAP plots to confirm metric findings.

Protocol 2: Evaluating a Single-Cell Foundation Model (scFM) on a Downstream Task

This protocol is based on contemporary scFM benchmarking practices [2].

- Embedding Extraction: In a zero-shot setting, pass your dataset through the pretrained scFM without fine-tuning to extract cell embeddings.

- Task Application: Use the extracted embeddings for your specific downstream task (e.g., cell type annotation, drug sensitivity prediction).

- Performance Evaluation:

- Standard Metrics: Apply standard supervised and unsupervised metrics relevant to the task (e.g., accuracy for annotation).

- Knowledge-Based Metrics: Calculate biology-aware metrics like scGraph-OntoRWR (to check alignment with known cell ontology) and LCAD (to gauge severity of misclassifications).

- Landscape Analysis: Compute the Roughness Index (ROGI) of the latent space for your data; a lower roughness often correlates with better task performance.

- Comparative Analysis: Benchmark the scFM's performance against established non-FM baselines (e.g., Seurat, Harmony, scVI) to determine the value of using a foundation model for your specific use case.

Experimental Workflow and Signaling Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Atlas-Level Integration [24] [2] [25]

| Tool / Resource | Type | Primary Function in Integration |

|---|---|---|

| Scanorama | Integration Algorithm | Efficiently integrates large-scale datasets by merging overlapping panoramas of batches. |

| scVI / scANVI | Generative Model (Python) | Uses deep generative models to integrate data and can incorporate cell annotations (scANVI). |

| Harmony | Integration Algorithm | Linear method that iteratively corrects embeddings to remove batch effects. |

| GIANT | Gene-Based Integration | Integrates data at the gene-graph level, useful for cross-modality analysis. |

| Seurat (CCA, RPCA) | Integration Toolkit (R) | Canonical Correlation Analysis (CCA) or Reciprocal PCA for anchoring batches. |

| scIB Python Module | Benchmarking Pipeline | Provides metrics and a pipeline to objectively evaluate integration method performance. |

| Cell Ontology | Knowledge Base | Provides a structured, controlled vocabulary for cell types for biology-aware evaluation. |

| CZ CELLxGENE | Data Repository | Platform providing unified access to millions of annotated single-cell datasets for pretraining and analysis. |

Frequently Asked Questions

This technical support guide addresses common challenges researchers face when applying transfer learning and pre-trained embeddings in resource-constrained environments, particularly within the context of single-cell Foundation Model (scFM) performance and dataset size constraints research.

FAQ 1: With a very small dataset (under 5MB), should I fine-tune a pre-trained model or train a new model from scratch?

- Answer: For very small datasets (e.g., 1-5MB), training a model from scratch can appear to yield superior metrics, but this often reflects memorization rather than genuine linguistic or biological understanding. The model achieves near-perfect perplexity by essentially copying training sequences [26]. For tasks where capturing domain-specific nuances is critical, using a pre-trained model with Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA is often more robust. This approach leverages the general knowledge within the pre-trained model while adapting it with minimal data, reducing the risk of overfitting [27] [26].

FAQ 2: How can I adapt a large pre-trained model to my specific single-cell analysis task without a powerful GPU cluster?

- Answer: Parameter-Efficient Fine-Tuning (PEFT) techniques are designed for this scenario. Specifically, LoRA (Low-Rank Adaptation) injects and trains small matrices into the transformer layers of a pre-trained model, freezing all original weights. This can reduce the number of trainable parameters by over 90%, dramatically cutting GPU memory requirements and training time. After training, these small matrices can be merged back into the base model, adding zero latency during inference [27] [28].

FAQ 3: I am using pre-trained embeddings from a public model for molecular property prediction, but my performance is worse than using traditional fingerprints. Why?

- Answer: This is a recognized issue in the field. Some benchmarking studies have found that despite their sophistication, many modern pre-trained molecular embedding models show negligible or no improvement over traditional, much simpler methods like Extended Connectivity Fingerprints (ECFP) [29]. Potential causes include a mismatch between the model's pretraining objective and your specific task, or the embeddings not generalizing well to your dataset's unique chemical space. It is recommended to validate against simple baselines like ECFP and consider models that incorporate strong chemical inductive biases [29].

FAQ 4: How do I prevent a pre-trained single-cell foundation model from losing its general biological knowledge when I fine-tune it on my narrow dataset?

- Answer: This phenomenon, known as catastrophic forgetting, can be mitigated with several strategies [27]:

- Use a lower learning rate (e.g., 5e-5) during fine-tuning to make smaller, more conservative updates to the weights [27].

- Employ progressive unfreezing, where you first fine-tune only the final layers and then gradually unfreeze and train earlier layers with an even lower learning rate [28].

- Apply PEFT methods like LoRA, which are less prone to causing catastrophic forgetting because they constrain the updates to a low-rank space [27].

- Continuously validate performance on general benchmarks (e.g., MMLU for LLMs or broad cell type annotation tasks for scFMs) alongside your domain-specific metrics [27].

- Answer: This phenomenon, known as catastrophic forgetting, can be mitigated with several strategies [27]:

FAQ 5: What is the practical difference between transfer learning and fine-tuning?

- Answer: While the terms are sometimes used interchangeably, a key distinction lies in the scope of retraining [30]:

- Transfer Learning typically involves using a pre-trained model as a fixed feature extractor. Most of the model's layers are frozen, and only a new classifier head (the final layers) is trained on the new task. This is data- and compute-efficient.

- Fine-Tuning involves updating some or all of the pre-trained model's weights on the new data. This is more adaptable and can achieve higher accuracy but requires more data and computational resources and carries a higher risk of overfitting [30].

- Answer: While the terms are sometimes used interchangeably, a key distinction lies in the scope of retraining [30]:

The table below summarizes a core quantitative finding related to dataset size, a critical constraint in research.

- Table 1: Performance Comparison on Different Dataset Sizes [26]

| Dataset Size | Training Approach | Generalization Score | Test Perplexity (PPL) | Key Interpretation |

|---|---|---|---|---|

| 1MB | From Scratch | 59.0 | 151.6 | Superior score but high PPL indicates limited real learning. |

| Pre-trained (GPT-2) | 57.8 | 27.0 | Lower score but better PPL shows more stable generalization. | |

| 5MB | From Scratch | 88.7 | 1.0 | Near-perfect PPL suggests dataset memorization, not understanding. |

| Pre-trained (GPT-2) | 63.6 | 18.7 | Consistent, stable performance. | |

| 10MB | From Scratch | 36.4 | 1.0 | High overfitting; model fails to generalize. |

| Pre-trained (GPT-2) | 56.7 | 19.0 | Pre-trained model becomes the better option. | |

| 20MB | From Scratch | 40.8 | 1.0 | Severe overfitting persists. |

| Pre-trained (GPT-2) | 46.0 | 18.8 | Clear advantage for the pre-trained approach. |

Experimental Protocols

This section provides detailed methodologies for key experiments cited in the FAQs, enabling replication and validation of the presented findings.

Protocol 1: Benchmarking Pre-trained Molecular Embeddings vs. Traditional Fingerprints

- Objective: To rigorously evaluate the performance of pre-trained molecular embedding models against classic ECFP fingerprints across multiple property prediction tasks [29].

- Methodology:

- Model & Data Selection: Select 25 pre-trained models of varying modalities (e.g., GNNs, Graph Transformers, NLP-based). Gather 25 diverse molecular property prediction datasets [29].

- Feature Extraction: For each model, generate static molecular embeddings for all compounds in the datasets. Simultaneously, compute ECFP4 fingerprints for the same compounds [29].

- Model Training & Evaluation: Use a simple, consistent predictor (e.g., a Logistic Regression or shallow Feed-Forward Network) on top of both the pre-trained embeddings and the ECFP fingerprints. Train and evaluate using a consistent data splitting strategy (e.g., 5-fold cross-validation). Record performance metrics (e.g., AUC-ROC, Accuracy) for all model-dataset pairs [29].

- Statistical Analysis: Employ a hierarchical Bayesian statistical model to perform paired comparisons and determine if the performance differences between neural embeddings and ECFP are statistically significant [29].

Protocol 2: Parameter-Efficient Fine-Tuning of an scFM for a Custom Cell Type Annotation Task

- Objective: To adapt a large single-cell Foundation Model (e.g., scGPT or Geneformer) for a specialized cell type annotation task using a small, labeled dataset and the LoRA technique [27] [2].

- Methodology:

- Data Preparation: Curate a high-quality dataset of 5K–50K single-cells with expert-annotated cell type labels. Split into training, validation, and test sets (e.g., 80/10/10). Format the data into instruction-tuning format:

"Task: Annotate the cell type. Input: [gene expression sequence] Output: [cell type label]"[27]. - Model Setup: Load the pre-trained scFM. Configure LoRA, typically with a rank (

r) of 16-32, targeting the attention mechanism layers or all linear layers in the transformer. Freeze all base model parameters [27]. - Training: Train only the LoRA parameters for 2-5 epochs using the AdamW optimizer. Use an adaptive learning rate (e.g., 2e-4 for this data size) and monitor the validation loss for early stopping [27] [26].

- Validation & Deployment: Evaluate the fine-tuned model on the held-out test set, measuring metrics like accuracy and F1-score. Compare against a baseline model. For deployment, merge the LoRA adapter weights into the base model, creating a single, inference-ready model file [27].

- Data Preparation: Curate a high-quality dataset of 5K–50K single-cells with expert-annotated cell type labels. Split into training, validation, and test sets (e.g., 80/10/10). Format the data into instruction-tuning format:

Protocol 3: Establishing the Dataset Size Threshold for Effective Fine-Tuning

- Objective: To empirically determine the dataset size at which fine-tuning a pre-trained model becomes more effective than training a model from scratch [26].

- Methodology:

- Dataset Creation: Extract subsets of increasing size (e.g., 1MB, 5MB, 10MB, 20MB) from a large, coherent text corpus [26].

- Model Training: