Decoding Cellular Diversity: How Single-Cell Foundation Models Are Revolutionizing Biomedical Research

Single-cell foundation models (scFMs) are emerging as transformative artificial intelligence tools for deciphering cellular heterogeneity in biomedical research.

Decoding Cellular Diversity: How Single-Cell Foundation Models Are Revolutionizing Biomedical Research

Abstract

Single-cell foundation models (scFMs) are emerging as transformative artificial intelligence tools for deciphering cellular heterogeneity in biomedical research. Trained on millions of single-cell transcriptomes, these models learn fundamental biological principles that can be adapted to diverse downstream tasks. This article explores the core concepts and architectures of scFMs, their practical applications in cell type annotation, perturbation prediction, and spatial analysis, alongside critical benchmarking insights that guide model selection. We also address current limitations in interpretability and data integration while highlighting validation frameworks that ensure biological relevance. For researchers and drug development professionals, this synthesis provides a comprehensive guide to leveraging scFMs for unlocking deeper insights into cellular function, disease mechanisms, and therapeutic development.

The New Language of Biology: Understanding Single-Cell Foundation Models

Single-cell foundation models (scFMs) represent a transformative paradigm in computational biology, leveraging large-scale deep learning architectures pretrained on massive single-cell omics datasets. Inspired by natural language processing (NLP) breakthroughs, these models adapt transformer-based architectures to decipher the complex "language" of cellular function, where genes serve as words and cells as sentences [1]. This technical guide examines the core architecture, pretraining methodologies, and biological applications of scFMs within the broader context of cellular heterogeneity research. We provide a comprehensive analysis of current model performance across key tasks, detailed experimental protocols for model evaluation, and essential computational tools that empower researchers to harness these advanced artificial intelligence systems for unraveling cellular complexity in development, homeostasis, and disease.

The fundamental analogy driving scFM development treats biological systems as linguistic structures—individual cells constitute meaningful sentences composed of gene "words" that follow grammatical rules of regulation and interaction [1] [2]. This conceptual framework enables the application of transformer architectures, originally developed for NLP, to single-cell omics data. Foundation models in single-cell biology are defined as large-scale machine learning models pretrained on extensive and diverse datasets, making them generalizable to specific downstream tasks with minimal fine-tuning [3]. The rapid accumulation of single-cell data—with repositories like CZ CELLxGENE now providing access to over 100 million unique cells—has created the necessary training corpus for these models to learn fundamental biological principles [1] [2].

Within cellular heterogeneity research, scFMs offer unprecedented capability to move beyond descriptive cataloging of cell types toward predictive modeling of cellular states and behaviors. Traditional analytical approaches face significant challenges in capturing the complex, high-dimensional relationships that define cellular identity and function. scFMs address these limitations by learning latent representations that encode biological knowledge from millions of cells across diverse tissues, species, and experimental conditions [4] [2]. This pretrained knowledge can then be efficiently adapted to specific research contexts, from identifying novel cell subpopulations in tumor microenvironments to predicting cellular responses to genetic perturbations.

Core Architectural Framework of Single-Cell Foundation Models

Tokenization Strategies for Biological Data

Tokenization converts raw gene expression data into structured inputs that transformer models can process. Unlike natural language with its inherent word sequence, gene expression data lacks natural ordering, requiring specialized approaches:

- Gene-based tokenization: Individual genes serve as tokens, with expression values incorporated through value embeddings [1] [4]. Genes are typically ordered by expression magnitude within each cell, creating a deterministic sequence analogous to word order in sentences.

- Modality-specific tokens: For multi-omics models, special tokens indicate different data modalities (e.g., scRNA-seq vs. scATAC-seq) [1] [2].

- Metadata incorporation: Cell-level metadata (e.g., tissue origin, donor information) can be prepended as special tokens to provide biological context [1].

Transformer Architectures in scFMs

Most scFMs utilize transformer architectures, which employ self-attention mechanisms to model complex dependencies between genes:

- Encoder models: BERT-like architectures with bidirectional attention capture all gene contexts simultaneously, ideal for classification and embedding tasks [1] [5].

- Decoder models: GPT-like architectures with unidirectional attention iteratively predict masked genes conditioned on known genes, excelling at generation tasks [1] [2].

- Hybrid architectures: Emerging models combine encoder-decoder structures or integrate transformers with other neural network components for specialized applications [1] [2].

The attention mechanism enables scFMs to learn which genes are most informative for determining cellular identity and state, effectively modeling regulatory relationships and functional pathways [1]. Positional encoding schemes adapted from NLP represent the relative ordering of genes based on expression ranks, while gene embeddings capture functional similarities analogous to semantic relationships in word embeddings [1] [4].

Quantitative Performance Benchmarking Across Biological Tasks

Model Comparison and Task Performance

Table 1: Performance benchmarking of major single-cell foundation models across key biological tasks

| Model | Architecture Type | Pretraining Scale | Cell Type Annotation (Accuracy) | Batch Integration (mixing metric) | Perturbation Prediction (Pearson r) | Cross-Species Generalization |

|---|---|---|---|---|---|---|

| scGPT [2] | Decoder (GPT-like) | 33 million cells | 94.2% | 0.89 | 0.78 | Moderate |

| Geneformer [4] | Encoder (BERT-like) | 30 million cells | 92.7% | 0.85 | 0.82 | High |

| scFoundation [4] | Hybrid | 50 million cells | 95.1% | 0.91 | 0.85 | High |

| scPlantFormer [2] | Encoder-Decoder | 15 million cells | 92.0%* | 0.87 | 0.79 | High* |

| Nicheformer [2] | Spatial Graph Transformer | 53 million cells | 96.3% | 0.93 | 0.81 | Moderate |

Performance on plant-specific datasets | *Spatial annotation accuracy

Biological Relevance Metrics

Table 2: Performance evaluation using biologically-informed metrics across five benchmarking datasets

| Model | scGraph-OntoRWR (Cell Ontology Consistency) | LCAD (Annotation Error Severity) | Landscape Roughness (ROGI) | Computational Requirements (GPU hours) |

|---|---|---|---|---|

| scGPT [4] | 0.67 | 2.3 | 0.12 | 480 |

| Geneformer [4] | 0.72 | 2.1 | 0.09 | 520 |

| scFoundation [4] | 0.75 | 1.8 | 0.07 | 650 |

| UCE [4] | 0.63 | 2.5 | 0.15 | 380 |

| LangCell [4] | 0.70 | 2.0 | 0.11 | 710 |

Recent benchmarking studies reveal that no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [4]. scGraph-OntoRWR measures consistency between model-derived cell relationships and established biological knowledge in cell ontologies, while Lowest Common Ancestor Distance (LCAD) quantifies the biological severity of cell type misclassification errors [4]. Models that achieve lower landscape roughness (ROGI) typically demonstrate better generalization to new datasets, as they learn smoother, more biologically plausible representations of cellular states [4].

Experimental Protocols for scFM Evaluation

Protocol 1: Zero-Shot Cell Type Annotation

Purpose: To evaluate model capability to accurately annotate cell types without task-specific fine-tuning.

Materials:

- Preprocessed scRNA-seq dataset with held-out cell type labels

- Pretrained scFM with gene vocabulary matching target dataset

- Computing environment with GPU acceleration

Methodology:

- Data Preprocessing: Map dataset genes to model vocabulary, retaining only genes present in both (typically 70-85% coverage) [4].

- Embedding Generation: Process each cell through scFM to extract cell-level embeddings (typically from [CLS] token or mean pooling of gene embeddings).

- Similarity Calculation: Compute cosine similarity between query cell embeddings and reference cell type centroids in embedding space.

- Annotation Assignment: Assign each cell to the cell type with highest similarity score.

- Performance Validation: Compare against ground truth labels using accuracy, F1-score, and LCAD metrics.

Technical Notes: Zero-shot performance heavily depends on vocabulary overlap and representation of similar cell types in pretraining corpus [4]. Models typically achieve 70-40% accuracy on novel cell types not explicitly seen during pretraining [4].

Protocol 2: In Silico Perturbation Prediction

Purpose: To predict transcriptomic changes resulting from genetic perturbations.

Materials:

- Wild-type expression profiles

- Perturbation targets (gene knockouts/overexpression)

- Fine-tuned or prompted scFM with perturbation modeling capability

Methodology:

- Baseline Establishment: Process wild-type cells through model to establish baseline embeddings.

- Perturbation Application: Modify input sequences to represent experimental perturbations (e.g., zeroing out expression of knocked-out genes).

- Prediction Generation: Process perturbed inputs through model and capture output expression profiles.

- Comparison: Calculate differential expression between predicted perturbed and wild-type states.

- Validation: Compare predictions to ground truth experimental data when available.

Technical Notes: scGPT and Geneformer have demonstrated capability to predict perturbation effects with correlation coefficients of 0.75-0.85 against experimental validation data [2]. Performance varies significantly by gene, with hub genes in regulatory networks showing more predictable effects [4].

Protocol 3: Cross-Modality Integration

Purpose: To align cells from different omics modalities into shared embedding space.

Materials:

- Multimodal single-cell data (e.g., scRNA-seq + scATAC-seq)

- scFM with cross-modal architecture (e.g., scMODAL)

- Limited set of linked features between modalities

Methodology:

- Input Preparation: Process each modality through separate but linked encoders.

- Anchor Identification: Use known linked features (e.g., gene expression and chromatin accessibility for same gene) to identify mutual nearest neighbors.

- Adversarial Alignment: Employ generative adversarial network components to minimize distribution differences between modalities.

- Geometric Preservation: Apply regularization to preserve within-modality cell neighborhood structures.

- Validation: Assess mixing metrics and biological preservation using cell type labels.

Technical Notes: scMODAL demonstrates state-of-the-art performance with as few as 10-20 linked features, effectively integrating modalities with weak correlations like protein abundance and gene expression [6].

The Scientist's Computational Toolkit

Table 3: Essential computational tools and resources for scFM implementation

| Tool/Resource | Type | Primary Function | Access Method | Key Applications |

|---|---|---|---|---|

| scGPT [2] | Foundation Model | Single-cell analysis & perturbation | Python package | Cell annotation, perturbation prediction, gene network inference |

| scMODAL [6] | Integration Framework | Multi-omics data alignment | Python package | Cross-modality integration, feature imputation |

| CZ CELLxGENE [1] [2] | Data Repository | Curated single-cell datasets | Web portal/API | Model pretraining, benchmarking |

| BioLLM [2] | Benchmarking Suite | Foundation model evaluation | Python package | Performance comparison, model selection |

| DISCO [2] | Data Resource | Single-cell data aggregation | Web portal | Large-scale pretraining corpus assembly |

| scGNN+ [2] | Analysis Pipeline | Automated single-cell analysis | Open-source package | Downstream analysis automation |

Future Directions and Implementation Challenges

Despite their transformative potential, scFMs face significant implementation challenges that require ongoing methodological development. Current limitations include computational intensity during training, with models requiring hundreds of GPU hours and specialized expertise [4] [3]. Model interpretability remains challenging, as the biological relevance of latent embeddings and attention mechanisms is not always transparent [1] [4]. There is also a notable gap between computational development and biological validation, with few novel model predictions being experimentally confirmed [3].

Future development priorities should focus on several key areas. Enhanced model interpretability through biologically grounded attention mechanisms and integration with prior knowledge will increase utility for biological discovery [1] [4]. Multimodal integration capabilities must expand to incorporate emerging spatial proteomics and metabolomics data types [2]. Development of resource-efficient fine-tuning approaches will democratize access for research groups with limited computational resources [4] [3]. Finally, the creation of user-friendly interfaces and standardized benchmarking frameworks will bridge the accessibility gap for experimental biologists [3].

The rapid evolution of single-cell foundation models represents a paradigm shift in computational biology, transitioning from task-specific algorithms to generalizable AI systems that capture fundamental principles of cellular function. As these models mature and address current limitations, they hold extraordinary promise for accelerating therapeutic development and deepening our understanding of cellular heterogeneity in health and disease.

The application of transformer neural networks represents a paradigm shift in the analysis of cellular data, particularly in the domain of cellular heterogeneity research. Originally developed for natural language processing (NLP), transformers have been adapted to decode the complex "language" of cellular systems, where genes function as words and entire cell transcriptomes form meaningful biological sentences [1]. This architectural transition from recurrent neural networks (RNNs) to attention-based mechanisms has effectively solved the critical problem of long-range dependencies, enabling models to capture intricate relationships across thousands of genes that were previously computationally intractable [7]. The emergence of single-cell foundation models (scFMs) built on transformer architectures now provides researchers with powerful tools capable of integrating massive-scale single-cell datasets and extracting previously inaccessible biological insights into cellular behavior, disease mechanisms, and therapeutic targets [1] [8].

Within this context, transformer architectures serve as the computational backbone for analyzing single-cell RNA sequencing (scRNA-seq) data, which provides comprehensive transcriptomic profiling at individual cell resolution. The self-attention mechanism inherent to transformers allows these models to dynamically weight the importance of different genes within and across cells, effectively identifying key biomarkers and regulatory relationships that define cellular states [9] [1]. This capability is particularly valuable for drug development professionals seeking to identify novel therapeutic targets within complex tissues like tumors, where understanding cellular heterogeneity at unprecedented resolution can reveal critical disease mechanisms and treatment opportunities [8].

Core Architectural Principles: From Language to Cellular Data

Fundamental Components of Transformer Networks

The transformer architecture, first introduced in the landmark paper "Attention Is All You Need," fundamentally redesigned sequence processing by replacing recurrence with self-attention mechanisms [7]. Unlike traditional RNNs and LSTMs that process data sequentially and struggle with long-term dependencies, transformers process all elements in parallel while using attention to model relationships regardless of their positional distance [7]. The core architectural components include:

Self-Attention Mechanism: Computes attention scores for each element in a sequence relative to all other elements, allowing the model to determine which inputs to "focus on" when processing cellular data. For cellular applications, this enables the identification of co-expressed gene modules and regulatory networks [7] [1].

Multi-Head Attention: Employs multiple attention heads in parallel, each capable of learning different types of relationships between genes—effectively capturing diverse biological relationships within the same model [7] [9].

Positional Encodings: Since transformers lack inherent sequential processing, these encodings inject information about the position of elements in the sequence. For cellular data, this presents a unique challenge as gene expression lacks natural ordering, requiring innovative approaches to represent positional context [1] [8].

Feed-Forward Networks: Applied independently to each position after attention layers, these networks transform the attention-weighted representations into formats suitable for downstream biological tasks [7].

Adapting Transformers for Cellular Data

Applying transformer architectures to cellular data requires significant modifications to handle the unique characteristics of biological data. Unlike natural language with its inherent sequential structure, gene expression data is non-sequential and high-dimensional with substantial technical noise [1] [8]. Key adaptations include:

Tokenization Strategies: Genes are treated as tokens analogous to words in a sentence. However, determining the optimal "gene order" for transformer input remains challenging. Common approaches include ranking genes by expression levels within each cell, binning genes by expression values, or using normalized counts without complex ranking [1].

Specialized Embeddings: Gene token embeddings typically combine a gene identifier with its expression value. Additional special tokens may represent cell identity, experimental batch, or modality information (e.g., scATAC-seq, spatial transcriptomics) [1].

Biological Positional Encodings: Since gene-gene interactions lack natural sequence, positional encodings are adapted using deterministic orderings based on expression magnitude or other biologically relevant rankings [1].

The following table summarizes the key architectural adaptations required for applying transformers to cellular data:

Table: Architectural Adaptations for Cellular Data

| Component | Standard Transformer | Cellular Data Adaptation | Biological Rationale |

|---|---|---|---|

| Input Tokens | Words/subwords | Genes/features with expression values | Captures transcriptional activity |

| Token Order | Natural language sequence | Expression-based ranking or binning | Provides consistent input structure |

| Positional Encoding | Sentence position | Expression rank or genomic position | Encodes relational context between genes |

| Special Tokens | [CLS], [SEP] | Cell type, batch, modality indicators | Incorporates experimental metadata |

scGraphformer: A Case Study in Cellular Transformer Architecture

Architectural Framework and Implementation

scGraphformer represents a cutting-edge implementation of transformer architecture specifically designed for single-cell RNA sequencing data analysis [9] [10]. This model integrates transformer capabilities with graph neural networks (GNNs) to overcome limitations of traditional GNNs that rely on predefined cell-cell relational graphs, which often introduce noise and bias through k-nearest neighbor (kNN) approximations [9]. The scGraphformer architecture consists of two interconnected modules:

Transformer Module: Processes gene representations using multi-head attention mechanisms to discern latent gene-gene interactions that influence cellular phenotypes. This module employs biologically re-engineered Query, Key, and Value sub-modules, where the Query utilizes global gene information, the Key captures cross-cell dependencies, and the Value provides contextualized cell representations [9].

Cell Network Learning Module: Dynamically constructs and refines cell-cell relationship networks from the data itself, rather than relying on predefined graphs. This module amalgamates learned gene-gene interactions with the evolving cell network to continuously refine topological structures [9].

The model begins by processing scRNA-seq data through standard preprocessing steps—removing low-quality cells and genes, normalization, and selecting highly variable genes (HVGs). Unlike other methods, scGraphformer tailors HVG selection based on expression matrix dimensionality rather than fixed counts, preserving more genetic information [9]. The data is then transformed into a graph structure where cells represent nodes with HVGs as features, optionally initialized with a kNN graph.

Experimental Methodology and Performance Evaluation

The experimental validation of scGraphformer employed rigorous benchmarking across 20 diverse datasets against seven state-of-the-art computational methods for scRNA-seq cell annotation: CellTypist, scVI, scmap-cluster, scmap-cell, ACTINN, scBert, TOSICA, scType, and scBalance [9]. Evaluation metrics focused on classification accuracy across diverse cell types, with particular attention to performance on complex datasets including campbell, zillionis, and Zheng 68K [9].

The following table summarizes the key performance comparisons between scGraphformer and other prominent methods:

Table: Performance Comparison of Single-Cell Analysis Methods

| Method | Architecture Type | Key Strengths | Performance Notes |

|---|---|---|---|

| scGraphformer | Transformer + GNN | Dynamic graph learning, identifies subtle patterns | Superior accuracy in intra-dataset evaluation |

| scBERT | Transformer encoder | Bidirectional attention | Limited performance on rare cell types |

| scGPT | Transformer decoder | Generative pretraining | Strong on large datasets |

| scVI | Generative model | Probabilistic modeling | Efficient on specific tasks |

| scmap | kNN-based | Fast computation | Limited on complex datasets |

| ACTINN | Neural network | Simple architecture | Struggles with heterogeneity |

Implementation of scGraphformer involves several critical steps. First, data preprocessing includes quality control, normalization, and HVG selection tailored to dataset dimensionality. The model then undergoes iterative refinement of cell-cell connections through its transformer modules, with training typically employing fivefold cross-validation to ensure robustness [9]. For researchers seeking to implement similar architectures, key considerations include computational resource allocation (particularly for attention mechanisms on large datasets), careful handling of batch effects, and strategies for interpreting the biological significance of learned attention weights.

Visualization of Architectures and Workflows

scGraphformer Architecture Diagram

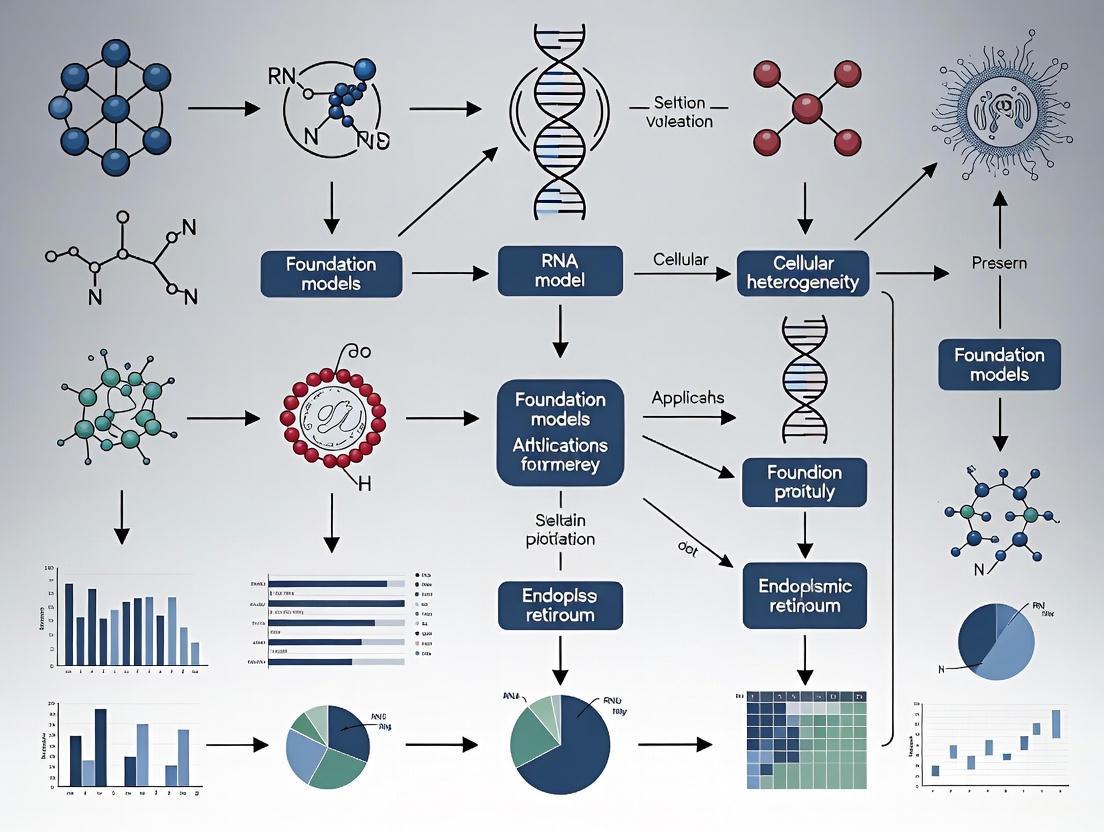

Single-Cell Foundation Model Workflow

Essential Research Reagents and Computational Tools

The implementation of transformer-based approaches for cellular data analysis requires both biological and computational resources. The following table details key research reagents and computational tools essential for working with these models:

Table: Essential Research Reagents and Computational Tools

| Resource Type | Specific Examples | Function/Purpose |

|---|---|---|

| Data Resources | CZ CELLxGENE, Human Cell Atlas, GEO, SRA | Provide standardized, annotated single-cell datasets for model training and validation [1] |

| Preprocessing Tools | Seurat, Scanpy | Perform quality control, normalization, and feature selection on raw scRNA-seq data [9] |

| Model Architectures | scGraphformer, scGPT, Geneformer, scBERT | Provide specialized transformer implementations for cellular data [9] [1] [8] |

| Benchmarking Frameworks | Custom evaluation pipelines, scGraph-OntoRWR, LCAD metrics | Enable performance comparison and biological interpretation of model outputs [8] |

| Computational Infrastructure | GPUs with substantial memory, High-performance computing clusters | Handle computational demands of transformer training and inference [9] [8] |

Transformer networks have fundamentally transformed our approach to analyzing cellular heterogeneity, providing unprecedented capabilities for deciphering complex biological systems. As these models continue to evolve, several critical challenges and opportunities emerge. Current limitations include the non-sequential nature of omics data, inconsistencies in data quality, and the substantial computational resources required for training and fine-tuning [1]. Future architectural innovations will likely focus on developing more efficient attention mechanisms, improving model interpretability to extract biologically meaningful insights, and creating better methods for integrating multimodal single-cell data [1] [8].

For researchers and drug development professionals, transformer-based cellular models offer powerful new approaches for identifying novel therapeutic targets, understanding disease mechanisms at single-cell resolution, and predicting cellular responses to perturbations. The emerging paradigm of single-cell foundation models pretrained on massive diverse datasets and fine-tuned for specific applications represents a significant advancement over traditional analysis methods [1] [8]. As these models become more accessible and computationally efficient, they will increasingly serve as essential tools in the precision medicine toolkit, enabling deeper insights into cellular function and accelerating the development of targeted therapeutics for complex diseases.

In single-cell genomics, foundation models are revolutionizing our ability to decipher cellular heterogeneity and complexity. These models rely on sophisticated tokenization strategies to convert gene expression data into meaningful numerical representations that machine learning architectures can process. This technical guide examines the current methodologies for transforming biological sequences into model inputs, detailing preprocessing workflows, architectural considerations, and downstream applications in drug discovery and disease research. By providing a comprehensive framework for gene tokenization, we enable more accurate modeling of cellular dynamics and accelerate therapeutic development for complex diseases.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, enabling unprecedented analysis of cellular heterogeneity at scale. These large-scale deep learning models, pretrained on vast single-cell datasets, have revolutionized data interpretation through self-supervised learning with capacity for various downstream tasks [1]. The effectiveness of these models hinges critically on their tokenization strategies—the processes that convert raw biological data into structured numerical inputs that deep learning architectures can process.

In natural language processing (NLP), tokenization breaks text into smaller units like words or subwords, standardizing unstructured data into formats models can understand and process [11] [12]. By analogy, single-cell foundation models employ specialized tokenization approaches that define what constitutes a "token" from single-cell data, typically representing each gene or genomic feature as a token [1]. These tokens serve as fundamental input units, with combinations collectively representing individual cells, much like words form sentences [1].

The fundamental challenge in gene expression tokenization stems from the nonsequential nature of omics data. Unlike words in a sentence, genes in a cell have no inherent ordering [1]. This creates unique computational challenges that require innovative solutions to structure biological data for transformer-based architectures that power modern foundation models. Effective tokenization must preserve biological meaning while enabling efficient model training on datasets encompassing millions of cells and thousands of genes.

Biological Foundation: From Genetic Code to Model Input

The Nature of Genomic Data

Understanding the biological basis of genomic data is essential for developing effective tokenization strategies. At its core, gene expression involves the process by which information contained within a gene is used to produce functional gene products, primarily proteins or functional RNA molecules [13]. This process begins with transcription, where DNA sequences are copied into RNA, followed by translation for protein-coding genes, where the RNA sequence is decoded to produce amino acid chains [13].

The protein-coding regions of genes comprise open reading frames (ORFs) consisting of codons that specify the amino acid sequence of the resulting protein [14]. Each ORF begins with an initiation codon (usually ATG) and ends with a termination codon (TAA, TAG, or TGA) [14]. In computational terms, genomic data exhibits several unique characteristics that influence tokenization design. The six possible reading frames (three forward, three reverse) in DNA sequences, the presence of introns and exons in eukaryotic genes, and the variable length of gene sequences all contribute to the complexity of biological tokenization [14].

Single-Cell Genomics Primer

Single-cell technologies, particularly single-cell RNA sequencing (scRNA-seq), measure gene expression at individual cell resolution, creating high-dimensional data matrices where rows represent cells and columns represent genes [1]. These datasets capture the transcriptional states of individual cells, revealing cellular heterogeneity, rare cell populations, and dynamic processes like differentiation or disease progression [1] [15].

The data generated from these technologies presents specific challenges for tokenization, including technical noise, dropout events (where genes are measured as unexpressed due to technical limitations), and batch effects across experiments [1] [15]. Effective tokenization strategies must account for these biological and technical considerations to create robust input representations for foundation models.

Tokenization Methodologies for Genomic Data

Core Tokenization Approaches

Gene-Based Tokenization

The most common approach in scFMs treats individual genes as tokens, analogous to words in NLP models [1]. Each gene's expression value in a given cell must be incorporated into the token representation, typically through:

- Gene identifier embedding: Unique representation for each gene

- Expression value encoding: Incorporation of expression magnitude

- Positional encoding: Artificial ordering to accommodate transformer architectures

Since gene expression data lacks natural ordering, various strategies have been developed to impose structure. These include ranking genes by expression levels within each cell, partitioning genes into expression value bins, or using normalized counts directly [1]. Positional encoding schemes then represent the relative order or rank of each gene in the cell [1].

Sequence-Based Tokenization

For DNA sequence data, alternative tokenization approaches include:

- K-mer tokenization: Breaking sequences into overlapping subsequences of length k

- Nucleotide-level tokenization: Treating individual nucleotides as tokens

- Motif-based tokenization: Identifying and tokenizing biologically meaningful sequence patterns

Current research indicates that significant work remains in developing efficient tokenization techniques that can capture or model underlying motifs within DNA sequences [16]. Many existing methods either reduce scalability through naive sequence representation, incorrectly model motifs, or are borrowed directly from NLP tasks without sufficient biological adaptation [16].

Advanced Tokenization Strategies

Multi-Modal Token Integration

Advanced scFMs incorporate multiple data modalities through specialized tokenization approaches:

- Modality indication tokens: Special tokens indicating data type (e.g., scATAC-seq, spatial transcriptomics)

- Batch effect tokens: Encoding technical variables to mitigate batch effects

- Metadata tokens: Incorporating cell-type labels, experimental conditions, or temporal information

These approaches enable models to learn unified representations across diverse data types, enhancing their biological relevance and predictive power [1].

Expression Value Representation

A critical consideration is how to represent expression values alongside gene identities:

- Value binning: Discretizing continuous expression values into categorical bins

- Continuous encoding: Using feature projections to combine gene identity and expression

- Hybrid approaches: Combining categorical gene tokens with continuous value embeddings

Table 1: Comparison of Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Key Methodology | Advantages | Limitations | Example Models |

|---|---|---|---|---|

| Gene Ranking | Orders genes by expression level within each cell | Deterministic, preserves high-expression genes | May lose low-expression signals | scBERT, scGPT |

| Expression Binning | Partitions genes into bins by expression values | Handles continuous expression values | Introduces discretization artifacts | Various transformer models |

| Normalized Counts | Uses normalized expression values directly | Simplicity, minimal preprocessing | May require specialized architectures | Recent scFMs |

| Multi-Modal Tokens | Incorporates special tokens for different data types | Enables integrated multi-omics analysis | Increased model complexity | scGPT, emerging models |

| Metadata Enrichment | Prepends cell identity and metadata tokens | Provides biological context | Requires careful embedding design | scGPT, custom architectures |

Implementation Framework

Experimental Protocol for Gene Tokenization

Data Preprocessing Workflow

A standardized preprocessing pipeline ensures consistent tokenization across experiments:

- Quality Control: Filter cells based on quality metrics (mitochondrial content, detected genes)

- Gene Selection: Identify highly variable genes or use full gene sets

- Normalization: Apply appropriate normalization (e.g., logCPM, SCTransform)

- Batch Correction: Implement harmonization methods if using multiple datasets

- Token Preparation: Format processed data for model ingestion

Tokenization Algorithm

The core tokenization process follows these computational steps:

Tokenization Workflow: From Raw Data to Model Input

Model Architecture Integration

Embedding Layer Design

The token embedding layer must accommodate both gene identity and expression information:

- Gene embedding matrix: Lookup table for gene identifiers

- Value projection layers: Neural network components for expression values

- Combination mechanisms: Methods to fuse identity and value information

Positional Encoding Strategies

To address the lack of natural sequence in genomic data:

- Learned positional embeddings: Treat gene order as a learned parameter

- Fixed schematic ordering: Use consistent ordering based on genomic position or other criteria

- Attention masking: Allow full connectivity without positional bias

Table 2: Research Reagent Solutions for Single-Cell Tokenization Experiments

| Resource Type | Specific Examples | Function in Tokenization Pipeline | Implementation Considerations |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE, Human Cell Atlas, GEO | Provide standardized single-cell datasets for pretraining | Data quality variation, batch effects |

| Processing Tools | Seurat, Scanpy, SCANPY | Perform quality control, normalization, and feature selection | Parameter tuning, scalability |

| Modeling Frameworks | scGPT, scBERT, UNAGI | Implement tokenization layers and model architectures | Computational resources, customization |

| Visualization Tools | UMAP, t-SNE, custom plots | Validate tokenization quality and model performance | Interpretation, biological relevance |

| Benchmark Datasets | PanglaoDB, Human Ensemble Cell Atlas | Standardized evaluation of tokenization strategies | Dataset size, annotation quality |

Applications in Cellular Heterogeneity Research

Drug Discovery Applications

Effective tokenization enables foundation models to power drug discovery pipelines. For example, UNAGI—a deep generative model for analyzing time-series single-cell transcriptomic data—leverages sophisticated tokenization to capture complex cellular dynamics underlying disease progression [15]. This approach enhances drug perturbation modeling and screening by representing cellular states in ways that enable in silico prediction of drug effects [15].

In practice, tokenized representations allow researchers to simulate cellular responses to therapeutic interventions, identifying candidates that shift diseased cells toward healthier states. This application was demonstrated in idiopathic pulmonary fibrosis, where the model identified nifedipine as a potential anti-fibrotic treatment, later validated using human tissue models [15].

Cellular Dynamics Mapping

Tokenization strategies enable the reconstruction of cellular trajectories and gene regulatory networks. By creating meaningful representations of cell states, researchers can:

- Infer differentiation pathways and lineage relationships

- Identify key transcriptional regulators

- Map disease progression trajectories

- Discover novel cell states and subtypes

Analysis Pipeline: From Tokens to Therapeutic Insights

Technical Considerations and Optimization

Computational Efficiency

Tokenization design significantly impacts model scalability and training efficiency:

- Vocabulary size: Balance between granularity and computational requirements

- Sequence length: Truncation vs. compression strategies for long gene lists

- Memory optimization: Efficient embedding implementations for large-scale data

Biological Relevance Preservation

Maintaining biological meaning during tokenization requires:

- Gene relationship modeling: Capturing co-expression and regulatory relationships

- Multi-scale representation: Integrating gene-level, pathway-level, and cell-level information

- Context awareness: Incorporating spatial, temporal, and environmental factors

Future Directions

The field of genomic tokenization continues to evolve rapidly. Promising research directions include:

- Adaptive tokenization: Methods that learn optimal tokenization strategies from data

- Hierarchical representations: Multi-scale tokens capturing genes, pathways, and systems

- Cross-species generalization: Tokenization approaches enabling model transfer across organisms

- Integrated multimodal tokens: Unified representations for diverse data types

As single-cell technologies advance and datasets grow, sophisticated tokenization strategies will become increasingly critical for unlocking the full potential of foundation models in biological research and therapeutic development.

The advent of high-throughput single-cell sequencing technologies has generated vast amounts of data, profiling cellular heterogeneity with unprecedented precision. This data explosion has created an urgent need for unified computational frameworks capable of integrating and analyzing rapidly expanding cellular datasets. Inspired by advancements in artificial intelligence, researchers have extended foundation model techniques to single-cell analysis, giving rise to single-cell foundation models (scFMs). These large-scale deep learning models are pretrained on massive cellular datasets through self-supervised learning and can be adapted to various downstream tasks in biological research [1].

Foundation models represent a paradigm shift in machine learning, where models are trained on extensive datasets at scale and then adapted to a wide range of tasks. A defining feature is their training via self-supervised objectives, often through predicting masked segments, enabling the model to learn generalizable patterns without manual labeling. These models develop rich internal representations that can be fine-tuned to excel at specific tasks with relatively few additional labeled examples [1]. In cellular research, scFMs typically use transformer architectures to incorporate diverse omics data and extract latent patterns at both cell and gene/feature levels for analyzing cellular heterogeneity and complex regulatory networks [1].

Core Architectural Frameworks and Pretraining Strategies

Data Processing and Tokenization Methods

A critical ingredient for any scFM is the compilation of large and diverse datasets. Platforms such as CZ CELLxGENE provide unified access to annotated single-cell datasets, with over 100 million unique cells standardized for analysis. Likewise, the Human Cell Atlas and other multiorgan atlases provide broad coverage of cell types and states. Public repositories including the National Center for Biotechnology Information (NCBI) Gene Expression Omnibus (GEO) and Sequence Read Archive (SRA) host thousands of single-cell sequencing studies [1].

Tokenization refers to the process of converting raw input data into discrete units called tokens. For scFMs, tokenization involves defining what constitutes a 'token' from single-cell data, typically representing each gene or feature as a token. These tokens serve as fundamental input units for the model, analogous to words in a sentence [1]. Several strategies have emerged for processing single-cell data:

- Gene Ranking: Genes within each cell are ranked by expression levels, and the ordered list of top genes is fed as a 'sentence' to the model [1]

- Value Categorization: Gene expression values are binned into discrete categories, transforming continuous expression into classification problems [17]

- Value Projection: Gene expression vectors are expressed as sums of projections, preserving full data resolution [17]

Table 1: Primary Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Mechanism | Advantages | Representative Models |

|---|---|---|---|

| Gene Ranking | Orders genes by expression level within each cell | Deterministic sequence generation | Geneformer, iSEEEK, tGPT |

| Value Categorization | Bins continuous expression values into discrete categories | Enables classification approaches | scBERT, scGPT |

| Value Projection | Projects expression values while preserving resolution | Maintains full data precision | scFoundation, GeneCompass, CellFM |

Model Architectures for Single-Cell Data

Most successful scFMs are built on transformer architectures, which are neural networks characterized by attention mechanisms that allow the model to learn and weight relationships between any pair of input tokens [1]. In scFMs, the attention mechanism can learn which genes in a cell are most informative of the cell's identity or state, how they covary across cells, and how they have regulatory or functional connections.

The gene expression profile of each cell is converted to a set of gene tokens serving as inputs for the model, and its attention layers gradually build up a latent representation of each cell or gene [1]. Several architectural variants have been implemented:

- BERT-like Encoders: Use bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1]

- GPT-style Decoders: Employ unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes [1]

- Hybrid Architectures: Combine encoder-decoder designs or custom modifications [1]

- RetNet Variants: Use retrieval-enhanced transformers with linear complexity for improved efficiency [17]

Diagram 1: Single-Cell Foundation Model Architecture

Implementation and Experimental Protocols

Pretraining Workflows and Methodologies

Pretraining an scFM involves training it on self-supervised tasks across unlabeled cellular datasets. The most common approach is masked language modeling adapted for cellular data, where portions of the input are masked and the model learns to predict them based on context [1]. Implementation requires careful consideration of several components:

- Data Curation: CellFM demonstrates a comprehensive data processing workflow involving quality control for filtering cells and genes, gene name standardization according to HUGO Gene Nomenclature Committee guidelines, and conversion to unified sparse matrix formats [17]

- Model Initialization: Large parameter models (e.g., CellFM with 800 million parameters) require strategic initialization and distributed training across multiple NPUs or GPUs [17]

- Efficient Attention Mechanisms: Models like CellFM implement modified RetNet frameworks with gated multi-head attention and simple gated linear units to balance efficiency and performance [17]

Diagram 2: Single-Cell Data Processing Workflow

Evaluation Frameworks for scFMs

Evaluating the performance of self-supervised learning methods is challenging since there are endless ways to evaluate their learned representations. The community has developed several evaluation protocols to compare representation quality, resulting in proxy metrics for unobserved downstream tasks [18]. Common evaluation approaches include:

- Linear Probing: Freezing the encoder and training a shallow classifier for classification tasks [18] [19]

- End-to-end Fine-tuning: Updating all or most encoder weights on supervised downstream tasks [18]

- k-Nearest Neighbor (kNN) Classification: Measuring class consistency of embedding space under non-parametric clustering [18] [19]

- Few-shot Learning: Fine-tuning using only small subsets of available labels [18]

Recent benchmarks emphasize the importance of evaluating on suites spanning natural, synthetic, and distributional shifts, using aggregate metrics to prevent cherry-picking [19]. Studies have found that in-domain linear/kNN probing protocols are, on average, the best general predictors for out-of-domain performance [18].

Table 2: Evaluation Protocols for Self-Supervised Learning in Biology

| Protocol | Mechanism | Use Cases | Advantages |

|---|---|---|---|

| Linear Probing | Freezes encoder, trains linear classifier | Standard evaluation for vision, speech, tabular data | Measures feature quality directly |

| kNN Classification | Non-parametric clustering in embedding space | Fast, training-free evaluation | Reveals embedding space structure |

| End-to-end Fine-tuning | Updates all model parameters | Transfer learning to new domains | Maximizes task-specific performance |

| Few-shot Learning | Uses minimal labeled examples | Low-data regimes, efficiency testing | Measures data efficiency |

| Unsupervised Clustering | k-means with Hungarian matching | Exploratory analysis, no labels needed | Evaluates inherent clusterability |

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Resources for Single-Cell Foundation Model Development

| Resource | Type | Function | Examples |

|---|---|---|---|

| Public Data Repositories | Data Sources | Provide standardized single-cell datasets | CZ CELLxGENE, Human Cell Atlas, GEO, SRA |

| Sequence Processing Tools | Software | Convert raw sequencing data to expression matrices | Kallisto, Cell Ranger |

| Quality Control Frameworks | Software | Filter cells and genes based on quality metrics | Scanpy, Seurat, SynEcoSys |

| Deep Learning Frameworks | Computational Tools | Model training and implementation | TensorFlow, PyTorch, MindSpore |

| Specialized Single-Cell Tools | Software | Single-cell specific analyses | scvi-tools, Scanny |

| Computational Infrastructure | Hardware | Large-scale model training | Ascend NPUs, GPUs, High-performance Clusters |

Applications in Cellular Heterogeneity Research

Advancing Understanding of Cellular Senescence

Single-cell foundation models have enabled comprehensive analysis of cellular senescence heterogeneity across cancer types. A recent pan-cancer study characterized five molecular subgroups of cellular senescence with distinct biological features: Inflamm-aging, DNA Damage Response, Autophagy, Immunologically Quiet, and Metabolic Disorder [20]. These subgroups showed cancer-type and tissue-type specific distribution and revealed significant associations with cancer prognosis, intratumoral microbiota, immunophenotypic features, and multi-omic alterations [20].

The study integrated multi-platform data including prognosis, microbiota, immune microenvironment, multi-omics, and drug sensitivity to investigate associations with CS subgroups. Additionally, 12 single-cell datasets and 19 immunotherapy cohorts were collected to evaluate the CS subgroups. The researchers developed a machine-learning model integrating CS-related cancer driver genes to infer CS subgroups and verified its prediction capability for immunotherapy response and prognosis in independent cohorts [20].

Spatial Transcriptomics and Brain Mapping

In neuroscience, self-supervised learning frameworks have been applied to analyze multi-FISH labeled cell-type maps in thick brain slices. The Voxelwise U-shaped Swin-Mamba network (VUSMamba) employs contrastive learning and pretext tasks for self-supervised learning on unlabeled data, followed by fine-tuning with minimal annotations [21]. This approach enables simultaneous high-precision segmentation of glutamatergic neurons, GABAergic neurons, and nuclei in 300 μm thick brain slices [21].

The framework begins with preprocessing of image data for three types of labeled cells (Hoechst, Vglut1, Vgat), followed by construction of a self-supervised training dataset. Three pretext tasks—rotation prediction, image reconstruction, and image recovery—are designed to enable representation learning through contrastive self-supervised learning. The pretrained model is then fine-tuned using a small set of manually annotated ground truth data [21].

Cancer Research and Therapeutic Development

scFMs are transforming cancer research by elucidating unique mechanisms underlying disease progression and therapeutic resistance. In a 2024 study, researchers used single-cell RNA analysis bolstered by scGPT-enabled cell annotation to pinpoint factors driving therapeutic resistance in a mouse model of breast cancer [22]. The approach identified subsets of tumor-associated macrophages with different roles in modulating resistance to PARP inhibitor therapy [22].

In another application, single-cell sequencing and scVI modeling identified changes in the tumor microenvironment that influence the progression of prostate cancer in mice and humans. Researchers found that different clusters of stromal cells were associated with distinct disease states, deriving a transcriptional signature that effectively predicts local metastasis [22].

Future Directions and Challenges

Despite their promising results, scFMs face several challenges that must be addressed for broader adoption. Technical hurdles include the nonsequential nature of omics data, inconsistency in data quality, and the computational intensity required for training and fine-tuning [1]. Furthermore, interpreting the biological relevance of latent embeddings and model representations remains nontrivial [1].

Future development of scFMs will likely focus on enhancing robustness, interpretability, and scalability. As noted by researchers at Helmholtz Munich, "One of my dreams in the next 10 years is to produce a virtual cell. What I mean by virtual cell is you model the whole function of the cell with an AI system" [23]. This vision of creating comprehensive digital models that simulate how individual cells behave in both health and disease represents the long-term potential of foundation models in cellular biology.

The integration of multimodal data represents another important frontier. Current models primarily focus on transcriptomic data, but future iterations will likely incorporate proteomic, epigenomic, and spatial information to create more comprehensive cellular representations. As these models evolve, they will increasingly enable researchers to simulate cellular behavior under various conditions, predict disease progression, and identify novel therapeutic targets, ultimately advancing personalized medicine and drug discovery.

Foundation models represent a paradigm shift in computational biology, leveraging large-scale pretraining on massive datasets to learn universal representations of cellular states. These models, built primarily on transformer architectures, have demonstrated remarkable success in natural language processing and are now being adapted to decode the complex "language" of cellular biology. Where words form sentences, genes define cellular identity and function. In single-cell RNA sequencing (scRNA-seq) data analysis, foundation models promise to transform our approach to cellular heterogeneity by enabling context-aware understanding of gene-gene interactions, robust cell type annotation, and prediction of cellular responses to perturbations. This technical guide examines the major model variants—Geneformer, scGPT, and scBERT—alongside emerging next-generation architectures, providing researchers with a comprehensive framework for their application in cellular heterogeneity research and drug development.

Core Model Architectures and Technical Specifications

Geneformer employs a transformer encoder architecture pretrained on approximately 30 million human single-cell transcriptomes using a novel rank-value encoding scheme [24]. This approach represents each cell's transcriptome as a sequence of genes ranked by their expression relative to their mean expression across the entire pretraining corpus, effectively deprioritizing ubiquitously expressed housekeeping genes while emphasizing transcription factors that distinguish cell states. The model uses six transformer encoder layers with self-attention mechanisms that enable context-aware gene representations, embedding each gene into a 256-dimensional space that encodes the gene's characteristics specific to each cellular context [24].

scGPT utilizes a generative pretrained transformer architecture across a massive repository of over 33 million cells, implementing a standard transformer backbone with task-specific heads for diverse downstream applications [25]. The model employs a masked language modeling objective during pretraining, where it learns to predict randomly masked genes based on their cellular context. scGPT incorporates both gene and value embeddings, treating genes as tokens and their expression values as additional features, enabling it to distill critical biological insights concerning genes and cells through its pretraining regimen [25].

scBERT adapts the Bidirectional Encoder Representations from Transformers (BERT) architecture for single-cell data, creating gene embeddings through gene2vec to capture semantic similarities between genes [26]. The model incorporates expression embeddings generated through term-frequency analysis to discretize continuous expression variables by binning them into 200-dimensional vectors. scBERT uses performer blocks in its architecture and includes a reconstructor module that calculates reconstruction loss for masked genes during self-supervised pretraining [26].

Comparative Technical Specifications

Table 1: Technical Specifications of Major Foundation Model Variants

| Specification | Geneformer | scGPT | scBERT |

|---|---|---|---|

| Architecture | Transformer Encoder | Generative Pretrained Transformer | BERT-style with Performer blocks |

| Pretraining Data Scale | ~30 million human cells [24] | ~33 million cells [25] | Not specified in detail |

| Input Representation | Rank-value encoding [24] | Gene tokens with expression values [25] | Gene2vec embeddings + expression bins [26] |

| Embedding Dimension | 256 [24] | 512 [27] | Varies |

| Primary Pretraining Objective | Masked gene prediction [24] | Masked language modeling [25] | Masked expression reconstruction [26] |

| Context Awareness | Yes, via attention weights [24] | Presumed yes | Limited information |

Figure 1: Generalized Workflow for Single-Cell Foundation Model Development and Application

Performance Benchmarks and Comparative Analysis

Zero-Shot Capabilities and Limitations

Comprehensive benchmarking reveals critical insights into the practical performance of single-cell foundation models. A rigorous zero-shot evaluation of Geneformer and scGPT demonstrated that these models face significant reliability challenges in settings where they are used without any further training. In cell type clustering tasks, both models performed worse than established methods like selecting highly variable genes (HVG) or using Harmony and scVI, as measured by average BIO score [28]. Similarly, in batch integration tasks, Geneformer consistently underperformed relative to scGPT, Harmony, scVI, and HVG across most datasets, with Geneformer's embedding space often failing to retain information about cell type, with clustering primarily driven by batch effects [28].

For perturbation prediction, recent benchmarks show that foundation models struggle to outperform deliberately simple baselines. In predicting transcriptome changes after single or double genetic perturbations, five foundation models and two other deep learning models failed to outperform simple linear baselines or even a "no change" model that always predicts the same expression as in control conditions [29]. This performance gap highlights the challenge these models face in capturing the complex biological reality of genetic interactions, despite significant computational resources required for fine-tuning.

Performance Across Biological Contexts

Table 2: Performance Comparison Across Key Biological Tasks

| Task | Best Performing Model(s) | Key Limitations | Performance Notes |

|---|---|---|---|

| Cell Type Annotation | scGraphformer, scBERT [9] [26] | Geneformer and scGPT underperform in zero-shot [28] | scBERT shows 85.1% accuracy on NeurIPS dataset vs 80.1% for Seurat [26] |

| Batch Integration | Harmony, scVI, HVG [28] | Geneformer shows inadequate batch mixing [28] | Foundation models underperform in both full and reduced dimensions [28] |

| Perturbation Prediction | Simple linear baselines, "no change" model [29] | Foundation models fail to predict genetic interactions [29] | GEARS, scGPT, scFoundation outperformed by simple additive models [29] |

| Spatial Context Prediction | Nicheformer [27] | Models trained only on dissociated data fail spatial tasks [27] | Nicheformer enables prediction of spatial context for dissociated cells [27] |

Impact of Pretraining Data Composition

The composition and diversity of pretraining data significantly influence model performance. Studies with scGPT variants demonstrated that pretraining on tissue-specific data (e.g., 10.3 million blood and bone marrow cells) provides clear improvements for specific tissue types, but more diverse pretraining datasets don't consistently confer additional benefits [28]. Surprisingly, scGPT pretrained on 33 million non-cancerous human cells slightly underperformed compared to the blood-specific version, even for datasets involving tissue types beyond blood and bone marrow cells [28].

Nicheformer, trained on both dissociated single-cell and spatial transcriptomics data (SpatialCorpus-110M), demonstrates that models trained exclusively on dissociated data fail to recover the complexity of spatial microenvironments [27]. This underscores the importance of multiscale integration for spatially aware tasks and highlights a fundamental limitation of models trained solely on dissociated data.

Next-Generation Architectures and Emerging Solutions

Graph-Integrated and Multi-View Models

scGraphformer represents a significant architectural advancement by integrating transformer capabilities with graph neural networks to transcend the limitations of predefined graphs [9]. The model learns an all-encompassing cell-cell relational network directly from scRNA-seq data through an iterative refinement process that constructs a dense graph structure capturing the full spectrum of cellular interactions [9]. This approach abandons dependence on predefined graphs and instead derives a cellular interaction network directly from the data, allowing for identification of subtle and previously obscured cellular patterns and relationships.

scHybridBERT implements a multi-view modeling framework that integrates spatiotemporal embeddings and cell graphs using a combination of graph attention networks and Performer models [30]. This architecture captures both spatial dynamics at the molecular level through gene co-expression networks and temporal patterns through gene and expression embeddings, addressing the limitation of conventional models that focus primarily on temporal expression patterns while ignoring inherent gene-gene and cell-cell interactions [30].

Spatial-Aware and Cross-Species Models

Nicheformer addresses the critical limitation of spatial awareness by training on both dissociated single-cell and targeted spatial transcriptomics data [27]. The model uses a shared vocabulary for human and mouse data by concatenating orthologous protein-coding genes and species-specific ones, totaling 20,310 gene tokens [27]. This approach enables novel downstream tasks such as spatial composition prediction and spatial label transfer, allowing researchers to predict the spatial context of dissociated cells and transfer rich spatial information to scRNA-seq datasets.

Mouse-Geneformer demonstrates the species-specific adaptation of foundation models, addressing the prominence of mouse as a primary mammalian model in biological and medical research [31]. This variant, pretrained on 21 million mouse scRNA-seq profiles, not only enhances accuracy for mouse-specific cell type classification but also shows potential for cross-species application, achieving comparable performance to human Geneformer on human data after ortholog-based gene name conversion [31].

Experimental Protocols and Methodologies

Standardized Fine-Tuning Protocols

For cell type annotation using scBERT, the standard protocol involves:

- Data preprocessing: Filtering low-quality cells and genes, normalization, and selection of highly variable genes [26]

- Model setup: Loading pretrained scBERT weights and adapting the final classification layer for target cell types

- Training configuration: Using learning rates between 1e-5 and 5e-5 with early stopping based on validation accuracy

- Evaluation: Assessing using accuracy, F1 score, and confusion matrices across cell types

For perturbation response prediction with scGPT:

- Data preparation: Formatting perturbation data as paired control-treatment cell populations

- Model adaptation: Utilizing scGPT's inherent perturbation prediction head or adapting the model through fine-tuning

- Training approach: Employing a mean squared error loss between predicted and observed expression changes

- Validation: Using held-out perturbations and comparing against additive and "no change" baselines [29]

Zero-Shot Evaluation Methodology

Comprehensive evaluation of foundation models requires rigorous zero-shot assessment:

- Embedding extraction: Forward passing specific datasets through the pretrained model to generate cell embeddings without any fine-tuning [28] [27]

- Task application: Applying embeddings to downstream tasks including clustering, batch integration, and spatial composition prediction

- Benchmarking: Comparing against established baselines like HVG selection, Harmony, and scVI using multiple metrics [28]

- Biological validation: Assessing whether learned representations capture known biological relationships through ontology-informed metrics [4]

Figure 2: Model-Task-Metric Relationships in Single-Cell Foundation Model Evaluation

Table 3: Essential Research Resources for Single-Cell Foundation Model Applications

| Resource Category | Specific Tools/Databases | Primary Function | Key Considerations |

|---|---|---|---|

| Pretraining Corpora | CELLxGENE Census [25], Genecorpus-30M [24], Mouse-Genecorpus-20M [31] | Large-scale, curated single-cell data for model pretraining | Tissue representation, data quality, and batch effects vary significantly |

| Spatial Omics Data | MERFISH, Xenium, CosMx, ISS datasets [27] | Enable spatially-aware model training | Targeted gene panels limit full transcriptome analysis |

| Benchmarking Datasets | Pancreas benchmark [28], PBMC datasets [28], NeurIPS multi-omics [26] | Standardized model evaluation | Dataset complexity impacts benchmark results |

| Model Implementations | scGPT GitHub repository [25], Geneformer Hugging Face [24] | Access to pretrained models and fine-tuning code | Computational requirements vary significantly (GPU memory, training time) |

| Biological Knowledge Bases | Gene Ontology [29], Cell Ontology [4] | Biological validation of model outputs | Essential for ontology-informed metrics like scGraph-OntoRWR [4] |

The landscape of single-cell foundation models is rapidly evolving, with distinct architectural variants offering complementary strengths and limitations. Geneformer's rank-based approach provides robust context-awareness, scGPT's generative framework enables flexible downstream application, and scBERT's BERT-inspired architecture offers strong performance on annotation tasks. However, rigorous benchmarking reveals that these models do not consistently outperform simpler methods in zero-shot settings or perturbation prediction, highlighting significant room for improvement.

Next-generation architectures like scGraphformer, scHybridBERT, and Nicheformer point toward promising directions by integrating graph-based reasoning, multi-view modeling, and spatial awareness. These approaches address fundamental limitations in capturing the complex spatial and relational dynamics of cellular systems. For researchers and drug development professionals, selection of appropriate model variants must be guided by specific application requirements, dataset characteristics, and computational resources, with careful consideration of each model's empirically demonstrated strengths rather than relying solely on claimed capabilities.

As the field progresses, the integration of multi-omic data, improved zero-shot generalization, and more biologically-meaningful evaluation metrics will be crucial for advancing these tools from computational novelties to essential resources for deciphering cellular heterogeneity and accelerating therapeutic development.

From Data to Discovery: Practical Applications of scFMs in Research

The advent of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by providing unprecedented resolution to analyze cellular heterogeneity. Concurrently, foundation models, pre-trained on vast datasets through self-supervised learning, have emerged as powerful tools for interpreting complex biological data. This whitepaper explores the integration of single-cell foundation models (scFMs) into the core tasks of cell type annotation and novel subpopulation discovery. We provide a technical examination of scFM architectures, including transformer-based models like scGPT and Geneformer, and detail their application through benchmarked protocols. Furthermore, we present novel methodologies such as multi-resolution variational inference (MrVI) for uncovering sample-level heterogeneity and advanced denoising frameworks like ZILLNB for enhancing data quality. This guide serves as an essential resource for researchers and drug development professionals seeking to leverage cutting-edge artificial intelligence to unlock deeper insights into cellular function and disease mechanisms.

Single-cell genomics has redefined our understanding of biology by resolving cellular heterogeneity with unprecedented precision, moving beyond the limitations of bulk sequencing that obscures critical differences between individual cells [32]. This technology has proven particularly transformative in complex tissue environments like tumors, where it reveals rare subclones and dynamic microenvironment interactions that drive disease progression and therapeutic resistance [32]. However, the high-dimensional, sparse, and noisy nature of scRNA-seq data presents significant analytical challenges that traditional computational methods struggle to address effectively [33] [8].

The emergence of foundation models represents a paradigm shift in single-cell data analysis. These large-scale deep learning models are pre-trained on massive, diverse datasets through self-supervised objectives, enabling them to learn fundamental biological principles that can be adapted to various downstream tasks [1]. Inspired by breakthroughs in natural language processing, researchers have developed single-cell foundation models (scFMs) that treat cells as "sentences" and genes or their expression values as "words" or "tokens" [1]. This approach allows scFMs to capture complex gene-gene interactions and cellular states across millions of cells spanning diverse tissues, species, and experimental conditions.

This technical guide examines how scFMs are revolutionizing the core tasks of cell type annotation and novel subpopulation discovery, which are fundamental to advancing our understanding of development, disease, and treatment response. By providing a comprehensive framework for implementing these technologies, we aim to bridge the gap between cutting-edge computational methods and biological discovery in both research and clinical applications.

Foundations of Single-Cell Foundation Models (scFMs)

Core Architectural Principles

Single-cell foundation models typically leverage transformer architectures, which utilize attention mechanisms to weight relationships between all genes in a cell simultaneously, enabling the model to learn complex regulatory and functional connections [1]. Most scFMs adopt one of two primary configurations: bidirectional encoder representations from transformers (BERT)-like encoder architectures that learn from all genes in a cell at once, or Generative Pretrained Transformer (GPT)-like decoder architectures that iteratively predict masked genes conditioned on known genes [1]. While both approaches have demonstrated success, no single architecture has yet emerged as clearly superior for single-cell data, leading to ongoing exploration of hybrid designs.

A critical challenge in applying transformer architectures to single-cell data is the non-sequential nature of gene expression. Unlike words in a sentence, genes have no inherent ordering. scFMs address this through various tokenization strategies, including ranking genes by expression levels within each cell, binning genes by expression values, or using normalized counts directly [1]. These approaches provide the deterministic sequence structure required by transformer models while attempting to preserve biological relevance.

Pre-training Strategies and Data Requirements

The power of scFMs stems from their pre-training on massive, diverse single-cell datasets. Public archives and databases such as CZ CELLxGENE provide unified access to annotated single-cell datasets containing over 100 million unique cells standardized for analysis [1]. Additional resources like the Human Cell Atlas, PanglaoDB, and public repositories including GEO and SRA contribute to extensive training corpora that enable scFMs to capture a wide spectrum of biological variation [1].

During pre-training, scFMs learn through self-supervised tasks similar to those used in natural language processing. The most common approach is masked gene modeling (MGM), where the model learns to predict randomly masked genes based on the context of other genes in the cell [1] [8]. Alternative strategies include predicting whether a gene is expressed or not, or using read-depth-aware reconstruction losses [8]. This self-supervised approach allows the models to learn fundamental biological principles without requiring manually labeled training data.

Table 1: Representative Single-Cell Foundation Models and Their Specifications

| Model Name | Omics Modalities | Model Parameters | Pre-training Dataset Size | Architecture Type | Key Pre-training Task |

|---|---|---|---|---|---|

| Geneformer | scRNA-seq | 40 million | 30 million cells | Encoder | MGM with gene ID prediction |

| scGPT | scRNA-seq, scATAC-seq, CITE-seq, spatial | 50 million | 33 million cells | Encoder with attention mask | Iterative MGM with MSE loss |

| UCE | scRNA-seq | 650 million | 36 million cells | Encoder | Binary classification of gene expression |

| scFoundation | scRNA-seq | 100 million | 50 million cells | Asymmetric encoder-decoder | Read-depth-aware MGM |

| LangCell | scRNA-seq | 40 million | 27.5 million cells | Encoder | MGM with cell type integration |

Figure 1: Single-Cell Foundation Model Workflow. This diagram illustrates the end-to-end process for developing and applying scFMs, from raw data processing through pre-training to downstream task adaptation.

Methodologies for Cell Type Annotation

scFM-Based Annotation Pipelines

Cell type annotation represents a fundamental application of scFMs, where models pre-trained on massive single-cell atlases can be fine-tuned or used directly to classify cells into known types. The scBERT model, one of the early transformer-based scFMs, demonstrated the viability of this approach by training on millions of single-cell transcriptomes in a self-supervised manner specifically for cell type annotation [1]. These models learn rich representations that capture both explicit and subtle transcriptional differences between cell types, enabling more accurate classification compared to traditional methods.

Benchmark studies have revealed that while scFMs show robust performance across diverse annotation tasks, their effectiveness varies depending on specific contexts. In comprehensive evaluations comparing six prominent scFMs against established baselines, no single model consistently outperformed all others across all tasks and datasets [8]. This highlights the importance of task-specific model selection rather than relying on a one-size-fits-all approach. Performance is influenced by factors including dataset size, biological complexity, and the degree of similarity between target cells and those encountered during pre-training.

Benchmarking Performance and Evaluation Metrics

Rigorous evaluation of annotation accuracy requires multiple metrics that capture different aspects of model performance. The Adjusted Rand Index (ARI) and Adjusted Mutual Information (AMI) measure clustering similarity against ground truth labels, while novel ontology-informed metrics like scGraph-OntoRWR assess the biological consistency of cell type relationships captured by scFMs [8]. The Lowest Common Ancestor Distance (LCAD) metric further evaluates the severity of misclassification by measuring ontological proximity between predicted and actual cell types [8].

In comparative evaluations, the ZILLNB framework, which integrates zero-inflated negative binomial regression with deep generative modeling, achieved the highest ARI and AMI scores among tested methods for cell type classification, with improvements ranging from 0.05 to 0.2 over alternatives including VIPER, scImpute, DCA, and DeepImpute [33]. These advances demonstrate how hybrid approaches that combine statistical rigor with deep learning can enhance annotation accuracy.

Table 2: Performance Comparison of Cell Annotation Methods Across Multiple Datasets

| Method | Average ARI | Average AMI | Computational Efficiency | Novel Type Detection |

|---|---|---|---|---|

| ZILLNB | 0.82 | 0.85 | Medium | Limited |

| scGPT | 0.78 | 0.81 | Low | Good |

| Geneformer | 0.76 | 0.79 | Low | Good |

| scVI | 0.75 | 0.78 | Medium | Limited |

| Seurat | 0.71 | 0.74 | High | Limited |

| Harmony | 0.69 | 0.72 | High | Poor |

Protocol for Cell Type Annotation Using scFMs

A standardized protocol for implementing scFM-based cell type annotation includes the following key steps:

Data Preprocessing: Begin with quality control to remove low-quality cells and genes, followed by normalization to account for sequencing depth variations. For transformer-based scFMs, convert the normalized expression matrix into token sequences using the model's specified tokenization strategy (e.g., ranking genes by expression levels) [1].

Model Selection and Setup: Choose an appropriate scFM based on dataset size and biological context. For large, diverse datasets (>10,000 cells), models like scGPT or Geneformer are generally suitable. For smaller datasets or those with limited computational resources, simpler baselines like Seurat or Harmony may be more efficient [8].