Decoding Cellular Heterogeneity: A Comprehensive Guide to scRNA-seq Data Analysis for Biomedical Research

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by enabling the precise dissection of cellular heterogeneity, revealing previously hidden cell types, states, and dynamics within tissues.

Decoding Cellular Heterogeneity: A Comprehensive Guide to scRNA-seq Data Analysis for Biomedical Research

Abstract

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by enabling the precise dissection of cellular heterogeneity, revealing previously hidden cell types, states, and dynamics within tissues. This article provides a comprehensive guide for researchers and drug development professionals, covering the foundational principles of scRNA-seq, its pivotal role in uncovering cellular diversity in fields like cancer research and developmental biology, and the key methodological steps from experimental design to data interpretation. It further addresses critical challenges in data analysis, including technical noise and batch effects, and offers robust solutions for troubleshooting and optimization. Finally, it explores the validation of findings and the powerful integration of scRNA-seq with other omics technologies, highlighting its transformative potential in advancing drug discovery and personalized medicine.

The Power of Single-Cell Resolution: Unraveling Cellular Diversity with scRNA-seq

Single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of cellular systems by enabling the precise characterization of gene expression at the resolution of individual cells. Unlike bulk RNA sequencing, which averages signals across thousands of cells, scRNA-seq dissects cellular heterogeneity, unveiling rare cell types and transitional states that are critical for development, homeostasis, and disease. This technical guide explores the foundational principles, methodologies, and analytical frameworks of scRNA-seq, with a focus on its transformative role in identifying hidden cell populations. We detail experimental and computational best practices, provide actionable protocols for rare cell investigation, and highlight applications in drug discovery and precision medicine, offering researchers a comprehensive resource for leveraging scRNA-seq to decode complex biological systems.

Cellular heterogeneity is a fundamental principle of biology, where genetically identical cells exhibit diverse molecular profiles, functions, and behaviors. Traditional bulk RNA sequencing approaches, which measure the average gene expression across thousands to millions of cells, inevitably mask this heterogeneity [1]. They are unable to resolve distinct cell subtypes, rare populations, or continuous transitional states, limiting our understanding of complex biological processes such as embryonic development, immune responses, and tumor evolution.

The advent of single-cell RNA sequencing (scRNA-seq) has overcome these limitations by allowing researchers to profile the transcriptomes of individual cells within a complex tissue. Since its initial demonstration in 2009, scRNA-seq has rapidly evolved from a low-throughput, specialized technique to a high-throughput, widely accessible technology [2] [3]. It has become an indispensable tool for discovering novel cell types, mapping differentiation trajectories, and investigating the molecular mechanisms underlying cellular identity and function.

This whitepaper frames scRNA-seq within the broader thesis of understanding cellular heterogeneity. By providing an in-depth technical guide, we aim to equip researchers and drug development professionals with the knowledge to design, execute, and interpret scRNA-seq studies, with a particular emphasis on uncovering hidden and rare cell populations.

The Fundamental Shift from Bulk to Single-Cell Transcriptomics

Bulk and single-cell RNA sequencing differ fundamentally in their input material, output data, and biological insights. The core distinctions are summarized in the table below.

Table 1: Key Differences Between Bulk and Single-Cell RNA Sequencing

| Feature | Bulk RNA Sequencing | Single-Cell RNA Sequencing |

|---|---|---|

| Input Material | RNA extracted from a population of thousands to millions of cells. | RNA from individually isolated cells. |

| Output Data | An average gene expression profile for the entire cell population. | Gene expression profiles for each individual cell. |

| Resolution | Population-level; obscures cellular heterogeneity. | Single-cell level; reveals cellular heterogeneity. |

| Ability to Detect Rare Cell Types | Very limited; signals from rare cells are diluted. | High; enables identification and characterization of rare cell types. |

| Primary Applications | Comparing overall gene expression between different tissue samples or conditions. | Cell type discovery, trajectory inference, and analysis of cellular heterogeneity. |

A major strength of scRNA-seq is its ability to identify and characterize rare cell populations that are often overlooked in bulk analyses, such as antigen-specific memory B cells, dormant cancer cells, or rare progenitor states [4] [1]. These populations can be biologically and clinically significant, acting as key drivers in immune responses, disease recurrence, or tissue regeneration.

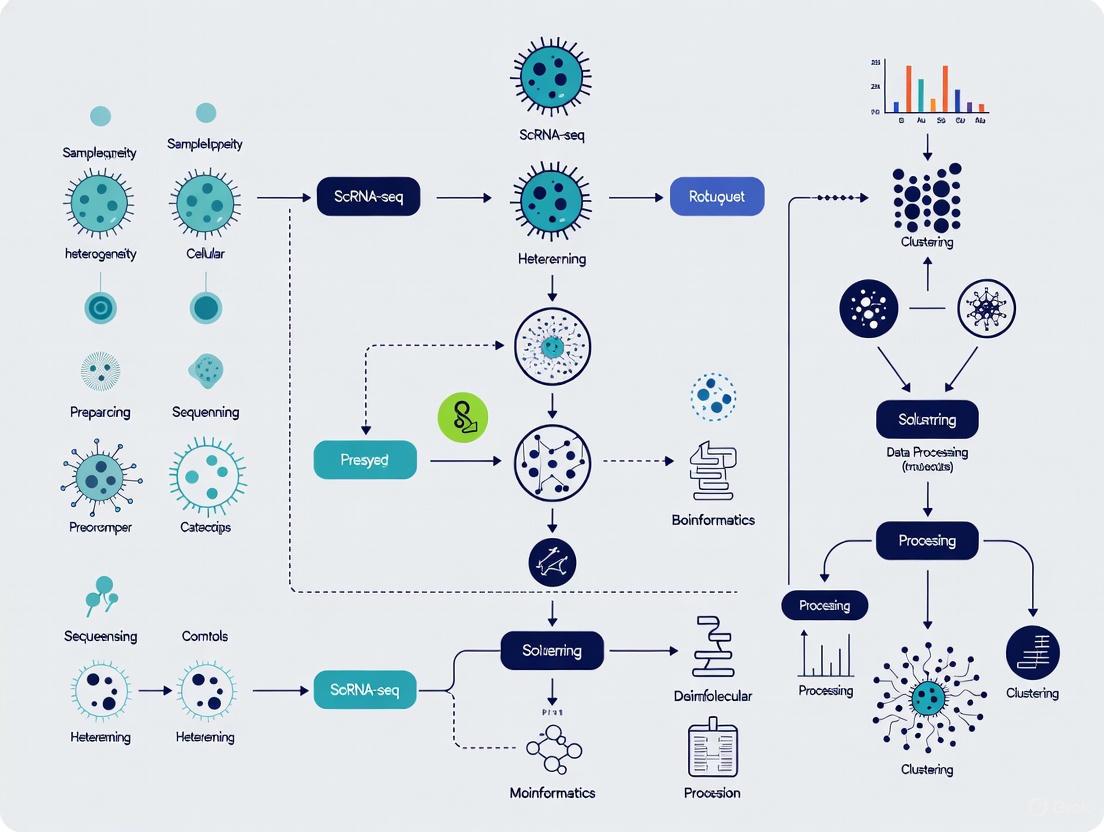

The following diagram illustrates the conceptual shift from bulk to single-cell analysis and the key steps involved in a typical scRNA-seq workflow.

Technical Foundations of scRNA-seq

Core Technological Principles

A standard scRNA-seq workflow involves isolating single cells, capturing their mRNA, reverse transcribing the RNA into cDNA, amplifying the cDNA, and sequencing it. Two key innovations have been critical for its scalability and accuracy:

- Cellular Barcoding: This involves labeling all cDNA molecules from a single cell with a unique cellular barcode during reverse transcription. This allows transcripts from thousands of cells to be pooled and sequenced together, as bioinformatic tools can later assign each read back to its cell of origin using the barcode [3].

- Unique Molecular Identifiers (UMIs): These are short, random nucleotide sequences added to each mRNA molecule during reverse transcription. UMIs enable accurate quantification of transcript counts by accounting for amplification bias, as PCR duplicates will share the same UMI [3].

The sensitivity of scRNA-seq protocols—the percentage of mRNA molecules present in a cell that are successfully captured and sequenced—has steadily improved, typically ranging from 3% to 20%. This has been achieved through optimized reverse transcription enzymes, buffer conditions, and reduced reaction volumes, such as in nanoliter-scale microfluidic devices [3].

Major scRNA-seq Platforms and Methods

Platforms for scRNA-seq can be broadly categorized into two types:

- Plate-Based Methods: Early protocols relied on fluorescence-activated cell sorting (FACS) to sort individual cells into the wells of 96- or 384-well plates. While these methods offer high sensitivity and are well-suited for processing smaller numbers of cells, their throughput is limited [4] [3].

- Droplet-Based Methods: Technologies like the 10X Genomics Chromium system encapsulate single cells in nanoliter-sized droplets along with barcoded beads. This approach enables the high-throughput profiling of tens of thousands of cells in a single experiment and has become the most widely used platform for large-scale atlas projects [2] [3].

More recent developments include combinatorial indexing methods (e.g., Parse Biosciences' Evercode), which use split-pool barcoding to profile millions of cells without the need for specialized droplet equipment, offering unprecedented scalability [5].

Experimental Design for Investigating Rare Cells

Strategic Considerations

Studying rare cell populations requires careful experimental planning. Key considerations include:

- Targeted vs. Agnostic Isolation: A strict a priori approach, where only the cells of interest are isolated (e.g., using FACS with specific surface markers), reduces heterogeneity and sequencing costs. However, a more agnostic approach, sequencing a mixed population, is beneficial for de novo discovery of new cell subtypes and can reveal unexpected cellular diversity [4].

- Cell Number and Sequencing Depth: Statistical power analysis is recommended to determine the number of cells to sequence. Detecting rare cells requires sequencing a sufficient number of cells to ensure they are captured. For example, to reliably find a population representing 1% of a sample, sequencing 10,000 cells would, in theory, capture 100 of these cells. Sequencing depth (number of reads per cell) must also be sufficient to detect lowly expressed genes, with ~500,000 reads per cell often being a good starting point [4].

- Minimizing Technical Artifacts: Batch effects can severely confound analysis. Randomizing samples across library preparation plates and sequencing lanes, or processing all samples simultaneously, is crucial. The use of spike-in RNA controls (e.g., ERCC or Sequin standards) can help calibrate measurements and account for technical variability [4].

Sample Preparation and Cell Isolation

The method of sample preparation is dictated by the tissue of interest. While immune organs like lymph nodes and spleen are easily dissociated, complex solid tissues or tumors require mechanical or enzymatic dissociation, which can induce cellular stress and transcriptional changes. Using cold-active proteases can help minimize this [4]. Viable cells can be obtained from cryopreserved samples, allowing for batch processing of samples collected at different times [4].

For identifying rare cells, several advanced techniques exist:

- Fluorescent Reporter Models: Genetically engineered mice with fluorescent proteins driven by cell-type-specific promoters allow for precise identification without the need for defined surface markers [4].

- Photolabeling for Spatial Context: Technologies like two-photon photoactivation or photoconversion (e.g., using PA-GFP or Kaede) can mark cells based on their precise microanatomical location within a tissue, which can then be isolated for scRNA-seq (e.g., NICHE-seq) [4].

Table 2: Methods for Isolating Rare Single Cells

| Method | Principle | Advantages | Considerations |

|---|---|---|---|

| FACS | Uses antibodies or fluorescent reporters to sort single cells into plates. | High purity; well-established. | Lower throughput; requires known markers. |

| Droplet-Based Encapsulation | Cells are individually encapsulated in droplets with barcoded beads. | Extremely high throughput; commercial solutions available. | Higher doublet rate; equipment cost. |

| Photolabeling | Cells are optically marked in their native tissue context using microscopy. | Preserves spatial information; no marker bias. | Technically complex; requires specialized models. |

| Combinatorial Indexing | Cells are labeled through multiple rounds of barcoding in plates. | Extremely scalable; no specialized equipment. | Applied to fixed cells or nuclei. |

Computational Analysis of scRNA-seq Data

Standard Analytical Workflow

The analysis of scRNA-seq data is a multi-step process. Standardized pipelines like scRNASequest help automate this workflow, which includes [6]:

- Quality Control (QC) and Filtering: Removal of low-quality cells (based on low gene counts or high mitochondrial RNA percentage, indicating cell stress) and potential doublets (droplets containing two cells).

- Normalization and Harmonization: Adjusting for technical variation like sequencing depth and correcting for batch effects between samples using tools like Harmony, Seurat, or LIGER.

- Dimensionality Reduction: The high-dimensional gene expression data is reduced to 2 or 3 dimensions using methods like PCA, t-SNE, or UMAP for visualization.

- Clustering and Cell Type Annotation: Cells are grouped based on transcriptional similarity. These clusters are then annotated as specific cell types using known marker genes or by comparing to reference datasets.

- Downstream Analysis: This includes identifying differentially expressed genes between conditions, inferring gene regulatory networks, and reconstructing developmental trajectories (pseudotime analysis).

Specialized Tools for Rare Cell Identification

The high dimensionality and sparsity of scRNA-seq data pose specific challenges. Dimensionality reduction methods must be chosen carefully, as they can distort local and global data structures, potentially obscuring rare populations [7]. Furthermore, technical noise and "dropout" events (false zeros) can be mitigated using denoising tools like the deep learning-based ZILLNB framework [8].

Specialized algorithms have been developed specifically for rare cell detection. These include scSID (single-cell similarity division algorithm), which identifies rare populations by deeply analyzing inter-cluster and intra-cluster similarities, demonstrating superior performance and scalability on benchmark datasets [9].

The following diagram outlines a standard analytical workflow and highlights where specialized tools for rare cell analysis are applied.

The Scientist's Toolkit: Essential Reagents and Computational Tools

Successfully conducting an scRNA-seq study, especially for rare cell populations, requires a combination of wet-lab reagents and dry-lab computational resources.

Table 3: Key Research Reagent Solutions and Computational Tools

| Category | Item | Function |

|---|---|---|

| Wet-Lab Reagents | Enzymatic Dissociation Kits | Liberates individual cells from solid tissues for analysis. |

| Viability Stains (e.g., DAPI, Propidium Iodide) | Distinguishes live cells from dead cells during FACS to improve data quality. | |

| Antibody Panels for FACS | Isulates specific cell populations based on surface protein expression. | |

| Commercial scRNA-seq Kits (e.g., 10X Genomics, Parse Evercode) | Provides all necessary reagents for library construction in an optimized system. | |

| Spike-in RNA Controls (e.g., ERCC) | Adds synthetic RNA transcripts to the sample to monitor technical performance. | |

| Computational Tools | Cell Ranger (10X Genomics) | Standard pipeline for processing raw sequencing data into a UMI count matrix. |

| Seurat / Scanpy | Comprehensive R/Python packages for the entire analysis workflow. | |

| Harmony | Fast and robust tool for integrating data from multiple batches or experiments. | |

| scSID, CellBender | Specialized algorithms for rare cell identification and ambient RNA removal. | |

| ZILLNB, DCA | Denoising tools to impute dropouts and correct technical noise. |

Applications in Drug Discovery and Development

scRNA-seq is transforming the pharmaceutical pipeline by providing unprecedented insights into disease mechanisms and therapeutic effects [2] [5].

- Target Identification and Validation: scRNA-seq can pinpoint genes with cell-type-specific expression in disease-relevant tissues. A 2024 study showed that this cell-type specificity is a robust predictor of a drug target's successful progression from Phase I to Phase II clinical trials [5].

- Mechanism of Action (MoA) Studies: In drug screening, scRNA-seq moves beyond simple viability readouts to provide detailed, cell-type-specific gene expression profiles. This helps elucidate a drug's MoA, identify biomarkers of response, and understand resistance mechanisms [2] [5].

- Biomarker Discovery and Patient Stratification: By defining cellular subtypes and their associated transcriptional programs in diseases like cancer, scRNA-seq enables the discovery of more accurate diagnostic and prognostic biomarkers. This allows for better stratification of patient populations for clinical trials and more personalized therapeutic strategies [2] [5].

- Evaluating Preclinical Models: scRNA-seq allows researchers to compare the cellular composition and gene expression profiles of animal models or patient-derived organoids to human tissues, assessing their translatability and relevance before investing in costly clinical trials [2].

Single-cell RNA sequencing has fundamentally changed our approach to investigating complex biological systems. By moving beyond the averaging inherent in bulk analyses, scRNA-seq empowers researchers to dissect cellular heterogeneity at an unparalleled resolution. As this guide has detailed, the careful application of scRNA-seq—from robust experimental design and sample preparation to sophisticated computational analysis—enables the discovery of hidden cell populations and rare cell types that are pivotal to understanding health and disease. The continued integration of scRNA-seq into biomedical research, particularly in drug discovery, promises to accelerate the development of targeted therapies and advance the era of precision medicine.

The fundamental goal of single-cell RNA sequencing (scRNA-seq) is to map gene expression at the individual cell level, enabling researchers to track heterogeneous cell sub-populations and infer regulatory relationships between genes and pathways [10]. Unlike bulk RNA sequencing, which provides an averaged expression profile from thousands of cells, scRNA-seq reveals the cell-to-cell variability that exists even in seemingly homogeneous populations [3]. This cellular heterogeneity is a central feature of biological systems, arising from developmental processes, physiological responses, and stochastic molecular events [10] [3]. Dissecting this heterogeneity is crucial for understanding how biological systems develop, maintain homeostasis, and respond to perturbations—with particular relevance for uncovering disease mechanisms and advancing drug development [3] [11].

The ability to resolve this heterogeneity depends on two interconnected technological foundations: cellular barcoding to tag individual cells, and unique molecular identifiers (UMIs) to accurately count mRNA molecules. These tools have transformed scRNA-seq from a specialized technique limited to small cell numbers into a high-throughput method capable of profiling tens of thousands of cells in a single experiment [10] [3]. This guide examines the core concepts of transcriptome analysis, barcoding strategies, and UMI implementation within the framework of studying cellular heterogeneity, providing researchers with both theoretical understanding and practical methodologies.

The scRNA-seq Workflow: From Cells to Data

The journey from biological sample to single-cell data involves a sophisticated workflow that preserves the identity of individual cells and their molecular constituents. The fundamental steps include sample preparation, cell partitioning, barcoding, library preparation, and sequencing, culminating in computational analysis.

Table 1: Key Steps in Single-Cell RNA Sequencing Workflow

| Workflow Stage | Key Components | Primary Function | Impact on Data Quality |

|---|---|---|---|

| Sample Preparation | Fresh/frozen tissue, dissociation protocol, viability assessment | Obtain high-quality single-cell suspension | Critical for cell viability and representative sampling |

| Cell Partitioning | Microfluidic devices, droplets, microwells | Isolate individual cells | Determines throughput and doublet rate |

| Barcoding | Cell barcodes, UMIs, capture sequences | Tag cellular origin of molecules | Enables multiplexing and cell identification |

| Library Preparation | Reverse transcription, PCR amplification, adapter ligation | Prepare molecules for sequencing | Impacts sensitivity and technical noise |

| Sequencing | Illumina, Nanopore, PacBio platforms | Generate raw sequence data | Determines read length, accuracy, and depth |

| Computational Analysis | Demultiplexing, alignment, quantification | Extract biological insights | Reveals cell types, states, and heterogeneity |

Cell Partitioning and Barcoding Strategies

Modern scRNA-seq employs innovative partitioning systems to process thousands of cells simultaneously. Droplet-based methods, such as those developed by 10x Genomics, use microfluidic chips to encapsulate individual cells in nanoliter-scale emulsion droplets (GEMs) containing barcoded primers [12]. Each droplet functions as an individual reaction chamber where cell lysis, mRNA capture, and barcoding occur simultaneously [10] [12]. Alternative methods use microwell arrays or combinatorial barcoding in multi-well plates to achieve similar goals through different physical mechanisms [3].

The core innovation enabling high-throughput scRNA-seq is cellular barcoding—where each cell's transcripts are tagged with a unique nucleotide sequence during reverse transcription [10] [3]. This allows material from thousands of cells to be pooled for efficient processing and sequencing while maintaining the ability to computationally separate the data by cell of origin during analysis [3]. The development of cellular barcoding represented a watershed moment in scaling single-cell transcriptomics, moving beyond the limitations of plate-based methods that processed only 70-90 cells per run [10].

Figure 1: Single-Cell Barcoding Workflow. The process begins with a single-cell suspension that is partitioned into droplets or wells. Within each partition, cells are lysed, mRNA is captured, and reverse transcription incorporates cellular barcodes that preserve cell-of-origin information throughout subsequent processing.

Unique Molecular Identifiers: Principles and Applications

The Fundamental Challenge of Amplification Bias

A central challenge in scRNA-seq stems from the exceptionally small amounts of starting material—a single mammalian cell contains approximately 10⁵-10⁶ mRNA molecules [13]. To make these molecules detectable on sequencing platforms, amplification through polymerase chain reaction (PCR) is necessary. However, PCR amplification is not uniform across all sequences; certain fragments amplify more efficiently than others due to sequence-specific biases [13] [14]. This amplification bias can distort the true representation of transcripts in the original sample, potentially leading to erroneous biological conclusions.

UMI Mechanism and Implementation

Unique Molecular Identifiers (UMIs) solve the amplification bias problem by providing each original mRNA molecule with a unique tag before amplification occurs. UMIs are short, random nucleotide sequences (typically 8-12 bases in length) that are incorporated into sequencing libraries during the reverse transcription step [13] [14]. When incorporated into cDNA synthesis primers, each mRNA molecule receives a random UMI sequence, creating a unique combination of transcript sequence and molecular barcode [13].

The power of UMIs becomes apparent during computational analysis. After sequencing, bioinformatics pipelines can distinguish between PCR duplicates (multiple copies of the same original molecule) and unique molecules by grouping reads that share both alignment coordinates and UMIs [13] [14]. This enables precise counting of the original mRNA molecules present in each cell, providing accurate digital gene expression counts rather than analog read counts distorted by amplification biases [13].

Figure 2: UMI Workflow for Accurate Transcript Quantification. Each original mRNA molecule receives a unique UMI tag before PCR amplification. After sequencing, computational deduplication identifies reads originating from the same original molecule (sharing UMI and alignment position), enabling precise counting of original molecules despite amplification biases.

UMI Design Considerations and Technical Advances

Effective UMI implementation requires careful design. The pool of possible UMI sequences must be substantially larger than the number of molecules being tagged to ensure each molecule receives a unique identifier [13]. For example, a 10-nucleotide UMI provides 4¹⁰ (1,048,576) possible unique sequences [13]. Recent advances have addressed challenges in UMI recovery and accuracy, particularly with the rise of long-read sequencing technologies. Innovative designs incorporating anchor sequences between barcodes and UMIs help mitigate issues caused by oligonucleotide synthesis errors and improve demarcation of UMI regions in long-read data [15].

Table 2: UMI Applications and Benefits in scRNA-seq

| Application | Technical Challenge | UMI Solution | Impact on Data Quality |

|---|---|---|---|

| Gene Expression Quantification | Amplification bias during PCR | Molecular counting without amplification distortion | More accurate differential expression analysis |

| Rare Transcript Detection | Distinguishing true low expression from technical noise | Absolute molecule counting | Improved sensitivity for rare cell types and transcripts |

| Multiomics Integration | Coordinating different molecular readouts | Shared barcoding system across data types | Better correlation between transcriptome and other modalities |

| Long-Read Sequencing | Higher error rates in third-generation sequencing | Error-correcting UMI designs | Maintains accuracy despite sequencing errors |

Experimental Protocols for scRNA-seq

Droplet-Based Single-Cell RNA Sequencing Protocol

The following protocol outlines the key steps for performing droplet-based scRNA-seq, based on established methodologies [10] [12]:

Sample Preparation and Quality Control

- Dissociate tissue to obtain single-cell suspension using appropriate enzymatic digestion

- Filter cells through flow cytometry strainer (30-40μm) to remove aggregates

- Assess cell viability (>90% recommended) and concentration using trypan blue or automated cell counters

- Adjust concentration to 700-1,200 cells/μL in appropriate buffer (e.g., PBS + 0.04% BSA)

Cell Partitioning and Barcoding

- Load cell suspension onto microfluidic device along with barcoded beads and partitioning oil

- Generate gel beads-in-emulsion (GEMs) at rates of 10-100 droplets per second

- Co-encapsulate single cells with single barcoded beads in nanoliter droplets

- Lyse cells within droplets to release mRNA

- Hybridize mRNA to barcoded oligo-dT primers containing cell barcode and UMI sequences

- Perform reverse transcription within droplets to produce barcoded cDNA

Library Preparation

- Break emulsion and recover barcoded cDNA

- Perform cDNA amplification using PCR (12-14 cycles typically recommended)

- Fragment amplified cDNA to optimal size for sequencing (if required by protocol)

- Add sequencing adapters and sample indices via additional PCR (8-10 cycles)

- Purify library using SPRI beads and quantify using fluorometric methods

- Validate library quality using Bioanalyzer or TapeStation

Sequencing

- Pool multiple libraries using unique dual indexes

- Sequence on appropriate platform (Illumina recommended for most applications)

- Target sequencing depth: 20,000-50,000 reads per cell

- Include custom sequencing primers as required by specific chemistry

Quality Control and Troubleshooting

Critical quality control checkpoints throughout the protocol include:

- Cell Quality: Ensure high viability and single-cell suspension to minimize doublets

- Barcoded Beads: Verify bead integrity and priming efficiency

- Emulsion Quality: Monitor droplet generation for consistent size and stability

- cDNA Yield: Assess after amplification (typically 1-10 ng/μL)

- Final Library: Confirm size distribution (broad peak ~1-5 kb for full-length protocols)

Common issues and solutions:

- Low Cell Recovery: Optimize cell concentration and minimize dead cells

- High Doublet Rate: Reduce cell loading concentration or implement doublet detection algorithms

- Low mRNA Capture Efficiency: Check bead quality and reverse transcription efficiency

- Library Complexity Issues: Verify input quality and reduce amplification cycles

The Scientist's Toolkit: Essential Reagents and Technologies

Table 3: Essential Research Reagents for scRNA-seq Experiments

| Reagent Category | Specific Examples | Function | Technical Considerations |

|---|---|---|---|

| Cell Preparation | Collagenase/Dispase enzymes, DNase I, viability dyes, FACS buffers | Tissue dissociation and cell quality control | Enzyme selection tissue-specific; viability critical for data quality |

| Barcoding Systems | 10x Genomics Gel Beads, Drop-seq beads, inDrop BHM | Cellular and molecular indexing | Barcode complexity determines cell throughput; compatibility with downstream steps |

| Reverse Transcription | Template-switching reverse transcriptases, dNTPs, RNase inhibitors | cDNA synthesis with UMI incorporation | High efficiency critical for sensitivity; template-switching enables full-length capture |

| Amplification | High-fidelity DNA polymerases, dNTPs, buffer systems | cDNA/library amplification | Minimize cycles to reduce bias; maintain sequence fidelity |

| Library Preparation | Fragmentation enzymes, ligases, index primers, SPRI beads | Sequencing library construction | Size selection critical for insert distribution; dual indexing recommended |

| Quality Control | Bioanalyzer/TapeStation reagents, fluorometric quantitation dyes | QC at multiple workflow stages | Critical for identifying issues early; ensures sequencing success |

Advanced Applications and Future Directions

The integration of cellular barcoding and UMIs has enabled sophisticated applications that extend beyond basic transcriptome profiling. Multiomic approaches now simultaneously profile DNA and RNA from the same single cells, linking genetic variants to transcriptional consequences [11]. Tools like SDR-seq (single-cell DNA-RNA-sequencing) capture both genomic variation and gene expression in thousands of cells simultaneously, particularly valuable for understanding non-coding variants that constitute over 95% of disease-associated genetic changes [11].

Computational methods continue to evolve alongside experimental technologies. Advanced algorithms like BLAZE enable accurate cell barcode identification from long-read scRNA-seq data without matched short-read sequencing, simplifying workflows and reducing costs [16]. Graph neural network approaches such as scGraphformer leverage the relational information in scRNA-seq data to identify subtle cellular patterns and relationships that might be obscured by traditional analysis methods [17].

Future directions focus on improving integration across molecular modalities, enhancing sensitivity for rare cell types and transcripts, and developing more robust computational frameworks that can handle the increasing scale and complexity of single-cell data. As these technologies mature, they promise to deepen our understanding of cellular heterogeneity in development, disease, and therapeutic response.

In the context of understanding cellular heterogeneity in scRNA-seq data research, the standard workflow is not merely a procedural necessity but the very foundation for capturing the true diversity of cell types, states, and transitions within a complex biological system. Unlike bulk RNA sequencing, which provides an averaged transcriptome across thousands of cells, scRNA-seq empowers researchers to dissect this heterogeneity, revealing rare cell populations, continuous cellular trajectories, and probabilistic expression events that would otherwise be obscured [3] [18] [1]. This in-depth technical guide details the core steps of the scRNA-seq workflow, from a tissue sample to a sequenced library, providing researchers, scientists, and drug development professionals with the methodologies essential for robust and interpretable data generation.

Sample Preparation and Single-Cell Isolation

The initial phase is critical, as the quality of the single-cell suspension directly determines the success of the entire experiment. The overarching goal is to generate a suspension of viable, dissociated single cells that accurately represent the in vivo cellular composition without introducing technical artifacts.

Tissue Dissociation

The process begins with procured tissue, which must be dissociated into a single-cell suspension. A typical protocol involves a combination of:

- Tissue Dissection and Mechanical Mincing: Physically breaking down the tissue structure.

- Enzymatic Breakdown: Using enzyme mixes (e.g., collagenase, trypsin) to digest the extracellular matrix [19].

The dissociation process is a major source of technical variation, and standardization is paramount. Automated tissue dissociators (e.g., gentleMACS Dissociator, PythoN Tissue Dissociation System, Singulator) offer significant advantages in consistency, speed, and cell viability by using predefined, tissue-specific programs [19]. Key considerations include optimizing the protocol for the specific tissue type to minimize stress-induced changes to the transcriptome and maximizing cell viability.

Cell Isolation Strategies

Once a single-cell suspension is achieved, individual cells must be isolated for separate processing. The common methods are:

Table 1: Common Cell Isolation Methods for scRNA-seq

| Method | Principle | Advantages | Limitations | Throughput |

|---|---|---|---|---|

| Fluorescence-Activated Cell Sorting (FACS) [20] [18] | Cells are sorted into multi-well plates based on light scattering and fluorescence. | High specificity if using labeled antibodies; high cell viability. | Lower throughput; higher cost. | 96 to 384 wells per run. |

| Droplet-Based Microfluidics (e.g., 10x Genomics) [21] | Cells are co-encapsulated with barcoded beads in nanoliter-scale droplets. | Extremely high throughput; cost-effective per cell. | Requires specialized equipment; limited ability to select specific cells. | Tens of thousands to millions of cells per run. |

| Microwell-Based Platforms (e.g., Seq-Well) [20] | Cells are randomly seeded into arrays of thousands of microwells. | Portable; lower cost; no complex equipment needed. | Throughput is lower than droplet-based methods. | Tens of thousands of cells per run. |

| Combinatorial Indexing (e.g., SPLiT-seq) [20] [3] | Cells are labeled in successive rounds of barcoding in multi-well plates. | Does not require physical single-cell isolation; ultra-high throughput. | Can only be applied to permeabilized fixed cells or nuclei. | Up to millions of cells. |

Single-Cell RNA Sequencing: Core Steps

After isolation, the single cells undergo a series of molecular biology steps to convert their minute amounts of RNA into a sequenceable library.

Diagram 1: Core scRNA-seq workflow

Cell Lysis and mRNA Capture

Within each isolated reaction vessel (well or droplet), the cell is lysed to release its RNA. To specifically target polyadenylated messenger RNA (mRNA) and avoid capturing abundant ribosomal RNAs (rRNAs), poly(T) primers are universally employed. These primers anneal to the poly(A) tails of mRNAs, enabling their selective capture [20] [18].

Reverse Transcription and Barcoding

This is a pivotal step where technical innovations have enabled modern high-throughput scRNA-seq. Reverse transcription (RT) converts the captured mRNA into more stable complementary DNA (cDNA). Two key barcoding strategies are incorporated here:

- Cellular Barcoding (CB): All RT primers from a single reaction vessel (representing one cell) share a unique cell barcode. This allows all sequencing reads derived from that cell to be bioinformatically grouped during analysis, enabling the multiplexing of thousands of cells in a single library [3].

- Unique Molecular Identifiers (UMI): Each RT primer also contains a random molecular barcode, the UMI. When a cDNA molecule is synthesized, it is tagged with a unique UMI. This allows bioinformatic correction for amplification bias, as the number of distinct UMIs for a gene reflects the original number of mRNA molecules, not the amplified number of reads [3].

In droplet-based methods like the 10x Genomics Chromium system, this occurs inside Gel Beads-in-emulsion (GEMs), where a single cell, a single barcoded gel bead, and RT reagents are combined [21].

cDNA Amplification and Library Preparation

The minute amounts of cDNA from a single cell must be amplified to generate sufficient material for sequencing. This is typically achieved by PCR or, in some protocols like CEL-Seq2, by in vitro transcription (IVT) [20]. After amplification, the barcoded cDNA from all cells is pooled, and a standard NGS library preparation is performed. This involves fragmentation (for full-length protocols), size selection, and the addition of sequencing adapters [18].

Key scRNA-seq Protocols and Their Properties

Various scRNA-seq protocols have been developed, differing in their transcript coverage, amplification method, and throughput. The choice of protocol depends on the specific biological question.

Table 2: Comparison of Key scRNA-seq Protocols

| Protocol | Isolation Strategy | Transcript Coverage | UMI | Amplification Method | Unique Features |

|---|---|---|---|---|---|

| Smart-Seq2 [20] | FACS | Full-length | No | PCR | High sensitivity for low-abundance transcripts; ideal for isoform and mutation analysis. |

| Drop-Seq [20] | Droplet-based | 3'-end | Yes | PCR | High-throughput, low cost per cell. |

| inDrop [20] | Droplet-based | 3'-end | Yes | IVT | Uses hydrogel beads. |

| CEL-Seq2 [20] | FACS | 3'-only | Yes | IVT | Linear amplification reduces bias. |

| Seq-Well [20] | Droplet-based | 3'-only | Yes | PCR | Portable and low-cost. |

| SPLiT-Seq [20] | Not required | 3'-only | Yes | PCR | Combinatorial indexing; fixed cells/nuclei; highly scalable and low cost. |

Diagram 2: Protocol selection logic

Sequencing and Data Analysis

Sequencing

The final library is sequenced on a next-generation sequencing (NGS) platform (e.g., Illumina, PacBio). For 3' end-counting methods, the sequencing read must cover the cellular barcode and UMI in addition to a fragment of the transcript's 3' end [21].

Primary Data Analysis

The raw sequencing data (FASTQ files) are processed using specialized pipelines (e.g., Cell Ranger for 10x Genomics data) to:

- Demultiplex the data based on cellular barcodes.

- Align reads to a reference genome.

- Generate a gene expression matrix, where rows represent genes, columns represent individual cells, and values are the UMI counts, quantifying the expression level of each gene in each cell [21].

This matrix is the primary data product for all subsequent bioinformatic analyses aimed at deciphering cellular heterogeneity, including clustering, cell type identification, trajectory inference, and differential expression analysis.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for scRNA-seq

| Item | Function | Example/Note |

|---|---|---|

| Tissue Dissociation Kits | Enzymatic mixes for breaking down extracellular matrix to create single-cell suspensions. | Predefined, tissue-specific kits (e.g., MACS Tissue Dissociation Kits) ensure consistency [19]. |

| Viability Stain | Distinguishes live from dead cells for sorting or quality control. | Propidium iodide or DAPI for flow cytometry. |

| Barcoded Gel Beads | Microbeads coated with oligonucleotides containing poly(T), UMIs, and cell barcodes. | Core component of droplet-based systems (e.g., 10x Genomics) [21]. |

| Reverse Transcriptase | Enzyme that synthesizes cDNA from mRNA templates. | Optimized enzymes are critical for sensitivity and yield [3]. |

| Poly(T) Primers | Oligonucleotides that specifically capture polyadenylated mRNA. | Foundational for mRNA enrichment in most protocols [20] [18]. |

| Template Switching Oligo | Facilitates the addition of universal primer sequences during RT for full-length protocols. | Used in protocols like Smart-Seq2. |

| Library Preparation Kit | Reagents for fragmenting, amplifying, and adding sequencing adapters to cDNA. | Often tailored to specific scRNA-seq platforms. |

The standard scRNA-seq workflow, from meticulous cell isolation to the generation of barcoded sequencing libraries, is a sophisticated but now highly accessible process. By carefully executing each step and selecting the appropriate protocol, researchers can obtain high-quality data that faithfully captures the transcriptional landscape of individual cells. This technical foundation is indispensable for achieving the central goal of dissecting cellular heterogeneity, ultimately driving discoveries in fundamental biology, drug discovery, and personalized medicine.

Single-cell RNA sequencing (scRNA-seq) has emerged as a transformative technology in cancer research, providing unprecedented resolution to dissect the cellular heterogeneity that defines malignant tumors. Unlike bulk RNA sequencing, which averages gene expression across thousands of cells, scRNA-seq enables researchers to profile individual cells within a complex tissue, revealing rare subpopulations, developmental trajectories, and cell-specific responses to therapy [22]. This technical capability is particularly crucial for understanding the tumor microenvironment (TME), a complex ecosystem comprising malignant cells and diverse non-malignant components including immune populations, fibroblasts, endothelial cells, and other stromal elements [23]. The ability to deconstruct this cellular complexity at single-cell resolution has fundamentally advanced our understanding of tumor biology, with direct implications for drug development and therapeutic targeting.

The power of scRNA-seq lies in its capacity to illuminate three critical aspects of cancer biology: (1) the intricate cellular composition and spatial organization of tumors, (2) the developmental pathways and lineage relationships between cell subpopulations, and (3) the molecular mechanisms underlying drug sensitivity and resistance. As we explore in this technical guide, these applications are transforming precision oncology by enabling the identification of novel therapeutic targets, informing combination therapy strategies, and revealing biomarkers of treatment response [24]. The following sections provide a comprehensive examination of the methodologies, applications, and practical implementations of scRNA-seq in contemporary cancer research.

Technical Foundations of scRNA-seq

Core Methodological Principles

A typical scRNA-seq workflow encompasses multiple critical steps: sample acquisition, single-cell isolation, cell lysis, reverse transcription, cDNA amplification, library construction, sequencing, and bioinformatic analysis [22]. The initial single-cell isolation represents a fundamental technical challenge, with current methods including fluorescence-activated cell sorting (FACS), magnetic-activated cell sorting (MACS), laser-capture microdissection (LCM), and increasingly, microfluidic approaches that provide superior throughput and efficiency [22]. Among these, droplet-based microfluidics has emerged as the dominant high-throughput platform, where individual cells are encapsulated in nanoliter droplets containing lysis buffer and barcoded beads using microfluidic and reverse emulsion devices [22].

The scRNA-seq protocols can be broadly categorized into two classes: full-length transcript sequencing approaches (e.g., Smart-seq2, MATQ-seq) and 3′/5′-end transcript sequencing methods (e.g., Drop-seq, inDrop, 10× Genomics) [22]. Full-length methods provide comprehensive transcript coverage, enabling isoform usage analysis, allelic expression detection, and identification of RNA editing markers. In contrast, tag-based methods are typically combined with unique molecular identifiers (UMIs) to reduce amplification bias and improve quantitative accuracy, making them ideal for large-scale cell population studies despite their limitation in transcript coverage [22]. The choice between these approaches depends on the specific research questions, with full-length protocols offering deeper molecular characterization per cell, and tag-based methods enabling larger-scale population analyses.

Quantitative Comparison of scRNA-seq Technologies

Table 1: Comparison of High-Throughput scRNA-seq Platforms

| Platform | Transcript Coverage | Cell Throughput | Per-Cell Cost | UMI Implementation | Key Advantages |

|---|---|---|---|---|---|

| 10× Genomics | 3' or 5' counting | High (thousands) | ~$0.50 | Yes | High sensitivity, low technical noise, commercial support |

| Drop-seq | 3' counting | High (thousands) | ~$0.10 | Yes | Cost-effective, customizable protocol |

| inDrop | 3' counting | High (thousands) | ~$0.25 | Yes | Customizable, good for low-abundance transcripts |

| CEL-seq2 | 3' counting | Medium (hundreds) | Moderate | Yes | High accuracy, low amplification noise |

| MARS-seq2.0 | 3' counting | High (8,000-10,000) | ~$0.10 | Yes | Automated, low background (2%), minimal doublets |

| Seq-Well | 3' counting | High (thousands) | Low | Yes | Portable, minimal equipment requirements |

Recent methodological advances have substantially improved the efficiency and accessibility of scRNA-seq technologies. For example, MARS-seq2.0 has achieved a sixfold reduction in library production costs (from $0.65 to $0.10 per cell) while reducing background levels from 10-15% to just 2% [22]. Similarly, highly scalable droplet-based platforms have reduced library preparation costs to approximately 5 cents per cell, with overall costs of ~$1,400 per tumor including sequencing and throughput of ~5,000 cells [24]. These advancements have made large-scale scRNA-seq studies routine and cost-effective, enabling comprehensive characterization of cellular heterogeneity across diverse cancer types.

Illuminating Tumor Microenvironments

Deconstructing Cellular Heterogeneity

The application of scRNA-seq to tumor microenvironments has revealed an extraordinary degree of cellular diversity that was previously obscured by bulk sequencing approaches. A representative study integrating scRNA-seq with spatial transcriptomics in colorectal cancer (CRC) profiled 41,700 cells from three CRC tumor-normal-blood pairs, identifying eight major cell populations: B cells, T cells, monocytes, NK cells, epithelial cells, fibroblasts, mast cells, and endothelial cells [23]. Further analysis revealed significant differences in cellular composition between tumor and normal tissues, with an approximately 2.5-fold increase in monocytes and a corresponding decrease in NK and B cell populations (to 0.3-0.4 times normal levels) in tumor tissues, suggesting a myeloid-driven immunosuppressive environment in CRC [23].

Beyond cataloging cellular diversity, scRNA-seq enables the identification of novel cell states and functional subsets within broad cell categories. Subclustering of epithelial cells in the CRC study revealed nine distinct subpopulations, including crypt cells, enterocytes, goblet cells, proinflammatory cells, stem-like cells, and tumor cells [23]. The ability to resolve these previously unrecognized cellular states provides critical insights into the functional organization of tumors and their microenvironments, with direct implications for understanding disease mechanisms and identifying therapeutic vulnerabilities.

Spatial Organization and Cellular Interactions

While scRNA-seq provides unparalleled resolution of cellular diversity, it inherently disrupts native spatial context. This limitation has been addressed through integration with spatial transcriptomic (ST) technologies, which preserve spatial information while providing transcriptome-wide data [23]. The combination of these approaches enables researchers to map cell populations back to their original tissue locations, revealing the spatial architecture of tumors and the proximity relationships between different cell types.

In the CRC study, transferring cellular annotations from scRNA-seq to ST data allowed researchers to delineate four distinct tissue regions: tumor, stroma, immune infiltration, and colon epithelium [23]. This integrated analysis revealed intensive intercellular interactions between stroma and tumor regions, including a specific ligand-receptor pair (C5AR1-RPS19) that appeared to mediate cross-talk between these compartments [23]. Additionally, region-specific molecular features were identified, with tumor regions characterized by high TMSB4X expression and stroma regions marked by elevated VIM expression [23]. These findings illustrate how spatial context informs functional interpretation of scRNA-seq data, revealing organizational principles of tumor ecosystems that would remain invisible with either approach alone.

Diagram 1: Cellular Architecture of the Colorectal Cancer Microenvironment. This diagram illustrates the complex cellular composition of the CRC TME as revealed by integrated scRNA-seq and spatial transcriptomics, highlighting key cellular subpopulations, spatial regions, and molecular interactions.

Analyzing Cellular Development and Lineage Relationships

Developmental Trajectories and Cellular Plasticity

scRNA-seq enables the reconstruction of developmental trajectories and lineage relationships within tumors through computational approaches that order cells along pseudotemporal axes based on transcriptomic similarity. This application has been particularly powerful for understanding the cellular hierarchy and plasticity of epithelial populations in colorectal cancer. Studies have demonstrated that human colon cancer cells recapitulate multilineage differentiation processes observed in normal colon epithelia, with distinct subpopulations representing various stages of differentiation and malignant transformation [23].

The identification of seven subtypes of malignant epithelial cells in CRC—tumorCAV1, tumorATF3JUN|FOS, tumorZEB2, tumorVIM, tumorWSB1, tumorLXN, and tumorPGM1—reflects the remarkable heterogeneity within the transformed compartment [23]. Each of these subtypes likely represents distinct functional states with potential implications for therapeutic response and disease progression. The transition from normal epithelium to intraepithelial neoplasia has been associated with patient survival in CRC, highlighting the clinical relevance of understanding these developmental pathways [23]. Similar approaches have been applied across cancer types, revealing conserved principles of tumor evolution while identifying context-specific developmental programs.

Stemness and Differentiation States

A particularly important application of trajectory inference in cancer scRNA-seq data is the identification and characterization of stem-like cells, which often represent therapeutic-resistant populations responsible for tumor maintenance and recurrence. In glioblastoma, single-cell transcriptomics has enabled the distinction of malignantly transformed tumor cells from untransformed cells in the tumor microenvironment while revealing novel insights into developmental programs underlying disease pathogenesis [24]. The ability to resolve these rare but critical populations provides opportunities for developing targeted therapies aimed at eliminating the root cells of tumor propagation.

Predicting and Understanding Drug Responses

Dissecting Mechanisms of Drug Sensitivity and Resistance

scRNA-seq has emerged as a powerful approach for understanding the cellular basis of drug response heterogeneity in cancer. A seminal study in melanoma used scRNA-seq to discover varying proportions of cells harboring drug-susceptible and drug-resistant phenotypes across patients [24]. The authors inferred interactions between malignant cells and T cells and identified expression patterns correlating with T cell infiltration, providing mechanistic insights into the variable clinical responses observed with targeted and immunotherapies [24]. Similarly, studies in lung adenocarcinoma have used scRNA-seq to identify subclonal heterogeneity in anti-cancer drug responses, revealing that pre-existing resistant subpopulations can expand under therapeutic pressure [25].

The ability to profile tumors at single-cell resolution before, during, and after treatment enables direct tracking of dynamic changes in cellular composition and cell states in response to therapy. This application allows researchers to determine whether specific subpopulations are ablated or altered by treatment compared to untreated specimens, providing a powerful approach for understanding mechanism of action and identifying potential resistance pathways [24]. Furthermore, scRNA-seq can reveal whether a therapeutic target is pervasively expressed or restricted to a rare subpopulation, and whether targets for combination therapy are expressed in redundant pathways or separate subpopulations [24].

Computational Tools for Drug Response Prediction

The integration of scRNA-seq data with drug response prediction has led to the development of specialized computational tools, such as scDrug, a bioinformatics workflow that provides a one-step pipeline for cell clustering identification in scRNA-seq data coupled with methods to predict drug treatments [25]. The scDrug pipeline consists of three main modules: scRNA-seq analysis for identification of tumor cell subpopulations, functional annotation of cellular subclusters, and prediction of drug responses [25]. This integrated approach facilitates drug repurposing by enabling the exploration of scRNA-seq data to identify candidate therapies that target specific cellular subpopulations within heterogeneous tumors.

Table 2: scRNA-seq Applications in Drug Discovery and Development

| Application | Methodological Approach | Key Insights | Clinical Implications |

|---|---|---|---|

| Resistance Mechanism Identification | Pre- and post-treatment scRNA-seq profiling | Reveals pre-existing resistant subclones and adaptive responses | Guides rational combination therapies to prevent resistance |

| Target Validation | Co-expression analysis across cell subpopulations | Determines target distribution across heterogeneous populations | Informs patient selection strategies for targeted therapies |

| Combination Therapy Design | Analysis of target co-expression in single cells | Identifies whether combination targets are in same or different pathways | Guides selection of synergistic drug combinations |

| Biomarker Discovery | Correlation of cell state signatures with clinical response | Identifies predictive biomarkers of treatment efficacy | Enables development of companion diagnostics |

| Drug Repurposing | scDrug and similar computational pipelines | Identifies novel indications for existing drugs based on cell state | Accelerates therapeutic development through repositioning |

Diagram 2: scDrug Computational Workflow for Drug Response Prediction. This diagram outlines the three-module bioinformatics pipeline for analyzing scRNA-seq data to predict drug sensitivity and identify repurposing opportunities, connecting cellular heterogeneity directly to therapeutic strategies.

Essential Research Reagent Solutions

The successful implementation of scRNA-seq studies requires carefully selected reagents and materials optimized for single-cell applications. The following table summarizes key solutions used in the field, drawn from methodologies described in the literature.

Table 3: Essential Research Reagents for scRNA-seq Applications

| Reagent Category | Specific Examples | Function | Technical Considerations |

|---|---|---|---|

| Cell Dissociation Kits | Tumor Dissociation Kits (commercial) | Liberate viable single cells from tissue | Must balance yield with preservation of transcriptome state |

| Cell Viability Stains | Propidium iodide, 7-AAD, DAPI | Identify live/dead cells for sorting | Critical for excluding compromised cells from analysis |

| Barcoded Beads | 10× Genomics Gel Beads, Drop-seq Beads | Capture mRNA with cell barcodes and UMIs | Determine sequencing sensitivity and cell throughput |

| Reverse Transcription Mix | Template-switch oligonucleotides, dNTPs | Convert mRNA to cDNA | Critical step determining library complexity |

| Amplification Reagents | PCR master mixes, IVT kits | Amplify cDNA for library construction | Major source of technical noise if not optimized |

| Library Preparation Kits | Nextera XT, custom tagmentation mixes | Fragment and add sequencing adapters | Impact library quality and sequencing efficiency |

| Cell Surface Antibodies | CD45, CD3, EPCAM, others | Identify cell types via protein markers | Enable CITE-seq and cell sorting applications |

| Nucleic Acid Quality Controls | Bioanalyzer RNA chips, Qubit assays | Assess RNA integrity and quantity | Essential for troubleshooting and quality assurance |

The selection of appropriate reagents is critical for generating high-quality scRNA-seq data. For example, the development of MARS-seq2.0 involved optimization of multiple reagent components including lysis buffer composition, reverse transcription primer concentration, second-strand-synthesis enzymes, and barcoded ligation adaptors, resulting in a sixfold cost reduction and significant improvement in performance [22]. Similarly, advances in barcoded bead technologies have been instrumental in enabling highly multiplexed scRNA-seq approaches, with different platforms offering trade-offs between cost, sensitivity, and throughput [24] [22].

Single-cell RNA sequencing has fundamentally transformed our approach to studying cancer biology by providing unprecedented resolution to dissect tumor heterogeneity, microenvironmental organization, and therapeutic responses. The applications discussed in this technical guide—ranging from deconstructing cellular ecosystems to predicting drug sensitivity—illustrate the power of this technology to reveal biological mechanisms that remain invisible to bulk sequencing approaches. As methodological advances continue to reduce costs and improve accessibility, scRNA-seq is poised to become an increasingly integral component of both basic cancer research and clinical translational studies, ultimately enabling more precise and effective therapeutic strategies for cancer patients.

From Sample to Insight: scRNA-seq Methodologies and Translational Applications

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to dissect cellular heterogeneity, moving beyond the limitations of bulk sequencing to reveal the complex transcriptomic landscapes of individual cells within tissues and tumors. The selection of an appropriate scRNA-seq platform is a critical first step that directly influences the scale, resolution, and biological insights of a study. This technical guide provides an in-depth comparison of two foundational approaches: high-throughput droplet-based systems, exemplified by 10x Genomics, and the more traditional, sensitive plate-based methods. We detail the underlying technologies, experimental workflows, and performance metrics to equip researchers and drug development professionals with the information necessary to align their platform choice with specific research objectives in the study of cellular diversity.

Cellular heterogeneity is a fundamental characteristic of biological systems, existing even within seemingly homogeneous populations of cells. Understanding this diversity is crucial for unraveling how tissues develop, maintain homeostasis, and respond to disease and treatment [3]. scRNA-seq enables an unbiased, genome-wide characterization of this heterogeneity by providing quantitative molecular profiles from tens of thousands of individual cells [3] [26]. Unlike bulk RNA sequencing, which averages gene expression across thousands of cells, scRNA-seq can identify distinct cell types, reveal rare cell populations, and delineate continuous transitions in cell states, such as those occurring during differentiation or in response to therapies [26]. This high-resolution view is particularly valuable in cancer research, where it can dissect the complex cellular ecosystem of a tumor, revealing malignant sub-clones and the diverse immune and stromal cells that constitute the tumor microenvironment [26]. The core technological challenge of scRNA-seq lies in efficiently isolating individual cells, capturing their often sparse mRNA transcripts, and labeling these molecules with unique identifiers so that data from thousands of cells can be pooled and sequenced simultaneously while retaining single-cell resolution.

Core Technologies and Principles

Plate-Based scRNA-seq Methods

Plate-based methods were the first developed for scRNA-seq. These protocols rely on fluorescence-activated cell sorting (FACS) to distribute individual cells into the wells of a microplate (e.g., 96, 384, or 1,536 wells) [27] [3]. Within each well, cells are lysed, and their mRNA is reverse-transcribed into cDNA.

- Early Protocols (SMART-seq & CEL-seq): Initial methods like SMART-seq provided high sensitivity and full-length transcript coverage but lacked built-in cell barcodes, requiring separate library preparation for each cell [27]. CEL-seq incorporated unique barcoded primers from the start, allowing cDNA from all wells to be pooled after reverse transcription [27].

- Combinatorial Indexing: A significant advancement in plate-based throughput, combinatorial indexing uses multiple rounds of barcoding in a split-pool manner [27] [3]. Fixed, permeabilized cells are distributed into a first plate and tagged with a well-specific barcode. The cells are then pooled and redistributed into a second plate for a second round of barcoding. The resulting combination of barcodes uniquely identifies each cell, enabling the processing of up to 1 million cells in a single experiment without the need for physical compartmentalization [27].

Droplet-Based scRNA-seq (10x Genomics)

Droplet-based methods use microfluidics to achieve high-throughput single-cell isolation. The 10x Genomics Chromium system is a leading commercial platform that creates nanoliter-scale emulsion droplets, each functioning as an independent reaction chamber [27] [28].

The process begins with an aqueous suspension of cells and gel beads. Each bead is coated with oligonucleotides containing several key elements:

- A poly(dT) sequence to capture polyadenylated mRNA.

- A cell barcode unique to each bead, ensuring all transcripts from the same cell receive the same identifier.

- A Unique Molecular Identifier (UMI) that labels each individual mRNA molecule to correct for amplification bias [27] [29].

This suspension is combined with oil in a microfluidic chip, generating thousands of Gel Bead-in-emulsions (GEMs). Ideally, each GEM contains a single cell and a single bead. Upon cell lysis within the droplet, the released mRNA hybridizes to the bead's oligonucleotides. Reverse transcription then occurs, producing barcoded cDNA. After breaking the emulsion, the cDNA is pooled, amplified, and prepared for sequencing [27] [29]. The 10x Genomics system is engineered to ensure most droplets contain exactly one bead, improving efficiency and enabling higher cell throughput [27].

Diagram 1: Droplet-Based Cell Isolation. A single cell and a barcoded gel bead are co-encapsulated in an oil emulsion droplet, where cell lysis and mRNA barcoding occur.

Comparative Performance Analysis

The choice between plate-based and droplet-based methods involves trade-offs between throughput, sensitivity, and cost. The table below summarizes the key performance characteristics of each platform.

Table 1: Performance Comparison of scRNA-seq Platforms

| Feature | Plate-Based scRNA-seq | Droplet-Based scRNA-seq (10x Genomics) |

|---|---|---|

| Throughput | Lowest (Combinatorial indexing increases scale) [27] | Highest (Tens of thousands of cells per run) [27] |

| Cost per Cell | Highest (Greater reagent consumption) [27] | Lowest (Miniaturization via microfluidics) [27] |

| Sensitivity | Highest (Ideal for detecting lowly-expressed genes) [27] | Lower than plate-based [27] |

| Workflow | Flexible but labor-intensive (manual sorting/pipetting) [27] | Highly automated (requires proprietary microfluidics equipment) [27] |

| Best For | Smaller-scale, in-depth studies; rare cell populations [27] | Large-scale studies; atlas-building; profiling heterogeneous tissues [27] |

Beyond these core metrics, each method has specific strengths. Plate-based protocols, particularly full-length ones like SMART-seq3, are superior for detecting splice variants and isoform-level heterogeneity [27]. Droplet-based systems excel in scalability and are the preferred choice for large cohort studies. A 2025 comparative study also highlighted that all major methods, including those from 10x Genomics and Parse Biosciences (a combinatorial indexing provider), are capable of generating high-quality data from sensitive clinical samples like human neutrophils, though sample collection and handling remain critical [30].

Experimental Workflow and Protocols

Detailed Protocol: 10x Genomics Chromium Universal 3'

The following workflow is specific to the 10x Genomics Chromium Single Cell 3' Gene Expression platform [29].

Gel Bead Emulsion (GEM) Generation:

- A single-cell suspension is combined with Master Mix and loaded into a Chromium chip along with gel beads and partitioning oil.

- The instrument generates up to 80,000 GEMs per channel. Each GEM contains a gel bead dissolving reagents, a single cell, and a single gel bead.

- The gel bead dissolves, releasing oligonucleotides with the following structure:

Illumina P5-Read 1-Cell Barcode (16 bp)-UMI (12 bp)-Poly(dT)30-VN[29].

Reverse Transcription and cDNA Synthesis:

- Within each GEM, the cell is lysed, and polyadenylated RNA binds to the poly(dT) sequence on the released oligonucleotides.

- Reverse transcription occurs, primed by the oligonucleotide, to produce full-length, barcoded cDNA.

- The terminal transferase activity of the reverse transcriptase adds a non-templated C to the 3' end of the cDNA strand.

Template Switching:

- A Template Switching Oligo (TSO) binds to the non-templated C, enabling the reverse transcriptase to "switch" templates and copy the TSO sequence, thus completing the cDNA strand [29].

cDNA Amplification and Library Construction:

- The emulsion is broken, and all barcoded cDNA is pooled.

- The cDNA is PCR-amplified using primers specific to the TSO and the oligonucleotide sequence.

- The amplified cDNA is fragmented and size-selected. An Illumina TruSeq adapter is ligated, and a sample index is added via a second PCR to create the final sequencing-ready library. The final library structure incorporates Illumina P5/P7 adapters, cell barcode, UMI, and the cDNA fragment [29].

Diagram 2: 10x Genomics Library Workflow. Key steps from single-cell encapsulation to sequencing library preparation.

The Scientist's Toolkit: Essential Reagent Solutions

Table 2: Key Research Reagents for 10x Genomics and Plate-Based Workflows

| Reagent / Kit | Function | Example Product |

|---|---|---|

| Chromium Chip & Reagents | Forms microfluidic droplets for single-cell isolation and barcoding. | 10x Genomics GEM-X Chip Kit [31] |

| Barcoded Gel Beads | Supplies cell barcodes and UMIs for mRNA capture. | 10x Genomics Barcoded Gel Beads [29] |

| Library Construction Kit | Converts barcoded cDNA into a sequencing-ready library. | 10x Genomics Library Construction Kit [31] |

| Dual Index Kit | Adds unique sample indices for multiplexing multiple libraries. | 10x Genomics Dual Index Kit TT Set A [31] [32] |

| Combinatorial Indexing Kit | Enables plate-based, split-pool barcoding for high cell numbers. | Parse Biosciences Evercode Kit [27] |

| Nuclei Isolation Kit | Prepares nuclei suspensions for samples difficult to dissociate into single cells. | Chromium Nuclei Isolation Kit [31] |

| Feature Barcoding Kit | Enables simultaneous profiling of cell surface proteins alongside gene expression. | Chromium Feature Barcode Kit [31] |

The decision between a droplet-based platform like 10x Genomics and a plate-based method is not a matter of one being universally superior, but rather of selecting the right tool for the biological question and experimental scale. For large-scale atlas projects, clinical trials monitoring, or any study requiring the profiling of tens of thousands of cells to comprehensively map heterogeneity, droplet-based methods offer an unparalleled combination of throughput and cost-effectiveness. Conversely, for focused investigations of specific cell populations, studies where high sensitivity for transcript detection is paramount, or when working with fixed or particularly precious samples, plate-based methods—especially modern combinatorial indexing approaches—remain a powerful and often preferable option. By understanding the technical foundations and practical trade-offs outlined in this guide, researchers can make an informed choice that optimally powers their discovery of cellular diversity.

Understanding cellular heterogeneity is a central goal in single-cell RNA sequencing (scRNA-seq) research. The resolution to distinguish rare cell types, define novel states, and accurately reconstruct biological continua depends overwhelmingly on the quality of the underlying data. This technical guide details the three foundational pillars of a robust scRNA-seq experimental design—cell viability, capture efficiency, and sequencing depth. Optimizing these parameters is not merely a technical exercise; it is a prerequisite for generating biologically meaningful insights into cellular heterogeneity, enabling discoveries in fundamental biology, disease mechanisms, and drug development.

Cell Viability: The Foundation of Quality Data

Cell viability refers to the proportion of live, intact cells in a single-cell suspension prior to library preparation. Compromised viability directly introduces noise and artifacts that can obscure true biological signals.

Impact on Data Integrity

Low-viability libraries are a primary source of misleading results in downstream analyses [33]. The consequences include:

- Formation of artifactual clusters: Debris and dying cells can form distinct clusters or create artificial intermediate states between genuine cell types, complicating interpretation [33].

- Skewed differential expression: Ambient RNA released from lysed cells can be scavenged by intact cells, leading to the false appearance of gene expression [34].

- Masking biological heterogeneity: High levels of technical variation from low-quality cells can drown out subtle but biologically important transcriptomic differences [33].

Assessment and Quality Control (QC)

Rigorous QC is essential. The standard metrics, typically assessed jointly, are summarized in Table 1 [35] [34] [36].

Table 1: Key Quality Control Metrics for scRNA-seq

| QC Metric | Description | Indication of Low Quality | Typical Threshold (Example) |

|---|---|---|---|

| Count Depth | Total number of UMIs or reads per cell | Damaged cell, insufficient cDNA capture | Low end: Significantly below population median [33] |

| Features per Cell | Number of detected genes per cell | Damaged cell, loss of transcript diversity | Low end: Significantly below population median [33] |

| Mitochondrial Read Fraction | Percentage of reads mapping to mitochondrial genes | Cell stress, apoptosis, or broken cell membrane | High end: >10-20% (varies by sample and cell type) [34] [36] |

| Hemoglobin Gene Expression | Expression of genes like HBB and HBA | Contamination from red blood cells [34] | Presence in non-erythroid cells [34] |

The following workflow outlines the process for preparing a single-cell suspension and performing quality control:

Practical Protocols for Maximizing Viability

- Temperature Control: Maintain cells at 4°C after creating a suspension to arrest metabolic activity and reduce stress-induced gene expression [37].

- Gentle Handling: Use media without calcium or magnesium to prevent cation-dependent clumping. Avoid over-pelleting cells during centrifugation, and gently filter the suspension to remove aggregates [37].

- Dissociation Optimization: Tailor dissociation protocols to specific tissues using commercially available enzyme cocktails or automated dissociators (e.g., gentleMACS Dissociator) for reproducible results [37].

- Fixation as an Alternative: For challenging logistics (e.g., clinical samples, time-course experiments), consider fixed-cell or fixed-nuclei protocols. Fixation halts transcriptomic processes, allowing samples to be stored and batched later, thereby mitigating batch effects [37].

Capture Efficiency and Technology Selection

Capture efficiency denotes the effectiveness of a scRNA-seq platform at isolating individual cells and converting their mRNA into sequencable libraries. This choice dictates the scale, cost, and applicability of your study.

Comparison of Commercial Platforms

The selection of a platform is a trade-off between throughput, cell size tolerance, and compatibility with sample type. Key commercial solutions are detailed in Table 2 [38].

Table 2: Research Reagent Solutions for Single-Cell Capture

| Commercial Solution | Capture Platform | Throughput (Cells/Run) | Max Cell Size | Fixed Cell Support | Key Considerations |

|---|---|---|---|---|---|

| 10x Genomics Chromium | Microfluidic oil partitioning | 500 - 20,000 | ~30 µm | Yes | High throughput, widely adopted [38] |

| BD Rhapsody | Microwell partitioning | 100 - 20,000 | ~30 µm | Yes | Allows for targeted mRNA enrichment [38] |

| Parse Evercode | Multiwell-plate combinatorial barcoding | 1,000 - 1M+ | No strict limit | Yes (exclusively) | Extremely high throughput, cost-effective per cell, requires high cell input [38] |

| Fluent/PIPseq (Illumina) | Vortex-based oil partitioning | 1,000 - 1M+ | No strict limit | Yes | Flexible input, no microfluidics hardware [38] |

Cells vs. Nuclei

The decision to sequence whole cells or isolated nuclei is critical and depends on the biological question and sample constraints [37] [38]:

- Whole Cells: Capture the full cytoplasmic mRNA content, providing a richer snapshot of the cell's transcriptomic state. Ideal for high-viability suspensions from tissues that are easy to dissociate.

- Single Nuclei: Recommended for tissues that are difficult to dissociate without damage (e.g., brain, fat, frozen archival tissues). While some cytoplasmic RNA is lost, nuclei contain most of the transcribed RNA and are more resilient to processing [37]. Nuclear sequencing is also the gateway to multi-ome assays, such as paired scRNA-seq and ATAC-seq [38].

The following diagram outlines the decision-making process for selecting the appropriate starting material and platform:

Sequencing Depth: Balancing Resolution and Cost

Sequencing depth refers to the number of reads allocated per cell. It is a key determinant for detecting lowly expressed genes and resolving subtle differences.

Determining Optimal Depth

The optimal depth is a function of the study's goals and the complexity of the system under investigation [39].

- Cell Type Discovery and Atlas Generation: These studies often prioritize sequencing more cells at a moderate depth (e.g., 20,000-50,000 reads/cell) to comprehensively sample the cellular heterogeneity within a tissue [36].

- Detecting Subtle Expression Differences: Studies focused on identifying weak transcriptional responses, such as those to drug treatments, or characterizing continuous processes like differentiation, often require a greater sequencing depth (e.g., 50,000-100,000 reads/cell) to achieve the necessary sensitivity for low-abundance transcripts [39].

- Rare Cell Population Detection: Identifying very rare cell types (e.g., stem cells, circulating tumor cells) is primarily a function of the total number of cells profiled. However, sufficient depth is still needed to confidently assign identity based on their transcriptome once they are captured.

The Relationship Between Parameters

The three pillars are deeply interconnected. As illustrated below, cell viability and capture efficiency set the upper limit for data quality, which sequencing depth then resolves.

- Tissue Dissociation Kits (e.g., Miltenyi Biotec): Pre-optimized enzyme cocktails for generating high-viability single-cell suspensions from various tissues [37].

- Live/Dead Stains and FACS: Fluorescence-activated cell sorting with viability dyes to remove debris and dead cells, or to enrich for specific populations [38].

- Cell Culture Media (Ca/Mg-free): Buffered solutions like HEPES or Hanks’ to prevent cell clumping during processing [37].

- Density Gradient Centrifugation Media (e.g., Ficoll, Optiprep): Effective techniques for separating viable cells from debris and myelin in samples like PBMCs or brain tissue [37].

- Fixed Sample Preservation Kits: Reagents for methanol or crosslinker-based fixation, enabling sample batching and storage [37] [38].

- Nuclei Isolation Kits: Optimized buffers for extracting nuclei from fresh or frozen tissues for single-nuclei RNA-seq [37].

- Ambient RNA Removal Tools (e.g., SoupX, CellBender): Computational tools to correct for contamination from ambient RNA in the solution, a common issue in low-viability samples [36].

A deep understanding of cellular heterogeneity through scRNA-seq is predicated on a rigorously optimized experimental design. Cell viability, capture efficiency, and sequencing depth are not isolated parameters but are deeply intertwined. High viability and appropriate technology selection create a high-fidelity cellular representation, while sufficient sequencing depth ensures the resolution to detect its nuances. By systematically addressing these essentials—employing stringent QC, making informed platform choices, and strategically allocating sequencing resources—researchers can ensure their data is a true reflection of biology, paving the way for robust discoveries in basic research and therapeutic development.