Decoding Cellular Logic: A Comprehensive Guide to Single-Cell Gene Regulatory Network Inference

This article provides a thorough exploration of gene regulatory network (GRN) inference from single-cell data, a key methodology for understanding the transcriptional programs that define cell identity and function.

Decoding Cellular Logic: A Comprehensive Guide to Single-Cell Gene Regulatory Network Inference

Abstract

This article provides a thorough exploration of gene regulatory network (GRN) inference from single-cell data, a key methodology for understanding the transcriptional programs that define cell identity and function. Aimed at researchers and bioinformaticians, we cover foundational concepts, current computational methods—including SCENIC, DAZZLE, and Meta-TGLink—and the significant challenge of data sparsity or 'dropout.' The guide details practical workflows, troubleshooting strategies for real-world data, and essential validation techniques. By synthesizing insights from foundational principles to advanced applications, this resource equips scientists with the knowledge to robustly infer GRNs and gain deeper insights into developmental biology, disease mechanisms, and potential therapeutic targets.

The Blueprint of the Cell: Understanding Gene Regulatory Networks and Single-Cell Fundamentals

What is a Gene Regulatory Network? Defining Nodes, Edges, and Regulons

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [1]. These networks play a central role in fundamental biological processes, including morphogenesis (the creation of body structures) and cellular differentiation, ensuring that genes are expressed at the proper time and in the proper amounts to ensure appropriate functional outcomes [1] [2]. GRNs represent the intricate control systems that allow genetically identical cells to adopt diverse fates and functions, forming the blueprint that guides development and physiological responses from a single set of genetic instructions [3].

In single-celled organisms, GRNs primarily respond to the external environment, optimizing the cell for survival. In multicellular organisms, these networks have been co-opted to control complex body plans through gene cascades and morphogen gradients that provide positional information to cells within the developing embryo [1]. The study of GRNs has been revolutionized by technological advances, enabling researchers to move from understanding individual gene interactions to analyzing differential gene expression at a systems level [2].

Core Components of a GRN

Nodes: The Biological Entities

In a GRN, nodes represent the key biological entities involved in regulatory processes. These typically include:

- Transcription Factor (TF) Nodes: Proteins that regulate gene expression by binding to specific DNA sequences. TFs can activate or repress their target genes.

- Target Gene Nodes: Genes whose expression is regulated by transcription factors.

- Non-coding RNA Nodes: Regulatory RNA molecules, such as microRNAs, that can influence gene expression.

- Protein/Protein Complex Nodes: Molecular complexes that can modify or interact with transcription factors.

- Cellular Process Nodes: Biological processes that emerge from the network activity.

Nodes that lie along vertical lines in network diagrams are often associated with cell/environment interfaces, while others are free-floating and can diffuse within the cellular environment [1].

Edges: The Regulatory Interactions

Edges represent the functional relationships and interactions between nodes in the network. These connections can be categorized as:

- Inductive/Activating Edges: Represented by arrowheads or '+' signs, where an increase in the regulator leads to an increase in the target node [1].

- Inhibitory/Repressive Edges: Represented by filled circles, blunt arrows, or '-' signs, where an increase in the regulator leads to a decrease in the target node [1].

- Dual Edges: Where depending on context, the regulator can either activate or inhibit the target node.

- Physical Interaction Edges: Direct molecular interactions, such as TF binding to DNA.

- Regulatory Relationship Edges: Functional relationships that may not involve direct physical contact.

The edges form the wiring diagram of the regulatory network, creating chains of dependencies that can include feedback loops—cyclic chains that are crucial for maintaining cellular states and enabling dynamic responses [1].

Regulons: The Functional Units

A regulon represents a collection of genes regulated by a common transcription factor or set of transcription factors. This concept extends beyond simple TF-target relationships to encompass the complete set of regulatory interactions controlled by a particular regulatory program. In computational biology tools like SCENIC, regulons are identified by combining transcription factor binding motifs with co-expression patterns to define stable functional units of regulation [4]. Each regulon operates as a semi-autonomous module within the broader GRN, contributing to specific aspects of cellular function or identity.

Table 1: Core Components of a Gene Regulatory Network

| Component | Biological Meaning | Representation in Models | Functional Role |

|---|---|---|---|

| Transcription Factor Node | Protein regulating gene expression | Node with high out-degree | Master regulator of gene expression |

| Target Gene Node | Gene being regulated | Node with high in-degree | Executor of cellular functions |

| Activating Edge | Transcriptional activation | Arrow or '+' sign | Turns on genetic programs |

| Inhibitory Edge | Transcriptional repression | Blunt arrow or '-' sign | Suppresses genetic programs |

| Regulon | TF + its target genes | Network module | Functional regulatory unit |

| Hub | Highly connected node | Node with many edges | Integration point for multiple signals |

Structural and Functional Properties of GRNs

Network Topology and Architecture

Gene regulatory networks exhibit distinct topological properties that reflect their evolutionary origins and functional constraints. GRNs generally approximate a hierarchical scale-free network topology, characterized by a few highly connected nodes (hubs) and many poorly connected nodes [1] [2]. This structure is thought to evolve through preferential attachment of duplicated genes to more highly connected genes, with natural selection favoring networks with sparse connectivity [1].

Key topological features include:

- Node Degree: The number of relationships in which a node engages, with two types:

- In-degree: Number of transcription factors regulating a gene

- Out-degree: Number of genes bound by a transcription factor

- Hubs: Nodes with unusually high connectivity, including:

- TF Hubs: Transcription factors that bind to many target genes

- Gene Hubs: Genes regulated by many transcription factors

- Flux Capacity: The product of a node's in-degree and out-degree, representing the number of potential information paths passing through it [2].

- Betweenness: The number of shortest paths connecting node pairs that pass through a specific node, indicating nodes that centrally connect different network modules [2].

Network Motifs

GRNs contain characteristic network motifs—repetitive topological patterns that appear more frequently than in randomly generated networks [1]. These motifs represent basic computational elements that perform specific regulatory functions:

- Feed-forward Loops: Consist of three nodes where a top-level transcription factor regulates a second TF, and both jointly regulate a target gene. This motif can create different input-output behaviors, serving as persistence detectors, accelerators, or fold-change detectors [1].

- Feedback Loops: Create self-sustaining circuits that can maintain cellular states or generate oscillatory behaviors.

- Single-input Modules: A single transcription factor controlling multiple target genes with similar functions.

The enrichment of these motifs in GRNs has been proposed to follow convergent evolution as "optimal designs" for specific regulatory purposes, though some researchers argue their abundance may be a non-adaptive side-effect of network evolution [1].

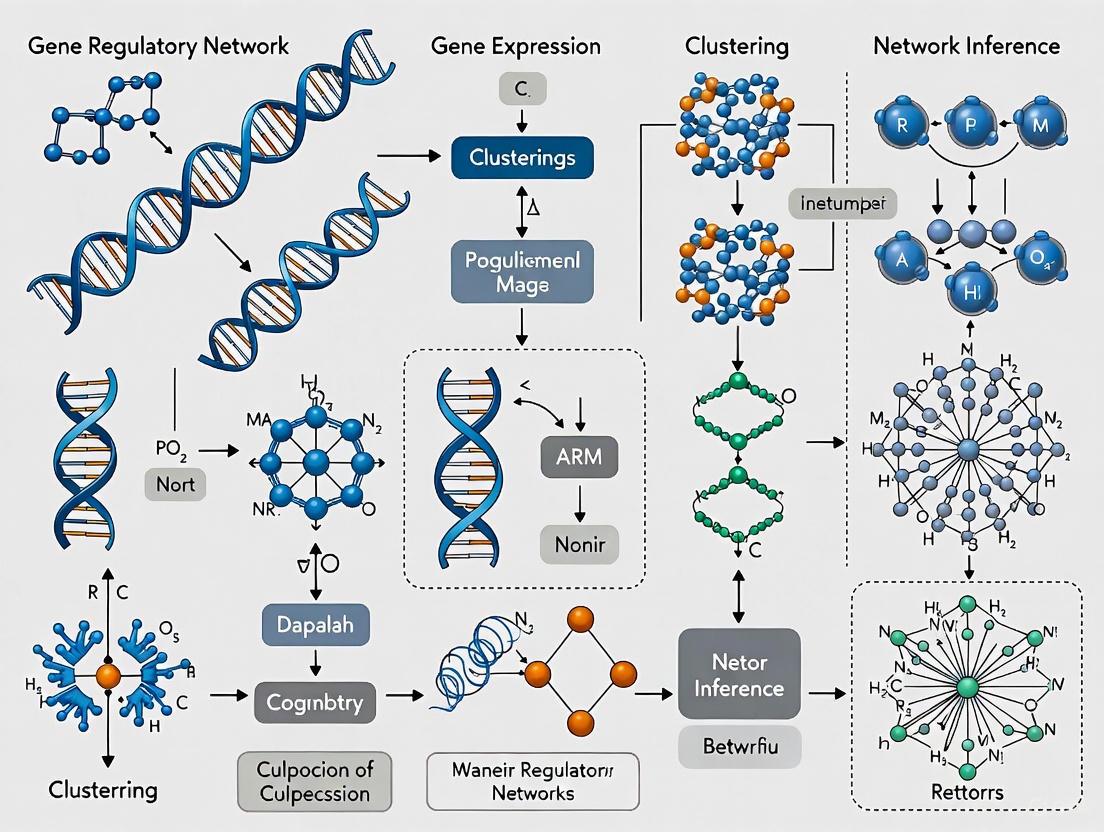

Diagram 1: GRN structural elements showing hubs, feed-forward, and feedback loops.

GRN Inference Methods and Experimental Protocols

Computational Inference from Omics Data

The field of GRN inference has evolved significantly with advances in sequencing technologies, moving from microarray data to next-generation sequencing, and from bulk to single-cell and multi-omics approaches [4]. Current methods leverage diverse computational frameworks to reconstruct networks from experimental data:

Table 2: GRN Inference Methods and Their Applications

| Method/Tool | Data Input Types | Modelling Approach | Regulatory Output | Key Applications |

|---|---|---|---|---|

| SCENIC/SCENIC+ | scRNA-seq, scATAC-seq | Linear | Signed, weighted regulons | Cell identity, differentiation trajectories |

| CellOracle | scRNA-seq, ATAC-seq | Linear | Signed, weighted | Perturbation prediction, developmental trajectories |

| scGPT | scRNA-seq | Transformer-based | Gene embeddings | Multi-task prediction, batch integration |

| ANANSE | Bulk groups, contrasts | Linear | Weighted | Differential networks between conditions |

| GRaNIE | Paired/integrated multi-omics | Linear | Weighted | eQTL-informed networks, disease contexts |

| Inferelator 3.0 | Unpaired multi-omics | Linear/non-linear | Weighted | Dynamic network inference, prokaryotes |

| Pando | Paired/integrated multi-omics | Linear/non-linear | Signed, weighted | Multi-omic network inference, TF binding prioritization |

Multi-omics GRN Inference Protocol

The most robust GRN inference leverages multi-omics data, particularly combining transcriptomic and epigenomic information. Below is a detailed protocol for GRN inference from single-cell multi-omics data:

Protocol: GRN Inference with SCENIC+ from Paired scRNA-seq and scATAC-seq Data

Sample Preparation and Sequencing:

- Cell Preparation: Isolate single-cell suspensions from tissue of interest, ensuring high viability (>80%).

- Multi-ome Library Preparation: Use 10x Genomics Multiome (ATAC + Gene Expression) kit according to manufacturer's protocol.

- Sequencing: Target >20,000 read pairs per cell for gene expression and >25,000 read pairs per cell for chromatin accessibility.

Data Preprocessing:

- Quality Control:

- Filter cells with <500 detected genes for RNA

- Filter cells with <1,000 fragments for ATAC

- Remove cells with >10% mitochondrial reads

- Normalization:

- RNA data: SCTransform normalization

- ATAC data: Term frequency-inverse document frequency (TF-IDF) normalization

- Integration: Use weighted nearest neighbors (WNN) integration to align RNA and ATAC modalities.

GRN Inference with SCENIC+:

- Region-to-Gene Linking:

- Identify candidate regulatory regions within 500kb of gene TSS

- Calculate correlation between chromatin accessibility and gene expression

- Retain links with FDR < 0.25 and correlation > 0.45

- TF-motif Analysis:

- Scan regulatory regions for known TF motifs using cisTarget databases

- Calculate motif enrichment for each region

- GRN Inference:

- Run using default parameters:

scenicplus --mode grn_inference - Use gradient boosting machines to model gene expression from TF expression and motif accessibility

- Run using default parameters:

- Regulon Processing:

- Binarize regulons using AUCell:

scenicplus --mode aucell - Calculate regulon specificity scores (RSS) for cell type identification

- Binarize regulons using AUCell:

Downstream Analysis:

- Cell State Regulation: Identify key regulons driving cell state differences using differential RSS.

- Trajectory Analysis: Infer regulatory changes along differentiation trajectories with PAGA or RNA velocity.

- Validation: Compare inferred networks with publicly available ChIP-seq data or perform CRISPR perturbations for key predictions.

Diagram 2: Multi-omics GRN inference workflow from data collection to final model.

Single-Cell Foundation Models (scFMs) for GRN Analysis

The Emergence of scFMs in GRN Research

Single-cell foundation models (scFMs) represent a transformative approach in computational biology, leveraging large-scale deep learning models pretrained on vast single-cell datasets to interpret cellular systems [5]. These models adapt transformer architectures—originally developed for natural language processing—to single-cell omics data, treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [5]. This paradigm shift enables researchers to build unified models that learn fundamental principles of cellular organization generalizable to new datasets and downstream tasks, including GRN inference.

Key scFMs relevant to GRN research include:

- scGPT: Uses a generative pretrained transformer architecture trained on over 33 million cells to learn gene and cell representations, enabling multi-task prediction including GRN inference [5].

- scBERT: Applies bidirectional encoder representations for cell type annotation and regulatory analysis [5].

- GeneFormer: A transformer model pretrained on约 30 million single-cell transcriptomes that learns network-level relationships during pretraining [6].

scRegNet: Integrating scFMs with Graph Learning

The scRegNet framework represents a state-of-the-art approach that leverages scFMs with joint graph-based learning for gene regulatory link prediction [6]. This method addresses the significant challenges posed by high sparsity, noise, and dropout events inherent in scRNA-seq data by combining large-scale pretrained models with supervised learning on known regulatory interactions.

Protocol: GRN Inference Using scRegNet

Prerequisite Data:

- Single-cell RNA-seq count matrix (cells × genes)

- Known TF-target interactions for supervision (from resources like TRRUST or RegNetwork)

- Pre-trained scFM embeddings (scGPT or GeneFormer)

Implementation Steps:

- Feature Extraction:

- Generate gene embeddings using pre-trained scFM

- Extract cell context-aware representations for each gene

- Graph Construction:

- Create bipartite graph with TF and target gene nodes

- Initialize node features using scFM embeddings

- Graph Neural Network Training:

- Train GNN with known TF-target pairs as positive examples

- Use negative sampling for non-interacting pairs

- Optimize with binary cross-entropy loss

- Link Prediction:

- Compute similarity scores between TF and target embeddings

- Rank potential regulatory connections

- Apply threshold for final GRN construction

Performance Characteristics: scRegNet achieves state-of-the-art results compared to nine baseline methods across seven scRNA-seq benchmark datasets and demonstrates superior robustness on noisy training data [6].

Table 3: Research Reagent Solutions for GRN Analysis

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| TF Binding Databases | CIS-BP, JASPAR, TRANSFAC | Motif scanning and enrichment | Identifying potential TF binding sites |

| Validation Databases | TRRUST, RegNetwork | Known TF-target interactions | Supervised learning and validation |

| Experimental Validation | CUT&Tag, ChIP-seq, Perturb-seq | Direct TF binding measurement | Experimental validation of predictions |

| Software Frameworks | SCENIC+, CellOracle, Pando | End-to-end GRN inference | Multi-omics network construction |

| Foundation Models | scGPT, GeneFormer, scBERT | Large-scale pretrained models | Context-aware gene representations |

| Visualization Tools | Cytoscape, SCope, hdWGCNA | Network visualization and exploration | Biological interpretation of GRNs |

| Benchmark Resources | DREAM challenges, BEELINE | Standardized performance evaluation | Method comparison and validation |

Applications in Drug Discovery and Development

GRN analysis provides critical insights for pharmaceutical research by elucidating the regulatory mechanisms underlying disease states and therapeutic responses. In drug discovery, understanding GRNs enables:

- Target Identification: Pinpointing master regulator transcription factors driving disease phenotypes, which represent potential therapeutic targets [2].

- Mechanism of Action Studies: Deconvoluting how drug treatments rewire regulatory networks to produce therapeutic effects.

- Biomarker Discovery: Identifying regulon activity signatures that stratify patient populations or predict treatment response.

- Toxicity Assessment: Understanding off-target effects through analysis of unintended regulatory consequences.

The integration of scFMs with GRN analysis is particularly promising for drug development, as these models can leverage large-scale public data to build context-specific networks across diverse cell types, tissues, and disease states [5]. This approach enables more accurate prediction of how regulatory programs change in response to compound treatments and how genetic variation influences network topology in individual patients.

Future Perspectives

The field of GRN research is rapidly evolving with several emerging trends:

- Multi-modal Foundation Models: Integration of transcriptomic, epigenomic, proteomic, and spatial data within unified foundation models will enable more comprehensive and accurate GRN inference [5].

- Dynamic Network Modeling: Moving from static to time-resolved networks that capture regulatory changes during processes like differentiation, disease progression, and drug response.

- Cross-species Conservation: Leveraging evolutionary conservation to identify core regulatory circuits and species-specific adaptations.

- Clinical Translation: Applying GRN analysis to patient samples for personalized medicine approaches, particularly in oncology and rare genetic diseases.

- Integration with Large Language Models: Combining biological knowledge encoded in LLMs with single-cell data for enhanced biological reasoning and hypothesis generation.

As single-cell technologies continue to advance and computational methods become more sophisticated, GRN analysis will play an increasingly central role in deciphering the regulatory code that governs cellular identity and function, ultimately accelerating therapeutic development across a wide range of human diseases.

Gene Regulatory Networks (GRNs) represent the complex web of interactions where transcription factors (TFs) regulate target genes, ultimately determining cellular identity and function [7] [8]. The reconstruction of accurate GRNs is fundamental to understanding cellular dynamics, disease mechanisms, and developing therapeutic strategies [8]. Traditionally, GRN inference relied on bulk RNA-sequencing data, which averaged gene expression across thousands to millions of cells, obscuring the cellular heterogeneity crucial for deciphering true regulatory relationships [9].

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized this field by enabling the measurement of gene expression at the resolution of the fundamental biological unit—the individual cell [9] [10]. This technological shift provides an unprecedented view into cellular heterogeneity, rare cell populations, and dynamic developmental processes, thereby transforming our approach to GRN inference [9] [11]. This application note details how scRNA-seq data is powering this revolution, framed within the advancing context of single-cell foundation models (scFMs), and provides structured protocols for researchers embarking on this cutting-edge work.

The scRNA-seq Advantage for GRN Inference

ScRNA-seq provides several distinct advantages over bulk sequencing for GRN inference, primarily by capturing the natural variation in gene expression across individual cells.

- Resolution of Cellular Heterogeneity: ScRNA-seq can identify distinct cell populations within seemingly homogeneous tissues, allowing for the construction of cell-type-specific GRNs rather than composite networks derived from averaged signals [9] [10]. This is critical as regulatory logic often differs dramatically between cell types.

- Identification of Rare Cell Populations: The technology can detect rare cell types that would be masked in bulk analyses, such as malignant tumor cells within a tumor mass or hyper-responsive immune cells, enabling the study of their unique regulatory programs [9].

- Temporal Dynamics Reconstruction: Through trajectory inference methods, scRNA-seq data can be used to order cells along a pseudo-temporal continuum, such as during differentiation or disease progression. This allows researchers to infer the dynamic rewiring of GRNs across different cellular states [12].

Table 1: How scRNA-seq Data Characteristics Impact GRN Inference

| Data Characteristic | Impact on GRN Inference |

|---|---|

| Single-Cell Resolution | Enables inference of cell-type-specific GRNs and reveals regulatory heterogeneity. |

| High Dimensionality | Captures coordinated expression of thousands of genes across thousands of cells, providing rich data for network inference. |

| Transcriptional Noise | Can be leveraged to distinguish direct regulatory relationships from indirect correlations. |

| Data Sparsity | Presents a challenge by introducing "dropout" events (false zeros), requiring specialized computational methods to address. |

Evolution of Computational Methods for GRN Inference

The unique characteristics of scRNA-seq data—high dimensionality, sparsity, and noise—have driven the development of specialized computational methods.

Traditional and Deep Learning Approaches

Early computational methods adapted from bulk sequencing, such as GENIE3 and GRNBoost2, infer regulatory relationships based on correlation or co-expression patterns [8]. While useful, these methods can struggle with the noise and sparsity inherent to scRNA-seq data. More recently, deep learning models have been applied to this challenge. For example, CNNC and DeepDRIM convert gene expression data into images and use convolutional neural networks (CNNs) to infer networks [8].

Graph-Based Deep Learning

The state-of-the-art has progressed to models that explicitly incorporate prior knowledge of GRN topology. GRLGRN is a deep learning model that uses a graph transformer network to extract implicit links from a prior GRN and combines this with scRNA-seq expression profiles to infer latent regulatory dependencies [8]. It employs attention mechanisms to improve feature extraction and has been shown to outperform previous models on benchmark datasets [8].

The Rise of Single-Cell Foundation Models (scFMs)

Inspired by breakthroughs in natural language processing, single-cell foundation models (scFMs) represent a paradigm shift [11] [13]. These models, including Geneformer, scGPT, and scBERT, are pre-trained on vast, diverse single-cell datasets comprising millions of cells [11] [13]. The core concept treats individual cells as "sentences" and genes (along with their expression values) as "words," allowing the model to learn the fundamental "language" of cellular biology [13]. These pre-trained models can then be adapted (fine-tuned) for various downstream tasks, including GRN inference, with remarkable efficiency and often in a zero-shot or few-shot learning context [11].

Table 2: Comparison of Computational Approaches for GRN Inference from scRNA-seq Data

| Method Category | Representative Tools | Key Principles | Advantages | Limitations |

|---|---|---|---|---|

| Traditional ML | GENIE3, GRNBoost2 | Infers networks based on correlation, mutual information, or regression. | Intuitive; well-established. | Can struggle with scRNA-seq sparsity and noise; may infer indirect relationships. |

| Deep Learning (CNN-based) | CNNC, DeepDRIM | Treats expression data as images for pattern recognition via CNNs. | Can capture complex, non-linear relationships. | Does not inherently incorporate prior network structure. |

| Graph-Based Deep Learning | GRLGRN, GCNG | Uses Graph Neural Networks (GNNs) to integrate expression data with prior GRN topology. | Leverages known biological information; can predict novel implicit links. | Performance depends on quality of prior network. |

| Single-Cell Foundation Models (scFMs) | Geneformer, scGPT, scBERT | Leverages large-scale pre-training on diverse cell types; uses transformer architecture with attention mechanisms. | High generalizability; efficient adaptation to new tasks; captures rich biological context. | Computationally intensive to pre-train; model interpretability can be challenging. |

Experimental and Computational Protocols

Below is a detailed protocol for inferring GRNs from scRNA-seq data, integrating both wet-lab and computational best practices.

Protocol 1: Sample Preparation and Library Generation

Goal: To generate high-quality single-cell RNA sequencing libraries.

- Sample Selection and QC: Begin with fresh or fixed cells/nuclei. For tissues unsuitable for single-cell analysis (e.g., frozen or fibrotic samples), nuclei are recommended [10]. Cell quality is critical; assess viability, membrane integrity, and RNA integrity via microscopy and staining techniques [10].

- Tissue Dissociation: Optimize dissociation protocols for the specific tissue type using resources like the Worthington Tissue Dissociation Guide or commercial instruments (e.g., Miltenyi gentleMACS) [10]. The goal is a suspension of intact, single cells while minimizing stress and RNA degradation.

- Single-Cell Partitioning and Barcoding: Use a commercial platform (e.g., 10x Genomics, Parse Biosciences) to partition individual cells into nanoliter-scale reactions [9]. These systems use combinatorial barcoding strategies where each cell's transcripts are labeled with a unique cellular barcode, and each mRNA molecule is tagged with a unique molecular identifier (UMI) to account for amplification bias [9] [12].

- Library Preparation and Sequencing: Generate sequencing libraries following the platform-specific protocol. The fixation step incorporated in some combinatorial barcoding methods is particularly apt for time-course experiments due to sample storage stability [10].

Protocol 2: Computational Data Analysis and GRN Inference

Goal: To process raw scRNA-seq data and infer a gene regulatory network.

Workflow Overview:

Diagram 1: Computational GRN Inference Workflow

Raw Data Processing and Quality Control

- Processing: Use standardized pipelines (e.g., Cell Ranger for 10x Genomics, CeleScope for Singleron) to demultiplex samples, align reads to a reference genome, and generate a cell-by-gene UMI count matrix [12].

- Quality Control: Filter out low-quality cells using metrics like total UMI count (count depth), number of detected genes, and fraction of mitochondrial counts. High mitochondrial fraction suggests dying cells, while an abnormally high gene/UMI count may indicate doublets (multiple cells with the same barcode) [12]. Tools like Seurat and Scater facilitate this step.

Basic Data Analysis and Feature Selection

- Normalization and Scaling: Normalize the count data to account for varying sequencing depth per cell and scale the data for downstream analyses.

- Feature Selection: Identify highly variable genes (HVGs) that drive biological heterogeneity, which will form the candidate gene set for GRN inference.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) to reduce noise and compress the data.

- Clustering and Annotation: Cluster cells based on their gene expression profiles using graph-based methods. Manually annotate cell types using known marker genes. This step is crucial for deciding whether to infer a global GRN or cell-type-specific GRNs.

GRN Inference using a Foundation Model

- Model Selection: Choose a pre-trained scFM such as scGPT or Geneformer based on the task and dataset size [11]. For a standard GRN inference task, a model pre-trained on a large, diverse human cell atlas would be appropriate.

- Data Tokenization: Convert the processed scRNA-seq data (cell-by-gene matrix) into a format the model understands. This typically involves ranking genes within each cell by expression level and creating a sequence of gene tokens, analogous to words in a sentence [13].

- Fine-Tuning / Zero-Shot Inference:

- Fine-Tuning: If labeled GRN data is available, fine-tune the pre-trained model on this specific task to adapt its knowledge.

- Zero-Shot Inference: Use the model's inherent knowledge directly. Extract gene embeddings from the model's input layer; functionally similar or regulatory-related genes should be in close proximity in this latent space [11]. The model's attention mechanisms can also be interpreted to infer which genes the model "focuses on" when representing a cell's state, suggesting potential regulatory relationships [13].

- Validation: Compare the inferred network against a ground-truth network from a database like STRING or a cell-type-specific ChIP-seq network to assess performance using metrics like AUROC and AUPRC [8].

Table 3: Key Resources for scRNA-seq and GRN Inference

| Category / Item | Function / Description | Example Tools / Sources |

|---|---|---|

| Commercial scRNA-seq Platforms | Provides integrated hardware and reagents for single-cell partitioning, barcoding, and library prep. | 10x Genomics Chromium, Parse Biosciences, Singleron [10] [12] |

| Data Processing Pipelines | Processes raw sequencing data into a cell-by-gene count matrix. | Cell Ranger, CeleScope, kallisto bustools [12] |

| Analysis Toolkits | Comprehensive software packages for QC, normalization, clustering, and visualization of scRNA-seq data. | Seurat (R), Scanpy (Python) [10] [12] |

| GRN Inference Software | Specialized tools and models for inferring regulatory networks from single-cell data. | GRLGRN (graph-based deep learning), Geneformer (scFM), scGPT (scFM) [11] [8] |

| Benchmark Datasets | Standardized datasets with ground-truth networks for validating and benchmarking GRN inference methods. | BEELINE database (7 cell lines, 3 ground-truth network types) [8] |

| Prior Knowledge Databases | Source databases for constructing initial GRN graphs or validating predictions. | STRING, ChIP-seq databases, Gene Ontology (GO) [8] |

The field is moving toward foundation models that serve as powerful, generalizable starting points for diverse tasks. Future developments will focus on improving their robustness, interpretability, and ability to integrate multi-omic data (e.g., scATAC-seq, spatial transcriptomics) [11] [13]. A key challenge remains the biologically meaningful interpretation of the latent representations learned by these complex models.

The integration of scRNA-seq data with advanced computational methods, particularly graph-based deep learning and single-cell foundation models, has fundamentally transformed GRN inference. This synergy allows researchers to move from static, population-averaged networks to dynamic, cell-type-specific, and context-aware regulatory maps. As these technologies and models become more accessible and refined, they will continue to drive discoveries in basic biology, disease pathogenesis, and therapeutic development, solidifying their role as indispensable tools in modern biomedical research.

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biology by allowing researchers to examine gene expression at the resolution of individual cells. This capability is crucial for understanding cellular heterogeneity, developmental biology, and disease mechanisms. However, the analysis of scRNA-seq data, particularly for the inference of Gene Regulatory Networks (GRNs), presents significant computational challenges. Two of the most pressing issues are data sparsity, caused predominantly by technical "dropout" events where true gene expression is measured as zero, and network complexity, referring to the difficulty in reconstructing accurate, large-scale networks from high-dimensional data [14]. Single-cell Foundation Models (scFMs) represent a transformative approach to these problems. These are large-scale deep learning models, typically based on transformer architectures, pre-trained on vast single-cell datasets to learn fundamental biological principles that can be adapted to various downstream tasks, including GRN inference [5]. This application note details the specific challenges posed by data sparsity and network complexity and provides structured protocols for addressing them using advanced computational methods.

Challenge 1: Data Sparsity and the Dropout Problem

Understanding Data Sparsity

A defining characteristic of scRNA-seq data is its high sparsity, manifesting as an excess of zero values in the expression matrix. Studies show that between 57% to 92% of observed counts in typical scRNA-seq datasets are zeros [14]. These zeros are a mixture of biological absence of expression and technical artifacts known as "dropouts," where transcripts expressed at low-to-moderate levels in a cell fail to be detected by the sequencing technology. This zero-inflation problem severely biases downstream analyses, including GRN inference, by obscuring true co-expression relationships and regulatory dynamics.

Quantitative Impact on Downstream Analysis

Table 1: Characteristics of Zero-Inflation in scRNA-seq Data

| Dataset Type | Range of Zero Percentages | Primary Cause of Zeros | Impact on GRN Inference |

|---|---|---|---|

| Early Droplet Protocols | 85% - 92% | Technical Dropouts | High false negative regulatory links |

| Advanced Protocols (10X) | 70% - 85% | Mixed Technical & Biological | Moderate missing edge detection |

| Integrated Atlas Data | 57% - 75% | Primarily Biological | Lower but non-negligible bias |

Protocol: Dropout Augmentation (DA) for Model Regularization

Counter-intuitively, augmenting data with additional, strategically placed zeros can enhance model robustness against dropout noise. This protocol, known as Dropout Augmentation (DA), regularizes models rather than modifying the data itself.

Application Note: DA is particularly effective for neural network-based GRN inference models, such as autoencoders, where resilience to input noise is critical.

Materials:

- Input Data: Preprocessed scRNA-seq count matrix, normalized via ( \log(x+1) ) transformation.

- Computing Environment: Python environment with PyTorch or TensorFlow, H100 or equivalent GPU recommended.

- Software: DAZZLE implementation (https://bcb.cs.tufts.edu/DAZZLE).

Procedure:

- Data Preprocessing: Transform raw counts ( x ) to ( \log(x+1) ) to reduce variance and avoid undefined operations.

- Augmentation Parameter Tuning: Set the dropout augmentation rate ( \alpha ), typically between 5-15% of non-zero values.

- Iterative Training with DA:

- For each training iteration ( t ), sample a proportion ( \alpha ) of the expression values.

- Set these sampled values to zero to create an augmented batch ( X{aug} ).

- Forward pass ( X{aug} ) through the model.

- Noise Classifier Co-training: Simultaneously train a noise classifier to predict the probability that each zero is an augmented dropout. This helps the model learn to down-weight likely dropout events during reconstruction.

- Model Convergence: Monitor reconstruction loss and GRN structure stability across epochs. Training typically requires 100-500 epochs depending on dataset size.

Challenge 2: Network Complexity and Model Architectures

The Scalability Problem in GRN Inference

GRN inference is inherently a high-dimensional problem. A network of ( N ) genes has potential ( N^2 ) regulatory interactions, creating a massive search space. For example, a focused study on 1,000 genes involves estimating up to 1,000,000 potential edges, a challenge that grows quadratically with the number of genes. scFMs, particularly those based on transformer architectures, are designed to manage this complexity by leveraging self-supervised learning on large corpora of single-cell data [5].

scFM Architectures for GRN Inference

scFMs typically use transformer architectures, which employ attention mechanisms to model complex dependencies between genes. Two predominant architectural patterns have emerged:

- Encoder-based models (scBERT-like): Use bidirectional attention, considering all genes in a cell simultaneously. This is particularly effective for classification tasks and embedding generation [5].

- Decoder-based models (scGPT-like): Use unidirectional masked self-attention, iteratively predicting masked genes conditioned on known genes. This approach excels at generative tasks [5].

Table 2: Comparison of scFM Architectural Approaches for GRN Inference

| Architecture | Attention Mechanism | Strengths for GRN | Limitations |

|---|---|---|---|

| Encoder-based (scBERT) | Bidirectional | Captures global gene context; Better for classification | Less effective for generation |

| Decoder-based (scGPT) | Unidirectional (masked) | Excels at imputation & prediction | Sequential processing limitations |

| Hybrid Designs | Both bidirectional & unidirectional | Flexibility for multiple tasks | Increased computational complexity |

Protocol: DAZZLE Model for Robust GRN Inference

DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) is a specialized model integrating DA with a variational autoencoder (VAE) framework for GRN inference, demonstrating improved stability and robustness compared to baseline methods like DeepSEM.

Materials:

- Input Data: Preprocessed and normalized scRNA-seq matrix (cells × genes).

- Software Framework: DAZZLE implementation (Python-based).

- Hardware: GPU (H100 or equivalent) for accelerated training.

- Prior Knowledge (Optional): Partially known GRN from databases for guided inference.

Procedure:

- Model Initialization:

- Parameterize the adjacency matrix ( A ) representing the GRN.

- Initialize encoder and decoder networks with dimensions matching the input data.

Staged Training Strategy:

- Phase 1 (Warm-up, 50-100 epochs): Train without sparsity constraints on ( A ) to allow initial convergence.

- Phase 2 (Full training, 100-400 epochs): Introduce sparsity loss term ( L_{sparse} ) to promote a sparse, biologically plausible network.

Dropout Augmentation Integration:

- Apply DA as described in Section 2.3 during both training phases.

- Co-train the noise classifier to identify likely dropout events.

Adjacency Matrix Extraction:

- After convergence, extract the weights of the trained adjacency matrix ( A ) as the inferred GRN.

- Apply thresholding to remove negligible edges and focus on high-confidence interactions.

Integrated Workflow and Benchmarking

Comprehensive Protocol: From Raw Data to GRN Inference

This integrated protocol combines solutions for both sparsity and complexity into a unified workflow for GRN inference using scFMs.

Materials:

- Data Sources: Public single-cell repositories (CZ CELLxGENE, Human Cell Atlas, GEO/SRA) [5].

- Preprocessing Tools: Scanpy, SCANPY, or Seurat for quality control and normalization.

- Model Implementation: scFM frameworks (scGPT, scBERT) or specialized GRN tools (DAZZLE).

- Validation Resources: Benchmark datasets (BEELINE), prior biological knowledge from regulatory databases.

Procedure:

- Data Acquisition and Curation:

- Collect single-cell datasets from public repositories, prioritizing diversity in cell types and conditions.

- Perform rigorous quality control: filter cells by mitochondrial content, number of detected genes, and count depth.

- Address batch effects using integration methods (Harmony, Scanorama) if multiple datasets are combined.

Tokenization and Input Representation:

- For transformer-based scFMs, convert gene expression profiles into token sequences.

- Adopt a deterministic gene ordering strategy (e.g., by expression level within each cell) to create input sequences.

- Incorporate special tokens for cell identity, batch information, or experimental conditions as needed [5].

Model Training and Fine-tuning:

- Option A (From Scratch): Pre-train a foundation model on large, diverse single-cell corpora.

- Option B (Transfer Learning): Fine-tune a pre-existing scFM on your specific dataset for GRN inference.

- Implement DA throughout training to enhance robustness to dropout noise.

GRN Extraction and Validation:

- Extract the regulatory network from the trained model (e.g., adjacency matrix weights in DAZZLE).

- Compare inferred networks against gold-standard benchmarks (e.g., BEELINE) using metrics like AUROC and AUPR.

- Validate key regulatory predictions using orthogonal data (e.g., ChIP-seq, perturbation studies) where available.

Performance Benchmarks and Validation

Table 3: Comparative Performance of GRN Inference Methods

| Method | Architecture | Key Innovation | Stability | BEELINE Benchmark (AUPR) |

|---|---|---|---|---|

| GENIE3/GRNBoost2 | Tree-based | Feature importance | High | 0.12 - 0.18 |

| DeepSEM | VAE | Structure equation model | Low | 0.15 - 0.22 |

| DAZZLE | VAE + DA | Dropout Augmentation | High | 0.18 - 0.25 |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for scFM-based GRN Inference

| Tool/Resource | Type | Primary Function | Application in GRN Inference |

|---|---|---|---|

| CZ CELLxGENE | Data Repository | Provides unified access to annotated single-cell data | Source of diverse training data for scFMs [5] |

| DAZZLE | Software Model | GRN inference with Dropout Augmentation | Robust network inference from zero-inflated data [14] |

| Transformer Models (scGPT, scBERT) | Foundation Model | General-purpose single-cell representation learning | Base models for transfer learning and GRN tasks [5] |

| BEELINE | Benchmark Framework | Standardized evaluation of GRN methods | Performance validation and method comparison [14] |

| Scanpy | Python Toolkit | Single-cell data preprocessing and analysis | Data quality control, normalization, and visualization |

| GPU (H100/equivalent) | Hardware | Accelerated deep learning computation | Enables training of large scFMs and complex GRN models [14] |

Gene regulatory networks (GRNs) form the fundamental control systems of biology, specifying the causal interactions between genes that drive cellular structure, function, and identity. These networks represent the functional output of complex genetic and epigenetic mechanisms that operate in a cell-type and context-specific manner to shape transcriptional programs. The emergence of single-cell genomics has revolutionized our ability to observe the molecular components of these regulatory circuits at unprecedented resolution, while the parallel development of single-cell foundation models (scFMs) represents a transformative computational approach for deciphering these complex relationships from large-scale transcriptomic data.

Single-cell RNA sequencing (scRNA-seq) provides a granular view of transcriptomics at cellular resolution, enabling researchers to observe the heterogeneous expression patterns that underlie cellular identity and function. However, this data is characterized by high sparsity, high dimensionality, and low signal-to-noise ratio, presenting significant challenges for traditional computational approaches. Single-cell foundation models have emerged as powerful tools to address these challenges, leveraging transformer-based architectures trained on millions of single-cells to learn universal biological patterns that can be adapted to various downstream tasks, including GRN inference.

These scFMs treat individual cells as sentences and genes as words, allowing them to learn the "language" of cellular regulation through self-supervised pretraining on vast datasets. By capturing intricate relationships between genes across diverse cell types and states, scFMs provide a powerful framework for uncovering the genetic and epigenetic mechanisms that shape regulatory circuits in development, homeostasis, and disease.

Computational Framework: Single-Cell Foundation Models for Regulatory Network Analysis

Architecture and Tokenization Strategies for Single-Cell Data

Single-cell foundation models employ sophisticated neural architectures, primarily based on the transformer, which utilize attention mechanisms to weight relationships between any pair of input tokens. In the context of scFMs, genes or genomic features serve as tokens, and their expression levels provide the contextual information that the model uses to learn regulatory relationships.

A critical challenge in applying transformer architectures to single-cell data is the non-sequential nature of gene expression data. Unlike words in a sentence, genes lack an inherent ordering. To address this, different scFMs have employed various tokenization strategies:

- Expression-ranked ordering: Genes are ranked within each cell by expression levels, creating a deterministic sequence based on expression magnitude.

- Value binning: Gene expression values are partitioned into bins, with rankings determined by these binned values.

- Normalized counts: Some models forgo complex ranking strategies and simply use normalized counts without specific ordering.

Each gene is typically represented as a token embedding that combines a gene identifier with its expression value in the given cell. Positional encoding schemes are then adapted to represent the relative order or rank of each gene within the cell's context. Special tokens may be added to represent cell identity, metadata, or modality information, enabling the model to learn cell-level context and incorporate multi-omics data.

Pretraining Objectives and Knowledge Acquisition

scFMs are pretrained using self-supervised objectives that enable the model to learn fundamental biological principles without explicit labeling. Common pretraining strategies include:

- Masked Gene Modeling (MGM): Random subsets of genes are masked, and the model learns to predict their expression values based on the remaining context, analogous to masked language modeling in NLP.

- Gene ID prediction: Models learn to predict the identity of genes based on their expression patterns and context.

- Binary expression classification: Some models employ binary classification to predict whether a gene is expressed or not.

Through these pretraining tasks on datasets encompassing tens of millions of cells from diverse tissues and conditions, scFMs develop rich internal representations of gene-gene relationships, regulatory patterns, and cellular states that can be transferred to specific GRN inference tasks.

Application Note 1: Interpretable Circuit Extraction from scFMs using Transcoder-Based Analysis

Experimental Protocol: Transcoder-Based Circuit Analysis

Purpose: To extract biologically interpretable decision-making circuits from single-cell foundation models, enabling the discovery of regulatory mechanisms underlying model predictions.

Background: While scFMs demonstrate state-of-the-art performance on various tasks, their decision-making processes remain less interpretable than traditional methods. Transcoder-based approaches address this limitation by extracting internal circuits that correspond to real-world biological mechanisms.

Methodology:

Model Selection and Preparation:

- Select a pretrained single-cell foundation model (e.g., cell2sentence model)

- Prepare model architecture for circuit extraction by identifying attention layers and connectivity patterns

Transcoder Training:

- Train a transcoder model on the target scFM to learn mappings between model internals and biological concepts

- Utilize attention head activation patterns to identify important regulatory relationships

- Optimize transcoder parameters to maximize interpretability while preserving predictive performance

Circuit Extraction:

- Identify consistent activation patterns across cell types and conditions

- Map attention patterns to gene regulatory relationships

- Filter spurious connections using statistical significance thresholds

Biological Validation:

- Compare extracted circuits to known biological pathways and regulatory networks

- Perform functional enrichment analysis on genes within extracted circuits

- Validate novel predictions using experimental data or literature evidence

Applications: This approach has been successfully applied to extract circuits corresponding to real-world biological mechanisms from the cell2sentence model, demonstrating the potential of transcoders to uncover biologically plausible pathways within complex single-cell models.

Research Reagent Solutions

Table 1: Essential Research Reagents for Transcoder-Based Circuit Analysis

| Reagent/Resource | Function | Specifications |

|---|---|---|

| Pretrained scFM (e.g., cell2sentence) | Provides foundation for circuit extraction | Trained on large-scale single-cell datasets (30M+ cells) |

| Single-cell dataset | Validation and testing | scRNA-seq data with appropriate cell type annotations |

| Transcoder implementation | Circuit extraction algorithm | Adapted from LLM interpretability methods |

| Biological pathway databases | Validation of extracted circuits | KEGG, Reactome, GO databases |

| High-performance computing resources | Model training and inference | GPU clusters with sufficient memory for large models |

Application Note 2: Lineage-Aware GRN Inference using Multi-Task Learning

Experimental Protocol: Single-cell Multi-Task Network Inference (scMTNI)

Purpose: To infer cell type-specific gene regulatory networks from scRNA-seq and scATAC-seq data while incorporating lineage relationships between cell types.

Background: Traditional GRN inference methods often infer a single network for an entire dataset or fail to properly model the population structure important for discerning network dynamics. scMTNI addresses these limitations by integrating cell lineage structure with multi-omics data.

Methodology:

Input Preparation:

- Obtain scRNA-seq and scATAC-seq data for the cell population of interest

- Define cell clusters with distinct transcriptional and accessibility profiles

- Construct cell lineage tree using trajectory inference methods (e.g., Monocle, PAGA) or prior knowledge

Prior Network Generation:

- Generate cell type-specific motif-based TF-target interactions from scATAC-seq data

- Filter priors using accessibility information to create cell type-specific prior networks

- Quantify confidence for each regulatory edge in the prior network

Multi-Task Learning Framework:

- Implement probabilistic lineage tree prior to model GRN evolution along lineage

- Optimize network parameters using multi-task learning objective function:

- Minimize prediction error for each cell type-specific GRN

- Incorporate lineage constraints to enforce similarity between related cell types

- Balance data fidelity with network sparsity

Network Analysis and Interpretation:

- Perform edge-based clustering to identify dynamic network modules

- Apply topic modeling to discover regulatory programs associated with lineage branches

- Identify key regulators of fate transitions by analyzing network rewiring

Validation: scMTNI has been rigorously benchmarked on simulated and experimental datasets, demonstrating superior performance compared to single-task learning approaches, with significant improvements in AUPR and F-scores across cell types.

Performance Comparison of Multi-Task vs. Single-Task Learning

Table 2: Benchmarking Results of scMTNI Against Single-Task Methods

| Method | AUPR (Dataset 1) | F-score (Dataset 1) | AUPR (Dataset 2) | F-score (Dataset 2) | Learning Type |

|---|---|---|---|---|---|

| scMTNI | 0.48 | 0.42 | 0.45 | 0.39 | Multi-task |

| MRTLE | 0.46 | 0.41 | 0.43 | 0.38 | Multi-task |

| LASSO | 0.32 | 0.28 | 0.29 | 0.25 | Single-task |

| SCENIC | 0.35 | 0.31 | 0.32 | 0.28 | Single-task |

| INDEP | 0.33 | 0.29 | 0.30 | 0.26 | Single-task |

Application Note 3: Metacell-Based GRN Inference for Lineage-Specific Analysis

Experimental Protocol: NetID for Scalable GRN Inference

Purpose: To overcome technical noise in scRNA-seq data and enable accurate inference of lineage-specific gene regulatory networks using homogeneous metacells.

Background: The sparsity of scRNA-seq data presents significant challenges for GRN inference, as traditional imputation methods can introduce spurious correlations. NetID addresses this by leveraging homogeneous metacells while preserving biological covariation.

Methodology:

Metacell Construction:

- Normalize and transform scRNA-seq data using PCA

- Select seed cells using geosketch sampling for homogeneous coverage

- Compute k-nearest neighbors for each seed cell

- Prune outlier cells using VarID2 background model (negative binomial distribution)

- Resolve shared neighbors through iterative assignment

- Aggregate expression profiles for each metacell

Lineage-Aware GRN Inference:

- Calculate cell fate probabilities using pseudotime or RNA velocity

- Order cells along lineage trajectories

- Infer directed regulator-target relationships using Granger causality tests

- Integrate Granger causal models with GENIE3-based network inference

- Generate lineage-specific GRNs for each major branch

Parameter Optimization:

- Determine optimal number of seed cells using sparsity-coverage tradeoff

- Optimize neighborhood size for metacell construction

- Validate network quality using ground truth references

Advantages: NetID demonstrates superior performance compared to imputation-based methods, with significant improvements in early precision rate (EPR) and area-under-receiver-operating-characteristic curve (AUROC) across multiple benchmarking datasets.

Research Reagent Solutions

Table 3: Essential Computational Tools for Metacell-Based GRN Inference

| Tool/Resource | Function | Key Features |

|---|---|---|

| NetID pipeline | Metacell construction and GRN inference | Geosketch sampling, VarID2 pruning |

| VarID2 | Neighborhood pruning | Local background model of gene expression |

| GENIE3 | GRN inference from metacells | Random forest-based network inference |

| Granger causality | Directed regulatory inference | Tests for predictive temporal relationships |

| dyngen | Simulation for benchmarking | In silico ground truth generation |

| STRING database | Validation resource | Known biological interactions |

Application Note 4: Causal Inference for Robust GRN Discovery

Experimental Protocol: Causal Inference Using Composition of Transactions (CICT)

Purpose: To accurately identify causal regulatory connections in GRNs by distinguishing patterns resulting from true regulatory processes from random associations.

Background: Many GRN inference methods struggle to achieve performance beyond random classifiers, particularly with realistic datasets. CICT addresses this by directly predicting causality through supervised learning on distinctive patterns produced by causal generative processes.

Methodology:

Feature Engineering:

- Calculate gene-gene association network using correlation or mutual information

- For each gene pair (j,h), compute confidence (wj→h) and contribution (wh→j) values

- Define distribution zones around each node in the relevance network

- Calculate Z-scores for values within each distribution zone (F0 features)

- Apply summarization functions to extract distribution statistics (F1 features)

- Compute network-level Z-scores to capture position within global context (F2 features)

Supervised Learning Framework:

- Prepare labeled edges indicating true regulatory relationships from prior knowledge

- Create balanced learning set with true edges and random edges (1:4 ratio)

- Split data into training (70%) and validation (30%) sets

- Train random forest classifier with 20 trees at depth 10 using 5-fold cross-validation

- Apply trained model to score all potential regulatory edges in the network

Performance Evaluation:

- Rank predicted regulatory edges by confidence scores

- Calculate early precision (EP) as fraction of true positives in top k predictions

- Compute partial area under precision-recall curve (pAUPR) for high-confidence predictions

- Compare performance to random classifiers using relative early precision ratio (rEPR)

Performance: CICT has demonstrated significant performance advantages, ranging from 10 to more than 100 times higher than several general-purpose and single-cell-specific network inference methods in rigorous benchmarking using both simulated and experimental scRNA-seq datasets.

CICT Feature Engineering Specifications

Table 4: Feature Definitions for CICT-Based GRN Inference

| Feature Type | Mathematical Definition | Biological Interpretation |

|---|---|---|

| F0 Features | Zjh^(Dj^1), Zjh^(Dj^2), Zhj^(Dh^1), Zhj^(Dh^2) | Normalized position of edge weights within local node distributions |

| F1 Features | φm(Sj^1), φm(Sj^2), φm(Sh^1), φm(Sh^2) | Summary statistics (median, mode, moments) of local distributions |

| F2 Features | Z(Zjh^(Dj^1)), Z(φm(Sj^1)), etc. | Position of local features within global network context |

| Confidence Values | wj→h = ajh / mean(a_j:) | Relevance of source gene to target normalized by average associations |

| Contribution Values | wh→j = ajh / mean(a_:h) | Relevance of target gene to source normalized by average associations |

Integrated Workflow: Combining scFMs with Specialized GRN Inference Methods

Comprehensive Protocol for Genetic and Epigenetic Regulatory Circuit Mapping

Purpose: To provide an integrated workflow that leverages the strengths of single-cell foundation models with specialized GRN inference methods for comprehensive mapping of genetic and epigenetic regulatory circuits.

Methodology:

Data Preprocessing and Integration:

- Collect scRNA-seq and scATAC-seq data from target biological system

- Perform quality control, normalization, and batch correction

- Identify cell types and states using clustering approaches

- Construct lineage relationships using trajectory inference

Foundation Model Embedding:

- Process data through pretrained scFM to obtain gene and cell embeddings

- Extract attention patterns for potential regulatory relationships

- Identify candidate regulatory interactions based on attention weights

Multi-Method GRN Inference:

- Apply transcoder-based analysis to extract interpretable circuits from scFM

- Implement scMTNI for lineage-aware network inference

- Utilize NetID for robust network inference via metacells

- Employ CICT for causal network inference

Network Integration and Validation:

- Combine networks from different methods using consensus approaches

- Validate networks using ground truth references and functional annotations

- Perform comparative analysis across methods and parameters

- Identify high-confidence regulatory circuits for experimental validation

Biological Interpretation:

- Annotate networks with epigenetic information from scATAC-seq

- Identify key regulators and network motifs

- Characterize dynamic network rewiring across lineages

- Relate regulatory circuits to functional outcomes

Implementation Considerations: This integrated approach leverages the complementary strengths of different methods—scFMs provide generalizable patterns and feature representations, while specialized GRN inference methods offer robust, interpretable, and context-specific network models.

Comparative Analysis of GRN Inference Methods

Table 5: Method Selection Guide for Different Research Contexts

| Method | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|

| Transcoder Analysis | High interpretability, reveals internal model logic | Dependent on scFM quality and architecture | Explaining scFM predictions, hypothesis generation |

| scMTNI | Incorporates lineage structure, multi-omics integration | Requires predefined lineage tree | Developmental systems, differentiation studies |

| NetID | Robust to noise, lineage-specific inference | Computationally intensive for large datasets | Noisy data, identifying branch-specific regulation |

| CICT | Causal inference, high precision | Requires labeled edges for training | Precision-critical applications, validation |

| Ensemble Approaches | Improved robustness, consensus networks | Complex implementation, computational cost | High-confidence discovery, integrative studies |

The integration of single-cell foundation models with specialized GRN inference methods represents a powerful paradigm for deciphering the genetic and epigenetic mechanisms that shape regulatory circuits. The approaches detailed in these application notes—transcoder-based circuit analysis, lineage-aware multi-task learning, metacell-based inference, and causal network discovery—provide researchers with a comprehensive toolkit for investigating regulatory networks across diverse biological contexts.

As single-cell technologies continue to evolve, generating increasingly complex and multi-modal datasets, these computational frameworks will be essential for extracting meaningful biological insights from the data deluge. The methods highlighted here not only address current challenges in GRN inference but also provide flexible foundations that can incorporate new data types and computational approaches as they emerge.

For research applications in drug development and disease mechanism elucidation, these protocols offer robust pathways for identifying key regulatory nodes and network perturbations associated with pathological states. By bridging cutting-edge computational approaches with fundamental biological questions, these methods enable deeper understanding of the dual genetic and epigenetic forces that shape the regulatory circuits governing cellular identity and function.

From Data to Networks: A Practical Guide to scGRN Inference Methods and Workflows

In the field of single-cell genomics, inferring accurate gene regulatory networks (GRNs) is fundamental for understanding cellular identity, differentiation, and disease mechanisms. GRNs model the complex interactions between transcription factors and their target genes, providing a systems-level view of transcriptional regulation. The advent of single-cell RNA sequencing (scRNA-seq) has provided unprecedented resolution for this task but also introduced significant technical challenges, most notably the "dropout" phenomenon—an excess of false zero measurements due to low mRNA capture efficiency. This article provides a detailed overview of three computational frameworks—SCENIC, IReNA, and DAZZLE—designed to address these challenges, complete with application notes, experimental protocols, and key resources for researchers and drug development professionals.

The following table summarizes the core characteristics, strengths, and limitations of the three toolkits.

Table 1: Comparative Analysis of GRN Inference Toolkits

| Framework | Core Methodology | Primary Input | Key Output | Handling of scRNA-seq Dropout | Key Advantage |

|---|---|---|---|---|---|

| SCENIC | Co-expression module identification + cis-regulatory motif analysis | scRNA-seq data | Regulons (TF + target genes) | Relies on initial co-expression inference (e.g., GENIE3/GRNBoost2) | Identifies biologically meaningful regulons via motif enrichment |

| IReNA | Integrated regulatory network analysis | scRNA-seq data, often with pseudo-temporal ordering | Gene modules and TFs driving trajectories | Not specifically addressed in core methodology | Facilitates network analysis along differentiation trajectories |

| DAZZLE | Autoencoder-based Structural Equation Model (SEM) + Dropout Augmentation (DA) | scRNA-seq data | Weighted adjacency matrix (GRN) | Explicitly models and regularizes against dropout via data augmentation | Improved robustness and stability against zero-inflation [15] |

Detailed Framework Protocols

DAZZLE: Protocol for Robust GRN Inference

DAZZLE introduces a novel approach to mitigate the impact of zero-inflation in single-cell data by using Dropout Augmentation (DA), a model regularization technique that improves resilience to dropout noise [15]. Its workflow is based on a stabilized autoencoder-based Structural Equation Model (SEM).

Experimental Workflow

The following diagram illustrates the DAZZLE pipeline for inferring gene regulatory networks from single-cell RNA-sequencing data.

Step-by-Step Protocol

- Input Data Preparation: Start with a raw single-cell gene expression count matrix (cells x genes). The prevalence of dropout means 57-92% of values can be zeros [15].

- Data Transformation: Apply a variance-stabilizing transformation. DAZZLE uses ( \log(x + 1) ) to reduce variance and avoid taking the log of zero [15].

- Dropout Augmentation (DA): During each training iteration, augment the input data by artificially setting a small, random subset of non-zero values to zero. This simulates additional dropout events, forcing the model to learn robustness against this noise [15].

- Model Training: Train the autoencoder-based SEM. The model is trained to reconstruct its input, and the parameterized adjacency matrix A is optimized as a part of this process. The DA regularization helps prevent overfitting to the dropout noise.

- GRN Extraction: Upon completion of training, the weights of the trained adjacency matrix A are retrieved. These weights represent the inferred regulatory interactions between genes, with higher absolute weights indicating stronger potential interactions [15].

Research Reagent Solutions

Table 2: Essential Computational Reagents for DAZZLE

| Item | Function/Description | Key Feature |

|---|---|---|

| Processed scRNA-seq Data | Input for GRN inference; a cells-by-genes matrix. | Must be pre-processed (e.g., normalized). Raw counts transformed using ( \log(x+1) ) [15]. |

| Dropout Augmentation (DA) Algorithm | Model regularization component that adds synthetic zeros during training. | Improves model robustness and stability against zero-inflation, moving beyond imputation [15]. |

| Parameterized Adjacency Matrix (A) | Core model parameter representing the GRN structure. | Learned during training; its weights indicate the strength and direction of gene-gene interactions [15]. |

| DAZZLE Software | The implemented model combining the autoencoder SEM and DA. | Provides a stabilized and robust version of GRN inference. Source code is publicly available [15]. |

SCENIC: Protocol for Regulon-Based Analysis

SCENIC (Single-Cell rEgulatory Network Inference and Clustering) is a widely used pipeline that infers transcription factor regulatory networks, known as regulons, and uses them to identify cell states.

Experimental Workflow

Step-by-Step Protocol

- Co-expression Network Inference: Identify potential TF-target gene relationships from the scRNA-seq data using a tree-based algorithm like GENIE3 or GRNBoost2. This step generates a list of potential targets for each TF.

- Regulon Inference (RcisTarget): Prune the co-expression modules using cis-regulatory motif analysis. This step retains only those targets for a TF where the gene set is significantly enriched for the TF's binding motif, resulting in direct regulons (TF + high-confidence targets).

- Cellular Activity Scoring (AUCell): Quantify the activity of each regulon in individual cells by calculating the Area Under the recovery Curve (AUC) for the regulon's gene set against the cell's expression ranking.

- Downstream Analysis: The resulting regulon activity matrix (cells x regulons) can be used for clustering cells, identifying key drivers of cell fate, and visualizing cellular states.

IReNA: Protocol for Integrated Network Analysis along Trajectories

IReNA (Integrated Regulatory Network Analysis) integrates pseudo-temporal ordering of single cells with network analysis to identify TFs and gene modules that drive differentiation processes.

Experimental Workflow

Step-by-Step Protocol

- Pseudo-time Construction: Order single cells along a continuous trajectory (e.g., using Monocle, PAGA, or Slingshot) based on transcriptomic similarity to reconstruct a dynamic biological process like differentiation.

- Co-expression Module Detection: Perform weighted gene co-expression network analysis (WGCNA) or similar on the pseudo-temporally ordered cells to identify modules of genes with correlated expression patterns across the trajectory.

- Network Integration: Link co-expression modules to candidate TFs by integrating TF-binding motifs (e.g., from JASPAR) and/or TF expression patterns. This builds a coordinated regulatory network.

- Validation and Key Driver Analysis: Validate the inferred networks using functional enrichment analysis and identify key regulatory TFs that sit at the top of the network hierarchy and drive module expression.

Performance Benchmarking and Data Presentation

Benchmarking on the BEELINE framework demonstrates the performance characteristics of different GRN inference methods. DAZZLE, in particular, was developed to address stability issues observed in other neural network-based methods like DeepSEM, whose inferred network quality can degrade quickly after model convergence due to overfitting to dropout noise [15].

Table 3: Quantitative Benchmarking of GRN Inference Methods (Illustrative Data)

| Method | AUC (Early Development) | AUC (Differentiation) | Stability (Score) | Run Time (CPU Hours) | Key Metric |

|---|---|---|---|---|---|

| GENIE3 | 0.75 | 0.68 | High | 12.5 | Area Under the Precision-Recall Curve (AUPR) |

| GRNBoost2 | 0.76 | 0.69 | High | 5.2 | Area Under the Precision-Recall Curve (AUPR) |

| DeepSEM | 0.82 | 0.75 | Low | 1.1 | Area Under the Precision-Recall Curve (AUPR) |

| DAZZLE | 0.84 | 0.78 | High | 1.3 | Area Under the Precision-Recall Curve (AUPR) |

| Benchmark Dataset | mESC (GSE75748) | mDC (GSE48968) | Variation across runs | Standard hardware | Performance Measure |

Application Notes for Drug Development

- Identifying Novel Therapeutic Targets: SCENIC's regulon analysis can pinpoint master regulator TFs specific to diseased cell states (e.g., cancer stem cells). These TFs, often considered "undruggable", can be targeted by exploring their downstream regulon members for druggable opportunities.

- Mechanism of Action (MoA) Elucidation: Applying DAZZLE to single-cell data from drug-treated versus control samples can reveal shifts in GRN architecture. This systems-level view helps deconvolute a drug's MoA by identifying the key regulatory pathways it disrupts, beyond just differential gene expression.

- Biomarker Discovery for Patient Stratification: IReNA can identify TFs and regulatory programs that define distinct disease endotypes along a progression trajectory. The activity of these regulons, derived from patient biopsies, can serve as biomarkers for stratifying patients for targeted therapies.

Gene regulatory network (GRN) inference is fundamental for understanding cellular identity, function, and the molecular basis of disease. A regulon—a set of genes controlled by a common transcription factor (TF)—represents a key functional module within GRNs. The advent of single-cell RNA sequencing (scRNA-seq) has enabled the resolution of GRNs at the cellular level, while the emergence of single-cell foundation models (scFMs) represents a paradigm shift, leveraging large-scale pretraining to learn generalizable representations of cellular biology [5] [16]. This protocol details a comprehensive pipeline, framed within scFMs research, for inferring regulons from single-cell genomic data. The framework integrates universal preprocessing, the power of scFMs for feature extraction and analysis, and specialized GRN inference tools to identify context-specific regulons, providing researchers and drug development professionals with a robust methodological foundation.

Background and Definitions

- Gene Regulatory Network (GRN): A graph-level representation where nodes denote genes and edges represent regulatory interactions between transcription factors and their target genes. GRNs are central to understanding cellular dynamics and metabolic systems [8].

- Regulon: A functional subunit of a GRN, consisting of a transcription factor and its direct target genes.

- Single-cell Foundation Models (scFMs): Large-scale deep learning models (e.g., scGPT, Geneformer) pretrained on vast, diverse single-cell datasets using self-supervised objectives. They can be adapted (e.g., via fine-tuning) for various downstream tasks, including GRN inference and regulon identification, by learning fundamental principles of gene expression and regulation [5] [16].

- Universal Preprocessing: A standardized workflow for handling single-cell genomics data from different technologies (e.g., scRNA-seq, scATAC-seq) to mitigate batch effects and ensure consistency for meta-analyses [17].

Preliminary: Data Acquisition and Preprocessing

The first step involves gathering a high-quality single-cell dataset. Useful resources include:

- Public Repositories: NCBI GEO, EMBL-EBI Expression Atlas, and single-cell-specific platforms like CZ CELLxGENE, which provides standardized access to millions of annotated single cells [5].

- Curated Compendia: PanglaoDB and the Human Cell Atlas, which collate data from multiple sources [5].

Raw sequencing data (FASTQ files) must undergo quality control. Tools like FastQC can assess sequence quality. For scRNA-seq data, key quality metrics include:

- The number of genes detected per cell.

- The total count depth per cell.

- The percentage of mitochondrial reads, which helps identify low-quality or dying cells.

Universal Preprocessing and Count Matrix Generation

This critical step converts raw sequencing reads into a gene expression count matrix, which is the standard input for scFMs and downstream analysis. The universal preprocessing approach ensures consistency across different assay types [17].

Experimental Protocol: Universal Preprocessing with cellatlas and kb-python

This protocol is based on the cellatlas package, which uses kallisto and bustools via kb-python for rapid, uniform processing [17].

Input Requirements:

- Paired-end FASTQ files (

R1.fastq.gz,R2.fastq.gz). - A genome reference file in FASTA format (

genome.fa). - Gene annotation file in GTF format (

genome.gtf). - A

seqspecassay specification file (spec.yaml), which machine-readably describes the structure of the sequencing reads (e.g., positions of cellular barcodes, UMIs, and cDNA) [17]. - A barcode allow-list file (

bcs.txt).

- Paired-end FASTQ files (

Execution: Run the following single command in a terminal. The

-mparameter specifies the molecular modality (e.g.,rnafor RNA-seq).This command automates:

- Read Cataloging: Mapping reads to genomic features.

- Barcode Error Correction: Using a consistent strategy (e.g., Hamming-1 distance) to correct sequencing errors in barcodes.

- Read/UMI Counting: Generating a sparse count matrix of genes (or features) by cells [17].

Output: A gene-cell count matrix, essential for all subsequent steps.

Data Wrangling and Filtering

The raw count matrix requires further processing before model input. Using tools like R/Bioconductor or Python-based frameworks:

- Filtering: Remove low-quality cells (e.g., with an abnormally low number of genes or high mitochondrial content) and genes that are detected in only a few cells.

- Normalization: Adjust counts for sequencing depth variation between cells (e.g., using log-normalization).

- Variable Gene Selection: Identify a subset of genes that exhibit high cell-to-cell variation, which often are biologically informative.

Table 1: Key Research Reagents and Computational Tools

| Item Name | Function/Biological Role | Example/Format |

|---|---|---|

| CZ CELLxGENE [5] | Data source; provides unified access to curated, annotated single-cell datasets. | Online platform/database |

cellatlas [17] |

Universal preprocessing; generates a count matrix from raw FASTQ files for various assays. | Python package/command-line tool |

kb-python [17] |

Core preprocessing engine; performs read cataloging, barcode error correction, and counting. | Python package |

seqspec File [17] |

Assay specification; machine-readable description of read structure for universal preprocessing. | YAML file |

| Barcode Allow-list [17] [18] | Demultiplexing; list of known, valid barcode sequences for assigning reads to cells. | .tabular or .txt file |