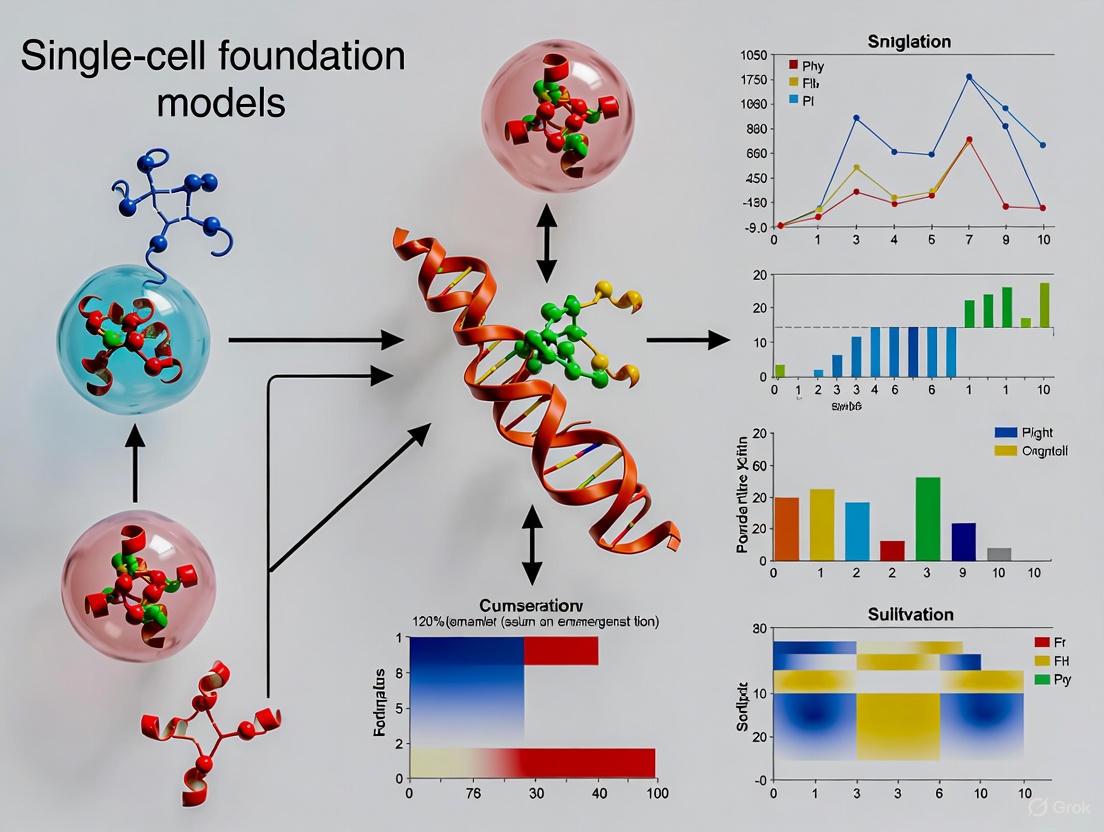

Emergent Abilities in Single-Cell Foundation Models: A Comprehensive Guide for Biomedical Researchers

Single-cell foundation models (scFMs) represent a paradigm shift in computational biology, leveraging large-scale pretraining on millions of cells to develop emergent capabilities for downstream biological tasks.

Emergent Abilities in Single-Cell Foundation Models: A Comprehensive Guide for Biomedical Researchers

Abstract

Single-cell foundation models (scFMs) represent a paradigm shift in computational biology, leveraging large-scale pretraining on millions of cells to develop emergent capabilities for downstream biological tasks. This article explores the transformative potential of scFMs in enabling zero-shot cell type annotation, cross-species data integration, in silico perturbation modeling, and gene regulatory network inference. We examine the underlying architectural innovations, including transformer-based models like scGPT and Geneformer, and provide a critical assessment of their performance against traditional methods. For researchers and drug development professionals, this review offers a balanced perspective on both the promising applications and current limitations of scFMs, including challenges in biological interpretability, computational demands, and benchmarking standards. Finally, we discuss future directions for translating these computational advances into mechanistic insights and clinical applications.

Demystifying Single-Cell Foundation Models: From Basic Concepts to Architectural Breakthroughs

What Defines a Single-Cell Foundation Model? Core Principles and Analogies to Large Language Models

Single-cell foundation models (scFMs) represent a paradigm shift in computational biology, leveraging large-scale deep learning to interpret the complex language of cellular biology. Much like large language models (LLMs) have revolutionized natural language processing, scFMs are pretrained on vast, diverse single-cell omics datasets to learn fundamental biological principles. These models employ self-supervised learning on millions of single-cell transcriptomes, treating cells as sentences and genes as words to capture universal patterns of gene regulation and cellular function [1]. The core architecture typically relies on transformer-based networks that enable the model to handle various downstream tasks through fine-tuning or zero-shot learning, demonstrating emergent abilities such as predicting cellular responses to perturbations and annotating novel cell types [2] [3]. This technical guide explores the defining principles of scFMs, their architectural foundations, and the striking analogies to LLMs that underpin their transformative potential in biological research and therapeutic development.

The advent of high-throughput single-cell sequencing has generated massive volumes of transcriptomic data, creating both an unprecedented opportunity and substantial computational challenge for extracting biological insights. Single-cell RNA sequencing (scRNA-seq) data exhibits characteristic high dimensionality, sparsity, and technical noise that complicate analysis using traditional machine learning approaches [4]. Concurrently, the transformer architecture has revolutionized artificial intelligence, enabling the development of foundation models—large-scale models pretrained on extensive datasets that can be adapted to diverse downstream tasks [1].

The conceptual bridge between natural language and biology has enabled this transformation: just as LLMs learn the statistical relationships between words in vast text corpora, scFMs learn the regulatory relationships between genes across millions of cells [3]. These models develop a fundamental understanding of cellular grammar—the rules governing how gene expression patterns define cell identity, state, and function [1]. The emergence of scFMs represents a pivotal advancement in computational biology, offering a unified framework for analyzing cellular heterogeneity and complex regulatory networks that underpin both normal physiology and disease processes [4].

Core Architectural Principles of Single-Cell Foundation Models

Fundamental Components and LLM Analogies

Single-cell foundation models build upon a conceptual framework that directly parallels the architecture of large language models. The table below systematizes the core components and their biological analogues.

Table 1: Core Components of Single-Cell Foundation Models and Their LLM Analogies

| Component | LLM Equivalent | Description in scFMs | Key Function |

|---|---|---|---|

| Token | Word | Gene or genomic feature | Fundamental unit of input data; represents individual biological components |

| Tokenization | Word segmentation | Converting gene expression values into discrete units | Standardizes raw expression data into model-processable tokens [1] |

| Sentence | Sequence of words | Single cell's complete gene expression profile | Represents a complete cellular state as an ordered collection of genes [5] |

| Embedding | Word vector | Numerical representation of genes/cells | Captures semantic biological relationships in continuous vector space [2] |

| Training Corpus | Text collection (e.g., Wikipedia) | Aggregated single-cell datasets (e.g., CZ CELLxGENE) | Provides diverse examples of cellular states for self-supervised learning [1] |

| Attention Mechanism | Context weighting | Gene-gene and cell-cell dependency modeling | Identifies influential genes and regulatory relationships within cellular contexts [1] |

Model Architectures and Tokenization Strategies

Most scFMs utilize transformer architectures, though with significant adaptations for biological data. A primary challenge is that gene expression data lacks inherent sequence—unlike words in a sentence, genes have no natural ordering [1]. To address this, models employ various tokenization strategies:

- Expression-based ordering: Genes are ranked by expression levels within each cell, creating a deterministic sequence from highest to lowest expressed genes [1] [3].

- Bin-based partitioning: Genes are partitioned into expression level bins, with rankings determining positional encoding [1].

- Graph-based approaches: Emerging models like CGCompass represent cells as graphs, with genes as nodes and regulatory relationships as edges, avoiding artificial sequencing entirely [2].

The transformer architecture in scFMs typically follows either encoder-based (BERT-like) or decoder-based (GPT-like) designs. Encoder models use bidirectional attention to learn from all genes in a cell simultaneously, making them effective for classification tasks like cell type annotation. Decoder models employ masked self-attention to iteratively predict masked genes conditioned on known genes, excelling at generative tasks [1]. Hybrid architectures that combine graph neural networks with transformers are also emerging, leveraging message-passing mechanisms to incorporate prior biological knowledge [2].

Pretraining Strategies and Data Requirements

Pretraining represents the foundational phase where scFMs learn universal biological principles from massive-scale data. The self-supervised pretraining objective typically involves masked gene prediction, where a portion of gene expression values are randomly masked, and the model learns to reconstruct them based on the remaining cellular context [1] [2]. This process forces the model to internalize gene-gene relationships, regulatory patterns, and cellular states without requiring labeled data.

The scale and diversity of pretraining data critically determines model capabilities. Successful scFMs train on tens of millions of human cells spanning diverse tissues, conditions, and experimental platforms [1] [2]. Major data sources include:

- CZ CELLxGENE: Provides unified access to annotated single-cell datasets with over 100 million unique cells standardized for analysis [1]

- Human Cell Atlas: Offers broad coverage of cell types and states across multiple organs [1]

- Public repositories: GEO, SRA, and EMBL-EBI Expression Atlas host thousands of single-cell sequencing studies [1]

Data quality challenges include batch effects, technical noise, and varying processing steps across studies. Effective pretraining requires careful dataset selection, cell and gene filtering, and quality control to ensure the model learns biological rather than technical variations [1].

Experimental Framework and Evaluation

Benchmarking Methodologies for scFM Performance

Rigorous evaluation frameworks have been developed to assess scFM capabilities across diverse biological tasks. The table below summarizes key performance metrics and evaluation paradigms used in comprehensive benchmarking studies.

Table 2: scFM Evaluation Metrics and Benchmarking Frameworks

| Evaluation Dimension | Specific Metrics | Description | Leading Performers |

|---|---|---|---|

| Cell-level Tasks | Cell type annotation accuracy, Batch correction (ASW, ARI), Label transfer F1 score | Evaluates model's ability to correctly identify and group cells by type and integrate datasets | scGPT, Geneformer, CGCompass [4] [6] |

| Gene-level Tasks | Gene function prediction, Gene-gene interaction recovery, Gene embedding quality | Assesses whether embeddings capture functional biological relationships between genes | scFoundation, Geneformer, CGCompass [4] [6] |

| Perturbation Prediction | Expression change correlation, Top-k candidate accuracy | Measures ability to predict cellular responses to genetic or chemical perturbations | scGPT, Geneformer [4] |

| Biological Relevance | scGraph-OntoRWR, LCAD metrics | Novel metrics evaluating consistency with prior biological knowledge from cell ontologies | CGCompass, scGPT [4] |

| Zero-shot Performance | Task adaptation without fine-tuning | Tests emergent capabilities on novel tasks without additional training | scGPT, Geneformer [4] |

Key Experimental Protocols

To ensure reproducible evaluation of scFMs, researchers have standardized several experimental protocols:

Protocol 1: Zero-shot Cell Type Annotation

- Input Preparation: Extract cell embeddings from pretrained scFM without fine-tuning

- Reference Mapping: Project query cells into reference embedding space using canonical correlation analysis

- Label Transfer: Apply k-nearest neighbors classification against annotated reference cells

- Evaluation: Calculate accuracy against held-out annotations and ontological consistency using Lowest Common Ancestor Distance (LCAD) metric [4]

Protocol 2: In-silico Perturbation Prediction

- Baseline Representation: Encode wild-type cell state using scFM to establish baseline embeddings

- Perturbation Simulation: Mask or modify target gene tokens to simulate knockout or overexpression

- Forward Pass: Generate predicted post-perturbation expression profile through model inference

- Validation: Compare predicted expression changes to experimental ground truth using Pearson correlation and mean squared error [1] [2]

Protocol 3: Batch Integration Assessment

- Dataset Selection: Curate multi-batch datasets with known biological ground truth

- Embedding Generation: Process each batch through scFM to obtain integrated embeddings

- Metric Calculation: Compute batch mixing (ASWbatch) and biological conservation (ASWbio) scores

- Visualization: Project embeddings using UMAP for qualitative assessment of batch mixing and structure preservation [4]

Research Reagent Solutions

The experimental ecosystem for developing and evaluating scFMs relies on several key computational frameworks and datasets:

Table 3: Essential Research Reagents for scFM Development

| Resource Type | Specific Tools | Function | Access |

|---|---|---|---|

| Model Frameworks | BioLLM, scGPT, scvi-tools | Standardized APIs for model training, fine-tuning, and evaluation | Open-source (GitHub) [6] |

| Pretraining Data | CZ CELLxGENE, PanglaoDB, Human Cell Atlas | Curated single-cell datasets for large-scale pretraining | Public repositories [1] |

| Benchmarking Suites | scBench, scGraph-OntoRWR | Comprehensive evaluation metrics and datasets | Open-source (GitHub) [4] |

| Visualization Tools | UCSC Cell Browser, SCope | Web-based platforms for exploring model outputs and embeddings | Web applications [1] |

| Specialized Architectures | CGCompass, GeneCompass | Domain-adapted model architectures for specific biological questions | Open-source (GitHub) [2] |

Emergent Abilities and Biological Applications

Single-cell foundation models exhibit remarkable emergent capabilities that mirror phenomena observed in large language models, including in-context learning, zero-shot reasoning, and compositional generalization.

Zero-shot Learning and Few-shot Adaptation

Pretrained scFMs demonstrate surprising proficiency on novel tasks without task-specific fine-tuning. For example, models like scGPT can perform accurate cell type annotation on previously unseen tissues using only a few labeled examples as references, effectively performing few-shot learning [4]. This emergent capability suggests that scFMs develop a fundamental understanding of cellular identity that transcends their training distribution. The biological knowledge embedded during pretraining enables models to make meaningful predictions about entirely new cell types and states through analogical reasoning and pattern completion mechanisms similar to those observed in LLMs [3].

In-silico Perturbation Prediction

One of the most powerful emergent capabilities of scFMs is predicting cellular responses to genetic and chemical perturbations. By modifying input tokens corresponding to specific genes or treatment conditions, models can simulate expression changes across the entire transcriptome [1] [3]. This capability enables in-silico screening of therapeutic interventions and genetic modifications, dramatically accelerating hypothesis generation and experimental design. For instance, scGPT has been used to identify candidate genes for immune cell engineering by predicting how transcription factor perturbations would alter T-cell states [5].

Cross-modal and Cross-species Generalization

Advanced scFMs exhibit the ability to integrate and reason across multiple data modalities, including transcriptomics, epigenomics, and proteomics [1] [7]. Models like GET (General Expression Transformer) demonstrate remarkable generalizability, accurately predicting gene expression in completely unseen cell types by leveraging chromatin accessibility data and sequence information [7]. This cross-modal transfer capability mirrors the cross-lingual understanding observed in multilingual LLMs and enables scFMs to fill data gaps by leveraging information from complementary assays.

Future Directions and Challenges

Despite rapid progress, several significant challenges remain in the development and application of single-cell foundation models. Technical limitations include computational intensity during training and inference, which currently restricts accessibility for many research groups [5]. Biological interpretation of model representations and attention patterns remains challenging, requiring specialized techniques to extract meaningful mechanistic insights [1]. Data quality and consistency issues across studies introduce potential confounding factors that models may inadvertently learn [4].

Promising research directions include:

- Multimodal integration: Developing unified architectures that simultaneously process transcriptomic, epigenomic, proteomic, and spatial data [1] [7]

- Interpretability advances: Creating specialized visualization and analysis tools to decipher the biological knowledge encoded in model parameters [4]

- Resource-efficient training: Exploring parameter-efficient fine-tuning methods and distilled model architectures to improve accessibility [6]

- Causal reasoning: Incorporating causal inference frameworks to distinguish correlation from causation in gene regulatory networks [2]

As these challenges are addressed, scFMs are poised to become indispensable tools for unraveling cellular complexity, accelerating therapeutic development, and building comprehensive virtual models of cellular behavior.

The advent of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by enabling the investigation of gene expression at the ultimate level of resolution: the individual cell. This technology has become a staple tool for unraveling cellular heterogeneity, developmental trajectories, and disease mechanisms in fields ranging from oncology to immunology [5]. However, the very power of single-cell technologies generates their greatest challenge: they produce massive, high-dimensional, and notoriously noisy datasets characterized by high sparsity, technical artifacts, and complex batch effects [8]. Traditional computational approaches, often designed for lower-dimensional or single-modality data, struggle to effectively harness biological signals from this data deluge, creating a critical analytical bottleneck.

Inspired by breakthroughs in natural language processing (NLP), single-cell foundation models (scFMs) have emerged as a transformative paradigm to overcome these limitations [1] [9]. These are large-scale deep learning models pretrained on vast, diverse collections of single-cell data using self-supervised objectives. The foundational premise is that by exposing a model to millions of cells across varied tissues, species, and conditions, it can learn the fundamental "language" of cellular biology [1]. This pretraining endows scFMs with the remarkable capacity to be adapted (via fine-tuning) to a wide array of downstream tasks—from cell type annotation to perturbation prediction—without requiring task-specific training from scratch. This "pre-train then fine-tune" paradigm represents a seismic shift in computational biology, moving away from specialized, single-task models toward unified frameworks capable of integrative and comprehensive biological analysis [1] [10].

Architectural Foundations: How scFMs Learn Cellular Language

Core Conceptual Framework: From Words to Genes

scFMs draw a powerful analogy between natural language and cellular biology. In this framework, individual cells are treated as "sentences," while genes or other genomic features, along with their expression values, are treated as "words" or "tokens" [1] [5]. The model's objective is to learn the contextual relationships between these genes—which combinations and expression levels define specific cell states—much as a language model learns grammatical structure and semantic meaning from word sequences.

The Tokenization Process: Structuring Unstructured Data

A critical technical challenge is that gene expression data lacks the inherent sequence of natural language. Unlike words in a sentence, genes in a cell have no natural ordering. scFMs overcome this through various tokenization strategies that impose a meaningful structure on the input data [1]:

- Rank-based tokenization: Genes are ordered by their expression levels within each cell, creating a deterministic sequence from highest to lowest expressed gene [1] [8].

- Binning approaches: Expression values are discretized into bins, with each bin representing a different expression level [1].

- Gene identity embedding: Each gene is assigned a unique identifier embedding, allowing the model to learn gene-specific properties across different cellular contexts [8].

This tokenization process typically combines information about gene identity with its expression value, often supplemented with special tokens for cell identity, omics modality, or batch information [1].

Model Architecture: The Transformer Backbone

Most advanced scFMs are built on the transformer architecture, which uses attention mechanisms to weight the importance of relationships between any pair of input tokens [1] [9]. This allows the model to learn complex, long-range dependencies between genes—effectively discerning which gene combinations are most informative for defining cellular identity and state. Two predominant architectural variants have emerged:

- Encoder-based models (e.g., scBERT): Use bidirectional attention, considering all genes simultaneously to build contextual representations [1] [6].

- Decoder-based models (e.g., scGPT): Employ masked self-attention, iteratively predicting masked genes based on known context [1] [6].

The following diagram illustrates a generalized workflow for how raw single-cell data is processed through an scFM to generate latent biological insights:

Pretraining Strategies: Self-Supervised Learning at Scale

scFMs are pretrained using self-supervised objectives on massive, unlabeled datasets, typically comprising tens of millions of cells from public repositories like CZ CELLxGENE, which provides access to over 100 million standardized single-cell datasets [1] [9]. The most common pretraining objective is Masked Gene Modeling (MGM), where random portions of a cell's gene expression profile are masked, and the model is trained to predict the missing values based on the remaining context [1]. Through this process, the model internalizes fundamental principles of gene co-expression, regulatory networks, and cellular function without requiring manually annotated labels.

Emergent Abilities: The Transformative Capabilities of scFMs

The large-scale pretraining of scFMs on diverse cellular data enables them to exhibit what are termed emergent abilities—capabilities not explicitly programmed but arising from the model's scale and comprehensive training. These abilities represent a qualitative leap beyond traditional analytical methods.

Zero-Shot and Few-Shot Learning

Perhaps the most significant emergent ability is performing tasks with little to no task-specific training. For example, scGPT has demonstrated exceptional zero-shot cell type annotation capabilities, accurately classifying cell types without previous exposure to labeled examples from the target dataset [9] [6]. This is particularly valuable for rare cell types or novel biological contexts where training data is scarce. Benchmark studies have shown that scFMs pretrained on massive datasets capture universal biological patterns that transfer effectively to new datasets and species, with models like scPlantFormer achieving 92% cross-species annotation accuracy in plant systems [9] [11].

Multimodal Integration and Cross-Modal Reasoning

Advanced scFMs can integrate and reason across different data modalities—such as transcriptomics, epigenomics, proteomics, and spatial data—within a unified representation space [9] [10]. For instance, Nicheformer, trained on over 110 million cells, integrates single-cell analysis with spatial transcriptomics, allowing researchers to infer spatial context for cells that were previously studied in isolation [12] [10]. This capability enables the reconstruction of how cells are organized and interact in tissues, providing crucial insights for understanding tumor microenvironments and tissue development.

In Silico Perturbation Modeling

scFMs can predict cellular responses to genetic or chemical perturbations, essentially serving as a "virtual laboratory" for testing hypotheses computationally. By manipulating input representations of genes or pathways, researchers can simulate the effects of perturbations—such as gene knockouts or drug treatments—and observe predicted changes in cellular state [9] [5]. This emergent capability has profound implications for drug discovery, allowing for rapid in silico screening of candidate therapeutics and identification of potential side effects before conducting wet-lab experiments.

The following diagram illustrates how these emergent abilities create a powerful feedback loop for biological discovery:

Quantitative Performance: Benchmarking scFMs Against Traditional Methods

Rigorous benchmarking studies provide critical insights into the real-world performance of scFMs compared to traditional methods. A comprehensive 2025 benchmark evaluating six leading scFMs against established baselines across multiple tasks reveals both the promise and limitations of current approaches [8].

Table 1: Performance Comparison of scFMs vs. Traditional Methods on Cell-Level Tasks

| Task Category | Best Performing scFM | Traditional Baseline | Performance Gap | Key Findings |

|---|---|---|---|---|

| Batch Integration | scGPT (fine-tuned) | Harmony / Seurat | scFMs show superior biology preservation | Specialized frameworks (scVI, CLAIRE) also excel |

| Cell Type Annotation | scPlantFormer | HVG Selection | 92% cross-species accuracy for scPlantFormer | Generic SSL methods (VICReg, SimCLR) competitive |

| Cancer Cell Identification | Multiple (task-dependent) | Standard ML | Robust across 7 cancer types | No single scFM dominates all cancer types |

| Drug Sensitivity Prediction | Multiple (task-dependent) | Standard ML | Effective across 4 drugs | Dataset size critically impacts performance |

Table 2: scFM Performance on Gene-Level and Spatial Tasks

| Task Category | Leading Model | Pretraining Data | Key Capability | Performance Notes |

|---|---|---|---|---|

| Gene Regulatory Network Inference | Geneformer | 30M cells | Network topology predictions | Benefits from targeted pretraining strategy |

| Spatial Context Prediction | Nicheformer | 110M cells (53M spatial) | Transfers spatial context to dissociated cells | Outperforms existing spatial approaches |

| Cross-Modal Prediction | scGPT | 33M cells | Integrates transcriptomics, epigenomics, proteomics | Superior multi-omic integration |

| Zero-Shot Annotation | scPlantFormer | 1M plant cells | Cross-species transfer | Lightweight yet highly effective |

The benchmark results indicate that while scFMs are robust and versatile tools, they don't consistently outperform simpler methods in all scenarios [8] [13]. The decision to use a complex foundation model versus a simpler alternative depends on factors including dataset size, task complexity, need for biological interpretability, and computational resources [8]. Notably, no single scFM consistently outperforms all others across diverse tasks, emphasizing the importance of task-specific model selection [8].

Experimental Protocols: Methodologies for scFM Implementation

Standardized Evaluation Framework

To ensure fair comparison and reproducibility, recent benchmarking efforts have established standardized evaluation protocols for scFMs [8]. The typical workflow involves:

- Embedding Extraction: Generating zero-shot gene and cell embeddings from pretrained scFMs without task-specific fine-tuning.

- Task-Specific Evaluation: Applying these embeddings to predefined downstream tasks using consistent metrics.

- Biological Validation: Assessing the biological relevance of results using ontology-informed metrics like scGraph-OntoRWR, which measures consistency between model-derived cell relationships and established biological knowledge [8].

Critical Experimental Considerations

When implementing scFMs in research workflows, several methodological factors require careful attention:

- Data Preprocessing: Models vary in their input requirements regarding gene selection, normalization, and transformation. Compatibility between preprocessing pipelines is essential for valid comparisons [8].

- Batch Effect Management: While some scFMs demonstrate inherent robustness to technical biases, others require explicit batch information as special tokens during training [1].

- Computational Resources: Large-scale scFMs require significant GPU memory and training time. Parameter-efficient fine-tuning techniques can mitigate these requirements while preserving performance [9].

The Scientist's Toolkit: Essential Research Reagents for scFM Implementation

Table 3: Key Computational Tools and Platforms for scFM Research

| Tool/Platform | Type | Primary Function | Research Application |

|---|---|---|---|

| BioLLM | Framework | Unified interface for >15 scFMs | Standardized benchmarking and model access |

| CZ CELLxGENE | Data Repository | 100M+ annotated single-cell datasets | Pretraining corpus assembly and validation |

| scGPT | Foundation Model | Multi-omic integration and generation | Cell annotation, perturbation modeling, network inference |

| Nicheformer | Spatial Foundation Model | Spatial context prediction | Tissue organization analysis, tumor microenvironment studies |

| Geneformer | Foundation Model | Network biology predictions | Gene regulatory network analysis, mechanistic insights |

| scPlantFormer | Domain-Specific FM | Plant single-cell omics | Cross-species plant biology, specialized applications |

Future Directions: Toward a Virtual Cell

The trajectory of scFM development points toward increasingly sophisticated and biologically grounded models. A key frontier is the development of tissue foundation models that incorporate physical relationships between cells to better understand tissue organization in health and disease [12]. Concurrently, efforts are underway to improve model interpretability, enabling researchers to not only predict cellular behavior but also understand the molecular regulators driving those predictions [9] [10].

The ultimate vision is the creation of a comprehensive "Virtual Cell"—a computational representation of how cells behave and interact within their native environments that can accurately simulate cellular responses to genetic, environmental, and therapeutic perturbations [12] [11]. Realizing this vision will require addressing persistent challenges including technical variability across platforms, limited model interpretability, and gaps in translating computational insights into clinical applications [9] [10].

As scFMs continue to evolve, they are poised to fundamentally transform how we approach biological investigation, drug discovery, and therapeutic development—moving from observation to prediction, and from analysis to engineering of cellular systems.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, mirroring the transformative impact of large language models (LLMs) in natural language processing. These scFMs are trained on millions of single-cell transcriptomes to learn fundamental biological principles that generalize across diverse tissues, conditions, and downstream tasks [1]. The core architectural framework enabling this revolution stems from the transformer model, adapted to handle the unique characteristics of biological data. This technical guide provides an in-depth examination of the transformer variants, tokenization strategies, and pretraining approaches that form the architectural backbone of modern scFMs, with particular focus on their implications for emergent abilities in biological research and drug development.

For researchers and drug development professionals, understanding these architectural nuances is crucial for selecting, implementing, and innovating upon existing models. The adaptation of transformer architectures to single-cell data presents unique challenges compared to traditional NLP applications, including the non-sequential nature of genomic data, high dimensionality, sparsity, and complex batch effects [4] [1]. This review systematically addresses these challenges through detailed architectural analysis, quantitative comparisons, and experimental methodologies that highlight the path toward emergent capabilities such as zero-shot cell type annotation, cross-species generalization, and therapeutic outcome prediction.

Transformer Architecture Fundamentals and Biological Adaptations

Core Transformer Mechanics

The transformer architecture, originally developed for sequence-to-sequence tasks, utilizes self-attention mechanisms to weight the importance of different elements in an input sequence when generating representations [1]. In natural language processing, this allows models to dynamically focus on relevant contextual words. The mathematical foundation of self-attention involves computing query (Q), key (K), and value (V) vectors for each token, with attention weights derived from the compatibility between queries and keys:

Attention(Q, K, V) = softmax(QKᵀ/√dₖ)V

where dₖ represents the dimension of the key vectors. This mechanism enables transformers to capture long-range dependencies more effectively than previous recurrent or convolutional architectures [1].

Critical Adaptations for Single-Cell Data

Applying transformers to single-cell RNA sequencing (scRNA-seq) data requires significant architectural adaptations to address fundamental differences between language and biological data:

- Non-sequential data structure: Unlike words in a sentence, genes in a cell have no inherent ordering, necessitating artificial sequence construction [1]

- High dimensionality and sparsity: scRNA-seq data typically measures 20,000+ genes with zero-inflated distributions [4]

- Technical artifacts: Batch effects, sequencing depth variations, and platform-specific biases must be addressed [4] [1]

- Multi-modal integration: Modern scFMs increasingly incorporate multiple data modalities (ATAC-seq, proteomics, spatial data) [1]

These challenges have driven the development of specialized transformer variants that maintain the benefits of self-attention while accommodating the unique properties of biological data.

Transformer Variants for Single-Cell Foundation Models

Architectural Spectrum in Current scFMs

Table 1: Transformer Variants in Single-Cell Foundation Models

| Model | Architecture Type | Core Innovation | Attention Mechanism | Typical Application |

|---|---|---|---|---|

| scBERT [4] [1] | Encoder-only | Bidirectional context understanding | Full self-attention | Cell type annotation, classification tasks |

| scGPT [4] [1] | Decoder-only | Generative pre-training | Masked self-attention | Cell generation, perturbation prediction |

| Geneformer [4] | Decoder-focused | Context-aware gene embeddings | Causal attention | Gene network analysis, disease modeling |

| UCE [4] | Hybrid | Multi-modal integration | Modified cross-attention | Multi-omics integration |

| scFoundation [4] | Encoder-decoder | Transfer learning optimization | Sparse attention | General-purpose embeddings |

The architectural landscape of scFMs primarily divides between encoder-based and decoder-based transformers, with emerging hybrid approaches [4] [1]. Encoder-based models like scBERT utilize bidirectional attention, allowing each gene to attend to all other genes in the cell simultaneously. This approach mirrors BERT-style architectures in NLP and excels at classification tasks such as cell type annotation [1]. In contrast, decoder-based models like scGPT employ masked self-attention, where each gene can only attend to previous genes in the sequence, making them particularly suited for generative tasks such as predicting cellular responses to perturbation [1].

Efficient Transformer Alternatives for Large-Scale Biological Data

The quadratic computational complexity of standard self-attention presents significant challenges when scaling to massive single-cell datasets containing millions of cells. Several efficient alternatives have emerged:

- Mamba Architecture: A promising transformer alternative that uses selective state space models (SSMs) for linear-time sequencing scaling, offering 5x faster throughput and constant memory usage regardless of sequence length [14]

- cosFormer: Implements cosine-based reweighting to approximate attention with 10x memory reduction for long sequences while maintaining 92-97% of traditional transformer accuracy [14]

- Linformer: Employs low-rank projection to compress attention matrices, achieving 76% memory reduction while maintaining 99% of RoBERTa performance on benchmarks [14]

- Performer: Uses random feature maps to approximate softmax attention, enabling 4,000x faster processing on very long sequences [14]

These efficient architectures enable researchers to process larger datasets with limited computational resources, though trade-offs exist in modeling precision and biological interpretability.

Hybrid and Specialized Architectures

Emerging hybrid architectures combine multiple attention mechanisms to balance efficiency and performance. Jamba, for instance, integrates Mamba blocks with traditional transformer attention, creating a 52-billion parameter model capable of handling 256,000 tokens on a single GPU [14]. In biological applications, such hybrids enable efficient processing of large gene sets while maintaining complex reasoning capabilities needed for understanding regulatory networks.

Table 2: Performance Comparison of Transformer Variants for Biological Data

| Architecture | Memory Efficiency | Training Speed | Sequence Length Handling | Biological Accuracy Retention |

|---|---|---|---|---|

| Standard Transformer | Baseline | Baseline | ~1-4K genes | 100% (baseline) |

| Mamba [14] | 7.8x improvement | 5x faster | 140K+ context | Competitive on most tasks |

| cosFormer [14] | 10x improvement | 2-22x faster | Linear scaling | 92-97% |

| Linformer [14] | 76% reduction | Moderate improvement | ~4K genes | 99% |

| Performer [14] | Significant improvement | 4,000x faster (long seqs) | Extreme lengths | 92-97% |

| Hybrid (Jamba) [14] | 3x improvement | 3x throughput | 256K tokens | Near-parity with transformers |

Tokenization Strategies for Single-Cell Data

Fundamental Tokenization Approaches

Tokenization converts raw gene expression data into discrete units processable by transformer models. Unlike NLP, where tokens typically represent words or subwords, scFMs face the unique challenge of representing continuous expression values in a discrete token space [1].

Table 3: Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Gene Representation | Expression Value Handling | Positional Encoding | Implementation Examples |

|---|---|---|---|---|

| Rank-based [1] | Gene identifiers | Implicit through ordering | Absolute position embeddings | Geneformer, scGPT |

| Value-binning [1] | Gene identifiers + expression bins | Discrete expression levels | Standard transformer encoding | scBERT, early scGPT |

| Raw value integration [1] | Gene embeddings + value embeddings | Continuous value embeddings | Modified for non-sequential data | scFoundation, UCE |

| Multi-modal tokens [1] | Modality-specific embeddings | Combined representation | Special modality tokens | Multi-modal scFMs |

The most common tokenization approaches include:

- Rank-based tokenization: Genes are ordered by expression level within each cell, creating a deterministic sequence where position indicates relative expression [1]

- Value-binning: Continuous expression values are discretized into bins (e.g., low/medium/high), with each bin representing a different token [1]

- Raw value integration: Gene identifiers and expression values are embedded separately and combined, preserving continuous expression information [1]

Advanced Tokenization with Biological Prior Knowledge

More sophisticated tokenization schemes incorporate biological knowledge to enhance model performance:

Diagram 1: Comprehensive Tokenization Workflow for scFMs. This workflow illustrates the transformation of raw expression data into model-ready tokens with biological knowledge integration.

- Gene ontology integration: Incorporating functional gene annotations as additional tokens or embedding initializations [4]

- Pathway-aware tokenization: Grouping genes by functional pathways to create hierarchical token structures [1]

- Multi-modal tokens: Using special tokens to represent different data modalities (e.g., [ATAC], [PROTEIN], [SPATIAL]) [1]

- Cell context tokens: Prepend tokens representing cell type, tissue origin, or experimental condition to provide global context [1]

Positional Encoding Adaptations

Since gene sequences lack natural ordering, scFMs employ various positional encoding strategies:

- Learnable position embeddings: Standard transformer approach treating each position as a unique learnable embedding [1]

- Expression-ranked encodings: Position embeddings based on expression percentiles rather than fixed positions [1]

- Relative attention biases: Modifying attention weights based on functional relationships between genes rather than sequence position [4]

- Position-free approaches: Some models omit positional encodings entirely, relying on the model to learn gene relationships without sequence bias [1]

Pretraining Approaches and Methodologies

Pretraining Objectives and Strategies

Pretraining forms the foundational phase where scFMs learn generalizable biological knowledge from vast datasets. The standard paradigm follows self-supervised learning approaches where models learn by predicting masked portions of the input [1].

Table 4: Pretraining Objectives in Single-Cell Foundation Models

| Pretraining Objective | Methodology | Strengths | Limitations | Examples |

|---|---|---|---|---|

| Masked Language Modeling [1] | Randomly mask gene tokens and predict their identities | Bidirectional context understanding | May not optimize for generative tasks | scBERT, scFoundation |

| Generative Pretraining [1] | Autoregressive next-gene prediction | Excellent for generation, perturbation modeling | Unidirectional context limitation | scGPT, Geneformer |

| Contrastive Learning [4] | Maximize similarity between related cellular states | Robust representations, batch correction | Requires careful negative sampling | UCE, scVI variants |

| Multi-task Pretraining [4] | Combine multiple objectives simultaneously | Comprehensive skill acquisition | Training complexity, balancing losses | Recent scFMs |

The dominant pretraining strategies include:

- Masked language modeling (MLM): Randomly masking a portion of gene tokens (typically 15-30%) and training the model to predict the masked genes based on context [1]

- Autoregressive next-gene prediction: Training models to predict each gene in sequence given previous genes, similar to GPT-style training [1]

- Contrastive objectives: Learning embeddings by maximizing similarity between related cells (e.g., same cell type) while minimizing similarity between unrelated cells [4]

Data Curation and Preprocessing for Effective Pretraining

Data quality and composition critically impact pretraining success. Current best practices include:

- Large-scale data aggregation: Models are typically pretrained on 10-100 million cells from diverse sources like CELLxGENE, Human Cell Atlas, and GEO [1]

- Strategic dataset balancing: Curating datasets to represent diverse tissues, conditions, and technologies to prevent bias [4]

- Quality control and normalization: Rigorous filtering of low-quality cells and genes, with appropriate normalization for sequencing depth [1]

- Batch effect management: Implementing strategies to learn biological signals while remaining robust to technical artifacts [4]

The scale of pretraining continues to grow, with modern scFMs trained on datasets encompassing hundreds of billions of tokens, though the optimal compute-data-parameter balance remains an active research area [15].

Experimental Protocol: Standard Pretraining Implementation

Diagram 2: Comprehensive Pretraining Pipeline for scFMs. This diagram outlines the end-to-end process for pretraining single-cell foundation models, from data collection to evaluation.

A standardized pretraining protocol involves:

- Data Acquisition: Collecting 20-50 million high-quality cells from diverse public repositories like CELLxGENE [1]

- Preprocessing: Filtering low-quality cells (high mitochondrial percentage, low gene counts), normalizing for sequencing depth, and selecting highly variable genes (5,000-20,000) [1]

- Tokenization: Implementing rank-based or value-embedding tokenization with appropriate positional encoding [1]

- Model Configuration: Selecting appropriate architecture (encoder/decoder/hybrid) with 100-500 million parameters depending on available compute [4]

- Training Loop: Implementing masked language modeling with 15-30% masking rate, using AdamW optimizer with learning rate 1e-4 to 5e-4, linear warmup, and cosine decay [1]

- Validation: Monitoring loss on held-out validation sets and periodic evaluation on downstream tasks [4]

The Scientist's Toolkit: Essential Research Reagents

Table 5: Essential Research Tools for scFM Development and Application

| Tool/Category | Specific Examples | Function | Relevance to Emergent Abilities |

|---|---|---|---|

| Data Resources | CELLxGENE [4] [1], Human Cell Atlas [1], GEO/SRA [1] | Provide massive, diverse training corpora | Enables emergence through scale and diversity |

| Model Architectures | scGPT [4] [1], Geneformer [4], scBERT [1] | Pretrained foundation models | Transfer learning, zero-shot capabilities |

| Benchmarking Frameworks | Custom evaluation pipelines [4], scGraph-OntoRWR [4] | Standardized performance assessment | Quantifies emergent ability measurement |

| Bioinformatics Libraries | Scanpy, Seurat, scvi-tools | Data preprocessing and analysis | Critical for data quality and interpretation |

| Specialized Metrics | scGraph-OntoRWR [4], LCAD [4], Roughness Index (ROGI) [4] | Biologically-grounded evaluation | Connects model performance to biological relevance |

Emergent Abilities and Biological Insight

The architectural decisions detailed in this review directly enable the emergent abilities observed in state-of-the-art scFMs. These include:

- Zero-shot cell type annotation: Models can accurately annotate novel cell types without task-specific training [4]

- Cross-species generalization: Learned representations transfer effectively across species boundaries [4]

- Cellular response prediction: Models can predict how cells will respond to perturbations, drugs, or disease states [1]

- Multi-modal integration: Emerging capabilities to harmonize and interpret data across transcriptomics, epigenomics, and proteomics [1]

Recent benchmarking studies reveal that no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [4]. The roughness index (ROGI) has emerged as a valuable proxy for predicting model performance on specific datasets, correlating with the smoothness of the cell-property landscape in the learned latent space [4].

The architectural landscape of single-cell foundation models continues to evolve rapidly, with transformer variants, tokenization strategies, and pretraining approaches becoming increasingly sophisticated. The field is progressing from single-modality transcriptomic models to multi-omic foundations capable of integrating diverse data types [1]. Future directions include developing more efficient architectures capable of scaling to billions of cells, improving interpretability to extract novel biological insights, and enhancing generalization across technologies and species [4] [1].

For researchers and drug development professionals, understanding these architectural fundamentals enables more effective application of existing models and informed participation in model development. As these technologies mature, they promise to unlock new capabilities in target identification, patient stratification, and therapeutic optimization through deep biological representation learning.

The rapid accumulation of single-cell RNA sequencing (scRNA-seq) data has created an unprecedented opportunity to decode cellular heterogeneity with revolutionary precision. Simultaneously, this data deluge presents significant analytical challenges due to inherent noise, high dimensionality, and batch effects [16] [1]. Inspired by the success of large language models (LLMs) in natural language processing, computational biologists have begun developing single-cell foundation models (scFMs)—large-scale deep learning models pre-trained on vast single-cell datasets using self-supervised learning [1] [8]. These models aim to learn a universal representation of cellular states that can be efficiently adapted to diverse downstream tasks, from cell type annotation to perturbation prediction.

A compelling aspect of scFMs is their potential for emergent abilities—capabilities not explicitly programmed during training that arise from scaling up model size and data diversity [1]. These may include zero-shot generalization to unseen cell types, prediction of novel gene functions, or inference of complex gene regulatory relationships. This whitepaper provides a comprehensive technical comparison of four prominent scFMs—scGPT, Geneformer, CellFM, and scBERT—framed within the context of these emergent abilities. We examine their architectural philosophies, pre-training strategies, and performance across biological tasks, offering researchers and drug development professionals a guide to navigating this rapidly evolving field.

Model Architectures and Pre-training Strategies

Foundational Design Philosophies

Single-cell foundation models adapt the transformer architecture to gene expression data by treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [1]. However, they diverge significantly in how they handle the fundamental challenge that gene expression data is not naturally sequential. The table below summarizes the core architectural and pre-training characteristics of the four models.

Table 1: Architectural and Pre-training Specifications of scFMs

| Model | Model Parameters | Pre-training Dataset Size | Core Architecture | Tokenization Strategy | Pre-training Objective |

|---|---|---|---|---|---|

| scGPT [8] [17] | ~50 Million | 33 Million human cells | Transformer Encoder with attention mask | Value binning of ~1200 Highly Variable Genes (HVGs) | Iterative masked gene modeling with MSE loss |

| Geneformer [16] [8] | ~40 Million | 30 Million cells (human & mouse) | Transformer Encoder | Ranking of 2,048 genes by expression level | Masked gene modeling with gene ID prediction (CE loss) |

| CellFM [16] [18] | 800 Million | 100 Million human cells | Modified RetNet (ERetNet Layers) | Value projection | Masked gene recovery from linear projections |

| scBERT [19] [20] | Not specified | PanglaoDB & other sources | Performer (BERT-like encoder) | Value binning into 7 categories | Masked gene expression reconstruction |

Tokenization and Input Representation

A critical differentiator among scFMs is their tokenization strategy—how continuous gene expression values are converted into discrete tokens for the transformer model [1]. Three predominant strategies have emerged:

Value Categorization (Binning): Used by scGPT and scBERT, this approach discretizes continuous gene expression values into a finite number of "buckets" or bins, converting regression into a classification problem [16] [19]. scBERT, for instance, bins expression values into 7 categories [20].

Ordering (Rank-based): Employed by Geneformer, this method ranks genes within each cell by expression levels and uses the ranked list of gene identifiers as the input sequence [16] [8]. This emphasizes relative expression patterns over absolute values.

Value Projection: Used by CellFM, this strategy aims to preserve the full resolution of the data by expressing the gene expression vector as a sum of a projection of the gene expression vector and a positional or gene embedding [16].

Figure 1: Tokenization Strategies in Single-Cell Foundation Models

Performance Benchmarking Across Biological Tasks

Quantitative Performance Comparison

Rigorous benchmarking is essential to understand the strengths and limitations of each model. The following table synthesizes performance data across key tasks from multiple studies, including large-scale benchmarks. It's important to note that performance can vary significantly based on dataset characteristics and task specifics.

Table 2: Comparative Model Performance Across Key Biological Tasks

| Model | Cell Type Annotation (Accuracy) | Batch Integration (Performance) | Perturbation Prediction | Gene Function Prediction | Zero-Shot Clustering (AvgBIO vs. HVG baseline) |

|---|---|---|---|---|---|

| scGPT | High (e.g., ~85% on NeurIPS data) [19] | Variable (outperforms Harmony/scVI on complex biological batches) [21] | Strong [16] | Good [16] | Underperforms HVG baseline [21] |

| Geneformer | High [16] | Struggles (primary structure in embeddings often driven by batch) [21] | Strong [16] | Good [16] | Underperforms HVG baseline [21] |

| CellFM | Outperforms existing models [16] | Not explicitly benchmarked | Outperforms existing models [16] | Improves accuracy [16] | Not evaluated in zero-shot setting |

| scBERT | High (e.g., outperforms Seurat) but sensitive to imbalanced data [19] | Not explicitly benchmarked | Not a primary focus | Not a primary focus | Not evaluated in zero-shot setting |

The Critical Role of Fine-Tuning and Zero-Shot Capabilities

A crucial consideration for researchers is the trade-off between zero-shot performance (using pre-trained models directly) and fine-tuned performance (additional task-specific training). A recent zero-shot evaluation revealed that both scGPT and Geneformer can underperform simpler baselines like Highly Variable Genes (HVG) selection or established methods (Harmony, scVI) on tasks like cell type clustering and batch integration when used without fine-tuning [21]. For instance, in batch integration, Geneformer's embeddings often failed to correct for batch effects, while scGPT showed mixed results, performing well on some datasets but not others [21].

However, fine-tuning—the process of adapting a pre-trained model to a specific task with a relatively small amount of labeled data—can dramatically improve performance. One analysis suggests that fine-tuning scGPT can yield a 10-25 percentage point accuracy jump on specific datasets like multiple sclerosis and tumor-infiltrating myeloid cells [22]. This highlights that while emergent zero-shot abilities are a promising direction, practical application often still benefits from task-specific adaptation, especially for complex or novel cell states.

Practical Implementation and Experimental Protocols

A Guide to Model Selection and Workflow

Choosing the right model and application strategy is paramount for research success. The following workflow diagram and subsequent guidance outline a structured approach based on the user's goal, data resources, and technical constraints.

Figure 2: A Workflow for Selecting and Applying Single-Cell Foundation Models

Effectively working with scFMs requires a suite of computational "research reagents." The table below details key resources, their functions, and practical considerations for researchers.

Table 3: Essential Computational Reagents for scFM Research

| Resource / Solution | Function / Purpose | Key Considerations & Examples |

|---|---|---|

| Pre-trained Model Weights | Provides the foundational model parameters learned during large-scale pre-training, enabling transfer learning. | Available from model repositories (e.g., scGPT Model Zoo [17], scBERT GitHub [20]). Choice depends on organism and tissue context. |

| Curated Reference Dataset | Serves as a high-quality ground truth for fine-tuning and evaluation. Critical for cell type annotation. | Platforms like CZ CELLxGENE [1] and the Human Cell Atlas [1] provide standardized, annotated datasets. |

| GPU Computing Resources | Accelerates model training and inference, reducing time from days to hours. | Fine-tuning scGPT typically requires a GPU (e.g., A100). Zero-shot inference for embedding generation can be more flexible [22]. |

| Differential Expression Tool | Identifies marker genes for clusters, which can be used for validation or prompting LLMs like GPT-4. | Standard tools like those in Scanpy [19] or Seurat. For LLM prompting, top 10 genes often outperform top 20 by reducing noise [22]. |

| Batch Integration Algorithm | Corrects for technical variation across experiments, often used in conjunction with scFM embeddings. | Tools like Harmony [21] or scVI [21] can be applied to correct scFM embeddings if batch effects persist in zero-shot mode. |

Detailed Protocol for Fine-Tuning scGPT for Cell Type Annotation

The following protocol provides a step-by-step methodology for adapting a pre-trained scGPT model to a custom dataset for cell type annotation, a common and critical task in single-cell analysis.

Data Preprocessing:

- Input: Raw count matrix (cells x genes).

- Gene Symbol Standardization: Revise gene symbols according to an official database (e.g., NCBI Gene) to ensure consistency with the model's vocabulary. Remove unmatched or duplicated genes [20].

- Normalization: Normalize total counts per cell (

sc.pp.normalize_totalin Scanpy) followed by log1p transformation (sc.pp.log1p) [19] [20]. - HVG Selection: Select the top ~2,000 highly variable genes to match the model's expected input dimension [8] [22].

Model Setup:

Fine-Tuning Loop:

- Data Splitting: Split the labeled data into training (e.g., 80%) and validation (e.g., 20%) sets. Ensure stratified sampling if cell types are imbalanced.

- Add Classification Head: The scGPT model requires a task-specific head for cell type classification. This is typically implemented as a linear layer on top of the pooled cell embedding.

- Training Configuration:

- Objective Function: Use Cross-Entropy Loss.

- Optimizer: Use AdamW optimizer with a low learning rate (e.g., 1e-5) to avoid catastrophic forgetting.

- Epochs: Train for 5-10 epochs, which typically takes approximately 20 minutes on a single A100 GPU for thousands of cells [22].

- Validation: Monitor validation accuracy after each epoch to prevent overfitting. Use early stopping if the validation performance plateaus.

Model Inference and Evaluation:

- Prediction: Run the held-out test set through the fine-tuned model to generate cell type predictions.

- Evaluation Metrics: Calculate accuracy, F1-score (especially important for imbalanced datasets [19]), and generate a confusion matrix to identify specific areas of confusion between cell types.

- Novel Type Detection: Cells with a maximum predicted probability below a set threshold (e.g., 0.5) can be flagged as potential novel or unknown cell types [19] [20].

Discussion and Future Directions

The development of single-cell foundation models represents a paradigm shift in how we analyze and interpret transcriptomic data. While models like scGPT, Geneformer, CellFM, and scBERT have demonstrated impressive performance, particularly after fine-tuning, critical challenges remain. The inconsistent zero-shot performance compared to simpler baselines [21] indicates that the emergent, generalizable biological understanding these models are designed for is still evolving. Furthermore, no single scFM consistently outperforms all others across every task, emphasizing that model selection must be tailored to the specific biological question, dataset size, and available computational resources [8].

The path forward will likely involve several key developments. First, multi-modal integration—combining transcriptomics with data from epigenomics, proteomics, and spatial technologies—will be crucial for building more comprehensive models of cellular function [1]. Second, enhancing interpretability is essential for building trust and extracting novel biological insights, not just predictions [1] [8]. Finally, as models scale in size and scope, establishing rigorous and biologically meaningful benchmarking standards that prioritize real-world discovery scenarios will be critical for measuring true progress [8] [21]. The promise of scFMs is vast, and continued development in these areas will be key to unlocking their full potential for revolutionizing cell biology and therapeutic development.

The development of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, mirroring the revolution caused by large language models in natural language processing [1]. The core thesis of this whitepaper posits that the emergence of advanced capabilities in scFMs—including zero-shot learning, cross-dataset generalization, and sophisticated biological reasoning—is intrinsically linked to the scale, diversity, and quality of the pretraining corpus [1] [23]. This document provides an in-depth technical guide to the construction and utilization of these foundational datasets, framing them not merely as input but as the critical determinant of emergent phenotypic understanding for researchers and drug development professionals.

The Architecture of a Pretraining Corpus

A pretraining corpus for scFMs is a large-scale, integrated collection of single-cell genomics data, meticulously assembled from diverse public repositories and curated cell atlases. Its primary function is to serve as the comprehensive "textbook" from which self-supervised models learn the fundamental language of cellular identity, state, and function [1]. The emergent abilities observed in scaled models—such as in-context learning and robust generalization—are directly contingent upon the biological and technical variety encapsulated within this corpus [23] [8].

Core Data Components and Public Repositories

The pretraining corpus is synthesized from a ecosystem of public data repositories, each contributing essential components. The table below summarizes the primary sources and their specific roles in corpus construction.

Table 1: Key Public Data Repositories for scFM Pretraining

| Repository Name | Data Type & Role | Scale & Context | Primary Use in Corpus Construction |

|---|---|---|---|

| CZ CELLxGENE [1] [24] | Curated single-cell datasets | Over 100 million unique cells; standardized analysis [1] | Provides a unified, high-quality source of annotated cells for diverse tissue and condition coverage. |

| Human Cell Atlas (HCA) [25] | Multiorgan, cross-tissue atlases | Aims to map every cell type in the human body [25] | Supplies broad coverage of cell types and states from diverse individuals. |

| Gene Expression Omnibus (GEO) / Sequence Read Archive (SRA) [1] | Archive for raw and processed sequencing data | Hosts thousands of individual single-cell studies [1] | Serves as a primary source for aggregating vast amounts of public data. |

| PanglaoDB [1] | Curated compendium of scRNA-seq data | Collates data from multiple sources and studies [1] | Offers a pre-filtered resource for model training. |

| Broad Institute Single Cell Portal [24] [25] | Tissue and disease-specific datasets | Includes massive cross-tissue atlases (e.g., 23.4M+ cells) [26] | Provides access to large-scale, systematically generated datasets. |

Quantitative Dimensions of a Modern Corpus

The scale of a pretraining corpus is a key driver of model performance. Leading scFM development efforts now leverage corpora comprising tens of millions of cells.

Table 2: Quantitative Scale of Exemplary Pretraining Corpora

| Model / Atlas | Reported Corpus Scale | Number of Studies | Diversity of Tissues/Cell Types |

|---|---|---|---|

| SCimilarity Foundation Model [26] | 23.4 million cells | 412 studies | 184 unique Tissue Ontology terms, 132 Disease Ontology terms |

| scGPT [8] | 33 million cells | Not Specified | Multiple omics modalities (scRNA-seq, scATAC-seq, spatial) |

| Geneformer [8] | 30 million cells | Not Specified | Focus on scRNA-seq data |

| Benchmark Training Set [26] | ~7.9 million cells (training) | 56 studies | 203 Cell Ontology author-annotated terms |

Technical Protocols for Corpus Construction and Experimental Pipelines

Constructing a robust pretraining corpus is a multi-stage process that involves data ingestion, standardization, and quality control. The following protocols are critical for ensuring data integrity and utility.

Data Ingestion and Pre-processing Workflow

A standardized pipeline is essential for transforming raw data from repositories into a analysis-ready corpus [24].

- Data Acquisition and Quality Control: Programmatic download of raw sequencing reads (FASTQ files) and associated metadata from GEO, SRA, and other repositories is the first step [24]. Initial quality control checks ensure data integrity.

- Uniform Processing and Gene-Cell Matrix Generation: A unified computational pipeline processes all raw data using consistent software versions and parameters. This involves alignment, barcode assignment, and generation of gene expression count matrices to minimize technical artifacts introduced by varying processing steps [1] [24].

- Metadata Annotation and Ontology Mapping: Sample, gene, and cell-level metadata are ingested and standardized. Critically, cell type annotations are mapped to a structured Cell Ontology [24] [26], which provides a standardized vocabulary vital for interoperability and for defining "similar" and "dissimilar" cells during supervised metric learning [26].

- Batch Effect Detection and Mitigation: Technical artifacts (batch effects) arising from differences in experiments, donors, or processing are identified. While not always eliminated at this stage, their documentation is crucial for downstream correction strategies [24].

Protocol for Tokenization and Input Representation

To apply transformer architectures, the non-sequential gene expression data must be converted into a sequence of tokens. This process, known as tokenization, is a critical architectural choice for scFMs [1]. The following methodology is employed by leading models:

- Gene Selection: The vast number of genes is typically reduced to the most informative set (e.g., 1,200-20,000 genes) based on high variability or high expression [8].

- Expression Value Representation: Continuous expression values are discretized. Common strategies include:

- Sequence Construction: The selected genes are formed into an input sequence. This requires imposing an order, commonly achieved by:

- Embedding: Each element in the sequence (comprising a gene identifier and its processed expression value) is converted into a numerical vector (embedding). Special tokens for cell identity or modality may be prepended [1].

Experimental Protocol: Querying for Biologically Similar Cells

The power of a foundation model is often validated by its ability to find transcriptionally similar cells across the entire corpus. The following protocol, as implemented in the SCimilarity framework, details this process [26]:

Model Training with Triplet Loss:

- Input: Sample millions of cell "triplets" from the pretraining corpus. Each triplet consists of an Anchor cell, a Positive cell (same cell type as anchor, but from a different study), and a Negative cell (a different cell type).

- Training: Train a deep metric learning model to minimize a combined loss function. The Triplet Loss ensures the anchor's embedding is closer to the positive's than to the negative's. A Reconstruction Loss (e.g., Mean Squared Error) ensures the model preserves subtle gene expression patterns.

- Output: A model that can project any new cell profile into a unified latent space where Euclidean distance corresponds to biological similarity.

Corpus Indexing: Process the entire pretraining corpus (e.g., 23.4 million cells) through the trained model to generate a database of latent embeddings.

Query Execution:

- Input: A query cell profile (e.g., a disease-associated macrophage state).

- Processing: Project the query cell into the same latent space.

- Output: A ranked list of the most transcriptionally similar cells from the entire corpus, identified via a fast nearest-neighbor search in the latent space. This can reveal similar states across diseases, tissues, or in vitro models [26].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and data resources essential for working with single-cell pretraining corpora and foundation models.

Table 3: Essential Research Reagent Solutions for scFM Research

| Tool / Resource | Type | Function & Application |

|---|---|---|

| CELLxGENE | Data Repository | Provides unified access to millions of curated and standardized single-cell datasets, enabling efficient data discovery and reuse [24]. |

| Cell Ontology | Structured Vocabulary | Provides standardized terms for cell type annotation, crucial for dataset interoperability and for training supervised components of scFMs [24] [26]. |

| SCimilarity | Foundation Model | A metric-learning model for searching a massive atlas of single-cell profiles to find transcriptionally similar cells across tissues and diseases, generating testable hypotheses [26]. |

| scGPT | Foundation Model | A versatile transformer-based scFM capable of multiple downstream tasks, including perturbation prediction and cell type annotation, trained on a multi-omic corpus [1] [8]. |

| Harmony / scVI | Integration Algorithm | Computational tools for correcting batch effects and integrating multiple datasets into a coherent space, a critical step in corpus construction and analysis [8] [26]. |

| Zarr / Parquet | Data Format | Disk-backed, efficient file formats for storing and processing very large single-cell datasets that exceed memory limitations [24]. |

The Relationship Between Corpus Scale and Emergent Abilities

The emergence of novel capabilities in large-scale models is a phenomenon documented across complex systems [23]. In single-cell biology, this translates to scFMs developing an understanding of cellular mechanisms that are not explicitly programmed. The diagram below illustrates the causal pathway from data scaling to emergent biological insights.

The scaling of the pretraining corpus directly enables several key emergent abilities:

- Zero-Shot Learning and Cross-Dataset Generalization: Models trained on a corpus encompassing many tissues, diseases, and technical protocols learn a universal representation of cellular state. This allows them to make accurate predictions on entirely new datasets without task-specific fine-tuning, a capability benchmarked in recent studies [8].

- In-Context Learning: Analogous to few-shot prompting in LLMs, some scFMs can adapt to a new task when provided with a few examples in the input context, such as learning a new cell type classification scheme from minimal data [1] [23].

- Biological Reasoning and Insight Generation: With a comprehensive model of cellular biology, scFMs can be queried to generate novel hypotheses. For example, querying a disease-associated macrophage state from a lung fibrosis study against a massive corpus identified similar cell states in other fibrotic diseases and pinpointed a specific 3D hydrogel system as the top in vitro hit—a finding that was subsequently experimentally validated [26]. This demonstrates an emergent capacity to connect biological concepts across traditional experimental boundaries.

Practical Applications: Leveraging scFM Capabilities for Drug Discovery and Biomedical Research

The rapid accumulation of single-cell RNA sequencing (scRNA-seq) data has created an urgent need for computational strategies that can automatically interpret cellular heterogeneity without extensive manual intervention. Single-cell foundation models (scFMs) represent a transformative approach, trained on millions of cells through self-supervised objectives to learn universal patterns in transcriptomic data [27]. These models promise emergent abilities—capabilities not explicitly programmed but arising from scale—including zero-shot cell type annotation, where models classify cell types without task-specific training [8] [4]. This emergent capacity is particularly valuable in discovery settings where labels are unknown or for rare cell types with limited examples [21]. The significance of robust zero-shot performance extends across biological research and therapeutic development, enabling rapid annotation of novel cell types in disease states, tumor microenvironments, and developmental processes [8] [4]. However, recent evaluations reveal that the zero-shot performance of proposed foundation models varies considerably, with simpler methods sometimes outperforming these sophisticated approaches [21] [8]. This technical guide examines the current state, methodologies, and practical applications of zero-shot cell type annotation, providing researchers with a framework for evaluating and implementing these emerging capabilities in biological and clinical research.

The Zero-Shot Paradigm in Single-Cell Analysis

Conceptual Framework and Biological Significance

Zero-shot evaluation tests a model's ability to perform tasks without any dataset-specific fine-tuning, using only its pre-trained representations [21]. In single-cell biology, this approach is crucial for applications excluding fine-tuning capability, particularly in exploratory research where cellular identities are unknown [21]. The fundamental premise is that scFMs pretrained on massive datasets will learn biologically meaningful representations of cells and genes that generalize to new datasets and unseen cell types [8] [4]. These models typically treat cells as "sentences" and genes as "words," adapting transformer architectures to capture complex gene-gene interactions across diverse cellular contexts [27].

The biological significance of robust zero-shot annotation is profound: it enables discovery of novel cell types without reference databases, identifies rare cell populations in complex tissues, and facilitates cross-species comparisons by learning universal cellular principles [8]. For drug development, reliable zero-shot classification can accelerate target identification by immediately characterizing cell types in disease models without requiring extensive manual annotation [28]. However, the non-sequential nature of gene expression data presents unique challenges, as genes lack inherent ordering unlike words in sentences, requiring innovative tokenization strategies [8] [4].

Current Model Landscape and Performance Gaps

Recent benchmarking studies reveal significant performance variations among scFMs in zero-shot settings. A comprehensive evaluation of six prominent scFMs against established baselines using biologically-informed metrics demonstrates that no single model consistently outperforms others across all tasks [8] [4]. Surprisingly, simpler methods like Highly Variable Genes (HVG) selection sometimes surpass foundation models in both cell type clustering and batch integration tasks [21].

Table 1: Zero-Shot Performance Comparison Across Single-Cell Foundation Models

| Model | Pretraining Data Scale | Key Strengths | Zero-Shot Limitations |

|---|---|---|---|

| scGPT | 33 million human cells [16] | Flexible architecture supporting multiple omics modalities [8] | Inconsistent cell type separation; batch effect challenges [21] |

| Geneformer | 30 million single-cell transcriptomes [16] | Context-aware gene embeddings [28] | Underperforms HVG in clustering; poor batch mixing [21] |

| CellFM | 100 million human cells [16] | Large parameter count (800M); improved accuracy [16] | Limited independent benchmarking available |

| UCE | 36 million cells [8] | Cross-species integration; protein language model integration [8] | Computational intensity [8] |

| scFoundation | 50 million human cells [16] | Value projection preserves data resolution [16] | Less established in annotation tasks [8] |

Quantitative assessments show that both Geneformer and scGPT underperform compared to established methods like Harmony and scVI in cell type clustering as measured by average BIO (AvgBio) score [21]. HVG selection surprisingly outperforms both proposed foundation models across all metrics in some evaluations [21]. This performance gap highlights the ongoing challenge of translating massive pretraining into reliable zero-shot capabilities.

Methodological Approaches to Zero-Shot Annotation

Model Architectures and Tokenization Strategies

Single-cell foundation models employ diverse architectural strategies to convert gene expression data into meaningful representations. The input layers typically comprise three components: gene embeddings (analogous to word embeddings), value embeddings, and positional embeddings [8] [4].

Table 2: Input Representation Strategies in Single-Cell Foundation Models

| Model | Tokenization Approach | Value Representation | Positional Encoding |

|---|---|---|---|

| Geneformer | 2048 ranked genes by expression [8] | Ordering-based | ✓ Present [8] |

| scGPT | 1200 Highly Variable Genes [8] | Value binning | × Absent [8] |

| UCE | 1024 non-unique genes sampled by expression [8] | Protein embeddings from ESM-2 | ✓ Present [8] |

| scFoundation | 19,264 human protein-encoding genes [16] | Value projection | × Absent [8] |

| LangCell | 2048 ranked genes [8] | Ordering-based | ✓ Present [8] |

The masked gene modeling (MGM) pretraining objective is common across most scFMs, where a subset of genes is masked and the model must predict their expression values based on context [27]. This approach encourages the model to learn biological relationships between genes and cellular states. However, evidence suggests this framework does not automatically produce useful cell embeddings for zero-shot tasks, indicating potential limitations in current pretraining methodologies [21].

Large Language Models for Marker-Based Annotation