Expert vs. Automated Annotation: A Framework for Consistency Evaluation in Biomedical AI

This article provides a comprehensive framework for researchers and drug development professionals to evaluate the consistency between expert and automated data annotations, a critical bottleneck in building reliable AI models...

Expert vs. Automated Annotation: A Framework for Consistency Evaluation in Biomedical AI

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate the consistency between expert and automated data annotations, a critical bottleneck in building reliable AI models for healthcare. It explores the foundational challenges of human annotation inconsistency, details emerging methodologies like LLM-driven verification, offers practical strategies for troubleshooting and optimizing annotation workflows, and establishes a rigorous validation framework for comparative assessment. By synthesizing current research and real-world case studies, this guide aims to equip teams with the knowledge to ensure data quality, accelerate model deployment, and build trustworthy AI systems for clinical and biomedical applications.

The Annotation Consistency Challenge: Why Human Expertise and AI Disagree

The Critical Role of Data Annotation in AI-Driven Drug Development

In AI-driven drug development, high-quality, annotated data is the fundamental substrate that powers machine learning (ML) and artificial intelligence (AI) models. The accuracy and reliability of these models are directly contingent upon the quality of their training data [1]. Data annotation—the process of labeling raw data with informative tags—enables AI systems to interpret complex biological information, from medical images to molecular structures. Within the context of consistency evaluation between expert and automated annotation, this process becomes critical for building trustworthy and regulatory-acceptable AI tools. As the industry moves toward AI-native frameworks, the methodologies for creating this clinical-grade data are undergoing significant transformation, blending expert human oversight with advanced automation to achieve new levels of speed and precision [2] [3].

The Expanding Scope of Data Annotation in Drug Development

Data annotation requirements span the entire drug development lifecycle, with specific data types and annotation needs at each stage.

Table: Data Annotation Applications Across the Drug Development Pipeline

| Development Stage | Data Types Requiring Annotation | Annotation Purpose | Common Annotation Methods |

|---|---|---|---|

| Target Identification | Scientific literature, genomic data, proteomic data [4] | Identify disease-associated proteins & pathways [5] | Named Entity Recognition (NER), semantic annotation [1] |

| Preclinical Research | Medical images (DICOM, NIfTI), molecular structures, assay data [6] [3] | Disease biomarker detection, compound efficacy & toxicity assessment [5] | Bounding boxes, semantic/instance segmentation, polygon annotation [1] |

| Clinical Trials | Trial imaging (CT, MRI), EHRs, lab reports, adverse event data [3] | Treatment efficacy evaluation, patient stratification, safety monitoring [5] | Object detection, temporal segmentation, activity recognition [1] |

| Post-Market Surveillance | Real-World Evidence (RWE), patient forums, pharmacovigilance reports [3] | Outcome monitoring, drug repurposing, safety signal detection [5] | Sentiment analysis, intent annotation, NER tagging [1] |

The complexity of biomedical data necessitates specialized annotation approaches. In computer vision applications for drug discovery, this includes:

- Image Annotation: Critical for histopathology and cellular imaging, involving techniques from simple bounding boxes to detailed polygon annotation for precise object delineation [1].

- Video Annotation: Used for tracking dynamic processes over time, employing frame-by-frame annotation or more efficient interpolation where models estimate object positions between annotated frames [1].

- DICOM and Medical Image Annotation: Requires specialized tools and expertise to handle complex file formats like DICOM and NIfTI, with projects often needing to comply with FDA guidelines and HIPAA security standards [6].

Comparative Analysis of Data Annotation Service Providers

Selecting an appropriate data annotation partner is crucial for pharmaceutical companies building AI capabilities. Different providers offer varying levels of domain expertise, compliance adherence, and technological sophistication.

Table: Provider Capability Comparison for Clinical-Grade AI Data

| Capability | iMerit | CureMeta | Scale AI | CloudFactory | Centaur Labs |

|---|---|---|---|---|---|

| GxP-Aligned Workflows | Yes [3] | No [3] | No [3] | No [3] | No [3] |

| Clinician-Annotated Datasets | Yes [3] | Yes [3] | No [3] | No [3] | Partial [3] |

| Multimodal Data Support | Yes (imaging, omics, EHR) [3] | Partial [3] | No [3] | Partial [3] | No [3] |

| FDA/EMA Submission Readiness | Yes [3] | No [3] | No [3] | No [3] | No [3] |

| Expert-in-the-Loop QA | Yes [3] | Yes [3] | No [3] | Partial [3] | Yes [3] |

| Domain-Specific Protocol Knowledge | Yes (oncology, pathology, radiology) [3] | Yes (oncology, neurology) [3] | No [3] | No [3] | No [3] |

Provider Specializations and Limitations:

- iMerit positions itself as a "Digital CRO" (Contract Research Organization), providing end-to-end, clinical-grade data solutions with robust compliance frameworks (HIPAA, ISO 27001, SOC 2) and a workforce trained in specialized therapeutic areas like oncology and pathology [3].

- CureMeta offers therapeutic-specific expertise, particularly in neurodegeneration and solid tumors, but has limitations in multimodal data integration and full regulatory compliance for GxP workflows [3].

- Scale AI excels at high-volume, general-purpose data labeling with fast turnaround times, but lacks deep domain-specific reviewer depth and GxP-aligned workflows needed for regulated clinical pipelines [3].

- CloudFactory provides flexible human-in-the-loop labeling at scale with process templating, but requires additional compliance layers for FDA/EMA-facing use cases and doesn't offer medical expert sourcing [3].

- Centaur Labs utilizes a crowd-consensus model among medical students and early-career clinicians, making it suitable for initial model validation but not for full-scale, production-level annotation pipelines requiring stringent compliance [3].

Experimental Protocols for Annotation Consistency Evaluation

Rigorous experimental protocols are essential to validate annotation consistency between experts and automated systems. The following methodology provides a framework for this critical evaluation.

Methodology for Comparative Consistency Assessment

1. Dataset Curation and Preparation:

- Select a representative dataset of biomedical images (e.g., 1,000 DICOM slides from a cancer pathology repository) [3].

- Ensure dataset diversity covering multiple disease stages, anatomical variations, and imaging conditions to robustly test annotation consistency.

- Establish a "gold standard" ground truth through consensus among a panel of three board-certified domain specialists (e.g., pathologists, radiologists) [3].

2. Expert Annotation Protocol:

- Engage five domain experts with minimum 5 years of specialty experience.

- Provide standardized annotation guidelines detailing label definitions, boundary criteria, and classification rules.

- Implement a double-blinded review process where experts annotate the same set of images independently.

- Use specialized annotation software (e.g., Ango Hub, proprietary platforms) supporting the required annotation types [3].

3. Automated Annotation Protocol:

- Pre-label the dataset using an AI-assisted annotation tool with a confidence threshold set at 80% [7].

- For confidence scores below 80%, route these cases to human reviewers for manual annotation, creating a hybrid workflow [7].

- Deploy active learning frameworks where the model flags ambiguous data points for prioritized human review, creating continuous feedback loops [7].

4. Consistency Metrics and Analysis:

- Calculate Inter-Annotator Agreement (IAA) using Cohen's Kappa for categorical labels and Intraclass Correlation Coefficient (ICC) for continuous measurements.

- Measure Dice Similarity Coefficient (Dice Score) for spatial overlap in segmentation tasks.

- Compute Precision, Recall, and F1-score for object detection against the "gold standard" ground truth.

- Perform Bland-Altman analysis to assess agreement between quantitative measurements from expert vs. automated approaches.

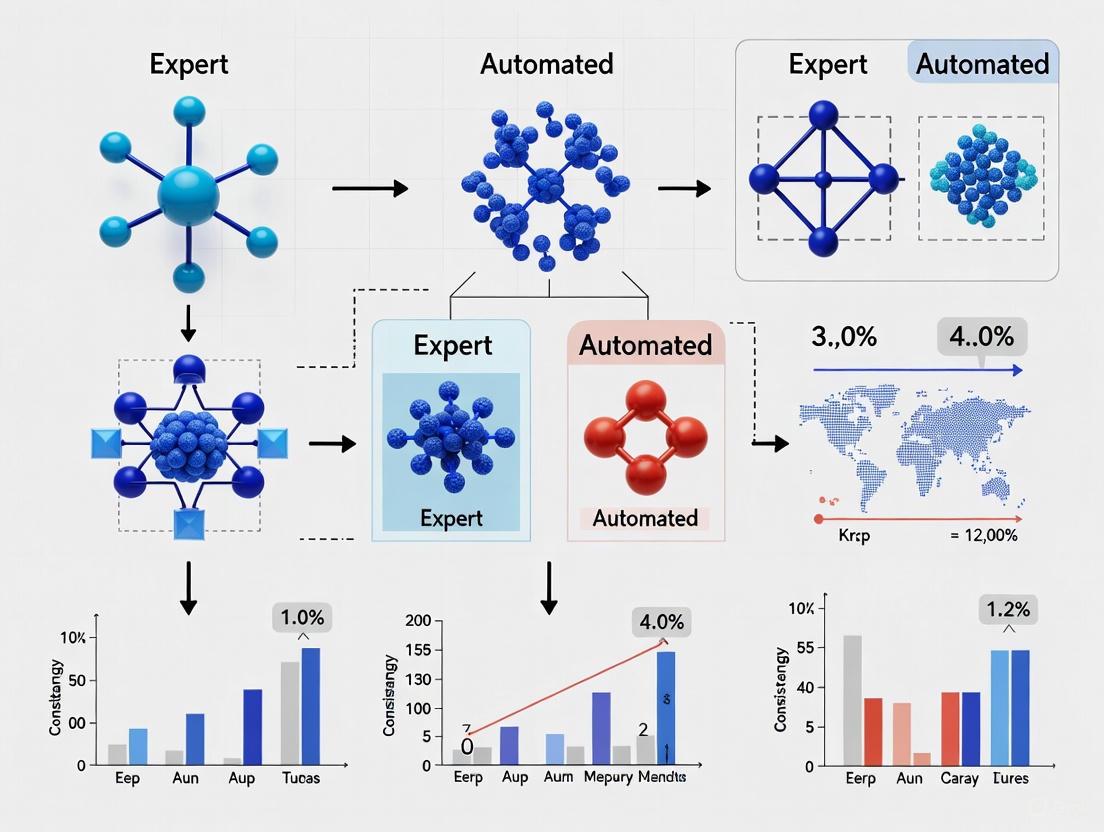

Diagram 1: Experimental Workflow for Annotation Consistency Evaluation

Quantitative Outcomes of Annotation Approaches

Industry case studies demonstrate the tangible benefits of effectively implemented annotation strategies:

Table: Performance Metrics of AI-Native Annotation in Drug Development

| Use Case / Company | Annotation Method | Reported Outcome | Significance |

|---|---|---|---|

| Biomedical Annotation for Drug Discovery [2] | Hybrid AI-Automation Model | Achieved >80% automation with 90% accuracy in biomedical annotation [2] | Accelerated R&D initiatives & enabled faster training of high-quality AI models [2] |

| Clinical Trial Oversight [2] | AI-Powered Trial Operations Insights | Saved $2.4 million and reduced open issues by 75% within 6 months [2] | Provided unified reporting & predictive risk analytics for multi-site trial management [2] |

| Regulatory Response Automation [2] | GenAI-Powered HAQ Response Assistant | Cut Health Authority Query turnaround time by >50% [2] | Improved response consistency & eased regulatory workload for faster submissions [2] |

| AI-Assisted Data Labeling [7] | AI Pre-labeling with Human Review | Reduced manual annotation effort by 25-30% while maintaining quality standards [7] | Enabled cost-effective scaling of annotation projects for large datasets [7] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Building an effective data annotation pipeline for AI-driven drug development requires both technological infrastructure and human expertise.

Table: Essential Components for Clinical AI Data Annotation

| Component | Function | Example Solutions / Standards |

|---|---|---|

| Annotation Platforms | Provide core tooling for labeling tasks with workflow management | Ango Hub [3], Encord [6], Proprietary GxP-compliant platforms [3] |

| Quality Control Systems | Ensure annotation accuracy & consistency through multi-layer review | Confidence thresholding [7], Crowd consensus validation [3], Active learning feedback loops [7] |

| Compliance Frameworks | Meet regulatory requirements for drug development data | GxP-aligned workflows [3], HIPAA-compliant infrastructure [6] [3], FDA/EMA submission-ready pipelines [3] |

| Domain Expertise | Provide therapeutic-area knowledge for accurate labeling | Board-certified clinicians [3], Oncology/pathology specialists [3], Biomedical annotators [2] |

| Computational Infrastructure | Support data-intensive processing & model training | Cloud-based solutions (AWS) [8], Federated learning platforms [9], High-performance computing resources |

Integrated Workflows: Bridging Expert and Automated Annotation

The most effective annotation pipelines for drug development seamlessly integrate human expertise with AI automation, creating a continuous cycle of improvement and validation.

Diagram 2: Expert-in-the-Loop Annotation Quality Framework

This integrated approach, often called "expert-in-the-loop" or human-in-the-loop (HITL), creates a virtuous cycle where [2] [7]:

- AI handles scalable processing of straightforward cases through pre-labeling and automation.

- Human experts focus their attention on complex, ambiguous, or low-confidence cases where judgment and domain knowledge are most valuable.

- Continuous feedback from expert corrections improves the AI models over time.

- Quality control is embedded throughout the process rather than being a final checkpoint.

This methodology is particularly crucial in sensitive, regulated domains like healthcare, where purely automated systems may lack the nuanced understanding required for clinical applications [7]. For example, in tumor detection from medical images, AI can pre-label potential areas of interest, but radiologists provide essential final validation, especially for borderline or complex cases [7].

In AI-driven drug development, data annotation is not merely a preliminary technical task but a strategic component directly influencing the success and speed of therapeutic innovation. As the industry progresses, the convergence of domain expertise, regulatory-compliant workflows, and intelligent automation will define the next generation of drug discovery platforms. The critical evaluation of consistency between expert and automated annotation provides the foundation for building trustworthy AI models that can accelerate the delivery of novel treatments to patients while maintaining the rigorous standards required in pharmaceutical development. Companies that prioritize investment in robust, scalable, and high-quality data annotation pipelines will establish a significant competitive advantage in the evolving landscape of AI-native drug development.

In the rigorous world of scientific research, particularly in drug development and AI-assisted analysis, the term "gold standard" is frequently invoked to signify the highest level of reference or ground truth. This benchmark is often established through expert annotations—the meticulous labeling of data by seasoned professionals, whether it involves classifying cellular structures in histopathology images, identifying adverse events in clinical trial narratives, or coding complex tutoring interactions for educational research. These annotations form the critical foundation upon which machine learning models are trained and validated; their quality directly dictates the performance, reliability, and, ultimately, the safety of AI-driven tools in high-stakes environments.

However, a growing body of evidence challenges the presumed infallibility of this standard. The central thesis of this article is that expert annotations are inherently inconsistent. This variability is not a mere artifact of carelessness but stems from deep-seated factors such as subjective interpretation, cognitive biases, and the inherent ambiguity of complex phenomena. This article will objectively compare the performance of human expert annotation against emerging automated and orchestrated methods, framing this analysis within the broader context of consistency evaluation. By synthesizing recent experimental data and detailing the methodologies used to quantify this inconsistency, we aim to provide researchers and drug development professionals with a clearer framework for assessing the true reliability of their foundational data.

Quantitative Comparison: Expert vs. Automated Annotation Performance

Recent empirical studies have begun to quantify the performance gaps and relationships between expert human annotators and automated systems. The following table summarizes key findings from a 2025 study that benchmarked human experts against Large Language Models (LLMs) in annotating tutoring discourse, a task analogous to labeling complex interactions in other domains.

Table 1: Performance Comparison of Human and LLM-based Annotation Systems (2025 Study) [10]

| Annotation System | Average Agreement with Adjudicated Standard (Cohen's κ) | Key Strengths | Key Weaknesses |

|---|---|---|---|

| Human Experts (Gold Standard) | Used as benchmark | Nuanced interpretation, context understanding | Time- and labor-intensive; moderate inherent inconsistency |

| Unverified LLM Annotation | Variable; often below human agreement | Highly scalable, low cost, rapid | Unstable; sensitive to prompt design and construct ambiguity |

| LLM with Self-Verification | ~58% improvement over unverified baseline | Improves stability and reliability; robust gains | Added computational overhead |

| LLM with Cross-Verification | ~37% improvement over unverified baseline | Leverages complementary model biases; selective improvements | Benefits are pair- and construct-dependent; can reduce alignment |

The data reveals a critical insight: the traditional binary of "human vs. machine" is outdated. The so-called gold standard established by human experts, while valuable, shows only moderate reliability and is difficult to scale [10]. While direct LLM annotation is scalable but unstable, the introduction of verification-oriented orchestration significantly bridges the performance gap. Self-verification, where a model checks its own work, nearly doubles agreement with the reference standard, while cross-verification, involving multiple models, also shows substantial, though more variable, improvement [10]. This suggests that consistency is not an intrinsic property of an annotator but a achievable outcome of a well-designed system that incorporates checks and balances.

Experimental Protocols: Measuring and Mitigating Inconsistency

Protocol 1: Benchmarking Annotation Quality

A fundamental method for quantifying inconsistency is benchmarking, a process that systematically compares annotations against an agreed-upon standard to measure accuracy, completeness, and consistency [11].

- Objective: To evaluate the effectiveness of an annotation team (human or automated) and identify systematic errors or interpretation discrepancies.

- Methodology:

- Define Benchmarking Object: Clearly identify the specific process or data product to be analyzed, such as the labeling of a specific biological structure in imaging data [11].

- Establish a Reference Standard: This often involves creating "gold standard" labels through disagreement-focused adjudication by multiple senior experts. In this process, annotations from two or more independent human raters are compared, and disagreements are resolved through discussion or a third adjudicator to create a refined ground truth [10].

- Data Collection and Comparison: Annotators (whether human teams or AI models) label the benchmark dataset. Their outputs are compared against the reference standard.

- Quantitative Analysis: Key metrics are calculated, including:

- Inter-Annotator Agreement (IAA): Measures how consistently different annotators label the same data. Common statistics include Cohen's Kappa (κ) for two annotators or Fleiss' Kappa for multiple annotators [10] [12].

- Confidence Scoring: AI models can assign scores to labels based on their certainty, which can then be validated by human review [12].

- Gap Analysis and Iteration: The results are used to identify weaknesses in the annotation guidelines, training needs for human annotators, or architectural tweaks for AI models, leading to an iterative refinement cycle [11].

Protocol 2: Verification-Oriented LLM Orchestration

This protocol, derived from a 2025 study on annotating tutoring discourse, provides a framework for enhancing the consistency of automated annotations, which can be adapted for various research contexts [10].

- Objective: To improve the reliability and stability of LLM-generated annotations beyond single-pass, unverified outputs.

- Methodology:

- Base Annotation: A production LLM (e.g., GPT-5, Claude, Gemini) is prompted to perform an initial annotation of the text or data based on a predefined rubric or codebook [10].

- Verification Stage:

- Self-Verification: The same LLM is prompted to review its own initial annotation, checking for errors, inconsistencies, or misapplications of the rubric, and then given the opportunity to refine its output [10].

- Cross-Verification: A different LLM acts as a verifier, auditing the initial annotations produced by the first model. This introduces a second, potentially complementary, perspective to catch errors the first model might miss [10].

- Adjudication and Output: The verified output is taken as the final annotation. The study used a concise notation (e.g.,

Gemini(GPT)for Gemini cross-verifying GPT's annotations) to standardize reporting [10]. - Validation: The final outputs from both verified conditions are benchmarked against a human-adjudicated reference standard to quantify the improvement in agreement, as measured by Cohen's κ [10].

Workflow Visualization: From Raw Data to Verified Annotation

The following diagram illustrates the logical workflow of the verification-oriented orchestration protocol, highlighting how it introduces critical feedback loops to enhance consistency.

The Scientist's Toolkit: Essential Reagents for Annotation Research

For researchers designing experiments to evaluate annotation consistency, a set of core "reagent solutions" is essential. The following table details these key components and their functions.

Table 2: Key Research Reagent Solutions for Annotation Consistency Evaluation

| Research Reagent | Function & Explanation |

|---|---|

| Adjudicated Reference Standard | A high-quality "ground truth" dataset created by resolving disagreements between multiple expert annotators. It serves as the benchmark for evaluating all other annotation methods [10]. |

| Structured Annotation Rubric | A detailed codebook that defines annotation categories, provides clear definitions, and includes examples and decision rules. This is critical for minimizing subjective interpretation by both humans and AI [10]. |

| Inter-Annotator Agreement (IAA) Metrics | Statistical tools like Cohen's κ or Krippendorff's α that quantify the level of agreement between annotators, correcting for chance. These are the primary metrics for measuring consistency [10] [11]. |

| Verification-Oriented Orchestration Framework | A software framework that implements self- and cross-verification loops for AI-based annotation, enabling the empirical testing of these consistency-enhancing strategies [10]. |

| Quality Assurance (QA) Pipelines | Integrated workflows within annotation platforms that support built-in QA, such as multi-pass review, consensus checks, and anomaly detection, to maintain label integrity [13] [12]. |

| Benchmarking Platform | Tools and standardized processes for continuously comparing annotation quality against internal goals and external industry standards to track progress and identify gaps [11]. |

The pursuit of a perfectly consistent "gold standard" in expert annotations is a scientific ideal that, in practice, remains elusive. The experimental data and methodologies presented herein demonstrate that inconsistency is an inherent property of complex annotation tasks, whether performed by humans or AI. The future of reliable data annotation, therefore, does not lie in seeking a single infallible source of truth but in architecting systems that explicitly manage and mitigate variability. This involves a paradigm shift from a reliance on solo expert judgment to the adoption of orchestrated, multi-agent frameworks that leverage the strengths of both human expertise and AI scalability through rigorous verification and continuous benchmarking. For researchers and drug development professionals, the imperative is clear: to build trustworthy AI models, we must first build more trustworthy, transparent, and systematically validated data annotation processes.

In high-stakes fields, from clinical medicine to data science, the consistency of expert judgment is a fundamental pillar of reliability. The intensive care unit (ICU) serves as a critical paradigm for studying expert disagreement, where decisions are complex, time-pressured, and carry profound consequences. Research reveals that clinician disagreement is not an anomaly but a prevalent feature of critical care environments, directly impacting patient outcomes and resource allocation [14] [15]. Similarly, in the domain of data science, expert inconsistency in tasks such as data annotation introduces significant noise into training datasets, ultimately compromising the performance of machine learning models [16] [17].

This guide examines the quantification of expert disagreement through the lens of clinical ICU studies, extracting transferable methodologies, metrics, and mitigation strategies. The ICU functions as a controlled, high-fidelity laboratory for studying human judgment under pressure. By understanding how disagreement is measured and managed in this critical setting, researchers across domains—particularly those evaluating consistency between expert and automated annotation—can develop more robust frameworks for quantifying and improving judgment reliability in their own fields.

Quantifying Disagreement: Key Metrics and Analytical Frameworks

Clinical studies employ sophisticated frameworks to dissect the components of judgment error, providing a template for systematic analysis in other domains.

The Bias-Noise Framework of Judgment Error

In ICU settings, judgment error is systematically categorized into two distinct components: bias (systematic, directional error) and noise (unsystematic, random variability) [18]. This distinction is crucial for deploying appropriate corrective strategies, as reducing one does not necessarily reduce the other.

- Bias includes cognitive biases like anchoring (over-relying on initial information) and availability (overweighting memorable cases), as well as discriminatory biases against patient subgroups [18].

- Noise comprises unwanted variability in professional judgments that should ideally be identical. The bias-noise framework enables precise measurement and targeted intervention [18].

Components of System Noise

Research identifies three distinct sources of system noise in clinical judgment, each measurable with specific metrics:

- Level Noise: Variability in clinicians' average judgments. Some clinicians are consistently more interventionist, while others are more conservative, independent of specific case details [18].

- Stable Pattern Noise: Arises from clinicians weighing patient factors differently. For example, one clinician might place greater emphasis on age, while another focuses more on functional status [18].

- Occasion Noise: Random variability in a single clinician's judgments across time, influenced by fatigue, mood, or time pressure [18].

Table 1: Analytical Frameworks for Quantifying Expert Disagreement

| Framework Component | Clinical ICU Manifestation | Data Annotation Analog |

|---|---|---|

| Bias (Systematic Error) | Consistent underestimation of pain in specific patient demographics [18] | Annotators consistently mislabeling a specific entity due to guideline ambiguity |

| Level Noise | Some intensivists consistently estimate higher mortality probabilities than colleagues [18] | Some annotators consistently apply stricter criteria for labeling "sentiment" |

| Stable Pattern Noise | Disagreement on how heavily to weight age versus comorbidities in prognosis [18] | Annotators disagreeing on which text features most indicate "sarcasm" |

| Occasion Noise | Same clinician making different triage decisions when fatigued versus rested [18] | Same annotator applying different standards to identical items at different times |

Prevalence and Detection of Disagreement

Empirical studies in ICUs reveal that physician-surrogate conflict occurs in a significant majority of cases. One prospective cohort study found that either physicians or surrogates identified conflict in 63% of cases, though physicians were less likely to perceive conflict than surrogates (27.8% vs. 42.3%) [15]. This perception gap highlights the complex nature of disagreement and the importance of multi-perspective assessment.

Agreement between physicians and surrogates about the presence of conflict is notably poor (kappa = 0.14), indicating that simplistic assessment methods may fail to capture the true extent of disagreement [15]. This has direct parallels in annotation projects, where project managers and annotators may have different perceptions of label quality and consistency.

Experimental Protocols for Measuring Disagreement

Research in critical care employs rigorous methodological approaches to capture and quantify disagreement, providing replicable templates for experimental design.

Prospective Cohort Studies with Multi-Rater Assessment

Protocol Overview: This design simultaneously captures perspectives from multiple stakeholders in real-world clinical settings to measure disagreement prevalence and correlates [15].

Key Methodological Elements:

- Population: ICU physicians and surrogate decision makers for critically ill patients [15]

- Data Collection: Parallel questionnaires administered to physicians and surrogates assessing perceptions of conflict, communication quality, and decision-making preferences [15]

- Measurement Instrument: Validated scales including the Quality of Communication instrument (17 items) and Physician Trust instrument (5 items) [15]

- Statistical Analysis: Hierarchical multivariate modeling to identify predictors of conflict while accounting for clustering of multiple surrogates per patient and multiple patients per physician [15]

Application to Annotation Research: This protocol can be adapted to measure disagreement between expert annotators and project managers, assessing not just labeling outcomes but perceptions of guideline clarity, task difficulty, and communication quality.

High-Fidelity Simulation with Standardized Cases

Protocol Overview: Simulation creates controlled laboratory conditions using standardized cases and professional actors to isolate variability in expert judgment [19].

Key Methodological Elements:

- Case Development: Evidence-based scenarios designed to reliably produce disagreement, such as end-of-life decision conflicts with clear advance directives [19]

- Standardization: Professional actors trained to portray surrogate decision makers with consistent responses across participants [19]

- Data Capture: Audio recording of simulated family conferences with verbatim transcription and de-identification [19]

- Behavioral Coding: Systematic coding of communication behaviors using codebooks developed through inductive and deductive approaches until thematic saturation is achieved [19]

Application to Annotation Research: This approach translates directly to annotation consistency research through the use of "gold standard" datasets with pre-established labels, allowing researchers to measure how experts deviate from standards and from each other when labeling identical content.

Repeated Judgment Design with Ground Truth Verification

Protocol Overview: This design captures within-expert inconsistency by having the same experts judge the same cases twice, months apart, without awareness of repetition [17].

Key Methodological Elements:

- Stimuli: Real-world diagnostic cases (e.g., mammograms, spinal images) with confirmed diagnostic outcomes from follow-up research [17]

- Procedure: Experts judge each case with sufficient time interval between presentations to prevent recognition, typically several months [17]

- Measurement: Consistency rates, confidence assessments, and comparison to inter-expert agreement levels [17]

- Theoretical Framework: Application of models like the Self-Consistency Model to relate within-expert inconsistency to between-expert disagreement [17]

Application to Annotation Research: This method directly applies to measuring annotation consistency by having expert annotators label the same data at different time points, revealing occasion noise and the stability of individual annotation patterns.

Comparative Analysis: Human vs. Algorithmic Performance

Clinical studies provide compelling data on the relative performance of human experts versus algorithmic approaches, with direct implications for the expert versus automated annotation debate.

Quantitative Comparisons of Judgment Accuracy

In critical care mortality prediction, physicians' predictions of in-hospital mortality achieved an Area Under the Curve (AUC) of 0.68 (95% CI 0.63–0.73), while the APACHE IV algorithmic scoring system significantly outperformed humans with an AUC of 0.83 (95% CI 0.79–0.88) [18]. This performance advantage is largely attributed to algorithms' superior consistency in applying the same weighting rules across all cases, eliminating human noise [18].

The Self-Consistency Model explains that expert inconsistency arises from the probabilistic sampling of evidence—when experts judge the same case twice, they may sample different pieces of evidence from memory or the environment, leading to different decisions [17]. This theoretical framework predicts that inconsistency is highest for cases where the evidence is most ambiguous (approaching a 50/50 split between alternatives), which aligns with empirical findings across multiple diagnostic domains [17].

Relative Strengths and Limitations

Table 2: Human Expert vs. Algorithmic Judgment in Clinical and Annotation Contexts

| Performance Dimension | Human Experts | Algorithmic Approaches |

|---|---|---|

| Consistency | Prone to level, pattern, and occasion noise [18] | Perfect consistency in applying rules [18] |

| Context Adaptation | Can incorporate unmodeled contextual factors [18] | Limited to predefined variables and relationships |

| Ambiguity Handling | Struggle with ambiguous cases (highest inconsistency) [17] | Apply consistent rules regardless of ambiguity [18] |

| Error Types | Both random (noise) and systematic (bias) errors [18] | Primarily biased training data or flawed feature weighting |

| Scalability | Limited by human cognitive capacity and time | Highly scalable once developed |

| Explanatory Capacity | Can articulate reasoning process (though potentially flawed) | Limited explainability without specific design features |

Mitigation Strategies for Expert Disagreement

ICU research has identified and tested multiple strategies for reducing disagreement, offering practical approaches for improving consistency.

Algorithmic Assistance and Decision Support

The implementation of standardized scoring systems like APACHE (Acute Physiology and Chronic Health Evaluation) demonstrates how algorithms can reduce system noise by ensuring multiple clinicians generate identical scores for identical patients [18]. These systems standardize both data collection (which variables to consider) and data combination (how to weight variables), addressing both level and pattern noise [18].

A crucial finding from judgment and decision-making research is that human judges often identify too many exceptions to algorithms, introducing noise and ultimately reducing accuracy [18]. This highlights the importance of understanding when to trust algorithmic consistency versus human intuition.

Structured Communication Protocols

Palliative care specialists demonstrate distinct conflict management approaches compared to intensivists, using 55% fewer task-focused communication statements and 48% more relationship-building statements [19]. This suggests that communication style significantly influences disagreement resolution.

Specific effective techniques include [20] [19]:

- Building trust through availability and engagement

- Targeted education to correct misunderstandings

- Exploring patient values rather than focusing solely on medical facts

- Providing time for surrogates to process information

- Emphasizing shared interests rather than differences in perspective

Aggregation of Independent Judgments

Averaging independent judgments (the "wisdom of crowds" principle) statistically reduces noise by the square root of the number of judgments averaged, as random errors tend to cancel each other out [18]. In clinical contexts, this translates to multidisciplinary team meetings where multiple specialists contribute independent assessments before reaching a collective decision.

The critical requirement for this approach to be effective is independence of judgments—when assessments are influenced by group dynamics or dominant voices, the noise-reduction benefit is diminished [18].

Based on successful implementation in clinical research, the following tools and approaches form a essential toolkit for quantifying and addressing expert disagreement.

Table 3: Essential Research Reagent Solutions for Disagreement Measurement

| Tool Category | Specific Instrument | Function and Application |

|---|---|---|

| Disagreement Assessment | Conflict Scale (0-10) [15] | Quantifies perceived disagreement between parties on a standardized scale |

| Communication Quality Measurement | Quality of Communication (QOC) instrument (17 items) [15] | Assesses multiple dimensions of communication quality in decision-making contexts |

| Trust Assessment | Physician Trust instrument (5 items) [15] | Measures trust between stakeholders, a key factor in disagreement resolution |

| Engagement Measurement | FAMily Engagement (FAME) tool [21] | Validated 12-item questionnaire measuring engagement behaviors in care decisions |

| Theoretical Framework | Self-Consistency Model (SCM) [17] | Predicts relationship between confidence, inconsistency, and case ambiguity |

| Coding Framework | Communication Behavior Codebook [19] | Systematically categorizes communication approaches during conflict scenarios |

Clinical ICU studies provide robust methodologies and insights directly transferable to quantifying expert disagreement in data annotation and other fields. The bias-noise distinction offers a crucial framework for diagnosing and addressing different types of inconsistency, while experimental protocols from clinical research provide validated approaches for measurement. The consistent finding that algorithmic approaches outperform humans in consistency (though not necessarily in all domains of judgment) suggests careful consideration of the role of automation in annotation pipelines.

Furthermore, the demonstrated effectiveness of structured communication and judgment aggregation provides practical pathways for improving consistency without completely replacing human expertise. As annotation quality remains the foundation of reliable machine learning systems, these clinical lessons offer valuable guidance for developing more rigorous approaches to measuring, understanding, and improving expert consistency across research domains.

In the scientific pursuit of reliable artificial intelligence (AI) for high-stakes fields like drug development, the quality of annotated data is paramount. The broader thesis of consistency evaluation between expert and automated annotation research reveals that "noise"—systematic inaccuracies and inconsistencies in labeled data—is not merely a random error but often a structured product of cognitive, methodological, and technological sources. This noise directly compromises the integrity of AI models, influencing their predictive accuracy and generalizability in critical applications.

This guide objectively compares the performance of contemporary data annotation platforms, focusing on their capacity to mitigate two primary sources of noise: cognitive biases originating from human experts and task ambiguities exacerbated by inadequate tooling. By synthesizing current experimental data and protocols, we provide researchers and scientists with a framework to evaluate annotation tools, not just on speed, but on the robustness of their outputs against these inherent noise sources.

Decoding Noise: Cognitive Biases and Task Ambiguity

Cognitive Biases as a Source of Systematic Noise

Cognitive biases are systematic patterns of deviation from norm or rationality in judgment. In expert annotation, these biases are not random errors but become structured noise that can skew AI model training.

- Interpretation Bias: This occurs when experts resolve ambiguity in data in a manner consistent with their underlying expectations or affective state. For instance, in a virtual reality study, healthy participants showed a bias in interpreting ambiguous cues as threatening, which was quantitatively linked to both their gaze (attention) and their subjective threat ratings [22]. This demonstrates how a pre-existing cognitive state can systematically influence the labeling of ambiguous information.

- Attention Bias: This bias reflects the preferential allocation of attention toward specific types of information. Research has shown that attention and interpretation biases can be interrelated, each uniquely contributing to task interference, suggesting they are distinct yet connected sources of noise in human decision-making [22].

- Measurement Reliability: The reliability of tools measuring these biases is a critical factor. The Ambiguous Cue Task (ACT), for instance, has been validated to provide a highly reliable measurement of interpretation bias with high internal consistency (rSB = .91 – .96) [23]. Using unreliable measurement paradigms is, in itself, a source of noise, as it fails to consistently capture the underlying construct.

Task Ambiguity and Platform-Induced Noise

Task ambiguity arises from poorly designed annotation interfaces, vague labeling guidelines, or complex data modalities. This ambiguity is often amplified by the annotation platform itself, leading to platform-induced noise.

- Labeler Uncertainty: When task instructions or object boundaries are unclear, it forces annotators to make subjective calls, increasing inter-annotator disagreement and introducing inconsistencies [12].

- Scalability vs. Accuracy Trade-off: As projects scale, maintaining consistency across a large annotator pool becomes challenging. One study found that overlapping polygons and incomplete labels from outsourced labeling created cascading errors that hampered model training [24].

- Domain Complexity: In fields like medical imaging or geospatial analysis, the data inherently contains edge cases and nuanced features. Without tools designed for these specific modalities, annotation quality inevitably suffers [13].

The following diagram illustrates how these primary sources of noise originate and ultimately impact model performance.

The Annotation Platform Landscape: A Comparative Analysis

The choice of annotation platform is a critical defense against noise. The following section benchmarks current tools based on empirical data from real-world implementations in 2024 and 2025, focusing on their effectiveness in managing cognitive bias and task ambiguity.

Quantitative Performance Benchmarking

Table 1: Benchmarking Data Annotation Platform Performance (2025)

| Platform | Primary Use Case | Reported Throughput Increase | Reported Accuracy Improvement | Key Strengths / Mitigation Strategies |

|---|---|---|---|---|

| Encord | Physical AI, Medical Imaging | 5x faster project setup; 5x data throughput [24] | 30% increase in annotation accuracy; 15% boost in downstream task precision [24] | AI-assisted labeling; Active learning; Integrated QA dashboards [13] [24] |

| Supervisely | Computer Vision, Healthcare | Information Missing | Information Missing | Custom scripting for niche domains; Support for DICOM & point-clouds [13] |

| CVAT | General Computer Vision | Information Missing | Information Missing | Open-source; Semi-automated labeling; Strong community support [13] [25] |

| Dataloop | Robotics, Autonomous Systems | Information Missing | Information Missing | Multi-format video support; Integrated quality control [13] |

| Roboflow | Rapid Prototyping | Information Missing | Information Missing | Auto-annotation via pre-trained models; Public dataset hosting [25] |

| Labelbox | End-to-End ML Lifecycle | Information Missing | Information Missing | Active learning for data prioritization; Elastic scalability [25] |

| T-Rex Label | Complex/rare object detection | Information Missing | Information Missing | Visual prompt models for rare objects; Low usage barrier [25] |

Analysis of Platform Approaches to Noise Mitigation

- AI-Assisted Labeling for Consistency: Platforms like Encord and Roboflow leverage AI for pre-labeling, which establishes a consistent baseline. This reduces the volume of manual, variable human input, directly countering attention and interpretation biases by providing a uniform starting point. Encord's use of computer vision models like SAM2 has been shown to reduce labeling costs by over 33% while improving consistency [24].

- Hybrid Workflows for Expert Oversight: The prevailing trend is toward hybrid, or human-in-the-loop (HITL), workflows. In this model, AI handles repetitive, high-certainty annotations, while human experts are reserved for complex edge cases and quality assurance [26] [24]. This approach leverages human judgment where it is most needed—resolving genuine ambiguity—while minimizing exposure to repetitive tasks that can induce cognitive fatigue and bias.

- Active Learning for Bias-Aaware Sampling: Advanced platforms incorporate active learning, which uses the model's own uncertainty to prioritize which data points need human annotation [13] [25]. This methodology ensures that expert effort is focused on the most informative and challenging samples, which can help identify and correct for systemic blind spots in both the model and the annotation guidelines.

Experimental Protocols for Consistency Evaluation

To empirically evaluate the consistency between expert and automated annotations, researchers can adopt the following rigorous protocols, derived from recent academic and industry research.

Protocol 1: Evaluating Label Noise Robustness

This protocol is based on frameworks like the Equal-Quality Instance-Dependent Noise (EQ-IDN) model, which treats label noise not as a bug to be eliminated but as a variable for systematic benchmarking [27].

- Objective: To assess an AI model's robustness to different types and levels of label noise, simulating real-world imperfections.

- Methodology:

- Dataset Creation: Start with a "clean" dataset verified by multiple domain experts. Programmatically inject noise into the labels based on a defined model (e.g., EQ-IDN) to create controlled noisy datasets with varying noise rates and types [27].

- Model Training: Train identical model architectures on both the clean and various noisy datasets.

- Evaluation: Compare model performance on a held-out, clean test set. Key metrics include accuracy, precision, recall, and generalization error.

- Outcome Measure: The performance degradation curve as a function of noise rate and type. Models (or annotation pipelines) that lead to slower degradation are deemed more robust.

Protocol 2: Measuring Inter-Annotator Agreement (IAA) and Expert-AI Consensus

This protocol directly measures the consistency of annotations, which is a direct proxy for noise levels.

- Objective: To quantify the reliability of annotations from both human experts and AI tools, and the consensus between them.

- Methodology:

- Task Design: A set of data samples is independently annotated by multiple domain experts (the "gold standard" panel) and by the AI annotation tool.

- Data Collection: Calculate IAA among the human experts using established statistics like Fleiss' Kappa or Krippendorff's Alpha. This establishes the baseline human reliability [12].

- Consensus Calculation: Calculate the agreement between the AI-generated labels and the consolidated expert opinion.

- Outcome Measure: High IAA indicates low task ambiguity and strong guideline clarity. A high Expert-AI consensus suggests the automated tool is effectively replicating expert-level judgment. Discrepancies highlight areas of high ambiguity or potential systematic bias.

The workflow for a comprehensive consistency evaluation, integrating these protocols, is visualized below.

The Scientist's Toolkit: Essential Reagents for Annotation Research

For researchers designing experiments to evaluate annotation consistency, the following "reagents" are essential. This list details key methodological components and their functions in ensuring a robust evaluation.

Table 2: Essential Research Reagents for Annotation Consistency Evaluation

| Reagent / Methodological Component | Function in the Experimental Protocol | Examples & Notes |

|---|---|---|

| Gold Standard Dataset | Serves as the ground truth for evaluating annotation quality and model performance. Created by consolidating labels from a panel of domain experts. | Critical for Protocol 2; requires high Inter-Annotator Agreement to be valid. |

| Noise Injection Model | Systematically introduces realistic label noise into a clean dataset to test model and pipeline robustness. | EQ-IDN Framework [27]; Allows for controlled, scalable experiments. |

| Inter-Annotator Agreement (IAA) Metric | Quantifies the consistency and reliability of human annotators, establishing the upper limit of annotation quality for a task. | Fleiss' Kappa, Krippendorff's Alpha [12]; High IAA indicates low task ambiguity. |

| Active Learning Sampling Strategy | Optimizes the annotation workflow by prioritizing the most informative data points for expert review, reducing total cost and time. | Integrated into platforms like Encord and Labelbox [13] [25]; Focuses expert effort on edge cases. |

| Confidence Scoring System | Provides a measure of the AI model's certainty in its predictions or pre-labels, used to triage data for human review in hybrid workflows. | A core feature of AI-assisted platforms [24]; Low-confidence samples are routed to experts. |

| Quality Assurance (QA) Dashboards | Enables real-time monitoring of annotation progress, flagging of discrepancies, and tracking of annotator performance. | Tools like Encord Analytics [24]; Essential for managing large-scale annotation projects. |

The empirical data and comparative analysis presented confirm that noise in data annotation is a multi-faceted challenge, stemming from deeply rooted cognitive biases and platform-dependent task ambiguities. The consistency between expert and automated annotation is not a fixed value but a metric that can be optimized through careful tool selection and workflow design.

Platforms that champion AI-assisted hybrid workflows have demonstrated measurable superiority in mitigating these noise sources, delivering not only speed (e.g., 5x throughput) but also quantifiable gains in accuracy (e.g., 30% improvement) [24]. The future of reliable AI in scientific domains like drug development hinges on a continued rigorous, empirical approach to data annotation. Future research must focus on developing more nuanced noise models, creating standardized benchmarks for annotation consistency, and building tools that are not just powerful, but also cognitively aligned to augment—rather than be hindered by—human expertise.

In the development of artificial intelligence (AI) for medical applications, annotation noise—discrepancies, inconsistencies, or inaccuracies in labeled data—poses a fundamental challenge to model reliability and, consequently, patient safety. The performance of any supervised learning model is intrinsically tied to the quality of its training data; models learn to replicate the patterns in their training data, including any errors present in the annotations [6] [1]. In high-stakes fields like medical imaging and drug development, where AI assists in diagnosis and treatment planning, these propagated errors can translate directly into negative patient outcomes, including misdiagnosis, inappropriate treatment, and compromised safety [1]. This guide frames the critical issue of annotation noise within the broader thesis of evaluating consistency between expert and automated annotations, providing researchers and scientists with a comparative analysis of emerging solutions designed to enhance data quality and model robustness.

Quantifying the Impact: Annotation Noise and Its Consequences

The risks associated with poor-quality annotations are not merely theoretical. In medical imaging, for instance, a model trained on inaccurately labeled data can produce false positives or hallucinations, leading a system to identify non-existent pathologies or, conversely, to miss critical signs of disease [1]. Empirical studies demonstrate that annotation noise is a pervasive problem. For example, research on the AIDE (Annotation-effIcient Deep lEarning) framework revealed that conventional deep learning models, which rely heavily on large volumes of high-quality manual annotations, suffer significant performance degradation when trained on imperfect datasets [28]. This reliance creates a major bottleneck, as curating large, expertly annotated medical datasets is time-consuming, expensive, and prone to inter-annotator variation [28].

The table below summarizes the core challenges and documented impacts of annotation noise in biomedical AI.

Table 1: Documented Impacts and Challenges of Annotation Noise

| Challenge | Impact on Model Performance | Potential Patient Outcome Risk |

|---|---|---|

| Limited Annotations (Semi-Supervised Learning challenge) [28] | Reduced segmentation accuracy and model generalizability. | Inaccurate measurement of tumors or organs, affecting diagnosis and treatment planning. |

| Label Noise (Noisy Label Learning challenge) [28] | Model learns incorrect features, leading to misclassification. | False positives/negatives in diagnostic assays or image-based detection. |

| Inter-annotator Variation [28] [29] | Inconsistent model predictions and unreliable performance benchmarks. | Lack of trust in AI-assisted diagnostics; variability in patient care. |

| Subjective Interpretation [29] | Introduction of bias and inaccuracies into the training data. | Model performance that reflects human error rather than ground truth. |

Comparative Analysis of Frameworks for Noisy Medical Data

To address these challenges, researchers have developed frameworks that are robust to annotation noise. The following table provides a structured comparison of two advanced approaches: AIDE, designed for medical image segmentation, and a Diffusion-based framework for ECG signal quality assessment.

Table 2: Framework Comparison for Handling Annotation Noise

| Evaluation Aspect | AIDE (Annotation-effIcient Deep lEarning) [28] | Diffusion-Based ECG Noise Quantification [29] |

|---|---|---|

| Primary Objective | Medical image segmentation with limited, noisy, or domain-shifted annotations. | ECG noise quantification and quality assessment via anomaly detection. |

| Core Methodology | Cross-model self-correction with two networks co-training, featuring local label filtering and global label correction. | Diffusion model trained on clean ECGs; identifies noisy signals as anomalies via reconstruction error. |

| Key Innovation | Transforms Semi-Supervised Learning (SSL) and Unsupervised Domain Adaptation (UDA) into a Noisy Label Learning (NLL) problem; leverages model-generated pseudo-labels. | Uses Wasserstein-1 distance ($W_1$) for distributional evaluation of reconstruction error, mitigating annotation inconsistencies. |

| Handling of Expert Annotations | Can achieve performance comparable to full supervision using only 10% of high-quality training annotations. | Identifies and excludes mislabeled signals from training set (e.g., noisy signals incorrectly annotated as clean). |

| Reported Performance | On CHAOS (liver segmentation): 87.9% DSC with 10 labeled cases vs. 86.4% DSC with AIDE using fewer labels. | Macro-average $W_1$ score of 1.308, outperforming next-best method by over 48%. Strong generalizability in external validations. |

| Ideal Application Scope | Large-scale medical image segmentation (e.g., tumors, organs) where expert labels are scarce. | Real-time or continuous ECG monitoring in clinical and wearable settings where signal quality is variable. |

Experimental Protocols and Workflows

A deeper understanding of these frameworks requires an examination of their core experimental protocols.

AIDE Workflow Protocol

The AIDE framework employs a cross-model co-optimization strategy [28]:

- Task Standardization: For SSL, a model is pre-trained on limited annotated data to generate pseudo-labels for unlabeled data. For UDA, a model trained on a source domain generates pseudo-labels for the target domain. This standardizes SSL and UDA as NLL problems.

- Parallel Network Training: Two deep neural networks are trained in parallel on the dataset containing both high-quality and noisy/pseudo-labels.

- Local Label Filtering (Per Iteration): In each training iteration, samples suspected of having noisy labels are filtered out. These samples are augmented (e.g., rotated, flipped), and pseudo-labels are generated by distilling the predictions of the augmented inputs.

- Global Label Correction (Per Epoch): After each training epoch, the entire training set's labels are analyzed. Labels with low similarity (e.g., low Dice score) to the network's current predictions are updated according to a designed schedule, progressively refining the dataset.

Diffusion-Based ECG Protocol

This method frames noise detection as an anomaly detection task [29]:

- Preprocessing with Adaptive Superlet Transform (ASLT): The raw ECG signal is transformed into a time-frequency representation (scalogram) using ASLT. This method provides superior resolution for physiological signals compared to conventional techniques like STFT or CWT, reducing cross-band contamination.

- Model Training on Clean Data: A diffusion model is trained exclusively on ECG signals annotated as "clean." The model learns to reverse a gradual noising process, effectively learning the distribution of clean ECG data.

- Anomaly Detection via Reconstruction: During inference, a potentially noisy ECG signal is fed into the trained diffusion model. The model attempts to reconstruct a clean version. A high reconstruction error indicates that the input signal is anomalous (noisy).

- Data Refinement and Quantification: The framework can identify and exclude mislabeled examples from the training set. The distribution of reconstruction errors for clean vs. noisy ECGs is compared using the Wasserstein-1 distance, providing a robust metric for noise quantification.

The logical workflow of this diffusion-based approach is detailed in the following diagram.

Diffusion-Based ECG Noise Assessment Workflow

The Scientist's Toolkit: Essential Reagents for Annotation-Conscious Research

For researchers designing experiments to evaluate annotation consistency or develop noise-robust models, the following tools and materials are essential.

Table 3: Key Research Reagents and Solutions

| Tool / Reagent | Function in Research |

|---|---|

| AIDE Framework [28] | An open-source deep learning framework provides a methodological baseline for handling imperfect datasets (SSL, UDA, NLL) in medical image segmentation. |

| Diffusion Model Architecture [29] | Serves as a core component for reconstruction-based anomaly detection tasks, particularly for signal or image quality assessment and noise quantification. |

| Adaptive Superlet Transform (ASLT) [29] | Provides high-resolution time-frequency representation of physiological signals (ECG, EEG), crucial for accurate feature extraction before model training. |

| Wasserstein-1 Distance ($W_1$) [29] | A robust distributional metric for comparing reconstruction error distributions between clean and noisy data, mitigating the effects of annotation inconsistencies. |

| Human-in-the-Loop (HITL) Platform [7] [6] | An annotation tool that combines AI pre-labeling with human expert review, essential for creating gold-standard datasets and validating model outputs. |

| DICOM/NIfTI Annotation Tools [6] | Specialized software capable of handling complex medical image file formats, enabling precise annotation of medical images for model training and validation. |

The comparative analysis presented in this guide underscores a critical paradigm shift in biomedical AI: from simply amassing larger datasets to intelligently managing data quality. The high stakes of patient outcomes demand robust frameworks like AIDE and diffusion-based anomaly detection, which explicitly address the realities of annotation noise and expert label scarcity. The consistency between expert and automated annotations is not a static goal but a dynamic process that can be managed and improved. By leveraging these advanced methodologies, researchers and drug development professionals can build more reliable, generalizable, and trustworthy AI models. The future of the field lies in creating efficient, human-in-the-loop ecosystems where automated tools handle scalable tasks under the vigilant guidance of expert oversight, ensuring that model performance translates safely into real-world clinical benefits.

Modern Annotation Methods: From Human-in-the-Loop to LLM Orchestration

In the development of artificial intelligence (AI), particularly for models that interact with the physical world, high-quality annotated data is a cornerstone. Annotation, the process of labeling raw data to train supervised learning algorithms, transforms unstructured data into a form that machines can learn from [13]. For researchers and drug development professionals, the choice of annotation methodology and platform is not merely a technical decision but a strategic one, directly influencing the accuracy, reliability, and safety of the resulting AI models [13] [16]. This guide objectively compares leading annotation solutions, framing the analysis within the critical thesis of evaluating consistency between expert and automated annotation—a key concern for scientific applications where precision is non-negotiable.

Annotation Methodologies: Manual vs. Automated

The fundamental choice in designing an annotation pipeline lies in the balance between human expertise and computational speed. The decision between manual and automated annotation involves trade-offs between accuracy, cost, and scalability, making the choice highly dependent on project-specific requirements, such as dataset complexity and the required level of precision [16].

Table 1: Comparative Analysis of Manual vs. Automated Annotation

| Criterion | Manual Annotation | Automated Annotation |

|---|---|---|

| Accuracy | Very high; professionals interpret nuance, context, and domain-specific terminology [16]. | Moderate to high; works well for clear, repetitive patterns but can mislabel subtle content [16]. |

| Speed | Slow; annotators label each data point individually, taking days or weeks for large volumes [16]. | Very fast; once set up, models can label thousands of data points in hours [16]. |

| Adaptability | Highly flexible; annotators adjust to new taxonomies, changing requirements, or unusual edge cases in real-time [16]. | Limited; models operate within pre-defined rules and require retraining for significant workflow shifts [16]. |

| Scalability | Limited; scaling requires hiring and training more annotators, which is costly and time-consuming [16]. | Excellent; once trained, annotation pipelines can scale to millions of data points with minimal marginal cost [16]. |

| Cost | High; involves skilled labor, multi-level reviews, and specialist expertise [16]. | Lower long-term cost; reduces human labor, though it incurs upfront model development and training costs [16]. |

| Ideal Use Case | High-risk applications, complex data types, smaller datasets, or projects requiring deep domain knowledge (e.g., medical, legal) [16]. | Large-scale datasets with clear, repetitive structures, and projects where speed and cost-efficiency are priorities [16]. |

For many research applications, a hybrid approach often yields the optimal results. This pipeline uses automated tools for bulk annotation to achieve scale, while human experts review, refine, and handle complex edge cases to ensure final quality and accuracy [16].

Comparative Analysis of Leading Annotation Platforms

Selecting the right platform is crucial for efficient dataset creation. The following section and table provide a detailed comparison of specialized companies and platforms based on their supported data types, annotation features, and primary use cases.

Table 2: Overview of Specialized Annotation Platforms

| Platform | Primary Focus & Supported Data Types | Key Features & Strengths | Considerations |

|---|---|---|---|

| Encord [13] [30] | Physical AI (Video, Images, DICOM, SAR) | AI-powered video engine; active learning integration; dataset quality metrics; strong security/compliance [13]. | Limited support for advanced 3D data and non-visual data types like text [30]. |

| BasicAI [30] | 3D Sensor Fusion (Image, Video, LiDAR, 4D-BEV, Text, Audio) | Industry-leading 3D sensor fusion; smart annotation tools; scalable project management [30]. | Lacks open API support and integrations with platforms like Databricks and TensorFlow [30]. |

| Supervisely [13] [30] | Computer Vision, Medical (Image, Video, DICOM, LiDAR) | "Unified OS" for CV; integrates state-of-the-art neural network models; strong visualization tools [13] [30]. | Does not support non-visual data (text, audio); steeper learning curve for non-technical users [30]. |

| CVAT [13] [31] [32] | Computer Vision (Image, Video) | Open-source; mature UI for vision; advanced video tools (tracking, interpolation); strong community [13] [31] [32]. | Complex UI for first-time users; requires more manual configuration for enterprise deployment [32]. |

| Label Studio [31] [32] | Multi-Domain (Text, Image, Audio, Video, Time-Series) | Extreme flexibility; intuitive and customizable UI; robust cloud-native integrations and API [31] [32]. | Less precision for advanced vision tasks vs. CVAT; toolset limited by initial project configuration [31] [32]. |

| Dataloop [13] [30] | AI Development & Vision (Image, Video, Audio, Text, LiDAR) | Flexible and scalable platform; intuitive data pipeline builder; integrated quality control [13] [30]. | Lacks built-in auto-annotation; limited support for PDF/HTML and some annotation tools [30]. |

| V7 [30] | Medical Imaging, Vision (Image, Video, Medical Files) | Comprehensive medical imaging suite; efficient AI-powered labeling and segmentation [30]. | Supports fewer data modalities; more niche in application [30]. |

Focused Comparison: CVAT vs. Label Studio

The choice between CVAT and Label Studio is a common point of consideration, as they represent two different philosophies in the open-source arena.

CVAT (Computer Vision Annotation Tool) is purpose-built for computer vision tasks. It excels in annotating images and videos, offering features like automatic object tracking and interpolation between frames, which can accelerate video annotation significantly [13] [32]. Its interface is highly specialized, which can be powerful for skilled annotators but may present a steeper learning curve [32].

Label Studio is designed as a flexible, multi-domain platform. It supports a wide array of data types, including text, audio, and time-series, making it ideal for projects that span multiple data modalities [31] [32]. Its user interface is often considered more modern and intuitive, and it offers stronger, cloud-native integrations out-of-the-box [32].

Experimental Protocols for Consistency Evaluation

A core thesis in advanced annotation research is the rigorous evaluation of consistency between expert human annotators and automated systems. The following workflow outlines a standardized protocol for this critical assessment. This process is vital for validating automated systems and establishing quality benchmarks in research-grade data production.

Detailed Experimental Methodology

The diagram above outlines a multi-stage experimental protocol. Below is a detailed explanation of each stage:

- 1. Dataset Curation: Select a representative, gold-standard subset of data from the broader project dataset. This subset should encompass the full spectrum of expected scenarios and edge cases to ensure a robust evaluation [13].

- 2. Expert Annotation: Domain experts annotate the gold-standard dataset in a double-blind setup. This involves multiple independent annotators labeling the same data without consultation. A consensus meeting is then held to resolve discrepancies and establish a single "ground truth" annotation set [16].

- 3. Automated Annotation: Pre-trained AI models (e.g., segmentation, object detection) available within or integrated into the annotation platform (such as those in CVAT or Encord) are used to automatically label the same gold-standard dataset [13] [31].

- 4. Quantitative Analysis: This phase involves calculating key metrics to objectively measure consistency.

- Inter-Annotator Agreement (IAA): Metrics like Fleiss' Kappa or Cohen's Kappa are used to measure agreement between the expert ground truth and the automated labels [16].

- Precision and Recall: Standard computer vision metrics assess the accuracy of the automated system's positive predictions and its ability to identify all relevant instances [13].

- 5. Qualitative Review: Experts perform a systematic analysis of disagreements between the expert ground truth and automated output. The goal is to identify patterns of error, such as consistent failure on specific edge cases (e.g., occluded objects, poor lighting) or systematic biases introduced by the model [13] [16].

- 6. Pipeline Refinement: Insights from the qualitative review are used to refine the automated annotation pipeline. This can involve retraining models on the corrected edge cases, adjusting the confidence thresholds for automated pre-labeling, or updating the quality assurance (QA) rules used in the platform's review workflow [13] [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

In the context of building a robust data annotation pipeline for scientific research, the "reagent solutions" are the core components of the annotation platform and its integrated ecosystem. The selection of these tools dictates the efficiency, quality, and scalability of the research data production.

Table 3: Key "Research Reagent Solutions" for Annotation Pipelines

| Tool/Component | Function in the Annotation Workflow |

|---|---|

| AI-Assisted Labeling Engine [13] | Uses micro-models and automated tracking to pre-label data (e.g., objects in video sequences), drastically reducing manual effort and accelerating the annotation process. |

| Active Learning Integration [13] | Algorithms that automatically identify and surface the most ambiguous or valuable data points from a large unlabeled dataset for human review, optimizing the use of expert annotator time. |

| Quality Assurance (QA) Workflows [13] [31] | Built-in review pipelines that enable multi-pass validation, consensus checks, and expert audits to ensure label integrity and adherence to project guidelines. |

| Multi-Modal Data Support [13] [30] | The platform's capability to handle and synchronize diverse data types (e.g., video, LiDAR, DICOM, text) essential for complex research projects like those in physical AI or medical imaging. |

| Collaboration & Role Management [13] [32] | Features for managing annotator teams, assigning tasks, tracking performance, and controlling access, which are critical for maintaining organization in large-scale projects. |

| Model Integration Backend [31] [32] | An interface (e.g., REST API, ML backend) that allows custom or pre-trained models to be integrated into the platform for tasks like automated pre-labeling and model-assisted refinement. |

The landscape of annotation solutions is diverse, with platforms offering specialized strengths tailored to different research needs. Pure computer vision projects may find a powerful solution in CVAT, while multi-modal research efforts might gravitate towards the flexibility of Label Studio or Dataloop. For domains with high stakes, such as medical AI or autonomous systems, platforms like Encord and Supervisely offer the necessary rigor, security, and advanced features for video and multimodal data [13] [30].

The critical takeaway for researchers and drug development professionals is that there is no single "best" platform, only the most suitable one for a specific project's data, accuracy requirements, and operational constraints [16] [30]. A disciplined approach to consistency evaluation, following the experimental protocols outlined, is indispensable for building trust in automated annotation systems and for producing the high-fidelity datasets that underpin reliable and impactful scientific AI models.

Human-in-the-Loop Workflows for Complex Biomedical Data

In the field of biomedical AI, the convergence of human expertise and automated systems is not just an advantage—it is a necessity for ensuring reliability, accuracy, and regulatory compliance. This guide objectively compares leading human-in-the-loop (HITL) platforms and workflows, framed within a broader thesis on evaluating consistency between expert and automated annotations. As of 2025, the strategic integration of human oversight is critical for preventing model degradation, with studies showing that continuous human feedback can reverse performance decay and improve accuracy by over 23% in real-world applications like radiology AI [33] [34]. The following analysis, based on published validations and feature comparisons, provides researchers and drug development professionals with a data-centric overview of the tools and methodologies shaping robust biomedical AI.

Platform Comparison: Capabilities and Compliance

The selection of an annotation platform is pivotal for building reliable AI models. The table below summarizes the core capabilities of leading tools designed for complex biomedical data, such as medical images (e.g., DICOM, NIfTI) and systematic literature review components.

Table 1: Feature Comparison of Leading Biomedical HITL Platforms

| Platform / Feature | iMerit + Ango Hub | V7 | Encord | RedBrick AI | 3D Slicer |

|---|---|---|---|---|---|

| In-house Expert Workforce | Yes (incl. Radiologists) [35] | No [35] | No [35] | No [35] | No [35] |

| Regulatory Support | HIPAA, 21 CFR Part 11 [35] | HIPAA, FDA, CE [36] | Limited [35] | FDA 510(k) [35] | No [35] |

| Key Biomedical Data Support | DICOM, NRRD, NIfTI, 16 simultaneous DICOM views [35] | DICOM, Volumetric Annotation [36] | DICOM, NIfTI [36] | DICOM, Multi-series upload [35] | DICOM, NIfTI, 3D/4D images [36] |

| 3D Multiplanar Annotation | Native [35] | Yes [36] | No [35] | Yes [35] | Yes (Open-source) [36] |

| Specialized Workflow | Radiology suite, multi-sequence comparison [35] | Consensus workflows, radiology & pathology [36] | Active learning pipelines, model fine-tuning [30] | Cloud-based, synced scrolling [35] | Research-focused, AI framework integration [36] |

Performance Benchmarks: Quantitative Validation Data

Independent validation studies and platform disclosures provide critical performance metrics. These quantitative data are essential for evaluating the consistency and efficiency of HITL systems against expert-driven gold standards.

Table 2: Performance Benchmarks for HITL AI Tools in Evidence Synthesis

| SLR Process Stage | AI Tool / Method | Key Performance Metric | Reported Result | Human Time Savings |

|---|---|---|---|---|

| Search Strategy Generation | AutoLit Smart Search (Boolean) | Recall vs. Gold Standard [37] | 76.8% - 79.6% [37] | Not Specified |

| Abstract Screening | AutoLit Supervised ML | Recall at Reviewer-level [37] | 82% - 97% [37] | ~50% [37] |

| Data Extraction (PICOs) | AutoLit Multi-model System | F1 Score [37] | 0.74 [37] | 70-80% [37] |

| Data Extraction (Study Details) | AutoLit Multi-model System | Accuracy [37] | 74% (Type), 78% (Location), 91% (Size) [37] | Not Specified |

Inside the Black Box: Experimental Protocols for Validation

To ensure the validity of HITL workflows, a rigorous, transparent experimental methodology is required. The following protocol, modeled on validation studies for AI-assisted systematic literature reviews (SLRs), provides a framework for objectively evaluating consistency between expert and automated annotations [37].

Protocol for Validating an AI-Assisted SLR Pipeline

This protocol is designed to compare an AI tool's performance at key SLR stages against a manually produced "gold standard" dataset created by domain experts.

1. Gold Standard Establishment

- Objective: Create a reference dataset of confirmed "included" studies and correctly extracted data against which AI performance will be measured.

- Methodology:

- Source Reviews: Select a set of previously completed, high-quality systematic reviews (e.g., from Cochrane) [37].

- Data Points: From these reviews, extract the final list of included studies and their key data (e.g., PICOs, study size, location) to form the positive set for validation [37].

- Expert Agreement: Ensure all extracted data points have been validated by multiple human experts during the original review process.

2. AI Tool Execution

- Objective: Execute the same review task using the AI tool and record its outputs.

- Methodology:

- Input: Provide the AI system (e.g., AutoLit's Smart Search) with only the research question or aim from the gold standard reviews [37].

- Process: Allow the AI to generate Boolean search strings, screen abstracts, and extract data from the resulting records without prior knowledge of the gold standard included studies [37].

- Output: Record the list of studies the AI identifies as "included" and the data it extracts from them.

3. Performance Analysis & Metric Calculation