Extended Characterization in Comparability Studies: A Strategic Guide for Ensuring Biologic Quality and Efficacy

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of extended characterization in comparability studies for biologics.

Extended Characterization in Comparability Studies: A Strategic Guide for Ensuring Biologic Quality and Efficacy

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of extended characterization in comparability studies for biologics. It covers the foundational principles of quality attributes and regulatory guidelines, delves into advanced methodological approaches and phase-appropriate strategies, addresses troubleshooting for complex modalities like cell and gene therapies, and outlines frameworks for data validation and establishing acceptance criteria. By synthesizing current regulatory expectations and scientific best practices, this resource aims to equip scientists with the knowledge to design robust comparability packages that ensure patient safety and facilitate regulatory success throughout a product's lifecycle.

The Foundation of Comparability: Understanding Critical Quality Attributes and Regulatory Expectations

The ICH Q5E guideline, titled "Comparability of Biotechnological/Biological Products Subject to Changes in Their Manufacturing Process," provides the foundational international standard for evaluating the impact of manufacturing process changes on biologic drugs [1]. The core principle of this framework is that demonstrating "comparability" does not require the pre-change and post-change products to be identical; rather, they must be highly similar [2]. The guideline mandates that the existing knowledge about the product must be sufficiently predictive to ensure that any differences in quality attributes have no adverse impact upon the safety or efficacy of the drug product [2]. This "highly similar" standard is the cornerstone for all subsequent experimental work, ensuring that patient safety and product efficacy are maintained while allowing for necessary manufacturing innovations.

The necessity for comparability studies arises throughout the entire drug development lifecycle. Changes may stem from improvements in process efficiencies, raw material changes, supply chain issues, evolving regulatory requirements, increasing production to meet patient needs, or other unforeseen circumstances [2]. The overall intention of the comparability package is to provide regulatory authorities with a transparent pathway from the safety, efficacy, and quality data from pre-change clinical batches to post-change batches, based on a strong foundation of science and a thorough understanding of the highly similar, and oftentimes improved, product [2].

The "Highly Similar" Standard: A Risk-Based Approach

The "highly similar" standard is a practical and scientific recognition of the inherent complexity of biological products. Unlike small molecule drugs, biologics are large, complex molecules produced from living systems, and they can exhibit a degree of natural variability. The objective of a comparability exercise is not to prove that two products are identical, but to assure that the differences which may exist between the pre-change and post-change product have no adverse impact on safety or efficacy [3]. This means the safety, identification, purity, and activity of the products should be highly similar and can be fully predicted based on existing knowledge [3].

A risk-based approach, as outlined in ICH Q9, is central to implementing the Q5E framework [3]. Risk assessment helps determine the scope and depth of the comparability study, guiding decisions on batch selection, analytical methods, and the necessity of supplementary studies (e.g., extended characterization, forced degradation, non-clinical, or clinical studies) [3]. The level of evidence required is directly proportional to the perceived risk of the manufacturing change, as illustrated in Table 1 below.

Table 1: Risk-Based Scoping for Comparability Studies

| Process Change | Comparability Risk | Recommended Study Content |

|---|---|---|

| Production site transfer | Low | Release testing, including activity, structural characterization, and accelerated stability studies [3]. |

| Site transfer with minor process changes | Low-Medium | Transfer all assays to the new facility, plus add receptor affinity analysis, ADCC, or other functional assays [3]. |

| Changes in culture methods or purification processes | Medium | All analytical tests, potentially supplemented by animal PK/PD testing [3]. |

| Cell line changes | Medium-High | Comprehensive analytical testing, potentially requiring GLP toxicology studies and human bridging studies [3]. |

Experimental Design and Batch Selection Strategy

A scientifically sound comparability study hinges on a robust experimental design. The lot selection strategy is essential, as batches must be representative of the pre- and post-change processes [2]. The pre- and post-change batches should be manufactured as close together as possible to avoid natural age-related differences that could convolute the results [2]. Furthermore, the selection strategy should be defined in the comparability protocol or study plan before testing begins [2].

The number of batches required for a comparability study is phase-appropriate and depends on the magnitude of the change and the stage of product development. For major changes post-approval, ≥3 batches of commercial-scale samples are generally selected after the change. For medium changes, 3 batches are typical, while minor changes can be studied with ≥1 batch [3]. The use of multiple batches helps demonstrate process robustness. For early-phase development, when representative batches are limited, it is acceptable to use single batches of pre- and post-change material to establish biophysical characteristics using platform methods [2]. The following workflow diagram outlines the key decision points in designing a comparability study.

Analytical Methodologies for Extended Characterization

Extended characterization provides a finer level of detail that is orthogonal to routine release methods and is critical for demonstrating comparability, especially for critical quality attributes (CQAs) [2]. These methods offer a more comprehensive detection of the impact of product changes on safety and efficacy, providing a detailed assessment of molecular structure [3]. Since these analytical methods are more complex and often lack extensive historical data, head-to-head comparative analysis of pre-change and post-change samples is typically required [3]. The following table summarizes the key analytical techniques used in extended characterization.

Table 2: Extended Characterization Analytical Methods for Monoclonal Antibodies

| Parameter / Structural Element | Analytical Technique | Function in Comparability Assessment |

|---|---|---|

| Primary Structure | Peptide Mapping (LC-MS) | Confirms amino acid sequence and identifies post-translational modifications (PTMs) [2] [3]. |

| Molecular Weight | LC-MS (e.g., ESI-TOF MS) | Determines accurate molecular mass and confirms protein sequence [2] [3]. |

| Higher-Order Structure | Circular Dichroism (CD) | Assesses secondary and tertiary structure, detecting changes in protein folding [3]. |

| Disulfide Bonds & Free Thiols | Peptide Map (non-reduced) / Spectrophotometry | Confirms correct disulfide bond linkages and quantifies free cysteine content [3]. |

| Purity & Heterogeneity | SEC-MALS / Analytical Ultracentrifugation (AUC) | Quantifies aggregates, fragments, and oligomers, providing size distribution and molecular weight [2] [3]. |

| Charge Variants | iCIEF / CEX-HPLC | Separates and quantifies charge isoforms resulting from modifications like deamidation or sialylation [2] [3]. |

| Glycosylation | Oligosaccharide Mapping (HPLC/UPLC) | Profiles glycan species, which can impact biological activity and immunogenicity [2] [3]. |

| Biological Function | Cell-Based Assays / Binding Affinity (e.g., SPR) | Measures potency and mechanism-of-action through receptor binding and effector functions [2] [3]. |

Forced Degradation and Stability Studies

Forced degradation studies, also known as stress studies, are a critical component of the comparability exercise. They are designed to unveil degradation pathways that may not be observed under real-time or accelerated stability conditions [2]. By subjecting pre-change and post-change samples to various stress conditions, scientists can compare the degradation profiles, kinetics, and pathways, thereby demonstrating quality alignment between the two processes through the analysis of trendline slopes, bands, and peak patterns [2].

These studies are not GMP activities, and it is important to note in the protocol that treated samples are not expected to meet release acceptance criteria, as the treatment conditions are outside typical process ranges [2]. The insights gained from forced degradation studies are invaluable for informing analytical test method limits, creating identification strategies for post-translational modifications or charge variants, and preparing for formal stability studies [2]. The standard conditions used in these studies are summarized below.

Table 3: Standard Forced Degradation Stress Conditions

| Stress Condition | Typical Parameters | Primary Degradation Pathways Assessed |

|---|---|---|

| Thermal (Solution) | e.g., 25°C, 40°C for 1-3 months | Aggregation, fragmentation, deamidation, oxidation [2]. |

| Thermal (Solid State) | e.g., 40°C, 60°C for 1-3 months | Dehydration, aggregation, chemical degradation [2]. |

| Photo-stability | Exposed to UV and Vis light per ICH Q1B | Photo-oxidation, discoloration, fragmentation [2]. |

| Oxidative | Incubation with oxidizing agents (e.g., H₂O₂) | Methionine/tryptophan oxidation, cross-linking [2]. |

| Acidic/Basic (pH) | Incubation at low (e.g., pH 3-4) and high (e.g., pH 9-10) pH | Deamidation, isomerization, hydrolysis, aggregation [2]. |

| Mechanical Stress | Shaking, agitation, freezing/thawing | Subvisible particle formation, aggregation, surface-induced denaturation [2]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of a comparability study relies on a suite of specialized reagents and materials. The following table details key solutions and their critical functions in the analytical workflow.

Table 4: Essential Research Reagent Solutions for Comparability Studies

| Research Reagent / Material | Function in Comparability Assessment |

|---|---|

| Reference Standard / Material | A well-characterized benchmark product batch used for head-to-head comparison in all analytical testing to ensure data validity [2] [3]. |

| Cell-Based Bioassay Reagents | Includes cells, cytokines, and detection substrates to measure the biological activity (potency) of the product, ensuring functional comparability [3]. |

| LC-MS Grade Solvents & Columns | High-purity solvents and specialized chromatography columns (e.g., C4, C8 for peptide mapping) are essential for reproducible and high-resolution separation in LC-MS analyses [2]. |

| Enzymes for Peptide Mapping | Sequencing-grade enzymes like trypsin are used to digest the protein into peptides for primary structure and PTM analysis via LC-MS [2] [3]. |

| Biosensor Chips (e.g., SPR) | Sensor chips functionalized with target antigens or Fc receptors to quantitatively measure binding affinity and kinetics, a key functional attribute [3]. |

| Stability Study Buffers & Excipients | Formulation buffers and stabilizers used in real-time and accelerated stability studies to assess the product's shelf-life and degradation profile under recommended storage conditions [2] [3]. |

| Forced Degradation Reagents | Chemical stressors such as hydrogen peroxide (oxidative), hydrochloric acid/sodium hydroxide (pH), and light sources (photostability) to intentionally degrade the product and study its degradation pathways [2]. |

Establishing Acceptance Criteria and Data Interpretation

Establishing prospective acceptance criteria is a fundamental requirement for a defensible comparability study. These criteria should be based on the historical data of the process and product quality, and sufficient justification must be provided for excluding any data [3]. The set acceptance criteria cannot be lower than the official quality standard unless proven to be reasonable [3]. Acceptance criteria can be divided into quantitative criteria, which must meet defined scope requirements, and qualitative criteria, which are based on the comparison of charts and patterns (e.g., peptide maps, spectra) [3].

Pre-defining both quantitative and qualitative acceptance criteria in the comparability study protocol alleviates pressure to interpret oftentimes complicated, subjective results as "comparable" or "not comparable" [2]. When evaluating the data, the focus is on whether any observed differences in quality attributes have an adverse impact on safety and efficacy. The following diagram illustrates the logical decision-making process for interpreting comparability data.

In conclusion, the ICH Q5E framework's "highly similar" standard provides a robust and practical pathway for managing manufacturing changes for biologics. A successful comparability assessment relies on a risk-based approach, strategic experimental design, and a comprehensive analytical package that heavily leverages extended characterization and forced degradation studies. The data generated from these studies form the scientific backbone of the comparability package, providing the deep molecular understanding required to justify that a process change has no adverse impact on the product.

Ultimately, regulators assess comparability based on a totality of evidence [4]. A strong, well-planned comparability study that integrates data from routine testing, extended characterization, and stability studies will leave regulators with a sense of confidence in the product and the company, paving the way for new drug approvals and future manufacturing improvements [2]. As analytical technologies continue to advance, the role of extended characterization will only grow in importance, potentially reducing the need for comparative clinical studies in some cases and solidifying its place as a cornerstone of comparability research [4].

The Role of Extended Characterization in the Biologics Lifecycle

In the development and manufacturing of biologics, extended characterization is not a single activity but a comprehensive, systematic approach to achieving deep molecular understanding. Biologics, produced in living systems, are inherently complex and heterogeneous. Even minor alterations in the manufacturing process can introduce subtle differences in a biologic's structure [5]. Extended characterization provides the analytical foundation to identify and control these variants, ensuring that product quality, and therefore patient safety and efficacy, are never compromised [2] [5].

This document frames extended characterization within the critical context of comparability studies. As defined in the ICH Q5E guideline, demonstrating comparability does not require the pre- and post-change materials to be identical, but they must be "highly similar" such that any differences in quality attributes have no adverse impact upon safety or efficacy [2]. A robust comparability package, underpinned by extended characterization and forced degradation studies, provides regulatory authorities with a transparent pathway to justify that a manufacturing change does not adversely impact the product [2]. With regulatory paradigms evolving—exemplified by the FDA's recent proposal to eliminate comparative clinical efficacy studies for biosimilars when supported by robust analytical data—the role of extended characterization as the primary tool for demonstrating product similarity is more crucial than ever [6].

Application Note: Extended Characterization in Comparability Protocols

Objective and Strategic Implementation

The primary objective of incorporating extended characterization into a comparability study is to provide a higher-resolution analytical assessment than routine testing alone can offer. It is designed to detect subtle, potentially impactful differences between pre-change and post-change biologics that standard release assays might miss [2]. This is achieved through a suite of orthogonal methods that probe the drug substance's structural, physicochemical, and functional properties in great detail.

The strategy for these studies should be phase-appropriate. Early in development, single batches may be characterized using platform methods. As development advances toward a Biologics License Application (BLA), the complexity increases, culminating in a head-to-head testing of multiple pre- and post-change batches—the gold standard being three pre-change versus three post-change batches [2]. Proper planning is essential; test methods and molecular characteristics must be well-understood before facing the scrutiny of a formal comparability study [2]. Key considerations include:

- Lot Selection: Batches must be representative of their respective processes and manufactured close together to avoid age-related differences convoluting the results [2].

- Protocol Definition: The comparability study protocol should predefine quantitative and qualitative acceptance criteria for extended characterization methods to alleviate pressure to interpret complicated, subjective results [2].

- Risk Mitigation: Unexpected results from extended characterization can open processes to scrutiny. Facing these challenges early saves time and energy by enabling teams to identify and mitigate risks before late-phase development [2].

Key Analytical Panels for Comparability

Extended characterization employs a multi-attribute approach to build a complete picture of the biologic. The table below summarizes a typical testing panel for a monoclonal antibody, though the specific methods may vary for other biologic modalities.

Table 1: Example Extended Characterization Testing Panel for Monoclonal Antibodies

| Characterization Category | Analytical Technique | Key Attributes Assessed |

|---|---|---|

| Structural Characterization | Liquid Chromatography-Mass Spectrometry (LC-MS) / Peptide Mapping [5] | Amino acid sequence, post-translational modifications (e.g., deamidation, oxidation), disulfide bond linkages [5] |

| High-Resolution Mass Spectrometry (e.g., ESI-TOF MS) [2] | Molecular weight, confirmation of primary structure, identification of modifications [2] | |

| Capillary Electrophoresis (cIEF, CE-SDS) [5] | Charge and size heterogeneity (acidic/basic variants, fragments, aggregates) [5] | |

| Spectroscopy (CD, FTIR, HDX-MS) [5] | Higher-order structure (secondary/tertiary), conformational dynamics, and stability [5] | |

| Functional Characterization | Surface Plasmon Resonance (SPR) [5] | Binding affinity (kinetics: on-rate/off-rate), specificity for antigen |

| Cell-Based Bioassays [5] | Biological potency reflecting mechanism of action (e.g., ADCC, CDC, cytokine neutralization) | |

| Enzyme-Linked Immunosorbent Assay (ELISA) [5] | Binding activity and immunoreactivity |

Forced Degradation Studies as a Pressure Test

Forced degradation studies are an integral component of extended characterization for comparability. These studies intentionally stress the biologic under conditions more severe than normal storage or process conditions (e.g., elevated temperature, light exposure, oxidative stress) to unveil degradation pathways and profile product variants [2]. In a comparability context, the forced degradation profiles of pre-change and post-change materials are compared. The analysis of trendline slopes, bands, and peak patterns demonstrates whether the products degrade in a similar manner, providing strong evidence of analytical comparability [2].

Table 2: Common Types of Forced Degradation Stress Conditions

| Stress Condition | Typical Parameters | Primary Degradation Pathways Induced |

|---|---|---|

| Thermal Stress | e.g., 25°C to 50°C for 1-3 months [2] | Aggregation, fragmentation, deamidation, oxidation |

| Photo Stress | Exposure to UV and visible light [2] | Oxidation (e.g., of methionine, tryptophan), cleavage |

| Oxidative Stress | Incubation with hydrogen peroxide [2] | Methionine/tryptophan oxidation, histidine modification |

| Acidic/Basic Stress | Low/high pH incubation [2] | Deamidation, fragmentation, aggregation |

Experimental Protocols for Extended Characterization

Protocol 1: Primary Structure and PTM Analysis via LC-MS Peptide Mapping

1.0 Objective: To confirm the amino acid sequence and identify and quantify post-translational modifications (PTMs) of a monoclonal antibody in pre- and post-change samples for comparability assessment.

2.0 Research Reagent Solutions: Table 3: Key Reagents for LC-MS Peptide Mapping

| Item | Function |

|---|---|

| Trypsin, Lys-C | Proteolytic enzymes for specific digestion of the antibody into peptides for analysis. |

| Urea / Guanidine HCl | Denaturants to unfold the protein for complete enzymatic digestion. |

| Dithiothreitol (DTT) | Reducing agent to break disulfide bonds. |

| Iodoacetamide (IAA) | Alkylating agent to cap cysteine residues and prevent reformation of disulfides. |

| Trifluoroacetic Acid (TFA) | Ion-pairing agent for reversed-phase chromatography separation. |

| Mobile Phase A (0.1% FA in Water) | Aqueous mobile phase for LC-MS separation. |

| Mobile Phase B (0.1% FA in Acetonitrile) | Organic mobile phase for LC-MS separation. |

3.1 Sample Preparation:

- Denaturation & Reduction: Dilute the antibody to 1 mg/mL in a solution containing 6 M Guanidine HCl and 5 mM DTT. Incubate at 37°C for 30-60 minutes.

- Alkylation: Add IAA to a final concentration of 15 mM. Incubate in the dark at room temperature for 30 minutes.

- Digestion: Desalt the protein using a buffer exchange column into a digestion-compatible buffer (e.g., 50 mM Tris-HCl, pH 8.0). Add trypsin at an enzyme-to-substrate ratio of 1:50 (w/w). Incubate at 37°C for 4-16 hours.

- Reaction Quenching: Acidify the sample with TFA to pH < 3 to stop the digestion.

3.2 LC-MS Analysis:

- Chromatography: Inject the digested peptide mixture onto a reversed-phase UHPLC column (e.g., C18, 1.7 µm, 2.1 x 150 mm). Elute peptides using a gradient from 2% to 40% Mobile Phase B over 90 minutes at a flow rate of 0.2 mL/min.

- Mass Spectrometry: Couple the UHPLC system to a high-resolution mass spectrometer (e.g., Q-TOF). Acquire data in data-dependent acquisition (DDA) mode, switching between a full MS scan and subsequent MS/MS scans of the most intense ions.

4.0 Data Analysis:

- Use software to map the acquired MS/MS spectra against the expected antibody sequence.

- Identify and relatively quantify PTMs (e.g., deamidation of asparagine, oxidation of methionine) by comparing the extracted ion chromatograms of modified and unmodified peptides between the pre- and post-change samples.

Protocol 2: Forced Degradation via Thermal Stress

1.0 Objective: To accelerate the formation of product-related variants and compare the degradation profiles of pre- and post-change drug substance samples.

2.0 Research Reagent Solutions: Table 4: Key Reagents for Thermal Stress Studies

| Item | Function |

|---|---|

| Formulation Buffer | The native buffer of the drug substance, providing the relevant stress environment. |

| SEC-HPLC Mobile Phase | A compatible buffer (e.g., phosphate) for separating and quantifying monomers and aggregates. |

| cIEF Reagents | Ampholytes, markers, and mobilizer for analyzing charge variant profiles. |

3.1 Sample Preparation and Stress Conditions:

- Prepare aliquots of the pre-change and post-change drug substance in its formulation buffer.

- Place samples in a controlled stability chamber set at 40°C ± 2°C.

- Withdraw samples at pre-determined time points (e.g., 1, 2, and 4 weeks). Analyze withdrawn samples immediately or freeze at -80°C until analysis.

3.2 Analysis of Stressed Samples: Analyze the stressed samples and unstressed controls (stored at -80°C) side-by-side using the following methods:

- Size Heterogeneity: Use Size-Exclusion Chromatography (SEC-HPLC) to quantify the percentage of high-molecular-weight aggregates and low-molecular-weight fragments.

- Charge Heterogeneity: Use capillary isoelectric focusing (cIEF) to monitor the formation of acidic and basic charge variants.

- Biological Activity: Perform a cell-based bioassay to determine any loss of potency relative to the unstressed control.

4.0 Data Analysis:

- Plot the increase in aggregates and charge variants over time for both pre- and post-change samples.

- Compare the slopes of the trendlines and the overall degradation profiles. The products are considered comparable if the degradation pathways and rates are highly similar.

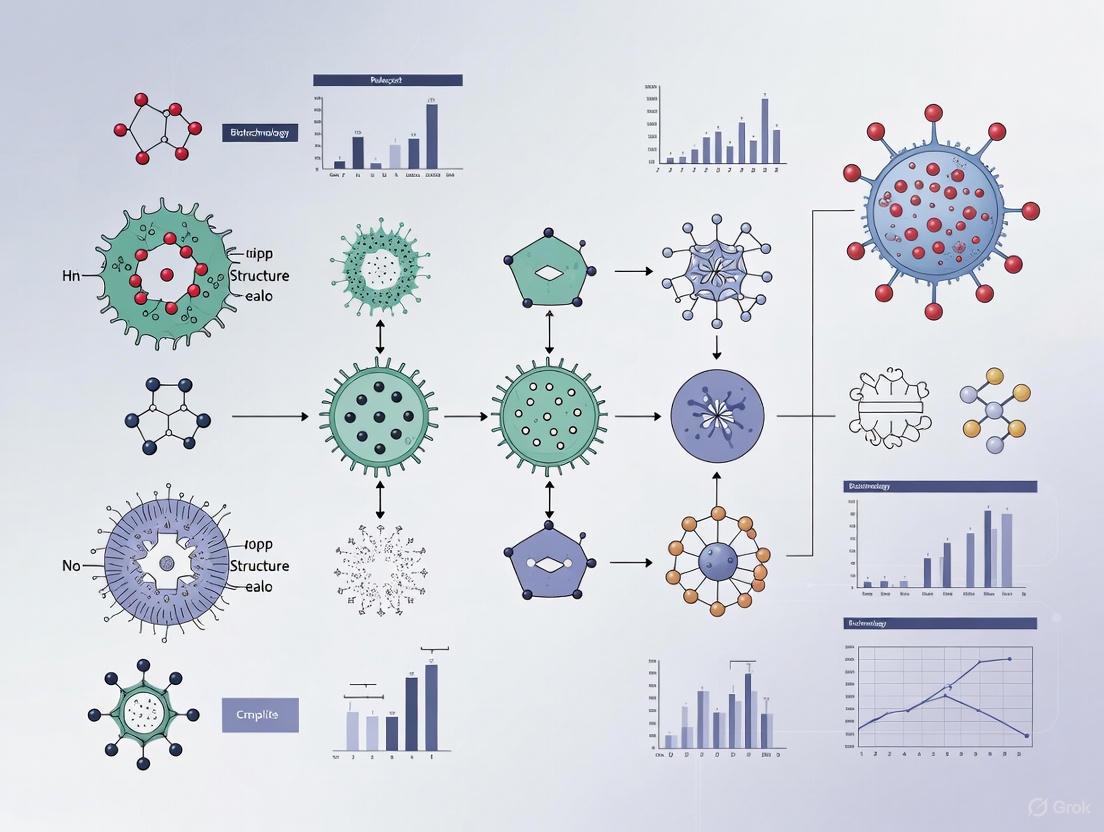

Visualizing Workflows and Pathways

The following diagrams illustrate the logical workflow for implementing extended characterization in a comparability study and the pathways explored in forced degradation.

Comparability Study Workflow

Forced Degradation Pathways

Extended characterization is the scientific backbone of the biologics lifecycle. It transforms a biologic from a black box into a well-understood entity, whose critical quality attributes are identified, monitored, and controlled. This deep product knowledge is fundamental to establishing batch-to-batch consistency and is indispensable for successfully navigating manufacturing changes via comparability studies [5]. By employing a rigorous, orthogonal analytical toolbox—including advanced structural techniques, functional bioassays, and predictive forced degradation studies—manufacturers can demonstrate with a high degree of confidence that their product maintains the requisite quality, safety, and efficacy profile throughout its commercial life. A well-executed extended characterization strategy not only de-risks development and accelerates regulatory approvals but also solidifies a company's reputation as a trusted leader in the biopharmaceutical industry [2].

A Deep Dive into Critical Quality Attributes (CQAs) for mAbs and Other Biologics

In the realm of biopharmaceuticals, Critical Quality Attributes (CQAs) are defined as physical, chemical, biological, or microbiological properties or characteristics that must remain within an appropriate limit, range, or distribution to ensure the desired product quality, safety, and efficacy [7]. For complex biologics like monoclonal antibodies (mAbs), these attributes form the very blueprint of product quality [8]. Unlike small molecule drugs, biologics are produced by living systems, making them inherently more complex, variable, and sensitive to manufacturing conditions [8]. This complexity necessitates a rigorous framework for identifying and controlling CQAs throughout the product lifecycle.

The foundation for CQAs lies within the Quality by Design (QbD) framework, a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding based on sound science and quality risk management [7]. Within QbD, CQAs provide the critical link between the Quality Target Product Profile (QTPP)—which outlines the desired characteristics of the drug product—and the development of a robust control strategy [7]. By focusing on CQAs, quality is designed and built into the product from the outset, rather than relying solely on end-product testing [7] [8].

Table: Key Elements of the Quality by Design (QbD) Framework

| QbD Element | Description | Relationship to CQAs |

|---|---|---|

| Predefined Objectives | Define Quality Target Product Profile (QTPP) | QTPP guides the identification of which attributes are critical [7]. |

| Product & Process Understanding | Identify Critical Material Attributes (CMAs) and Critical Process Parameters (CPPs) | Establish functional relationships linking CMAs/CPPs to CQAs [7]. |

| Process Control | Develop an appropriate Control Strategy | Control strategy is built around monitoring and maintaining CQAs [7]. |

| Sound Science | Apply science-driven development (e.g., DoE) | Provides the data to understand and control CQAs [7]. |

| Quality Risk Management | Implement a risk-based development approach | Helps prioritize which attributes are truly critical and require stringent control [7]. |

CQAs for Monoclonal Antibodies (mAbs) and Advanced Modalities

Core CQAs for Monoclonal Antibodies

For monoclonal antibodies, CQAs typically encompass a range of structural and functional properties. Commonly monitored attributes include potency, which ensures the mAb performs its intended biological function; purity, minimizing process-related impurities like host cell proteins (HCPs) and DNA; and stability, which involves monitoring aggregation or degradation over time [8]. A particularly critical attribute is glycosylation, a post-translational modification that can significantly affect the antibody's effector function, half-life, and immunogenicity [8]. Controlling the glycosylation pattern is a key challenge in mAb production, as it is highly sensitive to cell culture conditions and critical process parameters (CPPs) like temperature and pH [8].

Extended CQAs for Complex Modalities: The Case of ADCs

Antibody-Drug Conjugates (ADCs) present an even greater complexity, requiring an expanded set of CQAs beyond those for traditional mAbs. The 2025 edition of the Chinese Pharmacopoeia outlines a comprehensive "CQA panorama" for ADCs, emphasizing the need to control structural integrity (e.g., proportions of intact ADC, free antibody, and free payload), conjugation characteristics (e.g., Drug-to-Antibody Ratio (DAR) distribution and conjugation site heterogeneity), and payload properties (e.g., payload stability and retained bioactivity) [9]. Additional critical attributes include the control of aggregates and fragments, as well as specific process-related impurities like linker precursors, unreacted toxins, and residual HCPs [9]. For instance, the pharmacopoeia sets a strict limit for free toxin (≤0.1%), a key safety-related CQA [9].

Table: Key CQAs for mAbs and Advanced Biologics

| Product Class | Critical Quality Attribute Category | Specific Examples |

|---|---|---|

| Monoclonal Antibodies (mAbs) | Purity & Impurities | Host Cell Proteins (HCP), DNA, aggregates [8]. |

| Potency | Biological activity, binding affinity [8]. | |

| Structural Integrity | Glycosylation patterns, charge variants, sequence integrity [8]. | |

| Antibody-Drug Conjugates (ADCs) | Conjugation Attributes | Drug-Antibody Ratio (DAR), conjugation site heterogeneity [9]. |

| Payload & Linker | Free toxin (≤0.1%), linker stability, payload activity [9]. | |

| Structural Integrity | Intact ADC, free antibody, fragment levels [9]. |

Analytical Methodologies for CQA Monitoring

The Multi-Attribute Method (MAM) for mAbs

The Multi-Attribute Method (MAM) has emerged as a revolutionary platform for the simultaneous monitoring of multiple CQAs in monoclonal antibodies [10]. Leveraging high-resolution mass spectrometry (HRMS), MAM integrates peptide mapping with targeted and untargeted data processing workflows. This allows for the accurate identification and quantification of product variants, post-translational modifications (PTMs), and sequence variants in a single, streamlined assay [10]. By consolidating several orthogonal tests into one, MAM enhances efficiency and provides a more holistic view of product quality. Recent advances in MAM workflows include automation, advanced data analytics, and hybrid methodologies that incorporate orthogonal techniques like Raman spectroscopy and hydrogen-deuterium exchange mass spectrometry (HDX-MS) [10].

Orthogonal Techniques for Extended Characterization

For a comprehensive comparability study, a suite of orthogonal analytical techniques is required. These methods provide a deeper level of characterization beyond routine release testing and are critical for demonstrating product similarity after a manufacturing change [2]. Key technologies include:

- Liquid Chromatography-Mass Spectrometry (LC-MS): Used for peptide mapping, sequence variant analysis, and glycan profiling [2].

- High-Resolution Mass Spectrometry (HRMS): Essential for intact mass analysis and detailed characterization of PTMs [10] [9].

- Size Exclusion Chromatography with Multi-Angle Light Scattering (SEC-MALS): Provides absolute determination of molecular weight and is critical for quantifying aggregates and fragments [2] [11]. The recent integration of MALS detectors into centralized CDS software like Waters Empower simplifies data acquisition and can reduce analysis time by 20% [11].

- Capillary Electrophoresis (CE-SDS): Used for high-resolution purity analysis, separating fragments and aggregates under reducing and non-reducing conditions [9].

Diagram 1: Multi-Attribute Method (MAM) Workflow. This illustrates the integrated LC-MS workflow for simultaneous monitoring of multiple critical quality attributes.

Application Note: A Protocol for Comparability Studies

Study Design and Objectives

This application note outlines a phase-appropriate protocol for conducting an extended characterization study to demonstrate comparability between pre-change and post-change drug substance for a monoclonal antibody following a manufacturing process change. The objective is to provide scientific evidence that the change has no adverse impact on the safety or efficacy of the product, per ICH Q5E requirements [2]. The study is designed as a head-to-head comparison of multiple batches, employing orthogonal analytical methods to assess a comprehensive panel of CQAs.

Materials and Reagents

Table: Research Reagent Solutions for Extended Characterization

| Reagent / Material | Function / Application | Key Considerations |

|---|---|---|

| Trypsin, Sequencing Grade | Enzymatic digestion for peptide mapping and LC-MS analysis. | High purity and specificity are required for reproducible digestion [10]. |

| Reference Standard | Serves as a benchmark for analytical method performance and data comparison. | Must be well-characterized and traceable to a primary standard [9]. |

| Mobile Phase Buffers | For chromatographic separation (LC, SEC, CE). | Prepared with high-purity reagents; pH and composition are critical for reproducibility. |

| Forced Degradation Stressors | Chemicals for oxidative (e.g., H2O2), thermal, and pH stress studies. | Used to elucidate degradation pathways and product stability [2]. |

Experimental Protocol: Extended Characterization

Step 1: Batch Selection and Study Initiation

- Select a minimum of three pre-change and three post-change drug substance batches for a late-phase (Phase 3 or BLA) study [2]. Batches should be representative, have passed release criteria, and be manufactured as close together as possible to avoid age-related differences [2].

- Prepare a detailed study protocol pre-defining all analytical methods, test articles, and acceptance criteria (both quantitative and qualitative) for interpreting results as "comparable" [2].

Step 2: Orthogonal Analytical Testing Perform head-to-head testing on the selected batches using the following panel of methods, as derived from industry standards for mAb characterization [2]:

- Intact Mass Analysis by HRMS: Determine the molecular weight of the intact antibody and its major variants.

- Peptide Mapping with LC-MS/MS: Identify and quantify post-translational modifications (e.g., deamidation, oxidation) and sequence variants.

- Glycan Analysis: Release, label, and separate N-linked glycans using HILIC-UPLC or LC-MS to profile glycoforms.

- SEC-MALS: Quantify high molecular weight (HMW) aggregates and low molecular weight (LMW) fragments.

- CE-SDS (reducing and non-reducing): Assess purity and fragments.

- Ion-Exchange Chromatography (IEC): Profile charge variants resulting from modifications like C-terminal lysine processing or deamidation.

- Bioassay / Potency Assay: Measure the biological activity of the molecules.

Step 4: Forced Degradation Studies Subject pre- and post-change samples to controlled stress conditions to compare their degradation profiles and pathways [2]. This "pressure-test" reveals differences not always apparent in real-time stability studies.

- Thermal Stress: Incubate at 25°C and 40°C for defined durations.

- Oxidative Stress: Expose to a low concentration of hydrogen peroxide (e.g., 0.01% - 0.1%).

- pH Stress: Incubate at low (e.g., pH 3-4) and high (e.g., pH 9-10) conditions.

- Mechanical Stress: Agitate via shaking or stirring. After stress, analyze samples using SE-HPLC, CE-SDS, and IEC. Compare the trendline slopes, band patterns, and peak profiles to demonstrate similarity in degradation behavior [2].

Step 5: Data Analysis and Reporting

- Analyze data for both side-by-side qualitative similarity (e.g., chromatographic profiles, mass spectra) and quantitative equivalence [2].

- Use statistical models where appropriate, though acknowledge challenges with small sample sizes common in biologics development [12].

- Compile a comprehensive report concluding on comparability based on the totality of evidence from all studies.

The Role of CQAs in Comparability and Real-Time Control

CQAs as the Foundation for Successful Comparability

The primary goal of a comparability study is to demonstrate that the pre- and post-change products are highly similar and that the existing knowledge is sufficiently predictive to ensure no adverse impact on safety or efficacy [2]. CQAs are the central pillar of this assessment. A well-executed comparability study, as detailed in the protocol above, relies on measuring a wide panel of CQAs through extended characterization to provide a "finer level of detail" that is orthogonal to routine release methods [2]. This is crucial for gaining regulatory confidence that a manufacturing change has not altered the product in a meaningful way.

Emerging Trends: Real-Time Monitoring and Process Analytical Technology (PAT)

The biopharmaceutical industry is increasingly moving towards real-time quality control. Process Analytical Technology (PAT) is a system that utilizes real-time monitoring and control of critical process parameters (CPPs) to ensure they remain within predefined limits, thereby directly influencing CQAs [13]. By integrating analytical tools like Raman spectroscopy directly into bioreactors, PAT enables real-time adjustment of processes, embodying the QbD principle of building quality in rather than testing it in at the end [13]. This approach is a cornerstone of "BioPharma 4.0," facilitating a comprehensive digital transformation of pharmaceutical production [13].

For monoclonal antibodies and other complex biologics, Critical Quality Attributes are the definitive metrics of product quality. A deep understanding and rigorous control of CQAs—from early development through commercial manufacturing and across process changes—is non-negotiable for ensuring patient safety and product efficacy. The application of advanced analytical strategies like the Multi-Attribute Method (MAM), coupled with robust, phase-appropriate protocols for extended characterization and comparability, provides the scientific foundation required by regulators. As the industry advances, the integration of real-time monitoring and sophisticated data analytics will further enhance our ability to control these attributes, driving forward the development of safe, effective, and high-quality biologic therapies.

Impact of Post-Translational Modifications (PTMs) on Safety and Efficacy

Post-translational modifications are chemical modifications that occur on proteins after their synthesis, serving as critical regulatory mechanisms that govern protein stability, activity, localization, and interactions [14]. For therapeutic biologics, including monoclonal antibodies, fusion proteins, and peptide therapeutics, PTMs represent a crucial quality attribute that must be thoroughly characterized throughout the product lifecycle [2]. The impact of PTMs extends from basic biological function to direct implications for the safety profile and clinical efficacy of protein-based therapeutics, making their comprehensive understanding essential for successful drug development [15] [16].

Within the framework of comparability studies, PTM analysis forms the cornerstone of demonstrating product consistency following manufacturing process changes [2]. As regulatory guidance evolves to emphasize the importance of analytical characterization – evidenced by the FDA's recent proposal to eliminate comparative efficacy studies for biosimilars in favor of robust analytical assessment – the role of PTM analysis has become increasingly prominent in demonstrating product quality [6] [17]. This application note provides detailed methodologies for PTM characterization within comparability studies, supported by quantitative data and experimental protocols designed for researchers and drug development professionals.

Quantitative Landscape of Clinically Relevant PTMs

The comprehensive characterization of PTMs requires an understanding of their prevalence and functional impact. Large-scale proteomic studies have generated substantial quantitative data on various modification types across biological systems. The qPTMplants database, for instance, hosts over 1.2 million experimentally identified PTM events across 429,821 nonredundant sites on 123,551 proteins, encompassing 23 different PTM types [18]. While this resource is plant-focused, it demonstrates the scale and complexity of PTM analysis that must be applied to therapeutic proteins.

Table 1: Prevalence and Functional Impact of Major PTM Types in Therapeutic Proteins

| PTM Type | Key Residues Affected | Impact on Protein Function | Role in Comparability Studies |

|---|---|---|---|

| Glycosylation | Asparagine (N-linked), Serine/Threonine (O-linked) | Stability, half-life, immunogenicity, receptor binding [15] [16] | Critical Quality Attribute (CQA) for many biologics; affects efficacy and pharmacokinetics [2] |

| Phosphorylation | Serine, Threonine, Tyrosine | Signaling, activation state, protein-protein interactions [14] [16] | Potential impact on biological activity; process-related changes |

| Ubiquitination | Lysine | Protein degradation, signaling pathways [14] [16] | Affects protein turnover and stability; indicator of product quality |

| Acetylation | Lysine | Protein-protein interactions, activity, stability [14] [16] | Can influence functional properties; monitored in characterization |

| Succinylation | Lysine | Metabolic regulation, enzyme activity [16] | Emerging importance in therapeutic proteins |

For immune checkpoint proteins targeted by immunotherapies, specific PTMs have demonstrated direct clinical relevance. Glycosylation of PD-1/PD-L1, for instance, affects binding affinity and directly influences the efficacy of immune checkpoint inhibitors [16]. Phosphorylation patterns on CTLA-4 modulate its endocytosis and surface expression, ultimately affecting T-cell activation thresholds [16]. These examples underscore why PTM monitoring is essential for ensuring consistent safety and efficacy profiles throughout a product's lifecycle.

Analytical Methodologies for PTM Assessment in Comparability Studies

Extended Characterization Workflow

A comprehensive PTM assessment within comparability studies follows a tiered approach that progresses from general characterization to targeted analysis of specific modifications. The workflow integrates orthogonal analytical techniques to build a complete picture of product quality attributes.

High-Throughput PTM Screening Protocol

Recent advances in high-throughput methodologies have accelerated PTM characterization. The integration of cell-free expression (CFE) systems with AlphaLISA detection provides a rapid platform for screening PTM-installing enzymes and their protein substrates [15].

Table 2: Research Reagent Solutions for High-Throughput PTM Screening

| Reagent/Category | Specific Examples | Function in PTM Analysis |

|---|---|---|

| Expression Systems | PUREfrex CFE System [15] | Rapid protein expression without living cells |

| Detection Assays | AlphaLISA Beads (anti-FLAG, anti-MBP) [15] | Sensitive, bead-based proximity assay for protein interactions |

| Modification Enzymes | Oligosaccharyltransferases (OSTs), RiPP Modification Enzymes [15] | Install specific PTMs on target proteins |

| Analytical Standards | FluoroTect GreenLys [15] | Monitor protein expression and purity |

| Bioinformatics Tools | dbPTM, PhosphoSitePlus, UniProt [14] | PTM database mining and sequence analysis |

Protocol: Cell-Free Expression Coupled with AlphaLISA for PTM Screening

Purpose: To rapidly characterize PTM enzyme activity and substrate modification using high-throughput cell-free expression and detection [15].

Materials:

- PUREfrex cell-free protein expression system

- DNA templates for PTM enzymes and substrate proteins

- AlphaLISA anti-FLAG acceptor beads and anti-MBP donor beads

- White, low-volume 384-well microplates

- Acoustic liquid handling robot

- Plate reader capable of AlphaLISA detection

Procedure:

- Cell-Free Expression: Express RRE fusion proteins and N-terminally sFLAG-tagged peptide substrates in individual PUREfrex reactions according to manufacturer specifications [15].

- Reaction Assembly: Mix RRE protein-expressing PUREfrex reactions with corresponding peptide substrate-expressing reactions in 384-well plate format.

- Bead Incubation: Add anti-FLAG donor beads and anti-MBP acceptor beads to each well. Incubate for 1-2 hours at room temperature protected from light.

- Signal Detection: Measure chemiluminescent signal using an AlphaLISA-compatible plate reader. Only RRE-peptide binding brings acceptor and donor beads into proximity, generating a detectable signal [15].

- Data Analysis: Normalize signals to appropriate controls and calculate binding activities or modification efficiencies.

Applications: This protocol is particularly valuable for characterizing RiPP recognition elements and engineering oligosaccharyltransferases for improved glycosylation efficiency [15]. The method enables screening of hundreds to thousands of enzyme variants in a plate-based format, significantly accelerating engineering cycles for PTM-installing enzymes.

LC-MS/MS-Based PTM Characterization Protocol

Liquid chromatography coupled with tandem mass spectrometry represents the gold standard for comprehensive PTM mapping in comparability studies.

Protocol: Comprehensive Peptide Mapping for PTM Identification and Quantification

Purpose: To identify and quantify site-specific PTMs on therapeutic proteins as part of extended characterization for comparability assessment [2].

Materials:

- Reduced and alkylated protein samples

- Sequencing-grade trypsin or other proteolytic enzymes

- High-resolution LC-MS/MS system (Q-Exactive Orbitrap or similar)

- C18 reverse-phase chromatography columns

- Data processing software (MaxQuant, Proteome Discoverer)

Procedure:

- Sample Preparation: Denature, reduce, and alkylate protein samples following standard protocols. Digest with appropriate protease (typically trypsin) overnight at 37°C.

- LC Separation: Desalt and separate peptides using reverse-phase C18 chromatography with a 60-120 minute gradient optimized for peptide separation.

- MS Data Acquisition: Acquire MS1 spectra at high resolution (70,000+), followed by data-dependent MS/MS fragmentation of the most abundant ions. Include inclusion lists for specific peptides of interest.

- Database Searching: Search fragmentation spectra against protein sequence databases using search engines such as Andromeda or Sequest, enabling PTM searches as variable modifications.

- Quantitative Analysis: For comparability studies, use label-free quantification or isobaric tagging (TMT, iTRAQ) to quantify PTM changes between pre- and post-change materials.

Applications: This methodology is essential for comprehensive characterization of biosimilars and for demonstrating comparability after manufacturing changes [2]. It enables identification of specific glycosylation sites, oxidation-prone methionine residues, deamidation sites, and other PTMs that may impact product quality.

Regulatory Considerations and Strategic Implementation

The regulatory landscape for PTM assessment in comparability studies is evolving toward increased emphasis on analytical characterization. The FDA's recent draft guidance proposes eliminating comparative clinical efficacy studies for biosimilars when robust analytical data demonstrates high similarity to the reference product [6] [17]. This shift places greater importance on comprehensive PTM characterization as part of the comparative analytical assessment.

Strategic implementation of PTM assessment should be phase-appropriate, with increasing complexity throughout development. Early-phase development should focus on identifying PTM patterns and establishing platform methods, while late-phase development requires rigorous head-to-head testing of multiple pre- and post-change batches (typically 3 vs. 3) [2]. Forced degradation studies are particularly valuable for understanding how PTM profiles change under stress conditions and identifying potential degradation pathways not observed in real-time stability studies [2].

Thorough characterization of post-translational modifications is no longer optional but essential for demonstrating product quality, safety, and efficacy throughout the biologic lifecycle. The methodologies outlined in this application note provide a framework for implementing comprehensive PTM assessment within comparability studies, aligned with evolving regulatory expectations. As analytical technologies continue to advance, the ability to characterize PTMs with greater sensitivity and throughput will further enhance our understanding of their impact on therapeutic protein quality and performance.

Risk-Based Approaches for Scoping Comparability Studies

In the lifecycle of biopharmaceutical products, particularly complex molecules like recombinant monoclonal antibodies (mAbs), changes to the manufacturing process are inevitable [19]. The goal of a comparability study is not to demonstrate that the pre-change and post-change products are identical, but to establish that they are highly similar and that the existing knowledge is sufficiently predictive to ensure any differences in quality attributes have no adverse impact upon safety or efficacy of the drug product [2] [20]. A risk-based approach to scoping these studies ensures that the level of effort and scrutiny is commensurate with the potential impact of the change on product quality, safety, and efficacy, effectively balancing scientific rigor with resource allocation [21] [20].

This approach aligns with regulatory guidance, such as ICH Q5E, which emphasizes a science-based understanding of the relationship between quality attributes and their impact on safety and efficacy [21] [19]. For researchers and drug development professionals, implementing a risk-based framework is crucial for managing changes under expedited development paradigms, enabling faster implementation of process improvements without compromising patient safety or program timelines [20].

Theoretical Foundation and Risk Assessment

The Risk-Based Framework

A risk-based comparability approach operates on the principle that the extent and comprehensiveness of the comparability exercise should be appropriately aligned with the stage of development and the potential risk posed by the manufacturing change [20]. This philosophy is encapsulated in a hierarchical testing approach, where analytical comparison serves as the primary and often sufficient layer of assessment.

Table 1: Hierarchy of Comparability Testing Approach

| Testing Layer | When Required | Key Objective |

|---|---|---|

| Analytical Studies | First-line approach for all changes [20] | To demonstrate high similarity using biochemical, biophysical, and biological methods [19] |

| Non-Clinical Studies | When analytical comparability is insufficient to resolve uncertainties about safety or efficacy [20] | To address specific residual risks not fully characterized by analytical methods |

| Clinical Studies | When a potential clinically meaningful impact on efficacy, safety, or immunogenicity is suspected [20] | To confirm the absence of adverse clinical impacts in patients |

The foundation of this framework is a thorough risk assessment that evaluates the proposed process change against factors such as the molecule's stage of development, the level of existing product and process knowledge, and the potential for the change to impact Critical Quality Attributes (CQAs) [20]. A well-executed risk assessment allows teams to focus resources on the most impactful studies, streamlining the path to implementation.

Risk Assessment and Logical Workflow

The following diagram illustrates the logical decision workflow for implementing a risk-based comparability strategy, from identifying a process change to determining the appropriate scope of studies.

Implementing the Risk-Based Approach

Phase-Appropriate Strategy

The application of a risk-based approach must be phase-appropriate [2] [20]. The level of product and process knowledge, as well as the definition of CQAs, evolves throughout the development lifecycle. Consequently, the strategy for demonstrating comparability should also mature.

Table 2: Phase-Appropriate Comparability Strategy

| Development Phase | Batch Strategy | Analytical Focus | Acceptance Criteria |

|---|---|---|---|

| Early Phase (e.g., IND, Phase 1) | Single batches of pre- and post-change material may be acceptable [2] | Platform methods for biophysical characteristics; screening forced degradation conditions [2] | Focus on safety attributes; establish molecular characteristics [2] |

| Late Phase (e.g., Phase 3, BLA) | Multiple batches (e.g., 3 pre-change vs. 3 post-change) [2] | Molecule-specific methods; comprehensive extended characterization and forced degradation [2] | Statistically informed criteria (e.g., ETTI); alignment with historical data and defined CQAs [2] [20] |

| Post-Approval | PPQ batches and commercial-scale batches | Comprehensive comparability including routine, extended, and stability data [19] | Tight acceptance criteria aligned with the licensed product and prior knowledge [21] |

Analytical Testing Strategy

The analytical testing suite forms the cornerstone of any comparability exercise. For a complex molecule like a monoclonal antibody, the strategy should be designed to probe all relevant aspects of the molecule's structure and function. The following table outlines a comprehensive analytical testing panel for a thorough comparability assessment.

Table 3: Analytical Testing Strategy for mAb Comparability

| Attribute Category | Example Analytical Methods | Purpose in Comparability |

|---|---|---|

| Structural & Physicochemical | • Peptide Mapping (LC-MS/MS)\n• SEC-MALS\n• cIEF / icIEF\n• ESI-TOF MS [2] [19] | Verifies primary structure, confirms higher-order structure, detects charge variants and post-translational modifications [19] |

| Purity & Impurities | • CE-SDS (reduced/non-reduced)\n• Host Cell Protein (HCP) assays\n• DNA assays [19] | Quantifies product-related variants (fragments, aggregates) and process-related impurities [19] |

| Potency & Biological Activity | • Binding assays (e.g., SPR)\n• Cell-based bioassays (e.g., ADCC, CDC) [19] [20] | Demonstrates functional equivalence and confirms mechanism of action is maintained [20] |

| Stability | • Real-time stability studies\n• Accelerated stability studies\n• Forced degradation studies [2] [19] | Assesses degradation profiles and confirms similarity in stability behavior [2] |

Experimental Application: Detailed Protocol

This section provides a detailed, actionable protocol for executing an analytical comparability study for a recombinant monoclonal antibody following a manufacturing process change.

Protocol: Analytical Comparability Study for a Post-Change mAb

1.0 Objective: To demonstrate, through analytical testing, that the drug substance produced after a specified manufacturing process change is highly similar to the pre-change drug substance in terms of identity, purity, quality, potency, and stability.

2.0 Scope: This protocol applies to the comparability assessment between [Number] pre-change batches and [Number] post-change batches of [Drug Substance Name].

3.0 Materials and Reagents Table 4: Research Reagent Solutions and Key Materials

| Item | Function / Application |

|---|---|

| Reference Standard | Well-characterized material used as a system suitability control and for data normalization [2] |

| Cell-Based Assay Reagents | (e.g., effector cells, target cells, substrate reagents) for measuring biological activity (ADCC, CDC) [19] |

| Chromatography Resins & Columns | For HPLC/UPLC analyses (e.g., SEC, CEX, RP-HPLC, HIC) [19] |

| Mass Spectrometry Grade Solvents | For sample preparation and mobile phases in LC-MS analyses to minimize background interference [19] |

| Forced Degradation Reagents | (e.g., Hydrogen peroxide, acidic/basic buffers) for stress studies to elucidate degradation pathways [2] |

4.0 Pre-Study Planning

- 4.1 Risk Assessment: Document the risk assessment for the change, identifying CQAs potentially impacted [20].

- 4.2 Lot Selection: Select batches that are representative of the pre- and post-change processes. Use the latest available batches that have passed release criteria. Define the selection strategy a priori [2].

- 4.3 Acceptance Criteria: Pre-define quantitative and qualitative acceptance criteria for the study in a statistical quality control (SQC) file or study plan [2].

5.0 Experimental Workflow and Methodologies The following diagram outlines the core experimental workflow for the comparability study, from sample management through data analysis and reporting.

6.0 Key Experimental Procedures

- 6.1 Extended Characterization: Perform the battery of tests listed in Table 3. Methods must be validated or qualified. All testing should be performed head-to-head under the same conditions for pre- and post-change samples [2].

- 6.2 Forced Degradation Studies: Conduct stress studies on pre- and post-change batches to compare degradation profiles under accelerated conditions. This "pressure-test" reveals differences not seen in real-time stability [2].

Table 5: Forced Degradation Stress Conditions

Stress Type Example Conditions Attributes Monitored Thermal Incubation at 25°C - 40°C for 1-4 weeks [2] Aggregation (SEC), Fragmentation (CE-SDS), Charge Variants (cIEF) Oxidative Incubation with 0.01% - 0.1% H₂O₂ [2] Oxidation (Peptide Map), Potency (Bioassay) Light Per ICH Q1B option 1 or 2 [2] Color, Clarity, Oxidation, Aggregation - 6.3 Stability Studies: Initiate real-time, accelerated, and if applicable, stress stability studies on post-change batches, comparing the data to the historical stability profile of pre-change material [19].

7.0 Data Analysis and Reporting

- 7.1 Statistical Analysis: For quantitative data, use appropriate statistical methods. In late-stage development, an Equal-Tailed Tolerance Interval (ETTI) approach with pre-defined criteria is often employed to compare the post-change data to the pre-change historical data [20].

- 7.2 Assessment of Differences: Any observed differences must be evaluated in the context of the risk assessment. The scientific rationale should justify why the differences do not adversely impact safety or efficacy, referencing prior knowledge and the structure-function relationship of attributes [19] [20].

- 7.3 Conclusion: The final report must provide a definitive conclusion on whether analytical comparability has been demonstrated.

A risk-based approach to scoping comparability studies is a fundamental enabler for efficient and effective biopharmaceutical development. By leveraging a deep understanding of the product and process, coupled with phase-appropriate strategies and robust analytical tools, developers can ensure that manufacturing changes are implemented without compromising product quality or patient safety. This scientific, data-driven approach not only facilitates continuous improvement but also builds regulatory confidence, ultimately accelerating the delivery of vital therapies to patients.

Advanced Analytical Toolbox: Methodologies and Phase-Appropriate Application

Designing an Orthogonal Analytical Test Panel for Extended Characterization

Within the framework of comparability studies for biologics, extended characterization provides the foundational data required to demonstrate that a manufacturing process change does not adversely impact the product's safety or efficacy profile [2]. This process is critical throughout the drug development lifecycle, as changes to improve process efficiency, scale-up production, or address supply chain issues are common [2]. A well-designed orthogonal analytical test panel is indispensable for this exercise, moving beyond routine release testing to provide a deeper understanding of molecule-specific attributes and degradation pathways [2] [22].

The term "orthogonal" in this context refers to the use of multiple analytical methods that employ different physical or chemical principles to measure the same product attribute [22]. This approach offers a systematic way to achieve a complete picture of the components that need to be separated and identified, ensuring that no critical quality attributes (CQAs) are overlooked [22]. By integrating orthogonal methods, scientists can mitigate the risk of analytical gaps that have been a persistent cause of Complete Response Letters (CRLs) from regulatory agencies, often due to inadequate assay validation or unexpected method drift during scale-up [23].

This application note provides detailed protocols and workflows for constructing a robust orthogonal analytical strategy, directly supporting the broader thesis that comprehensive extended characterization is vital for successful comparability assessments and regulatory approval.

The Orthogonal Method Toolbox for Extended Characterization

An effective orthogonal panel for the extended characterization of biologics, such as monoclonal antibodies, should assess a wide range of physicochemical and functional properties. The selection of methods should be based on a risk assessment that considers the potential impact of manufacturing changes on product CQAs [2] [24].

Table 1: Orthogonal Methods for Extended Characterization of Biologics

| Category | Technique | Primary Purpose | Critical Quality Attributes (CQAs) Assessed |

|---|---|---|---|

| Purity & Impurities | Size Exclusion Chromatography (SEC) | Quantify soluble aggregates and fragments | % Monomer, % High Molecular Weight (HMW) Species, % Low Molecular Weight (LMW) Species |

| Capillary Electrophoresis-SDS (CE-SDS) | Evaluate purity and integrity under denaturing conditions | % Purity, % Fragmentation, % Non-glycosylated Heavy Chain | |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Identify and characterize product-related impurities and sequence variants | Sequence Variants, Incomplete Processing | |

| Charge Variants | Cation Exchange Chromatography (CEX) | Separate and quantify acidic and basic variants | % Acidic Variants, % Main Peak, % Basic Variants |

| Structural Integrity | Circular Dichroism (CD) | Assess secondary and tertiary structure | Thermal Melting Point (Tm), Structural Folding |

| Differential Scanning Fluorimetry (nanoDSF) | Probe conformational stability | Tm, Onset of Aggregation (Tagg) | |

| Small-Angle X-Ray Scattering (SAXS) | Analyze solution-state structure and flexibility | Particle Size, Shape, and Conformational Flexibility [25] | |

| Size & Aggregation | Dynamic Light Scattering (DLS) | Determine hydrodynamic size and polydispersity | Hydrodynamic Radius (Rh), Polydispersity Index (PDI) |

| Mass Photometry | Measure individual particle mass and oligomeric state in solution | Oligomeric State, Molecular Mass | |

| Electron Microscopy (EM) | Visualize particles and aggregates | Particle Morphology, Aggregate Visualization [25] | |

| Potency & Function | Biological Potency Assay (e.g., cell-based) | Quantify biological activity | Mechanism of Action (MoA)-linked Activity, Relative Potency |

| Surface Plasmon Resonance (SPR) | Measure binding kinetics and affinity | Binding Affinity (KD), Association Rate (Kon), Dissociation Rate (Koff) |

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents essential for executing the described orthogonal methods.

Table 2: Essential Research Reagent Solutions and Materials

| Item | Function/Application | Example Specifications |

|---|---|---|

| Expi293 Cells | Mammalian expression system for transient transfection and production of recombinant proteins [25]. | Cat. no. A14527 (ThermoFisher) |

| Protein-G Columns | Affinity chromatography purification for antibodies and Fc-fusion proteins [25]. | Cat. no. 17-0405-01 (Cytiva) |

| Polyethylenimine (PEI) | Transfection reagent for delivery of plasmid DNA into mammalian cells [25]. | Cat. no. A14527 (Polyscience) |

| LDS Sample Buffer | Denaturing buffer for protein sample preparation for SDS-PAGE analysis [25]. | Cat. no. B0007 (Life Technologies) |

| Size Exclusion Columns | High-resolution separation of protein monomers, aggregates, and fragments under native conditions. | Superdex Increase 10/300 (Cytiva) [25] |

| Mobile Phase Modifiers | Buffers and additives for chromatographic method development to achieve orthogonal separations [22]. | Trifluoroacetic Acid (TFA), Formic Acid, Ammonium Acetate |

Experimental Protocol: A Systematic Workflow for Orthogonal Method Implementation

This protocol outlines a systematic approach, from generating samples to selecting and applying orthogonal methods for extended characterization in a comparability study.

Sample Generation and Selection

Materials: All available batches of drug substance and drug product; reagents for forced degradation (see Table 3).

Procedure:

- Collect Representative Samples: Gather all available GMP and non-GMP batches of drug substance and drug product to capture the full range of synthetic impurities [22].

- Perform Forced Degradation Studies: Subject the drug substance and drug product to various stress conditions to generate potential degradation products. The goal is typically 5-15% degradation to minimize the formation of secondary degradants [22]. Key stress conditions are listed in Table 3.

- Store Stressed Samples: After stress, immediately store samples at -20°C to arrest further degradation [22].

- Initial Screening: Analyze all collected and stressed samples using a single, broad-gradient chromatographic method (e.g., reversed-phase HPLC). The purpose is to identify samples with unique impurity or degradation profiles for further, in-depth orthogonal analysis [22].

Table 3: Forced Degradation Stress Conditions

| Stress Condition | Typical Parameters | Target Degradation Pathways |

|---|---|---|

| Acidic Hydrolysis | e.g., 0.1 M HCl, room temperature for several hours | Deamidation, Fragmentation, Truncation |

| Basic Hydrolysis | e.g., 0.1 M NaOH, room temperature for several hours | Isomerization, Racemization, Fragmentation |

| Oxidative Stress | e.g., 0.1% H₂O₂, room temperature for several hours | Methionine/Tryptophan Oxidation, Cross-linking |

| Thermal Stress | e.g., 40°C for several weeks | Aggregation, Fragmentation, Oxidation |

| Photo-stability | Per ICH Q1B guidelines | Oxidation, Cleavage |

Orthogonal Method Screening and Selection

Materials: HPLC/UPLC system with multiple detector options (PDA, FLD), a set of at least six HPLC columns with different selectivities (e.g., C18, C8, PFP, Phenyl, HILIC), various mobile phase modifiers (e.g., formic acid, TFA, ammonium acetate, phosphate) [22].

Procedure:

- Column and Mobile Phase Screening: Take the "samples of interest" identified in Step 1.4 and screen them using multiple (e.g., six) broad gradient methods, each on a different column chemistry [22]. As outlined in the systematic approach, typical mobile phase modifiers include 0.1% formic acid, 0.1% trifluoroacetic acid, and 5 mM ammonium acetate, prepared from concentrated stocks [22].

- Data Analysis: Review all chromatographic data to identify:

- The method that provides the best separation of all known components (this becomes the primary method for release and stability).

- A second method that provides a fundamentally different selectivity profile (this becomes the orthogonal method) [22].

- Method Optimization: Use software tools (e.g., DryLab) to optimize the primary and orthogonal methods by adjusting parameters such as gradient steepness, temperature, and pH [22].

- Final Verification: Analyze the forced degradation and representative batch samples with both the optimized primary and orthogonal methods to confirm that no peaks were missed during the initial screening [22].

Application in Comparability Studies

Materials: Pre-change and post-change drug substance/drug product batches, validated primary methods, qualified orthogonal methods.

Procedure:

- Prospective Protocol: Develop a detailed comparability study protocol with pre-defined acceptance criteria based on historical data and process capability [2] [24].

- Side-by-Side Testing: Analyze pre-change and post-change batches using the full panel of orthogonal methods listed in Table 1. Testing should be performed side-by-side, in the same assay run where possible, to minimize assay variability [2] [24].

- Data Comparison: For each attribute (e.g., aggregate levels, charge variant profile, thermal stability), compare the data from pre- and post-change batches. The use of orthogonal methods can reveal differences that a single method might miss, such as co-eluting impurities [22].

- Assessment: Conclude comparability if the results are highly similar and any observed differences have no adverse impact on safety or efficacy, per ICH Q5E [2].

Workflow Visualization

The following diagram illustrates the logical workflow for designing and implementing an orthogonal analytical test panel.

Workflow for Orthogonal Test Panel Design

Concluding Remarks

A strategically designed orthogonal analytical test panel is not merely a technical exercise but a critical component of risk mitigation in biopharmaceutical development. By employing a systematic workflow that includes comprehensive sample generation, rigorous method screening, and structured comparability assessment, developers can build a robust scientific case to support manufacturing changes. This approach, firmly embedded within extended characterization protocols, provides the deep product understanding required by regulators and ensures that life-saving biologics maintain their quality, safety, and efficacy throughout their lifecycle.

Application Notes and Protocols for Extended Characterization in Comparability Studies

In the development of biopharmaceuticals, comparability studies are critical for demonstrating that manufacturing process changes do not adversely impact the product's quality, safety, or efficacy. This requires a comprehensive analytical approach using orthogonal techniques that provide complementary data on primary structure, higher-order structure, and physicochemical properties. Extended characterization employs sophisticated methodologies to detect subtle changes in critical quality attributes (CQAs) that conventional analytics might miss. The integration of separation techniques with advanced detection methods like mass spectrometry has dramatically enhanced our ability to characterize complex biologics at a molecular level, providing the depth of information necessary for robust comparability assessments.

The following application notes detail five key techniques—Peptide Mapping, SEC-MALS, CIEF, CD, and Mass Spectrometry—that form the cornerstone of extended characterization platforms. For each technique, we provide detailed protocols, data interpretation guidelines, and specific application scenarios within comparability studies, supported by tabulated experimental data and visual workflow diagrams to facilitate implementation in the laboratory.

Peptide Mapping with LC/MS

Application Note

Peptide mapping serves as the workhorse technique for comprehensive primary structure characterization of protein therapeutics. When interfaced with mass spectrometry, it enables identification of proteins based on peptide fragment patterns, determination of post-translational modifications (PTMs), confirmation of genetic sequence fidelity, and localization of modification sites. This technique is particularly valuable in comparability studies for lot-to-lot consistency evaluation and detecting subtle sequence variants or modifications resulting from process changes. The high resolution and mass accuracy of modern LC/MS systems significantly enhance information content by differentiating co-eluting peptides and identifying low-abundance modifications that traditional UV detection cannot resolve [26].

Experimental Protocol

Sample Preparation:

- Denaturation: Dilute protein to 1 mg/mL in 50 mM Tris-HCl buffer, pH 8.0. Add RapiGest SF to a final concentration of 0.1% (w/v).

- Reduction: Add dithiothreitol (DTT) to 5 mM final concentration. Incubate at 60°C for 30 minutes.

- Alkylation: Add iodoacetamide to 15 mM final concentration. Incubate in darkness at room temperature for 30 minutes.

- Digestion: Add trypsin at a 1:20 (w/w) enzyme-to-substrate ratio. Incubate at 37°C for 4 hours.

- Acidification: Add trifluoroacetic acid (TFA) to 0.5% final concentration to stop digestion. Centrifuge at 14,000 × g for 10 minutes to remove precipitates.

LC/MS Analysis:

- Column: ACQUITY UPLC Peptide BEH C18 Column (130Å, 1.7 µm, 1.0 mm × 100 mm)

- Mobile Phase A: 0.1% Formic acid in water

- Mobile Phase B: 0.1% Formic acid in acetonitrile

- Gradient: 1-40% B over 45 minutes

- Flow Rate: 0.1 mL/min

- Column Temperature: 55°C

- MS Detection: Xevo G3 QTof MS with MSE data acquisition mode

- Data Processing: Use BiopharmaLynx software for automated peptide identification and modification characterization [26]

Data Interpretation and Reporting

Table 1: Key Peptide Mapping Parameters for Comparability Assessment

| Parameter | Target Value | Acceptance Criteria | Typical Variability |

|---|---|---|---|

| Sequence Coverage | >95% | Match to reference standard | ±2% |

| PTM Identification | Consistent profile | Qualitative match | Site-specific quantification required |

| Deamidation Sites | Asn 32, 55, 82 | <5% increase vs. reference | ±0.5% |

| Oxidation Sites | Met 101, 155 | <3% increase vs. reference | ±0.3% |

| Glycan Profiles | Consistent pattern | Qualitative match | N/A |

Workflow Visualization

Size-Exclusion Chromatography with Multi-Angle Light Scattering (SEC-MALS)

Application Note

SEC-MALS provides an absolute determination of molar mass and size distributions of macromolecules in solution without relying on column calibration standards. This technique is indispensable in comparability studies for characterizing aggregation status, detecting fragmentation, and confirming oligomeric state consistency after process changes. Recent applications have expanded to include complex new modalities like mRNA therapeutics, where it enables size heterogeneity assessment and quantification of dimeric species. The direct, model-free measurement of molar mass makes SEC-MALS particularly valuable for confirming the integrity of complex biologics where hydrodynamic behavior may not follow standard globular protein models [27] [28].

Experimental Protocol

Sample Preparation:

- For proteins: Prepare sample at 0.5-2 mg/mL in mobile phase

- For mRNA: Dilute to 0.1 mg/mL in nuclease-free water

- Centrifuge at 14,000 × g for 10 minutes to remove particulates

- Filter through 0.1 µm syringe filter (for proteins) or 0.22 µm filter (for mRNA)

SEC-MALS Analysis:

- Column: GTxResolve Premier SEC 1000 Å 3 µm 7.8 × 300 mm Column

- Mobile Phase: 0.2 µm filtered PBS, pH 7.4 (10 mM phosphate, 200 mM KCl, 0.02% NaN₃)

- Flow Rate: 1 mL/min

- Injection Volume: 10 µL

- Column Temperature: Ambient (22°C)

- Detection: DAWN MALS detector (18 angles), UV absorbance at 260 nm (mRNA) or 280 nm (proteins)

- System Equilibration: Flush column with 20 column volumes mobile phase until stable MALS baseline achieved

- System Suitability: Validate with BSA standard (5 mg/mL, 50 µg injection) confirming monomer Mw of 66.4 kDa [28]

Data Interpretation and Reporting

Table 2: SEC-MALS Output Parameters for Comparability Assessment

| Parameter | Protein Therapeutic | mRNA Therapeutic | Acceptance Criteria |

|---|---|---|---|

| Weight-Average Molar Mass (Mw) | 144-152 kDa for mAbs | 1.2-1.5 MDa for 4.5 kb mRNA | ±5% of reference standard |

| Polydispersity Index (Mw/Mn) | <1.01 | <1.05 | ≤1.05 for proteins, ≤1.10 for mRNA |