Foundation Models for Single-Cell Multi-Omics Integration: A New Paradigm for Cellular Biology and Precision Medicine

The emergence of single-cell multi-omics technologies has created an urgent need for computational frameworks capable of integrating complex, high-dimensional data.

Foundation Models for Single-Cell Multi-Omics Integration: A New Paradigm for Cellular Biology and Precision Medicine

Abstract

The emergence of single-cell multi-omics technologies has created an urgent need for computational frameworks capable of integrating complex, high-dimensional data. Foundation models, large-scale deep learning architectures pretrained on vast cellular datasets, are revolutionizing this field. This article explores the core concepts of single-cell foundation models (scFMs), detailing their transformer-based architectures and self-supervised pretraining strategies. We examine cutting-edge methodologies for multimodal data alignment, their transformative applications in drug discovery and disease research, and critical challenges including data sparsity, batch effects, and model interpretability. Through comparative analysis of tools like scGPT, Nicheformer, and scMODAL, we provide a roadmap for researchers and drug development professionals to leverage these powerful AI tools for unlocking deeper insights into cellular heterogeneity, drug response mechanisms, and personalized therapeutic development.

Demystifying Single-Cell Foundation Models: Core Concepts and Architectural Principles

What Are Foundation Models and Why Do They Matter for Single-Cell Biology?

Foundation models are large-scale deep learning models pretrained on vast datasets that can be adapted to a wide range of downstream tasks. Inspired by breakthroughs in natural language processing, these models are revolutionizing single-cell biology by learning universal representations from millions of cells. This technical review examines the core architectures, pretraining strategies, and evaluation frameworks for single-cell foundation models (scFMs), with a focus on their transformative potential for multi-omics integration. We provide quantitative performance comparisons across key benchmarks, detailed experimental protocols for model evaluation, and visualizations of core workflows. For researchers and drug development professionals, scFMs offer powerful new capabilities for cell annotation, perturbation prediction, spatial context reconstruction, and drug target discovery, positioning them as indispensable tools for next-generation biological research.

Foundation models represent a paradigm shift in computational biology, defined as large-scale deep learning models pretrained on extensive datasets using self-supervised learning that can be adapted to diverse downstream tasks [1]. These models have revolutionized natural language processing and computer vision, and are now transforming single-cell genomics by learning universal representations from massive cellular datasets [1] [2]. The fundamental premise of single-cell foundation models (scFMs) is that by exposing a model to millions of cells encompassing diverse tissues, species, and conditions, it can learn the fundamental principles of cellular behavior that generalize to new biological contexts [1].

The urgent need for scFMs stems from the exponential growth of single-cell transcriptomics data, which presents characteristics of high sparsity, high dimensionality, and low signal-to-noise ratio that challenge traditional machine learning approaches [3]. Single-cell RNA sequencing (scRNA-seq) has become a cornerstone of biological research, enabling high-resolution analysis of gene expression at the individual cell level to uncover cellular heterogeneity, developmental trajectories, and disease mechanisms [4]. However, traditional analytical pipelines struggle with the complexity of modern single-cell datasets, creating a critical need for more powerful computational frameworks [2].

scFMs typically leverage transformer architectures to incorporate diverse omics data and extract latent patterns at both cell and gene/feature levels for analyzing cellular heterogeneity and complex regulatory networks [1]. These models treat cells as sentences and genes or genomic features along with their values as words or tokens, creating a "language of biology" that can be decoded using similar approaches to natural language processing [1]. The core value proposition of scFMs lies in their ability to learn generalizable biological patterns during pretraining that endow them with emergent capabilities for zero-shot learning and efficient adaptation to various downstream tasks with minimal fine-tuning [3].

Core Architectures and Technical Approaches

Model Architectures and Pretraining Strategies

Single-cell foundation models employ diverse neural architectures, with transformer-based designs currently dominating the landscape. These architectures can be broadly categorized into encoder-based, decoder-based, and hybrid models, each with distinct strengths for biological applications [1] [2]. Encoder-based models like scBERT employ bidirectional attention mechanisms that learn from all genes in a cell simultaneously, making them particularly effective for classification tasks and embedding generation [1]. Decoder-based models such as scGPT use unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes, demonstrating strong performance in generative tasks [1]. Emerging architectures like GeneMamba incorporate state-space models (SSMs) that offer linear computational complexity compared to transformers' quadratic constraints, enabling more efficient processing of long gene sequences [5].

Pretraining strategies for scFMs primarily utilize self-supervised learning objectives that learn from unlabeled data. The most common approach is masked language modeling (MLM), where the model learns to predict randomly masked genes based on their cellular context [1] [6]. Alternative strategies include rank-based prediction, where models predict gene rankings based on expression levels [7] [6], and bin-based classification that discretizes continuous expression values into categories [5] [6]. Multi-task learning approaches that combine self-supervision with biological annotation prediction are also emerging, as demonstrated by the Teddy model family which leverages rich metadata annotations to enhance representation learning [6].

Tokenization Strategies for Gene Expression Data

A critical technical challenge for scFMs is converting continuous, non-sequential gene expression data into discrete token sequences that transformers can process. Unlike words in natural language, genes have no inherent ordering, requiring carefully designed tokenization strategies [1]. The three predominant approaches are:

- Rank-based discretization: Genes are ordered by expression level within each cell, creating a ranked sequence that emphasizes highly expressed genes. This approach, used in Geneformer and Nicheformer, effectively captures relative expression patterns and demonstrates robustness to batch effects [7] [5].

- Bin-based discretization: Expression values are grouped into predefined bins or categories, converting continuous measurements into discrete tokens. scBERT and scGPT employ variations of this approach, which preserves absolute expression ranges but may introduce information loss for genes with subtle expression differences [5].

- Value projection: Continuous expression values are projected into embedding space through linear transformations, maintaining full data resolution. scFoundation uses this method, though its impact on model performance compared to discrete tokenization remains an active research area [5].

Table 1: Comparison of Primary Tokenization Strategies in scFMs

| Strategy | Key Advantage | Limitation | Representative Models |

|---|---|---|---|

| Rank-based discretization | Robust to batch effects and noise | Loses absolute expression values | Geneformer, Nicheformer |

| Bin-based discretization | Preserves expression ranges | Sensitive to parameter selection | scBERT, scGPT |

| Value projection | Maintains full data resolution | Diverges from NLP tokenization traditions | scFoundation |

Multimodal and Spatial Integration

Advanced scFMs increasingly incorporate multimodal data integration capabilities, combining transcriptomics with epigenomics, proteomics, and spatial information [2]. Nicheformer represents a groundbreaking approach specifically designed for spatial transcriptomics, trained on both dissociated single-cell and spatially resolved data to learn cellular representations that capture spatial context [7] [8]. This model demonstrates that spatial patterns leave measurable traces in gene expression even when cells are dissociated, enabling the transfer of spatial context to standard scRNA-seq data [8].

Cross-species integration is another advanced capability, with models like scPlantLLM specifically designed for plant single-cell data to address unique challenges posed by plant cellular complexity, including cell wall structures, polyploidy, and tissue-specific expression patterns [4]. These specialized models highlight the importance of domain-specific adaptations in scFM development.

Performance Benchmarks and Evaluation Frameworks

Comprehensive Model Evaluation

Rigorous benchmarking of scFMs reveals distinct performance profiles across different biological tasks. A comprehensive evaluation of six leading scFMs against traditional baselines using 12 metrics across gene-level and cell-level tasks provides nuanced insights into their relative strengths [3]. The benchmarking demonstrates that no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection based on specific dataset characteristics and research objectives [3].

At the gene level, scFMs are evaluated on their ability to capture functional gene relationships and biological pathways. Gene embeddings from foundation models are assessed by how well they cluster functionally similar genes and predict Gene Ontology terms, with performance varying significantly across models [3]. For cell-level tasks, including batch integration, cell type annotation, and disease state classification, scGPT generally demonstrates robust performance across tasks, while Geneformer and scFoundation show particular strengths in gene-level applications [3] [9].

Table 2: Performance Overview of Leading Single-Cell Foundation Models

| Model | Training Scale | Architecture | Strengths | Notable Applications |

|---|---|---|---|---|

| Nicheformer | 110M cells (53M spatial) | Transformer | Spatial context prediction, microenvironment modeling | Tissue organization, cellular neighborhoods [7] |

| Geneformer | 30-95M cells | Transformer (rank-based) | Gene regulatory networks, chromatin dynamics | Network inference, perturbation prediction [6] |

| scGPT | 33M cells | Transformer (bin-based) | Multi-omic integration, strong all-around performance | Cell type annotation, cross-species transfer [2] [9] |

| scPlantLLM | Plant-specific | Transformer | Plant genomics, zero-shot learning | Plant development, environmental response [4] |

| GeneMamba | 50M+ cells | State-space model | Computational efficiency, long sequences | Large-scale integration, resource-constrained settings [5] |

| Teddy Family | 116M cells | Transformer variants | Disease biology, scaling properties | Disease state classification [6] |

Novel Evaluation Metrics and Biological Relevance

Beyond traditional performance metrics, researchers are developing novel evaluation frameworks that assess the biological relevance of scFM representations. The scGraph-OntoRWR metric measures the consistency of cell type relationships captured by scFMs with prior biological knowledge encoded in cell ontologies [3]. Similarly, the Lowest Common Ancestor Distance (LCAD) metric assesses the severity of errors in cell type annotation by measuring the ontological proximity between misclassified cell types [3].

These biologically informed metrics address a critical gap in scFM evaluation by moving beyond technical performance to assess how well models capture established biological relationships. Benchmarking results indicate that pretrained scFM embeddings do indeed capture meaningful biological insights into the relational structure of genes and cells, which provides explanatory power for their strong performance across diverse downstream tasks [3].

Experimental Protocols for scFM Evaluation

Standardized Evaluation Workflows

Reproducible evaluation of scFMs requires standardized protocols for benchmarking studies. The BioLLM framework provides unified APIs and evaluation pipelines that enable consistent comparison across diverse models [9]. A typical evaluation workflow encompasses data preprocessing, feature extraction, task-specific fine-tuning or zero-shot evaluation, and multi-dimensional performance assessment.

For zero-shot evaluation, frozen pretrained models generate cell and gene embeddings without task-specific fine-tuning. These embeddings are then evaluated on downstream tasks using simple classifiers (linear probing) to assess the intrinsic quality of the learned representations [3]. For fine-tuning evaluation, models are adapted to specific tasks using limited labeled data, simulating real-world scenarios with constrained annotations [9].

Benchmarking datasets should encompass diverse biological conditions, including inter-patient, inter-platform, and inter-tissue variations that present realistic integration challenges [3]. Independent validation on held-out datasets not seen during pretraining is essential to assess model generalization and mitigate data leakage concerns [3].

Specialized Spatial Evaluation Tasks

For spatially aware models like Nicheformer, specialized evaluation tasks assess capabilities beyond standard cell annotation. Spatial composition prediction tasks challenge models to predict local cellular density or cell-type composition within spatially homogeneous niches [7]. Spatial label prediction evaluates model performance on human-annotated tissue regions and microenvironments, with additional assessment of predictive uncertainty [7].

These spatial tasks require specialized datasets with paired single-cell and spatial transcriptomics measurements. Models are evaluated on their ability to transfer spatial context identified in spatial transcriptomics onto dissociated single-cell data, enabling the enrichment of standard scRNA-seq datasets with spatial information [7].

The Scientist's Toolkit for scFM Research

Implementing and evaluating scFMs requires specialized computational resources and frameworks. The following tools constitute essential components of the scFM research ecosystem:

Table 3: Essential Research Tools for Single-Cell Foundation Model Applications

| Resource | Type | Primary Function | Key Features |

|---|---|---|---|

| BioLLM [9] | Software Framework | Unified model interface and evaluation | Standardized APIs, benchmarking tasks, model switching |

| CELLxGENE [1] [6] | Data Repository | Curated single-cell data | 100M+ standardized cells, cross-study annotations |

| CZ CELLxGENE Discover [2] | Data Platform | Federated data analysis | Scalable exploration, collaborative annotation |

| scPlantLLM [4] | Specialized Model | Plant single-cell analysis | Species adaptation, zero-shot learning for plants |

| SpatialCorpus-110M [7] | Training Corpus | Multimodal pretraining | 57M dissociated + 53M spatial cells, cross-technology |

BioLLM has emerged as a critical framework for addressing the challenge of heterogeneous architectures and coding standards across scFMs [9]. By providing unified APIs and comprehensive documentation, it enables streamlined model access and consistent benchmarking, significantly reducing the engineering overhead required for comparative evaluation [9].

Data resources like CELLxGENE provide the foundational datasets necessary for both pretraining and evaluation, with over 100 million unique cells standardized for analysis [1]. These curated collections are essential for training robust models that capture biological variation across tissues, species, and experimental conditions [1] [6].

Future Directions and Research Challenges

Despite rapid progress, several challenges persist in the development and application of single-cell foundation models. Technical variability across experimental platforms, limited model interpretability, and gaps in translating computational insights to clinical applications represent significant hurdles [2]. Batch effect propagation in transfer learning remains a particular concern, as models pretrained on diverse datasets may inadvertently introduce technical artifacts when applied to new studies [2].

The field is evolving toward more biologically grounded architectures that incorporate prior knowledge through biological ontologies and pathway databases [6]. Scaling laws for scFMs are still being established, though early evidence from the Teddy model family suggests that performance improves predictably with both data volume and parameter count [6]. Multimodal integration represents another frontier, with approaches like pathology-aligned embeddings and tensor-based fusion combining transcriptomic, epigenomic, proteomic, and spatial imaging data [2].

For drug discovery and development, scFMs offer particular promise in mapping drug-chromatin engagements and understanding cellular heterogeneity in treatment response [10]. As these models continue to mature, they are poised to become central tools in precision medicine, enabling more targeted therapeutic interventions based on comprehensive cellular understanding.

Foundation models represent a transformative advancement in single-cell biology, offering unprecedented capabilities for analyzing cellular heterogeneity, gene regulatory networks, and tissue organization. By learning universal representations from massive datasets, these models enable zero-shot transfer and efficient adaptation to diverse downstream tasks, from basic cell annotation to complex spatial composition prediction. As the field matures, standardized evaluation frameworks like BioLLM and biologically informed metrics will be crucial for rigorous model assessment and selection.

For researchers and drug development professionals, scFMs are evolving from specialized tools to essential components of the analytical pipeline. Their ability to integrate multimodal data, reconstruct spatial context, and predict cellular responses to perturbation positions them as critical technologies for unlocking new insights into disease mechanisms and therapeutic opportunities. While challenges remain in model interpretability, clinical translation, and computational efficiency, the rapid pace of innovation suggests that foundation models will fundamentally reshape how we understand and manipulate cellular systems in health and disease.

The advent of single-cell omics technologies has revolutionized biological research by enabling the detailed analysis of individual cells, uncovering unprecedented cellular heterogeneity, and providing insights into complex biological processes. However, the high-dimensionality, technical noise, and multimodal nature of modern single-cell datasets have exposed critical limitations in traditional computational methodologies. In parallel, transformer-based architectures have revolutionized natural language processing (NLP) and computer vision by capturing intricate long-range relationships in data. This convergence has catalyzed a transformative approach to single-cell analysis: the development of foundation models–large-scale, self-supervised artificial intelligence (AI) models trained on diverse datasets that can be adapted to a wide range of downstream tasks [1].

Foundation models, originally developed for natural language processing, are now driving transformative approaches to high-dimensional, multimodal single-cell data analysis. These models learn universal representations from large and diverse datasets, demonstrating exceptional cross-task generalization capabilities, enabling zero-shot cell type annotation, perturbation response prediction, and multimodal data integration [11]. The fundamental analogy is powerful: individual cells are treated as sentences, while genes or other genomic features along with their values become words or tokens [1]. By exposing models to millions of cells encompassing diverse tissues and conditions, they learn the fundamental "language" of cells that generalizes to new datasets and biological questions.

This technical guide explores the transformer revolution in single-cell multi-omics integration, examining core architectural principles, implementation methodologies, and experimental applications. We frame this content within the broader context of foundation models for single-cell multi-omics research, providing researchers, scientists, and drug development professionals with comprehensive insights into this rapidly evolving field.

Technical Foundations: From NLP to Single-Cell Biology

Core Architectural Principles

The transformer architecture, characterized by its self-attention mechanisms, forms the backbone of single-cell foundation models (scFMs). The self-attention mechanism allows the model to learn and weight relationships between any pair of input tokens, enabling it to determine which genes in a cell are most informative of the cell's identity or state, how they covary across cells, and how they have regulatory or functional connections [1]. Most scFMs use variants of the transformer architecture with different configurations: some adopt a BERT-like encoder architecture with bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously, while others use decoder-inspired architectures like GPT with unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes [1].

A critical innovation in adapting transformers to biological data lies in tokenization strategies. Unlike words in a sentence, gene expression data lack natural sequencing. To address this, researchers have developed several tokenization approaches. A common strategy ranks genes within each cell by expression levels, feeding the ordered list of top genes as a "sentence" to the model [7]. Other models partition genes into bins by expression values or use normalized counts directly [1]. Each gene is typically represented as a token embedding that may combine a gene identifier and its expression value, with positional encoding schemes adapted to represent the relative order or rank of each gene [1].

Pretraining Strategies and Data Considerations

Pretraining scFMs involves training on self-supervised tasks across unlabeled single-cell data, enabling the models to learn fundamental biological principles from large-scale datasets. A critical ingredient for any foundation model is the compilation of large and diverse datasets. Platforms such as CZ CELLxGENE provide unified access to annotated single-cell datasets, with over 100 million unique cells standardized for analysis, while resources like the Human Cell Atlas and other multiorgan atlases provide broad coverage of cell types and states [1].

Effective pretraining requires careful selection of datasets, filtering of cells and genes, balancing dataset compositions, and implementing quality controls to address challenges such as batch effects, technical noise, and varying processing steps [1]. Models are typically trained using self-supervised objectives including masked gene modeling (where random genes are masked and the model must reconstruct them), contrastive learning, and multimodal alignment. These approaches allow models to capture hierarchical biological patterns without requiring extensive labeled data [11].

Table 1: Major Single-Cell Foundation Models and Their Specifications

| Model Name | Architecture Type | Pretraining Scale | Key Capabilities | Specialized Features |

|---|---|---|---|---|

| scGPT [11] [2] | Generative Pretrained Transformer | 33+ million cells | Multi-omic integration, perturbation prediction, gene network inference | Large-scale pretraining; heterogeneous tasks |

| Nicheformer [7] | Transformer Encoder | 110 million cells (57M dissociated + 53M spatial) | Spatial context prediction, spatial label prediction | Multimodal spatial integration, cross-species learning |

| scPlantFormer [11] [2] | Lightweight Transformer | 1 million plant cells | Cross-species annotation, plant-specific analysis | Phylogenetic constraints, specialized for plant biology |

| Geneformer [7] | Transformer Encoder | Millions of cells | Cell classification, network inference | Rank-based encoding, transcriptome-centered |

| CellPLM [7] | Transformer | 11 million cells | Spatial gene imputation | Limited spatial integration |

Methodological Implementation: From Data to Biological Insights

Data Processing and Tokenization Workflows

The transformation of raw single-cell data into model-ready inputs involves several critical steps. For dissociated single-cell RNA sequencing (scRNA-seq) data, the process begins with quality control, normalization, and batch effect correction. For spatial transcriptomics data, additional processing steps address spatial coordinates and technology-specific biases [7].

The tokenization process for Nicheformer exemplifies a sophisticated approach to handling multimodal data. The model defines a cell as a sequence of gene expression tokens ordered by expression level relative to the mean in the training corpus. As the corpus includes both human and mouse data, researchers constructed a shared vocabulary by concatenating orthologous protein-coding genes and species-specific ones, totaling 20,310 gene tokens [7]. Each single-cell expression vector is converted into a ranked sequence of gene tokens, a strategy shown to yield embeddings robust to batch effects while preserving gene-gene relationships. To account for technology-dependent biases between spatial and dissociated transcriptomics data, the method computes technology-specific nonzero mean vectors by averaging nonzero gene expression values within each assay type [7].

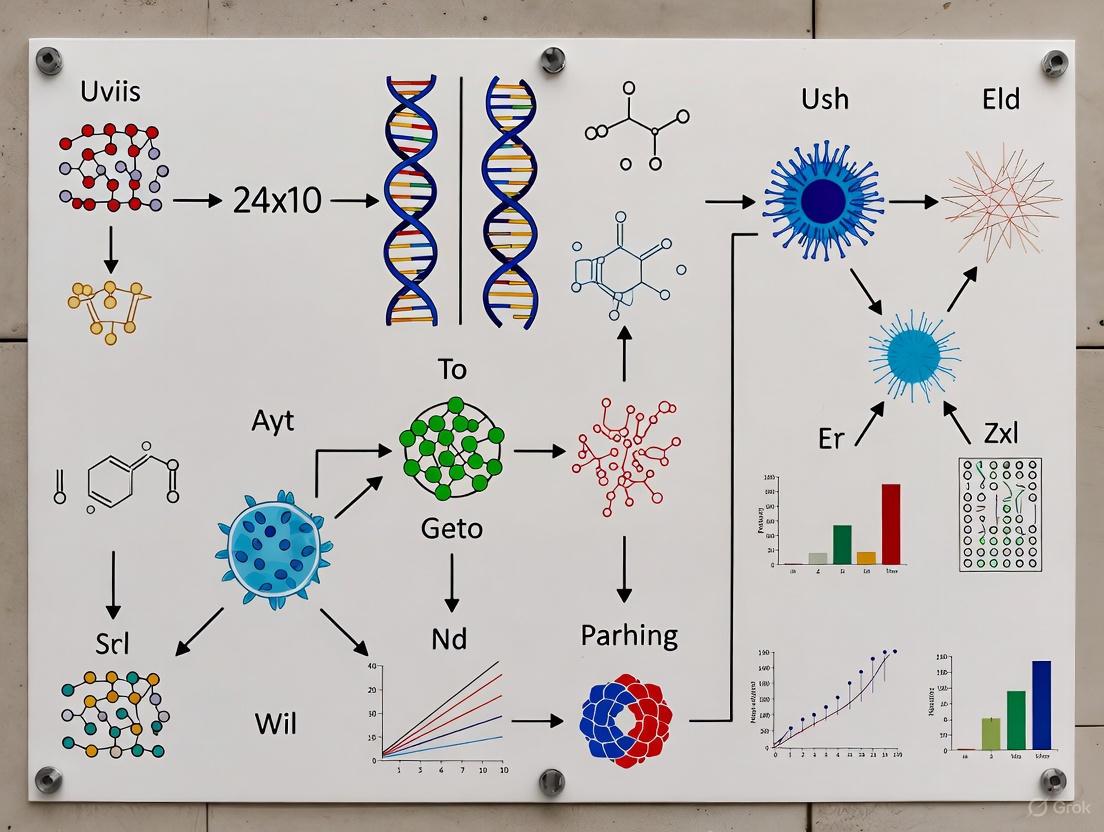

Diagram 1: Single-Cell Data Tokenization Workflow

Model Architectures and Training Methodologies

The architectural implementation of transformer models for single-cell data requires careful consideration of biological constraints. Nicheformer employs a architecture with 12 transformer encoder units with 16 attention heads per layer and a feed-forward network size of 1,024, generating a 512-dimensional embedding, resulting in 49.3 million parameters [7]. This architecture performed best compared to smaller models and other hyperparameter configurations in empirical evaluations.

Training strategies must account for the distinct characteristics of biological data. Research has demonstrated that models trained exclusively on dissociated data fail to capture spatial variation, even when trained on three times the amount of data compared to spatial data [7]. Similarly, models trained on only one organism perform poorly on the missing organism, highlighting the importance of data diversity rather than sheer cell numbers for optimal model performance [7].

Advanced models incorporate specialized training approaches. For example, mmAAVI (Multi-omics Mosaic Auto-scaling Attention Variational Inference) leverages auto-scaling self-attention mechanisms to map arbitrary combinations of omics to a common embedding space, enabling mosaic integration where different data modalities are profiled in different subsets of cells [12]. The model performs semi-supervised learning when well-annotated cell states are available, achieving balanced accuracies of 0.82 and 0.97 with less than 1% labeled cells between batches with completely different omics [12].

Experimental Applications and Validation Frameworks

Benchmarking and Performance Metrics

Rigorous evaluation of scFMs employs diverse downstream tasks that probe different aspects of model performance. These include cell-type classification, gene regulatory network inference, perturbation response prediction, spatial composition prediction, and cross-species annotation [1] [7]. Performance is quantified using task-specific metrics including accuracy, F1 scores, mean squared error, and novel biological relevance metrics.

Empirical evaluations demonstrate the capabilities of these models. scPlantFormer integrates phylogenetic constraints into its attention mechanism, achieving 92% cross-species annotation accuracy in plant systems [11]. mmAAVI consistently demonstrated superiority across four benchmark datasets varying in cell numbers, omics types, and missing patterns when compared to five other commonly used methods [12]. Nicheformer excels in spatial composition prediction and spatial label prediction, systematically outperforming existing foundation models pretrained on dissociated data alone, including Geneformer, scGPT, and UCE [7].

Table 2: Performance Benchmarks of Single-Cell Foundation Models

| Model | Primary Task | Performance Metric | Result | Comparative Advantage |

|---|---|---|---|---|

| mmAAVI [12] | Mosaic Integration | Balanced Accuracy | 0.82-0.97 | Superior with <1% labeled cells |

| scPlantFormer [11] | Cross-species Annotation | Accuracy | 92% | Phylogenetic constraints |

| Nicheformer [7] | Spatial Prediction | Multiple Tasks | Systematic Outperformance | Beats dissected-data models |

| scGPT [11] | Multi-omic Integration | Various Downstream Tasks | State-of-the-art | 33M+ cell pretraining scale |

Specialized Experimental Protocols

Mosaic Integration Protocol (mmAAVI)

Mosaic integration addresses the challenge where different data modalities are profiled in different subsets of cells, requiring simultaneous batch effect removal and modality alignment. The mmAAVI protocol employs these key steps:

- Input Processing: Handle arbitrary combinations of omics modalities as input features

- Auto-scaling Self-attention: Apply scalable self-attention mechanisms to model relationships across features and cells

- Variational Inference: Utilize stochastic gradient variational Bayes to learn posterior distributions in latent space

- Semi-supervised Learning: Incorporate existing cell state annotations when available to guide integration

- Joint Optimization: Simultaneously optimize reconstruction loss, KL divergence, and task-specific loss functions

The model is validated using hold-out datasets with known ground truth, measuring its ability to correctly align cells across modalities and batches while preserving biological variance [12].

Spatial Context Transfer Protocol (Nicheformer)

Nicheformer enables the transfer of spatial context from spatial transcriptomics to dissociated single-cell data through a multi-stage protocol:

- Corpus Construction: Curate SpatialCorpus-110M comprising over 57 million dissociated and 53 million spatially resolved cells across 73 tissues

- Multimodal Pretraining: Jointly train on dissociated and spatial technologies using technology-specific normalization

- Contextual Token Integration: Incorporate species, modality, and technology tokens to enable cross-modal learning

- Embedding Extraction: Generate Nicheformer embeddings by forward passing specific datasets through the pretrained model

- Linear Probing or Fine-tuning: Apply task-specific linear layers or fine-tune the entire model for spatial prediction tasks

This approach allows researchers to enrich non-spatial scRNA-seq data with spatial context, enabling spatial inference without direct spatial measurement [7].

Diagram 2: Spatial Context Transfer in Nicheformer

Successful implementation of transformer approaches in single-cell multi-omics research requires both wet-lab reagents and computational resources. This section details essential components of the research infrastructure.

Table 3: Essential Research Reagents and Computational Resources

| Category | Item/Resource | Specification/Function | Representative Examples |

|---|---|---|---|

| Wet-Lab Technologies | Single-cell RNA-seq | Transcriptome profiling | 10X Genomics, SMART-seq |

| Spatial Transcriptomics | In situ gene expression | MERFISH, Xenium, CosMx | |

| Multiome Technologies | Simultaneous epigenome & transcriptome | SHARE-seq, SNARE-seq | |

| Computational Resources | Data Repositories | Unified data access | CZ CELLxGENE, Human Cell Atlas |

| Benchmarking Platforms | Model evaluation | BioLLM, DISCO | |

| Pretraining Corpora | Foundation model training | SpatialCorpus-110M, 33M+ cell scGPT corpus | |

| Software Tools | Analysis Frameworks | Single-cell analysis | Seurat, Scanpy |

| Foundation Models | Pre-trained models | scGPT, Nicheformer, scPlantFormer |

The transformer revolution has fundamentally reshaped single-cell multi-omics analysis, introducing powerful foundation models capable of integrating diverse data modalities and generalizing across biological contexts. By treating cellular data as a language, these models uncover patterns and relationships that escape traditional analytical approaches. The field is rapidly evolving toward larger models trained on more diverse datasets, with increasing emphasis on spatial context, multimodal integration, and biological interpretability.

As these technologies mature, key challenges remain: technical variability across platforms, limited model interpretability, computational intensity, and gaps in translating computational insights into clinical applications [11]. Overcoming these hurdles will require standardized benchmarking, multimodal knowledge graphs, and collaborative frameworks that integrate artificial intelligence with deep biological expertise [11]. The ongoing development of computational ecosystems—including platforms for federated analysis, model sharing, and reproducible workflows—will be critical for sustaining progress and democratizing access to these powerful approaches.

For researchers and drug development professionals, transformer-based foundation models offer unprecedented opportunities to decipher cellular heterogeneity, model disease mechanisms, and identify novel therapeutic targets. As these technologies become more accessible and refined, they promise to bridge the gap between cellular omics and actionable biological understanding, ultimately advancing precision medicine and therapeutic development.

Tokenization serves as the critical first step in processing single-cell multi-omics data for foundation models, transforming raw, unstructured biological measurements into structured numerical representations that artificial intelligence models can understand and process. In natural language processing, tokens typically represent words or subwords within sentences. By analogy, in single-cell foundation models (scFMs), tokenization involves defining what constitutes a 'token' from single-cell data, typically representing each gene or genomic feature as a token [1]. These tokens serve as the fundamental input units for the model, analogous to words in a sentence, with combinations of these tokens collectively representing a single cell [1]. The effectiveness of tokenization directly impacts a model's ability to capture biological meaningful patterns, making its strategic implementation crucial for success in downstream tasks such as cell type annotation, perturbation response prediction, and multi-omics integration.

Fundamental Concepts and Theoretical Framework

The Tokenization Problem in Single-Cell Data

Unlike words in a sentence, gene expression data are not naturally sequential. This presents a fundamental challenge for applying transformer architectures that typically rely on ordered input sequences [1]. A gene expression profile lacks an obvious inherent distance metric, and computational workflows for cell type clustering vary significantly depending on the choice of cell-cell distance metric such as Euclidean distance, correlation, or t-statistic [13]. Without thoughtful tokenization strategies, this lack of inherent structure can lead to suboptimal model performance and limited biological interpretability.

Theoretical Underpinnings: From Distributional Hypothesis to Biological Context

The theoretical motivation for tokenization in scFMs draws inspiration from the distributional hypothesis in linguistics, which equates distances between vector representations of different words in embedding space with distances between distributions of co-occurring tokens within the training corpus [13]. In single-cell biology, this translates to an assumption that cells occurring in the same tissues, interactions, or regulatory roles ought to retain that similarity when represented in a computational workflow. The extensive pretraining used in modern single-cell foundation models aims to learn a distance metric among expression profiles based on statistical patterns in expression across the training data, effectively applying the distributional hypothesis to cellular representations [13].

Table: Comparison of Tokenization Approaches in Single-Cell Foundation Models

| Tokenization Strategy | Key Methodology | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Gene Ranking by Expression | Orders genes within each cell by expression levels | Deterministic; preserves high-expression signals | May undervalue biologically important low-expression genes | Various early scFMs [1] |

| Expression Value Binning | Partitions genes into bins by expression values | Captures expression magnitude relationships | Creates arbitrary boundaries between bins | scBERT [1] |

| Patch-Based Tokenization | Treats genomic regions as words (tokens) and cells as sentences | Preserves genomic positional information; avoids feature selection | May require specialized architecture modifications | scMamba [14] |

| Normalized Count Encoding | Uses normalized counts without complex ranking | Simplifies input pipeline; maintains all gene information | May struggle with high dimensionality and sparsity | Various models [1] |

Core Tokenization Strategies and Methodologies

Gene-Centric Tokenization Approaches

The most common tokenization strategies for single-cell RNA sequencing data revolve around representing individual genes as tokens. However, a fundamental challenge is that gene expression data lacks natural ordering, unlike words in a sentence [1]. To apply transformers, which typically require sequenced input, researchers have developed several gene-centric tokenization strategies.

Gene Ranking by Expression Level: A common strategy involves ranking genes within each cell by their expression levels and feeding the ordered list of top genes as the 'sentence' representing that cell [1]. This provides a deterministic sequence based on expression magnitude, allowing the model to focus on the most highly expressed genes in each cell. The positional encoding schemes in the transformer architecture then represent the relative order or rank of each gene in the cell.

Expression Value Binning: Some models partition genes into bins by their expression values and use those rankings to determine their positions [1] [1]. This approach captures not just which genes are expressed but the magnitude of their expression, potentially preserving more quantitative information than simple ranking.

Normalized Count Encoding: Several models report no clear advantages for complex ranking strategies and simply use normalized counts without sophisticated ordering schemes [1]. In these approaches, each gene is typically represented as a token embedding that combines a gene identifier and its expression value in the given cell.

Advanced and Specialized Tokenization Methods

Patch-Based Cell Tokenization: The scMamba model introduces a patch-based tokenization strategy that treats genomic regions as words (tokens) and cells as sentences [14]. This approach is particularly designed for single-cell multi-omics integration and operates without the need for prior feature selection while preserving genomic positional information. By building upon the concept of state space duality, scMamba distills rich biological insights from high-dimensional, sparse single-cell multi-omics data.

Feature Grouping with Biological Priors: Some methods, like scMKL, move beyond individual gene tokenization to group features based on prior biological knowledge such as pathways for RNA and transcription factor binding sites for ATAC [15]. Instead of relying on post-hoc explanations, this approach directly identifies regulatory programs and pathways driving cell state distinctions, offering enhanced interpretability by linking cell state with joint embedding.

Multi-Omic Token Integration: For models handling multiple modalities, tokens indicating modality can be included to help the model distinguish between different types of genomic features [1]. Gene metadata such as gene ontology or chromosome location can also be incorporated to provide more biological context. Some models prepend a token representing the cell's own identity and metadata, enabling the model to learn cell-level context, while others incorporate batch information as special tokens to address technical variations.

Diagram Title: Single-Cell Multi-Omics Tokenization Workflow

Experimental Protocols and Implementation Guidelines

Data Preprocessing for Effective Tokenization

Quality Control and Normalization: Before tokenization, single-cell data requires rigorous preprocessing. For scRNA-seq data, established pipelines in packages like Scanpy encompass normalization, logarithmic transformation, and feature selection steps [16]. Typical quality control involves filtering cells with less than 200 gene or peak expressions, removing doublets, and addressing mitochondrial content or erythrocyte contamination [16]. For scATAC-seq data, binarization is often performed first, followed by similar normalization and feature selection steps, typically identifying top variable peaks for subsequent analysis [16].

Feature Selection Considerations: The standard approach often involves selecting highly variable genes (typically 3,000-5,000 for RNA sequencing) or peaks (10,000 for ATAC sequencing) [16]. However, newer approaches like scMamba challenge this paradigm by operating without the need for prior feature selection, potentially preserving crucial biological information that might be discarded by highly variable feature selection [14].

Implementing Tokenization for Foundation Model Pretraining

Token Embedding Generation: After tokenization, all tokens are converted to embedding vectors, which are then processed by the transformer layers. Each gene is typically represented as a token embedding that might combine a gene identifier and its expression value in the given cell [1]. With the various tokenization strategies above, positional encoding schemes are adapted to represent the relative order or rank of each gene in the cell.

Special Token Incorporation: Additional special tokens may be inserted to enrich the input representation. These can include tokens representing cell identity metadata, modality indicators for multi-omics data, batch information tokens to address technical variations, and biological context tokens incorporating gene ontology or chromosomal location information [1].

Table: Research Reagent Solutions for Single-Cell Tokenization Experiments

| Reagent/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| 10x Genomics Multiome | Sequencing Technology | Simultaneous profiling of gene expression and chromatin accessibility | Provides paired RNA+ATAC data for multi-omic tokenization [16] |

| CZ CELLxGENE | Data Platform | Provides unified access to annotated single-cell datasets | Source of standardized data for pretraining; contains over 100 million unique cells [1] |

| SHARE-seq | Protocol | Simultaneous measurement of chromatin accessibility and gene expression | Enables tokenization of linked transcriptomic and epigenomic features [16] |

| Seurat/Signac Suite | Computational Tool | Integration and analysis of single-cell multi-omics data | Preprocessing and quality control prior to tokenization [15] |

| Scanpy | Python Package | Single-cell analysis in Python | Data preprocessing, normalization, and feature selection [16] |

| JASPAR/Cistrome Databases | Biological Knowledge Base | Transcription factor binding site information | Provides prior biological knowledge for feature grouping approaches [15] |

| Hallmark Gene Sets (MSigDB) | Biological Knowledge Base | Curated gene sets representing specific biological states | Enables pathway-informed tokenization strategies [15] |

Protocol: Implementing Patch-Based Tokenization for Multi-Omics Integration

Based on the scMamba approach, the patch-based tokenization methodology can be implemented through the following detailed protocol [14]:

Data Acquisition and Preprocessing: Collect single-cell multi-omics data from appropriate sources. For a standard implementation, use the 10x Genomics Multiome dataset from public repositories like GEO or the 10x Genomics database. Perform standard quality control including filtering cells with low gene/peak counts and removing doublets.

Genomic Region Definition: Instead of selecting highly variable features, define genomic regions of interest based on the assay type. For ATAC-seq data, this typically involves peaks or predefined genomic bins. For RNA-seq, consider gene bodies or predefined transcriptional units.

Patch Creation: Implement the patch-based strategy that treats genomics regions as words (tokens) and cells as sentences. Each patch represents a contiguous genomic region rather than individual features, preserving positional information that would be lost in standard feature selection approaches.

Contrastive Learning with Regularization: Apply the novel contrastive learning approach enhanced with cosine similarity regularization. This enables superior alignment across omics layers compared to traditional methods, a critical advantage for multi-omics integration tasks.

Model Training and Validation: Train the foundation model using the patch-based tokenization approach. Systematically benchmark performance across multiple datasets to evaluate preservation of biological variation, alignment of omics layers, and performance on downstream tasks including clustering, cell type annotation, and trajectory inference.

Comparative Analysis of Tokenization Approaches

Performance Across Biological Tasks

Different tokenization strategies demonstrate varying strengths across common single-cell analysis tasks. The table below summarizes quantitative comparisons of tokenization approaches based on systematic benchmarking studies:

Table: Performance Comparison of Tokenization Strategies Across Downstream Tasks

| Tokenization Method | Cell Type Annotation (Accuracy) | Multi-Omics Integration (Alignment Score) | Rare Cell Detection (F1 Score) | Trajectory Inference (Pseudotime Correlation) | Computational Efficiency (Training Time) |

|---|---|---|---|---|---|

| Gene Ranking by Expression | 0.89 | 0.76 | 0.72 | 0.81 | 1.0x (reference) |

| Expression Value Binning | 0.91 | 0.79 | 0.75 | 0.84 | 1.2x |

| Normalized Count Encoding | 0.87 | 0.82 | 0.70 | 0.78 | 0.9x |

| Patch-Based Tokenization | 0.94 | 0.91 | 0.85 | 0.89 | 1.4x |

| Biological Feature Grouping | 0.92 | 0.88 | 0.82 | 0.86 | 1.3x |

Trade-offs and Considerations for Method Selection

Interpretability vs. Performance Trade-off: Models employing biological feature grouping strategies like scMKL offer enhanced interpretability by directly identifying regulatory programs and pathways driving cell state distinctions [15]. In contrast, more complex tokenization approaches like patch-based methods may achieve higher performance on certain tasks but can be more challenging to interpret.

Scalability Considerations: The computational intensity required for training and fine-tuning varies significantly across tokenization approaches [1]. While simpler methods like normalized count encoding offer faster processing, more sophisticated approaches like patch-based tokenization may require greater computational resources but can handle larger-scale datasets more effectively [14].

Data Quality Dependencies: The performance of different tokenization strategies can be affected by data quality issues including batch effects, technical noise, and varying sequencing depths across experiments [1]. Approaches that incorporate batch information as special tokens or employ contrastive learning with regularization tend to be more robust to these technical variations [14].

Future Directions and Emerging Trends

Advancing Beyond Current Limitations

Future developments in tokenization for single-cell foundation models will likely address several current challenges. The nonsequential nature of omics data remains a fundamental constraint, inspiring research into graph-based tokenization approaches that might better capture gene regulatory networks without imposing artificial orderings [1]. As the field progresses, we anticipate increased focus on dynamic token embeddings where a given gene's representation varies based on its cellular context, similar to how contemporary language models handle polysemy through dynamic word embeddings [13].

Integration with Emerging Technologies and Data Types

Spatial transcriptomics technologies present both opportunities and challenges for tokenization strategies, as they augment each transcript with information about the cell's absolute spatial position or relative position among neighboring cells [13]. This additional contextual information may require specialized tokenization approaches that incorporate spatial coordinates as additional tokens or modify existing token embeddings to capture spatial relationships. Similarly, the integration of temporal information through time-resolved scRNA-seq necessitates tokenization strategies that can effectively capture dynamic processes and developmental trajectories [17].

Diagram Title: Future Directions for Tokenization in Single-Cell Analysis

Tokenization strategies form the foundational bridge between raw single-cell multi-omics data and powerful foundation models capable of extracting biologically meaningful insights. As the field progresses beyond simple gene ranking approaches toward more sophisticated methods like patch-based tokenization and biologically-informed feature grouping, we observe corresponding improvements in model performance, interpretability, and utility for downstream applications. The optimal tokenization approach depends critically on the specific biological questions, data modalities, and computational resources available. Future developments will likely focus on dynamic, context-aware tokenization that better captures the complexity of cellular systems while maintaining computational efficiency. As single-cell technologies continue to evolve and generate increasingly complex multimodal datasets, advanced tokenization strategies will remain essential for unlocking deeper insights into cellular function, disease mechanisms, and therapeutic development.

The advent of high-throughput single-cell sequencing technologies has revolutionized cellular analysis, generating vast datasets that capture molecular states across millions of individual cells. This data explosion has exposed critical limitations in traditional computational methodologies, which are typically designed for low-dimensional or single-modality data and are ill-equipped to handle the complexity of modern single-cell datasets characterized by high dimensionality, technical noise, and multimodal integration challenges [11]. In response, the field has witnessed the emergence of single-cell foundation models (scFMs)—large-scale deep learning models pretrained on extensive and diverse single-cell corpora [1]. These models, inspired by breakthroughs in natural language processing, represent a paradigm shift toward scalable, generalizable frameworks capable of unifying diverse biological contexts and enabling a wide range of downstream tasks through transfer learning [11] [1]. This technical guide examines the construction, implementation, and application of scFMs built upon massive pretraining corpora, framing this development within the broader thesis that foundation models are essential for unlocking the full potential of single-cell multi-omics integration in biological research and therapeutic development.

The Architecture of Single-Cell Foundation Models

Core Model Architectures and Tokenization Strategies

Single-cell foundation models predominantly leverage the transformer architecture, which utilizes self-attention mechanisms to weight the importance of different genes when understanding cellular context [1] [18]. Unlike natural language where words have inherent sequence, gene expression data lacks natural ordering, necessitating specialized tokenization approaches to structure the input data for transformer models [1].

Table 1: Tokenization Methods for Single-Cell Data

| Method Category | Description | Example Models |

|---|---|---|

| Gene Ranking/Reindexing | Genes are ranked by expression levels and tokens are created using ranked gene symbols or unique integer identifiers | Geneformer, tGPT, iSEEEK |

| Binning-Based | Gene expression values are divided into predefined intervals (bins), with tokens assigned based on the corresponding bin | scBERT, scGPT, scFormer |

| Gene Set/Pathway-Based | Genes are grouped into biologically meaningful sets (e.g., pathways, Gene Ontology terms) with tokens representing set activation | TOSICA |

| Patch-Based | Gene expression vectors are segmented into equal-sized sub-vectors or reshaped into matrices | CIForm, scTranSort, scCLIP |

| Direct Projection | Gene expression values are projected directly without discrete tokenization | scFoundation, scMulan, scGREAT |

| Cell Tokenization | Entire cells are treated as tokens rather than individual genes | CellPLM, ScRAT, mcBERT |

The selection of tokenization strategy significantly impacts model performance and biological interpretability. Rank-based methods, such as that employed by Geneformer and Nicheformer, where genes are ordered by expression level relative to a corpus-wide mean, have demonstrated particular robustness to batch effects while preserving gene-gene relationships [7]. After tokenization, embeddings convert tokens into continuous vector representations, capturing semantic relationships between genes, while positional encoding represents token order through vectors that encode relative or absolute positions in the sequence [18].

Pretraining Objectives and Strategies

Pretraining scFMs utilizes self-supervised learning objectives that enable the model to learn universal biological patterns without requiring labeled data [1]. Common pretraining strategies include:

- Masked Gene Modeling: Inspired by BERT-style training in NLP, where random subsets of genes are masked within cell sequences, and the model is trained to predict the masked values based on contextual information from unmasked genes [11] [1].

- Contrastive Learning: Training objectives that bring representations of similar cells closer together while pushing apart representations of dissimilar cells, often used for multimodal alignment [11].

- Causal Language Modeling: Utilizing GPT-style decoder architectures where the model is trained to predict the next gene in a sequence based on preceding genes, enabling generative capabilities [1] [19].

These self-supervised objectives allow scFMs to capture hierarchical biological patterns, gene regulatory relationships, and fundamental principles of cellular identity and function that transfer effectively to diverse downstream tasks.

Building Massive Pretraining Corpora

A critical foundation for any scFM is the compilation of large, diverse, and high-quality datasets. The scale and diversity of the pretraining corpus directly determine the model's ability to generalize across biological contexts, species, and experimental conditions [1]. Major data sources for constructing massive pretraining corpora include:

- CZ CELLxGENE Discover: Provides unified access to annotated single-cell datasets, with over 100 million unique cells standardized for analysis [1].

- Human Cell Atlas: A global consortium aimed at creating comprehensive reference maps of all human cells [11] [1].

- Public Repositories: NCBI Gene Expression Omnibus (GEO), Sequence Read Archive (SRA), and EMBL-EBI Expression Atlas host thousands of single-cell sequencing studies [1].

- Curated Compendia: Resources such as PanglaoDB and the Human Ensemble Cell Atlas collate data from multiple sources and studies [1].

The creation of SpatialCorpus-110M for Nicheformer exemplifies modern corpus construction, incorporating over 57 million dissociated and 53 million spatially resolved cells across 73 human and mouse tissues, specifically designed to capture spatial context in cellular representation [7].

Technical Considerations for Corpus Assembly

Assembling high-quality pretraining corpora requires addressing several technical challenges:

- Batch Effect Mitigation: Technical variation across protocols, instruments, and sequencing centers must be carefully accounted for to prevent models from learning non-biological artifacts [11] [1].

- Data Quality Control: Implementation of rigorous filtering criteria for cells and genes, balancing dataset compositions, and establishing quality thresholds to ensure robust model training [1].

- Multimodal Integration: Harmonizing data from diverse technologies and modalities, including transcriptomic, epigenomic, proteomic, and spatial imaging data [11] [20].

- Cross-Species Alignment: For models spanning multiple organisms, establishing orthologous gene mappings enables learning of conserved biological principles [7].

The careful curation and preprocessing of pretraining data is equally important as model architecture in building a robust and generalizable scFM [1].

Table 2: Exemplary Large-Scale Pretraining Corpora

| Corpus Name | Scale | Composition | Notable Models |

|---|---|---|---|

| SpatialCorpus-110M | 110 million cells | 57M dissociated + 53M spatially resolved cells across 73 human and mouse tissues | Nicheformer |

| scGPT Corpus | 33 million+ cells | Diverse human and mouse cell types across multiple tissues and conditions | scGPT |

| Geneformer Corpus | Millions of cells | Curated collection from various human tissues | Geneformer |

| CZ CELLxGENE | 100 million+ cells | Standardized collection of annotated single-cell datasets | Multiple models |

Experimental Protocols for Model Development

Protocol 1: Standard Pretraining Workflow for scFMs

Objective: Train a foundation model on millions of single-cell transcriptomes to learn universal cellular representations.

Materials:

- Hardware: High-performance computing cluster with multiple GPUs (e.g., NVIDIA Tesla T4 or higher) with substantial VRAM (≥16GB per GPU) [19]

- Software: Python with specialized libraries (accelerate, transformers, flash-attn, torch, datasets) [19]

- Data: Curated single-cell corpus with standardized preprocessing

Methodology:

- Data Tokenization: Convert raw gene expression matrices into tokenized sequences using selected strategy (e.g., rank-based encoding)

- Model Architecture Configuration: Implement transformer architecture with optimized parameters (e.g., 12 layers, 16 attention heads, 512-dimensional embedding for Nicheformer) [7]

- Self-Supervised Pretraining: Train model using masked gene modeling objective on large corpus

- Validation and Checkpointing: Monitor training metrics and save model checkpoints periodically

- Embedding Extraction: Generate latent representations for downstream task evaluation

Key Parameters:

- Learning rate: 1e-4 to 5e-4

- Batch size: Optimized for available GPU memory

- Context length: 1,500 tokens (Nicheformer) [7]

- Training epochs: Until validation loss plateaus

Protocol 2: Multimodal Integration and Spatial Context Incorporation

Objective: Extend foundation models to incorporate spatial context and multiple omics modalities.

Materials:

- Spatial Transcriptomics Data: Image-based spatial technologies (MERFISH, Xenium, CosMx, ISS)

- Multimodal Single-Cell Data: Paired or unpaired transcriptomic, epigenomic, and proteomic data

- Integration Tools: SIMO, StabMap, or custom integration pipelines [20]

Methodology:

- Technology-Specific Normalization: Account for platform-specific biases through separate normalization strategies [7]

- Contextual Token Incorporation: Introduce special tokens for species, modality, and technology to enable cross-modal learning [7]

- Multimodal Alignment: Use contrastive learning or optimal transport methods to align representations across modalities [20]

- Spatial Graph Construction: Incorporate spatial neighborhood information through graph-based representations

- Joint Representation Learning: Train model to capture relationships across modalities and spatial contexts

Validation Metrics:

- Spatial composition prediction accuracy

- Cross-modal retrieval performance

- Biological conservation of learned representations

Diagram 1: Comprehensive workflow for developing single-cell foundation models, showing the pipeline from diverse data sources through curation and tokenization to model training and downstream applications.

Table 3: Essential Research Reagent Solutions for scFM Development

| Resource Category | Specific Tools/Platforms | Function/Purpose |

|---|---|---|

| Data Repositories | CZ CELLxGENE, Human Cell Atlas, GEO, SRA | Provide standardized access to millions of curated single-cell datasets for pretraining |

| Model Architectures | Transformer variants (Encoder, Decoder, Hybrid) | Core neural network architecture for processing tokenized single-cell data |

| Tokenization Methods | Gene ranking, Binning, Pathway tokens | Convert raw gene expression data into structured model inputs |

| Pretraining Frameworks | Hugging Face Transformers, PyTorch, Custom scFM implementations | Software libraries enabling efficient model training and optimization |

| Computational Infrastructure | High-performance GPUs (NVIDIA Tesla T4+, A100), Cloud computing platforms | Essential hardware for processing massive datasets and training large models |

| Integration Tools | SIMO, StabMap, Harmony, Seurat | Enable multimodal data integration and spatial context incorporation |

| Benchmarking Platforms | BioLLM, Custom evaluation pipelines | Standardized frameworks for comparing model performance across diverse tasks |

Evaluation and Benchmarking Frameworks

Performance Metrics and Biological Validation

Evaluating scFMs requires multifaceted approaches that assess both computational efficiency and biological relevance. Standard evaluation paradigms include:

- Zero-shot and Few-shot Learning: Testing model ability to perform tasks with minimal or no task-specific training data [3]

- Linear Probing: Training simple linear classifiers on frozen model embeddings to assess representation quality [3] [7]

- Fine-tuning Evaluation: Adapting the entire model to specific downstream tasks with limited additional training [1]

Novel biologically-informed metrics are increasingly important for proper model assessment. The scGraph-OntoRWR metric measures consistency between cell type relationships captured by scFMs and established biological knowledge from cell ontologies, while the Lowest Common Ancestor Distance (LCAD) metric quantifies the ontological proximity between misclassified cell types, providing more nuanced error analysis than simple accuracy metrics [3].

Comparative Performance Across Downstream Tasks

Independent benchmarking studies reveal that while scFMs demonstrate robust and versatile performance across diverse applications, no single model consistently outperforms others across all tasks [3]. Performance varies based on factors including:

- Dataset Size and Complexity: Larger, more heterogeneous datasets often benefit more from scFM approaches

- Task Specificity: Simpler machine learning models may outperform foundation models for highly specific, narrow tasks

- Computational Constraints: Resource-intensive scFMs may not be optimal when computational resources are limited

Notably, models incorporating spatial context during pretraining (e.g., Nicheformer) significantly outperform models trained only on dissociated data for spatially-aware tasks, highlighting the importance of task-aligned pretraining corpora [7].

Diagram 2: Comprehensive evaluation framework for single-cell foundation models, showing relationships between evaluation metrics, downstream tasks, and representative models excelling in each area.

Future Directions and Challenges

The development of scFMs trained on massive corpora faces several significant challenges that represent opportunities for future research:

- Model Interpretability: Despite their impressive performance, understanding the biological reasoning behind model predictions remains challenging, necessitating improved interpretation methods [11] [1]

- Computational Resource Demands: Training models on tens to hundreds of millions of cells requires substantial computational resources, limiting accessibility [1]

- Standardization and Benchmarking: Inconsistent evaluation metrics and unreproducible pretraining protocols hinder cross-study comparisons and model selection [11] [3]

- Clinical Translation: Gaps persist in translating computational insights into clinically actionable applications, requiring closer integration with biomedical research [11]

Emerging solutions include federated computational platforms that enable decentralized data analysis, standardized benchmarking initiatives, multimodal knowledge graphs that integrate diverse biological knowledge, and collaborative frameworks that combine artificial intelligence with domain expertise [11]. As the field progresses, the development of more efficient architectures, improved tokenization strategies, and better integration of biological prior knowledge will further enhance the capabilities and applications of scFMs in biomedical research and therapeutic development.

The construction of foundation models on massive single-cell corpora represents a fundamental shift in computational biology, enabling unprecedented exploration of cellular heterogeneity, developmental trajectories, and disease mechanisms. By providing universal representations that capture the complex language of cellular function, these models serve as powerful platforms for accelerating biological discovery and advancing precision medicine.

The emergence of single-cell foundation models (scFMs) represents a transformative advancement in computational biology, enabling researchers to decipher the complex "language" of cells using artificial intelligence. These large-scale models, pretrained on millions of single-cell transcriptomes, learn fundamental biological principles that can be adapted to diverse downstream tasks including cell type annotation, perturbation response prediction, and gene regulatory network inference [1] [21]. At the core of this revolution lies self-supervised learning (SSL)—a powerful pretraining paradigm that allows models to learn meaningful representations from vast amounts of unlabeled genomic data without human annotations [22]. By leveraging SSL objectives, scFMs can uncover latent patterns in gene expression and epigenetic regulation that form the foundation for understanding cellular heterogeneity, developmental trajectories, and disease mechanisms. This technical guide explores the architectural frameworks, methodological approaches, and experimental validations that establish SSL as the indispensable engine powering scFM pretraining, with particular emphasis on applications within single-cell multi-omics integration research.

Core SSL Principles in scFM Architecture

Foundational Concepts

Self-supervised learning operates on the principle of generating supervisory signals directly from the structure of the data itself, eliminating the dependency on manually curated labels that are often scarce, inconsistent, or expensive to obtain in biological domains [22]. In the context of single-cell genomics, SSL methods leverage the inherent relationships within and across cells to learn rich, generalizable representations. The fundamental advantage of SSL lies in its ability to harness the rapidly expanding repositories of single-cell data—platforms such as CZ CELLxGENE now provide unified access to over 100 million unique cells standardized for analysis [1] [2]. This massive scale of unlabeled data presents an ideal training ground for SSL methods, which excel at discovering biological patterns without explicit guidance.

The SSL paradigm in single-cell genomics differs from traditional supervised learning by using pairwise relationships within data (X) for training, rather than relying on labeled examples (X with Y) [22]. It also diverges from purely unsupervised learning by creating structured prediction tasks that guide the model to learn meaningful representations. This approach has proven exceptionally powerful in other data-intensive domains including computer vision and natural language processing, and now serves as the foundational framework for scFMs [22].

Tokenization: Converting Biological Data to Model Input

A critical preprocessing step for applying SSL to single-cell data is tokenization—the process of converting raw input data into discrete units called tokens that models can understand and process [1] [21]. In natural language processing, tokens typically represent words or subwords; in scFMs, tokens generally correspond to genes or genomic features along with their expression values.

A fundamental challenge in this domain is that gene expression data lacks natural sequential ordering, unlike words in a sentence. To address this, researchers have developed several tokenization strategies:

- Expression-based ranking: Genes are ranked within each cell by expression levels, creating an ordered list of top genes that serves as the cellular "sentence" [1] [7]

- Binning approaches: Genes are partitioned into bins based on expression values, with rankings determining positional encoding [1]

- Normalized counts: Some models report no clear advantages for complex ranking strategies and simply use normalized counts [1]

Each gene is typically represented as a token embedding combining a gene identifier with its expression value. Additional special tokens may be incorporated to enrich biological context, including modality indicators for multi-omics data, batch information, species identifiers, and gene metadata such as genomic location or functional annotations [1] [7]. After tokenization, all tokens are converted to embedding vectors processed by transformer layers, ultimately generating latent embeddings for each gene token and often a dedicated embedding for the entire cell.

Transformer Architectures for Single-Cell Data

Most successful scFMs utilize transformer architectures characterized by attention mechanisms that learn and weight relationships between input tokens [1] [21]. The attention mechanism enables the model to determine which genes in a cell are most informative of cellular identity or state, how they co-vary across cells, and their potential regulatory or functional connections.

Architectural variations in scFMs include:

- BERT-like encoders: Utilize bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1]

- GPT-inspired decoders: Employ unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes [1]

- Hybrid designs: Combine encoder-decoder components for specialized applications [1]

- Spatially aware transformers: Incorporate spatial relationships between cells, as demonstrated by Nicheformer, which learns joint representations of dissociated and spatial transcriptomics data [7]

These architectures gradually build latent representations of cells and genes through multiple layers of attention and feed-forward networks, capturing hierarchical biological patterns at varying scales of resolution.

SSL Methodologies in scFM Pretraining

Masked Autoencoding Strategies

Masked autoencoding has emerged as a particularly effective SSL approach for single-cell genomics, outperforming contrastive methods in this domain—a notable divergence from trends in computer vision [22]. This methodology involves randomly masking portions of the input data and training the model to reconstruct the original information based on the remaining context.

Table 1: Masked Autoencoder Strategies in Single-Cell SSL

| Strategy | Mechanism | Biological Insight | Applications |

|---|---|---|---|

| Random Masking | Randomly selects genes to mask | Minimal inductive bias | General-purpose pretraining |

| Gene Programme (GP) Masking | Masks functionally related gene sets | Leverages known biological pathways | Pathway-level representation learning |

| GP-to-GP Masking | Predicts one gene programme from another | Captures interactions between biological programs | Regulatory network inference |

| GP-to-TF Masking | Predicts transcription factors from target genes | Models regulatory relationships | Gene regulatory network reconstruction |

In practice, models like scGPT implement masked language modeling pretraining where 15-30% of input genes are randomly masked, and the model learns to reconstruct their values based on the remaining genomic context [1] [2]. This approach forces the model to learn the complex dependencies and correlations between genes, effectively capturing the underlying structure of transcriptional programs.

Contrastive Learning Approaches

Contrastive learning represents another important SSL paradigm adapted for single-cell data, focusing on learning representations by contrasting positive and negative sample pairs [22] [23]. These methods aim to pull semantically similar cells closer in the embedding space while pushing dissimilar cells apart.

Key contrastive frameworks applied to single-cell data include:

- Barlow Twins: Eliminates the need for negative pairs entirely by learning embeddings where the cross-correlation matrix between two augmented versions of the dataset is close to the identity matrix [22]

- BYOL (Bootstrap Your Own Latent): Uses two neural networks (online and target networks) that learn by predicting each other's representations without explicit negative sampling [22]

- Domain-specific adaptations: Methods like CLAIRE employ novel augmentation strategies using mutual nearest neighbors between experimental batches to generate positive pairs [23]

While contrastive methods have shown value, empirical analyses indicate that masked autoencoders generally excel over contrastive approaches in single-cell genomics, particularly for gene-expression reconstruction and transfer learning scenarios [22].

Specialized SSL Frameworks for Single-Cell Data

Beyond generic SSL approaches, several specialized frameworks have been developed specifically for single-cell data challenges:

- scMGCL: Utilizes graph contrastive learning for multi-omics integration, where each modality's graph structure serves as an augmentation for the other in a cross-modality contrastive paradigm [24]

- Closure methods: Implement "closed-loop" frameworks that incorporate experimental perturbation data during model fine-tuning, significantly improving prediction accuracy for tasks like identifying therapeutic targets [25]

- Multimodal alignment: Techniques that align representations across different omics modalities (transcriptomics, epigenomics, proteomics) through contrastive objectives or shared latent spaces [26] [23]

These specialized approaches address unique characteristics of single-cell data including sparsity, technical noise, batch effects, and the need for multimodal integration.

Quantitative Performance of SSL in scFMs

Empirical Evaluation of SSL Benefits

Rigorous benchmarking studies have quantified the performance advantages conferred by SSL pretraining in single-cell foundation models. The most significant benefits emerge in transfer learning scenarios where models pretrained on large auxiliary datasets are adapted to smaller, target datasets [22].

Table 2: SSL Performance Improvements in Downstream Tasks

| Downstream Task | Dataset | Baseline Performance | SSL-Enhanced Performance | Key Improvement |

|---|---|---|---|---|

| Cell-type Prediction | PBMC (422K cells, 30 types) | 0.7013 macro F1 | 0.7466 macro F1 | +6.5% improvement, especially for rare cell types |

| Cell-type Prediction | Tabula Sapiens (483K cells, 161 types) | 0.2722 macro F1 | 0.3085 macro F1 | +13.3% improvement, better identification of specific types |

| Gene-expression Reconstruction | Multiple datasets | Varies by baseline | Significant improvements | Enhanced reconstruction accuracy |

| In-silico Perturbation | T-cell activation | 3% PPV (open-loop) | 9% PPV (closed-loop) | 3x improvement in positive predictive value |

| Data Integration | Multiple atlas datasets | Lower batch mixing | Higher batch mixing | Improved preservation of biological variation |