Gene Function Prediction with Single-Cell Foundation Models: A Practical Guide to Embeddings, Applications, and Benchmarking

This article provides a comprehensive overview of the rapidly evolving field of gene function prediction using single-cell Foundation Model (scFM) embeddings.

Gene Function Prediction with Single-Cell Foundation Models: A Practical Guide to Embeddings, Applications, and Benchmarking

Abstract

This article provides a comprehensive overview of the rapidly evolving field of gene function prediction using single-cell Foundation Model (scFM) embeddings. Tailored for researchers and drug development professionals, it explores the foundational concepts of scFMs, which treat cells as sentences and genes as words to learn universal biological principles from vast single-cell datasets. The content details methodological approaches for extracting and utilizing gene and cell embeddings in functional tasks, from variant effect prediction to in silico perturbation modeling. Crucially, it addresses current limitations and optimization strategies, synthesizing evidence from recent rigorous benchmarks that reveal scFMs often struggle to outperform simple linear baselines for specific prediction tasks. Finally, the article offers a framework for validation and model selection, empowering scientists to critically evaluate these powerful tools and apply them effectively in biomedical research.

Demystifying Single-Cell Foundation Models: From Cellular 'Language' to Functional Embeddings

What are Foundation Models and Why Do They Matter for Biology?

Foundation models are a class of large-scale deep learning models trained on vast and diverse datasets, capable of being adapted to a wide range of downstream tasks [1]. In biology, these models are trained on massive genomic, transcriptomic, proteomic, and other omics datasets to learn the fundamental "language" of life [2]. They matter because they mark a shift from traditional, single-task models to a more integrated, systems-level understanding of biology, enabling researchers to decode disease complexity and accelerate drug discovery with unprecedented precision [3].

The core idea behind biological foundation models is their pretraining on extensive, unlabeled datasets through self-supervised learning. This process allows the model to learn generalizable patterns and relationships within the data [1] [4]. Once a foundation model is established, it can be fine-tuned for specific applications with relatively few additional labeled examples, transferring its learned knowledge to improve performance on target tasks [1].

The "Language" of Biology

Inspired by successes in natural language processing (NLP), researchers treat biological components analogously to words in a language [4].

- Single-Cell Biology: Individual cells are treated as "sentences," and genes or other genomic features (along with their expression values) are treated as "words" or tokens [1] [4].

- DNA and Proteins: Nucleotides in a DNA sequence or amino acids in a protein sequence are processed as sequential tokens, allowing the model to learn grammar and semantics from genomic or proteomic data [3] [5].

This approach allows models to capture intricate long-range relationships and dependencies within biological data using transformer architectures, which use attention mechanisms to weight the importance of different tokens [1].

Key Applications in Biological Research

Foundation models are being applied across diverse areas of biology, from understanding single-cell function to designing novel proteins.

Single-Cell Biology (scFMs)

Single-cell foundation models (scFMs) learn from millions of single-cell transcriptomes to characterize cellular heterogeneity and states [1]. Key applications include:

- Cell Type Annotation: Models like scBERT are trained in a self-supervised manner for annotating novel cell types [1] [4].

- Predicting Perturbation Responses: Models can be fine-tuned to predict how cells will respond to genetic perturbations or drug treatments, although this remains a challenging area [2] [6]. For example, scGen predicts single-cell perturbation responses [2], and GEARS integrates gene-gene relationship knowledge graphs to predict transcriptional outcomes [2].

- Multi-omics Data Integration: Models such as scGPT are designed to integrate diverse data types, including transcriptomics, epigenomics, and proteomics, to create a unified representation of cellular state [2] [7].

Genomics and Gene Regulation

Models trained on DNA sequences learn to interpret the genetic code and predict regulatory elements.

- Non-Coding Variant Effects: DeepSEA uses deep learning to predict the effects of noncoding genomic variants on chromatin and epigenetic regulatory mechanisms [7]. Enformer is optimized to include long-range interactions (up to 100kb) in these predictions [7].

- Function-Guided Sequence Design: Evo, a genomic language model, can perform "semantic design" by using a DNA prompt encoding genomic context to generate novel sequences enriched for specific functions. This has been validated by experimentally testing generated anti-CRISPR proteins and toxin–antitoxin systems [5].

Proteomics

Proteomic foundation models have revolutionized the prediction of protein structures and functions.

- Protein Structure Prediction: AlphaFold uses neural networks to predict 3D protein structures from amino acid sequences with near-experimental accuracy [2] [7]. Its developers won the 2024 Nobel Prize in Chemistry for this breakthrough.

- Protein Function and Design: Models like ESM3 are language models that simultaneously reason over the sequence, structure, and function of proteins, enabling the simulation of evolution and the design of novel proteins [2].

Spatial Biology

Spatial foundation models incorporate spatial context, which is crucial for understanding tissue architecture and cellular communication.

- Nicheformer: Trained on both dissociated and spatially-resolved transcriptomics data, this model makes context-specific predictions about the spatial microenvironment of cells, helping to bridge the gap between cell-atlas data and spatial context [7].

- Novae: This model uses graph-based learning to correct for batch effects and enable more informative comparisons of spatial domains across different tissue samples and experiments [7].

Table 1: Selected Biological Foundation Models and Their Primary Applications

| Model Name | Domain | Primary Application | Key Feature |

|---|---|---|---|

| scGPT [7] | Single-Cell | Multi-omics integration, cell annotation, perturbation prediction | Generative pre-trained transformer on ~33 million cells [2] |

| Geneformer [7] | Single-Cell | Network dynamics from scRNA-seq | Pretrained on 95 million single-cell transcriptomes [7] |

| AlphaFold [7] | Proteomics | Protein structure prediction | Near-experimental accuracy from amino acid sequence [2] [7] |

| Evo [5] | Genomics | De novo gene and operon design | Uses genomic context ("semantic design") for function-guided generation |

| Enformer [7] | Genomics | Gene expression prediction | Incorporates long-range DNA interactions (up to 100kb) |

| Nicheformer [7] | Spatial | Spatial microenvironment prediction | Integrates dissociated and spatially-resolved data |

Quantitative Performance of Foundation Models

While foundation models show great promise, their performance must be critically evaluated against simpler baseline methods.

Benchmarking Perturbation Prediction

A recent benchmark study evaluated several foundation models (scGPT, scFoundation) and other deep learning models (GEARS, CPA) against simple linear baselines for predicting transcriptome changes after single or double genetic perturbations [6]. The baselines were:

- 'No change' model: Always predicts the same expression as the control condition.

- 'Additive' model: For a double perturbation, predicts the sum of the individual logarithmic fold changes (LFCs) from single perturbations.

The study found that none of the deep learning models outperformed the simple additive baseline in predicting expression changes for held-out double perturbations [6]. Furthermore, when predicting genetic interactions (where the double perturbation effect is non-additive), no model performed better than the 'no change' baseline [6].

Table 2: Benchmarking Results for Perturbation Prediction (L2 Distance for Top 1,000 Genes) [6]

| Model Type | Example Models | Performance vs. Additive Baseline | Notes |

|---|---|---|---|

| Simple Baseline | Additive Model | Best (Reference) | Simple, non-AI baseline |

| Simple Baseline | No Change Model | Worse | Simple, non-AI baseline |

| Foundation Models | scGPT, scFoundation | Worse | Required significant computational expense for fine-tuning |

| Other DL Models | GEARS, CPA | Worse | CPA was not designed for unseen perturbations |

Utility of Learned Representations

The same study also investigated whether the data representations (embeddings) learned by foundation models during pretraining provided any benefit. They extracted gene embedding matrices from scFoundation and scGPT and used them in a simple linear model [6]. The findings were mixed:

- Linear models equipped with these pretrained embeddings performed as well or better than the original models with their in-built decoders [6].

- However, these embeddings did not consistently outperform a linear model using embeddings derived directly from the perturbation training data [6].

- The best-performing approach was a linear model with embeddings pretrained on a different perturbation dataset (from a different cell line), suggesting that pretraining on specific perturbation data is more beneficial than pretraining on general single-cell atlas data for this task [6].

Experimental Protocols

This section provides detailed methodologies for key experiments involving foundation models, particularly in the context of gene function prediction and validation.

Protocol: Fine-Tuning an scFM for Perturbation Response Prediction

This protocol outlines the steps to adapt a pretrained single-cell foundation model to predict transcriptional responses to genetic perturbations [6] [1].

Research Reagent Solutions & Materials

- Pretrained Model Weights: e.g., for scGPT or Geneformer.

- Perturbation Dataset: A single-cell RNA-seq dataset profiling genetic perturbations (e.g., CRISPR-based) and an unperturbed control. Example: Norman et al. (K562 cells with CRISPRa on 100 single genes and 124 pairs) [6].

- Computing Environment: High-performance computing node with GPU acceleration (e.g., NVIDIA A100 or H100).

- Software Libraries: PyTorch or TensorFlow, scvi-tools, and the model's specific codebase (e.g., scGPT GitHub repository).

Procedure

- Data Preprocessing:

- Tokenization: Convert the gene expression matrix of the perturbation dataset into the token format required by the model. This typically involves ranking genes by expression level within each cell or binning expression values [1] [4].

- Formatting: Structure the data into (perturbation, expression profile) pairs. For the control population, assign a "no perturbation" token.

Model Setup:

- Load the pretrained foundation model architecture and its weights.

- Add a task-specific prediction head if needed (e.g., a linear decoder that maps the model's cell embedding to the gene expression space) [6].

Fine-Tuning:

- Freeze a portion of the pretrained layers initially to avoid catastrophic forgetting.

- Train the model on the perturbation dataset using a regression loss (e.g., Mean Squared Error) between the predicted and observed expression profiles. Use a held-out validation set for early stopping.

- Unfreeze all layers and continue training with a lower learning rate for full model adaptation.

Evaluation:

- Evaluate the model on a completely held-out test set of perturbations (e.g., double perturbations not used in training). Compare its performance against simple baselines like the 'additive' model using metrics like L2 distance or Pearson correlation [6].

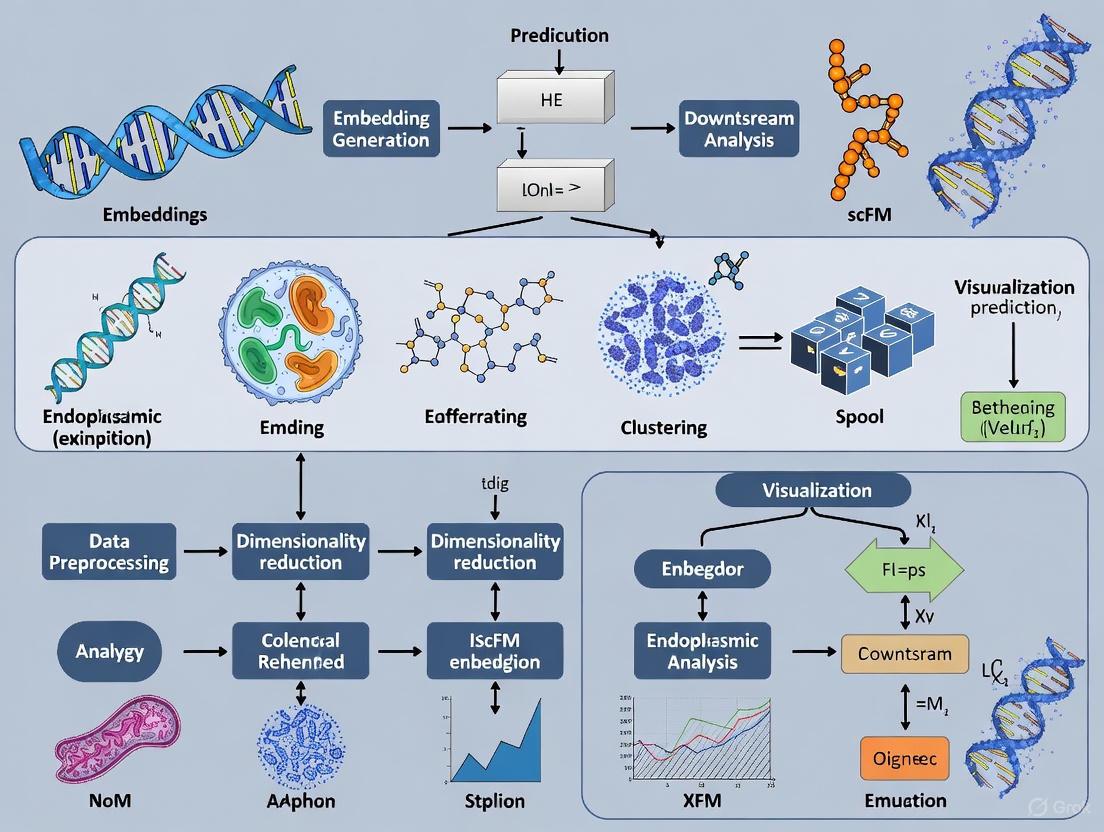

Diagram 1: Workflow for fine-tuning an scFM on perturbation data.

Protocol: Semantic Design of Functional Genes with a Genomic LM

This protocol describes the use of a generative genomic language model, like Evo, for designing novel functional genes based on genomic context, as validated in recent research [5].

Research Reagent Solutions & Materials

- Genomic Language Model: Evo 1.5 model [5].

- DNA Prompt Sequence: A genomic sequence of known function to serve as context (e.g., a gene or operon).

- Sampling Compute: Server with sufficient memory to run the model with long-context prompts (e.g., 131k context length).

- Validation Assays: Resources for functional validation (e.g., growth inhibition assays for toxins, interaction assays for antitoxins).

Procedure

- Prompt Engineering:

- Identify a genomic "context" sequence associated with the function of interest. In prokaryotes, this could be a gene from a toxin-antitoxin system or a known anti-CRISPR gene [5].

- The prompt can be the sense strand, the reverse complement, or the upstream/downstream genomic context of the target gene.

Sequence Generation:

- Input the prompt into the Evo model and use it to generate a set of candidate sequences through sampling. The model will "autocomplete" the sequence based on the learned distributional semantics of prokaryotic genomes [5].

In Silico Filtering:

- Filter the generated sequences for those that encode open reading frames (for proteins).

- Apply novelty filters, e.g., requiring low sequence identity to known proteins in databases.

- For multi-component systems (e.g., toxin-antitoxin), use structure prediction tools to assess potential complex formation [5].

Experimental Validation:

- Synthesize the top candidate sequences.

- Test their function in a relevant biological assay. For example, for a generated toxin, test its ability to inhibit bacterial growth in a growth inhibition assay. For a generated anti-CRISPR, test its ability to inhibit CRISPR-Cas activity [5].

Diagram 2: Semantic design of genes using a genomic LM.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function/Description | Example Use Case |

|---|---|---|

| Pretrained Model Weights | Pre-learned parameters of a foundation model that can be downloaded and fine-tuned. | Starting point for adapting scGPT or Geneformer to a specific task without training from scratch [7]. |

| Curated Single-Cell Atlas | Large, integrated collection of single-cell datasets used for pretraining or benchmarking. | CELLxGENE and the Human Cell Atlas provide standardized data for over 100 million cells [1] [4]. |

| Perturbation Datasets | Single-cell RNA-seq data from genetic or chemical perturbation experiments. | Used as labeled data for fine-tuning models to predict perturbation responses (e.g., Norman et al. data) [6]. |

| GPU Computing Cluster | High-performance computing resource with multiple GPUs. | Essential for training and fine-tuning large foundation models, which are computationally intensive [1] [6]. |

| Functional Assay Kits | Wet-lab kits for testing biological function (e.g., growth inhibition, protein-binding). | Critical for experimentally validating the function of sequences generated by models like Evo [5]. |

Foundation models represent a paradigm shift in computational biology, offering a unified framework to integrate and interpret complex biological data. Their ability to learn the fundamental principles of biological systems from massive datasets holds immense promise for gene function prediction, novel therapeutic design, and unraveling cellular mechanisms. However, critical benchmarks reveal that their performance on specific tasks, such as predicting genetic perturbation effects, does not yet consistently surpass that of simple linear models [6]. This highlights the importance of rigorous evaluation and continued method development. The future of foundation models in biology will likely involve more sophisticated multimodal integration, improved scalability, and a stronger focus on generating interpretable and actionable biological insights that can be validated experimentally.

The explosion of single-cell RNA sequencing (scRNA-seq) data has revolutionized our understanding of biological systems at cellular resolution. Concurrently, artificial intelligence has witnessed remarkable progress through foundation models in natural language processing (NLP). This confluence has given rise to a powerful conceptual framework: viewing cells as sentences and genes as words. In this analogy, the complete transcriptome of a cell forms a coherent biological "sentence," where the expression patterns of individual genes (words) create meaning through their contextual relationships [8].

Single-cell foundation models (scFMs) operationalize this analogy by treating scRNA-seq data as a biological "corpus" from which to learn universal representations. These models aim to capture the fundamental grammar and syntax of cellular states, enabling researchers to predict how cells respond to perturbations, annotate cell types, and infer gene function [9] [8]. This document provides application notes and experimental protocols for leveraging scFM embeddings in gene function prediction, framed within a broader thesis on advancing therapeutic discovery through computational biology.

Quantitative Benchmarking of scFMs for Biological Prediction

Performance Evaluation Across Multiple Tasks

Recent benchmarking studies have systematically evaluated scFMs against traditional methods. The table below summarizes performance findings across key biological prediction tasks:

Table 1: Performance of single-cell foundation models across diverse tasks

| Task Category | Specific Task | Model Performance Findings | Key References |

|---|---|---|---|

| Perturbation Effect Prediction | Predicting transcriptional responses to genetic perturbations | scFM embeddings showed limited improvement over simple baselines, particularly under distribution shift and for strong/atypical perturbations [9] [10]. | PertEval-scFM framework [9] |

| Cell-level Tasks | Batch integration; Cell type annotation | scFMs are robust and versatile, but simpler models can be more efficient for specific datasets; no single scFM consistently outperforms others [8]. | Biology-driven benchmark [8] |

| Gene-level Tasks | Gene function prediction; Tissue specificity | Gene embeddings from scFMs capture functional relationships and can predict Gene Ontology terms [8]. | FuncBase; FRoGS comparison [8] |

Evaluation Metrics and Framework Insights

The PertEval-scFM framework provides standardized assessment for perturbation prediction, while broader benchmarks employ multiple metrics:

Table 2: Evaluation metrics and frameworks for scFM assessment

| Evaluation Dimension | Specific Metrics | Framework Insights |

|---|---|---|

| Perturbation Prediction | Zero-shot embedding performance; Distribution shift robustness | Reveals that current scFMs struggle with strong or atypical perturbations, likely due to training on mostly mild perturbations [9]. |

| Biological Relevance | scGraph-OntoRWR (cell type relationships); LCAD (annotation error severity) | Novel ontology-informed metrics show scFMs capture biologically meaningful relationships between cell types [8]. |

| General Model Utility | 12+ metrics including unsupervised, supervised, and knowledge-based approaches | Holistic rankings help guide model selection based on dataset size, task complexity, and computational resources [8]. |

Experimental Protocols for scFM-Based Gene Function Prediction

Protocol 1: Gene Embedding Extraction and Functional Analysis

Purpose: To extract gene embeddings from scFMs and use them to predict gene function and relationships.

Materials and Reagents:

- Computational Environment: High-performance computing cluster with GPU acceleration

- Software: Python/R environment with scFM implementations (Geneformer, scGPT, UCE, scFoundation, LangCell, scCello) [8]

- Data: Preprocessed scRNA-seq dataset; Gene Ontology annotations

Procedure:

- Model Selection and Setup: Choose appropriate scFM based on task requirements. Install and configure the model according to documentation [8].

- Gene Embedding Extraction: Access the gene embedding matrix from the input layer of the scFM. These embeddings are typically learned from diverse cellular contexts during pretraining [8].

- Functional Similarity Analysis: Compute cosine similarity between gene embeddings to identify functionally related genes. Validate against known biological pathways.

- Gene Ontology Prediction: Train simple classifiers (e.g., logistic regression) using gene embeddings to predict GO term associations. Compare performance against traditional methods like FRoGS [8].

- Cross-Validation: Implement k-fold cross-validation to assess prediction robustness. Use metrics like AUC-ROC for quantitative comparison.

Troubleshooting Tips:

- If embeddings show poor functional discrimination, ensure the scFM was pretrained on relevant cellular contexts

- For large gene sets, consider dimensionality reduction techniques (PCA, UMAP) to visualize embedding relationships

Protocol 2: Perturbation Response Prediction Using Zero-Shot Embeddings

Purpose: To predict cellular responses to genetic perturbations using zero-shot scFM embeddings.

Materials and Reagents:

- Framework: PertEval-scFM benchmark framework [9]

- Data: Perturb-seq data combining gene expression from perturbed and unperturbed cells

- Controls: Baseline models (e.g., HVG selection, Seurat, Harmony, scVI) [8]

Procedure:

- Data Preparation: Process Perturb-seq data following PertEval-scFM guidelines. Select highly variable genes to focus analysis on most informative features [9].

- Embedding Generation: Generate cell embeddings using scFMs in zero-shot mode (without task-specific fine-tuning).

- Control State Representation: Compute average gene expression for control cells to establish baseline reference state [9].

- Perturbation Effect Modeling: Train simple models (e.g., linear classifiers) using scFM embeddings to predict perturbation effects compared to raw gene expression data.

- Evaluation Under Distribution Shift: Test model performance across different experimental conditions to assess robustness to distribution shifts [9] [10].

- Benchmarking: Compare against baseline methods using metrics implemented in PertEval-scFM framework.

Interpretation Guidelines:

- Minimal improvement over baselines suggests scFM embeddings may not capture perturbation-specific information

- Performance degradation on strong perturbations indicates limited generalization capability

- Consistent performance across distribution shifts indicates robust biological learning

Protocol 3: Biological Knowledge Validation Using Ontology-Informed Metrics

Purpose: To validate whether scFMs capture biologically meaningful relationships using ontology-based metrics.

Materials and Reagents:

- Metrics: scGraph-OntoRWR, Lowest Common Ancestor Distance (LCAD) [8]

- Data: Cell ontology hierarchies; Expert-annotated cell type references

Procedure:

- Cell Embedding Extraction: Generate zero-shot cell embeddings from scFMs for a diverse set of cell types.

- Cell Relationship Mapping: Construct k-nearest neighbor graphs from cell embeddings to identify similarity relationships between cell types.

- Ontological Consistency Assessment: Apply scGraph-OntoRWR metric to measure consistency between embedding-derived relationships and established cell ontology hierarchies [8].

- Annotation Error Analysis: Use LCAD metric to quantify the severity of cell type misclassifications by measuring ontological distance between predicted and true cell types [8].

- Comparative Analysis: Evaluate multiple scFMs using these metrics to identify which models best capture biological ground truth.

Validation Criteria:

- High scGraph-OntoRWR scores indicate strong alignment with biological knowledge

- Lower LCAD values for errors suggest semantically reasonable misclassifications

- Consistent performance across diverse tissue types indicates generalizable biological understanding

Visualization of scFM Workflows and Conceptual Framework

The Core Analogy: From Biological Data to Linguistic Concepts

scFM-Based Gene Function Prediction Workflow

Table 3: Essential resources for scFM research and gene function prediction

| Resource Category | Specific Tool/Resource | Function and Application |

|---|---|---|

| Benchmarking Frameworks | PertEval-scFM [9] [10] | Standardized evaluation of perturbation effect prediction; assesses performance under distribution shift |

| Evaluation Platforms | OmicsEV [11] | R package with 15+ evaluation metrics for omics data; generates HTML reports for comparative analysis |

| Gene Function Databases | FuncBase [12] | Resource for quantitative machine learning-based gene function annotations with community feedback system |

| Single-Cell Foundations Models | Geneformer, scGPT, UCE, scFoundation, LangCell, scCello [8] | Pretrained models with different architectures for extracting gene and cell embeddings |

| Biological Validation Metrics | scGraph-OntoRWR, LCAD [8] | Cell ontology-informed metrics measuring biological consistency of learned representations |

| Data Resources | CellxGene [8]; AIDA v2 [8] | Curated single-cell datasets for benchmarking and validation |

The "cells as sentences, genes as words" analogy provides a powerful conceptual framework for leveraging advances in NLP for biological discovery. Current benchmarking reveals that while scFMs show promise in capturing biological relationships, they have limitations in specific prediction tasks like perturbation response [9] [10]. The field is evolving toward more specialized models, higher-quality datasets capturing diverse cellular states, and improved evaluation methods that better reflect biological reality [9] [8].

Future development should focus on creating training datasets that encompass broader cellular states, including both subtle and strong perturbation effects [9]. Additionally, specialized models designed to take full advantage of large datasets while maintaining biological interpretability will enhance prediction capabilities [8]. As these models improve, they will become increasingly valuable for therapeutic development, offering in silico methods for triaging experimental candidates and identifying novel treatment strategies [12].

Single-cell foundation models (scFMs) are large-scale deep learning models pretrained on vast datasets of single-cell omics data, capable of being adapted to a wide range of downstream biological tasks [1] [4]. Inspired by the success of transformer architectures in natural language processing (NLP), researchers have developed scFMs that treat individual cells as sentences and genes or genomic features as words or tokens [1] [4]. By training on millions of cells encompassing diverse tissues and conditions, these models learn fundamental principles of cellular biology that generalize to new datasets and tasks, such as cell type annotation, perturbation response prediction, and gene regulatory network inference [1] [13].

The core innovation lies in applying the transformer's self-attention mechanism to single-cell data. This allows the model to weigh the importance of different genes within a cell, capturing complex, long-range dependencies and gene-gene interactions that are crucial for understanding cellular function and state [1]. Models like scGPT and Geneformer exemplify this approach, leveraging massive pretraining corpora to create foundational representations for single-cell biology [1] [14] [13].

Model Architectures and Core Technical Components

Foundational Transformer Architecture

At their core, scFMs are built on the transformer neural network architecture. Transformers utilize a self-attention mechanism that allows the model to dynamically weight the relevance of all input tokens (genes) when processing each individual token, thereby capturing complex contextual relationships within the data [1] [15]. The standard transformer comprises several key components:

- Embedding Layer: Converts raw input tokens into dense vector representations. In scFMs, this typically involves creating embeddings for gene identifiers and their expression values [1].

- Encoder Stack: Processes input embeddings through multiple layers of multi-head self-attention and feed-forward neural networks to build contextualized representations [15].

- Positional Encoding: Injects information about the order of tokens, necessary since transformers lack inherent sequential processing capabilities [1] [15].

For single-cell data, where genes lack a natural sequential order, researchers have developed innovative tokenization strategies to structure the input. Common approaches include ranking genes by expression levels within each cell or binning genes based on expression values to create deterministic sequences for transformer processing [1] [4].

Key scFM Architectures: scGPT and Geneformer

scGPT Architecture

scGPT adopts a GPT-like decoder architecture with a unidirectional masked self-attention mechanism [1]. This design enables the model to iteratively predict masked genes conditioned on known genes in the cell's expression profile. The model employs several technical innovations:

- Gene Tokenization: Represents each gene with a token embedding that combines gene identifier and expression value information [1].

- Special Tokens: Incorporates cell-level context tokens and modality indicators for multi-omic integration [1].

- Efficient Attention Mechanisms: Utilizes optimized attention patterns to handle the high dimensionality of gene expression data [13].

scGPT has been pretrained on over 33 million non-cancerous human cells, creating one of the most comprehensive scFMs to date [13]. This extensive pretraining enables strong performance across diverse downstream tasks including zero-shot cell type annotation and in silico perturbation modeling [13].

Geneformer Architecture

Geneformer employs a BERT-like encoder architecture with bidirectional attention mechanisms [14]. This allows the model to learn from the context of all genes in a cell simultaneously during pretraining. Key characteristics include:

- Contextual Gene Embeddings: Learns representations that capture how gene function varies across cellular contexts [14].

- Transfer Learning Focus: Emphasizes fine-tuning capabilities for specific biological applications [14].

- Hierarchical Representation: Builds embeddings at both gene and cell levels for multi-scale analysis [14].

Geneformer's pretraining incorporates attention mechanisms that learn and weight relationships between any pair of input tokens, enabling the model to identify which genes are most informative of a cell's identity or state [1].

Architectural Evolution and Innovations

Recent advancements in scFM architectures have introduced several improvements over vanilla transformer designs:

- Normalization Techniques: Modern implementations often use RMSNorm instead of LayerNorm for improved training stability and efficiency [16].

- Activation Functions: SwiGLU and GeLU activations have largely replaced ReLU in feed-forward networks, providing smoother gradients and better performance [16].

- Sparse Attention: Optimization of attention mechanisms to handle the long sequences represented by thousands of genes per cell [16].

Table 1: Comparative Architecture of Leading scFMs

| Feature | scGPT | Geneformer |

|---|---|---|

| Architecture Type | GPT-like Decoder | BERT-like Encoder |

| Attention Mechanism | Unidirectional/Masked | Bidirectional |

| Primary Pretraining Objective | Generative Gene Prediction | Masked Gene Modeling |

| Typical Pretraining Scale | 33+ million cells [13] | Not Specified |

| Tokenization Strategy | Gene ranking + value binning [1] | Gene ranking by expression [1] |

| Multi-omic Capability | Yes (transcriptomics, epigenomics, spatial) [1] | Primarily transcriptomics |

Application Notes for Gene Function Prediction

Zero-Shot Gene Function Annotation

scFMs enable zero-shot gene function prediction by leveraging the biological knowledge encoded during pretraining. The workflow involves:

- Embedding Generation: Process single-cell data through the foundation model to generate contextual gene embeddings.

- Similarity Analysis: Calculate cosine similarity between gene embeddings in the latent space.

- Functional Transfer: Infer functions for poorly characterized genes based on their proximity to well-annotated genes in the embedding space.

In practice, genes with similar functions cluster together in the embedding space, allowing functional annotation transfer from known to unknown genes without additional training [17]. For example, scGPT embeddings have demonstrated the ability to group genes from the same pathways and biological processes, enabling prediction of novel gene functions through neighborhood analysis in the latent space [17] [13].

Gene-Gene Interaction and Pathway Analysis

scFMs excel at identifying context-specific gene-gene interactions that vary across cell types and states. The scNET framework enhances this capability by integrating protein-protein interaction (PPI) networks with scRNA-seq data using graph neural networks [17]. The protocol involves:

- Dual-View Encoding: Simultaneously learning gene-gene relationships from PPI networks and cell-cell relationships from expression similarity.

- Attention-Based Refinement: Using attention mechanisms to prune irrelevant connections in the cell-cell similarity graph.

- Pathway Activation Scoring: Calculating pathway activity scores from the integrated embeddings.

Quantitative evaluations show that scNET's gene embeddings achieve substantially higher correlation with Gene Ontology semantic similarity (mean correlation ~0.17) compared to methods without prior biological knowledge [17]. This integration of PPI information with expression data significantly enhances the detection of functional pathways and complexes from single-cell data.

In Silico Perturbation Modeling

scFMs enable in silico perturbation experiments to predict gene function by simulating knockout or overexpression scenarios:

- Input Manipulation: Modify the expression value of a target gene in the input representation.

- Forward Pass: Process the perturbed input through the foundation model.

- Response Analysis: Measure the predicted changes in other genes' expression values.

- Network Inference: Identify downstream effects and affected biological pathways.

scGPT specifically demonstrates strong performance in perturbation response prediction, accurately modeling how targeted manipulations affect global expression patterns and cellular states [13]. This capability provides a powerful computational alternative to expensive wet-lab experiments for initial hypothesis generation.

Experimental Protocols and Benchmarking

Protocol for Gene Function Prediction Using scFM Embeddings

Materials: Preprocessed scRNA-seq dataset, pretrained scFM (scGPT or Geneformer), computational environment with adequate GPU resources.

Procedure:

- Data Preprocessing:

- Normalize gene expression counts using log(CP10K+1) transformation.

- Filter to include highly variable genes (typically top 2000-5000).

- Format data according to model-specific requirements (gene ordering or binning).

Embedding Generation:

- Load pretrained model weights (available from BioLLM or model-specific repositories) [13].

- Process dataset through model to extract gene and cell embeddings.

- Save embeddings in standardized format (H5AD or CSV) for downstream analysis.

Functional Annotation:

- Calculate pairwise cosine similarities between all gene embeddings.

- Perform clustering (k-means or hierarchical) on gene embeddings.

- Conduct Gene Ontology enrichment analysis on resulting clusters.

- Annotate unknown genes based on cluster membership and similarity to characterized genes.

Validation:

- Compare predictions to known gene functions in databases like GO, KEGG.

- Perform cross-validation using holdout sets of well-annotated genes.

- Assess biological coherence of predicted gene functions through literature review.

Table 2: Quantitative Performance of scFMs on Gene Function Prediction Tasks

| Model | GO Semantic Similarity Correlation | Cluster Enrichment (GO Terms) | Cross-Species Accuracy |

|---|---|---|---|

| scNET | 0.17 (mean) [17] | Significant improvement across clustering resolutions [17] | Not Reported |

| scGPT | Not Reported | Not Reported | High (demonstrated in plant models) [13] |

| Geneformer | Not Reported | Not Reported | Not Reported |

| Traditional Methods | <0.1 (estimated) [17] | Lower enrichment percentages [17] | Variable |

Benchmarking Results and Limitations

Recent zero-shot evaluations provide critical insights into scFM capabilities and limitations. When applied without task-specific fine-tuning:

- Both scGPT and Geneformer underperform simpler methods like Highly Variable Genes (HVG) selection in cell type clustering tasks [14].

- For batch integration, scGPT shows better performance on datasets with both technical and biological batch effects, while traditional methods like Harmony and scVI excel at correcting technical variation alone [14].

- Pretraining provides clear benefits over randomly initialized models, but larger and more diverse pretraining datasets do not consistently confer additional advantages [14].

These results highlight that while scFMs show tremendous promise, their zero-shot performance requires careful validation against established baselines, particularly for discovery-focused applications where fine-tuning may not be feasible.

Table 3: Essential Research Reagents and Computational Tools for scFM Research

| Resource | Type | Function/Purpose | Example/Availability |

|---|---|---|---|

| CZ CELLxGENE | Data Platform | Provides unified access to annotated single-cell datasets; contains >100 million standardized cells [1] [13] | CELLxGENE Discover [1] |

| BioLLM | Software Framework | Standardized framework for integrating and benchmarking single-cell foundation models [13] | Universal interface for scFM access [13] |

| Pretrained Model Weights | Computational Resource | Enable transfer learning without expensive pretraining | scGPT (33M cells), Geneformer weights [13] |

| ARCHS4 | Data Repository | Uniformly processed RNA-seq data from GEO with AI-curated annotations [18] | 705,430 human transcriptomes with matched text [18] |

| Protein-Protein Interaction Networks | Biological Database | Provide functional context for gene embedding interpretation | Integrated in scNET for enhanced functional analysis [17] |

| DISCO Database | Data Platform | Aggregates single-cell data for federated analysis | Over 100 million cells for cross-study comparisons [13] |

Workflow and Pathway Visualizations

scFM Gene Function Prediction Workflow

Multi-omic Integration Architecture

In the rapidly evolving field of single-cell genomics, single-cell foundation models (scFMs) are revolutionizing how researchers interpret complex biological systems. These large-scale deep learning models, pretrained on vast single-cell datasets, have demonstrated remarkable capabilities in predicting gene function, annotating cell types, and simulating cellular responses to perturbation [4]. A critical preprocessing step that enables this powerful analysis is tokenization—the process of converting raw gene expression data into a structured format that artificial intelligence models can understand and process [4]. Within the context of gene function prediction research, effective tokenization transforms high-dimensional, sparse single-cell RNA sequencing (scRNA-seq) data into meaningful numerical representations that capture the fundamental biological principles governing cellular behavior and gene regulatory networks [19]. This technical note details the methodologies and protocols for implementing tokenization strategies that optimally prepare single-cell data for scFM training and fine-tuning, with particular emphasis on their application in gene function prediction.

Tokenization Strategies for Single-Cell Data

Core Concept and Biological Analogy

Tokenization serves as the crucial bridge between biological measurements and computational analysis. In natural language processing (NLP), tokens represent words or subwords within sentences. By analogy, scFMs treat individual cells as "sentences" and genes or genomic features along with their expression values as "words" or "tokens" [4]. This framework allows models to learn the "language" of cells by exposing them to millions of cellular transcriptomes encompassing diverse tissues, states, and conditions. The primary challenge in single-cell tokenization stems from the non-sequential nature of gene expression data, unlike the inherent sequence in text, requiring researchers to impose meaningful structure for transformer-based model architectures [4].

Preprocessing and Input Representation

Before tokenization can occur, scRNA-seq data must undergo rigorous preprocessing to ensure quality and consistency:

- Quality Control: Filtering out low-quality cells and potential multiplets, particularly critical in droplet-based methods [20].

- Gene Filtering: Retaining genes expressed above minimum thresholds to reduce noise.

- Normalization: Applying specialized single-cell normalization techniques to account for varying sequencing depth, avoiding methods designed for bulk RNA-seq that can introduce errors [20].

- Batch Effect Consideration: Accounting for technical variations between experiments while preserving biological variation of interest [20].

Following preprocessing, the continuous, high-dimensional gene expression profiles must be converted into discrete tokens. A fundamental consideration is that gene expression data lacks inherent ordering, unlike words in a sentence [4]. To address this, several strategic approaches have been developed, each with distinct advantages for specific applications in gene function prediction.

Table 1: Comparison of Tokenization Strategies for Single-Cell Foundation Models

| Strategy | Core Methodology | Key Advantages | Considerations for Gene Function Prediction | Example Models |

|---|---|---|---|---|

| Gene Ranking | Genes ordered by expression level within each cell; top genes form sequence | Deterministic, captures most influential genes | May overlook lowly expressed functionally important genes | Geneformer [4], scBERT [19] |

| Value Categorization | Continuous expression values binned into discrete categories | Converts regression to classification problem | Loss of resolution for subtle expression differences | scGPT [19] |

| Value Projection | Gene expression vector projected and combined with positional/gene embedding | Preserves full resolution of expression data | Computationally intensive for very large datasets | scFoundation [19] |

| Multi-Modal Incorporation | Integration of gene metadata, batch information, or other omics data | Provides richer biological context | Increased complexity in token structure and processing | UCE [19] |

Protocol for Implementing Tokenization in Gene Function Prediction

Experimental Workflow and Design

The following protocol outlines a comprehensive procedure for implementing gene ranking tokenization, particularly suited for gene function prediction tasks using scFMs. This methodology has been validated through large-scale implementations in models such as CellFM, trained on 100 million human cells [19].

Reagent and Resource Requirements

Table 2: Essential Research Reagents and Computational Tools

| Item | Specification | Function/Purpose |

|---|---|---|

| Single-Cell Suspension | Highly viable cells from tissue of interest | Source of transcriptomic data |

| scRNA-seq Library Prep Kit | 10x Genomics 3' or similar platform | Generation of barcoded cDNA libraries |

| Sequence Alignment Tool | STAR, CellRanger, or scRNA-seq specialized aligners | Mapping reads to reference genome |

| Quality Control Software | FastQC, Seurat, or Scanpy | Assessing cell and gene quality metrics |

| Normalization Algorithm | scran, SCTransform, or specialized single-cell methods | Technical noise removal and count normalization |

| Tokenization Framework | Custom Python scripts implementing ranking logic | Conversion of expression matrix to token sequences |

| Foundation Model Architecture | Transformer-based (e.g., ERetNet, standard Transformer) | Learning representations for gene function prediction |

Sample Preparation and Sequencing

- Single-Cell Isolation: Extract viable single cells from tissue of interest using appropriate dissociation protocols. For tissues where dissociation is challenging (e.g., neuronal tissues), consider single-nucleus RNA-seq (snRNA-seq) as an alternative [20].

- Library Preparation: Perform scRNA-seq using preferred technology (e.g., droplet-based 10x Genomics, SMART-Seq2). Document all protocol parameters including amplification method (PCR or IVT) and transcript coverage (full-length vs. 3'/5' end) as these impact downstream tokenization [20].

- Sequencing: Execute high-throughput sequencing with sufficient depth to capture transcriptional diversity. For gene function prediction, deeper sequencing may be beneficial to detect lowly expressed transcription factors and regulatory genes.

Computational Processing and Tokenization

Diagram 1: Tokenization workflow for scRNA-seq data.

Primary Data Processing:

Expression Matrix Normalization:

- Apply specialized normalization methods designed for single-cell data to address varying sequencing depths while preserving biological heterogeneity.

- Avoid bulk RNA-seq normalization techniques that may introduce errors in single-cell data interpretation [20].

Tokenization Implementation:

- For each cell, rank all expressed genes by their normalized expression values in descending order.

- Select the top N genes (typically 1,000-2,000) based on expression magnitude to form the representative sequence for each cell.

- Convert each gene in the ranked list to a discrete token, typically represented as a unique integer identifier.

- Incorporate special tokens to represent cell-level metadata, batch information, or experimental conditions when available [4].

Positional Encoding:

- Apply positional encoding schemes to represent the relative ranking of each gene within the sequence, enabling the transformer architecture to utilize ordering information [4].

Advanced Tokenization Applications in Gene Function Prediction

Multi-Modal Tokenization for Enhanced Functional Insights

While basic gene ranking tokenization provides a solid foundation, advancing gene function prediction requires more sophisticated approaches that integrate diverse biological contexts:

- Gene Metadata Incorporation: Enhance token representations by including information about gene ontology terms, chromosome location, or protein domains to provide biological context that aids in functional annotation [4].

- Multi-Omic Integration: Develop specialized tokens for incorporating additional data modalities such as single-cell ATAC-seq (scATAC-seq) for chromatin accessibility, spatial transcriptomics for positional context, or proteomics data to create comprehensive cellular representations [4].

- Functional Prompt Engineering: Implement semantic design strategies where known functional gene contexts prompt the generation of novel gene sequences with related functions, facilitating discovery of genes with predicted functional relationships [5].

Case Study: CellFM Tokenization Implementation

CellFM, an 800-million parameter foundation model trained on 100 million human cells, implements a value projection-based tokenization strategy that preserves the full resolution of gene expression data [19]. In this approach:

- The gene expression vector is represented as the sum of a projection of the gene expression values and a positional or gene embedding.

- This method maintains continuous expression information rather than discretizing into bins or ranks, potentially capturing more subtle functional relationships.

- The model employs a modified RetNet framework with gated multi-head attention mechanisms to efficiently process the tokenized input while managing computational complexity [19].

Table 3: Performance Comparison of Tokenization Strategies in Gene Function Prediction

| Tokenization Method | Prediction Accuracy | Novel Function Discovery | Computational Efficiency | Data Requirements |

|---|---|---|---|---|

| Gene Ranking | Moderate to High | Limited | High | Standard |

| Value Categorization | High | Moderate | Moderate | Standard |

| Value Projection | Very High | High | Lower | Extensive |

| Multi-Modal Integration | Highest | Highest | Lowest | Extensive |

Discussion and Technical Considerations

Optimization Guidelines for Gene Function Prediction

Implementing effective tokenization for gene function prediction requires addressing several technical challenges:

- Batch Effect Management: Incorporate batch information as special tokens or employ statistical harmonization methods to reduce technical variation while preserving biologically relevant functional signals [4].

- Rare Cell Type Considerations: Adjust tokenization strategies for rare cell populations by implementing oversampling techniques or weighted ranking approaches to ensure adequate representation in training data.

- Cross-Species Generalization: When predicting gene functions across species, implement orthology-based token mapping to align gene tokens across different organisms.

- Interpretability: Develop attention visualization techniques to interpret which tokens (genes) most strongly influence functional predictions, providing biological validation of model decisions.

Validation and Quality Assessment

Rigorously validate tokenization implementations through the following quality metrics:

- Sequence Recovery Tests: Assess the model's ability to reconstruct known functional gene sequences from partial prompts, analogous to genomic "autocomplete" tasks [5].

- Functional Enrichment Analysis: Validate that embeddings derived from tokenized input show appropriate enrichment for known biological pathways and processes.

- Novelty Assessment: Quantify the entropy and variability of generated token sequences to ensure the model extends beyond mere memorization of training data [5].

Diagram 2: Tokenization integration in function prediction pipeline.

Tokenization represents a fundamental preprocessing step that transforms complex gene expression data into structured inputs accessible to single-cell foundation models. As research in gene function prediction advances, refined tokenization strategies that preserve biological nuance while enabling computational efficiency will be crucial. The protocols outlined herein provide a framework for implementing tokenization approaches optimized for extracting functional insights from single-cell transcriptomic data. Future directions will likely involve more sophisticated multi-modal tokenization, integration of prior biological knowledge directly into token representations, and adaptive tokenization strategies that dynamically optimize based on specific prediction tasks. Through continued refinement of these methodologies, tokenization will remain an essential component in the pipeline from raw sequencing data to biologically meaningful functional predictions, accelerating discovery in basic research and therapeutic development.

The development of robust single-cell foundation models (scFMs) is critically dependent on access to large-scale, high-quality, and biologically diverse datasets. These models, which treat cells as "sentences" and genes as "words," learn the fundamental language of biology through self-supervised pretraining on vast collections of single-cell transcriptomic data [4] [1]. The performance and generalizability of scFMs are directly influenced by the scope, quality, and diversity of their pretraining data. This application note provides a comprehensive overview of major public data sources essential for pretraining scFMs, with a specific focus on their application in gene function prediction research. We detail standardized protocols for data acquisition, processing, and integration to empower researchers and drug development professionals in constructing effective models for predicting gene function and cellular behavior.

The table below summarizes the key characteristics of major public data sources relevant for scFM pretraining, highlighting their unique contributions and scale.

Table 1: Major Public Data Sources for scFM Pretraining

| Database Name | Primary Focus & Description | Scale (Number of Cells) | Key Features for scFM Pretraining | Data Accessibility |

|---|---|---|---|---|

| CZ CELLxGENE Discover [21] | A comprehensive platform for exploring single-cell data, hosting a wide array of curated datasets. | >35 million cells (from portal); Platforms provide access to over 100 million standardized cells [4] [1]. | - Standardized data processing via Census [21].- Rich metadata and interactive Explorer tool.- Directly integrated into analysis workflows for differential expression and cell type annotation. | Web interface; Data available via AWS cloud; Python/R tools (Census) [21] [22]. |

| Human Cell Atlas (HCA) [22] | A global consortium aimed at creating comprehensive reference maps of all human cells. | Contributes to large-scale integrations (e.g., 58 million cells listed in one resource) [22]. | - Aims for complete coverage of human cell types and states.- Enforces strict metadata standards for data consistency.- Focus on healthy human tissues, providing a baseline for disease studies. | HCA Data Portal; Cloud-based storage and analysis platforms. |

| Arc Virtual Cell Atlas [23] | A newly released, massive resource integrating both observational and perturbational single-cell data. | ~300 million cells (combined from Tahoe-100M and scBaseCount) [23]. | - Includes Tahoe-100M, a perturbation atlas with 100M cells from 60,000 drug-cell interactions [23] [22].- scBaseCount provides 200M AI-curated cells from public data.- Uniquely combines natural cell states with drug perturbation responses. | Open source and freely accessible via Arc Institute's portal; Google Cloud Storage [23] [22]. |

| Single Cell Expression Atlas (SCEA) [22] [24] | A cross-species repository from EMBL-EBI providing uniformly processed single-cell RNA-seq data. | Varies; part of larger aggregated resources. | - Uniformly reprocesses data to facilitate cross-study comparisons.- Maps metadata to Experimental Factor Ontology (EFO) for enhanced integration.- Categorizes studies as "baseline" or "differential" for targeted queries. | Web interface; downloadable data matrices and raw data via FTP. |

| Gene Expression Omnibus (GEO) / SRA [4] [24] | NIH's primary archival repository for high-throughput functional genomics data. | Tens of millions of datasets available [4]. | - Largest and most diverse repository of primary data.- Essential for accessing the most recent studies not yet in curated portals.- Requires significant curation and processing effort due to heterogeneity. | Web interface; FASTQ and processed data files; often requires custom processing. |

| PanglaoDB [4] [22] | A curated database of mouse and human single-cell RNA-seq experiments. | Varies; incorporates data from over 1,300 experiments [24]. | - Includes pre-annotated cell-type markers, useful for validating gene functions.- User-friendly for exploring gene expression across cell types and studies. | Web interface; downloadable data as R objects or text files. |

Experimental Protocols for Data Utilization in Gene Function Prediction

Application: Building a large, diverse, and high-quality dataset for initial pretraining of an scFM from curated sources like CELLxGENE and the Arc Virtual Cell Atlas.

Materials and Reagents:

- Computational Resources: High-performance computing cluster or cloud computing environment (e.g., AWS, Google Cloud) with substantial CPU, RAM, and storage.

- Software/Tools: Python environment with data manipulation libraries (Pandas, NumPy), single-cell analysis tools (Scanpy, Seurat), and relevant API clients (e.g., CellxGene Census).

Procedure:

- Data Source Identification: Select databases that align with your research goals. For general-purpose human cell models, prioritize CZ CELLxGENE Discover and the HCA. For perturbation-focused models, the Arc Virtual Cell Atlas (Tahoe-100M) is indispensable [23] [22].

- Bulk Data Download: Utilize provided bulk download options and cloud APIs. For example, access CELLxGENE data via the

cellxgene-censusPython package, which streams data efficiently from the cloud without requiring full local downloads [21] [22]. - Data Harmonization: The data from curated sources like CELLxGENE Census and Arc scBaseCount are already uniformly processed. Verify that the gene identifiers are consistent across datasets (e.g., all using ENSEMBL IDs). Merge the

AnnDataobjects, treating each source as a separate "batch." - Quality Control (QC) and Filtering: Perform initial QC on the merged dataset. Standard filters include:

- Removing cells with an extreme number of detected genes (potential doublets) or high mitochondrial gene percentage (low viability).

- Filtering out genes detected in very few cells.

- Dataset Splitting: Partition the data into pretraining, validation, and hold-out test sets. Ensure the splits are stratified by tissue, cell type, or study to prevent data leakage and allow for robust evaluation of model generalizability.

Protocol 2: Data Preprocessing and Tokenization for scFMs

Application: Converting raw single-cell gene expression matrices into the tokenized sequences required by transformer-based scFMs.

Materials and Reagents:

- Input Data: A quality-controlled single-cell expression matrix (cells x genes).

- Software/Tools: scGPT or Geneformer codebases, which include implemented tokenizers.

Procedure:

- Normalization: Normalize the gene expression counts per cell to a standard scale (e.g., 10,000 counts per cell) and apply a logarithmic transformation (log1p) to stabilize variance.

- Gene Selection: Select a subset of highly variable genes (HVGs) for model input. This reduces computational complexity and focuses the model on biologically informative features. A common target is 5,000-20,000 genes.

- Tokenization: This is a critical step where continuous expression values are converted into discrete tokens for the model.

- Strategy 1 (Expression-based ranking): For each cell, rank the selected genes by their expression level. The input sequence is the ordered list of gene IDs from highest to lowest expression. The expression value itself can be incorporated as a separate "value embedding" [4] [1] [8].

- Strategy 2 (Binning): Bin gene expression values (e.g., no expression, low, medium, high) and create a combined token representing both the gene ID and its expression level bin [1].

- Sequence Assembly: Assemble the tokenized genes into a single sequence for each cell. Prepend special tokens to the sequence if supported by the model architecture, such as a

[CLS]token whose final embedding can represent the entire cell, or modality tokens for multi-omics data [1] [8].

The following diagram illustrates this multi-stage preprocessing and tokenization workflow.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Essential Computational Tools for scFM Research

| Item Name | Type | Primary Function in scFM Workflow |

|---|---|---|

| Scanpy [25] [26] | Python Library | Provides a comprehensive toolkit for single-cell data analysis, including preprocessing, clustering, trajectory inference, and visualization. Essential for initial data QC and exploration. |

| Seurat [22] [8] | R Package | A widely used R package for single-cell genomics, offering similar functionality to Scanpy for QC, integration, and analysis. |

| CellxGene Census [21] [22] | API / Data Source | A Python API that provides efficient, cloud-native access to the massive, uniformly processed CZ CELLxGENE corpus, enabling scalable data loading. |

| scGPT / Geneformer [4] [1] [8] | Foundation Model | Pretrained scFMs that can be fine-tuned for specific downstream tasks like gene function prediction, perturbation response modeling, and cell type annotation. |

| AnnData Format [25] [22] | Data Format | A flexible file format (.h5ad) for storing single-cell data matrices alongside rich metadata, layers (e.g., normalized counts), and embeddings. The standard for interoperability in Python-based scFM workflows. |

| Transformer Architecture [4] [1] | Model Architecture | The neural network backbone of most scFMs. Its self-attention mechanism allows the model to learn complex, context-dependent relationships between genes. |

Workflow for Gene Function Prediction Using scFM Embeddings

Leveraging scFM embeddings for gene function prediction involves a structured pipeline from data preparation to functional validation. The following diagram maps the key stages of this process.

Workflow Description:

- Input: A pretrained single-cell foundation model, whose gene embeddings have been learned from diverse cellular contexts [4] [8].

- Extraction: Gene embeddings are extracted from the model's input layer. Each gene is represented as a high-dimensional vector that encapsulates its contextual function based on co-expression patterns across millions of cells [8].

- Analysis: The gene embedding space is analyzed to predict gene function. This can be done by:

- Similarity Search: Finding genes with embedding vectors similar to a gene of unknown function, implying functional relatedness.

- Supervised Prediction: Training a classifier on embeddings of well-annotated genes to predict Gene Ontology terms for unknown genes [8].

- Clustering: Identifying modules of co-functional genes based on embedding proximity.

- Validation: Predictions are validated against external knowledge bases like Gene Ontology and pathway databases (e.g., KEGG, Reactome) to assess accuracy and biological relevance [8].

The availability of large-scale, curated public data sources like CZ CELLxGENE, the Human Cell Atlas, and the Arc Virtual Cell Atlas has fundamentally transformed the landscape of single-cell computational biology. These resources provide the essential fuel for training the next generation of scFMs. By adhering to the standardized protocols for data acquisition, preprocessing, and tokenization outlined in this application note, researchers can construct robust models capable of unraveling the complex language of gene function. As these datasets continue to grow in size and diversity, and as models and benchmarking practices evolve [6] [8], the potential for scFMs to drive discoveries in basic biology and therapeutic development will only increase.

Interpreting Gene and Cell Embeddings as Functional Representations

Single-cell foundation models (scFMs) are large-scale deep learning models pretrained on vast datasets of single-cell omics data, capable of generating meaningful low-dimensional representations, or embeddings, of genes and cells [4]. These embeddings are foundational for gene function prediction, as they encode complex biological relationships in a structured latent space. The core premise is that the embedding space learned by scFMs captures functional biological relationships; genes with similar functions or involved in the same pathways are positioned proximally, while cells in similar states or types form distinct clusters [27] [8]. This structured representation provides a powerful, computable framework for extracting novel biological insights and forming testable hypotheses about gene function and cellular identity without relying solely on predefined annotations.

Methodologies for Accessing and Processing Embeddings

Extraction of Gene and Cell Embeddings

The first step in functional interpretation involves extracting the raw embedding vectors from a pretrained scFM. The protocol varies slightly depending on the model architecture but generally follows a consistent pattern.

For Gene Embeddings: Gene embeddings are typically accessed from the input layer (or first layer) of the transformer model. In most scFMs, each gene is associated with a unique identifier (e.g., Ensembl ID or gene symbol), and its initial representation is a combination of a static gene embedding and a dynamic value embedding that encodes its expression level in a given cell [8]. For functional analysis, the static gene embedding, which is expected to capture the gene's intrinsic functional properties across diverse cellular contexts, is the primary vector of interest. This matrix of gene embeddings can be directly extracted from the model's parameters after pretraining [8].

For Cell Embeddings: Cell embeddings are often derived from a special classification token (e.g., [CLS]) that is prepended to the input sequence of genes. The final hidden state corresponding to this token serves as a global representation of the entire cell's state [4]. Alternatively, some models generate cell embeddings by pooling (e.g., mean pooling) the final hidden states of all gene tokens for that cell [4].

Table 1: Common Embedding Extraction Points in Popular scFMs

| Model Name | Gene Embedding Source | Cell Embedding Source | Key Reference |

|---|---|---|---|

| scGPT | Input gene embedding layer | [CLS] token or mean pooling |

[8] |

| Geneformer | Input gene embedding layer | Final layer context | [8] |

| scBERT | Input gene embedding layer | [CLS] token |

[4] |

| UCE | Input gene embedding layer | Cell-specific output token | [8] |

Normalization and Dimensionality Reduction

Once extracted, raw embeddings often require preprocessing before biological interpretation.

- Normalization: Standard practice involves L2 normalization, which scales each embedding vector to have a unit norm. This ensures that similarity metrics (e.g., cosine similarity) are computed based on direction rather than magnitude, leading to more stable and biologically relevant comparisons.

- Dimensionality Reduction: The original embedding space is high-dimensional (e.g., 128 to 1024 dimensions). For visualization and qualitative assessment, researchers commonly apply techniques like Uniform Manifold Approximation and Projection (UMAP) or t-Distributed Stochastic Neighbor Embedding (t-SNE) to project the embeddings into 2D or 3D space [27] [28]. This allows for visual inspection of clusters of genes or cells.

The following diagram illustrates the complete workflow from single-cell data to functional interpretation of embeddings.

Workflow for Interpreting scFM Embeddings

Experimental Protocols for Functional Interpretation

Protocol 1: Gene-Gene Similarity and Functional Clustering

This protocol assesses whether the gene embedding space captures known biological relationships by measuring the similarity between genes.

- Input: A set of gene embeddings for all genes of interest (e.g., ~20,000 protein-coding genes).

- Similarity Calculation: Compute the pairwise cosine similarity between all gene embeddings to create a gene-gene similarity matrix. Cosine similarity is preferred as it measures the angular difference, aligning with the intuition of comparing functional "direction."

- Validation against Ground Truth:

- Gene Ontology (GO) Analysis: For a query gene (e.g., IL7R), retrieve its top k most similar genes (nearest neighbors) based on cosine similarity. Perform GO enrichment analysis (using tools like Enrichr or clusterProfiler) on this gene set. A successful prediction is indicated by significant enrichment (False Discovery Rate (FDR) < 0.05) of the query gene's known biological processes [8].

- Protein-Protein Interaction (PPI) Validation: Check if the top similar genes are known interactors in PPI databases (e.g., STRING). Statistical significance can be evaluated using permutation tests.

- Quantitative Metric: Calculate the Area Under the Precision-Recall Curve (AUPRC) for recovering known gene-gene functional relationships from a reference database against random chance.

Table 2: Example Output from Gene-Gene Similarity Analysis for IL7R

| Rank | Gene Symbol | Cosine Similarity | Known Functional Link to IL7R |

|---|---|---|---|

| 1 | CD3D | 0.92 | T-cell receptor complex, co-expression |

| 2 | CD3E | 0.91 | T-cell receptor complex, co-expression |

| 3 | CD8B | 0.89 | T-cell marker, shared immune function |

| 4 | CCR7 | 0.87 | T-cell homing and activation |

| 5 | SELL (L-selectin) | 0.85 | T-cell adhesion and migration |

Protocol 2: Clustering-Free Marker Gene Discovery

This protocol uses the cell-gene co-embedding space to identify cell-type-specific marker genes without pre-defined clustering.

- Input: A co-embedding of cells and genes from a model like SIMBA, where both cell nodes and gene nodes reside in the same latent space [27].

- Query Execution:

- With Known Cell Labels: For a group of cells (e.g., CD8+ T-cells), identify the closest gene neighbors in the embedding space. The most proximate genes are strong candidates for marker genes for that cell type [27].

- Without Known Labels: Use metrics like the Gini index to calculate the imbalance of a gene's proximity to all cells. A high Gini index indicates that a gene is specifically close to a small subset of cells, suggesting it is a cell-type-specific marker rather than a universally expressed "housekeeping" gene [27].

- Validation: Compare the discovered markers against known marker databases from literature or the Cell Marker database. Use metrics like precision@k to quantify performance.

Protocol 3: Gene Embedding for Functional Annotation of Novel Genes

This protocol outlines a strategy for predicting the function of poorly characterized or novel genes.

- Input: Gene embeddings for all genes, including the novel gene (e.g., GENEX).

- Nearest Neighbor Retrieval: Find the top k (e.g., 50) most similar genes to GENEX in the embedding space based on cosine similarity.

- Functional Imputation: Perform functional enrichment analysis (GO, KEGG pathways) on the set of nearest neighbor genes. The significantly enriched terms are the predicted functions for GENEX.

- Confidence Scoring: Assign a confidence score to the prediction based on the functional coherence (e.g., the enrichment FDR and the average similarity) of the neighbor gene set.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for scFM Embedding Analysis

| Tool/Resource | Type | Function in Analysis | Reference/URL |

|---|---|---|---|

| CELLxGENE | Data Repository | Provides access to millions of curated, annotated single-cell datasets for model pretraining and validation. | [4] |

| Scanpy | Python Toolkit | A versatile library for general single-cell data analysis, often used for preprocessing data before embedding extraction and for downstream UMAP/t-SNE visualization. | [27] |

| PyTorch-BigGraph | Graph Embedding Framework | A scalable framework used by models like SIMBA for efficiently generating co-embeddings of millions of cells and features. | [27] |

| Enrichr / clusterProfiler | Functional Enrichment Tool | Web-based and R-based tools, respectively, for performing Gene Ontology and pathway enrichment analysis on gene sets derived from embedding queries. | [8] |

| scFMs (e.g., scGPT, Geneformer) | Pretrained Models | Provide the core gene and cell embeddings for functional analysis. They are the primary "reagent" for this research. | [8] |

Visualization and Quantitative Validation of Embeddings

Effective interpretation relies on robust quantitative and visual methods to validate the biological signals within embeddings.

Visual Inspection: A UMAP projection of gene embeddings should show clustering of genes from the same pathway or functional category. For example, genes involved in oxidative phosphorylation should form a distinct cluster separate from genes involved in ribosome biogenesis [8]. Similarly, a co-embedding of cells and genes should place known marker genes (e.g., IL7R for CD4+ T-cells) spatially close to the cell type they define [27].

Ontology-Informed Metrics: Beyond standard clustering metrics, novel evaluation metrics like scGraph-OntoRWR measure the consistency of cell type relationships captured by scFMs with prior biological knowledge encoded in cell ontologies [8]. Another metric, the Lowest Common Ancestor Distance (LCAD), assesses the severity of errors in cell type annotation by measuring the ontological proximity between misclassified cell types, providing a more biologically grounded assessment than simple accuracy [8].

The following diagram illustrates the relationship between the embedding space and the final biological interpretation, highlighting the key validation steps.

From Embeddings to Biological Insight

From Embeddings to Insights: Practical Workflows for Gene Function Prediction

Extracting and Utilizing Gene-Level Embeddings for Functional Analysis

Single-cell foundation models (scFMs) are large-scale deep learning models pretrained on vast datasets of single-cell omics data, capable of being adapted to a wide range of downstream biological tasks [1] [4]. A core component of their architecture is the learning of gene-level embeddings—vector representations that capture functional, regulatory, and contextual information about genes based on their expression patterns across millions of cells [29] [8]. These embeddings are learned in a self-supervised manner, typically by training the model on tasks such as masked gene modeling, where the model must predict randomly masked genes based on the context of other genes in the cell [1]. The premise is that by being exposed to a immense diversity of cellular states and conditions, the model internalizes fundamental principles of gene function and interaction [1] [4]. The resulting gene embeddings provide a powerful, compact representation that can be leveraged for various functional analysis tasks, moving beyond traditional methods that rely on pre-defined gene sets or annotations.

Source Models and Embedding Extraction Protocols

Several prominent scFMs provide the functionality to extract gene-level embeddings. These models differ in their pretraining data, architectural details, and the specific nature of the embeddings they produce. The following table summarizes key models used for this purpose.

Table 1: Single-Cell Foundation Models for Gene Embedding Extraction

| Model Name | Omics Modalities | Embedding Dimensionality | Key Feature of Embedding Strategy |

|---|---|---|---|

| Geneformer [29] | scRNA-seq | 256 or 512 | Lookup table embedding; genes are ranked by expression for input. |

| scGPT [29] | scRNA-seq, scATAC-seq, Multiome | 512 | Lookup table embedding with value binning for expression levels. |

| UCE [29] | scRNA-seq | 1280 | Uses protein embeddings from ESM-2, integrating protein sequence information. |

| scFoundation [29] | scRNA-seq | 3072 | Lookup table embedding; trained on a fixed set of ~19,000 genes. |

| scBERT [30] | scRNA-seq | 512 | An early encoder-based model for single-cell transcriptomes. |

Technical Protocol: Extracting Embeddings

The process of extracting gene embeddings is model-specific but generally follows a common workflow. The protocol below outlines the key steps, with specific examples for leading models.

Protocol 1: Gene Embedding Extraction Workflow

Step 1: Model Selection and Setup

- Select an scFM based on the task and compatibility with your data. For general gene function prediction, Geneformer and scGPT are strong starting points [30].

- Install the required software packages and download the pretrained model weights. Frameworks like BioLLM can provide a unified interface for multiple models, reducing coding heterogeneity [30].

Step 2: Data Preprocessing and Tokenization

- Format Input Data: Prepare your single-cell RNA-seq data as a gene-cell count matrix, preferably normalized.

- Gene Filtering and Ordering:

- For Geneformer, filter the gene matrix to the model's predefined vocabulary (2,048 genes). Within each cell, rank these genes by expression level and select the top 2,048 (or a user-defined number) to form the input "sentence" [29].

- For scGPT, a common approach is to use 1,200 highly variable genes (HVGs). The expression value for each gene is often transformed, for example, by binning into discrete levels [29].

- Tokenization: The model converts each gene symbol (and sometimes its expression value) into a numerical token. This token is then mapped to a dense vector in the model's embedding layer.

Step 3: Embedding Extraction