How Single-Cell Foundation Models Work: A Guide for Biomedical Researchers

Single-cell foundation models (scFMs) are transformative AI tools trained on millions of single-cell datasets to decipher the complex language of cellular biology.

How Single-Cell Foundation Models Work: A Guide for Biomedical Researchers

Abstract

Single-cell foundation models (scFMs) are transformative AI tools trained on millions of single-cell datasets to decipher the complex language of cellular biology. This article provides a comprehensive overview for researchers and drug development professionals, detailing how these models leverage transformer architectures to analyze gene expression data for tasks like cell type annotation, perturbation prediction, and drug sensitivity analysis. We explore the core concepts, from tokenization of gene expression to self-supervised pretraining, and critically evaluate their real-world performance against traditional methods. The content also addresses current limitations, offers guidance for model selection and optimization, and discusses the future potential of scFMs in advancing personalized medicine and therapeutic discovery.

The New Paradigm: How Foundation Models are Decoding Cellular Language

Single-cell foundation models (scFMs) represent a revolutionary convergence of artificial intelligence and cellular biology, creating a new paradigm for understanding cellular linguistics. These models are defined as large-scale deep learning models pretrained on vast single-cell datasets using self-supervised learning objectives, enabling them to be adapted to a wide range of downstream biological tasks [1]. Inspired by the remarkable success of large language models (LLMs) in natural language processing, researchers have developed scFMs that treat individual cells as "sentences" and genes or genomic features as "words" or "tokens" in a cellular language [1] [2]. This approach has fundamentally transformed our ability to interpret the complex language of gene regulation, cellular states, and biological systems at single-cell resolution.

The development of scFMs addresses a critical need in single-cell genomics for unified frameworks capable of integrating and comprehensively analyzing rapidly expanding data repositories [1]. With public archives now containing tens of millions of single-cell omics datasets spanning diverse cell types, states, and conditions, traditional analytical approaches struggle to leverage this wealth of information effectively [1]. scFMs overcome these limitations by learning fundamental principles of cellular biology from millions of cells encompassing many tissues and conditions, capturing biological variation at an unprecedented scale. This knowledge can then be transferred to new datasets or downstream tasks through fine-tuning or zero-shot learning approaches [3], establishing scFMs as pivotal tools for advancing our understanding of cellular function and disease mechanisms.

Fundamental Concepts: From Natural Language to Cellular Linguistics

Architectural Foundations: Transformers in Biology

The transformer architecture, which has revolutionized natural language processing and computer vision, serves as the computational backbone of single-cell foundation models [1] [4]. Transformers are neural network architectures characterized by attention mechanisms that allow the model to learn and weight relationships between any pair of input tokens [1]. In the context of single-cell biology, this attention mechanism enables scFMs to determine which genes in a cell are most informative of the cell's identity or state, how they co-vary across cells, and how they participate in regulatory or functional networks [1].

Most scFMs employ variants of the transformer architecture, primarily falling into two categories: encoder-based models and decoder-based models [1]. Encoder-based models, such as those inspired by BERT (Bidirectional Encoder Representations from Transformers), utilize bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1] [5]. In contrast, decoder-based models like scGPT use a unidirectional masked self-attention mechanism that iteratively predicts masked genes conditioned on known genes [1] [4]. Each architectural approach offers distinct advantages—encoder models typically excel at classification and embedding tasks, while decoder models show stronger performance in generation tasks—though no single architecture has emerged as clearly superior for single-cell data [1].

The Cellular Linguistics Framework

The core conceptual innovation underlying scFMs is the treatment of cellular biology as a language with its own grammar and semantics. In this framework, individual cells are treated analogously to sentences, while genes or genomic features along with their expression values serve as words or tokens [1] [2]. This analogy enables the application of linguistic principles to biological data, where cellular states can be "read" and "interpreted" through their gene expression patterns, much like sentences can be understood through their constituent words.

The premise of this approach is that by exposing a model to millions of cells encompassing many tissues and conditions, the model can learn the fundamental "grammar" of cellular behavior—the rules governing how genes interact and coordinate their expression to define cell identity and function [1]. This learned knowledge enables scFMs to generalize to new biological contexts and perform various analytical tasks without task-specific training, mirroring the zero-shot capabilities of large language models [3]. The cellular linguistics framework thus provides a powerful conceptual bridge between natural language understanding and biological interpretation, enabling researchers to decipher the complex language of cellular systems.

Technical Architecture of Single-Cell Foundation Models

Data Tokenization and Encoding Strategies

Tokenization, the process of converting raw input data into discrete units called tokens, represents a critical challenge in adapting transformer architectures to single-cell data. Unlike natural language, where words have inherent sequential order, gene expression data lacks natural ordering, requiring specialized tokenization strategies [1] [3].

Table: Tokenization and Encoding Strategies in Single-Cell Foundation Models

| Component | Encoding Method | Description | Example Models |

|---|---|---|---|

| Gene Identity | Learnable embedding | Each gene projected into high-dimensional space via one-hot encoding + projection network | scBERT, scGPT, Geneformer |

| External knowledge integration | Incorporates promoter embeddings, co-expression patterns, or protein language model outputs | GeneCompass, UCE | |

| Expression Values | Rank encoding | Genes sorted by expression level; position encoded via positional encoding | Geneformer, tGPT |

| Continuous value encoding | Direct projection of continuous expression values into embedding space | scFoundation, GeneCompass | |

| Discrete value encoding | Expression values discretized into bins; each bin treated as categorical variable | scGPT, scMulan | |

| Reference encoding | Expression values used as sampling weights or reference for gene embeddings | UCE, scELMo | |

| Extra Information | Modality tokens | Special tokens indicating data type (e.g., scRNA-seq, scATAC-seq) | scGPT, Nicheformer |

| Batch tokens | Tokens representing batch information to address technical variability | scGPT | |

| Metadata incorporation | Integration of cell metadata, spatial coordinates, or experimental conditions | scMulan, Nicheformer |

A fundamental challenge in applying transformers to single-cell data is that gene expression data are not naturally sequential [1] [3]. Unlike words in a sentence, genes in a cell have no inherent ordering, requiring researchers to impose artificial structure. Common strategies include ranking genes within each cell by their expression levels and feeding the ordered list of top genes as the "sentence" [1]. Other models partition genes into bins by their expression values or simply use normalized counts without complex ranking schemes [1]. Each gene is typically represented as a composite token embedding that combines a gene identifier and its expression value in the given cell, with positional encoding schemes adapted to represent the relative order or rank of each gene [1].

Model Architectures and Pretraining Strategies

scFMs employ diverse architectural configurations and pretraining objectives tailored to single-cell data. The transformer backbone processes tokenized gene expression data through multiple layers of self-attention and feed-forward networks, gradually building latent representations of cells and genes [1].

Table: Architecture and Pretraining Approaches in Representative Single-Cell Foundation Models

| Model | Architecture Type | Pretraining Data Scale | Pretraining Objective | Special Features |

|---|---|---|---|---|

| Geneformer | BERT-like encoder | 30 million cells | Masked gene prediction | Rank-based encoding; network biology focus |

| scGPT | GPT-like decoder | 33 million human cells | Masked gene prediction | Multi-omic support; discrete value encoding |

| scBERT | Performer encoder | Not specified | Cell type prediction | Focus on cell type annotation |

| scFoundation | Transformer encoder | 50 million cells | Masked gene prediction | Continuous value encoding |

| Nicheformer | Hybrid transformer | 110 million cells | Multi-task learning | Integrates single-cell + spatial data |

| scPlantLLM | Transformer | Plant-specific data | Masked LM + cell annotation | Specialized for plant genomics |

Pretraining scFMs involves self-supervised learning on massive single-cell datasets without explicit labeling [1]. The most common pretraining objective is masked language modeling, where random subsets of genes are masked from the input, and the model is trained to predict the masked genes based on the remaining context [1] [5]. This approach forces the model to learn the complex dependencies and relationships between genes, effectively capturing the underlying "grammar" of gene regulation. Through this process, scFMs develop rich internal representations that encode biological knowledge about cellular states, gene functions, and regulatory relationships, which can then be transferred to various downstream tasks through fine-tuning or used directly in zero-shot settings [3].

Experimental Framework and Benchmarking

Benchmarking Methodologies for scFM Evaluation

Comprehensive benchmarking is essential for evaluating the performance and capabilities of scFMs. Recent studies have developed sophisticated evaluation frameworks that assess models across multiple dimensions, including biological relevance, technical performance, and practical utility [3]. These benchmarks typically evaluate scFMs against well-established baseline methods under realistic conditions across diverse tasks, such as batch integration, cell type annotation, cancer cell identification, and drug sensitivity prediction [3].

Evaluation metrics for scFMs span unsupervised, supervised, and knowledge-based approaches [3]. Traditional metrics assess technical performance like clustering accuracy and batch correction effectiveness, while novel biologically-grounded metrics evaluate the ability of models to capture meaningful biological relationships. These include scGraph-OntoRWR, which measures the consistency of cell type relationships captured by scFMs with prior biological knowledge, and Lowest Common Ancestor Distance (LCAD), which assesses the severity of errors in cell type annotation based on ontological proximity [3]. Such biologically-informed metrics provide crucial insights into whether scFMs capture functionally relevant biological patterns beyond technical optimizations.

Key Experimental Protocols

Zero-Shot Evaluation Protocol

The zero-shot capabilities of scFMs represent one of their most powerful features, enabling model assessment without task-specific training [3]. The standard zero-shot evaluation protocol involves:

- Embedding Extraction: Generating cell or gene embeddings from pretrained models without any fine-tuning

- Task Application: Applying these embeddings to specific downstream tasks (e.g., clustering, classification)

- Performance Assessment: Evaluating performance using task-appropriate metrics compared to baseline methods

This protocol tests the general biological knowledge encoded during pretraining and the model's ability to transfer this knowledge to novel tasks and datasets [3]. Studies have shown that scFMs pretrained on diverse cellular contexts can generate embeddings that capture meaningful biological relationships, enabling effective performance even on previously unseen cell types or conditions [3] [6].

Cross-Species and Cross-Tissue Generalization Assessment

A critical test for scFMs is their ability to generalize across biological contexts not seen during training. The standard protocol involves:

- Model Pretraining: Training models on data from specific species or tissues

- Cross-Context Evaluation: Applying models to data from different species or tissue types

- Performance Comparison: Assessing whether performance degrades gracefully or maintains effectiveness

This evaluation has revealed that while some models show remarkable cross-context generalization capabilities (e.g., scPlantLLM performing zero-shot learning on unseen plant species) [6], performance varies significantly across models and tasks, highlighting the importance of dataset diversity during pretraining.

The development and application of scFMs rely on curated data resources that provide standardized, high-quality single-cell datasets.

Table: Key Data Resources for Single-Cell Foundation Models

| Resource Name | Scale | Content Description | Primary Use in scFMs |

|---|---|---|---|

| CZ CELLxGENE | 100+ million cells | Annotated single-cell datasets from diverse tissues and conditions | Primary pretraining corpus for many models |

| Human Cell Atlas | Not specified | Comprehensive reference map of all human cells | Pretraining data source |

| PanglaoDB | Not specified | Curated compendium of single-cell data | Pretraining and benchmarking |

| SpatialCorpus-110M | 110 million cells | Curated single-cell + spatial transcriptomics data | Pretraining for spatial-aware models |

| DISCO | Not specified | Single-cell data across human tissues and development | Multitask pretraining |

| Asian Immune Diversity Atlas (AIDA) | Not specified | Diverse human immune cell data | Benchmarking and validation |

Computational Infrastructure and Software Tools

Implementing scFMs requires substantial computational resources and specialized software tools. The transformer architectures used in these models typically require graphical processing units (GPUs) with substantial memory (16GB+) for both training and inference [1] [2]. Training large-scale scFMs from scratch may require hundreds or thousands of GPU hours distributed across multiple high-end processors [1], though fine-tuning pretrained models for specific tasks is computationally less intensive.

Key software frameworks for developing and applying scFMs include PyTorch and TensorFlow for model implementation, Hugging Face Transformers for architectural components, and specialized single-cell analysis libraries like Scanpy for data preprocessing and evaluation [7]. The field is also developing standardized benchmarking platforms such as scBench [3] to facilitate fair comparison across different models and methods.

Signaling Pathways and Biological Workflows

scFM Analytical Workflow

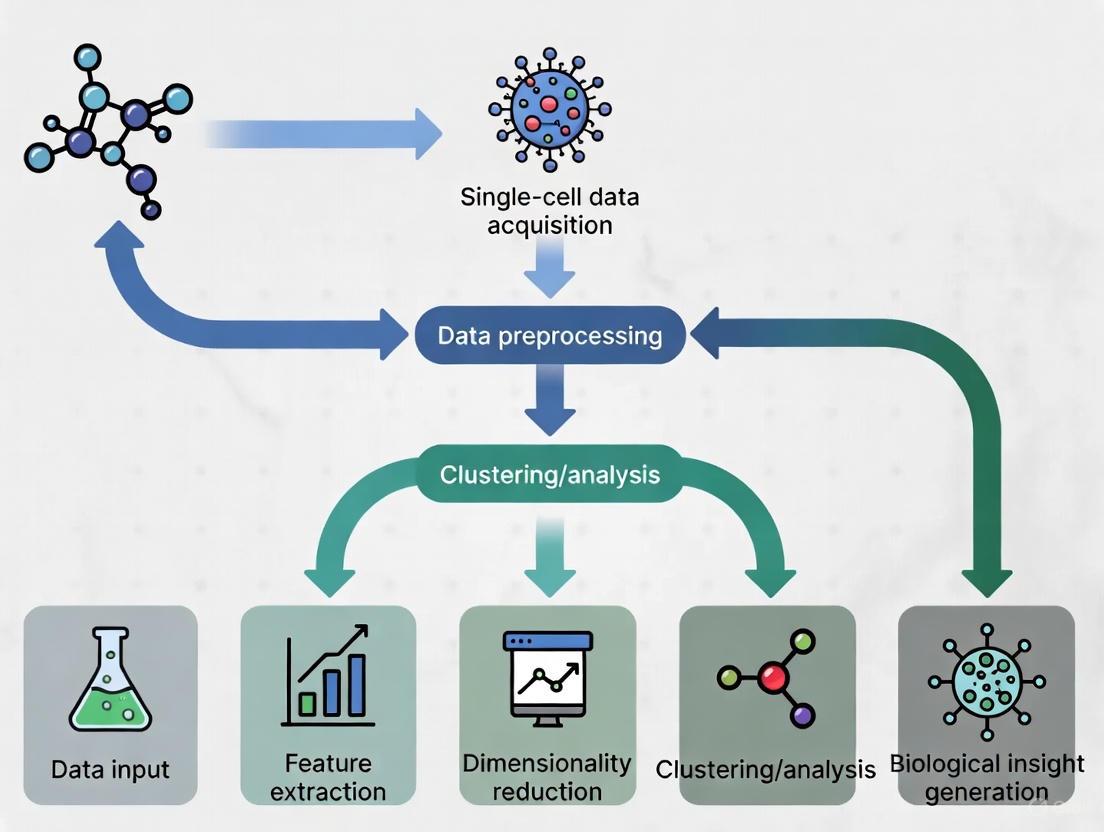

The following diagram illustrates the standard analytical workflow for applying single-cell foundation models to biological research questions:

Multi-modal scFM Architecture

The following diagram illustrates the architecture of a multi-modal single-cell foundation model capable of integrating diverse data types:

Performance Benchmarking and Comparative Analysis

Quantitative Performance Across Tasks

Rigorous benchmarking studies have evaluated scFMs across diverse biological and clinical tasks to assess their practical utility and performance advantages over traditional methods.

Table: Performance Comparison of Single-Cell Foundation Models Across Key Tasks

| Task Category | Best Performing Model(s) | Key Performance Metrics | Advantage Over Baseline |

|---|---|---|---|

| Cell Type Annotation | scBERT, scGPT | Accuracy: 85-95% (varies by dataset) | Superior for novel/rare cell types |

| Batch Integration | scGPT, scVI | LISI scores: 1.5-2.5 (higher better) | Better biological preservation |

| Drug Sensitivity Prediction | Geneformer, scGPT | AUROC: 0.75-0.90 | Context-aware predictions |

| Spatial Pattern Reconstruction | Nicheformer | Spatial correlation: 0.6-0.8 | Transfer spatial context to dissociated cells |

| Cross-Species Generalization | scPlantLLM, UCE | Zero-shot accuracy: 70-85% | Leverage protein language models |

| Perturbation Prediction | Geneformer, scGPT | Top-k accuracy: 0.7-0.9 | Identify master regulators |

Benchmarking results reveal that no single scFM consistently outperforms all others across every task, emphasizing the importance of task-specific model selection [3]. While scFMs generally demonstrate robust performance across diverse applications, simpler machine learning models can sometimes outperform foundation models on specific tasks, particularly under resource constraints or with limited data [3]. This highlights that the choice between complex foundation models and simpler alternatives should be guided by factors including dataset size, task complexity, need for biological interpretability, and available computational resources [3].

Biological Insight Extraction

Beyond technical performance metrics, scFMs are evaluated on their ability to generate novel biological insights. Models are assessed through attention-based interpretability analyses that examine which genes the model attends to when making specific predictions [3]. For example, studies have shown that scFMs can identify biologically meaningful gene-gene relationships and regulatory networks without explicit supervision, demonstrating that these models capture functionally relevant biological patterns [3].

The biological relevance of scFM embeddings is further validated through gene-level tasks that assess whether functionally similar genes are embedded in close proximity in the latent space [3]. Performance on predicting known biological relationships, including tissue specificity and Gene Ontology terms, provides crucial evidence that scFMs learn biologically meaningful representations rather than merely technical artifacts of the data [3].

Future Directions and Challenges

Despite their significant promise, scFMs face several important challenges that represent active areas of research. A primary limitation is the non-sequential nature of omics data, which complicates the direct application of transformer architectures designed for sequential data [1]. Additional challenges include inconsistency in data quality across studies, the computational intensity required for training and fine-tuning, and difficulty in interpreting the biological relevance of latent embeddings and model representations [1].

Future development of scFMs will likely focus on several key directions. Multi-modal integration represents a frontier, with models increasingly incorporating diverse data types including transcriptomics, epigenomics, proteomics, and cellular images [6] [8]. Spatial context awareness is another critical direction, with models like Nicheformer pioneering the integration of spatial relationships into cellular representations [8]. Additionally, efforts to improve model interpretability and biological grounding will be essential for building trust and facilitating biological discovery [3]. Finally, development of more efficient architectures and training methods will be crucial for making scFMs accessible to researchers with limited computational resources [1] [3].

As the field progresses, scFMs are poised to become increasingly central to single-cell research, potentially evolving toward comprehensive "virtual cell" models that can simulate cellular behavior and response in silico [8]. This trajectory promises to deepen our understanding of cellular biology and accelerate therapeutic development across a wide range of diseases.

Single-cell foundation models (scFMs) represent a paradigm shift in computational biology, leveraging the power of transformer networks to decipher the complex language of cellular function. Inspired by their success in natural language processing (NLP), these models are pretrained on vast datasets comprising millions of single cells to learn universal biological representations [1] [9]. The core premise is that individual cells can be treated as sentences, with genes and their expression levels acting as the words or tokens [1] [2]. This adaptation allows transformers to capture intricate patterns of gene-gene interactions and cellular heterogeneity, providing a foundational tool for a wide range of downstream biological tasks, from cell type annotation to perturbation prediction [1] [9] [3].

Core Architectural Framework

Transformer Architecture: From NLP to Single-Cell Biology

The transformer architecture, characterized by its self-attention mechanism, forms the backbone of modern scFMs. Self-attention allows the model to dynamically weigh and consider the relationships between all genes in a cell simultaneously, thereby capturing complex, non-linear dependencies within the gene expression profile [1]. In biological terms, this enables the model to learn how the expression of one gene might influence or be associated with the expression of thousands of others across diverse cellular contexts [1] [3].

Most scFMs implement specific variants of the transformer architecture:

- Encoder-based models (e.g., BERT-like): These models use a bidirectional attention mechanism, meaning each gene token can attend to all other genes in the cell context. This is particularly useful for tasks that require a comprehensive understanding of the entire cellular state, such as cell type classification [1].

- Decoder-based models (e.g., GPT-like): These models employ a unidirectional or masked self-attention mechanism, where a gene can only attend to preceding genes in the sequence. This architecture is often used for generative tasks, such as predicting masked genes or in-silico perturbation effects [1].

- Hybrid designs: Some models explore custom combinations of encoder and decoder blocks, though a clearly superior architecture for single-cell data has not yet emerged [1].

Key Adaptation: Tokenization Strategies for Single-Cell Data

A critical challenge in adapting transformers to single-cell omics is tokenization—the process of converting raw gene expression data into a sequence of discrete tokens that the model can process. Unlike words in a sentence, genes lack a natural sequential order [1] [3]. To overcome this, several strategies have been developed, as summarized in the table below.

Table 1: Common Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Description | Rationale | Examples of Use |

|---|---|---|---|

| Rank-based Encoding | Genes are ordered by their expression level within each cell, from highest to lowest. | Provides a deterministic, cell-specific sequence that reflects the most to least active genes. | scGPT [1], Nicheformer [10], Geneformer [10] |

| Binning | Expression values are partitioned into discrete bins or value ranges, and each bin is treated as a token. | Reduces the complexity of continuous expression values and can capture nonlinear relationships. | scBERT [1] |

| Normalized Counts | Uses normalized expression counts (e.g., log-transformed) directly or with minimal discretization. | Simplicity; avoids potential information loss from aggressive binning or ranking. | Some models report no advantage to complex ranking [1] |

The tokenization process typically results in a sequence where each gene is represented by an embedding vector that combines a gene identifier embedding (analogous to a word embedding) and a value embedding (representing its expression level) [3]. To provide the model with structural information, positional encodings are added to inform the model of the gene's rank or position in the input sequence [1] [2].

Furthermore, special tokens are often incorporated to enrich the biological context:

- Cell-level context tokens: Prepend information about the cell's identity or metadata [1].

- Modality tokens: Indicate the source of the data (e.g., scRNA-seq, scATAC-seq, spatial transcriptomics) [1] [10].

- Batch tokens: Used to account for technical variations between different experiments [1].

The following diagram illustrates the complete tokenization and data preparation workflow for a transformer model like scGPT or Nicheformer.

Comparative Analysis of Model Architectures

The field has seen the development of several prominent scFMs, each with distinct architectural choices and pretraining corpora. The table below provides a quantitative comparison of key models.

Table 2: Comparative Analysis of Single-Cell Foundation Models

| Model Name | Core Architecture | Pretraining Data Scale | Key Technical Features | Notable Applications |

|---|---|---|---|---|

| scGPT [1] [9] | Decoder (GPT-like) | 33+ million cells [9] | Uses rank-based gene tokenization; focuses on generative tasks. | Zero-shot cell annotation, multi-omic integration, in-silico perturbation prediction. |

| Geneformer [10] [3] | Encoder (BERT-like) | Not specified in detail | Employs rank-based encoding; context length of 2,042 genes. | Cell network inference, disease module identification. |

| Nicheformer [10] | Encoder | 110 million cells (SpatialCorpus-110M) | Jointly trained on dissociated and spatial transcriptomics data; uses species and technology tokens. | Spatial composition prediction, transferring spatial context to dissociated data. |

| scBERT [1] | Encoder (BERT-like) | Millions of cells | Uses gene binning for tokenization. | Cell type annotation. |

| UCE [3] | Encoder | Not specified in detail | A unified cell embedding model. | Cell type annotation, dataset integration. |

Experimental Protocols and Methodologies

Pretraining Strategies

The power of scFMs stems from self-supervised pretraining on massive, diverse collections of single-cell data. The primary objective is to learn generalizable representations of gene and cell function without the need for human-annotated labels [1] [10].

A. Data Sourcing and Curation:

- Sources: Pretraining corpora are assembled from public repositories such as the Gene Expression Omnibus (GEO), Sequence Read Archive (SRA), CZ CELLxGENE, the Human Cell Atlas, and PanglaoDB [1].

- Curation: This involves careful selection of datasets, filtering of low-quality cells and genes, and balancing dataset compositions to capture a wide spectrum of biological variation across tissues, species, and disease states [1]. A key challenge is managing batch effects and technical noise from different experimental protocols [1] [10].

B. Self-Supervised Pretraining Tasks:

- Masked Gene Modeling: A fraction (e.g., 15-20%) of the input gene tokens are randomly masked or replaced. The model is then trained to predict the original identity and/or expression value of the masked genes based on the context provided by the unmasked genes [1]. This task forces the model to learn the complex co-expression relationships and dependencies between genes.

- Contrastive Learning: Used in some models to align representations of similar cells or genes while pushing apart representations of dissimilar ones, often leveraging data augmentations [9].

Model Fine-Tuning and Evaluation

After pretraining, scFMs can be adapted to specific downstream tasks through fine-tuning or linear probing.

A. Fine-Tuning: The entire model or a subset of its layers is further trained on a smaller, task-specific labeled dataset. This process adjusts the model's parameters to specialize for the target application [1] [10].

B. Linear Probing: The weights of the pretrained model are frozen, and only a simple linear classifier is trained on top of the extracted cell or gene embeddings. This method tests the quality and generalizability of the representations learned during pretraining [10] [3].

C. Key Downstream Tasks for Evaluation:

- Cell-level tasks: Cell type annotation, batch integration, and patient classification [3].

- Gene-level tasks: Gene function prediction, gene regulatory network (GRN) inference, and analysis of gene-gene relationships [3].

- Spatial tasks: Prediction of spatial cellular niches and tissue region composition (for spatially-aware models like Nicheformer) [10].

- Perturbation modeling: Predicting cellular responses to genetic or chemical perturbations in silico [1] [2].

The Scientist's Toolkit

The following table details key computational reagents and resources essential for working with single-cell foundation models.

Table 3: Essential Research Reagents and Resources for scFM Research

| Item / Resource | Function / Description | Example Tools / Platforms |

|---|---|---|

| Pretrained Model Weights | The learned parameters of a scFM, allowing researchers to perform inference or fine-tuning without the prohibitive cost of pretraining. | scGPT, Geneformer, Nicheformer model checkpoints [1] [10]. |

| Processed Data Corpora | Large-scale, curated collections of single-cell data used for model pretraining and benchmarking. | CZ CELLxGENE [1] [9], DISCO [9], SpatialCorpus-110M [10]. |

| Benchmarking Frameworks | Standardized platforms for evaluating and comparing the performance of different scFMs across a suite of biological tasks. | BioLLM [9], custom benchmarking pipelines [3]. |

| Visualization Tools | Software for exploring and interpreting single-cell data and model outputs, such as embeddings and attention weights. | scViewer [11], cellxgene [11], UCSC Cell Browser [11]. |

Visualizing the End-to-End Workflow

The entire process, from data preparation to model output and application, is summarized in the following workflow diagram.

Single-cell foundation models (scFMs) are revolutionizing our understanding of cellular biology and disease by leveraging large-scale, self-supervised learning. The performance and capability of these models are intrinsically linked to the scale and quality of their training data. This technical guide explores the central role of massive public repositories, with a focus on CZ CELLxGENE, in constructing the foundational corpora that power scFMs, enabling them to decode the complex "language" of gene regulation and cellular function for biomedical research and drug discovery.

The Indispensable Role of Large-Scale Data in scFM Development

A foundation model is defined as a large-scale deep learning model pretrained on vast datasets, which can then be adapted to a wide range of downstream tasks. The success of this paradigm in single-cell biology is contingent on the availability of extensive and diverse training corpora [1].

The public domain now contains tens of millions of single-cell omics datasets, spanning a vast array of cell types, states, and conditions. This wealth of data enables researchers to train large models to decipher the fundamental principles of cellular behavior. The premise is that by exposing a model to millions of cells from diverse tissues and conditions, it can learn generalizable representations that transfer effectively to new datasets or tasks, such as cell type annotation, perturbation prediction, and disease state classification [1]. The performance of these models has been shown to scale predictably with both the volume of pre-training data and the number of model parameters, making repositories like CELLxGENE critical for state-of-the-art performance [12].

Public databases aggregate and curate single-cell data, providing the essential raw material for scFM training. The table below summarizes key repositories, highlighting their scale and specialization.

Table 1: Key Public Single-Cell RNA-Sequencing Databases and Their Scope

| Database Name | Description | Scale (Number of Cells) | Primary Focus |

|---|---|---|---|

| CZ CELLxGENE [13] | A platform for downloading and visually exploring single-cell data. | >33 million | General, multi-species |

| Arc Virtual Cell Atlas [14] | An AI-curated repository integrating single-cell profiles. | >300 million | General, multi-species |

| DISCO [14] | A database aggregating and harmonizing public single-cell datasets. | >100 million | General |

| Human Cell Atlas (HCA) [14] | A global collaborative effort to create comprehensive reference maps of all human cells. | 58 million | General (Human) |

| PanglaoDB [14] | A database of mouse and human scRNA-seq experiments with pre-annotated cell-type markers. | Information Missing | General |

| Tumor Immune Single-cell Hub (TISCH2) [14] | A resource dedicated to the tumor microenvironment. | Information Missing | Cancer-focused |

These resources provide the immense sample sizes needed to power scFMs. For example, the Teddy family of foundation models was trained on a corpus of 116 million cells sourced directly from CELLxGENE, leading to substantial improvements in downstream tasks like disease state identification [12]. The integration of many studies within these databases provides enormous aggregate cell counts, boosting statistical power for detecting rare cell populations or subtle gene expression changes that would be impossible to discern in individual studies [14].

CELLxGENE: A Cornerstone for scFM Pretraining

CELLxGENE has emerged as a critical infrastructure component for the single-cell research community. It provides unified access to a massive, standardized corpus of single-cell data, which is a prerequisite for effective model training.

The platform provides over 33 million unique cells from more than 436 datasets, encompassing over 2,700 cell types from human and mouse tissues [13]. This diversity is crucial for training models that can generalize across biological contexts. CELLxGENE facilitates model development not just as a data source but also as a platform for community innovation, showcasing research projects that leverage its data, such as PINNACLE (a model for contextual protein biology) and scCIPHER (a deep learning approach for precision medicine in neurological disorders) [15].

Table 2: Computational Tools and Resources for scFM Research

| Tool / Resource | Type | Primary Function in scFM Workflow |

|---|---|---|

| CELLxGENE Census [13] | Python/R API | Provides programmatic access to any custom slice of standardized cell data from the CELLxGENE corpus for model training and analysis. |

| Seurat [16] | Software Toolkit | A comprehensive toolkit for single-cell analysis, often used as a baseline or for comparison in reference mapping and data integration tasks. |

| Harmony [3] | Algorithm | A clustering-based method for dataset integration, used to correct for batch effects while preserving biological variation. |

| scVI [3] | Generative Model | A probabilistic neural network for single-cell data integration, used as a baseline model in benchmarking studies against scFMs. |

| Scanpy [14] | Python Library | A scalable toolkit for analyzing single-cell gene expression data, commonly used for preprocessing data before model training. |

A Technical Workflow for Leveraging CELLxGENE in scFM Development

The process of transforming raw data from a repository like CELLxGENE into a trained scFM involves several critical, interconnected steps. The following diagram illustrates this end-to-end workflow.

Data Curation and Assembly

The first step involves the careful selection and integration of datasets from the CELLxGENE corpus to create a pretraining cohort. This step is as important as the model architecture itself [1]. Challenges include managing batch effects, technical noise from different sequencing protocols, and varying data processing steps [1]. Effective pretraining requires meticulous dataset selection, filtering of cells and genes, balancing dataset compositions, and rigorous quality control [1]. CELLxGENE aids this process by providing standardized data and metadata, which reduces the preprocessing burden on model developers.

Tokenization and Input Representation

Tokenization converts raw gene expression data into a sequence of discrete units (tokens) that the model can process. This is a critical and non-trivial step because, unlike words in a sentence, genes have no inherent sequential order [1]. Common strategies implemented by scFMs include:

- Rank-based Encoding: Genes within each cell are ranked by their expression levels, and the ordered list of top genes is treated as the "sentence" representing the cell. This is used by models like Geneformer and Teddy-G [1] [12].

- Binning: Gene expression values are discretized into bins, and the model is trained to predict the bin for a given gene. This approach is used by scGPT and the Teddy-X variant [1] [12].

- Value Embeddings: Some models, like scFoundation, represent gene expressions as weighted combinations of learned embeddings [12].

During this step, gene metadata (e.g., gene ontology terms) and cell metadata (e.g., tissue of origin) can be incorporated as special tokens to provide richer biological context for the model [1].

Model Pretraining and Fine-Tuning

After tokenization, models are pretrained on a self-supervised task using the entire curated corpus. The most common objective is Masked Language Modeling (MLM), where a random subset of gene tokens is masked, and the model is trained to predict them based on the context of the unmasked genes in the cell [1] [12]. This process forces the model to learn the underlying relationships and co-dependencies between genes.

Once a model is pretrained, it possesses a general understanding of cellular biology. This base model can then be efficiently fine-tuned with a smaller, task-specific dataset for downstream applications such as cell type annotation, drug sensitivity prediction, or disease classification [3]. This "pre-train then fine-tune" paradigm allows the knowledge gained from millions of cells to be transferred to specialized tasks with limited labeled data.

Experimental Validation and Benchmarking

To validate the effectiveness of scFMs trained on large repositories, researchers conduct rigorous benchmarking studies. A 2025 benchmark evaluated six scFMs against established baselines on both biological and clinically relevant tasks [3]. The findings reveal that scFMs are robust and versatile but also highlight important nuances:

- Robustness and Versatility: scFMs demonstrate strong performance across diverse tasks, including dataset integration and cell type annotation, particularly in preserving biological relationships as measured by novel ontology-informed metrics [3].

- No Single Best Model: No single scFM consistently outperformed all others across every task. Model selection must be tailored based on factors like dataset size, task complexity, and computational resources [3].

- Comparison to Simpler Models: In certain scenarios, simpler, task-specific machine learning models can be more efficient and perform on par with or even surpass large foundation models, especially when computational resources are constrained [3] [12].

These results underscore that while large-scale data is powerful, its effective translation into model performance depends on thoughtful architectural choices and training strategies.

The rapid accumulation of single-cell genomics data has created an unprecedented opportunity to decipher the fundamental principles of cellular function. Drawing inspiration from the success of large language models (LLMs) in natural language processing (NLP), researchers have begun treating cellular biology as a linguistic system, creating single-cell foundation models (scFMs) that reinterpret biological data through a computational linguistic lens [1]. In this analogous framework, individual cells are treated as "sentences" while genes and their expression values become the "words" or tokens that constitute these sentences [1] [6]. This paradigm shift enables the application of transformer-based architectures, which have revolutionized NLP, to the complex, high-dimensional space of single-cell data, potentially unlocking deeper insights into cellular heterogeneity, gene regulatory networks, and disease mechanisms [1].

The core premise of this approach is that by exposing a model to millions of cells encompassing diverse tissues, species, and biological conditions, the model can learn the fundamental "grammar" and "syntax" of cellular behavior [1]. Just as LLMs learn contextual relationships between words by training on vast text corpora, scFMs learn the contextual relationships between genes across different cellular states and environments [3]. This whitepaper explores the technical foundations, methodological considerations, and practical applications of this transformative analogy, providing researchers with a comprehensive guide to understanding and utilizing single-cell foundation models in biological and pharmaceutical research.

Core Architectural Framework

Tokenization Strategies for Single-Cell Data

Tokenization converts raw gene expression data into discrete units (tokens) that models can process. Unlike natural language, where words have inherent sequence, gene expression data lacks natural ordering, presenting a fundamental challenge for applying sequential models [1]. Researchers have developed several strategic approaches to address this challenge, as summarized in Table 1.

Table 1: Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Method | Advantages | Limitations |

|---|---|---|---|

| Expression Ranking | Genes are ranked by expression levels within each cell; top genes form the "sentence" [1] | Deterministic; provides structured input for transformers | Arbitrary sequence may not reflect biological gene relationships |

| Value Binning | Expression values are partitioned into bins; bin assignments determine token identity [1] | Captures expression magnitude information; reduces vocabulary size | May lose fine-grained expression differences |

| Normalized Counts | Uses normalized expression values directly without complex ranking [1] | Simpler implementation; maintains continuous nature of data | Requires careful normalization to handle technical variability |

| Multi-Modal Tokens | Incorporates special tokens for different omics modalities (e.g., ATAC-seq, proteomics) [1] | Enables integrated multi-omics analysis; captures broader regulatory context | Increases model complexity and computational requirements |

In addition to these core tokenization methods, models often incorporate special tokens to enrich biological context. These may include tokens representing cell identity metadata, batch information, or gene metadata such as chromosomal location or Gene Ontology terms [1]. After tokenization, all tokens are converted to embedding vectors processed by transformer layers, ultimately producing latent embeddings for each gene token and often a dedicated embedding for the entire cell [1].

Model Architectures and Attention Mechanisms

Most scFMs utilize transformer architectures characterized by attention mechanisms that learn and weight relationships between any pair of input tokens [1]. These attention mechanisms enable models to determine which genes in a cell are most informative of the cell's identity or state, how genes covary across cells, and how they participate in regulatory or functional connections [1]. The gene expression profile of each cell is converted to a set of gene tokens that serve as inputs, and the attention layers gradually build latent representations of each cell and gene [1].

Two predominant architectural paradigms have emerged in scFM development, each with distinct characteristics and applications, as detailed in Table 2.

Table 2: Transformer Architectures in Single-Cell Foundation Models

| Architecture | Attention Mechanism | Common Applications | Representative Models |

|---|---|---|---|

| BERT-like Encoder | Bidirectional attention; learns from all genes in a cell simultaneously [1] | Cell type annotation; embedding generation; classification tasks | scBERT [1] |

| GPT-like Decoder | Unidirectional masked self-attention; iteratively predicts masked genes conditioned on known genes [1] | Generative tasks; perturbation prediction; sequence generation | scGPT [1] |

| Encoder-Decoder | Combines both bidirectional and unidirectional attention; can encode input and decode output [1] | Multi-task learning; complex prediction tasks | Hybrid designs under exploration [1] |

The attention mechanism in scFMs can be visualized as a process where each gene "looks" at other genes in the same cell to determine their contextual relationships. This process generates attention weights that signify the strength of relationships between gene pairs, potentially mirroring biological interactions such as co-regulation or functional pathway membership [3].

Figure 1: Core Architecture of Single-Cell Foundation Models

Experimental Protocols and Benchmarking

Pretraining Strategies and Self-Supervised Learning

Pretraining scFMs involves training on self-supervised tasks across unlabeled single-cell data, typically using masked language modeling objectives [1]. In this approach, random subsets of gene tokens are masked, and the model is trained to predict the masked tokens based on the context provided by the remaining genes in the cell [1]. This process enables the model to learn the fundamental relationships between genes and cellular states without requiring manually annotated labels.

The scale of pretraining corpora has expanded dramatically, with recent models training on datasets containing tens to hundreds of millions of cells from diverse sources including CELLxGENE, Human Cell Atlas, and GEO [1] [17]. For example, the CellWhisperer framework was trained on over 1 million human RNA-seq profiles with matched textual annotations created through LLM-assisted curation of sample metadata [17]. This massive scale is essential for capturing the broad spectrum of biological variation across cell types, tissues, and conditions.

Benchmarking Framework and Performance Metrics

Comprehensive benchmarking studies have evaluated scFMs against traditional methods to assess their capabilities and limitations. A recent biology-driven benchmark evaluated six scFMs against established baselines across two gene-level and four cell-level tasks using 12 different metrics [3]. The evaluation encompassed both pre-clinical applications (batch integration and cell type annotation across five datasets with diverse biological conditions) and clinically relevant tasks (cancer cell identification and drug sensitivity prediction across seven cancer types and four drugs) [3].

Table 3 summarizes key benchmarking results that highlight the comparative performance of scFMs versus traditional methods across different task categories.

Table 3: Performance Comparison of scFMs vs. Traditional Methods

| Task Category | Superior Approach | Key Findings | Practical Implications |

|---|---|---|---|

| Batch Integration | scFMs show advantages in large, complex datasets [18] | Deep learning methods better preserve biological variation while removing technical artifacts | Recommended for atlas-level integration projects |

| Cell Type Annotation | scFMs excel in zero-shot learning for novel cell types [3] | Foundation models transfer knowledge to unseen cell types better than traditional classifiers | Valuable for discovering or characterizing rare cell populations |

| Drug Sensitivity Prediction | Traditional ML can outperform for specific, narrow datasets [3] | Simpler models may adapt more efficiently to homogeneous data with limited samples | Consider task specificity and data resources when selecting approach |

| Gene Function Prediction | scFMs provide biologically meaningful gene embeddings [3] | Gene embeddings capture functional relationships and tissue specificity | Useful for inferring gene function and regulatory relationships |

Notably, benchmarking reveals that no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, biological interpretability requirements, and computational resources [3]. To address this challenge, researchers have proposed the roughness index (ROGI) as a proxy metric to recommend appropriate models in a dataset-dependent manner [3].

Visualization of Model Training and Evaluation Workflow

Figure 2: scFM Training and Evaluation Workflow

Implementing and utilizing single-cell foundation models requires familiarity with both computational resources and biological data sources. Table 4 provides a comprehensive overview of key tools, platforms, and datasets essential for researchers working with scFMs.

Table 4: Essential Research Reagents and Resources for scFM Research

| Resource Category | Specific Examples | Function and Utility | Access Information |

|---|---|---|---|

| Data Repositories | CELLxGENE [1], GEO [17], Human Cell Atlas [1] | Provide standardized, annotated single-cell datasets for model training and validation | Publicly available; CELLxGENE contains >100 million unique cells [1] |

| Pre-trained Models | scGPT [1], Geneformer [3], scPlantLLM [6] | Offer transfer learning capabilities; can be fine-tuned for specific applications without pretraining from scratch | Various licensing; some open-source implementations available |

| Benchmarking Frameworks | scIB [18], Biology-Driven Benchmark [3] | Provide standardized evaluation metrics and pipelines for comparing model performance | Open-source implementations; custom metrics for biological relevance |

| Specialized Tools | CellWhisperer [17], scANVI [18] | Enable specific applications like natural language query or semi-supervised integration | Mixed availability; some open-source, some proprietary |

| Computational Infrastructure | GPU clusters, Cloud computing platforms | Handle intensive computational requirements of training and fine-tuning large foundation models | Institutional HPC, commercial cloud providers |

Specialized domain-adapted models have also emerged to address specific research contexts. For example, scPlantLLM represents a transformer-based model specifically trained on plant single-cell data to address unique challenges such as polyploidy, cell walls, and complex tissue-specific expression patterns not adequately handled by models trained primarily on animal data [6].

Applications and Biological Insights

Downstream Task Applications

Single-cell foundation models pretrained using the "cells as sentences" analogy can be adapted to numerous downstream biological tasks through fine-tuning or zero-shot learning. Key applications include:

Cell Type Annotation and Discovery: scFMs can annotate cell types in new datasets based on learned representations, with demonstrated capability for identifying novel cell populations not present in training data [3]. For example, models like scBERT have been specifically designed for cell type annotation tasks [1].

Batch Integration and Data Harmonization: Foundation models effectively remove technical batch effects while preserving biological variation, enabling integration of datasets across different platforms, laboratories, and experimental conditions [18]. This is particularly valuable for large-scale atlas projects combining data from multiple sources.

Gene Function and Regulatory Inference: The attention mechanisms in scFMs can reveal gene-gene relationships and potential regulatory interactions, providing insights into gene function beyond what is available in existing annotations [3]. Analysis of attention weights has shown correspondence with known biological pathways.

Perturbation Prediction and Drug Response Modeling: scFMs can predict cellular responses to genetic perturbations or drug treatments, with applications in pharmaceutical development and personalized medicine [3]. Models have been benchmarked on drug sensitivity prediction across multiple cancer types [3].

Cross-Modal and Cross-Species Transfer Learning: The representations learned by scFMs can transfer across related domains, enabling applications such as protein expression prediction from transcriptomic data or knowledge transfer between model organisms and humans [6].

Biological Interpretation of Model Representations

A critical challenge in scFM development is ensuring that learned representations capture biologically meaningful patterns rather than technical artifacts or spurious correlations. Researchers have developed several approaches to address this challenge:

The scGraph-OntoRWR metric measures the consistency of cell type relationships captured by scFMs with prior biological knowledge encoded in cell ontologies [3]. This provides a biologically grounded evaluation that goes beyond traditional clustering metrics. Similarly, the Lowest Common Ancestor Distance (LCAD) metric assesses the ontological proximity between misclassified cell types, providing a more nuanced evaluation of annotation errors [3].

Analysis of variability in model representations has also proven biologically informative. Studies have revealed that certain neurodevelopmental conditions, including trisomy 21 and CHD8 haploinsufficiency, drive increased gene expression variability in brain cell types [19]. This variability, which is uncoupled from changes in mean transcript abundance, may contribute to the diverse phenotypic outcomes observed in these conditions [19].

Future Directions and Challenges

As single-cell foundation models continue to evolve, several key challenges and opportunities merit attention. Current limitations include the nonsequential nature of omics data, inconsistencies in data quality, computational intensity of training and fine-tuning, and difficulties in interpreting the biological relevance of latent embeddings [1]. Future developments will likely focus on several key areas:

Multimodal Integration: Combining transcriptomic data with other modalities such as epigenomics, proteomics, and spatial information to create more comprehensive foundation models [1] [6]. Techniques like cross-modal graph contrastive learning that combine cellular images with transcriptomic data show particular promise [6].

Interpretability and Explainability: Developing methods to better interpret model predictions and attention mechanisms in biological terms, potentially revealing novel biological insights [1] [3]. This includes refining metrics like scGraph-OntoRWR that evaluate biological consistency.

Efficiency and Scalability: Creating more computationally efficient architectures and training approaches to handle the exponentially growing volumes of single-cell data [1]. This is particularly important as datasets approach billions of cells.

Specialized Domain Models: Developing foundation models tailored to specific biological domains, similar to scPlantLLM for plant genomics [6], but extending to other specialized areas such as immunology, neurobiology, or cancer research.

The analogy of "cells as sentences and genes as words" has provided a powerful framework for applying advanced AI techniques to single-cell biology. As this field matures, scFMs are poised to become indispensable tools for extracting meaningful biological insights from the increasingly complex and high-dimensional data generated by modern single-cell technologies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling high-resolution analysis of gene expression at the individual cell level, uncovering cellular heterogeneity, developmental trajectories, and complex regulatory networks that were previously obscured by bulk sequencing approaches [6]. However, this transformative technology introduces significant analytical challenges characterized by a trilemma of data quality issues: high sparsity, technical noise, and batch effects. The data are inherently sparse, with a high percentage of zero counts due to both biological absence of expression and technical dropout events [3] [20]. Technical noise arises from amplification biases, stochastic sampling, and other experimental artifacts [21]. Meanwhile, batch effects—technical variations between experiments conducted at different times, locations, or protocols—can confound biological interpretations and impede data integration [21].

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in addressing these challenges. These large-scale artificial intelligence models, pretrained on vast datasets comprising millions of cells, leverage self-supervised learning to capture universal patterns of cellular behavior [1] [3]. Inspired by transformer architectures that revolutionized natural language processing, scFMs learn fundamental biological principles that can be transferred to various downstream tasks through fine-tuning or zero-shot learning [1] [22]. This technical guide examines how scFMs are overcoming persistent data challenges in scRNA-seq analysis, providing researchers with powerful new tools for extracting biological insights from complex single-cell datasets.

Foundation Model Architecture: Biological Language Processing

Conceptual Framework: Cells as Sentences

Single-cell foundation models operate on a powerful analogy: treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [1] [22]. This conceptual framework allows researchers to apply sophisticated transformer-based architectures originally developed for natural language processing to biological data. By training on massive collections of single-cell transcriptomes encompassing diverse tissues, species, and conditions, these models learn the fundamental "language" of cellular biology [1].

The underlying premise is that exposure to millions of cells across varied biological contexts enables the model to internalize the fundamental principles governing cellular states and functions. This learned knowledge becomes transferable to new datasets and analytical tasks through mechanisms like fine-tuning and zero-shot inference [1] [3]. The model develops rich internal representations that capture biological relationships between genes and cell types, creating a foundation for diverse applications from cell type annotation to perturbation prediction.

Tokenization Strategies: From Expression Values to Model Input

A critical technical challenge in adapting transformer architectures to single-cell data is tokenization—the process of converting raw gene expression profiles into discrete units that the model can process. Unlike words in a sentence, genes have no inherent ordering, requiring strategic approaches to impose structure on the data. Current scFMs employ several tokenization strategies:

- Expression-based ordering: Ranking genes within each cell by their expression levels and feeding the ordered list of top genes as a "sentence" [1]

- Binning approaches: Partitioning genes into bins based on expression values and using these rankings to determine positional encoding [1]

- Normalized counts: Some models report no clear advantage to complex ranking strategies and simply use normalized counts without sophisticated ordering [1]

Each gene is typically represented as a token embedding that combines a gene identifier with its expression value in the given cell. Special tokens may be added to represent cell identity, metadata, or experimental batch information, enabling the model to learn contextual relationships [1]. Positional encoding schemes are adapted to represent the relative order or rank of each gene within the cell's pseudo-sequence.

Transformer Architectures for Single-Cell Data

Most scFMs are built on transformer architectures characterized by attention mechanisms that learn and weight relationships between any pair of input tokens [1]. In practice, this allows the model to determine which genes in a cell are most informative about cellular identity or state, and how they co-vary across cells and conditions. The two primary architectural approaches are:

- Encoder-based models (e.g., BERT-like): Employ bidirectional attention mechanisms that learn from all genes in a cell simultaneously, ideal for classification and embedding tasks [1]

- Decoder-based models (e.g., GPT-like): Use unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes, particularly effective for generation tasks [1]

The attention layers gradually build latent representations of each cell and gene, capturing hierarchical biological relationships that prove valuable for downstream analytical tasks.

Figure 1: Single-Cell Foundation Model Architecture. scFMs transform raw scRNA-seq data through tokenization strategies and transformer architectures to produce latent representations enabling diverse downstream tasks.

Quantitative Performance Benchmarking

Comparative Performance Across Analytical Tasks

Rigorous benchmarking studies have evaluated scFMs against traditional methods across diverse analytical tasks. A comprehensive 2025 benchmark study evaluated six prominent scFMs (Geneformer, scGPT, UCE, scFoundation, LangCell, and scCello) against established baselines using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches [3]. The results demonstrate that while scFMs are robust and versatile tools, no single model consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection.

Table 1: Performance Comparison of Single-Cell Foundation Models Across Key Tasks

| Model | Batch Integration | Cell Type Annotation | Gene Function Prediction | Perturbation Modeling | Computational Efficiency |

|---|---|---|---|---|---|

| scGPT | High | High | High | High | Medium |

| Geneformer | Medium | High | High | Medium | Medium |

| scFoundation | Medium | Medium | High | Medium | Low |

| scBERT | Low | Medium | Low | Low | High |

| Traditional Methods | Variable | Variable | Low | Low | High |

The benchmarking revealed that scGPT demonstrates robust performance across all tasks, including zero-shot learning and fine-tuning scenarios, while Geneformer and scFoundation show strong capabilities in gene-level tasks [23]. Simpler machine learning models sometimes outperform foundation models in tasks with limited data or when efficiently adapting to specific datasets, particularly under resource constraints [3].

Performance Under Technical Challenges

The ability of scFMs to handle data sparsity and batch effects has been systematically evaluated against traditional methods. A landmark 2023 benchmarking study assessed 46 workflows for single-cell differential expression analysis, examining how batch effects, sequencing depth, and data sparsity impact performance [21]. The findings indicate that:

- For highly sparse data (zero rate > 80%), the use of batch-corrected data rarely improves differential expression analysis

- With substantial batch effects, batch covariate modeling improves analysis compared to naive approaches

- For low-depth data, single-cell techniques based on zero-inflation models deteriorate in performance, whereas analysis of uncorrected data using limmatrend, Wilcoxon test, and fixed effects model performs well [21]

Notably, scFMs have demonstrated particular strength in zero-shot learning scenarios, maintaining high accuracy in cell type annotation and batch integration even on previously unseen data from different species [6]. This capability is particularly valuable for plant single-cell genomics, where models like scPlantLLM overcome issues with batch effect correction and cross-platform data integration that plague traditional methods [6].

Table 2: Method Performance Under Technical Challenges in scRNA-seq Analysis

| Challenge Type | High-Performance Methods | Performance Limitations | Key Considerations |

|---|---|---|---|

| High Sparsity (Zero rate > 80%) | limmatrend, LogN_FEM, DESeq2 | BEC methods show minimal improvement | Avoid zero-inflation models for very sparse data |

| Substantial Batch Effects | MASTCov, ZWedgeR_Cov | Pseudobulk methods perform poorly | Covariate modeling outperforms BEC data |

| Low Sequencing Depth | Wilcoxon test, FEM | scVI+limmatrend effectiveness diminished | Simple methods maintain robustness |

| Cross-Species | scPlantLLM, Geneformer | Animal-trained models on plant data | Species-specific training beneficial |

Experimental Protocols for scFM Implementation

Standardized Framework for Model Application

The application and evaluation of scFMs present significant challenges due to heterogeneous architectures and coding standards. To address this, the BioLLM framework provides a unified interface for integrating and applying diverse scFMs to single-cell RNA sequencing analysis [23]. This standardized approach includes:

- Unified APIs: Standardized application programming interfaces that eliminate architectural and coding inconsistencies

- Comprehensive documentation: Support for streamlined model switching and consistent benchmarking

- Zero-shot and fine-tuning support: Flexible adaptation to various analytical scenarios and resource constraints

The implementation protocol begins with data preprocessing and quality control, followed by model selection based on task requirements and data characteristics, then proceeds to either zero-shot inference or model fine-tuning, and concludes with output interpretation and biological validation [23].

Data Preprocessing and Quality Control

Effective application of scFMs requires careful data preprocessing to ensure model compatibility and reliability. Key steps include:

- Data selection and filtering: Careful selection of datasets, filtering of cells and genes, balancing dataset compositions, and quality controls [1]

- Normalization: Standardization of expression values to mitigate technical variations while preserving biological signals [21]

- Gene selection: Identification of highly variable genes to reduce dimensionality and computational requirements [3]

Assembling high-quality, non-redundant datasets for analysis is as important as model selection for obtaining robust biological insights [1]. For optimal performance, researchers should prioritize data quality over quantity, though scFMs benefit from larger datasets during pretraining.

Model Selection and Fine-Tuning Strategies

Selecting the appropriate scFM requires consideration of multiple factors, including dataset size, task complexity, need for biological interpretability, and available computational resources [3]. Practical guidelines include:

- For large, diverse datasets requiring multiple analytical tasks: scGPT or Geneformer

- For gene-level tasks and network analysis: Geneformer or scFoundation

- For resource-constrained environments or focused tasks: Traditional methods may be preferable

- For plant single-cell data: scPlantLLM specifically designed for plant genomics [6]

Fine-tuning strategies should be tailored to the specific analytical task. For cell type annotation, full fine-tuning on labeled datasets typically yields best results. For batch integration, lighter fine-tuning that preserves the model's general biological knowledge is often more effective [3].

Table 3: Key Research Reagent Solutions for Single-Cell Foundation Model Research

| Resource Category | Specific Examples | Function and Application | Key Features |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE, Human Cell Atlas, GEO/SRA | Provide standardized single-cell datasets for model training and validation | Over 100 million unique cells; standardized annotations [1] |

| Pretrained Models | scGPT, Geneformer, scFoundation, scPlantLLM | Ready-to-use foundation models for various single-cell analysis tasks | Different architectural strengths; species specializations [1] [6] |

| Analysis Frameworks | BioLLM, Seurat, Scanpy | Standardized environments for applying and evaluating scFMs | Unified APIs; benchmarking capabilities [23] |

| Evaluation Metrics | scGraph-OntoRWR, LCAD, ROGI | Specialized metrics for assessing biological relevance of model outputs | Cell ontology-informed evaluation [3] |

| Computational Infrastructure | GPU clusters, Cloud computing platforms | Enable training and deployment of resource-intensive foundation models | Essential for large-scale model training and inference |

Future Directions and Implementation Workflow

Emerging Trends and Development Priorities

The field of single-cell foundation models is rapidly evolving, with several promising directions emerging. Future development priorities include enhancing model interpretability to extract biologically meaningful insights from latent representations, improving scalability to handle increasingly large datasets, and developing better methods for multimodal data integration [1] [6]. Additionally, there is growing interest in incorporating spatial transcriptomics data and single-cell epigenomics to create more comprehensive models of cellular function and regulation [6].

The integration of cross-modal learning approaches, such as graph contrastive learning that combines cellular images with transcriptomic data, shows particular promise for bridging structural and functional genomics [6]. These advancements will not only enrich our knowledge of basic biological processes but also drive innovations in drug development and precision medicine.

Integrated Workflow for Overcoming scRNA-seq Data Challenges

A systematic approach to implementing scFMs for overcoming data challenges involves multiple stages, from data preparation through biological interpretation.

Figure 2: Implementation Workflow for Addressing scRNA-seq Data Challenges. A systematic approach for applying scFMs to overcome sparsity, noise, and batch effects in single-cell data analysis.

The workflow begins with comprehensive data preparation and quality control, followed by assessment of the primary data challenges present in the specific dataset. Based on this assessment, researchers select appropriate models and implementation strategies, such as zero-shot inference for well-represented cell types or fine-tuning for novel cellular states. Finally, biological validation using ontology-informed metrics ensures that computational improvements translate to meaningful biological insights [3].

Single-cell foundation models represent a transformative approach to overcoming the persistent challenges of data sparsity, technical noise, and batch effects in scRNA-seq analysis. By leveraging large-scale pretraining on diverse cellular contexts, these models capture fundamental biological principles that enable robust performance across diverse analytical tasks. While implementation requires careful consideration of model selection, data preparation, and validation strategies, scFMs offer powerful new capabilities for extracting biological insights from complex single-cell datasets. As the field continues to evolve, these models are poised to become indispensable tools for advancing our understanding of cellular biology and driving innovations in drug development and precision medicine.

Inside the Engine Room: Tokenization, Training, and Practical Applications

Single-cell foundation models (scFMs) represent a transformative approach in computational biology, leveraging large-scale deep learning to interpret complex single-cell genomics data. These models are trained on vast datasets encompassing millions of cells to learn fundamental biological principles that generalize across diverse tissues and conditions [1]. The core challenge enabling this technology lies in effectively converting raw gene expression profiles into structured representations that deep learning architectures can process—a procedure known as tokenization.

In natural language processing (NLP), tokenization converts raw text into discrete units called tokens. Similarly, for single-cell data, tokenization transforms gene expression information into a structured format that scFMs can understand and process [1]. This process is foundational because it determines how biological information is encoded and what patterns the model can potentially learn. The tokenization strategy directly impacts a model's ability to capture biological relationships, handle technical variations, and perform accurately on downstream tasks such as cell type annotation, perturbation prediction, and batch integration [3].

Core Components of Tokenization in scFMs

Fundamental Concepts and Definitions

Tokenization in single-cell genomics serves as the critical bridge between biological measurements and computational analysis. Unlike natural language, where words naturally form discrete units, gene expression data presents unique challenges: the data is inherently non-sequential, with no inherent ordering of genes, and exhibits characteristics of high dimensionality and sparsity [1] [3]. The primary goal of tokenization is to overcome these challenges by creating a standardized, structured representation that preserves biological signal while enabling efficient model training.

In practice, tokenization for scFMs involves defining what constitutes a "token" from single-cell data, typically representing each gene or genomic feature as a token. These tokens serve as the fundamental input units for the model, analogous to words in a sentence [1]. The process must also address how to incorporate additional information such as expression values, positional context, and metadata to create rich, informative representations.

Three Core Components of scFM Tokenization

Gene Embeddings

Gene embeddings function as unique identifier vectors for each gene, analogous to word embeddings in NLP. These embeddings allow the model to learn contextual relationships between genes across different cellular environments [3]. Through training on massive single-cell datasets, the model develops embedding spaces where functionally related genes (e.g., those participating in the same biological pathways) are positioned in proximity within the latent space [24]. For example, research has demonstrated that these embeddings can capture protein domain information, gene-disease associations, and transcription factor targets despite being trained solely on expression data [24].

Value Embeddings

Value embeddings represent the expression level of each gene in a specific cell, capturing quantitative information beyond mere gene identity. This component is crucial because the same gene can have dramatically different expression patterns across cell types, states, and conditions [3]. Different strategies exist for handling expression values, including binning approaches that discretize continuous expression values into categorical ranges, and normalized count representations that maintain relative expression levels [1] [24]. The "Binning-By-Gene" method has shown particular promise by allocating gene expressions across samples into bins based on expression rank, reducing bias toward genes with atypical expression distributions [24].

Positional Embeddings

Positional embeddings address the non-sequential nature of genomic data by providing artificial ordering information to the model. Since genes lack inherent sequence in expression data, various strategies have emerged for imposing structure, including expression-based ordering (ranking genes by expression level within each cell) and genomic coordinate-based ordering [1] [3]. These embeddings enable the transformer architecture to recognize relationships between genes regardless of their arbitrary position in the input sequence. Some models employ Attention with Linear Biases (ALiBi) as an alternative to traditional positional embeddings, particularly beneficial for handling long input sequences [25].

Table 1: Core Components of Tokenization in Single-Cell Foundation Models

| Component | Function | Implementation Examples | Biological Significance |

|---|---|---|---|

| Gene Embeddings | Unique identifier for each gene | Learned vectors for each gene identifier | Captures functional relationships between genes across cellular contexts |

| Value Embeddings | Represents expression magnitude | Binned expression values; Normalized counts | Encodes quantitative gene activity levels essential for understanding cell state |

| Positional Embeddings | Provides artificial sequence context | Expression-based ordering; Genomic coordinates; ALiBi | Enables attention mechanisms to model gene-gene interactions despite non-sequential nature |

Tokenization Strategies and Architectures

Input Representation Strategies

The conversion of raw gene expression data into tokenized inputs requires careful consideration of biological principles and computational efficiency. A key challenge is that gene expression data lacks natural ordering, unlike words in a sentence [1]. To address this, several strategic approaches have been developed: