How to Validate Cell Type Annotations in scRNA-seq: A 2024 Guide for Biomedical Researchers

This article provides a comprehensive, step-by-step guide for researchers and drug development professionals on validating single-cell RNA sequencing (scRNA-seq) cell type annotations.

How to Validate Cell Type Annotations in scRNA-seq: A 2024 Guide for Biomedical Researchers

Abstract

This article provides a comprehensive, step-by-step guide for researchers and drug development professionals on validating single-cell RNA sequencing (scRNA-seq) cell type annotations. We explore the foundational principles of why validation is critical for scientific rigor and reproducibility. We then detail current methodological best practices, from marker gene evaluation to automated classifiers and multimodal integration. The guide tackles common troubleshooting scenarios, such as handling ambiguous or novel cell states. Finally, we present a framework for rigorous comparative validation, including benchmarking against gold standards and assessing annotation confidence. This resource empowers scientists to generate robust, defensible annotations that translate into reliable biological insights and accelerate therapeutic discovery.

Why Annotation Validation is Non-Negotiable: The Pillars of Reproducible scRNA-seq Science

Single-cell RNA sequencing (scRNA-seq) has revolutionized biology, enabling the dissection of tissue heterogeneity, identification of novel cell states, and understanding of disease mechanisms at unprecedented resolution. However, its translation into clinical diagnostics and therapeutics hinges on one critical, non-negotiable factor: robust and validated cell type annotations. Incorrect annotation can lead to misinterpretation of disease biology, misidentification of therapeutic targets, and ultimately, clinical trial failure. This guide frames the technical journey from data generation to clinical application within the core thesis of rigorous annotation validation.

The Validation Imperative: A Multi-Faceted Approach

Validating cell type annotations is not a single step but a multi-layered process integrating computational, experimental, and cross-modal evidence.

Computational & Statistical Validation

These are the first line of defense, assessing the internal consistency of clustering and annotation.

Key Metrics & Methods:

- Cluster Stability: Using bootstrapping or subsampling to test if clusters are reproducible.

- Differential Expression (DE) Analysis: Validating that annotated clusters have strong, statistically significant DE markers.

- Intra-cluster vs. Inter-cluster Distance: Quantifying that cells within a cluster are transcriptionally more similar to each other than to cells in other clusters.

Biological & Experimental Validation

Computational predictions must be anchored in biological reality through orthogonal wet-lab techniques.

Core Experimental Protocols for Validation:

A. Fluorescence-Activated Cell Sorting (FACS) with Known Markers

- Purpose: To physically isolate a predicted cell population based on putative surface protein markers derived from scRNA-seq data.

- Protocol:

- Prepare a single-cell suspension from the tissue of interest.

- Stain cells with fluorochrome-conjugated antibodies targeting the candidate surface proteins (e.g., CD3, CD19, EpCAM).

- Use a FACS sorter to isolate the double-positive (or defined marker combination) cell population into a lysis buffer.

- Perform bulk RNA-seq or qPCR on the sorted population.

- Validation: Compare the expression profile of the sorted population to the computational cluster. High correlation confirms the annotation.

B. Multiplexed Fluorescence In Situ Hybridization (FISH) - e.g., RNAscope

- Purpose: To visualize the spatial co-expression of key marker genes from an annotated cluster within intact tissue architecture.

- Protocol:

- Fix and section the tissue sample. Perform pretreatment to permit probe access.

- Hybridize target-specific, proprietary ZZ-probes for 2-5 key marker genes from the cluster, each with a unique fluorescent channel.

- Amplify signals and image using a confocal or multiplexed fluorescence microscope.

- Validation: Identification of individual cells or regions expressing the full combination of predicted markers, confirming they exist in situ and their spatial context matches biological expectation.

C. Cellular Indexing of Transcriptomes and Epitopes by Sequencing (CITE-seq)

- Purpose: To directly correlate cell surface protein abundance with transcriptomic profiles in the same single cell.

- Protocol:

- Label a single-cell suspension with a panel of antibodies conjugated to oligonucleotide barcodes (TotalSeq antibodies).

- Perform standard scRNA-seq workflows (e.g., 10x Genomics) where both cellular mRNAs and antibody-derived tags are captured and co-sequenced.

- Generate a dual-modality data matrix: gene expression counts and antibody-derived counts (ADT).

- Validation: The protein-level expression of canonical markers (e.g., CD4, CD8) should strongly align with the transcriptional cluster identity, providing a powerful orthogonal confirmation.

Quantitative Landscape of scRNA-seq in Clinical Translation

Table 1: Clinical Trial Landscape Involving scRNA-seq (2020-2024)

| Therapeutic Area | Number of Trials* | Primary Application of scRNA-seq | Phase I | Phase II | Phase III |

|---|---|---|---|---|---|

| Oncology | 85 | Biomarker Discovery, Therapy Response Monitoring | 45 | 32 | 8 |

| Immunology/Autoimmunity | 41 | Target ID, Patient Stratification | 28 | 12 | 1 |

| Neurology | 18 | Disease Mechanism Elucidation | 15 | 3 | 0 |

| Infectious Disease | 9 | Host-Pathogen Interaction, Immune Profiling | 7 | 2 | 0 |

*Data compiled from recent searches of ClinicalTrials.gov using terms "single cell RNA sequencing" or "scRNA-seq". Numbers are approximate and indicative of trends.

Table 2: Key Performance Metrics for Clinical-Grade scRNA-seq Protocols

| Metric | Research-Grade Standard | Proposed Clinical-Grade Threshold | Validation Method |

|---|---|---|---|

| Cell Viability (Input) | >70% | >85% | Trypan Blue/Flow Cytometry |

| Median Genes per Cell | 1,000 - 3,000 | >2,500 with low variance | Scatter plot & IQR |

| Mitochondrial Read % | <20% | <10% | QC Software (e.g., Cell Ranger) |

| Doublet Rate | 1-10% (library dependent) | <5% for 10k cells | DoubletFinder, Scrublet |

| Annotation Concordance (vs. IHC/FACS) | >70% | >90% | Orthogonal protein-level assay |

Pathways from Data to Clinical Insight

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for scRNA-seq Validation Workflows

| Reagent / Kit | Vendor Examples | Primary Function in Validation |

|---|---|---|

| Single-Cell 3' / 5' Gene Expression Kits | 10x Genomics, Parse Biosciences | Generate the foundational transcriptomic data for cluster identification. |

| TotalSeq Antibodies (for CITE-seq) | BioLegend | Oligo-tagged antibodies to simultaneously quantify surface protein and mRNA in single cells. |

| RNAscope Multiplex Fluorescent Kit | ACD Bio | Enable visualization of up to 12 marker RNAs in situ for spatial validation of annotated clusters. |

| Chromium Next GEM Chip K | 10x Genomics | Microfluidic device for partitioning single cells and barcoding beads with controlled cell load to minimize doublets. |

| Live-Dead Stain (e.g., Zombie Dye) | BioLegend | Distinguish and gate out dead cells during sample prep, crucial for high-quality input. |

| Cell Hashing Antibodies (for Multiplexing) | BioLegend | Tag cells from different samples with unique barcodes, allowing pooled processing and demultiplexing, reducing batch effects. |

| Single-Cell Multome ATAC + Gene Exp. Kit | 10x Genomics | Adds chromatin accessibility data to transcriptome, aiding annotation of cell states via regulatory landscapes. |

The stakes of scRNA-seq are indeed high. Transitioning from a research curiosity to a clinical tool demands a rigorous, validation-centric culture. By embedding multi-modal validation—spanning computational checks, protein-level confirmation, and spatial context—into the core workflow, researchers can build the robust, reproducible annotations necessary for discovering actionable biomarkers, identifying reliable drug targets, and ultimately, guiding patient care. The future of clinical scRNA-seq lies not just in technological advancement, but in the steadfast commitment to biological truth.

Common Pitfalls and Consequences of Unvalidated Annotations

Cell type annotation is a critical step in single-cell RNA sequencing (scRNA-seq) analysis, translating high-dimensional gene expression data into biologically meaningful categories. Within the broader thesis of How to validate cell type annotations in scRNA-seq research, this guide details the significant risks of proceeding with unvalidated labels. Relying solely on automated, reference-based, or marker-gene-driven annotation without rigorous validation introduces error propagation that can invalidate downstream biological interpretation and translational applications.

Core Pitfalls of Unvalidated Annotations

The consequences cascade from analytical mistakes to flawed scientific conclusions.

Pitfall 1: Over-reliance on Reference Datasets without Context Matching

Automated label transfer from a public reference atlas (e.g., via Seurat's FindTransferAnchors or SingleR) fails when the query data derives from a different tissue preparation, disease state, or species. This leads to "forced" annotations where cells are assigned the closest, yet incorrect, label.

Pitfall 2: Misinterpretation of Canonical Marker Genes Using outdated or non-specific marker gene lists can mislead annotations. For example, using CD3D alone for T cells is insufficient in a tumor microenvironment where natural killer (NK) cells may also express it at lower levels.

Pitfall 3: Ignoring Cellular Doublets or Intermediate States Unvalidated pipelines often annotate doublets or cells in transition as a pure cell type, creating artifunctional cell populations that distort pathway analysis.

Pitfall 4: Technical Artifact-Driven Clustering Batch effects or ambient RNA contamination can drive cluster formation, which are then incorrectly annotated as novel cell types.

Pitfall 5: Circular Validation Using the same genes for annotation and subsequent differential expression analysis creates biased, statistically invalid results.

Quantified Consequences: Impact on Data Interpretation

The following table summarizes documented repercussions from studies that initially used unvalidated annotations.

Table 1: Consequences of Unvalidated Annotations in Published Studies

| Consequence Category | Reported Impact (Quantitative) | Downstream Effect |

|---|---|---|

| Misidentification Rate | 15-30% of cells in cross-tissue atlas projects (Squair et al., 2022) | False discovery of "disease-specific" cell states |

| Differential Expression (DE) Error | Up to 50% of DE genes are false positives when annotation is 20% incorrect (Freytag et al., 2018) | Incorrect pathway and mechanistic insights |

| Trajectory Inference Failure | Incorrect root or branch assignment in >40% of cases with poor annotation (Tritschler et al., 2019) | Wrong model of cell differentiation or tumor evolution |

| Drug Target Mis-prioritization | In silico screens of incorrectly annotated endothelial cells proposed irrelevant targets, reducing hit rate by ~70% (Jambusaria et al., 2020) | Wasted preclinical resources |

Foundational Validation Methodologies

A multi-modal, iterative validation framework is essential. Below are core experimental protocols.

Wet-Lab Validation Protocol: Multiplexed FluorescenceIn SituHybridization (FISH)

Purpose: Spatial confirmation of putative cell type markers from scRNA-seq clusters. Reagents:

- RNAscope Multiplex Fluorescent Reagent Kit v2 (ACD Bio)

- Target probe sets for 2-4 key marker genes per annotated cell type

- DAPI for nuclear counterstain

- Confocal or fluorescence microscope with appropriate filter sets Workflow:

- Tissue Sectioning: Generate 5-10 µm formalin-fixed paraffin-embedded (FFPE) or frozen sections from the same biological sample used for scRNA-seq.

- Probe Hybridization: Follow manufacturer's protocol. Briefly, bake slides, deparaffinize, perform target retrieval, and apply protease digest. Hybridize with target-specific oligonucleotide probe sets.

- Signal Amplification & Detection: Apply sequential amplification steps. Use fluorophores (e.g., Opal 520, 570, 650) with distinct emission spectra for each channel.

- Imaging & Analysis: Acquire high-resolution z-stack images. Co-localization of mRNA signals from multiple marker genes within a single cell validates the scRNA-seq-derived annotation.

Computational Cross-Validation Protocol: Ensemble Annotation with Discrepancy Flagging

Purpose: Identify cells with ambiguous or conflicting annotations across multiple independent methods. Tools Required: Seurat, SingleR, SCINA, scANVI (within Scanpy). Workflow:

- Independent Annotations: Annotate the same dataset using at least three distinct methods:

- Method A: Reference-based (SingleR with Human Cell Atlas reference).

- Method B: Marker-based (SCINA using curated gene sets from CellMarker).

- Method C: Unsupervised clustering + manual annotation (based on top DEGs).

- Consensus & Discrepancy Analysis: Create a consensus label for cells where ≥2 methods agree. Flag cells where all three methods disagree for further investigation.

- Ambiguity Metric: Calculate an "Annotation Confidence Score" per cell as the proportion of methods agreeing on the label. Clusters with a mean score <0.7 require re-evaluation.

Table 2: Research Reagent Solutions for Validation

| Reagent / Resource | Provider Example | Function in Validation |

|---|---|---|

| RNAscope Multiplex Assay | Advanced Cell Diagnostics (ACD) | Gold-standard spatial validation of marker gene co-expression at single-cell resolution. |

| CITE-seq Antibody Panels | BioLegend, TotalSeq | Protein surface marker measurement integrated with transcriptome to confirm identity (e.g., CD45, CD3, EpCAM). |

| CellHash / MULTI-seq Oligos | BioLegend, Custom Synthesis | Demultiplex samples to confirm cell type annotations are consistent across biological replicates and are not batch artifacts. |

| Curated Reference Atlases | HuBMAP, CellTypist, Azimuth | Benchmark annotations against high-quality, community-vetted references. |

| CellSNP-lite & Vireo | Github (single-cell genetics tools) | Use natural genetic variants (SNPs) in donor samples to verify clonal relationships and detect doublets. |

Visualizing the Validation Workflow and Pitfalls

Title: Annotation Workflow: Pitfalls vs. Validation Pathway

Title: Iterative Cell Type Annotation & Validation Protocol

In the context of single-cell RNA sequencing (scRNA-seq) research, the validation of cell type annotations stands as a critical, non-trivial challenge. A robust validation framework hinges on the precise understanding and measurement of four foundational metrological concepts: Accuracy, Precision, Reproducibility, and Resolution. This whitepaper defines these concepts within the scRNA-seq annotation workflow, provides methodologies for their assessment, and details essential resources for implementation.

Core Definitions in the Context of scRNA-seq Annotation

- Accuracy: The degree of closeness of an annotated cell type label to its true biological identity. High accuracy means annotations match definitive, orthogonal biological evidence (e.g., in situ hybridization, indexed flow cytometry).

- Precision (Repeatability): The degree of agreement between independent annotation results obtained under identical conditions (same algorithm, same analyst, same reference dataset on the same computational environment). It measures stochastic noise in the process.

- Reproducibility: The degree of agreement between independent annotation results obtained under varied but acceptable conditions (different algorithms, different reference atlases, different analysts, or different laboratories). It measures the robustness of the annotation pipeline to methodological choices.

- Resolution: The granularity at which cell types or states can be distinguished. High resolution allows separation of closely related subtypes (e.g., naive vs. memory T cells), but must be balanced against statistical confidence.

Quantitative Framework & Data Presentation

The following table summarizes key metrics and their targets for validating scRNA-seq annotations.

Table 1: Metrics for Validating scRNA-seq Cell Type Annotation Concepts

| Concept | Typical Assessment Metric | Ideal Target (Benchmark) | Data Source for Validation |

|---|---|---|---|

| Accuracy | F1-score, Balanced Accuracy | >0.85 (vs. gold-standard) | Cell hashing/sorting, CITE-seq, spatial transcriptomics (same tissue), known marker genes |

| Precision | Adjusted Rand Index (ARI) | ARI > 0.9 | Repeated runs of the same clustering/annotation pipeline on a fixed dataset |

| Reproducibility | Cohen's Kappa (κ), ARI | κ > 0.6 (Substantial agreement) | Comparing annotations from different pipelines, reference atlases, or analysts on the same dataset |

| Resolution | Cluster Significance (Silhouette Width), Differential Expression | Silhouette > 0.25; >5 DE genes (adj. p < 0.01) | Within-dataset analysis of subcluster distinctness |

Experimental Protocols for Validation

Protocol 1: Assessing Accuracy with CITE-seq

- Library Preparation: Generate paired scRNA-seq and antibody-derived tag (ADT) libraries from a single cell suspension using a platform like 10x Genomics.

- Data Processing: Sequence libraries and pre-process RNA and ADT counts separately (standard normalization, QC).

- Annotation: Annotate cell types based solely on the scRNA-seq data using a chosen classifier (e.g., SingleR, SCINA) and a reference atlas.

- Validation: Use the independently quantified surface protein (ADT) levels as a orthogonal validation. Calculate the confusion matrix between RNA-based annotations and protein marker-defined populations.

- Analysis: Compute accuracy metrics (F1-score, Balanced Accuracy) from the confusion matrix.

Protocol 2: Assessing Reproducibility via Cross-Method Comparison

- Dataset Selection: Use a publicly available, well-characterized scRNA-seq dataset (e.g., PBMCs).

- Independent Annotation: Have two or more analysts, or apply two or more annotation tools (e.g., Seurat label transfer, scANVI, SingleR) to the same pre-processed dataset.

- Harmonization: Map the annotation labels from different methods to a common ontology (e.g., Cell Ontology terms) where possible.

- Metric Calculation: Compute the agreement between the label sets using Cohen's Kappa (for categorical agreement) or ARI (for cluster-level agreement).

Visualization of the Validation Workflow

Title: scRNA-seq Annotation Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for scRNA-seq Annotation & Validation

| Item | Function & Relevance to Validation |

|---|---|

| 10x Genomics Chromium Single Cell Immune Profiling | Provides paired gene expression (GEX) and surface protein (ADT) data. The definitive reagent for Accuracy validation via orthogonal protein measurement. |

| Cell Hashing Antibodies (e.g., BioLegend TotalSeq-A) | Enables sample multiplexing and doublet detection. Improves precision by allowing clean, sample-specific clustering before annotation. |

| Reference Atlases (e.g., Human Cell Landscape, Mouse Brain Atlas) | Pre-annotated, high-quality datasets used as a training reference for label transfer. Choice of atlas directly impacts reproducibility and achievable resolution. |

| Single-cell Annotation Software (Seurat, Scanpy, SingleR) | Computational toolkits implementing clustering and classification algorithms. The core of the annotation pipeline where parameters affect all four key concepts. |

| Benchmarking Datasets (e.g., from DCP or CZ CELLxGENE) | Gold-standard, ground-truth datasets (often with CITE-seq or sorted cells) essential for accuracy benchmarking of new annotation methods. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of cellular heterogeneity. The process of assigning cell identities—cell type annotation—is a critical but non-trivial step in the analysis pipeline. Validation is not a separate, final check but an integral component woven throughout the annotation workflow. This guide details the technical steps of the annotation workflow, explicitly framing each stage within the context of validation to ensure robust and biologically meaningful results for downstream research and drug development.

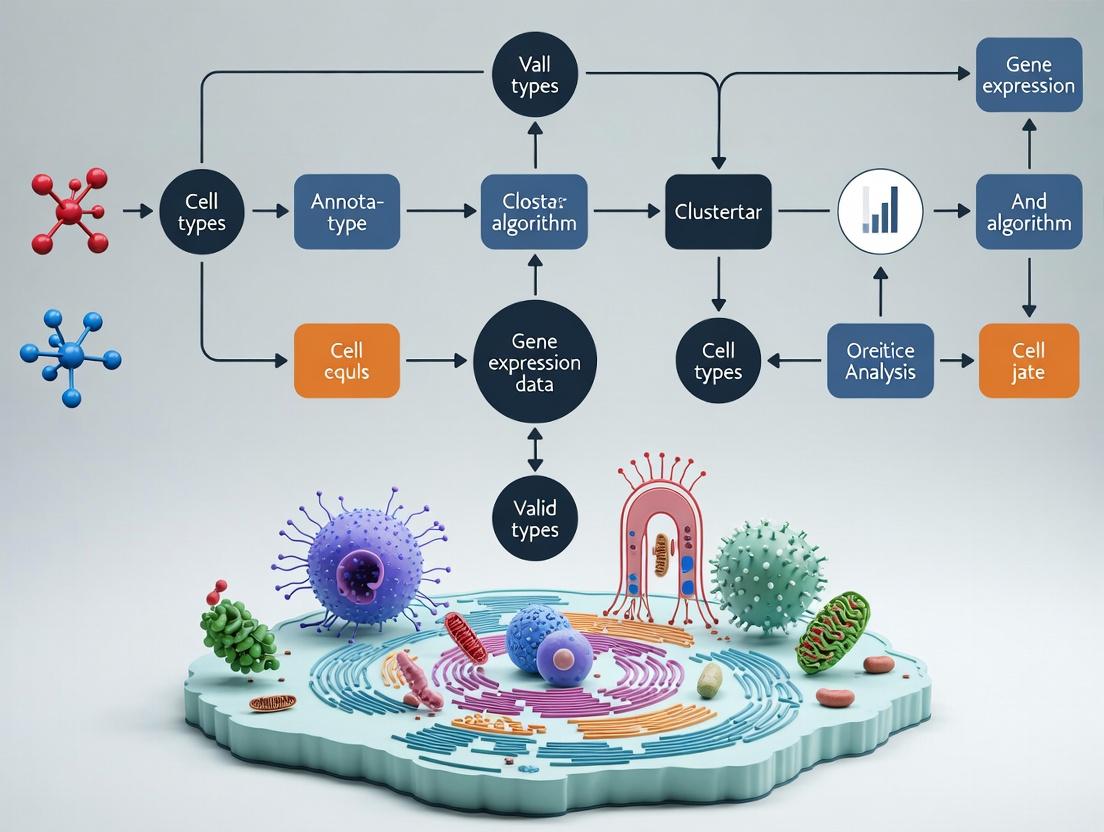

The Integrated Annotation & Validation Workflow

The annotation process is a cycle of hypothesis generation and testing. The following diagram illustrates this integrated workflow.

Diagram Title: The Integrated scRNA-seq Annotation and Validation Workflow

Stages of Annotation and Corresponding Validation Techniques

Pre-processing and Quality Control (QC)

This foundational stage requires validation of data quality before any annotation is attempted.

Experimental Protocol: Ambient RNA Correction with SoupX

- Input: Raw cellranger output matrices (filtered and raw).

- Estimate Contamination: Use the

autoEstContfunction in SoupX to estimate the global background contamination fraction from the raw matrix. - Calculate Soup Profile: Generate the background expression profile.

- Adjust Counts: Subtract the estimated contaminating counts using

adjustCountsto produce a corrected count matrix. - Validation Metric: Monitor the change in expression of known marker genes for highly expressed ambient RNAs (e.g., HBB for red blood cells in tissues) before and after correction. A significant drop in their spurious expression across the population validates the correction.

Table 1: Key QC Metrics and Validation Targets

| Metric | Acceptance Threshold | Validation Purpose |

|---|---|---|

| Reads/Cell | >20,000 (3' end) >50,000 (full-length) | Excludes low-information cells |

| Genes/Cell | >500-1,000 (tissue-dependent) | Filters damaged/empty droplets |

| Mitochondrial % | <10-20% (tissue-dependent) | Identifies dying/stressed cells |

| Hemoglobin Genes % | <5% (non-erythroid samples) | Flags ambient RNA contamination |

Provisional Annotation

Initial labels are assigned using computational methods, each requiring specific validation approaches.

Experimental Protocol: Marker-Based Annotation with Wilcoxon Test

- Find Markers: For each cluster from unsupervised analysis, perform a Wilcoxon rank-sum test comparing gene expression in the cluster vs. all other cells.

- Filter: Apply thresholds (e.g., log fold-change > 0.5, adjusted p-value < 0.01, min.pct > 0.25).

- Map to Reference: Compare top markers (e.g., top 5 per cluster) to canonical cell type markers from curated databases (CellMarker, PanglaoDB) or tissue-specific literature.

- Assign Provisional Label: Assign the cell type whose canonical markers best match the cluster's differentially expressed genes (DEGs).

The Core Validation Cycle

Validation at this stage is multi-faceted, moving from internal consistency to external biological evidence.

Diagram Title: The Three Pillars of scRNA-seq Annotation Validation

Table 2: Validation Techniques and Their Applications

| Validation Type | Common Tools/Methods | Key Output/Readout | What a Successful Validation Confirms |

|---|---|---|---|

| Internal | Sub-clustering, Marker expression UMAPs, Doublet detectors | Homogeneous expression of markers within clusters; No sub-structure correlating with technical artifacts. | Annotation is consistent with the intrinsic structure of this dataset. |

| External | SingleR, Azimuth, Seurat label transfer | High-confidence scores across cells; Agreement with independent, curated reference. | Annotation is generalizable and matches established biological knowledge. |

| Biological | CITE-seq, Spatial Transcriptomics, Functional assays | Co-expression of RNA and protein; Anatomically plausible location; Expected functional response. | Annotation corresponds to a true biological state with protein-level and spatial/functional correlates. |

Experimental Protocol: Cross-Validation with SingleR

- Prepare Reference: Download a high-quality, manually annotated scRNA-seq reference (e.g., from the Human Cell Atlas or Blueprint/ENCODE for SingleR).

- Map Query: Run SingleR (

SingleR()function) using the reference and the query dataset's normalized log-expression matrix. - Score Annotations: Examine the per-cell assignment scores (

$scores). High scores indicate confident matches. - Resolve Discrepancies: For clusters with low scores or ambiguous labels, compare SingleR's suggestions with the original marker-based labels and investigate discordant cells via differential expression.

Experimental Protocol: Orthogonal Protein Validation with CITE-seq

- Sample Preparation: Perform a feature barcoding experiment, hybridizing antibody-derived tags (ADTs) against 50-200 key surface proteins to the same cell suspension used for scRNA-seq.

- Sequencing & Processing: Sequence cDNA (RNA) and ADT libraries, then align and quantify using tools like

CITE-seq-CountandCellRanger. - Normalization: Normalize ADT counts using centered log-ratio (CLR) transformation.

- Correlation Analysis: For each annotated cell type, check the correlation between RNA expression of the marker gene and its corresponding protein (ADT) level (e.g., CD3E RNA vs. CD3 protein). High correlation validates the annotation at the protein level.

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Research Reagents and Kits for Validation Experiments

| Reagent/Kits | Provider Examples | Primary Function in Validation |

|---|---|---|

| Chromium Next GEM Single Cell 5' Kit w/ Feature Barcoding | 10x Genomics | Enables paired scRNA-seq and surface protein quantification (CITE-seq) for orthogonal validation. |

| TotalSeq Antibodies | BioLegend | Antibody-derived tags (ADTs) conjugated with oligonucleotide barcodes for use in CITE-seq experiments. |

| Visium Spatial Tissue Optimization & Gene Expression Slides | 10x Genomics | Enables spatial transcriptomic validation of annotated cell type localization within tissue architecture. |

| SMART-seq HT Kit | Takara Bio | Provides high-sensitivity, full-length scRNA-seq for generating deep reference datasets or validating rare cell types. |

| Cell Hashing Antibodies (TotalSeq-C) | BioLegend | Allows sample multiplexing, reducing batch effects and improving the power of cross-dataset validation. |

| Multiplexed FACS Antibody Panels | Standard Flow Cytometry Suppliers | Enables traditional flow cytometric sorting or analysis of cell populations defined by scRNA-seq for functional validation. |

Validation is the critical thread that runs through every stage of the scRNA-seq annotation workflow, from initial QC to final biological interpretation. A rigorous, multi-modal validation strategy—incorporating internal, external, and biological pillars—transforms provisional computational labels into biologically defensible cell type annotations. This robust foundation is essential for generating reliable insights in basic research and for building trustworthy biomarkers and therapeutic targets in drug development.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to deconstruct tissue heterogeneity. However, the critical step of assigning cell type identities to clusters—cell type annotation—remains a major challenge with significant implications for downstream biological interpretation. Validation is not a single step but a continuum of evidence, ranging from internal checks of the data itself to confirmation through independent, external biological assays. This guide provides a technical framework for implementing a rigorous, multi-layered validation strategy to ensure robust and reproducible cell type annotations.

The Validation Hierarchy: A Layered Approach

Effective validation operates on a hierarchy of evidence, each layer providing increasing confidence.

Diagram 1: The four-layer validation hierarchy for scRNA-seq annotations.

Layer 1: Internal Consistency Validation

This layer assesses the quality and logical coherence of the clustering and annotation process using only the scRNA-seq dataset itself.

Cluster Quality Metrics

A foundational step is to ensure clusters are robust and separable before annotation.

Table 1: Key Internal Cluster Quality Metrics

| Metric | Ideal Value | Interpretation | Common Tool/Function |

|---|---|---|---|

| Silhouette Width | Close to 1 | Measures how similar a cell is to its own cluster vs. others. High value indicates good separation. | cluster::silhouette(), scanpy.tl.silhouette |

| Modularity (for graph-based) | > 0.3 | Quality of graph partitioning. Higher values indicate strong community structure. | Louvain/Leiden algorithm output |

| Within-cluster sum of squares | Elbow in scree plot | Guides optimal cluster number (k) selection. | scikit-learn KMeans inertia_ |

| Average Jaccard Index (Stability) | > 0.75 | Checks cluster robustness upon subsampling. High index indicates stable clusters. | clustree, sccore |

Marker Gene Assessment

Annotation relies on marker genes. Their expression must be evaluated systematically.

Protocol: Differential Expression & Specificity Scoring

- Perform DE: For each cluster, run a differential expression test (e.g., Wilcoxon rank-sum, MAST) against all other cells.

- Calculate Specificity Metrics:

- Log Fold Change (logFC): Threshold > 0.58 (∼1.5x linear fold change).

- Area Under the ROC Curve (AUROC): Threshold > 0.8. Measures how well a gene separates one cluster from all others.

- Precision-Recall AUC: Particularly useful for rare cell types.

- Visualize: Create dot plots or heatmaps showing expression level (mean) and fraction of cells expressing (% expressed) for top markers per cluster.

Diagram 2: Workflow for internal marker gene validation.

Layer 2: Internal Predictive Validation

This layer uses computational cross-validation to test the stability and accuracy of the annotations.

Cross-Validation with Classifiers

Protocol: Train-Validate Classifier on Own Data

- Split Data: Randomly partition cells into a training set (e.g., 80%) and a held-out test set (20%), stratified by cluster label.

- Train Classifier: On the training set, train a cell type classifier (e.g., Random Forest, SVM, or a simple k-NN classifier) using the expression of top marker genes.

- Predict & Benchmark: Predict labels for the test set. Calculate metrics like Balanced Accuracy and F1-score (macro-averaged).

- Interpret: High accuracy (>85%) suggests annotations are consistent with the expression data. Low accuracy indicates poor or non-discriminative markers.

Leave-One-Out Gene Validation

Tests the dependency of the annotation on a single canonical marker.

- Annotate clusters using a full marker list.

- Systematically remove one key marker gene (e.g., CD3E for T cells).

- Re-run the annotation logic (automated or manual). Robust annotations should not change upon removal of a single gene.

Table 2: Predictive Validation Metrics & Interpretation

| Validation Method | Metric | Target Threshold | Indication of Problem |

|---|---|---|---|

| Train-Test Classifier | Balanced Accuracy | > 0.85 | Annotations are not reliably predictable from expression data. |

| Leave-One-Gene-Out | Annotation Stability | 100% stable | Annotation is overly reliant on a single, potentially noisy gene. |

Layer 3: External Biological & Database Evidence

This layer grounds annotations in prior biological knowledge from independent sources.

Reference Dataset Mapping

Protocol: Projection onto Atlas References

- Select Reference: Choose a well-curated, public scRNA-seq atlas (e.g., Human Cell Landscape, Mouse Cell Atlas, Tabula Sapiens).

- Harmonization: Use a batch integration method (e.g., Seurat's CCA, Scanorama, Harmony) to co-embed query data with the reference.

- Label Transfer: Employ a label transfer algorithm (e.g., Seurat's

FindTransferAnchors&TransferData, orscArches). - Evaluate Concordance: Calculate the proportion of cells where the transferred label matches your original annotation. Disagreements require biological scrutiny.

Enrichment Analysis for Functional Coherence

Check if marker genes for an annotated cell type enrich for known biological pathways.

- Gene List: Extract top 100-200 markers for a given cluster.

- Enrichment Test: Run Gene Ontology (GO), Kyoto Encyclopedia of Genes and Genomes (KEGG), or cell-type-specific signature (e.g., CellMarker) enrichment using tools like

clusterProfilerorEnrichr. - Interpret: A T-cell cluster should enrich for "T cell receptor signaling," "immune response," etc. Lack of expected enrichment is a red flag.

Layer 4: External Experimental Validation

The gold standard, providing direct biological confirmation.

Orthogonal Single-Cell Modalities

Protocol: Multimodal Co-measurement

- CITE-seq/REAP-seq: Measure surface protein abundance alongside transcriptome. Directly validate protein-level expression of key markers (e.g., CD3, CD19) used in RNA-based annotation.

- Spatial Transcriptomics: (e.g., 10x Visium, Slide-seq) Validate that cells annotated as a specific type localize to expected tissue microenvironments (e.g., glomerular cells within kidney glomeruli).

- scATAC-seq: Confirm that chromatin accessibility in an annotated cell type is enriched at key cell-type-specific regulatory elements.

In SituHybridization & Immunohistochemistry

Protocol: Spatial Validation on Tissue Sections

- Based on scRNA-seq annotations, select 2-3 highly specific RNA markers per cell type.

- Design RNAscope probes or antibodies for corresponding proteins.

- Perform multiplexed in situ hybridization (ISH) or immunohistochemistry (IHC) on serial sections of the original tissue.

- Confirm that the spatial distribution and co-localization of signals match the predicted relationships from the annotation (e.g., that "Marker A+" cells are found in the expected histological layer).

Table 3: Key Research Reagent Solutions for Validation

| Item / Resource | Function in Validation | Example Product/Platform |

|---|---|---|

| Cell Hashing/Optimized Nuclei Isolation Kits | Reduces batch effects in internal validation by enabling cleaner multiplexing. | BioLegend TotalSeq-C Antibodies, 10x Multiome ATAC + Gene Exp. |

| Validated Antibody Panels (for CITE-seq) | Provides orthogonal protein-level evidence for transcript-based markers. | BioLegend TotalSeq, BD AbSeq Assays |

| Multiplexed FISH/ISH Platforms | Enables spatial confirmation of marker gene expression at the RNA level. | Akoya CODEX, NanoString GeoMx, Advanced Cell Diagnostics RNAscope |

| Curated Reference Atlases | Provides external biological evidence for label transfer and consensus. | Human: Tabula Sapiens, HCA. Mouse: TMS Atlas. Cross-species: Azimuth. |

| Automated Annotation & Benchmarking Software | Standardizes internal consistency and predictive validation checks. | scType, SingleR, SCINA, scMatch, scMAGIC |

| Benchmarking Datasets (Gold Standards) | Provides positive controls for validating the entire annotation pipeline. | PBMC datasets from 10x Genomics, mouse brain datasets from Saunders et al. |

The Validation Toolkit: A Step-by-Step Guide to Methods and Best Practices

Validating cell type annotations in single-cell RNA sequencing (scRNA-seq) is a critical step to ensure biological conclusions are robust. While log2 fold-change (log2FC) remains a cornerstone for identifying differentially expressed genes (DEGs), it provides an incomplete picture. This guide details advanced metrics—specifically gene specificity scores and expression distribution analysis—that are essential for rigorous marker gene assessment within a comprehensive validation thesis.

Beyond Log2FC: Core Concepts

Log2FC measures the average expression difference between groups but fails to capture expression distribution across cells. A gene with a high log2FC may still be expressed in many non-target cell types, making it a poor specific marker. The following advanced approaches address this limitation.

Specificity Scores

Specificity scores quantify how restricted a gene's expression is to a particular cell type or cluster. The table below summarizes key metrics gathered from current literature.

Table 1: Comparison of Gene Specificity Metrics

| Metric Name | Formula (Conceptual) | Range | Interpretation | Key Advantage |

|---|---|---|---|---|

| Gini Index | Inequality of expression across clusters (1 - ∑(p_i²)) | 0 (uniform) to 1 (perfect specificity) | Higher = more specific to a subset of cells. | Robust, scale-invariant measure of inequality. |

| Tau (τ) | 1 - ∑(x_i / max(x)) / (n-1) | 0 (ubiquitous) to 1 (cell-type specific) | Values >0.85 often indicate a cell-type-specific gene. | Designed explicitly for tissue/cell type specificity. |

| Jensen-Shannon Divergence (JSD) | Distance of cluster expression profile from uniform distribution. | 0 (uniform) to 1 (specific) | Higher = distribution is skewed toward specific clusters. | Information-theoretic; symmetric and stable. |

| Specificity Metric (SPM) | (Max Mean Expression) / (Sum of Mean Expressions) | ~0 to 1 | Closer to 1 indicates expression dominated by one cluster. | Intuitive; directly uses mean expression values. |

| Area Under ROC Curve (AUC) | Classifier ability to identify cluster using gene expression. | 0.5 (random) to 1 (perfect) | AUC > 0.7 suggests predictive power for cell identity. | Evaluates discriminative power at single-cell level. |

Expression Distribution Analysis

Inspecting the full distribution of expression (e.g., via violin plots, ridge plots, or empirical cumulative distribution functions) reveals heterogeneity within the putative target cluster (e.g., only a subtype expresses the marker) and "leakage" into off-target clusters.

Experimental Protocols for Validation

Protocol: Calculating Specificity Scores from an scRNA-seq Count Matrix

Objective: Compute Tau and JSD scores for all genes across annotated clusters.

Input: Normalized (e.g., CPM, log-normalized) expression matrix with cell cluster labels.

Software: R (with Seurat, SCINA, scran packages) or Python (with scanpy, scikit-learn).

Steps:

- Aggregate Expression: Calculate the mean (or median) normalized expression for each gene in each cell cluster.

- Compute Tau: a. For each gene g, find its maximum mean expression across clusters, x_max. b. Compute relative expression for each cluster i: x_i / x_max. c. Tau = [∑ (1 - x_i / x_max)] / (N - 1), where N is the number of clusters.

- Compute JSD: a. Convert the vector of mean expressions per cluster for gene g to a probability distribution, P. b. Define a uniform distribution Q over the same N clusters. c. Calculate M = 0.5 * (P + Q). d. JSD(P||Q) = 0.5 * [KL(P||M) + KL(Q||M)], where KL is the Kullback-Leibler divergence.

- Integrate with DEGs: Filter DEGs (based on log2FC and adjusted p-value) by a Tau > 0.85 and/or JSD > 0.5 to generate a high-confidence specific marker list.

Protocol: Orthogonal Validation by Multiplexed FluorescenceIn SituHybridization (FISH)

Objective: Visually confirm spatial restriction and co-expression patterns of candidate markers. Method: RNAscope or MERFISH. Steps:

- Probe Design: Design oligonucleotide probes against top candidate markers from scRNA-seq analysis.

- Sample Preparation: Use the same or biologically matched tissue as used for scRNA-seq. Perform standard tissue fixation, embedding, and sectioning.

- Hybridization & Amplification: Follow manufacturer protocol for multiplexed FISH assay (e.g., RNAscope Multiplex Fluorescent v2). Include positive and negative control probes.

- Imaging: Acquire high-resolution, multi-channel z-stack images on a confocal or specialized spatial imaging platform.

- Analysis: Quantify signal co-localization and determine the percentage of target cell types expressing the marker versus off-target cells.

Visualization of the Validation Workflow

Diagram Title: Integrated scRNA-seq Marker Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Marker Validation

| Item | Function/Application in Validation | Example/Note |

|---|---|---|

| Chromium Single Cell 3' / 5' Reagent Kits (10x Genomics) | Generate the initial scRNA-seq libraries for marker discovery. | Essential for consistent, high-throughput single-cell gene expression profiling. |

| Cell Ranger / Space Ranger Analysis Pipelines | Process raw sequencing data into gene-cell count matrices and perform initial clustering. | Standardized software for data alignment, barcode processing, and UMI counting. |

| Seurat (R) or Scanpy (Python) | Comprehensive toolkit for downstream analysis: normalization, clustering, DEG calling, and visualization. | Enables calculation of specificity metrics and distribution plotting. |

| RNAscope Multiplex Fluorescent Reagent Kit v2 (ACD Bio) | For orthogonal FISH validation. Allows simultaneous detection of up to 4 RNA targets in tissue. | Provides high sensitivity and single-molecule visualization in fixed tissue. |

| Validated Antibodies for Protein Detection | Confirm marker expression at the protein level via IHC or IF on serial tissue sections. | Check Human Protein Atlas for antibody validation data. Crucial for translational work. |

| Cell Hash Tagging Antibodies (BioLegend) | For multiplexing samples, reducing batch effects, and improving cluster alignment. | Enables robust cross-sample comparisons to assess marker consistency. |

| SIRV / ERCC Spike-In Controls | Monitor technical sensitivity and accuracy of the scRNA-seq assay itself. | Used to calibrate experiments and assess quantitative performance. |

| Singlet Scoring Tools (e.g., DoubletFinder, scDblFinder) | Identify and remove doublets/multiplets that can confound marker identification. | Critical for ensuring clusters represent pure cell types. |

Within the critical task of validating cell type annotations in single-cell RNA sequencing (scRNA-seq) research, leveraging comprehensive, expertly annotated reference atlases has emerged as a gold-standard methodology. This technical guide details the process of mapping novel scRNA-seq datasets to major consortium references—the Human Cell Atlas (HCA), the Human BioMolecular Atlas Program (HuBMAP)—and specialized disease-specific databases. This mapping provides a robust, independent benchmark for annotation confidence, moving beyond cluster analysis and marker genes to a systems-level validation.

The Human Cell Atlas (HCA)

The HCA aims to create a comprehensive reference map of all human cells. Its data coordination platform, the HCA Data Portal, aggregates single-cell and spatial transcriptomics data from numerous international projects, applying standardized pipelines for primary analysis.

Key Features for Validation:

- Census of Cell Types: A growing, community-curated collection of canonical cell types across tissues.

- Standardized Annotations: Cell type labels are often generated using controlled ontologies (e.g., Cell Ontology).

- Integrated Analysis Tools: The HCA Data Explorer enables cross-dataset querying.

The Human BioMolecular Atlas Program (HuBMAP)

HuBMAP focuses on constructing a spatial framework of the human body at the cellular level. It complements the HCA by emphasizing high-resolution spatial mapping of tissues using technologies like multiplexed immunofluorescence, in situ sequencing, and spatial transcriptomics.

Key Features for Validation:

- Spatial Context: Provides the anatomical "address" for cell types, allowing validation of whether annotated cells are expected in the sampled tissue location.

- 3D Tissue Reference Maps: Publishes registered, segmented tissue maps showing zonation and microenvironments.

Disease-Specific Databases

Numerous databases house scRNA-seq data focused on specific pathologies. These are crucial for validating annotations in disease-context research.

Prominent Examples:

- Single Cell Portal (Broad Institute): Hosts disease-focused atlases for COVID-19, cancer, and more.

- CELLxGENE: A platform by CZI hosting curated, analyzed single-cell datasets, many with disease foci.

- The Cancer Genome Atlas (TCGA) & Cancer Single-Cell Atlas: Provide bulk and single-cell references for oncology.

Table 1: Core Characteristics of Major Reference Atlases for scRNA-seq Validation

| Resource | Primary Scope | Key Data Types | Typical Scale (Cells) | Spatial Context | Primary Use in Validation |

|---|---|---|---|---|---|

| Human Cell Atlas (HCA) | Comprehensive, multi-tissue cell census | scRNA-seq, snRNA-seq, scATAC-seq | 10^6 - 10^7 per integrated atlas | Limited (developing) | Defining canonical cell type gene expression profiles. |

| HuBMAP | Tissue microenvironment architecture | Spatial transcriptomics, Imaging, CODEX | Varies by tissue voxel | Core Feature | Confirming anatomical plausibility of annotated cell types. |

| CELLxGENE | Curated disease & tissue datasets | scRNA-seq, with curated metadata | 10^4 - 10^6 per study | Possible, if original study included it | Benchmarking against published, peer-reviewed annotations. |

| Single Cell Portal (Broad) | Disease mechanisms (Cancer, COVID-19) | scRNA-seq, CITE-seq, functional screens | 10^4 - 10^6 per study | Sometimes | Validating disease-associated cell states and phenotypes. |

Core Experimental Protocol: Reference-Based Annotation & Validation

This protocol describes using a reference atlas to annotate and validate a novel query scRNA-seq dataset (e.g., from a disease cohort).

Protocol: Supervised Mapping with Seurat v4/v5

Objective: To transfer cell type labels from an integrated reference atlas to a query dataset and assess confidence.

Research Reagent Solutions & Essential Materials:

Table 2: Key Tools for Reference Mapping and Validation

| Item | Function | Example/Note |

|---|---|---|

| Seurat R Toolkit (v4+) | Primary software for reference-based integration and label transfer. | Provides FindTransferAnchors() and TransferData() functions. |

| SingleR R Package | Annotation using correlation to reference bulk or scRNA-seq data. | Useful for independent, correlation-based validation. |

| Pre-processed Reference Atlas | The curated source of "ground truth" labels. | e.g., HCA immune cell atlas, HuBMAP kidney scaffold. |

| High-Performance Computing (HPC) Cluster | For computationally intensive integration steps. | ≥32 GB RAM recommended for large references. |

| scANVI / scArches (Python) | Deep learning-based alternative for mapping to a reference. | Useful for harmonizing complex batch effects. |

Step-by-Step Methodology:

Reference Selection & Download:

- Identify a reference atlas that best matches the tissue/organ and technology of your query data.

- Download the pre-processed, annotated reference object (e.g., an

.rdsfile for Seurat from a portal like CELLxGENE).

Query Dataset Pre-processing:

- Process your raw count matrix using standard Seurat workflow: QC filtering, normalization (

SCTransformrecommended), and preliminary PCA.

- Process your raw count matrix using standard Seurat workflow: QC filtering, normalization (

Anchor Finding & Label Transfer:

Find integration anchors between reference and query using

FindTransferAnchors. Use the reference's PCA or supervised PCA (sPCA) space.Transfer cell type labels and prediction scores:

Validation & Confidence Assessment:

- Analyze the

prediction.score.maxmetadata column, which contains the highest score per cell. Cells with low scores (<0.5) represent uncertain mappings. - Visualize the query cells colored by both predicted label and prediction score. Use UMAP with the reference-derived PCA dimensions.

- Perform a sanity check by visualizing canonical marker genes for the predicted types in the query dataset.

- Analyze the

Protocol: Spatial Validation with HuBMAP Data

Objective: To assess if annotated cell types are found in biologically plausible tissue locations.

- Access HuBMAP Spatial Data: Download a processed spatial dataset (e.g., a Visium or CODEX dataset) for a relevant tissue from the HuBMAP Portal.

- Cell Type Deconvolution: Use a tool like

Cell2location,SpatialDWLS, orRCTDto deconvolute the spatial spots/volumes using your validated scRNA-seq data as a signature reference. - Cross-Reference with HuBMAP Annotations: Compare your deconvolution results with the expert-annotated structures and cell types provided in the HuBMAP dataset. Co-localization provides strong spatial validation.

Visualizing the Validation Workflow

Diagram Title: Reference-Based scRNA-seq Validation Workflow.

For highest robustness, map query data to multiple references (e.g., HCA for consensus, a disease atlas for context). Discrepancies highlight uncertain or novel cell states requiring further investigation.

Diagram Title: Multi-Reference Consensus Strategy.

Integrating scRNA-seq data with major reference atlases is no longer optional for rigorous validation; it is a fundamental step. By systematically mapping to the HCA for foundational typing, HuBMAP for spatial context, and disease-specific databases for pathological relevance, researchers can produce cell type annotations that are reproducible, biologically plausible, and immediately interpretable within the global research ecosystem. This multi-reference approach significantly strengthens the thesis that annotation validation requires external, consortia-level benchmarks.

Within the broader thesis on validating cell type annotations in single-cell RNA sequencing (scRNA-seq) research, the automated transfer of labels from a reference to a query dataset is a cornerstone methodology. Tools like scPred, SingleR, and Seurat's label transfer functions are widely adopted, yet their performance is contingent on the biological context and data quality. This technical guide provides an in-depth comparison of evaluation metrics and protocols for these classifiers, ensuring robust and reproducible validation in research and drug development pipelines.

Core Performance Metrics for Annotation Classifiers

The evaluation of automated cell type classifiers hinges on a suite of metrics, each illuminating different aspects of performance, from overall accuracy to class-specific reliability. The following metrics are essential.

1. Accuracy: The proportion of total cells correctly classified. While intuitive, it can be misleading in imbalanced datasets where a majority class dominates. 2. Balanced Accuracy: The average of recall (sensitivity) obtained on each class. Corrects for dataset imbalance. 3. Precision (Positive Predictive Value): For a given cell type, the proportion of cells predicted as that type that truly belong to it. High precision indicates low false positive rates. 4. Recall (Sensitivity): For a given cell type, the proportion of truly existing cells of that type that were correctly identified. High recall indicates low false negative rates. 5. F1-Score: The harmonic mean of precision and recall, providing a single metric that balances both concerns. 6. Cohen's Kappa: Measures agreement between predicted and true labels, correcting for the agreement expected by chance. Values >0.8 indicate excellent agreement. 7. Confusion Matrix: A fundamental table showing the detailed breakdown of correct predictions and confusion between every pair of cell types.

These metrics should be calculated on a held-out test set not used during classifier training or tuning.

Quantitative Performance Comparison

Performance varies based on dataset complexity, technology, and similarity between reference and query. The following table synthesizes typical metric ranges from benchmark studies.

Table 1: Typical Metric Ranges for Classifiers on Benchmark scRNA-seq Datasets

| Metric | scPred | SingleR | Seurat Label Transfer | Notes |

|---|---|---|---|---|

| Overall Accuracy | 85-95% | 80-92% | 88-96% | Highly dependent on reference quality. |

| Balanced Accuracy | 82-93% | 78-90% | 85-94% | Superior for imbalanced datasets. |

| Mean F1-Score | 0.83-0.92 | 0.79-0.89 | 0.86-0.95 | Best single aggregate metric. |

| Cohen's Kappa | 0.80-0.90 | 0.75-0.87 | 0.82-0.93 | Accounts for chance agreement. |

| Runtime (10k cells) | Moderate | Fast | Slow to Moderate | SingleR is often fastest; Seurat can be GPU-accelerated. |

| Key Strength | Probabilistic, uses PCA/SVM | Fast, correlation-based | Integrative, uses CCA/anchors | |

| Key Limitation | Requires reference PCA model | Can be noisy for fine-grained types | Computationally intensive |

Experimental Protocol for Benchmarking

A standardized protocol is critical for fair comparison. This methodology assumes a gold-standard, annotated reference dataset and a query dataset with ground truth labels for validation.

Protocol 1: Cross-Validation on a Combined Dataset

- Data Preprocessing: Log-normalize counts for both reference and query datasets. Identify highly variable genes (2000-3000) using the reference.

- Integration & Splitting: Use a mild integration method (e.g., Seurat's CCA or Harmony) to combine datasets while removing batch effects. Randomly split the combined data into training (70%) and test (30%) sets, stratifying by cell type.

- Classifier Training on Training Set:

- scPred: Extract principal components (PCs) from the reference portion of the training set. Train a support vector machine (SVM) model per cell type using these PCs.

- SingleR: Use the reference portion of the training set as the labeled reference directly. No explicit training phase.

- Seurat: Train a joint multi-dataset PCA on the training set. Find transfer anchors between the reference and query portions of the training set. Transfer labels using the

TransferDatafunction.

- Prediction on Test Set: Apply each trained classifier to the held-out test set.

- Evaluation: Compare predictions against ground truth for the test set. Calculate all metrics in Table 1. Generate a multi-panel figure containing per-class bar plots for precision/recall and a combined confusion matrix.

Title: Benchmarking Workflow for Classifier Evaluation

Protocol 2: Leave-One-Dataset-Out Validation This protocol tests generalizability to entirely new studies.

- Reference Selection: Designate one or multiple fully annotated datasets as the reference.

- Query as Entire External Study: Use a completely separate, annotated dataset as the query. No genes or cells are shared between reference and query during training.

- Classifier Application: Apply classifiers directly without combined training.

- scPred: Project query onto reference PCA space; classify with pre-trained SVM.

- SingleR: Run directly with the reference dataset.

- Seurat: Perform reference-based mapping (

FindTransferAnchors,MapQuery).

- Evaluation: Compare predicted labels for the external query to its ground truth. This tests robustness to batch effects and biological variation.

Advanced Metrics and Diagnostic Visualizations

Beyond standard metrics, these diagnostics are crucial for deployment.

Prediction Score Distributions: Examine the distribution of classification scores (e.g., scPred's max.score, Seurat's prediction.score.max). Low scores indicate uncertain predictions, often corresponding to mislabels or novel cell states.

Table 2: Interpretation of Prediction Score Diagnostics

| Score Pattern | Potential Issue | Recommended Action |

|---|---|---|

| Bimodal distribution (high & low peaks) | Clear vs. ambiguous cells | Flag low-score cells for manual review or label as "Unassigned". |

| Uniformly low scores | Poor reference-query match or low-quality query | Re-evaluate reference choice or query data QC. |

| High scores but low accuracy | Overconfident, incorrect model | Check for severe batch effect or reference label errors. |

Confusion Network Analysis: Visualize persistent confusion between specific cell types (e.g., CD4+ T cell subtypes) across tools to identify biologically ambiguous populations.

Title: Common Cell Type Confusion Network

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Automated Classification & Validation

| Item / Solution | Function in Validation | Example / Note |

|---|---|---|

| Annotated Reference Atlas | Gold-standard for training and benchmarking. | Human Cell Landscape, Mouse Cell Atlas, disease-specific atlases. |

| Benchmarking Datasets | Provide ground truth for controlled tests. | PBMC datasets from 10x Genomics, pancreatic islet data. |

| scRNA-seq Analysis Suite | Primary toolkits containing classifiers. | Seurat (R), Scanpy (Python: scANVI, CellTypist). |

| Metric Calculation Library | Standardized computation of performance metrics. | scikit-learn (Python: metrics), caret (R). |

| Visualization Package | Generate confusion matrices, UMAPs with labels, score plots. | ggplot2 (R), matplotlib/seaborn (Python). |

| High-Performance Compute (HPC) | Manages computationally intensive anchor finding and integration. | Cloud services (AWS, GCP) or local clusters with SLURM. |

| Containerization Software | Ensures reproducibility of software environment. | Docker, Singularity. |

Validating automated cell type annotations requires a multi-faceted approach grounded in rigorous metrics. For robust thesis research or drug development pipelines:

- Never rely on a single metric. Report a suite including Balanced Accuracy, F1-score, and Cohen's Kappa.

- Use prediction scores as uncertainty indicators. Implement a score threshold to flag cells for manual re-evaluation.

- Context is critical. Choose a reference atlas that matches your query's biological context (species, tissue, disease state).

- Visualize errors. Use confusion matrices and UMAPs to understand systematic misclassifications.

- Benchmark multiple tools. As shown, performance is tool- and data-dependent. scPred offers probabilistic rigor, SingleR excels in speed, and Seurat provides deep integration.

Automated classification is a powerful accelerant, but its output must be validated with the same rigor applied to wet-lab experiments. This systematic evaluation framework ensures that downstream biological interpretations and translational findings are built upon a foundation of credible cell type annotations.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to deconvolve cellular heterogeneity. However, cell type annotation remains a significant challenge, often relying on reference datasets and marker genes that can be context-dependent or insufficiently specific. This technical guide, framed within the broader thesis on validating cell type annotations, details a multimodal framework integrating protein expression (CITE-seq), chromatin accessibility (ATAC-seq), and spatial context (Spatial Transcriptomics) to achieve robust, cross-validated annotations.

Core Technologies and Their Synergistic Roles

Each technology provides a distinct, orthogonal layer of evidence for cell identity.

Cellular Indexing of Transcriptomes and Epitopes by Sequencing (CITE-seq): Measures transcriptomes and surface protein abundance simultaneously using antibody-derived tags (ADTs). It provides direct, quantitative protein-level validation of transcriptional marker-based annotations. Assay for Transposase-Accessible Chromatin using Sequencing (scATAC-seq): Identifies regions of open chromatin, informing on regulatory potential and cell state. It validates scRNA-seq annotations by confirming the accessibility of marker gene promoters and lineage-specific enhancers. Spatial Transcriptomics (e.g., 10x Visium, MERFISH): Preserves the architectural context of cells within tissue. It validates clustered annotations by confirming that putative cell types reside in biologically plausible tissue locations and neighborhoods.

Integrated Experimental Workflow

The following diagram outlines the core logic and workflow for multimodal validation.

Title: Multimodal Validation Workflow for Cell Typing

Detailed Methodological Protocols

Protocol: CITE-seq for Transcriptome & Protein Capture

Principle: Stain a single-cell suspension with a panel of DNA-barcoded antibodies, followed by co-encapsulation and library construction for both cDNA and Antibody-Derived Tags (ADTs). Key Steps:

- Cell Preparation: Generate a high-viability (>90%) single-cell suspension. Count and adjust concentration to 700-1200 cells/µL.

- Antibody Staining: Incubate 1x10^5 - 1x10^6 cells with titrated CITE-seq antibody cocktail (in PBS + 0.04% BSA) for 30 min on ice. Wash twice with cell staining buffer.

- Multimodal Capture: Load stained cells onto a 10x Genomics Chromium Chip (Single Cell 5' or 3' v3.1 with Feature Barcode technology) per manufacturer's instructions.

- Library Prep: Generate separate cDNA and ADT libraries. Use the Sample Index PCR set for cDNA and the Feature Barcode PCR set for ADT amplification.

- Sequencing: Pool libraries. Sequence cDNA library to standard depth (e.g., 50,000 reads/cell). Sequence ADT library to lower depth (e.g., 5,000 reads/cell).

Protocol: scATAC-seq for Chromatin Accessibility

Principle: Use a hyperactive Tn5 transposase to insert sequencing adapters into accessible genomic regions, followed by single-cell encapsulation and library amplification. Key Steps:

- Nuclei Isolation: Lyse cells in cold lysis buffer (10mM Tris-HCl, pH 7.4, 10mM NaCl, 3mM MgCl2, 0.1% Tween-20, 0.1% Nonidet P40, 0.01% Digitonin, 1% BSA) for 3-5 min on ice. Quench and wash with nuclei buffer.

- Transposition: Incubate ~10,000 nuclei with pre-loaded Tn5 transposase (from 10x Chromium Next GEM ATAC kit) at 37°C for 60 min.

- Single-Cell Capture: Load transposed nuclei onto a 10x Chromium Chip for ATAC-seq.

- Library Construction: Perform PCR amplification with indexed primers to create the final library.

- Sequencing: Sequence on an Illumina platform with paired-end reads (e.g., 2x50 bp), targeting ~25,000 fragments per nucleus.

Protocol: Integration with Spatial Transcriptomics (10x Visium)

Principle: Align multimodal single-cell data to a spatially resolved reference map. Key Steps:

- Spatial Library Prep: Generate spatial gene expression data from a serial or adjacent tissue section using the 10x Visium platform (H&E staining, imaging, permeabilization, cDNA synthesis, and library construction).

- Data Alignment: Use computational tools (

Cell2location,Tangram,SpatialDWLS) to deconvolve or map the scRNA-seq/CITE-seq derived cell type signatures onto the spatial spots. - Validation: Assess if transcriptionally defined cell types localize to histologically and biologically expected regions (e.g., keratinocytes in epidermis, glomeruli in kidney).

Data Integration & Analysis Pathway

The computational integration of these datasets is critical. The following diagram illustrates the key analytical steps.

Title: Computational Integration Pathway for Multimodal Data

Table 1: Comparative Metrics of Multimodal Validation Technologies

| Technology | Measured Modality | Typical Cells/Experiment | Key Validation Metric | Common Concordance Rate with scRNA-seq* |

|---|---|---|---|---|

| CITE-seq | mRNA + 10-200 Surface Proteins | 5,000 - 10,000 | Protein/RNA correlation of marker genes | 85-95% for major types |

| scATAC-seq | Genome-wide Chromatin Accessibility | 5,000 - 50,000 | Gene Activity Score vs. RNA expression | 70-90% (challenged for fine subtypes) |

| Spatial Transcriptomics (Visium) | mRNA in Tissue Context | ~5,000 spots (multi-cell) | Histologically-plausible localization | >90% for spatially segregated types |

*Concordance rates are approximate and highly dependent on tissue quality, panel design, and analysis parameters.

Table 2: Essential Software Tools for Integrated Analysis

| Tool Name | Primary Function | Key Output |

|---|---|---|

| Seurat (v4+) | WNN for CITE-seq/RNA integration; spatial mapping | Unified multimodal clusters |

| Signac | scATAC-seq analysis & RNA/ATAC integration | Linked peaks & genes, co-embeddings |

| Cell2location | Spatial mapping of scRNA-seq to Visium data | Cell density maps per type |

| MOFA+ | Multi-omics factor analysis | Shared latent factors across modalities |

The Scientist's Toolkit: Essential Research Reagents & Kits

Table 3: Key Reagent Solutions for Multimodal Validation Experiments

| Item | Supplier Example | Function in Validation Workflow |

|---|---|---|

| TotalSeq Antibodies | BioLegend | DNA-barcoded antibodies for CITE-seq; directly link protein epitope to cell barcode. |

| Chromium Next GEM Single Cell 5' Kit v2 | 10x Genomics | Enables simultaneous gene expression and protein detection (CITE-seq) library prep. |

| Chromium Next GEM ATAC Kit | 10x Genomics | Library prep for single-cell chromatin accessibility profiling. |

| Chromium Visium Spatial Tissue Optimization & Gene Expression Kits | 10x Genomics | Optimize permeabilization and generate spatially barcoded cDNA libraries from tissue sections. |

| Digitonin | MilliporeSigma | Critical permeabilization agent for nuclei isolation in scATAC-seq protocols. |

| Hyperactive Tn5 Transposase | Illumina / DIY | Enzyme that simultaneously fragments and tags accessible chromatin. |

| Dual Index Kit TT Set A | 10x Genomics | Provides unique sample indices for multiplexing multiple CITE-seq/ATAC libraries. |

| Ribonuclease Inhibitor | Takara / NEB | Protects RNA integrity during single-cell suspension preparation and staining steps. |

| BSA (0.04% in PBS) | MilliporeSigma | Used as a blocking and wash buffer component to reduce nonspecific antibody binding in CITE-seq. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to deconstruct tissue heterogeneity. Cell type annotation, typically via cluster analysis and marker gene expression, assigns putative identities. However, these annotations, often derived from reference databases or prior knowledge, remain hypothetical. Differential Expression (DE) analysis serves as a critical, orthogonal validation step to confirm functional identity by comparing transcriptomic profiles against well-characterized controls or between stringent conditions. This guide details the experimental and computational framework for using DE analysis as a robust validation tool within a cell type annotation pipeline.

Core Experimental Design for Validation

A robust validation design moves beyond cluster marker discovery.

2.1. Key Comparison Paradigms:

- Benchmarking: Annotated clusters vs. FACS-sorted or bulk RNA-seq samples of known identity.

- Perturbation Response: Annotated clusters vs. themselves after a specific ligand stimulation or genetic perturbation expected to elicit a known, cell-type-specific response.

- Pseudotime/State Transitions: DE analysis between anchor points (e.g., progenitor vs. mature cell) to confirm expected differentiation trajectory.

2.2. Essential Experimental Protocols:

Protocol A: In Vitro Stimulation Followed by scRNA-seq for Functional Validation

- Cell Preparation: Isolate live cells of interest using FACS based on cluster-defining surface markers (e.g., CD45+CD3+ for T cells).

- Stimulation: Split cells into control (unstimulated) and experimental conditions.

- For T cells: Plate cells with anti-CD3/CD28 antibodies (5 µg/mL each) and IL-2 (100 IU/mL) for 24-48 hours.

- Include protein transport inhibitors (e.g., Brefeldin A) if cytokine production is the readout.

- Library Preparation & Sequencing: Process control and stimulated cells separately through the same scRNA-seq platform (e.g., 10x Genomics). Maintain consistent cell numbers and sequencing depth.

- Analysis: Integrate datasets, re-cluster, and perform DE analysis between control and stimulated cells within the re-identified cluster of interest. Validate known activation signatures (e.g., NF-κB, AP-1 target genes).

Protocol B: Benchmarking Using Public Bulk RNA-seq Data

- Reference Data Curation: Download bulk RNA-seq data (e.g., from GEO) for purified cell types. Ensure relevance of tissue and disease model.

- Pseudo-bulk Creation: Aggregate counts from all cells within each annotated scRNA-seq cluster.

- DE Analysis: Perform bulk RNA-seq DE tools (e.g., DESeq2) comparing each pseudo-bulk profile to its corresponding purified reference profile.

- Validation Metric: Assess enrichment of cell-type-defining gene sets from independent studies in the DE results.

Computational Workflow & Data Interpretation

3.1. Standardized DE Analysis Pipeline: The table below compares common DE methods for single-cell data.

Table 1: Comparison of Differential Expression Methods for scRNA-seq Validation

| Method | Core Algorithm | Best For Validation Because... | Key Consideration |

|---|---|---|---|

| Wilcoxon Rank-Sum | Non-parametric test on normalized counts. | Speed, simplicity, effective for identifying distinct marker sets. | Sensitive to cell number per group. |

| MAST | Generalized linear model with hurdle component. | Explicitly models dropouts, ideal for stimulated vs. control designs. | More computationally intensive. |

| DESeq2 (pseudo-bulk) | Negative binomial GLM on aggregated counts. | Robust variance estimation, direct benchmarking against bulk data. | Loses single-cell resolution. |

| limma-voom (pseudo-bulk) | Linear modeling of log-CPM with precision weights. | High specificity, excellent for well-powered designs. | Assumes normal distribution of log-counts. |

3.2. Quantitative Outputs for Validation: DE analysis for validation must yield quantitatively stringent outputs.

Table 2: Key Quantitative Metrics for Validating Functional Identity via DE

| Metric | Target Threshold | Interpretation for Validation | ||

|---|---|---|---|---|

| Number of DE Genes | Concordance with literature (e.g., >100 genes for strong activation). | Too few genes suggests weak or incorrect response. | ||

| Enrichment of Canonical Pathways | FDR < 0.01 & Normalized Enrichment Score (NES) | > 1.5 | Confirms expected biological functions are active. | |

| Overlap with Gold-Standard Sets | Jaccard Index > 0.2 or Hypergeometric p < 1e-5 | Confirms identity against independent datasets. | ||

| Log2 Fold Change | Majority of expected genes show | LFC | > 0.58 (1.5x linear change) | Ensures biological, not technical, differences. |

Visualization of Key Concepts

Diagram Title: Logical Workflow for DE-Based Cell Type Validation

Diagram Title: Experimental Pipeline for Stimulation-Response Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Functional DE Validation Experiments

| Reagent / Material | Function in Validation Experiment | Example Product/Catalog |

|---|---|---|

| Anti-CD3/CD28 Antibodies | Polyclonal T-cell receptor stimulation to validate T-cell identity and function. | Gibco Dynabeads Human T-Activator CD3/CD28 |

| Recombinant Cytokines (IL-2, IFN-γ, etc.) | Cell-type-specific priming and activation. | PeproTech human IL-2, carrier-free |

| Brefeldin A / Monensin | Protein transport inhibitors to intracellularly accumulate cytokines for detection. | BioLegend Protein Transport Inhibitor Cocktail |

| FACS Antibodies (Cell Surface) | Fluorescence-activated cell sorting (FACS) to isolate pure populations for benchmarking. | BioLegend Anti-Human CD45 Pacific Blue |

| Viability Dye (e.g., DAPI, PI) | Exclusion of dead cells during sorting to improve RNA quality. | Thermo Fisher Scientific DAPI (4',6-Diamidino-2-Phenylindole) |

| Chromium Next GEM Chip K | Generating single-cell partitions for 10x Genomics library prep. | 10x Genomics Chromium Next GEM Chip K Single Cell Kit |

| Cell Ranger Software | Primary analysis pipeline for demultiplexing, alignment, and counting. | 10x Genomics Cell Ranger (v7.0+) |

| Seurat / Scanpy R/Python Packages | Comprehensive toolkits for integrated scRNA-seq analysis and DE testing. | CRAN: Seurat v5, PyPI: scanpy v1.9 |

| MSigDB (Molecular Signatures Database) | Curated gene sets for pathway enrichment analysis of DE results. | Broad Institute GSEA MSigDB C2 & C7 collections |

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to deconstruct tissue heterogeneity. However, the subsequent step of annotating discrete cell populations remains a significant challenge, prone to technical artifacts and biological misinterpretation. Validation is therefore not a peripheral concern but a core component of robust single-cell analysis. This guide details how three specific visualizations—UMAP, Dot Plots, and Violin Plots—serve as essential, complementary diagnostic tools for validating hypothesized cell type annotations, ensuring biological fidelity and reproducible results.

Core Diagnostic Visualizations: Principles and Applications

UMAP: Assessing Population Coherence and Segregation

Uniform Manifold Approximation and Projection (UMAP) is a non-linear dimensionality reduction technique used to visualize high-dimensional scRNA-seq data in two dimensions. For validation, it is not a clustering tool per se, but a canvas upon which clustering and annotation results are evaluated.

Diagnostic Purpose:

- Coherence: Do cells with the same annotation form a contiguous, tight manifold?

- Segregation: Are different annotated populations well-separated, indicating distinct transcriptomic states?

- Outliers: Are there cells lying between major clusters, suggesting intermediate states, doublets, or misannotation?

Interpretation Workflow:

- Generate UMAP embedding using a stable set of parameters (e.g.,

n_neighbors=30,min_dist=0.3). - Color cells by their assigned cell type label.

- Diagnose: Scattered colors within a visual cluster imply poor coherence. Overlapping colors between clusters imply poor segregation, necessitating re-examination of markers or clustering resolution.

Dot Plots: Validating Marker Gene Specificity and Expression Patterns

Dot plots provide a compact, quantitative summary of gene expression across annotated cell groups. They visualize two key dimensions: the proportion of cells expressing a gene (dot size) and the average expression level (color intensity).

Diagnostic Purpose:

- Specificity Check: Do canonical marker genes show enriched expression in their expected cell types?

- Exclusivity Check: Are putative markers truly restricted to one population or shared, indicating a common functional state?

- Annotation Rationale: Provides an immediate, communicable snapshot of the evidence underlying annotations.

Interpretation Workflow:

- Define a panel of canonical marker genes for expected cell types (e.g., CD3E for T cells, MS4A1 for B cells, FCGR3A for monocytes).

- Plot average expression and percent expressed across all annotated clusters.

- Diagnose: Expected patterns (e.g., high INS expression only in beta cells) confirm annotations. Unexpected expression (e.g., epithelial marker in immune cluster) flags potential contamination or misannotation.

Violin Plots: Interrogating Expression Distribution and Unimodality

Violin plots depict the full distribution of expression (probability density) for a single gene across annotated populations. They reveal nuances obscured by the summary statistics of dot plots.

Diagnostic Purpose:

- Distribution Shape: Is the expression within an annotated cluster unimodal (suggesting purity) or bimodal (suggesting a mixed population)?

- Expression Magnitude: What is the full range of expression, including outliers?

- Detailed Comparison: Enables direct statistical comparison of expression distributions between two specific clusters for a disputed marker.

Interpretation Workflow:

- Select key marker genes and clusters requiring deep validation.

- Generate violin plots for these genes across relevant clusters.

- Diagnose: A bimodal distribution within one annotation suggests a subset of cells may belong to a different type. A long tail of high expression may indicate an activated sub-state.

Integrated Validation Workflow

The power of these tools is multiplicative when used in a structured workflow. The following diagram outlines a standard diagnostic cycle for annotation validation.

Diagram: The scRNA-seq Annotation Validation Cycle

Recent benchmarking studies have quantified the impact of rigorous visual validation on annotation accuracy. The table below summarizes key findings.

Table 1: Impact of Multi-Visual Diagnostic Strategies on Annotation Accuracy