In Silico Perturbation Modeling with Single-Cell Foundation Models: A New Frontier for Predictive Biology

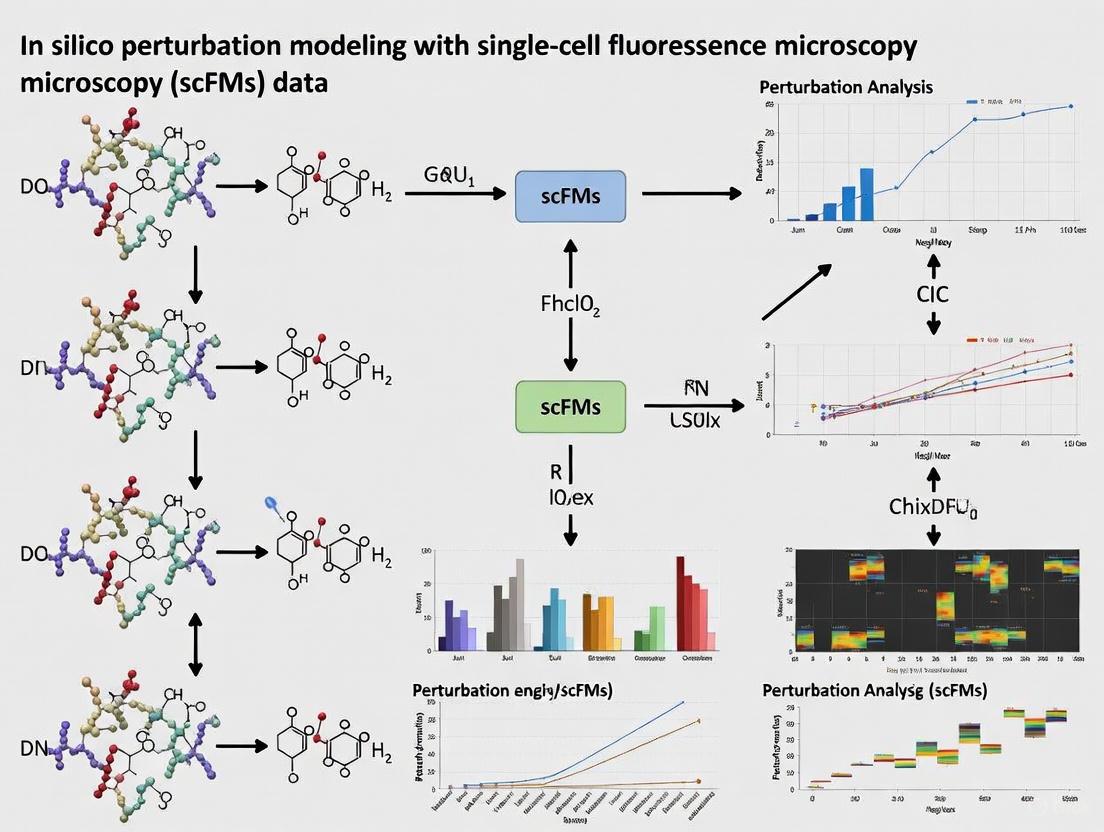

In silico perturbation modeling using single-cell foundation models (scFMs) promises to revolutionize biological discovery and therapeutic development by predicting cellular responses to genetic and chemical interventions.

In Silico Perturbation Modeling with Single-Cell Foundation Models: A New Frontier for Predictive Biology

Abstract

In silico perturbation modeling using single-cell foundation models (scFMs) promises to revolutionize biological discovery and therapeutic development by predicting cellular responses to genetic and chemical interventions. This article explores the foundational concepts of scFMs, their architectural principles, and their application in predicting perturbation effects. It critically examines current methodological approaches, including the emerging 'closed-loop' fine-tuning paradigm, which significantly enhances predictive accuracy by iteratively incorporating experimental data. Furthermore, the article addresses the pressing challenges and limitations highlighted by recent rigorous benchmarks, which show that current models often struggle to outperform simple linear baselines. Finally, it provides a comprehensive overview of the validation landscape, synthesizing insights from multiple benchmarking studies to guide researchers in evaluating model performance and to outline a path forward for realizing the full potential of virtual cell models in biomedical research.

Demystifying Single-Cell Foundation Models: From Core Concepts to Architectural Principles

What Are Foundation Models? Defining the Self-Supervised Learning Paradigm for Biology

Foundation models represent a revolutionary class of artificial intelligence systems trained on vast datasets using self-supervised learning objectives, enabling them to develop generalized representations that can be adapted to diverse downstream tasks without task-specific training [1]. In biology, these models are transforming how researchers analyze complex biological systems by learning fundamental patterns from massive, unlabeled datasets including genomic sequences, single-cell transcriptomes, and protein structures [2]. The core innovation of foundation models lies in their self-supervised pretraining phase, where models learn to predict masked or contextually relevant elements within their input data, thereby capturing deep biological relationships without human-provided labels [1] [3].

The application of foundation models to biological data represents a paradigm shift from traditional supervised approaches, which require extensive labeled datasets that are often expensive and time-consuming to create [3]. Instead, biological foundation models leverage the enormous quantities of unlabeled data being generated by modern high-throughput technologies, from single-cell sequencing platforms to genomic databases [1] [2]. This approach has proven particularly powerful in biological domains where labeled data is scarce but unlabeled data is abundant, enabling models to learn the fundamental "language" of biology—whether that be the grammar of gene regulation, the syntax of protein folding, or the vocabulary of cellular states [1].

Table: Key Characteristics of Biological Foundation Models

| Characteristic | Description | Biological Examples |

|---|---|---|

| Self-Supervised Pretraining | Models learn by predicting masked portions of input data without human labeling | Predicting masked genes in single-cell data [1] |

| Transfer Learning | Pretrained models adapt to new tasks with minimal additional training | Geneformer fine-tuned for disease-specific predictions [2] |

| Scalability | Models trained on millions to billions of data points | scGPT trained on ~30 million cells [2] |

| Multi-task Capability | Single model handles diverse prediction tasks | LPM predicts perturbation effects and identifies mechanisms [4] |

Core Concepts: Self-Supervised Learning in Biological Contexts

Self-supervised learning (SSL) represents the foundational training paradigm that enables foundation models to learn meaningful representations from unlabeled biological data [3]. In biological contexts, SSL methods create training signals directly from the data itself by designing pretext tasks that require the model to learn intrinsic patterns and relationships [3]. For genomic sequences, this might involve predicting missing nucleotides or reverse-complement sequences; for single-cell data, this typically means predicting masked gene expressions based on the context of other genes within the same cell [1] [3].

The transformer architecture has emerged as the dominant backbone for biological foundation models due to its ability to capture long-range dependencies and complex relationships within sequential data [1]. In single-cell biology, transformers process gene expression profiles by treating individual genes as "tokens" analogous to words in a sentence, allowing the model to learn how genes co-express and regulate one another across diverse cellular contexts [1]. Models like scBERT and Geneformer employ bidirectional attention mechanisms that consider all genes simultaneously, enabling comprehensive understanding of gene-gene interactions [1] [5]. Alternatively, decoder-based models like scGPT use autoregressive approaches that predict gene expressions sequentially, similar to how language models generate text [1].

Tokenization strategies form a critical component of biological foundation models, determining how raw biological data is transformed into model-processable units [1]. For single-cell data, this involves converting gene expression profiles into discrete tokens, typically by binning expression values or ranking genes by expression level within each cell [1]. A key challenge in this process is that gene expression data lacks natural sequential ordering—unlike words in a sentence—requiring researchers to impose artificial orderings based on expression magnitude or other criteria [1]. Advanced tokenization approaches may incorporate additional biological context, such as gene ontology terms or chromosomal locations, to enrich the input representations [1].

Application to In Silico Perturbation Modeling with scFMs

Single-cell foundation models (scFMs) have emerged as powerful tools for in silico perturbation modeling, enabling researchers to simulate cellular responses to genetic and chemical perturbations without conducting expensive wet-lab experiments [4] [6]. These models learn the fundamental principles of cellular organization from large-scale single-cell atlases, capturing how gene networks interact and respond to disturbances [1]. When applied to perturbation modeling, scFMs can predict transcriptomic changes resulting from gene knockouts, drug treatments, or other interventions, significantly accelerating biological discovery and drug development [4].

The Large Perturbation Model (LPM) represents a cutting-edge approach that specifically addresses the challenges of integrating heterogeneous perturbation data across different experimental contexts, readout modalities, and perturbation types [4]. LPM employs a disentangled architecture that separately represents perturbations (P), readouts (R), and contexts (C) as distinct dimensions, enabling the model to learn generalizable perturbation-response rules that transfer across biological settings [4]. This approach has demonstrated superior performance in predicting post-perturbation transcriptomes compared to existing methods, while also enabling the identification of shared molecular mechanisms between chemical and genetic perturbations [4].

Table: Performance Comparison of Perturbation Modeling Approaches

| Method | Architecture | Perturbation Types Supported | Prediction Accuracy (Pearson R) | Key Applications |

|---|---|---|---|---|

| LPM [4] | PRC-disentangled transformer | Genetic, chemical, multi-omics | 0.72-0.89 (across contexts) | Mechanism identification, drug-target mapping |

| Geneformer [4] [2] | Transformer encoder | Genetic | 0.61-0.75 | Network dynamics, disease modeling |

| scGPT [4] [5] | GPT-style decoder | Genetic, chemical | 0.65-0.81 | Cell annotation, multi-omic integration |

| CPA [4] | Autoencoder | Chemical, combinations | 0.58-0.72 | Drug combination prediction |

| GEARS [4] | Graph-enhanced MLP | Genetic | 0.63-0.78 | Genetic interaction mapping |

In pharmaceutical research, perturbation models are increasingly used to identify novel therapeutic applications for existing compounds and to understand their mechanisms of action [4] [2]. For example, LPM has demonstrated the ability to cluster pharmacological inhibitors with genetic perturbations targeting the same genes, effectively mapping compound-CRISPR relationships in a unified latent space [4]. This approach identified the anti-inflammatory properties of pravastatin, which clustered near non-steroidal anti-inflammatory drugs in the perturbation space—a finding corroborated by clinical observations [4]. Similarly, scGPT-enabled analysis of tumor-associated macrophages identified C5aR1 gene expression as a key modulator of PARP inhibitor resistance in breast cancer models, suggesting promising therapeutic targets [2].

Experimental Protocols for In Silico Perturbation Modeling

Protocol 1: Setting Up the Computational Environment

Objective: Establish a standardized environment for scFM-based perturbation analysis using containerized solutions to ensure reproducibility across research teams [5].

Materials:

- Computing resources: GPU cluster with ≥16GB VRAM (e.g., NVIDIA A100 or V100)

- Container platform: Docker or Singularity

- BioLLM framework [5]

- Pretrained scFM weights (scGPT, Geneformer, or LPM)

Procedure:

- Environment Configuration:

- Create a Dockerfile based on PyTorch 2.0+ and Python 3.9+

- Install BioLLM using:

pip install biollm - Verify CUDA compatibility and flash attention support

Data Preprocessing:

- Implement quality control using Scanpy or Seurat

- Filter cells with mitochondrial gene percentage >20%

- Remove genes expressed in <10 cells

- Normalize using scTransform or SCANPY's

pp.normalize_total

Model Initialization:

- Load pretrained weights through BioLLM's standardized API

- Configure tokenization parameters matching the pretraining setup

- Set attention mechanisms and hidden dimensions according to model specifications

Protocol 2: Performing In Silico Perturbations with LPM

Objective: Simulate transcriptional responses to genetic and chemical perturbations using the Large Perturbation Model architecture [4].

Materials:

- LPM implementation (available from original publication)

- Perturbation database (e.g., LINCS, DepMap)

- Reference single-cell dataset (e.g., CELLxGENE census)

Procedure:

- Data Integration:

- Format perturbation data as (P, R, C) tuples

- Align gene identifiers across datasets using HGNC symbols

- Batch correct using Harmony or SCVI if multiple datasets are combined

Model Inference:

- Input desired perturbation (e.g., "KRAS knockout" or "doxorubicin treatment")

- Specify biological context (e.g., "A549 lung cancer cells")

- Define readout parameters (e.g., "transcriptome 48h post-perturbation")

- Execute forward pass through LPM to obtain predicted expression profile

Result Interpretation:

- Calculate differential expression compared to unperturbed control

- Perform pathway enrichment analysis using GO, KEGG, or Reactome

- Compare predicted expression changes to empirical data when available

Protocol 3: Cross-Model Benchmarking with BioLLM

Objective: Compare performance across different scFMs for perturbation prediction tasks using standardized evaluation metrics [5].

Materials:

- BioLLM framework installation [5]

- Benchmark dataset with empirical perturbation responses

- Evaluation metrics suite (silhouette scores, RMSE, Pearson correlation)

Procedure:

- Dataset Preparation:

- Curate ground truth perturbation dataset (e.g., CRISPR screens with scRNA-seq readouts)

- Split data into training/validation/test sets (70/15/15%)

- Implement k-fold cross-validation with 5 folds

Model Comparison:

- Initialize multiple scFMs (scGPT, Geneformer, scBERT) through BioLLM APIs

- Fine-tune each model on identical training data

- Generate predictions for held-out test perturbations

- Calculate metrics: Pearson R (gene-level), ASW (cell embedding), RMSE (expression)

Results Analysis:

- Perform paired t-tests between model performances

- Visualize using UMAP/t-SNE for embedding quality assessment

- Identify model strengths by perturbation type and cellular context

Table: Research Reagent Solutions for scFM Perturbation Modeling

| Resource | Type | Function | Example Implementation |

|---|---|---|---|

| BioLLM [5] | Software Framework | Standardized API for multiple scFMs | Unified interface for scGPT, Geneformer, scBERT |

| CELLxGENE [1] | Data Repository | Curated single-cell datasets | >100 million standardized cells for model training |

| LPM [4] | Specialized Model | Multi-modal perturbation prediction | PRC-disentangled architecture for cross-context prediction |

| scvi-tools [2] | Analysis Suite | Probabilistic modeling of single-cell data | Differential expression, dimensionality reduction |

| TabPFN [7] | Tabular Foundation Model | Small-sample tabular predictions | Bayesian inference for experimental design |

| Self-GenomeNet [3] | SSL Method | Genomic sequence representation | Reverse-complement aware pre-training |

The integration of foundation models into biological research requires both computational resources and specialized knowledge. For researchers beginning with scFMs, starting with user-friendly frameworks like BioLLM provides immediate access to multiple models through standardized APIs, eliminating architectural inconsistencies and simplifying benchmarking [5]. When designing perturbation studies, careful consideration of model selection is crucial—encoder-based models like Geneformer excel at gene-level tasks and network inference, while decoder-based models like scGPT demonstrate stronger performance in cell-level predictions and batch effect correction [5].

Data quality remains paramount for successful perturbation modeling. Researchers should prioritize datasets with appropriate controls, sufficient replication, and minimal technical artifacts. For novel therapeutic applications, integration across multiple evidence streams—including foundation model predictions, electronic health records, and experimental validation—creates a compelling case for candidate targets [4] [2]. As these technologies mature, the scientific community is developing standards for reporting model predictions and establishing benchmarks for methodological comparisons, further accelerating the adoption of foundation models in biological discovery and drug development.

Single-cell foundation models (scFMs) represent a transformative approach in computational biology, drawing direct inspiration from large language models (LLMs) in natural language processing (NLP). The core concept involves reframing cellular biology as a linguistic system, where individual cells are treated as "sentences" and the genes within them as "words" or "tokens" [8]. This analogy allows researchers to apply the powerful transformer architecture, which has revolutionized machine understanding of human language, to decipher the complex "language" of cellular function and state [8]. This paradigm shift is particularly impactful for in silico perturbation modeling, where the goal is to predict how targeted genetic interventions might alter cellular states, potentially accelerating therapeutic discovery [9].

Foundational Concepts: From Biological Data to Linguistic Units

The Tokenization Process: Converting Gene Expression to Tokens

Tokenization is the critical first step that converts raw, non-sequential gene expression data into a structured format that transformer models can process. Unlike words in a sentence, genes have no inherent order, requiring scFMs to implement specific strategies to impose sequence [8].

- Gene Identity Tokens: Each gene is represented by a unique identifier token, analogous to a word in a dictionary. These tokens are typically converted into dense vector representations (embeddings) [8] [10].

- Expression Value Encoding: A gene's expression level in a given cell must be incorporated alongside its identity. Common methods include:

- Value Binning: Discretizing continuous expression values into categorical bins (e.g., low, medium, high) [10].

- Value Projection: Using a neural network layer to project the continuous value into an embedding vector [10].

- Rank-based Ordering: Ranking genes by their expression level within a cell and using this order to create the sequence [8] [10].

- Special Tokens: Models often include additional tokens to provide context, such as

[CELL]tokens to represent cell-level information, or modality indicators for multi-omics data [8].

Model Architecture: The Transformer Backbone

Most scFMs are built on the transformer architecture, which uses self-attention mechanisms to weigh the importance of all genes (tokens) when processing the information of each individual gene [8]. Two primary architectural variants are employed:

- Encoder-based Models (e.g., scBERT, Geneformer): These use a bidirectional attention mechanism, meaning each gene token can attend to all other genes in the cell simultaneously. This is well-suited for classification and embedding tasks [8].

- Decoder-based Models (e.g., scGPT): These use a unidirectional (masked) attention mechanism, where each token can only attend to previous tokens in the sequence. This architecture is often used for generative tasks, such as predicting masked genes [8].

Table 1: Overview of Prominent Single-Cell Foundation Models

| Model Name | Architecture Type | Primary Pretraining Task | Input Gene Count | Key Differentiating Feature |

|---|---|---|---|---|

| Geneformer [10] | Encoder | Masked Gene Modeling (Gene ID prediction) | 2,048 (ranked) | Uses ranked gene lists; lookup table for gene embeddings. |

| scGPT [10] | Encoder (with masking) | Iterative Masked Gene Modeling (Value prediction) | ~1,200 (HVGs) | Value binning; multi-omics capability; generative pretraining. |

| scFoundation [10] | Asymmetric Encoder-Decoder | Read-depth-aware Masked Gene Modeling | ~19,000 | Uses nearly the full transcriptome; value projection. |

| UCE [10] | Encoder | Binary prediction of gene expression | 1,024 (genomic position) | Uses protein sequence embeddings from ESM-2. |

The diagram below illustrates the core workflow of how a single cell's data flows through a typical scFM based on the transformer architecture.

Figure 1: From Cell to Embedding: The Core scFM Workflow. This diagram visualizes the process of converting a cell's gene expression profile into a unified latent representation via tokenization and transformer layers.

Protocols for In Silico Perturbation Modeling

In silico perturbation (ISP) is a premier application of scFMs, enabling the prediction of a cell's transcriptional state after a hypothetical genetic manipulation (e.g., gene knockout or overexpression).

Protocol 1: Open-Loop In Silico Perturbation

This is the baseline method for predicting perturbation effects without incorporating prior experimental perturbation data into the model fine-tuning [9].

Model Fine-Tuning for State Classification:

- Input: A dataset of single-cell RNA sequencing (scRNA-seq) data from two biological states (e.g., healthy vs. diseased, resting vs. activated T-cells) [9].

- Process: A pretrained scFM (e.g., Geneformer) is fine-tuned on this dataset to accurately classify a cell's state. This teaches the model the transcriptomic features distinguishing the states.

- Output: A fine-tuned model whose latent space is structured to separate the two states.

Perturbation Simulation and Prediction:

- Input: A query cell from the "diseased" (or target) population.

- Process: The fine-tuned model is prompted to simulate the effect of perturbing a specific gene (e.g., setting its expression to zero for a knockout). The model generates a predicted expression profile for the perturbed cell [9].

- Analysis: The predicted profile is projected into the model's latent space. The direction and magnitude of shift from the original "diseased" state towards the "healthy" state is quantified. A significant shift suggests the perturbation could be therapeutic [9].

Table 2: Performance of Open-Loop ISP vs. Differential Expression (DE) for T-cell Activation [9]

| Method | Positive Predictive Value (PPV) | Negative Predictive Value (NPV) | Sensitivity | Specificity |

|---|---|---|---|---|

| Open-Loop ISP | 3% | 98% | 48% | 60% |

| Differential Expression (DE) | 3% | 78% | 40% | 50% |

| ISP & DE Overlap | 7% | - | - | - |

Protocol 2: Closed-Loop In Silico Perturbation

This advanced protocol iteratively incorporates experimental data to significantly enhance prediction accuracy, creating a "virtuous cycle" of model improvement [9].

- Initial Model Fine-Tuning: Perform the same initial fine-tuning step as in the Open-Loop protocol.

- Integration of Perturbation Data:

- Input: scRNA-seq data from a Perturb-seq (or similar) experiment, where cells have been experimentally perturbed. The data is labeled only with the cell's resulting state (e.g., activated/resting), not the perturbed gene's identity [9].

- Process: The model is further fine-tuned on this combined dataset (original state data + perturbation data). This teaches the model how real perturbations manifest in transcriptomic space.

- Closed-Loop Prediction and Validation:

- Process: ISP is performed with the newly fine-tuned model on a set of candidate genes.

- Key Advantage: This method dramatically improves prediction quality. In a T-cell activation study, it increased the Positive Predictive Value (PPV) from 3% to 9% and boosted sensitivity to 76% and specificity to 81% [9].

- Iteration: The top predictions can be validated experimentally, and the results can be fed back into the model, further refining its accuracy in subsequent cycles.

Figure 2: The Closed-Loop Framework for Iterative Model Improvement. This workflow demonstrates how integrating experimental perturbation data creates a feedback loop that enhances the scFM's predictive accuracy.

Performance Benchmarking and Current Limitations

Despite their promise, critical benchmarking studies reveal that the performance of scFMs, particularly for perturbation prediction, must be rigorously evaluated against simpler baselines.

Benchmarking Against Simple Baselines

A 2025 benchmark study compared five scFMs and two other deep learning models against simple linear models for predicting transcriptome changes after single or double genetic perturbations [11]. The findings were sobering:

- Double Perturbation Prediction: For predicting effects of double-gene perturbations, no deep learning model outperformed a simple additive baseline (sum of individual logarithmic fold changes) [11].

- Unseen Perturbation Prediction: In predicting effects of entirely unseen perturbations, foundation models like scGPT and Geneformer were unable to consistently outperform a simple baseline that always predicts the mean expression from the training set [11].

- Utility of Pretrained Embeddings: While the end-to-end models struggled, the gene embeddings extracted from scGPT and scFoundation could be used in a simple linear model, which then performed competitively. This suggests the pretraining does capture some useful biological information, but the models' complex decoders may not leverage it optimally for this task [11].

Table 3: Key Findings from Benchmarking scFMs on Perturbation Prediction [11]

| Benchmark Scenario | Top Performing Model(s) | Key Implication |

|---|---|---|

| Double Gene Perturbation | Additive Linear Model (Baseline) | Current scFMs fail to capture non-additive genetic interactions better than a simple heuristic. |

| Unseen Single Gene Perturbation | Mean Prediction (Baseline); Linear Model with Perturbation Data | Pretraining on single-cell atlases offers less predictive power than pretraining on perturbation data itself. |

| Use of Model Embeddings | Linear Model using scGPT/scFoundation Gene Embeddings | Pretrained embeddings contain valuable biological knowledge, but may be better utilized by simpler models. |

Practical Application: A Case Study in RUNX1-FPD

The closed-loop framework has shown tangible success in a real disease context. Researchers applied it to RUNX1-Familial Platelet Disorder (RUNX1-FPD), a rare blood disorder [9]. After fine-tuning Geneformer on HSCs with RUNX1 loss-of-function, closed-loop ISP identified 14 high-confidence gene targets whose perturbation could shift diseased cells toward a healthy state. This led to the identification of several therapeutic pathways, including mTOR and protein kinase C, demonstrating the potential of scFMs to accelerate drug discovery for rare diseases where samples are scarce [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for scFM-Based Perturbation Studies

| Item / Reagent | Function in scFM Research |

|---|---|

| Public Cell Atlas Data (e.g., CZ CELLxGENE) [8] | Provides the large-scale, diverse single-cell datasets required for pretraining scFMs. Serves as a source of healthy/diseased reference data. |

| Perturb-seq / CRISPR Screens [9] | Generates the essential ground-truth dataset of single-cell transcriptomes following experimental genetic perturbations. Critical for closed-loop fine-tuning. |

| High-Quality scRNA-seq Datasets | Used for the initial fine-tuning of scFMs to learn the transcriptional signatures of specific biological states (e.g., T-cell activation, disease model vs. control). |

| Engineered Cell Models [9] | Provides a controlled system for modeling genetic diseases (e.g., RUNX1-FPD) and validating in silico perturbation predictions. |

| GPU Computing Clusters | Provides the necessary computational power for the fine-tuning and inference of large transformer models, which is computationally intensive [8]. |

Single-cell foundation models (scFMs) represent a revolutionary convergence of deep learning and computational biology, with transformer architectures at their core. These models fundamentally reinterpret cellular biology by treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [12]. This conceptual framework allows researchers to analyze cellular heterogeneity and complex regulatory networks using the same architectural principles that have revolutionized natural language processing. The adaptation of transformer models to single-cell genomics addresses a critical need for unified frameworks capable of integrating and comprehensively analyzing rapidly expanding biological data repositories, which now encompass tens of millions of single-cell omics datasets spanning diverse tissues, species, and biological conditions [12] [13].

The core innovation lies in applying self-supervised learning to vast single-cell datasets, enabling models to capture fundamental biological principles that generalize across diverse downstream tasks. Unlike traditional single-task models, scFMs leverage transformer architectures to incorporate diverse omics data—including single-cell RNA sequencing (scRNA-seq), single-cell ATAC sequencing (scATAC-seq), multiome sequencing, spatial transcriptomics, and proteomics—extracting latent patterns at both cell and gene/feature levels [12]. This architectural foundation has enabled breakthroughs in cross-species cell annotation, in silico perturbation modeling, and gene regulatory network inference, representing a paradigm shift toward scalable, generalizable frameworks capable of unifying diverse biological contexts [13].

Architectural Foundations: From Attention to Biological Insight

Core Attention Mechanism Components

The transformative capability of scFMs originates from the attention mechanism, which enables models to dynamically weight the importance of different genes when making predictions about cellular states. The mechanism operates through three fundamental components:

- Queries (Q): Vectors that represent the current focus or question being asked about the cellular state, analogous to asking "What here is relevant?" in a biological context [14].

- Keys (K): Vectors that contain information about what each gene token can provide, essentially answering "Here's what I have!" from the perspective of individual genomic features [14].

- Values (V): Vectors that contain the actual biological information used to construct the model's output representations [14].

These components are derived from the same input gene embeddings through learned linear transformations, allowing the model to project genomic data into spaces where biological relationships become computationally apparent [14]. The attention weights are calculated through scaled dot-product operations, followed by softmax normalization to convert similarity scores into probabilities that highlight the most important genetic relationships for any given cellular context [14].

Multi-Head Attention and Biological Specialization

Advanced scFMs employ multi-head attention, which operates like a team of biological experts analyzing the same cellular data from different perspectives. Each attention "head" independently focuses on distinct biological relationships—such as regulatory dynamics, functional pathways, or co-expression patterns—with their outputs merged to form rich, nuanced cellular representations [14]. This architectural approach enables models to capture diverse relationship types simultaneously, making them robust to biological variability and complexity [14].

For single-cell data, which lacks natural sequential ordering unlike linguistic data, transformers require specialized adaptation through deterministic gene ranking strategies. Common approaches include ranking genes by expression levels within each cell or partitioning genes into expression value bins, creating artificial "sentences" from fundamentally non-sequential data [12]. Positional encoding schemes are then adapted to represent the relative order or rank of each gene in the cell, preserving critical information about expression hierarchies [12].

Model Architecture Variations

scFMs demonstrate significant architectural diversity while maintaining core transformer principles:

Table: Architectural Variations in Single-Cell Foundation Models

| Model Type | Architecture | Tokenization Approach | Gene Ranking Method | Notable Examples |

|---|---|---|---|---|

| Encoder-based | Bidirectional transformer | Gene-level tokens | Expression magnitude ranking | scBERT, Geneformer |

| Decoder-based | Autoregressive transformer | Natural language tokenization | Rank-based sequencing | cell2sentence (C2S) |

| Hybrid | Transformer with specialized components | Combined gene and metadata tokens | Multi-factor ranking | scGPT, scPlantFormer |

Most scFMs use variants of the transformer architecture configured with different attention head counts, layer depths, and hidden dimension sizes [12]. Encoder-based models like scBERT employ bidirectional attention to capture genomic context from both "directions" simultaneously, while decoder-based models like cell2sentence leverage autoregressive approaches that generate gene sequences sequentially [15]. Emerging hybrid architectures incorporate specialized components for spatial relationships, phylogenetic constraints, or multimodal integration [13].

Application Notes: Experimental Protocols for scFM Implementation

Protocol 1: In Silico Perturbation Prediction with Closed-Loop Fine-Tuning

Purpose: To predict transcriptional responses to genetic perturbations and iteratively improve prediction accuracy through experimental feedback.

Background: In silico perturbation (ISP) modeling enables researchers to simulate how cells respond to genetic manipulations without costly wet-lab experiments. The "closed-loop" approach significantly enhances prediction accuracy by incorporating experimental perturbation data during model fine-tuning [9].

Materials:

- Pre-trained scFM (e.g., Geneformer-30M-12L)

- Single-cell RNA sequencing data from resting and activated cell states

- Perturb-seq data with genetic perturbation labels

- Computational environment with GPU acceleration

Procedure:

- Baseline Fine-tuning: Fine-tune the pre-trained scFM to classify cell states using scRNA-seq data from resting and activated conditions. Validate classification accuracy on hold-out test sets (>99% accuracy achievable) [9].

- Open-loop ISP: Perform in silico perturbation across the gene set, simulating both gene knockout and overexpression. For Geneformer, this involves computationally masking target genes and predicting expression changes [9].

- Experimental Validation: Validate open-loop predictions against orthogonal measurement modalities (e.g., flow cytometry for T-cell activation markers) to establish baseline performance [9].

- Closed-loop Fine-tuning: Incorporate Perturb-seq data alongside original scRNA-seq data during additional fine-tuning cycles. Critically, the Perturb-seq data should be labeled with activation status but not with specific gene perturbations to prevent data leakage [9].

- Closed-loop ISP: Perform perturbation predictions using the refined model, excluding genes used in perturbation training to avoid circularity [9].

- Performance Assessment: Evaluate positive predictive value, negative predictive value, sensitivity, and specificity against ground truth measurements. The closed-loop approach has demonstrated three-fold improvement in positive predictive value (from 3% to 9%) with concurrent enhancements in other metrics [9].

Troubleshooting:

- If performance improvements plateau, incrementally add perturbation examples (10-20 examples often sufficient for substantial improvement) [9].

- For batch effect concerns, incorporate batch information as special tokens during fine-tuning [12].

- If model fails to converge, verify gene tokenization strategy matches pre-training approach [12].

Protocol 2: Mechanistic Interpretability via Transcoder-based Circuit Analysis

Purpose: To extract biologically interpretable decision-making circuits from scFMs, connecting model internal mechanisms to known biological pathways.

Background: A significant challenge in scFMs is the "black box" nature of their predictions. Transcoder-based circuit analysis resolves the polysemanticity problem—where individual model components encode multiple biological concepts simultaneously—by decomposing transformer operations into interpretable components [15].

Materials:

- Trained scFM (e.g., cell2sentence model)

- Target dataset for analysis (e.g., Heart Cell Atlas v2)

- Transcoder implementation adapted for scFMs

- Computational resources for feature attribution analysis

Procedure:

- Transcoder Training: Train transcoders on each MLP layer of the target scFM using a biologically relevant dataset (90/10 train/validation split recommended). Use a maximum learning rate of 1×10⁻⁴ and sparsity regularization to encourage interpretable features [15].

- Feature Activation Analysis: For biological questions of interest, identify transcoder features with high activation levels. These represent specialized biological functions learned by the model [15].

- Circuit Tracing: Calculate attribution scores between transcoder feature pairs using the formula: z^(l,i)(x) × (fdec^(l,i) · fenc^(l',j)), where z represents input-dependent activation and the dot product represents input-independent connections [15].

- Attention Head Integration: Track information flow across different genomic tokens through attention head OV matrices, identifying which gene tokens contribute to specific biological features [15].

- Computational Subgraph Extraction: Iteratively apply attribution calculations to identify primary computational paths that activate specific biological features, integrating these paths into sparse computational subgraphs representing the model's internal decision-making process [15].

- Biological Validation: Establish correspondence between extracted circuits and known biological mechanisms through literature validation and experimental data comparison [15].

Troubleshooting:

- If transcoder features remain polysemantic, increase sparsity regularization strength [15].

- For weak circuit signals, focus on high-variance biological contexts where model predictions are most confident.

- If biological interpretation proves difficult, integrate gene ontology databases or known pathway information as prior knowledge [15].

Benchmarking and Performance Evaluation

Quantitative Performance Assessment

Recent systematic benchmarking reveals critical insights into scFM capabilities and limitations, particularly for perturbation prediction tasks:

Table: Benchmarking Results for Perturbation Effect Prediction

| Model/Approach | Double Perturbation Prediction Error (L2 Distance) | Single Perturbation Prediction | Genetic Interaction Detection | Computational Efficiency |

|---|---|---|---|---|

| Simple Additive Baseline | Reference performance | Varies by dataset | Not applicable | Most efficient |

| No Change Baseline | Higher than additive | Outperformed by linear models | Limited to buffering interactions | Most efficient |

| scGPT | Higher than baselines | Comparable to linear models | Poor (mostly buffering) | Moderate |

| Geneformer | Higher than baselines | Below linear models | Poor | Moderate |

| scBERT | Highest among benchmarks | Below linear models | Poor | Less efficient |

| Linear Model with Pretrained Embeddings | N/A | Best performance | Varies | Efficient |

Notably, current scFMs generally do not outperform deliberately simple baselines for perturbation effect prediction, particularly in zero-shot settings where models must generalize without task-specific fine-tuning [11] [16] [17]. The additive baseline model, which simply sums individual logarithmic fold changes for double perturbations, consistently outperforms or matches complex foundation models across multiple benchmarks [11]. Similarly, simple linear models using pretrained perturbation embeddings outperform foundation models for predicting effects of unseen single perturbations [11].

Critical Considerations for Model Selection

Performance evaluations across multiple domains reveal distinct model strengths and trade-offs:

- Embedding Quality: scGPT consistently generates the highest quality cell embeddings in zero-shot settings, achieving superior separation of cell types in visualization landscapes [5].

- Batch Effect Correction: scGPT demonstrates superior performance in removing technical batch effects while preserving biological distinctions, though all models struggle with cross-technology integration [5].

- Input Length Sensitivity: scGPT embedding quality improves with longer gene input sequences, while scBERT performance typically degrades with increasing sequence length [5].

- Computational Efficiency: scGPT and Geneformer show superior memory and computational efficiency compared to scBERT and scFoundation, making them more practical for large-scale analyses [5].

These findings highlight that model selection must be guided by specific application requirements rather than assuming general superiority of foundation models over simpler approaches.

Research Reagent Solutions: Essential Computational Tools

Table: Essential Research Reagents and Computational Tools for scFM Research

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| BioLLM | Standardized Framework | Unified interface for diverse scFMs | Model benchmarking and deployment [5] |

| PertEval-scFM | Benchmarking Framework | Standardized evaluation of perturbation predictions | Model performance validation [16] [17] |

| CZ CELLxGENE | Data Repository | Unified access to annotated single-cell datasets | Pretraining data sourcing [12] |

| DISCO | Data Atlas | Aggregated single-cell data for federated analysis | Multimodal data integration [13] |

| cell2sentence (C2S) | Pre-trained Model | Decoder-based scFM with biological literature training | Interpretability studies [15] |

| Geneformer | Pre-trained Model | Encoder-based scFM with focus on gene relationships | Gene-level tasks [5] |

| scGPT | Pre-trained Model | Large-scale transformer supporting multi-omic tasks | General-purpose applications [5] |

Visualizing Architectural Components and Workflows

Core scFM Architecture with Attention Mechanisms

Closed-Loop In Silico Perturbation Workflow

While transformer-based scFMs represent a significant architectural advancement in computational biology, substantial challenges remain. Current models face limitations in perturbation effect prediction, often failing to outperform simple linear baselines [11] [16]. The interpretability gap persists despite advances in mechanistic interpretability techniques [15], and batch effects continue to complicate cross-study integration [5].

Future developments will likely focus on specialized architectures for perturbation modeling, improved multimodal integration strategies, and more biologically-grounded benchmarking frameworks. The emergence of closed-loop approaches that iteratively incorporate experimental feedback demonstrates promising pathways for enhancing predictive accuracy [9]. As the field matures, standardized evaluation frameworks like BioLLM [5] and PertEval-scFM [16] [17] will be crucial for directing methodological progress toward biologically meaningful improvements rather than purely algorithmic advancements.

The integration of transformer architectures with single-cell genomics has unquestionably transformed the scale and scope of computational biological analysis. Through continued architectural innovation and rigorous biological validation, scFMs hold the potential to evolve from powerful pattern recognition tools into genuinely predictive in silico models of cellular behavior.

The development of robust single-cell Foundation Models (scFMs) for in silico perturbation modeling is fundamentally constrained by the scale, diversity, and quality of the data used for their pretraining. A foundation model is a large-scale deep learning model pretrained on vast datasets, enabling it to be adapted to a wide range of downstream tasks through self-supervised learning [1]. The premise of scFMs is that by exposing a model to millions of cells encompassing diverse tissues, species, and conditions, it can learn fundamental, generalizable principles of cellular identity and function [1]. For perturbation modeling, this extensive pretraining is critical, as it allows the model to internalize a representation of the "normal" cellular state space, against which the effects of genetic or chemical perturbations can be accurately predicted. The success of models like scGPT, pretrained on over 33 million cells, demonstrates the power of this approach [18]. This protocol details the data sources and methodologies for constructing a comprehensive pretraining corpus tailored for scFMs focused on perturbation biology.

Compendium of Public Data Repositories

Assembling a pretraining dataset requires leveraging multiple public repositories that host and standardize single-cell data. The table below summarizes the key resources, their primary content, and quantitative metrics relevant for scFM development.

Table 1: Key Public Repositories for Single-Cell and Perturbation Data

| Repository Name | Primary Content & Specialization | Reported Scale (Cells / Datasets) | Notable Features for Perturbation Modeling |

|---|---|---|---|

| CZ CELLxGENE [1] | Curated single-cell census data; multi-species, multi-tissue | Over 100 million cells [1] | Unified access to annotated datasets; standardized for analysis |

| Human Cell Atlas (HCA) [19] | Multi-omic, community-generated open data | 70.3 million cells; 523 projects; 11.2k donors [19] | Aims for a comprehensive reference map of all human cells |

| PerturbSeq.db [20] | Curated single-cell perturbation datasets | 189 datasets (165 scRNA-seq, 24 scATAC-seq) from 77 studies [20] | Dedicated to genetic (CRISPR) and chemical perturbation data |

| Expression Atlas [21] | Bulk and single-cell gene expression under different conditions | Information missing | Provides differential expression data across diverse biological conditions |

| DISCO [18] | Single-cell omics data browser and repository | Aggregates over 100 million cells [18] | Supports federated analysis across multiple data sources |

| Gene Expression Omnibus (GEO) / SRA [1] | Primary archive for high-throughput sequencing data | Thousands of single-cell studies [1] | Raw, primary data; requires significant processing and curation |

Protocol: Constructing a Pretraining Corpus for Perturbation scFMs

This protocol outlines a systematic procedure for building a large-scale, high-quality pretraining dataset from the repositories listed above, with a specific emphasis on enabling robust in silico perturbation modeling.

Stage 1: Data Discovery and Selection

Objective: To identify and select relevant datasets that maximize biological and technical diversity. Materials: Access to the internet, computational resources for metadata handling. Procedure:

- Prioritize Perturbation-Centric Repositories: Begin the data collection by querying specialized perturbation databases like PerturbSeq.db. This repository is pre-curated and provides a direct source of single-cell data from CRISPR-based (KO, CRISPRi, CRISPRa) and small-molecule compound screens [20].

- Expand to General Cell Atlases: Integrate data from large-scale cell atlases, primarily CZ CELLxGENE and the Human Cell Atlas [1] [19]. These resources provide the essential "baseline" representation of cellular states across tissues, donors, and species, which is the foundation upon which perturbation responses are modeled.

- Define Inclusion Criteria: Establish and adhere to the following criteria during dataset selection:

- Species: Focus on Homo sapiens and Mus musculus, as they are the best-represented species in public repositories and are primary models for drug development [20].

- Cell and Tissue Diversity: Actively select datasets from a wide range of primary cells, cell lines, and tissues (e.g., immune cells, neural, epithelial) to ensure the model learns a generalizable representation of cellular systems [20].

- Technology and Modality: Initially focus on single-cell RNA sequencing (scRNA-seq) data due to its abundance. Subsequently, incorporate single-cell ATAC-seq (scATAC-seq) data to empower the model to reason about gene regulatory networks underlying perturbation responses [20].

- Metadata Completeness: Give preference to datasets with comprehensive sample annotations, including donor information, tissue origin, and detailed experimental protocols.

Stage 2: Data Retrieval and Quality Control

Objective: To download selected data and perform rigorous quality control to ensure dataset integrity.

Materials: High-performance computing cluster, sufficient data storage, tools like wget or aws s3 for data transfer, and single-cell analysis toolkits (e.g., Scanpy in Python).

Procedure:

- Data Download: Download datasets in standardized formats such as H5AD (

.h5adfiles) where available, as this is the common format for CZ CELLxGENE and many other resources [22]. - Initial Quality Filtering: For each dataset, apply standard single-cell QC filters using a consistent pipeline. This typically includes:

- Removing cells with an unusually low or high number of detected genes (potential empty droplets or doublets).

- Filtering cells with a high percentage of mitochondrial reads (indicative of apoptotic or low-quality cells).

- Filtering out genes that are detected in only a very small number of cells.

- Batch Effect Audit: Visually inspect the data using Uniform Manifold Approximation and Projection (UMAP) plots colored by dataset of origin, sequencing platform, and other technical variables. This qualitative assessment is crucial for identifying strong technical batch effects that will require specialized handling during integration [1].

Stage 3: Data Integration and Harmonization

Objective: To merge the individually curated datasets into a unified, analysis-ready corpus while mitigating technical noise. Materials: Integrated development environment (e.g., RStudio, Jupyter Notebook), single-cell integration tools (e.g., scVI, Harmony, Scanorama). Procedure:

- Feature Space Unification: Intersect the gene features (vocabulary) across all datasets to create a common feature space for model input. This often involves retaining only highly variable genes that are robustly measured across multiple studies.

- Apply Integration Algorithms: Utilize advanced batch integration methods, such as sysVI (a batch-aware conditional Variational Autoencoder) or similar tools, to align the datasets [18]. The goal is to create a shared latent space where cells cluster by biological identity rather than technical origin.

- Corpus Splitting: Partition the fully integrated corpus into three distinct sets:

- Pretraining Set (~90%): Used for the self-supervised training of the scFM.

- Validation Set (~5%): Used for monitoring training progress and tuning hyperparameters.

- Hold-out Test Set (~5%): A completely withheld set of data, ideally from unique studies or donors, used for the final evaluation of the model's generalization performance, especially on perturbation prediction tasks.

The following diagram illustrates the complete workflow from data discovery to a finalized pretraining corpus.

Figure 1: Workflow for building a pretraining corpus for perturbation scFMs.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key computational tools, data resources, and platforms that constitute the essential "reagent solutions" for developing scFMs for perturbation modeling.

Table 2: Key Research Reagent Solutions for scFM Pretraining

| Item Name | Type | Primary Function in Pretraining |

|---|---|---|

| PerturbSeq.db [20] | Database | A pre-curated repository of single-cell perturbation datasets, providing ready-made data for training and benchmarking perturbation models. |

| CZ CELLxGENE / HCA [1] [19] | Data Platform | Provides the foundational "baseline" cellular data at scale, essential for teaching the model normal cellular states. |

| scGPT / scPlantFormer [18] | Foundation Model | Examples of state-of-the-art scFMs whose architectures and pretraining protocols can be adopted or adapted for new models. |

| BioLLM [18] | Software Framework | A standardized framework for integrating and benchmarking different single-cell foundation models, enabling performance comparison. |

| sysVI [18] | Computational Tool | A batch integration tool that preserves biological variation while removing technical noise, critical for data harmonization. |

| FISHscale / FISHspace [22] | Analysis Pipeline | Software for processing and analyzing spatial transcriptomics data (e.g., EEL-FISH), allowing for the inclusion of spatial context. |

Visualization of Data Quality Control Workflow

A critical, iterative step in the protocol is ensuring the quality of the incoming data. The diagram below details the quality control process applied to each dataset before integration.

Figure 2: Data quality control and batch effect audit workflow.

The construction of a high-quality, large-scale pretraining corpus is a foundational step in developing scFMs capable of accurate in silico perturbation modeling. By systematically leveraging public repositories—from specialized resources like PerturbSeq.db for perturbation data to expansive atlases like the HCA for cellular baselines—and adhering to a rigorous protocol of selection, quality control, and integration, researchers can build the robust datasets required to power the next generation of predictive models in computational biology and drug discovery.

Tokenization, the process of converting raw gene expression data into discrete, model-readable units or "tokens," is a foundational step in building single-cell foundation models (scFMs). For in silico perturbation modeling—where the goal is to computationally predict cellular responses to genetic or chemical perturbations—the choice of tokenization strategy directly impacts a model's ability to learn meaningful biological representations and generalize to unseen data. This document outlines prevalent tokenization strategies, provides quantitative comparisons, details experimental protocols for their implementation, and visualizes key workflows and pathways relevant to perturbation modeling.

In single-cell RNA sequencing (scRNA-seq) analysis, tokenization strategies define how the high-dimensional and non-sequential gene expression profile of a single cell is transformed into a structured sequence for transformer-based models [1]. The core challenge is that gene expression data lacks inherent sequence; the order of genes in a cell does not carry semantic meaning as words do in a sentence. scFMs address this by imposing a deterministic order or structure on the gene features.

Table 1: Common Tokenization Strategies for Single-Cell Foundation Models

| Strategy Name | Core Principle | Typical Model Examples | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Expression-Based Ranking | Genes are ordered by their expression value within each cell, and the top-k genes form the input sequence [1]. | scGPT, Geneformer [1] | Simple, deterministic; captures most active genes. | Order is arbitrary and may not reflect biological gene-gene relationships. |

| Expression Binning | Genes are partitioned into bins (e.g., high/medium/low expression) based on their expression values, and the bin membership determines the token [1]. | scBERT [1] | Reduces vocabulary size; can capture coarse-grained expression levels. | Loss of fine-grained, continuous expression information. |

| Direct Normalized Counts | Uses normalized count values (or their log-transform) directly as input features without complex sequencing [1]. | Some scFMs [1] | Preserves full, continuous expression information. | Model must learn to handle high dimensionality and sparsity directly. |

| Convolutional Tokenization | The entire gene expression vector is segmented into fixed-size windows, and 1D-convolution is applied to generate local feature tokens [23]. | scSFUT [23] | Eliminates need for gene selection; uses full gene vector; expands attention receptive field. | Computationally intensive; less interpretable at the single-gene level. |

Quantitative Comparison of Strategy Performance

The performance of a tokenization strategy is intrinsically linked to the downstream task. For in silico perturbation (ISP) prediction, the "closed-loop" framework—which incorporates experimental perturbation data during model fine-tuning—has demonstrated significant improvements over "open-loop" approaches. The following table summarizes performance metrics from a benchmark study that utilized a Geneformer model, highlighting the impact of data integration on ISP accuracy [9].

Table 2: Performance of Open-Loop vs. Closed-Loop In Silico Perturbation Prediction in T-Cell Activation [9]

| Prediction Method | Positive Predictive Value (PPV) | Negative Predictive Value (NPV) | Sensitivity | Specificity | AUROC |

|---|---|---|---|---|---|

| Differential Expression (DE) | 3% | 78% | 40% | 50% | Not Reported |

| Open-Loop ISP | 3% | 98% | 48% | 60% | 0.63 |

| DE + Open-Loop ISP Overlap | 7% | Not Reported | Not Reported | Not Reported | Not Reported |

| Closed-Loop ISP | 9% | 99% | 76% | 81% | 0.86 |

A critical finding for practical implementation is that the performance of the closed-loop model improved dramatically with just 10 perturbation examples and approached saturation with approximately 20 examples, indicating that even modest experimental validation can substantially enhance predictive accuracy [9].

Detailed Protocols for Tokenization and In Silico Perturbation

Protocol 1: Expression-Based Ranking and Tokenization for scFM Fine-Tuning

This protocol details the steps for fine-tuning a pre-trained scFM, like Geneformer, using an expression-based ranking tokenization strategy for a specific in silico perturbation task [9] [1].

Materials and Reagents:

- Hardware: Workstation with >= 32 CPUs, >= 64 GB RAM, and >= 64 GB free storage [24].

- Software: Command-line interface (Bash), Python, and relevant machine learning libraries (PyTorch/TensorFlow). Pre-trained scFM (e.g., Geneformer).

- Data: scRNA-seq dataset (FASTQ or count matrices) for the biological system of interest (e.g., T-cells, HSCs). For closed-loop learning, Perturb-seq data for the same system is required [9].

Method Details:

Data Preprocessing and Quality Control:

- Quality Control: Filter cells with low gene counts and genes with low expression across cells. Perform log-normalization (e.g.,

log10(gexp + 1)) to stabilize variance and manage long-tailed distributions [25]. - Data Integration (if multiple batches): Apply batch effect correction methods like ComBat if integrating data from multiple studies or platforms, though some scFMs report robustness to batch effects without specific correction [1] [26].

- Quality Control: Filter cells with low gene counts and genes with low expression across cells. Perform log-normalization (e.g.,

Tokenization and Input Sequencing:

- For each cell, rank all genes by their normalized expression value.

- Select the top 2,000 - 6,000 genes (model-dependent) to form the input sequence for that cell [1].

- Convert each gene's identifier (e.g., Ensembl ID) and its expression value into a combined token embedding. The sequence of these tokens, in the order of their rank, represents the "sentence" for the cell.

Model Fine-Tuning:

- Initialize the model with pre-trained weights from the scFM.

- Fine-tune the model on the tokenized sequences from your target dataset. The learning objective is typically a classification task (e.g., activated vs. resting T-cells) or a regression task to predict a cellular state [9].

In Silico Perturbation Prediction:

- To simulate a gene knockout, set the expression value of the target gene to zero in the input data and re-tokenize.

- To simulate overexpression, artificially elevate the expression value of the target gene beyond its normal range and re-tokenize.

- Feed the modified token sequence through the fine-tuned model and compare the output embedding or prediction to the unperturbed state. A significant shift indicates a predicted phenotypic change [9].

Protocol 2: Implementing a Closed-Loop ISP Framework

This protocol extends Protocol 1 by iteratively incorporating experimental data to refine the scFM, dramatically improving ISP accuracy [9].

Method Details:

Initial Model and Perturbation Screening:

- Fine-tune a scFM on baseline scRNA-seq data (e.g., resting and activated T-cells) as in Protocol 1.

- Perform open-loop ISP on a wide panel of genes to generate initial predictions of which perturbations shift the cell state.

Experimental Validation and Data Integration:

- Select top candidate perturbations from the open-loop screen for experimental validation using a method like Perturb-seq.

- Generate scRNA-seq data for cells subjected to the candidate genetic perturbations.

Closed-Loop Fine-Tuning:

- Combine the original baseline scRNA-seq data with the new Perturb-seq data. The Perturb-seq data should be labeled with the resulting cell state (e.g., activated), but not with the identity of the perturbed gene during this step [9].

- Re-fine-tune the pre-trained scFM on this combined dataset. This teaches the model the causal relationships between perturbations and outcomes.

- The resulting closed-loop model is now ready for a new, more accurate round of ISP.

Visualization of Workflows and Pathways

The following diagrams illustrate the core closed-loop framework and a key signaling pathway identified through its application.

Closed-Loop ISP Workflow

RUNX1-FPD Signaling Pathways

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for scFM and ISP

| Item Name | Function / Application | Specific Examples / Notes |

|---|---|---|

| Pre-trained scFMs | Provides a foundational model that can be fine-tuned for specific tasks, saving computational resources. | Geneformer, scGPT, scBERT [1]. |

| Containerization Platform | Ensures computational reproducibility by encapsulating the entire software environment. | Docker [24]. |

| Integrated Pipelines | Provides pre-defined workflows for processing raw sequencing data into analyzable formats. | RumBall (for RNA-seq), bioBakery Workflows (for metagenomics) [24] [27]. |

| Data Preprocessing Tools | Performs quality control, normalization, and batch effect correction on raw count matrices. | Scanpy in Python [23]. |

| Perturbation Screening Tech | Experimentally validates in silico predictions and generates data for closed-loop learning. | CRISPRi/CRISPRa screens, Perturb-seq [9]. |

| Reference Datasets | Used for model pretraining and as benchmarks for fine-tuned models. | CZ CELLxGENE, Human Cell Atlas, TCGA, GTEx [1] [26]. |

Self-supervised learning (SSL) has emerged as a transformative approach for analyzing single-cell transcriptome data, enabling researchers to extract meaningful biological insights from vast amounts of unlabeled data. By learning representations without manual annotation, SSL methods have demonstrated exceptional capability in capturing complex cellular states and functions, forming the foundational bedrock for advanced in silico perturbation modeling with single-cell foundation models (scFMs). This paradigm shift allows computational biologists to predict cellular responses to genetic and therapeutic interventions, accelerating therapeutic discovery—particularly for rare diseases where experimental data is scarce.

The power of SSL lies in its ability to leverage the intrinsic structure of single-cell RNA sequencing (scRNA-seq) data through pretext tasks that require the model to learn meaningful representations without explicit supervision. These pre-trained models can then be fine-tuned for specific downstream applications with remarkable efficiency. Within the context of scFMs research, SSL provides the essential pre-training framework that enables accurate prediction of perturbation effects, cell-type annotation, and data integration across diverse biological contexts.

Key Findings and Performance Benchmarks

Comparative Performance of SSL Strategies

Extensive benchmarking across multiple single-cell genomics datasets reveals the nuanced effectiveness of different SSL approaches. The following table summarizes key quantitative findings from large-scale studies evaluating SSL methods on millions of single cells:

Table 1: Performance comparison of self-supervised learning methods on single-cell transcriptomes

| SSL Method | Key Application | Performance Metric | Result | Reference |

|---|---|---|---|---|

| Masked Autoencoder (Random masking) | Cell-type prediction (PBMC) | Macro F1 score | 0.7466 ± 0.0057 | [28] |

| Supervised Baseline | Cell-type prediction (PBMC) | Macro F1 score | 0.7013 ± 0.0077 | [28] |

| Masked Autoencoder (GP masking) | Cell-type prediction (Tabula Sapiens) | Macro F1 score | 0.3085 ± 0.0040 | [28] |

| Supervised Baseline | Cell-type prediction (Tabula Sapiens) | Macro F1 score | 0.2722 ± 0.0123 | [28] |

| Closed-loop ISP Framework | Perturbation prediction (T-cell activation) | Positive Predictive Value | 9% (vs. 3% open-loop) | [9] |

| scPML | Cross-platform cell annotation | Accuracy | 0.87 (mean) | [29] |

| Geneformer | Cross-platform cell annotation | Accuracy | 0.72 (mean) | [29] |

Critical Insights on SSL Effectiveness

Research indicates that SSL demonstrates particularly strong performance in specific biological scenarios:

Transfer learning applications: SSL pre-training on large auxiliary datasets (e.g., scTab with >20 million cells) significantly improves performance on smaller target datasets for cell-type prediction and gene-expression reconstruction [28]. Improvements are most pronounced for underrepresented cell types, as evidenced by stronger gains in macro F1 scores compared to micro F1 scores [28].

Architectural advantages: Masked autoencoders consistently outperform contrastive learning methods in single-cell genomics applications, contrary to trends observed in computer vision [28] [30]. This advantage is maintained across multiple masking strategies, including random masking and biologically-informed gene program masking.

Data efficiency: The "closed-loop" framework for perturbation modeling demonstrates that incorporating even small amounts of experimental data (10-20 perturbation examples) during fine-tuning can substantially improve prediction accuracy [9].

Experimental Protocols

SSL Pre-training Protocol for Single-Cell Transcriptomes

Data Preparation and Preprocessing

Data Collection: Assemble a large-scale single-cell transcriptomics dataset for pre-training. The CELLxGENE census scTab dataset comprising over 20 million cells across diverse tissues and conditions serves as an ideal starting point [28]. Include all 19,331 human protein-encoding genes to maximize generalizability.

Quality Control: Apply standard scRNA-seq quality control metrics:

- Remove cells with fewer than 200 detected genes

- Exclude cells with high mitochondrial read percentage (>20%)

- Filter out genes expressed in fewer than 10 cells

Normalization: Normalize gene expression values using standard scRNA-seq processing:

- Apply library size normalization to obtain counts per 10,000 (CPT)

- Log-transform using log1p (log(1+CPT))

- Scale features to zero mean and unit variance

Model Architecture and Training

Network Architecture: Implement a fully connected autoencoder network with the following specifications [28]:

- Input layer: 19,331 neurons (one per human protein-coding gene)

- Bottleneck layer: 512 neurons (compressed representation)

- Output layer: 19,331 neurons (reconstruction)

- Activation functions: ReLU for hidden layers, linear/sigmoid for output

Pretext Task Implementation - Masked Autoencoding:

- Apply random masking to 30% of input features (genes)

- Alternative strategy: Implement gene program (GP) masking using biologically defined gene sets

- Train the model to reconstruct masked features using mean squared error loss computed only on masked positions

Training Specifications:

- Optimization: Adam optimizer with learning rate of 0.001

- Batch size: 256 cells

- Training epochs: 100-200 with early stopping

- Hardware: High-performance GPU cluster (e.g., NVIDIA A100)

Protocol for In Silico Perturbation Modeling

Foundation Model Fine-tuning

Base Model Initialization: Start with a foundation model pre-trained on large-scale single-cell data (e.g., Geneformer) [9] [11].

Task-Specific Fine-tuning:

- Prepare labeled dataset of perturbation responses (e.g., CRISPR screens)

- Add a classification or regression head appropriate for the prediction task

- Fine-tune with a lower learning rate (1e-5 to 1e-4) to adapt the pre-trained weights

Closed-Loop Framework Implementation [9]:

- Incorporate experimental perturbation data during fine-tuning

- Use as few as 10-20 perturbation examples to substantially improve prediction accuracy

- Implement iterative refinement cycles where model predictions guide subsequent experiments

Perturbation Effect Prediction

In Silico Perturbation Simulation:

- For gene knockout: Set target gene expression to zero in the input vector

- For gene overexpression: Increase target gene expression by 2-3 standard deviations

- Pass modified input through the fine-tuned model to predict transcriptomic response

Validation and Interpretation:

- Compare predictions to held-out experimental data

- Analyze predicted expression changes in pathway context

- Prioritize candidate genes based on effect size and confidence metrics

Visualizing SSL Frameworks and Workflows

Self-Supervised Learning Framework for Single-Cell Transcriptomics

Closed-Loop In Silico Perturbation Framework

Table 2: Key research reagents and computational resources for SSL in single-cell transcriptomics

| Resource | Type | Function/Application | Example/Reference |

|---|---|---|---|

| scTab Dataset | Data Resource | Large-scale reference dataset for SSL pre-training; contains >20 million cells | CELLxGENE census [28] |

| Masked Autoencoder | Algorithm | SSL method for learning representations through reconstruction of masked input features | [28] [30] |

| Gene Program Annotations | Biological Knowledge | Curated gene sets for biologically-informed masking strategies | Pathway databases [29] |

| Geneformer | Foundation Model | Pre-trained transformer model for single-cell transcriptomics | [9] [11] |

| Closed-Loop Framework | Methodology | Approach for incorporating experimental data to improve perturbation predictions | [9] |

| scPML | Software Tool | Pathway-based multi-view learning for cell type annotation | [29] |

| Perturb-seq Data | Experimental Data | Single-cell CRISPR screening data for perturbation model training | [9] [11] |

Self-supervised learning represents a paradigm shift in the analysis of single-cell transcriptomes, providing a powerful framework for extracting biological insights from unlabeled data at scale. The protocols and applications outlined in this document demonstrate the tangible benefits of SSL in enhancing cell-type annotation, data integration, and—most critically—predicting cellular responses to perturbations. As the field progresses toward more sophisticated "virtual cell" models, SSL will continue to serve as the foundational element enabling accurate in silico experiments and accelerating therapeutic discovery, particularly for rare diseases where experimental data remains limited. The integration of SSL pre-training with closed-loop experimental validation creates a powerful cycle of discovery that promises to transform computational biology and drug development.

Implementing In Silico Perturbation Prediction: From Virtual Cells to Therapeutic Discovery

In silico perturbation (ISP) represents a transformative computational approach in cellular biology, enabling researchers to predict the effects of genetic manipulations—such as gene knockouts and overexpression—without conducting costly and time-intensive laboratory experiments. This methodology leverages single-cell Foundation Models (scFMs), which are large-scale deep learning models pre-trained on vast datasets comprising millions of single-cell transcriptomes [1]. These models learn fundamental principles of cellular biology and gene regulation, allowing them to be fine-tuned for specific tasks like predicting transcriptional changes following genetic perturbations [9] [31]. The core premise of ISP is the creation of "virtual cells" that can simulate cellular responses to diverse perturbations, thus accelerating biological discovery and therapeutic development, particularly for rare diseases where patient samples are scarce [9].

The workflow operates by treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [1]. Through sophisticated tokenization and embedding processes, scFMs can model the complex, high-dimensional relationships within gene regulatory networks. When a perturbation is simulated, the model predicts how the removal (knockout) or enhanced expression (overexpression) of specific genes alters the transcriptional state of the cell [9] [32]. This capability is invaluable for prioritizing gene targets for functional validation, understanding disease mechanisms, and identifying potential therapeutic interventions [9].

Workflow and Methodology

The ISP workflow involves a sequence of critical steps, from data preparation and model setup to the execution and validation of in silico experiments. The following diagram illustrates the logical flow and key decision points in a standard ISP pipeline.

Data Preparation and Tokenization

The initial phase involves curating high-quality single-cell RNA sequencing (scRNA-seq) data, which serves as the input for the scFM. The model requires a gene-by-cell count matrix from wild-type (WT) samples [32]. A critical challenge is that gene expression data lacks inherent sequential order, unlike words in a sentence. To address this, scFMs employ various tokenization strategies to structure the data for the model:

- Gene Ranking by Expression: Genes within each cell are ranked by their expression levels, and the ordered list of top genes is treated as the input "sentence" [1].

- Expression Binning: Genes are partitioned into bins based on their expression values, and these rankings determine their positions in the sequence [1].

- Gene Identifier and Value Embedding: Each gene is typically represented as a token embedding that combines a gene identifier with its expression value in the given cell [1].

Additional special tokens may be incorporated to provide biological context, such as cell identity metadata, modality indicators for multi-omics data, or gene ontology information [1]. Positional encoding schemes are then applied to represent the relative order or rank of each gene in the cell.

Model Selection and Configuration

Selecting an appropriate scFM is crucial for ISP success. Current models vary in their architectures, pretraining data, and specific capabilities. The table below summarizes key models and their applications in ISP.

Table 1: Single-Cell Foundation Models for In Silico Perturbation

| Model Name | Architecture Type | Key ISP Features | Perturbation Types Supported | Notable Applications |

|---|---|---|---|---|

| Geneformer [9] [31] | Transformer-based Encoder | Predicts direction of cell state shift (e.g., toward activation or rest); Can be used in open or closed-loop modes. | Knockout, Overexpression | T-cell activation studies, RUNX1-familial platelet disorder target identification. |

| scGPT [11] [31] | GPT-like Decoder | Predicts post-perturbation transcriptomes; Can be combined with a linear decoder for perturbation tasks. | Single/double gene knockout | Benchmarking studies on CRISPRa/i datasets. |

| scTenifoldKnk [32] | Tensor-based Workflow | Constructs Gene Regulatory Networks (GRNs) from WT data; virtually deletes a gene from the GRN to identify differentially regulated genes. | Virtual knockout | Systematic virtual KO analysis; recapitulation of real-animal KO findings. |

| Large Perturbation Model (LPM) [31] | PRC-disentangled Decoder | Integrates diverse perturbation data (genetic, chemical); disentangles Perturbation, Readout, and Context dimensions. | CRISPR, Chemical compounds | Predicting outcomes of unobserved experiments, mapping compound-CRISPR shared space. |

Executing the In Silico Perturbation

The core of the ISP workflow involves applying the selected and configured model to simulate the genetic perturbation.

For Gene Knockout Simulation

- GRN-Based Approach (scTenifoldKnk): The Wild-Type Gene Regulatory Network (WT scGRN) is constructed from the input data. The target gene is then "virtually deleted" by setting the entire row corresponding to that gene in the adjacency matrix of the WT scGRN to zero, creating a pseudo-KO scGRN. Manifold alignment is used to compare the WT and pseudo-KO scGRNs to identify differentially regulated (DR) genes [32].

- Encoder-Based Approach (Geneformer): The model, fine-tuned to classify cell states, is used to predict the direction of cell state change (e.g., toward a resting or activated state in T-cells) upon in silico knockout of a specific gene. The magnitude of the predicted shift indicates the gene's importance in maintaining the cell state [9].

- Decoder-Based Approach (scGPT, LPM): The model is tasked with predicting the complete post-perturbation transcriptome given the perturbation (e.g., "knockout of Gene X") and the cellular context as input [11] [31].

For Gene Overexpression Simulation

Simulating overexpression often uses similar underlying architectures as knockout simulations. The key difference lies in how the perturbation is represented to the model. Instead of removing a gene's influence, the model is instructed to predict the transcriptional consequences of elevated expression of the target gene. For example, in Geneformer, this involves inputting a command to overexpress the gene and analyzing the predicted shift in the cell's embedding within the state space [9].

The Closed-Loop Framework for Enhanced Accuracy

A significant advancement in ISP is the "closed-loop" framework, which iteratively improves model predictions by incorporating experimental data [9]. The process is as follows: