LICT: How a Large Language Model is Revolutionizing Automated Cell Type Annotation

Accurate cell type annotation remains a significant bottleneck in single-cell RNA sequencing analysis.

LICT: How a Large Language Model is Revolutionizing Automated Cell Type Annotation

Abstract

Accurate cell type annotation remains a significant bottleneck in single-cell RNA sequencing analysis. This article explores LICT (Large language model-based Identifier for Cell Types), a novel tool that leverages multi-model integration and an interactive 'talk-to-machine' strategy to overcome the limitations of both manual and traditional automated methods. Tailored for researchers and drug development professionals, we provide a comprehensive analysis of LICT's foundational principles, its unique methodology for reliable annotation, strategies for optimizing performance on challenging datasets, and a critical validation against existing tools. The discussion concludes with the implications of this objective, reference-free framework for enhancing reproducibility and accelerating discovery in biomedical research.

The Cell Annotation Challenge: Why LLMs Like LICT Are a Game Changer

The Critical Bottleneck of Cell Type Annotation in Single-Cell RNA-seq

The interpretation of results represents one of the most challenging tasks in single-cell RNA sequencing (scRNA-seq) data analysis [1]. While obtaining cell clusters is computationally straightforward, determining the biological identity represented by each cluster creates a significant bottleneck in the analysis workflow [1]. This process requires bridging the gap between current datasets and prior biological knowledge, which is not always available in a consistent, quantitative manner [1]. The fundamental concept of a "cell type" itself lacks clear definition, with most practitioners relying on an intuitive "I'll know it when I see it" approach that resists computational formalization [1]. This interpretation step often becomes manual, time-consuming, and highly dependent on expert knowledge, which introduces subjectivity and variability across studies [2].

The emergence of large language models (LLMs) offers promising solutions to this persistent challenge. Unlike traditional reference-based methods that depend on pre-annotated datasets, LLM-based approaches can leverage vast biological knowledge encoded in their training parameters [2]. One such advancement is LICT (Large Language Model-based Identifier for Cell Types), which employs multi-model integration and a "talk-to-machine" approach to improve annotation reliability [2]. This protocol details the application of LLM-based frameworks, with particular emphasis on LICT, to address the critical bottleneck in cell type annotation.

LICT Framework: Protocol and Implementation

LICT addresses limitations of previous LLM applications by implementing three complementary strategies: multi-model integration, iterative "talk-to-machine" refinement, and objective credibility evaluation [2]. The system was systematically developed by first evaluating 77 publicly available LLMs using a benchmark scRNA-seq dataset of peripheral blood mononuclear cells (PBMCs) [2]. Through standardized prompts incorporating the top ten marker genes for each cell subset, five top-performing models were selected for integration: GPT-4, LLaMA-3, Claude 3, Gemini, and the Chinese language model ERNIE 4.0 [2].

Multi-Model Integration Strategy

The multi-model integration strategy leverages complementary strengths of multiple LLMs rather than relying on conventional approaches like majority voting or a single top-performing model [2]. This approach significantly improves annotation accuracy, particularly for challenging low-heterogeneity datasets.

Experimental Protocol: Multi-Model Integration

- Input Preparation: For each cell cluster identified through unsupervised clustering (e.g., Leiden algorithm), extract the top differentially expressed genes based on statistical testing.

- Parallel LLM Query: Format standardized prompts containing the marker gene list and submit to all five integrated LLMs simultaneously.

- Result Selection: The best-performing annotation from the five LLMs is selected for each cluster, effectively leveraging their complementary strengths.

- Validation: This strategy reduced mismatch rates from 21.5% to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data compared to single-model approaches [2].

Talk-to-Machine Iterative Refinement

The "talk-to-machine" strategy implements a human-computer interaction process to enhance annotation precision, particularly for low-heterogeneity cell types where LLM performance typically declines [2].

Experimental Protocol: Talk-to-Machine Refinement

- Marker Gene Retrieval: The LLM is queried to provide representative marker genes for each predicted cell type based on initial annotations.

- Expression Pattern Evaluation: Analyze the expression of these marker genes within the corresponding clusters in the input dataset.

- Validation Check: An annotation is considered valid if >4 marker genes are expressed in ≥80% of cells within the cluster. Otherwise, classified as validation failure.

- Iterative Feedback: For failed validations, generate a structured feedback prompt containing (i) expression validation results and (ii) additional differentially expressed genes from the dataset.

- Re-query: Use the feedback prompt to re-query the LLM, prompting revision or confirmation of previous annotation.

This optimization strategy significantly improved alignment with manual annotations, increasing full match rates to 34.4% for PBMC and 69.4% for gastric cancer data, while reducing mismatches to 7.5% and 2.8% respectively [2].

Objective Credibility Evaluation

Discrepancies between LLM-generated and manual annotations do not necessarily indicate reduced LLM reliability, as manual annotations often exhibit inter-rater variability and systematic biases [2]. The objective credibility evaluation strategy provides a framework to distinguish methodology-related discrepancies from intrinsic dataset limitations.

Experimental Protocol: Credibility Assessment

- Marker Gene Retrieval: For each predicted cell type, query the LLM to generate representative marker genes.

- Expression Analysis: Evaluate the expression patterns of these marker genes within corresponding cell clusters.

- Credibility Assessment: An annotation is deemed reliable if >4 marker genes are expressed in ≥80% of cells within the cluster; otherwise, classified as unreliable.

This evaluation demonstrated that LLM-generated annotations outperformed manual annotations in reliability for PBMC and low-heterogeneity datasets [2]. Specifically, in embryo data, 50% of mismatched LLM annotations were credible versus only 21.3% for expert annotations [2].

Performance Benchmarking and Comparison

Quantitative Performance Metrics

Table 1: Performance Comparison of LLM-Based Annotation Tools Across Diverse Biological Contexts

| Tool | PBMC Full Match Rate | Gastric Cancer Full Match Rate | Embryo Data Full Match Rate | Stromal Cells Full Match Rate | Key Innovation |

|---|---|---|---|---|---|

| LICT | 34.4% [2] | 69.4% [2] | 48.5% [2] | 43.8% [2] | Multi-model integration + talk-to-machine |

| GPT-4 Only | Information Missing | Information Missing | ~3% (improved to 48.5% with LICT) [2] | Information Missing | Single LLM approach |

| Claude 3.5 Sonnet | Highest agreement in benchmark [3] | Information Missing | Information Missing | Information Missing | Top-performing individual model |

| scExtract | Outperformed established methods across tissues [4] | Information Missing | Information Missing | Information Missing | LLM-based automated article processing |

Table 2: LICT Performance Improvement with Multi-Model Integration Strategy

| Dataset Type | Single Model Mismatch Rate | LICT Multi-Model Mismatch Rate | Improvement |

|---|---|---|---|

| PBMC (High Heterogeneity) | 21.5% [2] | 9.7% [2] | 54.9% reduction |

| Gastric Cancer (High Heterogeneity) | 11.1% [2] | 8.3% [2] | 25.2% reduction |

| Embryo (Low Heterogeneity) | >50% mismatch [2] | 51.5% mismatch [2] | Match rate increased to 48.5% |

| Fibroblast (Low Heterogeneity) | >50% mismatch [2] | 56.2% mismatch [2] | Match rate increased to 43.8% |

Benchmarking Protocol

Experimental Protocol: Performance Evaluation

- Dataset Selection: Utilize diverse scRNA-seq datasets representing normal physiology (PBMCs), developmental stages (human embryos), disease states (gastric cancer), and low-heterogeneity environments (stromal cells) [2].

- Pre-processing: For each dataset independently, perform standardization including normalization, log-transformation, identification of high-variance genes, scaling, PCA, neighborhood graph calculation, and clustering using Leiden algorithm [3].

- Differential Expression: Compute differentially expressed genes for each cluster using standard statistical methods.

- Annotation Comparison: Apply LLM-based tools and compare results with manual annotations using direct string comparison, Cohen's kappa (κ), and LLM-derived quality ratings (perfect, partial, or not-matching) [3].

- Credibility Assessment: Apply objective credibility evaluation to both LLM and manual annotations using marker gene expression thresholds.

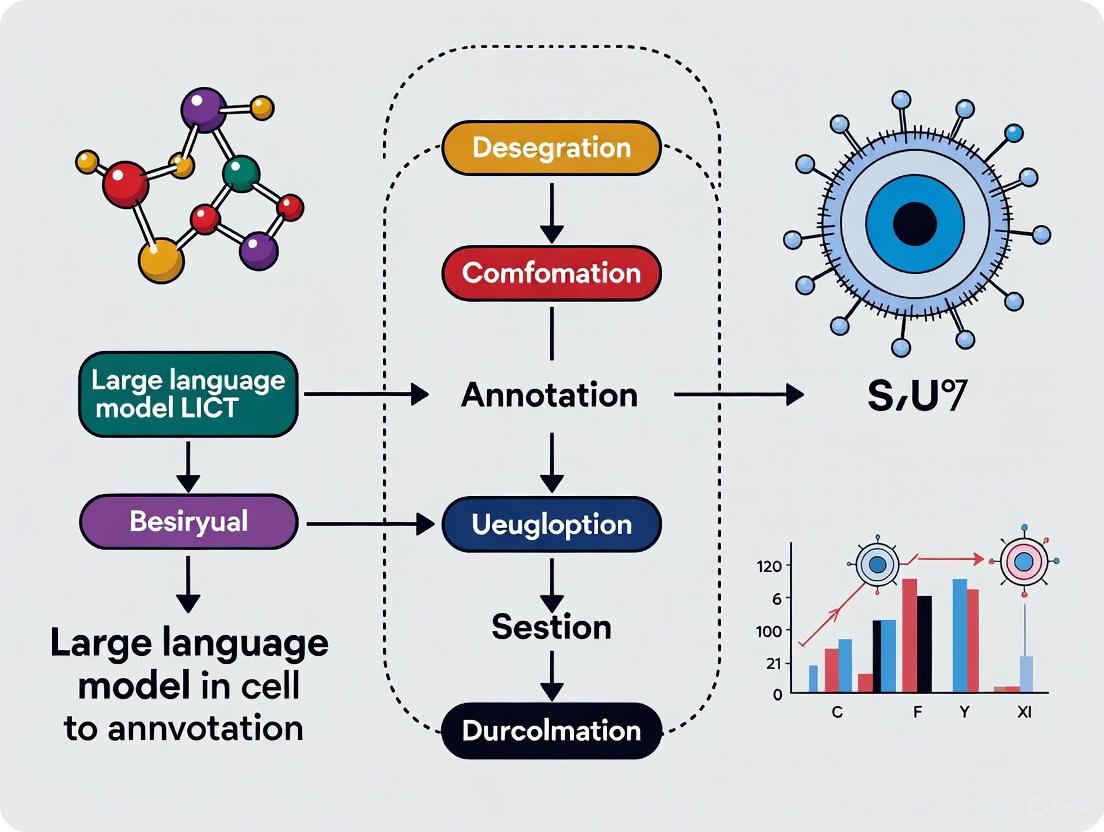

Integrated Workflow Visualization

LICT Annotation Workflow - This diagram illustrates the integrated LICT workflow combining multi-model integration with iterative talk-to-machine refinement for reliable cell type annotation.

Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagent Solutions for scRNA-seq Annotation

| Resource Type | Specific Tool/Database | Primary Function | Application Context |

|---|---|---|---|

| Marker Gene Databases | CellMarker 2.0 [5] | Manually curated resource of cell type markers from >100k publications | Manual annotation validation |

| Reference Atlases | Tabula Sapiens [5] | Reference-based annotation pipeline for human cell atlas | Reference-based annotation |

| Tabula Muris [5] | Repository of scRNA-seq data from 20 mouse organs | Cross-species validation | |

| Web Tools | Azimuth [5] | Web-based reference mapping using Seurat algorithm | Programming-free annotation |

| Annotation Packages | AnnDictionary [3] | LLM-agnostic Python package for cell type annotation | Flexible LLM integration |

| scExtract [4] | LLM framework for automated processing of published data | Automated literature-based annotation | |

| CellAnnotator [6] | scverse tool using OpenAI models for annotation | Ecosystem-integrated solution |

The LICT framework represents a significant advancement in addressing the critical bottleneck of cell type annotation in scRNA-seq analysis. By implementing the three core strategies—multi-model integration, talk-to-machine refinement, and objective credibility evaluation—researchers can achieve more reliable, consistent annotations while reducing manual effort. The protocols detailed herein provide comprehensive guidance for implementing this approach across diverse biological contexts, from high-heterogeneity immune cells to challenging low-heterogeneity microenvironments. As LLM technology continues to evolve, these methodologies offer a scalable foundation for extracting meaningful biological insights from the growing volume of single-cell transcriptomic data.

Limitations of Manual Annotation and Reference-Dependent Automated Tools

Cell type annotation is a critical step in the analysis of single-cell RNA sequencing (scRNA-seq) data, essential for understanding cellular composition and function [2] [7]. Traditionally, this process has relied on two primary approaches: manual annotation by domain experts and automated tools dependent on reference datasets. Manual annotation, while leveraging deep expert knowledge, is inherently subjective, time-consuming, and difficult to scale [2] [7]. Conversely, automated tools offer greater objectivity and speed but are often constrained by the scope and quality of their training data, limiting their accuracy and generalizability [2] [7] [8]. These limitations can introduce biases, lead to downstream errors, and consume significant resources in subsequent corrections, posing a significant challenge in cellular functional research [2] [7]. The emergence of large language models (LLMs) offers a promising path forward. Framed within research on the LICT (Large Language Model-based Identifier for Cell Types) tool, this analysis details the specific limitations of traditional methods and validates an advanced, reference-free approach for reliable cell annotation [2] [7].

Results

Comparative Performance of Annotation Methods

The limitations of traditional annotation methods are quantifiable across key metrics such as accuracy, scalability, and objectivity. The following table synthesizes performance data from benchmarking studies involving the LICT tool and other LLM-based methods against manual and reference-dependent automated techniques [2] [9] [7].

Table 1: Performance Comparison of Cell Type Annotation Methods

| Method Category | Example Tool | Reported Accuracy | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Manual Annotation | Expert Curation | High for known types [10] | Nuanced judgment, handles complex data [10] | Subjective, time-consuming, non-scalable, prone to inter-rater variability [2] [7] |

| Reference-Dependent Automated | SingleR, CellTypist [4] | Varies with reference quality [8] | Fast, objective, scalable for simple tasks [10] [8] | Limited to reference knowledge, poor generalizability, misses novel types [2] [7] [4] |

| LLM-Based (Single Model) | GPT-4, Claude 3 [2] | 80-90% for major types [9] [3] | No reference needed, broad knowledge base [2] | Performance drops on low-heterogeneity data [2] [7] |

| Advanced LLM Framework | LICT [2] [7] | Mismatch rate as low as 2.8% in gastric cancer data [2] [7] | High accuracy & reliability, objective credibility assessment, interprets complex populations [2] [7] | Requires iterative computation |

Performance is highly dependent on dataset context. In highly heterogeneous datasets like peripheral blood mononuclear cells (PBMCs) or gastric cancer samples, top-performing single LLMs like Claude 3 can show high agreement with manual annotations [2] [7]. However, their performance significantly diminishes in low-heterogeneity environments, such as stromal cells or human embryo data, where consistency with manual labels can fall to ~30-40% [2] [7]. This highlights a critical weakness of relying on a single model. The LICT framework addresses this via multi-model integration, drastically reducing mismatch rates—from 21.5% to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data compared to a baseline tool, GPTCelltype [2] [7].

Table 2: LICT Annotation Performance Across Diverse Biological Contexts

| Dataset Type | Example | Challenge | LICT Performance Post-Optimization |

|---|---|---|---|

| High Heterogeneity | PBMCs [2] [7] | Diverse, well-defined immune cells | 34.4% full match, 7.5% mismatch rate |

| Disease State | Gastric Cancer [2] [7] | Altered and complex cell states | 69.4% full match, 2.8% mismatch rate |

| Low Heterogeneity | Human Embryo [2] [7] | Less distinct transcriptional profiles | 48.5% full match (16x vs. GPT-4) |

| Low Heterogeneity | Mouse Stromal Cells [2] [7] | Subtle differences between populations | 43.8% full match |

Objective Credibility Evaluation

A key innovation of the LICT framework is its objective credibility evaluation strategy, which addresses the subjectivity inherent in manual annotation [2] [7]. Discrepancies between LLM-generated and manual annotations do not inherently favor the manual result; manual annotations are also prone to inter-rater variability and systematic biases, especially in ambiguous cell clusters [2] [7]. LICT's credibility assessment provides a reference-free, unbiased validation by checking if the LLM-predicted cell type is supported by the expression of its own suggested marker genes within the dataset [2] [7]. This process revealed that in stromal cell data, 29.6% of mismatched LICT annotations were credible, whereas none of the conflicting manual annotations met the objective credibility threshold [2] [7]. This demonstrates that LLM-based methods can, in some cases, provide a more reliable assessment than expert judgment alone.

Experimental Protocols

Protocol 1: LICT Annotation Workflow with Multi-Model Integration and "Talk-to-Machine" Strategy

This protocol details the core LICT methodology for de novo cell type annotation of scRNA-seq data clusters using a multi-LLM ensemble and an iterative feedback loop [2] [7].

- Input Preparation: For each cell cluster identified via unsupervised clustering (e.g., Leiden algorithm), compute the top N (e.g., 10) differentially expressed genes (DEGs) [2] [7] [3].

- Multi-Model Parallel Annotation:

- Prompt Engineering: Construct a standardized prompt containing the list of top DEGs for a cluster. Example: "Annotate the cell type based on the following marker genes: [List of Genes]" [2] [9].

- LLM Querying: Submit the prompt in parallel to multiple pre-selected, high-performing LLMs (e.g., GPT-4, Claude 3, Gemini, LLaMA-3, ERNIE-4.0) [2] [7].

- Result Integration: Collect all annotations and select the best-performing result, leveraging the complementary strengths of the different models rather than using simple majority voting [2] [7].

- Iterative "Talk-to-Machine" Validation:

- Marker Gene Retrieval: For the annotated cell type, query the same LLM to provide a list of representative marker genes [2] [7].

- Expression Pattern Evaluation: Assess the expression of these retrieved marker genes within the original cell cluster from the input dataset [2] [7].

- Validation Check: The annotation is considered valid if more than four marker genes are expressed in at least 80% of the cells within the cluster. If not, it proceeds to the feedback step [2] [7].

- Structured Feedback & Re-query: For failed validations, generate a new prompt containing the validation results and additional DEGs from the dataset. Re-query the LLM to revise or confirm its annotation [2] [7].

Protocol 2: Objective Credibility Evaluation of Annotations

This protocol provides a method to objectively assess the reliability of any cell type annotation, whether generated manually or by an automated tool, using the underlying gene expression data as ground truth [2] [7].

- Annotation Input: Begin with a cell type label for a specific cluster, from any source (e.g., manual expert, LLM, reference tool) [2] [7].

- Marker Gene Generation: Use an LLM to generate a list of representative marker genes for the provided cell type label. Note: For manual annotations, this step is still performed by the LLM to ensure a consistent basis for evaluation [2] [7].

- Expression Analysis: Calculate the percentage of cells within the cluster that express each of the generated marker genes. A gene is typically considered "expressed" if its count is above a technical noise threshold [2] [7].

- Credibility Assessment: The original annotation is deemed credible if more than four of the LLM-suggested marker genes are expressed in at least 80% of the cells in the cluster. Otherwise, the annotation is classified as unreliable for downstream analysis [2] [7].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Tool Name | Function / Application | Relevance to Protocol |

|---|---|---|

| LICT (LLM-based Identifier for Cell Types) [2] [7] | Integrated tool for reference-free cell annotation. | Implements the core multi-model and talk-to-machine strategies. |

| AnnDictionary [9] [3] | Open-source Python package for LLM-provider-agnostic single-cell analysis. | Backend for parallel processing of anndata objects and easy switching of LLM backends. |

| scExtract [4] | LLM framework for automated scRNA-seq data processing from articles. | Automates information extraction from literature to guide preprocessing and annotation. |

| Scanpy [4] | Standard Python toolkit for single-cell data analysis. | Used for core data processing: normalization, PCA, clustering, and DEG calculation. |

| Peripheral Blood Mononuclear Cell (PBMC) Dataset [2] [7] | A standard, highly heterogeneous benchmark dataset. | Essential for initial validation and benchmarking of annotation performance. |

| Tabula Sapiens v2 Atlas [9] [3] | A large, multi-tissue, manually annotated single-cell transcriptomic atlas. | Serves as a comprehensive benchmark for de novo annotation accuracy across tissues. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized biology by enabling the characterization of cellular heterogeneity at unprecedented resolution. However, a significant bottleneck persists: cell type annotation. This process, fundamental to interpreting scRNA-seq data, has traditionally relied on manual expertise to compare differentially expressed genes against canonical marker genes—a laborious, time-consuming, and subjective task [11] [12]. While automated computational methods exist, they often depend on specific reference datasets, limiting their generalizability and accuracy [2].

The emergence of Large Language Models (LLMs) like GPT-4 presents a paradigm shift. Trained on vast corpora of scientific literature, these models encode extensive knowledge of cell biology and marker genes, offering the potential for rapid, reference-free, and expert-level cell type annotation [11] [13]. This application note details the journey from general-purpose models like GPT-4 to the development of specialized, robust solutions such as the Large Language Model-based Identifier for Cell Types (LICT), providing structured experimental protocols and resources for their application.

From Generalist to Specialist: The Evolution of LLM-Based Annotation Tools

The development of LLM-based annotation tools has progressed from leveraging a single general-purpose model to sophisticated frameworks that integrate multiple models and strategies to enhance reliability.

GPT-4: The Proof of Concept The initial breakthrough was demonstrating that GPT-4 could accurately annotate cell types using marker gene information. Evaluated across hundreds of tissue and cell types from five species, GPT-4 generated annotations that showed strong concordance with manual annotations provided by domain experts [11] [13]. Key findings from this foundational work are summarized in Table 1.

Table 1: Performance Summary of GPT-4 in Cell Type Annotation

| Evaluation Metric | Performance Result | Context and Notes |

|---|---|---|

| Agreement with Manual Annotation | >75% (Full or Partial Match) | Consistent across most studies and tissues [11] |

| Optimal Input | Top 10 Differential Genes | Derived from a two-sided Wilcoxon test [11] [12] |

| Robustness to Input Strategy | High | Comparable performance across basic, chain-of-thought, and repeated prompts [11] |

| Identification of Unknown Types | 99% Accuracy | In simulations distinguishing known from unknown cell types [11] [13] |

| Distinction of Pure vs. Mixed Types | 93% Accuracy | In simulated complex data scenarios [11] |

| Reproducibility | 85% | Rate of identical annotations for the same marker genes [11] |

LICT: A Specialized Multi-Model Solution To address limitations of single models, including performance variability and "hallucination," the LICT framework was developed. It employs three core strategies to improve upon general-purpose LLMs [2]:

- Multi-Model Integration: Leverages multiple top-performing LLMs (e.g., GPT-4, Claude 3, Gemini) and selects the best-performing result, exploiting their complementary strengths.

- "Talk-to-Machine" Strategy: An iterative human-computer interaction process that enriches model input with contextual information and validation based on marker gene expression within the dataset.

- Objective Credibility Evaluation: Provides a reference-free, unbiased assessment of annotation reliability by validating the expression of LLM-retrieved marker genes in the input data.

This multi-faceted approach significantly reduces mismatch rates compared to single-model tools and offers a measurable confidence score for each annotation, which is crucial for downstream biological analysis [2].

Experimental Protocols for LLM-Based Cell Annotation

This section provides detailed methodologies for implementing two primary approaches to LLM-based annotation.

Protocol 1: Basic Cell Annotation Using a Single LLM

This protocol utilizes tools like GPTCelltype to annotate cell clusters via a single LLM API, suitable for standard analyses with well-defined marker genes [11].

Input Materials:

- Differential Gene List: A list of top differentially expressed genes for each cell cluster, typically identified using a two-sided Wilcoxon rank-sum test from standard pipelines (Seurat, Scanpy).

- LLM API Access: Access to an LLM such as OpenAI's GPT-4.

- Software Package: The

GPTCelltypeR package.

Procedure:

- Differential Expression Analysis: Perform clustering and differential expression analysis on your scRNA-seq dataset using your preferred pipeline (e.g., Seurat). For each cluster, extract the top 10 genes ranked by P-value and effect size.

- Input Preparation: Format the top 10 marker genes for a target cluster into a simple text string.

- Prompt Construction: Use a basic prompt strategy. Example:

"What is the cell type for a cell with high expression of [Gene1], [Gene2], ..., [Gene10]?" - Query and Annotation: Use the

GPTCelltypesoftware to send the prompt to the LLM API and retrieve the cell type label. - Iteration and Validation: Repeat steps 2-4 for all clusters. It is critical to validate the LLM's annotations by checking the expression of canonical marker genes for the proposed cell types via dot plots or violin plots in your analysis environment.

Protocol 2: High-Reliability Annotation with the LICT Framework

This protocol employs the LICT framework for complex scenarios, such as annotating low-heterogeneity cell populations or when the highest confidence is required.

Input Materials:

- Differential Gene List: As in Protocol 1.

- Multiple LLM API Keys: Access to several LLMs (e.g., GPT-4, Claude 3, Gemini).

- LICT Software Suite.

Procedure:

- Initial Multi-Model Annotation: Submit the marker gene list for a cluster to multiple integrated LLMs within the LICT framework.

- Consensus Generation: The framework applies its integration strategy to select the most accurate annotation from the pool of model responses.

- Iterative "Talk-to-Machine" Validation:

- The LLM is queried to provide a list of representative marker genes for its predicted cell type.

- The expression of these genes is evaluated within the corresponding cluster in your dataset.

- Validation Check: If more than four marker genes are expressed in at least 80% of the cluster's cells, the annotation is considered validated.

- If validation fails, a feedback prompt containing the validation results and additional differentially expressed genes is sent back to the LLM to revise or confirm the annotation.

- Credibility Scoring: The framework outputs a final annotation alongside a credibility score based on the objective evaluation strategy, allowing researchers to prioritize high-confidence annotations for further analysis.

The logical flow and components of this advanced protocol are visualized below.

Successful implementation of LLM-based annotation requires a suite of computational "research reagents." Key resources are cataloged in Table 2.

Table 2: Essential Research Reagents for LLM-Based Cell Annotation

| Reagent / Resource | Type | Primary Function | Reference / Source |

|---|---|---|---|

| GPTCelltype | R Software Package | Interfaces with GPT-4 API for automated cell type annotation using marker gene lists. | [11] |

| LICT Framework | Multi-Model Software Suite | Integrates multiple LLMs for consensus annotation and provides objective credibility evaluation. | [2] |

| Seurat / Scanpy | Computational Pipeline | Standard tools for scRNA-seq preprocessing, clustering, and differential expression analysis to generate input marker genes. | [11] [12] |

| mLLMCelltype | Consensus Framework | An open-source tool that integrates 10+ LLM providers to improve accuracy via consensus and quantify uncertainty. | [14] |

| Cell Ontology (CL) | Biological Ontology | A structured, controlled vocabulary for cell types, used for standardizing annotation outputs across studies. | [15] |

| GCTHarmony | LLM-based Tool | Harmonizes inconsistent cell type annotations across studies by mapping them to standard CL terms using text embeddings. | [15] |

Discussion and Outlook

The emergence of LLMs in biology, particularly for cell annotation, marks a transition from reliance on manual expertise to augmented, AI-assisted workflows. Initial tools like GPTCelltype demonstrated feasibility, while next-generation solutions like LICT and mLLMCelltype address key challenges of reliability and reproducibility through multi-model consensus and objective validation [2] [14].

Future directions point toward greater automation and integration. The development of LLM "agents" that can autonomously plan and execute analysis pipelines—from data querying to code execution and annotation—is already underway [16]. Furthermore, tools like GCTHarmony highlight the growing need to standardize LLM-generated annotations using established ontologies, ensuring consistency and enabling meta-analyses across disparate studies [15]. As these models continue to evolve, they will increasingly function not just as annotation tools, but as collaborative partners in the scientific discovery process, helping researchers navigate the complexity of single-cell data more efficiently and insightfully.

Accurate cell type annotation is a critical, yet challenging, step in single-cell RNA sequencing (scRNA-seq) analysis. Traditional methods, whether manual expert annotation or automated tools, present significant limitations. Manual annotation is inherently subjective and dependent on the annotator's experience, while automated tools often lack generalizability due to their dependence on reference datasets, potentially leading to biased results and downstream analytical errors [2]. The recently developed LICT (Large Language Model-based Identifier for Cell Types) addresses these challenges by leveraging a multi-model integration and a novel "talk-to-machine" approach [2] [17]. This tool provides an objective framework for assessing annotation reliability, establishing itself as a powerful and generalizable solution for scRNA-seq analysis, independent of reference data and enhancing reproducibility in cellular research [2].

Table: Comparison of Cell Type Annotation Methods

| Method Type | Key Features | Primary Limitations |

|---|---|---|

| Manual Expert Annotation | Benefits from expert knowledge and biological context [2]. | Inherently subjective; dependent on annotator's experience; exhibits inter-rater variability and systematic biases [2]. |

| Traditional Automated Tools | Provides greater objectivity and speed [2]. | Accuracy and generalizability are limited by reliance on reference datasets; can be biased or constrained by training data [2]. |

| LICT (LLM-based) | Independent of reference data; uses objective credibility evaluation; leverages multiple LLMs for robust results [2] [17]. | Performance can diminish on low-heterogeneity datasets without its integrated optimization strategies [2]. |

Performance Benchmarking of LICT

LICT was systematically validated against existing methods across diverse biological contexts to evaluate its performance and generalizability. The tool was benchmarked on scRNA-seq datasets representing normal physiology (Peripheral Blood Mononuclear Cells, or PBMCs), developmental stages (human embryos), disease states (gastric cancer), and low-heterogeneity cellular environments (mouse stromal cells) [2]. The benchmarking methodology followed a standardized approach that assesses agreement between the tool's annotations and manual expert annotations [2].

The initial evaluation identified five top-performing LLMs for integration into LICT: GPT-4, LLaMA-3, Claude 3, Gemini, and the Chinese language model ERNIE 4.0 [2]. While these models excelled in annotating highly heterogeneous cell populations, their performance significantly diminished in low-heterogeneity environments. For instance, in stromal cell data, the highest consistency with manual annotations achieved by any single model was only 33.3% [2]. This highlighted the necessity of LICT's integrated strategies to overcome the limitations of individual models.

Table: LICT Performance Across Diverse Datasets

| Dataset Type | Example | Key Performance Finding | Impact of LICT's Multi-Model Strategy |

|---|---|---|---|

| High Heterogeneity | Peripheral Blood Mononuclear Cells (PBMCs) [2] | All selected LLMs excelled at annotating highly heterogeneous subpopulations [2]. | Reduced mismatch rate from 21.5% (using GPTCelltype) to 9.7% [2]. |

| High Heterogeneity | Gastric Cancer [2] | Models like Claude 3 demonstrated high performance [2]. | Reduced mismatch rate from 11.1% to 8.3% [2]. |

| Low Heterogeneity | Human Embryos [2] | Significant discrepancies vs. manual annotation; Gemini 1.5 Pro achieved 39.4% consistency [2]. | Increased match rate (combined full and partial) to 48.5% [2]. |

| Low Heterogeneity | Stromal Cells [2] | Significant discrepancies vs. manual annotation; Claude 3 achieved 33.3% consistency [2]. | Increased match rate to 43.8% [2]. |

Core Methodology: Multi-Model Integration Strategy

LICT's first core strategy involves the integration of multiple large language models to leverage their complementary strengths, rather than relying on a single model or conventional majority voting [2]. This approach is particularly crucial for improving annotation accuracy and consistency across diverse cell types, especially in low-heterogeneity datasets where individual model performance wanes [2].

The workflow for this strategy is outlined below.

Application Note: The "Talk-to-Machine" Protocol

To further enhance precision, particularly for challenging low-heterogeneity cell types, LICT employs an interactive "talk-to-machine" strategy. This human-computer interaction protocol iteratively refines annotations by validating the model's predictions against the actual expression data [2]. The following detailed protocol is designed to be reproducible and can be directly incorporated into a research methodology.

Detailed Experimental Protocol

Purpose: To iteratively refine and validate automated cell type annotations using LICT's "talk-to-machine" strategy, ensuring high-confidence results.

Step-by-Step Workflow:

- Initialization: Provide LICT with the list of top marker genes for a cell cluster to receive the initial automated annotation [2].

- Marker Gene Retrieval: Query the same LLM to generate a list of representative marker genes for its predicted cell type [2].

- Expression Pattern Evaluation:

- Assess the expression of the retrieved marker genes within the corresponding cell cluster in your input scRNA-seq dataset.

- Validation Threshold: An annotation is considered preliminarily valid if more than four marker genes are expressed in at least 80% of the cells within the cluster [2].

- Iterative Feedback Loop (For Validation Failures):

- If the validation threshold is not met, the annotation is classified as a failure.

- Generate a structured feedback prompt containing: i. The results of the expression validation. ii. A list of additional differentially expressed genes (DEGs) from the dataset [2].

- Re-query the LLM with this prompt, instructing it to revise or confirm its previous annotation based on the new evidence [2].

- Output: The final annotation is the label provided after the iterative feedback loop, which demonstrates higher confidence and alignment with the underlying gene expression data.

The logical flow of this protocol, including its critical validation and feedback loop, is visualized in the following diagram.

Expected Outcomes

This protocol has been shown to significantly improve alignment with manual annotations. In highly heterogeneous datasets like PBMCs and gastric cancer, mismatch rates were reduced to 7.5% and 2.8%, respectively [2]. For low-heterogeneity datasets, such as human embryo data, the full match rate improved by 16-fold compared to using a base model like GPT-4 alone [2].

Objective Credibility Assessment Framework

A pivotal innovation of LICT is its objective framework for assessing annotation reliability, which moves beyond simple agreement with manual labels. This is critical because discrepancies between LLM-generated and manual annotations do not automatically indicate LLM error; manual annotations themselves can suffer from inter-rater variability and systematic biases [2]. LICT's credibility assessment provides a reference-free and unbiased metric for validation [2].

The assessment process, while sharing initial steps with the "talk-to-machine" protocol, serves a distinct purpose: to assign a confidence score to an annotation, regardless of its source.

The power of this objective evaluation was demonstrated in benchmarking studies. In the human embryo dataset, 50% of the LLM-generated annotations that disagreed with manual labels were deemed credible by this framework, compared to only 21.3% of the conflicting expert annotations. Strikingly, for the stromal cell dataset, 29.6% of LLM annotations were credible, whereas none of the manual annotations met the objective credibility threshold [2]. This underscores the limitations of relying solely on expert judgment and provides researchers with a data-driven method to identify reliably annotated cell types for robust downstream analysis.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key components and their functions in a typical LICT-based cell annotation workflow. This serves as an essential checklist for researchers seeking to implement this methodology.

Table: Essential Research Reagents and Resources for LICT

| Item Name / Resource | Function / Description | Critical Reporting Notes |

|---|---|---|

| scRNA-seq Dataset | The input data containing gene expression counts per cell. Must include a matrix of counts and pre-processing (quality control, normalization). | Report the source (e.g., public repository, in-house), unique accession ID if available, and key pre-processing steps and parameters [18]. |

| Cell Clustering Results | Pre-defined cell clusters (e.g., from graph-based clustering) that will be annotated. | Specify the clustering algorithm used (e.g., Louvain, Leiden) and the resolution parameter [2]. |

| Cluster Marker Genes | A list of differentially expressed genes that define each cluster. | Provide the method used for differential expression testing (e.g., Wilcoxon rank-sum test) and the criteria for significance (e.g., log fold-change, p-value) [2]. |

| Large Language Models (LLMs) | The AI models powering the annotation. LICT integrates multiple models. | For reproducibility, report the specific models and their versions used (e.g., GPT-4, Claude 3) [2] [18]. |

| Computational Environment | The software and hardware required to run LICT. | Document the software version (LICT), programming language (Python/R), and key library dependencies to ensure computational reproducibility [18]. |

Inside LICT's Engine: A Multi-Model, Interactive Approach to Annotation

LICT (Large Language Model-based Identifier for Cell Types) represents a paradigm shift in automated cell type annotation for single-cell RNA sequencing (scRNA-seq) data. Its core innovation lies in a sophisticated multi-model architecture designed to overcome the limitations inherent to individual Large Language Models (LLMs), such as performance degradation when annotating less heterogeneous cell populations [2]. The framework is built on the premise that no single LLM can accurately annotate all cell types with high reliability. By systematically integrating multiple, complementary LLMs, LICT achieves a level of robustness and accuracy unattainable by single-model systems [2]. This architecture is particularly vital in biological contexts where cellular environments range from highly heterogeneous (e.g., peripheral blood mononuclear cells - PBMCs) to low-heterogeneity (e.g., stromal cells or embryonic cells), each presenting unique annotation challenges [2].

The Rationale for a Multi-LLM Strategy

The initial development of LICT involved a rigorous evaluation of 77 publicly available LLMs to identify those most suitable for cell type annotation. This benchmarking, performed on a standard PBMC dataset, led to the selection of five top-performing models: GPT-4, LLaMA-3, Claude 3, Gemini, and the Chinese language model ERNIE 4.0 [2]. This selection was not arbitrary; each model possesses unique strengths and training data, leading to complementary capabilities in interpreting biological marker genes.

A critical finding motivating the multi-model approach was the significant performance drop observed when individual LLMs were applied to low-heterogeneity datasets. For instance, while models excelled with PBMCs and gastric cancer samples, their performance markedly decreased with human embryo and stromal cell data. Gemini 1.5 Pro achieved only 39.4% consistency with manual annotations for embryo data, and Claude 3 reached just 33.3% for fibroblast data [2]. This demonstrated that relying on a single LLM introduces a substantial risk of annotation errors in specific biological contexts. The multi-model integration strategy directly counteracts this vulnerability by leveraging the collective intelligence of diverse LLMs, ensuring that the strengths of one model compensate for the weaknesses of another.

Core Architectural Components of LICT

LICT's robustness is achieved through three synergistic core strategies: Multi-Model Integration, a "Talk-to-Machine" feedback loop, and an Objective Credibility Evaluation. The interplay of these components is illustrated in the following workflow.

Strategy I: Multi-Model Integration

The first pillar of LICT's architecture is its multi-model integration strategy. Unlike conventional approaches that might use simple majority voting, LICT is designed to select the best-performing result from its ensemble of five LLMs for any given annotation task [2]. This process actively harnesses the complementary strengths of the different models.

- Input Processing: A standardized prompt containing the top marker genes for a cell subset is sent concurrently to all five LLMs.

- Result Aggregation: The system collects the independent annotations from each model.

- Intelligent Selection: Based on the model's understanding of their respective performance profiles, it selects the most appropriate annotation, rather than relying on a simple democratic process. This is crucial because a single, high-confidence, correct annotation from one model is more valuable than a consensus of incorrect answers.

This strategy yielded significant performance gains. In highly heterogeneous datasets, it reduced the annotation mismatch rate from 21.5% (using a single model like GPTCelltype) to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data [2]. The improvement was even more dramatic for low-heterogeneity datasets, where the match rate (including both fully and partially matching annotations) increased to 48.5% for embryo data and 43.8% for fibroblast data [2]. The quantitative performance improvements across different dataset types are summarized in Table 1.

Table 1: Performance of LICT's Multi-Model Integration Strategy vs. Single-Model Approach

| Dataset Type | Example | Single-Model Mismatch Rate (e.g., GPTCelltype) | LICT Multi-Model Mismatch Rate | Match Rate (Full + Partial) |

|---|---|---|---|---|

| High-Heterogeneity | PBMCs | 21.5% | 9.7% | Not Specified |

| High-Heterogeneity | Gastric Cancer | 11.1% | 8.3% | Not Specified |

| Low-Heterogeneity | Human Embryo | Not Specified | Not Specified | 48.5% |

| Low-Heterogeneity | Stromal Cells | Not Specified | Not Specified | 43.8% |

Strategy II: The "Talk-to-Machine" Interactive Strategy

To further address discrepancies, particularly in low-heterogeneity cells, LICT employs an interactive "Talk-to-Machine" strategy. This human-computer interaction creates a dynamic feedback loop that refines the initial annotations [2].

The process, detailed in the protocol below, involves:

- Marker Gene Retrieval: The LLM is queried to provide a list of representative marker genes for its predicted cell type.

- Expression Pattern Evaluation: The system checks the expression of these suggested marker genes within the corresponding cell cluster in the input dataset.

- Validation & Iteration: An annotation is considered valid if more than four marker genes are expressed in at least 80% of the cells in the cluster. If this threshold is not met, the validation fails.

- Structured Feedback: For failed validations, a structured prompt is generated containing the expression validation results and additional differentially expressed genes (DEGs) from the dataset. This enriched prompt is used to re-query the LLM, prompting it to revise or confirm its annotation [2].

This iterative dialogue significantly enhances annotation accuracy. For example, in the gastric cancer dataset, the full match rate with manual annotations reached 69.4%, with a mismatch rate of only 2.8% [2].

Strategy III: Objective Credibility Evaluation

A groundbreaking feature of LICT's architecture is its objective framework for assessing annotation reliability, which moves beyond the traditional reliance on expert opinion. This strategy recognizes that a discrepancy between an LLM and a manual annotation does not automatically imply the LLM is wrong, as manual annotations can suffer from inter-rater variability and bias [2].

The credibility evaluation uses the same core check as the "Talk-to-Machine" validation but applies it as a final, objective assessment for all annotations, whether from the LLM or a human expert. An annotation is deemed reliable if the cluster expresses more than four of the LLM-suggested marker genes in over 80% of its cells [2].

This evaluation revealed that LICT's annotations often have higher credibility than manual expert annotations. In the stromal cell dataset, 29.6% of LICT's annotations were considered credible, whereas none of the manual annotations met the credibility threshold [2]. Similarly, in the embryo dataset, 50% of the mismatched LLM-generated annotations were credible, compared to only 21.3% of the expert annotations [2]. This demonstrates LICT's capacity to provide a more reliable and less biased foundation for downstream biological analysis. A comparison of credibility rates is shown in Table 2.

Table 2: Credibility Assessment of LICT vs. Manual Expert Annotations

| Dataset | Credible LICT Annotations | Credible Manual Annotations | Notable Finding |

|---|---|---|---|

| Gastric Cancer | Comparable to Manual | Comparable to LICT | Both methods showed similar reliability. |

| PBMCs | Outperformed Manual | Underperformed LICT | LICT annotations were more credible. |

| Human Embryo | 50% (of mismatches) | 21.3% (of mismatches) | Over double the credibility in discrepancies. |

| Stromal Cells | 29.6% | 0% | No manual annotations passed the objective check. |

Experimental Protocol for LICT-Based Cell Type Annotation

This protocol details the step-by-step procedure for utilizing the LICT tool to annotate cell types from an scRNA-seq dataset, incorporating its three core strategies.

4.1 Pre-processing and Input Preparation

- Data Clustering: Perform standard scRNA-seq analysis (quality control, normalization, dimensionality reduction, and clustering) using tools like Seurat or Scanpy to define cell clusters.

- Marker Gene Identification: Calculate differentially expressed genes (DEGs) for each cluster compared to all other cells. Select the top 10 marker genes per cluster based on log fold-change and statistical significance.

- Input Formatting: Prepare a standardized input for LICT. This should be a structured list (e.g., a JSON file) where each entry contains:

cluster_id: A unique identifier for the cell cluster.marker_genes: A list of the top 10 marker genes (e.g.,["CD3E", "CD4", "IL7R"]).

4.2 Execution of Multi-Model Annotation

- Model Querying: Submit the standardized prompt containing the marker genes for each cluster to the five integrated LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0) concurrently via their respective APIs.

- Initial Annotation Aggregation: Collect the raw cell type predictions from each LLM for every cluster.

4.3 Interactive Validation and Refinement ("Talk-to-Machine")

- For each cluster and its set of initial annotations, initiate the validation loop:

- Marker Gene Retrieval: For a given annotation, prompt the respective LLM with: "List representative marker genes for [predicted cell type]."

- Expression Validation:

- Calculate the percentage of cells within the cluster that express each of the LLM-suggested marker genes. A gene is considered "expressed" if its count is above a pre-defined noise threshold (e.g., > 0).

- Count the number of suggested marker genes that are expressed in >80% of the cluster's cells.

- Decision Point:

- IF the count of validated genes is >4, accept the annotation. Proceed to Section 4.4.

- ELSE (validation fails), generate a feedback prompt:

- "Your previous suggestion was [predicted cell type]. The expression validation for the marker genes you provided ([list genes]) failed. Specifically, only [number] genes were expressed in >80% of cells. Here are additional highly expressed genes from this cluster: [list of top 5 DEGs]. Please re-annotate the cell type based on this combined information."

- Submit this feedback prompt to the LLM and collect the revised annotation.

- Repeat steps 2-4 of this section using the revised annotation. A maximum of 3 iteration cycles is recommended to avoid infinite loops.

4.4 Final Credibility Assessment

- For the final accepted annotation for each cluster, perform a final credibility check using the same logic as the validation step (>4 genes expressed in >80% of cells).

- Flag annotations that fail this final check as "Low Reliability" in the output. These clusters may require further expert investigation or suggest low-quality or novel cell populations.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Resources for LICT-Based Cell Annotation Research

| Item | Function in the LICT Workflow |

|---|---|

| scRNA-seq Dataset | The fundamental input data. Requires pre-processing (QC, normalization, clustering) to generate cell clusters and marker genes for LICT analysis [2]. |

| Reference Annotations (e.g., PBMC) | A benchmark dataset with well-established cell types, used for validating and benchmarking LICT's performance on new data [2]. |

| LICT Software Package | The core tool that implements the multi-model integration, "talk-to-machine" strategy, and credibility evaluation. It handles API calls to the various LLMs and the internal logic [2]. |

| API Access to LLMs (GPT-4, Claude 3, etc.) | Essential infrastructure for LICT to function. Requires operational API keys and accounts for the five integrated LLMs to perform the annotation queries [2]. |

| Marker Gene Database (e.g., CellMarker) | External databases of known cell marker genes can be used for additional validation or to supplement the knowledge embedded within the LLMs [19]. |

Application Note: Enhancing Cell Type Annotation Reliability

In the context of Large Language Model-based Identifier for Cell Types (LICT) research, the multi-model integration strategy is designed to overcome the limitations inherent to relying on a single large language model (LLM) for automated cell type annotation. Individual LLMs, even top performers, exhibit significant variability and can struggle with accuracy, particularly when annotating low-heterogeneity cell populations such as those found in developmental stages or stromal cell datasets [2]. This strategy leverages the complementary strengths of multiple LLMs to produce more comprehensive, consistent, and reliable annotations, thereby providing an objective framework for assessing annotation credibility and freeing researchers to focus on underlying biological insights [2].

Quantitative Performance Data

Table 1: Performance of Multi-Model Integration vs. a Single Model (GPTCelltype) across Diverse Biological Contexts [2]

| Dataset Type | Example Dataset | Annotation Consistency (Single Model) | Annotation Consistency (Multi-Model) | Key Performance Improvement |

|---|---|---|---|---|

| High Heterogeneity | Peripheral Blood Mononuclear Cells (PBMCs) | 78.5% Match [2] | 90.3% Match [2] | Mismatch rate reduced from 21.5% to 9.7% [2] |

| High Heterogeneity | Gastric Cancer | 88.9% Match [2] | 91.7% Match [2] | Mismatch rate reduced from 11.1% to 8.3% [2] |

| Low Heterogeneity | Human Embryos | Low (Specific % not stated) [2] | 48.5% Match [2] | Match rate increased ~16-fold vs. GPT-4 alone [2] |

| Low Heterogeneity | Stromal Cells / Fibroblasts | Low (Specific % not stated) [2] | 43.8% Match [2] | Significant increase in match rate; mismatch decreased [2] |

Experimental Protocol: Multi-Model Integration for Cell Annotation

Purpose

To execute a multi-model integration strategy that selects the best-performing cell type annotation from a panel of LLMs, enhancing accuracy and consistency across diverse cell populations, particularly for low-heterogeneity datasets [2].

Pre-Experimental Requirements

- A pre-processed single-cell RNA sequencing (scRNA-seq) dataset with cells clustered.

- A list of top marker genes (e.g., top 10) for each cell cluster to be annotated.

- Access to the five pre-identified top-performing LLMs for cell type annotation: GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE 4.0 [2].

Step-by-Step Procedure

- Input Preparation: For a given cell cluster, prepare a standardized prompt that incorporates the list of top marker genes [2].

- Parallel Model Querying: Submit the identical prompt to each of the five LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, and ERNIE 4.0) to obtain independent cell type annotations [2].

- Result Integration: Instead of using a simple majority vote, evaluate the responses from all five models and select the annotation that is determined to be the best-performing for that specific cluster and biological context. This approach actively leverages the complementary strengths of the different models [2].

- Validation and Iteration (Optional but Recommended): Integrate this step with subsequent "talk-to-machine" and objective credibility evaluation strategies. Query the LLM for marker genes of its predicted cell type and validate that these genes are expressed in the cluster. If validation fails, use a structured feedback prompt to re-query the models [2].

Workflow Visualization

Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Multi-Model Integration [2]

| Item Name | Function / Role in the Protocol | Specification / Notes |

|---|---|---|

| Top-Performing LLMs | Provides the core annotation capability. The ensemble (GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0) ensures coverage of complementary strengths. | Selected based on benchmarking against a PBMC scRNA-seq dataset [2]. |

| Standardized Prompt Template | Ensures consistency in queries across different LLMs, reducing variability introduced by prompt engineering. | Contains the list of top marker genes for the cell cluster [2]. |

| scRNA-seq Dataset | The biological substrate for annotation. Provides the gene expression matrix derived from clustering analysis. | Used benchmark datasets include PBMCs (GSE164378), human embryos, gastric cancer, and mouse stromal cells [2]. |

| Computational Environment | Enables the parallel querying of multiple LLMs and the subsequent processing/integration of their outputs. | Requires stable API access or local deployment for the selected LLMs. |

Application Note

Within the framework of the Large Language Model-based Identifier for Cell Types (LICT), Strategy II, the "talk-to-machine" iterative feedback loop, is designed to significantly enhance annotation precision, particularly for challenging low-heterogeneity cell populations where standard LLM outputs can be ambiguous or biased [2]. This human-computer interaction protocol mitigates a key limitation of automated annotation by introducing a structured, evidence-based refinement cycle. It moves beyond single-pass queries, allowing the model to correct itself by integrating new evidence from the dataset itself, thereby closing the gap between initial prediction and biological validity [2] [20].

The core of this strategy lies in its ability to use iterative prompting to transform vague initial predictions into verified annotations. By treating the model's initial output as a hypothesis to be tested against gene expression data, this process mirrors the scientific method, fostering a collaborative dialogue between the researcher and the model [20]. This is crucial for building trust in LLM-generated annotations and for ensuring that the final results are grounded in the underlying data, which directly addresses concerns about model hallucinations in biological contexts [21].

Performance and Quantitative Validation

The "talk-to-machine" strategy has been quantitatively validated across diverse biological contexts, from highly heterogeneous peripheral blood mononuclear cells (PBMCs) to low-heterogeneity stromal cells and human embryo data [2]. The table below summarizes the performance improvements observed after implementing the iterative feedback loop, using manual expert annotations as the benchmark.

Table 1: Performance Metrics of the "Talk-to-Machine" Strategy Across Diverse Datasets

| Dataset | Cell Type Heterogeneity | Full Match with Expert Annotation (After Iteration) | Mismatch Rate (After Iteration) | Key Improvement |

|---|---|---|---|---|

| PBMC [2] | High | 34.4% | 7.5% | Mismatch reduced from 21.5% to 9.7% after multi-model integration. |

| Gastric Cancer [2] | High | 69.4% | 2.8% | Mismatch reduced from 11.1% to 8.3% after multi-model integration. |

| Human Embryo [2] | Low | 48.5% | 42.4% | Full match rate improved 16-fold compared to using GPT-4 alone. |

| Fibroblast/Stromal [2] | Low | 43.8% | 56.2% | Demonstrated the ongoing challenge of low-heterogeneity cells. |

The data shows a dramatic increase in the full match rate for low-heterogeneity datasets, such as the 16-fold improvement for human embryo data [2]. Furthermore, the strategy successfully minimized mismatch rates in high-heterogeneity datasets to very low levels (e.g., 2.8% for gastric cancer) [2]. These results underscore the strategy's role in making LICT a more robust and reliable tool for single-cell RNA sequencing analysis.

Protocol

This protocol details the step-by-step procedure for implementing the "talk-to-machine" iterative feedback loop within the LICT framework for scRNA-seq cell type annotation.

Experimental Workflow

The following diagram illustrates the logical flow and decision points of the iterative feedback loop.

Step-by-Step Procedures

Step 1: Marker Gene Retrieval

- Objective: To obtain a list of representative marker genes for the cell type predicted by LICT's initial annotation.

- Procedure:

- From the initial LICT annotation output, extract the proposed cell type label for a specific cell cluster.

- Formulate a structured prompt to the LLM. Example: "Provide a list of representative marker genes for [predicted cell type]."

- Submit the prompt to the LLM component (e.g., GPT-4, Claude 3, LLaMA-3) and retrieve the list of genes [2] [21].

Step 2: Expression Pattern Evaluation

- Objective: To quantitatively assess the expression of the retrieved marker genes within the corresponding cell cluster in the input scRNA-seq dataset.

- Procedure:

- Using the scRNA-seq data matrix (e.g., counts matrix), isolate the cells belonging to the cluster in question.

- For each marker gene retrieved in Step 1, calculate two key metrics:

- Expression Proportion: The percentage of cells within the cluster where the gene is detected (expression > 0).

- Average Expression Level: The mean expression value of the gene across all cells in the cluster.

- Record these values for the validation check [2].

Step 3: Validation Check

- Objective: To automatically determine if the initial annotation is supported by the expression data.

- Procedure:

- Apply a predefined credibility threshold. Based on LICT validation, the threshold is defined as follows: An annotation is considered valid if more than four marker genes are expressed in at least 80% of the cells within the cluster [2].

- If the condition is met, proceed to "Annotation Validated."

- If the condition is not met, the annotation is classified as a "Validation Failure," and the iterative loop is triggered.

Step 4: Generate Feedback Prompt (For Failed Validations)

- Objective: To create a new, enriched prompt that provides the LLM with contextual feedback to guide a revised annotation.

- Procedure:

- Structure a feedback prompt that includes:

- Expression Validation Results: Explicitly state that the previously suggested marker genes did not meet the validation threshold.

- Additional Differentially Expressed Genes (DEGs): Incorporate a list of top DEGs specific to the cell cluster from the scRNA-seq dataset analysis. This provides the model with new, data-driven evidence [2].

- Example prompt: "The previous suggestion of [initial cell type] was not supported because its key markers were not highly expressed. Here are the top differentially expressed genes from this cluster: [list of DEGs]. Please reassess the cell type."

- Structure a feedback prompt that includes:

Step 5: Re-query LLM

- Objective: To obtain a refined cell type annotation based on the new evidence.

- Procedure:

- Submit the feedback prompt generated in Step 4 to the LLM.

- The LLM processes the new information and outputs a revised or confirmed cell type annotation.

- This revised annotation is then fed back into Step 1 of the protocol, creating a closed iterative loop until the validation check is passed or a maximum number of iterations is reached [2] [20].

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Implementing the "Talk-to-Machine" Loop

| Item | Function/Description | Example or Note |

|---|---|---|

| LLM Backbone | Provides the core natural language understanding and biological knowledge for initial annotation and marker gene retrieval. | LICT integrates multiple models like GPT-4, Claude 3, and LLaMA-3 for complementary strengths [2]. |

| scRNA-seq Dataset | The input data containing the gene expression matrix and cell cluster information to be annotated. | Requires pre-processed data with cell clustering already performed (e.g., Seurat object, Scanpy AnnData). |

| Marker Gene Database | A source of ground truth for marker genes, used for validation and sometimes integrated directly into the agent. | CellxGene Database is used in related tools like CellTypeAgent for verification [21]. |

| Differential Expression Analysis Tool | Identifies genes that are significantly upregulated in each cluster compared to all others, providing the "Additional DEGs" for feedback. | Tools like Seurat's FindMarkers or Scanpy's tl.rank_genes_groups are essential for Step 4 [2]. |

| Credibility Threshold Parameters | The predefined numerical criteria that automate the validation check. | Key parameters are: min_markers = 4 and min_expression_proportion = 0.8 (80%) [2]. |

Application Note: Objective Credibility Evaluation

Within the framework of the Large Language Model-based Identifier for Cell Types (LICT), Strategy III: Objective Credibility Evaluation provides a reference-free, unbiased method for assessing the reliability of cell type annotations. This strategy addresses a critical challenge in single-cell RNA sequencing (scRNA-seq) analysis: discrepancies between automated or LLM-generated annotations and manual expert annotations do not inherently indicate reduced reliability, as manual annotations themselves can suffer from inter-rater variability and systematic biases [2]. Strategy III establishes an objective framework to distinguish between discrepancies caused by annotation methodology and those arising from intrinsic limitations in the dataset itself, such as ambiguous cell clusters [2]. The core principle is to validate the annotation by verifying the expression of canonical marker genes for the predicted cell type within the cluster, thereby moving beyond mere prediction to evidence-based confidence assessment.

Key Quantitative Performance Data

The implementation of Strategy III within LICT has demonstrated that LLM-generated annotations can achieve comparable or even superior objective credibility relative to manual expert annotations across diverse biological contexts [2].

Table 1: Performance of Objective Credibility Evaluation Across Datasets [2]

| Dataset Type | Biological Context | Credible Annotations (LICT) | Credible Annotations (Manual) |

|---|---|---|---|

| High-heterogeneity | Peripheral Blood Mononuclear Cells (PBMCs) | Outperformed manual annotations [2] | Lower than LICT [2] |

| High-heterogeneity | Gastric Cancer | Comparable to manual annotations [2] | Comparable to LICT [2] |

| Low-heterogeneity | Human Embryo | 50.0% (of mismatched annotations) [2] | 21.3% (of mismatched annotations) [2] |

| Low-heterogeneity | Stromal Cells (Mouse) | 29.6% (of mismatched annotations) [2] | 0% (of mismatched annotations) [2] |

Table 2: Credibility Threshold Criteria for Marker Gene Expression [2]

| Parameter | Threshold Value | Interpretation |

|---|---|---|

| Number of Marker Genes | > 4 genes | A minimum number of representative marker genes must be confirmed. |

| Cellular Expression | ≥ 80% of cells in the cluster | The marker genes must be expressed in the vast majority of cells within the annotated cluster. |

| Final Assessment | Both thresholds met | The annotation is deemed reliable for downstream analysis. |

Experimental Protocol

Detailed Step-by-Step Methodology

This protocol describes the procedure for implementing Strategy III to evaluate the credibility of cell type annotations generated by LICT or other methods.

Input Requirements:

- A list of cell clusters with their preliminary annotations (from LICT or other sources).

- A processed scRNA-seq count matrix (or Seurat/Object) where cells are assigned to clusters.

Procedure:

Marker Gene Retrieval:

- For each cell cluster and its preliminary annotation, query the integrated LLM to generate a list of representative marker genes for the predicted cell type. The prompt should be standardized, for example: "List the top 10 canonical marker genes for [ predicted cell type ]."

Expression Pattern Evaluation:

- For each cluster, extract the expression data from the input scRNA-seq dataset for the list of marker genes obtained in Step 1.

- Calculate the percentage of cells within the cluster that express each marker gene (expression > 0).

- Validation Criteria: An annotation is considered provisionally valid if more than four marker genes are expressed in at least 80% of cells within the cluster [2].

Credibility Assessment and Output:

- Reliable Annotation: If the validation criteria are met, flag the annotation as "Credible" or "Reliable." These clusters are safe for downstream biological analysis.

- Unreliable Annotation: If the validation criteria are not met (i.e., four or fewer marker genes meet the expression threshold), flag the annotation as "Unreliable" or "Requires Review." These clusters should be treated with caution, and investigators may consider refining the annotation using iterative strategies like LICT's "talk-to-machine" approach [2].

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Implementation

| Item Name | Function / Description | Example / Note |

|---|---|---|

| LICT Software Package | The core tool integrating multiple LLMs and the three strategies (multi-model integration, talk-to-machine, objective evaluation) for scRNA-seq cell type annotation [2]. | Available as described in Communications Biology, 2025 [2]. |

| Benchmark scRNA-seq Datasets | Validated datasets used for performance evaluation and protocol calibration. | Peripheral Blood Mononuclear Cells (PBMCs), human embryo data, gastric cancer data, mouse stromal cells [2]. |

| Top-Performing LLMs | The large language models integrated within LICT to perform the initial annotation and marker gene retrieval. | GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0 [2]. |

| Marker Gene Database | A source of canonical cell type-specific marker genes, which can be used to supplement or verify LLM-generated lists. | Can be derived from literature or specialized databases. The LLM itself serves this function in the protocol. |

| Processed Count Matrix | The essential input data containing normalized gene expression counts for each cell barcode, with cells assigned to clusters. | Typically generated from raw sequencing data (FASTQ) via preprocessing pipelines (e.g., Cell Ranger, STAR). |

LICT (Large Language Model-based Identifier for Cell Types) represents a significant advancement in the automation of cell type annotation for single-cell RNA sequencing (scRNA-seq) data. This tool addresses a fundamental bottleneck in single-cell analysis by leveraging the power of large language models (LLMs) to interpret marker gene information, thereby reducing the reliance on extensive manual curation and reference datasets that can introduce bias [2]. Traditional annotation methods face limitations; manual annotation is subjective and time-consuming, while automated tools often depend on reference data that may not generalize well across diverse biological contexts [2] [22]. LICT overcomes these challenges through an objective, reference-free framework that enhances reproducibility and provides reliable results for downstream biological analysis [2].

The operational superiority of LICT is grounded in three complementary core strategies. First, its multi-model integration strategy leverages the collective strengths of multiple top-performing LLMs, selectively using the best output for each annotation task to reduce uncertainty and improve accuracy [2]. Second, the "talk-to-machine" strategy implements an iterative human-computer interaction that enriches model input with contextual information and validation feedback, mitigating ambiguous or biased outputs [2]. Third, an objective credibility evaluation strategy systematically assesses annotation reliability based on marker gene expression patterns within the input dataset, enabling reference-free and unbiased validation of results [2]. This strategic framework allows LICT to consistently align with expert annotations while interpreting complex cases where single cell populations exhibit multifaceted traits [2].

Prerequisites and Installation

System Requirements and Computational Environment

Before implementing LICT, ensure your computational environment meets the necessary requirements. The tool is implemented as an R package and requires R version 4.1.0 or higher [1]. While not explicitly specified in the search results, similar single-cell analysis tools typically benefit from sufficient memory resources (recommended ≥16GB RAM) to handle large-scale scRNA-seq datasets. The package dependencies include key single-cell analysis packages such as SingleCellExperiment and Seurat for data handling, though users should consult the official repository for the most current dependency list [23].

Installation Procedure

LICT is available through its GitHub repository. To install and load the package, use the following commands in your R environment:

The installation will include all necessary dependencies, including connectivity packages for API access to various LLM services [23]. After installation, users should configure their LLM API keys according to the provider documentation to enable seamless integration with the language models used by LICT.

Experimental Design and Workflow Configuration

Research Reagent Solutions

Successful implementation of LICT requires several key computational and data resources. The table below outlines the essential components of the "research reagent solutions" needed for effective cell type annotation with LICT:

Table 1: Essential Research Reagents and Resources for LICT Implementation

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| LLM Providers | GPT-4, Claude 3, Gemini, LLaMA-3, ERNIE 4.0 [2] | Core annotation engines providing complementary strengths for cell type identification |

| Reference Datasets | PBMC (GSE164378) [2], Tabula Sapiens [9] | Benchmarking and validation of annotation performance |

| Marker Gene Databases | CellMarker, PanglaoDB [24] [22] | Reference knowledge for cell type signatures and validation |

| Single-cell Analysis Packages | Scanpy, Seurat, SingleCellExperiment [1] [9] | Data preprocessing, clustering, and differential expression analysis |

| Annotation Validation Tools | Cell Ontology [25], AUCell [1] | Standardized nomenclature and objective credibility assessment |

LLM Selection and Configuration

LICT's performance depends on strategic selection of underlying language models. The developers identified five top-performing LLMs for cell type annotation through systematic evaluation of 77 publicly available models using PBMC datasets as benchmarks [2]. These models include GPT-4, LLaMA-3, Claude 3, Gemini, and the Chinese language model ERNIE 4.0 [2]. Each model brings unique strengths, with Claude 3 demonstrating particularly high overall performance in heterogeneous cell populations, though all models show limitations when annotating low-heterogeneity datasets such as stromal cells or embryonic tissues [2].

Configuration of these models requires API access setup according to provider specifications. The multi-model integration strategy automatically selects the best-performing output from these five LLMs, leveraging their complementary strengths rather than relying on simple majority voting or a single model [2]. This approach significantly reduces mismatch rates - from 21.5% to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data compared to single-model tools like GPTCelltype [2].

Step-by-Step Protocol for Cell Type Annotation

Data Preprocessing and Quality Control

Proper data preprocessing is fundamental for reliable annotation with LICT. The workflow begins with standard single-cell RNA sequencing data processing steps:

These preprocessing steps eliminate low-quality cells and technical artifacts by evaluating standard metrics including the number of detected genes, total molecule count, and mitochondrial gene expression percentage [24]. The resulting quality-controlled dataset ensures that downstream differential expression analysis produces reliable marker genes for LLM interpretation.

Cluster Identification and Differential Expression Analysis

Following preprocessing, cell clustering and marker gene identification provide the essential inputs for LICT:

This cluster analysis generates the differentially expressed genes (DEGs) that serve as the primary input for LICT. The top marker genes (typically 10-15 genes per cluster) are compiled for submission to the LLM ensemble [2]. The selection of appropriate clustering resolution is important, as over-clustering may lead to fragmented cell populations while under-clustering can obscure biologically distinct populations.

Core LICT Annotation Workflow

The annotation process integrates LICT's three strategic approaches through a structured workflow:

Diagram 1: Complete LICT Annotation Workflow

The workflow begins with the multi-model integration strategy, where cluster-specific marker genes are submitted to all five LLMs simultaneously:

This multi-model approach selectively uses the best-performing results from the five LLMs, significantly improving annotation accuracy across diverse cell types [2]. For highly heterogeneous datasets like PBMCs, this strategy reduced mismatch rates from 21.5% to 9.7% compared to single-model approaches [2].

Iterative Validation via "Talk-to-Machine" Strategy

The initial annotations undergo validation through LICT's innovative "talk-to-machine" approach: