Manual Cell Type Annotation Best Practices 2025: A Researcher's Guide to Accurate Single-Cell Analysis

This comprehensive guide provides researchers and drug development professionals with current best practices for manual cell type annotation in single-cell RNA sequencing data.

Manual Cell Type Annotation Best Practices 2025: A Researcher's Guide to Accurate Single-Cell Analysis

Abstract

This comprehensive guide provides researchers and drug development professionals with current best practices for manual cell type annotation in single-cell RNA sequencing data. Covering foundational concepts to advanced validation techniques, we explore how to define cell identities across established types, novel populations, and disease states. The article details systematic workflows from quality control through marker gene validation, addresses common troubleshooting scenarios, and compares manual annotation with emerging automated and AI-assisted methods. With the growing importance of reliable cellular characterization in biomedical research, this resource equips scientists with strategies to produce biologically meaningful and technically sound annotations that withstand scientific scrutiny.

Understanding Cell Identity: The Conceptual Foundation of Manual Annotation

The definition of a cell type, a fundamental concept in biology, has undergone a profound transformation with the advent of single-cell technologies. Traditionally, biologists defined cell types based on morphological characteristics (e.g., the shape of eosinophil granulocytes) and physiological function (e.g., the capacity of stem cells to differentiate) [1]. The introduction of antibody labeling added another dimension, enabling definition via cell surface markers [1]. Today, single-cell RNA sequencing (scRNA-seq) has unlocked the ability to define cell types by their complete gene expression profiles, moving beyond a handful of markers to a holistic, data-driven view of cellular identity [1] [2].

This shift has revealed that cellular properties across different modalities—molecular, morphological, physiological—are highly heterogeneous and do not always align neatly [2]. Consequently, the scientific community actively debates what truly constitutes a cell type, and a single, universal definition remains elusive [1] [2]. This whitepaper, framed within a broader thesis on manual annotation best practices, explores the modern, multi-faceted approach to defining cell type identity. We outline the core principles, detailed methodologies, and essential tools that empower researchers to navigate this complexity and assign meaningful biological identities to the clusters revealed by single-cell transcriptomics.

Modern Frameworks for Cell Type Identity

In the single-cell era, cell identities derived from transcriptomic data generally fall into several interconnected categories, each requiring a slightly different interpretive lens [1].

- Established Cell Types: These are well-characterized populations, such as endothelial cells expressing PECAM1, and are typically straightforward to identify using existing reference datasets and canonical marker genes [1].

- Novel Cell Types: Rare but significant, these are biologically distinct clusters—potentially based on function or developmental origin—that cannot be mapped to known types. Their discovery relies on differential expression analysis and must be followed by functional validation [1].

- Cell States and Disease Stages: scRNA-seq can capture transient, dynamic conditions such as activation, stress, or pathology. These are not necessarily distinct cell types but rather functional or disease-associated alterations within a type [1].

- Developmental Stages: In developmental contexts, cells exist on a continuum. Trajectory inference and pseudotime analysis are used to reconstruct these paths and annotate cells according to their position in a differentiation process [1].

Foundational Pillars of Cell Type Annotation

Robust cell type annotation rests on three foundational pillars, which are often applied in an iterative manner.

Pillar 1: In-depth Preprocessing and Quality Control High-quality annotation is impossible without high-quality data. The foundation is rigorous quality control to filter out low-quality cells, doublet detection to exclude multiplets, and batch effect correction to mitigate technical variation [1] [3] [4]. This process concludes with preliminary clustering to group cells with similar transcriptomic profiles, providing the initial structure for annotation [1]. For example, best practices for 10x Genomics data include filtering cells based on UMI counts, the number of genes detected, and the percentage of mitochondrial reads to remove unhealthy cells or ambient RNA contamination [4].

Pillar 2: Reference-Based Annotation This approach involves aligning the gene expression profiles of cell clusters to well-annotated reference datasets or cell atlases, such as those provided by the Human Cell Atlas or the BRAIN Initiative Cell Census Network [1] [2]. Tools like SingleR and Azimuth perform this mapping computationally [1] [5]. A key advantage is that references like Azimuth provide annotations at different levels of granularity, allowing researchers to choose the resolution that best fits their biological question [1].

Pillar 3: Expert-Guided Manual Refinement Automated methods, while powerful, can miss subtle distinctions or be misled by ambiguous expression patterns [1]. Manual refinement adds a crucial layer of biological insight by:

- Verifying expression of canonical marker genes.

- Interpreting results from differential gene expression analysis.

- Contextualizing findings within the scientific literature.

- Integrating the researcher's domain-specific knowledge to resolve edge cases and identify novel populations [1].

This collaborative process between computational output and expert intuition ensures that final cell type assignments are both technically sound and biologically meaningful [1].

Quantitative Comparison of Annotation Tools and Performance

The field has developed a diverse array of computational tools to assist with cell type annotation. They can be broadly categorized as reference-based, marker-based, or hybrid methods, each with distinct strengths and performance characteristics.

Table 1: Classification and Characteristics of Selected Cell Type Annotation Tools

| Tool Name | Category | Core Methodology | Key Features |

|---|---|---|---|

| SingleR [5] [6] | Reference-based | Spearman correlation to reference scRNA-seq data | Fast, does not require clustering; depends on reference quality. |

| ScType [5] [6] | Marker-based | Scoring system using positive and negative marker sets | Utilizes comprehensive marker database (ScTypeDB); supports negative markers. |

| ScInfeR [6] | Hybrid | Graph-based integration of references and marker sets | Hierarchical framework for subtype identification; versatile across scRNA-seq, scATAC-seq, and spatial omics. |

| Garnett [6] | Marker-based | Generalized linear machine learning model | Performs hierarchical classification of types and subtypes; depends on training data quality. |

| LICT [7] | LLM-based | Multi-model LLM integration with "talk-to-machine" strategy | Reference-free; provides objective credibility evaluation of annotations. |

| GPTCelltype [5] | LLM-based | Leverages GPT-4's language understanding with marker gene input | No custom reference needed; cost-effective; can annotate with high granularity. |

Recent benchmarks have quantitatively evaluated the performance of these tools, including emerging methods that leverage large language models (LLMs).

Table 2: Performance Benchmarking of Annotation Tools Across Diverse Datasets

| Tool / Method | Reported Agreement with Manual Annotation | Notable Strengths | Noted Limitations |

|---|---|---|---|

| GPT-4 (via GPTCelltype) [5] | Over 75% full or partial match in most tissues/types. | High accuracy across many tissues; cost-efficient; requires no custom reference. | Performance dips for small populations (<10 cells); cannot annotate malignant cells without distinct gene sets. |

| LICT [7] | Significantly reduced mismatch rates (e.g., 9.7% vs. 21.5% in PBMCs). | Superior in low-heterogeneity datasets; provides objective reliability score. | Over 50% inconsistency remains for some low-heterogeneity data. |

| ScInfeR [6] | Superior performance in >100 cell-type prediction tasks. | Robust to batch effects; versatile across data modalities (RNA, ATAC, spatial). | Dependency on the quality of integrated references and marker sets. |

Detailed Experimental Protocols for Cell Type Annotation

A Standardized Workflow for scRNA-seq Cell Type Annotation

The following protocol describes a comprehensive combinatorial approach, integrating both reference-based and manual methods for robust annotation [1].

Step 1: Data Preprocessing and Clustering

- Quality Control: Filter cells based on metrics like UMI counts, genes detected, and mitochondrial read percentage. For PBMCs, a common threshold is to remove cells with >10% mitochondrial UMIs [4].

- Normalization and Scaling: Normalize the gene expression matrix for sequencing depth and scale the data.

- Dimensionality Reduction and Clustering: Perform principal component analysis (PCA), followed by graph-based clustering and visualization with UMAP or t-SNE to reveal the cellular population structure [1].

Step 2: Obtain Preliminary Annotations via Reference Mapping

- Reference Selection: Identify a suitable, well-annotated reference dataset (e.g., from the Human Cell Atlas or Azimuth) that matches the tissue and species of interest [1].

- Automated Label Transfer: Use a tool like SingleR or the Azimuth web application to map your clusters against the reference. This will generate a preliminary cell type label for each cluster [1] [5].

Step 3: Expert-Driven Manual Refinement and Validation

- Differential Expression Analysis: For each cluster, identify the top marker genes using a statistical test like the two-sided Wilcoxon rank-sum test [1] [5].

- Interrogate Marker Gene Expression: Create visualizations (dot plots, feature plots, violin plots) to inspect the expression of known canonical markers for the proposed cell types, as well as the top differentially expressed genes from your analysis.

- Contextualize and Refine: Compare the automated labels with the marker evidence. For example, if a cluster is labeled "Stromal Cells" but expresses high levels of type I collagen genes (COL1A1, COL1A2) and not type II, it may be more accurately annotated as "Fibroblasts" [5]. Use this evidence to accept, refine, or reject the automated labels.

Step 4: Finalize and Document

- Produce a final annotated UMAP plot and document the evidence (reference source, key marker genes, differential expression results) used for each cell type's identity to ensure reproducibility.

Protocol for LLM-Assisted Annotation with GPTCelltype

The emergence of LLMs like GPT-4 offers a powerful, reference-free alternative. The following protocol has been validated across hundreds of cell types [5].

- Input Preparation: For the cell cluster of interest, generate a list of marker genes. Benchmarking indicates that using the top 10 differential genes identified by the two-sided Wilcoxon test yields optimal performance with GPT-4 [5].

- Prompting the Model: Use a basic prompt strategy to query the model. An example prompt structure is: "What is the most specific cell type identity given the expression of the following marker genes: [list of top 10 genes]?" [5].

- Validation and Iteration: The model's output should be treated as a hypothesis. Crucially, validate the annotation by checking the expression of known marker genes for the proposed cell type in your dataset. For ambiguous cases, the "talk-to-machine" strategy implemented in LICT can be used: the model is provided with the validation results and asked to refine its annotation [7].

Visualizing Annotation Workflows and Data Integration

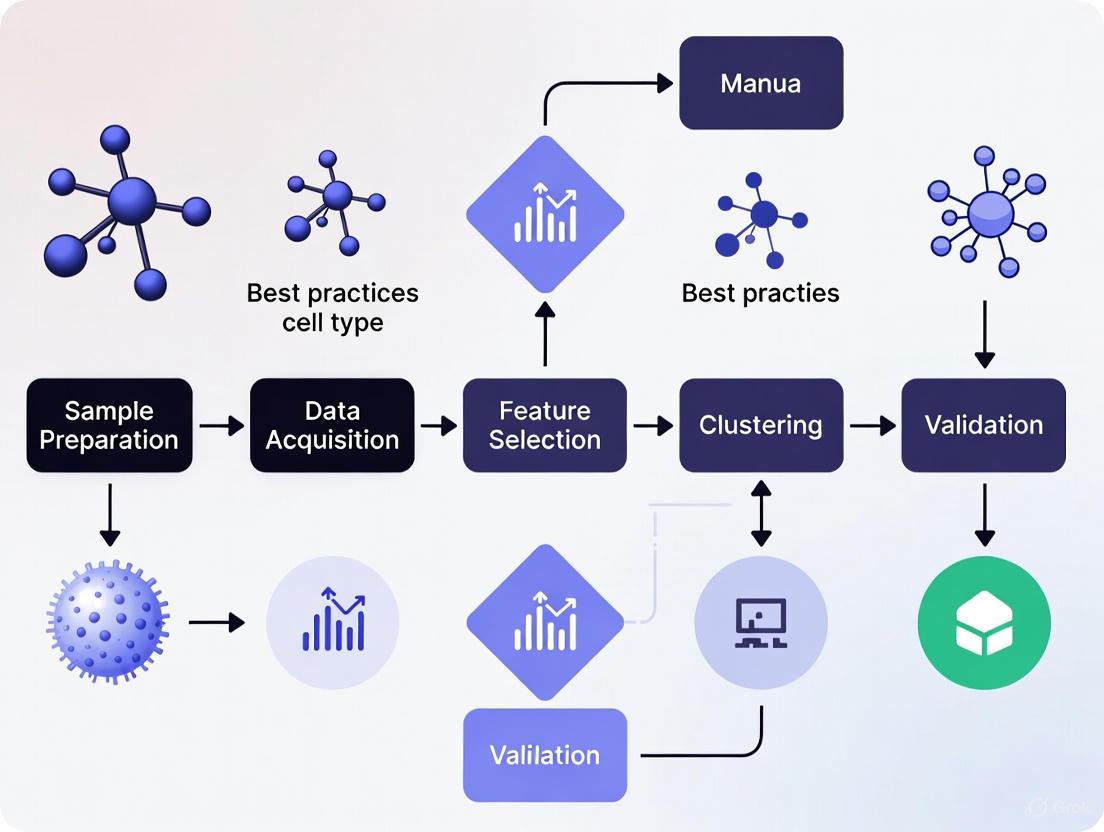

The following diagram illustrates the core logical workflow for modern cell type annotation, integrating both traditional and AI-assisted methods.

Figure 1: Integrated Cell Type Annotation Workflow. This diagram outlines the key steps in a modern annotation pipeline, from raw data to a finalized annotated dataset, highlighting the complementary roles of automated tools and expert-led refinement.

A critical prerequisite for multi-sample annotation is the integration of datasets to remove technical batch effects. The following diagram visualizes the semi-supervised integration process used by tools like STACAS, which leverages prior cell type knowledge to preserve biological variance.

Figure 2: Semi-Supervised Data Integration. This process uses prior cell type labels to guide the integration of multiple datasets, ensuring that technical batch effects are removed without obscuring true biological differences.

Successful cell type annotation relies on a suite of computational tools, reference data, and databases. The following table details key resources.

Table 3: Essential Reagents and Resources for Cell Type Annotation

| Resource Name | Type | Primary Function in Annotation | Key Application Notes |

|---|---|---|---|

| Seurat [8] | R Toolkit | Comprehensive environment for single-cell data analysis, including preprocessing, integration, and clustering. | The de facto standard for many analysis workflows; provides functions for reference-based integration. |

| Cell Ranger [4] | Analysis Pipeline | Processes raw 10x Genomics FASTQ data into gene-cell count matrices and performs initial secondary analysis. | Generates the foundational data (count matrices) for all downstream annotation work. |

| Human Cell Atlas [2] | Reference Database | Aims to create comprehensive reference maps of all human cells. | A growing source of high-quality, standardized reference data for multiple tissues. |

| ScInfeRDB [6] | Marker Database | An interactive database of 2,497 manually curated gene markers for 329 cell types across 28 tissues. | Can be directly integrated with the ScInfeR tool for marker-based annotation. |

| CellMarker / PanglaoDB [6] | Marker Database | Databases of cell type-specific markers compiled from literature. | Useful for manual refinement and validation of cluster identities. |

| Azimuth [1] [5] | Web Application / Reference | Provides automated cell type annotation for user-uploaded data against curated reference atlases. | Offers annotations at multiple levels of resolution, from broad categories to fine subtypes. |

The journey to define cell type identity has evolved from relying on simple morphological observations to integrating complex, high-dimensional transcriptomic data. The modern paradigm is combinatorial, leveraging automated reference mapping, emerging AI and LLM-based tools, and, indispensably, expert-guided manual refinement [1] [7] [5]. This integrated approach ensures that annotations are not only computationally derived but also biologically grounded.

Looking forward, several trends will shape the future of cell type annotation. The field is moving towards a multi-omic definition of cell identity, integrating not just transcriptomics but also epigenomic (e.g., scATAC-seq), proteomic, and spatial data to build a more complete picture [2] [6]. Furthermore, as LLM-based tools mature, their ability to interpret complex biological contexts will improve, but they will likely remain most powerful when used in a "human-in-the-loop" model [7]. Finally, the success of any annotation effort hinges on the quality of the underlying data and the availability of comprehensive, tissue-specific reference atlases. Continued community efforts to build and standardize these resources, such as the Human Cell Atlas, will be critical for deepening our understanding of cellular function in health and disease [1] [2].

In the era of single-cell biology, the definition of cell type identity is continuously evolving, moving beyond traditional morphological and physiological descriptions to encompass detailed transcriptomic signatures [1]. Assigning cell type identities is a central challenge in interpreting single-cell RNA sequencing (scRNA-seq) data, transforming clusters of gene expression data into meaningful biological insights. This process is fundamental for understanding complex biological systems, disease mechanisms, and developmental processes [1] [9]. Robust cell type identification depends on multiple factors: data quality, availability of suitable reference studies, and the validity of chosen marker genes or gene sets [1]. The annotation process is highly collaborative, combining computational expertise with deep biological knowledge to ensure annotations are technically sound and biologically meaningful [1]. Within this framework, cellular identities generally fall into several distinct categories, each requiring specific approaches for identification and validation.

Established Cell Types

Established cell types are the most straightforward to identify and are typically recognized through comparison with existing reference datasets or canonical marker genes [1]. These cell types have consistent, well-documented transcriptomic profiles supported by extensive previous research.

- Identification Methodology: The primary method for identifying established cell types is reference-based annotation. This involves aligning the gene expression profiles of single cells against established reference datasets from similar tissues using tools such as SingleR or Azimuth [1] [9]. The Azimuth project, for instance, provides annotations at different levels of granularity, from broad categories to detailed subtypes [1].

- Marker Gene Verification: Many established cell types possess distinct marker genes. For example, PECAM1 is a classic marker for endothelial cells, and PFN1 for osteocytes [1]. Verification involves checking for the expression of these canonical markers in the clusters of interest.

- Validation and Refinement: After an initial automated annotation, results are checked for consistency. If a reference indicates two clusters represent the same established cell type, they are merged. If it suggests finer distinctions, the clustering resolution is adjusted to capture those subtypes [1].

Novel Cell Populations

Novel cell populations are biologically distinct clusters that do not align with any known cell type based on existing references or marker gene databases. Their identification is a key driver of discovery in single-cell research.

- Identification Methodology: The process begins with differential expression analysis, which identifies genes that are statistically significantly upregulated in a cluster of interest compared to all other clusters [1] [9]. A cluster is considered potentially novel if its top differentially expressed genes do not match any known cell type signature.

- Biological Distinctness Assessment: Researchers must then assess whether the cluster's distinct transcriptomic profile correlates with a unique function or developmental origin [1]. This requires careful literature review and domain expertise to argue for the existence of a new cell type.

- Functional Validation: The identification of a novel cell type is considered provisional until followed up with independent validation experiments. This may include fluorescence in situ hybridization (FISH) to confirm spatial localization, or functional assays to determine the cell's role [1].

Transitional States and Disease-Associated Phenotypes

Cells can undergo changes in state without transitioning to a completely different type. These transitional states are often linked to processes like activation, stress, or disease pathology.

- Identification Methodology: Tools like gene set enrichment analysis or co-expression analysis are used to identify transcriptomic patterns associated with specific cellular states, such as activation or apoptosis [1]. In disease research, this involves comparing cells from healthy and diseased tissues to identify disease-associated expression signatures.

- Contextual Interpretation: Identifying a cell state requires deep biological context. For example, an increased level of mitochondrial transcripts can indicate an unhealthy or stressed cell state [4]. However, for some cell types like cardiomyocytes, mitochondrial gene expression is biologically meaningful and should not be used as a stress indicator [4].

- Trajectory Analysis: For understanding progressive changes, such as in development or disease progression, trajectory inference and pseudotime analysis are employed. These tools reconstruct the paths cells take as they progress from one state to another, providing dynamic insights beyond static classification [1].

Quantitative Comparison of Cell Type Annotation Methodologies

The choice of methodology for assigning cellular identities has a significant impact on the accuracy and reliability of the results. The following table summarizes the key approaches, their mechanisms, and their performance characteristics.

Table 1: Comparison of Cell Type Annotation Methodologies

| Method Category | Examples | Core Mechanism | Relative Speed | Key Requirements | Pros | Cons |

|---|---|---|---|---|---|---|

| Manual Curation | N/A | Inspection of cluster-specific differential genes against known markers [9]. | Slow | Known marker genes, accurate clustering, literature/databases (e.g., CellMarker) [9]. | Complete expert control; high reliability if meticulous [9]. | Time-consuming; requires expert knowledge; public databases not always updated [9]. |

| Traditional Automated | SingleR, CellTypist, Azimuth [9] [5] | Classification or reference mapping of cells to a reference dataset [9]. | Fast [5] | A single high-quality reference dataset similar to the query [9]. | Fast; no clustering needed; reliable with a good reference [9]. | Matching reference not always available; custom reference creation is non-trivial [9]. |

| AI and Foundation Models | scGPT, SCimilarity, Geneformer [9] [5] [10] | Leveraging models pre-trained on millions of cells to annotate using marker gene inputs [5]. | Varies (can be fast) [9] | GPU resources for some; possible fine-tuning with a reference [9]. | Can work without a reference; integrates multiple references in one model [9]. | Difficult setup; models are "black boxes" and not frequently updated [9]. |

| Knowledgebase-Driven | CellKb [9] | Rank-based search against a manually curated database of cell type signatures from literature [9]. | Fast | Web access; selection of relevant references from the knowledgebase [9]. | No installation; uses multiple, regularly updated references; simple interface [9]. | Not a free service [9]. |

Performance Note: A recent evaluation of GPT-4 found it could generate cell type annotations that fully or partially matched manual annotations in over 75% of cell types across several datasets, showcasing the potential of advanced AI in this field [5].

Integrated Experimental Workflow for Cell Type Annotation

A robust cell type annotation pipeline integrates multiple steps, from raw data processing to final validation. The following workflow diagram and protocol outline this integrated process.

Diagram: Integrated Workflow for Cell Identity Annotation

Detailed Protocol for Cell Identity Classification

In-depth Preprocessing and Quality Control:

- Quality Control: Filter out low-quality cells based on metrics like total UMI counts, number of features (genes), and the percentage of mitochondrial reads. For example, in PBMC data, a threshold of 10% mitochondrial reads is often used to remove damaged cells [4].

- Batch Correction: Apply computational methods to mitigate technical variation caused by differences in sample preparation or sequencing runs [1].

- Clustering: Perform a preliminary clustering analysis to group cells with similar transcriptomic profiles, providing the initial structural view of the dataset [1].

Combinatorial Annotation Strategy:

- Reference-Based Mapping: Use tools like Azimuth to map your pre-processed dataset against established reference atlases. This provides a first, automated preliminary annotation [1].

- Differential Expression: For each cluster, perform differential expression analysis (e.g., using a two-sided Wilcoxon rank-sum test) to identify genes that are statistically significantly upregulated compared to all other cells. The top genes (e.g., top 10) form the marker gene signature for the cluster [5].

- Marker Gene Cross-Referencing: Cross-reference the differential gene lists with canonical marker genes from literature and databases. This step is crucial for verifying established types and identifying the hallmarks of novel populations or states [1] [9].

Expert-Led Refinement and Validation:

- Cluster Merging/Splitting: Based on the annotation results, iteratively refine the clusters. Merge clusters predicted to be the same type or increase resolution to separate subtypes [1].

- Contextual Interpretation: Integrate client or domain-specific knowledge to interpret ambiguous clusters, edge cases, and biologically plausible states [1].

- Independent Validation: Plan and execute follow-up experiments of another nature (e.g., immunohistochemistry, functional assays) to further characterize the identified cell types, especially novel ones [1].

Successful cell type annotation relies on a suite of computational tools, reference data, and experimental reagents.

Table 2: Key Research Reagent Solutions for scRNA-seq Annotation

| Tool/Reagent Category | Examples | Primary Function |

|---|---|---|

| Commercial Platforms & Software | 10x Genomics Cell Ranger, Loupe Browser [4] | Processes raw sequencing data (FASTQ) into gene-cell matrices; provides initial QC, clustering, and interactive data visualization [4]. |

| Reference Datasets & Atlases | HuBMAP, Azimuth, Tabula Sapiens, Human Cell Atlas [1] [5] | Serve as a ground truth for reference-based annotation, providing pre-annotated cell types from various tissues [1]. |

| Automated Annotation Tools | SingleR, CellTypist, scGPT [9] [5] | Provide algorithmic cell type prediction using classification or reference mapping, reducing manual effort [9] [5]. |

| Marker Gene Databases | CellKb, CellMarker, PanglaoDB [9] | Curated collections of cell type-specific marker genes from published literature, used for manual verification of cluster identities [9]. |

| Experimental Validation Reagents | Antibodies for IHC/FISH, CRISPR kits [1] | Used for independent validation of cell type identities and functions identified through scRNA-seq analysis [1]. |

Assigning cell type identities is a central challenge and a foundational step in interpreting single-cell data. It is the process of transforming clusters of gene expression data into clear, meaningful biological insights [1]. Fundamentally, there is no universal method for defining cell identity [1]. With every publication, researchers must propose a cell type label and deliver compelling arguments for their labeling by extracting evidence from scRNA-seq data, informing scientific literature, and performing validation experiments [1]. This process is highly collaborative and not merely a default part of preliminary analysis; it requires partnering computational expertise with deep domain-specific biological knowledge to ensure annotations are both technically sound and biologically meaningful [1].

The following diagram illustrates the core decision-making workflow in manual cell type annotation, highlighting the critical role of researcher expertise at each stage.

The Inherent Complexities of Cell Identity

The very definition of a "cell type" is actively debated and continuously evolving, moving beyond traditional definitions based on morphology and physiology to encompass gene expression profiles and molecular states [1]. This complexity means cell identities often fall into multiple, sometimes overlapping, categories:

- Established cell types are identified through reference datasets and distinct markers (e.g., PECAM1 for endothelial cells) [1].

- Novel cell types are discovered when clusters are biologically distinct based on function or developmental origin, guided by differential expression and functional validation [1].

- Cell states and disease stages represent transient changes in response to perturbation, identified through patterns tied to activation, stress, or pathology [1].

- Developmental stages reveal progression from progenitor to mature cell types, reconstructed using trajectory and pseudotime analyses [1].

Methodological Landscape: From Manual Curation to AI Assistance

Traditional and Emerging Annotation Approaches

Cell type annotation methodologies generally fall into three categories, each with distinct strengths and limitations, as summarized in the table below.

Table 1: Comparison of Cell Type Annotation Methodologies

| Method Category | Key Examples | Pros | Cons | Expertise Dependency |

|---|---|---|---|---|

| Manual Annotation | Marker gene checking with databases (CellMarker, PanglaoDB) [9] | Complete control; High reliability if meticulous [9] | Time-consuming; Requires known markers; Depends on accurate clustering [9] | Very High |

| Automated Reference-Based | SingleR, Azimuth, CellTypist, scmap [9] [11] | Fast; No clustering needed; Objective [9] | Requires high-quality matching reference; Limited customization [9] | Medium |

| AI & Foundation Models | LICT, scGPT, Geneformer, Scimilarity [7] [9] | Can work without reference; Integrated multiple references [9] | Difficult setup; Models infrequently updated; Struggles with rare cell types [9] | Medium to High |

The Promise and Limitations of AI-Based Tools

Recent advancements have introduced artificial intelligence (AI) and large language models (LLMs) to cell type annotation. Tools like LICT (LLM-based Identifier for Cell Types) leverage a "talk-to-machine" strategy, where the model is iteratively queried with marker gene expression patterns to refine its predictions [7]. While these tools can reduce mismatch rates in highly heterogeneous datasets like PBMCs from 21.5% to 9.7% compared to earlier methods [7], their performance diminishes with less heterogeneous datasets. For example, even top-performing LLMs like Gemini 1.5 Pro and Claude 3 achieve only 39.4% and 33.3% consistency with manual annotations for human embryo and stromal cell data, respectively [7]. This highlights that AI tools serve as aids rather than replacements for expert judgment, particularly in complex or novel biological contexts.

Quantitative Benchmarks: How Methods Compare

Performance Across Dataset Types

Rigorous benchmarking studies provide quantitative evidence of the challenges in automated annotation. The following table summarizes the performance of a leading LLM-based method (LICT) across diverse biological contexts, demonstrating the variability in annotation success.

Table 2: Performance of LICT (LLM-based method) Across Diverse Biological Contexts [7]

| Dataset Type | Example Tissue/Condition | Match Rate with Manual Annotation | Key Challenges |

|---|---|---|---|

| High Heterogeneity | Peripheral Blood Mononuclear Cells (PBMCs) [7] | 90.3% (Low mismatch rate of 9.7%) [7] | Distinguishing closely related immune subtypes |

| High Heterogeneity | Gastric Cancer [7] | 91.7% (Low mismatch rate of 8.3%) [7] | Separating malignant from non-malignant cells |

| Low Heterogeneity | Human Embryos [7] | 48.5% (Match rate) [7] | Limited transcriptomic diversity between early lineages |

| Low Heterogeneity | Mouse Stromal Cells [7] | 43.8% (Match rate) [7] | Subtle differences between fibroblast subtypes |

Spatial Transcriptomics Present Unique Hurdles

In spatial transcriptomics, the challenge intensifies. A 2025 benchmarking study on 10x Xenium data for human HER2+ breast cancer found that reference-based methods like SingleR, while performing best among automated tools, still required manual validation, particularly for rare or ambiguous cell populations [11]. The study emphasized that manual annotation based on marker genes, despite being time-consuming, remains crucial for reconciling discrepancies and ensuring biologically plausible results [11].

A Practical Protocol for Manual Annotation

This section provides a detailed, executable protocol for researchers performing manual cell type annotation, incorporating both reference-based and expert-driven refinement.

Foundational Preprocessing and QC

- Step 1: Rigorous Quality Control - Filter out low-quality cells or genes and exclude multiplets. Critically assess metrics like percentage of mitochondrial reads, which can indicate stressed or dying cells (e.g., use <10% mt reads as a threshold for PBMCs) [4].

- Step 2: Batch Effect Correction - Apply bioinformatic corrections to mitigate technical variation from differences in sample preparation or sequencing runs [1].

- Step 3: Preliminary Clustering - Perform clustering analysis to group cells with similar transcriptomic profiles, providing the initial structural view of the dataset [1]. Use UMAP or t-SNE for visualization.

Reference-Based Annotation

- Step 4: Literature and Atlas Review - Conduct an in-depth review to identify suitable reference datasets and canonical marker genes for the expected cell types in the tissue of interest [1].

- Step 5: Automated Label Transfer - Use tools like SingleR or Azimuth to align gene expression profiles of each cell with chosen references. These tools provide annotations at different levels, from broad categories to detailed subtypes [1].

- Step 6: Iterative Cluster Refinement - Check how predicted cell types align with clusters. Merge clusters predicted to be the same type or adjust clustering resolution to capture finer differences. Use multiple references to generate a robust consensus annotation [1].

The Crucial Manual Refinement Cycle

- Step 7: Differential Expression Analysis - For each cluster, identify statistically significant upregulated genes compared to all other clusters. This generates cluster-specific gene lists beyond the preliminary markers [9].

- Step 8: Canonical Marker Validation - Manually check the expression patterns of known canonical marker genes for the hypothesized cell type. Use databases like CellMarker or PanglaoDB, but be aware they are not always regularly updated [9].

- Step 9: Biological Contextualization - Integrate domain knowledge to interpret ambiguous clusters or edge cases. This is often essential for distinguishing closely related cell subtypes, identifying transitional states, or flagging potentially novel populations [1].

- Step 10: Resolution of Inconsistencies - Reconcile discrepancies between reference-based predictions, marker gene evidence, and biological plausibility. This may involve re-clustering, consulting additional literature, or hypothesizing new cell states [1].

The following diagram details the iterative "talk-to-machine" strategy, a modern approach that exemplifies the collaboration between computational tools and researcher expertise.

Table 3: Key Research Reagent Solutions for Cell Type Annotation

| Tool/Resource | Function in Annotation | Application Context |

|---|---|---|

| CellKb [9] | A knowledgebase of high-quality cell type signatures from manually curated publications; allows use of multiple references without integration. | Annotating individual cells or clusters via a web interface without installation. |

| CellMarker/PanglaoDB [9] | Databases of known marker genes for various cell types across tissues and species. | Initial hypothesis generation during manual refinement and marker validation. |

| Azimuth [1] [11] | A reference-based annotation tool integrated within the Seurat platform; provides annotations at different resolution levels. | Transferring labels from a prepared reference (e.g., from the Human Cell Atlas) to query data. |

| SingleR [11] | A reference-based method that predicts cell types using correlation between query and reference datasets. | Fast, accurate annotation of common cell types, particularly in immune cells [11]. |

| LICT [7] | An LLM-based tool that uses a "talk-to-machine" approach for reference-free annotation. | Generating initial labels when a high-quality reference is unavailable; providing an objective credibility score. |

| STAMapper [12] | A heterogeneous graph neural network for transferring labels from scRNA-seq to single-cell spatial transcriptomics data. | Annotating challenging spatial data with high accuracy, especially with low gene numbers. |

Robust cell type identification is not a solved computational problem but a complex inference process that depends on multiple factors: data quality, the availability of suitable references, and the biological validity of chosen markers [1]. While automated and AI-driven methods are becoming increasingly powerful, they do not obviate the need for deep biological expertise. Instead, they shift the researcher's role from performing tedious comparisons to exercising critical judgment in interpreting results, reconciling discrepancies, and applying contextual knowledge [7] [9].

The most reliable annotations emerge from a combinatorial approach that integrates computational predictions with expert curation. It is also a critical best practice to follow up scRNA-seq experiments with independent validation using other methodological approaches, such as fluorescence in situ hybridization or immunohistochemistry, to further characterize the cells in a sample and confirm their identity [1]. Ultimately, accurately naming a cell type is the first step toward understanding its function, and this process remains fundamentally a human interpretation of complex data within a biological context.

In single-cell RNA sequencing (scRNA-seq) research, the path to biologically meaningful discoveries is paved long before the assignment of cell type labels. Manual cell type annotation, a cornerstone of biological interpretation, is entirely dependent on the quality of the data and the integrity of the initial clustering upon which it is built [1]. This guide details the two key prerequisites for any rigorous annotation workflow: comprehensive data quality assessment (DQA) and a foundational understanding of clustering analysis. Without excellence in these initial stages, even the most sophisticated annotation tools and expert biological knowledge can lead to spurious conclusions. The process transforms raw data into clusters of cells with similar expression profiles, which are then interpreted and labeled by researchers [1]. This document, framed within a broader thesis on manual cell type annotation best practices, provides researchers and drug development professionals with the essential technical groundwork to ensure their analytical pipeline is robust, reproducible, and ready for accurate biological interpretation.

Data Quality Assessment: Ensuring Analytical Robustness

A rigorous Data Quality Assessment (DQA) is the first and most critical step in the scRNA-seq pipeline. It serves to identify and mitigate technical artifacts that can obscure true biological signal, ensuring that downstream clustering and annotation are based on reliable data.

Core Quality Control Metrics and Filtering Strategies

After processing raw sequencing data with pipelines like Cell Ranger, the initial DQA involves examining key metrics to make informed decisions about filtering out low-quality cells [4]. The standard approach involves diagnosing three primary metrics for each cell barcode, which help distinguish intact cells from background noise or damaged cells.

Table 1: Key Quality Control Metrics for Single-Cell RNA-seq Data

| Metric | Description | Interpretation & Common Thresholds |

|---|---|---|

| UMI Counts per Cell | Total number of Unique Molecular Identifiers (UMIs) detected per cell. | Indicates sequencing depth. Cells with very high counts may be multiplets; cells with very low counts may be empty droplets or contain ambient RNA [4]. |

| Genes Detected per Cell | The number of unique genes detected per cell. | Correlates with UMI counts. High numbers can indicate multiplets; low numbers can indicate poor-quality cells or empty droplets [4]. |

| Mitochondrial Read Fraction | The percentage of reads mapping to the mitochondrial genome. | A high percentage (>10% in PBMCs) often indicates apoptotic or stressed cells due to cytoplasmic mRNA leakage [4]. |

These diagnostics are visualized and used for manual filtering in tools like Loupe Browser, where distributions are examined to remove extreme outliers [4]. Furthermore, the HTML summary file generated by Cell Ranger provides an initial, critical overview, indicating whether "No critical issues were identified" and showing expected values for cells recovered, mapping rates, and median genes per cell [4].

Addressing Technical Noise and Batch Effects

Beyond per-cell filtering, DQA must account for broader technical noise. A key challenge is ambient RNA, which arises from free-floating RNA released by lysed cells during sample preparation. This contamination can mask true expression patterns, particularly for rare cell types. Computational tools like SoupX and CellBender are recommended to estimate and subtract this background signal [4]. Additionally, when multiple samples or batches are involved, batch effect correction is a vital pre-processing step to prevent technical variation from being misinterpreted as biological variation during clustering [1]. This ensures that cells cluster together based on their type or state, not their sample of origin.

Clustering Analysis Fundamentals: Grouping Cells by Similarity

Clustering is the unsupervised learning process that groups cells based on the similarities in their gene expression profiles, forming the structural basis upon which cell type identities are assigned [13] [1].

The Clustering Workflow and Algorithm Selection

The standard clustering workflow in scRNA-seq analysis involves a sequence of steps designed to reduce dimensionality and identify natural groupings within the data. The following diagram illustrates this foundational workflow and its direct connection to the subsequent manual annotation phase.

The choice of clustering algorithm can significantly impact the results. Below is a comparison of common algorithms used in single-cell analysis, each with distinct strengths and limitations.

Table 2: Comparison of Common Clustering Algorithms in Single-Cell Analysis

| Algorithm | Underlying Principle | Advantages | Disadvantages |

|---|---|---|---|

| K-means [14] | Partitional; minimizes variance within K pre-defined clusters. | Computationally efficient for large datasets. | Requires prior specification of K (number of clusters); assumes spherical clusters. |

| Hierarchical Clustering [13] [14] | Builds a tree-like structure (dendrogram) of clusters. | Does not require pre-specifying cluster count; highly interpretable. | Computationally intensive on large datasets; sensitive to noise. |

| Leiden Algorithm [15] | Optimizes network structure to find tightly connected communities. | Fast, scalable, and guarantees connected clusters. | Resolution parameter impacts granularity; may require tuning. |

| DBSCAN [14] | Density-based; identifies dense regions separated by sparse areas. | Can find arbitrarily shaped clusters and identify outliers/noise. | Struggles with clusters of varying densities. |

In modern single-cell pipelines, such as those implemented in Scanpy, the Leiden algorithm (a successor to the Louvain method) is frequently used for community detection in graphs built from cells in a reduced dimensionality space [15].

Determining Cluster Resolution and Validation

A crucial step after clustering is validation to ensure the groups are robust and meaningful. Using metrics like the silhouette score or the Davies-Bouldin index provides a quantitative measure of clustering quality, indicating how well-separated the clusters are [14]. Furthermore, the choice of resolution is paramount. A too-low resolution may merge distinct cell types, while a too-high resolution may split a single cell type into multiple, overly fine-grained clusters. This is often an iterative process, guided by biological knowledge and the use of differential expression analysis to test for distinct transcriptomic profiles between clusters [1] [15].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

A successful single-cell study relies on a combination of wet-lab reagents and dry-lab computational tools. The table below details key resources essential for generating and analyzing data for manual cell type annotation.

Table 3: Essential Toolkit for Single-Cell RNA-seq Analysis

| Item / Tool | Type | Primary Function |

|---|---|---|

| Chromium Platform & Kits (e.g., 3' Gene Expression v4) [4] | Wet-lab Reagent | Platform for generating barcoded single-cell RNA-seq libraries from cell suspensions. |

| Cell Ranger [4] | Computational Tool | Primary analysis pipeline that processes FASTQ files to perform alignment, barcode counting, and initial clustering. |

| Loupe Browser [4] | Computational Tool | Interactive desktop software for visualization, quality control (filtering by UMI, genes, mt-reads), and initial exploration of clustering results. |

| Seurat / Scanpy [1] [15] | Computational Tool | Comprehensive R/Python packages for the entire downstream analysis workflow, including advanced normalization, dimensionality reduction, clustering, and differential expression. |

| Reference Atlases (e.g., Human Cell Atlas) [1] | Data Resource | Curated collections of cell type gene expression profiles used for automated (e.g., via Azimuth) or manual reference-based annotation. |

| Ambient RNA Removal Tools (e.g., SoupX, CellBender) [4] | Computational Tool | Algorithms to correct for background contamination, enhancing the signal-to-noise ratio in the count matrix. |

Integrated Workflow: From Raw Data to Annotated Clusters

The individual components of DQA and clustering form a cohesive, sequential pipeline. The following diagram provides a high-level overview of the complete journey from raw sequencing data to annotated cell types, highlighting the critical prerequisites covered in this guide.

The reliability of manual cell type annotation is inextricably linked to the meticulous application of data quality assessment and clustering analysis fundamentals. As the field advances with new technologies like single-cell long-read sequencing and automated annotation tools powered by large language models, the demand for high-quality input data and robust clustering only increases [10] [15]. By establishing a rigorous, reproducible approach to these foundational steps, researchers ensure that their subsequent biological interpretations and conclusions about cell identity, state, and function are built upon a solid analytical foundation, ultimately driving meaningful discoveries in biology and drug development.

Cell type annotation, a foundational step in single-cell RNA sequencing (scRNA-seq) analysis, has evolved from a purely manual, expert-driven process to one increasingly assisted by sophisticated computational tools. However, the integration of computational output with domain-specific biological knowledge remains a critical component for achieving accurate, biologically meaningful, and reproducible results. This whitepaper delineates the best practices for this collaborative paradigm, framing it within the broader context of manual annotation as the gold standard. It provides a technical guide for researchers and drug development professionals on effectively marrying automated predictions with expert curation to navigate the complexities of cellular heterogeneity, novel cell state discovery, and the inherent challenges of transcriptomic data interpretation.

The Indispensable Role of Manual Annotation and the Case for Collaboration

Manual cell type annotation is traditionally regarded as the benchmark for quality in scRNA-seq analysis. This process involves clustering cells based on gene expression profiles and then assigning cell identities by meticulously comparing cluster-specific gene lists with known canonical markers from scientific literature and databases [1] [16]. This expert-dependent approach provides deep biological insights and allows for the identification of novel or transient cell states that may not be predefined in existing classification schemas.

However, the manual process is labor-intensive, time-consuming, and suffers from poor scalability as datasets grow to encompass millions of cells [9] [17]. It is also susceptible to subjective biases and requires continuous consultation of a vast and ever-expanding body of literature. These limitations have spurred the development of numerous automated annotation methods. The core thesis of this guide is that these computational methods are not replacements for expert knowledge but are powerful partners. The most robust annotation strategy is a collaborative, iterative cycle where computational tools generate initial hypotheses and experts refine, validate, or correct these predictions using their domain-specific knowledge [1]. This synergy mitigates the weaknesses of both approaches, enhancing both efficiency and biological fidelity.

A Landscape of Computational Annotation Methods

Automated cell type annotation methods can be broadly categorized, each with distinct strengths, weaknesses, and appropriate use cases. Understanding this landscape is the first step toward effective integration.

Table 1: Categorization of Automated Cell Type Annotation Methods

| Method Category | Core Principle | Example Tools | Pros | Cons |

|---|---|---|---|---|

| Reference-Based | Transfers labels from a well-annotated reference dataset to a query dataset by correlating gene expression profiles. | SingleR [15] [11], Azimuth [1] [11], Seurat [6] | Fast, scalable, leverages established atlases. | Performance depends entirely on the quality and relevance of the reference; fails on cell types absent from the reference. |

| Marker-Based | Uses predefined lists of cell-type-specific marker genes to classify cells or clusters. | ScType [5] [6], SCINA [6], ACT [17] | Intuitive, based on established biological knowledge; does not require a full reference dataset. | Relies on the quality and completeness of marker lists; struggles with overlapping markers for similar subtypes. |

| Large Language Models (LLMs) | Leverages vast biological knowledge encoded in pre-trained models to annotate cell types from marker gene lists. | GPT-4 [5], AnnDictionary [15], Claude 3.5 Sonnet [15] | Broad knowledge base; requires no custom reference; can provide granular annotations. | "Black box" nature; potential for hallucination; requires expert validation [5]. |

| Hybrid & Advanced AI | Integrates multiple data sources (e.g., references and markers) or uses deep learning for hierarchical classification. | ScInfeR [6], STAMapper [18], scGPT [9] | Improved robustness and accuracy; can handle complex hierarchical relationships. | Often computationally intensive; complex setup and usage [9]. |

Quantitative Performance Benchmarking of Automated Tools

Selecting an appropriate computational tool requires an evidence-based approach. Recent benchmarking studies provide crucial performance metrics across various technologies and tissue types.

Table 2: Benchmarking Performance of Selected Annotation Tools

| Tool | Reported Performance | Context / Dataset | Key Finding |

|---|---|---|---|

| SingleR | Best performing, fast, and accurate [11]. | 10x Xenium spatial data (human breast cancer) | Predictions closely matched manual annotation. |

| Claude 3.5 Sonnet | >80-90% accuracy for major cell types; highest agreement with manual annotation [15]. | Tabula Sapiens v2 atlas (de novo annotation) | Leader in LLM-based annotation benchmarks. |

| GPT-4 | ~75% of cell types fully or partially matched manual annotations [5]. | Across 10 datasets, 5 species, normal and cancer samples. | Substantially outperformed other methods (e.g., SingleR, ScType) on average agreement scores. |

| STAMapper | Highest accuracy on 75 of 81 scST datasets [18]. | 81 single-cell spatial transcriptomics datasets across 8 technologies. | Superior performance in spatial transcriptomics, especially with low gene numbers. |

| ScInfeR | Superior accuracy and sensitivity in scRNA-seq, scATAC-seq, and spatial omics [6]. | Benchmarking over 100 prediction tasks across multiple atlas-scale datasets. | Robust against batch effects; effective as a hybrid method. |

| CellTypist | 65.4% exact match with author annotations [9]. | Asian Immune Diversity Atlas (AIDA) v2. | Example of performance in a specific, diverse immune dataset. |

A Detailed Workflow for Collaborative Integration

The following workflow provides a step-by-step protocol for integrating computational and manual annotation, ensuring that domain knowledge guides the entire process.

Experimental Protocol: The Collaborative Annotation Cycle

Step 1: Foundational Preprocessing and Quality Control

- Methodology: Begin with rigorous quality control (QC) to filter out low-quality cells, doublets, and technical artifacts. Standard steps include normalization, variable feature selection, dimensionality reduction (PCA), and clustering [1] [19].

- Domain Knowledge Integration: Experts must define QC thresholds (e.g., mitochondrial read percentage, number of detected genes) based on the specific biological system and technology. The initial clustering resolution is also a biological decision, balancing the desire to find subtypes against creating artifactual clusters.

Step 2: Generate Computational Hypotheses

- Methodology: Run one or more automated annotation tools. A recommended strategy is to use a reference-based tool (e.g., SingleR with an atlas like Tabula Sapiens) in tandem with an LLM-based tool (e.g., via AnnDictionary) that takes the top differential genes from each cluster as input [15] [5].

- Domain Knowledge Integration: The expert selects the reference datasets or marker databases that are most relevant to their tissue and species. This choice critically influences the outcome.

Step 3: Systematic Expert Curation and Refinement

- Methodology: This is the core manual validation step. For each cluster, experts should:

- Inspect Automated Labels: Compare the labels from different tools for consistency.

- Examine Differential Expression: Generate and review lists of differentially expressed genes for each cluster.

- Validate with Canonical Markers: Check the expression of well-established marker genes (e.g., PECAM1 for endothelial cells) via violin plots or feature plots to confirm the computational prediction [1].

- Investigate Ambiguities: For clusters with low-confidence or conflicting predictions, perform deeper analysis. This may involve gene set enrichment analysis (GSEA) to identify active biological pathways or subclustering to resolve potential mixed populations.

- Domain Knowledge Integration: Experts use their knowledge to interpret marker co-expression, recognize transitional states, and identify when a cluster may represent a novel cell type not present in reference databases. This step corrects for computational errors, such as over-granularization (e.g., a tool labeling "fibroblasts" and "osteoblasts" when the manual label is the broader "stromal cells") [5].

Step 4: Iterative Refinement and Validation

- Methodology: Annotation is rarely linear. Based on expert curation, clusters may be merged, split, or re-analyzed at a different resolution. The process returns to Step 2 until a stable and biologically defensible annotation is achieved.

- Domain Knowledge Integration: The entire iterative loop is driven by biological reasoning. Final annotations must form a coherent picture that aligns with the known biology of the tissue.

The following diagram illustrates this iterative workflow:

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key resources required for implementing the collaborative annotation workflow.

Table 3: Essential Resources for Cell Type Annotation

| Item / Resource | Type | Function in Annotation | Examples |

|---|---|---|---|

| Reference Atlases | Data | Provides a ground-truth set of gene expression profiles for reference-based methods. | Human Cell Atlas [1], Tabula Sapiens [15] [6], Tabula Muris [19] |

| Marker Gene Databases | Database | Curated lists of cell-type-specific genes for marker-based validation and manual annotation. | CellMarker [5] [19], PanglaoDB [9] [19], ACT [17] |

| Annotation Software (R/Python) | Tool | Executes automated annotation algorithms and provides frameworks for analysis. | SingleR [15] [11], Seurat [6] [11], Scanpy [15], AnnDictionary [15] |

| Visualization Platforms | Tool | Enables visual inspection of gene expression and cluster relationships in 2D/3D. | ScDiscoveries EDR [1], UCSC Cell Browser, commercial software suites |

| Validated Experimental Markers | Wet-lab Reagent | Provides orthogonal validation of computationally annotated cell types (e.g., via IHC, flow cytometry). | Antibodies for protein markers (e.g., CD3, CD19) [19], RNAscope probes |

The process of cell type annotation is most powerful when it is a collaborative dialogue between computational output and domain-specific knowledge. Automated methods provide unprecedented speed, scalability, and a valuable starting point, but they cannot fully encapsulate the nuanced, evolving understanding of cell identity and function. Manual expert annotation remains the cornerstone of biological interpretation, ensuring that results are not just statistically sound but also biologically meaningful. By adopting the integrated, iterative workflow outlined in this guide, researchers can enhance the accuracy and reliability of their single-cell analyses, thereby accelerating discovery in basic research and drug development.

The Manual Annotation Workflow: A Step-by-Step Protocol for Researchers

Robust manual cell type annotation in single-cell RNA sequencing (scRNA-seq) is fundamentally dependent on the quality of the underlying data. Preceding any biological interpretation, comprehensive quality control (QC) processes are essential to ensure that observed transcriptomic patterns reflect true biology rather than technical artifacts. This technical guide details the core QC pillars—filtering low-quality cells, detecting multiplets, and mitigating batch effects—within the context of preparing data for reliable manual annotation. As emphasized by single-cell research experts, "High-quality data is the foundation of reliable cell annotation" [1]. The presence of technical artifacts such as ambient RNA contamination and doublets can skew clustering and obscure genuine cell populations, leading to misinterpretation during the annotation process [20]. Furthermore, batch effects introduced during sample processing can create spurious clusters that mimic biological heterogeneity, fundamentally compromising the integrity of any subsequent cell type identification [21]. This guide provides researchers with a structured framework for implementing these critical QC steps, supported by current methodologies and quantitative benchmarks to ensure that manual annotation efforts are built upon a trustworthy data foundation.

Critical QC Metrics and Cell Filtering

Essential Quality Control Metrics

The initial phase of scRNA-seq quality control involves a systematic assessment of key metrics to identify and filter out low-quality cells. These metrics provide distinct insights into cell viability, capture efficiency, and technical artifacts that could confound downstream analysis. Rigorously quality-controlled data forms the essential foundation upon which all subsequent annotation is built [1].

The following table summarizes the primary QC metrics, their biological or technical interpretations, and standard filtering criteria:

Table 1: Key Quality Control Metrics for scRNA-seq Data

| Metric | Interpretation | Common Filtering Threshold/Rationale |

|---|---|---|

| UMI Counts per Cell | Total transcript count; indicates capture efficiency and cell integrity. | Filter extremes: low counts (empty droplets/lysed cells) and very high counts (potential multiplets) [4]. |

| Genes Detected per Cell | Cellular complexity; measures diversity of expressed genes. | Filter outliers with very low or high numbers of features; high counts may indicate doublets [4]. |

| Mitochondrial Read Percentage | Cell stress or apoptosis; high percentages suggest low viability. | Threshold varies by sample type (e.g., >10% for PBMCs). Note: some cell types (e.g., cardiomyocytes) naturally have high mtRNA [4]. |

| Ambient RNA Contamination | Background noise from lysed cells; can obfuscate true cell identity. | Use computational tools (e.g., SoupX, CellBender, DecontX) for estimation and removal [20] [4]. |

A Practical Workflow for Diagnostic QC

Implementation of these metrics follows a logical diagnostic workflow. The process typically begins with an assessment of the Cell Ranger summary report, which provides a first-pass evaluation of data quality, including metrics like the number of cells recovered, median genes per cell, and the confidently mapped read fraction [4]. Following this initial check, diagnostic plots such as the Barcode Rank Plot (which should show a characteristic "cliff-and-knee" shape separating cells from background) and violin plots of QC metrics per sample are used for visual inspection [4].

The actual filtering process involves applying thresholds to the metrics in Table 1. For instance, in a standard PBMC dataset, one might remove cell barcodes with UMI counts or gene counts in the extreme low and high percentiles of the distribution, and further filter out cells where the percentage of mitochondrial reads exceeds 10% [4]. This workflow ensures the removal of barcodes representing empty droplets, dead/dying cells, and multiplets, preserving only high-quality cells for downstream analysis and annotation.

Detection and Removal of Doublets

Understanding the Doublet Challenge

Doublets (or multiplets) are technical artifacts that occur when two or more cells are captured within a single droplet or well and are subsequently labeled as a single cell. These artifacts pose a significant challenge for cell type annotation, as they exhibit hybrid gene expression profiles that can be misinterpreted as novel or intermediate cell types [20]. The prevalence of doublets increases with the number of cells loaded into the instrument, making them a particularly critical concern in high-throughput droplet-based protocols [20]. If not removed, doublets can lead to the formation of spurious clusters that lack biological basis, thereby misleading annotation efforts and potentially resulting in the false discovery of non-existent cell states.

Computational Doublet Detection Strategies

Accurate doublet detection requires specialized computational tools, as their transcriptomic profiles can be complex. The field has developed several robust algorithms designed to identify and remove these artifacts.

Table 2: Computational Tools for Doublet Detection

| Tool | Underlying Principle | Key Application Note |

|---|---|---|

| Scrublet | Models the expected gene expression profile of doublets and scores each cell based on its similarity to these simulated doublets [20]. | Effective in heterogeneous samples; performance may vary with homogenous cell populations. |

| DoubletFinder | Identifies doublets based on the premise that artificial doublets will have nearest neighbors that are also artificial in the gene expression space [20]. | A widely used and benchmarked method integrated into many analysis pipelines. |

Best practices recommend using these tools in a complementary fashion, rather than relying on a single method. For instance, one might run both Scrublet and DoubletFinder on a dataset and treat cells flagged by either tool as putative doublets for removal. This conservative approach maximizes the likelihood of removing technical artifacts while preserving true biological signal. After doublet removal, the cleaned dataset provides a more accurate representation of genuine cell types, forming a more reliable basis for manual annotation.

Mitigating Ambient RNA Contamination

Ambient RNA contamination is a pervasive technical issue in droplet-based scRNA-seq, originating from the release of RNA fragments from lysed or dead cells into the cell suspension during sample preparation [20]. This extracellular RNA is then co-encapsulated with intact cells into droplets, leading to a background "soup" of counts that is added to the native transcriptome of every cell. The presence of this contamination can be particularly damaging for cell type annotation because it can cause misclassification of cell identities, especially for rare cell types whose marker genes may also be present at low levels in the ambient pool [20]. Sources of ambient RNA are numerous, including cell lysis during tissue dissociation, mechanical stress, enzymatic digestion, and even the laboratory environment or reagents [20].

Computational Decontamination Tools

To address this challenge, several computational decontamination tools have been developed. These methods estimate the profile of the ambient RNA and subtract its contribution from the gene expression counts of genuine cells.

Table 3: Computational Tools for Ambient RNA Removal

| Tool | Methodology | Key Strength |

|---|---|---|

| SoupX | Directly estimates the ambient RNA profile from empty droplets and subtracts it from cell-containing droplets [20] [4]. | A widely adopted and effective method for background correction. |

| CellBender | Employs a deep generative model to perform unsupervised removal of ambient RNA noise, distinguishing true cell-specific signal from technical background [20]. | A more recent, powerful approach that can also model other artifacts like doublets. |

| DecontX | Uses a contamination-focused statistical model to identify and remove ambient RNA signals from single-cell data [20]. | Provides robust decontamination within a comprehensive analysis framework. |

The application of these tools is a critical preprocessing step. By computationally "cleaning" the count matrix, they enhance the signal-to-noise ratio, leading to sharper cluster definitions and more reliable expression of marker genes. This, in turn, provides the manual annotator with a much clearer and more accurate picture of the underlying biology, preventing misinterpretations driven by technical artifacts.

Batch Effect Identification and Correction

The Nature and Source of Batch Effects

In the context of building a robust dataset for manual annotation, batch effects are systematic technical variations introduced when samples are processed in different batches, sequencing runs, or by different protocols. These non-biological variations can cause cells of the same type to appear transcriptionally distinct, leading to misleading clustering that can be falsely interpreted as novel biological states or subtypes during annotation [21]. A clear example comes from scATAC-seq studies, where variability in the nuclei-to-Tn5 transposase ratio between parallel reactions has been identified as a major source of batch effects, directly impacting data quality and confounding downstream analysis [21]. Similar issues arise in scRNA-seq from differences in library preparation, sequencing depth, or reagent lots.

Strategies for Batch Effect Mitigation

Addressing batch effects requires a multi-faceted strategy, combining experimental design and computational correction.

Figure 1: A dual-pronged strategy combining experimental and computational methods is most effective for mitigating batch effects.

The effectiveness of computational integration is highly dependent on proper feature selection. A recent large-scale benchmark study reinforced that using highly variable genes for integration is an effective common practice. Furthermore, the study provides guidance that batch-aware feature selection (considering variation across batches) and selecting an appropriate number of features (often around 2,000) can significantly improve the quality of integration and subsequent mapping of query samples to a reference [22]. Successful batch correction results in a dataset where cells cluster by biological identity rather than technical origin, creating a reliable foundation for accurate manual cell type annotation.

Implementing a comprehensive QC pipeline requires a suite of specialized tools and reagents. The following table catalogs key resources referenced in this guide.

Table 4: Essential Reagents and Computational Tools for scRNA-seq QC

| Category | Item/Tool | Primary Function in QC |

|---|---|---|

| Commercial Platform | 10x Genomics Chromium | A droplet-based system for high-throughput single-cell partitioning and barcoding [4]. |

| Data Processing Suite | Cell Ranger | Primary pipeline for processing 10x Genomics data, performing alignment, barcode counting, and initial QC [4]. |

| Visualization Software | Loupe Browser | Interactive visualization tool for exploring scRNA-seq data, assessing QC metrics, and performing initial filtering [4]. |

| Ambient RNA Removal | SoupX, CellBender, DecontX | Computational tools for estimating and removing background ambient RNA contamination [20] [4]. |

| Doublet Detection | Scrublet, DoubletFinder | Algorithms for identifying and filtering out multiplets from the dataset [20]. |

| Batch Correction | scVI, Harmony, Seurat CCA | Integration tools that merge datasets from different batches while preserving biological variance [22]. |

| Feature Selection | Scanpy (Highly Variable Genes) | Identifies genes with high biological variance for use in downstream integration and analysis, crucial for mitigating technical noise [22]. |

Quality control is not a standalone procedure but an integrated, foundational component of rigorous single-cell research. The processes of filtering low-quality cells, removing doublets, and correcting for batch effects are prerequisites that directly determine the success and accuracy of manual cell type annotation. As this guide outlines, a systematic approach—leveraging both established diagnostic metrics and advanced computational tools—is essential for transforming raw sequencing data into a biologically meaningful representation of cellular heterogeneity. By adhering to these best practices, researchers can build a trustworthy data foundation, ensuring that the identities they assign to cells during manual annotation are reflective of true biology, thereby enabling robust and reproducible scientific discovery.

The accurate identification of distinct cell types in complex tissue samples represents a critical prerequisite for elucidating the roles of cell populations in various biological processes, including hematopoiesis, embryonic development, and disease pathogenesis [23]. Central to this identification process are marker genes—genes whose expression is specific to one or a limited number of cell types and which serve as defining molecular signatures for cellular identity [24]. The systematic selection of these marker genes is therefore not merely a technical preliminary but a fundamental determinant of the validity and robustness of subsequent biological interpretations derived from single-cell RNA sequencing (scRNA-seq) data.

The process of cell type annotation has evolved from purely manual curation to increasingly automated computational methods, yet all approaches fundamentally rely on the quality and specificity of the marker genes employed [9]. Traditional manual annotation involves clustering cells based on transcriptomic profiles followed by inspection of cluster-specific gene expression against known marker databases—a process that is time-consuming, potentially subjective, and complicated by the reality that many candidate genes are expressed across multiple cell types [23] [25]. Automated methods, including both marker-based and reference-based approaches, offer scalability but require high-quality, well-curated marker gene sets to achieve accurate performance [9]. Despite technological advances, a significant challenge persists: marker gene specificity varies considerably across species, samples, and cell subtypes, necessitating sophisticated strategies for their selection and validation [24].

This technical guide frames the process of systematic marker gene selection within the broader context of manual cell type annotation best practices, providing researchers with a comprehensive methodology for leveraging databases and literature curation to build robust, evidence-based marker gene panels. By integrating principles from computational biology, rigorous statistical evaluation, and experimental validation, we outline a structured approach to navigating the complexities of marker gene selection that balances biological relevance with technical practicality.

Curated Marker Gene Databases and Knowledgebases

A foundation of any systematic marker selection strategy is the utilization of comprehensively curated databases that aggregate marker gene information from diverse sources. These resources vary in scope, curation methodology, and functionality, but collectively provide an essential starting point for evidence-based marker selection.

Table 1: Key Marker Gene Databases and Their Characteristics

| Database | Scope | Key Features | Curation Method | Update Frequency |

|---|---|---|---|---|

| GeneMarkeR | Human, mouse | Standardized marker results from 25 studies across 21,012 genomic entities; hierarchical ontology mapping; marker gene scoring algorithm [24] | Manual extraction and standardization from publications; statistical results integration | Not specified |

| ScType Database | Human, mouse | Comprehensive cell-specific markers; includes positive and negative markers; enables fully-automated annotation [23] | Integrated within computational platform; specificity scoring | Not specified |

| CellMarker | Human, mouse | Manually extracted marker lists from multiple sources [9] | Manual literature curation | Not regularly updated [9] |

| CellKb | Multiple species | Web-based interface; high-quality cell type signatures from curated publications; regular updates [9] | Manual curation from reference publications; every 3 months [9] |

The GeneMarkeR database exemplifies a sophisticated approach to marker gene consolidation, incorporating a novel scoring algorithm that quantifies the evidence supporting each gene-cell type relationship [24]. This system normalizes disparate statistical endpoints from original publications onto a uniform 0-1 scale, where 0.5 corresponds to the statistical significance cutoff used in the original study, and values between 0.5-1 represent increasingly strong evidence [24]. This normalization enables cross-study comparison and the identification of markers that demonstrate consistency across species, methodologies, and sample types.

Database Integration and Marker Selection Strategy

Effective utilization of these databases requires a strategic approach that acknowledges their complementary strengths and limitations. Researchers should prioritize databases that implement standardized ontologies (such as Cell Ontology terms) to ensure consistent cell type nomenclature across studies [24] [9]. Additionally, consideration of cellular hierarchy is essential, as markers may be specific to broad cell classes (e.g., "immune cells") or narrow subtypes (e.g., "CD16+ monocytes") [24]. The ScType platform addresses this specificity challenge by guaranteeing the specificity of marker genes across both cell clusters and cell types through a computed specificity score [23].

A critical best practice involves cross-referencing multiple databases to identify consistently reported markers while remaining cognizant of potential technological biases. For instance, markers identified through protein-based methods (e.g., FACS) may not always perform optimally in transcriptomic data, making RNA-based sources generally more reliable for scRNA-seq applications [25]. Furthermore, researchers should verify that selected markers have demonstrated effectiveness in contexts biologically relevant to their study system, as marker specificity can vary substantially across tissues and physiological states [24].

Methodologies for Marker Gene Selection

Computational Framework for Marker Selection

The selection of optimal marker genes from candidate pools requires computational methodologies that can evaluate gene specificity and discriminative power. These methods range from traditional statistical tests to advanced machine learning approaches, each with distinct strengths and performance characteristics.

Table 2: Marker Gene Selection Methods and Performance Characteristics

| Method Category | Representative Methods | Key Principles | Performance Notes |

|---|---|---|---|

| Differential Expression-Based | Wilcoxon rank-sum test, t-test, logistic regression [26] | Identifies genes differentially expressed between specific cell groups | Simple methods like Wilcoxon show competitive performance; balance of accuracy and speed [26] |

| Feature Selection-Based | RankCorr [27] | Sparse selection inspired by proteomic applications | Theoretical guarantees; good experimental performance [27] |

| Machine Learning-Based | SMaSH [27], MarkerMap [27] | Neural network frameworks leveraging explainable AI techniques | Competitive performance; particularly effective with limited markers [27] |