Manual vs. Automated Annotation: A Precision-Focused Analysis for Biomedical AI

This article provides a comprehensive comparison of manual and automated data annotation accuracy, tailored for researchers and professionals in drug development and biomedical science.

Manual vs. Automated Annotation: A Precision-Focused Analysis for Biomedical AI

Abstract

This article provides a comprehensive comparison of manual and automated data annotation accuracy, tailored for researchers and professionals in drug development and biomedical science. It explores the foundational principles of data annotation, examines methodological applications in real-world research scenarios, addresses critical challenges like bias and inconsistency, and presents rigorous validation frameworks. By synthesizing evidence from recent studies and industry practices, the content offers a strategic guide for selecting and optimizing annotation methodologies to ensure the reliability of AI models in high-stakes clinical and research environments.

The Critical Role of Annotation Accuracy in Biomedical AI

Data annotation is the critical process of labeling raw data—whether images, text, audio, or video—to create the ground truth that enables supervised machine learning models to learn and make accurate predictions [1] [2]. The choice between manual and automated annotation methods directly impacts the quality, efficiency, and success of AI projects, a decision particularly salient in research and drug development where precision is paramount [1] [3].

This guide objectively compares the performance of manual and automated data annotation, presenting supporting experimental data and methodologies relevant to scientific applications.

Experimental Protocols for Assessing Annotation Accuracy

Research into annotation accuracy employs rigorous methodologies to quantify performance and ensure the reliability of resulting datasets. The following protocols are standard in the field.

Protocol 1: Measuring Inter-Annotator Agreement (IAA)

IAA metrics assess the consistency of labels across multiple annotators, serving as a key indicator of annotation quality and guideline clarity [4].

- Objective: To quantify the consistency and reliability of annotations by measuring the agreement between multiple human annotators or between human annotators and an automated system.

- Procedure:

- Sample Selection: A representative subset of data is selected from the entire dataset.

- Multiple Annotations: The same data sample is independently annotated by two or more trained annotators (or by an automated system and a human expert).

- Statistical Analysis: Agreement is calculated using metrics like Cohen's Kappa or Fleiss' Kappa, which account for agreement occurring by chance [4]. For classification tasks, a confusion matrix is often used to visualize disagreements [4].

- Key Metrics:

- Cohen's Kappa (κ): Values range from -1 (complete disagreement) to 1 (perfect agreement). A score above 0.8 is typically considered excellent agreement, indicating high-quality, consistent annotations [4].

- F1 Score: The harmonic mean of precision and recall, providing a balanced measure of a model's annotation performance [4].

Protocol 2: Performance Benchmarking with Control Tasks

This method uses a "gold standard" dataset to benchmark the accuracy of new annotations [4].

- Objective: To evaluate labeling accuracy by testing annotators against a predefined set of questions with known correct labels.

- Procedure:

- Gold Standard Creation: A set of control tasks is meticulously labeled by domain experts to establish a ground truth.

- Integration and Evaluation: These control tasks are randomly interspersed within the main annotation workload. Annotators' responses are compared against the gold standard answers.

- Performance Tracking: Individual annotator accuracy is tracked, and systematic errors are identified for corrective feedback or guideline refinement [4].

Protocol 3: Tiered Quality Control and Validation

A multi-layered quality assurance (QA) framework is implemented to maintain high annotation standards throughout a project [2].

- Objective: To implement a multi-layered validation system that catches errors at various stages of the annotation pipeline.

- Procedure:

- Initial Validation: Automated checks for completeness and basic conformity [2].

- Peer Review: A second annotator reviews a sample of completed work to identify errors or guideline misinterpretations [2] [5].

- Expert Review: Domain experts review challenging cases and random samples to ensure domain-specific accuracy, a crucial step in fields like medical imaging [2] [5].

- Statistical Analysis: Automated detection of outlier patterns or inconsistencies across the dataset [2].

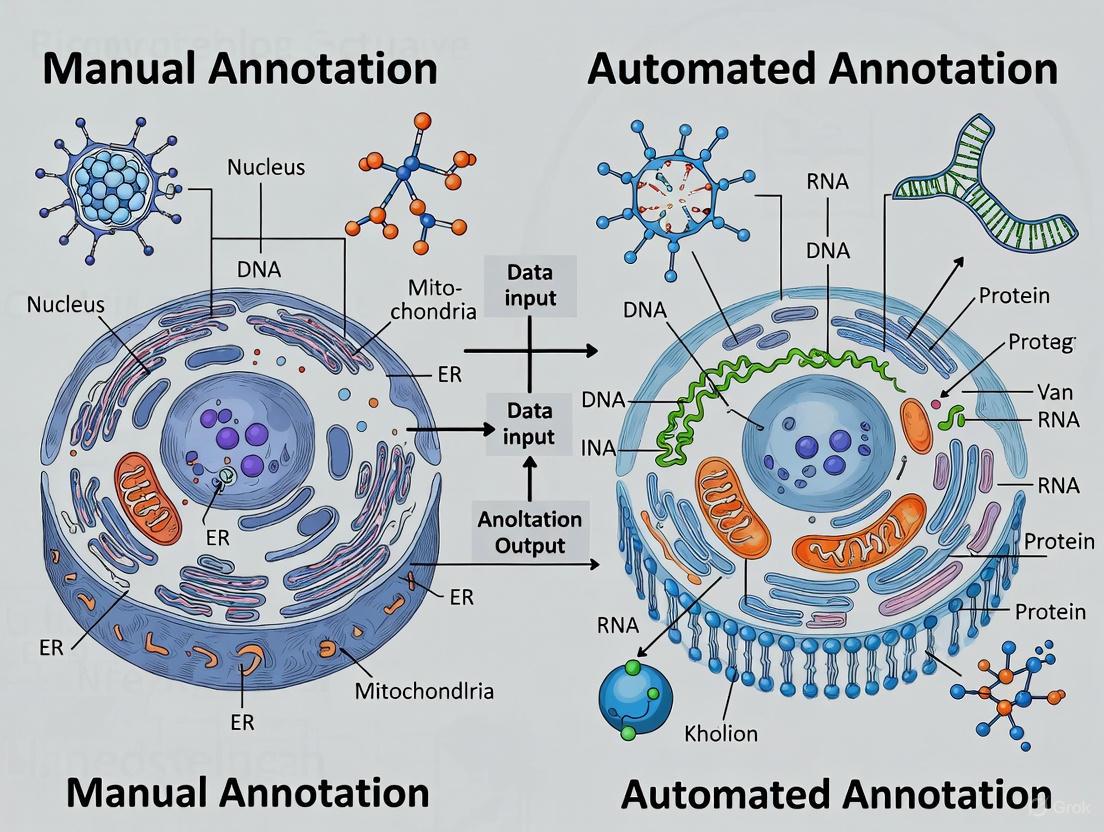

The following workflow diagram illustrates how these protocols and methods can be integrated into a robust annotation pipeline for a research setting.

Quantitative Comparison: Manual vs. Automated Annotation

The table below summarizes the comparative performance of manual and automated annotation across key metrics, synthesizing data from experimental protocols and industry benchmarks [1] [6] [7].

| Metric | Manual Annotation | Automated Annotation |

|---|---|---|

| Inherent Accuracy | Very high, especially for complex/nuanced data [1] [7] | Moderate to high; struggles with complexity and context [1] [6] |

| Typical Consistency (IAA Score) | High with rigorous training & guidelines (κ > 0.8) [4] | Perfect consistency on simple tasks; can be inconsistent on novel data [1] |

| Best-Suited Data Complexity | Excellent for complex, ambiguous, or subjective data (e.g., medical imagery) [1] [8] | Excellent for simple, repetitive tasks with clear patterns [1] |

| Experimental Control Task Performance | High accuracy (>95%) on domain-specific tasks with expert annotators [4] | High on training-like data; performance drops on edge cases and new distributions [7] |

| Impact on Model Performance | Can improve final model accuracy by up to 20% with high-quality labels [3] | Enables rapid iteration; model ceiling limited by annotation accuracy [1] |

| Scalability | Limited by human resources; difficult and costly to scale [1] [7] | Highly scalable; can process massive datasets rapidly [1] [6] |

| Cost & Time Efficiency | Time-consuming and costly due to labor [1] [6] | Cost-effective for large volumes after initial setup [1] [6] |

The Researcher's Toolkit: Essential Annotation Solutions

For scientists and drug development professionals, selecting the right tools and approaches is critical. The following table details key solutions and their applications in a research context.

| Research Reagent Solution | Function & Application |

|---|---|

| Specialized Annotation Platforms (e.g., Encord, Labelbox) | Support multimodal data (e.g., medical images DICOM), custom workflows, and MLOps integration for production-grade datasets [9]. |

| Active Learning Frameworks | Machine learning methods that identify the most informative data points for annotation, optimizing the time of expert annotators [8] [10]. |

| Inter-Annotator Agreement (IAA) Metrics | Quantitative measures (Cohen's Kappa, Fleiss' Kappa) to statistically validate annotation consistency and guideline clarity across a team [4]. |

| Hierarchical Labeling Systems | Organizes labels into a structured, multi-level framework (e.g., "Vehicle" -> "Car" -> "Sedan") to improve accuracy and contain errors within branches [5]. |

| Pre-Trained & Foundational Models | Models like T-Rex2 or DINO-X provide AI-assisted pre-annotation, significantly speeding up initial labeling for common objects [9]. |

| Quality Control (QC) & Benchmarking Suites | Integrated software features for creating control tasks, performing tiered reviews, and tracking quality metrics over time [2] [4]. |

Analysis of Experimental Data and Research Trends

Experimental data confirms that manual annotation, while slower, provides superior accuracy on complex and nuanced tasks, which is critical for applications like medical image analysis where error costs are high [1] [3]. The high IAA scores achievable with trained experts make this the gold standard for creating reliable ground truth datasets [4].

Conversely, automated annotation excels in throughput and scalability. Its performance is highly dependent on the quality and similarity of its training data to the target dataset. Performance can degrade significantly with domain shift—when new data differs from the training distribution—a common challenge in research [8] [7].

The emerging paradigm that addresses these trade-offs is Human-in-the-Loop (HITL) automation [1] [2]. This hybrid approach leverages AI for initial, high-volume labeling and reserves human expertise for complex edge cases, quality control, and reviewing the most uncertain predictions. This strategy balances efficiency with the high accuracy required for scientific model development.

In the rapidly evolving field of artificial intelligence, the precision of data annotation directly dictates the performance of machine learning models. While automated annotation offers compelling advantages in speed and scalability for large datasets, manual annotation—the human-driven process of labeling data—remains indispensable for tasks requiring nuanced judgment, contextual understanding, and domain-specific expertise [1] [6]. This is particularly true in high-stakes fields like healthcare and scientific research, where accuracy is paramount and errors can have significant consequences [11]. This guide objectively compares the performance of manual and automated annotation, presenting supporting experimental data that underscores the human advantage in managing complexity and ambiguity. The evidence confirms that in scenarios demanding sophisticated judgment, manual annotation provides a level of quality and reliability that automation has yet to surpass.

Experimental Evidence: Manual Annotation in Action

The superiority of manual annotation is not merely theoretical; it is demonstrated through rigorous experiments and practical applications across complex domains. The following case studies provide quantitative and qualitative evidence of its critical role.

Case Study 1: Medical Image Annotation for AI-Assisted Diagnosis A 2025 study on constructing a thyroid nodule ultrasound database quantified the value of human expertise in medical imaging [12]. The research established a two-stage manual annotation protocol: initial annotation by junior physicians, followed by review and correction by senior physicians (associate chief physicians or chief physicians). This process created a high-quality "gold standard" dataset for training a YOLOv8 AI model. The study found that even when using an AI model pre-trained on augmented data to assist junior physicians, it only saved approximately 30% of their manual annotation workload for a small dataset of 1,360 images [12]. This highlights that expert human judgment remains the backbone of creating reliable medical imaging datasets, a task too critical for full automation.

Case Study 2: A Scalable, Rule-Based Method for Clinical Alarm Annotation Research into reducing "alarm fatigue" in Intensive Care Units (ICUs) faced the challenge of labeling the actionability of millions of patient monitoring alarms [13]. A purely manual, case-by-case approach was deemed too slow and resource-intensive. The solution was an interdisciplinary, consensus-based manual process to develop a rule-based annotation method. Clinicians and researchers manually defined a set of eight rules and mapping tables to classify alarms as actionable or non-actionable based on data from the Patient Data Management System [13]. This methodology enabled the semiautomatic annotation of a large number of alarms retrospectively and quickly. This case demonstrates that manual expertise is crucial for establishing the foundational logic and rules that can later be scaled with technology.

Case Study 3: Curating a Precision Cancer Drug Combination Database The OncoDrug+ database, a 2025 resource for precision combinatorial therapy, was built entirely through manual curation [14]. To create this knowledge base, researchers systematically integrated data from FDA databases, clinical guidelines, trials, case reports, and biomedical literature. This process required professionals to interpret and synthesize complex, context-dependent information from disparate sources—a task fundamentally reliant on human discernment. The result was a highly annotated resource covering 7,895 data entries, 77 cancer types, and 1,200 biomarkers [14]. This project exemplifies manual annotation's unparalleled flexibility and ability to handle unstructured, multi-source information where automated tools would struggle.

Comparative Performance Data

The following tables synthesize experimental data and key differentiators between manual and automated annotation, illustrating why the choice of method is context-dependent.

Table 1: Quantitative Results from Medical Imaging Study Workload Reduction [12]

| Dataset Size | Annotation Method | Workload Reduction for Junior Physicians | Classification Accuracy vs. Junior Physicians |

|---|---|---|---|

| 1,360 images | AI Pre-annotation + Human Review | ~30% | Not Reported |

| 6,800+ images | AI Pre-annotation + Human Review | Not Required | Very Close |

Table 2: Key Differentiators Between Manual and Automated Annotation [1] [6] [7]

| Criterion | Manual Annotation | Automated Annotation |

|---|---|---|

| Accuracy & Complexity | High accuracy, especially for complex, nuanced, or subjective data [1]. | Lower accuracy for complex data; consistent for simple, repetitive tasks [1]. |

| Handling Novel Data | Highly flexible; humans adapt quickly to new challenges and edge cases [6]. | Limited flexibility; requires retraining for new data types, struggles with edge cases [1]. |

| Domain Expertise | Essential for specialized fields (medical, legal) [6]. | Minimal expertise needed; operates on pre-defined patterns. |

| Best-Suited Project Size | Small to medium datasets, or large datasets where quality is critical [7]. | Large to massive datasets (millions of instances) with tight deadlines [1] [7]. |

The Researcher's Toolkit: Protocols for High-Quality Manual Annotation

Successful manual annotation requires more than just human effort; it demands structured protocols, specialized tools, and careful management to ensure quality and consistency.

Detailed Experimental Protocols The methodologies from the cited experiments provide a blueprint for robust manual annotation:

- Two-Stage Review with Expert Oversight (Medical Imaging): This protocol involves initial annotation by trained personnel (e.g., junior physicians), followed by a mandatory review and correction cycle by senior domain experts. This creates a verified "gold standard" dataset and mitigates individual error [12].

- Interdisciplinary Rule-Set Development (Clinical Alarms): This method involves convening a multidisciplinary team (e.g., clinicians, data scientists) to manually analyze a problem domain. Through consensus, the team defines a logical rule set and mapping tables. This transforms human expertise into a scalable, rule-based annotation system [13].

- Systematic Data Curation and Integration (Drug Database): This protocol entails manually collecting data from multiple, heterogeneous sources (databases, literature, patient records). Professionals then interpret, synthesize, and integrate this information based on pre-defined evidence scores and inclusion criteria, ensuring a comprehensive and evidence-based final resource [14].

Essential Research Reagent Solutions The following tools and concepts are fundamental to executing a manual annotation project.

Table 3: Key Solutions for Manual Annotation Research

| Solution / Tool | Function / Description |

|---|---|

| Two-Stage Expert Review | A quality control process where initial annotations are reviewed and corrected by senior experts to establish a gold standard [12]. |

| Interdisciplinary Teams | Groups comprising domain experts (e.g., physicians) and data specialists to define annotation rules and standards [13]. |

| Annotation Guidelines & Rule Sets | Formally documented instructions that define classes, labels, and processes to ensure consistency across human annotators [13]. |

| Platforms like Encord & CVAT | Software tools that provide interfaces for manual labeling (e.g., drawing bounding boxes), workflow management, and collaboration for visual data [15]. |

Visualizing Annotation Workflows

The diagrams below illustrate the core methodologies derived from the featured research, providing a clear visual representation of the structured processes that underpin high-quality manual annotation.

Diagram 1: Two-stage medical annotation workflow with expert oversight.

Diagram 2: Consensus-driven process for creating a scalable rule-based annotation system.

The experimental data and case studies presented affirm that manual annotation holds a decisive advantage in scenarios where data is complex, ambiguous, or domain-specific. The human capacity for nuanced judgment, contextual understanding, and adaptive learning makes it an indispensable component in the development of reliable AI, particularly in critical fields like healthcare and drug development. While automated annotation excels in processing large volumes of standardized data, the evidence clearly shows that for tasks requiring deep expertise and complex judgment, manual annotation is not just a preference—it is a necessity. The most effective future path lies not in choosing one over the other, but in leveraging a hybrid approach, using automation for scale and speed while relying on human expertise to guide, correct, and handle the edge cases that define true intelligence.

In the development of artificial intelligence (AI) and machine learning (ML) models, data annotation serves as the critical foundation, transforming raw data into structured, machine-readable information. The choice between manual and automated annotation methods directly influences the accuracy, efficiency, and scalability of AI systems, particularly in sensitive fields like drug development and clinical research. Manual annotation relies on human expertise to label datasets, offering superior contextual understanding but operating under significant constraints of time and scalability. Conversely, automated annotation employs algorithms and AI-assisted tools to accelerate the labeling process, enabling rapid processing of large-scale datasets while facing challenges in handling nuanced or complex data. Understanding the mechanisms, accuracy, and appropriate applications of each approach is paramount for researchers and scientists aiming to build reliable, high-performing models for biomedical applications.

This guide provides a comprehensive, evidence-based comparison of manual versus automated data annotation, synthesizing current research findings and empirical data. It details specific experimental protocols from clinical benchmark studies, presents structured quantitative comparisons, and outlines the essential toolkit for implementing these methodologies in research environments. The analysis is particularly framed within the context of drug development and clinical research, where annotation accuracy directly impacts patient safety and therapeutic efficacy.

Comparative Accuracy: Quantitative Analysis

Empirical assessments across multiple studies demonstrate significant differences in error rates and performance metrics between manual and automated data annotation methods. The following tables synthesize quantitative findings from clinical research, computer vision applications, and large-scale data processing studies.

Table 1: Data Processing Error Rates in Clinical Research A systematic review and meta-analysis of data quality in clinical studies revealed substantial variability in error rates across processing methods. The analysis, which categorized 93 papers published from 1978 to 2008, calculated pooled error rates using meta-analysis of single proportions based on the Freeman-Tukey transformation method [16].

| Data Processing Method | Pooled Error Rate (%) | 95% Confidence Interval | Error Range (per 10,000 fields) |

|---|---|---|---|

| Medical Record Abstraction (MRA) | 6.57 | 5.51 - 7.72 | 200 - 2,784 |

| Optical Scanning | 0.74 | 0.21 - 1.60 | 21 - 160 |

| Single-Data Entry | 0.29 | 0.24 - 0.35 | 24 - 35 |

| Double-Data Entry | 0.14 | 0.08 - 0.20 | 8 - 20 |

Medical Record Abstraction, a primarily manual process, demonstrated both the highest and most variable error rate (6.57%, 95% CI: 5.51-7.72), with reported errors ranging from 200 to 2,784 per 10,000 fields [16]. This error rate exceeds thresholds known to impact statistical power and potentially necessitate sample size increases in clinical trials. In contrast, automated and semi-automated methods showed significantly lower error rates, with double-data entry achieving the highest accuracy at 0.14% (95% CI: 0.08-0.20) [16].

Table 2: Performance Metrics in specialized annotation tasks Studies in specialized domains reveal distinct performance patterns for manual and automated approaches, particularly in handling complex data types.

| Domain | Task Type | Manual Annotation Performance | Automated Annotation Performance | Key Metrics |

|---|---|---|---|---|

| Radiographic Landmark Identification | Pelvic tilt annotation | Maximum angular disagreement: 9.51°-16.55° (cloud size: 6.04mm-17.90mm) | Requires established benchmark for comparison | Landmark cloud size at 95% threshold [17] |

| Medication Error Analysis | Named Entity Recognition | Gold-standard dataset creation | F1-score: 0.97 | Cross-validation [18] |

| Medication Error Analysis | Intention/Factuality Analysis | Gold-standard dataset creation | F1-score: 0.76 | Cross-validation [18] |

| General Complex Data Handling | Contextual understanding | Superior accuracy | Struggles with nuance | Qualitative assessment [1] |

In clinical imaging annotation, a benchmark dataset for pelvic tilt landmarks revealed substantial human annotator variability, with landmark cloud sizes of 6.04 mm-17.90 mm at a 95% dataset threshold, corresponding to 9.51°–16.55° maximum angular disagreement in clinical settings [17]. This variability highlights the inherent challenges in establishing "ground truth" for ambiguous anatomical landmarks, whether for human annotators or AI systems.

For medication error analysis, automated annotation achieved remarkably high performance in Named Entity Recognition (F1-score: 0.97) but showed more moderate performance in the more complex intention/factuality analysis (F1-score: 0.76) based on cross-validation exercises [18]. This pattern demonstrates the current capabilities and limitations of automated systems in handling layered linguistic tasks.

Experimental Protocols and Methodologies

Clinical Radiographic Landmark Annotation Study

A clinical benchmark study established a methodology for quantifying annotation accuracy in pelvic tilt radiographic measurements, providing a framework for comparing human and AI annotation performance [17].

Objective: To quantify inter-annotator variability in pelvic tilt landmark identification and create a probabilistic benchmark dataset for validating AI annotation methods.

Imaging Dataset: Researchers sourced 115 consecutive sagittal radiographs (EOS Imaging, France) from 93 unique patients (62 males, 31 females, average age 64.6 ± 11.4 years) awaiting hip surgeries. The dataset was ethically approved (2019/ETH09656, St Vincent's Hospital Human Research Ethics Committee, Sydney, Australia) and shared under a CC-BY license [17].

Annotation Protocol:

- Five independent annotators (one senior surgeon, two orthopedic fellows, two orthopedic engineers) received equal training for labeling points defining pelvic tilt using a custom-designed MATLAB GUI program.

- Two pelvic tilt definitions were annotated: anatomical pelvic tilt (defined by anterior pelvic plane) and mechanical pelvic tilt (defined by line connecting midpoint of sacral plate and center of two femoral heads).

- Before annotation, images were zoomed until the anatomical structures filled the screen to ensure precision.

Probabilistic Model Calculation:

- Image-specific length parameters scaled skeletal sizes across different images using a standardized factor (η).

- Annotation coordinates were transformed to represent orientation of interest (θ) using coordinate transformation equations.

- Landmark accuracy was calculated from maximum impact of point cloud diameter of k% data points from two landmark ends, representing angular and length disagreements.

- The centroid of annotations from multiple annotators was considered the "ground-truth" point for benchmark establishment.

This methodology produced a quantified point cloud dataset for each landmark corresponding to different probabilities, enabling assessment of directional annotation distribution and parameter-wise impact [17].

Medication Error Incident Report Annotation Study

A large-scale study created an annotated corpus of medication error reports to develop and validate automated information extraction systems for patient safety applications [18].

Objective: To develop a machine annotator for extracting medication error-related information from unstructured clinical narrative reports and create a large annotated corpus for model training.

Data Source: 58,568 annotatable free-text medication error reports from the Japan Council for Quality Health Care's "Project to Collect Medical Near-Miss/Adverse Event Information" (2010-2020). The corpus included 478,175 medication error-related named entities [18].

Annotation Scheme:

- Named Entity Recognition (NER): Annotation of drug-related entities including 'drug', 'form', 'strength', 'duration', 'timing', 'frequency', 'date', 'dosage', and 'route'.

- Intention/Factuality Analysis (I&F): Labeling named entities as 'intended and actual' (IA), 'intended and not actual' (IN), or 'not intended and actual' (NA), with IN and NA indicating medication errors.

- Incident Type Classification: Determining error type based on which named entities were intended versus actual.

Machine Annotation Pipeline:

- Pre-training: A BERT model with SentencePiece tokenizer was pre-trained on Japanese Wikipedia and Twitter corpora, then further pre-trained on the full JQ incident report corpus of 121,244 free-text documents.

- Fine-tuning: The model was fine-tuned with rule-based annotated data, using a list of unique drug names based on Japan's Ministry of Health, Labour and Welfare 2022 standard drug list.

- Validation: The model achieved F1-scores of 0.97 for NER and 0.76 for intention/factuality analysis in cross-validation exercises.

This workflow produced the world's largest publicly available body of annotated incident reports covering concepts and attributes related to drug errors [18].

Workflow Comparison: Manual vs Automated Annotation

The fundamental processes of manual and automated annotation differ significantly in their operational sequences, quality control mechanisms, and human involvement requirements. The following diagram illustrates the core workflows for each approach:

The Researcher's Annotation Toolkit

Implementing effective annotation workflows requires specialized tools and platforms tailored to research needs. The following table details key solutions and their applications in scientific contexts.

Table 3: Essential Annotation Tools for Research Applications

| Tool/Platform | Type | Primary Research Applications | Key Features | Best For |

|---|---|---|---|---|

| Encord | Commercial | Medical imaging, DICOM annotation | AI-assisted labeling, active learning pipelines, HIPAA compliance | Medical image annotation with specialized file format support [19] |

| Labelbox | Commercial | Multi-modal data annotation | Automated labeling, project management, multi-user workflows | Large-scale projects requiring flexible annotation across data types [1] [20] |

| CVAT | Open-source | Computer vision research | Semantic segmentation, bounding boxes, object tracking | Academic and industry computer vision projects with limited budgets [20] |

| Amazon SageMaker Ground Truth | Commercial | Large-scale clinical data processing | Built-in ML model integration, managed labeling workforce | Teams integrated with AWS ecosystem needing scalable solutions [1] [20] |

| SuperAnnotate | Commercial | Medical imaging, video annotation | AI-assisted image segmentation, automated quality checks | Computer vision projects requiring precise, high-volume annotations [1] [20] |

| Custom MATLAB GUI | Research-specific | Radiographic landmark annotation | Custom-designed interface for specific measurement tasks | Specialized clinical measurement tasks requiring tailored interfaces [17] |

| BERT-based NLP Pipeline | Research-specific | Medication error extraction | Multi-task BERT model, intention/factuality analysis | Natural language processing of clinical narratives and reports [18] |

Tool selection should align with specific research requirements, including data type (medical images, clinical text), compliance needs (HIPAA, SOC 2), scalability requirements, and integration with existing research workflows. Open-source solutions like CVAT offer flexibility for academic settings, while commercial platforms typically provide enhanced security features and specialized functionality for regulated clinical research environments [20].

The comparative analysis of manual and automated annotation reveals a complex landscape where methodological choice significantly impacts research outcomes. Manual annotation delivers superior accuracy for complex, nuanced tasks but faces limitations in scalability and consistency. Automated annotation offers dramatic efficiency gains for large datasets but requires careful validation, particularly in ambiguous domains. The emerging hybrid paradigm—combining AI-assisted pre-labeling with human expert oversight—represents a promising direction for biomedical research, leveraging the strengths of both approaches while mitigating their respective limitations.

Future directions in annotation methodology will likely focus on enhancing AI capabilities for contextual understanding, developing more sophisticated benchmark datasets for validation, and creating specialized tools for domain-specific applications in drug development and clinical research. As AI systems continue to evolve, the establishment of rigorous, standardized evaluation frameworks remains essential for ensuring annotation quality and, consequently, the reliability of AI models in critical healthcare applications.

In modern drug discovery, artificial intelligence (AI) and machine learning (ML) models have become indispensable tools, capable of compressing discovery timelines from years to months [21]. The performance of these models is not merely a function of their algorithms but is fundamentally dependent on the quality of the training data from which they learn [6]. This training data acquires its predictive power through annotation—the process of labeling raw, unstructured data to identify meaningful entities and relationships [6] [1]. In the context of drug discovery, this can include labeling protein structures, molecular interactions, or clinical outcomes. The accuracy and consistency of these annotations create the foundational reality that AI models internalize. Consequently, the choice between manual and automated annotation methodologies carries profound implications for the entire research and development pipeline, influencing everything from initial target identification to clinical trial success rates [6] [1]. This guide provides an objective comparison of manual versus automated annotation, supporting drug development professionals in making evidence-based decisions for their AI initiatives.

Manual vs. Automated Annotation: A Comparative Analysis

The decision between manual and automated annotation is multifaceted, involving trade-offs between accuracy, speed, cost, and scalability. The table below summarizes the key performance characteristics of each method, synthesized from comparative studies.

Table 1: Performance Comparison of Manual vs. Automated Annotation

| Performance Criterion | Manual Annotation | Automated Annotation |

|---|---|---|

| Accuracy | Very high; excels with complex, nuanced data [6] [1] | Moderate to high; best for clear, repetitive patterns [6] |

| Speed | Slow; human-limited throughput [6] [1] | Very fast; processes thousands of data points per hour [6] |

| Cost | High, due to skilled labor costs [6] [1] | Lower long-term cost; high initial setup investment [6] [1] |

| Scalability | Limited; requires hiring and training [6] | Excellent; scales effortlessly with computing power [6] |

| Adaptability | Highly flexible to new tasks and taxonomies [6] | Limited flexibility; requires retraining for new data [1] |

| Consistency | Prone to human error and subjective bias [1] | High consistency for well-defined tasks [6] |

| Best-Suated For | Complex, small-scale, or mission-critical tasks (e.g., medical imaging, legal documents) [6] [1] | Large-scale, repetitive tasks with simple patterns (e.g., virtual screening) [6] [1] |

The Qualitative Trade-Offs

- Contextual Understanding: Manual annotation, performed by domain experts such as medicinal chemists or biologists, provides irreplaceable contextual and causal reasoning. This is critical for interpreting ambiguous data in areas like toxicology or patient stratification [6]. Automated systems, while consistent, operate on pre-defined rules and patterns and can struggle with novel or highly specialized content [6] [1].

- Bias and Validation: Human annotators can introduce unconscious bias, but can also be trained to identify and mitigate it. Automated models, however, can perpetuate and even amplify biases present in their training data, requiring rigorous human-in-the-loop oversight for quality control [6] [1].

Experimental Protocols for Assessing Annotation Quality

To objectively determine the optimal annotation strategy for a given project, researchers should implement controlled experiments that measure key performance indicators. The following protocols outline methodologies for benchmarking quality and its downstream impact on AI model performance.

Protocol 1: Benchmarking Annotation Accuracy

This protocol measures the intrinsic quality of the annotations themselves before they are used for model training.

- Dataset Curation: Select a gold-standard benchmark dataset relevant to the drug discovery task (e.g., a publicly available protein-ligand binding affinity database). Manually curate and verify a "ground truth" subset to use as the evaluation benchmark [22].

- Experimental Arms:

- Arm A (Manual): Provide the raw dataset to a team of expert annotators (e.g., PhD-level chemists). Implement a multi-level validation process where a subset of annotations is peer-reviewed by a second expert [6].

- Arm B (Automated): Process the same raw dataset using the chosen automated annotation tool (e.g., a graph neural network for molecular property prediction) [23] [24].

- Arm C (Hybrid): Process the dataset with the automated tool, then have expert annotators review and correct the outputs [6] [1].

- Quality Metrics: Compare the outputs of each arm against the ground truth benchmark. Calculate:

- Precision/Recall: To measure correctness and completeness.

- F1-Score: The harmonic mean of precision and recall.

- Inter-Annotator Agreement (IAA): For manual annotation, use Fleiss' Kappa to measure consistency among experts. For automated vs. manual, use Cohen's Kappa [6].

Protocol 2: Downstream Model Performance

This protocol evaluates how the quality of annotations from different methods ultimately affects the performance of a drug discovery AI model.

- Training Set Generation: Use the finalized annotated datasets from each arm of Protocol 1 (Manual, Automated, Hybrid) as separate training sets.

- Model Training: Train three identical AI models—for example, a Graph Neural Network (GNN) for predicting drug-target interactions—each on one of the three training sets. Keep all model architectures and hyperparameters constant [23] [24].

- Performance Evaluation: Test all three models on the same, pristine, held-out test set. Evaluate using domain-specific metrics:

- Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve for classification tasks (e.g., active/inactive compound) [24].

- Mean Squared Error (MSE) for regression tasks (e.g., predicting binding affinity) [22].

- Success Rate in Virtual Screening: The top candidates identified by each model can be validated in wet-lab experiments, with the hit-rate serving as a final performance measure [24] [25].

Table 2: Key Reagent Solutions for Annotation and AI Modeling in Drug Discovery

| Research Reagent / Solution | Function in Annotation & AI Modeling |

|---|---|

| FAIR Data Repositories (e.g., ChEMBL, PubChem) [22] | Provides structured, accessible data for training automated annotation models and establishing benchmark ground truth. |

| Graph Neural Networks (GNNs) [23] [24] | AI models that naturally represent molecules as graphs for highly accurate property prediction and virtual screening. |

| Computer-Assisted Synthesis Planning (CASP) Tools [22] | Automates the annotation of viable synthetic pathways for AI-designed molecules, critical for the "Make" step in DMTA cycles. |

| High-Throughput Experimentation (HTE) [22] | Generates large-scale, high-quality experimental data for training and validating automated annotation systems in chemistry. |

| AI-Powered Visualization Platforms (e.g., Labelbox, SageMaker) [1] | Provides interfaces for human experts to perform manual annotation and quality control efficiently. |

The relationship between annotation methodology, data quality, and final model performance is a causal chain. The following diagram visualizes this workflow and the critical points of quality decision-making.

Diagram 1: Annotation workflow and impact on model performance.

The choice between manual and automated annotation is not about finding a universally superior option, but rather the contextually optimal one. The evidence demonstrates that manual annotation is unparalleled for complex, small-scale, or high-stakes tasks where accuracy and nuanced understanding are paramount, such as in early-stage lead optimization for a first-in-class drug target [6] [22]. Conversely, automated annotation is essential for leveraging large-scale datasets, such as in virtual screening of billion-compound libraries, where its speed and consistency provide a decisive advantage [6] [24].

For most modern drug discovery pipelines, a hybrid strategy offers the most robust path forward. This approach uses automated tools for bulk processing and initial labeling, reserving scarce and expensive expert manual labor for quality control, edge cases, and the most critical data subsets [6] [1]. This creates a synergistic loop where human expertise trains and refines the automated systems, which in turn augment human productivity. By strategically aligning annotation methodology with project goals—prioritizing accuracy for foundational models and scalability for exploratory research—drug development professionals can build higher-performing AI models, ultimately accelerating the delivery of novel therapeutics.

Strategic Implementation: Choosing the Right Annotation Method for Your Research

Optimal Use Cases for Manual Annotation in Biomedical Research

In the development of artificial intelligence (AI) for biomedical applications, the creation of high-quality training datasets through annotation is a foundational step. This process, which involves labeling raw data such as medical images or clinical text to provide context for machine learning models, is performed through two primary methodologies: manual annotation by human experts and automated annotation via algorithms. While automated approaches offer compelling advantages in speed and scalability, manual annotation remains indispensable for numerous complex biomedical tasks. This guide objectively compares the performance of manual and automated annotation, framing the discussion within broader research on annotation accuracy to delineate the specific, optimal scenarios where the precision of human experts is not just beneficial but essential.

Manual vs. Automated Annotation: A Comparative Framework

The choice between manual and automated annotation is not a question of which is universally superior, but which is optimal for a specific project's goals, constraints, and data characteristics. The decision hinges on the trade-off between the scalability of automation and the nuanced understanding of human intelligence.

The table below summarizes the core performance characteristics of each method based on comparative analyses [26] [1] [27]:

| Performance Criterion | Manual Annotation | Automated Annotation |

|---|---|---|

| Accuracy & Precision | Very high, especially for complex/nuanced data [26] [1] | Moderate to high for clear, repetitive patterns; struggles with subtlety [26] [27] |

| Contextual Understanding | Excellent; can interpret ambiguity, jargon, and cultural nuance [28] | Limited; operates on pre-defined rules and patterns [26] |

| Speed & Throughput | Slow; human-limited and time-consuming [26] [1] | Very fast; can process thousands of data points in hours [26] [27] |

| Scalability | Limited and costly to scale [26] | Excellent; scales effortlessly with computing resources [26] [28] |

| Adaptability & Flexibility | Highly flexible; can adjust to new guidelines and edge cases in real-time [26] | Low flexibility; requires retraining or reprogramming for new tasks [1] |

| Operational Cost | High due to skilled labor and time [26] [28] | Lower long-run cost; high initial setup investment [26] [27] |

| Consistency | Prone to inter-annotator variability and subjective bias [29] [28] | High consistency in applying labeling rules [26] |

The Critical Challenge of Inter-Annotator Variability

A significant factor unique to manual annotation is inter-annotator variability—the inconsistency that arises when different experts label the same phenomenon. This is not merely a result of error but often stems from inherent differences in expert judgment, a source of "noise" in the system [29].

A landmark 2023 study extensively investigated this issue in a real-world clinical setting [29]. The experiment involved 11 Intensive Care Unit (ICU) consultants independently annotating a common dataset of 60 patient instances based on six clinical variables, assigning a severity score (A-E). The resulting classifiers, built from each consultant's annotations, showed only "fair" agreement internally (Fleiss' κ = 0.383). More critically, when these models were validated on an external ICU dataset, their classifications showed only "minimal" agreement (average Cohen's κ = 0.255). This demonstrates that the "ground truth" can shift significantly depending on which expert provides the labels, potentially leading to unpredictable model behavior in real-world clinical decision-support systems [29].

Optimal Use Cases for Manual Annotation

The strengths of manual annotation make it the preferred or required method in several high-stakes biomedical scenarios.

Complex and Subjective Data Interpretation

Human experts excel at tasks requiring deep contextual understanding and judgment that is difficult to codify into rules.

- Sentiment and Intent Analysis in Patient Data: Understanding patient-reported outcomes or sentiments in clinical notes often involves interpreting sarcasm, cultural nuance, and ambiguous phrasing, a task where human annotators significantly outperform automated tools [1] [27].

- Rhetorical Classification in Scientific Literature: Identifying the function of sentences in publications (e.g., "self-acknowledged limitations") requires understanding scientific argumentation. A 2018 study achieved good inter-annotator agreement (Krippendorff’s α = 0.781) using manual annotation, which was then used to train a rule-based classifier. The human-defined rules ultimately yielded the highest classification accuracy (91.5%), underscoring the value of human insight for setting up automated systems [30].

Mission-Critical Applications in Clinical and Diagnostic Fields

In domains like medicine, where annotation errors can have direct consequences for patient care, the accuracy of manual annotation is paramount.

- Medical Imaging Analysis: Annotating radiology scans or pathology slides to identify subtle disease markers requires a level of expertise and nuanced judgment that automated systems cannot reliably replicate. Manual annotation is the gold standard for creating training data in these fields [1] [28].

- Clinical Decision Support Systems: As evidenced by the ICU study, clinical judgment is variable. For building models that classify patient severity or predict outcomes, manual annotation by domain experts is essential, though consensus-building among multiple annotators is critical to mitigate individual bias [29].

Specialized Domains with Complex Jargon and Ontologies

Biomedical sub-fields often possess highly specialized terminologies and conceptual relationships.

- Legal and Regulatory Document Analysis: Interpreting complex language in clinical trial protocols or patient consent forms demands a human understanding of legal and regulatory context [27].

- Ontology Mapping and Relation Extraction: Mapping biological sample labels to standardized ontologies is a complex task. A 2025 study found that even a fine-tuned GPT-4 model achieved a precision of only 47-64% for annotating cell lines and types, indicating a continued strong need for expert curation to ensure validity [31]. Tools like MetaTron have been developed specifically to support biomedical experts in the manual and semi-automatic annotation of complex relations, integrating ontologies to aid this process [32].

Small, High-Value Datasets and Edge Cases

For pilot studies, rare diseases, or projects with limited, highly valuable data, the cost of setting up an automated system is not justified. Manual annotation ensures that every data point is labeled with the highest possible accuracy [1]. Furthermore, human annotators are uniquely equipped to identify and correctly label unusual or unexpected edge cases that fall outside the patterns an automated model was trained on [28].

Experimental Protocols and Performance Data

To move from theoretical comparison to empirical evidence, we examine specific experimental protocols that benchmark manual against automated or semi-automated methods.

Experiment 1: Digital Pathology Annotation Benchmark

A 2024 study provided a direct benchmark of manual versus semi-automated annotation in computational pathology, a domain requiring extreme precision [33].

- Objective: To compare the working time, reproducibility, and precision of manual (using a mouse or touchpad) and semi-automated (AI-assisted Segment Anything Model - SAM) methods for annotating renal tissue structures.

- Methodology:

- Annotations: Two pathologists annotated 57 tubules, 53 glomeruli, and 58 arteries from a PAS-stained whole slide image (WSI).

- Methods: Each used three methods: mouse, touchpad, and SAM (which uses a rough bounding box from the annotator to generate a fine outline).

- Metrics: Time to annotate, reproducibility (measured by overlap fraction of pixels between annotators), and precision (a 0-10 score from expert nephropathologists).

- Results Summary: The quantitative results are summarized in the table below.

| Annotation Method | Average Time (min) | Time Variability (Δ) | Reproducibility (Overlap Fraction) | Key Finding |

|---|---|---|---|---|

| Semi-Automated (SAM) | 13.6 ± 0.2 | 2% | 0.96 (0.99 for Glomeruli) | Fastest, most reproducible for common structures. |

| Manual (Mouse) | 29.9 ± 10.2 | 24% | 0.96 (0.97 for Glomeruli) | 121% slower than SAM. |

| Manual (Touchpad) | 47.5 ± 19.6 | 45% | 0.94 (0.93 for Glomeruli) | 249% slower than SAM; highest variability. |

Conclusion: The semi-automated method was dramatically faster and showed superior inter-observer reproducibility for most structures. However, its performance dropped for more complex annotations (arteries, overlap=0.89), indicating that automation may still require expert refinement for certain tasks. Manual methods, while slower, provided a high-quality baseline [33].

Experimental Workflow: Pathology Annotation

Experiment 2: Quantifying Clinical Annotation Inconsistency

The previously mentioned 2023 study on ICU data provides a protocol for quantifying the impact of inter-annotator variability [29].

- Objective: To assess the impact of annotation inconsistencies among clinical experts on the performance of resulting AI models.

- Methodology:

- Annotations: 11 ICU consultants independently annotated an identical dataset of 60 patient instances, labeling a severity score based on six clinical variables.

- Model Building: A separate classifier was built from the dataset labeled by each consultant.

- Validation: Models underwent internal validation (agreement between themselves) and broad external validation on a separate ICU dataset (HiRID). Agreement was measured using Fleiss' κ and Cohen's κ.

- Results Summary:

- Internal Validation: Fair agreement (Fleiss' κ = 0.383).

- External Validation: Minimal agreement (average Cohen's κ = 0.255). Models disagreed more on discharge decisions (κ = 0.174) than on mortality predictions (κ = 0.267).

- Consensus Analysis: Standard consensus methods like majority voting led to suboptimal models. The study suggested assessing "annotation learnability" to determine a better consensus.

- Conclusion: The "ground truth" in clinical settings is often not unitary. Relying on a single expert's annotations can produce models that reflect that individual's bias. Optimal model development requires acknowledging and managing this variability, for instance, through learnability-weighted consensus from multiple experts [29].

Logical Flow: ICU Annotation Inconsistency

The Scientist's Toolkit: Research Reagent Solutions

Selecting the right tools is critical for executing a successful manual or semi-automated annotation project. The following table details key solutions based on tool evaluations and experimental protocols [32] [34] [33].

| Tool / Resource | Primary Function | Application Context |

|---|---|---|

| MetaTron | Open-source, web-based annotation tool for biomedical texts. | Supports document-level and relation annotation with ontology integration; ideal for collaborative NLP projects [32]. |

| QuPath with SAM Extension | Digital pathology software with AI-assisted segmentation. | Used for semi-automated annotation of whole slide images; dramatically speeds up outlining structures like glomeruli [33]. |

| Segment Anything Model (SAM) | Foundation model for image segmentation. | Can be integrated into pipelines (e.g., in QuPath) to provide a "semi-automatic" annotation layer, reducing manual labor [33]. |

| brat | Web-based text annotation tool. | A widely-used, rapid annotation tool for structuring natural language data; common in NLP research [34]. |

| WebAnno | Web-based, customizable annotation tool. | Highly rated for linguistic annotation tasks; supports a wide range of project types and collaborative work [34]. |

| Medical-Grade Display (e.g., BARCO) | High-resolution, color-accurate monitor. | Essential for manual annotation of medical images where precision is critical; shown to impact annotation time and accuracy [33]. |

| Consensus Guidelines & Rubrics | Documented protocols for annotators. | Mitigates inter-annotator variability by providing clear, unambiguous rules for labeling complex or subjective data [29]. |

The empirical data clearly demonstrates that manual annotation is the optimal choice in biomedical research when the primary requirements are high contextual accuracy, the ability to interpret complex and subjective data, and domain expertise for tasks in clinical, diagnostic, or specialized fields. Its limitations in speed, scalability, and consistency due to human variability are significant.

The future of annotation in biomedicine does not lie in a binary choice but in hybrid, human-in-the-loop pipelines [28] [27]. In these workflows, automated tools like SAM are used for initial, rapid labeling of large datasets or straightforward tasks, which are then refined and validated by human experts who handle edge cases, complex judgments, and quality assurance. This approach leverages the scalability of automation while preserving the irreplaceable nuanced understanding of the human expert, thereby creating the most robust and reliable annotated corpora for powering the next generation of biomedical AI.

When to Leverage Automated Annotation for Scalable Analysis

For researchers, scientists, and drug development professionals, the quality of annotated data directly determines the performance of machine learning models that underpin modern scientific discovery. The choice between manual and automated annotation is particularly crucial in fields like drug development, where precision must be balanced against the need to process massive datasets at scale. While manual annotation has long been the gold standard for accuracy, automated annotation is increasingly becoming the solution for scalable analysis, particularly as AI models consume more data than ever before [35].

This guide objectively compares these approaches within the broader context of accuracy research, providing experimental data and methodologies to help scientific teams make evidence-based decisions for their annotation workflows. The central thesis is that a strategic hybrid approach—leveraging automation for scalability while retaining human oversight for complex judgments—delivers the optimal balance for research-grade data annotation.

Manual vs. Automated Annotation: A Quantitative Comparison

The table below summarizes core performance metrics between manual and automated annotation approaches, synthesizing data from multiple industry implementations and research studies.

Table 1: Performance Comparison of Manual vs. Automated Annotation

| Performance Metric | Manual Annotation | Automated Annotation | Experimental Context |

|---|---|---|---|

| Throughput Speed | Slow (human-limited) | Up to 5× faster [36] | Image annotation workflows with AI pre-labeling [36] |

| Accuracy Level | Very High (context-aware) | Moderate to High (pattern-based) | Complex data (e.g., medical texts) [6] [37] |

| Relative Cost | High (labor-intensive) | 30-35% lower at scale [36] | Large-scale dataset labeling [36] |

| Scalability | Limited (linear scaling) | Excellent (parallel processing) | Projects with millions of data points [6] [1] |

| Attribute Modeling Accuracy | Benchmark (Gold Standard) | >0.9 F-measure for many attributes [37] | Prescription regimen annotation study [37] |

Experimental Protocols: Methodologies for Annotation Research

Protocol 1: Hybrid Workflow Performance Assessment

Objective: To quantify the performance improvements of a human-in-the-loop (HITL) annotation system compared to purely manual or fully automated approaches [35] [36].

Methodology:

- Pre-labeling & Confidence Thresholding: An AI model pre-labels the dataset. Labels with high confidence scores are automatically approved, while low-confidence labels are routed for human review [35].

- Active Learning Integration: The system flags ambiguous or contentious data points for prioritized human review. Each correction made by a human annotator is fed back into the model as a training signal [35].

- Comparative Analysis: The same dataset is annotated using three different workflows: (a) purely manual, (b) fully automated, and (c) hybrid HITL. Throughput, accuracy, and cost are measured for each.

Key Workflow Diagram: The following diagram illustrates the core logical flow of this hybrid, AI-assisted annotation process.

Protocol 2: Automated Schema Modeling for Medical Texts

Objective: To evaluate the accuracy of automated annotation models in extracting structured information from complex medical texts, such as prescription regimens [37].

Methodology:

- Gold Standard Creation: A subset of a prescription corpus (e.g., 1,746 instructions) is manually annotated by multiple human experts to create a ground-truth dataset. This process involves cross-validation and reconciliation between annotators to ensure high inter-annotator agreement [37].

- Model Training: Machine learning models, such as Conditional Random Fields (CRF), are trained on the manually annotated data to learn the annotation schema (e.g., tags for dose, frequency, route) [37].

- Accuracy Measurement: The automated model's output is compared against the held-out gold standard labels. Performance is measured using standard metrics like F-measure (the harmonic mean of precision and recall) for tag labels and spans, and accuracy for normalized attribute values [37].

Key Workflow Diagram: This diagram outlines the sequential stages of the experimental protocol used for modeling medical texts.

The Scientist's Toolkit: Essential Research Reagent Solutions

For researchers designing annotation experiments, the "reagents" are the platforms and tools that enable the work. The table below details key solutions and their primary functions in the context of annotation research and deployment.

Table 2: Key Research Reagent Solutions for Data Annotation

| Tool / Platform | Primary Function | Research Application |

|---|---|---|

| Encord | Unified platform for multimodal data annotation, curation, and model evaluation [38]. | Manages petabyte-scale datasets; features AI-assisted labeling (SAM2, GPT-4o) and HITL workflows, ideal for complex computer vision and medical AI projects [36] [38]. |

| CVAT (Computer Vision Annotation Tool) | Open-source tool for annotating images, videos, and 3D data [38]. | Provides a flexible, customizable environment for computer vision research with support for multiple annotation types and algorithmic assistance [38]. |

| Lightly | Data curation platform using active learning for smart data selection [38]. | Optimizes annotation budgets by identifying the most valuable data points to label, reducing redundant effort in large-scale projects [38]. |

| Scale AI | Enterprise-grade data labeling infrastructure and pipelines [35]. | Provides the strategic labeling infrastructure needed for large-scale, mission-critical AI pipelines in areas like autonomous systems [35]. |

| Conditional Random Fields (CRF) | Probabilistic model for segmenting and labeling sequence data [37]. | Effective for structured information extraction from textual data, such as annotating concepts in medical prescriptions [37]. |

The experimental data and methodologies presented confirm that automated annotation is no longer a fringe approach but a core component of scalable analysis in scientific research. The key is strategic implementation: leveraging automation for its unmatched speed, scalability, and cost-efficiency on large, well-structured datasets, while relying on human expertise for complex edge cases, nuanced judgments, and quality assurance [6] [36].

The emerging gold standard is the human-in-the-loop model, which creates a virtuous cycle where automation handles volume and humans train the model on harder cases, leading to progressively smarter systems [35]. For research teams in drug development and related fields, adopting this hybrid approach is not just an optimization—it is a strategic necessity for keeping pace with the exploding demands of data-intensive AI models.

In the field of AI and machine learning, particularly within data-intensive sectors like drug development, the debate between manual and automated data annotation is central to research and operational success. Data annotation—the process of labeling data to train AI models—directly dictates the performance, accuracy, and reliability of resulting algorithms. This guide objectively compares the performance of manual, automated, and hybrid annotation approaches, framing them within the broader thesis of accuracy research and providing the experimental data and protocols needed for scientific evaluation.

Data annotation is the foundational process of labeling raw data (images, text, audio, video) to create a structured dataset for training and validating machine learning models [6] [1]. In scientific domains such as drug development, the precision of these labels is paramount, as errors can propagate through models, leading to flawed predictions and unreliable outcomes.

The core methodologies are:

- Manual Annotation: A human-driven approach where experts label each data point individually. This method is prized for its high accuracy and ability to handle nuanced, complex, or subjective data [1].

- Automated Annotation: A technology-driven approach that uses algorithms and pre-trained models to label data with minimal human intervention. This method excels in speed, scalability, and cost-effectiveness for large, repetitive datasets [1].

- Hybrid Annotation: An integrated approach that strategically blends human expertise with automated efficiency. In this model, automation handles the bulk of initial, repetitive labeling, while human experts focus on complex edge cases, quality control, and continuous model refinement [39]. This synergy is the focus of this guide.

Quantitative Comparison of Annotation Methods

The choice between annotation strategies involves trade-offs between accuracy, cost, speed, and scalability. The following tables summarize key performance metrics derived from industry practices and research.

Table 1: Core Performance Metrics of Annotation Methods

| Criterion | Manual Annotation | Automated Annotation | Hybrid Annotation |

|---|---|---|---|

| Accuracy | 92-98% (High for complex data) [1] | 85-90% (Moderate, context-dependent) [1] | >95% (High, enhanced by human review) [39] |

| Relative Speed | Slow (Time-consuming) [6] | Very Fast (Thousands of data points/hour) [1] | Fast (Faster than manual, slightly slower than full auto) [39] |

| Scalability | Low (Limited by human resources) [1] | High (Easily scales with compute power) [6] | High (Efficiently scales through task allocation) [39] |

| Cost Profile | High (Labor-intensive) [6] | Low (After initial setup) [1] | Moderate (Optimizes human and compute costs) [39] |

Table 2: Operational and Qualitative Factors

| Criterion | Manual Annotation | Automated Annotation | Hybrid Annotation |

|---|---|---|---|

| Handling Complex Data | Excellent (Nuance, context, subjectivity) [1] | Struggles (Lacks contextual judgment) [1] | Excellent (Automates routine, humans handle complexity) [39] |

| Consistency | Prone to human error/inconsistency [1] | High (Uniform rules) [1] | High (Human oversight ensures consistency) [39] |

| Adaptability | Highly Flexible (Adapts quickly to new tasks) [6] | Low (Requires retraining for new data) [1] | High (Humans guide model adaptation) [39] |

| Best For | Small, complex datasets; high-stakes tasks (e.g., medical imaging) [1] | Large, repetitive datasets with clear patterns [1] | Most real-world projects, especially evolving or complex domains [39] |

Experimental Protocols for Annotation Accuracy Research

To generate comparable data on annotation performance, researchers can implement the following experimental protocols. These are designed to objectively measure the metrics outlined in the previous section.

Protocol 1: Controlled Accuracy Benchmarking

This experiment is designed to quantify the accuracy and error profiles of each annotation method against a verified ground truth dataset.

- Objective: To measure and compare the accuracy, precision, and recall of manual, automated, and hybrid annotation methods on a standardized dataset.

- Materials & Dataset:

- A pre-annotated, ground truth dataset (e.g., 1,000 medical images with confirmed pathology labels from a public repository like TCIA).

- For automated annotation: Access to a pre-trained model (e.g., from Roboflow, Encord) or training a model on a subset of the ground truth data [9].

- For manual annotation: A group of 3-5 expert annotators (e.g., biochemists or radiologists).

- For hybrid annotation: The same automated tool, with output reviewed by one expert annotator.

- Methodology:

- Step 1: Preparation. Withhold the ground truth labels from the test set (e.g., 200 images).

- Step 2: Execution.

- Manual Group: Provide the test set to expert annotators for independent labeling.

- Automated Group: Process the test set through the chosen automated annotation tool.

- Hybrid Group: Process the test set through the automated tool, then have a single expert reviewer correct the output labels.

- Step 3: Analysis. Compare all outputs against the ground truth. Calculate standard metrics: Accuracy, Precision, Recall, and F1-Score. Document the time taken by each method to complete the task.

- Expected Outcome: The manual and hybrid methods are expected to show superior accuracy (>95%) and F1-scores compared to the fully automated approach. The hybrid method should demonstrate a significant time saving over the purely manual process [1] [39].

Protocol 2: Scalability and Cost-Efficiency Workflow

This experiment assesses the operational efficiency of each method as the dataset volume increases, a critical factor for large-scale drug discovery projects.

- Objective: To analyze the scaling capabilities and cost structure of each annotation method as data volume grows exponentially.

- Materials & Dataset: A large, raw dataset of at least 10,000 data points (e.g., cell microscopy images or scientific papers).

- Methodology:

- Step 1: Baseline Establishment. Use a small subset (1,000 points) to estimate the per-unit time and cost for each method.

- Step 2: Scaling Simulation. Project the time and cost required to annotate the full 10,000-point dataset for each method. For the manual group, model linear scaling. For the automated group, model a high initial setup time followed by minimal marginal cost. For the hybrid group, model a setup time with sub-linear scaling of human review time.

- Step 3: Validation. If resources allow, execute a partial scaling run (e.g., on 5,000 points) to validate projections.

- Expected Outcome: The automated method will show the lowest cost and time at large scale, but with potential accuracy trade-offs. The hybrid approach will demonstrate a favorable balance, maintaining high accuracy while being significantly more scalable and cost-effective than the manual approach [6] [39].

Visualizing the Hybrid Workflow

The hybrid approach is not a simple sequential process but an integrated system with a continuous feedback loop. The diagram below illustrates this operational workflow and its self-improving nature.

The Scientist's Toolkit: Essential Research Reagent Solutions

For researchers embarking on annotation projects, selecting the right tools is as critical as choosing laboratory reagents. The following table catalogs key platforms that facilitate the hybrid annotation methodology.

Table 3: Key Data Annotation Tools for Research in 2025

| Tool Name | Primary Function | Key Features for Research | Typified Use Case |

|---|---|---|---|

| Encord [9] | Hybrid Annotation Platform | Supports multimodal data (DICOM, geospatial); custom workflows; robust API for integration; production-grade MLOps. | Annotating medical imaging datasets for a pathology detection model. |

| Labelbox [9] | End-to-End Data & Model Management | Active learning prioritization; elastic scalability; comprehensive SDK/API support. | Managing the entire lifecycle of a large-scale cell image classification project. |

| Roboflow [9] | Computer Vision Platform | Simple interface; automatic pre-annotation; easy dataset hosting and export. | Rapidly prototyping and validating a new object detection model on public datasets. |

| T-Rex Label [9] | AI-Assisted Annotation | Out-of-the-box browser operation; state-of-the-art visual prompt models (T-Rex2) for rare objects. | Efficiently annotating dense scenes or rare biological structures in microscopy images. |

| CVAT [9] | Open-Source Annotation Tool | Full control over workflow and data; plugin support; completely free. | Academic research teams with technical expertise needing a customizable, cost-free solution. |

The empirical data and experimental protocols presented demonstrate that the hybrid annotation approach is not merely a compromise but a superior methodology for scientific research and drug development. By integrating human expertise with automated efficiency, it systematically balances the high accuracy required for sensitive domains with the scalability demanded by modern big-data challenges. This synergy creates a continuous learning loop where automated tools increase throughput and human experts ensure reliability and context, ultimately accelerating the path from raw data to actionable scientific insights.

The global health crisis of antimicrobial resistance (AMR) necessitates advanced tools for rapidly identifying resistance genes in bacterial pathogens. Annotation tools that analyze whole-genome sequencing data are critical for this task, yet their performance varies significantly based on underlying algorithms and databases [40]. This comparative guide evaluates the performance of prominent AMR annotation tools, framing the analysis within a broader research thesis comparing manual curation versus automated annotation accuracy. As AMR prediction increasingly integrates machine learning (ML), establishing benchmark performance for tools that identify known resistance markers is essential [41]. This study provides an objective, data-driven comparison to assist researchers, scientists, and drug development professionals in selecting appropriate tools for specific genomic applications.

Comparative Performance Analysis of AMR Annotation Tools

Tool Selection and Evaluation Framework

This assessment is based on a recent large-scale study analyzing Klebsiella pneumoniae genomes, a pathogen known for its genomic diversity and role in shuttling resistance genes [41]. The study implemented a "minimal model" approach, using machine learning models built exclusively on known resistance determinants from annotation tools to predict binary resistance phenotypes for 20 major antimicrobials [41]. The core methodology involved:

- Data Collection: 18,645 K. pneumoniae samples from the BV-BRC public database were filtered for quality, resulting in 3,751 high-quality genomes with corresponding resistance data for 15 antibiotic classes [41].

- Sample Annotation: Eight commonly used annotation tools were applied: Kleborate, ResFinder, AMRFinderPlus, DeepARG, RGI, SraX, Abricate, and StarAMR [41].

- Machine Learning Modeling: Two ML models (Elastic Net logistic regression and XGBoost) were trained using presence/absence matrices of annotated AMR features to predict resistance phenotypes [41].

- Performance Benchmarking: Model performance was evaluated to identify where known mechanisms fail to explain observed resistance, highlighting knowledge gaps and tool-specific limitations [41].

Quantitative Performance Comparison

The following tables summarize key performance metrics and characteristics derived from the comparative assessment.

Table 1: Performance Metrics of Annotation Tools in AMR Prediction

| Annotation Tool | Primary Database | Key Strengths | Prediction Limitations |

|---|---|---|---|

| AMRFinderPlus | NCBI AMRFinder | Comprehensive coverage of genes and point mutations [41] | Varies by antibiotic; known markers insufficient for some drugs [41] |

| Kleborate | Species-specific | Optimized for K. pneumoniae; concise, less spurious hits [41] | Species-specific; limited to known K. pneumoniae variants [41] |

| ResFinder/PointFinder | ResFinder | Integrated gene and mutation detection; K-mer based rapid analysis [40] | Focuses on acquired genes and specific chromosomal mutations [40] |

| DeepARG | DeepARG | Machine learning-based; predicts novel/low-abundance ARGs [40] | In silico predictions may include unvalidated genes [40] |

| RGI (CARD) | CARD | Rigorous manual curation; ontology-based organization [40] | Limited to experimentally validated genes; slower updates [40] |

| Abricate | Multiple (CARD default) | Supports multiple databases; user-friendly [41] | Cannot detect point mutations with default settings [41] |

Table 2: Database Curation Approaches and Their Impacts

| Database | Curation Approach | Inclusion Criteria | Impact on Annotation Accuracy |

|---|---|---|---|

| CARD | Manual Expert Curation | Experimental validation & peer-review required [40] | High accuracy but potential gaps for emerging genes [40] |

| ResFinder | Manual Curation | Focus on acquired resistance genes [40] | High reliability for known acquired ARGs [40] |

| DeepARG | Automated ML Curation | In silico prediction of ARGs [40] | Broader coverage including novel ARGs, but may contain false positives [40] |

| NDARO/FARME | Consolidated Automated Curation | Integrates multiple data sources [40] | Wide coverage but potential consistency and redundancy issues [40] |

Experimental Protocols for Annotation Tool Assessment

Workflow for Comparative Tool Evaluation

The following diagram illustrates the experimental workflow for evaluating annotation tool performance, as implemented in the foundational case study.

Database Selection and Curation Pathways

The annotation tools rely on databases with fundamentally different curation philosophies, which significantly impact their performance characteristics.

Detailed Methodological Approach

The core experiment followed a rigorous protocol to ensure comparable results across tools:

Genome Quality Control: Initial K. pneumoniae genomes were filtered to exclude outliers with excessive contigs (>250) or abnormal lengths (<4.9 Mbp or >6.4 Mbp). Species typing was verified using Kleborate, removing 125 samples that matched other Klebsiella species [41].

Phenotype Data Processing: Antimicrobial susceptibility testing data for 76 antibiotics were filtered to include only those with data for ≥1800 samples, resulting in 3,751 genomes with reliable phenotype annotations. Binary resistance labels were used as provided by BV-BRC to maintain database consistency [41].

Feature Engineering: Positive identifications of resistance genes/variants were formatted into a presence/absence matrix (X_p×n ∈ {0,1}), where features represented unique AMR determinants and samples represented genomes. For antibiotics tested as combination therapies, gene sets were combined (e.g., amoxicillin-tetracycline included both amoxicillin and tetracycline gene sets) [41].

Machine Learning Implementation: The dataset was split 70/30 for training and testing. Models were evaluated on their ability to predict resistance phenotypes using only the known AMR markers identified by each annotation tool, creating a "minimal model" baseline for assessing the sufficiency of current knowledge [41].

Table 3: Essential Research Resources for AMR Annotation Studies

| Resource Category | Specific Tools/Databases | Primary Function in AMR Research |

|---|---|---|

| Manual Curation Databases | CARD [40], ResFinder/PointFinder [40], MEGARes [40] | Provide rigorously validated reference data for known resistance determinants with high reliability. |

| Automated/ML Databases | DeepARG [40], NDARO [40], SARG [40] | Enable discovery of novel resistance genes and broader resistome characterization through computational prediction. |

| Species-Specialized Tools | Kleborate [41], TBProfiler [41], Mykrobe [41] | Offer optimized detection for specific pathogens, reducing spurious annotations in targeted studies. |

| General Annotation Tools | AMRFinderPlus [41], Abricate [41], RGI [41] | Provide flexible, multi-organism annotation capabilities using various database backends. |

| Analysis & Validation Tools | BV-BRC [41], BUSCO [42], Proteomics (NP10 metric) [42] | Support genome quality assessment, data integration, and experimental validation of genomic predictions. |