Mastering scGPT Hyperparameter Tuning: A Practical Guide for Enhanced Single-Cell Analysis

This comprehensive guide provides researchers, scientists, and drug development professionals with advanced strategies for hyperparameter optimization when fine-tuning scGPT, a foundational generative AI model for single-cell transcriptomics.

Mastering scGPT Hyperparameter Tuning: A Practical Guide for Enhanced Single-Cell Analysis

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with advanced strategies for hyperparameter optimization when fine-tuning scGPT, a foundational generative AI model for single-cell transcriptomics. Covering foundational concepts to practical applications, we explore parameter-efficient fine-tuning (PEFT) techniques that can reduce trainable parameters by up to 90% while enhancing performance in tasks like cell type annotation and perturbation prediction. The article delivers actionable methodologies for optimizing learning rates, batch sizes, and adapter configurations, alongside troubleshooting common pitfalls and validation frameworks for benchmarking model performance against biological baselines. By implementing these optimized tuning protocols, researchers can achieve state-of-the-art results, such as 99.5% F1-scores in cell type classification, while maintaining computational efficiency and biological interpretability in their single-cell analyses.

Understanding scGPT Architecture and the Critical Role of Hyperparameters

Demystifying scGPT's Transformer Architecture for Single-Cell Biology

Core Architecture & Technical Specifications

scGPT is a foundation model based on a generative pre-trained transformer (GPT) architecture, specifically designed for single-cell multi-omics data. [1]. The table below summarizes its core architectural parameters.

Table 1: scGPT Model Architecture Specifications

| Component | Specification | Function |

|---|---|---|

| Embedding Size | 512 | Dimension of the vector representing each gene token. |

| Transformer Blocks | 12 | Number of sequential transformer layers. |

| Attention Heads | 8 per block | Parallel attention mechanisms per transformer block. |

| Total Parameters | 53 million | Total number of trainable weights in the model. |

Tokenization: How scGPT "Reads" a Cell

A fundamental challenge in applying transformers to biology is that gene expression data is not naturally sequential. scGPT overcomes this by treating a cell's gene expression profile like a "sentence". The process is outlined in the diagram below.

Tokenization Workflow for scGPT

- Gene Tokens: Each gene is treated as a distinct token and assigned a unique identifier [1]. The initial input is a raw count (Cell X Gene Matrix) [1].

- Value Binning: A value binning technique converts continuous expression counts into discrete, relative values for the model to process [1].

- Positional Information: Since gene order is arbitrary, genes are typically ranked by their expression levels within each cell to create a deterministic sequence. Positional encodings are then added to inform the model of this sequence [2] [3].

- Special Tokens: The model can incorporate special "condition tokens" that encompass meta-information, such as functional pathways (pathway tokens) or details from perturbation experiments (perturbation tokens) [1].

Frequently Asked Questions (FAQs)

Q1: When should I use scGPT in zero-shot mode versus fine-tuning it for my specific task? Your choice depends on your goal and data. The decision framework below illustrates the optimal path for different scenarios.

Decision Framework for scGPT Operating Modes

- Zero-Shot (Pre-trained only): You apply the foundation model directly to your data without any additional training [4].

- Task-Specific Fine-Tuning: You start with the pre-trained model and further train it on a small, labeled subset of your own data [4].

Q2: What are the key hyperparameters for fine-tuning scGPT, and what are their recommended values? The original research provides a set of default hyperparameters that serve as a strong starting point for fine-tuning.

Table 2: Key Fine-Tuning Hyperparameters for scGPT

| Hyperparameter | Recommended Value | Description |

|---|---|---|

| Initial Learning Rate | 0.0001 | The starting step size for weight updates during fine-tuning. Decays by 10% after each epoch. [1] |

| Batch Size | 512 | The number of cells processed before the model's internal parameters are updated. [1] |

| Number of Epochs | 30 | The number of complete passes through the fine-tuning dataset for most tasks. [1] |

| Mask Ratio | 0.4 | The fraction of gene tokens randomly masked (hidden) during training for the model to predict. [1] |

| Train/Evaluation Split | 90%/10% | The recommended split of your labeled data for training and validation. [1] |

Q3: I'm encountering an issue installing the flash-attn dependency. How can I resolve this?

This is a common issue due to specific hardware and software requirements.

- Solution: The

flash-attndependency often requires a specific GPU and CUDA version. The scGPT GitHub repository recommends using CUDA 11.7 and installingflash-attn<1.0.5[5]. If problems persist, consult the officialflash-attnrepository for detailed installation instructions.

Q4: How does scGPT's performance compare to using general-purpose LLMs like GPT-4 for cell type annotation? Both approaches are viable but have different strengths and limitations.

- scGPT: A specialized foundation model trained directly on single-cell data. It is designed for a wide range of tasks beyond annotation, including batch correction, multi-omic integration, and perturbation prediction [1] [6].

- GPT-4 (via tools like GPTCelltype): A general-purpose LLM that can be prompted with marker gene lists to annotate cell types, showing strong concordance with manual expert annotations [7] [8]. It requires no training on single-cell data but relies on high-quality marker genes.

Practical Tip: These models can be complementary. You can use GPT-4 to sanity-check scGPT's predictions or to label clusters that scGPT flags as "unknown." This ensemble approach can improve accuracy for borderline cases [4].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools

| Item | Function / Explanation |

|---|---|

| CZ CELLxGENE Discover Census Data | The primary source of non-spatial RNA sequencing data used to pre-train scGPT, providing a massive, diverse corpus of single-cell data. [1] |

| Pre-trained Model Checkpoints | The starting weights of the model, pre-trained on over 33 million cells. Essential for transfer learning to avoid training from scratch. [5] [9] |

| Highly Variable Genes (HVGs) | A subset of genes (e.g., top 2,000-3,000) that exhibit the highest cell-to-cell variation, used as input tokens to reduce noise and computational load. [4] [9] |

| Marker Gene Lists | Curated sets of genes known to be specifically expressed in particular cell types. Used for prompting LLMs like GPT-4 and for validating model predictions. [8] |

| GPU (e.g., A100) | Essential hardware for efficient fine-tuning, significantly reducing the time required for model training compared to CPUs. [4] |

Frequently Asked Questions (FAQs)

Q1: Why can't I just use the default hyperparameters in scGPT for my single-cell analysis?

Using default hyperparameters provides a starting point, but they are a one-size-fits-all solution. Your specific single-cell dataset has unique characteristics—such as the number of cells, sequencing depth, and biological question—that default settings are not designed to address. Proper tuning adjusts the model to the specific noise, sparsity, and batch effects present in your data, which is crucial for generating biologically-relevant insights rather than just computational outputs. [2] [10] A tuned model can improve task performance by 10–20% or more, which in a biological context, could mean the difference between accurately identifying a rare cell type or missing it entirely. [11]

Q2: My fine-tuned scGPT model performs perfectly on training data but fails on new data. What went wrong?

This is a classic sign of overfitting. Your model has likely learned the noise and specific patterns in your training dataset too well, including its technical artifacts, and has lost the generalizable knowledge it gained during its foundation model pretraining. [12] To prevent this:

- Use a validation set: Always monitor your loss metrics on a validation set that is not used for training. [12]

- Implement early stopping: Halt training when the validation loss stops improving and begins to increase. [13] [12]

- Apply regularization: Techniques like

dropoutandweight decay (L2 regularization)can prevent the model from becoming over-reliant on specific nodes or features in your training data. [14] [12]

Q3: I have limited computational resources. Which hyperparameters should I prioritize tuning for scGPT? Focus on the hyperparameters with the highest impact on model performance and training dynamics. Based on benchmarks from large-scale tuning, the following are most critical: [13] [14]

Learning Rate: Directly controls the speed and stability of learning. This is the most important parameter to get right.Batch Size: Influences the stability of gradient estimates and the model's ability to generalize.Dropout Rate: Key for preventing overfitting, especially with smaller datasets.

You can use efficient search methods like Bayesian Optimization, which can find optimal configurations with far fewer trials than traditional grid or random search. [13]

Q4: What does "catastrophic forgetting" mean in the context of fine-tuning scGPT?

Catastrophic forgetting occurs when the process of fine-tuning on your new, specific dataset causes the model to overwrite and lose the broad, general biological knowledge it learned during its large-scale pretraining on millions of cells. [12] The model might become an expert on your small dataset but fail at basic tasks it could previously handle. To retain this valuable pretrained knowledge, consider using Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA (Low-Rank Adaptation), which freeze the core model weights and only train small adapter modules, thus preserving the original capabilities. [15] [12]

Troubleshooting Guides

Issue: Poor Cell Type Annotation Accuracy

Symptoms:

- Low F1 score or accuracy on cell type classification tasks.

- Model consistently misclassifies specific or rare cell populations.

- Visualization (e.g., UMAP) of cell embeddings shows poor separation of known cell types.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Suboptimal Learning Rate | Plot the training and validation loss. A wildly fluctuating or stagnating loss curve suggests an inappropriate learning rate. | Tune the learning rate using a log-uniform range (e.g., 1e-5 to 1e-2). Employ a learning rate scheduler with warmup. [13] [14] |

| Overfitting | Compare training vs. validation accuracy. A large gap indicates overfitting. | Increase the dropout rate and/or weight decay. Implement early stopping based on validation performance. [14] [12] |

| Inadequate Model Capacity | The model performance plateaus despite extended training. | If resources allow, increase the model's hidden dimension size or the number of transformer layers. [14] |

Verification: After applying these tuning steps, retrain the model and evaluate on a held-out test set. A successful tune will show improved and more consistent separation of cell types in the embedding space. [10]

Issue: Unstable or Diverging Training Loss

Symptoms:

- Training loss becomes

NaN(Not a Number). - Loss values increase dramatically instead of decreasing.

- Wild oscillations in the loss curve.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Learning Rate Too High | This is the most common cause. Check the initial loss values; divergence often happens in the first few steps. | Drastically reduce the learning rate. Use a learning rate finder tool if available. Introduce gradient clipping to cap the size of parameter updates. [14] [12] |

| Improper Data Preprocessing | Check the distribution of your input data. Extreme values can destabilize training. | Ensure gene expression values are properly normalized. Consider scaling or binning expression values as done in models like scBERT and scGPT. [2] |

| Gradient Explosion | Monitor gradient norms during training. A sudden spike indicates an explosion. | Implement gradient clipping. Review and adjust the weight initialization strategy. [14] |

Issue: Long Training Times with Minimal Performance Gain

Symptoms:

- Training is slow per epoch.

- Performance (e.g., accuracy) improves very slowly or not at all.

- Computational costs are becoming prohibitive.

Diagnosis and Solutions:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Inefficient Hyperparameter Search | You are using Grid Search over a large parameter space. |

Switch to Bayesian Optimization or Random Search. These methods find good parameters with far fewer trials. [13] [16] |

| Ineffective Early Stopping | Training runs for the full number of epochs every time, even when no progress is made. | Implement a robust early stopping callback that halts training when validation performance plateaus. [13] |

| Large, Un-tuned Batch Size | Training is slow because the batch size is too small for your hardware. | Find the maximum batch size that fits your GPU memory. Use this with a correspondingly adjusted learning rate. Consider gradient accumulation to simulate a larger batch size. [12] |

The Researcher's Toolkit: Hyperparameter Tuning Solutions

The following table summarizes key software and methodological "reagents" for a successful scGPT fine-tuning experiment.

| Tool / Method | Function | Use Case in scGPT Fine-Tuning |

|---|---|---|

| Ray Tune with BoTorch [13] | A scalable framework for distributed hyperparameter tuning using Bayesian Optimization. | Ideal for tuning a large number of parameters (e.g., learning rate, layers, dropout) across multiple GPUs. |

| Low-Rank Adaptation (LoRA) [15] [12] | A parameter-efficient fine-tuning method that freezes the base model and trains only small rank-decomposition matrices. | Dramatically reduces compute cost and memory usage for fine-tuning, while helping to prevent catastrophic forgetting. |

| Learning Rate Scheduler [14] | Dynamically adjusts the learning rate during training according to a predefined rule (e.g., cosine decay). | Helps refine learning in later stages of training, leading to better convergence and higher accuracy. |

| Scikit-Optimize [13] | A simple library for performing Bayesian Optimization. | A good starting point for smaller-scale tuning on a single machine. |

| Optuna [16] | An auto-ML framework that features efficient sampling and pruning algorithms. | Useful for defining complex search spaces and automatically pruning unpromising trials early. |

Experimental Protocol: A Standard Workflow for scGPT Hyperparameter Tuning

This protocol outlines a systematic approach to hyperparameter tuning for scGPT, drawing from best practices in the field. [13] [14] [12]

Objective: To optimize scGPT's performance on a specific downstream task (e.g., cell type annotation) for a novel single-cell RNA sequencing dataset.

Workflow Overview:

Step-by-Step Procedure:

Data Preparation:

- Partition your single-cell dataset (AnnData object) into three subsets: Training (~70%), Validation (~15%), and a held-out Test set (~15%). [12]

- Perform standard preprocessing (quality control, normalization) on the training set and apply the same parameters to the validation and test sets to avoid data leakage.

Establish Baseline Performance:

- Fine-tune scGPT using its default hyperparameter configuration.

- Evaluate its performance on the validation set. Record the key metric (e.g., annotation accuracy). This is your baseline for measuring improvement.

Select a Tuning Method and Define the Search Space:

- For efficiency, select a Bayesian Optimization framework like Ray Tune. [13]

- Define the search space for the most impactful hyperparameters based on the toolkit and FAQs. Example search space in Python code:

Execute Tuning Trials:

- Launch the tuning job. The optimizer will select hyperparameter combinations, train the model, and evaluate it on the validation set.

- Utilize early stopping in each trial to terminate underperforming runs early, saving significant compute resources. [13]

Final Evaluation:

- Once the tuning process is complete, select the hyperparameter set that achieved the best performance on the validation set.

- Perform a final evaluation by training a model with these optimal hyperparameters on the combined training and validation set, and then assessing it on the held-out test set. This provides an unbiased estimate of its real-world performance. [12]

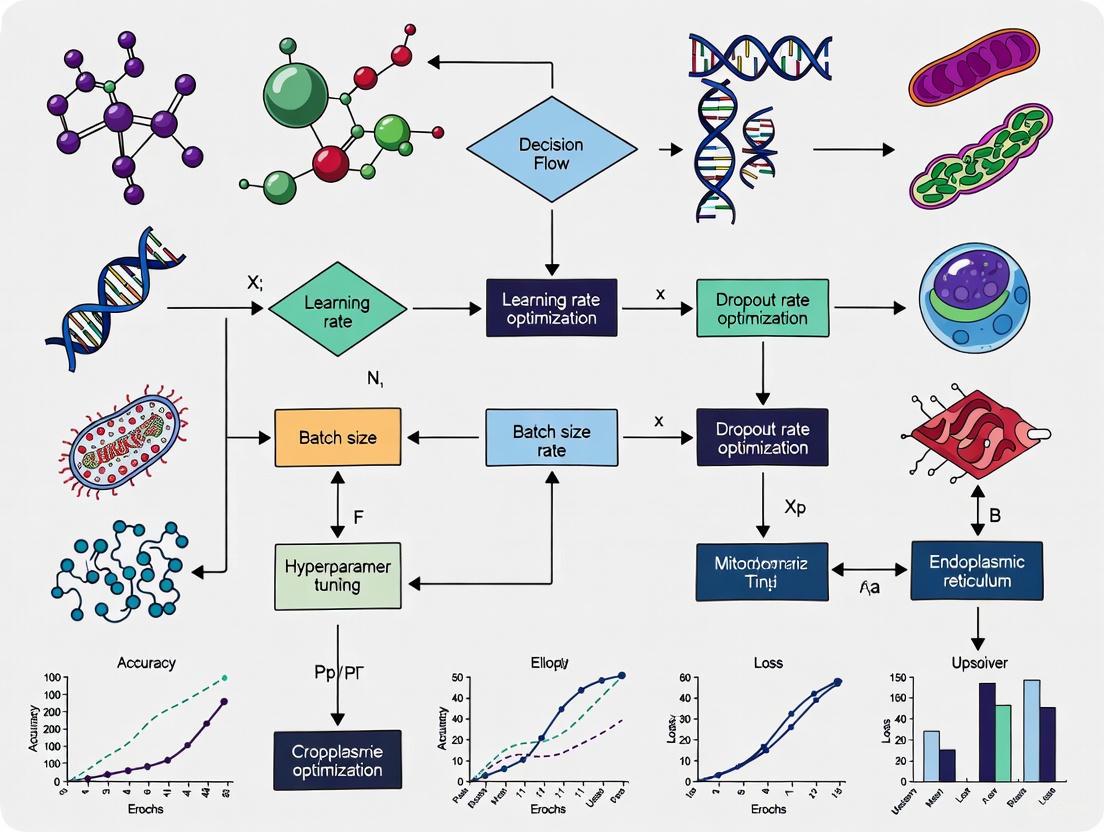

Decision Framework: Choosing a Tuning Strategy

Use this flowchart to determine the most efficient hyperparameter tuning strategy for your project's constraints. The process balances computational resources against desired performance gains. [13] [14] [16]

Frequently Asked Questions

Q: What are the foundational steps for fine-tuning scGPT? A: The fine-tuning process builds upon a model pre-trained on 33 million human cells. A standard workflow involves data preprocessing (normalization, binning, HVG selection), followed by model training for a specific downstream task. The pre-trained model is then adapted using your dataset over a set number of epochs with a defined learning rate and batch size [17] [18] [19].

Q: My fine-tuned model for perturbation prediction produces nearly identical outputs for different conditions. What is wrong? A: This is a known issue where predictions show a Pearson correlation R2 of ~0.99 across perturbations [20]. Potential causes and solutions include:

- Hyperparameters: Review and adjust your mask ratio and learning rate. A typical mask ratio used during fine-tuning is 0.4 [21].

- Batch Labels: Ensure that

use_batch_labels = Trueis correctly set in your configuration if your model requires this information, as a missingbatch_labelsparameter can cause errors [21]. - Task Setup: Verify that the model's objective (e.g.,

CLSfor classification) is correctly enabled for your specific task [21].

Q: For cell-type annotation, when should I use zero-shot versus fine-tuned scGPT? A: The choice depends on your data and accuracy requirements [4].

- Zero-Shot (Pre-trained only): Best for rapid exploration when you have no labeled reference data. It is instant and requires no GPU but may miss rare cell populations.

- Fine-Tuned: Essential for publication-quality or clinical-grade labels. It requires a GPU and a labeled subset of your data (typically 5-10 epochs taking ~20 minutes on a single A100 GPU) but can boost accuracy by 10-25 percentage points on complex datasets [4].

Q: How do I set the number of highly variable genes (HVGs) for fine-tuning?

A: The number of HVGs is a critical hyperparameter. Common values found in protocols are 1,200 or 4,000 genes [21]. The max_seq_len parameter should be set to your n_hvg value plus one [21].

Q: What is a good starting point for key hyperparameters? A: Based on published protocols and discussions, you can use the values in the table below as a starting point for your experiments.

| Hyperparameter | Suggested Starting Value | Context & Notes |

|---|---|---|

Learning Rate (lr) |

1e-3 | Common value used for fine-tuning [21]. |

| Batch Size | 16 | Used in multi-omic and annotation fine-tuning [21]. |

| Epochs | 25-50 | 25 epochs used in tutorials [21]; ~20 minutes for 5-10 epochs on an A100 GPU [4]. |

| Mask Ratio | 0.4 | Ratio of input values masked for generative training [21]. |

Number of HVGs (n_hvg) |

1200, 4000 | Defines the sequence length of gene tokens [21]. |

Troubleshooting Guides

Issue 1: Handling abatch_labels is NoneError During Fine-Tuning

This error occurs during training when the model expects batch information but cannot find it.

- Error Message:

- Solution:

- Verify Hyperparameter: In your configuration, ensure that

use_batch_labels = Trueis set [21]. - Check Data Processing: Confirm that your data preprocessing pipeline correctly identifies and includes the batch labels from your metadata. The error indicates that the variable was defined but is not being passed correctly to the model during the training step [21].

- Verify Hyperparameter: In your configuration, ensure that

Issue 2: Poor Performance on Genetic Perturbation Prediction Tasks

Recent independent benchmarks have shown that foundation models, including scGPT, can struggle to outperform simple baseline models on perturbation prediction tasks [22] [23].

- Problem: The fine-tuned model fails to predict distinct transcriptome changes after single or double genetic perturbations more accurately than a simple baseline (e.g., an additive model of individual effects or just predicting the mean of the training data) [22] [23].

- Diagnosis Steps:

- Benchmark with Baselines: Always compare your model's performance against simple baselines, such as the "no change" model (predicts control expression) or the "additive" model (sums individual logarithmic fold changes) [22].

- Examine Predictions: Check if your model's predictions for different perturbations are overly similar, a known issue where the Pearson correlation between predicted profiles is unnaturally high (e.g., ~0.99) [20].

- Mitigation Strategies:

- Manage Expectations: Be aware that predicting unseen perturbations is still an open challenge, and current models may not generalize well in this setting [22].

- Leverage Embeddings: Consider using the gene embeddings learned by scGPT during pre-training as features for a simpler model, like a Random Forest. One study found that a Random Forest using scGPT's embeddings sometimes outperformed the fine-tuned scGPT model itself [23].

- Incorporate Prior Knowledge: Simple models using features from Gene Ontology (GO) have been shown to outperform foundation models on these tasks. Exploring hybrid approaches may be beneficial [23].

Issue 3: Fine-Tuned Model Fails to Generalize or Shows Low Accuracy

This can happen if the model overfits to the training data or the hyperparameters are suboptimal.

- Problem: The model performs well on training data but poorly on held-out test data or new samples.

- Solution:

- Regularization: Utilize the

dropouthyperparameter. A common default value is 0.2 [21]. - Learning Rate Scheduling: Use the

schedule_ratioparameter (e.g., 0.95) to decay the learning rate, which can help converge to a better solution [21]. - Freeze Layers: If you have very limited task-specific data, try setting

freeze = Trueto freeze most of the pre-trained model weights and only fine-tune the final layers [21]. - Gene Selection: Ensure the number of Highly Variable Genes (

n_hvg) is appropriate for your dataset. Letting the model focus on the most informative genes can improve performance [4].

- Regularization: Utilize the

Experimental Protocols & Workflows

Standard Fine-Tuning Protocol for Cell-Type Annotation

This protocol, adapted from a Nature Protocol paper, details the steps to achieve high-accuracy (e.g., 99.5% F1-score) cell-type annotation on a custom retina dataset [18] [19].

- Data Preprocessing: Clean, normalize, and bin the raw gene expression data into a pre-defined number of bins (

n_bins=51is a typical value). Select a set of Highly Variable Genes (n_hvg=1200or4000). The output is a processed file ready for training [21] [18] [19]. - Hyperparameter Setup: Configure the model for the annotation task. Key settings include:

task = 'annotation'do_train = Trueload_model = "../save/scGPT_human"(path to pre-trained model)CLS = True(enables the cell-type classification objective)lr = 1e-3,batch_size = 16,epochs = 25mask_ratio = 0.4[21]

- Model Fine-Tuning: Load the pre-trained scGPT model and the processed data. Run the training loop for the specified number of epochs. The model's weights are updated to learn the cell-type labels from your reference data [18].

- Evaluation: Use the fine-tuned model to predict cell types on the query dataset. The output includes a UMAP visualization and a file with prediction results. A confusion matrix is generated if ground truth labels are available [18] [19].

Workflow for Multi-omic Integration Tasks

Integrating data from multiple omics layers or batches requires specific settings to guide the model.

- Data Preprocessing: Process the multi-omic data similarly to the standard protocol, ensuring consistency across modalities [21].

- Hyperparameter Setup: Key differences from the standard setup include:

- Model Fine-Tuning: Load the pre-trained model and fine-tune. The model learns to generate a joint embedding that integrates information across the different omics layers [17].

The following diagram illustrates the logical workflow for a standard fine-tuning process, from data preparation to model evaluation.

Fine-tuning Workflow and Key Hyperparameters

The Scientist's Toolkit

| Research Reagent / Resource | Function in scGPT Fine-Tuning |

|---|---|

| Pre-trained scGPT Model | The foundation model (pre-trained on ~33 million cells) that provides the initial weights for transfer learning [17]. |

| Annotated Reference Dataset | A single-cell dataset with pre-validated cell-type labels; used as the ground truth for training the classifier during fine-tuning [18] [4]. |

| Processed Multi-omic Data | Input data that has been normalized, binned, and filtered for Highly Variable Genes (HVGs) to be used for model training [21]. |

| Gene Ontology (GO) Annotations | External biological knowledge; can be used as feature vectors in baseline models to benchmark scGPT's perturbation prediction performance [23]. |

| Computational Baselines (e.g., Additive Model) | Simple models (e.g., predicting no change or additive effects) used to validate and benchmark the performance of the fine-tuned scGPT model [22] [23]. |

Frequently Asked Questions (FAQs)

Q1: What is tokenization in the context of single-cell data analysis, and why is it a critical step for foundation models like scGPT? Tokenization is the process of converting raw gene expression data into discrete units, or tokens, that a deep learning model can process. For single-cell foundation models (scFMs), this typically involves representing genes or genomic features as tokens, and their expression values as the input data [2] [3]. This step is fundamental because gene expression data is not naturally sequential; unlike words in a sentence, genes lack an inherent order. Successful tokenization transforms this unstructured data into a structured format that transformer-based models like scGPT can learn from, enabling tasks such as cell type annotation and perturbation prediction [2] [3].

Q2: My model performance is poor after fine-tuning. Could my gene ranking strategy be at fault? Yes, the strategy for ordering genes is a known hyperparameter that can significantly impact model performance. If you are using a simple ranking by expression magnitude, consider that this approach, while common, introduces an arbitrary sequence. Some models report no clear advantage from complex ranking strategies and may perform well with normalized counts [2] [3]. To troubleshoot, you could experiment with alternative ordering schemes, such as binning genes by their expression values before ranking [2], or evaluate whether your current strategy is discarding important biological signal from lowly expressed but functionally critical genes.

Q3: How does the choice between value binning and value projection affect the resolution of my gene expression data? The choice between these encoding methods directly determines whether your model treats expression as categorical or continuous data.

- Value Binning categorizes continuous expression values into discrete "buckets," transforming the task into a classification problem. This can simplify learning but loses some granularity of the original data [15]. For example, scBERT uses this approach [15].

- Value Projection methods, used by models like scFoundation and CellFM, aim to preserve the full, continuous resolution of the gene expression values. This strategy projects the expression values, allowing the model to predict raw or normalized counts directly, which can be crucial for tasks requiring fine-grained discrimination [15]. A direct comparison of these strategies on your specific downstream task is the best way to determine which is more suitable.

Q4: What are some methods to incorporate additional biological context during the tokenization process? You can enrich the tokenization input by adding special tokens that represent various types of metadata. This can provide valuable context for the model and potentially improve its biological relevance. Strategies include:

- Prepending a token representing the cell's own identity or metadata [2] [3].

- Including tokens that indicate the data modality (e.g., scRNA-seq vs. scATAC-seq) when working with multi-omics data [2] [3].

- Incorporating gene metadata, such as Gene Ontology terms or chromosomal location, into the gene token embeddings [2] [3].

- Using batch information as special tokens to help the model account for technical variations [2].

Q5: Why is my model struggling with rare cell types, and can tokenization help? Rare cell types are a common challenge for scFMs. While tokenization itself may not directly solve this, the way you handle the input data can influence the model's sensitivity. Ensure your tokenization and preprocessing steps do not inadvertently filter out genes that are characteristic of rare populations. Furthermore, during fine-tuning, you might explore strategies like oversampling cells from rare types or adjusting the loss function to be more sensitive to class imbalance, working in conjunction with a well-structured tokenization pipeline.

Troubleshooting Guides

Issue 1: Inconsistent Model Performance Across Datasets

Problem: Your fine-tuned scGPT model performs well on one dataset but fails to generalize to others, potentially due to batch effects or data quality inconsistencies introduced during tokenization.

Solution:

- Standardize Input Data: Implement a rigorous and consistent quality control (QC) pipeline before tokenization. This should include filtering low-quality cells and genes, and normalizing counts across all datasets you plan to use [15]. Using a standardized workflow, like the one used to train CellFM on 100 million cells, is crucial [15].

- Metadata Tokenization: Incorporate batch information as special tokens during the tokenization step. This explicitly informs the model about the source of the data, allowing it to learn to distinguish technical artifacts from biological signal [2] [3].

- Validate with Controls: If available, use RNA spike-in controls to assess and correct for technical variation during data preprocessing, before the tokenization step [24].

Issue 2: Loss of Information from Over-Aggressive Binning

Problem: Converting continuous gene expression values into too few bins results in a loss of resolution, hampering the model's ability to detect subtle but biologically important expression changes.

Solution:

- Diagnose Bin Distribution: Plot the distribution of expression values for key marker genes. If the distribution is highly compressed into one or two bins, you are losing information.

- Optimize Bin Number: Increase the number of expression bins. Instead of 10 bins, experiment with 20, 50, or more. The optimal number is a trade-off between resolution and computational complexity.

- Consider Alternative Encoding: If fine-grained prediction is critical for your task (e.g., predicting dose-dependent drug responses), consider switching from a binning-based tokenization to a value projection-based method that preserves continuous values [15].

Issue 3: Handling Multi-Omic Data Integration

Problem: Tokenizing and integrating data from different single-cell modalities (e.g., scRNA-seq and scATAC-seq) into a unified model input.

Solution:

- Modality Tokens: A standard and effective approach is to include special tokens that indicate the modality of each input sequence. For example, a

[RNA]token could precede gene expression tokens, and a[ATAC]token could precede chromatin accessibility features [2] [3]. - Cross-Modal Attention: Utilize model architectures that support cross-modal attention. This allows genes from one modality to attend to and interact with features from another modality within the transformer layers, facilitating integration [2].

- Unified Feature Space: Ensure that the feature dimensions (i.e., the token embeddings) are aligned across modalities. This might involve projectin all features into a common embedding space before processing by the transformer.

Quantitative Data and Methodologies

Comparison of Primary Tokenization Strategies

The table below summarizes the core tokenization strategies used in modern single-cell foundation models, which are critical hyperparameters to consider when fine-tuning scGPT.

| Tokenization Strategy | Core Principle | Example Models | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Gene Ranking | Genes are ordered within each cell by expression level to create a sequence. | Geneformer [15], scGPT [3] | Creates a deterministic input sequence; mimics next-word prediction in NLP. | Introduces arbitrary gene order; may obscure co-expression. |

| Value Binning | Continuous expression values are categorized into discrete bins/buckets. | scBERT [15], scBERT [2] | Simplifies the prediction task to classification; can be more stable. | Loses continuous resolution of expression data. |

| Value Projection | Projects continuous expression values directly, preserving full resolution. | CellFM [15], scFoundation [15] | Maintains full data granularity for fine-grained analysis. | Can be more computationally demanding and sensitive to noise. |

Experimental Protocol: Benchmarking Tokenization Schemes

Objective: Systematically evaluate the impact of different tokenization strategies on the performance of a fine-tuned scGPT model for a specific downstream task (e.g., cell type annotation).

Materials:

- A curated and QC-controlled single-cell dataset with ground truth cell type labels.

- A pre-trained scGPT model.

- Computing environment with necessary deep learning libraries (PyTorch, scGPT package).

Methodology:

- Data Preparation: Split your dataset into training, validation, and test sets. Ensure cell type distributions are balanced across splits.

- Tokenization Variants: Create three separate data loaders implementing the different tokenization schemes:

- Variant A (Ranking): Tokenize by ranking genes from highest to lowest expression.

- Variant B (Binning): Tokenize by binning expression values (e.g., 20 bins) and using bin indices as tokens.

- Variant C (Projection): If supported, use a value projection method to tokenize continuous values.

- Fine-Tuning: Fine-tune three separate instances of the pre-trained scGPT model, each on one of the tokenized data variants. Keep all other hyperparameters (learning rate, batch size, number of epochs) constant.

- Evaluation: Evaluate each fine-tuned model on the held-out test set. Use metrics including:

- Accuracy / F1-score for cell type annotation.

- Silhouette Score or other clustering metrics on the cell embeddings produced by the model.

- Analysis: Compare the performance metrics across the three variants to determine the most effective tokenization strategy for your specific data and task.

Workflow Visualization

Tokenization Strategies for scGPT

scGPT Fine-tuning with Tokenization

| Tool / Resource | Function / Purpose | Relevance to Tokenization & Fine-tuning |

|---|---|---|

| Pre-trained scGPT Model | A foundational model pre-trained on millions of single-cell transcriptomes. | The starting point for all fine-tuning experiments. Its architecture dictates the supported tokenization methods (e.g., ranking, binning). |

| CZ CELLxGENE Database | A platform providing unified access to annotated single-cell datasets. | A primary source for large-scale, diverse training data. High-quality, standardized data from such sources is crucial for effective tokenization [2] [3] [15]. |

| PanglaoDB / Human Cell Atlas | Curated compendia of single-cell data from multiple sources. | Provides well-annotated reference data useful for benchmarking tokenization strategies and model performance [2] [3]. |

| Scanpy / Seurat | Standard software toolkits for single-cell data analysis in Python/R. | Used for essential preprocessing steps (QC, normalization, filtering) that must be applied before tokenization [15]. |

| Gene Metadata (e.g., GO Terms) | Functional annotations for genes from databases like Gene Ontology. | Can be incorporated during tokenization to create biologically-informed token embeddings, potentially enhancing model interpretability and performance [2] [3]. |

Parameter-Efficient Fine-Tuning (PEFT) has emerged as a critical methodology for adapting large pre-trained models to specific downstream tasks without the prohibitive computational cost and risk of catastrophic forgetting associated with full fine-tuning. In the context of single-cell biology, where foundation models like scGPT are pre-trained on millions of cells to understand universal gene expression patterns, PEFT enables researchers to specialize these models for specific applications while preserving their valuable pre-trained biological knowledge. This technical support center provides essential guidance for researchers implementing PEFT strategies for scGPT in their single-cell RNA sequencing workflows, particularly focusing on hyperparameter optimization and troubleshooting common experimental challenges.

FAQs: PEFT Fundamentals and Implementation

What is Parameter-Efficient Fine-Tuning and why is it particularly valuable for scGPT?

Parameter-Efficient Fine-Tuning refers to a collection of techniques that fine-tune only a small subset of parameters in a pre-trained model, instead of updating all weights [25]. For scGPT, which is pre-trained on over 33 million cells to learn fundamental biological patterns, PEFT offers significant advantages [25] [5]. Traditional fine-tuning can cause "catastrophic forgetting" where the model overwrites its original parameters on narrow, task-specific datasets, losing broader pre-learned knowledge [25]. PEFT preserves this foundational biological understanding while adapting to new tasks. Additionally, PEFT can reduce the number of trainable parameters by up to 90% compared to conventional fine-tuning, dramatically decreasing computational requirements and training time [25].

What are the main PEFT methods available for single-cell large language models like scGPT?

The two primary PEFT strategies adapted for single-cell Large Language Models (scLLMs) are LoRA (Low-Rank Adaptation) and prefix prompt tuning [25]. LoRA works by injecting trainable rank decomposition matrices into transformer layers while keeping original weights frozen [25]. Prefix prompt tuning involves prepending trainable tensors to each transformer block, allowing adaptation without modifying core parameters [25]. Recent research has also introduced drug-conditional adapters for molecular perturbation prediction, which use less than 1% of the original foundation model's parameters while effectively linking cell representations with chemical structures [26].

When should I use fine-tuning versus zero-shot approaches with scGPT?

Your choice between zero-shot and fine-tuned scGPT should be guided by your specific research context and requirements [4]:

| Approach | Best Use Cases | Performance Characteristics | Resource Requirements |

|---|---|---|---|

| Zero-shot | Quick exploration, initial data assessment, when no reference labels exist | Can miss rare/novel cell states; lower macro-F1 on out-of-distribution data | Instant results; no GPU needed |

| Fine-tuned | Publication-quality analysis, clinical-grade labels, rare cell type identification | +10-25 percentage point accuracy improvement on complex datasets; better subtype resolution | Requires GPU; 5-10 epochs (≈20 min on 1 A100) |

| PEFT | Specializing models for specific tasks while preserving broad knowledge, limited data scenarios | Comparable to full fine-tuning while preserving pre-trained knowledge; enables zero-shot generalization | Up to 90% parameter reduction; minimal computational overhead |

How do I select the right hyperparameters for PEFT with scGPT?

Based on established fine-tuning protocols, the following hyperparameters provide a solid starting point for scGPT adaptation [27]:

| Hyperparameter | Recommended Value | Impact on Training | Adjustment Guidance |

|---|---|---|---|

| Learning Rate | 1e-4 to 1e-3 | Critical for convergence stability | Higher rates (1e-3) for larger datasets; lower (1e-4) for smaller ones |

| Batch Size | 16-64 | Affects gradient stability and memory use | Smaller batches for limited GPU memory; larger for stable convergence |

| Epochs | 15-25 | Balances underfitting and overfitting | Monitor validation loss for early stopping |

| Mask Ratio | 0.4 | Determines fraction of masked genes in MLM | Higher ratios increase difficulty; 0.4 optimal for most tasks |

| Dropout | 0.2 | Regularization to prevent overfitting | Increase if evidence of overfitting on small datasets |

| DAB Weight | 1.0 | Batch correction strength | Increase with stronger batch effects present in data |

| Schedule Ratio | 0.9 | Learning rate decay rate | Adjust based on convergence stability |

Troubleshooting Common PEFT Implementation Issues

Error: "AssertionError" with batch_labels being None during fine-tuning

Problem Context: This error typically occurs when the model expects batch label information but doesn't receive it, commonly encountered when adapting multi-omics workflows [21].

Root Cause: The hyperparameter use_batch_labels or DSBN (Domain-Spec BatchNorm) is set to True, but the data loader isn't providing batch label annotations [21].

Solution:

- Verify your data contains batch information in the

adata.obsdataframe - Ensure batch labels are properly formatted as categorical variables

- Check that the

per_seq_batch_sampleparameter aligns with your data structure - If not using batch correction, set

use_batch_labels = FalseandDSBN = Falsein your hyperparameters [21]

Code Verification:

Problem: Poor downstream task performance after PEFT application

Problem Context: After implementing PEFT, model performance on your target task doesn't meet expectations, with low accuracy in cell type annotation or poor batch integration [25] [4].

Potential Causes and Solutions:

Insufficient Adaptation: The PEFT method may not be providing enough capacity for your specific task

- Solution: Increase the rank parameter in LoRA or lengthen the prompt in prefix tuning

Hyperparameter Sensitivity: Learning rate and training schedule significantly impact PEFT effectiveness

- Solution: Implement learning rate finding experiments; try cosine annealing schedules

Data Representation Issues: Gene representation may not align between pre-training and target dataset

- Solution: Verify gene vocabulary alignment and use the common gene set between your data and pre-trained model [27]

Task-Objective Mismatch: The PEFT strategy may not align with your downstream task

- Solution: For cell type identification, ensure CLS objective is enabled; for integration, use DAR and DSBN objectives [27]

Diagnostic Steps:

- Compare training and validation loss curves to detect overfitting

- Evaluate on a small subset with known labels to verify basic functionality

- Test different PEFT methods (LoRA vs adapters) for your specific task

Challenge: Expanding model predictions to additional genes beyond tutorial examples

Problem Context: After fine-tuning, researchers often want to explore perturbation effects on genes not explicitly covered in tutorial examples [28].

Solution Approach:

- Ensure your target genes are present in the vocabulary of the pre-trained model

- Verify these genes are included in the highly variable gene selection process

- Modify visualization functions to accommodate custom gene sets [28]

Implementation Guidance:

Experimental Protocols for PEFT with scGPT

Standardized PEFT Workflow for Cell Type Annotation

The following workflow provides a robust foundation for implementing PEFT with scGPT, based on established protocols that have achieved 99.5% F1-score in retinal cell type annotation [19] [29]:

Hyperparameter Optimization Experimental Design

For systematic hyperparameter tuning in scGPT PEFT, implement the following factorial design:

Research Reagent Solutions for scGPT PEFT Experiments

| Resource Category | Specific Solution | Function in PEFT Experiments | Access Information |

|---|---|---|---|

| Pre-trained Models | scGPT Human Whole-body Model | Foundation for PEFT adaptation; pre-trained on 33M+ cells | Available via scGPT model zoo [5] |

| Dataset Resources | Retinal Cell Atlas | Benchmark dataset for fine-tuning evaluation; specialized cell types | Zenodo repository (19.7 GB) [29] |

| Software Tools | scGPT Fine-tuning Protocol | End-to-end workflow for model adaptation | GitHub: RCHENLAB/scGPTfineTuneprotocol [19] |

| Evaluation Metrics | F1-score, ARI, ASW | Quantitative assessment of cell type annotation quality | Standard scGPT evaluation pipeline [19] [29] |

| Computational Environment | A100 GPU, Python 3.7+ | Hardware/software requirements for efficient PEFT training | Cloud platforms or local GPU clusters |

In single-cell RNA sequencing analysis, researchers face fundamental statistical-computational tradeoffs—the inherent tension between achieving optimal statistical accuracy and maintaining computational feasibility when fine-tuning scGPT foundation models [30]. As high-dimensional single-cell data and model complexity increase, achieving minimal statistical error often becomes computationally intractable, while restricting to computationally efficient procedures typically degrades statistical efficiency [30]. This tradeoff permeates all aspects of scGPT fine-tuning, from hyperparameter selection to training strategy implementation.

The core challenge manifests as a gap between information-theoretic thresholds (the theoretical performance achievable without computational constraints) and computational thresholds (what polynomial-time algorithms can realistically achieve) [30]. Understanding and navigating this landscape is essential for researchers working with limited computational resources while striving to maintain biological relevance in their findings.

Frequently Asked Questions (FAQs)

Q1: What are the most critical computational constraints when fine-tuning scGPT?

The primary constraints include GPU memory capacity, training time, and storage requirements. scGPT's base architecture contains approximately 50 million parameters [31], and full fine-tuning requires storing optimizer states and gradients for all parameters, typically consuming 3-4 times the base model memory. For context, pretraining utilized 33 million human cells [25], but effective fine-tuning can be achieved with significantly smaller datasets through appropriate techniques.

Q2: How does parameter-efficient fine-tuning (PEFT) help balance this trade-off?

PEFT methods address both catastrophic forgetting (where models lose pre-learned knowledge during fine-tuning) and computational inefficiencies by keeping original model parameters fixed while selectively updating newly introduced minimal parameters [25]. Research demonstrates that PEFT can achieve up to 90% reduction in trainable parameters compared to conventional fine-tuning while maintaining or enhancing performance on tasks like cell type identification [25]. This represents an optimal compromise in the statistical-computational tradeoff.

Q3: What is the relationship between pretraining data size and zero-shot performance?

Evaluations reveal an unclear relationship between pretraining dataset scale and zero-shot performance on downstream tasks [32]. While pretraining provides clear improvements over randomly initialized models, the benefits plateau beyond certain dataset sizes. Surprisingly, scGPT pretrained on 10.3 million blood and bone marrow cells sometimes outperformed scGPT pretrained on 33 million diverse human cells, even on non-blood tissue datasets [32]. This suggests that dataset composition and quality may outweigh sheer volume in the computational trade-off calculus.

Q4: When should researchers choose scGPT over simpler traditional methods?

Benchmark studies indicate that no single foundation model consistently outperforms others across all tasks [31]. Simpler machine learning methods often adapt more efficiently to specific datasets under resource constraints, particularly for standardized analyses [31]. scGPT provides greatest value when: (1) analyzing diverse, complex datasets requiring integration; (2) performing multiple downstream tasks from a shared representation; (3) working with sufficient computational resources to justify the overhead; and (4) tackling novel problems where traditional methods have proven inadequate.

Q5: What are the performance implications of different fine-tuning strategies?

Strategies exist along a spectrum of computational cost versus adaptability. Full fine-tuning offers maximum task specificity but requires substantial resources and risks overfitting and catastrophic forgetting. Parameter-efficient methods (LoRA, prefix tuning) preserve pretrained knowledge with dramatically reduced computational load. Multi-task learning enables adaptation to multiple objectives simultaneously but requires careful balancing. The optimal choice depends on dataset size, task complexity, and available resources, reflecting the core statistical-computational tradeoff.

Troubleshooting Common Experimental Issues

Problem 1: Installation and Dependency Conflicts

Issue: Users encounter "No module named 'torch'" errors or difficulties installing flash attention dependencies [33] [34].

Solution: Follow this validated installation protocol:

- Use Mamba instead of pip for faster dependency resolution:

mamba create -n scgpt python=3.10thenmamba activate scgpt[34] - Install PyTorch 1.13 specifically:

pip install torch==1.13[34] - Verify CUDA compatibility: Check

torch.version.cudaand install matching CUDA toolkit (e.g., 11.7) [34] - Update GCC if encountering GLIBCXX errors:

sudo apt-get upgrade gcc[34]

Computational Trade-off Note: Using containerized solutions (Docker) simplifies installation but introduces additional storage overhead and platform dependencies [34].

Problem 2: Poor Zero-Shot Performance on Target Data

Issue: scGPT embeddings underperform simpler methods like Highly Variable Genes (HVG) or established algorithms like Harmony and scVI in cell type clustering and batch integration [32].

Solution: Implement a strategic fine-tuning protocol rather than relying on zero-shot performance:

- Extract cell embeddings using the pretrained model

- Apply PEFT methods rather than full fine-tuning to preserve general biological knowledge

- Leverate the model's domain-specific batch normalization (DSBN) for integration tasks [27]

- Monitor performance against simple baselines to ensure computational investment justifies gains

Computational Trade-off Note: The decision between using simple methods versus fine-tuning scGPT represents a classic statistical-computational tradeoff. Simple methods have lower computational requirements but may lack adaptability, while scGPT fine-tuning offers greater potential adaptability at substantial computational cost [32] [30].

Problem 3: Memory Constraints During Fine-Tuning

Issue: Training fails due to GPU memory limitations, especially with large single-cell datasets.

Solution: Implement memory-efficient training strategies:

- Reduce batch size (e.g., 64 as used in official tutorials [27]) at the cost of potential training instability

- Use gradient accumulation to maintain effective batch size

- Employ mixed precision training (

amp=True) to reduce memory footprint [27] - Implement LoRA or other PEFT methods to dramatically reduce trainable parameters [25]

Computational Trade-off Note: Each memory reduction strategy introduces statistical costs: smaller batches increase variance; mixed precision reduces numerical precision; PEFT methods limit model adaptability. The optimal balance depends on specific task requirements and resource constraints [30].

Problem 4: Inconsistent Performance Across Datasets

Issue: Fine-tuned models show variable performance across different biological contexts or sequencing technologies.

Solution:

- Ensure dataset compatibility with pretraining corpus - retain only genes present in scGPT's vocabulary [27]

- Implement comprehensive data preprocessing matching pretraining protocols (binning, normalization)

- Use explicit zero probability handling for sparse single-cell data [27]

- Apply appropriate regularization (DAB weight, ECS threshold) for specific tasks [27]

Computational Trade-off Note: The tension between generalizability and specialization represents a fundamental statistical-computational tradeoff. Over-optimizing for specific datasets improves immediate performance but reduces model flexibility and increases retraining costs for new applications [31].

Hyperparameter Optimization Guidelines

Critical Hyperparameters and Their Computational Trade-offs

Table 1: Hyperparameter Settings for Common Fine-Tuning Tasks

| Task Objective | Recommended Mask Ratio | DAB Weight | ECS Threshold | Learning Rate | Training Epochs |

|---|---|---|---|---|---|

| Batch Integration | 0.4 [27] | 1.0 [27] | 0.8 [27] | 1e-4 [27] | 15 [27] |

| Cell Type Annotation | 0.4-0.6 | 0.0 | 0.5-0.7 | 1e-4 | 10-20 |

| Perturbation Prediction | 0.3-0.5 | 0.2 | 0.6-0.8 | 5e-5 | 20-30 |

Quantitative Performance Comparisons

Table 2: Computational Costs vs. Performance Gains Across Methods

| Method | Trainable Parameters | Memory Usage | Training Time | Cell Type Accuracy | Batch Correction |

|---|---|---|---|---|---|

| Zero-Shot | 0 | Low | Minimal | Variable [32] | Inconsistent [32] |

| PEFT (LoRA) | ~10% of full [25] | Moderate | Moderate | High [25] | Good |

| Full Fine-Tuning | 100% | High | Extended | Highest | Best |

| Traditional Methods (HVG, scVI) | N/A | Low | Minimal | Competitive [32] | Good [32] |

Experimental Protocols for Optimal Trade-off Balance

Protocol 1: Parameter-Efficient Fine-Tuning for Cell Type Identification

- Initial Setup: Load pretrained scGPT model (approximately 50M parameters) [31]

- Data Preparation:

- PEFT Implementation:

- Freeze all base model parameters

- Introduce task-specific adapters using LoRA methodology [25]

- Configure adapter rank based on available resources (higher rank = more parameters + potential performance)

- Training Configuration:

- Validation: Compare against HVG baseline to ensure computational investment is justified [32]

Protocol 2: Resource-Aware Hyperparameter Tuning

- Computational Budget Assessment:

- Determine available GPU memory and time constraints

- Identify non-negotiable performance thresholds for your biological question

- Staged Optimization:

- First stage: Tune critical parameters (learning rate, mask ratio) with fixed efficient settings

- Second stage: Optimize secondary parameters (regularization strengths) if resources allow

- Performance Monitoring:

- Track both statistical performance (accuracy, integration metrics) and computational costs

- Establish early stopping criteria based on diminishing returns

- Trade-off Analysis:

- Calculate performance gain per unit computational cost

- Identify the "knee in the curve" where additional resources yield minimal improvements

Workflow Visualization

Computational Trade-off Decision Workflow

Table 3: Key Research Reagent Solutions for scGPT Fine-Tuning

| Resource Category | Specific Solution | Function/Purpose | Trade-off Considerations |

|---|---|---|---|

| Pretrained Models | scGPT human (33M cells) [25] | Base for transfer learning | Larger models may not always outperform specialized smaller ones [32] |

| Parameter Efficiency | LoRA adapters [25] | Reduces trainable parameters by ~90% | Balance between parameter efficiency and task specificity [25] |

| Integration Methods | Domain Specific Batch Norm [27] | Handles technical batch effects | Adds complexity but improves integration metrics [27] |

| Regularization | Elastic Cell Similarity [27] | Preserves biological variance while integrating | Threshold (0.8) balances integration and preservation [27] |

| Optimization | AdamW with LR scheduling [27] | Stable convergence with resource constraints | Schedule ratio (0.9) balances convergence speed and stability [27] |

| Evaluation | scib metrics [27] | Comprehensive performance assessment | Multiple metrics provide robust evaluation but increase complexity [27] |

Practical Strategies for Optimizing scGPT Fine-Tuning Parameters

Implementing Parameter-Efficient Fine-Tuning (PEFT) with LoRA and Adapters

This technical support center provides targeted guidance for researchers and scientists, particularly those in drug development, who are implementing Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA and Adapters. The content is framed within hyperparameter tuning research for scGPT fine-tuning, a key tool for biological data analysis. The following FAQs, troubleshooting guides, and protocols are designed to address specific, high-impact issues encountered during experimental work.

FAQs & Troubleshooting Guides

FAQ 1: My fine-tuned model is overfitting to the small training dataset. What key LoRA hyperparameters should I adjust to improve generalization?

Overfitting occurs when the model memorizes the training data, harming its performance on new, unseen inputs [35]. To counteract this:

- Reduce the LoRA Rank (

r): The rank controls the number of trainable parameters. A higher rank increases model capacity but also the risk of overfitting. If you started with a rank of 64 or 128, try reducing it to 16 or 32 [35]. - Increase Weight Decay: Weight decay is a regularization term that penalizes large weights. Consider increasing it from 0.01 towards 0.1 to prevent overfitting and improve generalization [35].

- Use LoRA Dropout: While sometimes omitted for speed,

lora_dropoutcan be an effective regularizer. If overfitting is severe, try a small dropout value like 0.1 [35]. - Reduce the Number of Epochs: Training for too many epochs can lead to memorization. For most instruction-based datasets, 1-3 epochs are recommended, as training beyond this offers diminishing returns and increases overfitting risk [35].

FAQ 2: I am getting "Out of Memory" (OOM) errors when fine-tuning a large model on my single GPU. What are my primary PEFT options?

OOM errors are common when working with large models. The solution involves reducing the memory footprint.

- Use QLoRA over LoRA: LoRA uses 16-bit precision, while QLoRA is a 4-bit fine-tuning method. QLoRA uses 4× less VRAM, allowing you to fine-tune models like a 70B parameter LLaMA on a GPU with less than 48GB of VRAM [35].

- Adjust Batch Size and Gradient Accumulation: The effective batch size is the product of

batch_sizeandgradient_accumulation_steps. To reduce VRAM usage, decrease thebatch_size(e.g., to 2) and increase thegradient_accumulation_steps(e.g., to 8) to maintain a stable effective batch size (e.g., 16) [35]. - Enable Gradient Checkpointing: This technique trades compute for memory by not storing all activations. Using an optimized version like

"unsloth"can reduce memory usage by an extra 30% [35].

FAQ 3: What is the fundamental difference between LoRA and Adapters?

Both are PEFT methods, but they work differently [36]:

- LoRA (Reparameterization-based): LoRA does not add new layers. Instead, it uses low-rank matrix decomposition to represent weight updates for existing layers (e.g., the attention mechanism's linear layers). It injects trainable rank decomposition matrices (A and B) alongside the original weights, which are frozen [37] [36].

- Adapters (Additive): Adapters introduce new, small neural network modules (e.g., a two-layer fully connected network) into the architecture of the transformer model, typically after the attention or feed-forward layers. The original model's parameters are frozen, and only the adapters are trained [38] [36].

FAQ 4: For scGPT fine-tuning, which parts of the model should I target with LoRA to ensure optimal performance?

Research has shown that for optimal performance, LoRA should be applied to all major linear layers to match the performance of full fine-tuning [35]. When configuring target_modules, it is recommended to include the modules for both attention and the MLP (Multilayer Perceptron):

- Attention Projections:

q_proj(query),k_proj(key),v_proj(value),o_proj(output) [35]. - MLP Projections:

gate_proj,up_proj,down_proj[35].

While removing some modules can reduce memory, it is not advised as the savings are minimal and can significantly impact final model quality [35].

Experimental Protocols & Hyperparameter Guidance

This section provides detailed methodologies for establishing a baseline and optimizing your PEFT experiments, with a focus on scGPT.

Standard Protocol for scGPT Fine-Tuning

The following protocol, derived from scGPT documentation, outlines key steps and a tested hyperparameter setup for a batch integration task [27].

Workflow Overview:

Detailed Methodology:

- Hyperparameter Setup: Adopt the recommended hyperparameters for scGPT batch integration tasks [27]. See Table 1 for values.

- Load and Pre-process Data:

- Load your target dataset (e.g., PBMC 10K for scGPT) [27].

- Perform gene filtering to retain only the highly variable genes (

n_hvg = 1200) [27]. - A critical step is to cross-check the gene set in your data with the vocabulary of the pre-trained scGPT model. Retain only the common genes for fine-tuning [27].

- Load Pre-trained Model: Load the pre-trained scGPT model, its tokenizer (vocab), and its configuration file [27].

- Task-Specific Fine-tuning: Fine-tune the model using the specified objectives. For batch integration in scGPT, this includes:

- GEPC: Gene Expression modeling for Cell objective.

- ECS: Elastic Cell Similarity objective.

- DAR: Domain Adaptation Rebalancing objective for batch correction [27].

- Evaluation: Evaluate the fine-tuned model on the downstream task using relevant metrics [27].

Table 1: Example Hyperparameters for scGPT Fine-tuning (Batch Integration Task) [27]

| Hyperparameter | Recommended Value | Function |

|---|---|---|

Learning Rate (lr) |

1e-4 | Controls how much model weights are adjusted during training. |

| Epochs | 15 | Number of full passes through the training dataset. |

| Batch Size | 64 | Number of samples processed per forward/backward pass. |

| Mask Ratio | 0.4 | Proportion of input values randomly masked for prediction. |

| DAB Weight | 1.0 | Weight for the Domain Adaptation (batch correction) objective. |

LoRA Hyperparameter Optimization Guide

Fine-tuning LoRA's hyperparameters is crucial for balancing performance, speed, and stability [35].

Table 2: Key LoRA Hyperparameters & Recommendations [35]

| Hyperparameter | Function | Recommended Range / Value |

|---|---|---|

LoRA Rank (r) |

Controls the number of trainable parameters. Higher rank = more capacity, but risk of overfitting. | 8, 16, 32, 64, 128. Start with 16 or 32. |

LoRA Alpha (lora_alpha) |

Scaling factor for the LoRA adjustments. Controls the magnitude of updates. | Set equal to rank (r) or double the rank (r * 2). |

| LoRA Dropout | Regularization technique to prevent overfitting. | 0 (for speed) to 0.1 (if overfitting is an issue). |

| Learning Rate | Defines step size for weight updates. | 2e-4 (0.0002) for normal LoRA/QLoRA fine-tuning. |

| Weight Decay | Regularization term that penalizes large weights. | 0.01 (recommended) - 0.1. |

| Target Modules | Specifies which model parts to apply LoRA to. | q_proj, k_proj, v_proj, o_proj, gate_proj, up_proj, down_proj. |

Optimization Protocol:

- Establish a Baseline: Start with the recommended values in Table 2 (e.g.,

r=16,lora_alpha=16,lr=2e-4). - Use a Pruner: Employ a tool like Optuna, which features automated early-stopping (pruning) algorithms. This automatically halts unpromising trials at an early stage, saving significant compute time [39].

- Perform a Hyperparameter Search: Use a framework like Ray Tune to run a distributed search. It integrates with various search algorithms (e.g., HyperOpt, Bayesian Optimization) and can parallelize trials across multiple GPUs [39].

- Evaluate and Iterate: Use your validation set performance to guide the search for the optimal hyperparameter combination.

The Scientist's Toolkit

This table lists essential "research reagents" – software tools and libraries – crucial for conducting efficient PEFT experiments.

Table 3: Essential Research Reagent Solutions for PEFT Experiments

| Tool / Library | Function | Use Case / Rationale |

|---|---|---|

| PEFT Library (Hugging Face) | Provides implementations of LoRA, Adapters, and other PEFT methods. | Core library for applying Parameter-Efficient Fine-Tuning to Hugging Face transformer models [36]. |

| Transformers Library | Offers pre-trained models and training utilities. | The standard library for working with transformer models, which integrates seamlessly with PEFT [36]. |

| Ray Tune | A scalable library for hyperparameter tuning. | Enables distributed hyperparameter search using cutting-edge algorithms, speeding up optimization [39]. |

| Optuna | A hyperparameter optimization framework. | Simplifies the search process with an intuitive define-by-run API and efficient pruning algorithms [39]. |

| scGPT | A pre-trained foundation model for single-cell biology. | The target model for fine-tuning in this thesis context, designed for analyzing single-cell data [27]. |

| Unsloth | An optimized library for faster LoRA/QLoRA fine-tuning. | Offers bug fixes and optimizations (e.g., for gradient accumulation) that can significantly speed up training [35]. |

Workflow Visualization: PEFT Method Selection

The following diagram outlines a logical decision pathway for selecting and configuring a PEFT method, based on your primary experimental constraint.

Frequently Asked Questions (FAQs)

Q1: Why is a learning rate schedule critical for fine-tuning models like scGPT? A learning rate schedule is vital because it directly controls the stability and quality of convergence during training. Using a learning rate that is too large can cause optimization to diverge, while one that is too small can lead to extremely long training times or convergence to a suboptimal result [40]. A well-designed schedule helps the model navigate the loss landscape efficiently, which is especially important for computationally expensive fine-tuning of foundation models on specialized biological data [41].

Q2: What is the primary mechanism and benefit of using a learning rate warmup? The primary benefit of warmup is to allow the network to tolerate a larger target learning rate than would otherwise be possible [42]. The underlying mechanism involves moving the model from a poorly-conditioned area of the loss landscape at initialization to a better-conditioned, flatter region. This is achieved by starting with a small learning rate, which prevents large, destabilizing updates from the initially random parameters. This process reduces the sharpness (the top eigenvalue of the Hessian of the loss), enabling the use of a higher target learning rate for faster convergence and more robust hyperparameter tuning [42].

Q3: My training loss is oscillating and fails to decrease. What could be wrong? This is a classic sign of a learning rate that is too high. Your optimizer is likely taking steps that are too large, causing it to bounce around or overshoot the minimum of the loss function [43]. We recommend the following troubleshooting steps:

- Implement a warmup schedule if you are not using one, as this can prevent initial instability [42].

- Reduce your target learning rate and consider using a more aggressive decay schedule [40].

- Check your batch size. There is a complex interaction between batch size and learning rate. A very small batch size with a high learning rate can lead to noisy, oscillating updates [44].

Q4: Are complex decay schedules always better than a constant learning rate? Not necessarily. While decay schedules often improve performance, recent research on fine-tuning small LLMs (3B-7B parameters) has found that using a constant learning rate can be a viable and simpler alternative, with studies showing that omitting warmup and decay can sometimes yield competitive results [44]. The optimal choice depends on your specific model, dataset, and compute budget.

Q5: How can I systematically find the best learning rate schedule for my project? Instead of manual tuning, we recommend using hyperparameter optimization frameworks. These tools automate the search for optimal schedules and other hyperparameters.

- Ray Tune is a scalable Python library that can parallelize searches across multiple GPUs and integrates with various optimization algorithms like Ax/Botorch and HyperOpt [39].

- Optuna is a framework-designed for machine learning that efficiently searches spaces using algorithms like Bayesian optimization and can prune unpromising trials early to save computation [39] [45]. A simple code example for setting up a study with Optuna is provided in the "Researcher's Toolkit" section.

Troubleshooting Guides

Issue 1: Managing Unstable or Diverging Training Loss

Symptoms:

- Training loss becomes

NaN(Not a Number). - Loss values increase dramatically over successive epochs.

- Wild oscillations in the loss value.

Diagnosis:

This is typically caused by an excessively large effective update step, which is a product of the learning rate and the gradient [46]. At the beginning of training, gradients can be very large because the randomly initialized model is far from a solution. A large learning rate applied to these large gradients causes the parameters to be updated too aggressively, leaving the region of useful optimization.

Resolution Protocol:

- Implement Linear Warmup: This is the most direct solution. Gradually increase the learning rate from a small value (e.g., 0) to your target value over a set number of steps (e.g., 5,000-10,000 steps). This allows gradient statistics to stabilize and the sharpness to decrease [42] [46].

- Apply Gradient Clipping: Cap the maximum magnitude of the gradient vector before the parameter update. This prevents any single step from being catastrophically large.

- Re-tune Learning Rate: If you are already using warmup, your target learning rate may still be too high. Reduce it by a factor of 2 or 5 and resume training.

Issue 2: Overcoming Slow or Stalled Convergence

Symptoms:

- Training loss decreases very slowly.

- Progress plateaus early, with no significant improvement over many epochs.

- The model fails to reach expected performance benchmarks.

Diagnosis:

The learning rate may be too small, causing the optimizer to take minuscule steps toward the minimum. It can also get stuck in flat regions or shallow local minima.

Resolution Protocol:

- Use a Learning Rate Decay Schedule: After a warmup period, gradually reduce the learning rate. This allows for large steps initially for rapid progress and smaller steps later for fine-tuning. Common schedules include:

- Cosine Decay: Decreases the learning rate smoothly following a cosine curve to zero or a minimum value.

- Linear Decay: Reduces the learning rate linearly.

- Exponential Decay: Multiplies the learning rate by a fixed factor at each step.

- Increase Batch Size with a Lower Learning Rate: Empirical evidence from fine-tuning 3B-7B parameter models shows that larger batch sizes paired with lower learning rates can improve generalization and final performance on benchmarks like MMLU [44].

- Explore Alternative Schedules: Consider a "Warmup-Stable-Decay" schedule, which maintains a constant learning rate for a prolonged period before applying decay. Theoretical work shows this can outperform direct-decay schedules in terms of scaling efficiency [41].

Quantitative Comparison of Learning Rate Schedules

The table below summarizes key characteristics of different learning rate schedules to guide your selection.

Table 1: Comparison of Learning Rate Scheduling Strategies

| Schedule Type | Key Mechanism | Theoretical/Empirical Justification | Best-Suited For |

|---|---|---|---|

| Constant | Learning rate remains fixed throughout training. | Simplifies tuning; found to be competitive in some LLM fine-tuning scenarios [44]. | Initial prototyping, environments where schedule tuning is not feasible. |

| Warmup-Only | LR linearly increases from zero to a target value. | Prevents large initial updates, reduces sharpness, allows higher target LR [42] [46]. | Stabilizing the beginning of training, especially with large batch sizes or adaptive optimizers. |

| Cosine Decay | LR decreases smoothly following a cosine curve. | A popular heuristic that provides a smooth transition from high to low learning rates. | General-purpose use, often used in conjunction with warmup for vision and language models. |

| Exponential Decay | LR is multiplied by a decay factor at each step/epoch. | Theoretically shown to boost scaling exponent compared to constant LR [41]. | Scenarios requiring a more rapid reduction in learning rate. |

| Warmup-Stable-Decay (WSD) | Constant LR for a long stable phase, then decay at the end. | Theoretically can substantially outperform direct exponential decay in scaling efficiency [41]. | Large-scale pre-training or fine-tuning where compute optimality is critical. |

Experimental Protocol: Hyperparameter Optimization for scGPT Fine-Tuning

This protocol outlines a systematic method for determining an effective learning rate schedule when fine-tuning an scGPT model on a new single-cell perturbation dataset.

Objective: To find a learning rate and schedule that minimizes the validation loss on a held-out set of cell populations.

Materials:

- Pre-trained scGPT model.

- Single-cell RNA-seq dataset for fine-tuning (e.g., with perturbation labels).

- Access to at least one GPU (e.g., NVIDIA A100).

- Python hyperparameter optimization framework (Optuna or Ray Tune).

Methodology:

- Define the Search Space: Create a parameter space for the optimization trial. This should include:

initial_lr: Log-uniform distribution between1e-6and1e-3.warmup_ratio: Uniform distribution between0.0and0.2(defining the fraction of total training steps for warmup).schedule_type: Categorical choice between['constant', 'cosine', 'linear'].batch_size: Categorical choice of[32, 64, 128]depending on GPU memory.

Set Up the Objective Function: The function for Optuna to minimize/maximize should:

- Instantiate the scGPT model with the proposed hyperparameters.

- Train the model for a fixed number of epochs (enough to observe convergence trends).

- Use a held-out validation set to compute the performance metric (e.g., loss, accuracy).

- Return the final validation metric.

Configure the Optimization Algorithm:

- Use a Tree-structured Parzen Estimator (TPE) sampler in Optuna for efficient search.

- Enable pruning (e.g.,

HyperbandPruner) to automatically stop underperforming trials early, saving significant compute resources [39].

Execute and Analyze:

- Run the optimization for 50-100 trials.

- Analyze the results to see which combinations of parameters consistently lead to low validation loss.

- The best trial will provide your optimized set of hyperparameters.

Visual Guide to Scheduling Strategies

The following diagram illustrates the progression of the learning rate under different scheduling strategies discussed in this guide.

The Researcher's Toolkit

This table lists essential software tools and libraries that are critical for implementing effective learning rate scheduling and hyperparameter optimization in your research.

Table 2: Essential Research Reagents & Software Tools