Navigating High Sparsity in scRNA-seq Data: A Comprehensive Guide to Single-Cell Foundation Models

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the study of gene expression at cellular resolution.

Navigating High Sparsity in scRNA-seq Data: A Comprehensive Guide to Single-Cell Foundation Models

Abstract

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the study of gene expression at cellular resolution. However, the characteristic high sparsity of scRNA-seq data, with an abundance of zero values arising from both technical limitations ('dropouts') and true biological absence, presents significant analytical challenges. This article explores the emerging role of single-cell foundation models (scFMs) in overcoming these hurdles. We provide a foundational understanding of data sparsity, detail the architectural innovations of transformer-based scFMs like scGPT and Geneformer, and offer practical guidance for model selection, tuning, and application in tasks such as batch integration, cell type annotation, and perturbation response prediction. Through a critical evaluation of benchmarking studies and performance metrics, we equip researchers and drug development professionals with the knowledge to leverage scFMs effectively, thereby unlocking deeper biological insights from sparse single-cell data for advancements in biomedicine and clinical research.

The Sparsity Challenge and the Rise of scFMs

FAQ: Addressing Key Questions on scRNA-seq Zeros

What causes zeros in my scRNA-seq data?

Zeros, or "zero expression," in your single-cell RNA-sequencing data arise from two primary sources:

- Biological Zeros: These represent the true biological absence of gene expression. The gene is not transcribed in that particular cell.

- Technical Zeros (Dropout Events): These are measurement failures where a gene is expressed but not detected due to technical limitations like low sequencing depth, inefficient reverse transcription, or unsuccessful amplification [1] [2].

A key challenge is that you cannot directly distinguish these two types of zeros by simple observation [1].

Why is it critical to understand the source of these zeros?

Correctly interpreting the nature of zeros is fundamental because it directly impacts your downstream analysis and biological conclusions.

- Data Interpretation: Misinterpreting technical zeros as true biological absence can lead to incorrect conclusions about cell identity and function [1] [3].

- Analysis Strategy: The chosen method for handling sparsity—whether through specialized statistical models or data imputation—depends on the nature of the zeros in your dataset [1].

My dataset is very sparse. Should I impute the zeros?

Imputation can be a powerful tool, but it must be used with caution. Systematic evaluations have shown that while many imputation methods can help recover biological signals, they can also introduce spurious noise [4].

- When it can help: Imputation may improve analyses that rely on gene-gene relationships or when trying to recover a signal that is very close to the technical noise floor [4].

- When to be cautious: The majority of imputation methods did not consistently improve performance in common downstream tasks like clustering and trajectory inference compared to analyzing the non-imputed data. Some methods can create artificial correlations or patterns [4].

- Consider binarization: For extremely sparse datasets with very large cell numbers, an emerging and powerful alternative is to convert your data to a binary format (0 for zero, 1 for non-zero). This approach can capture most of the biological variation while offering massive computational savings [5].

How can I diagnose if my sparsity is a technical problem?

You can assess your data using several key quality control (QC) metrics. The following table summarizes the primary QC metrics used to identify technical issues leading to sparsity [6] [3]:

Table 1: Key QC Metrics for Diagnosing Technical Sources of Sparsity

| QC Metric | What It Measures | Indication of a Technical Problem |

|---|---|---|

| Count Depth | Total number of counts (UMIs/reads) per cell barcode. | Too low: Likely an empty droplet.Too high: Could be a doublet/multiplet. |

| Genes Detected | Number of genes detected per cell barcode. | Too low: Empty droplet or dying cell.Too high: Could be a doublet/multiplet. |

| Mitochondrial Count Fraction | Percentage of counts originating from mitochondrial genes. | Unusually high: Often indicates a stressed, dying, or low-quality cell whose cytoplasmic mRNA has leaked out. |

To visualize the logical process of diagnosing the source of zeros and selecting an analysis strategy, follow this workflow:

Are there analysis methods that work directly with sparse data without imputation?

Yes, this is often the preferred approach. Many modern statistical models are specifically designed to handle the inherent sparsity of scRNA-seq count data without the need for imputation [1].

- Best Practice: For differential expression analysis, use statistical models like negative binomial or zero-inflated models that are appropriate for sparse count data [1].

- Emerging Trend: For clustering, visualization, and other analyses, simply using a binary representation (0 for not detected, 1 for detected) of gene expression can yield results highly similar to count-based methods, especially as datasets grow larger and sparser [5].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key platforms and methods relevant to generating and analyzing scRNA-seq data in the context of sparsity.

Table 2: Key Platforms & Methods for scRNA-seq and Sparsity Analysis

| Item / Platform | Primary Function | Relevance to Sparsity |

|---|---|---|

| 10X Genomics Chromium | Droplet-based single-cell partitioning and barcoding. | A major source of high-throughput, often sparse, scRNA-seq data. Understanding its limitations is key [7] [8]. |

| UMIs (Unique Molecular Identifiers) | Molecular barcodes to label individual mRNA molecules. | Critical for mitigating technical noise and quantifying molecules accurately, which helps model sparsity [6] [8]. |

| SAVER | Model-based imputation method. | Uses a probabilistic model to recover gene expression values, primarily for technical zeros [4]. |

| MAGIC | Data-smoothing imputation method. | Uses diffusion-based smoothing to impute values and reduce sparsity by sharing information across similar cells [4]. |

| scBFA | Dimensionality reduction for binary data. | A specialized tool for analyzing binarized scRNA-seq data, an alternative approach to handling sparsity [5]. |

| scIALM | Matrix completion imputation method. | A recent (2024) method that treats dropout imputation as a low-rank matrix completion problem [2]. |

The Limitations of Traditional Analysis Methods in Sparse Environments

Frequently Asked Questions (FAQs)

1. Why do traditional clustering methods often fail on my sparse scRNA-seq data? Traditional clustering methods like K-means and hierarchical clustering struggle with the high dimensionality and extreme sparsity of scRNA-seq data. The prevalence of zero counts (dropouts) means that these algorithms often operate on incomplete information, leading to suboptimal cell grouping. Methods that rely on constructing complete graph Laplacian matrices also face significant computational and storage costs, making them inefficient for large, sparse datasets [9].

2. My data has substantial batch effects from multiple species. Why can't standard cVAE models correct them properly? Standard conditional Variational Autoencoders (cVAEs) use Kullback–Leibler (KL) divergence regularization, which does not distinguish between biological and technical variation. Increasing KL regularization strength to remove stronger batch effects simultaneously removes biological signals, resulting in uninformative latent dimensions being set close to zero. This leads to a loss of information crucial for downstream analysis rather than intelligent batch correction [10].

3. What is the risk of using adversarial learning for batch correction on datasets with unbalanced cell types? Adversarial learning aims to make batches indistinguishable in the latent space. However, if cell type proportions are unbalanced across batches, this approach is prone to forcibly mixing embeddings of unrelated cell types. For example, a rare cell type in one batch may be incorrectly aligned with an abundant but biologically distinct cell type from another batch, compromising the biological validity of your integration [10].

4. How does data sparsity specifically impact the identification of cell types and states? High sparsity increases the similarity between cells from distinct populations and the dissimilarity between cells from the same population. This obscures the true biological boundaries between cell types. Consequently, clustering algorithms may either over-cluster, creating spurious subpopulations from noise, or under-cluster, failing to distinguish genuine, biologically distinct cell states [9].

5. Are there specific quality control (QC) pitfalls linked to sparse data? Yes, sparse data complicates QC. It can be challenging to distinguish between low-quality cells (with low gene counts) and genuine small cell types (like platelets). Furthermore, tools for detecting doublets or ambient RNA must be specifically designed to account for high dropout rates to avoid misclassifying singlets as doublets or vice-versa [11].

Troubleshooting Guides

Issue 1: Poor Clustering Performance on Sparse Data

Problem: Your clustering results are inconsistent, fail to separate known cell types, or are not reproducible.

Solution: Implement deep learning-based clustering methods designed for sparse data.

- Recommended Tool: Use

scHSC, a method that employs hard sample mining via contrastive learning [9]. - Protocol:

- Preprocessing: Follow a standard pipeline using

Scanpy:- Filter cells and genes with

sc.pp.filter_cells(min_counts=1)andsc.pp.filter_genes(min_counts=1). - Normalize total counts per cell with

sc.pp.normalize_total(). - Apply a logarithmic transformation with

sc.pp.log1p(). - Identify highly variable genes and scale the data to zero mean and unit variance [9].

- Filter cells and genes with

- Graph Construction: Build a k-nearest neighbor (KNN) graph from the preprocessed data to capture cellular topology.

- Model Training: Apply

scHSC, which integrates gene expression and graph structure. It focuses on "hard" positive and negative sample pairs to learn a more robust embedding space that is resilient to dropouts [9].

- Preprocessing: Follow a standard pipeline using

Issue 2: Batch Effects Persist After Standard Integration

Problem: Technical differences between datasets (e.g., from different labs, species, or protocols) remain visible in your UMAP and are confounding biological analysis.

Solution: Utilize advanced integration models that go beyond standard alignment.

- Recommended Tool: Use

sysVI, a cVAE-based method enhanced with VampPrior and cycle-consistency constraints [10]. - Protocol:

- Model Selection: Choose

sysVIfor complex integration tasks, such as across species or between organoids and primary tissue. - Workflow: The model employs a VampPrior to better preserve biological variation and a cycle-consistency loss to ensure faithful translation of cell states between batches without mixing distinct types.

- Evaluation: Assess integration success using metrics like:

- iLISI: Measures batch mixing (higher is better).

- NMI: Measures cell type preservation (higher is better). Avoid relying solely on visual inspection of UMAP plots [10].

- Model Selection: Choose

Issue 3: Loss of Biological Signal After Batch Correction

Problem: After correcting for batch effects, key biological variations (e.g., differential responses to a treatment) have been removed.

Solution: Select a method that explicitly discriminates between technical and biological noise.

- Recommended Tools: Consider

sysVI(for its VampPrior) or contrastive learning frameworks [10] [9]. - Protocol:

- Avoid Over-Correction: Do not maximize batch correction strength blindly. Tools that use a single weight to regulate both biological and technical information (like KL divergence in simple cVAEs) will inevitably remove signal.

- Benchmark Biological Preservation: After integration, perform differential expression analysis on known marker genes for your cell types to ensure they remain distinct. Use metrics like Normalized Mutual Information (NMI) to quantify cell type separation [10].

- Leverage Structure: Methods like

scHSCthat use graph topology can help preserve the inherent biological structure of the data against the diluting effect of sparsity [9].

The following table summarizes the performance of various methods on key metrics relevant to sparse data analysis, as revealed by benchmark studies.

Table 1: Benchmarking Performance of scRNA-seq Analysis Methods

| Method | Type | Key Strategy | Performance on Sparsity | Performance on Batch Correction | Biological Preservation |

|---|---|---|---|---|---|

| K-means / Hierarchical Clustering [9] | Traditional Clustering | Distance-based partitioning | Struggles with high dropout rates; provides locally optimal results | Not designed for batch correction | Poor, due to sparsity and noise |

| Standard cVAE [10] | Deep Learning (VAE) | KL divergence regularization | Limited; no special mechanism for sparsity | Limited on substantial batch effects; removes biological signal | Low when KL weight is increased |

| Adversarial cVAE (ADV, GLUE) [10] | Deep Learning (VAE + Adversary) | Aligns batch distributions | Can be misled by sparsity-induced similarities | High, but may over-correct and mix distinct cell types | Low; prone to removing biological variation |

| scHSC [9] | Deep Contrastive Clustering | Hard sample mining & graph topology | High; focuses on informative, hard-to-distinguish cells | Not primarily a batch correction tool | High; designed for accurate cell type identification |

| sysVI (VAMP+CYC) [10] | Enhanced cVAE | VampPrior & cycle-consistency | Improved by better latent space modeling | High, even across substantial batch effects (e.g., species) | High; actively preserves biological states |

Experimental Workflow for Sparse Data

The diagram below outlines a robust experimental workflow designed to address the limitations of traditional methods when analyzing sparse scRNA-seq data.

Research Reagent Solutions

Table 2: Essential Computational Tools for scRNA-seq Analysis in Sparse Environments

| Tool / Resource | Function | Role in Addressing Sparsity & Batch Effects |

|---|---|---|

| Scanpy [9] [11] | Python-based toolkit | Provides the standard preprocessing workflow (normalization, log-transform, HVG selection) which is the critical first step in managing sparse data. |

| scHSC [9] | Deep Clustering | Uses contrastive learning and hard sample mining to improve clustering accuracy directly from sparse count data. |

| sysVI [10] | Data Integration | Integrates datasets with substantial technical/biological differences (batch effects) while preserving biological signals that are often lost. |

| Seurat [12] [11] | R-based toolkit | Offers comprehensive workflows for QC, normalization, clustering, and includes methods for data integration and batch correction. |

| scVI [12] | Deep Learning Framework | Uses variational inference to model gene expression, facilitating tasks like batch correction and clustering in a probabilistic manner. |

| Harmony [12] [13] | Batch Correction | Aligns subpopulations across datasets in a reduced space, effectively mixing batches while preserving biological variation. |

| ZINB Model [9] | Statistical Model | Used within autoencoders to model the zero-inflated nature of scRNA-seq data, explicitly accounting for dropouts. |

| SoupX / CellBender [11] | Ambient RNA Correction | Removes background noise from the count matrix, reducing one source of technical zeros and improving data quality. |

Core Concepts: Frequently Asked Questions

What is a Single-Cell Foundation Model (scFM)? A single-cell foundation model is a large-scale deep learning model pretrained on vast amounts of single-cell omics data, capable of being adapted to a wide range of downstream biological tasks. These models use self-supervised learning to extract fundamental patterns and principles of cellular biology, much like large language models learn the patterns of human language from extensive text corpora [14].

How do scFMs handle the high sparsity of scRNA-seq data? scFMs are designed to manage the high dimensionality and sparsity inherent to scRNA-seq data through their architecture and training strategies. Models employ techniques like masked gene modeling, where random genes in a cell's expression profile are masked, and the network is trained to predict them using the context of other genes. This process teaches the model the complex, co-varying relationships between genes, effectively learning to distinguish biological signals from technical noise and dropout events [14] [15].

What are the primary architectures used for scFMs? Most scFMs are built on the transformer architecture, which uses attention mechanisms to weight the importance of relationships between any pair of input tokens (genes). Two main variants are employed:

- Encoder-based models (e.g., scBERT): Use bidirectional attention, learning from the context of all genes in a cell simultaneously. They are often preferred for classification and embedding tasks [14].

- Decoder-based models (e.g., scGPT): Use a unidirectional masked attention mechanism, predicting genes in a sequential manner. These are often used for generation tasks [14]. Currently, no single architecture has emerged as clearly superior, and hybrid designs are being explored [14].

Why is tokenization important, and how is it done? Tokenization converts raw gene expression data into a structured format the model can process. Since gene expression data lacks a natural sequence, a key challenge is imposing an order. Common strategies include:

- Ranking genes within each cell by expression level, treating the ordered list as a "sentence" [14].

- Value binning, where expression values are categorized into bins [14].

- Including special tokens for cell identity or modality to provide additional biological context [14].

Troubleshooting Common Experimental Challenges

Challenge: Model fails to capture biologically meaningful relationships.

- Potential Cause: Inadequate data preprocessing or poor selection of input genes.

- Solution:

- Ensure rigorous quality control on your input data, including filtering low-quality cells and genes.

- For models requiring a fixed input size, use the recommended method for selecting genes (e.g., Highly Variable Genes or ranking by expression) as specified in the model's documentation [14] [16].

- Verify that the pretraining corpus of the scFM is relevant to your biological context (e.g., immune cells, cancer) [16].

Challenge: Batch effects persist after using scFM embeddings.

- Potential Cause: The model's pretraining data may not have covered the specific technical variation in your dataset.

- Solution:

- Consider fine-tuning the pretrained scFM on a portion of your data, which can help it adapt to specific technical biases [14].

- Explore models that are explicitly designed for multi-batch integration. Benchmarking studies suggest that some scFMs excel at removing complex batch effects while conserving biological variance [16] [17].

- As a baseline, compare the scFM's performance against specialized batch integration tools like Harmony or scVI [16].

Challenge: Choosing between a complex scFM and a simpler model.

- Decision Guide: The choice depends on your resources and task.

- Use scFMs when: You have a complex task (e.g., novel cell type discovery, drug sensitivity prediction), need a versatile model for multiple downstream analyses, or require biologically interpretable embeddings [16] [17].

- Consider simpler models when: Working with limited computational resources, analyzing a small, focused dataset, or performing a single, well-defined task where simpler methods like Seurat or Scanpy are sufficient [16] [15]. No single scFM consistently outperforms others across all tasks, so selection should be task-specific [16].

Performance Benchmarking of Select scFMs

The following table summarizes a comprehensive benchmark of six scFMs across various tasks, providing guidance for model selection [16] [17].

Table 1: Benchmarking scFMs Across Key Downstream Tasks

| Model Name | Primary Architecture | Key Strengths | Considerations |

|---|---|---|---|

| Geneformer [16] | Encoder | Effective for gene network analysis; uses gene ranking by expression. | Input is a ranked list of 2,048 genes. |

| scGPT [16] | Decoder | Versatile for multi-omics; supports generation and prediction tasks. | Uses 1,200 Highly Variable Genes (HVGs) as input. |

| UCE [16] | Encoder | Integrates protein sequence information via ESM-2 embeddings. | Uses a unique sampling of genes by expression and genomic position. |

| scFoundation [16] | Asymmetric Encoder-Decoder | Trained on a vast number of protein-coding genes. | Larger model scale requires more computational resources. |

| LangCell [16] | Encoder | Incorporates text (cell type labels) during pretraining. | Relies on the availability of high-quality textual annotations. |

| scCello [16] | Custom | Designed for single-cell resolution analysis. | Specialized architecture may be less general-purpose. |

Table 2: Overall Model Ranking Based on a Holistic Benchmark Study [16] [17]

| Overall Rank | Model | Notable Performance |

|---|---|---|

| 1 | scGPT | Robust and versatile across diverse tasks. |

| 2 | Geneformer | Strong performance in gene-level tasks. |

| 3 | scFoundation | Effective in large-scale data integration. |

| 4 | UCE | Good at leveraging protein context. |

| 5 | LangCell | Shows promise with text integration. |

| 6 | scCello | Specialized for certain analyses. |

Experimental Protocol: Zero-Shot Cell Embedding for Atlas Integration

This protocol details how to use a pretrained scFM to generate cell embeddings without task-specific fine-tuning (zero-shot), ideal for integrating a new dataset into a reference atlas [16] [17].

1. Load Pretrained Model

- Download a publicly available scFM like scGPT or Geneformer.

- Load the model weights into the appropriate framework (e.g., PyTorch, JAX), ensuring all dependencies are installed.

2. Preprocess Query Dataset

- Quality Control: Filter cells and genes based on standard metrics (mitochondrial counts, number of genes detected).

- Gene Set Alignment: Map the genes in your dataset to the gene vocabulary used during the model's pretraining. This may require subsetting to a common set of highly variable genes.

- Normalization: Apply the normalization method (e.g., log(CP10K+1)) consistent with the model's training procedure.

- Tokenization & Input Formatting: Convert the normalized expression matrix into the input format the model expects. For example:

3. Generate Cell Embeddings

- Pass the preprocessed data through the model.

- Extract the cell-level embedding from the model's output layer. This is often a dedicated "[CLS]" token or the model's internal state that summarizes the entire cell.

4. Downstream Analysis: Batch Integration & Annotation

- Visualization: Use UMAP or t-SNE to visualize the scFM embeddings and assess the mixing of batches and separation of cell types.

- Clustering: Apply clustering algorithms (e.g., Leiden, Louvain) on the embeddings to identify cell populations.

- Annotation: Transfer labels from a reference atlas by finding the nearest neighbors of your cells in the scFM embedding space of the annotated reference data.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Resources for scFM Research and Application

| Item / Resource | Function / Description | Example |

|---|---|---|

| Public Cell Atlas Data | Serves as the pretraining corpus for building scFMs. | CZ CELLxGENE [14], Human Cell Atlas [14] |

| Pretrained Model Weights | Allows researchers to use existing scFMs without the prohibitive cost of pretraining. | scGPT [16], Geneformer [16] |

| Standardized Analysis Packages | Provides baseline methods for benchmarking scFM performance. | Seurat [16] [15], Scanpy [15] |

| Specialized Integration Tools | Offers strong baselines for evaluating batch correction performance of scFMs. | Harmony [16], scVI [16] |

| Ontology-Based Metrics | Novel metrics to biologically evaluate the quality of scFM embeddings. | scGraph-OntoRWR, LCAD [16] [17] |

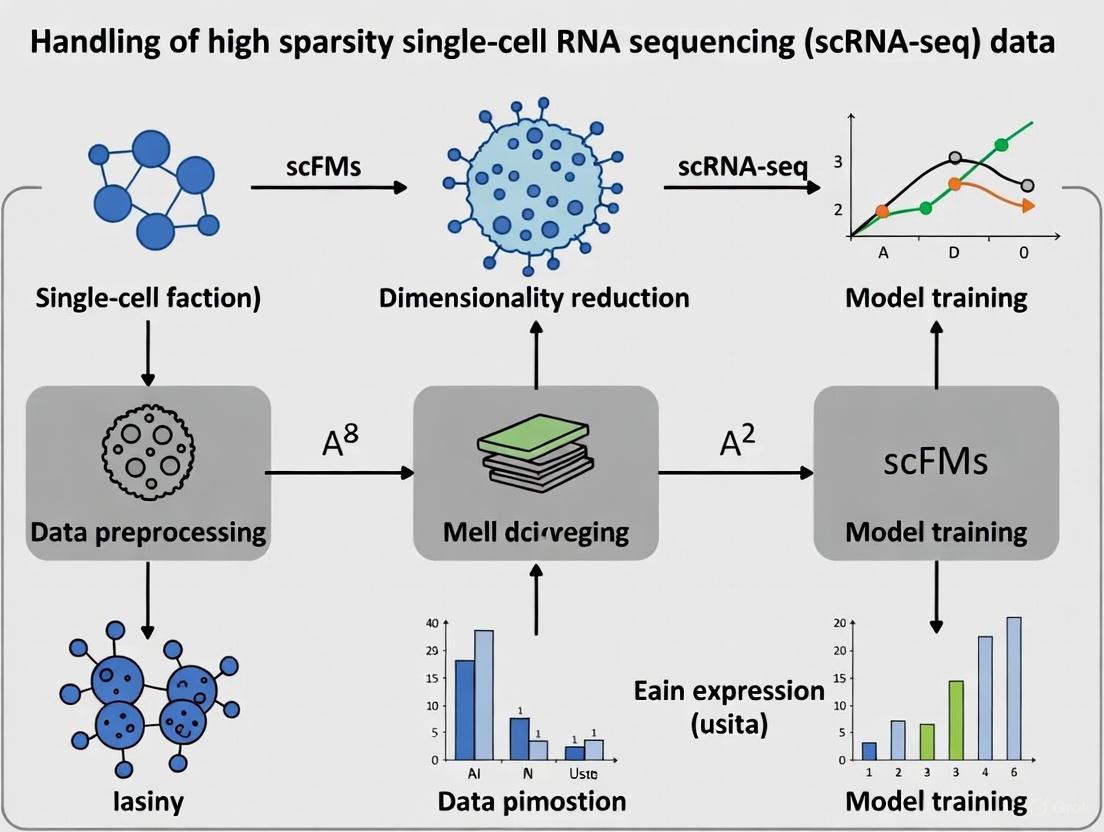

Workflow Diagram: From Single-Cell Data to Foundation Model

The diagram below illustrates the typical workflow for constructing and applying a single-cell foundation model.

How scFMs Learn Universal Representations from Massive, Heterogeneous Datasets

Frequently Asked Questions: Troubleshooting scFMs

FAQ 1: Why does my scFM model perform poorly on a new dataset with a different tissue type? This is often a problem of domain shift. scFMs are pretrained on large corpora but may not generalize perfectly to new biological contexts where cell type distributions or gene expression patterns differ.

- Solution: Utilize the model's fine-tuning capability. Instead of using the model in zero-shot mode, perform additional supervised fine-tuning on a small, annotated subset of your new dataset. This adapts the model's internal representations to the new domain. Leveraging pretrained weights, even for fine-tuning, typically requires less data and converges faster than training a model from scratch [17].

FAQ 2: How do I handle the extreme sparsity and high dimensionality of my scRNA-seq data before using an scFM? scFMs are specifically designed to handle the high sparsity inherent to scRNA-seq data. The key is not to aggressively impute the data beforehand.

- Solution: Trust the model's architecture. Most modern scFMs use transformer networks with self-supervised pretraining objectives (like masked gene modeling) that are inherently capable of learning from sparse data. Preprocessing should focus on robust normalization and quality control, not extensive imputation which can introduce false signals. Let the model learn to distinguish technical zeros from true biological absence through its pretraining [14] [1].

FAQ 3: My model's cell embeddings are dominated by batch effects. What went wrong? This indicates that the model's pretraining may not have encompassed sufficient technical diversity to learn batch-invariant biological representations.

- Solution: First, ensure you are using the cell embeddings from a model specifically benchmarked for integration tasks. If the problem persists, employ a two-step strategy:

- Use the scFM to generate initial cell embeddings.

- Apply a lightweight, post-hoc integration tool like Harmony or Scanorama on these embeddings to remove residual batch effects. This combines the powerful representation of scFMs with specialized batch correction algorithms [17].

FAQ 4: How can I biologically validate that my scFM has learned meaningful representations? Moving beyond standard clustering metrics is key.

- Solution: Use biology-driven evaluation metrics. One method is to analyze the Lowest Common Ancestor Distance (LCAD) in cell ontology for misclassified cells; smaller distances indicate the model confuses biologically similar cell types, a less severe error. Another is scGraph-OntoRWR, which measures if the relationships between cell types in the embedding space align with established biological knowledge from cell ontologies [17].

FAQ 5: When should I choose a complex scFM over a simpler, traditional model? The choice depends on your task, data, and resources.

- Solution: Refer to the following decision table:

| Situation | Recommended Approach | Rationale |

|---|---|---|

| Multiple downstream tasks (e.g., annotation, integration, perturbation) | Use an scFM | scFMs are versatile; one pretrained model can be adapted for various tasks, providing a unified analysis framework [14] [17]. |

| Small, focused dataset for a single task (e.g., DE analysis on one cell type) | Use a simpler model (e.g., scVI, Seurat) | Traditional models can be more efficient and easier to train and interpret for specific, narrow applications [17]. |

| Need for zero-shot learning (e.g., identifying novel cell types) | Use an scFM | The broad knowledge encoded during large-scale pretraining allows scFMs to make inferences on data not seen during training [18] [17]. |

| Limited computational resources | Use a simpler model | Training and fine-tuning large scFMs can be computationally intensive [14]. |

Experimental Protocols for Key scFM Analyses

Protocol 1: Zero-Shot Cell Type Annotation and Evaluation

Objective: To assess an scFM's ability to annotate cell types in a new dataset without task-specific training.

Methodology:

- Embedding Extraction: Pass the target scRNA-seq dataset (count matrix) through the pretrained scFM to obtain a cell embedding for each cell.

- Reference Mapping: Calculate the centroid of each known cell type in the embedding space using a small, labeled reference dataset.

- Annotation: For each cell in the target dataset, assign the cell type label of the nearest reference centroid (e.g., using cosine similarity).

- Evaluation:

- Standard Metrics: Calculate accuracy, F1-score, and weighted precision/recall if ground truth labels are available.

- Biological Metrics: Use Lowest Common Ancestor Distance (LCAD) to assess the biological plausibility of misclassifications. A lower average LCAD suggests the model makes "sensible" errors by confusing closely related cell types [17].

Protocol 2: Benchmarking Data Integration Performance

Objective: To evaluate how well an scFM removes batch effects while preserving biological variance.

Methodology:

- Data Preparation: Select a dataset with known batch effects (e.g., from different donors or sequencing platforms) but with consistently annotated cell types across batches.

- Embedding Generation: Generate cell embeddings for the entire dataset using the scFM.

- Visualization & Metric Calculation:

- Visualize the embeddings using UMAP, coloring points by both batch and cell type.

- Qualitative Assessment: A successful integration will show cells from different batches (colors) mixed together within each cell type cluster.

- Quantitative Assessment: Use metrics like:

- BatchASW (Batch Average Silhouette Width): Measures batch mixing. Closer to 0 is better.

- Cell-type ASW (cASW): Measures biological preservation. Closer to 1 is better.

- Graph Connectivity: Assesses whether cells of the same type form a connected graph [17].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in scFM Research |

|---|---|

| scGPT | A generative pretrained transformer model for single-cell data. Excels at multi-omic integration, perturbation prediction, and zero-shot cell annotation [14] [18]. |

| Geneformer | A transformer model pretrained on millions of cells. Noted for its context-aware gene embeddings and ability to predict downstream effects of perturbation [17]. |

| CZ CELLxGENE | A platform providing unified access to millions of curated single-cell datasets. Serves as a critical data source for pretraining and benchmarking scFMs [14] [18]. |

| Harmony | A robust batch integration algorithm. Often used in conjunction with scFM-generated embeddings to remove residual technical variation [17]. |

| Cell Ontology | A structured, controlled vocabulary for cell types. Used to develop biology-informed metrics (like LCAD) for validating the biological relevance of scFM embeddings [17]. |

| DISCO Database | A curated single-cell database that aggregates data from multiple studies, useful for training and evaluating the generalizability of scFMs [18]. |

Performance Comparison of scFMs and Traditional Methods

Table: Benchmarking results across various downstream tasks (Summarized from [17]).

| Model / Method | Cell Type Annotation (Avg. Accuracy) | Data Integration (BatchASW / cASW) | Perturbation Prediction | Notes |

|---|---|---|---|---|

| scGPT | High | Good / Good | Excellent | A versatile and robust model, strong all-rounder [18] [17]. |

| Geneformer | Good | Fair / Good | Good | Excels in gene-level tasks and capturing gene network relationships [17]. |

| scFoundation | High | Good / Good | Good | Trained on a massive corpus, demonstrates strong generalizability [17]. |

| Seurat (Traditional) | Variable (dataset-specific) | Good / Fair | Not Applicable | A reliable anchor-based method, but not a foundation model [17]. |

| scVI (Traditional) | Good | Excellent / Good | Limited | A powerful generative model, highly effective for integration and annotation of specific datasets [17]. |

Workflow Diagram: From Sparse Data to Universal Representations

Diagram Title: The scFM Pretraining and Application Workflow

Detailed View: The scFM Architecture Core

Diagram Title: Tokenization and Encoding in scFMs

The analysis of single-cell RNA sequencing (scRNA-seq) data is fundamentally challenged by its high sparsity, characterized by a large number of zero values in the cell-gene expression matrix. These zeros arise from both biological absence of expression and technical "dropout" events, where transcripts are not detected due to limitations in sequencing depth or reverse transcription [1] [2]. This sparsity can hinder downstream analyses such as clustering, trajectory inference, and differential expression.

Transformer architectures, which have revolutionized natural language processing (NLP), are uniquely suited to address this challenge. Their powerful multi-head self-attention mechanism can learn complex, long-range dependencies within data without requiring dimensionality reduction at the input stage, thereby preserving the integrity of the original sparse data and making the model's decisions traceable and interpretable [19] [20] [21]. This technical guide explores how Transformer-based single-cell foundation models (scFMs) are leveraged to handle high sparsity, providing troubleshooting advice and methodological protocols for researchers.

FAQs & Troubleshooting Guides

FAQ 1: How do Transformer models handle the high sparsity and numerous zeros in scRNA-seq data?

Answer: Transformers manage sparsity through several key strategies. Unlike autoencoders that compress data into an abstract latent space, Transformers typically process data without initial dimensionality reduction, keeping all features traceable [19]. Furthermore, the self-attention mechanism dynamically weights the importance of all genes (tokens) when analyzing a cell, effectively learning to "impute" or pay less attention to dropout zeros by contextualizing them with other co-expressed genes [21]. Some models also use data binarization, converting expression counts to a simple 0 (no expression) or 1 (expression detected). This approach embraces zeros as meaningful biological signals and has been shown to provide results comparable to count-based analyses for tasks like cell type identification and dimensionality reduction, while being computationally more efficient [5].

Troubleshooting Guide: Model performance is poor on a very sparse dataset.

- Symptom: Low accuracy in cell type annotation or poor clustering results after training.

- Potential Cause & Solution: The model may be struggling with the noise from technical dropouts overwhelming the true biological signal.

- Consider Binarization: As a preprocessing step, try binarizing your expression matrix. This can reduce the impact of technical noise and has been proven effective for many downstream tasks [5].

- Incorporate Prior Knowledge: Use a knowledge-based mask, such as gene-pathway memberships, to structure the model's initial embedding layer. This guides the model to focus on biologically relevant gene sets and can lead to faster convergence and improved performance compared to using random masks [19].

- Leverage Pre-trained scFMs: Instead of training from scratch, fine-tune a pre-trained single-cell foundation model (scFM). These models have already learned robust feature representations from millions of cells and are better at generalizing to new, sparse data [21].

FAQ 2: What are the best practices for tokenizing non-sequential scRNA-seq data for a Transformer model?

Answer: Tokenization is a critical step for adapting non-sequential gene expression data for Transformer models, which are designed for sequences. The most common approach is to treat each gene as a token [21]. However, since genes lack a natural order, defining their sequence is an active area of development. The table below summarizes prevalent tokenization strategies.

Troubleshooting Guide: The model seems sensitive to the order of input genes.

- Symptom: Significant changes in model output or attention scores when the input gene order is shuffled.

- Potential Cause & Solution: The chosen positional encoding is introducing artificial dependencies.

- Use Expression-Defined Ordering: Adopt a deterministic, cell-specific ordering based on gene expression values, such as ranking genes from highest to lowest expression. This creates a meaningful sequence for the model [21].

- Validate with Random Orders: Experiment with multiple random orderings during training or inference and aggregate the results to ensure robustness [20].

- Explore Alternative Encodings: Investigate models that use learned positional embeddings based on gene attributes (e.g., chromosomal location) or that are specifically designed to be more permutation-invariant [21].

FAQ 3: How can I ensure my Transformer model is biologically interpretable?

Answer: Interpretability is a key advantage of Transformer models. It is achieved primarily through the analysis of attention scores [19] [20]. These scores, which are calculated between a special classification token (CLS) and all gene/pathway tokens, reveal which features the model deems most important for its prediction (e.g., cell type annotation). By examining these scores, researchers can identify key genes or pathways driving a specific cellular state.

Troubleshooting Guide: The attention maps are diffuse and don't highlight known marker genes.

- Symptom: Attention scores are spread evenly across many genes, with no clear biological insight.

- Potential Cause & Solution: The model may not have learned specific, meaningful patterns.

- Inspect Training Data: Ensure the training data is of high quality and that cell type labels are accurate. A model trained on noisy labels will not produce interpretable attention.

- Use Pathway-Level Masking: Instead of raw genes, tokenize the data based on biologically defined gene sets (e.g., pathways, regulons). The attention scores will then directly reflect the importance of these functional units, which are often easier to interpret [19].

- Regularization: Apply regularization techniques during training to prevent overfitting and encourage sparser, more focused attention patterns.

Experimental Protocols

Protocol 1: Implementing a Basic Transformer for Cell Type Annotation

This protocol outlines the steps to implement TOSICA (Transformer for One-Stop Interpretable Cell-type Annotation), a model designed for interpretable cell type transfer from a reference to a query dataset [19].

1. Model Architecture and Workflow The following diagram illustrates the core architecture and data flow of the TOSICA model.

2. Key Reagents and Computational Tools Table 1: Essential Research Reagents and Tools for Implementing TOSICA.

| Item | Function/Description | Example/Note |

|---|---|---|

| Reference Dataset | A scRNA-seq dataset with pre-annotated, high-quality cell type labels. | Human Cell Atlas, PanglaoDB [21]. |

| Query Dataset | The new, unannotated scRNA-seq dataset to be labeled. | Must be normalized and preprocessed similarly to the reference. |

| Knowledge Mask | A binary matrix defining gene membership to biological entities. | Matrices based on pathways (e.g., KEGG, Reactome) or regulons [19]. |

| Transformer Model | The deep learning architecture based on multi-head self-attention. | Implemented in PyTorch or TensorFlow. |

| CLS Token | A trainable parameter vector that aggregates global cell information for classification [19]. | Standard practice in Transformer models. |

3. Step-by-Step Methodology

- Step 1: Data Preprocessing. Normalize both reference and query datasets using standard scRNA-seq pipelines (e.g., SCTransform). Perform feature selection to retain highly variable genes.

- Step 2: Mask Preparation. Construct or download a knowledge mask. This is a binary matrix where rows represent biological entities (e.g., pathways) and columns represent genes, with a 1 indicating membership.

- Step 3: Model Training.

- Input: The normalized gene expression vector for a single cell.

- Cell Embedding: Transform the gene expression vector using a fully connected layer, then apply the knowledge mask. This creates a set of "pathway tokens," where each token's value is derived only from the genes belonging to that pathway.

- Add CLS Token: Append a trainable CLS token to the sequence of pathway tokens.

- Self-Attention: Pass the token sequence through multiple Transformer encoder layers. The multi-head self-attention mechanism allows the model to learn interactions between different pathways.

- Classification: Use the final state of the CLS token to predict cell type probabilities via a linear classifier.

- Loss Function: Train the model using a cross-entropy loss between predictions and reference labels.

- Step 4: Interpretation. Extract attention scores between the CLS token and all pathway tokens. High attention scores for a pathway indicate its importance in classifying that cell type.

Protocol 2: Benchmarking Transformer Models Against Sparsity

1. Experimental Design Workflow This workflow outlines the process for systematically evaluating a model's performance as data sparsity increases.

2. Key Performance Metrics Table 2: Quantitative Metrics for Evaluating Model Performance on Sparse Data.

| Metric | Formula/Description | Interpretation in Sparsity Context | ||

|---|---|---|---|---|

| Accuracy (ACC) | ( \text{ACC} = \frac{\text{Correct Predictions}}{\text{Total Predictions}} ) | Measures overall cell type annotation correctness as zeros increase. | ||

| Mean Absolute Error (MAE) | ( \text{MAE} = \frac{1}{n}\sum_{i=1}^{n} | yi - \hat{y}i | ) | Evaluates error in imputation tasks; lower is better [2]. |

| Adjusted Rand Index (ARI) | Measures similarity between two data clusterings, corrected for chance. | Assesses clustering stability on sparse data; closer to 1 is better [2]. | ||

| Silhouette Score (SS) | Measures how similar an object is to its own cluster compared to other clusters. | Evaluates cluster separation in latent space; higher scores indicate better-defined clusters [5]. |

3. Step-by-Step Methodology

- Step 1: Dataset Preparation. Select a scRNA-seq dataset with known "ground truth" cell type labels.

- Step 2: Sparsity Simulation. Artificially introduce additional zeros into the dataset to mimic higher dropout rates. This can be done by randomly masking a defined percentage (e.g., 10%, 30%, 50%) of non-zero expression values.

- Step 3: Model Application. Run the Transformer model (e.g., TOSICA, scBERT) and baseline methods (e.g., Seurat, SCANPY) on the sparsified datasets.

- Step 4: Metric Calculation. For each sparsity level and method, calculate the performance metrics listed in Table 2.

- Step 5: Analysis. Plot metrics against the sparsity level. A model that is robust to sparsity will show a slower decline in performance (e.g., Accuracy, ARI) as sparsity increases.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Transformer-based scRNA-seq Analysis.

| Item Category | Specific Examples | Function in Research |

|---|---|---|

| Pre-trained Models | scBERT, GeneFormer, scGPT [21] | Provide a foundational understanding of gene regulation for transfer learning, reducing the need for extensive training data. |

| Data Resources | CZ CELLxGENE, Human Cell Atlas, PanglaoDB [21] | Provide large-scale, annotated scRNA-seq datasets essential for pre-training and benchmarking models. |

| Knowledge Databases | MSigDB, KEGG, Reactome, DoRothEA | Provide curated gene sets for creating knowledge masks to improve model interpretability and biological relevance [19]. |

| Imputation Methods | MAGIC, DCA, ALRA, scIALM [2] | Algorithms used to recover technical zeros in sparse expression matrices before downstream analysis, though their use before Transformers is debated. |

Architectures and Practical Applications of scFMs

Conceptual Foundation: How scFMs Tackle scRNA-seq Sparsity

Single-cell RNA sequencing (scRNA-seq) data is characterized by its high sparsity, containing a large number of observed zero values. These zeros arise from two primary sources: true biological absence of expression ("biological zeros") and technical failures in detection ("technical zeros" or "dropouts") [1] [15]. This sparsity poses significant challenges for downstream analysis, as it can obscure true biological signals and relationships.

Single-cell foundation models (scFMs) address this sparsity challenge through large-scale pre-training on millions of cells [22] [14]. By learning from vast datasets, these models develop robust representations that are less sensitive to technical noise. The transformer architectures at the core of scFMs utilize attention mechanisms that can learn complex gene-gene relationships, effectively inferring missing values based on contextual patterns observed during training [14]. Rather than performing explicit imputation as a separate step, scFMs inherently learn to compensate for sparsity through their pre-training objectives, such as masked language modeling where the model learns to predict randomly masked gene expressions based on their context [22] [14].

Comparative Analysis of Single-Cell Foundation Models

Technical Specifications and Implementation

Table 1: Technical specifications of major single-cell foundation models

| Model | Architecture Type | Pre-training Data Scale | Input Gene Count | Output Dimension | Key Features | Sparsity Handling |

|---|---|---|---|---|---|---|

| scGPT [16] [14] | Decoder-style Transformer | 33 million cells | 1,200 HVGs | 512 | Multi-omic support; value binning | Masked gene modeling with MSE loss |

| Geneformer [16] [14] | Encoder | 30 million cells | 2,048 ranked genes | 256/512 | Rank-based encoding; gene attention | MLM with causal attention |

| UCE [16] | Encoder | 36 million cells | 1,024 non-unique genes | 1,280 | Protein embeddings from ESM-2 | Modified MLM with binary classification |

| scFoundation [22] [16] | Asymmetric Encoder-Decoder | 50 million cells | ~19,000 genes | 3,072 | Read-depth-aware pre-training | MLM with MSE loss on non-zero genes |

| LangCell [16] | Encoder | 27.5 million cell-text pairs | 2,048 ranked genes | 256 | Text integration; ranking | Order-based modeling |

Performance Characteristics for Sparse Data

Table 2: Performance comparison across biological tasks (2025 benchmarking data) [16] [17]

| Model | Cell Type Annotation | Batch Integration | Gene Function Prediction | Robustness to High Sparsity | Computational Demand |

|---|---|---|---|---|---|

| scGPT | High | Medium-High | Medium | High | High |

| Geneformer | Medium | Low-Medium | Medium | Medium | Medium |

| UCE | Medium-High | Medium | High | Medium | High |

| scFoundation | High | High | High | High | Very High |

| LangCell | Medium | Medium | Medium-High | Medium | Medium |

Troubleshooting Guide: Common Experimental Issues and Solutions

Data Preprocessing and Quality Control

Q: My dataset has extremely high sparsity (>95% zeros). Which scFM is most appropriate?

A: For extremely sparse datasets, scFoundation and scGPT generally demonstrate superior robustness [22] [16]. scFoundation's read-depth-aware pre-training specifically handles varying sampling distributions, while scGPT's value binning approach provides stability against high dropout rates. Consider these strategies:

- Apply quality filters to remove low-quality cells while preserving biological heterogeneity

- Avoid aggressive gene filtering that might remove biologically relevant but rarely detected transcripts

- Utilize the model's inherent handling of sparsity rather than pre-imputing, which can introduce biases [1]

Q: How should I preprocess my scRNA-seq data before applying scFMs?

A: Preprocessing requirements vary significantly by model [22] [16]:

- For Geneformer and LangCell: Implement rank-based encoding where genes are ordered by expression level within each cell

- For scGPT: Use log normalization followed by value binning for continuous expression values

- For scFoundation: Provide raw counts or log-normalized values without extensive preprocessing

- For UCE: Prepare expression values compatible with protein embedding integration

Q: What are the recommended computing resources for fine-tuning scFMs on sparse datasets?

A: Computational requirements vary substantially [16]:

- Minimum configuration: GPU with 16GB+ VRAM (e.g., NVIDIA RTX 4080, A5000)

- Recommended for large datasets: GPU with 24GB+ VRAM (e.g., NVIDIA RTX 4090, A6000)

- Memory requirements: 32-64GB system RAM depending on dataset size

- Training time: 2-48 hours for fine-tuning on typical datasets (10k-100k cells)

Model Selection and Performance Optimization

Q: In zero-shot settings, my scFM embeddings show poor cell type separation. What alternatives exist?

A: This is a documented limitation [23]. When foundation models underperform in zero-shot settings:

- Consider simpler methods: Highly Variable Genes (HVG) selection often outperforms scFMs for clustering tasks [23]

- Evaluate specialized methods: scVI and Harmony provide robust alternatives for batch integration and visualization [23]

- Perform limited fine-tuning: Even minimal fine-tuning (1-2 epochs) on a small subset of labeled data can dramatically improve performance [16]

- Hybrid approach: Extract embeddings from scFMs then apply traditional clustering algorithms

Q: How do I choose between multiple scFMs for my specific research question?

A: Model selection should be guided by task requirements and dataset characteristics [16] [17]:

- For gene regulatory inference: scRegNet (built on scFoundation) demonstrates state-of-the-art performance [22]

- For multi-omic integration: scGPT provides native support for multi-modal data [16] [14]

- For transfer learning with limited data: Geneformer's mechanistic representations show strong transferability [16]

- For text integration: LangCell enables natural language queries and annotations [16]

Q: Batch effects persist in my integrated data despite using scFMs. How can I improve integration?

A: Batch correction remains challenging for scFMs [23]. Consider these approaches:

- Apply specialized integration tools: Use scVI, Harmony, or Scanorama after extracting scFM embeddings [23]

- Leverage model-specific features: scGPT offers explicit batch correction capabilities through conditional generation

- Staged processing: Perform initial integration with specialized methods, then apply scFMs for downstream analysis

- Hyperparameter tuning: Adjust integration strength parameters to balance batch removal and biological preservation

Experimental Protocols for scFM Implementation

Standard Workflow for scFM Application

Protocol 1: Zero-Shot Embedding Generation

Purpose: Generate cell embeddings without task-specific fine-tuning for exploratory analysis [23].

Materials:

- Processed scRNA-seq data (cell × gene matrix)

- Pre-trained scFM weights

- Python environment with appropriate libraries (PyTorch, Transformers)

Procedure:

- Data Normalization: Apply model-specific normalization (log(CP10K+1) for most models)

- Tokenization: Convert expression values to model-specific token sequences

- For Geneformer: Rank genes by expression and select top 2,048

- For scGPT: Bin expression values and select top 1,200 HVGs

- Embedding Extraction: Forward pass through model to extract cell embeddings

- Quality Assessment: Evaluate embedding quality using clustering metrics (ASW, ARI)

Troubleshooting:

- Poor separation may indicate need for fine-tuning [23]

- Batch effects may require additional integration steps [23]

Protocol 2: Fine-tuning for Cell Type Annotation

Purpose: Adapt pre-trained scFMs for specific cell type classification tasks [16].

Materials:

- Pre-computed scFM embeddings

- Reference cell type labels (partial or complete)

- GPU-enabled computing environment

Procedure:

- Data Partitioning: Split data into training/validation sets (80/20 recommended)

- Classifier Attachment: Add task-specific classification head to base model

- Fine-tuning: Train with cross-entropy loss for 10-50 epochs

- Evaluation: Assess performance on held-out validation set

- Application: Apply trained model to unlabeled data

Optimization Tips:

- Use gradual unfreezing of layers for stability

- Employ learning rate warmup for transformer fine-tuning

- Monitor for overfitting with early stopping

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key computational tools and resources for scFM research

| Tool/Resource | Type | Purpose | Relevance to Sparsity |

|---|---|---|---|

| Scanpy [24] | Python toolkit | Single-cell analysis ecosystem | Compatible with scFM embeddings for downstream analysis |

| Seurat [24] | R toolkit | Single-cell analysis and integration | Alternative approach for sparse data modeling |

| CellxGene [14] | Data resource | Curated single-cell datasets | Source of high-quality training and benchmarking data |

| scVI [23] | Deep generative model | Probabilistic modeling of scRNA-seq | Strong baseline for sparse data handling |

| Harmony [23] | Integration algorithm | Batch effect correction | Complementary to scFMs for data integration |

| UNCURL [25] | Preprocessing framework | Matrix factorization for sparse data | Preprocessing option for extremely sparse datasets |

Frequently Asked Questions

FAQ 1: What is tokenization in the context of single-cell RNA-seq data and foundation models? Tokenization is the process of converting raw gene expression data from single-cell RNA sequencing (scRNA-seq) into discrete units, or "tokens," that can be processed by deep learning models, particularly transformers. In single-cell foundation models (scFMs), individual cells are treated analogously to sentences, and genes or other genomic features along with their expression values are treated as words or tokens [14]. This process standardizes the unstructured, high-dimensional scRNA-seq data into a structured format that transformer-based architectures can understand and learn from.

FAQ 2: Why is tokenization particularly challenging for sparse scRNA-seq data? scRNA-seq data is characterized by a high degree of sparsity, containing a large number of observed zeros [1]. A clear trend is that an increasing number of cells in a dataset is highly correlated with decreasing detection rates (the fraction of non-zero values) [5]. These zeros can represent either true biological absence of expression or "technical zeros" due to methodological noise and limitations in capturing barely expressed transcripts [1]. This sparsity, combined with the non-sequential nature of gene expression data where genes have no inherent ordering, makes defining a meaningful token sequence difficult [14].

FAQ 3: What are the primary strategies for tokenizing gene expression data? The main strategies involve deciding how to represent genes and their values as tokens, and how to order these tokens into a sequence.

| Strategy | Description | Considerations |

|---|---|---|

| Expression Ranking [14] | Ranks genes within each cell by expression level; the ordered list of top genes is the 'sentence'. | Provides a deterministic sequence based on magnitude. |

| Value Binning [14] | Partitions genes into bins based on expression values, using rankings to determine sequence position. | Offers an alternative discretization of expression values. |

| Binary Representation [5] | Uses a binarized representation (zero vs. non-zero counts) instead of full count data. | Highly efficient for sparse data; can analyze far more cells with the same resources. |

| Gene Identifier + Value [14] | Represents each gene as a token embedding combining a gene identifier and its expression value. | Retains more quantitative information. |

FAQ 4: How does a binary tokenization strategy help with data sparsity? Downstream analyses on binary-based gene expression (zero vs. non-zero) have been shown to give similar results to count-based analyses for tasks like dimensionality reduction, data integration, cell type identification, and differential expression analysis [5]. This is because, as datasets become sparser, counts become less informative relative to binarized expression. A major advantage is computational efficiency: a binary representation can scale up to approximately 50-fold more cells using the same computational resources [5].

FAQ 5: What are some advanced tokenization approaches used in modern scFMs? Modern models like scSFUT (Single-Cell Scale-Free and Unbiased Transformer) segment each cell's high-dimensional data into smaller, information-dense sub-vectors using a fixed window size, which allows the model to learn from the data at its original scale without aggressive gene filtering [26]. Other models incorporate special tokens for cell identity, metadata, or omics modality to provide richer context [14]. The embedding of a token often combines the gene identifier's embedding with a representation of its expression value.

Troubleshooting Guides

Problem 1: Poor Model Generalization to New Datasets

- Symptoms: The scFM performs well on training data but fails to accurately annotate cell types or predict expression in new, unseen datasets from different labs or conditions.

- Potential Causes:

- Solutions:

- During Tokenization: Employ a tokenization strategy that is not dependent on a universal gene list. For example, models like scSFUT process data using a fixed window size across the native gene dimension, making them more flexible [26].

- Data Preprocessing: Use batch effect correction algorithms (e.g., Harmony, Combat) on the tokenized data or the resulting latent embeddings [27] [14].

- Model Design: Choose or develop models that use precision-preserving attention mechanisms designed for end-to-end learning across the full gene length, reducing bias [26].

Problem 2: Loss of Biologically Relevant Information

- Symptoms: Key rare cell populations are missed, or the model fails to identify meaningful differential expression in downstream tasks.

- Potential Causes:

- Solutions:

- Strategy Selection: For tasks where quantitative differences are critical, avoid simple binarization and use strategies that preserve more value information (e.g., Gene Identifier + Value) [14].

- Leverage Probabilistic Models: For a more nuanced approach, use model-based imputation methods (e.g., DCA, scVI) as a preprocessing step. These methods use probabilistic models to distinguish technical zeros from biological zeros and impute values accordingly before tokenization [1].

Problem 3: High Computational and Memory Demands

- Symptoms: Training or inference is prohibitively slow, or the model runs out of memory, especially with large cell numbers.

- Potential Causes:

- Solutions:

- Model Architecture: Utilize models that implement efficient attention mechanisms, such as the low-rank attention in scGPT or the "unbiased Transformer" in scSFUT, designed to manage computational load [26] [14].

- Binary Tokenization: If scientifically justified for the analysis goal, adopt a binary tokenization strategy. This drastically reduces memory footprint and increases processing speed [5].

- Input Segmentation: Adopt methods like scSFUT that segment the gene vector, allowing the processing of high-dimensional data in parts [26].

Experimental Protocols & Workflows

Protocol 1: Standard Tokenization with Expression Ranking

This is a common method for preparing scRNA-seq data for transformer-based models like scBERT and scGPT [14].

- Input: A normalized count matrix (cells x genes).

- Quality Control: Filter out low-quality cells and genes. For example, remove genes expressed in fewer than three cells [26].

- Gene Selection (Optional but common): For models that require it, select a subset of Highly Variable Genes (HVGs) to reduce sequence length. Note: Some modern models like scSFUT avoid this step to prevent information loss [26].

- Cell-wise Ranking: For each cell, rank all genes based on their expression value from highest to lowest.

- Token Sequence Construction: For each cell, create its input sequence by listing the gene identifiers in the order of their rank. The expression values themselves are often integrated into the token embeddings.

- Positional Encoding: Apply positional encodings to the token sequences to inform the model of the gene order.

Protocol 2: Binary Tokenization for Sparse Data Analysis

This protocol is effective for maximizing computational efficiency and has been shown to be sufficient for many downstream analysis tasks in sparse datasets [5].

- Input: A raw or normalized count matrix (cells x genes).

- Binarization: Convert the count matrix to a binary matrix. All non-zero counts are set to 1, and zero counts remain 0.

X_binary = (X > 0).astype(int)

- Dimensionality Reduction (Optional but Recommended): Apply a dimensionality reduction technique suitable for binary data.

- Options: Principal Component Analysis (PCA) on the binary matrix, or specialized methods like scBFA (Binary Factor Analysis) [5].

- Downstream Analysis: Use the reduced dimensions or the binary matrix directly for tasks like clustering, visualization, or differential expression analysis using methods designed for binary data [5].

Performance Comparison of Tokenization Strategies

The table below summarizes key characteristics of different tokenization approaches, based on evaluations reported in the literature.

| Tokenization Strategy | Reported Performance / Advantage | Computational Efficiency |

|---|---|---|

| Binary Representation [5] | Similar results to count-based analyses for clustering, integration, and annotation (Median F1-score ~0.93). | ~50x more cells analyzed with same resources. Ideal for large, sparse datasets. |

| Expression Ranking (scBERT) [26] | Effective for cell type annotation, but may rely on pre-selected HVGs, potentially losing information. | Standard transformer cost; can be limited by gene list length. |

| Scale-Free & Unbiased (scSFUT) [26] | Outperforms state-of-the-art methods in cross-species cell annotation; avoids HVG selection. | Designed for efficiency with segmented input and unbiased attention. |

| Full-Gene with Value Embedding [14] | Retains maximum quantitative information from the transcriptome. | Highest computational demand due to long sequences and dense value processing. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Tokenization & scFMs |

|---|---|

| Public Data Archives (e.g., CZ CELLxGENE, Human Cell Atlas) [14] | Provides large-scale, diverse scRNA-seq datasets essential for pre-training foundation models. |

| Scanpy [26] | A versatile Python toolkit for single-cell data analysis. Used for critical preprocessing steps like quality control, normalization, and filtering before tokenization. |

| Transformer Architectures (e.g., BERT, GPT) [14] | The core deep learning model architecture. Understanding its components (attention, embedding layers) is key to designing custom tokenizers. |

| Self-Supervised Learning (SSL) [26] [14] | A training paradigm where the model learns from data without explicit labels (e.g., by predicting masked tokens). Fundamental for pre-training scFMs on unlabeled data. |

| Batch Correction Algorithms (e.g., Harmony, Combat) [27] | Used to mitigate technical variation between datasets, which can be applied before or after tokenization to improve model generalization. |

Troubleshooting Guide & FAQs for Single-Cell Foundation Models

Frequently Asked Questions

FAQ 1: Why is handling data sparsity so critical for pretraining scFMs? scRNA-seq data is inherently sparse, containing a large proportion of zero values. These zeros represent a mix of true biological absence of expression and technical "dropouts" where a transcript was present but not detected [1]. This sparsity can obscure true biological signals [12]. When datasets measure more cells, they often become even sparser [5]. Pretraining scFMs effectively on such data requires strategies that can distinguish meaningful biological signals from this technical noise.

FAQ 2: My model fails to learn meaningful representations. Could the pretraining task be the issue? Yes, the choice of pretraining task is fundamental. Research indicates that Masked Autoencoders (MAE) generally excel in scRNA-seq data compared to some contrastive learning methods [28]. A successful strategy involves creating biologically-informed masking strategies, such as masking random genes or entire functional gene programmes, which forces the model to learn robust contextual relationships [28].

FAQ 3: What is a key advantage of using a self-supervised approach for my sparse single-cell data? SSL allows you to leverage vast amounts of unlabeled scRNA-seq data to learn generalizable patterns of gene expression. Models pre-trained on large, diverse auxiliary datasets (like the CELLxGENE census) learn a rich data representation. This provides a powerful starting point that can be fine-tuned for specific tasks, often leading to better performance, especially on sparse target datasets [28].

FAQ 4: How can I assess if my scFM has learned biologically relevant features from the sparse data? Beyond standard performance metrics, you can use novel, biology-driven evaluation methods. The scGraph-OntoRWR metric assesses whether the cell-type relationships captured by your model's embeddings are consistent with established biological knowledge from cell ontologies. Another metric, the Lowest Common Ancestor Distance (LCAD), evaluates the severity of cell type misannotation by measuring their proximity in a known ontological hierarchy [16].

FAQ 5: No single scFM seems to be the best. How do I choose? Benchmarking studies confirm that no single scFM consistently outperforms all others across every task or dataset [16]. Your choice should be guided by your specific goal. The table below summarizes the strengths of several prominent models to aid your selection.

Table: Key Characteristics of Selected Single-Cell Foundation Models

| Model Name | Primary Strengths and Characteristics |

|---|---|

| scGPT | Robust all-around performer across various tasks, supports multi-omic data [16] [29]. |

| Geneformer | Excels in gene-level tasks; uses a ranked-genes input approach [16] [29]. |

| scFoundation | Strong performance on gene-level tasks, trained on a large number of genes [16]. |

| scBERT | May lag in performance due to smaller model size and training data [16] [29]. |

Troubleshooting Common Experimental Issues

Problem: Poor Model Generalization to New Datasets

- Symptoms: The model performs well on its training data but fails to achieve good performance (e.g., in cell type annotation) on new, unseen datasets.

- Possible Causes & Solutions:

- Cause 1: The pre-training dataset lacked diversity.

- Cause 2: High dataset-specific technical noise or batch effects are overwhelming the biological signal.

Problem: Inefficient Learning from Sparse Data

- Symptoms: The model converges slowly or its performance plateaus at a low level during pre-training.

- Possible Causes & Solutions:

- Cause 1: The standard random masking strategy is not effective for highly sparse data.

- Solution: Implement more sophisticated masking strategies. Gene Programme (GP) masking, which masks groups of functionally related genes, can force the model to learn higher-order biological context [28].

- Cause 2: The model architecture is not well-suited for sparse, high-dimensional input.

- Solution: Consider architectures specifically designed for sparsity. For example, the scRobust model combines contrastive learning with gene expression prediction tasks within a Transformer framework to better handle missing data [30].

- Cause 1: The standard random masking strategy is not effective for highly sparse data.

Problem: Suboptimal Performance on Downstream Tasks After Pre-training

- Symptoms: Pre-training seems successful (low loss), but fine-tuning on a specific task like differential expression yields poor results.

- Possible Causes & Solutions:

- Cause 1: A disconnect between the pre-training objective and the downstream task.

- Solution: Align your pre-training and fine-tuning more closely. If your goal is differential expression, a pre-training task that focuses on reconstructing gene expression values (like MAE) may be more suitable than one designed only for cell embedding.

- Cause 2: The "zero-shot" capabilities of the model are insufficient for the task complexity.

- Solution: Always plan for a fine-tuning step. While zero-shot evaluation is a good diagnostic, supervised fine-tuning on a portion of your target data almost always improves performance [28].

- Cause 1: A disconnect between the pre-training objective and the downstream task.

Experimental Protocols & Workflows

Protocol 1: Implementing a Masked Gene Modeling Pre-training Task

Principle: The model is trained to reconstruct randomly masked portions of a cell's gene expression profile, learning the contextual relationships between genes [14] [28].

Materials:

- Hardware: GPU-enabled computing environment.

- Software: Python with PyTorch or TensorFlow, and scFM frameworks (e.g., scGPT, BioLLM [29]).

- Data: A large, normalized scRNA-seq count matrix (cells x genes).

Methodology:

- Input Representation (Tokenization):

- Masking:

- Randomly select a percentage (e.g., 15-30%) of the gene tokens in each input sequence.

- Replace these selected tokens with a special

[MASK]token.

- Model Architecture & Training:

Protocol 2: Benchmarking scFMs on a Sparse Target Dataset

Principle: Evaluate the effectiveness of a pre-trained scFM by applying it to a downstream task on a new, potentially sparse, dataset in a "zero-shot" or "fine-tuned" setting [16] [28].

Materials:

- Pre-trained scFM model weights.

- Target scRNA-seq dataset with relevant labels (e.g., cell type, condition).

Methodology:

- Feature Extraction:

- Zero-Shot: Pass the target dataset through the pre-trained model without updating its weights. Extract the cell embeddings from the model's output layer.

- Fine-Tuning: Further train the pre-trained model on the target dataset with a small amount of labeled data.

- Downstream Task Execution:

- Use the extracted embeddings to perform tasks like cell type annotation (e.g., using a k-NN classifier) or data visualization (e.g., UMAP) [28].

- Performance Evaluation:

- Cell Type Annotation: Calculate the macro F1-score to handle class imbalance and the Lowest Common Ancestor Distance (LCAD) to gauge the biological reasonableness of errors [16].

- Data Integration: Use metrics like the Local Inverse Simpson's Index (LISI) to quantify batch mixing [5] [16].

- Gene-Level Analysis: For tasks like gene expression reconstruction, use metrics like weighted explained variance [28].

Table: Key Metrics for Evaluating scFMs on Sparse Data

| Task Category | Evaluation Metric | What It Measures |

|---|---|---|

| Cell Type Annotation | Macro F1-Score | Model's accuracy in predicting cell types, robust to class imbalance [28]. |

| Lowest Common Ancestor Distance (LCAD) | Biological plausibility of misclassifications based on cell ontology [16]. | |

| Data Integration & Embedding Quality | LISI Score | Effectiveness of batch effect correction and cell mixing [5] [16]. |

| scGraph-OntoRWR | Concordance of learned cell relationships with prior biological knowledge [16]. | |

| Gene-Level Task | Weighted Explained Variance | Accuracy of gene expression reconstruction or prediction [28]. |

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Application | Relevance to Sparse Data & scFMs |

|---|---|---|

| CZ CELLxGENE [14] [28] | A curated data repository of single-cell datasets. | Provides massive, diverse datasets essential for pre-training generalizable models on sparse data. |

| BioLLM Framework [29] | A unified software framework for integrating and applying various scFMs. | Standardizes benchmarking and model switching, allowing researchers to find the best model for their sparse data challenge. |

| Harmony [5] [16] | Algorithm for integrating datasets and correcting batch effects. | Used in post-processing or analysis of scFM embeddings to ensure technical variation doesn't confound biological signals. |

| scVI [16] [1] | A probabilistic deep learning framework for single-cell data. | A strong baseline model that uses a zero-inflated negative binomial loss, explicitly modeling the sparsity of scRNA-seq data. |

| Transformer Architecture [14] [30] | Neural network model using self-attention mechanisms. | The backbone of most scFMs; its attention mechanism can learn which genes are most informative despite sparsity. |

Batch Integration, Cell Type Annotation, and Rare Cell Identification

Frequently Asked Questions (FAQs)

Batch Integration

Q1: Our integrated scRNA-seq data shows poor alignment of the same cell types across batches. What methods are recommended for effective batch-effect correction?

Batch-effect correction is crucial for integrating datasets from different experiments. Based on recent benchmarking studies, the following methods are recommended for their efficacy in removing batch effects while preserving biological variation.

- Recommended Methods: Harmony is highly recommended due to its strong performance across multiple benchmarks and significantly shorter runtime, making it suitable for large datasets [31] [32]. LIGER and Seurat 3 also perform well, particularly in complex integration scenarios [32].

- Methods to Use with Caution: Methods such as MNN, SCVI, and LIGER (in some tests) have been shown to introduce measurable artifacts or alter the data considerably during correction [31]. ComBat, ComBat-seq, BBKNN, and Seurat can also introduce detectable artifacts [31].

Table 1: Benchmarking of Common Batch Correction Methods

| Method | Recommended Use | Key Strengths | Noted Limitations |

|---|---|---|---|

| Harmony | Primary recommendation [31] [32] | Fast; well-calibrated; good batch mixing [31] [32] | - |

| LIGER | Alternative, especially for biological variation [32] | Separates technical and biological variation [32] | Can alter data considerably; longer runtime [31] [32] |

| Seurat 3 | Alternative for diverse tasks [32] | Good performance on multiple tasks [32] | May introduce artifacts [31] |

| ComBat | Use with caution | Established method | Can introduce artifacts; may not handle scRNA-seq sparsity well [31] |

| MNN | Not recommended | Early scRNA-seq specific method | Poor calibration; alters data considerably [31] |

Experimental Protocol: Batch Integration with Harmony

- Input Preparation: Prepare a normalized count matrix (e.g., from SCTransform) and a metadata vector specifying the batch for each cell [31].

- Dimensionality Reduction: Perform PCA on the normalized data to obtain a low-dimensional embedding [31].

- Run Harmony: Apply the Harmony algorithm to the PCA embedding and batch metadata. Harmony iteratively clusters cells and corrects their positions to maximize batch mixing within clusters [31] [32].

- Output: The output is a corrected embedding. Use this corrected embedding instead of the original PCA coordinates for all downstream analyses, such as UMAP visualization and clustering [31].

Batch Integration Workflow with Harmony

Cell Type Annotation

Q2: When annotating cell types in a sparse scRNA-seq dataset, an automated tool provided conflicting or low-confidence labels. How should we proceed?