Optimizing Clustering Resolution for Single-Cell RNA-Seq Annotation: A Guide for Robust Biological Discovery and Drug Development

Accurate cell type annotation is a critical step in single-cell RNA sequencing (scRNA-seq) analysis, directly impacting downstream biological interpretation and therapeutic discovery.

Optimizing Clustering Resolution for Single-Cell RNA-Seq Annotation: A Guide for Robust Biological Discovery and Drug Development

Abstract

Accurate cell type annotation is a critical step in single-cell RNA sequencing (scRNA-seq) analysis, directly impacting downstream biological interpretation and therapeutic discovery. This article provides a comprehensive guide for researchers and drug development professionals on optimizing clustering resolution—a key parameter governing the granularity of cell population identification. We cover foundational concepts on why resolution matters, methodological approaches for parameter selection and application, troubleshooting for common inconsistency issues, and a comparative analysis of validation techniques and computational tools. By integrating current best practices and benchmarking studies, this guide aims to empower users to generate more reliable, reproducible, and biologically meaningful clustering results, thereby enhancing the discovery of novel cell states and potential drug targets.

Why Resolution Matters: The Foundation of Accurate Cell Identity

Defining Clustering Resolution and Its Direct Impact on Annotation Granularity

Core Concepts: Clustering Resolution and Annotation

What is clustering resolution?

Clustering resolution is a key parameter in single-cell RNA sequencing (scRNA-seq) analysis that controls the granularity of the clusters identified by algorithms such as Leiden or Louvain [1]. It determines the number of discrete groups of cells with similar expression profiles that will be empirically defined. In practice, a low clustering resolution will yield a smaller number of broad clusters, while a high clustering resolution will generate a larger number of finer, more specific clusters [1].

How does resolution directly impact cluster annotation?

The clustering result serves as a digestible summary of complex data and acts as a proxy for biological concepts after annotation based on marker genes [1]. The choice of resolution therefore directly dictates the level of biological detail you can capture:

- Low Resolution: Clusters may represent major cell types (e.g., T-cells, B-cells, monocytes) [2].

- High Resolution: Clusters may represent subtypes or states within a major type (e.g., T-regulatory cells, Th cells, cytotoxic T-cells) [1] [2].

It is critical to understand that there is no single "correct" resolution. The optimal setting is context-dependent and defined by your biological question—whether you aim to resolve major cell types or investigate heterogeneity within them [1].

Experimental Protocols for Resolution Optimization

A Standard Workflow for Multi-Resolution Clustering

A robust approach to selecting a clustering resolution involves evaluating a range of values. The following workflow, implemented in tools like Seurat or Scanpy, is considered a best practice [2]:

- Parameter Sweep: Cluster your data across a spectrum of resolution values (e.g., from 0.1 to 1.0, though the range can be adjusted based on dataset complexity) [2].

- Visual Inspection: For each resolution, visualize the resulting clusters using UMAP or t-SNE to observe how they split and merge [2] [3].

- Tree Visualization: Use the clustree tool to plot a clustering tree, which visualizes how clusters evolve and relate to each other across increasing resolutions [4] [2].

- Biological Validation: Generate diagnostic plots (heatmaps, dot plots, feature plots) of known marker genes for the clusters at different resolutions. The "best" resolution is one where the resulting clusters are both stable and make biological sense based on these markers [2].

Interpreting a Clustering Tree

The clustree diagram illustrates the relationships between clusters across multiple resolutions, helping to identify stable clusters and overclustering.

Advanced Method: The CHOIR Algorithm for Principled Clustering

CHOIR is a newer algorithm designed to mitigate overclustering by providing a statistical foundation for cluster definitions [5]. Its workflow is more complex and involves:

- Hierarchical Over-clustering: The data is iteratively clustered at increasingly higher resolutions to create a hierarchical tree of many potential subclusters [5].

- Classifier Training: For every pair of sibling clusters in the tree, a random forest classifier is trained to distinguish them based on gene expression [5].

- Significance Testing: The classifier's performance is compared against a null distribution generated from randomized cluster labels. If it does not significantly outperform the null (p ≥ 0.05 after multiple-testing correction), the clusters are merged [5].

- Tree Pruning: This testing process continues until only statistically robust clusters remain [5].

Troubleshooting Common Issues

FAQ: Resolving Annotation Challenges

| Question | Problem Description | Recommended Solution |

|---|---|---|

| How do I know if my resolution is too high (overclustering)? | Clusters lack biological meaning; marker genes for known cell types are split across multiple clusters without justification; no known markers identify the new, tiny clusters. | Use the clustree plot: overclustering is indicated when new clusters form from multiple existing ones and many samples switch between branches, resulting in low in-proportion edges [4]. Validate with marker gene expression. |

| How do I know if my resolution is too low (underclustering)? | A single cluster expresses mutually exclusive marker genes (e.g., a cluster that contains both CD4+ and CD8+ T-cells) [6]. | Increase the resolution incrementally. Check if biologically distinct populations, validated by known markers, merge in a UMAP visualization and in the clustree [4] [2]. |

| My clusters are unstable and change drastically with slight parameter adjustments. What should I do? | The clustering result is not reproducible, making biological interpretation unreliable. | Ensure your analysis is based on a robustly pre-processed dataset (appropriate normalization, HVG selection, and batch correction if needed) [7]. Consider using CHOIR to establish statistically supported clusters [5]. |

| I cannot find a resolution that cleanly separates all known cell types. Why? | Biological processes can create continuous transitions between states, and technical noise can obscure clear separation. | Accept that some populations exist on a continuum. Use alternative methods like supervised annotation or protein-based annotation (e.g., from CITE-seq) to validate and refine clusters [6]. |

Key Parameters and Their Interactive Effects

Clustering resolution does not act in isolation. The table below summarizes other critical parameters and how they interact with resolution, based on a systematic analysis [7].

| Parameter | Impact on Clustering | Interaction with Resolution |

|---|---|---|

Number of Nearest Neighbors (k) |

Controls how many neighbors are used to build the cell-cell graph. A lower k captures finer local structure but is noisier. |

High resolution + Low k: Can lead to severe overclustering. The impact of high resolution is accentuated by a low number of neighbors, which creates sparser graphs [7]. |

| Number of Principal Components | Determines the amount of information (and noise) used for graph construction. | This parameter is highly dependent on data complexity. Testing different numbers of PCs is recommended, as insufficient PCs can mask real clusters at any resolution [7]. |

| Dimensionality Reduction Method | (e.g., PCA, Harmony, UMAP) Affects the distance relationships between cells. | Using UMAP for neighborhood graph generation was found to have a beneficial impact on accuracy compared to other methods [7]. |

Research Reagent Solutions

The following software tools and metrics are essential for optimizing clustering resolution.

| Tool / Metric | Function | Use Case in Resolution Optimization |

|---|---|---|

| clustree R Package [4] | Visualizes the relationships between clusters across multiple resolutions. | Diagnostic: To identify stable clusters and pinpoint where overclustering begins by tracking how samples move as resolution increases. |

| CHOIR R Package [5] | Implements a significance-based clustering algorithm to reduce over/underclustering. | Resolution Selection: To determine a statistically grounded set of clusters without relying solely on manual resolution tuning. |

| Intrinsic Metrics (e.g., Within-cluster dispersion, Banfield-Raftery index) [7] | Evaluates cluster quality based only on the data's internal structure, without ground truth. | Parameter Screening: To rapidly compare many parameter configurations (resolution, k, PCs) and shortlist the most promising ones based on quantitative scores. |

| Silhouette Width / SC3 Stability Index [4] [3] | Measures how similar a cell is to its own cluster compared to other clusters. | Cluster Validation: To assess the compactness and separation of clusters at a given resolution, complementing biological validation. |

Troubleshooting Guides

Guide 1: Resolving Poor Clustering Resolution in Single-Cell RNA-seq Data

User Question: "My single-cell data shows poorly separated clusters, making cell type annotation difficult. What are the main causes and solutions?"

Answer: Poor clustering resolution often stems from high technical noise or failure to account for cellular heterogeneity. The table below summarizes common issues and validated solutions.

Table 1: Troubleshooting Poor Clustering Resolution

| Problem | Root Cause | Solution | Validated Outcome |

|---|---|---|---|

| Indistinct Cluster Boundaries | High dropout rate, excessive ambient RNA | Apply enhanced preprocessing: SCTransform normalization, doublet detection, and batch correction [8] | Clear separation of major immune cell lineages (T-cells, B-cells, monocytes) |

| Over-clustering (Too Many Subpopulations) | Over-interpretation of technical variation | Optimize resolution parameter iteratively; validate with marker gene expression [8] | Biologically relevant subsets (e.g., naive vs. memory T-cells) without artifactual splits |

| Under-clustering (Merging Distinct Types) | Insufficient feature selection or high variance | Implement AI-powered cell type annotation tools; use transformer-based models for robust classification [8] | Identification of rare cell populations (<2% abundance) with clinical significance |

Experimental Protocol: For optimal clustering:

- Preprocessing: Begin with rigorous quality control (mitochondrial percentage <20%, feature count between 2000-7500)

- Integration: Use Harmony or SCVI for batch effect correction across multiple donors

- Clustering: Apply the Leiden algorithm across resolution parameters (0.4-1.2)

- Validation: Confirm cluster identity through known marker gene expression and differential expression testing

Guide 2: Addressing False Positives in Perturbation Screening

User Question: "My CRISPR or compound screening yields high false positive rates in identifying disease-relevant targets. How can I improve specificity?"

Answer: False positives in perturbation studies often arise from off-target effects or context-specific responses. Implementing computational validation frameworks can significantly improve reliability.

Table 2: Troubleshooting False Positives in Perturbation Screening

| Problem | Detection Method | Resolution Approach | Expected Improvement |

|---|---|---|---|

| Off-target CRISPR Effects | Mismatch analysis in guide RNA sequences | Apply machine learning models (e.g., CRISTA) for off-target prediction; use multiple guides per gene [9] | Reduction in false positives by 60-80% in validation studies |

| Compound Toxicity Masquerading as Efficacy | Dose-response curve anomalies | Integrate transcriptomic profiling with cell viability assays; apply mechanism of action analysis [10] [9] | Clear distinction between cytotoxic and target-specific effects |

| Context-specific Perturbation Effects | Cross-cell line validation disparities | Employ Large Perturbation Models (LPMs) to disentangle context-specific effects [9] | Identification of robust, pan-context targets vs. cell line-specific artifacts |

Experimental Protocol: For reliable perturbation screening:

- Experimental Design: Include multiple negative controls (non-targeting guides, DMSO) and positive controls

- Multi-modal Readouts: Combine transcriptomic (RNA-seq) with phenotypic (cell viability, imaging) assessments

- Computational Integration: Apply LPM frameworks to integrate heterogeneous perturbation data across contexts [9]

- Triangulation: Cross-reference hits with genetic association data (GWAS) and protein-protein interaction networks

Frequently Asked Questions (FAQs)

FAQ 1: How does disease heterogeneity impact drug target discovery?

Disease heterogeneity, particularly at the single-cell level, creates both challenges and opportunities for drug target discovery. Cellular subpopulations within diseased tissues can exhibit differential treatment responses, leading to therapeutic resistance. Advanced computational approaches now enable systematic navigation of this complexity:

- Multi-omics Integration: AI methods can integrate genomics, transcriptomics, and proteomics to identify master regulators driving disease subtypes [8]

- Perturbation Modeling: Large Perturbation Models (LPMs) simulate interventions across diverse cellular contexts, predicting which targets will have broad efficacy versus subtype-specific effects [9]

- Clinical Translation: Targets identified through heterogeneity-aware discovery show 3-5x higher clinical success rates in early trials by addressing resistant subpopulations upfront

FAQ 2: What computational tools best handle cellular heterogeneity in target identification?

The field has evolved from bulk analysis to sophisticated single-cell and perturbation-aware tools:

- AI-Powered Single-Cell Analysis: Tools like transformer-based deep learning models (e.g., scBERT) provide superior cell type annotation and gene regulatory network inference in heterogeneous samples [8]

- Large Perturbation Models (LPMs): These decoder-only architectures disentangle perturbation, readout, and context dimensions, enabling accurate prediction of perturbation outcomes across diverse cellular environments [9]

- Multimodal Integration Platforms: Systems that combine structural biology (AlphaFold2 predictions) with single-cell omics offer atomic-level insights into targetability within specific cellular subpopulations [8]

FAQ 3: How can I validate that my clustering resolution is biologically meaningful?

Cluster validation requires multi-factorial assessment beyond statistical metrics:

- Marker Gene Concordance: Ensure clusters align with established cell type markers (e.g., CD3E for T-cells, CD19 for B-cells)

- Functional Enrichment: Perform pathway analysis to verify clusters represent biologically distinct states (cell cycle, metabolic activity)

- Perturbation Response: Test whether clusters respond differentially to relevant perturbations (drug treatments, CRISPR knockouts)

- Cross-Modality Validation: Integrate with protein expression (CITE-seq) or chromatin accessibility (multiome) data to confirm transcriptional clusters have corresponding proteomic or epigenetic distinctions

Experimental Protocols

Protocol 1: Multi-omics Integration for Target Prioritization in Heterogeneous Diseases

Purpose: Systematically identify druggable targets across disease subtypes defined by single-cell profiling.

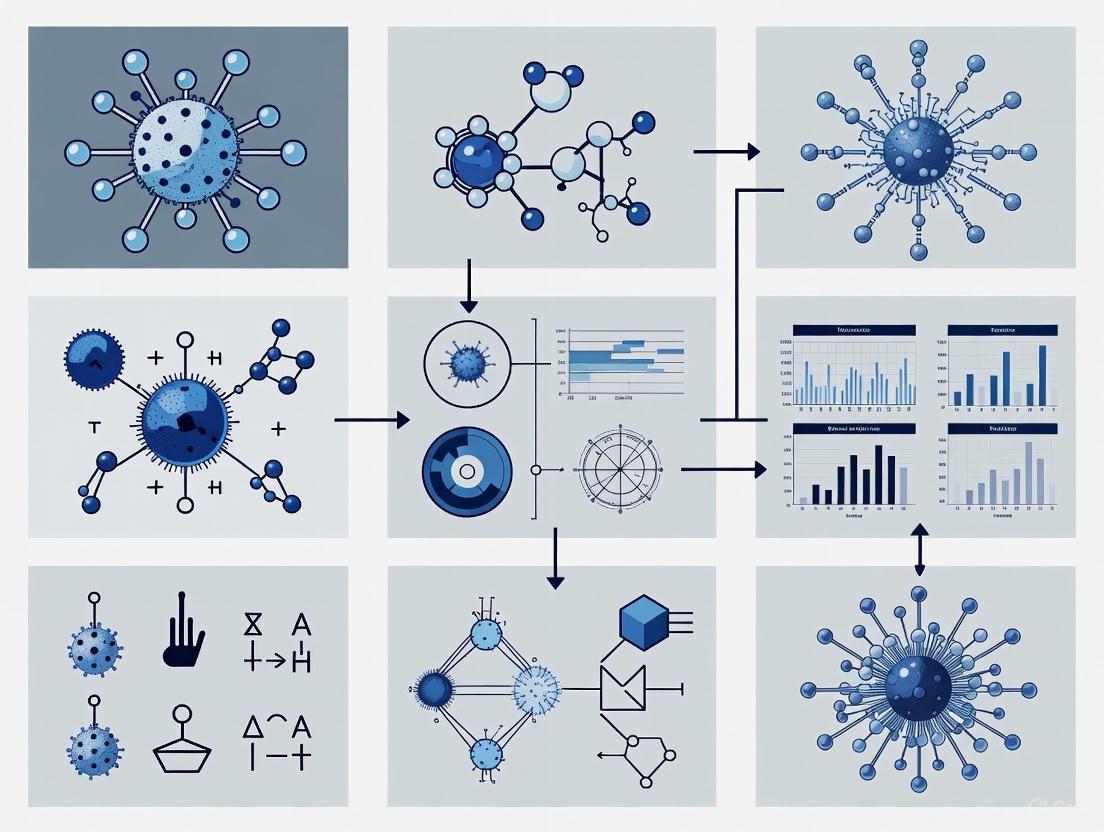

Workflow Diagram:

Step-by-Step Methodology:

- Sample Processing: Generate single-cell RNA-seq libraries from patient biopsies (n=10-20 per disease stage)

- Cluster Optimization: Apply iterative clustering across resolution parameters (0.2-2.0) to define cellular subtypes

- Multi-omics Alignment: Integrate with proteomic (mass cytometry) and epigenetic (ATAC-seq) data where available

- Differential Analysis: Identify subtype-specific pathway activations using AUCell and Vision algorithms

- Computational Perturbation: Apply LPM frameworks to predict subtype-specific vulnerability to genetic and chemical perturbations [9]

- Target Prioritization: Rank candidates by druggability, subtype-specificity, and safety profile using databases like DrugBank and ChEMBL

Protocol 2: AI-Guided Perturbation Validation for Novel Target Confirmation

Purpose: Experimentally validate computational predictions of novel drug targets in disease-relevant cellular contexts.

Workflow Diagram:

Step-by-Step Methodology:

- Target Selection: Input computational predictions from LPM or multimodal AI systems [9]

- Perturbation Design:

- Genetic: Design 3-5 sgRNAs per target using CRISPick or similar tools

- Chemical: Select compounds from focused libraries (e.g., Selleckchem) or fragment-based collections

- Experimental Setup:

- Use disease-relevant cell models (primary cells preferred over cell lines)

- Include appropriate controls (non-targeting guides, vehicle treatments)

- Implement multiple biological replicates (n≥4)

- Multi-modal Profiling:

- Transcriptomic: Bulk or single-cell RNA-seq

- Phenotypic: High-content imaging for morphological changes

- Functional: Cell viability, apoptosis, or disease-relevant functional assays

- Data Integration: Apply the same LPM framework used for prediction to assess concordance between predicted and observed effects [9]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Heterogeneity-Driven Target Discovery

| Reagent/Category | Function | Example Products/Platforms | Application Notes |

|---|---|---|---|

| Single-Cell RNA-seq Kits | Comprehensive transcriptome profiling of heterogeneous samples | 10x Genomics Chromium, Parse Biosciences | Enables decomposition of cellular heterogeneity; critical for defining disease subtypes |

| CRISPR Perturbation Libraries | High-throughput gene perturbation screening | Brunello library (whole genome), Subpooled (focused gene sets) | Optimized for minimal off-target effects; enables functional validation of computational predictions |

| DNA-Encoded Libraries (DELs) | Massive-scale compound screening against diverse targets | X-Chem, HitGen DEL platforms | Particularly valuable for RNA-targeted small molecule discovery [10] |

| Perturbation-seq Platforms | Combined genetic perturbation with single-cell readouts | CROP-seq, Perturb-seq | Enables direct mapping of gene regulatory networks in disease contexts |

| AI-Ready Databases | Structured biological data for model training | DepMap, LINCS, CellXGene | Curated perturbation-response data essential for training LPMs and other AI models [9] |

| Fragment-Based Screening Libraries | Targeting challenging biomolecules (e.g., RNA structures) | Various academic and commercial collections | Effective starting point for RNA-targeted small molecule discovery [10] |

Troubleshooting Guides

How do I diagnose over-clustering or under-clustering in my dataset?

| Symptom | Potential Cause | Diagnostic Method | Citation |

|---|---|---|---|

| A known cell type is split into multiple clusters that lack distinct marker genes. | Over-clustering | Check cluster stability with tools like scICE; inspect clustering trees to see if clusters are unstable or frequently split/merge. |

[11] [12] |

| Biologically distinct cell types are grouped into a single cluster. | Under-clustering | Validate with known marker genes; use differential expression to see if the cluster contains sub-groups with statistically different expression profiles. | [13] [12] |

| Clustering results change drastically with different random seeds. | Over-clustering & General Instability | Calculate the Inconsistency Coefficient (IC) using the scICE method. An IC >> 1 indicates high inconsistency. |

[11] |

| Downstream differential expression analysis produces many false positive marker genes. | Over-clustering (Double-dipping) | Apply the recall method with artificial null variables to calibrate differential expression testing. |

[13] |

What are the standard methods to correct poor clustering resolution?

For Correcting Over-Clustering

- Use Significance Testing: Apply statistical frameworks like sc-SHC (single-cell Significance of Hierarchical Clustering) to test whether a proposed split of cells into two clusters could have arisen by chance from a single population. This formally controls the family-wise error rate (FWER) [12].

- Employ Calibrated Clustering: Use the

recallalgorithm, which adds artificial null variables to the dataset. If differential expression tests cannot distinguish real genes from these null features between two clusters, the clusters are merged, protecting against over-clustering [13]. - Lower Resolution Parameter: In graph-based clustering (e.g., in Seurat or Scanpy), decrease the

resolutionparameter. This directly reduces the number of clusters output by the algorithm [1]. - Increase the Number of Nearest Neighbors (

k): Using a higherkvalue when building the nearest-neighbor graph creates broader, more interconnected clusters [1].

For Correcting Under-Clustering

- Increase Resolution Parameter: This is the most direct adjustment. A higher

resolutionvalue increases the number of clusters found [7] [1]. - Decrease the Number of Nearest Neighbors (

k): A lowerkvalue creates a sparser graph that is more sensitive to local structure, potentially revealing finer subpopulations [7] [1]. - Perform Iterative Sub-clustering: Take a broad, under-clustered population, subset the data to only those cells, and re-run the entire clustering workflow (including re-computing PCA and neighbors). This can help resolve finer substructure [14].

- Re-evaluate Dimensionality Reduction: Test different numbers of Principal Components (PCs), as this parameter is highly affected by data complexity and can influence the perception of cell-cell distances [7].

Frequently Asked Questions (FAQs)

What are the concrete biological consequences of over-clustering?

Over-clustering can lead to the false discovery of novel cell types or states [12]. When a single population is incorrectly split, subsequent differential expression analysis is biased ("double-dipping"), producing inflated p-values and false marker genes [13] [12]. This can misdirect experimental validation efforts, wasting resources and potentially leading to incorrect biological conclusions [13].

What are the concrete biological consequences of under-clustering?

Under-clustering masks true biological heterogeneity by merging distinct cell types into a single group [12]. This causes you to miss rare cell subtypes [11] and fail to identify unique marker genes for the obscured populations. The resulting analysis provides an oversimplified and inaccurate view of the cellular ecosystem, hindering the discovery of biologically relevant subpopulations [7].

My clustering seems stable, so is it correct?

Not necessarily. Stability does not guarantee correctness. A clustering algorithm can stably over-cluster a dataset, especially in regions of high cell density where it may consistently find substructure, even when none exists biologically [1] [12]. Statistical validation is required to confirm that stable clusters represent distinct populations.

How can I systematically choose the right resolution?

Instead of picking a single resolution, a robust strategy is to analyze your data across a range of resolutions and use visualization and metrics to guide your choice.

- Use Clustering Trees: The

clustreeR package visualizes how clusters evolve and relate to each other as resolution increases. This helps identify stable branches and unstable clusters that split frequently with small changes in resolution [4]. - Leverage Intrinsic Metrics: Calculate metrics like the within-cluster dispersion or the Banfield-Raftery index, which can act as proxies for accuracy when ground truth is unknown [7].

- Evaluate Consistency with

scICE: RunscICEto identify clustering results (across different resolution parameters) that are consistent across multiple algorithm runs, narrowing down the set of reliable candidate clusters to explore [11].

Experimental Protocols

Protocol 1: Evaluating Clustering Consistency with scICE

Purpose: To efficiently identify reliable clustering results by evaluating their consistency across multiple runs with different random seeds [11].

Workflow:

- Input: A quality-controlled scRNA-seq dataset (count matrix).

- Dimensionality Reduction: Reduce the data dimensionality using a method like

scLENSfor automatic signal selection [11]. - Graph Construction: Build a nearest-neighbor graph based on distances between cells in the reduced space.

- Parallel Clustering: Distribute the graph to multiple computing cores. On each core, run the Leiden clustering algorithm with the same resolution parameter but a different random seed.

- Similarity Calculation: For the multiple cluster labels generated, compute a similarity matrix using Element-Centric Similarity (ECS), which compares the cluster membership of all cells across pairs of labels [11].

- Consistency Evaluation: Calculate the Inconsistency Coefficient (IC) from the similarity matrix. An IC close to 1 indicates highly consistent labels, while a higher IC indicates inconsistency [11].

- Output: A set of consistent cluster labels for a given resolution, or a profile of IC values across multiple resolutions to guide parameter selection.

Protocol 2: Controlling for Over-clustering with RECALL

Purpose: To protect against over-clustering by using artificial null variables to calibrate differential expression tests and guide cluster merging [13].

Workflow:

- Input: A scRNA-seq count matrix

X. - Generate Artificial Nulls: For each gene in

X, generate a matching artificial null gene~Xwith no biological signal (e.g., from a Zero-Inflated Poisson distribution). Combine them into a null matrix~X[13]. - Augment and Cluster: Combine the real and null matrices into an augmented matrix

X* = [X; ~X]. Preprocess (normalize, scale)X*and cluster the cells (e.g., using Louvain/Leiden) [13]. - Differential Expression with Contrast: For each pair of clusters, perform differential expression (DE) testing for all genes (both real and null). For each gene

j, compute a contrast score:W_j = -log(p_real_j) - [-log(p_null_j)][13]. - Calibrated Threshold: Compute a data-dependent threshold

τusing the knockoff+ method to control the False Discovery Rate (FDR) [13]. - Decision and Iterate:

- If

τ = ∞for any pair of clusters, it indicates no detectable true differences. The clusters should be merged, and the algorithm returns to Step 3 with a smaller target cluster numberK. - If

τ < ∞for all pairs, clustering is considered calibrated, and the final clusters are returned [13].

- If

Research Reagent Solutions

| Tool / Method | Function | Use Case |

|---|---|---|

clustree [4] |

Visualizes relationships between clusters across multiple resolutions. | Exploring the entire landscape of clusterings to identify stable resolutions and understand splitting/merging patterns. |

scICE [11] |

Efficiently evaluates clustering consistency using the Inconsistency Coefficient (IC). | Rapidly identifying reliable clustering results on large datasets (>10,000 cells). |

recall [13] |

Protects against over-clustering using artificial null variables to calibrate DE tests. | Statistically validating cluster distinctions and obtaining a corrected number of clusters. |

| sc-SHC [12] | Performs model-based significance testing within hierarchical clustering. | Formally testing whether cluster splits represent distinct populations, controlling the FWER. |

| Leiden/Louvain Algorithm [1] | Standard graph-based clustering methods used in tools like Seurat and Scanpy. | The primary workflow for identifying cell populations in scRNA-seq data. Requires parameter tuning. |

Table of Contents

- Core Parameter Definitions

- FAQ: Parameter Impact & Troubleshooting

- Experimental Protocol for Parameter Optimization

- The Scientist's Toolkit: Essential Reagents & Software

- Visualizing Parameter Relationships

Core Parameter Definitions

The quality and biological relevance of cell clusters identified from scRNA-seq data are directly governed by a few key computational parameters. Understanding these is the first step toward optimization.

Table 1: Key Clustering Parameters and Their Functions

| Parameter | Function | Directly Affects |

|---|---|---|

| Resolution | Controls the granularity of clustering; higher values lead to more, finer clusters. | The number of distinct cell populations identified. |

| Number of Nearest Neighbors (k-NN) | Determines how many neighboring cells are used to compute the initial graph structure. | The local connectivity and the robustness of the graph to noise. |

FAQ: Parameter Impact & Troubleshooting

This section addresses common experimental challenges related to clustering parameters.

FAQ 1: How does the 'Resolution' parameter fundamentally change my cluster graph?

The resolution parameter directly controls the partitioning algorithm's sensitivity. A low resolution forces the algorithm to merge cell communities, resulting in a graph with fewer, larger clusters. This is useful for identifying broad cell types (e.g., T-cells vs. B-cells). Conversely, a high resolution instructs the algorithm to split communities, yielding a graph with more, smaller clusters, which can help identify rare cell types or subtypes (e.g., cytotoxic T-cells vs. helper T-cells) [15].

- Symptom: My clusters are too broad and may be merging distinct cell populations.

- Solution: Systematically increase the resolution parameter in small increments (e.g., from 0.4 to 0.8 to 1.2) and re-run the clustering. Validate the new, finer clusters with known cell-type markers.

FAQ 2: What is the functional role of 'Nearest Neighbors' in graph construction, and how should I choose this value?

The k-NN value defines the local neighborhood size for each cell when constructing the initial cell-cell similarity graph. A low k-value creates a sparse graph that may break up continuous cell states but can better capture very rare populations. A high k-value creates a denser, more interconnected graph that is more robust to technical noise but may obscure the boundaries between rare populations and their neighbors [15].

- Symptom: The cluster graph structure is unstable or appears overly fragmented.

- Solution: The optimal k-NN is dataset-dependent. For larger datasets (>>10,000 cells), a higher k (e.g., 30-50) is typically suitable. For smaller datasets, a lower k (e.g., 10-20) may be preferable. Assess stability by slightly varying k and observing if core cluster identities remain consistent.

FAQ 3: How do Resolution and Nearest Neighbors interact to shape the final clustering outcome?

These parameters operate sequentially. The k-NN parameter is used first to build the fundamental graph structure—the network of cells and their connections. The resolution parameter is applied second to partition this pre-built graph into clusters. Therefore, an improperly chosen k-NN (e.g., too low for a large dataset) can create a poor-quality graph that no resolution value can partition effectively.

- Symptom: Adjusting the resolution parameter does not yield the expected change in cluster number or granularity.

- Solution: Revisit the k-NN value and the initial steps of the analysis (normalization, highly variable gene selection, dimensionality reduction) as the underlying graph structure itself may be suboptimal.

FAQ 4: What quantitative and biological metrics should I use to determine the 'optimal' parameters?

There is no single "correct" parameter set; the goal is to find a biologically plausible and analytically robust result.

- Symptom: Uncertainty in which clustering result to use for downstream annotation.

- Solution:

- Internal Metrics: Use metrics like silhouette score (cluster compactness and separation).

- External Metrics: If a partial annotation exists, use metrics like Adjusted Rand Index (ARI) to compare clustering results to a ground truth [15].

- Biological Validation: The most critical step is to inspect the expression of well-established marker genes across the clusters. Optimal parameters should yield clusters with distinct and biologically interpretable marker expression profiles.

Experimental Protocol for Parameter Optimization

Below is a detailed, step-by-step methodology for systematically evaluating clustering parameters, as derived from evaluated literature [15].

Aim: To identify a set of clustering parameters (Resolution and k-Nearest Neighbors) that yield a biologically meaningful and robust cell-type classification from an scRNA-seq count matrix.

Procedure:

- Data Pre-processing: Normalize the raw count matrix (e.g., using SCTransform or log-normalization) and identify a set of highly variable genes (HVGs) that will be used for downstream analysis.

- Dimensionality Reduction: Perform PCA on the scaled expression data of the HVGs. Determine the number of significant principal components (PCs) to retain using an elbow plot or JackStraw plot.

- Graph Construction & Clustering: a. Construct a k-Nearest Neighbor graph in the PC space. b. Apply a community detection algorithm (e.g., the smart local moving algorithm in Seurat) to partition the graph into clusters, using a specified resolution parameter [15].

- Systematic Parameter Grid Test: a. Define a grid of values for k-NN (e.g., k=15, 20, 30, 50) and resolution (e.g., res=0.2, 0.4, 0.8, 1.2, 1.6). b. Iterate the clustering process (Step 3) for each combination of k-NN and resolution.

- Evaluation and Comparison: a. For each resulting clustering, calculate internal validation metrics (e.g., average silhouette width). b. Visualize all results using UMAP or t-SNE, colored by the cluster labels. c. For each cluster in each result, find the differentially expressed genes (DEGs) compared to all other cells.

- Biological Interpretation: a. Annotate the clusters from different parameter sets using canonical cell-type markers from the DEG analysis. b. Select the parameter set that produces clusters which are both stable (high internal metrics) and biologically interpretable (distinct, meaningful marker expression).

The Scientist's Toolkit: Essential Reagents & Software

Table 2: Key Research Reagents and Computational Tools for scRNA-seq Clustering

| Item | Function in Clustering Analysis |

|---|---|

| Seurat (v4.3.0+) | A comprehensive R toolkit for single-cell genomics that provides a complete workflow for clustering, including graph construction and resolution-based partitioning [15]. |

| Scanpy | A Python-based toolkit comparable to Seurat, offering scalable and efficient functions for clustering and analysis of large-scale scRNA-seq data. |

| Biclustering Methods (e.g., QUBIC2, runibic) | Advanced algorithms that cluster cells and genes simultaneously, useful for identifying local gene-expression patterns that might be missed by standard clustering [15]. |

| Clustering Validation Metrics (ARI, Silhouette Score) | Quantitative measures used to compare the performance and quality of different clustering results against a ground truth or based on internal structure [15]. |

| Canonical Cell Marker Genes | Well-established genes known to be specifically expressed in certain cell types; the biological "ground truth" for validating that computationally derived clusters correspond to real cell populations. |

Visualizing Parameter Relationships

The following diagram illustrates the logical workflow and decision-making process involved in optimizing clustering parameters for cell annotation. The path leads from raw data to a validated, biologically annotated cluster graph.

Clustering Parameter Optimization Workflow

From Theory to Practice: Methods for Determining Optimal Clustering Resolution

Frequently Asked Questions (FAQs)

Q1: What is the core challenge in selecting clustering resolution for scRNA-seq data? The fundamental challenge is that clustering algorithms require user-defined parameters (like resolution), and the optimal values are dataset-specific. Without foreknowledge of cell types, it is difficult to assess cluster quality and avoid under-clustering (masking biological structure) or over-clustering (creating non-biological subdivisions) [16]. Automated methods provide data-driven, objective ways to determine these parameters.

Q2: How does the Average Silhouette Width help in choosing the number of clusters? The Silhouette Width measures how similar a cell is to its own cluster compared to other clusters. Values range from -1 to 1, where values near +1 indicate well-separated clusters. The average silhouette score across all cells for a given clustering result (e.g., for a specific resolution or k) provides a single metric to compare different parameter sets. The parameter set that maximizes the average silhouette width is often considered a good candidate for the optimal cluster number [17] [18].

Q3: What is a "robustness score" in the context of clustering, and how is it different from silhouette width?

A robustness score, such as the one generated by the chooseR framework, quantifies the stability of a cluster across multiple iterations of clustering performed on subsampled data. It indicates how often cells are consistently assigned to the same cluster across these iterations [16]. While silhouette width assesses cluster separation based on distances in expression space, the robustness score assesses cluster stability against data perturbations.

Q4: My dataset is very large (>10,000 cells). Are these automated methods still practical?

Computational time is a significant concern for large datasets. Conventional consensus methods like multiK and chooseR can be slow due to repeated clustering and building consensus matrices [11]. However, newer tools like scICE use a more efficient metric (the Inconsistency Coefficient) and parallel processing, achieving up to a 30-fold speed improvement, making them suitable for larger datasets [11].

Q5: The automated tool suggested a resolution, but one cluster has a low robustness score. What should I do? This is a common scenario. A globally optimal parameter does not guarantee all clusters are equally well-resolved [16]. The recommended strategy is to take the cells from the low-robustness cluster and perform a re-clustering in isolation. This allows you to better subdivide these cells without the influence of other, more distinct populations, potentially revealing more robust sub-structures [16] [11].

Troubleshooting Guides

Issue 1: Unstable Clustering Results Across Different Random Seeds

Problem: Cluster labels change significantly every time you run the clustering algorithm with a different random seed, undermining the reliability of your results [11].

Diagnosis: This is a known issue with stochastic clustering algorithms like Louvain and Leiden. The inconsistency suggests that the cluster structure at your chosen resolution is not stable.

Solutions:

- Implement a consistency evaluation framework: Use a tool like

scICE(Single-cell Inconsistency Clustering Estimator) to calculate the Inconsistency Coefficient (IC) for your clustering results. An IC close to 1 indicates highly consistent labels across random seeds, while a higher IC indicates inconsistency [11]. - Adopt a consensus approach: Use a method like

chooseRormultiK, which run clustering many times on subsampled data. They identify parameter values that produce clusters where cells are consistently co-clustered together across iterations [16] [19].

Issue 2: Choosing Between Multiple "Good" Resolution Parameters

Problem: The average silhouette width or another metric is high for several different resolution values, and you are unsure which one to select for your biological interpretation.

Diagnosis: Biological systems often have a multi-scale organization, meaning different "correct" cluster numbers can exist for different cell type hierarchies [19].

Solutions:

- Use multi-resolution diagnostic tools: Tools like

MultiKare explicitly designed to identify multiple insightful numbers of clusters (K). It provides diagnostic plots showing several candidate Ks, which may correspond to major cell types (low K) and finer subtypes (high K) [19]. - Analyze the silhouette width distribution: Instead of just the average, look at the distribution of silhouette widths per cluster for each candidate resolution. A good clustering should have most clusters with high average silhouette scores and no clusters with many negative scores [17] [18].

- Inspect with Clustree: Visualize how cells move between clusters as the resolution increases. A stable cluster tree with clear branching points can help you select a resolution that captures the main biological states without excessive fragmentation [16].

Issue 3: Poor Silhouette or Robustness Scores for Specific Clusters

Problem: The global clustering metrics are acceptable, but a few specific clusters show low silhouette widths or robustness scores.

Diagnosis: This indicates that these specific cell populations are not well-separated from their neighbors or have internal heterogeneity.

Solutions:

- Focus on the problematic clusters: Isolate the cells from the low-scoring clusters and re-run your entire clustering workflow (including dimensionality reduction) on this subset of cells. This "sub-clustering" approach often reveals finer, more robust substructure that was masked in the global analysis [16] [11].

- Check cluster purity: Calculate the purity for each cell, defined as the proportion of its neighboring cells (in expression space) that belong to the same cluster. A low median purity for a cluster confirms it is highly intermixed with another cluster [18].

- Investigate biology: Use differential expression analysis on the poorly separated clusters to determine if the distinction is biologically meaningful. If no strong marker genes are found, merging the clusters might be justified.

Comparison of Automated Clustering Selection Methods

The table below summarizes key automated methods for selecting clustering resolution or cluster number.

| Method Name | Core Approach | Key Metric(s) | Primary Output | Notable Features |

|---|---|---|---|---|

| chooseR [16] | Subsampling and bootstrapped iterative clustering | Robustness score, co-clustering frequency | Near-optimal parameter value & per-cluster robustness | Flexible across workflows (Seurat, scVI); identifies less robust clusters |

| Silhouette Analysis [17] | Cluster separation distance | Silhouette width (per cell and average) | Optimal number of clusters (k) | Intuitive measure of cluster cohesion and separation |

| MultiK [19] | Consensus clustering across multiple resolutions | Relative Proportion of Ambiguous Clustering (rPAC), frequency of K | Multiple optimal cluster numbers (K) | Provides a multi-resolution perspective; finds both classes and subclasses |

| scICE [11] | Parallel clustering with random seed variation | Inconsistency Coefficient (IC) | Set of consistent cluster labels | High speed for large datasets; does not require a consensus matrix |

Key Metrics for Cluster Validation

The table below defines and compares the primary metrics used to evaluate clustering quality.

| Metric | Definition | Interpretation | Strengths | Weaknesses |

|---|---|---|---|---|

| Average Silhouette Width [17] [18] | Measures how similar a cell is to its own cluster vs. other clusters. Based on distances in a low-dimensional space (e.g., PCA). | Values close to +1: excellent separation. ~0: indifferent. Negative: poor separation. | Intuitive; captures both over- and under-clustering. | Can be computationally heavy for very large datasets without approximation. |

| Robustness Score (chooseR) [16] | The frequency with which cells are co-clustered together across multiple subsampling iterations. | High score: stable, reproducible cluster. Low score: unstable cluster. | Directly measures stability to data perturbation; provides a per-cluster score. | Computationally intensive as it requires many clustering runs. |

| Inconsistency Coefficient (IC) (scICE) [11] | Derived from the similarity of cluster labels generated across multiple random seeds. | IC close to 1: high consistency. IC > 1: increasing inconsistency. | Fast to compute; does not require a distance matrix or subsampling. | A newer metric that may be less familiar to researchers. |

| Cluster Purity [18] | The proportion of a cell's neighbors that belong to the same cluster. | High median purity: well-separated clusters. Low purity: intermingled clusters. | Easy to understand; directly measures neighborhood mixing. | Sensitive to the definition of "neighbors" (e.g., k in k-NN graph). |

Experimental Protocol: Implementing chooseR for Robustness Scoring

This protocol outlines the steps to implement the chooseR framework for selecting clustering parameters and assessing cluster robustness [16].

1. Define Parameter Range and Setup:

- Choose the clustering parameter to optimize (e.g., resolution for Seurat or scVI).

- Define a logical range of values to test (e.g., resolution from 0.1 to 3.0).

- Set the number of bootstrap iterations (e.g., 100) and the subsampling proportion (e.g., 80% of cells).

2. Iterative Subsampling and Clustering:

- For each parameter value in the range:

- For each bootstrap iteration:

- Randomly subsample the defined proportion of cells from the full dataset.

- Run the entire clustering workflow (dimensionality reduction, graph construction, clustering) on the subsampled data using the current parameter value.

- Record the cluster labels for the subsampled cells.

- For each bootstrap iteration:

3. Build Co-clustering Matrices:

- For each parameter value, create a co-clustering matrix. This matrix records, for each pair of cells, how many times they were assigned to the same cluster across all bootstrap iterations where both cells were selected.

4. Calculate Robustness Metrics:

- Global Robustness: Identify the parameter value that produces the highest number of robust clusters. This is often determined by analyzing the distribution of median silhouette scores derived from the co-clustering matrices, selecting the value with the highest confidence-interval bound [16].

- Per-cluster Robustness Score: For the chosen optimal parameter, calculate a robustness score for each cluster. This can be the average within-cluster co-clustering frequency or the cluster's silhouette score based on the co-clustering matrix [16].

5. Downstream Analysis:

- Use the cluster labels generated with the optimal parameter for all downstream biological interpretation.

- Use the per-cluster robustness scores to flag less reliable clusters for further investigation or sub-clustering.

Workflow Visualization

The following diagram illustrates the generic workflow for automated resolution selection using subsampling and robustness metrics, as implemented in tools like chooseR.

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

| Item / Tool | Function / Purpose | Example / Notes |

|---|---|---|

| Seurat [16] [20] | A comprehensive R toolkit for single-cell genomics. Used for QC, normalization, dimensionality reduction, clustering, and differential expression. | The FindClusters function is used for graph-based clustering with a tunable resolution parameter. |

| Scanpy [16] | A scalable Python toolkit for analyzing single-cell gene expression data. Analogous to Seurat. | Can be integrated with scVI for dimensionality reduction and clustering. |

| chooseR [16] | An R framework that wraps around clustering workflows (e.g., Seurat, scVI) to guide parameter selection via subsampling and robustness metrics. | Provides both a near-optimal resolution and a per-cluster robustness score. |

| scICE [11] | A Python tool for fast evaluation of clustering consistency using the Inconsistency Coefficient (IC) and parallel processing. | Recommended for large datasets (>10,000 cells) due to its computational efficiency. |

| MultiK [19] | An R tool for objective, multi-resolution estimation of cluster numbers (K) using consensus clustering. | Outputs multiple candidate Ks, corresponding to different hierarchical levels (e.g., cell types vs. subtypes). |

| Silhouette Analysis [17] [18] | A classic cluster validation method implemented in scikit-learn (Python) and cluster package (R). |

The silhouette_score function can be used to compute the average silhouette width for a clustering result. |

| Ground Truth Annotations [7] | Manually curated cell labels from reliable methods (e.g., FACS sorting). Serves as a benchmark for validating clustering accuracy. | Sourced from databases like the CellTypist organ atlas to avoid bias from algorithm-derived labels. |

In the context of optimizing clustering resolution for annotation research, intrinsic goodness metrics provide a powerful, unsupervised method for evaluating the quality of clustering results when ground truth labels are unavailable. These metrics assess cluster quality based solely on the data's inherent structure and the quality of the partition, focusing on the fundamental trade-off between intra-cluster cohesion (how similar data points are within a cluster) and inter-cluster separation (how distinct different clusters are) [21]. For researchers and scientists, particularly in drug development, leveraging these metrics is crucial for validating computational models and ensuring biological findings are robust and reproducible.

Two particularly effective intrinsic metrics are:

- Within-Cluster Dispersion: Measures the compactness or cohesiveness of data points within a single cluster. A lower dispersion indicates a tighter, more well-defined cluster.

- Banfield-Raftery (B-R) Index: A statistical index that helps determine the optimal number of clusters by balancing within-cluster similarity against between-cluster differences.

Recent research on single-cell RNA sequencing (scRNA-seq) data has demonstrated that these two metrics can be effectively used as proxies for clustering accuracy, allowing for the immediate comparison of different clustering parameter configurations [22]. This is especially valuable in biological research where true cell-type labels are often unknown and must be inferred.

Frequently Asked Questions for Experimental Troubleshooting

1. Why should I use intrinsic metrics instead of just comparing known cell types?

Using known cell types for validation (extrinsic metrics) is not always possible, especially when investigating novel or rare cell populations. Intrinsic metrics do not require any external information and assess the goodness of clusters based solely on the initial data [22]. This prevents circular reasoning, where a clustering method is evaluated against labels it helped create, and allows for the discovery of previously unknown biological structures [22] [6].

2. My clustering results change every time I run the algorithm. How can intrinsic metrics help?

Variability in clustering results due to stochastic algorithms is a major challenge that undermines reliability [11]. Intrinsic metrics provide an objective standard for comparison. By calculating metrics like the Within-Cluster Dispersion and Banfield-Raftery Index across multiple algorithm runs, you can identify the most stable and consistent clustering configuration, moving beyond a single, potentially random, result [11].

3. The Banfield-Raftery Index suggests a different number of clusters than the Silhouette Index. Which one should I trust?

Different cluster validity indices have different mathematical models and can exhibit varying characteristics [21]. It is common for metrics to suggest different optimal numbers. The best practice is not to rely on a single index but to use a consensus approach.

- Generate multiple clusterings across a range of parameters (e.g., resolution, number of nearest neighbors).

- Calculate several intrinsic metrics (e.g., B-R Index, Within-Cluster Dispersion, Silhouette Index, Calinski-Harabasz Index) for each configuration.

- Compare the results and look for a configuration that is consistently highly ranked across multiple metrics. This multi-metric approach increases confidence in the final selection.

4. What are the most common pitfalls when using Within-Cluster Dispersion?

The primary pitfall is that minimizing within-cluster dispersion alone can lead to overfitting. An algorithm can achieve zero dispersion by assigning each data point to its own cluster, which is not a meaningful result. Therefore, Within-Cluster Dispersion must always be used in conjunction with a metric that also accounts for the number of clusters and the separation between them, which is precisely what the Banfield-Raftery Index does [23].

Experimental Protocol: Validating Clustering Parameters with Intrinsic Metrics

This protocol outlines a systematic approach for using Within-Cluster Dispersion and the Banfield-Raftery Index to optimize clustering parameters, based on methodologies from recent single-cell RNA sequencing studies [22].

1. Data Preprocessing and Subsampling

- Begin with a normalized count matrix (e.g., from scRNA-seq).

- To ensure robustness and computational efficiency, perform stratified subsampling, taking 20% of cells 100 times while respecting the original dataset's proportions [22].

- For each subsample, perform standard preprocessing, including quality control, normalization, and dimensionality reduction (e.g., using PCA or UMAP) [22].

2. Parameter Space Exploration

- Choose a clustering algorithm (e.g., Leiden, K-means).

- Define a grid of key parameters to test. For graph-based algorithms like Leiden, this typically includes:

- Resolution Parameter: Controls the granularity of clustering; higher values lead to more clusters.

- Number of Nearest Neighbors (k): Affects the construction of the neighborhood graph.

- Number of Principal Components (PCs): Influences the distance calculation between cells by determining the dimensionality of the input space [22].

3. Metric Calculation and Analysis

- For each combination of parameters in your grid, run the clustering algorithm.

- For each resulting clustering, calculate:

- The Within-Cluster Dispersion.

- The Banfield-Raftery Index.

- The number of clusters (

k) generated.

- A lower B-R index generally indicates a better cluster configuration. The goal is to find the parameter set that minimizes this index.

4. Results Interpretation

- Research indicates that using UMAP for graph generation and increasing the resolution parameter generally has a beneficial impact on accuracy [22].

- The positive effect of resolution is accentuated when using a reduced number of nearest neighbors, which creates sparser, more locally sensitive graphs [22].

- The optimal number of principal components is highly dependent on data complexity and should be tested thoroughly [22].

The workflow can be summarized as follows:

The following table synthesizes key experimental findings on how clustering parameters impact accuracy, based on a robust linear mixed regression model analysis [22].

| Parameter | Impact on Accuracy | Key Interaction & Finding |

|---|---|---|

| Resolution | Beneficial (increased accuracy with higher values) | Impact is stronger with a reduced number of nearest neighbors, which preserves fine-grained cellular relationships [22]. |

| UMAP for Neighborhood Graph | Beneficial | Using UMAP for graph generation has a positive impact on clustering accuracy [22]. |

| Number of Principal Components (PCs) | Variable | Highly dependent on data complexity; requires systematic testing [22]. |

| Within-Cluster Dispersion & B-R Index | Predictive | Can be used as effective proxies for accuracy to compare parameter configurations [22]. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and metrics essential for experiments in clustering optimization.

| Item | Function & Application |

|---|---|

| Cluster Validity Indices (CVIs) | A category of metrics, including Within-Cluster Dispersion and the Banfield-Raftery Index, used as fitness functions to automatically evaluate the quality of candidate clustering solutions in metaheuristic-based algorithms [21]. |

| Intrinsic Goodness Metrics | Metrics that evaluate cluster quality without external labels, based solely on the data's structure and the partition's cohesion and separation [22]. |

| Stratified Subsampling | A data sampling technique that preserves the original proportion of cell types in subsets, used to ensure robust and unbiased validation of clustering parameters [22]. |

| Element-Centric Similarity (ECS) | A similarity metric used to compare multiple clustering results, which is more intuitive and unbiased than other label similarity metrics. It is used in frameworks like scICE to evaluate clustering consistency [11]. |

| Inconsistency Coefficient (IC) | A metric derived from multiple clustering runs that quantifies the reliability of cluster labels. An IC close to 1 indicates highly consistent and reliable results [11]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

FAQ 1: What is the most critical parameter to optimize in single-cell RNA-seq clustering, and why? The resolution parameter is often one of the most critical. It directly controls the granularity of the clustering, determining whether you over-merge distinct cell populations or over-split homogeneous ones. Research shows that increasing the resolution parameter generally has a beneficial impact on clustering accuracy, particularly when used in conjunction with UMAP for neighborhood graph generation and a reduced number of nearest neighbors, which creates sparser, more locally sensitive graphs [7].

FAQ 2: How can I evaluate my clustering results when there is no ground truth or prior biological knowledge? In the absence of ground truth, you should rely on intrinsic goodness metrics. Studies demonstrate that metrics like within-cluster dispersion and the Banfield-Raftery index can effectively serve as proxies for clustering accuracy. These metrics allow for a direct comparison of different parameter configurations without requiring external labels, helping to prevent the misuse of clustering parameters when cell type information is unavailable [7].

FAQ 3: My computational analysis is too slow for large-scale cytometry data. What strategies can help? For large datasets, such as those in cytometry containing millions of cells, consider an aggregation-based approach. Tools like SuperCellCyto can group highly similar cells into "supercells" or "metacells," reducing dataset size by 10 to 50 times. This significantly lowers computational demands for downstream tasks like clustering and dimensionality reduction while striving to preserve biological heterogeneity, including rare cell subsets that might be lost through simple random subsampling [24].

FAQ 4: Unsupervised clustering of T-cells is not cleanly separating CD4+ and CD8+ populations. What is wrong? This is a common and validated issue. The assumption that unsupervised clustering will always reflect core T-cell biology like CD4/CD8 lineage can be flawed. Analyses show that clustering is often driven by other factors like cellular metabolism (e.g., glucose metabolism), T-cell receptor (TCR) transcripts, or immunoglobulin genes rather than standard phenotypic markers [6]. For accurate T-cell annotation, prefer semi-supervised approaches that incorporate prior knowledge or, ideally, use paired protein-based data (CITE-seq) or TCR sequencing information to guide or validate clustering [6].

FAQ 5: How can I visualize the relationships between clusters across multiple resolutions?

Use a clustering tree visualization (e.g., from the clustree R package). This tool plots clusters at successively higher resolutions, showing how samples move between clusters as the number of clusters increases. It helps identify stable clusters, reveals which clusters split from others, and shows areas of instability potentially caused by over-clustering, thereby informing the choice of an appropriate resolution [4].

Troubleshooting Common Problems

Problem: Inconsistent clustering results between algorithm runs or with slight parameter changes.

- Potential Cause: The inherent stochasticity in some algorithms or high sensitivity to parameters like the number of nearest neighbors or principal components.

- Solution: Use algorithms with deterministic modes if available. For key parameters like the number of PCs, perform a sensitivity analysis as this parameter is highly affected by data complexity [7]. Employ stability measures and clustering trees to identify robust parameter ranges [4].

Problem: Clustering appears driven by technical artifacts or batch effects instead of biology.

- Potential Cause: The data contains strong technical variations (e.g., batch effects, sequencing depth differences) that overshadow biological signal.

- Solution: Implement robust data integration and batch correction methods before clustering. When using a tool like SuperCellCyto, ensure supercells are created within samples to prevent aggregating cells across different batches or samples [24].

Problem: Failure to identify rare cell populations.

- Potential Cause: The clustering resolution is too low, or the algorithm is biased toward major populations. Standard subsampling to handle large datasets can exclude rare cells.

- Solution: Increase the clustering resolution parameter systematically. Avoid simple random subsampling; use methods like SuperCellCyto that aim to preserve rare cell types during data compression [24].

Experimental Protocols & Data Presentation

Detailed Methodology for Clustering Parameter Optimization

This protocol is adapted from research on optimizing clustering parameters for single-cell RNA-seq analysis using intrinsic metrics [7].

1. Data Acquisition and Preprocessing:

- Obtain datasets with reliable, manually curated ground truth annotations from sources like the CellTypist organ atlas. Using annotations derived from biologically reliable methods (e.g., FACS sorting) is crucial to avoid bias.

- Perform standard scRNA-seq preprocessing: quality control, normalization, and filtering. The use of datasets from different anatomical districts enhances the robustness of the parameter analysis.

2. Parameter Sweep and Clustering:

- Select clustering algorithms to test (e.g., Leiden, DESC).

- Define a grid of key parameters to sweep. Essential parameters often include:

- Resolution: Test a range (e.g., 0.1 to 2.0) to control cluster granularity.

- Number of Principal Components (PCs): Test various values (e.g., 10, 20, 50).

- Number of Nearest Neighbors (k): Test different values (e.g., 15, 30, 50) for graph-based methods.

- Dimensionality Reduction Method: Compare UMAP, t-SNE, etc.

- Run the clustering algorithm for each combination of parameters in the sweep.

3. Performance Evaluation:

- With Ground Truth: Compare cluster labels to ground truth annotations using metrics like Adjusted Rand Index (ARI) or clustering accuracy.

- Without Ground Truth (Intrinsic Validation): Calculate a suite of intrinsic metrics for each clustering result. The study identified 15 such metrics, with within-cluster dispersion and the Banfield-Raftery index being particularly informative proxies for accuracy [7].

4. Model Training and Prediction (Optional):

- Use the computed intrinsic metrics as features to train a regression model (e.g., ElasticNet).

- The goal is to predict the clustering accuracy based solely on intrinsic metrics, which is especially valuable for new datasets lacking ground truth.

5. Analysis and Interpretation:

- Use a linear model to analyze the main effects and interactions of parameters on accuracy. For example, the study found that using UMAP and a higher resolution is beneficial, and this effect is stronger with a lower number of nearest neighbors [7].

- Visualize the results using tools like clustering trees to understand cluster stability and relationships across resolutions [4].

Table 1: Impact of Clustering Parameters on Accuracy. Based on a linear mixed model analysis of parameter interactions in scRNA-seq clustering [7].

| Parameter | Main Effect on Accuracy | Notable Interaction |

|---|---|---|

| Resolution | Positive (Increase is generally beneficial) | Effect is accentuated with a reduced number of nearest neighbors. [7] |

| Nearest Neighbors (k) | Negative (Lower k can be better) | Lower k leads to sparser graphs, preserving fine-grained relationships. Impact is data-dependent. [7] |

| Dimensionality Reduction (UMAP) | Positive | Using UMAP for neighborhood graph generation has a beneficial impact. [7] |

| Number of PCs | Context-dependent / Complex | Effect is highly dependent on data complexity. Requires testing a range of values. [7] |

Table 2: Key Intrinsic Metrics for Clustering Validation. These metrics can predict clustering accuracy in the absence of ground truth labels [7].

| Intrinsic Metric | Description | Utility as Accuracy Proxy |

|---|---|---|

| Within-Cluster Dispersion | Measures the compactness of clusters by calculating the sum of squared distances from points to their cluster centroid. | Effective for immediate comparison of parameter configurations. [7] |

| Banfield-Raftery Index | A likelihood-based metric that balances within-cluster similarity and between-cluster separation. | Effective for immediate comparison of parameter configurations. [7] |

| Silhouette Coefficient | Measures how similar an object is to its own cluster compared to other clusters. | Commonly used, but not highlighted as a top proxy in the cited study. [4] |

Mandatory Visualizations

Diagram 1: Parameter Sweep Workflow

Diagram 2: Clustering Tree of Resolutions

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Parameter Optimization

| Item / Resource | Function / Purpose |

|---|---|

| CellTypist Organ Atlas | A source of well-annotated scRNA-seq datasets with manually curated ground truth labels, essential for validating clustering parameters against reliable biological annotations. [7] |

clustree R Package |

Generates clustering tree visualizations to explore relationships between clusters across multiple resolutions, helping to identify stable clusters and appropriate resolution levels. [4] |

| SuperCellCyto R Package | Groups highly similar cells into "supercells" to dramatically reduce the size of large datasets (e.g., from cytometry), enabling faster downstream clustering and analysis without losing rare cell types. [24] |

| Leiden Algorithm | A widely used graph-based clustering algorithm common in single-cell analysis. Its output is strongly influenced by the resolution parameter. [7] |

| DESC Algorithm | A deep learning-based method (Deep Embedding for Single-cell Clustering) known for superior performance in clustering specific cell types and capturing heterogeneity. [7] |

| Word2Vec Embeddings | An NLP-based technique that can be applied to biological sequences (e.g., TCR CDR3 regions) to create vector representations for subsequent clustering and analysis. [25] |

| Intrinsic Goodness Metrics | A set of statistics (e.g., within-cluster dispersion, Banfield-Raftery index) calculated from the data and cluster labels alone to evaluate clustering quality without ground truth. [7] |

Frequently Asked Questions (FAQs)

1. How does clustering resolution directly impact my differential expression (DE) results? Clustering resolution determines the granularity at which cell populations are separated. Using too low a resolution (too few clusters) can merge biologically distinct cell types, causing DE analysis to identify markers for heterogeneous mixtures rather than pure populations. This leads to diluted or misleading gene signatures. Conversely, an excessively high resolution can split homogeneous populations into artificial, over-fitted subgroups, causing DE to identify statistically significant but biologically irrelevant markers based on technical noise rather than true transcriptionic differences [7].

2. Why do my functional enrichment results seem inconsistent when I re-run my clustering? This inconsistency often stems from clustering stochasticity. Graph-based clustering algorithms like Leiden have an inherent random component, meaning different random seeds can produce varying cluster labels for the same resolution parameter. When these labels change, the cell composition of each cluster shifts, leading to different sets of differentially expressed genes being passed to the enrichment analysis. This ultimately results in different functional terms (e.g., GO, KEGG pathways) being reported [11].

3. What is a "consistent" clustering result and how do I find it? A consistent clustering result is one that is stable and reproducible across multiple runs of the algorithm with different random seeds. A cluster is considered highly consistent if its labels remain nearly identical every time the clustering is repeated. You can identify these using metrics like the Inconsistency Coefficient (IC), where an IC close to 1 indicates high label consistency. Focusing on resolutions that yield consistent clusters prevents downstream analysis from being built on unstable, arbitrary partitions [11].

4. Which parameters most significantly affect clustering accuracy and integration? The choice of algorithm, the method for generating the neighborhood graph (e.g., UMAP), the number of nearest neighbors, and the resolution parameter are critical. Using UMAP for graph generation and a higher resolution parameter generally improves accuracy, particularly when the number of nearest neighbors is reduced, creating a sparser graph that is more sensitive to fine-grained local relationships. The optimal number of principal components is also highly dependent on your dataset's specific complexity [7].

Troubleshooting Guide

Common Integration Issues and Solutions

| Problem | Symptom | Underlying Cause | Solution |

|---|---|---|---|

| Vanishing Clusters | A cell cluster appears at one resolution but disappears at another or when the random seed is changed [11]. | The cluster is not a robust, consistent population and is highly sensitive to clustering parameters. | Use a tool like scICE to evaluate clustering consistency across seeds. Focus on resolutions that yield stable, high-consistency clusters (IC ≈ 1) [11]. |

| Uninterpretable Enrichment | Functional enrichment analysis returns vague, generic, or biologically implausible pathways. | Clustering resolution is too low, merging distinct cell types and forcing DE to find markers for an artificial, mixed population. | Incrementally increase the resolution parameter and re-cluster. Validate clusters using known marker genes to ensure they represent pure populations before DE [7]. |

| Proliferation of Rare Clusters | High resolution leads to many tiny clusters with no strong marker genes. | Over-clustering; the resolution parameter is too high, splitting true populations and fitting to technical noise. | Use intrinsic metrics like within-cluster dispersion or the Banfield-Raftery index to guide parameter selection. Lower the resolution and merge clusters post-hoc if supported by biology [7]. |

| Unstable DE Gene Lists | The list of differentially expressed genes for a cluster changes dramatically between analysis runs. | Underlying cluster labels are inconsistent due to algorithm stochasticity, not a change in biology [11]. | Employ a consensus clustering approach or use a tool like scICE to find stable cluster labels before performing DE. Run clustering multiple times to assess variability. |

Essential Experimental Protocol for Reliable Integration

Objective: To establish a robust workflow that connects stable clustering results to trustworthy differential expression and functional enrichment.

Step 1: Data Preprocessing and Dimensionality Reduction

- Perform standard quality control (filtering low-quality cells/genes).

- Normalize the data and scale it.

- Perform linear dimensionality reduction via Principal Component Analysis (PCA).

- Use a method like

scLENSfor automatic signal selection to determine the number of meaningful PCs [11].

Step 2: Systematic Clustering and Consistency Evaluation

- Construct a neighborhood graph (e.g., using UMAP).

- For a range of resolution parameters, run the Leiden algorithm multiple times (e.g., 50-100) with different random seeds.

- For each resolution, calculate the Inconsistency Coefficient (IC) to evaluate the stability of the resulting cluster labels [11].

- Key Metric: The IC is calculated from a similarity matrix of all cluster label pairs. An IC close to 1.0 indicates high consistency, while a higher IC indicates label instability [11].

- Select for downstream analysis only those resolution parameters that yield a low IC (i.e., stable clusters).

Step 3: Differential Expression and Functional Enrichment

- Using the stable cluster labels from Step 2, perform differential expression analysis between clusters of interest and all other cells.

- Take the resulting list of significant DE genes (e.g., top 100-200 upregulated genes) and input it into a functional enrichment tool (e.g., for Gene Ontology or KEGG pathways).

- The resulting enriched terms are now based on a stable, reproducible cellular grouping, giving greater confidence in the biological interpretation.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Workflow |

|---|---|

| Leiden Algorithm [7] [11] | A graph-based clustering algorithm widely used in single-cell analysis for its speed and ability to uncover fine-grained community structure in cellular data. |

| scICE [11] | A tool that efficiently evaluates clustering consistency by calculating the Inconsistency Coefficient, helping to identify reliable cluster labels and narrow down the number of clusters to explore. |

| Intrinsic Goodness Metrics [7] | Metrics like within-cluster dispersion and the Banfield-Raftery index that serve as proxies for clustering accuracy in the absence of ground truth, allowing for quick comparison of parameter configurations. |

| Element-Centric Similarity [11] | A similarity metric used to compare two different cluster labels in a more intuitive and unbiased way, forming the basis for calculating the inconsistency coefficient in scICE. |

| UMAP [7] | A dimensionality reduction technique often used for generating the neighborhood graph in clustering, noted for having a beneficial impact on clustering accuracy. |

Workflow for Robust Integrated Analysis

The following diagram illustrates the recommended pathway for connecting stable clustering to downstream interpretation.

Quantitative Impact of Clustering Parameters

This table summarizes how key parameters influence clustering outcomes based on empirical findings [7].

| Parameter | Primary Effect | Impact on Downstream Analysis | Recommended Strategy |

|---|---|---|---|

| Resolution | Controls cluster number & granularity. | High resolution can split true populations; low resolution can merge them, directly affecting DE gene lists. | Test a wide range; use consistency metrics (IC) and known markers to select. |

| Number of Nearest Neighbors (k) | Influences graph connectivity. | A lower k creates a sparser graph, which can improve preservation of fine-grained relationships when combined with higher resolution [7]. | Balance k and resolution; lower k can accentuate the beneficial effect of increased resolution. |

| Dimensionality Reduction Method | Alters cell-to-cell distances. | UMAP for graph generation has been shown to have a beneficial impact on accuracy compared to other methods [7]. | Prefer UMAP for neighborhood graph generation. |

| Random Seed | Impacts stochastic optimization. | Causes label variability for the same resolution, leading to instability in DE and enrichment [11]. | Run multiple iterations (e.g., with scICE) to assess consistency, not just one seed. |