Optimizing Highly Variable Gene Selection for Single-Cell Foundation Model Training: A Comprehensive Guide

Selecting highly variable genes (HVGs) is a critical preprocessing step that profoundly impacts the performance and biological relevance of single-cell foundation models (scFMs).

Optimizing Highly Variable Gene Selection for Single-Cell Foundation Model Training: A Comprehensive Guide

Abstract

Selecting highly variable genes (HVGs) is a critical preprocessing step that profoundly impacts the performance and biological relevance of single-cell foundation models (scFMs). This article provides a comprehensive guide for researchers and drug development professionals, covering foundational concepts, methodological implementation, optimization strategies, and validation approaches for HVG selection in scFM training. Drawing on recent benchmarks and emerging methodologies, we explore how informed HVG selection enhances data integration, improves cell type annotation, and boosts model robustness for downstream clinical and biomedical applications.

The Critical Role of Highly Variable Genes in Single-Cell Foundation Models

Defining Highly Variable Genes and Their Importance in scFM Training

Frequently Asked Questions

What are Highly Variable Genes (HVGs) and why are they important for single-cell analysis?

Highly Variable Genes (HVGs) are genes whose expression levels show significant variation across individual cells within a homogeneous cell population. Unlike bulk RNA sequencing which analyzes averaged expression from mixed cells, single-cell RNA sequencing (scRNA-seq) can detect these cell-to-cell differences. HVGs are crucial because they are presumed to contribute strongly to cellular heterogeneity and often reflect underlying biological processes, cellular states, and key transcriptional drivers of cell identity and function. Selecting HVGs is a critical feature selection step that reduces data dimensionality, enhances computational efficiency, and improves the interpretability of downstream analyses like clustering and trajectory inference [1] [2] [3].

Why is HVG selection critical for training single-cell Foundation Models (scFMs)?

HVG selection is a fundamental preprocessing step for scFM training because it directly addresses the high dimensionality, sparsity, and noise characteristic of scRNA-seq data. By focusing on the most informative features, HVG selection:

- Reduces Computational Burden: Training on a subset of genes (e.g., 1,000-5,000 HVGs) instead of the entire genome (>20,000 genes) drastically lowers memory and computational requirements [4] [5].

- Improves Model Performance: It filters out genes that contribute mostly technical noise or uninteresting biological variation, allowing the model to learn more robust and biologically meaningful representations of cells and genes [6] [3].

- Enhances Biological Insight: scFMs trained on HVGs are better at capturing the fundamental principles of cellular identity and state, which improves their performance on downstream tasks like cell type annotation, batch integration, and perturbation prediction [4] [5].

My scFM isn't performing well on downstream tasks. Could my HVG selection be the issue?

Yes, the choice of HVG method and the number of genes selected can significantly impact scFM performance. If your model is struggling, consider these troubleshooting steps:

- Evaluate the Number of Features: Benchmarking studies show that the number of selected features is strongly correlated with the performance of many downstream tasks. While using too few genes can lose biological signal, an excessively large gene set may introduce more noise. It is recommended to test a range of gene set sizes (e.g., from 500 to 5,000) to find the optimum for your specific data and task [6].

- Try a Different HVG Method: Different HVG methods use distinct statistical models to quantify variation, which can lead to varying gene rankings. If one method (e.g.,

scran) underperforms, try another (e.g.,Seurat's VST or the novel GLP method) [1] [6] [3]. - Check for Batch Effects: If your training data combines multiple datasets, consider using batch-aware HVG selection methods. This ensures that the selected genes are variable within biological conditions rather than being driven by technical batch effects [6].

How do I choose the right HVG method for my scFM project?

There is no single "best" method that outperforms all others in every scenario. Your choice should be guided by your data characteristics and project goals. The table below summarizes key methods:

Table 1: Comparison of Highly Variable Gene (HVG) Detection Methods

| Method | Underlying Model / Approach | Key Features | Considerations |

|---|---|---|---|

| Brennecke et al. | Fits a generalized linear model to the relationship between squared coefficient of variation (CV²) and mean expression [1]. | Uses DESeq's normalization; filters genes with high uncertainty. | A foundational method; may be superseded by more modern approaches. |

| scran | Fits a trend to the mean-variance relationship of log-transformed expression values using LOESS [1] [2]. | Uses a specialized pooling algorithm for normalization; decomposes variance into technical and biological components. | Robust; considered a strong performer in benchmarks. |

| Seurat (VST) | Uses a polynomial regression model to find a variance-stabilizing transformation of the mean-variance relationship [1] [6]. | Places genes into bins based on expression mean to calculate z-scores; widely used and integrated in Seurat workflows. | A common and effective default choice. |

| BASiCS | Employs a Bayesian hierarchical model to decompose variation into technical and biological components [1]. | Can use spike-in RNAs to model technical noise; can also identify lowly variable genes. | Computationally intensive; powerful for sophisticated noise modeling. |

| GLP | Uses optimized LOESS regression on the relationship between gene average expression and "positive ratio" (fraction of cells expressing the gene) [3]. | Designed to be robust to high sparsity and dropout noise in scRNA-seq data; reported to outperform other methods in some benchmarks. | A recently developed method; promising for handling noisy data. |

A practical workflow is to start with a well-established method like scran or Seurat's VST, and if downstream analysis is unsatisfactory, benchmark against alternative methods like GLP [1] [3].

Experimental Protocols

Standard Workflow for HVG Detection

The following protocol outlines a standard computational workflow for identifying HVGs, which can be applied prior to scFM training.

Inputs: A quality-controlled and normalized single-cell RNA-seq count matrix (cells x genes).

Procedure:

- Quantification of Variation: Calculate a measure of variation for each gene. The most straightforward approach is to compute the variance of the log-normalized expression values across all cells [2].

- Modeling the Mean-Variance Relationship: Model the trend between gene expression abundance (mean) and the chosen variation metric (variance). This step is crucial to account for the fact that variance in expression data is often mean-dependent [2].

- The

modelGeneVar()function (e.g., in thescranpackage) fits a trend to the per-gene variance with respect to abundance. It then decomposes the total variance for each gene into a technical component (the fitted value) and a biological component (the residual from the trend) [2]. - If spike-in RNAs are available,

modelGeneVarWithSpikes()can provide a more precise estimate of technical noise by fitting a trend to the spike-in variances [2].

- The

- Statistical Testing & Ranking: Rank genes based on their biological variation component (or a related statistic like the z-score of residuals from the trend). A statistical test (e.g., against a null hypothesis of no biological variation) is often performed, and genes are ranked by significance or the magnitude of the biological component [1] [2].

- Selection of Top HVGs: Select the top N genes (e.g., 2,000-5,000) from the ranked list for downstream analysis or scFM training [6].

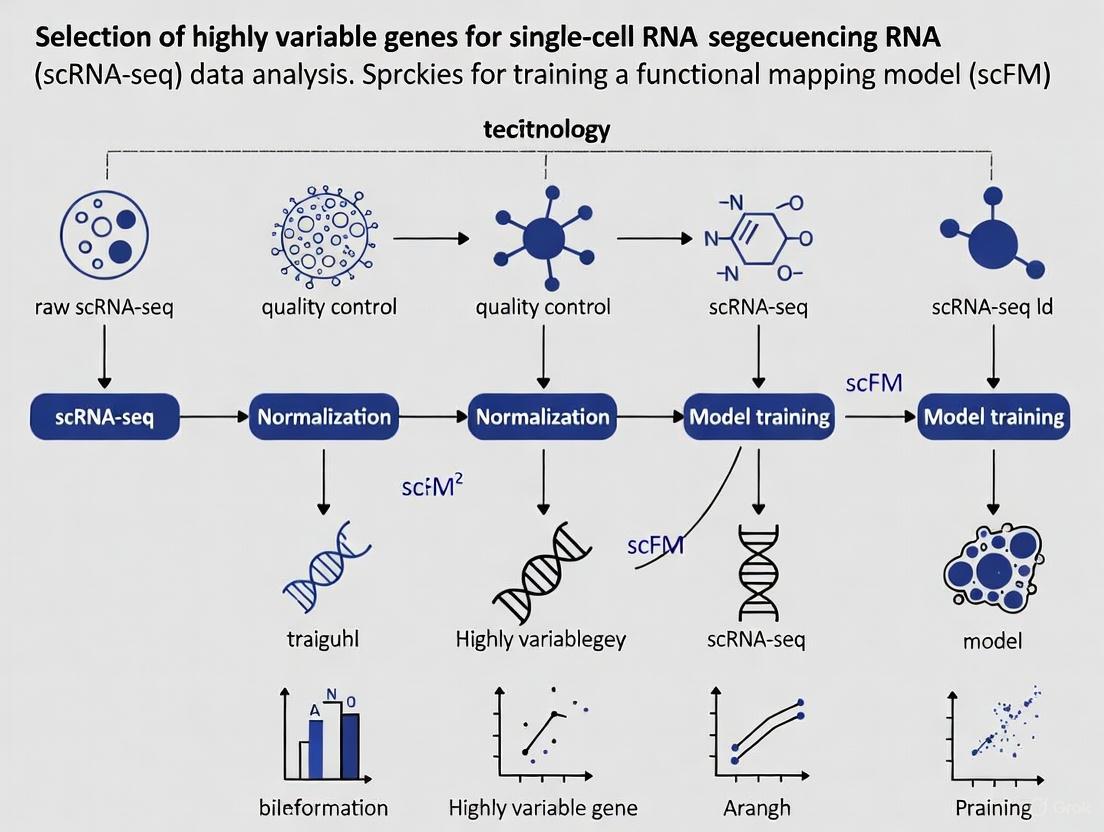

The following diagram illustrates the logical workflow and the key decision points.

Protocol for Validating scFM Biological Relevance Using HVG-Derived Insights

After training an scFM, it is critical to validate that the model has captured meaningful biological patterns and not just technical artifacts.

Inputs: A trained scFM, a held-out test scRNA-seq dataset with high-quality cell type annotations.

Procedure:

- Generate Cell Embeddings: Use the scFM in "zero-shot" mode to generate latent embeddings for all cells in the test dataset without any fine-tuning [4].

- Evaluate with Ontology-Informed Metrics: Assess the quality of the embeddings using novel metrics that incorporate prior biological knowledge.

- scGraph-OntoRWR: This metric evaluates whether the relationships between cell types captured by the scFM embeddings are consistent with known biological relationships defined in cell ontologies [4].

- Lowest Common Ancestor Distance (LCAD): For cell type annotation tasks, this metric measures the ontological proximity between misclassified cell types and the correct label, ensuring that errors are biologically plausible (e.g., confusing two T cell subtypes is less severe than confusing a T cell with a neuron) [4].

- Compare to Baseline Methods: Compare the performance of your scFM against simpler baseline models (e.g., standard HVG selection followed by PCA) on relevant downstream tasks like batch integration, cell type annotation, and cancer cell identification [4].

- Functional Validation (Gold Standard): For the most critical HVGs identified or prioritized by the scFM, plan wet-lab experiments to functionally validate their role. Techniques include:

- RNA FISH / Immunofluorescence (IF): To confirm the spatial localization and protein-level expression of the gene product [7].

- Gene Knockdown/Knockout: Using CRISPR/Cas9 or RNAi to silence the gene and observe phenotypic consequences in functional assays (e.g., migration, proliferation) [7] [8].

- Specific Cell Sorting: Using FACS to isolate cell populations based on HVG expression and validate their identity and function via RT-qPCR [7].

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for scRNA-seq and Validation

| Reagent / Tool | Function | Application Context |

|---|---|---|

| ERCC Spike-in RNAs | Exogenous RNA controls used to precisely model technical noise and improve the accuracy of HVG detection [2]. | scRNA-seq library preparation and normalization. |

| UMI Barcodes | Unique Molecular Identifiers are short random sequences that label individual mRNA molecules, allowing for accurate quantification by correcting for PCR amplification biases [9]. | scRNA-seq library preparation (e.g., in 10x Genomics, Drop-seq). |

| siRNAs / shRNAs | Small interfering RNAs or short hairpin RNAs used for transient gene knockdown to functionally validate the role of a target HVG [8]. | Functional validation in vitro (e.g., in HUVECs). |

| CRISPR-Cas9 System | A gene-editing tool used to create stable gene knockouts, providing definitive evidence for a gene's function [7] [8]. | Functional validation in vitro and in vivo. |

| FACS Antibodies | Fluorescently-labeled antibodies against cell surface or intracellular proteins for isolating specific cell populations via flow cytometry [7]. | Target population isolation and validation. |

| RNA FISH Probes | Fluorescently labeled nucleic acid probes that bind to specific RNA sequences, enabling visualization of gene expression and spatial localization in tissues [7]. | Spatial validation of HVG expression. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is Highly Variable Gene (HVG) selection a critical step in single-cell RNA-seq analysis? HVG selection is the process of identifying genes that exhibit significant cell-to-cell variation in expression within a seemingly homogeneous cell population. This step is crucial because it focuses downstream analyses on the genes most likely to be informative of biological heterogeneity, such as different cell types or states. Using HVGs improves computational efficiency, prevents overfitting, and enhances the performance of clustering algorithms by reducing the data dimensionality from tens of thousands of genes to a manageable set of features that capture key biological signals [2] [10]. Neglecting this step can obscure meaningful biological insights, as clustering and dimensionality reduction are highly sensitive to the choice of input genes [2].

FAQ 2: My single-cell analysis failed to identify a known rare cell population. Could HVG selection be the cause? Yes, this is a common challenge. While for abundant and well-separated cell types, even large random gene sets can perform adequately, the identification of rare or subtly different cell types is highly sensitive to the HVG selection method [10]. For instance, in a study focusing on CD4+ T cells, using the standard HVG method successfully identified a FOXP3+ T regulatory (Treg) population (~1.8% of cells), whereas using an equal number of randomly selected genes completely failed to reveal this population, even when the entire transcriptome was used [10]. This demonstrates that for subtle biological differences, a thoughtful choice of HVG method is essential.

FAQ 3: I see inconsistent results every time I re-run my HVG analysis on a subset of my data. How can I improve reproducibility? Low reproducibility in HVG selection is a recognized issue that can significantly impact downstream analyses like cell classification. A benchmarking study on hematopoietic cells revealed that the reproducibility of HVG methods—measured as the proportion of overlapping genes identified across multiple tests—varies considerably [11]. Methods like SCHS showed high reproducibility (>90%), while others, including some popular Seurat methods, showed lower reproducibility (50-70%) [11]. To overcome this, consider using a robust strategy like SIEVE (SIngle-cEll Variable gEnes), which employs multiple rounds of random sampling to identify a stable, high-confidence set of HVGs, thereby minimizing stochastic noise and improving the consistency of your results [11].

FAQ 4: How many Highly Variable Genes should I select for my analysis? The optimal number is not fixed and can depend on the complexity of your dataset and the biological question. However, using too many features can be as detrimental as using too few. Evidence suggests that for standard tasks like clustering peripheral blood mononuclear cells (PBMCs), performance plateaus after selecting a few hundred to a few thousand genes [10]. For example, in one PBMC dataset, clustering metrics reached a high level with around 725 selected genes [10]. It is recommended to avoid automatically selecting the maximum number of HVGs, as this can introduce noise. Start with a standard number (e.g., 2,000-3,000) and perform sensitivity checks to ensure your key findings are robust.

FAQ 5: How does HVG selection specifically impact the training of single-cell foundation models (scFMs)? Single-cell foundation models are pre-trained on massive single-cell datasets to learn universal biological knowledge. The choice of input genes fundamentally shapes what the model learns. HVG selection ensures the model focuses its capacity on the most biologically meaningful signals rather than technical noise or uninformative genes. A comprehensive benchmark of scFMs highlights that the input feature space is a critical factor in model performance [4]. While scFMs are robust tools, their ability to generate insightful embeddings for downstream tasks is directly influenced by the quality and relevance of the features they were trained on. A variability-centric view of feature selection aligns with the core strength of scRNA-seq—capturing cell-to-cell heterogeneity—and can empower scFMs to uncover deeper biological insights [12] [4].

Performance Comparison of HVG Selection Methods

The table below summarizes the performance of various HVG methods based on evaluations using hematopoietic stem/progenitor cells (HSPCs) and mature blood cells [11].

| Method | Reproducibility | Preference for Gene Expression Level | Notes on Performance |

|---|---|---|---|

| SCHS | High (>90%) | Prefers highly expressed genes | High accuracy in cell classification; robust performance. |

| Seurat (VST, SCT, DISP) | Low to Medium (50-70%) | Mix of high and low (quarter of genes are lowly expressed) | Common and accessible; performance can be improved with SIEVE. |

| M3Drop | Low (50-70%) | Selects lowly expressed genes | Lower distinguishing capability for similar cell types (e.g., HSPCs). |

| Scran | Medium (80-90%) | Prefers highly expressed genes | Does not select lowly expressed genes. |

| Scmap | Medium (80-90%) | Prefers highly expressed genes | Slightly lower cluster purity. |

| ROGUE/ROGUE_n | Medium (80-90%) | Prefers highly expressed genes | Does not select lowly expressed genes. |

| SIEVE | Very High (After application) | Shifts selected genes towards median expression | A meta-strategy applied to other methods to enhance reproducibility and biological relevance. |

Experimental Protocol: Identifying Robust HVGs with the SIEVE Strategy

The SIEVE strategy is designed to overcome the low reproducibility of many standalone HVG methods by leveraging multiple rounds of random sampling [11].

Sample the Data

- Begin with your complete, quality-controlled, and normalized scRNA-seq dataset (e.g., a

SeuratorSingleCellExperimentobject). - Randomly sample (without replacement) a predefined proportion of cells (e.g., 70%) from the full dataset. This subset is termed the "reference set." The remaining cells (e.g., 30%) form the "query set."

Identify HVGs on the Reference Set

- Apply your chosen HVG selection method (e.g., Seurat's VST, scran, SCHS) to the reference set to identify a list of highly variable genes. The number of top HVGs to select per run (e.g., 2,000) should be kept constant.

- Note: It is critical to use the same HVG method and parameters for every iteration.

Iterate the Process

- Repeat steps 1 and 2 a large number of times (e.g., 50 times). Each iteration generates a new, independent reference set and a corresponding list of HVGs.

Calculate Gene Frequencies and Define the Robust HVG Set

- Across all iterations, count how many times each gene appears in the HVG lists.

- The final, robust set of HVGs is defined as those genes that appear in a high proportion (e.g., >80%) of the iterations. This frequency threshold can be adjusted based on the desired stringency.

Validate with Downstream Analysis

- Use the robust HVG set for downstream tasks such as PCA, clustering, and cell type annotation.

- The SIEVE strategy has been shown to improve the accuracy of single-cell classification and helps recover more biologically relevant genes that are enriched for cluster markers [11].

SIEVE Workflow for Robust HVG Selection

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Function in HVG Analysis / scRNA-seq |

|---|---|

| ERCC Spike-in RNAs | External RNA controls used to model technical noise and improve the accuracy of variance estimation during normalization and HVG selection [1] [2]. |

| scRNA-seq Analysis Packages (Seurat, scran, Scanpy) | Software suites that provide integrated implementations of various HVG discovery methods (e.g., VST, scran, M3Drop) within a complete analytical workflow [1] [13] [11]. |

| SIEVE Software | A dedicated tool for implementing the SIEVE resampling strategy to identify a robust and reproducible set of HVGs, available from https://github.com/YinanZhang522/SIEVE [11]. |

| Single-cell Foundation Models (scGPT, Geneformer) | Pre-trained deep learning models on large-scale scRNA-seq data. Proper HVG selection can inform the feature space used for fine-tuning these models on specific tasks [4]. |

Advanced Concepts: Differential Variability (DV) Analysis

Moving beyond traditional differential expression (DE), which focuses on changes in mean expression, Differential Variability (DV) analysis identifies genes with significant differences in expression variability (cell-to-cell heterogeneity) between two conditions [12]. These DV genes can offer distinct functional insights.

Method Spotlight: spline-DV

- Purpose: A statistical framework to identify DV genes from scRNA-seq data between two experimental conditions (e.g., healthy vs. diseased) [12].

- How it works: It models gene-level statistics—mean expression, coefficient of variation (CV), and dropout rate—in a 3D space. A spline-fit curve is generated for each condition, representing the expected relationship between these statistics. For each gene, a vector is drawn from the nearest point on the spline to its observed position. The difference between the vectors for the two conditions (the DV vector) quantifies the change in variability, with its magnitude being the DV score [12].

- Application: In a study on diet-induced obesity,

spline-DVidentifiedPlpp1(increased variability in high-fat diet) andThrsp(decreased variability in high-fat diet) as top DV genes, providing insights into metabolic dysfunction that were not apparent from mean expression alone [12].

spline-DV Analysis Workflow

Frequently Asked Questions

Q1: What is the fundamental difference between technical and biological variation in single-cell RNA-seq data? Biological variation refers to the natural, functionally relevant differences in gene expression between individual cells. This includes differences due to cell type, cell cycle stage, transcriptional bursts, and response to environmental stimuli [14]. Technical variation arises from the experimental process itself, including cell isolation, reverse transcription, cDNA amplification, and sequencing. This results in biases such as low capture efficiency, high dropout rates (where a gene is observed in one cell but not in another), and amplification noise [14] [15].

Q2: Why is it critical to account for technical variation before selecting Highly Variable Genes (HVGs) for model training? HVG selection focuses on genes that show more cell-to-cell variability than expected from technical noise alone [15]. If technical variation is not accounted for, the selected gene set will be contaminated with technical artifacts rather than true biological signals. This leads to poor performance in downstream tasks such as cell clustering, data integration, and training of single-cell foundation models (scFMs), as the model learns from noise instead of biology [6] [16].

Q3: How does poor feature selection impact the training and performance of a single-cell foundation model (scFM)? Benchmarking studies show that feature selection methods directly affect the quality of data integration and query mapping, which are foundational for building robust reference atlases [6]. Using poorly selected features can cause an scFM to learn incorrect cellular representations, reducing its ability to accurately predict cellular responses to perturbations (in-silico perturbation). For example, an open-loop scFM might have a low positive predictive value, which can be significantly improved by incorporating even a small amount of experimental perturbation data to guide feature selection in a "closed-loop" framework [17].

Q4: What are some common methods to identify and correct for technical variance?

- Highly Variable Gene Selection: Standard practice is to select genes exhibiting high biological variability after modeling the mean-variance trend expected from technical noise [15] [16].

- Batch Correction: Computational integration methods are used to remove technical differences between samples or batches while conserving biological variation [6] [18].

- Utilizing Stable Genes: Using stably expressed genes as a negative control can help establish a baseline for technical noise [6].

Troubleshooting Guides

Issue 1: High Batch Effect in Integrated Data

Problem: After integrating multiple datasets for scFM pre-training, cells cluster strongly by batch or study of origin rather than by biological cell type.

- Potential Cause 1: The feature selection method did not properly account for batch effects.

- Solution: Use a batch-aware feature selection method. Instead of selecting HVGs per dataset, perform feature selection on a collaboratively corrected matrix or use a method that explicitly models batch information to identify features robust to technical variation [6].

- Potential Cause 2: The selected features are themselves driven by technical artifacts.

- Solution: As a diagnostic, check the expression patterns of the top selected features. If they are dominated by mitochondrial or ribosomal genes, they may reflect cell viability or other technical confounders. Re-run HVG selection with these genes filtered out.

Issue 2: scFM Fails to Generalize to New Query Data

Problem: Your trained scFM performs well on its training data but fails to accurately map or make predictions for new query samples.

- Potential Cause 1: The feature space used for training is not representative of the biological variation in the query.

- Solution: Re-evaluate the feature selection strategy. Ensure that the set of highly variable genes captures broad biological programs rather than being overly specific to the training data. Benchmarking suggests that using highly variable feature selection is effective for producing high-quality integrations and mappings [6].

- Potential Cause 2: Technical differences (e.g., sequencing depth, protocol) between the training and query data are too great.

- Solution: Apply a robust scaling/normalization method (e.g., Robust Scaler) to both training and query data using the same reference to minimize the effect of outliers and technical discrepancies [19].

Issue 3: Low Positive Predictive Value in In-Silico Perturbation

Problem: Predictions made by your scFM for genetic perturbations (e.g., knockout, overexpression) have a low rate of experimental validation.

- Potential Cause: The "open-loop" model predictions are based on patterns in the baseline data that may not fully capture the effects of perturbation.

- Solution: Implement a "closed-loop" fine-tuning framework. Incorporate a small set (as few as 10-20 examples) of experimental perturbation data (e.g., from Perturb-seq) into the model's fine-tuning process. This has been shown to triple the positive predictive value of in-silico perturbation predictions [17].

Experimental Protocols

Protocol 1: Benchmarking Feature Selection for Data Integration and Query Mapping

This protocol is based on a robust benchmarking pipeline from a registered report in Nature Methods [6].

1. Define Evaluation Metrics: Select metrics that cover multiple performance categories:

- Batch Effect Removal: Batch ASW (Average Silhouette Width), Batch PCR (Principal Component Regression).

- Biological Conservation: cLISI (Cell-type Local Inverse Simpson's Index), isolated label F1 score.

- Query Mapping Quality: Cell distance, mLISI (Mapping LISI).

- Unseen Population Detection: Milo, Unseen cell distance.

2. Establish Baseline Methods: Run integrations with diverse baseline feature sets to establish performance ranges for scaling metrics. Recommended baselines include:

- All features.

- 2,000 highly variable features (e.g., using the scanpy implementation).

- 500 randomly selected features (average over 5 sets).

- 200 stably expressed features (e.g., using scSEGIndex) as a negative control.

3. Scale and Summarize Performance: Scale the metric scores for each method relative to the minimum and maximum baseline scores. Aggregate scores within each metric category to summarize performance.

Protocol 2: Performing Cell-Type Specific Differential Expression with Biological Replicates

This protocol ensures valid statistical testing by treating samples, not individual cells, as experimental units [18].

1. Data Processing:

- Start with raw count data (do not use batch-corrected counts for DE).

- Perform quality control filtering and cell type annotation.

- If multiple samples are analyzed, integrate them with batch correction.

2. Pseudobulk Aggregation:

- For each cell type of interest, sum the UMI counts across all cells belonging to the same sample.

- This creates a representative expression profile for that cell type in each sample.

3. Differential Expression Analysis:

- Use established bulk RNA-seq tools (e.g.,

edgeR,limma-voom) on the pseudobulk counts. - The statistical model tests for expression differences between conditions (e.g., treated vs. control), using the samples as replicates.

Data Presentation

Table 1: Comparison of Multi-Condition Differential Expression Tools for scRNA-seq

| Tool Name | Statistical Approach | Key Feature / Use Case |

|---|---|---|

| muscat [18] | Mixed-effects model or Pseudobulk | Detects subpopulation-specific state transitions from multi-sample, multi-condition data. |

| NEBULA [18] | Mixed-effects model | A fast negative binomial mixed model for large-scale multi-subject data. |

| MAST [18] | Mixed-effects model | Accounts for the high number of zero counts; supports random effects. |

scran (pseudobulkDGE) [18] |

Pseudobulk | Wraps bulk tools edgeR and limma-voom for easy use with single-cell data. |

| distinct [18] | Differential distribution test | Tests for differences in the entire expression distribution, not just the mean. |

Table 2: Reagent and Tool Solutions for scRNA-seq Experimental Design

| Item | Function in Experiment |

|---|---|

| Unique Molecular Identifiers (UMIs) | Molecular barcodes added to each transcript during reverse transcription. They allow for accurate molecule counting by correcting for PCR amplification bias [20]. |

| Cell Barcodes | Short DNA sequences that uniquely label all mRNAs from a single cell, allowing samples to be multiplexed and computationally demultiplexed after sequencing [20]. |

| Fluidigm C1 System | A microfluidic-array platform for automated cell capture and library preparation, suitable for medium-throughput, full-length transcriptome analysis [20]. |

| 10x Chromium | A microfluidic-droplet platform for high-throughput, 3' or 5' tag-based library preparation. It is cost-effective for profiling tens of thousands of cells [20]. |

| SMART-seq2 | A plate-based, full-length RNA-seq protocol that provides uniform transcript coverage, enabling the study of splice variants and allele-specific expression [20]. |

Workflow Visualizations

HVG Selection for scFM Training

scRNA-seq Multi-Condition Experimental Design

Troubleshooting Guide: Addressing Data Sparsity and Technical Noise

FAQ 1: How does data sparsity fundamentally challenge scFM training?

Data sparsity, primarily caused by dropout events where genes are measured as unexpressed due to technical limitations, obscures the true biological signal in single-cell RNA sequencing (scRNA-seq) data. This high sparsity and high dimensionality create a "curse of dimensionality" problem where technical noise accumulates and masks subtle biological phenomena, including tumor-suppressor events in cancer and cell-type-specific transcription factor activities [21].

The core issue is that statistical properties of high-dimensional spaces differ dramatically from our intuitive understanding of two- or three-dimensional spaces. As dimensionality increases, the distance between data points becomes less meaningful, and technical noise dominates the data structure, making it difficult for foundation models to learn meaningful biological representations [21].

Solution: Implement comprehensive noise reduction before scFM training. The RECODE algorithm models technical noise arising from the entire data generation process as a general probability distribution and reduces it using eigenvalue modification theory rooted in high-dimensional statistics. This approach effectively mitigates technical noise while preserving biological signals [21].

FAQ 2: What methods effectively reduce both technical noise and batch effects simultaneously?

Traditional approaches that simply combine technical noise reduction with batch correction often fail because conventional batch correction methods typically rely on dimensionality reduction techniques like PCA, which themselves are insufficient to overcome the curse of dimensionality [21].

Solution: Utilize integrated approaches like iRECODE (integrative RECODE), which synergizes high-dimensional statistical noise reduction with established batch correction methods. iRECODE integrates batch correction within an "essential space" after initial noise variance-stabilizing normalization, thereby minimizing accuracy degradation and computational costs associated with high-dimensional calculations [21].

Table 1: Performance Comparison of Noise Reduction Methods

| Method | Technical Noise Reduction | Batch Effect Correction | Relative Error in Mean Expression | Computational Efficiency |

|---|---|---|---|---|

| Raw Data | None | None | 11.1-14.3% | Baseline |

| RECODE Only | Excellent | Limited | Not Available | High |

| Traditional Batch Correction | Limited | Good | Not Available | Moderate |

| iRECODE | Excellent | Excellent | 2.4-2.5% | 10x more efficient than combined approaches |

FAQ 3: How does feature selection impact scFM performance with sparse data?

Feature selection—specifically the identification of Highly Variable Genes (HVGs)—is critical for managing data sparsity in scFM training. The choice of feature selection method significantly affects downstream integration performance, query mapping, label transfer accuracy, and detection of unseen cell populations [6].

Benchmarking studies reveal that using highly variable genes generally leads to better integrations, but the specific feature selection strategy must be carefully chosen. Methods that leverage the relationship between gene average expression level and positive ratio (the proportion of cells where a gene is detected) can more robustly identify biologically informative features amidst technical noise [3].

Table 2: Feature Selection Method Performance Benchmarks

| Method | Adjusted Rand Index (ARI) | Normalized Mutual Information (NMI) | Silhouette Coefficient | Robustness to Dropout |

|---|---|---|---|---|

| GLP | Highest | Highest | Highest | Excellent |

| VST | High | High | High | Good |

| SCTransform | High | High | High | Good |

| M3Drop/NBDrop | Moderate | Moderate | Moderate | Excellent |

| Random Selection | Low | Low | Low | Poor |

Solution: Consider advanced feature selection methods like GLP (Genes identified through LOESS with Positive ratio), which uses optimized LOESS regression to capture the relationship between gene average expression level and positive ratio while minimizing overfitting. This approach has demonstrated consistent outperformance across multiple benchmark criteria compared to eight leading feature selection methods [3].

FAQ 4: Are foundation models inherently robust to data sparsity, or do traditional methods remain competitive?

Current benchmarking reveals that while scFMs are robust and versatile tools for diverse applications, simpler machine learning models can be more adept at efficiently adapting to specific datasets, particularly under resource constraints. Notably, no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection based on factors such as dataset size, task complexity, and computational resources [4].

The performance improvement of scFMs often arises from creating a "smoother landscape" in the pretrained latent space, which reduces the difficulty of training task-specific models. However, the high sparsity, high dimensionality, and low signal-to-noise ratio of transcriptome data continue to present challenges for all models [4].

Solution: Evaluate the specific requirements of your biological question before committing to scFM approaches. For well-defined tasks with limited data, traditional methods may provide more efficient solutions. For exploratory analyses across diverse cell types and conditions, scFMs may offer advantages in capturing broader biological patterns [4] [5].

Experimental Protocols for Noise Mitigation

Protocol 1: Implementing iRECODE for Dual Noise Reduction

Input Preparation: Format your scRNA-seq data as a standard gene expression matrix with cells as columns and genes as rows [21].

Noise Variance-Stabilizing Normalization (NVSN): Map gene expression data to an essential space using NVSN to stabilize technical variance across the expression range [21].

Singular Value Decomposition: Apply SVD to decompose the normalized matrix into orthogonal components representing the primary sources of variation [21].

Principal Component Variance Modification: Modify principal component variances using eigenvalue modification theory to reduce technical noise [21].

Integrated Batch Correction: Apply Harmony batch correction within the essential space to minimize batch effects while preserving biological variation [21].

Reconstruction: Reconstruct the denoised, batch-corrected expression matrix for downstream scFM training [21].

Protocol 2: GLP Feature Selection for scFM Training

Data Preprocessing: Filter out genes captured in fewer than 3 cells to ensure statistical reliability [3].

Parameter Calculation: For each gene, compute:

- Average expression level (λ) = (1/c) × ΣXij

- Positive ratio (f) = (1/c) × Σmin(1, Xij) where c is the number of cells and Xij is the expression value [3].

Bayesian Information Criterion Optimization: Use BIC to automatically determine the optimal LOESS smoothing parameter (α) through:

- RSS = Σ(yj - ŷj)²

- BIC = c × ln(RSS/c) + k × ln(c) where k is the degrees of freedom [3].

Two-Step LOESS Regression:

- First step: Apply Tukey's biweight robust statistical method to identify outlier genes

- Second step: Assign zero weights to outliers and repeat LOESS regression for accurate gene selection [3].

Feature Selection: Select genes with expression levels significantly higher than expected based on the LOESS-predicted values from their positive ratios [3].

Workflow Visualization

Research Reagent Solutions

Table 3: Essential Computational Tools for scFM Training

| Tool/Resource | Primary Function | Application Context | Key Advantage |

|---|---|---|---|

| RECODE/iRECODE | Technical noise and batch effect reduction | Preprocessing for scFM training | Preserves full-dimensional data; parameter-free |

| GLP | Feature selection based on positive ratio | HVG selection for sparse data | Optimized LOESS regression minimizes overfitting |

| Harmony | Batch correction | Multi-dataset integration | Compatible with iRECODE framework |

| Vitessce | Multimodal data visualization | Quality control and result interpretation | Integrates spatial and single-cell data |

| scGPT | Foundation model architecture | scFM training and fine-tuning | Supports multiple omics modalities |

| CZ CELLxGENE | Curated single-cell data | Pretraining data source | Standardized access to annotated datasets |

Advanced Technical Considerations

Evaluating Biological Relevance of scFM Embeddings

When assessing scFM performance beyond standard metrics, implement ontology-informed evaluation strategies:

scGraph-OntoRWR: Measures consistency between cell type relationships captured by scFMs and prior biological knowledge encoded in cell ontologies [4].

Lowest Common Ancestor Distance (LCAD): Quantifies ontological proximity between misclassified cell types to assess the severity of annotation errors [4].

Roughness Index (ROGI): Evaluates the smoothness of the cell-property landscape in the latent space, where smoother landscapes typically indicate better generalization capability [4].

Cross-Modality Applications

The RECODE platform extends beyond transcriptomics to epigenomic and spatial data modalities. For single-cell Hi-C data, RECODE effectively mitigates sparsity to reveal cell-specific chromatin interactions and topologically associating domains that align with bulk Hi-C counterparts [21]. Similarly, for spatial transcriptomics, integrated visualization tools like Vitessce enable correlative analysis of spatial localization and gene expression patterns [22].

Adaptive Model Selection Framework

Given that no single scFM consistently outperforms others across all tasks, implement a decision framework based on:

- Dataset size: Traditional methods often suffice for smaller datasets (<10,000 cells)

- Task complexity: scFMs show advantages for novel cell type discovery and cross-tissue analyses

- Resource constraints: Consider computational requirements relative to available infrastructure

- Biological interpretability: Assess need for mechanistic insights versus predictive accuracy [4]

The Relationship Between HVGs and Foundational Model Architecture

Frequently Asked Questions (FAQs)

1. How does the choice of Highly Variable Genes (HVGs) impact the input structure of a single-cell foundation model (scFM)?

The selection of Highly Variable Genes (HVGs) is a fundamental pre-processing step that directly determines the "vocabulary" and input sequence for a transformer-based scFM. Unlike words in a language, genes in a cell have no inherent sequential order, so models must impose one. A common strategy is to rank genes by their expression levels within each cell, feeding the ordered list of top genes as a "sentence" for the model to process [5]. The number of HVGs selected (e.g., 1,200 or 2,048) defines the sequence length for each cell [4]. Different models employ various gene ordering strategies, and the choice of HVG set can influence how effectively the model learns biological relationships.

2. My scFM is not performing well on downstream tasks like cell type annotation. Could the HVG selection be a factor?

Yes, absolutely. The benchmark study by Li et al. (2025) found that no single scFM consistently outperforms others across all tasks, and simpler baseline methods can sometimes be more effective, particularly under resource constraints [4] [23]. If your model is underperforming, consider that the HVG set used during pre-training might not be optimal for your specific downstream dataset. The biological variation captured by a general-purpose HVG list may not align perfectly with the cell types or states in your target data. Evaluating the "biological relevance" of the embeddings using ontology-informed metrics can help diagnose this issue [4].

3. What is the relationship between a model's architecture and its need for value embeddings alongside gene token embeddings?

This is a key architectural consideration. Because scRNA-seq data provides an expression value for each gene, models must encode both the gene's identity (the "word") and its expression level (the "emphasis"). This is typically handled through a two-part input layer [4] [23]:

- Gene Token Embedding: A lookup table that represents each gene's identity as a vector, analogous to a word embedding in NLP.

- Value Embedding: A separate representation for the gene's expression value. Models use different strategies for this, such as value binning (discretizing the expression into categories) or value projection (creating an embedding based on the continuous value) [4]. This dual-embedding approach allows the transformer architecture to use its attention mechanisms to weight the importance of genes dynamically based on their context and expression level.

4. Are there scFMs that avoid the HVG selection problem altogether?

Some models are designed to use the entire genome rather than a pre-selected HVG list. For example, the scFoundation model is pretrained on nearly all human protein-encoding genes (19,264 genes) [4]. While this avoids the potential bias introduced by HVG selection, it comes at a significant computational cost and may require more sophisticated architectures or training strategies to handle the high dimensionality and sparsity of the data effectively.

Troubleshooting Guides

Problem: Poor Batch Integration Performance

Symptoms: After using an scFM for dataset integration, biological cell types remain clustered by batch (e.g., by patient or sequencing platform) instead of mixing seamlessly.

Potential Causes and Solutions:

| Step | Potential Cause | Diagnostic Check | Solution |

|---|---|---|---|

| 1 | HVG Mismatch | The set of HVGs used in pre-training captures technical artifacts specific to the pre-training datasets. | Fine-tune the model on a small sample of your target data to adapt the gene representations. Alternatively, use a model like Nephrobase Cell+ that employs adversarial training to actively remove batch signals [24]. |

| 2 | Insufficient Model Pretraining | The model was not pre-trained on data with batch effects as diverse as yours. | Check the pre-training corpus of your scFM. Select a model pre-trained on massive, diverse datasets (e.g., >30 million cells) from multiple sources, as scale and diversity improve robustness [24]. |

| 3 | Suboptimal Embeddings | The zero-shot cell embeddings from the scFM are not batch-invariant. | Use the scFM embeddings as a starting point and apply a dedicated batch-integration tool like Harmony or Scanorama as a post-processing step [25]. |

Problem: Inaccurate Gene Perturbation Prediction

Symptoms: Your scFM fails to accurately predict gene expression changes following single or double genetic perturbations, performing worse than simple additive baselines.

Potential Causes and Solutions:

| Step | Potential Cause | Diagnostic Check | Solution |

|---|---|---|---|

| 1 | Limited Perturbation Knowledge | The model's pre-training data may have lacked sufficient perturbation examples to learn causal relationships. | A recent benchmark found that simple linear models can outperform complex scFMs for this task [26]. Consider using a baseline model or a linear model enhanced with gene embeddings extracted from an scFM [26]. |

| 2 | Ineffective Gene Embeddings | The gene-token embeddings do not adequately capture functional gene-gene relationships. | Extract the gene embedding matrix (G) from the scFM and use it to train a simpler predictive model. Benchmarks show this can sometimes match or exceed the performance of the scFM's own decoder [26]. |

Experimental Protocols from Key Studies

Benchmarking ScFM Performance on Cell-Level Tasks

This protocol is adapted from the comprehensive benchmark study by Li et al. (2025) [4] [23].

Objective: To evaluate the quality of cell embeddings generated by different scFMs for tasks like batch integration and cell type annotation.

Materials:

- Test Datasets: Five high-quality scRNA-seq datasets with manual annotations. These should include multiple sources of batch effects (e.g., inter-patient, inter-platform, inter-tissue variations).

- scFMs for Testing: e.g., Geneformer, scGPT, UCE, scFoundation, LangCell, scCello.

- Baseline Methods: Traditional approaches such as Highly Variable Genes (HVGs) selection, Seurat, Harmony, and scVI.

- Evaluation Metrics: A suite of 12 metrics including:

- Traditional: Clustering accuracy, Silhouette score.

- Biology-Informed: scGraph-OntoRWR (measures consistency of captured cell type relationships with known biology), Lowest Common Ancestor Distance (LCAD) (measures severity of cell type misclassification).

Methodology:

- Feature Extraction: For each scFM and baseline method, generate zero-shot cell embeddings from the test datasets.

- Downstream Task Application: Apply the embeddings to specific cell-level tasks, such as:

- Dataset Integration: Visualize embeddings using UMAP and assess batch mixing and biological conservation.

- Cell Type Annotation: Train a simple classifier on the embeddings and evaluate its accuracy.

- Evaluation: Score the performance of each model using the full set of evaluation metrics.

- Ranking: Aggregate results using a non-dominated sorting algorithm to provide task-specific and overall model rankings.

Expected Output: A holistic ranking of scFMs, identifying the strengths and limitations of each for different biological applications. The study revealed that while scFMs are robust and versatile, simpler models can be more efficient for specific datasets [4].

Evaluating Gene Embeddings for Functional Relevance

Objective: To determine if the gene embeddings learned by an scFM capture meaningful biological relationships.

Materials:

- Gene Embeddings: The gene-token embedding matrix extracted from the input layer of the scFM.

- Reference Data: Known biological relationships from databases like Gene Ontology (GO).

- Baseline Embeddings: e.g., Functional Representation of Gene Signatures (FRoGS), which learns gene embeddings from GO hypergraphs [23].

Methodology:

- Embedding Extraction: For a set of common genes, obtain their vector representations from the scFM and the baseline method.

- Similarity Calculation: Compute the cosine similarity between all pairs of gene embeddings within each method.

- Functional Prediction Task: Use the gene embeddings to predict known biological relationships, such as GO term associations or tissue specificity.

- Performance Comparison: Evaluate and compare the prediction accuracy of the scFM-derived embeddings against the baseline embeddings.

Expected Output: Quantification of how well the scFM's intrinsic gene embeddings align with established biological knowledge, providing insight into the functional insights the model has learned during pre-training [23].

Model Architecture and HVG Processing Diagram

The diagram below illustrates how a single-cell foundation model transforms a cell's gene expression profile into a latent representation, highlighting the critical role of HVG selection and tokenization.

HVG Processing in scFM Architecture

Research Reagent Solutions

The following table details key computational tools and resources essential for working with single-cell foundation models and Highly Variable Genes.

| Resource Name | Type | Primary Function | Relevance to HVGs & Architecture |

|---|---|---|---|

| Geneformer [4] | Pre-trained scFM | A transformer model for cell and gene representation learning. | Uses a ranked list of 2,048 genes as input, demonstrating a specific HVG-based architecture. |

| scGPT [4] [5] | Pre-trained scFM | A generative transformer for single-cell biology. | Employs 1,200 HVGs and uses value binning for expression levels, illustrating an alternative input strategy. |

| scFoundation [4] [26] | Pre-trained scFM | A large model for gene expression and perturbation prediction. | Uses all ~19k protein-encoding genes, showcasing an architecture that bypasses HVG selection. |

| Nephrobase Cell+ [24] | Organ-Specific scFM | A kidney-focused foundation model. | Pretrained on ~40M cells; its success suggests that specialized models can outperform general ones, which has implications for HVG relevance in specific tissues. |

| CellxGene [5] | Data Platform | Provides unified access to annotated single-cell datasets. | A primary source for obtaining diverse, high-quality data for model pre-training or benchmarking, which is crucial for defining robust HVG sets. |

| Seurat [25] | Analysis Toolkit | A comprehensive R package for single-cell genomics. | Provides standard pipelines for HVG selection and serves as a common baseline for benchmarking scFMs. |

| Harmony [4] [25] | Integration Algorithm | A tool for dataset integration. | Used as a post-processing step for scFM embeddings or as a baseline to compare against the integration performance of scFMs. |

Practical Implementation: HVG Selection Methods and Integration with scFM Pipelines

Comparative Analysis of Popular HVG Selection Methods (Seurat, Scran, SCHS, SIEVE)

FAQs on HVG Selection Principles and Best Practices

Q1: What is the core purpose of selecting Highly Variable Genes (HVGs) in single-cell RNA-seq analysis?

The primary purpose of HVG selection is to overcome the "curse of dimensionality" in single-cell RNA sequencing data by identifying a subset of genes that are most informative for distinguishing cell types or states. This process filters out genes that represent technical or biological noise, thereby enhancing the signal for downstream analyses such as clustering, dimensionality reduction, and cell type identification. Typically, only 3,000–5,000 of the tens of thousands of sequenced genes relate to cell-type-specific expression patterns, making HVG selection a critical pre-processing step to improve analytical resolution and accuracy [27].

Q2: For a multi-sample experiment, what is the recommended strategy to select HVGs that are robust across batches?

The recommended strategy for multi-sample experiments involves performing HVG selection on a per-batch basis and then identifying the consensus genes. This ensures the selected feature space is shared across samples. The methodology is as follows:

- Compute HVGs separately for each batch using the

batch_keyparameter in your HVG selection function. - For each gene, note how many batches it was identified as an HVG.

- Sort all genes by this count (

highly_variable_nbatches). - Select the top N genes (e.g., 3000) that are most frequently variable across batches for downstream analysis [28]. This consensus approach is crucial for data integration tasks, as it focuses on a shared set of features, improving integration quality and subsequent analysis.

Q3: Can I use the same set of HVGs for different analysis tasks, such as clustering and integration?

While a single set of HVGs can be used for multiple tasks, the optimal strategy may vary. For integration, the consensus method described above is highly recommended. For clustering within a single, well-controlled dataset, standard HVG selection on the entire dataset might be sufficient. However, it's important to note that no single method is universally best. For instance, SCHS excels in reproducibility but favors highly expressed genes, while other methods like M3Drop select more lowly expressed genes, which can impact clustering results [11]. Researchers should align their HVG selection strategy with their primary analytical goal.

Troubleshooting Common HVG Selection Issues

Q1: The tool I'm using (e.g., Seurat) is not returning the expected number of HVGs, even though I specified the nFeatures parameter. Why?

This is a documented issue that can occur in specific workflows. For example, in Seurat, this behavior has been observed when the RNA assay is split into multiple layers (e.g., by a batch key) before running FindVariableFeatures. The underlying cause may be related to how the function interacts with the split assay object. As a workaround, you can try running the HVG selection on an unsplit object first or ensure you are using the latest version of the software, as this may be a resolved bug. Always check the number of variable features stored in the output object to confirm the function's behavior [29].

Q2: My downstream clustering results are poor or do not resolve known cell populations. Could the HVG selection be the cause?

Yes, the choice of HVG selection method can significantly impact clustering resolution and accuracy. Different methods have biases; for example, some may overlook lowly expressed but biologically critical genes. If clustering performance is unsatisfactory, consider these steps:

- Re-evaluate your HVG method: Switch to a method known for higher accuracy in your biological context. The SIEVE method, for example, was developed to improve robustness and accuracy by minimizing stochastic noise [11].

- Check for batch effects: If your data contains multiple batches, ensure you are using a batch-aware HVG selection strategy. Using HVGs selected without considering batches can lead to batch effects dominating the biological signal.

- Explore method-specific diagnostics: Some methods, like SCHS, show high reproducibility, meaning the same genes are consistently selected across subsamples of your data. Low reproducibility in your chosen method can lead to unstable clustering results [11].

Q3: I am using an integrated object for clustering. Should I re-select HVGs after integration?

No, it is generally not sensible to re-select HVGs based on the integrated or corrected data. Highly Variable Gene detection methods are designed and calibrated for raw (or normalized) count data, which contains the technical and biological variation they are meant to discern. Integration methods like scVI explicitly remove unwanted technical variation (e.g., batch effects) to create a corrected expression matrix. Applying standard HVG selection on this "cleaned" data will not capture the intended sources of variation and is not part of standard analytical workflows [28].

Performance Comparison of HVG Methods

The table below summarizes the performance characteristics of various HVG selection methods based on an evaluation using scRNA-seq data from hematopoietic stem/progenitor cells and mature blood cells.

Table 1: Characteristics and Performance of HVG Selection Methods

| Method | Reproducibility | Key Strengths | Key Limitations | Bias in Gene Expression Level |

|---|---|---|---|---|

| SCHS | High | High reproducibility and accuracy [11] | Prefers selection of highly expressed genes [11] | Prefers highly expressed genes [11] |

| Seurat (VST, SCT, DISP) | Medium | Good distinguishing capability for similar cell types [11] | Moderate reproducibility [11] | Selects a mix, including ~25% lowly expressed genes [11] |

| Scran | Low to Medium | Good distinguishing capability [11] | Lower reproducibility; lower cluster purity [11] | Selects almost no lowly expressed genes [11] |

| M3Drop | Low | Can identify lowly expressed variable genes [11] | Lowest distinguishing capability and classification accuracy [11] | Selects a mix, including ~25% lowly expressed genes [11] |

| ROGUE | Low to Medium | - | Lower reproducibility; lower cluster purity [11] | Selects almost no lowly expressed genes [11] |

| Scmap | Low to Medium | - | Lower reproducibility; lower cluster purity [11] | Prefers highly expressed genes [11] |

| SIEVE | High (by design) | High robustness; improves cell classification accuracy; recovers lowly expressed variable genes [11] | Computationally intensive due to multiple rounds of sampling [11] | Mitigates bias, recovers genes across expression levels [11] |

Table 2: Impact on Downstream Analysis (Based on HSPC and Mature Blood Cell Data)

| Method | Cluster Purity | Classification Accuracy (HSPCs) | Classification Accuracy (Mature Cells) |

|---|---|---|---|

| SCHS | >90% | ~85-90% | >90% |

| Seurat | >90% | ~85-90% | >90% |

| Scran | ~90% (slightly inferior) | ~85-90% | >90% |

| M3Drop | >90% | Lowest | Lowest |

| ROGUE | ~90% (slightly inferior) | ~85-90% | >90% |

| Scmap | ~90% (slightly inferior) | ~85-90% | >90% |

| SIEVE | >90% | Substantially improved | Substantially improved |

Experimental Protocols for Key HVG Methods

Protocol 1: Standard HVG Selection with Seurat

This protocol describes a standard workflow for identifying HVGs on a single-cell dataset using Seurat.

- Normalization: Normalize the raw count data to account for sequencing depth using

NormalizeData. This typically involves log-normalization. - Selection: Run the

FindVariableFeaturesfunction. You must specify the following:nfeatures: The number of genes to select (e.g., 3000).selection.method: The specific algorithm to use (e.g., "vst", "sctransform", or "dispersion").

- Validation: The selected HVGs are stored in the Seurat object. You can access them with

VariableFeatures(object)and visualize the selection usingVariableFeaturePlot.

Protocol 2: Robust Multi-Batch HVG Selection with Scanpy

This protocol is essential for datasets comprising multiple batches or samples and is a critical precursor to data integration.

- Per-Batch HVG Calculation: Use the

sc.pp.highly_variable_genes(adata, batch_key='batch')function in Scanpy. This calculates HVGs within each batch independently and stores a count of how many batches each gene was variable in (highly_variable_nbatches). - Consensus Gene Selection: Identify the consensus HVGs by selecting genes that are variable in the most batches.

- Subsetting: Subset the

AnnDataobject to these consensus HVGs before proceeding with integration or joint analysis [28].

Protocol 3: The SIEVE Strategy for Robust HVG Identification

SIEVE is a meta-strategy that can be applied to existing HVG methods to improve their robustness.

- Random Sampling: Perform multiple rounds (e.g., 50) of random sampling. In each round, randomly select a subset of cells (e.g., 70%) to serve as the reference set.

- HVG Selection on Subsets: In each round, apply your chosen base HVG selection method (e.g., Seurat's VST) to the reference set to identify a set of HVGs.

- Identify Consensus HVGs: Across all rounds, compute how frequently each gene is selected as an HVG. The final robust set of HVGs is composed of genes with the highest selection frequency. This process minimizes stochastic noise and identifies a core set of variable genes that are consistently detected, substantially improving downstream classification accuracy [11].

Workflow Diagrams for HVG Selection

SIEVE Strategy Workflow

Multi-Batch HVG Selection Process

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools for HVG Selection and Evaluation

| Tool / Resource | Function in HVG Research | Key Application |

|---|---|---|

| Seurat | A comprehensive toolkit for single-cell analysis. Provides multiple embedded HVG selection methods (VST, SCT, DISP). | Standardized preprocessing and HVG selection for clustering and trajectory inference [11]. |

| Scanpy | A Python-based toolkit for analyzing single-cell gene expression data. Mirrors the functionality of Seurat. | HVG selection, especially in multi-batch scenarios, and integration with other Python-based ML tools [30] [28]. |

| SCHS | A method for identifying HVGs based on the spatial distribution of cells. | Selecting a reproducible set of variable genes, particularly useful when consistency across subsamples is a priority [11]. |

| SIEVE | A strategy, not a single algorithm, that uses multiple rounds of random sampling to identify robust HVGs. | Improving the robustness and accuracy of any base HVG method, leading to better single-cell classification [11]. |

| scran | A package for low-level analyses of single-cell RNA-seq data. Provides its own method for HVG selection. | An alternative approach to HVG selection, often used in comparative benchmarks [11]. |

| Human Phenotype Ontology (HPO) | A standardized vocabulary of phenotypic abnormalities. | While not directly for HVG selection, it is crucial for phenotype-based prioritization in diagnostic variant discovery following single-cell analysis [31]. |

Batch-Aware Feature Selection for Multi-Dataset Integration

FAQs

1. What is batch-aware feature selection and why is it critical for single-cell foundation model (scFM) training? Batch-aware feature selection is a computational strategy that identifies informative genes (features) for downstream analysis while explicitly accounting for non-biological technical differences between datasets, known as "batch effects." In the context of scFM training, which uses vast amounts of single-cell RNA sequencing (scRNA-seq) data, this is crucial because technical variation can confound true biological signals [6] [32]. Selecting features without considering batch effects can lead to a model that learns technical artifacts rather than underlying biology, compromising its performance on tasks like cell type annotation, data integration, and query mapping [6]. Proper batch-aware feature selection ensures the scFM learns robust, generalizable biological principles.

2. My integrated dataset shows good batch mixing but poor separation of known cell types. What might be the cause? This is a common challenge indicating that the integration or feature selection process may have been too aggressive, removing biological variation along with technical noise [32]. Specifically:

- Over-correction via KL Regularization: In conditional Variational Autoencoder (cVAE) models, increasing the Kullback–Leibler (KL) divergence regularization strength to force batch integration can indiscriminately remove both batch and biological information, leading to a loss of cell type definition [32] [33].

- Aggressive Adversarial Learning: Methods that use adversarial learning to align batch distributions can sometimes incorrectly merge distinct but proportionally unbalanced cell types across batches (e.g., mixing acinar and immune cells) to achieve statistical indistinguishability [32] [33]. A potential solution is to use more advanced integration methods like sysVI, which combines a VampPrior and cycle-consistency constraints, as it has been shown to improve batch correction while better preserving biological signals [32].

3. How does the number of selected features impact integration and downstream mapping tasks? The number of features selected is a critical parameter. Benchmarks show that the performance of integration and mapping is sensitive to this number [6].

- Integration Metrics: Most metrics evaluating batch effect removal and conservation of biological variation are positively correlated with the number of selected features.

- Mapping Metrics: In contrast, metrics assessing the quality of mapping a new query dataset to a reference are often negatively correlated with the feature count. This may be because smaller feature sets can produce noisier, more mixed integrations where mapping a query cell somewhere within its broad, mixed population is easier [6]. Therefore, there is a trade-off, and the optimal number may depend on the primary goal of your analysis (e.g., building a reference atlas versus mapping queries to it). It is recommended to benchmark different feature set sizes for your specific application [6].

Troubleshooting Guides

Problem: Poor Data Integration After Batch-Aware Feature Selection

Symptoms:

- Cells cluster strongly by batch instead of by cell type in visualizations like UMAP.

- Low scores on batch correction metrics (e.g., low iLISI scores [32]).

- Inability to transfer labels accurately from a reference to a query dataset [6].

Investigation & Resolution Flowchart

Diagnostic Steps:

Verify Input Data Quality:

- Action: Check the quality control metrics for each batch individually. Look for signs of library preparation issues, such as abnormally low library yield or high levels of adapter contamination, which can create insurmountable batch effects [34].

- Resolution: If problems are found, consult the "Low Library Yield" troubleshooting guide below and consider re-preparing libraries if necessary.

Assess Feature Selection Method:

- Action: Confirm you are using a batch-aware feature selection method. Common practice is to use Highly Variable Gene (HVG) selection. For stronger batch effects, ensure the HVG method accounts for batch.

- Resolution: As demonstrated in benchmarks, using a batch-aware variant of a standard HVG method (like the scanpy-Cell Ranger method) is effective [6]. Avoid simple random gene selection or using all genes.

Evaluate the Integration Algorithm:

- Action: Identify which integration algorithm you are using and understand its limitations. Standard methods (including basic cVAE) can struggle with "substantial batch effects" found across different biological systems (e.g., species) or technologies (e.g., single-cell vs. single-nuclei) [32].

- Resolution: For substantial batch effects, consider newer methods like sysVI, a cVAE-based model that uses VampPrior and cycle-consistency. It has been shown to provide better batch correction while preserving biological variation compared to simply tuning KL regularization or using adversarial learning [32] [33].

Verify Downstream Analysis Parameters:

- Action: Re-visit the number of features selected for the analysis.

- Resolution: Perform a sensitivity analysis. The benchmark by [6] suggests that the number of features significantly impacts results. Test a range of values (e.g., 500 to 5000) to find the optimum for your data and goal.

Problem: Low Library Yield in Single-Cell RNA-seq Experiments

Symptoms:

- Final cDNA or library concentrations are well below expectations.

- Electropherogram traces show broad or faint peaks, missing target fragment sizes, or a dominant peak of adapter dimers (~70-90 bp) [34].

Diagnosis and Solutions:

Table 1: Common Causes and Corrective Actions for Low Library Yield

| Category | Root Cause | Corrective Action |

|---|---|---|

| Sample Input / Quality | Degraded RNA or contaminants (phenol, salts) inhibiting enzymes. | Re-purify input sample; use fluorometric quantification (Qubit) over absorbance; ensure high purity (260/230 > 1.8) [34]. |

| Fragmentation & Ligation | Inefficient ligation due to poor enzyme activity or incorrect adapter-to-insert ratio. | Titrate adapter:insert ratios; ensure fresh ligase/buffer; optimize fragmentation parameters [34]. |

| Amplification / PCR | Too few PCR cycles or enzyme inhibitors in the reaction. | Re-amplify from leftover ligation product; avoid over-cycling which causes duplicates and bias [34]. |

| Purification & Cleanup | Overly aggressive size selection or bead cleanup leading to sample loss. | Use correct bead-to-sample ratio; avoid over-drying beads; ensure adequate washing without excessive sample loss [34] [35]. |

Proactive Prevention:

- Run Pilot Experiments: Test a few samples and controls to optimize conditions before processing valuable samples [35].

- Use Controls: Always include a positive control with RNA input mass similar to your cells (e.g., 1-10 pg) and a negative control (e.g., mock FACS buffer) to distinguish experimental from technical issues [35].

- Practice Good Technique: Wear gloves, use low-binding plasticware, maintain separate pre- and post-PCR workspaces, and be meticulous during bead cleanup steps to minimize sample loss and contamination [35].

Experimental Protocols

Protocol: Benchmarking Feature Selection and Integration Workflow

This protocol is adapted from large-scale benchmarking studies [6] to evaluate the impact of feature selection on scRNA-seq data integration and query mapping.

1. Data Preprocessing:

- Input: Multiple scRNA-seq datasets (count matrices).

- Steps:

2. Feature Selection:

- Method: Apply different feature selection strategies to be benchmarked.

- Parameter: Test a range of feature set sizes (e.g., 500, 1000, 2000, 5000).

3. Data Integration:

- Tool: Choose one or more integration methods. For standard batches, methods like Harmony, Seurat, or scVI are common. For substantial batch effects, consider sysVI [32].

- Action: Integrate the reference datasets using each selected feature set from Step 2.

4. Performance Evaluation:

- Action: Calculate a suite of metrics on the integrated data. The table below summarizes key metrics from benchmarks [6].

Table 2: Key Metrics for Evaluating Integration and Mapping Performance

| Category | Metric | Description | What a Good Score Indicates |

|---|---|---|---|

| Batch Correction | iLISI (Integration LISI) | Measures diversity of batches in a cell's neighborhood [32]. | High score: Batches are well-mixed. |

| Batch PCR (Batch Principal Component Regression) | Quantifies the variance explained by batch in the latent space [6]. | Low score: Less technical variation. | |

| Biology Preservation | cLISI (Cell-type LISI) | Measures diversity of cell labels in a cell's neighborhood [6]. | High score: Cell types are distinct. |

| bNMI (Batch-balanced NMI) | Compares clustering similarity to cell labels, balanced across batches [6]. | High score: Biological groups are conserved. | |

| Query Mapping | Cell Distance | Average distance between query cells and their nearest reference neighbors [6]. | Low score: Query cells map precisely to reference. |

| mLISI (Mapping LISI) | Assesses mixing of query and reference cells in local neighborhoods [6]. | High score: Query and reference are well-integrated. |

Protocol: Implementing sysVI for Substantial Batch Effects

This protocol outlines the use of sysVI, a method designed for challenging integrations [32] [33].

1. Installation and Setup:

- Tool: Access sysVI through the

sciv-toolsPython package [32]. - Input: An AnnData object containing your multi-batch scRNA-seq data.

2. Model Configuration:

- Key Features: sysVI enhances a standard cVAE with two components:

- VampPrior: Replaces the standard Gaussian prior with a mixture of posteriors, which can better capture multi-modal data distributions and improve biological preservation [32].

- Cycle-Consistency Loss: Encourages that translating a cell's expression from one batch to another and back again reconstructs the original expression, helping to preserve biological identity during integration [32].

3. Execution:

- Train the sysVI model on your multi-batch dataset.

- Obtain the integrated latent representation from the model for downstream analysis like clustering and visualization.

4. Validation:

- Use the metrics in Table 2 to validate the integration quality, paying close attention to the balance between iLISI (batch mixing) and cLISI/bNMI (biology preservation).

The Scientist's Toolkit

Table 3: Essential Computational Tools & Resources for scFM Research

| Resource Name | Type | Primary Function | Relevance to Batch-Aware Analysis |

|---|---|---|---|

| scanpy [6] | Python Package | Scalable single-cell analysis. | Provides implementations for standard HVG selection and preprocessing. |

| scvi-tools [32] | Python Package | Probabilistic models for scRNA-seq. | Hosts scalable integration methods like scVI and sysVI for substantial batch effects. |

| batchelor [36] | R/Bioconductor Package | Methods for correcting batch effects. | Implements fast and efficient batch correction algorithms like MNN. |

| Seurat [37] | R Package | Single-cell genomics analysis. | Offers a comprehensive integration workflow, including anchor-based integration. |

| CZ CELLxGENE [5] | Data Platform | Curated collection of single-cell datasets. | Provides a unified source of high-quality, annotated data essential for scFM pretraining and benchmarking. |

| Harmony [37] | Algorithm / Package | Data integration method. | A popular and efficient method for integrating datasets across technical batches. |

Gene Module-Based Approaches for Enhanced Biological Signal

Frequently Asked Questions

Q1: What are the main advantages of using foundation models over traditional methods for single-cell data analysis? Single-cell foundation models (scFMs) are robust and versatile tools that learn universal biological knowledge from massive datasets during pretraining. This endows them with emergent abilities for zero-shot learning and efficient adaptation to various downstream tasks, such as cell type annotation, batch integration, and drug sensitivity prediction. However, for specific tasks with limited data or resources, simpler machine learning models can sometimes be more efficient and effective [4] [5].

Q2: How can I select highly variable genes (HVGs) effectively for my scFM training? Traditional HVG selection methods can be challenged by the high sparsity and dropout noise of scRNA-seq data. The GLP (LOESS with positive ratio) method provides a robust alternative by identifying biologically informative genes through the relationship between a gene's positive ratio (the fraction of cells where it is detected) and its average expression level. Genes with expression levels significantly higher than expected for their positive ratio are selected, which helps preserve key biological signals for downstream analysis [3].

Q3: Why is my model failing to identify rare cell types or subtle biological signals? This is a common challenge, often stemming from how features are selected. Standard HVG methods may overrepresent highly abundant cell types and miss less abundant ones. The performance is closely tied to dataset size; with larger and more diverse pilot datasets, the proportions of cells in each cluster become more similar to the ground-truth data. Using feature selection methods specifically designed to capture nuanced biological information, like GLP, can improve the detection of rare cell types [38] [3].

Q4: Can I incorporate prior biological knowledge, like gene networks, to improve my model's performance? Yes, integrating known biological networks can significantly increase the power to identify biologically relevant signals. Methods like Markov Random Field (MRF) models appropriately accommodate gene network information as well as dependencies among cell types. This allows the model to borrow information across related genes and cell types, leading to more statistically powerful and biologically insightful identification of features like cell-type-specific differentially expressed genes [39].

Troubleshooting Guides

Issue 1: Poor Model Generalization Across Datasets