Optimizing Machine Learning Annotation Models: A Parameter Tuning Guide for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on parameter tuning for machine learning annotation models.

Optimizing Machine Learning Annotation Models: A Parameter Tuning Guide for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on parameter tuning for machine learning annotation models. It covers foundational concepts, advanced methodologies like semi-supervised learning and synthetic data generation, and practical optimization strategies including Grid Search, Random Search, and Bayesian Optimization. The content addresses critical challenges such as data quality, annotator bias, and computational efficiency, and offers rigorous validation techniques and performance metrics tailored for high-stakes biomedical applications, from clinical trial analysis to medical image annotation.

Core Concepts: The Critical Role of Parameter Tuning in Biomedical Annotation

Defining Annotation Models and Their Parameters in Biomedical Contexts

Troubleshooting Guide: FAQs on Annotation Models in Biomedical Machine Learning

FAQ 1: How can I train a model effectively when I have very few annotated biomedical images?

This is a common challenge known as data scarcity. Several annotation-efficient deep learning strategies can help.

Solution 1: Employ Weakly Supervised Learning

- Concept: Use simpler, less precise annotations (like bounding boxes or image-level labels) instead of detailed pixel-level segmentations to train models. This significantly reduces annotation time and complexity [1].

- Experimental Protocol:

- Data Preparation: Collect your biomedical images. Instead of precise masks, have domain experts draw bounding boxes around regions of interest (e.g., tumors, cells).

- Model Selection: Choose a model architecture capable of learning from weak labels, such as one that uses a multiple instance learning framework.

- Training: Train the model using the weak labels. The loss function is designed to learn from the incomplete supervisory signal.

- Validation: Validate the model's performance on a small, held-out test set that has precise, pixel-level annotations to assess its segmentation accuracy.

Solution 2: Utilize Active Learning

- Concept: An iterative process where a model identifies the most informative data points it needs annotated next, maximizing performance gains with minimal expert input [1].

- Experimental Protocol:

- Initial Training: Start with a very small set of annotated images to train an initial model.

- Prediction and Uncertainty Quantification: Use the model to predict on the large pool of unlabeled data. Calculate uncertainty scores (e.g., entropy, prediction confidence) for each prediction.

- Expert Annotation: Select the batch of data points with the highest uncertainty and have a domain expert annotate them.

- Model Update: Retrain the model by incorporating the newly annotated data.

- Iteration: Repeat steps 2-4 until a satisfactory performance level is achieved.

Solution 3: Leverage Self-Supervised Learning (SSL)

- Concept: The model first learns meaningful representations from unlabeled data by solving a "pretext" task (e.g., predicting image rotation, denoising). It is then fine-tuned on the limited labeled data for the specific downstream task [1] [2].

- Experimental Protocol:

- Pretext Task Training: Gather a large volume of unlabeled biomedical images. Train a model on a pretext task like contrastive learning or image inpainting.

- Feature Extraction: Use the pre-trained model to initialize the weights for your target segmentation or classification model.

- Fine-Tuning: Perform supervised fine-tuning on the small set of labeled data you possess.

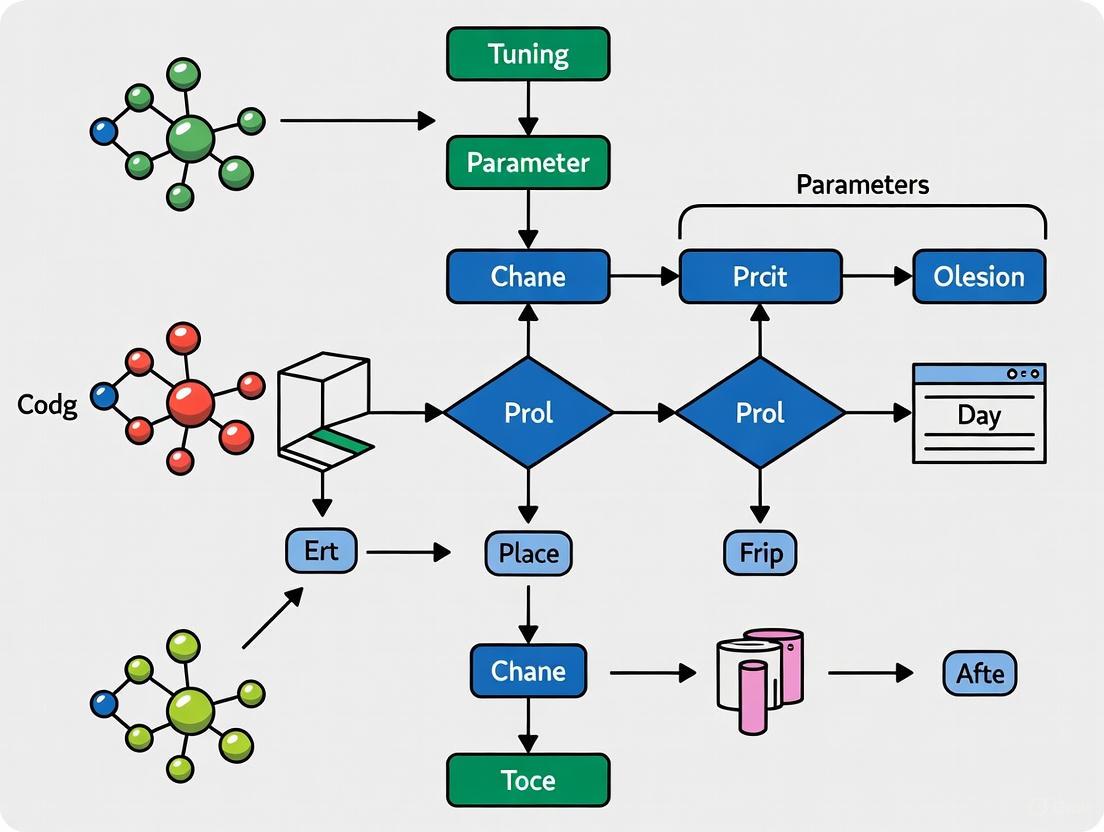

The following workflow integrates these strategies into a cohesive active learning cycle:

FAQ 2: My model's performance is inconsistent. Could this be caused by problems with the annotated data?

Yes, inconsistent model performance is often a direct symptom of issues with the training data, particularly annotation quality. This problem is prevalent in biomedical contexts due to inter-expert variability [3].

Problem: Inconsistent and Noisy Annotations

- Source: Different domain experts (e.g., radiologists) may provide conflicting labels for the same data due to subjective judgment, bias, or human error. This creates a "noisy" ground truth [3] [4].

- Impact: Models trained on such data learn these inconsistencies, leading to poor generalization and unreliable predictions [3].

Solution: Implement a Cross-Model Self-Correction Framework

- Concept: Train two models in parallel. Use their disagreements to identify potentially mislabeled data and collaboratively generate corrected pseudo-labels during training [4].

- Experimental Protocol (Based on the AIDE Framework):

- Parallel Training: Initialize two different model architectures (e.g., a U-Net and a DeepLabV3+) with the same noisy annotated dataset.

- Mini-batch Processing: For each mini-batch, each model makes predictions.

- Local Label Filtering: For each image, identify pixels where the two models' predictions disagree significantly with the original annotation. Apply data augmentation (e.g., rotation, flipping) to these problematic images and generate a consensus prediction from the augmented outputs to create a refined pseudo-label.

- Cross-Model Update: Update both models using a combination of the high-confidence original labels and the refined pseudo-labels.

- Global Label Correction: After each training epoch, analyze the entire dataset. For samples where the model predictions consistently disagree with the stored labels over multiple epochs, update the dataset label with a consensus prediction.

The methodology for this self-correction process is detailed below:

Table 1: Impact of Inter-Annotator Disagreement on Model Performance [3]

| Performance Metric | Result with 11 Independent Expert Annotations | Implication |

|---|---|---|

| Internal Validation Agreement | Fleiss' κ = 0.383 (Fair agreement) | Models built on different expert labels will inherently learn different decision boundaries. |

| External Validation Agreement | Average Cohen’s κ = 0.255 (Minimal agreement) | The resulting models show low consensus when classifying new, external data. |

| Discharge Decision vs. Mortality Prediction | Fleiss' κ = 0.174 (Discharge) vs. 0.267 (Mortality) | Inconsistency impact varies by clinical task, with some being more subjective than others. |

FAQ 3: What are the key parameters to tune when adapting a pre-trained annotation model to my specific biomedical dataset?

This process, known as fine-tuning or transfer learning, is critical for achieving high performance. The key is to adjust hyperparameters that control the learning process [5] [6].

Table 2: Key Hyperparameters for Fine-Tuning Annotation Models

| Hyperparameter | Function | Consideration for Biomedical Data |

|---|---|---|

| Learning Rate | Controls the step size during weight updates. | Use a lower learning rate than pre-training (e.g., 1e-5 to 1e-4) to avoid catastrophic forgetting and gently adapt to new features [6]. |

| Optimizer | Algorithm used to update model weights (e.g., SGD, Adam). | Adam is often a robust starting point. Momentum in SGD can help navigate noisy loss landscapes common with imperfect labels [6]. |

| Batch Size | Number of samples processed before a model update. | Limited by GPU memory. Smaller sizes can offer a regularizing effect, but too small may lead to unstable training. |

| Dropout Rate | Fraction of neurons randomly turned off during training to prevent overfitting. | Crucial when fine-tuning on small datasets. Increase dropout rates if the model overfits the limited training samples quickly [6]. |

| Number of Epochs | Number of complete passes through the training data. | Use early stopping on a validation set to halt training when performance plateaus, preventing overfitting. |

- Methodology for Systematic Hyperparameter Tuning:

- Define Search Space: Establish a range of values for each hyperparameter (e.g., learning rate: [1e-5, 1e-4, 1e-3]).

- Choose a Tuning Method:

- Grid Search: Exhaustively tries all combinations in the defined space. Good for a small number of parameters but computationally expensive.

- Random Search: Randomly samples combinations from the space. Often more efficient than grid search [6].

- Bayesian Optimization: Builds a probabilistic model to guide the search for the best hyperparameters, typically the most efficient method for complex models [6].

- Implement Cross-Validation: Use k-fold cross-validation on your training data to evaluate each hyperparameter set robustly. This provides a more reliable performance estimate and reduces the risk of overfitting to a single validation split.

- Final Evaluation: Once the best hyperparameters are found, retrain the model on the entire training set and evaluate its final performance on a held-out test set.

FAQ 4: How do I choose the right annotation tool for a collaborative biomedical project?

Selecting an appropriate tool is vital for ensuring annotation consistency, efficiency, and collaboration among domain experts.

Table 3: Key Criteria for Selecting a Biomedical Annotation Tool [7]

| Criteria | Description | Importance for Biomedical Research |

|---|---|---|

| Schema Configuration | Ability to define custom labels, concepts, and relations. | Essential for adapting to specific biomedical ontologies (e.g., UMLS) and entity types [7]. |

| Collaborative Features | Supports multiple annotators working on the same project. | Enables pooling of expert knowledge and scales annotation efforts across a team [7]. |

| Support for Relations | Allows annotation of relationships between entities (e.g., drug-interacts_with-gene). | Critical for complex tasks like relationship extraction from literature or medical records [7]. |

| Data Format Support | Handles required input/output formats (e.g., PDF, JSON, COCO). | Must process diverse biomedical data sources, including PubMed abstracts and medical reports [7]. |

| Installability & Access | Can be deployed online or on-premises. | On-premises or local Docker deployment is often mandatory for handling sensitive patient data due to privacy regulations [7]. |

- Recommendation: Tools like MetaTron are specifically designed for the biomedical domain, supporting relation annotation, collaboration, and handling documents in various formats, including PDFs and PubMed abstracts [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Annotation-Efficient Biomedical ML Research

| Item | Function | Example Use-Case |

|---|---|---|

| AIDE Framework | An open-source deep learning framework designed for annotation-efficient medical image segmentation. It handles semi-supervised learning, unsupervised domain adaptation, and learning with noisy labels [4]. | Segmenting breast tumors in MRI scans using only 10% of the annotated training data while achieving performance comparable to a fully-supervised model [4]. |

| Pre-trained Models (BioImage Model Zoo) | A collection of pre-trained models for bioimage analysis. Provides a starting point for transfer learning, reducing the need for large, task-specific datasets [1]. | Fine-tuning a pre-trained nucleus segmentation model on a new cell type with minimal additional annotation. |

| Parameter-Efficient Fine-Tuning (PEFT) Methods (e.g., LoRA) | A fine-tuning technique that updates only a small subset of a model's parameters, drastically reducing computational cost and memory requirements [5]. | Adapting a large foundation model for a specific task (e.g., radiology report classification) on a single GPU without full fine-tuning. |

| Active Learning Loops | A workflow/script that automates the cycle of model prediction, uncertainty sampling, and expert annotation. | Iteratively improving a model for classifying rare disease phenotypes in medical images by prioritizing the most uncertain cases for expert review. |

| Cross-Model Self-Correction Code | Implementation of a framework (like the one in AIDE) that uses two models to identify and correct noisy labels during training [4]. | Training a robust segmentation model on a dataset annotated by multiple pathologists, where inter-rater variability is high. |

Why Parameter Tuning is Crucial for Clinical-Grade Model Performance

Frequently Asked Questions

FAQ 1: Why can't I use a model's default parameters for my clinical dataset? Default parameters are generic starting points, but clinical data possesses unique characteristics like high dimensionality, class imbalance, and noise. Using default settings often leads to suboptimal performance and poor generalizability to new patient populations. Systematic tuning adapts the model to the specific statistical properties of medical data, which is essential for clinical reliability [8] [9].

FAQ 2: My model performs well on training data but poorly on the test set. Is parameter tuning the solution?

This is a classic sign of overfitting, and parameter tuning is a primary corrective strategy. Techniques like regularization strength tuning (e.g., adjusting C in SVM or weight decay) and explicitly tuning to maximize performance on a held-out validation set can help the model generalize better. A study on lung nodule classification showed that tuning helped a Random Forest model maintain stable performance between training and testing, whereas an untuned SVM model exhibited significant performance drops [9].

FAQ 3: How do I perform parameter tuning without causing data leakage? Data leakage is a critical concern. The proper methodology is to perform tuning only within the training fold during cross-validation.

- First, split your data into a fixed training set and a hold-out test set.

- Use k-fold cross-validation on the training set to evaluate different hyperparameters.

- The test set must never be used for tuning or during the training process; it is reserved for the final, unbiased evaluation [10]. Utilizing a

Pipelinein scikit-learn can also help ensure that preprocessing steps like standardization are fitted only on the training data [10].

FAQ 4: What is the difference between a model parameter and a hyperparameter?

- Model parameters are internal to the model and are learned directly from the training data (e.g., the weights and biases in a logistic regression or neural network).

- Hyperparameters are external configuration settings that are not learned from the data. They must be set prior to the training process and control the learning algorithm itself (e.g., the learning rate, the number of trees in a random forest, or the kernel type in an SVM) [11].

FAQ 5: For a clinical application, should I prioritize model interpretability or performance? In clinical contexts, interpretability can be as crucial as performance. A model that is slightly less accurate but whose decisions can be explained and validated by clinicians is often more trusted and useful than a "black box" model with superior metrics. The choice of model and the tuning process should balance this trade-off. For instance, a well-tuned logistic regression model might be preferred over a more complex but opaque model because its parameters can be more easily related to clinical features [9].

Troubleshooting Guides

Problem: Tuning takes too long and is computationally expensive.

- Solution 1: Use smarter search algorithms. Replace exhaustive Grid Search with more efficient methods like Random Search, Bayesian Optimization (with tools like Hyperopt or Optuna), or Genetic Algorithms [12] [13] [11]. These methods aim to find good parameters with fewer evaluations.

- Solution 2: Tune on a subset. Perform initial tuning cycles on a representative subset of your full dataset to quickly narrow down promising ranges of hyperparameters before running a final tuning job on the full dataset [14].

- Solution 3: Leverage cloud computing. Use scalable cloud resources to run multiple tuning trials in parallel, significantly reducing the total calendar time required [14].

Problem: After extensive tuning, the model still doesn't generalize to the test set.

- Check 1: Review your data splitting. Ensure your training, validation, and test sets are properly separated and that the validation performance is a true indicator of generalization. Stratified splitting is often necessary for imbalanced medical outcomes [10].

- Check 2: Simplify your model. You may be over-complicating the problem. Try a simpler model architecture and ensure your features are clinically relevant and well-engineered. The problem might lie with the data or features, not the parameters [15].

- Check 3: Expand your search space. The optimal hyperparameters might lie outside the ranges you initially defined. Review literature for similar tasks to inform a wider, more appropriate search space [13].

Problem: I'm unsure which hyperparameters to tune for my chosen algorithm.

- Guidance: While the most important parameters are model-specific, here are key ones for common algorithms:

- Tree-based models (Random Forest, XGBoost):

n_estimators,max_depth,min_samples_split,learning_rate(for boosting) [9] [13]. - Support Vector Machines (SVM): Regularization parameter

C, kernel parameters (e.g.,gammafor the RBF kernel) [9] [11]. - Neural Networks:

learning_rate,batch_size, number of layers and units,dropoutrate [11] [14].

- Tree-based models (Random Forest, XGBoost):

- Use tools like Optuna to automatically compute hyperparameter importance, helping you focus on the most influential ones [13].

Quantitative Data on Tuning Impact

Table 1: Performance Comparison of Machine Learning Models Before and After Hyperparameter Tuning for Lung Nodule Malignancy Classification (AUC Scores) [9].

| Model | AUC (Training - Default) | AUC (Test - Default) | AUC (Training - Tuned) | AUC (Test - Tuned) |

|---|---|---|---|---|

| Logistic Regression | 0.82 | 0.80 | 0.86 | 0.89 |

| Random Forest | 0.85 | 0.84 | 0.89 | 0.91 |

| XGBoost | 0.80 | 0.75 | 0.78 | 0.77 |

| SVM | 0.90 | 0.75 | 0.93 | 0.80 |

| LightGBM | 0.89 | 0.82 | 0.94 | 0.88 |

Table 2: Comparison of Hyperparameter Optimization Methods [10] [12] [13].

| Method | Key Principle | Pros | Cons | Best For |

|---|---|---|---|---|

| Grid Search | Exhaustive search over a predefined set of all possible combinations | Guaranteed to find the best combination within the grid | Computationally very expensive, especially for high-dimensional spaces | Small, well-understood parameter spaces |

| Random Search | Randomly samples parameter combinations from the defined space | Often finds good parameters faster than Grid Search | May miss the optimal point if not run for enough iterations | Faster initial exploration of wider parameter spaces |

| Bayesian Optimization | Builds a probabilistic model of the objective function to guide the search | Highly sample-efficient, requires fewer trials | Higher computational overhead per iteration; more complex to implement | When model evaluation is very time-consuming |

Detailed Experimental Protocol for k-Fold Cross-Validation with Hyperparameter Tuning

This protocol is essential for obtaining a robust and generalizable clinical model.

- Data Partitioning: Begin by splitting the entire dataset into a hold-out test set (e.g., 20%) and a development set (80%). The test set is locked away and must not be used until the very final evaluation [10].

- Define Hyperparameter Space: Specify the hyperparameters you wish to tune and their value ranges (e.g.,

'max_depth': [3, 5, 7, 10],'learning_rate': [0.01, 0.1, 0.3]). - Setup Cross-Validation: Divide the development set into k folds (typically k=5 or k=10). The model will be trained k times, each time using k-1 folds for training and 1 different fold for validation [10].

- Automated Tuning Loop: For each candidate set of hyperparameters:

- Train the model on the k-1 training folds.

- Evaluate the model on the remaining validation fold, storing the performance metric (e.g., AUC, accuracy).

- Calculate the average performance across all k validation folds. This average is the objective to maximize (or minimize, in the case of error).

- Model Selection & Final Training: Select the hyperparameter set that yielded the best average cross-validation performance. Train a new model on the entire development set using these optimal hyperparameters [10].

- Final Evaluation: Unlock the hold-out test set and perform a single, final evaluation on the model trained in the previous step. This score provides an unbiased estimate of its performance on new data.

The Hyperparameter Tuning Workflow

The following diagram visualizes the standard workflow for tuning a model using a validation set, which forms the core of the k-fold cross-validation process.

Visualizing Hyperparameter Importance

After a tuning run, it is critical to understand which parameters had the most influence on your model's performance. This guides future experimentation.

Table 3: Key Tools and Software for Clinical Machine Learning and Parameter Tuning [10] [9] [13].

| Tool / Resource Name | Type | Primary Function in Tuning |

|---|---|---|

| Scikit-learn (GridSearchCV, RandomizedSearchCV) | Python Library | Provides robust implementations of Grid Search, Random Search, and cross-validation pipelines. |

| Optuna | Hyperparameter Optimization Framework | A state-of-the-art framework for automated hyperparameter optimization using Bayesian methods. |

| Hyperopt | Hyperparameter Optimization Framework | A Python library for serial and parallel Bayesian optimization over awkward search spaces. |

| XGBoost / LightGBM | Machine Learning Library | High-performance gradient boosting frameworks that have many critical hyperparameters to tune. |

| TRIPOD-LLM / TRIPOD+AI | Reporting Guideline | Guidelines for transparent reporting of clinical prediction models, ensuring methodological rigor [16]. |

| PyTorch / TensorFlow | Deep Learning Framework | Core frameworks for building neural networks, often integrated with tuning libraries like Optuna. |

Troubleshooting Guide: Common Data Annotation Challenges

Challenge 1: Managing Subjectivity and Label Noise

Problem Statement: Researchers observe high inter-annotator variability in medical image labels, leading to inconsistent model performance and unreliable ground truth.

Diagnosis Steps:

- Calculate Inter-Annotator Agreement (IAA): Quantify consistency using metrics like Fleiss' Kappa or Cohen's Kappa for multiple annotators [17] [18].

- Audit Annotation Guidelines: Review instructions for ambiguous definitions of subjective features (e.g., "subtle malignancy," "uncertain margin") [18].

- Identify Confounders: Analyze dataset for hidden variables that systematically influence annotations, such as specific imaging artifacts or patient demographics that correlate with labels [18].

Solutions:

- Develop Consensus-Driven Protocols: Establish standardized, detailed annotation guidelines with visual examples and clear decision trees. Iteratively refine these guidelines based on annotator feedback [17] [18].

- Implement Multi-Step Validation: Adopt a tiered workflow where trained non-medical annotators perform initial labeling, followed by review and adjudication by board-certified clinical experts [17].

- Conduct IAA Studies: Regularly measure agreement and use disagreements as training opportunities to calibrate annotators and refine protocols [17].

Experimental Protocol: Measuring Annotation Subjectivity

- Objective: Quantify inter-observer variability for a tumor segmentation task.

- Materials: A set of 100 MRI scans with suspected tumors; 3 certified radiologists as annotators.

- Method:

- Provide all annotators with the same initial guideline document.

- Each annotator independently segments the tumor regions in all 100 scans.

- Compute the Dice Similarity Coefficient (DSC) pairwise between all annotators.

- Calculate Fleiss' Kappa to assess agreement beyond chance.

- Analysis: Low DSC or Kappa values indicate high subjectivity, necessitating guideline revisions and re-annotation.

Challenge 2: Mitigating Annotation Bias

Problem Statement: The AI model exhibits performance disparities across different patient demographics or imaging centers, likely due to biased training labels.

Diagnosis Steps:

- Analyze Annotator Demographics: Assess the diversity (geographic, cultural, clinical background) of the annotator pool [19].

- Perform Slice Analysis: Evaluate model performance separately on data slices defined by demographic attributes or clinical subgroups to identify underperforming areas [20].

- Audit Task Framing: Scrutinize annotation instructions for Western-centric assumptions or framing that ignores cultural or linguistic nuances in multimodal data [19].

Solutions:

- Recruit a Diverse Annotator Pool: Ensure annotators represent diverse backgrounds and, crucially, include clinical experts from the relevant medical specialty [17] [19].

- Implement Bias Detection Tools: Use automated tools to flag underrepresented data segments or skewed label distributions in real-time during annotation [20].

- Apply Post-Hoc Model Adjustments: Use techniques like Weak Ensemble Learning (WEL) to mitigate the impact of known biases in the annotated data [19].

Challenge 3: Overcoming Expert Annotator Scarcity

Problem Statement: A shortage of qualified clinical experts causes significant bottlenecks, delaying annotation projects and increasing costs.

Diagnosis Steps:

- Audit Project Timeline: Identify phases with the longest delays and correlate them with tasks requiring specialist input (e.g., pathology slide review) [17] [21].

- Quantify Workload: Calculate the total hours of specialist time required versus available, factoring in clinical duties and burnout risks [21].

- Evaluate Resource Allocation: Determine if highly skilled experts are spending time on tasks that could be handled by junior annotators or AI pre-labeling [17].

Solutions:

- Adopt AI-Assisted Pre-Labeling: Use foundation models or specialized networks (e.g., UMedPT) to generate initial annotations, which clinical experts then verify and refine, drastically reducing their workload [22] [20].

- Utilize Tiered Annotation Workflows: Assign simple or pre-annotated cases to trained technical annotators, reserving complex and ambiguous cases for senior clinical experts [17].

- Explore Hybrid Annotation Services: Partner with specialized vendors who provide access to vetted pools of clinical annotators and managed annotation platforms, ensuring quality while scaling capacity [23].

Frequently Asked Questions (FAQs)

Q1: How can we ensure annotation quality remains high when scaling up a project? Maintaining quality at scale requires a hybrid approach. Implement AI-assisted pre-labeling to ensure a consistent baseline, followed by human-in-the-loop review [20]. Use automated quality control (QC) tools to flag inconsistencies, and conduct regular audits on a subset of annotations. A tiered workflow with expert QC is essential for clinical validity [17].

Q2: What are the most effective strategies for managing annotation costs without sacrificing quality? The most effective strategy is a hybrid model that combines automation with strategic human input. Use AI tools for repetitive pre-labeling to reduce manual hours [20]. Optimize resource allocation by assigning highly-skilled and expensive clinical experts only to the most complex tasks, using trained non-medical annotators for others [17]. This can reduce annotation expenses by up to 50% while maintaining high accuracy [20].

Q3: Our model performs well on validation data but fails in real-world clinical settings. Could annotation bias be the cause? Yes, this is a classic symptom of annotation bias or dataset shift. Common causes include a lack of demographic diversity in your training set [20], cultural or contextual biases in the annotation instructions [19], or annotator pools that lack diversity. Conduct a thorough slice analysis of your model's performance and audit your dataset's representativity.

Q4: How do regulatory requirements like the EU AI Act impact biomedical data annotation? Regulations like the EU AI Act categorize most medical AI as "high-risk," explicitly requiring high-quality, traceable training data [23]. This means you must document your annotation protocols, annotator qualifications, and quality assurance processes. Data must be handled in compliance with privacy laws like HIPAA and GDPR, often requiring full anonymization before annotation [17] [23].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for a Biomedical Data Annotation Pipeline

| Research Reagent Solution | Function in the Annotation Pipeline |

|---|---|

| Clear Annotation Guidelines & Protocol | Defines the standardized rules, taxonomies, and visual examples for annotators to follow, reducing subjectivity and inconsistency [17] [18]. |

| Inter-Annotator Agreement (IAA) Metrics | A statistical measure (e.g., Fleiss' Kappa) to quantify consistency between different annotators, serving as a quality control check [17] [19]. |

| AI-Assisted Pre-Labeling Tool | A foundational model (e.g., UMedPT) or algorithm that provides initial annotations, drastically reducing the manual workload for human experts [22] [20]. |

| Diverse Annotator Pool | A group of annotators with diverse backgrounds and including relevant clinical experts, which is crucial for mitigating cultural and clinical bias [19]. |

| Secure, Cloud-Based Annotation Platform | A software platform that supports collaborative annotation, version control, task management, and integrates with model training pipelines, often with built-in compliance features [5] [23]. |

Experimental Workflows for Annotation Quality

The following diagrams illustrate a robust, multi-stage workflow for managing annotation quality and mitigating bias, from initial setup to model tuning.

Annotation Quality Assurance Workflow

Bias Detection and Mitigation Process

Frequently Asked Questions

FAQ 1: What are the most critical data errors that impact model tuning, and how can I identify them?

Unreliable model behavior is often caused by errors in the training data, such as mislabeled examples, outliers, or biased values. To identify the most harmful errors, you can use data attribution frameworks and influence functions. These techniques help trace a model's predictions back to its training data, quantifying the importance of individual data points and flagging those with a negative impact for review [24]. Tools like cleanlab implement Confident Learning to automatically characterize and identify label errors in datasets by estimating the joint distribution between noisy given labels and uncorrupted unknown labels [24].

FAQ 2: My model performance has plateaued despite extensive parameter tuning. Could the issue be upstream in the annotation pipeline? Yes, this is a common scenario. Model performance is often bounded by the quality of the training data. Before further tuning, you should:

- Audit your labels: Implement a quality assurance (QA) framework that uses multiple annotators and adjudication to measure and improve label consistency [25].

- Check for data coverage: Ensure your annotated data sufficiently represents the problem space and edge cases you expect in production. Poor coverage can lead to models that fail to generalize [25].

- Re-prioritize errors: Use a tool like

ActiveCleanto prioritize the cleaning of training records that are most likely to affect your model's results, which can improve accuracy more efficiently than indiscriminate cleaning [24].

FAQ 3: For a new drug discovery project, what annotation type and tuning approach should I consider for a molecular property prediction model? For molecular property prediction (a type of image or structured-data classification), a common and effective approach is:

- Annotation Type: Image classification or tagging of molecular structures or assay results to categorize them based on properties like toxicity or efficacy [26].

- Initial Model & Tuning: Start with a tree-based ensemble model like XGBoost, which performs well on structured molecular data with minimal preprocessing [25]. Hyperparameter tuning should focus on tree depth, learning rate, and the number of estimators. After establishing a baseline, you can explore deep learning with transformer-based models for more complex pattern recognition in molecular sequences or structures [26].

FAQ 4: How can I efficiently incorporate human expertise to tune a model for a highly specialized domain? Leverage Reinforcement Learning from Human Feedback (RLHF). This process involves:

- Pre-training a language model on your domain-specific text.

- Training a reward model: Domain experts (e.g., drug discovery scientists) rank or score the model's generated responses. These human preferences are used to train a reward model that assigns numerical rewards to the language model's actions [26].

- Fine-tuning with RL: The pre-trained language model is fine-tuned using reinforcement learning to maximize the reward from the reward model, effectively aligning its outputs with expert judgment [26].

Troubleshooting Guides

Issue: High-Variance Results During Model Tuning

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Noisy Training Labels | • Use cleanlab to estimate label noise [24].• Perform a manual QA audit on a data sample. |

• Re-annotate flagged data points.• Improve annotator training and guidelines. |

| Inadequate Data Splitting | • Check for duplicate or highly correlated data points across training and validation splits. | • Implement grouped splitting to prevent data leakage (e.g., ensure all samples from the same patient are in the same split). |

| Unstable Hyperparameters | • Perform a sensitivity analysis on key hyperparameters. | • Use a broader hyperparameter search with more cross-validation folds.• Switch to more robust models like tree-based ensembles as a baseline [25]. |

Issue: Model Fails to Generalize to Real-World Data After Tuning

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Covariate/Data Drift | • Use statistical tests (KS, Chi-square) to compare input feature distributions between training and live data [25]. | • Retrain the model with recent, representative data.• Implement continuous monitoring and automated retraining triggers. |

| Insufficient Data Coverage | • Analyze feature importance and check for features with low variance in training but high variance in production. | • Acquire and annotate data specifically for underrepresented feature regions.• Employ data augmentation techniques. |

| Annotation Bias | • Audit annotation guidelines for unconscious biases.• Check if annotator demographics match the target population. | • Diversify annotator pool.• Revise guidelines to minimize subjective judgments. |

Issue: LLM Generates Factually Incorrect or Unsafe Output in a Scientific Context

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Hallucinations from Base Model | • Manually evaluate outputs on a test set of known facts. | • Implement Retrieval-Augmented Generation (RAG) to ground the model in a verified, custom knowledge base [25]. |

| Poorly Aligned Objectives | • Check if the model's reward function aligns with factual accuracy and safety. | • Apply RLHF to fine-tune the model based on feedback from scientific experts, penalizing incorrect outputs [26]. |

| Out-of-Domain Queries | • Monitor and categorize user queries that trigger failures. | • Create a classifier to detect out-of-domain questions and respond with a predefined fallback message. |

Experimental Protocols & Methodologies

Protocol 1: Data Valuation using Data Shapley

Objective: To quantify the contribution of individual training data points to a model's performance, identifying both high-value and harmful data points for targeted cleaning and acquisition [24].

Methodology:

- Define the Utility Function: Let ( S ) be a subset of the training data ( D = {z1, ..., zn} ). The utility function ( U(S) ) is typically the performance (e.g., accuracy, F1-score) of a model trained on the subset ( S ) and evaluated on a held-out validation set.

- Calculate Data Shapley Value: The Shapley value for a data point ( zi ) is its average marginal contribution across all possible subsets of the training data. It is computed as: ( \phii = \sum{S \subseteq D \setminus {zi}} \frac{|S|! (|D| - |S| - 1)!}{|D|!} [U(S \cup {z_i}) - U(S)] )

- Interpretation: Data points with high Shapley values are the most valuable, while those with low or negative values are potentially harmful or redundant.

Considerations:

- Computational Cost: The exact calculation is combinatorially expensive. For practical applications, use approximation methods like Monte Carlo or gradient-based Shapley value estimation [24].

- Application: This method can be used for debugging models, detecting dataset errors, and guiding data acquisition strategies [24].

Protocol 2: Automated Pipeline for Generating Initial Parameter Estimates

Objective: To automatically generate reliable initial parameter estimates for complex models (e.g., population pharmacokinetics), which is critical for efficient parameter optimization and avoiding model convergence failures [27].

Methodology: The pipeline incorporates several data-driven methods, summarized in the table below.

| Method | Application | Key Formula/Description |

|---|---|---|

| Adaptive Single-Point | Sparse data; calculates clearance (CL) and volume of distribution (Vd). | • ( Vd = \frac{Dose}{C1} ), where ( C1 ) is measured shortly after dose.• ( CL = \frac{Dosing Rate}{C_{ss, avg}} ) at steady state [27]. |

| Naïve Pooled NCA | Rich data; treats all data as from a single subject to derive parameters. | • Uses AUC from naïve pooled data for CL calculation.• ( Vz = \frac{CL}{\lambdaz} ), where ( \lambdaz ) is the terminal slope [27]. |

| Graphic Methods | Single-dose data; visual analysis of concentration-time curves. | • For intravenous data: Vd is inverse of y-intercept from terminal phase extrapolation.• For extravascular data: Ka is slope of residual line from method of residuals [27]. |

| Parameter Sweeping | Complex models; tests a range of candidate values. | • Simulates concentrations for candidate parameters.• Selects values with the best predictive performance (lowest rRMSE) [27]. |

Protocol 3: Reinforcement Learning from Human Feedback (RLHF)

Objective: To align a pre-trained language model with human preferences and safety requirements, which is crucial for deploying reliable models in scientific and clinical settings [26].

Methodology:

- Pre-train a Language Model (LM): Start with a base LM trained on a large corpus of general and/or domain-specific text.

- Train a Reward Model:

- The LM generates multiple responses to a set of prompts.

- Human annotators (e.g., domain experts) rank these responses from best to worst.

- These rankings are used to train a separate reward model that learns to assign a scalar reward score to any LM output, quantifying human preference.

- Fine-tune the LM with Reinforcement Learning:

- Use the reward model as a reward function.

- Fine-tune the pre-trained LM using a reinforcement learning algorithm (like PPO) to maximize the reward score, encouraging the model to generate outputs that humans prefer [26].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in the Annotation & Tuning Pipeline |

|---|---|

| Labeling Platforms (e.g., Label Studio, SuperAnnotate) | Provides interfaces for human annotators to label data (images, text) efficiently. Supports QA workflows, consensus tracking, and project management [26]. |

| Experiment Trackers (e.g., MLflow, W&B) | Tracks code, data, parameters, and metrics for all tuning experiments, ensuring reproducibility [25]. |

| Data Valuation Libraries (e.g., Data Shapley) | Quantifies the importance of individual training data points, helping to identify mislabeled examples or outliers that hurt model performance [24]. |

| Automated Modeling Pipelines (e.g., Pharmpy, pyDarwin) | Automates the process of model selection and parameter estimation, reducing manual effort and standardizing workflows, especially in domains like pharmacometrics [27]. |

| Confident Learning Frameworks (e.g., cleanlab) | Algorithmically identifies label errors in datasets by characterizing the joint distribution between noisy given labels and uncorrupted unknown labels [24]. |

Annotation Pipeline Workflows

High-Level Annotation and Tuning Pipeline

Troubleshooting Loop for Data Quality

Advanced Tuning Strategies for Robust Biomedical Annotation

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between Grid Search and Random Search?

Grid Search is an exhaustive search method that tests every possible combination of hyperparameters within a user-defined grid. It systematically traverses the entire parameter space, guaranteeing that the best combination within the specified grid will be found [28] [29] [30]. In contrast, Random Search randomly samples a fixed number of hyperparameter combinations from predefined distributions. It does not explore the entire space but can cover a broader and more diverse range of values, often leading to more efficient discovery of good hyperparameters [28] [31] [32].

FAQ 2: When should I prefer Random Search over Grid Search?

You should prefer Random Search in the following scenarios [28] [33] [31]:

- You have a large number of hyperparameters to tune, leading to a high-dimensional search space.

- The computational budget or time is limited, as Random Search often finds good parameters faster.

- You are unsure of the exact optimal range for hyperparameter values and want to explore a wider space.

- Only a few hyperparameters significantly impact your model's performance. Random Search is better at finding these important parameters.

FAQ 3: Why is Grid Search considered computationally expensive?

The computational cost of Grid Search grows exponentially with the number of hyperparameters. This is known as the "curse of dimensionality" [29]. For example, if you have 5 hyperparameters and you want to try 10 values for each, Grid Search would train your model 10^5, or 100,000 times. Each of these trainings also involves cross-validation, further multiplying the computational cost [30].

FAQ 4: Does Random Search's random sampling guarantee finding the best hyperparameters?

No, Random Search does not guarantee that it will find the absolute best hyperparameters within the search space because it does not test every possible combination [31] [34]. However, in practice, it is highly effective at finding a set of hyperparameters that are very good, or "good enough," with significantly fewer iterations than Grid Search [30] [32]. Its efficiency allows you to run more iterations, increasing the probability of finding a superior combination.

FAQ 5: Can Grid Search and Random Search be combined?

Yes, a common hybrid approach is to start with Random Search to get a rough estimate of which regions of the hyperparameter space yield good performance. Then, you can perform a more focused Grid Search in a narrower range around the best values found by the Random Search [28]. This combines the broad exploration of Random Search with the local precision of Grid Search.

Troubleshooting Guides

Issue 1: Hyperparameter tuning is taking too long and consuming excessive computational resources.

Diagnosis: This is a common problem, especially with Grid Search on large parameter grids or complex models [33] [29].

Solution:

- Switch to Random Search: For large search spaces, replace Grid Search with Random Search and set a manageable number of iterations (

n_iter) [28] [32]. - Reduce the Search Space: Review your hyperparameter ranges. Use domain knowledge to narrow them down. For parameters like the learning rate, consider a logarithmic scale instead of a linear one [33].

- Use a Subset of Data: For an initial search, use a smaller subset of your training data to quickly eliminate poor hyperparameter combinations. Later, refine the best candidates on the full dataset [35].

- Leverage Parallelization: Both

GridSearchCVandRandomizedSearchCVin scikit-learn have ann_jobsparameter. Setn_jobs=-1to use all available processors and parallelize the computation [28] [35].

Issue 2: The best model from tuning performs well on the validation set but poorly on unseen test data.

Diagnosis: This is a classic sign of overfitting to the validation set. By searching too extensively, the tuning process may have found hyperparameters that are overly specialized to the validation data [28] [32].

Solution:

- Use Nested Cross-Validation: Perform hyperparameter tuning within an inner loop of cross-validation, and use an outer loop to provide an unbiased estimate of the model's generalization performance. This prevents information from the validation set leaking into the model selection process.

- Simplify the Model: The model might be too complex. Use hyperparameters that increase regularization (e.g., higher penalty

Cin SVMs, weight decay in neural networks, ormin_samples_leafin Random Forests) [28]. - Limit the Search Space: An excessively large search space increases the risk of overfitting. Define your hyperparameter ranges based on prior research or preliminary experiments [33].

Issue 3: The tuning process did not improve my model's performance compared to the default hyperparameters.

Diagnosis: The defined search space might not include the optimal values, or the wrong performance metric is being optimized [32].

Solution:

- Expand the Search Space: The optimal hyperparameters may lie outside the ranges you initially defined. Widen the distributions in Random Search or add more values to the grid in Grid Search [32].

- Check the Evaluation Metric: Ensure the scoring metric (e.g.,

scoring='accuracy'orscoring='f1') used in the search aligns with your project's ultimate objective [35]. - Verify Data Preprocessing: Ensure that your data is correctly preprocessed (e.g., scaled, encoded) and that there is no data leakage between the training and validation sets.

Data Presentation: Comparative Analysis

The table below summarizes the core characteristics of Grid Search and Random Search based on the search results.

| Feature | Grid Search | Random Search |

|---|---|---|

| Core Principle | Exhaustive search over a defined grid [29] [30] | Random sampling from specified distributions [31] [30] |

| Exploration Method | Systematic and comprehensive [28] | Stochastic and non-systematic [28] |

| Computational Efficiency | Low; grows exponentially with parameters [33] [29] | High; efficient in high-dimensional spaces [28] [31] |

| Best For | Small, well-understood parameter spaces [28] [33] | Large parameter spaces and limited resources [28] [31] |

| Prior Knowledge Requirement | Requires good intuition for setting the grid [28] | Less reliant on prior knowledge [28] |

| Risk of Overfitting | Higher if the search space is very large [28] [32] | Lower due to less exhaustive validation [28] |

| Guarantee | Finds best parameters within the grid [29] | No guarantee; finds good parameters faster [31] |

Experimental Protocols

Protocol 1: Implementing Hyperparameter Tuning with Scikit-Learn

This protocol provides a step-by-step methodology for performing hyperparameter tuning using Scikit-Learn's GridSearchCV and RandomizedSearchCV, as illustrated in the search results [28] [35] [30].

1. Preprocessing and Data Splitting:

2. Defining the Hyperparameter Search Space:

3. Executing the Search with Cross-Validation:

4. Evaluating the Best Model:

Workflow Visualization

The following diagram illustrates the logical workflow and key decision points for choosing between Grid Search and Random Search.

The Scientist's Toolkit: Research Reagent Solutions

This table details key components and their functions when setting up hyperparameter optimization experiments, analogous to a research reagent kit.

| Item | Function | Example/Note |

|---|---|---|

| Scikit-Learn Library | Provides the core implementations for GridSearchCV and RandomizedSearchCV [28] [35]. |

Essential Python library for machine learning. |

| Computational Resource (CPU) | Executes the training and validation of multiple model instances. The n_jobs=-1 parameter leverages all cores [28] [35]. |

Cloud computing instances (e.g., AWS EC2) can be used for heavy workloads. |

| Cross-Validation (e.g., cv=5) | A resampling technique used to evaluate the model and tune hyperparameters without a separate validation set, providing a more robust performance estimate [35] [30]. | Typically 5 or 10 folds are used. |

| Performance Metric (Scoring) | The objective function that the search process aims to optimize (e.g., accuracy, F1-score, R²) [35]. | Should be chosen to reflect the business or research objective. |

| Parameter Grid/Distributions | The defined search space from which hyperparameter values are drawn for testing [30]. | For Grid Search, it's a list of values. For Random Search, it's a statistical distribution (e.g., randint, uniform from scipy.stats) [28] [32]. |

| Base Estimator/Model | The machine learning algorithm whose hyperparameters are being tuned (e.g., RandomForestClassifier, SVC) [35] [30]. |

Must be compatible with Scikit-Learn's API. |

Q1: What is Bayesian Optimization, and when should I use it for my research? Bayesian Optimization (BO) is a powerful strategy for finding the global optimum of functions that are expensive to evaluate, noisy, and lack an analytical expression (black-box functions) [36]. It is particularly suited for tuning machine learning models and optimizing experimental parameters in fields like drug discovery and materials science, where each evaluation can be computationally intensive or resource-consuming [37] [38] [39]. Unlike grid or random search, BO uses past evaluation results to inform future selections, making it significantly more efficient [37].

Q2: How does Bayesian Optimization improve upon methods like Grid Search and Random Search? Grid Search and Random Search are "uninformed" methods, meaning they do not learn from past trials [37]. The table below summarizes key differences:

| Feature | Grid Search | Random Search | Bayesian Optimization |

|---|---|---|---|

| Learning Mechanism | No learning from past trials [37]. | No learning from past trials [37]. | Builds a probabilistic surrogate model to guide the search [37] [40]. |

| Efficiency | Low; scales poorly with dimensionality [39]. | Better than Grid Search, but can still be inefficient [39]. | High; focuses evaluations on promising regions [37]. |

| Best Use Case | Small, low-dimensional parameter spaces. | Larger parameter spaces where Grid Search is infeasible [41]. | Optimizing expensive black-box functions with limited evaluation budgets [36]. |

Q3: What are the core components of a Bayesian Optimization algorithm? A BO algorithm has two main components [39] [36]:

- Surrogate Model: A probability model that approximates the expensive objective function. Common choices are Gaussian Processes (GPs), Random Forest Regressions, and Tree Parzen Estimators (TPE) [37] [39].

- Acquisition Function: A function that uses the surrogate model to determine the next most promising parameters to evaluate by balancing exploration (probing regions of high uncertainty) and exploitation (probing regions with high predicted performance) [39] [36].

The following diagram illustrates the typical Bayesian Optimization workflow:

Implementation & Protocol Guide: Q&A

Q4: What is a standard experimental protocol for implementing Bayesian Optimization? The protocol, known as Sequential Model-Based Optimization (SMBO), involves the following steps [37]:

- Define Domain: Specify the hyperparameters and their search space (e.g., learning rate as a log-uniform distribution between 0.01 and 1.0) [37] [42].

- Initialize History: Start with a small set of randomly selected points and evaluate them on the objective function.

- Iterate until convergence or budget exhaustion:

- Fit Surrogate: Model the objective function using all currently observed data.

- Maximize Acquisition: Identify the parameter set that maximizes the acquisition function (e.g., Expected Improvement).

- Evaluate & Update: Evaluate the expensive objective function at the proposed point and add the result to the history dataset [37].

Q5: How do I set up a hyperparameter tuning experiment using Bayesian Optimization in Python?

Below is a detailed methodology using BayesSearchCV from the scikit-optimize library, as demonstrated in the search results [40] [42].

Objective: Tune a Support Vector Classifier (SVC) on the Breast Cancer dataset to maximize accuracy [40]. Materials and Reagents (The Researcher's Toolkit):

| Item | Function/Description | Example/Value |

|---|---|---|

| Breast Cancer Dataset | Standard benchmark dataset for classification tasks [40]. | Loaded via sklearn.datasets.load_breast_cancer. |

| Support Vector Classifier (SVC) | The machine learning model whose hyperparameters are being optimized [40]. | sklearn.svm.SVC |

| Search Space | The defined ranges and options for each hyperparameter to be tuned [40]. | C: (1e-6, 1e+6, 'log-uniform')gamma: (1e-6, 1e+1, 'log-uniform')kernel: ['linear', 'poly', 'rbf'] |

| Bayesian Optimizer | The algorithm that conducts the optimization loop [40] [42]. | skopt.BayesSearchCV |

| Surrogate Model | The underlying probabilistic model; often a Gaussian Process is used by default. | Gaussian Process (in BayesSearchCV) |

| Acquisition Function | The criterion for selecting the next parameters [37]. | Expected Improvement (EI) is common. |

Experimental Steps:

- Import packages and load data. Standardize the features to ensure consistent model performance [40].

- Define the hyperparameter search space. [40]

- Initialize and run the Bayesian Optimizer. [40]

- Evaluate results and implement the best model. [40]

Advanced Applications in Drug Discovery & Materials Science: Q&A

Q6: How is Bayesian Optimization applied in real-world scientific research like drug discovery? In drug discovery, BO is used to navigate the complex "chemical space" and optimize molecular structures towards a desired clinical profile. It treats the biological activity or other properties of a candidate molecule as an expensive black-box function [38]. BO can efficiently guide the selection of which compound to synthesize and test next, significantly accelerating the early hit discovery and optimization phases [38].

Q7: My research involves optimizing for multiple, potentially competing, objectives. Can Bayesian Optimization handle this? Yes, this is addressed by Multi-Objective Bayesian Optimization (MOBO). Instead of finding a single best solution, MOBO aims to identify a Pareto front—a set of optimal solutions where no objective can be improved without worsening another [43]. For example, in additive manufacturing (3D printing), researchers might simultaneously optimize for print accuracy and material homogeneity [43]. The acquisition function in MOBO, such as Expected Hypervolume Improvement (EHVI), is designed to handle multiple objectives [43].

Troubleshooting Common Experimental Issues: Q&A

Q8: The optimization process seems to be stuck in a local minimum. How can I encourage more exploration? This is a classic trade-off between exploration and exploitation.

- Solution: Adjust the acquisition function's behavior. For the popular Expected Improvement (EI) function, you can introduce a parameter

ζ(zeta) orxithat controls the balance. A largerxivalue forces the algorithm to prefer points with higher uncertainty, promoting more exploration [36]. - Action: In libraries like

scikit-optimize, you can often tune this parameter. For example, in a custom loop using aGaussianProcessRegressor, you would set thexiparameter in theexpected_improvementfunction.

Q9: The surrogate model is taking too long to fit as the data grows. What are my options? With a high number of evaluations, Gaussian Processes can become computationally expensive due to cubic scaling with the data size.

- Solution 1: Consider using a different surrogate model that scales more efficiently, such as a Random Forest or the Tree Parzen Estimator (TPE), which is used in the

Hyperoptlibrary [37] [41]. - Solution 2: For GPs, use sparse approximation methods to handle larger datasets.

Q10: How do I handle different types of hyperparameters (integer, categorical) within the same optimization?

A key advantage of BO and libraries like BayesSearchCV and Hyperopt is their native support for mixed parameter types [39] [41].

- Integer Parameters: Define them with an integer range (e.g.,

'n_estimators': (50, 500)). The internal surrogate model will handle the integer constraint [40] [42]. - Categorical Parameters: Define them as a list of choices (e.g.,

'kernel': ['linear', 'rbf']). The underlying model uses a special kernel (like a Hamming kernel for GPs) to handle categorical spaces [40] [42].

Leveraging Semi-Supervised Learning to Reduce Annotation Burden

Troubleshooting Common SSL Challenges

Q1: My semi-supervised model is not converging or showing minimal improvement over the supervised baseline. What could be wrong? A: This is often related to an imbalance between labeled and unlabeled data components. First, verify the ratio of your labeled to unlabeled data; a very small labeled set might be providing an insufficient signal to guide the learning from unlabeled data [44]. Second, check the consistency regularization loss weight—if set too low, the model ignores unlabeled data; if too high, it can destabilize training. Start with a low weight and gradually increase it using a ramp-up schedule [45]. Finally, ensure your unlabeled data comes from the same distribution as your labeled data; domain mismatch can cause the model to learn irrelevant patterns.

Q2: How can I address performance instability and high variance when training with very few labels? A: Instability is common in low-label regimes. Consider these approaches:

- Adopt Mean Teacher or other ensemble methods: The Mean Teacher model maintains an exponential moving average (EMA) of the student model's weights, creating a more stable target for predictions on unlabeled data, which significantly improves consistency [44].

- Implement data augmentation specific to your domain: For medical images, this might include random rotations, flips, or elastic deformations. For molecular data, consider atomic perturbations or graph augmentations [45]. Consistent augmentation is crucial for methods like FixMatch.

- Tune your optimizer aggresively: A reduced learning rate is often necessary. Monitor the loss on a small, held-out validation set and employ early stopping to prevent overfitting to the small labeled dataset.

Q3: My model performs well on the validation set but generalizes poorly to external test data from different institutions. How can I improve robustness? A: Poor cross-domain generalization indicates the model may be overfitting to site-specific noise in your training data. To enhance robustness:

- Incorporate domain-invariant features: Techniques like adversarial training or domain randomization can help the model learn features that are consistent across different scanners or protocols [44].

- Use a diverse unlabeled dataset: Include unlabeled data from multiple sources, institutions, or acquisition protocols in your training pool. This exposes the model to a wider range of variations [44].

- Apply Virtual Adversarial Training (VAT): VAT encourages the model to be smooth against small, directed perturbations on the input, which improves robustness against noise and adversarial examples [45].

Quantitative Performance of SSL Methods

Table 1: Performance Improvement of Semi-Supervised Learning over Supervised Baseline in Medical Image Segmentation [44]

| Test Cohort | DSC Improvement (Half Dataset) | DSC Improvement (Full Dataset) |

|---|---|---|

| Site 1 | 6.3% ± 1.6% | 3.6% ± 0.7% |

| Site 2 | 8.2% ± 3.8% | 2.0% ± 1.5% |

| Site 3 | 8.6% ± 2.6% | 1.8% ± 5.7% |

| Site 4 | 15.4% ± 1.4% | 4.7% ± 1.7% |

Table 2: Common Data Annotation Challenges and their Impact on Projects [46] [20]

| Challenge | Potential Impact on Model | Recommended Solution |

|---|---|---|

| Annotation Inconsistencies | Lower accuracy, biased predictions | Implement tiered review process & clear guidelines [46] |

| High Cost of Labeling | Project delays, limited scale | Use AI-assisted pre-labeling to reduce manual work [20] |

| Data Scarcity & Bias | Poor generalization, unfair outcomes | Leverage SSL and diversify data sources [47] |

| Security & Privacy Risks | Legal consequences, data breaches | Use encrypted, compliant platforms & data anonymization [20] |

Detailed Experimental Protocols

Protocol 1: Brain Metastases Segmentation on Multicenter MRI Data

This protocol is adapted from a multicenter study that demonstrated the efficacy of SSL for segmenting brain metastases using a limited set of labeled MRI scans [44].

1. Dataset Curation:

- Labeled Data: Collect a limited set of fully annotated scans. The referenced study used 156, 65, 324, and 200 labeled scans from four different institutions.

- Unlabeled Data: Gather a larger set of clinical scans without annotations. The study incorporated 519 unlabeled scans from a single institution.

- Key Consideration: Ensure all data (both labeled and unlabeled) includes T1-weighted pre- and post-contrast and FLAIR sequences from both 1.5T and 3T scanners to ensure protocol diversity.

2. Model and Training Setup:

- Architecture: A standard U-Net is a suitable baseline model.

- SSL Methods for Comparison: Adapt and compare multiple SSL methods. The study successfully used:

- Mean Teacher: Trains a student model and a teacher model (an EMA of the student), using consistency between their predictions on unlabeled data as a loss.

- Cross-Pseudo Supervision (CPS): Two identical networks perturb each other's training by generating pseudo-labels for the unlabeled data.

- Interpolation Consistency Training: Encourages consistency for interpolations of both input images and their labels.

- Training Regime: Train models on half-sized and full-sized labeled datasets to quantify data efficiency.

3. Evaluation and Validation:

- Test Set: Use a multinational test set from several institutions to evaluate generalizability.

- Metrics: Employ a comprehensive set of metrics including:

- Dice Similarity Coefficient (DSC) for overlap accuracy.

- 95th Hausdorff Distance for boundary segmentation accuracy.

- Number of true positive and false positive predictions.

- Statistical Testing: Use paired sample t-tests to determine the significance of performance differences between SSL and supervised baselines.

Protocol 2: Molecular Property Prediction for Drug Discovery

This protocol is based on a study that used Graph-based Virtual Adversarial Training (GVAT) for molecular property prediction with limited labeled data [45].

1. Data Preparation:

- Molecular Representation: Represent molecules as graphs, where atoms are nodes and bonds are edges.

- Dataset Split: Use a small subset of a public benchmark dataset (e.g., from the Tox21 challenge) as labeled data. The majority of the dataset should be treated as unlabeled data.

2. GVAT Model Implementation:

- Graph Neural Network (GNN): Use a GNN as the base model to learn molecular representations.

- Virtual Adversarial Training (VAT):

- For a given unlabeled molecule, calculate the model's original prediction.

- Apply a small, adversarial perturbation to the molecular graph representation that maximizes the divergence from the original prediction.

- Add a loss term that penalizes the difference between the predictions of the original and perturbed graphs, encouraging smoothness and robustness around each data point.

3. Evaluation:

- Compare the performance of the GVAT model against fully supervised GNNs and other conventional machine learning models on held-out test data.

- Perform an ablation study to confirm the contribution of the VAT component to the overall performance.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Components for a Semi-Supervised Learning Pipeline

| Item / Technique | Function in SSL Experiment |

|---|---|

| U-Net Architecture | A standard backbone model for segmentation tasks; provides a strong baseline for computer vision applications [44]. |

| Graph Neural Network (GNN) | Base model for non-Euclidean data; essential for tasks like molecular property prediction in drug discovery [45]. |

| Mean Teacher Model | Stabilizes training and generates better targets for unlabeled data via an exponential moving average of model weights [44]. |

| Virtual Adversarial Training (VAT) | Improves model robustness by enforcing consistency against adversarial perturbations of the input [45]. |

| Parameter-Efficient Fine-Tuning (PEFT) | Techniques like LoRA (Low-Rank Adaptation) that adapt large models with minimal trainable parameters, reducing compute needs [5]. |

| AI-Assisted Pre-labeling | Uses a pre-trained model to generate initial labels, which are then refined by human experts, drastically speeding up annotation [20]. |

| Inter-Annotator Agreement (IAA) | A quality control metric and process where multiple annotators label the same data to ensure consistency and reliability [48]. |

Workflow and Architecture Visualizations

Frequently Asked Questions (FAQs)

Q: Can SSL really match the performance of fully supervised models that use much more data? A: Yes, under the right conditions. Research has shown that semi-supervised models can achieve equal or even better performance than supervised models trained on twice the amount of labeled data [44]. The key is that the unlabeled data must help the model learn a more robust and generalizable representation of the underlying data distribution.

Q: What is the most critical hyperparameter to tune in an SSL experiment? A: While learning rate and batch size are always important, the consistency loss weight (λ) is particularly critical in SSL. This hyperparameter controls the influence of the unlabeled data on the training process. A best practice is to use a ramp-up function for λ, starting from zero and gradually increasing over training epochs. This prevents the model from being overwhelmed by noisy signals from the unlabeled data in the early stages of training [45].

Q: How do I choose the right SSL method for my specific task (e.g., segmentation vs. classification)? A: The choice often depends on the data modality and task:

- For image segmentation: Methods based on consistency regularization like Mean Teacher, Cross Pseudo Supervision, and Interpolation Consistency Training have proven highly effective [44].

- For graph-based tasks (e.g., molecular property prediction): Graph-based Virtual Adversarial Training (GVAT) is a strong candidate, as it builds on GNNs and enforces smoothness in the graph representation space [45].

- For scenarios with extreme data privacy concerns (e.g., cross-hospital data): Federated Learning frameworks can be combined with SSL to train models across data silos without centralizing the data [5].

Q: How can we quantify the cost savings from using SSL? A: Savings are primarily realized through reduced annotation time and costs. You can calculate it by comparing the project timeline and cost of annotating a full dataset versus a small labeled subset. One study reported reducing medical image annotation time from 6 months to 3 weeks by leveraging AI-assisted tools, which is a core enabler for effective SSL [20]. The exact ROI depends on your data's annotation complexity and the hourly rate of domain experts.

Synthetic Data Generation for Rare Events and Privacy-Sensitive Applications

Troubleshooting Guide: Common Issues & Solutions

Q1: My model, trained on synthetic rare events, fails to generalize to real-world data. What is wrong? This indicates a potential realism gap or distribution mismatch between your synthetic and real data [49]. To resolve this:

- Validate with Real Hold-Out Data: Always benchmark your model's performance on a reserved dataset of real-world examples [49]. This is the most reliable way to measure generalization.

- Blend Datasets: Do not rely solely on synthetic data. Augment your real dataset with synthetic examples to cover gaps, rather than replacing real data entirely [50] [49]. A common strategy is to fine-tune a model pre-trained on synthetic data with a smaller set of real data [50].

- Audit for Bias and Realism: Implement a rigorous validation process to check if the synthetic data accurately captures the complex patterns and subtle features of real rare events [49]. Techniques like visualizing synthetic samples and conducting statistical comparisons are essential [50].

Q2: How can I ensure my synthetic data does not accidentally expose private information from the original dataset? Privacy preservation is a key advantage of synthetic data, but it requires careful implementation [51].

- Use Robust Privacy Techniques: For highly sensitive data, employ generation methods that incorporate privacy guarantees, such as differential privacy, which adds calibrated noise to the data or training process [51]. Another method is k-anonymity, which generalizes data so that each individual is indistinguishable from at least k-1 others [51].

- Conduct Privacy Attacks: Actively test your synthetic dataset against membership inference or reconstruction attacks to probe for potential data leakage [51].

- Validate with Domain Experts: Especially in fields like healthcare, have subject matter experts review the synthetic data to ensure no real individuals can be identified [50].

Q3: My generative model for rare events only produces variations of the most common patterns, missing true outliers. How can I improve this? This is a classic challenge in generating true extremes, not just minor variations [52].

- Leverage Statistical Theory: Integrate Extreme Value Theory (EVT) into your generative models. EVT provides a mathematical framework for modeling the tails of distributions. Using approaches like the Peaks Over Threshold (POT) method and fitting a Generalized Pareto Distribution (GPD) can help the model learn and replicate the statistical behavior of genuine outliers [52].

- Specialized Training and Sampling: Investigate generative models that use specialized training loops and sampling mechanisms explicitly designed to capture heavy-tailed distributions and temporal clustering behaviors typical of rare events [52].

- Adjust Sampling Parameters: Many synthetic data platforms allow you to control parameters. You can deliberately oversample from the rare classes or edge cases in your training data to force the generator to focus on these areas [51].

Q4: I am getting poor results when generating synthetic tabular data. What are the best-suited models for this data type? The choice of model is critical and depends on the data structure [51].

- For Tabular Data: Models like Gaussian Copulas are efficient at learning the joint probability distribution and correlations between variables [51]. CTGAN is another advanced deep-learning model designed to handle the nuances of mixed data types (continuous and categorical) commonly found in tables [51].

- Avoid One-Size-Fits-All Models: A model that excels at generating images (like a GAN) may not be the best choice for structured tabular data. Match the generative model to the data modality [49] [51].

Frequently Asked Questions (FAQs)

Q: Can synthetic data fully replace real data in machine learning models for critical applications like drug development? A: In most high-stakes scenarios, no. While synthetic data can significantly augment real data and address specific gaps, it is generally not advisable to fully replace all real data—especially for highly complex scenarios where authentic real-world interactions and randomness are critical [50]. The best practice is to use a hybrid approach, combining synthetic and real data to achieve optimal model performance and reliability [50] [49].

Q: What are the most important metrics for evaluating the quality of synthetic data for rare events? A: Evaluation must go beyond general similarity metrics [52]. Key dimensions include:

- Statistical Similarity: Do basic statistics (mean, variance) and overall distributions match? [50] [52]

- Dependence Preservation: Are the correlations between variables maintained? [52]

- Extreme Coverage: Does the synthetic data accurately capture the "tail" of the distribution—the rare, high-impact events? This is paramount [52].

- Downstream Task Performance: Ultimately, the best test is to train a model on the synthetic data and evaluate its performance on a real-world task [50] [52].

Q: How does a Human-in-the-Loop (HITL) system integrate with synthetic data generation? A: A HITL system creates a powerful feedback loop [53] [49]. The process typically works as follows:

- AI generates large volumes of synthetic data quickly.

- Human experts (e.g., researchers, annotators) review, validate, and refine this data. They correct errors, ensure realism, and label edge cases that the AI might miss.

- This validated, high-quality data is then used to retrain and improve the AI model. This combination leverages the scalability of AI with the nuanced judgment of humans, leading to more accurate and trustworthy datasets [53] [49].

Q: What is model collapse and how can synthetic data help prevent it? A: Model collapse is a phenomenon where AI models, particularly generative ones, become progressively worse as they are trained on data that increasingly includes their own outputs [53]. This creates a feedback loop of degradation, leading to a loss of diversity and factual accuracy [53]. High-quality synthetic data, especially when generated to represent true underlying distributions or to fill data gaps, can provide a "fresh" source of information. This prevents the model from over-indexing on AI-generated artifacts and helps maintain the richness of the training set [53].

Experimental Protocols & Data

Table 1: Quantitative Metrics for Synthetic Data Evaluation

This table summarizes key metrics for assessing synthetic data quality, based on frameworks from current literature [52].

| Metric Category | Specific Metric | Description | Application Context |

|---|---|---|---|

| Statistical Similarity | Jensen-Shannon Divergence [52] | Measures the similarity between the probability distributions of real and synthetic data. | General use, validates overall distributional fidelity. |

| Statistical Similarity | Maximum Mean Discrepancy (MMD) [52] | A kernel-based test to determine if two distributions are different. | Effective for high-dimensional data. |

| Extreme Coverage | Tail Concentration Function [52] | Quantifies how well the synthetic data captures the extreme values in the tail of the distribution. | Critical for rare events; assesses extremeness performance. |

| Dependence Preservation | Kendall's Rank Correlation [52] | Measures the ordinal association between the dependencies in real and synthetic data. | Validates that variable relationships are maintained. |

| Downstream Task Performance | Performance Drop (Accuracy/F1) [50] [52] | The difference in performance of a model trained on synthetic data vs. real data when tested on a real hold-out set. | The ultimate utility test for the synthetic dataset. |

Table 2: Research Reagent Solutions Toolkit

A selection of essential techniques and tools for generating and validating synthetic data.

| Tool / Technique | Type | Primary Function | Key Reference / Implementation |

|---|---|---|---|

| Generative Adversarial Network (GAN) | AI Model | Generates high-fidelity synthetic data (images, tabular) through an adversarial training process. | [50] [51] [52] |

| Gaussian Copula | Statistical Model | Efficiently generates synthetic tabular data by learning joint probability distributions of variables. | [51] |

| Extreme Value Theory (EVT) | Statistical Framework | Provides mathematical foundation (e.g., GPD) for modeling the tail behavior of rare events. | [52] |

| Differential Privacy | Privacy Framework | Provides mathematical privacy guarantees by adding calibrated noise to the data or training process. | [51] |