Optimizing Single-Cell Foundation Model Hyperparameters: A Task-Specific Guide for Biomedical Research

Single-cell foundation models (scFMs) represent a transformative technology for analyzing cellular heterogeneity, but their effective application hinges on proper hyperparameter optimization.

Optimizing Single-Cell Foundation Model Hyperparameters: A Task-Specific Guide for Biomedical Research

Abstract

Single-cell foundation models (scFMs) represent a transformative technology for analyzing cellular heterogeneity, but their effective application hinges on proper hyperparameter optimization. This article provides a comprehensive, evidence-based guide for researchers and drug development professionals on tailoring scFM configurations for specific biological and clinical tasks. Drawing from recent large-scale benchmark studies, we synthesize foundational concepts, methodological workflows, practical optimization strategies, and rigorous validation frameworks. We address critical questions of when to use complex scFMs versus simpler alternatives and how to systematically select and tune models based on dataset characteristics, task complexity, and computational constraints to maximize biological insights in applications ranging from cell atlas construction to drug sensitivity prediction.

Understanding scFM Architecture and Hyperparameter Fundamentals

Frequently Asked Questions

Q1: What is a single-cell Foundation Model (scFM), and how does it relate to transformers?

A single-cell Foundation Model (scFM) is a large-scale deep learning model, typically based on a transformer architecture, that is pretrained on vast and diverse single-cell RNA sequencing (scRNA-seq) datasets in a self-supervised manner [1]. The core idea is to treat a single cell's data as a "sentence." The individual genes, along with their expression levels, are treated as "words" or tokens, allowing the transformer to learn the fundamental "language" of cellular biology [1]. These models learn rich, generalizable representations of genes and cells that can then be adapted (e.g., via fine-tuning) to a wide range of downstream tasks like cell type annotation, batch integration, and perturbation prediction [2] [1].

Q2: My scFM is not generalizing well to my specific dataset. Should I use a simpler model instead?

This is a common consideration. Comprehensive benchmarks reveal that no single scFM consistently outperforms others across all tasks [2] [3]. The choice between a complex scFM and a simpler alternative depends on several factors [2]:

- Dataset Size: For smaller, specific datasets, simpler machine learning models can be more efficient and easier to adapt with limited resources [2].

- Task Complexity: scFMs show strength in versatility and robustness across diverse applications, especially in zero-shot or few-shot learning scenarios [2].

- Computational Resources: Training or fine-tuning large scFMs requires significant computational intensity, which may be a constraint [1]. Benchmark studies suggest that scFMs serve as powerful plug-and-play modules, but for resource-constrained environments focused on a single task, established baseline methods remain competitive [2] [3].

Q3: I'm encountering memory issues when trying to analyze a large dataset. How can I handle this?

For datasets containing millions of cells, memory bottlenecks are a major challenge. Consider the following solutions:

- GPU Acceleration: Leverage GPU-accelerated analysis pipelines. Tools like ScaleSC and rapids-singlecell are designed to handle massive-scale datasets (up to 10-20 million cells) on a single high-memory GPU (e.g., NVIDIA A100), offering speedups of 20x or more compared to CPU-based tools [4] [5].

- Efficient Data Structures: Use GPU-optimized data structures like

cunnData(from rapids-singlecell), which stores count matrices directly on the GPU in sparse format, drastically reducing memory overhead and accelerating computations [5]. - Feature Selection: Reduce the dimensionality of your data early in the workflow by selecting Highly Variable Genes (HVGs), which filters out genes with little variation and focuses the analysis on the most informative features [4].

Q4: How do I choose the right scFM for my task, given the many options available?

Model selection should be guided by your specific task, data characteristics, and the biological questions you are asking. The table below summarizes key characteristics of prominent scFMs to aid in this decision [2]:

| Model Name | Key Architectural / Pretraining Features | Pretraining Scale (Cells) |

|---|---|---|

| Geneformer | Uses a ranked list of genes per cell as input; encoder architecture [2]. | 30 million [2] |

| scGPT | Supports multiple omics modalities; decoder architecture with generative pretraining [2]. | 33 million [2] |

| scFoundation | Asymmetric encoder-decoder; trained on a fixed set of protein-encoding genes [2]. | 50 million [2] |

| UCE | Incorporates protein embeddings from ESM-2; uses genomic position for gene ordering [2]. | 36 million [2] |

| LangCell | Uses a ranked list of genes; trained with text labels (cell types) in a multimodal setting [2]. | 27.5 million [2] |

Q5: The results from my scFM are difficult to interpret biologically. How can I gain insights?

Interpreting the biological relevance of latent embeddings and model representations remains a challenge but is an active area of research [1]. To improve interpretability:

- Use Biologically Informed Metrics: Novel evaluation metrics like scGraph-OntoRWR (which measures consistency of captured cell type relationships with known biological ontologies) and Lowest Common Ancestor Distance (LCAD) (which assesses the severity of cell type misannotation errors) can provide a more biologically grounded perspective on model performance [2] [3].

- Analyze Attention Mechanisms: The attention weights within the transformer can, in some cases, be analyzed to identify which genes the model deems important for specific predictions, potentially revealing gene-gene regulatory relationships [2] [1].

- Consult Knowledge Bases: Integrate your model's outputs (e.g., gene embeddings) with external knowledge bases like Gene Ontology (GO) to validate if functionally similar genes are clustered together in the latent space [3].

Troubleshooting Guides

Issue 1: Discrepancies in Results Between Different scFM Implementations or Between CPU and GPU

Problem: You notice that the output (e.g., principal components, integrated data) differs when running the same analysis with different tools (e.g., Scanpy vs. a GPU-accelerated version) or on different hardware.

Solution: This is often caused by "system variance" and "numerical variance" [4].

- Identify the Source:

- System Variance: Different software libraries (e.g., Scikit-learn on CPU vs. cuML on GPU) may implement the same algorithm with slight variations to optimize for their respective architectures. A known example is the sign of eigenvectors in PCA, which is mathematically correct either way but affects downstream results [4].

- Numerical Variance: Inherent floating-point errors can accumulate differently on CPUs and GPUs.

- Resolution Steps:

- For PCA Discrepancies: Ensure that the sign of the principal components is aligned. Some packages, like Scikit-learn, flip the sign so the largest absolute value in the eigenvector is positive. You may need to implement a similar correction step in your GPU pipeline for consistency [4].

- For Harmony Integration: Discrepancies can arise from differences in the initialization of K-Means clustering within the Harmony algorithm. Using a fixed random seed can help ensure reproducibility [4].

- General Best Practice: For reproducibility, always document the exact versions of all packages and the specific hardware used. When switching to a new tool (e.g.,

rapids-singlecell), use its built-in functions to ensure consistency, such as those implemented in theScaleSCpackage [4].

Issue 2: Poor Performance on Downstream Tasks Like Cell Type Annotation or Batch Integration

Problem: After obtaining embeddings from an scFM, your downstream task (e.g., classifying cell types) is underperforming.

Solution: This can be due to a mismatch between the model and the task or suboptimal use of the embeddings.

- Evaluate in Zero-Shot Mode First: Before fine-tuning, check the quality of the pretrained model's zero-shot cell embeddings. Use them for simple tasks like clustering and visualization to see if biological structures (e.g., separation of cell types) are preserved without any additional training [2] [3].

- Fine-Tune Strategically: If zero-shot performance is inadequate, fine-tune the model on your specific data.

- Start with a Baseline: Compare the scFM against simpler baseline methods (e.g., Seurat, Harmony, scVI) on your specific task to establish a performance benchmark [2] [3].

- Hyperparameter Tuning is Critical: Systematically tune hyperparameters during fine-tuning. The following table outlines a recommended protocol for this process, adapted from general machine learning best practices [6] [7]:

| Step | Methodology | Key Actions |

|---|---|---|

| 1. Baseline | Train a model with default hyperparameters. | Provides a benchmark for measuring improvement [6]. |

| 2. Initial Exploration | Use RandomizedSearchCV with cross-validation. | Efficiently explores a wide hyperparameter space; better for high-dimensional spaces [6] [7]. |

| 3. Focused Search | Use GridSearchCV with cross-validation. | Exhaustively searches a narrower parameter space identified in step 2 [6] [7]. |

| 4. Monitor Overfitting | Plot training and validation curves. | Visualizes if performance plateaus or if the gap between training and validation scores grows, indicating overfitting [6]. |

Issue 3: Handling the Non-Sequential Nature of Gene Data in Transformers

Problem: Gene expression data is not naturally ordered like words in a sentence, but transformers require a sequence of tokens as input.

Solution: This is a fundamental challenge addressed by different tokenization strategies. The workflow below illustrates the common approaches to structuring single-cell data for an scFM.

The choice of tokenization strategy (ranking, binning, or using a fixed set) is a key architectural decision that varies between different scFMs and can impact model performance [2] [1].

The Scientist's Toolkit: Essential Research Reagents

The table below details key computational "reagents" and tools essential for working with single-cell Foundation Models.

| Item / Tool | Function & Explanation |

|---|---|

| Annotated Data (AnnData) | The standard data structure in the scverse ecosystem for handling single-cell data in Python. It stores the count matrix, cell and gene annotations, and reduced dimensions in an integrated object [4] [5]. |

| cunnData | A GPU-accelerated, lightweight version of AnnData from the rapids-singlecell library. It stores count matrices as sparse matrices directly on the GPU, dramatically speeding up preprocessing steps [5]. |

| Highly Variable Genes (HVGs) | A feature selection method that identifies genes with high cell-to-cell variation. Using HVGs (typically 1,000-5,000) reduces the feature space from ~20-50k genes, lessening computational load and noise [4]. |

| Transformer Architecture | The core neural network architecture of most scFMs. Its self-attention mechanism allows the model to weigh the importance of all genes in a cell when learning representations, capturing complex gene-gene interactions [1]. |

| Cell Ontologies | Controlled vocabularies that formally define and relate cell types. They are used to create biologically informed metrics (e.g., scGraph-OntoRWR) for evaluating whether an scFM's embeddings capture known biological relationships [2] [3]. |

Frequently Asked Questions & Troubleshooting Guides

This technical support center addresses common challenges researchers face when tuning hyperparameters for single-cell foundation models (scFMs). These large-scale models, pretrained on vast single-cell datasets, require careful configuration of embedding, attention, and training parameters to excel at specific downstream tasks like cell type annotation, perturbation prediction, and drug sensitivity analysis [2] [1].

Problem: Model Fails to Capture Biological Relevance in Embeddings

Q: My scFM's cell embeddings do not separate well by known cell type and show poor performance on zero-shot cell type annotation tasks. What hyperparameters should I investigate?

A: This often indicates suboptimal configuration of embedding and model architecture parameters. The embedding layer is responsible for converting tokenized genes into vector representations that the transformer can process [1].

Troubleshooting Steps:

- Verify Tokenization Strategy: Ensure your tokenization method (e.g., gene ranking by expression, value binning) aligns with the pretrained model's expectations. Mismatches here can render embeddings meaningless [2] [1].

- Increase Embedding Dimension: If computational resources allow, increase the

gene_embedding_dimandvalue_embedding_dim. This gives the model more capacity to represent complex gene-gene interactions [2]. - Inspect Positional Embeddings: scRNA-seq data is non-sequential. Experiment with turning positional embeddings on/off (

use_positional_embedding) or try different encoding strategies (e.g., based on genomic position) as used by models like UCE [2]. - Evaluate with Biological Metrics: Use metrics like

scGraph-OntoRWRorLowest Common Ancestor Distance (LCAD)to quantitatively assess if the learned embeddings reflect known biological relationships [2].

Problem: Attention Mechanism Lacks Interpretability or Focus

Q: The attention weights from my scFM do not highlight biologically plausible gene regulators. How can I improve the attention mechanism's focus?

A: This suggests the model's attention mechanism isn't learning meaningful gene relationships. Key hyperparameters control how attention is computed and allocated [1].

Troubleshooting Steps:

- Adjust Number of Attention Heads: The

n_headsparameter controls how many different representation subspaces the model can attend to. For complex tasks involving diverse gene regulatory networks, increasing the number of heads can help capture different types of gene interactions [1]. - Tune Attention Dropout: Apply

attn_dropout_rateto prevent co-adaptation of attention heads. If attention maps are noisy or uniform, increasing dropout can force heads to specialize more [1]. - Inspect Attention Masking: Verify that the attention masking strategy (bidirectional for encoder models, unidirectional for decoder models) is appropriate for your task. For example, scGPT uses a masked gene modeling objective with a unidirectional attention mask [2] [1].

Problem: Unstable Training or Slow Convergence During Fine-Tuning

Q: When I fine-tune an scFM on my specific dataset, the training loss is unstable, fluctuates wildly, or converges very slowly.

A: This is typically related to the optimization hyperparameters, particularly the learning rate and batch size, which are critical for stable adaptation [8].

Troubleshooting Steps:

- Implement a Learning Rate Schedule: Use a linear warmup followed by cosine decay (

lr_scheduler_type). This helps stabilize training in the initial phases. A common starting point is a peak learning rate of 1e-4 to 1e-5 for fine-tuning [8]. - Tune the Adam Optimizer: The Adam optimizer's hyperparameters (

learning_rate,beta1,beta2) are crucial. A low learning rate (e.g., 1e-5) is often necessary for fine-tuning to avoid catastrophic forgetting [8]. - Increase Batch Size: If memory constraints allow, gradually increase the

batch_size. Larger batches provide more stable gradient estimates. If you hit memory limits, use gradient accumulation to simulate a larger batch [8]. - Apply Gradient Clipping: Set

max_grad_normto 1.0 or 0.5 to prevent exploding gradients, which are common in transformer-based models [8].

Problem: Poor Transfer Learning Performance on Small Target Datasets

Q: I have a small, high-value dataset for a specific clinical task (e.g., drug sensitivity prediction). The fine-tuned scFM overfits and fails to generalize.

A: With limited data, aggressive regularization and choosing the right fine-tuning strategy are paramount to success [2].

Troubleshooting Steps:

- Freeze Lower Layers: Set

trainable_layersto only the last 1-2 transformer blocks and the classification head. This retains the model's general knowledge while adapting to the new task. - Increase Regularization: Apply strong regularization via

weight_decay(L2 regularization) anddropout_rate. This is more effective than early stopping for very small datasets [9]. - Use a Simplified Model: Benchmark against a simpler baseline (e.g., a model on highly variable genes). As noted in benchmark studies, simpler models can sometimes outperform large scFMs on specific, small-scale tasks [2].

- Employ Bayesian Hyperparameter Optimization: For small datasets, use efficient methods like Bayesian optimization (e.g.,

HyperoptorBayesSearchCV) to find the optimal hyperparameters with fewer trials, as grid search is often computationally prohibitive [10] [11].

Experimental Protocols & Benchmark Data

Benchmarking scFM Performance Across Tasks

Recent comprehensive benchmarks evaluate six major scFMs against traditional methods on gene-level and cell-level tasks. The table below summarizes key findings, showing that no single model dominates all tasks, highlighting the need for task-specific hyperparameter optimization [2].

Table 1: Benchmark Performance of Single-Cell Foundation Models

| Model Name | Pretraining Data Scale | Key Architecture Features | Strength Areas | Weakness Areas |

|---|---|---|---|---|

| Geneformer [2] | 30M cells | 40M params; Ranked gene input; Encoder | Gene-level tasks | Varies by task |

| scGPT [2] [12] | 33M cells | 50M params; Multi-modal; Encoder with attention mask | Robust overall performance, zero-shot & fine-tuning | Computationally intensive |

| scFoundation [2] | 50M cells | 100M params; Asymmetric encoder-decoder | Gene-level tasks | Varies by task |

| UCE [2] | 36M cells | 650M params; Protein embedding input | Specific embedding tasks | Varies by task |

| scBERT [2] [12] | Not Specified | Smaller model size | Cell type annotation | Lags in many tasks due to size/data |

Protocol: Bayesian Hyperparameter Optimization for scFM Fine-Tuning

This protocol uses Hyperopt to efficiently find optimal hyperparameters, minimizing the number of expensive model training runs [10] [13].

Objective: Find the optimal hyperparameter combination ( \theta^* ) that minimizes the loss function ( \mathcal{L} ) on the validation set. [ \theta^* = \arg\min\theta \mathcal{L}(M\theta, D{val}) ] Where ( M\theta ) is the model trained with hyperparameters ( \theta ), and ( D_{val} ) is the validation dataset.

Steps:

- Define the Search Space: Specify the hyperparameters and their value ranges. Below is an example space for fine-tuning an scFM classifier.

- Define the Objective Function: Create a function that takes a hyperparameter set, trains the model, and returns the validation loss.

- Run the Optimization: Execute

fmin()from Hyperopt for a set number of evaluations (max_evals).

Table 2: Example Hyperparameter Search Space for scFM Fine-Tuning

| Hyperparameter | Type | Search Space | Notes |

|---|---|---|---|

learning_rate |

Continuous | hp.loguniform('lr', low=np.log(1e-6), high=np.log(1e-3)) |

Crucial for stability; use log scale. |

batch_size |

Categorical | hp.choice('batch_size', [16, 32, 64]) |

Maximize based on GPU memory. |

weight_decay |

Continuous | hp.uniform('weight_decay', 1e-5, 1e-2) |

Regularization to prevent overfitting. |

attn_dropout_rate |

Continuous | hp.uniform('attn_dropout', 0.0, 0.3) |

Reduces over-reliance on specific attention links. |

n_trainable_layers |

Integer | hp.randint('n_trainable', 1, 6) |

Layer-wise fine-tuning; freeze lower layers. |

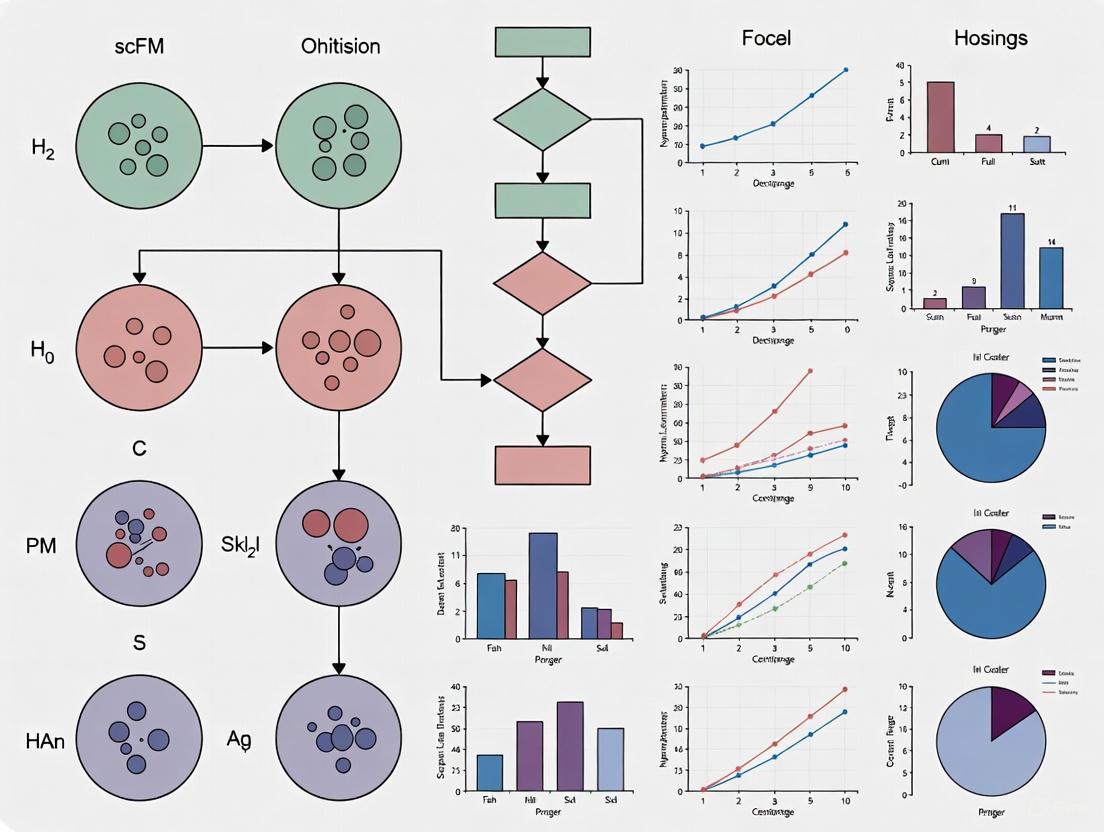

Workflow Visualization

scFM Hyperparameter Optimization Workflow

The diagram below outlines the iterative process of optimizing an scFM for a specific downstream task, integrating both manual tuning strategies and automated Bayesian optimization [2] [10] [13].

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item | Function / Purpose | Example / Note |

|---|---|---|

| Benchmarked scFMs [2] [12] | Pretrained models providing a starting point for fine-tuning. | scGPT, Geneformer, scFoundation. Access via official repositories or BioLLM. |

| BioLLM Framework [12] | Unified framework for integrating and evaluating different scFMs. | Standardizes APIs, enables model switching, and supports benchmarking. |

| Hyperparameter Optimization Libraries [10] [13] [11] | Automates the search for optimal hyperparameters. | Hyperopt, Scikit-Optimize (BayesSearchCV), Optuna. |

| Biological Evaluation Metrics [2] | Quantifies if model outputs are biologically meaningful. | scGraph-OntoRWR, Lowest Common Ancestor Distance (LCAD). |

| Single-Cell Data Platforms [2] [1] | Sources of high-quality, annotated data for pretraining and fine-tuning. | CZ CELLxGENE, Human Cell Atlas, PanglaoDB. |

Frequently Asked Questions

Q1: What is the pre-training and task mismatch problem in single-cell foundation models (scFMs)? The pre-training and task mismatch problem occurs when a model's generic self-supervised pre-training objectives fail to emphasize the specific, task-critical features needed for a particular downstream application [14]. In single-cell biology, this means a foundation model trained on a massive, general corpus of scRNA-seq data may not adequately capture the nuanced gene expression patterns or cellular states relevant to a specialized task like identifying a rare cancer cell type or predicting drug sensitivity [2]. This can lead to suboptimal performance compared to simpler, task-specific models.

Q2: How can I quickly diagnose if my scFM is suffering from a task mismatch? A key diagnostic step is to benchmark the scFM's zero-shot embeddings against traditional baseline methods on your specific dataset [2]. Evaluate the foundational embeddings on your target task using a simple classifier (e.g., a linear model). If performance is inferior to methods like Seurat or scVI, or if the model struggles with biologically meaningful distinctions (e.g., confusing closely related cell types), a significant task mismatch is likely present [2].

Q3: What is the difference between fine-tuning and test-time training for correcting mismatches? Fine-tuning is a stage where the pre-trained model is further trained (often with a small amount of labeled data) on the target task to align its representations with task-specific features [14]. Test-time training (TTT), conversely, is an inference-time strategy that makes lightweight, on-the-fly adjustments to the model for each new, unlabeled test sample, helping to calibrate it to new data distributions and reduce prediction entropy without full retraining [14].

Q4: Are foundation models always the best choice for single-cell analysis? Not necessarily. Benchmarking studies reveal that while scFMs are robust and versatile, simpler machine learning models can be more efficient and perform better on specific datasets, particularly when computational resources are limited or the task is well-defined [2]. The decision should be guided by factors like dataset size, task complexity, and the need for biological interpretability [2].

Troubleshooting Guide

| Problem Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| Poor performance on a specific cell type annotation task, especially for rare cell types. | Generic pre-training did not capture features discriminative for your cell types of interest. | Perform domain-specific self-supervised fine-tuning using pretext tasks that leverage spectral or spatial features of your data without needing more labels [14]. |

| Model performance degrades when applying the model to data from a new subject, lab, or sequencing protocol. | Domain shift or batch effects between your data and the model's pre-training corpus. | Apply Test-Time Training (TTT) with entropy minimization on incoming unlabeled test samples to adapt the model on-the-fly [14]. |

| The model's predictions are uncertain and lack confidence on your dataset. | The model's feature space is not well-calibrated to the new data distribution. | Implement test-time entropy minimization (e.g., the Tent method) to sharpen predictions and make the model more confident [14]. |

| A simpler, traditional model (e.g., on HVGs) outperforms your scFM on a specific task. | The scFM's capacity is misallocated; its generic knowledge does not align with your task's limited data scope. | Use the simpler model for this specific task, or use the scFM's embeddings as input features but employ a focused hyperparameter optimization strategy for your final classifier [7] [2]. |

Benchmarking ScFMs Against Traditional Methods

The following table summarizes findings from a comprehensive benchmark study, comparing scFMs against established baseline methods across various tasks. This data can help you set realistic performance expectations and guide model selection [2].

| Task Category | Example Task | Best Performing Approach (Varies by task/dataset) | Key Performance Insight |

|---|---|---|---|

| Cell-level Tasks | Batch Integration | scFMs and Traditional Methods (e.g., Harmony, scVI) | Performance is highly dataset-dependent; no single method dominates [2]. |

| Cell Type Annotation | scFMs and Traditional Methods | scFMs show robustness, but simpler models can be more efficient for specific datasets [2]. | |

| Cancer Cell Identification | scFMs | scFMs demonstrate advantages in capturing features for clinically relevant tasks [2]. | |

| Gene-level Tasks | Drug Sensitivity Prediction | ScFMs and Traditional Methods | Model superiority is not consistent; task and data specifics are critical [2]. |

Experimental Protocol: A Two-Stage Alignment Strategy

Here is a detailed methodology, inspired by NeuroTTT [14], to align a generic scFM with your specific downstream task. This protocol tackles both feature space misalignment and test-time distribution shifts.

Stage 1: Domain-Specific Self-Supervised Fine-Tuning

- Model Setup: Start with a pre-trained scFM backbone (e.g., scGPT, Geneformer). To this backbone, attach two types of heads: a main task head (e.g., a classifier for your labeled data) and one or more self-supervised task heads.

- Self-Supervised Pretext Tasks: Design lightweight, self-supervised tasks that leverage unlabeled data to steer the model toward biologically relevant features for your goal. Examples include:

- Masked Gene Prediction: Randomly mask a portion of the input gene expression vector and task the model with reconstructing it. This forces the model to learn gene-gene relationships in your data context.

- Cell State Prediction: Create a task where the model predicts a summary statistic or a perturbed state of the cell, encouraging it to capture broader cellular functions.

- Joint Optimization: Jointly train the entire model (backbone and heads) by minimizing a combined loss function:

Total Loss = Supervised Loss (from main task) + Self-Supervised Loss (from pretext tasks). This aligns the backbone's representations with your domain.

Stage 2: Test-Time Training for Inference

- Self-Supervised TTT: For each new, unlabeled test sample, perform a few steps of a self-supervised task (e.g., masked prediction).- This calibrates the model to the specific characteristics of that sample.

- Entropy Minimization (Tent): Alternatively, or in addition, update only the model's normalization layer statistics by minimizing the prediction entropy on the test sample. This encourages more confident and accurate outputs on the fly.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| High-Quality Pre-training Corpus | A large, diverse, and well-curated collection of single-cell datasets (e.g., from CZ CELLxGENE) is the fundamental "reagent" for building a robust scFM, providing the broad biological knowledge base [15]. |

| Self-Supervised Pretext Tasks | These are software "reagents" used during fine-tuning to guide the model without additional labeled data, aligning the model's feature space with task-specific patterns [14]. |

| Benchmarking Datasets | High-quality datasets with reliable labels (e.g., AIDA v2) are essential for rigorously evaluating model performance and diagnosing mismatch issues [2]. |

| Hyperparameter Optimization Framework | Tools like GridSearchCV or RandomizedSearchCV in scikit-learn are crucial for systematically finding the best model settings for your specific task and data [7]. |

Model Selection Workflow

The following diagram illustrates a logical pathway for selecting and applying a model to a single-cell analysis task, helping to mitigate the pretraining-task mismatch.

Diagnostic & Optimization Pathway

For researchers who have identified a potential performance issue, this pathway details the core strategy for diagnosing and resolving a pretraining-task mismatch.

The performance of single-cell foundation models (scFMs) is profoundly influenced by the intrinsic properties of the data on which they are trained and applied. Understanding the interplay between data characteristics—specifically sparsity, dimensionality, and batch effects—and model hyperparameters is crucial for optimizing scFMs for tasks such as cell type annotation, batch integration, and perturbation prediction. ScFMs are large-scale deep learning models, often based on transformer architectures, pretrained on vast single-cell omics datasets to learn universal biological knowledge that can be adapted to various downstream tasks [1]. Despite their promise, these models face significant challenges including the non-sequential nature of omics data, high data sparsity, and technical variations [2] [1]. This guide provides a structured approach to diagnosing and solving hyperparameter selection issues driven by these key data characteristics, enabling researchers to harness the full potential of scFMs in their research and drug development workflows.

Foundational Concepts: How Data Properties Guide Hyperparameter Strategy

The table below summarizes the core data characteristics, their impact on model behavior, and the primary hyperparameters they influence.

Table 1: Fundamental Data Characteristics and Their Hyperparameter Implications

| Data Characteristic | Description & Impact | Key Influenced Hyperparameters |

|---|---|---|

| Sparsity | High proportion of zero counts in scRNA-seq data due to low RNA input and dropout events [16]. Reduces signal-to-noise ratio, challenges model learning. | - Masking ratio in pretraining [2]- Learning rate & training epochs- Loss function weighting (e.g., for zero inflation) |

| Dimensionality | High feature count (genes); scRNA-seq data is high-dimensional with low signal-to-noise [2]. Risks overfitting, increases computational demand. | - Number of input genes (token selection) [2]- Latent embedding dimension [17]- Model architecture width (embedding dimensions) |

| Batch Effects | Technical variations from different labs, protocols, or reagent batches [16] [18]. Can obscure biological signals, lead to misleading integration. | - Batch correction layers or token inclusion [1]- Attention mechanism parameters- Data normalization strategy |

Troubleshooting Guides & FAQs

Sparsity-Related Issues

Q: My scFM fails to learn meaningful representations, and performance on sparse cell populations is poor. What hyperparameters should I adjust?

- A: High sparsity exacerbates the low signal-to-noise ratio of single-cell data. To address this:

- Adjust the Masking Ratio: The masking ratio in self-supervised pretraining tasks (like masked gene modeling) is critical. For very sparse data, a lower masking ratio (e.g., 15-20%) can help the model learn more stable representations without losing too much context. Models like scGPT and Geneformer use different masking strategies, and this is a key hyperparameter to tune for your specific dataset [2].

- Review Tokenization Strategy: How genes and their values are converted into model tokens (tokenization) directly handles sparsity. Strategies include ranking genes by expression within a cell [1] or binning expression values [2]. If using a top-ranked genes approach, increasing the number of input genes (tokens) may help capture more biological signal, but balance this against noise.

- Optimize Learning Rate and Schedule: Sparse data can lead to unstable training. Use a lower learning rate with a warm-up phase to ensure more stable convergence and prevent the model from overfitting to the most abundant signals.

Q: How can I validate that sparsity is the core issue?

- A: Perform an ablation study. Systematically increase the sparsity of your training data by artificially adding more zeros and observe the performance drop. Tools like

scFoundationandscGPTprovide metrics on reconstruction loss for zero-inflated features, which can help diagnose this issue [2] [18].

Dimensionality-Related Issues

Q: Training is computationally expensive and the model seems to overfit, not generalizing to held-out cell types. How can hyperparameters help?

- A: High dimensionality requires careful regularization and efficient parameterization.

- Tune Latent Embedding Dimension: The dimension of the latent space (e.g., the model's output embedding) is a crucial hyperparameter. While benchmarking has shown that a default of 10 dimensions can be effective for some tasks, performance is highly dependent on this choice [17]. A higher dimension preserves more information but risks overfitting on smaller datasets; a lower dimension promotes compression but may lose biologically relevant variance. This must be tuned with your dataset size and complexity in mind.

- Select Informative Input Genes: Instead of using all ~20,000 genes, most scFMs use a subset. The method for selecting these genes is a key hyperparameter. Using Highly Variable Genes (HVGs) is common (e.g., scGPT uses 1200 HVGs [2]), but other models like Geneformer use the top 2048 ranked genes by expression [2]. Experiment with the number and selection method of input genes to find the optimal trade-off between biological coverage and noise reduction.

- Incorporate Regularization: Increase dropout rates within the transformer layers and use weight decay to prevent overfitting to the high-dimensional input space. Monitor performance on a validation set containing novel cell types to guide this tuning.

Q: Are there benchmarks to guide dimensionality selection?

- A: Yes, benchmarks show that simpler baselines can sometimes outperform large scFMs on specific tasks, especially with limited data [2]. This highlights the importance of tailoring model complexity (via hyperparameters like latent dimension) to your dataset's scale. The

PEREGGRNplatform provides a framework for such task-specific evaluations [19].

Batch Effect-Related Issues

Q: After using an scFM, batch effects remain strong, and biological groups are not well separated. What is the solution?

- A: Batch effects are a major challenge in single-cell analysis. While some scFMs show robustness to batch effects, they often require explicit handling.

- Leverage Batch-Aware Pretraining: Some scFMs, like scGPT, allow for the incorporation of batch information as special tokens during pretraining [1]. If your model supports this, ensure it is utilized. For models that don't, consider using the model's embeddings as input to a dedicated post-hoc integration tool like Harmony [2] [18].

- Utilize Reference-Based Scaling: For non-foundation model workflows, a highly effective strategy is a ratio-based method. This involves scaling the feature values of study samples relative to those of a concurrently profiled reference material (e.g., a standard cell line) in each batch. This method has been shown to be particularly effective in confounded scenarios where biological groups and batches are inseparable [18].

- Hyperparameters for Integration Tasks: When using scFMs for batch integration, pay close attention to the hyperparameters of the integration loss component if the model was fine-tuned with one. The weight of the integration loss term versus the reconstruction loss is critical for balancing batch mixing and biological preservation.

Q: My biological groups are confounded with batch. Can I still correct for batch effects?

- A: This is a challenging scenario. Most standard batch correction algorithms fail because they cannot distinguish technical from biological variation [18]. The ratio-based scaling method using a reference sample is one of the few strategies demonstrated to work in such confounded designs, as it provides a stable technical baseline within each batch [18].

Experimental Protocols for Systematic Hyperparameter Optimization

Protocol: Benchmarking Hyperparameters for Data with High Batch Effects

Objective: To identify the optimal set of hyperparameters for an scFM when applied to a dataset with significant known batch effects.

Materials:

- A labeled scRNA-seq dataset with known batch information and cell type annotations.

- A pretrained scFM (e.g., scGPT, Geneformer).

- Access to a computing cluster with GPU acceleration.

Methodology:

- Data Partitioning: Split the data into training and validation sets, ensuring that all replicates of the same biological sample are in the same split to prevent data leakage.

- Define Hyperparameter Search Space:

integration_method: ['modeltoken', 'posthoc_harmony']latent_dimension: [10, 20, 50, 100]learning_rate: [1e-5, 1e-4, 1e-3]batch_loss_weight: [0, 0.5, 1.0] (if applicable)

- Evaluation Metric Selection: Use a combination of:

- Batch Mixing Score: The silhouette score where the label is the batch ID. A lower score indicates better batch mixing.

- Biological Conservation Score: The Adjusted Rand Index (ARI) or Normalized Mutual Information (NMI) against known cell type labels. A higher score is better.

- Automated Hyperparameter Search: Run a Bayesian optimization search over 50-100 trials to find the hyperparameter set that maximizes the biological conservation score while maintaining a satisfactorily low batch mixing score.

- Validation: Apply the best-performing hyperparameter set to a held-out test set to obtain a final, unbiased performance estimate.

Protocol: Evaluating Tokenization Strategies for Sparse Data

Objective: To determine the most effective tokenization strategy for a sparse dataset to maximize cell type annotation accuracy.

Materials:

- A sparse scRNA-seq dataset (e.g., from a rare cell population study).

- An scFM that allows flexible input (e.g., scGPT, scFoundation).

Methodology:

- Baseline Establishment: Process the data using the model's default tokenization (e.g., top 2000 ranked genes).

- Alternative Strategy Testing:

- HVG-based: Tokenize using the top 1000, 2000, and 5000 Highly Variable Genes.

- Value-based: Implement a value-binning strategy as used in scBERT [1].

- Zero-Shot Evaluation: Extract cell embeddings from the model using each tokenization strategy without any fine-tuning.

- Downstream Task Assessment: Perform k-means clustering on the embeddings and calculate the Adjusted Mutual Information (AMI) against the true cell labels [17].

- Analysis: The tokenization strategy that yields the highest AMI on the validation set is optimal for that specific data characteristic.

Workflow Diagrams

Hyperparameter Tuning Driven by Data Diagnostics

scFM Input Representation and Tokenization

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Resources for scFM Hyperparameter Optimization

| Resource Name | Type | Function & Application Context |

|---|---|---|

| Quartet Project Reference Materials [18] | Biological Reference | Matched DNA, RNA, protein, and metabolite reference materials from a monozygotic twin family. Used for ratio-based batch correction and method benchmarking in confounded designs. |

| CZ CELLxGENE [2] [1] | Data Repository | A curated platform providing unified access to over 100 million annotated single-cells. Essential for pretraining scFMs and creating diverse, biologically representative benchmark datasets. |

| PEREGGRN Benchmarking Platform [19] | Software Platform | A framework for evaluating expression forecasting methods on unseen genetic perturbations. Used to objectively assess how well tuned models generalize to novel biological conditions. |

| Harmony [2] [18] | Algorithm | A robust batch integration algorithm based on PCA and clustering. Often used as a post-processing step for scFM embeddings or as a strong baseline for benchmarking. |

| ComBat-seq [20] | Algorithm | An empirical Bayes method for batch effect correction designed for RNA-seq count data. A standard tool in bulk and single-cell RNA-seq analysis pipelines. |

This technical support center provides troubleshooting guides and FAQs for researchers working with single-cell foundation models (scFMs), framed within the broader context of optimizing scFM hyperparameters for specific tasks.

Core Concepts: How scFMs Encode Biological Knowledge

What are single-cell foundation models (scFMs) and how do they work?

Single-cell foundation models (scFMs) are large-scale deep learning models pretrained on vast single-cell genomics datasets to learn universal patterns of gene regulation and cellular function [1]. These models adapt transformer architectures from natural language processing to treat individual cells as "sentences" and genes or genomic features as "words" [1] [21]. By training on millions of cells across diverse tissues and conditions, scFMs learn the fundamental principles of cellular biology that can be transferred to various downstream tasks through fine-tuning [1].

How do scFMs capture relationships between genes and cells?

scFMs capture gene and cell relationships through several key mechanisms:

- Attention mechanisms within transformer architectures allow models to learn and weight relationships between any pair of genes in a cell, identifying which genes are most informative of a cell's identity or state [1]

- Self-supervised pretraining tasks like masked gene modeling enable models to learn gene-gene covariance and regulatory patterns from unlabeled data [2] [1]

- Latent representations that encode both gene-level and cell-level information, capturing hierarchical biological organization [2]

The diagram below illustrates this core tokenization workflow that enables scFMs to process single-cell data:

Troubleshooting Common scFM Implementation Challenges

Why does my scFM fail to capture biologically meaningful representations?

Problem: Model produces embeddings with poor biological relevance or fails to distinguish known cell types.

Solutions:

- Verify data quality and preprocessing: Ensure proper normalization, batch effect correction, and filtering of low-quality cells [1]

- Check tokenization strategy: Confirm gene ranking or binning approach matches the model's expected input format [1] [21]

- Assess training data diversity: Ensure pretraining or fine-tuning datasets encompass sufficient biological variation for your specific task [2]

- Evaluate with biological metrics: Use ontology-informed metrics like scGraph-OntoRWR or Lowest Common Ancestor Distance (LCAD) to quantitatively measure biological relevance [2]

How can I improve my scFM's performance on specific downstream tasks?

Problem: Model underperforms on tasks like cell type annotation, perturbation response prediction, or cancer cell identification.

Solutions:

- Apply task-specific fine-tuning: Leverage the "pre-train then fine-tune" paradigm with task-specific data [2] [21]

- Optimize hyperparameters systematically: Use grid search or random search methods to identify optimal learning rates, layer configurations, and training epochs [7] [22]

- Consider model selection carefully: Choose scFMs based on dataset size, task complexity, and available computational resources—no single scFM consistently outperforms others across all tasks [2]

- Incorporate biological prior knowledge: Use gene ontology information or pathway databases to inform model architecture or training [1]

Why is my scFM computationally intensive and how can I optimize efficiency?

Problem: Model requires excessive computational resources for training or inference.

Solutions:

- Implement successive halving: Use HalvingGridSearchCV or HalvingRandomSearchCV to efficiently search hyperparameter spaces [7]

- Reduce model scale strategically: Consider smaller architectures or feature subsets for specific tasks—simpler models sometimes outperform complex scFMs, especially with limited data [2]

- Leverage transfer learning: Utilize pretrained embeddings in a zero-shot manner before committing to full fine-tuning [2]

- Optimize data pipelines: Ensure efficient data loading and preprocessing to avoid bottlenecks [2]

Experimental Protocols for scFM Evaluation

Protocol: Evaluating Biological Relevance of scFM Embeddings

Purpose: Quantitatively assess how well scFM embeddings capture known biological relationships.

Methodology:

- Generate embeddings: Extract zero-shot cell embeddings from your scFM

- Compute similarity matrices: Calculate cell-cell similarity using embedding distances

- Compare with biological ground truth: Use cell ontology to define "true" biological relationships

- Apply evaluation metrics:

- Benchmark against baselines: Compare with traditional methods like HVG selection, Seurat, or scVI [2]

Protocol: Hyperparameter Optimization for Task-Specific Fine-Tuning

Purpose: Systematically identify optimal hyperparameters for your specific biological task.

Methodology:

- Define search space: Identify critical hyperparameters (learning rate, layer configurations, dropout rates)

- Select optimization strategy:

- Implement cross-validation: Use nested cross-validation to prevent overfitting and ensure generalizability [7]

- Evaluate multiple metrics: Assess both computational performance (speed, memory) and biological relevance (accuracy, interpretability)

Table 1: Key Hyperparameters for scFM Optimization

| Hyperparameter | Importance Level | Typical Values | Optimization Strategy |

|---|---|---|---|

| Learning Rate | Critical | 1e-5 to 1e-3 | Log-uniform sampling [22] |

| Batch Size | High | 32-512 | Power of 2 values |

| Hidden Layer Size | Medium | 256-3072 | Coarse-to-fine search [22] |

| Attention Heads | Medium | 4-16 | Integer uniform |

| Dropout Rate | Medium | 0.1-0.5 | Uniform sampling |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for scFM Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| scGPT | Transformer-based scFM | Multi-omics integration, perturbation prediction [2] |

| Geneformer | Rank-based gene tokenization | Gene network analysis, disease mechanism identification [2] [21] |

| cell2sentence (C2S) | Natural language tokenization | Cell type annotation, literature-knowledge integration [21] |

| Transcoder Analysis | Mechanistic interpretability | Extracting internal decision circuits from scFMs [21] |

| scGraph-OntoRWR | Biological evaluation metric | Quantifying ontology consistency of embeddings [2] |

| HalvingGridSearchCV | Hyperparameter optimization | Efficient parameter search for resource-intensive models [7] |

Advanced Techniques: Interpretability and Circuit Analysis

How can I interpret what my scFM has learned about biological mechanisms?

Challenge: scFMs operate as "black boxes" with limited inherent interpretability.

Solution:

- Apply transcoder analysis: Train sparse autoencoders to extract interpretable features from MLP layers, resolving polysemanticity in neural representations [21]

- Extract internal circuits: Identify computational subgraphs that correspond to real biological pathways by tracing feature contributions across layers [21]

- Perform attention analysis: Examine attention patterns to identify genes with strong regulatory influences [1]

The diagram below illustrates the transcoder-based interpretability workflow:

Key Recommendations for scFM Hyperparameter Optimization

Based on benchmarking studies [2], consider these guiding principles:

- No single scFM dominates all tasks: Select models based on your specific task requirements and data characteristics

- Balance complexity with efficiency: Simpler models often outperform complex scFMs under resource constraints or with small datasets

- Prioritize learning rate optimization: This hyperparameter typically has the greatest impact on model performance [22]

- Validate with biological metrics: Technical performance metrics alone don't guarantee biologically meaningful representations

- Leverage zero-shot capabilities: Before fine-tuning, evaluate pretrained embeddings for your task—they may already capture sufficient biological knowledge

Table 3: Model Selection Guide Based on Task Characteristics

| Task Type | Recommended scFM Type | Key Considerations | Hyperparameter Priority |

|---|---|---|---|

| Cell Type Annotation | Encoder-based (e.g., scBERT) | Handling of novel cell types | Learning rate, classifier head |

| Perturbation Prediction | Decoder-based (e.g., scGPT) | Generalization to unseen combos | Attention layers, dropout |

| Multi-omics Integration | Multi-modal architectures | Cross-modality alignment | Modality weighting, fusion layers |

| Large-scale Atlas Analysis | High-capacity models | Computational efficiency, scaling | Batch size, gradient accumulation |

Task-Specific Hyperparameter Optimization Workflows

Mapping Biological Tasks to Optimal Model Configurations

Troubleshooting Guide: Single-Cell Foundation Models (scFMs)

Why is my scFM failing to identify novel cell types in my dataset?

This typically occurs when the model encounters cell populations not represented in its pretraining data. Single-cell foundation models learn universal biological knowledge during pretraining, but their performance depends on the diversity and quality of this data [2].

Diagnosis & Solutions:

- Check Pretraining Coverage: Verify whether the model's original pretraining dataset included cells from your tissue type or species. Models like Geneformer and scGPT have specific pretraining corpus compositions [2].

- Leverage Zero-Shot Embeddings: Evaluate the intrinsic structure of the model's zero-shot cell embeddings using the

scGraph-OntoRWRmetric. This measures the consistency of captured cell type relationships with prior biological knowledge from cell ontologies [2]. - Fine-Tuning Protocol: If novel cell types are present, move beyond zero-shot inference. Use a small, annotated subset of your data to fine-tune the model, allowing it to adapt to the new cellular context [2].

How do I resolve poor batch integration that removes real biological variation?

Over-correction during batch integration can strip away meaningful biological signals, such as subtle disease-related transcriptional changes.

Diagnosis & Solutions:

- Evaluate with Biological Metrics: Assess integration using metrics beyond technical mixing. The Lowest Common Ancestor Distance (LCAD) metric evaluates if misclassified cells are at least ontologically similar, ensuring that preserved variation is biologically plausible [2].

- Analyze Landscape Roughness: Use the Roughness Index (ROGI) to quantitatively estimate the "cell-property landscape roughness" in the latent space. A smoother landscape often indicates better generalization and a lower risk of removing continuous biological gradients [2].

- Compare to Baselines: Benchmark your scFM's performance against established methods like Seurat, Harmony, or scVI to determine if the issue is model-specific [2].

My scFM is underperforming on a specific task like drug sensitivity prediction. Which model should I choose?

No single scFM consistently outperforms others across all tasks. Model selection must be tailored to the specific task, dataset size, and available resources [2].

Decision Framework:

- For Large, Complex Datasets: Models like scFoundation (100M parameters) or UCE (650M parameters), which are trained on massive datasets (50M and 36M cells respectively), may be more suitable for complex tasks like clinical outcome prediction [2].

- For Efficiency and Smaller Datasets: If you have limited data or computational resources, simpler machine learning models or smaller scFMs like Geneformer (40M parameters) can be more efficient and sometimes more effective [2].

- Consult Holistic Rankings: Refer to benchmark studies that provide task-specific model rankings. These aggregates performance across multiple metrics (unsupervised, supervised, knowledge-based) to guide selection [2].

What does it mean if my model has high accuracy but low biological interpretability?

This suggests the model may be learning technical artifacts or superficial patterns in the data rather than underlying biological principles.

Diagnosis & Solutions:

- Conduct Attention Analysis: Examine the model's attention mechanisms. High-performing models should show that genes with high attention weights are part of coherent biological pathways relevant to the task [2].

- Employ Ontology-Based Metrics: Use the

scGraph-OntoRWRmetric. A high score indicates that the model's embeddings reflect known biological relationships between cell types, increasing confidence in its interpretability [2]. - Perform Ablation Studies: Systematically remove or shuffle key input features to see if the model's performance drops in a biologically predictable way [2].

Frequently Asked Questions (FAQs)

What is the most critical factor for successful scFM fine-tuning?

Task and Dataset Alignment. The key is matching the model's architecture and pretraining strengths to your specific biological question. A comprehensive benchmark study revealed that no single scFM is universally superior. The choice depends on factors like dataset size, task complexity, the need for biological interpretability, and computational resources [2].

How can I assess a model's performance without extensive labeled data?

Use the Roughness Index (ROGI) as a proxy. This unsupervised metric measures the smoothness of the cell-property landscape in the model's latent space. A lower roughness (smoother landscape) often correlates with better performance on downstream tasks, allowing for model comparison even with limited labels [2].

Are larger pretrained models always better for clinical applications?

Not necessarily. While large models like scFoundation and UCE offer broad knowledge, benchmarking studies show that simpler models can be more adept at efficiently adapting to specific, resource-constrained clinical datasets. The decision should be guided by task complexity and dataset size rather than model size alone [2].

How do I know if my scFM has learned biologically meaningful representations?

Beyond standard accuracy metrics, use ontology-informed evaluations like scGraph-OntoRWR and Lowest Common Ancestor Distance (LCAD). These metrics evaluate whether the model's internal representations and any errors it makes align with established biological hierarchies and knowledge [2].

Experimental Protocols & Workflows

Protocol 1: Benchmarking scFMs on Cell-Type Annotation

Objective: Systematically evaluate the performance of different scFMs on a cell-type annotation task, particularly for novel or rare cell types.

Materials:

- Query Dataset: Your single-cell RNA-seq dataset (count matrix).

- Reference Atlas: A well-annotated dataset (e.g., from CellxGene) for evaluation.

- Software Environment: Python with scverse ecosystem (scanpy, scvi-tools) and model-specific libraries (e.g., scGPT, Geneformer).

- Computational Resources: GPU access is recommended for larger models.

Methodology:

- Data Preprocessing: Normalize and log-transform your query dataset. Ensure gene identifier matching between your dataset and the scFM's expected input (usually HGNC symbols).

- Embedding Extraction: Generate zero-shot cell embeddings using each scFM (e.g.,

model.get_cell_embeddings()). - Cell-Type Prediction: Transfer labels from the reference atlas to your query data using a simple classifier (e.g., k-NN) on the embeddings.

- Performance Evaluation:

- Calculate standard accuracy and F1-score.

- Apply the LCAD metric to assess the biological severity of misclassifications.

- Biological Consistency Check: Compute the

scGraph-OntoRWRscore to see if the model's perceived cell-type relationships match the Cell Ontology.

Protocol 2: Tuning scFMs for Drug Sensitivity Prediction

Objective: Fine-tune a pretrained scFM to predict cancer cell drug response from single-cell transcriptomic data.

Materials:

- Pretrained scFM: Such as scGPT or Geneformer.

- Drug Screening Data: A dataset linking single-cell profiles to drug response metrics (e.g., IC50).

- Software: PyTorch with scFM-specific fine-tuning scripts.

Methodology:

- Data Encoding: Use the scFM to encode each cell's transcriptome into an embedding.

- Task-Specific Head: Append a regression head on top of the frozen base model.

- Fine-Tuning:

- Stage 1: Train only the regression head for 50 epochs.

- Stage 2: Unfreeze the entire model and conduct full fine-tuning with a low learning rate (1e-5) for 100 epochs.

- Validation: Use 5-fold cross-validation, ensuring cells from the same patient are in the same fold.

- Interpretation: Perform attention analysis to identify genes and pathways the model uses for prediction.

Model Performance & Configuration Data

Table 1: Benchmarking scFM Performance Across Biological Tasks

This table summarizes the relative performance of different scFMs across common tasks, based on a comprehensive benchmark study. Performance is ranked from highest (1) to lowest (6) for each task [2].

| Model | Parameters | Pretraining Dataset Size | Batch Integration | Cell Type Annotation | Drug Sensitivity Prediction | Novel Cell Type Discovery |

|---|---|---|---|---|---|---|

| scFoundation | 100 M | 50 M cells | 2 | 1 | 1 | 3 |

| UCE | 650 M | 36 M cells | 1 | 3 | 2 | 4 |

| scGPT | 50 M | 33 M cells | 3 | 2 | 3 | 2 |

| Geneformer | 40 M | 30 M cells | 4 | 4 | 4 | 1 |

| LangCell | 40 M | 27.5 M cells | 5 | 5 | 5 | 5 |

| scCello | Info Missing | Info Missing | 6 | 6 | 6 | 6 |

Table 2: Decision Matrix for scFM Selection

Use this guide to select the most appropriate model based on your project's specific constraints and goals [2].

| Scenario | Primary Constraint | Recommended Model(s) | Rationale |

|---|---|---|---|

| Large-scale clinical prediction | Task accuracy | scFoundation, UCE | Superior on complex tasks like drug sensitivity prediction due to large scale pretraining [2]. |

| Novel cell type identification | Biological discovery | Geneformer, scGPT | More robust at generalizing to unseen cell types, potentially due to architectural choices [2]. |

| Limited computational resources | Efficiency | Geneformer, simpler ML baselines | Smaller models adapt more efficiently to specific datasets with lower computational cost [2]. |

| Batch integration of diverse data | Technical performance | UCE, scFoundation | Excel at removing technical artifacts while preserving biological variation [2]. |

| High interpretability needed | Biological plausibility | Models with high scGraph-OntoRWR scores | Choose a model whose internal representations best align with established biological knowledge [2]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example/Note |

|---|---|---|

| CellxGene Platform | Source for high-quality, curated single-cell datasets for benchmarking and validation. | Asian Immune Diversity Atlas (AIDA) v2 is recommended as an independent test set [2]. |

| scGraph-OntoRWR Metric | A novel metric to evaluate if a model's learned cell relationships are consistent with the Cell Ontology. | Measures biological meaningfulness of embeddings beyond simple accuracy [2]. |

| Lowest Common Ancestor Distance (LCAD) | Evaluates the biological severity of cell type misclassifications. | A smaller LCAD indicates a less severe, more biologically plausible error [2]. |

| Roughness Index (ROGI) | An unsupervised metric that acts as a proxy for downstream task performance. | Estimates the smoothness of the cell-property landscape in the latent space [2]. |

| Benchmarking Framework | A standardized pipeline for holistic model evaluation across multiple tasks and metrics. | Should include both gene-level and cell-level tasks with clinical relevance [2]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the main challenges in cell type annotation when aiming to discover novel cell types? Automated annotation using reference label transfer methods limits the discovery of novel cell types unique to smaller datasets, as it requires comprehensive, high-quality reference labels that are often unavailable [23]. Methods that rely solely on existing references can mask previously uncharacterized cell populations.

FAQ 2: How can I assess the reliability of my automated cell type annotations? An objective credibility evaluation strategy can be implemented. This involves using a tool to generate representative marker genes for each predicted cell type, then analyzing the expression of these genes within the corresponding cell clusters in your input dataset. An annotation is considered reliable if more than four marker genes are expressed in at least 80% of cells within the cluster [24].

FAQ 3: My dataset has low heterogeneity (e.g., stromal cells). Why do annotation tools perform poorly, and how can I improve results? Low-heterogeneity datasets, such as stromal cells, present a challenge because annotation tools often rely on distinct, well-separated marker gene expression [24]. Performance can be improved by using a multi-model integration strategy that leverages the complementary strengths of multiple large language models (LLMs) to reduce uncertainty and increase annotation reliability [24].

FAQ 4: What is the benefit of using single-cell foundation models (scFMs) over traditional methods for annotation? Pretrained scFMs capture biological insights into the relational structure of genes and cells during their training on massive and diverse datasets [2]. This endows them with strong generalization capabilities for various downstream tasks, including cell type annotation, and can provide a smoother latent space that reduces the difficulty of training task-specific models [2].

FAQ 5: How can I integrate multiple single-cell datasets without losing rare cell populations or novel cell types?

Conventional batch correction methods tend to favor predominant cell types and may over-integrate dataset-specific rare cell populations [23]. To address this, prior-informed integration methods like cellhint-prior and scanorama-prior incorporate preliminary annotation information to enhance batch correction while actively preserving biological diversities, including rare cell types [23].

Troubleshooting Guides

Issue 1: Inconsistent or Unreliable Cell Type Annotations

Problem: Automated cell type annotation results are inconsistent with manual expert knowledge, or different tools provide conflicting labels.

Solution:

- Step 1: Implement a multi-model integration strategy. Instead of relying on a single model, use a tool that integrates multiple top-performing LLMs (such as GPT-4, Claude 3, or Gemini) to leverage their complementary strengths and reduce annotation uncertainty [24].

- Step 2: Apply a "talk-to-machine" iterative feedback loop. If the initial annotation is ambiguous, use a tool that can query the model for marker genes of the predicted cell type, validate their expression in your dataset, and then feed the validation results back to the model for a refined annotation [24].

- Step 3: Perform an objective credibility evaluation. For any annotation (whether manual or automated), objectively check its reliability by verifying that the purported marker genes are actually expressed in the annotated cluster. This helps to identify potentially erroneous labels from any source [24].

- Recommended Tool: Consider using a framework like LICT (LLM-based Identifier for Cell Types), which incorporates the above strategies [24].

Issue 2: Failure to Identify Novel Cell Populations

Problem: Your dataset likely contains unknown or novel cell states, but standard reference-based annotation methods are forcing all cells into known categories.

Solution:

- Step 1: Use a flexible, context-aware annotation pipeline. Employ a tool like

scExtractthat can process data based on information extracted from the original research article. This allows the clustering granularity to align with the authors' biological understanding, which may hint at novel populations [23]. - Step 2: Bypass or carefully select reference datasets. When using automated annotation, choose methods that do not strictly depend on reference datasets, or use references that are broad and not overly specific, to allow for the discovery of uncharacterized cells [24] [23].

- Step 3: Employ prior-informed data integration. When integrating your dataset with others, use methods like

cellhint-priorandscanorama-priorthat are designed to preserve dataset-specific biological diversities, including rare and novel cell populations, during the batch correction process [23]. - Recommended Tool:

scExtractframework for automated processing and prior-informed integration [23].

Issue 3: Poor Annotation Performance in Low-Heterogeneity Cell Populations

Problem: Annotation accuracy drops significantly when working with datasets containing low-heterogeneity cell types, such as fibroblasts or specific embryonic cells.

Solution:

- Step 1: Enrich the contextual information provided to the model. The performance drop is often due to a lack of distinguishing features. Use an interactive strategy that provides the model with additional differentially expressed genes (DEGs) from your dataset and the results of marker gene validation to give it more context for a precise annotation [24].

- Step 2: Manually verify marker gene expression. For clusters that are difficult to annotate, do not rely solely on automated labels. Generate a list of known marker genes for suspected cell types and visually confirm their expression patterns using feature plots and violin plots in your analysis environment [25].

- Recommended Tool: Frameworks that support an interactive "talk-to-machine" approach, like LICT [24].

Experimental Protocols & Workflows

Protocol 1: Benchmarking scFMs for Cell-Level Tasks

This protocol is based on holistic benchmarking studies designed to evaluate the performance of single-cell foundation models (scFMs) [2].

1. Model Selection:

- Select multiple scFMs representing different pretraining settings (e.g., Geneformer, scGPT, scFoundation) [2].

- Include established baseline methods for comparison (e.g., Seurat, Harmony, scVI) [2].

2. Task Design:

- Prepare datasets for cell-level tasks, such as:

- Pre-clinical batch integration.

- Cell type annotation.

- Cancer cell identification.

- Investigation of cross-tissue homogeneity and intra-tumor heterogeneity [2].

3. Feature Extraction:

- Extract zero-shot cell embeddings from the pretrained scFMs without further fine-tuning to evaluate the intrinsic quality of the learned representations [2].

4. Performance Evaluation:

- Apply both standard unsupervised and supervised metrics.

- Implement biology-informed metrics such as:

- scGraph-OntoRWR: Measures the consistency of cell type relationships captured by scFMs with prior biological knowledge from cell ontologies.

- Lowest Common Ancestor Distance (LCAD): Assesses the severity of annotation errors by measuring the ontological proximity between misclassified cell types [2].

5. Analysis:

- Use a non-dominated sorting algorithm to aggregate multiple evaluation metrics and generate task-specific and overall model rankings [2].

- Quantitatively estimate the cell-property landscape roughness (ROGI) in the pretrained latent space as a proxy for model performance on your specific dataset [2].

Protocol 2: LLM-Based Automated Annotation and Integration

This protocol outlines the use of large language models (LLMs) for fully automated single-cell data processing and integration, as implemented in the scExtract framework [23].

1. Input:

- Provide the raw expression matrix and the full text of the associated research article.

2. Automated Preprocessing and Clustering:

- The LLM agent extracts preprocessing parameters (e.g., mitochondrial gene filter thresholds) and clustering preferences directly from the article's methods section [23].

- If the number of clusters is not explicitly stated, the LLM infers it from the granularity of cell populations discussed in the text [23].

3. Cell Population Annotation:

- The LLM performs initial annotation based on marker gene lists for each cluster and the background knowledge from the article [23].

- The annotation is optimized by having the LLM autonomously query the expression levels of a set of characteristic marker genes it generates, and then refining the labels based on this validation [23].

4. Prior-Informed Data Integration:

- Cell Type Harmonization: Use

cellhint-priorto harmonize annotations across different datasets, correcting for nomenclature inconsistencies from LLM outputs [23]. - Embedding Integration: Apply

scanorama-priorfor batch correction. This method uses the prior annotation information to adjust weighted distances between cells and applies adjustment vectors based on cell group centers, leading to more accurate integration while preserving biological diversity [23].

Data Presentation

Table 1: Evaluation of LLM-Based Annotation Strategies on Diverse Datasets

This table summarizes the performance of different strategies for large language model (LLM)-based cell type annotation across datasets with varying cellular heterogeneity [24].

| Strategy | PBMC (High Heterogeneity) | Gastric Cancer (High Heterogeneity) | Human Embryo (Low Heterogeneity) | Stromal Cells (Low Heterogeneity) |

|---|---|---|---|---|

| Single Top LLM (e.g., GPT-4) | Mismatch Rate: ~21.5% | Mismatch Rate: ~11.1% | Full Match Rate: ~3% (Baseline) | Full Match Rate: ~Baseline |

| Multi-Model Integration | Mismatch Rate: 9.7% | Mismatch Rate: 8.3% | Match Rate (Full+Partial): 48.5% | Match Rate (Full+Partial): 43.8% |

| "Talk-to-Machine" Iterative Feedback | Mismatch Rate: 7.5%; Full Match: 34.4% | Mismatch Rate: 2.8%; Full Match: 69.4% | Full Match Rate: 48.5% | Full Match Rate: 43.8% (Mismatch: 56.2%) |

Table 2: Key Single-Cell Foundation Models (scFMs) and Their Pretraining Characteristics

This table compares the core architectural and pretraining features of several prominent single-cell foundation models, which is crucial for model selection [2].

| Model Name | Model Parameters | # Input Genes | Value Embedding | Positional Embedding | Primary Pretraining Task |

|---|---|---|---|---|---|

| Geneformer [2] | 40 M | 2048 ranked genes | Ordering | ✓ | Masked Gene Modeling (MGM) with CE loss |

| scGPT [2] | 50 M | 1200 HVGs | Value binning | × | Iterative MGM with MSE loss |

| scFoundation [2] | 100 M | ~19,264 genes | Value projection | × | Read-depth-aware MGM with MSE loss |

| UCE [2] | 650 M | 1024 non-unique genes | / | ✓ | Modified MGM: binary CE loss for gene expression |

Workflow and Relationship Visualizations

Automated Annotation and Integration Workflow

scFM Benchmarking and Selection Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Advanced Cell Type Annotation

This table lists key software tools and their primary functions for tackling challenges in novel cell discovery and annotation accuracy.

| Tool / Resource | Primary Function | Relevance to Novel Discovery & Accuracy |

|---|---|---|

| scExtract [23] | Fully automated scRNA-seq data processing and prior-informed integration | Extracts article context to guide clustering; integration methods preserve rare populations. |

| LICT [24] | LLM-based cell type identification with reliability assessment | Uses multi-model integration & credibility evaluation for reliable annotations in low-heterogeneity data. |

| CellTypist [23] | Automated cell type annotation | An established reference-based method; useful as a baseline for comparison. |

| scGraph-OntoRWR Metric [2] | Biology-informed model evaluation | Measures if scFMs capture biologically consistent cell relationships, aiding model selection. |

| cellhint-prior / scanorama-prior [23] | Prior-informed data integration | Leverages preliminary annotations for improved batch correction while protecting biological diversity. |

Troubleshooting Guides & FAQs

Common Problem: Loss of Biological Variation After Integration

Q: After integrating my single-cell datasets, I've successfully removed batch effects, but my analysis now shows a loss of biologically meaningful variation, particularly within cell types. What strategies can I use to better preserve this intra-cell-type structure?

A: This is a common challenge where batch correction methods can over-correct and remove genuine biological signals. Based on recent benchmarking studies, several approaches can help:

- Implement Biologically Informed Loss Functions: Incorporate a correlation-based loss function during model training. This specifically aims to preserve the biological structure within cell types that standard integration metrics might overlook [26].

- Utilize Multi-Layer Annotations for Validation: When available, use datasets with hierarchical or multi-layer cell annotations (e.g., from the Human Lung Cell Atlas) to validate that your integration method preserves variation at multiple levels of biological resolution [26].

- Adopt the scIB-E Framework: Move beyond standard benchmarking metrics by using the extended scIB-E metrics, which are designed to better capture intra-cell-type biological conservation. This provides a more accurate assessment of whether your integration has preserved these subtle biological differences [26].

Experimental Protocol for Validation:

- Integrate your data using your chosen method (e.g., a variational autoencoder framework like scVI or scANVI).

- Apply the scIB-E metrics to the integrated output, paying close attention to metrics related to biological conservation.

- Perform differential abundance testing on the integrated data to check if the relative abundances of cell states have been artificially altered by the batch correction process [26].

Common Problem: Poor Integration of Heterogeneous Datasets

Q: My datasets have highly unbalanced cell type compositions across batches. Standard integration methods are failing to align similar cell types correctly. What advanced methods are designed for this scenario?

A: Heterogeneous datasets with unbalanced cell types require methods that go beyond simple neighbor matching.

- Use Robust Cross-Batch Cluster Identification: Frameworks like scBCN (single-cell Batch Correction Network) employ a two-stage clustering strategy. This involves identifying mutual nearest neighbors (MNNs) and then expanding these connections using a random walk approach to build a more robust cluster-level similarity graph across batches. This helps prevent incorrect alignment of different cell types [27].

- Leverage Semi-Supervised Learning: If you have some pre-defined cell type labels, use semi-supervised methods like scANVI. These methods incorporate known cell-type information to guide the integration process, ensuring that biological labels are consistent across batches [26].

- Explore Advanced Deep Learning Loss Functions: In a unified VAE framework, Level-3 methods that combine both batch labels and cell-type labels using techniques like Domain Class Triplet loss can simultaneously achieve strong batch-effect removal and biological conservation [26].

Experimental Protocol for scBCN Application:

- Pre-process data following a standard Scanpy workflow (quality control, normalization, highly variable gene selection, PCA) [27].

- Perform cross-batch cell clustering: For each batch, perform initial high-resolution clustering using the Leiden algorithm. Then, use the expanded MNN pairs to construct a cluster-level similarity graph and apply spectral clustering to connect similar clusters across batches [27].