Overcoming the Low-Heterogeneity Challenge: Advanced Strategies for Robust Single-Cell Data Annotation

This comprehensive review addresses the critical challenge of annotating low-heterogeneity single-cell datasets, where conventional methods often fail.

Overcoming the Low-Heterogeneity Challenge: Advanced Strategies for Robust Single-Cell Data Annotation

Abstract

This comprehensive review addresses the critical challenge of annotating low-heterogeneity single-cell datasets, where conventional methods often fail. We explore the fundamental causes of annotation difficulty in homogeneous cellular populations and present cutting-edge computational strategies, including large language model integration, ensemble machine learning, and multi-resolution variational inference. Through systematic validation frameworks and real-world case studies from recent research (2025), we provide researchers and drug development professionals with practical troubleshooting guidelines and optimization techniques to enhance annotation accuracy, reliability, and biological relevance in computationally challenging scenarios.

Understanding Low-Heterogeneity Datasets: Why Conventional Annotation Fails

Frequently Asked Questions (FAQs)

Q1: Why is cell type annotation particularly challenging in low-heterogeneity datasets, such as stromal cells or early embryonic cells?

Automated annotation tools, including many machine learning models, are primarily trained on and perform best with highly heterogeneous cell populations, like Peripheral Blood Mononuclear Cells (PBMCs), where distinct lineage markers are clearly expressed. In low-heterogeneity environments, such as stromal compartments in tumors or developing embryos, cells share highly similar transcriptional profiles. This lack of starkly divergent marker genes leads to significantly higher annotation errors and inconsistencies between automated methods and manual expert annotation [1]. One study found that even advanced Large Language Models (LLMs) showed consistency rates as low as 33.3-39.4% on embryonic and stromal datasets, compared to much higher accuracy on PBMCs [1].

Q2: What strategies can improve the reliability of annotations for low-heterogeneity cell populations?

Three key strategies can enhance reliability:

- Multi-Model Integration: Leveraging multiple annotation models or LLMs and selecting the best-performing consensus result can compensate for the weaknesses of any single tool [1].

- Iterative "Talk-to-Machine" Validation: This involves an interactive process where an initial annotation is validated by checking the expression of known marker genes for that cell type within your dataset. If validation fails, the model is queried again with additional information (e.g., more differentially expressed genes) to refine its prediction [1].

- Objective Credibility Evaluation: After annotation, systematically assess the reliability of each label by verifying that established marker genes for the assigned cell type are robustly expressed in the cluster. An annotation is considered credible if more than four marker genes are expressed in at least 80% of the cells in the cluster [1].

Q3: Beyond annotation, what unique analytical opportunities do low-heterogeneity datasets offer?

While presenting annotation challenges, low-heterogeneity datasets are ideal for dissecting subtle cellular dynamics. In embryonic development, trajectory inference analysis can reconstruct the continuous lineage paths from a zygote to the epiblast, hypoblast, and trophectoderm, revealing key transcription factors driving differentiation [2]. In cancer biology, subclustering stromal cells (fibroblasts, endothelial cells) can reveal functionally distinct subtypes with specific roles in tumor progression and therapy response [3] [4]. This allows researchers to move beyond broad cell types and investigate nuanced cellular states.

Q4: How can I use scRNA-seq data to explore genetic heterogeneity in addition to transcriptomic heterogeneity?

The sequence data from scRNA-seq can be leveraged to call Single Nucleotide Variants (SNVs). A genotype-centric analysis of these transcribed variants can reveal genetic subpopulations within a tumor that may be corroborated by gene expression-based clustering. This approach can quantify genetic heterogeneity, showing, for example, that lymph node metastases can have lower levels of functional genetic heterogeneity than their primary tumors [5].

Troubleshooting Guides

Problem: Low Concordance with Manual Annotation in Stromal or Embryonic Cells

Symptoms: Your automated cell annotation tool outputs labels that do not match expert knowledge or known lineage markers. This is especially common in microenvironments with transcriptionally similar cells.

Solution: Implement a multi-step, validated annotation pipeline.

Steps:

- Initial Multi-Model Annotation: Do not rely on a single tool. Run your data through multiple supervised classifiers or LLMs (e.g., GPT-4, Claude 3) and integrate the results [1].

- Subclustering and Marker Gene Analysis: Isolate the poorly annotated population (e.g., all stromal cells) and perform subclustering at a higher resolution. Identify differentially expressed genes for each subcluster.

- Iterative Validation with LICT Strategy: Use a tool like LICT (LLM-based Identifier for Cell Types) that employs the "talk-to-machine" strategy. It will automatically check marker gene expression for its predictions and iteratively refine them [1].

- Credibility Scoring: Assign a confidence score to each final annotation based on the expression of known marker genes. Flag low-confidence labels for manual review [1].

Problem: Identifying Rare but Functionally Critical Subpopulations

Symptoms: Standard clustering identifies major cell types but may mask rare subtypes (e.g., a specific fibroblast subtype with unique function).

Solution: Increase clustering resolution and conduct focused functional analysis.

Steps:

- Optimize Clustering Parameters: Systematically increase the clustering resolution parameter and observe the stability of new subclusters.

- Functional Enrichment on Subclusters: Perform gene set enrichment analysis (GSEA) on the marker genes of each subcluster to uncover unique biological functions [4]. For example, in breast cancer, subclustering fibroblasts can reveal subtypes like CXCR4+ fibroblasts with distinct spatial localization and immune-modulatory functions [4].

- Cross-Reference with Spatial Data: If available, use spatial transcriptomics to validate the spatial localization of the putative rare subset, which can confirm its unique niche and identity [4].

Problem: Integrating scRNA-seq Data from Different Studies or Modalities

Symptoms: Batch effects and technical variation obscure biological signals when combining datasets.

Solution: Use advanced integration and normalization engines.

Steps:

- Standardize Processing: Reprocess raw data from different studies using a unified pipeline (e.g., same alignment tool, genome reference, and gene annotation) to minimize batch effects from the start [2].

- Employ Robust Integration Algorithms: Use methods like FastMNN, Harmony, or Seurat's CCA to align datasets in a shared low-dimensional space [2].

- Leverage Metadata for Governance: Maintain rigorous metadata management to track the origin, processing steps, and transformation history of each dataset, which is crucial for reproducibility and troubleshooting integration issues [6].

Protocol 1: Single-Cell RNA Sequencing of PBMCs for Immune Profiling

This protocol outlines the process for generating data similar to the jellyfish envenomation study, which revealed a dramatic shift from lymphocytes to CD14+ monocytes [7].

- Sample Collection: Collect peripheral blood in heparin or EDTA tubes.

- PBMC Isolation: Isolate PBMCs using density gradient centrifugation (e.g., Ficoll-Paque).

- Cell Viability and Counting: Assess viability (trypan blue) and count cells. Aim for >90% viability.

- Single-Cell Library Preparation: Use a droplet-based system (e.g., 10x Genomics). Key steps include:

- Cell suspension loading into a chip.

- Co-encapsulation of single cells with barcoded beads in droplets.

- Cell lysis, reverse transcription, and barcoding of cDNA within droplets.

- Breaking droplets, cDNA purification, and amplification.

- Library construction and quality control (Bioanalyzer).

- Sequencing: Sequence on an Illumina platform to a recommended depth of 20,000-50,000 reads per cell.

Protocol 2: Subclustering Analysis to Uncover Cellular Subtypes

This methodology is critical for dissecting heterogeneity within broad cell classes like monocytes or stromal cells [7] [4].

- Data Subsetting: Extract the cell population of interest from the main Seurat object.

- Re-run Dimensionality Reduction and Clustering: Re-process the subset as a standalone object.

- Normalize data:

NormalizeData(monocytes) - Find variable features:

FindVariableFeatures(monocytes) - Scale data:

ScaleData(monocytes) - Run PCA:

RunPCA(monocytes) - Find neighbors and clusters:

FindNeighbors(monocytes, dims=1:15)andFindClusters(monocytes, resolution=0.5) - Run UMAP:

RunUMAP(monocytes, dims=1:15)

- Normalize data:

- Find Cluster Markers: Identify genes defining each new subcluster.

- Functional Annotation: Use marker genes to assign biological identities to subclusters (e.g., "MMP9+ pro-inflammatory monocytes") and perform pathway enrichment analysis [7].

Quantitative Data on Annotation Challenges in Low-Heterogeneity Datasets

Table 1: Performance of Automated Annotation on Different Biological Contexts. Consistency scores reflect agreement with manual expert annotation [1].

| Biological Context | Dataset Type | Example Cell Types | Top LLM Performance (Consistency) | After Multi-Model Integration (Match Rate) |

|---|---|---|---|---|

| Normal Physiology | High Heterogeneity | PBMCs (T cells, B cells, Monocytes) | High (Best model: Claude 3) | Mismatch reduced from 21.5% to 9.7% |

| Disease State (Cancer) | High Heterogeneity | Gastric Cancer Cells | High | Mismatch reduced from 11.1% to 8.3% |

| Developmental Stage | Low Heterogeneity | Human Embryo Cells | Low (Best model: Gemini 1.5 Pro, 39.4%) | Match rate increased to 48.5% |

| Tissue Microenvironment | Low Heterogeneity | Mouse Stromal Cells | Low (Best model: Claude 3, 33.3%) | Match rate increased to 43.8% |

Key Cell Type Proportions in Different Environments

Table 2: Comparative Immune Cell Composition in Health and Disease. Data demonstrates how cellular heterogeneity shifts dramatically in a severe immune response [7].

| Immune Cell Type | Healthy Control Proportion (%) | Severe Jellyfish Envenomation Patient Proportion (%) | Key Marker Genes |

|---|---|---|---|

| CD14+ Monocytes | 16.58 | 81.86 | CD14, LYZ, S100A family |

| T Cells | 37.68 | Significantly Reduced | CD3E, CD3D, CD3G |

| B Cells | 18.80 | Significantly Reduced | CD19, MS4A1, CD79A |

| Neutrophils | 2.62 | 6.42 (Immature) | FCGR3B, S100A8, S100A9, LTF |

| Natural Killer (NK) Cells | 17.80 | Significantly Reduced | NKG7, GNLY, KLRD1 |

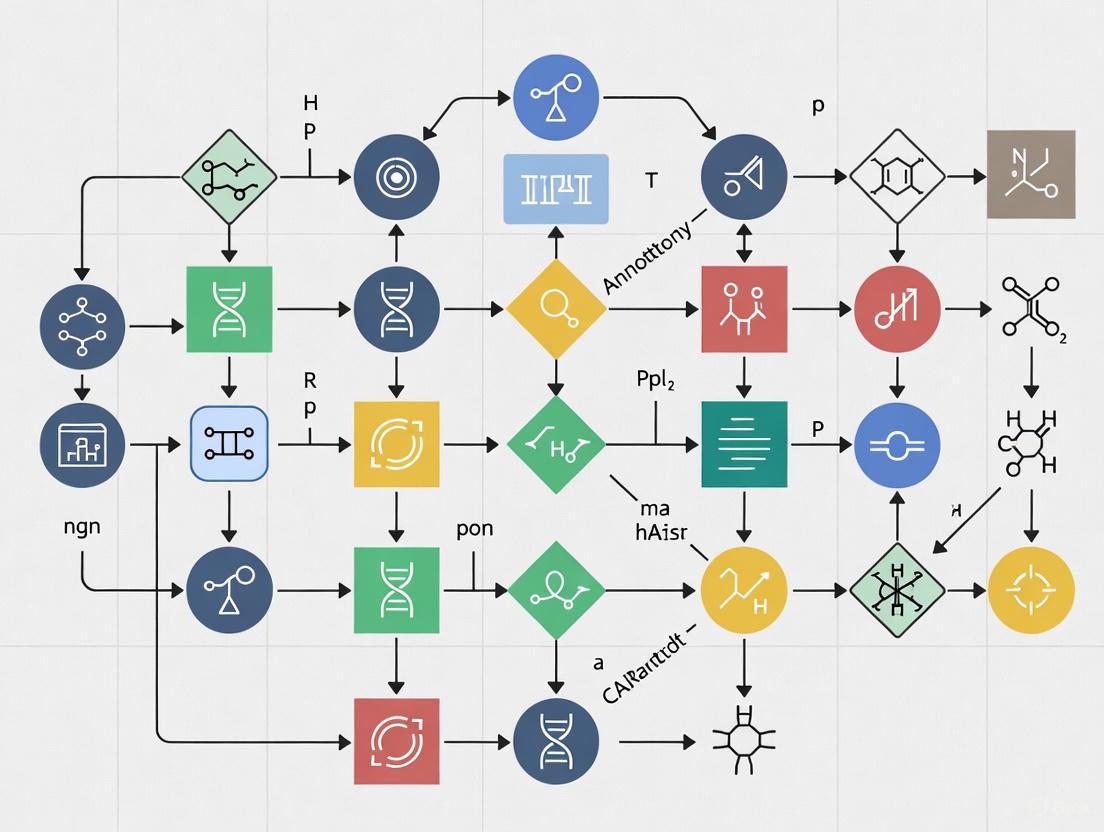

Visualizing Workflows and Signaling Pathways

Single-Cell Analysis Workflow for Low-Heterogeneity Datasets

Workflow for analyzing low-heterogeneity datasets, highlighting the critical subclustering and validation steps.

Credibility Evaluation Strategy for Cell Annotation

Decision workflow for the Objective Credibility Evaluation strategy, which assesses annotation reliability based on marker gene expression [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for scRNA-seq Heterogeneity Research

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| 10x Genomics Chromium | High-throughput single-cell partitioning and barcoding. | Profiling thousands of cells from a tumor or PBMC sample [7] [4]. |

| UMI (Unique Molecular Identifier) Oligonucleotides | Molecular barcoding to correct for PCR amplification bias and enable accurate transcript counting. | Quantifying absolute transcript numbers in each cell [8]. |

| Ficoll-Paque Premium | Density gradient medium for isolation of viable PBMCs from whole blood. | Preparing samples for immune profiling studies [7]. |

| Anti-human CD14 Antibody | Cell surface marker for identification and isolation of classical monocytes. | Validating the expansion of the CD14+ monocyte population via FACS [7]. |

| Seurat R Toolkit | Comprehensive software package for single-cell genomics data analysis, including clustering, integration, and visualization. | Performing subclustering analysis on stromal cells and running UMAP [7] [4]. |

| LICT (LLM-based Identifier) | Software tool using multiple large language models for automated, reference-free cell type annotation with credibility scoring. | Improving annotation accuracy in low-heterogeneity datasets like embryos or stromal cells [1]. |

| FastMNN Algorithm | Computational method for integrating multiple scRNA-seq datasets and correcting for batch effects. | Combining data from different patients or studies into a unified analysis [2]. |

Frequently Asked Questions

FAQ 1: What is the "performance gap" in the context of cell type annotation? The "performance gap" refers to the significant drop in annotation accuracy that automated methods, including advanced AI and large language models (LLMs), experience when processing low-heterogeneity cellular datasets compared to highly heterogeneous ones. In highly diverse samples like Peripheral Blood Mononuclear Cells (PBMCs), LLMs can achieve high consistency with expert annotations. However, in low-heterogeneity environments like stromal cells or embryonic cells, the consistency of even top-performing LLMs can fall dramatically, with match rates to manual annotations dropping to as low as 33.3% to 39.4% [1]. This gap poses a major challenge for research in areas like developmental biology and specialized tissue studies.

FAQ 2: Why does annotation accuracy drop in low-heterogeneity environments? Accuracy drops primarily because the informational context in low-heterogeneity data is less rich, which can limit the model's ability to distinguish between subtly different cell types [1]. In highly heterogeneous data, the vast differences between cell populations provide strong signals for the model. In contrast, low-heterogeneity datasets feature cells that are more similar to one another, making it difficult for models to identify robust, distinguishing features without more sophisticated analysis strategies.

FAQ 3: How can I objectively verify the reliability of automated annotations for my low-heterogeneity dataset? You can implement an Objective Credibility Evaluation strategy. This involves:

- For each predicted cell type, query the model to retrieve a list of representative marker genes.

- Analyze the expression of these marker genes within the corresponding cell clusters in your input dataset.

- Classify an annotation as reliable if more than four marker genes are expressed in at least 80% of the cells within the cluster. This provides a reference-free method to validate results and can sometimes show that LLM-generated annotations are more credible than manual ones for challenging low-heterogeneity data [1].

FAQ 4: Our research relies on consistent annotations across multiple labs. How can we mitigate inconsistencies? Annotation inconsistencies often stem from inter-annotator variability, which is a well-documented challenge even among highly experienced experts [9]. To mitigate this:

- Establish clear and detailed annotation guidelines.

- Implement structured feedback loops and review processes.

- Utilize computational frameworks designed to harmonize heterogeneous data sources. For instance, approaches like the "talk-to-machine" strategy can iteratively refine annotations based on marker gene validation, improving alignment with manual annotations [1].

Quantitative Analysis of the Performance Gap

The following table summarizes the performance disparity of top LLMs in annotating different types of scRNA-seq datasets, highlighting the challenge of low-heterogeneity environments [1].

Table 1: Annotation Consistency of LLMs Across Dataset Types

| Dataset Type | Biological Example | Performance in High-Heterogeneity Data (e.g., PBMCs, Gastric Cancer) | Performance in Low-Heterogeneity Data (e.g., Embryo, Stromal Cells) |

|---|---|---|---|

| Normal Physiology | Peripheral Blood Mononuclear Cells (PBMCs) | High performance, low mismatch rates | --- |

| Disease State | Gastric Cancer | High performance, low mismatch rates | --- |

| Developmental Stage | Human Embryos | --- | Low consistency (e.g., 39.4% with Gemini 1.5 Pro) |

| Low-Heterogeneity Environment | Stromal Cells in Mouse Organs | --- | Low consistency (e.g., 33.3% with Claude 3) |

Table 2: Impact of Mitigation Strategies on Annotation Accuracy

| Mitigation Strategy | Key Mechanism | Effect on Low-Heterogeneity Datasets | Effect on High-Heterogeneity Datasets |

|---|---|---|---|

| Multi-Model Integration | Combines outputs from multiple LLMs (e.g., GPT-4, Claude 3) to leverage complementary strengths [1] | Increases match rates (e.g., to 48.5% for embryo data) | Reduces mismatch rates (e.g., to 9.7% for PBMCs) |

| "Talk-to-Machine" Interaction | Iterative human-computer feedback loop using marker gene expression for validation [1] | Boosts full match rate (e.g., 16-fold improvement for embryo data vs. GPT-4 alone) | Achieves high full match rates (e.g., 69.4% for gastric cancer) |

Troubleshooting Guides

Problem 1: Poor Automated Annotation of Subtle Cell Types

Symptoms: Your automated annotation tool runs without error, but the resulting cell types are too broad, miss rare populations, or have low confidence scores for clusters you know should be distinct.

Solutions:

- Implement a Multi-Model Strategy: Do not rely on a single LLM. Use a framework like LICT that integrates several top-performing models (e.g., GPT-4, Claude 3, Gemini) to generate a consensus annotation, which significantly improves accuracy in low-heterogeneity settings [1].

- Employ the "Talk-to-Machine" Protocol: Engage in an interactive validation loop.

- Step 1: Run the initial automated annotation.

- Step 2: For each predicted cell type, command the model to output a list of canonical marker genes.

- Step 3: Validate the expression of these markers in your dataset. If fewer than four markers are expressed in >80% of cells, the annotation is likely unreliable.

- Step 4: Feed this validation result, along with the top differentially expressed genes (DEGs) from your dataset, back to the model and request a revised annotation [1].

- Utilize Advanced Graph-Based Models: For a non-LLM approach, consider tools like scGraphformer. This method uses a graph transformer network to learn cell-cell relationships directly from the data without relying on predefined graphs, which can better capture subtle cellular heterogeneity [10].

Problem 2: Discrepancies Between Automated and Manual Annotations

Symptoms: You find significant disagreements between the labels generated by your automated pipeline and the annotations performed by your domain experts, causing uncertainty about which result to trust.

Solutions:

- Apply Objective Credibility Evaluation: Use the marker-gene-based credibility assessment described in FAQ #3. This provides a data-driven metric to determine which annotation—automated or manual—is more reliable for a given cluster. In some cases, the automated annotation may be more credible based on marker evidence [1].

- Audit for Inter-Annotator Variability: Recognize that expert manual annotation is not a perfect gold standard. Studies show that models trained on annotations from different experts can perform inconsistently on external validation sets, with low pairwise agreement (average Cohen’s κ = 0.255) [9]. If possible, use annotations from multiple experts and assess their consensus.

- Check for Data Heterogeneity: Use a tool like scGraphformer to visualize the learned cell-cell relationship network. This can help you understand if the model is failing to distinguish subpopulations that experts can identify, indicating a potential weakness in the model's learning for your specific data type [10].

Experimental Protocols

Protocol 1: Benchmarking Annotation Tools on a Low-Heterogeneity Dataset

This protocol is adapted from the validation methodology used in [1].

1. Objective: To quantitatively evaluate and compare the performance of different automated cell type annotation tools on a low-heterogeneity scRNA-seq dataset.

2. Materials:

- A well-annotated, public low-heterogeneity scRNA-seq dataset (e.g., stromal cells from mouse organs [1] or human embryo data [1]).

- Software Tools: The annotation tools to be benchmarked (e.g., LICT, scGraphformer, scBERT, CellTypist).

- Computing Environment: A server or computing cluster with sufficient memory and processing power to run the selected tools.

3. Procedure:

- Step 1 - Data Preprocessing: Download the chosen dataset and perform standard quality control and normalization using a pipeline like Seurat or Scanpy.

- Step 2 - Ground Truth Definition: Use the original manual annotations from the dataset publication as the ground truth for benchmarking.

- Step 3 - Tool Execution: Run each annotation tool according to its official documentation. For LLM-based tools like LICT, provide standardized prompts that include the top differentially expressed genes for each cell cluster.

- Step 4 - Performance Metric Calculation: For each tool, calculate the following:

- Annotation Consistency: The percentage of cells where the tool's label matches the manual label.

- Mismatch Rate: The percentage of cells with conflicting labels.

- Credibility Score: The percentage of annotations deemed reliable by the Objective Credibility Evaluation (see FAQ #3).

4. Analysis: Compare the metrics across all tested tools to identify the best-performing solution for your specific low-heterogeneity data context.

Benchmarking Experimental Workflow

Protocol 2: Implementing the "Talk-to-Machine" Refinement Loop

This protocol details the steps for the iterative refinement strategy proven to enhance annotation accuracy [1].

1. Objective: To iteratively improve the initial annotations of an LLM-based tool by incorporating marker gene expression validation from the dataset.

2. Materials:

- Your preprocessed scRNA-seq dataset (cell clusters and DEGs).

- Access to an LLM-based annotation tool (e.g., as implemented in LICT).

3. Procedure:

- Step 1 - Initial Annotation: Submit the top marker genes for each cell cluster to the LLM and request an initial cell type prediction.

- Step 2 - Marker Retrieval: For each LLM-predicted cell type, prompt the model to provide a list of known, representative marker genes.

- Step 3 - Expression Validation: Check the expression of these retrieved marker genes in the corresponding cell cluster of your dataset.

- Step 4 - Decision Point:

- PASS: If >4 marker genes are expressed in >80% of cells, accept the annotation.

- FAIL: If not, proceed to Step 5.

- Step 5 - Iterative Feedback: Generate a structured prompt for the LLM that includes: (i) the initial prediction, (ii) the list of marker genes that failed validation, and (iii) the top DEGs from your dataset. Request a new, refined annotation.

Talk-to-Machine Refinement Loop

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Type | Function / Application | Relevant Context |

|---|---|---|---|

| LICT (LLM-based Identifier for Cell Types) | Software Tool | Integrates multiple LLMs for robust, reference-free cell type annotation. Crucial for low-heterogeneity data. | Core method for multi-model integration and "talk-to-machine" [1]. |

| scGraphformer | Software Tool | A graph transformer network that learns cell-cell relationships directly from data, capturing subtle heterogeneity. | An alternative to graph-based methods that avoids predefined kNN graphs [10]. |

| Objective Credibility Evaluation | Analytical Protocol | A method to assess annotation reliability by validating marker gene expression, providing an objective quality score. | Used to resolve conflicts between automated and manual annotations [1]. |

| Stromal Cell Dataset | Reference Data | A scRNA-seq dataset from mouse organs, used as a benchmark for low-heterogeneity environments. | Used to quantify the performance gap of LLMs [1]. |

| Human Embryo Dataset | Reference Data | A scRNA-seq dataset representing developmental stages, characterized by low heterogeneity. | Used to validate annotation tools on developmental biology questions [1]. |

The table below summarizes the key quantitative findings from the evaluation of Large Language Models (LLMs) on low-heterogeneity cell type annotation tasks, including embryo data.

Table 1: LLM Performance on Low-Heterogeneity Annotation Tasks

| Model/Dataset | Performance Metric | Score | Context |

|---|---|---|---|

| Gemini 1.5 Pro on Embryo Data | Consistency with Manual Annotations | 39.4% | Initial performance on low-heterogeneity human embryo dataset [1] |

| Claude 3 on Fibroblast Data | Consistency with Manual Annotations | 33.3% | Performance on low-heterogeneity mouse stromal cells [1] |

| Multi-Model Integration on Embryo Data | Match Rate (Full + Partial) | 48.5% | Performance after applying Strategy I [1] |

| "Talk-to-Machine" on Embryo Data | Full Match Rate | 48.5% | Performance after applying Strategy II [1] |

| LLM-generated Annotations on Embryo Data | Credible Annotations in Mismatches | 50.0% | Proportion of LLM annotations deemed reliable per Strategy III [1] |

| Expert Annotations on Embryo Data | Credible Annotations in Mismatches | 21.3% | Proportion of manual annotations deemed reliable per Strategy III [1] |

Frequently Asked Questions (FAQs)

Q1: Why does LLM performance drop significantly on low-heterogeneity datasets like embryo cells? LLMs struggle with low-heterogeneity data due to limited informational context and subtle distinguishing features. These models are trained on highly diverse data and excel at identifying clear, distinct patterns. In low-heterogeneity environments—where cell subpopulations share many characteristics—the models lack sufficient signal to make accurate differentiations, leading to performance drops as severe as 39.4% compared to manual annotations [1].

Q2: What is the evidence that the problem is with the data rather than the models? Objective credibility evaluations reveal that LLM-generated annotations for embryo data show higher reliability (50% credible) than expert manual annotations (21.3% credible) when validated against marker gene expression patterns. This suggests that discrepancies often reflect inherent ambiguities in the biological data itself rather than purely model deficiencies [1].

Q3: How can researchers determine if their dataset suffers from low heterogeneity? Low-heterogeneity datasets typically exhibit: minimal variance in gene expression profiles, high cellular similarity, poor clustering separation in dimensional reduction (UMAP/t-SNE), and consistent failure of multiple algorithms to achieve satisfactory annotation accuracy. Specifically, if multiple LLMs consistently achieve below 40% agreement with manual annotations on embryo data, low heterogeneity is likely a contributing factor [1].

Q4: What are the main sources of annotation inconsistency in biological data? Annotation inconsistencies arise from four primary sources: (1) insufficient information for reliable labeling, (2) insufficient domain expertise, (3) human error and cognitive slips, and (4) inherent subjectivity in the labeling task. Studies show even highly experienced clinical experts exhibit significant inter-rater variability (Fleiss' κ = 0.383, indicating only fair agreement) [9].

Troubleshooting Guides

Problem: Poor LLM Performance on Low-Heterogeneity Cell Annotation

Symptoms:

- Consistent annotation accuracy below 40% on embryo or stromal cell data

- High mismatch rates between LLM predictions and manual annotations

- Low inter-annotator agreement across multiple models

Solution: Implement a Three-Strategy Framework

Verification: After implementation, researchers should observe:

- Increase in embryo data annotation match rates from 39.4% to approximately 48.5%

- Reduction in mismatches for high-heterogeneity datasets to below 10%

- Improved reliability scores for LLM-generated annotations

Problem: Handling Discrepancies Between LLM and Expert Annotations

Symptoms:

- Contradictory annotations between LLMs and domain experts

- Uncertainty about which annotations to trust for downstream analysis

- Inconsistent validation results

Solution: Implement Objective Credibility Evaluation

Verification:

- Credibility assessment showing >50% of LLM annotations are reliable despite mismatches

- Identification of cases where both LLM and manual annotations are reliable but different (14% of cases)

- Clear prioritization of cell clusters for downstream analysis based on reliability scores

Experimental Protocols

Protocol 1: Multi-Model Integration for Enhanced Annotation

Purpose: Leverage complementary strengths of multiple LLMs to improve annotation accuracy on low-heterogeneity datasets.

Materials:

- Top-performing LLMs (GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0)

- Standardized prompts incorporating top marker genes

- scRNA-seq dataset with preliminary clustering

Methodology:

- Model Selection: Evaluate 77 publicly available LLMs using benchmark PBMC dataset to identify top performers [1]

- Parallel Annotation: Submit standardized prompts with cluster-specific marker genes to all selected models

- Result Integration: Select best-performing annotations from each model rather than using majority voting

- Validation: Compare integrated annotations with manual benchmarks using consistency metrics

Expected Outcomes:

- Match rate improvement from 39.4% to 48.5% for embryo data

- Mismatch rate reduction from 21.5% to 9.7% for high-heterogeneity data

- More comprehensive coverage of diverse cell types

Protocol 2: "Talk-to-Machine" Iterative Optimization

Purpose: Enhance annotation precision through human-computer interaction and iterative feedback.

Materials:

- Pre-annotated dataset using multi-model integration

- Differentially expressed genes (DEGs) analysis pipeline

- Validation threshold parameters (80% expression in clusters)

Methodology:

- Initial Annotation: Generate preliminary annotations using multi-model integration

- Marker Gene Retrieval: Query LLM for representative marker genes for each predicted cell type

- Expression Validation: Validate marker gene expression in corresponding clusters

- Iterative Feedback: For validation failures, generate structured feedback prompts with expression results and additional DEGs

- Re-query LLM: Use feedback prompts to obtain revised annotations

- Repeat steps 2-5 until validation criteria are met or maximum iterations reached

Validation Criteria:

- Annotation considered valid if >4 marker genes expressed in ≥80% of cluster cells

- Maximum of 3 iteration cycles to prevent over-optimization

Expected Outcomes:

- Full match rate of 34.4% for PBMC and 69.4% for gastric cancer data

- Significant reduction in mismatches (7.5% for PBMC, 2.8% for gastric cancer)

- 16-fold improvement in full match rate for embryo data compared to single-model approach

The Scientist's Toolkit

Table 2: Essential Research Reagents and Solutions

| Tool/Reagent | Function | Application Note |

|---|---|---|

| LICT (LLM-based Identifier for Cell Types) | Integrates multiple LLMs with three core strategies for reliable cell annotation | Specifically designed to address low-heterogeneity challenges [1] |

| Benchmark scRNA-seq Dataset (PBMC) | Standardized evaluation of LLM performance using peripheral blood mononuclear cells | Serves as initial screening tool for model selection [1] |

| Standardized Prompt Templates | Ensure consistent query structure across different LLMs | Incorporates top ten marker genes for each cell subset [1] |

| Objective Credibility Evaluation Framework | Validates annotation reliability based on marker gene expression | Reference-free validation method [1] |

| Multi-gate Mixture-of-Experts (MMoE) | Coordinates co-optimization of shared and local tasks in distributed learning | Helps address data heterogeneity in collaborative settings [11] |

| HeteroSync Learning (HSL) Framework | Privacy-preserving distributed learning for heterogeneous medical data | Useful for multi-institutional collaborations [11] |

Troubleshooting Guide: Low Heterogeneity Dataset Annotation

Common Problems & Solutions

| Problem | Possible Cause | Solution | Reference |

|---|---|---|---|

| Low annotation match rate with manual labels | Inherent low cellular diversity; limited marker gene variety. | Implement a multi-model integration strategy to leverage complementary LLM strengths. | [1] |

| Ambiguous or biased cell type predictions | Standardized LLM data formats struggle with dynamic biological data. | Apply the iterative "talk-to-machine" strategy to enrich model input with contextual data. | [1] |

| Uncertainty in annotation reliability | Lack of an objective, reference-free method for validation. | Employ an objective credibility evaluation based on marker gene expression patterns. | [1] |

| Inconsistent data labeling across the project | Unclear annotation guidelines; subjective interpretations by different annotators. | Define precise annotation rules and implement a cross-validation process between annotators. | [12] |

| Bias in the annotated dataset | Homogeneous group of annotators; unbalanced dataset classes. | Diversify annotators and apply data rebalancing techniques for underrepresented classes. | [12] |

Frequently Asked Questions (FAQs)

Conceptual & Biological Basis

Q1: What defines a "low-heterogeneity" cellular environment in developmental biology? A low-heterogeneity environment consists of cells that are very similar to each other in terms of their state, function, and genetic expression profiles. This is common in early embryonic stages and within specialized tissues like certain stromal cell populations, where cells have not yet undergone extensive diversification or have converged on a highly specific function. In these contexts, the limited diversity makes it difficult to distinguish subtle differences between cell subpopulations using automated annotation tools [1].

Q2: How do fundamental developmental processes like cell differentiation contribute to heterogeneity? Cell differentiation is the process by which a less specialized cell becomes a specific, functional cell type (e.g., neuron, muscle fiber). This process is driven by specific transcription factors (like NeuroD for neurons) that activate unique sets of genes, giving the cell its characteristic appearance and function [13]. The progression of cells through different states of commitment toward these differentiated fates is a primary source of cellular heterogeneity within a tissue [14].

Technical & Computational Challenges

Q3: Why do automated annotation tools, including LLMs, perform poorly on low-heterogeneity data? These tools often rely on identifying distinct patterns in marker gene expression. In low-heterogeneity populations, the differences in gene expression between cell subtypes are subtler and less pronounced. The informational context is poorer, providing fewer robust signals for the models to latch onto, which leads to higher rates of discrepancy compared to expert manual annotation [1].

Q4: What is an objective credibility evaluation for cell type annotation? This is a reference-free method to assess the reliability of an annotation. After an LLM predicts a cell type, it is queried for a list of representative marker genes for that type. The annotation is deemed credible if more than four of these marker genes are expressed in at least 80% of the cells within the cluster. This provides a data-driven measure of confidence independent of manual labels [1].

Q5: How can semi-automated labeling improve our workflow for these difficult datasets? A hybrid AI/human approach is often most effective. An AI model can perform the initial "pre-annotation," handling the bulk of the data quickly. Human annotators then validate or correct these results, adding nuance and understanding that algorithms may miss. This combines speed with accuracy, ensuring reliable annotations for model training [12].

Experimental Protocols for Enhanced Annotation

Protocol 1: Multi-Model Integration Strategy

Purpose: To increase annotation accuracy and consistency by leveraging the complementary strengths of multiple large language models (LLMs), especially for low-heterogeneity datasets [1].

Methodology:

- Input Preparation: For each cell cluster, compile a list of top marker genes (e.g., the top 10 most differentially expressed genes).

- Model Selection & Query: Submit a standardized prompt containing the marker gene list to five top-performing LLMs (e.g., GPT-4, Claude 3, Gemini, LLaMA-3, ERNIE 4.0).

- Result Integration: Instead of using a simple majority vote, select the best-performing annotation result from among the five LLMs for each cluster. This approach capitalizes on the unique strengths of each model for different cell types.

Protocol 2: Iterative "Talk-to-Machine" Refinement

Purpose: To iteratively improve annotation precision for ambiguous or incorrect predictions through a structured human-computer feedback loop [1].

Methodology:

- Initial Annotation & Marker Retrieval: Obtain an initial cell type prediction from an LLM. Then, query the same LLM for a list of known marker genes for the predicted cell type.

- Expression Validation: Evaluate the expression of these retrieved marker genes in the original dataset's corresponding cell cluster.

- Validation Check:

- PASS: If >4 marker genes are expressed in ≥80% of cells in the cluster, accept the annotation.

- FAIL & REFINE: If the condition is not met, generate a feedback prompt for the LLM. This prompt includes the validation results and additional differentially expressed genes (DEGs) from the dataset. Use this prompt to re-query the LLM, asking it to revise or confirm its annotation.

- Iteration: Repeat steps 1-3 until a validated annotation is achieved or a maximum number of iterations is reached.

Protocol 3: Objective Credibility Evaluation

Purpose: To provide a reference-free, unbiased assessment of annotation reliability, distinguishing methodological limitations from intrinsic data ambiguity [1].

Methodology:

- For any given annotation (whether from an LLM or a manual expert), retrieve a set of representative marker genes for that cell type.

- Analyze the expression pattern of these markers within the annotated cell cluster in your scRNA-seq dataset.

- Apply Credibility Threshold: The annotation is classified as "reliable" if more than four marker genes are expressed in at least 80% of the cells in the cluster. Annotations not meeting this threshold are classified as "unreliable" for downstream analysis.

Experimental Workflow Visualization

LICT Annotation Workflow

Talk-to-Machine Refinement Loop

Research Reagent Solutions

Essential Materials for scRNA-seq Annotation Research

| Item | Function / Description | Application in Low-Heterogeneity Context |

|---|---|---|

| Peripheral Blood Mononuclear Cells (PBMCs) | A benchmark dataset of highly heterogeneous immune cells. | Serves as a positive control to validate annotation pipeline performance on well-defined cell types. [1] |

| Human Embryo scRNA-seq Data | Represents a lower-heterogeneity dataset from early developmental stages. | Used to test and optimize annotation strategies for challenging, less diverse cellular environments. [1] |

| Stromal Cell scRNA-seq Data | Data from specialized, low-heterogeneity tissues like mouse organ fibroblasts. | Provides a model for annotating dedicated tissue-specific cell populations with subtle differences. [1] |

| GPT-4, Claude 3, Gemini | Top-performing Large Language Models (LLMs) for biological inference. | Core engines for initial cell type prediction. A multi-model integration approach leverages their complementary strengths. [1] |

| LICT (LLM-based Identifier for Cell Types) | A software package integrating multiple LLMs and strategies. | The primary tool for implementing the multi-model, "talk-to-machine," and credibility evaluation protocols. [1] |

| Data Annotation Platforms (e.g., Labelbox, V7) | Tools for creating ergonomic interfaces for manual and semi-automated data labeling. | Facilitates the human-in-the-loop validation and correction essential for refining AI-generated annotations. [12] |

This technical support center provides troubleshooting guides for researchers addressing annotation errors in biological data analysis. Annotation—the process of labeling biological data such as cell types, genes, or genomic features—is a critical step in bioinformatics pipelines. When performed inaccurately, these errors propagate through downstream analyses, leading to flawed biological interpretations and reduced reproducibility. This guide focuses specifically on the challenges of low-heterogeneity datasets, where subtle annotation errors can have disproportionately large effects, and provides actionable solutions for researchers and drug development professionals.

Quantitative Impact of Annotation Errors

The tables below summarize key quantitative findings from recent studies on how annotation and segmentation errors distort downstream biological analyses.

Table 1: Impact of Segmentation Errors on Clustering and Phenotyping Consistency

| Perturbation Level | k-Means Clustering Consistency | Leiden Clustering Consistency | Cell Phenotyping Accuracy |

|---|---|---|---|

| Low Error | Minimal reduction | Minimal reduction (with larger neighborhood sizes) | >95% for distinct cell types |

| Moderate Error | Significant reduction | Significant reduction (with smaller neighborhood sizes) | 85-95% for distinct cell types |

| High Error | Severe reduction | Severe reduction | Notable misclassification between closely related cell types [15] [16] |

Table 2: Annotation Tool Performance Across Dataset Types

| Dataset Heterogeneity | Manual Annotation | Single LLM Tool (e.g., GPT-4) | Multi-Model Integration (LICT) |

|---|---|---|---|

| High Heterogeneity (e.g., PBMCs) | High accuracy, but subjective and time-consuming | 78.5% match rate | 90.3% match rate |

| Low Heterogeneity (e.g., Embryonic cells) | Considered benchmark, but potential for bias | 39.4% match rate | 48.5% match rate [1] |

Troubleshooting Guides & FAQs

FAQ 1: How do annotation errors specifically affect the analysis of low-heterogeneity datasets?

Answer: In low-heterogeneity datasets, where cell populations have similar molecular profiles, annotation errors cause more severe consequences than in highly heterogeneous data.

- Mechanism: The feature space—the mathematical representation of cellular characteristics—is inherently compressed in low-heterogeneity data. Minor errors in assigning cell boundaries or labels introduce noise that is large relative to the subtle biological differences between cell states. This noise directly obscures these critical distinctions [15] [1].

- Downstream Impact: The result is a significant drop in the performance of automated annotation tools. For example, one study showed that even top-performing Large Language Models (LLMs) like Gemini 1.5 Pro achieved only a 39.4% consistency with manual annotations on embryo data, a low-heterogeneity scenario [1]. This leads to unreliable cell type identification and flawed conclusions about cellular functions and relationships.

FAQ 2: My clustering results are unstable and change with different algorithm parameters. Could this be caused by annotation quality?

Answer: Yes, instability in clustering results is a classic symptom of underlying annotation or segmentation errors.

- Mechanism: Annotation errors distort the fundamental input to clustering algorithms: the single-cell expression profiles. As segmentation inaccuracies increase, they alter the computed protein expression levels for each cell. This "feature distortion" changes the distances between cells in the feature space, making the neighborhoods used by algorithms like k-Means and Leiden inherently unstable [15] [16].

- Diagnosis: If your clustering results are highly sensitive to small changes in parameters like the number of clusters (k) or the neighborhood size, you should first investigate the quality of your input data and annotations before further tuning the algorithms.

FAQ 3: What are the most effective strategies to improve annotation reliability for difficult datasets?

Answer: A multi-layered strategy that combines computational checks with expert knowledge is most effective.

- Implement a Multi-Model Integration Strategy: Instead of relying on a single annotation tool, leverage the complementary strengths of multiple models. One study used five different LLMs (including GPT-4, Claude 3, and Gemini) and selected the best-performing result for each cell type, which significantly reduced the mismatch rate in low-heterogeneity data [1].

- Adopt a "Talk-to-Machine" Feedback Loop: Create an interactive process where an initial annotation is validated against the dataset's own evidence.

- The tool suggests an annotation and provides a list of expected marker genes.

- The expression of these genes is automatically checked in the corresponding cell cluster.

- If validation fails (e.g., fewer than four markers are expressed in 80% of cells), the tool re-queries with the new evidence to refine its annotation [1].

- Apply Rigorous Quality Control Metrics: Use established metrics to quantify annotation quality.

- F1 Score: Balances precision (how many annotations are correct) and recall (how many correct annotations were found) [17].

- Inter-Annotator Agreement (IAA): Measures consistency between different annotators or tools. Use metrics like Fleiss' kappa (for multiple annotators) or Krippendorff's alpha (which can handle missing data and partial agreement) [17].

FAQ 4: What are the best practices for preparing data to minimize annotation errors from the start?

Answer: Preventing errors at the source is the most efficient troubleshooting strategy. Adhere to the following best practices:

- Define Clear Guidelines: Before annotation begins, create detailed, unambiguous instructions for annotators. Use simple language, provide visual examples of "do's" and "don'ts," and explicitly describe how to handle edge cases [18].

- Establish Golden Standards: Have domain experts create a small, "ground truth" dataset that reflects the ideal annotation. This serves as a benchmark for training annotators and evaluating the quality of all other annotations [17].

- Implement Systematic Review Cycles: Build quality control into your workflow. This includes periodic double-checks, having multiple annotators label the same data point to measure consistency, and holding regular meetings to resolve ambiguities [18] [19].

- Ensure Ongoing Training and Support: Annotation is not a one-time task. Provide continuous training for your team and maintain a clear channel for annotators to ask questions and get timely feedback [18] [19].

Experimental Protocols for Error Mitigation

Protocol 1: Benchmarking Segmentation Robustness

This methodology allows you to quantitatively evaluate how sensitive your analysis is to segmentation errors.

- Input Ground Truth Data: Start with a high-quality, manually validated segmentation mask.

- Apply Controlled Perturbations: Use the Affine Transform function from the Albumentations library to simulate realistic segmentation errors. Systematically apply combinations of translation, rotation, scaling, and shearing to each cell mask. Parameters for these transformations should be sampled from uniform distributions to create a range of perturbation strengths [15] [16].

- Generate Perturbed Masks:

- Initialize an output array matching the input mask size.

- For each cell, extract its mask, set non-cell pixels to zero, and apply padding.

- Apply the sampled affine transformations.

- Write the transformed non-zero pixels back to the output array.

- Use binary opening (erosion followed by dilation) to clean up the resulting fuzzy masks.

- Detect and resolve any overlapping masks by randomly removing border pixels to maintain a one-pixel separation [15].

- Run Downstream Analysis: Execute your standard clustering (e.g., k-Means, Leiden) and phenotyping (e.g., Gaussian Mixture Models) pipelines on both the ground truth and the series of perturbed datasets.

- Quantify Impact: Calculate the consistency between the results from the perturbed data and the ground truth. Use the F1 score to compare clustering outputs and track metrics like misclassification rates for cell phenotyping [15].

Protocol 2: Credibility Evaluation for Cell Type Annotations

This protocol provides an objective framework for assessing the reliability of automated or manual cell type annotations.

- Retrieve Marker Genes: For a given annotated cell type (e.g., "CD4+ T-cell"), query the annotation tool or a reference database to generate a list of representative marker genes (e.g., CD3D, CD4, IL7R).

- Evaluate Expression Patterns: In your single-cell dataset (e.g., scRNA-seq or multiplexed imaging), analyze the expression of these marker genes within the cluster of cells that received the annotation.

- Assess Credibility: Apply a predefined, objective threshold to determine reliability. For example, an annotation can be deemed "credible" if more than four of the suggested marker genes are expressed in at least 80% of the cells within the cluster. Annotations failing this threshold should be flagged for manual review or re-annotation [1].

Visualization of Error Propagation & Mitigation

Diagram 1: Annotation Error Propagation Pathway

Diagram 2: Strategy for Robust Annotation

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Annotation and Quality Control

| Tool / Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CellSeg / Cellpose / Stardist | Segmentation Algorithm | Delineates individual cell boundaries in imaging data | Highly multiplexed tissue imaging (CODEX, MIBI, IMC) [15] [16] |

| LICT (LLM-based Identifier) | Annotation Tool | Automated cell type annotation for scRNA-seq data using multi-LLM integration | Single-cell RNA sequencing analysis, especially for low-heterogeneity data [1] |

| PubTator 3.0 | Database & NER Tool | Validates and normalizes biomedical entities (genes, chemicals) via canonical IDs | Grounding LLM outputs to reduce hallucinations in metadata annotation [20] |

| Albumentations Library | Python Library | Applies affine transformations (scale, rotate, shear) to simulate segmentation errors | Benchmarking segmentation robustness and pipeline error tolerance [15] [16] |

| FastQC / MultiQC | Quality Control Tool | Provides initial quality assessment of raw sequencing data (e.g., base quality, GC content) | First step in bioinformatics pipeline to identify issues before they propagate [21] [22] |

| F1 Score / Fleiss' Kappa | Quality Metric | Quantifies annotation precision/recall (F1) and inter-annotator agreement (Fleiss' Kappa) | Objectively measuring the consistency and accuracy of annotations [15] [17] |

Advanced Computational Frameworks for Low-Heterogeneity Annotation

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using multiple LLMs over a single model for annotating low-heterogeneity cell types? Using multiple LLMs leverages their complementary strengths, which is crucial for low-heterogeneity datasets where single models often struggle. For example, while Claude 3 might excel in annotating highly heterogeneous cell subpopulations, Gemini 1.5 Pro or GPT-4 could provide better results for specific low-heterogeneity contexts. Multi-model integration significantly improves match rates with manual annotations, reducing mismatch from over 50% to more manageable levels [1].

Q2: My multi-LLM pipeline is producing inconsistent annotations for similar cell clusters. How can I resolve this? Inconsistency often arises from ambiguous marker gene expression in low-heterogeneity environments. Implement the "talk-to-machine" strategy: query the LLM to provide representative marker genes for its predicted cell type, then validate if these genes are expressed in your dataset. If validation fails, provide this feedback with additional differentially expressed genes to the LLM for re-annotation. This iterative process significantly improves annotation consistency [1].

Q3: What methods can I use to objectively evaluate which LLM annotations are most reliable? Use an objective credibility evaluation strategy. For each LLM-predicted cell type, retrieve representative marker genes and assess their expression pattern in your dataset. An annotation is considered reliable if more than four marker genes are expressed in at least 80% of cells within the cluster. This reference-free validation provides quantitative assessment of annotation reliability independent of manual annotations [1].

Q4: How can I efficiently compare and integrate outputs from different LLMs without constantly switching interfaces? Use specialized systems like LLMartini that provide unified interfaces for comparing multiple LLM outputs. These systems automatically segment responses into semantically-aligned units, merge consensus content, and highlight discrepancies through color coding. This approach significantly reduces cognitive load and operational friction compared to manual multi-tab workflows [23].

Q5: What are the most effective technical frameworks for implementing multi-LLM pipelines in biomedical research? For entity recognition, consider cache-augmented generation approaches that integrate GPT-4o with specialized tools like PubTator 3.0. This combines LLM analysis with validated biomedical databases. For systematic evaluation, frameworks like DeepEval provide metrics specifically designed for LLM assessment, including faithfulness, contextual relevancy, and answer relevancy metrics [20] [24].

Troubleshooting Guides

Problem: High Discrepancy Between LLM and Manual Annotations

Symptoms:

- Over 50% inconsistency between LLM-generated and manual annotations for low-heterogeneity cell types

- LLM annotations flagged as unreliable by credibility evaluation

- Significant inter-model variability in annotation results

Resolution Steps:

- Implement Multi-Model Integration: Instead of relying on a single LLM, deploy a panel of complementary models (GPT-4, Claude 3, Gemini, LLaMA-3, ERNIE 4.0) and select the best-performing results for each cell type [1].

Apply "Talk-to-Machine" Strategy:

- Step 1: Obtain initial annotations from your LLM panel

- Step 2: Query each LLM for representative marker genes for its predicted cell types

- Step 3: Validate expression patterns in your dataset

- Step 4: For failed validations, provide structured feedback with additional DEGs

- Step 5: Iterate until convergence or maximum iterations reached [1]

Objective Credibility Assessment:

- Calculate the percentage of expressed marker genes for each annotation

- Apply the 4-gene/80% threshold for reliability classification

- Prioritize annotations meeting credibility criteria for downstream analysis [1]

Problem: LLM Hallucinations in Biomedical Entity Recognition

Symptoms:

- LLM generates plausible but incorrect biomedical entities

- Entities not validated in reference databases

- Inconsistent entity identification across similar datasets

Resolution Steps:

- Implement Cache-Augmented Generation:

- Step 1: GPT-4o-based full-text analysis for candidate entity generation

- Step 2: PubTator 3.0 validation of suggested terms

- Step 3: Schema-constrained full-text analysis using domain-specific metadata

- Step 4: Combined evaluation of validated and schema-related terms [20]

Domain Schema Integration:

- Develop dedicated metadata schema for your research area

- Constrain LLM output to schema-defined entities

- Combine universal entities (via PubTator) with project-specific concepts [20]

Validation Workflow:

- Use PubTator 3.0 for high-precision normalization with canonical IDs

- Maintain project-specific schema for in-house concepts

- Merge results with clear provenance tracking [20]

Experimental Protocols & Data

Quantitative Performance of Multi-LLM Strategies

Table 1: Annotation Performance Across Dataset Types Using Multi-Model Integration

| Dataset Type | Single Model Mismatch Rate | Multi-Model Mismatch Rate | Improvement | Key Performing Models |

|---|---|---|---|---|

| High Heterogeneity (PBMC) | 21.5% | 9.7% | 55% reduction | Claude 3, GPT-4 |

| High Heterogeneity (Gastric Cancer) | 11.1% | 8.3% | 25% reduction | Claude 3, Gemini 1.5 Pro |

| Low Heterogeneity (Embryo) | >50% inconsistency | 48.5% match rate | 16x improvement | Gemini 1.5 Pro, GPT-4 |

| Low Heterogeneity (Stromal Cells) | >50% inconsistency | 43.8% match rate | Significant improvement | Claude 3, LLaMA-3 |

Source: Validation across four scRNA-seq datasets representing diverse biological contexts [1]

Table 2: Credibility Assessment Results for LLM vs. Manual Annotations

| Dataset | LLM Annotations Deemed Reliable | Manual Annotations Deemed Reliable | Advantage |

|---|---|---|---|

| Gastric Cancer | Comparable to manual | Benchmark | Comparable reliability |

| PBMC | Higher than manual | Lower than LLM | LLM outperformed manual |

| Embryo (Low Heterogeneity) | 50% of mismatched annotations credible | 21.3% credible | 2.3x more credible |

| Stromal Cells (Low Heterogeneity) | 29.6% credible | 0% credible | Significant LLM advantage |

Source: Objective credibility evaluation based on marker gene expression patterns [1]

Detailed Methodological Protocols

Protocol 1: Multi-Model Integration for scRNA-seq Annotation

Model Selection: Identify top-performing LLMs for your specific domain through benchmarking (e.g., GPT-4, LLaMA-3, Claude 3, Gemini, ERNIE 4.0 for cell typing) [1].

Standardized Prompting:

- Format: Incorporate top ten marker genes for each cell subset

- Structure: Use consistent prompt templates across all models

- Context: Provide equivalent biological context for all queries

Output Integration:

- Method: Select best-performing results from each model rather than simple voting

- Validation: Compare against benchmark datasets with known annotations

- Metrics: Calculate consistency rates with manual annotations

Iterative Refinement:

- Identify low-performance scenarios (e.g., low-heterogeneity cells)

- Implement additional strategies for challenging cases

- Re-benchmark improved pipeline [1]

Protocol 2: Cache-Augmented Generation for Biomedical Entities

Initial Entity Generation:

- Tool: GPT-4o with full-text analysis capability

- Scope: Analyze complete manuscript text excluding discussion and bibliography

- Instruction: Generate relevant biomedical entities without restrictions

PubTator 3.0 Validation:

- Method: Custom GPT with PubTator 3.0 augmentation

- Process: Query PubTator for standardized entity IDs for each generated term

- Output: Retain only validated entities with canonical identifiers

Schema-Constrained Extraction:

- Input: Dedicated metadata schema in tree-like structure

- Task: Re-analyze full text identifying schema-defined entities

- Output: Project-specific entities not in universal databases

Combined Evaluation:

- Merge: Schema-related and PubTator-validated entities

- Deduplicate: Prioritize schema-derived entities

- Finalize: Comprehensive entity list with provenance tracking [20]

Workflow Diagrams

Multi-Model LLM Integration Workflow for Low-Heterogeneity Data

Objective Credibility Evaluation Protocol

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-LLM Experiments

| Tool/Resource | Function | Application Context | Key Features |

|---|---|---|---|

| PubTator 3.0 | Biomedical entity validation and normalization | Step 2 validation in cache-augmented generation | Provides canonical IDs for entities, reduces hallucinations [20] |

| Domain-Specific Metadata Schema | Constrains LLM output to project-relevant concepts | Schema-constrained entity extraction | Captures in-house cell lines, endpoints not in universal databases [20] |

| LLMartini System | Visual comparison and fusion of multiple LLM outputs | Multi-model comparison and selection | Segments responses, merges consensus, highlights differences [23] |

| DeepEval Framework | LLM evaluation metrics and testing | Validation of multi-LLM pipeline performance | Provides hallucination, bias, relevance metrics [24] |

| Cache-Augmented Generation | Proprietary data integration without retrieval latency | Full-text analysis with extended context | Eliminates retrieval errors, handles large documents [20] |

| RAGAs Framework | Retrieval-Augmented Generation assessment | Evaluation of knowledge-grounded LLM systems | Measures faithfulness, contextual relevancy, answer relevancy [24] |

| Objective Credibility Evaluation | Reference-free annotation validation | Assessing reliability of LLM vs manual annotations | Uses marker gene expression patterns as ground truth [1] |

Frequently Asked Questions

Q1: My genetic algorithm fails when converting binary data back to float values, showing an "unpack requires a buffer of 4 bytes" error. What's wrong?

This error typically occurs when the binary data buffer size doesn't match the expected 4 bytes for a float conversion. The function binary_to_float might be receiving a binary list of incorrect length.

- Solution: Verify that every binary string representing a float is exactly 32 bits (4 bytes) long before unpacking. Debug by checking the exact value of

binary_listwhen the error occurs and ensure the byte conversion creates a buffer of precisely 4 bytes [25].

Q2: How can I prevent data leakage when preprocessing data for the ensemble model?

Data leakage causes overly optimistic performance estimates and models that fail on unseen data.

- Solution: Always split your data into training and test sets before applying any preprocessing steps. Use pipelines to ensure preprocessing steps (like imputation and scaling) are fitted only on the training data and then applied to the test data. Never preprocess the full dataset before splitting [26].

Q3: My feature selection process seems unstable—different runs select different features. How can I improve consistency?

Instability in feature selection can arise from high-dimensional data and correlated features, especially with limited samples.

- Solution: Implement a robust ensemble feature selection approach. Aggregate results from multiple feature selectors and use a pseudo-variables assisted tuning strategy. This method uses permuted copies of features as known irrelevant controls; only features consistently outperforming these pseudo-variables across multiple permutations are selected [27].

Q4: What is the most common mistake in machine learning projects that I should avoid?

A common mistake is insufficient data understanding and preprocessing. Real-world datasets are rarely usable in their native form and require extensive cleaning.

- Solution: Perform thorough exploratory data analysis (EDA) before modeling. Use summary statistics and visualizations to understand distributions, identify outliers, and handle missing values appropriately before proceeding to feature engineering and model building [26].

Q5: When should I use knowledge-based versus data-driven feature selection?

The choice depends on your data context and goals. Knowledge-based feature selection leverages prior biological knowledge, while data-driven methods rely on patterns in the experimental data.

- Solution: For drug response prediction with transcriptome data, knowledge-based methods (like using drug target pathways) often yield more interpretable models and can be highly predictive for drugs targeting specific genes and pathways. Data-driven methods may perform better for drugs affecting general cellular mechanisms [28] [29].

Troubleshooting Guides

Issue 1: Poor Annotation Accuracy on Low-Heterogeneity Datasets

Problem: Ensemble model with genetic feature selection performs poorly when annotating single-cell RNA sequencing data with low cellular heterogeneity.

Diagnosis Steps:

- Check if the genetic algorithm's feature selection is too aggressive, removing biologically relevant but low-expression markers.

- Verify whether batch effects or technical variations are confounding the genetic optimizer.

- Evaluate if the ensemble learners are overfitting to the majority cell types.

Resolution:

- Adjust Genetic Algorithm Parameters: Incorporate prior biological knowledge into the fitness function. Penalize feature sets that exclude genes from known, biologically relevant pathways [29].

- Implement Advanced Normalization: Apply techniques like SCTransform to handle technical noise before feature selection.

- Utilize Pseudo-Variables for Tuning: Integrate pseudo-variables (known irrelevant features) into the genetic algorithm's selection process. This helps ensure selected features show consistently stronger signals than noise [27].

Issue 2: Genetic Algorithm Convergence Problems

Problem: The genetic optimizer fails to converge or gets stuck in local minima during feature selection.

Diagnosis Steps:

- Check population diversity metrics across generations.

- Analyze fitness score progression over iterations.

- Verify mutation and crossover rates are appropriately set.

Resolution:

- Parameter Adjustment: Implement adaptive mutation rates that increase when population diversity drops below a threshold. For feature selection, typical mutation rates range from 0.001 to 0.1 [25] [30].

- Alternative Selection Methods: Experiment with different parent selection strategies:

- Implement Elitism: Preserve a small percentage of top-performing solutions unchanged in the next generation to ensure fitness doesn't decrease [30].

Issue 3: Handling High-Dimensional Data with Limited Samples

Problem: The ensemble model struggles with datasets where the number of features (genes) vastly exceeds the number of samples (cells), common in scRNA-seq studies.

Diagnosis Steps:

- Determine if the feature selection process is retaining too many variables.

- Check for overfitting by comparing training and validation performance.

- Evaluate if the chosen ML models are appropriate for high-dimensional data.

Resolution:

- Knowledge-Based Feature Pre-Filtering: Before applying genetic algorithm-based selection, reduce feature space using biological knowledge. For drug response prediction, start with features related to drug targets or their pathways [29].

- Consider Feature Transformation: Instead of selecting gene subsets, use methods like Pathway Activities or Transcription Factor Activities, which transform many gene expressions into fewer, biologically meaningful scores [28].

- Apply Regularization: Use models with built-in regularization like Ridge regression or Elastic Net, which have been shown to perform well on high-dimensional biological data [28].

Experimental Protocols & Data

Protocol 1: Benchmarking Ensemble-Genetic Framework Against Established Methods

Objective: Evaluate the performance of the Ensemble Machine Learning with Genetic Optimization framework against existing annotation tools like scMRA, ItClust, Scmap, and Seurat [31].

Methodology:

- Data Preparation: Obtain well-annotated scRNA-seq reference datasets and corresponding query datasets with known cell type labels.

- Performance Metrics: Measure annotation accuracy under varying conditions: different levels of data scarcity (mild, moderate, severe reduction in training data) and increasing number of cell type clusters [31].

- Experimental Runs: For each method and condition, execute multiple runs to ensure statistical significance of results.

Expected Outcome: The proposed ensemble-genetic framework is expected to demonstrate superior accuracy and generalization, particularly under conditions of limited reference data and increasing dataset complexity [31].

Protocol 2: Evaluating Feature Reduction Methods for Drug Response Prediction

Objective: Compare the performance of knowledge-based and data-driven feature reduction methods for predicting drug sensitivity from transcriptome data [28].

Methodology:

- Feature Reduction Methods: Apply nine different methods to cell line gene expression data:

- Machine Learning Models: Feed reduced features to multiple ML models (Ridge regression, Lasso, SVM, Random Forest, etc.) [28].

- Validation: Perform both cross-validation on cell lines and validation on clinical tumor data [28].

Key Results Summary: Table: Comparative Performance of Feature Reduction Methods for Drug Response Prediction

| Feature Reduction Method | Type | Typical Feature Count | Best-Performing ML Model | Key Strengths |

|---|---|---|---|---|

| Transcription Factor Activities | Knowledge-based | Varies | Ridge Regression | Effectively distinguishes sensitive/resistant tumors [28] |

| Pathway Activities | Knowledge-based | ~14 | Ridge Regression | High interpretability, minimal features [28] |

| Drug Pathway Genes | Knowledge-based | ~3,704 | Ridge Regression | Incorporates known biological mechanisms [28] |

| Autoencoder Embedding | Data-driven | User-defined | Ridge Regression | Captures non-linear patterns [28] |

| Principal Components | Data-driven | User-defined | Ridge Regression | Maximizes variance explained [28] |

Protocol 3: Robust Ensemble Feature Selection with Pseudo-Variables

Objective: Implement a robust ensemble feature selection approach integrated with group Lasso to identify impactful features from high-dimensional data with survival outcomes [27].

Methodology:

- Feature Aggregation: Apply multiple feature selectors to the dataset and aggregate their results to create a ranked feature set [27].

- Group Lasso Application: Fit a group Lasso model on the ranked features, where groups are defined based on correlation structure [27].

- Pseudo-Variable Tuning: Incorporate permuted copies of features (pseudo-variables) as known irrelevant controls. Select only features that consistently show stronger signals than the strongest pseudo-variable across multiple permutations [27].

Application: This method has been successfully applied to colorectal cancer data from TCGA, generating a composite score based on selected genes that correctly distinguishes patient subtypes [27].

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Tools

| Item | Function/Application | Example/Notes |

|---|---|---|

| scRNA-seq Datasets | Provide single-cell resolution transcriptome data for model training and validation. | Human Cell Atlas, Mouse Cell Atlas [31] |

| Drug Sensitivity Databases | Source of drug response data for building predictive models. | GDSC, CCLE, PRISM [28] [29] |

| Pathway Databases | Provide biological knowledge for knowledge-based feature selection. | Reactome, KEGG, MSigDB [28] |

| Genetic Algorithm Framework | Optimizes feature selection by evolving solutions over generations. | Custom implementation in Python; key parameters: mutation rate (0.001-0.1), crossover type (one-point/two-point), selection method [25] [30] |

| Ensemble Machine Learning Models | Combines multiple models to improve prediction accuracy and robustness. | Gradient Boosting, Random Forest, Stacking of LSTM/BiLSTM/GRU [31] [32] |

| Pseudo-Variables | Act as negative controls during feature selection to reduce false discoveries. | Created by permuting original features; only features outperforming pseudo-variables are selected [27] |

Workflow and System Diagrams

Ensemble Genetic Feature Selection Workflow

Troubleshooting Process Flow

Troubleshooting Guides

Guide 1: Annotation Inconsistency in Low Heterogeneity Datasets

Issue or Problem Statement Researchers encounter inconsistent annotation results despite working with low heterogeneity datasets where data originates from similar sources, formats, and collection environments [6] [33].

Symptoms or Error Indicators

- High inter-annotator disagreement despite clear guidelines

- Model performance variance with different annotation batches

- Inconsistent ground truth labels for visually similar samples

- Poor model generalization despite high training accuracy

Environment Details

- Low heterogeneity datasets (structured/semi-structured formats: CSV, JSON, Parquet) [6]

- Multiple annotators working simultaneously

- Standardized annotation platforms (LabelBox, CVAT, Prodigy)

- Homogeneous data sources (single institution, consistent imaging protocols) [11]

Possible Causes

- Subtle Data Variations: Minor differences in data characteristics not captured in heterogeneity assessment [33]

- Annotation Fatigue: Repetitive labeling tasks leading to decreased attention [34]

- Guideline Ambiguity: Unclear boundaries for similar-looking classes

- Tooling Limitations: Annotation interface not optimized for fine-grained distinctions

Step-by-Step Resolution Process

- Data Quality Assessment: Verify dataset homogeneity using statistical tests (KS-test, χ²)

- Annotation Validation: Implement cross-annotation with expert review

- Guideline Refinement: Clarify edge cases with visual examples

- Tool Optimization: Configure interface to highlight distinguishing features

- Quality Metrics: Establish consistency metrics (Cohen's κ > 0.8)

Escalation Path or Next Steps If consistency metrics remain below threshold after two refinement cycles, escalate to data science lead for protocol revision and additional annotator training.

Validation or Confirmation Step Measure inter-annotator agreement scores across three consecutive annotation batches with κ ≥ 0.85.

Guide 2: Model Performance Discrepancies with Homogeneous Data

Issue or Problem Statement AI models show unexpected performance variations when trained on apparently homogeneous datasets, contradicting expectations of stable learning curves [11].

Symptoms or Error Indicators

- Fluctuating validation accuracy despite data consistency

- Overfitting on homogeneous training data

- Poor cross-validation performance

- Inconsistent model predictions across similar test samples

Environment Details

- Homogeneous data sources (single collection protocol) [11]

- Standardized preprocessing pipelines

- Consistent feature extraction methods

- Fixed model architectures and hyperparameters

Possible Causes

- Hidden Heterogeneity: Undetected variations in data subpopulations [33]

- Annotation Noise: Imperfect ground truth labels

- Feature Sensitivity: Model over-emphasizing minor data variations

- Evaluation Bias: Test set not representing true data distribution

Step-by-Step Resolution Process

- Data Auditing: Cluster analysis to identify hidden subpopulations

- Annotation Verification: Expert review of uncertain labels

- Feature Analysis: Ablation studies to identify sensitive features

- Cross-Validation: Implement stratified k-fold validation

- Regularization: Adjust dropout, weight decay to prevent overfitting

Escalation Path or Next Steps For persistent performance issues despite regularization, escalate to ML lead for architecture modification or data augmentation strategy development.

Frequently Asked Questions (FAQs)

Q1: What defines a truly low heterogeneity dataset in drug discovery research? A low heterogeneity dataset exhibits minimal variance across these dimensions: data sources (single institution), collection protocols (standardized equipment/settings), formats (consistent structured formats like Parquet, CSV), and annotation schemes (uniform labeling criteria). True homogeneity requires verification through statistical testing of feature distributions and label consistency metrics [6] [33] [11].