Preventing Data Leakage in scFM Benchmarking: A Guide for Robust and Reproducible Drug Discovery

This article addresses the critical challenge of data leakage in single-cell force microscopy (scFM) benchmarking for drug discovery.

Preventing Data Leakage in scFM Benchmarking: A Guide for Robust and Reproducible Drug Discovery

Abstract

This article addresses the critical challenge of data leakage in single-cell force microscopy (scFM) benchmarking for drug discovery. As machine learning becomes integral to analyzing compound-protein interactions, ensuring unbiased and reproducible benchmarks is paramount. We explore the foundational causes and consequences of data leakage, drawing parallels from computational chemistry benchmarks. The content provides methodological guidance for constructing leakage-free datasets, troubleshooting common pitfalls in experimental design, and establishing rigorous validation protocols. Aimed at researchers and drug development professionals, this guide synthesizes best practices to safeguard the integrity of predictive models in biomedical research, fostering trust in AI-driven discovery.

Understanding Data Leakage: Why It Undermines scFM Benchmarking in Biomedical Research

FAQs: Data Leakage in Computational Drug Discovery

What is data leakage in the context of machine learning for drug discovery? Data leakage occurs when information from outside the training dataset is used to create a machine learning model. This leads to overly optimistic performance estimates during testing because the model has, in effect, already "seen" the test data. When this happens, the model memorizes the training data instead of learning generalizable properties, resulting in poor performance when applied to real-world, out-of-distribution data [1].

Why is data leakage a critical problem for drug discovery and scFM benchmarking? Data leakage compromises the reliability of model evaluations. In fields like molecular property prediction or single-cell perturbation effect prediction, a model that has experienced data leakage will fail to generalize to new, unseen molecules or cellular states. For example, in single-cell foundation model (scFM) benchmarking, PertEval-scFM found that models offered limited improvement over baselines in zero-shot settings, particularly under distribution shift, highlighting the need for rigorous, leakage-free evaluation to assess true model capability [2] [3].

How can data leakage lead to the exposure of proprietary chemical structures? Publishing neural networks trained on confidential datasets poses a significant privacy risk. Adversaries can use Membership Inference Attacks (MIAs) to determine whether a specific molecule was part of the model's training data. In a black-box setting, similar to making models available as a web service, these attacks can successfully identify training data molecules, thereby exposing proprietary chemical structures. This risk is especially high for molecules from minority classes and for models trained on smaller datasets [4].

What are the common technical causes of data leakage in biomedical ML?

- Inappropriate Data Splitting: Randomly splitting datasets that contain highly similar data points (e.g., molecules with similar structures or proteins from the same family) can lead to leakage. If similar samples are in both training and test sets, the model is not truly tested on novel data [1].

- Feature Selection Before Splitting: Performing feature selection or normalization on the entire dataset before splitting it can allow information from the test set to influence the training process.

- Model Sharing: As explored in the "Publishing neural networks in drug discovery might compromise training data privacy" study, the act of sharing a trained model itself can be a source of leakage, as the model's outputs can be queried to infer the training data [4].

Troubleshooting Guides

Issue: Inflated Model Performance During Training with a Sharp Drop on Real-World Data

This is a classic symptom of data leakage, where your model performs exceptionally well in validation but fails in practice.

Investigation and Resolution Protocol:

Audit Your Data Splitting Strategy:

- Action: Verify that your data splitting method accounts for the inherent similarities in your biological data. For molecular data, this means ensuring that structurally similar compounds are not spread across training and test sets. For protein-related tasks, ensure that proteins with high sequence homology are not in different splits.

- Tool: Use a tool like DataSAIL to perform similarity-aware data splitting. DataSAIL formulates the splitting problem to minimize information leakage as a combinatorial optimization problem, ensuring that your test set is truly out-of-distribution relative to your training set [1].

- Visual Workflow:

Check for Preprocessing Errors:

- Action: Confirm that all preprocessing steps (e.g., normalization, imputation, feature selection) were fit only on the training data and then applied to the validation and test sets. Any step that uses global dataset statistics contaminates the training process.

Evaluate Privacy Risks Before Model Sharing:

- Action: If you plan to publish your model, assess its vulnerability to privacy attacks.

- Tool: Implement a framework like the one provided in the cited study (available at

https://github.com/FabianKruger/molprivacy) to run Membership Inference Attacks against your own model [4]. - Mitigation: Consider using molecular representations that are less susceptible to leakage. The same study found that representing molecules as graphs and using message-passing neural networks resulted in the least information leakage across all evaluated datasets [4].

Issue: Membership Inference Attacks Successfully Identify Training Set Molecules

If your proprietary model is found to be leaking information about its training data, take these steps to understand and mitigate the risk.

Investigation and Resolution Protocol:

Quantify the Risk:

- Action: Use Likelihood Ratio Attacks (LiRA) and Robust Membership Inference Attacks (RMIA) to measure the True Positive Rate (TPR) at a very low False Positive Rate (FPR), such as 0 or 0.001. This will tell you how many of your training molecules can be confidently identified [4].

- Interpretation: The table below summarizes the privacy risks found across different molecular property prediction tasks, showing that smaller datasets are at higher risk [4].

Table 1: Privacy Risk from Membership Inference Attacks

Dataset Training Set Size Key Finding Blood-Brain Barrier (BBB) 859 molecules Median TPR between 0.01-0.03 at FPR=0 (9-26 molecules identified) Ames Mutagenicity 3,264 molecules Significantly higher TPR than random guessing DNA Encoded Library (DEL) 48,837 molecules TPRs decreased with larger dataset size; one attack performed significantly better hERG Inhibition 137,853 molecules TPRs decreased with larger dataset size; one attack performed significantly better Choose a Safer Model Architecture:

- Action: Transition from using simpler molecular representations (like fingerprints or SMILES) to graph-based representations with message-passing neural networks.

- Evidence: Research shows that the graph representation consistently had the lowest TPRs across all datasets and attacks, with a median TPR that was on average 66% ± 6% lower than other representations. For larger datasets, it was the only representation that sometimes prevented attackers from identifying more molecules than by random guessing [4].

Understand Attacker Advantages:

- Action: Be aware that combining different MIAs (LiRA and RMIA) can identify a wider range of training data molecules than using a single attack method, as they do not always identify the same molecules [4].

Experimental Protocols for Data Leakage Assessment

Protocol: Assessing Model Privacy with Membership Inference Attacks (MIA)

Objective: To evaluate the risk that a trained machine learning model will leak information about its proprietary training data.

Methodology:

- Model Training: Train your target model (e.g., a neural network for molecular property prediction) on your confidential dataset.

- Attack Setup: In a black-box setting, assume the adversary has access to the model's output logits (e.g., via a web service). The adversary does not need access to the model's internal weights.

- Execute Attacks:

- Apply two state-of-the-art attacks: the Likelihood Ratio Attack (LiRA) and the Robust Membership Inference Attack (RMIA) [4].

- The attacks query the model with samples from the training set and from a hold-out set not seen during training.

- Evaluation Metric: Calculate the True Positive Rate (TPR) at a fixed, low False Positive Rate (FPR), such as 0 or 0.001. A TPR significantly above the random guessing baseline indicates a successful attack and information leakage [4].

Protocol: Creating Leakage-Reduced Data Splits with DataSAIL

Objective: To split a dataset into training, validation, and test sets in a way that minimizes information leakage and enables a realistic evaluation of a model's performance on out-of-distribution data.

Methodology:

- Problem Formulation: DataSAIL treats data splitting as a combinatorial optimization problem that is NP-hard. It uses a scalable heuristic based on clustering and integer linear programming (ILP) to find a solution [1].

- Input: Provide your dataset and a similarity or distance measure for the data points (e.g., molecular similarity, protein sequence homology).

- Splitting Mode: Choose the appropriate splitting task. For drug-target interaction prediction (a 2D problem), use similarity-based two-dimensional splitting (S2) to ensure that neither similar drugs nor similar targets are shared between splits [1].

- Output: DataSAIL returns data splits where the similarity between data points in the training set and the test set is minimized, providing a more realistic and challenging benchmark for your models [1].

Table 2: DataSAIL Splitting Schemes for Different Data Types

| Data Type | Splitting Scheme | Description | Goal |

|---|---|---|---|

| 1D (e.g., Small Molecules) | Similarity-based (S1) | Splits data so that samples in the test set are dissimilar to those in the training set. | Prevents models from exploiting molecular similarity shortcuts. |

| 2D (e.g., Drug-Target Pairs) | Similarity-based (S2) | Splits data so that neither the drugs nor the targets in the test set are highly similar to those in the training set. | Forces the model to learn generalizable interaction rules, not rely on similarities in either dimension. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools for Data Leakage Prevention

| Tool / Solution | Function | Relevance to Data Leakage |

|---|---|---|

| DataSAIL [1] | A Python package for computing similarity-aware data splits for 1D and 2D biomolecular data. | Prevents information leakage during the data splitting stage, the most common source of leakage. |

| MolPrivacy Framework [4] | A framework for assessing the privacy risks of classification models and molecular representations via Membership Inference Attacks. | Allows researchers to proactively test their models' vulnerability before publication. |

| Message-Passing Neural Networks (MPNN) [4] | A neural network architecture that operates directly on graph representations of molecules. | A safer architecture that demonstrates significantly less information leakage compared to models using other molecular representations. |

| PertEval-scFM Benchmark [2] [3] | A standardized framework for evaluating single-cell foundation models on perturbation effect prediction. | Provides a rigorous, standardized testing ground that helps identify model limitations and over-optimism potentially caused by data leakage. |

| Dark Web Scanning Tools [5] | Proactive security tools that search hacker forums and ransomware blogs for leaked data. | Protects the underlying training data from being stolen and used to attack models or compromise intellectual property. |

FAQs on Data Leakage in Computational Drug Discovery

Q1: What is data leakage in the context of compound activity prediction? Data leakage occurs when information from the test dataset inadvertently influences the training process of a model. This leads to overly optimistic, unrealistic performance estimates that do not translate to real-world applications. In compound activity prediction, a common form of leakage is compound overlap, where the same molecule appears in both the training and test sets due to inadequate splitting procedures [6].

Q2: Why is data leakage a critical issue for benchmarking single-cell foundation models (scFMs) and activity prediction models? For both scFMs and activity prediction models, data leakage creates a false impression of a model's capability to generalize to new, unseen data. This undermines the fairness of model comparisons and can misdirect research efforts. Preventing leakage is a foundational step in creating trustworthy benchmarks, as it ensures that performance metrics reflect true predictive power rather than the model's ability to "remember" training data [7] [8].

Q3: How can I identify potential data leakage in my experimental setup? Be vigilant for these warning signs:

- Unusually High Performance: If your model achieves near-perfect accuracy on a complex task with limited data, it may be memorizing data rather than learning generalizable patterns [6].

- Compound or Sample Overlap: Inspect your training and test splits to ensure no individual compound (for activity prediction) or cell (for scRNA-seq analysis) is present in both sets [6].

- Temporal or Procedural Confounding: Ensure that data from a later experiment (e.g., a confirmatory assay) is not used to train a model meant to predict results from an earlier screening stage [9].

Q4: What are the best practices for splitting data to prevent leakage in compound activity datasets? Standard random splitting is often insufficient. For rigorous benchmarking, use advanced cross-validation (AXV) or hold-out methods that operate at the compound level rather than the data point level [6]. This means that before generating data points (such as matched molecular pairs), a hold-out set of compounds is first removed. Any data point derived from a compound in this hold-out set is exclusively assigned to the test set, guaranteeing no compound overlap [6].

Case Studies & Experimental Protocols

Case Study 1: Large-Scale Prediction of Activity Cliffs

This study benchmarked machine and deep learning methods for predicting activity cliffs (ACs)—pairs of structurally similar compounds with large differences in potency. It highlighted how data leakage through compound overlap can significantly inflate perceived model performance [6].

Experimental Protocol:

- Data Extraction: 100 compound activity classes were extracted from ChEMBL (version 29), focusing on Ki or Kd measurements [6].

- AC Definition: Activity cliffs were defined using the Matched Molecular Pair (MMP) formalism. A critical step was using activity class-dependent potency difference criteria (mean potency plus two standard deviations) instead of a fixed threshold, making AC identification more statistically robust [6].

- Data Splitting (Leakage Prevention): The study implemented two splitting strategies to quantify the leakage effect:

- Data Leakage Possibly Included: Standard random 80/20 split of all MMPs.

- Data Leakage Excluded (AXV): A hold-out set of 20% of compounds was first created. MMPs were then assigned to training or test sets based on whether their constituent compounds were in the training or hold-out set [6].

- Molecular Representation: MMPs were represented using concatenated ECFP4 fingerprints for the core structure and the chemical transformation [6].

- Model Training & Evaluation: A range of models, from simple k-nearest neighbors to support vector machines and deep neural networks, were trained and evaluated on both data splits. Performance was measured using AUC (Area Under the Curve) [6].

Quantitative Results: The table below summarizes the performance impact of data leakage in this study [6].

| Model Complexity | Model Type | Average Performance (AUC, Leakage Included) | Average Performance (AUC, Leakage Excluded) | Performance Gap Due to Leakage |

|---|---|---|---|---|

| Low | k-Nearest Neighbors | 0.91 | 0.78 | 0.13 |

| Medium | Support Vector Machine | 0.93 | 0.80 | 0.13 |

| High | Deep Neural Network | 0.90 | 0.78 | 0.12 |

Case Study 2: The CARA Benchmark for Real-World Drug Discovery

The Compound Activity benchmark for Real-world Applications (CARA) was designed to address biases in existing benchmarks, including data leakage. It emphasizes the importance of distinguishing between different assay types—Virtual Screening (VS) and Lead Optimization (LO)—which have fundamentally different data distributions that can lead to inadvertent leakage if not handled properly [9].

- Experimental Protocol:

- Data Source & Assay Classification: Data was curated from the ChEMBL database. Assays were classified as VS (diverse compounds) or LO (congeneric compounds with high similarity) based on the pairwise similarity of their compounds [9].

- Task-Specific Splitting: Different data splitting schemes were designed for VS and LO tasks to reflect real-world application scenarios and prevent unrealistic information transfer [9].

- Evaluation under Low-Data Scenarios: The benchmark evaluates models in both few-shot and zero-shot scenarios, which are critical for assessing a model's generalizability without leakage [9].

- Key Finding: The study found that sophisticated training strategies like meta-learning were effective for VS tasks, but simpler QSAR models performed well for LO tasks, highlighting that benchmark design directly influences model selection and perceived performance [9].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key computational tools and data resources used in the featured case studies.

| Item Name | Function / Application | Relevance to Leakage Prevention |

|---|---|---|

| ChEMBL Database | A large-scale, open-source bioactivity database containing compound-property data from scientific literature [9] [6]. | Serves as the primary data source for building robust benchmarks. Requires careful preprocessing to avoid inherent biases. |

| Matched Molecular Pair (MMP) Formalism | A method to represent pairs of compounds that differ by a single chemical transformation [6]. | The fundamental data structure for AC prediction studies. Leakage prevention requires splitting at the compound level, not the MMP level. |

| Extended Connectivity Fingerprints (ECFP4) | A type of circular fingerprint that encodes molecular substructures and is widely used for chemical similarity searches and machine learning [6]. | A standard method for numerically representing molecules and MMPs for model input. |

| Advanced Cross-Validation (AXV) | A data splitting protocol that ensures no compounds in the training set are in the test set by using a compound-level hold-out [6]. | A core methodological tool for explicitly preventing data leakage in compound-centric prediction tasks. |

Experimental Workflow & Data Partitioning Diagrams

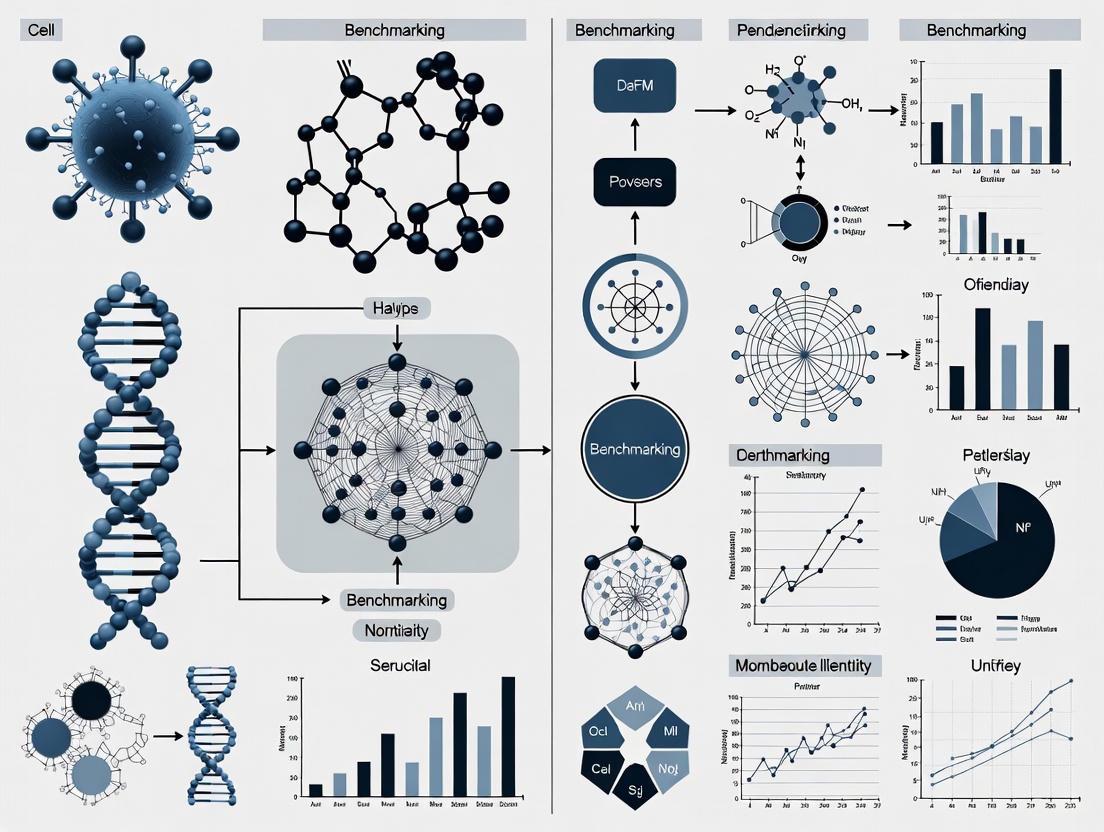

The following diagram illustrates the rigorous data partitioning strategy used to prevent data leakage in activity cliff prediction studies [6].

Data Splitting to Prevent Leakage

This workflow ensures no molecule in the test set is structurally related to any molecule in the training set, providing an unbiased evaluation.

The diagram below outlines the high-level process for creating a robust, leakage-free benchmark for computational models, integrating lessons from both case studies.

Leakage-Aware Benchmark Creation

FAQs on Data Leakage and Reproducibility

What is data leakage in the context of single-cell foundation model (scFM) benchmarking? Data leakage refers to the unintentional sharing of information between the training and evaluation phases of a model. In scFM benchmarking, this can severely compromise the validity of perturbation effect predictions. For instance, if information about a perturbation is indirectly learned during pre-training, the model's "zero-shot" prediction is no longer a true test of its understanding, but a reflection of this leaked information. The PertEval-scFM benchmark has highlighted that such issues can lead to models that fail to generalize, especially when faced with data that differs from their training set (a distribution shift) or when predicting strong/atypical perturbations [2].

Why is the distinction between reproducibility and replicability critical? These terms are often used interchangeably, but they refer to different validation stages. Reproducibility means using the original data and code to obtain the same results. Replicability means conducting a new, independent experiment and arriving at the same conclusions [10]. A study may be reproducible but not replicable if the original findings were a product of hidden data leakage or sampling error. True scientific rigor requires both.

How does data leakage contribute to the broader "replication crisis"? The replication crisis is the growing observation that many published scientific findings cannot be reproduced by other researchers [11]. Data leakage is a direct technical cause of this problem. It creates overly optimistic performance metrics during the initial study, leading to published results that cannot be replicated in real-world settings or independent labs. This wastes resources, as noted in reports that landmark findings in preclinical research have replication rates as low as 11-20% [11], and undermines trust in scientific literature [12].

What are common sources of data leakage in computational biology?

- Temporal Leakage: Using data from a "future" experiment to train a model meant to predict it.

- Preprocessing Leakage: Applying normalization or feature selection to the entire dataset before splitting it into training and test sets.

- Cross-Contamination in Splits: Inadequate splitting of single-cell data, where cells from the same donor or batch end up in both training and test sets, allowing the model to "memorize" donor-specific noise.

- Benchmarking Leakage: In scFM research, a model's pre-training data may inadvertently include information that is supposed to be held out for final evaluation on a benchmark task [2] [13].

Troubleshooting Guide: Preventing Data Leakage

| Problem | Symptom | Solution |

|---|---|---|

| Over-optimistic model performance | Model performs nearly perfectly on test data but fails on new, external data [2]. | Implement strict, domain-aware data splitting (e.g., by patient or batch). Use standardized frameworks like BioLLM for consistent evaluation [13]. |

| Failure to generalize | Model cannot predict effects under distribution shift or for strong perturbations [2]. | Apply rigorous cross-validation. Use holdout sets that are truly novel. Benchmark against simple baseline models to gauge true added value [2]. |

| Irreproducible results | Inability to obtain the same results from the original data and code [10]. | Practice open science: share all data, code, and analysis scripts. Use tools like the Open Science Framework for preregistration and sharing [10]. |

| High false positive rates | Findings are statistically significant in initial study but not in follow-up work [12]. | Preregister your study protocol and statistical analysis plan. Avoid p-hacking and HARKing (Hypothesizing After Results are Known) [14] [12]. |

Experimental Protocol: A Leakage-Free scFM Benchmarking Workflow

This protocol provides a methodology for conducting a robust, leakage-free benchmark of single-cell foundation models for perturbation effect prediction, based on frameworks like PertEval-scFM and BioLLM [2] [13].

1. Pre-Experimental Planning: Preregistration

- Action: Before any analysis, write and register a study protocol.

- Details: The protocol must specify the exact research question, the scFMs to be evaluated, the source and version of all benchmarking datasets, the primary outcome metric (e.g., accuracy in predicting perturbation effects), and the precise statistical tests to be used [14].

- Purpose: This prevents HARKing and outcome switching, safeguarding against conscious or unconscious bias.

2. Data Preparation and Curation

- Action: Establish a clean, held-out evaluation dataset.

- Details: For perturbation prediction, this involves compiling a dataset of post-perturbation gene expression profiles. Crucially, ensure that no information from these specific perturbation experiments was used in the pre-training data of any scFM being tested. Meticulous documentation of data provenance is key.

- Purpose: Creates a pure test set, free from pre-training contamination.

3. Model Integration and Zero-Shot Setup

- Action: Integrate scFMs using a unified framework and extract embeddings.

- Details: Use a framework like BioLLM, which provides standardized APIs for diverse scFMs (e.g., scGPT, Geneformer, scFoundation), eliminating architectural and coding inconsistencies [13]. In a zero-shot setup, the model's pre-trained embeddings are used directly without any further training on the benchmark task.

- Purpose: Ensures a fair and consistent comparison between different model architectures.

4. Evaluation and Baseline Comparison

- Action: Train a simple predictive model on the scFM embeddings and compare against baselines.

- Details: Train a simple classifier (e.g., logistic regression) on the extracted embeddings to predict perturbation effects. Compare its performance against simpler, non-contextualized baselines (e.g., models using raw gene expression counts). The PertEval-scFM benchmark found that scFM embeddings often do not provide consistent improvements over these baselines [2].

- Purpose: Determines whether the complex scFM provides tangible benefits over simpler, more interpretable methods.

5. Robustness and Sensitivity Analysis

- Action: Test model performance under varied conditions.

- Details: Evaluate the model on different subsets of data, such as strong vs. weak perturbations, or on data from a different laboratory or technology platform (distribution shift). Document where performance drops significantly.

- Purpose: Reveals the limitations and failure modes of the models, providing a more complete picture than a single aggregate performance score [2].

The following workflow diagram illustrates the key stages of this protocol, highlighting the critical points where data integrity must be enforced to prevent leakage.

The following table details essential computational tools and resources for conducting rigorous scFM benchmarking research.

| Item | Function in Research |

|---|---|

| BioLLM Framework | A unified system that simplifies the process of using, comparing, and improving diverse single-cell foundation models (scFMs) by providing standardized APIs and comprehensive documentation [13]. |

| PertEval-scFM Benchmark | A standardized framework designed specifically for the evaluation of models for perturbation effect prediction, enabling systematic comparison against simpler baseline models [2]. |

| Open Science Framework (OSF) | A infrastructure for supporting the research workflow, facilitating preregistration of study protocols, sharing of data and analysis code, and collaboration [10]. |

| Registered Reports | A publication format where peer review happens before data collection and results are known. This incentivizes high-quality research design and reduces publication bias for null results [14]. |

| Data Management Plan | A formal document that outlines what data will be collected, and how it will be organized, stored, handled, and protected during and after the research project to ensure long-term integrity and accessibility [14]. |

Quantitative Impact of the Reproducibility Crisis

The tables below summarize key quantitative findings that highlight the scale and impact of the reproducibility crisis across scientific fields.

Table 1: Replication Rates in Scientific Research

| Field | Replication Rate | Source / Context |

|---|---|---|

| Psychology | ~40% | AI-predicted likelihood of replicability for over 40,000 articles [10]. |

| Preclinical Cancer Research | <50% | Reproducibility Project: Cancer Biology found fewer than half of experiments were reproducible [12]. |

| Landmark Preclinical Studies | 11-20% | Reports from biotech companies Amgen and Bayer Healthcare [11]. |

Table 2: Perverse Incentives and Problematic Practices

| Issue | Statistic | Implication |

|---|---|---|

| Publication Bias for Positive Results | ~85% of published literature reports positive results [12]. | Creates a distorted, falsely successful scientific record. |

| Prevalence of HARKing | 43% of researchers admitted to doing it at least once [12]. | Increases the likelihood that false hypotheses are published. |

| Financial Cost in the U.S. | $28 Billion USD annually [12]. | Massive waste of research funding on non-reproducible work. |

Detailed Methodologies from Key Studies

1. PertEval-scFM: Benchmarking for Perturbation Effect Prediction

- Objective: To evaluate whether zero-shot embeddings from single-cell foundation models (scFMs) enhance prediction of transcriptional responses to perturbations compared to simpler baselines.

- Methodology:

- Framework Setup: A standardized evaluation framework (PertEval-scFM) was established to ensure consistent model comparison.

- Model Embedding Extraction: Multiple scFMs were used to generate contextualized embeddings for single-cell data in a zero-shot manner (no fine-tuning on the benchmark tasks).

- Baseline Model Training: Simpler, non-contextualized models (e.g., based on raw gene expression counts) were trained for the same perturbation prediction task.

- Performance Comparison: The predictive performance of classifiers trained on scFM embeddings was directly compared to the baseline models across various conditions, including distribution shifts and different perturbation strengths.

- Key Finding: The benchmark revealed that scFM embeddings did not provide consistent improvements over the simpler baseline models, and all models struggled with predicting strong or atypical perturbation effects [2].

2. AI-Powered Replicability Prediction

- Objective: To develop a procedure to accurately predict whether a given scientific study could be replicated without undertaking costly new experiments.

- Methodology:

- Model Training: A machine learning algorithm was trained to read the full text of scientific papers, analyzing cues in the writing (5,000-12,000 words) that correlate with replicability.

- Validation: The model was validated against known replication outcomes.

- Large-Scale Survey: The trained algorithm was applied to over 40,000 articles in top psychology journals published over 20 years to estimate the field's overall replicability rate.

- Key Finding: The model correctly predicted replication outcomes 65-78% of the time, and its survey suggested only about 40% of the studied psychology articles were likely to replicate [10].

Quick Reference: Types of Data Leakage

| Leakage Type | Brief Description | Common Example in scFM Benchmarking |

|---|---|---|

| Improper Data Splitting | Test data contaminates the training process, leading to over-optimistic performance. | Splitting cell data randomly by observation instead of by donor or batch, causing highly similar cells in both training and test sets [15]. |

| Feature Leakage | Using information for training that would not be available at the time of prediction in a real-world scenario. | In time-series prediction, using a future measurement (e.g., a later time point) to predict a past or present state [16] [15]. |

| Target Leakage | The training data includes a variable that is a direct proxy for the target itself. | A feature like "paymentstatus" is used to predict "loandefault"; the status is a direct consequence of the target [16]. |

| Preprocessing Leakage | Preprocessing steps (e.g., normalization, imputation) are applied to the entire dataset before splitting. | Calculating the mean and variance for normalization from the entire dataset (including test data) before splitting into train and test sets [16]. |

| Temporal Dependencies | Ignoring the time-dependent structure of data, violating the causal order of events. | In perturbation prediction, training on data collected after the time point you are trying to predict [16] [15]. |

Frequently Asked Questions

Q1: During scFM benchmarking, our model achieves near-perfect accuracy on the test set but fails completely on new experimental data. What could be the cause?

This is a classic sign of data leakage, most likely from Improper Data Splitting [15]. If your data splitting strategy does not account for the underlying biological structure, you will get optimistically biased performance. For example, in single-cell data, if cells from the same donor, culture, or sequencing batch are spread across both training and test sets, the model may learn to recognize technical artifacts or donor-specific signatures instead of the general biological signal of interest. When applied to a new dataset with different technical variations, the model's performance drops significantly [15].

Q2: What is the difference between a data leak and a data breach in the context of AI research?

This is a critical distinction. A data breach is a security incident where sensitive data is intentionally stolen by an unauthorized party, often through a cyberattack [16]. In contrast, data leakage in machine learning is a methodological error where information from outside the training dataset is unintentionally used to create the model [16] [15]. This leads to incorrect and irreproducible performance estimates, which is a primary focus in ensuring robust scFM benchmarking [17].

Q3: Our pipeline uses a standardized preprocessing step (like normalization) before splitting the data. Is this risky?

Yes, this practice, known as Preprocessing Leakage, is a common and serious risk [16] [18]. Any step that calculates statistics (like mean, standard deviation, or principal components) from the entire dataset before splitting will allow information from the test set to influence the training process. The model will be evaluated on data that it has already "seen" in a statistical sense, making it perform better than it would on truly independent, new data [16].

Q4: How can temporal dependencies cause leakage in predicting perturbation effects?

Temporal Dependencies are a major concern in dynamic biological processes. Leakage occurs when you use information from the future to predict the past [16] [15]. For instance, if you are building a model to predict a cell's state at time T, you must ensure that all data used for training was generated only up to time T. If your training data inadvertently includes measurements from time T+1, the model will learn this non-causal relationship and will fail to generalize to real-world scenarios where future data is, by definition, unavailable [16].

Troubleshooting Guide: Diagnosing and Fixing Leakage

Issue: Suspected Improper Splitting

- Symptoms: High performance on the held-out test set that does not replicate on a truly external validation set.

- Diagnosis: Check the splitting unit. Was the data split randomly by individual cells? If so, and your data has multiple cells per donor or batch, leakage is likely [15].

- Solution: Implement a group-based splitting strategy. Split the data by the independent experimental unit (e.g., by donor ID, cell culture plate, or sequencing batch). This ensures that all cells from one independent unit are entirely in either the training or the test set, providing a more realistic estimate of performance on new subjects [15].

Issue: Suspected Feature or Target Leakage

- Symptoms: The model's performance is unrealistically high, and upon inspection, you find a feature that seems to be a perfect predictor.

- Diagnosis: For every feature used in training, ask: "Would this information have been available in a real-world setting at the moment I need to make the prediction?" [16]

- Solution: Perform a thorough causal review of your features. Remove any variable that is set or collected after the event you are trying to predict or that is directly caused by the target variable [16].

Issue: Suspected Preprocessing Leakage

- Symptoms: The model performs well on the test set but fails when you try to preprocess new data and make predictions.

- Diagnosis: Review your code. Look for steps like normalization, imputation, or feature selection that are performed on the combined dataset.

- Solution: Structure your workflow as a pipeline. Treat all preprocessing steps as part of the model training. Learn the parameters (e.g., mean and variance for scaling) from the training set only, and then apply those learned parameters to the test set and any future data [18].

Experimental Protocol: A Leakage-Resistant scFM Benchmarking Workflow

The following diagram illustrates a robust workflow designed to prevent common data leakage sources in scFM benchmarking.

Step-by-Step Methodology:

- Initial Data Partitioning: This is the most critical step. Immediately after data collection and curation, split your dataset by the fundamental independent biological unit (e.g., donor ID, experimental batch, or cell culture). This prevents improper splitting by ensuring that correlated samples from the same source do not leak between training and test sets [15]. Keep the test set completely untouched and unseen until the final evaluation.

- Preprocessing and Featurization: Perform all data preprocessing steps (e.g., normalization, handling missing values, generating embeddings from an scFM) separately on the training set. Calculate all necessary statistics (mean, variance, etc.) and parameters from the training data only [18].

- Model Training: Train your machine learning model using only the preprocessed training data. Be vigilant for feature leakage; ensure no feature incorporates information that would be unavailable in a practical deployment scenario [16].

- Application to Test Set: Apply the preprocessing parameters and featurization models learned in Step 2 to the held-out test set. This simulates the arrival of new, unseen data.

- Final Evaluation: Evaluate the trained model's performance on the preprocessed test set. This performance metric provides a realistic estimate of how the model will generalize to new independent data, as all major leakage pathways have been controlled.

The Scientist's Toolkit: Essential Reagents for Robust Benchmarking

| Tool / Reagent | Function in Leakage Prevention |

|---|---|

| Group-Based Splitter | A software function that splits datasets by a grouping variable (e.g., patient ID), preventing improper splitting by ensuring all samples from a group are in the same partition (train or test) [15]. |

| Pipeline Automation Framework | A tool (e.g., from QSPRpred or scikit-learn) that encapsulates preprocessing and model training into a single object. This ensures test data is transformed using parameters learned from the training set alone, preventing preprocessing leakage [19] [18]. |

| Causal Feature Validator | A checklist or review process to vet each feature for target leakage. It forces the researcher to confirm: "Was this feature value known and fixed before the prediction target was determined?" [16] |

| Time-Aware Splitter | A data splitting function designed for temporal data. It ensures the training set only contains data from timepoints strictly before those in the test set, preventing leakage from temporal dependencies [16] [15]. |

| Standardized Benchmarking Framework (e.g., PertEval-scFM) | A standardized framework, like PertEval-scFM, provides a consistent and rigorous method to evaluate models, helping to reveal limitations and ensure that performance claims are not inflated by data leakage [2] [3]. |

Troubleshooting Guide: Data Leakage in scFM Benchmarking

This guide helps researchers identify and correct common data leakage scenarios that compromise single-cell foundation model (scFM) benchmarks and their application in drug discovery.

Q1: Our scFM performs perfectly in validation but fails to predict therapeutic targets in real patient samples. What went wrong? This classic sign of data leakage often stems from an incomplete separation of training and test data. In single-cell research, this can occur when cells from the same biological source or experimental batch are split across training and test sets. The model then learns to recognize technical artifacts rather than underlying biology [8]. To prevent this, ensure a strict, study-level split where all cells from an entire independent study or donor are assigned to either the training or test set, never both.

Q2: We incorporated a public dataset for fine-tuning. How can we be sure we haven't introduced leakage? Using public data requires vigilance. First, meticulously audit the metadata of the public dataset to ensure it does not contain any samples, donors, or cell lines that are also present in your test set, even if the sample identifiers are different [7]. Second, apply the same rigorous pre-processing and normalization pipeline to both your internal and external datasets to prevent the model from learning to distinguish sets based on technical, non-biological features [20].

Q3: Our model identifies strong biomarkers, but they are not biologically plausible. Could leakage be the cause? Yes. Highly significant but biologically nonsensical findings can be a red flag for a subtle form of temporal leakage. This happens when the model inadvertently accesses future or concurrent information that would not be available in a real predictive scenario [8]. Re-evaluate your feature selection process to ensure that only information available at the time of "prediction" is used during training. For perturbation prediction, this means the model should not be exposed to any post-perturbation data from the test set during its training phase [2].

Q4: What is the minimum number of perturbation examples needed to fine-tune an scFM without causing target leakage? Recent research indicates that even a modest number of experimental perturbations can significantly improve a model's predictive accuracy without inducing leakage, provided the data is handled correctly. Studies have shown that incorporating as few as 10-20 validated perturbation examples during fine-tuning can dramatically improve key metrics like sensitivity and specificity for predicting therapeutic targets [21]. The critical factor is that these perturbation examples must be distinct and properly excluded from the zero-shot evaluation of the model's general capabilities [21].

Quantitative Impact of Data Leakage on scFM Performance

The table below summarizes findings from benchmark studies that reveal how data leakage and distribution shifts can degrade model performance, leading to overly optimistic initial results.

Table 1: Performance Gaps in scFM Benchmarking Revealed by Rigorous Evaluation

| Evaluation Scenario | Metric | Reported Performance | Context & Caveats |

|---|---|---|---|

| Perturbation Effect Prediction (Zero-Shot) [2] [3] | General Performance | Limited improvement over simple baselines | Fails to provide consistent gains, especially under distribution shift. |

| Perturbation Effect Prediction (Zero-Shot) [2] [3] | Prediction of Strong/Atypical Effects | All models struggle | Highlights limitation in generalizability beyond training data distribution. |

| T-cell Activation (Open-loop ISP) [21] | Positive Predictive Value (PPV) | 3% | Very low PPV for open-loop in silico perturbation (ISP) predictions. |

| T-cell Activation (Open-loop ISP) [21] | Negative Predictive Value (NPV) | 98% | Open-loop ISP excels at identifying true negatives. |

| T-cell Activation (Closed-loop ISP) [21] | Positive Predictive Value (PPV) | 9% | 3-fold increase in PPV after fine-tuning with experimental perturbation data. |

Experimental Protocols for Leakage-Free scFM Benchmarking

Protocol 1: Implementing a Rigorous Train-Test Splitting Strategy for scFM Evaluation

- Objective: To create training and test sets that accurately reflect a model's ability to generalize to new, unseen biological conditions.

- Materials: Aggregated single-cell dataset (e.g., from CELLxGENE), computational environment.

- Methodology:

- Split by Study/Donor: Partition the data such that all cells from an entire independent study, patient donor, or experimental batch are assigned exclusively to the training or test set [7]. This prevents the model from learning study-specific technical biases.

- Hold-Out for Final Validation: Reserve one or more entire studies that are not used in any training or hyperparameter tuning phases. This serves as the final, unbiased test of generalizability [7].

- Stratification (if necessary): If the data partition leads to imbalanced cell type distributions between train and test sets, consider stratifying the splits at the study level to ensure all major cell types are represented in the test set.

- Expected Outcome: A significant drop in performance metrics (e.g., accuracy, F1-score) on the held-out test set compared to a leaky validation set, providing a realistic assessment of the model's utility.

Protocol 2: Closed-Loop Fine-Tuning for Enhanced Therapeutic Target Prediction

- Objective: To improve the predictive accuracy of a pre-trained scFM for a specific disease context (e.g., RUNX1-Familial Platelet Disorder) by incorporating a small number of experimental perturbations without causing data leakage [21].

- Materials: Pre-trained scFM (e.g., Geneformer), scRNA-seq data from disease model (e.g., RUNX1-knockout HSCs), scRNA-seq data from a limited set of genetic perturbations (e.g., Perturb-seq) in a relevant cell type.

- Methodology:

- Initial Fine-tuning: Fine-tune the scFM to distinguish the disease state (e.g., RUNX1-knockout) from the healthy control state using the available scRNA-seq data.

- Perturbation Incorporation: Further fine-tune this model using the labeled perturbation dataset. Critically, the labels should indicate the cellular state (e.g., "shifted-toward-healthy"), not the identity of the perturbed gene, to force the model to learn the phenotypic effect [21].

- In Silico Perturbation (ISP) Screening: Use the final fine-tuned model to perform in silico knockout or overexpression of genes across the genome. The model will predict which perturbations shift the disease state toward a healthy state.

- Validation: The top predicted therapeutic targets must be validated using orthogonal experimental methods (e.g., with specific small-molecule inhibitors) to confirm the model's predictions [21].

- Expected Outcome: A significant increase in the Positive Predictive Value (PPV) for identifying true therapeutic targets, as demonstrated by a three-fold improvement in a T-cell activation model [21].

Workflow Visualization

Diagram 1: A closed-loop framework for scFM fine-tuning incorporates experimental data to improve prediction accuracy for therapeutic target discovery [21].

Diagram 2: The cascade of consequences from data leakage in scFM pipelines, ultimately leading to costly R&D failures [7] [2] [21].

The Scientist's Toolkit: Key Research Reagents for scFM Benchmarking

Table 2: Essential Materials and Resources for Rigorous scFM Evaluation

| Resource / Reagent | Function in scFM Benchmarking |

|---|---|

| CELlxGENE Atlas [7] [20] | A primary source of standardized, annotated single-cell datasets used for large-scale model pretraining and for creating unbiased benchmark test sets. |

| Asian Immune Diversity Atlas (AIDA) v2 [7] | An independent, unbiased dataset used to mitigate the risk of data leakage and provide rigorous external validation of model generalizability. |

| Perturb-seq Data [21] | Single-cell RNA sequencing data from genetic perturbation screens (e.g., CRISPRi/a). Used for fine-tuning scFMs in a "closed-loop" framework to improve prediction accuracy. |

| Geneformer / scGPT [7] [20] [21] | Examples of prominent single-cell foundation models with different architectures and pretraining strategies, used as base models for fine-tuning and benchmarking. |

| PertEval-scFM Framework [2] [3] | A standardized benchmarking framework specifically designed to evaluate the performance of scFMs and other models for perturbation effect prediction. |

| scGraph-OntoRWR Metric [7] | A novel, knowledge-based evaluation metric that measures the consistency of cell type relationships captured by scFMs with prior biological knowledge from cell ontologies. |

Building Leakage-Proof scFM Benchmarks: A Step-by-Step Methodological Framework

Troubleshooting Guide: Common Data Preprocessing Pitfalls in Benchmark Creation

1. Issue: Data Leakage Between Training and Test Sets

- Problem: Model performance is optimistically biased because information from the test set inadvertently influences the training process.

- Solution: Implement a temporal split where the test set contains only cases that start after a defined separation time. For ongoing cases, events occurring before this time can be used to construct test prefixes, but their targets should be marked as "NAN" and excluded from inference [22].

- Advanced Check: Use data versioning tools like

lakeFSto create isolated branches for each preprocessing run, ensuring the exact data snapshot used for training is preserved and can be audited [23].

2. Issue: Inconsistent Dataset Documentation Leads to Irreproducible Results

- Problem: Preprocessing steps are inadequately documented, making it impossible to replicate the benchmark or compare results across studies.

- Solution: Adopt a principled, standardized preprocessing workflow and document all steps meticulously. Publicly share the scripts used to convert raw datasets into benchmarks [22].

3. Issue: Benchmark Suffers from Temporal and Representation Bias

- Problem: The test set is not representative of real-world scenarios, potentially favoring models that perform well only on specific case durations or types.

- Solution: Curate test sets to be unbiased concerning the mix of case durations and the number of running cases. Ensure the split is representative of the underlying process [22].

4. Issue: High Cardinality Categorical Variables and Complex Data Types

- Problem: Single-cell data or process mining events contain numerous categories or high-dimensional features, complicating the analysis.

- Solution: For categorical data, use encoding techniques like one-hot, label, or binary encoding. For high-dimensional data, employ dimensionality reduction techniques like Principal Component Analysis (PCA) [24]. Foundation models can be leveraged to extract meaningful features from such complex data [25].

Frequently Asked Questions (FAQs)

Q1: Why is principled data preprocessing so critical for creating public benchmarks in machine learning? Principled preprocessing is the foundation of reliable and unbiased research. It ensures that datasets are of high quality, models are trained and evaluated on correctly separated data, and results are reproducible. Inconsistent or flawed preprocessing leads to data leakage, biased performance metrics, and ultimately, a failure to compare different algorithms fairly, hindering scientific progress [22] [23].

Q2: What is the single most important rule to prevent data leakage in predictive process monitoring? The most critical rule is to enforce a strict temporal separation between training and test sets. The test set should only contain cases (or parts of cases) that started after all the data in the training set. This prevents the model from having access to future information during training, which is a common form of data leakage in temporal data [22].

Q3: How can I handle missing values in a benchmark dataset without introducing bias? Common techniques include:

- Removal: Deleting rows or columns with missing values, suitable for large datasets where removal doesn't cause significant data loss [23] [24].

- Imputation: Replacing missing values with a statistical measure like the mean, median, or mode of the available data [23] [24].

- Advanced Imputation: Using more sophisticated methods like k-nearest neighbors (KNN) imputation or multiple imputations by chained equations (MICE) for a more accurate estimate [24]. The choice depends on the data amount and the nature of the missingness.

Q4: What is the purpose of scaling and normalization in data preprocessing? Many machine learning algorithms are sensitive to the scale of input features. If features are on different scales, a feature with a larger range can disproportionately influence the model. Scaling and normalization transform all features to a comparable range, which helps models like Support Vector Machines and k-Nearest Neighbors converge faster and perform better [23] [24].

Q5: In the context of single-cell foundation model benchmarking, what are the key evaluation scenarios?

The scDrugMap framework, for instance, uses two primary evaluation strategies [25]:

- Pooled-Data Evaluation: Models are trained and tested on aggregated data from multiple studies. This tests the model's performance on a large, combined dataset.

- Cross-Data Evaluation: Models are trained on a collection of datasets and then tested independently on held-out datasets from individual studies. This tests the model's ability to generalize to new, unseen data sources.

Quantitative Data on Benchmark Datasets and Preprocessing

The following tables summarize quantitative data from benchmarking efforts and standard preprocessing techniques.

Table 1: Summary of Curated Single-Cell Data Collections for Drug Response Benchmarking [25]

| Data Collection | Number of Cells | Number of Datasets | Number of Studies | Key Characteristics |

|---|---|---|---|---|

| Primary Collection | 326,751 | 36 | 23 | Covers 14 cancer types, 3 therapy types, 5 tissue types, and 21 treatment regimens. |

| Validation Collection | 18,856 | 17 | 6 | Includes 5 cancer types and 3 therapy types; used for external testing. |

Table 2: Common Data Preprocessing Techniques and Their Applications [23] [24]

| Preprocessing Step | Common Techniques | Brief Description | Typical Use Case |

|---|---|---|---|

| Handling Missing Values | Listwise Deletion, Mean/Median Imputation, KNN Imputation | Removes incomplete rows/columns or infers missing values. | Preparing data for algorithms that cannot handle missingness. |

| Categorical Encoding | One-Hot Encoding, Label Encoding | Converts non-numerical categories into numerical format. | Making categorical data understandable for ML algorithms. |

| Feature Scaling | Min-Max Scaler, Standard Scaler, Robust Scaler | Brings all features to a similar scale. | Required for distance-based models (e.g., SVM, KNN). |

| Data Splitting | Temporal Split, Random Split | Divides data into training, validation, and test sets. | Evaluating model performance on unseen data; temporal splits prevent leakage. |

Table 3: Foundation Model Performance in Pooled-Data Evaluation (Primary Collection) [25]

| Foundation Model | Training Strategy | Mean F1 Score (Cell Line Data) | Key Takeaway |

|---|---|---|---|

| scFoundation | Layer Freezing | 0.971 | Top-performing model, significantly outperformed the lowest-performing model. |

| scBERT | Layer Freezing | 0.630 | Example of a lower-performing model in this specific evaluation scenario. |

Experimental Protocols for Benchmark Creation

Protocol 1: Creating Unbiased Benchmark Datasets from Event Logs (e.g., BPIC Challenges)

This protocol is based on principles to prevent data leakage and create representative test sets [22].

- Data Acquisition: Obtain the raw event log (e.g., in

.xesformat). - Define Separation Time: Choose a specific timestamp that splits the timeline. All cases that start after this point are eligible for the test set.

- Construct Training Set: Include all cases that were completed before the separation time.

- Construct Test Set:

- Identify cases that start after the separation time. These form the core of the test set.

- For ongoing cases that started before and ended after the separation time, events occurring before the separation time can be used to build prefixes. However, the target values for these prefixes must be set to "NAN" and excluded from model training and evaluation to prevent leakage.

- Add Prediction Targets: Augment the dataset with new columns for the specific prediction task:

- Remaining Time: Add a

remain_timecolumn. - Outcome: Add binary target columns (e.g.,

approved,declined,canceled). - Next Event: Add a

nextEventcolumn.

- Remaining Time: Add a

- Data Export: Save the training and test sets as separate files (e.g., CSV or Pickle for practicality with large datasets like BPIC_2017).

Protocol 2: Benchmarking Foundation Models for Single-Cell Drug Response Prediction

This protocol outlines the methodology for a comprehensive benchmarking study as implemented in scDrugMap [25].

- Data Curation and QC:

- Manually collect a large number of single-cell RNA-seq datasets from public studies.

- Perform quality control to filter out low-quality cells.

- Annotate cells with metadata: cancer type, tissue type, therapy type, and drug response status (sensitive/resistant).

- Split the curated data into a primary collection for main benchmarking and a separate validation collection for external testing.

- Model Selection: Choose a set of foundation models to evaluate, including both domain-specific models (e.g., scFoundation, scGPT, Geneformer) and general-purpose large language models (e.g., LLaMa).

- Model Adaptation:

- Strategy 1 (Layer Freezing): Keep the pre-trained weights of the foundation model frozen and train only a lightweight classification head on top.

- Strategy 2 (Fine-Tuning): Use Low-Rank Adaptation (LoRA) to efficiently fine-tune the foundation model on the specific drug response task.

- Evaluation:

- Pooled-Data Evaluation: Train and test models on the aggregated primary collection to assess overall performance.

- Cross-Data Evaluation: Train models on the primary collection and test them on the held-out validation collection to assess generalization to new data sources.

- Zero-Shot Evaluation: Test some models' pre-trained capabilities without any task-specific fine-tuning.

- Performance Analysis: Compare models based on metrics like F1 score across different data categories (tissue, cancer, drug class).

Experimental Workflow and Signaling Pathway Diagrams

Benchmark Creation Workflow

scFM Benchmarking Process

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Principled Data Preprocessing and Benchmarking

| Item | Function | Example / Note |

|---|---|---|

| Data Version Control (lakeFS) | Manages data lake versions with Git-like branching, ensuring reproducible preprocessing pipelines and isolating experiment runs [23]. | Critical for preventing non-deterministic pipelines and supporting ML governance. |

| Workflow Management (Apache Airflow) | Orchestrates complex data preprocessing workflows as Directed Acyclic Graphs (DAGs), automating the sequence of tasks [23]. | Ensures preprocessing steps are consistent and repeatable. |

| Single-Cell Foundation Models (scFoundation, scGPT) | Pre-trained models on large-scale scRNA-seq data that can be adapted via transfer learning for downstream tasks like drug response prediction [25]. | Provides a powerful starting point, often outperforming models trained from scratch. |

| Low-Rank Adaptation (LoRA) | A parameter-efficient fine-tuning technique that allows for effective adaptation of large foundation models without the cost of full fine-tuning [25]. | Reduces computational requirements and training time. |

| Python Data Libraries (pandas, scikit-learn) | Provide built-in functions and libraries for data manipulation, imputation, encoding, and scaling, streamlining the preprocessing code [23] [24]. | The standard toolkit for implementing preprocessing steps. |

| Benchmark Creation Scripts | Custom Python scripts (e.g., for converting BPIC datasets) that implement the principled preprocessing steps defined in research papers [22]. | Ensures the benchmark is created exactly as described, aiding reproducibility. |

FAQs: Understanding Data Partitioning

Q1: What is the primary goal of strategic data splitting in single-cell foundation model (scFM) benchmarking?

The primary goal is to prevent data leakage, which occurs when information from the test dataset inadvertently influences the model training process. This breach in the separation between training and test data leads to overly optimistic performance metrics that do not reflect the model's true ability to generalize to unseen data, thereby compromising the validity and reproducibility of the benchmarking study [26].

Q2: How does "assay-wise" partitioning differ from "compound-wise" partitioning?

- Assay-Wise Partitioning involves splitting the data based on experimental batches or technological platforms. This ensures that all data from a particular assay (e.g., a specific scRNA-seq protocol or a distinct drug sensitivity screen) is entirely contained within either the training or the test set. It tests the model's ability to generalize across different experimental conditions [27].

- Compound-Wise Partitioning involves splitting the data based on the entities being studied, such as different chemical compounds or distinct cell lines. This ensures that all data related to a specific compound is confined to a single split (training, validation, or test). It tests the model's ability to predict for entirely new compounds that were not seen during training [28].

Q3: What is a common, hidden source of data leakage in scFM workflows?

A common source is performing feature selection or data preprocessing on the entire dataset before splitting. When steps like Highly Variable Gene (HVG) selection or covariate regression are applied to the combined training and test data, information from the test set leaks into the training process. These operations must be performed independently on each split after the data has been partitioned [26] [29].

Q4: Why is a simple random split often insufficient for scFM benchmarking?

Simple random splitting does not account for the complex, nested structure of biological data. It can lead to non-representative splits where, for instance, highly similar biological replicates or technical replicates from the same donor end up in both training and test sets. This can artificially inflate performance, as the model is not being tested on a truly independent sample. Structured splits like assay-wise or compound-wise are necessary to rigorously assess generalizability [30] [27].

Troubleshooting Guides

Issue 1: Inflated Performance Metrics Despite Using a Separate Test Set

Problem Your scFM shows excellent performance on the test set during benchmarking, but this performance drastically drops when applied to new, external data. This is a classic symptom of data leakage.

Diagnosis and Solution Follow this diagnostic workflow to identify and remedy the source of leakage:

Issue 2: Implementing Compound-Wise Splitting for Drug Sensitivity Prediction

Challenge Creating a test set that contains entirely new compounds to realistically simulate a drug discovery scenario.

Step-by-Step Protocol

- Compound List Extraction: Compile a definitive list of all unique compounds (or cell lines) present in your entire dataset.

- Stratified Splitting: Use the

scikit-learnGroupShuffleSplitor a similar function. This ensures that the data is split based on the compound groups, and it can also attempt to preserve the overall distribution of a key variable (e.g., high vs. low sensitivity) in each split. - Validation of Splits: Manually verify that no compound appears in more than one data split (training, validation, and test). The following Python code demonstrates this process:

Issue 3: Managing Batch Effects with Assay-Wise Splitting

Challenge When splitting data by assay or batch, strong technical batch effects can make it difficult for the model to learn the underlying biology, causing poor performance.

Solution Strategy

- Acknowledge the Challenge: A drop in performance with a proper assay-wise split is a more honest reflection of the model's capability to handle batch variation.

- Consider Integration Tools: As part of the training process only, you can use batch integration methods (e.g., Harmony, Seurat, scVI) to align the training data from different assays [7]. It is critical that the integration model is learned only on the training data and then applied to the test data.

- Benchmark Robustly: Evaluate your scFM's "zero-shot" integration capabilities by comparing its performance on unintegrated test data against traditional integration methods applied in a leak-free manner [7].

Quantitative Impact of Data Leakage

The table below summarizes experimental data on how different types of data leakage inflate model performance, underscoring the importance of rigorous partitioning.

Table 1: Performance Inflation Caused by Data Leakage in Predictive Modeling (adapted from [26])

| Type of Data Leakage | Phenotype / Task | Baseline Performance (r) | Performance with Leakage (r) | Inflation (Δr) |

|---|---|---|---|---|

| Feature Leakage | Attention Problems Prediction | 0.01 | 0.48 | +0.47 |

| (Feature selection done on entire dataset) | Matrix Reasoning Prediction | 0.30 | 0.47 | +0.17 |

| Subject Leakage (20%) | Attention Problems Prediction | ~0.01 | 0.29 | +0.28 |

| (Data duplicates across splits) | Matrix Reasoning Prediction | ~0.30 | 0.44 | +0.14 |

| Family Leakage | Attention Problems Prediction | ~0.01 | 0.03 | +0.02 |

| (Related subjects in different splits) | Matrix Reasoning Prediction | ~0.30 | 0.30 | 0.00 |

The Scientist's Toolkit

Table 2: Essential Reagents and Computational Tools for Robust scFM Benchmarking

| Item / Tool Name | Type | Primary Function in Data Splitting & Leakage Prevention |

|---|---|---|

GroupShuffleSplit (scikit-learn) |

Computational Tool | Implements compound-wise or assay-wise splitting by ensuring data groups are not split across training and test sets. |

scGPT / Geneformer |

Single-Cell Foundation Model | Benchmarking targets; their zero-shot embeddings are tested on data partitioned with the strategies described here [7]. |

| Stratified Splitting | Computational Technique | Maintains the distribution of a key categorical variable (e.g., cell type, sensitivity class) across all data splits, preventing biased splits. |

| Harmony / scVI | Computational Tool | Batch integration methods used during training to correct for technical variation across assays, improving model learning on assay-wise split data [7]. |

| CellxGene Atlas | Data Resource | Provides high-quality, public single-cell datasets that can be used as an independent, unbiased test set to finally validate model performance and mitigate leakage risks [7]. |

| PAC-MAN | Computational Pipeline | A scalable analysis method for multi-sample cytometry data that handles batch effects and aligns clusters across samples, illustrating the importance of cross-sample partitioning [27]. |

Frequently Asked Questions (FAQs)

Q1: What is the primary goal of the CARA benchmark? The CARA (Compound Activity benchmark for Real-world Applications) benchmark is designed to provide a high-quality dataset and framework for developing and evaluating computational models that predict compound activity against target proteins. Its key goal is to offer a more realistic and practical evaluation by considering the biased distribution of real-world compound activity data, thereby preventing the overestimation of model performance that can occur with other benchmarks [9] [31].

Q2: How does CARA help prevent data leakage in benchmarking? CARA incorporates carefully designed train-test splitting schemes tailored to different drug discovery tasks, such as Virtual Screening (VS) and Lead Optimization (LO). This rigorous separation of training and test data helps prevent data leakage by ensuring that models are evaluated on assays and compound distributions that are not represented in the training set, mirroring real-world application scenarios and yielding more reliable performance estimates [9].

Q3: Why does CARA distinguish between Virtual Screening (VS) and Lead Optimization (LO) assays? This distinction is crucial because these two stages of drug discovery generate data with fundamentally different characteristics. VS assays typically contain compounds with a diffused distribution and lower pairwise similarities, representing diverse chemical libraries. In contrast, LO assays contain congeneric compounds with highly similar scaffolds, representing optimized chemical series. Models may perform differently on these tasks, so evaluating them separately provides more meaningful insights for real-world applications [9].

Q4: What are the consequences of data leakage in a benchmark? Data leakage, where information from the test set inadvertently influences the training process, leads to over-optimistic and biased performance estimates [8]. This makes a model appear more capable than it actually is, hinders fair comparison between different methods, and ultimately results in models that fail when deployed in real-world drug discovery settings [8] [32].

Q5: Which evaluation metrics are most relevant for real-world performance in CARA? CARA emphasizes evaluation metrics that align with practical utility. For VS tasks, this includes metrics that assess a model's ability to successfully rank active compounds. For LO tasks, the accurate prediction of activity cliffs (where small structural changes lead to large potency changes) is critical. The benchmark moves beyond simple binary classification to ensure recommendations are relevant for practice [9].

Troubleshooting Guides

Issue 1: Poor Model Generalization on New Assays

This issue occurs when a model performs well on its training data but fails to generalize to new, unseen assays, often due to biased data or incorrect data splitting.

- Problem: Model performance drops significantly on test assays.

- Solution: Adopt the CARA data splitting protocol.

- Procedure:

- Assay Classification: First, classify your assays as either VS-type (diffused compound distribution) or LO-type (congeneric compounds) [9].

- Stratified Splitting: Ensure that during train-test splitting, all data from a single assay (representing a specific experimental condition and target) is entirely contained within either the training set or the test set. This prevents information leakage from assay-specific biases [9].

- Task-Specific Evaluation: Evaluate your model's performance on VS and LO assays separately to identify its specific strengths and weaknesses [9].

Issue 2: Managing Few-Shot and Zero-Shot Learning Scenarios

In real-world discovery, you may have very few or no measured activities for a new target. Standard models often fail in these low-data regimes.

- Problem: Your model is unable to make accurate predictions when only a few (few-shot) or no (zero-shot) task-related data points are available.

- Solution: Implement meta-learning or multi-task learning strategies.

- Procedure:

- Strategy Selection: Based on CARA's findings, for VS tasks, leverage training strategies like meta-learning or multi-task learning across many different assays to build a more generalizable model [9].

- Model Design: For few-shot prediction of continuous compound activities, consider architectures specifically designed for this purpose, which aggregate encodings from known compounds to capture assay information [33].

- Validation: Rigorously validate the model in a hold-out setting that simulates the few-shot or zero-shot scenario before applying it to a truly new target.

Issue 3: Detecting and Mitigating Data Contamination

Data contamination happens when test data, or data very similar to it, is present in the training set, invalidating the benchmark results.

- Problem: You suspect your benchmark results are unrealistically high due to data contamination.

- Solution: Proactively construct contamination-free benchmarks.

- Procedure:

- Automated Screening: Use automated frameworks like AntiLeakBench to screen for and exclude data points that contain knowledge likely present in the training sets of modern AI models [32].

- Knowledge Recency: Construct benchmark samples using explicitly new knowledge (e.g., from recent scientific literature published after the model's training cutoff date) to ensure a strictly contamination-free evaluation [32].

- Audit Training Data: Maintain meticulous records of your training data sources and versions to trace the origin of potential contamination.

Experimental Protocols & Data

Core Experimental Workflow of the CARA Benchmark

The following diagram illustrates the key steps in constructing and applying the CARA benchmark to ensure a realistic and leakage-free evaluation.

Quantitative Data from the CARA Benchmark

The table below summarizes key characteristics of the two main assay types defined in the CARA benchmark, which necessitate different modeling approaches [9].

Table 1: Characteristics of VS and LO Assays in the CARA Benchmark

| Assay Type | Discovery Stage | Compound Distribution | Pairwise Similarity | Typical Modeling Goal |

|---|---|---|---|---|

| Virtual Screening (VS) | Hit Identification | Diffused, Widespread | Low | Identify active compounds from large, diverse libraries. |

| Lead Optimization (LO) | Hit-to-Lead / Lead Optimization | Aggregated, Concentrated | High (Congeneric) | Accurately rank and predict activities of similar compounds. |

Essential Reagents for Computational Experimentation

This table lists key resources and their functions for researchers looking to work with or build upon benchmarks like CARA.

Table 2: Key Research Reagent Solutions for Benchmarking

| Item Name | Function / Application |

|---|---|

| ChEMBL Database | A primary, publicly available source of bioactive, drug-like molecules providing curated compound activity data for training and evaluation [9]. |

| CARA Benchmark Dataset | Provides a pre-processed, high-quality dataset with assay classifications and splitting schemes designed for real-world drug discovery applications [9] [31]. |

| Meta-Learning Algorithms | Training strategies (e.g., MAML, Prototypical Networks) used to improve model performance in few-shot learning scenarios for VS tasks [9]. |

| AntiLeakBench Framework | An automated tool for constructing and updating benchmarks with new knowledge to prevent data contamination and ensure fair model evaluation [32]. |

Data Leakage Prevention Framework

A robust benchmarking workflow must incorporate specific steps to prevent data leakage, as shown in the following protocol.

Data leakage occurs when information from outside the training dataset—particularly from the target variable or validation/test sets—is unintentionally used during the feature engineering process [34]. This problem creates overly optimistic performance metrics during model development but leads to significant performance degradation when models are deployed in real-world scenarios where the leaked information is unavailable [34] [35].

In the context of single-cell foundation model (scFM) benchmarking research, data leakage poses a particularly critical challenge. When evaluating scFMs for tasks like perturbation effect prediction, leakage can invalidate benchmark results and lead to incorrect conclusions about model capabilities [3] [2]. Understanding and preventing data leakage is therefore essential for producing reliable, reproducible research that accurately reflects model performance.

FAQs: Understanding Data Leakage

What are the most common types of data leakage in feature engineering?

The most prevalent types of data leakage in feature engineering include:

Target Leakage: Occurs when features include information that directly reveals the target variable [36] [35]. For example, in a model predicting loan repayment, including a feature like "repayment_status" would leak the answer [36].

Temporal Leakage: Happens with time-series data when future information is used to predict past events [34] [36]. For instance, using stock prices from next week to predict today's price.

Train-Test Contamination: Occurs when information from the test set leaks into the training process, often through improper preprocessing [36] [35]. This can happen when scaling or normalization is applied before data splitting.

Feature Leakage: When features indirectly contain information too closely related to the target variable [36]. For example, using "total sales in last 30 days" to predict whether a product will sell next period.

Why is data leakage particularly problematic for scFM benchmarking?