scGPT vs Geneformer: A Critical Benchmarking Review for Biomedical Researchers

This article provides a comprehensive, evidence-based comparison of two prominent single-cell foundation models, scGPT and Geneformer, tailored for researchers and drug development professionals.

scGPT vs Geneformer: A Critical Benchmarking Review for Biomedical Researchers

Abstract

This article provides a comprehensive, evidence-based comparison of two prominent single-cell foundation models, scGPT and Geneformer, tailored for researchers and drug development professionals. We synthesize recent benchmarking studies to evaluate their performance across key tasks like cell type annotation, batch integration, and perturbation prediction. The analysis covers foundational principles, practical applications, optimization strategies, and rigorous validation, revealing that while both models show promise, their zero-shot performance often lags behind simpler methods. This review offers actionable insights for model selection and discusses the future trajectory of foundation models in clinical and biomedical research.

Understanding scGPT and Geneformer: Architectures and Pretraining Paradigms

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the investigation of cellular heterogeneity, developmental trajectories, and disease mechanisms at unprecedented resolution. However, the high dimensionality, sparsity, and technical noise inherent to scRNA-seq data present significant analytical challenges. Inspired by the remarkable success of transformer architectures in natural language processing, computational biologists have developed specialized foundation models to harness the vast amounts of emerging single-cell data. These models, pretrained on millions of cells, promise to learn universal biological representations that can be adapted to diverse downstream tasks with minimal fine-tuning. Among the most prominent architectures in this rapidly evolving field are scGPT and Geneformer, which embody contrasting philosophical approaches to modeling transcriptomic data. This article provides a comprehensive comparison of these two pioneering models, examining their core architectures, pretraining strategies, and performance across key biological tasks to guide researchers in selecting appropriate tools for their specific analytical needs.

Architectural Philosophies: A Technical Comparison

scGPT: Generative Pretrained Transformer for Single-Cell Biology

scGPT adopts a decoder-only transformer architecture similar to the GPT series, treating single-cell transcriptomes as sequences of gene-expression pairs. The model processes input data by creating three distinct embeddings for each gene: a gene identity embedding, an expression value embedding (often using value binning), and no positional embedding, reflecting the assumption that gene interactions are non-sequential and permutation-invariant. scGPT employs a masked language modeling pretraining objective where randomly selected genes are masked, and the model learns to reconstruct their expression values based on the context provided by other genes. This approach allows scGPT to learn the complex, context-dependent relationships between genes across diverse cell types and tissues. With 50 million parameters pretrained on approximately 33 million human cells, scGPT aims to build a comprehensive foundation model capable of generalizing across multiple omics modalities, including scRNA-seq, scATAC-seq, and spatial transcriptomics [1] [2].

Geneformer: Context-Aware Encoder Architecture

In contrast, Geneformer utilizes a transformer encoder architecture similar to BERT, with a distinctive rank-based input representation. Rather than using raw expression values, Geneformer employs "rank value encoding," where genes are sorted by expression level to create a cell-specific "sentence" of genes. This approach prioritizes the relative importance of genes within each cell while reducing technical variability. Geneformer incorporates both gene identity embeddings and positional embeddings, with the latter reflecting the ranked order of genes. Its pretraining utilizes a masked language modeling objective with a key distinction: instead of predicting continuous expression values, it predicts the identities of masked genes based on their context. With 40 million parameters pretrained on 30 million human cells, Geneformer is designed to capture gene-gene relationships and hierarchical regulatory networks, with a particular emphasis on context-aware representations that can illuminate biological mechanisms [1] [3].

Table 1: Core Architectural Comparison of scGPT and Geneformer

| Architectural Feature | scGPT | Geneformer |

|---|---|---|

| Transformer Type | Decoder-only | Encoder-only |

| Primary Input Representation | Gene + value embeddings | Rank-based gene ordering |

| Value Embedding | Value binning | Ordering (implicit) |

| Positional Embedding | × | ✓ |

| Pretraining Dataset Size | ~33 million cells | ~30 million cells |

| Model Parameters | 50 million | 40 million |

| Pretraining Objective | Masked gene modeling with MSE loss | Masked gene modeling with CE loss |

| Gene Tokenization | 1200 HVGs | 2048 ranked genes |

Performance Benchmarking: Quantitative Comparisons

Zero-Shot Capability Assessment

Zero-shot performance, where models are applied without task-specific fine-tuning, is crucial for exploratory biological research where labeled data may be unavailable. Recent evaluations reveal significant limitations in both models' zero-shot capabilities. In cell type clustering tasks measured by Average BIO (AvgBIO) score, both scGPT and Geneformer underperformed compared to simpler methods like highly variable genes (HVG) selection and established algorithms such as Harmony and scVI. Geneformer demonstrated particularly high variance across different datasets, while scGPT showed more consistent but still suboptimal performance. In batch integration tasks, which aim to remove technical artifacts while preserving biological signals, both models struggled to correct for batch effects, with Geneformer consistently ranking last across most evaluation metrics. Surprisingly, selecting HVGs alone often outperformed both transformer-based approaches in batch integration scores calculated in full dimensions [4] [5].

Task-Specific Performance Variations

Comprehensive benchmarking across diverse biological applications reveals a complex performance landscape where neither model consistently outperforms the other. Instead, each demonstrates strengths in specific domains. scGPT generally excels in perturbation prediction and multi-omic integration, leveraging its generative architecture to model cellular responses to genetic and chemical perturbations. Geneformer typically shows advantages in cell type annotation and in silico perturbation experiments, where its rank-based input representation appears to capture biologically meaningful hierarchies. However, benchmarking studies consistently note that performance is highly dependent on dataset characteristics and task requirements, with neither model establishing clear overall superiority [1] [6].

Table 2: Performance Comparison Across Key Biological Tasks

| Task Category | Superior Model | Key Performance Notes | Primary Metric |

|---|---|---|---|

| Zero-shot Cell Type Clustering | HVG (baseline) | Both models underperformed vs. simpler methods | AvgBIO Score |

| Batch Integration | scVI/Harmony (baseline) | Geneformer consistently ranked last | iLISI, PCR |

| Cell Type Annotation | Geneformer | Better captures cell-type hierarchies | Accuracy |

| Perturbation Prediction | scGPT | Superior response modeling | MSE |

| Cross-Species Generalization | Geneformer | Mouse-Geneformer validated cross-species | Accuracy |

| Multi-omic Integration | scGPT | Handles diverse modalities | Integration Score |

Experimental Protocols and Evaluation Methodologies

Standardized Evaluation Frameworks

Rigorous benchmarking of single-cell foundation models requires standardized evaluation protocols across diverse datasets and tasks. The most comprehensive evaluations employ multiple datasets representing different tissues, technologies, and biological conditions. For cell type clustering assessment, models generate cell embeddings which are evaluated using metrics like Average Silhouette Width (ASW) and Average BIO (AvgBIO) score, which measure the separation and purity of known cell types in the latent space. Batch integration performance is quantified using metrics such as Integration Local Inverse Simpson's Index (iLISI) for batch mixing and principal component regression (PCR) score for biological conservation. For perturbation tasks, models are evaluated on their ability to predict expression changes after genetic or chemical perturbations, typically measured using mean squared error (MSE) or correlation coefficients between predicted and observed expression changes [4] [1] [6].

Zero-Shot Evaluation Protocol

The zero-shot evaluation protocol is particularly important for assessing the fundamental biological knowledge captured during pretraining. In this setting, models generate embeddings without any task-specific fine-tuning, and these embeddings are directly used for downstream analyses. This approach tests the model's ability to extract biologically meaningful representations without additional training, which is especially valuable for exploratory research where labeled data may be unavailable or incomplete. Studies implementing this protocol have revealed significant limitations in current foundation models, demonstrating that their pretraining objectives do not necessarily translate to high-quality representations for all downstream tasks [4].

Technical Implementation: Research Reagent Solutions

Table 3: Essential Research Reagents for Single-Cell Foundation Model Experiments

| Research Reagent | Function/Purpose | Implementation Example |

|---|---|---|

| CELLxGENE Datasets | Curated single-cell data for pretraining and benchmarking | 33M human cells for scGPT pretraining |

| Highly Variable Genes (HVG) | Feature selection to reduce dimensionality | 1200 HVGs for scGPT input |

| Rank Value Encoding | Input representation method for Geneformer | 2048 ranked genes per cell |

| Masked Language Modeling | Self-supervised pretraining objective | Randomly mask 15% of genes |

| Harmony | Batch integration benchmark algorithm | Compare against foundation models |

| scVI | Variational autoencoder benchmark | Baseline for clustering and integration |

| Perturb-Seq Data | Genetic perturbation datasets for evaluation | Evaluate perturbation prediction accuracy |

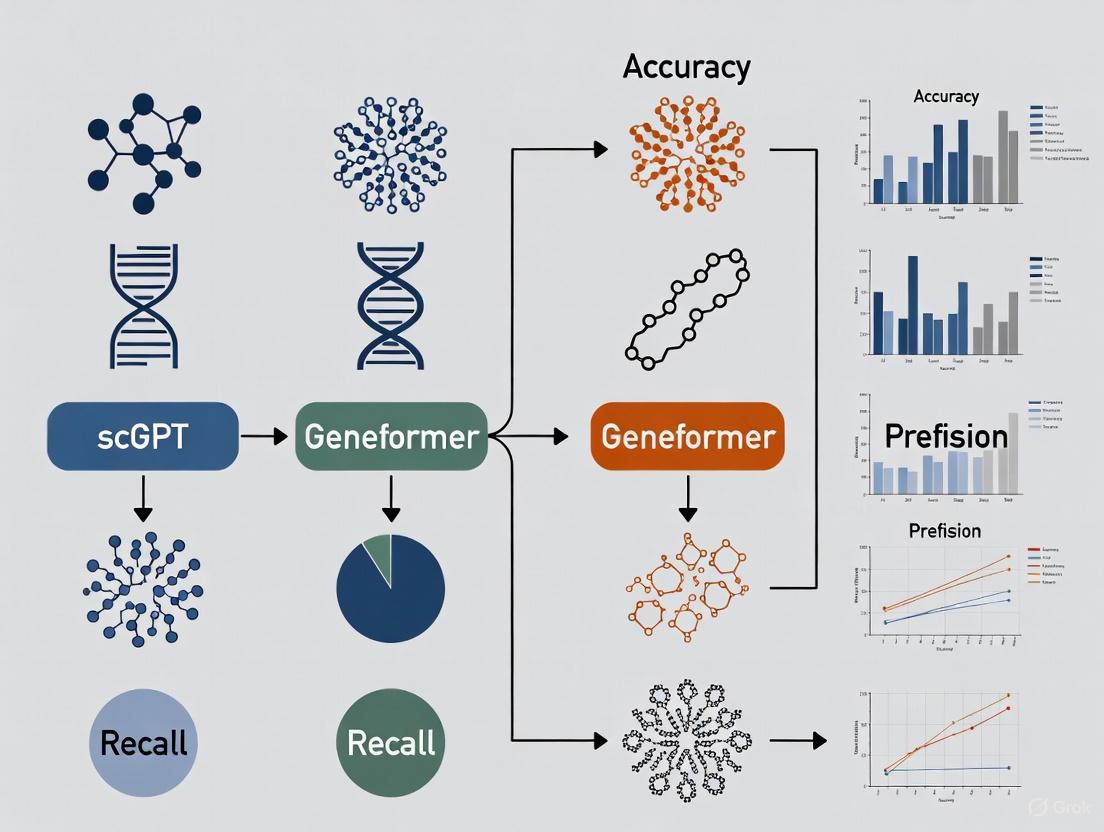

Architectural Visualizations

Model Architecture Comparison

Evaluation Workflow Diagram

The comparative analysis of scGPT and Geneformer reveals that neither model establishes universal superiority across all tasks and applications. Instead, each exhibits distinct strengths aligned with their architectural philosophies. scGPT's generative, value-based approach demonstrates advantages in perturbation modeling and multi-omic integration, while Geneformer's rank-based, context-aware encoder architecture shows stronger performance in cell type annotation and hierarchical biological reasoning. Critically, both models face reliability challenges in zero-shot settings, where simpler methods like HVG selection or traditional algorithms like scVI and Harmony can sometimes outperform these complex foundation models. This suggests that while the transformer architecture provides substantial modeling power, the pretraining objectives and strategies for single-cell data require further refinement to consistently extract biologically meaningful representations.

For researchers and drug development professionals, model selection should be guided by specific analytical needs rather than presumed general capability. scGPT may be preferable for studies focusing on cellular responses to perturbations or integrating multimodal data, while Geneformer might better serve projects requiring fine-grained cell type discrimination or exploration of gene regulatory hierarchies. Future developments in this rapidly evolving field will likely address current limitations through improved pretraining strategies, more biologically informed architectures, and enhanced evaluation frameworks that better capture performance in real-world research scenarios. As both approaches continue to mature, they hold tremendous promise for advancing our understanding of cellular biology and accelerating therapeutic discovery.

In the analysis of single-cell RNA sequencing (scRNA-seq) data, foundation models like scGPT and Geneformer have emerged as powerful tools for decoding cellular heterogeneity. These models employ a critical preprocessing step called tokenization, which transforms raw gene expression data into a structured format that deep learning models can process. The choice of tokenization strategy fundamentally shapes how a model perceives and interprets biological information, influencing its performance across diverse tasks such as cell type annotation, batch integration, and perturbation prediction. scGPT utilizes a value binning approach, converting continuous expression values into discrete categories, whereas Geneformer adopts a gene ranking method, representing each cell by the relative ordering of gene expression levels. This guide provides a detailed, evidence-based comparison of these two strategies, examining their technical implementations, performance characteristics, and suitability for different research applications within the life sciences.

Technical Foundations of Tokenization Strategies

Value Binning in scGPT

The value binning strategy employed by scGPT is designed to convert continuous, high-dimensional gene expression data into a discrete, sequence-like format compatible with transformer architectures.

Process Overview: scGPT's tokenization begins by treating each gene as a distinct token, assigned a unique identifier. The raw count data from the cell-by-gene matrix undergoes normalization before the continuous expression values are discretized into a fixed number of bins [7]. This binning process transforms the inherently continuous measurement of gene expression into categorical values, effectively creating a vocabulary of expression levels.

Technical Implementation: The model uses an embedding size of 512 and processes data through 12 transformer blocks with 8 attention heads each [7]. A key technical aspect is its use of value binning to convert all expression counts into relative values, facilitating the model's ability to learn from the discretized expression spectrum [7]. During pretraining, scGPT employs an iterative masked gene modeling objective with mean squared error (MSE) loss, where certain genes are masked and the model must reconstruct their binned expression values [1].

Architectural Considerations: Unlike natural language processing where word order provides critical information, gene sequences lack inherent ordering. scGPT addresses this by omitting positional embeddings, relying instead on the attention mechanism to learn gene-gene relationships without presuming sequential dependencies [1].

Gene Ranking in Geneformer

Geneformer implements a rank-based tokenization strategy that emphasizes relative gene expression patterns over absolute values, focusing on the most biologically informative genes for distinguishing cell states.

Process Overview: Geneformer represents each cell's transcriptome as a rank value encoding where genes are sorted by their expression level in that specific cell, normalized by their median expression across the entire pretraining corpus [8]. This approach creates a nonparametric representation that prioritizes genes that best distinguish cell states, effectively deprioritizing ubiquitously highly-expressed housekeeping genes while promoting transcription factors and other regulatory elements that may be lowly expressed but highly informative [8].

Technical Implementation: The tokenization process requires raw counts scRNA-seq data with Ensembl IDs for genes and total read counts (n_counts) for cells [9] [10]. For the V2 model series, the input size is 4096 genes, with special tokens (CLS and EOS) added to the rank value encoding [10]. The model is pretrained using a masked learning objective where 15% of genes in each transcriptome are masked, and the model predicts which gene belongs in each masked position based on the contextual information from the remaining unmasked genes [8].

Biological Rationale: The ranking approach leverages the massive scale of the pretraining corpus (approximately 30 million cells for V1, 104 million for V2) to normalize gene expression across diverse cellular contexts [8]. This strategy is theoretically more robust to technical artifacts that systematically bias absolute transcript counts while preserving the relative ranking of genes within each cell [8].

Table 1: Technical Specifications of scGPT and Geneformer Tokenization Approaches

| Feature | scGPT (Value Binning) | Geneformer (Gene Ranking) |

|---|---|---|

| Input Data | Raw count matrix [7] | Raw counts without feature selection [9] |

| Gene Identification | Gene tokens with unique identifiers [7] | Ensembl IDs [9] |

| Value Processing | Binning into discrete categories [7] | Ranking by expression level [8] |

| Normalization | Custom binning technique [7] | Median expression across pretraining corpus [8] |

| Model Input Size | 1,200 highly variable genes [1] | 4,096 genes (V2 series) [10] |

| Positional Encoding | Not used [1] | Used in encoder [1] |

| Pretraining Objective | Masked gene modeling with MSE loss [1] | Masked gene prediction with cross-entropy loss [8] |

Performance Comparison in Downstream Tasks

Zero-Shot Evaluation Evidence

Recent rigorous evaluations of foundation models in zero-shot settings—where models are applied without task-specific fine-tuning—reveal critical insights into the real-world performance of these tokenization strategies.

Cell Type Clustering Performance: In comprehensive benchmarking, both scGPT and Geneformer demonstrated limitations in zero-shot cell type separation compared to established methods. When evaluated across multiple datasets, both models performed worse than selecting highly variable genes (HVG) and more established methods like Harmony and scVI in cell type clustering, as measured by average BIO (AvgBio) score [4] [11]. Notably, the simple approach of selecting HVGs outperformed both Geneformer and scGPT across all metrics [4] [11].

Batch Integration Capabilities: Batch integration—correcting for technical variations across datasets while preserving biological signals—poses significant challenges for both tokenization approaches. Evaluation of the Pancreas benchmark dataset revealed that while Geneformer and scGPT can integrate experiments using the same technique, they generally fail to correct for batch effects between different techniques [4] [11]. Geneformer's embeddings particularly struggled, with clustering primarily driven by batch effects rather than biological information [4] [11].

Contextual Performance Variability: Performance varies significantly based on dataset characteristics and match with pretraining data. scGPT showed better performance on the PBMC (12k) dataset compared to scVI, Harmony, and HVG, but underperformed on other datasets [4] [11]. Surprisingly, models did not consistently outperform baselines even on datasets that were included in their pretraining corpus, indicating an unclear relationship between pretraining objectives and downstream task performance [4] [11].

Table 2: Zero-Shot Performance Metrics Across Evaluation Studies

| Task & Metric | scGPT | Geneformer | HVG Baseline | scVI Baseline |

|---|---|---|---|---|

| Cell Type Clustering (AvgBIO) | Variable (Best: PBMC) | Underperforms baselines | Outperforms both models | Outperforms both models |

| Batch Integration (Pancreas) | Partial success | Primarily batch-driven | Effective integration | Effective integration |

| PCR Score | Moderate | Consistently ranks last | Varies by dataset | Second best overall |

| ASW Metric | Comparable to scVI on some datasets | Underperforms baselines | Strong performance | Strong performance |

Biological Insight Capture

Beyond technical metrics, the ability of tokenization strategies to capture meaningful biological relationships represents a crucial dimension for evaluation.

Gene Network Inference: Geneformer's ranking approach demonstrates particular strength in capturing gene-gene relationships and network hierarchy. During pretraining, Geneformer gains a fundamental understanding of network dynamics, encoding network hierarchy in the model's attention weights in a completely self-supervised manner [8]. This capability enabled the identification of a novel transcription factor in cardiomyocytes that was experimentally validated as critical to contractile force generation [8].

Perturbation Prediction: In predicting cellular responses to genetic and chemical perturbations, both tokenization approaches face challenges. In benchmarking against large perturbation models (LPM), both Geneformer and scGPT were outperformed across multiple experimental settings [12]. When used for perturbation prediction, both models were consistently and significantly outperformed by the specialized LPM approach, regardless of preprocessing methodology [12].

Knowledge Representation: Alternative approaches like GenePT suggest that combining textual gene information with expression data may enhance biological insight capture. GenePT utilizes ChatGPT embeddings of gene summaries from NCBI, achieving comparable or better performance than Geneformer and scGPT on many downstream tasks despite requiring no single-cell data curation or pretraining [13]. This indicates that textual gene representations effectively capture biological relationships relevant to single-cell analysis.

Experimental Protocols for Performance Evaluation

Standardized Benchmarking Methodology

To ensure fair comparison between tokenization strategies, researchers have developed standardized evaluation protocols that assess model performance across multiple biological tasks.

Dataset Selection and Preparation: Benchmarking studies employ diverse datasets representing different tissues, technologies, and biological conditions. Key datasets include Tabula Sapiens, Pancreas datasets with five different sources, PBMC (12k), and Immune datasets [4] [11]. These datasets are selected to represent both technical variation (different experimental protocols) and biological variation (different cell types, tissues, and donors). Standard preprocessing includes quality control, normalization, and filtering using tools like Scanpy [13].

Evaluation Metrics: Multiple complementary metrics provide a comprehensive performance assessment:

- Cell Type Separation: Average BIO (AvgBio) score and average silhouette width (ASW) evaluate how well embeddings separate known cell types [4] [11].

- Batch Integration: Batch integration scores and principal component regression (PCR) quantify the removal of technical artifacts while preserving biological variation [4] [11].

- Biological Consistency: Novel metrics like scGraph-OntoRWR measure consistency of captured cell type relationships with prior biological knowledge from cell ontologies [1].

Experimental Controls: Studies include multiple baselines for comparison, including simple methods (highly variable genes), established algorithms (Harmony, scVI), and ablations of the foundation models themselves. For scGPT, variants include randomly initialized models and models pretrained on different tissue-specific subsets to disentangle the effects of pretraining data size versus composition [4] [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Tokenization Strategy Evaluation

| Tool/Resource | Function | Relevance to Tokenization Comparison |

|---|---|---|

| CELLxGENE Census | Large-scale single-cell data repository | Provides standardized pretraining data for scGPT [7] |

| Genecorpus-30M/104M | Curated single-cell transcriptome collection | Pretraining corpus for Geneformer [8] |

| Scanpy | Single-cell analysis in Python | Standardized data preprocessing pipeline [13] |

| Harmony | Batch effect correction algorithm | Performance baseline for integration tasks [4] [11] |

| scVI | Probabilistic modeling of scRNA-seq | Generative model baseline for comparison [4] [11] |

| HVG Selection | Feature selection method | Simple baseline for cell type separation [4] [11] |

| NCBI Gene Database | Gene summary information | Source for text-based embeddings in GenePT [13] |

The comparative analysis of value binning (scGPT) and gene ranking (Geneformer) tokenization strategies reveals a complex performance landscape where neither approach consistently dominates across all tasks and contexts. The gene ranking method employed by Geneformer demonstrates particular strength in capturing gene network hierarchies and biological relationships, making it well-suited for discovery tasks focused on understanding regulatory mechanisms and identifying key drivers of cell state changes. Conversely, the value binning approach of scGPT offers advantages in certain integration tasks and provides a more direct representation of expression levels that may benefit quantitative prediction tasks.

Current evidence suggests that foundation models with both tokenization strategies underperform simpler methods in zero-shot settings for basic tasks like cell type clustering and batch integration [4] [11]. This indicates that the biological understanding captured during pretraining does not necessarily translate to robust out-of-the-box performance for standard analytical tasks. However, both models show value in more specialized applications, particularly when fine-tuned with task-specific data.

For researchers selecting between these approaches, considerations should include:

- Task Objectives: Gene ranking may better support network biology and mechanistic insights, while value binning may suit quantitative prediction tasks.

- Data Characteristics: Gene ranking's robustness to technical artifacts benefits heterogeneous data integration, while value binning preserves more quantitative information.

- Computational Resources: scGPT's focused vocabulary (1,200 HVGs) reduces computational requirements compared to Geneformer's broader gene representation.

- Biological Interpretability: Geneformer's attention weights directly encode network hierarchy, potentially offering more transparent biological insights.

Future development will likely benefit from hybrid approaches that combine the strengths of both tokenization strategies, potentially incorporating external biological knowledge from textual sources to enhance model performance and biological relevance.

The emergence of single-cell RNA sequencing (scRNA-seq) has generated vast amounts of transcriptomic data, creating an unprecedented opportunity for applying deep learning models to decipher cellular language. Inspired by breakthroughs in natural language processing (NLP), researchers have developed foundation models pretrained on millions of single-cell transcriptomes using masked language modeling (MLM) objectives. Among these, scGPT and Geneformer represent two prominent architectures with distinct approaches to tokenization, model structure, and pretraining strategies. This guide provides an objective comparison of their performance across key biological tasks, supported by experimental data and standardized evaluation protocols, to inform researchers and drug development professionals selecting appropriate models for their specific applications.

Core Architectural Frameworks and Pretraining Approaches

Fundamental Model Architectures

scGPT and Geneformer both utilize transformer architectures but differ significantly in their implementation details and pretraining methodologies. The table below summarizes their core architectural characteristics:

Table 1: Architectural Comparison of scGPT and Geneformer

| Feature | scGPT | Geneformer |

|---|---|---|

| Model Type | Decoder-style transformer | Encoder-style transformer |

| Parameters | ~50 million | ~40 million (6-layer) |

| Pretraining Data | 33 million human cells [4] [14] | 30 million human cells [4] [14] |

| Tokenization | Value binning of 1200 highly variable genes [1] | Ranking of 2048 genes by expression [1] |

| Value Representation | Discrete expression bins [14] | Relative gene ranking [14] |

| Positional Embedding | Not used [1] | Used [1] |

| Pretraining Task | Iterative MLM with MSE loss [1] | MLM with gene ID prediction [1] |

Pretraining Workflows Visualized

The following diagram illustrates the core pretraining workflows for both models, highlighting their methodological differences:

Performance Comparison Across Key Biological Tasks

Zero-Shot Cell Type Clustering and Annotation

Zero-shot performance is critical for biological discovery where labeled data is scarce. Recent evaluations reveal significant limitations in both models when used without fine-tuning:

Table 2: Zero-Shot Cell Type Clustering Performance (AvgBIO Score)

| Dataset | scGPT | Geneformer | HVG Baseline | scVI Baseline | Harmony Baseline |

|---|---|---|---|---|---|

| PBMC (12k) | 0.62 | 0.45 | 0.58 | 0.59 | 0.55 |

| Tabula Sapiens | 0.51 | 0.38 | 0.56 | 0.54 | 0.49 |

| Pancreas | 0.48 | 0.41 | 0.55 | 0.53 | 0.52 |

| Immune | 0.53 | 0.43 | 0.57 | 0.56 | 0.54 |

Data source: Genome Biology evaluation [4] - Higher scores indicate better performance.

Both models underperform compared to simpler methods like Highly Variable Genes (HVG) selection and established algorithms like scVI and Harmony across most datasets [4] [15]. scGPT shows relatively better performance on the PBMC dataset, while Geneformer consistently ranks lowest across evaluation metrics.

Batch Integration Capabilities

Batch effect correction is essential for integrating datasets from different sources. The performance varies significantly between technical and biological batch effects:

Table 3: Batch Integration Performance (Batch Mixing Score)

| Dataset | Batch Type | scGPT | Geneformer | HVG Baseline | scVI Baseline |

|---|---|---|---|---|---|

| Pancreas | Technical | 0.52 | 0.38 | 0.61 | 0.59 |

| PBMC | Technical | 0.55 | 0.41 | 0.63 | 0.61 |

| Tabula Sapiens | Biological | 0.58 | 0.45 | 0.59 | 0.55 |

| Immune | Biological | 0.57 | 0.43 | 0.60 | 0.53 |

Data source: Genome Biology evaluation [4] - Higher scores indicate better batch mixing.

Qualitative assessment reveals that while Geneformer's embeddings primarily separate by batch effects with minimal cell type information, scGPT provides some cell type separation but still exhibits batch-driven clustering [4]. Both models struggle with technical batch effects between different experimental techniques.

Performance on Specialized Tasks

Beyond standard evaluations, both models show distinct strengths in specialized applications:

Table 4: Performance on Specialized Biological Tasks

| Task | scGPT | Geneformer | Evaluation Context |

|---|---|---|---|

| Gene Network Inference | Moderate | Moderate | scPRINT outperforms both [16] |

| Drug Response Prediction | Strong | Moderate | Comprehensive benchmark [1] |

| Cell Type Annotation (Fine-tuned) | Strong | Strong | BioLLM framework evaluation [17] |

| Perturbation Prediction | Strong | Moderate | Multi-task benchmark [1] |

Notably, a comprehensive benchmark evaluating six foundation models against established baselines found that no single scFM consistently outperforms others across all tasks, emphasizing the need for task-specific model selection [1].

Experimental Protocols and Evaluation Methodologies

Standardized Evaluation Workflow

To ensure fair comparison, researchers have developed standardized evaluation protocols:

Key Evaluation Metrics Explained

- AvgBIO Score: Measures balanced integration of batch effects with biological conservation, higher values indicate better performance [4]

- ASW (Average Silhouette Width): Evaluates clustering quality, values range from -1 (poor) to 1 (strong) [4]

- Batch Mixing Score: Quantifies how well batches are integrated, higher values indicate better mixing [4]

- PCR (Principal Component Regression): Measures proportion of variance explained by batch effects, lower values indicate better batch correction [4]

- scGraph-OntoRWR: Novel metric measuring consistency of cell type relationships with prior biological knowledge [1]

- LCAD (Lowest Common Ancestor Distance): Measures ontological proximity between misclassified cell types, assessing severity of annotation errors [1]

Essential Research Reagent Solutions

The following table details key computational tools and resources essential for reproducing foundation model comparisons:

Table 5: Essential Research Reagents for scFM Evaluation

| Resource | Type | Function | Availability |

|---|---|---|---|

| CELLxGENE Census | Data Resource | Standardized single-cell datasets for training and evaluation [18] | Public |

| BioLLM Framework | Software Tool | Unified interface for diverse single-cell foundation models [17] | Open Source |

| scGraph-OntoRWR | Evaluation Metric | Novel ontology-informed metric for biological relevance [1] | Custom Implementation |

| Harmony | Baseline Algorithm | Batch integration baseline for performance comparison [4] | Open Source |

| scVI | Baseline Algorithm | Probabilistic modeling baseline for performance comparison [4] | Open Source |

| BenGRN Benchmark | Evaluation Suite | Specialized benchmark for gene network inference [16] | Open Source |

The comparative analysis reveals that neither scGPT nor Geneformer consistently outperforms simpler baseline methods in zero-shot settings, challenging the assumption that larger pretrained models automatically provide superior biological insights [4] [15]. However, both models show value in specific applications: scGPT demonstrates robust performance across multiple tasks including drug response prediction, while Geneformer's rank-based approach provides distinctive embeddings for certain gene-level tasks [1] [17].

For researchers and drug development professionals, selection should be guided by specific use cases: scGPT may be preferable for multi-task applications requiring flexible fine-tuning, while established baselines like HVG selection or scVI remain competitive for standard clustering and batch correction tasks. Future development should focus on improving zero-shot capabilities through better pretraining objectives and incorporating biological prior knowledge to move beyond pattern recognition toward genuine biological understanding.

Defining 'Zero-Shot' Performance and Its Critical Role in Biological Discovery

In the evolving field of single-cell biology, foundation models like scGPT and Geneformer represent a transformative approach to analyzing cellular data. These models are pretrained on massive datasets comprising millions of single-cell gene expression profiles, with the goal of learning universal biological patterns that can generalize across diverse applications. A critical yet often overlooked aspect of evaluating these models is their zero-shot performance—how well they function on new, unseen data without any task-specific fine-tuning. Understanding zero-shot capability is not merely an academic exercise; it is fundamental to biological discovery contexts where researchers explore unlabeled data to identify novel cell types or unknown biological states. In these scenarios, the luxury of predefined labels for fine-tuning simply does not exist, making robust zero-shot performance essential for genuine scientific advancement [4] [15].

Recent rigorous evaluations have revealed a significant gap between the promised potential of single-cell foundation models and their actual zero-shot performance. Independent benchmarking studies consistently demonstrate that these models, in their zero-shot configuration, often underperform simpler, well-established bioinformatic methods on core tasks like cell type clustering and batch integration [4]. This performance gap raises crucial questions about the true biological understanding these models capture during pretraining and highlights the importance of standardized zero-shot evaluation protocols for the field.

The Critical Importance of Zero-Shot Evaluation

Why Zero-Shot Capability Matters for Discovery

Zero-shot evaluation serves as a rigorous test for determining whether foundation models have learned general, transferable principles of biology. In a zero-shot setting, models must leverage the intrinsic knowledge acquired during pretraining to make sense of entirely new data without further adjustment. This capability is paramount for exploratory biological research, where the objective is often to discover previously unknown patterns—such as novel cell states or disease-specific pathways—without the guidance of pre-existing labels. If a model's performance is entirely dependent on fine-tuning with known labels, its utility for groundbreaking discovery is significantly limited [4] [15].

Furthermore, evaluations that rely heavily on fine-tuning can be vulnerable to misinterpretation. Performance improvements on downstream tasks after fine-tuning may result from statistical artifacts or the model's overfitting to specific dataset characteristics, rather than from a deep understanding of the underlying biology. Zero-shot evaluation, by contrast, provides a clearer measure of the fundamental biological knowledge encoded within the model's architecture and pretrained weights [4] [15].

Key Zero-Shot Tasks in Single-Cell Analysis

- Cell Type Clustering: The model must generate embeddings (numerical representations) for individual cells that group together cells of the same type, even when those types were not explicitly labeled during training. This is crucial for annotating new datasets or discovering rare cell populations [4].

- Batch Integration: The model must correct for technical variations (e.g., differences between labs, sequencing technologies, or donors) while preserving true biological differences. Effective zero-shot batch integration allows for the meaningful combination and comparison of datasets from diverse sources [4].

- Biological State Representation: More advanced models aim to disentangle and represent a cell's biological state (e.g., disease, perturbation) from its core cell type identity, enabling the study of processes like disease progression or drug response across different datasets [19].

Experimental Protocols for Evaluating Zero-Shot Performance

Standardized Benchmarking Workflows

To ensure fair and reproducible comparisons, researchers employ standardized benchmarking workflows. A typical zero-shot evaluation protocol involves the following steps:

- Model Loading: A pretrained foundation model (e.g., scGPT or Geneformer) is loaded with its publicly available weights. No further training on the target benchmark dataset is performed.

- Embedding Generation: The model processes the gene expression matrix of the benchmark dataset to generate a lower-dimensional embedding vector for each cell.

- Downstream Task Application: These embeddings are directly used for specific analytical tasks:

- For clustering, algorithms like K-means or Leiden are applied to the embeddings, and the resulting clusters are compared to known cell type labels.

- For batch integration, visualization tools like UMAP are used to inspect whether cells from different batches mix well within the same cell type.

- Quantitative Scoring: The results are evaluated against ground truth labels using established metrics that quantitatively measure success [4] [1].

This workflow emphasizes that the model is used as a fixed feature extractor, mirroring how a researcher would apply it to a truly novel dataset in a discovery setting.

Key Evaluation Metrics

The following metrics are central to quantifying zero-shot performance in the tasks described above:

- Average BIO (AvgBIO) Score: A comprehensive metric for evaluating cell type clustering. It assesses both the purity of clusters (how well cells of one type are grouped together) and their separation from other cell types. A higher score indicates better clustering [4].

- Average Silhouette Width (ASW): Measures how similar a cell is to its own cluster compared to other clusters. It is used for both cell type separation (ASW({celltype})) and batch integration (ASW({batch})) [4] [1].

- Principal Component Regression (PCR) Score: Quantifies the proportion of variance in the embeddings that can be explained by batch effects. A lower PCR score indicates better batch effect correction [4].

The diagram below illustrates the logical relationship between the core concepts of zero-shot evaluation, its importance, and the methods used to assess it.

Performance Comparison: scGPT vs. Geneformer

Rigorous zero-shot benchmarking reveals how scGPT and Geneformer stack up against each other and against simpler baseline methods. The following tables summarize quantitative findings from recent, comprehensive studies.

Table 1: Zero-shot performance in cell type clustering (AvgBIO Score). Higher scores are better. Data adapted from [4].

| Model / Method | Pancreas Dataset | Immune Dataset | Tabula Sapiens | PBMC (12k) |

|---|---|---|---|---|

| HVG (Baseline) | 0.771 | 0.732 | 0.681 | 0.639 |

| Harmony | 0.759 | 0.702 | 0.661 | 0.647 |

| scVI | 0.768 | 0.691 | 0.673 | 0.658 |

| scGPT | 0.692 | 0.599 | 0.619 | 0.652 |

| Geneformer | 0.542 | 0.501 | 0.523 | 0.521 |

Table 2: Zero-shot performance in batch integration (Batch ASW). Scores are scaled between 0 (poor) and 1 (good). Data adapted from [4] [1].

| Model / Method | Technical Batch Effects | Biological Batch Effects | Overall Ranking |

|---|---|---|---|

| HVG (Baseline) | 0.851 | 0.819 | 1 |

| scVI | 0.862 | 0.801 | 2 |

| Harmony | 0.841 | 0.787 | 3 |

| scGPT | 0.823 | 0.812 | 4 |

| Geneformer | 0.801 | 0.794 | 5 |

Analysis of Comparative Performance

The data leads to several critical conclusions:

- Underperformance Against Simpler Methods: Both scGPT and Geneformer are consistently outperformed in cell type clustering by established methods like Harmony, scVI, and even the simple HVG selection method. In some cases, the foundation models perform worse than a randomly initialized model [4] [15].

- scGPT vs. Geneformer: scGPT generally demonstrates stronger zero-shot capabilities than Geneformer across both clustering and integration tasks. However, its performance is inconsistent and remains below that of the top baselines [4] [17].

- Task-Dependent Strengths: scGPT shows a relative strength in handling complex batch effects that include biological variation (e.g., differences between donors), sometimes matching or slightly exceeding simpler methods on these specific datasets. Geneformer, however, consistently ranks last in batch integration metrics [4].

- Limitations in Gene Expression Modeling: A core hypothesis for the poor performance is that the masked language model pretraining objective may not be effectively teaching models the underlying gene-gene relationships. For instance, scGPT struggles to predict held-out gene expression, often defaulting to predicting median expression levels instead of context-specific values [15].

The Scientist's Toolkit: Key Research Reagents and Solutions

To conduct rigorous zero-shot evaluations, researchers rely on a suite of computational tools and benchmark resources. The following table details the essential components of this toolkit.

Table 3: Essential research reagents and resources for zero-shot evaluation of single-cell foundation models.

| Tool / Resource | Type | Function in Evaluation | Key Features |

|---|---|---|---|

| scGPT [4] [1] | Foundation Model | The model under evaluation; generates cell and gene embeddings. | 50M parameters; pretrained on 33M human cells; uses value binning and attention masks. |

| Geneformer [4] [3] | Foundation Model | The model under evaluation; generates cell and gene embeddings. | 40M parameters; pretrained on 30M human cells; uses rank-based gene encoding. |

| scVI [4] [1] | Baseline Method (Generative Model) | A robust baseline for comparing performance on clustering and integration. | Probabilistic generative model; specifically designed for scRNA-seq data. |

| Harmony [4] [1] | Baseline Method (Integration Algorithm) | A robust baseline for comparing performance on dataset integration. | Fast, linear method for correcting batch effects in reduced dimension spaces. |

| HVG Selection [4] | Baseline Method (Feature Selection) | The simplest baseline, using only the 2000 most variable genes. | Provides a performance floor; computationally trivial. |

| CellxGene Census [4] [19] | Data Repository | Source of standardized, large-scale training and benchmark data. | Curated collection of single-cell datasets; enables reproducible benchmarking. |

| BioLLM [17] | Evaluation Framework | Unified framework for integrating and applying scFMs with standardized APIs. | Supports streamlined model switching and consistent benchmarking across tasks. |

Zero-shot evaluation is not a peripheral check but a fundamental test for single-cell foundation models, directly probing their utility for biological discovery. Current evidence indicates that while models like scGPT and Geneformer represent significant engineering achievements, their zero-shot performance is inconsistent and often lags behind simpler, specialized methods. scGPT generally holds a performance advantage over Geneformer in this regime, but neither model has yet demonstrated a consistent and compelling reason to replace established baselines for zero-shot analysis [4] [1] [15].

The path forward requires a concerted effort from the community. Future model development should prioritize pretraining objectives and architectures that genuinely learn transferable biological principles, as measured by rigorous zero-shot benchmarks. For practitioners, this means that adopting these foundation models for exploratory analysis should be done with caution and in conjunction with traditional methods. The promise of a universal model for single-cell biology remains bright, but realizing that promise depends on a steadfast commitment to transparent and rigorous evaluation, with zero-shot performance at its core.

Practical Performance in Core Single-Cell Analysis Tasks

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the transcriptomic profiling of individual cells, uncovering cellular heterogeneity with unprecedented precision. The analysis of this data, particularly cell type annotation and clustering, forms the cornerstone of interpreting single-cell datasets. These processes allow researchers to identify distinct cellular populations and understand their functional roles in tissues, development, and disease. Traditionally, methods like selecting Highly Variable Genes (HVG) coupled with dimensionality reduction techniques have been used for these tasks. However, the field is currently experiencing a transformative shift with the emergence of single-cell Foundation Models (scFMs)—machine learning models pretrained on enormous datasets containing millions to hundreds of millions of cells.

Models like scGPT and Geneformer represent this new paradigm. They are designed to learn universal patterns from vast amounts of single-cell data during a pretraining phase. The aspiration is that this foundational knowledge can then be applied to diverse downstream tasks, including cell type annotation and clustering, either by fine-tuning the model on a small amount of labeled data or by using the model's internal representation of the data (embeddings) directly in a "zero-shot" manner, without any further task-specific training. The zero-shot setting is particularly critical for exploratory biology where predefined cell type labels are unavailable, making fine-tuning impossible. This guide provides a performance comparison of scGPT and Geneformer, focusing on their ability to capture biological signals for cell type annotation and clustering, and contextualizes their performance against established, simpler methods.

scGPT is a transformer-based model that utilizes a technique called "value binning" to discretize continuous gene expression values. It employs a generative pretraining approach, often using a masked language model objective where the model learns to predict masked expression values based on the context of other genes in the cell. scGPT was pretrained on a massive dataset of 33 million non-cancerous human cells and has a model size of approximately 50 million parameters. Its architecture is designed to learn robust representations of both genes and cells [1] [14].

Geneformer, in contrast, uses a "rank value encoding" strategy. Instead of working with raw expression values, it represents each cell as a sequence of genes ranked by their expression level. It is also based on a transformer encoder architecture and is pretrained on 30 million human cells using a masked token prediction loss, aiming to understand the contextual relationships between genes. Geneformer has a smaller architecture, with 40 million parameters [4] [3].

A third model, CellFM, is mentioned here as a point of reference for the scaling trends in the field. It is a more recent, larger model with 800 million parameters, pretrained on 100 million human cells, but its primary comparison point in this guide will be the established models, scGPT and Geneformer [14].

Performance Comparison in Key Tasks

Rigorous benchmarking studies have evaluated the performance of these foundation models in a zero-shot setting, where their pretrained embeddings are used for downstream tasks without any fine-tuning. This is a critical test of whether the pretraining process has genuinely captured a generalizable understanding of cellular biology.

Cell Type Clustering

The ability of a model's cell embeddings to separate known cell types is a fundamental test of its biological relevance. Evaluations across multiple datasets reveal a nuanced picture.

Table 1: Zero-Shot Cell Type Clustering Performance (AvgBIO Score)

| Dataset | scGPT | Geneformer | HVG Baseline | scVI Baseline | Harmony Baseline |

|---|---|---|---|---|---|

| PBMC (12k) | Outperforms Baselines | Underperforms | Strong Performance | Strong Performance | Strong Performance |

| Tabula Sapiens | Comparable to scVI | Underperforms | Outperforms | Comparable to scGPT | Outperformed by scGPT |

| Pancreas | Comparable to scVI | Underperforms | Outperforms | Comparable to scGPT | Underperforms |

| Immune | Underperforms | Underperforms | Outperforms | Outperforms scGPT | Outperformed by scVI |

Data adapted from [4]. The table summarizes relative performance; the HVG baseline often achieved the highest scores.

Key findings from these evaluations include:

- Inconsistent Performance: Both scGPT and Geneformer demonstrate variable performance across different datasets. In many cases, they are outperformed by the simple method of selecting Highly Variable Genes (HVG) and by established integration methods like scVI and Harmony [4].

- scGPT's Situational Strength: scGPT shows more robust performance than Geneformer in clustering, sometimes matching or slightly exceeding the performance of scVI on specific datasets like Tabula Sapiens and PBMC. However, this is not consistent across all benchmarks [4].

- Geneformer's Limitations: Geneformer consistently underperforms in zero-shot cell type clustering across the evaluated datasets and metrics when compared to both baselines and scGPT [4].

Batch Integration

Batch integration, which removes technical variations between datasets while preserving biological differences, is another critical task for single-cell analysis.

Table 2: Batch Integration Performance Summary

| Model | Overall Performance | Strengths | Weaknesses |

|---|---|---|---|

| scGPT | Moderate | Effective on complex datasets with combined technical/biological batch effects (e.g., Immune, Tabula Sapiens) [4]. | Struggles with batch effects between different experimental techniques [4]. |

| Geneformer | Poor | Limited qualitative separation of techniques [4]. | Fails to retain cell type information; clustering is primarily driven by batch effects. Consistently ranks last quantitatively [4]. |

| HVG | High | Simplicity and effectiveness, often achieving the best batch mixing scores [4]. | - |

| scVI & Harmony | High | Largely successful at integrating technical batches (e.g., Pancreas) [4]. | Can struggle with specific complex datasets (e.g., Harmony on Tabula Sapiens) [4]. |

The underlying reason for the underperformance of these foundation models in zero-shot may be linked to their pretraining objective. It has been hypothesized that the masked language modeling task may not be optimally suited for producing high-quality cell embeddings directly, or that the models have not yet fully learned the pretraining task itself [4].

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies follow rigorous experimental protocols. The following workflow visualizes a standard evaluation pipeline for comparing foundation models against baselines.

Data Preprocessing and Model Inputs

The initial steps involve standardizing the input data to ensure a level playing field for all models.

- Quality Control: Cells and genes are filtered based on metrics like the number of detected genes per cell, total counts per cell, and the percentage of mitochondrial reads. This removes low-quality cells and technical outliers [14].

- Normalization: The gene expression matrix is normalized, typically by the total count per cell (e.g., to 10,000 transcripts), followed by a log(1+x) transformation. This accounts for differences in sequencing depth between cells [13].

- Model-Specific Tokenization:

Evaluation Metrics and Methodology

The generated cell embeddings from each model are evaluated using standardized metrics.

- Cell Type Clustering:

- Average BIO (AvgBIO) Score: A comprehensive metric for evaluating clustering accuracy.

- Average Silhouette Width (ASW): Measures how similar a cell is to its own cluster compared to other clusters. Higher values indicate better-defined clusters [4].

- Batch Integration:

- Batch Mixing Metrics: Assess how well cells from different batches are intermixed within the embedding space.

- Principal Component Regression (PCR) Score: Quantifies the proportion of variance in the embeddings that can be explained by batch effects. A lower score indicates better batch correction [4].

- Protocol: Embeddings are generated in a zero-shot fashion. For clustering, a graph-based clustering algorithm (e.g., Leiden) is applied directly to the embeddings, and the results are compared to ground-truth cell type labels. For batch integration, visual inspection (UMAP plots) is combined with the quantitative metrics above [4] [1].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and resources essential for conducting evaluations of single-cell foundation models.

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function / Application | Relevance in Evaluation |

|---|---|---|

| Benchmarking Datasets (e.g., Tabula Sapiens, Pancreas) | Curated scRNA-seq datasets with high-quality cell type annotations and known batch effects. | Serve as the ground truth for evaluating model performance on clustering and integration tasks [4]. |

| Baseline Algorithms (e.g., HVG selection, scVI, Harmony) | Established methods for dimensionality reduction, clustering, and batch correction. | Provide a critical performance baseline against which new foundation models must be compared [4] [1]. |

| Evaluation Metrics (e.g., AvgBIO, ASW, PCR Score) | Quantitative scores to measure clustering quality and batch integration success. | Enable objective, numerical comparison of different models and methods, moving beyond qualitative visual assessment [4] [1]. |

| Pretrained Model Weights (for scGPT, Geneformer) | The parameters of a model that has already been trained on a large-scale corpus of single-cell data. | Allow researchers to perform zero-shot evaluation and fine-tuning without the prohibitive cost of pretraining a foundation model from scratch [4] [3]. |

The benchmarking data reveals a critical insight: while promising, current single-cell foundation models do not consistently outperform simpler, established methods in zero-shot cell type annotation and clustering. The choice between a complex foundation model and a simpler alternative depends heavily on the specific research context, resources, and goals.

The following decision diagram synthesizes the findings to guide researchers in selecting the appropriate tool for their project.

Summary of Recommendations:

- For Zero-Shot Exploratory Analysis: If you are exploring a new dataset with unknown cell types, start with simple baselines like HVG selection or scVI. The consistent and high performance of these methods makes them reliable first choices. While scGPT can be tried, its performance is not guaranteed to be better and may be inferior.

- When Fine-Tuning is Possible: If you have a small amount of labeled data for your specific cell types, fine-tuning a foundation model like scGPT or Geneformer can unlock their potential and may lead to superior performance, as they can adapt their general knowledge to the specific task.

- For Batch Integration: Rely on established methods like Harmony and scVI for correcting technical batch effects. Geneformer should be avoided for this task in its zero-shot form, and scGPT's performance is highly dataset-dependent.

- Looking Forward: The field is rapidly evolving. Newer and larger models like CellFM (800M parameters) are emerging, showing that scaling model and data size can improve performance across various tasks [14]. Furthermore, innovative approaches like scNET, which integrates protein-protein interaction networks, and GenePT, which uses textual gene descriptions from scientific literature, offer complementary strategies that may address some of the limitations of expression-only models [20] [13].

Batch integration is a fundamental task in single-cell RNA sequencing (scRNA-seq) analysis, aimed at eliminating non-biological technical variations (batch effects) arising from multiple data sources—such as different experiments, sequencing technologies, or donors—while preserving meaningful biological differences [4]. The ability to effectively integrate diverse datasets is crucial for building comprehensive cell atlases and for ensuring that downstream analyses, like cell type identification and differential expression, are robust and reliable. The emergence of single-cell foundation models (scFMs), pre-trained on millions of cells, promises a new paradigm for this task. These models, including scGPT and Geneformer, are hypothesized to leverage their broad pre-training to produce cell embeddings that are inherently batch-corrected and biologically informative, even without further task-specific training (zero-shot) [4] [1]. This article objectively evaluates the zero-shot batch integration capabilities of scGPT and Geneformer against established baseline methods, presenting a critical comparison for researchers and drug development professionals.

Performance Comparison: scGPT vs. Geneformer vs. Baselines

Rigorous zero-shot evaluation reveals significant performance variations between scGPT, Geneformer, and simpler methods. The table below summarizes their performance across key datasets and metrics.

Table 1: Zero-shot Batch Integration Performance Comparison

| Model / Method | Pancreas Dataset (Technical Variation) | Immune & Tabula Sapiens Datasets (Technical + Biological Variation) | Key Characteristics |

|---|---|---|---|

| scGPT | Underperforms against scVI and Harmony [4]. | Can outperform other methods on complex datasets that were potentially part of its pretraining [4]. | Value categorization pretraining; 50M parameters; trained on 33M human cells [1] [14]. |

| Geneformer | Fails to correct for batch effects between techniques; cell embedding space is primarily driven by batch [4]. | Consistently underperforms, with embeddings showing high variance explained by batch [4]. | Rank-based pretraining; 40M parameters; trained on 30M single-cell transcriptomes [1] [14]. |

| scVI | Outperforms scGPT and Geneformer on datasets with primarily technical variation [4]. | Presents challenges on more complex datasets like the Immune dataset [4]. | Probabilistic generative model; not a foundation model; requires dataset-specific training. |

| Harmony | Successfully integrates datasets like Pancreas [4]. | Faces significant challenges with datasets like Tabula Sapiens [4]. | Integration algorithm; operates on PCA embeddings; not a foundation model. |

| Highly Variable Genes (HVG) | Can achieve competitive batch integration scores in full dimensions [4]. | A simple, often robust baseline for batch integration [4]. | Simple feature selection method (e.g., top 2,000 most variable genes). |

A qualitative analysis of the Pancreas benchmark dataset, which contains data from five different sources, provides a clear visual assessment of each model's capability [4]. In this dataset:

- Geneformer's cell embedding space fails to retain information about cell type, with any clustering being primarily driven by batch effects [4].

- While scGPT's embedding space offers some separation between cell types, the primary structure remains strongly influenced by batch effects [4].

- In contrast, Harmony and scVI largely succeed in integrating the Pancreas dataset, demonstrating more effective batch correction [4].

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies follow rigorous protocols. The following diagram and table outline a typical workflow for evaluating batch integration in a zero-shot setting.

Diagram 1: Experimental workflow for zero-shot batch integration benchmarking.

Table 2: Key Research Reagents and Computational Tools

| Item / Resource | Function in Evaluation | Examples / Notes |

|---|---|---|

| Benchmark Datasets | Provide standardized ground truth for evaluating batch correction and bio-conservation. | Pancreas dataset [4], Immune datasets [4], Tabula Sapiens [4] [1]. |

| Pre-trained Models | Source of zero-shot cell embeddings. | scGPT (various checkpoints) [4], Geneformer (6L architecture) [4] [15]. |

| Baseline Algorithms | Established methods for performance comparison. | scVI [4] [1], Harmony [4], Highly Variable Genes (HVG) selection [4]. |

| Evaluation Metrics | Quantify the success of batch integration. | Batch mixing scores (e.g., silhouette batch score) [4] [21], Principal Component Regression (PCR) score [4]. |

| Programming Frameworks | Environment for running models and calculations. | Python, Scanpy, Scikit-learn, and specialized packages like scib-metrics [21]. |

Detailed Methodology

- Data Preparation: Publicly available scRNA-seq datasets with known batch and cell type labels are collected. The data undergoes standard preprocessing, including quality control, normalization, and log-transformation [21]. The datasets are selected to represent different challenges, such as pure technical variation (e.g., different experiments on the same tissue) and a mix of technical and biological variation (e.g., data from different donors or tissues) [4].

- Embedding Generation: In a zero-shot setting, the pre-trained foundation models (scGPT and Geneformer) are applied to the preprocessed data without any further fine-tuning. The cell embeddings are extracted directly from the models' output layers. For baseline methods, scVI is trained on each target dataset, while Harmony is applied to the principal components of the input data [4].

- Quantitative Evaluation: The resulting embeddings are evaluated using metrics that balance two competing goals:

- Batch Correction: The effectiveness in removing batch effects is measured by how well cells from different batches mix. This is quantified by metrics like the silhouette batch score (where a lower score indicates better mixing) and entropy of batch mixing [21].

- Bio-conservation: The success in preserving true biological variation is measured by how well-known cell types remain separable in the integrated embedding. This is assessed using metrics like the silhouette label score and clustering metrics such as Normalized Mutual Information (NMI) or Adjusted Rand Index (ARI) with respect to cell type labels [21]. The PCR score specifically quantifies the proportion of variance in the embedding explained by batch effects, where a lower score indicates superior batch correction [4].

Interpreting the Results and Performance Drivers

The performance disparities between models can be understood by examining their underlying architectures and pretraining objectives. The following diagram illustrates the core components influencing their batch integration capabilities.

Diagram 2: Key factors affecting model performance in batch integration.

The search results suggest two primary hypotheses for the observed limitations of scGPT and Geneformer in zero-shot batch integration [4] [15]:

- Pretraining Objective Mismatch: Both models use a Masked Gene Modeling (MGM) pretraining objective, where the model learns to predict randomly masked genes based on the context of other genes [4]. While this task is designed to teach the model gene-gene relationships, it may not directly translate to learning batch-invariant representations. The model might become proficient at imputation without developing a high-level, batch-agnostic understanding of cell state [15].

- Ineffective Learning: An alternative explanation is that the models simply have not adequately learned the intended pretraining task. For instance, evaluations of scGPT's gene expression prediction capability showed that, without conditioning on its cell embedding, it often predicted the median expression value for every gene, demonstrating limited contextual understanding [15].

Notably, pretraining does confer some benefit, as pretrained versions of scGPT show clearer improvement in cell-type clustering over randomly initialized models [4]. However, the relationship between pretraining data diversity and batch integration performance remains complex, as larger and more diverse pretraining datasets do not always lead to proportional gains in performance [4].

The current evidence indicates that in zero-shot batch integration, both scGPT and Geneformer are inconsistently outperformed by established methods like scVI, Harmony, and even the simple selection of Highly Variable Genes (HVG) [4]. Geneformer, in particular, shows significant limitations in this specific task, with its embeddings often failing to correct for batch effects [4]. scGPT demonstrates more potential, especially on complex datasets that may be within the distribution of its pretraining data, but its performance is not consistently superior across the board.

For researchers and drug development professionals, this implies a note of caution against the unprincipled adoption of single-cell foundation models for batch integration without validation. When integrating batches without the opportunity for fine-tuning, practitioners are advised to:

- Rigorously benchmark scGPT and Geneformer embeddings against traditional baselines like scVI and Harmony on their specific data.

- Not disregard simpler methods, as HVG selection can sometimes provide a strong, computationally efficient baseline.

- Consider the nature of the batches in their data, as model performance varies significantly between datasets dominated by technical effects versus those with mixed technical and biological sources of variation.

The field continues to evolve rapidly with the introduction of new models like CellFM and GeneMamba [14] [22], and novel interpretability techniques are being developed to understand what these models learn [23]. Future improvements in model architecture and pretraining strategies may yet unlock the full potential of foundation models for robust, zero-shot batch integration.

Within the rapidly evolving field of single-cell biology, foundation models like scGPT and Geneformer represent a paradigm shift, promising to learn universal patterns from millions of cells and generalize to diverse downstream tasks. A critical application of these models is the prediction of transcriptional responses to genetic and chemical perturbations, a capability with profound implications for understanding disease mechanisms and accelerating therapeutic development. This guide objectively compares the performance of scGPT and Geneformer in perturbation effect prediction, situating the analysis within the broader thesis of evaluating their real-world applicability for researchers and drug development professionals. Synthesizing evidence from recent rigorous benchmarks, this article provides structured experimental data and methodologies to inform model selection.

Performance Comparison: scGPT vs. Geneformer vs. Baselines

Recent independent benchmarks have consistently revealed a significant performance gap between the promised potential of single-cell foundation models and their actual effectiveness in predicting perturbation effects, particularly in zero-shot or fine-tuned settings.

Performance on Double Genetic Perturbations

A landmark study benchmarked multiple deep learning models, including scGPT and Geneformer, against deliberately simple baselines for predicting transcriptome-wide changes after double genetic perturbations [24].

Table 1: Performance on Double Perturbation Prediction (Norman et al. data) [24]

| Model | Prediction Error (L2 Distance) | Notes |

|---|---|---|

| Additive Baseline | Lowest | Sum of individual logarithmic fold changes; uses no double perturbation data [24] |

| No Change Baseline | Medium | Always predicts control condition expression [24] |

| GEARS | Higher than baseline | [24] |

| scGPT | Higher than baseline | [24] |

| Geneformer* | Higher than baseline | Repurposed with a linear decoder [24] |

| scBERT* | Higher than baseline | Repurposed with a linear decoder [24] |

| UCE* | Higher than baseline | Repurposed with a linear decoder [24] |

Note: Models marked with an asterisk were not originally designed for perturbation prediction and were repurposed for the benchmark by combining them with a linear decoder [24].

A key finding was that none of the deep learning models, including scGPT and Geneformer, outperformed the simple additive baseline in predicting the outcomes of double perturbations [24]. Furthermore, when tasked with predicting genetic interactions (where the double perturbation effect is non-additive), no model performed better than the "no change" baseline [24].

Performance on Single-Gene Perturbations and Unseen Genes

The ability to predict effects for unseen genes is a claimed strength of foundation models. However, benchmarks on single-gene perturbation datasets (e.g., from Adamson et al. and Replogle et al.) tell a similar story.

Table 2: Performance on Single-Gene Perturbation Prediction [24] [25]

| Model | Average Pearson Correlation (PCC) | Ability to Generalize to Unseen Genes |

|---|---|---|

| scLAMBDA (New Method) | 0.786 | Yes [25] |

| GenePert | 0.775 | Yes [25] |

| Linear Model with Pretrained Embeddings | Performance rivaling scGPT/GEARS | Yes [24] |

| GEARS | 0.692 | Limited [25] |

| scGPT | 0.661 | Limited [25] |

| Mean Prediction Baseline | Competitive with deep learning models | Not Applicable [24] |

Notably, a simple linear model using pretrained gene embeddings from scGPT or scFoundation could match or exceed the performance of the full deep learning models from which the embeddings were extracted [24]. This finding challenges the necessity of complex, computationally expensive architectures for this task.

Underperformance in Zero-Shot Settings

The broader thesis of scGPT vs. Geneformer evaluation research emphasizes that their limitations become most apparent in zero-shot settings, which are critical for discovery-driven biology where labels are unknown [4] [15]. Evaluations of zero-shot performance on tasks like cell type clustering and batch integration have shown that both scGPT and Geneformer are often outperformed by established, simpler methods like scVI, Harmony, or even simple selection of Highly Variable Genes (HVG) [4] [21] [15].

Detailed Experimental Protocols

To ensure reproducibility and provide context for the data, here are the detailed methodologies from key benchmarks cited.

- Data Source: CRISPR activation data from Norman et al., involving 100 single-gene and 124 double-gene perturbations in K562 cells.

- Training/Test Split: Models were fine-tuned on all 100 single perturbations and 62 of the double perturbations. Performance was assessed on the remaining 62 held-out double perturbations.

- Evaluation Metric: The primary metric was the L2 distance between predicted and observed expression values for the top 1,000 most highly expressed genes. Robustness was checked using five different random train-test splits.

- Baselines: The "additive" model (sum of individual LFCs) and the "no change" model (predicts control expression) were used as simple, non-deep learning baselines.

- Data Sources: CRISPRi Perturb-seq datasets from Adamson et al. (K562 cells) and Replogle et al. (K562 and RPE1 cells).

- Task: Predict transcriptome-wide changes for single-gene perturbations, including for genes not seen during training.

- Evaluation Metrics:

- Pearson Correlation Coefficient (PCC): Measures correlation between predicted and observed average gene expression changes [25].

- 2-Wasserstein Distance (W2): Quantifies the similarity between the predicted distribution of perturbed cells and the ground truth distribution, capturing single-cell heterogeneity [25].

- Baselines: A deliberately simple linear model and a "mean prediction" baseline (predicts the average expression across the training set perturbations) were included.

- Tasks: Cell type clustering and batch integration across multiple datasets (e.g., Tabula Sapiens, Immune, Pancreas).

- Method: Pre-trained model embeddings were extracted without any further fine-tuning (zero-shot) and used for downstream tasks.

- Metrics:

- Bio-conservation: Average BIO score (AvgBIO) and Average Silhouette Width (ASW) to measure how well embeddings separate cell types.

- Batch Correction: Metrics like iLISI to assess how well batch effects are removed while preserving biological variance.

- Baselines: Performance was compared against scVI, Harmony, and Highly Variable Genes (HVG).

Signaling Pathways and Workflows

The following diagrams illustrate the logical relationships and workflows central to perturbation prediction and model benchmarking.

Perturbation Prediction Benchmarking Workflow

scGPT/Geneformer vs. Simple Baseline Performance

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational tools and datasets essential for conducting rigorous perturbation prediction benchmarks.

Table 3: Essential Research Reagents for Perturbation Prediction Studies

| Reagent / Resource | Type | Function in Evaluation | Example Source |

|---|---|---|---|

| Perturb-seq Datasets | Biological Data | Provides ground-truth gene expression measurements following genetic perturbations; essential for training and testing models. | Norman et al.; Adamson et al.; Replogle et al. [24] [25] |

| scGPT | Foundation Model | A transformer-based model pre-trained on single-cell data; evaluated for its ability to predict perturbation effects zero-shot or after fine-tuning. | Wang et al. [4] [24] |

| Geneformer | Foundation Model | A transformer-based model pre-trained on single-cell data; evaluated for its ability to predict perturbation effects zero-shot or after fine-tuning. | Theodoris et al. [4] [24] |

| GEARS | Deep Learning Model | A deep learning model specifically designed for perturbation prediction; often used as a state-of-the-art comparator. | Roohani et al. [24] [25] |

| scVI | Generative Model | A robust probabilistic model for single-cell data; frequently used as a high-performing baseline for tasks like integration and clustering. | Lopez et al. [4] [26] |

| Harmony | Integration Algorithm | A fast and effective method for data integration; used as a baseline for assessing batch correction and cell type separation. | Korsunsky et al. [4] |

| Linear Model / Additive Model | Mathematical Baseline | A deliberately simple model that serves as a critical sanity check; its strong performance highlights the challenges in this field. | N/A [24] |

| Benchmarking Frameworks (e.g., scib-metrics) | Software | Provides standardized metrics (e.g., ASW, iLISI, PCC) to ensure fair and consistent comparison across different models and studies. | Luecken et al. [21] |