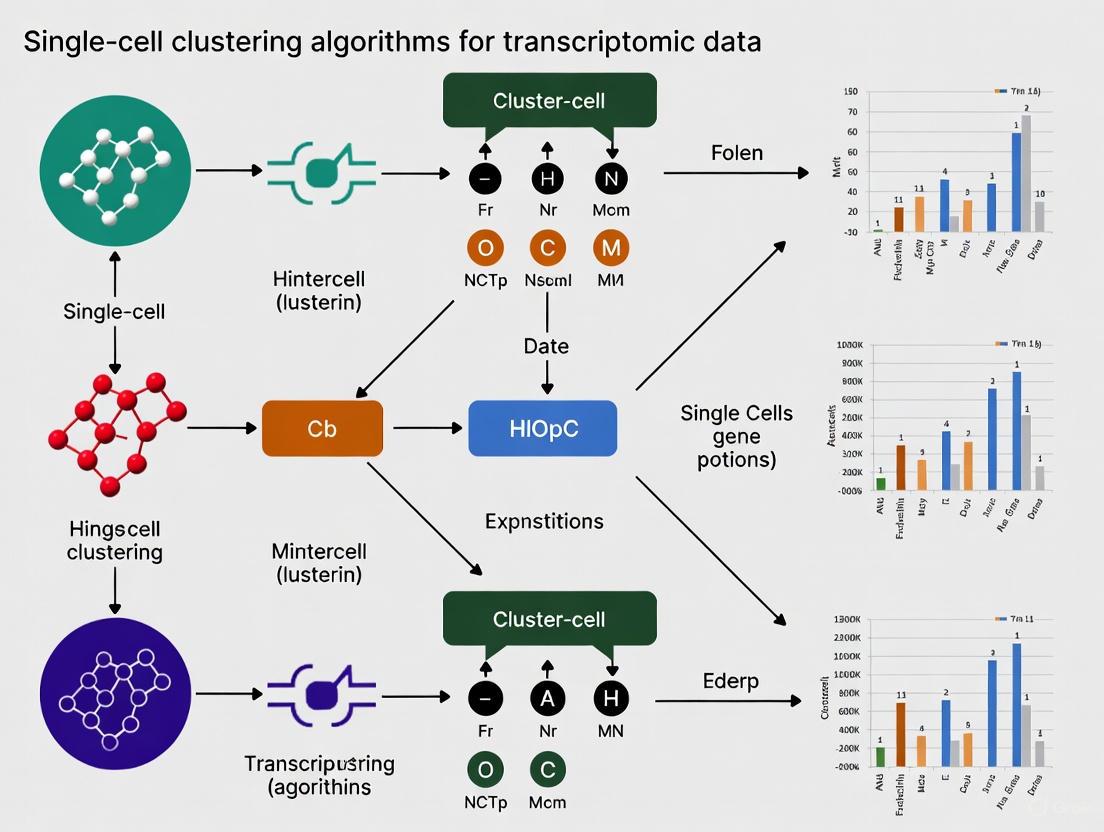

Single-Cell Clustering Algorithms for Transcriptomic Data: A 2025 Benchmarking and Practical Guide

This article provides a comprehensive overview of single-cell RNA sequencing (scRNA-seq) clustering algorithms, essential tools for unraveling cellular heterogeneity.

Single-Cell Clustering Algorithms for Transcriptomic Data: A 2025 Benchmarking and Practical Guide

Abstract

This article provides a comprehensive overview of single-cell RNA sequencing (scRNA-seq) clustering algorithms, essential tools for unraveling cellular heterogeneity. We explore the foundational concepts of cell identity annotation via clustering and detail the landscape of methodological approaches, from classical graph-based to modern deep learning techniques. Drawing on the latest 2025 benchmarking studies, we offer actionable insights for algorithm selection, parameter optimization, and troubleshooting common issues like stochastic inconsistency. A comparative analysis of top-performing methods, including scAIDE, scDCC, and FlowSOM, equips researchers and drug development professionals with the knowledge to generate robust, reliable clustering results for downstream biological discovery and clinical application.

Understanding Single-Cell Clustering: The Key to Unlocking Cellular Heterogeneity

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the transcriptomic profiling of individual cells, thereby revealing cellular heterogeneity and identifying novel cell types [1] [2]. A cornerstone of scRNA-seq data analysis is clustering, an unsupervised learning process that groups cells based on similar gene expression patterns. This grouping is fundamental for cell type identification, forming the basis for downstream analyses like differential expression and trajectory inference [3] [4].

However, the path from raw data to confident cell type assignment is fraught with technical and computational challenges. These include the high dimensionality of the data, the impact of technical noise (such as "dropout" events where transcripts fail to be detected), and the inherent stochasticity of clustering algorithms themselves [5] [4]. This article details the current best practices and latest methodologies in scRNA-seq clustering, providing a structured framework for researchers to derive robust and biologically meaningful conclusions from their data.

Key Clustering Algorithms and Performance Benchmarks

A wide array of clustering algorithms has been developed for or applied to scRNA-seq data. These methods can be broadly categorized, each with distinct strengths and weaknesses [1] [4].

The table below summarizes the primary categories of clustering algorithms used in scRNA-seq analysis:

Table 1: Categories of Single-Cell RNA-seq Clustering Algorithms

| Category | Description | Key Examples | Typical Use Case |

|---|---|---|---|

| Community Detection | Operates on a k-nearest neighbour (KNN) graph to find densely connected groups of cells. | Leiden [6], Louvain [6], PARC [7] | Default in many toolkits (e.g., Seurat, Scanpy); fast and efficient. |

| Classical Machine Learning | Traditional clustering methods adapted for high-dimensional data. | K-means [1], Hierarchical Clustering [1], SC3 [8], SIMLR [1] | General-purpose clustering; some (e.g., SC3) offer consensus approaches. |

| Density-Based | Identifies clusters as high-density regions in the data space. | RaceID [8], densityCut [8] | Effective for identifying rare cell types and complex cluster shapes. |

| Deep Learning | Uses neural networks to learn non-linear representations for clustering. | scDCC [7], scAIDE [7], DESC [7] | Handling complex data distributions and large-scale datasets. |

Recent, comprehensive benchmarking studies have evaluated these algorithms across multiple criteria, including the accuracy of estimating the number of cell types, the concordance of cell assignments with known labels, and computational efficiency [8] [7]. One such study evaluated 28 algorithms on 10 paired transcriptomic and proteomic datasets [7].

The following table summarizes the top-performing algorithms from this benchmark based on the Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI), metrics that measure the similarity between computational clustering and ground-truth labels:

Table 2: Top-Performing Clustering Algorithms in Recent Benchmark Studies (2022-2025)

| Algorithm | Category | Performance (Transcriptomics) | Performance (Proteomics) | Computational Notes |

|---|---|---|---|---|

| scAIDE | Deep Learning | Top 3 (Ranked 2nd) [7] | Top 3 (Ranked 1st) [7] | High overall performance across omics. |

| scDCC | Deep Learning | Top 3 (Ranked 1st) [7] | Top 3 (Ranked 2nd) [7] | Also recommended for memory efficiency [7]. |

| FlowSOM | Classical ML | Top 3 (Ranked 3rd) [7] | Top 3 (Ranked 3rd) [7] | Excellent robustness and performance [7]. |

| Leiden | Community Detection | Common default method [6] | Common default method [6] | Good balance of speed and performance [7]. |

| scICE | Ensemble/Stability | High reliability in estimating consistent clusters [5] | Not Evaluated | Up to 30x faster than conventional consensus methods [5]. |

These benchmarks reveal that while deep learning methods like scAIDE and scDCC often achieve top accuracy, community-detection methods like Leiden offer a robust and computationally efficient default choice [6] [7]. Furthermore, newer methods like scICE address the critical issue of clustering consistency, ensuring results are not artifacts of a particular algorithm's random seed [5].

Experimental Protocols for Reliable Clustering

A successful clustering analysis is built upon a rigorous pre-processing workflow. Deviations from best practices can lead to misleading clusters driven by technical artifacts rather than biology.

Pre-processing and Quality Control (QC)

The first step is to filter the count matrix to remove low-quality cells and genes.

Quality Control (QC) of Cells: Cells are typically filtered based on three key metrics [2]:

- Count Depth: The total number of UMIs (or reads) per cell. Barcodes with very low counts may represent empty droplets, while those with abnormally high counts could be doublets (multiple cells captured together) [9] [2].

- Number of Genes: The number of genes detected per cell. This correlates with count depth and is used similarly to filter low-quality cells and doublets.

- Mitochondrial Read Fraction: The proportion of reads mapping to mitochondrial genes. A high fraction (>5-10%) often indicates stressed, apoptotic, or low-quality cells due to broken membranes [9] [4]. Thresholds are dataset-specific, and exploratory visualization is crucial.

Gene Filtering: Genes that are detected in only a very small number of cells (e.g., less than 10) are often filtered out as they provide little information for clustering.

Normalization: To correct for differences in sequencing depth between cells, data is normalized. Common methods include log-normalization, and more advanced approaches like

sctransformwhich uses Pearson residuals from a regularized negative binomial regression [4].Feature Selection: Dimensionality is reduced by selecting Highly Variable Genes (HVGs) that drive cell-to-cell heterogeneity. These genes contain the most informative signal for distinguishing cell types [2].

Dimensionality Reduction: Principal Component Analysis (PCA) is applied to the scaled HVGs to create a lower-dimensional representation that captures the major axes of variation [6] [4]. The top principal components (PCs) are used for downstream graph construction and clustering.

The Clustering Workflow and Resolution Parameter

The standard clustering workflow in tools like Seurat and Scanpy involves building a graph from the reduced dimensional space (e.g., the top 30 PCs) and then applying a community detection algorithm [6].

- Graph Construction: A k-Nearest Neighbour (KNN) graph is calculated, where each cell is connected to its k most similar cells in PCA space [6].

- Community Detection: The Leiden (or Louvain) algorithm partitions the KNN graph into highly interconnected "communities," which correspond to cell clusters [6]. The

sc.tl.leidenfunction in Scanpy implements this.

A critical parameter is the resolution, which controls the granularity of the clustering [6].

- Lower resolution (e.g., 0.2-0.5) produces fewer, broader clusters.

- Higher resolution (e.g., 1.0-2.0) produces more, finer clusters. It is considered a best practice to cluster the data at multiple resolution parameters and use biological knowledge and consistency metrics to choose the most appropriate result [6]. The following DOT language script visualizes this complete workflow.

Protocol: Evaluating Clustering Consistency with scICE

A major challenge with stochastic clustering algorithms is inconsistency across different runs due to random seeds, which undermines reliability [5]. The recently developed scICE (single-cell Inconsistency Clustering Estimator) provides a protocol to address this [5].

Principle: Instead of relying on a single clustering result, scICE runs the Leiden algorithm multiple times with different random seeds and evaluates the consistency of the resulting labels using the Inconsistency Coefficient (IC). An IC close to 1 indicates highly consistent and reliable clusters [5].

Step-by-Step Protocol:

- Input Preparation: Begin with a pre-processed scRNA-seq dataset (post-QC, normalization, and PCA).

- Parallel Clustering: Distribute the cell-cell graph to multiple processor cores. On each core, run the Leiden algorithm simultaneously with a different random seed. Repeat this for a range of resolution parameters [5].

- Similarity Calculation: For each resolution, calculate the pairwise similarity between all cluster label results using element-centric similarity (ECS) to construct a similarity matrix [5].

- Inconsistency Coefficient (IC) Calculation: Compute the IC from the similarity matrix and the probability of each unique cluster label. Lower IC values indicate more consistent clustering [5].

- Result Identification: Identify the cluster labels (and their corresponding resolution parameters) that yield an IC below a reliability threshold. These consistent results form a compact set of candidate clusterings for downstream biological interpretation, drastically reducing the parameter space a researcher needs to explore manually [5].

Successful scRNA-seq clustering relies on a combination of computational tools, reference data, and biological reagents. The following table lists key resources for planning and executing a clustering analysis.

Table 3: Essential Tools and Resources for scRNA-seq Clustering Analysis

| Item Name | Type | Function in Analysis | Examples & Notes |

|---|---|---|---|

| Cell Ranger | Software Pipeline | Processes raw sequencing data (FASTQ) into a gene-cell count matrix, performs initial clustering and annotation [9]. | 10x Genomics' standard pipeline. A key starting point for data generated on their platform [9]. |

| Reference Atlases | Data Resource | Provides pre-annotated, large-scale scRNA-seq datasets for label transfer and cluster annotation [3]. | Human Cell Atlas, Tabula Muris, Tabula Sapiens [8] [3]. |

| Marker Gene Databases | Data Resource | Provides curated lists of genes known to be associated with specific cell types, guiding manual annotation. | CellMarker 2.0 [3]. |

| Annotation Tools | Software | Automates the process of assigning cell identity to clusters by comparing data to references or marker lists. | SingleR, Garnett, CellTypist [3]. |

| Clustering Algorithms | Software | The core computational methods that group cells. | Leiden (community detection), scDCC (deep learning), scICE (stability) [5] [6] [7]. |

| Analysis Platforms | Software Environment | Integrated toolkits that wrap pre-processing, clustering, and visualization into a unified framework. | Seurat (R), Scanpy (Python) [1] [6] [2]. |

| PBMCs (Peripheral Blood Mononuclear Cells) | Biological Sample | A well-characterized, heterogeneous cell population often used as a positive control or benchmark dataset. | 10x Genomics provides public 5k PBMC datasets for tutorial and method testing purposes [9]. |

Visualizing the Logic of Cluster Annotation

Once clusters are defined, the critical step of annotation begins, where biological identities (e.g., "T-cell," "macrophage") are assigned. This is an iterative process that combines computational prediction with biological validation. The following diagram outlines the logical workflow and decision points involved.

Discussion and Future Directions

While current clustering methods are powerful, several challenges remain. Batch effects can confound analysis, requiring specialized integration tools [3] [2]. Distinguishing between biological variation and technical noise is still non-trivial [4]. Furthermore, identifying rare cell types and transitional cell states requires careful parameter tuning and specialized approaches like over-clustering or trajectory inference [3].

The field is rapidly evolving, with several promising future directions:

- Multi-Omics Integration: Clustering will increasingly leverage data from multiple modalities (e.g., scRNA-seq with ATAC-seq or protein abundance) from the same cells to define cell types with higher resolution and confidence [7] [3].

- AI-Driven Annotation: Machine learning models, including large language models, are being developed to go beyond simple pattern matching. The goal is to infer cell states by integrating gene expression patterns with knowledge from the vast scientific literature, enabling more intelligent and automated annotation [3].

- Enhanced Scalability and Robustness: As datasets grow to millions of cells, algorithms must become more computationally efficient without sacrificing accuracy. Methods like scICE that focus on the stability and reliability of clusters are a critical step in this direction, ensuring that biological discoveries are built on a robust computational foundation [5].

In conclusion, a rigorous and well-informed clustering workflow—incorporating careful pre-processing, method selection informed by benchmarks, and consistency evaluation—is paramount for transforming high-dimensional scRNA-seq data into meaningful biological insights.

The analysis of single-cell transcriptomics data presents significant challenges due to its high-dimensional nature, where each of the thousands of cells is characterized by expression measurements of thousands of genes. K-Nearest Neighbor (K-NN) graphs have emerged as a fundamental computational scaffold for navigating this complexity, serving as the foundational data structure for cellular heterogeneity exploration. In this framework, individual cells are represented as nodes in a graph, with edges connecting each cell to its k most similar counterparts based on transcriptome profiles. The subsequent application of community detection algorithms on these graphs enables the identification of densely connected groups of cells, which correspond to distinct cell types or states. This graph-based approach has become the cornerstone of modern single-cell RNA-sequencing (scRNA-seq) analysis, overcoming limitations of traditional clustering methods that often struggle with the continuous nature of transcriptional states and the reliable identification of rare cell populations.

Theoretical Foundation

Construction of the K-NN Graph

The process of constructing a K-NN graph from single-cell transcriptomic data involves several methodical steps. Initially, feature selection is performed to identify highly variable genes that contribute most to biological heterogeneity, thereby reducing technical noise. The expression matrix is then projected into a lower-dimensional space, typically using principal component analysis, to compute cellular distances efficiently. For each cell, the k cells with the smallest distances (e.g., Euclidean, cosine) in this reduced space are identified as its nearest neighbors [6] [10].

The choice of the parameter k profoundly influences the resulting graph topology. A small k value may produce a fragmented graph unable to capture global population structure, while an excessively large k may create spurious connections between biologically distinct populations. Advanced methods like aKNNO address this challenge by implementing an adaptive k-selection strategy that automatically chooses an appropriate k for each cell based on its local distance distribution, assigning smaller k values to rare cells and larger k values to abundant cell types [11].

Community Detection Algorithms

Once the K-NN graph is constructed, community detection algorithms identify groups of cells with denser connections within groups than between them. The Leiden algorithm has emerged as the current standard for this task, outperforming its predecessor, the Louvain algorithm, by guaranteeing well-connected communities [6]. The algorithm optimizes the partition of cells into communities by maximizing a quality function called modularity, which measures the density of connections within communities compared to what would be expected in a random graph [6] [10].

The resolution parameter directly controls the granularity of the resulting clusters, with higher values leading to more fine-grained communities [6]. This parameter enables researchers to explore cellular heterogeneity at multiple biological scales, from major cell types to subtle subpopulations.

Comparative Analysis of Methods and Performance

Table 1: Overview of Graph-Based Clustering Methods for Single-Cell Transcriptomics

| Method | K-NN Graph Construction | Graph Refinement | Community Detection | Key Features |

|---|---|---|---|---|

| aKNNO | Adaptive k based on local distance distribution | Shared Nearest Neighbors (SNN) reweighting | Louvain | Specifically designed for simultaneous identification of abundant and rare cell types [11] |

| CosTaL | L2knng algorithm with cosine similarity | Tanimoto coefficient | Leiden | Combines angular and spatial separation; no normalization required for scRNA-seq [10] |

| PhenoGraph | kd-tree or brute force | Jaccard similarity | Louvain/Leiden | Pioneering method adapting Jaccard-Louvain approach for single-cell data [10] |

| Scanpy | PyNNDescent algorithm | Connectivity | Leiden | Comprehensive toolkit with standard preprocessing pipeline [6] [10] |

| PARC | HNSW algorithm | Jaccard similarity with threshold cutoffs | Leiden | Specializes in detecting rare populations [10] |

| Milo | Standard K-NN graph | Not applicable | Not applicable | Models cell states as overlapping neighborhoods for differential abundance testing [12] |

Table 2: Performance Benchmarking of Selected Methods

| Method | Accuracy on Abundant Cell Types (ARI) | Accuracy on Rare Cell Types (F1 Score) | Scalability to Large Datasets | Notable Application Strengths |

|---|---|---|---|---|

| aKNNO | High (ARI ≈ 1) | Perfect (F1 = 1) in simulated data with rare cells similar to abundant populations [11] | Good | Identifies known and novel rare cell types without sacrificing abundant type performance [11] |

| CosTaL | Equivalent or higher than state-of-the-art | Equivalent or higher than state-of-the-art | High efficiency with small datasets; acceptable for large datasets [10] | Effective on both cytometry and scRNA-seq data without normalization [10] |

| Scanpy | High | Moderate | Good | Integrated ecosystem with preprocessing and visualization [6] |

| PhenoGraph | High | Moderate | Moderate | Established benchmark method [10] |

Experimental Protocols

Standard Workflow for K-NN Graph-Based Clustering with Scanpy

Purpose: To identify cell populations from single-cell RNA-seq data using community detection on a K-NN graph.

Materials:

- Software: Scanpy Python package (v1.9.0 or higher)

- Input Data: Processed count matrix (cells × genes) with basic quality control applied

- Computational Resources: Standard workstation (8+ GB RAM recommended for datasets >10,000 cells)

Procedure:

- Data Preprocessing:

- Normalize total counts to 10,000 per cell:

sc.pp.normalize_total(adata, target_sum=1e4) - Logarithmize the data:

sc.pp.log1p(adata) - Identify highly variable genes:

sc.pp.highly_variable_genes(adata, n_top_genes=2000) - Scale data to zero mean and unit variance:

sc.pp.scale(adata, max_value=10)

- Normalize total counts to 10,000 per cell:

Dimensionality Reduction:

- Compute principal components:

sc.tl.pca(adata, svd_solver='arpack') - Determine statistically significant PCs using elbow plot:

sc.pl.pca_variance_ratio(adata, log=True)

- Compute principal components:

K-NN Graph Construction:

- Construct K-NN graph using the first 30 PCs:

sc.pp.neighbors(adata, n_neighbors=15, n_pcs=30) - Parameters can be adjusted based on dataset size and complexity

- Construct K-NN graph using the first 30 PCs:

Community Detection:

- Apply Leiden algorithm at multiple resolutions:

Visualization and Interpretation:

- Generate UMAP embedding:

sc.tl.umap(adata) - Visualize clusters:

sc.pl.umap(adata, color=["leiden_res0_25", "leiden_res0_5", "leiden_res1"]) - Identify cluster markers:

sc.tl.rank_genes_groups(adata, 'leiden_res0_5', method='wilcoxon')[6]

- Generate UMAP embedding:

Specialized Protocol for Rare Cell Type Identification with aKNNO

Purpose: To simultaneously identify both abundant and rare cell types using an adaptive K-NN graph approach.

Materials:

- Software: aKNNO implementation (available from original publication)

- Input Data: Normalized and log-transformed expression matrix

- Computational Resources: Similar to standard workflow

Procedure:

- Feature Selection:

- Follow standard preprocessing as in Protocol 4.1 steps 1-2

- Select highly variable genes with emphasis on preserving potential rare population markers

Adaptive K-NN Graph Construction:

- For each cell, compute distances to Kmax nearest neighbors (default Kmax=10)

- Sort distances in ascending order: d₁ < d₂ < ... < d_Kmax

- Determine adaptive k for each cell based on local distance distribution and cutoff parameter σ

- Automatically assign smaller k for rare cells and larger k for abundant cells [11]

Graph Optimization:

- Apply shared nearest neighbor (SNN) reweighting to refine graph connectivity

- Perform grid search to identify optimal σ parameter that balances sensitivity and specificity

Community Detection:

- Apply Louvain community detection on the optimized adaptive K-NN graph

- The method automatically identifies communities corresponding to both abundant and rare cell types without requiring specialized rare-cell detection modules [11]

Validation:

- Compare clustering results with known markers for rare populations

- Validate using simulated datasets with ground truth where available

Figure 1: Standard workflow for K-NN graph-based clustering in single-cell transcriptomics, highlighting key computational steps and parameters that influence clustering outcomes.

The Scientist's Toolkit

Table 3: Essential Computational Tools and Resources

| Tool/Resource | Type | Purpose | Application Context |

|---|---|---|---|

| Scanpy [6] | Python package | Comprehensive single-cell analysis | End-to-end workflow from preprocessing to clustering and visualization |

| Seurat [10] | R package | Single-cell analysis suite | Alternative comprehensive ecosystem with sophisticated normalization |

| Leiden Algorithm [6] | Community detection | Graph partitioning | Preferred over Louvain for guaranteed well-connected communities |

| MetaCell [13] | R/C++ package | Metacell partitioning | Creating granular groups of profiles that could be resampled from same cell |

| COMSE [14] | Feature selection | Community detection-based gene selection | Identifying informative genes for improved cell sub-state identification |

| CosTaL [10] | Python implementation | Cosine-based clustering | Effective clustering without requiring normalization for scRNA-seq data |

Advanced Applications and Integration

Spatial Transcriptomics Integration

The K-NN graph framework extends beyond dissociated single-cell data to spatial transcriptomics, where it enables the identification of spatially coherent domains and niches. Methods like SCGP enhance this approach by constructing dual graphs incorporating both spatial edges (based on physical proximity via Delaunay triangulation) and feature edges (connecting cells with similar expression profiles) [15]. This combined approach ensures spatial continuity while maintaining consistency in tissue structure interpretation across samples. Applications in diabetic kidney disease tissue have demonstrated superior performance (median ARI = 0.60) in identifying anatomical structures compared to alternative methods [15].

Differential Abundance Testing

Milo represents a novel adaptation of K-NN graphs that moves beyond discrete clustering to model cellular states as overlapping neighborhoods on the graph [12]. This approach enables differential abundance testing between experimental conditions without relying on predefined clusters, particularly valuable for identifying subtle abundance changes along continuous trajectories or in response to perturbations. The method uses a negative binomial generalized linear model framework to test for abundance differences in these overlapping neighborhoods while controlling for false discovery rates.

Troubleshooting and Optimization Guidelines

Parameter Selection

- Number of neighbors (k): Start with k = 15-30 for datasets of 10,000-100,000 cells. Increase k for larger datasets to improve connectivity. Consider adaptive methods like aKNNO for populations with high size disparity [11] [6].

- Resolution parameter: Begin with resolution = 0.8 for broad cell type identification. Use resolution = 1.5-2.0 for finer subpopulation distinction. Multiple resolutions should be explored in parallel [6].

- Number of principal components: Typically 20-50 PCs capture sufficient biological variation. Use elbow plot of explained variance to determine optimal number [6].

Quality Assessment

- Cluster stability: Evaluate consistency across multiple random initializations.

- Rare population detection: Validate using known marker genes and compare performance with specialized methods like PARC or aKNNO [11] [10].

- Biological interpretation: Ensure clusters correspond to meaningful biological states through marker gene identification and functional enrichment analysis.

Figure 2: Troubleshooting guide for common challenges in K-NN graph-based clustering, with corresponding solution strategies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the exploration of cellular heterogeneity from a single-cell perspective, providing unprecedented resolution for identifying cell types, states, and functions [16] [17]. Unsupervised clustering methods form the foundational computational framework for interpreting scRNA-seq data, allowing researchers to delineate distinct cellular subpopulations without prior knowledge of cell identities [4]. The rapid evolution of these algorithms has produced specialized method families with distinct mechanistic approaches and application domains, presenting both opportunities and challenges for researchers and drug development professionals seeking to implement these tools in transcriptomic studies [7].

This overview examines three principal algorithm families—graph-based, deep learning, and biclustering approaches—that represent the current state-of-the-art in single-cell clustering for transcriptomic data. Each paradigm offers unique advantages: graph-based methods excel at capturing complex cellular relationships through network structures; deep learning approaches leverage neural networks to handle high-dimensional, sparse data distributions; and biclustering techniques identify local gene-cell co-expression patterns that may be obscured in global clustering analyses [16] [18] [19]. We provide structured comparisons, detailed protocols, and practical implementation guidelines to facilitate informed method selection within the broader context of single-cell transcriptomic research and drug discovery applications.

Algorithm Families: Core Principles and Representative Methods

Graph-Based Clustering Approaches

Graph-based clustering methods represent single-cell data as networks where nodes correspond to cells and edges represent similarities in gene expression profiles [16]. These approaches typically employ community detection algorithms to identify densely connected groups of cells, effectively partitioning the cellular landscape into distinct subpopulations.

The Seurat toolkit exemplifies graph-based clustering, constructing a Shared Nearest Neighbor (SNN) graph from the single-cell expression matrix and applying modularity optimization techniques to identify cell communities [16]. Similarly, ScGSLC integrates scRNA-seq data with protein-protein interaction networks using Graph Convolutional Networks (GCNs) to embed cellular relationships, while MPSSC employs spectral clustering with multiple similarity matrices to enhance robustness against high noise and missing data [16]. These methods particularly excel at preserving nonlinear structures and complex topological relationships between cells, making them suitable for heterogeneous tissues with continuous developmental trajectories [16] [4].

Deep Learning-Based Clustering Approaches

Deep learning methods utilize neural network architectures to learn low-dimensional representations that are optimized for clustering objectives, simultaneously addressing dimensionality reduction and cell grouping within unified frameworks [18] [19]. These approaches typically employ autoencoder variants or graph neural networks to capture complex, hierarchical patterns in transcriptomic data.

Table 1: Representative Deep Learning Clustering Methods

| Method | Architecture | Key Features | Reported Advantages |

|---|---|---|---|

| scDeepCluster | Denoising Autoencoder | Joint optimization of reconstruction and clustering loss | Enhanced robustness to technical noise [18] |

| scDCC | Deep Clustering Network | Incorporates partial labels as prior information | Improved performance in semi-supervised settings [18] [7] |

| scG-cluster | Dual-topology Graph Convolutional Network | Integrates global and local node distribution information | Mitigates oversmoothing; enhanced stability [18] |

| scSMD | Convolutional Autoencoder with Multi-Dilated Attention Gate | Negative binomial distribution; dynamic feature weighting | Superior clustering accuracy on complex data [19] |

| scBGDL | Graph Attention Networks | Integrates single-cell and bulk transcriptomic data | Identifies clinical cancer subtypes [20] |

Notably, scG-cluster introduces a dual-topology adjacency graph that enriches cellular relationship representation by incorporating both global and local feature information, addressing limitations of conventional Graph Convolutional Networks (GCNs) that often suffer from oversmoothing [18]. The architecture employs residual connections to preserve feature discrimination and an attention mechanism to dynamically weight informative features, significantly enhancing clustering accuracy and stability across diverse datasets [18].

Biclustering Approaches

Biclustering methods simultaneously cluster both cells and genes, identifying local consistency patterns where specific gene sets exhibit similar expression profiles across particular cell subsets [16] [21]. This dual perspective is particularly valuable for detecting functional gene modules that operate in specific cellular contexts, such as disease states or developmental stages.

Table 2: Biclustering Method Categories and Applications

| Method Category | Representative Methods | Mechanism | Typical Applications |

|---|---|---|---|

| Graph-Based | BiSNN-Walk | Iterative cell clustering and candidate gene filtering | Identifying cell-type specific gene programs [16] |

| Information-Theoretic | QUBIC2 | Information-theoretic metric (Kullback-Leibler divergence) | Detecting functional gene modules [16] |

| Sequence Alignment-Based | runibic | Longest Common Subsequence (LCS) method | Finding ordered bimodules in expression data [16] |

| Statistical-Based | GiniClust3 | Gini index and Fano factor measurements | Rare cell type identification [16] |

| Factor Decomposition-Based | SSLB | Factor decomposition with dynamic scaling | Extracting latent features from complex data [16] |

Biclustering approaches demonstrate particular utility for mining partially annotated datasets and identifying local co-expression patterns that might be overlooked by global clustering methods [16]. For example, biclustering has been successfully applied to Alzheimer's disease research, simultaneously capturing gene interactions and cellular heterogeneity to reveal cell-specific transcriptomic perturbations during disease progression [21].

Performance Comparison and Benchmarking Insights

Recent large-scale benchmarking studies provide critical insights into the relative performance of clustering algorithms across diverse transcriptomic datasets. A comprehensive evaluation of 28 clustering methods on 10 paired transcriptomic and proteomic datasets revealed that top-performing methods consistently demonstrate cross-modal applicability, with scAIDE, scDCC, and FlowSOM achieving superior performance for both transcriptomic and proteomic data [7] [22].

Table 3: Performance Benchmarking of Clustering Algorithms (Adapted from Genome Biology, 2025)

| Performance Priority | Recommended Methods | Key Strengths |

|---|---|---|

| Overall Accuracy | scAIDE, scDCC, FlowSOM | High clustering accuracy (ARI, NMI) across modalities [7] |

| Memory Efficiency | scDCC, scDeepCluster | Optimized memory utilization for large datasets [7] |

| Computational Speed | TSCAN, SHARP, MarkovHC | Fast processing suitable for high-throughput data [7] |

| Robustness | FlowSOM, Community detection methods | Consistent performance across noise levels and dataset sizes [7] |

For researchers prioritizing specific performance metrics, method selection requires careful consideration of dataset characteristics and analytical goals. Benchmarking analyses indicate that biclustering methods particularly excel at identifying local consistency in complex data structures, while deep learning approaches generally outperform other paradigms when dealing with unknown datasets or requiring integration of multiple data modalities [16] [7].

Experimental Protocols and Implementation Guidelines

Standardized scRNA-seq Clustering Workflow

The following protocol outlines a comprehensive workflow for single-cell clustering analysis, integrating best practices from multiple methodological approaches:

Protocol 1: Graph-Based Clustering with Seurat

This protocol details the implementation of graph-based clustering following the Seurat workflow, which has emerged as a community standard for single-cell analysis [16] [4]:

Data Preprocessing: Begin with the raw count matrix. Filter out cells expressing fewer than 200 genes or more than 2,500 genes to remove low-quality cells and potential doublets. Exclude cells with mitochondrial content exceeding 5%, indicating compromised cell viability [4].

Normalization and Scaling: Normalize the data using a global-scaling method that adjusts the gene expression measurements for each cell by the total expression, multiplies by a scale factor (10,000), and log-transforms the result. Follow with linear scaling ('z-scoring') to standardize the expression of each gene across cells [18] [4].

Feature Selection: Identify the top 2,000 highly variable genes (HVGs) based on a variance-stabilizing transformation to focus on biologically meaningful genes and reduce computational overhead [18] [4].

Linear Dimension Reduction: Perform Principal Component Analysis (PCA) on the scaled data of HVGs. Select the optimal number of principal components (typically 10-50) based on the elbow point in a scree plot of standard deviations [4].

Graph Construction and Clustering: Construct a k-Nearest Neighbor (k-NN) graph based on Euclidean distance in PCA space (default k=20). Refine this into a Shared Nearest Neighbor (SNN) graph to quantify the overlap in local neighborhoods between cell pairs. Apply the Louvain or Leiden algorithm to partition the SNN graph into distinct cell communities, typically using a resolution parameter between 0.4-1.2 for most datasets [16] [4].

Visualization and Interpretation: Generate 2D embeddings using UMAP or t-SNE based on the PCA reduction to visualize clustering results. Identify cluster-specific marker genes through differential expression analysis and annotate cell types using known marker genes or reference datasets [4].

Protocol 2: Deep Learning Clustering with scG-cluster

For researchers requiring enhanced accuracy on complex datasets, the scG-cluster framework provides a sophisticated deep learning alternative [18]:

Data Preparation: Follow standard preprocessing steps (quality control, normalization) as in Protocol 1. The scG-cluster model specifically benefits from Z-score scaling of log-transformed gene expression data to standardize the expression of each gene across cells (mean=0, standard deviation=1) [18].

Dual Adjacency Graph Construction: Construct two complementary adjacency matrices representing cellular relationships:

- Global topology: Compute cell-cell similarities using the entire gene expression profile.

- Local topology: Calculate neighborhood relationships based on local feature distributions. Integrate both matrices to form a comprehensive graph representation that captures multi-scale cellular relationships [18].

Model Configuration: Implement the Topology Adaptive Graph Convolutional Network (TAGCN) architecture with residual concatenation connections. Configure the network with attention mechanisms to dynamically weight node features during message passing, enhancing focus on informative genes [18].

Multi-task Training: Train the model using a combined objective function including:

- Reconstruction loss: Minimize discrepancy between input and decoded expression profiles.

- Clustering loss: Optimize cluster assignment purity using self-supervised objectives.

- Topological preservation: Maintain consistency with the dual adjacency structure. Implement iterative cluster center updates during training to adapt to evolving data distributions [18].

Inference and Evaluation: Extract the latent embeddings from the trained encoder and assign cluster labels based on proximity to learned cluster centers. Evaluate clustering quality using internal validation metrics (Silhouette Index, Davies-Bouldin Index) and biological consistency through marker gene enrichment [18].

Table 4: Essential Computational Tools for Single-Cell Clustering Analysis

| Resource Category | Specific Tools/Packages | Primary Function | Application Context |

|---|---|---|---|

| Comprehensive Analysis Platforms | Seurat (R), SCANPY (Python) | End-to-end scRNA-seq analysis | Standardized workflows; community detection clustering [16] [4] |

| Deep Learning Frameworks | TensorFlow, PyTorch | Neural network implementation | Custom deep clustering models (scDeepCluster, scDCC) [18] [19] |

| Graph Analysis Libraries | igraph (R/python), NetworkX (Python) | Graph manipulation and community detection | Graph-based clustering implementations [16] |

| Benchmarking Suites | scIB (Python), clustree (R) | Clustering method evaluation | Performance comparison and method selection [7] |

| Visualization Tools | ggplot2 (R), matplotlib (Python) | Data visualization and plotting | Result interpretation and publication-quality figures [4] |

Successful implementation of single-cell clustering analyses requires appropriate computational infrastructure, particularly for deep learning approaches which benefit significantly from GPU acceleration. Memory requirements vary substantially by method, with graph-based approaches typically requiring 8-16GB RAM for datasets of ~10,000 cells, while deep learning methods may utilize 16-32GB RAM for comparable data sizes [7].

Applications in Drug Discovery and Development

Single-cell clustering algorithms have become indispensable tools in pharmaceutical research, enabling unprecedented resolution for understanding disease mechanisms and therapeutic responses [17] [23]. In target identification, clustering analysis of patient tissues reveals novel cell subtypes and disease-associated cellular states, highlighting promising therapeutic targets [17]. For example, clustering of tumor microenvironments has identified rare cell populations driving therapy resistance, enabling targeted intervention strategies [17] [23].

In preclinical development, clustering methods applied to complex tissue models help validate the physiological relevance of experimental systems and assess compound effects across diverse cellular compartments [17] [23]. The integration of single-cell clustering with CRISPR screening technologies (e.g., Perturb-seq) enables systematic mapping of gene regulatory networks and identification of synthetic lethal interactions at single-cell resolution [17]. Additionally, clustering analysis of clinical samples facilitates biomarker discovery and patient stratification by identifying transcriptionally defined cell subtypes associated with treatment response or disease progression [23] [20].

The evolving landscape of single-cell clustering algorithms offers researchers diverse analytical paradigms tailored to specific experimental questions and data characteristics. Graph-based methods provide intuitive, computationally efficient approaches for standard analyses; deep learning techniques deliver enhanced accuracy on complex datasets through integrated representation learning; and biclustering approaches uncover local gene-cell relationships often missed by global clustering methods [16] [18] [7].

Method selection should be guided by dataset properties, analytical goals, and computational resources, with emerging benchmarking studies providing evidence-based guidance for optimal algorithm choice [7]. As single-cell technologies continue to advance, integrating clustering approaches with multi-omic measurements and spatial context will further enhance our ability to decipher cellular heterogeneity in health and disease, ultimately accelerating therapeutic development and precision medicine initiatives [17] [23] [20].

A Practical Workflow: From Data Preprocessing to Algorithm Implementation

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the characterization of gene expression at unprecedented resolution. This technology allows researchers to uncover cellular heterogeneity, identify rare cell populations, and understand developmental trajectories and disease mechanisms in a way that was previously impossible with bulk sequencing approaches. Clustering analysis stands as a fundamental step in scRNA-seq data analysis, serving to group cells with similar expression profiles together, thereby facilitating cell type identification and characterization.

This protocol article provides detailed, step-by-step methodologies for performing single-cell clustering using two of the most widely adopted frameworks in the field: Scanpy (Python-based) and Seurat (R-based). Both frameworks offer comprehensive toolkits for the entire single-cell analysis workflow, from quality control to advanced downstream analyses. The clustering algorithms implemented in these frameworks, particularly graph-based methods such as Leiden and Louvain, have been extensively benchmarked and validated across diverse dataset types and sizes [7] [24].

Within the broader context of single-cell clustering algorithm research for transcriptomic data, this guide focuses on the practical application of established methods that have demonstrated robust performance in comparative benchmarking studies. Recent evaluations of 28 computational clustering algorithms have identified several top-performing methods that can be implemented through these frameworks, including scDCC, scAIDE, and FlowSOM for transcriptomic data [7]. By providing standardized protocols for these validated approaches, we aim to support reproducible and biologically meaningful clustering analyses in transcriptomic research and drug development applications.

Experimental Design and Workflow

The clustering workflows for both Scanpy and Seurat follow similar conceptual steps, though their implementations differ due to their respective programming environments and data structures. The overall process can be divided into three main phases: (1) data preprocessing and quality control, (2) dimensionality reduction and feature selection, and (3) clustering and visualization. Benchmarking studies have demonstrated that consistent application of these preprocessing steps significantly improves clustering performance and biological interpretability [7].

The following diagram illustrates the parallel workflows for Scanpy and Seurat, highlighting their analogous processing steps:

Materials and Reagents

| Category | Item | Function/Specification |

|---|---|---|

| Hardware | Computational Workstation | Minimum 16GB RAM (32GB+ recommended for large datasets); Multi-core processor |

| Software Environment | R (v4.0+) | Programming language for Seurat workflow [25] [26] |

| Python (v3.7+) | Programming language for Scanpy workflow [27] [28] | |

| Single-Cell Analysis Packages | Seurat R package | Comprehensive toolkit for single-cell analysis in R [25] [24] |

| Scanpy Python package | Scalable toolkit for single-cell analysis in Python [27] [28] | |

| Data Structures | Seurat Object | Container for single-cell data storing count matrix, metadata, and analyses [25] |

| AnnData Object | Container for single-cell data with annotated data matrices [27] [28] | |

| Input Data | Count Matrix | Gene expression matrix (cells × genes) in MTX, H5, or CSV format [25] [29] |

| Feature File | Gene annotations (genes.tsv) [29] | |

| Barcode File | Cell identifiers (barcodes.tsv) [29] | |

| Quality Control Metrics | Mitochondrial Gene Percentage | QC metric identifying low-quality cells using MT- prefix genes [25] [27] |

| nFeatureRNA / ngenes | Number of genes detected per cell [25] [27] | |

| nCountRNA / totalcounts | Total molecules detected per cell [25] [27] |

Step-by-Step Protocol

Scanpy Workflow for Single-Cell Clustering

Scanpy provides a comprehensive Python-based framework for analyzing single-cell gene expression data, building upon the AnnData data structure which efficiently handles large, sparse matrices typical of scRNA-seq datasets [27] [28].

Data Loading and Quality Control

Begin by importing the count matrix and creating an AnnData object, then perform comprehensive quality control:

The quality control step filters out low-quality cells and genes, which is crucial for obtaining reliable clustering results. Cells with too few or too many genes detected may represent empty droplets or multiplets, while high mitochondrial percentage often indicates apoptotic or damaged cells [27] [28].

Normalization, Feature Selection, and Dimensionality Reduction

Proceed with data normalization, identification of highly variable genes, and dimensionality reduction:

The selection of highly variable genes focuses the analysis on biologically informative features, while PCA reduces dimensionality and computational complexity for subsequent steps [27].

Neighborhood Graph Construction and Clustering

Construct a k-nearest neighbor graph and perform clustering using the Leiden algorithm:

The resolution parameter controls the granularity of clustering, with higher values resulting in more clusters. The optimal resolution depends on the specific dataset and biological question [27] [30].

Seurat Workflow for Single-Cell Clustering

Seurat provides an equally comprehensive R-based framework for single-cell analysis, utilizing a specialized object structure to store all data and analysis results [25] [26].

Data Loading and Quality Control

Begin by loading the count matrix and creating a Seurat object, then perform quality control:

The Seurat object automatically calculates basic QC metrics during creation, including the number of features (genes) and counts per cell [25] [26].

Normalization, Feature Selection, and Dimensionality Reduction

Proceed with normalization, identification of variable features, and scaling:

The FindVariableFeatures function implements the variance stabilizing transformation ("vst") method, which models the mean-variance relationship inherent in single-cell data to select biologically informative genes [25].

Neighborhood Graph Construction and Clustering

Construct shared nearest neighbor graph and perform clustering:

For larger datasets, the Leiden algorithm (algorithm = 4) may provide improved performance. The resolution parameter should be adjusted based on the expected complexity of the dataset, with values typically ranging from 0.4-1.2 for most applications [24].

Performance Benchmarking and Method Selection

Recent comprehensive benchmarking of single-cell clustering algorithms provides valuable guidance for method selection. The following table summarizes key performance metrics from a study evaluating 28 computational algorithms on 10 paired transcriptomic and proteomic datasets:

Clustering Algorithm Performance Comparison

| Method | Framework | ARI (Transcriptomic) | NMI (Transcriptomic) | ARI (Proteomic) | NMI (Proteomic) | Computational Efficiency | Recommended Use Case |

|---|---|---|---|---|---|---|---|

| scDCC | Deep Learning | 0.713 | 0.745 | 0.685 | 0.712 | Memory Efficient | Top performance across omics |

| scAIDE | Deep Learning | 0.705 | 0.738 | 0.692 | 0.720 | Moderate | Top performance across omics |

| FlowSOM | Classical ML | 0.698 | 0.731 | 0.681 | 0.708 | Excellent Robustness | Proteomic data, robust performance |

| Leiden | Community Detection | 0.642 | 0.681 | 0.623 | 0.659 | Time Efficient | Standard transcriptomic clustering |

| Louvain | Community Detection | 0.635 | 0.674 | 0.615 | 0.651 | Time Efficient | Standard transcriptomic clustering |

| TSCAN | Classical ML | 0.628 | 0.667 | 0.591 | 0.629 | Time Efficient | Large datasets, trajectory analysis |

| SHARP | Classical ML | 0.621 | 0.662 | 0.598 | 0.635 | Time Efficient | Large-scale clustering |

Metrics based on benchmarking across 10 paired datasets using Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI) with values closer to 1.0 indicating better performance [7].

Practical Guidance for Method Selection

Based on the benchmarking results and practical considerations:

For most standard applications: The Leiden algorithm (implemented in both Scanpy and Seurat) provides an excellent balance of performance and computational efficiency [7] [27] [24].

For specialized applications requiring top performance: Consider implementing scDCC or scAIDE, particularly when analyzing both transcriptomic and proteomic data simultaneously [7].

For memory-constrained environments: scDCC and scDeepCluster offer memory-efficient alternatives without significant performance compromises [7].

For very large datasets: TSCAN, SHARP, and MarkovHC provide excellent time efficiency for datasets exceeding 100,000 cells [7].

The benchmarking study also highlighted that performance can be influenced by data characteristics, including cell type granularity and the use of highly variable genes. Therefore, researchers should validate clustering results using biological markers regardless of the algorithm selected [7].

Troubleshooting and Optimization

Common Issues and Solutions

Poor cluster separation: Increase the number of highly variable genes or adjust the resolution parameter. Check that appropriate number of PCs were used for graph construction [27] [24].

Over-clustering (too many clusters): Decrease the resolution parameter (typically between 0.4-1.2) or increase the

k.paraminFindNeighbors(Seurat) orn_neighborsinpp.neighbors(Scanpy) [24].Under-clustering (too few clusters): Increase the resolution parameter or check whether too stringent filtering removed biologically relevant cell populations [24].

Batch effects between samples: Use integration methods such as Harmony, BBKNN, or Seurat's CCA integration before clustering when analyzing datasets comprising multiple samples [27] [24].

Computational performance issues: For large datasets (>50,000 cells), consider using the

igraphimplementation in Scanpy (flavor='igraph') or the Leiden algorithm in Seurat (algorithm=4) [27] [24].

Validation of Clustering Results

Always validate clustering results using biological knowledge:

Identify marker genes for each cluster using

FindAllMarkersin Seurat orsc.tl.rank_genes_groupsin Scanpy [25] [27].Compare expression of known cell type markers across clusters.

Check for clusters defined by technical artifacts (e.g., high mitochondrial percentage, low complexity) rather than biological variation [27] [24].

Consider using automated cell type identification tools (e.g., SingleR, scCATCH) or manual annotation based on marker gene expression.

The iterative process of clustering, validation, and potential re-clustering is normal and often necessary to obtain biologically meaningful results that faithfully represent the cellular heterogeneity in the dataset.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the characterization of gene expression at the level of individual cells. A critical step in analyzing this data is clustering, which groups cells with similar expression profiles to identify distinct cell types and states. Among the plethora of clustering methods available, four algorithms have demonstrated particular utility: Leiden (a graph-based community detection method), scDCC (a deep learning approach that incorporates prior knowledge), DESC (a deep embedding method that removes batch effects), and FlowSOM (a self-organizing map-based method popular in cytometry data analysis) [31] [32] [33].

The performance of these algorithms is highly dependent on both their underlying principles and the specific parameters chosen during implementation. Despite the availability of numerous clustering tools, researchers often face challenges in selecting appropriate methods and optimizing their parameters for specific datasets [34]. This protocol provides detailed application notes for implementing these four key algorithms, with a focus on practical considerations for researchers working with single-cell transcriptomic data.

Algorithm Characteristics and Performance

Key Algorithm Features

Table 1: Characteristics of single-cell clustering algorithms

| Algorithm | Underlying Method | Key Features | Prior Knowledge Integration | Scalability |

|---|---|---|---|---|

| Leiden | Graph-based community detection | Optimizes modularity; guarantees connected communities | Limited to graph structure | Highly scalable [35] |

| scDCC | Deep constrained clustering | Uses must-link/cannot-link constraints; handles dropouts | Directly integrates pairwise constraints | Suitable for large datasets (tested on 10,000+ cells) [36] [33] |

| DESC | Deep embedding clustering | Learns feature representation and clusters simultaneously; reduces batch effects | Unsupervised | Handles large datasets efficiently [34] |

| FlowSOM | Self-organizing maps | Two-step clustering with meta-clustering; good for high-dimensional data | Limited | Fast execution suitable for large datasets [31] [32] |

Performance Benchmarking

Recent comprehensive benchmarking studies have evaluated clustering algorithms across multiple metrics including Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), clustering accuracy, and computational efficiency [31]. In evaluations across 10 paired single-cell transcriptomic and proteomic datasets, these algorithms demonstrated varying strengths:

- scDCC and FlowSOM ranked among the top performers for both transcriptomic and proteomic data [31]

- DESC has demonstrated superior performance in terms of clustering specific cell types and capturing cell type heterogeneity compared to other deep learning methods [34]

- Leiden clustering forms the foundation for many single-cell analysis pipelines and can be extended with spatial awareness for spatial transcriptomics applications [35]

Table 2: Performance benchmarking of algorithms across omics data types

| Algorithm | Transcriptomic Data (ARI) | Proteomic Data (ARI) | Memory Efficiency | Time Efficiency |

|---|---|---|---|---|

| scDCC | High | High | Medium | Medium |

| FlowSOM | High | High | High | High |

| DESC | Medium-High | Not fully evaluated | Medium | Medium |

| Leiden | Medium | Medium | High | High |

Experimental Protocols and Implementation

General Single-Cell Clustering Workflow

The following diagram illustrates the common workflow for single-cell clustering analysis, upon which algorithm-specific protocols are built:

Single-cell clustering workflow

Leiden Clustering Protocol

Leiden clustering is widely used in single-cell analysis due to its ability to guarantee well-connected communities and its computational efficiency [34] [35].

Basic Implementation

Parameter Optimization

Critical parameters requiring optimization:

- Resolution: Higher values (0.8-2.0) yield more clusters; lower values (0.2-0.8) yield fewer clusters

- n_neighbors: Balances local vs. global structure (typical range: 5-50)

- n_pcs: Number of principal components (typical range: 10-50)

A robust linear mixed regression model analysis demonstrated that using UMAP for neighborhood graph generation combined with increased resolution has a beneficial impact on accuracy, particularly when using a reduced number of nearest neighbors which creates sparser, more locally sensitive graphs [34].

SpatialLeiden Extension

For spatial transcriptomics data, Leiden can be extended to SpatialLeiden by incorporating spatial information:

SpatialLeiden significantly improves performance over non-spatial Leiden, with performance comparable to specialized spatial clustering tools like SpaGCN and BayesSpace [35].

scDCC Clustering Protocol

scDCC (Single-Cell Deep Constrained Clustering) integrates domain knowledge through pairwise constraints to improve clustering performance [36] [33].

Constraint Integration

The key innovation of scDCC is its use of must-link (ML) and cannot-link (CL) constraints:

- Must-link constraints: Force pairs of cells to be in the same cluster

- Cannot-link constraints: Force pairs of cells to be in different clusters

Implementation Steps

Parameter Guidelines

- --n_clusters: Number of expected cell populations

- --gamma: Weight of clustering loss (default: 1.0)

- --ml_weight: Weight of must-link loss (default: 1.0, range: 0.5-2.0)

- --cl_weight: Weight of cannot-link loss (default: 1.0, range: 0.5-2.0)

- --n_pairwise: Number of pairwise constraints to generate

Experiments show that using just 10% of cells with known labels to generate constraints can significantly improve clustering performance on the remaining 90% of cells [33]. Performance improves consistently as more constraint information is incorporated.

DESC Clustering Protocol

DESC (Deep Embedding for Single-Cell Clustering) simultaneously learns feature representations and cluster assignments while effectively handling batch effects [34].

Implementation Code

Parameter Optimization Strategy

- dims: Network dimensions, typically [input_dim, 64, 32, ...] based on dataset size

- n_neighbors: Balance between local and global structure (default: 10)

- louvain_resolution: Initial clustering resolution (default: 0.8)

- batch_size: 256 for datasets <10,000 cells; 512 for larger datasets

DESC has demonstrated superior performance in clustering specific cell types and capturing cell type heterogeneity compared to other deep learning methods [34]. It is particularly effective for datasets with complex batch effects.

FlowSOM Clustering Protocol

FlowSOM uses self-organizing maps followed by hierarchical meta-clustering, making it particularly suitable for large-scale single-cell data [31] [32].

Implementation Steps

Critical Parameters

- nClus: Number of primary clusters in SOM (typically 10-20)

- maxMeta: Maximum number of meta-clusters (typically 10-30)

- colsToUse: Features/markers for clustering

FlowSOM ranks among top performers for both transcriptomic and proteomic data in benchmarking studies and offers excellent robustness and memory efficiency [31].

Research Reagent Solutions

Table 3: Essential research reagents and computational tools for single-cell clustering

| Item | Function/Purpose | Examples/Specifications |

|---|---|---|

| CellTypist Atlas | Provides ground-truth annotations for benchmarking | Manually curated cell annotations; datasets from MacParland liver model (GSE115469), De Micheli skeletal muscle (GSE143704) [34] |

| Scanpy | Python-based single-cell analysis toolkit | Provides implementation of Leiden clustering; integrates with other algorithms [35] |

| Seurat | R-based single-cell analysis platform | Alternative to Scanpy; comprehensive preprocessing and clustering capabilities |

| Apache Spark | Distributed computing framework | Enables scalable analysis of large datasets (>100,000 cells) via scSPARKL [37] |

| Squidpy | Spatial omics analysis library | Spatial neighborhood graph generation for SpatialLeiden [35] |

| 10x Genomics Data | Standardized single-cell datasets | PBMC, Jurkat-293T mixtures for benchmarking [37] |

Applications and Case Studies

Liver Cell Atlas Analysis

In a study optimizing clustering parameters using intrinsic goodness metrics, researchers utilized the MacParland liver model (GSE115469) containing 8,444 cells from five healthy donors [34]. The dataset identified 20 hepatic cell populations including six hepatocyte populations, three endothelial cell populations, cholangiocytes, hepatic stellate cells, macrophages, T-cells, NK cells, B-cells, and erythroid cells.

Key Findings:

- The combination of UMAP for neighborhood graph generation with increased resolution parameters significantly improved accuracy

- Within-cluster dispersion and Banfield-Raftery index served as effective proxies for accuracy in parameter optimization

- Testing different numbers of principal components was crucial due to high sensitivity to data complexity

Cross-Modality Benchmarking

A comprehensive benchmark of 28 clustering algorithms across 10 paired transcriptomic and proteomic datasets revealed that:

- scDCC, scAIDE, and FlowSOM achieved top performance for both transcriptomic and proteomic data [31]

- FlowSOM offered excellent robustness in addition to high performance

- Community detection-based methods (including Leiden) provided a good balance between performance and computational efficiency

Spatial Transcriptomics Application

SpatialLeiden was applied to a 10x Visium spatial transcriptomics dataset of the human dorsolateral prefrontal cortex (DLPFC) [35]. The implementation demonstrated:

- Substantial improvement over non-spatial Leiden when using spatially aware dimensionality reduction (msPCA)

- Performance comparable to specialized spatial clustering tools (SpaGCN, BayesSpace) with significantly faster processing times

- Effective application across multiple technologies including Stereo-Seq, MERFISH, and STARmap

The implementation of Leiden, scDCC, DESC, and FlowSOM algorithms requires careful consideration of both methodological foundations and parameter optimization strategies. This protocol provides comprehensive guidance for researchers applying these methods to single-cell transcriptomic data.

Key recommendations emerging from recent studies include:

- Leiden should be considered for general-purpose clustering, particularly when computational efficiency is important

- scDCC offers superior performance when prior knowledge is available to generate constraints

- DESC is particularly effective for datasets with batch effects or complex heterogeneity

- FlowSOM provides robust performance across both transcriptomic and proteomic modalities

Future development in single-cell clustering will likely focus on improved integration of multi-omics data, enhanced scalability for increasingly large datasets, and more sophisticated incorporation of spatial information. The algorithms detailed in this protocol represent the current state-of-the-art and provide a solid foundation for biological discovery through single-cell transcriptomics.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to deconstruct cellular heterogeneity within complex tissues. For the human bone marrow (BM)—the primary site of hematopoiesis—this technology is key to understanding the intricate cellular crosstalk in the bone marrow microenvironment (BME) that controls blood production [38]. This case study details the application and benchmarking of clustering algorithms to scRNA-seq data from human bone marrow, providing a structured protocol for researchers. The findings are contextualized within a broader thesis on clustering algorithms for transcriptomic data, highlighting how method selection directly impacts biological interpretation in a clinically relevant tissue.

Background: The Human Bone Marrow Microenvironment

The BME is composed of non-hematopoietic stromal cells that constitute less than 1-2% of the bone marrow, presenting a significant technical challenge for their comprehensive study [38]. These cells are vital for hematopoietic support and include several key populations:

- Mesenchymal Stromal Cells (MSC): The predominant stromal population, characterized by high expression of

CXCL12andLEPR, responsible for supporting hematopoietic stem and progenitor cells (HSPCs) [38]. - Osteolineage Cells (OLC): Include osteoblasts at various differentiation stages, from immature (

SP7,SPP1) to mature (BGLAP), influencing hematopoietic stem cell quiescence and retention [38]. - Endothelial Cells (EC): Form the vascular network, defined by markers like

PECAM1(CD31) andCD34[38]. - Smooth Muscle Cells (SMC) and Fibroblasts: SMCs express

MYH11andACTA2, while fibroblasts are identified byS100Agenes and play a role in extracellular matrix production [38].

Aging and disease states are associated with significant transcriptional remodeling of the BME, including a pro-inflammatory shift and downregulation of key hematopoietic factors like CXCL12 and KITLG [38].

Benchmarking Clustering Algorithms for Bone Marrow Data

Performance of Top Algorithms

A comprehensive 2025 benchmark study of 28 single-cell clustering algorithms on 10 paired transcriptomic and proteomic datasets provides critical insights for method selection. The study evaluated algorithms based on the Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), clustering accuracy, computational efficiency, and robustness [7] [22].

Table 1: Top-Performing Clustering Algorithms for Single-Cell Data

| Algorithm | Overall Performance (Transcriptomics) | Overall Performance (Proteomics) | Key Strengths |

|---|---|---|---|

| scAIDE | Ranked 2nd | Ranked 1st | Top overall performance across omics |

| scDCC | Ranked 1st | Ranked 2nd | Excellent performance, memory-efficient |

| FlowSOM | Ranked 3rd | Ranked 3rd | Top robustness, fast running time |

For researchers prioritizing specific operational needs, the study further recommends:

- Memory Efficiency:

scDCCandscDeepCluster[7]. - Time Efficiency:

TSCAN,SHARP, andMarkovHC[7]. - Balanced Approach: Community detection-based methods [7].

Impact of Analysis Parameters

The benchmark also highlighted two critical factors that influence clustering outcomes, which are crucial for setting resolution parameters:

- Highly Variable Genes (HVGs): The selection of HVGs significantly impacts the resulting clusters and should be carefully optimized [7].

- Cell Type Granularity: The ability of an algorithm to resolve fine-grained versus broad cell populations varies, and should be matched to the biological question [7].

Experimental Protocol: Clustering of Human Bone Marrow Stromal Cells

Sample Preparation and Single-Cell RNA Sequencing

This protocol is adapted from a 2025 study that established a detailed atlas of the human BME [38].

- Donor and Sample Source: Bone marrow aspirates were obtained from young, healthy allogeneic transplantation donors.

- Cell Dissociation: Bone marrow samples were digested with collagenase and DNase I.

- Stromal Cell Enrichment:

- Deplete CD45+ hematopoietic cells using a Rosettesep antibody cocktail.

- Using fluorescence-activated cell sorting (FACS), enrich for live (7AAD-), nucleated (Vybrant DyeCycle+) cells that lack expression of hematopoietic (CD45), erythroid (CD235a, CD71), and plasma cell (SLAMF7) markers.

- Library Preparation and Sequencing: Prepare single-cell RNA libraries using the 10x Genomics 3'-end capture platform and sequence.

Computational Clustering Workflow

Figure 1: A standard computational workflow for clustering human bone marrow single-cell data.

- Data Preprocessing:

- Quality Control: Filter out cells with high mitochondrial gene percentage or low unique gene counts.

- Normalization: Normalize the raw count matrix to account for varying sequencing depth (e.g., using log-normalization).

- Highly Variable Gene Selection: Identify the most variable genes across cells to reduce noise and computational load [7].

- Dimensionality Reduction and Clustering:

- Perform linear dimensionality reduction using Principal Component Analysis (PCA).

- Apply a top-performing clustering algorithm such as scDCC or FlowSOM [7]. The resolution parameter should be tuned based on the expected cellular heterogeneity. For the sparse BME stroma, a higher resolution may be needed to subset rare populations.

- Visualization and Annotation:

- Generate non-linear embeddings (UMAP or t-SNE) for visualization of clusters.

- Annotate cell types by identifying cluster-specific marker genes and comparing them to known signatures from references [38] [39]. For example, an MSC cluster will express

CXCL12andLEPR, while OLCs will expressSPP1andBGLAP.

Table 2: Essential Research Reagents and Resources for Human BME scRNA-seq

| Item | Function / Description | Example or Note |

|---|---|---|

| Collagenase & DNase I | Enzymatic digestion of bone marrow tissue to create a single-cell suspension. | Critical for releasing rare stromal cells [38]. |

| CD45 Depletion Kit | Negative selection to enrich for non-hematopoietic stromal cells. | RosetteSep antibody cocktail [38]. |

| Viability Stain (7AAD) | Identifies and allows for the exclusion of dead cells during sorting. | Improves data quality by reducing background noise. |

| Nucleated Cell Stain | Labels DNA to identify and sort nucleated cells. | Vybrant DyeCycle+ [38]. |

| Fluorescence-Activated Cell Sorter (FACS) | Isolation of highly pure populations of target cells based on surface markers. | Used for enriching live, nucleated, CD45- cells [38]. |

| 10x Genomics Platform | High-throughput single-cell RNA sequencing library preparation. | 3'-end kit is widely used for cell atlas construction [38]. |

| BMDB (Bone Marrow Database) | An integrated database for exploring single-cell transcriptomic profiles of the BME. | Publicly available web resource for data validation [40]. |

Results and Biological Validation

Application of this protocol to human bone marrow successfully identified five distinct stromal populations: MSC, OLC, SMC, fibroblasts, and EC [38]. Further analysis revealed significant sub-structure, including:

- Inflammatory MSC Subpopulation (MSC1): Characterized by upregulated expression of

CXCL2,CCL2,CEBPB, and AP-1 complex genes (FOSB,JUND), suggesting a role in mediating inflammation in the BME [38]. - Adipo-primed MSC Subpopulation (MSC2): Displayed a transcriptomic profile suggesting adipocyte differentiation potential, supported by upregulation of

LPLandAPOE[38].

This refined clustering allows for the investigation of novel cellular interactions. For instance, receptor-ligand analysis suggests fibroblasts may indirectly regulate hematopoiesis by producing DPP4, a peptidase that modulates the availability of the key HSC retention factor CXCL12 produced by MSCs [38].

Figure 2: A simplified network of cellular crosstalk in the human bone marrow niche, as revealed by high-resolution clustering.

Concluding Remarks

This case study demonstrates that the choice of clustering algorithm and parameters is not merely a computational decision but a critical biological one. Applying robust, benchmarked methods like scDCC and FlowSOM to human bone marrow scRNA-seq data enables the resolution of rare and novel cellular subsets, such as pro-inflammatory MSCs. This refined view of the BME is essential for understanding its functional plasticity in aging and disease, directly informing future research in hematologic malignancies and stem cell biology. The integration of systematic benchmarking with detailed biological protocols provides a powerful framework for advancing single-cell transcriptomic research.

Solving Common Clustering Challenges: Parameters, Consistency, and Performance

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the investigation of cellular heterogeneity at unprecedented resolution. A crucial step in scRNA-seq data analysis is unsupervised clustering, which identifies distinct cell populations based on transcriptomic similarity. The performance of clustering algorithms is highly sensitive to several critical parameters, including resolution, number of neighbors, and number of principal components (PCs). This Application Note provides a comprehensive framework for optimizing these parameters to ensure biologically meaningful clustering results. We present structured protocols, quantitative benchmarks, and visualization tools to guide researchers in making informed decisions during scRNA-seq data analysis, ultimately enhancing the reliability of downstream biological interpretations in transcriptomic research and drug development.

Single-cell clustering represents a foundational analytical procedure in transcriptomic research, enabling the identification of novel cell types, characterization of cellular states, and understanding of disease mechanisms. Despite the proliferation of sophisticated clustering algorithms, the accurate subdivision of cell subpopulations remains challenging and heavily dependent on parameter selection [34]. The efficacy of unsupervised clustering hinges on three pivotal parameters that govern how cellular relationships are defined and partitioned: the resolution parameter, which controls the granularity of clustering; the number of neighbors, which determines local connectivity in graph-based methods; and the number of principal components, which defines the feature space for analysis. Inappropriate selection of these parameters can lead to either over-clustering (partitioning homogeneous populations) or under-clustering (failing to distinguish biologically distinct populations), potentially obscuring meaningful biological insights [4]. This Application Note addresses these challenges by providing evidence-based protocols for parameter optimization, grounded in empirical benchmarking studies and statistical validation approaches.

Background and Significance

The Clustering Parameter Challenge

Single-cell RNA-seq data are characterized by high dimensionality, sparsity, and technical noise, which complicate clustering analysis. The clustering process typically involves multiple steps: normalization, feature selection, dimensionality reduction, graph construction, and community detection. At each stage, parameter choices accumulate and interact, making it difficult to intuit optimal settings [4]. For instance, graph-based clustering algorithms like Leiden and Louvain first construct a k-nearest neighbor (k-NN) graph where cells are connected to their most similar counterparts, then partition this graph into communities. The number of neighbors (k) parameter determines the connectivity of this graph, while the resolution parameter influences the partition granularity. Simultaneously, the number of PCs defines the dimensionality of the space in which distances between cells are calculated, directly impacting which cells appear similar [41]. These parameters do not operate in isolation; they exhibit complex interactions that can significantly alter clustering outcomes and subsequent biological interpretations.

Impact on Biological Discovery