Single-Cell Foundation Models: A Comprehensive Review of Concepts, Applications, and Future Directions in Biomedical Research

This review provides a comprehensive examination of single-cell foundation models (scFMs), large-scale AI systems pretrained on massive single-cell datasets that are revolutionizing cellular biology and drug discovery.

Single-Cell Foundation Models: A Comprehensive Review of Concepts, Applications, and Future Directions in Biomedical Research

Abstract

This review provides a comprehensive examination of single-cell foundation models (scFMs), large-scale AI systems pretrained on massive single-cell datasets that are revolutionizing cellular biology and drug discovery. We explore the fundamental concepts behind scFMs, their transformer-based architectures, and self-supervised pretraining strategies that enable them to learn universal biological patterns. The article critically assesses current methodologies, practical applications in drug development and clinical research, significant technical challenges, and rigorous validation approaches. Through comparative analysis of emerging models like scGPT and Geneformer, we identify performance limitations in zero-shot settings and provide evidence-based guidance for model selection. This resource equips researchers and drug development professionals with the knowledge to effectively leverage scFMs while understanding their current constraints and future potential in advancing precision medicine.

Demystifying Single-Cell Foundation Models: Core Concepts and Architectural Principles

The advent of high-throughput single-cell sequencing has fundamentally transformed biological research, enabling the unprecedented exploration of cellular heterogeneity, developmental trajectories, and complex regulatory networks at single-cell resolution. As experimental & molecular medicine journals report, vast collections of single-cell data have become available across diverse tissues and conditions, with public archives like CZ CELLxGENE now providing unified access to annotated single-cell datasets containing over 100 million unique cells [1]. This data explosion has created an urgent need for unified computational frameworks capable of integrating and comprehensively analyzing these rapidly expanding data repositories. Inspired by the revolutionary success of transformer architectures in natural language processing (NLP) and computer vision, researchers have begun developing foundation models specifically designed for single-cell biology, giving rise to single-cell foundation models (scFMs) [1].

A foundation model is defined as a large-scale deep learning model pretrained on vast datasets at scale and then adapted to a wide range of downstream tasks. These models are characterized by self-supervised learning through objectives such as predicting masked segments, enabling them to learn generalizable patterns without extensive manual labeling [1]. The core premise of scFMs is that by exposing a model to millions of cells encompassing many tissues and conditions, the model can learn the fundamental principles governing cellular behavior and gene regulation that are generalizable to new datasets or analytical tasks. In these scFMs, individual cells are treated analogously to sentences, while genes or other genomic features along with their expression values are treated as words or tokens, creating what can be conceptualized as a "language of cells" [1] [2]. This paradigm shift represents a fundamental transformation in how we approach computational cell biology, moving from specialized analytical tools to unified frameworks that can leverage the collective knowledge embedded in massive single-cell datasets.

Core Concepts: How Language Models Interpret Cellular Data

Fundamental Analogies Between Language and Biology

The application of language models to single-cell biology relies on establishing conceptual parallels between natural language and biological systems. In this framework, the "vocabulary" consists of genes or genomic features, while the "sentences" are individual cells represented by their molecular profiles [1] [2]. The grammatical rules that govern how words combine to form meaningful sentences correspond to the gene regulatory networks and biological pathways that define cellular identity and function. This analogy enables researchers to leverage sophisticated transformer architectures originally developed for NLP tasks to decipher the complex "language" of cellular biology.

The self-supervised learning approaches used in large language models translate remarkably well to single-cell data. Just as language models learn by predicting masked words in sentences, scFMs learn by predicting masked gene expressions in cells, capturing the complex dependencies and correlations between genes across diverse cellular contexts [1]. Through this process, scFMs develop a deep understanding of cellular syntax—the patterns and relationships between genes that define specific cell types, states, and responses. The model's attention mechanisms allow it to learn which genes in a cell are most informative of the cell's identity or state, how they covary across cells, and how they have regulatory or functional connections [1].

Architectural Foundations: Transformer Models in Biology

Most successful scFMs are built on the transformer architecture, which has become the backbone of modern foundation models across domains [1]. Transformers are neural network architectures characterized by attention mechanisms that allow the model to learn and weight the relationships between any pair of input tokens. In the context of single-cell biology, this enables the model to identify which genes are most relevant for understanding specific cellular functions or states, effectively learning the contextual relationships between different genomic features [1].

Two primary architectural approaches have emerged in scFM development. The first adopts a BERT-like encoder architecture with bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1] [2]. The second approach, exemplified by scGPT, uses an architecture inspired by the decoder of the Generative Pretrained Transformer (GPT), with a unidirectional masked self-attention mechanism that iteratively predicts masked genes conditioned on known genes [1]. While both architectures have demonstrated success in single-cell applications, no single design has emerged as clearly superior, and hybrid approaches are currently being explored to optimize performance for specific biological tasks.

Table 1: Comparison of Major Single-Cell Foundation Model Architectures

| Model Name | Base Architecture | Pretraining Data Scale | Key Features | Primary Applications |

|---|---|---|---|---|

| Geneformer | Transformer-based | 30 million cells [3] | Context-aware gene embeddings | Network biology, predictions |

| scGPT | GPT-inspired decoder | 100 million cells [3] | Generative modeling | Multi-omics integration, perturbation prediction |

| scBERT | BERT-like encoder | Not specified | Bidirectional attention | Cell type annotation |

| scFoundation | Transformer-based | 100 million cells [3] | Large-scale pretraining | General-purpose representations |

| scPlantLLM | Transformer-based | Plant-specific data [3] | Species-specific optimization | Plant single-cell genomics |

Technical Implementation: From Raw Data to Biological Insights

Data Tokenization Strategies for Single-Cell Data

Tokenization represents a critical preprocessing step that converts raw single-cell data into a structured format suitable for transformer models. Unlike words in natural language, gene expression data lacks inherent sequential ordering, presenting unique challenges for applying sequential models like transformers [1] [4]. To address this fundamental discrepancy, researchers have developed several tokenization strategies that impose artificial structure on single-cell data while preserving biological meaning.

The most common approach involves ranking genes within each cell by their expression levels and feeding the ordered list of top genes as a "sentence" representing that cell [1]. This provides a deterministic sequence based on expression magnitude, allowing the model to learn relationships between highly expressed genes. Alternative methods partition genes into bins according to their expression values or simply use normalized counts without complex ranking schemes [1]. Each gene is typically represented as a token embedding that combines a gene identifier with its expression value in the given cell. Positional encoding schemes are then adapted to represent the relative order or rank of each gene in the cell, providing the model with information about the artificial sequence structure [1]. Special tokens may also be incorporated to represent cell-level metadata, experimental conditions, or multimodal information, enriching the contextual information available to the model.

Pretraining Strategies and Objectives

Pretraining scFMs involves training models on self-supervised tasks across large, unlabeled single-cell datasets. The most common pretraining objective is masked language modeling, where random subsets of gene tokens are masked, and the model must predict the missing values based on the remaining context [1]. This approach forces the model to learn the complex dependencies and correlations between genes, effectively capturing the underlying structure of gene regulatory networks. Through this process, the model develops a comprehensive understanding of how genes co-vary across different cell types and states, enabling it to form robust representations of cellular identity and function.

Additional pretraining objectives may include next-gene prediction (similar to next-word prediction in language models), contrastive learning to bring similar cells closer in embedding space, and multi-task learning that combines several self-supervised objectives [1] [4]. The scale of pretraining data is substantial, with modern scFMs training on datasets ranging from 30 to 100 million cells from diverse tissues, species, and experimental conditions [3]. This extensive exposure to varied cellular contexts enables the models to learn universal principles of cellular biology that transfer effectively to new datasets and biological questions.

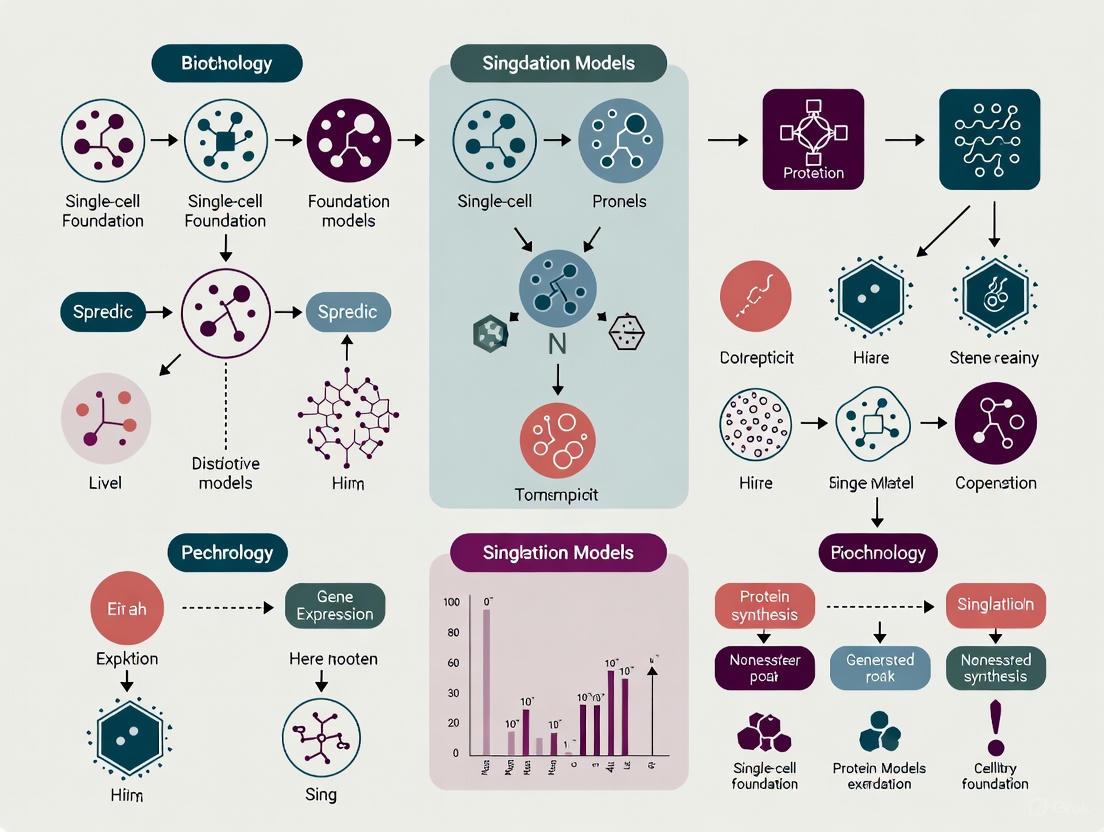

Single-Cell Foundation Model Workflow

Experimental Framework: Benchmarking and Evaluation

Standardized Evaluation Metrics and Protocols

Comprehensive benchmarking of scFMs requires standardized evaluation protocols that assess model performance across diverse biological tasks. Recent benchmarking studies have employed multiple metrics spanning unsupervised, supervised, and knowledge-based approaches to provide holistic assessment of model capabilities [4]. These evaluations typically examine performance across two primary categories: gene-level tasks and cell-level tasks, each targeting different aspects of biological understanding.

Gene-level tasks focus on evaluating the quality of gene embeddings and their ability to capture known biological relationships. Standard protocols include predicting gene functions based on Gene Ontology (GO) terms, identifying tissue-specific genes, and reconstructing known biological pathways [4]. Performance is measured using standard classification metrics such as area under the receiver operating characteristic curve (AUROC) and area under the precision-recall curve (AUPRC), as well as specialized metrics that assess the semantic similarity between gene embeddings and established functional annotations. Cell-level tasks evaluate the model's understanding of cellular identity and function, including cell type annotation, batch integration, identification of rare cell populations, and prediction of cellular responses to perturbations [4]. These tasks employ metrics that measure both technical performance (such as clustering accuracy and batch correction efficiency) and biological relevance (such as the preservation of known cellular hierarchies).

Table 2: Standard Evaluation Metrics for Single-Cell Foundation Models

| Metric Category | Specific Metrics | Biological Interpretation | Ideal Value |

|---|---|---|---|

| Gene-Level Evaluation | GO Term AUROC | Functional relationship capture | >0.8 |

| Pathway Reconstruction Accuracy | Biological pathway identification | Higher better | |

| Tissue Specificity AUPRC | Tissue-specific gene detection | >0.7 | |

| Cell-Level Evaluation | Cell Type Annotation F1 | Cell classification accuracy | >0.9 |

| Batch Integration ASW | Technical effect removal | 0-1 (context dependent) | |

| Biological Conservation LISI | Biological variation preservation | Higher better | |

| Ontology-Based Evaluation | scGraph-OntoRWR | Biological consistency with prior knowledge | Higher better |

| LCAD (Lowest Common Ancestor Distance) | Severity of misclassification errors | Lower better |

Comparative Performance Across Model Architectures

Recent comprehensive benchmarking studies have revealed distinct performance patterns across different scFM architectures. Notably, no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [4] [5]. Evaluation of six prominent scFMs (Geneformer, scGPT, UCE, scFoundation, LangCell, and scCello) against established baseline methods has provided insights into the relative strengths and limitations of each approach.

The BioLLM framework, which provides a unified interface for diverse scFMs, has revealed that scGPT demonstrates robust performance across multiple tasks, including both zero-shot learning and fine-tuning scenarios [5]. Geneformer and scFoundation show particularly strong capabilities in gene-level tasks, benefiting from effective pretraining strategies that capture functional gene relationships [5]. In contrast, scBERT often lags behind larger models, likely due to its smaller architecture and more limited training data [5]. Importantly, simpler machine learning models with carefully selected features (such as Highly Variable Genes) can sometimes outperform complex foundation models on specific tasks, particularly when data are limited or computational resources are constrained [4]. This suggests that while scFMs offer powerful general-purpose capabilities, task-specific considerations should guide model selection in practical applications.

Advanced Applications: From Basic Research to Therapeutic Discovery

Drug Discovery and Development Applications

Single-cell foundation models are increasingly playing transformative roles in multiple stages of drug discovery and development. In target identification, scFMs enable improved disease understanding through precise cell subtyping and characterization of disease-associated cellular states [6] [7]. Highly multiplexed functional genomics screens incorporating scRNA-seq are enhancing target credentialing and prioritization by revealing the cellular contexts in which potential targets operate and their functional relationships within broader biological networks [6].

During preclinical development, scFMs aid the selection of relevant disease models by comparing their cellular compositions and states to human disease references [6]. They also provide new insights into drug mechanisms of action by characterizing cellular responses to perturbations at single-cell resolution [6] [7]. In clinical development, scFMs can inform critical decision-making through improved biomarker identification for patient stratification and more precise monitoring of drug response and disease progression [6]. The ability to integrate single-cell data across platforms, tissues, and species positions scFMs as powerful tools for bridging translational gaps in pharmaceutical development.

Emerging Multimodal and Interactive Approaches

Recent advances have extended scFMs beyond basic transcriptomic analysis to multimodal and interactive applications. The CellWhisperer framework represents a groundbreaking approach that establishes a multimodal embedding of transcriptomes and their textual annotations using contrastive learning on over 1 million RNA sequencing profiles with AI-curated descriptions [8]. This embedding informs a large language model that answers user-provided questions about cells and genes in natural-language conversations, enabling researchers to interactively explore single-cell data through intuitive chat interfaces.

Commercial implementations are also emerging, such as 10x Genomics' integration with Anthropic's Claude for Life Sciences, which provides natural-language interfaces to single-cell analysis pipelines through the Model Context Protocol (MCP) [9]. These developments lower the barrier to sophisticated single-cell analysis, allowing non-computational researchers to perform complex analytical tasks through natural language queries rather than specialized programming. The convergence of single-cell technologies with conversational AI represents a significant step toward truly interactive biological discovery systems that can serve as collaborative partners in scientific investigation.

Interactive Single-Cell Analysis Architecture

Successful implementation of scFMs requires both biological and computational resources that collectively enable robust model development and application. The table below details key components of the scFM research toolkit, including their specific functions and representative examples from current literature and practice.

Table 3: Essential Research Reagents and Computational Resources for Single-Cell Foundation Models

| Resource Category | Specific Item/Platform | Function/Purpose | Representative Examples |

|---|---|---|---|

| Data Resources | CELLxGENE Census | Standardized single-cell data access | >100 million curated cells [1] |

| GEO/SRA Archives | Raw sequencing data repository | 705,430 human transcriptomes [8] | |

| Human Cell Atlas | Reference cell maps | Multiorgan coverage [1] | |

| Computational Frameworks | BioLLM | Unified scFM interface | Standardized APIs for model integration [5] |

| Transformer Architectures | Model backbone | BERT-like encoders, GPT-style decoders [1] | |

| Cloud Analysis Platforms | Scalable computation | 10x Genomics Cloud [9] | |

| Specialized Models | Geneformer | Gene embedding generation | 30 million cell pretraining [3] |

| scGPT | Generative modeling | 100 million cell scale [3] | |

| scPlantLLM | Species-specific adaptation | Plant single-cell genomics [3] | |

| Evaluation Tools | scGraph-OntoRWR | Biological consistency metric | Cell ontology alignment [4] |

| ROGI (Roughness Index) | Model selection proxy | Dataset-dependent recommendation [4] |

Future Directions and Challenges in Single-Cell Foundation Models

Despite rapid progress, several significant challenges remain in the development and application of scFMs. A primary limitation is the nonsequential nature of omics data, which doesn't naturally align with the sequential processing of transformer architectures [1]. Additional challenges include inconsistency in data quality across studies, the computational intensity required for training and fine-tuning large models, and the difficulty of interpreting the biological relevance of latent embeddings and model representations [1] [4].

Future research directions are likely to focus on several key areas. Improved multimodal integration will combine transcriptomic data with epigenetic, proteomic, and spatial information to create more comprehensive cellular representations [1] [3]. Enhanced interpretability methods will be crucial for translating model insights into biologically actionable knowledge, potentially through attention mechanism analysis and concept-based explanations [4]. Species-specific and context-specific adaptations, exemplified by scPlantLLM for plant genomics, will address the unique characteristics of different biological systems [3]. Finally, more efficient architectures and training methods will be needed to make scFMs accessible to broader research communities with limited computational resources [4] [5].

As these challenges are addressed, scFMs are poised to become increasingly central to biological discovery and therapeutic development, potentially evolving into true collaborative partners in scientific investigation through enhanced natural language interfaces and reasoning capabilities. The ongoing integration of single-cell technologies with artificial intelligence represents a transformative frontier in computational biology, with foundation models serving as the cornerstone of this paradigm shift.

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biology by enabling the profiling of gene expression at an unprecedented resolution, uncovering vast cellular heterogeneity. However, the high-dimensionality, sparsity, and technical noise inherent to single-cell data present significant challenges for traditional analytical methods [1] [4]. Inspired by their success in natural language processing (NLP), transformer architectures have been recently adapted to single-cell genomics, giving rise to single-cell foundation models (scFMs). These models leverage the power of attention mechanisms to interpret the complex "language" of biology, mapping intricate gene relationships and regulatory networks from millions of cells [1]. This technical guide explores the core architectural adaptations of transformers for single-cell data, detailing how attention mechanisms are engineered to decipher the fundamental principles of cellular function.

Core Architectural Adaptations for Single-Cell Data

Applying transformer architectures to single-cell transcriptomics requires significant modifications to handle the unique structure and properties of biological data.

Tokenization Strategies for Non-Sequential Data

A fundamental challenge is that gene expression data lacks the inherent sequential order of words in a sentence. To apply transformers, which process ordered sequences, genes must be artificially structured. Several tokenization strategies have been developed:

- Rank-based Tokenization: Genes are ordered by their expression levels within each cell, creating a deterministic sequence where the top-expressed genes form the input "sentence" [1]. Models like Geneformer and scGPT employ this approach, treating the ordered list of gene tokens as the cellular representation [1] [10].

- Value Categorization: Continuous gene expression values are binned into discrete categories or "buckets," converting the regression problem of predicting expression into a classification task. scBERT is a prominent example that uses this strategy [10].

- Value Projection: This strategy aims to preserve the full resolution of the data by representing the gene expression vector as a sum of a projection of the expression value and a positional or gene embedding. scFoundation and CellFM utilize this approach to predict raw gene expression values directly [10].

The following diagram illustrates a typical tokenization and embedding workflow for single-cell data.

Attention Mechanisms for Gene Interaction Mapping

The self-attention mechanism is the cornerstone of the transformer, allowing the model to dynamically weigh the importance of different parts of the input sequence. In the context of single-cell data, this translates to learning the contextual relationships between genes.

- Self-Attention: In models with a BERT-like encoder architecture (e.g., scBERT), bidirectional self-attention allows each gene to attend to all other genes in the cell simultaneously. This enables the model to learn co-expression patterns and potential regulatory relationships by capturing how the expression of one gene influences the context of others [1] [11].

- Masked Self-Attention: In decoder-based models like scGPT, a unidirectional masked self-attention mechanism is used. The model iteratively predicts masked genes conditioned on the known, unmasked genes in the sequence. This forces the model to learn the dependencies between genes and build an internal representation of gene-gene interactions [1].

- Multi-Head Attention: By employing multiple attention heads in parallel, the model can jointly attend to information from different representation subspaces. For example, different heads might specialize in capturing relationships between genes involved in different biological pathways or processes, such as metabolism, immune response, or cell cycle regulation [11]. This parallels the use of multi-head attention in other domains, such as financial modeling, where it helps capture diverse temporal patterns [12].

Quantitative Performance of Single-Cell Foundation Models

Benchmarking studies have evaluated the performance of various scFMs across a range of biological tasks. The table below summarizes the performance of several prominent models in key applications, demonstrating their utility in gene relationship mapping and other downstream tasks.

Table 1: Performance Benchmarking of Selected Single-Cell Foundation Models

| Model | Pretraining Scale | Key Architecture | Cell Type Annotation (Accuracy Metrics) | Perturbation Prediction (Performance) | Batch Integration (Metrics) | Gene Function Prediction |

|---|---|---|---|---|---|---|

| CellFM | 100M human cells [10] | ERetNet (Linear Attention) [10] | High performance across datasets [10] | Outperforms existing models [10] | Effective integration [10] | Improved accuracy [10] |

| scGPT | 33M+ cells [1] [10] | Transformer Decoder [1] | Robust performance [4] | Accurate prediction [4] | High efficiency [4] | Captures functional relationships [1] |

| Geneformer | 30M cells [10] [3] | Transformer Encoder [10] | Context-aware annotations [1] | Network dynamics insights [1] | Preserves biological variation [4] | Learns rank-based embeddings [10] |

| scBERT | Millions of cells [1] | BERT-like Encoder [1] [10] | Specialized for annotation [1] | N/A | N/A | N/A |

| scPlantLLM | Plant-specific data [3] | Transformer [3] | High zero-shot accuracy [3] | N/A | Effective in plants [3] | Plant-specific adaptations [3] |

A comprehensive benchmark study evaluating six scFMs against traditional baselines revealed that no single model consistently outperforms all others across every task. The choice of model depends on factors such as dataset size, task complexity, and computational resources. Notably, scFMs demonstrate a remarkable ability to capture biological relevance, with their learned representations showing high consistency with known gene ontology (GO) terms and cell-type relationships [4].

Experimental Protocols for Validating Gene Relationships

Validating the gene relationships and regulatory networks inferred by transformer models requires rigorous experimental and computational protocols. The following workflow outlines a standard process for training a model and validating its predictions.

Detailed Methodology for Key Tasks

4.1.1 Gene Function Prediction

- Protocol: Gene embeddings are extracted from the input layer of the pretrained scFM. The similarity between these embedding vectors is computed (e.g., using cosine similarity) to predict functional relationships [4] [10].

- Validation: The model's predictions are benchmarked against known biological databases, such as Gene Ontology (GO) terms and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathways. Performance is measured by the model's ability to cluster genes with similar functions together in the embedding space and retrieve known functional annotations [4] [10].

4.1.2 Perturbation Response Prediction

- Data Generation: Single-cell CRISPR screening technologies are used to generate knockout or perturbation data. In these experiments, guide RNAs (gRNAs) targeting specific genes are introduced into cells via lentiviral transduction, and the transcriptional outcomes are measured using scRNA-seq [13].

- Modeling Protocol: The pretrained foundation model is fine-tuned or prompted with data from perturbed cells. The model's task is to predict the transcriptional state of a cell given a specific gene perturbation.

- Validation: Predictions are compared to held-out experimental data. Accuracy is assessed by correlating the predicted expression changes with the observed ones for all genes in the genome. Successful models can identify both direct and indirect targets of the perturbed gene [4] [13].

4.1.3 Analyzing Attention Maps for Network Inference

- Protocol: Attention weights from the transformer's self-attention layers are extracted. These weights form an attention map where high scores between two genes indicate a strong model-predicted relationship [1] [14].

- Validation: The inferred relationships are compared to experimentally derived protein-protein interaction networks (e.g., from STRING database) or chromatin interaction data (e.g., from Hi-C). For example, the CREaTor model demonstrated that its attention weights could prioritize functional enhancer-gene interactions validated by CRISPR screens [14].

The development and application of single-cell foundation models rely on a suite of computational tools, datasets, and resources. The following table details key components of the research ecosystem.

Table 2: Key Research Reagent Solutions for scFM Development and Application

| Category | Item | Function and Utility |

|---|---|---|

| Data Resources | CZ CELLxGENE [1] | Provides unified access to standardized, annotated single-cell datasets; essential for sourcing diverse pretraining data. |

| Human Cell Atlas [1] | A broad coverage atlas of cell types and states; serves as a foundational data corpus. | |

| NCBI GEO / SRA [1] [10] | Public repositories hosting thousands of single-cell studies; primary sources for raw data. | |

| Computational Tools & Models | scGPT [1] | A versatile foundation model based on a generative transformer decoder; used for multi-omic integration and perturbation prediction. |

| CellFM [10] | A large-scale foundation model with 800M parameters pretrained on 100M human cells; excels in gene function prediction. | |

| CREaTor [14] | An attention-based model for zero-shot modeling of cis-regulatory patterns; links CREs to target genes. | |

| Experimental Validation | CRISPR/Cas9 Screens [13] | Enables large-scale gene perturbation; generates ground-truth data for validating model-predicted gene relationships. |

| Single-cell Perturbation-seq [13] | Measures transcriptomic readout of CRISPR perturbations in single cells; key for testing causal predictions. | |

| ChIP-seq & ATAC-seq [14] | Provides data on transcription factor binding and chromatin accessibility; used to validate regulatory insights from models. |

The adaptation of transformer architectures and attention mechanisms for single-cell transcriptomics represents a paradigm shift in computational biology. By treating cells as sentences and genes as words, scFMs like scGPT, Geneformer, and CellFM leverage self-supervised learning on massive datasets to infer the complex, context-dependent relationships between genes. The attention mechanism is particularly powerful as it provides a computationally efficient and biologically intuitive way to model gene interactions, potentially uncovering novel regulatory circuits and functional modules. While challenges remain—including the need for better interpretability, handling of multi-omic data, and reduction of computational cost—these models are robust and versatile tools poised to unlock deeper insights into cellular function and disease mechanisms, accelerating discovery in basic research and drug development.

In the development of single-cell foundation models (scFMs), tokenization serves as the critical first step that transforms raw gene expression data into a structured format understandable by deep learning architectures. Single-cell RNA sequencing (scRNA-seq) data presents unique computational challenges, including high dimensionality, significant sparsity, and technical noise [1] [4]. Tokenization addresses these challenges by converting continuous, high-dimensional expression profiles into discrete tokens that preserve biological meaning while enabling efficient model processing. This process draws inspiration from natural language processing (NLP), where words are converted into tokens for language models, but requires specialized adaptations to handle the unique characteristics of biological data [1] [15]. In scFMs, individual cells are treated analogously to sentences, while genes and their expression values become the words or tokens that constitute these cellular sentences [1]. The effectiveness of tokenization directly impacts a model's ability to capture gene-gene interactions, cell-type specificity, and regulatory relationships, making it a fundamental component in building powerful and generalizable scFMs [16].

Key Tokenization Strategies in Single-Cell Analysis

Rank-Based Discretization

Rank-based discretization transforms gene expression values into ordinal rankings within each cell, effectively capturing relative expression levels while maintaining robustness to batch effects and technical noise. This approach, utilized by models including Geneformer and GeneCompass, operates on the biological rationale that the relative ranking of gene importance often carries more information than absolute expression values for determining cell state [17] [1]. The implementation involves normalizing expression values to account for sequencing depth, then ranking genes in descending order based on their normalized expression within each cell. This method naturally deprioritizes universally high-expression housekeeping genes while highlighting genes that distinguish cell states [17]. A key advantage of rank-based discretization is its robustness to technical variations across experiments, as it focuses on relative rather than absolute expression patterns. However, this approach may discard information about the magnitude of expression differences between genes and can be sensitive to the choice of how many top-ranked genes are included for analysis [17] [1].

Bin-Based Discretization

Bin-based discretization groups continuous expression values into predefined categorical bins, preserving aspects of the absolute value distribution while simplifying sequence modeling. This approach is employed by models including scBERT, scGPT, and scMulan [17] [1]. The implementation typically involves establishing expression value thresholds that define bin boundaries, then assigning each gene to a specific bin based on its expression level in a given cell. Bins may represent expression levels such as "unexpressed," "low," "medium," and "high," with the number of bins and their boundaries being key parameters. The primary advantage of bin-based methods is their ability to maintain some information about expression magnitude while still converting continuous values into manageable categories. Limitations include inevitable information loss, particularly for genes with subtle but biologically significant expression differences, and sensitivity to parameter selection which can significantly impact downstream results [17].

Value Projection Methods

Value projection methods represent a hybrid approach that projects gene expression values into continuous embeddings rather than discrete categories. This strategy, adopted by scFoundation and its backbone model xTrimoGene, maintains full data resolution by applying a linear transformation to the gene expression vector, which is then combined with gene-specific embeddings [17] [4]. The implementation typically involves creating separate embeddings for gene identity and expression values, then combining them through element-wise multiplication or concatenation before feeding them into the model architecture. This continuous representation avoids the information loss inherent in discretization methods and can capture more subtle expression patterns. However, value projection diverges from traditional tokenization strategies in NLP and may require more sophisticated model architectures and training approaches to effectively process the continuous embeddings [17].

Table 1: Comparison of Major Tokenization Strategies for Single-Cell Foundation Models

| Strategy | Key Models Using This Approach | Advantages | Limitations |

|---|---|---|---|

| Rank-Based Discretization | Geneformer, GeneCompass, LangCell | Robust to batch effects and noise; captures relative expression importance | Discards magnitude information; sensitive to number of genes included |

| Bin-Based Discretization | scBERT, scGPT, scMulan | Preserves some absolute expression information; simplifies sequence modeling | Introduces information loss; sensitive to bin parameter selection |

| Value Projection | scFoundation, xTrimoGene | Maintains full expression resolution; avoids discretization artifacts | Diverges from NLP traditions; requires more complex architecture |

Experimental Protocols and Methodologies

Data Preprocessing Workflow

A standardized data preprocessing pipeline is essential for effective tokenization across diverse single-cell datasets. The initial processing of single-cell data typically begins with quality control to remove low-quality cells and genes, followed by normalization to account for varying sequencing depths between cells [17]. For rank-based tokenization, the normalized expression matrix is further processed by computing the median of non-zero expression values for each gene across all cells using efficient algorithms like t-digest. The final normalized expression value for gene j in cell i is calculated as Mijnorm = (Mij / ∑k=1n Mik) / t-digest{Mkj | Mkj} > 0} [17]. Genes are then ranked within each cell in descending order based on their normalized expression values, with the top k genes typically selected for model input. For bin-based approaches, normalized expression values are mapped to discrete bins based on predefined thresholds, which may be determined empirically or through statistical methods. Value projection methods require careful scaling of expression values to ensure consistent embedding generation across datasets with different expression ranges [17] [4].

Integration with Model Architectures

Tokenization strategies must be carefully aligned with model architectures to optimize performance. Transformer-based models typically incorporate token embeddings along with positional encodings to represent the order of genes in the input sequence [1]. For models using the Mamba architecture (a state space model), such as GeneMamba, tokenized inputs are processed through bidirectional computation to capture both upstream and downstream contextual dependencies [17]. The integration often includes special tokens analogous to those used in NLP, such as [CLS] tokens for cell-level representation or modality indicators for multi-omics data [1] [4]. In graph neural network approaches like scNET, tokenized gene expressions are integrated with protein-protein interaction networks to learn context-specific gene and cell embeddings through a dual-view architecture [18]. These integrations demonstrate how tokenization serves as the bridge between raw biological data and sophisticated model architectures, enabling the capture of complex biological patterns.

Diagram 1: Tokenization Workflow for Single-Cell Foundation Models. This diagram illustrates the comprehensive pipeline from raw single-cell data to model-ready tokenized inputs, highlighting the three major tokenization strategies.

Comparative Analysis of Tokenization Approaches

Performance Across Downstream Tasks

The effectiveness of tokenization strategies must be evaluated through performance on key biological tasks. Recent benchmarking studies have assessed various approaches across multiple applications including cell type annotation, batch integration, and gene-gene relationship identification [4]. Rank-based methods have demonstrated particular strength in capturing cellular hierarchies and developmental trajectories, as their focus on relative expression aligns well with biological processes like differentiation [17] [1]. Bin-based approaches have shown robust performance in cell type classification tasks, where distinct expression categories effectively discriminate between cell states [4]. Value projection methods excel in scenarios requiring fine-grained expression analysis, such as predicting subtle perturbation effects or identifying rare cell populations, where continuous expression information provides critical sensitivity [17] [4]. Notably, no single tokenization strategy consistently outperforms all others across every task, highlighting the importance of selecting approaches based on specific biological questions and data characteristics [4].

Computational Considerations

Tokenization strategies significantly impact computational efficiency and scalability, crucial factors given the rapidly increasing scale of single-cell datasets. Rank-based tokenization typically produces the most compact representations, as only the top k genes are included for each cell, substantially reducing sequence length [17]. Bin-based approaches offer intermediate computational efficiency, with sequence length determined by the number of genes included but requiring additional embedding dimensions to represent different bins [1]. Value projection methods generally have the highest computational demands, as they maintain full gene sets and continuous values, though techniques like gene sampling can mitigate this burden [19]. The computational complexity of subsequent model architectures is directly influenced by tokenization choices; for example, transformer-based models with self-attention mechanisms scale quadratically with sequence length, making reduction of token sequence length particularly important [17] [19]. Emerging architectures like Mamba with linear scaling complexity offer potential to accommodate longer token sequences more efficiently [17].

Table 2: Computational Characteristics of Tokenization Methods for Large-Scale Single-Cell Data

| Tokenization Method | Sequence Length | Memory Usage | Scalability to >1M Cells | Compatibility with Model Architectures |

|---|---|---|---|---|

| Rank-Based Discretization | Short (top 1,000-5,000 genes) | Low | Excellent | Transformers, Mamba, RNNs |

| Bin-Based Discretization | Medium (all expressed genes) | Medium | Good | Transformers, RNNs |

| Value Projection | Long (all genes) | High | Moderate with sampling | Transformers, Specialized architectures |

Advanced Tokenization Frameworks and Integrations

Multi-Modal and Integrated Tokenization

Advanced tokenization frameworks have evolved to integrate multiple data types and biological prior knowledge. Multi-omic models incorporate special tokens to indicate modality, such as scATAC-seq for chromatin accessibility or spatial transcriptomics for positional information [1] [20]. The scNET framework demonstrates how protein-protein interaction networks can be integrated with gene expression tokenization through a dual-view architecture that simultaneously learns gene-gene and cell-cell relationships [18]. This approach uses graph neural networks to propagate gene expression information across PPI networks, effectively refining token representations with functional context. Another emerging trend incorporates biological knowledge bases directly into tokenization, such as adding gene ontology terms or pathway information as additional tokens or metadata [1] [18]. These integrated approaches demonstrate how tokenization can evolve beyond simple expression value conversion to incorporate rich biological context, significantly enhancing the biological relevance of model representations.

Emerging Architectures and Tokenization Innovations

Recent architectural innovations have driven corresponding advances in tokenization strategies. The GeneMamba model incorporates a BiMamba module that processes token sequences bidirectionally, capturing both upstream and downstream gene context with linear computational complexity [17]. This approach enables efficient processing of ultra-long sequences, potentially accommodating complete transcriptomes rather than subsets. Another innovation involves dynamic tokenization that adapts to cellular context, similar to how contemporary language models create dynamic token embeddings based on surrounding context [15]. For spatial transcriptomics, tokenization schemes incorporate positional information through absolute or relative coordinate encodings, enabling models to learn spatial expression patterns [15] [20]. These innovations demonstrate the ongoing co-evolution of tokenization strategies and model architectures, working in concert to extract increasingly sophisticated biological insights from single-cell data.

Table 3: Key Research Resources for Implementing Tokenization in Single-Cell Foundation Models

| Resource Category | Specific Tools/Datasets | Function in Tokenization Research | Access Information |

|---|---|---|---|

| Benchmark Datasets | CZ CELLxGENE, Human Cell Atlas, PanglaoDB | Provide standardized, annotated single-cell data for developing and evaluating tokenization methods | Publicly available through respective portals |

| Pre-trained Models | Geneformer, scGPT, scFoundation | Offer pre-trained tokenization modules and embeddings that can be transferred to new datasets | Model weights and code typically available via GitHub repositories |

| Biological Networks | STRING, BioGRID, Human Protein Reference Database | Source of protein-protein interaction data for integrated tokenization approaches | Publicly available databases with programmatic access |

| Evaluation Frameworks | BioLLM, scBenchmark, scGraph-OntoRWR | Provide standardized metrics and protocols for assessing tokenization quality | Open-source implementations available |

| Processing Pipelines | Scanpy, Seurat, scanny | Offer preprocessing workflows that can be adapted for various tokenization strategies | Open-source packages with extensive documentation |

Tokenization strategies form the critical foundation upon which single-cell foundation models are built, serving as the essential bridge between raw biological data and powerful computational architectures. The three primary approaches—rank-based discretization, bin-based discretization, and value projection—each offer distinct advantages and limitations, making them suitable for different biological questions and data characteristics. As the field progresses, emerging trends point toward more integrated tokenization schemes that incorporate multiple data modalities, biological prior knowledge, and dynamic context-aware representations. Future developments will likely focus on adaptive tokenization that automatically optimizes strategies based on data characteristics, cross-modal tokenization that seamlessly integrates diverse data types, and interpretable tokenization that provides biological insights into the representation learning process. As single-cell technologies continue to evolve, producing increasingly large and complex datasets, advances in tokenization will remain essential for unlocking the full potential of foundation models to decipher cellular complexity and drive biomedical discovery.

Self-supervised learning (SSL) has emerged as a transformative paradigm in single-cell genomics, enabling researchers to leverage vast, unlabeled datasets to build foundation models with remarkable generalization capabilities. These models learn meaningful biological representations by solving pretext tasks designed to capture inherent structures and relationships within the data. Among these tasks, masked gene prediction has established itself as a cornerstone objective, drawing inspiration from successful applications in natural language processing. However, the biological complexity of single-cell data has spurred the development of numerous complementary pretraining strategies that extend beyond this foundational approach.

This technical guide provides a comprehensive overview of the self-supervised pretraining objectives powering the next generation of single-cell foundation models (scFMs). We examine the technical specifications, implementation considerations, and relative performance of these methods within the context of a broader review of single-cell foundation model concepts. For researchers, scientists, and drug development professionals, understanding these core mechanisms is essential for selecting appropriate models, designing novel architectures, and interpreting results in biological and clinical applications.

Core Pretraining Objectives in Single-Cell Foundation Models

Self-supervised pretraining objectives equip models with generalized biological knowledge before fine-tuning for specific downstream tasks. The table below summarizes the primary objectives used in contemporary single-cell foundation models.

Table 1: Core Self-Supervised Pretraining Objectives in Single-Cell Foundation Models

| Objective | Mechanism | Key Variants | Representative Models | Primary Strengths |

|---|---|---|---|---|

| Masked Gene Prediction | Randomly masks portions of the input gene expression vector and trains the model to reconstruct the original values [21] [10]. | Random masking, Gene-programme masking, Isolated masking (GP to GP, GP to TF) [21]. | scGPT [1] [20], scFoundation [10] [4], CellFM [10] | Excels in transfer learning; effective for gene-expression reconstruction and cross-modality prediction [21]. |

| Contrastive Learning | Learns representations by maximizing agreement between differently augmented views of the same cell while distinguishing them from other cells [21]. | BYOL (Bootstrap Your Own Latent), Barlow Twins [21]. | UCE (Universal Cell Embedding) [4] | Addresses data sparsity and batch effects; effective for learning cell-level representations [21]. |

| Gene Ranking Prediction | Treats a cell as a sequence of genes ordered by expression level and trains the model to predict gene rank or position [1] [10]. | N/A | Geneformer [10] [4], scBERT [1] [10] | Captures context-dependent gene importance; robust to technical noise [10]. |

| Value Categorization | Bins continuous gene expression values into discrete buckets, transforming regression into a classification task [10]. | N/A | scBERT [10], scGPT [10] | Handles high technical variance in expression measurements [10]. |

Masked Gene Prediction: The Foundational Objective

The masked gene prediction objective, often implemented via a masked autoencoder (MAE) architecture, treats individual cells as sets of genes and their expression values. During pretraining, a random subset of a cell's gene expression values is masked (typically set to zero). The model is then tasked with reconstructing the original values based on the context provided by the remaining, unmasked genes [21] [10]. This forces the model to learn the complex, non-linear dependencies and co-expression patterns that define cellular states.

Evidence suggests that the specific masking strategy influences the quality of the learned representations. While random masking introduces minimal inductive bias, more biologically-informed strategies like gene programme (GP) masking—which masks groups of genes known to function in coordinated pathways—can guide the model toward more meaningful biological insights [21]. Empirical analyses underscore the nuanced role of SSL, showing that models pretrained on large auxiliary datasets (e.g., over 20 million cells) using masked autoencoders demonstrate significant improvements in downstream tasks like cell-type prediction and gene-expression reconstruction, particularly in transfer learning scenarios [21].

Beyond Masking: Complementary Pretraining Strategies

While powerful, masked gene prediction is often combined with or supplemented by other objectives to create more robust foundation models.

- Contrastive Learning: This approach learns effective cell embeddings by creating two augmented views of each cell (e.g., through subsampling, noise addition, or masking) and training an encoder to make their representations agree with each other while disagreeing with representations of other cells. Negative-pair-free methods like BYOL and Barlow Twins have been adapted for single-cell data to avoid the computational expense of defining negative pairs [21].

- Gene Ranking Prediction: Instead of predicting exact expression values, models like Geneformer treat the gene expression profile of a cell as a sequence of genes ranked by expression level. The pretext task involves predicting the rank of masked genes within this sequence, teaching the model about relative gene importance in different cellular contexts [10].

- Value Categorization: This strategy discretizes continuous gene expression values into a finite number of bins or "buckets," converting the reconstruction problem into a classification task. This can improve robustness to technical noise and platform-specific effects [10].

Experimental Protocols and Benchmarking

Rigorous benchmarking is essential for evaluating the performance of different pretraining objectives. The following protocol outlines a standardized workflow for this purpose.

Protocol for Benchmarking Pretraining Objectives

1. Data Curation and Preprocessing

- Data Aggregation: Compile a large-scale, diverse single-cell dataset from public repositories such as CELLxGENE, NCBI GEO, and the Human Cell Atlas [10] [22]. A high-quality corpus, such as the 100 million human cells used to train CellFM, is ideal [10].

- Quality Control: Filter cells and genes based on standard QC metrics (e.g., number of genes per cell, mitochondrial read percentage).

- Gene Name Standardization: Standardize gene nomenclature according to HUGO Gene Nomenclature Committee (HGNC) guidelines [10].

- Normalization: Apply standard normalization and log-transformation to count data.

2. Model Pretraining

- Objective Implementation: Implement the target pretraining objectives (masked prediction, contrastive learning, etc.) using a consistent base architecture (e.g., Transformer) to ensure fair comparison.

- Hyperparameter Setting: Utilize consistent training hyperparameters (learning rate, batch size, masking ratio) across objectives. For masked autoencoders, a masking ratio of 15-40% is common.

3. Downstream Task Evaluation Evaluate the pretrained models in both zero-shot and fine-tuned settings on critical biological tasks:

- Cell-type annotation: Assess prediction accuracy using macro F1 score, which is sensitive to class imbalances [21] [4].

- Batch integration: Use metrics like Local Inverse Simpson's Index (LISI) to evaluate how well the model removes technical artifacts while preserving biological variation [4].

- Perturbation response prediction: Measure the model's ability to predict cellular responses to genetic or chemical perturbations [10] [4].

- Gene function prediction: Evaluate learned gene embeddings on tasks like Gene Ontology term prediction [10] [4].

Table 2: Comparative Performance of Pretraining Objectives on Key Downstream Tasks

| Pretraining Objective | Cell-Type Annotation (Macro F1) | Batch Integration (LISI Score) | Perturbation Prediction (Accuracy) | Gene Function Prediction (AUPRC) |

|---|---|---|---|---|

| Masked Gene Prediction | 0.7466 (PBMC) [21] | 0.89 (Pancreas) [4] | 0.81 (Geneformer) [4] | 0.72 (CellFM) [10] |

| Contrastive Learning | 0.7013 (PBMC) [21] | 0.85 (Pancreas) [4] | 0.76 (UCE) [4] | 0.68 (UCE) [4] |

| Gene Ranking Prediction | 0.7310 (Geneformer) [4] | 0.87 (Pancreas) [4] | 0.83 (Geneformer) [4] | 0.75 (Geneformer) [10] |

| Supervised Baseline | 0.7120 (PBMC) [21] | 0.82 (Pancreas) [4] | 0.79 (MLP) [4] | 0.65 (Logistic Regression) [4] |

Key Findings from Benchmarking Studies

Recent benchmarking studies have yielded several critical insights:

- Transfer Learning Superiority: The primary strength of self-supervised pretraining, particularly masked autoencoders, emerges in transfer learning scenarios where models pretrained on large auxiliary datasets (e.g., >20 million cells) are applied to smaller target datasets. Performance improvements of 4-13% in macro F1 scores have been observed for cell-type annotation on datasets like PBMC and Tabula Sapiens [21].

- Zero-Shot Capabilities: Models pretrained with masked objectives demonstrate notable zero-shot capabilities, successfully annotating cell types without task-specific fine-tuning [21] [23].

- Objective-Dependent Performance: No single pretraining objective consistently outperforms all others across every task. Masked autoencoders generally excel in gene-expression reconstruction and cross-modality prediction, while contrastive methods can be more effective for certain cell-level representation tasks [21] [4].

- Architectural Considerations: While transformer architectures dominate the scFM landscape, masked autoencoders based on fully connected networks have shown competitive performance, suggesting that pretraining objective may be as important as model architecture [21].

Visualization of Pretraining Workflows and Relationships

The following diagrams illustrate the core workflows for the primary pretraining objectives and their relationships to downstream tasks.

Diagram 1: Masked Gene Prediction Workflow. This objective trains the model to reconstruct randomly masked portions of the gene expression vector, forcing it to learn co-expression patterns and biological dependencies.

Diagram 2: Relationship Between Pretraining Objectives and Downstream Applications. Different self-supervised objectives produce representations with particular strengths for specific biological tasks.

Successfully developing and applying single-cell foundation models requires access to specific data, computational resources, and software tools.

Table 3: Essential Resources for Single-Cell Foundation Model Research

| Resource Category | Specific Resource | Function/Purpose | Key Features |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1] [22] | Provides unified access to standardized single-cell datasets. | Over 100 million unique cells; standardized analysis format [22]. |

| NCBI GEO / SRA [1] [10] | Archives raw and processed single-cell sequencing data. | Extensive collection of diverse studies and technologies. | |

| Human Cell Atlas [21] [1] | Reference map of all human cells. | Broad coverage of cell types and states across tissues. | |

| Computational Platforms | BioLLM [20] | Standardized framework for benchmarking scFMs. | Universal interface for >15 foundation models [20]. |

| DISCO [20] | Single-cell data portal for federated analysis. | Aggregates data from multiple studies with query interface. | |

| scGPT [1] [20] | End-to-end foundation model framework. | Pretrained on 33M+ cells; supports multiple downstream tasks [20]. | |

| Analysis Frameworks | Scanpy [10] | Python-based toolkit for single-cell analysis. | Standard preprocessing, visualization, and analysis workflows. |

| Seurat [4] | R toolkit for single-cell genomics. | Comprehensive suite for analysis, integration, and discovery. | |

| Harmony [4] | Integration method for single-cell data. | Fast, sensitive batch effect correction without compromising biology. |

Self-supervised pretraining objectives, with masked gene prediction at the forefront, have fundamentally transformed the analysis of single-cell genomic data. These methods enable models to learn transferable biological knowledge from vast, unlabeled datasets, forming the foundation for powerful, generalizable tools. While masked autoencoding has proven particularly effective, especially in transfer learning scenarios, the diversity of objectives—from contrastive learning to gene ranking—provides researchers with a rich toolkit for addressing specific biological questions.

The ongoing benchmarking of these approaches reveals a nuanced landscape: no single objective dominates across all tasks, emphasizing the importance of task-specific model selection. As the field progresses, the integration of multiple objectives, the development of more biologically-informed pretext tasks, and improved evaluation metrics will further enhance the capabilities of single-cell foundation models. For researchers and drug development professionals, understanding these core mechanisms is no longer optional but essential for leveraging the full potential of single-cell technologies in basic research and translational applications.

The emergence of single-cell genomics has fundamentally transformed biological research by enabling the characterization of cellular heterogeneity at unprecedented resolution. A critical driver of this transformation has been the development of large-scale, publicly accessible data repositories that serve as foundational resources for the scientific community. These repositories provide the vast, diverse datasets necessary for training sophisticated computational models, including single-cell foundation models (scFMs), which require massive amounts of standardized data to learn universal biological patterns. Platforms such as CZ CELLxGENE Discover and initiatives like the Human Cell Atlas (HCA) have become indispensable pillars in this ecosystem, aggregating and standardizing single-cell data from thousands of studies worldwide [24] [25].

The scale of these resources is substantial. As of 2025, CZ CELLxGENE Discover hosts over 33 million unique cells from 436 datasets, characterizing more than 2,700 cell types across healthy human and mouse tissues [24]. Concurrently, the Human Cell Atlas consortium—a global collaborative effort involving over 3,900 members from more than 100 countries—is executing its mission to create comprehensive reference maps of all human cells [25]. These repositories do not merely serve as data archives; they provide standardized, analysis-ready data that has been processed through uniform computational pipelines, enabling robust comparative analyses and meta-analyses across diverse studies and experimental conditions. For researchers developing and applying single-cell foundation models, these resources provide the critical pretraining corpora necessary to build models that can generalize across tissues, species, and biological contexts.

Comprehensive Landscape of Major Data Repositories

Core Repository Specifications and Features

Table 1: Major Public Single-Cell Data Repositories

| Repository Name | Primary Content | Scale (as of 2025) | Key Features | Common Applications |

|---|---|---|---|---|

| CZ CELLxGENE Discover [24] | Standardized single-cell transcriptomics data from healthy human and mouse tissues | 33M+ cells, 436 datasets, 2.7K+ cell types [24] | Differential expression tool, Census API, Cell Guide, interactive Explorer, Collections & Datasets | Cell type annotation, differential expression analysis, dataset exploration, model pretraining |

| Human Cell Atlas (HCA) [25] | Comprehensive reference maps of all human cells from multiple tissues and organs | Global consortium with 3,900+ members from 1,700+ institutes [25] | Open global initiative, organized biological networks, partnership with UNESCO for open science | Reference atlas construction, cross-tissue integration, cell ontology development |

| DISCO [20] | Aggregated single-cell data across multiple studies and modalities | 100M+ cells (aggregated) [20] | Federated analysis capabilities, query interfaces across diverse datasets | Large-scale integrative analysis, cross-study validation |

| NCBI GEO/SRA [1] | Archival repository for high-throughput sequencing data | Thousands of single-cell sequencing studies [1] | Primary data submission hub, raw and processed data, links to original publications | Data preservation, method benchmarking, reanalysis |

Beyond the primary repositories, several specialized resources have emerged to address specific analytical needs. The Census component of CZ CELLxGENE provides programmatic access to any custom slice of standardized cell data through R and Python interfaces, enabling seamless integration into computational workflows [24]. The Cell Guide offers an interactive encyclopedia of over 700 cell types with detailed definitions, marker genes, lineage information, and relevant datasets in one place [24].

For cross-species comparisons and specialized taxonomic groups, resources like scPlantFormer have been pretrained on approximately 1 million Arabidopsis thaliana cells, facilitating plant single-cell omics analysis [20]. The Asian Immune Diversity Atlas (AIDA) v2, available through CELLxGENE, represents an example of population-specific references that are increasingly important for capturing human genetic diversity [4].

These repositories collectively enable the "mosaic integration" approach, where datasets that do not measure identical features can be aligned by leveraging shared cell neighborhoods or robust cross-modal anchors rather than requiring strict feature overlaps [20]. This capability is particularly valuable for integrative analyses across platforms and modalities.

Data Curation Workflows and Standardization Processes

From Raw Data to Analysis-Ready Corpora

The transformation of raw single-cell sequencing data into analysis-ready resources involves multiple curation steps that are critical for ensuring data quality and interoperability. CELLxGENE employs a standardized processing pipeline that performs key harmonization steps including quality control, normalization, batch effect correction, and annotation [24]. This standardized processing is essential for creating the unified corpora required for scFM pretraining, as it mitigates technical variation across different experimental protocols and platforms.

A fundamental challenge in single-cell data curation is the integration of multimodal data—including transcriptomics, epigenomics, proteomics, and spatial information—measured from the same cell [26]. The curation process must preserve the natural biological relationships between these modalities while removing technical artifacts. Methods for this integration include canonical correlation vectorization (CCV), which identifies shared features across modalities by projecting cells into a common basis space, and manifold alignment, which unravels pseudotemporal relationships between different molecular layers such as gene expression and DNA methylation [26].

Table 2: Data Curation and Integration Methods for Single-Cell Repositories

| Curation Step | Key Methods/Tools | Purpose | Considerations |

|---|---|---|---|

| Quality Control | scran, scater [27] | Filtering low-quality cells/genes, doublet detection | Dataset-specific thresholds, technology-dependent parameters |

| Batch Correction | Harmony [27], Seurat CCA [27], scVI [27] | Removing technical variation while preserving biology | Correction strength tuning, biological signal preservation |

| Multimodal Integration | StabMap [20], TMO-Net [20] | Aligning different omics modalities from same cells | Handling non-overlapping features, preserving inter-modality relationships |

| Cell Type Annotation | SingleR [28], Azimuth [28] | Assigning cell identities using reference datasets | Resolution levels (broad to detailed), consensus approaches |

Metadata Standardization and Ontology Implementation

Effective data curation extends beyond processing molecular measurements to encompass comprehensive metadata standardization. Repositories like CELLxGENE and HCA employ structured ontologies including Cell Ontology (CL), Uberon anatomy ontology, and Gene Ontology (GO) to ensure consistent annotation across datasets [24] [25]. This ontological framework enables precise semantic queries and facilitates cross-dataset integration by establishing common terminologies for cell types, tissues, and biological processes.

The implementation of these ontologies is particularly crucial for scFM development, as it provides the biological grounding necessary for models to learn meaningful representations rather than merely technical artifacts. As noted in benchmark studies, scFMs that incorporate ontological relationships in their training objectives demonstrate superior performance in tasks such as cross-species annotation and rare cell type identification [4].

Diagram 1: Single-cell data curation workflow for repository integration and model pretraining.

Experimental Protocols for Repository-Driven Research

Reference-Based Cell Type Annotation

Leveraging curated public repositories enables robust cell type identification through reference-based annotation, a fundamental application in single-cell analysis. The standard protocol involves:

In-depth Preprocessing: Rigorous quality control to filter low-quality cells or genes, followed by doublet detection and batch correction to mitigate technical variation from differences in sample preparation or sequencing runs [28].

Reference Dataset Selection: Careful selection of appropriate reference datasets from repositories based on tissue similarity, species, and experimental protocol. Researchers typically conduct an in-depth review of literature and available cell atlases to identify the most suitable reference datasets [28].

Annotation Transfer: Using tools such as SingleR or Azimuth to align the gene expression profiles of each single cell with references from similar tissues [28]. The Azimuth project provides cell type annotations at different levels—from broad categories to very detailed subtypes—allowing researchers to choose the appropriate resolution.

Manual Refinement: Careful review of preliminary annotations against multiple sources of evidence, including verification of canonical marker gene expression patterns, differential gene expression analyses, and consultation of relevant literature [28]. This step integrates biological expertise to interpret ambiguous clusters or edge cases.

This protocol exemplifies how curated repositories serve not merely as data sources but as knowledge bases that transfer biological understanding from well-characterized reference datasets to novel experimental data.

Foundation Model Pretraining Using Repository Data

The development of single-cell foundation models relies on carefully designed pretraining protocols using repository-scale data:

Data Sourcing and Selection: Compilation of large and diverse datasets from repositories such as CELLxGENE, which provides unified access to annotated single-cell datasets with over 100 million unique cells standardized for analysis [1]. Effective pretraining requires careful selection of datasets, filtering of cells and genes, balancing dataset compositions, and quality controls [1].

Tokenization Strategy: Conversion of gene expression profiles into discrete tokens that scFMs can process. Common approaches include ranking genes within each cell by expression levels, partitioning genes into expression bins, or using normalized counts directly [1]. Special tokens representing cell identity, metadata, or modality information may be prepended to enrich the input context.

Model Architecture Configuration: Implementation of transformer-based architectures, typically using either bidirectional encoder representations (BERT-like) for classification tasks or autoregressive decoder architectures (GPT-like) for generation tasks [1]. The attention mechanisms in these architectures enable the model to learn relationships between genes and how they covary across cells.

Self-Supervised Pretraining: Training models using objectives such as masked gene modeling, where a portion of input genes are masked and the model must predict them based on the remaining context [1]. This approach allows the model to learn fundamental biological patterns without requiring labeled data.

Diagram 2: scFM pretraining protocol using public repository data.

Table 3: Essential Computational Tools for Repository-Based Single-Cell Analysis

| Tool Category | Representative Tools | Primary Function | Application in Repository Research |

|---|---|---|---|

| Comprehensive Analysis Platforms | Scanpy [27], Seurat [27] | End-to-end scRNA-seq analysis | Data preprocessing, visualization, and integration of repository datasets |

| Deep Learning Frameworks | scvi-tools [27], scGPT [20] | Probabilistic modeling and foundation models | Batch correction, imputation, and transfer learning on repository data |

| Spatial Analysis Tools | Squidpy [27], Nicheformer [20] | Spatially resolved transcriptomics | Integrating spatial context with repository single-cell data |

| Trajectory Inference | Monocle 3 [27], Velocyto [27] | Pseudotime and cell fate analysis | Mapping developmental trajectories using reference atlases |

| Multimodal Integration | StabMap [20], TMO-Net [20] | Integrating multiple omics modalities | Combining repository datasets across different molecular layers |

| Benchmarking Platforms | BioLLM [20] | Standardized model evaluation | Comparing scFM performance across tasks and datasets |

Public data repositories have evolved from passive archives to active knowledge platforms that drive discovery in single-cell biology. The continued growth of resources like CELLxGENE and Human Cell Atlas, coupled with advances in computational methods that can leverage these vast data collections, promises to accelerate our understanding of cellular mechanisms in both health and disease. For the field of single-cell foundation models, these repositories provide not only the training data necessary for model development but also the reference frameworks for biological interpretation and validation.

Future developments will likely focus on enhancing multimodal integration, improving cross-species generalization, and developing more efficient data structures for querying and analyzing repository-scale data. As these resources continue to expand, they will play an increasingly central role in enabling researchers to translate cellular-level insights into clinical applications and therapeutic developments.

Practical Implementation: scFM Workflows, Drug Discovery Applications, and Multi-Omic Integration

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the investigation of gene expression at unprecedented resolution, revealing cellular heterogeneity and complex biological processes that are obscured in bulk sequencing data [29]. Concurrently, the field has witnessed the emergence of single-cell foundation models (scFMs)—large-scale deep learning models pretrained on vast datasets—which are revolutionizing data interpretation through self-supervised learning with capacity for various downstream tasks [1]. These technological advances have created an urgent need for unified analytical frameworks capable of integrating and comprehensively analyzing rapidly expanding data repositories.

The volume and complexity of data generated by modern single-cell technologies necessitate robust, standardized, yet flexible end-to-end analysis pipelines that guide researchers from raw sequencing data to meaningful biological insights. These pipelines must address multiple challenges inherent to single-cell data, including high dimensionality, technical noise, batch effects, and the integration of multimodal measurements [30] [31]. This technical guide examines the architecture, components, and implementation of these pipelines within the broader context of single-cell foundation model research, providing researchers and drug development professionals with a comprehensive framework for navigating this rapidly evolving landscape.

Foundational Concepts: From Single-Cell Data to Foundation Models

The Single-Cell Data Landscape

Single-cell technologies have progressed from measuring just the transcriptome to simultaneously capturing multiple molecular layers from the same cells. Modern multi-omics assays can measure gene expression, chromatin accessibility, DNA methylation, and protein abundance in tandem, creating datasets of immense value and complexity [31]. The fundamental computational challenge lies in integrating these different omics layers with distinct feature spaces—for example, accessible chromatin regions in scATAC-seq versus genes in scRNA-seq [32]. Effective pipelines must bridge these modality gaps while preserving biological signals and removing technical artifacts.

A critical characteristic of single-cell data is its high sparsity, high dimensionality, and low signal-to-noise ratio [4]. Gene expression matrices typically contain thousands of cells measured across tens of thousands of genes, with most genes showing zero counts in most cells due to both biological and technical factors. This sparsity presents unique challenges for analytical methods and requires specialized statistical approaches distinct from those used for bulk sequencing data.

The Rise of Single-Cell Foundation Models

Inspired by successes in natural language processing (NLP) and computer vision, researchers have begun developing scFMs that learn from extensive single-cell datasets and can be fine-tuned for various biological analyses [1]. A foundation model is defined as a large-scale, self-supervised artificial intelligence model trained on diverse datasets that can be adapted to a wide range of tasks [1]. These models typically employ transformer architectures that use attention mechanisms to learn relationships between genes, analogous to how language models learn relationships between words [1].