Single-Cell Foundation Models Architectures Compared: A 2025 Guide for Biomedical Researchers

Single-cell foundation models (scFMs) are revolutionizing biological research by providing unified AI frameworks for analyzing cellular heterogeneity.

Single-Cell Foundation Models Architectures Compared: A 2025 Guide for Biomedical Researchers

Abstract

Single-cell foundation models (scFMs) are revolutionizing biological research by providing unified AI frameworks for analyzing cellular heterogeneity. This article offers a comprehensive comparison of leading scFM architectures, including transformer-based models like scGPT, Geneformer, and scFoundation. It explores their core concepts, methodological applications in drug discovery and clinical research, common optimization challenges, and performance across key benchmarks. Designed for researchers and drug development professionals, this guide synthesizes the latest findings to inform model selection and application, highlighting future directions for integrating these powerful tools into biomedical and clinical workflows.

Demystifying Single-Cell Foundation Models: Core Architectures and Pretraining Strategies

What are Foundation Models? From NLP to Cell Biology

Foundation models represent a revolutionary approach in artificial intelligence, defined as large-scale machine learning models pre-trained on vast, diverse datasets that can be adapted to a wide range of downstream tasks through fine-tuning [1] [2]. This "pre-train then fine-tune" paradigm has fundamentally transformed natural language processing (NLP) and computer vision, with models like GPT and BERT demonstrating remarkable capabilities in understanding context, generating text, and transferring knowledge across domains [1] [3].

The single-cell genomics field, generating massive amounts of transcriptomic data from technologies like single-cell RNA sequencing (scRNA-seq), has emerged as fertile ground for foundation model development [1] [4]. Single-cell foundation models (scFMs) represent a convergence of AI and biology, aiming to capture the fundamental principles of cellular behavior that can generalize across tissues, conditions, and even species [1] [5]. This guide provides an objective comparison of scFM architectures, their performance across biological tasks, and the experimental frameworks used to evaluate them—critical knowledge for researchers and drug development professionals navigating this rapidly evolving landscape.

Architectural Landscape of Single-Cell Foundation Models

Core Architectural Components

Single-cell foundation models adapt transformer architectures and other neural network designs to the unique challenges of gene expression data. Unlike natural language, gene expression data lacks inherent sequential ordering and contains continuous values rather than discrete tokens [1] [4]. scFMs address these challenges through several key components:

Tokenization Strategies: Converting continuous gene expression values into model-processable tokens represents a fundamental design choice. Bin-based discretization (used by scBERT, scGPT) groups expression values into predefined categories, while rank-based discretization (used by Geneformer) transforms expressions into ordinal rankings. Value projection approaches (used by scFoundation, CellFM) maintain continuous representations by projecting expression values into embedding spaces [6] [7].

Attention Mechanisms: Most scFMs utilize transformer architectures with self-attention mechanisms that learn relationships between genes. The bidirectional attention in encoder-style models (like BERT) processes all genes simultaneously, while unidirectional attention in decoder-style models (like GPT) processes genes sequentially [1].

Positional Encoding: Since genes lack natural ordering, scFMs implement various schemes to represent gene position, most commonly using expression magnitude rankings to determine sequence position [1] [2].

Model Architecture Comparison

Table 1: Architectural Overview of Major Single-Cell Foundation Models

| Model | Architecture Type | Tokenization Strategy | Parameters | Training Scale | Key Innovations |

|---|---|---|---|---|---|

| Geneformer [3] [6] | Transformer (BERT-like) | Rank-based discretization | 52 million | 30 million cells | Predicts gene positions within cellular context |

| scGPT [8] [3] | Transformer (GPT-like) | Bin-based discretization | 51 million | 33 million human cells | Attention mask mechanism for autoregressive prediction |

| scBERT [3] [9] | Performer architecture | Bin-based discretization | 8 million | Panglao database | Early transformer adaptation for single-cell data |

| scFoundation [6] | Transformer encoder | Value projection | ~100 million | ~50 million human cells | Direct prediction of raw gene expression values |

| CellFM [6] | Modified RetNet (ERetNet) | Value projection | 800 million | 100 million human cells | Linear complexity architecture for scalability |

| GeneMamba [7] | State Space Model (BiMamba) | Rank-based discretization | Not specified | 50 million cells | Linear computational complexity; bidirectional processing |

Experimental Benchmarking: Methodologies and Performance

Standardized Evaluation Frameworks

Rigorous benchmarking of scFMs requires standardized protocols across diverse biological tasks. Leading evaluations typically assess models in zero-shot settings (using pre-trained embeddings without task-specific fine-tuning) and fine-tuning paradigms (updating model parameters on labeled task data) [4] [8]. The BioLLM framework provides standardized APIs for consistent model evaluation, revealing distinct performance trade-offs across architectures [8].

Comprehensive benchmarks like the one conducted by [4] evaluate models across multiple task categories:

- Cell-level tasks: Batch integration, cell type annotation, cancer cell identification, drug sensitivity prediction

- Gene-level tasks: Gene function prediction, gene-gene relationship analysis, tissue specificity prediction

- Interpretability analysis: Attention mechanism analysis to identify biologically relevant gene interactions

Quantitative Performance Comparison

Table 2: Performance Comparison of scFMs Across Key Biological Tasks

| Model | Cell Type Annotation (Accuracy) | Batch Integration (ASW) | Perturbation Prediction | Gene Function Prediction | Computational Efficiency |

|---|---|---|---|---|---|

| Geneformer | Moderate [4] | Moderate [4] | Strong [3] [6] | Strong [8] | Moderate [7] |

| scGPT | High [8] | High [4] [8] | Strong [2] [8] | Moderate [8] | Low [7] |

| scBERT | Lower [4] [8] | Lower [4] | Moderate [9] | Lower [8] | High [9] |

| scFoundation | High [4] | High [4] | Strong [6] | Strong [8] | Low [6] |

| CellFM | Highest [6] | High [6] | Strongest [6] | Strongest [6] | Lowest [6] |

| GeneMamba | High [7] | High [7] | Not specified | High [7] | Highest [7] |

Performance rankings based on comprehensive benchmarking studies [4] [8] [6]. Metrics are relative comparisons within each task category.

Key Benchmarking Insights

Recent benchmarking reveals several critical insights for scFM selection and application:

No single model dominates all tasks: Each architecture demonstrates distinct strengths, with performance highly dependent on specific task requirements and dataset characteristics [4].

Trade-offs between simplicity and power: In some scenarios, particularly with limited data or specific tasks, simpler machine learning models can compete with or outperform complex foundation models [4] [9].

Biological relevance varies: Models capture biological relationships with varying fidelity, with some architectures demonstrating better alignment with established biological knowledge [4].

Computational requirements differ significantly: Architectural choices dramatically impact training and inference costs, with newer models like GeneMamba offering substantially improved efficiency [7].

Experimental Protocols and Methodologies

Standardized Preprocessing Workflow

Single-Cell Data Preprocessing Pipeline

Reproducible evaluation of scFMs requires standardized data processing protocols. The typical workflow includes:

Quality Control: Filtering cells and genes based on quality metrics (mitochondrial content, number of detected genes, total counts) [6].

Gene Name Standardization: Converting gene identifiers to standardized nomenclature (e.g., HGNC guidelines) to ensure consistency across datasets [6].

Normalization: Accounting for sequencing depth and gene-specific variation using methods like counts per million (CPM) or more advanced normalization techniques [7].

Tokenization: Applying model-specific tokenization strategies (binning, ranking, or value projection) to convert continuous expression values into model-processable inputs [1] [7].

Benchmarking Experimental Design

scFM Benchmarking Methodology

Comprehensive benchmarking follows standardized protocols to ensure fair model comparison:

Zero-Shot Evaluation: Extracting model embeddings without task-specific fine-tuning to assess inherent representation quality [4].

Fine-Tuning Protocol: Updating model parameters on task-specific labeled data with careful hyperparameter optimization [9].

Task-Specific Evaluation:

- Cell type annotation: Measuring accuracy against manual annotations using metrics like F1-score and accuracy [4].

- Batch integration: Assessing batch effect removal while preserving biological variation using metrics like Average Silhouette Width (ASW) [4].

- Perturbation prediction: Evaluating model ability to predict cellular responses to genetic or chemical perturbations [2] [6].

- Gene function prediction: Measuring accuracy in predicting Gene Ontology terms and functional relationships [4] [6].

Biological Ground Truthing: Novel metrics like scGraph-OntoRWR evaluate whether model-derived cell relationships align with established biological knowledge in cell ontology [4].

Table 3: Essential Research Reagents and Computational Resources for scFM Applications

| Resource Category | Specific Tools/Platforms | Function/Purpose | Key Features |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1], GEO [1], Single-Cell Data Portals | Standardized access to annotated single-cell datasets | Curated collections with uniform formatting |

| Model Frameworks | BioLLM [8], scGPT Pipeline [8], Geneformer Codebase | Standardized APIs for model application and switching | Reduces implementation barriers |

| Preprocessing Tools | Scanpy, Seurat, SynEcoSys Database [6] | Quality control, normalization, gene name standardization | Prepares raw data for model input |

| Evaluation Metrics | scGraph-OntoRWR [4], LCAD [4], Traditional ML metrics | Assess biological relevance and task performance | Connects model outputs to biological knowledge |

| Computational Infrastructure | MindSpore Framework [6], PyTorch, GPU/NPU Clusters | Enables training and inference of large-scale models | Handles massive parameter counts and datasets |

Single-cell foundation models represent a transformative development in computational biology, offering unprecedented capabilities for analyzing cellular heterogeneity and function. However, current benchmarking reveals a nuanced landscape where model selection requires careful consideration of task requirements, dataset characteristics, and computational resources [4].

The field is rapidly evolving with several promising directions:

Architectural innovations: New paradigms like state space models (GeneMamba) and hybrid architectures address computational limitations of pure transformer approaches [7].

Scale expansion: Models like CellFM demonstrate the potential of extreme scaling in both training data (100M+ cells) and parameters (800M+) [6].

Multimodal integration: Future models will incorporate additional data modalities including epigenomics, proteomics, and spatial information [5].

Specialized domain adaptation: Models like scPlantLLM demonstrate the value of domain-specific adaptation, particularly for non-animal systems [5].

For researchers and drug development professionals, the current scFM landscape offers powerful tools but requires informed selection based on specific use cases rather than assuming universal superiority of any single approach. As standardization improves and biological interpretability deepens, these models promise to become increasingly indispensable for extracting insights from the complex language of cellular biology.

Transformer architectures have fundamentally reshaped the landscape of single-cell genomics, emerging as the foundational infrastructure for next-generation biological discovery. Originally developed for natural language processing (NLP), these models have been successfully adapted to decode the complex "language" of cellular biology, where cells function as sentences and genes act as words [1] [10]. The unique self-attention mechanisms within transformers enable them to capture intricate, long-range dependencies in gene expression data, mirroring their success in identifying contextual relationships in text [11]. This architectural superiority has catalyzed the development of single-cell foundation models (scFMs)—large-scale deep learning models pretrained on vast datasets that can be adapted to numerous downstream analytical tasks [1] [12].

The transition to transformer-based models addresses critical limitations in traditional single-cell analysis methods, which often struggled with the high dimensionality, technical noise, and complex heterogeneity inherent in single-cell omics data [13] [4]. By training on millions of cells across diverse tissues, conditions, and species, scFMs learn fundamental biological principles that generalize across experimental contexts [1] [10]. This review provides a comprehensive comparison of leading transformer-based scFM architectures, their performance across specialized tasks, and the experimental frameworks validating their biological utility, offering researchers evidence-based guidance for model selection and implementation.

Core Transformer Components in scFMs

Transformer architectures adapted for single-cell analysis retain the fundamental components of their NLP counterparts while incorporating crucial modifications for biological data. The self-attention mechanism serves as the computational core, allowing the model to dynamically weight the importance of different genes when representing a cell's state [1] [11]. This capability enables scFMs to identify which genes are most informative for determining cellular identity, state, and functional relationships [1]. The multi-head attention architecture further enhances this by enabling the model to simultaneously focus on different types of gene-gene relationships across multiple representation subspaces [11].

Most scFMs utilize either encoder-based or decoder-based transformer variants, each with distinct strengths. Encoder-based models (e.g., scBERT, Geneformer) employ bidirectional attention mechanisms that process all genes in a cell simultaneously, making them particularly effective for classification tasks and embedding generation [1]. In contrast, decoder-based models (e.g., scGPT) use masked self-attention mechanisms that iteratively predict masked genes conditioned on known expressions, excelling in generative tasks [1]. Hybrid architectures that combine encoder and decoder components are also emerging, though no single variant has established clear superiority across all biological tasks [1].

Tokenization Strategies: Converting Biology to Machine-Readable Inputs

A critical adaptation for applying transformers to single-cell data involves tokenization—the process of converting raw gene expression values into discrete units processable by the model [1] [10]. Unlike natural language with its inherent word sequence, gene expression data lacks natural ordering, requiring innovative solutions:

- Rank-based tokenization: Genes are ordered by their expression levels within each cell, creating a deterministic sequence based on expression magnitude [1] [14].

- Value binning: Expression values are partitioned into discrete bins, with each bin representing a different token [1].

- Normalized counts: Some models simply use normalized expression values without complex ranking schemes, reporting comparable performance [1].

Additional specialized tokens enrich the biological context, including cell identity tokens that represent a cell's metadata, modality tokens for multi-omics integration, and batch-specific tokens to account for technical variations [1]. After tokenization, all tokens are converted to embedding vectors processed by the transformer layers, ultimately generating latent representations at both the gene and cellular levels [1].

Architectural Variations Across Prominent scFMs

Table 1: Architectural Specifications of Leading scFMs

| Model | Architecture Type | Parameters | Pretraining Scale | Input Genes | Embedding Dimension |

|---|---|---|---|---|---|

| Geneformer [13] | Encoder-based | 40M | 30M cells | 2,048 ranked genes | 256/512 |

| scGPT [12] [13] | Decoder-based | 50M | 33M cells | 1,200 HVGs | 512 |

| UCE [13] | Encoder-based | 650M | 36M cells | 1,024 non-unique genes | 1,280 |

| scFoundation [13] | Encoder-decoder | 100M | 50M cells | ~19,000 genes | 3,072 |

| Nicheformer [14] | Encoder-based | 49.3M | 110M cells | 1,500 tokens | 512 |

| CellMemory [15] | Bottlenecked Transformer | - | No pretraining | Flexible | - |

The architectural landscape of scFMs reveals substantial diversity in design choices and scaling approaches. Model sizes range from compact architectures like Geneformer (40M parameters) to substantially larger networks like UCE (650M parameters), reflecting different hypotheses about the optimal complexity for biological representation learning [13]. Pretraining corpora have expanded dramatically, with recent models like Nicheformer utilizing over 110 million cells from both dissociated and spatially-resolved transcriptomics assays [14]. Emerging innovations include specialized architectures like CellMemory, which incorporates a bottlenecked transformer inspired by global workspace theory in cognitive neuroscience to improve interpretability and handle out-of-distribution cells [15].

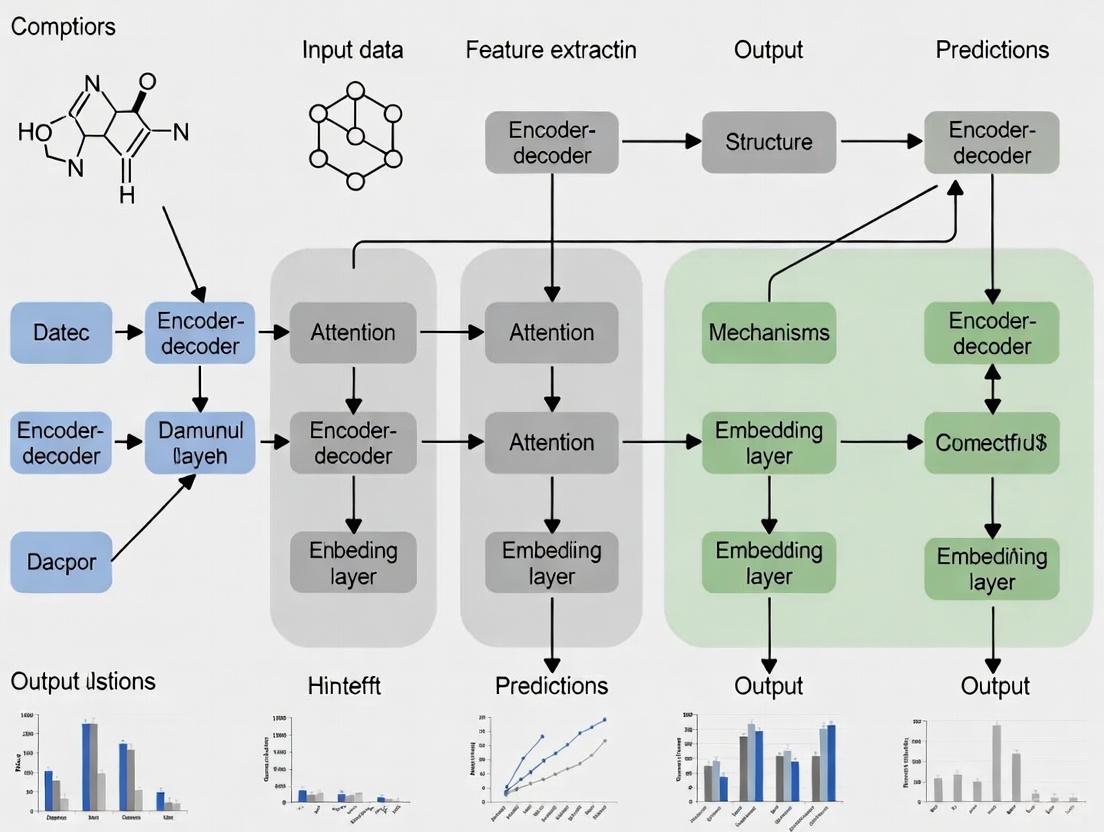

Diagram: Generic scFM Architecture showing the transformation of single-cell data through tokenization, embedding, and transformer layers to generate task-appropriate outputs.

Comparative Performance Analysis Across Biological Tasks

Experimental Frameworks for scFM Evaluation

Rigorous benchmarking studies have established standardized protocols to evaluate scFM performance across diverse biological tasks. A comprehensive 2025 benchmark assessed six prominent scFMs against established baselines using twelve evaluation metrics spanning unsupervised, supervised, and knowledge-based approaches [13] [4]. The evaluation incorporated biologically-informed metrics like scGraph-OntoRWR, which measures consistency between model-derived cell type relationships and established biological ontologies, and LCAD (Lowest Common Ancestor Distance), which quantifies the severity of cell type misannotation errors [13] [4].

Experimental designs typically evaluate both zero-shot performance (using pretrained embeddings without task-specific fine-tuning) and fine-tuned performance (after additional task-specific training) [13] [8]. To ensure real-world relevance, benchmarks include clinically oriented tasks such as cancer cell identification and drug sensitivity prediction across multiple cancer types and therapeutic compounds [13]. Independent validation datasets like the Asian Immune Diversity Atlas (AIDA) v2 further mitigate the risk of data leakage and provide unbiased performance assessment [13].

Task-Specific Performance Comparisons

Table 2: Comparative Performance of scFMs Across Key Biological Tasks

| Model | Cell Type Annotation | Batch Integration | Perturbation Prediction | Spatial Task Performance | Multi-Omic Integration |

|---|---|---|---|---|---|

| scGPT | Excellent [8] | Strong [12] | Excellent [12] | Limited [14] | Strong [1] |

| Geneformer | Good [13] | Moderate [13] | Strong [13] | Limited [14] | Limited |

| Nicheformer | Good (spatial) [14] | Strong (spatial) [14] | Not Reported | Excellent [14] | Moderate |

| UCE | Variable [13] | Variable [13] | Good [13] | Limited | Limited |

| scFoundation | Good [13] | Good [13] | Strong [13] | Limited | Limited |

| CellMemory | Excellent (OOD) [15] | Strong [15] | Not Reported | Excellent [15] | Not Reported |

Performance analyses reveal that no single scFM consistently dominates across all tasks, emphasizing the importance of task-specific model selection [13] [4]. In cell type annotation, scGPT demonstrates robust performance, while CellMemory excels particularly at annotating rare and out-of-distribution cell types, achieving 81% accuracy on challenging beta_minor pancreatic cells where other models struggled [15] [8]. For spatially-aware tasks, Nicheformer substantially outperforms models trained solely on dissociated data, accurately predicting spatial context and cellular niche composition by leveraging its training on 53 million spatially resolved cells [14].

In batch integration tasks, which remove technical variations while preserving biological signals, transformer-based approaches generally show strong performance, though simpler methods like Harmony and scVI remain competitive in certain scenarios [13]. For perturbation prediction, models with effective pretraining strategies like Geneformer and scGPT demonstrate notable capabilities in forecasting cellular responses to genetic and chemical perturbations [12] [13]. Benchmarking results consistently indicate that while scFMs provide robust and versatile performance across diverse applications, simpler machine learning models can sometimes outperform them on specific tasks, particularly under computational constraints or with limited dataset sizes [13].

Implementation Considerations and Research Solutions

Computational Ecosystem and Tools

The growing complexity of scFM architectures has spurred development of standardized frameworks to facilitate their application and comparison. BioLLM provides a unified interface for integrating and benchmarking diverse scFMs, offering standardized APIs that eliminate architectural and coding inconsistencies [12] [8]. This framework supports both zero-shot and fine-tuning evaluation, enabling researchers to make informed decisions about model selection based on comprehensive performance data [8].

Data resources have become equally critical for scFM development and application. Platforms like CZ CELLxGENE provide unified access to over 100 million annotated single cells, while the Human Cell Atlas and other multiorgan atlases offer broad coverage of cell types and states [1] [10]. Computational ecosystems like DISCO further aggregate single-cell data for federated analysis, creating the extensive training corpora essential for effective scFM pretraining [12].

Key Research Reagents and Computational Solutions

Table 3: Essential Research Resources for scFM Implementation

| Resource Category | Specific Tools/Databases | Primary Function | Access Method |

|---|---|---|---|

| Benchmarking Frameworks | BioLLM [8], scGraph-OntoRWR [13] | Standardized model evaluation and comparison | Python packages |

| Data Repositories | CZ CELLxGENE [1], DISCO [12], GEO/SRA [1] | Provide curated single-cell datasets for training and testing | Web portal/API |

| Model Architectures | scGPT [12], Geneformer [13], Nicheformer [14] | Pretrained foundation models for various tasks | GitHub repositories |

| Integration Tools | Seurat [13], Harmony [13], scVI [13] | Baseline methods for performance comparison | R/Python packages |

| Visualization Platforms | CellxGene [13], UCSC Cell Browser [12] | Interactive exploration of model outputs and embeddings | Web applications |

Decision Framework for Model Selection

Based on comprehensive benchmarking results, researchers can apply the following decision framework for scFM selection:

- For general-purpose single-cell analysis: scGPT demonstrates robust performance across multiple task categories and offers strong multi-omic capabilities [12] [8].

- For spatial transcriptomics and niche analysis: Nicheformer systematically outperforms other models on spatially-aware tasks by incorporating spatial context during pretraining [14].

- For analyzing rare or novel cell types: CellMemory shows exceptional performance for out-of-distribution cells, accurately annotating cell populations absent from training data [15].

- For gene-level tasks and regulatory inference: Geneformer and scFoundation provide strong gene embeddings that effectively capture functional relationships [13] [8].

- Under computational constraints: Simpler baseline models (Seurat, Harmony) may provide sufficient performance for standard tasks without requiring extensive computational resources [13].

The roughness index (ROGI) can serve as a practical proxy for model selection, measuring the smoothness of the cell-property landscape in the latent space, which correlates with downstream task performance [13].

Future Directions and Conceptual Limitations

Despite rapid advancement, transformer-based scFMs face several conceptual and technical challenges. Interpretability remains a significant hurdle, as understanding the biological relevance of latent embeddings and attention weights continues to be nontrivial [1] [15]. The nonsequential nature of omics data presents fundamental architectural questions, as genes lack inherent ordering unlike words in natural language [1] [11]. Additionally, computational intensity for training and fine-tuning these models creates accessibility barriers for many research groups [1].

Promising research directions include developing more efficient attention mechanisms to reduce computational complexity, enhancing multimodal integration capabilities for combining transcriptomic, epigenomic, proteomic, and spatial data, and creating more biologically grounded pretraining objectives that incorporate known molecular interactions [12] [11]. Architectural innovations like CellMemory's bottlenecked transformer demonstrate how inspiration from other fields can address limitations in handling long token sequences while improving interpretability [15].

As the field matures, standardized benchmarking, improved model interoperability, and more sophisticated biological evaluation metrics will be crucial for translating computational advances into genuine biological insights and clinical applications [12] [13]. By critically understanding the strengths and limitations of different transformer architectures, researchers can more effectively leverage these powerful tools to unravel cellular complexity and advance precision medicine.

In single-cell biology, foundation models (scFMs) are revolutionizing how researchers interpret the complex language of cellular function. These large-scale deep learning models, pretrained on vast single-cell datasets, can be adapted for diverse downstream tasks from cell type annotation to perturbation prediction [1] [10]. A pivotal preprocessing step that enables this transformation is tokenization—the process of converting raw gene expression data into discrete units that models can process [1] [10]. Unlike natural language, where words naturally segment into tokens, gene expression data presents unique challenges: it's inherently non-sequential, high-dimensional, and sparse [4] [7]. This article provides a comprehensive comparison of prevailing tokenization strategies, their experimental evaluations, and practical considerations for researchers selecting approaches for single-cell analysis.

Main Tokenization Approaches: A Comparative Analysis

Single-cell foundation models employ different tokenization strategies to convert continuous gene expression values into model-readable inputs. The table below summarizes the primary approaches, their methodologies, and representative implementations.

Table 1: Comparison of Primary Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Methodology | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Rank-based | Genes are ordered by expression level within each cell; the sequence of gene identifiers serves as tokens [7]. | Captures relative expression patterns; robust to batch effects and technical noise [7]. | Loses information about absolute expression magnitudes [7]. | Geneformer, GeneCompass, LangCell [7] |

| Bin-based | Continuous expression values are discretized into predefined bins or categories; each bin becomes a token [7]. | Preserves information about expression value distributions [7]. | Risk of information loss; sensitivity to bin selection parameters [7]. | scBERT, scGPT, scMulan [7] |

| Value Projection | Applies a linear transformation to the continuous expression vector, combining it with gene embeddings [7]. | Maintains full resolution of continuous data without discretization [7]. | Diverges from standard NLP tokenization; impact on performance not fully established [7]. | scFoundation, xTrimoGene [7] |

| Raw Normalized Counts | Uses normalized count values directly without complex discretization or ranking [1]. | Simple and straightforward implementation; avoids artificial boundaries from binning. | May not optimally structure data for the model's attention mechanisms. | Multiple models [1] |

Enhancing Tokenization with Biological Context

Beyond these core strategies, models often incorporate special tokens to enrich biological context. These include:

- Cell identity tokens prepended to gene sequences to provide cell-level context [1] [10].

- Modality indicators to distinguish between different omics data types (e.g., scRNA-seq vs. scATAC-seq) [1] [10].

- Gene metadata such as Gene Ontology terms or chromosomal locations to provide additional biological context [1] [10].

- Batch information to help account for technical variations across different experiments [1] [10].

Experimental Benchmarking and Performance Evaluation

Rigorous benchmarking studies have evaluated how different tokenization strategies impact model performance across biologically relevant tasks. Experimental protocols typically involve pretraining models with different tokenization approaches on large-scale single-cell atlases, then evaluating their zero-shot or fine-tuned performance on diverse downstream applications [4].

Benchmarking Methodology

Comprehensive evaluations follow standardized protocols:

- Model Selection: Multiple scFMs (e.g., Geneformer, scGPT, UCE, scFoundation) employing different tokenization strategies are selected [4].

- Task Design: Models are evaluated on both gene-level and cell-level tasks. Gene-level tasks include predicting gene functionality and tissue specificity, while cell-level tasks include batch integration, cell type annotation, and clinically relevant applications like cancer cell identification [4].

- Evaluation Metrics: Performance is assessed using multiple metrics including traditional supervised metrics and novel biology-informed measures such as scGraph-OntoRWR, which evaluates whether model-derived cell relationships align with established biological knowledge from cell ontologies [4].

Table 2: Performance Comparison of Models Using Different Tokenization Strategies

| Model | Primary Tokenization Strategy | Cell Type Annotation | Batch Integration | Biological Relevance (scGraph-OntoRWR) | Computational Efficiency |

|---|---|---|---|---|---|

| scGPT | Bin-based [7] | Strong [8] | Strong [8] | Moderate [4] | Moderate [7] |

| Geneformer | Rank-based [7] | Moderate [8] | Moderate [4] | High [4] | High [7] |

| scFoundation | Value Projection [7] | Strong (gene-level) [8] | Moderate [4] | High [4] | Variable [7] |

| scBERT | Bin-based [7] | Weaker [8] | Weaker [4] | Moderate [4] | High [7] |

Key Findings from Benchmarking Studies

Experimental results reveal several important patterns:

- No single superior strategy: No tokenization approach consistently outperforms others across all tasks and datasets [4].

- Task-dependent performance: Rank-based approaches often excel at capturing biological relationships, while bin-based and value projection methods may perform better on specific classification tasks [4] [8].

- Data efficiency considerations: While foundation models show robust performance, simpler traditional methods can be more efficient for dataset-specific applications, particularly under resource constraints [4].

Visualizing Tokenization Workflows

The following diagram illustrates the complete tokenization pipeline from raw single-cell data to model-ready token sequences, highlighting the key decision points for different strategies.

Tokenization Workflow from Raw Data to Model Input

Implementing effective tokenization strategies requires leveraging curated biological datasets and computational resources. The table below outlines key resources for researchers developing or working with single-cell foundation models.

Table 3: Essential Research Resources for Single-Cell Foundation Model Development

| Resource Type | Resource Name | Function and Application | Access Information |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1] [10] | Provides unified access to annotated single-cell datasets with over 100 million unique cells standardized for analysis. | Publicly available |

| Human Cell Atlas [1] [10] | Offers broad coverage of cell types and states across multiple organs and species. | Publicly available | |

| NCBI GEO and SRA [1] [10] | Host thousands of single-cell sequencing studies for assembling diverse training corpora. | Publicly available | |

| Curated Compendia | PanglaoDB [1] [10] | Collates single-cell data from multiple sources with standardized annotations. | Publicly available |

| Human Ensemble Cell Atlas [1] [10] | Integrates data from multiple studies to provide comprehensive cell type references. | Publicly available | |

| Evaluation Frameworks | BioLLM [8] | Provides standardized APIs for benchmarking scFMs across diverse tasks and tokenization strategies. | Open source |

| scGraph-OntoRWR [4] | Novel metric evaluating biological relevance of embeddings against established ontologies. | Research implementation |

Future Directions in Tokenization Research

As single-cell foundation models evolve, tokenization strategies continue to advance with several promising directions:

- Biologically-informed tokenization: Developing methods that incorporate prior biological knowledge about gene interactions, pathways, and regulatory networks [16] [17].

- Adaptive tokenization: Creating tokenizers that dynamically adjust to specific biological contexts or tissue types [18].

- Multi-modal integration: Designing tokenization schemes that seamlessly integrate multiple data modalities (transcriptomics, proteomics, epigenomics) while preserving inter-modality relationships [1] [19].

- Efficiency optimization: Refining tokenization to reduce computational demands while maintaining biological fidelity, particularly important as dataset sizes continue growing [7].

Tokenization serves as the critical bridge connecting raw biological data with powerful analytical models in single-cell research. Through comparative analysis of different approaches—rank-based, bin-based, value projection, and normalized counts—we observe that each method presents distinct tradeoffs in biological relevance, computational efficiency, and task-specific performance. Experimental benchmarking reveals that strategy selection should be guided by specific research goals, dataset characteristics, and computational resources rather than seeking a universal optimal solution. As the field advances, developing more biologically-grounded tokenization methods and standardized evaluation frameworks will be essential for unlocking deeper insights into cellular function and disease mechanisms through single-cell foundation models.

Single-cell foundation models (scFMs) are revolutionizing biological research by enabling a unified analysis of cellular biology at scale. These models, trained on millions of single-cell transcriptomes, learn the fundamental "language" of cells, where a cell is treated as a sentence and its genes as words [1] [10]. The performance and utility of these models are intrinsically tied to the quality, scale, and diversity of the data on which they are pretrained. This guide provides an objective comparison of the primary data sources and the models they empower, offering researchers a framework for selecting the right resources and tools for their work.

Large-scale, publicly available cell atlases provide the foundational data necessary for pretraining scFMs. These resources aggregate and curate data from thousands of individual studies, though they vary significantly in scope and content. The table below summarizes key characteristics of several prominent atlases.

- Cell Atlas Overview

| Atlas Name | # Cells (Millions) | # Species | Key Features & Notes | URL |

|---|---|---|---|---|

| CZ CELLxGENE Discover [20] | 112.8 | 7 | A major unified resource; used for pretraining by multiple scFMs [21]. | https://cellxgene.cziscience.com/ |

| DISCO [20] | 125.6 | 1 (Human) | Deeply Integrated Single-Cell Omics database. | https://www.immunesinglecell.org |

| Single Cell Portal [20] | 57.6 | 18 | Hosted by the Broad Institute. | https://singlecell.broadinstitute.org |

| Human Cell Atlas (HCA) [20] | 65.4 | 1 (Human) | A foundational international consortium. | https://data.humancellatlas.org/ |

| Single Cell Expression Atlas [20] | 13.5 | 21 | Hosted by EMBL-EBI. | https://www.ebi.ac.uk/gxa/sc/home |

| Arc Virtual Cell Atlas [22] | 300+ | 21 | Includes the new Tahoe-100M perturbation dataset & AI-curated scBaseCount. | https://arcinstitute.org/tools/virtualcellatlas |

Comparative Analysis of scFMs Pretrained on Large-Scale Data

Different scFMs leverage these atlases with distinct architectural choices and pretraining strategies, leading to varied performance across downstream tasks. The following table compares several leading models.

- Model Comparison

| Model Name | Pretraining Scale | Key Architectural & Data Features | Noted Strengths from Benchmarks |

|---|---|---|---|

| scGPT [8] | 33 million cells [4] | Uses GPT-like decoder architecture; incorporates gene and value embeddings [4]. | Robust performance across all tasks, including zero-shot and fine-tuning [8]. |

| Geneformer [8] | 30 million cells [5] | Pretrained on 30 million cells from the cellxgene database [5]. | Strong capabilities in gene-level tasks [8]. |

| scFoundation [8] | 50 million cells [21] | A large-scale foundation model on single-cell transcriptomics [5]. | Strong performance on gene-level tasks [8]. |

| scPRINT [21] | 50 million cells [21] | Uses protein embeddings (ESM2) for gene IDs; innovative multi-task pretraining [21]. | Superior performance in gene network inference; competitive in denoising and batch correction [21]. |

| scPlantLLM [5] | Plant-specific data [5] | A model specifically trained on plant single-cell data [5]. | High accuracy in cell type annotation and batch integration on plant data [5]. |

| scBERT [8] | Not specified | Smaller model size and limited training data compared to others [8]. | Lagged behind larger models in performance [8]. |

Experimental Protocols for Benchmarking scFMs

To ensure fair and meaningful comparisons, benchmarking studies employ standardized evaluation protocols across diverse biological tasks. The following diagram and table outline a typical benchmarking workflow and the key metrics used.

Benchmarking scFM Performance

- Key Evaluation Metrics

| Task Category | Evaluation Metric | Description | What It Measures |

|---|---|---|---|

| Gene-Level Tasks | GO Term Prediction Accuracy [4] | Assesses if gene embeddings can predict known Gene Ontology biological functions. | Biological relevance of gene representations. |

| Cell-Level Tasks | Batch Effect Removal (ASWBatch) [4] [23] | Average Silhouette Width for batch labels. A lower score indicates better batch mixing. | Technical effect removal. |

| Cell-Level Tasks | Biological Conservation (ASWCell) [4] [23] | Average Silhouette Width for cell type labels. A higher score indicates better preservation of cell identity. | Biological variation preservation. |

| Cell-Level Tasks | Cell Ontology-informed Metrics (scGraph-OntoRWR) [4] | Measures consistency of cell type relationships in the model with prior knowledge in cell ontologies. | Biological plausibility of latent space. |

| Cell-Level Tasks | Lowest Common Ancestor Distance (LCAD) [4] | Measures ontological proximity between misclassified cell types. | Meaningfulness of model errors. |

The Scientist's Toolkit: Essential Research Reagents

Working with scFMs and large atlases requires a suite of computational "reagents" and resources. The following table details key tools and their functions in the model development and analysis pipeline.

- Research Reagent Solutions

| Item / Resource | Function | Example / Note |

|---|---|---|

| Unified Data Portals | Provide centralized, uniformly processed single-cell data for pretraining and fine-tuning. | CZ CELLxGENE, HCA Data Portal [1] [20]. |

| Standardized Metadata & Ontologies | Enables automated processing and ensures interoperability across datasets by providing a structured vocabulary for cell types. | Cell Ontology (CL) [20]. |

| Unified Framework Tools | Simplify model access and benchmarking by providing standardized APIs for diverse scFMs, mitigating challenges from heterogeneous architectures. | BioLLM framework [8]. |

| Transfer Learning Tools | Enable efficient mapping of new query datasets to large reference atlases without sharing raw data, facilitating iterative reference building. | scArches (single-cell architectural surgery) [23]. |

| Computational Hardware | Running and fine-tuning large scFMs requires significant GPU resources. Efficient hardware is critical for practical application. | GPUs with sufficient memory (e.g., A40 GPU used for scPRINT training) [21]. |

The construction of powerful single-cell foundation models is fundamentally driven by the million-cell atlases that serve as their training corpora. While general-purpose atlases like CELLxGENE and the Arc Virtual Cell Atlas provide the broad data foundation for models like scGPT and Geneformer, the emergence of specialized models like scPlantLLM and scPRINT highlights a trend towards purpose-built solutions. Benchmarks reveal that no single scFM dominates all tasks; selection must be guided by the specific biological question, whether it requires robust all-around performance (scGPT), specialized gene network inference (scPRINT), or analysis of non-animal data (scPlantLLM). As the field evolves, the synergy between ever-larger, higher-quality data atlases and more refined model architectures will continue to deepen our computational understanding of cellular biology.

The explosion of single-cell genomics data has created an urgent need for computational frameworks capable of integrating and analyzing cellular information at unprecedented scales. Self-supervised learning (SSL) has emerged as a transformative approach, enabling models to learn the fundamental "language of cells" by pretraining on vast, unlabeled datasets. These single-cell foundation models (scFMs) treat individual cells as sentences and genes as words, creating a powerful paradigm for deciphering cellular heterogeneity and function. As the field rapidly evolves, researchers and drug development professionals face the critical challenge of selecting appropriate models for specific biological questions. This guide provides an objective comparison of leading scFM architectures, synthesizing performance data from recent benchmarks to inform model selection for research and clinical applications.

ScFM Architectures: Tokenization, Pretraining, and Adaptation

Fundamental Architecture and Tokenization Strategies

Single-cell foundation models adapt transformer architectures to the unique challenges of genomic data. Unlike natural language, gene expression data lacks inherent sequence, requiring innovative tokenization approaches. Most scFMs represent genes or genomic features as tokens, with each cell comprising a "sentence" of these tokens [1] [10]. Three predominant tokenization strategies have emerged:

- Expression-based ranking orders genes by expression level within each cell to create a deterministic sequence [1].

- Expression binning partitions genes into bins based on expression values [1].

- Normalized counts directly uses normalized expression values without complex ranking [1].

Special tokens are often incorporated to enrich biological context, including cell identity metadata, modality indicators for multi-omics data, and gene annotations from resources like Gene Ontology [1] [10]. After tokenization, genes are converted to embedding vectors processed by transformer layers, typically producing two types of output: gene-level embeddings and a dedicated cell-level embedding [1].

The transformer architecture itself has been implemented in both encoder-based (BERT-like) and decoder-based (GPT-like) variants for single-cell data [1]. scBERT employs a bidirectional encoder architecture that learns from all genes in a cell simultaneously [1] [24], while scGPT uses a decoder-style architecture with masked self-attention that predicts masked genes conditioned on known genes [1]. Hybrid designs are also being explored, though no single architecture has emerged as clearly superior across all tasks [1].

Diagram: The scFM architecture pipeline shows how raw single-cell data is processed through tokenization strategies and transformer models to produce gene and cell embeddings.

Self-Supervised Pretraining Objectives

scFMs employ self-supervised pretraining objectives that enable learning without labeled data. The most successful approaches include:

- Masked Autoencoding: Randomly masking portions of the input gene expression profile and training the model to reconstruct the missing values [25]. Variations include random masking, gene program masking, and isolated masking of biologically meaningful gene sets [25].

- Contrastive Learning: Creating augmented views of cells and training the model to identify representations that are invariant to these augmentations [26]. Methods include BYOL (Bootstrap Your Own Latent) and Barlow Twins, which avoid negative pairs [25].

- Multimodal Alignment: For multi-omics data, aligning representations across different modalities (e.g., RNA and protein) using contrastive objectives [26].

Recent evidence suggests that masked autoencoders may outperform contrastive methods in single-cell genomics, diverging from trends in computer vision [25]. Random masking has emerged as particularly effective, surpassing even domain-specific augmentations across multiple tasks [26].

Comparative Performance Benchmarking

Batch Correction and Data Integration

Batch effects represent a fundamental challenge in single-cell genomics, where technical variations can obscure biological signals. Specialized single-cell frameworks like scVI and CLAIRE, along with the finetuned scGPT, demonstrate superior performance for uni-modal batch correction [26]. However, for multi-modal batch correction, generic SSL methods such as VICReg and SimCLR outperform domain-specific approaches [26].

Table 1: Batch Correction Performance Across Model Types

| Model Category | Representative Models | Uni-modal Performance | Multi-modal Performance | Key Strengths |

|---|---|---|---|---|

| Specialized Single-cell | scVI, CLAIRE, scGPT | Excellent | Moderate | Domain-specific architecture |

| Generic SSL | VICReg, SimCLR | Good | Excellent | Flexibility across data types |

| Foundation Models | scGPT, Geneformer | Good | Good | Transfer learning capability |

In benchmarking across five datasets with diverse biological conditions, scFMs demonstrated robust integration capabilities, particularly in preserving biological variation while removing technical artifacts [4]. The performance advantage was most pronounced in challenging scenarios involving cross-tissue homogeneity and intra-tumor heterogeneity [4].

Cell Type Annotation Accuracy

Cell type annotation remains a cornerstone of single-cell analysis, with methods ranging from unsupervised clustering to supervised classification. Benchmarking studies reveal that no single scFM consistently outperforms all others across diverse annotation tasks [4]. Instead, performance depends on factors including dataset size, cell type complexity, and annotation specificity.

Table 2: Cell Type Annotation Performance Across Models and Datasets

| Model | Tabula Sapiens (Macro F1) | PBMC SARS-CoV-2 (Macro F1) | Cross-Species Accuracy | Annotation Approach |

|---|---|---|---|---|

| Supervised Baseline | 0.2722 ± 0.0123 | 0.7013 ± 0.0077 | N/A | Traditional supervised learning |

| + SSL Pretraining | 0.3085 ± 0.0040 | 0.7466 ± 0.0057 | N/A | SSL with fine-tuning |

| scGPT | 0.3019 | 0.7412 | 92% (with scPlantFormer) | Zero-shot and fine-tuning |

| scBERT | 0.2955 | 0.7328 | Moderate | Fine-tuning required |

| Geneformer | 0.2872 | 0.7234 | Good | Contextual learning |

Notably, SSL pretraining on large auxiliary datasets (e.g., 20 million cells from CELLxGENE census) significantly boosts performance on smaller target datasets, with macro F1 scores increasing from 0.7013 to 0.7466 in PBMC data and from 0.2722 to 0.3085 in Tabula Sapiens [25]. This improvement is especially pronounced for underrepresented cell types, demonstrating SSL's value for imbalanced datasets [25].

Performance Across Downstream Tasks

The utility of scFMs extends beyond basic annotation to diverse downstream applications. A comprehensive benchmark of six scFMs against established baselines evaluated performance across two gene-level and four cell-level tasks [4]:

- Gene-level tasks: Tissue specificity prediction and Gene Ontology term prediction

- Cell-level tasks: Batch integration, cell type annotation, cancer cell identification, and drug sensitivity prediction

Performance was assessed using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches [4]. The introduction of cell ontology-informed metrics like scGraph-OntoRWR (measuring consistency of cell type relationships with biological knowledge) and LCAD (Lowest Common Ancestor Distance for error severity assessment) provided biologically grounded evaluation perspectives [4].

Diagram: The evaluation workflow for scFMs encompasses pretraining objectives, downstream applications, and multiple performance metrics.

Experimental Protocols and Methodologies

Standardized Benchmarking Frameworks

Rigorous evaluation of scFMs requires standardized benchmarking frameworks. Leading efforts include:

- scSSL-Bench: Evaluates 19 SSL methods across 9 datasets, focusing on batch correction, cell type annotation, and missing modality prediction [26]. Employs metrics like kNN accuracy for cell type annotation and average silhouette width for batch mixing [26].

- Biology-Driven Benchmark: Assesses 6 scFMs against traditional baselines using 12 metrics across gene-level and cell-level tasks [4]. Incorporates novel ontology-informed metrics like scGraph-OntoRWR [4].

- Transfer Learning Evaluation: Measures performance gains when models pretrained on large datasets (e.g., scTab with 20M+ cells) are fine-tuned on smaller target datasets [25].

These benchmarks consistently employ k-fold cross-validation, with common practices including 5-fold validation for cell type annotation tasks [24]. Evaluation typically occurs in both zero-shot settings (where models predict without fine-tuning) and fine-tuned scenarios [25] [4].

Data Processing and Normalization

Consistent data processing is critical for fair model comparison. Standard protocols include:

- Quality Control: Filtering cells based on gene counts, mitochondrial percentage, and other quality metrics [1].

- Normalization: Library size normalization followed by log transformation [27].

- Feature Selection: Identifying highly variable genes (HVGs) [4].

- Scaling: Z-score normalization or other scaling approaches [27].

For multi-omic data, additional processing steps include modality-specific normalization and cross-modal alignment [26] [12]. Batch-aware processing techniques are particularly important given the prevalence of batch effects in single-cell data [26].

Key Research Reagent Solutions

Table 3: Essential Resources for scFM Development and Application

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Data Repositories | CZ CELLxGENE (100M+ cells), Human Cell Atlas, DISCO, PanglaoDB | Provide standardized, annotated single-cell data for model training and validation |

| Benchmarking Platforms | scSSL-Bench, BioLLM | Enable standardized model evaluation and comparison across diverse tasks |

| Computational Tools | Scanpy, AnnData, AnnDictionary | Facilitate data preprocessing, analysis, and multimodal data management |

| Model Architectures | scGPT, Geneformer, scBERT, scVI, scReformer-BERT | Offer specialized architectures optimized for single-cell data challenges |

Computational Considerations

Training and applying scFMs requires substantial computational resources. Key considerations include:

- Memory Requirements: Standard transformers have quadratic complexity with sequence length, challenging for full gene sets (>10,000 genes) [24]. Efficient variants like Reformer reduce complexity through locality-sensitive hashing [24].

- Pretraining Infrastructure: Training on millions of cells typically requires GPU clusters and distributed training strategies [1].

- Fine-tuning Efficiency: While pretraining is computationally intensive, fine-tuning for specific tasks is more accessible [4].

The landscape of single-cell foundation models offers diverse solutions with complementary strengths. Specialized frameworks excel in domain-specific tasks like uni-modal batch correction, while generic SSL methods demonstrate superior performance in multi-modal integration [26]. Model selection should be guided by specific application requirements rather than seeking a universal winner [4].

For resource-constrained environments or focused applications, simpler machine learning models may provide more efficient adaptation to specific datasets [4]. However, for large-scale integration, transfer learning scenarios, and complex multimodal analysis, scFMs pretrained on diverse cellular atlases offer unparalleled performance [25]. As the field matures, standardized benchmarking and biological interpretability will be crucial for translating computational advances into mechanistic insights and clinical applications [12] [4].

From Model to Insight: Practical Applications in Drug Discovery and Biology

Cell type annotation is a fundamental task in single-cell genomics that involves classifying individual cells into specific biological categories based on their gene expression profiles. Traditional methods rely heavily on manual comparison to reference datasets and marker genes, making the process time-consuming and subjective, especially with the increasing scale of single-cell atlases now encompassing millions of cells [1]. The emergence of single-cell foundation models (scFMs) represents a paradigm shift toward automated, standardized, and reproducible cell type annotation [28] [1].

These scFMs are large-scale deep learning models pre-trained on vast single-cell datasets using self-supervised objectives. They learn transferable representations of cellular states that can be adapted to various downstream tasks, including annotation, with minimal additional labeled examples [1]. This guide provides a comprehensive comparison of current scFM architectures for cell type annotation, evaluating their performance, technical approaches, and practical implementation requirements to assist researchers in selecting appropriate methodologies for their specific annotation challenges.

Foundational Concepts and Model Taxonomy

Architectural Foundations of Single-Cell Foundation Models

Single-cell foundation models typically employ transformer-based architectures, which utilize attention mechanisms to weight relationships between genes within a cell [1]. The key conceptual innovation lies in treating single-cell data as a "language" where:

- Cells are analogous to sentences or documents

- Genes or genomic features serve as words or tokens

- Gene expression values provide the semantic content [1]

This conceptual framework enables models to learn the fundamental "grammar" of cell states from large-scale datasets, capturing complex gene-gene relationships and regulatory patterns that generalize across tissues, species, and experimental conditions.

Data Tokenization Strategies

A critical technical challenge involves converting non-sequential gene expression data into structured inputs for transformer models. Different approaches have emerged:

- Rank-based tokenization: Genes are ordered by expression levels within each cell, creating a deterministic sequence [1]

- Binning approaches: Expression values are partitioned into discrete bins or categories [1]

- Normalized counts: Some models use directly normalized expression values without complex ranking [1]

Gene tokens typically combine identifier embeddings with expression value information, while positional encoding schemes represent the relative order or rank of each gene. Special tokens may be added to represent cell-level metadata, batch information, or modality indicators [1].

Model Taxonomy and Methodological Families

The landscape of single-cell foundation models can be categorized into five methodological families based on their core design and data modality:

- Foundation Models: Learn transferable cell and gene embeddings directly from large-scale scRNA-seq data without explicit labels (e.g., scGPT, Geneformer, scFoundation) [28]

- Text-Bridge LLMs: Connect biological text knowledge with molecular patterns

- Spatial/Multimodal Models: Incorporate spatial organization and multiple data types

- Epigenomic Models: Focus on chromatin accessibility and regulatory elements

- Agentic Frameworks: Extend capabilities toward reasoning and autonomous analysis [28]

The following workflow diagram illustrates the typical cell type annotation process using these foundation models:

Comparative Performance Analysis

Benchmarking Framework and Evaluation Metrics

Rigorous evaluation of annotation performance requires comprehensive benchmarking across multiple dimensions. The LLM4Cell survey analyzed 58 foundation and agentic models using a ten-dimension rubric covering biological grounding, multi-omics alignment, fairness, privacy, and explainability [28]. Additional benchmarking studies have employed metrics including:

- Annotation Accuracy: Proportion of correctly classified cells

- F1-Score: Harmonic mean of precision and recall

- Normalized Mutual Information (NMI): Information-theoretic similarity measure

- Adjusted Rand Index (ARI): Similarity measure between clusterings [19]

These metrics evaluate both the classification performance and the biological plausibility of annotation results, with particular attention to performance on rare cell populations and cross-species generalization.

Quantitative Performance Comparison

The following table summarizes the performance characteristics of major single-cell foundation models for cell type annotation tasks:

Table 1: Performance Comparison of Single-Cell Foundation Models for Annotation Tasks

| Model | Architecture Type | Primary Modality | Annotation Accuracy Range | Scalability | Key Strengths |

|---|---|---|---|---|---|

| scGPT [28] | Decoder (GPT-style) | scRNA-seq | High (varies by dataset) | Millions of cells | Generative capabilities, strong zero-shot learning |

| Geneformer [28] | Transformer | scRNA-seq | High (varies by dataset) | Millions of cells | Context-aware embeddings, transfer learning |

| scBERT [1] | Encoder (BERT-style) | scRNA-seq | High (varies by dataset) | Millions of cells | Bidirectional context, fine-tuning efficiency |

| scFoundation [28] | Transformer | scRNA-seq | High (varies by dataset) | Millions of cells | Multi-tissue generalization |

| scANVI [29] | Variational Autoencoder | Multi-omic | High (varies by dataset) | Hundreds of thousands of cells | Semi-supervised learning, multi-modal integration |

Performance metrics vary significantly across datasets and tissue types. Models like scANVI demonstrate particular strength in semi-supervised scenarios where limited labeled data is available, while scGPT excels in generative annotation tasks [28] [29].

Integration Capabilities and Multi-omic Performance

As single-cell technologies evolve to measure multiple modalities simultaneously, annotation models must integrate diverse data types. The following table compares model performance across data modalities:

Table 2: Multi-omic Integration Capabilities for Cell Type Annotation

| Model | RNA Handling | ATAC-seq Compatibility | Protein Integration | Satial Context | Cross-Modal Alignment |

|---|---|---|---|---|---|

| scGPT [28] | Excellent | Limited | Limited | Limited | Moderate |

| Geneformer [28] | Excellent | Limited | Limited | No | Moderate |

| scBERT [1] | Excellent | Limited | Limited | No | Moderate |

| scANVI [29] | Excellent | Good | Good | Limited | Good |

| scVI [30] | Excellent | Good | Good (via totalVI) | Limited | Good |

Models with strong multi-omic integration capabilities like scANVI and scVI demonstrate enhanced annotation accuracy, particularly for complex tissues and disease states where multiple data types provide complementary biological information [29].

Experimental Protocols and Methodologies

Standardized Annotation Workflow

The following diagram illustrates the complete experimental workflow for model-based cell type annotation, from data preprocessing to final validation:

Model Training and Fine-tuning Protocols

Effective implementation of scFMs for annotation requires careful attention to training procedures:

Pretraining Phase:

- Models are initially pretrained on large-scale single-cell corpora (e.g., CELLxGENE Census) using self-supervised objectives like masked gene prediction [1]

- Training typically uses the AdamW optimizer with learning rate warmup and decay schedules

- Large batch sizes (8,192-16,384 cells) are employed for stability [28]

Fine-tuning Phase:

- Pretrained models are adapted to specific annotation tasks using limited labeled data

- Transfer learning techniques prevent catastrophic forgetting of general biological knowledge

- Semi-supervised approaches (e.g., scANVI) leverage both labeled and unlabeled cells [29]

Validation Procedures:

- Strict train-validation-test splits preserve generalization assessment

- Multiple random seeds ensure result stability

- Cross-dataset validation tests biological generalization beyond technical batches [29]

Benchmarking Methodologies

Comprehensive benchmarking requires standardized evaluation protocols:

Data Selection:

- Diverse tissue types and biological conditions

- Multiple sequencing technologies and protocols

- Variation in dataset size and cell type complexity [19]

Performance Assessment:

- Cross-validation within datasets

- Cross-dataset generalization tests

- Rare cell type detection capability

- Computational efficiency metrics [19]

Baseline Comparisons:

- Traditional methods (differential expression, clustering)

- Reference-based approaches (Seurat label transfer)

- Alternative machine learning classifiers [29]

Successful implementation of automated cell type annotation requires both computational tools and biological resources. The following table outlines key components of the annotation toolkit:

Table 3: Essential Research Reagents and Computational Tools for Cell Type Annotation

| Resource Category | Specific Tools/Resources | Primary Function | Application Context |

|---|---|---|---|

| Reference Atlases | Tabula Sapiens, Human Cell Atlas | Biological ground truth | Training data, reference standards |

| Analysis Ecosystems | Scanpy, Seurat, scvi-tools | Data handling and preprocessing | Primary analysis environments |

| Model Repositories | scvi-hub, Hugging Face | Model sharing and deployment | Access to pretrained models |

| Benchmarking Frameworks | LLM4Cell, scIB, scIB-E | Performance evaluation | Method comparison and validation |

| Visualization Tools | UCSC Cell Browser, SCope | Result exploration and interpretation | Biological validation and hypothesis generation |

High-quality reference datasets form the foundation for effective annotation systems:

- Tabula Sapiens: Multi-organ, multi-modal reference with carefully annotated cell types [28]

- Human Cell Atlas: Comprehensive map of all human cells with standardized annotations [28]

- CELLxGENE Census: Curated collection of standardized single-cell datasets [30]

- CellBlast: Specialized resources for query-to-reference mapping [28]

Computational Infrastructure Requirements

Deploying foundation models requires substantial computational resources:

- GPU Memory: 16-80GB for model inference and fine-tuning

- System RAM: 32-128GB for handling large reference datasets

- Storage: TB-scale for raw data and model checkpoints

- Software: Python/R ecosystems with specialized libraries (scvi-tools, transformers) [28] [30]

Implementation Considerations and Best Practices

Model Selection Guidelines

Choosing the appropriate foundation model depends on specific research requirements:

For maximum accuracy with abundant labeled data:

- Fine-tuned encoder models (scBERT, Geneformer)

- Strong supervision with comprehensive reference datasets

For limited labeled data scenarios:

- Semi-supervised approaches (scANVI)

- Few-shot learning capabilities (scGPT)

For multi-omic integration:

- Specialized architectures (scVI, totalVI)

- Cross-modal alignment techniques

For exploratory analysis:

- Generative models with interactive capabilities

- Explainable AI approaches for biological interpretation

Quality Control and Validation Frameworks

Robust annotation requires comprehensive quality assessment:

Technical Quality Metrics:

- Batch effect correction evaluation

- Integration quality scores

- Label transfer confidence metrics

Biological Validation:

- Marker gene expression verification

- Cellular composition plausibility

- Differential expression confirmation

- Spatial validation (when available)

Reproducibility Safeguards:

- Version control for models and data

- Containerized analysis environments

- Automated reproducibility tests

Future Directions and Emerging Trends

The field of automated cell type annotation is rapidly evolving with several promising directions:

- Multi-modal foundation models that simultaneously process RNA, ATAC, protein, and spatial data [28]

- Agentic systems that perform autonomous experimental design and hypothesis testing [28]

- Interpretable AI approaches that provide biological insights beyond black-box predictions [1]

- Federated learning frameworks that enable model training across institutions while preserving data privacy [28]

- Continuous learning systems that adapt to new data without catastrophic forgetting [30]

Platforms like scvi-hub represent the infrastructure direction, providing version-controlled model repositories with standardized evaluation metrics and massively reduced computational requirements through data minification techniques [30].

As these technologies mature, automated cell type annotation will become increasingly accurate, efficient, and accessible, ultimately enabling researchers to focus more on biological interpretation and less on manual curation tasks.

The rapid expansion of single-cell genomics has generated vast repositories of data from diverse tissues, species, and experimental conditions. However, integrating these heterogeneous datasets presents a significant challenge due to batch effects—systematic technical variations arising from differences in sample preparation, sequencing platforms, or laboratory conditions. These non-biological variations can obscure true biological signals, compromise downstream analyses, and hinder the development of robust biological insights [1]. In the context of single-cell foundation models (scFMs), which are large-scale artificial intelligence models pretrained on massive single-cell datasets, effective batch effect correction becomes paramount for building accurate and generalizable representations of cellular biology [1] [4].

The field currently faces a critical methodological divide: researchers must choose between traditional batch correction algorithms and the emerging paradigm of foundation models that implicitly learn to harmonize data during pretraining. This comparison guide provides an objective assessment of both approaches through rigorous experimental benchmarking, offering scientists a evidence-based framework for selecting appropriate methods based on their specific research needs, dataset characteristics, and computational resources.

Comparative Analysis of Correction Methodologies

Traditional Computational Approaches

Traditional batch effect correction methods employ explicit statistical and algorithmic strategies to remove technical artifacts while preserving biological variation. These approaches range from relatively simple linear models to complex deep learning architectures, each with distinct strengths and limitations [31].

Table 1: Traditional Batch Effect Correction Methods

| Method | Core Algorithm | Preserves Data Structure | Handles Missing Data | Scalability |

|---|---|---|---|---|

| ComBat | Empirical Bayes | Order-preserving [31] | Limited [32] | Moderate |

| Limma | Linear models | Order-preserving [31] | Limited [32] | High |

| Harmony | Iterative clustering | No (embeddings only) [31] | Moderate | High |

| Seurat v3 | CCA + MNN | No | Limited | Moderate |

| BERT | Tree-based ComBat/Limma | Yes [32] | Excellent [32] | High |

| Order-Preserving DL | Monotonic deep learning | Order-preserving [31] | Moderate | Moderate |

Notably, the recently introduced Batch-Effect Reduction Trees (BERT) method represents a significant advancement for large-scale data integration tasks. BERT employs a tree-based framework that decomposes integration tasks into binary correction steps, retaining up to five orders of magnitude more numeric values compared to alternative methods like HarmonizR while offering up to 11× runtime improvement [32]. This method particularly excels in scenarios with severely imbalanced or sparsely distributed conditions, achieving up to 2× improvement in average-silhouette-width scores [32].

Order-preserving methods represent another important innovation, specifically designed to maintain the relative rankings of gene expression levels within each batch after correction. This property ensures that biologically meaningful patterns, such as relative expression levels between genes or cells, remain intact throughout the integration process [31]. As demonstrated in comparative studies, methods with order-preserving capabilities like ComBat and specialized monotonic deep learning networks show superior performance in maintaining inter-gene correlations and preserving differential expression information [31].

Foundation Model Approaches

Single-cell foundation models (scFMs) represent a paradigm shift in how batch effects are addressed. Rather than applying explicit correction algorithms as a preprocessing step, these large-scale models learn to implicitly harmonize data during self-supervised pretraining on millions of cells [1]. The transformer architecture underlying most scFMs enables them to capture complex relationships between genes and cells, potentially learning biological invariants that transcend batch-specific technical variations [1].

Table 2: Single-Cell Foundation Models with Batch Integration Capabilities

| Model | Architecture | Pretraining Scale | Multi-omics Support | Zero-shot Batch Integration |

|---|---|---|---|---|

| scGPT | Transformer decoder | 30+ million cells [5] | Yes [1] | Yes [4] |

| Geneformer | Transformer encoder | 30 million cells [5] | Limited | Yes [4] |

| scFoundation | Transformer | 100 million cells [5] | Limited | Yes [4] |

| scPlantLLM | Transformer | Plant-specific [5] | Limited | Yes [5] |

| LangCell | Transformer | Large-scale [4] | Limited | Yes [4] |

These foundation models typically employ innovative tokenization strategies to represent single-cell data in a format suitable for transformer architectures. Individual cells are treated analogously to sentences, with genes or genomic features and their expression values represented as words or tokens [1]. Some models rank genes by expression levels to create deterministic sequences, while others use binning strategies or normalized counts directly [1]. The resulting latent representations have demonstrated remarkable robustness to batch-dependent technical biases without requiring explicit batch correction in some applications [1].

A comprehensive benchmark study evaluating six scFMs against traditional baselines revealed that while foundation models offer robust and versatile performance across diverse applications, simpler machine learning models can sometimes adapt more efficiently to specific datasets, particularly under resource constraints [4]. Notably, no single scFM consistently outperformed others across all tasks, emphasizing the importance of context-dependent model selection [4].

Experimental Benchmarking and Performance Metrics

Evaluation Frameworks and Metrics

Rigorous evaluation of batch effect correction methods requires multidimensional assessment using both technical and biological metrics. The scientific community has developed specialized evaluation protocols that address two critical aspects: batch mixing (removal of technical biases) and biological preservation (retention of meaningful biological variation) [4] [31].

Common technical metrics include:

- Average Silhouette Width (ASW): Measures cluster compactness and separation, with separate calculations for batch labels (lower values desired) and cell type labels (higher values desired) [32].

- Adjusted Rand Index (ARI): Quantifies clustering accuracy by measuring agreement between predicted clusters and known cell type labels [31].

- Local Inverse Simpson's Index (LISI): Assesses neighborhood diversity in terms of both batch mixing and cell type purity [31].

Biologically-informed metrics have recently emerged as crucial complements to technical measures:

- scGraph-OntoRWR: A novel metric that evaluates the consistency of cell type relationships captured by scFMs with prior biological knowledge from cell ontologies [4].

- Lowest Common Ancestor Distance (LCAD): Measures ontological proximity between misclassified cell types, providing nuanced assessment of annotation errors [4].

- Inter-gene Correlation Preservation: Quantifies how well correction methods maintain original correlations between functionally related genes [31].

Quantitative Performance Comparison

Table 3: Benchmarking Results Across Method Categories (Scale: ★ Poor to ★★★★★ Excellent)

| Method Category | Batch Mixing | Biological Preservation | Computational Efficiency | Ease of Use | Missing Data Handling |

|---|---|---|---|---|---|

| Statistical Methods (ComBat, limma) | ★★★☆☆ | ★★★★☆ [31] | ★★★★★ | ★★★★☆ | ★★☆☆☆ [32] |

| Procedural Methods (Seurat, Harmony) | ★★★★☆ | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ |

| Deep Learning Methods (scVI, etc.) | ★★★★☆ | ★★★★☆ | ★★☆☆☆ | ★★☆☆☆ | ★★★☆☆ |

| Order-Preserving Methods | ★★★☆☆ | ★★★★★ [31] | ★★☆☆☆ | ★★☆☆☆ | ★★★☆☆ |

| Foundation Models (scGPT, etc.) | ★★★★☆ [4] | ★★★★☆ [4] | ★☆☆☆☆ | ★★☆☆☆ | ★★★★☆ |

Recent benchmarking studies have revealed nuanced performance patterns across method categories. Foundation models like scGPT and Geneformer demonstrate particularly strong performance in zero-shot settings, where pretrained models are applied to new datasets without task-specific fine-tuning [4]. In batch integration tasks, scFMs consistently outperform traditional methods in preserving fine-grained biological structures, especially for rare cell populations and cross-tissue integrations [4].

However, traditional methods maintain advantages in specific scenarios. For well-controlled experiments with limited batch effects and complete data matrices, established tools like ComBat and Harmony offer excellent performance with substantially lower computational requirements [4] [31]. The order-preserving deep learning method demonstrates superior capability in maintaining inter-gene correlations and differential expression patterns, achieving higher Pearson and Kendall correlation coefficients compared to non-order-preserving approaches [31].