Single-Cell Foundation Models: Revolutionizing Drug Sensitivity Prediction in Precision Oncology

This article explores the transformative role of single-cell foundation models (scFMs) in predicting drug sensitivity, a critical challenge in precision medicine.

Single-Cell Foundation Models: Revolutionizing Drug Sensitivity Prediction in Precision Oncology

Abstract

This article explores the transformative role of single-cell foundation models (scFMs) in predicting drug sensitivity, a critical challenge in precision medicine. We first establish the foundational concepts of scFMs, inspired by large language models, which learn universal biological knowledge from massive single-cell transcriptomics datasets. The discussion then progresses to the methodological architectures of prominent models like scGPT and Geneformer, and their application in predicting cellular responses to therapeutics. A critical troubleshooting section addresses key challenges such as data sparsity, model selection, and computational demands, providing optimization strategies. Finally, we present a comprehensive validation framework, benchmarking scFMs against traditional machine learning approaches across diverse biological and clinical tasks. This resource is designed for researchers, scientists, and drug development professionals seeking to leverage cutting-edge AI for oncology research and therapy development.

Understanding Single-Cell Foundation Models: The New Paradigm in Cellular Biology

Defining Single-Cell Foundation Models (scFMs) and Their Core Principles

Single-cell foundation models (scFMs) represent a transformative class of artificial intelligence in cellular biology, defined as large-scale deep learning models pretrained on vast single-cell omics datasets using self-supervised learning objectives [1]. These models are designed to learn universal representations of cellular states that can be adapted to a wide array of downstream biological tasks through fine-tuning or zero-shot inference [1] [2]. The development of scFMs marks a paradigm shift from traditional single-task computational models toward unified frameworks capable of integrating and analyzing the rapidly expanding repositories of single-cell data [1].

The core premise of scFMs draws inspiration from the success of foundation models in natural language processing (NLP), where models trained on massive text corpora demonstrate remarkable generalization capabilities [1] [3]. In the biological context, scFMs treat individual cells as analogous to sentences and genes or genomic features as words or tokens, enabling the model to decipher the fundamental "language" of cellular biology [1]. By training on millions of single-cell transcriptomes encompassing diverse tissues, species, and biological conditions, scFMs learn the underlying principles governing cellular identity, state, and function that generalize to novel datasets and biological questions [1] [2].

Core Architectural Principles of scFMs

Foundational Components and Tokenization Strategies

The architecture of single-cell foundation models rests on several key components that enable their remarkable adaptability. Transformer architectures form the computational backbone of most scFMs, leveraging attention mechanisms to model complex dependencies between genes within individual cells [1]. These architectures allow the models to learn and weight relationships between any pair of input tokens (genes), effectively determining which genes are most informative about a cell's identity or state [1]. The implementation of transformer architectures in scFMs typically follows one of two approaches: bidirectional encoder representations (BERT-like) that learn from all genes in a cell simultaneously, or generative pretrained transformer (GPT-like) designs with unidirectional masked self-attention that iteratively predict masked genes conditioned on known genes [1].

Tokenization strategies represent a critical preprocessing step that converts raw single-cell data into structured inputs compatible with transformer architectures [1]. Unlike words in natural language, gene expression data lacks inherent sequential ordering, necessitating carefully designed tokenization approaches:

- Gene identity tokens represent individual genes using unique identifiers, analogous to words in a sentence [1]

- Expression value encoding captures quantitative expression levels through various strategies, including rank-based ordering of genes by expression magnitude or binning approaches that partition expression values into discrete categories [1]

- Positional embeddings provide information about gene order when a deterministic sequence is established, typically through expression ranking [1]

- Special tokens incorporate cell-level metadata, modality indicators for multi-omics data, and batch information to enrich contextual understanding [1]

Pretraining Paradigms and Data Requirements

The development of robust scFMs depends on large-scale diverse datasets that capture the full spectrum of biological variation [1]. Model performance correlates strongly with the breadth and quality of pretraining data, which typically incorporates tens of millions of single-cell profiles from public repositories such as CZ CELLxGENE, the Human Cell Atlas, and NCBI GEO [1]. These aggregated datasets enable scFMs to learn fundamental biological principles across diverse cell types, states, and conditions [1].

Self-supervised pretraining objectives enable scFMs to learn meaningful biological representations without explicit labeling [1]. The most common approaches include:

- Masked gene modeling, where a subset of input genes is randomly masked and the model learns to predict the missing values based on contextual information from the remaining genes [1]

- Contrastive learning objectives that train models to recognize similar cellular states while distinguishing technically or biologically distinct profiles [2]

- Multimodal alignment strategies that learn correspondences between different data types, such as transcriptomic and epigenomic measurements [2]

Table 1: Comparative Analysis of Prominent Single-Cell Foundation Models

| Model Name | Architecture Type | Pretraining Scale | Key Strengths | Notable Applications |

|---|---|---|---|---|

| scGPT [2] [4] | Generative Transformer | 33+ million cells | Strong zero-shot annotation, multi-omic integration | Cell type annotation, perturbation modeling |

| Geneformer [5] [4] | Transformer-based | Not specified | Effective gene-level tasks, perturbation prediction | Gene network inference, transcriptional dynamics |

| scFoundation [5] [6] | Transformer-based | Extensive (size not specified) | Gene expression enhancement, drug response | Drug response prediction, expression imputation |

| scBERT [1] [4] | BERT-like Encoder | Smaller scale | Cell type annotation | Classification tasks, pattern recognition |

| EpiAgent [2] | Epigenomic Foundation Model | ~5 million cells | cis-regulatory element reconstruction | ATAC-seq analysis, chromatin accessibility |

Application Notes for Drug Sensitivity Prediction

Experimental Design and Workflow

Drug sensitivity prediction using scFMs leverages the models' capacity to infer transcriptional responses to chemical perturbations based on foundational knowledge of cellular systems [5] [2]. The experimental workflow typically employs a transfer learning approach, where a pretrained scFM is adapted to predict how individual cells or cell populations will respond to therapeutic interventions [5]. This application holds particular promise in oncology for understanding heterogeneous treatment responses within tumor microenvironments and identifying patient-specific therapeutic vulnerabilities [5] [3].

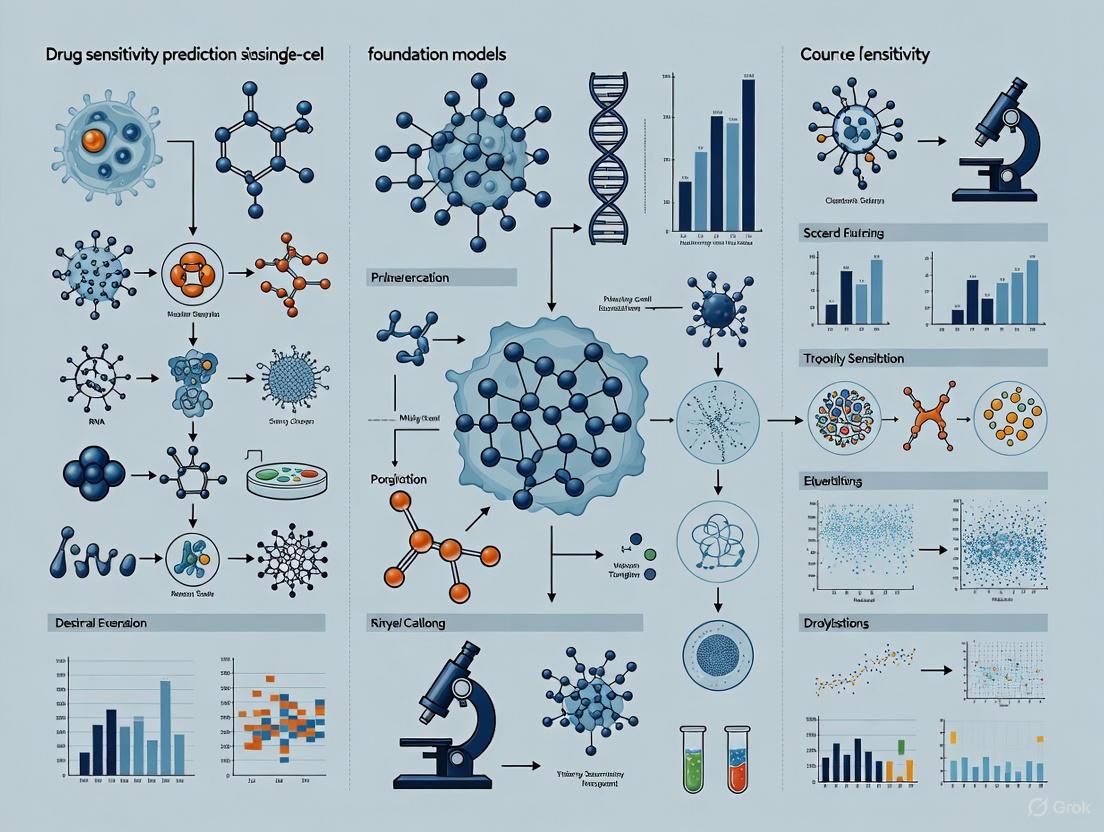

The standard workflow for drug sensitivity prediction involves multiple stages, from data preprocessing through model interpretation, as illustrated below:

Implementation Protocols

Protocol 1: Zero-shot drug sensitivity prediction using scFM embeddings

This protocol evaluates the intrinsic capability of scFMs to predict drug responses without task-specific fine-tuning [5] [7]:

- Input Preparation: Extract single-cell RNA-seq profiles from target cell populations (e.g., tumor biopsies) and format according to model-specific tokenization requirements [1]

- Embedding Generation: Process cellular profiles through pretrained scFM to obtain latent representations using zero-shot inference [5]

- Similarity Assessment: Compare query cell embeddings with reference profiles of annotated drug responses using cosine similarity metrics in the latent space [5]

- Response Prediction: Assign sensitivity scores based on proximity to known responsive or resistant cellular states in the embedding space [5] [7]

Protocol 2: Fine-tuned drug sensitivity classification

For enhanced performance on specific drug classes or cellular contexts, supervised fine-tuning is recommended [5] [4]:

- Data Curation: Compile labeled single-cell datasets with documented drug response outcomes (e.g., scRNA-seq of cancer cells pre- and post-treatment) [5]

- Model Adaptation: Append task-specific classification layers to the pretrained scFM and initialize with pretrained weights [4]

- Transfer Learning: Fine-tune the composite model on drug response labels using cross-entropy loss with balanced sampling to address class imbalance [5]

- Validation: Evaluate predictive performance on held-out test sets using multiple metrics including accuracy, AUC-ROC, and precision-recall characteristics [5]

Table 2: Performance Benchmarks of scFMs in Drug Sensitivity Prediction Tasks

| Model | Prediction Approach | Cancer Types Evaluated | Key Performance Metrics | Limitations |

|---|---|---|---|---|

| scGPT [5] [4] | Zero-shot & Fine-tuning | Multiple (Pan-cancer) | Strong overall performance across tasks | Computational intensity for fine-tuning |

| Geneformer [5] [4] | Representation transfer | Four cancer types | Effective gene-level prediction | Limited zero-shot capability |

| scFoundation [5] | Latent space projection | Seven cancer types | State-of-the-art in specific contexts | Inconsistent cross-dataset generalization |

| Baseline ML Models [5] [7] | Standard supervised learning | Benchmark comparisons | Efficient on targeted datasets | Poor transfer across biological contexts |

Essential Research Toolkit

Computational Frameworks and Reagents

Successful implementation of scFMs for drug sensitivity prediction requires specialized computational resources and frameworks:

Table 3: Essential Research Reagents and Computational Solutions for scFM Implementation

| Resource Category | Specific Tools | Functionality | Application Context |

|---|---|---|---|

| Integration Frameworks [2] [4] | BioLLM, DISCO, CZ CELLxGENE | Unified model access, standardized benchmarking | Cross-model comparison, reproducible analysis |

| Pretraining Corpora [1] [2] | Human Cell Atlas, CELLxGENE, GEO | Curated single-cell datasets for model training | Foundation model development, transfer learning |

| Specialized Architectures [2] | scGPT, Geneformer, scFoundation, EpiAgent | Domain-optimized model architectures | Task-specific applications, multimodal integration |

| Analysis Ecosystems [2] | scGNN+, BioLLM | Automated workflow optimization | Accessible implementation for non-specialists |

Critical Analytical Considerations

The effective application of scFMs for drug sensitivity prediction necessitates addressing several analytical challenges:

- Batch effect mitigation: Technical variation across datasets can confound biological signal, requiring careful preprocessing and batch-aware modeling [1] [2]

- Interpretability constraints: The black-box nature of transformer architectures complicates biological insight extraction, necessitating specialized interpretation tools [1] [6]

- Data quality requirements: High sparsity and noise in single-cell data impact model performance, emphasizing the need for rigorous quality control [1] [5]

- Computational resource demands: Training and fine-tuning large scFMs requires substantial GPU memory and processing capacity [1] [3]

The following diagram illustrates the key decision points in selecting an appropriate scFM strategy for drug sensitivity prediction:

Single-cell foundation models represent a powerful paradigm for predicting drug sensitivity at cellular resolution, offering unprecedented opportunities to understand heterogeneous treatment responses and identify novel therapeutic opportunities [5] [2]. The core principles of these models—including transformer architectures, self-supervised pretraining, and flexible adaptation mechanisms—enable them to capture complex biological relationships that traditional methods struggle to discern [1] [2].

While current implementations demonstrate promising capabilities, several challenges remain to be addressed, including improved interpretability, reduced computational requirements, and enhanced generalization across diverse biological contexts [1] [3] [7]. Future developments will likely focus on multimodal integration combining transcriptomic, epigenomic, and proteomic data [2], more biologically-informed architecture designs [5], and streamlined interfaces to broaden accessibility for biological researchers [3]. As these models continue to evolve, they hold substantial promise for accelerating therapeutic discovery and enabling more precise, personalized treatment strategies based on deep molecular profiling of individual cells [5] [2].

The advent of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by enabling the investigation of cellular heterogeneity at unprecedented resolution. However, the data generated by these technologies—characterized by high dimensionality, extreme sparsity, and technical noise—presents significant analytical challenges [8] [1]. Inspired by breakthroughs in natural language processing (NLP), researchers have begun treating single-cell data as a distinct "language" where genes function as words and entire cellular transcriptomes as sentences [1]. This conceptual framework has paved the way for transformer-based foundation models, which leverage self-supervised learning on massive datasets to capture fundamental biological principles that can be adapted to diverse downstream tasks including drug sensitivity prediction, cell type annotation, and mechanistic inference [9] [8] [10].

This Application Note details how transformer architectures process single-cell data through a linguistic lens and provides detailed protocols for applying these models to predict drug sensitivity in cancer research. By framing biological data analysis within this paradigm, researchers can unlock deeper insights into cellular function and therapeutic response.

Foundation Models: Architectural Principles and Tokenization Strategies

Core Architecture Components

Single-cell foundation models (scFMs) predominantly utilize transformer architectures, which employ attention mechanisms to weight relationships between all genes within a cell simultaneously [1]. The self-attention mechanism enables these models to decide which genes in a cellular "sentence" are most informative for predicting the cell's identity or state, capturing complex regulatory relationships without predefined biological pathways [1].

Most scFMs employ either encoder-based architectures (like BERT) for classification and embedding tasks, or decoder-based architectures (like GPT) for generative modeling [1]. Hybrid designs are increasingly being explored to balance the strengths of both approaches for different biological applications. These models typically generate two types of output: gene embeddings that capture functional relationships between genes, and cell embeddings that represent the overall state or identity of a cell [8] [10].

Tokenization: From Biology to Computational Tokens

Tokenization converts raw gene expression data into structured inputs that transformers can process. Unlike words in natural language, genes lack inherent sequential ordering, requiring strategic approaches to sequence definition:

- Rank-based tokenization: Genes are ordered by expression level within each cell, creating a deterministic sequence from highest to lowest expressed genes [11] [1]. This approach provides robustness to technical variations.

- Value-based tokenization: Gene expression values are binned into discrete ranges, with each bin representing a different "word" in the vocabulary [1].

- Hybrid approaches: Some models incorporate both gene identifiers and their expression values as separate tokens, enabling the model to learn more complex relationships [8].

Additional special tokens are often incorporated to enrich biological context, including:

- Modality tokens indicating data types (e.g., scRNA-seq, spatial transcriptomics)

- Species tokens for cross-species learning

- Batch tokens to account for technical effects

- Cell-type tokens for supervised pretraining [11] [1]

Table 1: Common Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Mechanism | Advantages | Representative Models |

|---|---|---|---|

| Rank-Based | Orders genes by expression level | Robust to batch effects, preserves gene relationships | Geneformer, Nicheformer |

| Value-Binning | Groups expression values into discrete bins | Captures absolute expression differences | scGPT |

| Hybrid | Combines gene ID and expression value tokens | Maximizes contextual information | scFoundation |

| Genomic Position | Orders genes by genomic coordinates | Leverages spatial genome organization | UCE |

Application Protocol: Drug Sensitivity Prediction with scGPT

Experimental Workflow and Design

The following protocol adapts the DeepCDR framework by integrating scGPT to predict drug sensitivity (IC50 values) from bulk RNA-seq of cancer cell lines, demonstrating how foundation models can enhance therapeutic prediction [10].

Table 2: Essential Research Reagents and Computational Resources

| Category | Item | Specification | Function/Purpose |

|---|---|---|---|

| Data Sources | Cancer Cell Line Encyclopedia (CCLE) | Bulk RNA-seq for 561 cancer cell lines | Provides gene expression inputs for model |

| Genomics of Drug Sensitivity in Cancer (GDSC) | IC50 values for drug-cell line pairs | Ground truth for model training/validation | |

| Computational Tools | scGPT | Pretrained foundation model (33M cells) | Generates cell embeddings from expression data |

| DeepCDR Framework | Hybrid graph convolutional network | Base architecture for drug response prediction | |

| Graph Neural Networks | Molecular structure processing | Encodes drug chemical information | |

| Hardware | GPU Resources | NVIDIA recommended (e.g., A100, V100) | Enables efficient model training/inference |

Step-by-Step Procedures

Data Preprocessing and Embedding Generation

Gene Expression Normalization

- Obtain bulk RNA-seq data from CCLE for cancer cell lines of interest.

- Normalize data using Counts Per Million (CPM) followed by log1p transformation to stabilize variance.

- Filter and align genes to match the expected input of scGPT (approximately 20,000 genes).

- Apply zero-padding for genes present in the scGPT reference list but absent from the expression dataset [10].

scGPT Embedding Generation

- Load the pretrained scGPT-human checkpoint (publicly available).

- Input preprocessed gene expression data into scGPT model.

- Extract the 512-dimensional cell embedding from the model output.

- Store embeddings for integration with drug representation data [10].

Drug Representation Processing

- Represent each drug as a molecular graph with:

- Feature matrix (75-dimensional) encoding atom attributes

- Adjacency list specifying bonds between atoms

- Degree list indicating neighbor counts for each atom

- Process drug graphs through a Graph Neural Network (GNN).

- Apply max pooling operation to summarize the most salient molecular features [10].

- Represent each drug as a molecular graph with:

Model Integration and Training

Feature Integration

- Concatenate the scGPT cell embedding (512-dimensional) with the drug representation from the GNN.

- Pass the concatenated representation through fully connected neural network layers.

Model Training and Validation

- Partition data into training (95%) and test (5%) sets.

- Use Mean Squared Error (MSE) loss between predicted and observed IC50 values.

- Implement leave-one-drug-out validation to assess generalizability to novel compounds.

- Evaluate performance using Pearson Correlation Coefficient (PCC) across cell lines, cancer types, and specific drugs [10].

Performance and Validation

The scGPT-enhanced DeepCDR framework demonstrates superior performance compared to both the original DeepCDR and scFoundation-integrated approaches:

- Prediction Accuracy: Achieves higher Pearson Correlation Coefficients (PCC) across cell line-based, cancer type-specific, and drug-specific evaluations [10].

- Generalization: Shows strong performance in leave-one-drug-out tests, indicating robust prediction capability for novel therapeutic compounds [10].

- Training Stability: Exhibits more consistent convergence and validation performance during training compared to alternative approaches [10].

Advanced Applications and Emerging Directions

Spatial Context Integration with Nicheformer

Recent advances incorporate spatial information through models like Nicheformer, trained on both dissociated single-cell and spatial transcriptomics data (SpatialCorpus-110M). This approach enables prediction of spatial context for dissociated cells, transferring rich microenvironmental information to standard scRNA-seq datasets [11]. Key applications include:

- Spatial composition prediction: Forecasting local cellular densities and neighborhood compositions

- Spatial label prediction: Annotating dissociated cells with spatial context (e.g., tissue layer, niche identity) [11]

Interpretability and Mechanistic Insight

Beyond prediction, transformer models enable mechanistic biological discovery through interpretability techniques:

- Attention mapping: Identifying genes with strongest influence on model predictions

- Feature importance: Using methods like SHAP to quantify contribution of individual genes to outcomes [9] [12]

- Rational biomarker discovery: Uncovering novel biomarkers by analyzing model decision patterns, such as the "Down Shift" white blood cell biomarker that complements existing inflammation markers [9]

Table 3: Benchmarking Single-Cell Foundation Models on Key Tasks

| Model | Pretraining Data | Key Applications | Drug Response Performance |

|---|---|---|---|

| scGPT | 33 million cells | Cell annotation, multi-omic integration, drug response | PCC: 0.85 (superior to baseline) |

| scFoundation | 50 million cells | Gene network inference, perturbation prediction | PCC: 0.82 (improved over DeepCDR) |

| Nicheformer | 110 million cells (incl. spatial) | Spatial context prediction, niche identification | N/A (specialized spatial tasks) |

| Geneformer | 30 million cells | Cell state transitions, network inference | N/A (limited drug response data) |

Troubleshooting and Technical Considerations

Common Implementation Challenges

Data Sparsity and Quality

- Challenge: High sparsity of scRNA-seq data impedes model performance.

- Solution: Implement gene embedding blocks to reduce sparsity effects, as demonstrated in scTransSort [13].

Computational Resource Limitations

- Challenge: Memory constraints during training with large datasets.

- Solution: Implement dataset slicing with consideration of potential bias introduction [10].

Batch Effect Integration

- Challenge: Technical variations between datasets affect model generalizability.

- Solution: Incorporate batch information as special tokens during training [1].

Model Selection Guidelines

When selecting a foundation model for drug sensitivity applications, consider:

- Dataset size: Simpler models may outperform foundation models with very small datasets [8]

- Task complexity: Complex tasks with limited training data benefit most from pretrained models [8]

- Interpretability needs: Models with built-in interpretability features (e.g., attention weights) support mechanistic insights [9]

- Computational resources: Larger models require significant GPU memory and training time [1]

Transformer-based foundation models represent a paradigm shift in single-cell data analysis, treating cellular transcriptomes as a language that can be decoded using advanced NLP-inspired architectures. The protocols outlined herein provide researchers with practical frameworks for applying these powerful models to drug sensitivity prediction, potentially accelerating therapeutic discovery and personalized treatment strategies. As these models continue to evolve—incorporating multimodal data, enhanced interpretability, and spatial context—they promise to unlock increasingly sophisticated insights into cellular biology and therapeutic response mechanisms.

The advent of single-cell RNA sequencing (scRNA-seq) has provided an unprecedented lens through which to view cellular heterogeneity, a critical factor in understanding differential drug responses. The computational analysis of this data, however, is fraught with challenges stemming from its high dimensionality, inherent sparsity, and technical noise [14]. Single-cell foundation models (scFMs), pre-trained on millions of cells, have emerged as powerful tools to overcome these hurdles. By learning universal patterns in transcriptomic data, these models provide a robust starting point for various downstream tasks, particularly in the realm of drug sensitivity prediction [8] [15]. Their ability to capture a deep understanding of gene-gene interactions and cellular states makes them uniquely suited for predicting how individual cells or populations will respond to therapeutic interventions. Among the plethora of scFMs, three key architectures—scBERT, scGPT, and Geneformer—exemplify different architectural philosophies and training strategies. Understanding their distinct mechanisms, strengths, and limitations is essential for researchers and drug development professionals aiming to harness their power for precision medicine. This article details the key architectural distinctions between these models and provides practical protocols for their application in predicting drug sensitivity.

The design of a foundation model—specifically, its choice of architecture, gene representation strategy, and pre-training objective—fundamentally shapes its capabilities and performance in downstream applications. The table below summarizes the core characteristics of scBERT, scGPT, and Geneformer.

Table 1: Key Architectural Characteristics of Featured Single-Cell Foundation Models

| Feature | scBERT | scGPT | Geneformer |

|---|---|---|---|

| Core Architecture | Encoder-only Transformer | Encoder-only Transformer (with generative pre-training) | Encoder-only Transformer |

| Primary Pre-training Task | Masked Gene Modeling (Classification) | Masked Gene Modeling (Regression & Generative) | Masked Gene Modeling (Contextual Rank Prediction) |

| Gene Representation | Value Binning (Categorization) | Value Binning & Value Projection | Gene Ranking (Ordering) |

| Model Parameters | ~40 million [8] | ~50 million [8] [16] | ~40 million [8] |

| Pre-training Scale | Millions of human cells [17] | 33 million human cells [17] [16] [18] | 30 million human cells [17] |

| Input Gene Count | 1,200 Highly Variable Genes (HVGs) [8] | 1,200 HVGs [8] | 2,048 ranked genes [8] |

Gene Representation Strategies

A critical differentiator among scFMs is how they convert continuous gene expression values into a format suitable for neural networks.

- Value Binning (scBERT, scGPT): This approach discretizes continuous expression values into a set of predefined "bins" or "buckets," effectively transforming regression into a classification problem [17] [14]. For example, scBERT might assign a gene with a certain expression level to a specific token ID representing "high expression." While this simplifies the modeling process, it can lead to a loss of fine-grained, quantitative information.

- Gene Ranking (Geneformer): Geneformer represents a cell by a sequence of gene names sorted in descending order of their expression value. This rank-based prioritization emphasizes the relative importance of genes within a cell and is inherently robust to batch effects and technical noise [17] [14]. Its pre-training task involves predicting the rank of masked genes within this contextual sequence.

- Value Projection (scGPT): In addition to binning, scGPT can also use a value projection strategy, where the continuous expression value is linearly projected into an embedding vector. This method preserves the full resolution of the expression data without discretization [17] [14].

Encoder-Centric Architectures

All three models—scBERT, scGPT, and Geneformer—are fundamentally based on the encoder-only Transformer architecture. Unlike decoder models that generate sequences autoregressively (like GPT for language), these models are designed to build rich, contextualized representations of their input data.

The encoder is composed of multiple layers of self-attention and feed-forward neural networks. The self-attention mechanism allows each gene in the input sequence to interact with every other gene, enabling the model to learn complex, non-linear gene-gene relationships that are crucial for understanding cellular state and, by extension, drug response [19]. The output is a dense embedding vector for each input gene, or a pooled embedding for the entire cell, which can then be used for classification, regression, or other downstream analyses.

scGPT's architecture is a notable variant, as it employs a generative pre-training objective within its encoder framework. It uses specialized attention masks during pre-training to predict the expression values of masked genes, allowing it to learn a generative understanding of cellular transcriptomes [16].

Diagram 1: Encoder Model Input-Output Workflow

Application Notes and Protocols for Drug Sensitivity Prediction

The following section provides detailed methodologies for applying scBERT, scGPT, and Geneformer to predict cancer drug response, a task critical for personalized medicine.

Protocol: Fine-tuning scGPT for Cell Line Drug Response Classification

This protocol outlines the steps to adapt the pre-trained scGPT model to predict the sensitivity of cancer cell lines to specific drugs.

Research Reagent Solutions:

- Pre-trained scGPT Model: The foundation model pre-trained on 33 million human cells, providing a general understanding of transcriptomics [18].

- Cancer Cell Line Dataset: A labeled dataset such as the Cancer Cell Line Encyclopedia (CCLE) or a proprietary dataset containing scRNA-seq profiles of cell lines and their measured IC50 values or binarized sensitivity labels for a drug of interest.

- Computational Environment: A GPU-equipped workstation (e.g., NVIDIA A100) with Python and the scGPT package installed via

pip install scgpt[18].

Step-by-Step Procedure:

- Data Preprocessing: Prepare your cell line expression matrix. Normalize the data using scGPT's builtin normalization functions and select the top 1,200 Highly Variable Genes (HVGs) to match the model's expected input dimension [8] [20].

- Model Initialization: Load the pre-trained scGPT "whole-human" model checkpoint using the provided

load_pretrainedfunction from the scGPT codebase [18]. - Classifier Head Attachment: Replace the model's pre-training head with a task-specific classification head. This is typically a fully connected (linear) layer that maps the final cell embedding to a probability distribution over the output classes (e.g., "sensitive" vs. "resistant").

- Fine-tuning: Train the model on your labeled cell line data. Freeze the initial layers of the transformer encoder if the dataset is small to prevent overfitting, and only fine-tune the later layers and the new classification head. Use a standard cross-entropy loss function and an Adam optimizer with a low learning rate (e.g., 1e-5).

- Evaluation: Assess the model's performance on a held-out test set of cell lines. Report standard metrics such as Area Under the ROC Curve (AUC), accuracy, and F1-score. The GRNFormer study, which built upon scGPT, demonstrated that such integration can lead to significant improvements in drug response prediction tasks [19].

Protocol: Zero-Shot Cell Embedding with Geneformer for Drug Sensitivity Analysis

This protocol describes how to use Geneformer in a zero-shot setting to generate cell embeddings that can be used as features for a separate drug response prediction model. This is particularly useful in discovery settings where labeled data is scarce or unavailable for fine-tuning [21].

Research Reagent Solutions:

- Pre-trained Geneformer Model: The model pre-trained on 30 million cells to understand gene context via rank-based modeling [8].

- In-house scRNA-seq Data: Unlabeled transcriptomic profiles from patient-derived cells or cell lines.

- External Drug Response Model: A machine learning classifier (e.g., Random Forest, Support Vector Machine) capable of predicting drug response from cell embeddings.

Step-by-Step Procedure:

- Input Preparation: For each cell in your dataset, create an input sequence for Geneformer by ranking the top 2,048 genes by expression level [8].

- Zero-Shot Inference: Pass each cell's gene rank sequence through the pre-trained Geneformer model without updating any of the model's parameters. Extract the cell's embedding from the model's output layer (e.g., the [CLS] token embedding or the mean of all gene embeddings).

- Feature Matrix Construction: Assemble the embeddings from all cells into a feature matrix, where each row is a cell and each column is a dimension of the embedding vector.

- Predictive Modeling: Use this feature matrix to train a separate, external drug response predictor if labels are available. The embeddings can also be used for unsupervised analysis, such as clustering, to identify cell subpopulations with potentially distinct drug sensitivity profiles. It is important to note that benchmarking studies have shown zero-shot performance can be variable, and may be outperformed by simpler methods like using Highly Variable Genes (HVGs) in some cases [21].

Protocol: Integrating Foundation Models into Multimodal Drug Response Pipelines

For the highest predictive accuracy, foundation models can be integrated as components within larger, multimodal deep learning frameworks that incorporate multiple data types.

Research Reagent Solutions:

- scFM Component: A single-cell foundation model (scGPT, Geneformer, or scBERT) to process transcriptomic data.

- Drug-Target Interaction (DTI) Model: A separately trained model, such as a Graph Neural Network (GNN), that generates embeddings from a drug's molecular structure and its protein targets [15].

- Multimodal Fusion Architecture: A neural network designed to combine embeddings from different modalities (e.g., transcriptomics and drug chemistry).

Step-by-Step Procedure:

- Process Each Modality:

- Cell Representation: Generate a cell embedding for a cell line or patient cell using one of the scFMs as described in Protocols 3.1 or 3.2.

- Drug Representation: Generate a drug embedding using the DTI model based on the drug's SMILES string or molecular graph.

- Feature Fusion: Concatenate the cell embedding and the drug embedding into a single, combined feature vector. More sophisticated fusion methods, such as cross-attention, can also be employed [19].

- Joint Prediction: Feed the fused feature vector into a final regression head (to predict a continuous value like IC50) or a classification head (to predict sensitive/resistant). The entire pipeline can be trained end-to-end.

- Validation: Rigorously validate the multimodal pipeline using leave-drug-out cross-validation to test its ability to generalize to novel therapeutics not seen during training. The DTLCDR model is an example of this approach, showing that integrating target information and single-cell language models significantly improves generalizability to unseen drugs [15].

Diagram 2: Multimodal Drug Response Prediction

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Type | Function in Experiment | Example Source/Implementation |

|---|---|---|---|

| Pre-trained scGPT Checkpoint | Software/Model | Provides a foundational understanding of human transcriptomics for transfer learning. | scGPT GitHub repository "whole-human" model [18]. |

| Pre-trained Geneformer Checkpoint | Software/Model | Provides rank-based gene context understanding for zero-shot embedding generation. | Hugging Face Hub or original publication resources [8]. |

| Cancer Cell Line Encyclopedia (CCLE) | Dataset | Provides labeled scRNA-seq and drug sensitivity data for model training and validation. | Broad Institute DepMap Portal. |

| Harmony | Software Algorithm | Used for batch integration of scRNA-seq data from different sources to remove technical artifacts [21]. | R or Python package. |

| scVI | Software Algorithm | A generative model for scRNA-seq data used for normalization, dimensionality reduction, and batch correction [8] [21]. | Python package. |

| Flash-Attention Library | Software Library | Accelerates the self-attention computation in Transformer models, reducing training time and memory usage for scGPT. | Python package (pip install flash-attn) [18]. |

| Ascend/Atlas 800 Servers | Hardware | High-performance computing infrastructure with Ascend910 NPUs for large-scale model training. | Huawei (Used for training CellFM) [17]. |

Choosing the most appropriate single-cell foundation model for a drug sensitivity project depends on the specific task, data availability, and computational constraints. The following guide synthesizes insights from benchmarking studies and application notes to aid in this decision [8] [20] [21].

- Choose scGPT if: Your task requires high accuracy on a well-defined problem (e.g., cell line classification) and you have a labeled dataset for fine-tuning. Its generative pre-training and flexible input representation make it a powerful and versatile choice for supervised downstream tasks [16] [20].

- Choose Geneformer if: You are working in an exploratory setting with limited or no labels, and require robust, zero-shot cell embeddings for clustering or as features for a separate model. Its rank-based representation is highly effective at capturing biological signal amidst noise [17] [21].

- Consider a Simpler Baseline if: Computational resources are extremely limited or a benchmarking study on your specific data type shows that methods like Highly Variable Genes (HVG) selection combined with Harmony or scVI outperform foundation models in a zero-shot setting [21].

In conclusion, encoder-based models like scBERT, scGPT, and Geneformer have established a new paradigm for analyzing single-cell transcriptomic data in drug discovery. Their power lies in their pre-trained understanding of gene networks and cellular states. By following the detailed protocols provided—whether for fine-tuning scGPT, using Geneformer in zero-shot mode, or constructing a multimodal pipeline—researchers can effectively leverage these architectures to predict drug sensitivity with greater accuracy and biological insight, ultimately accelerating the development of personalized cancer therapies. Future advancements will likely involve tighter integration of multi-omics data and biological prior knowledge, as seen in models like GRNFormer, to further enhance predictive power and interpretability [19].

Single-cell foundation models (scFMs) represent a revolutionary approach in computational biology, leveraging large-scale deep learning models pretrained on vast single-cell datasets through self-supervised learning [1]. These models have emerged as powerful tools designed to overcome the inherent challenges of single-cell data analysis, including high dimensionality, technical noise, batch effects, and data sparsity [1] [5] [17]. Inspired by the success of transformer architectures in natural language processing, researchers have adapted these techniques to single-cell genomics, treating individual cells as "sentences" and genes or genomic features as "words" or "tokens" [1]. The fundamental premise of scFMs is that by exposing a model to millions of cells encompassing diverse tissues, species, and conditions, the model can learn universal biological principles that generalize effectively to new datasets and downstream tasks [1]. This pretraining paradigm is particularly valuable for drug sensitivity prediction, as it enables the model to capture fundamental aspects of cellular heterogeneity and regulatory mechanisms that underlie differential drug responses [22] [5]. The self-supervised nature of pretraining allows scFMs to learn from the rapidly expanding repositories of public single-cell data without requiring explicit labeling, making them exceptionally well-suited for extracting biologically meaningful representations that can be fine-tuned for specific predictive tasks in oncology and precision medicine [1] [22].

Core Architectures and Tokenization Strategies

Model Architecture Foundations

Most single-cell foundation models are built on transformer architectures, which utilize attention mechanisms to model complex dependencies between genes within individual cells [1] [17]. These architectures can be broadly categorized into encoder-based, decoder-based, and hybrid designs. Encoder-based models like scBERT employ bidirectional attention mechanisms that learn from all genes in a cell simultaneously, making them particularly effective for classification tasks and cell embedding [1]. In contrast, decoder-based models such as scGPT utilize a unidirectional masked self-attention mechanism that iteratively predicts masked genes conditioned on known genes, excelling in generative tasks [1]. Hybrid architectures that combine encoder and decoder components are also being explored to leverage the strengths of both approaches [1]. A recent innovation in this space is CellFM, which employs a modified RetNet framework with gated multi-head attention and Simple Gated Linear Units to achieve training parallelism and cost-effective inference while maintaining high performance [17]. The attention mechanisms in these architectures enable the model to learn which genes in a cell are most informative of cellular identity and state, capturing how genes covary across cells and their potential regulatory relationships [1].

Tokenization Approaches for Single-Cell Data

Tokenization converts raw gene expression data into discrete units that transformer models can process. Unlike words in natural language, genes lack inherent sequential ordering, presenting a unique challenge for applying transformer architectures to single-cell data [1] [5]. Three principal tokenization strategies have emerged, each with distinct advantages for capturing biological information:

Table: Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Method Description | Representative Models | Advantages |

|---|---|---|---|

| Gene Ranking | Genes are ordered by expression levels within each cell to create a deterministic sequence | Geneformer, scGPT | Captures most highly expressed genes; provides natural ordering |

| Value Categorization | Continuous expression values are binned into discrete categories | scBERT, scGPT | Converts regression to classification; handles technical noise |

| Value Projection | Directly predicts raw gene expression values using linear projections | scFoundation, CellFM | Preserves full data resolution; maintains continuous nature of expression |

The gene ranking approach orders genes by expression magnitude, feeding the ordered list as a "sentence" to the model [1] [17]. Value categorization strategies discretize continuous expression values into bins or "buckets," transforming expression prediction into a classification problem [1] [17]. Value projection methods preserve the continuous nature of expression data by directly predicting raw values through linear projections [1] [17]. Beyond these core strategies, models often incorporate special tokens representing cell identity, modality, or batch information, and may enrich gene tokens with additional biological context such as gene ontology terms or chromosomal locations [1]. After tokenization, all tokens are converted to embedding vectors that combine gene identity and expression information, then processed by the transformer layers to produce latent embeddings for both individual genes and entire cells [1].

Data Curation and Pretraining Protocols

Data Sourcing and Curation

The development of robust scFMs requires massive, diverse, and high-quality single-cell datasets for pretraining. Researchers benefit from organized archives and databases that provide unified access to annotated single-cell data [1]. Platforms such as CZ CELLxGENE offer standardized access to over 100 million unique cells, while the Human Cell Atlas and other multiorgan atlases provide broad coverage of cell types and states [1]. Additional public repositories including the NCBI Gene Expression Omnibus (GEO), Sequence Read Archive (SRA), and EMBL-EBI Expression Atlas host thousands of individual single-cell studies [1]. The curation process involves meticulous data cleaning, quality control, and standardization. For example, CellFM aggregated 19,914 samples totaling approximately 100 million human cells from various public databases, followed by rigorous quality control filtering, gene name standardization according to HUGO Gene Nomenclature Committee guidelines, and conversion to a unified sparse matrix format [17]. This dataset included 46.3 million cells from normal donors and additional cells from diseased donors, with approximately 70 million cells having annotated cell types spanning diverse categories including T cells (19.2 million), mononuclear phagocytes (7.01 million), and neurons (6.29 million) [17]. Such comprehensive data curation ensures that the pretraining corpus captures a wide spectrum of biological variation essential for learning generalizable representations.

Self-Supervised Pretraining Objectives

Self-supervised learning objectives enable scFMs to learn meaningful biological representations without manual labeling. The most common pretraining tasks include:

Masked Gene Modeling: Inspired by masked language modeling in NLP, this approach randomly masks a subset of genes in each cell and trains the model to predict the masked values based on the remaining genes [1] [2]. This task forces the model to learn contextual relationships between genes and their coordinated expression patterns.

Next Gene Prediction: Utilizing decoder-based architectures, this method trains models to autoregressively predict the next gene in a sequence ordered by expression levels [1] [17]. This approach encourages the model to learn probabilistic dependencies between genes.

Contrastive Learning: This strategy trains models to recognize similar cellular states while distinguishing different ones, often by maximizing agreement between augmented views of the same cell while minimizing agreement with other cells [23]. Techniques such as random masking, Gaussian noise addition, or mutual nearest neighbor identification create positive and negative pairs for contrastive learning [23].

These self-supervised objectives allow the model to capture fundamental biological principles, including gene-gene interactions, regulatory relationships, and cellular state transitions, which form a foundational knowledge base transferable to various downstream tasks including drug sensitivity prediction [1] [22] [5].

Experimental Protocols for scFM Pretraining

Standardized Pretraining Workflow

The following protocol outlines a comprehensive procedure for pretraining single-cell foundation models, synthesizing best practices from established methods:

Step 1: Data Collection and Curation

- Source diverse single-cell datasets from public repositories (CELLxGENE, GEO, SRA, ENA, GSA, ImmPort) encompassing multiple tissues, conditions, and experimental platforms [1] [17].

- Implement rigorous quality control metrics: filter cells based on gene counts, mitochondrial percentage, and other quality measures; filter genes based on detection rates [1] [17].

- Standardize gene annotations according to HUGO Gene Nomenclature Committee (HGNC) guidelines to ensure consistent gene identity across datasets [17].

- Convert all data to a unified sparse matrix format for efficient storage and processing [17].

Step 2: Data Preprocessing and Normalization

- Apply appropriate normalization methods to address sequencing depth variations (e.g., library size normalization, log transformation) [1].

- Select highly variable genes to focus on biologically informative features and reduce computational complexity [5].

- For integration of multiple datasets, apply batch correction techniques that preserve biological variation while removing technical artifacts [5] [23].

Step 3: Tokenization Strategy Implementation

- Choose an appropriate tokenization strategy based on model architecture and research goals (gene ranking, value categorization, or value projection) [1] [17].

- Incorporate special tokens for cell identity, modality, or batch information when relevant [1].

- Enhance gene tokens with additional biological context such as gene ontology terms or chromosomal locations where available [1].

Step 4: Model Architecture Configuration

- Select transformer variant based on target applications: encoder architectures (e.g., BERT-like) for classification tasks, decoder architectures (e.g., GPT-like) for generation tasks, or hybrid designs for multifaceted applications [1].

- Configure model dimensions: embedding size, number of attention heads, number of layers, and feed-forward dimensions based on available computational resources and dataset size [1] [17].

- Implement efficient attention variants (e.g., RetNet, sparse attention) for large-scale training to reduce computational complexity [17].

Step 5: Self-Supervised Pretraining

- Implement masked gene modeling by randomly masking 15-30% of genes in each cell and training the model to reconstruct the masked values [1] [2].

- Utilize appropriate loss functions: mean squared error for continuous values, cross-entropy for categorized values, or contrastive losses for representation learning [1] [23].

- Train with large batch sizes and distributed training strategies across multiple GPUs or NPUs to handle the scale of millions of cells [17].

- Implement progressive training strategies: start with smaller subsets of data before scaling to full dataset [1].

Step 6: Model Validation and Evaluation

- Evaluate reconstruction accuracy on held-out validation datasets [1].

- Assess learned representations through zero-shot performance on downstream tasks such as cell type annotation, batch correction, or perturbation prediction [5] [23].

- Analyze biological relevance of embeddings by examining neighborhood relationships and functional enrichment [5].

Protocol for Fine-Tuning scFMs for Drug Sensitivity Prediction

Once a foundation model is pretrained, it can be adapted for drug sensitivity prediction using the following protocol:

Step 1: Task-Specific Data Preparation

- Collect single-cell RNA-seq data from cancer cells before drug treatment [22].

- Generate binary response labels (sensitive/resistant) based on post-treatment viability assays or established thresholds from databases like GDSC or CCLE [22].

- Address class imbalance using techniques such as SMOTE or oversampling, particularly important for drug response datasets where resistant cells may be underrepresented [22].

Step 2: Model Adaptation

- Extract cell embeddings from the pretrained foundation model [22] [5].

- Add task-specific prediction heads: multilayer perceptrons for direct classification or regression [22].

- Implement transfer learning approaches: fine-tune all parameters or use parameter-efficient methods like LoRA (Low-Rank Adaptation) [17].

- Incorporate attention mechanisms to identify genes critical for drug response prediction, enhancing both interpretability and performance [22].

Step 3: Model Training and Validation

- Split data into training, validation, and test sets, ensuring that cells from the same patient or experiment remain in the same split [22].

- Train with appropriate loss functions: binary cross-entropy for classification or mean squared error for continuous response values [22].

- Implement cross-validation strategies to assess model robustness [22] [5].

- Evaluate using multiple metrics: area under the ROC curve (AUC), average precision (AP), accuracy, and F1-score [22] [5].

Step 4: Interpretation and Biological Validation

- Analyze attention weights to identify genes and pathways important for drug response prediction [22] [5].

- Validate identified genes through differential expression analysis between sensitive and resistant cells [22].

- Visualize the transition from sensitive to resistant states using dimensionality reduction techniques like UMAP [22].

- Correlate model predictions with known biomarkers and pathways to assess biological plausibility [22] [5].

Performance Benchmarking and Evaluation

Quantitative Performance Comparison

Comprehensive benchmarking studies provide critical insights into the performance of scFMs across various biological tasks. The following table summarizes key performance metrics for established foundation models across tasks relevant to drug discovery:

Table: Performance Benchmarking of Single-Cell Foundation Models

| Model | Pretraining Scale | Cell Type Annotation Accuracy | Perturbation Prediction | Drug Response Prediction | Computational Efficiency |

|---|---|---|---|---|---|

| CellFM | 100M cells, 800M parameters | Superior cross-tissue annotation | High accuracy in gene function prediction | Not explicitly reported | Efficient RetNet architecture |

| scGPT | 33M cells | Robust zero-shot annotation | Strong perturbation modeling | Adaptable via fine-tuning | Moderate resource requirements |

| Geneformer | 30M cells | Context-aware embeddings | Good performance on perturbation tasks | Not explicitly reported | Rank-based efficiency |

| ATSDP-NET | (Fine-tuned approach) | Not primary focus | Not primary focus | Superior performance (Recall, ROC, AP) | Attention-based efficiency |

Recent benchmarks evaluating six scFMs against traditional methods reveal that no single model consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [5]. For drug sensitivity prediction, specialized approaches like ATSDP-NET, which combines transfer learning from bulk RNA-seq data with attention mechanisms, demonstrate superior performance with high correlation between predicted sensitivity gene scores and actual values (R = 0.888, p < 0.001) and resistance gene scores (R = 0.788, p < 0.001) [22]. In batch correction tasks, specialized frameworks like scVI and CLAIRE, along with fine-tuned scGPT, excel at removing technical variations while preserving biological signals [23]. For cell type annotation, generic self-supervised methods like VICReg and SimCLR sometimes outperform domain-specific approaches, particularly in cross-species and cross-tissue generalization [5] [23].

Evaluation Metrics and Biological Relevance

Rigorous evaluation of scFMs extends beyond traditional performance metrics to include biologically grounded assessment criteria:

Cell Ontology-Informed Metrics: Novel evaluation approaches like scGraph-OntoRWR measure the consistency of cell type relationships captured by scFMs with established biological knowledge from cell ontologies [5]. The Lowest Common Ancestor Distance (LCAD) metric assesses the severity of cell type misclassifications by measuring ontological proximity between predicted and true cell types [5].

Landscape Roughness Analysis: The Roughness Index (ROGI) quantifies the smoothness of cell property landscapes in the latent space, with smoother landscapes correlating with better generalization and easier training of task-specific models [5].

Knowledge-Based Evaluation: Beyond supervised metrics, evaluating the biological insights captured by models through gene set enrichment analysis, pathway activation patterns, and consistency with known biological hierarchies provides crucial validation of model utility [5].

Zero-Shot Transfer Capability: Assessing model performance on novel cell types, tissues, or species without additional fine-tuning measures the generalizability of learned representations [5] [2].

These multifaceted evaluation strategies ensure that scFMs capture not only statistical patterns but also biologically meaningful representations that can advance drug discovery and therapeutic development.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Research Resources for scFM Development and Application

| Resource Category | Specific Tools/Platforms | Primary Function | Relevance to Drug Sensitivity Prediction |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE, NCBI GEO, ENA, SPDB | Provide standardized access to single-cell datasets | Source of training data and benchmark datasets for model development |

| Computational Frameworks | MindSpore, PyTorch, TensorFlow | Enable model development and training | Support implementation of novel architectures and training strategies |

| Benchmarking Platforms | BioLLM, scSSL-Bench | Standardized evaluation of model performance | Enable comparative assessment of prediction accuracy and robustness |

| Specialized Models | scGPT, Geneformer, CellFM, ATSDP-NET | Pretrained models for specific applications | Provide foundation for transfer learning and fine-tuning approaches |

| Integration Methods | Harmony, scVI, CLAIRE | Batch correction and data integration | Ensure data quality and comparability across experimental conditions |

| Visualization Tools | UMAP, t-SNE, scGraph-OntoRWR | Interpretation and communication of results | Enable visualization of drug response transitions and cellular heterogeneity |

Pretraining strategies for single-cell foundation models have established a new paradigm for analyzing cellular heterogeneity and predicting drug sensitivity. By learning from millions of cells through self-supervised objectives, these models capture fundamental biological principles that enable accurate prediction of therapeutic responses at single-cell resolution [1] [22]. The integration of transformer architectures with biologically informed tokenization strategies creates representations that effectively capture the complex molecular interactions underlying drug sensitivity and resistance mechanisms [1] [22]. As evidenced by comprehensive benchmarking studies, scFMs demonstrate robust performance across diverse tasks but require careful selection based on specific application needs, dataset characteristics, and available computational resources [5] [23].

Future developments in scFMs for drug sensitivity prediction will likely focus on several key areas: enhanced multimodal integration combining transcriptomic, epigenomic, proteomic, and spatial data [2]; improved interpretability through biologically grounded attention mechanisms [22] [5]; federated learning approaches enabling model training across distributed datasets while preserving privacy [2]; and greater incorporation of biological prior knowledge through structured knowledge graphs [5] [2]. As these models continue to evolve, they will play an increasingly vital role in precision oncology and therapeutic development, ultimately enabling more accurate prediction of patient-specific treatment responses and uncovering novel mechanisms of drug resistance.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by enabling the investigation of gene expression at unprecedented cellular resolution. This technology provides detailed insight into cellular heterogeneity, revealing hidden cell diversity and complex biological processes that are obscured in bulk sequencing approaches [24]. However, the powerful insights gained from scRNA-seq come with significant computational challenges that must be addressed for meaningful biological interpretation, particularly in the context of drug sensitivity prediction.

The two primary technical challenges in scRNA-seq data analysis are high dimensionality and data sparsity [24]. scRNA-seq datasets typically contain measurements for thousands of genes across thousands to millions of cells, creating a high-dimensional space that is computationally intensive to process and analyze [25]. Furthermore, scRNA-seq data are characterized by exceptionally high sparsity, where a significant proportion of gene-cell combinations (often >90%) contain zero counts [26] [24]. These zeros represent a combination of biological factors (true absence of expression) and technical limitations (failure to detect expressed genes), commonly referred to as "dropout events" [27] [26].

For researchers developing drug sensitivity prediction models, these challenges are particularly acute. Accurate prediction of therapeutic responses requires distinguishing biologically relevant signals from technical artifacts, and the high sparsity can obscure critical gene expression patterns that determine drug sensitivity or resistance [28]. This application note provides detailed protocols and methodologies to overcome these challenges, with specific emphasis on applications in single-cell foundation models for drug sensitivity prediction.

Understanding scRNA-seq Data Characteristics

The sparsity and dimensionality of scRNA-seq data stem from both biological and technical factors. Biologically, individual cells naturally express only a subset of genes in the genome at any given time, creating legitimate zero counts. Technically, limitations in mRNA capture efficiency, reverse transcription, amplification, and sequencing depth contribute to additional zeros where expressed genes fail to be detected [27].

The term "dropout" specifically describes technical failures that cause highly expressed genes to be undetected [26]. However, usage has broadened in the literature to sometimes refer to all observed zeros. Recent evidence suggests that certain genes are consistently under-detected in scRNA-seq due to sequence-specific features. A comprehensive analysis of 53 paired bulk and scRNA-seq samples identified an enrichment of poly(T) motifs in the tails of frequently under-detected genes, which may form hairpin structures with poly(A) tails and impede mRNA capture during library preparation [26].

Quantitative Impact on Drug Response Prediction

The challenges of sparsity and dimensionality directly impact drug sensitivity prediction in several ways. Sparse data can obscure the expression patterns of critical drug response genes, particularly when these genes are expressed at low levels but have substantial biological effects. High dimensionality increases the risk of overfitting in predictive models, especially given the typically limited number of treated samples available for training [28].

Table 1: Characteristics of scRNA-seq Data That Impact Drug Sensitivity Prediction

| Characteristic | Typical Values | Impact on Drug Prediction |

|---|---|---|

| Cell-Gene Matrix Sparsity | >90% zeros [26] [24] | Obscures expression patterns of key drug response genes |

| Dimensionality | 20,000+ genes × 1,000-1,000,000+ cells [24] | Computational burden; high risk of overfitting |

| Dropout Rate Variability | Gene- and technology-dependent [26] | Introduces noise in feature selection for prediction models |

| Batch Effects | Multiple technical sources | Confounds drug response signals with technical variation |

For drug development professionals, these data characteristics necessitate robust preprocessing and analytical strategies. The ATSDP-NET model for single-cell drug response prediction addresses sparsity by combining transfer learning from bulk RNA-seq data with attention mechanisms to focus on informative genes, demonstrating how computational approaches can overcome these limitations [28].

Computational Strategies for Addressing Sparsity and Dimensionality

Dimensionality Reduction Techniques

Dimensionality reduction transforms high-dimensional gene expression data into lower-dimensional representations that retain essential biological information while reducing noise and computational requirements [24]. These techniques are fundamental for visualizing cellular heterogeneity and creating features for downstream predictive modeling.

Principal Component Analysis (PCA) is a linear dimensionality reduction method that identifies orthogonal directions of maximum variance in the data [25] [29]. PCA creates new uncorrelated variables called principal components (PCs), which are linear combinations of the original genes. The top 10-50 PCs that capture the majority of variance are typically retained for downstream analysis [25]. For scRNA-seq data, PCA is often applied after selecting highly variable genes to focus on biologically meaningful variation.

t-Distributed Stochastic Neighbor Embedding (t-SNE) is a non-linear graph-based technique that projects high-dimensional data into 2D or 3D space by defining a Gaussian probability distribution based on Euclidean distances between data points and recreating the distribution in low-dimensional space using Student t-distribution [25] [29]. t-SNE excels at revealing local structure and has demonstrated excellent performance in benchmarking studies, though it can be computationally intensive [29].

Uniform Manifold Approximation and Projection (UMAP) is another non-linear dimensionality reduction method that constructs a high-dimensional graph representation of the dataset and optimizes a low-dimensional graph to be structurally similar [25]. UMAP preserves more global structure than t-SNE while offering superior runtime performance, and has shown the highest stability in comparative evaluations [29].

Table 2: Comparison of Dimensionality Reduction Methods for scRNA-seq Data

| Method | Type | Strengths | Limitations | Best Use Cases |

|---|---|---|---|---|

| PCA [25] [29] | Linear | Computationally efficient; highly interpretable; preserves global structure | Limited to capturing linear relationships; less effective for visualization | Initial feature reduction; preprocessing for downstream algorithms |

| t-SNE [25] [29] | Non-linear | Excellent at revealing local structure and fine-grained clustering | Computationally expensive; loses global structure; sensitive to parameters | Visualization of cell subtypes and local neighborhoods |

| UMAP [25] [29] | Non-linear | Preserves both local and global structure; faster than t-SNE | Can produce overly connected clusters; parameter sensitivity | Visualization for trajectory inference; preprocessing for clustering |

| ZIFA [29] | Model-based | Explicitly models dropout events; handles zero-inflation | Limited to linear transformations; computationally intensive | Data with suspected high technical dropout rates |

| VAE/DCA [29] [24] | Deep learning | Captures complex non-linear patterns; integrates denoising | "Black box" nature; requires substantial computational resources | Large datasets; integration with deep learning pipelines |

Sparsity-Handling Approaches

Imputation methods aim to distinguish technical zeros from biological zeros and estimate values for the technical dropouts. Model-based imputation methods use probabilistic models to identify which observed zeros represent technical artifacts and impute expression values specifically for these cases [27]. For example, deep count autoencoder (DCA) denoises scRNA-seq data using deep learning with zero-inflated negative binomial loss functions, learning parameters of the negative binomial distribution to represent denoised reconstructions [29].

Data-smoothing approaches adjust all expression values based on similar cells, functioning as denoising methods rather than strict imputation [27]. These include diffusion-based methods like MAGIC, k-nearest neighbor approaches like knn-smooth, and network diffusion methods like netSmooth [27]. These methods can improve downstream analysis but risk introducing false signals if applied indiscriminately.

Data-reconstruction methods learn latent space representations through matrix factorization or autoencoders, implicitly generating less sparse reconstructions of the data [27]. Methods like ZINB-WaVE use zero-inflated negative binomial factor models, while variational autoencoders like scVI capture non-linear relationships while accounting for zero inflation [27].

Binary representations offer an alternative approach that embraces rather than corrects for sparsity. As datasets grow larger and sparser, several studies have demonstrated that binarized expression data (0 for zero counts, 1 for non-zero) can produce results comparable to count-based analyses for many applications, including dimensionality reduction, cell type identification, and differential expression [30]. Binary representations offer substantial computational efficiency, scaling up to ~50-fold more cells using the same resources [30].

Experimental Protocols for scRNA-seq Data Processing

Standardized Preprocessing Workflow

This protocol outlines a comprehensive workflow for processing raw scRNA-seq count data to address sparsity and dimensionality challenges, optimized for drug sensitivity prediction applications.

Materials and Reagents

- Raw scRNA-seq count matrix (genes × cells)

- Computational resources: Minimum 16GB RAM, multi-core processor

- Software environment: R (v4.0+) or Python (v3.8+)

- Key packages: Scanpy [25], Seurat [26], or equivalent scRNA-seq analysis toolkit

Procedure

Quality Control and Filtering

- Calculate quality metrics: total counts per cell, number of genes detected per cell, percentage of mitochondrial reads

- Filter out low-quality cells based on thresholds: typically <200 genes detected, >10-25% mitochondrial reads

- Remove genes expressed in fewer than 10 cells to reduce noise

Normalization

- Apply library size normalization to account for varying sequencing depths between cells

- Use methods tailored to scRNA-seq characteristics (e.g., SCTransform, or log1p normalization after size factor calculation)

- For UMI-based technologies, log1p transformation is often appropriate:

X_normalized = log(1 + X)

Feature Selection

- Identify highly variable genes using mean-variance relationships

- Select 2,000-5,000 most highly variable genes for downstream analysis

- This step dramatically reduces dimensionality while preserving biological signal

Dimensionality Reduction

- Apply PCA to normalized, highly variable genes

- Determine number of significant PCs using elbow method or statistical approaches

- Typically retain 10-50 PCs capturing majority of biological variance

- For visualization, apply non-linear methods (UMAP/t-SNE) to PC space

Batch Effect Correction (if multiple samples/datasets)

- Apply integration methods (Harmony, BBKNN, or Seurat's CCA) when combining datasets

- Particularly crucial for drug response studies combining multiple experiments

Troubleshooting Tips

- If cellular heterogeneity appears limited, adjust highly variable gene selection

- If batch effects persist, increase neighborhood size in integration methods

- For datasets with rare cell populations, use specialized methods (e.g., sctransform) to preserve rare cell signals

Protocol for Sparsity-Aware Drug Response Modeling

This protocol specifically addresses drug sensitivity prediction from sparse scRNA-seq data, incorporating strategies to handle sparsity without introducing significant bias.

Materials and Reagents

- Processed scRNA-seq data (post-QC and normalization)

- Drug response measurements (e.g., viability scores, IC50 values)

- Transfer learning resources: Pre-trained models on bulk RNA-seq (e.g., from GDSC/CCLE) [28] [31]

Procedure

Data Representation Selection

- For large, sparse datasets (>50,000 cells), consider binary representation (0/1) for computational efficiency [30]

- For smaller datasets with suspected high technical dropout, apply appropriate imputation (e.g., DCA, MAGIC)

- Validate representation choice by checking correlation between binary and count-based pseudobulk profiles [30]

Dimensionality Reduction for Feature Engineering

- Apply PCA to reduced gene set (highly variable or biologically relevant genes)

- For non-linear relationships, consider autoencoder-based reduction (e.g., scVI, DCA)

- For interpretable features, use methods that provide gene weights (PCA, BAE) [32]

Transfer Learning Implementation

Model Training and Validation

- Implement cross-validation strategies that preserve cell population structure

- Use appropriate metrics for drug response: ROC/AUC for classification, R² for continuous outcomes

- Apply regularization techniques to prevent overfitting to sparse features

Interpretation and Biological Validation

- Identify genes with highest attention weights as potential biomarkers

- Validate predictions using known drug response mechanisms

- Perform pathway enrichment on influential genes to contextualize predictions

Validation Methods

- Compare binary vs. count-based representations using silhouette scores in reduced dimension space [30]

- Validate imputation by measuring correlation with matched bulk RNA-seq where available [26]

- Assess transfer learning performance by comparing with and without pre-training

Advanced Methods and Emerging Approaches

Machine Learning and Deep Learning Approaches

Advanced machine learning methods are increasingly being applied to address scRNA-seq sparsity and dimensionality challenges, particularly for drug response prediction.

Transfer learning has emerged as a powerful strategy, leveraging large bulk RNA-seq drug response datasets (e.g., GDSC, CCLE) to improve generalization on smaller scRNA-seq datasets [28] [33]. The ATSDP-NET framework demonstrates how pre-training on bulk data followed by fine-tuning on single-cell data can significantly enhance prediction accuracy, with reported correlation values of R=0.888 for sensitivity gene scores and R=0.788 for resistance gene scores [28].

Attention mechanisms help models focus on the most informative genes and cells, effectively ignoring uninformative zeros in sparse data [28]. Multi-head attention allows models to capture different aspects of gene expression patterns relevant to drug response, improving both accuracy and interpretability.

Autoencoder architectures provide flexible dimensionality reduction while learning meaningful latent representations. Variational autoencoders (VAEs) like scVI explicitly model scRNA-seq noise characteristics, while denoising autoencoders (DAE) like DCA learn to reconstruct clean expression profiles from noisy inputs [29] [24]. The DrugS model employs autoencoders to reduce 20,000 protein-coding genes to just 30 features while retaining predictive power for drug response [31].

Boosting autoencoders (BAE) represent a recent innovation that combines componentwise boosting with neural networks to incorporate structural assumptions [32]. BAE identifies small gene sets that characterize latent dimensions, providing both dimensionality reduction and biological interpretability - particularly valuable for understanding drug response mechanisms.

Specialized Applications in Drug Sensitivity Prediction

Several specialized frameworks have been developed specifically for drug response prediction in single-cell data:

scDEAL utilizes bulk-to-single-cell transfer learning to predict drug responses at single-cell resolution, demonstrating the feasibility of leveraging existing large-scale drug screening data [28].

CaDRReS-SC employs latent space algorithms to model the relationship between drug action and cellular transcriptomic profiles, enabling prediction based on transcriptomic similarities [31].

ATSDP-NET combines transfer learning with multi-head attention mechanisms, showing superior performance across multiple metrics (recall, ROC, AP) in predicting sensitivity and resistance to compounds like I-BET-762 and cisplatin [28].

These approaches typically employ specialized preprocessing steps, such as TSNE clustering to exclude assay data with high variability within homogeneous clusters for the same drugs, ensuring more reliable training data [31].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Addressing scRNA-seq Sparsity and Dimensionality

| Tool/Resource | Function | Application Context | Key Advantages |

|---|---|---|---|

| Scanpy [25] | Comprehensive scRNA-seq analysis | End-to-end processing pipeline | Integration of multiple DR methods; seamless workflow |

| Seurat [26] | scRNA-seq analysis platform | Quality control through visualization | User-friendly; extensive documentation |