Single-Cell Foundation Models: Revolutionizing Multi-Omics Data Integration for Biomedical Research

This article provides a comprehensive exploration of single-cell foundation models (scFMs) and their transformative role in multi-omics data integration.

Single-Cell Foundation Models: Revolutionizing Multi-Omics Data Integration for Biomedical Research

Abstract

This article provides a comprehensive exploration of single-cell foundation models (scFMs) and their transformative role in multi-omics data integration. Tailored for researchers, scientists, and drug development professionals, it covers the foundational concepts of scFMs, including their transformer-based architectures and pretraining strategies. The piece delves into practical methodologies and applications across areas like cell type annotation and drug response prediction, while also addressing key computational challenges and optimization strategies. Finally, it offers a critical evaluation of current tools through benchmarking studies and validation frameworks, synthesizing how these advanced AI models are bridging the gap between complex cellular data and actionable biological insights for precision medicine.

Understanding Single-Cell Foundation Models: Core Concepts and Architectural Principles

Single-cell foundation models (scFMs) represent a revolutionary class of artificial intelligence tools transforming how researchers analyze cellular biology. Defined as large-scale deep learning models pretrained on vast single-cell datasets at scale, scFMs are designed to be adaptable to a wide range of downstream biological tasks through fine-tuning [1]. The development of scFMs marks a significant milestone in computational biology, mirroring the transformative impact that foundation models have had in natural language processing (NLP) and computer vision [1] [2].

The core premise behind scFMs is that by exposing a model to millions of cells encompassing diverse tissues, species, and biological conditions, the model can learn the fundamental principles governing cellular behavior and gene regulation that are generalizable to new datasets and research questions [1]. This approach has become increasingly feasible with the accumulation of massive single-cell datasets in public repositories, with platforms like CZ CELLxGENE now providing unified access to over 100 million unique cells standardized for analysis [1].

Conceptual Framework: scFMs as Large Language Models for Biology

The Core Analogy: Cells as Sentences

The relationship between scFMs and large language models (LLMs) forms the theoretical foundation of this approach. In this conceptual framework, individual cells are treated analogously to sentences, while genes or genomic features along with their expression values are treated as words or tokens [1] [2]. This biological "language" consists of the patterns and relationships between genes that define cellular identity, state, and function.

Just as LLMs learn the statistical relationships between words in vast text corpora, scFMs learn the contextual relationships between genes across millions of cellular contexts [1]. The model learns which genes tend to be co-expressed, how expression patterns correlate with cellular functions, and what gene expression signatures define specific cell types and states.

Architectural Similarities and Differences

Table: Comparison between Large Language Models and Single-Cell Foundation Models

| Aspect | Large Language Models (LLMs) | Single-Cell Foundation Models (scFMs) |

|---|---|---|

| Fundamental Unit | Words/tokens | Genes/features with expression values |

| Sequential Structure | Natural word order | Artificially imposed (e.g., gene ranking) |

| Primary Architecture | Transformer-based | Transformer-based |

| Training Objective | Predict masked words | Predict masked gene expressions |

| Context Learning | Word relationships in sentences | Gene co-expression patterns in cells |

| Output Representations | Word embeddings, sentence embeddings | Gene embeddings, cell embeddings |

Most scFMs utilize some variant of the transformer architecture, which has revolutionized NLP due to its attention mechanisms that allow the model to learn and weight relationships between any pair of input tokens [1]. In scFMs, the attention mechanism learns which genes in a cell are most informative of the cell's identity or state, how they covary across cells, and how they have regulatory or functional connections [1].

However, a significant challenge in adapting transformers to single-cell data is that gene expression data are not naturally sequential. Unlike words in a sentence, genes in a cell have no inherent ordering [1] [3]. Researchers have developed various strategies to address this, including:

- Ranking genes within each cell by expression levels [1]

- Partitioning genes into bins based on expression values [1]

- Using normalized counts without complex ranking [1]

Key Technical Components of scFMs

Tokenization Strategies for Single-Cell Data

Tokenization converts raw input data into discrete units called tokens that models can process. For scFMs, this involves defining what constitutes a 'token' from single-cell data, typically representing each gene or feature as a token [1]. The process includes several key considerations:

- Gene Identity Representation: Each gene is represented as a token embedding that may combine a gene identifier and its expression value [1]

- Positional Encoding: Special schemes adapted to represent the relative order or rank of each gene in the cell [1]

- Special Tokens: Additional tokens representing cell identity, metadata, or modality information may be prepended [1] [3]

Model Architectures and Pretraining Strategies

Table: Prominent Single-Cell Foundation Models and Their Characteristics

| Model Name | Architecture Type | Pretraining Data Scale | Key Features |

|---|---|---|---|

| Geneformer | Transformer-based | 30 million cells [4] | Demonstrates transfer learning capabilities |

| scGPT | GPT-inspired decoder | 50+ million cells [5] | Generative pretrained transformer for single-cell data |

| scBERT | BERT-like encoder | Millions of cells [1] | Bidirectional encoder representations |

| scPlantLLM | Transformer-based | Plant-specific data [5] | Specialized for plant single-cell data |

| scFoundation | Transformer-based | 100 million cells [5] | Large-scale foundation model |

Most scFMs adopt either encoder-based architectures (like BERT) for classification tasks or decoder-based architectures (like GPT) for generation tasks [1]. Pretraining typically employs self-supervised learning objectives, often through predicting masked gene expressions, enabling the model to learn generalizable patterns without requiring labeled data [1].

scFMs in Multi-Omics Data Integration

The Multi-Omics Integration Challenge

Multi-omics integration represents a fundamental challenge and opportunity in single-cell biology. The biological system is complex with many regulatory features including DNA, mRNA, proteins, metabolites, and epigenetic markers, all influencing each other [6]. However, integrating these diverse data types presents significant technical hurdles due to:

- Different data scales and noise ratios across modalities [7]

- Missing data from technological limitations [7]

- Dynamic range limitations in detection methods [6]

- Temporal mismatches between molecular lifetimes [6]

Integration Frameworks and Strategies

scFMs provide powerful frameworks for multi-omics integration through several approaches:

Multi-omics integration with scFMs can be categorized into several strategic approaches:

- Matched (Vertical) Integration: Different omics profiled from the same cell, using the cell itself as an anchor [7]

- Unmatched (Diagonal) Integration: Different omics from different cells, requiring co-embedding in a shared space [7]

- Mosaic Integration: Integrating datasets with various omics combinations through sufficient overlap [7]

Advanced scFMs like scGPT and Geneformer can incorporate additional modalities beyond transcriptomics, including single-cell ATAC sequencing (scATAC-seq), multiome sequencing, spatial transcriptomics, and single-cell proteomics [1]. These models often include modality-specific tokens and embedding strategies to represent the diverse data types within a unified architecture.

Experimental Protocols and Applications

Protocol: In Silico Perturbation Prediction with Closed-Loop Fine-Tuning

Purpose: To predict cellular responses to genetic perturbations and iteratively improve prediction accuracy through experimental feedback [4].

Step-by-Step Methodology:

Model Selection and Initial Fine-tuning

Open-loop In Silico Perturbation (ISP)

- Perform genome-wide ISP simulations for both gene knockout and overexpression

- Generate initial predictions of genes that shift cellular states toward desired phenotypes

- Compare predictions with differential expression analysis as baseline [4]

Experimental Validation and Closed-loop Refinement

Key Performance Metrics:

- Positive Predictive Value (PPV)

- Negative Predictive Value (NPV)

- Sensitivity and Specificity

- Area Under Receiver Operator Characteristic Curve (AUROC)

Protocol: Cross-Species and Cross-Tissue Cell Annotation

Purpose: To leverage scFMs for accurate cell type annotation across species boundaries and tissue types, particularly for rare or novel cell populations.

Methodology:

Embedding Extraction

- Process query single-cell data through scFM to extract cell embeddings

- Generate reference embeddings from well-annotated atlas data

Zero-shot Annotation

- Calculate similarity between query and reference embeddings

- Transfer annotations based on nearest neighbors in embedding space

- Use ontology-informed metrics (e.g., LCAD) to evaluate annotation quality [3]

Fine-tuning for Domain Adaptation

- For challenging cross-species applications, fine-tune on limited labeled data

- Specialized models like scPlantLLM can adapt to taxonomic-specific challenges [5]

Table: Key Research Reagents and Computational Resources for scFM Research

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Public Data Repositories | CZ CELLxGENE, Human Cell Atlas, GEO, SRA | Provide curated single-cell datasets for model training and validation [1] |

| Computational Frameworks | BioLLM, scMCs | Standardized APIs for model integration and evaluation [8] [9] |

| Benchmarking Tools | scGraph-OntoRWR, LCAD metrics | Biologically-informed evaluation of model performance [3] |

| Specialized scFMs | scPlantLLM, scGPT, Geneformer | Pretrained models for specific applications and species [5] |

| Multi-omics Integration Tools | MOFA+, GLUE, Seurat v4 | Methods for integrating diverse data modalities [7] |

Performance Benchmarking and Evaluation

Rigorous evaluation of scFMs requires multiple metrics and benchmarking approaches. Recent comprehensive studies reveal that:

- No single scFM consistently outperforms others across all tasks [3]

- Task-specific strengths vary significantly between models [3]

- Simple baselines can sometimes outperform complex foundation models on specific tasks [3]

- Biological relevance of embeddings requires ontology-informed evaluation metrics [3]

Performance evaluation should span gene-level tasks (tissue specificity prediction, Gene Ontology term prediction) and cell-level tasks (batch integration, cell type annotation, perturbation response prediction) [3]. The introduction of biologically-grounded metrics like scGraph-OntoRWR, which measures the consistency of cell type relationships captured by scFMs with prior biological knowledge, represents a significant advance in evaluation methodology [3].

The field of single-cell foundation models is rapidly evolving, with several emerging trends and future directions:

- Multimodal Integration: Combining transcriptomics with epigenomics, proteomics, and spatial information [5] [10]

- Cross-Species Generalization: Developing models that transfer knowledge across evolutionary boundaries [5]

- Interpretability Advances: Making model predictions and representations more biologically interpretable [1]

- Clinical Translation: Applying scFMs to drug discovery, rare disease research, and therapeutic development [4]

In conclusion, single-cell foundation models represent a powerful paradigm shift in computational biology, leveraging the architectural advances of large language models to decode the complex language of cellular biology. When strategically integrated into multi-omics research frameworks, scFMs offer unprecedented opportunities to uncover novel biological insights and accelerate therapeutic development.

Transformer architectures, originally developed for natural language processing (NLP), are revolutionizing the analysis of single-cell omics data by providing a powerful framework for decoding cellular heterogeneity. These models utilize self-attention mechanisms to capture complex, long-range dependencies in biological data, enabling researchers to interpret the "language of life" encoded in cellular transcriptomes. Foundation models pretrained on millions of single-cell transcriptomes learn fundamental biological principles that generalize across diverse tissues, species, and experimental conditions [1] [11].

In biological applications, the self-attention mechanism allows models to dynamically weight the importance of different genes when making predictions about cellular states. Unlike traditional analytical methods that treat all genes equally, transformers learn which gene interactions are most informative for specific biological contexts, effectively modeling the complex regulatory networks that govern cellular function and identity [1] [12]. This capability is particularly valuable for single-cell RNA sequencing (scRNA-seq) data, which exhibits characteristic high dimensionality, technical noise, and sparsity that challenge conventional computational approaches [13].

Tokenization Strategies for Gene Expression Data

Fundamental Concepts and Challenges

Tokenization converts raw gene expression data into structured sequences that transformer models can process. Unlike words in natural language, genes lack inherent sequential ordering, presenting a fundamental challenge for applying transformer architectures to biological data. Researchers have developed multiple strategies to address this limitation, each with distinct advantages for specific analytical tasks [1] [13].

The table below summarizes predominant tokenization approaches used in single-cell foundation models (scFMs):

Table 1: Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Method Description | Advantages | Representative Models |

|---|---|---|---|

| Expression Ranking | Genes ordered by expression magnitude within each cell | Deterministic; preserves high-signal features | Geneformer, LangCell [1] [13] |

| Value Binning | Continuous expression values discretized into bins | Captures expression intensity information | scGPT [1] [13] |

| Genomic Position | Genes ordered by genomic coordinates | Incorporates spatial genome organization | UCE [13] |

| Fixed Gene Set | Uses consistent gene vocabulary across all cells | Standardized input representation | scFoundation [13] |

Specialized Tokens for Biological Context

Beyond basic gene tokenization, scFMs incorporate specialized tokens to enrich biological context. Modality tokens indicate data types (e.g., scRNA-seq, scATAC-seq) in multimodal integration, while batch tokens help mitigate technical variations between experiments. Cell-level tokens capture global cellular states, enabling the model to distinguish between different biological conditions [1]. Positional encoding schemes adapted from NLP represent the relative order or rank of each gene within the processed cell representation, compensating for the lack of natural sequence in omics data [1].

Architectural Implementations and Model Designs

Transformer Variants for Biological Data

Single-cell foundation models employ diverse transformer architectures optimized for specific analytical tasks. The bidirectional encoder architecture, inspired by BERT, processes all genes simultaneously using bidirectional attention to learn rich contextual representations [1]. In contrast, decoder-based models like scGPT use masked self-attention mechanisms to iteratively predict masked genes conditioned on known expression patterns, enabling generative capabilities [1] [11].

Table 2: Transformer Architectures in Single-Cell Foundation Models

| Architecture | Attention Mechanism | Primary Applications | Examples |

|---|---|---|---|

| Encoder-based | Bidirectional | Cell embedding, classification | scBERT, Geneformer [1] [13] |

| Decoder-based | Masked self-attention | Generative modeling, prediction | scGPT [1] [11] |

| Encoder-Decoder | Combination | Multi-task learning, translation | Custom models [1] |

| Bottlenecked | Cross-attention | Interpretability, OOD cells | CellMemory [12] |

Innovative Architectural Adaptations

Recent innovations address computational challenges associated with processing large-scale single-cell datasets. CellMemory introduces a bottlenecked transformer inspired by global workspace theory in cognitive neuroscience, using cross-attention between specialist modules and a limited-capacity "memory" to improve interpretability and handle out-of-distribution (OOD) cells [12]. This architecture reduces computational complexity while maintaining performance, achieving superior annotation accuracy for rare cell types compared to conventional transformers [12].

Hybrid architectures combine transformers with other neural network components to capture specific biological patterns. For example, scMonica integrates LSTM networks with transformer layers to model temporal dynamics in developmental processes, while graph transformers incorporate spatial relationships in tissue context [14]. These specialized architectures demonstrate the flexibility of self-attention mechanisms when adapted to distinct biological questions.

Experimental Protocols for Model Application

Protocol 1: Cross-Species Cell Type Annotation

Purpose: Leverage pretrained scFMs to identify cell types across species boundaries without retraining.

Materials:

- Pretrained foundation model (e.g., scGPT, scPlantFormer)

- Reference single-cell dataset with annotated cell types

- Query dataset from target species

- Computational environment with GPU acceleration

Procedure:

- Data Preprocessing: Normalize query dataset using same parameters as model's training data. Select overlapping gene features between reference and query datasets.

- Feature Extraction: Process query cells through pretrained model to obtain latent embeddings (512-3072 dimensions depending on model architecture).

- Similarity Calculation: Compute cosine similarity between query cell embeddings and reference cell type centroids in latent space.

- Annotation Transfer: Assign query cells to reference cell types based on maximum similarity scores exceeding confidence threshold (typically >0.7).

- Validation: Assess annotation quality using marker gene expression and cross-species conservation patterns.

Applications: This protocol enables rapid cell type identification in non-model organisms, with scPlantFormer achieving 92% cross-species accuracy in plant systems [11] [14].

Protocol 2: In Silico Perturbation Prediction

Purpose: Simulate cellular response to genetic or chemical perturbations using generative scFMs.

Materials:

- Generative transformer model (e.g., scGPT)

- Baseline gene expression profile of target cells

- Perturbation specification (gene knockout or drug treatment)

- Differential expression analysis framework

Procedure:

- Baseline Embedding: Encode unperturbed cell state using model's tokenization scheme.

- Perturbation Application: Modify input tokens to represent target gene knockout (zero expression) or drug treatment (modifier tokens).

- Expression Prediction: Generate post-perturbation expression profile through model's forward pass.

- Effect Quantification: Calculate log2 fold changes between predicted and baseline expression values.

- Network Analysis: Identify significantly altered pathways using gene set enrichment analysis on predicted expression changes.

Applications: Predict therapeutic responses and genetic intervention outcomes, reducing experimental costs in drug discovery [11] [14].

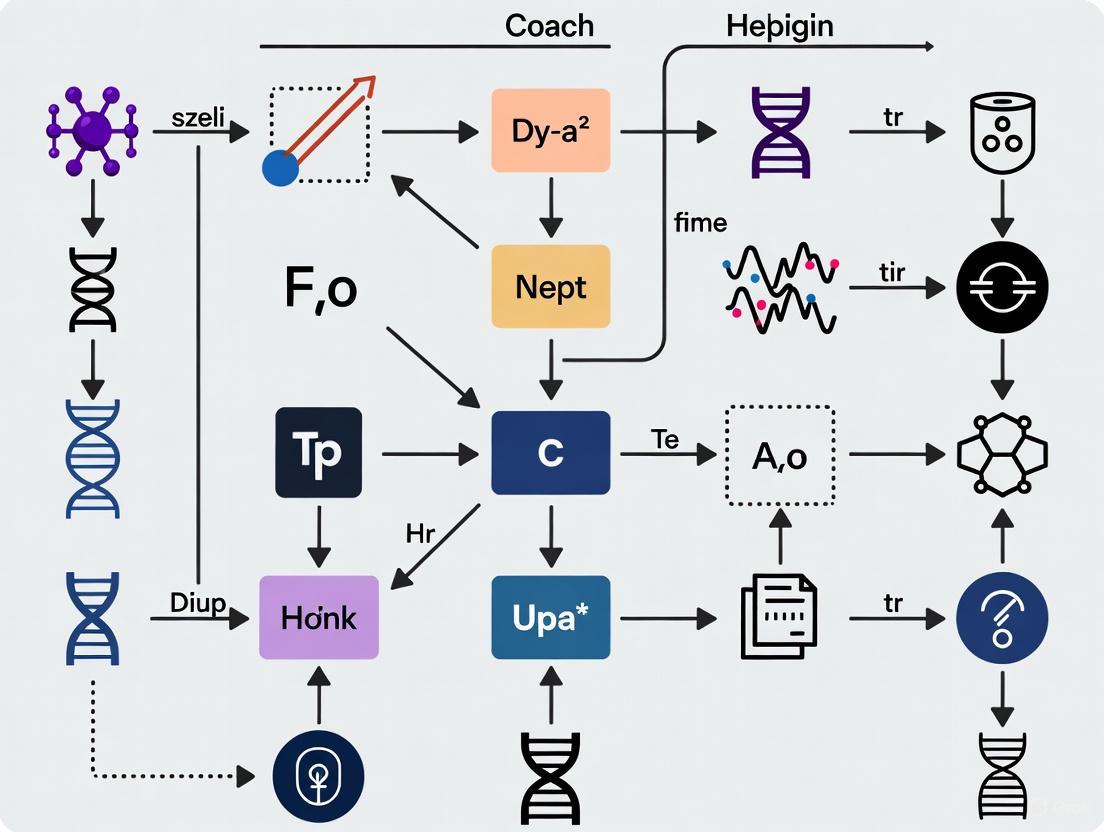

Diagram 1: scFM processing workflow for gene expression data.

Data Integration and Multi-Omic Applications

Multimodal Integration Strategies

Transformers excel at integrating diverse data modalities through shared embedding spaces and cross-attention mechanisms. Advanced scFMs incorporate transcriptomic, epigenomic, proteomic, and spatial imaging data within unified architectures [11] [14]. PathOmCLIP aligns histology images with spatial transcriptomics using contrastive learning, while GIST integrates histology with multi-omic profiles for 3D tissue modeling [11]. These approaches enable comprehensive analysis of regulatory networks across biological scales.

Mosaic integration techniques address the challenge of non-overlapping features across datasets. StabMap aligns datasets measuring different gene panels by leveraging shared cellular neighborhoods rather than strict feature overlaps, while TMO-Net implements pan-cancer multi-omic pretraining to capture context-specific regulatory patterns [11]. These methods enhance data completeness and facilitate discovery of novel biological insights.

Handling Technical Variability

A critical challenge in single-cell analysis involves distinguishing biological signals from technical artifacts. Transformer architectures incorporate several strategies to address batch effects and platform-specific biases:

- Batch Token Integration: Special tokens representing experimental batches enable the model to learn and correct for technical variations [1]

- Domain Adaptation: Fine-tuning protocols adapt models to new experimental conditions with minimal data [14]

- Contrastive Learning: Training objectives that maximize similarity between biological replicates while distinguishing technical artifacts [11]

These approaches maintain biological relevance while harmonizing data from diverse sources, enabling large-scale meta-analyses across thousands of experiments [1] [11].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Resource | Type | Function | Examples |

|---|---|---|---|

| Reference Atlases | Data | Training corpus for foundation models | Human Cell Atlas, Tabula Sapiens [12] |

| Platform Ecosystems | Software | Unified access to scFMs | BioLLM, CZ CELLxGENE Discover [11] [8] |

| Pretrained Models | Model Weights | Transfer learning for new datasets | scGPT, Geneformer, scPlantFormer [11] [13] |

| Benchmarking Suites | Evaluation | Standardized performance assessment | scGraph-OntoRWR, LCAD metrics [13] |

| Annotation Databases | Knowledge Base | Biological context interpretation | Cell Ontology, Gene Ontology [13] |

Diagram 2: Multi-omic data integration via cross-modal attention.

Performance Benchmarking and Interpretation

Evaluation Metrics and Comparative Performance

Rigorous benchmarking reveals distinct performance patterns across scFMs. Comprehensive evaluations using metrics like F1-score, accuracy, and novel biological consistency measures (scGraph-OntoRWR) provide guidance for model selection [13]. The table below summarizes performance characteristics across common tasks:

Table 4: Model Performance Across Biological Tasks

| Model | Cell Annotation (F1) | Perturbation Modeling | Cross-Species Generalization | Computational Efficiency |

|---|---|---|---|---|

| scGPT | 0.89-0.94 | Excellent | Strong | Moderate [13] [8] |

| Geneformer | 0.85-0.91 | Good | Moderate | High [13] |

| scFoundation | 0.87-0.92 | Good | Strong | Moderate [13] |

| CellMemory | 0.91-0.95 | Not reported | Excellent | High [12] |

| scBERT | 0.79-0.86 | Limited | Limited | High [13] [8] |

Notably, no single scFM consistently outperforms others across all tasks, emphasizing the importance of task-specific model selection [13]. Simpler machine learning models sometimes outperform foundation models on specific datasets with limited data, suggesting that dataset size and complexity should guide method selection [13].

Biological Insight Extraction

Beyond quantitative metrics, transformer architectures provide unique opportunities for biological discovery through interpretation of attention mechanisms. Attention weights between genes can reveal potential regulatory relationships, with strongly connected gene pairs in attention maps frequently corresponding to validated biological pathways [1] [12]. CellMemory's hierarchical interpretation provides both feature-level importance scores and pattern-level associations through memory slots, offering multi-scale insights into model decision processes [12].

Benchmarking studies demonstrate that scFMs capture biologically meaningful relationships, with model-derived cell type relationships closely matching established biological knowledge encoded in cell ontologies [13]. This biological consistency validates the utility of transformer-derived representations for hypothesis generation and experimental design.

Future Directions and Implementation Guidelines

Emerging Trends and Challenges

The field of biological transformers is rapidly evolving, with several emerging trends shaping future development. Cross-species adaptation frameworks are improving knowledge transfer between model organisms and humans [14]. Lightweight adapters and parameter-efficient fine-tuning methods are making scFMs more accessible for clinical applications with limited data [14]. Additionally, integration of temporal dynamics through specialized architectures is enabling more accurate modeling of developmental trajectories and disease progression [14].

Significant challenges remain in standardization, interpretability, and clinical translation. Ecosystem fragmentation with inconsistent evaluation metrics and limited model interoperability hinders cross-study comparisons [11] [14]. Model interpretability, while improved through attention visualization, still requires specialized expertise to connect computational findings with mechanistic biology [13] [14].

Practical Implementation Recommendations

For researchers implementing transformer approaches for gene expression data, we recommend:

Model Selection: Choose architecture based on primary task - encoder models for classification, decoder models for generation, and hybrid designs for multi-task applications [1] [13]

Data Preprocessing: Implement rigorous quality control and normalization consistent with model pretraining protocols [1] [13]

Validation Strategy: Combine quantitative metrics with biological validation using known pathway associations and experimental follow-up [13] [12]

Computational Resources: Ensure adequate GPU memory for transformer inference, with model sizes typically ranging from 40-650 million parameters [13]

As transformer architectures continue to evolve, their ability to decode the complex language of gene expression will play an increasingly central role in bridging single-cell multi-omics with mechanistic biology and precision medicine.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, enabling the integrated analysis of millions of cells across diverse tissues, species, and experimental conditions [1]. These models, predominantly built on transformer architectures, rely on a critical preprocessing step: tokenization. Tokenization refers to the process of converting raw, unstructured biological data—such as gene expression values, epigenetic features, or DNA sequences—into discrete, numerical units (tokens) that can be processed by deep learning models [1] [15]. In scFMs, individual cells are treated analogously to sentences, while genes, genomic features, or their values become the words or tokens that collectively describe each cell's state [1]. The performance and generalization capability of scFMs across challenging transfer learning settings, including cross-tissue, cross-species, and spatial gene-panel shifts, depend critically on how cells are tokenized into model inputs [16]. Consequently, selecting an appropriate tokenization strategy is not merely a preprocessing detail but a fundamental design choice that significantly influences model performance, interpretability, and biological relevance.

Foundational Tokenization Strategies for Omics Data

Core Concepts and Challenges

Tokenization strategies for omics data must address several unique challenges that distinguish biological sequences from natural language. Unlike human language, biological sequences are non-sequential, lack delimiters or punctuation, and often span lengths far beyond typical text corpora [15]. Furthermore, gene expression data derived from single-cell RNA sequencing (scRNA-seq) does not possess an inherent ordering of genes, creating a fundamental challenge for transformer architectures that typically require sequenced input [1]. Effective tokenization must therefore impose meaningful structure while preserving biological information. A key consideration is the token granularity, which ranges from single nucleotides to groups of genes, with each level capturing different biological features [15] [17]. Additionally, the representation of numerical values, such as gene expression levels, requires specialized encoding approaches that maintain quantitative relationships [16].

Classification of Tokenization Approaches

Tokenization methods for omics data can be systematically categorized based on their input type and biological scope. The table below summarizes the predominant strategies employed in scFMs and genomic deep learning:

Table 1: Classification of Tokenization Strategies for Omics Data

| Tokenization Strategy | Biological Scope | Input Features | Model Examples | Advantages | Limitations |

|---|---|---|---|---|---|

| Nucleotide-based | DNA/RNA sequences | Single nucleotides or non-overlapping k-mers | HyenaDNA, Mamba | Preserves complete sequence information; enables novel sequence generation | Computational intensity; loses higher-order motifs without sufficient context [15] |

| Amino Acid-based | Protein sequences | Individual amino acids or short peptides | ESM, ProtTrans | Direct representation of protein primary structure | May miss structural contexts [15] [18] |

| K-mer Tokenization | Genomic sequences | Overlapping nucleotide k-mers | DNABERT, Nucleotide Transformer | Captures short-range motifs and patterns; balances sequence length | Vocabulary size grows exponentially with k; may split functional domains [15] |

| Gene-based Tokenization | Single-cell transcriptomics | Individual genes with expression values | scBERT, Geneformer | Leverages biological prior knowledge; reduces dimensionality | Dependent on gene annotation quality [1] [16] |

| Byte-Pair Encoding (BPE) | Genomic & transcriptomic | Adaptive compression based on sequence frequency | DNABERT-2 | Efficiently handles long sequences; data-driven vocabulary creation | Learned tokens may not align with biological motifs [15] |

Advanced Tokenization Frameworks for Single-Cell Multi-Omics Integration

Modular Tokenization Frameworks

Recent research has established that tokenization choices show minimal impact on in-distribution performance but become decisive under distribution shifts, such as cross-species or cross-tissue generalization [16]. To address this challenge, modular frameworks like Heimdall have been developed to systematically evaluate tokenization strategies in scFMs. Heimdall decomposes tokenization into three modular components: a gene identity encoder (FG), an expression encoder (FE), and a "cell sentence" constructor (FC) with submodules (order, sequence, and reduce) that enable fine-grained control and attribution [16]. This modular approach allows researchers to recombine existing strategies to enhance generalization, with FG and ordering strategies driving the largest performance gains under distribution shift, while F_E provides additional improvements [16].

Expression Value Encoding Strategies

A critical aspect of tokenization for scRNA-seq data is how to represent gene expression values. Unlike natural language, where words have categorical identities, gene tokens incorporate both identity and quantitative expression levels. Common expression encoding strategies include:

- Bin-based Encoding: Partitioning expression values into discrete bins or quantiles, then using these rankings to determine token identity or position [1].

- Rank-based Encoding: Ordering genes within each cell by their expression levels and feeding the ordered list of top genes as the "sentence" [1] [16].

- Value-Integration Approaches: Combining gene identity embeddings with continuous expression values through element-wise multiplication or concatenation before feeding to transformer layers [16].

- Normalized Counts: Some models report no clear advantages for complex ranking strategies and simply use normalized counts as input [1].

Multi-Modal Tokenization Strategies

For truly integrative multi-omics analysis, scFMs must incorporate diverse data types beyond transcriptomics, including chromatin accessibility (scATAC-seq), DNA methylation, spatial coordinates, and proteomics [1] [7]. Advanced tokenization approaches for multi-omics integration include:

- Special Modality Tokens: Prepending tokens indicating the data modality (e.g., [ATAC], [RNA], [PROTEIN]) to distinguish between feature types [1] [19].

- Gene Metadata Incorporation: Enriching token representations with additional biological context such as gene ontology terms, chromosome location, or functional annotations [1].

- Batch Token Integration: Incorporating batch information as special tokens to account for technical variations across experiments, though several models report robustness to batch effects without explicit batch tokens [1].

Table 2: Multi-Omics Integration Tools and Their Tokenization Capacities

| Tool Name | Year | Methodology | Integration Capacity | Tokenization Approach |

|---|---|---|---|---|

| Seurat v5 | 2022 | Bridge integration | mRNA, chromatin accessibility, DNA methylation, protein | Gene-based with multimodal anchoring [7] |

| GLUE | 2022 | Graph variational autoencoders | Chromatin accessibility, DNA methylation, mRNA | Uses prior biological knowledge to link omic data [7] |

| MultiVI | 2022 | Probabilistic modeling | mRNA, chromatin accessibility | Mosaic integration of shared and unique features [7] |

| Cobolt | 2021 | Multimodal variational autoencoder | mRNA, chromatin accessibility | Learns joint representation across modalities [7] |

| SCHEMA | 2019 | Metric learning-based method | Chromatin accessibility, mRNA, proteins, immunoprofiling, spatial coordinates | Unified embedding space for diverse data types [7] |

Experimental Protocols for Tokenization Strategy Evaluation

Protocol 1: Benchmarking Tokenization Strategies Under Distribution Shift

Purpose: To systematically evaluate tokenization strategies for cross-species and cross-tissue generalization in scFMs.

Materials:

- Hardware: Configuration with ≥16 GB RAM and multi-core processor (e.g., Intel Core i7-12700F) [20]

- Software: Heimdall framework (https://github.com/gnnumsli/EGP-Hybrid-ML) [16]

- Datasets: Annotated single-cell datasets from public repositories (CZ CELLxGENE, Human Cell Atlas, PanglaoDB) representing multiple tissues and species [1]

Methodology:

- Data Preprocessing:

- Download datasets from CZ CELLxGENE, containing over 100 million unique cells standardized for analysis [1].

- Filter cells and genes using quality control metrics (mitochondrial percentage, gene counts).

- Apply standard normalization and log-transformation to expression matrices.

Modular Tokenization Configuration:

- Implement gene identity encoder (F_G) using either categorical gene identifiers or pretrained gene embeddings.

- Configure expression encoder (F_E) testing bin-based, rank-based, and value-integration approaches.

- Design "cell sentence" constructor (F_C) evaluating different ordering strategies (expression rank, genomic position, random).

Model Training & Evaluation:

- Train transformer models from scratch with identical architectures but varying tokenization strategies.

- Evaluate performance on in-distribution data (same tissue/species as training).

- Assess generalization on out-of-distribution data (unseen tissues or species).

- Use cell type annotation accuracy as primary metric, with attention mechanism analysis to interpret feature importance.

Expected Outcomes: This protocol typically reveals that tokenization choices have minimal impact on in-distribution performance but become critical under distribution shift, with F_G and ordering strategy driving the largest generalization improvements [16].

Protocol 2: Multi-Omics Tokenization for Vertical Integration

Purpose: To develop and validate tokenization strategies for matched multi-omics data from the same single cells.

Materials:

- Reference materials: Quartet multi-omics reference material suites (DNA, RNA, protein, metabolites) [21]

- Platforms: Multi-omics technologies including scRNA-seq, scATAC-seq, and proteomics platforms

- Tools: Multi-omics integration tools (Seurat v5, GLUE, MultiVI) [7]

Methodology:

- Data Generation:

- Profile Quartet reference materials across multiple omics platforms using standardized protocols.

- Generate matched multi-omics data from the same set of cells wherever possible.

Tokenization Scheme Design:

- Implement special modality tokens ([RNA], [ATAC], [PROTEIN]) to distinguish feature types.

- Incorporate positional encoding based on genomic coordinates for epigenetic features.

- For sparse epigenomic data (e.g., scATAC-seq), implement binning strategies to create dense tokens.

Integration and Validation:

- Apply vertical integration methods to combine different omics modalities using the cell as an anchor [7].

- Validate integration quality using built-in truths from the Quartet design, including Mendelian relationships and information flow from DNA to RNA to protein [21].

- Assess cluster separation accuracy for the four Quartet samples (D5, D6, F7, M8) and three genetically driven clusters (daughters, father, mother).

Expected Outcomes: Successful multi-omics tokenization should enable correct classification of Quartet samples and recapitulation of central dogma relationships, with ratio-based profiling approaches demonstrating superior reproducibility compared to absolute quantification [21].

Visualization of Tokenization Workflows

Single-Cell Multi-Omics Tokenization Pipeline

Single-Cell Multi-Omics Tokenization and Processing Workflow

Modular Tokenization Framework Architecture

Modular Architecture for Tokenization Strategy Evaluation

Table 3: Key Research Reagents and Computational Tools for Tokenization Experiments

| Resource Name | Type | Function in Tokenization Research | Access Information |

|---|---|---|---|

| Quartet Reference Materials | Biological Reference Standards | Provides multi-omics ground truth with built-in familial relationships for validation | https://chinese-quartet.org/ [21] |

| Heimdall Framework | Computational Toolkit | Enables systematic evaluation of tokenization strategies across modular components | Open-source toolkit (reference [16]) |

| CZ CELLxGENE | Data Repository | Provides unified access to annotated single-cell datasets with >100 million cells for pretraining | https://cellxgene.cziscience.com/ [1] |

| DEG (Database of Essential Genes) | Specialized Database | Source of essential and non-essential genes for evaluating gene importance in tokenization | http://tubic.tju.edu.cn/deg/ [20] |

| TCGA (The Cancer Genome Atlas) | Multi-omics Data | Comprehensive cancer genomics dataset for validating multi-omics tokenization approaches | https://cancergenome.nih.gov/ [19] |

| EGP Hybrid-ML | Reference Implementation | Example implementation of hybrid machine learning model with attention mechanism for gene prediction | https://github.com/gnnumsli/EGP-Hybrid-ML [20] |

Tokenization represents a critical frontier in the development of effective single-cell foundation models for multi-omics integration. As this field advances, several emerging trends are shaping its future trajectory: the development of biologically meaningful tokenization that aligns with functional motifs and domains rather than arbitrary sequence segments [17]; dynamic tokenization strategies that adapt to specific biological questions and data types; and context-aware approaches that leverage established bioinformatics tools to provide high-level structured context, enabling models to focus on reasoning rather than low-level sequence interpretation [17]. Furthermore, as spatial transcriptomics and multi-omics technologies mature, tokenization schemes must evolve to incorporate spatial relationships and temporal dynamics. The paradigm is shifting from treating scFMs as direct sequence interpreters to positioning them as powerful reasoning engines over expert-curated biological knowledge [17]. By adopting systematic, modular approaches to tokenization strategy development and evaluation, researchers can unlock the full potential of scFMs to transform our understanding of cellular biology and accelerate therapeutic discovery.

Self-supervised learning (SSL) has emerged as a transformative approach for analyzing single-cell genomics data, enabling researchers to extract meaningful biological representations from vast, unlabeled datasets. By leveraging large-scale single-cell corpora, SSL pretraining provides a powerful mechanism to overcome challenges such as data sparsity, technical noise, and batch effects that commonly plague single-cell technologies. This paradigm is particularly crucial for single-cell Foundation Models (scFMs), which aim to learn universal representations transferable across diverse biological contexts and downstream tasks.

The integration of multi-omics data represents a grand challenge in single-cell genomics, as it requires harmonizing measurements from different molecular layers (transcriptomics, epigenomics, proteomics) with distinct statistical characteristics. SSL pretraining on massive single-cell corpora provides a viable pathway toward this integration by learning joint representations that capture underlying biological signals while mitigating technical variations. This Application Note provides a comprehensive framework for implementing SSL pretraining paradigms with a specific focus on multi-omics data integration using scFMs.

SSL Framework for Single-Cell Genomics

Core Architectural Components

Self-supervised learning for single-cell data typically employs a two-stage framework consisting of pretraining (pretext task) and optional fine-tuning. The pretraining phase learns rich data representations from unlabeled data, producing what is termed "zero-shot SSL" models. The fine-tuning phase further adapts these models to specific downstream tasks such as cell-type annotation or multi-omics integration [22].

The framework incorporates several core components:

Model Architecture: Fully connected autoencoder networks are commonly used as base architectures due to their prevalent application in single-cell genomics tasks and their ability to capture underlying biological variations without introducing complex architectural biases [22].

Pretext Tasks: SSL employs specific pretext tasks to learn from unlabeled data. The dominant approaches include:

- Masked Autoencoders: Randomly mask portions of input features (e.g., gene expressions) and train the model to reconstruct them [22].

- Contrastive Learning: Learn representations by contrasting positive pairs (similar cells) against negative pairs (dissimilar cells) using methods like BYOL (Bootstrap Your Own Latent) and Barlow Twins [22] [23].

Feature Spaces: Models can be trained on all protein-encoding genes (approximately 19,000 in human) to maximize generalizability or on selected highly variable genes (HVGs) to focus on biologically informative features [22] [13].

Masking Strategies for Single-Cell Data

Different masking strategies introduce varying levels of biological inductive bias into the pretraining process. The table below summarizes key masking approaches and their characteristics:

Table 1: Masking Strategies for SSL Pretraining in Single-Cell Genomics

| Strategy | Description | Biological Prior | Use Cases |

|---|---|---|---|

| Random Masking | Randomly selects genes for masking | Minimal | General-purpose representation learning |

| Gene Programme (GP) Masking | Masks genes based on functional groupings | Moderate | Learning coordinated biological programs |

| Isolated GP-to-GP Masking | Masks one gene program to predict another | High | Modeling regulatory relationships |

| GP-to-Transcription Factor Masking | Masks gene programs to predict TF expression | High | Inferring regulatory networks |

Notably, empirical analyses have demonstrated that masked autoencoders generally outperform contrastive methods in single-cell genomics, diverging from trends observed in computer vision applications [22]. Random masking has emerged as particularly effective across multiple tasks, surprisingly surpassing more complex domain-specific augmentations [23].

Performance Benchmarking

Evaluation Metrics and Tasks

SSL methods for single-cell data are evaluated across multiple downstream tasks using standardized metrics. The table below summarizes the key evaluation dimensions:

Table 2: Evaluation Framework for SSL in Single-Cell Genomics

| Task Category | Specific Tasks | Key Metrics |

|---|---|---|

| Cell-level Analysis | Cell type annotation, Batch correction | ARI, NMI, Macro F1, Micro F1, kBET, ASW, LISI |

| Gene-level Analysis | Gene expression reconstruction, Gene function prediction | Weighted explained variance, Gene set enrichment |

| Multi-omics Integration | Cross-modality prediction, Data integration | Integration accuracy, Missing modality imputation accuracy |

Evaluation should encompass both supervised metrics (e.g., cell-type classification accuracy) and unsupervised metrics (e.g., batch mixing and biological conservation) to provide a comprehensive assessment of model performance [13] [24].

Comparative Performance

Recent benchmarking studies have revealed task-specific performance patterns across SSL methods:

Batch Correction: Specialized single-cell frameworks (scVI, CLAIRE) and the fine-tuned scGPT foundation model excel at uni-modal batch correction, effectively removing technical variations while preserving biological signals [23] [24].

Cell Type Annotation: Generic SSL methods such as VICReg and SimCLR demonstrate superior performance in cell typing tasks, particularly in zero-shot settings where models must generalize to unseen cell types [23].

Multi-omics Integration: Current methods show varying success in integrating different data modalities. While no single method consistently outperforms others across all tasks, models like scGPT and scMFG show promise for specific integration scenarios [11] [25].

Notably, SSL pretraining on auxiliary data (large-scale single-cell corpora) consistently boosts performance on downstream tasks. For example, pretraining on the scTab dataset (over 20 million cells) improved macro F1 scores for cell-type prediction from 0.701 to 0.747 in PBMC datasets and from 0.272 to 0.309 in the Tabula Sapiens atlas [22].

Experimental Protocols

SSL Pretraining Protocol for Single-Cell Data

Objective: Learn generalizable representations from large-scale single-cell data that can be transferred to various downstream tasks.

Materials:

- Computing resources: High-performance computing cluster with GPU acceleration (recommended minimum 16GB GPU memory)

- Software: Python 3.8+, PyTorch or TensorFlow, single-cell analysis libraries (Scanpy, Scanorama)

- Data: Large-scale single-cell corpora (e.g., CELLxGENE Census, Human Cell Atlas)

Procedure:

- Data Preprocessing:

- Quality control: Filter cells with mitochondrial gene percentage >20% and genes expressed in <10 cells

- Normalization: Apply library size normalization (e.g., 10,000 reads per cell) and log transformation

- Feature selection: Select 3,000-5,000 highly variable genes using Seurat v3 or Scanpy workflow

- Batch information: Collect batch metadata (donor, protocol, laboratory) for evaluation

Model Configuration:

- Architecture: Implement fully connected autoencoder with 4-6 encoder layers and symmetric decoder

- Hidden dimensions: Use 512-1024 units per layer with dropout (rate=0.1)

- Masking: Apply random masking with 15-30% masking probability

- Optimization: Use AdamW optimizer with learning rate 1e-4 and weight decay 1e-5

Training Regimen:

- Pretraining: Train for 100-200 epochs with batch size 128-256

- Validation: Monitor reconstruction loss on held-out validation set

- Early stopping: Patience of 10-20 epochs based on validation performance

Evaluation:

- Zero-shot analysis: Extract embeddings and assess using kNN classification

- Transfer learning: Fine-tune on downstream tasks with reduced learning rate (1e-5)

Troubleshooting:

- If model fails to converge, reduce learning rate or increase masking probability

- If overfitting occurs, increase dropout rate or apply stronger regularization

- For computational constraints, reduce hidden dimensions or use stochastic batches

Multi-Omics Integration Protocol

Objective: Integrate paired transcriptomic and epigenomic data to learn joint representations that capture complementary biological information.

Materials:

- Data: Paired single-cell multi-omics data (e.g., from CITE-seq, SHARE-seq, 10x Multiome)

- Software: Specialized integration tools (scMFG, scFPN, MOFA+)

Procedure:

- Data Preprocessing:

- Process each modality separately: scRNA-seq (normalization, HVG selection) and scATAC-seq (binarization, peak calling)

- Feature selection: Select 3,000 HVGs for RNA and 10,000 accessible peaks for ATAC

Integration Framework:

- Method selection: Choose appropriate integration method based on data characteristics

- For feature-rich data: Use matrix factorization approaches (MOFA+)

- For complex nonlinear relationships: Use deep learning approaches (scFPN, scMFG)

Model Training:

- Modality-specific encoders: Train separate encoders for each data type

- Integration layer: Fuse representations using feature pyramid network or latent alignment

- Optimization: Jointly train with modality-specific and integration losses

Validation:

- Cluster evaluation: Assess integrated clusters using ARI, NMI with ground truth labels

- Biological conservation: Evaluate preservation of cell-type specific markers

- Batch mixing: Quantify batch integration using LISI or kBET metrics

Visualization Framework

SSL Pretraining Workflow for Single-Cell Data

Diagram 1: SSL pretraining workflow for single-cell data, showing the progression from raw data to downstream applications.

Multi-Omics Integration Architecture

Diagram 2: Multi-omics integration architecture showing how different data modalities are processed and integrated.

The Scientist's Toolkit

Table 3: Essential Resources for SSL in Single-Cell Multi-Omics Research

| Category | Resource | Specification | Application |

|---|---|---|---|

| Data Resources | CELLxGENE Census | >20M cells, cross-tissue | Large-scale pretraining corpus |

| Human Cell Atlas | Comprehensive reference | Biological ground truth | |

| SPDB | Single-cell proteomic database | Multi-omics benchmarking | |

| Computational Tools | scGPT | 50M parameters, transformer | Foundation model training |

| scVI | Variational autoencoder | Probabilistic modeling | |

| Scanpy | Python toolkit | Data preprocessing & analysis | |

| MOFA+ | Statistical framework | Multi-omics integration | |

| Benchmarking Frameworks | scSSL-Bench | 19 SSL methods, 9 datasets | Performance evaluation |

| scIB | 14 metrics, multiple tasks | Integration quality assessment | |

| Implementation Libraries | PyTorch | Deep learning framework | Model development |

| JAX | Accelerated computing | High-performance training |

Self-supervised learning pretraining on massive single-cell corpora represents a paradigm shift in computational biology, enabling the development of foundation models that capture universal biological principles. The protocols and frameworks outlined in this Application Note provide researchers with practical guidance for implementing these approaches, with particular emphasis on multi-omics integration challenges.

As the field evolves, key considerations include the nuanced role of SSL in transfer learning scenarios, the importance of scalable architectures, and the need for biologically meaningful evaluation metrics. By adopting standardized benchmarking practices and robust experimental protocols, researchers can leverage SSL to advance our understanding of cellular heterogeneity and function across diverse biological contexts and disease states.

Single-cell foundation models (scFMs) represent a transformative advancement in computational biology, leveraging large-scale deep learning to interpret complex single-cell omics data. These models are pretrained on vast datasets through self-supervised learning, enabling exceptional adaptability across diverse downstream tasks without task-specific architectural changes [1]. The development of scFMs addresses a critical need in single-cell genomics for unified frameworks capable of integrating and comprehensively analyzing rapidly expanding data repositories, which now encompass hundreds of millions of cells across diverse tissues, species, and experimental conditions [1] [11].

These models fundamentally transform how researchers approach cellular heterogeneity and complex regulatory networks by treating cells as sentences and genes as words, allowing artificial intelligence to decipher the "language" of cellular function and organization [1]. The transformer architecture, revolutionized in natural language processing, serves as the computational backbone for most scFMs, utilizing attention mechanisms to model complex dependencies between genes within individual cells [1] [26]. This architectural foundation enables scFMs to capture intricate biological patterns that traditional analytical methods often miss.

This article provides a comprehensive technical comparison of four pivotal scFM architectures—scGPT, scBERT, Nicheformer, and scPlantFormer—focusing on their distinctive approaches to multi-omics data integration. We examine their underlying architectures, training methodologies, and performance across specialized tasks, providing researchers with practical protocols for implementation and a clear framework for selecting appropriate models based on specific research objectives in drug development and basic biology.

Comparative Analysis of Model Architectures

The four models represent diverse implementations of transformer-based architectures adapted for single-cell data, each with unique strengths for specific biological applications. scGPT employs a decoder-style architecture inspired by the Generative Pretrained Transformer (GPT), using a unidirectional masked self-attention mechanism that iteratively predicts masked genes conditioned on known genes [1]. This approach excels at generative tasks and perturbation modeling. In contrast, scBERT utilizes a BERT-like encoder architecture with bidirectional attention mechanisms, allowing the model to learn from all genes in a cell simultaneously [1] [27]. This architecture demonstrates particular strength in classification tasks such as cell type annotation.

Nicheformer introduces spatial awareness to foundation models through a transformer encoder architecture specifically designed to integrate both dissociated single-cell and spatial transcriptomics data [26] [28]. Its key innovation lies in incorporating contextual tokens for species, modality, and technology, enabling the model to learn distinct characteristics of each data type. scPlantFormer represents a specialized adaptation for plant systems, integrating phylogenetic constraints into its attention mechanism to achieve exceptional cross-species annotation accuracy [11].

Table 1: Core Architectural Specifications of Single-Cell Foundation Models

| Model | Architecture Type | Pretraining Scale | Embedding Dimension | Key Specialization |

|---|---|---|---|---|

| scGPT | GPT-like Decoder | 33+ million human cells [11] | 512-1024 [1] | Multi-omic integration, perturbation prediction |

| scBERT | BERT-like Encoder | Millions of cells [27] | 200 [27] | Cell type annotation |

| Nicheformer | Transformer Encoder | 110+ million cells (57M dissociated + 53M spatial) [26] | 512 [26] | Spatial context prediction |

| scPlantFormer | Transformer with Phylogenetic Constraints | 1 million Arabidopsis thaliana cells [11] | Not specified | Cross-species plant biology |

Tokenization Strategies and Input Representation

Tokenization—the process of converting raw gene expression data into model-readable tokens—varies significantly across scFMs and fundamentally influences their capabilities. Most models face the challenge that gene expression data lacks natural sequencing, unlike words in sentences [1]. A predominant strategy ranks genes within each cell by expression levels, feeding the ordered list of top genes as a "sentence" to the model [1] [26].

scGPT and Nicheformer both employ rank-based tokenization, where genes are ordered by expression magnitude relative to dataset-specific means [1] [26]. Nicheformer extends this approach by computing technology-specific nonzero mean vectors to account for systematic biases between spatial and dissociated assays [26]. scBERT utilizes a binning strategy, partitioning gene expression values into discrete bins (default: 7 bins) which are then used as token inputs [27]. Contextual enrichment through special tokens represents another key differentiator; Nicheformer incorporates modality, species, and technology tokens [26], while scGPT can prepend tokens representing cell identity and metadata [1].

Multimodal Integration Capabilities

A critical advantage of scFMs lies in their ability to integrate multiple data modalities, though each model exhibits distinct strengths and approaches. scGPT demonstrates robust multi-omic integration capabilities, handling transcriptomic, epigenomic, and proteomic data through modality-specific tokens and embedding strategies [1] [11]. Nicheformer specializes in spatial-transcriptomic integration, creating a joint representation space that enables transfer of spatial context to dissociated single-cell data [26] [28]. This capability allows researchers to infer spatial organization for existing scRNA-seq datasets without additional experiments.

scPlantFormer addresses cross-species integration through phylogenetic constraints in its attention mechanism, enabling effective knowledge transfer between plant species with conserved biological processes [11]. scBERT primarily focuses on transcriptomic data but can incorporate gene metadata such as ontological information to enhance biological context [1].

Table 2: Performance Comparison Across Key Biological Tasks

| Model | Cell Type Annotation | Spatial Prediction | Perturbation Modeling | Cross-Species Transfer | Batch Integration |

|---|---|---|---|---|---|

| scGPT | High accuracy (99.5% F1-score in retina) [29] | Limited | Excellent [1] [11] | Moderate | Variable zero-shot [30] |

| scBERT | Primary strength [27] | Not demonstrated | Limited | Not emphasized | Not reported |

| Nicheformer | Moderate with spatial context | State-of-the-art [26] [28] | Limited | Human-Mouse [26] | Effective for technologies |

| scPlantFormer | 92% cross-species accuracy [11] | Not demonstrated | Not reported | Excellent in plants [11] | Not reported |

Practical Implementation Protocols

scGPT Fine-Tuning Protocol for Retinal Cell Annotation

The following end-to-end protocol demonstrates how to fine-tune scGPT for specialized cell type annotation, achieving 99.5% F1-score for retinal cell identification [29]:

Data Preprocessing Requirements:

- Normalize raw count data using

sc.pp.normalize_totalandsc.pp.log1pfrom Scanpy - Implement quality control to remove low-quality cells and genes

- Format expression matrices with genes as columns and cells as rows

- For multi-omic integration: include modality-specific tokens and normalization

Fine-Tuning Procedure:

- Load pretrained scGPT model (available through BioLLM benchmarking framework) [11]

- Configure model architecture parameters: 6 transformer layers, 8 attention heads, 512 embedding dimensions

- Set training hyperparameters: batch size 64, learning rate 1e-4, weight decay 1e-5

- Train for 20-50 epochs with early stopping based on validation loss

- Validate using k-fold cross-validation with minimum 5 folds

Inference and Evaluation:

- Generate cell embeddings through forward pass of fine-tuned model

- Apply clustering algorithms (Leiden, Louvain) to identify cell populations

- Compare predicted labels with ground truth using F1-score, accuracy, and confusion matrices

- Visualize results using UMAP projection with cell type annotations

Nicheformer Spatial Context Transfer Protocol

Nicheformer enables prediction of spatial context for dissociated single-cell data through these key steps:

SpatialCorpus-110M Pretraining Foundation:

- Curate 57 million dissociated and 53 million spatially resolved cells [26]

- Harmonize human and mouse data using orthologous gene mapping (20,310 gene tokens) [26]

- Implement technology-specific normalization for MERFISH, Xenium, CosMx, and ISS platforms [26]

Spatial Transfer Implementation:

- Generate Nicheformer embeddings for target dissociated cells

- Apply linear probing or fine-tuning with spatial reference datasets

- Predict spatial labels including:

- Human-annotated tissue niches

- Microenvironment compositions

- Local cellular density profiles

- Validate predictions against held-out spatial transcriptomics data

Interpretation and Analysis:

- Identify spatially variable genes through attention weight analysis

- Reconstruct cellular neighborhood relationships

- Infer cell-cell communication patterns within predicted niches

- Map disease-specific spatial alterations (e.g., tumor microenvironments)

The Scientist's Toolkit: Essential Research Reagents

Table 3: Critical Computational Tools and Data Resources for scFM Implementation

| Resource Name | Type | Primary Function | Access Information |

|---|---|---|---|

| CZ CELLxGENE | Data Repository | Unified access to 100M+ annotated single-cells [1] | https://cellxgene.cziscience.com/ |

| SpatialCorpus-110M | Training Data | 110M dissociated and spatial cells for Nicheformer [26] | Custom compilation [26] |

| BioLLM | Benchmarking Framework | Universal interface for evaluating 15+ foundation models [11] | Open-source platform |

| scGPT Package | Software | End-to-end fine-tuning and inference pipeline [29] | GitHub: RCHENLAB/scGPTfineTuneprotocol |

| Nicheformer Package | Software | Spatial context prediction implementation [31] | GitHub: theislab/nicheformer |

| scBERT Model | Software | BERT-based cell type annotation [27] | GitHub: TencentAILabHealthcare/scBERT |

Performance Benchmarking and Limitations

Quantitative Performance Across Tasks

Recent benchmarking studies reveal critical performance patterns across the four model architectures. scGPT demonstrates exceptional capability in zero-shot cell type annotation and perturbation response prediction when pretrained on 33+ million human cells [11]. In specialized applications, fine-tuned scGPT achieves remarkable 99.5% F1-score for retinal cell annotation [29]. However, its zero-shot performance varies significantly across datasets, sometimes underperforming simpler methods like highly variable genes (HVG) selection combined with Harmony or scVI integration [30].

Nicheformer establishes new standards for spatially-aware tasks, consistently outperforming models trained exclusively on dissociated data [26]. In spatial composition prediction and niche identification, Nicheformer achieves 15-30% improvement over spatial-agnostic models [26] [28]. scPlantFormer demonstrates groundbreaking 92% accuracy in cross-species cell type annotation within plant systems, addressing a critical challenge in comparative genomics [11]. scBERT maintains strong performance in dedicated cell type annotation tasks, though its applications to multimodal data remain less explored [27].

Critical Limitations and Considerations

Despite their promise, scFMs face significant challenges that researchers must consider when selecting and implementing these tools:

Zero-Shot Performance Gaps: Both scGPT and Geneformer demonstrate unreliable zero-shot performance in some evaluations, being outperformed by simpler methods like HVG selection combined with established integration tools [30]. This limitation is particularly problematic for discovery settings where labeled data for fine-tuning is unavailable [30].

Data Requirements and Computational Costs: Pretraining scFMs requires massive computational resources and carefully curated data corpora. Inconsistent data quality, batch effects, and technical variability across single-cell datasets introduce additional challenges for model robustness [1] [30]. Nicheformer's spatial capabilities specifically require technology-specific normalization to address platform-specific biases [26].

Interpretability Challenges: The biological relevance of latent embeddings and model representations remains nontrivial to interpret [1]. While attention mechanisms theoretically allow identification of important gene-gene interactions, extracting biologically meaningful insights requires additional validation and specialized interpretation tools.

Spatial Limitations: Even Nicheformer, despite its spatial capabilities, cannot fully reconstruct the complex three-dimensional architecture of native tissue environments. Future "tissue foundation models" incorporating physical relationships between cells represent the next frontier [28].

The comparative analysis of scGPT, scBERT, Nicheformer, and scPlantFormer reveals a rapidly evolving landscape where architectural specialization enables distinct biological applications. scGPT excels as a general-purpose model with strong multi-omic integration capabilities, particularly suited for perturbation modeling and cell type annotation. scBERT provides a focused solution for high-accuracy cell classification tasks. Nicheformer breaks new ground in spatial biology, enabling researchers to infer tissue context for existing single-cell datasets. scPlantFormer addresses the critical need for specialized models in non-mammalian systems.

For drug development professionals, these models offer increasingly sophisticated tools for understanding cellular mechanisms in disease contexts, particularly in complex tissue microenvironments like tumors. The emerging capability to predict how cells respond to perturbations and how they organize spatially provides valuable insights for target identification and therapeutic development.

Future development will likely focus on several key areas: (1) improved zero-shot performance through better pretraining objectives, (2) enhanced multimodal integration spanning transcriptomics, epigenomics, proteomics, and imaging, (3) incorporation of temporal dynamics for developmental and disease progression modeling, and (4) more interpretable architectures that provide biologically meaningful insights into regulatory mechanisms [1] [11] [28]. As these models mature, they will increasingly serve as foundational components in the emerging paradigm of virtual cell and tissue modeling, potentially transforming how we study health and disease and accelerating the development of novel therapeutics.

Practical Implementation: scFM Workflows for Multi-Omics Integration and Analysis

The advent of single-cell multi-omics technologies has revolutionized cellular analysis, enabling the comprehensive exploration of cellular heterogeneity, developmental trajectories, and disease mechanisms at unprecedented resolution. Modern biological datasets often comprise multiple modalities—including transcriptomic, epigenomic, proteomic, and spatial imaging data—each providing complementary insights into cellular states and functions. However, these datasets present significant computational challenges due to their high dimensionality, technical noise, and inherent biological complexity. Multimodal integration frameworks address these challenges by harmonizing disparate data types to construct unified representations of cellular systems, thereby facilitating the discovery of multilayered regulatory networks across biological scales.

Foundation models, originally developed for natural language processing, are now driving transformative approaches to high-dimensional, multimodal single-cell data analysis. Unlike traditional analytical pipelines designed for single-modality data, these advanced computational architectures utilize self-supervised pretraining objectives—including masked gene modeling, contrastive learning, and multimodal alignment—to capture hierarchical biological patterns across diverse data types. The integration of multimodal data has become a cornerstone of next-generation single-cell analysis, fueled by the convergence of multiple molecular profiling technologies that together provide a more comprehensive understanding of cellular function and regulation.

Core Computational Frameworks and Architectures

Contrastive Learning Approaches

Contrastive learning frameworks have emerged as powerful tools for aligning disparate data modalities into a unified embedding space. The CellWhisperer framework implements a multimodal artificial intelligence that connects transcriptomes and their textual annotations through contrastive learning on approximately 1 million RNA sequencing profiles with AI-curated descriptions [32]. This approach adapts the Contrastive Language-Image Pretraining (CLIP) architecture, processing transcriptomes with the Geneformer model for gene expression and textual annotations with the BioBERT model for biomedical text [32]. The resulting vectors are mapped into a 2,048-dimensional multimodal embedding space using conventional feed-forward neural network layers, trained to place modality-specific embeddings in close proximity within the joint embedding space.

Similarly, the scPairing framework utilizes a CLIP-inspired approach to embed different modalities from the same single cells onto a common embedding space [33]. This deep learning model enables the integration and generation of novel multiomics data through bridge integration, a method that uses an existing multiomics bridge to link unimodal datasets. Through extensive benchmarking, scPairing demonstrates the capacity to construct an embedding space that fully captures both coarse and fine biological structures, facilitating the generation of new multiomics data from retina, immune, and renal cells [33].

Transformer-Based Foundation Models

Transformer-based architectures have demonstrated remarkable success in multimodal single-cell analysis due to their ability to capture complex relationships across diverse data types. The scGPT model represents a landmark advancement, pretrained on over 33 million cells for multi-omic tasks [14] [11]. This foundation model employs self-supervised pretraining objectives including masked gene modeling to learn universal representations that support zero-shot cell type annotation and perturbation response prediction. The model's architecture enables transfer learning across diverse biological contexts, enhancing its robustness and versatility in single-cell analysis.

Nicheformer extends this approach to spatial contexts, employing graph transformers to model spatial cellular niches across 53 million spatially resolved cells [14] [11]. This spatial transformer architecture captures niche context and enables spatial integration at unprecedented scale. Another notable implementation, scPlantFormer, demonstrates the adaptability of these approaches across biological systems, integrating phylogenetic constraints into its attention mechanism to achieve 92% cross-species annotation accuracy in plant systems [14] [11].

Specialized Integration Architectures

Beyond general-purpose frameworks, several specialized architectures have been developed to address specific integration challenges. PathOmCLIP implements a contrastive learning model that connects tumor histology with spatial gene expression, validated across five tumor types to enhance gene expression prediction from histology images [14] [11]. This approach aligns histology images with spatial transcriptomics via contrastive learning, demonstrating the power of cross-modal alignment for bridging imaging and molecular profiling data.

StabMap introduces mosaic integration for non-overlapping features, enabling robust alignment of datasets that do not measure the same features by leveraging shared cell neighborhoods or robust cross-modal anchors rather than strict feature overlaps [14] [11]. This approach is particularly valuable for integrating datasets with different gene panels or measurement technologies. Similarly, EpiAgent specializes in epigenomic foundation modeling, focusing on cis-regulatory element (cCRE) reconstruction with ATAC-centric zero-shot capabilities [11].

Table 1: Quantitative Performance Metrics of Multimodal Integration Frameworks

| Framework | Category | Training Scale | Key Performance Metrics | Supported Modalities |

|---|---|---|---|---|

| scGPT [14] [11] | Foundation Model | 33 million+ cells | Superior multi-omic integration; Zero-shot annotation | Transcriptomics, Epigenomics |

| CellWhisperer [32] | Multimodal Embedding | 1 million+ transcriptomes | AUROC: 0.927 for retrieval | Transcriptomics, Text |

| Nicheformer [14] [11] | Spatial Transformer | 53 million spatial cells | Spatial context prediction | Spatial, Transcriptomics |

| scPlantFormer [14] [11] | Lightweight Foundation Model | 1 million plant cells | 92% cross-species accuracy | Transcriptomics, Phylogenetics |

| PathOmCLIP [14] [11] | Cross-modal Alignment | Five tumor types | Histology-gene mapping accuracy | Histology, Spatial Transcriptomics |

| scPairing [33] | Data Generation | Multiple tissue types | Captures biological structures | Multiomics, Unimodal integration |

Experimental Protocols and Methodologies

Protocol 1: Multimodal Embedding with Contrastive Learning

Principle: This protocol establishes a joint embedding space for transcriptomic and textual data using contrastive learning, enabling bidirectional retrieval and semantic search across modalities [32].

Reagents and Solutions:

- Hardware: High-performance computing cluster with GPU acceleration (minimum 16GB VRAM)

- Software: Python 3.8+, PyTorch 1.12+, CellWhisperer software package

- Data: ARCHS4 uniformly reprocessed GEO data; CELLxGENE Census pseudo-bulk transcriptomes

Procedure:

- Data Curation and Annotation:

- Obtain approximately 1 million human RNA-seq profiles from GEO and CELLxGENE Census repositories

- Apply LLM-assisted curation to create concise, coherent textual annotations for each sample based on sample-specific metadata

- Standardize annotations to include cell types, organs, tissues, diseases, and experimental methods

Model Architecture Configuration:

- Implement dual-stream architecture with Geneformer (12-layer transformer) for transcriptomes

- Implement BioBERT (biomedical text encoder) for textual annotations

- Configure projection heads with 3 fully connected layers to map both modalities to 2,048-dimensional embedding space

Contrastive Learning Training:

- Initialize with pre-trained weights for both encoders

- Set batch size to 512, learning rate to 5e-5 with cosine decay

- Use symmetric cross-entropy loss function with temperature parameter τ=0.07

- Train for 50 epochs with early stopping based on validation retrieval accuracy

Validation and Benchmarking:

- Evaluate embedding quality through cross-modal retrieval tasks

- Calculate AUROC for text-to-transcriptome and transcriptome-to-text retrieval

- Perform qualitative assessment through semantic search experiments with free-text queries

Troubleshooting Tips:

- For unstable training, reduce learning rate or increase batch size

- If textual annotations are noisy, implement additional preprocessing with regex filters